id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

7973fdc9-12d1-448e-a00c-642b018fde07 | trentmkelly/LessWrong-43k | LessWrong | Rationality and overriding human preferences: a combined model

A putative new idea for AI control; index here.

Previously, I presented a model in which a “rationality module” kept track of two things: how well a human was maximising their actual reward, and whether their preferences had been overridden by AI action.

The second didn’t integrate well into the first, and was tracked by a clunky extra Boolean. Since the two didn’t fit together, I was going to separate the two concepts, especially since the Boolean felt a bit too... Boolean, not allowing for grading. But then I realised that they actually fit together completely naturally, without the need for arbitrary Booleans or other tricks.

----------------------------------------

Feast or heroin famine

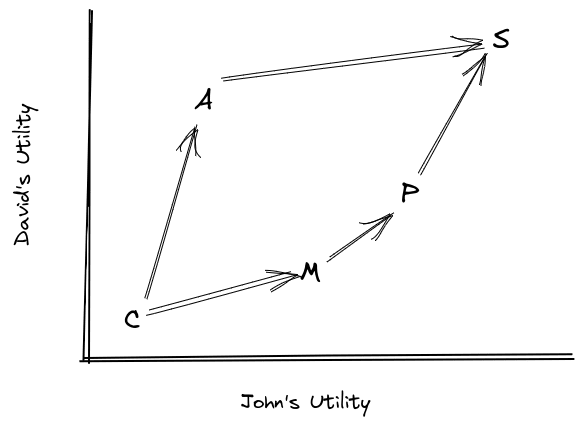

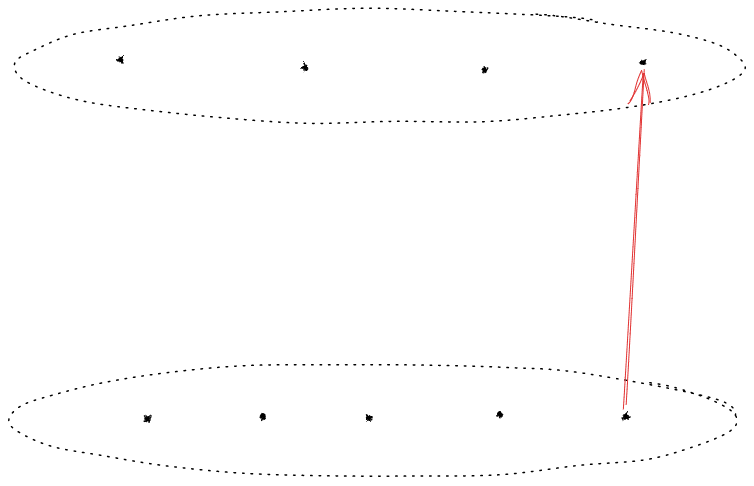

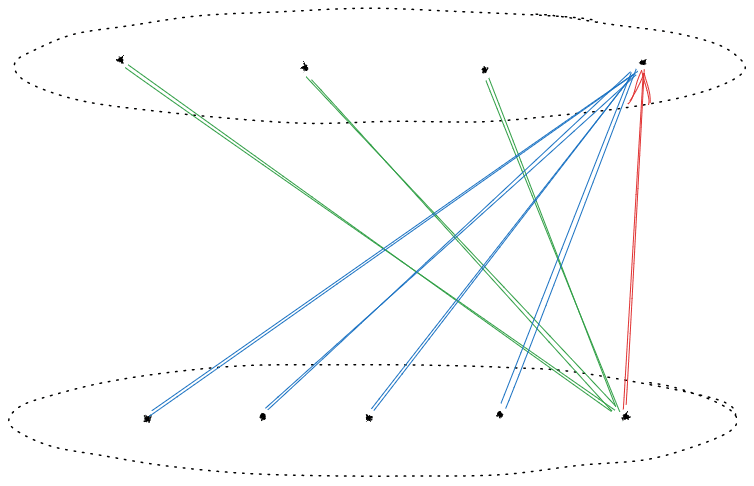

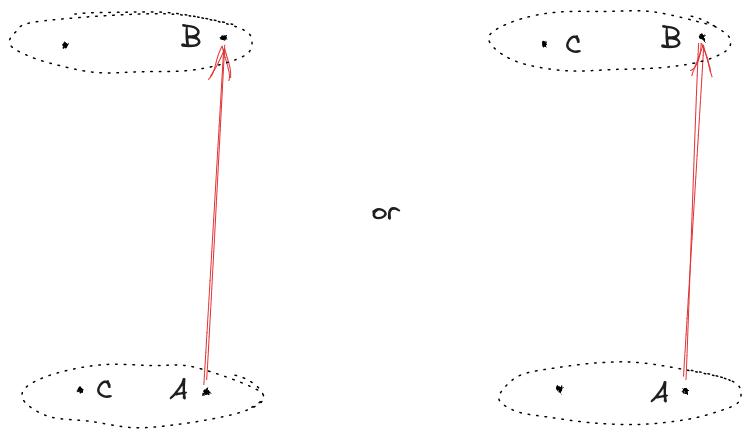

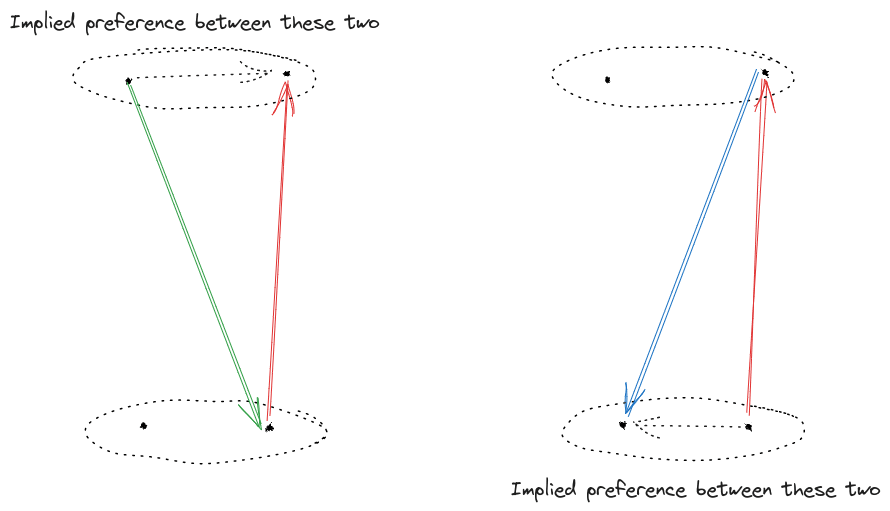

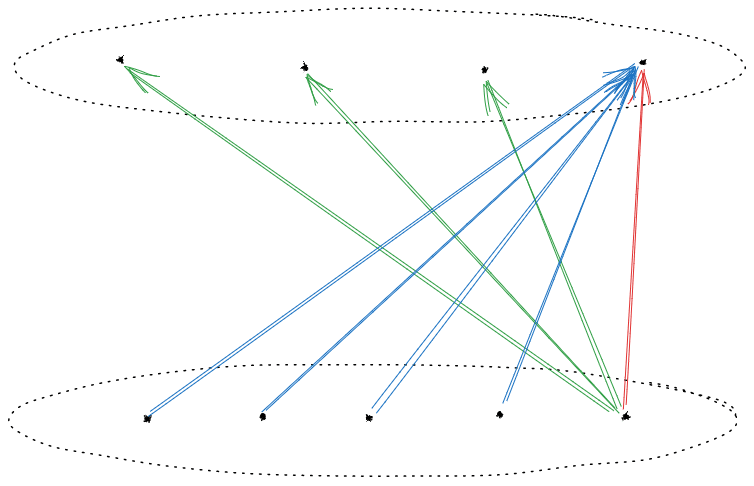

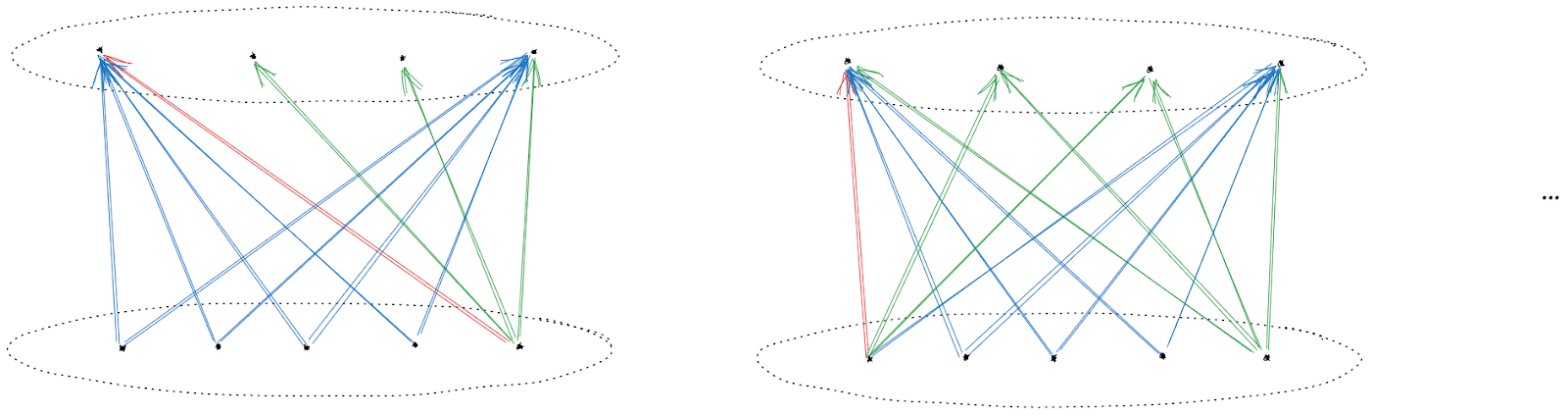

Consider the situation detailed in the following figure. An AI has the opportunity to surreptitiously inject someone with heroin (I) or not do so (¬I). If it doesn’t, the human will choose to enjoy a massive feast (F); if it does, the human will instead choose more heroin (H).

So the human policy is given by π(I)=H,π(¬I)=F. The human rationality and reward are given by a pair (m,R), where R is the human reward and m measures their rationality - how closely their actions conform with their reward.

The module m can be seen as a map from rewards to policies (or, since policies are maps from histories to actions, m can be seen as mapping histories and rewards to actions). The pair (m,R) are said to be compatible if m(R)=π, the human policy.

There are three natural Rs to consider here: Rp, a generic pleasure. Next, Re, the ‘enjoyment’ reward, where enjoyment is pleasure endorsed as ‘genuine’ by common judgement. Assume that Rp(H)=1, Rp(F)=1/3, Re(F)=1/2, and Re(H)=0. Finally, there is the twisted reward Rt, which is Rp conditional on I and Re

There are two natural ms: mr, the fully rational module. And mf, the module that is fully rational conditional on I, but always maps to H if I is chosen: m(R)(I)=H, for all R.

The pair mr(Re) is not compatible with π: it predicts tha |

3ed01fe0-c59a-49fb-b1eb-286e173e233f | trentmkelly/LessWrong-43k | LessWrong | Frequentist practice incorporates prior information all the time

I thought that the frequentist view was that you should not incorporate prior information. But looking around, this doesn't seem true in practice. It's common with the data scientists and analysts I've worked with to view probability fundamentally in terms of frequencies. But they also consider things like model-choice to be super important. For example, a good data scientist (frequentist or not) will check data for linearity, and if it is linear, they will model it with a linear or logistic regression. Choosing a linear model constrains the outcomes and relationships you can find to linear ones. If the data is non-linear, they've commited to a model that won't realize it. In this way, the statement "this relationship is linear" expresses strong prior information! Arguments over model choice can be viewed as arguments over prior distributions, and arguments over model choice abound among practitioners with frequentist views.

I'm not the first to notice this, and it might even be common knowledge: From Geweke, Understanding Non-Bayesians:

> If a frequentist uses the asymptotic approximation in a given sample, stating only the weak assumptions, he or she is implicitly ruling out those parts of the parameter space in which the asymptotic approximation, in this sample size, and with these conditioning variables, is inaccurate. These implicit assumptions are Bayesian in the sense that they invoke the researcher’s pre-sample, or prior, beliefs about which parameter values or models are likely.

E.T. "thousand-year-old vampire" Jaynes, in the preface of his book on probability, basically says that frequentist methods do incorporate known information, but do it in an ad-hoc, lossy way (bold added by me):

> In addition, frequentist methods provide no technical means to eliminate nuisance parameters or to take prior information into account, no way even to use all the information in the data when sufficient or ancillary statistics do not exist. Lacking the necessary theore |

ce440296-2f75-4210-bd55-b641648735b4 | trentmkelly/LessWrong-43k | LessWrong | Harry Potter and the Methods of Rationality discussion thread, part 14, chapter 82

The new discussion thread (part 15) is here.

This is a new thread to discuss Eliezer Yudkowsky’s Harry Potter and the Methods of Rationality and anything related to it. This thread is intended for discussing chapter 82. The previous thread passed 1000 comments as of the time of this writing, and so has long passed 500. Comment in the 13th thread until you read chapter 82.

There is now a site dedicated to the story at hpmor.com, which is now the place to go to find the authors notes and all sorts of other goodies. AdeleneDawner has kept an archive of Author’s Notes. (This goes up to the notes for chapter 76, and is now not updating. The authors notes from chapter 77 onwards are on hpmor.com.)

The first 5 discussion threads are on the main page under the harry_potter tag. Threads 6 and on (including this one) are in the discussion section using its separate tag system. Also: 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13.

As a reminder, it’s often useful to start your comment by indicating which chapter you are commenting on.

Spoiler Warning: this thread is full of spoilers. With few exceptions, spoilers for MOR and canon are fair game to post, without warning or rot13. More specifically:

> You do not need to rot13 anything about HP:MoR or the original Harry Potter series unless you are posting insider information from Eliezer Yudkowsky which is not supposed to be publicly available (which includes public statements by Eliezer that have been retracted).

>

> If there is evidence for X in MOR and/or canon then it’s fine to post about X without rot13, even if you also have heard privately from Eliezer that X is true. But you should not post that “Eliezer said X is true” unless you use rot13. |

c7650036-e753-4efe-bf07-34e3d6b756b5 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Three Stories for How AGI Comes Before FAI

*Epistemic status: fake framework*

To do effective differential technological development for AI safety, we'd like to know which combinations of AI insights are more likely to lead to FAI vs UFAI. This is an overarching strategic consideration which feeds into questions like how to think about the value of [AI capabilities research](https://www.lesswrong.com/posts/y5fYPAyKjWePCsq3Y/project-proposal-considerations-for-trading-off-capabilities).

As far as I can tell, there are actually several different stories for how we may end up with a set of AI insights which makes UFAI more likely than FAI, and these stories aren't entirely compatible with one another.

Note: In this document, when I say "FAI", I mean any superintelligent system which does a good job of helping humans (so an "aligned Task AGI" also counts).

Story #1: The Roadblock Story

=============================

Nate Soares describes the roadblock story in [this comment](https://forum.effectivealtruism.org/posts/SEL9PW8jozrvLnkb4/my-current-thoughts-on-miri-s-highly-reliable-agent-design#Z6TbXivpjxWyc8NYM):

>

> ...if a safety-conscious AGI team asked how we’d expect their project to fail, the two likeliest scenarios we’d point to are "your team runs into a capabilities roadblock and can't achieve AGI" or "your team runs into an alignment *roadblock* and *can easily tell that the system is currently misaligned, but can’t figure out how to achieve alignment in any reasonable amount of time*."

>

>

>

(emphasis mine)

The roadblock story happens if there are key safety insights that FAI needs but AGI doesn't need. In this story, the knowledge needed for FAI is a superset of the knowledge needed for AGI. If the safety insights are difficult to obtain, or no one is working to obtain them, we could find ourselves in a situation where we have all the AGI insights without having all the FAI insights.

There is subtlety here. In order to make a strong argument for the existence of insights like this, it's not enough to point to failures of existing systems, or describe hypothetical failures of future systems. You also need to explain why the insights necessary to create AGI wouldn't be sufficient to fix the problems.

Some possible ways the roadblock story could come about:

* Maybe safety insights are more or less agnostic to the chosen AGI technology and can be discovered in parallel. (Stuart Russell has pushed against this, saying that in the same way making sure bridges don't fall down is part of civil engineering, safety should be part of mainstream AI research.)

* Maybe safety insights require AGI insights as a prerequisite, leaving us in a precarious position where we will have acquired the capability to build an AGI *before* we begin critical FAI research.

+ This could be the case if the needed safety insights are mostly about how to safely assemble AGI insights into an FAI. It's possible we could do a bit of this work in advance by developing "contingency plans" for how we would construct FAI in the event of combinations of capabilities advances that seem plausible.

- Paul Christiano's IDA framework could be considered a contingency plan for the case where we develop much more powerful imitation learning.

- Contingency plans could also be helpful for directing differential technological development, since we'd get a sense of the difficulty of FAI under various tech development scenarios.

* Maybe there will be multiple subsets of the insights needed for FAI which are sufficient for AGI.

+ In this case, we'd like to speed the discovery of whichever FAI insight will be discovered last.

Story #2: The Security Story

============================

From [Security Mindset and the Logistic Success Curve](https://www.lesswrong.com/posts/cpdsMuAHSWhWnKdog/security-mindset-and-the-logistic-success-curve):

>

> CORAL: You know, back in mainstream computer security, when you propose a new way of securing a system, it's considered traditional and wise for everyone to gather around and try to come up with reasons why your idea might not work. It's understood that no matter how smart you are, most seemingly bright ideas turn out to be flawed, and that you shouldn't be touchy about people trying to shoot them down.

>

>

>

The main difference between the security story and the roadblock story is that in the security story, it's not obvious that the system is misaligned.

We can subdivide the security story based on the ease of fixing a flaw if we're able to detect it in advance. For example, vulnerability #1 on the [OWASP Top 10](https://www.cloudflare.com/learning/security/threats/owasp-top-10/) is injection, which is typically easy to patch once it's discovered. Insecure systems are often right next to secure systems in program space.

If the security story is what we are worried about, it could be wise to try & develop the AI equivalent of OWASP's [Cheat Sheet Series](https://cheatsheetseries.owasp.org/), to make it easier for people to find security problems with AI systems. Of course, many items on the cheat sheet would be speculative, since AGI doesn't actually exist yet. But it could still serve as a useful starting point for brainstorming flaws.

Differential technological development could be useful in the security story if we push for the development of AI tech that is easier to secure. However, it's not clear how confident we can be in our intuitions about what will or won't be easy to secure. In his book *Thinking Fast and Slow*, Daniel Kahneman describes his adversarial collaboration with expertise researcher Gary Klein. Kahneman was an expertise skeptic, and Klein an expertise booster:

>

> We eventually concluded that our disagreement was due in part to the fact that we had different experts in mind. Klein had spent much time with fireground commanders, clinical nurses, and other professionals who have real expertise. I had spent more time thinking about clinicians, stock pickers, and political scientists trying to make unsupportable long-term forecasts. Not surprisingly, his default attitude was trust and respect; mine was skepticism.

>

>

>

>

> ...

>

>

>

>

> When do judgments reflect true expertise? ... The answer comes from the two basic conditions for acquiring a skill:

>

>

>

>

> * an environment that is sufficiently regular to be predictable

> * an opportunity to learn these regularities through prolonged practice

>

>

>

>

> In a less regular, or low-validity, environment, the heuristics of judgment are invoked. System 1 is often able to produce quick answers to difficult

> questions by substitution, creating coherence where there is none. The question that is answered is not the one that was intended, but the answer is produced quickly and may be sufficiently plausible to pass the lax and lenient review of System 2. You may want to forecast the commercial future of a company, for example, and believe that this is what you are judging, while in fact your evaluation is dominated by your impressions of the energy and competence of its current executives. Because substitution occurs automatically, you often do not know the origin of a judgment that you (your System 2) endorse and adopt. If it is the only one that comes to mind, it may be subjectively undistinguishable from valid judgments that you make with expert confidence. This is why subjective confidence is not a good diagnostic of accuracy: judgments that answer the wrong question can also be made with high confidence.

>

>

>

Our intuitions are only as good as the data we've seen. "Gathering data" for an AI security cheat sheet could helpful for developing security intuition. But I think we should be skeptical of intuition anyway, given the speculative nature of the topic.

Story #3: The Alchemy Story

===========================

Ali Rahimi and Ben Recht [describe](http://www.argmin.net/2017/12/05/kitchen-sinks/) the alchemy story in their Test-of-time award presentation at the NeurIPS machine learning conference in 2017 ([video](https://www.youtube.com/watch?v=Qi1Yry33TQE)):

>

> Batch Norm is a technique that speeds up gradient descent on deep nets. You sprinkle it between your layers and gradient descent goes faster. I think it’s ok to use techniques we don’t understand. I only vaguely understand how an airplane works, and I was fine taking one to this conference. But *it’s always better if we build systems on top of things we do understand deeply*? This is what we know about why batch norm works well. But don’t you want to understand why reducing internal covariate shift speeds up gradient descent? Don’t you want to see evidence that Batch Norm reduces internal covariate shift? Don’t you want to know what internal covariate shift *is*? Batch Norm has become a foundational operation for machine learning. *It works amazingly well. But we know almost nothing about it.*

>

>

>

(emphasis mine)

The alchemy story has similarities to both the roadblock story and the security story.

**From the perspective of the roadblock story**, "alchemical" insights could be viewed as insights which could be useful if we only cared about creating AGI, but are too unreliable to use in an FAI. (It's possible there are other insights which fall into the "usable for AGI but not FAI" category due to something other than their alchemical nature--if you can think of any, I'd be interested to hear.)

In some ways, alchemy could be worse than a clear roadblock. It might be that not everyone agrees whether the systems are reliable enough to form the basis of an FAI, and then we're looking at a [unilateralist's curse](https://concepts.effectivealtruism.org/concepts/unilateralists-curse/) scenario.

Just like chemistry only came after alchemy, it's possible that we'll first develop the capability to create AGI via alchemical means, and only acquire the deeper understanding necessary to create a reliable FAI later. (This is a scenario from the roadblock section, where FAI insights require AGI insights as a prerequisite.) To prevent this, we could try & deepen our understanding of components we expect to fail in subtle ways, and retard the development of components we expect to "just work" without any surprises once invented.

**From the perspective of the security story**, "alchemical" insights could be viewed as components which are *clearly* prone to vulnerabilities. Alchemical components could produce failures which are hard to understand or summarize, let alone fix. From a differential technological development point of view, the best approach may be to differentially advance less alchemical, more interpretable AI paradigms, developing the AI equivalent of reliable cryptographic primitives. (Note that explainability is [inferior](https://www.nature.com/articles/s42256-019-0048-x.epdf?author_access_token=SU_TpOb-H5d3uy5KF-dedtRgN0jAjWel9jnR3ZoTv0M3t8uDwhDckroSbUOOygdba5KNHQMo_Ji2D1_SdDjVr6hjgxJXc-7jt5FQZuPTQKIAkZsBoTI4uqjwnzbltD01Z8QwhwKsbvwh-z1xL8bAcg%3D%3D) to [interpretability](https://statmodeling.stat.columbia.edu/2018/10/30/explainable-ml-versus-interpretable-ml/).)

Trying to create an FAI from alchemical components is obviously not the best idea. But it's not totally clear how much of a risk these components pose, because if the components don't work reliably, an AGI built from them may not work well enough to pose a threat. Such an AGI could work better over time if it's able to improve its own components. In this case, we might be able to program it so it periodically re-evaluates its training data as its components get upgraded, so its understanding of human values improves as its components improve.

Discussion Questions

====================

* How plausible does each story seem?

* What possibilities aren't covered by the taxonomy provided?

* What distinctions does this framework fail to capture?

* Which claims are incorrect? |

da9042ca-3fb8-4dde-b6dd-ac4f620ed5ce | StampyAI/alignment-research-dataset/blogs | Blogs | cognitive biases regarding the evaluation of AI risk when doing AI capabilities work

cognitive biases regarding the evaluation of AI risk when doing AI capabilities work

------------------------------------------------------------------------------------

i have recently encountered a few rationality failures, in the context of talking about AI risk. i will document them here for reference; they probly have already been documented elsewhere, but their application to AI risk is particularly relevant here.

### 1. forgetting to multiply

let's say i'm talking with someone about the likelyhood that working on some form of AI capability [kills everything everywhere forever](https://en.wikipedia.org/wiki/Existential_risk_from_artificial_general_intelligence). they say: "i think the risk is near 0%". i say: "i think the risk is maybe more like 10%".

would i bet that it will kill everyone? no, 10% is less than 50%. but "what i bet" isn't the only relevant thing; a proper utilitarian *multiples* likelyhood by *quality of outcome*. and X-risk is really bad. i mistakenly see some people use only the probability, forgetting to multiply; if i think everyone dying is not likely, that's enough for them. one should care that it's *extremely* unlikely.

### 2. categorizing vs average of risk

let's take the example above again. let's say you believe said likelyhood is close to 0% and i believe it's close to 10%; and let's say we each believe the other person generally tends to be as correct as oneself.

how should we come out of this? some people seem to want to pick an average between "carefully avoiding killing everyone" and "continuing as before" — which lets them more easily continue as before.

this is not how things should work. if i learn that someone who i generally consider about as likely as me to be correct about things, seriously thinks there's a 10% chance that my tap water has lead in it, my reaction is not "well, whatever, it's only 10% and only 1 out of the two of us believe this". my reaction is "what the hell?? i should look into this and stick to bottled water in the meantime". the average between risk and no risk is not "i guess maybe risk maybe no risk"; it's "lower (but still some) risk". the average between ≈0% and 10% is not "huh, well, one of those numbers is 0% so i can pick 0% and only have half a chance of being wrong"; the average is 5%. 5% is still a large risk.

this is kind of equivalent to *forgetting to multiply*, but to me it's a different problem: here, one is not just forgetting to multiply, one is forgetting that probabilities are numbers altogether, and is treating them as a set of discrete objects that they have to pick one of — and thus can justify picking the one that makes their AI capability work okay, because it's one out of the two objects.

### 3. deliberation ahead vs retroactive justification

someone says "well, i don't think the work i'm doing on AI capability is likely to kill everyone" or even "well, i think AI capability work is needed to do alignment work". that *may* be true, but how carefully did you arrive at that consideration?

did you sit down at a table with everybody, talk about what is safe and needed to do alignment work, and determine that AI capability work of the kind you're doing is the best course of actions to pursue?

or are you already committed to AI capability work and are trying to retroactively justify it?

i know the former isn't the case because there *was* no big societal sitting down at a table with everyone about cosmic AI risk. most people (including AI capability devs) don't even meaningfully *know* about cosmic AI risk; let alone deliberated on what to do about it.

this isn't to say that you're necessarily wrong; maybe by chance you happen to be right this time. but this is not how you arrive at truth, and you should be highly suspicious of such convenient retroactive justifications. and by "highly suspect" i don't mean "think mildly about it while you keep gleefully working on capability"; i mean "seriously sit down and reconsider whether what you're doing is more likely helping to save the world, or hindering saving the world".

### 4. it's not a prisoner's dilemma

some people think of alignment as a coordination problem. "well, unfortunately everyone is in a [rat race](https://slatestarcodex.com/2014/07/30/meditations-on-moloch/) to do AI capability, because if they don't they get outcompeted by others!"

this is *not* how it works. such prisoner's dilemmas work because if your opponent defects, your outcome if you defect too is worse than if you cooperate. this is **not** the case here; less people working on AI capability is pretty much strictly less probability that we all die, because it's just less people trying (and thus less people likely to randomly create an AI that kills everyone). even if literally everyone except you is working on AI capability, you should still not work on it; working on it would *still only make things worse*.

"but at that point it only makes things negligeably worse!"

…and? what's that supposed to justify? is your goal to *cause evil as long as you only cause very small amounts of evil*? shouldn't your goal be to just generally try to cause good and not cause evil?

### 5. we *are* utilitarian… right?

when situations akin to the trolley problem *actually appear*, it seems a lot of people are very reticent to actually press the lever. "i was only LARPing as a utilitarian this whole time! pressing the lever makes me feel way too bad to do it!"

i understand this and worry that i am in that situation myself. i am not sure what to say about it, other than: if you believe utilitarianism is what is *actually right*, you should try to actually *act utilitarianistically in the real world*. you should *actually press actual levers in trolley-problem-like situations in the real world*, not just nod along that pressing the lever sure is the theoretical utilitarian optimum to the trolley problem and then keep living as a soup of deontology and virtue ethics.

i'll do my best as well.

### a word of sympathy

i would love to work on AI capability. it sounds like great fun! i would love for everything to be fine; trust me, i really do.

sometimes, when we're mature adults who [take things seriously](life-refocus.html), we have to actually consider consequences and update, and make hard choices. this can be kind of fun too, if you're willing to truly engage in it. i'm not arguing with AI capabilities people out of hate or condescension. i *know* it sucks; it's *painful*. i have cried a bunch these past months. but feelings are no excuse to risk killing everyone. we **need** to do what is **right**.

shut up and multiply. |

509b002a-61d4-4ccb-a731-45f09da885ba | trentmkelly/LessWrong-43k | LessWrong | Gentleness and the artificial Other

(Cross-posted from my website. Audio version here, or search "Joe Carlsmith Audio" on your podcast app.

This is the first essay in a series that I’m calling “Otherness and control in the age of AGI.” See here for more about the series as a whole.)

When species meet

The most succinct argument for AI risk, in my opinion, is the “second species” argument. Basically, it goes like this.

> Premise 1: AGIs would be like a second advanced species on earth, more powerful than humans.

>

> Conclusion: That’s scary.

To be clear: this is very far from airtight logic.[1] But I like the intuition pump. Often, if I only have two sentences to explain AI risk, I say this sort of species stuff. “Chimpanzees should be careful about inventing humans.” Etc.[2]

People often talk about aliens here, too. “What if you learned that aliens were on their way to earth? Surely that’s scary.” Again, very far from a knock-down case (for example: we get to build the aliens in question). But it draws on something.

In particular, though: it draws on a narrative of interspecies conflict. You are meeting a new form of life, a new type of mind. But these new creatures are presented to you, centrally, as a possible threat; as competitors; as agents in whose power you might find yourself helpless.

And unfortunately: yes. But I want to start this series by acknowledging how many dimensions of interspecies-relationship this narrative leaves out, and how much I wish we could be focusing only on the other parts. To meet a new species – and especially, a new intelligent species – is not just scary. It’s incredible. I wish it was less a time for fear, and more a time for wonder and dialogue. A time to look into new eyes – and to see further.

Gentleness

> “If I took it in hand,

>

> it would melt in my hot tears—

>

> heavy autumn frost.”

>

> - Basho

Have you seen the documentary My Octopus Teacher? No problem if not, but I recommend it. Here’s the plot.

Craig Foster, a filmmaker, has been feeling b |

8dc1a783-88d4-4e4d-a730-b3bef17d040a | trentmkelly/LessWrong-43k | LessWrong | How do we know our own desires?

Sometimes I find myself longing for something, with little idea what it is.

This suggests that perceiving desire and perceiving which thing it is that is desired by the desire are separable mental actions.

In this state, I make guesses as to what I want. Am I thirsty? (I consider drinking some water and see if that feels appealing.) Do I want to have sex? (A brief fantasy informs me that sex would be good, but is not what I crave.) Do I want social comfort? (I open Facebook, maybe that has social comfort I could test with…)

If I do infer the desire in this way, I am still not directly reading it from my own mind. I am making educated guesses and testing them using my mind’s behavior.

Other times, it seems like I immediately know my own desires. When that happens, am I really receiving them introspectively, or am I merely playing the same inference game more insightfully?

We usually suppose that people are correct about their own immediate desires. They may be wrong about whether they want cookie A or cookie B, because they are misinformed about which one is delicious. But if they think they want to eat something delicious, we trust them on that.

On the model where we are mostly inferring our desires from more general feelings of wanting, we might expect people are wrong about their desires fairly often.

|

16daa3e9-6df2-48dc-918e-355e8536315a | trentmkelly/LessWrong-43k | LessWrong | The utility of information should almost never be negative

As humans, finding out facts that we would rather not be true is unpleasant. For example, I would dislike finding out that my girlfriend were cheating on me, or finding out that my parent had died, or that my bank account had been hacked and I had lost all my savings.

However, this is a consequence of the dodgily designed human brain. We don't operate with a utility function. Instead, we have separate neural circuitry for wanting and liking things, and behave according to those. If my girlfriend is cheating on me, I may want to know, but I wouldn't like knowing. In some cases, we'd rather not learn things: if I'm dying in hospital with only a few hours to live, I might rather be ignorant of another friend's death for the short remainder of my life.

However, a rational being, say an AI, would never rather not learn something, except for contrived cases like Omega offering you $100 if you can avoid learning the square of 156 for the next minute.

As far as I understand, an AI with a set of options decides by using approximately the following algorithm. This algorithm uses causal decision theory for simplicity.

"For each option, guess what will happen if you do it, and calculate the average utility. Choose the option with the highest utility."

So say Clippy is using that algorithm with his utility function of utility = number of paperclips in world.

Now imagine Clippy is on a planet making paperclips. He is considering listening to the Galactic Paperclip News radio broadcast. If he does so, there is a chance he might hear about a disaster leading to the destruction of thousands of paperclips. Would he decide in the following manner?

"If I listen to the radio show, there's maybe a 10% chance I will learn that 1000 paperclips were destroyed. My utility in from that decision would be on average reduced by 100. If I don't listen, there is no chance that I will learn about the destruction of paperclips. That is no utility reduction for me. Therefo |

56937ee3-9f54-4df2-8199-58e8194b7c60 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Washington, D.C.: Prisoner's Dilemna tournament

Discussion article for the meetup : Washington, D.C.: Prisoner's Dilemna tournament

WHEN: 13 July 2014 03:00:00PM (-0400)

WHERE: National Portrait Gallery, Washington, DC 20001, USA

We'll be meeting in the Kogod Courtyard of the National Portrait Gallery for our second Prisoner's Dilemna tournament. We'll play several different versions of iterated and one-shot games; feel free to suggest your own formats. There will be prizes (baked goods).

Discussion article for the meetup : Washington, D.C.: Prisoner's Dilemna tournament |

dc4577ad-df43-4239-a75b-63bb04ba3f77 | trentmkelly/LessWrong-43k | LessWrong | The Design Space of Minds-In-General

People ask me, "What will Artificial Intelligences be like? What will they do? Tell us your amazing story about the future."

And lo, I say unto them, "You have asked me a trick question."

ATP synthase is a molecular machine - one of three known occasions when evolution has invented the freely rotating wheel - which is essentially the same in animal mitochondria, plant chloroplasts, and bacteria. ATP synthase has not changed significantly since the rise of eukaryotic life two billion years ago. It's is something we all have in common - thanks to the way that evolution strongly conserves certain genes; once many other genes depend on a gene, a mutation will tend to break all the dependencies.

Any two AI designs might be less similar to each other than you are to a petunia.

Asking what "AIs" will do is a trick question because it implies that all AIs form a natural class. Humans do form a natural class because we all share the same brain architecture. But when you say "Artificial Intelligence", you are referring to a vastly larger space of possibilities than when you say "human". When people talk about "AIs" we are really talking about minds-in-general, or optimization processes in general. Having a word for "AI" is like having a word for everything that isn't a duck.

Imagine a map of mind design space... this is one of my standard diagrams...

All humans, of course, fit into a tiny little dot - as a sexually reproducing species, we can't be too different from one another.

This tiny dot belongs to a wider ellipse, the space of transhuman mind designs - things that might be smarter than us, or much smarter than us, but which in some sense would still be people as we understand people.

This transhuman ellipse is within a still wider volume, the space of posthuman minds, which is everything that a transhuman might grow up into.

And then the rest of the sphere is the space of minds-in-general, including possible Artificial Intelligences so odd that they |

a026176d-7625-4deb-8052-77114ea85a9f | StampyAI/alignment-research-dataset/arxiv | Arxiv | Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach

1 Introduction

---------------

Data and predictive models are used by artificial intelligence (AI) systems to make decisions across many applications and industries. Yet, many data-rich organizations struggle when adopting AI decision-making systems because of managerial and cultural challenges, rather than issues related to data and technology (LaValle et al., [2011](#bib.bib1 "Big data, analytics and the path from insights to value")). In fact, as predictive models become more complex and difficult to understand, stakeholders often become more skeptical and reluctant to adopt or use them, even if the models have been shown to improve decision-making performance (Arnold et al., [2006](#bib.bib2 "The differential use and effect of knowledge-based system explanations in novice and expert judgment decisions"); Kayande et al., [2009](#bib.bib3 "How incorporating feedback mechanisms in a DSS affects DSS evaluations")).

Explanations are also useful for other reasons beyond increasing adoption (Martens and Provost, [2014](#bib.bib9 "Explaining data-driven document classifications")). For example, explanations may help customers understand the reasoning behind automated decisions that affect them. Users of the model, such as managers or analysts, may use explanations to obtain insights about the domain in which the system is being used. Data scientists and machine learning engineers may also use the explanations to identify, debug, and address potential flaws in the system. Many researchers have tried to reduce the gap in stakeholders’ understanding of AI systems in recent years, most notably by proposing methods for explaining predictive models and their predictions.

Methods for explaining AI models and their predictions include extracting rules that represent the inner workings (e.g., Craven and Shavlik, [1996](#bib.bib4 "Extracting tree-structured representations of trained networks"); Jacobsson, [2005](#bib.bib5 "Rule extraction from recurrent neural networks: a taxonomy and review"); Martens et al., [2007](#bib.bib6 "Comprehensible credit scoring models using rule extraction from support vector machines")) and associating weights to each feature according to their importance for model predictions (e.g., Lundberg and Lee, [2017](#bib.bib7 "A unified approach to interpreting model predictions"); Ribeiro et al., [2016](#bib.bib8 "Why should I trust you?: explaining the predictions of any classifier")). Importance weights, in particular, have become increasingly popular because “model-agnostic” methods that produce importance weights have been introduced: the weights explain predictions in terms of features, so users can understand any specific prediction without any knowledge of the underlying model or the modeling method(s) used to produce the model. For example, two of the most popular methods for explaining model predictions, LIME (Ribeiro et al., [2016](#bib.bib8 "Why should I trust you?: explaining the predictions of any classifier")) and SHAP (Lundberg and Lee, [2017](#bib.bib7 "A unified approach to interpreting model predictions")), are model-agnostic and produce importance-weight explanations.

This paper points at two fundamental reasons why importance-weight explanations may not be well-suited to explain data-driven decisions made by AI systems. First, importance weights are designed to explain model predictions, but explaining model predictions is not the same as explaining the *decisions* made using those predictions. Notably, and perhaps counter-intuitively, features that have a large impact on a prediction may not necessarily have an impact on the decision that was made using that prediction. The examples in this paper illustrate this in detail. Therefore, importance weights that are obtained with respect to model predictions may portray an inaccurate picture of how features influence system decisions.

Second, identifying (and quantifying) important features is not sufficient to explain system decisions, even when importance is assessed with respect to the decisions being explained. As an example, suppose that a credit scoring system denies credit to a loan applicant, and that feature importance weights reveal that the two most important features in the credit denial decision were annual income and loan amount. While informative, this “explanation” does not in fact explain what it was that made the system decide to deny credit. Would changing either the annual income or the loan amount be enough for the system to approve credit? Would it be necessary to change both? Or perhaps even changing both would not be enough. From the weights alone, it is not clear how the important features may influence the decision. To be fair, this is not an indictment of methods that calculate feature importance; they were not designed to explain system decisions. However, we are not aware of papers or posts that clarify this in research or in practice.

An alternative to importance-weight explanations are counterfactual explanations—explanations explicitly designed to explain system decisions proposed by Martens and Provost ([2014](#bib.bib9 "Explaining data-driven document classifications")); Provost ([2014](#bib.bib36 "Understanding decisions driven by big data")). For the question “why did the model-based system make a specific decision?”, the counterfactual approach asks specifically, “which data inputs caused the system to make its decision?”. This approach is advantageous because (i) it explains decisions rather than the outputs of the model(s) on which the decisions are based; (ii) it standardizes the form that an explanation can take; (iii) it does not require all features to be part of the explanation, and (iv) the explanations can be separated from the specifics of the model.

Martens and Provost ([2014](#bib.bib9 "Explaining data-driven document classifications")) originally applied this framework to explain document classifications, and although it has been applied to other contexts beyond document classification (Moeyersoms et al., [2016](#bib.bib11 "Explaining classification models built on high-dimensional sparse data"); Chen et al., [2017](#bib.bib10 "Enhancing transparency and control when drawing data-driven inferences about individuals"); Ramon et al., [2019](#bib.bib28 "Counterfactual explanation algorithms for behavioral and textual data")), researchers don’t all see how the framework can be generalized to settings beyond text (see, e.g., Molnar, [2019](#bib.bib12 "Interpretable machine learning, see 18.1 counterfactual explanations"); Wachter et al., [2017](#bib.bib13 "Counterfactual explanations without opening the black box: automated decisions and the GPDR"); Biran and Cotton, [2017](#bib.bib34 "Explanation and justification in machine learning: a survey")). To our knowledge, this approach has not been extended beyond classification models using sparse features in high-dimensional settings. Therefore, we introduce a multi-faceted generalization that focuses on providing explanations for general data-driven system decisions, resulting in a framework that (a) may explain decisions made by systems that incorporate multiple models, (b) is model-agnostic, (c) can address features with arbitrary data types, and (d) is scalable to very large numbers of features. We also propose and showcase a heuristic procedure that may be used to search and sort counterfactual explanations according to their context-specific relevance.

Finally, we illustrate the advantages of our proposed counterfactual approach by comparing it to SHAP (Lundberg and Lee, [2017](#bib.bib7 "A unified approach to interpreting model predictions")), an increasingly popular method to explain model predictions that unites several feature importance weighting methods. Via three business case studies that use real-world data, we detail the ways in which counterfactual explanations explain data-driven decisions better than the popular alternative of feature importance weights.

2 AI Systems and Explanations

------------------------------

In this paper, we focus specifically on explaining decisions made by systems that use predictive statistical models to support or automate decision-making (Shmueli and Koppius, [2011](#bib.bib15 "Predictive analytics in Information Systems research")), and in particular on systems that make or recommend discrete decisions. We refer to these as artificial intelligence (AI) systems.

###

2.1 Explaining system decisions

Discrete decision making is closely related to classification, and indeed the subtle distinction often can be overlooked safely—but for explaining system decisions it is important to be clear. First there is a definitional difference: a classification model might classify someone as defaulting on credit or not; a corresponding decision-making system would use this model to make a decision on whether or not to grant credit. Deciding not to grant credit is not the same (at all) as saying that the individual will default—which brings us to the technical difference.

Classification tasks usually are modeled as scoring problems, where we want our predictive models to score the observations such that those more likely to have the “correct” class will have higher scores. These scores may then be used by a system to make a decision that is related to (but usually not the same as) the classification. For example, for binary decisions (and corresponding classifications) typically the scores rank observations, and decisions are made using a chosen threshold appropriate for the problem at hand (Provost and Fawcett, [2013](#bib.bib16 "Data science for business: what you need to know about data mining and data-analytic thinking")). In many cases, estimated probabilities of class membership are computed from the models, which allows the use of decision theory to combine them with application-specific information on costs and benefits (Provost and Fawcett, [2013](#bib.bib16 "Data science for business: what you need to know about data mining and data-analytic thinking")) to produce a next stage of more nuanced scores. Thus, decision-making problems are often modeled as “classification tasks” by associating a class with each decision.

However, it is important to emphasize that the final output of the system (i.e., the decision) may not correspond to the labels in the training data. As another example, for a system deciding whether to target a customer with a promotion, scores could consist of expected profits. In this case, we could estimate a classification model to predict the probability that the customer will make a purchase and a regression model to estimate the size of the purchase (conditioned on the customer making a purchase); the expected profits would be the multiplication of these two predictions (Provost and Fawcett, [2013](#bib.bib16 "Data science for business: what you need to know about data mining and data-analytic thinking"))—and the ranking of the customers by expected profit could be different from the ranking based simply on the classification model score. The final output of the decision-making system would be whether the customer should be targeted with a promotion (and because of selection bias and other complications, we often patently would not want to learn models based on training data about who was targeted with a promotion).

Explaining the decisions made by intelligent systems has received both practical and research attention

for decades (Gregor and Benbasat, [1999](#bib.bib17 "Explanations from intelligent systems: theoretical foundations and implications for practice")). Prior work has shown that the ability for intelligent systems to explain their decisions is necessary for their effective use: when users do not understand the workings of an intelligent system, they become skeptical and reluctant to use it, even if the system is known to improve decision-making performance (Arnold et al., [2006](#bib.bib2 "The differential use and effect of knowledge-based system explanations in novice and expert judgment decisions"); Kayande et al., [2009](#bib.bib3 "How incorporating feedback mechanisms in a DSS affects DSS evaluations")). More recently, for example, a field study in a Department of Radiology showed that the use of AI systems slowed down, rather than sped up, the radiologists’ decision-making process because the AI systems often provided recommendations that conflicted with the doctors’ judgement (Lebovitz et al., [2019](#bib.bib18 "Doubting the diagnosis: how artificial intelligence increases ambiguity during professional decision making")). Lacking critical understanding of the opaque AI systems, the doctors often relied on their own diagnoses, which did not concur with the system’s. Our paper provides a methodological framework to make the decisions of such AI systems more transparent.

###

2.2 Explaining predictive models

Over the past several decades, many researchers have worked on explaining predictive models---in contrast to explaining their predictions or decisions made using them. Because symbolic models, such as decision trees, are often considered straightforward to explain when they are small,111Recent work has been revisiting this assumption, working to produce models explicitly designed to be both accurate and comprehensible; see Wang and Rudin ([2015](#bib.bib35 "Falling rule lists")) for an illustrative example. most research has focused on explaining non-symbolic (black box) models or large models.

Rule-based explanations have been a popular approach to explain black-box models.

For example, in many credit scoring applications, banking regulatory entities require banks to implement globally comprehensible predictive models (Martens et al., [2007](#bib.bib6 "Comprehensible credit scoring models using rule extraction from support vector machines")). Typical techniques to provide rule-based explanations consist of approximating the black box model with a symbolic model (Craven and Shavlik, [1996](#bib.bib4 "Extracting tree-structured representations of trained networks")), or extracting explicit if-then rules (Andrews et al., [1995](#bib.bib19 "Survey and critique of techniques for extracting rules from trained artificial neural networks")). Proposed methods are often tailored to the specifics of the models being explained, and researchers have invested significant effort attempting to make state-of-the-art black box models more transparent. For example, Jacobsson ([2005](#bib.bib5 "Rule extraction from recurrent neural networks: a taxonomy and review")) offers a review of explanation techniques for deep learning models, and Martens et al. ([2007](#bib.bib6 "Comprehensible credit scoring models using rule extraction from support vector machines")) propose a rule extraction method for SVMs. Importantly, these “global” explanations (Martens and Provost, [2014](#bib.bib9 "Explaining data-driven document classifications")) attempt to explain the model as a whole, rather than explaining particular decisions made. As Martens and Provost point out, this can be viewed as explaining every possible decision the model might make—but the methods are not tailored to explain individual decisions.

###

2.3 Explaining model predictions

A different approach, that has become quite popular recently, is to explain the predictions of complex models, framing the explanations in terms of feature importance by associating a weight to each feature in the model. Each weight can be interpreted as the proportion of the information contributed by the corresponding feature to the model prediction. The main strength of this approach is that the explanations are defined in terms of the domain (i.e., the features), separating them from the specifics of the model being explained. As a result, models can be replaced without replacing the explanation method; end users (such as customers or managers) do not need any knowledge of the underlying modeling methods to understand the explanations, and different models may be compared in terms of their explanations in settings where transparency is critical.

A common way of assessing feature importance is based on simulating lack of knowledge about features (Robnik-Šikonja and Kononenko, [2008](#bib.bib21 "Explaining classifications for individual instances"); Lemaire et al., [2008](#bib.bib20 "Contact personalization using a score understanding method")). For example, one could compare the original model’s output with the output obtained when removing a specific feature from the data and the model (e.g., by imputing a default value for the feature). If the output changes, it means that the feature was important for the model prediction. Methods that use this approach often decompose each prediction into the individual contributions of each feature and use the decompositions as explanations, allowing one to visualize explanations at the instance level.

Continuing with the earlier credit scoring example, Figure [1](#S2.F1 "Figure 1 ‣ 2.3 Explaining model predictions ‣ 2 AI Systems and Explanations ‣ Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach") shows an importance-weight explanation for an individual who has an above-average probability of default. These importance weights were generated using SHAP (Lundberg and Lee, [2017](#bib.bib7 "A unified approach to interpreting model predictions")), which we will discuss in more detail in the following sections. Each weight in the explanation represents the impact that its respective feature had on the prediction. Thus, the weight of (roughly) 2.5% that is attributed to the loan amount feature (‘loan\_amnt’) implies that the feature increased the probability of default of that particular individual by 2.5%.

Figure 1: Example of an importance-weight explanation for a model prediction

A notable challenge, however, is that interactions between features may lead to ambiguous explanations, because the order in which features are removed may affect the importance attributed to each feature. As a result, subsequent work proposed assessing feature importance by removing all possible subsets of features (rather than only one feature at a time), retraining models without the removed features, and comparing how predictions change (Štrumbelj et al., [2009](#bib.bib22 "Explaining instance classifications with interactions of subsets of feature values")). However, such approaches may take hours of computation time even for a single prediction and have been reported to handle only up to about 200 features. Alternative formulations (such as SHAP) have attempted to reduce computation time by sampling the space of feature combinations and by using imputation to deal with removed features, resulting in sampling-based approximations of the influence of each feature on the prediction (Štrumbelj and Kononenko, [2010](#bib.bib23 "An efficient explanation of individual classifications using game theory"); Ribeiro et al., [2016](#bib.bib8 "Why should I trust you?: explaining the predictions of any classifier"); Lundberg and Lee, [2017](#bib.bib7 "A unified approach to interpreting model predictions"); Datta et al., [2016](#bib.bib24 "Algorithmic transparency via quantitative input influence: theory and experiments with learning systems")).

Nevertheless, importance weights are tailored to explain model predictions and may not be adequate to explain system decisions, namely because they don’t communicate how the features actually influence decisions. We will illustrate this with several examples below. Moreover, complex systems may incorporate many features in their decision making. In these settings, hundreds of features may have non-zero importance weights for any given instance, yet only a handful of the features may be critical for understanding the system’s decisions (Martens and Provost, [2014](#bib.bib9 "Explaining data-driven document classifications"); Chen et al., [2017](#bib.bib10 "Enhancing transparency and control when drawing data-driven inferences about individuals")).

3 Counterfactual explanations

------------------------------

The idea of using a causal perspective to explain model predictions with counterfactuals was first proposed (to our knowledge) by Martens and Provost ([2014](#bib.bib9 "Explaining data-driven document classifications")) (see also Provost ([2014](#bib.bib36 "Understanding decisions driven by big data"))). Other researchers followed with similar causal, counterfactual explanation approaches (see Molnar, [2019](#bib.bib12 "Interpretable machine learning, see 18.1 counterfactual explanations"), for examples). In this paper, we generalize the counterfactual explanations originally proposed for document classification (Martens and Provost, [2014](#bib.bib9 "Explaining data-driven document classifications")) and used subsequently to explain ad-targeting decisions (Moeyersoms et al., [2016](#bib.bib11 "Explaining classification models built on high-dimensional sparse data")), targeting decisions based on Facebook Likes (Chen et al., [2017](#bib.bib10 "Enhancing transparency and control when drawing data-driven inferences about individuals")), and classifications based on other high-dimensional, sparse data (Ramon et al., [2019](#bib.bib28 "Counterfactual explanation algorithms for behavioral and textual data")). We provide a more precise definition of counterfactual explanations below, but as with the prior work, we define explanations in terms of input data—or evidence—that would change the decision if it were not present.

###

3.1 Example: explaining the decision to flag a transaction

For illustration, suppose a credit card transaction was flagged for action by a data-driven AI system after it was registered as occurring outside the country where the cardholder lives, and suppose the system would have not flagged the transaction absent this location.222We should keep in mind the decision-rather-than-classification perspective. The decision is to flag the transaction for one or more actions, such as sending a message to the account holder to verify. Flagging may be based on a threshold on the estimated likelihood of fraud, but may also consider the existence of evidence from other transactions and the potential loss if the transaction were indeed fraudulent. In this case, it is intuitive to consider the location of the transaction as an explanation for the system decision. Of course, there could be other explanations. Perhaps the transaction also involved a consumption category outside the profile of the cardholder (e.g., a purchase at a casino), and excluding this information from the system would also change the decision to “do not flag”. Both are counterfactual explanations—they comprise evidence without which the system would have made a different decision.

A subtle implication of this perspective is that counterfactual explanations are generally applied to ‘‘non-default’’ decisions, because data-driven systems usually make default decisions in the absence of evidence suggesting that a different decision should be made. In our example, a transaction would be considered legitimate unless there is enough evidence suggesting fraud. As a result, explaining default decisions often corresponds to saying, ‘‘because there was not enough evidence of a non-default class’’.333However, this is not always the case. For example, if a credit card transaction was made in a foreign country, but the cardholder recently reported a trip abroad, the trip report could be a reasonable explanation for the transaction being classified as legitimate. So, the evidence in favor of a non-default classification may be cancelled out by other evidence in favor of a default classification. Thus, as with prior work, in this paper we focus primarily on explaining non-default decisions.

###

3.2 Defining counterfactual explanations

Following Martens and Provost ([2014](#bib.bib9 "Explaining data-driven document classifications")) and Provost ([2014](#bib.bib36 "Understanding decisions driven by big data")), we define a counterfactual explanation for a system decision as a set of features that is causal and irreducible. Being causal means that removing the set of features from the instance causes the system decision to change.444It is critical to differentiate what is causing the data-driven system to make its decisions from causal influences in the actual data-generating processes in the “real” world. Our definition of counterfactual explanations relates to the former. Irreducible means that removing any proper subset of the explanation would not change the system decision. The importance of an explanation being causal is straightforward: the decision would have been different if not for the presence of this set of features. The irreducibility condition serves to avoid including features that are superfluous, which relates to the fact that some of the features in a causal set may not be necessary for the decision to change.

More formally, consider an instance I consisting of a set of m features, I={1,2,...,m}, for which the decision-making system C:I→{1,2,...,k} gives decision c. A feature i is an attribute taking on a particular value, like income=$50,000 or country=FRANCE.

Then, a set of features E is a counterfactual explanation for C(I)=c if and only if:

| | | | |

| --- | --- | --- | --- |

| | E⊆I (the features are present in the instance) | | (1) |

| | C(I−E)≠c (the explanation is causal) | | (2) |

| | ∀E′⊂E:C(I−E′)=c (the %

explanation is irreducible) | | (3) |

As mentioned, our approach builds on the explanations proposed by Martens and Provost (2014), who developed and applied counterfactual explanations for document classifications, defining an explanation as an irreducible set of words such that removing them from a document changes its classification. Our definition generalizes their counterfactual explanations in three important ways. First, it makes explicit how the explanations may be used for broader system decisions, which may incorporate predictions from multiple predictive models. Second, their practical implementation of explanations (and subsequent work) consists of removing features by setting them to zero, whereas we generalize to arbitrary methods for removing features (and note the important relationship to methods for dealing with missing data). Third, while their approach has been applied in other contexts beyond document classification (Chen et al., [2017](#bib.bib10 "Enhancing transparency and control when drawing data-driven inferences about individuals"); Moeyersoms et al., [2016](#bib.bib11 "Explaining classification models built on high-dimensional sparse data"); Ramon et al., [2019](#bib.bib28 "Counterfactual explanation algorithms for behavioral and textual data")), these applications all have the same data structure: high-dimensional, sparse features. Our generalization applies to features with arbitrary data types.

Going back to our credit scoring example, suppose a decision-making system using the model prediction explained in Figure [1](#S2.F1 "Figure 1 ‣ 2.3 Explaining model predictions ‣ 2 AI Systems and Explanations ‣ Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach") decides not to grant credit to that individual. Table [1](#S3.T1 "Table 1 ‣ 3.2 Defining counterfactual explanations ‣ 3 Counterfactual explanations ‣ Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach") shows some possible counterfactual explanations for the credit denial decision. Each explanation represents a counterfactual world in which specific evidence is not considered when making the decision, resulting in a default decision (approving credit in this case).

| Explanation 1 | Credit approved if {‘loan\_amnt’} is removed. |

| --- | --- |

| Explanation 2 | Credit approved if {‘annual\_inc’} is removed. |

| Explanation 3 | Credit approved if {‘fico\_range\_high’, ‘fico\_range\_low’} are removed. |

Table 1: Examples of counterfactual explanations for a system decision

###

3.3 Removing “evidence” from the input to a data-driven decision procedure

A vital practical question that is raised by the counterfactual approach discussed here is what does it mean to “remove” evidence (i.e., features) from a data instance that will be input to a model-based decision-making procedure? Prior methods for counterfactual explanations and model sensitivity analyses have replaced input feature values with some other specified value. For example, Martens and Provost ([2014](#bib.bib9 "Explaining data-driven document classifications")) replace the presence (binary indicator, count, TFIDF value, etc.) of a word in a document with a zero. This makes sense in the context of their application, because if we consider the presence of a word as evidence for a document classification, removing that evidence---that word---would be represented by a zero for that feature.555They discuss the case where absence of a word would be evidence as well; see the original paper.

More generally, we should consider carefully the notion of removing features from the input to a data-driven model. If we step away for a moment from explaining AI systems, we can think of explaining other sorts of evidence-driven decisions within the same framework. For instance, in a murder case, we might explain our decision to bring in the suspect based on the fact that the murder weapon was found in her apartment; if there were no murder weapon, we would not have brought her in. If we would have brought her in anyway, then the presence of the weapon does not suffice as an explanation for our decision. So, in this case, we are imagining our collection of evidence with the focal piece of evidence missing. We can do the same *in principle* with data-driven decisions: we can make the feature in question be missing and ask if we would still make the same decision. Thus, we can generalize to data inputs of any kind: removing the feature means “making it missing” in the data instance.

We emphasize that we can do this “in principle” because in practice it may or may not be practicable to simply make a feature be missing. Some AI models and systems deal with missing features naturally and some do not. Importantly, note that here we are talking about dealing with missing values at the time of use of the model, not dealing with missing values during machine learning. There are different ways for dealing with missing features when applying (as opposed to learning) a predictive model (Saar-Tsechansky and Provost, [2007](#bib.bib25 "Handling missing values when applying classification models")), such as imputing default values for the missing features, using an alternative model trained with only the available features, etc.

Therefore, the generalized explanation framework we present is agnostic to which method is used to deal with the removed features—taking the position that this decision is domain and problem dependent. Within a particular domain and explanation context, the user should choose the method for dealing with missing values. For example, in settings where features are often missing at prediction time, replacing the value of a feature with a “missing” categorical value might make the most sense to simulate missingness, whereas in cases where all attributes must have values specified in order to make the decision, replacing the value with the mean or the mode might make more sense. What matters is that the decision may change when some of the features are not present at the time of decision making, and that the method for dealing with missing values allows the change in the decision to be attributed to the absence of these features.

This framework naturally incorporates other techniques used in prior counterfactual approaches: the common case of replacing a feature in a sparse setting with a zero corresponds to mode imputation; replacing a numeric feature with the mean value for that attribute corresponds to mean imputation.

In the empirical examples presented below, we use mean imputation for continuous variables and mode imputation for sparse numeric, binary, and categorical variables. Saar-Tsechansky and Provost ([2007](#bib.bib25 "Handling missing values when applying classification models")) discuss other alternatives for dealing with missing values when applying predictive models; any of them could be used in conjunction with this counterfactual explanation framework.

###

3.4 A procedure for finding useful counterfactual explanations

This definition of counterfactual explanations for system decisions allows any procedure for finding such explanations. For example, fast solvers for combinatorial problems may be used to find counterfactual explanations (Schreiber et al., [2018](#bib.bib26 "Optimal multi-way number partitioning")). For this paper, and for the examples that follow, we adopt a heuristic procedure to find the most useful explanations depending on the context.

The algorithm proposed by Martens and Provost ([2014](#bib.bib9 "Explaining data-driven document classifications")) finds counterfactual explanations by using a heuristic search that requires the decision to be based on a scoring function, such as a probability estimate from a predictive model. We also will presume that the decision making is based on comparing some score to a threshold.

This scoring function is used by the search algorithm to first consider features that, when removed, reduce the score of the predicted class the most. This heuristic may be desirable when the goal is to find the smallest explanations, such as when explaining the decisions of models that use thousands of features. Another possible heuristic is to remove features according to their overall importance for the prediction, where the importance may be computed by a feature importance explanation technique (Ramon et al., [2019](#bib.bib28 "Counterfactual explanation algorithms for behavioral and textual data")).

However, the shortest explanations are not necessarily the best explanations. For instance, users may want to use the explanations as guidelines for what to change in order to affect the system decision. As an example, suppose that a system decides to warn a man that he is at high risk of having a heart attack. An explanation that “the system would have not made the warning if the patient were not male” is of very little use as a guide for what to do about it. In practice, some features are easier to change than others, and some may be practically impossible to change.

Therefore, we allow the incorporation of a cost function as part of the heuristic procedure in order to search first for the most relevant explanations. The underlying idea is that the cost function may be used to associate costs to the removal (or adjustment) of features, so that sets of features that satisfy desirable characteristics are searched first. Importantly, the cost function is meant to be used as a mechanism to capture the relevance of explanations, so the cost of changing or removing the features might not represent an actual cost (we will show an example of this in one of the case studies below). For example, the cost may be fixed (e.g., when removing a word from a document), may be contingent on the value of the variable (e.g., when adjusting a continuous variable), contingent on the value of other features, or may even be practically infinite.

Subsequently, instead of searching for the feature combinations that change the score of the predicted class the most, the heuristic could search for the feature combinations for which the output score changes the most per unit of cost. The motivation behind this new heuristic is to find first the explanations with the lowest costs. Returning to the heart attack example, if we assign an infinite cost to changing the gender feature, the heuristic would not select feature combinations that include it, regardless of its high impact on the output score. Instead, the heuristic would prefer explanations with many modest but “cheap” changes, such as changing several daily habits. To the extent that the system also has a scoring function (which could be the result of combining several predictive models), the procedure proposed by Martens and Provost ([2014](#bib.bib9 "Explaining data-driven document classifications")) could be easily adjusted to find the most useful explanations for the problem at hand. A similar approach has been suggested for classifiers that have a known and differentiable scoring function (Lash et al., [2017](#bib.bib27 "A budget-constrained inverse classification framework for smooth classifiers")).

###

3.5 Other advantages of counterfactual explanations

Counterfactual explanations have other benefits as well. First, as with importance weights, they are defined in terms of domain knowledge (features) rather than in terms of modeling techniques. As mentioned above, this is of critical importance to explain individual decisions made by such models to users. More importantly, these explanations can be used to understand how features affect decisions, which (as we will show in next sections) is not captured well by feature importance methods. Also, because only a fraction of the features will be present in any single explanation, the present approach may be used to explain decisions from models with thousands of features (or many more). Studies show cases where such explanations can be obtained in seconds for models with tens or hundreds of thousands of features and that the explanations typically consisted of a handful to a few dozen of features at the most (Martens and Provost, [2014](#bib.bib9 "Explaining data-driven document classifications"); Moeyersoms et al., [2016](#bib.bib11 "Explaining classification models built on high-dimensional sparse data"); Chen et al., [2017](#bib.bib10 "Enhancing transparency and control when drawing data-driven inferences about individuals")).

4 Limitations of importance weights

------------------------------------

In this section, we use three simple, synthetic (but illustrative) examples to highlight two fundamental reasons why importance-weight explanations may not be well-suited to explain data-driven decisions made by AI systems. The first example (Example 1) is meant to illustrate that features that have a large impact on a prediction (and thus large importance weights) may not have any impact on the decision made using that prediction. The next two examples show that importance weights are insufficient to communicate how features actually affect decisions (even when importance is determined with respect to system decisions rather than model predictions). More specifically, we show cases in which importance weights remain the same despite substantial changes to decision making (Examples 1, 2, and 3) and in which features deemed unimportant by the weights actually affect the decision (Example 3). Similar examples to the ones discussed in this section will come up again in the case studies in Section [5](#S5 "5 Case Studies ‣ Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach"), when comparing importance weights with counterfactual explanations using real-world data.

Throughout this section, the examples assume that we want to explain the binary decision made for three-feature instance I and decision procedure Ci as defined here:

| | | | |

| --- | --- | --- | --- |

| | I={F1=1,F2=1,F3=1}, | | (4) |

| | Ci(I)={1,if ^Yi(I)≥10,otherwise, | | (5) |

where {F1,F2,F3} are binary features, and Ci is the decision-making procedure (an AI system) that uses the scoring (or prediction) function ^Yi to make decisions. The examples that follow will employ different ^Yi. We assume that domain knowledge has guided us to replace the values of missing features with a default value of zero.

We compute importance weights using SHAP (Lundberg and Lee, [2017](#bib.bib7 "A unified approach to interpreting model predictions")), a popular approach to explain the output of machine learning models. Before we focus on the disadvantages of importance weights for explaining system decisions, let us point out that SHAP has several advantages for explaining data-driven model predictions: (i) it produces numeric “importance weights” for each feature at an instance-level, (ii) it is model-agnostic, (iii) its importance weights tie instance-level explanations to cooperative game theory, providing a solid theoretical foundation, (iv) and SHAP unites several feature importance weighting methods, including the relatively well-known LIME (Ribeiro, Singh and Guestrin, 2016).

In the case of SHAP, importance weights consist of the (approximated) Shapley values of the features for a model prediction. Shapley values correspond to the impact each feature has on the prediction, averaged over all possible joining orders of the features. A major limitation of Shapley values is that computing them becomes intractable as the number of features grows. SHAP circumvents this limitation by sampling the space of feature combinations, resulting in a sampling-based approximation of the Shapley values. There are only 3 features in the examples that follow, so the approximations are not necessary here, but they will be for the case studies discussed in Section [5](#S5 "5 Case Studies ‣ Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach"), where the number of features is much larger. We illustrate the computation of Shapley values in more detail in the examples below.

###

4.1 Example 1: Distinguishing between predictions and decisions

All importance weighting methods (that we are aware of) are designed to explain the output of scoring functions, not system decisions. This is problematic because a large impact on the scoring function does not necessarily translate to an impact on the decision. This example illustrates this by defining ^Y1 as follows:

| | | | |

| --- | --- | --- | --- |

| | ^Y1(I)=F1+F2+10F1F3+10F2F3, | | (6) |

so the prediction and the decision for instance I are ^Y1(I)=22 and C1(I)=1 respectively.

Table [2](#S4.T2 "Table 2 ‣ 4.1 Example 1: Distinguishing between predictions and decisions ‣ 4 Limitations of importance weights ‣ Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach") shows how to compute the Shapley values of the features with respect to ^Y1. Each row represents one of the six possible joining orders of the features, and each column corresponds to the impact of one of the three features across those joining orders. The last row shows the average impact of the features, which corresponds to the Shapley values.

| Joining orders | Impact of F1 | Impact of F2 | Impact of F3 |

| --- | --- | --- | --- |

| F1,F2,F3 | 1 | 1 | 20 |

| F1,F3,F2 | 1 | 11 | 10 |

| F2,F1,F3 | 1 | 1 | 20 |

| F2,F3,F1 | 11 | 1 | 10 |

| F3,F1,F2 | 11 | 11 | 0 |

| F3,F2,F1 | 11 | 11 | 0 |

| Shapley values | 6 | 6 | 10 |

Table 2: Shapley values for ^Y1 and all the joining orders used in their computation.

According to Table [2](#S4.T2 "Table 2 ‣ 4.1 Example 1: Distinguishing between predictions and decisions ‣ 4 Limitations of importance weights ‣ Explaining Data-Driven Decisions made by AI Systems: The Counterfactual Approach"), SHAP gives F3 a larger weight than F1 or F2 due to its large impact on ^Y1. However, if we take a closer look at C1 and ^Y1 simultaneosuly, we can see that F3 does not affect the decision-making procedure at all! More specifically F3 only affects ^Y1 if F1 or F2 are already present, but if those features are present, then increasing the score does not affect the decision because ^Y1≥1 (implying that C1=1 regardless of F3). Therefore, the large “importance” of a feature for a model prediction may not imply an impact on a decision made with that prediction.