modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

DucHaiten/DucHaitenJourney | 2023-06-15T12:58:48.000Z | [

"diffusers",

"stable-diffusion",

"text-to-image",

"image-to-image",

"en",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | DucHaiten | null | null | DucHaiten/DucHaitenJourney | 8 | 3,377 | diffusers | 2023-03-12T16:01:27 | ---

language:

- en

tags:

- stable-diffusion

- text-to-image

- image-to-image

- diffusers

license: creativeml-openrail-m

inference: true

---

DPM++ 2S a Karras cfg 10

will be better in large resolution 768x768, 512x512 will be poor quality

negative prompt:

illustration, painting, cartoons, sketch, (worst quality:2), (low quality:2), (normal quality:2), lowres, bad anatomy, bad hands, ((monochrome)), ((grayscale)), collapsed eyeshadow, multiple eyeblows, vaginas in breasts, (cropped), oversaturated, extra limb, missing limbs, deformed hands, long neck, long body, imperfect, (bad hands), signature, watermark, username, artist name, conjoined fingers, deformed fingers, ugly eyes, imperfect eyes, skewed eyes, unnatural face, unnatural body, error | 754 | [

[

-0.0478515625,

-0.03839111328125,

0.046173095703125,

0.0269622802734375,

-0.065673828125,

0.0039005279541015625,

0.0282440185546875,

-0.050933837890625,

0.02880859375,

0.02874755859375,

-0.048736572265625,

-0.01143646240234375,

-0.08258056640625,

0.021469116... |

wissamantoun/araelectra-base-artydiqa | 2023-03-20T13:06:59.000Z | [

"transformers",

"pytorch",

"safetensors",

"electra",

"question-answering",

"ar",

"dataset:tydiqa",

"arxiv:2012.15516",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | question-answering | wissamantoun | null | null | wissamantoun/araelectra-base-artydiqa | 8 | 3,376 | transformers | 2022-03-02T23:29:05 | ---

language: ar

datasets:

- tydiqa

widget:

- text: "ما هو نظام الحكم في لبنان؟"

context: "لبنان أو (رسميا: الجمهورية اللبنانية)، هي دولة عربية واقعة في الشرق الأوسط في غرب القارة الآسيوية. تحدها سوريا من الشمال و الشرق، و فلسطين المحتلة - إسرائيل من الجنوب، وتطل من جهة الغرب على البحر الأبيض المتوسط. هو بلد ديمقراطي جمهوري طوائفي. معظم سكانه من العرب المسلمين و المسيحيين. وبخلاف غالبية الدول العربية هناك وجود فعال للمسيحيين في الحياة العامة والسياسية. هاجر وانتشر أبناؤه حول العالم منذ أيام الفينيقيين، وحاليا فإن عدد اللبنانيين المهاجرين يقدر بضعف عدد اللبنانيين المقيمين. واجه لبنان منذ القدم تعدد الحضارات التي عبرت فيه أو احتلت أراضيه وذلك لموقعه الوسطي بين الشمال الأوروبي والجنوب العربي والشرق الآسيوي والغرب الأفريقي، ويعد هذا الموقع المتوسط من أبرز الأسباب لتنوع الثقافات في لبنان، وفي الوقت ذاته من الأسباب المؤدية للحروب والنزاعات على مر العصور تجلت بحروب أهلية ونزاع مصيري مع إسرائيل. ويعود أقدم دليل على استيطان الإنسان في لبنان ونشوء حضارة على أرضه إلى أكثر من 7000 سنة. في القدم، سكن الفينيقيون أرض لبنان الحالية مع جزء من أرض سوريا و فلسطين، وهؤلاء قوم ساميون اتخذوا من الملاحة والتجارة مهنة لهم، وازدهرت حضارتهم طيلة 2500 سنة تقريبا (من حوالي سنة 3000 حتى سنة 539 ق.م). وقد مرت على لبنان عدة حضارات وشعوب استقرت فيه منذ عهد الفينيقين، مثل المصريين القدماء، الآشوريين، الفرس، الإغريق، الرومان، الروم البيزنطيين، العرب، الصليبيين، الأتراك العثمانيين، فالفرنسيين."

---

<img src="https://raw.githubusercontent.com/WissamAntoun/arabic-wikipedia-qa-streamlit/main/is2alni_logo.png" width="150" align="center"/>

# Arabic QA

AraELECTRA powered Arabic Wikipedia QA system with Streamlit [](https://share.streamlit.io/wissamantoun/arabic-wikipedia-qa-streamlit/main)

This model is trained on the Arabic section of ArTyDiQA using the colab here [](https://colab.research.google.com/drive/1hik0L_Dxg6WwJFcDPP1v74motSkst4gE?usp=sharing)

# How to use:

```bash

git clone https://github.com/aub-mind/arabert

pip install pyarabic

```

```python

from arabert.preprocess import ArabertPreprocessor

from transformers import pipeline

prep = ArabertPreprocessor("aubmindlab/araelectra-base-discriminator") #or empty string it's the same

qa_pipe =pipeline("question-answering",model="wissamantoun/araelectra-base-artydiqa")

text = " ما هو نظام الحكم في لبنان؟"

context = """

لبنان أو (رسميًّا: الجُمْهُورِيَّة اللبنانيَّة)، هي دولة عربيّة واقِعَة في الشَرق الأوسط في غرب القارة الآسيويّة. تَحُدّها سوريا من الشمال والشرق، وفلسطين المحتلة - إسرائيل من الجنوب، وتطل من جهة الغرب على البحر الأبيض المتوسط. هو بلد ديمقراطي جمهوري طوائفي. مُعظم سكانه من العرب المسلمين والمسيحيين. وبخلاف غالبيّة الدول العربيّة هناك وجود فعّال للمسيحيين في الحياة العامّة والسياسيّة. هاجر وانتشر أبناؤه حول العالم منذ أيام الفينيقيين، وحاليًّا فإن عدد اللبنانيين المهاجرين يُقدَّر بضعف عدد اللبنانيين المقيمين.

واجه لبنان منذ القدم تعدد الحضارات التي عبرت فيه أو احتلّت أراضيه وذلك لموقعه الوسطي بين الشمال الأوروبي والجنوب العربي والشرق الآسيوي والغرب الأفريقي، ويعد هذا الموقع المتوسط من أبرز الأسباب لتنوع الثقافات في لبنان، وفي الوقت ذاته من الأسباب المؤدية للحروب والنزاعات على مر العصور تجلت بحروب أهلية ونزاع مصيري مع إسرائيل. ويعود أقدم دليل على استيطان الإنسان في لبنان ونشوء حضارة على أرضه إلى أكثر من 7000 سنة.

في القدم، سكن الفينيقيون أرض لبنان الحالية مع جزء من أرض سوريا وفلسطين، وهؤلاء قوم ساميون اتخذوا من الملاحة والتجارة مهنة لهم، وازدهرت حضارتهم طيلة 2500 سنة تقريبًا (من حوالي سنة 3000 حتى سنة 539 ق.م). وقد مرّت على لبنان عدّة حضارات وشعوب استقرت فيه منذ عهد الفينيقين، مثل المصريين القدماء، الآشوريين، الفرس، الإغريق، الرومان، الروم البيزنطيين، العرب، الصليبيين، الأتراك العثمانيين، فالفرنسيين.

"""

context = prep.preprocess(context)# don't forget to preprocess the question and the context to get the optimal results

result = qa_pipe(question=text,context=context)

"""

{'answer': 'ديمقراطي جمهوري طوائفي',

'end': 241,

'score': 0.4910127818584442,

'start': 219}

"""

```

# If you used this model please cite us as :

```

@misc{antoun2020araelectra,

title={AraELECTRA: Pre-Training Text Discriminators for Arabic Language Understanding},

author={Wissam Antoun and Fady Baly and Hazem Hajj},

year={2020},

eprint={2012.15516},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` | 4,392 | [

[

-0.053863525390625,

-0.04412841796875,

0.030731201171875,

0.011627197265625,

-0.0306854248046875,

-0.01120758056640625,

0.01708984375,

-0.024139404296875,

0.037750244140625,

0.0181121826171875,

-0.02288818359375,

-0.038787841796875,

-0.0565185546875,

0.01786... |

microsoft/tapex-large-finetuned-wtq | 2023-03-14T11:51:54.000Z | [

"transformers",

"pytorch",

"bart",

"text2text-generation",

"tapex",

"table-question-answering",

"en",

"dataset:wikitablequestions",

"arxiv:2107.07653",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | table-question-answering | microsoft | null | null | microsoft/tapex-large-finetuned-wtq | 31 | 3,374 | transformers | 2022-03-10T05:06:08 | ---

language: en

tags:

- tapex

- table-question-answering

datasets:

- wikitablequestions

license: mit

---

# TAPEX (large-sized model)

TAPEX was proposed in [TAPEX: Table Pre-training via Learning a Neural SQL Executor](https://arxiv.org/abs/2107.07653) by Qian Liu, Bei Chen, Jiaqi Guo, Morteza Ziyadi, Zeqi Lin, Weizhu Chen, Jian-Guang Lou. The original repo can be found [here](https://github.com/microsoft/Table-Pretraining).

## Model description

TAPEX (**Ta**ble **P**re-training via **Ex**ecution) is a conceptually simple and empirically powerful pre-training approach to empower existing models with *table reasoning* skills. TAPEX realizes table pre-training by learning a neural SQL executor over a synthetic corpus, which is obtained by automatically synthesizing executable SQL queries.

TAPEX is based on the BART architecture, the transformer encoder-decoder (seq2seq) model with a bidirectional (BERT-like) encoder and an autoregressive (GPT-like) decoder.

This model is the `tapex-base` model fine-tuned on the [WikiTableQuestions](https://huggingface.co/datasets/wikitablequestions) dataset.

## Intended Uses

You can use the model for table question answering on *complex* questions. Some **solveable** questions are shown below (corresponding tables now shown):

| Question | Answer |

|:---: |:---:|

| according to the table, what is the last title that spicy horse produced? | Akaneiro: Demon Hunters |

| what is the difference in runners-up from coleraine academical institution and royal school dungannon? | 20 |

| what were the first and last movies greenstreet acted in? | The Maltese Falcon, Malaya |

| in which olympic games did arasay thondike not finish in the top 20? | 2012 |

| which broadcaster hosted 3 titles but they had only 1 episode? | Channel 4 |

### How to Use

Here is how to use this model in transformers:

```python

from transformers import TapexTokenizer, BartForConditionalGeneration

import pandas as pd

tokenizer = TapexTokenizer.from_pretrained("microsoft/tapex-large-finetuned-wtq")

model = BartForConditionalGeneration.from_pretrained("microsoft/tapex-large-finetuned-wtq")

data = {

"year": [1896, 1900, 1904, 2004, 2008, 2012],

"city": ["athens", "paris", "st. louis", "athens", "beijing", "london"]

}

table = pd.DataFrame.from_dict(data)

# tapex accepts uncased input since it is pre-trained on the uncased corpus

query = "In which year did beijing host the Olympic Games?"

encoding = tokenizer(table=table, query=query, return_tensors="pt")

outputs = model.generate(**encoding)

print(tokenizer.batch_decode(outputs, skip_special_tokens=True))

# [' 2008.0']

```

### How to Eval

Please find the eval script [here](https://github.com/huggingface/transformers/tree/main/examples/research_projects/tapex).

### BibTeX entry and citation info

```bibtex

@inproceedings{

liu2022tapex,

title={{TAPEX}: Table Pre-training via Learning a Neural {SQL} Executor},

author={Qian Liu and Bei Chen and Jiaqi Guo and Morteza Ziyadi and Zeqi Lin and Weizhu Chen and Jian-Guang Lou},

booktitle={International Conference on Learning Representations},

year={2022},

url={https://openreview.net/forum?id=O50443AsCP}

}

``` | 3,197 | [

[

-0.03851318359375,

-0.0516357421875,

0.036468505859375,

-0.009368896484375,

-0.01568603515625,

0.003597259521484375,

-0.0162506103515625,

-0.0076751708984375,

0.0289459228515625,

0.03662109375,

-0.038238525390625,

-0.041778564453125,

-0.036346435546875,

-0.0... |

timm/vit_base_patch16_224.augreg_in1k | 2023-05-06T00:00:30.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2106.10270",

"arxiv:2010.11929",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/vit_base_patch16_224.augreg_in1k | 1 | 3,372 | timm | 2022-12-22T07:24:52 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for vit_base_patch16_224.augreg_in1k

A Vision Transformer (ViT) image classification model. Trained on ImageNet-1k (with additional augmentation and regularization) in JAX by paper authors, ported to PyTorch by Ross Wightman.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 86.6

- GMACs: 16.9

- Activations (M): 16.5

- Image size: 224 x 224

- **Papers:**

- How to train your ViT? Data, Augmentation, and Regularization in Vision Transformers: https://arxiv.org/abs/2106.10270

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale: https://arxiv.org/abs/2010.11929v2

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/google-research/vision_transformer

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('vit_base_patch16_224.augreg_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'vit_base_patch16_224.augreg_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 197, 768) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@article{steiner2021augreg,

title={How to train your ViT? Data, Augmentation, and Regularization in Vision Transformers},

author={Steiner, Andreas and Kolesnikov, Alexander and and Zhai, Xiaohua and Wightman, Ross and Uszkoreit, Jakob and Beyer, Lucas},

journal={arXiv preprint arXiv:2106.10270},

year={2021}

}

```

```bibtex

@article{dosovitskiy2020vit,

title={An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale},

author={Dosovitskiy, Alexey and Beyer, Lucas and Kolesnikov, Alexander and Weissenborn, Dirk and Zhai, Xiaohua and Unterthiner, Thomas and Dehghani, Mostafa and Minderer, Matthias and Heigold, Georg and Gelly, Sylvain and Uszkoreit, Jakob and Houlsby, Neil},

journal={ICLR},

year={2021}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 3,796 | [

[

-0.03875732421875,

-0.03106689453125,

-0.0036296844482421875,

0.006702423095703125,

-0.0298004150390625,

-0.027069091796875,

-0.0207977294921875,

-0.03448486328125,

0.0141754150390625,

0.0246124267578125,

-0.04144287109375,

-0.038238525390625,

-0.047760009765625... |

textattack/bert-base-uncased-yelp-polarity | 2021-05-20T07:49:07.000Z | [

"transformers",

"pytorch",

"jax",

"bert",

"text-classification",

"endpoints_compatible",

"has_space",

"region:us"

] | text-classification | textattack | null | null | textattack/bert-base-uncased-yelp-polarity | 2 | 3,371 | transformers | 2022-03-02T23:29:05 | ## TextAttack Model Card

This `bert-base-uncased` model was fine-tuned for sequence classification using TextAttack

and the yelp_polarity dataset loaded using the `nlp` library. The model was fine-tuned

for 5 epochs with a batch size of 16, a learning

rate of 5e-05, and a maximum sequence length of 256.

Since this was a classification task, the model was trained with a cross-entropy loss function.

The best score the model achieved on this task was 0.9699473684210527, as measured by the

eval set accuracy, found after 4 epochs.

For more information, check out [TextAttack on Github](https://github.com/QData/TextAttack).

| 632 | [

[

-0.01605224609375,

-0.02587890625,

0.029693603515625,

0.0007410049438476562,

-0.039215087890625,

-0.0008273124694824219,

-0.017974853515625,

-0.033782958984375,

-0.005992889404296875,

0.0294036865234375,

-0.043243408203125,

-0.05596923828125,

-0.036346435546875,... |

timm/maxvit_base_tf_384.in1k | 2023-05-10T23:55:57.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2204.01697",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/maxvit_base_tf_384.in1k | 1 | 3,367 | timm | 2022-12-02T21:48:32 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for maxvit_base_tf_384.in1k

An official MaxViT image classification model. Trained in tensorflow on ImageNet-1k by paper authors.

Ported from official Tensorflow implementation (https://github.com/google-research/maxvit) to PyTorch by Ross Wightman.

### Model Variants in [maxxvit.py](https://github.com/huggingface/pytorch-image-models/blob/main/timm/models/maxxvit.py)

MaxxViT covers a number of related model architectures that share a common structure including:

- CoAtNet - Combining MBConv (depthwise-separable) convolutional blocks in early stages with self-attention transformer blocks in later stages.

- MaxViT - Uniform blocks across all stages, each containing a MBConv (depthwise-separable) convolution block followed by two self-attention blocks with different partitioning schemes (window followed by grid).

- CoAtNeXt - A timm specific arch that uses ConvNeXt blocks in place of MBConv blocks in CoAtNet. All normalization layers are LayerNorm (no BatchNorm).

- MaxxViT - A timm specific arch that uses ConvNeXt blocks in place of MBConv blocks in MaxViT. All normalization layers are LayerNorm (no BatchNorm).

- MaxxViT-V2 - A MaxxViT variation that removes the window block attention leaving only ConvNeXt blocks and grid attention w/ more width to compensate.

Aside from the major variants listed above, there are more subtle changes from model to model. Any model name with the string `rw` are `timm` specific configs w/ modelling adjustments made to favour PyTorch eager use. These were created while training initial reproductions of the models so there are variations.

All models with the string `tf` are models exactly matching Tensorflow based models by the original paper authors with weights ported to PyTorch. This covers a number of MaxViT models. The official CoAtNet models were never released.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 119.7

- GMACs: 73.8

- Activations (M): 332.9

- Image size: 384 x 384

- **Papers:**

- MaxViT: Multi-Axis Vision Transformer: https://arxiv.org/abs/2204.01697

- **Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('maxvit_base_tf_384.in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'maxvit_base_tf_384.in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 64, 192, 192])

# torch.Size([1, 96, 96, 96])

# torch.Size([1, 192, 48, 48])

# torch.Size([1, 384, 24, 24])

# torch.Size([1, 768, 12, 12])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'maxvit_base_tf_384.in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 768, 12, 12) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

### By Top-1

|model |top1 |top5 |samples / sec |Params (M) |GMAC |Act (M)|

|------------------------------------------------------------------------------------------------------------------------|----:|----:|--------------:|--------------:|-----:|------:|

|[maxvit_xlarge_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_512.in21k_ft_in1k) |88.53|98.64| 21.76| 475.77|534.14|1413.22|

|[maxvit_xlarge_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_384.in21k_ft_in1k) |88.32|98.54| 42.53| 475.32|292.78| 668.76|

|[maxvit_base_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_512.in21k_ft_in1k) |88.20|98.53| 50.87| 119.88|138.02| 703.99|

|[maxvit_large_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_512.in21k_ft_in1k) |88.04|98.40| 36.42| 212.33|244.75| 942.15|

|[maxvit_large_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_384.in21k_ft_in1k) |87.98|98.56| 71.75| 212.03|132.55| 445.84|

|[maxvit_base_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_384.in21k_ft_in1k) |87.92|98.54| 104.71| 119.65| 73.80| 332.90|

|[maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.81|98.37| 106.55| 116.14| 70.97| 318.95|

|[maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.47|98.37| 149.49| 116.09| 72.98| 213.74|

|[coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k) |87.39|98.31| 160.80| 73.88| 47.69| 209.43|

|[maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.89|98.02| 375.86| 116.14| 23.15| 92.64|

|[maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.64|98.02| 501.03| 116.09| 24.20| 62.77|

|[maxvit_base_tf_512.in1k](https://huggingface.co/timm/maxvit_base_tf_512.in1k) |86.60|97.92| 50.75| 119.88|138.02| 703.99|

|[coatnet_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_2_rw_224.sw_in12k_ft_in1k) |86.57|97.89| 631.88| 73.87| 15.09| 49.22|

|[maxvit_large_tf_512.in1k](https://huggingface.co/timm/maxvit_large_tf_512.in1k) |86.52|97.88| 36.04| 212.33|244.75| 942.15|

|[coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k) |86.49|97.90| 620.58| 73.88| 15.18| 54.78|

|[maxvit_base_tf_384.in1k](https://huggingface.co/timm/maxvit_base_tf_384.in1k) |86.29|97.80| 101.09| 119.65| 73.80| 332.90|

|[maxvit_large_tf_384.in1k](https://huggingface.co/timm/maxvit_large_tf_384.in1k) |86.23|97.69| 70.56| 212.03|132.55| 445.84|

|[maxvit_small_tf_512.in1k](https://huggingface.co/timm/maxvit_small_tf_512.in1k) |86.10|97.76| 88.63| 69.13| 67.26| 383.77|

|[maxvit_tiny_tf_512.in1k](https://huggingface.co/timm/maxvit_tiny_tf_512.in1k) |85.67|97.58| 144.25| 31.05| 33.49| 257.59|

|[maxvit_small_tf_384.in1k](https://huggingface.co/timm/maxvit_small_tf_384.in1k) |85.54|97.46| 188.35| 69.02| 35.87| 183.65|

|[maxvit_tiny_tf_384.in1k](https://huggingface.co/timm/maxvit_tiny_tf_384.in1k) |85.11|97.38| 293.46| 30.98| 17.53| 123.42|

|[maxvit_large_tf_224.in1k](https://huggingface.co/timm/maxvit_large_tf_224.in1k) |84.93|96.97| 247.71| 211.79| 43.68| 127.35|

|[coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k) |84.90|96.96| 1025.45| 41.72| 8.11| 40.13|

|[maxvit_base_tf_224.in1k](https://huggingface.co/timm/maxvit_base_tf_224.in1k) |84.85|96.99| 358.25| 119.47| 24.04| 95.01|

|[maxxvit_rmlp_small_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_small_rw_256.sw_in1k) |84.63|97.06| 575.53| 66.01| 14.67| 58.38|

|[coatnet_rmlp_2_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in1k) |84.61|96.74| 625.81| 73.88| 15.18| 54.78|

|[maxvit_rmlp_small_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_small_rw_224.sw_in1k) |84.49|96.76| 693.82| 64.90| 10.75| 49.30|

|[maxvit_small_tf_224.in1k](https://huggingface.co/timm/maxvit_small_tf_224.in1k) |84.43|96.83| 647.96| 68.93| 11.66| 53.17|

|[maxvit_rmlp_tiny_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_tiny_rw_256.sw_in1k) |84.23|96.78| 807.21| 29.15| 6.77| 46.92|

|[coatnet_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_1_rw_224.sw_in1k) |83.62|96.38| 989.59| 41.72| 8.04| 34.60|

|[maxvit_tiny_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_tiny_rw_224.sw_in1k) |83.50|96.50| 1100.53| 29.06| 5.11| 33.11|

|[maxvit_tiny_tf_224.in1k](https://huggingface.co/timm/maxvit_tiny_tf_224.in1k) |83.41|96.59| 1004.94| 30.92| 5.60| 35.78|

|[coatnet_rmlp_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw_224.sw_in1k) |83.36|96.45| 1093.03| 41.69| 7.85| 35.47|

|[maxxvitv2_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvitv2_nano_rw_256.sw_in1k) |83.11|96.33| 1276.88| 23.70| 6.26| 23.05|

|[maxxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_nano_rw_256.sw_in1k) |83.03|96.34| 1341.24| 16.78| 4.37| 26.05|

|[maxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_nano_rw_256.sw_in1k) |82.96|96.26| 1283.24| 15.50| 4.47| 31.92|

|[maxvit_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_nano_rw_256.sw_in1k) |82.93|96.23| 1218.17| 15.45| 4.46| 30.28|

|[coatnet_bn_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_bn_0_rw_224.sw_in1k) |82.39|96.19| 1600.14| 27.44| 4.67| 22.04|

|[coatnet_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_0_rw_224.sw_in1k) |82.39|95.84| 1831.21| 27.44| 4.43| 18.73|

|[coatnet_rmlp_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_nano_rw_224.sw_in1k) |82.05|95.87| 2109.09| 15.15| 2.62| 20.34|

|[coatnext_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnext_nano_rw_224.sw_in1k) |81.95|95.92| 2525.52| 14.70| 2.47| 12.80|

|[coatnet_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_nano_rw_224.sw_in1k) |81.70|95.64| 2344.52| 15.14| 2.41| 15.41|

|[maxvit_rmlp_pico_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_pico_rw_256.sw_in1k) |80.53|95.21| 1594.71| 7.52| 1.85| 24.86|

### By Throughput (samples / sec)

|model |top1 |top5 |samples / sec |Params (M) |GMAC |Act (M)|

|------------------------------------------------------------------------------------------------------------------------|----:|----:|--------------:|--------------:|-----:|------:|

|[coatnext_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnext_nano_rw_224.sw_in1k) |81.95|95.92| 2525.52| 14.70| 2.47| 12.80|

|[coatnet_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_nano_rw_224.sw_in1k) |81.70|95.64| 2344.52| 15.14| 2.41| 15.41|

|[coatnet_rmlp_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_nano_rw_224.sw_in1k) |82.05|95.87| 2109.09| 15.15| 2.62| 20.34|

|[coatnet_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_0_rw_224.sw_in1k) |82.39|95.84| 1831.21| 27.44| 4.43| 18.73|

|[coatnet_bn_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_bn_0_rw_224.sw_in1k) |82.39|96.19| 1600.14| 27.44| 4.67| 22.04|

|[maxvit_rmlp_pico_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_pico_rw_256.sw_in1k) |80.53|95.21| 1594.71| 7.52| 1.85| 24.86|

|[maxxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_nano_rw_256.sw_in1k) |83.03|96.34| 1341.24| 16.78| 4.37| 26.05|

|[maxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_nano_rw_256.sw_in1k) |82.96|96.26| 1283.24| 15.50| 4.47| 31.92|

|[maxxvitv2_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvitv2_nano_rw_256.sw_in1k) |83.11|96.33| 1276.88| 23.70| 6.26| 23.05|

|[maxvit_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_nano_rw_256.sw_in1k) |82.93|96.23| 1218.17| 15.45| 4.46| 30.28|

|[maxvit_tiny_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_tiny_rw_224.sw_in1k) |83.50|96.50| 1100.53| 29.06| 5.11| 33.11|

|[coatnet_rmlp_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw_224.sw_in1k) |83.36|96.45| 1093.03| 41.69| 7.85| 35.47|

|[coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k) |84.90|96.96| 1025.45| 41.72| 8.11| 40.13|

|[maxvit_tiny_tf_224.in1k](https://huggingface.co/timm/maxvit_tiny_tf_224.in1k) |83.41|96.59| 1004.94| 30.92| 5.60| 35.78|

|[coatnet_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_1_rw_224.sw_in1k) |83.62|96.38| 989.59| 41.72| 8.04| 34.60|

|[maxvit_rmlp_tiny_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_tiny_rw_256.sw_in1k) |84.23|96.78| 807.21| 29.15| 6.77| 46.92|

|[maxvit_rmlp_small_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_small_rw_224.sw_in1k) |84.49|96.76| 693.82| 64.90| 10.75| 49.30|

|[maxvit_small_tf_224.in1k](https://huggingface.co/timm/maxvit_small_tf_224.in1k) |84.43|96.83| 647.96| 68.93| 11.66| 53.17|

|[coatnet_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_2_rw_224.sw_in12k_ft_in1k) |86.57|97.89| 631.88| 73.87| 15.09| 49.22|

|[coatnet_rmlp_2_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in1k) |84.61|96.74| 625.81| 73.88| 15.18| 54.78|

|[coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k) |86.49|97.90| 620.58| 73.88| 15.18| 54.78|

|[maxxvit_rmlp_small_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_small_rw_256.sw_in1k) |84.63|97.06| 575.53| 66.01| 14.67| 58.38|

|[maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.64|98.02| 501.03| 116.09| 24.20| 62.77|

|[maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.89|98.02| 375.86| 116.14| 23.15| 92.64|

|[maxvit_base_tf_224.in1k](https://huggingface.co/timm/maxvit_base_tf_224.in1k) |84.85|96.99| 358.25| 119.47| 24.04| 95.01|

|[maxvit_tiny_tf_384.in1k](https://huggingface.co/timm/maxvit_tiny_tf_384.in1k) |85.11|97.38| 293.46| 30.98| 17.53| 123.42|

|[maxvit_large_tf_224.in1k](https://huggingface.co/timm/maxvit_large_tf_224.in1k) |84.93|96.97| 247.71| 211.79| 43.68| 127.35|

|[maxvit_small_tf_384.in1k](https://huggingface.co/timm/maxvit_small_tf_384.in1k) |85.54|97.46| 188.35| 69.02| 35.87| 183.65|

|[coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k) |87.39|98.31| 160.80| 73.88| 47.69| 209.43|

|[maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.47|98.37| 149.49| 116.09| 72.98| 213.74|

|[maxvit_tiny_tf_512.in1k](https://huggingface.co/timm/maxvit_tiny_tf_512.in1k) |85.67|97.58| 144.25| 31.05| 33.49| 257.59|

|[maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.81|98.37| 106.55| 116.14| 70.97| 318.95|

|[maxvit_base_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_384.in21k_ft_in1k) |87.92|98.54| 104.71| 119.65| 73.80| 332.90|

|[maxvit_base_tf_384.in1k](https://huggingface.co/timm/maxvit_base_tf_384.in1k) |86.29|97.80| 101.09| 119.65| 73.80| 332.90|

|[maxvit_small_tf_512.in1k](https://huggingface.co/timm/maxvit_small_tf_512.in1k) |86.10|97.76| 88.63| 69.13| 67.26| 383.77|

|[maxvit_large_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_384.in21k_ft_in1k) |87.98|98.56| 71.75| 212.03|132.55| 445.84|

|[maxvit_large_tf_384.in1k](https://huggingface.co/timm/maxvit_large_tf_384.in1k) |86.23|97.69| 70.56| 212.03|132.55| 445.84|

|[maxvit_base_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_512.in21k_ft_in1k) |88.20|98.53| 50.87| 119.88|138.02| 703.99|

|[maxvit_base_tf_512.in1k](https://huggingface.co/timm/maxvit_base_tf_512.in1k) |86.60|97.92| 50.75| 119.88|138.02| 703.99|

|[maxvit_xlarge_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_384.in21k_ft_in1k) |88.32|98.54| 42.53| 475.32|292.78| 668.76|

|[maxvit_large_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_512.in21k_ft_in1k) |88.04|98.40| 36.42| 212.33|244.75| 942.15|

|[maxvit_large_tf_512.in1k](https://huggingface.co/timm/maxvit_large_tf_512.in1k) |86.52|97.88| 36.04| 212.33|244.75| 942.15|

|[maxvit_xlarge_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_512.in21k_ft_in1k) |88.53|98.64| 21.76| 475.77|534.14|1413.22|

## Citation

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

```bibtex

@article{tu2022maxvit,

title={MaxViT: Multi-Axis Vision Transformer},

author={Tu, Zhengzhong and Talebi, Hossein and Zhang, Han and Yang, Feng and Milanfar, Peyman and Bovik, Alan and Li, Yinxiao},

journal={ECCV},

year={2022},

}

```

```bibtex

@article{dai2021coatnet,

title={CoAtNet: Marrying Convolution and Attention for All Data Sizes},

author={Dai, Zihang and Liu, Hanxiao and Le, Quoc V and Tan, Mingxing},

journal={arXiv preprint arXiv:2106.04803},

year={2021}

}

```

| 22,110 | [

[

-0.05224609375,

-0.030426025390625,

0.0009055137634277344,

0.03106689453125,

-0.0245208740234375,

-0.0172119140625,

-0.0110626220703125,

-0.023651123046875,

0.054718017578125,

0.0168304443359375,

-0.04119873046875,

-0.046966552734375,

-0.047515869140625,

-0.... |

deepmind/vision-perceiver-conv | 2021-12-11T13:12:42.000Z | [

"transformers",

"pytorch",

"perceiver",

"image-classification",

"dataset:imagenet",

"arxiv:2107.14795",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | image-classification | deepmind | null | null | deepmind/vision-perceiver-conv | 5 | 3,359 | transformers | 2022-03-02T23:29:05 | ---

license: apache-2.0

tags:

datasets:

- imagenet

---

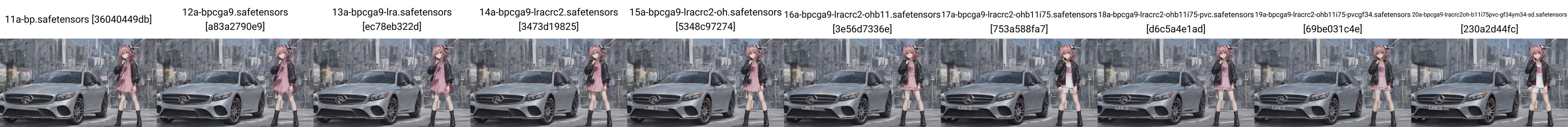

# Perceiver IO for vision (convolutional processing)

Perceiver IO model pre-trained on ImageNet (14 million images, 1,000 classes) at resolution 224x224. It was introduced in the paper [Perceiver IO: A General Architecture for Structured Inputs & Outputs](https://arxiv.org/abs/2107.14795) by Jaegle et al. and first released in [this repository](https://github.com/deepmind/deepmind-research/tree/master/perceiver).

Disclaimer: The team releasing Perceiver IO did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

Perceiver IO is a transformer encoder model that can be applied on any modality (text, images, audio, video, ...). The core idea is to employ the self-attention mechanism on a not-too-large set of latent vectors (e.g. 256 or 512), and only use the inputs to perform cross-attention with the latents. This allows for the time and memory requirements of the self-attention mechanism to not depend on the size of the inputs.

To decode, the authors employ so-called decoder queries, which allow to flexibly decode the final hidden states of the latents to produce outputs of arbitrary size and semantics. For image classification, the output is a tensor containing the logits, of shape (batch_size, num_labels).

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/perceiver_architecture.jpg" alt="drawing" width="600"/>

<small> Perceiver IO architecture.</small>

As the time and memory requirements of the self-attention mechanism don't depend on the size of the inputs, the Perceiver IO authors can train the model directly on raw pixel values, rather than on patches as is done in ViT. This particular model employs a simple 2D conv+maxpool preprocessing network on the pixel values, before using the inputs for cross-attention with the latents.

By pre-training the model, it learns an inner representation of images that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled images for instance, you can train a standard classifier by replacing the classification decoder.

## Intended uses & limitations

You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=deepmind/perceiver) to look for other fine-tuned versions on a task that may interest you.

### How to use

Here is how to use this model in PyTorch:

```python

from transformers import PerceiverFeatureExtractor, PerceiverForImageClassificationConvProcessing

import requests

from PIL import Image

feature_extractor = PerceiverFeatureExtractor.from_pretrained("deepmind/vision-perceiver-conv")

model = PerceiverForImageClassificationConvProcessing.from_pretrained("deepmind/vision-perceiver-conv")

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

# prepare input

inputs = feature_extractor(image, return_tensors="pt").pixel_values

# forward pass

outputs = model(inputs)

logits = outputs.logits

print("Predicted class:", model.config.id2label[logits.argmax(-1).item()])

>>> should print Predicted class: tabby, tabby cat

```

## Training data

This model was pretrained on [ImageNet](http://www.image-net.org/), a dataset consisting of 14 million images and 1k classes.

## Training procedure

### Preprocessing

Images are center cropped and resized to a resolution of 224x224 and normalized across the RGB channels. Note that data augmentation was used during pre-training, as explained in Appendix H of the [paper](https://arxiv.org/abs/2107.14795).

### Pretraining

Hyperparameter details can be found in Appendix H of the [paper](https://arxiv.org/abs/2107.14795).

## Evaluation results

This model is able to achieve a top-1 accuracy of 82.1 on ImageNet-1k.

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-2107-14795,

author = {Andrew Jaegle and

Sebastian Borgeaud and

Jean{-}Baptiste Alayrac and

Carl Doersch and

Catalin Ionescu and

David Ding and

Skanda Koppula and

Daniel Zoran and

Andrew Brock and

Evan Shelhamer and

Olivier J. H{\'{e}}naff and

Matthew M. Botvinick and

Andrew Zisserman and

Oriol Vinyals and

Jo{\~{a}}o Carreira},

title = {Perceiver {IO:} {A} General Architecture for Structured Inputs {\&}

Outputs},

journal = {CoRR},

volume = {abs/2107.14795},

year = {2021},

url = {https://arxiv.org/abs/2107.14795},

eprinttype = {arXiv},

eprint = {2107.14795},

timestamp = {Tue, 03 Aug 2021 14:53:34 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2107-14795.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

``` | 4,989 | [

[

-0.052276611328125,

-0.0478515625,

0.0245513916015625,

-0.000774383544921875,

-0.01470947265625,

-0.038818359375,

-0.0042266845703125,

-0.06121826171875,

0.01042938232421875,

0.0115203857421875,

-0.0345458984375,

-0.0175018310546875,

-0.04718017578125,

-0.00... |

DucHaiten/DucHaitenDreamWorld | 2023-03-28T15:19:02.000Z | [

"diffusers",

"stable-diffusion",

"text-to-image",

"image-to-image",

"en",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | DucHaiten | null | null | DucHaiten/DucHaitenDreamWorld | 24 | 3,358 | diffusers | 2023-02-06T16:26:07 | ---

license: creativeml-openrail-m

language:

- en

tags:

- stable-diffusion

- text-to-image

- image-to-image

- diffusers

inference: true

---

After many days of not eating well, sleeping 4 hours at night. Finally, version 2.4.1 of the DucHaitenDreamWorld model is also completed, it will be a huge improvement, just looking at the sample image is enough to understand how great it is. At least not as bad as the previous version :)

Dream World is my model for art like Disney, Pixar.

xformer on, no ave (I haven't tried it with vae so I don't know if it's good or bad)

Please support me by becoming a patron:

https://www.patreon.com/duchaitenreal

![00376-1484770875-[uploaded e621], by Pino Daeni, by Ruan Jia, by Fumiko, by Alayna Lemmer, by Carlo Galli Bibiena, solo female ((Vulpix)) with ((.png](https://s3.amazonaws.com/moonup/production/uploads/1676126509917-630b58b279d18d5e53e3a5a9.png)

| 3,380 | [

[

-0.0433349609375,

-0.0267791748046875,

0.0222015380859375,

0.01134490966796875,

-0.0307159423828125,

0.0175018310546875,

0.0214080810546875,

-0.052734375,

0.06280517578125,

0.06298828125,

-0.06005859375,

-0.02972412109375,

-0.047210693359375,

0.0109710693359... |

setu4993/LEALLA-small | 2023-10-19T06:22:00.000Z | [

"transformers",

"pytorch",

"tf",

"jax",

"safetensors",

"bert",

"feature-extraction",

"sentence_embedding",

"multilingual",

"google",

"sentence-similarity",

"lealla",

"labse",

"af",

"am",

"ar",

"as",

"az",

"be",

"bg",

"bn",

"bo",

"bs",

"ca",

"ceb",

"co",

"cs",

"c... | sentence-similarity | setu4993 | null | null | setu4993/LEALLA-small | 3 | 3,357 | transformers | 2023-05-21T08:17:47 | ---

pipeline_tag: sentence-similarity

language:

- af

- am

- ar

- as

- az

- be

- bg

- bn

- bo

- bs

- ca

- ceb

- co

- cs

- cy

- da

- de

- el

- en

- eo

- es

- et

- eu

- fa

- fi

- fr

- fy

- ga

- gd

- gl

- gu

- ha

- haw

- he

- hi

- hmn

- hr

- ht

- hu

- hy

- id

- ig

- is

- it

- ja

- jv

- ka

- kk

- km

- kn

- ko

- ku

- ky

- la

- lb

- lo

- lt

- lv

- mg

- mi

- mk

- ml

- mn

- mr

- ms

- mt

- my

- ne

- nl

- no

- ny

- or

- pa

- pl

- pt

- ro

- ru

- rw

- si

- sk

- sl

- sm

- sn

- so

- sq

- sr

- st

- su

- sv

- sw

- ta

- te

- tg

- th

- tk

- tl

- tr

- tt

- ug

- uk

- ur

- uz

- vi

- wo

- xh

- yi

- yo

- zh

- zu

tags:

- bert

- sentence_embedding

- multilingual

- google

- sentence-similarity

- lealla

- labse

license: apache-2.0

datasets:

- CommonCrawl

- Wikipedia

---

# LEALLA-small

## Model description

LEALLA is a collection of lightweight language-agnostic sentence embedding models supporting 109 languages, distilled from [LaBSE](https://ai.googleblog.com/2020/08/language-agnostic-bert-sentence.html). The model is useful for getting multilingual sentence embeddings and for bi-text retrieval.

- Model: [HuggingFace's model hub](https://huggingface.co/setu4993/LEALLA-small).

- Paper: [arXiv](https://arxiv.org/abs/2302.08387).

- Original model: [TensorFlow Hub](https://tfhub.dev/google/LEALLA/LEALLA-small/1).

- Conversion from TensorFlow to PyTorch: [GitHub](https://github.com/setu4993/convert-labse-tf-pt).

This is migrated from the v1 model on the TF Hub. The embeddings produced by both the versions of the model are [equivalent](https://github.com/setu4993/convert-labse-tf-pt/blob/c0d4fbce789b0709a9664464f032d2e9f5368a86/tests/test_conversion_lealla.py#L31). Though, for some of the languages (like Japanese), the LEALLA models appear to require higher tolerances when comparing embeddings and similarities.

## Usage

Using the model:

```python

import torch

from transformers import BertModel, BertTokenizerFast

tokenizer = BertTokenizerFast.from_pretrained("setu4993/LEALLA-small")

model = BertModel.from_pretrained("setu4993/LEALLA-small")

model = model.eval()

english_sentences = [

"dog",

"Puppies are nice.",

"I enjoy taking long walks along the beach with my dog.",

]

english_inputs = tokenizer(english_sentences, return_tensors="pt", padding=True)

with torch.no_grad():

english_outputs = model(**english_inputs)

```

To get the sentence embeddings, use the pooler output:

```python

english_embeddings = english_outputs.pooler_output

```

Output for other languages:

```python

italian_sentences = [

"cane",

"I cuccioli sono carini.",

"Mi piace fare lunghe passeggiate lungo la spiaggia con il mio cane.",

]

japanese_sentences = ["犬", "子犬はいいです", "私は犬と一緒にビーチを散歩するのが好きです"]

italian_inputs = tokenizer(italian_sentences, return_tensors="pt", padding=True)

japanese_inputs = tokenizer(japanese_sentences, return_tensors="pt", padding=True)

with torch.no_grad():

italian_outputs = model(**italian_inputs)

japanese_outputs = model(**japanese_inputs)

italian_embeddings = italian_outputs.pooler_output

japanese_embeddings = japanese_outputs.pooler_output

```

For similarity between sentences, an L2-norm is recommended before calculating the similarity:

```python

import torch.nn.functional as F

def similarity(embeddings_1, embeddings_2):

normalized_embeddings_1 = F.normalize(embeddings_1, p=2)

normalized_embeddings_2 = F.normalize(embeddings_2, p=2)

return torch.matmul(

normalized_embeddings_1, normalized_embeddings_2.transpose(0, 1)

)

print(similarity(english_embeddings, italian_embeddings))

print(similarity(english_embeddings, japanese_embeddings))

print(similarity(italian_embeddings, japanese_embeddings))

```

## Details

Details about data, training, evaluation and performance metrics are available in the [original paper](https://arxiv.org/abs/2302.08387).

### BibTeX entry and citation info

```bibtex

@inproceedings{mao-nakagawa-2023-lealla,

title = "{LEALLA}: Learning Lightweight Language-agnostic Sentence Embeddings with Knowledge Distillation",

author = "Mao, Zhuoyuan and

Nakagawa, Tetsuji",

booktitle = "Proceedings of the 17th Conference of the European Chapter of the Association for Computational Linguistics",

month = may,

year = "2023",

address = "Dubrovnik, Croatia",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2023.eacl-main.138",

doi = "10.18653/v1/2023.eacl-main.138",

pages = "1886--1894",

abstract = "Large-scale language-agnostic sentence embedding models such as LaBSE (Feng et al., 2022) obtain state-of-the-art performance for parallel sentence alignment. However, these large-scale models can suffer from inference speed and computation overhead. This study systematically explores learning language-agnostic sentence embeddings with lightweight models. We demonstrate that a thin-deep encoder can construct robust low-dimensional sentence embeddings for 109 languages. With our proposed distillation methods, we achieve further improvements by incorporating knowledge from a teacher model. Empirical results on Tatoeba, United Nations, and BUCC show the effectiveness of our lightweight models. We release our lightweight language-agnostic sentence embedding models LEALLA on TensorFlow Hub.",

}

```

| 5,554 | [

[

-0.0033512115478515625,

-0.06658935546875,

0.0447998046875,

0.012725830078125,

-0.005771636962890625,

-0.01715087890625,

-0.039703369140625,

-0.01947021484375,

0.0216064453125,

-0.00046539306640625,

-0.0287322998046875,

-0.041656494140625,

-0.043426513671875,

... |

asahi417/tner-xlm-roberta-base-ontonotes5 | 2022-11-04T03:24:37.000Z | [

"transformers",

"pytorch",

"xlm-roberta",

"token-classification",

"en",

"arxiv:2209.12616",

"arxiv:1910.09700",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | token-classification | asahi417 | null | null | asahi417/tner-xlm-roberta-base-ontonotes5 | 3 | 3,352 | transformers | 2022-03-02T23:29:05 |

---

language:

- en

---

# Model Card for XLM-RoBERTa for NER

XLM-RoBERTa finetuned on NER.

# Model Details

## Model Description

XLM-RoBERTa finetuned on NER.

- **Developed by:** Asahi Ushio

- **Shared by [Optional]:** Hugging Face

- **Model type:** Token Classification

- **Language(s) (NLP):** en

- **License:** More information needed

- **Related Models:** XLM-RoBERTa

- **Parent Model:** XLM-RoBERTa

- **Resources for more information:**

- [GitHub Repo](https://github.com/asahi417/tner)

- [Associated Paper](https://arxiv.org/abs/2209.12616)

- [Space](https://huggingface.co/spaces/akdeniz27/turkish-named-entity-recognition)

# Uses

## Direct Use

Token Classification

## Downstream Use [Optional]

This model can be used in conjunction with the [tner library](https://github.com/asahi417/tner).

## Out-of-Scope Use

The model should not be used to intentionally create hostile or alienating environments for people.

# Bias, Risks, and Limitations

Significant research has explored bias and fairness issues with language models (see, e.g., [Sheng et al. (2021)](https://aclanthology.org/2021.acl-long.330.pdf) and [Bender et al. (2021)](https://dl.acm.org/doi/pdf/10.1145/3442188.3445922)). Predictions generated by the model may include disturbing and harmful stereotypes across protected classes; identity characteristics; and sensitive, social, and occupational groups.

## Recommendations

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recomendations.

# Training Details

## Training Data

An NER dataset contains a sequence of tokens and tags for each split (usually `train`/`validation`/`test`),

```python

{

'train': {

'tokens': [

['@paulwalk', 'It', "'s", 'the', 'view', 'from', 'where', 'I', "'m", 'living', 'for', 'two', 'weeks', '.', 'Empire', 'State', 'Building', '=', 'ESB', '.', 'Pretty', 'bad', 'storm', 'here', 'last', 'evening', '.'],

['From', 'Green', 'Newsfeed', ':', 'AHFA', 'extends', 'deadline', 'for', 'Sage', 'Award', 'to', 'Nov', '.', '5', 'http://tinyurl.com/24agj38'], ...

],

'tags': [

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 2, 2, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0],

[0, 0, 0, 0, 3, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], ...

]

},

'validation': ...,

'test': ...,

}

```

with a dictionary to map a label to its index (`label2id`) as below.

```python

{"O": 0, "B-ORG": 1, "B-MISC": 2, "B-PER": 3, "I-PER": 4, "B-LOC": 5, "I-ORG": 6, "I-MISC": 7, "I-LOC": 8}

```

## Training Procedure

### Preprocessing

More information needed

### Speeds, Sizes, Times

**Layer_norm_eps:** 1e-05,

**Num_attention_heads:** 12,

**Num_hidden_layers:** 12,

**Vocab_size:** 250002

# Evaluation

## Testing Data, Factors & Metrics

### Testing Data

See [dataset card](https://github.com/asahi417/tner/blob/master/DATASET_CARD.md) for full dataset lists

### Factors

More information needed

### Metrics

More information needed

## Results

More information needed

# Model Examination

More information needed

# Environmental Impact

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** More information needed

- **Hours used:** More information needed

- **Cloud Provider:** More information needed

- **Compute Region:** More information needed

- **Carbon Emitted:** More information needed

# Technical Specifications [optional]

## Model Architecture and Objective

More information needed

## Compute Infrastructure

More information needed

### Hardware

More information needed

### Software

More information needed

# Citation

**BibTeX:**

```

@inproceedings{ushio-camacho-collados-2021-ner,

title = "{T}-{NER}: An All-Round Python Library for Transformer-based Named Entity Recognition",

author = "Ushio, Asahi and

Camacho-Collados, Jose",

booktitle = "Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations",

month = apr,

year = "2021",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2021.eacl-demos.7",

pages = "53--62",

}

```

# Glossary [optional]

More information needed

# More Information [optional]

More information needed

# Model Card Authors [optional]

Asahi Ushio in collaboration with Ezi Ozoani and the Hugging Face team.

# Model Card Contact

More information needed

# How to Get Started with the Model

Use the code below to get started with the model.

<details>

<summary> Click to expand </summary>

```python

from transformers import AutoTokenizer, AutoModelForTokenClassification

tokenizer = AutoTokenizer.from_pretrained("asahi417/tner-xlm-roberta-base-ontonotes5")

model = AutoModelForTokenClassification.from_pretrained("asahi417/tner-xlm-roberta-base-ontonotes5")

```

</details>

| 5,189 | [

[

-0.039337158203125,

-0.039093017578125,

0.0180206298828125,

0.002857208251953125,

-0.011474609375,

-0.018585205078125,

-0.0199127197265625,

-0.0254058837890625,

0.0236358642578125,

0.0333251953125,

-0.039093017578125,

-0.04791259765625,

-0.05560302734375,

0.... |

IDEA-CCNL/Taiyi-CLIP-Roberta-102M-Chinese | 2023-05-25T09:44:14.000Z | [

"transformers",

"pytorch",

"safetensors",

"bert",

"text-classification",

"clip",

"zh",

"image-text",

"feature-extraction",

"arxiv:2209.02970",

"license:apache-2.0",

"endpoints_compatible",

"has_space",

"region:us"

] | feature-extraction | IDEA-CCNL | null | null | IDEA-CCNL/Taiyi-CLIP-Roberta-102M-Chinese | 45 | 3,349 | transformers | 2022-07-09T07:11:05 | ---

license: apache-2.0

# inference: false

# pipeline_tag: zero-shot-image-classification

pipeline_tag: feature-extraction

# inference:

# parameters:

tags:

- clip

- zh

- image-text

- feature-extraction

---

# Taiyi-CLIP-Roberta-102M-Chinese

- Main Page:[Fengshenbang](https://fengshenbang-lm.com/)

- Github: [Fengshenbang-LM](https://github.com/IDEA-CCNL/Fengshenbang-LM)

## 简介 Brief Introduction

首个开源的中文CLIP模型,1.23亿图文对上进行预训练的文本端RoBERTa-base。

The first open source Chinese CLIP, pre-training on 123M image-text pairs, the text encoder: RoBERTa-base.

## 模型分类 Model Taxonomy

| 需求 Demand | 任务 Task | 系列 Series | 模型 Model | 参数 Parameter | 额外 Extra |

| :----: | :----: | :----: | :----: | :----: | :----: |

| 特殊 Special | 多模态 Multimodal | 太乙 Taiyi | CLIP (Roberta) | 102M | 中文 Chinese |

## 模型信息 Model Information

我们遵循CLIP的实验设置,以获得强大的视觉-语言表征。在训练中文版的CLIP时,我们使用[chinese-roberta-wwm](https://huggingface.co/hfl/chinese-roberta-wwm-ext)作为语言的编码器,并将[CLIP](https://github.com/openai/CLIP)中的ViT-B-32应用于视觉的编码器。为了快速且稳定地进行预训练,我们冻结了视觉编码器并且只微调语言编码器。此外,我们将[Noah-Wukong](https://wukong-dataset.github.io/wukong-dataset/)数据集(100M)和[Zero](https://zero.so.com/)数据集(23M)用作预训练的数据集,训练了24个epoch,在在A100x32上训练了7天。据我们所知,我们的Taiyi-CLIP是目前Huggingface社区中首个的开源中文CLIP。

We follow the experimental setup of CLIP to obtain powerful visual-language intelligence. To obtain the CLIP for Chinese, we employ [chinese-roberta-wwm](https://huggingface.co/hfl/chinese-roberta-wwm-ext) for the language encoder, and apply the ViT-B-32 in [CLIP](https://github.com/openai/CLIP) for the vision encoder. We freeze the vision encoder and tune the language encoder to speed up and stabilize the pre-training process. Moreover, we apply [Noah-Wukong](https://wukong-dataset.github.io/wukong-dataset/) dataset (100M) and [Zero](https://zero.so.com/) dataset (23M) as the pre-training datasets. We train 24 epochs, which takes 7 days to train on A100x16. To the best of our knowledge, our TaiyiCLIP is currently the only open-sourced Chinese CLIP in the huggingface community.

### 下游效果 Performance

**Zero-Shot Classification**

| model | dataset | Top1 | Top5 |

| ---- | ---- | ---- | ---- |

| Taiyi-CLIP-Roberta-102M-Chinese | ImageNet1k-CN | 42.85% | 71.48% |

**Zero-Shot Text-to-Image Retrieval**

| model | dataset | Top1 | Top5 | Top10 |

| ---- | ---- | ---- | ---- | ---- |

| Taiyi-CLIP-Roberta-102M-Chinese | Flickr30k-CNA-test | 46.32% | 74.58% | 83.44% |

| Taiyi-CLIP-Roberta-102M-Chinese | COCO-CN-test | 47.10% | 78.53% | 87.84% |

| Taiyi-CLIP-Roberta-102M-Chinese | wukong50k | 49.18% | 81.94% | 90.27% |

## 使用 Usage

```python3

from PIL import Image

import requests

import clip

import torch

from transformers import BertForSequenceClassification, BertConfig, BertTokenizer

from transformers import CLIPProcessor, CLIPModel

import numpy as np

query_texts = ["一只猫", "一只狗",'两只猫', '两只老虎','一只老虎'] # 这里是输入文本的,可以随意替换。

# 加载Taiyi 中文 text encoder

text_tokenizer = BertTokenizer.from_pretrained("IDEA-CCNL/Taiyi-CLIP-Roberta-102M-Chinese")

text_encoder = BertForSequenceClassification.from_pretrained("IDEA-CCNL/Taiyi-CLIP-Roberta-102M-Chinese").eval()

text = text_tokenizer(query_texts, return_tensors='pt', padding=True)['input_ids']

url = "http://images.cocodataset.org/val2017/000000039769.jpg" # 这里可以换成任意图片的url

# 加载CLIP的image encoder

clip_model = CLIPModel.from_pretrained("openai/clip-vit-base-patch32")

processor = CLIPProcessor.from_pretrained("openai/clip-vit-base-patch32")

image = processor(images=Image.open(requests.get(url, stream=True).raw), return_tensors="pt")

with torch.no_grad():

image_features = clip_model.get_image_features(**image)

text_features = text_encoder(text).logits

# 归一化

image_features = image_features / image_features.norm(dim=1, keepdim=True)

text_features = text_features / text_features.norm(dim=1, keepdim=True)

# 计算余弦相似度 logit_scale是尺度系数

logit_scale = clip_model.logit_scale.exp()

logits_per_image = logit_scale * image_features @ text_features.t()

logits_per_text = logits_per_image.t()

probs = logits_per_image.softmax(dim=-1).cpu().numpy()

print(np.around(probs, 3))

```

## 引用 Citation

如果您在您的工作中使用了我们的模型,可以引用我们的[论文](https://arxiv.org/abs/2209.02970):

If you are using the resource for your work, please cite the our [paper](https://arxiv.org/abs/2209.02970):

```text

@article{fengshenbang,

author = {Jiaxing Zhang and Ruyi Gan and Junjie Wang and Yuxiang Zhang and Lin Zhang and Ping Yang and Xinyu Gao and Ziwei Wu and Xiaoqun Dong and Junqing He and Jianheng Zhuo and Qi Yang and Yongfeng Huang and Xiayu Li and Yanghan Wu and Junyu Lu and Xinyu Zhu and Weifeng Chen and Ting Han and Kunhao Pan and Rui Wang and Hao Wang and Xiaojun Wu and Zhongshen Zeng and Chongpei Chen},

title = {Fengshenbang 1.0: Being the Foundation of Chinese Cognitive Intelligence},

journal = {CoRR},

volume = {abs/2209.02970},

year = {2022}

}

```

也可以引用我们的[网站](https://github.com/IDEA-CCNL/Fengshenbang-LM/):

You can also cite our [website](https://github.com/IDEA-CCNL/Fengshenbang-LM/):

```text

@misc{Fengshenbang-LM,

title={Fengshenbang-LM},

author={IDEA-CCNL},

year={2021},

howpublished={\url{https://github.com/IDEA-CCNL/Fengshenbang-LM}},

}

```

| 5,287 | [

[

-0.0360107421875,

-0.056671142578125,

0.013519287109375,

0.031707763671875,

-0.036285400390625,

-0.01422882080078125,

-0.04156494140625,

-0.027069091796875,

0.034942626953125,

0.0118255615234375,

-0.040008544921875,

-0.042572021484375,

-0.043701171875,

0.003... |

digiplay/RunDiffusionFXPhotorealistic_v1 | 2023-10-20T02:46:45.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | digiplay | null | null | digiplay/RunDiffusionFXPhotorealistic_v1 | 8 | 3,347 | diffusers | 2023-06-04T16:02:01 | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Realistic like text-to-image model,

pretty good and very stable.

Model info :

https://civitai.com/models/82972/rundiffusion-fx-photorealistic

Original Author's DEMO images :

| 676 | [

[

-0.030029296875,

-0.07574462890625,

0.036529541015625,

0.0184478759765625,

-0.031341552734375,

0.002399444580078125,

-0.006015777587890625,

-0.020294189453125,

0.0303192138671875,

0.057098388671875,

-0.038421630859375,

-0.032073974609375,

-0.0233612060546875,

... |

artificialguybr/StickersRedmond | 2023-09-12T06:21:30.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"license:creativeml-openrail-m",

"has_space",

"region:us"

] | text-to-image | artificialguybr | null | null | artificialguybr/StickersRedmond | 10 | 3,346 | diffusers | 2023-09-12T06:16:10 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: Stickers, sticker

widget:

- text: Stickers, sticker

---

# Stickers.Redmond

Stickers.Redmond is here!

Introducing Stickers.Redmond, the ultimate LORA for creating Stickers images!

I'm grateful for the GPU time from Redmond.AI that allowed me to make this LORA! If you need GPU, then you need the great services from Redmond.AI.

It is based on SD XL 1.0 and fine-tuned on a large dataset.

The LORA has a high capacity to generate Coloring Book Images!

The tag for the model: Stickers, Sticker

I really hope you like the LORA and use it.

If you like the model and think it's worth it, you can make a donation to my Patreon or Ko-fi.

Patreon:

https://www.patreon.com/user?u=81570187

Ko-fi:https://ko-fi.com/artificialguybr

BuyMeACoffe:https://www.buymeacoffee.com/jvkape

Follow me in my twitter to know before all about new models:

https://twitter.com/artificialguybr/ | 1,076 | [

[

-0.0384521484375,

-0.053070068359375,

0.0297088623046875,

0.02862548828125,

-0.03173828125,

0.00215911865234375,

0.0212249755859375,

-0.051422119140625,

0.07623291015625,

0.0367431640625,

-0.03363037109375,

-0.035369873046875,

-0.025787353515625,

-0.03344726... |

EleutherAI/pythia-160m-v0 | 2023-07-09T16:03:26.000Z | [

"transformers",

"pytorch",

"safetensors",

"gpt_neox",

"text-generation",

"causal-lm",

"pythia",

"pythia_v0",

"en",

"dataset:the_pile",

"arxiv:2101.00027",

"arxiv:2201.07311",

"license:apache-2.0",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text-generation | EleutherAI | null | null | EleutherAI/pythia-160m-v0 | 7 | 3,342 | transformers | 2022-10-16T17:40:11 | ---

language:

- en

tags:

- pytorch

- causal-lm

- pythia

- pythia_v0

license: apache-2.0

datasets:

- the_pile

---

The *Pythia Scaling Suite* is a collection of models developed to facilitate

interpretability research. It contains two sets of eight models of sizes

70M, 160M, 410M, 1B, 1.4B, 2.8B, 6.9B, and 12B. For each size, there are two

models: one trained on the Pile, and one trained on the Pile after the dataset

has been globally deduplicated. All 8 model sizes are trained on the exact

same data, in the exact same order. All Pythia models are available

[on Hugging Face](https://huggingface.co/models?other=pythia).

The Pythia model suite was deliberately designed to promote scientific

research on large language models, especially interpretability research.

Despite not centering downstream performance as a design goal, we find the

models <a href="#evaluations">match or exceed</a> the performance of

similar and same-sized models, such as those in the OPT and GPT-Neo suites.

Please note that all models in the *Pythia* suite were renamed in January

2023. For clarity, a <a href="#naming-convention-and-parameter-count">table

comparing the old and new names</a> is provided in this model card, together

with exact parameter counts.

## Pythia-160M

### Model Details

- Developed by: [EleutherAI](http://eleuther.ai)

- Model type: Transformer-based Language Model

- Language: English

- Learn more: [Pythia's GitHub repository](https://github.com/EleutherAI/pythia)

for training procedure, config files, and details on how to use.

- Library: [GPT-NeoX](https://github.com/EleutherAI/gpt-neox)

- License: Apache 2.0

- Contact: to ask questions about this model, join the [EleutherAI

Discord](https://discord.gg/zBGx3azzUn), and post them in `#release-discussion`.

Please read the existing *Pythia* documentation before asking about it in the

EleutherAI Discord. For general correspondence: [contact@eleuther.

ai](mailto:contact@eleuther.ai).

<figure>

| Pythia model | Non-Embedding Params | Layers | Model Dim | Heads | Batch Size | Learning Rate | Equivalent Models |

| -----------: | -------------------: | :----: | :-------: | :---: | :--------: | :-------------------: | :--------------------: |

| 70M | 18,915,328 | 6 | 512 | 8 | 2M | 1.0 x 10<sup>-3</sup> | — |

| 160M | 85,056,000 | 12 | 768 | 12 | 4M | 6.0 x 10<sup>-4</sup> | GPT-Neo 125M, OPT-125M |

| 410M | 302,311,424 | 24 | 1024 | 16 | 4M | 3.0 x 10<sup>-4</sup> | OPT-350M |

| 1.0B | 805,736,448 | 16 | 2048 | 8 | 2M | 3.0 x 10<sup>-4</sup> | — |

| 1.4B | 1,208,602,624 | 24 | 2048 | 16 | 4M | 2.0 x 10<sup>-4</sup> | GPT-Neo 1.3B, OPT-1.3B |

| 2.8B | 2,517,652,480 | 32 | 2560 | 32 | 2M | 1.6 x 10<sup>-4</sup> | GPT-Neo 2.7B, OPT-2.7B |

| 6.9B | 6,444,163,072 | 32 | 4096 | 32 | 2M | 1.2 x 10<sup>-4</sup> | OPT-6.7B |

| 12B | 11,327,027,200 | 36 | 5120 | 40 | 2M | 1.2 x 10<sup>-4</sup> | — |

<figcaption>Engineering details for the <i>Pythia Suite</i>. Deduped and

non-deduped models of a given size have the same hyperparameters. “Equivalent”

models have <b>exactly</b> the same architecture, and the same number of

non-embedding parameters.</figcaption>

</figure>

### Uses and Limitations

#### Intended Use

The primary intended use of Pythia is research on the behavior, functionality,

and limitations of large language models. This suite is intended to provide

a controlled setting for performing scientific experiments. To enable the

study of how language models change over the course of training, we provide

143 evenly spaced intermediate checkpoints per model. These checkpoints are

hosted on Hugging Face as branches. Note that branch `143000` corresponds

exactly to the model checkpoint on the `main` branch of each model.

You may also further fine-tune and adapt Pythia-160M for deployment,

as long as your use is in accordance with the Apache 2.0 license. Pythia

models work with the Hugging Face [Transformers

Library](https://huggingface.co/docs/transformers/index). If you decide to use

pre-trained Pythia-160M as a basis for your fine-tuned model, please

conduct your own risk and bias assessment.

#### Out-of-scope use

The Pythia Suite is **not** intended for deployment. It is not a in itself

a product and cannot be used for human-facing interactions.

Pythia models are English-language only, and are not suitable for translation

or generating text in other languages.

Pythia-160M has not been fine-tuned for downstream contexts in which

language models are commonly deployed, such as writing genre prose,

or commercial chatbots. This means Pythia-160M will **not**

respond to a given prompt the way a product like ChatGPT does. This is because,

unlike this model, ChatGPT was fine-tuned using methods such as Reinforcement

Learning from Human Feedback (RLHF) to better “understand” human instructions.

#### Limitations and biases

The core functionality of a large language model is to take a string of text

and predict the next token. The token deemed statistically most likely by the

model need not produce the most “accurate” text. Never rely on

Pythia-160M to produce factually accurate output.

This model was trained on [the Pile](https://pile.eleuther.ai/), a dataset

known to contain profanity and texts that are lewd or otherwise offensive.

See [Section 6 of the Pile paper](https://arxiv.org/abs/2101.00027) for a

discussion of documented biases with regards to gender, religion, and race.

Pythia-160M may produce socially unacceptable or undesirable text, *even if*

the prompt itself does not include anything explicitly offensive.

If you plan on using text generated through, for example, the Hosted Inference

API, we recommend having a human curate the outputs of this language model

before presenting it to other people. Please inform your audience that the

text was generated by Pythia-160M.

### Quickstart

Pythia models can be loaded and used via the following code, demonstrated here

for the third `pythia-70m-deduped` checkpoint:

```python

from transformers import GPTNeoXForCausalLM, AutoTokenizer

model = GPTNeoXForCausalLM.from_pretrained(

"EleutherAI/pythia-70m-deduped",

revision="step3000",

cache_dir="./pythia-70m-deduped/step3000",

)

tokenizer = AutoTokenizer.from_pretrained(

"EleutherAI/pythia-70m-deduped",

revision="step3000",

cache_dir="./pythia-70m-deduped/step3000",

)

inputs = tokenizer("Hello, I am", return_tensors="pt")

tokens = model.generate(**inputs)

tokenizer.decode(tokens[0])

```

Revision/branch `step143000` corresponds exactly to the model checkpoint on

the `main` branch of each model.<br>

For more information on how to use all Pythia models, see [documentation on

GitHub](https://github.com/EleutherAI/pythia).

### Training

#### Training data

[The Pile](https://pile.eleuther.ai/) is a 825GiB general-purpose dataset in

English. It was created by EleutherAI specifically for training large language

models. It contains texts from 22 diverse sources, roughly broken down into

five categories: academic writing (e.g. arXiv), internet (e.g. CommonCrawl),

prose (e.g. Project Gutenberg), dialogue (e.g. YouTube subtitles), and

miscellaneous (e.g. GitHub, Enron Emails). See [the Pile

paper](https://arxiv.org/abs/2101.00027) for a breakdown of all data sources,

methodology, and a discussion of ethical implications. Consult [the

datasheet](https://arxiv.org/abs/2201.07311) for more detailed documentation

about the Pile and its component datasets. The Pile can be downloaded from

the [official website](https://pile.eleuther.ai/), or from a [community

mirror](https://the-eye.eu/public/AI/pile/).<br>

The Pile was **not** deduplicated before being used to train Pythia-160M.

#### Training procedure

All models were trained on the exact same data, in the exact same order. Each

model saw 299,892,736,000 tokens during training, and 143 checkpoints for each

model are saved every 2,097,152,000 tokens, spaced evenly throughout training.

This corresponds to training for just under 1 epoch on the Pile for

non-deduplicated models, and about 1.5 epochs on the deduplicated Pile.

All *Pythia* models trained for the equivalent of 143000 steps at a batch size

of 2,097,152 tokens. Two batch sizes were used: 2M and 4M. Models with a batch

size of 4M tokens listed were originally trained for 71500 steps instead, with

checkpoints every 500 steps. The checkpoints on Hugging Face are renamed for

consistency with all 2M batch models, so `step1000` is the first checkpoint

for `pythia-1.4b` that was saved (corresponding to step 500 in training), and

`step1000` is likewise the first `pythia-6.9b` checkpoint that was saved