license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['bart', 'cloze', 'distractor', 'generation'] | false | Training hyperparameters The following hyperparameters were used during training: - Pre-train language model: [facebook/bart-base](https://huggingface.co/facebook/bart-base) - Optimizer: adam - Learning rate: 0.0001 - Max length of input: 64 - Batch size: 64 - Epoch: 1 - Device: NVIDIA® Tesla T4 in Google Colab | a6ddbf634a1e16af7e4651eb17b5b5e6 |

mit | ['bart', 'cloze', 'distractor', 'generation'] | false | Testing The evaluations of this model as a Candidate Set Generator in CDGP is as follows: | P@1 | F1@3 | F1@10 | MRR | NDCG@10 | | ----- | ----- | ----- | ----- | ------- | | 14.20 | 11.07 | 11.37 | 24.29 | 31.74 | | 585b4abf2f518cf21c64ed15664b5b93 |

mit | ['bart', 'cloze', 'distractor', 'generation'] | false | Candidate Set Generator | Models | CLOTH | DGen | | ----------- | ----------------------------------------------------------------------------------- | -------------------------------------------------------------------------------- | | **BERT** | [cdgp-csg-bert-cloth](https://huggingface.co/AndyChiang/cdgp-csg-bert-cloth) | [cdgp-csg-bert-dgen](https://huggingface.co/AndyChiang/cdgp-csg-bert-dgen) | | **SciBERT** | [cdgp-csg-scibert-cloth](https://huggingface.co/AndyChiang/cdgp-csg-scibert-cloth) | [cdgp-csg-scibert-dgen](https://huggingface.co/AndyChiang/cdgp-csg-scibert-dgen) | | **RoBERTa** | [cdgp-csg-roberta-cloth](https://huggingface.co/AndyChiang/cdgp-csg-roberta-cloth) | [cdgp-csg-roberta-dgen](https://huggingface.co/AndyChiang/cdgp-csg-roberta-dgen) | | **BART** | [*cdgp-csg-bart-cloth*](https://huggingface.co/AndyChiang/cdgp-csg-bart-cloth) | [cdgp-csg-bart-dgen](https://huggingface.co/AndyChiang/cdgp-csg-bart-dgen) | | 16cdd3461e4f0f49ba52bafd054b95c2 |

mit | ['generated_from_keras_callback'] | false | deepiit98/Pub-clustered This model is a fine-tuned version of [nandysoham16/16-clustered_aug](https://huggingface.co/nandysoham16/16-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.3787 - Train End Logits Accuracy: 0.8715 - Train Start Logits Accuracy: 0.8924 - Validation Loss: 0.1505 - Validation End Logits Accuracy: 1.0 - Validation Start Logits Accuracy: 0.9231 - Epoch: 0 | 8e2be07d8c15327043ebcf59dbf26fcf |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.3787 | 0.8715 | 0.8924 | 0.1505 | 1.0 | 0.9231 | 0 | | 99e4f985c657ae91776cbf549c7755cf |

apache-2.0 | ['generated_from_trainer'] | false | openai/whisper-medium This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1594 - Wer: 21.8343 | ce4d1c7c4c49ca9ee852f3d054f5da46 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 4000 - mixed_precision_training: Native AMP | 137ff3c6ae12c06910fd69fa6987f949 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.0269 | 5.0 | 500 | 0.1069 | 118.0302 | | 0.0049 | 10.01 | 1000 | 0.1263 | 135.2788 | | 0.0009 | 15.01 | 1500 | 0.1355 | 94.5731 | | 0.0001 | 20.01 | 2000 | 0.1413 | 7.5188 | | 0.0001 | 25.01 | 2500 | 0.1515 | 7.2508 | | 0.0001 | 30.02 | 3000 | 0.1568 | 24.8493 | | 0.0 | 35.02 | 3500 | 0.1588 | 22.1470 | | 0.0 | 40.02 | 4000 | 0.1594 | 21.8343 | | 301c25944988367b3e0daa0c605e7e4c |

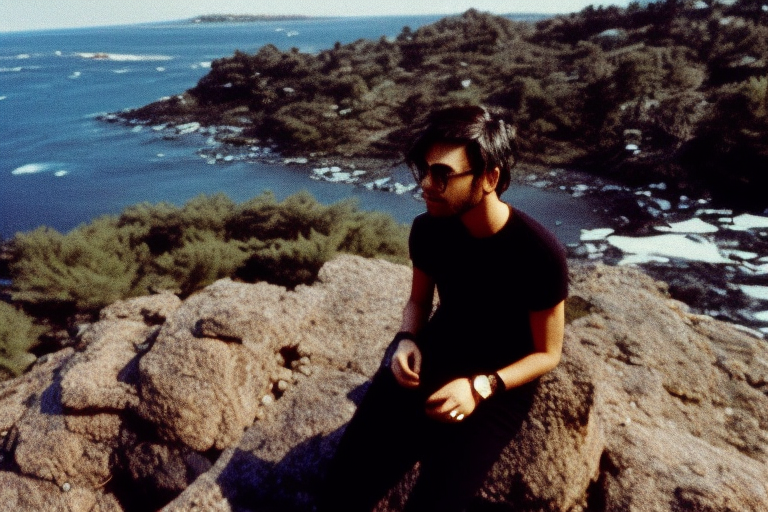

mit | [] | false | KodakVision500T on Stable Diffusion This is the `<kodakvision_500T>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). This concept was trained on **6** photographs taken with **Kodak Vision 3 500T**, through **1800** steps. Here are some generated images from the concept that you will be able to use as a `style`:     | fbb72940a5917407437bf0be23f8f8de |

apache-2.0 | ['generated_from_trainer'] | false | ELL_pretrained This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.9006 | b4b82f11465aa2d52e18fb0c66d3b2f1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.1542 | 1.0 | 1627 | 2.1101 | | 2.0739 | 2.0 | 3254 | 2.0006 | | 2.0241 | 3.0 | 4881 | 1.7874 | | a2129cbbbdec8b9bf51bfeaf744edfd4 |

apache-2.0 | ['generated_from_trainer'] | false | t5-finetuned-for-GEC This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on an unkown dataset. It achieves the following results on the evaluation set: - Loss: 0.3949 - Bleu: 0.3571 - Gen Len: 19.0 | e7b3778e0e39d140e7c3ac949fb70092 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:------:|:-------:| | 0.3958 | 1.0 | 4053 | 0.4236 | 0.3493 | 19.0 | | 0.3488 | 2.0 | 8106 | 0.4076 | 0.3518 | 19.0 | | 0.319 | 3.0 | 12159 | 0.3962 | 0.3523 | 19.0 | | 0.3105 | 4.0 | 16212 | 0.3951 | 0.3567 | 19.0 | | 0.3016 | 5.0 | 20265 | 0.3949 | 0.3571 | 19.0 | | 1b3cbfaa9ee6c59004bc0b5598d0ce9c |

other | ['token-classification', 'ncats'] | false | Model description **EpiExtract4GARD-v2** is a fine-tuned [BioBERT-base-cased](https://huggingface.co/dmis-lab/biobert-base-cased-v1.1) model that is ready to use for **Named Entity Recognition** of locations (LOC), epidemiologic types (EPI), and epidemiologic rates (STAT). This model was fine-tuned on EpiSet4NER-v2 for epidemiological information from rare disease abstracts. See dataset documentation for details on the weakly supervised teaching methods and dataset biases and limitations. See [EpiExtract4GARD on GitHub](https://github.com/ncats/epi4GARD/tree/master/EpiExtract4GARD | dae093ec091dcaecf5a2a2563185ae13 |

other | ['token-classification', 'ncats'] | false | How to use You can use this model with the Hosted inference API to the right with this [test sentence](https://pubmed.ncbi.nlm.nih.gov/21659675/): "27 patients have been diagnosed with PKU in Iceland since 1947. Incidence 1972-2008 is 1/8400 living births." See code below for use with Transformers *pipeline* for NER.: ~~~ from transformers import pipeline, AutoModelForTokenClassification, AutoTokenizer model = AutoModelForTokenClassification.from_pretrained("ncats/EpiExtract4GARD") tokenizer = AutoTokenizer.from_pretrained("ncats/EpiExtract4GARD") NER_pipeline = pipeline('ner', model=model, tokenizer=tokenizer,aggregation_strategy='simple') sample = "The live-birth prevalence of mucopolysaccharidoses in Estonia. Previous studies on the prevalence of mucopolysaccharidoses (MPS) in different populations have shown considerable variations. There are, however, few data with regard to the prevalence of MPSs in Fenno-Ugric populations or in north-eastern Europe, except for a report about Scandinavian countries. A retrospective epidemiological study of MPSs in Estonia was undertaken, and live-birth prevalence of MPS patients born between 1985 and 2006 was estimated. The live-birth prevalence for all MPS subtypes was found to be 4.05 per 100,000 live births, which is consistent with most other European studies. MPS II had the highest calculated incidence, with 2.16 per 100,000 live births (4.2 per 100,000 male live births), forming 53% of all diagnosed MPS cases, and was twice as high as in other studied European populations. The second most common subtype was MPS IIIA, with a live-birth prevalence of 1.62 in 100,000 live births. With 0.27 out of 100,000 live births, MPS VI had the third-highest live-birth prevalence. No cases of MPS I were diagnosed in Estonia, making the prevalence of MPS I in Estonia much lower than in other European populations. MPSs are the third most frequent inborn error of metabolism in Estonia after phenylketonuria and galactosemia." sample2 = "Early Diagnosis of Classic Homocystinuria in Kuwait through Newborn Screening: A 6-Year Experience. Kuwait is a small Arabian Gulf country with a high rate of consanguinity and where a national newborn screening program was expanded in October 2014 to include a wide range of endocrine and metabolic disorders. A retrospective study conducted between January 2015 and December 2020 revealed a total of 304,086 newborns have been screened in Kuwait. Six newborns were diagnosed with classic homocystinuria with an incidence of 1:50,000, which is not as high as in Qatar but higher than the global incidence. Molecular testing for five of them has revealed three previously reported pathogenic variants in the <i>CBS</i> gene, c.969G>A, p.(Trp323Ter); c.982G>A, p.(Asp328Asn); and the Qatari founder variant c.1006C>T, p.(Arg336Cys). This is the first study to review the screening of newborns in Kuwait for classic homocystinuria, starting with the detection of elevated blood methionine and providing a follow-up strategy for positive results, including plasma total homocysteine and amino acid analyses. Further, we have demonstrated an increase in the specificity of the current newborn screening test for classic homocystinuria by including the methionine to phenylalanine ratio along with the elevated methionine blood levels in first-tier testing. Here, we provide evidence that the newborn screening in Kuwait has led to the early detection of classic homocystinuria cases and enabled the affected individuals to lead active and productive lives." | edb912e2e24a197eaaa25b04333b3cc7 |

other | ['token-classification', 'ncats'] | false | Sample 1 is from: Krabbi K, Joost K, Zordania R, Talvik I, Rein R, Huijmans JG, Verheijen FV, Õunap K. The live-birth prevalence of mucopolysaccharidoses in Estonia. Genet Test Mol Biomarkers. 2012 Aug;16(8):846-9. doi: 10.1089/gtmb.2011.0307. Epub 2012 Apr 5. PMID: 22480138; PMCID: PMC3422553. | 1689a3d06f18e9f471c4099601fe631b |

other | ['token-classification', 'ncats'] | false | Sample 2 is from: Alsharhan H, Ahmed AA, Ali NM, Alahmad A, Albash B, Elshafie RM, Alkanderi S, Elkazzaz UM, Cyril PX, Abdelrahman RM, Elmonairy AA, Ibrahim SM, Elfeky YME, Sadik DI, Al-Enezi SD, Salloum AM, Girish Y, Al-Ali M, Ramadan DG, Alsafi R, Al-Rushood M, Bastaki L. Early Diagnosis of Classic Homocystinuria in Kuwait through Newborn Screening: A 6-Year Experience. Int J Neonatal Screen. 2021 Aug 17;7(3):56. doi: 10.3390/ijns7030056. PMID: 34449519; PMCID: PMC8395821. NER_pipeline(sample) NER_pipeline(sample2) ~~~ Or if you download [*classify_abs.py*](https://github.com/ncats/epi4GARD/blob/master/EpiExtract4GARD/classify_abs.py), [*extract_abs.py*](https://github.com/ncats/epi4GARD/blob/master/EpiExtract4GARD/extract_abs.py), and [*gard-id-name-synonyms.json*](https://github.com/ncats/epi4GARD/blob/master/EpiExtract4GARD/gard-id-name-synonyms.json) from GitHub then you can test with this [*additional* code](https://github.com/ncats/epi4GARD/blob/master/EpiExtract4GARD/Case%20Study.ipynb): ~~~ import pandas as pd import extract_abs import classify_abs pd.set_option('display.max_colwidth', None) NER_pipeline = extract_abs.init_NER_pipeline() GARD_dict, max_length = extract_abs.load_GARD_diseases() nlp, nlpSci, nlpSci2, classify_model, classify_tokenizer = classify_abs.init_classify_model() def search(term,num_results = 50): return extract_abs.search_term_extraction(term, num_results, NER_pipeline, GARD_dict, max_length,nlp, nlpSci, nlpSci2, classify_model, classify_tokenizer) a = search(7058) a b = search('Santos Mateus Leal syndrome') b c = search('Fellman syndrome') c d = search('GARD:0009941') d e = search('Homocystinuria') e ~~~ | 32cc779ee38a6630c9fc58e4f7f5af1e |

other | ['token-classification', 'ncats'] | false | Training data It was trained on [EpiSet4NER](https://huggingface.co/datasets/ncats/EpiSet4NER). See dataset documentation for details on the weakly supervised teaching methods and dataset biases and limitations. The training dataset distinguishes between the beginning and continuation of an entity so that if there are back-to-back entities of the same type, the model can output where the second entity begins. As in the dataset, each token will be classified as one of the following classes: Abbreviation|Description ---------|-------------- O |Outside of a named entity B-LOC | Beginning of a location I-LOC | Inside of a location B-EPI | Beginning of an epidemiologic type (e.g. "incidence", "prevalence", "occurrence") I-EPI | Epidemiologic type that is not the beginning token. B-STAT | Beginning of an epidemiologic rate I-STAT | Inside of an epidemiologic rate +More | Description pending | 53cde97667341253d1b06b61029723dc |

other | ['token-classification', 'ncats'] | false | EpiSet Statistics Beyond any limitations due to the EpiSet4NER dataset, this model is limited in numeracy due to BERT-based model's use of subword embeddings, which is crucial for epidemiologic rate identification and limits the entity-level results. Recent techniques in numeracy could be used to improve the performance of the model without improving the underlying dataset. | 8c93e44b1d24f5cd81194f010a3f8304 |

other | ['token-classification', 'ncats'] | false | Training procedure This model was trained on a [AWS EC2 p3.2xlarge](https://aws.amazon.com/ec2/instance-types/), which utilized a single Tesla V100 GPU, with these hyperparameters: 4 epochs of training (AdamW weight decay = 0.05) with a batch size of 16. Maximum sequence length = 192. Model was fed one sentence at a time. <!--- Full config [here](https://wandb.ai/wzkariampuzha/huggingface/runs/353prhts/files/config.yaml). ---> <!--- THIS IS NOT THE UPDATED RESULTS ---> <!--- | 3c4dee3eaf868031fceca9ba4270305a |

other | ['token-classification', 'ncats'] | false | Test results ---> <!--- | Dataset for Model Training | Evaluation Level | Entity | Precision | Recall | F1 | ---> <!--- |:--------------------------:|:----------------:|:------------------:|:---------:|:------:|:-----:| ---> <!--- | EpiSet | Entity-Level | Overall | 0.556 | 0.662 | 0.605 | ---> <!--- | | | Location | 0.661 | 0.696 | 0.678 | ---> <!--- | | | Epidemiologic Type | 0.854 | 0.911 | 0.882 | ---> <!--- | | | Epidemiologic Rate | 0.143 | 0.218 | 0.173 | ---> <!--- | | Token-Level | Overall | 0.811 | 0.713 | 0.759 | ---> <!--- | | | Location | 0.949 | 0.742 | 0.833 | ---> <!--- | | | Epidemiologic Type | 0.9 | 0.917 | 0.908 | ---> <!--- | | | Epidemiologic Rate | 0.724 | 0.636 | 0.677 | ---> Thanks to [@William Kariampuzha](https://github.com/wzkariampuzha) at Axle Informatics/NCATS for contributing this model. | d725489402b56b5acd12efe7328c6ae6 |

apache-2.0 | ['generated_from_trainer'] | false | english-filipino-wav2vec2-l-xls-r-test-08 This model is a fine-tuned version of [jonatasgrosman/wav2vec2-large-xlsr-53-english](https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-english) on the filipino_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.5968 - Wer: 0.4255 | 09948ec977b3e494fba8e27d59a50371 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 20 - mixed_precision_training: Native AMP | 63c7a09949ccd00d0a4733867b5354b7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.3434 | 2.09 | 400 | 2.2857 | 0.9625 | | 1.6304 | 4.19 | 800 | 1.1547 | 0.7268 | | 0.9231 | 6.28 | 1200 | 1.0252 | 0.6186 | | 0.6098 | 8.38 | 1600 | 0.9371 | 0.5494 | | 0.4922 | 10.47 | 2000 | 0.7092 | 0.5478 | | 0.3652 | 12.57 | 2400 | 0.7358 | 0.5149 | | 0.2735 | 14.66 | 2800 | 0.6270 | 0.4646 | | 0.2038 | 16.75 | 3200 | 0.5717 | 0.4506 | | 0.1552 | 18.85 | 3600 | 0.5968 | 0.4255 | | 6df2125a38879c624b04d50c2e670b5b |

mit | ['generated_from_trainer'] | false | deberta-base-CoLA This model is a fine-tuned version of [microsoft/deberta-base](https://huggingface.co/microsoft/deberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.1655 - Accuracy: 0.8482 - F1: 0.8961 - Roc Auc: 0.8987 - Mcc: 0.6288 | 8f1a931cb70408b03478e5d4851bb656 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.05 - num_epochs: 10 | 3a7c54c43e2ab7684ff815f871ca1fcf |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Roc Auc | Mcc | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:-------:|:------:| | 0.5266 | 1.0 | 535 | 0.4138 | 0.8159 | 0.8698 | 0.8627 | 0.5576 | | 0.3523 | 2.0 | 1070 | 0.3852 | 0.8387 | 0.8880 | 0.9041 | 0.6070 | | 0.2479 | 3.0 | 1605 | 0.3981 | 0.8482 | 0.8901 | 0.9120 | 0.6447 | | 0.1712 | 4.0 | 2140 | 0.4732 | 0.8558 | 0.9008 | 0.9160 | 0.6486 | | 0.1354 | 5.0 | 2675 | 0.7181 | 0.8463 | 0.8938 | 0.9024 | 0.6250 | | 0.0876 | 6.0 | 3210 | 0.8453 | 0.8520 | 0.8992 | 0.9123 | 0.6385 | | 0.0682 | 7.0 | 3745 | 1.0282 | 0.8444 | 0.8938 | 0.9061 | 0.6189 | | 0.0431 | 8.0 | 4280 | 1.1114 | 0.8463 | 0.8960 | 0.9010 | 0.6239 | | 0.0323 | 9.0 | 4815 | 1.1663 | 0.8501 | 0.8970 | 0.8967 | 0.6340 | | 0.0163 | 10.0 | 5350 | 1.1655 | 0.8482 | 0.8961 | 0.8987 | 0.6288 | | e177fff91acaa850c3da524f1695d658 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | `kan-bayashi/jsut_tts_train_transformer_raw_phn_jaconv_pyopenjtalk_prosody_train.loss.ave` ♻️ Imported from https://zenodo.org/record/5499040/ This model was trained by kan-bayashi using jsut/tts1 recipe in [espnet](https://github.com/espnet/espnet/). | 5ea43839e1ec570a4583be364a85deae |

apache-2.0 | ['translation'] | false | opus-mt-en-kwn * source languages: en * target languages: kwn * OPUS readme: [en-kwn](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/en-kwn/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/en-kwn/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-kwn/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-kwn/opus-2020-01-08.eval.txt) | f74debd1700ea2785dc1e348c922804c |

apache-2.0 | ['automatic-speech-recognition', 'ar'] | false | exp_w2v2t_ar_vp-nl_s103 Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | b1402e93d9f7a9a1e5defe036760d524 |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2t_de_hubert_s921 Fine-tuned [facebook/hubert-large-ll60k](https://huggingface.co/facebook/hubert-large-ll60k) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | b2cb90ac7b2599d44dbcea5feaa9c6c4 |

mit | [] | false | karl's lzx 1 on Stable Diffusion This is the `<lzx>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:     | d5d22500bb21220b66270a3e034a234a |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2r_de_xls-r_age_teens-0_sixties-10_s113 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 5e5c78a380b8fcf98dadc6df13af050b |

mit | ['generated_from_trainer'] | false | ES_corlec_DeepESP-gpt2-spanish This model is a fine-tuned version of [DeepESP/gpt2-spanish](https://huggingface.co/DeepESP/gpt2-spanish) on the None dataset. It achieves the following results on the evaluation set: - Loss: 4.0360 | b01c750fd3d3e330c5d0804ec7f1ee41 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-06 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 200 - num_epochs: 7 | 9771a085086b52493ebac75f8794888e |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 4.2471 | 0.4 | 2000 | 4.2111 | | 4.1503 | 0.79 | 4000 | 4.1438 | | 4.0749 | 1.19 | 6000 | 4.1077 | | 4.024 | 1.59 | 8000 | 4.0857 | | 3.9855 | 1.98 | 10000 | 4.0707 | | 3.9465 | 2.38 | 12000 | 4.0605 | | 3.9277 | 2.78 | 14000 | 4.0533 | | 3.9159 | 3.17 | 16000 | 4.0482 | | 3.8918 | 3.57 | 18000 | 4.0448 | | 3.8789 | 3.97 | 20000 | 4.0421 | | 3.8589 | 4.36 | 22000 | 4.0402 | | 3.8554 | 4.76 | 24000 | 4.0387 | | 3.8509 | 5.15 | 26000 | 4.0377 | | 3.8389 | 5.55 | 28000 | 4.0370 | | 3.8288 | 5.95 | 30000 | 4.0365 | | 3.8293 | 6.34 | 32000 | 4.0362 | | 3.8202 | 6.74 | 34000 | 4.0360 | | 08d7980d9889b8e5787e8cfd3bbe31ca |

apache-2.0 | ['Super-Resolution', 'computer-vision', 'ESRGAN', 'gan'] | false | Model Description [ESRGAN](https://arxiv.org/abs/2107.10833): ECCV18 Workshops - Enhanced SRGAN. Champion PIRM Challenge on Perceptual Super-Resolution [Paper Repo](https://github.com/xinntao/ESRGAN): Implementation of paper. | 89ca9fa6493448cf482b2de6dc92d80e |

apache-2.0 | ['Super-Resolution', 'computer-vision', 'ESRGAN', 'gan'] | false | BSRGAN Usage ```python from bsrgan import BSRGAN model = BSRGAN(weights='kadirnar/RRDB_ESRGAN_x4', device='cuda:0', hf_model=True) model.save = True pred = model.predict(img_path='data/image/test.png') ``` | 2dc13ae5327bd87edaa30db4129e928b |

apache-2.0 | ['Super-Resolution', 'computer-vision', 'ESRGAN', 'gan'] | false | BibTeX Entry and Citation Info ``` @inproceedings{zhang2021designing, title={Designing a Practical Degradation Model for Deep Blind Image Super-Resolution}, author={Zhang, Kai and Liang, Jingyun and Van Gool, Luc and Timofte, Radu}, booktitle={IEEE International Conference on Computer Vision}, pages={4791--4800}, year={2021} } ``` ``` @InProceedings{wang2018esrgan, author = {Wang, Xintao and Yu, Ke and Wu, Shixiang and Gu, Jinjin and Liu, Yihao and Dong, Chao and Qiao, Yu and Loy, Chen Change}, title = {ESRGAN: Enhanced super-resolution generative adversarial networks}, booktitle = {The European Conference on Computer Vision Workshops (ECCVW)}, month = {September}, year = {2018} } ``` | 200e0bcfc944d77ac9dcd30e9deb5e83 |

apache-2.0 | ['generated_from_trainer', 'fnet-bert-base-comparison'] | false | bert-base-cased-finetuned-qnli This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the GLUE QNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.3986 - Accuracy: 0.9099 The model was fine-tuned to compare [google/fnet-base](https://huggingface.co/google/fnet-base) as introduced in [this paper](https://arxiv.org/abs/2105.03824) against [bert-base-cased](https://huggingface.co/bert-base-cased). | 4b84582ee0b77018dc4b5bf8c4038c55 |

apache-2.0 | ['generated_from_trainer', 'fnet-bert-base-comparison'] | false | !/usr/bin/bash python ../run_glue.py \\n --model_name_or_path bert-base-cased \\n --task_name qnli \\n --do_train \\n --do_eval \\n --max_seq_length 512 \\n --per_device_train_batch_size 16 \\n --learning_rate 2e-5 \\n --num_train_epochs 3 \\n --output_dir bert-base-cased-finetuned-qnli \\n --push_to_hub \\n --hub_strategy all_checkpoints \\n --logging_strategy epoch \\n --save_strategy epoch \\n --evaluation_strategy epoch \\n``` | 229358f440497eda3cef62530b9e02b9 |

apache-2.0 | ['generated_from_trainer', 'fnet-bert-base-comparison'] | false | Training results | Training Loss | Epoch | Step | Accuracy | Validation Loss | |:-------------:|:-----:|:-----:|:--------:|:---------------:| | 0.337 | 1.0 | 6547 | 0.9013 | 0.2448 | | 0.1971 | 2.0 | 13094 | 0.9143 | 0.2839 | | 0.1175 | 3.0 | 19641 | 0.9099 | 0.3986 | | 492928bceaae8dd6c63185ed51729b51 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-custom This model is a fine-tuned version of [bert-large-uncased-whole-word-masking-finetuned-squad](https://huggingface.co/bert-large-uncased-whole-word-masking-finetuned-squad) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7808 | 938a9d87ce8a684d529ba8b38d01c9c3 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 1 - eval_batch_size: 1 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | cd27aca69819872c0fbfe72b915f5e59 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 368 | 1.1128 | | 2.1622 | 2.0 | 736 | 0.8494 | | 1.2688 | 3.0 | 1104 | 0.7808 | | 42ae379ccaf7be2d3c1338e6a4cf3fe9 |

openrail | ['text-to-image', 'stable-diffusion'] | false | How do I use it? The keyword for this model is **vitordeluccagrs**. Try to use "*portrait of vitordeluccagrs shirtless reading a book in a chair, art by artgerm*". Be creative. Specify the faces features you want, hair style, the environment, if is a art, it's in which artist style? Want it realistic? Say it! And also say which ISO, camera, etc. Want to look like 500px and unsplash pictures? Say it too! Want it to look like Blade Runner? Say it. **Be creative**. USE NEGATIVE PROMPT! Stable Diffusion works best if you use negative prompt. With negative prompt, you are saying what things you don't want in picture, like you know... extra fingers!. | 8f1c5aae61243cc53ec8abb0835f7052 |

openrail | ['text-to-image', 'stable-diffusion'] | false | Sample pictures     | 0003c2231dc8a526e15dd0dc11a28a7e |

mit | [] | false | Bob Dobbs on Stable Diffusion This is the `<bob>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:               | 268490ebae1dfc93ccc9cd88455edc54 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | restaurant_local_test_model This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the local_test_data dataset. It achieves the following results on the evaluation set: - Loss: 0.5435 - Wer: 78.5714 | b21d2ab8a499809762292b18ceca8628 |

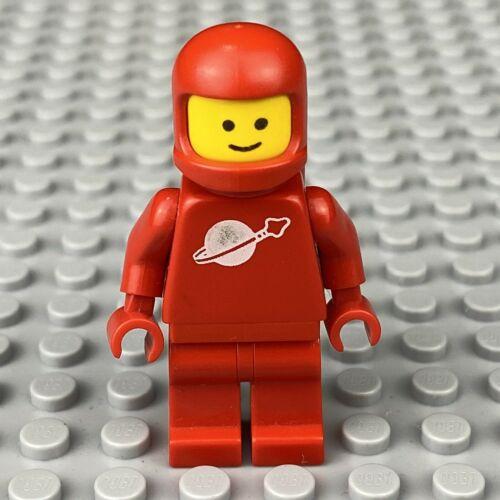

mit | [] | false | Lego astronaut on Stable Diffusion This is the `<lego-astronaut>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | 23f20b8f373be27a5947e67cbba08505 |

mit | [] | false | <monster-toy> on Stable Diffusion This is the `<monster-toy>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | 915c6b102e3279e06c755a840d36e7c2 |

mit | ['generated_from_trainer'] | false | distilcamembert-cae-feeling This model is a fine-tuned version of [cmarkea/distilcamembert-base](https://huggingface.co/cmarkea/distilcamembert-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.9307 - Precision: 0.6783 - Recall: 0.6835 - F1: 0.6767 | 1c34c5a6b785507221015dcd7db82858 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 5.0 | 79058a387a1566068ed5722e5c70df1a |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:| | 1.1901 | 1.0 | 40 | 1.0935 | 0.1963 | 0.4430 | 0.2720 | | 1.0584 | 2.0 | 80 | 0.8978 | 0.6304 | 0.6076 | 0.5776 | | 0.6805 | 3.0 | 120 | 0.8577 | 0.6918 | 0.6709 | 0.6759 | | 0.3938 | 4.0 | 160 | 1.0034 | 0.6966 | 0.6582 | 0.6586 | | 0.2713 | 5.0 | 200 | 0.9307 | 0.6783 | 0.6835 | 0.6767 | | e4525261771d0937b3bd2754400661b8 |

apache-2.0 | ['exbert'] | false | DistilBERT base model (uncased) This model is a distilled version of the [BERT base model](https://huggingface.co/bert-base-uncased). It was introduced in [this paper](https://arxiv.org/abs/1910.01108). The code for the distillation process can be found [here](https://github.com/huggingface/transformers/tree/main/examples/research_projects/distillation). This model is uncased: it does not make a difference between english and English. | 031540ab163b7fa433404050006bc32f |

apache-2.0 | ['exbert'] | false | Model description DistilBERT is a transformers model, smaller and faster than BERT, which was pretrained on the same corpus in a self-supervised fashion, using the BERT base model as a teacher. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts using the BERT base model. More precisely, it was pretrained with three objectives: - Distillation loss: the model was trained to return the same probabilities as the BERT base model. - Masked language modeling (MLM): this is part of the original training loss of the BERT base model. When taking a sentence, the model randomly masks 15% of the words in the input then run the entire masked sentence through the model and has to predict the masked words. This is different from traditional recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the sentence. - Cosine embedding loss: the model was also trained to generate hidden states as close as possible as the BERT base model. This way, the model learns the same inner representation of the English language than its teacher model, while being faster for inference or downstream tasks. | 4162a64036f4021026aa8de0498026fa |

apache-2.0 | ['exbert'] | false | Intended uses & limitations You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=distilbert) to look for fine-tuned versions on a task that interests you. Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked) to make decisions, such as sequence classification, token classification or question answering. For tasks such as text generation you should look at model like GPT2. | 071b16f0c8b568812eded226552c2698 |

apache-2.0 | ['exbert'] | false | How to use You can use this model directly with a pipeline for masked language modeling: ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='distilbert-base-uncased') >>> unmasker("Hello I'm a [MASK] model.") [{'sequence': "[CLS] hello i'm a role model. [SEP]", 'score': 0.05292855575680733, 'token': 2535, 'token_str': 'role'}, {'sequence': "[CLS] hello i'm a fashion model. [SEP]", 'score': 0.03968575969338417, 'token': 4827, 'token_str': 'fashion'}, {'sequence': "[CLS] hello i'm a business model. [SEP]", 'score': 0.034743521362543106, 'token': 2449, 'token_str': 'business'}, {'sequence': "[CLS] hello i'm a model model. [SEP]", 'score': 0.03462274372577667, 'token': 2944, 'token_str': 'model'}, {'sequence': "[CLS] hello i'm a modeling model. [SEP]", 'score': 0.018145186826586723, 'token': 11643, 'token_str': 'modeling'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import DistilBertTokenizer, DistilBertModel tokenizer = DistilBertTokenizer.from_pretrained('distilbert-base-uncased') model = DistilBertModel.from_pretrained("distilbert-base-uncased") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import DistilBertTokenizer, TFDistilBertModel tokenizer = DistilBertTokenizer.from_pretrained('distilbert-base-uncased') model = TFDistilBertModel.from_pretrained("distilbert-base-uncased") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | a6fb7bd946165e90b2fc258508c2352b |

apache-2.0 | ['exbert'] | false | Limitations and bias Even if the training data used for this model could be characterized as fairly neutral, this model can have biased predictions. It also inherits some of [the bias of its teacher model](https://huggingface.co/bert-base-uncased | 258dcda7f642d60df9fe4dccdc83d8d0 |

apache-2.0 | ['exbert'] | false | limitations-and-bias). ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='distilbert-base-uncased') >>> unmasker("The White man worked as a [MASK].") [{'sequence': '[CLS] the white man worked as a blacksmith. [SEP]', 'score': 0.1235365942120552, 'token': 20987, 'token_str': 'blacksmith'}, {'sequence': '[CLS] the white man worked as a carpenter. [SEP]', 'score': 0.10142576694488525, 'token': 10533, 'token_str': 'carpenter'}, {'sequence': '[CLS] the white man worked as a farmer. [SEP]', 'score': 0.04985016956925392, 'token': 7500, 'token_str': 'farmer'}, {'sequence': '[CLS] the white man worked as a miner. [SEP]', 'score': 0.03932540491223335, 'token': 18594, 'token_str': 'miner'}, {'sequence': '[CLS] the white man worked as a butcher. [SEP]', 'score': 0.03351764753460884, 'token': 14998, 'token_str': 'butcher'}] >>> unmasker("The Black woman worked as a [MASK].") [{'sequence': '[CLS] the black woman worked as a waitress. [SEP]', 'score': 0.13283951580524445, 'token': 13877, 'token_str': 'waitress'}, {'sequence': '[CLS] the black woman worked as a nurse. [SEP]', 'score': 0.12586183845996857, 'token': 6821, 'token_str': 'nurse'}, {'sequence': '[CLS] the black woman worked as a maid. [SEP]', 'score': 0.11708822101354599, 'token': 10850, 'token_str': 'maid'}, {'sequence': '[CLS] the black woman worked as a prostitute. [SEP]', 'score': 0.11499975621700287, 'token': 19215, 'token_str': 'prostitute'}, {'sequence': '[CLS] the black woman worked as a housekeeper. [SEP]', 'score': 0.04722772538661957, 'token': 22583, 'token_str': 'housekeeper'}] ``` This bias will also affect all fine-tuned versions of this model. | e2f6a9f7c1cc1b3b7f6854743ac0cce4 |

apache-2.0 | ['exbert'] | false | Training data DistilBERT pretrained on the same data as BERT, which is [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038 unpublished books and [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and headers). | 927d00ac64ef729400d71fb876c9ff9a |

apache-2.0 | ['exbert'] | false | Pretraining The model was trained on 8 16 GB V100 for 90 hours. See the [training code](https://github.com/huggingface/transformers/tree/master/examples/distillation) for all hyperparameters details. | aa15809c5afb1935b40f0da4d2aaf5d0 |

apache-2.0 | ['exbert'] | false | Evaluation results When fine-tuned on downstream tasks, this model achieves the following results: Glue test results: | Task | MNLI | QQP | QNLI | SST-2 | CoLA | STS-B | MRPC | RTE | |:----:|:----:|:----:|:----:|:-----:|:----:|:-----:|:----:|:----:| | | 82.2 | 88.5 | 89.2 | 91.3 | 51.3 | 85.8 | 87.5 | 59.9 | | 9a55e7b6baf165da6efd299b6a2a3a57 |

apache-2.0 | ['exbert'] | false | BibTeX entry and citation info ```bibtex @article{Sanh2019DistilBERTAD, title={DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter}, author={Victor Sanh and Lysandre Debut and Julien Chaumond and Thomas Wolf}, journal={ArXiv}, year={2019}, volume={abs/1910.01108} } ``` <a href="https://huggingface.co/exbert/?model=distilbert-base-uncased"> <img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png"> </a> | b70b043e8428efd9eb62abe9b556eb78 |

mit | ['generated_from_trainer'] | false | my_awesome_wnut_model This model is a fine-tuned version of [dbmdz/bert-base-turkish-cased](https://huggingface.co/dbmdz/bert-base-turkish-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2661 - Precision: 0.8999 - Recall: 0.8933 - F1: 0.8966 - Accuracy: 0.9264 | 0d2c1a29f6fd4b5df83d55c12c4c03c1 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 488 | 0.3614 | 0.8731 | 0.8606 | 0.8668 | 0.9043 | | 0.6843 | 2.0 | 976 | 0.2872 | 0.8927 | 0.8856 | 0.8891 | 0.9209 | | 0.3517 | 3.0 | 1464 | 0.2661 | 0.8999 | 0.8933 | 0.8966 | 0.9264 | | a4907a54817f29d6b6c3964e7fd9d217 |

mit | ['huggan', 'gan'] | false | Model description [Pix2pix Model](https://arxiv.org/abs/1611.07004) is a conditional adversarial networks, a general-purpose solution to image-to-image translation problems. These networks not only learn the mapping from input image to output image, but also learn a loss function to train this mapping. This makes it possible to apply the same generic approach to problems that traditionally would require very different loss formulations. We demonstrate that this approach is effective at synthesizing photos from label maps, reconstructing objects from edge maps, and colorizing images, among other tasks. | a0a2615f3f3d0fe642e70b6c2df48f67 |

mit | ['huggan', 'gan'] | false | How to use ```python from torchvision.transforms import Compose, Resize, ToTensor, Normalize from PIL import Image from torchvision.utils import save_image import cv2 from huggan.pytorch.pix2pix.modeling_pix2pix import GeneratorUNet transform = Compose( [ Resize((256, 256), Image.BICUBIC), ToTensor(), Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)), ] ) model = GeneratorUNet.from_pretrained('huggan/pix2pix-night2day') def predict_fn(img): inp = transform(img).unsqueeze(0) out = model(inp) save_image(out, 'out.png', normalize=True) return 'out.png' predict_fn(img) ``` | 70ca49dbdd297d712aaa4ecddf09b5e5 |

mit | ['huggan', 'gan'] | false | launch training with required parameters accelerate launch train.py --checkpoint_interval 5 --dataset huggan/night2day --push_to_hub --model_name pix2pix-night2day --batch_size 128 --n_epochs 50 ``` | 4298425d18ed03ff0cfe1077265a4060 |

mit | ['huggan', 'gan'] | false | BibTeX entry and citation info ```bibtex @article{pix2pix2017, title={Image-to-Image Translation with Conditional Adversarial Networks}, author={Isola, Phillip and Zhu, Jun-Yan and Zhou, Tinghui and Efros, Alexei A}, journal={CVPR}, year={2017} } ``` | 4d630f2a41034de7769ca8d813b3feb7 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 2 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 15 - mixed_precision_training: Native AMP | c8072d1735a982c8bc6e81057957ef7d |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 2.2591 | 29eb4f38c74b7892105b29dede55af07 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.4216 | 1.0 | 782 | 2.2803 | | 2.3719 | 2.0 | 1564 | 2.2577 | | 2.3407 | 3.0 | 2346 | 2.2320 | | 55b0bd1857d121279d640eb23518f8b5 |

mit | ['Stable Diffusion', 'Senko', 'Hypernetwork'] | false | Description This hypernetwork will help you to make your Senko-san be look like she was drawn by Rimukoro. This model was trained using [AbyssOrangeMix_base](https://huggingface.co/WarriorMama777/OrangeMixs/blob/main/Models/AbyssOrangeMix/AbyssOrangeMix_base.ckpt) model so it should work fine with that specific model or with other relative models. | 87f05952c8ac04bde9017a09786d5a71 |

mit | ['Stable Diffusion', 'Senko', 'Hypernetwork'] | false | Usage For using this hypernetwork just place .pt file in your `models\hypernetworks` directory and then depends on your UI you will need to choose this hypernetwork in settings or use it directly in your positive prompt like `<hypernet:abyss_orange_mix_senko_by_rimukoro:1.0>` | 51029b022770f3d3955d453e8ab5f6e8 |

mit | ['Stable Diffusion', 'Senko', 'Hypernetwork'] | false | PNG Info Example masterpiece, best quality, 1girl, solo, cinematic lighting, 1girl, solo, senko \(sewayaki kitsune no senko-san\), senko-san, sewayaki kitsune no senko-san, animal ears, fox ears, fox girl, fox tail, hair flower, hair ornament, orange eyes, orange hair, rimukoro, short hair, tail, flat chest, looking at viewer, light smile, full body, forest, rain, darkness, moon, wet clothes, blush, jacket, T-shirt, skirt Negative prompt: ugly, old, amateur drawing, odd, fat, lowres, text, error, worst quality, low quality, jpeg artifacts, signature, watermark, username, (blurry:1.3), out of focus, cropped, out of frame, cloned face, mutilated, deformed, gross proportions, disfigured, mutated hands, poorly drawn hands, bad anatomy, (bad hands:1.4), missing fingers, extra digit, (extra fingers:1.3), fewer digits, poorly drawn face, fused fingers, long neck, extra limbs, broken limb, asymmetrical eyes cell shading, watercolor Steps: 30, Sampler: DDIM, CFG scale: 12, Seed: 1393421640, Size: 640x832, Model hash: ffa7b160, Model: AbyssOrangeMix_base, Hypernet: abyss_orange_mix_senko_by_rimukoro, Hypernet hash: f3753abd, Denoising strength: 0.7, Eta: 0.69, Clip skip: 2, Hires upscale: 2, Hires upscaler: Latent | d806f35d4d1607f64d673efb0213d1c6 |

mit | ['Stable Diffusion', 'Senko', 'Hypernetwork'] | false | Chosing a hypernetwork with non-default amount of steps Hypernetwork `abyss_orange_mix_senko_by_rimukoro.pt` presents a model which was trained on 4000 amount of steps (which I personally prefer). I also published hypernetworks which were trained on different amount of steps (up to 15000). You can find these hypernetworks in [models folder](https://huggingface.co/NeuroSenko/abyss_orange_mix_senko_by_rimukoro_hyper/tree/main/models). To make it easier for you to choose a hypernetwork I publish [this grid](https://neurosenko.github.io/sd-grid-viewer/?configUrl=https://neurosenko.github.io/sd-grids/orange-senko-by-rimukoro/config.json) which you can use for comparing these hypernetworks using 5 different seeds. | 34a942f426e7c728ac19cdc19b7adf8c |

mit | [] | false | This model is the **query** encoder of ANCE-Tele trained on NQ, described in the EMNLP 2022 paper ["Reduce Catastrophic Forgetting of Dense Retrieval Training with Teleportation Negatives"](https://arxiv.org/pdf/2210.17167.pdf). The associated GitHub repository is available at https://github.com/OpenMatch/ANCE-Tele. ANCE-Tele only trains with self-mined negatives (teleportation negatives) without using additional negatives (e.g., BM25, other DR systems) and eliminates the dependency on filtering strategies and distillation modules. |NQ (Test)|R@5|R@20|R@20| |:---|:---|:---|:---| |ANCE-Tele|77.0|84.9|89.7| ``` @inproceedings{sun2022ancetele, title={Reduce Catastrophic Forgetting of Dense Retrieval Training with Teleportation Negatives}, author={Si Sun, Chenyan Xiong, Yue Yu, Arnold Overwijk, Zhiyuan Liu and Jie Bao}, booktitle={Proceedings of EMNLP 2022}, year={2022} } ``` | f952b98f732f9a357bd925b72180e5eb |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2245 - Accuracy: 0.92 - F1: 0.9203 | 28d307fd0ba7ca4b9a91b21b0bde4620 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8171 | 1.0 | 250 | 0.3222 | 0.907 | 0.9055 | | 0.2546 | 2.0 | 500 | 0.2245 | 0.92 | 0.9203 | | 80354c56309713b4b765bc38fd4ae8f4 |

unknown | [] | false | Example prompts `bear deer by gauzy storms`: <img src="https://huggingface.co/cyburn/gauzy_storms/resolve/main/1.png" alt="Picture." width="500"/> `pinguin by gauzy storms`: <img src="https://huggingface.co/cyburn/gauzy_storms/resolve/main/2.png" alt="Picture." width="500"/> `unicorn zebra by gauzy storms`: <img src="https://huggingface.co/cyburn/gauzy_storms/resolve/main/3.png" alt="Picture." width="500"/> | 3116e06ffcef724a4aae84d36b77c6c3 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'animal'] | false | DreamBooth model for the caicai concept trained by chenglu. This is a Stable Diffusion model fine-tuned on the caicai concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of caicai dog** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | fb8108021e3a2626a20c7645a939d44b |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'animal'] | false | Description This is a Stable Diffusion model fine-tuned on `dog` images for the animal theme, for the Hugging Face DreamBooth Hackathon, from the HF CN Community, corporated with the HeyWhale. Thanks to @hhhxynh in the HF China community. | 2b9109c202b8acab645cff3a80bcc5b3 |

mit | ['generated_from_trainer'] | false | camembert-base-finetuned-Train_RAW10-dd This model is a fine-tuned version of [camembert-base](https://huggingface.co/camembert-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2175 - Precision: 0.8744 - Recall: 0.9056 - F1: 0.8897 - Accuracy: 0.9357 | 32fd335eb55dde4b4e83c1e067143969 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.1873 | 1.0 | 9930 | 0.2088 | 0.8652 | 0.8927 | 0.8788 | 0.9326 | | 0.1533 | 2.0 | 19860 | 0.2175 | 0.8744 | 0.9056 | 0.8897 | 0.9357 | | 62fb15dabc4a2f3f4fd18077c9f658b8 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-cola This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.7580 - Matthews Correlation: 0.5406 | 0707079d458a5fa089c8d6c8ae35f2bd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.5307 | 1.0 | 535 | 0.5094 | 0.4152 | | 0.3545 | 2.0 | 1070 | 0.5230 | 0.4940 | | 0.2371 | 3.0 | 1605 | 0.6412 | 0.5087 | | 0.1777 | 4.0 | 2140 | 0.7580 | 0.5406 | | 0.1288 | 5.0 | 2675 | 0.8494 | 0.5396 | | 7a1172ea70161e94dbbab60efaaafc65 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-issues-128 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.0348 | cfdb7cbf376c0727b5580cd04641edaa |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 2.3932 | 1.0 | 1409 | 2.0750 | | 2.1659 | 2.0 | 2818 | 1.9781 | | 2.0364 | 3.0 | 4227 | 2.1215 | | 1.9399 | 4.0 | 5636 | 2.1018 | | 1.8857 | 5.0 | 7045 | 1.9919 | | 1.813 | 6.0 | 8454 | 2.2653 | | 1.7505 | 7.0 | 9863 | 2.0857 | | 1.7196 | 8.0 | 11272 | 1.9211 | | 1.672 | 9.0 | 12681 | 1.9853 | | 1.6379 | 10.0 | 14090 | 2.0391 | | 1.6037 | 11.0 | 15499 | 1.9305 | | 1.5699 | 12.0 | 16908 | 2.0291 | | 1.5363 | 13.0 | 18317 | 2.0492 | | 1.5155 | 14.0 | 19726 | 1.8807 | | 1.4999 | 15.0 | 21135 | 1.8604 | | 1.4784 | 16.0 | 22544 | 2.0348 | | 7032b3838034e9bff6e3bd7257f943f7 |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-TINY-FF6000 (Deep-Narrow version) T5-Efficient-TINY-FF6000 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | 642c2ebe285766c01c79c955609694d6 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-tiny-ff6000** - is of model type **Tiny** with the following variations: - **ff** is **6000** It has **36.55** million parameters and thus requires *ca.* **146.21 MB** of memory in full precision (*fp32*) or **73.1 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | 274c76c4ca5bcbf3190dbe840cbe2481 |

apache-2.0 | [] | false | Graphcore/convnext-base-ipu Optimum Graphcore is a new open-source library and toolkit that enables developers to access IPU-optimized models certified by Hugging Face. It is an extension of Transformers, providing a set of performance optimization tools enabling maximum efficiency to train and run models on Graphcore’s IPUs - a completely new kind of massively parallel processor to accelerate machine intelligence. Learn more about how to take train Transformer models faster with IPUs at [hf.co/hardware/graphcore](https://huggingface.co/hardware/graphcore). Through HuggingFace Optimum, Graphcore released ready-to-use IPU-trained model checkpoints and IPU configuration files to make it easy to train models with maximum efficiency in the IPU. Optimum shortens the development lifecycle of your AI models by letting you plug-and-play any public dataset and allows a seamless integration to our State-of-the-art hardware giving you a quicker time-to-value for your AI project. | 3d25292ee7b3a1d7c8a7997165ffc7ba |

apache-2.0 | [] | false | Intended uses & limitations This model contains just the `IPUConfig` files for running the [facebook/convnext-base-224](https://huggingface.co/facebook/convnext-base-224) model on Graphcore IPUs. **This model contains no model weights, only an IPUConfig.** | 5c2439dd224404a0850da8a9199c0bc9 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-2'] | false | MultiBERTs Seed 2 Checkpoint 2000k (uncased) Seed 2 intermediate checkpoint 2000k MultiBERTs (pretrained BERT) model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/pdf/2106.16163.pdf) and first released in [this repository](https://github.com/google-research/language/tree/master/language/multiberts). This is an intermediate checkpoint. The final checkpoint can be found at [multiberts-seed-2](https://hf.co/multberts-seed-2). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing MultiBERTs did not write a model card for this model so this model card has been written by [gchhablani](https://hf.co/gchhablani). | 62d8b1cc65224db86c1d3950f43a68f6 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-2'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('multiberts-seed-2-2000k') model = BertModel.from_pretrained("multiberts-seed-2-2000k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | d047059b680d60778a87d928241844f4 |

apache-2.0 | ['automatic-speech-recognition', 'ja'] | false | exp_w2v2t_ja_vp-it_s544 Fine-tuned [facebook/wav2vec2-large-it-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-it-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (ja)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 406f784078c40cc5f65bb0df05b66989 |

apache-2.0 | ['generated_from_trainer'] | false | recipe-lr0.0001-wd0.05-bs64 This model is a fine-tuned version of [paola-md/recipe-distilroberta-Is](https://huggingface.co/paola-md/recipe-distilroberta-Is) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2792 - Rmse: 0.5284 - Mse: 0.2792 - Mae: 0.4268 | 0c9e98c106870b90ed6960a4990c707c |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 256 - eval_batch_size: 256 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | eec195e2894233d29c97da6189dbc74b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.2798 | 1.0 | 623 | 0.2789 | 0.5281 | 0.2789 | 0.4219 | | 0.2786 | 2.0 | 1246 | 0.2795 | 0.5287 | 0.2795 | 0.4287 | | 0.2785 | 3.0 | 1869 | 0.2792 | 0.5284 | 0.2792 | 0.4268 | | 857a65c05833a7b0cf192e262e9b265a |

apache-2.0 | ['generated_from_keras_callback'] | false | salihkavaf/distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [salihkavaf/distilbert-base-uncased-finetuned-imdb](https://huggingface.co/salihkavaf/distilbert-base-uncased-finetuned-imdb) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.6769 - Validation Loss: 2.5848 - Epoch: 0 | 4f90655d8a2a15e9fabd222da9fa6119 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.