problem

stringlengths 54

2.23k

| solution

stringlengths 134

24.1k

| answer

stringclasses 1

value | problem_is_valid

stringclasses 1

value | solution_is_valid

stringclasses 1

value | question_type

stringclasses 1

value | problem_type

stringclasses 8

values | problem_raw

stringlengths 54

2.21k

| solution_raw

stringlengths 134

24.1k

| metadata

dict | uuid

stringlengths 36

36

| id

int64 23.5k

612k

|

|---|---|---|---|---|---|---|---|---|---|---|---|

Let $a_{2}, \ldots, a_{n}$ be $n-1$ positive real numbers, where $n \geq 3$, such that $a_{2} a_{3} \cdots a_{n}=1$. Prove that $$ \left(1+a_{2}\right)^{2}\left(1+a_{3}\right)^{3} \cdots\left(1+a_{n}\right)^{n}>n^{n} . $$

|

The substitution $a_{2}=\frac{x_{2}}{x_{1}}, a_{3}=\frac{x_{3}}{x_{2}}, \ldots, a_{n}=\frac{x_{1}}{x_{n-1}}$ transforms the original problem into the inequality $$ \left(x_{1}+x_{2}\right)^{2}\left(x_{2}+x_{3}\right)^{3} \cdots\left(x_{n-1}+x_{1}\right)^{n}>n^{n} x_{1}^{2} x_{2}^{3} \cdots x_{n-1}^{n} $$ for all $x_{1}, \ldots, x_{n-1}>0$. To prove this, we use the AM-GM inequality for each factor of the left-hand side as follows: $$ \begin{array}{rlcl} \left(x_{1}+x_{2}\right)^{2} & & & \geq 2^{2} x_{1} x_{2} \\ \left(x_{2}+x_{3}\right)^{3} & = & \left(2\left(\frac{x_{2}}{2}\right)+x_{3}\right)^{3} & \geq 3^{3}\left(\frac{x_{2}}{2}\right)^{2} x_{3} \\ \left(x_{3}+x_{4}\right)^{4} & = & \left(3\left(\frac{x_{3}}{3}\right)+x_{4}\right)^{4} & \geq 4^{4}\left(\frac{x_{3}}{3}\right)^{3} x_{4} \\ & \vdots & \vdots & \vdots \end{array} $$ Multiplying these inequalities together gives $\left({ }^{*}\right)$, with inequality sign $\geq$ instead of $>$. However for the equality to occur it is necessary that $x_{1}=x_{2}, x_{2}=2 x_{3}, \ldots, x_{n-1}=(n-1) x_{1}$, implying $x_{1}=(n-1) ! x_{1}$. This is impossible since $x_{1}>0$ and $n \geq 3$. Therefore the inequality is strict. Comment. One can avoid the substitution $a_{i}=x_{i} / x_{i-1}$. Apply the weighted AM-GM inequality to each factor $\left(1+a_{k}\right)^{k}$, with the same weights like above, to obtain $$ \left(1+a_{k}\right)^{k}=\left((k-1) \frac{1}{k-1}+a_{k}\right)^{k} \geq \frac{k^{k}}{(k-1)^{k-1}} a_{k} $$ Multiplying all these inequalities together gives $$ \left(1+a_{2}\right)^{2}\left(1+a_{3}\right)^{3} \cdots\left(1+a_{n}\right)^{n} \geq n^{n} a_{2} a_{3} \cdots a_{n}=n^{n} . $$ The same argument as in the proof above shows that the equality cannot be attained.

|

proof

|

Yes

|

Yes

|

proof

|

Inequalities

|

Let $a_{2}, \ldots, a_{n}$ be $n-1$ positive real numbers, where $n \geq 3$, such that $a_{2} a_{3} \cdots a_{n}=1$. Prove that $$ \left(1+a_{2}\right)^{2}\left(1+a_{3}\right)^{3} \cdots\left(1+a_{n}\right)^{n}>n^{n} . $$

|

The substitution $a_{2}=\frac{x_{2}}{x_{1}}, a_{3}=\frac{x_{3}}{x_{2}}, \ldots, a_{n}=\frac{x_{1}}{x_{n-1}}$ transforms the original problem into the inequality $$ \left(x_{1}+x_{2}\right)^{2}\left(x_{2}+x_{3}\right)^{3} \cdots\left(x_{n-1}+x_{1}\right)^{n}>n^{n} x_{1}^{2} x_{2}^{3} \cdots x_{n-1}^{n} $$ for all $x_{1}, \ldots, x_{n-1}>0$. To prove this, we use the AM-GM inequality for each factor of the left-hand side as follows: $$ \begin{array}{rlcl} \left(x_{1}+x_{2}\right)^{2} & & & \geq 2^{2} x_{1} x_{2} \\ \left(x_{2}+x_{3}\right)^{3} & = & \left(2\left(\frac{x_{2}}{2}\right)+x_{3}\right)^{3} & \geq 3^{3}\left(\frac{x_{2}}{2}\right)^{2} x_{3} \\ \left(x_{3}+x_{4}\right)^{4} & = & \left(3\left(\frac{x_{3}}{3}\right)+x_{4}\right)^{4} & \geq 4^{4}\left(\frac{x_{3}}{3}\right)^{3} x_{4} \\ & \vdots & \vdots & \vdots \end{array} $$ Multiplying these inequalities together gives $\left({ }^{*}\right)$, with inequality sign $\geq$ instead of $>$. However for the equality to occur it is necessary that $x_{1}=x_{2}, x_{2}=2 x_{3}, \ldots, x_{n-1}=(n-1) x_{1}$, implying $x_{1}=(n-1) ! x_{1}$. This is impossible since $x_{1}>0$ and $n \geq 3$. Therefore the inequality is strict. Comment. One can avoid the substitution $a_{i}=x_{i} / x_{i-1}$. Apply the weighted AM-GM inequality to each factor $\left(1+a_{k}\right)^{k}$, with the same weights like above, to obtain $$ \left(1+a_{k}\right)^{k}=\left((k-1) \frac{1}{k-1}+a_{k}\right)^{k} \geq \frac{k^{k}}{(k-1)^{k-1}} a_{k} $$ Multiplying all these inequalities together gives $$ \left(1+a_{2}\right)^{2}\left(1+a_{3}\right)^{3} \cdots\left(1+a_{n}\right)^{n} \geq n^{n} a_{2} a_{3} \cdots a_{n}=n^{n} . $$ The same argument as in the proof above shows that the equality cannot be attained.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

dbf5c9a1-f1fa-5160-9b65-96aa3f10b76e

| 24,144

|

Let $f$ and $g$ be two nonzero polynomials with integer coefficients and $\operatorname{deg} f>\operatorname{deg} g$. Suppose that for infinitely many primes $p$ the polynomial $p f+g$ has a rational root. Prove that $f$ has a rational root.

|

Since $\operatorname{deg} f>\operatorname{deg} g$, we have $|g(x) / f(x)|<1$ for sufficiently large $x$; more precisely, there is a real number $R$ such that $|g(x) / f(x)|<1$ for all $x$ with $|x|>R$. Then for all such $x$ and all primes $p$ we have $$ |p f(x)+g(x)| \geq|f(x)|\left(p-\frac{|g(x)|}{|f(x)|}\right)>0 $$ Hence all real roots of the polynomials $p f+g$ lie in the interval $[-R, R]$. Let $f(x)=a_{n} x^{n}+a_{n-1} x^{n-1}+\cdots+a_{0}$ and $g(x)=b_{m} x^{m}+b_{m-1} x^{m-1}+\cdots+b_{0}$ where $n>m, a_{n} \neq 0$ and $b_{m} \neq 0$. Upon replacing $f(x)$ and $g(x)$ by $a_{n}^{n-1} f\left(x / a_{n}\right)$ and $a_{n}^{n-1} g\left(x / a_{n}\right)$ respectively, we reduce the problem to the case $a_{n}=1$. In other words one can assume that $f$ is monic. Then the leading coefficient of $p f+g$ is $p$, and if $r=u / v$ is a rational root of $p f+g$ with $(u, v)=1$ and $v>0$, then either $v=1$ or $v=p$. First consider the case when $v=1$ infinitely many times. If $v=1$ then $|u| \leq R$, so there are only finitely many possibilities for the integer $u$. Therefore there exist distinct primes $p$ and $q$ for which we have the same value of $u$. Then the polynomials $p f+g$ and $q f+g$ share this root, implying $f(u)=g(u)=0$. So in this case $f$ and $g$ have an integer root in common. Now suppose that $v=p$ infinitely many times. By comparing the exponent of $p$ in the denominators of $p f(u / p)$ and $g(u / p)$ we get $m=n-1$ and $p f(u / p)+g(u / p)=0$ reduces to an equation of the form $$ \left(u^{n}+a_{n-1} p u^{n-1}+\ldots+a_{0} p^{n}\right)+\left(b_{n-1} u^{n-1}+b_{n-2} p u^{n-2}+\ldots+b_{0} p^{n-1}\right)=0 . $$ The equation above implies that $u^{n}+b_{n-1} u^{n-1}$ is divisible by $p$ and hence, since $(u, p)=1$, we have $u+b_{n-1}=p k$ with some integer $k$. On the other hand all roots of $p f+g$ lie in the interval $[-R, R]$, so that $$ \begin{gathered} \frac{\left|p k-b_{n-1}\right|}{p}=\frac{|u|}{p}<R, \\ |k|<R+\frac{\left|b_{n-1}\right|}{p}<R+\left|b_{n-1}\right| . \end{gathered} $$ Therefore the integer $k$ can attain only finitely many values. Hence there exists an integer $k$ such that the number $\frac{p k-b_{n-1}}{p}=k-\frac{b_{n-1}}{p}$ is a root of $p f+g$ for infinitely many primes $p$. For these primes we have $$ f\left(k-b_{n-1} \frac{1}{p}\right)+\frac{1}{p} g\left(k-b_{n-1} \frac{1}{p}\right)=0 . $$ So the equation $$ f\left(k-b_{n-1} x\right)+x g\left(k-b_{n-1} x\right)=0 $$ has infinitely many solutions of the form $x=1 / p$. Since the left-hand side is a polynomial, this implies that (1) is a polynomial identity, so it holds for all real $x$. In particular, by substituting $x=0$ in (1) we get $f(k)=0$. Thus the integer $k$ is a root of $f$. In summary the monic polynomial $f$ obtained after the initial reduction always has an integer root. Therefore the original polynomial $f$ has a rational root.

|

proof

|

Yes

|

Yes

|

proof

|

Algebra

|

Let $f$ and $g$ be two nonzero polynomials with integer coefficients and $\operatorname{deg} f>\operatorname{deg} g$. Suppose that for infinitely many primes $p$ the polynomial $p f+g$ has a rational root. Prove that $f$ has a rational root.

|

Since $\operatorname{deg} f>\operatorname{deg} g$, we have $|g(x) / f(x)|<1$ for sufficiently large $x$; more precisely, there is a real number $R$ such that $|g(x) / f(x)|<1$ for all $x$ with $|x|>R$. Then for all such $x$ and all primes $p$ we have $$ |p f(x)+g(x)| \geq|f(x)|\left(p-\frac{|g(x)|}{|f(x)|}\right)>0 $$ Hence all real roots of the polynomials $p f+g$ lie in the interval $[-R, R]$. Let $f(x)=a_{n} x^{n}+a_{n-1} x^{n-1}+\cdots+a_{0}$ and $g(x)=b_{m} x^{m}+b_{m-1} x^{m-1}+\cdots+b_{0}$ where $n>m, a_{n} \neq 0$ and $b_{m} \neq 0$. Upon replacing $f(x)$ and $g(x)$ by $a_{n}^{n-1} f\left(x / a_{n}\right)$ and $a_{n}^{n-1} g\left(x / a_{n}\right)$ respectively, we reduce the problem to the case $a_{n}=1$. In other words one can assume that $f$ is monic. Then the leading coefficient of $p f+g$ is $p$, and if $r=u / v$ is a rational root of $p f+g$ with $(u, v)=1$ and $v>0$, then either $v=1$ or $v=p$. First consider the case when $v=1$ infinitely many times. If $v=1$ then $|u| \leq R$, so there are only finitely many possibilities for the integer $u$. Therefore there exist distinct primes $p$ and $q$ for which we have the same value of $u$. Then the polynomials $p f+g$ and $q f+g$ share this root, implying $f(u)=g(u)=0$. So in this case $f$ and $g$ have an integer root in common. Now suppose that $v=p$ infinitely many times. By comparing the exponent of $p$ in the denominators of $p f(u / p)$ and $g(u / p)$ we get $m=n-1$ and $p f(u / p)+g(u / p)=0$ reduces to an equation of the form $$ \left(u^{n}+a_{n-1} p u^{n-1}+\ldots+a_{0} p^{n}\right)+\left(b_{n-1} u^{n-1}+b_{n-2} p u^{n-2}+\ldots+b_{0} p^{n-1}\right)=0 . $$ The equation above implies that $u^{n}+b_{n-1} u^{n-1}$ is divisible by $p$ and hence, since $(u, p)=1$, we have $u+b_{n-1}=p k$ with some integer $k$. On the other hand all roots of $p f+g$ lie in the interval $[-R, R]$, so that $$ \begin{gathered} \frac{\left|p k-b_{n-1}\right|}{p}=\frac{|u|}{p}<R, \\ |k|<R+\frac{\left|b_{n-1}\right|}{p}<R+\left|b_{n-1}\right| . \end{gathered} $$ Therefore the integer $k$ can attain only finitely many values. Hence there exists an integer $k$ such that the number $\frac{p k-b_{n-1}}{p}=k-\frac{b_{n-1}}{p}$ is a root of $p f+g$ for infinitely many primes $p$. For these primes we have $$ f\left(k-b_{n-1} \frac{1}{p}\right)+\frac{1}{p} g\left(k-b_{n-1} \frac{1}{p}\right)=0 . $$ So the equation $$ f\left(k-b_{n-1} x\right)+x g\left(k-b_{n-1} x\right)=0 $$ has infinitely many solutions of the form $x=1 / p$. Since the left-hand side is a polynomial, this implies that (1) is a polynomial identity, so it holds for all real $x$. In particular, by substituting $x=0$ in (1) we get $f(k)=0$. Thus the integer $k$ is a root of $f$. In summary the monic polynomial $f$ obtained after the initial reduction always has an integer root. Therefore the original polynomial $f$ has a rational root.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

aa5d752a-c297-5ca0-89c2-2366a5fde362

| 24,147

|

Let $f$ and $g$ be two nonzero polynomials with integer coefficients and $\operatorname{deg} f>\operatorname{deg} g$. Suppose that for infinitely many primes $p$ the polynomial $p f+g$ has a rational root. Prove that $f$ has a rational root.

|

Analogously to the first solution, there exists a real number $R$ such that the complex roots of all polynomials of the form $p f+g$ lie in the disk $|z| \leq R$. For each prime $p$ such that $p f+g$ has a rational root, by GAUSs' lemma $p f+g$ is the product of two integer polynomials, one with degree 1 and the other with degree $\operatorname{deg} f-1$. Since $p$ is a prime, the leading coefficient of one of these factors divides the leading coefficient of $f$. Denote that factor by $h_{p}$. By narrowing the set of the primes used we can assume that all polynomials $h_{p}$ have the same degree and the same leading coefficient. Their complex roots lie in the disk $|z| \leq R$, hence VIETA's formulae imply that all coefficients of all polynomials $h_{p}$ form a bounded set. Since these coefficients are integers, there are only finitely many possible polynomials $h_{p}$. Hence there is a polynomial $h$ such that $h_{p}=h$ for infinitely many primes $p$. Finally, if $p$ and $q$ are distinct primes with $h_{p}=h_{q}=h$ then $h$ divides $(p-q) f$. Since $\operatorname{deg} h=1$ or $\operatorname{deg} h=\operatorname{deg} f-1$, in both cases $f$ has a rational root. Comment. Clearly the polynomial $h$ is a common factor of $f$ and $g$. If $\operatorname{deg} h=1$ then $f$ and $g$ share a rational root. Otherwise $\operatorname{deg} h=\operatorname{deg} f-1$ forces $\operatorname{deg} g=\operatorname{deg} f-1$ and $g$ divides $f$ over the rationals.

|

proof

|

Yes

|

Yes

|

proof

|

Algebra

|

Let $f$ and $g$ be two nonzero polynomials with integer coefficients and $\operatorname{deg} f>\operatorname{deg} g$. Suppose that for infinitely many primes $p$ the polynomial $p f+g$ has a rational root. Prove that $f$ has a rational root.

|

Analogously to the first solution, there exists a real number $R$ such that the complex roots of all polynomials of the form $p f+g$ lie in the disk $|z| \leq R$. For each prime $p$ such that $p f+g$ has a rational root, by GAUSs' lemma $p f+g$ is the product of two integer polynomials, one with degree 1 and the other with degree $\operatorname{deg} f-1$. Since $p$ is a prime, the leading coefficient of one of these factors divides the leading coefficient of $f$. Denote that factor by $h_{p}$. By narrowing the set of the primes used we can assume that all polynomials $h_{p}$ have the same degree and the same leading coefficient. Their complex roots lie in the disk $|z| \leq R$, hence VIETA's formulae imply that all coefficients of all polynomials $h_{p}$ form a bounded set. Since these coefficients are integers, there are only finitely many possible polynomials $h_{p}$. Hence there is a polynomial $h$ such that $h_{p}=h$ for infinitely many primes $p$. Finally, if $p$ and $q$ are distinct primes with $h_{p}=h_{q}=h$ then $h$ divides $(p-q) f$. Since $\operatorname{deg} h=1$ or $\operatorname{deg} h=\operatorname{deg} f-1$, in both cases $f$ has a rational root. Comment. Clearly the polynomial $h$ is a common factor of $f$ and $g$. If $\operatorname{deg} h=1$ then $f$ and $g$ share a rational root. Otherwise $\operatorname{deg} h=\operatorname{deg} f-1$ forces $\operatorname{deg} g=\operatorname{deg} f-1$ and $g$ divides $f$ over the rationals.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

aa5d752a-c297-5ca0-89c2-2366a5fde362

| 24,147

|

Let $f$ and $g$ be two nonzero polynomials with integer coefficients and $\operatorname{deg} f>\operatorname{deg} g$. Suppose that for infinitely many primes $p$ the polynomial $p f+g$ has a rational root. Prove that $f$ has a rational root.

|

Like in the first solution, there is a real number $R$ such that the real roots of all polynomials of the form $p f+g$ lie in the interval $[-R, R]$. Let $p_{1}<p_{2}<\cdots$ be an infinite sequence of primes so that for every index $k$ the polynomial $p_{k} f+g$ has a rational root $r_{k}$. The sequence $r_{1}, r_{2}, \ldots$ is bounded, so it has a convergent subsequence $r_{k_{1}}, r_{k_{2}}, \ldots$ Now replace the sequences $\left(p_{1}, p_{2}, \ldots\right)$ and $\left(r_{1}, r_{2}, \ldots\right)$ by $\left(p_{k_{1}}, p_{k_{2}}, \ldots\right)$ and $\left(r_{k_{1}}, r_{k_{2}}, \ldots\right)$; after this we can assume that the sequence $r_{1}, r_{2}, \ldots$ is convergent. Let $\alpha=\lim _{k \rightarrow \infty} r_{k}$. We show that $\alpha$ is a rational root of $f$. Over the interval $[-R, R]$, the polynomial $g$ is bounded, $|g(x)| \leq M$ with some fixed $M$. Therefore $$ \left|f\left(r_{k}\right)\right|=\left|f\left(r_{k}\right)-\frac{p_{k} f\left(r_{k}\right)+g\left(r_{k}\right)}{p_{k}}\right|=\frac{\left|g\left(r_{k}\right)\right|}{p_{k}} \leq \frac{M}{p_{k}} \rightarrow 0, $$ and $$ f(\alpha)=f\left(\lim _{k \rightarrow \infty} r_{k}\right)=\lim _{k \rightarrow \infty} f\left(r_{k}\right)=0 $$ So $\alpha$ is a root of $f$ indeed. Now let $u_{k}, v_{k}$ be relative prime integers for which $r_{k}=\frac{u_{k}}{v_{k}}$. Let $a$ be the leading coefficient of $f$, let $b=f(0)$ and $c=g(0)$ be the constant terms of $f$ and $g$, respectively. The leading coefficient of the polynomial $p_{k} f+g$ is $p_{k} a$, its constant term is $p_{k} b+c$. So $v_{k}$ divides $p_{k} a$ and $u_{k}$ divides $p_{k} b+c$. Let $p_{k} b+c=u_{k} e_{k}$ (if $p_{k} b+c=u_{k}=0$ then let $e_{k}=1$ ). We prove that $\alpha$ is rational by using the following fact. Let $\left(p_{n}\right)$ and $\left(q_{n}\right)$ be sequences of integers such that the sequence $\left(p_{n} / q_{n}\right)$ converges. If $\left(p_{n}\right)$ or $\left(q_{n}\right)$ is bounded then $\lim \left(p_{n} / q_{n}\right)$ is rational. Case 1: There is an infinite subsequence $\left(k_{n}\right)$ of indices such that $v_{k_{n}}$ divides $a$. Then $\left(v_{k_{n}}\right)$ is bounded, so $\alpha=\lim _{n \rightarrow \infty}\left(u_{k_{n}} / v_{k_{n}}\right)$ is rational. Case 2: There is an infinite subsequence $\left(k_{n}\right)$ of indices such that $v_{k_{n}}$ does not divide $a$. For such indices we have $v_{k_{n}}=p_{k_{n}} d_{k_{n}}$ where $d_{k_{n}}$ is a divisor of $a$. Then $$ \alpha=\lim _{n \rightarrow \infty} \frac{u_{k_{n}}}{v_{k_{n}}}=\lim _{n \rightarrow \infty} \frac{p_{k_{n}} b+c}{p_{k_{n}} d_{k_{n}} e_{k_{n}}}=\lim _{n \rightarrow \infty} \frac{b}{d_{k_{n}} e_{k_{n}}}+\lim _{n \rightarrow \infty} \frac{c}{p_{k_{n}} d_{k_{n}} e_{k_{n}}}=\lim _{n \rightarrow \infty} \frac{b}{d_{k_{n}} e_{k_{n}}} . $$ Because the numerator $b$ in the last limit is bounded, $\alpha$ is rational.

|

proof

|

Yes

|

Yes

|

proof

|

Algebra

|

Let $f$ and $g$ be two nonzero polynomials with integer coefficients and $\operatorname{deg} f>\operatorname{deg} g$. Suppose that for infinitely many primes $p$ the polynomial $p f+g$ has a rational root. Prove that $f$ has a rational root.

|

Like in the first solution, there is a real number $R$ such that the real roots of all polynomials of the form $p f+g$ lie in the interval $[-R, R]$. Let $p_{1}<p_{2}<\cdots$ be an infinite sequence of primes so that for every index $k$ the polynomial $p_{k} f+g$ has a rational root $r_{k}$. The sequence $r_{1}, r_{2}, \ldots$ is bounded, so it has a convergent subsequence $r_{k_{1}}, r_{k_{2}}, \ldots$ Now replace the sequences $\left(p_{1}, p_{2}, \ldots\right)$ and $\left(r_{1}, r_{2}, \ldots\right)$ by $\left(p_{k_{1}}, p_{k_{2}}, \ldots\right)$ and $\left(r_{k_{1}}, r_{k_{2}}, \ldots\right)$; after this we can assume that the sequence $r_{1}, r_{2}, \ldots$ is convergent. Let $\alpha=\lim _{k \rightarrow \infty} r_{k}$. We show that $\alpha$ is a rational root of $f$. Over the interval $[-R, R]$, the polynomial $g$ is bounded, $|g(x)| \leq M$ with some fixed $M$. Therefore $$ \left|f\left(r_{k}\right)\right|=\left|f\left(r_{k}\right)-\frac{p_{k} f\left(r_{k}\right)+g\left(r_{k}\right)}{p_{k}}\right|=\frac{\left|g\left(r_{k}\right)\right|}{p_{k}} \leq \frac{M}{p_{k}} \rightarrow 0, $$ and $$ f(\alpha)=f\left(\lim _{k \rightarrow \infty} r_{k}\right)=\lim _{k \rightarrow \infty} f\left(r_{k}\right)=0 $$ So $\alpha$ is a root of $f$ indeed. Now let $u_{k}, v_{k}$ be relative prime integers for which $r_{k}=\frac{u_{k}}{v_{k}}$. Let $a$ be the leading coefficient of $f$, let $b=f(0)$ and $c=g(0)$ be the constant terms of $f$ and $g$, respectively. The leading coefficient of the polynomial $p_{k} f+g$ is $p_{k} a$, its constant term is $p_{k} b+c$. So $v_{k}$ divides $p_{k} a$ and $u_{k}$ divides $p_{k} b+c$. Let $p_{k} b+c=u_{k} e_{k}$ (if $p_{k} b+c=u_{k}=0$ then let $e_{k}=1$ ). We prove that $\alpha$ is rational by using the following fact. Let $\left(p_{n}\right)$ and $\left(q_{n}\right)$ be sequences of integers such that the sequence $\left(p_{n} / q_{n}\right)$ converges. If $\left(p_{n}\right)$ or $\left(q_{n}\right)$ is bounded then $\lim \left(p_{n} / q_{n}\right)$ is rational. Case 1: There is an infinite subsequence $\left(k_{n}\right)$ of indices such that $v_{k_{n}}$ divides $a$. Then $\left(v_{k_{n}}\right)$ is bounded, so $\alpha=\lim _{n \rightarrow \infty}\left(u_{k_{n}} / v_{k_{n}}\right)$ is rational. Case 2: There is an infinite subsequence $\left(k_{n}\right)$ of indices such that $v_{k_{n}}$ does not divide $a$. For such indices we have $v_{k_{n}}=p_{k_{n}} d_{k_{n}}$ where $d_{k_{n}}$ is a divisor of $a$. Then $$ \alpha=\lim _{n \rightarrow \infty} \frac{u_{k_{n}}}{v_{k_{n}}}=\lim _{n \rightarrow \infty} \frac{p_{k_{n}} b+c}{p_{k_{n}} d_{k_{n}} e_{k_{n}}}=\lim _{n \rightarrow \infty} \frac{b}{d_{k_{n}} e_{k_{n}}}+\lim _{n \rightarrow \infty} \frac{c}{p_{k_{n}} d_{k_{n}} e_{k_{n}}}=\lim _{n \rightarrow \infty} \frac{b}{d_{k_{n}} e_{k_{n}}} . $$ Because the numerator $b$ in the last limit is bounded, $\alpha$ is rational.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

aa5d752a-c297-5ca0-89c2-2366a5fde362

| 24,147

|

Let $f: \mathbb{N} \rightarrow \mathbb{N}$ be a function, and let $f^{m}$ be $f$ applied $m$ times. Suppose that for every $n \in \mathbb{N}$ there exists a $k \in \mathbb{N}$ such that $f^{2 k}(n)=n+k$, and let $k_{n}$ be the smallest such $k$. Prove that the sequence $k_{1}, k_{2}, \ldots$ is unbounded.

|

We restrict attention to the set $$ S=\left\{1, f(1), f^{2}(1), \ldots\right\} $$ Observe that $S$ is unbounded because for every number $n$ in $S$ there exists a $k>0$ such that $f^{2 k}(n)=n+k$ is in $S$. Clearly $f$ maps $S$ into itself; moreover $f$ is injective on $S$. Indeed if $f^{i}(1)=f^{j}(1)$ with $i \neq j$ then the values $f^{m}(1)$ start repeating periodically from some point on, and $S$ would be finite. Define $g: S \rightarrow S$ by $g(n)=f^{2 k_{n}}(n)=n+k_{n}$. We prove that $g$ is injective too. Suppose that $g(a)=g(b)$ with $a<b$. Then $a+k_{a}=f^{2 k_{a}}(a)=f^{2 k_{b}}(b)=b+k_{b}$ implies $k_{a}>k_{b}$. So, since $f$ is injective on $S$, we obtain $$ f^{2\left(k_{a}-k_{b}\right)}(a)=b=a+\left(k_{a}-k_{b}\right) . $$ However this contradicts the minimality of $k_{a}$ as $0<k_{a}-k_{b}<k_{a}$. Let $T$ be the set of elements of $S$ that are not of the form $g(n)$ with $n \in S$. Note that $1 \in T$ by $g(n)>n$ for $n \in S$, so $T$ is non-empty. For each $t \in T$ denote $C_{t}=\left\{t, g(t), g^{2}(t), \ldots\right\}$; call $C_{t}$ the chain starting at $t$. Observe that distinct chains are disjoint because $g$ is injective. Each $n \in S \backslash T$ has the form $n=g\left(n^{\prime}\right)$ with $n^{\prime}<n, n^{\prime} \in S$. Repeated applications of the same observation show that $n \in C_{t}$ for some $t \in T$, i. e. $S$ is the disjoint union of the chains $C_{t}$. If $f^{n}(1)$ is in the chain $C_{t}$ starting at $t=f^{n_{t}}(1)$ then $n=n_{t}+2 a_{1}+\cdots+2 a_{j}$ with $$ f^{n}(1)=g^{j}\left(f^{n_{t}}(1)\right)=f^{2 a_{j}}\left(f^{2 a_{j-1}}\left(\cdots f^{2 a_{1}}\left(f^{n_{t}}(1)\right)\right)\right)=f^{n_{t}}(1)+a_{1}+\cdots+a_{j} . $$ Hence $$ f^{n}(1)=f^{n_{t}}(1)+\frac{n-n_{t}}{2}=t+\frac{n-n_{t}}{2} . $$ Now we show that $T$ is infinite. We argue by contradiction. Suppose that there are only finitely many chains $C_{t_{1}}, \ldots, C_{t_{r}}$, starting at $t_{1}<\cdots<t_{r}$. Fix $N$. If $f^{n}(1)$ with $1 \leq n \leq N$ is in $C_{t}$ then $f^{n}(1)=t+\frac{n-n_{t}}{2} \leq t_{r}+\frac{N}{2}$ by (1). But then the $N+1$ distinct natural numbers $1, f(1), \ldots, f^{N}(1)$ are all less than $t_{r}+\frac{N}{2}$ and hence $N+1 \leq t_{r}+\frac{N}{2}$. This is a contradiction if $N$ is sufficiently large, and hence $T$ is infinite. To complete the argument, choose any $k$ in $\mathbb{N}$ and consider the $k+1$ chains starting at the first $k+1$ numbers in $T$. Let $t$ be the greatest one among these numbers. Then each of the chains in question contains a number not exceeding $t$, and at least one of them does not contain any number among $t+1, \ldots, t+k$. So there is a number $n$ in this chain such that $g(n)-n>k$, i. e. $k_{n}>k$. In conclusion $k_{1}, k_{2}, \ldots$ is unbounded.

|

proof

|

Yes

|

Yes

|

proof

|

Number Theory

|

Let $f: \mathbb{N} \rightarrow \mathbb{N}$ be a function, and let $f^{m}$ be $f$ applied $m$ times. Suppose that for every $n \in \mathbb{N}$ there exists a $k \in \mathbb{N}$ such that $f^{2 k}(n)=n+k$, and let $k_{n}$ be the smallest such $k$. Prove that the sequence $k_{1}, k_{2}, \ldots$ is unbounded.

|

We restrict attention to the set $$ S=\left\{1, f(1), f^{2}(1), \ldots\right\} $$ Observe that $S$ is unbounded because for every number $n$ in $S$ there exists a $k>0$ such that $f^{2 k}(n)=n+k$ is in $S$. Clearly $f$ maps $S$ into itself; moreover $f$ is injective on $S$. Indeed if $f^{i}(1)=f^{j}(1)$ with $i \neq j$ then the values $f^{m}(1)$ start repeating periodically from some point on, and $S$ would be finite. Define $g: S \rightarrow S$ by $g(n)=f^{2 k_{n}}(n)=n+k_{n}$. We prove that $g$ is injective too. Suppose that $g(a)=g(b)$ with $a<b$. Then $a+k_{a}=f^{2 k_{a}}(a)=f^{2 k_{b}}(b)=b+k_{b}$ implies $k_{a}>k_{b}$. So, since $f$ is injective on $S$, we obtain $$ f^{2\left(k_{a}-k_{b}\right)}(a)=b=a+\left(k_{a}-k_{b}\right) . $$ However this contradicts the minimality of $k_{a}$ as $0<k_{a}-k_{b}<k_{a}$. Let $T$ be the set of elements of $S$ that are not of the form $g(n)$ with $n \in S$. Note that $1 \in T$ by $g(n)>n$ for $n \in S$, so $T$ is non-empty. For each $t \in T$ denote $C_{t}=\left\{t, g(t), g^{2}(t), \ldots\right\}$; call $C_{t}$ the chain starting at $t$. Observe that distinct chains are disjoint because $g$ is injective. Each $n \in S \backslash T$ has the form $n=g\left(n^{\prime}\right)$ with $n^{\prime}<n, n^{\prime} \in S$. Repeated applications of the same observation show that $n \in C_{t}$ for some $t \in T$, i. e. $S$ is the disjoint union of the chains $C_{t}$. If $f^{n}(1)$ is in the chain $C_{t}$ starting at $t=f^{n_{t}}(1)$ then $n=n_{t}+2 a_{1}+\cdots+2 a_{j}$ with $$ f^{n}(1)=g^{j}\left(f^{n_{t}}(1)\right)=f^{2 a_{j}}\left(f^{2 a_{j-1}}\left(\cdots f^{2 a_{1}}\left(f^{n_{t}}(1)\right)\right)\right)=f^{n_{t}}(1)+a_{1}+\cdots+a_{j} . $$ Hence $$ f^{n}(1)=f^{n_{t}}(1)+\frac{n-n_{t}}{2}=t+\frac{n-n_{t}}{2} . $$ Now we show that $T$ is infinite. We argue by contradiction. Suppose that there are only finitely many chains $C_{t_{1}}, \ldots, C_{t_{r}}$, starting at $t_{1}<\cdots<t_{r}$. Fix $N$. If $f^{n}(1)$ with $1 \leq n \leq N$ is in $C_{t}$ then $f^{n}(1)=t+\frac{n-n_{t}}{2} \leq t_{r}+\frac{N}{2}$ by (1). But then the $N+1$ distinct natural numbers $1, f(1), \ldots, f^{N}(1)$ are all less than $t_{r}+\frac{N}{2}$ and hence $N+1 \leq t_{r}+\frac{N}{2}$. This is a contradiction if $N$ is sufficiently large, and hence $T$ is infinite. To complete the argument, choose any $k$ in $\mathbb{N}$ and consider the $k+1$ chains starting at the first $k+1$ numbers in $T$. Let $t$ be the greatest one among these numbers. Then each of the chains in question contains a number not exceeding $t$, and at least one of them does not contain any number among $t+1, \ldots, t+k$. So there is a number $n$ in this chain such that $g(n)-n>k$, i. e. $k_{n}>k$. In conclusion $k_{1}, k_{2}, \ldots$ is unbounded.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

f88d1469-5556-5b12-abec-818b6abf3a76

| 24,155

|

Several positive integers are written in a row. Iteratively, Alice chooses two adjacent numbers $x$ and $y$ such that $x>y$ and $x$ is to the left of $y$, and replaces the pair $(x, y)$ by either $(y+1, x)$ or $(x-1, x)$. Prove that she can perform only finitely many such iterations.

|

Note first that the allowed operation does not change the maximum $M$ of the initial sequence. Let $a_{1}, a_{2}, \ldots, a_{n}$ be the numbers obtained at some point of the process. Consider the sum $$ S=a_{1}+2 a_{2}+\cdots+n a_{n} $$ We claim that $S$ increases by a positive integer amount with every operation. Let the operation replace the pair ( $\left.a_{i}, a_{i+1}\right)$ by a pair $\left(c, a_{i}\right)$, where $a_{i}>a_{i+1}$ and $c=a_{i+1}+1$ or $c=a_{i}-1$. Then the new and the old value of $S$ differ by $d=\left(i c+(i+1) a_{i}\right)-\left(i a_{i}+(i+1) a_{i+1}\right)=a_{i}-a_{i+1}+i\left(c-a_{i+1}\right)$. The integer $d$ is positive since $a_{i}-a_{i+1} \geq 1$ and $c-a_{i+1} \geq 0$. On the other hand $S \leq(1+2+\cdots+n) M$ as $a_{i} \leq M$ for all $i=1, \ldots, n$. Since $S$ increases by at least 1 at each step and never exceeds the constant $(1+2+\cdots+n) M$, the process stops after a finite number of iterations.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Several positive integers are written in a row. Iteratively, Alice chooses two adjacent numbers $x$ and $y$ such that $x>y$ and $x$ is to the left of $y$, and replaces the pair $(x, y)$ by either $(y+1, x)$ or $(x-1, x)$. Prove that she can perform only finitely many such iterations.

|

Note first that the allowed operation does not change the maximum $M$ of the initial sequence. Let $a_{1}, a_{2}, \ldots, a_{n}$ be the numbers obtained at some point of the process. Consider the sum $$ S=a_{1}+2 a_{2}+\cdots+n a_{n} $$ We claim that $S$ increases by a positive integer amount with every operation. Let the operation replace the pair ( $\left.a_{i}, a_{i+1}\right)$ by a pair $\left(c, a_{i}\right)$, where $a_{i}>a_{i+1}$ and $c=a_{i+1}+1$ or $c=a_{i}-1$. Then the new and the old value of $S$ differ by $d=\left(i c+(i+1) a_{i}\right)-\left(i a_{i}+(i+1) a_{i+1}\right)=a_{i}-a_{i+1}+i\left(c-a_{i+1}\right)$. The integer $d$ is positive since $a_{i}-a_{i+1} \geq 1$ and $c-a_{i+1} \geq 0$. On the other hand $S \leq(1+2+\cdots+n) M$ as $a_{i} \leq M$ for all $i=1, \ldots, n$. Since $S$ increases by at least 1 at each step and never exceeds the constant $(1+2+\cdots+n) M$, the process stops after a finite number of iterations.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

54b5b6f8-a696-5e45-9f93-233d11b8f1a8

| 24,161

|

Several positive integers are written in a row. Iteratively, Alice chooses two adjacent numbers $x$ and $y$ such that $x>y$ and $x$ is to the left of $y$, and replaces the pair $(x, y)$ by either $(y+1, x)$ or $(x-1, x)$. Prove that she can perform only finitely many such iterations.

|

Like in the first solution note that the operations do not change the maximum $M$ of the initial sequence. Now consider the reverse lexicographical order for $n$-tuples of integers. We say that $\left(x_{1}, \ldots, x_{n}\right)<\left(y_{1}, \ldots, y_{n}\right)$ if $x_{n}<y_{n}$, or if $x_{n}=y_{n}$ and $x_{n-1}<y_{n-1}$, or if $x_{n}=y_{n}$, $x_{n-1}=y_{n-1}$ and $x_{n-2}<y_{n-2}$, etc. Each iteration creates a sequence that is greater than the previous one with respect to this order, and no sequence occurs twice during the process. On the other hand there are finitely many possible sequences because their terms are always positive integers not exceeding $M$. Hence the process cannot continue forever.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Several positive integers are written in a row. Iteratively, Alice chooses two adjacent numbers $x$ and $y$ such that $x>y$ and $x$ is to the left of $y$, and replaces the pair $(x, y)$ by either $(y+1, x)$ or $(x-1, x)$. Prove that she can perform only finitely many such iterations.

|

Like in the first solution note that the operations do not change the maximum $M$ of the initial sequence. Now consider the reverse lexicographical order for $n$-tuples of integers. We say that $\left(x_{1}, \ldots, x_{n}\right)<\left(y_{1}, \ldots, y_{n}\right)$ if $x_{n}<y_{n}$, or if $x_{n}=y_{n}$ and $x_{n-1}<y_{n-1}$, or if $x_{n}=y_{n}$, $x_{n-1}=y_{n-1}$ and $x_{n-2}<y_{n-2}$, etc. Each iteration creates a sequence that is greater than the previous one with respect to this order, and no sequence occurs twice during the process. On the other hand there are finitely many possible sequences because their terms are always positive integers not exceeding $M$. Hence the process cannot continue forever.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

54b5b6f8-a696-5e45-9f93-233d11b8f1a8

| 24,161

|

Several positive integers are written in a row. Iteratively, Alice chooses two adjacent numbers $x$ and $y$ such that $x>y$ and $x$ is to the left of $y$, and replaces the pair $(x, y)$ by either $(y+1, x)$ or $(x-1, x)$. Prove that she can perform only finitely many such iterations.

|

Let the current numbers be $a_{1}, a_{2}, \ldots, a_{n}$. Define the score $s_{i}$ of $a_{i}$ as the number of $a_{j}$ 's that are less than $a_{i}$. Call the sequence $s_{1}, s_{2}, \ldots, s_{n}$ the score sequence of $a_{1}, a_{2}, \ldots, a_{n}$. Let us say that a sequence $x_{1}, \ldots, x_{n}$ dominates a sequence $y_{1}, \ldots, y_{n}$ if the first index $i$ with $x_{i} \neq y_{i}$ is such that $x_{i}<y_{i}$. We show that after each operation the new score sequence dominates the old one. Score sequences do not repeat, and there are finitely many possibilities for them, no more than $(n-1)^{n}$. Hence the process will terminate. Consider an operation that replaces $(x, y)$ by $(a, x)$, with $a=y+1$ or $a=x-1$. Suppose that $x$ was originally at position $i$. For each $j<i$ the score $s_{j}$ does not increase with the change because $y \leq a$ and $x \leq x$. If $s_{j}$ decreases for some $j<i$ then the new score sequence dominates the old one. Assume that $s_{j}$ stays the same for all $j<i$ and consider $s_{i}$. Since $x>y$ and $y \leq a \leq x$, we see that $s_{i}$ decreases by at least 1 . This concludes the proof. Comment. All three proofs work if $x$ and $y$ are not necessarily adjacent, and if the pair $(x, y)$ is replaced by any pair $(a, x)$, with $a$ an integer satisfying $y \leq a \leq x$. There is nothing special about the "weights" $1,2, \ldots, n$ in the definition of $S=\sum_{i=1}^{n} i a_{i}$ from the first solution. For any sequence $w_{1}<w_{2}<\cdots<w_{n}$ of positive integers, the sum $\sum_{i=1}^{n} w_{i} a_{i}$ increases by at least 1 with each operation. Consider the same problem, but letting Alice replace the pair $(x, y)$ by $(a, x)$, where $a$ is any positive integer less than $x$. The same conclusion holds in this version, i. e. the process stops eventually. The solution using the reverse lexicographical order works without any change. The first solution would require a special set of weights like $w_{i}=M^{i}$ for $i=1, \ldots, n$. Comment. The first and the second solutions provide upper bounds for the number of possible operations, respectively of order $M n^{2}$ and $M^{n}$ where $M$ is the maximum of the original sequence. The upper bound $(n-1)^{n}$ in the third solution does not depend on $M$.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Several positive integers are written in a row. Iteratively, Alice chooses two adjacent numbers $x$ and $y$ such that $x>y$ and $x$ is to the left of $y$, and replaces the pair $(x, y)$ by either $(y+1, x)$ or $(x-1, x)$. Prove that she can perform only finitely many such iterations.

|

Let the current numbers be $a_{1}, a_{2}, \ldots, a_{n}$. Define the score $s_{i}$ of $a_{i}$ as the number of $a_{j}$ 's that are less than $a_{i}$. Call the sequence $s_{1}, s_{2}, \ldots, s_{n}$ the score sequence of $a_{1}, a_{2}, \ldots, a_{n}$. Let us say that a sequence $x_{1}, \ldots, x_{n}$ dominates a sequence $y_{1}, \ldots, y_{n}$ if the first index $i$ with $x_{i} \neq y_{i}$ is such that $x_{i}<y_{i}$. We show that after each operation the new score sequence dominates the old one. Score sequences do not repeat, and there are finitely many possibilities for them, no more than $(n-1)^{n}$. Hence the process will terminate. Consider an operation that replaces $(x, y)$ by $(a, x)$, with $a=y+1$ or $a=x-1$. Suppose that $x$ was originally at position $i$. For each $j<i$ the score $s_{j}$ does not increase with the change because $y \leq a$ and $x \leq x$. If $s_{j}$ decreases for some $j<i$ then the new score sequence dominates the old one. Assume that $s_{j}$ stays the same for all $j<i$ and consider $s_{i}$. Since $x>y$ and $y \leq a \leq x$, we see that $s_{i}$ decreases by at least 1 . This concludes the proof. Comment. All three proofs work if $x$ and $y$ are not necessarily adjacent, and if the pair $(x, y)$ is replaced by any pair $(a, x)$, with $a$ an integer satisfying $y \leq a \leq x$. There is nothing special about the "weights" $1,2, \ldots, n$ in the definition of $S=\sum_{i=1}^{n} i a_{i}$ from the first solution. For any sequence $w_{1}<w_{2}<\cdots<w_{n}$ of positive integers, the sum $\sum_{i=1}^{n} w_{i} a_{i}$ increases by at least 1 with each operation. Consider the same problem, but letting Alice replace the pair $(x, y)$ by $(a, x)$, where $a$ is any positive integer less than $x$. The same conclusion holds in this version, i. e. the process stops eventually. The solution using the reverse lexicographical order works without any change. The first solution would require a special set of weights like $w_{i}=M^{i}$ for $i=1, \ldots, n$. Comment. The first and the second solutions provide upper bounds for the number of possible operations, respectively of order $M n^{2}$ and $M^{n}$ where $M$ is the maximum of the original sequence. The upper bound $(n-1)^{n}$ in the third solution does not depend on $M$.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

54b5b6f8-a696-5e45-9f93-233d11b8f1a8

| 24,161

|

The columns and the rows of a $3 n \times 3 n$ square board are numbered $1,2, \ldots, 3 n$. Every square $(x, y)$ with $1 \leq x, y \leq 3 n$ is colored asparagus, byzantium or citrine according as the modulo 3 remainder of $x+y$ is 0,1 or 2 respectively. One token colored asparagus, byzantium or citrine is placed on each square, so that there are $3 n^{2}$ tokens of each color. Suppose that one can permute the tokens so that each token is moved to a distance of at most $d$ from its original position, each asparagus token replaces a byzantium token, each byzantium token replaces a citrine token, and each citrine token replaces an asparagus token. Prove that it is possible to permute the tokens so that each token is moved to a distance of at most $d+2$ from its original position, and each square contains a token with the same color as the square.

|

Without loss of generality it suffices to prove that the A-tokens can be moved to distinct $\mathrm{A}$-squares in such a way that each $\mathrm{A}$-token is moved to a distance at most $d+2$ from its original place. This means we need a perfect matching between the $3 n^{2} \mathrm{~A}$-squares and the $3 n^{2}$ A-tokens such that the distance in each pair of the matching is at most $d+2$. To find the matching, we construct a bipartite graph. The A-squares will be the vertices in one class of the graph; the vertices in the other class will be the A-tokens. Split the board into $3 \times 1$ horizontal triminos; then each trimino contains exactly one Asquare. Take a permutation $\pi$ of the tokens which moves A-tokens to B-tokens, B-tokens to C-tokens, and C-tokens to A-tokens, in each case to a distance at most $d$. For each A-square $S$, and for each A-token $T$, connect $S$ and $T$ by an edge if $T, \pi(T)$ or $\pi^{-1}(T)$ is on the trimino containing $S$. We allow multiple edges; it is even possible that the same square and the same token are connected with three edges. Obviously the lengths of the edges in the graph do not exceed $d+2$. By length of an edge we mean the distance between the A-square and the A-token it connects. Each A-token $T$ is connected with the three A-squares whose triminos contain $T, \pi(T)$ and $\pi^{-1}(T)$. Therefore in the graph all tokens are of degree 3. We show that the same is true for the A-squares. Let $S$ be an arbitrary A-square, and let $T_{1}, T_{2}, T_{3}$ be the three tokens on the trimino containing $S$. For $i=1,2,3$, if $T_{i}$ is an A-token, then $S$ is connected with $T_{i}$; if $T_{i}$ is a B-token then $S$ is connected with $\pi^{-1}\left(T_{i}\right)$; finally, if $T_{i}$ is a C-token then $S$ is connected with $\pi\left(T_{i}\right)$. Hence in the graph the A-squares also are of degree 3. Since the A-squares are of degree 3 , from every set $\mathcal{S}$ of A-squares exactly $3|\mathcal{S}|$ edges start. These edges end in at least $|\mathcal{S}|$ tokens because the A-tokens also are of degree 3. Hence every set $\mathcal{S}$ of A-squares has at least $|\mathcal{S}|$ neighbors among the A-tokens. Therefore, by HALL's marriage theorem, the graph contains a perfect matching between the two vertex classes. So there is a perfect matching between the A-squares and A-tokens with edges no longer than $d+2$. It follows that the tokens can be permuted as specified in the problem statement. Comment 1. In the original problem proposal the board was infinite and there were only two colors. Having $n$ colors for some positive integer $n$ was an option; we chose $n=3$. Moreover, we changed the board to a finite one to avoid dealing with infinite graphs (although Hall's theorem works in the infinite case as well). With only two colors Hall's theorem is not needed. In this case we split the board into $2 \times 1$ dominos, and in the resulting graph all vertices are of degree 2. The graph consists of disjoint cycles with even length and infinite paths, so the existence of the matching is trivial. Having more than three colors would make the problem statement more complicated, because we need a matching between every two color classes of tokens. However, this would not mean a significant increase in difficulty. Comment 2. According to Wikipedia, the color asparagus (hexadecimal code \#87A96B) is a tone of green that is named after the vegetable. Crayola created this color in 1993 as one of the 16 to be named in the Name The Color Contest. Byzantium (\#702963) is a dark tone of purple. Its first recorded use as a color name in English was in 1926. Citrine (\#E4D00A) is variously described as yellow, greenish-yellow, brownish-yellow or orange. The first known use of citrine as a color name in English was in the 14th century.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

The columns and the rows of a $3 n \times 3 n$ square board are numbered $1,2, \ldots, 3 n$. Every square $(x, y)$ with $1 \leq x, y \leq 3 n$ is colored asparagus, byzantium or citrine according as the modulo 3 remainder of $x+y$ is 0,1 or 2 respectively. One token colored asparagus, byzantium or citrine is placed on each square, so that there are $3 n^{2}$ tokens of each color. Suppose that one can permute the tokens so that each token is moved to a distance of at most $d$ from its original position, each asparagus token replaces a byzantium token, each byzantium token replaces a citrine token, and each citrine token replaces an asparagus token. Prove that it is possible to permute the tokens so that each token is moved to a distance of at most $d+2$ from its original position, and each square contains a token with the same color as the square.

|

Without loss of generality it suffices to prove that the A-tokens can be moved to distinct $\mathrm{A}$-squares in such a way that each $\mathrm{A}$-token is moved to a distance at most $d+2$ from its original place. This means we need a perfect matching between the $3 n^{2} \mathrm{~A}$-squares and the $3 n^{2}$ A-tokens such that the distance in each pair of the matching is at most $d+2$. To find the matching, we construct a bipartite graph. The A-squares will be the vertices in one class of the graph; the vertices in the other class will be the A-tokens. Split the board into $3 \times 1$ horizontal triminos; then each trimino contains exactly one Asquare. Take a permutation $\pi$ of the tokens which moves A-tokens to B-tokens, B-tokens to C-tokens, and C-tokens to A-tokens, in each case to a distance at most $d$. For each A-square $S$, and for each A-token $T$, connect $S$ and $T$ by an edge if $T, \pi(T)$ or $\pi^{-1}(T)$ is on the trimino containing $S$. We allow multiple edges; it is even possible that the same square and the same token are connected with three edges. Obviously the lengths of the edges in the graph do not exceed $d+2$. By length of an edge we mean the distance between the A-square and the A-token it connects. Each A-token $T$ is connected with the three A-squares whose triminos contain $T, \pi(T)$ and $\pi^{-1}(T)$. Therefore in the graph all tokens are of degree 3. We show that the same is true for the A-squares. Let $S$ be an arbitrary A-square, and let $T_{1}, T_{2}, T_{3}$ be the three tokens on the trimino containing $S$. For $i=1,2,3$, if $T_{i}$ is an A-token, then $S$ is connected with $T_{i}$; if $T_{i}$ is a B-token then $S$ is connected with $\pi^{-1}\left(T_{i}\right)$; finally, if $T_{i}$ is a C-token then $S$ is connected with $\pi\left(T_{i}\right)$. Hence in the graph the A-squares also are of degree 3. Since the A-squares are of degree 3 , from every set $\mathcal{S}$ of A-squares exactly $3|\mathcal{S}|$ edges start. These edges end in at least $|\mathcal{S}|$ tokens because the A-tokens also are of degree 3. Hence every set $\mathcal{S}$ of A-squares has at least $|\mathcal{S}|$ neighbors among the A-tokens. Therefore, by HALL's marriage theorem, the graph contains a perfect matching between the two vertex classes. So there is a perfect matching between the A-squares and A-tokens with edges no longer than $d+2$. It follows that the tokens can be permuted as specified in the problem statement. Comment 1. In the original problem proposal the board was infinite and there were only two colors. Having $n$ colors for some positive integer $n$ was an option; we chose $n=3$. Moreover, we changed the board to a finite one to avoid dealing with infinite graphs (although Hall's theorem works in the infinite case as well). With only two colors Hall's theorem is not needed. In this case we split the board into $2 \times 1$ dominos, and in the resulting graph all vertices are of degree 2. The graph consists of disjoint cycles with even length and infinite paths, so the existence of the matching is trivial. Having more than three colors would make the problem statement more complicated, because we need a matching between every two color classes of tokens. However, this would not mean a significant increase in difficulty. Comment 2. According to Wikipedia, the color asparagus (hexadecimal code \#87A96B) is a tone of green that is named after the vegetable. Crayola created this color in 1993 as one of the 16 to be named in the Name The Color Contest. Byzantium (\#702963) is a dark tone of purple. Its first recorded use as a color name in English was in 1926. Citrine (\#E4D00A) is variously described as yellow, greenish-yellow, brownish-yellow or orange. The first known use of citrine as a color name in English was in the 14th century.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

c3f95224-caf8-522c-bc7b-603c743ed466

| 24,176

|

Let $k$ and $n$ be fixed positive integers. In the liar's guessing game, Amy chooses integers $x$ and $N$ with $1 \leq x \leq N$. She tells Ben what $N$ is, but not what $x$ is. Ben may then repeatedly ask Amy whether $x \in S$ for arbitrary sets $S$ of integers. Amy will always answer with yes or no, but she might lie. The only restriction is that she can lie at most $k$ times in a row. After he has asked as many questions as he wants, Ben must specify a set of at most $n$ positive integers. If $x$ is in this set he wins; otherwise, he loses. Prove that: a) If $n \geq 2^{k}$ then Ben can always win. b) For sufficiently large $k$ there exist $n \geq 1.99^{k}$ such that Ben cannot guarantee a win.

|

Consider an answer $A \in\{y e s, n o\}$ to a question of the kind "Is $x$ in the set $S$ ?" We say that $A$ is inconsistent with a number $i$ if $A=y e s$ and $i \notin S$, or if $A=n o$ and $i \in S$. Observe that an answer inconsistent with the target number $x$ is a lie. a) Suppose that Ben has determined a set $T$ of size $m$ that contains $x$. This is true initially with $m=N$ and $T=\{1,2, \ldots, N\}$. For $m>2^{k}$ we show how Ben can find a number $y \in T$ that is different from $x$. By performing this step repeatedly he can reduce $T$ to be of size $2^{k} \leq n$ and thus win. Since only the size $m>2^{k}$ of $T$ is relevant, assume that $T=\left\{0,1, \ldots, 2^{k}, \ldots, m-1\right\}$. Ben begins by asking repeatedly whether $x$ is $2^{k}$. If Amy answers no $k+1$ times in a row, one of these answers is truthful, and so $x \neq 2^{k}$. Otherwise Ben stops asking about $2^{k}$ at the first answer yes. He then asks, for each $i=1, \ldots, k$, if the binary representation of $x$ has a 0 in the $i$ th digit. Regardless of what the $k$ answers are, they are all inconsistent with a certain number $y \in\left\{0,1, \ldots, 2^{k}-1\right\}$. The preceding answer yes about $2^{k}$ is also inconsistent with $y$. Hence $y \neq x$. Otherwise the last $k+1$ answers are not truthful, which is impossible. Either way, Ben finds a number in $T$ that is different from $x$, and the claim is proven. b) We prove that if $1<\lambda<2$ and $n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1$ then Ben cannot guarantee a win. To complete the proof, then it suffices to take $\lambda$ such that $1.99<\lambda<2$ and $k$ large enough so that $$ n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1 \geq 1.99^{k} $$ Consider the following strategy for Amy. First she chooses $N=n+1$ and $x \in\{1,2, \ldots, n+1\}$ arbitrarily. After every answer of hers Amy determines, for each $i=1,2, \ldots, n+1$, the number $m_{i}$ of consecutive answers she has given by that point that are inconsistent with $i$. To decide on her next answer, she then uses the quantity $$ \phi=\sum_{i=1}^{n+1} \lambda^{m_{i}} $$ No matter what Ben's next question is, Amy chooses the answer which minimizes $\phi$. We claim that with this strategy $\phi$ will always stay less than $\lambda^{k+1}$. Consequently no exponent $m_{i}$ in $\phi$ will ever exceed $k$, hence Amy will never give more than $k$ consecutive answers inconsistent with some $i$. In particular this applies to the target number $x$, so she will never lie more than $k$ times in a row. Thus, given the claim, Amy's strategy is legal. Since the strategy does not depend on $x$ in any way, Ben can make no deductions about $x$, and therefore he cannot guarantee a win. It remains to show that $\phi<\lambda^{k+1}$ at all times. Initially each $m_{i}$ is 0 , so this condition holds in the beginning due to $1<\lambda<2$ and $n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1$. Suppose that $\phi<\lambda^{k+1}$ at some point, and Ben has just asked if $x \in S$ for some set $S$. According as Amy answers yes or no, the new value of $\phi$ becomes $$ \phi_{1}=\sum_{i \in S} 1+\sum_{i \notin S} \lambda^{m_{i}+1} \quad \text { or } \quad \phi_{2}=\sum_{i \in S} \lambda^{m_{i}+1}+\sum_{i \notin S} 1 $$ Since Amy chooses the option minimizing $\phi$, the new $\phi$ will equal $\min \left(\phi_{1}, \phi_{2}\right)$. Now we have $$ \min \left(\phi_{1}, \phi_{2}\right) \leq \frac{1}{2}\left(\phi_{1}+\phi_{2}\right)=\frac{1}{2}\left(\sum_{i \in S}\left(1+\lambda^{m_{i}+1}\right)+\sum_{i \notin S}\left(\lambda^{m_{i}+1}+1\right)\right)=\frac{1}{2}(\lambda \phi+n+1) $$ Because $\phi<\lambda^{k+1}$, the assumptions $\lambda<2$ and $n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1$ lead to $$ \min \left(\phi_{1}, \phi_{2}\right)<\frac{1}{2}\left(\lambda^{k+2}+(2-\lambda) \lambda^{k+1}\right)=\lambda^{k+1} $$ The claim follows, which completes the solution. Comment. Given a fixed $k$, let $f(k)$ denote the minimum value of $n$ for which Ben can guarantee a victory. The problem asks for a proof that for large $k$ $$ 1.99^{k} \leq f(k) \leq 2^{k} $$ A computer search shows that $f(k)=2,3,4,7,11,17$ for $k=1,2,3,4,5,6$.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Let $k$ and $n$ be fixed positive integers. In the liar's guessing game, Amy chooses integers $x$ and $N$ with $1 \leq x \leq N$. She tells Ben what $N$ is, but not what $x$ is. Ben may then repeatedly ask Amy whether $x \in S$ for arbitrary sets $S$ of integers. Amy will always answer with yes or no, but she might lie. The only restriction is that she can lie at most $k$ times in a row. After he has asked as many questions as he wants, Ben must specify a set of at most $n$ positive integers. If $x$ is in this set he wins; otherwise, he loses. Prove that: a) If $n \geq 2^{k}$ then Ben can always win. b) For sufficiently large $k$ there exist $n \geq 1.99^{k}$ such that Ben cannot guarantee a win.

|

Consider an answer $A \in\{y e s, n o\}$ to a question of the kind "Is $x$ in the set $S$ ?" We say that $A$ is inconsistent with a number $i$ if $A=y e s$ and $i \notin S$, or if $A=n o$ and $i \in S$. Observe that an answer inconsistent with the target number $x$ is a lie. a) Suppose that Ben has determined a set $T$ of size $m$ that contains $x$. This is true initially with $m=N$ and $T=\{1,2, \ldots, N\}$. For $m>2^{k}$ we show how Ben can find a number $y \in T$ that is different from $x$. By performing this step repeatedly he can reduce $T$ to be of size $2^{k} \leq n$ and thus win. Since only the size $m>2^{k}$ of $T$ is relevant, assume that $T=\left\{0,1, \ldots, 2^{k}, \ldots, m-1\right\}$. Ben begins by asking repeatedly whether $x$ is $2^{k}$. If Amy answers no $k+1$ times in a row, one of these answers is truthful, and so $x \neq 2^{k}$. Otherwise Ben stops asking about $2^{k}$ at the first answer yes. He then asks, for each $i=1, \ldots, k$, if the binary representation of $x$ has a 0 in the $i$ th digit. Regardless of what the $k$ answers are, they are all inconsistent with a certain number $y \in\left\{0,1, \ldots, 2^{k}-1\right\}$. The preceding answer yes about $2^{k}$ is also inconsistent with $y$. Hence $y \neq x$. Otherwise the last $k+1$ answers are not truthful, which is impossible. Either way, Ben finds a number in $T$ that is different from $x$, and the claim is proven. b) We prove that if $1<\lambda<2$ and $n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1$ then Ben cannot guarantee a win. To complete the proof, then it suffices to take $\lambda$ such that $1.99<\lambda<2$ and $k$ large enough so that $$ n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1 \geq 1.99^{k} $$ Consider the following strategy for Amy. First she chooses $N=n+1$ and $x \in\{1,2, \ldots, n+1\}$ arbitrarily. After every answer of hers Amy determines, for each $i=1,2, \ldots, n+1$, the number $m_{i}$ of consecutive answers she has given by that point that are inconsistent with $i$. To decide on her next answer, she then uses the quantity $$ \phi=\sum_{i=1}^{n+1} \lambda^{m_{i}} $$ No matter what Ben's next question is, Amy chooses the answer which minimizes $\phi$. We claim that with this strategy $\phi$ will always stay less than $\lambda^{k+1}$. Consequently no exponent $m_{i}$ in $\phi$ will ever exceed $k$, hence Amy will never give more than $k$ consecutive answers inconsistent with some $i$. In particular this applies to the target number $x$, so she will never lie more than $k$ times in a row. Thus, given the claim, Amy's strategy is legal. Since the strategy does not depend on $x$ in any way, Ben can make no deductions about $x$, and therefore he cannot guarantee a win. It remains to show that $\phi<\lambda^{k+1}$ at all times. Initially each $m_{i}$ is 0 , so this condition holds in the beginning due to $1<\lambda<2$ and $n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1$. Suppose that $\phi<\lambda^{k+1}$ at some point, and Ben has just asked if $x \in S$ for some set $S$. According as Amy answers yes or no, the new value of $\phi$ becomes $$ \phi_{1}=\sum_{i \in S} 1+\sum_{i \notin S} \lambda^{m_{i}+1} \quad \text { or } \quad \phi_{2}=\sum_{i \in S} \lambda^{m_{i}+1}+\sum_{i \notin S} 1 $$ Since Amy chooses the option minimizing $\phi$, the new $\phi$ will equal $\min \left(\phi_{1}, \phi_{2}\right)$. Now we have $$ \min \left(\phi_{1}, \phi_{2}\right) \leq \frac{1}{2}\left(\phi_{1}+\phi_{2}\right)=\frac{1}{2}\left(\sum_{i \in S}\left(1+\lambda^{m_{i}+1}\right)+\sum_{i \notin S}\left(\lambda^{m_{i}+1}+1\right)\right)=\frac{1}{2}(\lambda \phi+n+1) $$ Because $\phi<\lambda^{k+1}$, the assumptions $\lambda<2$ and $n=\left\lfloor(2-\lambda) \lambda^{k+1}\right\rfloor-1$ lead to $$ \min \left(\phi_{1}, \phi_{2}\right)<\frac{1}{2}\left(\lambda^{k+2}+(2-\lambda) \lambda^{k+1}\right)=\lambda^{k+1} $$ The claim follows, which completes the solution. Comment. Given a fixed $k$, let $f(k)$ denote the minimum value of $n$ for which Ben can guarantee a victory. The problem asks for a proof that for large $k$ $$ 1.99^{k} \leq f(k) \leq 2^{k} $$ A computer search shows that $f(k)=2,3,4,7,11,17$ for $k=1,2,3,4,5,6$.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

2fea8430-ecf3-5002-8082-2ae72d0ea929

| 24,178

|

There are given $2^{500}$ points on a circle labeled $1,2, \ldots, 2^{500}$ in some order. Prove that one can choose 100 pairwise disjoint chords joining some of these points so that the 100 sums of the pairs of numbers at the endpoints of the chosen chords are equal.

|

The proof is based on the following general fact. Lemma. In a graph $G$ each vertex $v$ has degree $d_{v}$. Then $G$ contains an independent set $S$ of vertices such that $|S| \geq f(G)$ where $$ f(G)=\sum_{v \in G} \frac{1}{d_{v}+1} $$ Proof. Induction on $n=|G|$. The base $n=1$ is clear. For the inductive step choose a vertex $v_{0}$ in $G$ of minimum degree $d$. Delete $v_{0}$ and all of its neighbors $v_{1}, \ldots, v_{d}$ and also all edges with endpoints $v_{0}, v_{1}, \ldots, v_{d}$. This gives a new graph $G^{\prime}$. By the inductive assumption $G^{\prime}$ contains an independent set $S^{\prime}$ of vertices such that $\left|S^{\prime}\right| \geq f\left(G^{\prime}\right)$. Since no vertex in $S^{\prime}$ is a neighbor of $v_{0}$ in $G$, the set $S=S^{\prime} \cup\left\{v_{0}\right\}$ is independent in $G$. Let $d_{v}^{\prime}$ be the degree of a vertex $v$ in $G^{\prime}$. Clearly $d_{v}^{\prime} \leq d_{v}$ for every such vertex $v$, and also $d_{v_{i}} \geq d$ for all $i=0,1, \ldots, d$ by the minimal choice of $v_{0}$. Therefore $$ f\left(G^{\prime}\right)=\sum_{v \in G^{\prime}} \frac{1}{d_{v}^{\prime}+1} \geq \sum_{v \in G^{\prime}} \frac{1}{d_{v}+1}=f(G)-\sum_{i=0}^{d} \frac{1}{d_{v_{i}}+1} \geq f(G)-\frac{d+1}{d+1}=f(G)-1 . $$ Hence $|S|=\left|S^{\prime}\right|+1 \geq f\left(G^{\prime}\right)+1 \geq f(G)$, and the induction is complete. We pass on to our problem. For clarity denote $n=2^{499}$ and draw all chords determined by the given $2 n$ points. Color each chord with one of the colors $3,4, \ldots, 4 n-1$ according to the sum of the numbers at its endpoints. Chords with a common endpoint have different colors. For each color $c$ consider the following graph $G_{c}$. Its vertices are the chords of color $c$, and two chords are neighbors in $G_{c}$ if they intersect. Let $f\left(G_{c}\right)$ have the same meaning as in the lemma for all graphs $G_{c}$. Every chord $\ell$ divides the circle into two arcs, and one of them contains $m(\ell) \leq n-1$ given points. (In particular $m(\ell)=0$ if $\ell$ joins two consecutive points.) For each $i=0,1, \ldots, n-2$ there are $2 n$ chords $\ell$ with $m(\ell)=i$. Such a chord has degree at most $i$ in the respective graph. Indeed let $A_{1}, \ldots, A_{i}$ be all points on either arc determined by a chord $\ell$ with $m(\ell)=i$ and color $c$. Every $A_{j}$ is an endpoint of at most 1 chord colored $c, j=1, \ldots, i$. Hence at most $i$ chords of color $c$ intersect $\ell$. It follows that for each $i=0,1, \ldots, n-2$ the $2 n$ chords $\ell$ with $m(\ell)=i$ contribute at least $\frac{2 n}{i+1}$ to the sum $\sum_{c} f\left(G_{c}\right)$. Summation over $i=0,1, \ldots, n-2$ gives $$ \sum_{c} f\left(G_{c}\right) \geq 2 n \sum_{i=1}^{n-1} \frac{1}{i} $$ Because there are $4 n-3$ colors in all, averaging yields a color $c$ such that $$ f\left(G_{c}\right) \geq \frac{2 n}{4 n-3} \sum_{i=1}^{n-1} \frac{1}{i}>\frac{1}{2} \sum_{i=1}^{n-1} \frac{1}{i} $$ By the lemma there are at least $\frac{1}{2} \sum_{i=1}^{n-1} \frac{1}{i}$ pairwise disjoint chords of color $c$, i. e. with the same sum $c$ of the pairs of numbers at their endpoints. It remains to show that $\frac{1}{2} \sum_{i=1}^{n-1} \frac{1}{i} \geq 100$ for $n=2^{499}$. Indeed we have $$ \sum_{i=1}^{n-1} \frac{1}{i}>\sum_{i=1}^{2^{400}} \frac{1}{i}=1+\sum_{k=1}^{400} \sum_{i=2^{k-1+1}}^{2^{k}} \frac{1}{i}>1+\sum_{k=1}^{400} \frac{2^{k-1}}{2^{k}}=201>200 $$ This completes the solution.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

There are given $2^{500}$ points on a circle labeled $1,2, \ldots, 2^{500}$ in some order. Prove that one can choose 100 pairwise disjoint chords joining some of these points so that the 100 sums of the pairs of numbers at the endpoints of the chosen chords are equal.

|

The proof is based on the following general fact. Lemma. In a graph $G$ each vertex $v$ has degree $d_{v}$. Then $G$ contains an independent set $S$ of vertices such that $|S| \geq f(G)$ where $$ f(G)=\sum_{v \in G} \frac{1}{d_{v}+1} $$ Proof. Induction on $n=|G|$. The base $n=1$ is clear. For the inductive step choose a vertex $v_{0}$ in $G$ of minimum degree $d$. Delete $v_{0}$ and all of its neighbors $v_{1}, \ldots, v_{d}$ and also all edges with endpoints $v_{0}, v_{1}, \ldots, v_{d}$. This gives a new graph $G^{\prime}$. By the inductive assumption $G^{\prime}$ contains an independent set $S^{\prime}$ of vertices such that $\left|S^{\prime}\right| \geq f\left(G^{\prime}\right)$. Since no vertex in $S^{\prime}$ is a neighbor of $v_{0}$ in $G$, the set $S=S^{\prime} \cup\left\{v_{0}\right\}$ is independent in $G$. Let $d_{v}^{\prime}$ be the degree of a vertex $v$ in $G^{\prime}$. Clearly $d_{v}^{\prime} \leq d_{v}$ for every such vertex $v$, and also $d_{v_{i}} \geq d$ for all $i=0,1, \ldots, d$ by the minimal choice of $v_{0}$. Therefore $$ f\left(G^{\prime}\right)=\sum_{v \in G^{\prime}} \frac{1}{d_{v}^{\prime}+1} \geq \sum_{v \in G^{\prime}} \frac{1}{d_{v}+1}=f(G)-\sum_{i=0}^{d} \frac{1}{d_{v_{i}}+1} \geq f(G)-\frac{d+1}{d+1}=f(G)-1 . $$ Hence $|S|=\left|S^{\prime}\right|+1 \geq f\left(G^{\prime}\right)+1 \geq f(G)$, and the induction is complete. We pass on to our problem. For clarity denote $n=2^{499}$ and draw all chords determined by the given $2 n$ points. Color each chord with one of the colors $3,4, \ldots, 4 n-1$ according to the sum of the numbers at its endpoints. Chords with a common endpoint have different colors. For each color $c$ consider the following graph $G_{c}$. Its vertices are the chords of color $c$, and two chords are neighbors in $G_{c}$ if they intersect. Let $f\left(G_{c}\right)$ have the same meaning as in the lemma for all graphs $G_{c}$. Every chord $\ell$ divides the circle into two arcs, and one of them contains $m(\ell) \leq n-1$ given points. (In particular $m(\ell)=0$ if $\ell$ joins two consecutive points.) For each $i=0,1, \ldots, n-2$ there are $2 n$ chords $\ell$ with $m(\ell)=i$. Such a chord has degree at most $i$ in the respective graph. Indeed let $A_{1}, \ldots, A_{i}$ be all points on either arc determined by a chord $\ell$ with $m(\ell)=i$ and color $c$. Every $A_{j}$ is an endpoint of at most 1 chord colored $c, j=1, \ldots, i$. Hence at most $i$ chords of color $c$ intersect $\ell$. It follows that for each $i=0,1, \ldots, n-2$ the $2 n$ chords $\ell$ with $m(\ell)=i$ contribute at least $\frac{2 n}{i+1}$ to the sum $\sum_{c} f\left(G_{c}\right)$. Summation over $i=0,1, \ldots, n-2$ gives $$ \sum_{c} f\left(G_{c}\right) \geq 2 n \sum_{i=1}^{n-1} \frac{1}{i} $$ Because there are $4 n-3$ colors in all, averaging yields a color $c$ such that $$ f\left(G_{c}\right) \geq \frac{2 n}{4 n-3} \sum_{i=1}^{n-1} \frac{1}{i}>\frac{1}{2} \sum_{i=1}^{n-1} \frac{1}{i} $$ By the lemma there are at least $\frac{1}{2} \sum_{i=1}^{n-1} \frac{1}{i}$ pairwise disjoint chords of color $c$, i. e. with the same sum $c$ of the pairs of numbers at their endpoints. It remains to show that $\frac{1}{2} \sum_{i=1}^{n-1} \frac{1}{i} \geq 100$ for $n=2^{499}$. Indeed we have $$ \sum_{i=1}^{n-1} \frac{1}{i}>\sum_{i=1}^{2^{400}} \frac{1}{i}=1+\sum_{k=1}^{400} \sum_{i=2^{k-1+1}}^{2^{k}} \frac{1}{i}>1+\sum_{k=1}^{400} \frac{2^{k-1}}{2^{k}}=201>200 $$ This completes the solution.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

99ef8db9-945c-5776-8c22-da704bff3e05

| 24,181

|

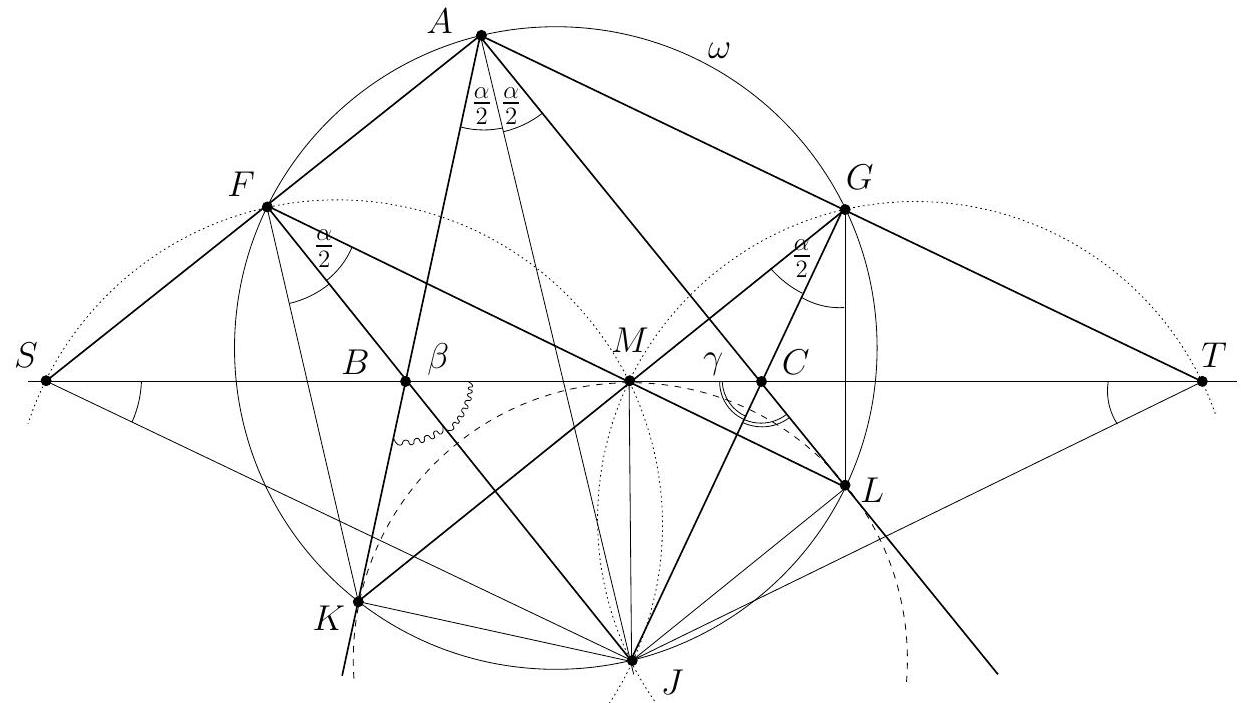

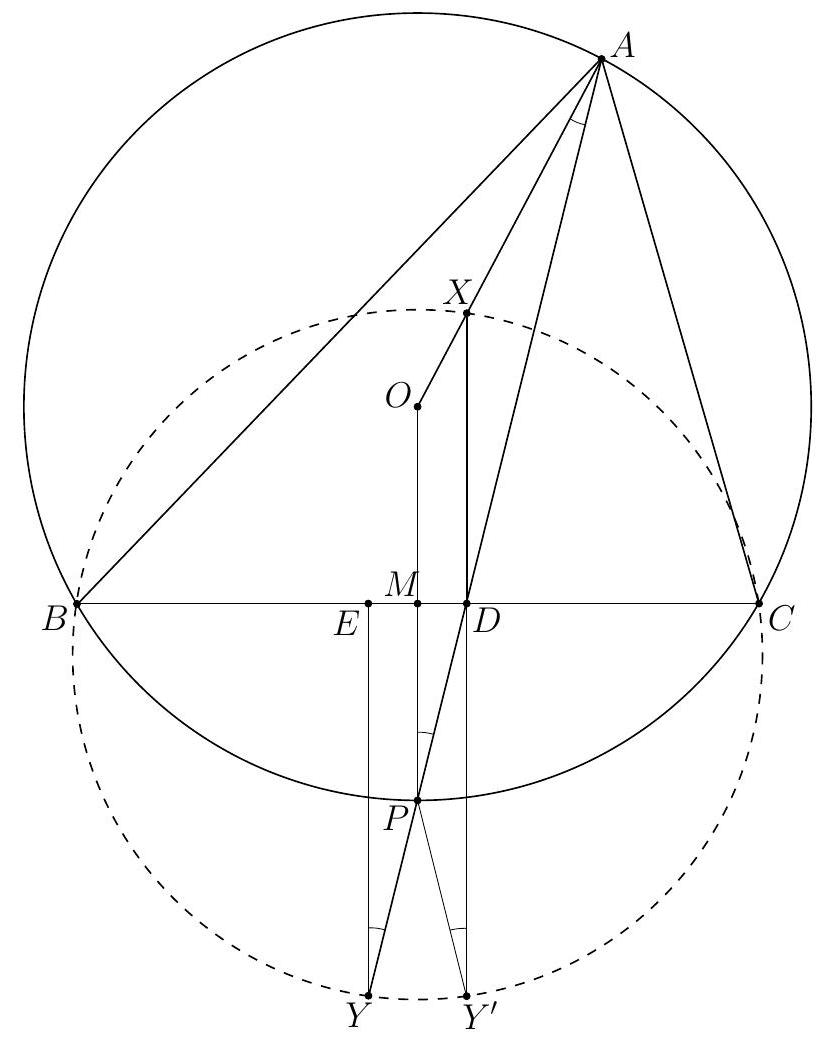

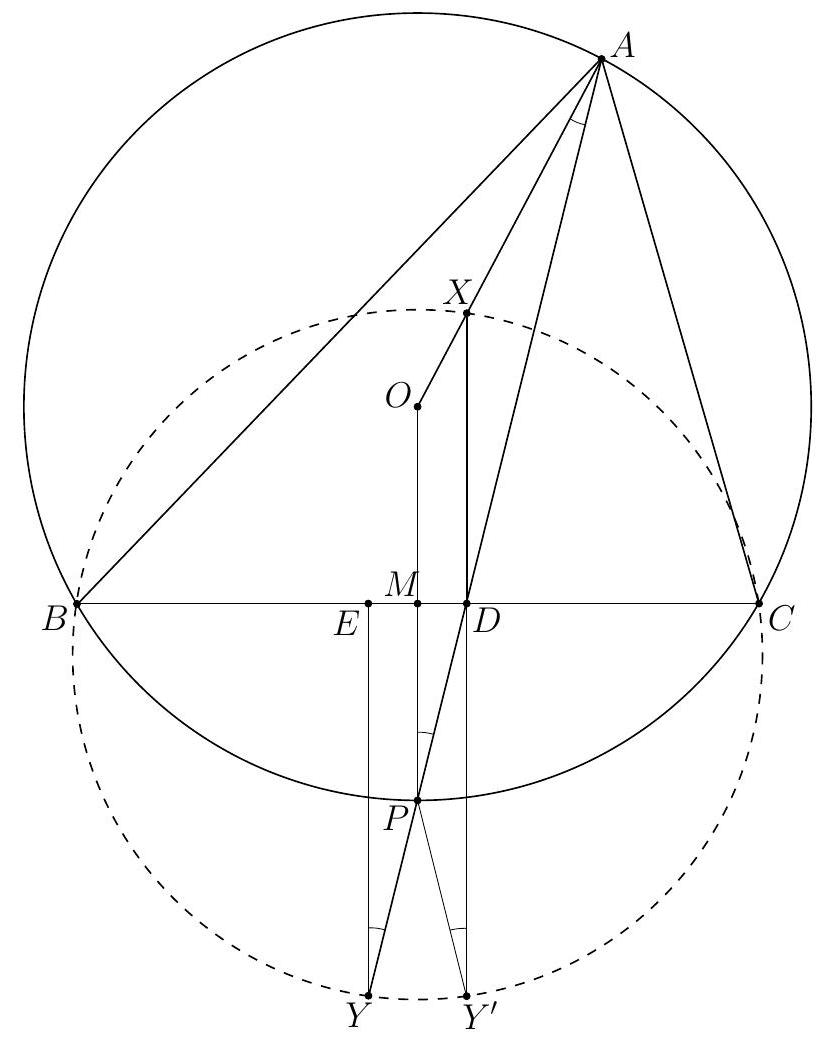

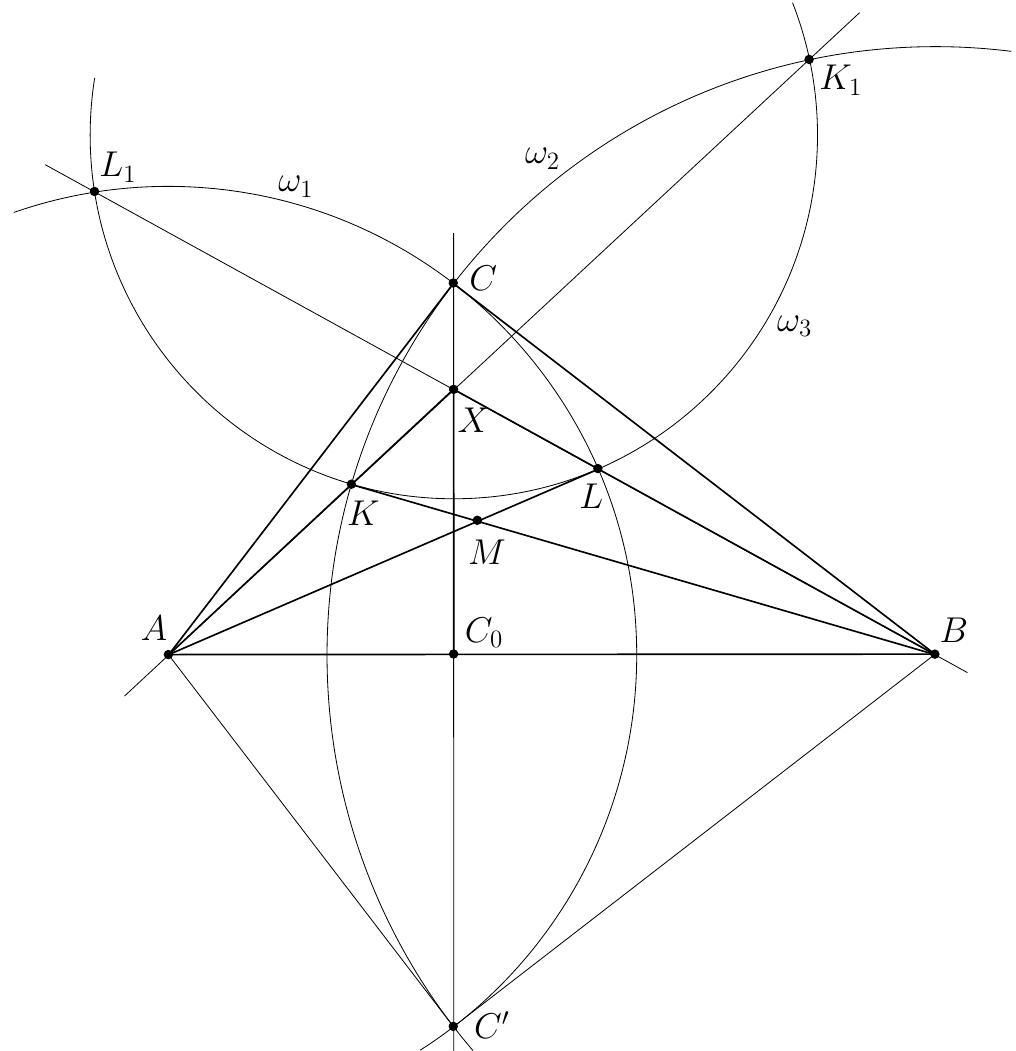

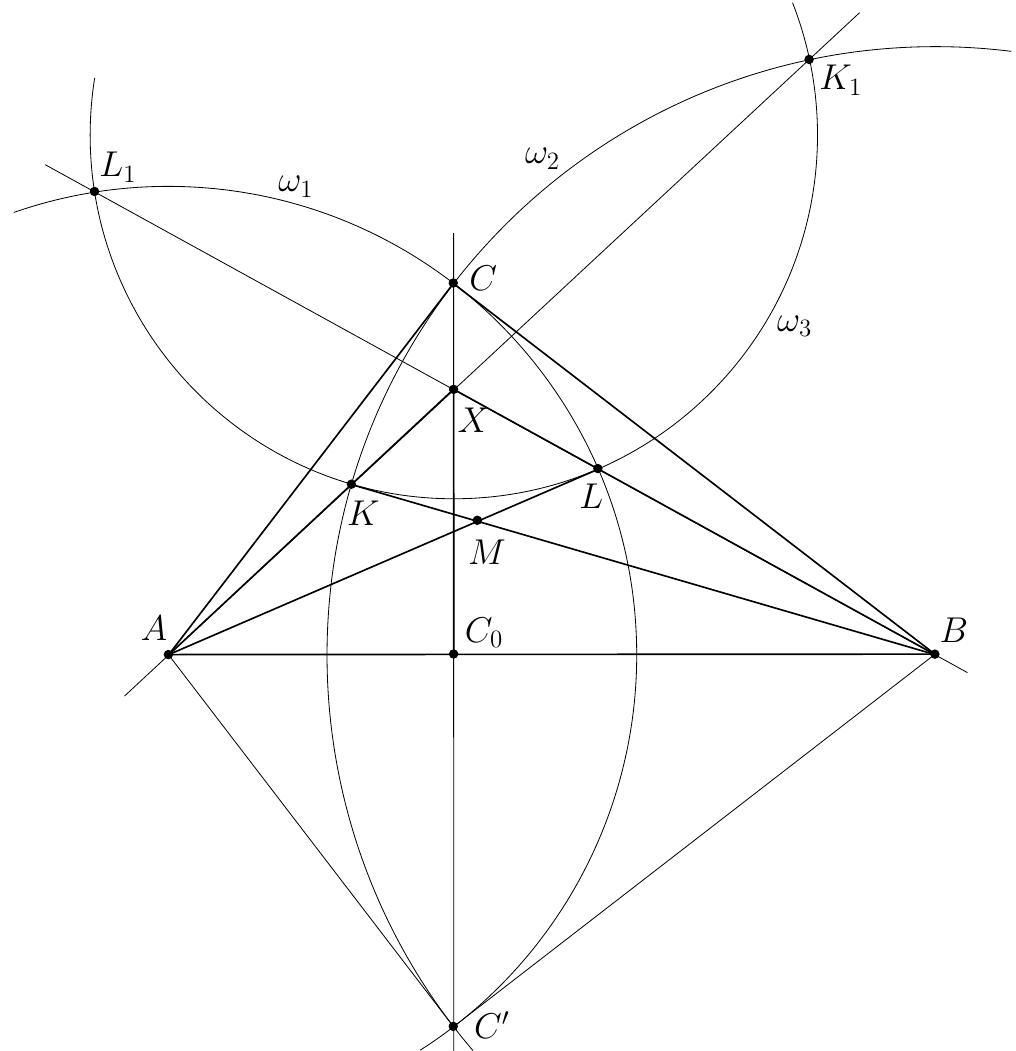

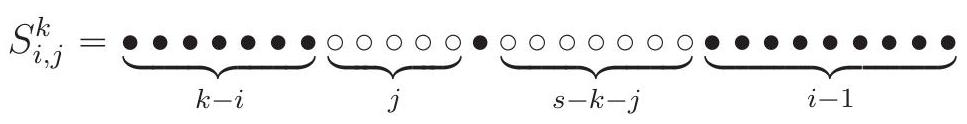

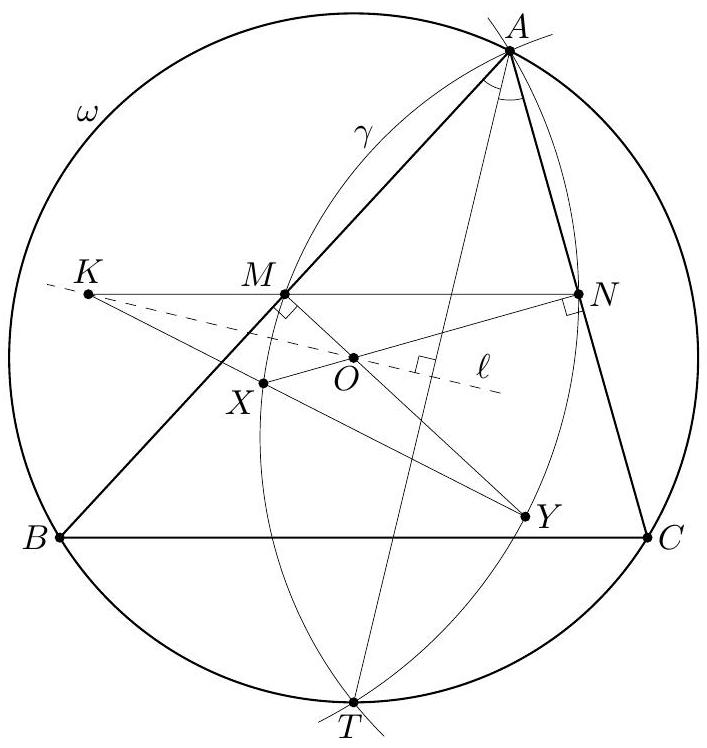

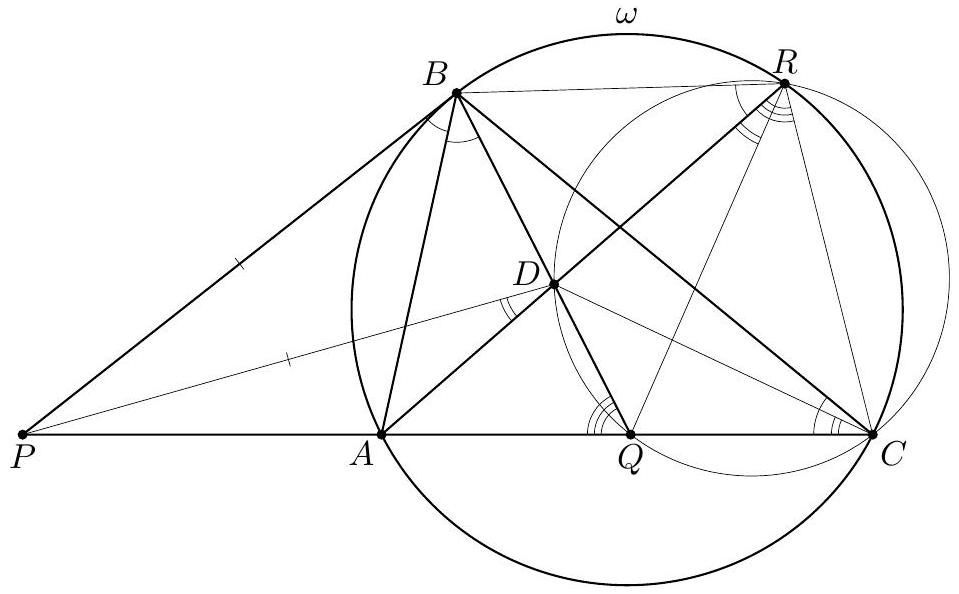

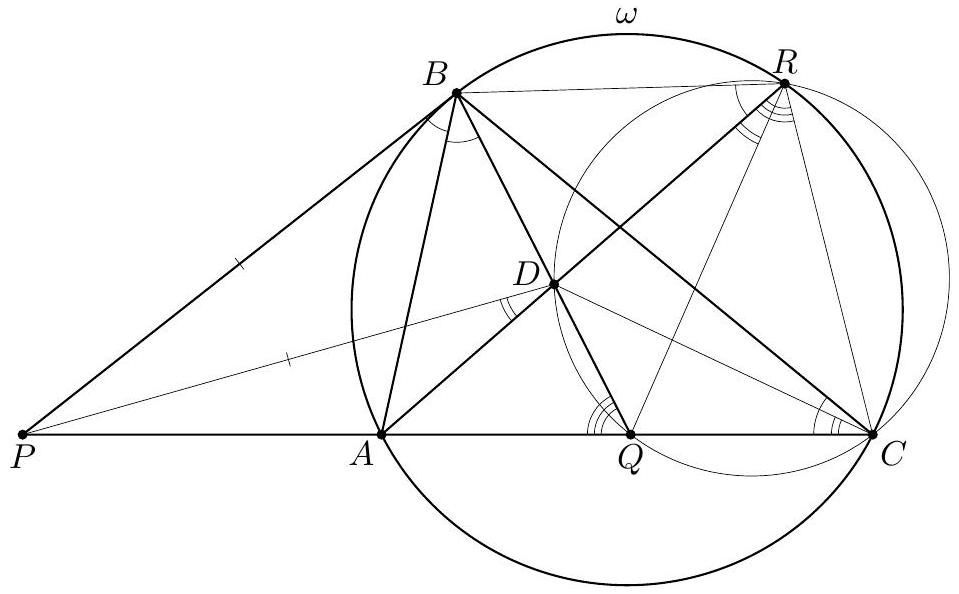

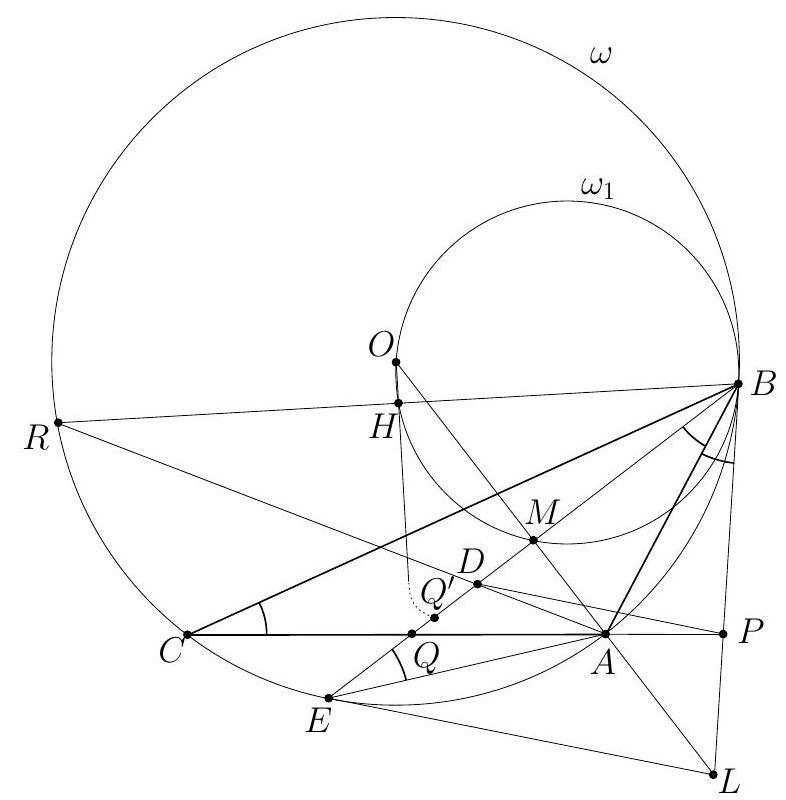

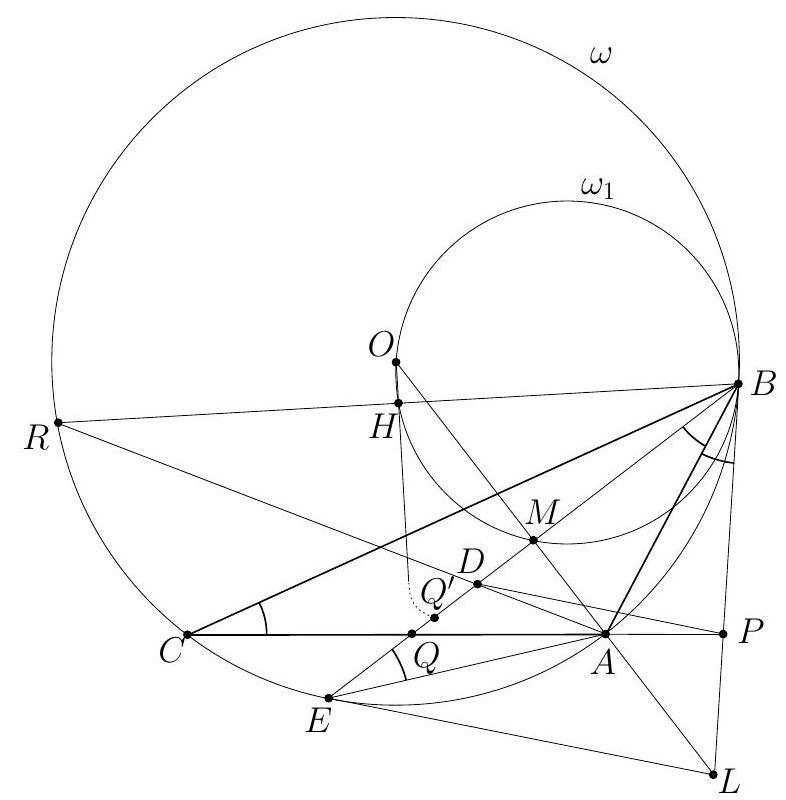

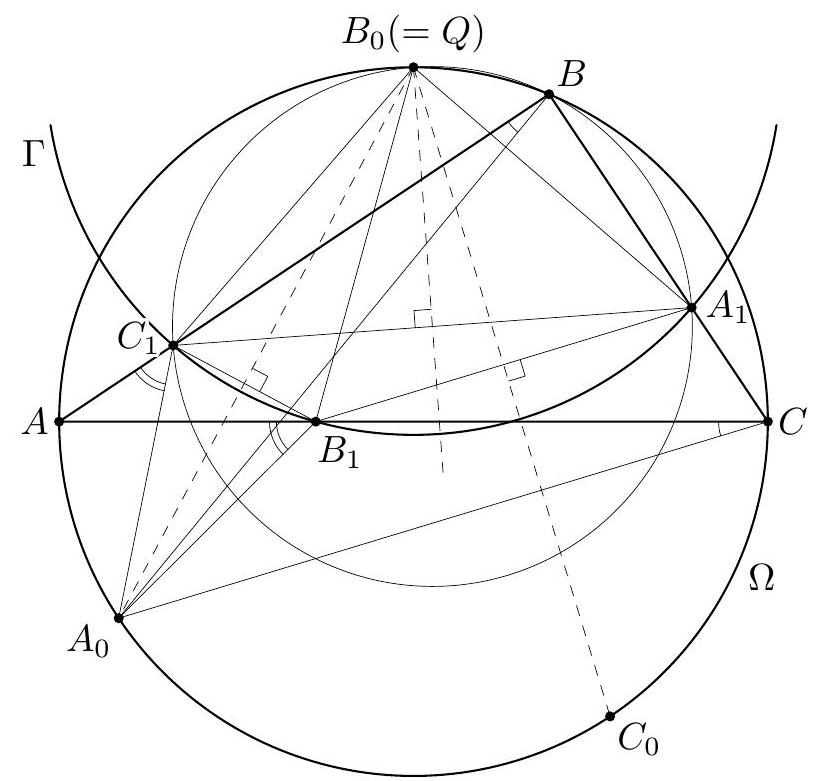

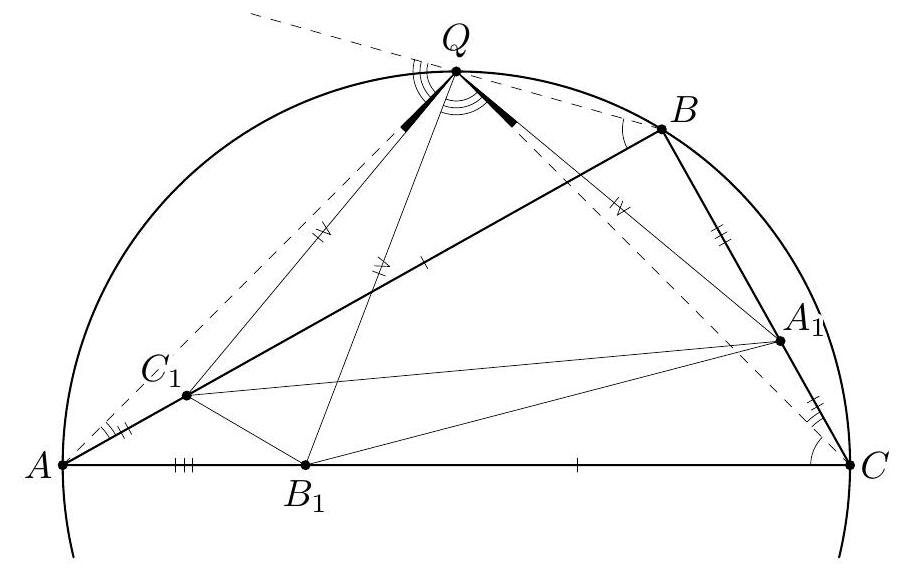

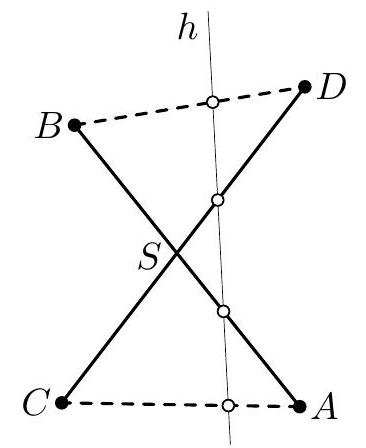

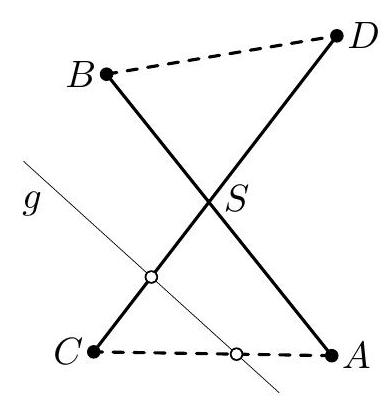

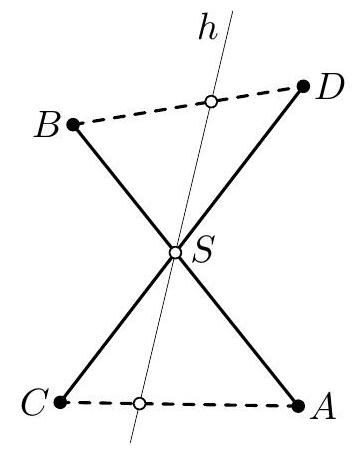

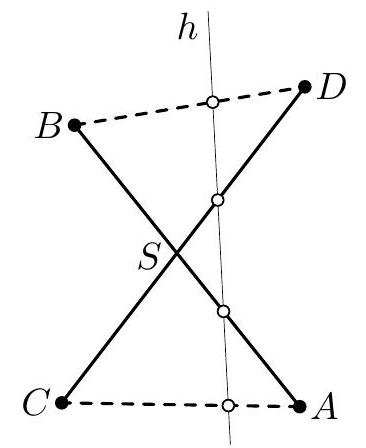

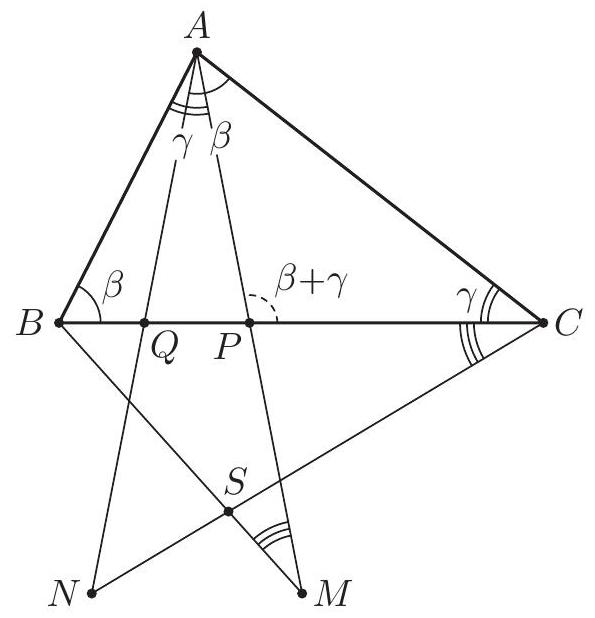

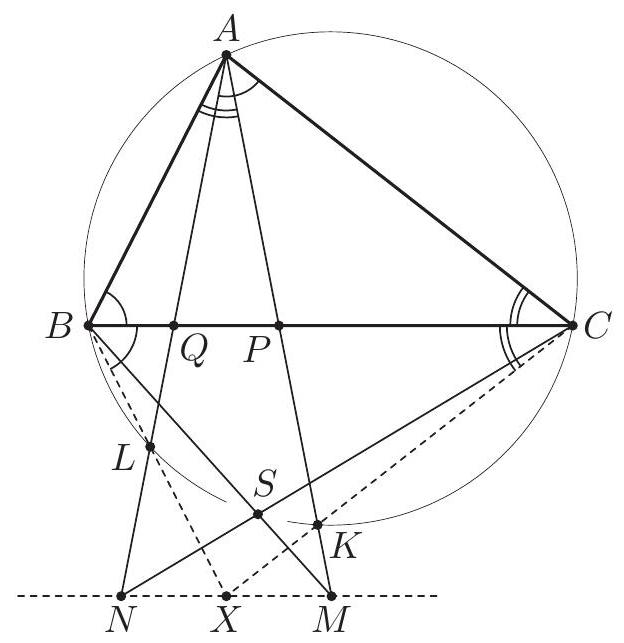

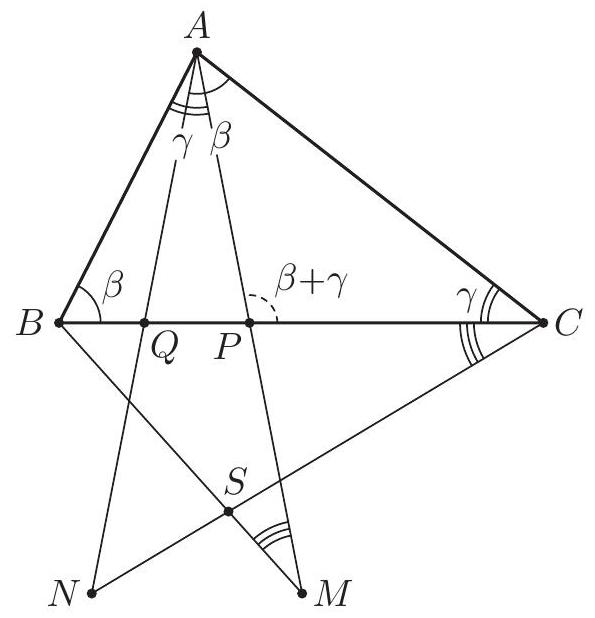

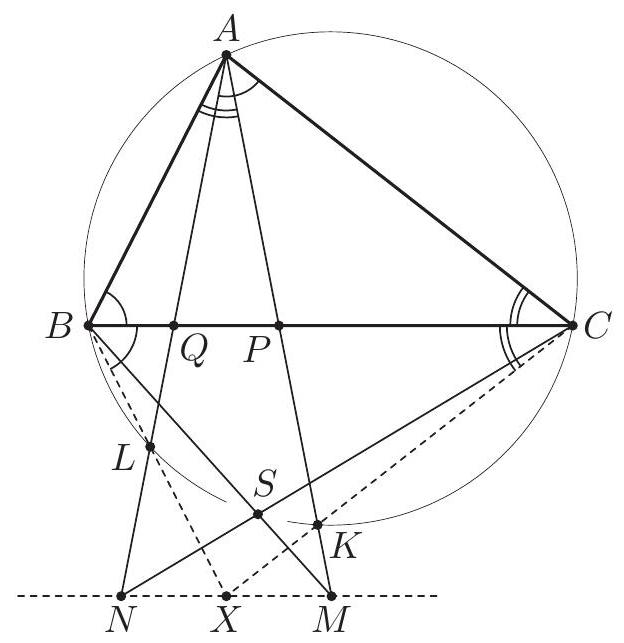

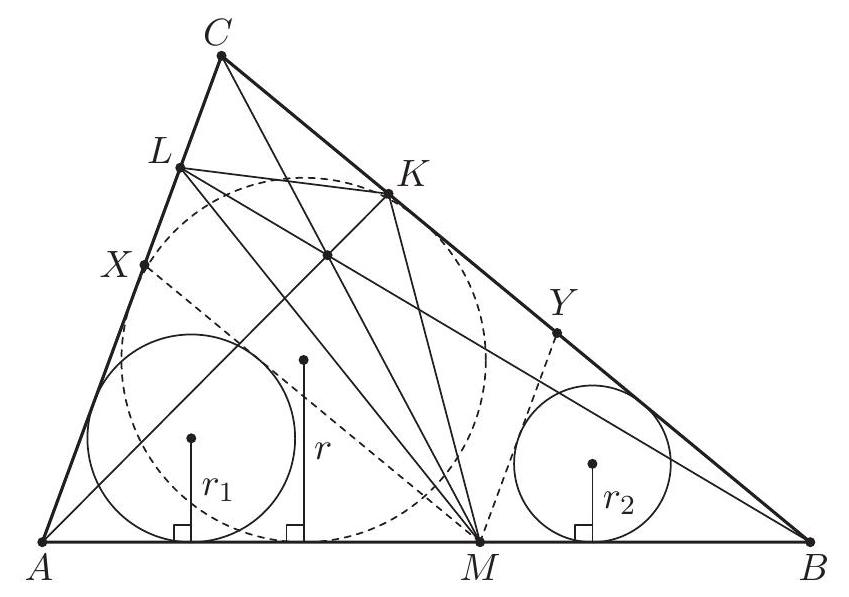

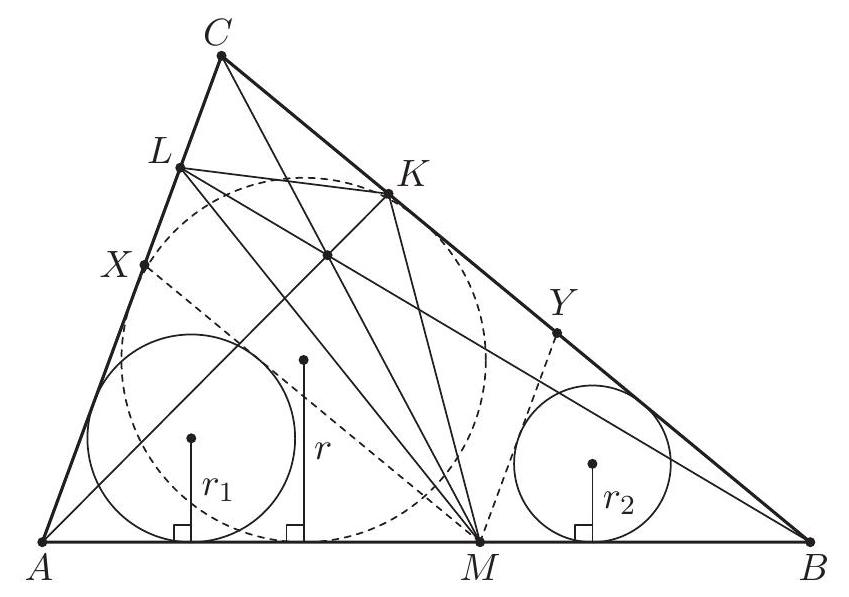

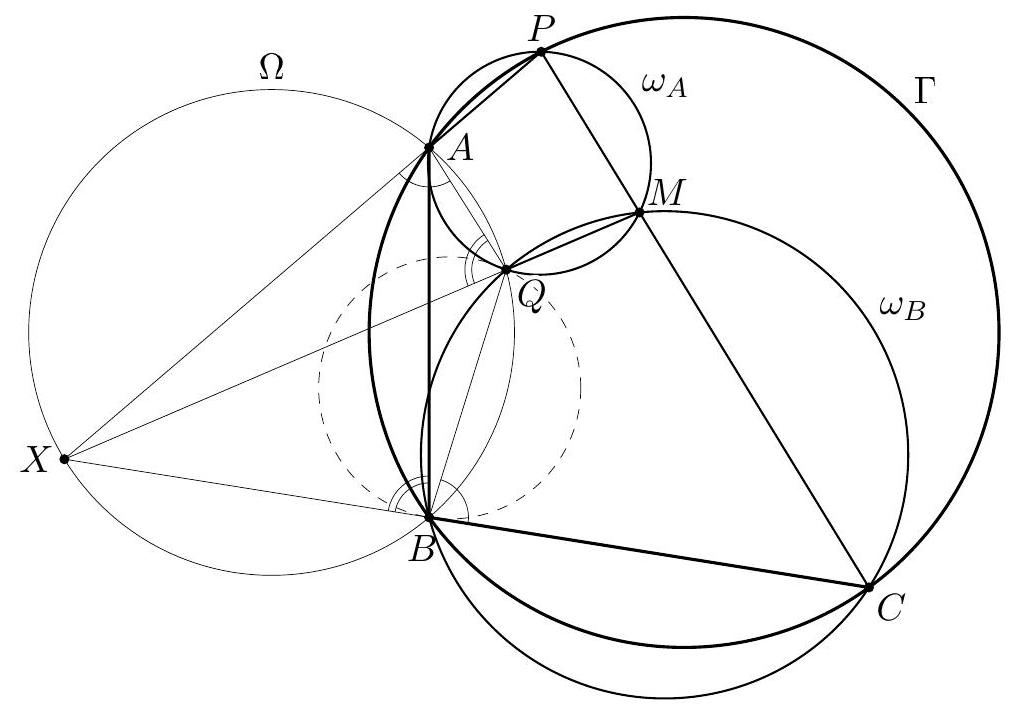

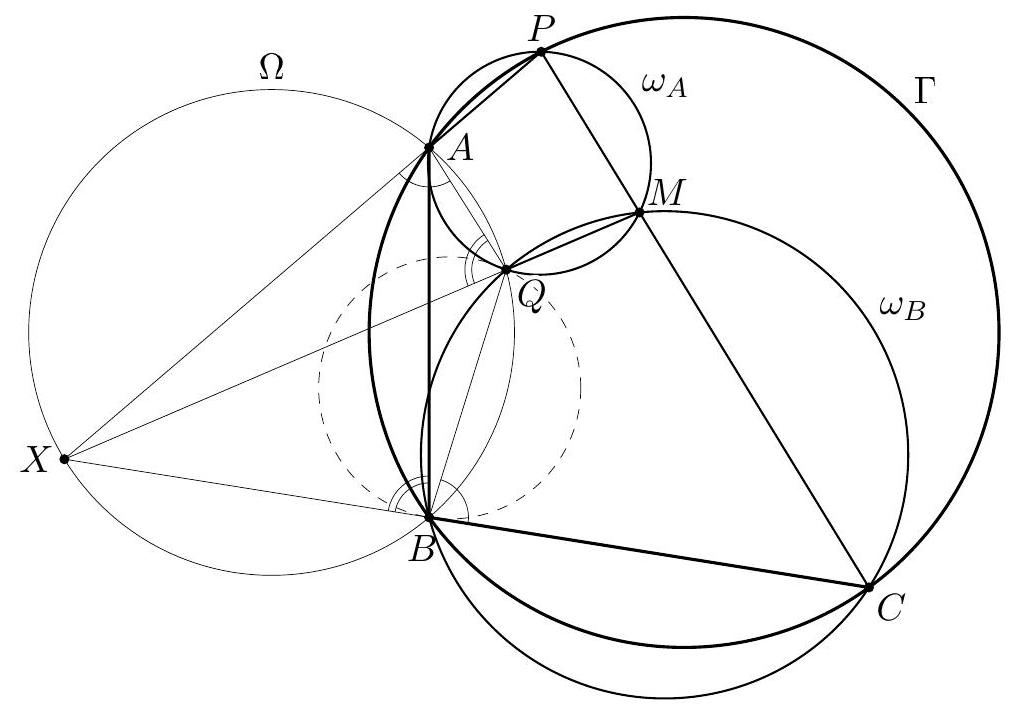

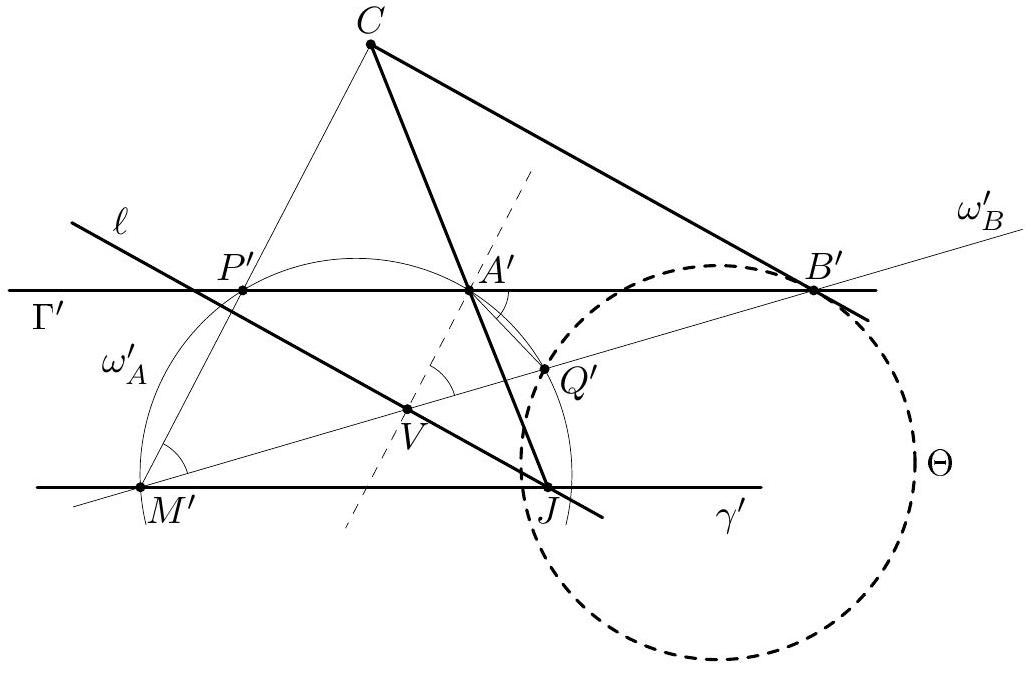

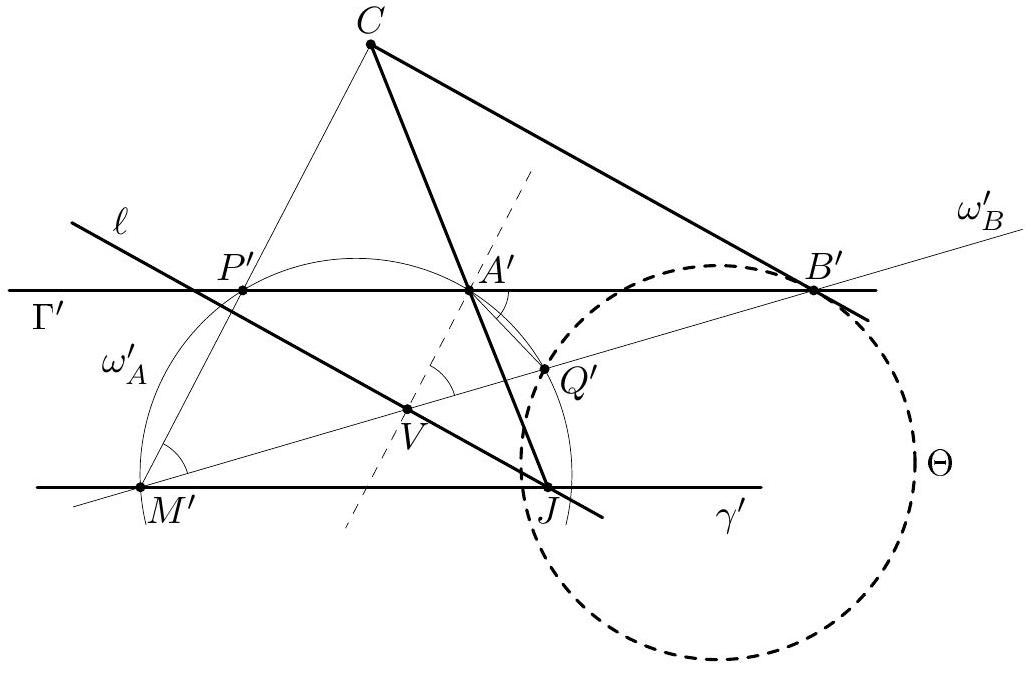

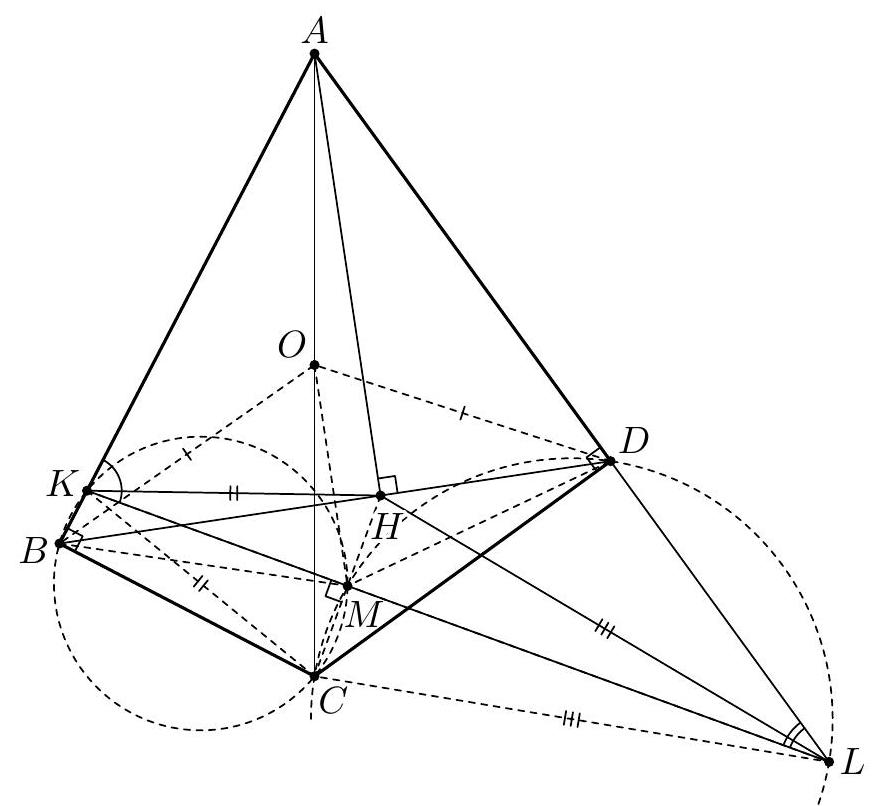

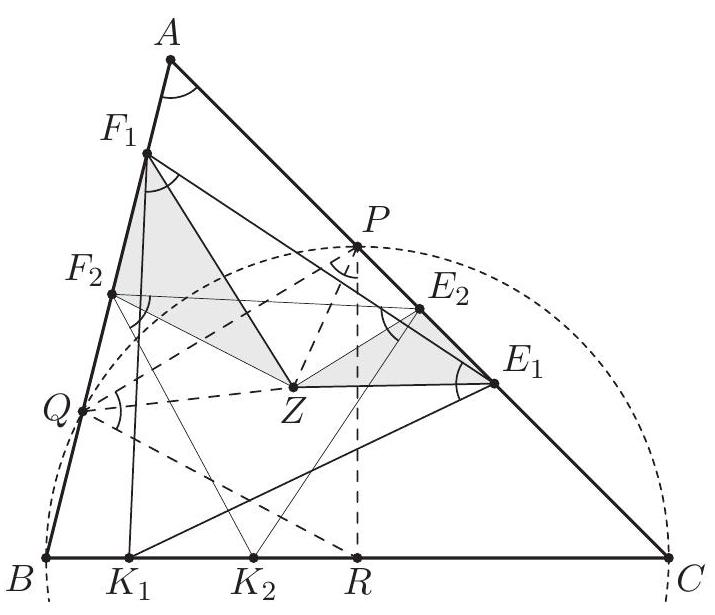

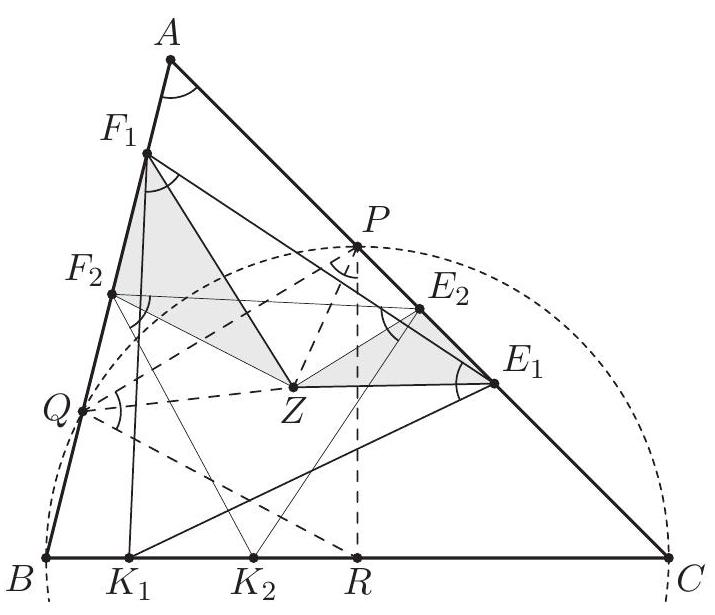

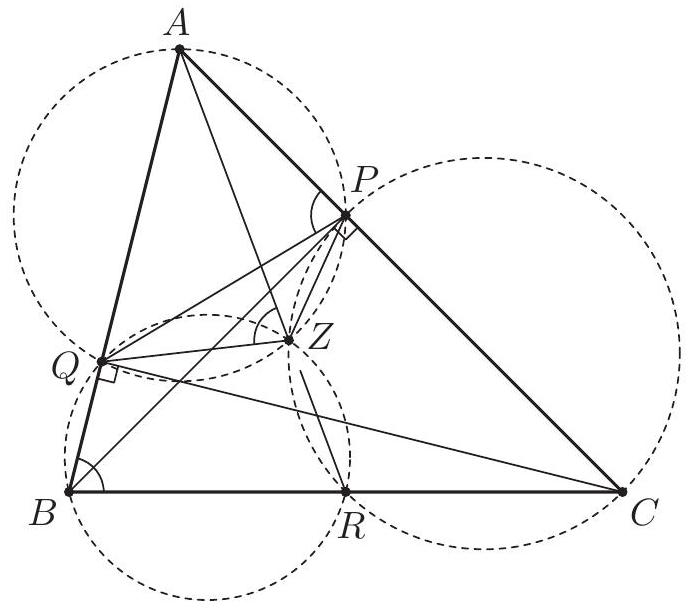

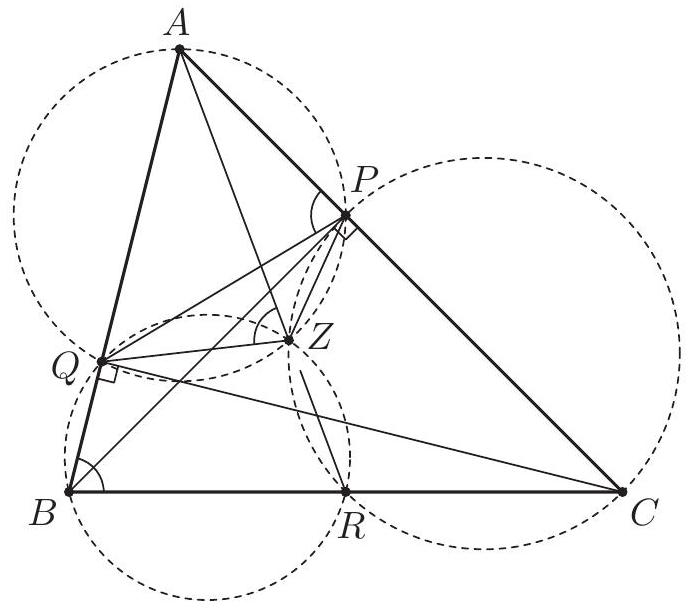

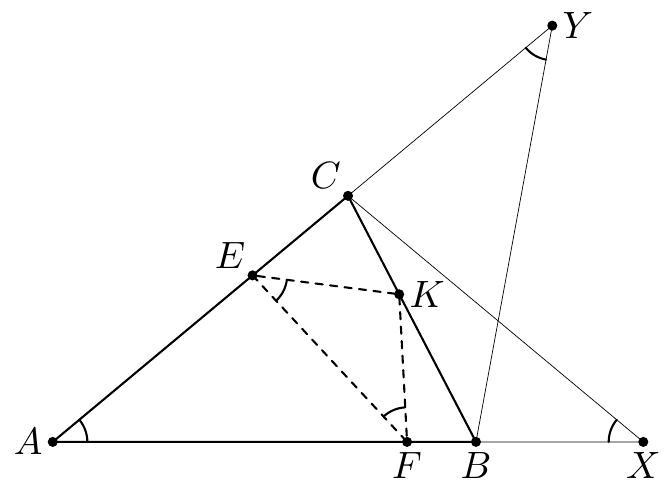

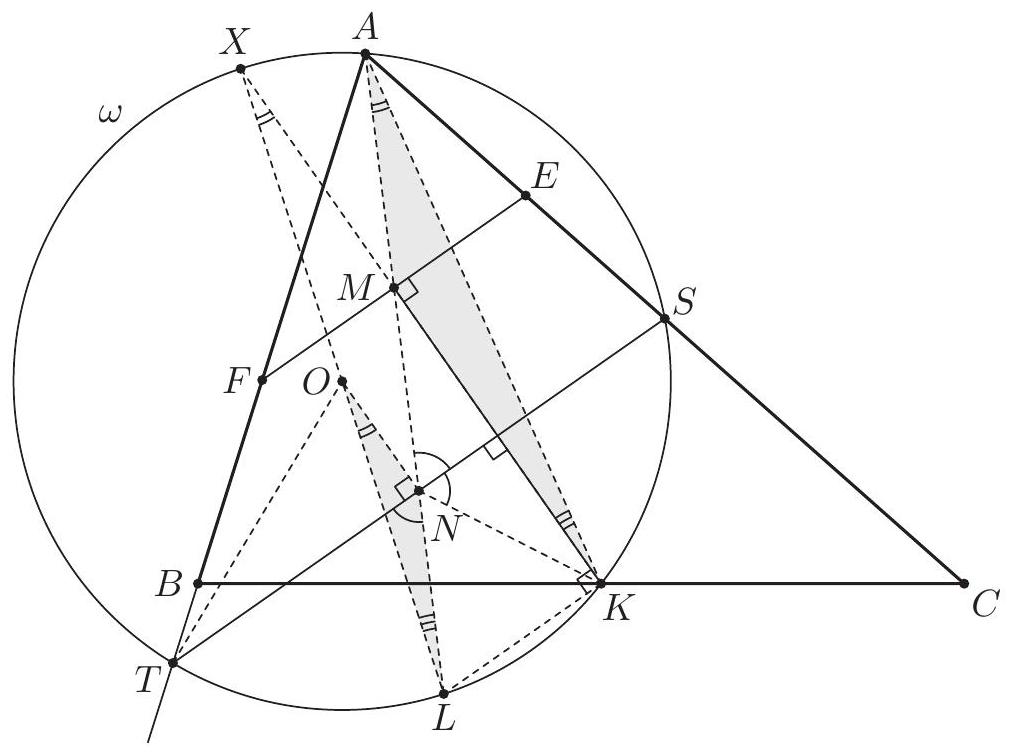

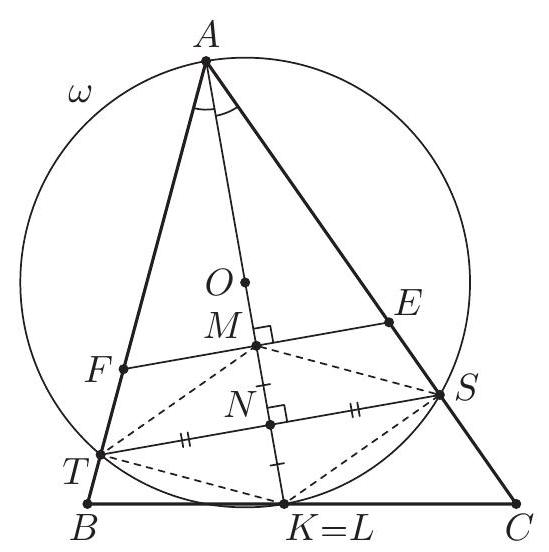

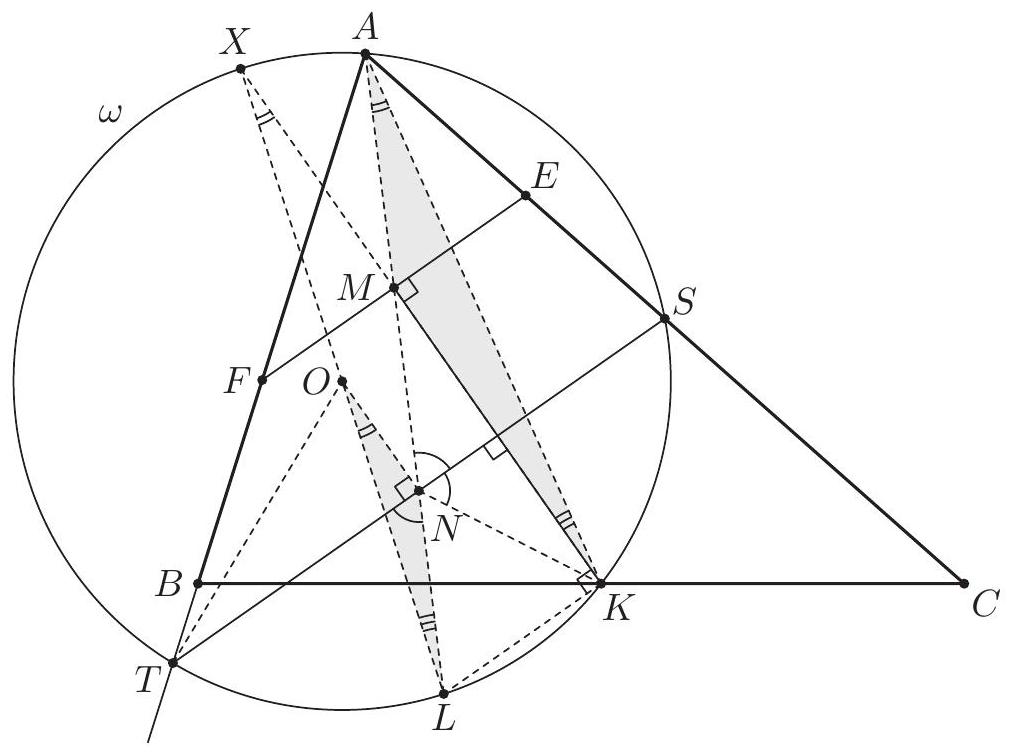

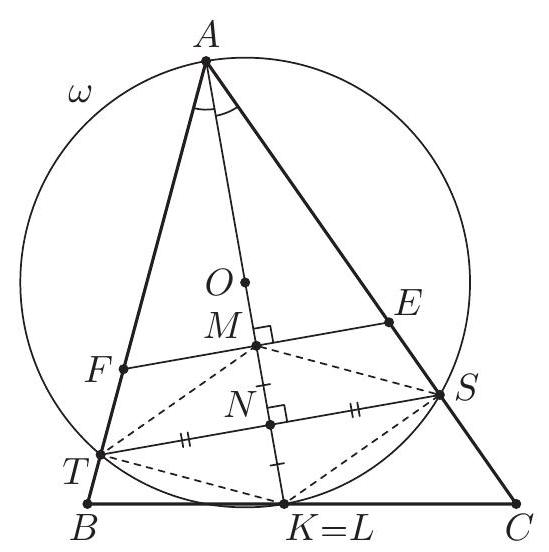

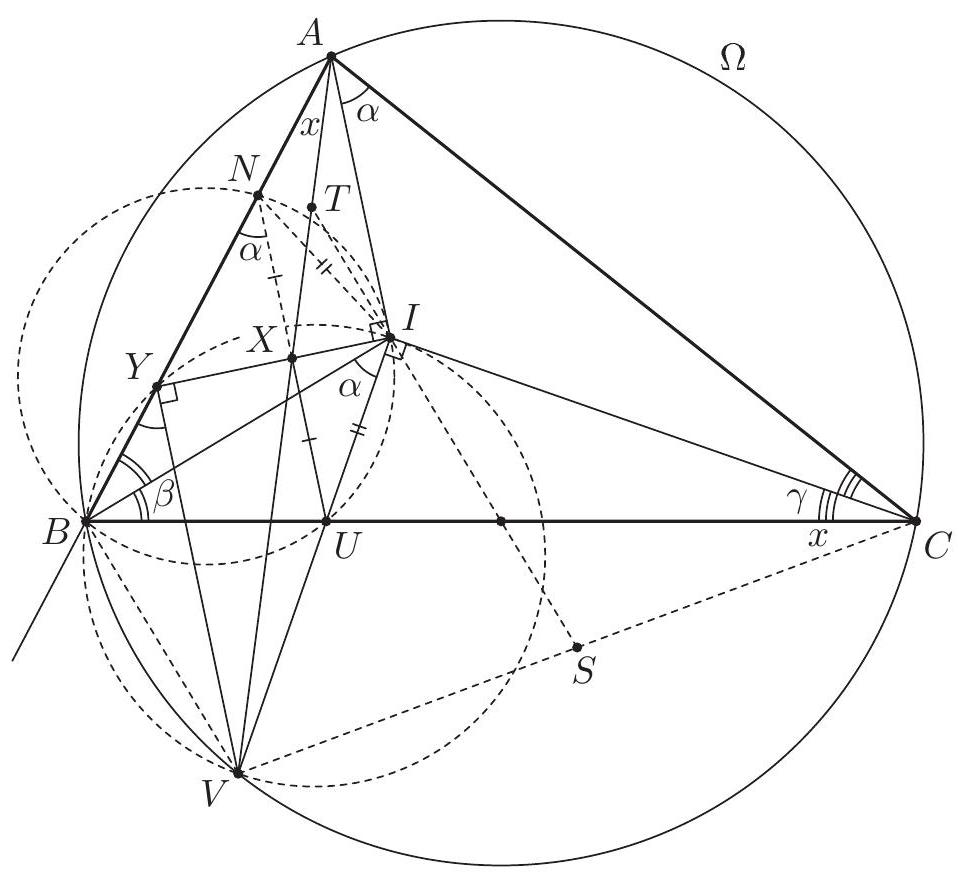

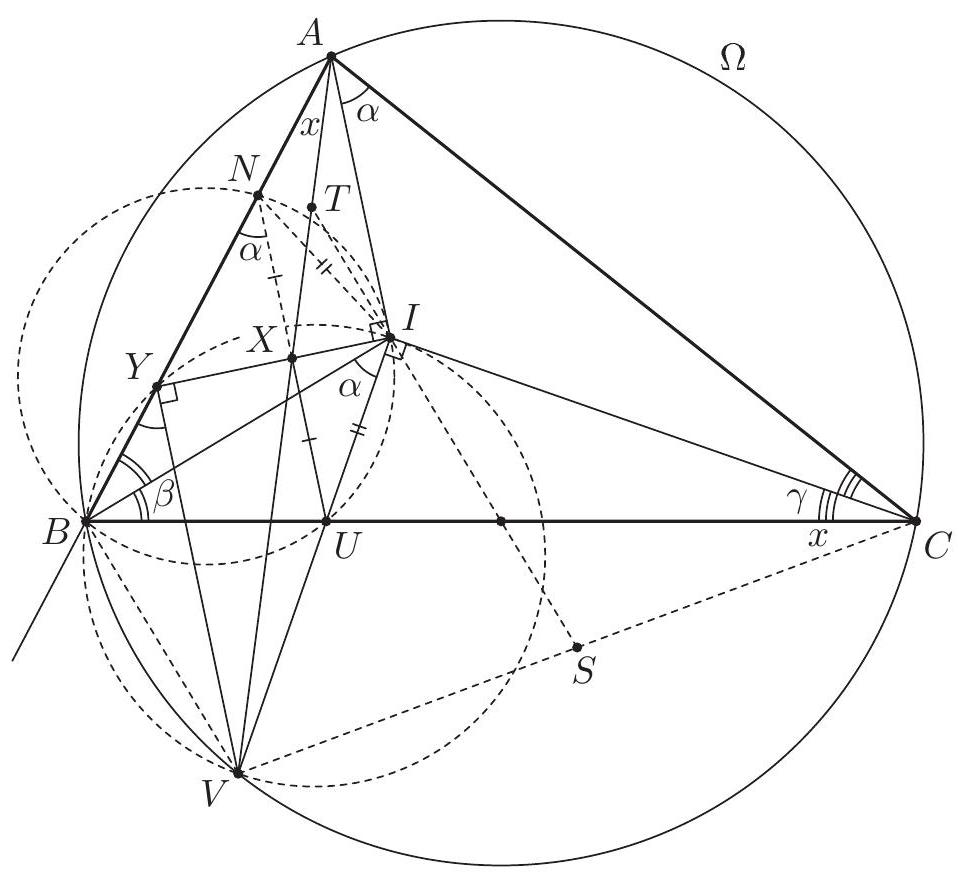

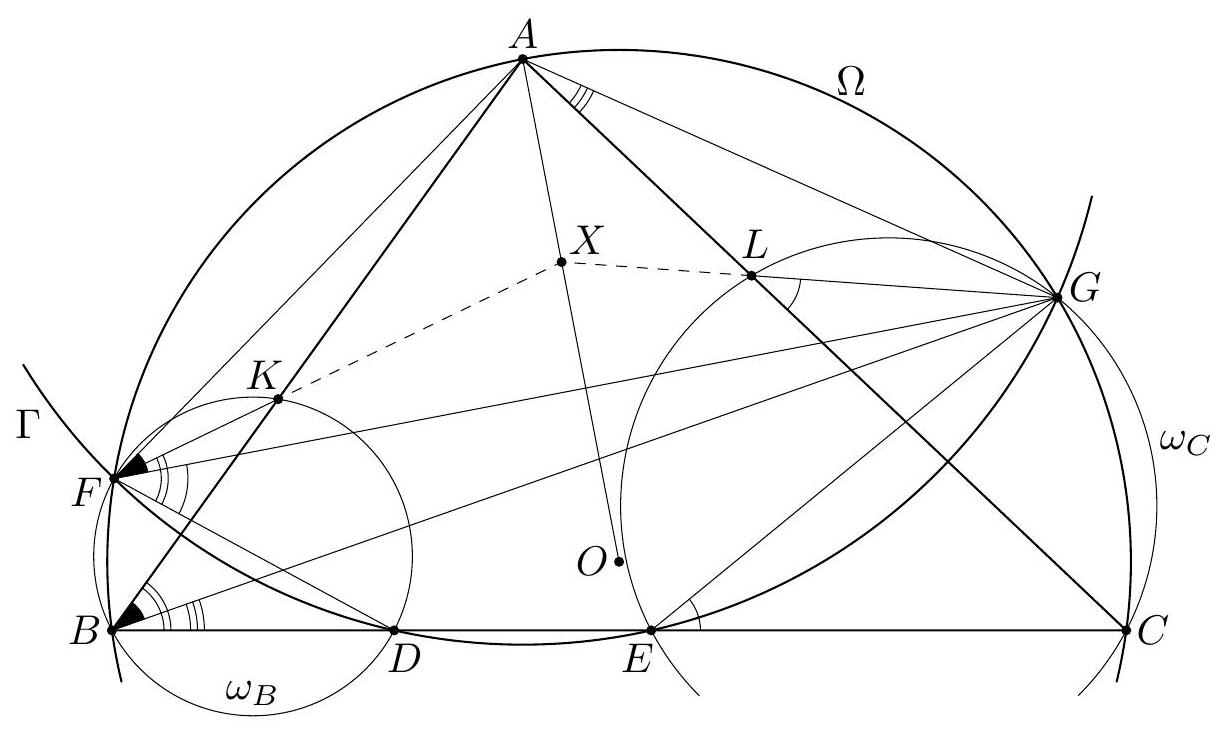

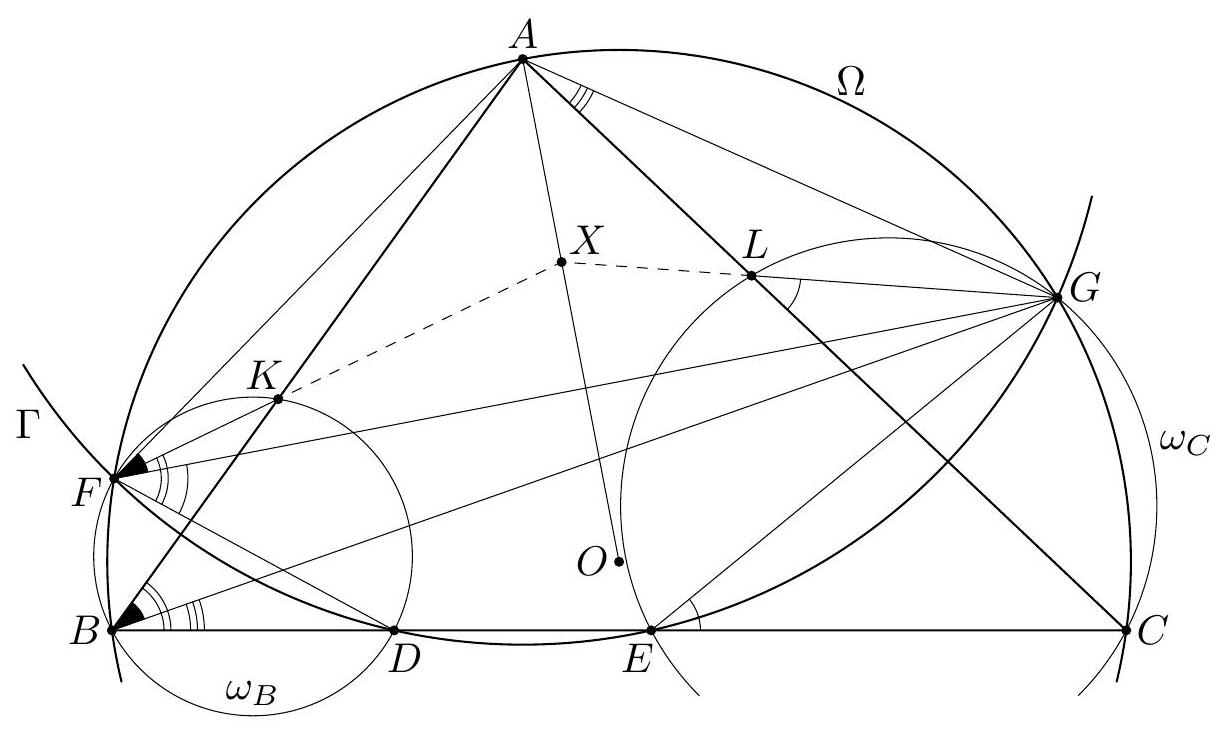

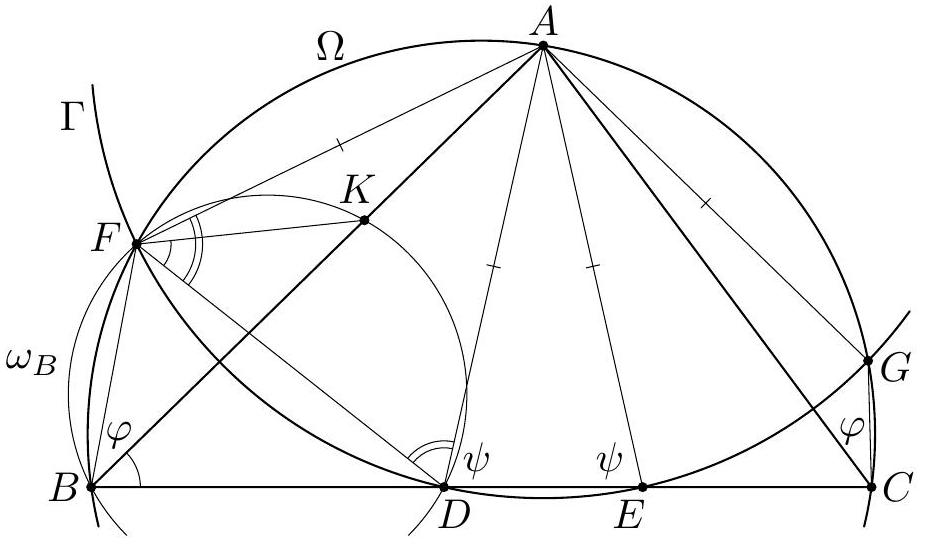

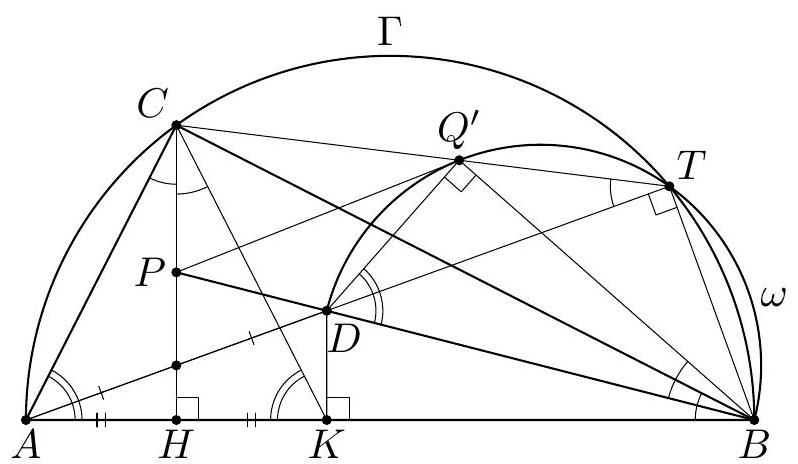

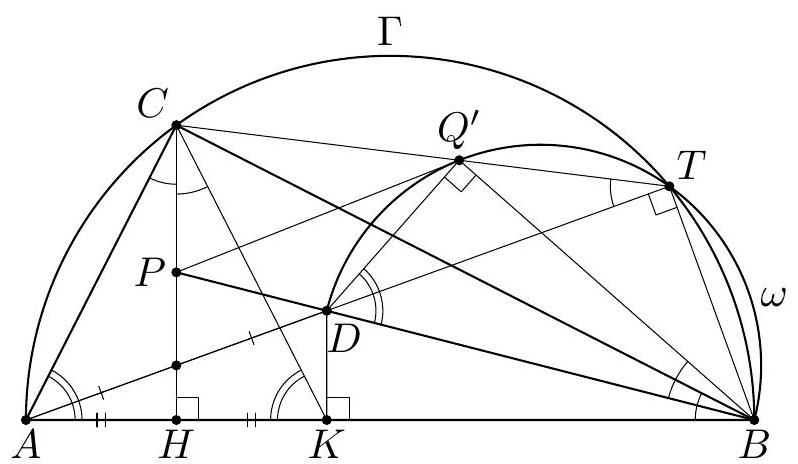

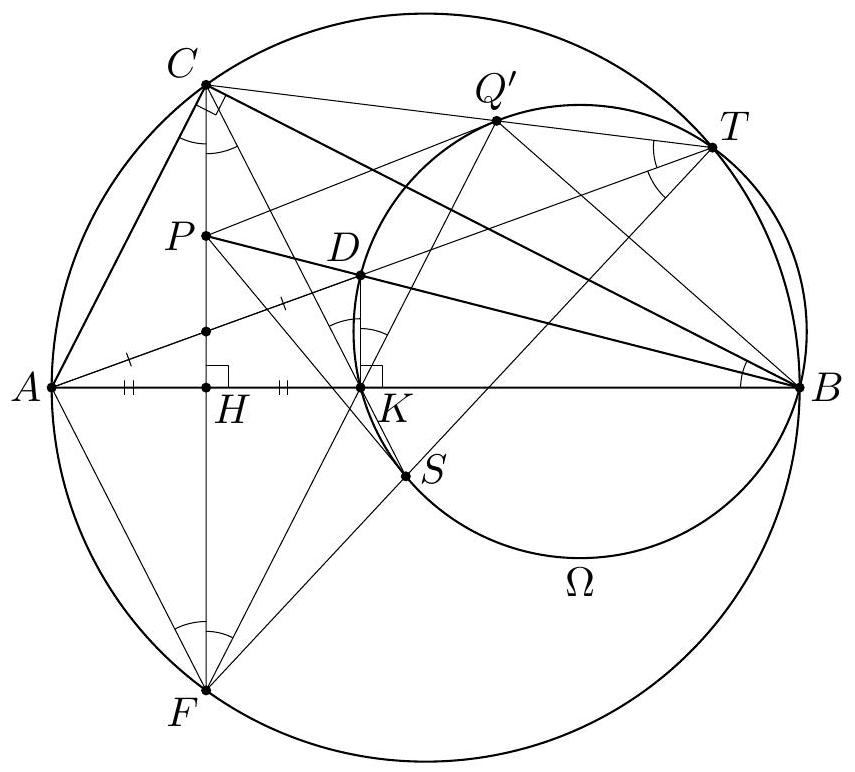

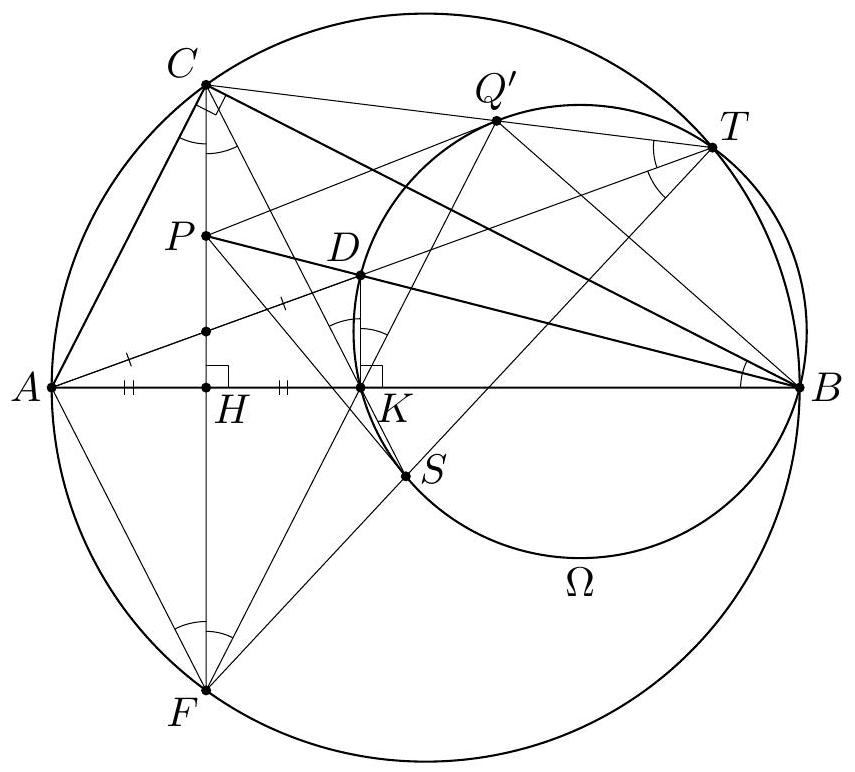

In the triangle $A B C$ the point $J$ is the center of the excircle opposite to $A$. This excircle is tangent to the side $B C$ at $M$, and to the lines $A B$ and $A C$ at $K$ and $L$ respectively. The lines $L M$ and $B J$ meet at $F$, and the lines $K M$ and $C J$ meet at $G$. Let $S$ be the point of intersection of the lines $A F$ and $B C$, and let $T$ be the point of intersection of the lines $A G$ and $B C$. Prove that $M$ is the midpoint of $S T$.

|

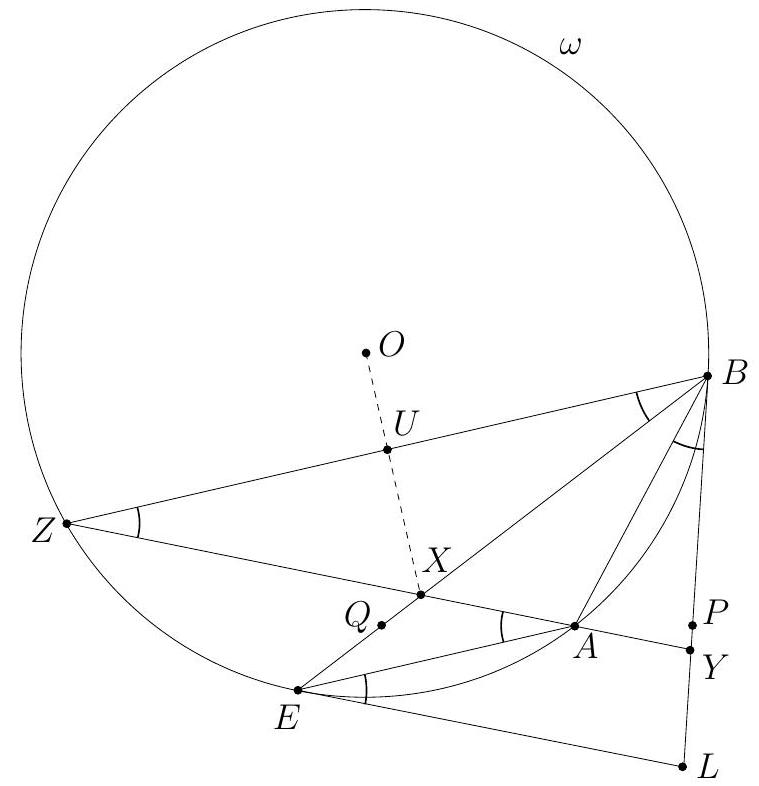

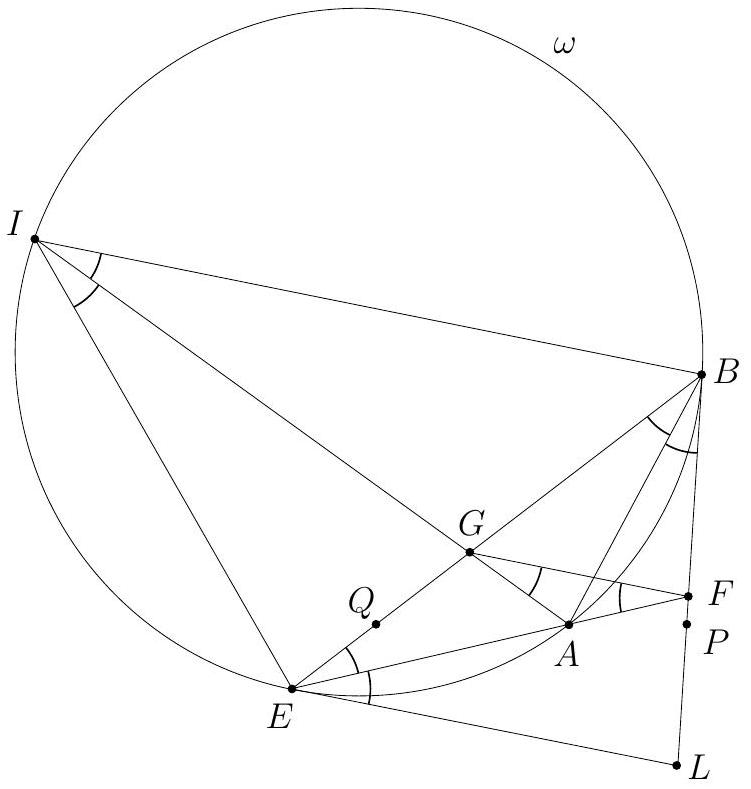

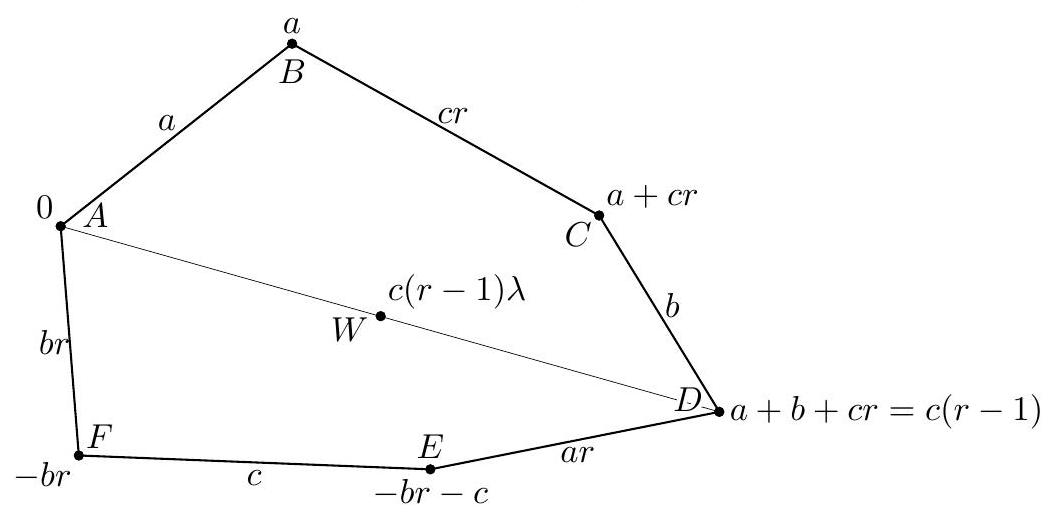

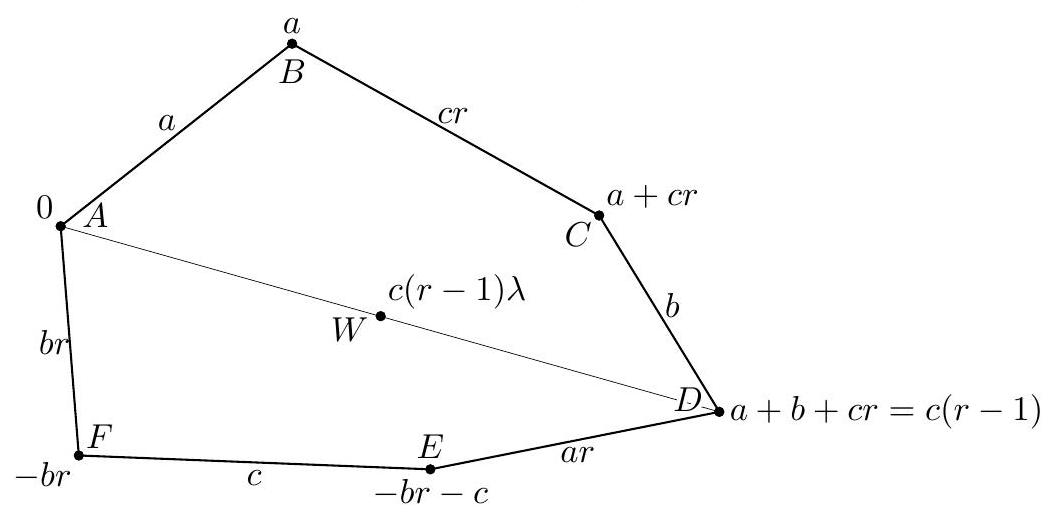

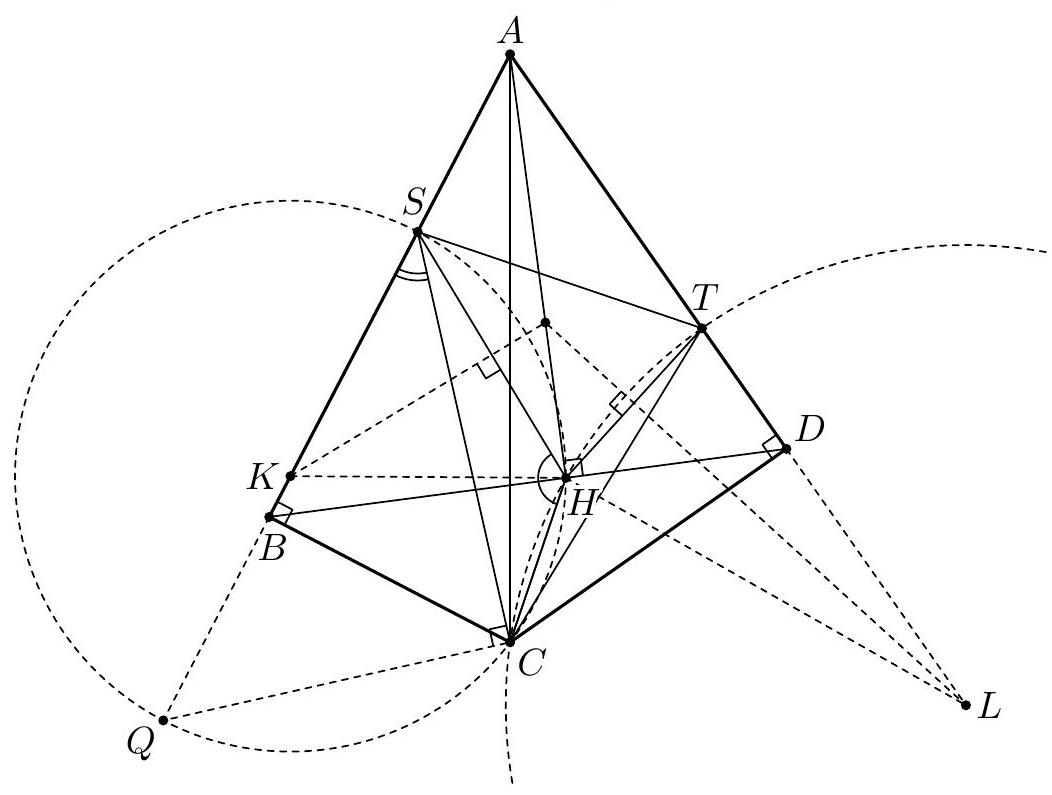

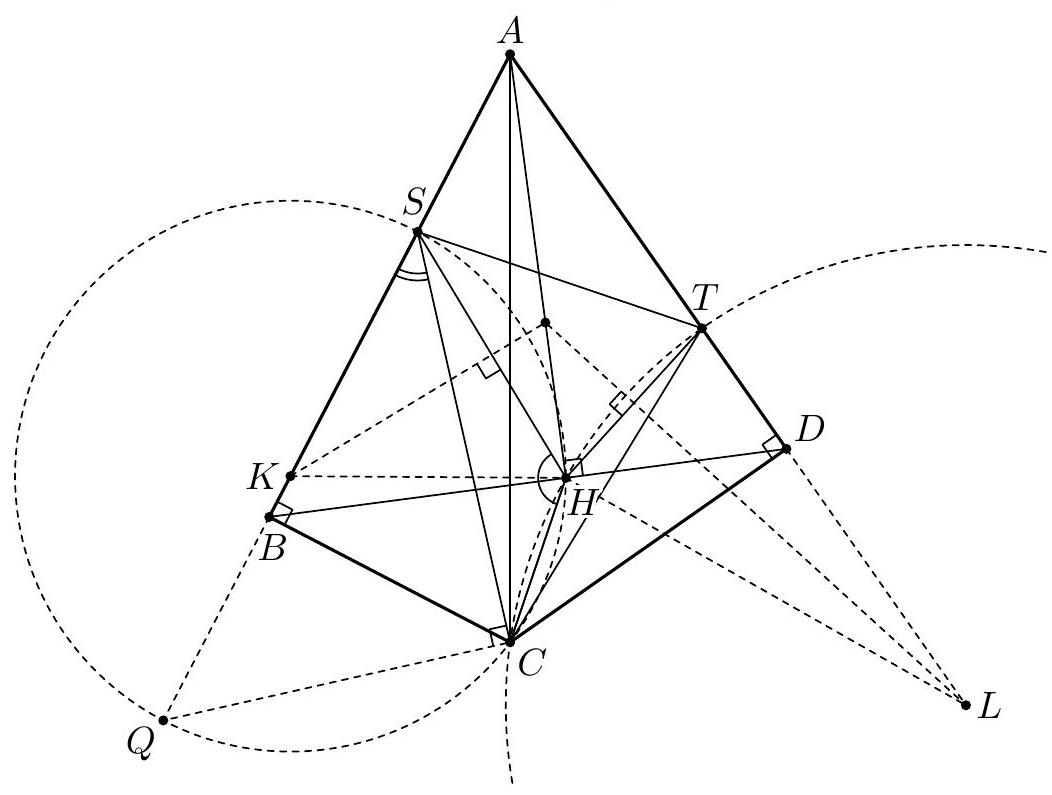

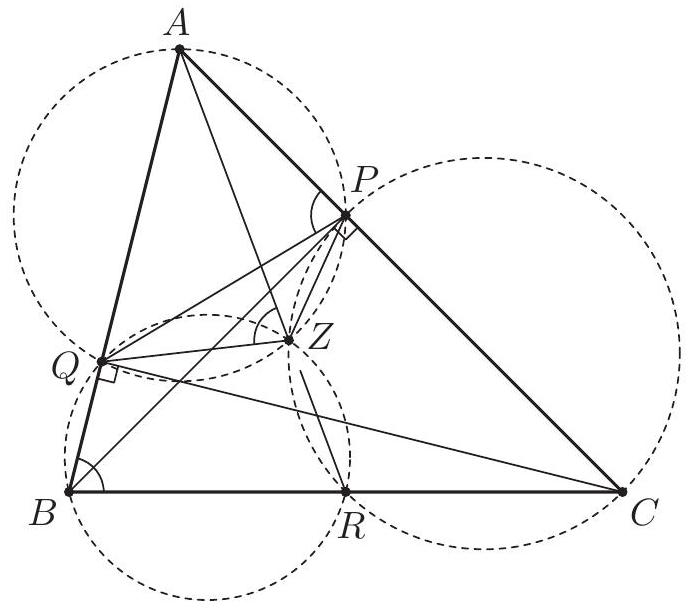

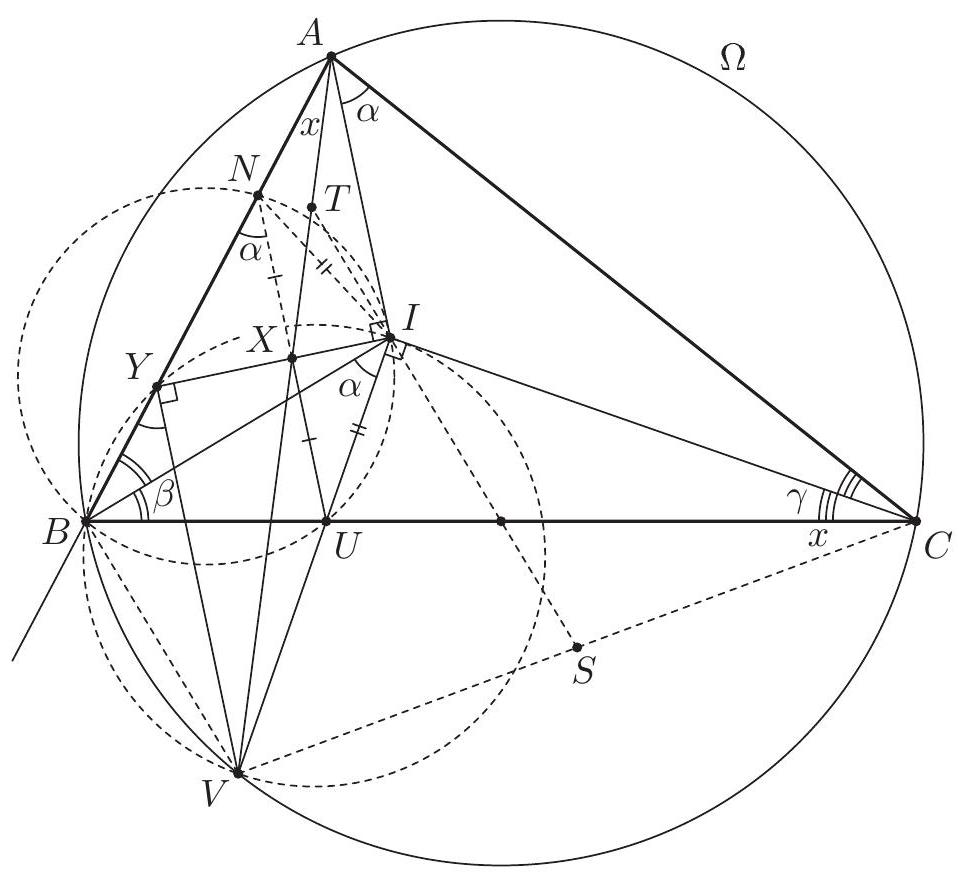

Let $\alpha=\angle C A B, \beta=\angle A B C$ and $\gamma=\angle B C A$. The line $A J$ is the bisector of $\angle C A B$, so $\angle J A K=\angle J A L=\frac{\alpha}{2}$. By $\angle A K J=\angle A L J=90^{\circ}$ the points $K$ and $L$ lie on the circle $\omega$ with diameter $A J$. The triangle $K B M$ is isosceles as $B K$ and $B M$ are tangents to the excircle. Since $B J$ is the bisector of $\angle K B M$, we have $\angle M B J=90^{\circ}-\frac{\beta}{2}$ and $\angle B M K=\frac{\beta}{2}$. Likewise $\angle M C J=90^{\circ}-\frac{\gamma}{2}$ and $\angle C M L=\frac{\gamma}{2}$. Also $\angle B M F=\angle C M L$, therefore $$ \angle L F J=\angle M B J-\angle B M F=\left(90^{\circ}-\frac{\beta}{2}\right)-\frac{\gamma}{2}=\frac{\alpha}{2}=\angle L A J . $$ Hence $F$ lies on the circle $\omega$. (By the angle computation, $F$ and $A$ are on the same side of $B C$.) Analogously, $G$ also lies on $\omega$. Since $A J$ is a diameter of $\omega$, we obtain $\angle A F J=\angle A G J=90^{\circ}$.  The lines $A B$ and $B C$ are symmetric with respect to the external bisector $B F$. Because $A F \perp B F$ and $K M \perp B F$, the segments $S M$ and $A K$ are symmetric with respect to $B F$, hence $S M=A K$. By symmetry $T M=A L$. Since $A K$ and $A L$ are equal as tangents to the excircle, it follows that $S M=T M$, and the proof is complete. Comment. After discovering the circle $A F K J L G$, there are many other ways to complete the solution. For instance, from the cyclic quadrilaterals $J M F S$ and $J M G T$ one can find $\angle T S J=\angle S T J=\frac{\alpha}{2}$. Another possibility is to use the fact that the lines $A S$ and $G M$ are parallel (both are perpendicular to the external angle bisector $B J$ ), so $\frac{M S}{M T}=\frac{A G}{G T}=1$.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

In the triangle $A B C$ the point $J$ is the center of the excircle opposite to $A$. This excircle is tangent to the side $B C$ at $M$, and to the lines $A B$ and $A C$ at $K$ and $L$ respectively. The lines $L M$ and $B J$ meet at $F$, and the lines $K M$ and $C J$ meet at $G$. Let $S$ be the point of intersection of the lines $A F$ and $B C$, and let $T$ be the point of intersection of the lines $A G$ and $B C$. Prove that $M$ is the midpoint of $S T$.

|

Let $\alpha=\angle C A B, \beta=\angle A B C$ and $\gamma=\angle B C A$. The line $A J$ is the bisector of $\angle C A B$, so $\angle J A K=\angle J A L=\frac{\alpha}{2}$. By $\angle A K J=\angle A L J=90^{\circ}$ the points $K$ and $L$ lie on the circle $\omega$ with diameter $A J$. The triangle $K B M$ is isosceles as $B K$ and $B M$ are tangents to the excircle. Since $B J$ is the bisector of $\angle K B M$, we have $\angle M B J=90^{\circ}-\frac{\beta}{2}$ and $\angle B M K=\frac{\beta}{2}$. Likewise $\angle M C J=90^{\circ}-\frac{\gamma}{2}$ and $\angle C M L=\frac{\gamma}{2}$. Also $\angle B M F=\angle C M L$, therefore $$ \angle L F J=\angle M B J-\angle B M F=\left(90^{\circ}-\frac{\beta}{2}\right)-\frac{\gamma}{2}=\frac{\alpha}{2}=\angle L A J . $$ Hence $F$ lies on the circle $\omega$. (By the angle computation, $F$ and $A$ are on the same side of $B C$.) Analogously, $G$ also lies on $\omega$. Since $A J$ is a diameter of $\omega$, we obtain $\angle A F J=\angle A G J=90^{\circ}$.  The lines $A B$ and $B C$ are symmetric with respect to the external bisector $B F$. Because $A F \perp B F$ and $K M \perp B F$, the segments $S M$ and $A K$ are symmetric with respect to $B F$, hence $S M=A K$. By symmetry $T M=A L$. Since $A K$ and $A L$ are equal as tangents to the excircle, it follows that $S M=T M$, and the proof is complete. Comment. After discovering the circle $A F K J L G$, there are many other ways to complete the solution. For instance, from the cyclic quadrilaterals $J M F S$ and $J M G T$ one can find $\angle T S J=\angle S T J=\frac{\alpha}{2}$. Another possibility is to use the fact that the lines $A S$ and $G M$ are parallel (both are perpendicular to the external angle bisector $B J$ ), so $\frac{M S}{M T}=\frac{A G}{G T}=1$.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

af8ca3ab-1067-5f94-b043-bcc229327c29

| 24,185

|

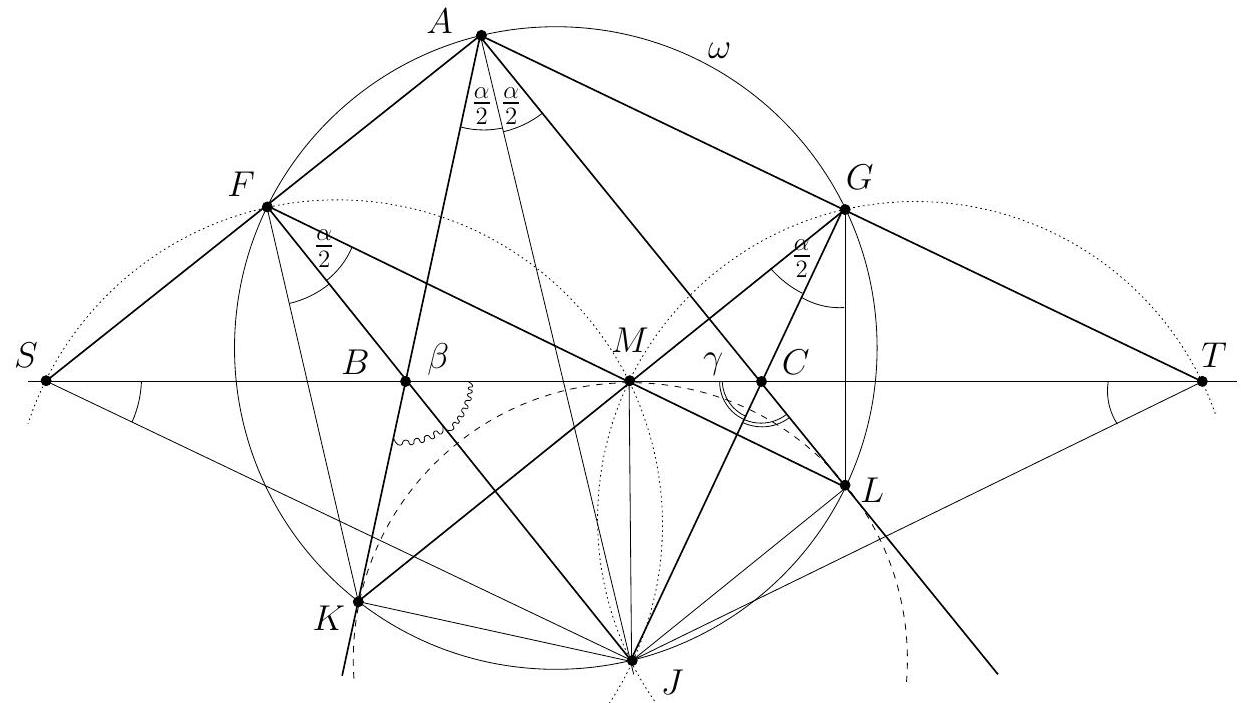

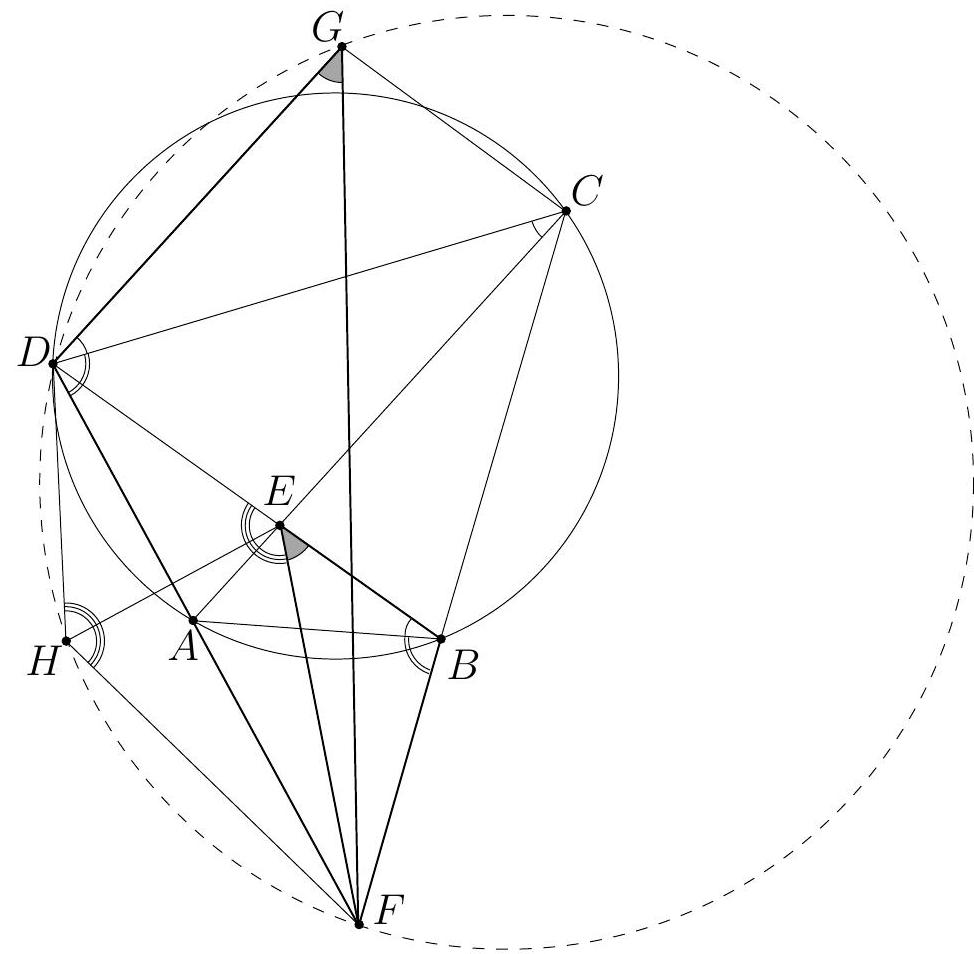

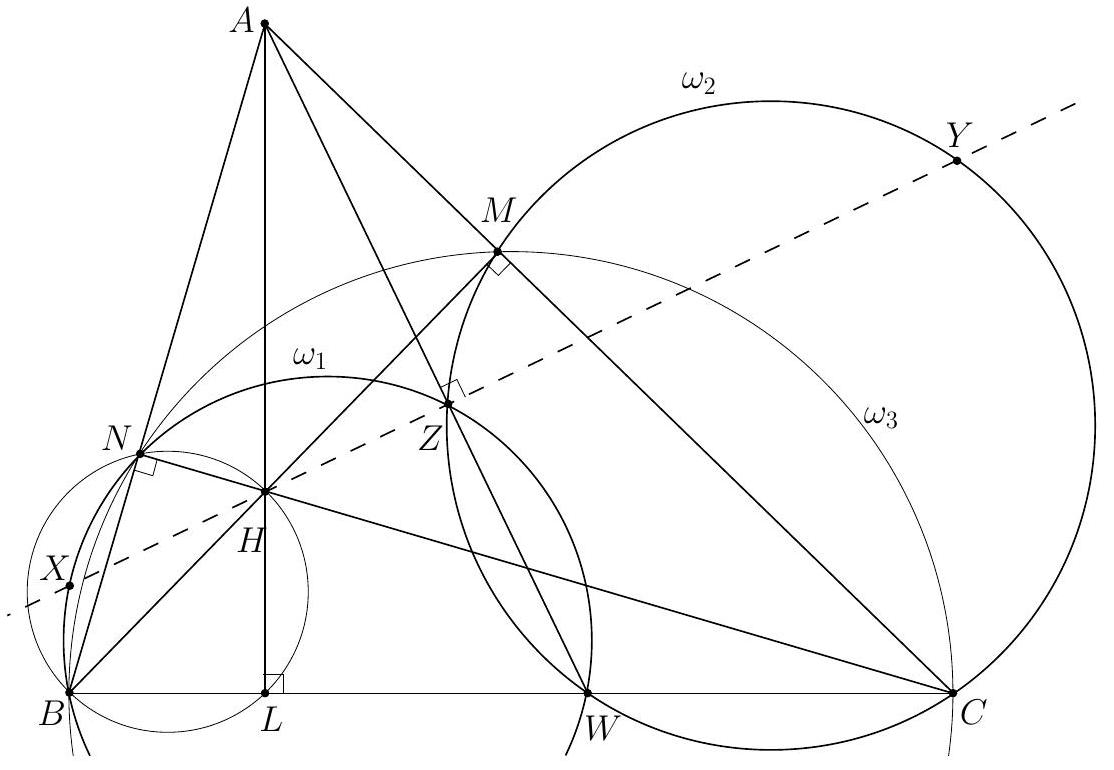

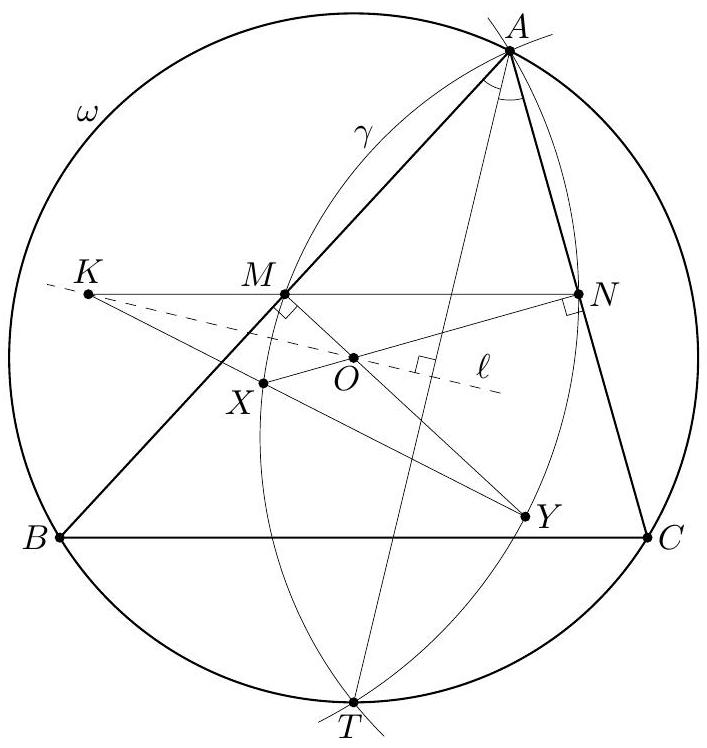

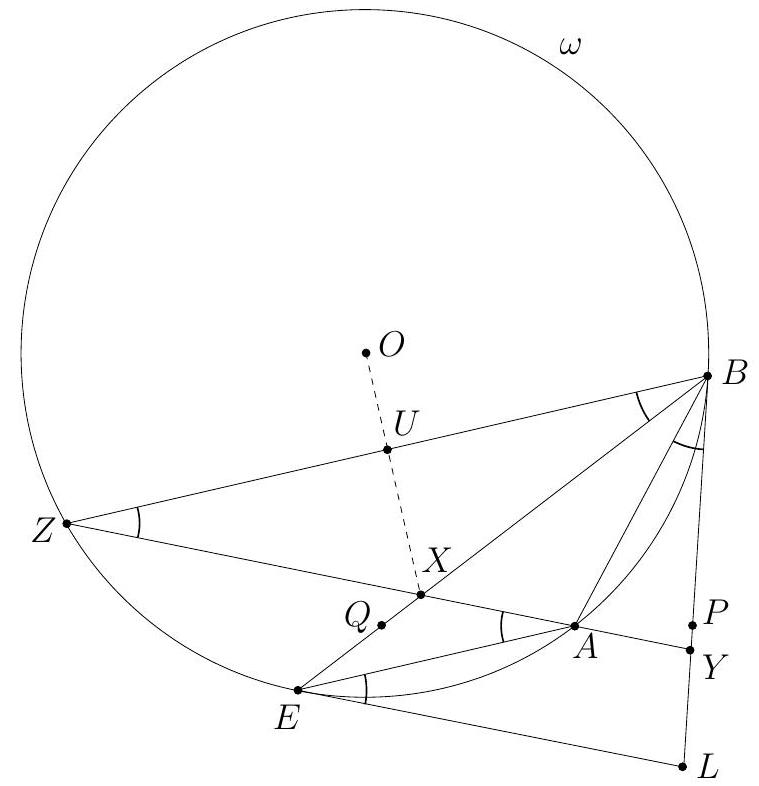

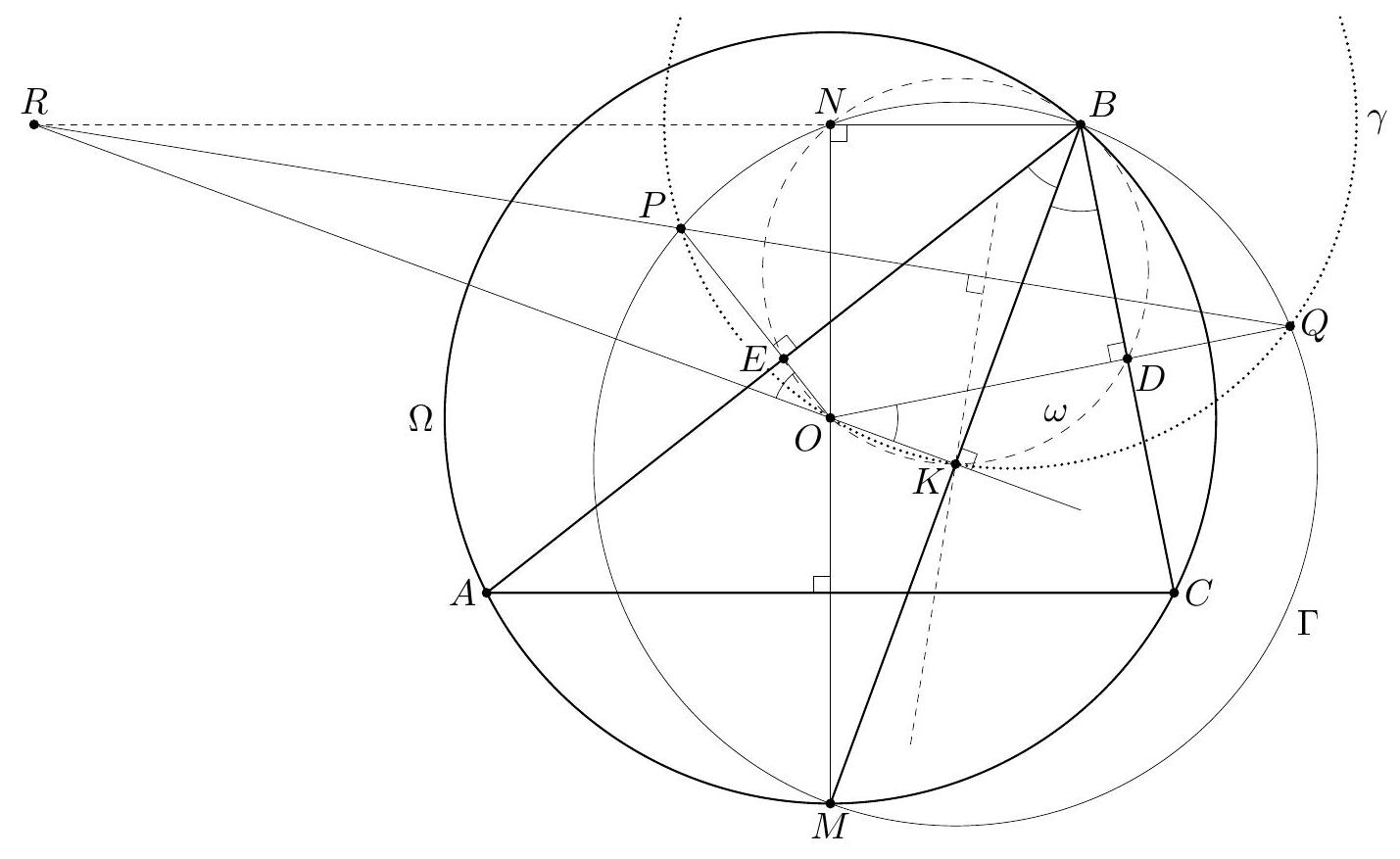

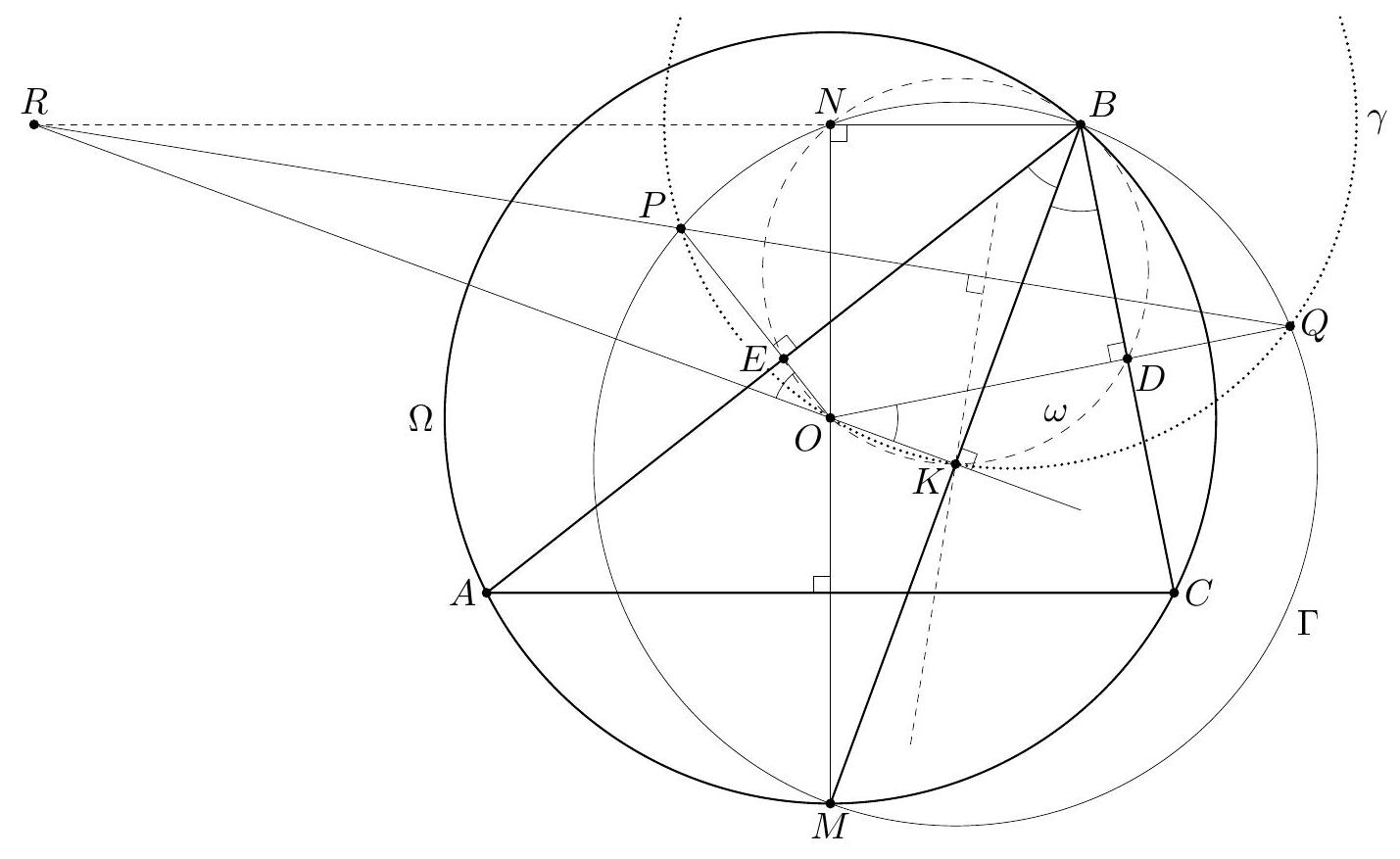

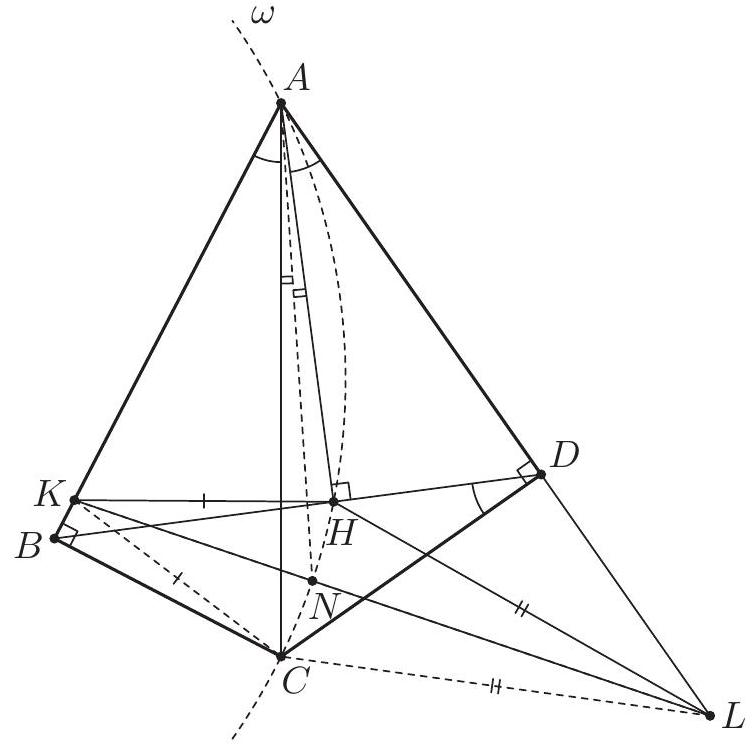

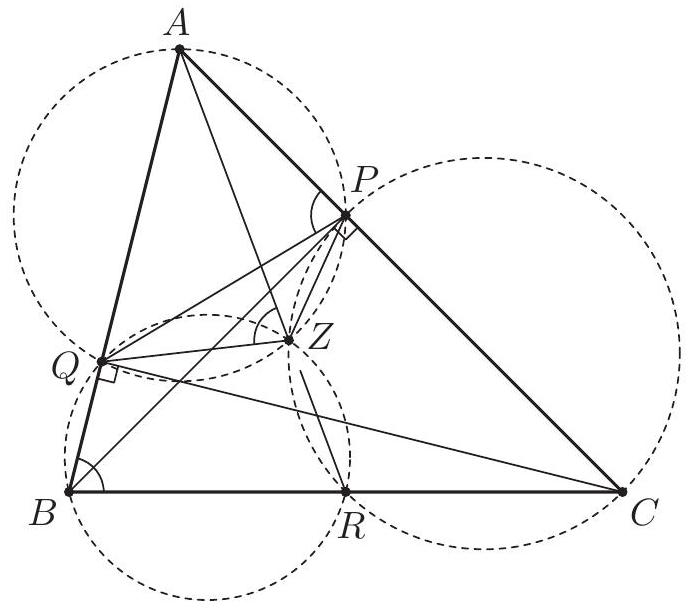

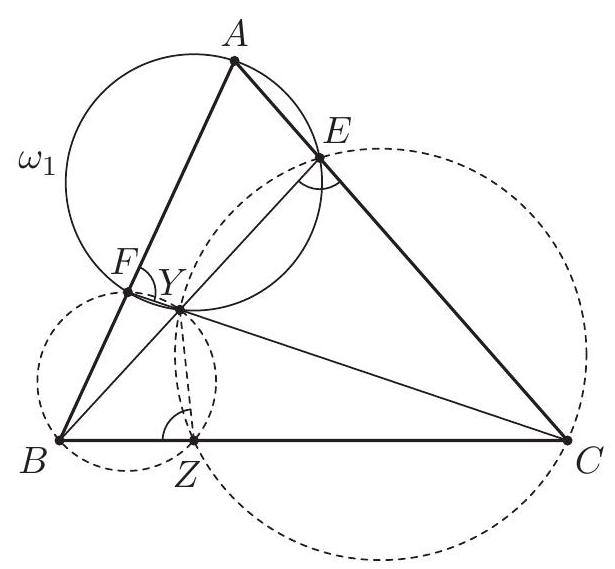

Let $A B C D$ be a cyclic quadrilateral whose diagonals $A C$ and $B D$ meet at $E$. The extensions of the sides $A D$ and $B C$ beyond $A$ and $B$ meet at $F$. Let $G$ be the point such that $E C G D$ is a parallelogram, and let $H$ be the image of $E$ under reflection in $A D$. Prove that $D, H, F, G$ are concyclic.

|

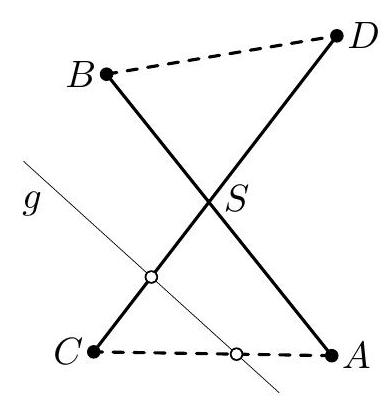

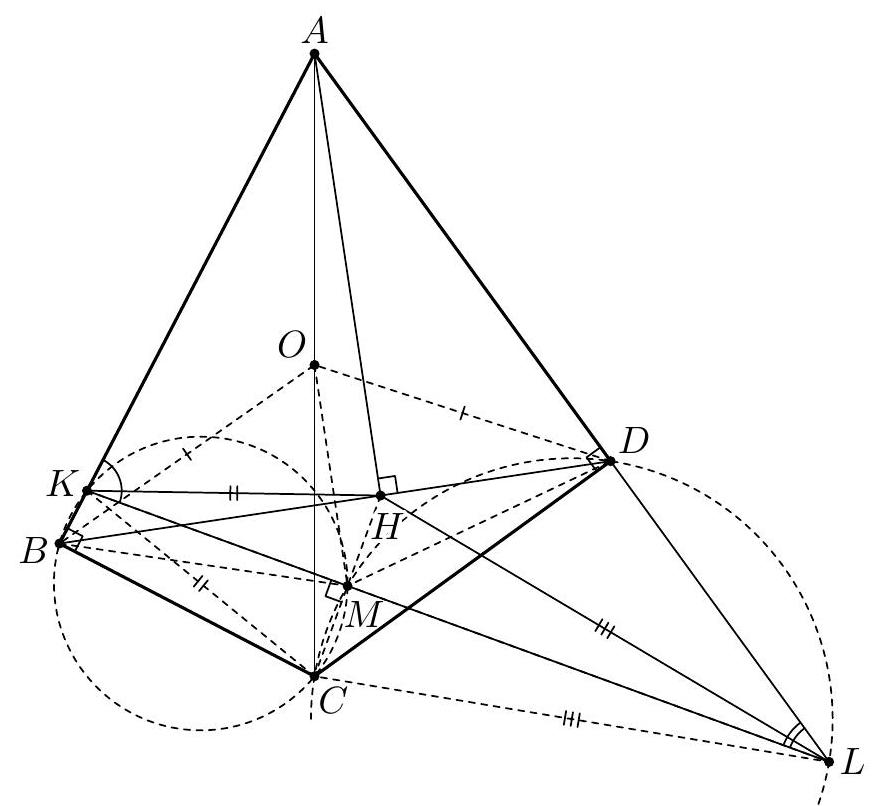

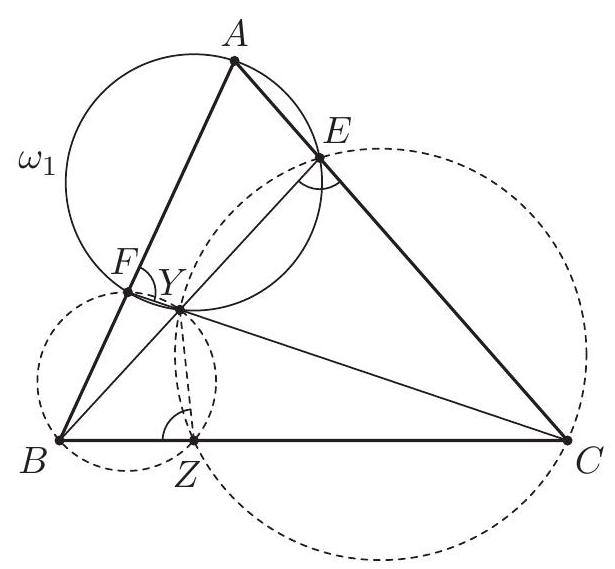

We show first that the triangles $F D G$ and $F B E$ are similar. Since $A B C D$ is cyclic, the triangles $E A B$ and $E D C$ are similar, as well as $F A B$ and $F C D$. The parallelogram $E C G D$ yields $G D=E C$ and $\angle C D G=\angle D C E$; also $\angle D C E=\angle D C A=\angle D B A$ by inscribed angles. Therefore $$ \begin{gathered} \angle F D G=\angle F D C+\angle C D G=\angle F B A+\angle A B D=\angle F B E, \\ \frac{G D}{E B}=\frac{C E}{E B}=\frac{C D}{A B}=\frac{F D}{F B} . \end{gathered} $$ It follows that $F D G$ and $F B E$ are similar, and so $\angle F G D=\angle F E B$.  Since $H$ is the reflection of $E$ with respect to $F D$, we conclude that $$ \angle F H D=\angle F E D=180^{\circ}-\angle F E B=180^{\circ}-\angle F G D . $$ This proves that $D, H, F, G$ are concyclic. Comment. Points $E$ and $G$ are always in the half-plane determined by the line $F D$ that contains $B$ and $C$, but $H$ is always in the other half-plane. In particular, $D H F G$ is cyclic if and only if $\angle F H D+\angle F G D=180^{\circ}$.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Let $A B C D$ be a cyclic quadrilateral whose diagonals $A C$ and $B D$ meet at $E$. The extensions of the sides $A D$ and $B C$ beyond $A$ and $B$ meet at $F$. Let $G$ be the point such that $E C G D$ is a parallelogram, and let $H$ be the image of $E$ under reflection in $A D$. Prove that $D, H, F, G$ are concyclic.

|

We show first that the triangles $F D G$ and $F B E$ are similar. Since $A B C D$ is cyclic, the triangles $E A B$ and $E D C$ are similar, as well as $F A B$ and $F C D$. The parallelogram $E C G D$ yields $G D=E C$ and $\angle C D G=\angle D C E$; also $\angle D C E=\angle D C A=\angle D B A$ by inscribed angles. Therefore $$ \begin{gathered} \angle F D G=\angle F D C+\angle C D G=\angle F B A+\angle A B D=\angle F B E, \\ \frac{G D}{E B}=\frac{C E}{E B}=\frac{C D}{A B}=\frac{F D}{F B} . \end{gathered} $$ It follows that $F D G$ and $F B E$ are similar, and so $\angle F G D=\angle F E B$.  Since $H$ is the reflection of $E$ with respect to $F D$, we conclude that $$ \angle F H D=\angle F E D=180^{\circ}-\angle F E B=180^{\circ}-\angle F G D . $$ This proves that $D, H, F, G$ are concyclic. Comment. Points $E$ and $G$ are always in the half-plane determined by the line $F D$ that contains $B$ and $C$, but $H$ is always in the other half-plane. In particular, $D H F G$ is cyclic if and only if $\angle F H D+\angle F G D=180^{\circ}$.

|

{

"resource_path": "IMO/segmented/en-IMO2012SL.jsonl",

"problem_match": null,

"solution_match": null

}

|

acb9a374-c904-58cf-b986-5cbd4475ab71

| 24,187

|

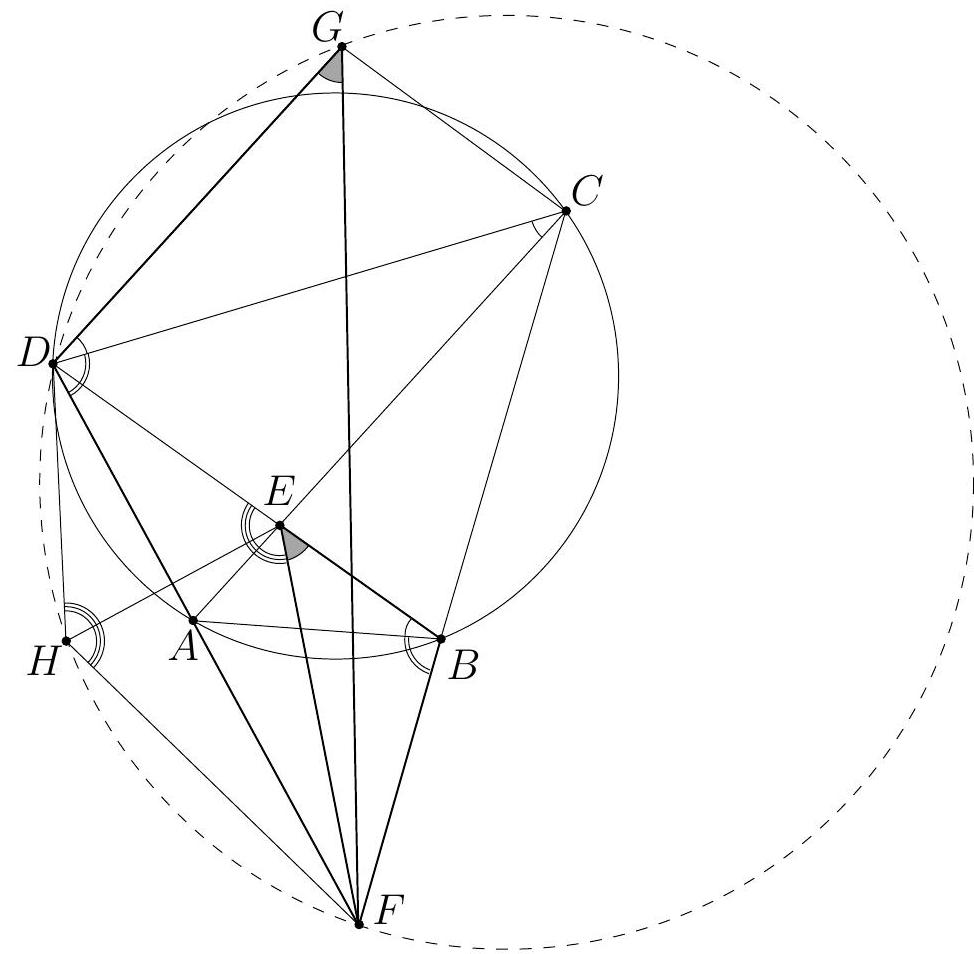

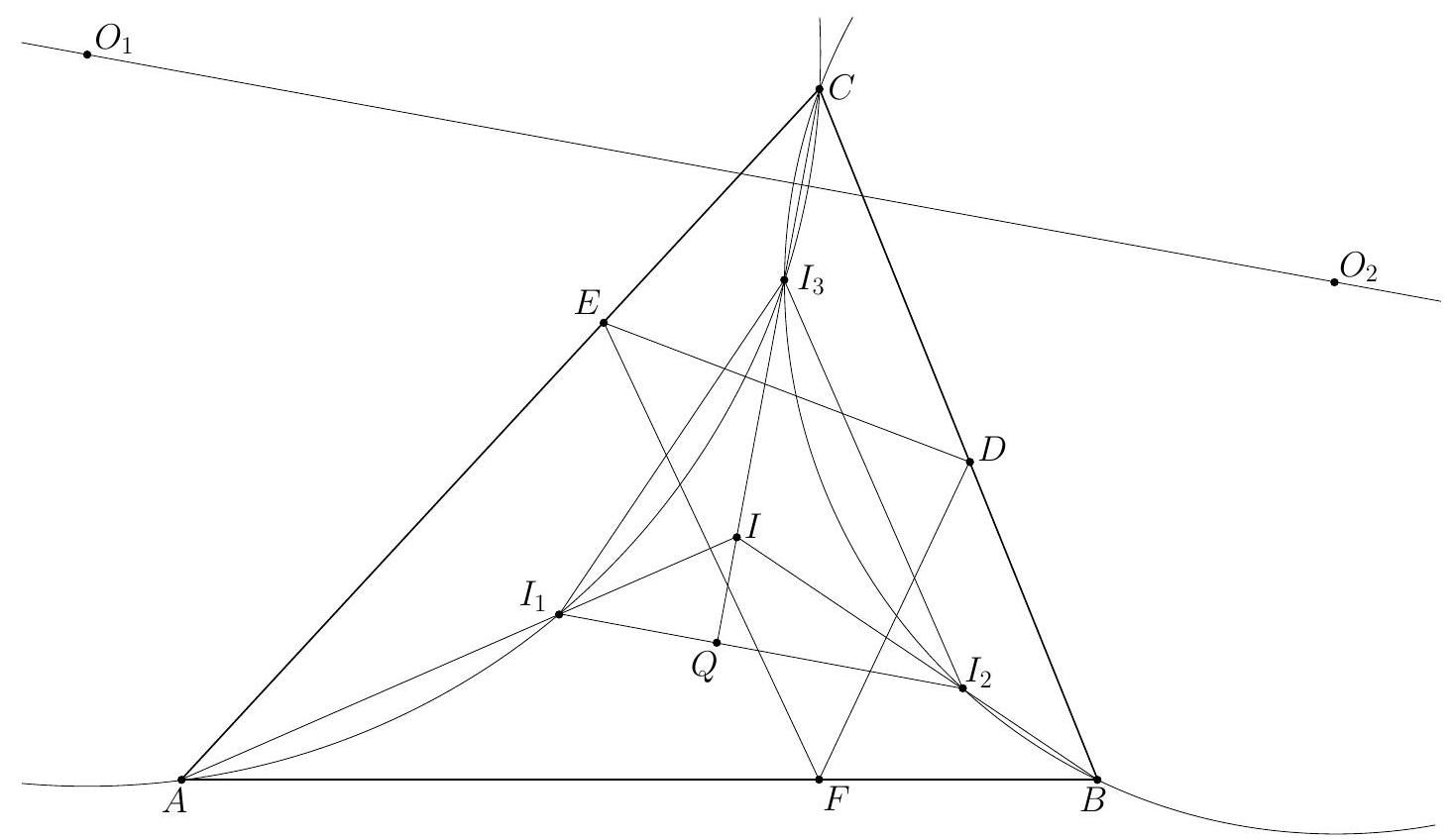

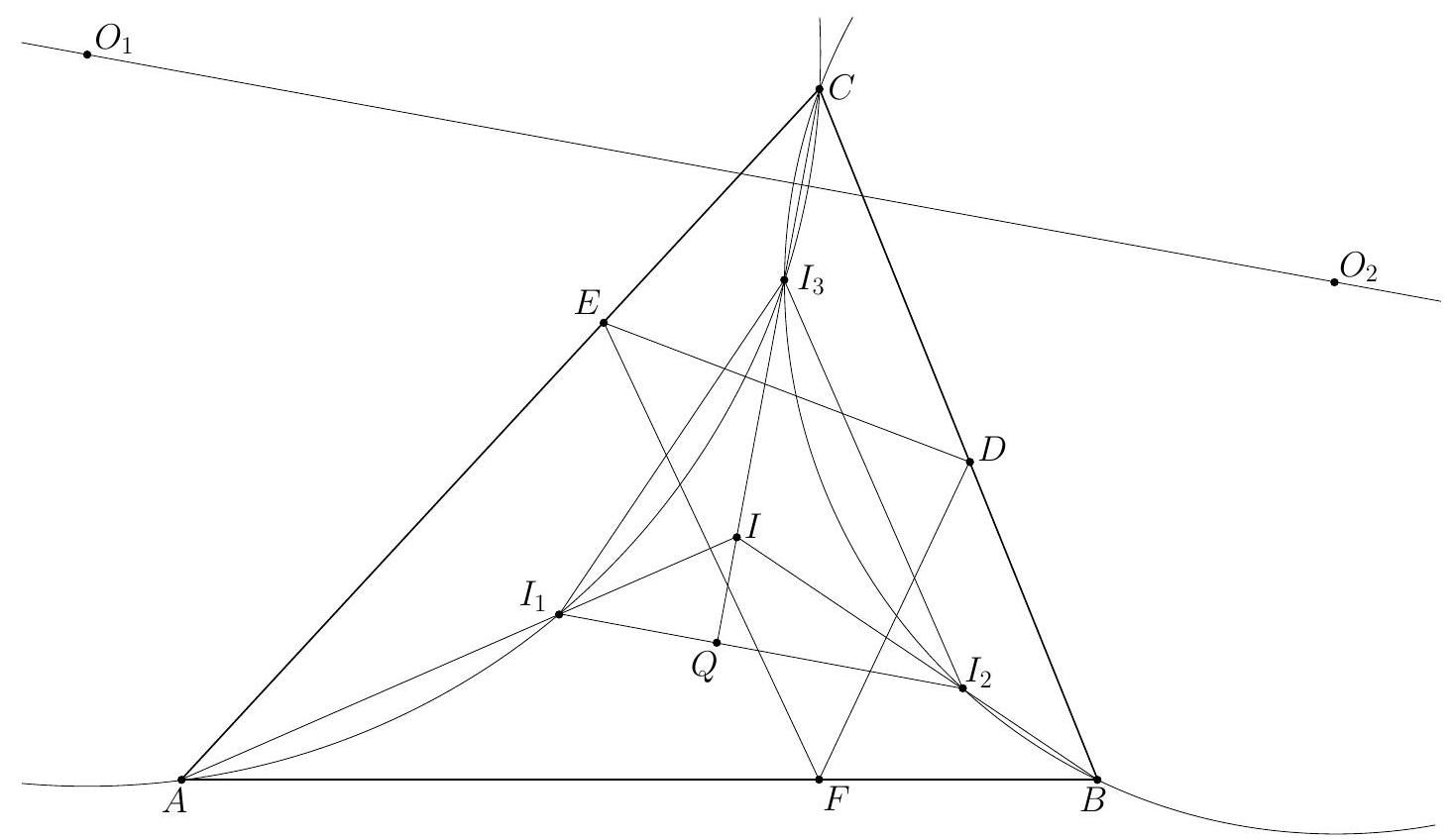

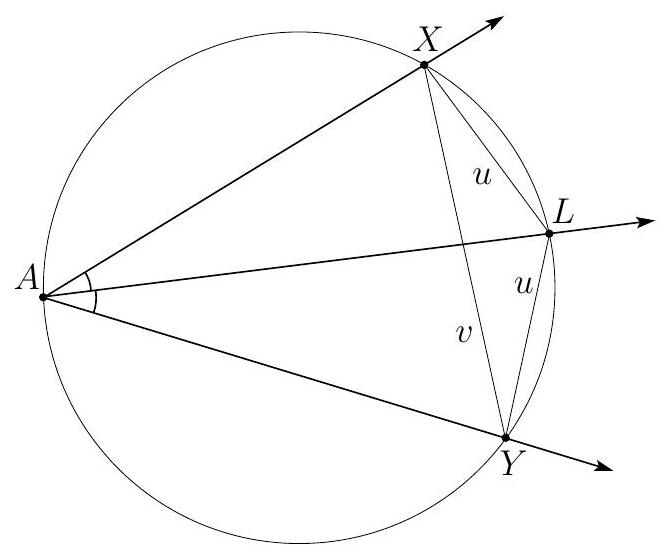

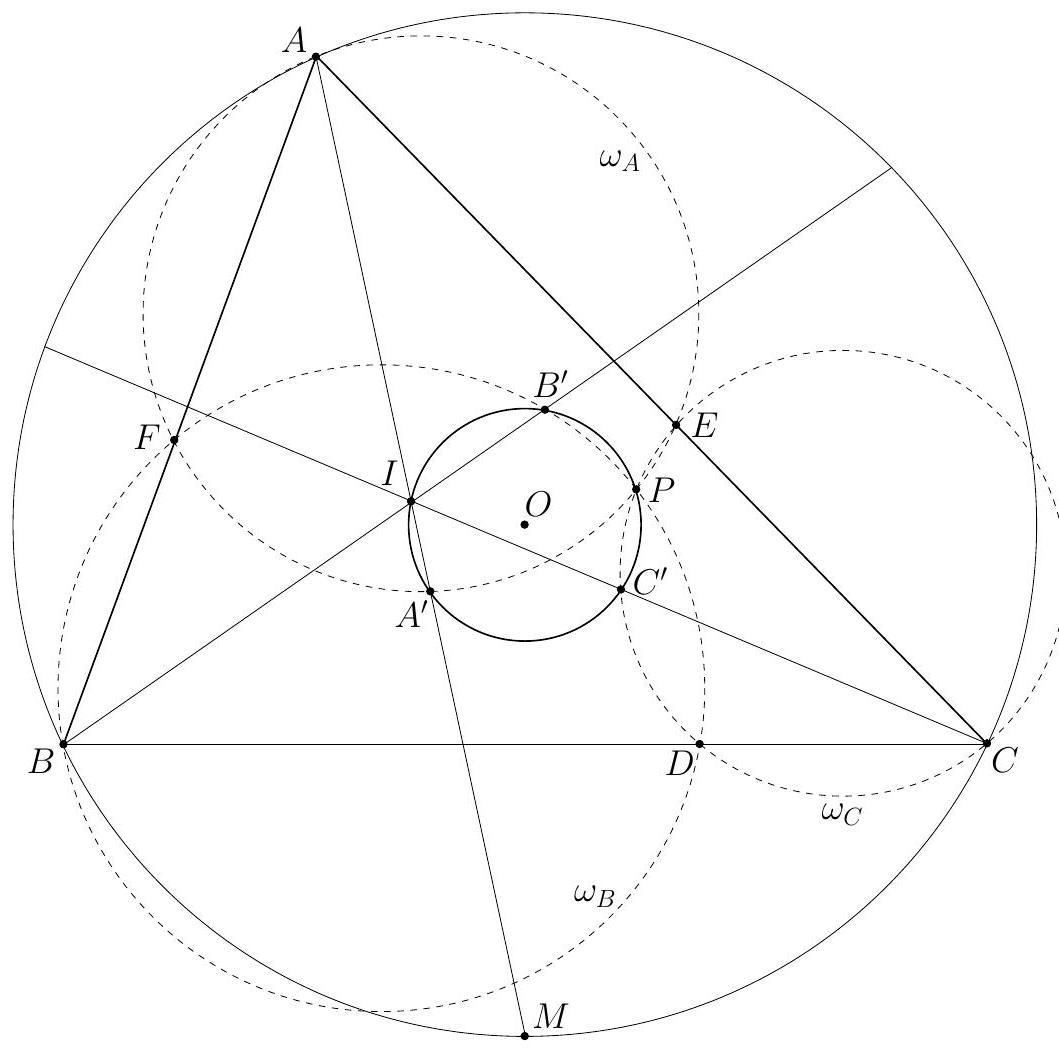

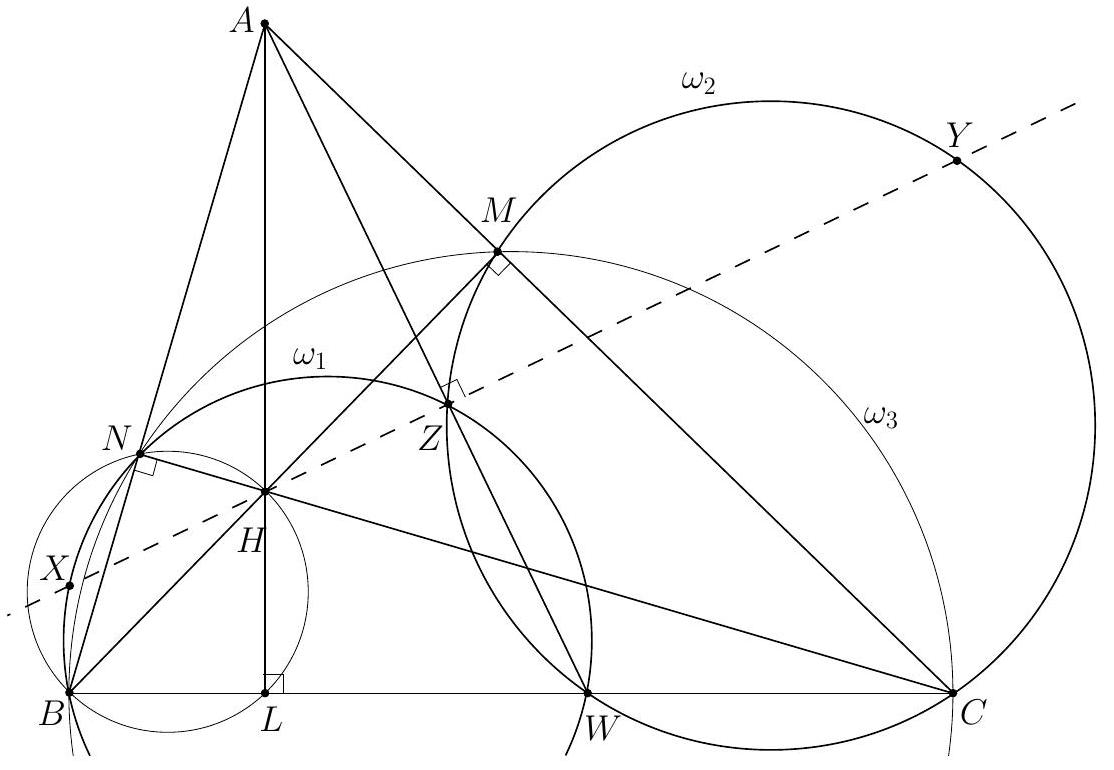

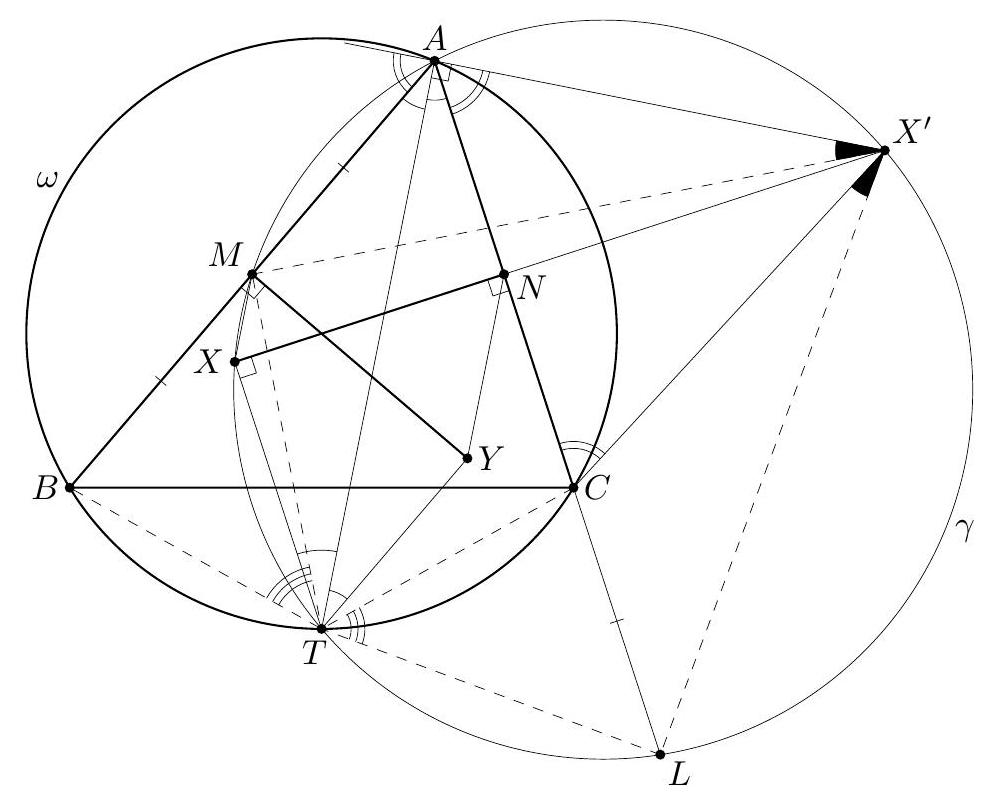

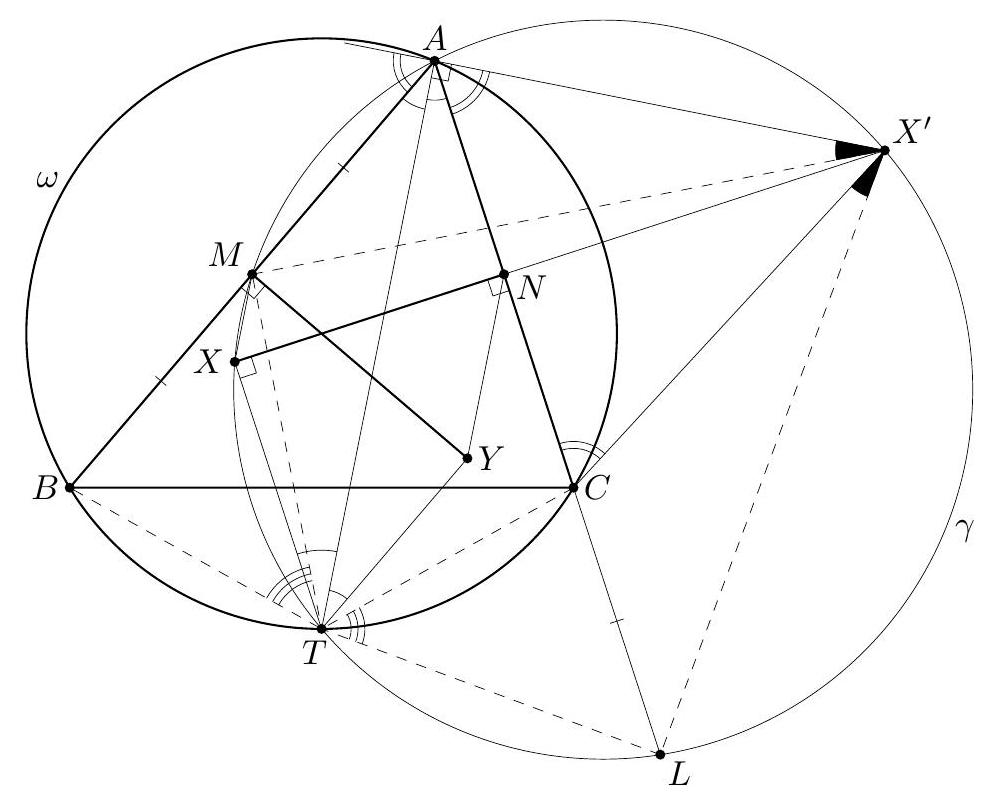

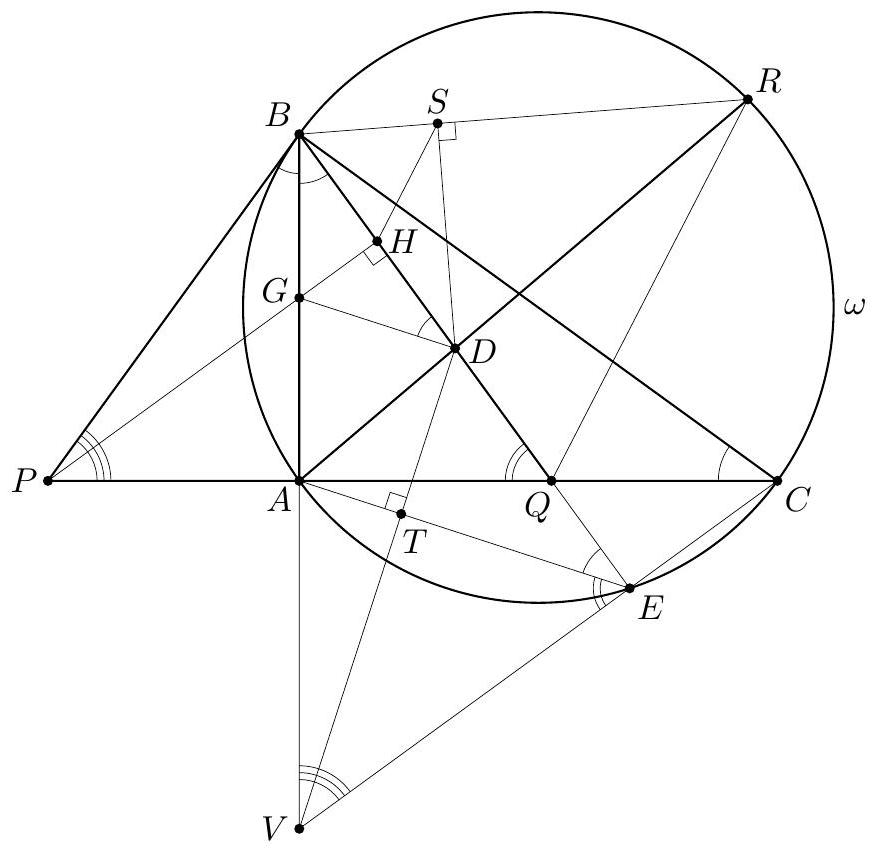

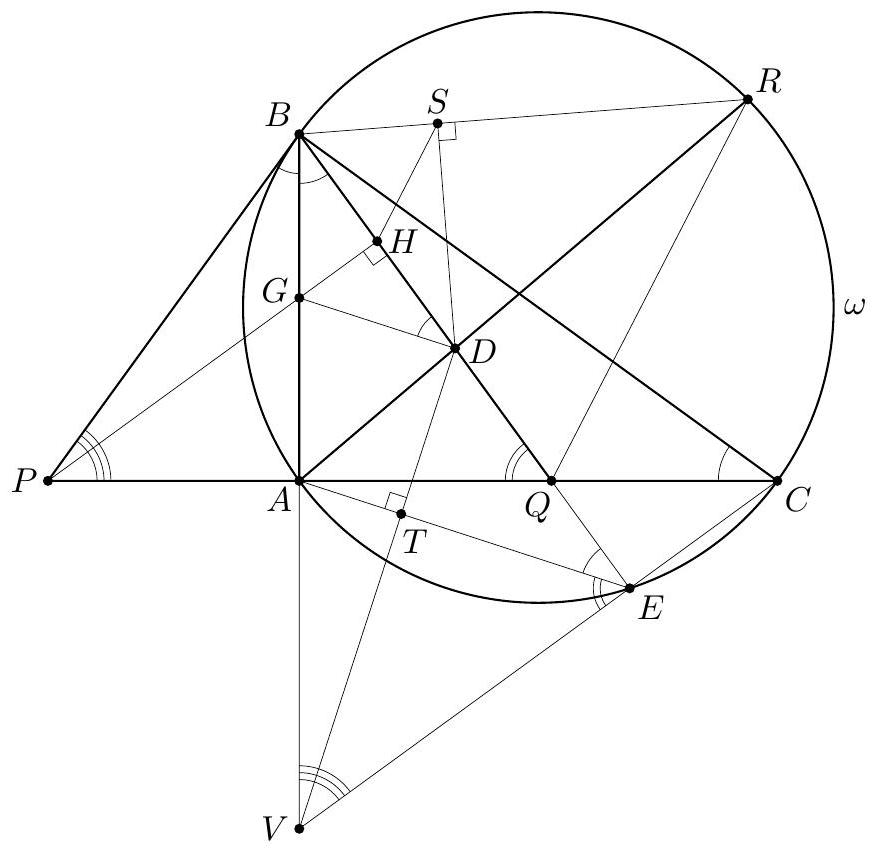

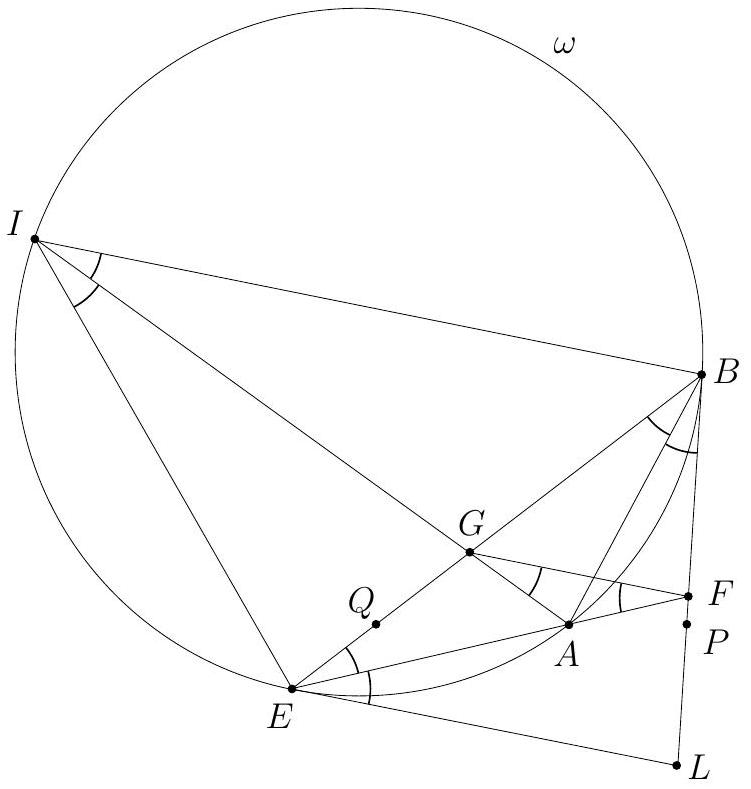

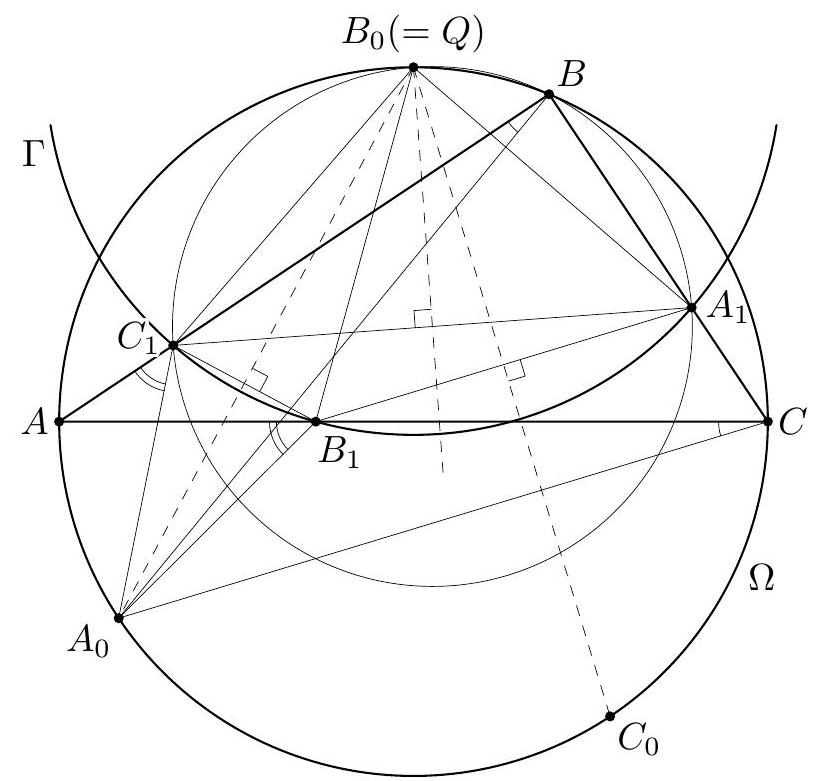

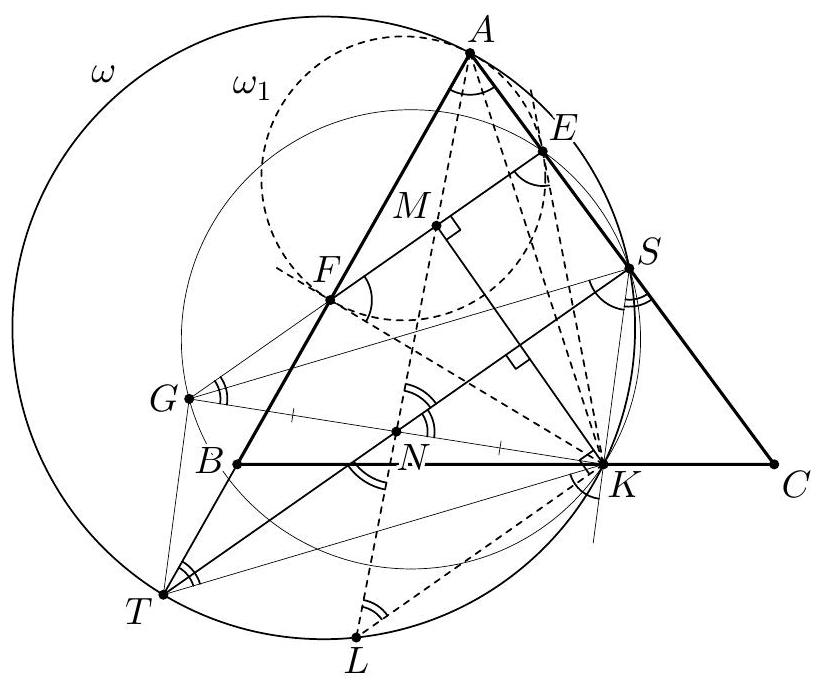

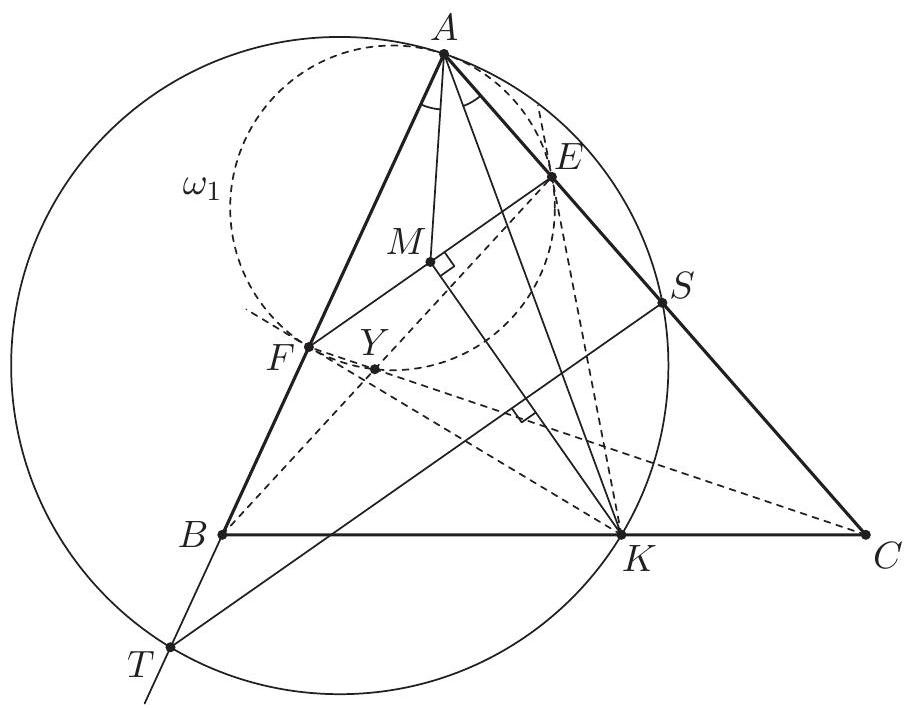

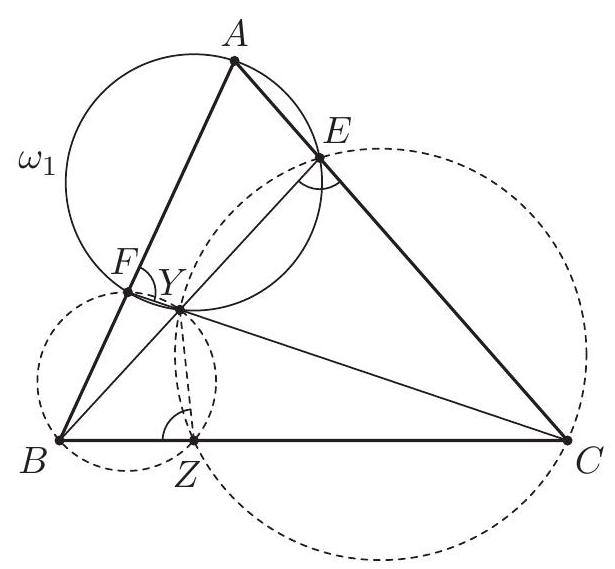

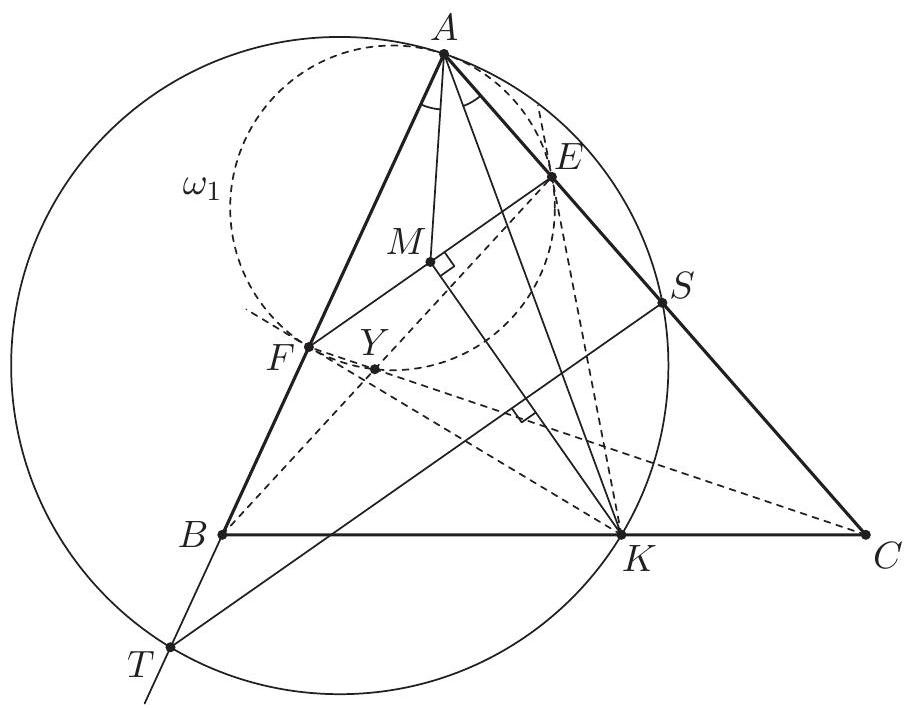

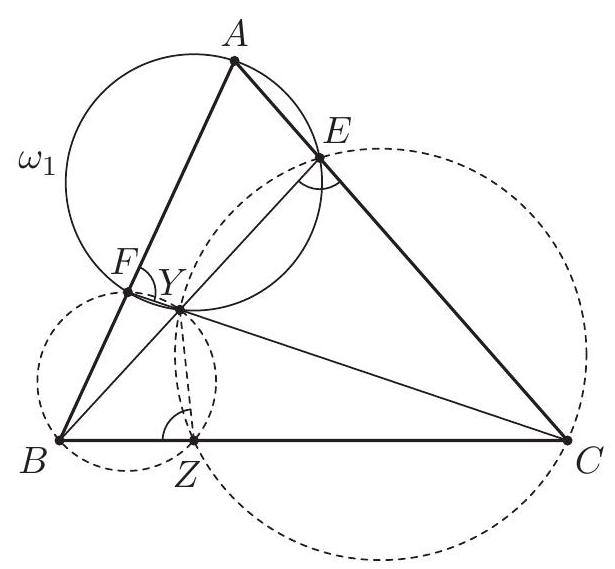

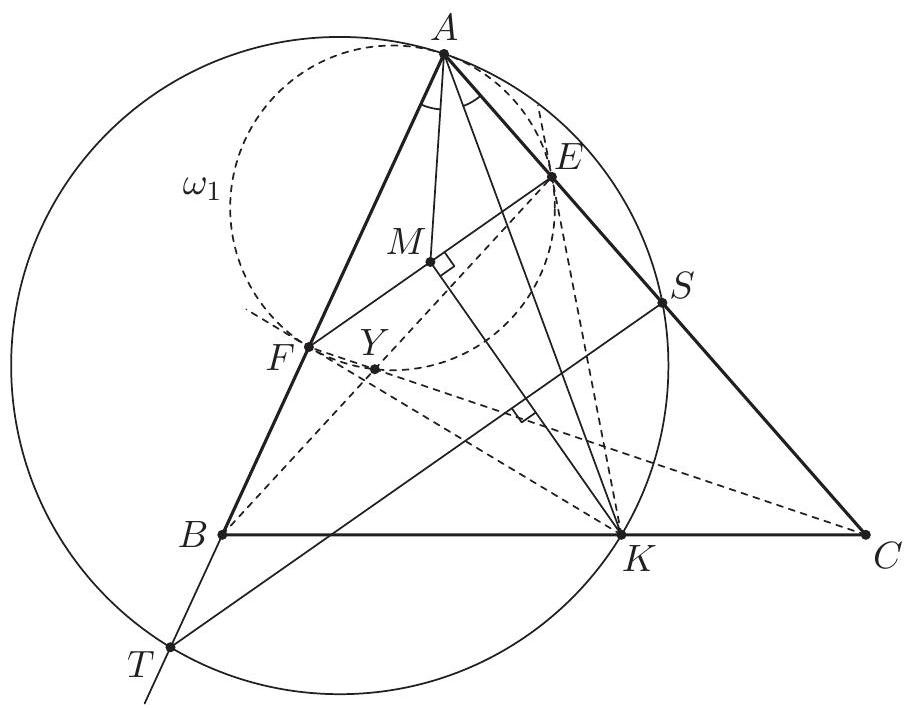

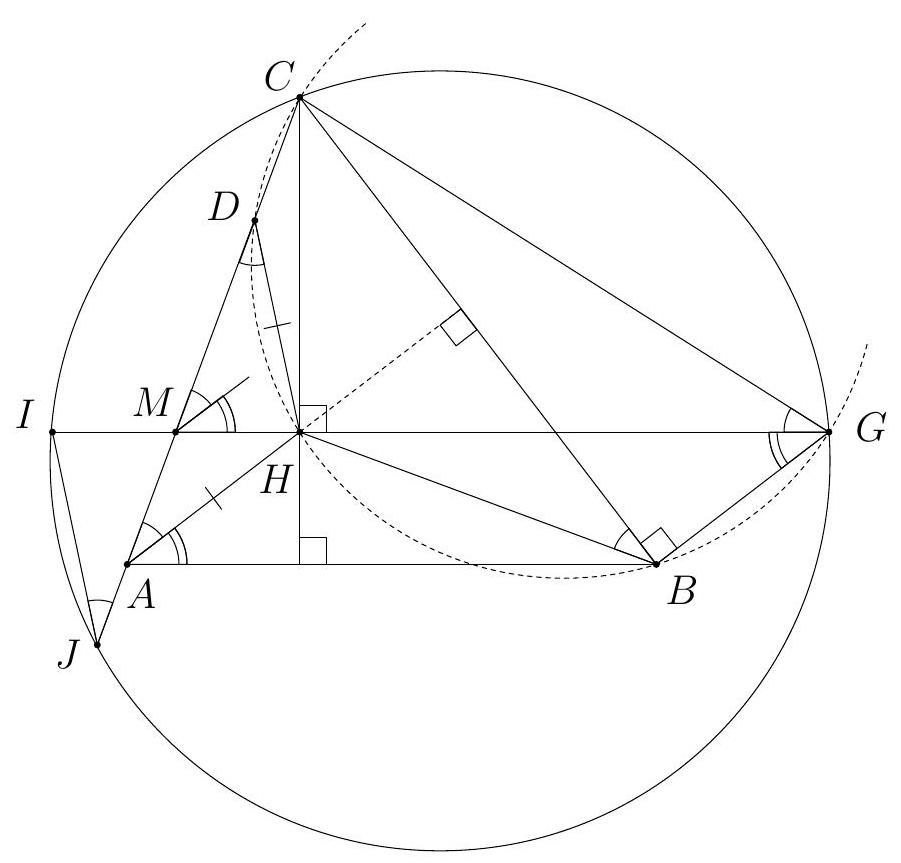

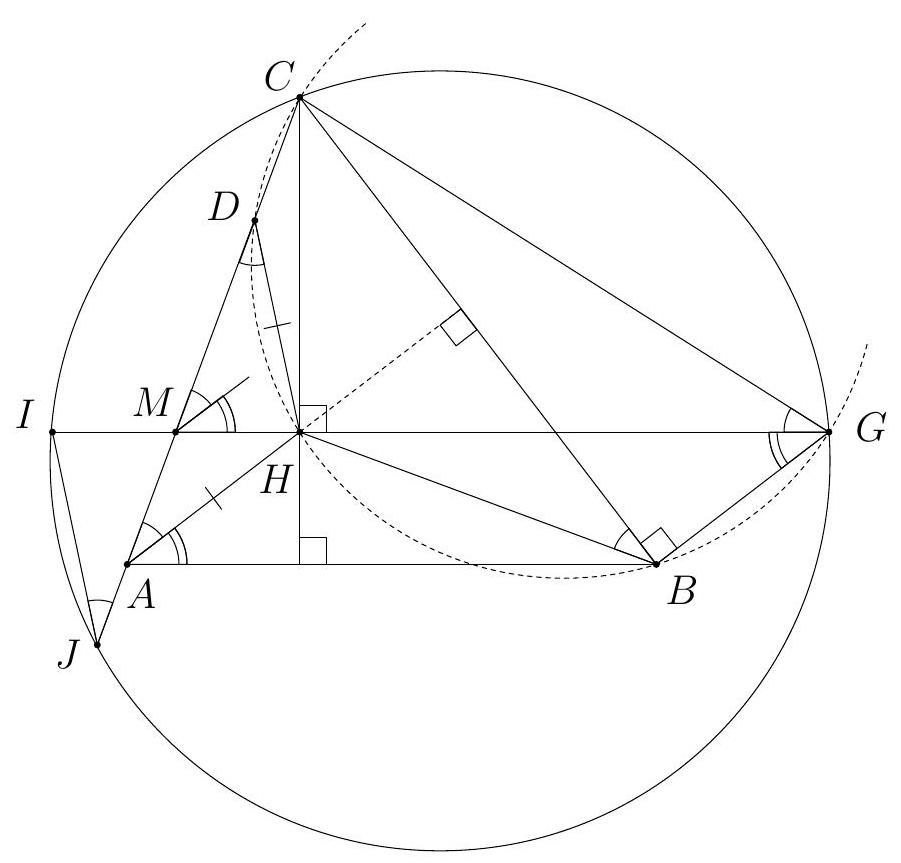

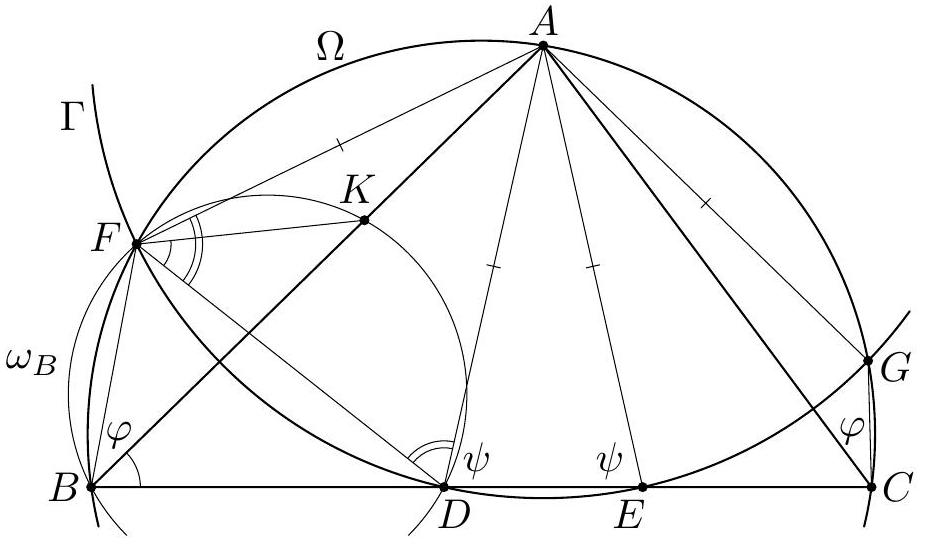

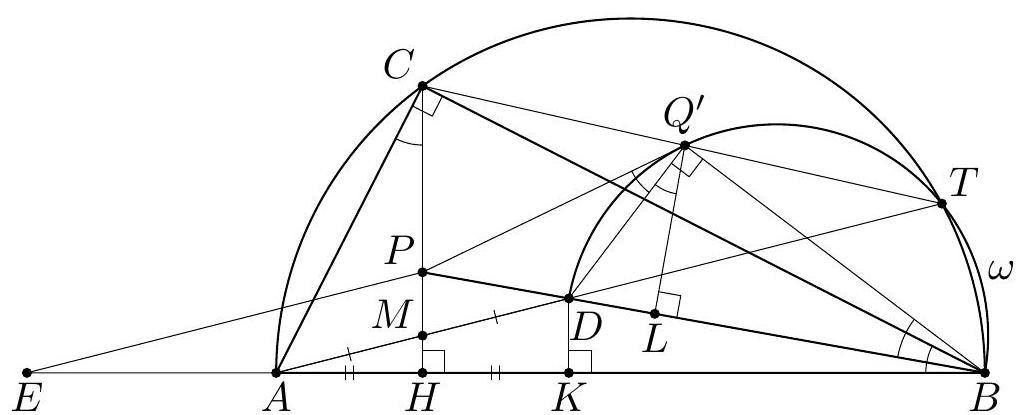

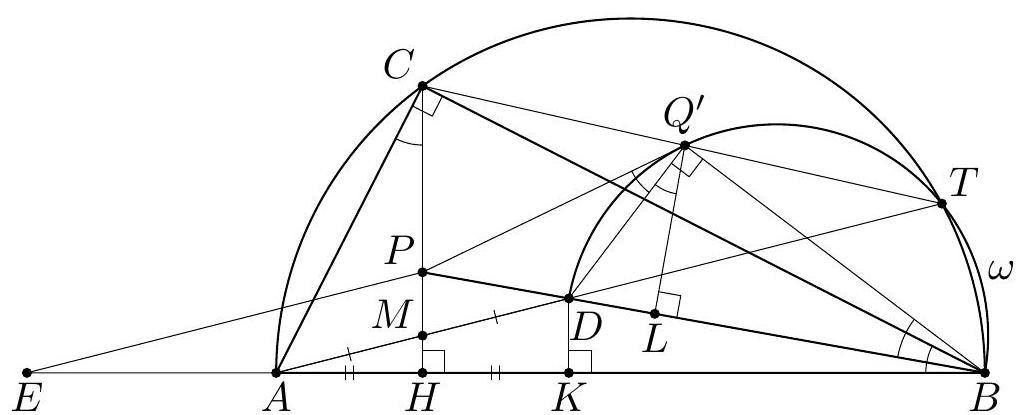

In an acute triangle $A B C$ the points $D, E$ and $F$ are the feet of the altitudes through $A$, $B$ and $C$ respectively. The incenters of the triangles $A E F$ and $B D F$ are $I_{1}$ and $I_{2}$ respectively; the circumcenters of the triangles $A C I_{1}$ and $B C I_{2}$ are $O_{1}$ and $O_{2}$ respectively. Prove that $I_{1} I_{2}$ and $O_{1} O_{2}$ are parallel.

|

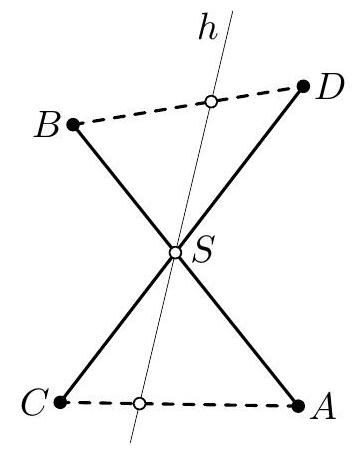

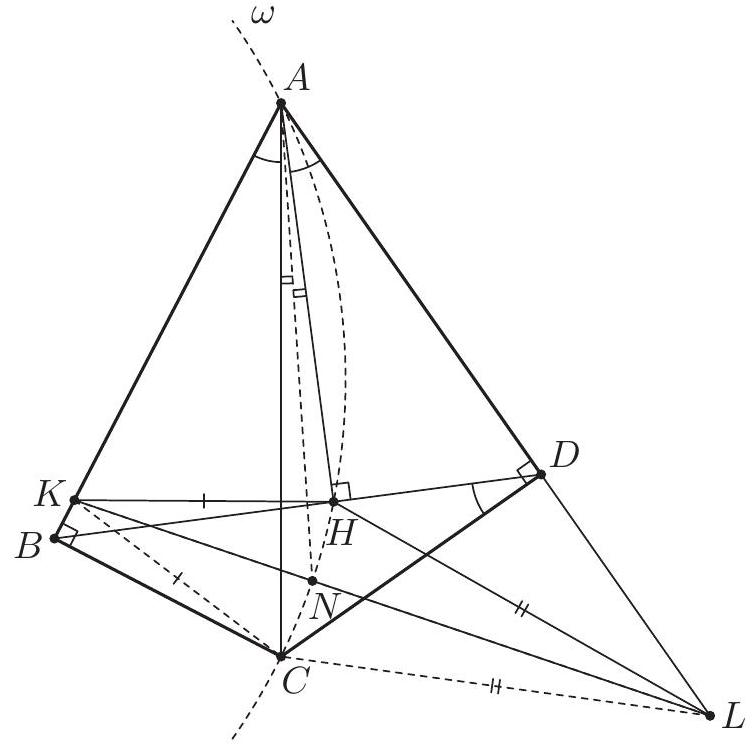

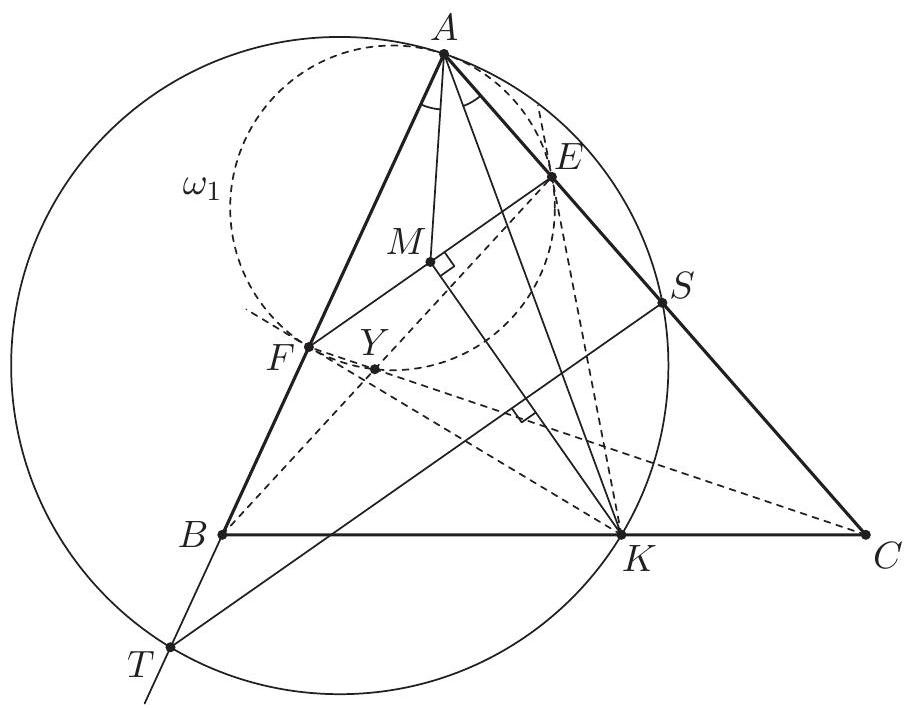

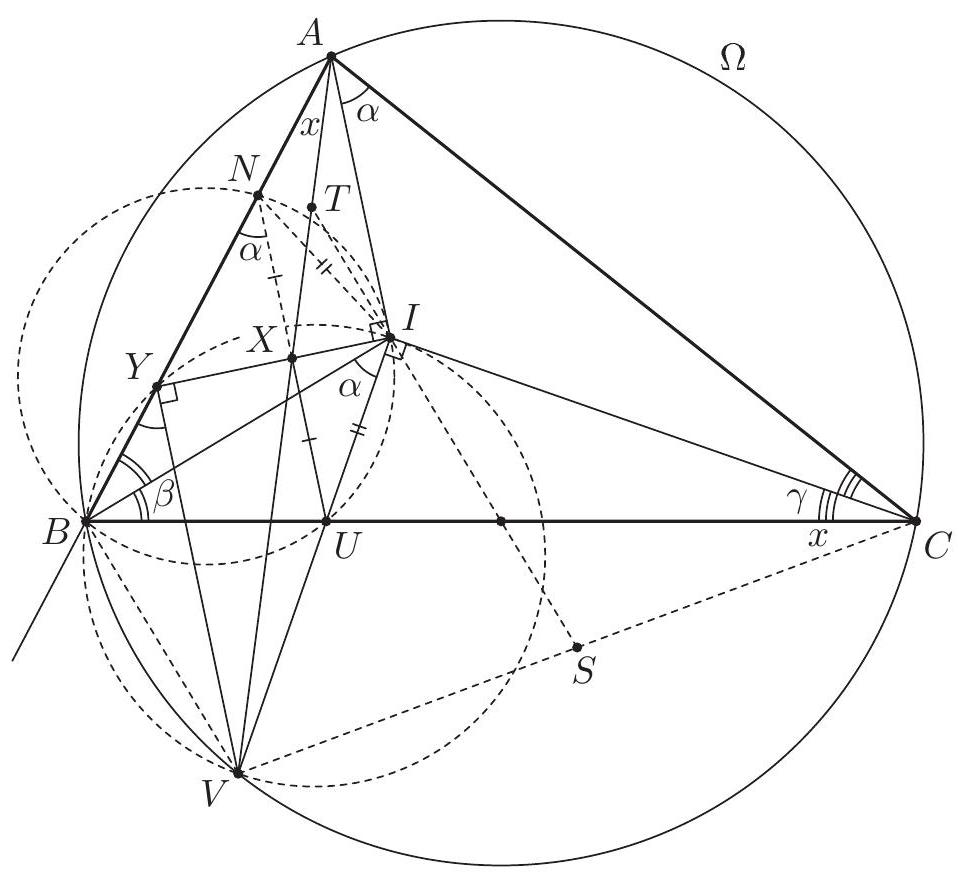

Let $\angle C A B=\alpha, \angle A B C=\beta, \angle B C A=\gamma$. We start by showing that $A, B, I_{1}$ and $I_{2}$ are concyclic. Since $A I_{1}$ and $B I_{2}$ bisect $\angle C A B$ and $\angle A B C$, their extensions beyond $I_{1}$ and $I_{2}$ meet at the incenter $I$ of the triangle. The points $E$ and $F$ are on the circle with diameter $B C$, so $\angle A E F=\angle A B C$ and $\angle A F E=\angle A C B$. Hence the triangles $A E F$ and $A B C$ are similar with ratio of similitude $\frac{A E}{A B}=\cos \alpha$. Because $I_{1}$ and $I$ are their incenters, we obtain $I_{1} A=I A \cos \alpha$ and $I I_{1}=I A-I_{1} A=2 I A \sin ^{2} \frac{\alpha}{2}$. By symmetry $I I_{2}=2 I B \sin ^{2} \frac{\beta}{2}$. The law of sines in the triangle $A B I$ gives $I A \sin \frac{\alpha}{2}=I B \sin \frac{\beta}{2}$. Hence $$ I I_{1} \cdot I A=2\left(I A \sin \frac{\alpha}{2}\right)^{2}=2\left(I B \sin \frac{\beta}{2}\right)^{2}=I I_{2} \cdot I B $$ Therefore $A, B, I_{1}$ and $I_{2}$ are concyclic, as claimed.  In addition $I I_{1} \cdot I A=I I_{2} \cdot I B$ implies that $I$ has the same power with respect to the circles $\left(A C I_{1}\right),\left(B C I_{2}\right)$ and $\left(A B I_{1} I_{2}\right)$. Then $C I$ is the radical axis of $\left(A C I_{1}\right)$ and $\left(B C I_{2}\right)$; in particular $C I$ is perpendicular to the line of centers $O_{1} O_{2}$. Now it suffices to prove that $C I \perp I_{1} I_{2}$. Let $C I$ meet $I_{1} I_{2}$ at $Q$, then it is enough to check that $\angle I I_{1} Q+\angle I_{1} I Q=90^{\circ}$. Since $\angle I_{1} I Q$ is external for the triangle $A C I$, we have $$ \angle I I_{1} Q+\angle I_{1} I Q=\angle I I_{1} Q+(\angle A C I+\angle C A I)=\angle I I_{1} I_{2}+\angle A C I+\angle C A I . $$ It remains to note that $\angle I I_{1} I_{2}=\frac{\beta}{2}$ from the cyclic quadrilateral $A B I_{1} I_{2}$, and $\angle A C I=\frac{\gamma}{2}$, $\angle C A I=\frac{\alpha}{2}$. Therefore $\angle I I_{1} Q+\angle I_{1} I Q=\frac{\alpha}{2}+\frac{\beta}{2}+\frac{\gamma}{2}=90^{\circ}$, completing the proof. Comment. It follows from the first part of the solution that the common point $I_{3} \neq C$ of the circles $\left(A C I_{1}\right)$ and $\left(B C I_{2}\right)$ is the incenter of the triangle $C D E$.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

In an acute triangle $A B C$ the points $D, E$ and $F$ are the feet of the altitudes through $A$, $B$ and $C$ respectively. The incenters of the triangles $A E F$ and $B D F$ are $I_{1}$ and $I_{2}$ respectively; the circumcenters of the triangles $A C I_{1}$ and $B C I_{2}$ are $O_{1}$ and $O_{2}$ respectively. Prove that $I_{1} I_{2}$ and $O_{1} O_{2}$ are parallel.

|