Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

249,907 | 7,965,270,671 | IssuesEvent | 2018-07-14 05:47:56 | esteemapp/esteem-surfer | https://api.github.com/repos/esteemapp/esteem-surfer | closed | Sort comments with number of Votes by default | high priority | Let's try default sorting to be by number of votes... encourages more comment voters

| 1.0 | Sort comments with number of Votes by default - Let's try default sorting to be by number of votes... encourages more comment voters

| priority | sort comments with number of votes by default let s try default sorting to be by number of votes encourages more comment voters | 1 |

79,589 | 3,537,431,090 | IssuesEvent | 2016-01-18 00:44:35 | pombase/canto | https://api.github.com/repos/pombase/canto | closed | SF-Trac error | high priority next | Not really Canto related but I just tried to access SF-Trac and got this error:

Error

TracError: The Trac Environment needs to be upgraded.

Run "trac-admin /data/pombase/trac-sf-copy upgrade" | 1.0 | SF-Trac error - Not really Canto related but I just tried to access SF-Trac and got this error:

Error

TracError: The Trac Environment needs to be upgraded.

Run "trac-admin /data/pombase/trac-sf-copy upgrade" | priority | sf trac error not really canto related but i just tried to access sf trac and got this error error tracerror the trac environment needs to be upgraded run trac admin data pombase trac sf copy upgrade | 1 |

492,376 | 14,201,191,467 | IssuesEvent | 2020-11-16 07:14:07 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.xvideos.com - desktop site instead of mobile site | browser-fenix engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox Mobile 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:83.0) Gecko/83.0 Firefox/83.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/61890 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.xvideos.com/videos-i-like#0

**Browser / Version**: Firefox Mobile 83.0

**Operating System**: Android

**Tested Another Browser**: Yes Safari

**Problem type**: Desktop site instead of mobile site

**Description**: Desktop site instead of mobile site

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20201108174701</li><li>channel: beta</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/11/18cc2c3d-ad1b-4edc-967d-51672fd97281)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.xvideos.com - desktop site instead of mobile site - <!-- @browser: Firefox Mobile 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:83.0) Gecko/83.0 Firefox/83.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/61890 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.xvideos.com/videos-i-like#0

**Browser / Version**: Firefox Mobile 83.0

**Operating System**: Android

**Tested Another Browser**: Yes Safari

**Problem type**: Desktop site instead of mobile site

**Description**: Desktop site instead of mobile site

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20201108174701</li><li>channel: beta</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/11/18cc2c3d-ad1b-4edc-967d-51672fd97281)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | desktop site instead of mobile site url browser version firefox mobile operating system android tested another browser yes safari problem type desktop site instead of mobile site description desktop site instead of mobile site steps to reproduce view the screenshot browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

127,996 | 5,042,256,235 | IssuesEvent | 2016-12-19 13:23:42 | robotology/icub-tests | https://api.github.com/repos/robotology/icub-tests | opened | Missing installation doc for macOS and Windows | Platform: macOS Platform: Windows Priority: High Status: In Progress Type: Enhancement | Missing installation documentation for LD_LIBRARY_PATH equivalent for macOS and Windows in

https://robotology.github.io/icub-tests/doxygen/doc/html/installation.html | 1.0 | Missing installation doc for macOS and Windows - Missing installation documentation for LD_LIBRARY_PATH equivalent for macOS and Windows in

https://robotology.github.io/icub-tests/doxygen/doc/html/installation.html | priority | missing installation doc for macos and windows missing installation documentation for ld library path equivalent for macos and windows in | 1 |

614,564 | 19,185,608,984 | IssuesEvent | 2021-12-05 05:55:30 | SLCommunity/511 | https://api.github.com/repos/SLCommunity/511 | closed | USER.ROLE 판별 | Feature/Function Status:Done Priority:High | ## 목적

USER, Admin 판별 후 라우팅

## 작업 상세 내용

- [x] frontend 접근 막기

- [x] backend API접근 막기

## 참고사항

ajaxPrefilter와 ajax beforeSend의 차이점에 대해 조금 더 알아볼 예정입니다. | 1.0 | USER.ROLE 판별 - ## 목적

USER, Admin 판별 후 라우팅

## 작업 상세 내용

- [x] frontend 접근 막기

- [x] backend API접근 막기

## 참고사항

ajaxPrefilter와 ajax beforeSend의 차이점에 대해 조금 더 알아볼 예정입니다. | priority | user role 판별 목적 user admin 판별 후 라우팅 작업 상세 내용 frontend 접근 막기 backend api접근 막기 참고사항 ajaxprefilter와 ajax beforesend의 차이점에 대해 조금 더 알아볼 예정입니다 | 1 |

172,626 | 6,514,741,030 | IssuesEvent | 2017-08-26 05:08:53 | ncssar/radiolog | https://api.github.com/repos/ncssar/radiolog | opened | get rid of 'printing' message dialog | bug Priority:High | This window serves no purpose, and causes confusion - might have actually led the operator to hit some button that caused a crash? Anyway - just get rid of it - which would address #33 and #263 | 1.0 | get rid of 'printing' message dialog - This window serves no purpose, and causes confusion - might have actually led the operator to hit some button that caused a crash? Anyway - just get rid of it - which would address #33 and #263 | priority | get rid of printing message dialog this window serves no purpose and causes confusion might have actually led the operator to hit some button that caused a crash anyway just get rid of it which would address and | 1 |

323,873 | 9,879,770,396 | IssuesEvent | 2019-06-24 10:52:02 | telstra/open-kilda | https://api.github.com/repos/telstra/open-kilda | closed | add `diverse-with` to get /flow | feature priority/2-high | When a flows set or a single flow requested, we should return all flow IDs this flow diverse with.

Parameter name suggestion: `diverse-with` | 1.0 | add `diverse-with` to get /flow - When a flows set or a single flow requested, we should return all flow IDs this flow diverse with.

Parameter name suggestion: `diverse-with` | priority | add diverse with to get flow when a flows set or a single flow requested we should return all flow ids this flow diverse with parameter name suggestion diverse with | 1 |

282,587 | 8,708,221,793 | IssuesEvent | 2018-12-06 10:16:52 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | GraphQL get objects by relation ID | priority: high status: confirmed type: bug 🐛 | <!-- ⚠️ If you do not respect this template your issue will be closed. -->

<!-- =============================================================================== -->

<!-- ⚠️ If you are not using the current Strapi release, you will be asked to update. -->

<!-- Please see the wiki for guides on upgrading to the latest release. -->

<!-- =============================================================================== -->

<!-- ⚠️ Make sure to browse the opened and closed issues before submitting your issue. -->

<!-- ⚠️ Before writing your issue make sure you are using:-->

<!-- Node 10.x.x -->

<!-- npm 6.x.x -->

<!-- The latest version of Strapi. -->

**Informations**

- **Node.js version**: 10.8.0

- **npm version**: 6.2.0

- **Strapi version**: alpha 14.4

- **Database**: pg

- **Operating system**: windows/wsl

**What is the current behavior?**

When I define the `where` clause of a related object for the parent ID, I get wrong information for nested properties.

**Steps to reproduce the problem**

So my model `event` consists of multiple `registrations`.

I can ask either for

1: `event(id: 6) {registrations{}}`

or for

2: `registrations(where: {event: 6}) {}`

while `event(id)` delivers the correct data, `registrations(where)` does not.

**Query 1:**

```

event(id: 6) {

registrations{

user {

id

username

}

}

}

```

**Response 1:**

```

"event": {

"registrations": [

{

"user": {

"id": "87",

"username": "kio"

}

},

{

"user": {

"id": "26",

"username": "chitatz"

}

},

{

"user": {

"id": "10",

"username": "akiru"

}

},

{

"user": {

"id": "56",

"username": "famis"

}

}

]

}

```

**Query 2:**

```

registrations(where: {event: 6}) {

id

user {

id

username

}

}

```

**Response 2:**

```

{

"registrations": [

{

"id": "17",

"user": {

"id": "39",

"username": "dawn"

}

},

{

"id": "18",

"user": {

"id": "39",

"username": "dawn"

}

},

{

"id": "19",

"user": {

"id": "39",

"username": "dawn"

}

},

{

"id": "20",

"user": {

"id": "39",

"username": "dawn"

}

}

]

}

```

**What is the expected behavior?**

relation where selectors should behave as object(id) selector

**Suggested solutions**

I believe this might be sql specific, but I can't be sure.

Make sure the related objects are properly resolved, I don't know why a user is returned that is not even in the list of registrations. | 1.0 | GraphQL get objects by relation ID - <!-- ⚠️ If you do not respect this template your issue will be closed. -->

<!-- =============================================================================== -->

<!-- ⚠️ If you are not using the current Strapi release, you will be asked to update. -->

<!-- Please see the wiki for guides on upgrading to the latest release. -->

<!-- =============================================================================== -->

<!-- ⚠️ Make sure to browse the opened and closed issues before submitting your issue. -->

<!-- ⚠️ Before writing your issue make sure you are using:-->

<!-- Node 10.x.x -->

<!-- npm 6.x.x -->

<!-- The latest version of Strapi. -->

**Informations**

- **Node.js version**: 10.8.0

- **npm version**: 6.2.0

- **Strapi version**: alpha 14.4

- **Database**: pg

- **Operating system**: windows/wsl

**What is the current behavior?**

When I define the `where` clause of a related object for the parent ID, I get wrong information for nested properties.

**Steps to reproduce the problem**

So my model `event` consists of multiple `registrations`.

I can ask either for

1: `event(id: 6) {registrations{}}`

or for

2: `registrations(where: {event: 6}) {}`

while `event(id)` delivers the correct data, `registrations(where)` does not.

**Query 1:**

```

event(id: 6) {

registrations{

user {

id

username

}

}

}

```

**Response 1:**

```

"event": {

"registrations": [

{

"user": {

"id": "87",

"username": "kio"

}

},

{

"user": {

"id": "26",

"username": "chitatz"

}

},

{

"user": {

"id": "10",

"username": "akiru"

}

},

{

"user": {

"id": "56",

"username": "famis"

}

}

]

}

```

**Query 2:**

```

registrations(where: {event: 6}) {

id

user {

id

username

}

}

```

**Response 2:**

```

{

"registrations": [

{

"id": "17",

"user": {

"id": "39",

"username": "dawn"

}

},

{

"id": "18",

"user": {

"id": "39",

"username": "dawn"

}

},

{

"id": "19",

"user": {

"id": "39",

"username": "dawn"

}

},

{

"id": "20",

"user": {

"id": "39",

"username": "dawn"

}

}

]

}

```

**What is the expected behavior?**

relation where selectors should behave as object(id) selector

**Suggested solutions**

I believe this might be sql specific, but I can't be sure.

Make sure the related objects are properly resolved, I don't know why a user is returned that is not even in the list of registrations. | priority | graphql get objects by relation id informations node js version npm version strapi version alpha database pg operating system windows wsl what is the current behavior when i define the where clause of a related object for the parent id i get wrong information for nested properties steps to reproduce the problem so my model event consists of multiple registrations i can ask either for event id registrations or for registrations where event while event id delivers the correct data registrations where does not query event id registrations user id username response event registrations user id username kio user id username chitatz user id username akiru user id username famis query registrations where event id user id username response registrations id user id username dawn id user id username dawn id user id username dawn id user id username dawn what is the expected behavior relation where selectors should behave as object id selector suggested solutions i believe this might be sql specific but i can t be sure make sure the related objects are properly resolved i don t know why a user is returned that is not even in the list of registrations | 1 |

704,686 | 24,206,105,866 | IssuesEvent | 2022-09-25 08:41:47 | AY2223S1-CS2103T-T13-4/tp | https://api.github.com/repos/AY2223S1-CS2103T-T13-4/tp | closed | As a user, I can add my contact's phone number | type.Story priority.High | so that I do not have to remember their phone number | 1.0 | As a user, I can add my contact's phone number - so that I do not have to remember their phone number | priority | as a user i can add my contact s phone number so that i do not have to remember their phone number | 1 |

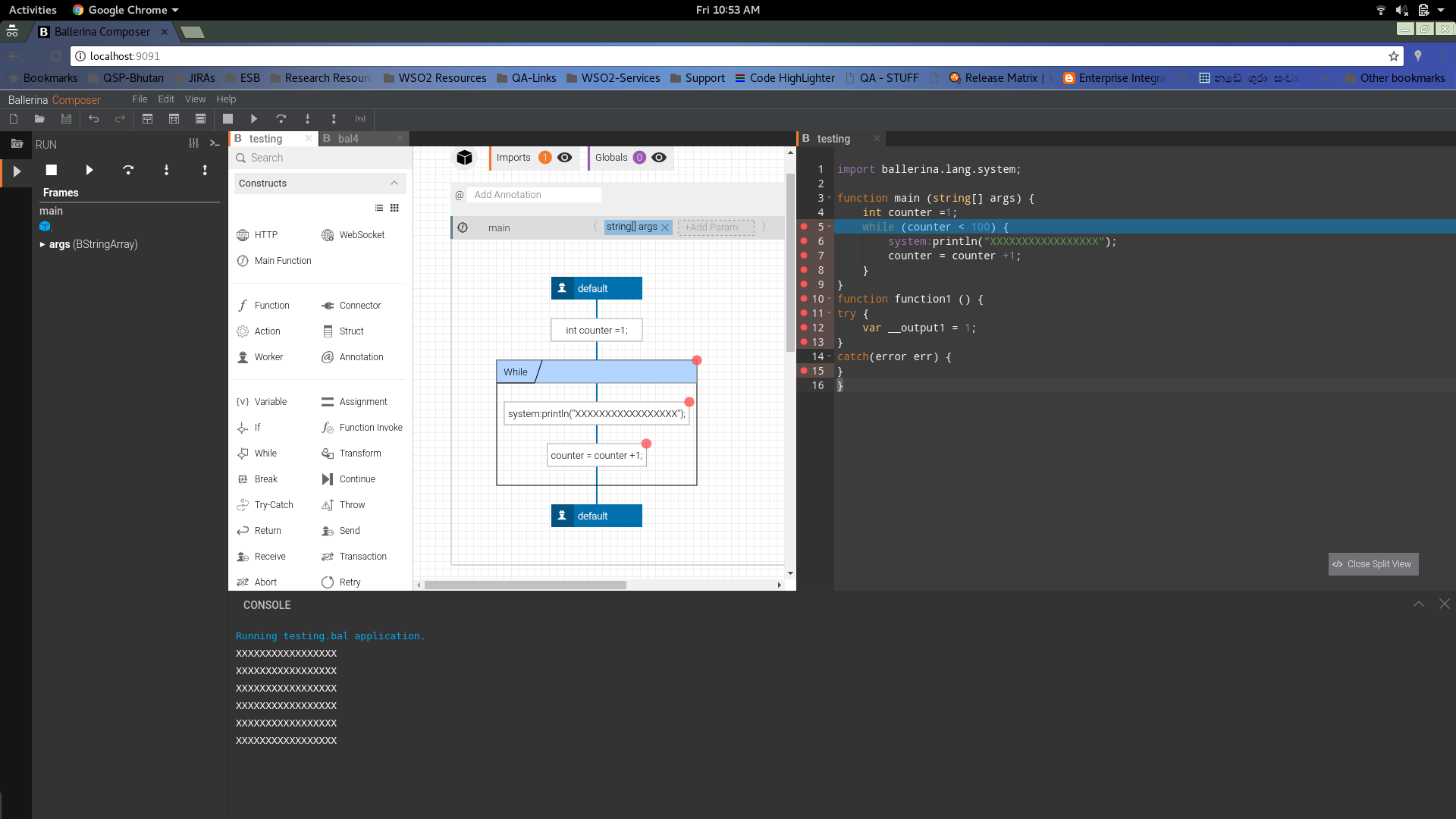

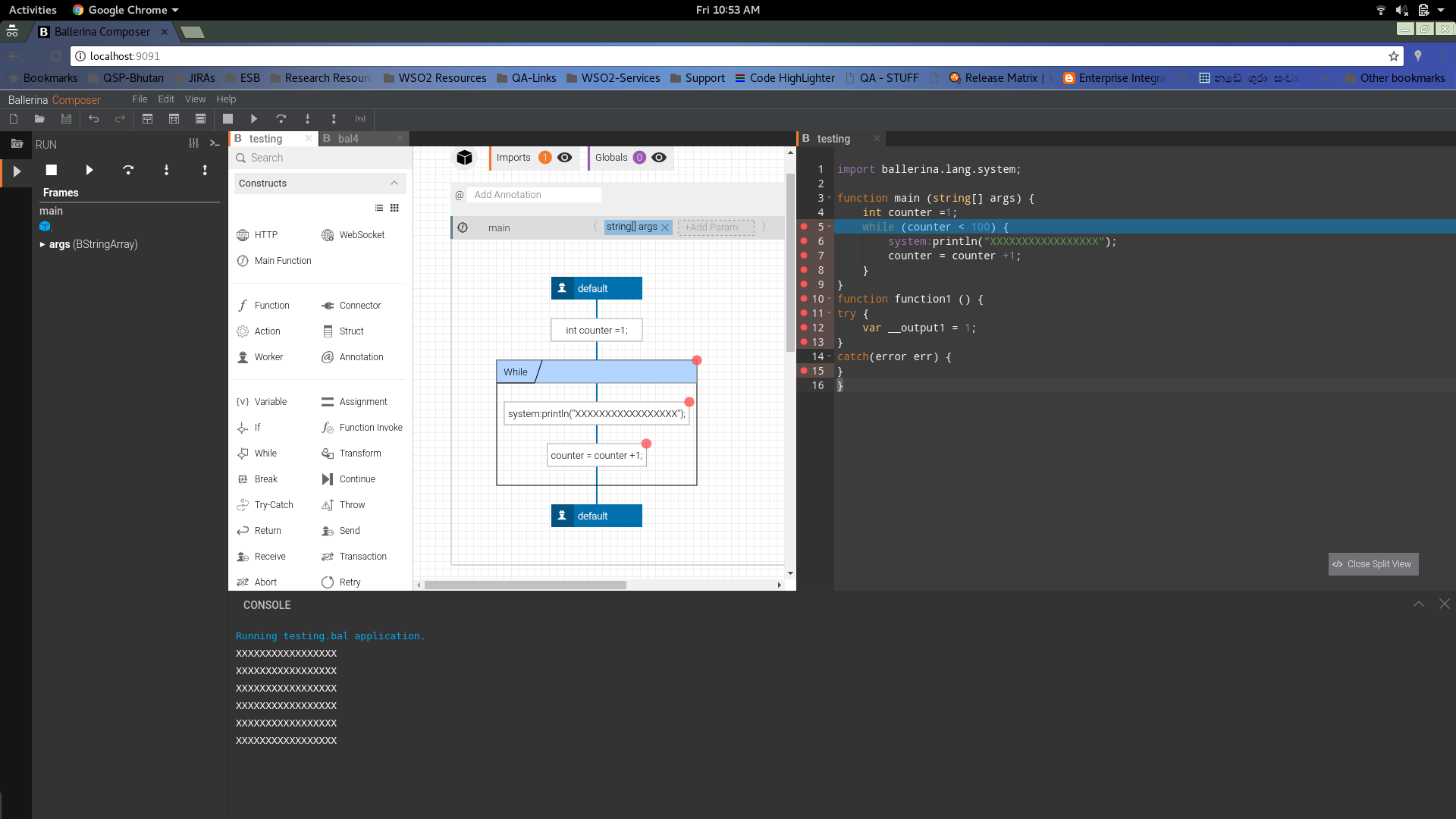

224,587 | 7,471,934,230 | IssuesEvent | 2018-04-03 10:54:39 | ballerina-lang/composer | https://api.github.com/repos/ballerina-lang/composer | closed | [Intermittent] 404 when loading the composer | Imported Priority/High Severity/Major Type/Bug cloud component/Composer | Release 0.93

404 is observed when trying to open the composer

| 1.0 | [Intermittent] 404 when loading the composer - Release 0.93

404 is observed when trying to open the composer

| priority | when loading the composer release is observed when trying to open the composer | 1 |

550,833 | 16,133,180,566 | IssuesEvent | 2021-04-29 08:25:41 | 389ds/389-ds-base | https://api.github.com/repos/389ds/389-ds-base | closed | 389ds coredump on the 1st server while installing an IPA replica | priority_high | The nightly test `test_integration/test_fips.py::TestInstallFIPS` failed in PR #[809](https://github.com/freeipa-pr-ci2/freeipa/pull/809) while installing a replica with CA.

This is simlar to ipa #[8765](https://pagure.io/freeipa/issue/8765) / 389-ds #[4670](https://github.com/389ds/389-ds-base/issues/4670) but the coredump happens on the master at a different place.

```

Mar 27 21:13:08 master.ipa.test systemd-coredump[35807]: Process 34105 (ns-slapd) of user 389 dumped core.

Stack trace of thread 35774:

#0 0x00007f1ef2e250d7 dblayer_bulk_start (libback-ldbm.so + 0x260d7)

#1 0x00007f1ef2d46e06 clcache_load_buffer_bulk (libreplication-plugin.so + 0x2ce06)

#2 0x00007f1ef2d48228 clcache_load_buffer (libreplication-plugin.so + 0x2e228)

#3 0x00007f1ef2d4be76 _cl5PositionCursorForReplay (libreplication-plugin.so + 0x31e76)

#4 0x00007f1ef2d4c5e3 cl5CreateReplayIterator (libreplication-plugin.so + 0x325e3)

#5 0x00007f1ef2d5f787 repl5_inc_run (libreplication-plugin.so + 0x45787)

#6 0x00007f1ef2d66231 prot_thread_main (libreplication-plugin.so + 0x4c231)

#7 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#8 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#9 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34105:

#0 0x00007f1ef7cd9a5f __poll (libc.so.6 + 0xf6a5f)

#1 0x00007f1ef79b9d46 _pr_poll_with_poll (libnspr4.so + 0x2bd46)

#2 0x0000562234913ab8 slapd_daemon (ns-slapd + 0x87ab8)

#3 0x000056223490761a main (ns-slapd + 0x7b61a)

#4 0x00007f1ef7c0b1e2 __libc_start_main (libc.so.6 + 0x281e2)

#5 0x00005622349088ae _start (ns-slapd + 0x7c8ae)

Stack trace of thread 34106:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e6b5df deadlock_threadmain (libback-ldbm.so + 0x6c5df)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34107:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e70def checkpoint_threadmain (libback-ldbm.so + 0x71def)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34108:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e6b427 trickle_threadmain (libback-ldbm.so + 0x6c427)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34109:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e6b064 perf_threadmain (libback-ldbm.so + 0x6c064)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34111:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef2c84a51 roles_cache_wait_on_change (libroles-plugin.so + 0x6a51)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34112:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef2c84a51 roles_cache_wait_on_change (libroles-plugin.so + 0x6a51)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34113:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef2c84a51 roles_cache_wait_on_change (libroles-plugin.so + 0x6a51)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34114:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x000056223491682a housecleaning (ns-slapd + 0x8a82a)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34115:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef79afbc8 PR_WaitCondVar (libnspr4.so + 0x21bc8)

#2 0x00007f1ef7ebceab eq_loop (libslapd.so.0 + 0xf7eab)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34116:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef7ebccae eq_loop_rel (libslapd.so.0 + 0xf7cae)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34118:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34119:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34120:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34121:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34122:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34123:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34124:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34125:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34126:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34127:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34128:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef33afce3 __db_hybrid_mutex_suspend (libdb-5.3.so + 0x36ce3)

#2 0x00007f1ef33b00b1 __db_tas_mutex_lock_int (libdb-5.3.so + 0x370b1)

#3 0x00007f1ef345cbfe __lock_get_internal (libdb-5.3.so + 0xe3bfe)

#4 0x00007f1ef345e390 __lock_get (libdb-5.3.so + 0xe5390)

#5 0x00007f1ef34873a5 __db_lget (libdb-5.3.so + 0x10e3a5)

#6 0x00007f1ef33cc58a __bam_search (libdb-5.3.so + 0x5358a)

#7 0x00007f1ef33ba72b __bamc_search (libdb-5.3.so + 0x4172b)

#8 0x00007f1ef33bb1a8 __bamc_put (libdb-5.3.so + 0x421a8)

#9 0x00007f1ef34714fa __dbc_iput (libdb-5.3.so + 0xf84fa)

#10 0x00007f1ef347615a __db_put (libdb-5.3.so + 0xfd15a)

#11 0x00007f1ef3484fbe __db_put_pp (libdb-5.3.so + 0x10bfbe)

#12 0x00007f1ef2e73db6 bdb_public_db_op (libback-ldbm.so + 0x74db6)

#13 0x00007f1ef2d4a287 _cl5WriteOperationTxn (libreplication-plugin.so + 0x30287)

#14 0x00007f1ef2d4b57f cl5WriteOperationTxn (libreplication-plugin.so + 0x3157f)

#15 0x00007f1ef2d63009 write_changelog_and_ruv (libreplication-plugin.so + 0x49009)

#16 0x00007f1ef2d63481 multimaster_mmr_postop (libreplication-plugin.so + 0x49481)

#17 0x00007f1ef7ef618d plugin_call_mmr_plugin_postop (libslapd.so.0 + 0x13118d)

#18 0x00007f1ef2e533d7 ldbm_back_modify (libback-ldbm.so + 0x543d7)

#19 0x00007f1ef7ee588a op_shared_modify (libslapd.so.0 + 0x12088a)

#20 0x00007f1ef7ee64fd do_modify (libslapd.so.0 + 0x1214fd)

#21 0x0000562234910f8f connection_threadmain (ns-slapd + 0x84f8f)

#22 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#23 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#24 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34129:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34130:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34131:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34133:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34134:

#0 0x00007f1ef7cd9a5f __poll (libc.so.6 + 0xf6a5f)

#1 0x00007f1ef79b9d46 _pr_poll_with_poll (libnspr4.so + 0x2bd46)

#2 0x0000562234912ede accept_thread (ns-slapd + 0x86ede)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34378:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223491ba4c ps_send_results (ns-slapd + 0x8fa4c)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34382:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223491ba4c ps_send_results (ns-slapd + 0x8fa4c)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34385:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223491ba4c ps_send_results (ns-slapd + 0x8fa4c)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35202:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef6a57783 sync_send_results (libcontentsync-plugin.so + 0x7783)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35291:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef6a57783 sync_send_results (libcontentsync-plugin.so + 0x7783)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35732:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef2d49650 _cl5TrimMain (libreplication-plugin.so + 0x2f650)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35733:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef2d5b84e protocol_sleep (libreplication-plugin.so + 0x4184e)

#2 0x00007f1ef2d60dfb repl5_inc_run (libreplication-plugin.so + 0x46dfb)

#3 0x00007f1ef2d66231 prot_thread_main (libreplication-plugin.so + 0x4c231)

#4 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#5 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#6 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35773:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef2d49650 _cl5TrimMain (libreplication-plugin.so + 0x2f650)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34110:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef6a69179 cos_cache_wait_on_change (libcos-plugin.so + 0x9179)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34132:

#0 0x00007f1ef7cdc3fb fsync (libc.so.6 + 0xf93fb)

#1 0x00007f1ef79b6988 pt_Fsync (libnspr4.so + 0x28988)

#2 0x00007f1ef7f39867 log_flush_buffer.constprop.0 (libslapd.so.0 + 0x174867)

#3 0x00007f1ef7eda37e vslapd_log_access (libslapd.so.0 + 0x11537e)

#4 0x00007f1ef7eda521 slapi_log_access (libslapd.so.0 + 0x115521)

#5 0x00007f1ef7f0a871 log_result (libslapd.so.0 + 0x145871)

#6 0x00007f1ef7f0b1ed send_ldap_result_ext (libslapd.so.0 + 0x1461ed)

#7 0x00007f1ef7f0b5ff send_ldap_result (libslapd.so.0 + 0x1465ff)

#8 0x00007f1ef7ef166d op_shared_search (libslapd.so.0 + 0x12c66d)

#9 0x0000562234920bf2 do_search (ns-slapd + 0x94bf2)

#10 0x0000562234911420 connection_threadmain (ns-slapd + 0x85420)

#11 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#12 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#13 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Mar 27 21:13:09 master.ipa.test systemd[1]: systemd-coredump@0-35806-0.service: Succeeded.

```

The installed version is 389-ds-base-2.0.3-20210327git741e7a72a.fc33.x86_64 taken from the nightly copr @389ds/389-ds-base-nightly.

Companion issue on IPA side: https://pagure.io/freeipa/issue/8778 | 1.0 | 389ds coredump on the 1st server while installing an IPA replica - The nightly test `test_integration/test_fips.py::TestInstallFIPS` failed in PR #[809](https://github.com/freeipa-pr-ci2/freeipa/pull/809) while installing a replica with CA.

This is simlar to ipa #[8765](https://pagure.io/freeipa/issue/8765) / 389-ds #[4670](https://github.com/389ds/389-ds-base/issues/4670) but the coredump happens on the master at a different place.

```

Mar 27 21:13:08 master.ipa.test systemd-coredump[35807]: Process 34105 (ns-slapd) of user 389 dumped core.

Stack trace of thread 35774:

#0 0x00007f1ef2e250d7 dblayer_bulk_start (libback-ldbm.so + 0x260d7)

#1 0x00007f1ef2d46e06 clcache_load_buffer_bulk (libreplication-plugin.so + 0x2ce06)

#2 0x00007f1ef2d48228 clcache_load_buffer (libreplication-plugin.so + 0x2e228)

#3 0x00007f1ef2d4be76 _cl5PositionCursorForReplay (libreplication-plugin.so + 0x31e76)

#4 0x00007f1ef2d4c5e3 cl5CreateReplayIterator (libreplication-plugin.so + 0x325e3)

#5 0x00007f1ef2d5f787 repl5_inc_run (libreplication-plugin.so + 0x45787)

#6 0x00007f1ef2d66231 prot_thread_main (libreplication-plugin.so + 0x4c231)

#7 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#8 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#9 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34105:

#0 0x00007f1ef7cd9a5f __poll (libc.so.6 + 0xf6a5f)

#1 0x00007f1ef79b9d46 _pr_poll_with_poll (libnspr4.so + 0x2bd46)

#2 0x0000562234913ab8 slapd_daemon (ns-slapd + 0x87ab8)

#3 0x000056223490761a main (ns-slapd + 0x7b61a)

#4 0x00007f1ef7c0b1e2 __libc_start_main (libc.so.6 + 0x281e2)

#5 0x00005622349088ae _start (ns-slapd + 0x7c8ae)

Stack trace of thread 34106:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e6b5df deadlock_threadmain (libback-ldbm.so + 0x6c5df)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34107:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e70def checkpoint_threadmain (libback-ldbm.so + 0x71def)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34108:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e6b427 trickle_threadmain (libback-ldbm.so + 0x6c427)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34109:

#0 0x00007f1ef7cdc1eb __select (libc.so.6 + 0xf91eb)

#1 0x00007f1ef7f27824 DS_Sleep (libslapd.so.0 + 0x162824)

#2 0x00007f1ef2e6b064 perf_threadmain (libback-ldbm.so + 0x6c064)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34111:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef2c84a51 roles_cache_wait_on_change (libroles-plugin.so + 0x6a51)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34112:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef2c84a51 roles_cache_wait_on_change (libroles-plugin.so + 0x6a51)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34113:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef2c84a51 roles_cache_wait_on_change (libroles-plugin.so + 0x6a51)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34114:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x000056223491682a housecleaning (ns-slapd + 0x8a82a)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34115:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef79afbc8 PR_WaitCondVar (libnspr4.so + 0x21bc8)

#2 0x00007f1ef7ebceab eq_loop (libslapd.so.0 + 0xf7eab)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34116:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef7ebccae eq_loop_rel (libslapd.so.0 + 0xf7cae)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34118:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34119:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34120:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34121:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34122:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34123:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34124:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34125:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34126:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34127:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34128:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef33afce3 __db_hybrid_mutex_suspend (libdb-5.3.so + 0x36ce3)

#2 0x00007f1ef33b00b1 __db_tas_mutex_lock_int (libdb-5.3.so + 0x370b1)

#3 0x00007f1ef345cbfe __lock_get_internal (libdb-5.3.so + 0xe3bfe)

#4 0x00007f1ef345e390 __lock_get (libdb-5.3.so + 0xe5390)

#5 0x00007f1ef34873a5 __db_lget (libdb-5.3.so + 0x10e3a5)

#6 0x00007f1ef33cc58a __bam_search (libdb-5.3.so + 0x5358a)

#7 0x00007f1ef33ba72b __bamc_search (libdb-5.3.so + 0x4172b)

#8 0x00007f1ef33bb1a8 __bamc_put (libdb-5.3.so + 0x421a8)

#9 0x00007f1ef34714fa __dbc_iput (libdb-5.3.so + 0xf84fa)

#10 0x00007f1ef347615a __db_put (libdb-5.3.so + 0xfd15a)

#11 0x00007f1ef3484fbe __db_put_pp (libdb-5.3.so + 0x10bfbe)

#12 0x00007f1ef2e73db6 bdb_public_db_op (libback-ldbm.so + 0x74db6)

#13 0x00007f1ef2d4a287 _cl5WriteOperationTxn (libreplication-plugin.so + 0x30287)

#14 0x00007f1ef2d4b57f cl5WriteOperationTxn (libreplication-plugin.so + 0x3157f)

#15 0x00007f1ef2d63009 write_changelog_and_ruv (libreplication-plugin.so + 0x49009)

#16 0x00007f1ef2d63481 multimaster_mmr_postop (libreplication-plugin.so + 0x49481)

#17 0x00007f1ef7ef618d plugin_call_mmr_plugin_postop (libslapd.so.0 + 0x13118d)

#18 0x00007f1ef2e533d7 ldbm_back_modify (libback-ldbm.so + 0x543d7)

#19 0x00007f1ef7ee588a op_shared_modify (libslapd.so.0 + 0x12088a)

#20 0x00007f1ef7ee64fd do_modify (libslapd.so.0 + 0x1214fd)

#21 0x0000562234910f8f connection_threadmain (ns-slapd + 0x84f8f)

#22 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#23 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#24 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34129:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34130:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34131:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34133:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223490f417 connection_threadmain (ns-slapd + 0x83417)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34134:

#0 0x00007f1ef7cd9a5f __poll (libc.so.6 + 0xf6a5f)

#1 0x00007f1ef79b9d46 _pr_poll_with_poll (libnspr4.so + 0x2bd46)

#2 0x0000562234912ede accept_thread (ns-slapd + 0x86ede)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34378:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223491ba4c ps_send_results (ns-slapd + 0x8fa4c)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34382:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223491ba4c ps_send_results (ns-slapd + 0x8fa4c)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34385:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x000056223491ba4c ps_send_results (ns-slapd + 0x8fa4c)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35202:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef6a57783 sync_send_results (libcontentsync-plugin.so + 0x7783)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35291:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef6a57783 sync_send_results (libcontentsync-plugin.so + 0x7783)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35732:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef2d49650 _cl5TrimMain (libreplication-plugin.so + 0x2f650)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35733:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef2d5b84e protocol_sleep (libreplication-plugin.so + 0x4184e)

#2 0x00007f1ef2d60dfb repl5_inc_run (libreplication-plugin.so + 0x46dfb)

#3 0x00007f1ef2d66231 prot_thread_main (libreplication-plugin.so + 0x4c231)

#4 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#5 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#6 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 35773:

#0 0x00007f1ef79539e8 pthread_cond_timedwait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf9e8)

#1 0x00007f1ef2d49650 _cl5TrimMain (libreplication-plugin.so + 0x2f650)

#2 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#3 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#4 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34110:

#0 0x00007f1ef79536c2 pthread_cond_wait@@GLIBC_2.3.2 (libpthread.so.0 + 0xf6c2)

#1 0x00007f1ef7f12f1d slapi_wait_condvar_pt (libslapd.so.0 + 0x14df1d)

#2 0x00007f1ef6a69179 cos_cache_wait_on_change (libcos-plugin.so + 0x9179)

#3 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#4 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#5 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Stack trace of thread 34132:

#0 0x00007f1ef7cdc3fb fsync (libc.so.6 + 0xf93fb)

#1 0x00007f1ef79b6988 pt_Fsync (libnspr4.so + 0x28988)

#2 0x00007f1ef7f39867 log_flush_buffer.constprop.0 (libslapd.so.0 + 0x174867)

#3 0x00007f1ef7eda37e vslapd_log_access (libslapd.so.0 + 0x11537e)

#4 0x00007f1ef7eda521 slapi_log_access (libslapd.so.0 + 0x115521)

#5 0x00007f1ef7f0a871 log_result (libslapd.so.0 + 0x145871)

#6 0x00007f1ef7f0b1ed send_ldap_result_ext (libslapd.so.0 + 0x1461ed)

#7 0x00007f1ef7f0b5ff send_ldap_result (libslapd.so.0 + 0x1465ff)

#8 0x00007f1ef7ef166d op_shared_search (libslapd.so.0 + 0x12c66d)

#9 0x0000562234920bf2 do_search (ns-slapd + 0x94bf2)

#10 0x0000562234911420 connection_threadmain (ns-slapd + 0x85420)

#11 0x00007f1ef79b9150 _pt_root (libnspr4.so + 0x2b150)

#12 0x00007f1ef794d3f9 start_thread (libpthread.so.0 + 0x93f9)

#13 0x00007f1ef7ce4b53 __clone (libc.so.6 + 0x101b53)

Mar 27 21:13:09 master.ipa.test systemd[1]: systemd-coredump@0-35806-0.service: Succeeded.

```

The installed version is 389-ds-base-2.0.3-20210327git741e7a72a.fc33.x86_64 taken from the nightly copr @389ds/389-ds-base-nightly.

Companion issue on IPA side: https://pagure.io/freeipa/issue/8778 | priority | coredump on the server while installing an ipa replica the nightly test test integration test fips py testinstallfips failed in pr while installing a replica with ca this is simlar to ipa ds but the coredump happens on the master at a different place mar master ipa test systemd coredump process ns slapd of user dumped core stack trace of thread dblayer bulk start libback ldbm so clcache load buffer bulk libreplication plugin so clcache load buffer libreplication plugin so libreplication plugin so libreplication plugin so inc run libreplication plugin so prot thread main libreplication plugin so pt root so start thread libpthread so clone libc so stack trace of thread poll libc so pr poll with poll so slapd daemon ns slapd main ns slapd libc start main libc so start ns slapd stack trace of thread select libc so ds sleep libslapd so deadlock threadmain libback ldbm so pt root so start thread libpthread so clone libc so stack trace of thread select libc so ds sleep libslapd so checkpoint threadmain libback ldbm so pt root so start thread libpthread so clone libc so stack trace of thread select libc so ds sleep libslapd so trickle threadmain libback ldbm so pt root so start thread libpthread so clone libc so stack trace of thread select libc so ds sleep libslapd so perf threadmain libback ldbm so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so slapi wait condvar pt libslapd so roles cache wait on change libroles plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so slapi wait condvar pt libslapd so roles cache wait on change libroles plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so slapi wait condvar pt libslapd so roles cache wait on change libroles plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond timedwait glibc libpthread so housecleaning ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so pr waitcondvar so eq loop libslapd so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond timedwait glibc libpthread so eq loop rel libslapd so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so db hybrid mutex suspend libdb so db tas mutex lock int libdb so lock get internal libdb so lock get libdb so db lget libdb so bam search libdb so bamc search libdb so bamc put libdb so dbc iput libdb so db put libdb so db put pp libdb so bdb public db op libback ldbm so libreplication plugin so libreplication plugin so write changelog and ruv libreplication plugin so multimaster mmr postop libreplication plugin so plugin call mmr plugin postop libslapd so ldbm back modify libback ldbm so op shared modify libslapd so do modify libslapd so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so connection threadmain ns slapd pt root so start thread libpthread so clone libc so stack trace of thread poll libc so pr poll with poll so accept thread ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so ps send results ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so ps send results ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so ps send results ns slapd pt root so start thread libpthread so clone libc so stack trace of thread pthread cond timedwait glibc libpthread so sync send results libcontentsync plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond timedwait glibc libpthread so sync send results libcontentsync plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond timedwait glibc libpthread so libreplication plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond timedwait glibc libpthread so protocol sleep libreplication plugin so inc run libreplication plugin so prot thread main libreplication plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond timedwait glibc libpthread so libreplication plugin so pt root so start thread libpthread so clone libc so stack trace of thread pthread cond wait glibc libpthread so slapi wait condvar pt libslapd so cos cache wait on change libcos plugin so pt root so start thread libpthread so clone libc so stack trace of thread fsync libc so pt fsync so log flush buffer constprop libslapd so vslapd log access libslapd so slapi log access libslapd so log result libslapd so send ldap result ext libslapd so send ldap result libslapd so op shared search libslapd so do search ns slapd connection threadmain ns slapd pt root so start thread libpthread so clone libc so mar master ipa test systemd systemd coredump service succeeded the installed version is ds base taken from the nightly copr ds base nightly companion issue on ipa side | 1 |

118,958 | 4,758,403,224 | IssuesEvent | 2016-10-24 19:23:10 | NashTeamAlpha/BangazonWeb | https://api.github.com/repos/NashTeamAlpha/BangazonWeb | closed | Add Items from BangazonAPI Startup file | First Wave High priority | ## Feature Name

1. Add MVC service to Startup.cs file

1. Add CORS service to Startup.cs file

1. Add string path to Startup.cs file

Basically, copy and paste lines 31 through 51 (approx) from the BangazonAPI Startup.cs file to the current project's Startup.cs

| 1.0 | Add Items from BangazonAPI Startup file - ## Feature Name

1. Add MVC service to Startup.cs file

1. Add CORS service to Startup.cs file

1. Add string path to Startup.cs file

Basically, copy and paste lines 31 through 51 (approx) from the BangazonAPI Startup.cs file to the current project's Startup.cs

| priority | add items from bangazonapi startup file feature name add mvc service to startup cs file add cors service to startup cs file add string path to startup cs file basically copy and paste lines through approx from the bangazonapi startup cs file to the current project s startup cs | 1 |

442,215 | 12,741,781,118 | IssuesEvent | 2020-06-26 07:01:24 | Eastrall/Rhisis | https://api.github.com/repos/Eastrall/Rhisis | closed | Mars Mine: Mobs take no damage and drop nothing | bug priority: high srv: world sys: battle v0.4.x | # :beetle: Bug Report

**Rhisis version:** v0.4.3

## Expected Behavior

Mobs take damage and drop loot.

## Current Behavior

Mobs don't even register taking damage and drop nothing after relog.

## Steps to Reproduce

1. Enter Mars Mine.

2. Attack Mutant Feferns.

3. Relog.

4. **Mobs now take damage** but won't drop loot.

| 1.0 | Mars Mine: Mobs take no damage and drop nothing - # :beetle: Bug Report

**Rhisis version:** v0.4.3

## Expected Behavior

Mobs take damage and drop loot.

## Current Behavior

Mobs don't even register taking damage and drop nothing after relog.

## Steps to Reproduce

1. Enter Mars Mine.

2. Attack Mutant Feferns.

3. Relog.

4. **Mobs now take damage** but won't drop loot.

| priority | mars mine mobs take no damage and drop nothing beetle bug report rhisis version expected behavior mobs take damage and drop loot current behavior mobs don t even register taking damage and drop nothing after relog steps to reproduce enter mars mine attack mutant feferns relog mobs now take damage but won t drop loot | 1 |

566,607 | 16,825,275,389 | IssuesEvent | 2021-06-17 17:40:38 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | Unable to view notification template in UI | component:ui priority:high state:needs_devel type:bug | <!-- Issues are for **concrete, actionable bugs and feature requests** only - if you're just asking for debugging help or technical support, please use:

- http://webchat.freenode.net/?channels=ansible-awx

- https://groups.google.com/forum/#!forum/awx-project

We have to limit this because of limited volunteer time to respond to issues! -->

##### ISSUE TYPE

- Bug Report

##### SUMMARY

<!-- Briefly describe the problem. -->

I am trying to create a webhook notification template. When I first created the notification template, I was greeted with a blank screen and these javascript errors:

```

worker-json.js:1 GET https://my-awx-url.com/worker-json.js 404

react-dom.production.min.js:209 TypeError: console.warning is not a function

at Ec (CodeEditor.jsx:88)

at Zo (react-dom.production.min.js:153)

at Ss (react-dom.production.min.js:261)

at vl (react-dom.production.min.js:246)

at ml (react-dom.production.min.js:246)

at sl (react-dom.production.min.js:239)

at react-dom.production.min.js:123

at t.unstable_runWithPriority (scheduler.production.min.js:19)

at Vi (react-dom.production.min.js:122)

at Yi (react-dom.production.min.js:123)

asyncToGenerator.js:6 Uncaught (in promise) TypeError: console.warning is not a function

at Ec (CodeEditor.jsx:88)

at Zo (react-dom.production.min.js:153)

at Ss (react-dom.production.min.js:261)

at vl (react-dom.production.min.js:246)

at ml (react-dom.production.min.js:246)

at sl (react-dom.production.min.js:239)

at react-dom.production.min.js:123

at t.unstable_runWithPriority (scheduler.production.min.js:19)

at Vi (react-dom.production.min.js:122)

at Yi (react-dom.production.min.js:123)

useWebsocket.js:20 WebSocket is already in CLOSING or CLOSED state.

```

If I go back to my AWX homepage and go to the notifications screen however, I see that the template has still been created. I can edit the template fine, but if I try and click the template: `#/notification_templates/1/details`, I get the same results from above.

##### ENVIRONMENT

* AWX version: 19.1.0

* AWX install method: kubernetes

* Ansible version: X.Y.Z

* Operating System:

* Web Browser: Chrome

##### STEPS TO REPRODUCE

<!-- Please describe exactly how to reproduce the problem. -->

1. Add a new notification template and fill out all required fields (I am working with webhook notifications but it seems this problem persists across all notification types)

2. Press "save" on the create notification template screen

##### EXPECTED RESULTS

<!-- What did you expect to happen when running the steps above? -->

The notification template to be created and for me to be redirected to its page successfully (e.g. `#/notification_templates/1/details`)

##### ACTUAL RESULTS

<!-- What actually happened? -->

I get a blank screen and the javascript errors mentioned above.

##### ADDITIONAL INFORMATION

<!-- Include any links to sosreport, database dumps, screenshots or other

information. -->

| 1.0 | Unable to view notification template in UI - <!-- Issues are for **concrete, actionable bugs and feature requests** only - if you're just asking for debugging help or technical support, please use:

- http://webchat.freenode.net/?channels=ansible-awx

- https://groups.google.com/forum/#!forum/awx-project

We have to limit this because of limited volunteer time to respond to issues! -->

##### ISSUE TYPE

- Bug Report

##### SUMMARY

<!-- Briefly describe the problem. -->

I am trying to create a webhook notification template. When I first created the notification template, I was greeted with a blank screen and these javascript errors:

```

worker-json.js:1 GET https://my-awx-url.com/worker-json.js 404

react-dom.production.min.js:209 TypeError: console.warning is not a function

at Ec (CodeEditor.jsx:88)

at Zo (react-dom.production.min.js:153)

at Ss (react-dom.production.min.js:261)

at vl (react-dom.production.min.js:246)

at ml (react-dom.production.min.js:246)

at sl (react-dom.production.min.js:239)

at react-dom.production.min.js:123

at t.unstable_runWithPriority (scheduler.production.min.js:19)

at Vi (react-dom.production.min.js:122)

at Yi (react-dom.production.min.js:123)

asyncToGenerator.js:6 Uncaught (in promise) TypeError: console.warning is not a function

at Ec (CodeEditor.jsx:88)

at Zo (react-dom.production.min.js:153)

at Ss (react-dom.production.min.js:261)

at vl (react-dom.production.min.js:246)

at ml (react-dom.production.min.js:246)

at sl (react-dom.production.min.js:239)

at react-dom.production.min.js:123

at t.unstable_runWithPriority (scheduler.production.min.js:19)

at Vi (react-dom.production.min.js:122)

at Yi (react-dom.production.min.js:123)

useWebsocket.js:20 WebSocket is already in CLOSING or CLOSED state.

```

If I go back to my AWX homepage and go to the notifications screen however, I see that the template has still been created. I can edit the template fine, but if I try and click the template: `#/notification_templates/1/details`, I get the same results from above.

##### ENVIRONMENT

* AWX version: 19.1.0

* AWX install method: kubernetes

* Ansible version: X.Y.Z

* Operating System:

* Web Browser: Chrome

##### STEPS TO REPRODUCE

<!-- Please describe exactly how to reproduce the problem. -->

1. Add a new notification template and fill out all required fields (I am working with webhook notifications but it seems this problem persists across all notification types)

2. Press "save" on the create notification template screen

##### EXPECTED RESULTS

<!-- What did you expect to happen when running the steps above? -->

The notification template to be created and for me to be redirected to its page successfully (e.g. `#/notification_templates/1/details`)

##### ACTUAL RESULTS

<!-- What actually happened? -->

I get a blank screen and the javascript errors mentioned above.

##### ADDITIONAL INFORMATION

<!-- Include any links to sosreport, database dumps, screenshots or other

information. -->

| priority | unable to view notification template in ui issues are for concrete actionable bugs and feature requests only if you re just asking for debugging help or technical support please use we have to limit this because of limited volunteer time to respond to issues issue type bug report summary i am trying to create a webhook notification template when i first created the notification template i was greeted with a blank screen and these javascript errors worker json js get react dom production min js typeerror console warning is not a function at ec codeeditor jsx at zo react dom production min js at ss react dom production min js at vl react dom production min js at ml react dom production min js at sl react dom production min js at react dom production min js at t unstable runwithpriority scheduler production min js at vi react dom production min js at yi react dom production min js asynctogenerator js uncaught in promise typeerror console warning is not a function at ec codeeditor jsx at zo react dom production min js at ss react dom production min js at vl react dom production min js at ml react dom production min js at sl react dom production min js at react dom production min js at t unstable runwithpriority scheduler production min js at vi react dom production min js at yi react dom production min js usewebsocket js websocket is already in closing or closed state if i go back to my awx homepage and go to the notifications screen however i see that the template has still been created i can edit the template fine but if i try and click the template notification templates details i get the same results from above environment awx version awx install method kubernetes ansible version x y z operating system web browser chrome steps to reproduce add a new notification template and fill out all required fields i am working with webhook notifications but it seems this problem persists across all notification types press save on the create notification template screen expected results the notification template to be created and for me to be redirected to its page successfully e g notification templates details actual results i get a blank screen and the javascript errors mentioned above additional information include any links to sosreport database dumps screenshots or other information | 1 |

718,993 | 24,740,730,846 | IssuesEvent | 2022-10-21 04:44:05 | AY2223S1-CS2103T-W10-4/tp | https://api.github.com/repos/AY2223S1-CS2103T-W10-4/tp | closed | feat(tasks): Edit module associated to task | type.Story priority.High | # User Story

Tasks/Deadlines User Story 11

As a user, I can change the module of a specific task or deadline, so that I can move the tasks around if I make a mistake. | 1.0 | feat(tasks): Edit module associated to task - # User Story

Tasks/Deadlines User Story 11

As a user, I can change the module of a specific task or deadline, so that I can move the tasks around if I make a mistake. | priority | feat tasks edit module associated to task user story tasks deadlines user story as a user i can change the module of a specific task or deadline so that i can move the tasks around if i make a mistake | 1 |

145,331 | 5,565,001,028 | IssuesEvent | 2017-03-26 10:00:09 | Caleydo/mothertable | https://api.github.com/repos/Caleydo/mothertable | opened | Brush only works from top to bottom | high priority | Should also work when the user starts to brush an element and the drags upwards. | 1.0 | Brush only works from top to bottom - Should also work when the user starts to brush an element and the drags upwards. | priority | brush only works from top to bottom should also work when the user starts to brush an element and the drags upwards | 1 |

721,181 | 24,820,465,801 | IssuesEvent | 2022-10-25 16:02:07 | AY2223S1-CS2113-T17-4/tp | https://api.github.com/repos/AY2223S1-CS2113-T17-4/tp | closed | Create default class | type.Bug priority.High severity.High | Currently the backup .json doesn't get loaded into the .jar file. A `Defaults` class can be made to store this data, and when fetching has failed, can create a backup .json in the file directory. | 1.0 | Create default class - Currently the backup .json doesn't get loaded into the .jar file. A `Defaults` class can be made to store this data, and when fetching has failed, can create a backup .json in the file directory. | priority | create default class currently the backup json doesn t get loaded into the jar file a defaults class can be made to store this data and when fetching has failed can create a backup json in the file directory | 1 |

328,984 | 10,010,768,026 | IssuesEvent | 2019-07-15 08:54:15 | IATI/ckanext-iati | https://api.github.com/repos/IATI/ckanext-iati | closed | Many error messages on the Registry | High priority bug | Hi,

I tried to purge the Registry today but received a timeout error, and it didn't compete.

This data file is also showing a fair few error messages. Not sure if it's related to the purging or not? https://iatiregistry.org/dataset/ec-fpi-88

| 1.0 | Many error messages on the Registry - Hi,

I tried to purge the Registry today but received a timeout error, and it didn't compete.

This data file is also showing a fair few error messages. Not sure if it's related to the purging or not? https://iatiregistry.org/dataset/ec-fpi-88

| priority | many error messages on the registry hi i tried to purge the registry today but received a timeout error and it didn t compete this data file is also showing a fair few error messages not sure if it s related to the purging or not | 1 |

103,049 | 4,164,299,707 | IssuesEvent | 2016-06-18 17:58:29 | ALitttleBitDifferent/AmbientPrologueBugs | https://api.github.com/repos/ALitttleBitDifferent/AmbientPrologueBugs | opened | Camera not centered on Krini during the fight. | bug High Priority | The camera turned sideways during the fight. It might have been caused by trying to focus on Krini while at the same time trying to rotate it. | 1.0 | Camera not centered on Krini during the fight. - The camera turned sideways during the fight. It might have been caused by trying to focus on Krini while at the same time trying to rotate it. | priority | camera not centered on krini during the fight the camera turned sideways during the fight it might have been caused by trying to focus on krini while at the same time trying to rotate it | 1 |

125,230 | 4,954,559,903 | IssuesEvent | 2016-12-01 17:56:01 | lgblgblgb/xemu | https://api.github.com/repos/lgblgblgb/xemu | closed | Mega-65: colour RAM handling bug sometimes | bug HIGH PRIORITY MEGA65 work in progress | It seems, M65 emulator has an issue with colour RAM, sometimes wrong information is read. For example by scrolling the screen, the C65 "colour stripes" disappears as being colour on screen. The problem is given by the fact (it seems) that I use tricks to manage colour RAM at multiple places to speed up the more frequent reads (compared to writes). This should be fixed! Though I noticed now about the DMA changes, it's not DMA related, but an older bug in the general address decoding part. | 1.0 | Mega-65: colour RAM handling bug sometimes - It seems, M65 emulator has an issue with colour RAM, sometimes wrong information is read. For example by scrolling the screen, the C65 "colour stripes" disappears as being colour on screen. The problem is given by the fact (it seems) that I use tricks to manage colour RAM at multiple places to speed up the more frequent reads (compared to writes). This should be fixed! Though I noticed now about the DMA changes, it's not DMA related, but an older bug in the general address decoding part. | priority | mega colour ram handling bug sometimes it seems emulator has an issue with colour ram sometimes wrong information is read for example by scrolling the screen the colour stripes disappears as being colour on screen the problem is given by the fact it seems that i use tricks to manage colour ram at multiple places to speed up the more frequent reads compared to writes this should be fixed though i noticed now about the dma changes it s not dma related but an older bug in the general address decoding part | 1 |

471,774 | 13,610,124,020 | IssuesEvent | 2020-09-23 06:49:17 | GluuFederation/oxauth-config | https://api.github.com/repos/GluuFederation/oxauth-config | closed | OAuth - UMA Scopes | Priority-HIGH User Story | # Description

This endpoint can be used to create, view, add, search, partially update, complete update as well as deletion of a UMA scope.

# Endpoint

https://<servername:port>/api/v1/oxauth/uma/scopes/{inum}

# Supported methods