Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

253,295 | 8,053,806,660 | IssuesEvent | 2018-08-02 01:17:34 | Prospress/action-scheduler | https://api.github.com/repos/Prospress/action-scheduler | opened | Restore batch processing loop to boost default batch processing speed | enhancement priority:high | At the moment, Action Scheduler will process 25 actions per run. That's it. No more until the next loop, despite [what the `README.md` file says](https://github.com/Prospress/action-scheduler/blob/2.0.0/README.md#batch-processing).

This is because the [loop for processing actions until running out of time or memory was removed](https://github.com/Prospress/action-scheduler/commit/5145a9d66e8a12639f3ebd870a0381ca977803db) to prevent the timeout errors that have since been addressed with #134.

**To (greatly) increase the default processing speed, we should reinstate this loop.**

While we have timeout prevention in place with #134, we still need memory monitoring to safely introduce a loop.

The `WP_Background_Process` provides a good approach for how to do this with the `memory_exceeded()` method.

I checked both the [WC Implementation of `WP_Background_Process::memory_exceeded()`](https://github.com/woocommerce/woocommerce/blob/3.4.0/includes/libraries/wp-background-process.php#L343-L353) and the [SkyVerge implementation](https://github.com/woocommerce/woocommerce/blob/3.4.0/includes/libraries/wp-background-process.php#L335-L353), and neither of this have added any additional patches to that, so the approach is likely working reliably at their scale and can be relied upon in Action Scheduler.

Once we have the memory limit in place, we should be able to implement a method like `WC_Background_Process ::batch_limit_exceeded()`](https://github.com/woocommerce/woocommerce/blob/master/includes/abstracts/class-wc-background-process.php#L80-L87) in `ActionScheduler_Abstract_QueueRunner` to control the loop. This means that method can be overridden in other runners, namely `ActionScheduler_WPCLI_QueueRunner`, that do not want to be constrained by timeouts (or likely memory usage, as it has the `stop_the_insanity()` method). | 1.0 | Restore batch processing loop to boost default batch processing speed - At the moment, Action Scheduler will process 25 actions per run. That's it. No more until the next loop, despite [what the `README.md` file says](https://github.com/Prospress/action-scheduler/blob/2.0.0/README.md#batch-processing).

This is because the [loop for processing actions until running out of time or memory was removed](https://github.com/Prospress/action-scheduler/commit/5145a9d66e8a12639f3ebd870a0381ca977803db) to prevent the timeout errors that have since been addressed with #134.

**To (greatly) increase the default processing speed, we should reinstate this loop.**

While we have timeout prevention in place with #134, we still need memory monitoring to safely introduce a loop.

The `WP_Background_Process` provides a good approach for how to do this with the `memory_exceeded()` method.

I checked both the [WC Implementation of `WP_Background_Process::memory_exceeded()`](https://github.com/woocommerce/woocommerce/blob/3.4.0/includes/libraries/wp-background-process.php#L343-L353) and the [SkyVerge implementation](https://github.com/woocommerce/woocommerce/blob/3.4.0/includes/libraries/wp-background-process.php#L335-L353), and neither of this have added any additional patches to that, so the approach is likely working reliably at their scale and can be relied upon in Action Scheduler.

Once we have the memory limit in place, we should be able to implement a method like `WC_Background_Process ::batch_limit_exceeded()`](https://github.com/woocommerce/woocommerce/blob/master/includes/abstracts/class-wc-background-process.php#L80-L87) in `ActionScheduler_Abstract_QueueRunner` to control the loop. This means that method can be overridden in other runners, namely `ActionScheduler_WPCLI_QueueRunner`, that do not want to be constrained by timeouts (or likely memory usage, as it has the `stop_the_insanity()` method). | priority | restore batch processing loop to boost default batch processing speed at the moment action scheduler will process actions per run that s it no more until the next loop despite this is because the to prevent the timeout errors that have since been addressed with to greatly increase the default processing speed we should reinstate this loop while we have timeout prevention in place with we still need memory monitoring to safely introduce a loop the wp background process provides a good approach for how to do this with the memory exceeded method i checked both the and the and neither of this have added any additional patches to that so the approach is likely working reliably at their scale and can be relied upon in action scheduler once we have the memory limit in place we should be able to implement a method like wc background process batch limit exceeded in actionscheduler abstract queuerunner to control the loop this means that method can be overridden in other runners namely actionscheduler wpcli queuerunner that do not want to be constrained by timeouts or likely memory usage as it has the stop the insanity method | 1 |

587,647 | 17,627,640,117 | IssuesEvent | 2021-08-19 01:16:13 | parallel-finance/parallel | https://api.github.com/repos/parallel-finance/parallel | closed | Implement LiquidStaking2.0, stake-client interaction part | high priority | **Motivation**

On-chain staking pallet needs to interact with off-chain stake-client, here are the main methods.

Please adjust if necessary.

let's develop from this pr, #362 @alannotnerd

**Suggested Solution**

- [x] trigger_new_era

- [x] record_reward

- [x] record_slash

- [x] record_bond_response/record_bond_extra_response/record_rebond_response/record_unbond_response

- [x] transfer_to_relaychain | 1.0 | Implement LiquidStaking2.0, stake-client interaction part - **Motivation**

On-chain staking pallet needs to interact with off-chain stake-client, here are the main methods.

Please adjust if necessary.

let's develop from this pr, #362 @alannotnerd

**Suggested Solution**

- [x] trigger_new_era

- [x] record_reward

- [x] record_slash

- [x] record_bond_response/record_bond_extra_response/record_rebond_response/record_unbond_response

- [x] transfer_to_relaychain | priority | implement stake client interaction part motivation on chain staking pallet needs to interact with off chain stake client here are the main methods please adjust if necessary let s develop from this pr alannotnerd suggested solution trigger new era record reward record slash record bond response record bond extra response record rebond response record unbond response transfer to relaychain | 1 |

614,860 | 19,191,177,861 | IssuesEvent | 2021-12-06 00:45:16 | myConsciousness/duolingo4d | https://api.github.com/repos/myConsciousness/duolingo4d | opened | Duolingo APIから返却されたJSONの変換時に文字コードを明示的に指定 | Priority: high Type: improvement | <!--

Please describe the feature you'd like to see us implement along with a use

case.

-->

Duolingo APIから返却されたJSON文字列を変換する際に文字コードを明示的に指定するように修正を行う。

```dart

jsonDecode(utf8.decode(response.body.runes.toList()));

``` | 1.0 | Duolingo APIから返却されたJSONの変換時に文字コードを明示的に指定 - <!--

Please describe the feature you'd like to see us implement along with a use

case.

-->

Duolingo APIから返却されたJSON文字列を変換する際に文字コードを明示的に指定するように修正を行う。

```dart

jsonDecode(utf8.decode(response.body.runes.toList()));

``` | priority | duolingo apiから返却されたjsonの変換時に文字コードを明示的に指定 please describe the feature you d like to see us implement along with a use case duolingo apiから返却されたjson文字列を変換する際に文字コードを明示的に指定するように修正を行う。 dart jsondecode decode response body runes tolist | 1 |

439,165 | 12,678,491,696 | IssuesEvent | 2020-06-19 09:51:57 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Langlib tests are failing in windows due to exception in positionRangeCheck | Component/LangLib Component/Parser Priority/High Type/Bug | **Description:**

Disabled in commit

https://github.com/ballerina-platform/ballerina-lang/pull/24258/commits/2a79b95fe5b555ea10d2982e1f6dc5eaeaaa01f9 | 1.0 | Langlib tests are failing in windows due to exception in positionRangeCheck - **Description:**

Disabled in commit

https://github.com/ballerina-platform/ballerina-lang/pull/24258/commits/2a79b95fe5b555ea10d2982e1f6dc5eaeaaa01f9 | priority | langlib tests are failing in windows due to exception in positionrangecheck description disabled in commit | 1 |

140,411 | 5,408,640,339 | IssuesEvent | 2017-03-01 00:48:47 | ubc/compair | https://api.github.com/repos/ubc/compair | closed | Create supporting website for UBC and external users | developer suggestion enhancement front end high priority instructor request | Use Github pages to add a basic static site that supports (and promotes) the application. | 1.0 | Create supporting website for UBC and external users - Use Github pages to add a basic static site that supports (and promotes) the application. | priority | create supporting website for ubc and external users use github pages to add a basic static site that supports and promotes the application | 1 |

767,382 | 26,921,798,864 | IssuesEvent | 2023-02-07 10:59:22 | Public-Health-Scotland/source-linkage-files | https://api.github.com/repos/Public-Health-Scotland/source-linkage-files | closed | Compute age in Home care extract | bug Priority: High | When comparing SPSS vs R i have noticed that the variable age is missing from the home care R extract. This will need added back into the code in either the ALL home care script or year specific. | 1.0 | Compute age in Home care extract - When comparing SPSS vs R i have noticed that the variable age is missing from the home care R extract. This will need added back into the code in either the ALL home care script or year specific. | priority | compute age in home care extract when comparing spss vs r i have noticed that the variable age is missing from the home care r extract this will need added back into the code in either the all home care script or year specific | 1 |

390,874 | 11,565,103,510 | IssuesEvent | 2020-02-20 09:54:19 | localstack/localstack | https://api.github.com/repos/localstack/localstack | closed | ExtendedS3DestinationConfiguration not supported to set S3 destination on Firehose stream | feature-missing needs-triaging priority-high | When creating a new firehose stream it's not possible to set an S3 destination using the `.withExtendedS3DestinationConfiguration()` method. Currently, you have to use `.withS3DestinationConfiguration()`, but this method has been deprecated. Could you please update the code to support the new ExtendedS3DestinationConfiguration? | 1.0 | ExtendedS3DestinationConfiguration not supported to set S3 destination on Firehose stream - When creating a new firehose stream it's not possible to set an S3 destination using the `.withExtendedS3DestinationConfiguration()` method. Currently, you have to use `.withS3DestinationConfiguration()`, but this method has been deprecated. Could you please update the code to support the new ExtendedS3DestinationConfiguration? | priority | not supported to set destination on firehose stream when creating a new firehose stream it s not possible to set an destination using the method currently you have to use but this method has been deprecated could you please update the code to support the new | 1 |

137,202 | 5,299,693,370 | IssuesEvent | 2017-02-10 01:09:23 | atilatosta/dotnet-standard-sdk | https://api.github.com/repos/atilatosta/dotnet-standard-sdk | closed | [documentation] Write code samples | high-priority | Create a code samples project for examples of using services and document code samples in ReadMe for each service endpoint implemented so far.

- [x] Speech to Text

- [x] Text to Speech

- [x] Tone Analyzer

- [x] Personality Insights

- [x] Language Translation

- [ ] Discovery

- [x] Conversation

- [ ] Visual Recognition | 1.0 | [documentation] Write code samples - Create a code samples project for examples of using services and document code samples in ReadMe for each service endpoint implemented so far.

- [x] Speech to Text

- [x] Text to Speech

- [x] Tone Analyzer

- [x] Personality Insights

- [x] Language Translation

- [ ] Discovery

- [x] Conversation

- [ ] Visual Recognition | priority | write code samples create a code samples project for examples of using services and document code samples in readme for each service endpoint implemented so far speech to text text to speech tone analyzer personality insights language translation discovery conversation visual recognition | 1 |

38,040 | 2,838,412,396 | IssuesEvent | 2015-05-27 07:29:04 | Stratio/sparkta | https://api.github.com/repos/Stratio/sparkta | opened | Policies Persistence. Step 3) Composition of saved fragments | component - driver enhancement priority - high | If the user sends a post message to start a new policy and it contains fragments, these fragments should be interpreted and changed with the real JSON | 1.0 | Policies Persistence. Step 3) Composition of saved fragments - If the user sends a post message to start a new policy and it contains fragments, these fragments should be interpreted and changed with the real JSON | priority | policies persistence step composition of saved fragments if the user sends a post message to start a new policy and it contains fragments these fragments should be interpreted and changed with the real json | 1 |

229,657 | 7,582,425,030 | IssuesEvent | 2018-04-25 04:08:16 | HXLStandard/hxl-proxy | https://api.github.com/repos/HXLStandard/hxl-proxy | reopened | pcodes service generate empty file for itos | high-priority | If adm4 doesn't exist for ITOS for Guinea, the pcodes service of hxl proxy will generate an empty csv file which crashes the validation

https://beta.proxy.hxlstandard.org/data/validate?url=https%3A%2F%2Fdocs.google.com%2Fspreadsheets%2Fd%2F19oLyom0jNYSZJKXgbPKEVYbdlv8zIAuGGRYJAYttOb0%2Fedit%23gid%3D0&schema_url=https%3A%2F%2Fdocs.google.com%2Fspreadsheets%2Fd%2F1EyUzGO7M2juKyVuY2p1nPcxM29SauV1kT2vjRBnS9wU%2Fedit%23gid%3D1513801580&url=https%3A%2F%2Fdocs.google.com%2Fspreadsheets%2Fd%2F19oLyom0jNYSZJKXgbPKEVYbdlv8zIAuGGRYJAYttOb0%2Fedit%23gid%3D0

<img width="866" alt="screen shot 2018-04-24 at 12 23 13" src="https://user-images.githubusercontent.com/3865844/39181556-52d7667e-47ba-11e8-842d-2dccdbefdbbf.png">

| 1.0 | pcodes service generate empty file for itos - If adm4 doesn't exist for ITOS for Guinea, the pcodes service of hxl proxy will generate an empty csv file which crashes the validation

https://beta.proxy.hxlstandard.org/data/validate?url=https%3A%2F%2Fdocs.google.com%2Fspreadsheets%2Fd%2F19oLyom0jNYSZJKXgbPKEVYbdlv8zIAuGGRYJAYttOb0%2Fedit%23gid%3D0&schema_url=https%3A%2F%2Fdocs.google.com%2Fspreadsheets%2Fd%2F1EyUzGO7M2juKyVuY2p1nPcxM29SauV1kT2vjRBnS9wU%2Fedit%23gid%3D1513801580&url=https%3A%2F%2Fdocs.google.com%2Fspreadsheets%2Fd%2F19oLyom0jNYSZJKXgbPKEVYbdlv8zIAuGGRYJAYttOb0%2Fedit%23gid%3D0

<img width="866" alt="screen shot 2018-04-24 at 12 23 13" src="https://user-images.githubusercontent.com/3865844/39181556-52d7667e-47ba-11e8-842d-2dccdbefdbbf.png">

| priority | pcodes service generate empty file for itos if doesn t exist for itos for guinea the pcodes service of hxl proxy will generate an empty csv file which crashes the validation img width alt screen shot at src | 1 |

248,637 | 7,934,499,415 | IssuesEvent | 2018-07-08 19:57:49 | HealthRex/CDSS | https://api.github.com/repos/HealthRex/CDSS | closed | Batch unit test failures - Some library dependencies and floating point checks | Priority - 1 High help wanted | ======================================================================

ERROR: medinfo.dataconversion.test.TestEventDigraph (unittest.loader.ModuleImportFailure)

----------------------------------------------------------------------

ImportError: Failed to import test module: medinfo.dataconversion.test.TestEventDigraph

Traceback (most recent call last):

File "C:\Dev\Python27\lib\unittest\loader.py", line 254, in _find_tests

module = self._get_module_from_name(name)

File "C:\Dev\Python27\lib\unittest\loader.py", line 232, in _get_module_from_name

__import__(name)

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestEventDigraph.py", line 6, in <module>

import networkx as nx

ImportError: No module named networkx

======================================================================

ERROR: test_dataConversion (medinfo.dataconversion.test.TestSTRIDEDxListConversion.TestSTRIDEDxListConversion)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestSTRIDEDxListConversion.py", line 60, in setUp

(dataItemId, isNew) = DBUtil.findOrInsertItem("stride_dx_list", dataModel, retrieveCol="pat_id" );

File "C:\HealthRex\CDSS\medinfo\db\DBUtil.py", line 682, in findOrInsertItem

cur.execute( searchQuery, searchParams );

ProgrammingError: column "dx_icd10_code_list" does not exist

LINE 8: AND dx_icd10_code_list = ''

^

======================================================================

ERROR: test_dataConversion_maxMixtureCount (medinfo.dataconversion.test.TestSTRIDEPreAdmitMedConversion.TestSTRIDEPreAdmitMedConversion)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestSTRIDEPreAdmitMedConversion.py", line 249, in test_dataConversion_maxMixtureCount

self.converter.convertSourceItems(convOptions);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 48, in convertSourceItems

rxcuiDataByMedId = self.loadRXCUIData(conn=conn);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 81, in loadRXCUIData

DBUtil.execute(query)

File "C:\HealthRex\CDSS\medinfo\db\DBUtil.py", line 261, in execute

cur.execute( query, parameters )

ProgrammingError: syntax error at or near "NOT"

LINE 4: ADD COLUMN IF NOT EXISTS

^

======================================================================

ERROR: test_dataConversion_normalized (medinfo.dataconversion.test.TestSTRIDEPreAdmitMedConversion.TestSTRIDEPreAdmitMedConversion)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestSTRIDEPreAdmitMedConversion.py", line 124, in test_dataConversion_normalized

self.converter.convertSourceItems(convOptions);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 48, in convertSourceItems

rxcuiDataByMedId = self.loadRXCUIData(conn=conn);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 81, in loadRXCUIData

DBUtil.execute(query)

File "C:\HealthRex\CDSS\medinfo\db\DBUtil.py", line 261, in execute

cur.execute( query, parameters )

ProgrammingError: syntax error at or near "NOT"

LINE 4: ADD COLUMN IF NOT EXISTS

^

======================================================================

FAIL: test_addTimeCycleFeatures (medinfo.dataconversion.test.TestFeatureMatrixFactory.TestFeatureMatrixFactory)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestFeatureMatrixFactory.py", line 447, in test_addTimeCycleFeatures

self.assertEqualList(resultMatrix[2:], expectedMatrix)

File "C:\HealthRex\CDSS\medinfo\common\test\Util.py", line 142, in assertEqualList

self.assertEqual(verifyItem, sampleItem)

AssertionError: Lists differ: ['-789', '-900', 'LABMETB', '2... != ['-789', '-900', 'LABMETB', '2...

First differing element 6:

'0.866025403784'

'0.8660254037844388'

['-789',

'-900',

'LABMETB',

'2009-05-06 15:00:00',

'0',

'5',

- '0.866025403784',

+ '0.8660254037844388',

? ++++

- '-0.5',

+ '-0.4999999999999998',

'15',

- '-0.707106781187',

+ '-0.7071067811865471',

? +++ +

- '-0.707106781187']

+ '-0.7071067811865479']

? +++ +

======================================================================

FAIL: test_buildFeatureMatrix_multiFlowsheet (medinfo.dataconversion.test.TestFeatureMatrixFactory.TestFeatureMatrixFactory)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestFeatureMatrixFactory.py", line 405, in test_buildFeatureMatrix_multiFlowsheet

self.assertEqualList(resultMatrix[2:], expectedMatrix)

File "C:\HealthRex\CDSS\medinfo\common\test\Util.py", line 142, in assertEqualList

self.assertEqual(verifyItem, sampleItem)

AssertionError: Lists differ: ['-789', '-900', 'LABMETB', '2... != ['-789', '-900', 'LABMETB', '2...

First differing element 40:

'0.666666666667'

'0.6666666666666666'

Diff is 702 characters long. Set self.maxDiff to None to see it.

======================================================================

FAIL: test_build_FeatureMatrix_multiLabTest (medinfo.dataconversion.test.TestFeatureMatrixFactory.TestFeatureMatrixFactory)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestFeatureMatrixFactory.py", line 345, in test_build_FeatureMatrix_multiLabTest

self.assertEqualTable(resultMatrix[2:], expectedMatrix)

File "C:\HealthRex\CDSS\medinfo\common\test\Util.py", line 172, in assertEqualTable

self.assertEqual(verifyItem, sampleItem)

AssertionError: '0.666666666667' != '0.6666666666666666'

----------------------------------------------------------------------

Ran 122 tests in 97.337s

FAILED (failures=3, errors=4) | 1.0 | Batch unit test failures - Some library dependencies and floating point checks - ======================================================================

ERROR: medinfo.dataconversion.test.TestEventDigraph (unittest.loader.ModuleImportFailure)

----------------------------------------------------------------------

ImportError: Failed to import test module: medinfo.dataconversion.test.TestEventDigraph

Traceback (most recent call last):

File "C:\Dev\Python27\lib\unittest\loader.py", line 254, in _find_tests

module = self._get_module_from_name(name)

File "C:\Dev\Python27\lib\unittest\loader.py", line 232, in _get_module_from_name

__import__(name)

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestEventDigraph.py", line 6, in <module>

import networkx as nx

ImportError: No module named networkx

======================================================================

ERROR: test_dataConversion (medinfo.dataconversion.test.TestSTRIDEDxListConversion.TestSTRIDEDxListConversion)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestSTRIDEDxListConversion.py", line 60, in setUp

(dataItemId, isNew) = DBUtil.findOrInsertItem("stride_dx_list", dataModel, retrieveCol="pat_id" );

File "C:\HealthRex\CDSS\medinfo\db\DBUtil.py", line 682, in findOrInsertItem

cur.execute( searchQuery, searchParams );

ProgrammingError: column "dx_icd10_code_list" does not exist

LINE 8: AND dx_icd10_code_list = ''

^

======================================================================

ERROR: test_dataConversion_maxMixtureCount (medinfo.dataconversion.test.TestSTRIDEPreAdmitMedConversion.TestSTRIDEPreAdmitMedConversion)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestSTRIDEPreAdmitMedConversion.py", line 249, in test_dataConversion_maxMixtureCount

self.converter.convertSourceItems(convOptions);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 48, in convertSourceItems

rxcuiDataByMedId = self.loadRXCUIData(conn=conn);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 81, in loadRXCUIData

DBUtil.execute(query)

File "C:\HealthRex\CDSS\medinfo\db\DBUtil.py", line 261, in execute

cur.execute( query, parameters )

ProgrammingError: syntax error at or near "NOT"

LINE 4: ADD COLUMN IF NOT EXISTS

^

======================================================================

ERROR: test_dataConversion_normalized (medinfo.dataconversion.test.TestSTRIDEPreAdmitMedConversion.TestSTRIDEPreAdmitMedConversion)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestSTRIDEPreAdmitMedConversion.py", line 124, in test_dataConversion_normalized

self.converter.convertSourceItems(convOptions);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 48, in convertSourceItems

rxcuiDataByMedId = self.loadRXCUIData(conn=conn);

File "C:\HealthRex\CDSS\medinfo\dataconversion\STRIDEPreAdmitMedConversion.py", line 81, in loadRXCUIData

DBUtil.execute(query)

File "C:\HealthRex\CDSS\medinfo\db\DBUtil.py", line 261, in execute

cur.execute( query, parameters )

ProgrammingError: syntax error at or near "NOT"

LINE 4: ADD COLUMN IF NOT EXISTS

^

======================================================================

FAIL: test_addTimeCycleFeatures (medinfo.dataconversion.test.TestFeatureMatrixFactory.TestFeatureMatrixFactory)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestFeatureMatrixFactory.py", line 447, in test_addTimeCycleFeatures

self.assertEqualList(resultMatrix[2:], expectedMatrix)

File "C:\HealthRex\CDSS\medinfo\common\test\Util.py", line 142, in assertEqualList

self.assertEqual(verifyItem, sampleItem)

AssertionError: Lists differ: ['-789', '-900', 'LABMETB', '2... != ['-789', '-900', 'LABMETB', '2...

First differing element 6:

'0.866025403784'

'0.8660254037844388'

['-789',

'-900',

'LABMETB',

'2009-05-06 15:00:00',

'0',

'5',

- '0.866025403784',

+ '0.8660254037844388',

? ++++

- '-0.5',

+ '-0.4999999999999998',

'15',

- '-0.707106781187',

+ '-0.7071067811865471',

? +++ +

- '-0.707106781187']

+ '-0.7071067811865479']

? +++ +

======================================================================

FAIL: test_buildFeatureMatrix_multiFlowsheet (medinfo.dataconversion.test.TestFeatureMatrixFactory.TestFeatureMatrixFactory)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestFeatureMatrixFactory.py", line 405, in test_buildFeatureMatrix_multiFlowsheet

self.assertEqualList(resultMatrix[2:], expectedMatrix)

File "C:\HealthRex\CDSS\medinfo\common\test\Util.py", line 142, in assertEqualList

self.assertEqual(verifyItem, sampleItem)

AssertionError: Lists differ: ['-789', '-900', 'LABMETB', '2... != ['-789', '-900', 'LABMETB', '2...

First differing element 40:

'0.666666666667'

'0.6666666666666666'

Diff is 702 characters long. Set self.maxDiff to None to see it.

======================================================================

FAIL: test_build_FeatureMatrix_multiLabTest (medinfo.dataconversion.test.TestFeatureMatrixFactory.TestFeatureMatrixFactory)

----------------------------------------------------------------------

Traceback (most recent call last):

File "C:\HealthRex\CDSS\medinfo\dataconversion\test\TestFeatureMatrixFactory.py", line 345, in test_build_FeatureMatrix_multiLabTest

self.assertEqualTable(resultMatrix[2:], expectedMatrix)

File "C:\HealthRex\CDSS\medinfo\common\test\Util.py", line 172, in assertEqualTable

self.assertEqual(verifyItem, sampleItem)

AssertionError: '0.666666666667' != '0.6666666666666666'

----------------------------------------------------------------------

Ran 122 tests in 97.337s

FAILED (failures=3, errors=4) | priority | batch unit test failures some library dependencies and floating point checks error medinfo dataconversion test testeventdigraph unittest loader moduleimportfailure importerror failed to import test module medinfo dataconversion test testeventdigraph traceback most recent call last file c dev lib unittest loader py line in find tests module self get module from name name file c dev lib unittest loader py line in get module from name import name file c healthrex cdss medinfo dataconversion test testeventdigraph py line in import networkx as nx importerror no module named networkx error test dataconversion medinfo dataconversion test teststridedxlistconversion teststridedxlistconversion traceback most recent call last file c healthrex cdss medinfo dataconversion test teststridedxlistconversion py line in setup dataitemid isnew dbutil findorinsertitem stride dx list datamodel retrievecol pat id file c healthrex cdss medinfo db dbutil py line in findorinsertitem cur execute searchquery searchparams programmingerror column dx code list does not exist line and dx code list error test dataconversion maxmixturecount medinfo dataconversion test teststridepreadmitmedconversion teststridepreadmitmedconversion traceback most recent call last file c healthrex cdss medinfo dataconversion test teststridepreadmitmedconversion py line in test dataconversion maxmixturecount self converter convertsourceitems convoptions file c healthrex cdss medinfo dataconversion stridepreadmitmedconversion py line in convertsourceitems rxcuidatabymedid self loadrxcuidata conn conn file c healthrex cdss medinfo dataconversion stridepreadmitmedconversion py line in loadrxcuidata dbutil execute query file c healthrex cdss medinfo db dbutil py line in execute cur execute query parameters programmingerror syntax error at or near not line add column if not exists error test dataconversion normalized medinfo dataconversion test teststridepreadmitmedconversion teststridepreadmitmedconversion traceback most recent call last file c healthrex cdss medinfo dataconversion test teststridepreadmitmedconversion py line in test dataconversion normalized self converter convertsourceitems convoptions file c healthrex cdss medinfo dataconversion stridepreadmitmedconversion py line in convertsourceitems rxcuidatabymedid self loadrxcuidata conn conn file c healthrex cdss medinfo dataconversion stridepreadmitmedconversion py line in loadrxcuidata dbutil execute query file c healthrex cdss medinfo db dbutil py line in execute cur execute query parameters programmingerror syntax error at or near not line add column if not exists fail test addtimecyclefeatures medinfo dataconversion test testfeaturematrixfactory testfeaturematrixfactory traceback most recent call last file c healthrex cdss medinfo dataconversion test testfeaturematrixfactory py line in test addtimecyclefeatures self assertequallist resultmatrix expectedmatrix file c healthrex cdss medinfo common test util py line in assertequallist self assertequal verifyitem sampleitem assertionerror lists differ labmetb labmetb first differing element labmetb fail test buildfeaturematrix multiflowsheet medinfo dataconversion test testfeaturematrixfactory testfeaturematrixfactory traceback most recent call last file c healthrex cdss medinfo dataconversion test testfeaturematrixfactory py line in test buildfeaturematrix multiflowsheet self assertequallist resultmatrix expectedmatrix file c healthrex cdss medinfo common test util py line in assertequallist self assertequal verifyitem sampleitem assertionerror lists differ labmetb labmetb first differing element diff is characters long set self maxdiff to none to see it fail test build featurematrix multilabtest medinfo dataconversion test testfeaturematrixfactory testfeaturematrixfactory traceback most recent call last file c healthrex cdss medinfo dataconversion test testfeaturematrixfactory py line in test build featurematrix multilabtest self assertequaltable resultmatrix expectedmatrix file c healthrex cdss medinfo common test util py line in assertequaltable self assertequal verifyitem sampleitem assertionerror ran tests in failed failures errors | 1 |

157,712 | 6,011,378,610 | IssuesEvent | 2017-06-06 15:05:45 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | RNN CUDNN backend OOM issue | bug high priority | Hi,

I think I have stumbled upon something weird with the CUDNN backend for RNN. I am using CUDNN v5 on Cent OS 7.3.1.

```

torch.version.__version__ = e1d257bc6d472ee297df1719bf344bae359dbeaa

```

I have discussed this with @soumith as well.

The code snippet for reproducing is below. Enabling the cudnn backend increases the memory used linearly (goes OOM eventually). Disabling the backend results in expected behavior.

```python

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

from __future__ import unicode_literals

import torch

torch.backends.cudnn.enabled = False

import torch.cuda

import torch.nn as nn

from torch.autograd import Variable

import gc

print(torch.version.__version__)

def get_num_tensors():

ctr = 0

for obj in gc.get_objects():

if torch.is_tensor(obj):

ctr += 1

return ctr

wordvec_dim = 300

hidden_dim = 256

rnn_num_layers = 1

batch_size = 10

vocab_size = 100

rnn_dropout = 0.5

model = nn.LSTM(wordvec_dim, hidden_dim, rnn_num_layers,

dropout=rnn_dropout, batch_first=True)

# set training mode

model.cuda()

model.train()

encoded = Variable(torch.FloatTensor(batch_size, 1, wordvec_dim))

encoded = encoded.cuda()

h0 = Variable(torch.zeros(rnn_num_layers, batch_size, hidden_dim))

c0 = Variable(torch.zeros(rnn_num_layers, batch_size, hidden_dim))

h = h0.cuda()

c = c0.cuda()

print('Start:', get_num_tensors())

num_forward_passes = 10

for _i in range(num_forward_passes):

output, (h, c) = model(encoded, (h, c))

print(_i, get_num_tensors())

print('End:', get_num_tensors())

```

Output *with* cudnn enabled

```

e1d257bc6d472ee297df1719bf344bae359dbeaa

Start: 9

0 16

1 22

2 28

3 34

4 40

5 46

6 52

7 58

8 64

9 70

End: 70

```

Output without cudnn

```

e1d257bc6d472ee297df1719bf344bae359dbeaa

Start: 9

0 10

1 10

2 10

3 10

4 10

5 10

6 10

7 10

8 10

9 10

End: 10

``` | 1.0 | RNN CUDNN backend OOM issue - Hi,

I think I have stumbled upon something weird with the CUDNN backend for RNN. I am using CUDNN v5 on Cent OS 7.3.1.

```

torch.version.__version__ = e1d257bc6d472ee297df1719bf344bae359dbeaa

```

I have discussed this with @soumith as well.

The code snippet for reproducing is below. Enabling the cudnn backend increases the memory used linearly (goes OOM eventually). Disabling the backend results in expected behavior.

```python

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

from __future__ import unicode_literals

import torch

torch.backends.cudnn.enabled = False

import torch.cuda

import torch.nn as nn

from torch.autograd import Variable

import gc

print(torch.version.__version__)

def get_num_tensors():

ctr = 0

for obj in gc.get_objects():

if torch.is_tensor(obj):

ctr += 1

return ctr

wordvec_dim = 300

hidden_dim = 256

rnn_num_layers = 1

batch_size = 10

vocab_size = 100

rnn_dropout = 0.5

model = nn.LSTM(wordvec_dim, hidden_dim, rnn_num_layers,

dropout=rnn_dropout, batch_first=True)

# set training mode

model.cuda()

model.train()

encoded = Variable(torch.FloatTensor(batch_size, 1, wordvec_dim))

encoded = encoded.cuda()

h0 = Variable(torch.zeros(rnn_num_layers, batch_size, hidden_dim))

c0 = Variable(torch.zeros(rnn_num_layers, batch_size, hidden_dim))

h = h0.cuda()

c = c0.cuda()

print('Start:', get_num_tensors())

num_forward_passes = 10

for _i in range(num_forward_passes):

output, (h, c) = model(encoded, (h, c))

print(_i, get_num_tensors())

print('End:', get_num_tensors())

```

Output *with* cudnn enabled

```

e1d257bc6d472ee297df1719bf344bae359dbeaa

Start: 9

0 16

1 22

2 28

3 34

4 40

5 46

6 52

7 58

8 64

9 70

End: 70

```

Output without cudnn

```

e1d257bc6d472ee297df1719bf344bae359dbeaa

Start: 9

0 10

1 10

2 10

3 10

4 10

5 10

6 10

7 10

8 10

9 10

End: 10

``` | priority | rnn cudnn backend oom issue hi i think i have stumbled upon something weird with the cudnn backend for rnn i am using cudnn on cent os torch version version i have discussed this with soumith as well the code snippet for reproducing is below enabling the cudnn backend increases the memory used linearly goes oom eventually disabling the backend results in expected behavior python from future import absolute import from future import division from future import print function from future import unicode literals import torch torch backends cudnn enabled false import torch cuda import torch nn as nn from torch autograd import variable import gc print torch version version def get num tensors ctr for obj in gc get objects if torch is tensor obj ctr return ctr wordvec dim hidden dim rnn num layers batch size vocab size rnn dropout model nn lstm wordvec dim hidden dim rnn num layers dropout rnn dropout batch first true set training mode model cuda model train encoded variable torch floattensor batch size wordvec dim encoded encoded cuda variable torch zeros rnn num layers batch size hidden dim variable torch zeros rnn num layers batch size hidden dim h cuda c cuda print start get num tensors num forward passes for i in range num forward passes output h c model encoded h c print i get num tensors print end get num tensors output with cudnn enabled start end output without cudnn start end | 1 |

120,225 | 4,786,956,151 | IssuesEvent | 2016-10-29 18:32:13 | devists/projectile | https://api.github.com/repos/devists/projectile | closed | Mock User Detail for login | backend hacktoberfest Priority - High | Please provide sample username and password for testing.

This will be the username and password everyone will use to login.

Currently we are not able to login. | 1.0 | Mock User Detail for login - Please provide sample username and password for testing.

This will be the username and password everyone will use to login.

Currently we are not able to login. | priority | mock user detail for login please provide sample username and password for testing this will be the username and password everyone will use to login currently we are not able to login | 1 |

718,057 | 24,702,610,226 | IssuesEvent | 2022-10-19 16:21:54 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | opened | Initialization of the backend project | priority-high status-new back-end | ### Issue Description

As we started the implementation of the main app, we need to initialize the node project for main backend application.

We decided to use our folder structure and packages from the practice app from the last year.

Under this issue I will kickstart the project with required packages and complete the issue in ASAP so that we can begin implementation.

### Step Details

Steps that will be performed:

- [ ] Initialize the npm project.

- [ ] Load the required packages.

### Final Actions

Upon completion of this issue, backend team will start the implementation of the authorization endpoints as discussed in meeting 2

### Deadline of the Issue

20.10.2022 10:00 AM

### Reviewer

Hasan Can Erol

### Deadline for the Review

20.10.2022 10:00 PM | 1.0 | Initialization of the backend project - ### Issue Description

As we started the implementation of the main app, we need to initialize the node project for main backend application.

We decided to use our folder structure and packages from the practice app from the last year.

Under this issue I will kickstart the project with required packages and complete the issue in ASAP so that we can begin implementation.

### Step Details

Steps that will be performed:

- [ ] Initialize the npm project.

- [ ] Load the required packages.

### Final Actions

Upon completion of this issue, backend team will start the implementation of the authorization endpoints as discussed in meeting 2

### Deadline of the Issue

20.10.2022 10:00 AM

### Reviewer

Hasan Can Erol

### Deadline for the Review

20.10.2022 10:00 PM | priority | initialization of the backend project issue description as we started the implementation of the main app we need to initialize the node project for main backend application we decided to use our folder structure and packages from the practice app from the last year under this issue i will kickstart the project with required packages and complete the issue in asap so that we can begin implementation step details steps that will be performed initialize the npm project load the required packages final actions upon completion of this issue backend team will start the implementation of the authorization endpoints as discussed in meeting deadline of the issue am reviewer hasan can erol deadline for the review pm | 1 |

831,657 | 32,057,306,852 | IssuesEvent | 2023-09-24 08:30:49 | varundeepsaini/discordbot | https://api.github.com/repos/varundeepsaini/discordbot | closed | bug: the bot shows `the application didnt respond` even after sending the message | bug good first issue priority: high | Bug:

| 1.0 | bug: the bot shows `the application didnt respond` even after sending the message - Bug:

| priority | bug the bot shows the application didnt respond even after sending the message bug | 1 |

426,437 | 12,372,435,735 | IssuesEvent | 2020-05-18 20:24:23 | technologiestiftung/tsb-trees-frontend | https://api.github.com/repos/technologiestiftung/tsb-trees-frontend | closed | Cookie content | Priority HIGH | There is a local storage object being generated with following content: mapbox.eventData:ZmRua2xn:{"lastSuccess":1588594083071,"tokenU":"fdnklg"}

?? | 1.0 | Cookie content - There is a local storage object being generated with following content: mapbox.eventData:ZmRua2xn:{"lastSuccess":1588594083071,"tokenU":"fdnklg"}

?? | priority | cookie content there is a local storage object being generated with following content mapbox eventdata lastsuccess tokenu fdnklg | 1 |

705,435 | 24,234,729,763 | IssuesEvent | 2022-09-26 21:45:51 | nasa/fprime | https://api.github.com/repos/nasa/fprime | closed | Cross-compile toolchain for raspberry pi is deprecated | High Priority Easy First Issue | [Cross-compile toolchain](https://github.com/raspberrypi/tools) in [package installation](https://github.com/nasa/fprime/blob/devel/RPI/README.md#package-installation) for raspberry pi demo is listed as a deprecated project. | 1.0 | Cross-compile toolchain for raspberry pi is deprecated - [Cross-compile toolchain](https://github.com/raspberrypi/tools) in [package installation](https://github.com/nasa/fprime/blob/devel/RPI/README.md#package-installation) for raspberry pi demo is listed as a deprecated project. | priority | cross compile toolchain for raspberry pi is deprecated in for raspberry pi demo is listed as a deprecated project | 1 |

644,453 | 20,978,108,906 | IssuesEvent | 2022-03-28 17:03:37 | bcgov/foi-flow | https://api.github.com/repos/bcgov/foi-flow | closed | Closed request is showing in IAO user queue | bug high priority | **Describe the bug in current situation**

Closed request is showing in IAO user queue

**Link bug to the User Story**

**Impact of this bug**

medium - goes against ac, does not prevent users from using the system

**Chance of Occurring (high/medium/low/very low)**

high

**Pre Conditions: which Env, any pre-requesites or assumptions to execute steps?**

**Steps to Reproduce**

Steps to reproduce the behavior:

- create a request

- login as any IAO user (processing, intake, flex)

- move to open stage

- close, note the request id

- return to queue

- search for request in queue (not advanced search)

**Actual/ observed behaviour/ results**

closed request shows up

**Expected behaviour**

closed request should not show up

**Screenshots/ Visual Reference/ Source**

| 1.0 | Closed request is showing in IAO user queue - **Describe the bug in current situation**

Closed request is showing in IAO user queue

**Link bug to the User Story**

**Impact of this bug**

medium - goes against ac, does not prevent users from using the system

**Chance of Occurring (high/medium/low/very low)**

high

**Pre Conditions: which Env, any pre-requesites or assumptions to execute steps?**

**Steps to Reproduce**

Steps to reproduce the behavior:

- create a request

- login as any IAO user (processing, intake, flex)

- move to open stage

- close, note the request id

- return to queue

- search for request in queue (not advanced search)

**Actual/ observed behaviour/ results**

closed request shows up

**Expected behaviour**

closed request should not show up

**Screenshots/ Visual Reference/ Source**

| priority | closed request is showing in iao user queue describe the bug in current situation closed request is showing in iao user queue link bug to the user story impact of this bug medium goes against ac does not prevent users from using the system chance of occurring high medium low very low high pre conditions which env any pre requesites or assumptions to execute steps steps to reproduce steps to reproduce the behavior create a request login as any iao user processing intake flex move to open stage close note the request id return to queue search for request in queue not advanced search actual observed behaviour results closed request shows up expected behaviour closed request should not show up screenshots visual reference source | 1 |

430,283 | 12,450,710,964 | IssuesEvent | 2020-05-27 09:14:43 | bounswe/bounswe2020group8 | https://api.github.com/repos/bounswe/bounswe2020group8 | closed | Missing urls2.py [Shipment_Calculator branch] | Priority: High help wanted | urls2.py is missing in the shipment_calculator. To test my code, I commented the 22. line in practice_app/urls.py.

Could you please upload the urls2.py and uncomment the 22. line in practice_app/urls.py? | 1.0 | Missing urls2.py [Shipment_Calculator branch] - urls2.py is missing in the shipment_calculator. To test my code, I commented the 22. line in practice_app/urls.py.

Could you please upload the urls2.py and uncomment the 22. line in practice_app/urls.py? | priority | missing py py is missing in the shipment calculator to test my code i commented the line in practice app urls py could you please upload the py and uncomment the line in practice app urls py | 1 |

781,897 | 27,453,891,075 | IssuesEvent | 2023-03-02 19:36:43 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | opened | [all] Upgrade to Java 17 | new feature priority: high triage | ### Duplicates

- [X] I have searched the existing issues

### Is your feature request related to a problem? Please describe.

Java 11 LTS is approaching EOL

### Describe the solution you'd like

Upgrade to Java 17 LTS | 1.0 | [all] Upgrade to Java 17 - ### Duplicates

- [X] I have searched the existing issues

### Is your feature request related to a problem? Please describe.

Java 11 LTS is approaching EOL

### Describe the solution you'd like

Upgrade to Java 17 LTS | priority | upgrade to java duplicates i have searched the existing issues is your feature request related to a problem please describe java lts is approaching eol describe the solution you d like upgrade to java lts | 1 |

219,335 | 7,335,038,779 | IssuesEvent | 2018-03-06 01:45:34 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | error message improvement when adding users in hierarchical configuration | priority:high sprint1 status:pending type:bug | Problem statement: error message when adding xdsh capabilities, does not reveal that the user is missing from the service nodes, in this hierarchical configuration.

Use case: A user was added to the primary xCAT server, and granted initial ability to open a node console.

> run '/opt/xcat/share/xcat/scripts/setup-local-client.sh ccuser' as 'root' to generate the client certificates

Then the policy table was updated for this local user to do xCAT commands, which included xdsh.

> chdef -t policy -o 5.7 name=ccuser commands=nodels,rpower,lsdef,xdsh rule=allow

A postscript is run to add the user to the compute nodes, and ssh, nodels, rpower, all are tested and work. Finally, I attempted to add xdsh capability.

> xdsh cn01 -K

...

> Error: xdsh plugin bug, pid 68135, process description: ‘xcatd SSL: xdsh: xdsh instance’ with error ‘cannot open file /.ssh/copy.sh

> ’ while trying to fulfill request for the following nodes: cn01

The root cause of the error was that xdsh, unlike ssh, was attempting to route through the service node (by design). I had neglected to add the user and sync the /etc/passwd files to the service nodes.

This issue request is to remove the plugin bug message and have some better message to figure out where the error is coming from, if it's MGMT node or SN. Otherwise it's quite difficult to pinpoint the error.

documentation reference for this user scenario:

http://xcat-docs.readthedocs.io/en/stable/advanced/security/security.html | 1.0 | error message improvement when adding users in hierarchical configuration - Problem statement: error message when adding xdsh capabilities, does not reveal that the user is missing from the service nodes, in this hierarchical configuration.

Use case: A user was added to the primary xCAT server, and granted initial ability to open a node console.

> run '/opt/xcat/share/xcat/scripts/setup-local-client.sh ccuser' as 'root' to generate the client certificates

Then the policy table was updated for this local user to do xCAT commands, which included xdsh.

> chdef -t policy -o 5.7 name=ccuser commands=nodels,rpower,lsdef,xdsh rule=allow

A postscript is run to add the user to the compute nodes, and ssh, nodels, rpower, all are tested and work. Finally, I attempted to add xdsh capability.

> xdsh cn01 -K

...

> Error: xdsh plugin bug, pid 68135, process description: ‘xcatd SSL: xdsh: xdsh instance’ with error ‘cannot open file /.ssh/copy.sh

> ’ while trying to fulfill request for the following nodes: cn01

The root cause of the error was that xdsh, unlike ssh, was attempting to route through the service node (by design). I had neglected to add the user and sync the /etc/passwd files to the service nodes.

This issue request is to remove the plugin bug message and have some better message to figure out where the error is coming from, if it's MGMT node or SN. Otherwise it's quite difficult to pinpoint the error.

documentation reference for this user scenario:

http://xcat-docs.readthedocs.io/en/stable/advanced/security/security.html | priority | error message improvement when adding users in hierarchical configuration problem statement error message when adding xdsh capabilities does not reveal that the user is missing from the service nodes in this hierarchical configuration use case a user was added to the primary xcat server and granted initial ability to open a node console run opt xcat share xcat scripts setup local client sh ccuser as root to generate the client certificates then the policy table was updated for this local user to do xcat commands which included xdsh chdef t policy o name ccuser commands nodels rpower lsdef xdsh rule allow a postscript is run to add the user to the compute nodes and ssh nodels rpower all are tested and work finally i attempted to add xdsh capability xdsh k error xdsh plugin bug pid process description ‘xcatd ssl xdsh xdsh instance’ with error ‘cannot open file ssh copy sh ’ while trying to fulfill request for the following nodes the root cause of the error was that xdsh unlike ssh was attempting to route through the service node by design i had neglected to add the user and sync the etc passwd files to the service nodes this issue request is to remove the plugin bug message and have some better message to figure out where the error is coming from if it s mgmt node or sn otherwise it s quite difficult to pinpoint the error documentation reference for this user scenario | 1 |

290,590 | 8,901,159,144 | IssuesEvent | 2019-01-17 01:02:06 | VIDY/embed.js | https://api.github.com/repos/VIDY/embed.js | closed | Hover: Occasionally prevents freeform movement | High Priority bug | This isn't consistent, but _does_ happen semi-regularly as of late.

On mobile (Android & iOS), when holding a vlink, the player will immediately shutdown/reset once your finger leaves the vlink's boundaries. It behaves as if it's in desktop mode, but abruptly & without the exit animation. | 1.0 | Hover: Occasionally prevents freeform movement - This isn't consistent, but _does_ happen semi-regularly as of late.

On mobile (Android & iOS), when holding a vlink, the player will immediately shutdown/reset once your finger leaves the vlink's boundaries. It behaves as if it's in desktop mode, but abruptly & without the exit animation. | priority | hover occasionally prevents freeform movement this isn t consistent but does happen semi regularly as of late on mobile android ios when holding a vlink the player will immediately shutdown reset once your finger leaves the vlink s boundaries it behaves as if it s in desktop mode but abruptly without the exit animation | 1 |

250,147 | 7,969,228,846 | IssuesEvent | 2018-07-16 08:18:00 | ISISScientificComputing/autoreduce | https://api.github.com/repos/ISISScientificComputing/autoreduce | closed | Dealing with negative RB numbers and when RB number is 0 | Bug High Priority | Currently the webapp fails when the RB number for the run is negative. This is the case for calibration runs. We should find a more elegant way to deal with this case when it occurs.

We should also ensure RB=0 is working correctly.

*This functionality was requested by Pascal*

```

Hello,

We cannot autoreduce data that is in calibration or commissioning RB as they do not appear listed on SECI when we go to change experiment.

Is it also possible to have a RB number =0 (quite a few people use that) so we can put stuff there and it can be autoprocessed.

Thanks,

P

``` | 1.0 | Dealing with negative RB numbers and when RB number is 0 - Currently the webapp fails when the RB number for the run is negative. This is the case for calibration runs. We should find a more elegant way to deal with this case when it occurs.

We should also ensure RB=0 is working correctly.

*This functionality was requested by Pascal*

```

Hello,

We cannot autoreduce data that is in calibration or commissioning RB as they do not appear listed on SECI when we go to change experiment.

Is it also possible to have a RB number =0 (quite a few people use that) so we can put stuff there and it can be autoprocessed.

Thanks,

P

``` | priority | dealing with negative rb numbers and when rb number is currently the webapp fails when the rb number for the run is negative this is the case for calibration runs we should find a more elegant way to deal with this case when it occurs we should also ensure rb is working correctly this functionality was requested by pascal hello we cannot autoreduce data that is in calibration or commissioning rb as they do not appear listed on seci when we go to change experiment is it also possible to have a rb number quite a few people use that so we can put stuff there and it can be autoprocessed thanks p | 1 |

142,133 | 5,459,712,332 | IssuesEvent | 2017-03-09 01:42:33 | CS2103JAN2017-T09-B4/main | https://api.github.com/repos/CS2103JAN2017-T09-B4/main | opened | Indicate a starting and ending time for my tasks | priority.high status.ongoing type.story | So that I can keep track of events I need to attend | 1.0 | Indicate a starting and ending time for my tasks - So that I can keep track of events I need to attend | priority | indicate a starting and ending time for my tasks so that i can keep track of events i need to attend | 1 |

681,342 | 23,306,692,576 | IssuesEvent | 2022-08-08 02:20:22 | jhugon/semantic-data-taking-webapp | https://api.github.com/repos/jhugon/semantic-data-taking-webapp | closed | Cloud userfile environment variable isn’t working as it should | bug High priority | The userfile location set by the env var shows up correctly at the beginning of the log, but then appears as the default userfile.txt later in the log and when the auth functions are called | 1.0 | Cloud userfile environment variable isn’t working as it should - The userfile location set by the env var shows up correctly at the beginning of the log, but then appears as the default userfile.txt later in the log and when the auth functions are called | priority | cloud userfile environment variable isn’t working as it should the userfile location set by the env var shows up correctly at the beginning of the log but then appears as the default userfile txt later in the log and when the auth functions are called | 1 |

706,632 | 24,279,974,938 | IssuesEvent | 2022-09-28 16:31:45 | oceanprotocol/df-web | https://api.github.com/repos/oceanprotocol/df-web | opened | Lock Ocean UX - Add multi-step component to flesh out Approve+Lock (and all possible veOCEAN flows). | Priority: High | There are 3 different locking flows the user can experience

#### Creating Lock

1. Approve Ocean

2. Create Lock

#### Update Lock (if user adds an amount)

1. Approve Ocean

2. Update Lock

#### Update Lock (if user does not add an amount, only a date)

1. Update Lock

### DoD:

- [ ] We have 1 (or 3, 1 for each) multi-step component that reflects all of these flows and are contextual to the user actions

- [ ] Substitute the 1 button, for this multi-step flow | 1.0 | Lock Ocean UX - Add multi-step component to flesh out Approve+Lock (and all possible veOCEAN flows). - There are 3 different locking flows the user can experience

#### Creating Lock

1. Approve Ocean

2. Create Lock

#### Update Lock (if user adds an amount)

1. Approve Ocean

2. Update Lock

#### Update Lock (if user does not add an amount, only a date)

1. Update Lock

### DoD:

- [ ] We have 1 (or 3, 1 for each) multi-step component that reflects all of these flows and are contextual to the user actions

- [ ] Substitute the 1 button, for this multi-step flow | priority | lock ocean ux add multi step component to flesh out approve lock and all possible veocean flows there are different locking flows the user can experience creating lock approve ocean create lock update lock if user adds an amount approve ocean update lock update lock if user does not add an amount only a date update lock dod we have or for each multi step component that reflects all of these flows and are contextual to the user actions substitute the button for this multi step flow | 1 |

566,827 | 16,831,517,849 | IssuesEvent | 2021-06-18 05:59:41 | tooploox/autonomous_car_model | https://api.github.com/repos/tooploox/autonomous_car_model | closed | Physical properties of the simulated model requires checking | bug good first issue high priority | As the model behaves strangely in simulation I guess there's something wrong with its description and/or physical properties defined in URDF (`collision`, `inertia`, and `joints` tags in general should be checked).

Potentially helpful resources: http://gazebosim.org/tutorials/?tut=ros_urdf

It should make more sense to resolve #1 first. | 1.0 | Physical properties of the simulated model requires checking - As the model behaves strangely in simulation I guess there's something wrong with its description and/or physical properties defined in URDF (`collision`, `inertia`, and `joints` tags in general should be checked).

Potentially helpful resources: http://gazebosim.org/tutorials/?tut=ros_urdf

It should make more sense to resolve #1 first. | priority | physical properties of the simulated model requires checking as the model behaves strangely in simulation i guess there s something wrong with its description and or physical properties defined in urdf collision inertia and joints tags in general should be checked potentially helpful resources it should make more sense to resolve first | 1 |

717,263 | 24,668,373,097 | IssuesEvent | 2022-10-18 12:05:52 | proyectos-tsdwad/integrador-modulo-fullstack | https://api.github.com/repos/proyectos-tsdwad/integrador-modulo-fullstack | closed | #US02 Como usuario quiero poder ver el header del home | high priority 3 Story Point | - [x] #TK05 Diseñar header del home.

- [x] #TK06 Maquetar el mismo.

- [x] #TK07 Testear.

| 1.0 | #US02 Como usuario quiero poder ver el header del home - - [x] #TK05 Diseñar header del home.

- [x] #TK06 Maquetar el mismo.

- [x] #TK07 Testear.

| priority | como usuario quiero poder ver el header del home diseñar header del home maquetar el mismo testear | 1 |

58,993 | 3,098,436,931 | IssuesEvent | 2015-08-28 11:00:42 | artofkot/evarist | https://api.github.com/repos/artofkot/evarist | opened | Как отображать решения. | high priority todo | Сейчас на сайте сделано так - студент постит решение, проверяющие его читают, голосуют за верно или неверно и комментируют. Далее студент нажимает "исправить решение", пишет новое решение, решение исправляется (при этом старое решение вообще удаляется). Далее проверяющий при необходимости отменяет свой голос, и голосует заново.

Нужно добавить две вещи:

1. Чтобы при исправлении решения старые версии решения сохранялись, и проверяющий потом не отменял свой голос, а голосовал уже за новое (исправленное) решение. Это нужно, чтобы можно было отследить прогресс по решению.

2. Чтобы студент мог отправить совершенно новое решение задачи (несмотря на то, что может быть у него уже есть решения этой задачи).

То есть грубо говоря, студент всегда может отправить решение, и оно либо является исправлением какого-то решения, и тогда это решение отправляется в соответствующую цепочку версий решения. Либо решение совсем новое, и оно рождает новую цепочку решений.

Существенное изменение в архитектуре появляется одно - добавляется объект цепочка решений, который объединяет собой разные версии одного решения. То есть content_block with type='problem' будет хранить не решения теперь, а цепочки решений, а уже цепочки решений хранят решения.

Выглядит цепочка на сайте примерно так:

> Цепочка решений.

> кнопка "исправить решение"

> Решение v1.3 (latest)

> comment 1

> comment 2

> comment 3

> кнопка "комметировать"

> Решение v1.2

> comment 1

> comment 2

> comment 3

> Решение v1.1

> Решение v1.0

На сайте на страничках /problem, /check, /my_solutions вместо решений и комментарий к ним будут соответственно находиться цепочки. В цепочке показывать имеет смысл только последнюю версию решения, остальные либо под collapse.js, либо фетчаться через ajax requests. | 1.0 | Как отображать решения. - Сейчас на сайте сделано так - студент постит решение, проверяющие его читают, голосуют за верно или неверно и комментируют. Далее студент нажимает "исправить решение", пишет новое решение, решение исправляется (при этом старое решение вообще удаляется). Далее проверяющий при необходимости отменяет свой голос, и голосует заново.

Нужно добавить две вещи:

1. Чтобы при исправлении решения старые версии решения сохранялись, и проверяющий потом не отменял свой голос, а голосовал уже за новое (исправленное) решение. Это нужно, чтобы можно было отследить прогресс по решению.

2. Чтобы студент мог отправить совершенно новое решение задачи (несмотря на то, что может быть у него уже есть решения этой задачи).

То есть грубо говоря, студент всегда может отправить решение, и оно либо является исправлением какого-то решения, и тогда это решение отправляется в соответствующую цепочку версий решения. Либо решение совсем новое, и оно рождает новую цепочку решений.

Существенное изменение в архитектуре появляется одно - добавляется объект цепочка решений, который объединяет собой разные версии одного решения. То есть content_block with type='problem' будет хранить не решения теперь, а цепочки решений, а уже цепочки решений хранят решения.

Выглядит цепочка на сайте примерно так:

> Цепочка решений.

> кнопка "исправить решение"

> Решение v1.3 (latest)

> comment 1

> comment 2

> comment 3

> кнопка "комметировать"

> Решение v1.2

> comment 1

> comment 2

> comment 3

> Решение v1.1

> Решение v1.0

На сайте на страничках /problem, /check, /my_solutions вместо решений и комментарий к ним будут соответственно находиться цепочки. В цепочке показывать имеет смысл только последнюю версию решения, остальные либо под collapse.js, либо фетчаться через ajax requests. | priority | как отображать решения сейчас на сайте сделано так студент постит решение проверяющие его читают голосуют за верно или неверно и комментируют далее студент нажимает исправить решение пишет новое решение решение исправляется при этом старое решение вообще удаляется далее проверяющий при необходимости отменяет свой голос и голосует заново нужно добавить две вещи чтобы при исправлении решения старые версии решения сохранялись и проверяющий потом не отменял свой голос а голосовал уже за новое исправленное решение это нужно чтобы можно было отследить прогресс по решению чтобы студент мог отправить совершенно новое решение задачи несмотря на то что может быть у него уже есть решения этой задачи то есть грубо говоря студент всегда может отправить решение и оно либо является исправлением какого то решения и тогда это решение отправляется в соответствующую цепочку версий решения либо решение совсем новое и оно рождает новую цепочку решений существенное изменение в архитектуре появляется одно добавляется объект цепочка решений который объединяет собой разные версии одного решения то есть content block with type problem будет хранить не решения теперь а цепочки решений а уже цепочки решений хранят решения выглядит цепочка на сайте примерно так цепочка решений кнопка исправить решение решение latest comment comment comment кнопка комметировать решение comment comment comment решение решение на сайте на страничках problem check my solutions вместо решений и комментарий к ним будут соответственно находиться цепочки в цепочке показывать имеет смысл только последнюю версию решения остальные либо под collapse js либо фетчаться через ajax requests | 1 |

551,856 | 16,190,172,143 | IssuesEvent | 2021-05-04 07:16:08 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | tinder.com - see bug description | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox 89.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:89.0) Gecko/20100101 Firefox/89.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/72394 -->

**URL**: https://tinder.com/app/recs

**Browser / Version**: Firefox 89.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Chrome

**Problem type**: Something else

**Description**: just a white page appear

**Steps to Reproduce**:

With both browsers, just after the webside started to load, the design switched to a white page and is not usable. Failure appears since three days.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/4/8aaf98b7-291b-4ade-a0fb-52140b922056.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20210429190114</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2021/4/801262df-b99c-420a-a04c-a5aca8d3d9a3)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | tinder.com - see bug description - <!-- @browser: Firefox 89.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:89.0) Gecko/20100101 Firefox/89.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/72394 -->

**URL**: https://tinder.com/app/recs

**Browser / Version**: Firefox 89.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Chrome

**Problem type**: Something else

**Description**: just a white page appear

**Steps to Reproduce**:

With both browsers, just after the webside started to load, the design switched to a white page and is not usable. Failure appears since three days.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/4/8aaf98b7-291b-4ade-a0fb-52140b922056.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20210429190114</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2021/4/801262df-b99c-420a-a04c-a5aca8d3d9a3)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | tinder com see bug description url browser version firefox operating system windows tested another browser yes chrome problem type something else description just a white page appear steps to reproduce with both browsers just after the webside started to load the design switched to a white page and is not usable failure appears since three days view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

678,794 | 23,210,867,276 | IssuesEvent | 2022-08-02 10:00:05 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | SAML related cookie not clearing when a User's session is timedout | Priority/Highest Component/SAML bug 6.0.0-bug-fixing | When using a saml app after a user's session timed out the user will have to re-login at that time the old samlssoTokenId is assigned instead of a new one. | 1.0 | SAML related cookie not clearing when a User's session is timedout - When using a saml app after a user's session timed out the user will have to re-login at that time the old samlssoTokenId is assigned instead of a new one. | priority | saml related cookie not clearing when a user s session is timedout when using a saml app after a user s session timed out the user will have to re login at that time the old samlssotokenid is assigned instead of a new one | 1 |

299,841 | 9,205,904,333 | IssuesEvent | 2019-03-08 12:03:26 | geosolutions-it/tdipisa | https://api.github.com/repos/geosolutions-it/tdipisa | closed | PHOTOMAP - Legend print checks | Priority: High enhancement in progress | I attached print screen from print output (pdf), where the text for layer (legend) is not show in full. would it be possible to wrap it into more lines.

| 1.0 | PHOTOMAP - Legend print checks - I attached print screen from print output (pdf), where the text for layer (legend) is not show in full. would it be possible to wrap it into more lines.

| priority | photomap legend print checks i attached print screen from print output pdf where the text for layer legend is not show in full would it be possible to wrap it into more lines | 1 |

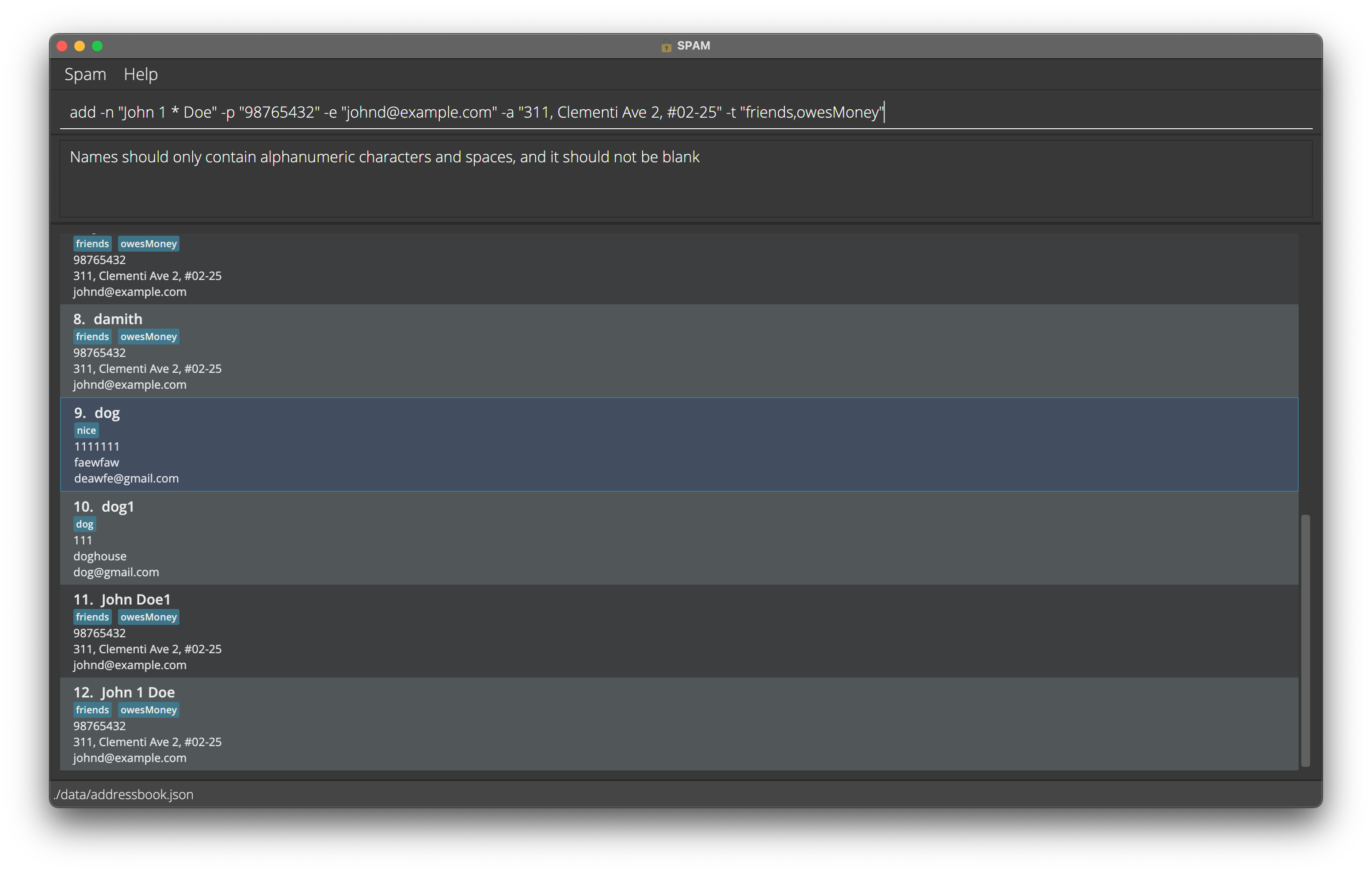

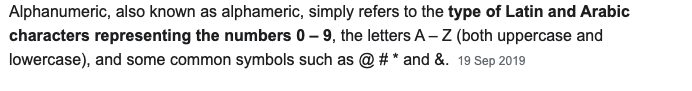

605,264 | 18,727,864,312 | IssuesEvent | 2021-11-03 18:08:35 | AY2122S1-CS2103T-W13-2/tp | https://api.github.com/repos/AY2122S1-CS2103T-W13-2/tp | closed | [PE-D] Unclear name constraints. | type.Bug priority.High severity.Medium |

The constraints say that names should only contain `alphanumeric characters and spaces`. However, based on this definition of alphanumeric characters, symbols should be allowed for names but it is not allowed, even though numbers are.

<!--session: 1635494539368-6e4ca0fb-c787-43c4-a16e-48a16480861f--><!--Version: Web v3.4.1-->

-------------

Labels: `type.FunctionalityBug` `severity.Medium`

original: s7u4rt99/ped#3 | 1.0 | [PE-D] Unclear name constraints. -

The constraints say that names should only contain `alphanumeric characters and spaces`. However, based on this definition of alphanumeric characters, symbols should be allowed for names but it is not allowed, even though numbers are.

<!--session: 1635494539368-6e4ca0fb-c787-43c4-a16e-48a16480861f--><!--Version: Web v3.4.1-->

-------------

Labels: `type.FunctionalityBug` `severity.Medium`

original: s7u4rt99/ped#3 | priority | unclear name constraints the constraints say that names should only contain alphanumeric characters and spaces however based on this definition of alphanumeric characters symbols should be allowed for names but it is not allowed even though numbers are labels type functionalitybug severity medium original ped | 1 |

780,115 | 27,379,739,871 | IssuesEvent | 2023-02-28 09:13:33 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | When selecting a post with the structure /%postname%/amp/, it does not work. (User end ) | bug [Priority: HIGH] Ready for Review | When the user sets structure /%postname%/amp/, this is not working in AMP, and the "post_link" action is being modified depending on the selected category.

Ref ticket: https://magazine3.in/conversation/83169?folder_id=29 | 1.0 | When selecting a post with the structure /%postname%/amp/, it does not work. (User end ) - When the user sets structure /%postname%/amp/, this is not working in AMP, and the "post_link" action is being modified depending on the selected category.

Ref ticket: https://magazine3.in/conversation/83169?folder_id=29 | priority | when selecting a post with the structure postname amp it does not work user end when the user sets structure postname amp this is not working in amp and the post link action is being modified depending on the selected category ref ticket | 1 |

652,742 | 21,560,351,430 | IssuesEvent | 2022-05-01 04:03:13 | bitfoundation/bitframework | https://api.github.com/repos/bitfoundation/bitframework | closed | Correct the old parameters of the Bit components used in the `TodoTemplate` project based on the new updates | area / project template high priority enhancement | Some of the Bit components have been updated and some parameters have been renamed that have not been updated in the `TodoTemplate` project.

For example, the `BitRadioButtonGroup` component parameter `Key` should be changed to the `Value` | 1.0 | Correct the old parameters of the Bit components used in the `TodoTemplate` project based on the new updates - Some of the Bit components have been updated and some parameters have been renamed that have not been updated in the `TodoTemplate` project.

For example, the `BitRadioButtonGroup` component parameter `Key` should be changed to the `Value` | priority | correct the old parameters of the bit components used in the todotemplate project based on the new updates some of the bit components have been updated and some parameters have been renamed that have not been updated in the todotemplate project for example the bitradiobuttongroup component parameter key should be changed to the value | 1 |