Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

7,747 | 7,078,990,444 | IssuesEvent | 2018-01-10 07:35:52 | spring1944/spring1944 | https://api.github.com/repos/spring1944/spring1944 | closed | RFC: ditch wordpress/forum setup in favor of a static github page | infrastructure | Chatted with hoko about a direction for the site, and I think it's at least worth considering ditching our current setup.

Basically: We have three big pieces of software run on our behalf by koshi: wordpress, mediawiki, and phpbb. All of them are famously insecure, and we hardly use them (other than the wiki, which I'll get to).

I'm thinking about moving us to a static page hosted on github. Here's a quick breakdown of what we use in our current setup, and how we might replace it.

#### Wordpress --> static github project page

The few useful bits of content on the site (maps, media) can be moved over. For the other wordpress features -- we barely make use of wordpress for the authoring stuff, and the comments are mostly spam. Our image gallery is mostly dead links at this point, and changes so rarely that keeping screenshots in a branch of a repo would be perfectly fine. We're all sufficiently technical that writing newsposts by editing some markdown files and git push-ing is fine.

#### Mediawiki --> github wiki / curated content as part of the site

Mediawiki is probably the better wiki software, but porting the useful content wouldn't be very hard to do, since our wiki doesn't make extensive use of formatting, and it isn't themed in a meaningful way.

That said, I think I would promote the best bits of the wiki to proper pages on the static site, so we can polish and curate and promote the best resources in a highly visible place. Other stuff can go into the github wiki, for assorted 'things users want to contribute'.

#### Forum --> ???

This might be the most contentious point -- giving up our dedicated forum. I can grab a backup from koshi, so the archive would still be available, but I think our forum is actually fairly damaging to our image. Our repo is really active (at the moment :smiley:), but our forum is kind of a ghost town. One of the first things I do when checking out an open source game project is peek at the forums. No recent posts == no players, so I usually bail.

I think we would be better served by drawing people directly into `#s44` using the IRC bridge by having a web-IRC thingy available from the static page, maybe in addition to some public logging or other persistent messaging. I suspect that between github issues and #s44, our communication needs are pretty much covered.

Thoughts?

| 1.0 | RFC: ditch wordpress/forum setup in favor of a static github page - Chatted with hoko about a direction for the site, and I think it's at least worth considering ditching our current setup.

Basically: We have three big pieces of software run on our behalf by koshi: wordpress, mediawiki, and phpbb. All of them are famously insecure, and we hardly use them (other than the wiki, which I'll get to).

I'm thinking about moving us to a static page hosted on github. Here's a quick breakdown of what we use in our current setup, and how we might replace it.

#### Wordpress --> static github project page

The few useful bits of content on the site (maps, media) can be moved over. For the other wordpress features -- we barely make use of wordpress for the authoring stuff, and the comments are mostly spam. Our image gallery is mostly dead links at this point, and changes so rarely that keeping screenshots in a branch of a repo would be perfectly fine. We're all sufficiently technical that writing newsposts by editing some markdown files and git push-ing is fine.

#### Mediawiki --> github wiki / curated content as part of the site

Mediawiki is probably the better wiki software, but porting the useful content wouldn't be very hard to do, since our wiki doesn't make extensive use of formatting, and it isn't themed in a meaningful way.

That said, I think I would promote the best bits of the wiki to proper pages on the static site, so we can polish and curate and promote the best resources in a highly visible place. Other stuff can go into the github wiki, for assorted 'things users want to contribute'.

#### Forum --> ???

This might be the most contentious point -- giving up our dedicated forum. I can grab a backup from koshi, so the archive would still be available, but I think our forum is actually fairly damaging to our image. Our repo is really active (at the moment :smiley:), but our forum is kind of a ghost town. One of the first things I do when checking out an open source game project is peek at the forums. No recent posts == no players, so I usually bail.

I think we would be better served by drawing people directly into `#s44` using the IRC bridge by having a web-IRC thingy available from the static page, maybe in addition to some public logging or other persistent messaging. I suspect that between github issues and #s44, our communication needs are pretty much covered.

Thoughts?

| infrastructure | rfc ditch wordpress forum setup in favor of a static github page chatted with hoko about a direction for the site and i think it s at least worth considering ditching our current setup basically we have three big pieces of software run on our behalf by koshi wordpress mediawiki and phpbb all of them are famously insecure and we hardly use them other than the wiki which i ll get to i m thinking about moving us to a static page hosted on github here s a quick breakdown of what we use in our current setup and how we might replace it wordpress static github project page the few useful bits of content on the site maps media can be moved over for the other wordpress features we barely make use of wordpress for the authoring stuff and the comments are mostly spam our image gallery is mostly dead links at this point and changes so rarely that keeping screenshots in a branch of a repo would be perfectly fine we re all sufficiently technical that writing newsposts by editing some markdown files and git push ing is fine mediawiki github wiki curated content as part of the site mediawiki is probably the better wiki software but porting the useful content wouldn t be very hard to do since our wiki doesn t make extensive use of formatting and it isn t themed in a meaningful way that said i think i would promote the best bits of the wiki to proper pages on the static site so we can polish and curate and promote the best resources in a highly visible place other stuff can go into the github wiki for assorted things users want to contribute forum this might be the most contentious point giving up our dedicated forum i can grab a backup from koshi so the archive would still be available but i think our forum is actually fairly damaging to our image our repo is really active at the moment smiley but our forum is kind of a ghost town one of the first things i do when checking out an open source game project is peek at the forums no recent posts no players so i usually bail i think we would be better served by drawing people directly into using the irc bridge by having a web irc thingy available from the static page maybe in addition to some public logging or other persistent messaging i suspect that between github issues and our communication needs are pretty much covered thoughts | 1 |

60,823 | 8,468,406,688 | IssuesEvent | 2018-10-23 19:39:21 | devtools-html/perf.html | https://api.github.com/repos/devtools-html/perf.html | opened | Improve Enzyme best practice docs | documentation | From #1401, there were some updates to the Enzyme testing docs, but they could be better still.

@julienw wrote:

> I'd like some guidance of how to use enzyme; because enzyme is very powerful it's easy to use it in a bad way (eg: manipulating props), and I think we should take great care to test the components from the point of view of the user.

| 1.0 | Improve Enzyme best practice docs - From #1401, there were some updates to the Enzyme testing docs, but they could be better still.

@julienw wrote:

> I'd like some guidance of how to use enzyme; because enzyme is very powerful it's easy to use it in a bad way (eg: manipulating props), and I think we should take great care to test the components from the point of view of the user.

| non_infrastructure | improve enzyme best practice docs from there were some updates to the enzyme testing docs but they could be better still julienw wrote i d like some guidance of how to use enzyme because enzyme is very powerful it s easy to use it in a bad way eg manipulating props and i think we should take great care to test the components from the point of view of the user | 0 |

531,903 | 15,527,114,081 | IssuesEvent | 2021-03-13 04:19:55 | OnTopicCMS/OnTopic-Library | https://api.github.com/repos/OnTopicCMS/OnTopic-Library | closed | Bug: TrackedRecord<T> constructor doesn't accept null value | Area: Entity Priority: 3 Severity 1: Minor Status 5: Complete Type: Bug | The `TrackedRecord<T>.Value` property is nullable, but when constructing a new `TrackedRecord<T>` via the constructor, the `value` parameter is required—both in terms of its nullability annotation, as well as an explicit guard clause. The same is true of the derived `TopicReferenceRecord` and `AttributeRecord` constructors.

In practice, we generally prefer creating these via e.g. `TrackedRecord<T>.SetValue()`, which doesn't use the constructor, and can set a `null` value. But this mismatch isn't consistent with the data model. As such, the constructors should be updated to maintain parity with the underlying property return types they represent. | 1.0 | Bug: TrackedRecord<T> constructor doesn't accept null value - The `TrackedRecord<T>.Value` property is nullable, but when constructing a new `TrackedRecord<T>` via the constructor, the `value` parameter is required—both in terms of its nullability annotation, as well as an explicit guard clause. The same is true of the derived `TopicReferenceRecord` and `AttributeRecord` constructors.

In practice, we generally prefer creating these via e.g. `TrackedRecord<T>.SetValue()`, which doesn't use the constructor, and can set a `null` value. But this mismatch isn't consistent with the data model. As such, the constructors should be updated to maintain parity with the underlying property return types they represent. | non_infrastructure | bug trackedrecord constructor doesn t accept null value the trackedrecord value property is nullable but when constructing a new trackedrecord via the constructor the value parameter is required—both in terms of its nullability annotation as well as an explicit guard clause the same is true of the derived topicreferencerecord and attributerecord constructors in practice we generally prefer creating these via e g trackedrecord setvalue which doesn t use the constructor and can set a null value but this mismatch isn t consistent with the data model as such the constructors should be updated to maintain parity with the underlying property return types they represent | 0 |

11,418 | 9,181,319,045 | IssuesEvent | 2019-03-05 09:56:57 | elastic/beats | https://api.github.com/repos/elastic/beats | opened | [Metricbeat] Improve / add integration tests of Ceph | :infrastructure Metricbeat | In its current state Ceph module don't have any integration tests because Ceph containers take too long to start: over 1 minute in most situations and more than 5 even sometimes, producing a flaky test because `compose.EnsureUp` function we use defaults to 5 minutes. Probably, it has some problem if it reaches 5 minutes for starting up that wouldn't allow it to ever start properly.

`TestData` must be manually skipped for the same reason as it tries to reach the server that is not started as described here https://github.com/elastic/beats/pull/10990#discussion_r262406519 and here https://github.com/elastic/beats/pull/10993#discussion_r261627931

As soon as we have this merged https://github.com/elastic/beats/pull/10648 the module should be more friendly to add those tests. | 1.0 | [Metricbeat] Improve / add integration tests of Ceph - In its current state Ceph module don't have any integration tests because Ceph containers take too long to start: over 1 minute in most situations and more than 5 even sometimes, producing a flaky test because `compose.EnsureUp` function we use defaults to 5 minutes. Probably, it has some problem if it reaches 5 minutes for starting up that wouldn't allow it to ever start properly.

`TestData` must be manually skipped for the same reason as it tries to reach the server that is not started as described here https://github.com/elastic/beats/pull/10990#discussion_r262406519 and here https://github.com/elastic/beats/pull/10993#discussion_r261627931

As soon as we have this merged https://github.com/elastic/beats/pull/10648 the module should be more friendly to add those tests. | infrastructure | improve add integration tests of ceph in its current state ceph module don t have any integration tests because ceph containers take too long to start over minute in most situations and more than even sometimes producing a flaky test because compose ensureup function we use defaults to minutes probably it has some problem if it reaches minutes for starting up that wouldn t allow it to ever start properly testdata must be manually skipped for the same reason as it tries to reach the server that is not started as described here and here as soon as we have this merged the module should be more friendly to add those tests | 1 |

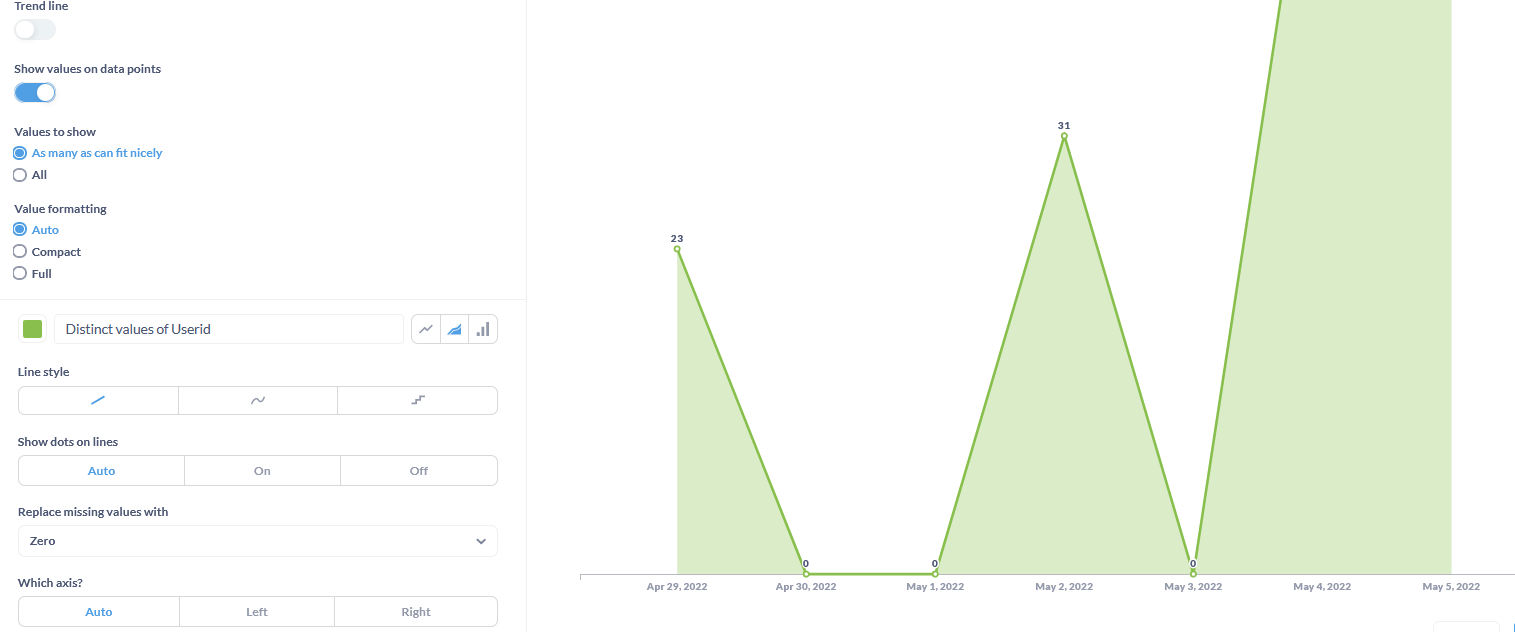

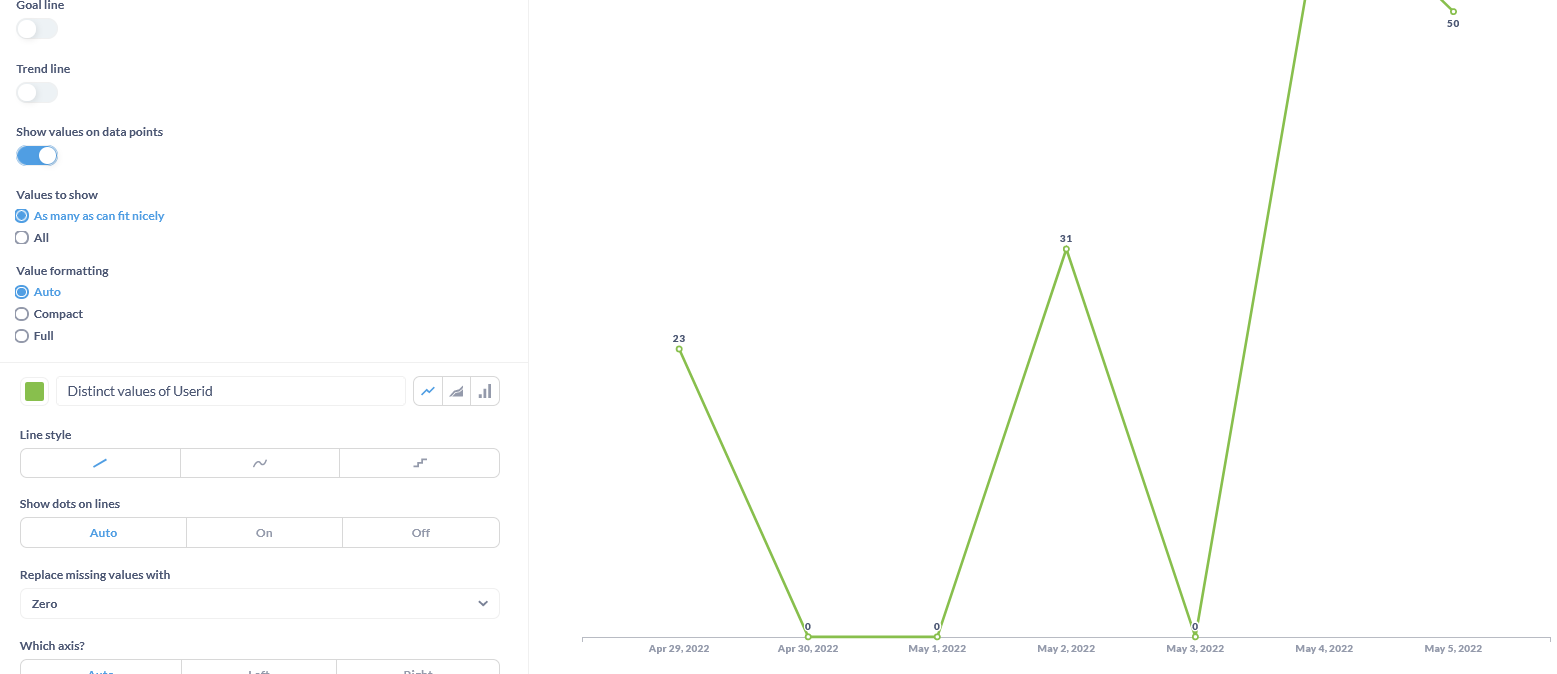

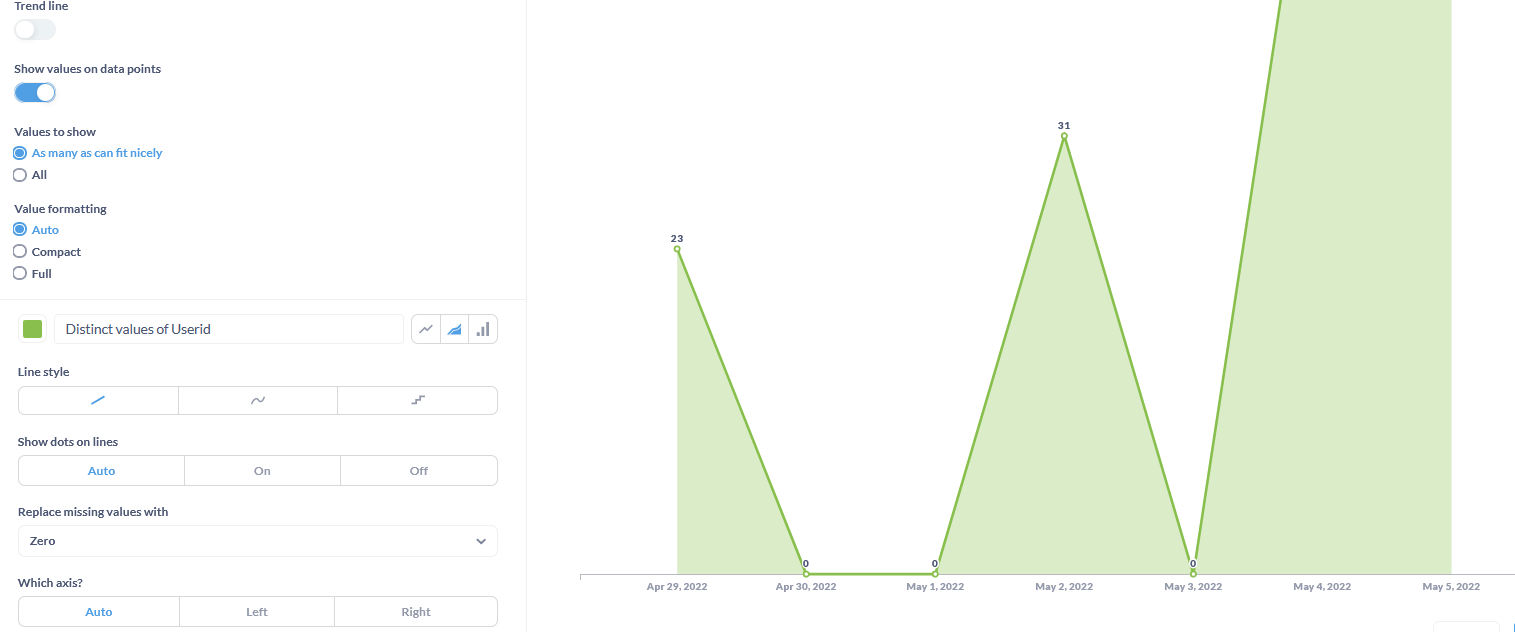

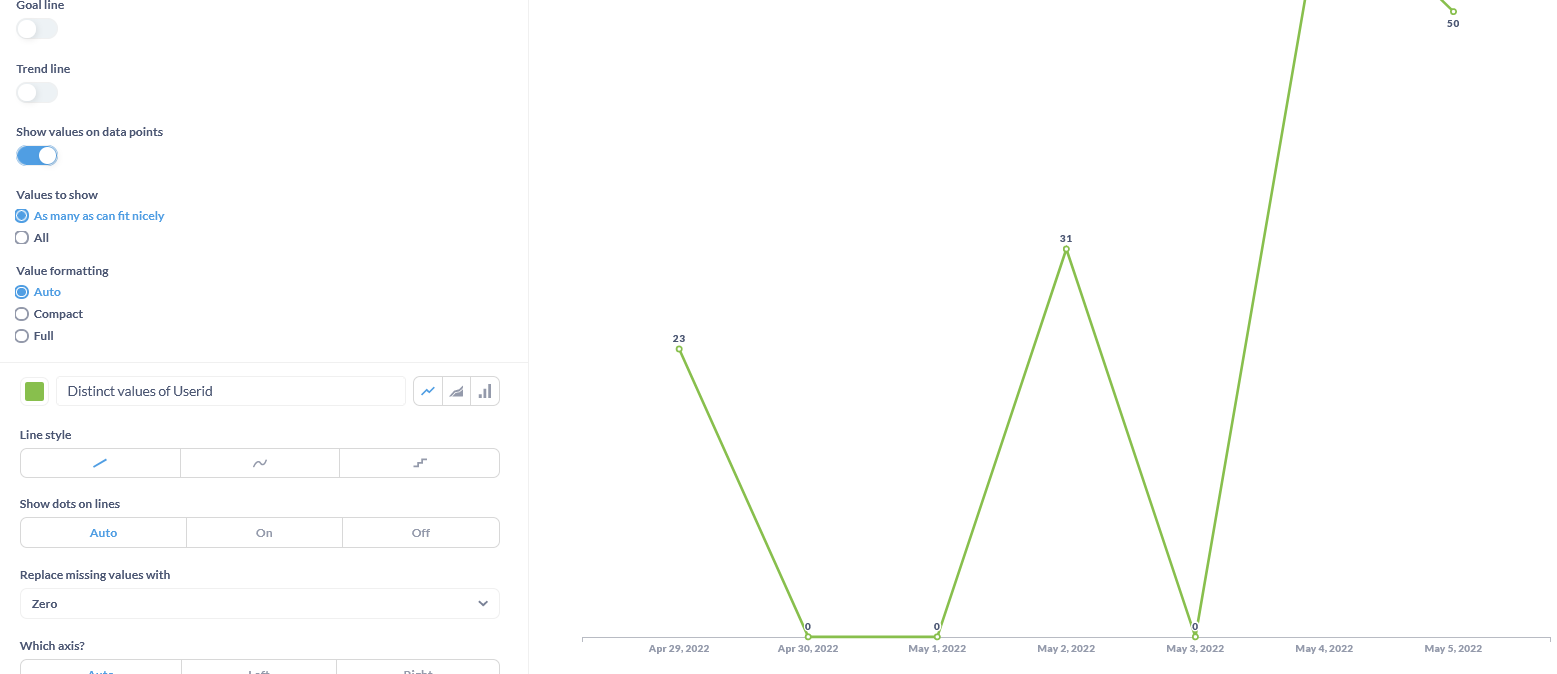

672,910 | 22,908,233,369 | IssuesEvent | 2022-07-16 00:01:29 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Visualization: Bar chart not showing 0 values unlike line and area chart | Type:Bug Priority:P3 .Frontend Visualization/Charts | This is the only option when choosing bar chart.

Whilst below images are for Line and Area chart.

This is the same as for previous metabase version that I was on around v0.3+

However, there was a workaround (just switch to line/area chart then select **"Replace missing values" with Zero** then revert to bar chart, missing values would then be replaced with 0 value) that I did back then that is now not working on my current version.

{

"browser-info": {

"language": "en-US",

"platform": "Win32",

"userAgent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:100.0) Gecko/20100101 Firefox/100.0",

"vendor": ""

},

"system-info": {

"file.encoding": "UTF-8",

"java.runtime.name": "OpenJDK Runtime Environment",

"java.runtime.version": "11.0.15+10-Ubuntu-0ubuntu0.18.04.1",

"java.vendor": "Private Build",

"java.vendor.url": "Unknown",

"java.version": "11.0.15",

"java.vm.name": "OpenJDK 64-Bit Server VM",

"java.vm.version": "11.0.15+10-Ubuntu-0ubuntu0.18.04.1",

"os.name": "Linux",

"os.version": "5.4.0-1077-azure",

"user.language": "en",

"user.timezone": "Etc/UTC"

},

"metabase-info": {

"databases": [

"mysql",

"mongo",

"googleanalytics"

],

"hosting-env": "unknown",

"application-database": "h2",

"application-database-details": {

"database": {

"name": "H2",

"version": "1.4.197 (2018-03-18)"

},

"jdbc-driver": {

"name": "H2 JDBC Driver",

"version": "1.4.197 (2018-03-18)"

}

},

"run-mode": "prod",

"version": {

"date": "2022-04-07",

"tag": "v0.42.4",

"branch": "release-x.42.x",

"hash": "7c3ce2d"

},

"settings": {

"report-timezone": "Asia/Hong_Kong"

}

}

}

| 1.0 | Visualization: Bar chart not showing 0 values unlike line and area chart - This is the only option when choosing bar chart.

Whilst below images are for Line and Area chart.

This is the same as for previous metabase version that I was on around v0.3+

However, there was a workaround (just switch to line/area chart then select **"Replace missing values" with Zero** then revert to bar chart, missing values would then be replaced with 0 value) that I did back then that is now not working on my current version.

{

"browser-info": {

"language": "en-US",

"platform": "Win32",

"userAgent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:100.0) Gecko/20100101 Firefox/100.0",

"vendor": ""

},

"system-info": {

"file.encoding": "UTF-8",

"java.runtime.name": "OpenJDK Runtime Environment",

"java.runtime.version": "11.0.15+10-Ubuntu-0ubuntu0.18.04.1",

"java.vendor": "Private Build",

"java.vendor.url": "Unknown",

"java.version": "11.0.15",

"java.vm.name": "OpenJDK 64-Bit Server VM",

"java.vm.version": "11.0.15+10-Ubuntu-0ubuntu0.18.04.1",

"os.name": "Linux",

"os.version": "5.4.0-1077-azure",

"user.language": "en",

"user.timezone": "Etc/UTC"

},

"metabase-info": {

"databases": [

"mysql",

"mongo",

"googleanalytics"

],

"hosting-env": "unknown",

"application-database": "h2",

"application-database-details": {

"database": {

"name": "H2",

"version": "1.4.197 (2018-03-18)"

},

"jdbc-driver": {

"name": "H2 JDBC Driver",

"version": "1.4.197 (2018-03-18)"

}

},

"run-mode": "prod",

"version": {

"date": "2022-04-07",

"tag": "v0.42.4",

"branch": "release-x.42.x",

"hash": "7c3ce2d"

},

"settings": {

"report-timezone": "Asia/Hong_Kong"

}

}

}

| non_infrastructure | visualization bar chart not showing values unlike line and area chart this is the only option when choosing bar chart whilst below images are for line and area chart this is the same as for previous metabase version that i was on around however there was a workaround just switch to line area chart then select replace missing values with zero then revert to bar chart missing values would then be replaced with value that i did back then that is now not working on my current version browser info language en us platform useragent mozilla windows nt rv gecko firefox vendor system info file encoding utf java runtime name openjdk runtime environment java runtime version ubuntu java vendor private build java vendor url unknown java version java vm name openjdk bit server vm java vm version ubuntu os name linux os version azure user language en user timezone etc utc metabase info databases mysql mongo googleanalytics hosting env unknown application database application database details database name version jdbc driver name jdbc driver version run mode prod version date tag branch release x x hash settings report timezone asia hong kong | 0 |

3,627 | 14,672,547,338 | IssuesEvent | 2020-12-30 10:53:23 | Homebrew/homebrew-core | https://api.github.com/repos/Homebrew/homebrew-core | opened | luajit probably needs to be deprecated | help wanted maintainer feedback | - The latest release (stable OR beta) is from 2017

- It's heavily patched

- Every new macOS version requires an additional patch

- Upstream's recommendation is to “build from git HEAD”, and they won't apparently ship new releases: https://github.com/LuaJIT/LuaJIT/issues/648#issuecomment-752404043

The reason I'm not doing a pull request directly is that a lot of things depend on luajit, so I want to open a discussion and figure out the best way to handle this. Can some of these be migrated to one of the lua formulas? | True | luajit probably needs to be deprecated - - The latest release (stable OR beta) is from 2017

- It's heavily patched

- Every new macOS version requires an additional patch

- Upstream's recommendation is to “build from git HEAD”, and they won't apparently ship new releases: https://github.com/LuaJIT/LuaJIT/issues/648#issuecomment-752404043

The reason I'm not doing a pull request directly is that a lot of things depend on luajit, so I want to open a discussion and figure out the best way to handle this. Can some of these be migrated to one of the lua formulas? | non_infrastructure | luajit probably needs to be deprecated the latest release stable or beta is from it s heavily patched every new macos version requires an additional patch upstream s recommendation is to “build from git head” and they won t apparently ship new releases the reason i m not doing a pull request directly is that a lot of things depend on luajit so i want to open a discussion and figure out the best way to handle this can some of these be migrated to one of the lua formulas | 0 |

114,416 | 11,846,416,770 | IssuesEvent | 2020-03-24 10:09:36 | kyma-incubator/documentation-component | https://api.github.com/repos/kyma-incubator/documentation-component | reopened | Provide examples in JavaScript | area/documentation enhancement stale | **Description**

- have examples with both, JS and TS like we have here https://github.com/kyma-project/kyma/blob/master/docs/kyma/04-04-cluster-installation.md#prerequisites (raw https://raw.githubusercontent.com/kyma-project/kyma/master/docs/kyma/04-04-cluster-installation.md)

- JS is always first on the list

**Reasons**

At the moment all examples for the component are in TypeScript, except of sandbox projects.

Believe it or not, but there is still a great number of javascript developers that do not need TypeScript at all to write good quality code :D https://insights.stackoverflow.com/survey/2019#technology-_-programming-scripting-and-markup-languages | 1.0 | Provide examples in JavaScript - **Description**

- have examples with both, JS and TS like we have here https://github.com/kyma-project/kyma/blob/master/docs/kyma/04-04-cluster-installation.md#prerequisites (raw https://raw.githubusercontent.com/kyma-project/kyma/master/docs/kyma/04-04-cluster-installation.md)

- JS is always first on the list

**Reasons**

At the moment all examples for the component are in TypeScript, except of sandbox projects.

Believe it or not, but there is still a great number of javascript developers that do not need TypeScript at all to write good quality code :D https://insights.stackoverflow.com/survey/2019#technology-_-programming-scripting-and-markup-languages | non_infrastructure | provide examples in javascript description have examples with both js and ts like we have here raw js is always first on the list reasons at the moment all examples for the component are in typescript except of sandbox projects believe it or not but there is still a great number of javascript developers that do not need typescript at all to write good quality code d | 0 |

11,095 | 8,924,513,643 | IssuesEvent | 2019-01-21 18:58:37 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | metricbeat - filesystem & fsstat not collecting mount path on windows machines | :Windows :infrastructure Metricbeat module | Hi,

it's look like the metricbeat not collecting any data of devices without drive letter on windows machines (mount path), the reason for that is metricbeat relay wrong command:

get-psdrive -PSProvider filesystem

this powershell syntax by design not returning mount path.

they should relay on different command:

Get-WmiObject Win32_Volume | Format-Table Name, Label, FreeSpace, Capacity

metricbeat 6.2.4 | 1.0 | metricbeat - filesystem & fsstat not collecting mount path on windows machines - Hi,

it's look like the metricbeat not collecting any data of devices without drive letter on windows machines (mount path), the reason for that is metricbeat relay wrong command:

get-psdrive -PSProvider filesystem

this powershell syntax by design not returning mount path.

they should relay on different command:

Get-WmiObject Win32_Volume | Format-Table Name, Label, FreeSpace, Capacity

metricbeat 6.2.4 | infrastructure | metricbeat filesystem fsstat not collecting mount path on windows machines hi it s look like the metricbeat not collecting any data of devices without drive letter on windows machines mount path the reason for that is metricbeat relay wrong command get psdrive psprovider filesystem this powershell syntax by design not returning mount path they should relay on different command get wmiobject volume format table name label freespace capacity metricbeat | 1 |

38,871 | 6,712,075,254 | IssuesEvent | 2017-10-13 07:55:56 | Microsoft/WindowsTemplateStudio | https://api.github.com/repos/Microsoft/WindowsTemplateStudio | closed | Minimum requirements to install WTS have changed to VS 2017 Update 3 or higher and .NET 4.7 | Documentation fall-creators-update | Currently the minimum requirements to install WTS are VS 2017 Update 3 or higher and .NET 4.7.

By now, the TargetVersion for generated projects still point to 10.0.15063.0. When SDK for FCU ready (publicly available) we will update this too.

We need to update the requirements in the documentation. | 1.0 | Minimum requirements to install WTS have changed to VS 2017 Update 3 or higher and .NET 4.7 - Currently the minimum requirements to install WTS are VS 2017 Update 3 or higher and .NET 4.7.

By now, the TargetVersion for generated projects still point to 10.0.15063.0. When SDK for FCU ready (publicly available) we will update this too.

We need to update the requirements in the documentation. | non_infrastructure | minimum requirements to install wts have changed to vs update or higher and net currently the minimum requirements to install wts are vs update or higher and net by now the targetversion for generated projects still point to when sdk for fcu ready publicly available we will update this too we need to update the requirements in the documentation | 0 |

8,616 | 7,525,090,886 | IssuesEvent | 2018-04-13 09:26:09 | OCR-D/pyocrd | https://api.github.com/repos/OCR-D/pyocrd | closed | Encapsulate merging functionality in an API | infrastructure | `merge_ocr_txt.py` is a command line script containing actual functionality which should be available via API. | 1.0 | Encapsulate merging functionality in an API - `merge_ocr_txt.py` is a command line script containing actual functionality which should be available via API. | infrastructure | encapsulate merging functionality in an api merge ocr txt py is a command line script containing actual functionality which should be available via api | 1 |

15,392 | 11,493,089,346 | IssuesEvent | 2020-02-11 22:17:57 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | [cms-ci] Sync files from upstream | ⭐️ Infrastructure | Sync files from upstream and have all new environments consume that tar file. Create similar command as `cms-db-download` e.g. `cms-files-download`.

This should be synced at same interval as DB, `10 * * * *`.

Current logs have a lot of "image download failed" in logs. | 1.0 | [cms-ci] Sync files from upstream - Sync files from upstream and have all new environments consume that tar file. Create similar command as `cms-db-download` e.g. `cms-files-download`.

This should be synced at same interval as DB, `10 * * * *`.

Current logs have a lot of "image download failed" in logs. | infrastructure | sync files from upstream sync files from upstream and have all new environments consume that tar file create similar command as cms db download e g cms files download this should be synced at same interval as db current logs have a lot of image download failed in logs | 1 |

14,826 | 11,184,393,250 | IssuesEvent | 2019-12-31 17:56:04 | eventespresso/event-espresso-core | https://api.github.com/repos/eventespresso/event-espresso-core | closed | Data Hydration | EDTR Prototype category:models-and-data-infrastructure | Investigate and implement logic for hydrating the Apollo Client cache using the data that is already being dumped into the DOM.

The relations data can be dumped separately as it is not stored in Apollo cache. -mw | 1.0 | Data Hydration - Investigate and implement logic for hydrating the Apollo Client cache using the data that is already being dumped into the DOM.

The relations data can be dumped separately as it is not stored in Apollo cache. -mw | infrastructure | data hydration investigate and implement logic for hydrating the apollo client cache using the data that is already being dumped into the dom the relations data can be dumped separately as it is not stored in apollo cache mw | 1 |

234,404 | 17,954,049,305 | IssuesEvent | 2021-09-13 04:10:09 | PySimpleGUI/PySimpleGUI | https://api.github.com/repos/PySimpleGUI/PySimpleGUI | closed | Problem when graphing a Line | documentation | ### Type of Issues (Enhancement, Error, Bug, Question)

Bug/Question

### Operating System

Windows 10

### Python version

3.7.0

### PySimpleGUI Port and Version

PySimpleGUIQt 0.26.0

### Code or partial code causing the problem

MainRadioCenter_elem = sg.Graph(canvas_size=(600,400),graph_bottom_left=(0,0), graph_top_right=(600,400), background_color='white' )

MainRadioCenter_elem.DrawLine(point_from=(78,240), point_to=(522,240), color='blue')

I don't quite understand whats happening or if I did something wrong but this error happens when attempting the part of the code above

| 1.0 | Problem when graphing a Line - ### Type of Issues (Enhancement, Error, Bug, Question)

Bug/Question

### Operating System

Windows 10

### Python version

3.7.0

### PySimpleGUI Port and Version

PySimpleGUIQt 0.26.0

### Code or partial code causing the problem

MainRadioCenter_elem = sg.Graph(canvas_size=(600,400),graph_bottom_left=(0,0), graph_top_right=(600,400), background_color='white' )

MainRadioCenter_elem.DrawLine(point_from=(78,240), point_to=(522,240), color='blue')

I don't quite understand whats happening or if I did something wrong but this error happens when attempting the part of the code above

| non_infrastructure | problem when graphing a line type of issues enhancement error bug question bug question operating system windows python version pysimplegui port and version pysimpleguiqt code or partial code causing the problem mainradiocenter elem sg graph canvas size graph bottom left graph top right background color white mainradiocenter elem drawline point from point to color blue i don t quite understand whats happening or if i did something wrong but this error happens when attempting the part of the code above | 0 |

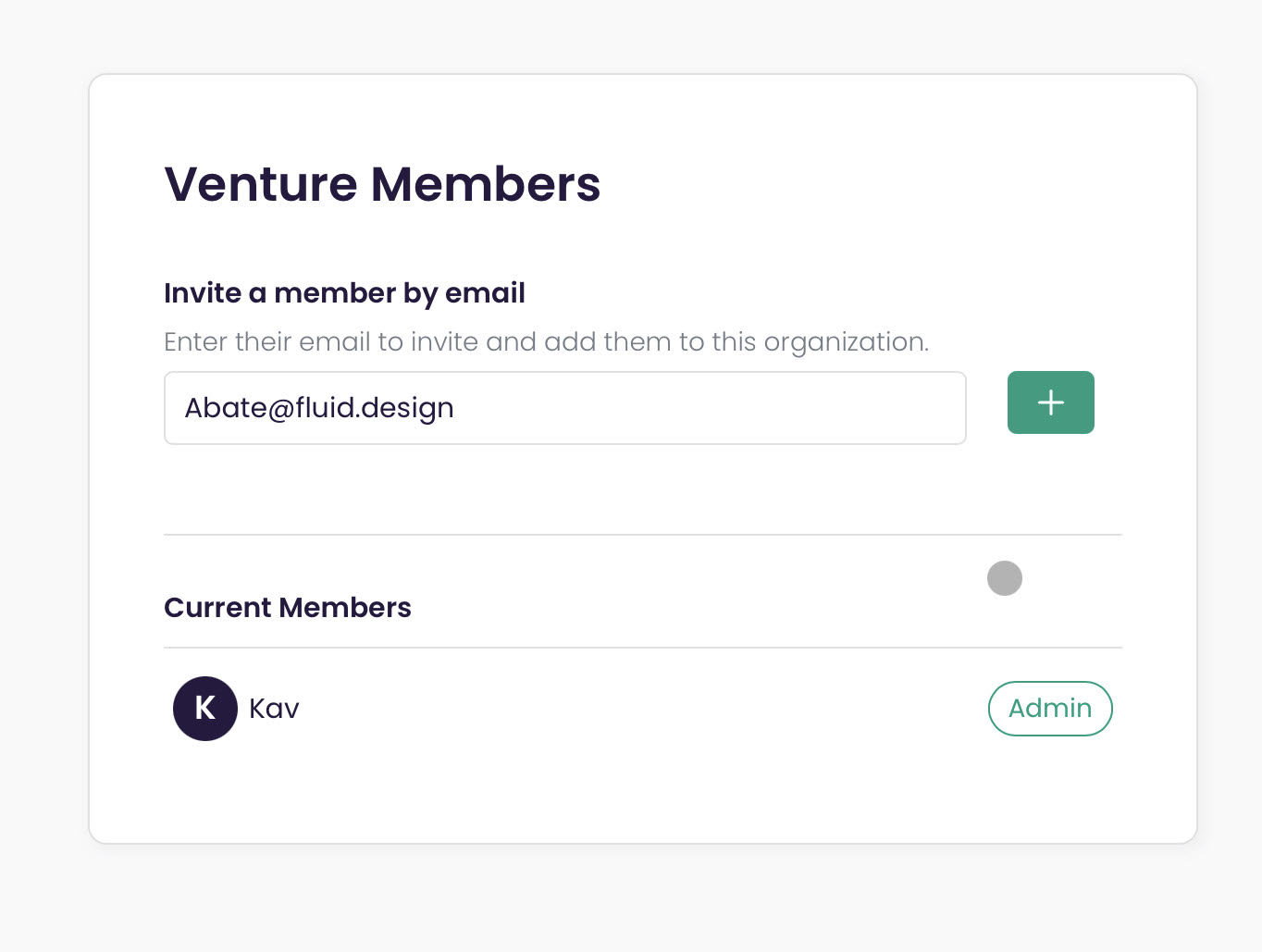

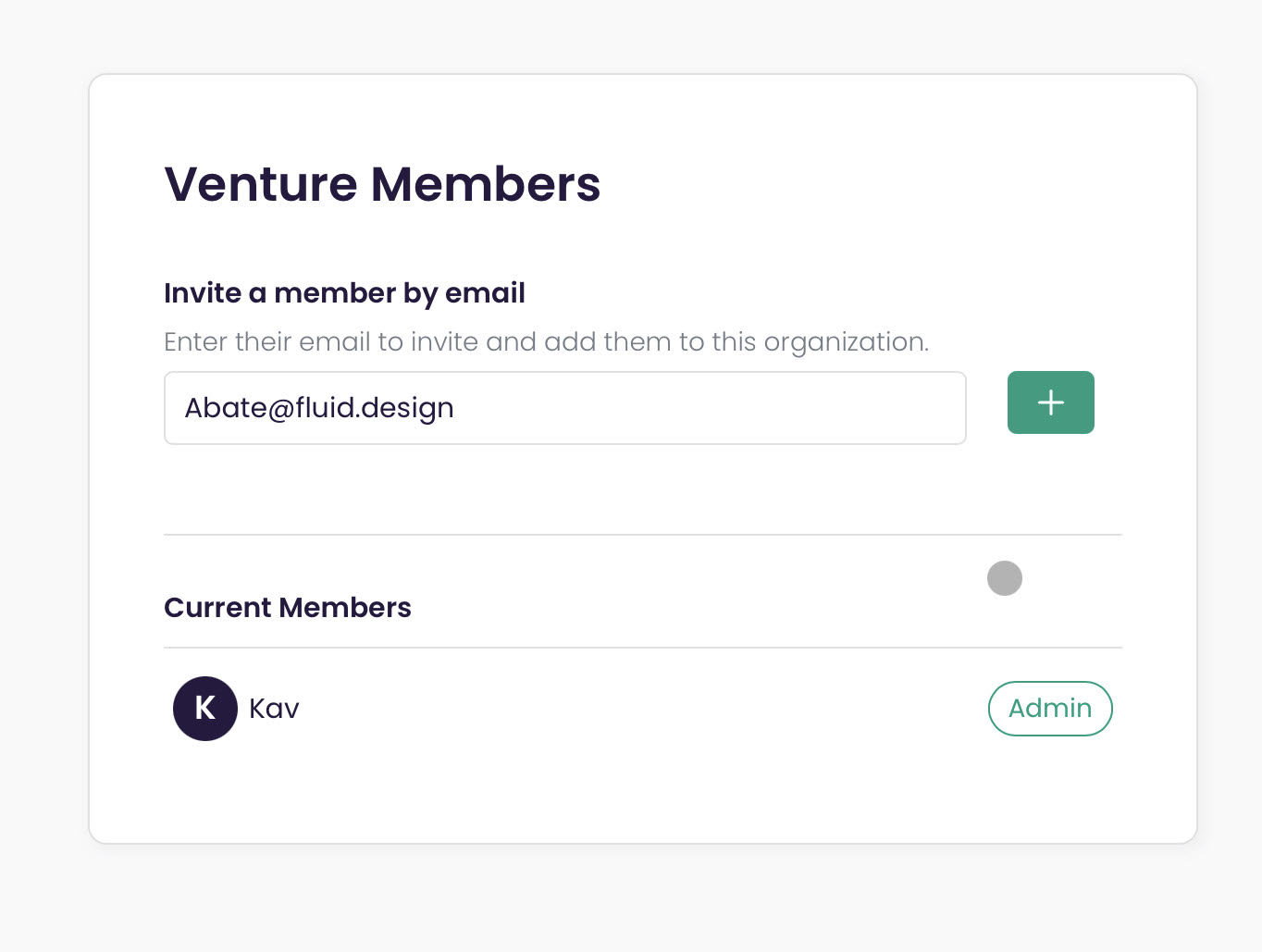

556,615 | 16,487,120,469 | IssuesEvent | 2021-05-24 19:46:35 | venturemark/webclient | https://api.github.com/repos/venturemark/webclient | closed | Can't send out invite emails | priority/high | Kav was trying to use Venturemark to invite a user and was unable to send out an invite.

***update* It appears that his comment is based on the input not being cleared after inviting user.**

| 1.0 | Can't send out invite emails - Kav was trying to use Venturemark to invite a user and was unable to send out an invite.

***update* It appears that his comment is based on the input not being cleared after inviting user.**

| non_infrastructure | can t send out invite emails kav was trying to use venturemark to invite a user and was unable to send out an invite update it appears that his comment is based on the input not being cleared after inviting user | 0 |

712,633 | 24,501,544,017 | IssuesEvent | 2022-10-10 13:11:18 | AzisabaNetwork/Kuvel | https://api.github.com/repos/AzisabaNetwork/Kuvel | closed | Handling of Duplicate Server Names for Load Balancer | kind/feature priority/high area/discovery area/load-balancer | If Kuvel try to create a load balancer with a server name that is already currently registered, it will just warn you and not be created. It should be possible to set the registration strategy for duplicate names from config.

The planned values currently include

1. Only warning ( current behavior )

2. Hijack the server name if nobody is playing on the server

3. Hijack the server name even if someone is playing on the server | 1.0 | Handling of Duplicate Server Names for Load Balancer - If Kuvel try to create a load balancer with a server name that is already currently registered, it will just warn you and not be created. It should be possible to set the registration strategy for duplicate names from config.

The planned values currently include

1. Only warning ( current behavior )

2. Hijack the server name if nobody is playing on the server

3. Hijack the server name even if someone is playing on the server | non_infrastructure | handling of duplicate server names for load balancer if kuvel try to create a load balancer with a server name that is already currently registered it will just warn you and not be created it should be possible to set the registration strategy for duplicate names from config the planned values currently include only warning current behavior hijack the server name if nobody is playing on the server hijack the server name even if someone is playing on the server | 0 |

93,049 | 11,736,611,912 | IssuesEvent | 2020-03-11 13:22:35 | emergenzeHack/covid19italia_form | https://api.github.com/repos/emergenzeHack/covid19italia_form | opened | Nuovo form unico per segnalazione INIZIATIVE | aiuto necessario backend form design | Qui: https://ee.humanitarianresponse.info/x/#6KafBk33

Mi date un feedback? @Saraveg @cristigalas | 1.0 | Nuovo form unico per segnalazione INIZIATIVE - Qui: https://ee.humanitarianresponse.info/x/#6KafBk33

Mi date un feedback? @Saraveg @cristigalas | non_infrastructure | nuovo form unico per segnalazione iniziative qui mi date un feedback saraveg cristigalas | 0 |

815,004 | 30,533,242,864 | IssuesEvent | 2023-07-19 15:30:57 | Consiglio-Regionale-della-Lombardia/PEM | https://api.github.com/repos/Consiglio-Regionale-della-Lombardia/PEM | closed | Visualizzazione comandi griglia riepilogo atti | view low priority mobile | Riga singola

- Visualizza gli atti per i quali è richiesta la mia firma

Sulla stessa riga:

- Seleziona tutti gli atti

- Espandi tutti gli atti

| 1.0 | Visualizzazione comandi griglia riepilogo atti - Riga singola

- Visualizza gli atti per i quali è richiesta la mia firma

Sulla stessa riga:

- Seleziona tutti gli atti

- Espandi tutti gli atti

| non_infrastructure | visualizzazione comandi griglia riepilogo atti riga singola visualizza gli atti per i quali è richiesta la mia firma sulla stessa riga seleziona tutti gli atti espandi tutti gli atti | 0 |

4,478 | 2,610,094,804 | IssuesEvent | 2015-02-26 18:28:36 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳红蓝光怎么样治疗青春痘 | auto-migrated Priority-Medium Type-Defect | ```

深圳红蓝光怎么样治疗青春痘【深圳韩方科颜全国热线400-869-

1818,24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机��

�以韩国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘�

��品,韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“

不反弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开��

�国内专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾�

��脸上的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:18 | 1.0 | 深圳红蓝光怎么样治疗青春痘 - ```

深圳红蓝光怎么样治疗青春痘【深圳韩方科颜全国热线400-869-

1818,24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机��

�以韩国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘�

��品,韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“

不反弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开��

�国内专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾�

��脸上的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:18 | non_infrastructure | 深圳红蓝光怎么样治疗青春痘 深圳红蓝光怎么样治疗青春痘【 , 】深圳韩方科颜专业祛痘连锁机构,机�� �以韩国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘� ��品,韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“ 不反弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开�� �国内专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾� ��脸上的痘痘。 original issue reported on code google com by szft com on may at | 0 |

690,193 | 23,648,910,181 | IssuesEvent | 2022-08-26 03:21:19 | Kong/gateway-operator | https://api.github.com/repos/Kong/gateway-operator | closed | Data race on setting the Cloud Flare logger singleton from multiple tests | bug priority/high | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

When running `make test.integration` I encountered the followig:

```

==================

WARNING: DATA RACE

Write at 0x000108380f20 by goroutine 703:

github.com/cloudflare/cfssl/log.SetLogger()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/github.com/cloudflare/cfssl@v1.6.1/log/log.go:62 +0x45c

github.com/kong/gateway-operator/controllers.signCertificate()

/Users/USER/code_/gateway-operator/controllers/utils.go:110 +0x28

github.com/kong/gateway-operator/controllers.maybeCreateCertificateSecret()

/Users/USER/code_/gateway-operator/controllers/utils.go:219 +0x9c0

github.com/kong/gateway-operator/controllers.(*DataPlaneReconciler).ensureCertificate()

/Users/USER/code_/gateway-operator/controllers/dataplane_controller_reconciler_utils.go:114 +0x190

github.com/kong/gateway-operator/controllers.(*DataPlaneReconciler).Reconcile()

/Users/USER/code_/gateway-operator/controllers/dataplane_controller.go:120 +0x578

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Reconcile()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:121 +0xe4

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).reconcileHandler()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:320 +0x360

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).processNextWorkItem()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:273 +0x240

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2.2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:234 +0x98

Previous write at 0x000108380f20 by goroutine 704:

github.com/cloudflare/cfssl/log.SetLogger()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/github.com/cloudflare/cfssl@v1.6.1/log/log.go:62 +0x45c

github.com/kong/gateway-operator/controllers.signCertificate()

/Users/USER/code_/gateway-operator/controllers/utils.go:110 +0x28

github.com/kong/gateway-operator/controllers.maybeCreateCertificateSecret()

/Users/USER/code_/gateway-operator/controllers/utils.go:219 +0x9c0

github.com/kong/gateway-operator/controllers.(*ControlPlaneReconciler).ensureCertificate()

/Users/USER/code_/gateway-operator/controllers/controlplane_controller_reconciler_utils.go:290 +0x19c

github.com/kong/gateway-operator/controllers.(*ControlPlaneReconciler).Reconcile()

/Users/USER/code_/gateway-operator/controllers/controlplane_controller.go:234 +0x1330

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Reconcile()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:121 +0xe4

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).reconcileHandler()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:320 +0x360

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).processNextWorkItem()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:273 +0x240

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2.2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:234 +0x98

Goroutine 703 (running) created at:

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:230 +0x364

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:241 +0x254

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func1()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:219 +0x158

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:222 +0x44

Goroutine 704 (running) created at:

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:230 +0x364

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:241 +0x254

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func1()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:219 +0x158

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:222 +0x44

==================

```

which points to the following line in code: https://github.com/Kong/gateway-operator/blob/efea71c1b334f4eb5d9be0e845127f54a2600834/controllers/utils.go#L110 which sets a singleton to a designated value in here: https://github.com/cloudflare/cfssl/blob/7614d6cad35dd6d33c8c1fc2e2db5d9ce111e56b/log/log.go#L62

### Expected Behavior

No data race happens.

### Steps To Reproduce

```markdown

1. Run `make test.integration`

2. Wait for data race to occur

```

### Kong Ingress Controller version

```shell

N/A

```

### Kubernetes version

```shell

N/A

```

### Anything else?

_No response_ | 1.0 | Data race on setting the Cloud Flare logger singleton from multiple tests - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

When running `make test.integration` I encountered the followig:

```

==================

WARNING: DATA RACE

Write at 0x000108380f20 by goroutine 703:

github.com/cloudflare/cfssl/log.SetLogger()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/github.com/cloudflare/cfssl@v1.6.1/log/log.go:62 +0x45c

github.com/kong/gateway-operator/controllers.signCertificate()

/Users/USER/code_/gateway-operator/controllers/utils.go:110 +0x28

github.com/kong/gateway-operator/controllers.maybeCreateCertificateSecret()

/Users/USER/code_/gateway-operator/controllers/utils.go:219 +0x9c0

github.com/kong/gateway-operator/controllers.(*DataPlaneReconciler).ensureCertificate()

/Users/USER/code_/gateway-operator/controllers/dataplane_controller_reconciler_utils.go:114 +0x190

github.com/kong/gateway-operator/controllers.(*DataPlaneReconciler).Reconcile()

/Users/USER/code_/gateway-operator/controllers/dataplane_controller.go:120 +0x578

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Reconcile()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:121 +0xe4

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).reconcileHandler()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:320 +0x360

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).processNextWorkItem()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:273 +0x240

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2.2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:234 +0x98

Previous write at 0x000108380f20 by goroutine 704:

github.com/cloudflare/cfssl/log.SetLogger()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/github.com/cloudflare/cfssl@v1.6.1/log/log.go:62 +0x45c

github.com/kong/gateway-operator/controllers.signCertificate()

/Users/USER/code_/gateway-operator/controllers/utils.go:110 +0x28

github.com/kong/gateway-operator/controllers.maybeCreateCertificateSecret()

/Users/USER/code_/gateway-operator/controllers/utils.go:219 +0x9c0

github.com/kong/gateway-operator/controllers.(*ControlPlaneReconciler).ensureCertificate()

/Users/USER/code_/gateway-operator/controllers/controlplane_controller_reconciler_utils.go:290 +0x19c

github.com/kong/gateway-operator/controllers.(*ControlPlaneReconciler).Reconcile()

/Users/USER/code_/gateway-operator/controllers/controlplane_controller.go:234 +0x1330

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Reconcile()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:121 +0xe4

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).reconcileHandler()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:320 +0x360

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).processNextWorkItem()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:273 +0x240

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2.2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:234 +0x98

Goroutine 703 (running) created at:

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:230 +0x364

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:241 +0x254

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func1()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:219 +0x158

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:222 +0x44

Goroutine 704 (running) created at:

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:230 +0x364

sigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/internal/controller/controller.go:241 +0x254

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func1()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:219 +0x158

sigs.k8s.io/controller-runtime/pkg/manager.(*runnableGroup).reconcile.func2()

/Users/USER/.gvm/pkgsets/go1.19/global/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.3/pkg/manager/runnable_group.go:222 +0x44

==================

```

which points to the following line in code: https://github.com/Kong/gateway-operator/blob/efea71c1b334f4eb5d9be0e845127f54a2600834/controllers/utils.go#L110 which sets a singleton to a designated value in here: https://github.com/cloudflare/cfssl/blob/7614d6cad35dd6d33c8c1fc2e2db5d9ce111e56b/log/log.go#L62

### Expected Behavior

No data race happens.

### Steps To Reproduce

```markdown

1. Run `make test.integration`

2. Wait for data race to occur

```

### Kong Ingress Controller version

```shell

N/A

```

### Kubernetes version

```shell

N/A

```

### Anything else?

_No response_ | non_infrastructure | data race on setting the cloud flare logger singleton from multiple tests is there an existing issue for this i have searched the existing issues current behavior when running make test integration i encountered the followig warning data race write at by goroutine github com cloudflare cfssl log setlogger users user gvm pkgsets global pkg mod github com cloudflare cfssl log log go github com kong gateway operator controllers signcertificate users user code gateway operator controllers utils go github com kong gateway operator controllers maybecreatecertificatesecret users user code gateway operator controllers utils go github com kong gateway operator controllers dataplanereconciler ensurecertificate users user code gateway operator controllers dataplane controller reconciler utils go github com kong gateway operator controllers dataplanereconciler reconcile users user code gateway operator controllers dataplane controller go sigs io controller runtime pkg internal controller controller reconcile users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller reconcilehandler users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller processnextworkitem users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller start users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go previous write at by goroutine github com cloudflare cfssl log setlogger users user gvm pkgsets global pkg mod github com cloudflare cfssl log log go github com kong gateway operator controllers signcertificate users user code gateway operator controllers utils go github com kong gateway operator controllers maybecreatecertificatesecret users user code gateway operator controllers utils go github com kong gateway operator controllers controlplanereconciler ensurecertificate users user code gateway operator controllers controlplane controller reconciler utils go github com kong gateway operator controllers controlplanereconciler reconcile users user code gateway operator controllers controlplane controller go sigs io controller runtime pkg internal controller controller reconcile users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller reconcilehandler users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller processnextworkitem users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller start users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go goroutine running created at sigs io controller runtime pkg internal controller controller start users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller start users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg manager runnablegroup reconcile users user gvm pkgsets global pkg mod sigs io controller runtime pkg manager runnable group go sigs io controller runtime pkg manager runnablegroup reconcile users user gvm pkgsets global pkg mod sigs io controller runtime pkg manager runnable group go goroutine running created at sigs io controller runtime pkg internal controller controller start users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg internal controller controller start users user gvm pkgsets global pkg mod sigs io controller runtime pkg internal controller controller go sigs io controller runtime pkg manager runnablegroup reconcile users user gvm pkgsets global pkg mod sigs io controller runtime pkg manager runnable group go sigs io controller runtime pkg manager runnablegroup reconcile users user gvm pkgsets global pkg mod sigs io controller runtime pkg manager runnable group go which points to the following line in code which sets a singleton to a designated value in here expected behavior no data race happens steps to reproduce markdown run make test integration wait for data race to occur kong ingress controller version shell n a kubernetes version shell n a anything else no response | 0 |

19,267 | 13,211,305,516 | IssuesEvent | 2020-08-15 22:11:04 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | pybindings - don't auto-build dependencies (╯°□°)╯︵ ┻━┻ (Trac #1032) | Incomplete Migration Migrated from Trac enhancement infrastructure | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1032">https://code.icecube.wisc.edu/projects/icecube/ticket/1032</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-01-12T00:01:19",

"_ts": "1547251279109761",

"description": "this is fine with `make` or `make all` but when trying to do a partial/incremental build, you have to keep failing python scripts to see what pybindings you need (without looking into CMakeLists.txt)",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"time": "2015-06-25T19:00:21",

"component": "infrastructure",

"summary": "pybindings - don't auto-build dependencies (\u256f\u00b0\u25a1\u00b0)\u256f\ufe35 \u253b\u2501\u253b",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "nega",

"type": "enhancement"

}

```

</p>

</details>

| 1.0 | pybindings - don't auto-build dependencies (╯°□°)╯︵ ┻━┻ (Trac #1032) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1032">https://code.icecube.wisc.edu/projects/icecube/ticket/1032</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-01-12T00:01:19",

"_ts": "1547251279109761",

"description": "this is fine with `make` or `make all` but when trying to do a partial/incremental build, you have to keep failing python scripts to see what pybindings you need (without looking into CMakeLists.txt)",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"time": "2015-06-25T19:00:21",

"component": "infrastructure",

"summary": "pybindings - don't auto-build dependencies (\u256f\u00b0\u25a1\u00b0)\u256f\ufe35 \u253b\u2501\u253b",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "nega",

"type": "enhancement"

}

```

</p>

</details>

| infrastructure | pybindings don t auto build dependencies ╯°□° ╯︵ ┻━┻ trac migrated from json status closed changetime ts description this is fine with make or make all but when trying to do a partial incremental build you have to keep failing python scripts to see what pybindings you need without looking into cmakelists txt reporter nega cc resolution fixed time component infrastructure summary pybindings don t auto build dependencies priority normal keywords milestone owner nega type enhancement | 1 |

27,814 | 4,330,371,486 | IssuesEvent | 2016-07-26 19:47:55 | F5Networks/f5-common-python | https://api.github.com/repos/F5Networks/f5-common-python | closed | During functional testing in 11.5.4 device goes to forced offline and does not come back online | functional test | The tests live here, and the likely culprit is: https://github.com/F5Networks/f5-common-python/blob/60abd57feb5b457e441f6d940430a50458866762/test/functional/tm/sys/test_failover.py#L75

Maybe this is just as simple as having an addfinalizer at the end of the test to force it back online in case the test fails. | 1.0 | During functional testing in 11.5.4 device goes to forced offline and does not come back online - The tests live here, and the likely culprit is: https://github.com/F5Networks/f5-common-python/blob/60abd57feb5b457e441f6d940430a50458866762/test/functional/tm/sys/test_failover.py#L75

Maybe this is just as simple as having an addfinalizer at the end of the test to force it back online in case the test fails. | non_infrastructure | during functional testing in device goes to forced offline and does not come back online the tests live here and the likely culprit is maybe this is just as simple as having an addfinalizer at the end of the test to force it back online in case the test fails | 0 |

6,618 | 6,534,978,529 | IssuesEvent | 2017-08-31 13:05:14 | openshiftio/appdev-documentation | https://api.github.com/repos/openshiftio/appdev-documentation | opened | Deduplicate cico_build_deploy.sh and cico_build_test.sh | Component | Infrastructure Type | Enhancement | Due to #504, there is a new script called `cico_build_test.sh` in the repo root. This script is partially identical to `cico_build_deploy`, so it warrants refactoring to eliminate the code duplication. | 1.0 | Deduplicate cico_build_deploy.sh and cico_build_test.sh - Due to #504, there is a new script called `cico_build_test.sh` in the repo root. This script is partially identical to `cico_build_deploy`, so it warrants refactoring to eliminate the code duplication. | infrastructure | deduplicate cico build deploy sh and cico build test sh due to there is a new script called cico build test sh in the repo root this script is partially identical to cico build deploy so it warrants refactoring to eliminate the code duplication | 1 |

229,001 | 18,275,752,624 | IssuesEvent | 2021-10-04 18:36:20 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: sequelize failed | C-test-failure O-robot O-roachtest branch-master | roachtest.sequelize [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3524635&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3524635&tab=artifacts#/sequelize) on master @ [5f53feb1ce7070453e9ce52d99013934718cc9d7](https://github.com/cockroachdb/cockroach/commits/5f53feb1ce7070453e9ce52d99013934718cc9d7):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/sequelize/run_1

sequelize.go:101,sequelize.go:157,test_runner.go:777: all attempts failed for add nodesource repository due to error: output in run_122206.270795111_n1_curl: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-3524635-1633069195-81-n1cpu4:1 -- curl -sL https://deb.nodesource.com/setup_12.x | sudo -E bash - returned: exit status 20

```

<details><summary>Reproduce</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #70981 roachtest: sequelize failed [C-test-failure O-roachtest O-robot branch-release-21.2 release-blocker]

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*sequelize.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| 2.0 | roachtest: sequelize failed - roachtest.sequelize [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3524635&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3524635&tab=artifacts#/sequelize) on master @ [5f53feb1ce7070453e9ce52d99013934718cc9d7](https://github.com/cockroachdb/cockroach/commits/5f53feb1ce7070453e9ce52d99013934718cc9d7):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/sequelize/run_1

sequelize.go:101,sequelize.go:157,test_runner.go:777: all attempts failed for add nodesource repository due to error: output in run_122206.270795111_n1_curl: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-3524635-1633069195-81-n1cpu4:1 -- curl -sL https://deb.nodesource.com/setup_12.x | sudo -E bash - returned: exit status 20

```

<details><summary>Reproduce</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #70981 roachtest: sequelize failed [C-test-failure O-roachtest O-robot branch-release-21.2 release-blocker]

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*sequelize.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| non_infrastructure | roachtest sequelize failed roachtest sequelize with on master the test failed on branch master cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts sequelize run sequelize go sequelize go test runner go all attempts failed for add nodesource repository due to error output in run curl home agent work go src github com cockroachdb cockroach bin roachprod run teamcity curl sl sudo e bash returned exit status reproduce see same failure on other branches roachtest sequelize failed cc cockroachdb sql experience | 0 |

92,553 | 11,682,359,033 | IssuesEvent | 2020-03-05 00:01:35 | quicwg/base-drafts | https://api.github.com/repos/quicwg/base-drafts | closed | Allow the Transport to Stop/Reset a Stream? | -transport design has-consensus | Per the current design for streams, the application MUST be the one to shutdown a stream, because the error code space for streams is purely application layer; and the QUIC transport has no knowledge of these error code, so it can't just pick one.

When writing a general purpose QUIC library, and integrating it into other general purpose libraries ([i.e. dotnet](https://github.com/dotnet/runtime/pull/427)) I constantly have to explain to non-QUIC folks that they can't just close their stream object/handle without first supplying an application layer specific error code; meaning any intermediate library needs input from the app even if some fatal error (memory allocation failure) happened along the way.

So, I've come to the point that it might be a good idea to allow for the transport layer to completely terminate a stream for it's own reason. Whether that means we add a flag to RESET_STREAM and STOP_SENDING to indicate the transport is specifying the error code, or we add a new frame entirely doesn't matter to me. It would just be a lot cleaner for general purpose libraries to be able to kill a stream when necessary, on its own (no app layer involvement). | 1.0 | Allow the Transport to Stop/Reset a Stream? - Per the current design for streams, the application MUST be the one to shutdown a stream, because the error code space for streams is purely application layer; and the QUIC transport has no knowledge of these error code, so it can't just pick one.

When writing a general purpose QUIC library, and integrating it into other general purpose libraries ([i.e. dotnet](https://github.com/dotnet/runtime/pull/427)) I constantly have to explain to non-QUIC folks that they can't just close their stream object/handle without first supplying an application layer specific error code; meaning any intermediate library needs input from the app even if some fatal error (memory allocation failure) happened along the way.

So, I've come to the point that it might be a good idea to allow for the transport layer to completely terminate a stream for it's own reason. Whether that means we add a flag to RESET_STREAM and STOP_SENDING to indicate the transport is specifying the error code, or we add a new frame entirely doesn't matter to me. It would just be a lot cleaner for general purpose libraries to be able to kill a stream when necessary, on its own (no app layer involvement). | non_infrastructure | allow the transport to stop reset a stream per the current design for streams the application must be the one to shutdown a stream because the error code space for streams is purely application layer and the quic transport has no knowledge of these error code so it can t just pick one when writing a general purpose quic library and integrating it into other general purpose libraries i constantly have to explain to non quic folks that they can t just close their stream object handle without first supplying an application layer specific error code meaning any intermediate library needs input from the app even if some fatal error memory allocation failure happened along the way so i ve come to the point that it might be a good idea to allow for the transport layer to completely terminate a stream for it s own reason whether that means we add a flag to reset stream and stop sending to indicate the transport is specifying the error code or we add a new frame entirely doesn t matter to me it would just be a lot cleaner for general purpose libraries to be able to kill a stream when necessary on its own no app layer involvement | 0 |

317,786 | 9,669,276,411 | IssuesEvent | 2019-05-21 16:57:47 | etternagame/etterna | https://api.github.com/repos/etternagame/etterna | closed | Old files with only .dwi sometimes use wrong image for song banner | Good First Issue Priority: Low Type: Bug | Some old files that only have a .dwi and not .sm or .ssc don't specify the file path to the song banner. It seems like Etterna tries to fall back by grabbing some other image from the folder, but it doesn't always grab the correct one. I think the desired behavior should be to first try and fall back to another image in the song folder that has the word "banner" in the file name, as I think specifying which image was which using the file name used to be the common practice.

Example: Red Zone in KBC3. The .ogg itself has some associated artwork (album cover?), and Etterna uses this image as the banner rather than the actual banner, which has "banner" in the file name. | 1.0 | Old files with only .dwi sometimes use wrong image for song banner - Some old files that only have a .dwi and not .sm or .ssc don't specify the file path to the song banner. It seems like Etterna tries to fall back by grabbing some other image from the folder, but it doesn't always grab the correct one. I think the desired behavior should be to first try and fall back to another image in the song folder that has the word "banner" in the file name, as I think specifying which image was which using the file name used to be the common practice.

Example: Red Zone in KBC3. The .ogg itself has some associated artwork (album cover?), and Etterna uses this image as the banner rather than the actual banner, which has "banner" in the file name. | non_infrastructure | old files with only dwi sometimes use wrong image for song banner some old files that only have a dwi and not sm or ssc don t specify the file path to the song banner it seems like etterna tries to fall back by grabbing some other image from the folder but it doesn t always grab the correct one i think the desired behavior should be to first try and fall back to another image in the song folder that has the word banner in the file name as i think specifying which image was which using the file name used to be the common practice example red zone in the ogg itself has some associated artwork album cover and etterna uses this image as the banner rather than the actual banner which has banner in the file name | 0 |

192,163 | 14,601,561,112 | IssuesEvent | 2020-12-21 08:53:05 | hbvhuwe/easy-travel | https://api.github.com/repos/hbvhuwe/easy-travel | closed | Add tests for Travel planning API | project:api test | Create a file `/tests/routes/travel.test.js` for testing Travel planning API.

Create a file `/tests/services/travel.test.js` for testing Travel planning API service.

Can start implementing, when the #95 pull request will be closed and merged. | 1.0 | Add tests for Travel planning API - Create a file `/tests/routes/travel.test.js` for testing Travel planning API.

Create a file `/tests/services/travel.test.js` for testing Travel planning API service.

Can start implementing, when the #95 pull request will be closed and merged. | non_infrastructure | add tests for travel planning api create a file tests routes travel test js for testing travel planning api create a file tests services travel test js for testing travel planning api service can start implementing when the pull request will be closed and merged | 0 |

12,082 | 9,582,704,396 | IssuesEvent | 2019-05-08 01:55:53 | nervosnetwork/ckb | https://api.github.com/repos/nervosnetwork/ckb | closed | Config file check and friendly error message | m:infrastructure p:should-have s:available t:enhancement | Before booting the node, check the config file first:

- Report any error with a user-friendly message.

- ~~Verify that all path points to existing files.~~ #387 | 1.0 | Config file check and friendly error message - Before booting the node, check the config file first:

- Report any error with a user-friendly message.

- ~~Verify that all path points to existing files.~~ #387 | infrastructure | config file check and friendly error message before booting the node check the config file first report any error with a user friendly message verify that all path points to existing files | 1 |

143,338 | 22,032,960,064 | IssuesEvent | 2022-05-28 05:48:24 | stores-cedcommerce/Internal---Liz-Store-dev.---31-May-2022 | https://api.github.com/repos/stores-cedcommerce/Internal---Liz-Store-dev.---31-May-2022 | closed | Slide navigation buttons getting disappear on clicking in mobile UI | Ready to test Home page content Design / UI / UX Mobile | Bug - Slide navigation buttons getting disappear on clicking in mobile UI

Exp - slide navigation button should not get disappear on clicking.

Ref Link - https://drive.google.com/file/d/1uEBSututgfn9wpAFZkUoYB0OevucOB1e/view | 1.0 | Slide navigation buttons getting disappear on clicking in mobile UI - Bug - Slide navigation buttons getting disappear on clicking in mobile UI

Exp - slide navigation button should not get disappear on clicking.

Ref Link - https://drive.google.com/file/d/1uEBSututgfn9wpAFZkUoYB0OevucOB1e/view | non_infrastructure | slide navigation buttons getting disappear on clicking in mobile ui bug slide navigation buttons getting disappear on clicking in mobile ui exp slide navigation button should not get disappear on clicking ref link | 0 |

6,590 | 6,525,653,887 | IssuesEvent | 2017-08-29 16:38:32 | eclipse/smarthome | https://api.github.com/repos/eclipse/smarthome | closed | Version bumps / releases | Infrastructure | migrated from Bugzilla [#478597](https://bugs.eclipse.org/bugs/show_bug.cgi?id=478597)

status UNCONFIRMED severity _normal_ in component _Infrastructure_ for _---_

Reported in version _unspecified_ on platform _All_

Assigned to: Project Inbox

On 2015-09-29 04:06:10 -0400, Markus Rathgeb wrote:

> Eclipse SmartHome is a framework to build end user solutions on top.

>

> If an "end user" would like to use that framework an do a release of his software, the software should not depend on a SNAPSHOT at all.

>

> The ESH framework themselves is not bound (AFAIK) to any consumer release cycles and this is IMHO fine.

>

> But I think it would be fine if we could produce releases from time to time so it could used as a reference for custom products.

> I cannot depend on 0.8-SNAPSHOT all the time.

>

> I do not mean a 1.0 stable framework release without API breaks anymore...

> I just want to use a fixed reference in my sources.

>

> Using custom repositories are possible, but this mean for every reference a new repository. That is not good.

>

> If the process for a release is a long task for Eclipse products, I will ask, if we could bump the snapshot version from time to time without a official release.

> So building a release could build by the consumers.

>

> I cannot build a 0.8.0, 0.8.1, ... release myself if Eclipse releases an official 0.8[.0] some time.

On 2015-09-29 04:39:35 -0400, Kai Kreuzer wrote:

> I agree that fixed releases would definitely help to have a reliable way to reference a specific code base from within a solution.

>

> Note that I am currently preparing the 0.8.0 release, see https://projects.eclipse.org/projects/iot.smarthome/releases/0.8.0/

>

> But creating such minor releases is some work; I would not expect more than 2-3 each year.

>

> Nonetheless, we could maybe check if we do more regular service releases, which should be a bit simpler (but still means work).

> We will have to check how much this can be automated (update version in sources, have documentation generated and published, adapt the build plans and download locations etc.)

On 2015-09-29 04:55:04 -0400, Kai Kreuzer wrote:

> Probably a better option than regular service releases might be to create milestones, e.g. 0.9.0M1, 0.9.0M2 etc. This seems to be possible without following the release process and it I think we also would not need to publish documentation on the website for such milestones.

On 2015-09-29 07:38:43 -0400, Markus Rathgeb wrote:

> > Probably a better option than regular service releases might be to create

> > milestones, e.g. 0.9.0M1, 0.9.0M2 etc. This seems to be possible without

> > following the release process and it I think we also would not need to

> > publish documentation on the website for such milestones.

>

> That would be enough. I just want fixed states we could point to. If this are alphas, betas, release candidates, milestones, releases, ... does not matter for me.

> As long as it is fixed for a specific code base.

>

> If the qualifier (M) is chosen correctly, Maven should handle this correctly, too.

> https://docs.oracle.com/middleware/1212/core/MAVEN/maven_version.htm#MAVEN400

On 2015-11-03 12:50:36 -0500, Markus Rathgeb wrote:

> I started doing it for myself:

> https://github.com/maggu2810/smarthome/commit/SHA: 04f8c9477fb896f77718a377f972f7f91876c639

>

> But is there something we can do to get a solution upstream?

| 1.0 | Version bumps / releases - migrated from Bugzilla [#478597](https://bugs.eclipse.org/bugs/show_bug.cgi?id=478597)

status UNCONFIRMED severity _normal_ in component _Infrastructure_ for _---_

Reported in version _unspecified_ on platform _All_

Assigned to: Project Inbox

On 2015-09-29 04:06:10 -0400, Markus Rathgeb wrote:

> Eclipse SmartHome is a framework to build end user solutions on top.

>

> If an "end user" would like to use that framework an do a release of his software, the software should not depend on a SNAPSHOT at all.

>

> The ESH framework themselves is not bound (AFAIK) to any consumer release cycles and this is IMHO fine.

>

> But I think it would be fine if we could produce releases from time to time so it could used as a reference for custom products.

> I cannot depend on 0.8-SNAPSHOT all the time.

>

> I do not mean a 1.0 stable framework release without API breaks anymore...

> I just want to use a fixed reference in my sources.

>

> Using custom repositories are possible, but this mean for every reference a new repository. That is not good.

>

> If the process for a release is a long task for Eclipse products, I will ask, if we could bump the snapshot version from time to time without a official release.

> So building a release could build by the consumers.

>

> I cannot build a 0.8.0, 0.8.1, ... release myself if Eclipse releases an official 0.8[.0] some time.

On 2015-09-29 04:39:35 -0400, Kai Kreuzer wrote:

> I agree that fixed releases would definitely help to have a reliable way to reference a specific code base from within a solution.

>

> Note that I am currently preparing the 0.8.0 release, see https://projects.eclipse.org/projects/iot.smarthome/releases/0.8.0/

>

> But creating such minor releases is some work; I would not expect more than 2-3 each year.

>

> Nonetheless, we could maybe check if we do more regular service releases, which should be a bit simpler (but still means work).

> We will have to check how much this can be automated (update version in sources, have documentation generated and published, adapt the build plans and download locations etc.)

On 2015-09-29 04:55:04 -0400, Kai Kreuzer wrote: