Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

80,485 | 23,220,559,311 | IssuesEvent | 2022-08-02 17:46:51 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Build 2 for 8.4 with status FAILURE | automation ci-reported Team:Elastic-Agent-Data-Plane build-failures |

## :broken_heart: Tests Failed

<!-- BUILD BADGES-->

> _the below badges are clickable and redirect to their specific view in the CI or DOCS_

[](https://beats-ci.elastic.co/blue/organizations/jenkins/Beats%2Fbeats%2F8.4/detail/8.4/2//pipeline) [... | 1.0 | Build 2 for 8.4 with status FAILURE -

## :broken_heart: Tests Failed

<!-- BUILD BADGES-->

> _the below badges are clickable and redirect to their specific view in the CI or DOCS_

[](https://beats-ci.elastic.co/blue/organizations/jenkins/Beats%2... | non_priority | build for with status failure broken heart tests failed the below badges are clickable and redirect to their specific view in the ci or docs expand to view the summary build stats start time duration min sec test stats ... | 0 |

6,393 | 14,498,679,951 | IssuesEvent | 2020-12-11 15:48:09 | ratchetphp/Ratchet | https://api.github.com/repos/ratchetphp/Ratchet | opened | Roadmap | architecture docs enhancement feature | ## v0.5

Updates to the next release of Ratchet will be made against the [v0.5 branch](https://github.com/ratchetphp/Ratchet/tree/v0.5). This version will add some functionality, including a transition period, while keeping backwards compatibility. Key features for this version include:

- WebSocket deflate support... | 1.0 | Roadmap - ## v0.5

Updates to the next release of Ratchet will be made against the [v0.5 branch](https://github.com/ratchetphp/Ratchet/tree/v0.5). This version will add some functionality, including a transition period, while keeping backwards compatibility. Key features for this version include:

- WebSocket defla... | non_priority | roadmap updates to the next release of ratchet will be made against the this version will add some functionality including a transition period while keeping backwards compatibility key features for this version include websocket deflate support off by default a new optional parameter to be ad... | 0 |

153,445 | 19,706,434,918 | IssuesEvent | 2022-01-12 22:40:28 | KaterinaOrg/maven-modular | https://api.github.com/repos/KaterinaOrg/maven-modular | opened | CVE-2020-36187 (High) detected in jackson-databind-2.9.6.jar | security vulnerability | ## CVE-2020-36187 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-36187 (High) detected in jackson-databind-2.9.6.jar - ## CVE-2020-36187 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml path to vulnerable... | 0 |

35,223 | 7,660,753,335 | IssuesEvent | 2018-05-11 11:50:35 | jnavila/graffle2svg | https://api.github.com/repos/jnavila/graffle2svg | closed | Shapes not managed | defect | Don't know how to display Shape Bezier

Don't know how to display Shape FlattenedRectangle

Don't know how to display Shape Cloud

Don't know how to display Class "PolygonGraphic"

Don't know how to display Shape Diamond

Don't know how to display Shape Trapazoid

Don't know how to display Shape NoteShape

| 1.0 | Shapes not managed - Don't know how to display Shape Bezier

Don't know how to display Shape FlattenedRectangle

Don't know how to display Shape Cloud

Don't know how to display Class "PolygonGraphic"

Don't know how to display Shape Diamond

Don't know how to display Shape Trapazoid

Don't know how to display Shape NoteShap... | non_priority | shapes not managed don t know how to display shape bezier don t know how to display shape flattenedrectangle don t know how to display shape cloud don t know how to display class polygongraphic don t know how to display shape diamond don t know how to display shape trapazoid don t know how to display shape noteshap... | 0 |

188,965 | 15,174,163,022 | IssuesEvent | 2021-02-13 17:15:09 | jeffdc/gallformers | https://api.github.com/repos/jeffdc/gallformers | closed | Add Note & Links to Gall-Host Mapping Page That Both Host and Gall must Exisit Before Mapping | documentation enhancement good first issue | I think we should add a note on the Add Gallformers page that points users to the Map Galls and Hosts page for this; I just tried to map a new gall to a genus and had to remember that I need to add one host first, then go to the other tool--not something that would be clear to another user.

_Originally posted by @Me... | 1.0 | Add Note & Links to Gall-Host Mapping Page That Both Host and Gall must Exisit Before Mapping - I think we should add a note on the Add Gallformers page that points users to the Map Galls and Hosts page for this; I just tried to map a new gall to a genus and had to remember that I need to add one host first, then go to... | non_priority | add note links to gall host mapping page that both host and gall must exisit before mapping i think we should add a note on the add gallformers page that points users to the map galls and hosts page for this i just tried to map a new gall to a genus and had to remember that i need to add one host first then go to... | 0 |

175,575 | 14,532,585,363 | IssuesEvent | 2020-12-14 22:42:04 | geozeke/ubuntu | https://api.github.com/repos/geozeke/ubuntu | closed | Graphics Missing Stroke | documentation | * The graphic in setup guide Part 2; Step 20 is missing a stroke.

* The graphic in setup guide Part 3; Step 13 is missing a stroke. | 1.0 | Graphics Missing Stroke - * The graphic in setup guide Part 2; Step 20 is missing a stroke.

* The graphic in setup guide Part 3; Step 13 is missing a stroke. | non_priority | graphics missing stroke the graphic in setup guide part step is missing a stroke the graphic in setup guide part step is missing a stroke | 0 |

45,081 | 12,535,364,887 | IssuesEvent | 2020-06-04 21:13:35 | SasView/sasview | https://api.github.com/repos/SasView/sasview | closed | 5.0.1 constraints between FitPages stop working | defect major | In my local build of ESS_GUI, have been trying my usual constraints between core, shell & drop microemulsion contrast series.

M2:radius=M4.radius

M3:radius=M4.radius+M4.thickness

having added and removed a few constraints, including an M2:sld_solvent=M2.sld_core, these plainly stopped working. Of course I cannot e... | 1.0 | 5.0.1 constraints between FitPages stop working - In my local build of ESS_GUI, have been trying my usual constraints between core, shell & drop microemulsion contrast series.

M2:radius=M4.radius

M3:radius=M4.radius+M4.thickness

having added and removed a few constraints, including an M2:sld_solvent=M2.sld_core, t... | non_priority | constraints between fitpages stop working in my local build of ess gui have been trying my usual constraints between core shell drop microemulsion contrast series radius radius radius radius thickness having added and removed a few constraints including an sld solvent sld core these pl... | 0 |

447,541 | 31,713,867,263 | IssuesEvent | 2023-09-09 16:08:08 | vercel/next.js | https://api.github.com/repos/vercel/next.js | closed | Docs: twitter metadate | template: documentation | ### What is the improvement or update you wish to see?

doc says below the code.

but I think `name property `should be `property property` according to [Twitter Card markup reference](https://developer.twitter.com/en/docs/twitter-for-websites/cards/overview/markup).

input

```

export const metadata = {

twitter... | 1.0 | Docs: twitter metadate - ### What is the improvement or update you wish to see?

doc says below the code.

but I think `name property `should be `property property` according to [Twitter Card markup reference](https://developer.twitter.com/en/docs/twitter-for-websites/cards/overview/markup).

input

```

export con... | non_priority | docs twitter metadate what is the improvement or update you wish to see doc says below the code but i think name property should be property property according to input export const metadata twitter card summary large image title next js description the r... | 0 |

41,514 | 5,343,198,126 | IssuesEvent | 2017-02-17 10:35:51 | cyclestreets/cyclescape | https://api.github.com/repos/cyclestreets/cyclescape | opened | Sidebar metadata (with unfollow) needs to be persistent | design | Because of the auto-scrolling, the unfollow link often disappears up the screen.

This can't be solved until a fuller redesign. | 1.0 | Sidebar metadata (with unfollow) needs to be persistent - Because of the auto-scrolling, the unfollow link often disappears up the screen.

This can't be solved until a fuller redesign. | non_priority | sidebar metadata with unfollow needs to be persistent because of the auto scrolling the unfollow link often disappears up the screen this can t be solved until a fuller redesign | 0 |

15,171 | 5,073,736,845 | IssuesEvent | 2016-12-27 10:19:10 | drbenvincent/delay-discounting-analysis | https://api.github.com/repos/drbenvincent/delay-discounting-analysis | closed | Decompose the Data class into smaller classes | code clean up tests | At the moment, the Data class is doing too much.

- [x] Create a DataFile class, which holds data and plot methods

- [x] Get Data class to use (maybe generate) DataFile objects.

- [x] Add a DataImporter class which takes all responsibility for importing and validating data | 1.0 | Decompose the Data class into smaller classes - At the moment, the Data class is doing too much.

- [x] Create a DataFile class, which holds data and plot methods

- [x] Get Data class to use (maybe generate) DataFile objects.

- [x] Add a DataImporter class which takes all responsibility for importing and validating... | non_priority | decompose the data class into smaller classes at the moment the data class is doing too much create a datafile class which holds data and plot methods get data class to use maybe generate datafile objects add a dataimporter class which takes all responsibility for importing and validating data | 0 |

300,988 | 26,008,593,404 | IssuesEvent | 2022-12-20 22:08:20 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | Acceptance tests create operator account fallback cause later test case failure | enhancement test | ### Problem

We introduced the config property `createOperatorAccount` to create a normal account (not exempt from fees) as the operator account to run the acceptance tests, in order to work around the issue that the default operator account may be exempt fees which in turn causing the check of the number of crypto tra... | 1.0 | Acceptance tests create operator account fallback cause later test case failure - ### Problem

We introduced the config property `createOperatorAccount` to create a normal account (not exempt from fees) as the operator account to run the acceptance tests, in order to work around the issue that the default operator acco... | non_priority | acceptance tests create operator account fallback cause later test case failure problem we introduced the config property createoperatoraccount to create a normal account not exempt from fees as the operator account to run the acceptance tests in order to work around the issue that the default operator acco... | 0 |

352,090 | 25,047,488,691 | IssuesEvent | 2022-11-05 12:53:39 | AY2223S1-CS2103-W14-1/tp | https://api.github.com/repos/AY2223S1-CS2103-W14-1/tp | closed | [PE-D][Tester C] `add -p` command format in User Guide is missing `h/PROPERTY_TYPE` | type.Documentation PE-D.must-fix | As shown in the image belows, the format of the command is missing `h/PROPERTY_TYPE`, although the argument is compulsory.

<!--session: 1666941683639-2c47a0e6-9266-459f-b30e-47ba3c034af2--><!--Version: ... | 1.0 | [PE-D][Tester C] `add -p` command format in User Guide is missing `h/PROPERTY_TYPE` - As shown in the image belows, the format of the command is missing `h/PROPERTY_TYPE`, although the argument is compulsory.

detected in xercesImpl-2.8.0.jar | security vulnerability | ## CVE-2022-23437 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xercesImpl-2.8.0.jar</b></p></summary>

<p>Xerces2 is the next generation of high performance, fully compliant XML pars... | True | CVE-2022-23437 (High) detected in xercesImpl-2.8.0.jar - ## CVE-2022-23437 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xercesImpl-2.8.0.jar</b></p></summary>

<p>Xerces2 is the next... | non_priority | cve high detected in xercesimpl jar cve high severity vulnerability vulnerable library xercesimpl jar is the next generation of high performance fully compliant xml parsers in the apache xerces family this new version of xerces introduces the xerces native interface xni... | 0 |

31,700 | 6,587,219,361 | IssuesEvent | 2017-09-13 20:13:38 | CenturyLinkCloud/mdw | https://api.github.com/repos/CenturyLinkCloud/mdw | opened | Javadocs are not produced correctly by the MDW build | defect | The javadocs on our site are terribly out-of-date:

http://centurylinkcloud.github.io/mdw/docs/javadoc/

We should include in our build procedure a step to update these each time we publish a formal build.

Also, the mdw-javadoc jar on maven-central only includes docs for mdw-hub:

http://repo1.maven.org/maven2/com/c... | 1.0 | Javadocs are not produced correctly by the MDW build - The javadocs on our site are terribly out-of-date:

http://centurylinkcloud.github.io/mdw/docs/javadoc/

We should include in our build procedure a step to update these each time we publish a formal build.

Also, the mdw-javadoc jar on maven-central only includes... | non_priority | javadocs are not produced correctly by the mdw build the javadocs on our site are terribly out of date we should include in our build procedure a step to update these each time we publish a formal build also the mdw javadoc jar on maven central only includes docs for mdw hub | 0 |

58,421 | 14,274,431,889 | IssuesEvent | 2020-11-22 03:55:13 | Ghost-chu/QuickShop-Reremake | https://api.github.com/repos/Ghost-chu/QuickShop-Reremake | closed | CVE-2020-9546 (High) detected in jackson-databind-2.3.4.jar - autoclosed | Bug security vulnerability | ## CVE-2020-9546 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.3.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming... | True | CVE-2020-9546 (High) detected in jackson-databind-2.3.4.jar - autoclosed - ## CVE-2020-9546 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.3.4.jar</b></p></summary>

... | non_priority | cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to dependency file quickshop reremake pom xml path to vulnerable l... | 0 |

122,609 | 17,760,803,948 | IssuesEvent | 2021-08-29 16:59:00 | MidnightBSD/src | https://api.github.com/repos/MidnightBSD/src | opened | CVE-2018-17942 (High) detected in non-gnucvs-1.12.13 | security vulnerability | ## CVE-2018-17942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>non-gnucvs-1.12.13</b></p></summary>

<p>

<p>Gnu Distributions</p>

<p>Library home page: <a href=https://ftp.gnu.org/gn... | True | CVE-2018-17942 (High) detected in non-gnucvs-1.12.13 - ## CVE-2018-17942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>non-gnucvs-1.12.13</b></p></summary>

<p>

<p>Gnu Distributions</... | non_priority | cve high detected in non gnucvs cve high severity vulnerability vulnerable library non gnucvs gnu distributions library home page a href found in head commit a href found in base branch master vulnerable source files vul... | 0 |

42,922 | 11,101,141,658 | IssuesEvent | 2019-12-16 20:47:05 | microsoft/MixedRealityToolkit-Unity | https://api.github.com/repos/microsoft/MixedRealityToolkit-Unity | closed | Build window defaults to 2017 if both 2017 and 2019 are installed | Bug Build / Tools Build Window | ## Describe the bug

If both VS versions are installed, the build window checks for and uses 2017 first.

This can cause a build issue if Unity builds the solution expecting to use VS 2019.

As a workaround, the VS version can be explicitly set in Unity's build Window, but it should be configurable from the MRTK's ... | 2.0 | Build window defaults to 2017 if both 2017 and 2019 are installed - ## Describe the bug

If both VS versions are installed, the build window checks for and uses 2017 first.

This can cause a build issue if Unity builds the solution expecting to use VS 2019.

As a workaround, the VS version can be explicitly set in ... | non_priority | build window defaults to if both and are installed describe the bug if both vs versions are installed the build window checks for and uses first this can cause a build issue if unity builds the solution expecting to use vs as a workaround the vs version can be explicitly set in unity s build w... | 0 |

4,893 | 2,886,848,816 | IssuesEvent | 2015-06-12 11:18:46 | kodi-pvr/pvr.vbox | https://api.github.com/repos/kodi-pvr/pvr.vbox | closed | Update architecture README subsection | documentation | At least the part about the timeshift buffer is partly wrong, the implementation has already been moved to its own namespace. Probably some other things need updating as well. | 1.0 | Update architecture README subsection - At least the part about the timeshift buffer is partly wrong, the implementation has already been moved to its own namespace. Probably some other things need updating as well. | non_priority | update architecture readme subsection at least the part about the timeshift buffer is partly wrong the implementation has already been moved to its own namespace probably some other things need updating as well | 0 |

130,669 | 12,452,109,550 | IssuesEvent | 2020-05-27 11:42:31 | FRRouting/frr | https://api.github.com/repos/FRRouting/frr | closed | how can I use protobuf message. | documentation triage | The default FPM message format is netlink, I configured the FPM message format is protobuf,but there is no use.

I configred zebra like this;

```systemctl status frr

● frr.service - FRRouting

Loaded: loaded (/usr/lib/systemd/system/frr.service; disabled; vendor preset: disabled)

Active: active (running) sinc... | 1.0 | how can I use protobuf message. - The default FPM message format is netlink, I configured the FPM message format is protobuf,but there is no use.

I configred zebra like this;

```systemctl status frr

● frr.service - FRRouting

Loaded: loaded (/usr/lib/systemd/system/frr.service; disabled; vendor preset: disabled)... | non_priority | how can i use protobuf message the default fpm message format is netlink i configured the fpm message format is protobuf but there is no use i configred zebra like this systemctl status frr ● frr service frrouting loaded loaded usr lib systemd system frr service disabled vendor preset disabled ... | 0 |

133,932 | 29,670,585,651 | IssuesEvent | 2023-06-11 11:03:03 | dtcxzyw/llvm-ci | https://api.github.com/repos/dtcxzyw/llvm-ci | closed | Regressions Report [rv64gcv-O3-thinlto] April 25th 2023, 6:06:24 am | regression codegen transform reasonable | ## Metadata

+ Workflow URL: https://github.com/dtcxzyw/llvm-ci/actions/runs/4793517267

## Change Logs

from 1245a1ed07bab52fd4a5501f50651d65f43b9971 to 65eedcebdc03052959508911417bac548009652a

[65eedcebdc03052959508911417bac548009652a](https://github.com/llvm/llvm-project/commit/65eedcebdc03052959508911417bac548009652a... | 1.0 | Regressions Report [rv64gcv-O3-thinlto] April 25th 2023, 6:06:24 am - ## Metadata

+ Workflow URL: https://github.com/dtcxzyw/llvm-ci/actions/runs/4793517267

## Change Logs

from 1245a1ed07bab52fd4a5501f50651d65f43b9971 to 65eedcebdc03052959508911417bac548009652a

[65eedcebdc03052959508911417bac548009652a](https://github... | non_priority | regressions report april am metadata workflow url change logs from to detensorize don t accidentally convert function entry blocks port support scalar fix length vector ntlh intrinsic with different domain wframe larger than improve error with an invalid arg... | 0 |

130,523 | 10,617,606,178 | IssuesEvent | 2019-10-12 20:20:05 | Vachok/ftpplus | https://api.github.com/repos/Vachok/ftpplus | closed | testRun [D335] | Lowest TestQuality bug mint resolution_Fixed | Execute WeeklyInternetStatsTest::testRun**testRun**

*WeeklyInternetStatsTest*

*java.lang.UnsupportedOperationException: System tray unavailable WeeklyInternetStatsTest.java: ru.vachok.networker.info.stats.WeeklyInternetStatsTest.testRun(WeeklyInternetStatsTest.java:83) expected [null] but found [java.util.concurrent.... | 1.0 | testRun [D335] - Execute WeeklyInternetStatsTest::testRun**testRun**

*WeeklyInternetStatsTest*

*java.lang.UnsupportedOperationException: System tray unavailable WeeklyInternetStatsTest.java: ru.vachok.networker.info.stats.WeeklyInternetStatsTest.testRun(WeeklyInternetStatsTest.java:83) expected [null] but found [java... | non_priority | testrun execute weeklyinternetstatstest testrun testrun weeklyinternetstatstest java lang unsupportedoperationexception system tray unavailable weeklyinternetstatstest java ru vachok networker info stats weeklyinternetstatstest testrun weeklyinternetstatstest java expected but found java lang a... | 0 |

3,160 | 2,741,657,941 | IssuesEvent | 2015-04-21 12:40:02 | gbv/paia | https://api.github.com/repos/gbv/paia | closed | Make HTTP Accept header explicit | documentation | This is not mentioned explicitly:

Accept: application/json

or

Accept: application/json, ... | 1.0 | Make HTTP Accept header explicit - This is not mentioned explicitly:

Accept: application/json

or

Accept: application/json, ... | non_priority | make http accept header explicit this is not mentioned explicitly accept application json or accept application json | 0 |

41,300 | 5,325,918,813 | IssuesEvent | 2017-02-15 01:36:10 | elegantthemes/Divi-Beta | https://api.github.com/repos/elegantthemes/Divi-Beta | closed | BlueHost :: Shortcode Trimming :: Issues with adding padding | BUG DESIGN SIGNOFF QUALITY ASSURED READY FOR REVIEW | ### Problem:

I don't see the results as they should appear on the VB. The front end works okay, and when you reload the VB page you also see the padding applied correctly, it's just not when you add it live:

## 1. どんなもの?

シミュレーションを行わずにテストパターンのテスト電力を予測する機械学習を用いた手法を提案

## 2. 先行研究と比べてどこがすごい?

様々な機械学習アルゴリズムを適用することで,高い予測精度も示しつつ,

シミュレーションベース... | 2.0 | Machine Learning-based Prediction of Test Power - ## 0. 論文

[Machine Learning-based Prediction of Test Power](https://ehimetakahashilab.slack.com/files/U2CTQRAMS/F010FNX6DV4/ets2019_machine_learning-based_prediction_of_test_power.pdf)

## 1. どんなもの?

シミュレーションを行わずにテストパターンのテスト電力を予測する機械学習を用いた手法を提案

## 2. 先行研究と比べてどこがすごい... | non_priority | machine learning based prediction of test power 論文 どんなもの? シミュレーションを行わずにテストパターンのテスト電力を予測する機械学習を用いた手法を提案 先行研究と比べてどこがすごい? 様々な機械学習アルゴリズムを適用することで,高い予測精度も示しつつ, シミュレーションベースのテスト電力を解析するものより実行時間も大幅に短縮した. 技術や手法のキモはどこ? 最近ではテストパターンの数が多く,各テストの電力解析の実行時間が長すぎるため, すべてのテストについて完全な電力解析結果を得ることは不可... | 0 |

5,678 | 3,975,542,030 | IssuesEvent | 2016-05-05 06:12:39 | kolliSuman/issues | https://api.github.com/repos/kolliSuman/issues | closed | QA_Common Errors in English_Prerequisites_p1 | Category: Usability Developed By: VLEAD Release Number: Production Severity: S2 Status: Open | Defect Description :

In the "Common Errors in English" experiment, the minimum requirement to run the experiment is not displayed in the page instead a page or Scrolling should appear providing information on minimum requirement to run this experiment, information like Bandwidth,Device Resolution,Hardware Configuration... | True | QA_Common Errors in English_Prerequisites_p1 - Defect Description :

In the "Common Errors in English" experiment, the minimum requirement to run the experiment is not displayed in the page instead a page or Scrolling should appear providing information on minimum requirement to run this experiment, information like Ban... | non_priority | qa common errors in english prerequisites defect description in the common errors in english experiment the minimum requirement to run the experiment is not displayed in the page instead a page or scrolling should appear providing information on minimum requirement to run this experiment information like band... | 0 |

11,266 | 29,492,777,602 | IssuesEvent | 2023-06-02 14:36:15 | tremor-rs/tremor-www | https://api.github.com/repos/tremor-rs/tremor-www | opened | Add a generic error handling guide | bug documentation information-architecture | Experienced production tremor users who have grown up with tremor and how it

can manage, compensate, drop or process runtime errors have had the benefit

of face time with tremor maintainers.

We need to document the development and production practices as a guide as

it can be confusing for new adopters and users o... | 1.0 | Add a generic error handling guide - Experienced production tremor users who have grown up with tremor and how it

can manage, compensate, drop or process runtime errors have had the benefit

of face time with tremor maintainers.

We need to document the development and production practices as a guide as

it can be c... | non_priority | add a generic error handling guide experienced production tremor users who have grown up with tremor and how it can manage compensate drop or process runtime errors have had the benefit of face time with tremor maintainers we need to document the development and production practices as a guide as it can be c... | 0 |

227,885 | 17,402,033,087 | IssuesEvent | 2021-08-02 21:13:12 | lightbend/akkaserverless-javascript-sdk | https://api.github.com/repos/lightbend/akkaserverless-javascript-sdk | opened | Add Replicated Entity page back to JavaScript SDK doc pages | Documentation doc-team | This task is to bring back the old documentation on replicated entities, but the content still needs to be updated to reflect the new implementation. | 1.0 | Add Replicated Entity page back to JavaScript SDK doc pages - This task is to bring back the old documentation on replicated entities, but the content still needs to be updated to reflect the new implementation. | non_priority | add replicated entity page back to javascript sdk doc pages this task is to bring back the old documentation on replicated entities but the content still needs to be updated to reflect the new implementation | 0 |

40,093 | 12,746,539,857 | IssuesEvent | 2020-06-26 16:07:23 | tech256/jobs | https://api.github.com/repos/tech256/jobs | closed | Cyber Threat Intel Analyst | Active Clearance Required Cyber Security Hiring stale |

Cyber Threat Intelligence Analyst

Vicksburg, MS

Are you a whiz at Cyber Security? Do you enjoy supporting our military? INSUVI, Inc. is looking for great talent to join our team!

What We Can Offer YOU!

Medical

Dental

Vision

Long and Short-Term Disability

Life Insurance

401(k)

Pai... | True | Cyber Threat Intel Analyst -

Cyber Threat Intelligence Analyst

Vicksburg, MS

Are you a whiz at Cyber Security? Do you enjoy supporting our military? INSUVI, Inc. is looking for great talent to join our team!

What We Can Offer YOU!

Medical

Dental

Vision

Long and Short-Term Disability

Li... | non_priority | cyber threat intel analyst cyber threat intelligence analyst vicksburg ms are you a whiz at cyber security do you enjoy supporting our military insuvi inc is looking for great talent to join our team what we can offer you medical dental vision long and short term disability li... | 0 |

13,607 | 3,163,764,985 | IssuesEvent | 2015-09-20 16:33:57 | Quaggles/Icarus | https://api.github.com/repos/Quaggles/Icarus | closed | 1: New Gameplay Mechanics | design enhancement programming | Implement new Shield Burst, Charge and Weapons. Refer to "Altered Mechanics" Document for how moves interact with each other (Rock, Paper, Scissors Balancing). | 1.0 | 1: New Gameplay Mechanics - Implement new Shield Burst, Charge and Weapons. Refer to "Altered Mechanics" Document for how moves interact with each other (Rock, Paper, Scissors Balancing). | non_priority | new gameplay mechanics implement new shield burst charge and weapons refer to altered mechanics document for how moves interact with each other rock paper scissors balancing | 0 |

93,864 | 11,813,615,906 | IssuesEvent | 2020-03-19 22:55:09 | aragon/aragon | https://api.github.com/repos/aragon/aragon | closed | Allow users to use the default (local) network as their wallet provider | design: request enhancement | See discussion in https://github.com/aragon/aragon/pull/469#discussion_r233074933:

> Maybe we should not have it by default and have a setting for that, below the provider URL, for users that know what they are doing?

```

Ethereum node:

[ wss://mainnet.eth.aragon.network/ws ]

[✓] Sign transactions with this pr... | 1.0 | Allow users to use the default (local) network as their wallet provider - See discussion in https://github.com/aragon/aragon/pull/469#discussion_r233074933:

> Maybe we should not have it by default and have a setting for that, below the provider URL, for users that know what they are doing?

```

Ethereum node:

[... | non_priority | allow users to use the default local network as their wallet provider see discussion in maybe we should not have it by default and have a setting for that below the provider url for users that know what they are doing ethereum node sign transactions with this provider ... | 0 |

142,838 | 11,496,422,504 | IssuesEvent | 2020-02-12 07:55:26 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [CI] RegressionIT - testTwoJobsWithSameRandomizeSeedUseSameTrainingSet failing periodically | :ml >test-failure | Seen a few of these failures in the past week and was able to reproduce locally.

```

java.lang.AssertionError:

Stats were: {"id":"regression_two_jobs_with_same_randomize_seed_1","state":"analyzing","progress":[{"phase":"reindexing","progress_percent":100},{"phase":"loading_data","progress_percent":100},{"phase":"... | 1.0 | [CI] RegressionIT - testTwoJobsWithSameRandomizeSeedUseSameTrainingSet failing periodically - Seen a few of these failures in the past week and was able to reproduce locally.

```

java.lang.AssertionError:

Stats were: {"id":"regression_two_jobs_with_same_randomize_seed_1","state":"analyzing","progress":[{"phase":"... | non_priority | regressionit testtwojobswithsamerandomizeseedusesametrainingset failing periodically seen a few of these failures in the past week and was able to reproduce locally java lang assertionerror stats were id regression two jobs with same randomize seed state analyzing progress node id ... | 0 |

45,592 | 9,790,031,654 | IssuesEvent | 2019-06-10 11:32:18 | deity-io/falcon | https://api.github.com/repos/deity-io/falcon | closed | Rework Extensions | code-cleanup extension node | At this point, the `Extension` class seems to be an overhead when using within Falcon-Server.

It is possible to move the functionality of Extension into ExtensionContainer class, keeping the current level of customization for users' extensions.

| 1.0 | Rework Extensions - At this point, the `Extension` class seems to be an overhead when using within Falcon-Server.

It is possible to move the functionality of Extension into ExtensionContainer class, keeping the current level of customization for users' extensions.

| non_priority | rework extensions at this point the extension class seems to be an overhead when using within falcon server it is possible to move the functionality of extension into extensioncontainer class keeping the current level of customization for users extensions | 0 |

19,677 | 27,322,438,894 | IssuesEvent | 2023-02-24 21:14:41 | BlitterStudio/amiberry | https://api.github.com/repos/BlitterStudio/amiberry | closed | GFX Glitches | compatibility fixed in dev | **Describe the bug**

Some games do present some graphical artifacts either in menu or in-game. This is 100% reproductible. Currently the following packages are impacted:

* **PinkPanther_v1.0_0236** (does run fine on FS-uae)

* **PuttySquad_v1.3_AGA** (does run fine on FS-uae)

* **SurfNinjas_v1.0_AGA_1291** (does *... | True | GFX Glitches - **Describe the bug**

Some games do present some graphical artifacts either in menu or in-game. This is 100% reproductible. Currently the following packages are impacted:

* **PinkPanther_v1.0_0236** (does run fine on FS-uae)

* **PuttySquad_v1.3_AGA** (does run fine on FS-uae)

* **SurfNinjas_v1.0_AGA... | non_priority | gfx glitches describe the bug some games do present some graphical artifacts either in menu or in game this is reproductible currently the following packages are impacted pinkpanther does run fine on fs uae puttysquad aga does run fine on fs uae surfninjas aga do... | 0 |

5,328 | 5,631,973,192 | IssuesEvent | 2017-04-05 15:36:45 | Cadasta/cadasta-platform | https://api.github.com/repos/Cadasta/cadasta-platform | closed | Public Users can still view `records/locations` and `records/parties` | bug security | ### Steps to reproduce the error

Add a user to an organization and assign them as a Public User for a project.

Login as that user and go to that project page.

Add `/records/locations/` to the URL.

::or::

Add `/records/parties/` to the URL

### Actual behavior

They see a stripped down version of the "map" page.

... | True | Public Users can still view `records/locations` and `records/parties` - ### Steps to reproduce the error

Add a user to an organization and assign them as a Public User for a project.

Login as that user and go to that project page.

Add `/records/locations/` to the URL.

::or::

Add `/records/parties/` to the URL

#... | non_priority | public users can still view records locations and records parties steps to reproduce the error add a user to an organization and assign them as a public user for a project login as that user and go to that project page add records locations to the url or add records parties to the url ... | 0 |

7,738 | 3,600,637,174 | IssuesEvent | 2016-02-03 07:16:32 | hjwylde/werewolf | https://api.github.com/repos/hjwylde/werewolf | closed | Add an `upcoming-deaths` field to the game state | existing: enhancement kind: code state: awaiting release | This will make it easier to control when someone dies and help to fix the message ordering issue. | 1.0 | Add an `upcoming-deaths` field to the game state - This will make it easier to control when someone dies and help to fix the message ordering issue. | non_priority | add an upcoming deaths field to the game state this will make it easier to control when someone dies and help to fix the message ordering issue | 0 |

90,887 | 15,856,305,104 | IssuesEvent | 2021-04-08 02:01:43 | Molizo/FTC-Scouting-App-Skystone | https://api.github.com/repos/Molizo/FTC-Scouting-App-Skystone | opened | CVE-2021-23337 (High) detected in lodash-4.17.15.tgz | security vulnerability | ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.... | True | CVE-2021-23337 (High) detected in lodash-4.17.15.tgz - ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular utilitie... | non_priority | cve high detected in lodash tgz cve high severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file ftc scouting app skystone skystonescouting package json path to vulnerable library ftc scouting ap... | 0 |

94,410 | 15,962,371,818 | IssuesEvent | 2021-04-16 01:10:17 | RG4421/azure-iot-platform-dotnet | https://api.github.com/repos/RG4421/azure-iot-platform-dotnet | opened | CVE-2021-23369 (Medium) detected in handlebars-4.1.0.tgz | security vulnerability | ## CVE-2021-23369 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.0.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic template... | True | CVE-2021-23369 (Medium) detected in handlebars-4.1.0.tgz - ## CVE-2021-23369 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.0.tgz</b></p></summary>

<p>Handlebars prov... | non_priority | cve medium detected in handlebars tgz cve medium severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file azure i... | 0 |

62,681 | 8,634,368,480 | IssuesEvent | 2018-11-22 16:35:57 | coursier/coursier | https://api.github.com/repos/coursier/coursier | closed | Document cache location rules and overrides | documentation | Per the source code, the rules are quite complicated, but the (deprecated) docs suggest it is a simple matter of `~/.coursier/cache` unless overridden.

Eyeballing the source says there are 4 possibilities:

1/ wherever `$COURSIER_CACHE` says

2/ wherever `-Dcoursier.cache=...` says

3/ a platform specific place like... | 1.0 | Document cache location rules and overrides - Per the source code, the rules are quite complicated, but the (deprecated) docs suggest it is a simple matter of `~/.coursier/cache` unless overridden.

Eyeballing the source says there are 4 possibilities:

1/ wherever `$COURSIER_CACHE` says

2/ wherever `-Dcoursier.cach... | non_priority | document cache location rules and overrides per the source code the rules are quite complicated but the deprecated docs suggest it is a simple matter of coursier cache unless overridden eyeballing the source says there are possibilities wherever coursier cache says wherever dcoursier cach... | 0 |

184,893 | 14,994,757,718 | IssuesEvent | 2021-01-29 13:23:45 | HubertWelp/SweetPicker3 | https://api.github.com/repos/HubertWelp/SweetPicker3 | opened | Alle Readme.md sind veraltet | documentation enhancement | Die Readme Dateien der Pakete und des Projektes wurden noch nicht aktualisiert. | 1.0 | Alle Readme.md sind veraltet - Die Readme Dateien der Pakete und des Projektes wurden noch nicht aktualisiert. | non_priority | alle readme md sind veraltet die readme dateien der pakete und des projektes wurden noch nicht aktualisiert | 0 |

309,960 | 26,687,631,297 | IssuesEvent | 2023-01-26 23:51:21 | status-im/status-mobile | https://api.github.com/repos/status-im/status-mobile | closed | Accessibility-id is gone from community channel name | bug e2e test blocker | # Bug Report

## Problem

Regression from https://github.com/status-im/status-mobile/pull/14799

No accessibility-ids on channels inside the community

#### Expected behavior

'chat-name-text'

#### Actual behavior

- [x] Dynamic Array

- [x] LinkedList

- [x] Double Linked List

- [x] Stack

- [x] Queue

- [ ] Priority Queue

- [ ] Union Find

- [ ] Binary Search Tree

- [ ] Hashtable

| 1.0 | complete freecodecamp youtube tutorial on data structures - [Data Structures Easy to Advanced Course - Full Tutorial from a Google Engineer](https://www.youtube.com/watch?v=RBSGKlAvoiM)

- [x] Dynamic Array

- [x] LinkedList

- [x] Double Linked List

- [x] Stack

- [x] Queue

- [ ] Priority Queue

- [ ] Union Find

... | non_priority | complete freecodecamp youtube tutorial on data structures dynamic array linkedlist double linked list stack queue priority queue union find binary search tree hashtable | 0 |

95,107 | 11,954,118,410 | IssuesEvent | 2020-04-03 22:35:10 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | opened | IA: gather initial website content | design | [Copy doc is here](https://docs.google.com/document/d/19Y5hNmEU4wix_gCoSvmhMKUk2F1Dht6Nwf1JQAu0lsg/edit#heading=h.jga10kqap7d8). We need to continue to update the doc section to reflect our IA, continue to ping Lotta, Anil, and others to populate the doc. | 1.0 | IA: gather initial website content - [Copy doc is here](https://docs.google.com/document/d/19Y5hNmEU4wix_gCoSvmhMKUk2F1Dht6Nwf1JQAu0lsg/edit#heading=h.jga10kqap7d8). We need to continue to update the doc section to reflect our IA, continue to ping Lotta, Anil, and others to populate the doc. | non_priority | ia gather initial website content we need to continue to update the doc section to reflect our ia continue to ping lotta anil and others to populate the doc | 0 |

401,591 | 27,333,138,720 | IssuesEvent | 2023-02-25 22:03:43 | wxWidgets/wxWidgets | https://api.github.com/repos/wxWidgets/wxWidgets | closed | May be an error in a settings.h | documentation |

I see 2 files settings.h, one in include/wx/ and an other in interface/wx.

I have the feeling that only the first one is used, so it's not really a problem.

The second one, has probably an error at line 118 because this line is not ended by a comma, so the enum is probably not correct. In the first one, the corresp... | 1.0 | May be an error in a settings.h -

I see 2 files settings.h, one in include/wx/ and an other in interface/wx.

I have the feeling that only the first one is used, so it's not really a problem.

The second one, has probably an error at line 118 because this line is not ended by a comma, so the enum is probably not corr... | non_priority | may be an error in a settings h i see files settings h one in include wx and an other in interface wx i have the feeling that only the first one is used so it s not really a problem the second one has probably an error at line because this line is not ended by a comma so the enum is probably not correc... | 0 |

144,381 | 11,614,148,682 | IssuesEvent | 2020-02-26 12:03:47 | pingcap/tidb-operator | https://api.github.com/repos/pingcap/tidb-operator | closed | e2e: "[Feature: AdvancedStatefulSet] Scaling tidb cluster with advanced statefulset " is flaky | test/e2e | ## Bug Report

https://internal.pingcap.net/idc-jenkins/blue/organizations/jenkins/operator_ghpr_e2e_test_kind/detail/operator_ghpr_e2e_test_kind/2273/tests

```

Stacktrace

/home/jenkins/agent/workspace/operator_ghpr_e2e_test_kind/go/src/github.com/pingcap/tidb-operator/tests/e2e/tidbcluster/serial.go:139

Jan 10... | 1.0 | e2e: "[Feature: AdvancedStatefulSet] Scaling tidb cluster with advanced statefulset " is flaky - ## Bug Report

https://internal.pingcap.net/idc-jenkins/blue/organizations/jenkins/operator_ghpr_e2e_test_kind/detail/operator_ghpr_e2e_test_kind/2273/tests

```

Stacktrace

/home/jenkins/agent/workspace/operator_ghpr_... | non_priority | scaling tidb cluster with advanced statefulset is flaky bug report stacktrace home jenkins agent workspace operator ghpr test kind go src github com pingcap tidb operator tests tidbcluster serial go jan unexpected error groupkind group pingcap com kind tidbc... | 0 |

304,762 | 26,331,250,591 | IssuesEvent | 2023-01-10 10:57:38 | serlo/frontend | https://api.github.com/repos/serlo/frontend | closed | visual: scrollbars on mitmachen-menu | in testing | On the landing page with the featured Spenden button the Mitmachen overlay is cropped:

<img width="574" alt="image" src="https://user-images.githubusercontent.com/1258870/210352452-77bf173f-68b4-4cfa-91eb-4808ff5963f2.png">

| 1.0 | visual: scrollbars on mitmachen-menu - On the landing page with the featured Spenden button the Mitmachen overlay is cropped:

<img width="574" alt="image" src="https://user-images.githubusercontent.com/1258870/210352452-77bf173f-68b4-4cfa-91eb-4808ff5963f2.png">

| non_priority | visual scrollbars on mitmachen menu on the landing page with the featured spenden button the mitmachen overlay is cropped img width alt image src | 0 |

129,596 | 10,579,341,766 | IssuesEvent | 2019-10-08 02:17:31 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Error on Rollback on a workload | [zube]: To Test kind/bug-qa team/ca | **What kind of request is this (question/bug/enhancement/feature request):** bug

**Steps to reproduce (least amount of steps as possible):**

1. Deploy a workload with image: `sangeetha/mytestcontainer`. Scale up the pods to 2.

2. Edit the workload, change image to `ubuntu`.

3. Pods will get recreated

4. Edit t... | 1.0 | Error on Rollback on a workload - **What kind of request is this (question/bug/enhancement/feature request):** bug

**Steps to reproduce (least amount of steps as possible):**

1. Deploy a workload with image: `sangeetha/mytestcontainer`. Scale up the pods to 2.

2. Edit the workload, change image to `ubuntu`.

3. ... | non_priority | error on rollback on a workload what kind of request is this question bug enhancement feature request bug steps to reproduce least amount of steps as possible deploy a workload with image sangeetha mytestcontainer scale up the pods to edit the workload change image to ubuntu ... | 0 |

77,564 | 10,000,921,402 | IssuesEvent | 2019-07-12 14:28:49 | systemd/systemd | https://api.github.com/repos/systemd/systemd | closed | systemctl man page needs full examples for multiple property assignment | RFE 🎁 documentation 📖 has-pr ✨ systemctl | ### Submission type

- [ ] Bug report

- [X] Request for enhancement (RFE)

### systemd version the issue has been seen with

v232

### Used distribution

Debian

See also https://bugs.debian.org/cgi-bin/bugreport.cgi?bug=807464

File: /usr/share/man/man1/systemctl.1.gz

We read:

```

--ru... | 1.0 | systemctl man page needs full examples for multiple property assignment - ### Submission type

- [ ] Bug report

- [X] Request for enhancement (RFE)

### systemd version the issue has been seen with

v232

### Used distribution

Debian

See also https://bugs.debian.org/cgi-bin/bugreport.cgi?bug=807464

... | non_priority | systemctl man page needs full examples for multiple property assignment submission type bug report request for enhancement rfe systemd version the issue has been seen with used distribution debian see also file usr share man systemctl gz we read ... | 0 |

43,753 | 5,559,379,713 | IssuesEvent | 2017-03-24 16:48:29 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Run DCS/DCJS Tests in CoreFx against uapaot. | area-Serialization os-windows-uwp test enhancement | Running tests against uapaot is not supported. The task is to make our DCS/DCJS test projects build and run against uapaot. | 1.0 | Run DCS/DCJS Tests in CoreFx against uapaot. - Running tests against uapaot is not supported. The task is to make our DCS/DCJS test projects build and run against uapaot. | non_priority | run dcs dcjs tests in corefx against uapaot running tests against uapaot is not supported the task is to make our dcs dcjs test projects build and run against uapaot | 0 |

43,740 | 5,558,873,992 | IssuesEvent | 2017-03-24 15:40:42 | MitocGroup/deep-framework | https://api.github.com/repos/MitocGroup/deep-framework | closed | [deep-security] Move custom auth context from event data to lambda context object | test delayed | Move custom auth context `_deep_auth_context_` injected by api gateway integration template when using CUSOM authorizer from event to lambda context object. | 1.0 | [deep-security] Move custom auth context from event data to lambda context object - Move custom auth context `_deep_auth_context_` injected by api gateway integration template when using CUSOM authorizer from event to lambda context object. | non_priority | move custom auth context from event data to lambda context object move custom auth context deep auth context injected by api gateway integration template when using cusom authorizer from event to lambda context object | 0 |

207,161 | 16,067,155,417 | IssuesEvent | 2021-04-23 21:11:48 | pulibrary/dspace-development | https://api.github.com/repos/pulibrary/dspace-development | closed | Document the process of ingesting large-scale datasets into DataSpace on behalf of researchers | dataspace documentation research-data | @jrgriffiniii commented on [Mon May 04 2020](https://github.com/pulibrary/dspace-cli/issues/11)

Currently we cannot support the ingestion of data sets beyond 250MB for public download into DataSpace collections due to storage and server configuration restrictions. As a consequence, we have recently started to use Drop... | 1.0 | Document the process of ingesting large-scale datasets into DataSpace on behalf of researchers - @jrgriffiniii commented on [Mon May 04 2020](https://github.com/pulibrary/dspace-cli/issues/11)

Currently we cannot support the ingestion of data sets beyond 250MB for public download into DataSpace collections due to stor... | non_priority | document the process of ingesting large scale datasets into dataspace on behalf of researchers jrgriffiniii commented on currently we cannot support the ingestion of data sets beyond for public download into dataspace collections due to storage and server configuration restrictions as a consequence we have ... | 0 |

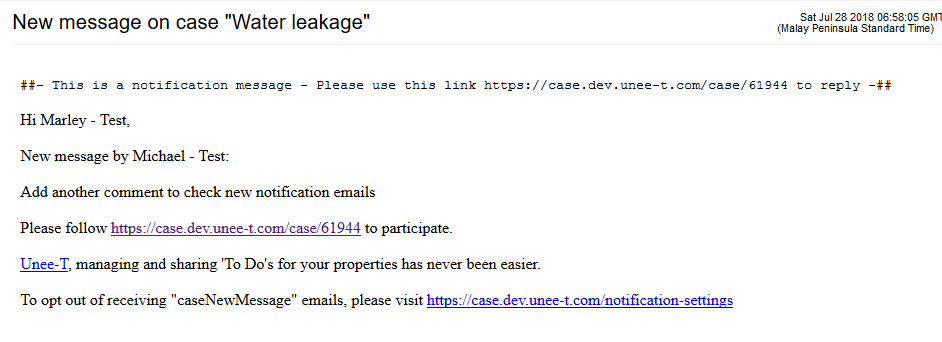

78,806 | 9,797,058,808 | IssuesEvent | 2019-06-11 09:06:54 | unee-t/frontend | https://api.github.com/repos/unee-t/frontend | closed | Notification email - first link to the case is not clickable | design/ux enhancement | # The problem:

With the rollout of PR #414 we

## Expected result:

The link to the case is clickable

## Actual result:

The first link to the case is NOT clickable (but the second link is)

| 1.0 | Notification email - first link to the case is not clickable - # The problem:

With the rollout of PR #414 we

## Expected result:

The link to the case is clickable

## Actual result:

The first link to the case is NOT clickable (but the second link is)

| security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-2.1.13.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/socket.io-parser/package.jso... | True | socket.io-2.1.13.tgz: 1 vulnerabilities (highest severity is: 9.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-2.1.13.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>P... | non_priority | socket io tgz vulnerabilities highest severity is vulnerable library socket io tgz path to dependency file package json path to vulnerable library node modules socket io parser package json found in head commit a href vulnerabilities cve severity cvss... | 0 |

15,350 | 8,852,562,976 | IssuesEvent | 2019-01-08 18:42:52 | webhintio/hint | https://api.github.com/repos/webhintio/hint | reopened | Add rule(s) to check for redirects | area:hint help wanted hint-category:performance | Possible checks:

- [X] Multiple redirect (bad for performance) (#641)

- [ ] Redirects from HTTPS to HTTP

- [ ] Client-side redirects (meta or JavaScript)

- [ ] Other? | True | Add rule(s) to check for redirects - Possible checks:

- [X] Multiple redirect (bad for performance) (#641)

- [ ] Redirects from HTTPS to HTTP

- [ ] Client-side redirects (meta or JavaScript)

- [ ] Other? | non_priority | add rule s to check for redirects possible checks multiple redirect bad for performance redirects from https to http client side redirects meta or javascript other | 0 |

396,920 | 27,141,615,220 | IssuesEvent | 2023-02-16 16:43:59 | chartjs/Chart.js | https://api.github.com/repos/chartjs/Chart.js | opened | Docs incomplete, no code examples. | type: documentation | ### Documentation Is:

- [X] Missing or needed?

- [X] Confusing

- [ ] Not sure?

### Please Explain in Detail...

There are no full examples of how to use this library and the code that has been provided is incomplete.

I am making a simple app and I just need to display a basic array as a line chart. None of the exa... | 1.0 | Docs incomplete, no code examples. - ### Documentation Is:

- [X] Missing or needed?

- [X] Confusing

- [ ] Not sure?

### Please Explain in Detail...

There are no full examples of how to use this library and the code that has been provided is incomplete.

I am making a simple app and I just need to display a basic a... | non_priority | docs incomplete no code examples documentation is missing or needed confusing not sure please explain in detail there are no full examples of how to use this library and the code that has been provided is incomplete i am making a simple app and i just need to display a basic array a... | 0 |

33,300 | 4,467,483,789 | IssuesEvent | 2016-08-25 05:02:35 | owncloud/core | https://api.github.com/repos/owncloud/core | closed | favicon.ico from custom theme is not applied | bug design feature:theming | <!--

Thanks for reporting issues back to ownCloud! This is the issue tracker of ownCloud, if you have any support question please check out https://owncloud.org/support

This is the bug tracker for the Server component. Find other components at https://github.com/owncloud/core/blob/master/CONTRIBUTING.md#guidelines

... | 1.0 | favicon.ico from custom theme is not applied - <!--

Thanks for reporting issues back to ownCloud! This is the issue tracker of ownCloud, if you have any support question please check out https://owncloud.org/support

This is the bug tracker for the Server component. Find other components at https://github.com/ownclo... | non_priority | favicon ico from custom theme is not applied thanks for reporting issues back to owncloud this is the issue tracker of owncloud if you have any support question please check out this is the bug tracker for the server component find other components at to make it possible for us to help you please f... | 0 |

34,697 | 4,940,295,649 | IssuesEvent | 2016-11-29 16:28:45 | openbakery/gradle-xcodePlugin | https://api.github.com/repos/openbakery/gradle-xcodePlugin | closed | Unit tests without simulator | bug status:testing | It's possible to run Unit tests with a real device?

configuration:

```

buildscript {

repositories {

maven { url 'https://plugins.gradle.org/m2/' } // Mirrors jcenter() and mavenCentral()

maven { url 'http://repository.openbakery.org/' }

}

dependencies {

// iOS gradle plugin

... | 1.0 | Unit tests without simulator - It's possible to run Unit tests with a real device?

configuration:

```

buildscript {

repositories {

maven { url 'https://plugins.gradle.org/m2/' } // Mirrors jcenter() and mavenCentral()

maven { url 'http://repository.openbakery.org/' }

}

dependencies {

... | non_priority | unit tests without simulator it s possible to run unit tests with a real device configuration buildscript repositories maven url mirrors jcenter and mavencentral maven url dependencies ios gradle plugin classpath org openbakery xc... | 0 |

19,599 | 11,254,098,455 | IssuesEvent | 2020-01-11 20:56:26 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | [Bug] Task Orders Status is Not Current in Data Tracker | Project: Warehouse Inventory Service: Dev Type: Bug Report Workgroup: AMD | The `ACTIVE` field for task orders in the Data Tracker are not being updated. The issue is that we're not detecting this change in the integration script. [Here](https://github.com/cityofaustin/atd-data-publishing/blob/bf44df63f3f9e7c63b878667b65aa290fe9d9f74/transportation-data-publishing/data_tracker/task_orders.py#L... | 1.0 | [Bug] Task Orders Status is Not Current in Data Tracker - The `ACTIVE` field for task orders in the Data Tracker are not being updated. The issue is that we're not detecting this change in the integration script. [Here](https://github.com/cityofaustin/atd-data-publishing/blob/bf44df63f3f9e7c63b878667b65aa290fe9d9f74/tr... | non_priority | task orders status is not current in data tracker the active field for task orders in the data tracker are not being updated the issue is that we re not detecting this change in the integration script is where the script needs to be updated to check for changes in status | 0 |

327,726 | 24,149,892,043 | IssuesEvent | 2022-09-21 22:49:29 | airbytehq/airbyte | https://api.github.com/repos/airbytehq/airbyte | opened | Firestore Connector: Documentation link broken | type/bug team/databases team/documentation | In the firestore connector the link to the documentation is broken and does not exist: https://airbyte.gitbook.io/airbyte/integrations/destinations/firestore | 1.0 | Firestore Connector: Documentation link broken - In the firestore connector the link to the documentation is broken and does not exist: https://airbyte.gitbook.io/airbyte/integrations/destinations/firestore | non_priority | firestore connector documentation link broken in the firestore connector the link to the documentation is broken and does not exist | 0 |

284,993 | 31,023,087,298 | IssuesEvent | 2023-08-10 07:14:46 | whitesource-ps/ws-ignore-alerts | https://api.github.com/repos/whitesource-ps/ws-ignore-alerts | closed | requests-2.27.1-py2.py3-none-any.whl: 1 vulnerabilities (highest severity is: 6.1) - autoclosed | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>requests-2.27.1-py2.py3-none-any.whl</b></p></summary>

<p>Python HTTP for Humans.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/2d/61/080... | True | requests-2.27.1-py2.py3-none-any.whl: 1 vulnerabilities (highest severity is: 6.1) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>requests-2.27.1-py2.py3-none-any.whl</b></p></summary>

<p>Python HTT... | non_priority | requests none any whl vulnerabilities highest severity is autoclosed vulnerable library requests none any whl python http for humans library home page a href found in head commit a href vulnerabilities cve severity cvss dependency type f... | 0 |

158,826 | 12,426,108,820 | IssuesEvent | 2020-05-24 19:32:06 | FriendsOfREDAXO/tricks | https://api.github.com/repos/FriendsOfREDAXO/tricks | closed | Ansatz für ein simples Rewrite-Schema mit Parameter für rex_getUrl | question testing needed | Hintergrund: Ich will, dass in einer schönen Url verschiedene Get-Parameter auf dem einen Artikel abgebildet werden

Thomas Blum [1 month ago]

Du könntest dir da was selber umsetzen … Hänge dich an den `URL_REWRITE` und wandel dann die Params um.

Thomas Blum [1 month ago]

Das ist der Part wie es früher in R4 pa... | 1.0 | Ansatz für ein simples Rewrite-Schema mit Parameter für rex_getUrl - Hintergrund: Ich will, dass in einer schönen Url verschiedene Get-Parameter auf dem einen Artikel abgebildet werden

Thomas Blum [1 month ago]

Du könntest dir da was selber umsetzen … Hänge dich an den `URL_REWRITE` und wandel dann die Params um.

... | non_priority | ansatz für ein simples rewrite schema mit parameter für rex geturl hintergrund ich will dass in einer schönen url verschiedene get parameter auf dem einen artikel abgebildet werden thomas blum du könntest dir da was selber umsetzen … hänge dich an den url rewrite und wandel dann die params um thomas bl... | 0 |

55,362 | 30,713,991,660 | IssuesEvent | 2023-07-27 11:49:36 | davidhalter/jedi | https://api.github.com/repos/davidhalter/jedi | closed | iPython Jedi completion result timing depends sensitively on CWD | performance high-prio | At ipython/ipython#13866 I reported an issue in which completions inside an `astropy.units` object (`x.unit.[Tab]`) produce results in widely varying amounts of time, depending on the _current working directory_. If iPython is in a deep directory (like `~/`) Jedi actually crashes. Not-so-deep but significant (`~/code... | True | iPython Jedi completion result timing depends sensitively on CWD - At ipython/ipython#13866 I reported an issue in which completions inside an `astropy.units` object (`x.unit.[Tab]`) produce results in widely varying amounts of time, depending on the _current working directory_. If iPython is in a deep directory (like... | non_priority | ipython jedi completion result timing depends sensitively on cwd at ipython ipython i reported an issue in which completions inside an astropy units object x unit produce results in widely varying amounts of time depending on the current working directory if ipython is in a deep directory like j... | 0 |

169,937 | 14,236,906,175 | IssuesEvent | 2020-11-18 16:33:11 | SAP/openui5 | https://api.github.com/repos/SAP/openui5 | closed | API reference: constructor of all View classes should be hidden | documentation in progress | ## URL (minimal example if possible)

* https://openui5nightly.hana.ondemand.com/api/sap.ui.core.mvc.View#constructor

* https://openui5nightly.hana.ondemand.com/api/sap.ui.core.mvc.HTMLView#constructor

* https://openui5nightly.hana.ondemand.com/api/sap.ui.core.mvc.JSONView#constructor

* https://openui5nightly.hana.o... | 1.0 | API reference: constructor of all View classes should be hidden - ## URL (minimal example if possible)

* https://openui5nightly.hana.ondemand.com/api/sap.ui.core.mvc.View#constructor

* https://openui5nightly.hana.ondemand.com/api/sap.ui.core.mvc.HTMLView#constructor

* https://openui5nightly.hana.ondemand.com/api/sap... | non_priority | api reference constructor of all view classes should be hidden url minimal example if possible according to view s api reference applications should not call the constructor directly but use one of the view factories instead e g view create accordingly shouldn t they a... | 0 |

2,865 | 3,671,962,832 | IssuesEvent | 2016-02-22 10:19:17 | owncloud/client | https://api.github.com/repos/owncloud/client | closed | Client "exploring" unselected folders | Performance | Hi,

I am using version 2.0.1 of the windows sync client and I am syncing my lightroom catalogue to a local OC server.

Lightroom creates previews of every photo you have in your catalogue and saves them in a lot of directories.

In my case these are 15 GB in 52'000 directories holding 120'000 files.

I have desel... | True | Client "exploring" unselected folders - Hi,

I am using version 2.0.1 of the windows sync client and I am syncing my lightroom catalogue to a local OC server.

Lightroom creates previews of every photo you have in your catalogue and saves them in a lot of directories.

In my case these are 15 GB in 52'000 directorie... | non_priority | client exploring unselected folders hi i am using version of the windows sync client and i am syncing my lightroom catalogue to a local oc server lightroom creates previews of every photo you have in your catalogue and saves them in a lot of directories in my case these are gb in directories ho... | 0 |

22,555 | 15,269,210,596 | IssuesEvent | 2021-02-22 12:29:00 | conan-io/conan-center-index | https://api.github.com/repos/conan-io/conan-center-index | closed | [service] Build packages for Clang 10 | infrastructure | Now where https://github.com/conan-io/conan-docker-tools/pull/193 is merged, the service should generate packages for Clang 10.

If added, the wiki should be updated as well: https://github.com/conan-io/conan-center-index/wiki/Supported-Platforms-And-Configurations | 1.0 | [service] Build packages for Clang 10 - Now where https://github.com/conan-io/conan-docker-tools/pull/193 is merged, the service should generate packages for Clang 10.

If added, the wiki should be updated as well: https://github.com/conan-io/conan-center-index/wiki/Supported-Platforms-And-Configurations | non_priority | build packages for clang now where is merged the service should generate packages for clang if added the wiki should be updated as well | 0 |

196,093 | 14,798,810,687 | IssuesEvent | 2021-01-13 00:42:22 | Slimefun/Slimefun4 | https://api.github.com/repos/Slimefun/Slimefun4 | closed | LiteXpansion metal forge issues when crafting. | 🎯 Needs testing 🐞 Bug Report | <!-- FILL IN THE FORM BELOW -->

## :round_pushpin: Description (REQUIRED)

<!-- A clear and detailed description of what went wrong. -->

<!-- The more information you can provide, the easier we can handle this problem. -->

<!-- Start writing below this line -->

When attempting to make Mixed Metal Ingot in the met... | 1.0 | LiteXpansion metal forge issues when crafting. - <!-- FILL IN THE FORM BELOW -->

## :round_pushpin: Description (REQUIRED)

<!-- A clear and detailed description of what went wrong. -->

<!-- The more information you can provide, the easier we can handle this problem. -->

<!-- Start writing below this line -->

Whe... | non_priority | litexpansion metal forge issues when crafting round pushpin description required when attempting to make mixed metal ingot in the metal forge from litexpansion without using an output chest each of these combinations give the message sorry my inventory is too full message if an o... | 0 |

126,244 | 26,809,316,877 | IssuesEvent | 2023-02-01 20:55:43 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Bug]: Custom JS Lib return 401 error for anonymous user | Bug High Needs Triaging Mongo BE Coders Pod Integrations Pod Custom JS Libraries | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

https://theappsmith.slack.com/archives/CGBPVEJ5C/p1674729997721399

### Steps To Reproduce

https://theappsmith.slack.com/archives/CGBPVEJ5C/p1674729997721399

### Public Sample App

_No response_

### Issue video log

... | 1.0 | [Bug]: Custom JS Lib return 401 error for anonymous user - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

https://theappsmith.slack.com/archives/CGBPVEJ5C/p1674729997721399

### Steps To Reproduce

https://theappsmith.slack.com/archives/CGBPVEJ5C/p1674729997721399

... | non_priority | custom js lib return error for anonymous user is there an existing issue for this i have searched the existing issues description steps to reproduce public sample app no response issue video log no response version cloud self hosted | 0 |

91,504 | 10,721,988,189 | IssuesEvent | 2019-10-27 08:22:14 | swsnu/swpp2019-team12 | https://api.github.com/repos/swsnu/swpp2019-team12 | opened | API 수정사항 | Backend documentation | 1. /profile/:id/ 추가

- GET: 특정 프로필 반환

- PATCH: 특정 프로필 정보 수정

2. /user/:id/ 삭제

- /profile/:id/로 통합 | 1.0 | API 수정사항 - 1. /profile/:id/ 추가

- GET: 특정 프로필 반환

- PATCH: 특정 프로필 정보 수정

2. /user/:id/ 삭제

- /profile/:id/로 통합 | non_priority | api 수정사항 profile id 추가 get 특정 프로필 반환 patch 특정 프로필 정보 수정 user id 삭제 profile id 로 통합 | 0 |

18,543 | 10,253,218,649 | IssuesEvent | 2019-08-21 10:44:39 | HumanCellAtlas/ingest-central | https://api.github.com/repos/HumanCellAtlas/ingest-central | closed | NGINX HTTP/2 vulnerability | security | This was [brought to our attention](https://humancellatlas.slack.com/archives/GBCT6QECF/p1564063446022500) by the security team. The NGINX version Ingest uses as ingress controller has a known vulnerability with its implementation of HTTP/2. Any version after 1.15.6 is said to have already the fix for this. | True | NGINX HTTP/2 vulnerability - This was [brought to our attention](https://humancellatlas.slack.com/archives/GBCT6QECF/p1564063446022500) by the security team. The NGINX version Ingest uses as ingress controller has a known vulnerability with its implementation of HTTP/2. Any version after 1.15.6 is said to have already ... | non_priority | nginx http vulnerability this was by the security team the nginx version ingest uses as ingress controller has a known vulnerability with its implementation of http any version after is said to have already the fix for this | 0 |

412,842 | 27,876,351,788 | IssuesEvent | 2023-03-21 16:15:12 | xarray-contrib/xpublish | https://api.github.com/repos/xarray-contrib/xpublish | closed | Add methods to API auto-documentation | documentation | Right now methods like `Rest.register_plugin()` don't get full documentation, and only the summary shows up (first line of the doc string). I noticed this when looking for the expanded docstring from the changes in #158 .

One method would be to explicitly add these methods to `api.rst` the same way `Rest.serve()` is... | 1.0 | Add methods to API auto-documentation - Right now methods like `Rest.register_plugin()` don't get full documentation, and only the summary shows up (first line of the doc string). I noticed this when looking for the expanded docstring from the changes in #158 .

One method would be to explicitly add these methods to ... | non_priority | add methods to api auto documentation right now methods like rest register plugin don t get full documentation and only the summary shows up first line of the doc string i noticed this when looking for the expanded docstring from the changes in one method would be to explicitly add these methods to a... | 0 |

123,277 | 10,261,689,094 | IssuesEvent | 2019-08-22 10:33:22 | viszerale-therapie/vt.at-drupal | https://api.github.com/repos/viszerale-therapie/vt.at-drupal | closed | Menüveränderungen css | ready for test | *Sent by Ursula Feuerherdt. Created by [fire](https://fire.fundersclub.com/).*

---

Lieber Andreas,

wir haben jetzt auch gleich noch ein paar Usability-Optimierungen geplant.

Könntest Du die bitte erledigen laut Screenshots anbei.

Wenn was unklar ist melde Dich bitte.

Vielen lieben Dank!

Liebe Grüße,

Ursul... | 1.0 | Menüveränderungen css - *Sent by Ursula Feuerherdt. Created by [fire](https://fire.fundersclub.com/).*

---

Lieber Andreas,

wir haben jetzt auch gleich noch ein paar Usability-Optimierungen geplant.

Könntest Du die bitte erledigen laut Screenshots anbei.

Wenn was unklar ist melde Dich bitte.

Vielen lieben Da... | non_priority | menüveränderungen css sent by ursula feuerherdt created by lieber andreas wir haben jetzt auch gleich noch ein paar usability optimierungen geplant könntest du die bitte erledigen laut screenshots anbei wenn was unklar ist melde dich bitte vielen lieben dank liebe grüße ursula ... | 0 |

350,385 | 31,883,286,854 | IssuesEvent | 2023-09-16 16:39:33 | lake-wg/edhoc | https://api.github.com/repos/lake-wg/edhoc | closed | Difference betwen Test Vector 1 and Test Vector 2 | Close? test vectors | What is the difference between the two test vectors - Vector 1 and Vector 2 in https://github.com/lake-wg/edhoc/blob/master/test-vectors-15/vectors-p256.txt?

Does the value of COSE header vary and thus the sizes of all the EDHOC messages? | 1.0 | Difference betwen Test Vector 1 and Test Vector 2 - What is the difference between the two test vectors - Vector 1 and Vector 2 in https://github.com/lake-wg/edhoc/blob/master/test-vectors-15/vectors-p256.txt?

Does the value of COSE header vary and thus the sizes of all the EDHOC messages? | non_priority | difference betwen test vector and test vector what is the difference between the two test vectors vector and vector in does the value of cose header vary and thus the sizes of all the edhoc messages | 0 |

96,644 | 10,958,417,736 | IssuesEvent | 2019-11-27 09:22:05 | Jogans/Gruppe1Semester4 | https://api.github.com/repos/Jogans/Gruppe1Semester4 | opened | Overfører / oprette et statisk klassediagram | documentation | Her tænkes at lave et stort klassediagram, som kombinere de funde klasser | 1.0 | Overfører / oprette et statisk klassediagram - Her tænkes at lave et stort klassediagram, som kombinere de funde klasser | non_priority | overfører oprette et statisk klassediagram her tænkes at lave et stort klassediagram som kombinere de funde klasser | 0 |

139,769 | 12,879,457,858 | IssuesEvent | 2020-07-11 22:20:24 | rrousselGit/river_pod | https://api.github.com/repos/rrousselGit/river_pod | closed | example for StreamProvider | documentation | I would like to see a more detailed example with StreamProvider in the docs. I think in the flutter / firebase context, a really nice example would be the equivalent of this from the provider way of things:

```

StreamProvider<FirebaseUser>.value(

value: FirebaseAuth.instance.onAuthStateChanged);

```

... | 1.0 | example for StreamProvider - I would like to see a more detailed example with StreamProvider in the docs. I think in the flutter / firebase context, a really nice example would be the equivalent of this from the provider way of things:

```

StreamProvider<FirebaseUser>.value(

value: FirebaseAuth.instan... | non_priority | example for streamprovider i would like to see a more detailed example with streamprovider in the docs i think in the flutter firebase context a really nice example would be the equivalent of this from the provider way of things streamprovider value value firebaseauth instance onauthstat... | 0 |

229,764 | 25,368,496,330 | IssuesEvent | 2022-11-21 08:47:36 | jmservera/opendonita-fork | https://api.github.com/repos/jmservera/opendonita-fork | closed | Pillow-9.2.0-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl: 1 vulnerabilities (highest severity is: 7.5) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-9.2.0-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl</b></p></summary>

<p>Python Imaging Library (Fork)</p>

<p>Library home page: <a href="https://f... | True | Pillow-9.2.0-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl: 1 vulnerabilities (highest severity is: 7.5) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-9.2.0-cp37-cp37m-manylinux_2... | non_priority | pillow manylinux whl vulnerabilities highest severity is autoclosed vulnerable library pillow manylinux whl python imaging library fork library home page a href path to dependency file requirements txt path to vulnerable library r... | 0 |

207,366 | 23,441,862,263 | IssuesEvent | 2022-08-15 15:36:51 | KaterinaOrg/WebGoat | https://api.github.com/repos/KaterinaOrg/WebGoat | opened | CVE-2021-42550 (Medium) detected in logback-classic-1.2.3.jar, logback-core-1.2.3.jar | security vulnerability | ## CVE-2021-42550 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>logback-classic-1.2.3.jar</b>, <b>logback-core-1.2.3.jar</b></p></summary>

<p>

<details><summary><b>logback-classi... | True | CVE-2021-42550 (Medium) detected in logback-classic-1.2.3.jar, logback-core-1.2.3.jar - ## CVE-2021-42550 - Medium Severity Vulnerability