Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

131,688 | 10,706,240,162 | IssuesEvent | 2019-10-24 15:03:30 | python-discord/bot | https://api.github.com/repos/python-discord/bot | opened | Writing unit tests for the bot | area: unit tests help wanted | ### This issue is work in progress

I'm currently in the process of adding individual issues for individual files. I'll update this issue as I create these issues.

---

The unit test branch coverage of our bot is currently 20% (30% including the test files). We would like to increase the test coverage of our bot t... | 1.0 | Writing unit tests for the bot - ### This issue is work in progress

I'm currently in the process of adding individual issues for individual files. I'll update this issue as I create these issues.

---

The unit test branch coverage of our bot is currently 20% (30% including the test files). We would like to increa... | non_priority | writing unit tests for the bot this issue is work in progress i m currently in the process of adding individual issues for individual files i ll update this issue as i create these issues the unit test branch coverage of our bot is currently including the test files we would like to increase... | 0 |

105,929 | 11,462,475,567 | IssuesEvent | 2020-02-07 14:13:40 | justinwilaby/spellchecker-wasm | https://api.github.com/repos/justinwilaby/spellchecker-wasm | closed | Browser example does not work | bug documentation | Hi,

I may be missing something, but in the initializeSpellchecker function, "spellchecker" is not defined.

Furthermore, when running your sample, I get this error (latest version of Chrome) :

spellchecker.js:56 Uncaught TypeError: initializeSpellchecker.then is not a function

Thanks for any help

| 1.0 | Browser example does not work - Hi,

I may be missing something, but in the initializeSpellchecker function, "spellchecker" is not defined.

Furthermore, when running your sample, I get this error (latest version of Chrome) :

spellchecker.js:56 Uncaught TypeError: initializeSpellchecker.then is not a function

... | non_priority | browser example does not work hi i may be missing something but in the initializespellchecker function spellchecker is not defined furthermore when running your sample i get this error latest version of chrome spellchecker js uncaught typeerror initializespellchecker then is not a function ... | 0 |

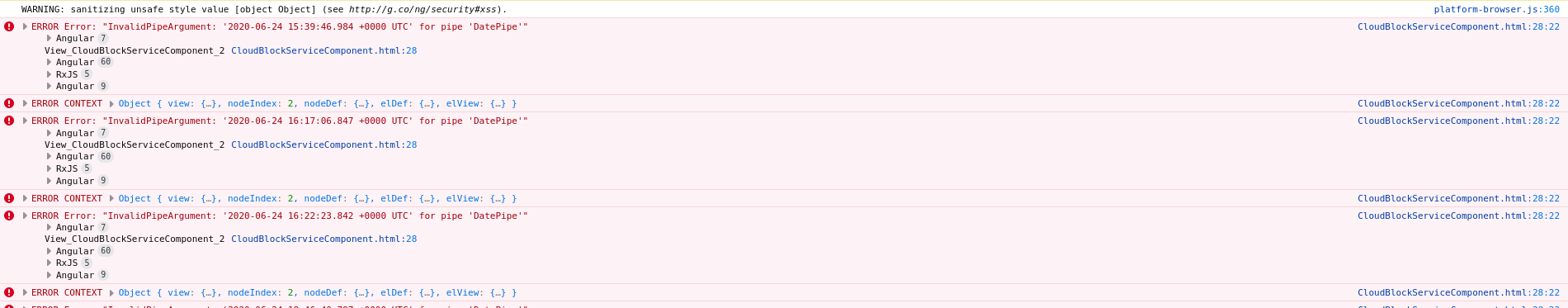

442,843 | 12,751,558,027 | IssuesEvent | 2020-06-27 11:42:31 | sodafoundation/dashboard | https://api.github.com/repos/sodafoundation/dashboard | closed | Opening the Cloud Volume page in Firefox / Edge browser breaks the UI. | Critical Priority bug | **Issue/Feature Description:**

When the dashboard is accessed using the Firefox to Edge browser on accessing the Cloud Volume page the UI crashes with JavaScript errors.

** will be available via the [MongoDB Cloud](https://www.mongodb.com/cloud) service. This will allow for remote monitoring of exceptions and operations without having to directly access the production server of this application.

### Schema ###

Th... | 1.0 | MongoDB: Log Document - ## MongoDB: Log Document ##

The Log document of **_Lum-chan!_ (2.0.0)** will be available via the [MongoDB Cloud](https://www.mongodb.com/cloud) service. This will allow for remote monitoring of exceptions and operations without having to directly access the production server of this applicatio... | priority | mongodb log document mongodb log document the log document of lum chan will be available via the service this will allow for remote monitoring of exceptions and operations without having to directly access the production server of this application schema this is a sample of t... | 1 |

33,895 | 7,762,404,263 | IssuesEvent | 2018-06-01 13:27:02 | surrsurus/edgequest | https://api.github.com/repos/surrsurus/edgequest | opened | Add screens to renderer | enhancement:code eq:core priority:low | The renderer could benefit from a `Screen` trait that has some `draw_all()` method implemented. This would allow the renderer to hold some `Box<Screen>` and allow the game to swap such screens in and out. This would allow for things such as UI screens, a main game screen, etc that could be modularly hot swapped on a bu... | 1.0 | Add screens to renderer - The renderer could benefit from a `Screen` trait that has some `draw_all()` method implemented. This would allow the renderer to hold some `Box<Screen>` and allow the game to swap such screens in and out. This would allow for things such as UI screens, a main game screen, etc that could be mod... | non_priority | add screens to renderer the renderer could benefit from a screen trait that has some draw all method implemented this would allow the renderer to hold some box and allow the game to swap such screens in and out this would allow for things such as ui screens a main game screen etc that could be modularly ... | 0 |

178,320 | 6,607,121,572 | IssuesEvent | 2017-09-19 05:04:02 | apache/incubator-openwhisk-wskdeploy | https://api.github.com/repos/apache/incubator-openwhisk-wskdeploy | closed | Two "inputs" formats create different assets | bug priority: medium | According the samples in specification, we support two formats for "inputs" (list of

parameter):

a complex one:

```

actions:

func1:

function: actions/function.js

runtime: nodejs:6

inputs:

functionID:

type: string

description: the ID of function

vis... | 1.0 | Two "inputs" formats create different assets - According the samples in specification, we support two formats for "inputs" (list of

parameter):

a complex one:

```

actions:

func1:

function: actions/function.js

runtime: nodejs:6

inputs:

functionID:

type: string

... | priority | two inputs formats create different assets according the samples in specification we support two formats for inputs list of parameter a complex one actions function actions function js runtime nodejs inputs functionid type string d... | 1 |

580,466 | 17,259,078,288 | IssuesEvent | 2021-07-22 03:25:23 | Thorium-Sim/thorium | https://api.github.com/repos/Thorium-Sim/thorium | opened | Copy Timeline Items Across to Other Timelines | priority/medium type/feature | ### Requested By: Bracken

### Priority: Medium

### Version: 3.3.1

What are the odds of adding the ability to copy a timeline item across to another timeline?

| 1.0 | Copy Timeline Items Across to Other Timelines - ### Requested By: Bracken

### Priority: Medium

### Version: 3.3.1

What are the odds of adding the ability to copy a timeline item across to another timeline?

| priority | copy timeline items across to other timelines requested by bracken priority medium version what are the odds of adding the ability to copy a timeline item across to another timeline | 1 |

597,285 | 18,160,021,193 | IssuesEvent | 2021-09-27 08:33:19 | creativecommons/project_creativecommons.org | https://api.github.com/repos/creativecommons/project_creativecommons.org | closed | Profile (Gutenberg editor test) | enhancement good first issue help wanted 🟥 priority: critical 🕹 aspect: interface | We recently agreed to use Gutenberg editor as much as possible to build pages for creativecommons.org. Now, we need to test how well Gutenberg default widgets support each page design.

## Task

Try to realize the creativecommons.org [Profile page mockup](https://www.figma.com/file/K6kbDVsx4Zpluhd52yEdDB/Mockups?no... | 1.0 | Profile (Gutenberg editor test) - We recently agreed to use Gutenberg editor as much as possible to build pages for creativecommons.org. Now, we need to test how well Gutenberg default widgets support each page design.

## Task

Try to realize the creativecommons.org [Profile page mockup](https://www.figma.com/file... | priority | profile gutenberg editor test we recently agreed to use gutenberg editor as much as possible to build pages for creativecommons org now we need to test how well gutenberg default widgets support each page design task try to realize the creativecommons org using only gutenberg default widgets ... | 1 |

822,909 | 30,913,817,696 | IssuesEvent | 2023-08-05 03:02:32 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Carp on uplink seems a bit too stronk | Priority: 2-Before Release Difficulty: 1-Easy Issue: Balance | e.g.

- Nukies spam it

- Make carp instant death trap

It's just a matter of whether people want to abuse it or not rn. | 1.0 | Carp on uplink seems a bit too stronk - e.g.

- Nukies spam it

- Make carp instant death trap

It's just a matter of whether people want to abuse it or not rn. | priority | carp on uplink seems a bit too stronk e g nukies spam it make carp instant death trap it s just a matter of whether people want to abuse it or not rn | 1 |

245,010 | 7,880,732,448 | IssuesEvent | 2018-06-26 16:46:30 | aowen87/FOO | https://api.github.com/repos/aowen87/FOO | closed | DBMetaData MeshForVar needs processed var names, but GetMesh, GetVar, etc get passed raw var names | Likelihood: 3 - Occasional OS: All Priority: Normal Severity: 2 - Minor Irritation Support Group: Any Target Version: 2.12.0 bug version: 2.10.0 | I you have a variable name with special chars in it (colons, brackets, etc)

You will have to use the filtered names to interact with the metadata -- even though the names requested by the pipeline appear to be the unfiltered names.

| 1.0 | DBMetaData MeshForVar needs processed var names, but GetMesh, GetVar, etc get passed raw var names - I you have a variable name with special chars in it (colons, brackets, etc)

You will have to use the filtered names to interact with the metadata -- even though the names requested by the pipeline appear to be the un... | priority | dbmetadata meshforvar needs processed var names but getmesh getvar etc get passed raw var names i you have a variable name with special chars in it colons brackets etc you will have to use the filtered names to interact with the metadata even though the names requested by the pipeline appear to be the un... | 1 |

284,228 | 8,736,725,576 | IssuesEvent | 2018-12-11 20:22:22 | aowen87/TicketTester | https://api.github.com/repos/aowen87/TicketTester | closed | Displaying curves in the pseudocolor plot as ribbons crashes the engine. | bug crash likelihood medium priority reviewed severity high wrong results | I was displaying streamlines as ribbons in the pseudocolor plot window and then engine crashed. We need this before supercomputing.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below i... | 1.0 | Displaying curves in the pseudocolor plot as ribbons crashes the engine. - I was displaying streamlines as ribbons in the pseudocolor plot window and then engine crashed. We need this before supercomputing.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As su... | priority | displaying curves in the pseudocolor plot as ribbons crashes the engine i was displaying streamlines as ribbons in the pseudocolor plot window and then engine crashed we need this before supercomputing redmine migration this ticket was migrated from redmine as su... | 1 |

637,666 | 20,674,939,513 | IssuesEvent | 2022-03-10 08:18:22 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | opened | Key Provisioning does not work | Type/Bug Priority/High APIM - 4.1.0 | ### Description:

Followed documentation [1]. Getting the below error when providing existing oauth keys and clicking the Provide button.

<img width="1680" alt="Screenshot 2022-03-10 at 13 46 14" src="https://user-images.githubusercontent.com/8557410/157617934-905757c1-6a7e-4c54-a29b-6a1d0f80a7bb.png">

```[2022... | 1.0 | Key Provisioning does not work - ### Description:

Followed documentation [1]. Getting the below error when providing existing oauth keys and clicking the Provide button.

<img width="1680" alt="Screenshot 2022-03-10 at 13 46 14" src="https://user-images.githubusercontent.com/8557410/157617934-905757c1-6a7e-4c54-a29b-6... | priority | key provisioning does not work description followed documentation getting the below error when providing existing oauth keys and clicking the provide button img width alt screenshot at src error abstractkeymanager some thing went wrong while getting oauth application for... | 1 |

148,961 | 5,704,079,507 | IssuesEvent | 2017-04-18 02:42:43 | Erigitic/TotalEconomy | https://api.github.com/repos/Erigitic/TotalEconomy | reopened | Virtual Account Support | feature request high priority | Recently issues have been coming in that would be fixed if there was support for virtual accounts. As well as that, virtual account support should allow for other plugins, such as those with towns that have their own balance, to work with Total Economy. So, with that said, this is definitely priority number one.

UPD... | 1.0 | Virtual Account Support - Recently issues have been coming in that would be fixed if there was support for virtual accounts. As well as that, virtual account support should allow for other plugins, such as those with towns that have their own balance, to work with Total Economy. So, with that said, this is definitely p... | priority | virtual account support recently issues have been coming in that would be fixed if there was support for virtual accounts as well as that virtual account support should allow for other plugins such as those with towns that have their own balance to work with total economy so with that said this is definitely p... | 1 |

164,732 | 12,812,888,063 | IssuesEvent | 2020-07-04 09:26:15 | aliasrobotics/RVD | https://api.github.com/repos/aliasrobotics/RVD | closed | RVD#2954: CWE-134 (format), If format strings can be influenced by an attacker, they can be exploi... @ s/boards/aerofc-v1/init.c:100 | CWE-134 bug components software flawfinder flawfinder_level_4 mitigated robot component: PX4 static analysis testing triage version: v1.8.0 | ```yaml

id: 2954

title: 'RVD#2954: CWE-134 (format), If format strings can be influenced by an attacker,

they can be exploi... @ s/boards/aerofc-v1/init.c:100'

type: bug

description: If format strings can be influenced by an attacker, they can be exploited

(CWE-134). Use a constant for the format specification. . H... | 1.0 | RVD#2954: CWE-134 (format), If format strings can be influenced by an attacker, they can be exploi... @ s/boards/aerofc-v1/init.c:100 - ```yaml

id: 2954

title: 'RVD#2954: CWE-134 (format), If format strings can be influenced by an attacker,

they can be exploi... @ s/boards/aerofc-v1/init.c:100'

type: bug

description:... | non_priority | rvd cwe format if format strings can be influenced by an attacker they can be exploi s boards aerofc init c yaml id title rvd cwe format if format strings can be influenced by an attacker they can be exploi s boards aerofc init c type bug description if format strings ... | 0 |

327,262 | 9,968,950,041 | IssuesEvent | 2019-07-08 16:46:30 | beelabhmc/ant_tracker | https://api.github.com/repos/beelabhmc/ant_tracker | closed | Parallelize croprotate.py | enhancement low-priority | The croprotate.py file is a significant bottleneck in the code's execution speed. With the current video setup and running on purves, it takes almost an hour to crop 8 ROIs on one video.

It would greatly increase the pipeline speed if this code gets parallelized, to run several crop actions at once. It's more import... | 1.0 | Parallelize croprotate.py - The croprotate.py file is a significant bottleneck in the code's execution speed. With the current video setup and running on purves, it takes almost an hour to crop 8 ROIs on one video.

It would greatly increase the pipeline speed if this code gets parallelized, to run several crop actio... | priority | parallelize croprotate py the croprotate py file is a significant bottleneck in the code s execution speed with the current video setup and running on purves it takes almost an hour to crop rois on one video it would greatly increase the pipeline speed if this code gets parallelized to run several crop actio... | 1 |

184,353 | 14,289,010,911 | IssuesEvent | 2020-11-23 18:34:03 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | opened | posener/wstest: dialer_test.go; 27 LoC | small test |

Found a possible issue in [posener/wstest](https://www.github.com/posener/wstest) at [dialer_test.go](https://github.com/posener/wstest/blob/e79331f65216413fbfc72b452b2ee78884c97cc6/dialer_test.go#L82-L108)

The below snippet of Go code triggered static analysis which searches for goroutines and/or defer statements

wh... | 1.0 | posener/wstest: dialer_test.go; 27 LoC -

Found a possible issue in [posener/wstest](https://www.github.com/posener/wstest) at [dialer_test.go](https://github.com/posener/wstest/blob/e79331f65216413fbfc72b452b2ee78884c97cc6/dialer_test.go#L82-L108)

The below snippet of Go code triggered static analysis which searches ... | non_priority | posener wstest dialer test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer go for pair ... | 0 |

784,450 | 27,571,514,759 | IssuesEvent | 2023-03-08 09:40:03 | AdrKacz/super-duper-guacamole | https://api.github.com/repos/AdrKacz/super-duper-guacamole | opened | 📈 Delete sam-group-production stack | priority:P3 operation | # What is your idea to improve operation?

`sam-group-production` is not used anymore (**to verify**), so delete it.

# How you're idea will improve operation?

Reduce cost of maintenance.

# Why do we need your idea to improve operation? | 1.0 | 📈 Delete sam-group-production stack - # What is your idea to improve operation?

`sam-group-production` is not used anymore (**to verify**), so delete it.

# How you're idea will improve operation?

Reduce cost of maintenance.

# Why do we need your idea to improve operation? | priority | 📈 delete sam group production stack what is your idea to improve operation sam group production is not used anymore to verify so delete it how you re idea will improve operation reduce cost of maintenance why do we need your idea to improve operation | 1 |

118,629 | 15,342,905,984 | IssuesEvent | 2021-02-27 18:05:03 | plotn/coolreader | https://api.github.com/repos/plotn/coolreader | closed | Перенести настройки "Опции рендеринга" и "Уровень совместимости DOM" | design | Пользователь [написал](https://4pda.ru/forum/index.php?s=&showtopic=995536&view=findpost&p=104618639):

> "Опции рендеринга" и "Уровень совместимости DOM" на мой взгляд стоит убрать в "Редкие и экспериментальные". Понять что это такое у простого юзверя нет никаких шансов (даже невзирая на разъяснения), да и умолчальное... | 1.0 | Перенести настройки "Опции рендеринга" и "Уровень совместимости DOM" - Пользователь [написал](https://4pda.ru/forum/index.php?s=&showtopic=995536&view=findpost&p=104618639):

> "Опции рендеринга" и "Уровень совместимости DOM" на мой взгляд стоит убрать в "Редкие и экспериментальные". Понять что это такое у простого юзв... | non_priority | перенести настройки опции рендеринга и уровень совместимости dom пользователь опции рендеринга и уровень совместимости dom на мой взгляд стоит убрать в редкие и экспериментальные понять что это такое у простого юзверя нет никаких шансов даже невзирая на разъяснения да и умолчальное их значение по... | 0 |

126,926 | 5,007,702,836 | IssuesEvent | 2016-12-12 17:25:44 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | lcmt_driving_command_t Should Be Updated to Include Reference Velocity Rather Than Reference Throttle / Brake | priority: medium type: cleanup | # Problem Definition

`drake/lcmtypes/lcmt_driving_command_t.lcm` over-specifies the system's desired state by including both `throttle` and `brake` reference values.

```

struct lcmt_driving_command_t {

// The timestamp in milliseconds.

int64_t timestamp;

double steering_angle;

double throttle;

d... | 1.0 | lcmt_driving_command_t Should Be Updated to Include Reference Velocity Rather Than Reference Throttle / Brake - # Problem Definition

`drake/lcmtypes/lcmt_driving_command_t.lcm` over-specifies the system's desired state by including both `throttle` and `brake` reference values.

```

struct lcmt_driving_command_t {... | priority | lcmt driving command t should be updated to include reference velocity rather than reference throttle brake problem definition drake lcmtypes lcmt driving command t lcm over specifies the system s desired state by including both throttle and brake reference values struct lcmt driving command t ... | 1 |

82,291 | 7,836,560,329 | IssuesEvent | 2018-06-17 21:01:07 | FIDATA/gradle-semantic-release-plugin | https://api.github.com/repos/FIDATA/gradle-semantic-release-plugin | opened | Rewrite quoting arguments in tests using Gradle API | Test | `org.gradle.internal.Transformers.asSafeCommandLineArgument().transform(...)`

or

`org.gradle.internal.process.ArgWriter....` | 1.0 | Rewrite quoting arguments in tests using Gradle API - `org.gradle.internal.Transformers.asSafeCommandLineArgument().transform(...)`

or

`org.gradle.internal.process.ArgWriter....` | non_priority | rewrite quoting arguments in tests using gradle api org gradle internal transformers assafecommandlineargument transform or org gradle internal process argwriter | 0 |

4,572 | 5,194,842,007 | IssuesEvent | 2017-01-23 06:34:14 | msimerson/Mail-Toaster-6 | https://api.github.com/repos/msimerson/Mail-Toaster-6 | closed | integrate LetsEncrypt into the provisioning steps | enhancement security | ### present

As part of the base jail provisioning step, a self-signed TLS certificate is generated and left in /etc/ssl. When TLS is used (haproxy, haraka, dovecot), the files (key, certs, ca-cert) are installed into the necessary jails.

If the site has a real certificate, the sysadmin is expected to manually cop... | True | integrate LetsEncrypt into the provisioning steps - ### present

As part of the base jail provisioning step, a self-signed TLS certificate is generated and left in /etc/ssl. When TLS is used (haproxy, haraka, dovecot), the files (key, certs, ca-cert) are installed into the necessary jails.

If the site has a real c... | non_priority | integrate letsencrypt into the provisioning steps present as part of the base jail provisioning step a self signed tls certificate is generated and left in etc ssl when tls is used haproxy haraka dovecot the files key certs ca cert are installed into the necessary jails if the site has a real c... | 0 |

96,760 | 16,164,652,834 | IssuesEvent | 2021-05-01 08:31:11 | AlexRogalskiy/github-action-issue-commenter | https://api.github.com/repos/AlexRogalskiy/github-action-issue-commenter | opened | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js | security vulnerability | ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js - ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript librar... | non_priority | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file github action issue commenter node modules redeyed examples browser index html ... | 0 |

313,177 | 23,460,543,926 | IssuesEvent | 2022-08-16 12:47:07 | corvvs/webserv | https://api.github.com/repos/corvvs/webserv | closed | HTTPエラーと持続的接続 | documentation 設計 | ## タスク

- [x] 正常に終了するリクエストを、接続を切らずに連続して処理できる

- [x] 正常に終了するリクエストを処理したあと、データを送らない場合に接続がタイムアウトする

- [x] 正常に終了するリクエストを2つ繋げて送信した時、レスポンスが2つ連続で返ってくる

- [ ] recoverable(回復可能)エラーについて

- 以下にあてはまるエラーを"recoverableエラー"とする:

- リクエストのヘッダ解析完了までに生じたエラーの中で、そのまま次のリクエストを処理することが可能かつそうしても支障がないもの

- リクエストマッチングで生じたもの

... | 1.0 | HTTPエラーと持続的接続 - ## タスク

- [x] 正常に終了するリクエストを、接続を切らずに連続して処理できる

- [x] 正常に終了するリクエストを処理したあと、データを送らない場合に接続がタイムアウトする

- [x] 正常に終了するリクエストを2つ繋げて送信した時、レスポンスが2つ連続で返ってくる

- [ ] recoverable(回復可能)エラーについて

- 以下にあてはまるエラーを"recoverableエラー"とする:

- リクエストのヘッダ解析完了までに生じたエラーの中で、そのまま次のリクエストを処理することが可能かつそうしても支障がないもの

- リクエ... | non_priority | httpエラーと持続的接続 タスク 正常に終了するリクエストを、接続を切らずに連続して処理できる 正常に終了するリクエストを処理したあと、データを送らない場合に接続がタイムアウトする 、 recoverable 回復可能 エラーについて 以下にあてはまるエラーを recoverableエラー とする リクエストのヘッダ解析完了までに生じたエラーの中で、そのまま次のリクエストを処理することが可能かつそうしても支障がないもの リクエストマッチングで生じたもの 上記に当てはまらないエラーは unrecover... | 0 |

249,978 | 21,220,037,784 | IssuesEvent | 2022-04-11 11:02:34 | gameserverapp/Platform | https://api.github.com/repos/gameserverapp/Platform | closed | Chat log parses same chat twice | bug status: to be tested admin tools:chat console | **Describe the bug**

Occasionally chat is being indexed twice.

Seems to happen mostly with higher-latency servers (US).

**Screenshots**

Symptom:

**Additional context**

https://discord.com/channels... | 1.0 | Chat log parses same chat twice - **Describe the bug**

Occasionally chat is being indexed twice.

Seems to happen mostly with higher-latency servers (US).

**Screenshots**

Symptom:

**Additional conte... | non_priority | chat log parses same chat twice describe the bug occasionally chat is being indexed twice seems to happen mostly with higher latency servers us screenshots symptom additional context | 0 |

53,766 | 13,205,357,455 | IssuesEvent | 2020-08-14 17:46:50 | open-telemetry/opentelemetry-java-instrumentation | https://api.github.com/repos/open-telemetry/opentelemetry-java-instrumentation | closed | Use Gradle build cache | area:build contributor experience priority:p2 release:required-for-ga | It takes a lot of time to build instrumentation project. But the majority of that time is spent building and testing tons of individual instrumentations. As developers often make changes to a specific instrumentation, there is no sense in building all other, unrelated, instrumentations. [Gradle build cache](https://doc... | 1.0 | Use Gradle build cache - It takes a lot of time to build instrumentation project. But the majority of that time is spent building and testing tons of individual instrumentations. As developers often make changes to a specific instrumentation, there is no sense in building all other, unrelated, instrumentations. [Gradle... | non_priority | use gradle build cache it takes a lot of time to build instrumentation project but the majority of that time is spent building and testing tons of individual instrumentations as developers often make changes to a specific instrumentation there is no sense in building all other unrelated instrumentations can... | 0 |

816,214 | 30,593,848,940 | IssuesEvent | 2023-07-21 19:42:14 | 42atomys/stud42 | https://api.github.com/repos/42atomys/stud42 | closed | misc: phone features postponed for consistent API Integration | state/confirmed 💜 priority/critical 🟥 aspect/backend 💻 type/chore 🛠 domain/complicated 🟨 | ### Please exprime yourself

We are currently facing a technical challenge regarding the alignment of data between webhooks and the intra API. More specifically, there is a notable inconsistency in how the phone number is synchronized between these two interfaces.

In the interest of transparency and delivering a h... | 1.0 | misc: phone features postponed for consistent API Integration - ### Please exprime yourself

We are currently facing a technical challenge regarding the alignment of data between webhooks and the intra API. More specifically, there is a notable inconsistency in how the phone number is synchronized between these two i... | priority | misc phone features postponed for consistent api integration please exprime yourself we are currently facing a technical challenge regarding the alignment of data between webhooks and the intra api more specifically there is a notable inconsistency in how the phone number is synchronized between these two i... | 1 |

84,907 | 3,681,533,816 | IssuesEvent | 2016-02-24 03:55:19 | scottaj/mocha.el | https://api.github.com/repos/scottaj/mocha.el | closed | Debugging? | Feature Low Priority | Could there be a debug function that spawns the test command in a terminal instead of a compilation buffer and runs mocha with the --debug option? Combined with the debugger keyword in js this could be pretty nice. | 1.0 | Debugging? - Could there be a debug function that spawns the test command in a terminal instead of a compilation buffer and runs mocha with the --debug option? Combined with the debugger keyword in js this could be pretty nice. | priority | debugging could there be a debug function that spawns the test command in a terminal instead of a compilation buffer and runs mocha with the debug option combined with the debugger keyword in js this could be pretty nice | 1 |

59,600 | 17,023,172,412 | IssuesEvent | 2021-07-03 00:42:03 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Bookmarks that aren't centered on the marker | Component: mapnik Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 8.58am, Monday, 16th July 2007]**

I have switched the link from my employer's web site to OSM now that the area looks respectable (actually much better than any other online map), and now it has a marker to denote our location:

http://www.openstreetmap.org/?mlon=-0.... | 1.0 | Bookmarks that aren't centered on the marker - **[Submitted to the original trac issue database at 8.58am, Monday, 16th July 2007]**

I have switched the link from my employer's web site to OSM now that the area looks respectable (actually much better than any other online map), and now it has a marker to denote our lo... | non_priority | bookmarks that aren t centered on the marker i have switched the link from my employer s web site to osm now that the area looks respectable actually much better than any other online map and now it has a marker to denote our location the link would be even more useful if i could shift the center of ... | 0 |

12,740 | 2,715,160,016 | IssuesEvent | 2015-04-10 10:55:35 | codenameone/CodenameOne | https://api.github.com/repos/codenameone/CodenameOne | closed | Spinner overlay applied twice on Android | Priority-Medium Type-Defect | Original [issue 711](https://code.google.com/p/codenameone/issues/detail?id=711) created by codenameone on 2013-05-16T02:32:35.000Z:

Previously you had fixed issue 629 were the spinners overlay was applied twice. I have been creating an app with includeNativeBool set to false, and the spinner looked fine, now when I ... | 1.0 | Spinner overlay applied twice on Android - Original [issue 711](https://code.google.com/p/codenameone/issues/detail?id=711) created by codenameone on 2013-05-16T02:32:35.000Z:

Previously you had fixed issue 629 were the spinners overlay was applied twice. I have been creating an app with includeNativeBool set to fals... | non_priority | spinner overlay applied twice on android original created by codenameone on previously you had fixed issue were the spinners overlay was applied twice i have been creating an app with includenativebool set to false and the spinner looked fine now when i set includenativebool to true the spinn... | 0 |

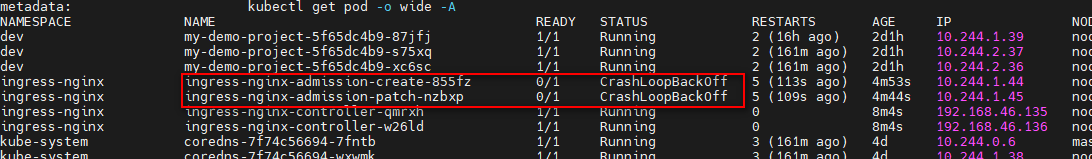

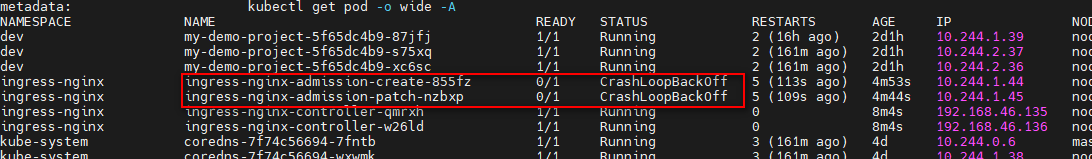

690,270 | 23,652,938,984 | IssuesEvent | 2022-08-26 08:30:40 | kubernetes/ingress-nginx | https://api.github.com/repos/kubernetes/ingress-nginx | closed | ingress-nginx-admission do not work. log show "got secret, but it did not contain a 'ca' key" | kind/bug needs-triage needs-priority |

```bash

[root@node136 tmp.9n5adjQhNn]# kubectl logs -f -n ingress-nginx ingress-nginx-admission-create-855fz

W0826 04:01:30.263082 1 client_config.go:615] Neither --kubeconfig nor --ma... | 1.0 | ingress-nginx-admission do not work. log show "got secret, but it did not contain a 'ca' key" -

```bash

[root@node136 tmp.9n5adjQhNn]# kubectl logs -f -n ingress-nginx ingress-nginx-admissio... | priority | ingress nginx admission do not work log show got secret but it did not contain a ca key bash kubectl logs f n ingress nginx ingress nginx admission create client config go neither kubeconfig nor master was specified using the inclusterconfig this mi... | 1 |

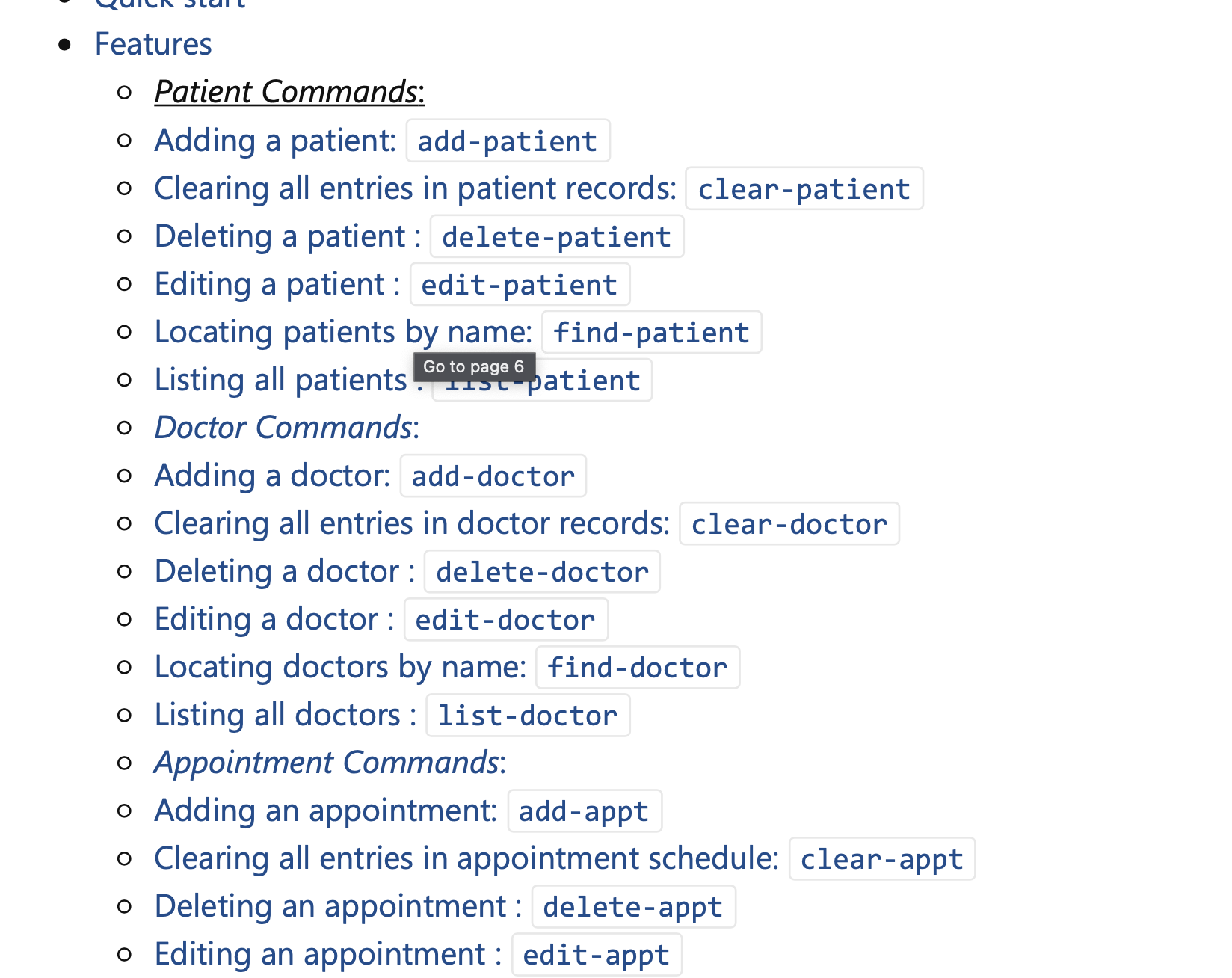

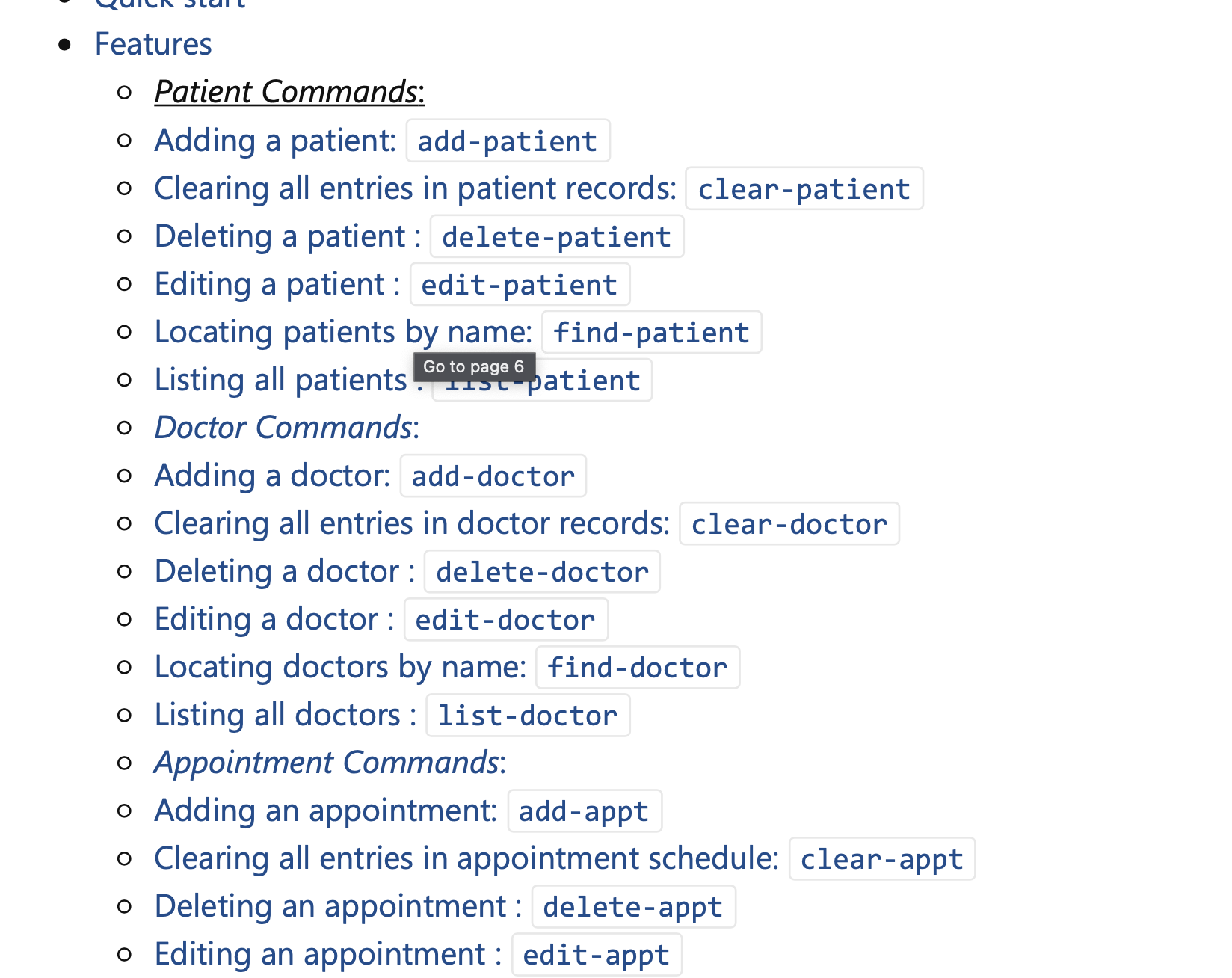

205,063 | 15,964,473,829 | IssuesEvent | 2021-04-16 06:17:30 | Maurice2n97/pe | https://api.github.com/repos/Maurice2n97/pe | opened | User Guide Bullet list doesnt seem to be indented | severity.VeryLow type.DocumentationBug |

It would be easier to search if the command types were indented by one more level, under each command type for patient, doctor etc, instead of it being all lying on the same ... | 1.0 | User Guide Bullet list doesnt seem to be indented -

It would be easier to search if the command types were indented by one more level, under each command type for patient, do... | non_priority | user guide bullet list doesnt seem to be indented it would be easier to search if the command types were indented by one more level under each command type for patient doctor etc instead of it being all lying on the same indentation level | 0 |

24,288 | 17,083,215,192 | IssuesEvent | 2021-07-08 08:31:36 | python-pillow/Pillow | https://api.github.com/repos/python-pillow/Pillow | closed | Old pypi homepage is SEO spam | Infrastructure | Taking a look at https://pypi.org/project/Pillow/2.2.1/ (a very old version I realize, but also the first google result for me), the homepage it points at is https://python-imaging.github.io/, which is some kind of computer-generated SEO garbage. Is there any way you could get this pointed at something more reasonable,... | 1.0 | Old pypi homepage is SEO spam - Taking a look at https://pypi.org/project/Pillow/2.2.1/ (a very old version I realize, but also the first google result for me), the homepage it points at is https://python-imaging.github.io/, which is some kind of computer-generated SEO garbage. Is there any way you could get this point... | non_priority | old pypi homepage is seo spam taking a look at a very old version i realize but also the first google result for me the homepage it points at is which is some kind of computer generated seo garbage is there any way you could get this pointed at something more reasonable such as alex clark said th... | 0 |

10,953 | 8,229,272,255 | IssuesEvent | 2018-09-07 08:52:02 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | new X509Certificate2 throws exception while loading certificate in Docker container | area-System.Security | SDK: Microsoft.AspNetCore.App 2.1.1

The following code throws an exception when it runs in a docker container

` var certificatePath = Path.Combine(env.ContentRootPath, "TestCertificate.pfx");

Console.WriteLine($"Certificate file exists: {File.Exists(certificatePath)}");

va... | True | new X509Certificate2 throws exception while loading certificate in Docker container - SDK: Microsoft.AspNetCore.App 2.1.1

The following code throws an exception when it runs in a docker container

` var certificatePath = Path.Combine(env.ContentRootPath, "TestCertificate.pfx");

Console.Wr... | non_priority | new throws exception while loading certificate in docker container sdk microsoft aspnetcore app the following code throws an exception when it runs in a docker container var certificatepath path combine env contentrootpath testcertificate pfx console writeline certi... | 0 |

121,058 | 15,835,719,724 | IssuesEvent | 2021-04-06 18:22:38 | ParabolInc/parabol | https://api.github.com/repos/ParabolInc/parabol | closed | Update Upgrade Page to include Enterprise | design discussion enhancement icebox | ###3790 Issue - Enhancement

Right now, the conversion prompt and the upgrade page only direct users to upgrade Pro. This forces them to go onto our website, find contact details, and write in seeking information on Enterprise if they don't feel that Pro is the right option for them. In order to increase enterprise c... | 1.0 | Update Upgrade Page to include Enterprise - ###3790 Issue - Enhancement

Right now, the conversion prompt and the upgrade page only direct users to upgrade Pro. This forces them to go onto our website, find contact details, and write in seeking information on Enterprise if they don't feel that Pro is the right option... | non_priority | update upgrade page to include enterprise issue enhancement right now the conversion prompt and the upgrade page only direct users to upgrade pro this forces them to go onto our website find contact details and write in seeking information on enterprise if they don t feel that pro is the right option fo... | 0 |

35,573 | 9,629,566,212 | IssuesEvent | 2019-05-15 09:53:16 | eclipse/kapua | https://api.github.com/repos/eclipse/kapua | closed | Travis tests random fails | bug build test | A lot of Travis builds are failing due to random errors in tests. Usually retrying the job some times fixes the builds, but this is not always the case. Also, the same branch may fail on a fork while building correctly on others.

**To Reproduce**

Steps to reproduce the behavior:

1. Launch a build in Travis

2. Che... | 1.0 | Travis tests random fails - A lot of Travis builds are failing due to random errors in tests. Usually retrying the job some times fixes the builds, but this is not always the case. Also, the same branch may fail on a fork while building correctly on others.

**To Reproduce**

Steps to reproduce the behavior:

1. Laun... | non_priority | travis tests random fails a lot of travis builds are failing due to random errors in tests usually retrying the job some times fixes the builds but this is not always the case also the same branch may fail on a fork while building correctly on others to reproduce steps to reproduce the behavior laun... | 0 |

54,875 | 3,071,468,476 | IssuesEvent | 2015-08-19 12:19:59 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Сделать полное описание команд, доступных пользователю из окна чата. | Component-Docs enhancement imported Priority-High Usability | _From [a.rain...@gmail.com](https://code.google.com/u/117892482479228821242/) on October 20, 2010 15:11:55_

Часть команд уже описана в r5070 , крохи разбросаны по справкам, необходимо собрать все описание вместе и добавить к справке.

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=206_ | 1.0 | Сделать полное описание команд, доступных пользователю из окна чата. - _From [a.rain...@gmail.com](https://code.google.com/u/117892482479228821242/) on October 20, 2010 15:11:55_

Часть команд уже описана в r5070 , крохи разбросаны по справкам, необходимо собрать все описание вместе и добавить к справке.

_Original iss... | priority | сделать полное описание команд доступных пользователю из окна чата from on october часть команд уже описана в крохи разбросаны по справкам необходимо собрать все описание вместе и добавить к справке original issue | 1 |

389,584 | 11,504,214,243 | IssuesEvent | 2020-02-12 22:45:19 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | opened | Payment type not correctly sent to mSupply on SYNC | 4.0.0-rc7 API: sync Bug: development Docs: not needed Effort: small Module: dispensary Priority: high | ## Describe the bug

The Payment type is missing from the Cash payment that was done on a mobile store using `New Prescription` with a payment type selected. The prescription was Finalised and synced.

## To Reproduce

Steps to reproduce the behaviour:

On mSupply MOBILE:

1. Go to Dispensary

2. Click on any ... | 1.0 | Payment type not correctly sent to mSupply on SYNC - ## Describe the bug

The Payment type is missing from the Cash payment that was done on a mobile store using `New Prescription` with a payment type selected. The prescription was Finalised and synced.

## To Reproduce

Steps to reproduce the behaviour:

On m... | priority | payment type not correctly sent to msupply on sync describe the bug the payment type is missing from the cash payment that was done on a mobile store using new prescription with a payment type selected the prescription was finalised and synced to reproduce steps to reproduce the behaviour on m... | 1 |

108,864 | 13,673,732,752 | IssuesEvent | 2020-09-29 10:14:20 | copilot-jp/project-sprint | https://api.github.com/repos/copilot-jp/project-sprint | closed | マニュアルにfigureを適宜いれたい | design | ターゲットによると思うのですが、全体的にもじもじしいので、ちょっとでもイラストが入ってたりするといいのかもなあと思ったりしました。内容よりも先に文字と文字がすごいつまってるなと思ってしまったというか……。

デリバリー平林 | 1.0 | マニュアルにfigureを適宜いれたい - ターゲットによると思うのですが、全体的にもじもじしいので、ちょっとでもイラストが入ってたりするといいのかもなあと思ったりしました。内容よりも先に文字と文字がすごいつまってるなと思ってしまったというか……。

デリバリー平林 | non_priority | マニュアルにfigureを適宜いれたい ターゲットによると思うのですが、全体的にもじもじしいので、ちょっとでもイラストが入ってたりするといいのかもなあと思ったりしました。内容よりも先に文字と文字がすごいつまってるなと思ってしまったというか……。 デリバリー平林 | 0 |

9,374 | 7,703,471,643 | IssuesEvent | 2018-05-21 08:33:45 | symfony/symfony-docs | https://api.github.com/repos/symfony/symfony-docs | closed | [Security] Typo in Security Main Page | Bug Security Status: Needs Review good first issue help wanted | Under the section

Always Check if the User is Logged In

the code:

`use Symfony\Component\Security\Core\User\UserInterface\UserInterface;`

should be

`use Symfony\Component\Security\Core\User\UserInterface;`

| True | [Security] Typo in Security Main Page - Under the section

Always Check if the User is Logged In

the code:

`use Symfony\Component\Security\Core\User\UserInterface\UserInterface;`

should be

`use Symfony\Component\Security\Core\User\UserInterface;`

| non_priority | typo in security main page under the section always check if the user is logged in the code use symfony component security core user userinterface userinterface should be use symfony component security core user userinterface | 0 |

551,492 | 16,174,154,693 | IssuesEvent | 2021-05-03 01:25:53 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | closed | GObject rotation broken [$100] | Bounty CORE Priority - Low | EXPECTED BLIZZLIKE BEHAVIOUR:

The GObjects should "rotate", change angles when defined in the DB table gameobject column rotation0, rotation1, rotation2, rotation3

Also, the command for this ingame is weird. It should offer .gobject turn [3 rotations] instead it is only offering the "...same as current character ... | 1.0 | GObject rotation broken [$100] - EXPECTED BLIZZLIKE BEHAVIOUR:

The GObjects should "rotate", change angles when defined in the DB table gameobject column rotation0, rotation1, rotation2, rotation3

Also, the command for this ingame is weird. It should offer .gobject turn [3 rotations] instead it is only offering t... | priority | gobject rotation broken expected blizzlike behaviour the gobjects should rotate change angles when defined in the db table gameobject column also the command for this ingame is weird it should offer gobject turn instead it is only offering the same as current character orientation ... | 1 |

11,667 | 3,214,178,657 | IssuesEvent | 2015-10-06 23:40:47 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | kubeproxy e2e test is flaky | area/test component/kube-proxy kind/flake priority/P0 team/cluster | ```

STEP: Hit Test with Fewer Endpoints

STEP: Hitting endpoints from host and container

STEP: dialing(udp) endpointPodIP:endpointUdpPort from node1

STEP: Dialing from node. command:for i in $(seq 1 1); do echo 'hostName' | nc -w 2 -u 10.245.3.24 8081; echo; done | grep -v '^\s*$' |sort | uniq -c | wc -l

STEP: dial... | 1.0 | kubeproxy e2e test is flaky - ```

STEP: Hit Test with Fewer Endpoints

STEP: Hitting endpoints from host and container

STEP: dialing(udp) endpointPodIP:endpointUdpPort from node1

STEP: Dialing from node. command:for i in $(seq 1 1); do echo 'hostName' | nc -w 2 -u 10.245.3.24 8081; echo; done | grep -v '^\s*$' |sort... | non_priority | kubeproxy test is flaky step hit test with fewer endpoints step hitting endpoints from host and container step dialing udp endpointpodip endpointudpport from step dialing from node command for i in seq do echo hostname nc w u echo done grep v s sort uniq c ... | 0 |

549,892 | 16,101,598,667 | IssuesEvent | 2021-04-27 09:59:12 | input-output-hk/cardano-node | https://api.github.com/repos/input-output-hk/cardano-node | closed | [FR] - CLI - Add support for Bech32 Addresses input format | cli revision enhancement priority medium shelley mainnet | **Internal**

**Describe the feature you'd like**

We need to support addresses in Bech32 format so that we can interchange between the wallet and node.

| 1.0 | [FR] - CLI - Add support for Bech32 Addresses input format - **Internal**

**Describe the feature you'd like**

We need to support addresses in Bech32 format so that we can interchange between the wallet and node.

| priority | cli add support for addresses input format internal describe the feature you d like we need to support addresses in format so that we can interchange between the wallet and node | 1 |

6,372 | 9,421,405,684 | IssuesEvent | 2019-04-11 06:41:52 | plazi/arcadia-project | https://api.github.com/repos/plazi/arcadia-project | opened | selection of articles for BLR processing: IRMNG data | Article processing | Here is the list of articles from the list of genus names in taxonomy, provided by Tony Rees. can you please create a ranking of contribution of the journals, eg how many time is a journal present in this list? This is important to decide where the most new names are

[irmng-sources-extra-Jul2017.xlsx](https://github... | 1.0 | selection of articles for BLR processing: IRMNG data - Here is the list of articles from the list of genus names in taxonomy, provided by Tony Rees. can you please create a ranking of contribution of the journals, eg how many time is a journal present in this list? This is important to decide where the most new names a... | non_priority | selection of articles for blr processing irmng data here is the list of articles from the list of genus names in taxonomy provided by tony rees can you please create a ranking of contribution of the journals eg how many time is a journal present in this list this is important to decide where the most new names a... | 0 |

141,192 | 5,431,570,426 | IssuesEvent | 2017-03-04 01:39:54 | Radarr/Radarr | https://api.github.com/repos/Radarr/Radarr | closed | Validate Folder on Net Import / Lists | bug priority:high | **Description:**

People aren't choosing a root folder when importing and are getting errors because of that. We need to validate they choose something. | 1.0 | Validate Folder on Net Import / Lists - **Description:**

People aren't choosing a root folder when importing and are getting errors because of that. We need to validate they choose something. | priority | validate folder on net import lists description people aren t choosing a root folder when importing and are getting errors because of that we need to validate they choose something | 1 |

42,468 | 11,054,095,092 | IssuesEvent | 2019-12-10 12:48:46 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | Dialog: closeDynamic fails due to invalid use of data-widgetvar | defect | ## 1) Environment

* **PrimeFaces version:** 7.0.10

* **Does it work on the newest released PrimeFaces version?** No.

* **Does it work on the newest sources in GitHub?** In works in showcase.

* **Application server + version:** Apache TomEE 8.0.0 (Tomct 9.0.22, MyFaces 2.3.4)

* **Affected browsers:** all tested

... | 1.0 | Dialog: closeDynamic fails due to invalid use of data-widgetvar - ## 1) Environment

* **PrimeFaces version:** 7.0.10

* **Does it work on the newest released PrimeFaces version?** No.

* **Does it work on the newest sources in GitHub?** In works in showcase.

* **Application server + version:** Apache TomEE 8.0.0 (Tom... | non_priority | dialog closedynamic fails due to invalid use of data widgetvar environment primefaces version does it work on the newest released primefaces version no does it work on the newest sources in github in works in showcase application server version apache tomee tomc... | 0 |

203,136 | 15,351,076,036 | IssuesEvent | 2021-03-01 04:09:36 | tgstation/TerraGov-Marine-Corps | https://api.github.com/repos/tgstation/TerraGov-Marine-Corps | closed | Wraith bug | Bug In Game Exploit Test Merge Bug | <!-- Write **BELOW** The Headers and **ABOVE** The comments else it may not be viewable -->

## Testmerges:

#5240

<!-- If you're certain the issue is to be caused by a test merge, go on the TGMC discord (preferabily the #bot-abuse channel) and type '!tgs prs' (without the brackets), and then copy and paste the... | 1.0 | Wraith bug - <!-- Write **BELOW** The Headers and **ABOVE** The comments else it may not be viewable -->

## Testmerges:

#5240

<!-- If you're certain the issue is to be caused by a test merge, go on the TGMC discord (preferabily the #bot-abuse channel) and type '!tgs prs' (without the brackets), and then copy ... | non_priority | wraith bug testmerges reproduction when banish is used on dead xeno the dead annoncment is played again when used on a bursted colonist the colonist comes back to life still bursted though also please make this impossible surr i m not sure why hot hot was abusing... | 0 |

578,048 | 17,143,043,715 | IssuesEvent | 2021-07-13 11:49:52 | logisim-evolution/logisim-evolution | https://api.github.com/repos/logisim-evolution/logisim-evolution | opened | Ability to remove "Bold" attribute using font picker | bug low priority | > Thing is, even if I wanted to change the fonts one by one for each element, seems that I can't get rid of the bold aspect of them. Whatever font I chose, even if I change it to other font face and select plain, it always comes back as bold.

Taken from #444 | 1.0 | Ability to remove "Bold" attribute using font picker - > Thing is, even if I wanted to change the fonts one by one for each element, seems that I can't get rid of the bold aspect of them. Whatever font I chose, even if I change it to other font face and select plain, it always comes back as bold.

Taken from #444 | priority | ability to remove bold attribute using font picker thing is even if i wanted to change the fonts one by one for each element seems that i can t get rid of the bold aspect of them whatever font i chose even if i change it to other font face and select plain it always comes back as bold taken from | 1 |

442,744 | 12,749,591,866 | IssuesEvent | 2020-06-26 23:26:31 | NRCan/GSIP | https://api.github.com/repos/NRCan/GSIP | opened | User Story: Ontology Management | Priority: Could Have Requirement User: Administrator | As an administrator, I have a way to manage the ontology as a separate artifact to be used by the inference engine. Ideally, the configuration should be a reference to someplace on the web to pull an OWL file (so, all nodes share the same ontology), but I also want to add extra rules (as long as they don't override the... | 1.0 | User Story: Ontology Management - As an administrator, I have a way to manage the ontology as a separate artifact to be used by the inference engine. Ideally, the configuration should be a reference to someplace on the web to pull an OWL file (so, all nodes share the same ontology), but I also want to add extra rules (... | priority | user story ontology management as an administrator i have a way to manage the ontology as a separate artifact to be used by the inference engine ideally the configuration should be a reference to someplace on the web to pull an owl file so all nodes share the same ontology but i also want to add extra rules ... | 1 |

581,845 | 17,333,617,464 | IssuesEvent | 2021-07-28 07:26:59 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | closed | Enhancement Data Storing of Users Avatar/Cover Photo and Group Avatar/Cover Photo | feature: enhancement priority: medium | **Is your feature request related to a problem? Please describe.**

At this moment platform is storing all the data related to avatar/cover photos into the `avatar/USERID/.jpg` and same for the Groups as `group-avatars/GROUPID/.jpg` and it's not storing these values in the Database.

Basically avatars do not get sto... | 1.0 | Enhancement Data Storing of Users Avatar/Cover Photo and Group Avatar/Cover Photo - **Is your feature request related to a problem? Please describe.**

At this moment platform is storing all the data related to avatar/cover photos into the `avatar/USERID/.jpg` and same for the Groups as `group-avatars/GROUPID/.jpg` an... | priority | enhancement data storing of users avatar cover photo and group avatar cover photo is your feature request related to a problem please describe at this moment platform is storing all the data related to avatar cover photos into the avatar userid jpg and same for the groups as group avatars groupid jpg an... | 1 |

703,932 | 24,178,642,582 | IssuesEvent | 2022-09-23 06:32:08 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.netflix.com - video or audio doesn't play | browser-firefox priority-critical engine-gecko | <!-- @browser: Firefox 105.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile VR; rv:105.0) Gecko/105.0 Firefox/105.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/111196 -->

**URL**: https://www.netflix.com/watch/81056739?trackId=14170287&t... | 1.0 | www.netflix.com - video or audio doesn't play - <!-- @browser: Firefox 105.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile VR; rv:105.0) Gecko/105.0 Firefox/105.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/111196 -->

**URL**: https://w... | priority | video or audio doesn t play url browser version firefox operating system android tested another browser yes other problem type video or audio doesn t play description the video or audio does not play steps to reproduce wolvic on quest cannot play netflix ... | 1 |

127,552 | 5,032,210,305 | IssuesEvent | 2016-12-16 10:25:16 | ubuntudesign/snapcraft.io | https://api.github.com/repos/ubuntudesign/snapcraft.io | closed | Change --force-dangerous to --dangerous | Priority: Low | ## Summary

--force-dangerous needs to be changed to --dangerous when the next version of snapd lands in xenial

| 1.0 | Change --force-dangerous to --dangerous - ## Summary

--force-dangerous needs to be changed to --dangerous when the next version of snapd lands in xenial

| priority | change force dangerous to dangerous summary force dangerous needs to be changed to dangerous when the next version of snapd lands in xenial | 1 |

9,048 | 12,551,490,050 | IssuesEvent | 2020-06-06 14:56:42 | teddywilson/defund12.org | https://api.github.com/repos/teddywilson/defund12.org | closed | Eureka - Letter to Mayor and Council Members | meets-issue-requirements | To: sseaman@ci.eureka.ca.gov, lcastellano@ci.eureka.ca.gov, hmessner@ci.eureka.ca.gov, kbergel@ci.eureka.ca.gov, aallison@ci.eureka.ca.gov, narroyo@ci.eureka.ca.gov, cityclerk@ci.eureka.ca.gov

Subject: Commit to reallocate for social equity

Message:

To whom it may concern,

I am a resident of Eureka's [X] Wa... | 1.0 | Eureka - Letter to Mayor and Council Members - To: sseaman@ci.eureka.ca.gov, lcastellano@ci.eureka.ca.gov, hmessner@ci.eureka.ca.gov, kbergel@ci.eureka.ca.gov, aallison@ci.eureka.ca.gov, narroyo@ci.eureka.ca.gov, cityclerk@ci.eureka.ca.gov

Subject: Commit to reallocate for social equity

Message:

To whom it ma... | non_priority | eureka letter to mayor and council members to sseaman ci eureka ca gov lcastellano ci eureka ca gov hmessner ci eureka ca gov kbergel ci eureka ca gov aallison ci eureka ca gov narroyo ci eureka ca gov cityclerk ci eureka ca gov subject commit to reallocate for social equity message to whom it ma... | 0 |

323,167 | 9,850,836,659 | IssuesEvent | 2019-06-19 09:05:43 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | nextdoor.com - site is not usable | browser-firefox engine-gecko priority-normal | <!-- @browser: Firefox 62.0 -->

<!-- @ua_header: https://chase.com/Mozilla/5.0 (rv:62.0) Gecko/20100101 Firefox/62.0 -->

<!-- @reported_with: addon-reporter-firefox -->

**URL**: https://nextdoor.com/login/?session_error=true

**Browser / Version**: Firefox 62.0

**Operating System**: Unknown

**Tested Another Browser**:... | 1.0 | nextdoor.com - site is not usable - <!-- @browser: Firefox 62.0 -->

<!-- @ua_header: https://chase.com/Mozilla/5.0 (rv:62.0) Gecko/20100101 Firefox/62.0 -->

<!-- @reported_with: addon-reporter-firefox -->

**URL**: https://nextdoor.com/login/?session_error=true

**Browser / Version**: Firefox 62.0

**Operating System**:... | priority | nextdoor com site is not usable url browser version firefox operating system unknown tested another browser yes problem type site is not usable description canpt log in steps to reproduce when log in it gives error message there was an error establishing a sess... | 1 |

18,732 | 5,697,599,996 | IssuesEvent | 2017-04-16 23:21:29 | Xinnx/rsps317 | https://api.github.com/repos/Xinnx/rsps317 | closed | missing google commons dependency | code cleanup | multiple packages in the build are missing packages from com.google.common.* | 1.0 | missing google commons dependency - multiple packages in the build are missing packages from com.google.common.* | non_priority | missing google commons dependency multiple packages in the build are missing packages from com google common | 0 |

218,980 | 24,424,803,958 | IssuesEvent | 2022-10-06 01:05:23 | jasonbrown17/jasonbrown17.github.io | https://api.github.com/repos/jasonbrown17/jasonbrown17.github.io | opened | WS-2022-0320 (Medium) detected in commonmarker-0.17.13.gem | security vulnerability | ## WS-2022-0320 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commonmarker-0.17.13.gem</b></p></summary>

<p>A fast, safe, extensible parser for CommonMark. This wraps the official ... | True | WS-2022-0320 (Medium) detected in commonmarker-0.17.13.gem - ## WS-2022-0320 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commonmarker-0.17.13.gem</b></p></summary>

<p>A fast, saf... | non_priority | ws medium detected in commonmarker gem ws medium severity vulnerability vulnerable library commonmarker gem a fast safe extensible parser for commonmark this wraps the official libcmark library library home page a href path to dependency file gemfile lock path to... | 0 |

38,369 | 8,789,660,957 | IssuesEvent | 2018-12-21 05:09:41 | line/armeria | https://api.github.com/repos/line/armeria | closed | Armeria removes ContentLength header when performing PUT requests without content | defect | When performing a PUT http request without content and with Content-Length=0 with armeria, the Content-Length header gets removed [here](https://github.com/line/armeria/blob/master/core/src/main/java/com/linecorp/armeria/internal/Http1ObjectEncoder.java#L247). This was added in #226 which speaks of GET requests, but th... | 1.0 | Armeria removes ContentLength header when performing PUT requests without content - When performing a PUT http request without content and with Content-Length=0 with armeria, the Content-Length header gets removed [here](https://github.com/line/armeria/blob/master/core/src/main/java/com/linecorp/armeria/internal/Http1O... | non_priority | armeria removes contentlength header when performing put requests without content when performing a put http request without content and with content length with armeria the content length header gets removed this was added in which speaks of get requests but this logic is being applied to all request meth... | 0 |

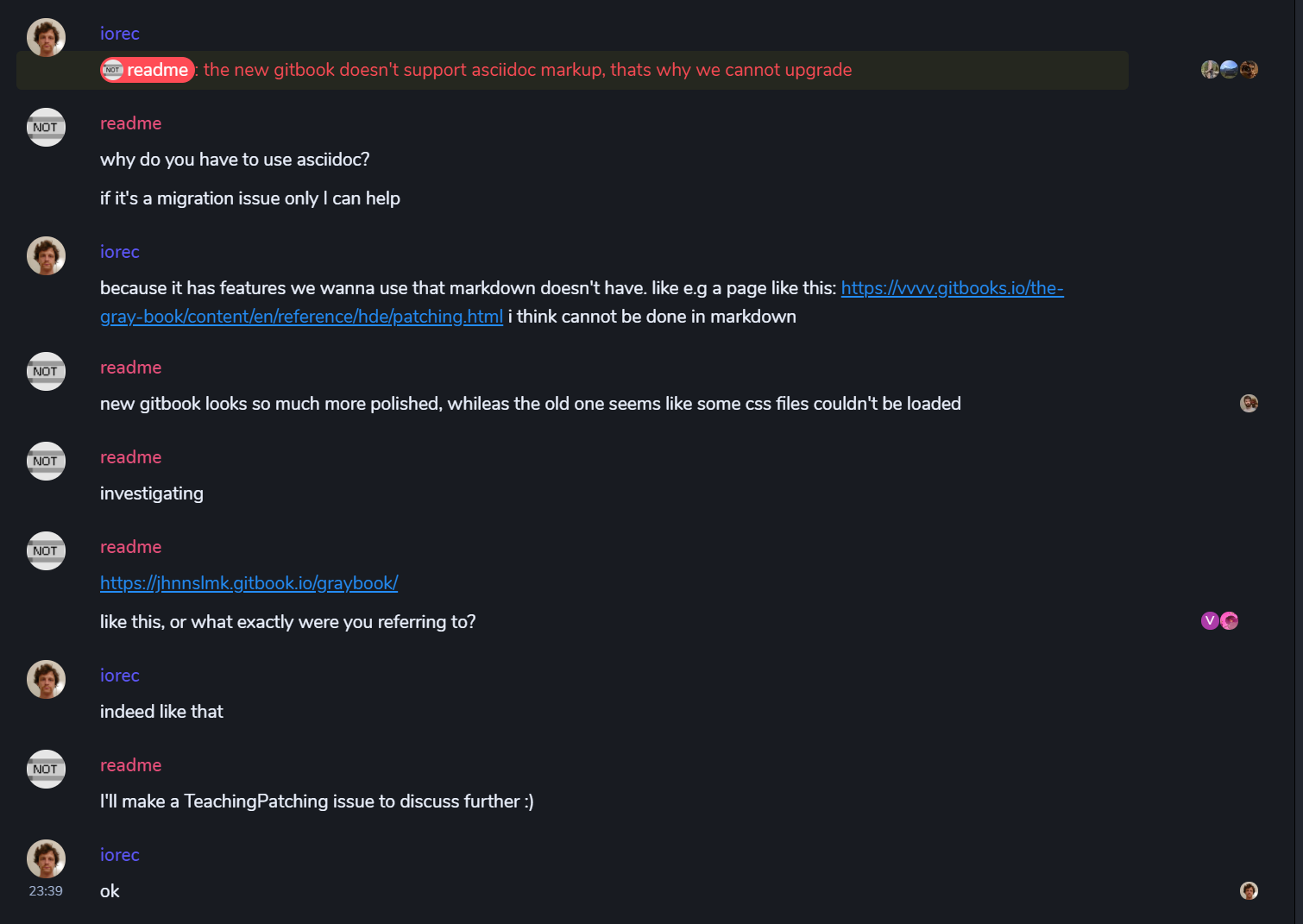

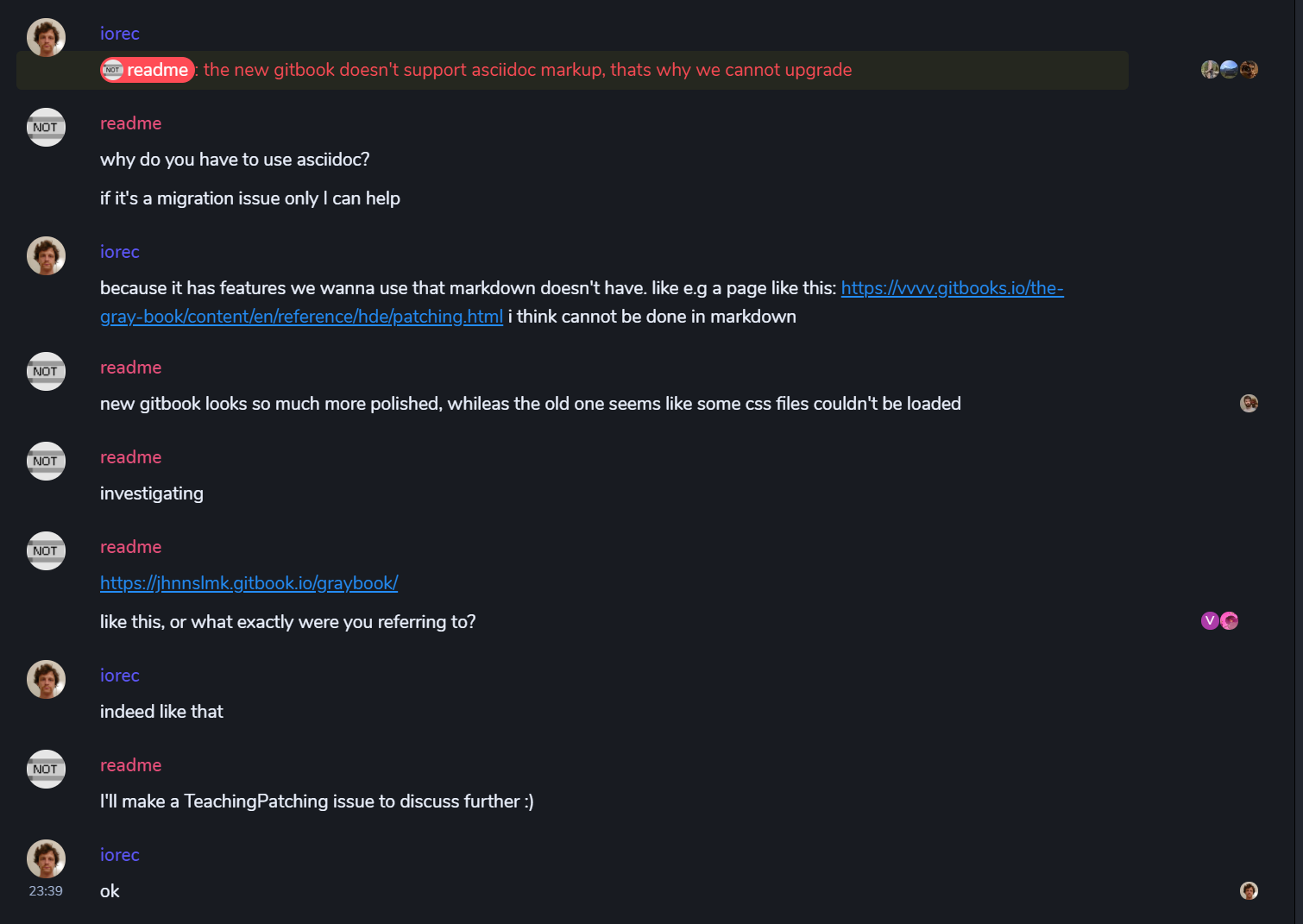

97,476 | 11,014,466,574 | IssuesEvent | 2019-12-04 22:50:25 | vvvv/TeachingPatching | https://api.github.com/repos/vvvv/TeachingPatching | opened | Migrating gray book from legacy to current | Documentation |

The link is here: https://jhnnslmk.gitbook.io/graybook/

**!!!** this is not to be intended the link to the new graybook.

***My reasoning: I personally think the new graybook looks much better than the le... | 1.0 | Migrating gray book from legacy to current -

The link is here: https://jhnnslmk.gitbook.io/graybook/

**!!!** this is not to be intended the link to the new graybook.

***My reasoning: I personally think t... | non_priority | migrating gray book from legacy to current the link is here this is not to be intended the link to the new graybook my reasoning i personally think the new graybook looks much better than the legacy one providing more readable visual structure as well also these little hint sections just... | 0 |

608,931 | 18,851,490,760 | IssuesEvent | 2021-11-11 21:32:18 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] improve sidebar explorer widget breadcrumb behavior | enhancement priority: low | # Feature Request

#### Is your feature request related to a problem? Please describe.

The explorer widget displays a bread crumb that includes the current folder. When the current folder is the first level this creates a visually redundant experience for the user (Cabinet is "Pages", Breadcrumb is "Home" and right ... | 1.0 | [studio-ui] improve sidebar explorer widget breadcrumb behavior - # Feature Request

#### Is your feature request related to a problem? Please describe.

The explorer widget displays a bread crumb that includes the current folder. When the current folder is the first level this creates a visually redundant experience... | priority | improve sidebar explorer widget breadcrumb behavior feature request is your feature request related to a problem please describe the explorer widget displays a bread crumb that includes the current folder when the current folder is the first level this creates a visually redundant experience for the u... | 1 |

246,604 | 7,895,427,195 | IssuesEvent | 2018-06-29 03:12:34 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Add ability to specify the x axis label for curve plots. | Expected Use: 3 - Occasional Feature Impact: 3 - Medium OS: All Priority: High Support Group: Any version: 2.12.3 | Dinesh Shetty has time dependent curve data and it only labels it with x-axis. He would like it to say time. This seem like a reasonable request.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. The following information

could not be accurately captured in the ne... | 1.0 | Add ability to specify the x axis label for curve plots. - Dinesh Shetty has time dependent curve data and it only labels it with x-axis. He would like it to say time. This seem like a reasonable request.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. The follo... | priority | add ability to specify the x axis label for curve plots dinesh shetty has time dependent curve data and it only labels it with x axis he would like it to say time this seem like a reasonable request redmine migration this ticket was migrated from redmine the follo... | 1 |

225,792 | 7,495,070,627 | IssuesEvent | 2018-04-07 16:51:51 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | External Kubernetes: Builder and registry attempt to hit the base Kubernetes API instead of the OpenShift API for OpenShift resources | component/imageregistry lifecycle/rotten priority/P2 | Running under Kubernetes as usual.

Here are the build errors, notice the error near the beginning about being unable to PUT builds:

```

I0317 23:14:19.031041 1 builder.go:57] Master version "v1.1.4", Builder version "v1.1.4"

I0317 23:14:19.031912 1 builder.go:145] Running build with cgroup limits: api.CGr... | 1.0 | External Kubernetes: Builder and registry attempt to hit the base Kubernetes API instead of the OpenShift API for OpenShift resources - Running under Kubernetes as usual.

Here are the build errors, notice the error near the beginning about being unable to PUT builds:

```

I0317 23:14:19.031041 1 builder.go:57] M... | priority | external kubernetes builder and registry attempt to hit the base kubernetes api instead of the openshift api for openshift resources running under kubernetes as usual here are the build errors notice the error near the beginning about being unable to put builds builder go master version... | 1 |

386,116 | 11,432,126,036 | IssuesEvent | 2020-02-04 13:33:04 | luna/ide | https://api.github.com/repos/luna/ide | closed | Drawing collapsed nodes. | Category: BaseGL API Change: Non-Breaking Difficulty: Core Contributor Priority: Highest Type: Enhancement | ### Summary

This task is only about the visual part of the nodes. It does not include interactions.

### Specification

- Ability to display collapsed nodes with:

- icons

- ports

- arrows (with labels and colors)

- expressions

- selection

- Theme management

- ability to change the theme and re-draw the scen... | 1.0 | Drawing collapsed nodes. - ### Summary

This task is only about the visual part of the nodes. It does not include interactions.

### Specification

- Ability to display collapsed nodes with:

- icons

- ports

- arrows (with labels and colors)

- expressions

- selection

- Theme management

- ability to change the... | priority | drawing collapsed nodes summary this task is only about the visual part of the nodes it does not include interactions specification ability to display collapsed nodes with icons ports arrows with labels and colors expressions selection theme management ability to change the... | 1 |

174,508 | 13,493,113,354 | IssuesEvent | 2020-09-11 19:07:02 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Rancher Dashboard doesn't work when Rancher/cluster is configured with a proxy | [zube]: To Test | <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of posting a public issue in GitHub. You may (but are not required to) use the GPG ke... | 1.0 | Rancher Dashboard doesn't work when Rancher/cluster is configured with a proxy - <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of p... | non_priority | rancher dashboard doesn t work when rancher cluster is configured with a proxy please search for existing issues first then read to see what we expect in an issue for security issues please email security rancher com instead of posting a public issue in github you may but are not required to use the gpg... | 0 |

360,650 | 25,301,653,845 | IssuesEvent | 2022-11-17 11:09:10 | t3-oss/create-t3-app | https://api.github.com/repos/t3-oss/create-t3-app | opened | feat: in translated docs, show if the English version is newer | 📚 documentation 🌟 enhancement | ### Is your feature request related to a problem? Please describe.

With Arabic and Russian docs translations underway, we should start thinking about how to integrate the docs well.

One common issue with docs translations is that they become out of date as the English version gets more updates. Explicitly showing ... | 1.0 | feat: in translated docs, show if the English version is newer - ### Is your feature request related to a problem? Please describe.

With Arabic and Russian docs translations underway, we should start thinking about how to integrate the docs well.

One common issue with docs translations is that they become out of d... | non_priority | feat in translated docs show if the english version is newer is your feature request related to a problem please describe with arabic and russian docs translations underway we should start thinking about how to integrate the docs well one common issue with docs translations is that they become out of d... | 0 |

137,963 | 11,171,682,516 | IssuesEvent | 2019-12-28 21:55:48 | ariya/phantomjs | https://api.github.com/repos/ariya/phantomjs | closed | Some tests failing on Windows | Need testing stale | A couple look like they might be forward-back slash related.

Master branch, Windows 10, Visual Studio 2013

```

ff..E..F......F.......f......F..f.............s................E.......F.....E....f...

basics\require: ERROR

ERROR: Test group skipped

ERROR: Error: Cannot find module 'require/require_spec.js'

basics\tim... | 1.0 | Some tests failing on Windows - A couple look like they might be forward-back slash related.

Master branch, Windows 10, Visual Studio 2013

```

ff..E..F......F.......f......F..f.............s................E.......F.....E....f...

basics\require: ERROR

ERROR: Test group skipped

ERROR: Error: Cannot find module 'requ... | non_priority | some tests failing on windows a couple look like they might be forward back slash related master branch windows visual studio ff e f f f f f s e f e f basics require error error test group skipped error error cannot find module require ... | 0 |

5,000 | 2,765,678,784 | IssuesEvent | 2015-04-29 21:50:40 | aspnet/HttpAbstractions | https://api.github.com/repos/aspnet/HttpAbstractions | opened | Consider merging the FeatureModel and Features packages | needs design | The FeatureModel package is used by Http (DefaultHttpContext), Servers (IFeatureCollection), and Hosting (new DefaultHttpContext(iFeatureCollection)).

The Features package is used by Http (DefaultHttpContext), Servers, and some middleware that access features not directly available on HttpContext. | 1.0 | Consider merging the FeatureModel and Features packages - The FeatureModel package is used by Http (DefaultHttpContext), Servers (IFeatureCollection), and Hosting (new DefaultHttpContext(iFeatureCollection)).

The Features package is used by Http (DefaultHttpContext), Servers, and some middleware that access features... | non_priority | consider merging the featuremodel and features packages the featuremodel package is used by http defaulthttpcontext servers ifeaturecollection and hosting new defaulthttpcontext ifeaturecollection the features package is used by http defaulthttpcontext servers and some middleware that access features... | 0 |

123,580 | 4,865,316,833 | IssuesEvent | 2016-11-14 20:25:42 | phetsims/tasks | https://api.github.com/repos/phetsims/tasks | closed | Investigate Chrome web store | priority:5-deferred tasks:developer | @arnabp can you look into the Chrome web store again? What would it take to put an HTML5 sim on the store? All of our HTML5 sims?

Also, @aadish mentioned Appcache manifest - investigate bookmarking html5 sim: http://www.html5rocks.com/en/tutorials/appcache/beginner/

| 1.0 | Investigate Chrome web store - @arnabp can you look into the Chrome web store again? What would it take to put an HTML5 sim on the store? All of our HTML5 sims?

Also, @aadish mentioned Appcache manifest - investigate bookmarking html5 sim: http://www.html5rocks.com/en/tutorials/appcache/beginner/

| priority | investigate chrome web store arnabp can you look into the chrome web store again what would it take to put an sim on the store all of our sims also aadish mentioned appcache manifest investigate bookmarking sim | 1 |

37,458 | 8,405,326,123 | IssuesEvent | 2018-10-11 15:00:08 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | p-chips disabled issue | defect | ```

[ x] bug report => Search github for a similar issue or PR before submitting

```

I am having a problem with disabling the add chip functionality.

After adding this to my code:

`<p-chips name="tags" [disabled]="true" [(ngModel)]="model.tags"></p-chips>`

The old chips are disabled (I can't remove them), howeve... | 1.0 | p-chips disabled issue - ```

[ x] bug report => Search github for a similar issue or PR before submitting

```

I am having a problem with disabling the add chip functionality.

After adding this to my code:

`<p-chips name="tags" [disabled]="true" [(ngModel)]="model.tags"></p-chips>`

The old chips are disabled (I c... | non_priority | p chips disabled issue bug report search github for a similar issue or pr before submitting i am having a problem with disabling the add chip functionality after adding this to my code the old chips are disabled i can t remove them however the input is still present and i can add new ones... | 0 |

14,422 | 2,811,804,975 | IssuesEvent | 2015-05-18 01:36:13 | RenatoUtsch/nulldc | https://api.github.com/repos/RenatoUtsch/nulldc | closed | Enter one-line summary | auto-migrated Priority-Medium Restrict-AddIssueComment-Commit Type-Defect | ```

Please i need lst files for hokuto no ken converted game for nulldc naomi i

dont know how to create lst file please help

```

Original issue reported on code.google.com by `markocur...@gmail.com` on 25 Dec 2014 at 8:42 | 1.0 | Enter one-line summary - ```

Please i need lst files for hokuto no ken converted game for nulldc naomi i

dont know how to create lst file please help

```

Original issue reported on code.google.com by `markocur...@gmail.com` on 25 Dec 2014 at 8:42 | non_priority | enter one line summary please i need lst files for hokuto no ken converted game for nulldc naomi i dont know how to create lst file please help original issue reported on code google com by markocur gmail com on dec at | 0 |

233,470 | 7,698,064,911 | IssuesEvent | 2018-05-18 21:15:22 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | opened | Some promo images and text become unreadably jumbled on screen resize | help wanted priority: minor section: Login/Statics | [//]: # (Before logging this issue, please post to the Report a Bug guild from the Habitica website's Help menu. Most bugs can be handled quickly there. If a GitHub issue is needed, you will be advised of that by a moderator or staff member -- a player with a dark blue or purple name. It is recommended that you don't c... | 1.0 | Some promo images and text become unreadably jumbled on screen resize - [//]: # (Before logging this issue, please post to the Report a Bug guild from the Habitica website's Help menu. Most bugs can be handled quickly there. If a GitHub issue is needed, you will be advised of that by a moderator or staff member -- a pl... | priority | some promo images and text become unreadably jumbled on screen resize before logging this issue please post to the report a bug guild from the habitica website s help menu most bugs can be handled quickly there if a github issue is needed you will be advised of that by a moderator or staff member a playe... | 1 |

72,859 | 3,392,131,487 | IssuesEvent | 2015-11-30 18:16:15 | thesgc/chembiohub_helpdesk | https://api.github.com/repos/thesgc/chembiohub_helpdesk | closed | Search page rework.

Search page needs some redevelopment:

add ability to select multiple projects

| app: ChemReg enhancement name: Karen priority: Medium status: New | Search page rework.

Search page needs some redevelopment:

add ability to select multiple projects

move structural search type to underneath chemdoodle widget (minimally)

OR hide ChemDoodle (or separate tab?) unless user selects a new 'Search by substructure' option which makes draw window visible (in pop-up?)

add '... | 1.0 | Search page rework.

Search page needs some redevelopment:

add ability to select multiple projects

- Search page rework.

Search page needs some redevelopment:

add ability to select multiple projects

move structural search type to underneath chemdoodle widget (minimally)

OR hide ChemDoodle (or separate tab?) unless... | priority | search page rework search page needs some redevelopment add ability to select multiple projects search page rework search page needs some redevelopment add ability to select multiple projects move structural search type to underneath chemdoodle widget minimally or hide chemdoodle or separate tab unless... | 1 |

54,098 | 13,251,357,508 | IssuesEvent | 2020-08-20 01:55:14 | beaverbuilder/feature-requests | https://api.github.com/repos/beaverbuilder/feature-requests | opened | Add Publish button beside Done button | Beaver Builder | Add a Publish button beside the Done button so that people don't have to click twice to publish a page.