Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

92,538 | 11,671,570,449 | IssuesEvent | 2020-03-04 03:39:11 | microsoft/nni | https://api.github.com/repos/microsoft/nni | closed | Customized trial | design discussion new feature | ## Current Design

### User Side:

* Web UI adds a "clone" button for each trial

* Web UI adds a "tag" column in the trial details table (and maybe "edit tag" buttons)

* When user wants to create a customized trial, they click "clone" and a form pops up

* User edits the parameters and the *tag* (defaults to `CUSTO... | 1.0 | Customized trial - ## Current Design

### User Side:

* Web UI adds a "clone" button for each trial

* Web UI adds a "tag" column in the trial details table (and maybe "edit tag" buttons)

* When user wants to create a customized trial, they click "clone" and a form pops up

* User edits the parameters and the *tag* ... | non_priority | customized trial current design user side web ui adds a clone button for each trial web ui adds a tag column in the trial details table and maybe edit tag buttons when user wants to create a customized trial they click clone and a form pops up user edits the parameters and the tag ... | 0 |

736,847 | 25,490,263,956 | IssuesEvent | 2022-11-27 00:39:22 | jeremilev/Soen287-project | https://api.github.com/repos/jeremilev/Soen287-project | closed | Database: Use regular queries to update grades for course and student data, since cloud functions are paid | Priority 1 | write db queries that will allow the teacher to insert grades to students, we are not using cloud functions anymore | 1.0 | Database: Use regular queries to update grades for course and student data, since cloud functions are paid - write db queries that will allow the teacher to insert grades to students, we are not using cloud functions anymore | priority | database use regular queries to update grades for course and student data since cloud functions are paid write db queries that will allow the teacher to insert grades to students we are not using cloud functions anymore | 1 |

438,405 | 12,627,410,810 | IssuesEvent | 2020-06-14 21:20:51 | domialex/Sidekick | https://api.github.com/repos/domialex/Sidekick | closed | Make windows/panels resizable | Priority: Medium Status: Review Needed Type: Enhancement | # Resizable windows

- [x] Splash screen

- [x] Price view

- [ ] ~~League view~~

- [x] Settings (vertical only)

- [x] Logs | 1.0 | Make windows/panels resizable - # Resizable windows

- [x] Splash screen

- [x] Price view

- [ ] ~~League view~~

- [x] Settings (vertical only)

- [x] Logs | priority | make windows panels resizable resizable windows splash screen price view league view settings vertical only logs | 1 |

289,845 | 25,017,695,703 | IssuesEvent | 2022-11-03 20:25:19 | cfpb/hmda-frontend | https://api.github.com/repos/cfpb/hmda-frontend | closed | [Cypress] Login bug; Load test too big | Test Automation | - I thought the 500K limit was in the generation script but rather it is a limit of the way Cypress handles file uploads.

- I missed updating the login method for on of the `HMDA Help - Institution` tests | 1.0 | [Cypress] Login bug; Load test too big - - I thought the 500K limit was in the generation script but rather it is a limit of the way Cypress handles file uploads.

- I missed updating the login method for on of the `HMDA Help - Institution` tests | non_priority | login bug load test too big i thought the limit was in the generation script but rather it is a limit of the way cypress handles file uploads i missed updating the login method for on of the hmda help institution tests | 0 |

808,816 | 30,112,334,990 | IssuesEvent | 2023-06-30 08:46:06 | fossasia/open-event-frontend | https://api.github.com/repos/fossasia/open-event-frontend | reopened | Show test/live key of Stripe under Payments section | bug Priority: High Priority: Urgent | **Describe the bug**

Show test/live key of Stripe under Payments section depending on the environment being used, if local/test.eventyay.com is used - show the test keys else show live keys. Currently, the live keys are shown in both environments.

. Right now you can submit grades for students that are not shown if you had selected them before filtering.

... | 1.0 | Do not submit grades that are not shown - ### Component

Grade submission in main screen.

### Summary

When submitting grades with partial grading enabled, we should always apply the same filter as shown (e.g. when filtering by group or student name). Right now you can submit grades for students that are not shown i... | priority | do not submit grades that are not shown component grade submission in main screen summary when submitting grades with partial grading enabled we should always apply the same filter as shown e g when filtering by group or student name right now you can submit grades for students that are not shown i... | 1 |

23,796 | 12,123,741,009 | IssuesEvent | 2020-04-22 13:12:14 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | Lazy loading React components | Team:AppArch Team:Geo Team:Platform discuss performance | I created this issue to discuss the lazy loading strategy for react components used by different registries in Kibana.

If you [build Kibana platform plugins locally and check their sizes](https://github.com/elastic/kibana/blob/master/src/core/MIGRATION.md#how-to-understand-how-big-the-bundle-size-of-my-plugin-is). You... | True | Lazy loading React components - I created this issue to discuss the lazy loading strategy for react components used by different registries in Kibana.

If you [build Kibana platform plugins locally and check their sizes](https://github.com/elastic/kibana/blob/master/src/core/MIGRATION.md#how-to-understand-how-big-the-... | non_priority | lazy loading react components i created this issue to discuss the lazy loading strategy for react components used by different registries in kibana if you you can notice that maps plugin size reaches that s mostly due to new optimizer architecture including all the plugin dependencies in the bundle ... | 0 |

596,289 | 18,102,086,265 | IssuesEvent | 2021-09-22 15:08:41 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | opened | Enhance Graphic SVG Regex | problem: bug bug-type: broken use case priority: low type: corrective | Function `_isUrl` in `graphicsRecord.js` contains some regex that tries to validate if a string is a valid image, either a url to an image or a data url containing encoded treats.

The data url regex will currently not match some valid data urls, including our default north pole flag lol.

This regex covers more ca... | 1.0 | Enhance Graphic SVG Regex - Function `_isUrl` in `graphicsRecord.js` contains some regex that tries to validate if a string is a valid image, either a url to an image or a data url containing encoded treats.

The data url regex will currently not match some valid data urls, including our default north pole flag lol.

... | priority | enhance graphic svg regex function isurl in graphicsrecord js contains some regex that tries to validate if a string is a valid image either a url to an image or a data url containing encoded treats the data url regex will currently not match some valid data urls including our default north pole flag lol ... | 1 |

300,256 | 25,954,948,198 | IssuesEvent | 2022-12-18 04:32:15 | nuvolaris/nuvolaris | https://api.github.com/repos/nuvolaris/nuvolaris | closed | An script to setup an OKD environment in AWS | nuvolaris-testing | Contribute an ansible script to the [nuvolaris-testing](https://github.com/nuvolaris/nuvolaris-testing) repo that builds in a test server accessible with ssh an OKD Kubernetes cluster to be usable as a test environment. It should export the kubernetes cluster access port and make available the key in /etc/kube/config, ... | 1.0 | An script to setup an OKD environment in AWS - Contribute an ansible script to the [nuvolaris-testing](https://github.com/nuvolaris/nuvolaris-testing) repo that builds in a test server accessible with ssh an OKD Kubernetes cluster to be usable as a test environment. It should export the kubernetes cluster access port a... | non_priority | an script to setup an okd environment in aws contribute an ansible script to the repo that builds in a test server accessible with ssh an okd kubernetes cluster to be usable as a test environment it should export the kubernetes cluster access port and make available the key in etc kube config so with an scp s... | 0 |

767,093 | 26,910,601,122 | IssuesEvent | 2023-02-06 23:15:01 | projectLEMDO/lemdoIssues | https://api.github.com/repos/projectLEMDO/lemdoIssues | closed | Need to constrain image height in PDF rendering | bug Priority: urgent fix committed PDF rendering | Building the latest PDF has resulted in a lot of warnings like this:

`LaTeX Warning: Float too large for page`

and it's mostly because while I'm setting the width for images to 0.9 of textwidth, I'm not setting a maximum height. This provides a useful guide:

https://infolatex.com/set-a-maximum-width-and-height... | 1.0 | Need to constrain image height in PDF rendering - Building the latest PDF has resulted in a lot of warnings like this:

`LaTeX Warning: Float too large for page`

and it's mostly because while I'm setting the width for images to 0.9 of textwidth, I'm not setting a maximum height. This provides a useful guide:

ht... | priority | need to constrain image height in pdf rendering building the latest pdf has resulted in a lot of warnings like this latex warning float too large for page and it s mostly because while i m setting the width for images to of textwidth i m not setting a maximum height this provides a useful guide ... | 1 |

641,988 | 20,864,176,743 | IssuesEvent | 2022-03-22 04:18:26 | angelewilliams/flashcards-starter | https://api.github.com/repos/angelewilliams/flashcards-starter | opened | Turn Class and Turn Test | iteration2 high priority | Should be:

Instantiated with two arguments - a string (that represents a user’s guess to the question), and a Card object for the current card in play.

returnGuess: method that returns the guess

returnCard: method that returns the Card

evaluateGuess: method that returns a boolean indicating if the user’s guess mat... | 1.0 | Turn Class and Turn Test - Should be:

Instantiated with two arguments - a string (that represents a user’s guess to the question), and a Card object for the current card in play.

returnGuess: method that returns the guess

returnCard: method that returns the Card

evaluateGuess: method that returns a boolean indicat... | priority | turn class and turn test should be instantiated with two arguments a string that represents a user’s guess to the question and a card object for the current card in play returnguess method that returns the guess returncard method that returns the card evaluateguess method that returns a boolean indicat... | 1 |

631,098 | 20,143,986,936 | IssuesEvent | 2022-02-09 04:20:12 | brave/brave-ios | https://api.github.com/repos/brave/brave-ios | closed | [feature/webauthn] Touch key modal does not show up if the key is already plugged in | security priority/P4 sec-low QA/No release-notes/exclude Epic: WebAuthn | <!-- Have you searched for similar issues on the repository?

Before submitting this issue, please visit our wiki for common ones: https://github.com/brave/browser-ios/wiki

For more, check out our community site: https://community.brave.com/ -->

### Description:

Touch key modal should show up if the lightning key... | 1.0 | [feature/webauthn] Touch key modal does not show up if the key is already plugged in - <!-- Have you searched for similar issues on the repository?

Before submitting this issue, please visit our wiki for common ones: https://github.com/brave/browser-ios/wiki

For more, check out our community site: https://community.b... | priority | touch key modal does not show up if the key is already plugged in have you searched for similar issues on the repository before submitting this issue please visit our wiki for common ones for more check out our community site description touch key modal should show up if the lightning ... | 1 |

530,786 | 15,436,692,368 | IssuesEvent | 2021-03-07 14:01:34 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [0.9.4 Develop-203]Missing marker on map when editing Deed or marking district on Zoning office | Category: Gameplay Priority: High Regression Type: Bug | Build: 0.9.4 Develop-203

### Issues

1. When editing Deed or marking district area via Zoning Office, the map UI opens but the marker is missing. The market where you either mark an area for the selected district and increase or decrease the area of a deed.

2. When trying to claim via Deed edit, pressing and holding th... | 1.0 | [0.9.4 Develop-203]Missing marker on map when editing Deed or marking district on Zoning office - Build: 0.9.4 Develop-203

### Issues

1. When editing Deed or marking district area via Zoning Office, the map UI opens but the marker is missing. The market where you either mark an area for the selected district and incre... | priority | missing marker on map when editing deed or marking district on zoning office build develop issues when editing deed or marking district area via zoning office the map ui opens but the marker is missing the market where you either mark an area for the selected district and increase or decrease the ... | 1 |

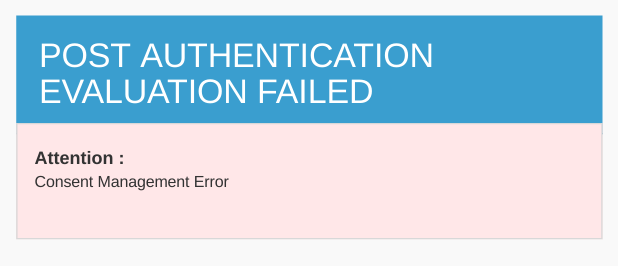

214,205 | 7,267,780,871 | IssuesEvent | 2018-02-20 07:19:06 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | Need a meaningful error message when the user denies to share data | Affected/5.5.0-Alpha Priority/High Severity/Major Type/Bug | Need a meaningful error message when the user denies to share data. The message should mention the reason for the failure; that you cannot proceed as you cannot share the information.

| 1.0 | Need a meaningful error message when the user denies to share data - Need a meaningful error message when the user denies to share data. The message should mention the reason for the failure; that you cannot proceed as you cannot share the information.

. When I want to continute drawing a line that already has ~200+ nodes in it, each new node I draw takes about 2-3 seconds to... | True | iD performance issue with long lines - Hello,

after a short discussion in the OSM Discord group I was referred here to address my problem.

I am experiencing an issue that affects the performance of iD (Windows 10, Google Chrome). When I want to continute drawing a line that already has ~200+ nodes in it, each new... | non_priority | id performance issue with long lines hello after a short discussion in the osm discord group i was referred here to address my problem i am experiencing an issue that affects the performance of id windows google chrome when i want to continute drawing a line that already has nodes in it each new no... | 0 |

130,023 | 5,108,190,749 | IssuesEvent | 2017-01-05 16:58:42 | semihalf-berestovskyy-andriy/test2 | https://api.github.com/repos/semihalf-berestovskyy-andriy/test2 | closed | can not show fastpath and get some error | docs & examples low priority | Note: the issue was imported automatically from Bugzilla with bugzilla2issues.py tool

# Bugzilla Bug ID: 95

Date: 2016-09-20 08:32:08 +0200

From: xiaowen yang <xiaowen.1.yang@gmail.com>

To: Sorin Vultureanu <sorin.vultureanu@enea.com>

CC: xiaowen.1.yang@gmail.com

Last updated: 2016-09-30 11:17:38 +0200

## Bugzi... | 1.0 | can not show fastpath and get some error - Note: the issue was imported automatically from Bugzilla with bugzilla2issues.py tool

# Bugzilla Bug ID: 95

Date: 2016-09-20 08:32:08 +0200

From: xiaowen yang <xiaowen.1.yang@gmail.com>

To: Sorin Vultureanu <sorin.vultureanu@enea.com>

CC: xiaowen.1.yang@gmail.com

Last up... | priority | can not show fastpath and get some error note the issue was imported automatically from bugzilla with py tool bugzilla bug id date from xiaowen yang to sorin vultureanu cc xiaowen yang gmail com last updated bugzilla comment id date from ... | 1 |

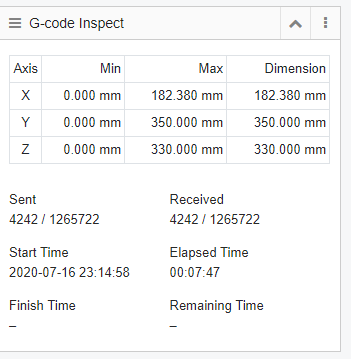

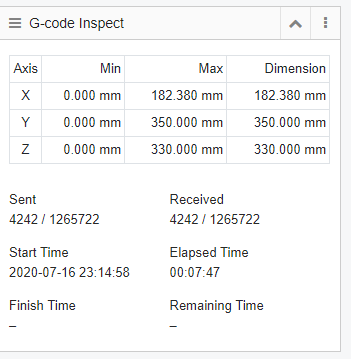

791,506 | 27,865,782,880 | IssuesEvent | 2023-03-21 10:09:19 | Snapmaker/Luban | https://api.github.com/repos/Snapmaker/Luban | closed | Bug: Show estimated remaining time when running Gcode directly from Luban | Type: Bug/Bug Fix Priority: Medium | At the moment this is what you see when starting the Gcode while connected to the machine directly from Luban:

It would be nice to get an estimated time for finish time and remaining time, this could be a si... | 1.0 | Bug: Show estimated remaining time when running Gcode directly from Luban - At the moment this is what you see when starting the Gcode while connected to the machine directly from Luban:

It would be nice to ... | priority | bug show estimated remaining time when running gcode directly from luban at the moment this is what you see when starting the gcode while connected to the machine directly from luban it would be nice to get an estimated time for finish time and remaining time this could be a simple calculation based on re... | 1 |

153,254 | 5,887,988,248 | IssuesEvent | 2017-05-17 09:00:12 | MatthijsKok/TI2806-Contextproject | https://api.github.com/repos/MatthijsKok/TI2806-Contextproject | closed | API key Research | 5h priority:high ready story | Find out if 1 API key can be used for multiple IP addresses. As we cannot estimate the implementation there is no way of telling if it can be implemented immediately

| 1.0 | API key Research - Find out if 1 API key can be used for multiple IP addresses. As we cannot estimate the implementation there is no way of telling if it can be implemented immediately

| priority | api key research find out if api key can be used for multiple ip addresses as we cannot estimate the implementation there is no way of telling if it can be implemented immediately | 1 |

30,603 | 7,236,338,346 | IssuesEvent | 2018-02-13 06:25:43 | Microsoft/vscode-python | https://api.github.com/repos/Microsoft/vscode-python | closed | Add an option to exclude certain kinds of `ctags` | awaiting 2-PR feature-code navigation good first issue type-enhancement | This is a feature request.

Currently, `ctags` generates all kinds of tags for Python code: class, function, class member, variable, import, file, etc.

When doing "Go To Symbol in Workspace" I'm only interested in definitions, such as class and function definitions and class members.

Allowing to specify what ki... | 1.0 | Add an option to exclude certain kinds of `ctags` - This is a feature request.

Currently, `ctags` generates all kinds of tags for Python code: class, function, class member, variable, import, file, etc.

When doing "Go To Symbol in Workspace" I'm only interested in definitions, such as class and function definitio... | non_priority | add an option to exclude certain kinds of ctags this is a feature request currently ctags generates all kinds of tags for python code class function class member variable import file etc when doing go to symbol in workspace i m only interested in definitions such as class and function definitio... | 0 |

225,655 | 24,881,071,391 | IssuesEvent | 2022-10-28 01:10:24 | Nivaskumark/kernel_v4.1.15 | https://api.github.com/repos/Nivaskumark/kernel_v4.1.15 | opened | CVE-2022-3646 (Medium) detected in linuxlinux-4.6 | security vulnerability | ## CVE-2022-3646 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kerne... | True | CVE-2022-3646 (Medium) detected in linuxlinux-4.6 - ## CVE-2022-3646 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>L... | non_priority | cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in base branch master vulnerable source files fs segment c fs segment c ... | 0 |

135,529 | 5,253,992,919 | IssuesEvent | 2017-02-02 11:23:40 | hpi-swt2/workshop-portal | https://api.github.com/repos/hpi-swt2/workshop-portal | closed | Fix Fonts | High Priority needs acceptance team-hendrik | **Acceptance Criteria**

- [ ] make sure that all fonts are loaded correctly, especially "Roboto Slab" and "Open Sans"

- [ ] fix font loading via Google Fonts

- [ ] if not possible: download fonts and serve them from our own server | 1.0 | Fix Fonts - **Acceptance Criteria**

- [ ] make sure that all fonts are loaded correctly, especially "Roboto Slab" and "Open Sans"

- [ ] fix font loading via Google Fonts

- [ ] if not possible: download fonts and serve them from our own server | priority | fix fonts acceptance criteria make sure that all fonts are loaded correctly especially roboto slab and open sans fix font loading via google fonts if not possible download fonts and serve them from our own server | 1 |

386,390 | 11,437,931,354 | IssuesEvent | 2020-02-05 01:36:38 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | closed | Dispensary state not cleared when navigating away | Bug: development Docs: not needed Effort: small Module: dispensary Priority: high | ## Describe the bug

When navigating away from the dispensary page, the redux state is not reset

### To reproduce

Dispensary development bug

### Expected behaviour

Dispensary development bug

### Proposed Solution

Dispensary development bug

### Version and device info

Dispensary development bug... | 1.0 | Dispensary state not cleared when navigating away - ## Describe the bug

When navigating away from the dispensary page, the redux state is not reset

### To reproduce

Dispensary development bug

### Expected behaviour

Dispensary development bug

### Proposed Solution

Dispensary development bug

### V... | priority | dispensary state not cleared when navigating away describe the bug when navigating away from the dispensary page the redux state is not reset to reproduce dispensary development bug expected behaviour dispensary development bug proposed solution dispensary development bug v... | 1 |

16,528 | 6,220,578,719 | IssuesEvent | 2017-07-10 00:10:55 | atom/atom | https://api.github.com/repos/atom/atom | closed | chmod build for deb or build in place instead of /tmp | build-error linux stale | The assumption of your build scripts is that all people use the default insecure permissions provided by Ubuntu but this is not true, quite a few of us default all user `umask`'s to `0077` and use `getfacl` and `setfacl` to adjust per directory permissions inside of the home directory, this results in dpkg going nuts u... | 1.0 | chmod build for deb or build in place instead of /tmp - The assumption of your build scripts is that all people use the default insecure permissions provided by Ubuntu but this is not true, quite a few of us default all user `umask`'s to `0077` and use `getfacl` and `setfacl` to adjust per directory permissions inside ... | non_priority | chmod build for deb or build in place instead of tmp the assumption of your build scripts is that all people use the default insecure permissions provided by ubuntu but this is not true quite a few of us default all user umask s to and use getfacl and setfacl to adjust per directory permissions inside of ... | 0 |

673,447 | 22,969,886,105 | IssuesEvent | 2022-07-20 01:21:55 | stackcollision/Nebulous-BugReporting | https://api.github.com/repos/stackcollision/Nebulous-BugReporting | reopened | Missiles with Semi-active RADAR primary seekers and other different forms of RADAR backup seekers still displays errors. | bug branch-modmis incorrect behavior priority high | **Describe the bug**

Missiles with Semi-active RADAR primary seekers and other different forms of RADAR backup seekers still displays errors.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Missile Designer

2. Create new hybrid missile (Cyclone, Atlatl) and put primary seeker as Semi-active RADAR and ... | 1.0 | Missiles with Semi-active RADAR primary seekers and other different forms of RADAR backup seekers still displays errors. - **Describe the bug**

Missiles with Semi-active RADAR primary seekers and other different forms of RADAR backup seekers still displays errors.

**To Reproduce**

Steps to reproduce the behavior:

... | priority | missiles with semi active radar primary seekers and other different forms of radar backup seekers still displays errors describe the bug missiles with semi active radar primary seekers and other different forms of radar backup seekers still displays errors to reproduce steps to reproduce the behavior ... | 1 |

125,634 | 16,822,421,870 | IssuesEvent | 2021-06-17 14:31:02 | ProjectMirador/mirador | https://api.github.com/repos/ProjectMirador/mirador | closed | Research and design a new two-up view that does not do book view calculations. | Mirador3 design needed user experience | It may be useful for Mirador users to have a two-up view within an object where the second canvas is not the back of the first canvas.

An example here is the scanning and representation of a booklike thing was scanned published in a non-IIIF `paged` compatible way. Or maybe there are two images next two each other ... | 1.0 | Research and design a new two-up view that does not do book view calculations. - It may be useful for Mirador users to have a two-up view within an object where the second canvas is not the back of the first canvas.

An example here is the scanning and representation of a booklike thing was scanned published in a no... | non_priority | research and design a new two up view that does not do book view calculations it may be useful for mirador users to have a two up view within an object where the second canvas is not the back of the first canvas an example here is the scanning and representation of a booklike thing was scanned published in a no... | 0 |

154,132 | 5,911,830,367 | IssuesEvent | 2017-05-21 01:16:37 | HaxeFoundation/intellij-haxe | https://api.github.com/repos/HaxeFoundation/intellij-haxe | closed | For in loop type inference only works for arrays | bug Priority 3 | Type inference works fine for the following because it's an array. Item will be recognized as a String and will give code completion for the "indexOf" method.

```

var array : Array<String> = ["item"];

for (item in array) {

var index = item.indexOf("e"); // works fine

}

```

However, on a map, the same thing does n... | 1.0 | For in loop type inference only works for arrays - Type inference works fine for the following because it's an array. Item will be recognized as a String and will give code completion for the "indexOf" method.

```

var array : Array<String> = ["item"];

for (item in array) {

var index = item.indexOf("e"); // works f... | priority | for in loop type inference only works for arrays type inference works fine for the following because it s an array item will be recognized as a string and will give code completion for the indexof method var array array for item in array var index item indexof e works fine how... | 1 |

772,644 | 27,130,408,412 | IssuesEvent | 2023-02-16 09:22:48 | thebaselab/codeapp | https://api.github.com/repos/thebaselab/codeapp | closed | Feature Request: SSH Improvements | enhancement priority | This app would be AMAZING if it had better SSH/Remote connections support. If you could mimic VS Code's remote connections plugin this would be great.

Beyond that minor SSH improvements would be awesome, for example pressing tab to auto complete and adding arrow keys on the keyboard to go up/down. | 1.0 | Feature Request: SSH Improvements - This app would be AMAZING if it had better SSH/Remote connections support. If you could mimic VS Code's remote connections plugin this would be great.

Beyond that minor SSH improvements would be awesome, for example pressing tab to auto complete and adding arrow keys on the keyboar... | priority | feature request ssh improvements this app would be amazing if it had better ssh remote connections support if you could mimic vs code s remote connections plugin this would be great beyond that minor ssh improvements would be awesome for example pressing tab to auto complete and adding arrow keys on the keyboar... | 1 |

245,339 | 20,763,371,290 | IssuesEvent | 2022-03-15 18:12:18 | MRC-CSO-SPHSU/jamjam | https://api.github.com/repos/MRC-CSO-SPHSU/jamjam | opened | Add a test for absolute errors | component:tests | This should be a separate utility class with auxiliary functions only. | 1.0 | Add a test for absolute errors - This should be a separate utility class with auxiliary functions only. | non_priority | add a test for absolute errors this should be a separate utility class with auxiliary functions only | 0 |

262,768 | 19,830,882,299 | IssuesEvent | 2022-01-20 11:51:42 | ngneat/falso | https://api.github.com/repos/ngneat/falso | closed | Interactive Playground within the Docs | documentation enhancement | ### Description

https://dev.to/mrmuhammadali/live-code-editing-in-docusaurus-ux-at-its-best-2hj1

### Proposed solution

_No response_

### Alternatives considered

_No response_

### Do you want to create a pull request?

No | 1.0 | Interactive Playground within the Docs - ### Description

https://dev.to/mrmuhammadali/live-code-editing-in-docusaurus-ux-at-its-best-2hj1

### Proposed solution

_No response_

### Alternatives considered

_No response_

### Do you want to create a pull request?

No | non_priority | interactive playground within the docs description proposed solution no response alternatives considered no response do you want to create a pull request no | 0 |

534,356 | 15,615,084,045 | IssuesEvent | 2021-03-19 18:38:31 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | closed | Using pre-existing deployments | area/deploy area/modules kind/feature-request priority/p1 | Hello!

Let's say I already have a shared Dev remote cluster running some deployments (say App1, App2 and App3) that all communicate with each other.

Is it possible to temporary "replace" the cluster's App2 by a local App2 using Skaffold?

I may have misunderstood the documentation but I'm afraid I'll have to re-d... | 1.0 | Using pre-existing deployments - Hello!

Let's say I already have a shared Dev remote cluster running some deployments (say App1, App2 and App3) that all communicate with each other.

Is it possible to temporary "replace" the cluster's App2 by a local App2 using Skaffold?

I may have misunderstood the documentation... | priority | using pre existing deployments hello let s say i already have a shared dev remote cluster running some deployments say and that all communicate with each other is it possible to temporary replace the cluster s by a local using skaffold i may have misunderstood the documentation but i m afraid... | 1 |

171,069 | 27,056,940,347 | IssuesEvent | 2023-02-13 16:47:30 | microsoft/pyright | https://api.github.com/repos/microsoft/pyright | closed | Cannot use type variable for the init argument type and method type | as designed | **Describe the bug**

I created a class that receives any subclass instance of `Exception` class as an argument when initializing the class.

I defined a property that returns the exception and I want to use a type variable because those two types should be the same, but the pyright error occurs.

```

Type "T@__init__... | 1.0 | Cannot use type variable for the init argument type and method type - **Describe the bug**

I created a class that receives any subclass instance of `Exception` class as an argument when initializing the class.

I defined a property that returns the exception and I want to use a type variable because those two types sh... | non_priority | cannot use type variable for the init argument type and method type describe the bug i created a class that receives any subclass instance of exception class as an argument when initializing the class i defined a property that returns the exception and i want to use a type variable because those two types sh... | 0 |

195,071 | 14,701,879,417 | IssuesEvent | 2021-01-04 12:40:16 | microcks/microcks | https://api.github.com/repos/microcks/microcks | closed | Reported test duration for asynchronous tests is not correct | component/tests component/ux kind/bug | When dealing with asynchronous tests, the total test duration that is reported is not correct.

Each and every message as a duration assigned to the maximum timeout > that's not correct

and the total test duration is a sum of message tests duration > that's not correct too.

<img width="1046" alt="Capture d’écran... | 1.0 | Reported test duration for asynchronous tests is not correct - When dealing with asynchronous tests, the total test duration that is reported is not correct.

Each and every message as a duration assigned to the maximum timeout > that's not correct

and the total test duration is a sum of message tests duration > tha... | non_priority | reported test duration for asynchronous tests is not correct when dealing with asynchronous tests the total test duration that is reported is not correct each and every message as a duration assigned to the maximum timeout that s not correct and the total test duration is a sum of message tests duration tha... | 0 |

659,724 | 21,939,289,953 | IssuesEvent | 2022-05-23 16:22:45 | hashicorp/terraform-provider-google | https://api.github.com/repos/hashicorp/terraform-provider-google | opened | Add support for `tpu_config` to `google_container_cluster` | enhancement priority/0 | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Add support for `tpu_config` to `google_container_cluster` - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the communi... | priority | add support for tpu config to google container cluster community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and d... | 1 |

255,893 | 8,126,570,711 | IssuesEvent | 2018-08-17 03:05:11 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Modify build_visit to use vtkjpeg with NetCDF on all platforms | Bug Likelihood: 3 - Occasional Priority: Normal Severity: 2 - Minor Irritation | There are some systems other than Macs that don't have libjpeg installed on them so we should switch to using libvtkjpeg on all platforms in build_visit.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in t... | 1.0 | Modify build_visit to use vtkjpeg with NetCDF on all platforms - There are some systems other than Macs that don't have libjpeg installed on them so we should switch to using libvtkjpeg on all platforms in build_visit.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Re... | priority | modify build visit to use vtkjpeg with netcdf on all platforms there are some systems other than macs that don t have libjpeg installed on them so we should switch to using libvtkjpeg on all platforms in build visit redmine migration this ticket was migrated from re... | 1 |

578,751 | 17,152,983,562 | IssuesEvent | 2021-07-14 00:23:25 | hep-gc/glint | https://api.github.com/repos/hep-gc/glint | closed | No error message when failing to create repository | Priority-Low enhancement | In e.g. #56 , there should be some kind of error message displayed instead of just nothing happening at all.

| 1.0 | No error message when failing to create repository - In e.g. #56 , there should be some kind of error message displayed instead of just nothing happening at all.

| priority | no error message when failing to create repository in e g there should be some kind of error message displayed instead of just nothing happening at all | 1 |

263,486 | 8,290,227,207 | IssuesEvent | 2018-09-19 16:43:27 | CyberReboot/poseidon | https://api.github.com/repos/CyberReboot/poseidon | closed | poseidon throws a logging error when the log file hits 10M | bug high-priority | poseidon[31429]: --- Logging error ---

poseidon[31429]: Traceback (most recent call last):

poseidon[31429]: File "/usr/lib/python3.6/logging/handlers.py", line 72, in emit

poseidon[31429]: self.doRollover()

poseidon[31429]: File "/usr/lib/python3.6/logging/handlers.py", line 173, in doRollover

poseidon[31429]: sel... | 1.0 | poseidon throws a logging error when the log file hits 10M - poseidon[31429]: --- Logging error ---

poseidon[31429]: Traceback (most recent call last):

poseidon[31429]: File "/usr/lib/python3.6/logging/handlers.py", line 72, in emit

poseidon[31429]: self.doRollover()

poseidon[31429]: File "/usr/lib/python3.6/loggin... | priority | poseidon throws a logging error when the log file hits poseidon logging error poseidon traceback most recent call last poseidon file usr lib logging handlers py line in emit poseidon self dorollover poseidon file usr lib logging handlers py line in dorollover poseid... | 1 |

566,955 | 16,835,047,482 | IssuesEvent | 2021-06-18 10:50:40 | Beep6581/RawTherapee | https://api.github.com/repos/Beep6581/RawTherapee | opened | Nightly builds on Windows create 5-dev-dev cache folder | priority: medium scope: distribution | As reported here https://discuss.pixls.us/t/cant-start-the-latest-dev-version-of-rawtherapee-for-win64/24746/30, using our nightly builds creates a `RawTherapee5-dev-dev` folder in `%LOCALAPPDATA%`. The expected suffix is `5-dev` as per http://rawpedia.rawtherapee.com/File_Paths.

Ping @aferrero2707 and @Beep6581, ca... | 1.0 | Nightly builds on Windows create 5-dev-dev cache folder - As reported here https://discuss.pixls.us/t/cant-start-the-latest-dev-version-of-rawtherapee-for-win64/24746/30, using our nightly builds creates a `RawTherapee5-dev-dev` folder in `%LOCALAPPDATA%`. The expected suffix is `5-dev` as per http://rawpedia.rawtherap... | priority | nightly builds on windows create dev dev cache folder as reported here using our nightly builds creates a dev dev folder in localappdata the expected suffix is dev as per ping and can you investigate comment i haven t tested your builds how do they behave contrary to what is re... | 1 |

258,745 | 8,179,392,792 | IssuesEvent | 2018-08-28 16:18:04 | LBHackney-IT/lbh-pattern-library | https://api.github.com/repos/LBHackney-IT/lbh-pattern-library | closed | Configure theme | Priority: High Status: Available Type: Enhancement | Check https://github.com/AcasDigital/acas-component-library

- [x] Hackney colour

- [x] Hackney logo

- [x] How to add/import image?

- [x] Favicon | 1.0 | Configure theme - Check https://github.com/AcasDigital/acas-component-library

- [x] Hackney colour

- [x] Hackney logo

- [x] How to add/import image?

- [x] Favicon | priority | configure theme check hackney colour hackney logo how to add import image favicon | 1 |

702,360 | 24,120,934,505 | IssuesEvent | 2022-09-20 18:38:30 | googleapis/nodejs-billing-budgets | https://api.github.com/repos/googleapis/nodejs-billing-budgets | closed | Integration Tests: should try to list billing account failed | type: bug priority: p1 api: cloudbilling flakybot: issue flakybot: flaky | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 70cac3b65e02641f0e573ed3bbf32be84729da00

b... | 1.0 | Integration Tests: should try to list billing account failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop comme... | priority | integration tests should try to list billing account failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output expected unauthenticated reques... | 1 |

28,082 | 5,184,886,053 | IssuesEvent | 2017-01-20 08:25:34 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | CachedExecutorServiceDelegateTest shutdownNow() | Team: Core Type: Defect | from https://hazelcast-l337.ci.cloudbees.com/job/new-lab-fast-pr/6849/testReport/junit/com.hazelcast.util.executor/CachedExecutorServiceDelegateTest/shutdownNow/

```

com.hazelcast.util.executor.CachedExecutorServiceDelegateTest.shutdownNow

Failing for the past 1 build (Since Failed#6849 )

Took 0.11 sec.

Error ... | 1.0 | CachedExecutorServiceDelegateTest shutdownNow() - from https://hazelcast-l337.ci.cloudbees.com/job/new-lab-fast-pr/6849/testReport/junit/com.hazelcast.util.executor/CachedExecutorServiceDelegateTest/shutdownNow/

```

com.hazelcast.util.executor.CachedExecutorServiceDelegateTest.shutdownNow

Failing for the past 1 ... | non_priority | cachedexecutorservicedelegatetest shutdownnow from com hazelcast util executor cachedexecutorservicedelegatetest shutdownnow failing for the past build since failed took sec error message expected but was stacktrace java lang assertionerror expected but was at org junit ... | 0 |

80,494 | 30,307,583,429 | IssuesEvent | 2023-07-10 10:32:11 | vector-im/element-call | https://api.github.com/repos/vector-im/element-call | opened | Glitch in tiling, in spotlight mode, when speaker has video muted | T-Defect | ### Steps to reproduce

View in spotlight mode.

The tile for speakers with video muted tends to jump position, somewhat erratically. Glitch confirmed by other call participants. Recorded below:

https://drive.google.com/file/d/17ZWPIcBQBdhdgfEXC4qUWIvkzwmrFSJ9/view?usp=sharing

Recorded at https://element-call-l... | 1.0 | Glitch in tiling, in spotlight mode, when speaker has video muted - ### Steps to reproduce

View in spotlight mode.

The tile for speakers with video muted tends to jump position, somewhat erratically. Glitch confirmed by other call participants. Recorded below:

https://drive.google.com/file/d/17ZWPIcBQBdhdgfEXC4q... | non_priority | glitch in tiling in spotlight mode when speaker has video muted steps to reproduce view in spotlight mode the tile for speakers with video muted tends to jump position somewhat erratically glitch confirmed by other call participants recorded below recorded at outcome what did you e... | 0 |

566,271 | 16,817,165,078 | IssuesEvent | 2021-06-17 08:45:45 | IgniteUI/igniteui-angular | https://api.github.com/repos/IgniteUI/igniteui-angular | closed | IgxSelect dropdown is not positioned correctly in IE11 | browser: IE-11 bug priority: low select status: resolved version: 11.1.x version: 12.0.x version: 12.1.x | ## Description

IgxSelect dropdown is not positioned correctly in IE11.

* igniteui-angular version: 11.1.3

* browser: IE11

## Steps to reproduce

1. Start the attached reproducible sample and open http://localhost:4200/ in IE11.

2. Open the dropdown.

3. Select an item around at the end of the list. For... | 1.0 | IgxSelect dropdown is not positioned correctly in IE11 - ## Description

IgxSelect dropdown is not positioned correctly in IE11.

* igniteui-angular version: 11.1.3

* browser: IE11

## Steps to reproduce

1. Start the attached reproducible sample and open http://localhost:4200/ in IE11.

2. Open the dropdo... | priority | igxselect dropdown is not positioned correctly in description igxselect dropdown is not positioned correctly in igniteui angular version browser steps to reproduce start the attached reproducible sample and open in open the dropdown select an item around at t... | 1 |

567,547 | 16,870,468,255 | IssuesEvent | 2021-06-22 03:23:53 | VEuPathDB/plot.data | https://api.github.com/repos/VEuPathDB/plot.data | closed | improve unit tests | good first issue priority 1 | check for returning num bins as well as bin width, null viewports, dates as input in addition to numbers etc | 1.0 | improve unit tests - check for returning num bins as well as bin width, null viewports, dates as input in addition to numbers etc | priority | improve unit tests check for returning num bins as well as bin width null viewports dates as input in addition to numbers etc | 1 |

1,883 | 3,025,181,446 | IssuesEvent | 2015-08-03 06:15:30 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 21523690: Safari should display UIActivityViewController when inline links are long-pressed | classification:ui/usability reproducible:always status:open | #### Description

Summary:

When an inline link is long-pressed in Safari, an action sheet with only a few built-in options is displayed. It would be much better if a UIActivityViewController was presented instead; this would allow all of the share and action extensions that the user has enabled to act on the URL being ... | True | 21523690: Safari should display UIActivityViewController when inline links are long-pressed - #### Description

Summary:

When an inline link is long-pressed in Safari, an action sheet with only a few built-in options is displayed. It would be much better if a UIActivityViewController was presented instead; this would a... | non_priority | safari should display uiactivityviewcontroller when inline links are long pressed description summary when an inline link is long pressed in safari an action sheet with only a few built in options is displayed it would be much better if a uiactivityviewcontroller was presented instead this would allow al... | 0 |

79,706 | 7,723,869,910 | IssuesEvent | 2018-05-24 13:40:50 | khartec/waltz | https://api.github.com/repos/khartec/waltz | closed | Change Initiatives: add logical flows and indicator sections | fixed (test & close) noteworthy waiting on client | `dynamicSections.logicalFlowsTabgroupSection`

| 1.0 | Change Initiatives: add logical flows and indicator sections - `dynamicSections.logicalFlowsTabgroupSection`

| non_priority | change initiatives add logical flows and indicator sections dynamicsections logicalflowstabgroupsection | 0 |

258,749 | 8,179,401,721 | IssuesEvent | 2018-08-28 16:20:27 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | Theme name form Logs-Reviews redirects to a 404 page after deletion | component: reviewer tools priority: p4 state: wontfix triaged type: bug | Steps to reproduce:

1. Submit a theme and review it

2. Delete it

3. Go to Reviewer Tools - Logs - Reviews https://addons.allizom.org/en-US/editors/themes/logs

4. Click on the deleted theme name

Expected results:

**Theme name is no longer a link or it is a link but clicking on it reloads the same page (as it hap... | 1.0 | Theme name form Logs-Reviews redirects to a 404 page after deletion - Steps to reproduce:

1. Submit a theme and review it

2. Delete it

3. Go to Reviewer Tools - Logs - Reviews https://addons.allizom.org/en-US/editors/themes/logs

4. Click on the deleted theme name

Expected results:

**Theme name is no longer a li... | priority | theme name form logs reviews redirects to a page after deletion steps to reproduce submit a theme and review it delete it go to reviewer tools logs reviews click on the deleted theme name expected results theme name is no longer a link or it is a link but clicking on it reloads the sam... | 1 |

149,090 | 11,882,171,636 | IssuesEvent | 2020-03-27 13:59:06 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/machine_learning/anomaly_detection/advanced_job·ts - machine learning anomaly detection advanced job with categorization detector and default datafeed settings job creation displays the pick fields step | :ml blocker failed-test skipped-test | A test failed on a tracked branch

```

Error: expected testSubject(mlJobWizardStepTitlePickFields) to exist

at TestSubjects.existOrFail (/dev/shm/workspace/kibana/test/functional/services/test_subjects.ts:62:15)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+7.x/3932/)

<!-- kib... | 2.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/machine_learning/anomaly_detection/advanced_job·ts - machine learning anomaly detection advanced job with categorization detector and default datafeed settings job creation displays the pick fields step - A test failed on a tracked branch

```

... | non_priority | failing test chrome x pack ui functional tests x pack test functional apps machine learning anomaly detection advanced job·ts machine learning anomaly detection advanced job with categorization detector and default datafeed settings job creation displays the pick fields step a test failed on a tracked branch ... | 0 |

315,647 | 9,630,241,096 | IssuesEvent | 2019-05-15 11:34:46 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | closed | No logs and containers not being updated | Priority: Low Status: Awaiting user Type: Question | Hi, I use a ansible role to deploy watchtower to my server, however I notice there is no logs with `docker logs watchtower`, zero output.

And my containers are not also being updated... I know this because one of the dockers I use changed their dockerfile and it did not update.

```

- name: Create container

d... | 1.0 | No logs and containers not being updated - Hi, I use a ansible role to deploy watchtower to my server, however I notice there is no logs with `docker logs watchtower`, zero output.

And my containers are not also being updated... I know this because one of the dockers I use changed their dockerfile and it did not up... | priority | no logs and containers not being updated hi i use a ansible role to deploy watchtower to my server however i notice there is no logs with docker logs watchtower zero output and my containers are not also being updated i know this because one of the dockers i use changed their dockerfile and it did not up... | 1 |

60,642 | 17,023,480,417 | IssuesEvent | 2021-07-03 02:14:55 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | [amenity-points] Some names wrap, some don't. | Component: mapnik Priority: major Resolution: duplicate Type: defect | **[Submitted to the original trac issue database at 1.20am, Friday, 18th September 2009]**

I've noticed that some names wrap vertically, almost with a line break on every word, and some don't wrap, making a long horizontal label.

For example:

http://osm.org/go/54z6C6pPp-

You can see Hyeseong Girls' Middle Sch... | 1.0 | [amenity-points] Some names wrap, some don't. - **[Submitted to the original trac issue database at 1.20am, Friday, 18th September 2009]**

I've noticed that some names wrap vertically, almost with a line break on every word, and some don't wrap, making a long horizontal label.

For example:

http://osm.org/go/54z6... | non_priority | some names wrap some don t i ve noticed that some names wrap vertically almost with a line break on every word and some don t wrap making a long horizontal label for example you can see hyeseong girls middle school gaenjiseu and yeongnak church render nice and compactly but eokjo fish re... | 0 |

72,928 | 3,393,034,524 | IssuesEvent | 2015-11-30 22:13:29 | ualbertalib/HydraNorth | https://api.github.com/repos/ualbertalib/HydraNorth | closed | add status facet to results page | priority:high size:small | (from #841) add status facet to results page (for public etc. - not sure if this is possible, since read_access_group_ssim has values for public, university_of_alberta and registered, but not for private - would have to look at how the search results display is being populated)

| 1.0 | add status facet to results page - (from #841) add status facet to results page (for public etc. - not sure if this is possible, since read_access_group_ssim has values for public, university_of_alberta and registered, but not for private - would have to look at how the search results display is being populated)

| priority | add status facet to results page from add status facet to results page for public etc not sure if this is possible since read access group ssim has values for public university of alberta and registered but not for private would have to look at how the search results display is being populated | 1 |

33,192 | 4,817,908,807 | IssuesEvent | 2016-11-04 15:00:52 | uProxy/uproxy | https://api.github.com/repos/uProxy/uproxy | closed | Reverse backpressure | C:SOCKS C:Testing P3 T:Obsolete | Right now, we have a backpressure implementation that takes effect when a TCP socket is feeding into a DataChannel, and the DataChannel is slower. This is sufficient for most cases. However, it is not sufficient if (1) the getter is uploading a large file, (2) the link between the getter and sharer is fast, and (3) t... | 1.0 | Reverse backpressure - Right now, we have a backpressure implementation that takes effect when a TCP socket is feeding into a DataChannel, and the DataChannel is slower. This is sufficient for most cases. However, it is not sufficient if (1) the getter is uploading a large file, (2) the link between the getter and sh... | non_priority | reverse backpressure right now we have a backpressure implementation that takes effect when a tcp socket is feeding into a datachannel and the datachannel is slower this is sufficient for most cases however it is not sufficient if the getter is uploading a large file the link between the getter and sh... | 0 |

289,140 | 8,855,196,522 | IssuesEvent | 2019-01-09 05:14:58 | visit-dav/issues-test | https://api.github.com/repos/visit-dav/issues-test | closed | Windows installer should have wbronze as the default bank for the LLNL host profiles. | bug likelihood medium priority reviewed severity low | I just installed VisIt 2.9.2 on my 64 bit Windows system and bdivp still appears as the default bank when installing the LLNL open host profiles. It should be wbronze.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be... | 1.0 | Windows installer should have wbronze as the default bank for the LLNL host profiles. - I just installed VisIt 2.9.2 on my 64 bit Windows system and bdivp still appears as the default bank when installing the LLNL open host profiles. It should be wbronze.

-----------------------REDMINE MIGRATION-------------------... | priority | windows installer should have wbronze as the default bank for the llnl host profiles i just installed visit on my bit windows system and bdivp still appears as the default bank when installing the llnl open host profiles it should be wbronze redmine migration ... | 1 |

37,037 | 2,814,446,981 | IssuesEvent | 2015-05-18 20:05:45 | geoffhumphrey/brewcompetitiononlineentry | https://api.github.com/repos/geoffhumphrey/brewcompetitiononlineentry | closed | The assigned Stewards list pulls through everyone who volunteered to be stewards.Judges etc, not just assigned stewards | auto-migrated Priority-Medium Type-Enhancement | ```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on code.google.com by `lee.Imm...@btinternet.com` on 23 Apr ... | 1.0 | The assigned Stewards list pulls through everyone who volunteered to be stewards.Judges etc, not just assigned stewards - ```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any... | priority | the assigned stewards list pulls through everyone who volunteered to be stewards judges etc not just assigned stewards what steps will reproduce the problem what is the expected output what do you see instead what version of the product are you using on what operating system please provide any... | 1 |

35,719 | 5,004,945,662 | IssuesEvent | 2016-12-12 08:58:39 | Starcounter/Starcounter | https://api.github.com/repos/Starcounter/Starcounter | closed | Create regression tests based on Client Node and PMail | test | Open a session using Client side Node and force fixed session id. Run a series of deterministic http request and check all JSON (and JSON-Patch) results using exact string match.

Use PMail on the server side.

| 1.0 | Create regression tests based on Client Node and PMail - Open a session using Client side Node and force fixed session id. Run a series of deterministic http request and check all JSON (and JSON-Patch) results using exact string match.

Use PMail on the server side.

| non_priority | create regression tests based on client node and pmail open a session using client side node and force fixed session id run a series of deterministic http request and check all json and json patch results using exact string match use pmail on the server side | 0 |

64,312 | 6,899,294,069 | IssuesEvent | 2017-11-24 13:11:16 | geosolutions-it/GeoCollect | https://api.github.com/repos/geosolutions-it/GeoCollect | reopened | Add an administrative tool to create a survey record on demand | enhancement In Test Project: C040 | The administrator should be able to create a survey record with the same info of an item.

This is to have an history record without waiting for a survey to come back from the field. | 1.0 | Add an administrative tool to create a survey record on demand - The administrator should be able to create a survey record with the same info of an item.

This is to have an history record without waiting for a survey to come back from the field. | non_priority | add an administrative tool to create a survey record on demand the administrator should be able to create a survey record with the same info of an item this is to have an history record without waiting for a survey to come back from the field | 0 |

46,179 | 13,150,873,289 | IssuesEvent | 2020-08-09 13:57:52 | shaundmorris/ddf | https://api.github.com/repos/shaundmorris/ddf | closed | CVE-2018-9159 Medium Severity Vulnerability detected by WhiteSource | security vulnerability wontfix | ## CVE-2018-9159 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spark-core-2.5.5.jar</b></p></summary>

<p></p>

<p>path: /root/.m2/repository/com/sparkjava/spark-core/2.5.5/... | True | CVE-2018-9159 Medium Severity Vulnerability detected by WhiteSource - ## CVE-2018-9159 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spark-core-2.5.5.jar</b></p></summary>

... | non_priority | cve medium severity vulnerability detected by whitesource cve medium severity vulnerability vulnerable library spark core jar path root repository com sparkjava spark core spark core jar repository com sparkjava spark core spark core jar dependency ... | 0 |

361,492 | 10,709,466,218 | IssuesEvent | 2019-10-24 22:14:33 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.downtowndoglounge.com - Streaming video appears to buffer, but never plays | browser-fenix engine-gecko priority-normal severity-important | <!-- @browser: Firefox Preview 1.3.1 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:69.0) Gecko/69.0 Firefox/69.0 -->

<!-- @reported_with: -->

**URL**: http://www.downtowndoglounge.com/webcam-slu_westpark.html

**Browser / Version**: Firefox Preview 1.3.1

**Operating System**: Android 8.1.0

**Tested Anot... | 1.0 | www.downtowndoglounge.com - Streaming video appears to buffer, but never plays - <!-- @browser: Firefox Preview 1.3.1 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:69.0) Gecko/69.0 Firefox/69.0 -->

<!-- @reported_with: -->

**URL**: http://www.downtowndoglounge.com/webcam-slu_westpark.html

**Browser / V... | priority | streaming video appears to buffer but never plays url browser version firefox preview operating system android tested another browser yes problem type video or audio doesn t play description streaming video appears to buffer but never plays steps to repro... | 1 |

376,550 | 26,205,737,566 | IssuesEvent | 2023-01-03 22:23:05 | rstudio/rstudio | https://api.github.com/repos/rstudio/rstudio | opened | Consider incorporating software vs. hardware rendering into RStudio User Guide | enhancement documentation | Issues resolved by switching to software rendering pop up every once in a while. It might be worth documenting the rendering engine by incorporating the ["Troubleshooting RStudio Rendering Errors"](https://support.posit.co/hc/en-us/articles/360017886674-Troubleshooting-RStudio-Rendering-Errors) article in the RStudio U... | 1.0 | Consider incorporating software vs. hardware rendering into RStudio User Guide - Issues resolved by switching to software rendering pop up every once in a while. It might be worth documenting the rendering engine by incorporating the ["Troubleshooting RStudio Rendering Errors"](https://support.posit.co/hc/en-us/article... | non_priority | consider incorporating software vs hardware rendering into rstudio user guide issues resolved by switching to software rendering pop up every once in a while it might be worth documenting the rendering engine by incorporating the article in the rstudio user guide with the perspective of a feature that might al... | 0 |

282,404 | 8,706,266,187 | IssuesEvent | 2018-12-06 01:57:56 | zulip/zulip | https://api.github.com/repos/zulip/zulip | opened | Add support for denying guest users access to all other users in the organization | area: settings (admin/org) difficult priority: high | Zulip's current "guest users" feature is designed to handle well the case of contractors, where you want someone to be part of your organization and able to send PMs to anyone, but limited to only a few streams.

For some use cases (e.g. customer support), folks often want to have a more limited guest user feature, w... | 1.0 | Add support for denying guest users access to all other users in the organization - Zulip's current "guest users" feature is designed to handle well the case of contractors, where you want someone to be part of your organization and able to send PMs to anyone, but limited to only a few streams.

For some use cases (e... | priority | add support for denying guest users access to all other users in the organization zulip s current guest users feature is designed to handle well the case of contractors where you want someone to be part of your organization and able to send pms to anyone but limited to only a few streams for some use cases e... | 1 |

25,113 | 12,495,545,436 | IssuesEvent | 2020-06-01 13:23:29 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | storage: large value performance degradation since switching to pebble | A-storage C-performance | **What is your situation?**

Starting May 11th, after the merging of https://github.com/cockroachdb/cockroach/pull/48145, we've begun to observe performance regressions in our large-value KV workloads (4kb and 64kb values). See

. See

but also have a specially-treated set o... | True | Default gems can't be upgraded without booting RubyGems - TruffleRuby currently lazy-loads RubyGems to improve startup. Unfortunately this means that unless RubyGems is forced to boot, default gems will not honor `gem install`ed upgrades.

Default gems are installed in the standard library (so they are trivially load... | non_priority | default gems can t be upgraded without booting rubygems truffleruby currently lazy loads rubygems to improve startup unfortunately this means that unless rubygems is forced to boot default gems will not honor gem install ed upgrades default gems are installed in the standard library so they are trivially load... | 0 |

151,479 | 5,821,020,426 | IssuesEvent | 2017-05-06 01:06:10 | paceuniversity/CS3892017team2 | https://api.github.com/repos/paceuniversity/CS3892017team2 | closed | US10 - Battle System - Implement algorithm - 8hrs | High Priority Sprint 2 Sprint 3 Task3 | Implement the algorithm/equations into code for the battle system that determines if the user wins based on the power of their army | 1.0 | US10 - Battle System - Implement algorithm - 8hrs - Implement the algorithm/equations into code for the battle system that determines if the user wins based on the power of their army | priority | battle system implement algorithm implement the algorithm equations into code for the battle system that determines if the user wins based on the power of their army | 1 |

646,518 | 21,051,067,801 | IssuesEvent | 2022-03-31 20:36:03 | model-bakers/model_bakery | https://api.github.com/repos/model-bakers/model_bakery | closed | AttributeError: 'ManyToManyRel' object has no attribute 'has_default' | bug high priority | After update from 1.3.2 to 1.3.3 started getting exception from title.

Sorry I didn't debug properly this issue and can't say why this is happening but my best guess would be because of this change https://github.com/model-bakers/model_bakery/compare/1.3.2...1.3.3#diff-e5857deb915e241f429a0c118e89e06a3388d3ce1466e3a... | 1.0 | AttributeError: 'ManyToManyRel' object has no attribute 'has_default' - After update from 1.3.2 to 1.3.3 started getting exception from title.

Sorry I didn't debug properly this issue and can't say why this is happening but my best guess would be because of this change https://github.com/model-bakers/model_bakery/co... | priority | attributeerror manytomanyrel object has no attribute has default after update from to started getting exception from title sorry i didn t debug properly this issue and can t say why this is happening but my best guess would be because of this change expected behavior baker get fi... | 1 |

209,346 | 16,190,953,596 | IssuesEvent | 2021-05-04 08:25:07 | nilearn/nilearn | https://api.github.com/repos/nilearn/nilearn | opened | Use bibtex in nilearn/decoding | Documentation Good first issue | Convert references in `nilearn/decoding/decoder.py` to use footcite / footbibliography

See #2759 | 1.0 | Use bibtex in nilearn/decoding - Convert references in `nilearn/decoding/decoder.py` to use footcite / footbibliography

See #2759 | non_priority | use bibtex in nilearn decoding convert references in nilearn decoding decoder py to use footcite footbibliography see | 0 |

627,742 | 19,913,578,207 | IssuesEvent | 2022-01-25 19:49:54 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | [base_bc] can't leave public chat | bug priority 3: low branch: base_bc | ### Description

1. join public chat

2. right click -> leave chat

As result, nothing happens | 1.0 | [base_bc] can't leave public chat - ### Description

1. join public chat

2. right click -> leave chat

As result, nothing happens | priority | can t leave public chat description join public chat right click leave chat as result nothing happens | 1 |

340,070 | 30,492,780,919 | IssuesEvent | 2023-07-18 08:50:08 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: disk-stalled/cgroup/read-write/logs-too=false failed | C-test-failure O-robot O-roachtest branch-master release-blocker T-storage | roachtest.disk-stalled/cgroup/read-write/logs-too=false [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10950435?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10950435?buildTab=art... | 2.0 | roachtest: disk-stalled/cgroup/read-write/logs-too=false failed - roachtest.disk-stalled/cgroup/read-write/logs-too=false [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10950435?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/... | non_priority | roachtest disk stalled cgroup read write logs too false failed roachtest disk stalled cgroup read write logs too false with on master cluster go start cockroach connect timeout cockroach sql url postgres root localhost sslmode disable e create schedule if not exists test only... | 0 |

89,451 | 17,912,903,636 | IssuesEvent | 2021-09-09 08:04:10 | andriy-baran/mother_ship | https://api.github.com/repos/andriy-baran/mother_ship | closed | Fix "method_complexity" issue in lib/mother_ship/builder/strategies/init.rb | codestyle | Method `call` has a Cognitive Complexity of 9 (exceeds 5 allowed). Consider refactoring.

https://codeclimate.com/github/andriy-baran/mother_ship/lib/mother_ship/builder/strategies/init.rb#issue_612f7562a428680001000033 | 1.0 | Fix "method_complexity" issue in lib/mother_ship/builder/strategies/init.rb - Method `call` has a Cognitive Complexity of 9 (exceeds 5 allowed). Consider refactoring.

https://codeclimate.com/github/andriy-baran/mother_ship/lib/mother_ship/builder/strategies/init.rb#issue_612f7562a428680001000033 | non_priority | fix method complexity issue in lib mother ship builder strategies init rb method call has a cognitive complexity of exceeds allowed consider refactoring | 0 |

675,099 | 23,078,793,350 | IssuesEvent | 2022-07-26 04:15:33 | ballerina-platform/ballerina-dev-website | https://api.github.com/repos/ballerina-platform/ballerina-dev-website | closed | `Report Issues` link in community page `get involved` section should open in a new tab | Priority/Highest Area/UIUX Type/Bug WebsiteRewrite | ## Description

$subject

| 1.0 | `Report Issues` link in community page `get involved` section should open in a new tab - ## Description

$subject

| priority | report issues link in community page get involved section should open in a new tab description subject | 1 |

336,339 | 10,186,081,934 | IssuesEvent | 2019-08-10 09:59:40 | ilakeful/LakeBot | https://api.github.com/repos/ilakeful/LakeBot | opened | Fix of the awaiting user to rejoin the VC | bug changes: patch priority: high type: issue | The feature implies fixing the issue when LakeBot leaves the VC (after the last member in the VC does) and clears the queue if it consists of only one single track. | 1.0 | Fix of the awaiting user to rejoin the VC - The feature implies fixing the issue when LakeBot leaves the VC (after the last member in the VC does) and clears the queue if it consists of only one single track. | priority | fix of the awaiting user to rejoin the vc the feature implies fixing the issue when lakebot leaves the vc after the last member in the vc does and clears the queue if it consists of only one single track | 1 |

75,191 | 9,828,339,844 | IssuesEvent | 2019-06-15 10:37:01 | opensds/documentation | https://api.github.com/repos/opensds/documentation | opened | getting started document | documentation | /kind new-documentation

**What happened**:

There is no proper getting started document available where all the other docs and information can be fetched.

Document Name: New document needed.

Document Path / Location: https://opensds.readthedocs.io/en/latest/

(Like from website/github etc - give the complete lin... | 1.0 | getting started document - /kind new-documentation

**What happened**:

There is no proper getting started document available where all the other docs and information can be fetched.

Document Name: New document needed.

Document Path / Location: https://opensds.readthedocs.io/en/latest/

(Like from website/github ... | non_priority | getting started document kind new documentation what happened there is no proper getting started document available where all the other docs and information can be fetched document name new document needed document path location like from website github etc give the complete link issue fac... | 0 |

245,280 | 7,884,102,916 | IssuesEvent | 2018-06-27 08:06:51 | LaCoolCo/LaCOOLBoard | https://api.github.com/repos/LaCoolCo/LaCOOLBoard | closed | Prevent SPIFFS corruption on low battery | bug hard high priority | **estimate:** 3d

**Steps to reproduce**

* Make a device/sketch that drains the battery real, real fast

* Use 2 kinds of battery: 2000 mAh, 6600 mAh

* Plug those batteries to unplugged COOL Boards

* Let the batteries drain and observe

**Expected**

- If voltage is 1.8V < v < 3.55V shut down immediately and d... | 1.0 | Prevent SPIFFS corruption on low battery - **estimate:** 3d

**Steps to reproduce**

* Make a device/sketch that drains the battery real, real fast

* Use 2 kinds of battery: 2000 mAh, 6600 mAh

* Plug those batteries to unplugged COOL Boards

* Let the batteries drain and observe

**Expected**

- If voltage is 1... | priority | prevent spiffs corruption on low battery estimate steps to reproduce make a device sketch that drains the battery real real fast use kinds of battery mah mah plug those batteries to unplugged cool boards let the batteries drain and observe expected if voltage is v ... | 1 |

264,787 | 8,319,444,890 | IssuesEvent | 2018-09-25 17:14:37 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | Product-name and summary for localized add-ons are not correctly submitted to Akismet | component: admin tools component: devhub priority: p2 | STR:

1. Log in to Developer Hub

2. Submit an add-on that supports various localisations

3. With an Admin account, check the Akismet reports for the submitted add-on

Actual result:

`Product-summary` and `product-name` seem incorrectly extracted (i.e displayed as `__MSG_name__`)

Expected result:

Localized st... | 1.0 | Product-name and summary for localized add-ons are not correctly submitted to Akismet - STR:

1. Log in to Developer Hub

2. Submit an add-on that supports various localisations

3. With an Admin account, check the Akismet reports for the submitted add-on

Actual result:

`Product-summary` and `product-name` seem i... | priority | product name and summary for localized add ons are not correctly submitted to akismet str log in to developer hub submit an add on that supports various localisations with an admin account check the akismet reports for the submitted add on actual result product summary and product name seem i... | 1 |

127,434 | 5,030,408,738 | IssuesEvent | 2016-12-16 00:39:29 | MinetestForFun/server-minetestforfun-skyblock | https://api.github.com/repos/MinetestForFun/server-minetestforfun-skyblock | closed | Worldmap POI request | Priority: Low Website | 13211,x, 11301

Name: Ikoma Japanese Gardens

by: tjnenrtn

:large_orange_diamond: | 1.0 | Worldmap POI request - 13211,x, 11301

Name: Ikoma Japanese Gardens

by: tjnenrtn

:large_orange_diamond: | priority | worldmap poi request x name ikoma japanese gardens by tjnenrtn large orange diamond | 1 |

21,347 | 10,605,471,835 | IssuesEvent | 2019-10-10 20:33:13 | ros-security/aws-roadmap | https://api.github.com/repos/ros-security/aws-roadmap | closed | Run Sandbox integration tests as a batch | theme: security user-story | ## Description

"As a ROS2 Developer, I want to run all sandbox integration tests to ensure any changes are OK"

Instead of running all integration tests separately, run them all in a single bash script or pytest module. This allows easier development workflow for developers.

## Test Plan

* Test the tests, ens... | True | Run Sandbox integration tests as a batch - ## Description

"As a ROS2 Developer, I want to run all sandbox integration tests to ensure any changes are OK"

Instead of running all integration tests separately, run them all in a single bash script or pytest module. This allows easier development workflow for developers... | non_priority | run sandbox integration tests as a batch description as a developer i want to run all sandbox integration tests to ensure any changes are ok instead of running all integration tests separately run them all in a single bash script or pytest module this allows easier development workflow for developers ... | 0 |