Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

471,783 | 13,610,407,937 | IssuesEvent | 2020-09-23 07:19:09 | HackYourFuture-CPH/chattie | https://api.github.com/repos/HackYourFuture-CPH/chattie | closed | List of message components | High priority User story | ## User story

**Who:** **As a** user

**What:** **I want to** see all messages on a conversation

**Why:** **so that we can** see who has created the message, what the message says and the date and time of the message

## Implementation details

- There are two distinct components (MessageList and Message) The... | 1.0 | List of message components - ## User story

**Who:** **As a** user

**What:** **I want to** see all messages on a conversation

**Why:** **so that we can** see who has created the message, what the message says and the date and time of the message

## Implementation details

- There are two distinct components ... | priority | list of message components user story who as a user what i want to see all messages on a conversation why so that we can see who has created the message what the message says and the date and time of the message implementation details there are two distinct components ... | 1 |

3,255 | 4,288,559,431 | IssuesEvent | 2016-07-17 14:54:49 | iszwnc/eapisy.js | https://api.github.com/repos/iszwnc/eapisy.js | opened | [Snyk] Build failed while vulnerability tests run | enhancement security | I follow the documentation that https://snyk.io/docs/ provides, but for some reason I cannot get my tests to run during the building process. Here is the thing that I know so far:

- If you run `snyk protect`, it will look for the `.snyk` configuration and run our patches before testing.

### What are patches?

P... | True | [Snyk] Build failed while vulnerability tests run - I follow the documentation that https://snyk.io/docs/ provides, but for some reason I cannot get my tests to run during the building process. Here is the thing that I know so far:

- If you run `snyk protect`, it will look for the `.snyk` configuration and run our p... | non_priority | build failed while vulnerability tests run i follow the documentation that provides but for some reason i cannot get my tests to run during the building process here is the thing that i know so far if you run snyk protect it will look for the snyk configuration and run our patches before testing ... | 0 |

785,345 | 27,610,170,501 | IssuesEvent | 2023-03-09 15:29:17 | bats-core/bats-core | https://api.github.com/repos/bats-core/bats-core | closed | `setup_[suite|file]` errors are not reported (tests are skipped) making them hard to debug | Type: Bug Priority: NeedsTriage | **Describe the bug**

Errors in these functions are not reported to callers. It would be nice to see an error message. `-x` doesn't reveal them (though it does for `setup`).

**To Reproduce**

```

function setup_file(){

false

}

@test blah {

true

}

```

`blah` will be skipped, but without suggestion a... | 1.0 | `setup_[suite|file]` errors are not reported (tests are skipped) making them hard to debug - **Describe the bug**

Errors in these functions are not reported to callers. It would be nice to see an error message. `-x` doesn't reveal them (though it does for `setup`).

**To Reproduce**

```

function setup_file(){

... | priority | setup errors are not reported tests are skipped making them hard to debug describe the bug errors in these functions are not reported to callers it would be nice to see an error message x doesn t reveal them though it does for setup to reproduce function setup file false ... | 1 |

519,341 | 15,049,340,074 | IssuesEvent | 2021-02-03 11:21:21 | assemblee-virtuelle/semapps | https://api.github.com/repos/assemblee-virtuelle/semapps | opened | Persister l'ordre des items des OrderedCollection avec rdf:Seq | activitypub low priority | **Problématique**

Actuellement pour gérer les `OrderedCollection` on ordonne les items selon un prédicat (par exemple `as:published`). Mais normalement l'ordre des items est persisté.

**Proposition**

- Utiliser les [rdf:Seq](https://ontola.io/blog/ordered-data-in-rdf/) pour persister l'ordre des items.

- On pourr... | 1.0 | Persister l'ordre des items des OrderedCollection avec rdf:Seq - **Problématique**

Actuellement pour gérer les `OrderedCollection` on ordonne les items selon un prédicat (par exemple `as:published`). Mais normalement l'ordre des items est persisté.

**Proposition**

- Utiliser les [rdf:Seq](https://ontola.io/blog/or... | priority | persister l ordre des items des orderedcollection avec rdf seq problématique actuellement pour gérer les orderedcollection on ordonne les items selon un prédicat par exemple as published mais normalement l ordre des items est persisté proposition utiliser les pour persister l ordre des item... | 1 |

317,027 | 9,659,977,457 | IssuesEvent | 2019-05-20 14:34:03 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | closed | TreeList pager does not refresh when All pageSize is selected | Bug C: Pager C: TreeList Kendo1 Priority 1 SEV: Medium | ### Bug report

Reported in ticket with ID 1389358

### Reproduction of the problem

- page the TreeList next page/any page

- select "All" from the pageSizes dropdown

[Dojo](https://dojo.telerik.com/@bubblemaster/UNOgAzuz)

### Current behavior

### Current behavio... | priority | treelist pager does not refresh when all pagesize is selected bug report reported in ticket with id reproduction of the problem page the treelist next page any page select all from the pagesizes dropdown current behavior possibly related with expected desi... | 1 |

172,391 | 6,502,490,796 | IssuesEvent | 2017-08-23 13:49:47 | wordpress-mobile/AztecEditor-Android | https://api.github.com/repos/wordpress-mobile/AztecEditor-Android | opened | Add support for [wpvideo] shortcode | medium priority new feature | `[wpvideo] is another video shortcode that must be implemented. It does not contain a full path to the video, only a reference to videopress.com file.

More information: https://en.support.wordpress.com/videopress/ | 1.0 | Add support for [wpvideo] shortcode - `[wpvideo] is another video shortcode that must be implemented. It does not contain a full path to the video, only a reference to videopress.com file.

More information: https://en.support.wordpress.com/videopress/ | priority | add support for shortcode is another video shortcode that must be implemented it does not contain a full path to the video only a reference to videopress com file more information | 1 |

325,025 | 27,840,941,230 | IssuesEvent | 2023-03-20 12:43:58 | FuelLabs/fuels-ts | https://api.github.com/repos/FuelLabs/fuels-ts | closed | Improve confidence of Typegen tests | bug refactor tests | Sometimes the Typegen tests lose track of stuff and give errors such as this:

```

ENOENT: no such file or directory, open '/fuels-ts/packages/abi-typegen/test/fixtures/out/abis/minimal.bin'

``` | 1.0 | Improve confidence of Typegen tests - Sometimes the Typegen tests lose track of stuff and give errors such as this:

```

ENOENT: no such file or directory, open '/fuels-ts/packages/abi-typegen/test/fixtures/out/abis/minimal.bin'

``` | non_priority | improve confidence of typegen tests sometimes the typegen tests lose track of stuff and give errors such as this enoent no such file or directory open fuels ts packages abi typegen test fixtures out abis minimal bin | 0 |

510,770 | 14,815,951,472 | IssuesEvent | 2021-01-14 08:16:12 | ntop/ntopng | https://api.github.com/repos/ntop/ntopng | opened | Remove file name (pcap) from the menu layout | low-priority bug | <img width="1180" alt="Screenshot 2021-01-14 at 09 15 39" src="https://user-images.githubusercontent.com/4493366/104562785-17649080-5649-11eb-9847-dd520952f85e.png">

| 1.0 | Remove file name (pcap) from the menu layout - <img width="1180" alt="Screenshot 2021-01-14 at 09 15 39" src="https://user-images.githubusercontent.com/4493366/104562785-17649080-5649-11eb-9847-dd520952f85e.png">

| priority | remove file name pcap from the menu layout img width alt screenshot at src | 1 |

156,003 | 12,290,104,315 | IssuesEvent | 2020-05-10 01:36:38 | input-output-hk/cardano-ledger-specs | https://api.github.com/repos/input-output-hk/cardano-ledger-specs | opened | Return only the first validation error | priority medium shelley testnet | The shelley ledger is currently returning all the validation errors when a block/transaction is invalid. This can be confusing, though, in cases where one error is really the cause of the others. For example, if you supply a bad transaction input, the `ValueNotConservedUTxO` error is almost certainly also going to tri... | 1.0 | Return only the first validation error - The shelley ledger is currently returning all the validation errors when a block/transaction is invalid. This can be confusing, though, in cases where one error is really the cause of the others. For example, if you supply a bad transaction input, the `ValueNotConservedUTxO` er... | non_priority | return only the first validation error the shelley ledger is currently returning all the validation errors when a block transaction is invalid this can be confusing though in cases where one error is really the cause of the others for example if you supply a bad transaction input the valuenotconservedutxo er... | 0 |

23,763 | 7,373,920,070 | IssuesEvent | 2018-03-13 18:40:12 | TerabyteQbt/meta | https://api.github.com/repos/TerabyteQbt/meta | opened | Reproducibility Project - make standard java build process bit-for-bit reproducible | discussion enhancement help wanted standard java build process | This will require stripping out or otherwise dealing with file timestamps, etc. The end condition here is that if two different computers both execute a build of a java package (like meta_tools.release), with the exact same JDK version, then they should produce identical output (sha1s match). | 1.0 | Reproducibility Project - make standard java build process bit-for-bit reproducible - This will require stripping out or otherwise dealing with file timestamps, etc. The end condition here is that if two different computers both execute a build of a java package (like meta_tools.release), with the exact same JDK versi... | non_priority | reproducibility project make standard java build process bit for bit reproducible this will require stripping out or otherwise dealing with file timestamps etc the end condition here is that if two different computers both execute a build of a java package like meta tools release with the exact same jdk versi... | 0 |

3,422 | 2,538,379,335 | IssuesEvent | 2015-01-27 05:51:45 | GoogleCloudPlatform/kubernetes | https://api.github.com/repos/GoogleCloudPlatform/kubernetes | closed | etcd on master died due to running out of memory on day-old cluster with very little activity | area/introspection priority/P1 | Yesterday I spun up a cluster on GCE around 2pm using the 0.5.2 release. I scheduled two nginx pods on it with a replication controller, then ran kube-push using the 0.5.3 release to update the master. I then started 3 nginx pods on a different replication controller. Everything seemed to be working.

This morning I ... | 1.0 | etcd on master died due to running out of memory on day-old cluster with very little activity - Yesterday I spun up a cluster on GCE around 2pm using the 0.5.2 release. I scheduled two nginx pods on it with a replication controller, then ran kube-push using the 0.5.3 release to update the master. I then started 3 nginx... | priority | etcd on master died due to running out of memory on day old cluster with very little activity yesterday i spun up a cluster on gce around using the release i scheduled two nginx pods on it with a replication controller then ran kube push using the release to update the master i then started nginx p... | 1 |

315,238 | 27,057,959,832 | IssuesEvent | 2023-02-13 17:26:45 | inaturalist/iNaturalistAndroid | https://api.github.com/repos/inaturalist/iNaturalistAndroid | closed | Test new obs from camera flow | Test | I'd like to have an instrumented test that does the following:

1. Opens the app to My Observations (`ObservationsListActivity`)

1. Taps the FAB

1. Taps the "Take a Photo" button

1. Takes a photo and confirms the photo (or stubs this somehow)

1. Asserts that the photo exists in the photos row at the top of the ob... | 1.0 | Test new obs from camera flow - I'd like to have an instrumented test that does the following:

1. Opens the app to My Observations (`ObservationsListActivity`)

1. Taps the FAB

1. Taps the "Take a Photo" button

1. Takes a photo and confirms the photo (or stubs this somehow)

1. Asserts that the photo exists in the... | non_priority | test new obs from camera flow i d like to have an instrumented test that does the following opens the app to my observations observationslistactivity taps the fab taps the take a photo button takes a photo and confirms the photo or stubs this somehow asserts that the photo exists in the... | 0 |

8,241 | 7,309,704,921 | IssuesEvent | 2018-02-28 12:46:21 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | opened | Build of host ia32 dart in ARMv7 cross-compilation fails - result segfaults - on Debian | area-infrastructure area-vm | Building ARMv7 32-bit dart sdk on a Debian based linux fails because the x86 dart executable it builds (to generate the snapshots for the ARM sdk) crashes.

After upgrading to our local Debian distribution, and adding toolchain support with

sudo apt-get install g++-multilib

sudo apt-get install g++-arm-linux-gnueab... | 1.0 | Build of host ia32 dart in ARMv7 cross-compilation fails - result segfaults - on Debian - Building ARMv7 32-bit dart sdk on a Debian based linux fails because the x86 dart executable it builds (to generate the snapshots for the ARM sdk) crashes.

After upgrading to our local Debian distribution, and adding toolchain ... | non_priority | build of host dart in cross compilation fails result segfaults on debian building bit dart sdk on a debian based linux fails because the dart executable it builds to generate the snapshots for the arm sdk crashes after upgrading to our local debian distribution and adding toolchain support with ... | 0 |

477,699 | 13,766,659,981 | IssuesEvent | 2020-10-07 14:49:28 | NCIOCPL/cgov-digital-platform | https://api.github.com/repos/NCIOCPL/cgov-digital-platform | closed | Move "apply" button on popups | Component: Admin UX Improvement Low priority change request | Can the apply button be moved to the right, so it's more clearly connected to the search/filters on popups?

Want to avoid confusion thinking that "apply" is the go button, instead of "select"

detected in socket.io-parser-4.0.4.tgz | security vulnerability | ## CVE-2022-2421 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-parser-4.0.4.tgz</b></p></summary>

<p>socket.io protocol parser</p>

<p>Library home page: <a href="https://re... | True | CVE-2022-2421 (High) detected in socket.io-parser-4.0.4.tgz - ## CVE-2022-2421 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-parser-4.0.4.tgz</b></p></summary>

<p>socket.io... | non_priority | cve high detected in socket io parser tgz cve high severity vulnerability vulnerable library socket io parser tgz socket io protocol parser library home page a href path to dependency file package json path to vulnerable library node modules socket io parser packag... | 0 |

447,727 | 12,892,395,769 | IssuesEvent | 2020-07-13 19:32:33 | PREreview/rapid-prereview | https://api.github.com/repos/PREreview/rapid-prereview | closed | Searching for already added preprints by DOI doesn't yield any results | :dollar: Funded on Issuehunt COVID-19 Mozilla 2020 Sprints OASPA priority 1 bug priority | <!-- Issuehunt Badges -->

[<img alt="Issuehunt badges" src="https://img.shields.io/badge/IssueHunt-%24100%20Funded-%2300A156.svg" />](https://issuehunt.io/r/PREreview/rapid-prereview/issues/114)

<!-- /Issuehunt Badges -->

Happening both on my local build and on the deployed OSrPRE site. Can someone else corroborate?

... | 2.0 | Searching for already added preprints by DOI doesn't yield any results - <!-- Issuehunt Badges -->

[<img alt="Issuehunt badges" src="https://img.shields.io/badge/IssueHunt-%24100%20Funded-%2300A156.svg" />](https://issuehunt.io/r/PREreview/rapid-prereview/issues/114)

<!-- /Issuehunt Badges -->

Happening both on my lo... | priority | searching for already added preprints by doi doesn t yield any results happening both on my local build and on the deployed osrpre site can someone else corroborate issuehunt summary backers total submitted pull requests t... | 1 |

5,753 | 2,966,810,263 | IssuesEvent | 2015-07-12 08:39:02 | nanoc/nanoc.ws | https://api.github.com/repos/nanoc/nanoc.ws | closed | Suggestion: In conversion guide, indicate "gem install nanoc --pre" | documentation work in progress | Otherwise it's hard to install the new stuff. | 1.0 | Suggestion: In conversion guide, indicate "gem install nanoc --pre" - Otherwise it's hard to install the new stuff. | non_priority | suggestion in conversion guide indicate gem install nanoc pre otherwise it s hard to install the new stuff | 0 |

687,687 | 23,535,456,803 | IssuesEvent | 2022-08-19 20:08:18 | trimble-oss/modus-web-components | https://api.github.com/repos/trimble-oss/modus-web-components | closed | [1] Add Data Table component (barebones) | priority:medium new-component data-table | https://docs.google.com/document/d/1JwHy6Hqrj5EJY6deM5382STvM4InvV3OTdci4f1b9Ac/edit#

We may want to wrap a third party table library. Implementation details are TBD | 1.0 | [1] Add Data Table component (barebones) - https://docs.google.com/document/d/1JwHy6Hqrj5EJY6deM5382STvM4InvV3OTdci4f1b9Ac/edit#

We may want to wrap a third party table library. Implementation details are TBD | priority | add data table component barebones we may want to wrap a third party table library implementation details are tbd | 1 |

196,176 | 6,925,406,319 | IssuesEvent | 2017-11-30 15:51:12 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | closed | Checklist Sorting Issue - Wrong Checklist Deleted | help wanted priority: important section: Task Page | At least on Firefox, there is a checklist sorting bug that occurs when you move a checklist item up, and then, without saving, delete an item that used to be above it but is now below it. We've had a report that this causes

Here is how to replicate:

Add items A, B, C, D, E to checklist (in that order).

Move item ... | 1.0 | Checklist Sorting Issue - Wrong Checklist Deleted - At least on Firefox, there is a checklist sorting bug that occurs when you move a checklist item up, and then, without saving, delete an item that used to be above it but is now below it. We've had a report that this causes

Here is how to replicate:

Add items A, ... | priority | checklist sorting issue wrong checklist deleted at least on firefox there is a checklist sorting bug that occurs when you move a checklist item up and then without saving delete an item that used to be above it but is now below it we ve had a report that this causes here is how to replicate add items a ... | 1 |

40,566 | 2,868,928,465 | IssuesEvent | 2015-06-05 22:01:00 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Help text for using a package is wrong | bug Fixed Priority-Medium | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#5899_

----

It looks like: "Apps should use any as the Dart Ed... | 1.0 | Help text for using a package is wrong - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#5899_

----

It looks like:... | priority | help text for using a package is wrong issue by originally opened as dart lang sdk it looks like quot apps should use any as the dart editor quot which is all kinds of wrong | 1 |

378,968 | 11,211,552,968 | IssuesEvent | 2020-01-06 15:40:46 | matthiaskoenig/pkdb | https://api.github.com/repos/matthiaskoenig/pkdb | closed | update django to django 3.0 | backend dependencies priority | I am waiting for django-elasticsearch-dsl to support django 3.x.

[[related issue](https://github.com/sabricot/django-elasticsearch-dsl/issues/232)]

| 1.0 | update django to django 3.0 - I am waiting for django-elasticsearch-dsl to support django 3.x.

[[related issue](https://github.com/sabricot/django-elasticsearch-dsl/issues/232)]

| priority | update django to django i am waiting for django elasticsearch dsl to support django x | 1 |

21,102 | 3,870,122,697 | IssuesEvent | 2016-04-11 00:25:09 | jmeas/finance-app | https://api.github.com/repos/jmeas/finance-app | closed | Add babel plugin rewire | enhancement test | This will make it much easier to test some internal algorithms I don't want to export from any file, as well as test the stuff that is getting exported | 1.0 | Add babel plugin rewire - This will make it much easier to test some internal algorithms I don't want to export from any file, as well as test the stuff that is getting exported | non_priority | add babel plugin rewire this will make it much easier to test some internal algorithms i don t want to export from any file as well as test the stuff that is getting exported | 0 |

434,428 | 30,452,901,758 | IssuesEvent | 2023-07-16 14:20:33 | JasonDsouza212/free-hit | https://api.github.com/repos/JasonDsouza212/free-hit | closed | [Docs]: Adding a small Licenses section to the readme and a MIT Licenses badge | documentation level1 gssoc23 no-issue-activity | ### what's wrong in the documentation?

The readme file of the repo should have a Licenses section to make it more professional.

### Add screenshots

### Code of Conduct

- [X] I agree to follow this project'... | 1.0 | [Docs]: Adding a small Licenses section to the readme and a MIT Licenses badge - ### what's wrong in the documentation?

The readme file of the repo should have a Licenses section to make it more professional.

### Add screenshots

getting started guide for docker includes support for running on VirtualBox and suggests using docker-machine to manage that. While the provided commands do get the kubernetes cluster up and running /within/ the VirtualBox VM, the cluster's API server is not accessible from /outside/ the VM without furthe... | 1.0 | API server is not accessible via VirtualBox/docker-machine after getting started instructions - The (awesome) getting started guide for docker includes support for running on VirtualBox and suggests using docker-machine to manage that. While the provided commands do get the kubernetes cluster up and running /within/ th... | priority | api server is not accessible via virtualbox docker machine after getting started instructions the awesome getting started guide for docker includes support for running on virtualbox and suggests using docker machine to manage that while the provided commands do get the kubernetes cluster up and running within th... | 1 |

1,656 | 2,613,420,953 | IssuesEvent | 2015-02-27 20:52:57 | EasyFarm/EasyFarm | https://api.github.com/repos/EasyFarm/EasyFarm | closed | Update FAQ to include solution for attacking wrong targets. | documentation | Update the FAQ section to inform users to disable auto-target before use. This feature messes up with EF's targeting system and can get the player killed. | 1.0 | Update FAQ to include solution for attacking wrong targets. - Update the FAQ section to inform users to disable auto-target before use. This feature messes up with EF's targeting system and can get the player killed. | non_priority | update faq to include solution for attacking wrong targets update the faq section to inform users to disable auto target before use this feature messes up with ef s targeting system and can get the player killed | 0 |

427,040 | 29,738,154,686 | IssuesEvent | 2023-06-14 03:57:33 | Pradumnasaraf/LinkFree-CLI | https://api.github.com/repos/Pradumnasaraf/LinkFree-CLI | opened | [DOCS] New demo GIF | documentation | ### Description

Add a new demo GIF to the README

### Screenshots

_No response_ | 1.0 | [DOCS] New demo GIF - ### Description

Add a new demo GIF to the README

### Screenshots

_No response_ | non_priority | new demo gif description add a new demo gif to the readme screenshots no response | 0 |

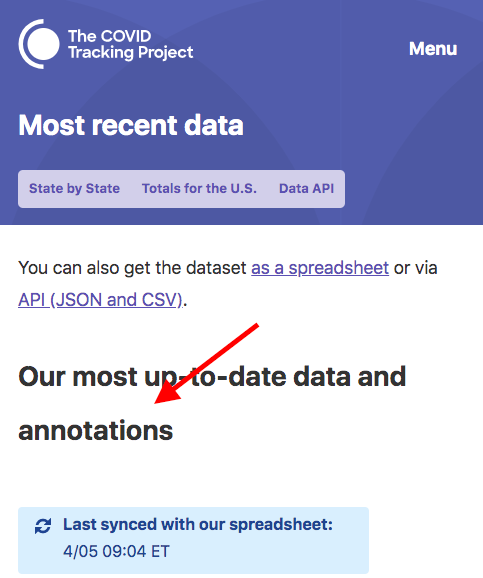

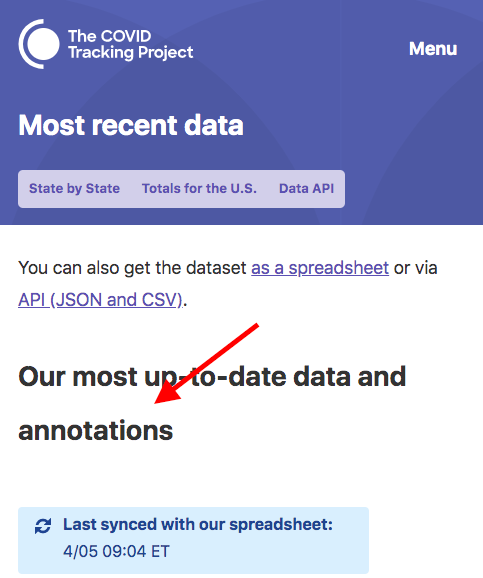

95,266 | 11,964,729,146 | IssuesEvent | 2020-04-05 20:40:40 | COVID19Tracking/website | https://api.github.com/repos/COVID19Tracking/website | closed | fix(design): Tighten header line heights | DESIGN | We need to redo line heights for headers, especially H2 in the body has way too much leading between lines. I think this is a typography.js config fix.

| 1.0 | fix(design): Tighten header line heights - We need to redo line heights for headers, especially H2 in the body has way too much leading between lines. I think this is a typography.js config fix.

| non_priority | fix design tighten header line heights we need to redo line heights for headers especially in the body has way too much leading between lines i think this is a typography js config fix | 0 |

65,523 | 3,231,736,892 | IssuesEvent | 2015-10-13 00:15:30 | aic-collections/aicdams-lakeshore | https://api.github.com/repos/aic-collections/aicdams-lakeshore | opened | AssetPresenter returns hash for status instead of ListItem | bug LOW priority | A minor problem, probably buried in Hydra::Presenter, perhaps when to_model is called. We can dig into this later if time permits. Note: the model still resolves status as a ListItem and the object of the triple is unaffected. It only affects GenericFile (i.e. assets). | 1.0 | AssetPresenter returns hash for status instead of ListItem - A minor problem, probably buried in Hydra::Presenter, perhaps when to_model is called. We can dig into this later if time permits. Note: the model still resolves status as a ListItem and the object of the triple is unaffected. It only affects GenericFile (i.e... | priority | assetpresenter returns hash for status instead of listitem a minor problem probably buried in hydra presenter perhaps when to model is called we can dig into this later if time permits note the model still resolves status as a listitem and the object of the triple is unaffected it only affects genericfile i e... | 1 |

306,046 | 9,380,012,325 | IssuesEvent | 2019-04-04 16:03:46 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | reopened | [Reviewer Tools] Anonymising Reviewer Emails | component: reviewer tools priority: p2 | It is a fact the developers are often not happy if their extensions are rejected during a review. While it should not cause any further complications, in reality that is not always the case.

There are instance where the dissatisfied developers resort to targeting the reviewer via other AMO systems as a consequence.

... | 1.0 | [Reviewer Tools] Anonymising Reviewer Emails - It is a fact the developers are often not happy if their extensions are rejected during a review. While it should not cause any further complications, in reality that is not always the case.

There are instance where the dissatisfied developers resort to targeting the rev... | priority | anonymising reviewer emails it is a fact the developers are often not happy if their extensions are rejected during a review while it should not cause any further complications in reality that is not always the case there are instance where the dissatisfied developers resort to targeting the reviewer via other... | 1 |

69,802 | 17,861,874,901 | IssuesEvent | 2021-09-06 02:40:57 | Vitzual/Automa | https://api.github.com/repos/Vitzual/Automa | opened | Add assemblers | Building System Alpha 1 | **High Priority**

- [x] Add smelter model

- [ ] Inherit all constructor logic

- [x] Add ability to construct items with 2 inputs / 1 output

| 1.0 | Add assemblers - **High Priority**

- [x] Add smelter model

- [ ] Inherit all constructor logic

- [x] Add ability to construct items with 2 inputs / 1 output

| non_priority | add assemblers high priority add smelter model inherit all constructor logic add ability to construct items with inputs output | 0 |

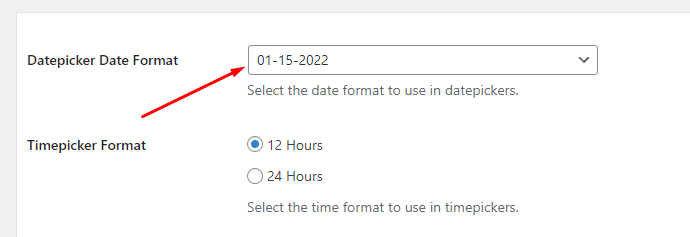

181,781 | 30,742,600,585 | IssuesEvent | 2023-07-28 12:45:06 | ncosd/food-pantry-app | https://api.github.com/repos/ncosd/food-pantry-app | closed | Change default time for a volunteer window | help wanted Needs Design volunteer-portal | # Feature Description

<!-- Describe how the feature should work, and what problem it solves. -->

- [x] Default time should be 9am - 12pm

- [x] Add target numbers 6,7,8,9,10+

| 1.0 | Change default time for a volunteer window - # Feature Description

<!-- Describe how the feature should work, and what problem it solves. -->

- [x] Default time should be 9am - 12pm

- [x] Add target numbers 6,7,8,9,10+

| non_priority | change default time for a volunteer window feature description default time should be add target numbers | 0 |

151,938 | 23,894,355,320 | IssuesEvent | 2022-09-08 13:48:16 | carbon-design-system/carbon-platform | https://api.github.com/repos/carbon-design-system/carbon-platform | closed | Update framework icons | role: design 🎨 | we need to update framework icons for resource and mini cards to the IBM grid so that they all have the same visual weight.

icons needed for dashboard as well sized like status indicators. | 1.0 | Update framework icons - we need to update framework icons for resource and mini cards to the IBM grid so that they all have the same visual weight.

icons needed for dashboard as well sized like status indicators. | non_priority | update framework icons we need to update framework icons for resource and mini cards to the ibm grid so that they all have the same visual weight icons needed for dashboard as well sized like status indicators | 0 |

156,322 | 5,967,348,799 | IssuesEvent | 2017-05-30 15:46:43 | DCS-LCSR/SignStream3 | https://api.github.com/repos/DCS-LCSR/SignStream3 | opened | Text events disappear when new segment tier created | bug priority | Use case:

Either on clicking new segment tier, or after it, the text events in a ST disappear.

(TODO: verify, etc) | 1.0 | Text events disappear when new segment tier created - Use case:

Either on clicking new segment tier, or after it, the text events in a ST disappear.

(TODO: verify, etc) | priority | text events disappear when new segment tier created use case either on clicking new segment tier or after it the text events in a st disappear todo verify etc | 1 |

545,074 | 15,935,508,284 | IssuesEvent | 2021-04-14 09:56:48 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Fatal error occuring with the recent update Version 1.0.76.11 | NEXT UPDATE [Priority: HIGH] bug | Fatal error: Uncaught Error: Undefined constant "AIOSEO_VERSION" in C:\xampp\htdocs\sravan\wp-content\plugins\accelerated-mobile-pages\templates\features.php:3333

an error occurring when All in One SEO Pro is not activated.

Ref: https://secure.helpscout.net/conversation/1483458039/190934?folderId=2632030

issue... | 1.0 | Fatal error occuring with the recent update Version 1.0.76.11 - Fatal error: Uncaught Error: Undefined constant "AIOSEO_VERSION" in C:\xampp\htdocs\sravan\wp-content\plugins\accelerated-mobile-pages\templates\features.php:3333

an error occurring when All in One SEO Pro is not activated.

Ref: https://secure.helps... | priority | fatal error occuring with the recent update version fatal error uncaught error undefined constant aioseo version in c xampp htdocs sravan wp content plugins accelerated mobile pages templates features php an error occurring when all in one seo pro is not activated ref issue occurring in l... | 1 |

259,327 | 8,197,320,343 | IssuesEvent | 2018-08-31 13:03:48 | CSCfi/pebbles | https://api.github.com/repos/CSCfi/pebbles | closed | Clear group users after the course ends | backend feature high-priority | After a course has passed 3-6 months (period can be decided), there should be a button to clear all the users of a group EXCEPT the owner and the manager of the group.

This should be done to accommodate new iterations of a group. This feature is desirable because there are a lot of groups and people being added ever... | 1.0 | Clear group users after the course ends - After a course has passed 3-6 months (period can be decided), there should be a button to clear all the users of a group EXCEPT the owner and the manager of the group.

This should be done to accommodate new iterations of a group. This feature is desirable because there are a... | priority | clear group users after the course ends after a course has passed months period can be decided there should be a button to clear all the users of a group except the owner and the manager of the group this should be done to accommodate new iterations of a group this feature is desirable because there are a... | 1 |

190,107 | 6,808,756,835 | IssuesEvent | 2017-11-04 07:59:56 | spring-projects/spring-boot | https://api.github.com/repos/spring-projects/spring-boot | closed | Log context path at startup | priority: low type: enhancement | When starting a web application, there is a nice log message stating which port your server is running on:

`2017-05-24 09:32:50,586 - INFO : MESSAGE=[Jetty started on port(s) 7000 (http/1.1)] TID=[main] CLASS=[org.springframework.boot.context.embedded.jetty.JettyEmbeddedServletContainer]`

I've run into several occa... | 1.0 | Log context path at startup - When starting a web application, there is a nice log message stating which port your server is running on:

`2017-05-24 09:32:50,586 - INFO : MESSAGE=[Jetty started on port(s) 7000 (http/1.1)] TID=[main] CLASS=[org.springframework.boot.context.embedded.jetty.JettyEmbeddedServletContainer]`... | priority | log context path at startup when starting a web application there is a nice log message stating which port your server is running on info message tid class i ve run into several occassions though where the context path is also relevant to know since you can set it with spring boot via... | 1 |

754,689 | 26,398,506,919 | IssuesEvent | 2023-01-12 21:56:20 | B4-Group/swe_b4 | https://api.github.com/repos/B4-Group/swe_b4 | closed | Sounds | bug Priority | # What is going on

Music dont restart

If the game over sound comes, the music dont start again, after restarting the game

If restarting before you die, the music dont start from the beginning

# What should happen

Music need to start after restart from the beginning

# Steps to reproduce

Die

# Platform

... | 1.0 | Sounds - # What is going on

Music dont restart

If the game over sound comes, the music dont start again, after restarting the game

If restarting before you die, the music dont start from the beginning

# What should happen

Music need to start after restart from the beginning

# Steps to reproduce

Die

# P... | priority | sounds what is going on music dont restart if the game over sound comes the music dont start again after restarting the game if restarting before you die the music dont start from the beginning what should happen music need to start after restart from the beginning steps to reproduce die p... | 1 |

489,029 | 14,100,268,200 | IssuesEvent | 2020-11-06 03:41:02 | PMEAL/OpenPNM | https://api.github.com/repos/PMEAL/OpenPNM | closed | Add health/consistency checks as pore-scale models? | discussion enhancement low priority proposal | As I am investigating issue #1500, I am writing several functions that inspect pore and throat properties, such as finding throats diameters that are larger than their neighboring pores. This returns an Nt-by-1 array of True/False. It occurs to me that this could be a pore-scale model, that returns Trues in locations ... | 1.0 | Add health/consistency checks as pore-scale models? - As I am investigating issue #1500, I am writing several functions that inspect pore and throat properties, such as finding throats diameters that are larger than their neighboring pores. This returns an Nt-by-1 array of True/False. It occurs to me that this could b... | priority | add health consistency checks as pore scale models as i am investigating issue i am writing several functions that inspect pore and throat properties such as finding throats diameters that are larger than their neighboring pores this returns an nt by array of true false it occurs to me that this could be a... | 1 |

101,817 | 4,141,484,401 | IssuesEvent | 2016-06-14 05:43:49 | Apollo-Community/ApolloStation | https://api.github.com/repos/Apollo-Community/ApolloStation | closed | Observation Screens doesn't work. | 0.3 mapping oversight priority: medium | This include the observ. screen of the tribunal, and the toxin launching room. There may be more. | 1.0 | Observation Screens doesn't work. - This include the observ. screen of the tribunal, and the toxin launching room. There may be more. | priority | observation screens doesn t work this include the observ screen of the tribunal and the toxin launching room there may be more | 1 |

542,401 | 15,859,604,116 | IssuesEvent | 2021-04-08 08:12:55 | mapbox/mapbox-navigation-ios | https://api.github.com/repos/mapbox/mapbox-navigation-ios | closed | High CPU when camera is not moving | - bug - performance High Priority Injection Work archived | Steps to reproduce:

1. Run example-swift app

1. Turn off navigation simulation

1. Set the simulator location to `Apple` (so it does not move)

1. Start navigation

### Expected:

CPU is near 0% because the camera is not moving.

### Actual:

CPU is near 100%.

If this line https://github.com/mapbox/mapb... | 1.0 | High CPU when camera is not moving - Steps to reproduce:

1. Run example-swift app

1. Turn off navigation simulation

1. Set the simulator location to `Apple` (so it does not move)

1. Start navigation

### Expected:

CPU is near 0% because the camera is not moving.

### Actual:

CPU is near 100%.

If thi... | priority | high cpu when camera is not moving steps to reproduce run example swift app turn off navigation simulation set the simulator location to apple so it does not move start navigation expected cpu is near because the camera is not moving actual cpu is near if this ... | 1 |

142,196 | 13,017,880,130 | IssuesEvent | 2020-07-26 14:41:47 | semi-technologies/semi-website | https://api.github.com/repos/semi-technologies/semi-website | opened | Add contributor guide | documentation | The guides are available [here](https://github.com/semi-technologies/semi-website/tree/feature/contributor-guide/_documentation/weaviate/current/contributor-guide), adding MD files will add them to the menu as well.

When it's done, you can create a PR (or already open one). | 1.0 | Add contributor guide - The guides are available [here](https://github.com/semi-technologies/semi-website/tree/feature/contributor-guide/_documentation/weaviate/current/contributor-guide), adding MD files will add them to the menu as well.

When it's done, you can create a PR (or already open one). | non_priority | add contributor guide the guides are available adding md files will add them to the menu as well when it s done you can create a pr or already open one | 0 |

102,164 | 4,151,345,950 | IssuesEvent | 2016-06-15 20:18:39 | w3c/webpayments | https://api.github.com/repos/w3c/webpayments | opened | Should the browser pass user data it has collected (email etc) to the payment app? | Priority: Medium Proposal: Payment Apps question | Migrated from https://github.com/w3c/browser-payment-api/issues/194

@mattsaxon said:

>Now that the payment options field can include the request of the email and phone number information, we need to consider how that information might be made securely available to payment applications such that for payment applic... | 1.0 | Should the browser pass user data it has collected (email etc) to the payment app? - Migrated from https://github.com/w3c/browser-payment-api/issues/194

@mattsaxon said:

>Now that the payment options field can include the request of the email and phone number information, we need to consider how that information ... | priority | should the browser pass user data it has collected email etc to the payment app migrated from mattsaxon said now that the payment options field can include the request of the email and phone number information we need to consider how that information might be made securely available to payment applicat... | 1 |

347,821 | 10,434,754,548 | IssuesEvent | 2019-09-17 15:49:16 | prysmaticlabs/prysm | https://api.github.com/repos/prysmaticlabs/prysm | closed | Multinode is currently Broken | Bug Priority: Critical | Blocks have different signing roots between peers. If one peer broadcasts a block and that block has a root of `0x123` for that peer, the other peer would get the same block and determine the root as `0x456`.

```

[2019-09-17 16:01:20] ERROR sync: Failed to handle p2p pubsub error=signature did not verify

could no... | 1.0 | Multinode is currently Broken - Blocks have different signing roots between peers. If one peer broadcasts a block and that block has a root of `0x123` for that peer, the other peer would get the same block and determine the root as `0x456`.

```

[2019-09-17 16:01:20] ERROR sync: Failed to handle p2p pubsub error=si... | priority | multinode is currently broken blocks have different signing roots between peers if one peer broadcasts a block and that block has a root of for that peer the other peer would get the same block and determine the root as error sync failed to handle pubsub error signature did not verify could ... | 1 |

10,210 | 8,851,399,561 | IssuesEvent | 2019-01-08 15:40:41 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | image_picker: add multiple images picking | p: first party p: image_picker p: self service plugin severe: new feature would be a good package | Hi guys.

One more option as a valuable update in image_picker plugin.

It would be cool to have multiple image picking option

For now - on long press we have selection of image and selected count in AppBar. Looks like default behavior. But multiple image picking disabled.

Please let me know if it is possible.

... | 1.0 | image_picker: add multiple images picking - Hi guys.

One more option as a valuable update in image_picker plugin.

It would be cool to have multiple image picking option

For now - on long press we have selection of image and selected count in AppBar. Looks like default behavior. But multiple image picking disabled.... | non_priority | image picker add multiple images picking hi guys one more option as a valuable update in image picker plugin it would be cool to have multiple image picking option for now on long press we have selection of image and selected count in appbar looks like default behavior but multiple image picking disabled ... | 0 |

7,471 | 3,090,086,542 | IssuesEvent | 2015-08-26 02:39:07 | thoughtbot/neat | https://api.github.com/repos/thoughtbot/neat | closed | Remove span-column(12) from table-grid example | documentation | `@include span-columns(12);` shouldn't have a right-margin. We shouldn't have to include omega every time we want to use a full width column. I would have made the update myself and submitted a PR but I couldn't figure out how to update the code accordingly.

I'm guessing this hasn't been an issue before now because ... | 1.0 | Remove span-column(12) from table-grid example - `@include span-columns(12);` shouldn't have a right-margin. We shouldn't have to include omega every time we want to use a full width column. I would have made the update myself and submitted a PR but I couldn't figure out how to update the code accordingly.

I'm guess... | non_priority | remove span column from table grid example include span columns shouldn t have a right margin we shouldn t have to include omega every time we want to use a full width column i would have made the update myself and submitted a pr but i couldn t figure out how to update the code accordingly i m guessin... | 0 |

407,693 | 11,935,909,189 | IssuesEvent | 2020-04-02 09:24:05 | deora-earth/tealgarden | https://api.github.com/repos/deora-earth/tealgarden | opened | Research about community interaction/ integration | 02 Medium Priority | <!--

# Simple Summary

This policy allows to write out rewards to complete required tasks. Completed tasks are payed by the deora council to the claiming member.

# How to create a new bounty?

1. To start you'll have to fill out the bounty form below.

- If the bounty spans across multiple repositories, ... | 1.0 | Research about community interaction/ integration - <!--

# Simple Summary

This policy allows to write out rewards to complete required tasks. Completed tasks are payed by the deora council to the claiming member.

# How to create a new bounty?

1. To start you'll have to fill out the bounty form below.

... | priority | research about community interaction integration simple summary this policy allows to write out rewards to complete required tasks completed tasks are payed by the deora council to the claiming member how to create a new bounty to start you ll have to fill out the bounty form below ... | 1 |

179,587 | 21,573,344,315 | IssuesEvent | 2022-05-02 11:00:26 | tabac-ws/JAVA-Demo-2022 | https://api.github.com/repos/tabac-ws/JAVA-Demo-2022 | opened | CVE-2017-3586 (Medium) detected in mysql-connector-java-5.1.25.jar | security vulnerability | ## CVE-2017-3586 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.25.jar</b></p></summary>

<p>MySQL JDBC Type 4 driver</p>

<p>Library home page: <a href="http... | True | CVE-2017-3586 (Medium) detected in mysql-connector-java-5.1.25.jar - ## CVE-2017-3586 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.25.jar</b></p></summary>... | non_priority | cve medium detected in mysql connector java jar cve medium severity vulnerability vulnerable library mysql connector java jar mysql jdbc type driver library home page a href path to dependency file pom xml path to vulnerable library sitory mysql mysql connector j... | 0 |

2,902 | 4,055,170,829 | IssuesEvent | 2016-05-24 14:44:06 | modxcms/revolution | https://api.github.com/repos/modxcms/revolution | closed | Require and force a back-end user to change password first | area-security feature | bertoost created Redmine issue ID 2748

This one should be very cool. We always create a customer-account and we have a simple password for that user. It will be very nice if we could say that the user needs to change the password at first when logging in. Untill they don't do it, the manager is blocked for that user... | True | Require and force a back-end user to change password first - bertoost created Redmine issue ID 2748

This one should be very cool. We always create a customer-account and we have a simple password for that user. It will be very nice if we could say that the user needs to change the password at first when logging in. ... | non_priority | require and force a back end user to change password first bertoost created redmine issue id this one should be very cool we always create a customer account and we have a simple password for that user it will be very nice if we could say that the user needs to change the password at first when logging in unt... | 0 |

37,501 | 8,407,650,346 | IssuesEvent | 2018-10-11 21:39:59 | riksanyal/et | https://api.github.com/repos/riksanyal/et | closed | Superfluous error message (Trac #54) | Migrated from Trac SimFactory defect mthomas | I submitted a job for a simulation which already existed. This lead to the following output, where there is an error message about "mkdir". Even though there is an error, simfactory proceeds (it probably shouldn't). In this case, however, I assume that the error is benign because the directory existed before, and thus ... | 1.0 | Superfluous error message (Trac #54) - I submitted a job for a simulation which already existed. This lead to the following output, where there is an error message about "mkdir". Even though there is an error, simfactory proceeds (it probably shouldn't). In this case, however, I assume that the error is benign because ... | non_priority | superfluous error message trac i submitted a job for a simulation which already existed this lead to the following output where there is an error message about mkdir even though there is an error simfactory proceeds it probably shouldn t in this case however i assume that the error is benign because t... | 0 |

48,949 | 13,185,168,892 | IssuesEvent | 2020-08-12 20:51:32 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | icetray development email list (Trac #512) | Incomplete Migration Migrated from Trac defect tools/ports | <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/512

, reported by blaufuss and owned by cgils</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-01-22T18:45:35",

"description": "create a email list for icetray development.\n\nicetray-dev or something like that... | 1.0 | icetray development email list (Trac #512) - <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/512

, reported by blaufuss and owned by cgils</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-01-22T18:45:35",

"description": "create a email list for icetray devel... | non_priority | icetray development email list trac migrated from reported by blaufuss and owned by cgils json status closed changetime description create a email list for icetray development n nicetray dev or something like that n nlet s use umdgrb s mailman and avoid... | 0 |

353,818 | 10,559,289,496 | IssuesEvent | 2019-10-04 11:11:20 | nationalarchives/front-end-development-guide | https://api.github.com/repos/nationalarchives/front-end-development-guide | closed | Update development guide to explain decorative images should be CSS backgrounds, not img tags | high-priority | The DAC report has identified an instance where a decorative image has been implemented using an `<img>` tag. We should be explicity about this in the front-end-development-guide so that everyone (including new starters) and 3rd parties are aware. | 1.0 | Update development guide to explain decorative images should be CSS backgrounds, not img tags - The DAC report has identified an instance where a decorative image has been implemented using an `<img>` tag. We should be explicity about this in the front-end-development-guide so that everyone (including new starters) and... | priority | update development guide to explain decorative images should be css backgrounds not img tags the dac report has identified an instance where a decorative image has been implemented using an tag we should be explicity about this in the front end development guide so that everyone including new starters and p... | 1 |

299,557 | 22,613,164,586 | IssuesEvent | 2022-06-29 19:07:04 | SandraScherer/EntertainmentInfothek | https://api.github.com/repos/SandraScherer/EntertainmentInfothek | opened | Introduce factory to all Entry-derived classes | documentation enhancement program | - [ ] Update implementation

- [ ] Update tests

- [ ] Update documentation

- [ ] EntertainmentInfothek_EntertainmentDB.dll.vpp

- [ ] Doxygen | 1.0 | Introduce factory to all Entry-derived classes - - [ ] Update implementation

- [ ] Update tests

- [ ] Update documentation

- [ ] EntertainmentInfothek_EntertainmentDB.dll.vpp

- [ ] Doxygen | non_priority | introduce factory to all entry derived classes update implementation update tests update documentation entertainmentinfothek entertainmentdb dll vpp doxygen | 0 |

759,768 | 26,609,879,671 | IssuesEvent | 2023-01-23 22:50:25 | redhat-developer/odo | https://api.github.com/repos/redhat-developer/odo | closed | `odo delete component --running-in` | priority/Medium kind/user-story | /kind user-story

## User Story

- As an odo user

- I want to delete just `dev` resources from the cluster and keep `deploy` or just `deploy` resources and keep `dev` resources.

- So I can deploy/undeploy applications without touching `dev` instance or delete just `dev` instance without affecting `deployed` app. Th... | 1.0 | `odo delete component --running-in` - /kind user-story

## User Story

- As an odo user

- I want to delete just `dev` resources from the cluster and keep `deploy` or just `deploy` resources and keep `dev` resources.

- So I can deploy/undeploy applications without touching `dev` instance or delete just `dev` instanc... | priority | odo delete component running in kind user story user story as an odo user i want to delete just dev resources from the cluster and keep deploy or just deploy resources and keep dev resources so i can deploy undeploy applications without touching dev instance or delete just dev instanc... | 1 |

49,363 | 10,341,918,041 | IssuesEvent | 2019-09-04 04:20:30 | ssm-deepcove/deepcove-website | https://api.github.com/repos/ssm-deepcove/deepcove-website | opened | Override GetHashCode on CmsButton and TextComponent | improve code | Since we have overriden the Equals() method on these classes, we should also override GetHashCode(), so that the classes behave appropriately in hash tables.

Putting this as low priority as I do not believe that it will likely affect us, but is good practice. | 1.0 | Override GetHashCode on CmsButton and TextComponent - Since we have overriden the Equals() method on these classes, we should also override GetHashCode(), so that the classes behave appropriately in hash tables.

Putting this as low priority as I do not believe that it will likely affect us, but is good practice. | non_priority | override gethashcode on cmsbutton and textcomponent since we have overriden the equals method on these classes we should also override gethashcode so that the classes behave appropriately in hash tables putting this as low priority as i do not believe that it will likely affect us but is good practice | 0 |

354,731 | 10,571,537,843 | IssuesEvent | 2019-10-07 07:25:28 | LightXEthan/uwahs-campus-map | https://api.github.com/repos/LightXEthan/uwahs-campus-map | closed | Improve password strength of Firebase Authentication | admin-front enhancement low priority | - firebase default password requirement is 6 characters min.

- research if password strength is configurable in firebase or implement our own. | 1.0 | Improve password strength of Firebase Authentication - - firebase default password requirement is 6 characters min.

- research if password strength is configurable in firebase or implement our own. | priority | improve password strength of firebase authentication firebase default password requirement is characters min research if password strength is configurable in firebase or implement our own | 1 |

615,584 | 19,268,980,137 | IssuesEvent | 2021-12-10 01:30:22 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | Feature Request - make "show number of entries" consistent throughout the working session in bulkloader browse-and-edit | Priority-Normal (Not urgent) Enhancement | Issue Documentation is http://handbook.arctosdb.org/how_to/How-to-Use-Issues-in-Arctos.html

**Is your feature request related to a problem? Please describe.**

In Bulkloader browse-and-edit and when working with accessions where n>10 and using SQL or other tools, on every page reload, number of shown entries reverts... | 1.0 | Feature Request - make "show number of entries" consistent throughout the working session in bulkloader browse-and-edit - Issue Documentation is http://handbook.arctosdb.org/how_to/How-to-Use-Issues-in-Arctos.html

**Is your feature request related to a problem? Please describe.**

In Bulkloader browse-and-edit and w... | priority | feature request make show number of entries consistent throughout the working session in bulkloader browse and edit issue documentation is is your feature request related to a problem please describe in bulkloader browse and edit and when working with accessions where n and using sql or other tools ... | 1 |

67,821 | 28,056,588,911 | IssuesEvent | 2023-03-29 09:46:24 | elastic/integrations | https://api.github.com/repos/elastic/integrations | opened | [RabbitMQ] Support metrics like message publish and deliver rate for queues | Team:Service-Integrations | Currently both beats and integrations module for RabbitMQ doesn't support all the metrics for queue data stream.

There is an ask for metrics like rabbitmq.queue.messages.publish.rate and rabbitmq.queue.messages.deliver.rate in Discuss forum.

It would be good to consider any such metrics which is currently missing and... | 1.0 | [RabbitMQ] Support metrics like message publish and deliver rate for queues - Currently both beats and integrations module for RabbitMQ doesn't support all the metrics for queue data stream.

There is an ask for metrics like rabbitmq.queue.messages.publish.rate and rabbitmq.queue.messages.deliver.rate in Discuss forum.... | non_priority | support metrics like message publish and deliver rate for queues currently both beats and integrations module for rabbitmq doesn t support all the metrics for queue data stream there is an ask for metrics like rabbitmq queue messages publish rate and rabbitmq queue messages deliver rate in discuss forum it woul... | 0 |

543,326 | 15,879,976,872 | IssuesEvent | 2021-04-09 13:09:37 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | Version-specific documentation links broken | component:ui priority:medium state:needs_devel type:bug | ##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

- UI

##### SUMMARY

When clicking the button Key from Search you'll get a series of informations, the link that send to Ansible Tower is not aligned with the AWX version.

##### ENVIRONMENT

<!--

* AWX version: ... | 1.0 | Version-specific documentation links broken - ##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

- UI

##### SUMMARY

When clicking the button Key from Search you'll get a series of informations, the link that send to Ansible Tower is not aligned with the AWX versi... | priority | version specific documentation links broken issue type bug report component name ui summary when clicking the button key from search you ll get a series of informations the link that send to ansible tower is not aligned with the awx version environment awx versio... | 1 |

165,044 | 20,574,097,154 | IssuesEvent | 2022-03-04 01:20:00 | JMD60260/kitkatclub | https://api.github.com/repos/JMD60260/kitkatclub | opened | WS-2022-0089 (High) detected in nokogiri-1.10.8.gem | security vulnerability | ## WS-2022-0089 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.8.gem</b></p></summary>

<p>Nokogiri (鋸) is an HTML, XML, SAX, and Reader parser. Among

Nokogiri's many fe... | True | WS-2022-0089 (High) detected in nokogiri-1.10.8.gem - ## WS-2022-0089 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.8.gem</b></p></summary>

<p>Nokogiri (鋸) is an HTML, ... | non_priority | ws high detected in nokogiri gem ws high severity vulnerability vulnerable library nokogiri gem nokogiri 鋸 is an html xml sax and reader parser among nokogiri s many features is the ability to search documents via xpath or selectors library home page a href pat... | 0 |

63,506 | 14,656,733,240 | IssuesEvent | 2020-12-28 14:04:41 | fu1771695yongxie/next.js | https://api.github.com/repos/fu1771695yongxie/next.js | opened | CVE-2020-7774 (High) detected in y18n-4.0.0.tgz | security vulnerability | ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>y18n-4.0.0.tgz</b></p></summary>

<p>the bare-bones internationalization library used by yargs</p>

<p>Library home page:... | True | CVE-2020-7774 (High) detected in y18n-4.0.0.tgz - ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>y18n-4.0.0.tgz</b></p></summary>

<p>the bare-bones internationalizati... | non_priority | cve high detected in tgz cve high severity vulnerability vulnerable library tgz the bare bones internationalization library used by yargs library home page a href path to dependency file next js node modules package json path to vulnerable library next js node ... | 0 |

693,854 | 23,792,221,093 | IssuesEvent | 2022-09-02 15:32:51 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [fusesoc] Compile DPI modules into shared libraries and link them afterwards | Component:Tooling Good First Issue Priority:P2 Type:Enhancement | Currently we compile all DPI code together with the Verilator-generated C++ code. We need to extend fusesoc to first compile the DPI modules into shared libraries, and then pass those libraries to the Verilator makefile to link all components together into one simulation.

This allows us to actually compile C code wi... | 1.0 | [fusesoc] Compile DPI modules into shared libraries and link them afterwards - Currently we compile all DPI code together with the Verilator-generated C++ code. We need to extend fusesoc to first compile the DPI modules into shared libraries, and then pass those libraries to the Verilator makefile to link all component... | priority | compile dpi modules into shared libraries and link them afterwards currently we compile all dpi code together with the verilator generated c code we need to extend fusesoc to first compile the dpi modules into shared libraries and then pass those libraries to the verilator makefile to link all components togeth... | 1 |

78,111 | 10,040,212,747 | IssuesEvent | 2019-07-18 19:22:31 | macports/macports-webapp | https://api.github.com/repos/macports/macports-webapp | closed | README.md does not describe what the code does | documentation | The top level README.md explains how to run the webapp but not what it is. A few paragraphs of explanation at the beginning would be in order. | 1.0 | README.md does not describe what the code does - The top level README.md explains how to run the webapp but not what it is. A few paragraphs of explanation at the beginning would be in order. | non_priority | readme md does not describe what the code does the top level readme md explains how to run the webapp but not what it is a few paragraphs of explanation at the beginning would be in order | 0 |

2,289 | 2,715,840,628 | IssuesEvent | 2015-04-10 15:27:12 | Gouga34/TERM1_Poker | https://api.github.com/repos/Gouga34/TERM1_Poker | closed | Ajout d'une méthode qui effectue une mise | Amélioration code | Il faut factoriser les méthodes miser, suivre et relancer en écrivant une méthode qui fasse :

- setMiseCourante

- setMiseTotale

- setMisePlusHaute (avec vérif)

- Incrémentation pot

- Décrémentation

Et utiliser la méthode qui fait tout dans miser, relancer et suivre ! | 1.0 | Ajout d'une méthode qui effectue une mise - Il faut factoriser les méthodes miser, suivre et relancer en écrivant une méthode qui fasse :

- setMiseCourante

- setMiseTotale

- setMisePlusHaute (avec vérif)

- Incrémentation pot

- Décrémentation

Et utiliser la méthode qui fait tout dans miser, relancer et suivre ! | non_priority | ajout d une méthode qui effectue une mise il faut factoriser les méthodes miser suivre et relancer en écrivant une méthode qui fasse setmisecourante setmisetotale setmiseplushaute avec vérif incrémentation pot décrémentation et utiliser la méthode qui fait tout dans miser relancer et suivre | 0 |

457,954 | 13,165,676,425 | IssuesEvent | 2020-08-11 07:07:46 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.gst.gov.in - design is broken | browser-firefox engine-gecko priority-normal | <!-- @browser: Firefox 80.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; rv:80.0) Gecko/20100101 Firefox/80.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56457 -->

**URL**: https://www.gst.gov.in/

**Browser / Version**: Firefox 80.0

**Operating System**: Wi... | 1.0 | www.gst.gov.in - design is broken - <!-- @browser: Firefox 80.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; rv:80.0) Gecko/20100101 Firefox/80.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56457 -->

**URL**: https://www.gst.gov.in/

**Browser / Version**: F... | priority | design is broken url browser version firefox operating system windows tested another browser yes chrome problem type design is broken description images not loaded steps to reproduce view the screenshot img alt screenshot src ... | 1 |

5,801 | 2,974,709,166 | IssuesEvent | 2015-07-15 03:31:22 | stan-dev/cmdstan | https://api.github.com/repos/stan-dev/cmdstan | closed | update doc for L-BFGS default, pointers to Stan language doc | bug documentation enhancement | * [ ] update doc everywhere indicating L-BFGS is default optimizer

* [ ] remove all the algorithm description and replace with pointers to Stan language model; see https://github.com/stan-dev/stan/issues/786#issuecomment-50775550 | 1.0 | update doc for L-BFGS default, pointers to Stan language doc - * [ ] update doc everywhere indicating L-BFGS is default optimizer

* [ ] remove all the algorithm description and replace with pointers to Stan language model; see https://github.com/stan-dev/stan/issues/786#issuecomment-50775550 | non_priority | update doc for l bfgs default pointers to stan language doc update doc everywhere indicating l bfgs is default optimizer remove all the algorithm description and replace with pointers to stan language model see | 0 |

28,359 | 6,988,186,138 | IssuesEvent | 2017-12-14 11:57:41 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Problem saving article in frontend | No Code Attached Yet | ### Steps to reproduce the issue

Try to disable recaptcha plugin

go to frontend and login with an admin account

edit an article

try to save

### Expected result

saved article

### Actual result

PHP Fatal error: Call to a member function checkAnswer() on null in /home/uaug0z2o/domains/icfontanellatoef... | 1.0 | Problem saving article in frontend - ### Steps to reproduce the issue

Try to disable recaptcha plugin

go to frontend and login with an admin account

edit an article

try to save

### Expected result

saved article

### Actual result

PHP Fatal error: Call to a member function checkAnswer() on null in /h... | non_priority | problem saving article in frontend steps to reproduce the issue try to disable recaptcha plugin go to frontend and login with an admin account edit an article try to save expected result saved article actual result php fatal error call to a member function checkanswer on null in h... | 0 |

7,091 | 9,375,814,553 | IssuesEvent | 2019-04-04 05:55:26 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | reopened | incompatible_windows_style_arg_escaping: enables correct subprocess argument escaping on Windows | bazel 1.0 breaking-change-0.25 incompatible-change migration-0.24 team-Windows | # Description

The option `--incompatible_windows_style_arg_escaping` enables correct subprocess argument escaping on Windows. This flag has NO effect on other platforms.

When enabled, [WindowsSubprocessFactory will use ShellUtils.windowsEscapeArg to escape command line arguments](https://github.com/bazelbuild/baz... | True | incompatible_windows_style_arg_escaping: enables correct subprocess argument escaping on Windows - # Description

The option `--incompatible_windows_style_arg_escaping` enables correct subprocess argument escaping on Windows. This flag has NO effect on other platforms.

When enabled, [WindowsSubprocessFactory will ... | non_priority | incompatible windows style arg escaping enables correct subprocess argument escaping on windows description the option incompatible windows style arg escaping enables correct subprocess argument escaping on windows this flag has no effect on other platforms when enabled this is correct as ... | 0 |

387,990 | 26,747,603,952 | IssuesEvent | 2023-01-30 17:03:03 | Glassait/Computer_craft | https://api.github.com/repos/Glassait/Computer_craft | closed | [UPDATE] Refactor gamemode selection with strategy pattern | documentation update | ## **Update**

**Describe the solution you'd like**

Create a new class to handle multiple gamemode | 1.0 | [UPDATE] Refactor gamemode selection with strategy pattern - ## **Update**

**Describe the solution you'd like**

Create a new class to handle multiple gamemode | non_priority | refactor gamemode selection with strategy pattern update describe the solution you d like create a new class to handle multiple gamemode | 0 |

169,602 | 6,412,818,350 | IssuesEvent | 2017-08-08 05:13:58 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | closed | Exception Handling Design | core priority: p2+ status: acknowledged type: enhancement | Created from https://github.com/GoogleCloudPlatform/google-cloud-python/pull/2936.

@dhermes I wasn't 100% sure if you had this all planned out or not, but if there are pieces to it or design questions we could talk about it here instead of in PRs.

The idea(to the best of my knowledge) is to better handle exceptio... | 1.0 | Exception Handling Design - Created from https://github.com/GoogleCloudPlatform/google-cloud-python/pull/2936.

@dhermes I wasn't 100% sure if you had this all planned out or not, but if there are pieces to it or design questions we could talk about it here instead of in PRs.

The idea(to the best of my knowledge) ... | priority | exception handling design created from dhermes i wasn t sure if you had this all planned out or not but if there are pieces to it or design questions we could talk about it here instead of in prs the idea to the best of my knowledge is to better handle exceptions between our services that handle multip... | 1 |

534,183 | 15,611,593,533 | IssuesEvent | 2021-03-19 14:29:14 | sopra-fs21-group-01/client | https://api.github.com/repos/sopra-fs21-group-01/client | opened | #4 Pressing the UNO button sends notification to other players | medium priority task | The notification should be kinda "alarming"

Time: 45min

Part of: #4 | 1.0 | #4 Pressing the UNO button sends notification to other players - The notification should be kinda "alarming"

Time: 45min

Part of: #4 | priority | pressing the uno button sends notification to other players the notification should be kinda alarming time part of | 1 |

223,825 | 7,461,071,079 | IssuesEvent | 2018-03-30 23:04:25 | Motoxpro/WorldCupStatsSite | https://api.github.com/repos/Motoxpro/WorldCupStatsSite | closed | Get correct date for qualifying for all Roots and Rain data. | Data/Backend Low Priority Data Issue | Roots and Rain date is for the finals only, so qualifying needs to be corrected. | 1.0 | Get correct date for qualifying for all Roots and Rain data. - Roots and Rain date is for the finals only, so qualifying needs to be corrected. | priority | get correct date for qualifying for all roots and rain data roots and rain date is for the finals only so qualifying needs to be corrected | 1 |

282,992 | 21,316,008,135 | IssuesEvent | 2022-04-16 09:32:47 | FTang21/pe | https://api.github.com/repos/FTang21/pe | opened | Overlapping lines in class diagrams make it a little confusing | severity.Low type.DocumentationBug |

For some, there is a line coming both in and out around the same location, making the diagram a bit harder to read.

I suggest spreading it out a little bit more and suggest mayb... | 1.0 | Overlapping lines in class diagrams make it a little confusing -

For some, there is a line coming both in and out around the same location, making the diagram a bit harder to re... | non_priority | overlapping lines in class diagrams make it a little confusing for some there is a line coming both in and out around the same location making the diagram a bit harder to read i suggest spreading it out a little bit more and suggest maybe using different colors to differentiate | 0 |

50,003 | 12,452,587,626 | IssuesEvent | 2020-05-27 12:33:14 | drupal-celebrations/celebrate-drupal-9 | https://api.github.com/repos/drupal-celebrations/celebrate-drupal-9 | closed | Group facets filters | backend sitebuild | Filters for videos and images (possible filters: category, country #37, celebration) are in the content region and there is no way to group/style them easily and thus make use of e.g. flex.

There is an investigation to make use of layout builder #36 but for the mvp we could start with a more simple approach (e.g. re... | 1.0 | Group facets filters - Filters for videos and images (possible filters: category, country #37, celebration) are in the content region and there is no way to group/style them easily and thus make use of e.g. flex.

There is an investigation to make use of layout builder #36 but for the mvp we could start with a more s... | non_priority | group facets filters filters for videos and images possible filters category country celebration are in the content region and there is no way to group style them easily and thus make use of e g flex there is an investigation to make use of layout builder but for the mvp we could start with a more sim... | 0 |

89,077 | 11,195,254,114 | IssuesEvent | 2020-01-03 05:31:28 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | API Designer less files should be revamped | Area/Tooling Component/APIDesigner Component/Composer Type/Improvement Type/UX | **Description:**

This issue is to track the API Designer styles and less file review improvements noted on #13305. | 1.0 | API Designer less files should be revamped - **Description:**

This issue is to track the API Designer styles and less file review improvements noted on #13305. | non_priority | api designer less files should be revamped description this issue is to track the api designer styles and less file review improvements noted on | 0 |

37,832 | 8,529,987,021 | IssuesEvent | 2018-11-03 17:49:45 | jadrake75/stamp-imageparsing | https://api.github.com/repos/jadrake75/stamp-imageparsing | closed | Unable to create files in folders with non A-Z characters such as ö | Defect | If you have a folder or country name like "Grössbachen" it will show up as a square box | 1.0 | Unable to create files in folders with non A-Z characters such as ö - If you have a folder or country name like "Grössbachen" it will show up as a square box | non_priority | unable to create files in folders with non a z characters such as ö if you have a folder or country name like grössbachen it will show up as a square box | 0 |

83,641 | 3,638,064,985 | IssuesEvent | 2016-02-12 14:07:21 | molgenis/molgenis | https://api.github.com/repos/molgenis/molgenis | closed | Charts won't plot TypeTest ID column versus TypeTest ID column | bug molgenis-dataexplorer priority-later | ## Reproduce

Select Dataexplorer, Select TypeTest, Select charts

Create scatter plot for ID versus ID column.

## Expected

I can see the chart

## Actual

I get a somewhat obscure error:

```

18:32:36.221 [ajp-bio-8009-exec-161] ERROR org.molgenis.charts.ChartController - null

org.elasticsearch.action.search... | 1.0 | Charts won't plot TypeTest ID column versus TypeTest ID column - ## Reproduce

Select Dataexplorer, Select TypeTest, Select charts

Create scatter plot for ID versus ID column.

## Expected

I can see the chart

## Actual

I get a somewhat obscure error:

```