Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

143,366 | 11,545,408,089 | IssuesEvent | 2020-02-18 13:23:40 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | WriteBehindEntryStoreQueueReplicationTest.queued_entries_with_expirationTimes_are_replicated_when_cluster_scaled | Module: IMap Source: Internal Team: Core Type: Test-Failure | http://jenkins.hazelcast.com/job/Hazelcast-pr-builder/4021/testReport/junit/com.hazelcast.map.impl.mapstore.writebehind/WriteBehindEntryStoreQueueReplicationTest/queued_entries_with_expirationTimes_are_replicated_when_cluster_scaled_up/

```

java.lang.AssertionError: Expected 'expirationTime' to be between 157201164... | 1.0 | WriteBehindEntryStoreQueueReplicationTest.queued_entries_with_expirationTimes_are_replicated_when_cluster_scaled - http://jenkins.hazelcast.com/job/Hazelcast-pr-builder/4021/testReport/junit/com.hazelcast.map.impl.mapstore.writebehind/WriteBehindEntryStoreQueueReplicationTest/queued_entries_with_expirationTimes_are_rep... | non_priority | writebehindentrystorequeuereplicationtest queued entries with expirationtimes are replicated when cluster scaled java lang assertionerror expected expirationtime to be between and but was at org junit assert fail assert java at org junit assert asserttrue assert java at com hazelcast t... | 0 |

206,961 | 16,062,439,546 | IssuesEvent | 2021-04-23 14:18:05 | poliastro/poliastro | https://api.github.com/repos/poliastro/poliastro | closed | Review documentation classification, take 2 | documentation | Now that I work at a documentation company 🤓 I have been thinking more and more about poliastro docs, and in particular within the Diátaxis framework https://diataxis.fr/ (formerly known as "The Documentation System").

.

Currently any of endpoint security mechanism is not supported in mgw. Basic auth support should be included

### Describe your solution

Adding security details to API object and parse to enforcer in order to add basic auth credentials at runtime

### How will you implement it

JWTAuth... | 1.0 | Support Basic Auth Endpoint security - ### Describe your problem(s)

Currently any of endpoint security mechanism is not supported in mgw. Basic auth support should be included

### Describe your solution

Adding security details to API object and parse to enforcer in order to add basic auth credentials at runtime

... | priority | support basic auth endpoint security describe your problem s currently any of endpoint security mechanism is not supported in mgw basic auth support should be included describe your solution adding security details to api object and parse to enforcer in order to add basic auth credentials at runtime ... | 1 |

97,363 | 28,214,053,785 | IssuesEvent | 2023-04-05 07:36:51 | microsoft/appcenter | https://api.github.com/repos/microsoft/appcenter | reopened | iOS and Android Build Fail when Test on Real Device is Toggled on | bug build test reviewed-DRI | **What App Center service does this affect?**

React Native SDK, and Build.

**Describe the bug**

Locally Xcode is building normally, but it fails in appcenter with Test on Real Device Toggled on. When it's toggled off, the build is successful.

**Expected behavior**

Build successful.

Additional context

##[er... | 1.0 | iOS and Android Build Fail when Test on Real Device is Toggled on - **What App Center service does this affect?**

React Native SDK, and Build.

**Describe the bug**

Locally Xcode is building normally, but it fails in appcenter with Test on Real Device Toggled on. When it's toggled off, the build is successful.

*... | non_priority | ios and android build fail when test on real device is toggled on what app center service does this affect react native sdk and build describe the bug locally xcode is building normally but it fails in appcenter with test on real device toggled on when it s toggled off the build is successful ... | 0 |

235,213 | 7,735,453,222 | IssuesEvent | 2018-05-27 15:17:40 | ream/ream | https://api.github.com/repos/ream/ream | closed | Prefetch data for route components | contribution welcome enhancement priority: high | Currently we have [getInitialData](https://github.com/ream/ream/blob/master/docs/guides/preloading-data.md) but it does not inject data or props to relevant route component, hopefully we can implement a _real_ `getInitialData` which injects resolved object to component data or `getInitialProps` which injects props inst... | 1.0 | Prefetch data for route components - Currently we have [getInitialData](https://github.com/ream/ream/blob/master/docs/guides/preloading-data.md) but it does not inject data or props to relevant route component, hopefully we can implement a _real_ `getInitialData` which injects resolved object to component data or `getI... | priority | prefetch data for route components currently we have but it does not inject data or props to relevant route component hopefully we can implement a real getinitialdata which injects resolved object to component data or getinitialprops which injects props instead getinitialdata js export defaul... | 1 |

305,847 | 9,377,715,858 | IssuesEvent | 2019-04-04 11:02:07 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m.facebook.com - see bug description | browser-firefox-mobile priority-critical | <!-- @browser: Firefox Mobile 66.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.1; Mobile; rv:66.0) Gecko/66.0 Firefox/66.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://m.facebook.com/story.php?story_fbid=2260305700712631&id=1160243874052158&anchor_composer=false

**Browser / Version**: Firefox Mobil... | 1.0 | m.facebook.com - see bug description - <!-- @browser: Firefox Mobile 66.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.1; Mobile; rv:66.0) Gecko/66.0 Firefox/66.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://m.facebook.com/story.php?story_fbid=2260305700712631&id=1160243874052158&anchor_composer=fals... | priority | m facebook com see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description i use messenger on a tablet but i am having issues with message notifications clearing from all of m... | 1 |

414,483 | 12,103,836,536 | IssuesEvent | 2020-04-20 19:07:17 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | media.interieur.gouv.fr - see bug description | browser-fenix engine-gecko ml-needsdiagnosis-false priority-normal | <!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.0.0; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/51919 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://media.interieur.gouv.fr/deplacement-... | 1.0 | media.interieur.gouv.fr - see bug description - <!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.0.0; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/51919 -->

<!-- @extra_labels: browser-fenix -->

**URL... | priority | media interieur gouv fr see bug description url browser version firefox mobile operating system android tested another browser no problem type something else description pdf download is blocked steps to reproduce generated pdf cannot be downloaded erro... | 1 |

266,464 | 8,367,974,659 | IssuesEvent | 2018-10-04 13:42:16 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Improve program directory related experience | Component/Composer Imported Priority/High Type/Improvement | <a href="https://github.com/kaviththiranga"><img src="https://avatars3.githubusercontent.com/u/1505855?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [kaviththiranga](https://github.com/kaviththiranga)**

_Tuesday Oct 31, 2017 at 03:12 GMT_

_Originally opened as https://github.com/ballerina-l... | 1.0 | Improve program directory related experience - <a href="https://github.com/kaviththiranga"><img src="https://avatars3.githubusercontent.com/u/1505855?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [kaviththiranga](https://github.com/kaviththiranga)**

_Tuesday Oct 31, 2017 at 03:12 GMT_

_Ori... | priority | improve program directory related experience issue by tuesday oct at gmt originally opened as when a user tries to open a packaged file within a program dir ask whether user wants to open the program dir too and open upon confirmation when a user adds a package to a file for the... | 1 |

611,988 | 18,988,281,137 | IssuesEvent | 2021-11-22 01:44:23 | boostcampwm-2021/iOS05-Escaper | https://api.github.com/repos/boostcampwm-2021/iOS05-Escaper | closed | [E8 S2 T1] 랭킹 View를 구현한다. | feature High Priority | ### Epic - Story - Task

Epic : 상세 페이지

Story : 방에 대한 랭킹을 보낼 수 있다

Task : 랭킹 Cell을 구현한다.

| 1.0 | [E8 S2 T1] 랭킹 View를 구현한다. - ### Epic - Story - Task

Epic : 상세 페이지

Story : 방에 대한 랭킹을 보낼 수 있다

Task : 랭킹 Cell을 구현한다.

| priority | 랭킹 view를 구현한다 epic story task epic 상세 페이지 story 방에 대한 랭킹을 보낼 수 있다 task 랭킹 cell을 구현한다 | 1 |

99,441 | 20,966,290,711 | IssuesEvent | 2022-03-28 07:06:35 | Validator2/MesaBox | https://api.github.com/repos/Validator2/MesaBox | opened | Mining Laser, man-portable/emplacement | code models | Original idea by "sirro"

Powered by U-235, its a modern, finalized successor to the Gluon Gun. Instead of completely vaporizing matter, it has several functions;

tbd | 1.0 | Mining Laser, man-portable/emplacement - Original idea by "sirro"

Powered by U-235, its a modern, finalized successor to the Gluon Gun. Instead of completely vaporizing matter, it has several functions;

tbd | non_priority | mining laser man portable emplacement original idea by sirro powered by u its a modern finalized successor to the gluon gun instead of completely vaporizing matter it has several functions tbd | 0 |

1,604 | 6,445,176,676 | IssuesEvent | 2017-08-12 23:23:56 | p4lang/p4-spec | https://api.github.com/repos/p4lang/p4-spec | closed | [PSA] count() operation on counters | portable switch architecture | For a 'bytes' or 'packets_and_bytes' counter type, count() having a

second parameter 'increment' that specifies how much to add to the

byte counter is a very good thing. It is best if the P4 program has

the flexibility to choose the length in bytes it wants to use for the

packet, e.g. in case it is increasing or d... | 1.0 | [PSA] count() operation on counters - For a 'bytes' or 'packets_and_bytes' counter type, count() having a

second parameter 'increment' that specifies how much to add to the

byte counter is a very good thing. It is best if the P4 program has

the flexibility to choose the length in bytes it wants to use for the

pack... | non_priority | count operation on counters for a bytes or packets and bytes counter type count having a second parameter increment that specifies how much to add to the byte counter is a very good thing it is best if the program has the flexibility to choose the length in bytes it wants to use for the packet e... | 0 |

320,160 | 9,777,097,699 | IssuesEvent | 2019-06-07 08:08:15 | DCRGraphsNet/DCROpenCaseManager | https://api.github.com/repos/DCRGraphsNet/DCROpenCaseManager | reopened | Will it be possible to add the child's ages as a guard of an activity? | Priority 3 Udviklingforslag | eg. when the young person turns 16 or 16, the activity becomes pending

So - we need the Age of the child in the process. Or date of birth - not cpr. So we can calculate age. Right now we do not have this capability. You could enter Age in a form - and solve it this way. But we cannot execute "Robot" events that take... | 1.0 | Will it be possible to add the child's ages as a guard of an activity? - eg. when the young person turns 16 or 16, the activity becomes pending

So - we need the Age of the child in the process. Or date of birth - not cpr. So we can calculate age. Right now we do not have this capability. You could enter Age in a for... | priority | will it be possible to add the child s ages as a guard of an activity eg when the young person turns or the activity becomes pending so we need the age of the child in the process or date of birth not cpr so we can calculate age right now we do not have this capability you could enter age in a form ... | 1 |

368,791 | 25,808,835,568 | IssuesEvent | 2022-12-11 17:04:26 | 12rambau/sepal_ui | https://api.github.com/repos/12rambau/sepal_ui | closed | refactor the graph in the contributor section | documentation | We are curently suggesting to use the following branching system:

It is not the one we currently use in the repository so it should be updated with a custom one (maybe using graphviz ?)

| 1.0 | refactor the graph in the contributor section - We are curently suggesting to use the following branching system:

It is not the one we currently use in the repository so it should be updated with a custom one (maybe using graphviz ?)

| non_priority | refactor the graph in the contributor section we are curently suggesting to use the following branching system it is not the one we currently use in the repository so it should be updated with a custom one maybe using graphviz | 0 |

593,496 | 18,009,412,923 | IssuesEvent | 2021-09-16 06:40:55 | GC-spigot/AdvancedEnchantments | https://api.github.com/repos/GC-spigot/AdvancedEnchantments | closed | Effect TNT not reduced by Blast Protection or DECREASE_DAMAGE | Priority: Low Bug: Confirmed Resolution: Accepted | ## Details

**Describe the bug**

The effect "TNT" cannot be reduced by vanilla Blast Protection or AE's DECREASE_DAMAGE effect (when activated by EXPLOSION).

**To Reproduce** <!-- !IMPORTANT! -->

1. Create an armor enchantment with a damaging TNT effect, activated by FALL_DAMAGE.

2. Create an armor enchantmen... | 1.0 | Effect TNT not reduced by Blast Protection or DECREASE_DAMAGE - ## Details

**Describe the bug**

The effect "TNT" cannot be reduced by vanilla Blast Protection or AE's DECREASE_DAMAGE effect (when activated by EXPLOSION).

**To Reproduce** <!-- !IMPORTANT! -->

1. Create an armor enchantment with a damaging TNT ... | priority | effect tnt not reduced by blast protection or decrease damage details describe the bug the effect tnt cannot be reduced by vanilla blast protection or ae s decrease damage effect when activated by explosion to reproduce create an armor enchantment with a damaging tnt effect activated b... | 1 |

50,021 | 26,433,021,846 | IssuesEvent | 2023-01-15 02:40:05 | GraphiteEditor/Graphite | https://api.github.com/repos/GraphiteEditor/Graphite | closed | Debounce widget inputs to minimize backend spam | Feature Web P-Medium Performance | Send fewer messages to the backend for rapidly dragged input widgets so they update the backend a little less frequently. | True | Debounce widget inputs to minimize backend spam - Send fewer messages to the backend for rapidly dragged input widgets so they update the backend a little less frequently. | non_priority | debounce widget inputs to minimize backend spam send fewer messages to the backend for rapidly dragged input widgets so they update the backend a little less frequently | 0 |

447,286 | 12,887,563,861 | IssuesEvent | 2020-07-13 11:28:31 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1634] Coroutine couldn't be started | Category: Tech Priority: Medium Status: Fixed Week Task | When you go to main menu:

```

Coroutine couldn't be started because the the game object 'News(Clone)' is inactive!

News.NewsRenderer:DoRendering(News)

News.NewsController:SetNews(List`1)

News.<SetNews>d__4:MoveNext()

UnityEngine.SetupCoroutine:InvokeMoveNext(IEnumerator, IntPtr)

``` | 1.0 | [0.9.0 staging-1634] Coroutine couldn't be started - When you go to main menu:

```

Coroutine couldn't be started because the the game object 'News(Clone)' is inactive!

News.NewsRenderer:DoRendering(News)

News.NewsController:SetNews(List`1)

News.<SetNews>d__4:MoveNext()

UnityEngine.SetupCoroutine:InvokeMoveNext(IE... | priority | coroutine couldn t be started when you go to main menu coroutine couldn t be started because the the game object news clone is inactive news newsrenderer dorendering news news newscontroller setnews list news d movenext unityengine setupcoroutine invokemovenext ienumerator intptr | 1 |

144,381 | 11,614,148,682 | IssuesEvent | 2020-02-26 12:03:47 | pingcap/tidb-operator | https://api.github.com/repos/pingcap/tidb-operator | closed | e2e: "[Feature: AdvancedStatefulSet] Scaling tidb cluster with advanced statefulset " is flaky | test/e2e | ## Bug Report

https://internal.pingcap.net/idc-jenkins/blue/organizations/jenkins/operator_ghpr_e2e_test_kind/detail/operator_ghpr_e2e_test_kind/2273/tests

```

Stacktrace

/home/jenkins/agent/workspace/operator_ghpr_e2e_test_kind/go/src/github.com/pingcap/tidb-operator/tests/e2e/tidbcluster/serial.go:139

Jan 10... | 1.0 | e2e: "[Feature: AdvancedStatefulSet] Scaling tidb cluster with advanced statefulset " is flaky - ## Bug Report

https://internal.pingcap.net/idc-jenkins/blue/organizations/jenkins/operator_ghpr_e2e_test_kind/detail/operator_ghpr_e2e_test_kind/2273/tests

```

Stacktrace

/home/jenkins/agent/workspace/operator_ghpr_... | non_priority | scaling tidb cluster with advanced statefulset is flaky bug report stacktrace home jenkins agent workspace operator ghpr test kind go src github com pingcap tidb operator tests tidbcluster serial go jan unexpected error groupkind group pingcap com kind tidbc... | 0 |

53,463 | 13,167,592,090 | IssuesEvent | 2020-08-11 10:32:49 | wellcomecollection/platform | https://api.github.com/repos/wellcomecollection/platform | closed | Associate CI policy for Buildkite agents IAM Role | :recycle: Builds and CI | Buildkite agents (as EC2 instances) created using the Buildkite AWS elastic stack (https://github.com/buildkite/elastic-ci-stack-for-aws) have associated IAM roles which should have policy documents mirroring our existing CI permissions. | 1.0 | Associate CI policy for Buildkite agents IAM Role - Buildkite agents (as EC2 instances) created using the Buildkite AWS elastic stack (https://github.com/buildkite/elastic-ci-stack-for-aws) have associated IAM roles which should have policy documents mirroring our existing CI permissions. | non_priority | associate ci policy for buildkite agents iam role buildkite agents as instances created using the buildkite aws elastic stack have associated iam roles which should have policy documents mirroring our existing ci permissions | 0 |

802,865 | 29,047,860,883 | IssuesEvent | 2023-05-13 20:06:58 | vdjagilev/nmap-formatter | https://api.github.com/repos/vdjagilev/nmap-formatter | closed | Update Node.js 12 -> 16 for pipelines | priority/medium type/other prop/pipeline | ```

Node.js 12 actions are deprecated. Please update the following actions to use Node.js 16: actions/checkout@v2. For more information see: https://github.blog/changelog/2022-09-22-github-actions-all-actions-will-begin-running-on-node16-instead-of-node12/

```

Need to upgrade github actions to version 16 | 1.0 | Update Node.js 12 -> 16 for pipelines - ```

Node.js 12 actions are deprecated. Please update the following actions to use Node.js 16: actions/checkout@v2. For more information see: https://github.blog/changelog/2022-09-22-github-actions-all-actions-will-begin-running-on-node16-instead-of-node12/

```

Need to upgrad... | priority | update node js for pipelines node js actions are deprecated please update the following actions to use node js actions checkout for more information see need to upgrade github actions to version | 1 |

661,913 | 22,095,763,009 | IssuesEvent | 2022-06-01 09:57:29 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | Allow deleting qcow2 disk snapshot using blockcommit | Category: Drivers - Storage Type: Feature Sponsored Status: Accepted Priority: Normal | **Description**

Currently deleting disk snapshots when using qcow2 DS is very limited. Users can not delete the active snapshots and also snapshots with children, so the only way how to delete the snapshot is to revert back.

**Use case**

e.g: when users get charged in terms of snapshot numbers, they need to delete... | 1.0 | Allow deleting qcow2 disk snapshot using blockcommit - **Description**

Currently deleting disk snapshots when using qcow2 DS is very limited. Users can not delete the active snapshots and also snapshots with children, so the only way how to delete the snapshot is to revert back.

**Use case**

e.g: when users get ch... | priority | allow deleting disk snapshot using blockcommit description currently deleting disk snapshots when using ds is very limited users can not delete the active snapshots and also snapshots with children so the only way how to delete the snapshot is to revert back use case e g when users get charged in... | 1 |

29,789 | 14,265,763,425 | IssuesEvent | 2020-11-20 17:36:50 | ampproject/amp-toolbox-php | https://api.github.com/repos/ampproject/amp-toolbox-php | opened | New Transformer: BrowserHints | Performance | - [ ] Add preconnect link tag for Google fonts resources

- [ ] Add preconnect link tag to the publisher's own origin

- [ ] Preload images

- [ ] Preload AMP runtime script

- [ ] Prune duplicate resource hints

| True | New Transformer: BrowserHints - - [ ] Add preconnect link tag for Google fonts resources

- [ ] Add preconnect link tag to the publisher's own origin

- [ ] Preload images

- [ ] Preload AMP runtime script

- [ ] Prune duplicate resource hints

| non_priority | new transformer browserhints add preconnect link tag for google fonts resources add preconnect link tag to the publisher s own origin preload images preload amp runtime script prune duplicate resource hints | 0 |

88,181 | 15,800,747,050 | IssuesEvent | 2021-04-03 01:06:24 | rammatzkvosky/jenkins | https://api.github.com/repos/rammatzkvosky/jenkins | opened | CVE-2021-21348 (High) detected in xstream-1.4.15.jar | security vulnerability | ## CVE-2021-21348 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.15.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="http://x-stream.github.io">http://x-stream... | True | CVE-2021-21348 (High) detected in xstream-1.4.15.jar - ## CVE-2021-21348 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.15.jar</b></p></summary>

<p></p>

<p>Library home pa... | non_priority | cve high detected in xstream jar cve high severity vulnerability vulnerable library xstream jar library home page a href path to dependency file jenkins test pom xml path to vulnerable library home wss scanner repository com thoughtworks xstream xstream xs... | 0 |

586,874 | 17,599,170,794 | IssuesEvent | 2021-08-17 09:37:18 | googleapis/nodejs-video-intelligence | https://api.github.com/repos/googleapis/nodejs-video-intelligence | opened | analyze samples: should track objects in a GCS file failed | type: bug priority: p1 flakybot: issue | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 2f7fe652af0d15621b89fc80cc22f9b2d0a2f209

b... | 1.0 | analyze samples: should track objects in a GCS file failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop comment... | priority | analyze samples should track objects in a gcs file failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output command failed node analyze js track... | 1 |

286,054 | 8,783,367,864 | IssuesEvent | 2018-12-20 05:32:54 | hotosm/tasking-manager | https://api.github.com/repos/hotosm/tasking-manager | closed | Project Clone does not copy allowed user list if private | Low Priority bug | Cloning a project keeps the private setting, but does not keep the user list. | 1.0 | Project Clone does not copy allowed user list if private - Cloning a project keeps the private setting, but does not keep the user list. | priority | project clone does not copy allowed user list if private cloning a project keeps the private setting but does not keep the user list | 1 |

37,490 | 5,117,329,407 | IssuesEvent | 2017-01-07 15:41:46 | ngageoint/hootenanny-ui | https://api.github.com/repos/ngageoint/hootenanny-ui | closed | Port refactored schema switcher code | Status: In Progress Status: Ready for Test | This feature was refactored in isolation https://github.com/brianhatchl/iD/pull/1

So may need some patching around the edges to pull out the old stuff and shoehorn the new. | 1.0 | Port refactored schema switcher code - This feature was refactored in isolation https://github.com/brianhatchl/iD/pull/1

So may need some patching around the edges to pull out the old stuff and shoehorn the new. | non_priority | port refactored schema switcher code this feature was refactored in isolation so may need some patching around the edges to pull out the old stuff and shoehorn the new | 0 |

89,076 | 17,783,607,561 | IssuesEvent | 2021-08-31 08:26:18 | zeek/spicy | https://api.github.com/repos/zeek/spicy | closed | Non-public enum type gets optimized out even though needed | Bug Codegen | If a unit depends on a non-public enum type it sometimes gets optimized out, even though it is needed.

```

$ cat x.spicy

module x;

type E = enum { a, b, c };

public type U = unit {

var e: E;

};

```

```

$ spicyc -j x.spicy -D global-optimizer

[debug/global-optimizer] disabling feature 'supports_fi... | 1.0 | Non-public enum type gets optimized out even though needed - If a unit depends on a non-public enum type it sometimes gets optimized out, even though it is needed.

```

$ cat x.spicy

module x;

type E = enum { a, b, c };

public type U = unit {

var e: E;

};

```

```

$ spicyc -j x.spicy -D global-optim... | non_priority | non public enum type gets optimized out even though needed if a unit depends on a non public enum type it sometimes gets optimized out even though it is needed cat x spicy module x type e enum a b c public type u unit var e e spicyc j x spicy d global optim... | 0 |

352,961 | 25,091,913,520 | IssuesEvent | 2022-11-08 07:10:03 | argilla-io/argilla | https://api.github.com/repos/argilla-io/argilla | closed | Docs: Replace one old UI screenshot | bug documentation | **Describe the bug**

A screenshot from Rubrix UI persists on docs .

**To Reproduce**

Steps to reproduce the behavior:

(weak labeling mode view)

https://docs.argilla.io/en/latest/reference/webapp/features.html

**Expected behavior**

Update it with a screenshot from the new UI

**Screenshots**

If applicabl... | 1.0 | Docs: Replace one old UI screenshot - **Describe the bug**

A screenshot from Rubrix UI persists on docs .

**To Reproduce**

Steps to reproduce the behavior:

(weak labeling mode view)

https://docs.argilla.io/en/latest/reference/webapp/features.html

**Expected behavior**

Update it with a screenshot from the n... | non_priority | docs replace one old ui screenshot describe the bug a screenshot from rubrix ui persists on docs to reproduce steps to reproduce the behavior weak labeling mode view expected behavior update it with a screenshot from the new ui screenshots if applicable add screenshots to hel... | 0 |

207,140 | 16,066,867,139 | IssuesEvent | 2021-04-23 20:40:18 | bounswe/2021SpringGroup12 | https://api.github.com/repos/bounswe/2021SpringGroup12 | closed | Merge Use Case Diagrams | documentation status: Review Request | First Deadline for review 23.04.2021, @18:00

Final Deadline 23.04.2021, @22:00

Others have drawn their parts on Lucid. I will merge them in one page. | 1.0 | Merge Use Case Diagrams - First Deadline for review 23.04.2021, @18:00

Final Deadline 23.04.2021, @22:00

Others have drawn their parts on Lucid. I will merge them in one page. | non_priority | merge use case diagrams first deadline for review final deadline others have drawn their parts on lucid i will merge them in one page | 0 |

721,973 | 24,845,593,829 | IssuesEvent | 2022-10-26 15:38:26 | googleapis/nodejs-pubsub | https://api.github.com/repos/googleapis/nodejs-pubsub | opened | createSubscription method throws if oidcToken is being set | priority: p2 type: bug | Thanks for stopping by to let us know something could be better!

**PLEASE READ**: If you have a support contract with Google, please create an issue in the [support console](https://cloud.google.com/support/) instead of filing on GitHub. This will ensure a timely response.

1) Is this a client library issue or a p... | 1.0 | createSubscription method throws if oidcToken is being set - Thanks for stopping by to let us know something could be better!

**PLEASE READ**: If you have a support contract with Google, please create an issue in the [support console](https://cloud.google.com/support/) instead of filing on GitHub. This will ensure a... | priority | createsubscription method throws if oidctoken is being set thanks for stopping by to let us know something could be better please read if you have a support contract with google please create an issue in the instead of filing on github this will ensure a timely response is this a client library ... | 1 |

158,288 | 6,025,001,058 | IssuesEvent | 2017-06-08 07:27:09 | VirtoCommerce/vc-platform | https://api.github.com/repos/VirtoCommerce/vc-platform | closed | Theme colors stopped working | bug Priority: High | When changing theme default definition from Blue to Dark, color schema no longer changes, only left filter changes. This is a showcase feature during the demo and needs to work. The bug is current in dev branch. | 1.0 | Theme colors stopped working - When changing theme default definition from Blue to Dark, color schema no longer changes, only left filter changes. This is a showcase feature during the demo and needs to work. The bug is current in dev branch. | priority | theme colors stopped working when changing theme default definition from blue to dark color schema no longer changes only left filter changes this is a showcase feature during the demo and needs to work the bug is current in dev branch | 1 |

26,426 | 26,853,938,009 | IssuesEvent | 2023-02-03 13:15:05 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Confusing error message: Argument is too big for formatting | usability | ```

SELECT format('{}asdfasd{}', '111')

Query id: 77df41ec-4f04-4017-863f-f7d31b92893d

0 rows in set. Elapsed: 0.031 sec.

Received exception from server (version 22.13.1):

Code: 36. DB::Exception: Received from localhost:9000. DB::Exception: Argument is too big for formatting: While processing format('{}a... | True | Confusing error message: Argument is too big for formatting - ```

SELECT format('{}asdfasd{}', '111')

Query id: 77df41ec-4f04-4017-863f-f7d31b92893d

0 rows in set. Elapsed: 0.031 sec.

Received exception from server (version 22.13.1):

Code: 36. DB::Exception: Received from localhost:9000. DB::Exception: Ar... | non_priority | confusing error message argument is too big for formatting select format asdfasd query id rows in set elapsed sec received exception from server version code db exception received from localhost db exception argument is too big for formatting whi... | 0 |

614,857 | 19,191,045,360 | IssuesEvent | 2021-12-06 00:23:20 | apcountryman/picolibrary | https://api.github.com/repos/apcountryman/picolibrary | closed | Remove reverse iterator | priority-normal status-awaiting_review type-refactoring | Remove reverse iterator (`::picolibrary::Reverse_Iterator`). `std::reverse_iterator` will be required instead. | 1.0 | Remove reverse iterator - Remove reverse iterator (`::picolibrary::Reverse_Iterator`). `std::reverse_iterator` will be required instead. | priority | remove reverse iterator remove reverse iterator picolibrary reverse iterator std reverse iterator will be required instead | 1 |

79,561 | 28,375,113,659 | IssuesEvent | 2023-04-12 20:10:30 | JohnAustinDev/xulsword | https://api.github.com/repos/JohnAustinDev/xulsword | closed | Add GUI capability to display the Greek TR words in module KJV version 2.6 lemma markup | Type-Defect Priority-Medium auto-migrated | ```

From kjv.conf

SwordVersionDate=2014-02-15

Version=2.6

History_2.6=Fixed bugs. Added Greek from TR.

Example: (mod2imp)

$$$Matthew 1:1

<w lemma="strong:G976 lemma.TR:βιβλος" morph="robinson:N-NSF" src="1">The

book</w> <w lemma="strong:G1078 lemma.TR:γενεσεως"

morph="robinson:N-GSF" src="2">of the generation</w> ... | 1.0 | Add GUI capability to display the Greek TR words in module KJV version 2.6 lemma markup - ```

From kjv.conf

SwordVersionDate=2014-02-15

Version=2.6

History_2.6=Fixed bugs. Added Greek from TR.

Example: (mod2imp)

$$$Matthew 1:1

<w lemma="strong:G976 lemma.TR:βιβλος" morph="robinson:N-NSF" src="1">The

book</w> <w lem... | non_priority | add gui capability to display the greek tr words in module kjv version lemma markup from kjv conf swordversiondate version history fixed bugs added greek from tr example matthew the book w lemma strong lemma tr γενεσεως morph robinson n gsf src of the generation ... | 0 |

59,707 | 12,013,373,137 | IssuesEvent | 2020-04-10 08:42:17 | home-assistant/brands | https://api.github.com/repos/home-assistant/brands | closed | Philips TV is missing brand images | domain-missing has-codeowner |

## The problem

The Philips TV integration does not have brand images in

this repository.

We recently started this Brands repository, to create a centralized storage of all brand-related images. These images are used on our website and the Home Assistant frontend.

The following images are missing and would ideal... | 1.0 | Philips TV is missing brand images -

## The problem

The Philips TV integration does not have brand images in

this repository.

We recently started this Brands repository, to create a centralized storage of all brand-related images. These images are used on our website and the Home Assistant frontend.

The followi... | non_priority | philips tv is missing brand images the problem the philips tv integration does not have brand images in this repository we recently started this brands repository to create a centralized storage of all brand related images these images are used on our website and the home assistant frontend the followi... | 0 |

446,697 | 12,876,716,568 | IssuesEvent | 2020-07-11 06:41:57 | luksan47/mars | https://api.github.com/repos/luksan47/mars | closed | Deleting mac addresses is not working | Priority: HIGH bug | on list.blade and and admin.internet.mac_addresses.list.blade also | 1.0 | Deleting mac addresses is not working - on list.blade and and admin.internet.mac_addresses.list.blade also | priority | deleting mac addresses is not working on list blade and and admin internet mac addresses list blade also | 1 |

709,164 | 24,369,190,466 | IssuesEvent | 2022-10-03 17:42:05 | Chatterino/chatterino2 | https://api.github.com/repos/Chatterino/chatterino2 | closed | Migrate /subscribersoff command to Helix API | Platform: Twitch Priority: Medium Deprecation: Twitch IRC Commands hacktoberfest | As part of Twitch's announced deprecation of IRC-based commands ([see here for more info](https://discuss.dev.twitch.tv/t/deprecation-of-chat-commands-through-irc/40486), the `/subscribersoff` command needs to be migrated to use the relevant Helix API endpoint.

Helix API reference: https://dev.twitch.tv/docs/api/refer... | 1.0 | Migrate /subscribersoff command to Helix API - As part of Twitch's announced deprecation of IRC-based commands ([see here for more info](https://discuss.dev.twitch.tv/t/deprecation-of-chat-commands-through-irc/40486), the `/subscribersoff` command needs to be migrated to use the relevant Helix API endpoint.

Helix API ... | priority | migrate subscribersoff command to helix api as part of twitch s announced deprecation of irc based commands the subscribersoff command needs to be migrated to use the relevant helix api endpoint helix api reference split from | 1 |

102,769 | 11,307,004,396 | IssuesEvent | 2020-01-18 17:58:34 | Luceapuce/SEPR-Project | https://api.github.com/repos/Luceapuce/SEPR-Project | closed | willCollide if spawned in Entity | documentation question | Might need to write something to handle the situation of an entity spawning within an entity.

_This is just a reminder to check this later_ | 1.0 | willCollide if spawned in Entity - Might need to write something to handle the situation of an entity spawning within an entity.

_This is just a reminder to check this later_ | non_priority | willcollide if spawned in entity might need to write something to handle the situation of an entity spawning within an entity this is just a reminder to check this later | 0 |

51,739 | 6,195,202,786 | IssuesEvent | 2017-07-05 12:00:24 | mifort-org/mifort-timesheet | https://api.github.com/repos/mifort-org/mifort-timesheet | closed | One week in month is not displayed | bug fixed on test env | When you enter new data in current week and then click on back arrow in the first column, data of previous week isn't displayed. If you reload window data is displayed again. | 1.0 | One week in month is not displayed - When you enter new data in current week and then click on back arrow in the first column, data of previous week isn't displayed. If you reload window data is displayed again. | non_priority | one week in month is not displayed when you enter new data in current week and then click on back arrow in the first column data of previous week isn t displayed if you reload window data is displayed again | 0 |

148,431 | 19,531,026,647 | IssuesEvent | 2021-12-30 16:50:03 | vital-ws/empty | https://api.github.com/repos/vital-ws/empty | closed | CVE-2016-1000236 (Medium) detected in cookie-signature-1.0.3.tgz | security vulnerability | ## CVE-2016-1000236 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>cookie-signature-1.0.3.tgz</b></p></summary>

<p>Sign and unsign cookies</p>

<p>Library home page: <a href="https:/... | True | CVE-2016-1000236 (Medium) detected in cookie-signature-1.0.3.tgz - ## CVE-2016-1000236 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>cookie-signature-1.0.3.tgz</b></p></summary>

<p... | non_priority | cve medium detected in cookie signature tgz cve medium severity vulnerability vulnerable library cookie signature tgz sign and unsign cookies library home page a href path to dependency file package json path to vulnerable library node modules cookie signature pack... | 0 |

174,885 | 27,747,982,185 | IssuesEvent | 2023-03-15 18:24:16 | Olian04/simply-reactive | https://api.github.com/repos/Olian04/simply-reactive | closed | Add createSessionAtom | further design needed | Much like `createQueryAtom` however it stores the value in `sessionStorage` instead of the query string.

```ts

const A = createSessionAtom({

key: 'a',

default: 0,

});

A.set(3);

// Reload page

A.get(); // 3

``` | 1.0 | Add createSessionAtom - Much like `createQueryAtom` however it stores the value in `sessionStorage` instead of the query string.

```ts

const A = createSessionAtom({

key: 'a',

default: 0,

});

A.set(3);

// Reload page

A.get(); // 3

``` | non_priority | add createsessionatom much like createqueryatom however it stores the value in sessionstorage instead of the query string ts const a createsessionatom key a default a set reload page a get | 0 |

56,313 | 15,020,016,603 | IssuesEvent | 2021-02-01 14:14:26 | mozilla-lockwise/lockwise-ios | https://api.github.com/repos/mozilla-lockwise/lockwise-ios | reopened | recently used doesn't work | archived defect | steps to reproduce:

- copy a username from an item

- navigate back to entries list

- sort by recently used

expected: item with copied username appears at the top

actual: not necessarily | 1.0 | recently used doesn't work - steps to reproduce:

- copy a username from an item

- navigate back to entries list

- sort by recently used

expected: item with copied username appears at the top

actual: not necessarily | non_priority | recently used doesn t work steps to reproduce copy a username from an item navigate back to entries list sort by recently used expected item with copied username appears at the top actual not necessarily | 0 |

194,890 | 6,900,397,676 | IssuesEvent | 2017-11-24 18:25:37 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | admin UI: Authentication sources rules description not displayed in listing | Priority: Low Status: For review Type: Feature / Enhancement Type: Nice to have | It would be better if we could see the description of the rules in authentication sources in the listing of the actual rules rather than having to click on each of them to see it. | 1.0 | admin UI: Authentication sources rules description not displayed in listing - It would be better if we could see the description of the rules in authentication sources in the listing of the actual rules rather than having to click on each of them to see it. | priority | admin ui authentication sources rules description not displayed in listing it would be better if we could see the description of the rules in authentication sources in the listing of the actual rules rather than having to click on each of them to see it | 1 |

240,595 | 7,803,312,842 | IssuesEvent | 2018-06-10 22:18:13 | DigitalCampus/django-oppia | https://api.github.com/repos/DigitalCampus/django-oppia | closed | When creating a "ResponseResource" object via the API not all fields are required | invalid medium priority | they should be!

| 1.0 | When creating a "ResponseResource" object via the API not all fields are required - they should be!

| priority | when creating a responseresource object via the api not all fields are required they should be | 1 |

57,749 | 16,039,745,947 | IssuesEvent | 2021-04-22 06:03:14 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | New lightbox mouse wheel zoom too agressive | T-Defect | ### Description

Zooming the image by mouse wheel shows it in maximal/minimal zoom. Single wheel step should do the same thing as (+) and (-) buttons.

### Steps to reproduce

- open the lightbox clicking an image

- scrool mouse wheel one step up

- image is shown in maximal zoom

- scrool mouse wheel one step d... | 1.0 | New lightbox mouse wheel zoom too agressive - ### Description

Zooming the image by mouse wheel shows it in maximal/minimal zoom. Single wheel step should do the same thing as (+) and (-) buttons.

### Steps to reproduce

- open the lightbox clicking an image

- scrool mouse wheel one step up

- image is shown in... | non_priority | new lightbox mouse wheel zoom too agressive description zooming the image by mouse wheel shows it in maximal minimal zoom single wheel step should do the same thing as and buttons steps to reproduce open the lightbox clicking an image scrool mouse wheel one step up image is shown in... | 0 |

423,624 | 12,299,239,204 | IssuesEvent | 2020-05-11 12:01:39 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www5.ha.org.hk - site is not usable | browser-firefox engine-gecko priority-normal | <!-- @browser: Firefox 76.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:76.0) Gecko/20100101 Firefox/76.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/52664 -->

**URL**: https://www5.ha.org.hk/rcbts

**Browser / Version**: Firefox 76.0

**Operating S... | 1.0 | www5.ha.org.hk - site is not usable - <!-- @browser: Firefox 76.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:76.0) Gecko/20100101 Firefox/76.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/52664 -->

**URL**: https://www5.ha.org.hk/rcbts

**Browser /... | priority | ha org hk site is not usable url browser version firefox operating system windows tested another browser yes edge problem type site is not usable description page not loading correctly steps to reproduce secure connection failed an error occurred during a... | 1 |

653,761 | 21,626,002,868 | IssuesEvent | 2022-05-05 02:18:17 | bossbuwi/reality | https://api.github.com/repos/bossbuwi/reality | closed | Create event list view logic | enhancement logic high priority | The event list view must fetch and display data from the server. Clear any hard coded data. | 1.0 | Create event list view logic - The event list view must fetch and display data from the server. Clear any hard coded data. | priority | create event list view logic the event list view must fetch and display data from the server clear any hard coded data | 1 |

307,274 | 9,415,227,394 | IssuesEvent | 2019-04-10 12:09:29 | bio-tools/biotoolsRegistry | https://api.github.com/repos/bio-tools/biotoolsRegistry | closed | Count of search results not being cleared | GUI bug high priority | e.g. when navigating to Tool Card or other page (having done a search) the number of results remains visible in the search box - which looks bad. | 1.0 | Count of search results not being cleared - e.g. when navigating to Tool Card or other page (having done a search) the number of results remains visible in the search box - which looks bad. | priority | count of search results not being cleared e g when navigating to tool card or other page having done a search the number of results remains visible in the search box which looks bad | 1 |

254,930 | 19,277,491,629 | IssuesEvent | 2021-12-10 13:36:06 | PnX-SI/gn_module_monitoring | https://api.github.com/repos/PnX-SI/gn_module_monitoring | closed | Utilisation "filter" dans la configuration json | documentation question | Bonjour,

J'ai configuré un sous-module pour nos suivis par tente malaise et autres pièges fixes.

Je voudrais filtrer une liste de nomenclatures, j'ai vu que c'était prévu dans la doc. Mais (en tous cas avec ma conf) ça ne semble pas fonctionner. La configuration du widget est la suivante :

```

"id_trap_typ... | 1.0 | Utilisation "filter" dans la configuration json - Bonjour,

J'ai configuré un sous-module pour nos suivis par tente malaise et autres pièges fixes.

Je voudrais filtrer une liste de nomenclatures, j'ai vu que c'était prévu dans la doc. Mais (en tous cas avec ma conf) ça ne semble pas fonctionner. La configuration... | non_priority | utilisation filter dans la configuration json bonjour j ai configuré un sous module pour nos suivis par tente malaise et autres pièges fixes je voudrais filtrer une liste de nomenclatures j ai vu que c était prévu dans la doc mais en tous cas avec ma conf ça ne semble pas fonctionner la configuration... | 0 |

255,443 | 19,302,624,690 | IssuesEvent | 2021-12-13 08:06:32 | it-academyproject/ita-directory | https://api.github.com/repos/it-academyproject/ita-directory | closed | review documentation make install | bug documentation | - [ ] make install doesn't run => `make: *** No rule to make target 'install'. Stop.`

- [ ] make build bug =>

```

rm -f .env

process_begin: CreateProcess(NULL, rm -f .env, ...) failed.

make (e=2): El sistema no puede encontrar el archivo especificado.

make: *** [C:/Users/TESTER/Desktop/it-projecte/ita-director... | 1.0 | review documentation make install - - [ ] make install doesn't run => `make: *** No rule to make target 'install'. Stop.`

- [ ] make build bug =>

```

rm -f .env

process_begin: CreateProcess(NULL, rm -f .env, ...) failed.

make (e=2): El sistema no puede encontrar el archivo especificado.

make: *** [C:/Users/TES... | non_priority | review documentation make install make install doesn t run make no rule to make target install stop make build bug rm f env process begin createprocess null rm f env failed make e el sistema no puede encontrar el archivo especificado make error ... | 0 |

74,260 | 20,101,578,146 | IssuesEvent | 2022-02-07 05:16:34 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | tensorflow.datasets.load() throws an exception | stat:awaiting response type:build/install stalled subtype: ubuntu/linux | <em>Please make sure that this is a bug. As per our

[GitHub Policy](https://github.com/tensorflow/tensorflow/blob/master/ISSUES.md),

we only address code/doc bugs, performance issues, feature requests and

build/installation issues on GitHub. tag:bug_template</em>

**System information**

- Have I written custom co... | 1.0 | tensorflow.datasets.load() throws an exception - <em>Please make sure that this is a bug. As per our

[GitHub Policy](https://github.com/tensorflow/tensorflow/blob/master/ISSUES.md),

we only address code/doc bugs, performance issues, feature requests and

build/installation issues on GitHub. tag:bug_template</em>

*... | non_priority | tensorflow datasets load throws an exception please make sure that this is a bug as per our we only address code doc bugs performance issues feature requests and build installation issues on github tag bug template system information have i written custom code as opposed to using a stock exa... | 0 |

315,167 | 27,051,136,237 | IssuesEvent | 2023-02-13 13:19:38 | enonic/app-contentstudio | https://api.github.com/repos/enonic/app-contentstudio | closed | Project wizard - language selector gets disabled after clicking on 'Copy from parent' button | Bug Not in Changelog Test is Failing | 1. Create a layer in Defalt project, do not select a language in the layer

2. Open the layer and select a language

3. Do not click on `Save` button in the wizard page, but click on `Copy from parent` button

**BUG** - Filter input gets disabled in the selector

is expensive for large process counts | Atmosphere bug performance | The runtime of the routine print_cost_p, which writes out the cost of each chunk to the file atm_chunk_costs.txt, is large for large process counts on Cori-KNL. For example, for an F case using the ne256pg2 mesh and 32768 processes, this output took 18 minutes to complete. As print_cost_p is now called by default, this... | True | output of chunk costs (print_cost_p) is expensive for large process counts - The runtime of the routine print_cost_p, which writes out the cost of each chunk to the file atm_chunk_costs.txt, is large for large process counts on Cori-KNL. For example, for an F case using the ne256pg2 mesh and 32768 processes, this outpu... | non_priority | output of chunk costs print cost p is expensive for large process counts the runtime of the routine print cost p which writes out the cost of each chunk to the file atm chunk costs txt is large for large process counts on cori knl for example for an f case using the mesh and processes this output took mi... | 0 |

160,215 | 6,084,950,851 | IssuesEvent | 2017-06-17 09:43:21 | climu/openstudyroom | https://api.github.com/repos/climu/openstudyroom | closed | Create a method that return all users one can play with | enhancement help wanted high priority | We need to calculate this players results for each divisions and then to filter the users with whom he didn't play the max_number of games. | 1.0 | Create a method that return all users one can play with - We need to calculate this players results for each divisions and then to filter the users with whom he didn't play the max_number of games. | priority | create a method that return all users one can play with we need to calculate this players results for each divisions and then to filter the users with whom he didn t play the max number of games | 1 |

448,857 | 31,815,653,595 | IssuesEvent | 2023-09-13 20:14:30 | proofcarryingdata/zupass | https://api.github.com/repos/proofcarryingdata/zupass | opened | example 'feed' application that demonstrates how 3rd parties are supposed to issue pcds to pcdpass users | documentation | cc @robknight | 1.0 | example 'feed' application that demonstrates how 3rd parties are supposed to issue pcds to pcdpass users - cc @robknight | non_priority | example feed application that demonstrates how parties are supposed to issue pcds to pcdpass users cc robknight | 0 |

40,782 | 8,847,362,711 | IssuesEvent | 2019-01-08 01:22:07 | pnp/pnpjs | https://api.github.com/repos/pnp/pnpjs | closed | Can't make batching work in sp-taxonomy | area: code status: details needed type: bug | ### Category

- [ ] Enhancement

- [x] Bug

- [x] Question

- [ ] Documentation gap/issue

### Version

Please specify what version of the library you are using: [1.2.7]

Please specify what version(s) of SharePoint you are targeting: [SharePoint Online]

### Expected / Desired Behavior / Question

Batching for... | 1.0 | Can't make batching work in sp-taxonomy - ### Category

- [ ] Enhancement

- [x] Bug

- [x] Question

- [ ] Documentation gap/issue

### Version

Please specify what version of the library you are using: [1.2.7]

Please specify what version(s) of SharePoint you are targeting: [SharePoint Online]

### Expected /... | non_priority | can t make batching work in sp taxonomy category enhancement bug question documentation gap issue version please specify what version of the library you are using please specify what version s of sharepoint you are targeting expected desired behavior question ba... | 0 |

323,683 | 27,746,189,647 | IssuesEvent | 2023-03-15 17:11:52 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] flaky test: YbAdminSnapshotScheduleUpgradeTestWithYsql.PgsqlTestMigrationFromEarliestSysCatalogSnapshot | kind/bug area/ysql kind/failing-test priority/medium | Jira Link: [DB-5838](https://yugabyte.atlassian.net/browse/DB-5838)

### Description

From https://detective-gcp.dev.yugabyte.com/stability/test?class=YbAdminSnapshotScheduleUpgradeTestWithYsql&name=PgsqlTestMigrationFromEarliestSysCatalogSnapshot

it shows in debug build the test frequently failed with the following e... | 1.0 | [YSQL] flaky test: YbAdminSnapshotScheduleUpgradeTestWithYsql.PgsqlTestMigrationFromEarliestSysCatalogSnapshot - Jira Link: [DB-5838](https://yugabyte.atlassian.net/browse/DB-5838)

### Description

From https://detective-gcp.dev.yugabyte.com/stability/test?class=YbAdminSnapshotScheduleUpgradeTestWithYsql&name=PgsqlTes... | non_priority | flaky test ybadminsnapshotscheduleupgradetestwithysql pgsqltestmigrationfromearliestsyscatalogsnapshot jira link description from it shows in debug build the test frequently failed with the following error src yb tools yb admin snapshot schedule test cc failed expected to find subs... | 0 |

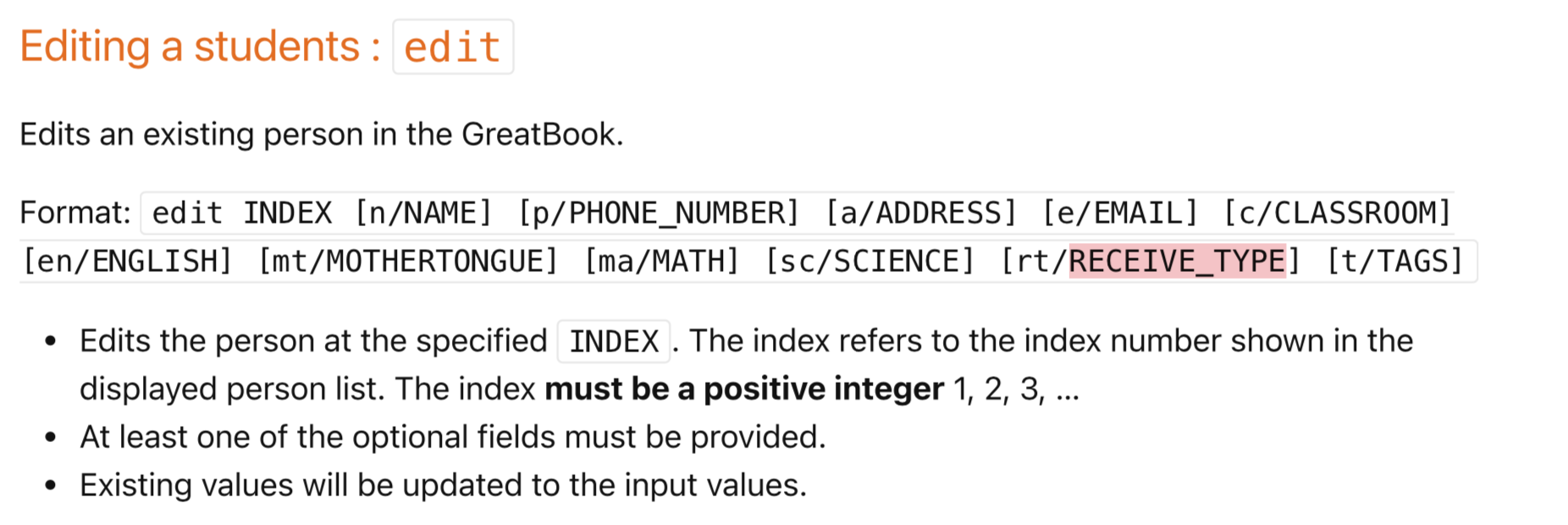

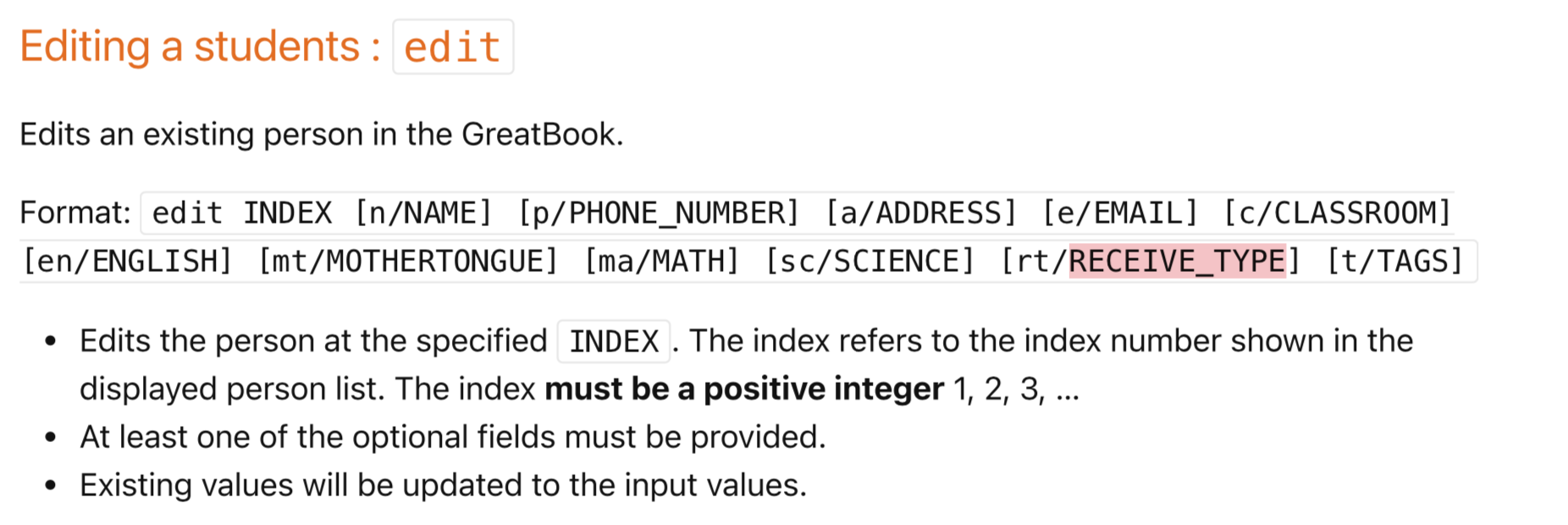

283,407 | 21,316,554,320 | IssuesEvent | 2022-04-16 11:33:14 | jr-mojito/pe | https://api.github.com/repos/jr-mojito/pe | opened | Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function | severity.Low type.DocumentationBug | Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function

<!--session: 1650108417268-dccfef75-89da-4d4a-87bc-8b7c9ca8a778-->

<!--Version: Web v3.4.... | 1.0 | Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function - Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function

<!--... | non_priority | option field for found on ug but not available for edit function option field for found on ug but not available for edit function | 0 |

85,136 | 3,686,993,306 | IssuesEvent | 2016-02-25 05:13:48 | cs2103jan2016-w13-4j/main | https://api.github.com/repos/cs2103jan2016-w13-4j/main | closed | Create method that allows removing one tag from a task | component.storage priority.medium | Something like `Task.removeTag(int id, String tag)` | 1.0 | Create method that allows removing one tag from a task - Something like `Task.removeTag(int id, String tag)` | priority | create method that allows removing one tag from a task something like task removetag int id string tag | 1 |

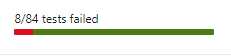

216,650 | 16,794,397,425 | IssuesEvent | 2021-06-16 00:04:01 | microsoft/appcenter | https://api.github.com/repos/microsoft/appcenter | closed | Inconclusive and Skipped tests missing in the Test run results on the main page | Portal feature request test | In the log I see

Test Count: 84, Passed: 74, Failed: 8, Warnings: 0, Inconclusive: 0, Skipped: 2

On the main page I see

Will be great to see the real count of tests on the main page. | 1.0 | Inconclusive and Skipped tests missing in the Test run results on the main page - In the log I see

Test Count: 84, Passed: 74, Failed: 8, Warnings: 0, Inconclusive: 0, Skipped: 2

On the main page I see

... | non_priority | inconclusive and skipped tests missing in the test run results on the main page in the log i see test count passed failed warnings inconclusive skipped on the main page i see will be great to see the real count of tests on the main page | 0 |

484,679 | 13,943,533,823 | IssuesEvent | 2020-10-22 23:22:26 | SkriptLang/Skript | https://api.github.com/repos/SkriptLang/Skript | closed | Can't check if item has NO name applied - Beta 4 | bug completed dev needed priority: low | I used this line of code to check if player had X regular diamonds (that had no special name or lore):

`if arg-player has arg-number of diamonds named "":`

After updating to beta 2, beta 3 i started to get this error when loading the skript:

`the 1st argument of diamond named "" is not an item type (atm_diamond.sk... | 1.0 | Can't check if item has NO name applied - Beta 4 - I used this line of code to check if player had X regular diamonds (that had no special name or lore):

`if arg-player has arg-number of diamonds named "":`

After updating to beta 2, beta 3 i started to get this error when loading the skript:

`the 1st argument of d... | priority | can t check if item has no name applied beta i used this line of code to check if player had x regular diamonds that had no special name or lore if arg player has arg number of diamonds named after updating to beta beta i started to get this error when loading the skript the argument of dia... | 1 |

94,251 | 10,817,094,205 | IssuesEvent | 2019-11-08 08:57:30 | influxdata/influxdb-client-java | https://api.github.com/repos/influxdata/influxdb-client-java | closed | Documentation: README.md documents example that should be improved | documentation | The current README.md code documents classes that were renamed.

```

RetentionRule retention = new RetentionRule();

retention.setEverySeconds(3600L);

```

Correct example is:

```

BucketRetentionRules bucketRetentionRules = new BucketRetentionRules();

bucketRetentionRules.setEv... | 1.0 | Documentation: README.md documents example that should be improved - The current README.md code documents classes that were renamed.

```

RetentionRule retention = new RetentionRule();

retention.setEverySeconds(3600L);

```

Correct example is:

```

BucketRetentionRules bucketRetentionRu... | non_priority | documentation readme md documents example that should be improved the current readme md code documents classes that were renamed retentionrule retention new retentionrule retention seteveryseconds correct example is bucketretentionrules bucketretentionrules ... | 0 |

831,536 | 32,051,727,476 | IssuesEvent | 2023-09-23 16:33:41 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Action Containers broke spellbooks + exception | Issue: Bug Priority: 2-Before Release Difficulty: 2-Medium | ## Description

<!-- Explain your issue in detail. Issues without proper explanation are liable to be closed by maintainers. -->

An exception is thrown when trying to learn spells from spellbooks. The recent action container PR broke this behavior: #20260

**Reproduction**

<!-- Include the steps to reproduce if a... | 1.0 | Action Containers broke spellbooks + exception - ## Description

<!-- Explain your issue in detail. Issues without proper explanation are liable to be closed by maintainers. -->

An exception is thrown when trying to learn spells from spellbooks. The recent action container PR broke this behavior: #20260

**Reprodu... | priority | action containers broke spellbooks exception description an exception is thrown when trying to learn spells from spellbooks the recent action container pr broke this behavior reproduction pick up a spellbook and try to learn it console will show the exception screenshots ... | 1 |

163,275 | 20,356,090,147 | IssuesEvent | 2022-02-20 00:37:34 | eclipse/che | https://api.github.com/repos/eclipse/che | closed | REST Service on the Che server-side that will manage user secrets | kind/enhancement severity/P1 lifecycle/stale area/dev-experience area/security | ### Is your enhancement related to a problem? Please describe.

Some che users need to have some secrets on personal workspace. Secrets can be:

- github / SCM token

- files that contain secrets

- Environnment variables

Eclipse CHE provide some function to handle this. All of them consume existings secrets from... | True | REST Service on the Che server-side that will manage user secrets - ### Is your enhancement related to a problem? Please describe.

Some che users need to have some secrets on personal workspace. Secrets can be:

- github / SCM token

- files that contain secrets

- Environnment variables

Eclipse CHE provide some... | non_priority | rest service on the che server side that will manage user secrets is your enhancement related to a problem please describe some che users need to have some secrets on personal workspace secrets can be github scm token files that contain secrets environnment variables eclipse che provide some... | 0 |

230,674 | 7,612,865,291 | IssuesEvent | 2018-05-01 19:05:06 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | Reordering layers has no effect on map | bug-type: broken use case bug-type: regression priority: high problem: bug | Tested build: http://fgpv.cloudapp.net/demo/develop/prod/samples/index-fgp-en.html

Imported 2 layers (`happy.json` and then `convert.json`) and reordering them had no effect.

`happy.json` always remained below the other layer even if it was above in the legend stack.

Expected behaviour would be to see `happy.js... | 1.0 | Reordering layers has no effect on map - Tested build: http://fgpv.cloudapp.net/demo/develop/prod/samples/index-fgp-en.html

Imported 2 layers (`happy.json` and then `convert.json`) and reordering them had no effect.

`happy.json` always remained below the other layer even if it was above in the legend stack.

Exp... | priority | reordering layers has no effect on map tested build imported layers happy json and then convert json and reordering them had no effect happy json always remained below the other layer even if it was above in the legend stack expected behaviour would be to see happy json layer above convert j... | 1 |

68,398 | 13,127,364,092 | IssuesEvent | 2020-08-06 10:13:02 | hpi-swa-teaching/Algernon-Launcher | https://api.github.com/repos/hpi-swa-teaching/Algernon-Launcher | closed | Cleanup Git(-hub) | DevOps non-code | Make the Git (-hub) ready for the next team.

This includes:

- [x] Remove Merged/stale/dangling Branches

- [x] Archive/Complete Milestones

- [x] Look through open issues add/remove description/labels/etc if needed | 1.0 | Cleanup Git(-hub) - Make the Git (-hub) ready for the next team.

This includes:

- [x] Remove Merged/stale/dangling Branches

- [x] Archive/Complete Milestones

- [x] Look through open issues add/remove description/labels/etc if needed | non_priority | cleanup git hub make the git hub ready for the next team this includes remove merged stale dangling branches archive complete milestones look through open issues add remove description labels etc if needed | 0 |

422,215 | 12,268,448,667 | IssuesEvent | 2020-05-07 12:32:59 | AxonFramework/AxonFramework | https://api.github.com/repos/AxonFramework/AxonFramework | closed | Missing aggregateIdentifier() method reported by an exception | Priority 4: Would Status: In Progress Type: Bug | When I was writing my tests the other day I happened to encounter an `EventStoreException` with message "You probably want to use aggregateIdentifier() on your fixture".

The solution was to create a separate fixture for each independent unit test, but I could not find the `aggregateIdentifier()` method on any of th... | 1.0 | Missing aggregateIdentifier() method reported by an exception - When I was writing my tests the other day I happened to encounter an `EventStoreException` with message "You probably want to use aggregateIdentifier() on your fixture".

The solution was to create a separate fixture for each independent unit test, but ... | priority | missing aggregateidentifier method reported by an exception when i was writing my tests the other day i happened to encounter an eventstoreexception with message you probably want to use aggregateidentifier on your fixture the solution was to create a separate fixture for each independent unit test but ... | 1 |

414,126 | 12,099,647,314 | IssuesEvent | 2020-04-20 12:33:18 | rubyforgood/casa | https://api.github.com/repos/rubyforgood/casa | closed | Add contact medium to new case contact success page | :raised_hands: Volunteer Priority: High Status: Available | relates to epic #3

**What type of user is this for? [volunteer/supervisor/admin/all]**

**Where does/should this occur**

On the page a volunteer views after successfully creating a new `case_contact`.

**Description**

_Contact medium_ should display underneath _Contact type:_

**Screenshots**

<img width="12... | 1.0 | Add contact medium to new case contact success page - relates to epic #3

**What type of user is this for? [volunteer/supervisor/admin/all]**

**Where does/should this occur**

On the page a volunteer views after successfully creating a new `case_contact`.

**Description**

_Contact medium_ should display underne... | priority | add contact medium to new case contact success page relates to epic what type of user is this for where does should this occur on the page a volunteer views after successfully creating a new case contact description contact medium should display underneath contact type screen... | 1 |

98,284 | 12,305,950,794 | IssuesEvent | 2020-05-11 23:58:13 | alice-i-cecile/Fonts-of-Power | https://api.github.com/repos/alice-i-cecile/Fonts-of-Power | closed | Rework attacks of opportunity to fix melee stalemate | bug design | 10:03 PM] Alice: Alright, here we go:

- combat in 5e (and FoP) feels really stale because melee combatants don't try to move

- once you're in melee combat, being the first one to blink just gets you smacked in the face, and nothing else

- this is less bad in FoP, because a) Disengage is a minor action b) if you can ... | 1.0 | Rework attacks of opportunity to fix melee stalemate - 10:03 PM] Alice: Alright, here we go:

- combat in 5e (and FoP) feels really stale because melee combatants don't try to move

- once you're in melee combat, being the first one to blink just gets you smacked in the face, and nothing else

- this is less bad in FoP... | non_priority | rework attacks of opportunity to fix melee stalemate pm alice alright here we go combat in and fop feels really stale because melee combatants don t try to move once you re in melee combat being the first one to blink just gets you smacked in the face and nothing else this is less bad in fop b... | 0 |

427,085 | 29,795,857,404 | IssuesEvent | 2023-06-16 02:14:19 | inventree/InvenTree | https://api.github.com/repos/inventree/InvenTree | closed | units parameters | question report documentation | ### Body of the issue

is there a way to print the units of the parameters in the labels. behind the value i want the unit to print but don't know how

`<p class="text">

{% if parameters.voltage %}

{{parameters.voltage}}

{% endif %}

</p>` | 1.0 | units parameters - ### Body of the issue

is there a way to print the units of the parameters in the labels. behind the value i want the unit to print but don't know how

`<p class="text">

{% if parameters.voltage %}

{{parameters.voltage}}

{% endif %}

</p>` | non_priority | units parameters body of the issue is there a way to print the units of the parameters in the labels behind the value i want the unit to print but don t know how if parameters voltage parameters voltage endif | 0 |

104,600 | 11,415,273,942 | IssuesEvent | 2020-02-02 09:52:53 | engnogueira/webdjango | https://api.github.com/repos/engnogueira/webdjango | closed | 3.5.3 - Implementando um Breadcrumb | documentation | Nessa aula implementamos a funcionalidade de breadcrumb para melhorar a navegalibilidade das aulas no website.

[Implementando um Breadcrumb](https://www.python.pro.br/modulos/django/topicos/implementando-um-breadcrumb)

Link com documentação do Twitter Bootstrap:

https://getbootstrap.com/docs/4.4/components/bread... | 1.0 | 3.5.3 - Implementando um Breadcrumb - Nessa aula implementamos a funcionalidade de breadcrumb para melhorar a navegalibilidade das aulas no website.

[Implementando um Breadcrumb](https://www.python.pro.br/modulos/django/topicos/implementando-um-breadcrumb)

Link com documentação do Twitter Bootstrap:

https://getb... | non_priority | implementando um breadcrumb nessa aula implementamos a funcionalidade de breadcrumb para melhorar a navegalibilidade das aulas no website link com documentação do twitter bootstrap | 0 |

490,200 | 14,116,621,813 | IssuesEvent | 2020-11-08 04:19:50 | AY2021S1-CS2103-T16-2/tp | https://api.github.com/repos/AY2021S1-CS2103-T16-2/tp | opened | Check Code Quality for Joven's Features | priority.Medium | Will only close when the documentation for code quality improvements are made. | 1.0 | Check Code Quality for Joven's Features - Will only close when the documentation for code quality improvements are made. | priority | check code quality for joven s features will only close when the documentation for code quality improvements are made | 1 |

163,294 | 13,914,727,812 | IssuesEvent | 2020-10-20 22:47:44 | nexusformat/definitions | https://api.github.com/repos/nexusformat/definitions | closed | DOC: ! LaTeX Error: Too deeply nested. | documentation | When building the documentation, this error is reported by the pdflatex build.

It means that we have requested more than six levels of a latex ``/begin{quote}`` (or other) block. The issue could be with how we:

1. extract the rst from the NXDL files

1. format specific documentation (indentation) in the NXDL fil... | 1.0 | DOC: ! LaTeX Error: Too deeply nested. - When building the documentation, this error is reported by the pdflatex build.

It means that we have requested more than six levels of a latex ``/begin{quote}`` (or other) block. The issue could be with how we:

1. extract the rst from the NXDL files

1. format specific do... | non_priority | doc latex error too deeply nested when building the documentation this error is reported by the pdflatex build it means that we have requested more than six levels of a latex begin quote or other block the issue could be with how we extract the rst from the nxdl files format specific do... | 0 |

65,983 | 19,846,070,635 | IssuesEvent | 2022-01-21 06:31:41 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | opened | Cannot share a video from iOS | T-Defect | ### Steps to reproduce

Steps to reproduce:

1. Have a video present in chat with someone

2. Click on the video and hold

3. Click more in the bottom left corner

4. Select share and select a user to send it to someone

### Outcome

Expected the video to be shared to the other user.

Actual result: The process gets ... | 1.0 | Cannot share a video from iOS - ### Steps to reproduce

Steps to reproduce:

1. Have a video present in chat with someone

2. Click on the video and hold

3. Click more in the bottom left corner

4. Select share and select a user to send it to someone

### Outcome

Expected the video to be shared to the other user.

... | non_priority | cannot share a video from ios steps to reproduce steps to reproduce have a video present in chat with someone click on the video and hold click more in the bottom left corner select share and select a user to send it to someone outcome expected the video to be shared to the other user ... | 0 |

12,630 | 4,513,229,528 | IssuesEvent | 2016-09-04 05:27:52 | Jeremy-Barnes/Critters | https://api.github.com/repos/Jeremy-Barnes/Critters | opened | Server: Create Landmark/NPC | Code feature Server | Create endpoints to allow:

Random dialog fetching for distinct pages (NPCs)

Quest management

| 1.0 | Server: Create Landmark/NPC - Create endpoints to allow:

Random dialog fetching for distinct pages (NPCs)

Quest management

| non_priority | server create landmark npc create endpoints to allow random dialog fetching for distinct pages npcs quest management | 0 |

14,164 | 3,807,048,174 | IssuesEvent | 2016-03-25 04:33:06 | stevegrunwell/mcavoy | https://api.github.com/repos/stevegrunwell/mcavoy | closed | Introduce a formal change log | documentation | Once the plugin gets past 0.1.0, a formal change log should be kept based on the [Keep a Changelog standard](http://keepachangelog.com/). | 1.0 | Introduce a formal change log - Once the plugin gets past 0.1.0, a formal change log should be kept based on the [Keep a Changelog standard](http://keepachangelog.com/). | non_priority | introduce a formal change log once the plugin gets past a formal change log should be kept based on the | 0 |

617,407 | 19,349,655,247 | IssuesEvent | 2021-12-15 14:29:24 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | att-yahoo.att.net - see bug description | browser-firefox-mobile priority-normal engine-gecko | <!-- @browser: Firefox Mobile 96.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:96.0) Gecko/96.0 Firefox/96.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/96773 -->

**URL**: https://att-yahoo.att.net/FIM/sps/auth?SPRelayState=https%3A%2F%2Fmail.yahoo.c... | 1.0 | att-yahoo.att.net - see bug description - <!-- @browser: Firefox Mobile 96.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:96.0) Gecko/96.0 Firefox/96.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/96773 -->

**URL**: https://att-yahoo.att.net/FIM/sps/au... | priority | att yahoo att net see bug description url browser version firefox mobile operating system android tested another browser yes chrome problem type something else description has timed out for about now steps to reproduce keeps saying timed out i turned off ... | 1 |

568,720 | 16,987,209,797 | IssuesEvent | 2021-06-30 15:37:39 | bcgov/entity | https://api.github.com/repos/bcgov/entity | closed | NR Extension Date not updated in NRO | ENTITY OPS Priority1 | ServiceNow incident: INC0098013

Contact information

Staff Name: Debbie Blythe

Staff Email:

Description

NR Extension has been applied in NAMEX but is not updated in NRO. Update Extension Date in NRO to July 31, 2021.

Email from Client:

Patrick can you create a high priority bug for this please that needs to be wo... | 1.0 | NR Extension Date not updated in NRO - ServiceNow incident: INC0098013

Contact information

Staff Name: Debbie Blythe

Staff Email:

Description

NR Extension has been applied in NAMEX but is not updated in NRO. Update Extension Date in NRO to July 31, 2021.

Email from Client:

Patrick can you create a high priority ... | priority | nr extension date not updated in nro servicenow incident contact information staff name debbie blythe staff email description nr extension has been applied in namex but is not updated in nro update extension date in nro to july email from client patrick can you create a high priority bug for this ... | 1 |