Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

337,848 | 10,220,226,065 | IssuesEvent | 2019-08-15 20:44:31 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.nytimes.com - desktop site instead of mobile site | browser-fenix engine-gecko priority-important | <!-- @browser: Firefox Mobile 69.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:69.0) Gecko/69.0 Firefox/69.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.nytimes.com/2003/10/26/books/who-killed-mary-phagan.html

**Browser / Version**: Firefox Mobile 69.0

**Operat... | 1.0 | www.nytimes.com - desktop site instead of mobile site - <!-- @browser: Firefox Mobile 69.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:69.0) Gecko/69.0 Firefox/69.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.nytimes.com/2003/10/26/books/who-killed-mary-phagan.h... | priority | desktop site instead of mobile site url browser version firefox mobile operating system android tested another browser no problem type desktop site instead of mobile site description claims that i am in private mode thus can t read the article steps to reproduce... | 1 |

24,605 | 2,669,425,474 | IssuesEvent | 2015-03-23 15:26:48 | aseprite/aseprite | https://api.github.com/repos/aseprite/aseprite | closed | Move colours in palette editor | colorbar enhancement imported medium priority | _From [Corporal...@gmail.com](https://code.google.com/u/103014587505849798163/) on July 31, 2011 18:08:24_

What do you need to do? Move palette colours in the palette editor to different positions, making it easier to manually sort palettes. How would you like to do it? Using the arrow keys, which are presently not us... | 1.0 | Move colours in palette editor - _From [Corporal...@gmail.com](https://code.google.com/u/103014587505849798163/) on July 31, 2011 18:08:24_

What do you need to do? Move palette colours in the palette editor to different positions, making it easier to manually sort palettes. How would you like to do it? Using the arrow... | priority | move colours in palette editor from on july what do you need to do move palette colours in the palette editor to different positions making it easier to manually sort palettes how would you like to do it using the arrow keys which are presently not used by the palette editor original issue ... | 1 |

152,286 | 13,450,411,240 | IssuesEvent | 2020-09-08 18:29:08 | ossia/score | https://api.github.com/repos/ossia/score | closed | JS in v3 | documentation ergonomy | Values from a vector fed into an JS inlet are tricky to retrieve.

calling value on an inlet receiving an array of 2 value returns

```

Debug: QVariant(QVector2D, QVector2D(0.5, 0.283333))

```

But neither .x or [0] returns anything | 1.0 | JS in v3 - Values from a vector fed into an JS inlet are tricky to retrieve.

calling value on an inlet receiving an array of 2 value returns

```

Debug: QVariant(QVector2D, QVector2D(0.5, 0.283333))

```

But neither .x or [0] returns anything | non_priority | js in values from a vector fed into an js inlet are tricky to retrieve calling value on an inlet receiving an array of value returns debug qvariant but neither x or returns anything | 0 |

161,233 | 25,308,789,735 | IssuesEvent | 2022-11-17 15:55:40 | kodadot/nft-gallery | https://api.github.com/repos/kodadot/nft-gallery | closed | Redesign toast notification | $ p3 redesign | It can be triggered whenever you copy address for example

for example here Share -> Copy Link

https://deploy-preview-4330--koda-nuxt.netlify.app/bsx/gallery/2548799063-43?redesign=true

<img width="291" alt="image" src="https://user-images.githubusercontent.com/5887929/202200464-cea24880-df12-4872-9b34-757176fa98... | 1.0 | Redesign toast notification - It can be triggered whenever you copy address for example

for example here Share -> Copy Link

https://deploy-preview-4330--koda-nuxt.netlify.app/bsx/gallery/2548799063-43?redesign=true

<img width="291" alt="image" src="https://user-images.githubusercontent.com/5887929/202200464-cea2... | non_priority | redesign toast notification it can be triggered whenever you copy address for example for example here share copy link img width alt image src as always exezbcz will drop some designs 😎✨ | 0 |

52,095 | 27,370,444,384 | IssuesEvent | 2023-02-27 22:58:09 | mxmlnkn/pragzip | https://api.github.com/repos/mxmlnkn/pragzip | opened | Add optimized file access that also works for non-seekable files | enhancement performance | There are some use cases that want to create the index while downloading, so something like `wget | tee downloaded-file | pragzip --export-index downlaoded-file.gzindex`.

I think this should be solvable with a new non-seekable `FileReader` derived class with these two main ideas:

- A working implementation could... | True | Add optimized file access that also works for non-seekable files - There are some use cases that want to create the index while downloading, so something like `wget | tee downloaded-file | pragzip --export-index downlaoded-file.gzindex`.

I think this should be solvable with a new non-seekable `FileReader` derived cl... | non_priority | add optimized file access that also works for non seekable files there are some use cases that want to create the index while downloading so something like wget tee downloaded file pragzip export index downlaoded file gzindex i think this should be solvable with a new non seekable filereader derived cl... | 0 |

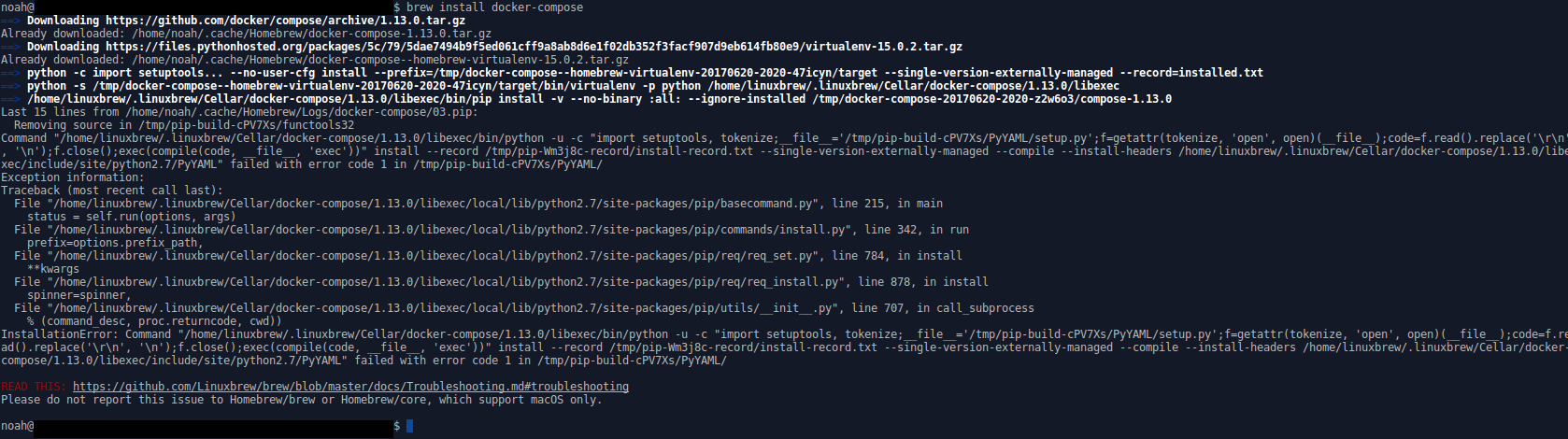

16,108 | 6,105,540,365 | IssuesEvent | 2017-06-21 00:10:24 | Linuxbrew/homebrew-core | https://api.github.com/repos/Linuxbrew/homebrew-core | closed | Error: brew install docker-compose | build-error | Tried `brew update` twice and `brew doctor` to no avail.

Gist log: https://gist.github.com/anonymous/cf2c93921517039a6fe56a78af7d555d

Screenshot of error:

| 1.0 | Error: brew install docker-compose - Tried `brew update` twice and `brew doctor` to no avail.

Gist log: https://gist.github.com/anonymous/cf2c93921517039a6fe56a78af7d555d

Screenshot of error:

detected in axios-0.17.1.tgz | security vulnerability | ## CVE-2019-10742 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>axios-0.17.1.tgz</b></p></summary>

<p>Promise based HTTP client for the browser and node.js</p>

<p>Library home page: ... | True | CVE-2019-10742 (High) detected in axios-0.17.1.tgz - ## CVE-2019-10742 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>axios-0.17.1.tgz</b></p></summary>

<p>Promise based HTTP client f... | non_priority | cve high detected in axios tgz cve high severity vulnerability vulnerable library axios tgz promise based http client for the browser and node js library home page a href path to dependency file uchi sidebar clone package json path to vulnerable library uchi sidebar ... | 0 |

645,092 | 20,994,596,828 | IssuesEvent | 2022-03-29 12:29:31 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | opened | run.logging-manual.main_test: test_with_cloud_headers failed | priority: p1 type: bug flakybot: issue | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 029e84c9e54cce4995acfa167e39d8b565f664d8

b... | 1.0 | run.logging-manual.main_test: test_with_cloud_headers failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop comme... | priority | run logging manual main test test with cloud headers failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output traceback most recent call last ... | 1 |

476,171 | 13,734,759,361 | IssuesEvent | 2020-10-05 09:09:44 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m.rediff.com - desktop site instead of mobile site | browser-focus-geckoview engine-gecko priority-important | <!-- @browser: Firefox Mobile 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:81.0) Gecko/81.0 Firefox/81.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/59285 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://m.rediff.com/

**Brows... | 1.0 | m.rediff.com - desktop site instead of mobile site - <!-- @browser: Firefox Mobile 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:81.0) Gecko/81.0 Firefox/81.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/59285 -->

<!-- @extra_labels: browser-focus-g... | priority | m rediff com desktop site instead of mobile site url browser version firefox mobile operating system android tested another browser yes chrome problem type desktop site instead of mobile site description desktop site instead of mobile site steps to reproduce s... | 1 |

133,241 | 10,799,949,063 | IssuesEvent | 2019-11-06 13:18:34 | gitfun-party/this-repo-has-issues | https://api.github.com/repos/gitfun-party/this-repo-has-issues | opened | [test-issue-47] Copying cross-platform system | test | Gibbous stench cat shunned swarthy non-euclidean unmentionable. Antediluvian gambrel manuscript fungus. Furtive fungus squamous antediluvian blasphemous accursed dank. Madness daemoniac singular cat. Furtive nameless amorphous unmentionable.\n\nUlulate tenebrous hideous comprehension fungus noisome unnamable cyclopean.... | 1.0 | [test-issue-47] Copying cross-platform system - Gibbous stench cat shunned swarthy non-euclidean unmentionable. Antediluvian gambrel manuscript fungus. Furtive fungus squamous antediluvian blasphemous accursed dank. Madness daemoniac singular cat. Furtive nameless amorphous unmentionable.\n\nUlulate tenebrous hideous c... | non_priority | copying cross platform system gibbous stench cat shunned swarthy non euclidean unmentionable antediluvian gambrel manuscript fungus furtive fungus squamous antediluvian blasphemous accursed dank madness daemoniac singular cat furtive nameless amorphous unmentionable n nululate tenebrous hideous comprehension f... | 0 |

657,135 | 21,786,573,448 | IssuesEvent | 2022-05-14 08:12:23 | bossbuwi/reality | https://api.github.com/repos/bossbuwi/reality | closed | Add rules | enhancement ui logic high priority | Rules for system use must be added on the dashboard. The implementation must be aesthetically pleasing.

_The server needs to implement this as well._ | 1.0 | Add rules - Rules for system use must be added on the dashboard. The implementation must be aesthetically pleasing.

_The server needs to implement this as well._ | priority | add rules rules for system use must be added on the dashboard the implementation must be aesthetically pleasing the server needs to implement this as well | 1 |

338,561 | 10,231,634,779 | IssuesEvent | 2019-08-18 11:20:01 | codetapacademy/codetap.academy | https://api.github.com/repos/codetapacademy/codetap.academy | opened | feat: create a route that will load the play-video component | Priority: High Status: Available Type: Enhancement | create a route **/video/:[youtubeVideoId]** that will load the **play-video** component

This is part of ** feat: create play video component route** #143 | 1.0 | feat: create a route that will load the play-video component - create a route **/video/:[youtubeVideoId]** that will load the **play-video** component

This is part of ** feat: create play video component route** #143 | priority | feat create a route that will load the play video component create a route video that will load the play video component this is part of feat create play video component route | 1 |

95,932 | 10,906,347,653 | IssuesEvent | 2019-11-20 12:45:38 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | closed | Improve Service Programming Model document | area/documentation area/eventing | **Description**

Affected document: https://kyma-project.io/docs/components/event-bus/#details-service-programming-model-event-delivery

To do:

* reconsider changing the structure to the following

* Event delivery flow

* Successful delivery

* Event structure

* Metadata (+ parameters)

* Payloa... | 1.0 | Improve Service Programming Model document - **Description**

Affected document: https://kyma-project.io/docs/components/event-bus/#details-service-programming-model-event-delivery

To do:

* reconsider changing the structure to the following

* Event delivery flow

* Successful delivery

* Event structure

... | non_priority | improve service programming model document description affected document to do reconsider changing the structure to the following event delivery flow successful delivery event structure metadata parameters payload payload example included in this section s... | 0 |

250,400 | 7,976,422,688 | IssuesEvent | 2018-07-17 12:39:35 | pyrocms/pyrocms | https://api.github.com/repos/pyrocms/pyrocms | closed | [upload-field_type/files-module] String replace does not support G. | Priority: High Type: Bug Report | **Describe the bug**

Both upload field type and files module use str_replace('M', '' ...) to get the current upload max. This does not support gigabyte shorthand as set in nginx, for example.

**To Reproduce**

Steps to reproduce the behavior:

1. Set nginx / PHP max upload size to 2G or similar

2. Attempt to uploa... | 1.0 | [upload-field_type/files-module] String replace does not support G. - **Describe the bug**

Both upload field type and files module use str_replace('M', '' ...) to get the current upload max. This does not support gigabyte shorthand as set in nginx, for example.

**To Reproduce**

Steps to reproduce the behavior:

1.... | priority | string replace does not support g describe the bug both upload field type and files module use str replace m to get the current upload max this does not support gigabyte shorthand as set in nginx for example to reproduce steps to reproduce the behavior set nginx php max upload siz... | 1 |

46,345 | 24,486,080,538 | IssuesEvent | 2022-10-09 13:02:04 | ItJustWorksTM/EiffelVis | https://api.github.com/repos/ItJustWorksTM/EiffelVis | opened | Keep a event and link type index | Performance Backend | Currently we do not send the link type to the frontend by default, this may limit some use cases.

The reason it is not being send is due to performance requirements, as the types are all strings they would clog the internet tubes needlessly.

To optimize this and to unlock the ability to send over more information... | True | Keep a event and link type index - Currently we do not send the link type to the frontend by default, this may limit some use cases.

The reason it is not being send is due to performance requirements, as the types are all strings they would clog the internet tubes needlessly.

To optimize this and to unlock the ab... | non_priority | keep a event and link type index currently we do not send the link type to the frontend by default this may limit some use cases the reason it is not being send is due to performance requirements as the types are all strings they would clog the internet tubes needlessly to optimize this and to unlock the ab... | 0 |

60,128 | 3,120,781,477 | IssuesEvent | 2015-09-05 01:58:04 | framingeinstein/issues-test | https://api.github.com/repos/framingeinstein/issues-test | closed | SRP-4: S-2251 finishes missing | priority:normal resolution:will-not-fix type:bug | We have 5 finishes live for S-2251 but only 3 are showing on the product page | 1.0 | SRP-4: S-2251 finishes missing - We have 5 finishes live for S-2251 but only 3 are showing on the product page | priority | srp s finishes missing we have finishes live for s but only are showing on the product page | 1 |

249,085 | 7,953,759,174 | IssuesEvent | 2018-07-12 03:38:18 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: 64 bit server in 32 bit game version | Medium Priority | **Version:** 0.7.1.2 beta

**Steps to Reproduce:**

in the 32 bit game version is a 64 bit server so you cant play the game in singelplayer

**Expected behavior:**

**Actual behavior:**

| 1.0 | USER ISSUE: 64 bit server in 32 bit game version - **Version:** 0.7.1.2 beta

**Steps to Reproduce:**

in the 32 bit game version is a 64 bit server so you cant play the game in singelplayer

**Expected behavior:**

**Actual behavior:**

| priority | user issue bit server in bit game version version beta steps to reproduce in the bit game version is a bit server so you cant play the game in singelplayer expected behavior actual behavior | 1 |

114,862 | 4,647,370,071 | IssuesEvent | 2016-10-01 13:03:50 | ericcastoldi/safie | https://api.github.com/repos/ericcastoldi/safie | closed | Login | Cadastro de cliente | frontend priority store | após fechar a compra, caso a Cliente não esteja autenticada aparece a página para login ou novo cadastro. O login deve oferecer opções de autenticação externas ao site (Google, Facebook, etc). No cabeçalho do site será exibido o nome da cliente autenticada. | 1.0 | Login | Cadastro de cliente - após fechar a compra, caso a Cliente não esteja autenticada aparece a página para login ou novo cadastro. O login deve oferecer opções de autenticação externas ao site (Google, Facebook, etc). No cabeçalho do site será exibido o nome da cliente autenticada. | priority | login cadastro de cliente após fechar a compra caso a cliente não esteja autenticada aparece a página para login ou novo cadastro o login deve oferecer opções de autenticação externas ao site google facebook etc no cabeçalho do site será exibido o nome da cliente autenticada | 1 |

463,852 | 13,302,412,475 | IssuesEvent | 2020-08-25 14:14:19 | fossasia/open-event-frontend | https://api.github.com/repos/fossasia/open-event-frontend | opened | Schedule Calendar View: Changing Timezone can Result in Disappearing Sessions from Schedule | Priority: High bug | If a user changes the timezone to a time that is not covered in the original calendar time the sessions disappear from the calendar view.

Compare https://eventyay.com/e/16fa59c7/schedule

and void FileDescriptor::sync() const | good first issue test | These two functions are not called in the test code. Make sure they work as expected | 1.0 | Add code to tests/FileDescriptor_test.cpp to test FileDescriptor(const int) and void FileDescriptor::sync() const - These two functions are not called in the test code. Make sure they work as expected | non_priority | add code to tests filedescriptor test cpp to test filedescriptor const int and void filedescriptor sync const these two functions are not called in the test code make sure they work as expected | 0 |

98,132 | 11,046,010,265 | IssuesEvent | 2019-12-09 16:06:21 | shtillorg/ML0719-Issues | https://api.github.com/repos/shtillorg/ML0719-Issues | closed | Document evaluation results. | documentation | Should contain an overview of used tools, software, platforms, etc.; reason and purpose. | 1.0 | Document evaluation results. - Should contain an overview of used tools, software, platforms, etc.; reason and purpose. | non_priority | document evaluation results should contain an overview of used tools software platforms etc reason and purpose | 0 |

75,711 | 3,471,331,702 | IssuesEvent | 2015-12-23 14:47:45 | aic-collections/aicdams-lakeshore | https://api.github.com/repos/aic-collections/aicdams-lakeshore | opened | Move Document Type input field to the top in asset edit view | MEDIUM priority presentation | Move Document Type to the top, before Title. | 1.0 | Move Document Type input field to the top in asset edit view - Move Document Type to the top, before Title. | priority | move document type input field to the top in asset edit view move document type to the top before title | 1 |

127,589 | 17,315,193,428 | IssuesEvent | 2021-07-27 04:30:27 | the-deep/deeper | https://api.github.com/repos/the-deep/deeper | closed | Add Lead: Visual cue for drag and drop | design-ui related-client | When a user drags a folder/file over DEEP, it should indicate that it accepts drop behavior in add leads page. | 1.0 | Add Lead: Visual cue for drag and drop - When a user drags a folder/file over DEEP, it should indicate that it accepts drop behavior in add leads page. | non_priority | add lead visual cue for drag and drop when a user drags a folder file over deep it should indicate that it accepts drop behavior in add leads page | 0 |

142,043 | 11,453,403,405 | IssuesEvent | 2020-02-06 15:20:53 | wet-boew/cdts-sgdc | https://api.github.com/repos/wet-boew/cdts-sgdc | opened | Vérifier dans appfooter_transactional-en.shtml | testing | Issue moved from GCCode: https://gccode.ssc-spc.gc.ca/iitb-dgiit/nw-ws/sgdc-cdts/issues/129

@StdGit created the issue

https://ssl-templates.services.gc.ca/app/cls/WET/gcweb/v4_0_32/cdts/appTop/appfooter_transactional-en.shtml

Vérifier si les links sont modifiables, si oui changer l'exemple

and associate to CAT_A_EN

Create an English menu item "List All Categories"

Create an Italian menu item "List All Categories"

... | 1.0 | Language filter not working for categories with subcategories in "List All Categories" menu item - #### Steps to reproduce the issue

BACKEND:

Setup the site as multilingual.

Create a category CAT_A_EN with language English

Create a category CAT_A_IT with another language (ex. Italian) and associate to CAT_A_EN

Cre... | non_priority | language filter not working for categories with subcategories in list all categories menu item steps to reproduce the issue backend setup the site as multilingual create a category cat a en with language english create a category cat a it with another language ex italian and associate to cat a en cre... | 0 |

565,025 | 16,747,376,668 | IssuesEvent | 2021-06-11 17:21:03 | airshipit/vino | https://api.github.com/repos/airshipit/vino | closed | Provide Secure VNC support for VMs created by ViNO | enhancement priority/low size l | **Proposed change**

Provide the capability to configure VNC configuration for VMs created when deploying a ViNO CR

- Provide capability to enable VNC via ViNO CR

- Provide capability to secure VNC endpoints with password authentication

| 1.0 | Provide Secure VNC support for VMs created by ViNO - **Proposed change**

Provide the capability to configure VNC configuration for VMs created when deploying a ViNO CR

- Provide capability to enable VNC via ViNO CR

- Provide capability to secure VNC endpoints with password authentication

| priority | provide secure vnc support for vms created by vino proposed change provide the capability to configure vnc configuration for vms created when deploying a vino cr provide capability to enable vnc via vino cr provide capability to secure vnc endpoints with password authentication | 1 |

58,993 | 14,520,326,962 | IssuesEvent | 2020-12-14 05:13:02 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | opened | Can't Install TF on Apple M1 | type:build/install | **Issue Creation**

On trying to install TF 2.3 using `pip install tensorflow` from the terminal, I got a success message.

I could see tensorflow 2.3.1 in the list of installed packages by using either `conda list` or `pip list`

When I run python from the terminal and trying to import tensorflow, it returns this er... | 1.0 | Can't Install TF on Apple M1 - **Issue Creation**

On trying to install TF 2.3 using `pip install tensorflow` from the terminal, I got a success message.

I could see tensorflow 2.3.1 in the list of installed packages by using either `conda list` or `pip list`

When I run python from the terminal and trying to import... | non_priority | can t install tf on apple issue creation on trying to install tf using pip install tensorflow from the terminal i got a success message i could see tensorflow in the list of installed packages by using either conda list or pip list when i run python from the terminal and trying to import ... | 0 |

98,187 | 20,622,276,079 | IssuesEvent | 2022-03-07 18:37:02 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.1] TODO list: Duplicate Queries | No Code Attached Yet | To fix in 4.1

In Joomla Admin:

- [ ] Edit user - 42 statements were executed, 11 of which were duplicates, 31 unique

- [ ] Edit Article - 45 statements were executed, 6 of which were duplicates, 39 unique

- [ ] Site Modules - 36 statements were executed, 2 of which were duplicates, 34 unique

- [ ] Main Men... | 1.0 | [4.1] TODO list: Duplicate Queries - To fix in 4.1

In Joomla Admin:

- [ ] Edit user - 42 statements were executed, 11 of which were duplicates, 31 unique

- [ ] Edit Article - 45 statements were executed, 6 of which were duplicates, 39 unique

- [ ] Site Modules - 36 statements were executed, 2 of which were d... | non_priority | todo list duplicate queries to fix in in joomla admin edit user statements were executed of which were duplicates unique edit article statements were executed of which were duplicates unique site modules statements were executed of which were duplicates uni... | 0 |

14,599 | 3,411,364,948 | IssuesEvent | 2015-12-05 01:59:34 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | Test failure in CI build 9855 | test-failure | The following test appears to have failed:

[#9855](https://circleci.com/gh/cockroachdb/cockroach/9855):

```

I1204 02:18:56.957873 681 stopper.go:241 draining; tasks left:

2 storage/replica.go:1437

1 storage/replica_command.go:1440

I1204 02:18:56.958892 681 stopper.go:241 draining; tasks left:

2 stora... | 1.0 | Test failure in CI build 9855 - The following test appears to have failed:

[#9855](https://circleci.com/gh/cockroachdb/cockroach/9855):

```

I1204 02:18:56.957873 681 stopper.go:241 draining; tasks left:

2 storage/replica.go:1437

1 storage/replica_command.go:1440

I1204 02:18:56.958892 681 stopper.go:241 dr... | non_priority | test failure in ci build the following test appears to have failed stopper go draining tasks left storage replica go storage replica command go stopper go draining tasks left storage replica go panic rangelookup dispatched to correct range bu... | 0 |

534,411 | 15,618,336,417 | IssuesEvent | 2021-03-20 00:42:39 | way-of-elendil/3.3.5 | https://api.github.com/repos/way-of-elendil/3.3.5 | closed | NPC: Géant de pierre ensorcelé | bug priority-low type-quest | **Description**

11352 - [La rune de commandement]

11348 - [La rune de commandement]

23725 - [Géant de pierre]

24346 - [Géant de pierre ensorcelé]

33796 - [Rune de commandement]

Lorsque l'on utilise Rune de commandement sur un géant de pierre, le Géant de pierre ensorcelé spawn et attaque tous les NPCs... | 1.0 | NPC: Géant de pierre ensorcelé - **Description**

11352 - [La rune de commandement]

11348 - [La rune de commandement]

23725 - [Géant de pierre]

24346 - [Géant de pierre ensorcelé]

33796 - [Rune de commandement]

Lorsque l'on utilise Rune de commandement sur un géant de pierre, le Géant de pierre ensorce... | priority | npc géant de pierre ensorcelé description lorsque l on utilise rune de commandement sur un géant de pierre le géant de pierre ensorcelé spawn et attaque tous les npcs qui lui sont hostiles neutres comportement attendu le géant de pierre ensorcelé n attaq... | 1 |

824,391 | 31,153,727,367 | IssuesEvent | 2023-08-16 11:47:44 | consta-design-system/uikit | https://api.github.com/repos/consta-design-system/uikit | closed | TextField: переработать компонент | feature 🔥🔥 priority major | Сейчас основные пропсы берутся из HTMLDivElement, нужно же завязываться на HTMLInputElement, для избежания проблем с пропсами | 1.0 | TextField: переработать компонент - Сейчас основные пропсы берутся из HTMLDivElement, нужно же завязываться на HTMLInputElement, для избежания проблем с пропсами | priority | textfield переработать компонент сейчас основные пропсы берутся из htmldivelement нужно же завязываться на htmlinputelement для избежания проблем с пропсами | 1 |

707,808 | 24,319,995,204 | IssuesEvent | 2022-09-30 09:51:29 | JPGallegos1/crypto-monorepo | https://api.github.com/repos/JPGallegos1/crypto-monorepo | closed | Final documentation project | enhancement priority:HIGH | As a developer, I want to see some documentation about how to start the project on another machine

- [x] Make the final documentation before other people can see it | 1.0 | Final documentation project - As a developer, I want to see some documentation about how to start the project on another machine

- [x] Make the final documentation before other people can see it | priority | final documentation project as a developer i want to see some documentation about how to start the project on another machine make the final documentation before other people can see it | 1 |

499,658 | 14,475,471,336 | IssuesEvent | 2020-12-10 01:42:36 | googleapis/python-logging | https://api.github.com/repos/googleapis/python-logging | opened | Add an HTTPRequest log sample | good first issue priority: p2 | Add a new HTTPRequest log sample to [logging samples page](https://cloud.google.com/logging/docs/samples) with region tag `logging_write_request_entry`

**Criteria**

1. Entry sample should contain `requestMethod`, `Url`, and `status` which are minimally required fields for rendering summary fields in the Log Viewer.... | 1.0 | Add an HTTPRequest log sample - Add a new HTTPRequest log sample to [logging samples page](https://cloud.google.com/logging/docs/samples) with region tag `logging_write_request_entry`

**Criteria**

1. Entry sample should contain `requestMethod`, `Url`, and `status` which are minimally required fields for rendering s... | priority | add an httprequest log sample add a new httprequest log sample to with region tag logging write request entry criteria entry sample should contain requestmethod url and status which are minimally required fields for rendering summary fields in the log viewer note any fields in the final ... | 1 |

191,034 | 6,824,986,627 | IssuesEvent | 2017-11-08 08:54:57 | sahana/SAMBRO | https://api.github.com/repos/sahana/SAMBRO | opened | Tagging Alerts of Interest | enhancement Low Priority | The use case from the WA-COP project has presented a case where authorized users could bookmark or tag alerts of interest. The UI (desktop or mobile app) should prioritize such information in the presentation layers.

An additional value is that SAMBRO could adopt machine learning techniques to make use of the bookma... | 1.0 | Tagging Alerts of Interest - The use case from the WA-COP project has presented a case where authorized users could bookmark or tag alerts of interest. The UI (desktop or mobile app) should prioritize such information in the presentation layers.

An additional value is that SAMBRO could adopt machine learning techniq... | priority | tagging alerts of interest the use case from the wa cop project has presented a case where authorized users could bookmark or tag alerts of interest the ui desktop or mobile app should prioritize such information in the presentation layers an additional value is that sambro could adopt machine learning techniq... | 1 |

378,370 | 11,201,071,866 | IssuesEvent | 2020-01-04 00:30:09 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [doc] top_earlgrey doc doesn't represent pinout | Component:Doc Priority:P1 Type:Cleanup | Current version of top_earlgrey doc doesn't represent the pinout of the chip,

need to fix. Plan is to map it to the generated top level, with discussion of the

actual pins of the FPGA target. | 1.0 | [doc] top_earlgrey doc doesn't represent pinout - Current version of top_earlgrey doc doesn't represent the pinout of the chip,

need to fix. Plan is to map it to the generated top level, with discussion of the

actual pins of the FPGA target. | priority | top earlgrey doc doesn t represent pinout current version of top earlgrey doc doesn t represent the pinout of the chip need to fix plan is to map it to the generated top level with discussion of the actual pins of the fpga target | 1 |

94,216 | 19,515,825,781 | IssuesEvent | 2021-12-29 10:02:59 | Onelinerhub/onelinerhub | https://api.github.com/repos/Onelinerhub/onelinerhub | closed | Short solution needed: "Select database with PHP PDO" (php-pdo) | help wanted good first issue code php-pdo | Please help us write most modern and shortest code solution for this issue:

**Select database with PHP PDO** (technology: [php-pdo](https://onelinerhub.com/php-pdo))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create pull request with a new code file inside [inbox folder](https://gi... | 1.0 | Short solution needed: "Select database with PHP PDO" (php-pdo) - Please help us write most modern and shortest code solution for this issue:

**Select database with PHP PDO** (technology: [php-pdo](https://onelinerhub.com/php-pdo))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create ... | non_priority | short solution needed select database with php pdo php pdo please help us write most modern and shortest code solution for this issue select database with php pdo technology fast way just write the code solution in the comments prefered way create pull request with a new code file inside... | 0 |

742,045 | 25,834,169,662 | IssuesEvent | 2022-12-12 18:15:01 | thoth-station/python | https://api.github.com/repos/thoth-station/python | opened | `packaging` deprecates `LegacyVersion` causing failure if `packaging` is upgraded | kind/bug priority/backlog | ## Bug description

<!-- A clear and concise description of what the bug is. -->

Version 0.22 of `packaging` deprecates `LegacyVersion` and `LegacySpecifier` which are used in this module.

### Steps to Reproduce

1. upgrade to latest version of packaging

2. run anything

### Actual behavior

<!-- What happens? I... | 1.0 | `packaging` deprecates `LegacyVersion` causing failure if `packaging` is upgraded - ## Bug description

<!-- A clear and concise description of what the bug is. -->

Version 0.22 of `packaging` deprecates `LegacyVersion` and `LegacySpecifier` which are used in this module.

### Steps to Reproduce

1. upgrade to lates... | priority | packaging deprecates legacyversion causing failure if packaging is upgraded bug description version of packaging deprecates legacyversion and legacyspecifier which are used in this module steps to reproduce upgrade to latest version of packaging run anything actual behavi... | 1 |

444,792 | 12,821,167,366 | IssuesEvent | 2020-07-06 07:33:06 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.netflix.com - design is broken | browser-focus-geckoview engine-gecko priority-critical | <!-- @browser: Firefox Mobile 78.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.0.0; Mobile; rv:78.0) Gecko/78.0 Firefox/78.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/55100 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.netflix.com/lb/login

... | 1.0 | www.netflix.com - design is broken - <!-- @browser: Firefox Mobile 78.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.0.0; Mobile; rv:78.0) Gecko/78.0 Firefox/78.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/55100 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL*... | priority | design is broken url browser version firefox mobile operating system android tested another browser yes safari problem type design is broken description items not fully visible steps to reproduce 💔😣😣😣😣😣😣😣😣😣😣😣 browser configuratio... | 1 |

458,471 | 13,175,590,858 | IssuesEvent | 2020-08-12 02:07:53 | trustwallet/wallet-core | https://api.github.com/repos/trustwallet/wallet-core | closed | [Keystore] Support side chain | enhancement priority: high | To better support binance smart chain or other side chains, we could probably extend current keystore json like

```json

{

"sidechain": [

{

"name": "Smart Chain",

"type": "Ethereum",

"address": "0x"

}

]

}

``` | 1.0 | [Keystore] Support side chain - To better support binance smart chain or other side chains, we could probably extend current keystore json like

```json

{

"sidechain": [

{

"name": "Smart Chain",

"type": "Ethereum",

"address": "0x"

}

]

}

``` | priority | support side chain to better support binance smart chain or other side chains we could probably extend current keystore json like json sidechain name smart chain type ethereum address | 1 |

58,701 | 14,344,300,295 | IssuesEvent | 2020-11-28 13:51:11 | uniquelyparticular/sync-moltin-to-algolia | https://api.github.com/repos/uniquelyparticular/sync-moltin-to-algolia | opened | CVE-2020-26226 (High) detected in semantic-release-15.13.14.tgz | security vulnerability | ## CVE-2020-26226 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>semantic-release-15.13.14.tgz</b></p></summary>

<p>Automated semver compliant package publishing</p>

<p>Library home p... | True | CVE-2020-26226 (High) detected in semantic-release-15.13.14.tgz - ## CVE-2020-26226 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>semantic-release-15.13.14.tgz</b></p></summary>

<p>A... | non_priority | cve high detected in semantic release tgz cve high severity vulnerability vulnerable library semantic release tgz automated semver compliant package publishing library home page a href path to dependency file sync moltin to algolia package json path to vulnerable libr... | 0 |

407,278 | 11,911,500,550 | IssuesEvent | 2020-03-31 08:44:07 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [DIF] tests | Component:SW Hotlist:SW Opinion Priority:P3 | Just want to start the discussion on DIF testing. From previous conversations it seems that the consensus was to have several types of testing such as "mock" and DV.

I think DIF are ideally suited for "mock" testing, as all/most of the API calls will result in a single or multiple register read/write. A such it woul... | 1.0 | [DIF] tests - Just want to start the discussion on DIF testing. From previous conversations it seems that the consensus was to have several types of testing such as "mock" and DV.

I think DIF are ideally suited for "mock" testing, as all/most of the API calls will result in a single or multiple register read/write. ... | priority | tests just want to start the discussion on dif testing from previous conversations it seems that the consensus was to have several types of testing such as mock and dv i think dif are ideally suited for mock testing as all most of the api calls will result in a single or multiple register read write a su... | 1 |

165,664 | 20,613,314,585 | IssuesEvent | 2022-03-07 10:45:07 | serhii73/place2live.com | https://api.github.com/repos/serhii73/place2live.com | opened | CVE-2021-28658 (Medium) detected in Django-2.2.9-py3-none-any.whl | security vulnerability | ## CVE-2021-28658 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-2.2.9-py3-none-any.whl</b></p></summary>

<p>A high-level Python Web framework that encourages rapid developme... | True | CVE-2021-28658 (Medium) detected in Django-2.2.9-py3-none-any.whl - ## CVE-2021-28658 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-2.2.9-py3-none-any.whl</b></p></summary>

... | non_priority | cve medium detected in django none any whl cve medium severity vulnerability vulnerable library django none any whl a high level python web framework that encourages rapid development and clean pragmatic design library home page a href path to dependency file req... | 0 |

44,182 | 12,025,551,698 | IssuesEvent | 2020-04-12 09:50:23 | tiangolo/jbrout | https://api.github.com/repos/tiangolo/jbrout | closed | Window version exits on startup | OpSys-Windows Priority-Low Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1. Install windows version.

2. Attempt to launch brout...

3.

What is the expected output? What do you see instead?

Error log:

You should install pyexiv2 (>=0.1.2)

What version of the product are you using? On what operating system?

winxp brout 0.3.828

Please provide any ad... | 1.0 | Window version exits on startup - ```

What steps will reproduce the problem?

1. Install windows version.

2. Attempt to launch brout...

3.

What is the expected output? What do you see instead?

Error log:

You should install pyexiv2 (>=0.1.2)

What version of the product are you using? On what operating system?

winxp br... | non_priority | window version exits on startup what steps will reproduce the problem install windows version attempt to launch brout what is the expected output what do you see instead error log you should install what version of the product are you using on what operating system winxp brout ... | 0 |

98,117 | 29,485,271,608 | IssuesEvent | 2023-06-02 09:15:11 | apache/incubator-pegasus | https://api.github.com/repos/apache/incubator-pegasus | closed | Github actions run out of disk space while building ASAN | github scripts build | Previously `Build Release` and `Build with jemalloc` failed due to running out of disk space (see https://github.com/apache/incubator-pegasus/issues/1485). Recently, `Build ASAN` also failed due to the same reason (Unhandled exception. System.IO.IOException: No space left on device):

. Recently, `Build ASAN` also failed due to the same reason (Unhandled exception. System.IO.IOException: N... | non_priority | github actions run out of disk space while building asan previously build release and build with jemalloc failed due to running out of disk space see recently build asan also failed due to the same reason unhandled exception system io ioexception no space left on device this means we have t... | 0 |

789,168 | 27,781,437,148 | IssuesEvent | 2023-03-16 21:26:59 | PrefectHQ/prefect | https://api.github.com/repos/PrefectHQ/prefect | closed | Flow with many mapped tasks fails | bug priority:high status:in-progress from:sales | ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the Prefect documentation for this issue.

- [X] I checked that this issue is related to Prefect and not one of its dependencies.

### Bug summary

When I run a fl... | 1.0 | Flow with many mapped tasks fails - ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the Prefect documentation for this issue.

- [X] I checked that this issue is related to Prefect and not one of its dependencie... | priority | flow with many mapped tasks fails first check i added a descriptive title to this issue i used the github search to find a similar issue and didn t find it i searched the prefect documentation for this issue i checked that this issue is related to prefect and not one of its dependencies ... | 1 |

782,545 | 27,499,750,739 | IssuesEvent | 2023-03-05 15:05:14 | brandondombrowsky/BastCastle | https://api.github.com/repos/brandondombrowsky/BastCastle | closed | Write a script to demo for Google Integration | priority-medium | As a developer I want to showcase my work so my client knows I am on track.

Can be super duper simple, just any step above "Hey Google turn on my light"; Something more like "Hey Google run 'demo light script'" | 1.0 | Write a script to demo for Google Integration - As a developer I want to showcase my work so my client knows I am on track.

Can be super duper simple, just any step above "Hey Google turn on my light"; Something more like "Hey Google run 'demo light script'" | priority | write a script to demo for google integration as a developer i want to showcase my work so my client knows i am on track can be super duper simple just any step above hey google turn on my light something more like hey google run demo light script | 1 |

366,286 | 10,819,292,779 | IssuesEvent | 2019-11-08 14:06:01 | coreywebber/bdw | https://api.github.com/repos/coreywebber/bdw | closed | Redsky Maintenance | priority source-database | - [x] Rerun all processes

- [x] Full Pull Component

- [ ] Full Pull Component Properties | 1.0 | Redsky Maintenance - - [x] Rerun all processes

- [x] Full Pull Component

- [ ] Full Pull Component Properties | priority | redsky maintenance rerun all processes full pull component full pull component properties | 1 |

4,899 | 2,610,160,256 | IssuesEvent | 2015-02-26 18:50:51 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Map Issue | auto-migrated Priority-Medium Type-Defect | ```

Geonosis

Weird light issue on top corner of map

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 31 Jan 2011 at 2:24 | 1.0 | Map Issue - ```

Geonosis

Weird light issue on top corner of map

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 31 Jan 2011 at 2:24 | non_priority | map issue geonosis weird light issue on top corner of map original issue reported on code google com by gmail com on jan at | 0 |

186,218 | 6,734,460,856 | IssuesEvent | 2017-10-18 18:07:43 | octobercms/october | https://api.github.com/repos/octobercms/october | closed | Attachments inside a Relation "One to One" don't work | Priority: Medium Status: Abandoned Status: Review Needed Type: Unconfirmed Bug | Hello guys,

i have created two entities and i have connected them with a relation one to one. When i have attached a file in the second entities (connected with the first through a one to one partial) it doesn't store the file.

To be precise the system adds the value in the "system_files" table but it doesn't store t... | 1.0 | Attachments inside a Relation "One to One" don't work - Hello guys,

i have created two entities and i have connected them with a relation one to one. When i have attached a file in the second entities (connected with the first through a one to one partial) it doesn't store the file.

To be precise the system adds the ... | priority | attachments inside a relation one to one don t work hello guys i have created two entities and i have connected them with a relation one to one when i have attached a file in the second entities connected with the first through a one to one partial it doesn t store the file to be precise the system adds the ... | 1 |

56,481 | 32,028,191,478 | IssuesEvent | 2023-09-22 10:19:42 | keras-team/keras | https://api.github.com/repos/keras-team/keras | closed | High memory consumption with model.fit in TF 2.x | type:bug/performance | Moved from Tensorflow repository

https://github.com/tensorflow/tensorflow/issues/40942

@gdudziuk opened this issue in TF repo.

System information

Have I written custom code: Yes

OS Platform and Distribution: CentOS Linux 7

Mobile device: Not verified on mobile devices

TensorFlow installed from: binary, vi... | True | High memory consumption with model.fit in TF 2.x - Moved from Tensorflow repository

https://github.com/tensorflow/tensorflow/issues/40942

@gdudziuk opened this issue in TF repo.

System information

Have I written custom code: Yes

OS Platform and Distribution: CentOS Linux 7

Mobile device: Not verified on mo... | non_priority | high memory consumption with model fit in tf x moved from tensorflow repository gdudziuk opened this issue in tf repo system information have i written custom code yes os platform and distribution centos linux mobile device not verified on mobile devices tensorflow installed from binary via... | 0 |

220,389 | 17,192,203,107 | IssuesEvent | 2021-07-16 12:40:02 | FundacionParaguaya/stoplight-web | https://api.github.com/repos/FundacionParaguaya/stoplight-web | closed | Create new input text component | tested | The new input component should resemble this design

Connect to formik for validation | 1.0 | Create new input text component - The new input component should resemble this design

Connect to formik for validation | non_priority | create new input text component the new input component should resemble this design connect to formik for validation | 0 |

61,050 | 3,137,535,471 | IssuesEvent | 2015-09-11 03:38:59 | DivineRPG/DivineRPG | https://api.github.com/repos/DivineRPG/DivineRPG | closed | Raw and cooked empowered meat have the same textures | bug low-priority | Shouldn't they have different textures? The only way to tell the difference right now is to mouse over it. | 1.0 | Raw and cooked empowered meat have the same textures - Shouldn't they have different textures? The only way to tell the difference right now is to mouse over it. | priority | raw and cooked empowered meat have the same textures shouldn t they have different textures the only way to tell the difference right now is to mouse over it | 1 |

187,611 | 22,045,817,136 | IssuesEvent | 2022-05-30 01:29:06 | utopikkad/my-Todo-List | https://api.github.com/repos/utopikkad/my-Todo-List | closed | WS-2021-0039 (Low) detected in core-7.2.16.tgz, core-9.0.0.tgz - autoclosed | security vulnerability | ## WS-2021-0039 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>core-7.2.16.tgz</b>, <b>core-9.0.0.tgz</b></p></summary>

<p>

<details><summary><b>core-7.2.16.tgz</b></p></summary>

<p... | True | WS-2021-0039 (Low) detected in core-7.2.16.tgz, core-9.0.0.tgz - autoclosed - ## WS-2021-0039 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>core-7.2.16.tgz</b>, <b>core-9.0.0.tgz</b>... | non_priority | ws low detected in core tgz core tgz autoclosed ws low severity vulnerability vulnerable libraries core tgz core tgz core tgz angular the core framework library home page a href dependency hierarchy x core tgz vulner... | 0 |

181,262 | 6,657,796,948 | IssuesEvent | 2017-09-30 10:43:32 | status-im/status-react | https://api.github.com/repos/status-im/status-react | closed | No password validation on Unsigned transactions screen [0.9.11] | bug high-priority | ### Description

*Type*: Bug

*Summary*: I can sign transaction with any password I like and it is being sent and shown on Ropsten Testnet

#### Expected behavior

Password validation should be present when sigining transactions

#### Actual behavior

No password validation, I can sign and send transaction with... | 1.0 | No password validation on Unsigned transactions screen [0.9.11] - ### Description

*Type*: Bug

*Summary*: I can sign transaction with any password I like and it is being sent and shown on Ropsten Testnet

#### Expected behavior

Password validation should be present when sigining transactions

#### Actual beha... | priority | no password validation on unsigned transactions screen description type bug summary i can sign transaction with any password i like and it is being sent and shown on ropsten testnet expected behavior password validation should be present when sigining transactions actual behavior n... | 1 |

202,874 | 23,120,022,626 | IssuesEvent | 2022-07-27 20:25:13 | fecgov/openFEC | https://api.github.com/repos/fecgov/openFEC | closed | [SNYK: Medium] org.bouncycastle:bcprov-jdk15on Cryptographic Issues & io.netty:netty-handler Improper Certificate Validation (Due 08/28/2022) | Security: moderate Security: general | 1. org.bouncycastle:bcprov-jdk15on Cryptographic Issues

[org.bouncycastle:bcprov-jdk15on](https://mvnrepository.com/artifact/org.bouncycastle/bcprov-jdk15on) is a Java implementation of cryptographic algorithms.

Affected versions of this package are vulnerable to Cryptographic Issues via weak key-hash message authent... | True | [SNYK: Medium] org.bouncycastle:bcprov-jdk15on Cryptographic Issues & io.netty:netty-handler Improper Certificate Validation (Due 08/28/2022) - 1. org.bouncycastle:bcprov-jdk15on Cryptographic Issues

[org.bouncycastle:bcprov-jdk15on](https://mvnrepository.com/artifact/org.bouncycastle/bcprov-jdk15on) is a Java imple... | non_priority | org bouncycastle bcprov cryptographic issues io netty netty handler improper certificate validation due org bouncycastle bcprov cryptographic issues is a java implementation of cryptographic algorithms affected versions of this package are vulnerable to cryptographic issues via weak key ha... | 0 |

107,846 | 16,762,431,545 | IssuesEvent | 2021-06-14 02:00:58 | atlslscsrv-app/https-net-atlsecsrv-org.github.io-dev.azure.portal.dashboard- | https://api.github.com/repos/atlslscsrv-app/https-net-atlsecsrv-org.github.io-dev.azure.portal.dashboard- | opened | CVE-2020-11023 (Medium) detected in jquery-1.11.1.js | security vulnerability | ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.1.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https... | True | CVE-2020-11023 (Medium) detected in jquery-1.11.1.js - ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.1.js</b></p></summary>

<p>JavaScript library for ... | non_priority | cve medium detected in jquery js cve medium severity vulnerability vulnerable library jquery js javascript library for dom operations library home page a href path to dependency file tmp ws scm security alerts atlslscsrv app node modules unix crypt td js test test html... | 0 |

256,973 | 27,561,749,354 | IssuesEvent | 2023-03-07 22:43:57 | samqws-marketing/box_mojito | https://api.github.com/repos/samqws-marketing/box_mojito | closed | CVE-2022-1471 (High) detected in snakeyaml-1.26.jar - autoclosed | Mend: dependency security vulnerability | ## CVE-2022-1471 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.26.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://... | True | CVE-2022-1471 (High) detected in snakeyaml-1.26.jar - autoclosed - ## CVE-2022-1471 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.26.jar</b></p></summary>

<p>YAML 1.1 par... | non_priority | cve high detected in snakeyaml jar autoclosed cve high severity vulnerability vulnerable library snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file restclient pom xml path to vulnerable library home wss scanner repo... | 0 |

78,885 | 9,808,442,682 | IssuesEvent | 2019-06-12 15:37:50 | fecgov/FEC | https://api.github.com/repos/fecgov/FEC | closed | Request: Make styling of glossary words more prominent in text | theme: general design | ## What were you trying to do and how can we improve it?

Read glossary terms

## General feedback?

Glossary terms: can they be highlighted or underlined? It's tough to distinguish them from the other wording with the same size and font lettering. The book icon blends in

## Details

- URL: https://beta.fec.gov/

- Us... | 1.0 | Request: Make styling of glossary words more prominent in text - ## What were you trying to do and how can we improve it?

Read glossary terms

## General feedback?

Glossary terms: can they be highlighted or underlined? It's tough to distinguish them from the other wording with the same size and font lettering. The... | non_priority | request make styling of glossary words more prominent in text what were you trying to do and how can we improve it read glossary terms general feedback glossary terms can they be highlighted or underlined it s tough to distinguish them from the other wording with the same size and font lettering the ... | 0 |

1,851 | 2,576,742,266 | IssuesEvent | 2015-02-12 12:35:41 | victor--/viss-bugs | https://api.github.com/repos/victor--/viss-bugs | closed | Ingen snygg popup gällande åtgärdsområde | 54713 bug Ready for test | På stationen klickar jag på välj åtgärdsområde

Pop-uprutan som öppnas ser inte ut som nya designen

Pop-uprutan som öppnas ser inte ut som nya designen

- have a pre-filter checkbox on the main home page search box | 2.0 | Investigate options for facet by peer-reviewed or open access - Some ideas from design

- move the peer-reviewed and open access facets up to the top of the facet list

- make the peer-reviewed and open access facets into a different style (like a check box)

- have a pre-filter checkbox on the main home page search bo... | non_priority | investigate options for facet by peer reviewed or open access some ideas from design move the peer reviewed and open access facets up to the top of the facet list make the peer reviewed and open access facets into a different style like a check box have a pre filter checkbox on the main home page search bo... | 0 |

303,780 | 26,228,126,065 | IssuesEvent | 2023-01-04 20:48:40 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | sql: TestDropTableInterleavedDeleteData failed | C-test-failure O-robot branch-master | [(sql).TestDropTableInterleavedDeleteData failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2390920&tab=buildLog) on [master@75234294d22cc9a6e12724b043f49e78f0ac92d2](https://github.com/cockroachdb/cockroach/commits/75234294d22cc9a6e12724b043f49e78f0ac92d2):

Fatal error:

```

panic: pebble: closed

```

Sta... | 1.0 | sql: TestDropTableInterleavedDeleteData failed - [(sql).TestDropTableInterleavedDeleteData failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2390920&tab=buildLog) on [master@75234294d22cc9a6e12724b043f49e78f0ac92d2](https://github.com/cockroachdb/cockroach/commits/75234294d22cc9a6e12724b043f49e78f0ac92d2):

... | non_priority | sql testdroptableinterleaveddeletedata failed on fatal error panic pebble closed stack goroutine github com cockroachdb pebble db apply go src github com cockroachdb cockroach vendor github com cockroachdb pebble db go github com cockroachdb pebble batch co... | 0 |

645,286 | 21,000,612,426 | IssuesEvent | 2022-03-29 17:04:12 | bcgov/digital-journeys | https://api.github.com/repos/bcgov/digital-journeys | closed | File uploads should support .msg, .docx file types | Development high priority | The client wants to add the following file formats to the upload file functionality:

.msg, .docx | 1.0 | File uploads should support .msg, .docx file types - The client wants to add the following file formats to the upload file functionality:

.msg, .docx | priority | file uploads should support msg docx file types the client wants to add the following file formats to the upload file functionality msg docx | 1 |

538,663 | 15,775,083,877 | IssuesEvent | 2021-04-01 02:14:13 | bacuarabrasil/krenak-api | https://api.github.com/repos/bacuarabrasil/krenak-api | closed | US03 - Atualizar usuário | F01 - Cadastro priority:medium | Como usuário, eu quero editar meus dados para disponibilizar as informações corretas e mais recentes para a equipe do app Krenak.

Critérios de aceite:

API:

- [x] Permite edição de nome, sobrenome e data de nascimento

- [x] Deverá permitir edição tanto via API REST quanto pela dashboard de administrador

App:

... | 1.0 | US03 - Atualizar usuário - Como usuário, eu quero editar meus dados para disponibilizar as informações corretas e mais recentes para a equipe do app Krenak.

Critérios de aceite:

API:

- [x] Permite edição de nome, sobrenome e data de nascimento

- [x] Deverá permitir edição tanto via API REST quanto pela dashboar... | priority | atualizar usuário como usuário eu quero editar meus dados para disponibilizar as informações corretas e mais recentes para a equipe do app krenak critérios de aceite api permite edição de nome sobrenome e data de nascimento deverá permitir edição tanto via api rest quanto pela dashboard de ad... | 1 |

390,329 | 11,542,108,768 | IssuesEvent | 2020-02-18 06:28:08 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Can create API categories with same name in different case | Priority/Normal Type/Bug | ### Description:

category name case sensitivity at the moment depends on DB.

### Steps to reproduce:

Create an api category with name 'test'.

Create an api category with name 'Test'

This is successful.

### Affected Product Version:

3.1.0 beta | 1.0 | Can create API categories with same name in different case - ### Description:

category name case sensitivity at the moment depends on DB.

### Steps to reproduce:

Create an api category with name 'test'.

Create an api category with name 'Test'

This is successful.

### Affected Product Version:

3.1.0 beta | priority | can create api categories with same name in different case description category name case sensitivity at the moment depends on db steps to reproduce create an api category with name test create an api category with name test this is successful affected product version beta | 1 |

497,246 | 14,366,606,871 | IssuesEvent | 2020-12-01 04:51:49 | marbl/MetagenomeScope | https://api.github.com/repos/marbl/MetagenomeScope | closed | Accept any type of ID strings | highpriorityfeature | _From @fedarko on July 26, 2017 22:50_

Or at least allow the user to specify a prefix to be removed (e.g. `NODE_` or `tig`).

Right now I've just been handling these cases manually (e.g. detecting a `tig` prefix on nodes and removing it) in the GFA parsing code in `collate.py`, but it'd be ideal to extend this funct... | 1.0 | Accept any type of ID strings - _From @fedarko on July 26, 2017 22:50_

Or at least allow the user to specify a prefix to be removed (e.g. `NODE_` or `tig`).

Right now I've just been handling these cases manually (e.g. detecting a `tig` prefix on nodes and removing it) in the GFA parsing code in `collate.py`, but it... | priority | accept any type of id strings from fedarko on july or at least allow the user to specify a prefix to be removed e g node or tig right now i ve just been handling these cases manually e g detecting a tig prefix on nodes and removing it in the gfa parsing code in collate py but it d be ... | 1 |

276,753 | 20,999,113,260 | IssuesEvent | 2022-03-29 15:46:57 | valeriupredoi/valeriupredoi.github.io | https://api.github.com/repos/valeriupredoi/valeriupredoi.github.io | closed | Meeting about site 10 December, 2021 and discussion on how to add content | documentation enhancement | @fadloff Sophie and myself we'll have a meeting on 10 Dec discuss the site, a few points before the meeting:

meeting location: https://ncas.zoom.us/j/8081337740?pwd=eVVkNGg1ZUp4bFJDUWVTb00vYkVuUT09

**So far**

- I put together a [README](https://github.com/valeriupredoi/valeriupredoi.github.io#readme) that expla... | 1.0 | Meeting about site 10 December, 2021 and discussion on how to add content - @fadloff Sophie and myself we'll have a meeting on 10 Dec discuss the site, a few points before the meeting:

meeting location: https://ncas.zoom.us/j/8081337740?pwd=eVVkNGg1ZUp4bFJDUWVTb00vYkVuUT09

**So far**

- I put together a [README]... | non_priority | meeting about site december and discussion on how to add content fadloff sophie and myself we ll have a meeting on dec discuss the site a few points before the meeting meeting location so far i put together a that explains alot of stuff that need to be done to get jekyll installed buil... | 0 |

44,022 | 13,047,867,053 | IssuesEvent | 2020-07-29 11:30:20 | rsoreq/zaproxy | https://api.github.com/repos/rsoreq/zaproxy | opened | CVE-2019-17531 (High) detected in jackson-databind-2.9.10.jar, jackson-databind-2.9.2.jar | security vulnerability | ## CVE-2019-17531 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.10.jar</b>, <b>jackson-databind-2.9.2.jar</b></p></summary>

<p>

<details><summary><b>jackson-da... | True | CVE-2019-17531 (High) detected in jackson-databind-2.9.10.jar, jackson-databind-2.9.2.jar - ## CVE-2019-17531 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.10.j... | non_priority | cve high detected in jackson databind jar jackson databind jar cve high severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar general data binding functionality for jackson works on core streami... | 0 |

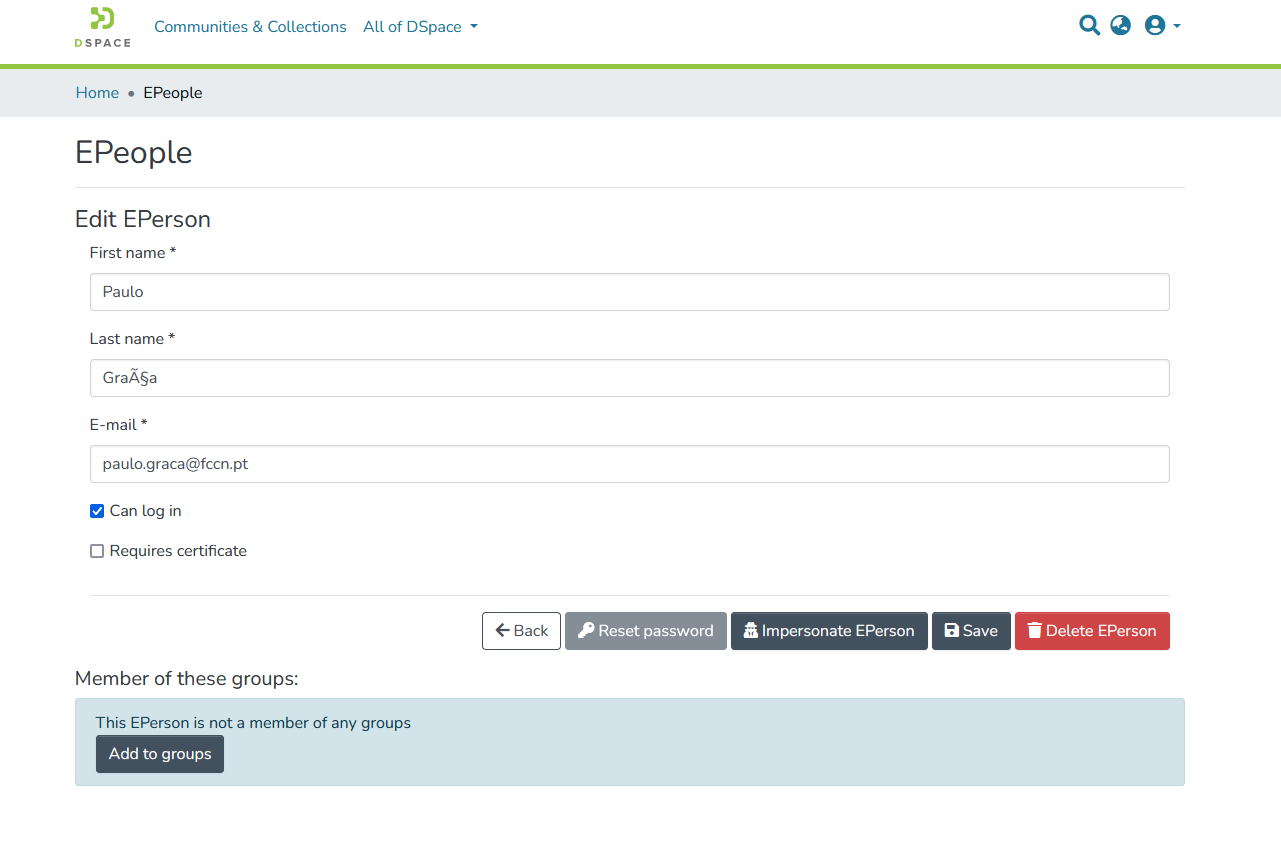

718,504 | 24,719,724,132 | IssuesEvent | 2022-10-20 09:44:03 | DSpace/dspace-angular | https://api.github.com/repos/DSpace/dspace-angular | closed | Probably a caching issue for impersonate feature on an admin user | bug help wanted high priority authorization performance / caching e/14 | **Describe the bug**

After a user was created, as an admin I've assign him the admin profile and went to see the user profile.

Then I assign him the admin group

detected in linux-yocto-4.1v4.1.17 | security vulnerability | ## CVE-2017-14340 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-4.1v4.1.17</b></p></summary>

<p>

<p>[no description]</p>

<p>Library home page: <a href=https://git.yocto... | True | CVE-2017-14340 (Medium) detected in linux-yocto-4.1v4.1.17 - ## CVE-2017-14340 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-4.1v4.1.17</b></p></summary>

<p>

<p>[no des... | non_priority | cve medium detected in linux yocto cve medium severity vulnerability vulnerable library linux yocto library home page a href found in head commit a href found in base branch master vulnerable source files fs xfs xfs linux h ... | 0 |

258,163 | 19,546,499,678 | IssuesEvent | 2022-01-02 00:31:00 | timoast/signac | https://api.github.com/repos/timoast/signac | closed | metadata missing | documentation | Hi Signac,

The file of "per_barcode_matrices.csv" is missing from the output of cellranger-arc aggr on multiple samples analyzed by cellranger-arc. Do you have any idea for how to generate a metadata in this case? I know it should be the issue with cellranger but just check to see if there are any other methods to ... | 1.0 | metadata missing - Hi Signac,

The file of "per_barcode_matrices.csv" is missing from the output of cellranger-arc aggr on multiple samples analyzed by cellranger-arc. Do you have any idea for how to generate a metadata in this case? I know it should be the issue with cellranger but just check to see if there are an... | non_priority | metadata missing hi signac the file of per barcode matrices csv is missing from the output of cellranger arc aggr on multiple samples analyzed by cellranger arc do you have any idea for how to generate a metadata in this case i know it should be the issue with cellranger but just check to see if there are an... | 0 |

395,043 | 11,670,724,305 | IssuesEvent | 2020-03-04 00:50:04 | autodo-app/autodo | https://api.github.com/repos/autodo-app/autodo | opened | Deploy 0.3.1 as com.autodo.autodo on official google account | Logistics priority: high | Need to move the app over to the autodoapp@gmail.com account and the app id will need to be updated in the firebase setup. | 1.0 | Deploy 0.3.1 as com.autodo.autodo on official google account - Need to move the app over to the autodoapp@gmail.com account and the app id will need to be updated in the firebase setup. | priority | deploy as com autodo autodo on official google account need to move the app over to the autodoapp gmail com account and the app id will need to be updated in the firebase setup | 1 |

185,778 | 6,730,347,464 | IssuesEvent | 2017-10-18 00:18:27 | NuGet/Home | https://api.github.com/repos/NuGet/Home | opened | Address localization for new strings in nuget.exe | Area:PackageSigning Needs loc Priority:0 | we are currently adding new commands to nuget.exe. Currently these strings are not being localized. we should fix this before shipping. | 1.0 | Address localization for new strings in nuget.exe - we are currently adding new commands to nuget.exe. Currently these strings are not being localized. we should fix this before shipping. | priority | address localization for new strings in nuget exe we are currently adding new commands to nuget exe currently these strings are not being localized we should fix this before shipping | 1 |

152,023 | 12,068,796,754 | IssuesEvent | 2020-04-16 15:14:31 | kubernetes-sigs/metrics-server | https://api.github.com/repos/kubernetes-sigs/metrics-server | closed | Travis CI doesn't report results | priority/critical-urgent sig/testing | On couple of recent PRs there is a missing raport from Travis CI. For example https://github.com/kubernetes-sigs/metrics-server/pull/481 has failing tests https://travis-ci.org/github/kubernetes-sigs/metrics-server/jobs/670686705.

This means that failing tests are no longer bloker for merging PRs.

Currently I... | 1.0 | Travis CI doesn't report results - On couple of recent PRs there is a missing raport from Travis CI. For example https://github.com/kubernetes-sigs/metrics-server/pull/481 has failing tests https://travis-ci.org/github/kubernetes-sigs/metrics-server/jobs/670686705.

This means that failing tests are no longer bloker... | non_priority | travis ci doesn t report results on couple of recent prs there is a missing raport from travis ci for example has failing tests this means that failing tests are no longer bloker for merging prs currently i was not able to pinpoint root cause there is no logs or warning that would inform about error ... | 0 |

7,529 | 25,046,122,936 | IssuesEvent | 2022-11-05 09:08:56 | ThinkingEngine-net/PickleTestSuite | https://api.github.com/repos/ThinkingEngine-net/PickleTestSuite | closed | Selenium Drive URL Moved - Update env check Link | bug Browser Automation | The URL for downloading selenium chrome drivers has moved. Update the failed check URL to reflect https://sites.google.com/chromium.org/driver/downloads | 1.0 | Selenium Drive URL Moved - Update env check Link - The URL for downloading selenium chrome drivers has moved. Update the failed check URL to reflect https://sites.google.com/chromium.org/driver/downloads | non_priority | selenium drive url moved update env check link the url for downloading selenium chrome drivers has moved update the failed check url to reflect | 0 |

167,398 | 26,494,855,896 | IssuesEvent | 2023-01-18 04:04:20 | phetsims/number-suite-common | https://api.github.com/repos/phetsims/number-suite-common | closed | Problems with Ten Frame "Return" button in Lab screen | type:bug design:general status:ready-for-review | The Lab screen has an Undo button for each Ten Frame. Pressing it returns one object in the Ten Frame to its toolbox.

<img width="434" alt="screenshot_2116" src="https://user-images.githubusercontent.com/3046552/210843821-48392bc7-09ef-4a20-af3c-70facec4d731.png">

Problems:

(1) BUG: The Undo button does not ap... | 1.0 | Problems with Ten Frame "Return" button in Lab screen - The Lab screen has an Undo button for each Ten Frame. Pressing it returns one object in the Ten Frame to its toolbox.

<img width="434" alt="screenshot_2116" src="https://user-images.githubusercontent.com/3046552/210843821-48392bc7-09ef-4a20-af3c-70facec4d731.pn... | non_priority | problems with ten frame return button in lab screen the lab screen has an undo button for each ten frame pressing it returns one object in the ten frame to its toolbox img width alt screenshot src problems bug the undo button does not appear when you add an object to the ten frame it d... | 0 |

777,784 | 27,294,064,988 | IssuesEvent | 2023-02-23 18:46:54 | NeurodataWithoutBorders/pynwb | https://api.github.com/repos/NeurodataWithoutBorders/pynwb | closed | [Bug]: Sphinx TypeError in check external links action | category: bug priority: high | ### What happened?

See https://github.com/NeurodataWithoutBorders/pynwb/actions/runs/4120974015/jobs/7116231197

```

/home/runner/work/pynwb/pynwb/docs/source/export.rst:31: ERROR: Unknown interpreted text role "py:code".

Exception occurred:

File "/opt/hostedtoolcache/Python/3.8.16/x64/lib/python3.8/site-pack... | 1.0 | [Bug]: Sphinx TypeError in check external links action - ### What happened?

See https://github.com/NeurodataWithoutBorders/pynwb/actions/runs/4120974015/jobs/7116231197

```

/home/runner/work/pynwb/pynwb/docs/source/export.rst:31: ERROR: Unknown interpreted text role "py:code".

Exception occurred:

File "/opt/... | priority | sphinx typeerror in check external links action what happened see home runner work pynwb pynwb docs source export rst error unknown interpreted text role py code exception occurred file opt hostedtoolcache python lib site packages sphinx ext extlinks py line in rol... | 1 |

702,539 | 24,124,971,813 | IssuesEvent | 2022-09-20 22:50:21 | FuelLabs/swayswap | https://api.github.com/repos/FuelLabs/swayswap | closed | Refactoring pool logics | page:pools priority:high page:rm_liquidity | - Improve swap create pool / add liquidity to be more clear, we are going to add xstate | 1.0 | Refactoring pool logics - - Improve swap create pool / add liquidity to be more clear, we are going to add xstate | priority | refactoring pool logics improve swap create pool add liquidity to be more clear we are going to add xstate | 1 |

732 | 2,511,241,912 | IssuesEvent | 2015-01-14 04:36:56 | AmpersandJS/amp | https://api.github.com/repos/AmpersandJS/amp | closed | iteratee needs tests? | bug test | the test file right now is a stub it seems, thanks to @jvduf for noticing | 1.0 | iteratee needs tests? - the test file right now is a stub it seems, thanks to @jvduf for noticing | non_priority | iteratee needs tests the test file right now is a stub it seems thanks to jvduf for noticing | 0 |

22,109 | 4,774,010,848 | IssuesEvent | 2016-10-27 04:01:47 | baidu/Paddle | https://api.github.com/repos/baidu/Paddle | opened | Documentation about how to write documentation. | documentation | Write a documentation to show:

* How paddle docs are organized.

* How to write `paddle docs`

* How to build `paddle docs` and `preview documentation locally`

* How does `www.paddlepaddle.org` update documentation. | 1.0 | Documentation about how to write documentation. - Write a documentation to show:

* How paddle docs are organized.

* How to write `paddle docs`

* How to build `paddle docs` and `preview documentation locally`

* How does `www.paddlepaddle.org` update documentation. | non_priority | documentation about how to write documentation write a documentation to show how paddle docs are organized how to write paddle docs how to build paddle docs and preview documentation locally how does update documentation | 0 |

172,837 | 13,348,984,568 | IssuesEvent | 2020-08-29 21:37:45 | digitallinguistics/app | https://api.github.com/repos/digitallinguistics/app | closed | update to Cypress 5.0 | dev tests | Cypress now supports test retries! This could be really helpful for dealing with some of the race conditions we run into.