Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

291,465 | 8,925,527,268 | IssuesEvent | 2019-01-21 23:11:04 | mat3rial-dev/page_rwanda_memorial | https://api.github.com/repos/mat3rial-dev/page_rwanda_memorial | opened | Implement admin view | priority-high | Page used by database owner to administer admin users.

These administrative users will be in charge of curating the data

entered by the general public. This page requires an authentication

system. | 1.0 | Implement admin view - Page used by database owner to administer admin users.

These administrative users will be in charge of curating the data

entered by the general public. This page requires an authentication

system. | priority | implement admin view page used by database owner to administer admin users these administrative users will be in charge of curating the data entered by the general public this page requires an authentication system | 1 |

33,488 | 2,765,734,500 | IssuesEvent | 2015-04-29 22:10:00 | biocore/qiita | https://api.github.com/repos/biocore/qiita | closed | re-defining the required fields for the study and prep templates to facilitate addition of new data | database changes priority: high refactor | In working on [documentation of the sample and prep templates](https://github.com/biocore/qiita/wiki/Preparing-Qiita-template-files) @rob-knight, @adamrp, @wasade, @josenavas and I discussed updating the requirements for sample and prep template fields.

These fields should no longer be required:

- [ ] ``emp_statu... | 1.0 | re-defining the required fields for the study and prep templates to facilitate addition of new data - In working on [documentation of the sample and prep templates](https://github.com/biocore/qiita/wiki/Preparing-Qiita-template-files) @rob-knight, @adamrp, @wasade, @josenavas and I discussed updating the requirements f... | priority | re defining the required fields for the study and prep templates to facilitate addition of new data in working on rob knight adamrp wasade josenavas and i discussed updating the requirements for sample and prep template fields these fields should no longer be required emp status run... | 1 |

348,098 | 10,438,771,318 | IssuesEvent | 2019-09-18 03:30:52 | nmiodice/terraform-azure-devops-hack | https://api.github.com/repos/nmiodice/terraform-azure-devops-hack | closed | AzDO Client Implementation | High Priority | # Description

As contributor to the Azure DevOps Terraform Provider, I want to use an authenticated client to interact with AzDO

# Acceptance Criteria

- [x] AzDO client written in Go can authenticate with AzDO using a personal access token

- [x] Client can be used to provision resources within AzDO

- [ ] Test... | 1.0 | AzDO Client Implementation - # Description

As contributor to the Azure DevOps Terraform Provider, I want to use an authenticated client to interact with AzDO

# Acceptance Criteria

- [x] AzDO client written in Go can authenticate with AzDO using a personal access token

- [x] Client can be used to provision resou... | priority | azdo client implementation description as contributor to the azure devops terraform provider i want to use an authenticated client to interact with azdo acceptance criteria azdo client written in go can authenticate with azdo using a personal access token client can be used to provision resources... | 1 |

184,510 | 14,289,501,215 | IssuesEvent | 2020-11-23 19:21:43 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | ipsn/go-ipfs: gxlibs/github.com/libp2p/go-libp2p-swarm/swarm_test.go; 111 LoC | fresh large test |

Found a possible issue in [ipsn/go-ipfs](https://www.github.com/ipsn/go-ipfs) at [gxlibs/github.com/libp2p/go-libp2p-swarm/swarm_test.go](https://github.com/ipsn/go-ipfs/blob/8b9b72514244155bc38ab21eac7f4d950ea97c28/gxlibs/github.com/libp2p/go-libp2p-swarm/swarm_test.go#L111-L221)

The below snippet of Go code trigger... | 1.0 | ipsn/go-ipfs: gxlibs/github.com/libp2p/go-libp2p-swarm/swarm_test.go; 111 LoC -

Found a possible issue in [ipsn/go-ipfs](https://www.github.com/ipsn/go-ipfs) at [gxlibs/github.com/libp2p/go-libp2p-swarm/swarm_test.go](https://github.com/ipsn/go-ipfs/blob/8b9b72514244155bc38ab21eac7f4d950ea97c28/gxlibs/github.com/libp2... | non_priority | ipsn go ipfs gxlibs github com go swarm swarm test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyz... | 0 |

813,016 | 30,442,468,136 | IssuesEvent | 2023-07-15 08:23:55 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | Docker sunsets Free Team Organizations affecting Kicbase | priority/critical-urgent lifecycle/rotten | Following the same issue as kind https://github.com/kubernetes-sigs/kind/issues/3124

Just got the notice, we have Until April 14th to resolve this.

here is a list of organizations we use in build and release of minikube, even though minikube uses docker hub as a fail over, it is essential for users not having acce... | 1.0 | Docker sunsets Free Team Organizations affecting Kicbase - Following the same issue as kind https://github.com/kubernetes-sigs/kind/issues/3124

Just got the notice, we have Until April 14th to resolve this.

here is a list of organizations we use in build and release of minikube, even though minikube uses docker h... | priority | docker sunsets free team organizations affecting kicbase following the same issue as kind just got the notice we have until april to resolve this here is a list of organizations we use in build and release of minikube even though minikube uses docker hub as a fail over it is essential for users not havi... | 1 |

108,964 | 4,365,084,533 | IssuesEvent | 2016-08-03 09:29:50 | google/paco | https://api.github.com/repos/google/paco | opened | iOS - Last experiment on invitations page is not touchable | Component-iOS Component-UI Priority-Medium | With many experiments, more than one screen full, the last experiment is visible when scrolling done, but the screen stops and scrolls back up to hide it when you quit scrolling. This makes it hidden and untouchable. | 1.0 | iOS - Last experiment on invitations page is not touchable - With many experiments, more than one screen full, the last experiment is visible when scrolling done, but the screen stops and scrolls back up to hide it when you quit scrolling. This makes it hidden and untouchable. | priority | ios last experiment on invitations page is not touchable with many experiments more than one screen full the last experiment is visible when scrolling done but the screen stops and scrolls back up to hide it when you quit scrolling this makes it hidden and untouchable | 1 |

380,704 | 11,270,039,923 | IssuesEvent | 2020-01-14 10:06:47 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Importing ogg vorbis files causes a crash in 3.1 stable | bug confirmed high priority topic:import | Opening a project with ogg vorbis files, or importing them, causes the editor to immediately crash. Here's what I get from running the editor in the command line:

```

ERROR: set_data: Condition ' ogg_stream == __null ' is true.

At: modules/stb_vorbis/audio_stream_ogg_vorbis.cpp:196.

ERROR: instance_playback: Con... | 1.0 | Importing ogg vorbis files causes a crash in 3.1 stable - Opening a project with ogg vorbis files, or importing them, causes the editor to immediately crash. Here's what I get from running the editor in the command line:

```

ERROR: set_data: Condition ' ogg_stream == __null ' is true.

At: modules/stb_vorbis/audio... | priority | importing ogg vorbis files causes a crash in stable opening a project with ogg vorbis files or importing them causes the editor to immediately crash here s what i get from running the editor in the command line error set data condition ogg stream null is true at modules stb vorbis audio... | 1 |

237,194 | 7,757,200,169 | IssuesEvent | 2018-05-31 15:39:27 | web-platform-tests/wpt | https://api.github.com/repos/web-platform-tests/wpt | opened | Migrate Travis CI to GitHub Apps | infra priority:roadmap | Like https://github.com/whatwg/meta/issues/96, and possible we can learn from that issue, or vice versa. | 1.0 | Migrate Travis CI to GitHub Apps - Like https://github.com/whatwg/meta/issues/96, and possible we can learn from that issue, or vice versa. | priority | migrate travis ci to github apps like and possible we can learn from that issue or vice versa | 1 |

270,825 | 8,470,853,911 | IssuesEvent | 2018-10-24 06:31:54 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | closed | Enable nightly links validation on repositories | area/community enhancement priority/critical | <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Currently governance job is executed on master when PR is merged. That validation should be disabled, because it sometimes blocks PR when ... | 1.0 | Enable nightly links validation on repositories - <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Currently governance job is executed on master when PR is merged. That validation should... | priority | enable nightly links validation on repositories thank you for your contribution before you submit the issue search open and closed issues for duplicates read the contributing guidelines description currently governance job is executed on master when pr is merged that validation should... | 1 |

254,002 | 21,720,037,553 | IssuesEvent | 2022-05-10 22:29:56 | trinodb/trino | https://api.github.com/repos/trinodb/trino | closed | Flaky TestHiveFaultTolerantExecutionConnectorTest.close | bug test | ```

2022-04-13T03:08:12.254-0500 INFO pool-3-thread-1 io.trino.testng.services.LogTestDurationListener Test io.trino.plugin.hive.TestHiveFaultTolerantExecutionConnectorTest.close took 30.49s

Error: Tests run: 754, Failures: 1, Errors: 0, Skipped: 25, Time elapsed: 919.723 s <<< FAILURE! - in TestSuite

Error: io.tr... | 1.0 | Flaky TestHiveFaultTolerantExecutionConnectorTest.close - ```

2022-04-13T03:08:12.254-0500 INFO pool-3-thread-1 io.trino.testng.services.LogTestDurationListener Test io.trino.plugin.hive.TestHiveFaultTolerantExecutionConnectorTest.close took 30.49s

Error: Tests run: 754, Failures: 1, Errors: 0, Skipped: 25, Time el... | non_priority | flaky testhivefaulttolerantexecutionconnectortest close info pool thread io trino testng services logtestdurationlistener test io trino plugin hive testhivefaulttolerantexecutionconnectortest close took error tests run failures errors skipped time elapsed s fail... | 0 |

359,645 | 25,248,701,291 | IssuesEvent | 2022-11-15 13:09:24 | airalab/robonomics-wiki | https://api.github.com/repos/airalab/robonomics-wiki | closed | [Issue]: Add Use Intro page | documentation | ### Issue description

This page is to describe the three modules of the Use block: Use Cases, Proof of Concept and Playground.

It would be great to add three buttons leading to these modules.

### Doc Page

https://github.com/airalab/robonomics-wiki/blob/test-code/data/sidebar_docs.yaml#L90

| 1.0 | [Issue]: Add Use Intro page - ### Issue description

This page is to describe the three modules of the Use block: Use Cases, Proof of Concept and Playground.

It would be great to add three buttons leading to these modules.

### Doc Page

https://github.com/airalab/robonomics-wiki/blob/test-code/data/sidebar_docs... | non_priority | add use intro page issue description this page is to describe the three modules of the use block use cases proof of concept and playground it would be great to add three buttons leading to these modules doc page | 0 |

492,758 | 14,219,455,195 | IssuesEvent | 2020-11-17 13:18:46 | neuropoly/axondeepseg | https://api.github.com/repos/neuropoly/axondeepseg | opened | Dash app for morphometrics analysis | feature gui priority:MEDIUM | ## Pre-planning stage

* [ ] Attend live Plotly session on the topic

* https://go.plotly.com/dash-image-processing/

* Registration requires.

* [ ] Explore examples of similar features already available on the web

* https://eoss-image-processing.github.io/2020/11/10/region-properties-exploration.html

* [ ]... | 1.0 | Dash app for morphometrics analysis - ## Pre-planning stage

* [ ] Attend live Plotly session on the topic

* https://go.plotly.com/dash-image-processing/

* Registration requires.

* [ ] Explore examples of similar features already available on the web

* https://eoss-image-processing.github.io/2020/11/10/reg... | priority | dash app for morphometrics analysis pre planning stage attend live plotly session on the topic registration requires explore examples of similar features already available on the web follow tutorials to get an idea of how to properly plan app documentation page ... | 1 |

116,989 | 9,905,221,601 | IssuesEvent | 2019-06-27 11:02:03 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed test: TestChangefeedMonitoring | C-test-failure O-robot | The following tests appear to have failed on master (testrace): TestChangefeedMonitoring, TestChangefeedMonitoring/enterprise

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestChangefeedMonitoring).

[#1361910](https://teamcity.cockroachdb.com/viewLog.ht... | 1.0 | teamcity: failed test: TestChangefeedMonitoring - The following tests appear to have failed on master (testrace): TestChangefeedMonitoring, TestChangefeedMonitoring/enterprise

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestChangefeedMonitoring).

[#13... | non_priority | teamcity failed test testchangefeedmonitoring the following tests appear to have failed on master testrace testchangefeedmonitoring testchangefeedmonitoring enterprise you may want to check testchangefeedmonitoring fail testrace testchangefeedmonitoring testchangefeedmonitoring en... | 0 |

218,149 | 16,959,784,747 | IssuesEvent | 2021-06-29 00:56:26 | RPTools/maptool | https://api.github.com/repos/RPTools/maptool | closed | Macros moved/copied between Macro Groups & Panels are always player-editable | bug macro changes tested | **Describe the bug**

When moving a macro between a macro group, or when copying a macro from one panel to another, the 'Allow Players to Edit Macro' checkbox is always reset to 'checked'.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a campaign macro and edit it so 'Allow Players to Edit Macro' is *... | 1.0 | Macros moved/copied between Macro Groups & Panels are always player-editable - **Describe the bug**

When moving a macro between a macro group, or when copying a macro from one panel to another, the 'Allow Players to Edit Macro' checkbox is always reset to 'checked'.

**To Reproduce**

Steps to reproduce the behavior... | non_priority | macros moved copied between macro groups panels are always player editable describe the bug when moving a macro between a macro group or when copying a macro from one panel to another the allow players to edit macro checkbox is always reset to checked to reproduce steps to reproduce the behavior... | 0 |

462,484 | 13,247,979,474 | IssuesEvent | 2020-08-19 18:10:00 | open-telemetry/opentelemetry-specification | https://api.github.com/repos/open-telemetry/opentelemetry-specification | closed | Span name: Both low-cardinality (grouping key) and human-readable (display name) | area:api area:sampling priority:p1 release:required-for-ga spec:trace | This came up when I suggested the handler name instead of the route name for the HTTP semantic conventions (#548). I think that the handler name is a better grouping key than the route, but the route is a better display name, as @yurishkuro [pointed out](https://github.com/open-telemetry/opentelemetry-specification/pul... | 1.0 | Span name: Both low-cardinality (grouping key) and human-readable (display name) - This came up when I suggested the handler name instead of the route name for the HTTP semantic conventions (#548). I think that the handler name is a better grouping key than the route, but the route is a better display name, as @yurishk... | priority | span name both low cardinality grouping key and human readable display name this came up when i suggested the handler name instead of the route name for the http semantic conventions i think that the handler name is a better grouping key than the route but the route is a better display name as yurishkur... | 1 |

811,125 | 30,275,335,085 | IssuesEvent | 2023-07-07 19:02:54 | virtualcell/vcell | https://api.github.com/repos/virtualcell/vcell | closed | Update reactome old to new id map, access resource as stream instead of file. | bug High Priority VCell-7.5.0 VCell-7.5.1 | Map was 6 years old.

Open as file in Release fails, trying as stream.

Improved robustness, better error messages. | 1.0 | Update reactome old to new id map, access resource as stream instead of file. - Map was 6 years old.

Open as file in Release fails, trying as stream.

Improved robustness, better error messages. | priority | update reactome old to new id map access resource as stream instead of file map was years old open as file in release fails trying as stream improved robustness better error messages | 1 |

590,207 | 17,773,751,207 | IssuesEvent | 2021-08-30 16:28:33 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | bug: extra slash in docker registry other than index.docker.io | type:bug priority-3-normal good first issue datasource:docker status:ready reproduction:provided | ### How are you running Renovate?

Self-hosted

### Please select which platform you are using if self-hosting.

GitHub Enterprise Server

### If you're self-hosting Renovate, tell us what version of Renovate you run.

25.73.0-986e1c1f9

### Describe the bug

Unable to retrieve tags for docker registry ot... | 1.0 | bug: extra slash in docker registry other than index.docker.io - ### How are you running Renovate?

Self-hosted

### Please select which platform you are using if self-hosting.

GitHub Enterprise Server

### If you're self-hosting Renovate, tell us what version of Renovate you run.

25.73.0-986e1c1f9

### D... | priority | bug extra slash in docker registry other than index docker io how are you running renovate self hosted please select which platform you are using if self hosting github enterprise server if you re self hosting renovate tell us what version of renovate you run describe th... | 1 |

815 | 8,208,334,570 | IssuesEvent | 2018-09-04 00:54:24 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | rpm build failed on build xCAT-probe | component:automation status:pending | August 31st: *linux_rpm* build of branch `devel` ==> *FAILED*

BUILD_MACHINE=`c910f04x33@xcat.build.server`

BUILD_DIR=`/xcat/build/xcat2_autobuild_daily_builds/20180831.0615/linux_rpm

```

Error: build of the following RPMs failed: xCAT-probe

2018-08-31 10:17:51,909 buildxcat WARNING:Wide character in pr... | 1.0 | rpm build failed on build xCAT-probe - August 31st: *linux_rpm* build of branch `devel` ==> *FAILED*

BUILD_MACHINE=`c910f04x33@xcat.build.server`

BUILD_DIR=`/xcat/build/xcat2_autobuild_daily_builds/20180831.0615/linux_rpm

```

Error: build of the following RPMs failed: xCAT-probe

2018-08-31 10:17:51,909... | non_priority | rpm build failed on build xcat probe august linux rpm build of branch devel failed build machine xcat build server build dir xcat build autobuild daily builds linux rpm error build of the following rpms failed xcat probe buildxcat warning wide character in... | 0 |

39,851 | 9,691,523,621 | IssuesEvent | 2019-05-24 11:27:47 | cakephp/bake | https://api.github.com/repos/cakephp/bake | closed | 4.x - Can't bake custom view template | Defect | ## What you did

I baked my plugin and copied across the templates as per the documentation.

```bash

~/Sites/CakePHP4 $ bin/cake bake template -f --theme=CakeBootstrap --prefix=Admin Questions view

Baking `view` view template file...

File `/Users/davidyell/Sites/CakePHP4/src/../templates/Admin/Questions/view.... | 1.0 | 4.x - Can't bake custom view template - ## What you did

I baked my plugin and copied across the templates as per the documentation.

```bash

~/Sites/CakePHP4 $ bin/cake bake template -f --theme=CakeBootstrap --prefix=Admin Questions view

Baking `view` view template file...

File `/Users/davidyell/Sites/CakePHP... | non_priority | x can t bake custom view template what you did i baked my plugin and copied across the templates as per the documentation bash sites bin cake bake template f theme cakebootstrap prefix admin questions view baking view view template file file users davidyell sites src templ... | 0 |

300,375 | 9,210,151,316 | IssuesEvent | 2019-03-09 02:06:15 | ac2cz/FoxTelem | https://api.github.com/repos/ac2cz/FoxTelem | closed | FCD Pro- Bad Behavior on RPi | Fox In A Box/RaspPi fcd low priority wontfix | This is from Barry, WD4ASW. He did not realize that the original FCDP (which I call FCDP-) did not work, so he plugged it into an FIAB. It did not appear as a source, so he chose RTL-SDR (?) and started. He got an error box saying the device was not found, but clicking OK just brought up the error box again, so he c... | 1.0 | FCD Pro- Bad Behavior on RPi - This is from Barry, WD4ASW. He did not realize that the original FCDP (which I call FCDP-) did not work, so he plugged it into an FIAB. It did not appear as a source, so he chose RTL-SDR (?) and started. He got an error box saying the device was not found, but clicking OK just brought ... | priority | fcd pro bad behavior on rpi this is from barry he did not realize that the original fcdp which i call fcdp did not work so he plugged it into an fiab it did not appear as a source so he chose rtl sdr and started he got an error box saying the device was not found but clicking ok just brought up th... | 1 |

85,009 | 3,683,650,228 | IssuesEvent | 2016-02-24 14:50:35 | emoncms/MyHomeEnergyPlanner | https://api.github.com/repos/emoncms/MyHomeEnergyPlanner | closed | Top bar figures not changing after measures applied. | bug High priority | In "63 Nicholas rd V4" having improved several elements the heat losses have dropped but the space heat demand , primary energy etc have not. | 1.0 | Top bar figures not changing after measures applied. - In "63 Nicholas rd V4" having improved several elements the heat losses have dropped but the space heat demand , primary energy etc have not. | priority | top bar figures not changing after measures applied in nicholas rd having improved several elements the heat losses have dropped but the space heat demand primary energy etc have not | 1 |

417,063 | 28,110,078,034 | IssuesEvent | 2023-03-31 06:18:47 | jerrrren/ped | https://api.github.com/repos/jerrrren/ped | opened | Missing explanation for input in UG | severity.Medium type.DocumentationBug | The UG sheed explain the command input, specifically [ct/{ind|env}] syntax is not explained in the UG.

<!--session: 1680243402490-26cf3b09-9882-4ab8-a78c-2fd363fb09d5-->

<!--Version: Web v3.4.7--> | 1.0 | Missing explanation for input in UG - The UG sheed explain the command input, specifically [ct/{ind|env}] syntax is not explained in the UG.

<!--session: 1680243402490-26cf3b09-9882-4ab8-a78c-2fd363fb09d5-->

<!--Version: Web v3.4.7--> | non_priority | missing explanation for input in ug the ug sheed explain the command input specifically syntax is not explained in the ug | 0 |

6,400 | 14,502,894,231 | IssuesEvent | 2020-12-11 21:45:52 | sg-s/xolotl | https://api.github.com/repos/sg-s/xolotl | opened | why is getFullStateSize a function? | C++ weak-architecture | isn't it sufficient to make it a property? that is public? | 1.0 | why is getFullStateSize a function? - isn't it sufficient to make it a property? that is public? | non_priority | why is getfullstatesize a function isn t it sufficient to make it a property that is public | 0 |

213,814 | 16,539,106,486 | IssuesEvent | 2021-05-27 14:48:52 | alvgomper1/Acme-Planner | https://api.github.com/repos/alvgomper1/Acme-Planner | closed | Task-004: Test crear shout y publicarlo sin spam(Anonymous) | test | Write a shout and publish it as long as it is not considered spam (Probar solo la parte de spam). | 1.0 | Task-004: Test crear shout y publicarlo sin spam(Anonymous) - Write a shout and publish it as long as it is not considered spam (Probar solo la parte de spam). | non_priority | task test crear shout y publicarlo sin spam anonymous write a shout and publish it as long as it is not considered spam probar solo la parte de spam | 0 |

42,075 | 9,127,062,926 | IssuesEvent | 2019-02-25 02:09:38 | EdenServer/community | https://api.github.com/repos/EdenServer/community | closed | Trade for Puppetry Tobe not working | in-code-review | Dhima Polevhia isn't taking the items for Puppetry Tobe.

1 Ruby

1 Moblinweave

1 Scarlet Linen

1 Wamoura Cloth

1 Imperial Gold Piece

The other 2 AF piece trades worked fine. | 1.0 | Trade for Puppetry Tobe not working - Dhima Polevhia isn't taking the items for Puppetry Tobe.

1 Ruby

1 Moblinweave

1 Scarlet Linen

1 Wamoura Cloth

1 Imperial Gold Piece

The other 2 AF piece trades worked fine. | non_priority | trade for puppetry tobe not working dhima polevhia isn t taking the items for puppetry tobe ruby moblinweave scarlet linen wamoura cloth imperial gold piece the other af piece trades worked fine | 0 |

377,480 | 11,171,474,088 | IssuesEvent | 2019-12-28 20:04:16 | clinwiki-org/clinwiki | https://api.github.com/repos/clinwiki-org/clinwiki | closed | Implement Type Ahead Using Facets | Priority 1 | --from email exchange--

At the last meeting we spoke about being able to limit the number of facets in the autocomplete. This simplifies the problem and allows us to use the current API. There is already an api that enables us to filter on one facet. We can reuse this endpoint calling it for each of the facets and t... | 1.0 | Implement Type Ahead Using Facets - --from email exchange--

At the last meeting we spoke about being able to limit the number of facets in the autocomplete. This simplifies the problem and allows us to use the current API. There is already an api that enables us to filter on one facet. We can reuse this endpoint cal... | priority | implement type ahead using facets from email exchange at the last meeting we spoke about being able to limit the number of facets in the autocomplete this simplifies the problem and allows us to use the current api there is already an api that enables us to filter on one facet we can reuse this endpoint cal... | 1 |

481,405 | 13,885,701,801 | IssuesEvent | 2020-10-18 21:09:23 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | [feature] conan config install a single file | Hacktoberfest complex: low good first issue priority: low stage: queue type: feature | When using `conan config install`, allow a single configuration file to be installed.

Normally, the command requires a git repository, a local folder, or a zip file that contains the file(s) to be installed. If I have a single file I want installed, I would have to put it into a folder or zip it into a file.

The... | 1.0 | [feature] conan config install a single file - When using `conan config install`, allow a single configuration file to be installed.

Normally, the command requires a git repository, a local folder, or a zip file that contains the file(s) to be installed. If I have a single file I want installed, I would have to put... | priority | conan config install a single file when using conan config install allow a single configuration file to be installed normally the command requires a git repository a local folder or a zip file that contains the file s to be installed if i have a single file i want installed i would have to put it into... | 1 |

217,433 | 16,855,775,160 | IssuesEvent | 2021-06-21 06:24:53 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | raftstore::test_replication_mode::test_dr_auto_sync failed | component/test-bench | raftstore::test_replication_mode::test_dr_auto_sync

Latest failed builds:

https://internal.pingcap.net/idc-jenkins/job/tikv_ghpr_test/22703/consoleFull

| 1.0 | raftstore::test_replication_mode::test_dr_auto_sync failed - raftstore::test_replication_mode::test_dr_auto_sync

Latest failed builds:

https://internal.pingcap.net/idc-jenkins/job/tikv_ghpr_test/22703/consoleFull

| non_priority | raftstore test replication mode test dr auto sync failed raftstore test replication mode test dr auto sync latest failed builds | 0 |

483,148 | 13,919,394,004 | IssuesEvent | 2020-10-21 09:01:19 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | reopened | Identify maxItems cfg option removed | Good first issue Priority: Medium user feedback | ### Description

Hi

In one of my localConfig.json I have set maxItems for the Identify plugin

{

"name": "Identify",

"cfg": {

"showHighlightFeatureButton": true,

"draggable": false,

"dock": true,

... | 1.0 | Identify maxItems cfg option removed - ### Description

Hi

In one of my localConfig.json I have set maxItems for the Identify plugin

{

"name": "Identify",

"cfg": {

"showHighlightFeatureButton": true,

"draggable": false,

... | priority | identify maxitems cfg option removed description hi in one of my localconfig json i have set maxitems for the identify plugin name identify cfg showhighlightfeaturebutton true draggable false ... | 1 |

35,540 | 17,113,197,471 | IssuesEvent | 2021-07-10 19:28:12 | aperta-principium/Interclip | https://api.github.com/repos/aperta-principium/Interclip | closed | Add Redis | enhancement performance | Add a Redis server and use it for retrieving clips, as it could drastically improve load times. | True | Add Redis - Add a Redis server and use it for retrieving clips, as it could drastically improve load times. | non_priority | add redis add a redis server and use it for retrieving clips as it could drastically improve load times | 0 |

493,940 | 14,241,796,646 | IssuesEvent | 2020-11-19 00:13:03 | sButtons/sbuttons | https://api.github.com/repos/sButtons/sbuttons | closed | [BUTTON IDEA]: Funky Button | Hacktoberfest Priority: Low button-idea good first issue help wanted stale-issue | **1. Name of button**: Funky Button

**2. Description**: A button that's color is originally outside the border but on hover becomes inside

**3. Button Type (Animated, Special, etc...)**: Animated

**4. Will you work on it?**: No if you want to work on it please comment to be assigned.

Here's an example of wh... | 1.0 | [BUTTON IDEA]: Funky Button - **1. Name of button**: Funky Button

**2. Description**: A button that's color is originally outside the border but on hover becomes inside

**3. Button Type (Animated, Special, etc...)**: Animated

**4. Will you work on it?**: No if you want to work on it please comment to be assign... | priority | funky button name of button funky button description a button that s color is originally outside the border but on hover becomes inside button type animated special etc animated will you work on it no if you want to work on it please comment to be assigned here ... | 1 |

36,426 | 6,533,697,426 | IssuesEvent | 2017-08-31 07:43:54 | linkedresearch/linkedresearch.org | https://api.github.com/repos/linkedresearch/linkedresearch.org | opened | Specify data shape for annotation notifications | documentation RDF | Annotations can be subdivided to Web Annotation motivations. Some relevant ones listed in ballpark priority that should be specified:

* [ ] assessing

* [ ] replying

* [ ] commenting

* [ ] describing

* [ ] linking

* [ ] moderating

* [ ] tagging

* [ ] questioning

.. | 1.0 | Specify data shape for annotation notifications - Annotations can be subdivided to Web Annotation motivations. Some relevant ones listed in ballpark priority that should be specified:

* [ ] assessing

* [ ] replying

* [ ] commenting

* [ ] describing

* [ ] linking

* [ ] moderating

* [ ] tagging

* [ ] questionin... | non_priority | specify data shape for annotation notifications annotations can be subdivided to web annotation motivations some relevant ones listed in ballpark priority that should be specified assessing replying commenting describing linking moderating tagging questioning | 0 |

274,741 | 30,123,016,300 | IssuesEvent | 2023-06-30 16:46:52 | bcgov/cloud-pathfinder | https://api.github.com/repos/bcgov/cloud-pathfinder | closed | Security review of AWS services (EMR Serverless, Redshift, Kinesis, EC2 ) identified through GDX-Analytics CAPS | Security Privacy | **Describe the issue**

We identified some AWS services like EMR (Elastic Map Reduce) Serverless, Redshift, Kinesis, EC2 used by GDX-Analytics's Core Analytics Pipeline Service (CAPS).

We are looking forward to carrying out compliance work for these services and marking them as reviewed in the list of services availab... | True | Security review of AWS services (EMR Serverless, Redshift, Kinesis, EC2 ) identified through GDX-Analytics CAPS - **Describe the issue**

We identified some AWS services like EMR (Elastic Map Reduce) Serverless, Redshift, Kinesis, EC2 used by GDX-Analytics's Core Analytics Pipeline Service (CAPS).

We are looking forwa... | non_priority | security review of aws services emr serverless redshift kinesis identified through gdx analytics caps describe the issue we identified some aws services like emr elastic map reduce serverless redshift kinesis used by gdx analytics s core analytics pipeline service caps we are looking forward t... | 0 |

24,337 | 3,900,778,595 | IssuesEvent | 2016-04-18 08:04:05 | geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | https://api.github.com/repos/geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | closed | G+r36omqGgVrc/X5y5fJdTnXUmSIeGZe3b02fvTlAfPOt5YhvyMxbH/tnvENVEAF+AjOYxHn414qNi2ohu05AaLnyceCYQ1cTEdaXgZipiNxav4yoscVis7LFc3oxXZPZWeWgzYn36i5y7bs6+1cQ7RkGYD/MW2FkMXoG5IILUs= | design | VEFAi63WMg7q48WvQkRKMoq8yYiNqX1PLeogTSc2f9r8D4X+1XmMbqMaFD4sUTEYmGzyOJ40kUKBNOzNy1JUbYZBZJcEe0QAEWty4hMR4rLaF4J7d1hV3ApDoUbSZ7ltsAm6yVKxBfjwKhEQMCguVmmrnbd0LaJr1XiXCgGP6IAlj+KZA4bMLheMsp8oA0cANQu1WUQ7YNkn+jKZioSmkMlr2G2lP2W0zfHw2VIIel1CHMKLGBzK4f5V+9W17RaFUqADXdOLR5P5eIxRpdhAN6GQ7rWy/pxhwK3D5s6Mb0AU8G1a3OzypqVUkTJ/D6eX... | 1.0 | G+r36omqGgVrc/X5y5fJdTnXUmSIeGZe3b02fvTlAfPOt5YhvyMxbH/tnvENVEAF+AjOYxHn414qNi2ohu05AaLnyceCYQ1cTEdaXgZipiNxav4yoscVis7LFc3oxXZPZWeWgzYn36i5y7bs6+1cQ7RkGYD/MW2FkMXoG5IILUs= - VEFAi63WMg7q48WvQkRKMoq8yYiNqX1PLeogTSc2f9r8D4X+1XmMbqMaFD4sUTEYmGzyOJ40kUKBNOzNy1JUbYZBZJcEe0QAEWty4hMR4rLaF4J7d1hV3ApDoUbSZ7ltsAm6yVKxBfjwKhEQM... | non_priority | g tnvenveaf fhmi kjkhiuivfuvx obuveui l | 0 |

382,556 | 11,307,907,860 | IssuesEvent | 2020-01-19 00:45:27 | buddyqc69/infernalwowproject | https://api.github.com/repos/buddyqc69/infernalwowproject | closed | Quêtes : L'arène de sang : l'ultime défi | Bugfix-Quest Bugfix-creatures Priority-Medium Resolu-Completed legion-7.3.5 | niveaux 65 l'arène de sang quand on tue le pnj sela ne compte pas pour la quete

https://fr.wowhead.com/quest=9977/lar%C3%A8ne-de-sang-lultime-d%C3%A9fi | 1.0 | Quêtes : L'arène de sang : l'ultime défi - niveaux 65 l'arène de sang quand on tue le pnj sela ne compte pas pour la quete

https://fr.wowhead.com/quest=9977/lar%C3%A8ne-de-sang-lultime-d%C3%A9fi | priority | quêtes l arène de sang l ultime défi niveaux l arène de sang quand on tue le pnj sela ne compte pas pour la quete | 1 |

241,117 | 7,808,920,830 | IssuesEvent | 2018-06-11 21:52:12 | jdextraze/go-gesclient | https://api.github.com/repos/jdextraze/go-gesclient | closed | Running publisher example (with -race flag) yields warning | bug low-priority | Running `go run -race ./examples/publisher/main.go` produces the following:

```

2017/12/03 22:58:01 -> 'Default': &{eventId:8764a574-1b19-4bd3-b733-7b22c2bd40ac type:TestEvent isJson:true data:[...] metadata:[...]}

2017/12/03 22:58:01 Connected: &{remoteEndpoint:127.0.0.1:1113, connection:AllCatchUpSubscriber}

201... | 1.0 | Running publisher example (with -race flag) yields warning - Running `go run -race ./examples/publisher/main.go` produces the following:

```

2017/12/03 22:58:01 -> 'Default': &{eventId:8764a574-1b19-4bd3-b733-7b22c2bd40ac type:TestEvent isJson:true data:[...] metadata:[...]}

2017/12/03 22:58:01 Connected: &{remoteE... | priority | running publisher example with race flag yields warning running go run race examples publisher main go produces the following default eventid type testevent isjson true data metadata connected remoteendpoint connection allcatchupsubscriber ... | 1 |

108,511 | 9,309,584,781 | IssuesEvent | 2019-03-25 16:47:38 | mozilla/iris | https://api.github.com/repos/mozilla/iris | closed | Fix download_paused_unpaused | test case |

download_paused_unpaused - The download can be paused/unpaused. By: rrobotin

--

1 | 4:30:58 AM | Progress information is displayed.

2 | 4:31:13 AM | Download was paused. - [Actual]: False [Expected]: True

| 1.0 | Fix download_paused_unpaused -

download_paused_unpaused - The download can be paused/unpaused. By: rrobotin

--

1 | 4:30:58 AM | Progress information is displayed.

2 | 4:31:13 AM | Download was paused. - [Actual]: False [Expected]: True

| non_priority | fix download paused unpaused download paused unpaused the download can be paused unpaused by rrobotin am progress information is displayed am download was paused false true | 0 |

145,941 | 19,377,648,900 | IssuesEvent | 2021-12-17 01:02:14 | TIBCOSoftware/jasperreports-server-ce | https://api.github.com/repos/TIBCOSoftware/jasperreports-server-ce | opened | CVE-2021-45046 (Low) detected in log4j-core-2.13.3.jar | security vulnerability | ## CVE-2021-45046 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.13.3.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Library home page: <a href="https://l... | True | CVE-2021-45046 (Low) detected in log4j-core-2.13.3.jar - ## CVE-2021-45046 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.13.3.jar</b></p></summary>

<p>The Apache Log4j Im... | non_priority | cve low detected in core jar cve low severity vulnerability vulnerable library core jar the apache implementation library home page a href dependency hierarchy x core jar vulnerable library found in base branch master vulnerab... | 0 |

273,395 | 8,530,219,247 | IssuesEvent | 2018-11-03 20:10:16 | MyMICDS/MyMICDS-v2 | https://api.github.com/repos/MyMICDS/MyMICDS-v2 | opened | Exposed stickynotes data | bug effort: easy priority: it can wait work length: short | We expose the `_id` and `user` properties in the get stickynotes endpoint, which might be an indicator that we're directly responding with the database document-- We don't need to be responding with these properties, and we don't want to respond directly with document in case we add private data in those documents in t... | 1.0 | Exposed stickynotes data - We expose the `_id` and `user` properties in the get stickynotes endpoint, which might be an indicator that we're directly responding with the database document-- We don't need to be responding with these properties, and we don't want to respond directly with document in case we add private d... | priority | exposed stickynotes data we expose the id and user properties in the get stickynotes endpoint which might be an indicator that we re directly responding with the database document we don t need to be responding with these properties and we don t want to respond directly with document in case we add private d... | 1 |

270,829 | 20,611,236,931 | IssuesEvent | 2022-03-07 08:51:28 | estruyf/vscode-front-matter | https://api.github.com/repos/estruyf/vscode-front-matter | opened | Enhancement: Create new `isPublishDate` and `isModifiedDate` property to define date types | bug documentation | ### Discussed in https://github.com/estruyf/vscode-front-matter/discussions/278

<div type='discussions-op-text'>

<sup>Originally posted by **jmatthewpryor** March 5, 2022</sup>

Hi

I've followed the documentation to try & get custom publish and last mod dates working - so far without success

My workspace e... | 1.0 | Enhancement: Create new `isPublishDate` and `isModifiedDate` property to define date types - ### Discussed in https://github.com/estruyf/vscode-front-matter/discussions/278

<div type='discussions-op-text'>

<sup>Originally posted by **jmatthewpryor** March 5, 2022</sup>

Hi

I've followed the documentation to t... | non_priority | enhancement create new ispublishdate and ismodifieddate property to define date types discussed in originally posted by jmatthewpryor march hi i ve followed the documentation to try get custom publish and last mod dates working so far without success my workspace editor setti... | 0 |

2,710 | 3,968,118,685 | IssuesEvent | 2016-05-03 18:33:09 | google/end-to-end | https://api.github.com/repos/google/end-to-end | reopened | S2K uses small c/bytecount, inconsistent suite of S2K-KDF-SHA1 (160b) and AES-256 | bug crypto imported logic security |

_From [coruus@gmail.com](https://code.google.com/u/coruus@gmail.com/) on July 05, 2014 23:47:38_

**Is this report about the crypto library or the extension?**

Crypto library. javascript/crypto/e2e/openpgp/encryptedcipher.js

What is the security bug? The library assumes that the hash algorithm used for th... | True | S2K uses small c/bytecount, inconsistent suite of S2K-KDF-SHA1 (160b) and AES-256 -

_From [coruus@gmail.com](https://code.google.com/u/coruus@gmail.com/) on July 05, 2014 23:47:38_

**Is this report about the crypto library or the extension?**

Crypto library. javascript/crypto/e2e/openpgp/encryptedcipher.js

... | non_priority | uses small c bytecount inconsistent suite of kdf and aes from on july is this report about the crypto library or the extension crypto library javascript crypto openpgp encryptedcipher js what is the security bug the library assumes that the hash algorithm used for... | 0 |

268,711 | 8,410,785,937 | IssuesEvent | 2018-10-12 11:51:26 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | newsprofin.com - site is not usable | browser-firefox priority-normal | <!-- @browser: Firefox 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:64.0) Gecko/20100101 Firefox/64.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://newsprofin.com/t1/?&geocode=en-in&hero=12&tmplcode=igzt&instsmall=1&cep=FsCgAX6OQmTUXP_9L9pg3YxtqheNLusSI6O-2xiUhNMiaAaFG8tmyIm4-... | 1.0 | newsprofin.com - site is not usable - <!-- @browser: Firefox 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:64.0) Gecko/20100101 Firefox/64.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://newsprofin.com/t1/?&geocode=en-in&hero=12&tmplcode=igzt&instsmall=1&cep=FsCgAX6OQmTUXP_9L9p... | priority | newsprofin com site is not usable url browser version firefox operating system windows tested another browser unknown problem type site is not usable description site opens automatically steps to reproduce browser configuration mixed active conte... | 1 |

819,635 | 30,746,674,638 | IssuesEvent | 2023-07-28 15:32:55 | AspectOfJerry/BapUtils | https://api.github.com/repos/AspectOfJerry/BapUtils | closed | party takeover | enhancement qol priority commands wip | **Describe your idea**

Add a command to take someone's party.

**Describe the solution you'd like**

Implement a trust feature to only allow trusted players to take the party from you.

**Additional context**

Already a work in progress

| 1.0 | party takeover - **Describe your idea**

Add a command to take someone's party.

**Describe the solution you'd like**

Implement a trust feature to only allow trusted players to take the party from you.

**Additional context**

Already a work in progress

| priority | party takeover describe your idea add a command to take someone s party describe the solution you d like implement a trust feature to only allow trusted players to take the party from you additional context already a work in progress | 1 |

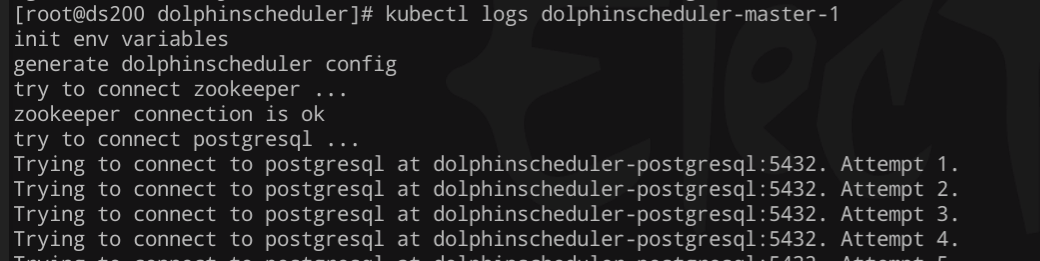

647,616 | 21,132,169,202 | IssuesEvent | 2022-04-06 00:18:17 | apache/dolphinscheduler | https://api.github.com/repos/apache/dolphinscheduler | closed | [Bug] [k8s] start server failed because can't connect to postgresql | bug Stale priority:low | ### Search before asking

- [X] I had searched in the [issues](https://github.com/apache/dolphinscheduler/issues?q=is%3Aissue) and found no similar issues.

### What happened

### What you expected to hap... | 1.0 | [Bug] [k8s] start server failed because can't connect to postgresql - ### Search before asking

- [X] I had searched in the [issues](https://github.com/apache/dolphinscheduler/issues?q=is%3Aissue) and found no similar issues.

### What happened

checklist | area/HA area/upgrades kind/enhancement priority/important-soon triaged | The following is a checklist for kubeadm support for deploying HA-clusters. This a distillation of action items from:

https://docs.google.com/document/d/1lH9OKkFZMSqXCApmSXemEDuy9qlINdm5MfWWGrK3JYc/edit#heading=h.8hdxw3quu67g

but there may be more.

New Features:

- [x] Option enable active<>passive locking v... | 1.0 | Kubeadm HA ( high availability ) checklist - The following is a checklist for kubeadm support for deploying HA-clusters. This a distillation of action items from:

https://docs.google.com/document/d/1lH9OKkFZMSqXCApmSXemEDuy9qlINdm5MfWWGrK3JYc/edit#heading=h.8hdxw3quu67g

but there may be more.

New Features:... | priority | kubeadm ha high availability checklist the following is a checklist for kubeadm support for deploying ha clusters this a distillation of action items from but there may be more new features option enable active passive locking via configmaps pr enable support to componentsc... | 1 |

94,226 | 3,923,097,529 | IssuesEvent | 2016-04-22 09:45:15 | HGustavs/LenaSYS | https://api.github.com/repos/HGustavs/LenaSYS | closed | Can't create new item on a course | highPriority | When you create a new item on a course and select the type code you get the following error.

"Returned from setup: Debug: Error occur at line 453 in file /var/www/lenasys/4/CodeViewer/editorService.php. There are no examples or the ID of example is incorrect."

| 1.0 | Can't create new item on a course - When you create a new item on a course and select the type code you get the following error.

"Returned from setup: Debug: Error occur at line 453 in file /var/www/lenasys/4/CodeViewer/editorService.php. There are no examples or the ID of example is incorrect."

| priority | can t create new item on a course when you create a new item on a course and select the type code you get the following error returned from setup debug error occur at line in file var www lenasys codeviewer editorservice php there are no examples or the id of example is incorrect | 1 |

785,321 | 27,609,376,843 | IssuesEvent | 2023-03-09 15:02:05 | frequenz-floss/frequenz-sdk-python | https://api.github.com/repos/frequenz-floss/frequenz-sdk-python | closed | Implement BatteryPool | priority:high type:enhancement part:data-pipeline | ### What's needed?

User should be able to subscribe for data from some pool of Batteries.

BatteryPool should communicate with user using channels. This approach would be consisntent with LogicanMeter and EvChargerPool.

## What data are needed:

All these values will be packed in the class and send with one chann... | 1.0 | Implement BatteryPool - ### What's needed?

User should be able to subscribe for data from some pool of Batteries.

BatteryPool should communicate with user using channels. This approach would be consisntent with LogicanMeter and EvChargerPool.

## What data are needed:

All these values will be packed in the class... | priority | implement batterypool what s needed user should be able to subscribe for data from some pool of batteries batterypool should communicate with user using channels this approach would be consisntent with logicanmeter and evchargerpool what data are needed all these values will be packed in the class... | 1 |

759,298 | 26,587,929,355 | IssuesEvent | 2023-01-23 04:42:35 | googleapis/nodejs-spanner | https://api.github.com/repos/googleapis/nodejs-spanner | closed | Error parsing STRUCT with JSON "keys" field | type: bug priority: p2 api: spanner | # Environment details

- OS: `Mac OS Ventura 13.1`

- Node.js version: `14.17.6`

- npm version: `6.14.15`

- `@google-cloud/spanner` version: `6.7.0`

---

# Error

### Steps to reproduce

The error occurs when the queried column either is or includes a `STRUCT` with a child field of `type: "JSON"` a... | 1.0 | Error parsing STRUCT with JSON "keys" field - # Environment details

- OS: `Mac OS Ventura 13.1`

- Node.js version: `14.17.6`

- npm version: `6.14.15`

- `@google-cloud/spanner` version: `6.7.0`

---

# Error

### Steps to reproduce

The error occurs when the queried column either is or includes a `... | priority | error parsing struct with json keys field environment details os mac os ventura node js version npm version google cloud spanner version error steps to reproduce the error occurs when the queried column either is or includes a struc... | 1 |

521,830 | 15,117,091,077 | IssuesEvent | 2021-02-09 07:55:46 | rchain/rchain | https://api.github.com/repos/rchain/rchain | opened | Missing response to ApprovedBlockRequest keeps the node waiting forever | Priority-Low enhancement | On 0.10.0 release, NodeA makes a request for `ApprovedBlockRequest` from NodeB. NodeB responds with `ApprovedBlock`, however, NodeA never receives the block, making NodeA wait forever for the block. It would be good to have a timeout and retry N times before exiting.

| 1.0 | Missing response to ApprovedBlockRequest keeps the node waiting forever - On 0.10.0 release, NodeA makes a request for `ApprovedBlockRequest` from NodeB. NodeB responds with `ApprovedBlock`, however, NodeA never receives the block, making NodeA wait forever for the block. It would be good to have a timeout and retry ... | priority | missing response to approvedblockrequest keeps the node waiting forever on release nodea makes a request for approvedblockrequest from nodeb nodeb responds with approvedblock however nodea never receives the block making nodea wait forever for the block it would be good to have a timeout and retry n... | 1 |

159,456 | 24,994,757,850 | IssuesEvent | 2022-11-02 22:27:34 | Agoric/agoric-sdk | https://api.github.com/repos/Agoric/agoric-sdk | closed | QA the wallet UX | wallet needs-design Inter-protocol MUST-HAVE | ## What is the Problem Being Solved?

Wallet needs QA of the UX.

## Description of the Design

- Go through user flows and make sure there aren't any kinks.

- Go through other docs of issues

- [UX Issues (from Econ Testing)](https://docs.google.com/document/d/1Kho6aLrNaD-qxG7StOQXLSMPTZMHGHQLbV7x70jEEDk/edi... | 1.0 | QA the wallet UX - ## What is the Problem Being Solved?

Wallet needs QA of the UX.

## Description of the Design

- Go through user flows and make sure there aren't any kinks.

- Go through other docs of issues

- [UX Issues (from Econ Testing)](https://docs.google.com/document/d/1Kho6aLrNaD-qxG7StOQXLSMPTZMH... | non_priority | qa the wallet ux what is the problem being solved wallet needs qa of the ux description of the design go through user flows and make sure there aren t any kinks go through other docs of issues triage what needs to be fixed security considerations test plan | 0 |

15,712 | 6,028,274,375 | IssuesEvent | 2017-06-08 15:24:59 | CleverRaven/Cataclysm-DDA | https://api.github.com/repos/CleverRaven/Cataclysm-DDA | closed | Problem compiling with Visual Studio | Build | Using Visual Studio 2015 on Windows 7 can't compile latest SDL tiles version.

Here is the log with /verbose (today I learn what that is)

[Cataclysm.txt](https://github.com/CleverRaven/Cataclysm-DDA/files/1043248/Cataclysm.txt)

After spending all this day looking what the linker error were, and after manually che... | 1.0 | Problem compiling with Visual Studio - Using Visual Studio 2015 on Windows 7 can't compile latest SDL tiles version.

Here is the log with /verbose (today I learn what that is)

[Cataclysm.txt](https://github.com/CleverRaven/Cataclysm-DDA/files/1043248/Cataclysm.txt)

After spending all this day looking what the li... | non_priority | problem compiling with visual studio using visual studio on windows can t compile latest sdl tiles version here is the log with verbose today i learn what that is after spending all this day looking what the linker error were and after manually checking commits i discovered later that i could have... | 0 |

6,726 | 3,041,980,609 | IssuesEvent | 2015-08-08 05:22:34 | latex3/svn-mirror | https://api.github.com/repos/latex3/svn-mirror | closed | incorrect rounding in l3fp | documentation l3fp | The rounding function doesn't work correctly for 2 (tested with a current texlive 2015):

~~~~~~

\documentclass{article}

\usepackage{expl3}

\begin{document}

\ExplSyntaxOn

\fp_eval:n{round(1.115,2)}\par %OK = 1.12

\fp_eval:n{round(1.125,2)}\par %wrong = 1.12 (instead of 1.13

\fp_eval:n{round(1.135,2)} %O... | 1.0 | incorrect rounding in l3fp - The rounding function doesn't work correctly for 2 (tested with a current texlive 2015):

~~~~~~

\documentclass{article}

\usepackage{expl3}

\begin{document}

\ExplSyntaxOn

\fp_eval:n{round(1.115,2)}\par %OK = 1.12

\fp_eval:n{round(1.125,2)}\par %wrong = 1.12 (instead of 1.13

\fp_... | non_priority | incorrect rounding in the rounding function doesn t work correctly for tested with a current texlive documentclass article usepackage begin document explsyntaxon fp eval n round par ok fp eval n round par wrong instead of fp eval n round ... | 0 |

72,370 | 3,384,866,826 | IssuesEvent | 2015-11-27 07:49:03 | sphereio/sphere-jvm-sdk | https://api.github.com/repos/sphereio/sphere-jvm-sdk | opened | Add new payment fields | priority task | see http://dev.commercetools.com/release-notes.html#all-releases

```

[SPHERE.IO API] Transactions in PaymentsBETA has the new fields id and state. The field timestamp is now optional.

[SPHERE.IO API] Payment has three new update actions for transactions: changeTransactionState, changeTransactionTimestamp and chang... | 1.0 | Add new payment fields - see http://dev.commercetools.com/release-notes.html#all-releases

```

[SPHERE.IO API] Transactions in PaymentsBETA has the new fields id and state. The field timestamp is now optional.

[SPHERE.IO API] Payment has three new update actions for transactions: changeTransactionState, changeTrans... | priority | add new payment fields see transactions in paymentsbeta has the new fields id and state the field timestamp is now optional payment has three new update actions for transactions changetransactionstate changetransactiontimestamp and changetransactioninteractionid new message paymenttransactionst... | 1 |

238,083 | 19,697,146,829 | IssuesEvent | 2022-01-12 13:20:54 | backend-br/vagas | https://api.github.com/repos/backend-br/vagas | closed | [REMOTO] Back-end Developer Ruby on Rails @ James Delivery | CLT JavaScript Ruby Remoto AWS PostgreSQL Testes Unitários Git Rest Linux Stale | ## James Delivery

Por meio do meu app levo praticidade e conforto à vida das pessoas! Com apenas alguns cliques, ofereço a maneira mais prática de receber seu delivery, que vai muito além de comida. É do produto que você quiser e de qualquer lugar da cidade! E, para que isso tudo funcione da melhor forma, estamos em... | 1.0 | [REMOTO] Back-end Developer Ruby on Rails @ James Delivery - ## James Delivery

Por meio do meu app levo praticidade e conforto à vida das pessoas! Com apenas alguns cliques, ofereço a maneira mais prática de receber seu delivery, que vai muito além de comida. É do produto que você quiser e de qualquer lugar da cidad... | non_priority | back end developer ruby on rails james delivery james delivery por meio do meu app levo praticidade e conforto à vida das pessoas com apenas alguns cliques ofereço a maneira mais prática de receber seu delivery que vai muito além de comida é do produto que você quiser e de qualquer lugar da cidade e p... | 0 |

206,310 | 15,724,819,528 | IssuesEvent | 2021-03-29 09:15:29 | ubtue/DatenProbleme | https://api.github.com/repos/ubtue/DatenProbleme | closed | Brill ORCID | Einspielung_Zotero_AUTO Zotero_AUTO_RSS ready for testing | **URL**

https://doi.org/10.1163/15700747-bja10031

IxTheo#2021-03-25#EDC621C8CBE1FE9435F1AA4F37C1DFCBBA304D79

**Ausführliche Problembeschreibung**

Hier wurde die ORCID-ID nicht als Hinweis ins entsprechende Feld eingespielt. | 1.0 | Brill ORCID - **URL**

https://doi.org/10.1163/15700747-bja10031

IxTheo#2021-03-25#EDC621C8CBE1FE9435F1AA4F37C1DFCBBA304D79

**Ausführliche Problembeschreibung**

Hier wurde die ORCID-ID nicht als Hinweis ins entsprechende Feld eingespielt. | non_priority | brill orcid url ixtheo ausführliche problembeschreibung hier wurde die orcid id nicht als hinweis ins entsprechende feld eingespielt | 0 |

120,886 | 25,887,258,407 | IssuesEvent | 2022-12-14 15:21:51 | pulumi/pulumi | https://api.github.com/repos/pulumi/pulumi | closed | Improve validation and linting for schema as part of codegen | kind/enhancement impact/usability area/codegen | ## Hello!

<!-- Please leave this section as-is, it's designed to help others in the community know how to interact with our GitHub issues. -->

- Vote on this issue by adding a 👍 reaction

- If you want to implement this feature, comment to let us know (we'll work with you on design, scheduling, etc.)

## Issue d... | 1.0 | Improve validation and linting for schema as part of codegen - ## Hello!

<!-- Please leave this section as-is, it's designed to help others in the community know how to interact with our GitHub issues. -->

- Vote on this issue by adding a 👍 reaction

- If you want to implement this feature, comment to let us know ... | non_priority | improve validation and linting for schema as part of codegen hello vote on this issue by adding a 👍 reaction if you want to implement this feature comment to let us know we ll work with you on design scheduling etc issue details this is a follow up from the postmortem for where credenti... | 0 |

595,937 | 18,091,069,252 | IssuesEvent | 2021-09-22 01:43:18 | knative/docs | https://api.github.com/repos/knative/docs | closed | [1.0] Fully document pingsources.sources.knative.dev | priority/high kind/eventing | @n3wscott commented on [Mon Feb 22 2021](https://github.com/knative/eventing/issues/4916)

Related to https://github.com/knative/docs/issues/3268

The following work needs to be validated for pingsources.sources.knative.dev to be ready for 1.0:

- [x] Getting Started demo using the component.

- [x] Sample minimum of c... | 1.0 | [1.0] Fully document pingsources.sources.knative.dev - @n3wscott commented on [Mon Feb 22 2021](https://github.com/knative/eventing/issues/4916)

Related to https://github.com/knative/docs/issues/3268

The following work needs to be validated for pingsources.sources.knative.dev to be ready for 1.0:

- [x] Getting Start... | priority | fully document pingsources sources knative dev commented on related to the following work needs to be validated for pingsources sources knative dev to be ready for getting started demo using the component sample minimum of component sample complete resource of component fully do... | 1 |

49,880 | 20,970,921,463 | IssuesEvent | 2022-03-28 11:17:25 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | closed | Error when using MACRO with VA_ARGS | bug Language Service more info needed not reproing | vscode version used: 1.61.2

C/C++ version used: v1.7.1

When we use a MACRO with VA_ARGS with just the 1 argument, the IntelliSense gives an Error:

`expected an expression`

```

static void func(const char *format, ...)

{

va_list args;

va_start(args,format);

vfprintf(stdout,format,args);

va_... | 1.0 | Error when using MACRO with VA_ARGS - vscode version used: 1.61.2

C/C++ version used: v1.7.1

When we use a MACRO with VA_ARGS with just the 1 argument, the IntelliSense gives an Error:

`expected an expression`

```

static void func(const char *format, ...)

{

va_list args;

va_start(args,format);

... | non_priority | error when using macro with va args vscode version used c c version used when we use a macro with va args with just the argument the intellisense gives an error expected an expression static void func const char format va list args va start args format vf... | 0 |

8,810 | 4,328,527,184 | IssuesEvent | 2016-07-26 14:19:33 | GitTools/GitVersion | https://api.github.com/repos/GitTools/GitVersion | closed | Cache broken with GitVersionTask 3.6.0 | pr-open Type: MSBuild Task | I've just updated the MSBuild task package and apparently the cache is broken. Each target (`WriteVersionInfoToBuildLog`, `WriteVersionInfoToBuildLog` and `GitVersion`) in each built project recalculates the version number from scratch. Every time I see

```

Cache file C:\TeamCity\buildAgent\work\aaa244402599d927\.... | 1.0 | Cache broken with GitVersionTask 3.6.0 - I've just updated the MSBuild task package and apparently the cache is broken. Each target (`WriteVersionInfoToBuildLog`, `WriteVersionInfoToBuildLog` and `GitVersion`) in each built project recalculates the version number from scratch. Every time I see

```

Cache file C:\Te... | non_priority | cache broken with gitversiontask i ve just updated the msbuild task package and apparently the cache is broken each target writeversioninfotobuildlog writeversioninfotobuildlog and gitversion in each built project recalculates the version number from scratch every time i see cache file c te... | 0 |

20,135 | 6,822,400,632 | IssuesEvent | 2017-11-07 19:56:56 | htacg/tidy-html5 | https://api.github.com/repos/htacg/tidy-html5 | closed | `sprtf` library should work cross platform | Build/Install | Although this is intended for debugging, its features should be platform agnostic. It doesn't affect release builds of Tidy, so this isn't a hard milestone.

| 1.0 | `sprtf` library should work cross platform - Although this is intended for debugging, its features should be platform agnostic. It doesn't affect release builds of Tidy, so this isn't a hard milestone.

| non_priority | sprtf library should work cross platform although this is intended for debugging its features should be platform agnostic it doesn t affect release builds of tidy so this isn t a hard milestone | 0 |

86,848 | 17,091,076,464 | IssuesEvent | 2021-07-08 17:32:18 | danieljharvey/mimsa | https://api.github.com/repos/danieljharvey/mimsa | closed | add let pattern | as a treat code hygiene | Allow destructuring with a `let` binding using patterns from pattern matching. Means we can bin off `LetPair`.

```haskell

let { dog: d, cat: c } = { dog: 1, cat: "poo" }

```

This would be like doing `let d = 1; let c = "poo"`

Only works when the pattern is exhaustive on it's own, this would not work because ... | 1.0 | add let pattern - Allow destructuring with a `let` binding using patterns from pattern matching. Means we can bin off `LetPair`.

```haskell

let { dog: d, cat: c } = { dog: 1, cat: "poo" }

```

This would be like doing `let d = 1; let c = "poo"`

Only works when the pattern is exhaustive on it's own, this would... | non_priority | add let pattern allow destructuring with a let binding using patterns from pattern matching means we can bin off letpair haskell let dog d cat c dog cat poo this would be like doing let d let c poo only works when the pattern is exhaustive on it s own this would... | 0 |

175,876 | 14,543,752,236 | IssuesEvent | 2020-12-15 17:15:48 | gabbhack/deser | https://api.github.com/repos/gabbhack/deser | opened | More information of {.flat.} behavior | documentation | Currently an important, but not obvious thing is not described - `deser` can override "global" pragmas:

- https://github.com/gabbhack/deser/blob/1dbdf74fde55d1add9fbaf213c3590e9a9c288e6/tests/serialize/flat/rename_all/tlevels_replace.nim

- https://github.com/gabbhack/deser/blob/1dbdf74fde55d1add9fbaf213c3590e9a9c288... | 1.0 | More information of {.flat.} behavior - Currently an important, but not obvious thing is not described - `deser` can override "global" pragmas:

- https://github.com/gabbhack/deser/blob/1dbdf74fde55d1add9fbaf213c3590e9a9c288e6/tests/serialize/flat/rename_all/tlevels_replace.nim

- https://github.com/gabbhack/deser/blo... | non_priority | more information of flat behavior currently an important but not obvious thing is not described deser can override global pragmas etc | 0 |

263,937 | 23,091,672,708 | IssuesEvent | 2022-07-26 15:41:33 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | TestLinkedListRemoveOlderNoAllocs – github.com/elastic/beats/v7/x-pack/auditbeat/module/system/socket/helper | flaky-test Team:Security-External Integrations | ## Flaky Test

* **Test Name:** `TestLinkedListRemoveOlderNoAllocs – github.com/elastic/beats/v7/x-pack/auditbeat/module/system/socket/helper`

* **Link:** Link to file/line number in github.

* **Branch:** Git branch the test was seen in. If a PR, the branch the PR was based off.

* **Artifact Link:** https://githu... | 1.0 | TestLinkedListRemoveOlderNoAllocs – github.com/elastic/beats/v7/x-pack/auditbeat/module/system/socket/helper - ## Flaky Test

* **Test Name:** `TestLinkedListRemoveOlderNoAllocs – github.com/elastic/beats/v7/x-pack/auditbeat/module/system/socket/helper`

* **Link:** Link to file/line number in github.

* **Branch:**... | non_priority | testlinkedlistremoveoldernoallocs – github com elastic beats x pack auditbeat module system socket helper flaky test test name testlinkedlistremoveoldernoallocs – github com elastic beats x pack auditbeat module system socket helper link link to file line number in github branch g... | 0 |

421,085 | 12,248,562,127 | IssuesEvent | 2020-05-05 17:43:00 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | Unity build linker error for Windows Standalone/HoloLens 1 & 2 ( x86 & x86_64 ) | kind/bug lang/C# priority/P2 | ### What version of gRPC and what language are you using?

* C#

* 1.25-dev

* Unity 2019.2.6f1

### What operating system (Linux, Windows,...) and version?

* Windows 10

* SDK 10.0.17763.0 (x86)

* SDK 10.0.18362.0 (x64)

### What runtime / compiler are you using (e.g. python version or version of gcc)

* V... | 1.0 | Unity build linker error for Windows Standalone/HoloLens 1 & 2 ( x86 & x86_64 ) - ### What version of gRPC and what language are you using?

* C#

* 1.25-dev

* Unity 2019.2.6f1

### What operating system (Linux, Windows,...) and version?

* Windows 10

* SDK 10.0.17763.0 (x86)

* SDK 10.0.18362.0 (x64)

### Wh... | priority | unity build linker error for windows standalone hololens what version of grpc and what language are you using c dev unity what operating system linux windows and version windows sdk sdk what runtime compiler are ... | 1 |

740,039 | 25,733,538,586 | IssuesEvent | 2022-12-07 22:22:05 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | opened | make generate-gh-issue-templates changes the current components list | bug priority:p2 | ### Component(s)

_No response_

### What happened?

We need to update it and investigate why https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/551e5c97b32588c33a3852f2635052813e727c2e/.github/workflows/build-and-test.yml#L186 is not blocking the CI

### Collector version

latest

### Environment i... | 1.0 | make generate-gh-issue-templates changes the current components list - ### Component(s)

_No response_

### What happened?

We need to update it and investigate why https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/551e5c97b32588c33a3852f2635052813e727c2e/.github/workflows/build-and-test.yml#L186 i... | priority | make generate gh issue templates changes the current components list component s no response what happened we need to update it and investigate why is not blocking the ci collector version latest environment information no response opentelemetry collector configuration no respons... | 1 |

150,248 | 11,953,519,580 | IssuesEvent | 2020-04-03 21:05:10 | open-cluster-management/rhacm-docs | https://api.github.com/repos/open-cluster-management/rhacm-docs | closed | Sample Subscription YAML - timeWindow | bug squad:doc testathon | <!--

Use the [summary.md](https://github.com/open-cluster-management/rhacm-docs/blob/doc_stage/summary.md) file as the table of contents. The `summary.md` file provides direct linking within the repository to the corresponding files.

1. Provide detailed descriptions of the changes. If you provide your contact in... | 1.0 | Sample Subscription YAML - timeWindow - <!--

Use the [summary.md](https://github.com/open-cluster-management/rhacm-docs/blob/doc_stage/summary.md) file as the table of contents. The `summary.md` file provides direct linking within the repository to the corresponding files.

1. Provide detailed descriptions of the... | non_priority | sample subscription yaml timewindow use the file as the table of contents the summary md file provides direct linking within the repository to the corresponding files provide detailed descriptions of the changes if you provide your contact information we can contact you with any questions r... | 0 |

4,005 | 4,154,033,617 | IssuesEvent | 2016-06-16 10:00:22 | bartdag/py4j | https://api.github.com/repos/bartdag/py4j | closed | Replace check_connection by so_linger | performance | Essentially, so linger at true with timeout 0 will send a RST to indicate that the connection ended with an error. This should only be used on timeout. We should never use it for other reasons.

This will make a send fails without needing to read first.

I believe this should make the performance go back to what it... | True | Replace check_connection by so_linger - Essentially, so linger at true with timeout 0 will send a RST to indicate that the connection ended with an error. This should only be used on timeout. We should never use it for other reasons.

This will make a send fails without needing to read first.

I believe this should... | non_priority | replace check connection by so linger essentially so linger at true with timeout will send a rst to indicate that the connection ended with an error this should only be used on timeout we should never use it for other reasons this will make a send fails without needing to read first i believe this should... | 0 |

334,980 | 30,001,722,825 | IssuesEvent | 2023-06-26 09:43:59 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Backspacing after searching for non-existent feed source seems to "hang" search | bug priority/P5 QA/Yes QA/Test-Plan-Specified OS/Desktop feature/brave-news | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Backspacing after searching for non-existent feed source seems to "hang" search - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO... | non_priority | backspacing after searching for non existent feed source seems to hang search have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info... | 0 |

621,997 | 19,603,481,047 | IssuesEvent | 2022-01-06 05:53:21 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | [multibody] Hydroelastics with polygon contact surfaces crash continuous systems. | type: bug priority: low team: dynamics component: multibody plant | After PR #16238 was merged, users of hydroelastic contact models can freely mix-and-match {continuous, discrete} system with {triangle,polygon} contact surfaces by using this switch in MultibodyPlant:

```C++

// Use polygon contact surfaces.

plant.set_contact_surface_representation(

geometry::Hydroel... | 1.0 | [multibody] Hydroelastics with polygon contact surfaces crash continuous systems. - After PR #16238 was merged, users of hydroelastic contact models can freely mix-and-match {continuous, discrete} system with {triangle,polygon} contact surfaces by using this switch in MultibodyPlant:

```C++

// Use polygon contact... | priority | hydroelastics with polygon contact surfaces crash continuous systems after pr was merged users of hydroelastic contact models can freely mix and match continuous discrete system with triangle polygon contact surfaces by using this switch in multibodyplant c use polygon contact surfaces ... | 1 |

743,548 | 25,904,135,585 | IssuesEvent | 2022-12-15 08:53:54 | vyper-protocol/vyper-cli | https://api.github.com/repos/vyper-protocol/vyper-cli | closed | Pyth Rate plugin support | enhancement high priority | - Create new Pyth rate plugin

- Fetch existing pyth rate plugin

Ref: #24 | 1.0 | Pyth Rate plugin support - - Create new Pyth rate plugin

- Fetch existing pyth rate plugin

Ref: #24 | priority | pyth rate plugin support create new pyth rate plugin fetch existing pyth rate plugin ref | 1 |

18,909 | 5,732,917,803 | IssuesEvent | 2017-04-21 15:55:09 | google/dagger | https://api.github.com/repos/google/dagger | closed | provided Map and Sets will be recreated in Subcomponents | area: code-generation status: researching type: bug | This component does create extra providers for set and maps in the subcomponent, although they are not used.

If you have many or large provided maps or sets and your subcomponent is used with a scope that is created on request, dagger recreates all the maps and sets on each request, although they are never injected.

... | 1.0 | provided Map and Sets will be recreated in Subcomponents - This component does create extra providers for set and maps in the subcomponent, although they are not used.

If you have many or large provided maps or sets and your subcomponent is used with a scope that is created on request, dagger recreates all the maps an... | non_priority | provided map and sets will be recreated in subcomponents this component does create extra providers for set and maps in the subcomponent although they are not used if you have many or large provided maps or sets and your subcomponent is used with a scope that is created on request dagger recreates all the maps an... | 0 |

373,359 | 11,042,653,784 | IssuesEvent | 2019-12-09 09:36:36 | xwikisas/application-forum | https://api.github.com/repos/xwikisas/application-forum | closed | Creating and commenting answers events are not displayed properly in Notifications list after Forum Pro is uninstalled | Priority: Minor Type: Bug | STEPS TO REPRODUCE

1. Login as Admin

2. Set Pages Notifications to ON (and Watch the wiki)

3. Create an user (U1)

4. Login with U1

5. Create a forum, add a topic, add an answer on that topic, add a comment to the answer

6. Login with Admin

7. Observe the Notifications list

8. Uninstall Forum Pro

9. Observe t... | 1.0 | Creating and commenting answers events are not displayed properly in Notifications list after Forum Pro is uninstalled - STEPS TO REPRODUCE

1. Login as Admin

2. Set Pages Notifications to ON (and Watch the wiki)

3. Create an user (U1)

4. Login with U1

5. Create a forum, add a topic, add an answer on that topic, ... | priority | creating and commenting answers events are not displayed properly in notifications list after forum pro is uninstalled steps to reproduce login as admin set pages notifications to on and watch the wiki create an user login with create a forum add a topic add an answer on that topic ad... | 1 |