Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,960 | 13,397,846,787 | IssuesEvent | 2020-09-03 12:17:06 | openpower-cores/a2i | https://api.github.com/repos/openpower-cores/a2i | closed | The order of writing the GPRs | core RTL/Architecture | Hi All,

In the pipeline, the A2I core writes the data into GPRs (general purpose registers) after the ex7 stage which is named as ex8 or rf1. My current understanding of A2I is that different instructions of one thread can actually write the data into the register file out-of-order.

For example, there are two instructions, i.e., "Load RegA, addr1"; and "Add RegB, RegC, RegD". If the load instruction misses in the L1 cache, it will be stored in the LMQ and waiting to access the L2 cache. In this case, if the add instruction keeps executing, it will write the data into GPR before the load instruction. If this is the case, the order of writing the register file for these two instructions, i.e.., load and add, is out of sequence. Is it the right understanding?

If I understand correctly, how can A2I guarantee the precise interrupt or exception? For example, one exception happens after the add instruction writing the GPRs.

Thanks! | 1.0 | The order of writing the GPRs - Hi All,

In the pipeline, the A2I core writes the data into GPRs (general purpose registers) after the ex7 stage which is named as ex8 or rf1. My current understanding of A2I is that different instructions of one thread can actually write the data into the register file out-of-order.

For example, there are two instructions, i.e., "Load RegA, addr1"; and "Add RegB, RegC, RegD". If the load instruction misses in the L1 cache, it will be stored in the LMQ and waiting to access the L2 cache. In this case, if the add instruction keeps executing, it will write the data into GPR before the load instruction. If this is the case, the order of writing the register file for these two instructions, i.e.., load and add, is out of sequence. Is it the right understanding?

If I understand correctly, how can A2I guarantee the precise interrupt or exception? For example, one exception happens after the add instruction writing the GPRs.

Thanks! | non_priority | the order of writing the gprs hi all in the pipeline the core writes the data into gprs general purpose registers after the stage which is named as or my current understanding of is that different instructions of one thread can actually write the data into the register file out of order for example there are two instructions i e load rega and add regb regc regd if the load instruction misses in the cache it will be stored in the lmq and waiting to access the cache in this case if the add instruction keeps executing it will write the data into gpr before the load instruction if this is the case the order of writing the register file for these two instructions i e load and add is out of sequence is it the right understanding if i understand correctly how can guarantee the precise interrupt or exception for example one exception happens after the add instruction writing the gprs thanks | 0 |

209,374 | 16,191,904,646 | IssuesEvent | 2021-05-04 09:40:09 | microsoft/microsoft-ui-xaml | https://api.github.com/repos/microsoft/microsoft-ui-xaml | closed | Can you please keep the roadmap more up to date? | documentation question winui3preview | The latest preview is not on the [roadmap](https://github.com/microsoft/microsoft-ui-xaml/blob/master/docs/roadmap.md).

The "[Feature Roadmap](https://github.com/microsoft/microsoft-ui-xaml/blob/master/docs/roadmap.md#winui-30-feature-roadmap)" only has two categories left: "Reunion 0.5" (in two weeks) and "Planned for a future update" (which could not be any vaguer.)

I understand not wanting to promise too much. But at this point you are promising nothing that is more than 2 weeks away from shipping.

Are there going to be future previews, I guess so, I remember @ryandemopoulos speaking about roughly monthly previews at some point?

Maybe the "Milestones" in GitHub could be used to track some of the progress? (Currently they are clearly [very outdated](https://github.com/microsoft/microsoft-ui-xaml/milestones).)

(Btw. the project [reunion roadmap](https://github.com/microsoft/ProjectReunion/blob/main/docs/roadmap.md) contains the "unpackaged desktop apps WinUI 3" as part of the Q4 2021 milestone, but the WinUI 3 roadmap does not give and date for that)

| 1.0 | Can you please keep the roadmap more up to date? - The latest preview is not on the [roadmap](https://github.com/microsoft/microsoft-ui-xaml/blob/master/docs/roadmap.md).

The "[Feature Roadmap](https://github.com/microsoft/microsoft-ui-xaml/blob/master/docs/roadmap.md#winui-30-feature-roadmap)" only has two categories left: "Reunion 0.5" (in two weeks) and "Planned for a future update" (which could not be any vaguer.)

I understand not wanting to promise too much. But at this point you are promising nothing that is more than 2 weeks away from shipping.

Are there going to be future previews, I guess so, I remember @ryandemopoulos speaking about roughly monthly previews at some point?

Maybe the "Milestones" in GitHub could be used to track some of the progress? (Currently they are clearly [very outdated](https://github.com/microsoft/microsoft-ui-xaml/milestones).)

(Btw. the project [reunion roadmap](https://github.com/microsoft/ProjectReunion/blob/main/docs/roadmap.md) contains the "unpackaged desktop apps WinUI 3" as part of the Q4 2021 milestone, but the WinUI 3 roadmap does not give and date for that)

| non_priority | can you please keep the roadmap more up to date the latest preview is not on the the only has two categories left reunion in two weeks and planned for a future update which could not be any vaguer i understand not wanting to promise too much but at this point you are promising nothing that is more than weeks away from shipping are there going to be future previews i guess so i remember ryandemopoulos speaking about roughly monthly previews at some point maybe the milestones in github could be used to track some of the progress currently they are clearly btw the project contains the unpackaged desktop apps winui as part of the milestone but the winui roadmap does not give and date for that | 0 |

694,063 | 23,800,597,512 | IssuesEvent | 2022-09-03 08:06:23 | kubernetes/ingress-nginx | https://api.github.com/repos/kubernetes/ingress-nginx | closed | Allow SSL certificate expiration warning threshold to be adjusted | kind/feature lifecycle/rotten triage/accepted needs-priority | <!--

Welcome to ingress-nginx! For a smooth feature request process, try to

answer the following questions. Don't worry if they're not all applicable; just

try to include what you can :-)

If you need to include code snippets or logs, please put them in fenced code

blocks. If they're super-long, please use the details tag like

<details><summary>super-long log</summary> lots of stuff </details>

-->

<!-- What do you want to happen? -->

Some PKI implementations like https://github.com/smallstep/certificates issue aggressively short-lived certificates by default (24 hours, for example). In situations where this is the desired/intended configuration for Ingress TLS certs, this causes a disproportionate amount of warning messages.

```

W0122 17:44:59.804433 9 controller.go:1339] SSL certificate for server "prometheus.k8s.home.arpa" is about to expire (2022-01-23 16:50:14 +0000 UTC)

W0122 17:44:59.804670 9 controller.go:1339] SSL certificate for server "grafana.k8s.home.arpa" is about to expire (2022-01-23 16:50:15 +0000 UTC)

W0122 17:44:59.804801 9 controller.go:1339] SSL certificate for server "alertmanager.k8s.home.arpa" is about to expire (2022-01-23 16:50:15 +0000 UTC)

```

The warning threshold is currently hard-coded here:

https://github.com/kubernetes/ingress-nginx/blob/abdece6e80b6d54d177cf3f51e43d1f8220c1b1c/internal/ingress/controller/controller.go#L1349

It would be useful to make this an adjustable value.

<!-- Is there currently another issue associated with this? -->

<!-- Does it require a particular kubernetes version? -->

<!-- If this is actually about documentation, uncomment the following block -->

<!--

/kind documentation

/remove-kind feature

--> | 1.0 | Allow SSL certificate expiration warning threshold to be adjusted - <!--

Welcome to ingress-nginx! For a smooth feature request process, try to

answer the following questions. Don't worry if they're not all applicable; just

try to include what you can :-)

If you need to include code snippets or logs, please put them in fenced code

blocks. If they're super-long, please use the details tag like

<details><summary>super-long log</summary> lots of stuff </details>

-->

<!-- What do you want to happen? -->

Some PKI implementations like https://github.com/smallstep/certificates issue aggressively short-lived certificates by default (24 hours, for example). In situations where this is the desired/intended configuration for Ingress TLS certs, this causes a disproportionate amount of warning messages.

```

W0122 17:44:59.804433 9 controller.go:1339] SSL certificate for server "prometheus.k8s.home.arpa" is about to expire (2022-01-23 16:50:14 +0000 UTC)

W0122 17:44:59.804670 9 controller.go:1339] SSL certificate for server "grafana.k8s.home.arpa" is about to expire (2022-01-23 16:50:15 +0000 UTC)

W0122 17:44:59.804801 9 controller.go:1339] SSL certificate for server "alertmanager.k8s.home.arpa" is about to expire (2022-01-23 16:50:15 +0000 UTC)

```

The warning threshold is currently hard-coded here:

https://github.com/kubernetes/ingress-nginx/blob/abdece6e80b6d54d177cf3f51e43d1f8220c1b1c/internal/ingress/controller/controller.go#L1349

It would be useful to make this an adjustable value.

<!-- Is there currently another issue associated with this? -->

<!-- Does it require a particular kubernetes version? -->

<!-- If this is actually about documentation, uncomment the following block -->

<!--

/kind documentation

/remove-kind feature

--> | priority | allow ssl certificate expiration warning threshold to be adjusted welcome to ingress nginx for a smooth feature request process try to answer the following questions don t worry if they re not all applicable just try to include what you can if you need to include code snippets or logs please put them in fenced code blocks if they re super long please use the details tag like super long log lots of stuff some pki implementations like issue aggressively short lived certificates by default hours for example in situations where this is the desired intended configuration for ingress tls certs this causes a disproportionate amount of warning messages controller go ssl certificate for server prometheus home arpa is about to expire utc controller go ssl certificate for server grafana home arpa is about to expire utc controller go ssl certificate for server alertmanager home arpa is about to expire utc the warning threshold is currently hard coded here it would be useful to make this an adjustable value kind documentation remove kind feature | 1 |

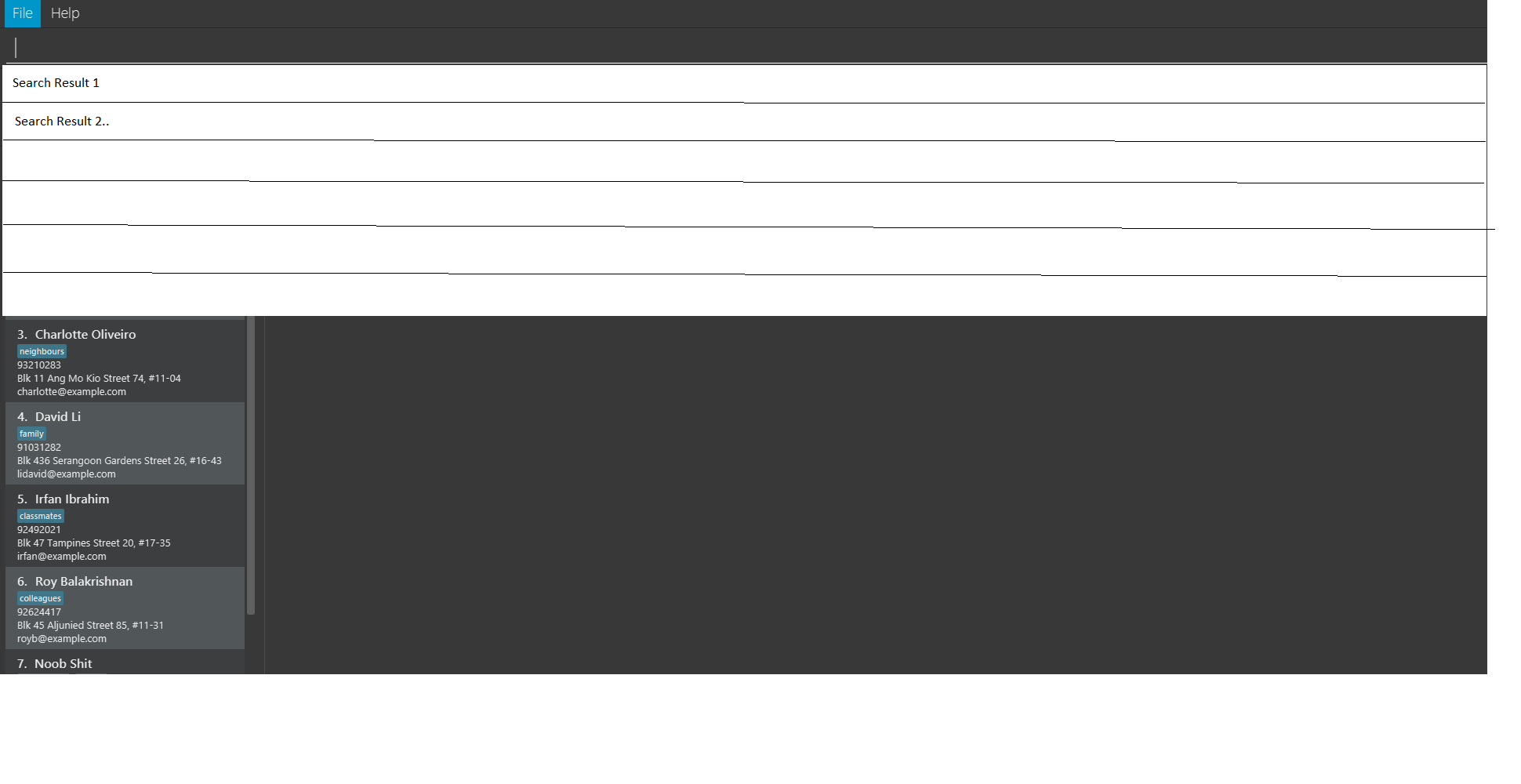

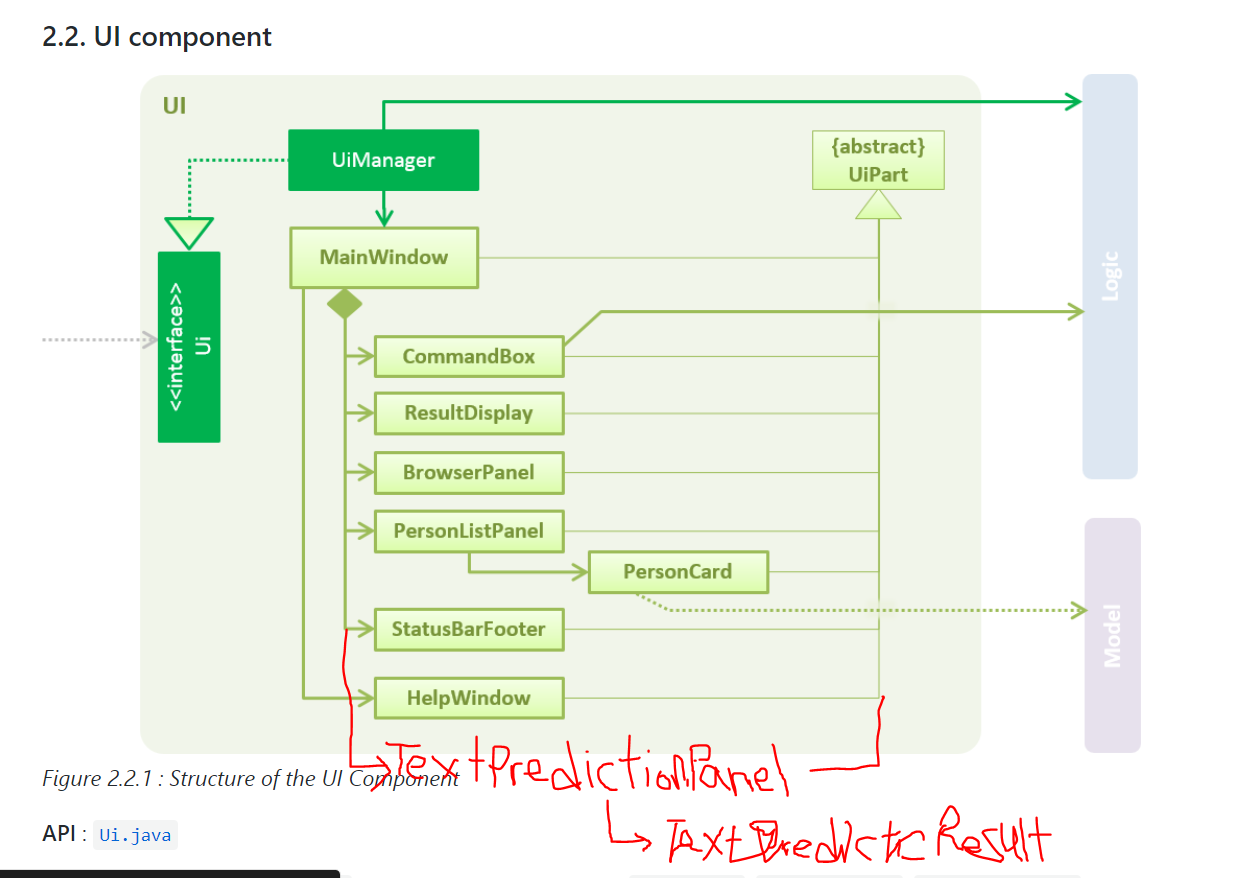

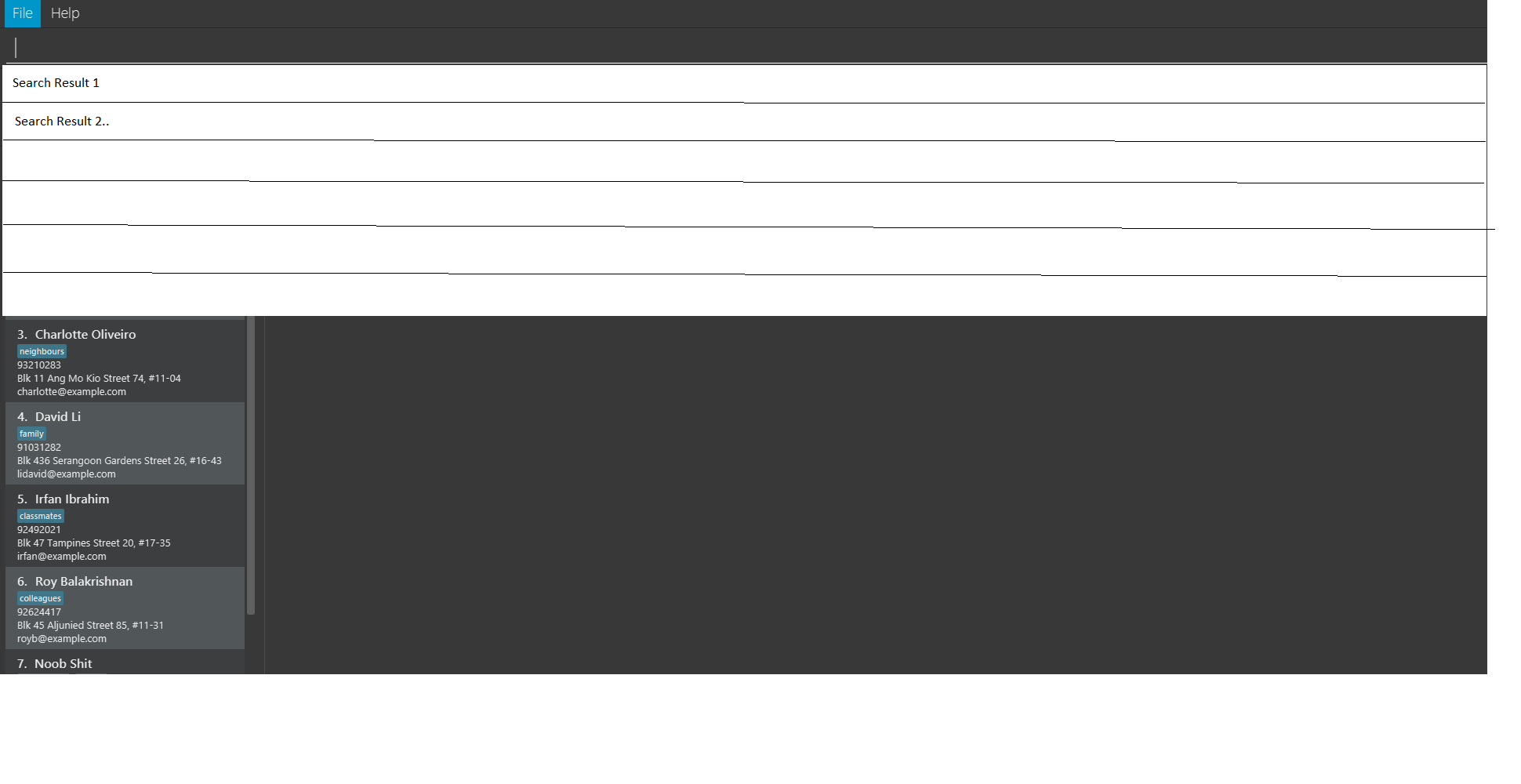

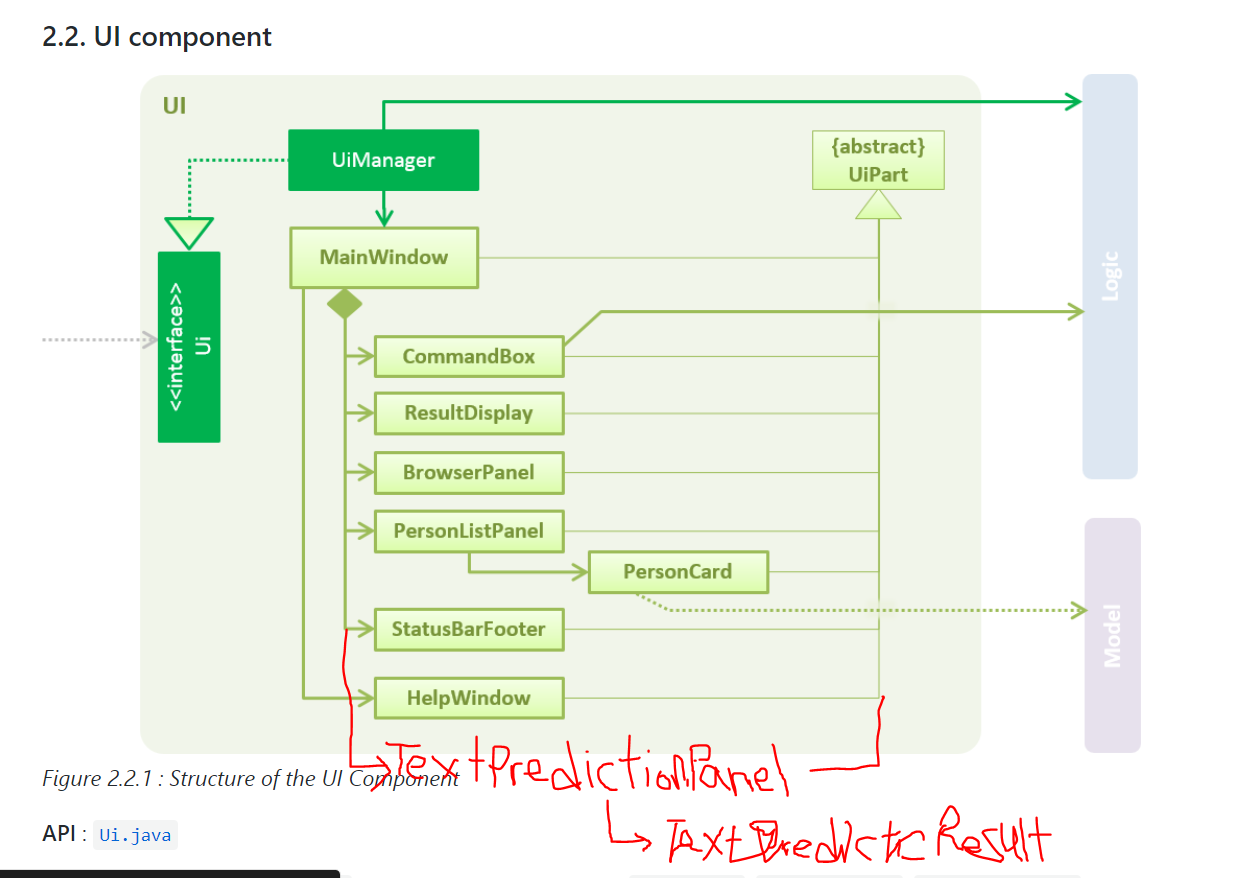

192,207 | 6,847,630,952 | IssuesEvent | 2017-11-13 15:58:24 | CS2103AUG2017-W15-B2/main | https://api.github.com/repos/CS2103AUG2017-W15-B2/main | closed | Command Prediction for CommandBox | new feature Priority: HIGH | ## TODO

- [x] Implement basic functionality

- [x] Rename components to `CommandPrediction` for clarity (instead of `SearchPrediction` or `TextPrediction`

- [x] Add tests

- [x] Add more cases to support new commands that will be introduced up till V1.5

- [x] Update UG and DG

### Motivation

The command box is going to be the main way of navigating through CYNC.

It'd be great if we can make the text bar more powerful with text prediction / autocomplete.

### Description

I'ma do this in a few parts.

First part will only be involved with searching through commands,

Next part will be involved with making the find command more powerful

I'll try my best to touch only the UI component.

### UI Mockup

### Class Diagram

| 1.0 | Command Prediction for CommandBox - ## TODO

- [x] Implement basic functionality

- [x] Rename components to `CommandPrediction` for clarity (instead of `SearchPrediction` or `TextPrediction`

- [x] Add tests

- [x] Add more cases to support new commands that will be introduced up till V1.5

- [x] Update UG and DG

### Motivation

The command box is going to be the main way of navigating through CYNC.

It'd be great if we can make the text bar more powerful with text prediction / autocomplete.

### Description

I'ma do this in a few parts.

First part will only be involved with searching through commands,

Next part will be involved with making the find command more powerful

I'll try my best to touch only the UI component.

### UI Mockup

### Class Diagram

| priority | command prediction for commandbox todo implement basic functionality rename components to commandprediction for clarity instead of searchprediction or textprediction add tests add more cases to support new commands that will be introduced up till update ug and dg motivation the command box is going to be the main way of navigating through cync it d be great if we can make the text bar more powerful with text prediction autocomplete description i ma do this in a few parts first part will only be involved with searching through commands next part will be involved with making the find command more powerful i ll try my best to touch only the ui component ui mockup class diagram | 1 |

291,414 | 8,924,955,792 | IssuesEvent | 2019-01-21 20:40:14 | alakajam-team/alakajam | https://api.github.com/repos/alakajam-team/alakajam | closed | PSQL errors are no longer caught | bug high priority | I can't seem to be able to catch PSQL errors anymore, to display 500s in a pretty page.

I've had the same issue with multer as well, but I've been able to [wrap multer](https://github.com/alakajam-team/alakajam/blob/master/controllers/index.js#L170) to force catching any errors.

I feel something's wrong with our integration of `express-promise-router` but I can't put my finger on it. Back to jamming for now... | 1.0 | PSQL errors are no longer caught - I can't seem to be able to catch PSQL errors anymore, to display 500s in a pretty page.

I've had the same issue with multer as well, but I've been able to [wrap multer](https://github.com/alakajam-team/alakajam/blob/master/controllers/index.js#L170) to force catching any errors.

I feel something's wrong with our integration of `express-promise-router` but I can't put my finger on it. Back to jamming for now... | priority | psql errors are no longer caught i can t seem to be able to catch psql errors anymore to display in a pretty page i ve had the same issue with multer as well but i ve been able to to force catching any errors i feel something s wrong with our integration of express promise router but i can t put my finger on it back to jamming for now | 1 |

19,001 | 2,616,017,148 | IssuesEvent | 2015-03-02 00:59:11 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | Get Order Type from Tech Type | auto-migrated Component-Logic Component-Persistence Priority-High Type-Enhancement | ```

This is mostly for internal use so we don't have huge duplicate switch

statements just to map orders to techs.

```

Original issue reported on code.google.com by `AHeinerm` on 9 Jun 2011 at 7:03 | 1.0 | Get Order Type from Tech Type - ```

This is mostly for internal use so we don't have huge duplicate switch

statements just to map orders to techs.

```

Original issue reported on code.google.com by `AHeinerm` on 9 Jun 2011 at 7:03 | priority | get order type from tech type this is mostly for internal use so we don t have huge duplicate switch statements just to map orders to techs original issue reported on code google com by aheinerm on jun at | 1 |

115,135 | 11,866,018,992 | IssuesEvent | 2020-03-26 02:19:59 | laurenriddle/Hive-Mind-Back-End-Capstone-Client | https://api.github.com/repos/laurenriddle/Hive-Mind-Back-End-Capstone-Client | closed | Update README.md for Client | documentation | Add ERD, Wireframes, Technologies, Pictures of App with instructions | 1.0 | Update README.md for Client - Add ERD, Wireframes, Technologies, Pictures of App with instructions | non_priority | update readme md for client add erd wireframes technologies pictures of app with instructions | 0 |

629,763 | 20,052,333,974 | IssuesEvent | 2022-02-03 08:20:12 | Climate-Refugee-Stories/crs-website | https://api.github.com/repos/Climate-Refugee-Stories/crs-website | opened | Netlify: swap account for CMS | low priority | change account to Main owner's account for netlify integration and GH Oauth app. | 1.0 | Netlify: swap account for CMS - change account to Main owner's account for netlify integration and GH Oauth app. | priority | netlify swap account for cms change account to main owner s account for netlify integration and gh oauth app | 1 |

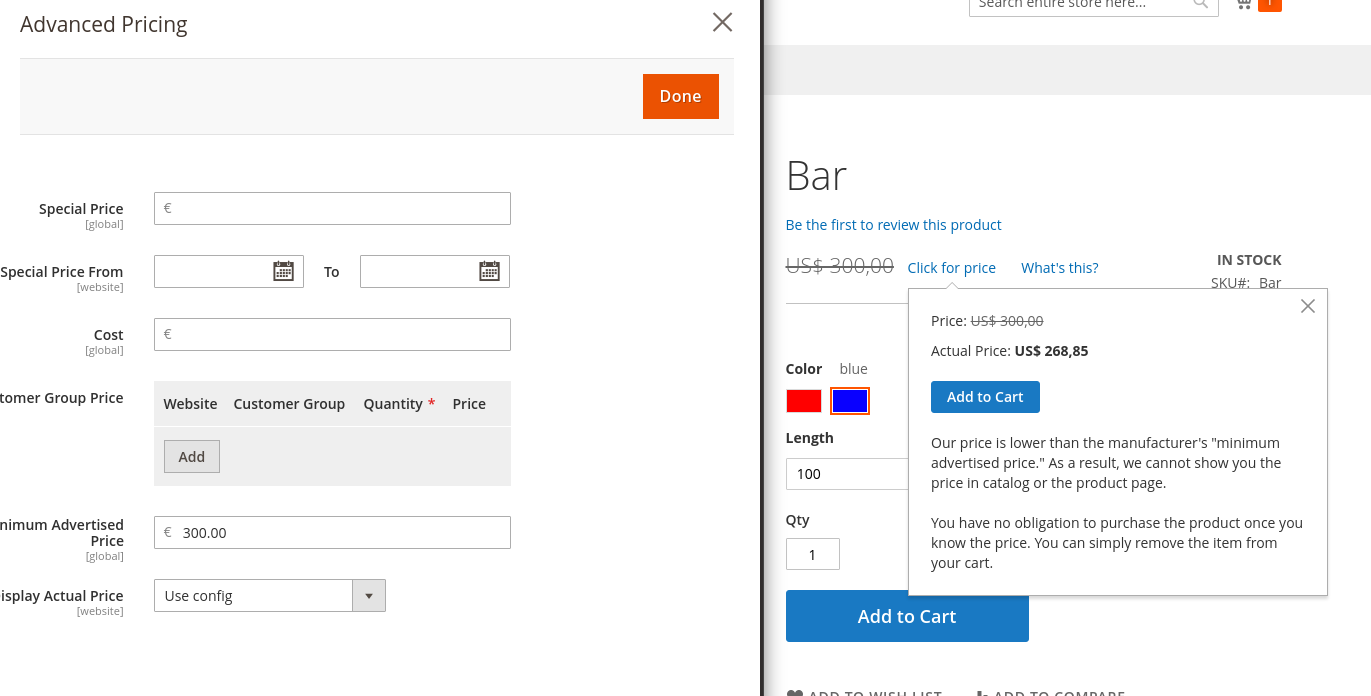

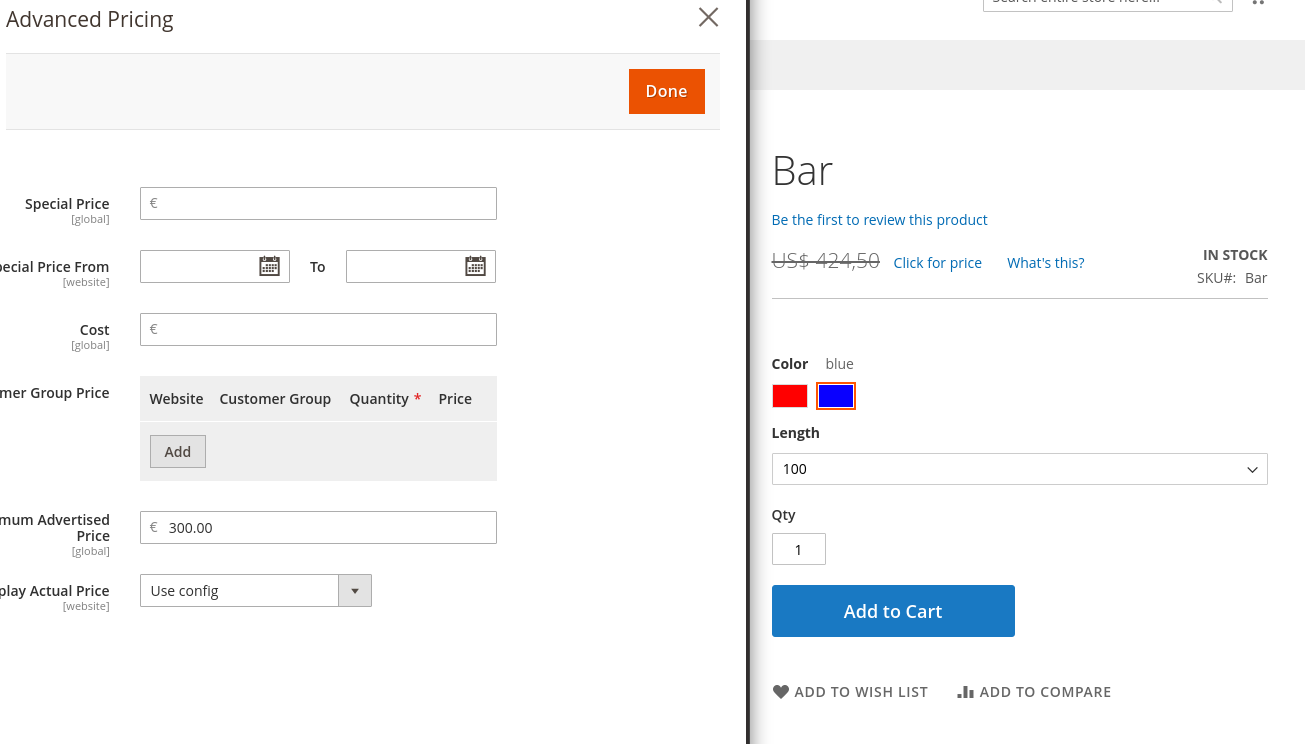

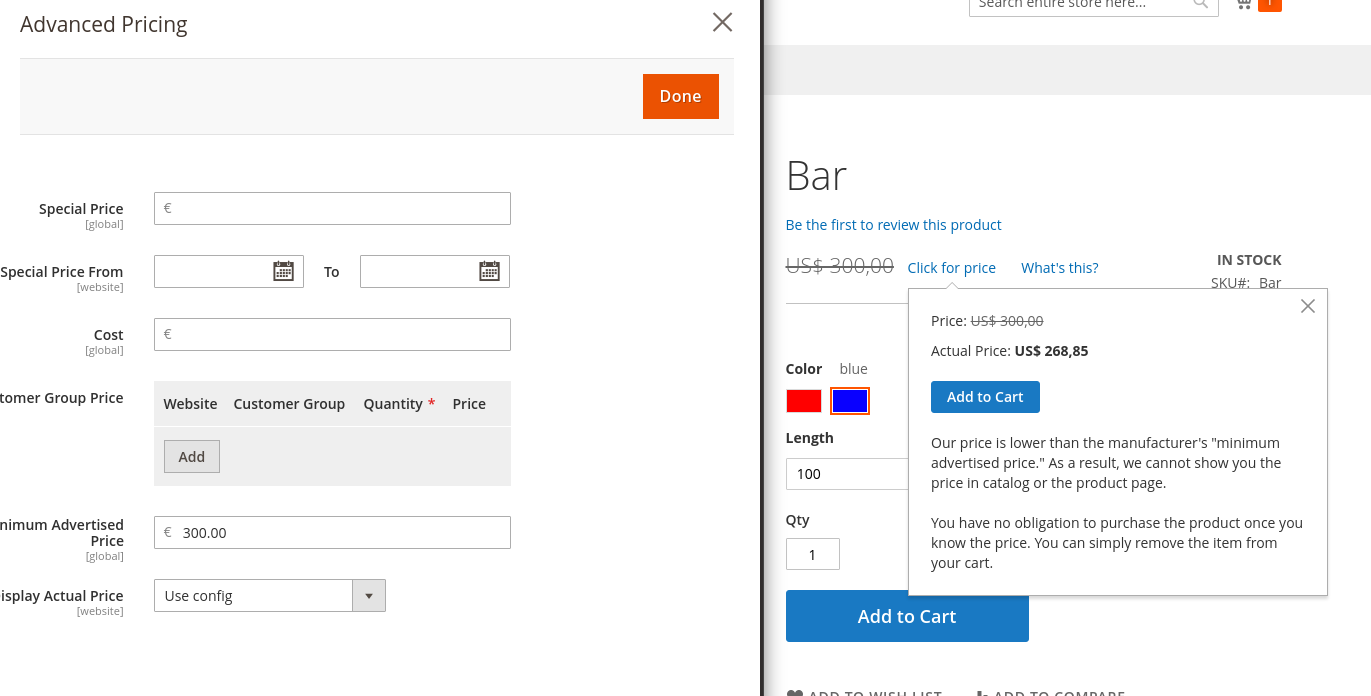

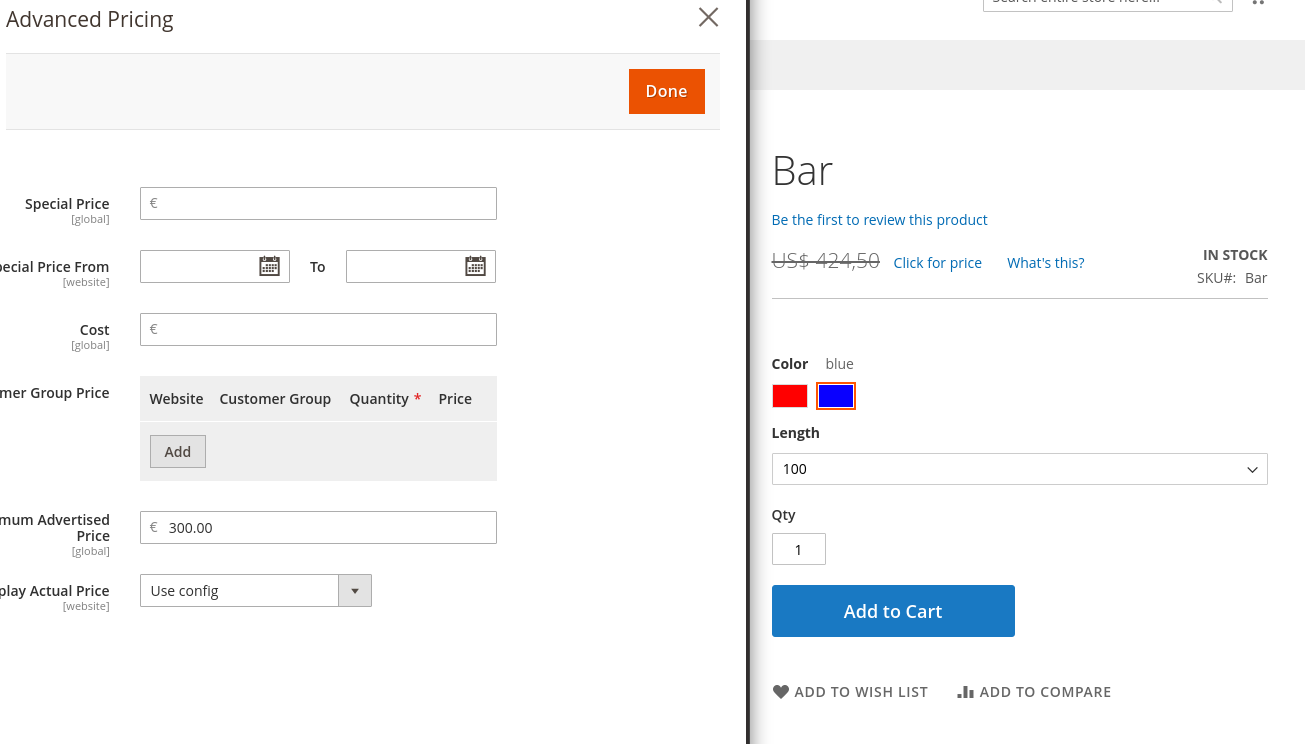

461,066 | 13,222,993,917 | IssuesEvent | 2020-08-17 16:26:18 | magento/magento2 | https://api.github.com/repos/magento/magento2 | opened | [Issue] Convert MSRP currency of configurable product options | Component: ConfigurableProduct Priority: P2 Severity: S2 | This issue is automatically created based on existing pull request: magento/magento2#27446: Convert MSRP currency of configurable product options

---------

### Description

The MSRP of configurable products is updated in Javascript when an option is chosen. Javascript uses an object with prices of the options. The prices in this object are converted to the chosen currency. MSRP prices however were not converted, leading to the price being displayed in a wrong currency.

### Manual testing scenarios

1. Enable MAP (Configuration > Sales > Sales > Minimum Advertised Price > Enable MAP).

2. Enable multiple currencies (Configuration > General > Currency Setup > Allowed Currencies)

3. Create a configurable product with options that have an MSRP

4. Go to the frontend and choose a currency that is not the base currency

5. View the product on the frontend a choose an option.

Expected result: The MSRP is converted to the chosen currency

Actual result: The MSRP is not converted but it is labeled as the chosen currency

Note that the MSRP is configured in euro but displayed in dollars with the euro amount.

After applying the change the MSRP is converted:

Rates in table directory_currency_rate:

| 1.0 | [Issue] Convert MSRP currency of configurable product options - This issue is automatically created based on existing pull request: magento/magento2#27446: Convert MSRP currency of configurable product options

---------

### Description

The MSRP of configurable products is updated in Javascript when an option is chosen. Javascript uses an object with prices of the options. The prices in this object are converted to the chosen currency. MSRP prices however were not converted, leading to the price being displayed in a wrong currency.

### Manual testing scenarios

1. Enable MAP (Configuration > Sales > Sales > Minimum Advertised Price > Enable MAP).

2. Enable multiple currencies (Configuration > General > Currency Setup > Allowed Currencies)

3. Create a configurable product with options that have an MSRP

4. Go to the frontend and choose a currency that is not the base currency

5. View the product on the frontend a choose an option.

Expected result: The MSRP is converted to the chosen currency

Actual result: The MSRP is not converted but it is labeled as the chosen currency

Note that the MSRP is configured in euro but displayed in dollars with the euro amount.

After applying the change the MSRP is converted:

Rates in table directory_currency_rate:

| priority | convert msrp currency of configurable product options this issue is automatically created based on existing pull request magento convert msrp currency of configurable product options description the msrp of configurable products is updated in javascript when an option is chosen javascript uses an object with prices of the options the prices in this object are converted to the chosen currency msrp prices however were not converted leading to the price being displayed in a wrong currency manual testing scenarios enable map configuration sales sales minimum advertised price enable map enable multiple currencies configuration general currency setup allowed currencies create a configurable product with options that have an msrp go to the frontend and choose a currency that is not the base currency view the product on the frontend a choose an option expected result the msrp is converted to the chosen currency actual result the msrp is not converted but it is labeled as the chosen currency note that the msrp is configured in euro but displayed in dollars with the euro amount after applying the change the msrp is converted rates in table directory currency rate | 1 |

7,425 | 9,669,439,696 | IssuesEvent | 2019-05-21 17:22:36 | jenkinsci/configuration-as-code-plugin | https://api.github.com/repos/jenkinsci/configuration-as-code-plugin | closed | provide build_timestamp configuration demo | plugin-compatibility | [jenkins-jira]: https://issues.jenkins-ci.org

[dashboard]: https://issues.jenkins.io/secure/Dashboard.jspa?selectPageId=18341

[contributing]: ../blob/master/docs/CONTRIBUTING.md

[compatibility]: ../blob/master/docs/COMPATIBILITY.md

### Your checklist for this issue

🚨 Please review the [guidelines for contributing][contributing] to this repository.

💡 To better understand plugin compatibility issues, you can [read more here][compatibility]

<!--

Here is a link to get you started with creating the issue over at Jenkins JIRA

https://issues.jenkins-ci.org/secure/CreateIssueDetails!init.jspa?pid=10172&issuetype=1&summary=Cannot+configure+X+plugin+with+JCasC&labels=jcasc-compatibility

-->

- [ ] Create an issue on [issues.jenkins-ci.org][jenkins-jira], set the component to the plugin you are reporting it against

- [ ] Before creating an issue on [Jenkins JIRA][jenkins-jira], check for [an existing one via dashboard][dashboard]

- [ ] Link to [Jenkins JIRA issue][jenkins-jira]

- [ ] Ensure [Jenkins JIRA issue][jenkins-jira] has the label `jcasc-compatibility`

- [ ] Link to plugin's GitHub repository

- [x] Link to Plugin Compatibility Tracker #809

<!--

Put an `x` into the [ ] to show you have filled the information below

Describe your issue below

-->

### Description

Please add the https://wiki.jenkins.io/display/JENKINS/Build+Timestamp+Plugin

to the casc demo and to the plugin itself.

| True | provide build_timestamp configuration demo - [jenkins-jira]: https://issues.jenkins-ci.org

[dashboard]: https://issues.jenkins.io/secure/Dashboard.jspa?selectPageId=18341

[contributing]: ../blob/master/docs/CONTRIBUTING.md

[compatibility]: ../blob/master/docs/COMPATIBILITY.md

### Your checklist for this issue

🚨 Please review the [guidelines for contributing][contributing] to this repository.

💡 To better understand plugin compatibility issues, you can [read more here][compatibility]

<!--

Here is a link to get you started with creating the issue over at Jenkins JIRA

https://issues.jenkins-ci.org/secure/CreateIssueDetails!init.jspa?pid=10172&issuetype=1&summary=Cannot+configure+X+plugin+with+JCasC&labels=jcasc-compatibility

-->

- [ ] Create an issue on [issues.jenkins-ci.org][jenkins-jira], set the component to the plugin you are reporting it against

- [ ] Before creating an issue on [Jenkins JIRA][jenkins-jira], check for [an existing one via dashboard][dashboard]

- [ ] Link to [Jenkins JIRA issue][jenkins-jira]

- [ ] Ensure [Jenkins JIRA issue][jenkins-jira] has the label `jcasc-compatibility`

- [ ] Link to plugin's GitHub repository

- [x] Link to Plugin Compatibility Tracker #809

<!--

Put an `x` into the [ ] to show you have filled the information below

Describe your issue below

-->

### Description

Please add the https://wiki.jenkins.io/display/JENKINS/Build+Timestamp+Plugin

to the casc demo and to the plugin itself.

| non_priority | provide build timestamp configuration demo blob master docs contributing md blob master docs compatibility md your checklist for this issue 🚨 please review the to this repository 💡 to better understand plugin compatibility issues you can here is a link to get you started with creating the issue over at jenkins jira create an issue on set the component to the plugin you are reporting it against before creating an issue on check for link to ensure has the label jcasc compatibility link to plugin s github repository link to plugin compatibility tracker put an x into the to show you have filled the information below describe your issue below description please add the to the casc demo and to the plugin itself | 0 |

20,203 | 15,112,109,359 | IssuesEvent | 2021-02-08 21:21:54 | stripe/vscode-stripe | https://api.github.com/repos/stripe/vscode-stripe | opened | Allow user to easily skip https verification on `listen` command | usability | From customer:

"I use the extension to run the `listen` command, but then I cancel, press ctrl-p to get last command, and add --skip-verify, because I use https:// for my local server. Would be nice if I could tick a box to "skip https verification". See https://github.com/stripe/stripe-cli/issues/108#issuecomment-521806984" | True | Allow user to easily skip https verification on `listen` command - From customer:

"I use the extension to run the `listen` command, but then I cancel, press ctrl-p to get last command, and add --skip-verify, because I use https:// for my local server. Would be nice if I could tick a box to "skip https verification". See https://github.com/stripe/stripe-cli/issues/108#issuecomment-521806984" | non_priority | allow user to easily skip https verification on listen command from customer i use the extension to run the listen command but then i cancel press ctrl p to get last command and add skip verify because i use https for my local server would be nice if i could tick a box to skip https verification see | 0 |

129,070 | 18,070,769,057 | IssuesEvent | 2021-09-21 02:26:43 | JoePep09/WebGoat | https://api.github.com/repos/JoePep09/WebGoat | opened | CVE-2021-3805 (Medium) detected in object-path-0.9.2.tgz | security vulnerability | ## CVE-2021-3805 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>object-path-0.9.2.tgz</b></p></summary>

<p>Access deep properties using a path</p>

<p>Library home page: <a href="https://registry.npmjs.org/object-path/-/object-path-0.9.2.tgz">https://registry.npmjs.org/object-path/-/object-path-0.9.2.tgz</a></p>

<p>Path to dependency file: WebGoat/docs/package.json</p>

<p>Path to vulnerable library: WebGoat/docs/node_modules/object-path/package.json</p>

<p>

Dependency Hierarchy:

- browser-sync-2.26.3.tgz (Root Library)

- eazy-logger-3.0.2.tgz

- tfunk-3.1.0.tgz

- :x: **object-path-0.9.2.tgz** (Vulnerable Library)

<p>Found in base branch: <b>develop</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

object-path is vulnerable to Improperly Controlled Modification of Object Prototype Attributes ('Prototype Pollution')

<p>Publish Date: 2021-09-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-3805>CVE-2021-3805</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://huntr.dev/bounties/571e3baf-7c46-46e3-9003-ba7e4e623053/">https://huntr.dev/bounties/571e3baf-7c46-46e3-9003-ba7e4e623053/</a></p>

<p>Release Date: 2021-09-17</p>

<p>Fix Resolution: object-path - 0.11.8</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-3805 (Medium) detected in object-path-0.9.2.tgz - ## CVE-2021-3805 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>object-path-0.9.2.tgz</b></p></summary>

<p>Access deep properties using a path</p>

<p>Library home page: <a href="https://registry.npmjs.org/object-path/-/object-path-0.9.2.tgz">https://registry.npmjs.org/object-path/-/object-path-0.9.2.tgz</a></p>

<p>Path to dependency file: WebGoat/docs/package.json</p>

<p>Path to vulnerable library: WebGoat/docs/node_modules/object-path/package.json</p>

<p>

Dependency Hierarchy:

- browser-sync-2.26.3.tgz (Root Library)

- eazy-logger-3.0.2.tgz

- tfunk-3.1.0.tgz

- :x: **object-path-0.9.2.tgz** (Vulnerable Library)

<p>Found in base branch: <b>develop</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

object-path is vulnerable to Improperly Controlled Modification of Object Prototype Attributes ('Prototype Pollution')

<p>Publish Date: 2021-09-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-3805>CVE-2021-3805</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://huntr.dev/bounties/571e3baf-7c46-46e3-9003-ba7e4e623053/">https://huntr.dev/bounties/571e3baf-7c46-46e3-9003-ba7e4e623053/</a></p>

<p>Release Date: 2021-09-17</p>

<p>Fix Resolution: object-path - 0.11.8</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_priority | cve medium detected in object path tgz cve medium severity vulnerability vulnerable library object path tgz access deep properties using a path library home page a href path to dependency file webgoat docs package json path to vulnerable library webgoat docs node modules object path package json dependency hierarchy browser sync tgz root library eazy logger tgz tfunk tgz x object path tgz vulnerable library found in base branch develop vulnerability details object path is vulnerable to improperly controlled modification of object prototype attributes prototype pollution publish date url a href cvss score details base score metrics exploitability metrics attack vector n a attack complexity n a privileges required n a user interaction n a scope n a impact metrics confidentiality impact n a integrity impact n a availability impact n a for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution object path step up your open source security game with whitesource | 0 |

59,807 | 24,879,034,168 | IssuesEvent | 2022-10-27 22:09:09 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | "Authenticate the app" section tells you to follow principle of least privilege, but doesn't do that itself | service-bus-messaging/svc triaged assigned-to-author docs-experience Pri1 | In the "Authenticate the app to Azure" section, in the "passwordless" alternative, it says

"The following example assigns the Azure Service Bus Data Owner role to your user account, which provides full access to Azure Service Bus resources. In a real scenario, follow the [Principle of Least Privilege](https://learn.microsoft.com/en-us/azure/active-directory/develop/secure-least-privileged-access)"

... so why doesn't it just say what the minimum things you need are? At the moment, this means it's making life harder for the people who are trying to do things properly: they will all have to do further searches to find the answer (good luck with that on a topic that you're just learning). And it's worth saying that I don't have permission to grant permissions on our Azure, so I can't even go and look what's in there. Which makes it very hard to ask the administrator to grant me permissions that I don't know.

I think I've found it here: https://learn.microsoft.com/en-us/azure/service-bus-messaging/service-bus-sas#rights-required-for-service-bus-operations

and it looks like I need "Send" to send messages and "Listen" to receive them; and "Manage" if I wanted to do admin-like things.

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 443c9051-c55e-1202-7b75-ce86f6977017

* Version Independent ID: 677b6375-196f-fed8-7300-b9823c1031d5

* Content: [Get started with Azure Service Bus topics (.NET) - Azure Service Bus](https://learn.microsoft.com/en-us/azure/service-bus-messaging/service-bus-dotnet-how-to-use-topics-subscriptions?tabs=passwordless%2Croles-azure-portal%2Csign-in-azure-cli%2Cidentity-visual-studio#authenticate-the-app-to-azure)

* Content Source: [articles/service-bus-messaging/service-bus-dotnet-how-to-use-topics-subscriptions.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/service-bus-messaging/service-bus-dotnet-how-to-use-topics-subscriptions.md)

* Service: **service-bus-messaging**

* GitHub Login: @spelluru

* Microsoft Alias: **spelluru** | 1.0 | "Authenticate the app" section tells you to follow principle of least privilege, but doesn't do that itself - In the "Authenticate the app to Azure" section, in the "passwordless" alternative, it says

"The following example assigns the Azure Service Bus Data Owner role to your user account, which provides full access to Azure Service Bus resources. In a real scenario, follow the [Principle of Least Privilege](https://learn.microsoft.com/en-us/azure/active-directory/develop/secure-least-privileged-access)"

... so why doesn't it just say what the minimum things you need are? At the moment, this means it's making life harder for the people who are trying to do things properly: they will all have to do further searches to find the answer (good luck with that on a topic that you're just learning). And it's worth saying that I don't have permission to grant permissions on our Azure, so I can't even go and look what's in there. Which makes it very hard to ask the administrator to grant me permissions that I don't know.

I think I've found it here: https://learn.microsoft.com/en-us/azure/service-bus-messaging/service-bus-sas#rights-required-for-service-bus-operations

and it looks like I need "Send" to send messages and "Listen" to receive them; and "Manage" if I wanted to do admin-like things.

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 443c9051-c55e-1202-7b75-ce86f6977017

* Version Independent ID: 677b6375-196f-fed8-7300-b9823c1031d5

* Content: [Get started with Azure Service Bus topics (.NET) - Azure Service Bus](https://learn.microsoft.com/en-us/azure/service-bus-messaging/service-bus-dotnet-how-to-use-topics-subscriptions?tabs=passwordless%2Croles-azure-portal%2Csign-in-azure-cli%2Cidentity-visual-studio#authenticate-the-app-to-azure)

* Content Source: [articles/service-bus-messaging/service-bus-dotnet-how-to-use-topics-subscriptions.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/service-bus-messaging/service-bus-dotnet-how-to-use-topics-subscriptions.md)

* Service: **service-bus-messaging**

* GitHub Login: @spelluru

* Microsoft Alias: **spelluru** | non_priority | authenticate the app section tells you to follow principle of least privilege but doesn t do that itself in the authenticate the app to azure section in the passwordless alternative it says the following example assigns the azure service bus data owner role to your user account which provides full access to azure service bus resources in a real scenario follow the so why doesn t it just say what the minimum things you need are at the moment this means it s making life harder for the people who are trying to do things properly they will all have to do further searches to find the answer good luck with that on a topic that you re just learning and it s worth saying that i don t have permission to grant permissions on our azure so i can t even go and look what s in there which makes it very hard to ask the administrator to grant me permissions that i don t know i think i ve found it here and it looks like i need send to send messages and listen to receive them and manage if i wanted to do admin like things document details ⚠ do not edit this section it is required for learn microsoft com ➟ github issue linking id version independent id content content source service service bus messaging github login spelluru microsoft alias spelluru | 0 |

349,797 | 31,831,810,835 | IssuesEvent | 2023-09-14 11:05:33 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachprod: azure.List should retry on "ExpiredAuthenticationToken" | C-bug X-stale A-roachprod no-issue-activity T-testeng O-cloudreport | Azure provider currently uses CLI Authorizer (see `auth.NewAuthorizerFromCLI`) which essentially executes `az account get-access-token`. The returned auth token can expire after 5 minutes according to official doc. [1],

> The token will be valid for at least 5 minutes with the maximum at 60 minutes. If the subscription argument isn't specified, the current account is used.

Below, we see that VMs took longer than 5 minutes to start (see `SyncedCluster.Wait`). Subsequently, `cleanupFailedCreate` is invoked which in turn invokes `azure.List`. The latter fails because the auth token has already expired. Consequently, `DestroyCluster` is skipped, leaving the resources dangling.

```

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0001 10.1.0.28 10.1.0.28 20.55.66.124

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0002 10.1.0.29 10.1.0.29 20.55.66.159

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0003 10.1.0.31 10.1.0.31 20.55.66.178

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0004 10.1.0.30 10.1.0.30 20.55.66.160

stan-cldrprt23-Standard-E32s-v5-3228080358-125-1: waiting for nodes to start...

08:18:29 cluster_synced.go:775: 3: timed out after 5m

Cleaning up partially-created cluster (prev err: not all nodes booted successfully)

Error while cleaning up partially-created cluster: compute.VirtualMachinesClient#ListAll: Failure responding to request: StatusCode=401 -- Original Error: autorest/azure: Service returned an error. Status=401 Code="ExpiredAuthenticationToken" Message="The access token expiry UTC time '2/20/2022 8:14:57 AM' is earlier than current UTC time '2/20/2022 8:18:29 AM'."

Error: UNCLASSIFIED_PROBLEM: not all nodes booted successfully

(1) UNCLASSIFIED_PROBLEM

Wraps: (2) attached stack trace

-- stack trace:

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).Wait

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/roachprod/install/cluster_synced.go:780

| github.com/cockroachdb/cockroach/pkg/roachprod.SetupSSH

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/roachprod/roachprod.go:587

| github.com/cockroachdb/cockroach/pkg/roachprod.Create

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/roachprod/roachprod.go:1247

| main.glob..func1

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachprod/main.go:129

| main.wrap.func1

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachprod/main.go:68

| github.com/spf13/cobra.(*Command).execute

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/vendor/github.com/spf13/cobra/command.go:860

| github.com/spf13/cobra.(*Command).ExecuteC

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/vendor/github.com/spf13/cobra/command.go:974

| github.com/spf13/cobra.(*Command).Execute

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/vendor/github.com/spf13/cobra/command.go:902

| main.main

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachprod/main.go:988

| runtime.main

| /usr/local/go/src/runtime/proc.go:255

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1581

Wraps: (3) not all nodes booted successfully

```

[1] https://docs.microsoft.com/en-us/cli/azure/account?view=azure-cli-latest

Epic: CRDB-10428

Jira issue: CRDB-13679 | 1.0 | roachprod: azure.List should retry on "ExpiredAuthenticationToken" - Azure provider currently uses CLI Authorizer (see `auth.NewAuthorizerFromCLI`) which essentially executes `az account get-access-token`. The returned auth token can expire after 5 minutes according to official doc. [1],

> The token will be valid for at least 5 minutes with the maximum at 60 minutes. If the subscription argument isn't specified, the current account is used.

Below, we see that VMs took longer than 5 minutes to start (see `SyncedCluster.Wait`). Subsequently, `cleanupFailedCreate` is invoked which in turn invokes `azure.List`. The latter fails because the auth token has already expired. Consequently, `DestroyCluster` is skipped, leaving the resources dangling.

```

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0001 10.1.0.28 10.1.0.28 20.55.66.124

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0002 10.1.0.29 10.1.0.29 20.55.66.159

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0003 10.1.0.31 10.1.0.31 20.55.66.178

07:23:24 cluster_cloud.go:155: stan-cldrprt23-Standard-E32s-v5-3228080358-125-1-0004 10.1.0.30 10.1.0.30 20.55.66.160

stan-cldrprt23-Standard-E32s-v5-3228080358-125-1: waiting for nodes to start...

08:18:29 cluster_synced.go:775: 3: timed out after 5m

Cleaning up partially-created cluster (prev err: not all nodes booted successfully)

Error while cleaning up partially-created cluster: compute.VirtualMachinesClient#ListAll: Failure responding to request: StatusCode=401 -- Original Error: autorest/azure: Service returned an error. Status=401 Code="ExpiredAuthenticationToken" Message="The access token expiry UTC time '2/20/2022 8:14:57 AM' is earlier than current UTC time '2/20/2022 8:18:29 AM'."

Error: UNCLASSIFIED_PROBLEM: not all nodes booted successfully

(1) UNCLASSIFIED_PROBLEM

Wraps: (2) attached stack trace

-- stack trace:

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).Wait

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/roachprod/install/cluster_synced.go:780

| github.com/cockroachdb/cockroach/pkg/roachprod.SetupSSH

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/roachprod/roachprod.go:587

| github.com/cockroachdb/cockroach/pkg/roachprod.Create

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/roachprod/roachprod.go:1247

| main.glob..func1

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachprod/main.go:129

| main.wrap.func1

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachprod/main.go:68

| github.com/spf13/cobra.(*Command).execute

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/vendor/github.com/spf13/cobra/command.go:860

| github.com/spf13/cobra.(*Command).ExecuteC

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/vendor/github.com/spf13/cobra/command.go:974

| github.com/spf13/cobra.(*Command).Execute

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/vendor/github.com/spf13/cobra/command.go:902

| main.main

| /home/srosenberg/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachprod/main.go:988

| runtime.main

| /usr/local/go/src/runtime/proc.go:255

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1581

Wraps: (3) not all nodes booted successfully

```

[1] https://docs.microsoft.com/en-us/cli/azure/account?view=azure-cli-latest

Epic: CRDB-10428

Jira issue: CRDB-13679 | non_priority | roachprod azure list should retry on expiredauthenticationtoken azure provider currently uses cli authorizer see auth newauthorizerfromcli which essentially executes az account get access token the returned auth token can expire after minutes according to official doc the token will be valid for at least minutes with the maximum at minutes if the subscription argument isn t specified the current account is used below we see that vms took longer than minutes to start see syncedcluster wait subsequently cleanupfailedcreate is invoked which in turn invokes azure list the latter fails because the auth token has already expired consequently destroycluster is skipped leaving the resources dangling cluster cloud go stan standard cluster cloud go stan standard cluster cloud go stan standard cluster cloud go stan standard stan standard waiting for nodes to start cluster synced go timed out after cleaning up partially created cluster prev err not all nodes booted successfully error while cleaning up partially created cluster compute virtualmachinesclient listall failure responding to request statuscode original error autorest azure service returned an error status code expiredauthenticationtoken message the access token expiry utc time am is earlier than current utc time am error unclassified problem not all nodes booted successfully unclassified problem wraps attached stack trace stack trace github com cockroachdb cockroach pkg roachprod install syncedcluster wait home srosenberg go src github com cockroachdb cockroach pkg roachprod install cluster synced go github com cockroachdb cockroach pkg roachprod setupssh home srosenberg go src github com cockroachdb cockroach pkg roachprod roachprod go github com cockroachdb cockroach pkg roachprod create home srosenberg go src github com cockroachdb cockroach pkg roachprod roachprod go main glob home srosenberg go src github com cockroachdb cockroach pkg cmd roachprod main go main wrap home srosenberg go src github com cockroachdb cockroach pkg cmd roachprod main go github com cobra command execute home srosenberg go src github com cockroachdb cockroach vendor github com cobra command go github com cobra command executec home srosenberg go src github com cockroachdb cockroach vendor github com cobra command go github com cobra command execute home srosenberg go src github com cockroachdb cockroach vendor github com cobra command go main main home srosenberg go src github com cockroachdb cockroach pkg cmd roachprod main go runtime main usr local go src runtime proc go runtime goexit usr local go src runtime asm s wraps not all nodes booted successfully epic crdb jira issue crdb | 0 |

49,967 | 26,410,678,994 | IssuesEvent | 2023-01-13 11:58:11 | conda-forge/status | https://api.github.com/repos/conda-forge/status | closed | Feedstock uploads need token reset | degraded performance | Our admin migrations bots lost a significant number of feedstock tokens, disabling uploads of feedstock builds. If your feedstock is affected, please send a request for a token reset to <a href="https://github.com/conda-forge/admin-requests">conda-forge/admin-requests</a>. We are working to reset the tokens ourselves but do not know when this will be complete. | True | Feedstock uploads need token reset - Our admin migrations bots lost a significant number of feedstock tokens, disabling uploads of feedstock builds. If your feedstock is affected, please send a request for a token reset to <a href="https://github.com/conda-forge/admin-requests">conda-forge/admin-requests</a>. We are working to reset the tokens ourselves but do not know when this will be complete. | non_priority | feedstock uploads need token reset our admin migrations bots lost a significant number of feedstock tokens disabling uploads of feedstock builds if your feedstock is affected please send a request for a token reset to a href we are working to reset the tokens ourselves but do not know when this will be complete | 0 |

212,284 | 16,437,892,826 | IssuesEvent | 2021-05-20 11:21:44 | ethersphere/bee | https://api.github.com/repos/ethersphere/bee | closed | TestDelivery is flaking | flaky-test | ```

ok github.com/ethersphere/bee/pkg/resolver/multiresolver/multierror 0.030s

=== RUN TestDelivery

##[error] retrieval_test.go:133: unexpected balance on server. want 10 got 0

--- FAIL: TestDelivery (0.00s)

FAIL

```

from the CI | 1.0 | TestDelivery is flaking - ```

ok github.com/ethersphere/bee/pkg/resolver/multiresolver/multierror 0.030s

=== RUN TestDelivery

##[error] retrieval_test.go:133: unexpected balance on server. want 10 got 0

--- FAIL: TestDelivery (0.00s)

FAIL

```

from the CI | non_priority | testdelivery is flaking ok github com ethersphere bee pkg resolver multiresolver multierror run testdelivery retrieval test go unexpected balance on server want got fail testdelivery fail from the ci | 0 |

180,748 | 6,652,990,981 | IssuesEvent | 2017-09-29 05:58:31 | ocf/slackbridge | https://api.github.com/repos/ocf/slackbridge | closed | Make IRC bots show as away when the Slack user is inactive | enhancement medium-priority | Using [this API method](https://api.slack.com/events/presence_change), it could probably be done. | 1.0 | Make IRC bots show as away when the Slack user is inactive - Using [this API method](https://api.slack.com/events/presence_change), it could probably be done. | priority | make irc bots show as away when the slack user is inactive using it could probably be done | 1 |

257,096 | 27,561,768,595 | IssuesEvent | 2023-03-07 22:45:10 | samqws-marketing/walmartlabs-concord | https://api.github.com/repos/samqws-marketing/walmartlabs-concord | closed | CVE-2022-24785 (High) detected in moment-2.24.0.tgz - autoclosed | security vulnerability | ## CVE-2022-24785 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>moment-2.24.0.tgz</b></p></summary>

<p>Parse, validate, manipulate, and display dates</p>

<p>Library home page: <a href="https://registry.npmjs.org/moment/-/moment-2.24.0.tgz">https://registry.npmjs.org/moment/-/moment-2.24.0.tgz</a></p>

<p>Path to dependency file: /console2/package.json</p>

<p>Path to vulnerable library: /console2/node_modules/moment/package.json</p>

<p>

Dependency Hierarchy:

- semantic-ui-calendar-react-0.15.3.tgz (Root Library)

- :x: **moment-2.24.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/samqws-marketing/walmartlabs-concord/commit/b9420f3b9e73a9d381266ece72f7afb756f35a76">b9420f3b9e73a9d381266ece72f7afb756f35a76</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Moment.js is a JavaScript date library for parsing, validating, manipulating, and formatting dates. A path traversal vulnerability impacts npm (server) users of Moment.js between versions 1.0.1 and 2.29.1, especially if a user-provided locale string is directly used to switch moment locale. This problem is patched in 2.29.2, and the patch can be applied to all affected versions. As a workaround, sanitize the user-provided locale name before passing it to Moment.js.

<p>Publish Date: 2022-04-04

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-24785>CVE-2022-24785</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/moment/moment/security/advisories/GHSA-8hfj-j24r-96c4">https://github.com/moment/moment/security/advisories/GHSA-8hfj-j24r-96c4</a></p>

<p>Release Date: 2022-04-04</p>

<p>Fix Resolution: moment - 2.29.2</p>

</p>

</details>

<p></p>

| True | CVE-2022-24785 (High) detected in moment-2.24.0.tgz - autoclosed - ## CVE-2022-24785 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>moment-2.24.0.tgz</b></p></summary>

<p>Parse, validate, manipulate, and display dates</p>

<p>Library home page: <a href="https://registry.npmjs.org/moment/-/moment-2.24.0.tgz">https://registry.npmjs.org/moment/-/moment-2.24.0.tgz</a></p>

<p>Path to dependency file: /console2/package.json</p>

<p>Path to vulnerable library: /console2/node_modules/moment/package.json</p>

<p>

Dependency Hierarchy:

- semantic-ui-calendar-react-0.15.3.tgz (Root Library)

- :x: **moment-2.24.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/samqws-marketing/walmartlabs-concord/commit/b9420f3b9e73a9d381266ece72f7afb756f35a76">b9420f3b9e73a9d381266ece72f7afb756f35a76</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Moment.js is a JavaScript date library for parsing, validating, manipulating, and formatting dates. A path traversal vulnerability impacts npm (server) users of Moment.js between versions 1.0.1 and 2.29.1, especially if a user-provided locale string is directly used to switch moment locale. This problem is patched in 2.29.2, and the patch can be applied to all affected versions. As a workaround, sanitize the user-provided locale name before passing it to Moment.js.

<p>Publish Date: 2022-04-04

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-24785>CVE-2022-24785</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/moment/moment/security/advisories/GHSA-8hfj-j24r-96c4">https://github.com/moment/moment/security/advisories/GHSA-8hfj-j24r-96c4</a></p>

<p>Release Date: 2022-04-04</p>

<p>Fix Resolution: moment - 2.29.2</p>

</p>

</details>

<p></p>

| non_priority | cve high detected in moment tgz autoclosed cve high severity vulnerability vulnerable library moment tgz parse validate manipulate and display dates library home page a href path to dependency file package json path to vulnerable library node modules moment package json dependency hierarchy semantic ui calendar react tgz root library x moment tgz vulnerable library found in head commit a href found in base branch master vulnerability details moment js is a javascript date library for parsing validating manipulating and formatting dates a path traversal vulnerability impacts npm server users of moment js between versions and especially if a user provided locale string is directly used to switch moment locale this problem is patched in and the patch can be applied to all affected versions as a workaround sanitize the user provided locale name before passing it to moment js publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution moment | 0 |

830,663 | 32,020,446,121 | IssuesEvent | 2023-09-22 03:46:17 | dimensionhq/infralink | https://api.github.com/repos/dimensionhq/infralink | reopened | `deploy` Command | todo high-priority | This deploys the stack required to host your application using the previously generated terraform configuration.

This step is also responsible for uploading the required Docker images that were previously generated and hosting it on the cloud provider of choice. | 1.0 | `deploy` Command - This deploys the stack required to host your application using the previously generated terraform configuration.

This step is also responsible for uploading the required Docker images that were previously generated and hosting it on the cloud provider of choice. | priority | deploy command this deploys the stack required to host your application using the previously generated terraform configuration this step is also responsible for uploading the required docker images that were previously generated and hosting it on the cloud provider of choice | 1 |

86,397 | 3,711,498,949 | IssuesEvent | 2016-03-02 10:35:16 | CS2103jan2016-w13-3j/main | https://api.github.com/repos/CS2103jan2016-w13-3j/main | opened | Storage unit to store the completed tasks into a separate file | priority.medium type.enhancement | ...so that the file reading process speeds up. | 1.0 | Storage unit to store the completed tasks into a separate file - ...so that the file reading process speeds up. | priority | storage unit to store the completed tasks into a separate file so that the file reading process speeds up | 1 |

295,145 | 25,457,934,428 | IssuesEvent | 2022-11-24 15:41:52 | Jamesflynn1/CS344-Opponent-Modelling-Poker | https://api.github.com/repos/Jamesflynn1/CS344-Opponent-Modelling-Poker | closed | Define what game exactly is being used for testing | Project Management Research Testing | What Poker ruleset? How many players? How many rounds? What kind of opponents?

### ANSWER

What I am doing:

Limit Texas Hold 'em (stretch goal would be the full NL HE but this is almost certainly intractable on DCS machines and 2 days of compute time).

2 player, this is the minimum number of players that we can chose, increasing this number would again add alot of complexity to the project. I have resources limited to the DCS batch compute system.

Computer opponents, this will allow for efficient learning and circuments potential ethical issues and admin issues in administering such tests. See #17 for more details. | 1.0 | Define what game exactly is being used for testing - What Poker ruleset? How many players? How many rounds? What kind of opponents?

### ANSWER

What I am doing:

Limit Texas Hold 'em (stretch goal would be the full NL HE but this is almost certainly intractable on DCS machines and 2 days of compute time).

2 player, this is the minimum number of players that we can chose, increasing this number would again add alot of complexity to the project. I have resources limited to the DCS batch compute system.

Computer opponents, this will allow for efficient learning and circuments potential ethical issues and admin issues in administering such tests. See #17 for more details. | non_priority | define what game exactly is being used for testing what poker ruleset how many players how many rounds what kind of opponents answer what i am doing limit texas hold em stretch goal would be the full nl he but this is almost certainly intractable on dcs machines and days of compute time player this is the minimum number of players that we can chose increasing this number would again add alot of complexity to the project i have resources limited to the dcs batch compute system computer opponents this will allow for efficient learning and circuments potential ethical issues and admin issues in administering such tests see for more details | 0 |

648,891 | 21,212,551,733 | IssuesEvent | 2022-04-11 01:51:32 | EeeeG-Inc/OKR-web-app | https://api.github.com/repos/EeeeG-Inc/OKR-web-app | opened | アプリ名称を設定画面で決められるようにする | priority: low | <img width="1248" alt="スクリーンショット 2022-04-11 10 49 57" src="https://user-images.githubusercontent.com/27577954/162651894-c639a25a-4fd5-44f9-92fd-42379c161ea8.png">

現状 `.env` の値を参照しているので、アプリ的には設定画面から参照できたほうがいい

| 1.0 | アプリ名称を設定画面で決められるようにする - <img width="1248" alt="スクリーンショット 2022-04-11 10 49 57" src="https://user-images.githubusercontent.com/27577954/162651894-c639a25a-4fd5-44f9-92fd-42379c161ea8.png">

現状 `.env` の値を参照しているので、アプリ的には設定画面から参照できたほうがいい

| priority | アプリ名称を設定画面で決められるようにする img width alt スクリーンショット src 現状 env の値を参照しているので、アプリ的には設定画面から参照できたほうがいい | 1 |

673,184 | 22,951,599,253 | IssuesEvent | 2022-07-19 07:56:35 | celo-org/celo-monorepo | https://api.github.com/repos/celo-org/celo-monorepo | closed | [Protocol Economics] Cap on cUSD CP-DOTO tank size | economics Priority: P2 stablecoin-issuance | ### Expected Behavior

As a safeguard against oracle manipulation or other issues related to an incorrect cUSD/CELO exchange rate, there should be a cap on the cUSD tank size. The rationale is that if other safeguards fail, the impact is limited

### Current Behavior

There is no cap.

### Definition of Done

- We have a design doc detailing why the cap is necessary and how we come up with a value for it

- Cap is implemented and live on mainnet.

| 1.0 | [Protocol Economics] Cap on cUSD CP-DOTO tank size - ### Expected Behavior

As a safeguard against oracle manipulation or other issues related to an incorrect cUSD/CELO exchange rate, there should be a cap on the cUSD tank size. The rationale is that if other safeguards fail, the impact is limited

### Current Behavior

There is no cap.

### Definition of Done

- We have a design doc detailing why the cap is necessary and how we come up with a value for it

- Cap is implemented and live on mainnet.

| priority | cap on cusd cp doto tank size expected behavior as a safeguard against oracle manipulation or other issues related to an incorrect cusd celo exchange rate there should be a cap on the cusd tank size the rationale is that if other safeguards fail the impact is limited current behavior there is no cap definition of done we have a design doc detailing why the cap is necessary and how we come up with a value for it cap is implemented and live on mainnet | 1 |

148,117 | 11,838,976,340 | IssuesEvent | 2020-03-23 16:26:45 | istio/istio | https://api.github.com/repos/istio/istio | closed | StartupProbe's are removed from Deployments | area/config area/test and release area/user experience lifecycle/needs-triage | **Bug description**

When creating a Deployment in Kubernetes with a StartupProbe included the (as I believe) sidecar injector service which rewrites the readiness/liveness probes will remove the StartupProbe. I think this issue is related to https://github.com/istio/istio/issues/19324.

**Expected behavior**

StartupProbes are able to be set against a Deployment

**Steps to reproduce the bug**

Create a Deployment with a StartupProbe set, check the created Deployment and notice the StartupProbe is no longer then.

**Version (include the output of `istioctl version --remote` and `kubectl version` and `helm version` if you used Helm)**

```

> istioctl version --remote

client version: 1.4.3

citadel version: 1.4.3

citadel version: 1.4.3

citadel version: 1.4.3

galley version: 1.4.3

galley version: 1.4.3

galley version: 1.4.3

ingressgateway version: 1.4.3

ingressgateway version: 1.4.3

ingressgateway version: 1.4.3

nodeagent version:

nodeagent version:

nodeagent version:

pilot version: 1.4.3

pilot version: 1.4.3

pilot version: 1.4.3

policy version: 1.4.3

policy version: 1.4.3

policy version: 1.4.3

sidecar-injector version: 1.4.3

sidecar-injector version: 1.4.3

sidecar-injector version: 1.4.3

telemetry version: 1.4.3

telemetry version: 1.4.3

telemetry version: 1.4.3

data plane version: 1.4.3 (32 proxies)

```

**How was Istio installed?**

Legacy shipped helm charts inside the istio/istio repository

**Environment where bug was observed (cloud vendor, OS, etc)**

Kubernetes v1.17.0 running on CoreOS virtualised inside VMWare | 1.0 | StartupProbe's are removed from Deployments - **Bug description**

When creating a Deployment in Kubernetes with a StartupProbe included the (as I believe) sidecar injector service which rewrites the readiness/liveness probes will remove the StartupProbe. I think this issue is related to https://github.com/istio/istio/issues/19324.

**Expected behavior**

StartupProbes are able to be set against a Deployment

**Steps to reproduce the bug**

Create a Deployment with a StartupProbe set, check the created Deployment and notice the StartupProbe is no longer then.

**Version (include the output of `istioctl version --remote` and `kubectl version` and `helm version` if you used Helm)**

```

> istioctl version --remote

client version: 1.4.3

citadel version: 1.4.3

citadel version: 1.4.3

citadel version: 1.4.3

galley version: 1.4.3

galley version: 1.4.3

galley version: 1.4.3

ingressgateway version: 1.4.3

ingressgateway version: 1.4.3

ingressgateway version: 1.4.3

nodeagent version:

nodeagent version:

nodeagent version:

pilot version: 1.4.3

pilot version: 1.4.3

pilot version: 1.4.3

policy version: 1.4.3

policy version: 1.4.3

policy version: 1.4.3

sidecar-injector version: 1.4.3

sidecar-injector version: 1.4.3

sidecar-injector version: 1.4.3

telemetry version: 1.4.3

telemetry version: 1.4.3

telemetry version: 1.4.3

data plane version: 1.4.3 (32 proxies)

```

**How was Istio installed?**

Legacy shipped helm charts inside the istio/istio repository

**Environment where bug was observed (cloud vendor, OS, etc)**

Kubernetes v1.17.0 running on CoreOS virtualised inside VMWare | non_priority | startupprobe s are removed from deployments bug description when creating a deployment in kubernetes with a startupprobe included the as i believe sidecar injector service which rewrites the readiness liveness probes will remove the startupprobe i think this issue is related to expected behavior startupprobes are able to be set against a deployment steps to reproduce the bug create a deployment with a startupprobe set check the created deployment and notice the startupprobe is no longer then version include the output of istioctl version remote and kubectl version and helm version if you used helm istioctl version remote client version citadel version citadel version citadel version galley version galley version galley version ingressgateway version ingressgateway version ingressgateway version nodeagent version nodeagent version nodeagent version pilot version pilot version pilot version policy version policy version policy version sidecar injector version sidecar injector version sidecar injector version telemetry version telemetry version telemetry version data plane version proxies how was istio installed legacy shipped helm charts inside the istio istio repository environment where bug was observed cloud vendor os etc kubernetes running on coreos virtualised inside vmware | 0 |

377,132 | 11,164,581,225 | IssuesEvent | 2019-12-27 05:45:22 | space-wizards/RobustToolbox | https://api.github.com/repos/space-wizards/RobustToolbox | closed | Transforms should be linked to their grid | Area: Entities Priority: 2-medium Type: Refactor | Transforms should be made relative to a grid when a transform is located on that grid, and when a transform moves off that grid it should switch to using world space instead.

What that allows: Grids themselves become able to move without affecting the transform of anything on the grid itself. | 1.0 | Transforms should be linked to their grid - Transforms should be made relative to a grid when a transform is located on that grid, and when a transform moves off that grid it should switch to using world space instead.

What that allows: Grids themselves become able to move without affecting the transform of anything on the grid itself. | priority | transforms should be linked to their grid transforms should be made relative to a grid when a transform is located on that grid and when a transform moves off that grid it should switch to using world space instead what that allows grids themselves become able to move without affecting the transform of anything on the grid itself | 1 |

15,204 | 19,278,265,956 | IssuesEvent | 2021-12-10 14:24:03 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Upgrading from 20.3 to 21.3: Partition key cannot contain non-deterministic functions, but contains function now | backward compatibility | **Describe the issue**

When non-deterministic function is used in 20.3, upgrade to 21.3 can't be performed

**How to reproduce**

* When upgrading from 20.3.21.2 to 21.3.18.4

* Interface: clickhouse-client

* No unusual settings (used config.xml and users.xml from [20.3](https://github.com/ClickHouse/ClickHouse/tree/20.3/programs/server) without any other files)

Do this on 20.3:

* `CREATE TABLE test_coalescence (id Int, name String) Engine=MergeTree() ORDER BY id PARTITION BY toDate(coalesce(id, now()));

`

* `INSERT INTO test_coalescence VALUES (5, 'hello')`

Upgrade to 21.3, start server and select:

* `INSERT INTO test_coalescence`

**Error message and/or stacktrace**

```

2021.12.10 17:09:04.517045 [ 4509 ] {} <Error> Application: Caught exception while loading metadata: Code: 36, e.displayText() = DB::Exception: Partition key cannot contain non-deterministic functions, but contains function now: Cannot attach table `default`.`test_coalescence` from metadata file /var/lib/clickhouse/metadata/default/test_coalescence.sql from query ATTACH TABLE default.test_coalescence (`id` Int32, `name` String) ENGINE = MergeTree PARTITION BY toDate(coalesce(id, now())) ORDER BY id SETTINGS index_granularity = 8192: while loading database `default` from path /var/lib/clickhouse/metadata/default, Stack trace (when copying this message, always include the lines below):

0. ? @ 0xf39fb87 in /usr/bin/clickhouse

1. DB::MergeTreeData::checkPartitionKeyAndInitMinMax(DB::KeyDescription const&) @ 0xf39b74d in /usr/bin/clickhouse

2. DB::MergeTreeData::MergeTreeData(DB::StorageID const&, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&, DB::StorageInMemoryMetadata const&, DB::Context&, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&, DB::MergeTreeData::MergingParams const&, std::__1::unique_ptr<DB::MergeTreeSettings, std::__1::default_delete<DB::MergeTreeSettings> >, bool, bool, std::__1::function<void (std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&)>) @ 0xf396435 in /usr/bin/clickhouse

3. DB::StorageMergeTree::StorageMergeTree(DB::StorageID const&, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&, DB::StorageInMemoryMetadata const&, bool, DB::Context&, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&, DB::MergeTreeData::MergingParams const&, std::__1::unique_ptr<DB::MergeTreeSettings, std::__1::default_delete<DB::MergeTreeSettings> >, bool) @ 0xf16170e in /usr/bin/clickhouse

4. ? @ 0xf59730d in /usr/bin/clickhouse

5. DB::StorageFactory::get(DB::ASTCreateQuery const&, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&, DB::Context&, DB::Context&, DB::ColumnsDescription const&, DB::ConstraintsDescription const&, bool) const @ 0xf0dbe19 in /usr/bin/clickhouse

6. DB::createTableFromAST(DB::ASTCreateQuery, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > const&, DB::Context&, bool) @ 0xe782789 in /usr/bin/clickhouse

7. ? @ 0xe781911 in /usr/bin/clickhouse

8. ThreadPoolImpl<ThreadFromGlobalPool>::worker(std::__1::__list_iterator<ThreadFromGlobalPool, void*>) @ 0x853bac8 in /usr/bin/clickhouse

9. ThreadFromGlobalPool::ThreadFromGlobalPool<void ThreadPoolImpl<ThreadFromGlobalPool>::scheduleImpl<void>(std::__1::function<void ()>, int, std::__1::optional<unsigned long>)::'lambda1'()>(void&&, void ThreadPoolImpl<ThreadFromGlobalPool>::scheduleImpl<void>(std::__1::function<void ()>, int, std::__1::optional<unsigned long>)::'lambda1'()&&...)::'lambda'()::operator()() @ 0x853d4df in /usr/bin/clickhouse

10. ThreadPoolImpl<std::__1::thread>::worker(std::__1::__list_iterator<std::__1::thread, void*>) @ 0x853911f in /usr/bin/clickhouse

11. ? @ 0x853c593 in /usr/bin/clickhouse

12. start_thread @ 0x76db in /lib/x86_64-linux-gnu/libpthread-2.27.so

13. __clone @ 0x12171f in /lib/x86_64-linux-gnu/libc-2.27.so

(version 21.3.18.4 (official build))

```

| True | Upgrading from 20.3 to 21.3: Partition key cannot contain non-deterministic functions, but contains function now - **Describe the issue**

When non-deterministic function is used in 20.3, upgrade to 21.3 can't be performed

**How to reproduce**

* When upgrading from 20.3.21.2 to 21.3.18.4

* Interface: clickhouse-client

* No unusual settings (used config.xml and users.xml from [20.3](https://github.com/ClickHouse/ClickHouse/tree/20.3/programs/server) without any other files)

Do this on 20.3:

* `CREATE TABLE test_coalescence (id Int, name String) Engine=MergeTree() ORDER BY id PARTITION BY toDate(coalesce(id, now()));

`

* `INSERT INTO test_coalescence VALUES (5, 'hello')`

Upgrade to 21.3, start server and select:

* `INSERT INTO test_coalescence`

**Error message and/or stacktrace**

```