Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

129,980 | 17,950,361,115 | IssuesEvent | 2021-09-12 16:00:10 | decred/politeiagui | https://api.github.com/repos/decred/politeiagui | closed | [redesign] Post-vote instructions | Needs design specs | When proposal successfully votes, instruct its owner what to do next. For example, send him an email linking to a relevant docs page that tells how and when to invoice. Open an issue in dcrdocs for that page.

Later we may also add some guidance what to do if proposal failed. E.g. if it failed at 40-50%, it means the... | 1.0 | [redesign] Post-vote instructions - When proposal successfully votes, instruct its owner what to do next. For example, send him an email linking to a relevant docs page that tells how and when to invoice. Open an issue in dcrdocs for that page.

Later we may also add some guidance what to do if proposal failed. E.g. ... | non_priority | post vote instructions when proposal successfully votes instruct its owner what to do next for example send him an email linking to a relevant docs page that tells how and when to invoice open an issue in dcrdocs for that page later we may also add some guidance what to do if proposal failed e g if it fai... | 0 |

29,058 | 5,515,183,470 | IssuesEvent | 2017-03-17 16:48:48 | EOxServer/eoxserver | https://api.github.com/repos/EOxServer/eoxserver | opened | wrong assertion in xmltools.py | Priority: trivial Type: defect | After the recent 0.4 merges the Django commands started complaining about the wrong assertion:

```

/usr/local/eoxserver/eoxserver/core/util/xmltools.py:144: SyntaxWarning: assertion is always true, perhaps remove parentheses?

```

The actual code was added in cef1df1a5a065619473c393cf417158588f2bd75 and it does:

``... | 1.0 | wrong assertion in xmltools.py - After the recent 0.4 merges the Django commands started complaining about the wrong assertion:

```

/usr/local/eoxserver/eoxserver/core/util/xmltools.py:144: SyntaxWarning: assertion is always true, perhaps remove parentheses?

```

The actual code was added in cef1df1a5a065619473c393c... | non_priority | wrong assertion in xmltools py after the recent merges the django commands started complaining about the wrong assertion usr local eoxserver eoxserver core util xmltools py syntaxwarning assertion is always true perhaps remove parentheses the actual code was added in and it does a... | 0 |

596,014 | 18,094,375,723 | IssuesEvent | 2021-09-22 07:22:46 | elan-ev/opencast-editor | https://api.github.com/repos/elan-ev/opencast-editor | closed | Screen reader error in "Save and Process" | bug accessibility priority:low | The button "Start processing" is read two times. Also the buttons are weirdly named. -Wien | 1.0 | Screen reader error in "Save and Process" - The button "Start processing" is read two times. Also the buttons are weirdly named. -Wien | priority | screen reader error in save and process the button start processing is read two times also the buttons are weirdly named wien | 1 |

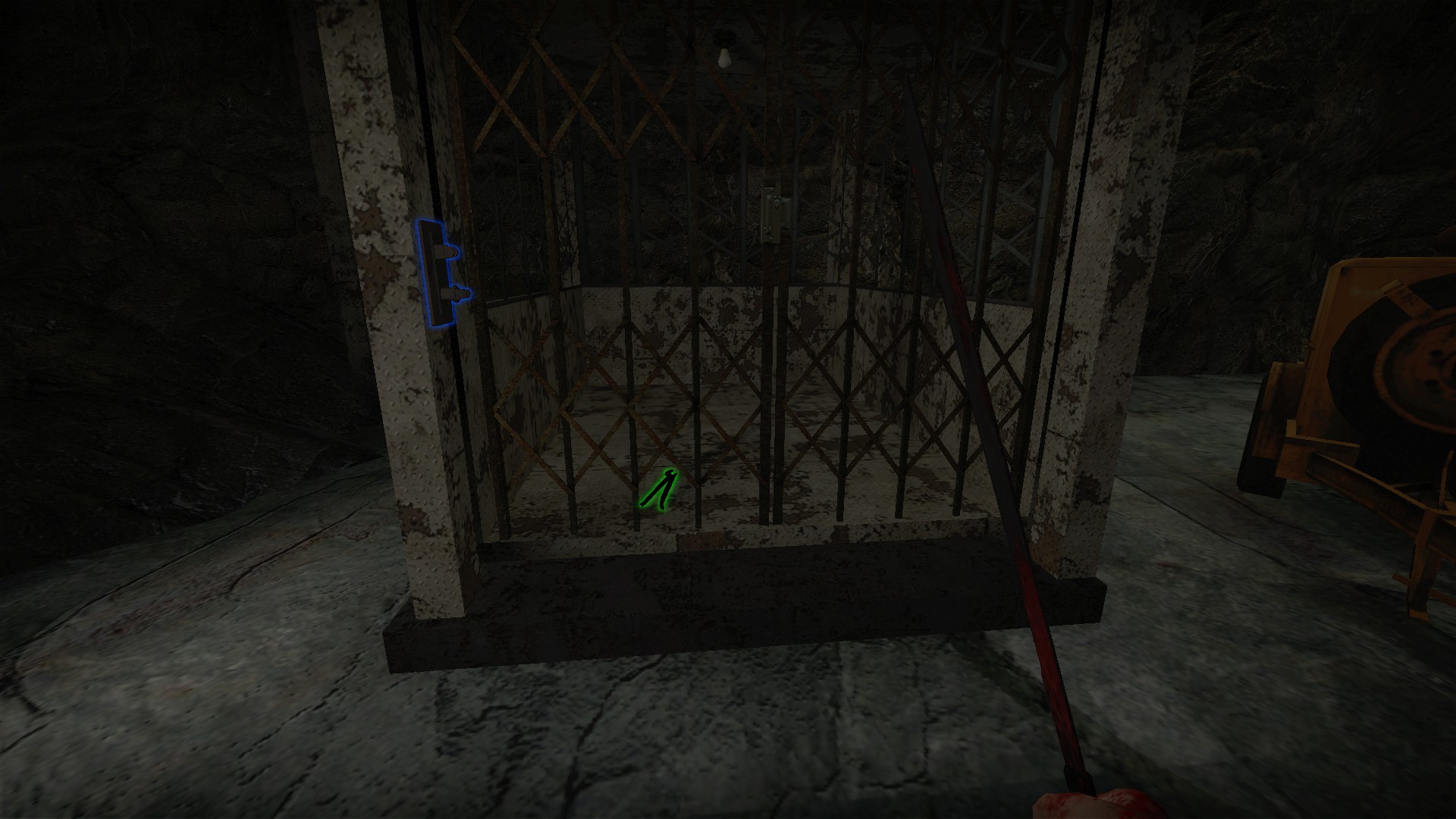

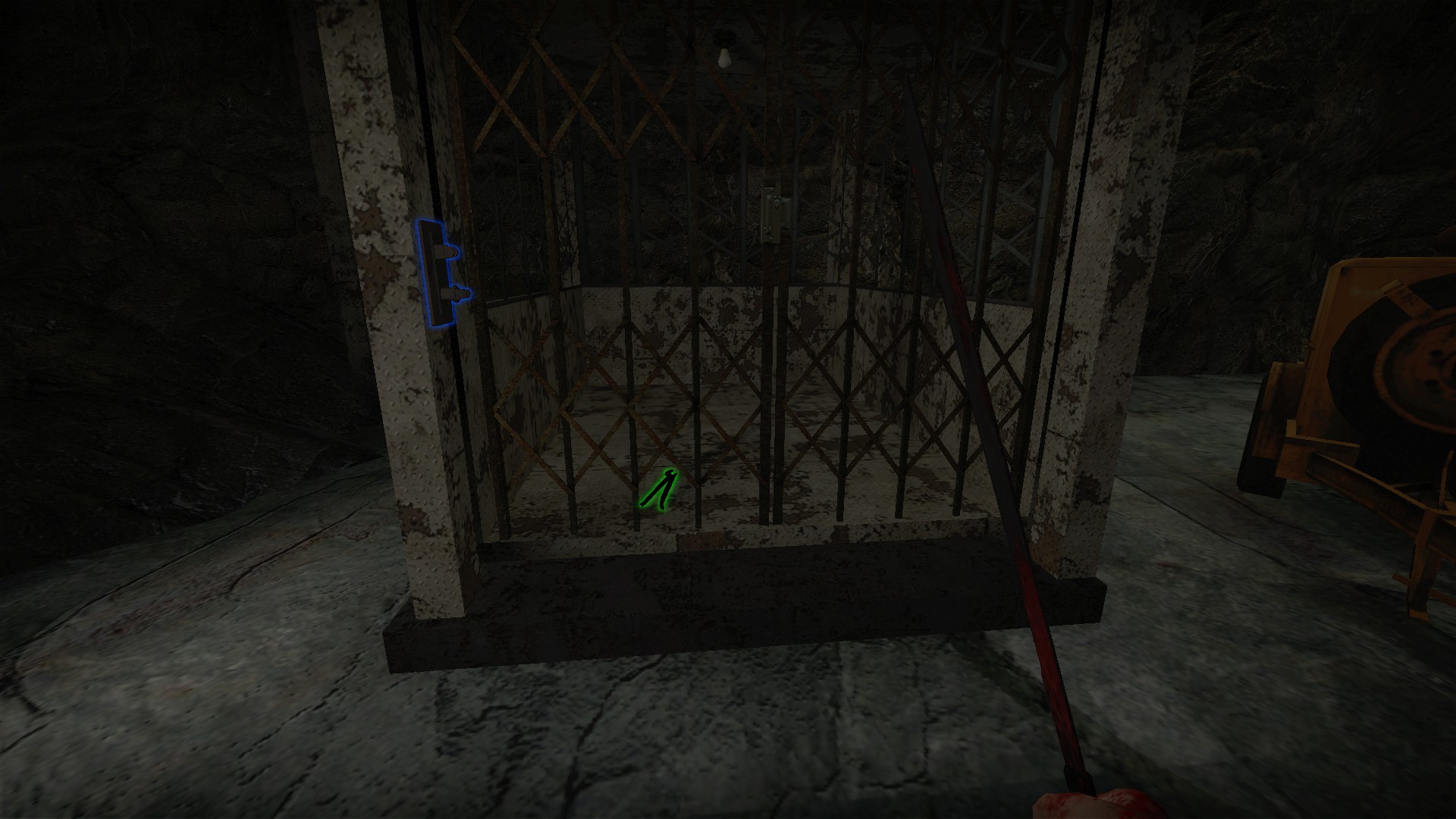

116,465 | 4,702,590,496 | IssuesEvent | 2016-10-13 03:07:42 | Kaezon/Unreal-SCP | https://api.github.com/repos/Kaezon/Unreal-SCP | opened | Add obstruction check to inSight | Blueprint enhancement High Priority | Currently, SCP-173 cannot move if the player is looking at it regardless of whether something is in the way or not.

@asegavac suggested doing some ray casting as a cheap way to check for obstructions. | 1.0 | Add obstruction check to inSight - Currently, SCP-173 cannot move if the player is looking at it regardless of whether something is in the way or not.

@asegavac suggested doing some ray casting as a cheap way to check for obstructions. | priority | add obstruction check to insight currently scp cannot move if the player is looking at it regardless of whether something is in the way or not asegavac suggested doing some ray casting as a cheap way to check for obstructions | 1 |

351,683 | 10,522,092,724 | IssuesEvent | 2019-09-30 07:55:38 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | `docker-compose up -d` should not start addons-frontend and selenium/firefox | env: local dev priority: p3 state: stale | ### Describe the problem and steps to reproduce it:

See the install steps at: https://addons-server.readthedocs.io/en/latest/topics/install/docker.html

### What happened?

The addons-frontend and selenium-firefox containers are started when running `docker-compose up [-d]`.

### What did you expect to happen?... | 1.0 | `docker-compose up -d` should not start addons-frontend and selenium/firefox - ### Describe the problem and steps to reproduce it:

See the install steps at: https://addons-server.readthedocs.io/en/latest/topics/install/docker.html

### What happened?

The addons-frontend and selenium-firefox containers are start... | priority | docker compose up d should not start addons frontend and selenium firefox describe the problem and steps to reproduce it see the install steps at what happened the addons frontend and selenium firefox containers are started when running docker compose up what did you expect to hap... | 1 |

3,062 | 2,536,312,035 | IssuesEvent | 2015-01-26 12:59:12 | nlbdev/nordic-epub3-dtbook-migrator | https://api.github.com/repos/nlbdev/nordic-epub3-dtbook-migrator | opened | Make nordic13a work for multi-document fileset | 2 - High priority bug epub3-validator | ("All books must have frontmatter and bodymatter")

This rule currently only works for the single-HTML representation.

nordic13b might need a similar update. | 1.0 | Make nordic13a work for multi-document fileset - ("All books must have frontmatter and bodymatter")

This rule currently only works for the single-HTML representation.

nordic13b might need a similar update. | priority | make work for multi document fileset all books must have frontmatter and bodymatter this rule currently only works for the single html representation might need a similar update | 1 |

225,131 | 7,478,593,571 | IssuesEvent | 2018-04-04 12:13:43 | akroma-project/akroma.io | https://api.github.com/repos/akroma-project/akroma.io | closed | Transaction Page - Confirmations | enhancement top priority | Transaction Page - Confirmations - display on transaction page (blockHeight - blockNumber = confirmations) | 1.0 | Transaction Page - Confirmations - Transaction Page - Confirmations - display on transaction page (blockHeight - blockNumber = confirmations) | priority | transaction page confirmations transaction page confirmations display on transaction page blockheight blocknumber confirmations | 1 |

224,973 | 17,209,463,737 | IssuesEvent | 2021-07-19 00:14:09 | seancorfield/next-jdbc | https://api.github.com/repos/seancorfield/next-jdbc | closed | Migration docs need to warn about c.j.j ops inside n.j/with-transaction | documentation | Because c.j.j ops attempt to create a (c.j.j) TX by default, if you use them inside `next.jdbc/with-transaction` operations will still be auto-committed.

You can either pass `:transaction? false` to all such nested c.j.j ops or convert them to `next.jdbc` calls (recommended). | 1.0 | Migration docs need to warn about c.j.j ops inside n.j/with-transaction - Because c.j.j ops attempt to create a (c.j.j) TX by default, if you use them inside `next.jdbc/with-transaction` operations will still be auto-committed.

You can either pass `:transaction? false` to all such nested c.j.j ops or convert them to... | non_priority | migration docs need to warn about c j j ops inside n j with transaction because c j j ops attempt to create a c j j tx by default if you use them inside next jdbc with transaction operations will still be auto committed you can either pass transaction false to all such nested c j j ops or convert them to... | 0 |

270,360 | 8,454,959,133 | IssuesEvent | 2018-10-21 10:04:36 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | coco.fr - see bug description | browser-chrome priority-normal | <!-- @browser: Chrome 69.0.3497 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36 -->

<!-- @reported_with: -->

**URL**: http://coco.fr/

**Browser / Version**: Chrome 69.0.3497

**Operating System**: Linux

**Tested Another Browser**: No

**Pr... | 1.0 | coco.fr - see bug description - <!-- @browser: Chrome 69.0.3497 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36 -->

<!-- @reported_with: -->

**URL**: http://coco.fr/

**Browser / Version**: Chrome 69.0.3497

**Operating System**: Linux

**Te... | priority | coco fr see bug description url browser version chrome operating system linux tested another browser no problem type something else description test steps to reproduce from with ❤️ | 1 |

60,236 | 12,070,184,115 | IssuesEvent | 2020-04-16 17:13:23 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Do not simplify away field as clause - it breaks compilation | 4 - In Review Area-IDE Bug IDE-CodeStyle | **Version Used**:

codeanalysis 3.5.0

**Steps to Reproduce**:

On a Visual Basic

1. Call Reduce on a single variable field with an As clause and an initializer

```vbnet

<Workspace>

<Project Language="Visual Basic" CommonReferences="true">

<Document>

Imports System

Imp... | 1.0 | Do not simplify away field as clause - it breaks compilation - **Version Used**:

codeanalysis 3.5.0

**Steps to Reproduce**:

On a Visual Basic

1. Call Reduce on a single variable field with an As clause and an initializer

```vbnet

<Workspace>

<Project Language="Visual Basic" CommonReferences="true">

... | non_priority | do not simplify away field as clause it breaks compilation version used codeanalysis steps to reproduce on a visual basic call reduce on a single variable field with an as clause and an initializer vbnet imports system imports i sys... | 0 |

832,281 | 32,077,457,363 | IssuesEvent | 2023-09-25 11:59:48 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | [Nightly CI Failures] Failures detected for google-cloud-bigquery-storage | type: bug priority: p1 nightly failure | At 2023-09-09 09:39:44 UTC, detected failures in google-cloud-bigquery-storage for: test.

The CI logs can be found [here](https://github.com/googleapis/google-cloud-ruby/actions/runs/6129868852)

report_key_e0627aab1a8c51af683a5975db459b06 | 1.0 | [Nightly CI Failures] Failures detected for google-cloud-bigquery-storage - At 2023-09-09 09:39:44 UTC, detected failures in google-cloud-bigquery-storage for: test.

The CI logs can be found [here](https://github.com/googleapis/google-cloud-ruby/actions/runs/6129868852)

report_key_e0627aab1a8c51af683a5975db459b06 | priority | failures detected for google cloud bigquery storage at utc detected failures in google cloud bigquery storage for test the ci logs can be found report key | 1 |

96,663 | 27,970,960,001 | IssuesEvent | 2023-03-25 02:25:47 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Build source osx error | build | > Make sure that `git diff` result is empty and you've just pulled fresh master. Try cleaning up cmake cache. Just in case, official build instructions are published here: https://clickhouse.com/docs/en/development/build/

**Operating system**

macOS 12.2.1

> OS kind or distribution, specific version/release, no... | 1.0 | Build source osx error - > Make sure that `git diff` result is empty and you've just pulled fresh master. Try cleaning up cmake cache. Just in case, official build instructions are published here: https://clickhouse.com/docs/en/development/build/

**Operating system**

macOS 12.2.1

> OS kind or distribution, sp... | non_priority | build source osx error make sure that git diff result is empty and you ve just pulled fresh master try cleaning up cmake cache just in case official build instructions are published here operating system macos os kind or distribution specific version release non standard kernel if an... | 0 |

51,891 | 27,294,876,862 | IssuesEvent | 2023-02-23 19:26:55 | enso-org/enso | https://api.github.com/repos/enso-org/enso | closed | Pre-emptive shader compilation | -gui --low-performance | Now that we have static information about shaders, we should compile them all at the beginning of application startup. | True | Pre-emptive shader compilation - Now that we have static information about shaders, we should compile them all at the beginning of application startup. | non_priority | pre emptive shader compilation now that we have static information about shaders we should compile them all at the beginning of application startup | 0 |

254,570 | 8,075,329,823 | IssuesEvent | 2018-08-07 05:00:52 | CarbonLDP/carbonldp-website | https://api.github.com/repos/CarbonLDP/carbonldp-website | closed | Move formal vocabulary into website so terms are resolvable by URI | in progress priority2: required type: task | The vocabulary needs to be resolvable by URI at:

https://carbonldp.com/ns/v1/platform# | 1.0 | Move formal vocabulary into website so terms are resolvable by URI - The vocabulary needs to be resolvable by URI at:

https://carbonldp.com/ns/v1/platform# | priority | move formal vocabulary into website so terms are resolvable by uri the vocabulary needs to be resolvable by uri at | 1 |

168,357 | 6,370,332,113 | IssuesEvent | 2017-08-01 13:59:25 | tardis-sn/tardis | https://api.github.com/repos/tardis-sn/tardis | closed | Add flexibility to callback mechanism of Simulation object | easy feature request priority - low | Requested feature:

Let the callback function know if it is ran after plasma or after the spectral run (the last run/iteration).

Possible implementations:

- Add a separate set of callback functions that are executed after the last iteration `Simulation.add_last_callback(...)` or something similiar

- Modify the exi... | 1.0 | Add flexibility to callback mechanism of Simulation object - Requested feature:

Let the callback function know if it is ran after plasma or after the spectral run (the last run/iteration).

Possible implementations:

- Add a separate set of callback functions that are executed after the last iteration `Simulation.ad... | priority | add flexibility to callback mechanism of simulation object requested feature let the callback function know if it is ran after plasma or after the spectral run the last run iteration possible implementations add a separate set of callback functions that are executed after the last iteration simulation ad... | 1 |

76,901 | 3,505,724,823 | IssuesEvent | 2016-01-08 00:33:55 | Code60Home/fbs | https://api.github.com/repos/Code60Home/fbs | closed | Parachute icons don't have a side | bug priority 4 | Parachute icons don't show what side they belong to, so it's hard to tell who I have to go rescue | 1.0 | Parachute icons don't have a side - Parachute icons don't show what side they belong to, so it's hard to tell who I have to go rescue | priority | parachute icons don t have a side parachute icons don t show what side they belong to so it s hard to tell who i have to go rescue | 1 |

347,618 | 31,235,272,466 | IssuesEvent | 2023-08-20 07:34:39 | softeerbootcamp-2nd/A1-PoongCha | https://api.github.com/repos/softeerbootcamp-2nd/A1-PoongCha | opened | BE/08.20/추가 질문 기능 구현 | ✨ Feature 📃 Docs 📬 API ✅ Test | ### Domain

Backend

### 어떠한 작업을 진행하실 건가요?

- [ ] 추가 질문 생성

- [ ] 추가 질문 전체 ID 조회

- [ ] 추가 질문 식별자 목록으로 조회

### 고려사항

_No response_

### Priority Label Setting

- [X] Yes!

### Projects Card

- [X] Yes! | 1.0 | BE/08.20/추가 질문 기능 구현 - ### Domain

Backend

### 어떠한 작업을 진행하실 건가요?

- [ ] 추가 질문 생성

- [ ] 추가 질문 전체 ID 조회

- [ ] 추가 질문 식별자 목록으로 조회

### 고려사항

_No response_

### Priority Label Setting

- [X] Yes!

### Projects Card

- [X] Yes! | non_priority | be 추가 질문 기능 구현 domain backend 어떠한 작업을 진행하실 건가요 추가 질문 생성 추가 질문 전체 id 조회 추가 질문 식별자 목록으로 조회 고려사항 no response priority label setting yes projects card yes | 0 |

357,466 | 10,606,810,233 | IssuesEvent | 2019-10-11 01:00:36 | AY1920S1-CS2113T-T09-1/main | https://api.github.com/repos/AY1920S1-CS2113T-T09-1/main | opened | Work on an outline of the class diagram for Hustler v1.4 | priority.High type.Task | Create a general outline into which variables and functions can be filled. | 1.0 | Work on an outline of the class diagram for Hustler v1.4 - Create a general outline into which variables and functions can be filled. | priority | work on an outline of the class diagram for hustler create a general outline into which variables and functions can be filled | 1 |

73,013 | 3,398,840,466 | IssuesEvent | 2015-12-02 07:27:53 | ckaiser79/webtest-rb | https://api.github.com/repos/ckaiser79/webtest-rb | closed | Use jquery datatables in report for easier filtering | enhancement Priority-Medium | The file log/last_run/run.log.html should be easier searchable. By using datatables, the columns should be orderable and filterable by text | 1.0 | Use jquery datatables in report for easier filtering - The file log/last_run/run.log.html should be easier searchable. By using datatables, the columns should be orderable and filterable by text | priority | use jquery datatables in report for easier filtering the file log last run run log html should be easier searchable by using datatables the columns should be orderable and filterable by text | 1 |

46,540 | 5,820,977,468 | IssuesEvent | 2017-05-06 00:47:23 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | ci-kubernetes-e2e-gke-gci-1.4-gci-1.5-upgrade-cluster: broken test run | kind/flake priority/P1 team/test-infra | https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-e2e-gke-gci-1.4-gci-1.5-upgrade-cluster/17/

Multiple broken tests:

Failed: [k8s.io] V1Job should fail a job [Slow] {Kubernetes e2e suite}

```

/go/src/k8s.io/kubernetes/_output/dockerized/go/src/k8s.io/kubernetes/test/e2e/batch_v1_jobs.go:... | 1.0 | ci-kubernetes-e2e-gke-gci-1.4-gci-1.5-upgrade-cluster: broken test run - https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-e2e-gke-gci-1.4-gci-1.5-upgrade-cluster/17/

Multiple broken tests:

Failed: [k8s.io] V1Job should fail a job [Slow] {Kubernetes e2e suite}

```

/go/src/k8s.io/kubernet... | non_priority | ci kubernetes gke gci gci upgrade cluster broken test run multiple broken tests failed should fail a job kubernetes suite go src io kubernetes output dockerized go src io kubernetes test batch jobs go expected error s timed out waiting for the condition ... | 0 |

280,156 | 24,281,023,986 | IssuesEvent | 2022-09-28 17:24:42 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: cdc/pubsub-sink/assume-role failed | C-test-failure O-robot O-roachtest release-blocker branch-release-22.2 | roachtest.cdc/pubsub-sink/assume-role [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6661627?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6661627?buildTab=artifacts#/cdc/pubsub-s... | 2.0 | roachtest: cdc/pubsub-sink/assume-role failed - roachtest.cdc/pubsub-sink/assume-role [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6661627?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyG... | non_priority | roachtest cdc pubsub sink assume role failed roachtest cdc pubsub sink assume role with on release test artifacts and logs in artifacts cdc pubsub sink assume role run monitor go cdc go cdc go test runner go monitor failure monitor task failed dial tcp connect conn... | 0 |

121,809 | 26,035,858,044 | IssuesEvent | 2022-12-22 04:51:44 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | LSRA Reg Optional: Folding of operations using a tree temp | enhancement tenet-performance area-CodeGen-coreclr optimization | Say we have the following expr

a = (b + (c + (d+ (e+f))))

in LIR form

t0 = e+f

t1 = t0+d

t2 = t1+c

a = t2+b

Say it is profitable to not to allocate a reg to Use position of '+' in (e+f). That is tree temp t0 needs to be spilled to memory. Furthermore it is profitable to not to allocate a reg to both use and def ... | 1.0 | LSRA Reg Optional: Folding of operations using a tree temp - Say we have the following expr

a = (b + (c + (d+ (e+f))))

in LIR form

t0 = e+f

t1 = t0+d

t2 = t1+c

a = t2+b

Say it is profitable to not to allocate a reg to Use position of '+' in (e+f). That is tree temp t0 needs to be spilled to memory. Furthermore i... | non_priority | lsra reg optional folding of operations using a tree temp say we have the following expr a b c d e f in lir form e f d c a b say it is profitable to not to allocate a reg to use position of in e f that is tree temp needs to be spilled to memory furthermore it is pr... | 0 |

408,186 | 11,942,883,039 | IssuesEvent | 2020-04-02 21:53:50 | wazuh/wazuh-kibana-app | https://api.github.com/repos/wazuh/wazuh-kibana-app | closed | Pagination is not shown in table-type visualizations | bug priority/high | | Wazuh | Elastic | Rev |

| ----- | ------- | --- |

| 3.12.0 | 7.6.1 | n/a |

**Description**

The pagination controls in the table-type Kibana visualizations are not shown.

**Steps to reproduce**

1. Go to 'Overview > Security Events'

2. Navigate to the Alerts Summary table

3. See error

**Screensho... | 1.0 | Pagination is not shown in table-type visualizations - | Wazuh | Elastic | Rev |

| ----- | ------- | --- |

| 3.12.0 | 7.6.1 | n/a |

**Description**

The pagination controls in the table-type Kibana visualizations are not shown.

**Steps to reproduce**

1. Go to 'Overview > Security Events'

2. Navigate t... | priority | pagination is not shown in table type visualizations wazuh elastic rev n a description the pagination controls in the table type kibana visualizations are not shown steps to reproduce go to overview security events navigate to... | 1 |

151,235 | 5,808,230,256 | IssuesEvent | 2017-05-04 10:04:53 | learnweb/moodle-mod_ratingallocate | https://api.github.com/repos/learnweb/moodle-mod_ratingallocate | closed | Feature Request: delete own rating (or even selected student ratings) | Effort: Medium Priority: High | Some teachers tend to test their acitivities before publishing them. If they try to rate the choices by simulating student role (or by using test students) this test ratings will be used for allocation and therefore disturb the allocation algorithm.

Therefore it should be possible to delete ratings. In my opinion ther... | 1.0 | Feature Request: delete own rating (or even selected student ratings) - Some teachers tend to test their acitivities before publishing them. If they try to rate the choices by simulating student role (or by using test students) this test ratings will be used for allocation and therefore disturb the allocation algorithm... | priority | feature request delete own rating or even selected student ratings some teachers tend to test their acitivities before publishing them if they try to rate the choices by simulating student role or by using test students this test ratings will be used for allocation and therefore disturb the allocation algorithm... | 1 |

356,000 | 10,587,328,696 | IssuesEvent | 2019-10-08 21:50:01 | flextype/flextype | https://api.github.com/repos/flextype/flextype | closed | Flextype Core: Entries API - improve and_where & or_where for fetchAll method | priority: medium type: improvement | We should have ability to path unlimited and_where & or_where expressions

Query example:

<img width="668" alt="Screenshot 2019-09-23 at 23 59 53" src="https://user-images.githubusercontent.com/477114/65463605-b5cf5000-de60-11e9-98df-e7598b5cfdfa.png">

| 1.0 | Flextype Core: Entries API - improve and_where & or_where for fetchAll method - We should have ability to path unlimited and_where & or_where expressions

Query example:

<img width="668" alt="Screenshot 2019-09-23 at 23 59 53" src="https://user-images.githubusercontent.com/477114/65463605-b5cf5000-de60-11e9-98... | priority | flextype core entries api improve and where or where for fetchall method we should have ability to path unlimited and where or where expressions query example img width alt screenshot at src | 1 |

7,349 | 6,916,269,003 | IssuesEvent | 2017-11-29 01:35:24 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Fix traceback error output from test_realm_scenarios | area: testing-infrastructure bug priority: high | I think this error output is the result of our having recently changed the queue processors to run the `consume` methods when `queue_json_publish` is called in tests. Not sure yet.

```

Running zerver.tests.test_messages.TestCrossRealmPMs.test_realm_scenarios

2017-10-27 23:29:50.575 ERR [] Error queueing interna... | 1.0 | Fix traceback error output from test_realm_scenarios - I think this error output is the result of our having recently changed the queue processors to run the `consume` methods when `queue_json_publish` is called in tests. Not sure yet.

```

Running zerver.tests.test_messages.TestCrossRealmPMs.test_realm_scenarios

... | non_priority | fix traceback error output from test realm scenarios i think this error output is the result of our having recently changed the queue processors to run the consume methods when queue json publish is called in tests not sure yet running zerver tests test messages testcrossrealmpms test realm scenarios ... | 0 |

608,571 | 18,842,671,619 | IssuesEvent | 2021-11-11 11:26:13 | googleapis/google-api-python-client | https://api.github.com/repos/googleapis/google-api-python-client | closed | MediaIoBaseDownload next_chunk() Range header off-by-one | type: bug priority: p2 status: investigating | #### Environment details

- OS type and version:

```

Ubuntu 20.04.3 LTS

```

- Python version: `python --version`

```

Python 3.9.7

```

- pip version: `pip --version`

```

pip 21.3.1

```

- `google-api-python-client` version: `pip show google-api-python-client`

```

Name: google... | 1.0 | MediaIoBaseDownload next_chunk() Range header off-by-one - #### Environment details

- OS type and version:

```

Ubuntu 20.04.3 LTS

```

- Python version: `python --version`

```

Python 3.9.7

```

- pip version: `pip --version`

```

pip 21.3.1

```

- `google-api-python-client` version:... | priority | mediaiobasedownload next chunk range header off by one environment details os type and version ubuntu lts python version python version python pip version pip version pip google api python client version p... | 1 |

300,825 | 9,212,871,212 | IssuesEvent | 2019-03-10 06:05:19 | CS2103-AY1819S2-W10-1/main | https://api.github.com/repos/CS2103-AY1819S2-W10-1/main | opened | As an offline user, I can view a saved link in a browser while offline | priority.High type.Story | so that I can read the content of links I’ve saved.

Part of epics:

#28 Offline support | 1.0 | As an offline user, I can view a saved link in a browser while offline - so that I can read the content of links I’ve saved.

Part of epics:

#28 Offline support | priority | as an offline user i can view a saved link in a browser while offline so that i can read the content of links i’ve saved part of epics offline support | 1 |

662,998 | 22,159,469,740 | IssuesEvent | 2022-06-04 09:15:19 | application-research/estuary | https://api.github.com/repos/application-research/estuary | closed | Current filecoin-ffi submodule version fails to build on linux-arm machines | Bug Priority: high | Commit version `5d00bb4` of filecoin-ffi does not build on linux arm.

Commit version `8b32252` builds fine but I can't get estuary to build whilst using this version. Help would be greatly appreciated. | 1.0 | Current filecoin-ffi submodule version fails to build on linux-arm machines - Commit version `5d00bb4` of filecoin-ffi does not build on linux arm.

Commit version `8b32252` builds fine but I can't get estuary to build whilst using this version. Help would be greatly appreciated. | priority | current filecoin ffi submodule version fails to build on linux arm machines commit version of filecoin ffi does not build on linux arm commit version builds fine but i can t get estuary to build whilst using this version help would be greatly appreciated | 1 |

609,125 | 18,854,993,713 | IssuesEvent | 2021-11-12 04:18:20 | hoffstadt/DearPyGui | https://api.github.com/repos/hoffstadt/DearPyGui | closed | "Input_float" widget cannot be disabled | state: ready priority: high type: bug | ## Version of Dear PyGui

Version: 1.0.2

Python: 3.9.7

Operating System: SolusOS 64bit

## My Issue

The "input_float" widget cannot be disabled, both via the definition of the widget (see code example), and via the function "disable_item(item).

## To Reproduce

Steps to reproduce the behavior:

```pytho... | 1.0 | "Input_float" widget cannot be disabled - ## Version of Dear PyGui

Version: 1.0.2

Python: 3.9.7

Operating System: SolusOS 64bit

## My Issue

The "input_float" widget cannot be disabled, both via the definition of the widget (see code example), and via the function "disable_item(item).

## To Reproduce

St... | priority | input float widget cannot be disabled version of dear pygui version python operating system solusos my issue the input float widget cannot be disabled both via the definition of the widget see code example and via the function disable item item to reproduce steps ... | 1 |

701,143 | 24,088,053,694 | IssuesEvent | 2022-09-19 12:42:53 | AY2223S1-CS2103T-T09-2/tp | https://api.github.com/repos/AY2223S1-CS2103T-T09-2/tp | opened | Update AboutUs page | priority.High task | This page (in the `/docs` folder) is used for module admin purposes.

[ ] Add your own details. Include a suitable photo as described here.

There is no need to mention the the tutor/lecturer, but OK to do so too.

The filename of the profile photo should be docs/images/github_username_in_lower_case.png

Note the need... | 1.0 | Update AboutUs page - This page (in the `/docs` folder) is used for module admin purposes.

[ ] Add your own details. Include a suitable photo as described here.

There is no need to mention the the tutor/lecturer, but OK to do so too.

The filename of the profile photo should be docs/images/github_username_in_lower_c... | priority | update aboutus page this page in the docs folder is used for module admin purposes add your own details include a suitable photo as described here there is no need to mention the the tutor lecturer but ok to do so too the filename of the profile photo should be docs images github username in lower cas... | 1 |

416,567 | 12,148,579,972 | IssuesEvent | 2020-04-24 14:48:26 | TykTechnologies/tyk | https://api.github.com/repos/TykTechnologies/tyk | closed | Requesting the notion of "Applications" within the portal | Priority: High customer request enhancement wontfix | **Do you want to request a *feature* or report a *bug*?**

*feature*

**What is the current behavior?**

API consumers are consuming Policies in the portal. Keys are tied to the policy.

**What is the expected behavior?**

Ideally, the following behavior should be accomplished:

✔️ As a consumer, I can sign up fo... | 1.0 | Requesting the notion of "Applications" within the portal - **Do you want to request a *feature* or report a *bug*?**

*feature*

**What is the current behavior?**

API consumers are consuming Policies in the portal. Keys are tied to the policy.

**What is the expected behavior?**

Ideally, the following behavior s... | priority | requesting the notion of applications within the portal do you want to request a feature or report a bug feature what is the current behavior api consumers are consuming policies in the portal keys are tied to the policy what is the expected behavior ideally the following behavior s... | 1 |

160,489 | 6,098,557,940 | IssuesEvent | 2017-06-20 07:54:39 | McStasMcXtrace/iFit | https://api.github.com/repos/McStasMcXtrace/iFit | closed | Models: Phonons: recent PhonoPy has issue to get mas | bug priority |

```

s=sqw_phonons([ ifitpath 'Data/POSCAR_Al'],'metal','emt');

qh=linspace(0.01,.5,50);qk=qh; ql=qh; w=linspace(0.01,50,51);

f=iData(s,[],qh,qk,ql',w);

```

fails with:

```

Traceback (most recent call last):

File "/tmp/tpea7560eb_ef46_49a5_8071_74f86e168658/sqw_phonons_eval.py", line 17, in <module>

... | 1.0 | Models: Phonons: recent PhonoPy has issue to get mas -

```

s=sqw_phonons([ ifitpath 'Data/POSCAR_Al'],'metal','emt');

qh=linspace(0.01,.5,50);qk=qh; ql=qh; w=linspace(0.01,50,51);

f=iData(s,[],qh,qk,ql',w);

```

fails with:

```

Traceback (most recent call last):

File "/tmp/tpea7560eb_ef46_49a5_8071_74f... | priority | models phonons recent phonopy has issue to get mas s sqw phonons metal emt qh linspace qk qh ql qh w linspace f idata s qh qk ql w fails with traceback most recent call last file tmp sqw phonons eval py line in omega kn po... | 1 |

60,885 | 3,135,240,286 | IssuesEvent | 2015-09-10 14:28:14 | minetest/minetest | https://api.github.com/repos/minetest/minetest | closed | Locale directory regression | bug Low priority | On the forums, user mahmutelmas06 [points out](https://forum.minetest.net/viewtopic.php?f=42&p=190382#p190382) that the main menu isn't translated anymore. Most likely his binary has been compiled with `RUN_IN_PLACE=false`, and he experiences the regression of commit 645e2086734e3d2d1ec95f50faa39f0f24304761 that the lo... | 1.0 | Locale directory regression - On the forums, user mahmutelmas06 [points out](https://forum.minetest.net/viewtopic.php?f=42&p=190382#p190382) that the main menu isn't translated anymore. Most likely his binary has been compiled with `RUN_IN_PLACE=false`, and he experiences the regression of commit 645e2086734e3d2d1ec95f... | priority | locale directory regression on the forums user that the main menu isn t translated anymore most likely his binary has been compiled with run in place false and he experiences the regression of commit that the locale isn t detected anymore if run in place false and the locale isn t present at the absolu... | 1 |

550,388 | 16,110,938,741 | IssuesEvent | 2021-04-27 21:05:55 | ExoCTK/exoctk | https://api.github.com/repos/ExoCTK/exoctk | opened | Request development web server | 1: HIGH PRIORITY Tool: exoctk_app | ExoCTK currently has a test and production web server. By adding a development web server, we would be able to test out changes on the fly, and leave the test and production servers to be used only when pushing major releases. | 1.0 | Request development web server - ExoCTK currently has a test and production web server. By adding a development web server, we would be able to test out changes on the fly, and leave the test and production servers to be used only when pushing major releases. | priority | request development web server exoctk currently has a test and production web server by adding a development web server we would be able to test out changes on the fly and leave the test and production servers to be used only when pushing major releases | 1 |

444,439 | 12,812,656,937 | IssuesEvent | 2020-07-04 07:55:21 | shakram02/PiFloor | https://api.github.com/repos/shakram02/PiFloor | closed | Make an emulator for the detection and communication functionality | Medium Priority enhancement | It'll be convenient to make a desktop application that can facilitate testing, which has the following features

1. Websocket & http server

2. Directory to serve the page from

3. A method to select the invisible tile

The downside to the current pipeline is that the person doing development needs to have android ... | 1.0 | Make an emulator for the detection and communication functionality - It'll be convenient to make a desktop application that can facilitate testing, which has the following features

1. Websocket & http server

2. Directory to serve the page from

3. A method to select the invisible tile

The downside to the current... | priority | make an emulator for the detection and communication functionality it ll be convenient to make a desktop application that can facilitate testing which has the following features websocket http server directory to serve the page from a method to select the invisible tile the downside to the current... | 1 |

718,199 | 24,707,347,544 | IssuesEvent | 2022-10-19 20:18:26 | TimZoet/cppql | https://api.github.com/repos/TimZoet/cppql | closed | Add NULL ordering to OrderBy expression | feature low priority | Add support for ordering NULLS first/last to OrderBy expression. See also [sqlite doc](https://www.sqlite.org/lang_select.html). | 1.0 | Add NULL ordering to OrderBy expression - Add support for ordering NULLS first/last to OrderBy expression. See also [sqlite doc](https://www.sqlite.org/lang_select.html). | priority | add null ordering to orderby expression add support for ordering nulls first last to orderby expression see also | 1 |

232,714 | 7,674,145,340 | IssuesEvent | 2018-05-15 02:08:55 | Ryan6578/TTS-Codenames | https://api.github.com/repos/Ryan6578/TTS-Codenames | closed | Save State | enhancement feature request high priority | On a rewind, load the previous game's save state. This is so that the script doesn't completely break on rewinding. | 1.0 | Save State - On a rewind, load the previous game's save state. This is so that the script doesn't completely break on rewinding. | priority | save state on a rewind load the previous game s save state this is so that the script doesn t completely break on rewinding | 1 |

642,500 | 20,905,694,357 | IssuesEvent | 2022-03-24 01:45:43 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | reopened | Security Policy violation Binary Artifacts | java priority/p1 kind/todo area/example allstar | _This issue was automatically created by [Allstar](https://github.com/ossf/allstar/)._

**Security Policy Violation**

Project is out of compliance with Binary Artifacts policy: binaries present in source code

**Rule Description**

Binary Artifacts are an increased security risk in your repository. Binary artifacts cann... | 1.0 | Security Policy violation Binary Artifacts - _This issue was automatically created by [Allstar](https://github.com/ossf/allstar/)._

**Security Policy Violation**

Project is out of compliance with Binary Artifacts policy: binaries present in source code

**Rule Description**

Binary Artifacts are an increased security r... | priority | security policy violation binary artifacts this issue was automatically created by security policy violation project is out of compliance with binary artifacts policy binaries present in source code rule description binary artifacts are an increased security risk in your repository binary artifacts c... | 1 |

245,938 | 18,799,131,614 | IssuesEvent | 2021-11-09 04:03:16 | IBM/aihwkit | https://api.github.com/repos/IBM/aihwkit | closed | How to access the source code? | documentation | Hi,

Thanks to the IBM developers for this great package. Can you please help me to access the source code of the package? I could not find the original source code from the docs page. | 1.0 | How to access the source code? - Hi,

Thanks to the IBM developers for this great package. Can you please help me to access the source code of the package? I could not find the original source code from the docs page. | non_priority | how to access the source code hi thanks to the ibm developers for this great package can you please help me to access the source code of the package i could not find the original source code from the docs page | 0 |

457,780 | 13,162,199,875 | IssuesEvent | 2020-08-10 21:05:13 | jenkins-x/jx | https://api.github.com/repos/jenkins-x/jx | closed | Cannot upgrade jx client without a functioning server install | area/cli area/upgrade kind/bug lifecycle/rotten priority/important-longterm | ### Summary

`jx upgrade client` has started failing if there is no active server install associated.

```

➜ jx upgrade cli

error: failed to load TeamSettings: failed to create the jx client: Get https://35.189.218.13/api/v1/namespaces/jx: dial tcp 35.189.218.13:443: connect: connection refused

```

### Jx ver... | 1.0 | Cannot upgrade jx client without a functioning server install - ### Summary

`jx upgrade client` has started failing if there is no active server install associated.

```

➜ jx upgrade cli

error: failed to load TeamSettings: failed to create the jx client: Get https://35.189.218.13/api/v1/namespaces/jx: dial tcp 35.... | priority | cannot upgrade jx client without a functioning server install summary jx upgrade client has started failing if there is no active server install associated ➜ jx upgrade cli error failed to load teamsettings failed to create the jx client get dial tcp connect connection refused ... | 1 |

60,379 | 3,125,879,748 | IssuesEvent | 2015-09-08 04:59:55 | AtlasOfLivingAustralia/biocache-hubs | https://api.github.com/repos/AtlasOfLivingAustralia/biocache-hubs | closed | Print CSS file needed | enhancement in progress priority-high | Printing results page is a mess. Needs a separate print.css file to hide most of the divs so we just get the results list and search meta data.

Should also work with record pages. | 1.0 | Print CSS file needed - Printing results page is a mess. Needs a separate print.css file to hide most of the divs so we just get the results list and search meta data.

Should also work with record pages. | priority | print css file needed printing results page is a mess needs a separate print css file to hide most of the divs so we just get the results list and search meta data should also work with record pages | 1 |

10,676 | 7,274,667,547 | IssuesEvent | 2018-02-21 10:47:49 | gradle/kotlin-dsl | https://api.github.com/repos/gradle/kotlin-dsl | opened | KT-22977 - Kotlin compilation should produce the same result when compiling the same source files | a:bug by:jetbrains in:kt-compiler re:performance | See https://youtrack.jetbrains.com/issue/KT-22977

This may have an impact on embedded script compilation caching and scripts compiled by the `kotlin-gradle-plugin` as in #666 | True | KT-22977 - Kotlin compilation should produce the same result when compiling the same source files - See https://youtrack.jetbrains.com/issue/KT-22977

This may have an impact on embedded script compilation caching and scripts compiled by the `kotlin-gradle-plugin` as in #666 | non_priority | kt kotlin compilation should produce the same result when compiling the same source files see this may have an impact on embedded script compilation caching and scripts compiled by the kotlin gradle plugin as in | 0 |

211,235 | 7,199,468,003 | IssuesEvent | 2018-02-05 16:02:12 | Jumpscale/prefab9 | https://api.github.com/repos/Jumpscale/prefab9 | closed | Allow to use prefab.web.portal.configure() to set membership to distinct value | priority_major state_verification type_feature | #### Installation information

- jumpscale version: 9.2.0

Also see: https://github.com/Jumpscale/prefab9/issues/87

#### Detailed description

Currently if you use `prefab.web.portal.configure()` to configure the OAuth settings of JumpScale Portal you cannot set a value for `memberof` in `oauth.client_scope` t... | 1.0 | Allow to use prefab.web.portal.configure() to set membership to distinct value - #### Installation information

- jumpscale version: 9.2.0

Also see: https://github.com/Jumpscale/prefab9/issues/87

#### Detailed description

Currently if you use `prefab.web.portal.configure()` to configure the OAuth settings o... | priority | allow to use prefab web portal configure to set membership to distinct value installation information jumpscale version also see detailed description currently if you use prefab web portal configure to configure the oauth settings of jumpscale portal you cannot set a value for... | 1 |

17,046 | 10,594,225,259 | IssuesEvent | 2019-10-09 16:18:31 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Code samples not valid for in-page cloud shell | Pri2 cognitive-services/svc cxp docs-experience immersive-reader/subsvc triaged | The code samples on this page are specific to Azure **Powershell**, get the `Try It` buttons around them launch the in-page Azure Cloud Shell **using bash**. This is extremely confusing. Recommend fixing one of these issues so readers can actually utilize the code as they read the doc.

---

#### Document Details

⚠ *Do... | 1.0 | Code samples not valid for in-page cloud shell - The code samples on this page are specific to Azure **Powershell**, get the `Try It` buttons around them launch the in-page Azure Cloud Shell **using bash**. This is extremely confusing. Recommend fixing one of these issues so readers can actually utilize the code as the... | non_priority | code samples not valid for in page cloud shell the code samples on this page are specific to azure powershell get the try it buttons around them launch the in page azure cloud shell using bash this is extremely confusing recommend fixing one of these issues so readers can actually utilize the code as the... | 0 |

383,855 | 26,566,556,107 | IssuesEvent | 2023-01-20 20:52:26 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Props incorrectly documented for FocalPointPicker | [Type] Developer Documentation Good First Issue [Status] In Progress [Package] Components | **Describe the bug**

The docs for `components/focal-point-picker` state that `dimensions` is both a property, and required.

It appears that that prop was replaced with an internal function to get the dimensions from the DOM itself.

This should probably be removed to avoid confusion.

**The relevant portion of ... | 1.0 | Props incorrectly documented for FocalPointPicker - **Describe the bug**

The docs for `components/focal-point-picker` state that `dimensions` is both a property, and required.

It appears that that prop was replaced with an internal function to get the dimensions from the DOM itself.

This should probably be remov... | non_priority | props incorrectly documented for focalpointpicker describe the bug the docs for components focal point picker state that dimensions is both a property and required it appears that that prop was replaced with an internal function to get the dimensions from the dom itself this should probably be remov... | 0 |

88,241 | 11,047,433,718 | IssuesEvent | 2019-12-09 18:57:56 | codeforboston/plogalong | https://api.github.com/repos/codeforboston/plogalong | opened | Replace nav with svgs | behavior visual design |

Replace nav icons with the following:

https://www.dropbox.com/sh/wrzsq7mntxsny87/AAAW9XL4kVdP_Jf8HbTx-SOua/nav?dl=0

Use purple as selected state, i.e. if I'm on the history page, history icon should be purple | 1.0 | Replace nav with svgs -

Replace nav icons with the following:

https://www.dropbox.com/sh/wrzsq7mntxsny87/AAAW9XL4kVdP_Jf8HbTx-SOua/nav?dl=0

Use purple as selected state, i.e. if I'm on the history page, history icon should be purple | non_priority | replace nav with svgs replace nav icons with the following use purple as selected state i e if i m on the history page history icon should be purple | 0 |

673,693 | 23,027,826,428 | IssuesEvent | 2022-07-22 10:57:41 | microsoft/PowerToys | https://api.github.com/repos/microsoft/PowerToys | closed | Always On Top Square border with Windows 11 | Issue-Bug Resolution-Fix-Committed Area-User Interface Product-Always On Top Priority-2 | ### Microsoft PowerToys version

0.51.1

### Running as admin

- [ ] Yes

### Area(s) with issue?

Always on Top

### Steps to reproduce

Toggle a window to Always On Top on a Windows 11 Build

### ✔️ Expected Behavior

Coloured border should wrap with the curve of the window

### ❌ Actual Behavior

Coloured border is ... | 1.0 | Always On Top Square border with Windows 11 - ### Microsoft PowerToys version

0.51.1

### Running as admin

- [ ] Yes

### Area(s) with issue?

Always on Top

### Steps to reproduce

Toggle a window to Always On Top on a Windows 11 Build

### ✔️ Expected Behavior

Coloured border should wrap with the curve of the wind... | priority | always on top square border with windows microsoft powertoys version running as admin yes area s with issue always on top steps to reproduce toggle a window to always on top on a windows build ✔️ expected behavior coloured border should wrap with the curve of the window ... | 1 |

1,681 | 24,395,824,043 | IssuesEvent | 2022-10-04 19:09:34 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | Hot threads should treat `other=` time on transport workers as idle time | >enhancement :Core/Infra/Core Team:Core/Infra Supportability | Transport worker threads should basically always be in state `RUNNABLE` which means that today they are reported by the hot threads API as 100% hot even when completely idle:

```

100.0% [cpu=0.0%, other=100.0%] (500ms out of 500ms) cpu usage by thread 'elasticsearch[instance-0000000004][transport_worker][T#1]'

... | True | Hot threads should treat `other=` time on transport workers as idle time - Transport worker threads should basically always be in state `RUNNABLE` which means that today they are reported by the hot threads API as 100% hot even when completely idle:

```

100.0% [cpu=0.0%, other=100.0%] (500ms out of 500ms) cpu us... | non_priority | hot threads should treat other time on transport workers as idle time transport worker threads should basically always be in state runnable which means that today they are reported by the hot threads api as hot even when completely idle out of cpu usage by thread elasticsearch ... | 0 |

158,576 | 24,858,631,197 | IssuesEvent | 2022-10-27 06:09:48 | Kanika637/amazon-clone | https://api.github.com/repos/Kanika637/amazon-clone | closed | Subtotal Container floating on top of the footer | bug enhancement good first issue invalid UX Design hacktoberfest | ### Describe the bug

No Response

### Expected behaviour

Expected to be in the same place

### Screenshots

https://user-images.githubusercontent.com/97578285/196969299-3295f2e1-c6d5-4037-9d91-16a201b2915b.mp4

### Additional context

No Response | 1.0 | Subtotal Container floating on top of the footer - ### Describe the bug

No Response

### Expected behaviour

Expected to be in the same place

### Screenshots

https://user-images.githubusercontent.com/97578285/196969299-3295f2e1-c6d5-4037-9d91-16a201b2915b.mp4

### Additional context

No Response | non_priority | subtotal container floating on top of the footer describe the bug no response expected behaviour expected to be in the same place screenshots additional context no response | 0 |

401,120 | 27,317,459,079 | IssuesEvent | 2023-02-24 16:48:06 | vercel/next.js | https://api.github.com/repos/vercel/next.js | opened | Docs: Beta Docs JSON-LD recommendation is insecure by default | template: documentation | ### What is the improvement or update you wish to see?

The beta SEO docs suggest `dangerouslySetInnerHTML={{ __html: JSON.stringify(jsonLd) }}`.

Turns out this is vulnerable to XSS and not actually compliant with JSON-LD. e.g. a `name: "abc</script><MOREHTML>"` is valid JSON that stringifies without an escaped `</s... | 1.0 | Docs: Beta Docs JSON-LD recommendation is insecure by default - ### What is the improvement or update you wish to see?

The beta SEO docs suggest `dangerouslySetInnerHTML={{ __html: JSON.stringify(jsonLd) }}`.

Turns out this is vulnerable to XSS and not actually compliant with JSON-LD. e.g. a `name: "abc</script><MO... | non_priority | docs beta docs json ld recommendation is insecure by default what is the improvement or update you wish to see the beta seo docs suggest dangerouslysetinnerhtml html json stringify jsonld turns out this is vulnerable to xss and not actually compliant with json ld e g a name abc is vali... | 0 |

45,158 | 11,597,056,586 | IssuesEvent | 2020-02-24 20:05:42 | dotnet/source-build | https://api.github.com/repos/dotnet/source-build | opened | Transport package creation | area-build area-infra | - Add transport package generation/publishing logic

- Choose a leaf repo to initially generate transport package

- Roll out to all repos from leaves up to core-sdk | 1.0 | Transport package creation - - Add transport package generation/publishing logic

- Choose a leaf repo to initially generate transport package

- Roll out to all repos from leaves up to core-sdk | non_priority | transport package creation add transport package generation publishing logic choose a leaf repo to initially generate transport package roll out to all repos from leaves up to core sdk | 0 |

59,997 | 17,023,307,436 | IssuesEvent | 2021-07-03 01:20:47 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Grey background on living_street | Component: osmarender Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 4.46pm, Wednesday, 8th October 2008]**

The end of a "living_street" tagged highway looks a bit strange, it has got an grey edge, no other "highway" shows this behavior, so there might be something wrong.

You can see it here:

http://www.openstreetmap.org/?lat=51.1... | 1.0 | Grey background on living_street - **[Submitted to the original trac issue database at 4.46pm, Wednesday, 8th October 2008]**

The end of a "living_street" tagged highway looks a bit strange, it has got an grey edge, no other "highway" shows this behavior, so there might be something wrong.

You can see it here:

htt... | non_priority | grey background on living street the end of a living street tagged highway looks a bit strange it has got an grey edge no other highway shows this behavior so there might be something wrong you can see it here the end of am gymnasium | 0 |

424,586 | 12,313,364,510 | IssuesEvent | 2020-05-12 15:12:27 | balena-io/balena-supervisor | https://api.github.com/repos/balena-io/balena-supervisor | reopened | Send an event when we poll with details of whether it gave us a new target state | Medium Priority area/state-engine type/enhancement | Using this information, we can decrease the poll interval if we find that it's rarely helpful, but currently we have no information on it. | 1.0 | Send an event when we poll with details of whether it gave us a new target state - Using this information, we can decrease the poll interval if we find that it's rarely helpful, but currently we have no information on it. | priority | send an event when we poll with details of whether it gave us a new target state using this information we can decrease the poll interval if we find that it s rarely helpful but currently we have no information on it | 1 |

594,823 | 18,054,842,896 | IssuesEvent | 2021-09-20 06:38:31 | nmrih/source-game | https://api.github.com/repos/nmrih/source-game | closed | [public-1.11.4] nmo_underground ; key item into the elevator before activating, the game get stuck | Status: Reviewed Type: Map Priority: Minimal | I can not get pliers.

| 1.0 | [public-1.11.4] nmo_underground ; key item into the elevator before activating, the game get stuck - I can not get pliers.

| priority | nmo underground key item into the elevator before activating the game get stuck i can not get pliers | 1 |

95,427 | 19,698,557,324 | IssuesEvent | 2022-01-12 14:34:31 | google/web-stories-wp | https://api.github.com/repos/google/web-stories-wp | closed | Code Quality: Consolidate useCombinedRefs & useComposeRefs usage | P2 Type: Code Quality Pod: WP & Infra | These two hooks seem to duplicate the same functionality. This ticket is to just use one of them and replace the other everywhere else.

https://github.com/google/web-stories-wp/blob/main/packages/react/src/useCombinedRefs.js

https://github.com/google/web-stories-wp/blob/main/packages/design-system/src/utils/useCompos... | 1.0 | Code Quality: Consolidate useCombinedRefs & useComposeRefs usage - These two hooks seem to duplicate the same functionality. This ticket is to just use one of them and replace the other everywhere else.

https://github.com/google/web-stories-wp/blob/main/packages/react/src/useCombinedRefs.js

https://github.com/google/... | non_priority | code quality consolidate usecombinedrefs usecomposerefs usage these two hooks seem to duplicate the same functionality this ticket is to just use one of them and replace the other everywhere else probably best to keep whichever hook we consolidate too inside the react package because of the current dep... | 0 |

145,061 | 13,133,307,666 | IssuesEvent | 2020-08-06 20:39:11 | jenkins-infra/jenkins.io | https://api.github.com/repos/jenkins-infra/jenkins.io | closed | Migrate 'Product requirements' page from wiki | documentation | ## Essential information

Page to Migrate: https://wiki.jenkins.io/display/JENKINS/Product+requirements#

Watch the tutorial to learn more about each page migration process:

[](https://www.youtube.com/watch?v=KB-NPlRvLoY)

## Additional informat... | 1.0 | Migrate 'Product requirements' page from wiki - ## Essential information

Page to Migrate: https://wiki.jenkins.io/display/JENKINS/Product+requirements#

Watch the tutorial to learn more about each page migration process:

[](https://www.youtube.co... | non_priority | migrate product requirements page from wiki essential information page to migrate watch the tutorial to learn more about each page migration process additional information note check jenkins io first to see if there s content for this already possibly there s some useful content on the ... | 0 |

284,953 | 31,017,874,134 | IssuesEvent | 2023-08-10 01:05:28 | amaybaum-dev/verademo2 | https://api.github.com/repos/amaybaum-dev/verademo2 | opened | jackson-databind-2.11.0.jar: 4 vulnerabilities (highest severity is: 7.5) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.11.0.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http:/... | True | jackson-databind-2.11.0.jar: 4 vulnerabilities (highest severity is: 7.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.11.0.jar</b></p></summary>

<p>General data-binding functionality for Ja... | non_priority | jackson databind jar vulnerabilities highest severity is vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library app target verademo web inf lib jackson databind ... | 0 |

225,608 | 7,493,729,922 | IssuesEvent | 2018-04-07 00:16:43 | AdChain/AdChainRegistryDapp | https://api.github.com/repos/AdChain/AdChainRegistryDapp | opened | Re-Design Claim Rewards in Governance Module | Priority: Medium Type: UX Enhancement | # Problem

The claim rewards container in the governance module isn't working correctly. The purpose of the container is to alert the user when they have ADT rewards to claim from them voting on the winning side when a parameter proposal is in question.

# Solution

Foobar | 1.0 | Re-Design Claim Rewards in Governance Module - # Problem

The claim rewards container in the governance module isn't working correctly. The purpose of the container is to alert the user when they have ADT rewards to claim from them voting on the winning side when a parameter proposal is in question.

# Solution

Foo... | priority | re design claim rewards in governance module problem the claim rewards container in the governance module isn t working correctly the purpose of the container is to alert the user when they have adt rewards to claim from them voting on the winning side when a parameter proposal is in question solution foo... | 1 |

184,778 | 32,044,491,569 | IssuesEvent | 2023-09-22 23:08:35 | department-of-veterans-affairs/vets-design-system-documentation | https://api.github.com/repos/department-of-veterans-affairs/vets-design-system-documentation | opened | H1 white font is incorrect | bug platform-design-system-team | # Bug Report

- [ ] I’ve searched for any related issues and avoided creating a duplicate issue.

## What happened

The `H1 white` is set in `Helvetica` instead of `Bitter` in the Component Library text styles.

| It seems that EXPLAIN clause is missing in the Cypher manual. Should we add it? | 1.0 | Should we add EXPLAIN to Cypher manual? - It seems that EXPLAIN clause is missing in the Cypher manual. Should we add it? | priority | should we add explain to cypher manual it seems that explain clause is missing in the cypher manual should we add it | 1 |

17,491 | 24,105,245,579 | IssuesEvent | 2022-09-20 06:51:07 | Juuxel/Adorn | https://api.github.com/repos/Juuxel/Adorn | closed | Drawers create a hole in the floor and sides of other blocks (Screenshot attached) / Sodium - Iris - Continuity - CITResewn etc. | bug wontfix mod compatibility fabric | ### Adorn version

3.6.1+1.19

### Minecraft version

1.19.2

### Mod loader

Fabric

### Mod loader version

Fabric Loader 0.14.9 - Fabric Api 0.60.0+1.19.2

### Describe the bug

Using the Oak Drawer, it removes a 4x16 pixel part of any floor directly below the protruding drawers, revealing the map below. They also r... | True | Drawers create a hole in the floor and sides of other blocks (Screenshot attached) / Sodium - Iris - Continuity - CITResewn etc. - ### Adorn version

3.6.1+1.19

### Minecraft version

1.19.2

### Mod loader

Fabric

### Mod loader version

Fabric Loader 0.14.9 - Fabric Api 0.60.0+1.19.2

### Describe the bug

Using th... | non_priority | drawers create a hole in the floor and sides of other blocks screenshot attached sodium iris continuity citresewn etc adorn version minecraft version mod loader fabric mod loader version fabric loader fabric api describe the bug using the oak... | 0 |

636,908 | 20,612,716,184 | IssuesEvent | 2022-03-07 10:12:47 | deltaDAO/mvg-portal | https://api.github.com/repos/deltaDAO/mvg-portal | closed | [Enhancement] Switch to correct network button should have disabled style | Priority: Mid Type: Enhancement | ## Motivation / Problem

When the user is connected through a different network compared to the asset displayed, the button to automatically switch to the correct network looks like it can't be clicked.

<img width="552" alt="Screenshot 2022-03-04 at 11 34 56" src="https://user-images.githubusercontent.com/20192135/156... | 1.0 | [Enhancement] Switch to correct network button should have disabled style - ## Motivation / Problem

When the user is connected through a different network compared to the asset displayed, the button to automatically switch to the correct network looks like it can't be clicked.

<img width="552" alt="Screenshot 2022-0... | priority | switch to correct network button should have disabled style motivation problem when the user is connected through a different network compared to the asset displayed the button to automatically switch to the correct network looks like it can t be clicked img width alt screenshot at src ... | 1 |

17,218 | 11,801,088,487 | IssuesEvent | 2020-03-18 18:45:22 | MakeInBelgium/babbelbox | https://api.github.com/repos/MakeInBelgium/babbelbox | closed | FAQ-pagina toevoegen | usability | Er zijn wel enkele vragen die beantwoord zullen moeten worden.

Deze zijn al opgelijst in een document. 10 vragen en hun antwoorden.

Zie: https://docs.google.com/presentation/d/1UzhwKhPo_CY9kIW727_CnjXgZJkMBVx88KJvqBDf8y0/edit#slide=id.g7f216d806c_19_0

Het zou goed zijn als deze in de tool beschikbaar komen.

Per... | True | FAQ-pagina toevoegen - Er zijn wel enkele vragen die beantwoord zullen moeten worden.

Deze zijn al opgelijst in een document. 10 vragen en hun antwoorden.

Zie: https://docs.google.com/presentation/d/1UzhwKhPo_CY9kIW727_CnjXgZJkMBVx88KJvqBDf8y0/edit#slide=id.g7f216d806c_19_0

Het zou goed zijn als deze in de tool be... | non_priority | faq pagina toevoegen er zijn wel enkele vragen die beantwoord zullen moeten worden deze zijn al opgelijst in een document vragen en hun antwoorden zie het zou goed zijn als deze in de tool beschikbaar komen persoonlijk zou ik gaan voor een aparte pagina nieuw tabblad geen download van pdf en niet ... | 0 |

678,242 | 23,190,839,384 | IssuesEvent | 2022-08-01 12:32:03 | SAP/xsk | https://api.github.com/repos/SAP/xsk | closed | $.util.zip to support $.web.Body as source type | wontfix API priority-low effort-low incomplete | Related to #684

Support providing $.web.Body as source type as shown in the [SAP documentation](https://help.sap.com/doc/3de842783af24336b6305a3c0223a369/2.0.03/en-US/$.util.Zip.html#:~:text=WebRequest/WebResponse%20Body%20Integration).

If instance of $.web.Body just take the arrayBuffer | 1.0 | $.util.zip to support $.web.Body as source type - Related to #684

Support providing $.web.Body as source type as shown in the [SAP documentation](https://help.sap.com/doc/3de842783af24336b6305a3c0223a369/2.0.03/en-US/$.util.Zip.html#:~:text=WebRequest/WebResponse%20Body%20Integration).

If instance of $.web.Body ju... | priority | util zip to support web body as source type related to support providing web body as source type as shown in the if instance of web body just take the arraybuffer | 1 |

427,674 | 12,397,943,787 | IssuesEvent | 2020-05-21 00:14:40 | eclipse-ee4j/glassfish | https://api.github.com/repos/eclipse-ee4j/glassfish | closed | More than maximum number of characters can be entered for create-file-user | Component: security ERR: Assignee Priority: Minor Stale Type: Bug | More than maximum number of characters can be entered in the UserID, Group List and Password for create-file-user on command or Admin console.

1.Open admin GUI. [http://localhost:4848](http://localhost:4848)

2.Configurations > server-config > Security > Realms > file

3.Click Manage Users button.

4.In File Users, click... | 1.0 | More than maximum number of characters can be entered for create-file-user - More than maximum number of characters can be entered in the UserID, Group List and Password for create-file-user on command or Admin console.

1.Open admin GUI. [http://localhost:4848](http://localhost:4848)

2.Configurations > server-config >... | priority | more than maximum number of characters can be entered for create file user more than maximum number of characters can be entered in the userid group list and password for create file user on command or admin console open admin gui configurations server config security realms file click manage us... | 1 |

76,668 | 9,479,092,166 | IssuesEvent | 2019-04-20 04:41:56 | merenlab/anvio | https://api.github.com/repos/merenlab/anvio | closed | Adding collections of PCs to pan genomes | design enhancement feature request pangenomic workflow time-out / fade-out | Is there a way to choose and export pangenome collections via the command line? It would be very useful to be able export all PCs that are found in a certain genomes without having to highlight them via the GUI. Highlighting the PCs via the GUI is prone to error. For example, it would be excellent to be able to easily ... | 1.0 | Adding collections of PCs to pan genomes - Is there a way to choose and export pangenome collections via the command line? It would be very useful to be able export all PCs that are found in a certain genomes without having to highlight them via the GUI. Highlighting the PCs via the GUI is prone to error. For example, ... | non_priority | adding collections of pcs to pan genomes is there a way to choose and export pangenome collections via the command line it would be very useful to be able export all pcs that are found in a certain genomes without having to highlight them via the gui highlighting the pcs via the gui is prone to error for example ... | 0 |

54,233 | 11,205,229,983 | IssuesEvent | 2020-01-05 12:50:57 | rubinera1n/blog | https://api.github.com/repos/rubinera1n/blog | opened | LeetCode-102-Binary Tree Level Order Traversal | Algorithm LCT-Breadth-first Search LCT-Depth-first Search LCT-Tree LeetCode-Medium | ERROR: type should be string, got "https://leetcode.com/problems/binary-tree-level-order-traversal/description/\r\n\r\n* algorithms\r\n* Medium (48.77%)\r\n* Source Code: 102.binary-tree-level-order-traversal.py\r\n* Total Accepted: 487K\r\n* Total Submissions: 945.8K\r\n* Testcase Example: '[3,9,20,null,null,15,7]'\r\n\r\nGiven a binary tree, return the level order traversal of its nodes' values. (ie, from left to right, level by level\r\n).\r\n\r\n\r\nFor example:\r\nGiven binary tree `[3,9,20,null,null,15,7]`,\r\n\r\n```\r\n 3\r\n / \\\r\n 9 20\r\n / \\\r\n 15 7\r\n```\r\n\r\nreturn its level order traversal as:\r\n```\r\n[\r\n [3],\r\n [9,20],\r\n [15,7]\r\n]\r\n```\r\n\r\n### C++\r\n```cpp\r\nclass Solution {\r\npublic:\r\n vector<vector<int>> levelOrder(TreeNode* root) {\r\n vector<vector<int>> ans;\r\n DFS(root, 0, ans);\r\n return ans;\r\n }\r\nprivate:\r\n void DFS(TreeNode* root, int depth, vector<vector<int>>& ans) {\r\n if (!root) return;\r\n if (ans.size() <= depth) ans.push_back({});\r\n ans[depth].push_back(root->val);\r\n DFS(root->left, depth+1, ans);\r\n DFS(root->right, depth+1, ans);\r\n }\r\n};\r\n```\r\n\r\n### Python\r\n```python\r\nclass Solution:\r\n \"\"\"\r\n def levelOrder(self, root: TreeNode) -> List[List[int]]:\r\n res = []\r\n self.dfs(root, 0, res)\r\n return res\r\n # DFS\r\n def dfs(self, root, level, res):\r\n if not root:\r\n return \r\n if len(res) < level + 1:\r\n res.append([])\r\n res[level].append(root.val)\r\n self.dfs(root.left, level + 1, res)\r\n self.dfs(root.right, level + 1, res)\r\n \"\"\"\r\n \r\n \r\n def levelOrder(self, root: TreeNode) -> List[List[int]]:\r\n # BFS + queue\r\n res, queue = [], [(root, 0)]\r\n while queue:\r\n curr, level = queue.pop(0)\r\n if curr:\r\n if len(res) < level + 1:\r\n res.append([])\r\n res[level].append(curr.val)\r\n queue.append((curr.left, level+1))\r\n queue.append((curr.right, level+1))\r\n return res\r\n```" | 1.0 | LeetCode-102-Binary Tree Level Order Traversal - https://leetcode.com/problems/binary-tree-level-order-traversal/description/

* algorithms

* Medium (48.77%)

* Source Code: 102.binary-tree-level-order-traversal.py

* Total Accepted: 487K

* Total Submissions: 945.8K

* Testcase Example: '[3,9,20,null,null... | non_priority | leetcode binary tree level order traversal algorithms medium source code binary tree level order traversal py total accepted total submissions testcase example given a binary tree return the level order traversal of its nodes values ie from left to righ... | 0 |

630,953 | 20,121,708,821 | IssuesEvent | 2022-02-08 03:30:17 | threefoldtech/vgrid | https://api.github.com/repos/threefoldtech/vgrid | opened | get_nodes_by_city_country not working | priority_minor | examples/get_nodes_by_city_country.v

doesn't seem to work

## also need to deal with case insensitivity

- need to make sure, search is all lower case, do we need to change this in DB?

- and we need to lower the name we look for as well to match

| 1.0 | get_nodes_by_city_country not working - examples/get_nodes_by_city_country.v

doesn't seem to work

## also need to deal with case insensitivity

- need to make sure, search is all lower case, do we need to change this in DB?

- and we need to lower the name we look for as well to match

| priority | get nodes by city country not working examples get nodes by city country v doesn t seem to work also need to deal with case insensitivity need to make sure search is all lower case do we need to change this in db and we need to lower the name we look for as well to match | 1 |

324,185 | 23,987,478,727 | IssuesEvent | 2022-09-13 20:29:55 | airbytehq/airbyte | https://api.github.com/repos/airbytehq/airbyte | closed | Document the Job Orchestrator. | area/documentation type/enhancement team/oss autoteam team/documentation | ### Tell us about the documentation you'd like us to add or update.

Airbyte Kubernetes has the Job Orchestrator to decouple worker deploys from job execution. We should document this.

### If applicable, add links to the relevant docs that should be updated

- [Link to relevant docs page](https://docs.airbyte.io) | 2.0 | Document the Job Orchestrator. - ### Tell us about the documentation you'd like us to add or update.

Airbyte Kubernetes has the Job Orchestrator to decouple worker deploys from job execution. We should document this.

### If applicable, add links to the relevant docs that should be updated

- [Link to relevant doc... | non_priority | document the job orchestrator tell us about the documentation you d like us to add or update airbyte kubernetes has the job orchestrator to decouple worker deploys from job execution we should document this if applicable add links to the relevant docs that should be updated | 0 |

1,100 | 2,595,143,282 | IssuesEvent | 2015-02-20 11:52:06 | keyboardsurfer/blinkendroid | https://api.github.com/repos/keyboardsurfer/blinkendroid | opened | Abmelden von Client funktioniert nicht | auto-migrated Priority-Medium Type-Defect | ```

Ich starte Client und Server auf getrennten Geraeten.

Auf dem Server erscheint der Client in der Liste (connectionOpenend() wird

aufgerufen).

Ich beende den Client mit "Back".

Auf dem Server bleibt der Client in der Liste stehen (connectionClosed()

wird NICHT aufgerufen).

```

-----

Original issue reported on c... | 1.0 | Abmelden von Client funktioniert nicht - ```

Ich starte Client und Server auf getrennten Geraeten.

Auf dem Server erscheint der Client in der Liste (connectionOpenend() wird

aufgerufen).

Ich beende den Client mit "Back".

Auf dem Server bleibt der Client in der Liste stehen (connectionClosed()

wird NICHT aufgerufen).... | non_priority | abmelden von client funktioniert nicht ich starte client und server auf getrennten geraeten auf dem server erscheint der client in der liste connectionopenend wird aufgerufen ich beende den client mit back auf dem server bleibt der client in der liste stehen connectionclosed wird nicht aufgerufen ... | 0 |

38,673 | 2,849,991,260 | IssuesEvent | 2015-05-31 06:01:14 | GLolol/PyLink | https://api.github.com/repos/GLolol/PyLink | closed | Add shared functions for sending messages to users (ircmsgs.py) | feature priority:medium | This is a lot simpler than writing `_sendFromUser('PRIVMSG person :hello!')` every time you want to send a message from a pseudoclient. | 1.0 | Add shared functions for sending messages to users (ircmsgs.py) - This is a lot simpler than writing `_sendFromUser('PRIVMSG person :hello!')` every time you want to send a message from a pseudoclient. | priority | add shared functions for sending messages to users ircmsgs py this is a lot simpler than writing sendfromuser privmsg person hello every time you want to send a message from a pseudoclient | 1 |

673,318 | 22,957,819,855 | IssuesEvent | 2022-07-19 13:07:48 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | SHDGSS odd code | SeverityMedium PriorityLow | This code in SHDGSS seems odd/inefficient and possibly not as intended:

``` cpp

NVR = HCNV( 1 );

for ( N = 1; N <= NumVertInShadowOrClippedSurface; ++N ) {

for ( M = 1; M <= NVR; ++M ) {

if ( std::abs( HCX( M, 1 ) - HCX( N, NS3 ) ) > 6 ) continue;

if ( std::abs( HCY( M, 1 ) - HCY( N, NS3 ) ) > 6 ) conti... | 1.0 | SHDGSS odd code - This code in SHDGSS seems odd/inefficient and possibly not as intended:

``` cpp

NVR = HCNV( 1 );

for ( N = 1; N <= NumVertInShadowOrClippedSurface; ++N ) {

for ( M = 1; M <= NVR; ++M ) {

if ( std::abs( HCX( M, 1 ) - HCX( N, NS3 ) ) > 6 ) continue;

if ( std::abs( HCY( M, 1 ) - HCY( N, N... | priority | shdgss odd code this code in shdgss seems odd inefficient and possibly not as intended cpp nvr hcnv for n n numvertinshadoworclippedsurface n for m m nvr m if std abs hcx m hcx n continue if std abs hcy m hcy n ... | 1 |

239,616 | 26,231,972,950 | IssuesEvent | 2023-01-05 01:35:19 | keanhankins/ranger | https://api.github.com/repos/keanhankins/ranger | opened | CVE-2021-35515 (High) detected in commons-compress-1.8.1.jar | security vulnerability | ## CVE-2021-35515 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-compress-1.8.1.jar</b></p></summary>