Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

177

| 2,587,552,739

|

IssuesEvent

|

2015-02-17 19:11:57

|

GsDevKit/gsDevKitHome

|

https://api.github.com/repos/GsDevKit/gsDevKitHome

|

closed

|

1.0.0 to 2.0.0 compat ideas

|

in process

|

- [ ] symbolic link from tode/sys/default/clients to tode/clients (similar link for server(?))

- [ ] composition that includes tode/home for home directories

- [ ] directory map of tode/sys documenting the directory structure

- [ ] anything else that preserves changes users made in the old tode structure

- [ ] tag 1.0.0 before merge ... next release 2.0.0

- [ ] script for building /sys structure for exsiting stones

|

1.0

|

1.0.0 to 2.0.0 compat ideas - - [ ] symbolic link from tode/sys/default/clients to tode/clients (similar link for server(?))

- [ ] composition that includes tode/home for home directories

- [ ] directory map of tode/sys documenting the directory structure

- [ ] anything else that preserves changes users made in the old tode structure

- [ ] tag 1.0.0 before merge ... next release 2.0.0

- [ ] script for building /sys structure for exsiting stones

|

process

|

to compat ideas symbolic link from tode sys default clients to tode clients similar link for server composition that includes tode home for home directories directory map of tode sys documenting the directory structure anything else that preserves changes users made in the old tode structure tag before merge next release script for building sys structure for exsiting stones

| 1

|

111

| 3,460,514,519

|

IssuesEvent

|

2015-12-19 07:09:52

|

Automattic/wp-calypso

|

https://api.github.com/repos/Automattic/wp-calypso

|

closed

|

People: Redirect followers to frontend of site after accepting follow invite

|

People Management [Type] Task

|

Based on [this line](https://github.com/Automattic/wp-calypso/blob/master/client/accept-invite/logged-out-invite/signup-form.jsx#L53), we would redirect a logged out follow that signs up to the reader.

But, we'll also need to handle the case for users that only want to follow via email. For these users, let's redirect them to the front-end of the site that they followed.

|

1.0

|

People: Redirect followers to frontend of site after accepting follow invite - Based on [this line](https://github.com/Automattic/wp-calypso/blob/master/client/accept-invite/logged-out-invite/signup-form.jsx#L53), we would redirect a logged out follow that signs up to the reader.

But, we'll also need to handle the case for users that only want to follow via email. For these users, let's redirect them to the front-end of the site that they followed.

|

non_process

|

people redirect followers to frontend of site after accepting follow invite based on we would redirect a logged out follow that signs up to the reader but we ll also need to handle the case for users that only want to follow via email for these users let s redirect them to the front end of the site that they followed

| 0

|

14,488

| 17,602,756,093

|

IssuesEvent

|

2021-08-17 13:45:12

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

Changes to viral term 'GO:0039723 suppression by virus of host TBK1 activity'

|

multi-species process

|

KW-1223 defines this keyword as 'Viral protein involved in the evasion of host innate defenses by inhibiting the TBK1 kinase. Upon viral infection, Toll-like receptors (TLRs) or DNA recognition receptors recognize foreign material and transmit the signal to TBK1 that in turn phosphorylates and activates IRF3 and IRF7. Once phosphorylated, IRF3 and/or IRF7 translocate into the nucleus to drive transcription of interferons. Several viruses including herpes simplex virus 1 or vaccinia virus interact directly with and inhibit TBK1 to prevent IRFs activation.' (https://www.uniprot.org/keywords/KW-1223)

Should we keep this term and move it, or merge it into 'suppression by virus of host toll-like receptor signaling pathway',

AND annotate the proteins annotated to the KW to MF 'protein serine/threonine kinase inhibitor activity' ?

If we keep the term, there are two possible parents for this term:

- 'evasion of host immune response', defined as 'A process by which an organism avoids the effects of the host organism's immune response.

- 'suppression by virus of host toll-like receptor signaling pathway', which is not 'evasion' but 'suppression'

- [ ] remove comment that refers to obsolete term: "Comment: When TBK1 acts as part of a TBK1-IKBKE-DDX3 complex, consider also annotating to: suppression by virus of host TBK1-IKBKE-DDX3 complex activity ; GO:0039659."

@pmasson55 what do you think is the best solution ?

Thanks, Pascale

|

1.0

|

Changes to viral term 'GO:0039723 suppression by virus of host TBK1 activity' - KW-1223 defines this keyword as 'Viral protein involved in the evasion of host innate defenses by inhibiting the TBK1 kinase. Upon viral infection, Toll-like receptors (TLRs) or DNA recognition receptors recognize foreign material and transmit the signal to TBK1 that in turn phosphorylates and activates IRF3 and IRF7. Once phosphorylated, IRF3 and/or IRF7 translocate into the nucleus to drive transcription of interferons. Several viruses including herpes simplex virus 1 or vaccinia virus interact directly with and inhibit TBK1 to prevent IRFs activation.' (https://www.uniprot.org/keywords/KW-1223)

Should we keep this term and move it, or merge it into 'suppression by virus of host toll-like receptor signaling pathway',

AND annotate the proteins annotated to the KW to MF 'protein serine/threonine kinase inhibitor activity' ?

If we keep the term, there are two possible parents for this term:

- 'evasion of host immune response', defined as 'A process by which an organism avoids the effects of the host organism's immune response.

- 'suppression by virus of host toll-like receptor signaling pathway', which is not 'evasion' but 'suppression'

- [ ] remove comment that refers to obsolete term: "Comment: When TBK1 acts as part of a TBK1-IKBKE-DDX3 complex, consider also annotating to: suppression by virus of host TBK1-IKBKE-DDX3 complex activity ; GO:0039659."

@pmasson55 what do you think is the best solution ?

Thanks, Pascale

|

process

|

changes to viral term go suppression by virus of host activity kw defines this keyword as viral protein involved in the evasion of host innate defenses by inhibiting the kinase upon viral infection toll like receptors tlrs or dna recognition receptors recognize foreign material and transmit the signal to that in turn phosphorylates and activates and once phosphorylated and or translocate into the nucleus to drive transcription of interferons several viruses including herpes simplex virus or vaccinia virus interact directly with and inhibit to prevent irfs activation should we keep this term and move it or merge it into suppression by virus of host toll like receptor signaling pathway and annotate the proteins annotated to the kw to mf protein serine threonine kinase inhibitor activity if we keep the term there are two possible parents for this term evasion of host immune response defined as a process by which an organism avoids the effects of the host organism s immune response suppression by virus of host toll like receptor signaling pathway which is not evasion but suppression remove comment that refers to obsolete term comment when acts as part of a ikbke complex consider also annotating to suppression by virus of host ikbke complex activity go what do you think is the best solution thanks pascale

| 1

|

5,502

| 8,368,990,172

|

IssuesEvent

|

2018-10-04 16:03:59

|

allinurl/goaccess

|

https://api.github.com/repos/allinurl/goaccess

|

closed

|

tail -f access.log,when logrotate run, real-time-html will not update

|

log-processing question

|

when i use cmd

`tail -f /var/log/nginx/access.log | /usr/local/bin/goaccess -o /home/wwwroot/default/report.html --real-time-html --log-format='%^ %^[%d:%t %^] "%r" %s %b "%R" "%u" "%h"' --date-format=%d/%b/%Y --time-format=%T --keep-db-files --load-from-disk --db-path=/home/wwwroot/default/ `

work normal.

but when logrotate run (daily run). **report.html** data will not update. so i have to rerun above cmd `tailf -f ....`

|

1.0

|

tail -f access.log,when logrotate run, real-time-html will not update - when i use cmd

`tail -f /var/log/nginx/access.log | /usr/local/bin/goaccess -o /home/wwwroot/default/report.html --real-time-html --log-format='%^ %^[%d:%t %^] "%r" %s %b "%R" "%u" "%h"' --date-format=%d/%b/%Y --time-format=%T --keep-db-files --load-from-disk --db-path=/home/wwwroot/default/ `

work normal.

but when logrotate run (daily run). **report.html** data will not update. so i have to rerun above cmd `tailf -f ....`

|

process

|

tail f access log when logrotate run real time html will not update when i use cmd tail f var log nginx access log usr local bin goaccess o home wwwroot default report html real time html log format r s b r u h date format d b y time format t keep db files load from disk db path home wwwroot default work normal but when logrotate run daily run report html data will not update so i have to rerun above cmd tailf f

| 1

|

8,994

| 12,103,679,101

|

IssuesEvent

|

2020-04-20 18:50:06

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

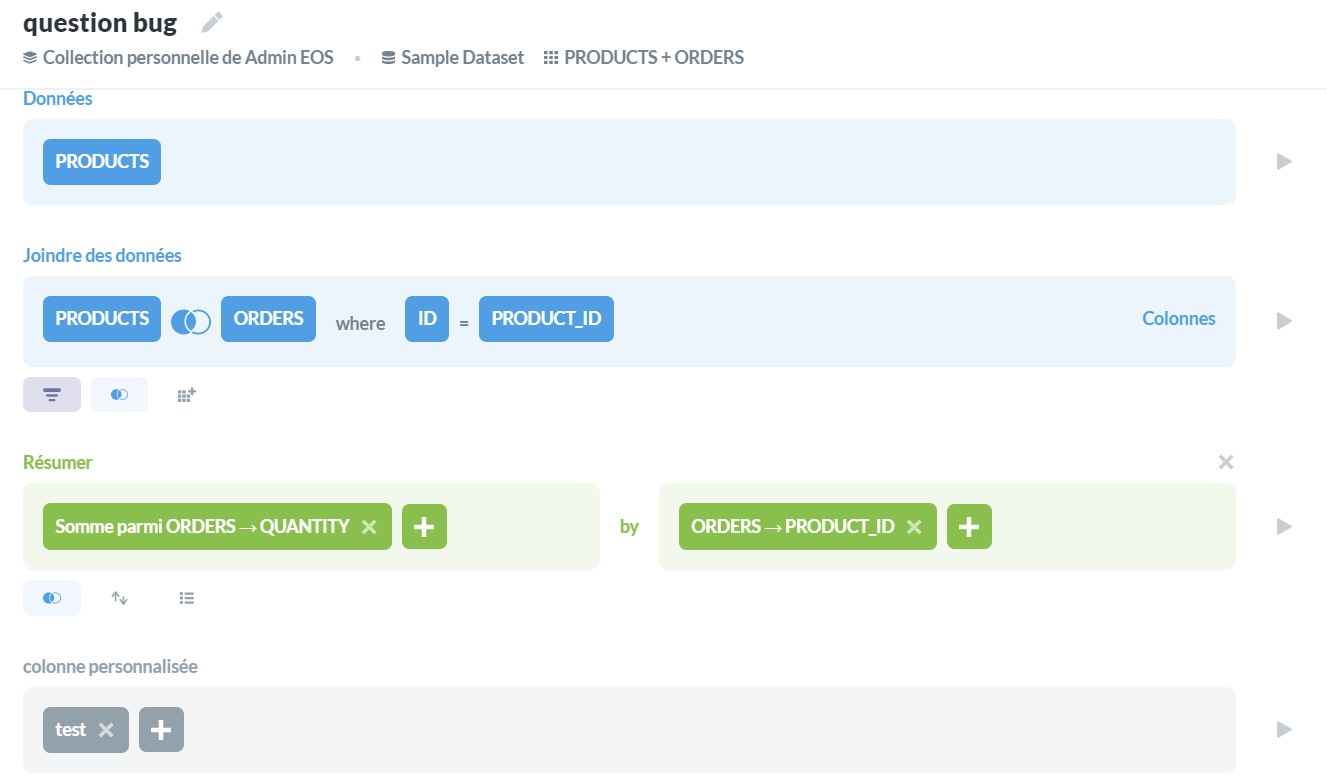

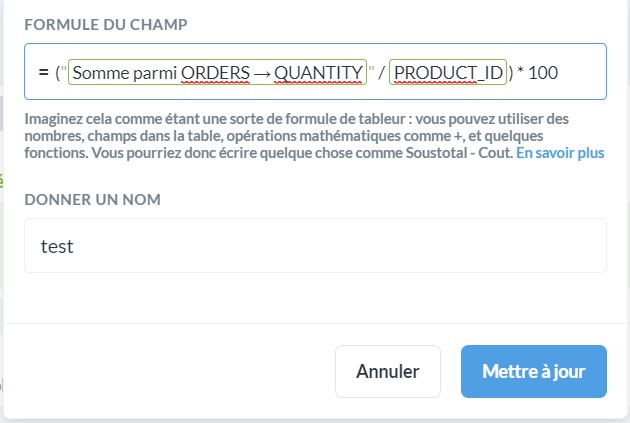

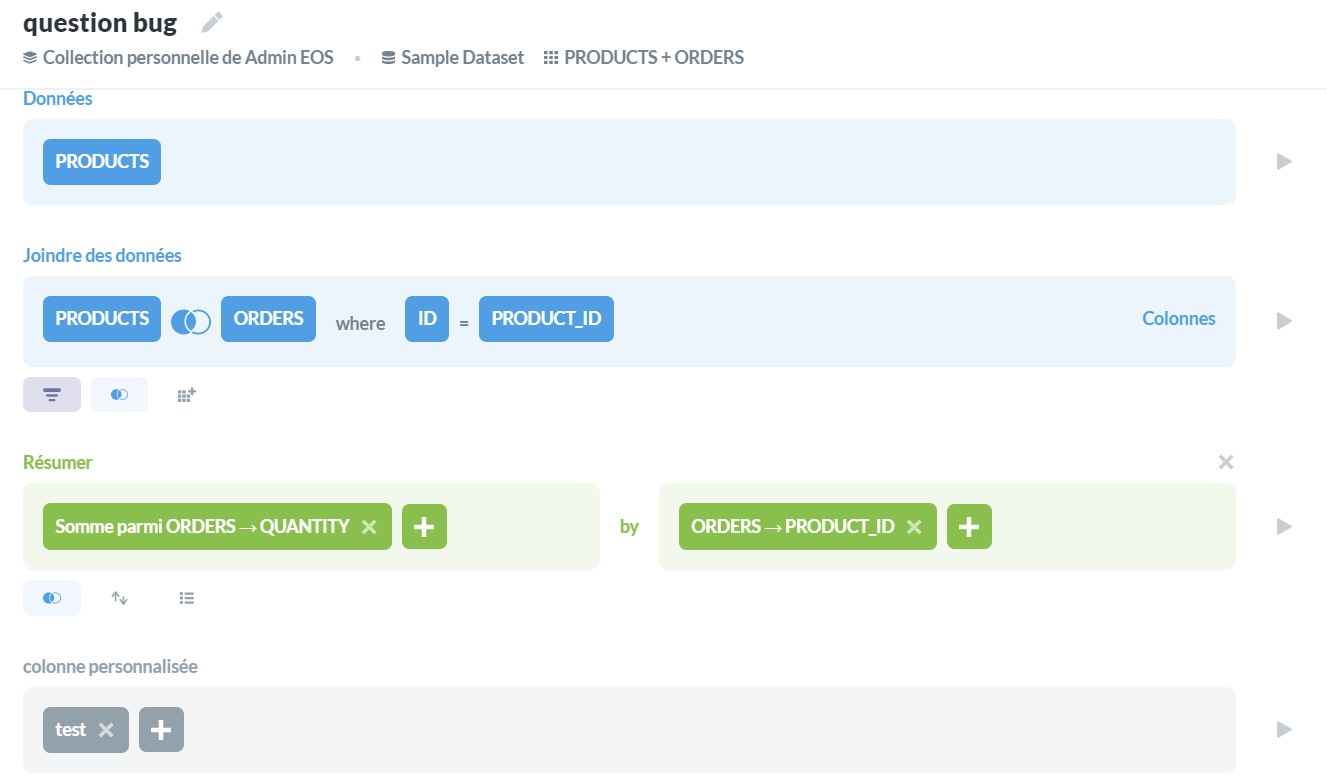

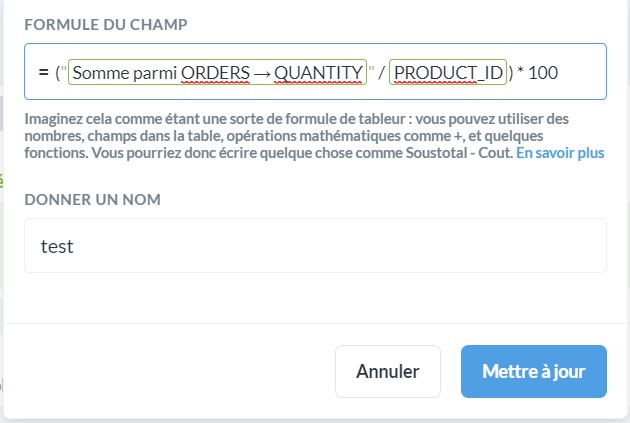

Version 0.35 regression : column not found error after custom column

|

Priority:P2 Querying/Notebook Querying/Processor Type:Bug

|

**Describe the bug**

When creating a question with a summurize and a Custom Column based on the summurize the error "column not found" appears

**To Reproduce**

you just have to create a query in 0.35.0 or 0.35-RC2 like this one to reproduce the problem (the same query works in 0.34.3 :

Thanks

|

1.0

|

Version 0.35 regression : column not found error after custom column - **Describe the bug**

When creating a question with a summurize and a Custom Column based on the summurize the error "column not found" appears

**To Reproduce**

you just have to create a query in 0.35.0 or 0.35-RC2 like this one to reproduce the problem (the same query works in 0.34.3 :

Thanks

|

process

|

version regression column not found error after custom column describe the bug when creating a question with a summurize and a custom column based on the summurize the error column not found appears to reproduce you just have to create a query in or like this one to reproduce the problem the same query works in thanks

| 1

|

7,760

| 10,879,828,895

|

IssuesEvent

|

2019-11-17 05:37:47

|

google/ground-android

|

https://api.github.com/repos/google/ground-android

|

opened

|

[Feature] Code styling check

|

priority: p2 type: cleanup type: feature request type: process

|

- [ ] Checkstyle gradle plugin for code styling

- [ ] Run check on GCB with every commit and fail if necessary

- [ ] Generate reports

|

1.0

|

[Feature] Code styling check - - [ ] Checkstyle gradle plugin for code styling

- [ ] Run check on GCB with every commit and fail if necessary

- [ ] Generate reports

|

process

|

code styling check checkstyle gradle plugin for code styling run check on gcb with every commit and fail if necessary generate reports

| 1

|

374,793

| 11,095,582,851

|

IssuesEvent

|

2019-12-16 09:24:48

|

unep-grid/map-x-mgl

|

https://api.github.com/repos/unep-grid/map-x-mgl

|

closed

|

Problems with the "download" function

|

bug done priority 2

|

When downloading a dataset, the zip folder is not downloaded automatically as it used to be.

Users must now click on the "click here" green sentence at the bottom of the download window at the end of the download process. It is a problem because:

1. the content of the download window doesn't scroll down automatically to the end of the text where the user could see the sentence "click here to download". The window displays only the beginning of the text which may not be user-friendly.

2. it is much less intuitive

_Suggestion_: the dataset should be downloaded automatically at the end of the download process without clicking on anything.

Also, users receive an email containing a link to download the data, but the link doesn't work and says (for me) "Cette page ne fonctionne pas".

Link: http://api.mapx.org:443/download/mx_dl_5x1d2_rL6mL_FMGfj_iTwKb.zip

Console: there is nothing written in the console

|

1.0

|

Problems with the "download" function - When downloading a dataset, the zip folder is not downloaded automatically as it used to be.

Users must now click on the "click here" green sentence at the bottom of the download window at the end of the download process. It is a problem because:

1. the content of the download window doesn't scroll down automatically to the end of the text where the user could see the sentence "click here to download". The window displays only the beginning of the text which may not be user-friendly.

2. it is much less intuitive

_Suggestion_: the dataset should be downloaded automatically at the end of the download process without clicking on anything.

Also, users receive an email containing a link to download the data, but the link doesn't work and says (for me) "Cette page ne fonctionne pas".

Link: http://api.mapx.org:443/download/mx_dl_5x1d2_rL6mL_FMGfj_iTwKb.zip

Console: there is nothing written in the console

|

non_process

|

problems with the download function when downloading a dataset the zip folder is not downloaded automatically as it used to be users must now click on the click here green sentence at the bottom of the download window at the end of the download process it is a problem because the content of the download window doesn t scroll down automatically to the end of the text where the user could see the sentence click here to download the window displays only the beginning of the text which may not be user friendly it is much less intuitive suggestion the dataset should be downloaded automatically at the end of the download process without clicking on anything also users receive an email containing a link to download the data but the link doesn t work and says for me cette page ne fonctionne pas link console there is nothing written in the console

| 0

|

6,570

| 9,654,194,092

|

IssuesEvent

|

2019-05-19 12:08:06

|

brandon1roadgears/Interpreter-of-programming-language-of-Turing-Machine

|

https://api.github.com/repos/brandon1roadgears/Interpreter-of-programming-language-of-Turing-Machine

|

closed

|

Проверка программы на работоспособность.

|

Testing process Work in process bug

|

### Необходимо проверить правильность выдаваемых программой ответов.

Для этого необходимо найти в интернете несколько задач с ответами и вписать правила в программы сравнить ответы ресурса и ответы полученные в нашей программе.

Думаю этого ресурса будет достаточно [https://docplayer.ru/32397731-Mashina-tyuringa-i-algoritmy-markova-reshenie-zadach.html](url)

|

2.0

|

Проверка программы на работоспособность. - ### Необходимо проверить правильность выдаваемых программой ответов.

Для этого необходимо найти в интернете несколько задач с ответами и вписать правила в программы сравнить ответы ресурса и ответы полученные в нашей программе.

Думаю этого ресурса будет достаточно [https://docplayer.ru/32397731-Mashina-tyuringa-i-algoritmy-markova-reshenie-zadach.html](url)

|

process

|

проверка программы на работоспособность необходимо проверить правильность выдаваемых программой ответов для этого необходимо найти в интернете несколько задач с ответами и вписать правила в программы сравнить ответы ресурса и ответы полученные в нашей программе думаю этого ресурса будет достаточно url

| 1

|

260,076

| 22,589,691,410

|

IssuesEvent

|

2022-06-28 18:32:31

|

danbudris/vulnerabilityProcessor

|

https://api.github.com/repos/danbudris/vulnerabilityProcessor

|

closed

|

LOW vulnerability in arn:aws:ecr:us-east-1:555555555555:repository/myrepo/sha256:7308b29228bde15a52a49b2f4a4cf95d5e2610e5ca67cdae32430e4b18effd91

|

hey there test severity/LOW

|

Issue auto cut by Vulnerability Processor version `v0.0.1-dev`

Message Source: `EventBridge`

Finding Source: `inspectorV2`

LOW Vulnerability CVE-2016-9085 detected in arn:aws:ecr:us-east-1:555555555555:repository/myrepo/sha256:7308b29228bde15a52a49b2f4a4cf95d5e2610e5ca67cdae32430e4b18effd91

Associated Pull Requests:

- https://github.com/danbudris/vulnerabilityProcessor/pull/106

|

1.0

|

LOW vulnerability in arn:aws:ecr:us-east-1:555555555555:repository/myrepo/sha256:7308b29228bde15a52a49b2f4a4cf95d5e2610e5ca67cdae32430e4b18effd91 - Issue auto cut by Vulnerability Processor version `v0.0.1-dev`

Message Source: `EventBridge`

Finding Source: `inspectorV2`

LOW Vulnerability CVE-2016-9085 detected in arn:aws:ecr:us-east-1:555555555555:repository/myrepo/sha256:7308b29228bde15a52a49b2f4a4cf95d5e2610e5ca67cdae32430e4b18effd91

Associated Pull Requests:

- https://github.com/danbudris/vulnerabilityProcessor/pull/106

|

non_process

|

low vulnerability in arn aws ecr us east repository myrepo issue auto cut by vulnerability processor version dev message source eventbridge finding source low vulnerability cve detected in arn aws ecr us east repository myrepo associated pull requests

| 0

|

54

| 2,515,308,901

|

IssuesEvent

|

2015-01-15 17:44:19

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

reopened

|

Validation for @cols attribute too drastic [DITA OT 2.0]

|

feature P2 preprocess won't fix

|

The Java method "ValidationFilter.validateCols" should not try to validate a `@cols` attribute value if it is set on a tgroup from a table element which has a conref.

For example this topic could be output to HTML using an older DITA OT:

<?xml version='1.0' encoding='UTF-8'?>

<!DOCTYPE topic PUBLIC "-//OASIS//DTD DITA Topic//EN" "http://docs.oasis-open.org/dita/v1.1/OS/dtd/topic.dtd">

<topic id="introduction">

<title>Introduction</title>

<body>

<p>

<table conref="#introduction/table_ndw_gjy_qq" id="table_dkx_hjy_qq">

<tgroup cols="cols_rgx_hjy_qq">

<tbody>

<row>

<entry/>

</row>

</tbody>

</tgroup>

</table>

<table frame="all" rowsep="1" colsep="1" id="table_ndw_gjy_qq">

<title>abc</title>

<tgroup cols="2">

<colspec colname="c1" colnum="1" colwidth="1.0*"/>

<colspec colname="c2" colnum="2" colwidth="1.0*"/>

<thead>

<row>

<entry/>

<entry/>

</row>

</thead>

<tbody>

<row>

<entry/>

<entry/>

</row>

<row>

<entry/>

<entry/>

</row>

<row>

<entry/>

<entry/>

</row>

</tbody>

</tgroup>

</table></p>

</body>

</topic>

|

1.0

|

Validation for @cols attribute too drastic [DITA OT 2.0] - The Java method "ValidationFilter.validateCols" should not try to validate a `@cols` attribute value if it is set on a tgroup from a table element which has a conref.

For example this topic could be output to HTML using an older DITA OT:

<?xml version='1.0' encoding='UTF-8'?>

<!DOCTYPE topic PUBLIC "-//OASIS//DTD DITA Topic//EN" "http://docs.oasis-open.org/dita/v1.1/OS/dtd/topic.dtd">

<topic id="introduction">

<title>Introduction</title>

<body>

<p>

<table conref="#introduction/table_ndw_gjy_qq" id="table_dkx_hjy_qq">

<tgroup cols="cols_rgx_hjy_qq">

<tbody>

<row>

<entry/>

</row>

</tbody>

</tgroup>

</table>

<table frame="all" rowsep="1" colsep="1" id="table_ndw_gjy_qq">

<title>abc</title>

<tgroup cols="2">

<colspec colname="c1" colnum="1" colwidth="1.0*"/>

<colspec colname="c2" colnum="2" colwidth="1.0*"/>

<thead>

<row>

<entry/>

<entry/>

</row>

</thead>

<tbody>

<row>

<entry/>

<entry/>

</row>

<row>

<entry/>

<entry/>

</row>

<row>

<entry/>

<entry/>

</row>

</tbody>

</tgroup>

</table></p>

</body>

</topic>

|

process

|

validation for cols attribute too drastic the java method validationfilter validatecols should not try to validate a cols attribute value if it is set on a tgroup from a table element which has a conref for example this topic could be output to html using an older dita ot doctype topic public oasis dtd dita topic en introduction abc

| 1

|

248,328

| 7,929,370,897

|

IssuesEvent

|

2018-07-06 14:52:06

|

containous/traefik

|

https://api.github.com/repos/containous/traefik

|

closed

|

Allow configuration of auth.headerField via labels

|

area/authentication kind/enhancement priority/P2

|

<!--

DO NOT FILE ISSUES FOR GENERAL SUPPORT QUESTIONS.

The issue tracker is for reporting bugs and feature requests only.

For end-user related support questions, refer to one of the following:

- Stack Overflow (using the "traefik" tag): https://stackoverflow.com/questions/tagged/traefik

- the Traefik community Slack channel: https://traefik.herokuapp.com

-->

This is a feature request. In configuring authentication, I can pass the authenticated user to the frontend via an HTTP header using configuration like this:

```toml

# traefik.toml

[entryPoints]

[entryPoints.http]

address = ":80"

[entryPoints.http.auth.basic]

users = ["test:$apr1$H6uskkkW$IgXLP6ewTrSuBkTrqE8wj/"]

[entryPoints.http.auth]

headerField = "X-Webauth-User"

```

This works great when I specify the basic auth users in the entrypoint, but fails when I remove the `[entryPoints.http.auth.basic]` block in order to move that configuration back into a label specified in docker-compose.yml. I'd like to be able to set a custom headerField for each application using a docker label such as:

```yml

# docker-compose.yml

myservice:

image: myimage

labels:

traefik.frontend.auth.headerField: "X-Webauth-User"

traefik.frontend.auth.basic: "test:$$apr1$$H6uskkkW$$IgXLP6ewTrSuBkTrqE8wj/"

```

### Output of `traefik version`:

```

Version: v1.3.8

Codename: raclette

Go version: go1.8.3

Built: 2017-09-07_08:46:19PM

OS/Arch: linux/amd64

```

### What is your environment & configuration (arguments, toml, provider, platform, ...)?

```toml

defaultEntryPoints = ["http", "https"]

[entryPoints]

[entryPoints.http]

address = ":80"

[entryPoints.http.redirect]

entryPoint= "https"

[entryPoints.https]

address = ":8011"

[entryPoints.https.auth.basic]

#[entryPoints.https.tls]

[web]

address=":8080"

[docker]

domain="docker.local"

watch=true

exposedbydefault = false

```

|

1.0

|

Allow configuration of auth.headerField via labels - <!--

DO NOT FILE ISSUES FOR GENERAL SUPPORT QUESTIONS.

The issue tracker is for reporting bugs and feature requests only.

For end-user related support questions, refer to one of the following:

- Stack Overflow (using the "traefik" tag): https://stackoverflow.com/questions/tagged/traefik

- the Traefik community Slack channel: https://traefik.herokuapp.com

-->

This is a feature request. In configuring authentication, I can pass the authenticated user to the frontend via an HTTP header using configuration like this:

```toml

# traefik.toml

[entryPoints]

[entryPoints.http]

address = ":80"

[entryPoints.http.auth.basic]

users = ["test:$apr1$H6uskkkW$IgXLP6ewTrSuBkTrqE8wj/"]

[entryPoints.http.auth]

headerField = "X-Webauth-User"

```

This works great when I specify the basic auth users in the entrypoint, but fails when I remove the `[entryPoints.http.auth.basic]` block in order to move that configuration back into a label specified in docker-compose.yml. I'd like to be able to set a custom headerField for each application using a docker label such as:

```yml

# docker-compose.yml

myservice:

image: myimage

labels:

traefik.frontend.auth.headerField: "X-Webauth-User"

traefik.frontend.auth.basic: "test:$$apr1$$H6uskkkW$$IgXLP6ewTrSuBkTrqE8wj/"

```

### Output of `traefik version`:

```

Version: v1.3.8

Codename: raclette

Go version: go1.8.3

Built: 2017-09-07_08:46:19PM

OS/Arch: linux/amd64

```

### What is your environment & configuration (arguments, toml, provider, platform, ...)?

```toml

defaultEntryPoints = ["http", "https"]

[entryPoints]

[entryPoints.http]

address = ":80"

[entryPoints.http.redirect]

entryPoint= "https"

[entryPoints.https]

address = ":8011"

[entryPoints.https.auth.basic]

#[entryPoints.https.tls]

[web]

address=":8080"

[docker]

domain="docker.local"

watch=true

exposedbydefault = false

```

|

non_process

|

allow configuration of auth headerfield via labels do not file issues for general support questions the issue tracker is for reporting bugs and feature requests only for end user related support questions refer to one of the following stack overflow using the traefik tag the traefik community slack channel this is a feature request in configuring authentication i can pass the authenticated user to the frontend via an http header using configuration like this toml traefik toml address users headerfield x webauth user this works great when i specify the basic auth users in the entrypoint but fails when i remove the block in order to move that configuration back into a label specified in docker compose yml i d like to be able to set a custom headerfield for each application using a docker label such as yml docker compose yml myservice image myimage labels traefik frontend auth headerfield x webauth user traefik frontend auth basic test output of traefik version version codename raclette go version built os arch linux what is your environment configuration arguments toml provider platform toml defaultentrypoints address entrypoint https address address domain docker local watch true exposedbydefault false

| 0

|

11,130

| 13,957,688,580

|

IssuesEvent

|

2020-10-24 08:09:34

|

alexanderkotsev/geoportal

|

https://api.github.com/repos/alexanderkotsev/geoportal

|

opened

|

RO: Question related to harvest results with id INSPIRE-7edbed58-ddbc-11e4-b469-52540004b857_20190610-084632 - Issue while initializing the Discover Matadata Operation

|

Geoportal Harvesting process RO - Romania

|

Dear Angelo,

Dear Davide,

After the harvesting process we have an issue while initializing the Discovery Metadata Operation.

Could you help us to fix this error : The interaction with the remote service at "http://geoportal.gov.ro/Geoportal_INIS/csw202/discovery?service=csw&version=2.0.2&request=GetCapabilities" ended with the following error "javax.xml.stream.FactoryConfigurationError"

Result of the interaction with the Discovery Service:

Resources available for discovery: 159, Expected Resource Count: 140, Actual Resource Count : 140.

Link Evaluation Report : http://inspire-geoportal.ec.europa.eu/sandbox/resources/INSPIRE-7edbed58-ddbc-11e4-b469-52540004b857_20190610-084632/services/1/PullResults/.

Best regards,

Simona Bunea

|

1.0

|

RO: Question related to harvest results with id INSPIRE-7edbed58-ddbc-11e4-b469-52540004b857_20190610-084632 - Issue while initializing the Discover Matadata Operation - Dear Angelo,

Dear Davide,

After the harvesting process we have an issue while initializing the Discovery Metadata Operation.

Could you help us to fix this error : The interaction with the remote service at "http://geoportal.gov.ro/Geoportal_INIS/csw202/discovery?service=csw&version=2.0.2&request=GetCapabilities" ended with the following error "javax.xml.stream.FactoryConfigurationError"

Result of the interaction with the Discovery Service:

Resources available for discovery: 159, Expected Resource Count: 140, Actual Resource Count : 140.

Link Evaluation Report : http://inspire-geoportal.ec.europa.eu/sandbox/resources/INSPIRE-7edbed58-ddbc-11e4-b469-52540004b857_20190610-084632/services/1/PullResults/.

Best regards,

Simona Bunea

|

process

|

ro question related to harvest results with id inspire ddbc issue while initializing the discover matadata operation dear angelo dear davide after the harvesting process we have an issue while initializing the discovery metadata operation could you help us to fix this error the interaction with the remote service at quot ended with the following error quot javax xml stream factoryconfigurationerror quot result of the interaction with the discovery service resources available for discovery expected resource count actual resource count link evaluation report best regards simona bunea

| 1

|

15,693

| 11,661,944,684

|

IssuesEvent

|

2020-03-03 08:04:57

|

ampproject/amp.dev

|

https://api.github.com/repos/ampproject/amp.dev

|

opened

|

Upgrade uuid in amp-analytics API to v7

|

Category: Infrastructure P2: Medium Type: Update

|

uuid introduced breaking changes with v7 we need to adapt to prior updating. See https://github.com/ampproject/amp.dev/pull/3635 for details.

|

1.0

|

Upgrade uuid in amp-analytics API to v7 - uuid introduced breaking changes with v7 we need to adapt to prior updating. See https://github.com/ampproject/amp.dev/pull/3635 for details.

|

non_process

|

upgrade uuid in amp analytics api to uuid introduced breaking changes with we need to adapt to prior updating see for details

| 0

|

18,970

| 11,102,232,773

|

IssuesEvent

|

2019-12-16 23:21:55

|

Azure/azure-cli

|

https://api.github.com/repos/Azure/azure-cli

|

closed

|

azure-cli-acs module requires some strict type checking for all the input parameters

|

AKS Service Attention

|

**Describe the bug**

azure-cli\src\command_modules\azure-cli-acs\azure\cli\command_modules\acs\custom.py

has functions which type cast to int, this needs to have a stricter type checking during input parameter itself.

example: count=int(node_count),

**To Reproduce**

Code improvement to have strict type checking.

**Expected behavior**

Not lead to run time errors due to casting

**Environment summary**

Install Method (e.g. pip, interactive script, apt-get, Docker, MSI, edge build) / CLI version (`az --version`) / OS version / Shell Type (e.g. bash, cmd.exe, Bash on Windows)

**Additional context**

|

1.0

|

azure-cli-acs module requires some strict type checking for all the input parameters - **Describe the bug**

azure-cli\src\command_modules\azure-cli-acs\azure\cli\command_modules\acs\custom.py

has functions which type cast to int, this needs to have a stricter type checking during input parameter itself.

example: count=int(node_count),

**To Reproduce**

Code improvement to have strict type checking.

**Expected behavior**

Not lead to run time errors due to casting

**Environment summary**

Install Method (e.g. pip, interactive script, apt-get, Docker, MSI, edge build) / CLI version (`az --version`) / OS version / Shell Type (e.g. bash, cmd.exe, Bash on Windows)

**Additional context**

|

non_process

|

azure cli acs module requires some strict type checking for all the input parameters describe the bug azure cli src command modules azure cli acs azure cli command modules acs custom py has functions which type cast to int this needs to have a stricter type checking during input parameter itself example count int node count to reproduce code improvement to have strict type checking expected behavior not lead to run time errors due to casting environment summary install method e g pip interactive script apt get docker msi edge build cli version az version os version shell type e g bash cmd exe bash on windows additional context

| 0

|

201,302

| 22,948,163,991

|

IssuesEvent

|

2022-07-19 03:39:36

|

elikkatzgit/TestingPOM

|

https://api.github.com/repos/elikkatzgit/TestingPOM

|

reopened

|

CVE-2020-36187 (High) detected in jackson-databind-2.7.2.jar

|

security vulnerability

|

## CVE-2020-36187 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.7.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.7.2.jar** (Vulnerable Library)

<p>Found in base branch: <b>dev</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.8 mishandles the interaction between serialization gadgets and typing, related to org.apache.tomcat.dbcp.dbcp.datasources.SharedPoolDataSource.

<p>Publish Date: 2021-01-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36187>CVE-2020-36187</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-01-06</p>

<p>Fix Resolution: 2.9.10.8</p>

</p>

</details>

<p></p>

|

True

|

CVE-2020-36187 (High) detected in jackson-databind-2.7.2.jar - ## CVE-2020-36187 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.7.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.7.2.jar** (Vulnerable Library)

<p>Found in base branch: <b>dev</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.8 mishandles the interaction between serialization gadgets and typing, related to org.apache.tomcat.dbcp.dbcp.datasources.SharedPoolDataSource.

<p>Publish Date: 2021-01-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36187>CVE-2020-36187</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-01-06</p>

<p>Fix Resolution: 2.9.10.8</p>

</p>

</details>

<p></p>

|

non_process

|

cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href dependency hierarchy x jackson databind jar vulnerable library found in base branch dev vulnerability details fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to org apache tomcat dbcp dbcp datasources sharedpooldatasource publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution

| 0

|

86,801

| 3,733,664,717

|

IssuesEvent

|

2016-03-08 01:30:23

|

openshift/origin

|

https://api.github.com/repos/openshift/origin

|

opened

|

Builder tags should be sorted as numbers when possible in UI

|

component/web kind/bug priority/P3

|

Builder wildfly:10.0 should appear after wildfly:9.0. Tags aren't always numbers, but we should compare them as numbers when sorting if possible.

<img width="380" alt="openshift_web_console" src="https://cloud.githubusercontent.com/assets/1167259/13589056/f8bb137e-e4a2-11e5-9ca9-318f7f320c25.png">

/cc @bparees @jwforres

|

1.0

|

Builder tags should be sorted as numbers when possible in UI - Builder wildfly:10.0 should appear after wildfly:9.0. Tags aren't always numbers, but we should compare them as numbers when sorting if possible.

<img width="380" alt="openshift_web_console" src="https://cloud.githubusercontent.com/assets/1167259/13589056/f8bb137e-e4a2-11e5-9ca9-318f7f320c25.png">

/cc @bparees @jwforres

|

non_process

|

builder tags should be sorted as numbers when possible in ui builder wildfly should appear after wildfly tags aren t always numbers but we should compare them as numbers when sorting if possible img width alt openshift web console src cc bparees jwforres

| 0

|

11,289

| 9,081,903,389

|

IssuesEvent

|

2019-02-17 06:55:45

|

amethyst/amethyst

|

https://api.github.com/repos/amethyst/amethyst

|

closed

|

CI does not check json feature

|

diff: easy pri: important status: ready team: engine team: infrastructure type: bug

|

We're currently not testing the `json` feature, and it turns out it doesn't compile:

https://github.com/amethyst/amethyst/blob/38877ed47c5bb9a7869276fdea545085ade0d81e/amethyst_assets/src/formats.rs#L48-L49

`chain_err` is not defined. Is there any convention for how to migrate to the new error handling @udoprog? I can't find it in the doc.

|

1.0

|

CI does not check json feature - We're currently not testing the `json` feature, and it turns out it doesn't compile:

https://github.com/amethyst/amethyst/blob/38877ed47c5bb9a7869276fdea545085ade0d81e/amethyst_assets/src/formats.rs#L48-L49

`chain_err` is not defined. Is there any convention for how to migrate to the new error handling @udoprog? I can't find it in the doc.

|

non_process

|

ci does not check json feature we re currently not testing the json feature and it turns out it doesn t compile chain err is not defined is there any convention for how to migrate to the new error handling udoprog i can t find it in the doc

| 0

|

526,050

| 15,278,867,378

|

IssuesEvent

|

2021-02-23 02:32:11

|

space-wizards/space-station-14

|

https://api.github.com/repos/space-wizards/space-station-14

|

closed

|

MedicalScanner needs collision fixed

|

Difficulty: 2 - Medium Priority: 2-medium Size: 1 - Tiny Type: Improvement

|

Ideally it's passable to mobs but impassable to walls etc (if it gets moved around) so do with the mask and layers what needs doing.

Need it because if a player moves out of the scanner they will immediately clip.

|

1.0

|

MedicalScanner needs collision fixed - Ideally it's passable to mobs but impassable to walls etc (if it gets moved around) so do with the mask and layers what needs doing.

Need it because if a player moves out of the scanner they will immediately clip.

|

non_process

|

medicalscanner needs collision fixed ideally it s passable to mobs but impassable to walls etc if it gets moved around so do with the mask and layers what needs doing need it because if a player moves out of the scanner they will immediately clip

| 0

|

22,412

| 31,142,293,567

|

IssuesEvent

|

2023-08-16 01:44:54

|

cypress-io/cypress

|

https://api.github.com/repos/cypress-io/cypress

|

closed

|

Flaky test: <OpenBrowserList /> throws when activeBrowser is null

|

OS: linux process: flaky test topic: flake ❄️ stage: flake stale

|

### Current behavior

This is our top flaky test on Linux, having flaked 440 times in the last 30 days at time of writing. See [Dashboard Insights](https://app.circleci.com/insights/github/cypress-io/cypress/workflows/linux-x64/tests?branch=develop&reporting-window=last-30-days)

We're trying to test an error state, but the error itself is causing the test to flake:

<img width="1423" alt="Screen Shot 2022-08-03 at 2 55 03 PM" src="https://user-images.githubusercontent.com/26726429/182719393-dfaca960-7315-4245-bf39-8406be88ffa5.png">

```

cy.once('uncaught:exception', (err) => {

expect(err.message).to.include('Missing activeBrowser in selectedBrowserId')

done()

})

```

Looks like an easy fix, just need to tweak the logic for asserting the error

### Desired behavior

No more flake 😃

### Test code to reproduce

https://github.com/cypress-io/cypress/blob/develop/packages/launchpad/src/setup/OpenBrowserList.cy.tsx#L134

### Cypress Version

10.4.0

### Other

https://github.com/cypress-io/cypress/issues/23099

|

1.0

|

Flaky test: <OpenBrowserList /> throws when activeBrowser is null - ### Current behavior

This is our top flaky test on Linux, having flaked 440 times in the last 30 days at time of writing. See [Dashboard Insights](https://app.circleci.com/insights/github/cypress-io/cypress/workflows/linux-x64/tests?branch=develop&reporting-window=last-30-days)

We're trying to test an error state, but the error itself is causing the test to flake:

<img width="1423" alt="Screen Shot 2022-08-03 at 2 55 03 PM" src="https://user-images.githubusercontent.com/26726429/182719393-dfaca960-7315-4245-bf39-8406be88ffa5.png">

```

cy.once('uncaught:exception', (err) => {

expect(err.message).to.include('Missing activeBrowser in selectedBrowserId')

done()

})

```

Looks like an easy fix, just need to tweak the logic for asserting the error

### Desired behavior

No more flake 😃

### Test code to reproduce

https://github.com/cypress-io/cypress/blob/develop/packages/launchpad/src/setup/OpenBrowserList.cy.tsx#L134

### Cypress Version

10.4.0

### Other

https://github.com/cypress-io/cypress/issues/23099

|

process

|

flaky test throws when activebrowser is null current behavior this is our top flaky test on linux having flaked times in the last days at time of writing see we re trying to test an error state but the error itself is causing the test to flake img width alt screen shot at pm src cy once uncaught exception err expect err message to include missing activebrowser in selectedbrowserid done looks like an easy fix just need to tweak the logic for asserting the error desired behavior no more flake 😃 test code to reproduce cypress version other

| 1

|

7,327

| 10,468,917,986

|

IssuesEvent

|

2019-09-22 17:02:24

|

produvia/ai-platform

|

https://api.github.com/repos/produvia/ai-platform

|

closed

|

Sentiment Analysis

|

natural-language-processing task wontfix

|

# Goal(s)

- Classify the polarity of a given text

# Input(s)

- Text

# Output(s)

- Text

# Objective Function(s)

- TBD

|

1.0

|

Sentiment Analysis - # Goal(s)

- Classify the polarity of a given text

# Input(s)

- Text

# Output(s)

- Text

# Objective Function(s)

- TBD

|

process

|

sentiment analysis goal s classify the polarity of a given text input s text output s text objective function s tbd

| 1

|

74,274

| 9,011,520,865

|

IssuesEvent

|

2019-02-05 14:53:22

|

iagodahlem/tiempo

|

https://api.github.com/repos/iagodahlem/tiempo

|

opened

|

Design Debits

|

design help wanted

|

- Optimize it to look better on desktop view.

- Find better icons to keep the look n' feel of the app.

- Avoid content jumping on the timer numbers.

|

1.0

|

Design Debits - - Optimize it to look better on desktop view.

- Find better icons to keep the look n' feel of the app.

- Avoid content jumping on the timer numbers.

|

non_process

|

design debits optimize it to look better on desktop view find better icons to keep the look n feel of the app avoid content jumping on the timer numbers

| 0

|

369,449

| 25,847,981,276

|

IssuesEvent

|

2022-12-13 08:19:48

|

ophub/amlogic-s9xxx-armbian

|

https://api.github.com/repos/ophub/amlogic-s9xxx-armbian

|

closed

|

M401A 制作了启动盘,但是黑屏无法启动

|

documentation

|

**Device Information | 设备信息**

- SOC: s905l3a (问了卖家)

- Model 魔百和 M401A

**Armbian Version | 系统版本**

- Kernel Version: 5.15.82

- Release: jammy

**Describe the bug | 问题描述**

下载和烧录以下镜像:

Armbian_23.02.0_amlogic_s905l3a_jammy_5.15.82_server_2022.12.09.img.gz

Armbian_23.02.0_amlogic_s905l3a_jammy_6.0.12_server_2022.12.09.img.gz

用adb connect IP

adb shell reboot update

然后插入优盘,屏幕提示无视频输入,然后一直卡在这里。

如果不插入优盘,会提示update失败和重启菜单。

请问有什么调试和判断方法?

|

1.0

|

M401A 制作了启动盘,但是黑屏无法启动 -

**Device Information | 设备信息**

- SOC: s905l3a (问了卖家)

- Model 魔百和 M401A

**Armbian Version | 系统版本**

- Kernel Version: 5.15.82

- Release: jammy

**Describe the bug | 问题描述**

下载和烧录以下镜像:

Armbian_23.02.0_amlogic_s905l3a_jammy_5.15.82_server_2022.12.09.img.gz

Armbian_23.02.0_amlogic_s905l3a_jammy_6.0.12_server_2022.12.09.img.gz

用adb connect IP

adb shell reboot update

然后插入优盘,屏幕提示无视频输入,然后一直卡在这里。

如果不插入优盘,会提示update失败和重启菜单。

请问有什么调试和判断方法?

|

non_process

|

制作了启动盘,但是黑屏无法启动 device information 设备信息 soc (问了卖家) model 魔百和 armbian version 系统版本 kernel version release jammy describe the bug 问题描述 下载和烧录以下镜像: armbian amlogic jammy server img gz armbian amlogic jammy server img gz 用adb connect ip adb shell reboot update 然后插入优盘,屏幕提示无视频输入,然后一直卡在这里。 如果不插入优盘,会提示update失败和重启菜单。 请问有什么调试和判断方法?

| 0

|

303,261

| 22,961,376,628

|

IssuesEvent

|

2022-07-19 15:38:23

|

JanssenProject/jans

|

https://api.github.com/repos/JanssenProject/jans

|

opened

|

docs: add artifact link

|

area-documentation

|

- [x] Add artifact link for janssen to the main readme

- [x] add latest release link to the main readme

|

1.0

|

docs: add artifact link - - [x] Add artifact link for janssen to the main readme

- [x] add latest release link to the main readme

|

non_process

|

docs add artifact link add artifact link for janssen to the main readme add latest release link to the main readme

| 0

|

235,078

| 18,041,333,645

|

IssuesEvent

|

2021-09-18 04:52:46

|

girlscript/winter-of-contributing

|

https://api.github.com/repos/girlscript/winter-of-contributing

|

opened

|

Python: sets

|

documentation GWOC21 Python Video Audio

|

### Description :

Briefly explain sets in python

### Note :

- Changes should be made inside the `Python/` directory & `Python` branch.

- Issue only for `GWOC'21` contributors.

- Issue will be assigned on a **first come first serve basis**, **1 Issue == 1 PR.**

- In the PR, keep this issue's title as your PR's title.

#### Must Follow : [Contributing Guidelines](https://github.com/girlscript/winter-of-contributing/blob/main/.github/CONTRIBUTING.md) & [Code of Conduct](https://github.com/girlscript/winter-of-contributing/blob/main/.github/CODE_OF_CONDUCT.md) before start Contributing.

|

1.0

|

Python: sets - ### Description :

Briefly explain sets in python

### Note :

- Changes should be made inside the `Python/` directory & `Python` branch.

- Issue only for `GWOC'21` contributors.

- Issue will be assigned on a **first come first serve basis**, **1 Issue == 1 PR.**

- In the PR, keep this issue's title as your PR's title.

#### Must Follow : [Contributing Guidelines](https://github.com/girlscript/winter-of-contributing/blob/main/.github/CONTRIBUTING.md) & [Code of Conduct](https://github.com/girlscript/winter-of-contributing/blob/main/.github/CODE_OF_CONDUCT.md) before start Contributing.

|

non_process

|

python sets description briefly explain sets in python note changes should be made inside the python directory python branch issue only for gwoc contributors issue will be assigned on a first come first serve basis issue pr in the pr keep this issue s title as your pr s title must follow before start contributing

| 0

|

14,316

| 17,333,624,174

|

IssuesEvent

|

2021-07-28 07:27:31

|

2i2c-org/team-compass

|

https://api.github.com/repos/2i2c-org/team-compass

|

closed

|

Pilot Hubs should have a single non-2i2c admin user manually specified

|

:label: team-process type: enhancement

|

# Summary

We have run into some confusion with setting admin users on some of our hubs, where people didn't know whether they were admins and were asking 2i2c for admin users status.

This is some extra noise that we have to deal with, and it would be simpler if there were a single, repeatable policy for who gets admin user status on a hub.

I propose that we adopt the following practice:

- All 2i2c engineers get admin user status on hubs via **manual configuration** (ideally this would be automatically interpolated from config somewhere)

- The Community Representative gets admin user status on hubs via **manual configuration**

- All other Hub Administrators must be added via the JupyterHub UI **by the Community Representative**.

# Important information

- Some discussion in slack: https://2i2c.slack.com/archives/C01DB2JRP8W/p1626768120154300

- An issue that led to this conversation https://github.com/2i2c-org/pilot-hubs/issues/529

# Tasks to complete

- [ ] Discuss and/or agree with this policy

- [ ] Write it up into the pilot hubs documentation

- [ ] Done!

Note: I think we can leave our *current* hubs as-is, and adopt this for any new hubs.

|

1.0

|

Pilot Hubs should have a single non-2i2c admin user manually specified - # Summary

We have run into some confusion with setting admin users on some of our hubs, where people didn't know whether they were admins and were asking 2i2c for admin users status.

This is some extra noise that we have to deal with, and it would be simpler if there were a single, repeatable policy for who gets admin user status on a hub.

I propose that we adopt the following practice:

- All 2i2c engineers get admin user status on hubs via **manual configuration** (ideally this would be automatically interpolated from config somewhere)

- The Community Representative gets admin user status on hubs via **manual configuration**

- All other Hub Administrators must be added via the JupyterHub UI **by the Community Representative**.

# Important information

- Some discussion in slack: https://2i2c.slack.com/archives/C01DB2JRP8W/p1626768120154300

- An issue that led to this conversation https://github.com/2i2c-org/pilot-hubs/issues/529

# Tasks to complete

- [ ] Discuss and/or agree with this policy

- [ ] Write it up into the pilot hubs documentation

- [ ] Done!

Note: I think we can leave our *current* hubs as-is, and adopt this for any new hubs.

|

process

|

pilot hubs should have a single non admin user manually specified summary we have run into some confusion with setting admin users on some of our hubs where people didn t know whether they were admins and were asking for admin users status this is some extra noise that we have to deal with and it would be simpler if there were a single repeatable policy for who gets admin user status on a hub i propose that we adopt the following practice all engineers get admin user status on hubs via manual configuration ideally this would be automatically interpolated from config somewhere the community representative gets admin user status on hubs via manual configuration all other hub administrators must be added via the jupyterhub ui by the community representative important information some discussion in slack an issue that led to this conversation tasks to complete discuss and or agree with this policy write it up into the pilot hubs documentation done note i think we can leave our current hubs as is and adopt this for any new hubs

| 1

|

18,392

| 24,529,890,469

|

IssuesEvent

|

2022-10-11 15:37:05

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

incompatible_enforce_config_setting_visibility

|

P2 type: process team-Configurability incompatible-change migration-ready breaking-change-6.0

|

Visibility on `config_setting` isn't historically enforced. This is purely for legacy reasons. There's no philosophical reason to distinguish them.

This flag starts the process of removing the distinction.

Values:

* `--incompatible_enforce_config_setting_visibility=off`: every `config_setting` is visible to every target, regardless of visibility settings

* `--incompatible_enforce_config_setting_visibility=on`: `config_setting` follows the policy set by `--incompatible_config_setting_private_default_visibility` (https://github.com/bazelbuild/bazel/issues/12933).

**Incompatibility error:**

`ERROR: myapp/BUILD:4:1: in config_setting rule //myapp:my_config: target 'myapp:my_config' is not visible from target '//some:other_target. Check the visibility declaration of the former target if you think the dependency is legitimate`

**Migration:**

Treat all `config_setting`s as if they follow standard visibility logic at https://docs.bazel.build/versions/master/visibility.html: have them set visibility explicitly if they'll be used anywhere outside their own package. The ultimate goal of this migration is to fully enforce that expectation.

|

1.0

|

incompatible_enforce_config_setting_visibility - Visibility on `config_setting` isn't historically enforced. This is purely for legacy reasons. There's no philosophical reason to distinguish them.

This flag starts the process of removing the distinction.

Values:

* `--incompatible_enforce_config_setting_visibility=off`: every `config_setting` is visible to every target, regardless of visibility settings

* `--incompatible_enforce_config_setting_visibility=on`: `config_setting` follows the policy set by `--incompatible_config_setting_private_default_visibility` (https://github.com/bazelbuild/bazel/issues/12933).

**Incompatibility error:**

`ERROR: myapp/BUILD:4:1: in config_setting rule //myapp:my_config: target 'myapp:my_config' is not visible from target '//some:other_target. Check the visibility declaration of the former target if you think the dependency is legitimate`

**Migration:**

Treat all `config_setting`s as if they follow standard visibility logic at https://docs.bazel.build/versions/master/visibility.html: have them set visibility explicitly if they'll be used anywhere outside their own package. The ultimate goal of this migration is to fully enforce that expectation.

|

process

|

incompatible enforce config setting visibility visibility on config setting isn t historically enforced this is purely for legacy reasons there s no philosophical reason to distinguish them this flag starts the process of removing the distinction values incompatible enforce config setting visibility off every config setting is visible to every target regardless of visibility settings incompatible enforce config setting visibility on config setting follows the policy set by incompatible config setting private default visibility incompatibility error error myapp build in config setting rule myapp my config target myapp my config is not visible from target some other target check the visibility declaration of the former target if you think the dependency is legitimate migration treat all config setting s as if they follow standard visibility logic at have them set visibility explicitly if they ll be used anywhere outside their own package the ultimate goal of this migration is to fully enforce that expectation

| 1

|

445,437

| 31,238,102,623

|

IssuesEvent

|

2023-08-20 14:20:17

|

KrisztanZero/terra-custos

|

https://api.github.com/repos/KrisztanZero/terra-custos

|

closed

|

Internationalization and Localization

|

documentation

|

### If the application operates in multiple languages, design the internationalization and localization processes. Handling text and date formatting based on different cultural preferences ensures widespread usability.

- [ ] Internationalization and Localization

|

1.0

|

Internationalization and Localization - ### If the application operates in multiple languages, design the internationalization and localization processes. Handling text and date formatting based on different cultural preferences ensures widespread usability.

- [ ] Internationalization and Localization

|

non_process

|

internationalization and localization if the application operates in multiple languages design the internationalization and localization processes handling text and date formatting based on different cultural preferences ensures widespread usability internationalization and localization

| 0

|

22,356

| 31,032,990,460

|

IssuesEvent

|

2023-08-10 13:37:11

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

opened

|

[MLv2] Column not found errors when joining cards

|

.Backend .metabase-lib .Team/QueryProcessor :hammer_and_wrench:

|

We have two failing E2E tests on the [joins FE branch](https://github.com/metabase/metabase/pull/32912) when a BE now returns an error when running a query with joined cards:

```

Column "Question 5 - Products → Created At: Month.CREATED_AT" not found;

SQL statement: ...

```

**Failing tests**

- [should join two saved questions with the same implicit/explicit grouped field (metabase#18512)](https://github.com/metabase/metabase/blob/master/e2e/test/scenarios/joins/reproductions/18512-cannot-join-two-saved-questions-with-same-implicit-explicit-grouped-field.cy.spec.js)

- [should join saved questions that themselves contain joins (metabase#12928)](https://github.com/metabase/metabase/blob/master/e2e/test/scenarios/joins/joins.cy.spec.js)

|

1.0

|

[MLv2] Column not found errors when joining cards - We have two failing E2E tests on the [joins FE branch](https://github.com/metabase/metabase/pull/32912) when a BE now returns an error when running a query with joined cards:

```

Column "Question 5 - Products → Created At: Month.CREATED_AT" not found;

SQL statement: ...

```

**Failing tests**

- [should join two saved questions with the same implicit/explicit grouped field (metabase#18512)](https://github.com/metabase/metabase/blob/master/e2e/test/scenarios/joins/reproductions/18512-cannot-join-two-saved-questions-with-same-implicit-explicit-grouped-field.cy.spec.js)

- [should join saved questions that themselves contain joins (metabase#12928)](https://github.com/metabase/metabase/blob/master/e2e/test/scenarios/joins/joins.cy.spec.js)

|

process

|

column not found errors when joining cards we have two failing tests on the when a be now returns an error when running a query with joined cards column question products → created at month created at not found sql statement failing tests

| 1

|

5,752

| 8,597,470,669

|

IssuesEvent

|

2018-11-15 18:46:57

|

googleapis/google-cloud-python

|

https://api.github.com/repos/googleapis/google-cloud-python

|

opened

|

Firestore: support features blacklisted in conformance tests

|

api: firestore type: process

|

PR #6290 blacklisted a number of conformance tests because we do not currently support the usecases they support:

- `get-*` tests (because we use `BatchGetDocuments` API rather than the `GetDocument` API.

- `listen-*` tests exercise the "watch" features (since landed in PR #6191).

- `update_paths-*` tests (they've been excluded forever, with a note that Python lacked the support).

- Tests involving unimplemented / incorrectly implemented "transforms" (`DELETE`, `ARRAY_REMOVE`, `ARRAY_UNION`).

- `query-*` tests have been (inadvertently) skipped since being copied in from `google-cloud-common` in PR #5351).

This is a tracking issue for removing those skips / blacklist entries:

- [ ] Update `Document.get` to use the `GetDocument` API, and re-enable the (one) `get-basic.textproto` test.

- [ ] Enable the `listen-*` tests and make changes needed for them to pass.

- [ ] Enable the `query-*` tests and make changes needed for them to pass.

- [ ] Figure how to support the "update with paths" use case, and enable those tests.

- [ ] Fix the support for the `DELETE` transform (one failing test) and enable those tests.

- [ ] Figure out how to support the `ARRAY_REMOVE` transform, and enable those tests.

- [ ] Figure out how to support the `ARRAY_UNION` transform, and enable those tests.

|

1.0

|

Firestore: support features blacklisted in conformance tests - PR #6290 blacklisted a number of conformance tests because we do not currently support the usecases they support:

- `get-*` tests (because we use `BatchGetDocuments` API rather than the `GetDocument` API.

- `listen-*` tests exercise the "watch" features (since landed in PR #6191).

- `update_paths-*` tests (they've been excluded forever, with a note that Python lacked the support).

- Tests involving unimplemented / incorrectly implemented "transforms" (`DELETE`, `ARRAY_REMOVE`, `ARRAY_UNION`).

- `query-*` tests have been (inadvertently) skipped since being copied in from `google-cloud-common` in PR #5351).

This is a tracking issue for removing those skips / blacklist entries:

- [ ] Update `Document.get` to use the `GetDocument` API, and re-enable the (one) `get-basic.textproto` test.

- [ ] Enable the `listen-*` tests and make changes needed for them to pass.

- [ ] Enable the `query-*` tests and make changes needed for them to pass.

- [ ] Figure how to support the "update with paths" use case, and enable those tests.

- [ ] Fix the support for the `DELETE` transform (one failing test) and enable those tests.

- [ ] Figure out how to support the `ARRAY_REMOVE` transform, and enable those tests.

- [ ] Figure out how to support the `ARRAY_UNION` transform, and enable those tests.

|

process

|

firestore support features blacklisted in conformance tests pr blacklisted a number of conformance tests because we do not currently support the usecases they support get tests because we use batchgetdocuments api rather than the getdocument api listen tests exercise the watch features since landed in pr update paths tests they ve been excluded forever with a note that python lacked the support tests involving unimplemented incorrectly implemented transforms delete array remove array union query tests have been inadvertently skipped since being copied in from google cloud common in pr this is a tracking issue for removing those skips blacklist entries update document get to use the getdocument api and re enable the one get basic textproto test enable the listen tests and make changes needed for them to pass enable the query tests and make changes needed for them to pass figure how to support the update with paths use case and enable those tests fix the support for the delete transform one failing test and enable those tests figure out how to support the array remove transform and enable those tests figure out how to support the array union transform and enable those tests

| 1

|

18,587

| 24,567,937,441

|

IssuesEvent

|

2022-10-13 05:58:32

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[Android] Comprehension test is not getting failed in the updated consent flow

|

Bug P0 Android Process: Fixed Process: Tested QA Process: Tested dev

|

**Steps:**

1. Sign in and enroll to the study

2. Now, Go to SB and update the consent for enrolled participants

3. Open the mobile app and complete the eligibility section

4. Navigate to comprehension section

5. Fail the comprehension section and Observe

**AR:** Comprehension test is not getting failed in the updated consent flow

**ER:** Comprehension test should be failed in the updated consent flow

https://user-images.githubusercontent.com/86007179/188439152-cb26d4dd-671f-40c5-9895-50dbd016ac70.mp4

|

3.0

|

[Android] Comprehension test is not getting failed in the updated consent flow - **Steps:**

1. Sign in and enroll to the study

2. Now, Go to SB and update the consent for enrolled participants

3. Open the mobile app and complete the eligibility section

4. Navigate to comprehension section

5. Fail the comprehension section and Observe

**AR:** Comprehension test is not getting failed in the updated consent flow

**ER:** Comprehension test should be failed in the updated consent flow

https://user-images.githubusercontent.com/86007179/188439152-cb26d4dd-671f-40c5-9895-50dbd016ac70.mp4

|

process

|

comprehension test is not getting failed in the updated consent flow steps sign in and enroll to the study now go to sb and update the consent for enrolled participants open the mobile app and complete the eligibility section navigate to comprehension section fail the comprehension section and observe ar comprehension test is not getting failed in the updated consent flow er comprehension test should be failed in the updated consent flow

| 1

|

20,586

| 27,245,929,762

|

IssuesEvent

|

2023-02-22 02:00:09

|

lizhihao6/get-daily-arxiv-noti

|

https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti

|

opened

|

New submissions for Wed, 22 Feb 23

|

event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB

|

## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### ViGU: Vision GNN U-Net for Fast MRI

- **Authors:** Jiahao Huang, Angelica Aviles-Rivero, Carola-Bibiane Schonlieb, Guang Yang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2302.10273

- **Pdf link:** https://arxiv.org/pdf/2302.10273

- **Abstract**

Deep learning models have been widely applied for fast MRI. The majority of existing deep learning models, e.g., convolutional neural networks, work on data with Euclidean or regular grids structures. However, high-dimensional features extracted from MR data could be encapsulated in non-Euclidean manifolds. This disparity between the go-to assumption of existing models and data requirements limits the flexibility to capture irregular anatomical features in MR data. In this work, we introduce a novel Vision GNN type network for fast MRI called Vision GNN U-Net (ViGU). More precisely, the pixel array is first embedded into patches and then converted into a graph. Secondly, a U-shape network is developed using several graph blocks in symmetrical encoder and decoder paths. Moreover, we show that the proposed ViGU can also benefit from Generative Adversarial Networks yielding to its variant ViGU-GAN. We demonstrate, through numerical and visual experiments, that the proposed ViGU and GAN variant outperform existing CNN and GAN-based methods. Moreover, we show that the proposed network readily competes with approaches based on Transformers while requiring a fraction of the computational cost. More importantly, the graph structure of the network reveals how the network extracts features from MR images, providing intuitive explainability.

### Semantic Feature Integration network for Fine-grained Visual Classification

- **Authors:** Hui Wang, Yueyang li, Haichi Luo

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2302.10275

- **Pdf link:** https://arxiv.org/pdf/2302.10275

- **Abstract**

Fine-Grained Visual Classification (FGVC) is known as a challenging task due to subtle differences among subordinate categories. Many current FGVC approaches focus on identifying and locating discriminative regions by using the attention mechanism, but neglect the presence of unnecessary features that hinder the understanding of object structure. These unnecessary features, including 1) ambiguous parts resulting from the visual similarity in object appearances and 2) noninformative parts (e.g., background noise), can have a significant adverse impact on classification results. In this paper, we propose the Semantic Feature Integration network (SFI-Net) to address the above difficulties. By eliminating unnecessary features and reconstructing the semantic relations among discriminative features, our SFI-Net has achieved satisfying performance. The network consists of two modules: 1) the multi-level feature filter (MFF) module is proposed to remove unnecessary features with different receptive field, and then concatenate the preserved features on pixel level for subsequent disposal; 2) the semantic information reconstitution (SIR) module is presented to further establish semantic relations among discriminative features obtained from the MFF module. These two modules are carefully designed to be light-weighted and can be trained end-to-end in a weakly-supervised way. Extensive experiments on four challenging fine-grained benchmarks demonstrate that our proposed SFI-Net achieves the state-of-the-arts performance. Especially, the classification accuracy of our model on CUB-200-2011 and Stanford Dogs reaches 92.64% and 93.03%, respectively.

### Combining Blockchain and Biometrics: A Survey on Technical Aspects and a First Legal Analysis

- **Authors:** Mahdi Ghafourian, Bilgesu Sumer, Ruben Vera-Rodriguez, Julian Fierrez, Ruben Tolosana, Aythami Moralez, Els Kindt

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Cryptography and Security (cs.CR); Distributed, Parallel, and Cluster Computing (cs.DC); Machine Learning (cs.LG)

- **Arxiv link:** https://arxiv.org/abs/2302.10883

- **Pdf link:** https://arxiv.org/pdf/2302.10883

- **Abstract**