Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

430

| 2,862,172,125

|

IssuesEvent

|

2015-06-04 01:42:44

|

rancherio/rancher

|

https://api.github.com/repos/rancherio/rancher

|

closed

|

Name is not set for containers deployed as part of a service.

|

area/service status/blocker status/to-test

|

Server version - rancher/server:v0.23.0-rc2

Steps to reproduce the problem:

Create a service providing name.

Activate service.

When containers get created , they have no name set.

|

1.0

|

Name is not set for containers deployed as part of a service. - Server version - rancher/server:v0.23.0-rc2

Steps to reproduce the problem:

Create a service providing name.

Activate service.

When containers get created , they have no name set.

|

non_process

|

name is not set for containers deployed as part of a service server version rancher server steps to reproduce the problem create a service providing name activate service when containers get created they have no name set

| 0

|

17,806

| 6,517,012,795

|

IssuesEvent

|

2017-08-27 17:24:19

|

mikeboers/PyAV

|

https://api.github.com/repos/mikeboers/PyAV

|

closed

|

Python3 on source scripts/activate.sh

|

bug build

|

```

[w495@localhost PyAV]$ python --version

Python 3.6.1 :: Continuum Analytics, Inc.

[w495@localhost PyAV]$

[w495@localhost PyAV]$ source scripts/activate.sh

File "<string>", line 1

import sys; print sys.prefix

^

SyntaxError: invalid syntax

No $PYAV_LIBRARY_NAME set; defaulting to ffmpeg

No $PYAV_LIBRARY_VERSION set; defaulting to 3.2

File "<string>", line 1

import sys; print "%d.%d" % sys.version_info[:2]

^

SyntaxError: invalid syntax

```

|

1.0

|

Python3 on source scripts/activate.sh - ```

[w495@localhost PyAV]$ python --version

Python 3.6.1 :: Continuum Analytics, Inc.

[w495@localhost PyAV]$

[w495@localhost PyAV]$ source scripts/activate.sh

File "<string>", line 1

import sys; print sys.prefix

^

SyntaxError: invalid syntax

No $PYAV_LIBRARY_NAME set; defaulting to ffmpeg

No $PYAV_LIBRARY_VERSION set; defaulting to 3.2

File "<string>", line 1

import sys; print "%d.%d" % sys.version_info[:2]

^

SyntaxError: invalid syntax

```

|

non_process

|

on source scripts activate sh python version python continuum analytics inc source scripts activate sh file line import sys print sys prefix syntaxerror invalid syntax no pyav library name set defaulting to ffmpeg no pyav library version set defaulting to file line import sys print d d sys version info syntaxerror invalid syntax

| 0

|

784,433

| 27,570,590,688

|

IssuesEvent

|

2023-03-08 08:58:54

|

canonical/vanilla-framework

|

https://api.github.com/repos/canonical/vanilla-framework

|

closed

|

Side navigation drawer on small screens doesn't trap the focus

|

Priority: Medium Bug 🐛 Accessibility

|

via @petermakowski comment via @petermakowski comment https://github.com/canonical/juju.is/pull/431#issuecomment-1231665391

> [...] the side navigation is missing a focus trap (if you keep pressing `Tab` you'll eventually start tabbing through elements on the page outside of it).

**Describe the bug**

The side navigation drawer "popup" on small screen doesn't trap the focus. Ideally it should move back up to "Toggle" side navigation button on top once you focus out of last link in the side nav (but only on small screens where side nav is collapsible).

|

1.0

|

Side navigation drawer on small screens doesn't trap the focus - via @petermakowski comment via @petermakowski comment https://github.com/canonical/juju.is/pull/431#issuecomment-1231665391

> [...] the side navigation is missing a focus trap (if you keep pressing `Tab` you'll eventually start tabbing through elements on the page outside of it).

**Describe the bug**

The side navigation drawer "popup" on small screen doesn't trap the focus. Ideally it should move back up to "Toggle" side navigation button on top once you focus out of last link in the side nav (but only on small screens where side nav is collapsible).

|

non_process

|

side navigation drawer on small screens doesn t trap the focus via petermakowski comment via petermakowski comment the side navigation is missing a focus trap if you keep pressing tab you ll eventually start tabbing through elements on the page outside of it describe the bug the side navigation drawer popup on small screen doesn t trap the focus ideally it should move back up to toggle side navigation button on top once you focus out of last link in the side nav but only on small screens where side nav is collapsible

| 0

|

5,061

| 7,867,139,482

|

IssuesEvent

|

2018-06-23 04:08:57

|

StrikeNP/trac_test

|

https://api.github.com/repos/StrikeNP/trac_test

|

closed

|

Include Lscale as a new panel in the default plotgen plots (Trac #496)

|

Migrated from Trac bladornr@uwm.edu enhancement post_processing

|

We include a lot of variables in our plotgen plots, but we omit one important one, namely, Lscale, which is output in clubb's zt files. Let's output Lscale for all our CLUBB cases on the standard plotgen plots.

The variables to plot in plotgen are specified in the case files. For instance, for RICO, the case file is [http://carson.math.uwm.edu/trac/clubb/browser/trunk/postprocessing/plotgen/cases/clubb/rico.case here]. More information about plotgen and the case files is given in the TWiki.

Note: there is no Lscale output by SAM, WRF, COAMPS, etc., and so we can't include a thick red benchmark line.

Attachments:

[plot_explicit_ta_configs.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_explicit_ta_configs.maff)

[plot_new_pdf_config_1_plot_2.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_config_1_plot_2.maff)

[plot_combo_pdf_run_3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_combo_pdf_run_3.maff)

[plot_input_fields_rtp3_thlp3_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_input_fields_rtp3_thlp3_1.maff)

[plot_new_pdf_20180522_test_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_20180522_test_1.maff)

[plot_attempts_8_10.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempts_8_10.maff)

[plot_attempt_8_only.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempt_8_only.maff)

[plot_beta_1p3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3.maff)

[plot_beta_1p3_all.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3_all.maff)

Migrated from http://carson.math.uwm.edu/trac/clubb/ticket/496

```json

{

"status": "closed",

"changetime": "2012-06-28T21:55:53",

"description": "We include a lot of variables in our plotgen plots, but we omit one important one, namely, Lscale, which is output in clubb's zt files. Let's output Lscale for all our CLUBB cases on the standard plotgen plots. \n\nThe variables to plot in plotgen are specified in the case files. For instance, for RICO, the case file is [http://carson.math.uwm.edu/trac/clubb/browser/trunk/postprocessing/plotgen/cases/clubb/rico.case here]. More information about plotgen and the case files is given in the TWiki.\n\nNote: there is no Lscale output by SAM, WRF, COAMPS, etc., and so we can't include a thick red benchmark line.\n\n\n\n",

"reporter": "vlarson@uwm.edu",

"cc": "vlarson@uwm.edu",

"resolution": "fixed",

"_ts": "1340920553955391",

"component": "post_processing",

"summary": "Include Lscale as a new panel in the default plotgen plots",

"priority": "minor",

"keywords": "",

"time": "2012-02-07T20:54:59",

"milestone": "",

"owner": "bladornr@uwm.edu",

"type": "enhancement"

}

```

|

1.0

|

Include Lscale as a new panel in the default plotgen plots (Trac #496) - We include a lot of variables in our plotgen plots, but we omit one important one, namely, Lscale, which is output in clubb's zt files. Let's output Lscale for all our CLUBB cases on the standard plotgen plots.

The variables to plot in plotgen are specified in the case files. For instance, for RICO, the case file is [http://carson.math.uwm.edu/trac/clubb/browser/trunk/postprocessing/plotgen/cases/clubb/rico.case here]. More information about plotgen and the case files is given in the TWiki.

Note: there is no Lscale output by SAM, WRF, COAMPS, etc., and so we can't include a thick red benchmark line.

Attachments:

[plot_explicit_ta_configs.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_explicit_ta_configs.maff)

[plot_new_pdf_config_1_plot_2.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_config_1_plot_2.maff)

[plot_combo_pdf_run_3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_combo_pdf_run_3.maff)

[plot_input_fields_rtp3_thlp3_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_input_fields_rtp3_thlp3_1.maff)

[plot_new_pdf_20180522_test_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_20180522_test_1.maff)

[plot_attempts_8_10.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempts_8_10.maff)

[plot_attempt_8_only.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempt_8_only.maff)

[plot_beta_1p3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3.maff)

[plot_beta_1p3_all.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3_all.maff)

Migrated from http://carson.math.uwm.edu/trac/clubb/ticket/496

```json

{

"status": "closed",

"changetime": "2012-06-28T21:55:53",

"description": "We include a lot of variables in our plotgen plots, but we omit one important one, namely, Lscale, which is output in clubb's zt files. Let's output Lscale for all our CLUBB cases on the standard plotgen plots. \n\nThe variables to plot in plotgen are specified in the case files. For instance, for RICO, the case file is [http://carson.math.uwm.edu/trac/clubb/browser/trunk/postprocessing/plotgen/cases/clubb/rico.case here]. More information about plotgen and the case files is given in the TWiki.\n\nNote: there is no Lscale output by SAM, WRF, COAMPS, etc., and so we can't include a thick red benchmark line.\n\n\n\n",

"reporter": "vlarson@uwm.edu",

"cc": "vlarson@uwm.edu",

"resolution": "fixed",

"_ts": "1340920553955391",

"component": "post_processing",

"summary": "Include Lscale as a new panel in the default plotgen plots",

"priority": "minor",

"keywords": "",

"time": "2012-02-07T20:54:59",

"milestone": "",

"owner": "bladornr@uwm.edu",

"type": "enhancement"

}

```

|

process

|

include lscale as a new panel in the default plotgen plots trac we include a lot of variables in our plotgen plots but we omit one important one namely lscale which is output in clubb s zt files let s output lscale for all our clubb cases on the standard plotgen plots the variables to plot in plotgen are specified in the case files for instance for rico the case file is more information about plotgen and the case files is given in the twiki note there is no lscale output by sam wrf coamps etc and so we can t include a thick red benchmark line attachments migrated from json status closed changetime description we include a lot of variables in our plotgen plots but we omit one important one namely lscale which is output in clubb s zt files let s output lscale for all our clubb cases on the standard plotgen plots n nthe variables to plot in plotgen are specified in the case files for instance for rico the case file is more information about plotgen and the case files is given in the twiki n nnote there is no lscale output by sam wrf coamps etc and so we can t include a thick red benchmark line n n n n reporter vlarson uwm edu cc vlarson uwm edu resolution fixed ts component post processing summary include lscale as a new panel in the default plotgen plots priority minor keywords time milestone owner bladornr uwm edu type enhancement

| 1

|

547,320

| 16,041,177,771

|

IssuesEvent

|

2021-04-22 08:05:38

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

openvellum.ecollege.com - site is not usable

|

browser-firefox engine-gecko os-ios priority-normal

|

<!-- @browser: Firefox iOS 33.0 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4_2 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.0 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/71508 -->

**URL**: https://openvellum.ecollege.com/course.html?courseId=16507825

**Browser / Version**: Firefox iOS 33.0

**Operating System**: iOS 14.4.2

**Tested Another Browser**: No

**Problem type**: Site is not usable

**Description**: Browser unsupported

**Steps to Reproduce**:

doesn’t allow mobile firefox, which is disappointing.

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

openvellum.ecollege.com - site is not usable - <!-- @browser: Firefox iOS 33.0 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4_2 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.0 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/71508 -->

**URL**: https://openvellum.ecollege.com/course.html?courseId=16507825

**Browser / Version**: Firefox iOS 33.0

**Operating System**: iOS 14.4.2

**Tested Another Browser**: No

**Problem type**: Site is not usable

**Description**: Browser unsupported

**Steps to Reproduce**:

doesn’t allow mobile firefox, which is disappointing.

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_process

|

openvellum ecollege com site is not usable url browser version firefox ios operating system ios tested another browser no problem type site is not usable description browser unsupported steps to reproduce doesn’t allow mobile firefox which is disappointing browser configuration none from with ❤️

| 0

|

1,343

| 3,901,647,139

|

IssuesEvent

|

2016-04-18 11:48:48

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

mRNA stability/turnover/degradation

|

auto-migrated PomBase RNA processes

|

1\.

regulation of mRNA stability \(add related synonym mRNA turnover\)?

Any process that modulates the propensity of mRNA molecules to degradation. Includes processes that both stabilize and destabilize mRNAs.

has no relationship to

mRNA catabolic process

The chemical reactions and pathways resulting in the breakdown of mRNA, messenger RNA, which is responsible for carrying the coded genetic 'message', transcribed from DNA, to sites of protein assembly at the ribosomes.

2\. deadenylated and or decapped mRNAs are subject to degradation \(5' 3' degradation by the exosome\), so should mRNA deadenylation/mRNA decapping be a child of

regulation of mRNA stability or regulation of catabolism?, or should thsi be captured by concurrent annotations?

Reported by: ValWood

Original Ticket: [geneontology/ontology-requests/6697](https://sourceforge.net/p/geneontology/ontology-requests/6697)

|

1.0

|

mRNA stability/turnover/degradation - 1\.

regulation of mRNA stability \(add related synonym mRNA turnover\)?

Any process that modulates the propensity of mRNA molecules to degradation. Includes processes that both stabilize and destabilize mRNAs.

has no relationship to

mRNA catabolic process

The chemical reactions and pathways resulting in the breakdown of mRNA, messenger RNA, which is responsible for carrying the coded genetic 'message', transcribed from DNA, to sites of protein assembly at the ribosomes.

2\. deadenylated and or decapped mRNAs are subject to degradation \(5' 3' degradation by the exosome\), so should mRNA deadenylation/mRNA decapping be a child of

regulation of mRNA stability or regulation of catabolism?, or should thsi be captured by concurrent annotations?

Reported by: ValWood

Original Ticket: [geneontology/ontology-requests/6697](https://sourceforge.net/p/geneontology/ontology-requests/6697)

|

process

|

mrna stability turnover degradation regulation of mrna stability add related synonym mrna turnover any process that modulates the propensity of mrna molecules to degradation includes processes that both stabilize and destabilize mrnas has no relationship to mrna catabolic process the chemical reactions and pathways resulting in the breakdown of mrna messenger rna which is responsible for carrying the coded genetic message transcribed from dna to sites of protein assembly at the ribosomes deadenylated and or decapped mrnas are subject to degradation degradation by the exosome so should mrna deadenylation mrna decapping be a child of regulation of mrna stability or regulation of catabolism or should thsi be captured by concurrent annotations reported by valwood original ticket

| 1

|

8,531

| 11,705,511,057

|

IssuesEvent

|

2020-03-07 16:20:43

|

Jeffail/benthos

|

https://api.github.com/repos/Jeffail/benthos

|

closed

|

Add flatten as a json operator

|

enhancement processors

|

```json

{

"a": "b",

"c": { "d": "e", "f": "g" },

"h": [

{ "i": "j" },

{ "k": "l" }

]

}

```

becomes

```

{

"a": "b",

"c.d": "e",

"c.f": "g",

"h.0.i": "j",

"h.1.k": "l"

}

```

Not sure on the best representation of arrays, but a feature that flattened the json would be valuable for outputting a fully nested json struct to a kafka .e.g and at the same time have a separate topic with a flat json output.

|

1.0

|

Add flatten as a json operator - ```json

{

"a": "b",

"c": { "d": "e", "f": "g" },

"h": [

{ "i": "j" },

{ "k": "l" }

]

}

```

becomes

```

{

"a": "b",

"c.d": "e",

"c.f": "g",

"h.0.i": "j",

"h.1.k": "l"

}

```

Not sure on the best representation of arrays, but a feature that flattened the json would be valuable for outputting a fully nested json struct to a kafka .e.g and at the same time have a separate topic with a flat json output.

|

process

|

add flatten as a json operator json a b c d e f g h i j k l becomes a b c d e c f g h i j h k l not sure on the best representation of arrays but a feature that flattened the json would be valuable for outputting a fully nested json struct to a kafka e g and at the same time have a separate topic with a flat json output

| 1

|

10,927

| 13,726,946,577

|

IssuesEvent

|

2020-10-04 03:14:00

|

SpencerTSterling/AdvancedWebsite

|

https://api.github.com/repos/SpencerTSterling/AdvancedWebsite

|

closed

|

Set up Continuous Integration with GitHub Actions

|

dev process

|

GitHub actions should be used to build the project on each commit

|

1.0

|

Set up Continuous Integration with GitHub Actions - GitHub actions should be used to build the project on each commit

|

process

|

set up continuous integration with github actions github actions should be used to build the project on each commit

| 1

|

237,342

| 19,617,870,523

|

IssuesEvent

|

2022-01-07 00:07:51

|

kubernetes/test-infra

|

https://api.github.com/repos/kubernetes/test-infra

|

closed

|

Feature request: ability to search through component logs from CI runs

|

area/prow sig/testing kind/feature lifecycle/rotten

|

<!-- Please only use this template for submitting enhancement requests -->

/area prow

**What would you like to be added**:

Search capability for CI logs so I can search for jobs that match certain failure text. For example, I would really like to be able to search for `Observed a panic` in component logs and find all matching jobs and files.

Guberator (https://storage.googleapis.com/k8s-gubernator/triage/index.html) is great but doesn't allow me to search node or component logs, only the test output.

**Why is this needed**:

Right now it is impossible to proactively check for failures in CI artifacts; someone has to notice the problem. There is no aggregate way to search node logs.

|

1.0

|

Feature request: ability to search through component logs from CI runs - <!-- Please only use this template for submitting enhancement requests -->

/area prow

**What would you like to be added**:

Search capability for CI logs so I can search for jobs that match certain failure text. For example, I would really like to be able to search for `Observed a panic` in component logs and find all matching jobs and files.

Guberator (https://storage.googleapis.com/k8s-gubernator/triage/index.html) is great but doesn't allow me to search node or component logs, only the test output.

**Why is this needed**:

Right now it is impossible to proactively check for failures in CI artifacts; someone has to notice the problem. There is no aggregate way to search node logs.

|

non_process

|

feature request ability to search through component logs from ci runs area prow what would you like to be added search capability for ci logs so i can search for jobs that match certain failure text for example i would really like to be able to search for observed a panic in component logs and find all matching jobs and files guberator is great but doesn t allow me to search node or component logs only the test output why is this needed right now it is impossible to proactively check for failures in ci artifacts someone has to notice the problem there is no aggregate way to search node logs

| 0

|

12,514

| 14,963,807,346

|

IssuesEvent

|

2021-01-27 11:04:20

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[PM] [Audit Logs] 'studyId','siteId' and 'appId' is incorrect for the events

|

Bug P2 Participant manager datastore Process: Fixed Process: Tested dev

|

**Issue 1: 'studyId','siteId' and 'appId' is incorrect for the events**

1. SITE_ADDED_FOR_STUDY

2. PARTICIPANT_EMAIL_ADDED

3. PARTICIPANTS_EMAIL_LIST_IMPORTED

4. PARTICIPANTS_EMAIL_LIST_IMPORT_FAILED

5. PARTICIPANTS_EMAIL_LIST_IMPORT_PARTIAL_FAILED

6. SITE_DECOMMISSIONED_FOR_STUDY

7. SITE_ACTIVATED_FOR_STUDY

8. PARTICIPANT_INVITATION_DISABLED

9. CONSENT_DOCUMENT_DOWNLOADED

10. INVITATION_EMAIL_SENT

11. INVITATION_EMAIL_FAILED

12. PARTICIPANT_INVITATION_ENABLED

13. ENROLLMENT_TARGET_UPDATED

14. SITE_PARTICIPANT_REGISTRY_VIEWED

**Issue 2: 'studyId' and 'appId' is incorrect for the event**

15. STUDY_PARTICIPANT_REGISTRY_VIEWED

**Issue 3: 'appId' is incorrect for the event**

16. APP_PARTICIPANT_REGISTRY_VIEWED

Actual: 'studyId','siteId' and 'appId' displaying DB value

Expected: 'studyId','siteId' and 'appId' should be custom IDs i.e IDs entered from user in SB/PM web app

Sample snippet for event `SITE_ADDED_FOR_STUDY` event

```

{

"insertId": "epzd98g1b9f0co",

"jsonPayload": {

"correlationId": "2eb996c6-4d84-435b-b438-35caf65ae6b2",

"userAccessLevel": null,

"eventCode": "SITE_ADDED_FOR_STUDY",

"platformVersion": "1.0",

"source": "PARTICIPANT MANAGER",

"occurred": 1611313124346,

"mobilePlatform": "UNKNOWN",

"userId": "2c9180897689364401768a08f0060000",

"studyId": "2c91808876fa9409017706d12918002a",

"destinationApplicationVersion": "1.0",

"participantId": null,

"appVersion": "v0.1",

"studyVersion": null,

"siteId": "2c91808977290f8f017729bf13eb0006",

"sourceApplicationVersion": "1.0",

"destination": "PARTICIPANT USER DATASTORE",

"resourceServer": null,

"userIp": "117.211.20.33",

"description": "Site added to study (site ID- 2c91808977290f8f017729bf13eb0006).",

"appId": "2c91808876fa9409017706d11ea10028"

}

```

|

2.0

|

[PM] [Audit Logs] 'studyId','siteId' and 'appId' is incorrect for the events - **Issue 1: 'studyId','siteId' and 'appId' is incorrect for the events**

1. SITE_ADDED_FOR_STUDY

2. PARTICIPANT_EMAIL_ADDED

3. PARTICIPANTS_EMAIL_LIST_IMPORTED

4. PARTICIPANTS_EMAIL_LIST_IMPORT_FAILED

5. PARTICIPANTS_EMAIL_LIST_IMPORT_PARTIAL_FAILED

6. SITE_DECOMMISSIONED_FOR_STUDY

7. SITE_ACTIVATED_FOR_STUDY

8. PARTICIPANT_INVITATION_DISABLED

9. CONSENT_DOCUMENT_DOWNLOADED

10. INVITATION_EMAIL_SENT

11. INVITATION_EMAIL_FAILED

12. PARTICIPANT_INVITATION_ENABLED

13. ENROLLMENT_TARGET_UPDATED

14. SITE_PARTICIPANT_REGISTRY_VIEWED

**Issue 2: 'studyId' and 'appId' is incorrect for the event**

15. STUDY_PARTICIPANT_REGISTRY_VIEWED

**Issue 3: 'appId' is incorrect for the event**

16. APP_PARTICIPANT_REGISTRY_VIEWED

Actual: 'studyId','siteId' and 'appId' displaying DB value

Expected: 'studyId','siteId' and 'appId' should be custom IDs i.e IDs entered from user in SB/PM web app

Sample snippet for event `SITE_ADDED_FOR_STUDY` event

```

{

"insertId": "epzd98g1b9f0co",

"jsonPayload": {

"correlationId": "2eb996c6-4d84-435b-b438-35caf65ae6b2",

"userAccessLevel": null,

"eventCode": "SITE_ADDED_FOR_STUDY",

"platformVersion": "1.0",

"source": "PARTICIPANT MANAGER",

"occurred": 1611313124346,

"mobilePlatform": "UNKNOWN",

"userId": "2c9180897689364401768a08f0060000",

"studyId": "2c91808876fa9409017706d12918002a",

"destinationApplicationVersion": "1.0",

"participantId": null,

"appVersion": "v0.1",

"studyVersion": null,

"siteId": "2c91808977290f8f017729bf13eb0006",

"sourceApplicationVersion": "1.0",

"destination": "PARTICIPANT USER DATASTORE",

"resourceServer": null,

"userIp": "117.211.20.33",

"description": "Site added to study (site ID- 2c91808977290f8f017729bf13eb0006).",

"appId": "2c91808876fa9409017706d11ea10028"

}

```

|

process

|

studyid siteid and appid is incorrect for the events issue studyid siteid and appid is incorrect for the events site added for study participant email added participants email list imported participants email list import failed participants email list import partial failed site decommissioned for study site activated for study participant invitation disabled consent document downloaded invitation email sent invitation email failed participant invitation enabled enrollment target updated site participant registry viewed issue studyid and appid is incorrect for the event study participant registry viewed issue appid is incorrect for the event app participant registry viewed actual studyid siteid and appid displaying db value expected studyid siteid and appid should be custom ids i e ids entered from user in sb pm web app sample snippet for event site added for study event insertid jsonpayload correlationid useraccesslevel null eventcode site added for study platformversion source participant manager occurred mobileplatform unknown userid studyid destinationapplicationversion participantid null appversion studyversion null siteid sourceapplicationversion destination participant user datastore resourceserver null userip description site added to study site id appid

| 1

|

68,129

| 8,216,809,553

|

IssuesEvent

|

2018-09-05 10:18:42

|

WordPress/gutenberg

|

https://api.github.com/repos/WordPress/gutenberg

|

closed

|

Transform "Fix Toolbar to Top" into "Focus Mode"

|

Needs Design Feedback [Type] Enhancement

|

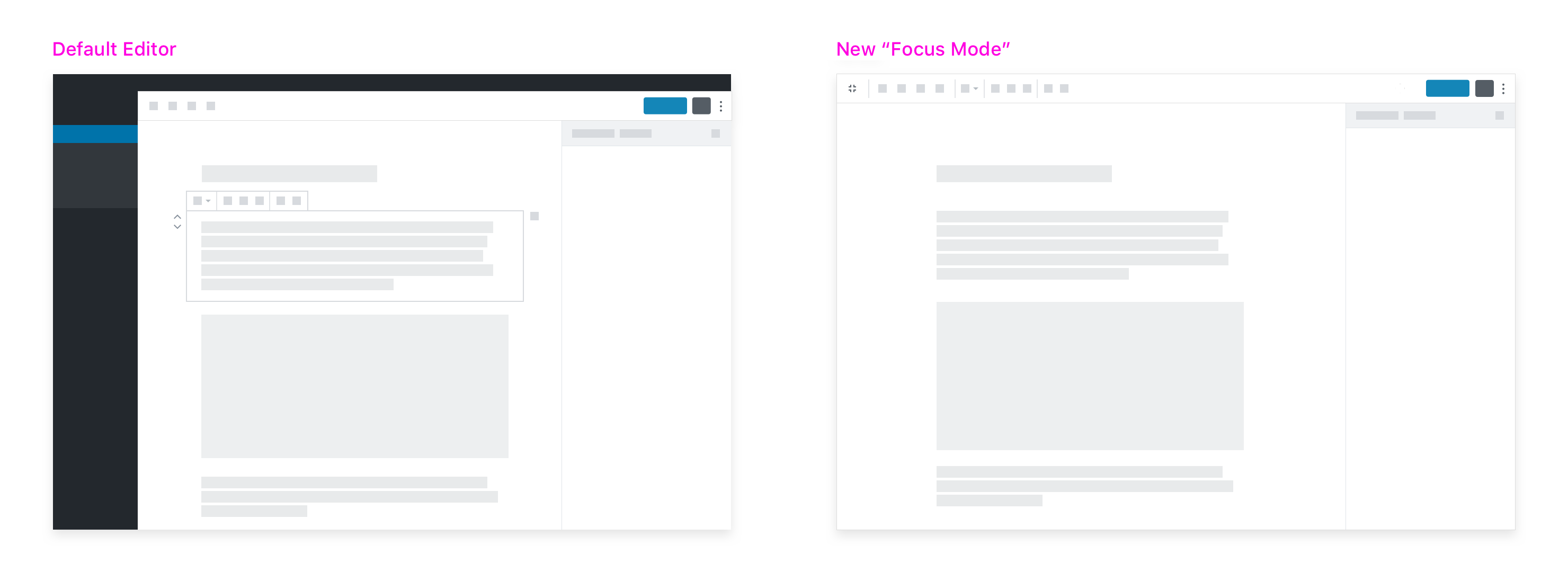

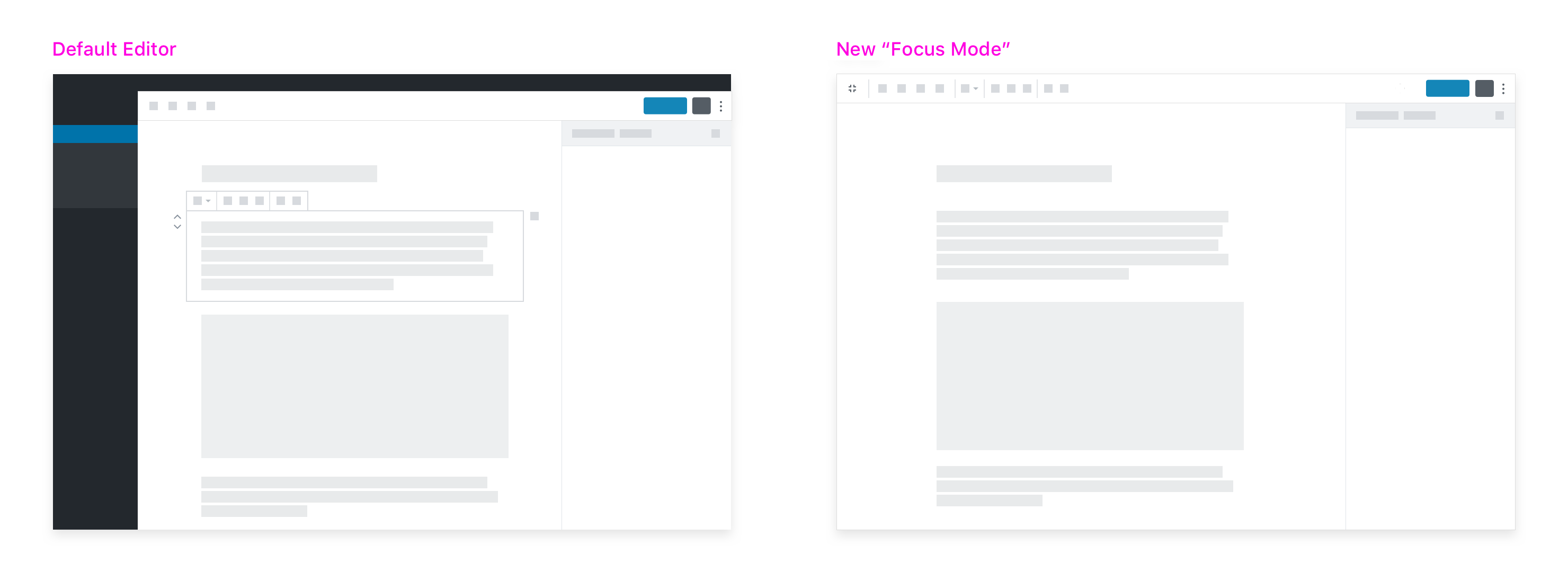

Inspired by the "Focus" mode that @mtias introduced in #8920, I'd like to propose we try a different kind of Focus Mode.

## Problem

A key feedback point we hear is that Gutenberg’s interface can be a little overwhelming. This often comes from users who more commonly focus on "writing" versus "building" their posts. They find the contextual block controls and block hover states to be distracting: When they're focused on writing, they don't necessarily want to think about blocks — they just want to write.

Oftentimes, this subset of users also miss the common "formatting toolbar at the top of the page" paradigm that's present in Google Docs, Microsoft Word, and the Classic Editor.

I think we can introduce an alternate editing mode that addresses both these concerns for them.

## Suggested Solution

We already have a "Fix Toolbar to Top" option that moves the contextual block toolbar to the top of the page. For the user I described above, this is already a step towards the interface they're used to. It's also a good first step to decluttering the writing interface — relocating heavy UI to a less-disctracting area of the screen.

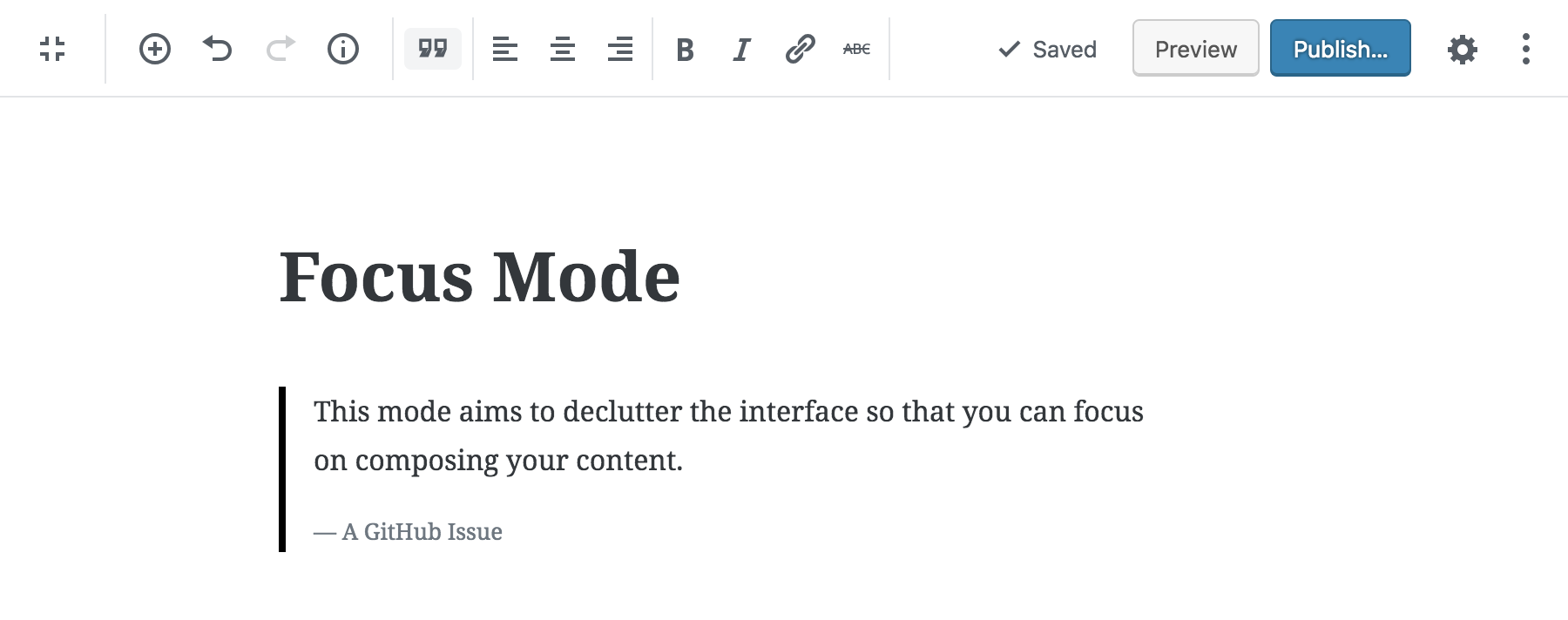

I suggest we take that option further, and adapt it into a more complete "Focus Mode":

This new editing mode would consist of a collection of UI updates aimed at decluttering the interface so that the user can focus on writing their content.

▶️ **Video demo:** https://cloudup.com/cMr22auRtXC

## Details

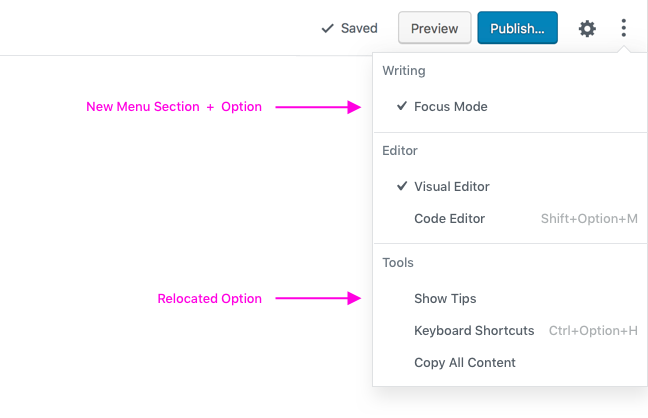

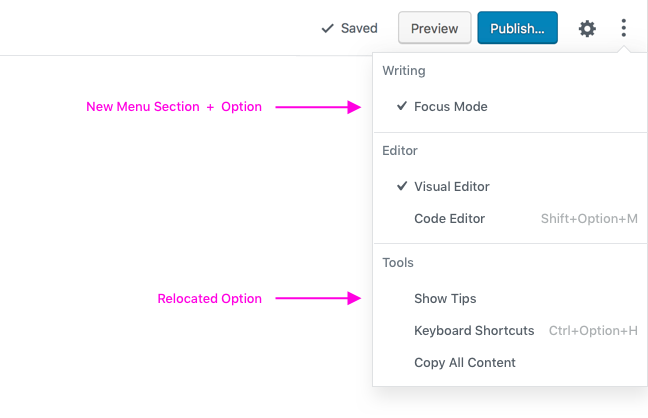

Focus Mode would be activated via the "More" menu. To accomodate this new mode, I propose renaming the "Fix Toolbar to Top" option to "Focus Mode" and including this as a new "Writing" option:

_(Since this would leave "Show Tips" all alone under "Settings", I suggest moving it into the "Tools" section at the bottom)_

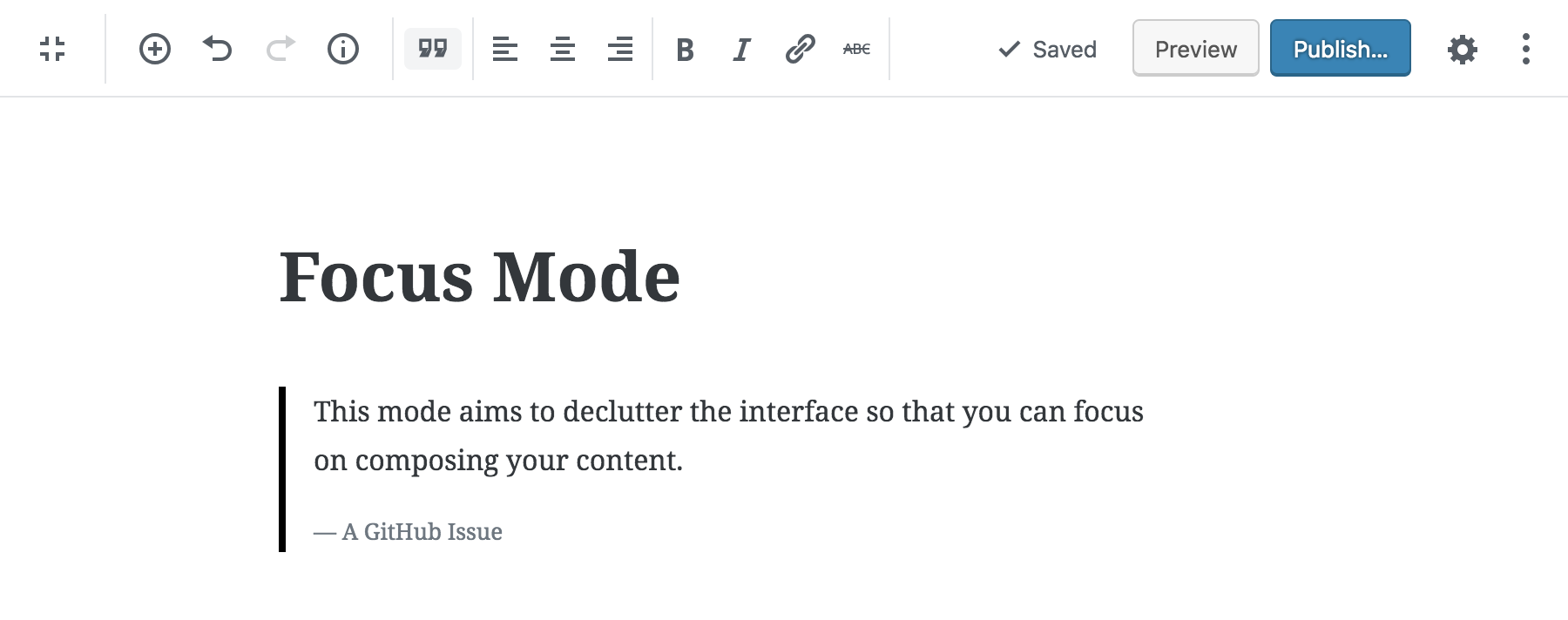

When users have this new mode active, the editor would include the following UI updates:

### 1. The block toolbar would be pinned to the top of the screen.

(This is an existing feature.)

### 2. The editor would be full screen.

This is one of the highest impact changes, and would be the default for this mode. Users could exit out of full screen mode — and retain all other features of Focus Mode — via a new toggle in the upper left of the toolbar.

### 3. Block outlines would be removed for both hover and selected states.

I initially thought this change would be confusing, but (as a power user myself) I find it quite usable. Since this is an optional mode, and this is a high-impact change in terms of eliminating distractions, I'm all for it.

### 4. The block label would appear on a delay, and be toned down visually.

This label is less essential in this mode, but including it will help with wayfinding. (The delay aspect of this change is already in progress in #9197)

### 5. Block mover + block options would also appear on a delay.

(For non-selected blocks). When a block is selected, they'll appear just as quickly as they usually do.

_Non-selected Blocks_

_Selected Blocks (This is the same as our current behavior)_

---

In case you missed it above, here's a short video demo to convey how these changes work in practice:

▶️ https://cloudup.com/cMr22auRtXC

## FAQ

I foresee a few likely questions to this approach, so I'll try to address them in advance:

- **How does this relate to the "Focus Mode" in #8920?** These can work together. The pattern of focusing in on a single block is compatible with both writing modes, so we could either allow it to be available in both modes, or we could make it an add-on feature for this Focus mode.

- **There are acessibility issues with not showing block borders.** That's very likely true. I'd reinforce the fact that this is a non-default, opt-in mode. If block borders and contextual controls are important to your use of Gutenberg, the default option will still be available. This direction would _not_ take that away.

- **Nested blocks will still require borders in this mode**. Yes, maybe they will! We'll need to address this, and I think it's reasonable to include some sort of minimal border in this case.

- **What about mobile?** We currently don't offer the "Fix Toolbar to Top" option on small screens, due to a [Safari issue noted here](https://github.com/WordPress/gutenberg/issues/7479#issuecomment-410988762). That will still be a problem for us. The other enhancements in this mode (full screen, hover state changes, etc.) would have little to no effect on mobile. For those reasons I suggest we limit this to larger screens only at this point.

Looking forward to thoughts and reactions. 🙂

|

1.0

|

Transform "Fix Toolbar to Top" into "Focus Mode" - Inspired by the "Focus" mode that @mtias introduced in #8920, I'd like to propose we try a different kind of Focus Mode.

## Problem

A key feedback point we hear is that Gutenberg’s interface can be a little overwhelming. This often comes from users who more commonly focus on "writing" versus "building" their posts. They find the contextual block controls and block hover states to be distracting: When they're focused on writing, they don't necessarily want to think about blocks — they just want to write.

Oftentimes, this subset of users also miss the common "formatting toolbar at the top of the page" paradigm that's present in Google Docs, Microsoft Word, and the Classic Editor.

I think we can introduce an alternate editing mode that addresses both these concerns for them.

## Suggested Solution

We already have a "Fix Toolbar to Top" option that moves the contextual block toolbar to the top of the page. For the user I described above, this is already a step towards the interface they're used to. It's also a good first step to decluttering the writing interface — relocating heavy UI to a less-disctracting area of the screen.

I suggest we take that option further, and adapt it into a more complete "Focus Mode":

This new editing mode would consist of a collection of UI updates aimed at decluttering the interface so that the user can focus on writing their content.

▶️ **Video demo:** https://cloudup.com/cMr22auRtXC

## Details

Focus Mode would be activated via the "More" menu. To accomodate this new mode, I propose renaming the "Fix Toolbar to Top" option to "Focus Mode" and including this as a new "Writing" option:

_(Since this would leave "Show Tips" all alone under "Settings", I suggest moving it into the "Tools" section at the bottom)_

When users have this new mode active, the editor would include the following UI updates:

### 1. The block toolbar would be pinned to the top of the screen.

(This is an existing feature.)

### 2. The editor would be full screen.

This is one of the highest impact changes, and would be the default for this mode. Users could exit out of full screen mode — and retain all other features of Focus Mode — via a new toggle in the upper left of the toolbar.

### 3. Block outlines would be removed for both hover and selected states.

I initially thought this change would be confusing, but (as a power user myself) I find it quite usable. Since this is an optional mode, and this is a high-impact change in terms of eliminating distractions, I'm all for it.

### 4. The block label would appear on a delay, and be toned down visually.

This label is less essential in this mode, but including it will help with wayfinding. (The delay aspect of this change is already in progress in #9197)

### 5. Block mover + block options would also appear on a delay.

(For non-selected blocks). When a block is selected, they'll appear just as quickly as they usually do.

_Non-selected Blocks_

_Selected Blocks (This is the same as our current behavior)_

---

In case you missed it above, here's a short video demo to convey how these changes work in practice:

▶️ https://cloudup.com/cMr22auRtXC

## FAQ

I foresee a few likely questions to this approach, so I'll try to address them in advance:

- **How does this relate to the "Focus Mode" in #8920?** These can work together. The pattern of focusing in on a single block is compatible with both writing modes, so we could either allow it to be available in both modes, or we could make it an add-on feature for this Focus mode.

- **There are acessibility issues with not showing block borders.** That's very likely true. I'd reinforce the fact that this is a non-default, opt-in mode. If block borders and contextual controls are important to your use of Gutenberg, the default option will still be available. This direction would _not_ take that away.

- **Nested blocks will still require borders in this mode**. Yes, maybe they will! We'll need to address this, and I think it's reasonable to include some sort of minimal border in this case.

- **What about mobile?** We currently don't offer the "Fix Toolbar to Top" option on small screens, due to a [Safari issue noted here](https://github.com/WordPress/gutenberg/issues/7479#issuecomment-410988762). That will still be a problem for us. The other enhancements in this mode (full screen, hover state changes, etc.) would have little to no effect on mobile. For those reasons I suggest we limit this to larger screens only at this point.

Looking forward to thoughts and reactions. 🙂

|

non_process

|

transform fix toolbar to top into focus mode inspired by the focus mode that mtias introduced in i d like to propose we try a different kind of focus mode problem a key feedback point we hear is that gutenberg’s interface can be a little overwhelming this often comes from users who more commonly focus on writing versus building their posts they find the contextual block controls and block hover states to be distracting when they re focused on writing they don t necessarily want to think about blocks — they just want to write oftentimes this subset of users also miss the common formatting toolbar at the top of the page paradigm that s present in google docs microsoft word and the classic editor i think we can introduce an alternate editing mode that addresses both these concerns for them suggested solution we already have a fix toolbar to top option that moves the contextual block toolbar to the top of the page for the user i described above this is already a step towards the interface they re used to it s also a good first step to decluttering the writing interface — relocating heavy ui to a less disctracting area of the screen i suggest we take that option further and adapt it into a more complete focus mode this new editing mode would consist of a collection of ui updates aimed at decluttering the interface so that the user can focus on writing their content ▶️ video demo details focus mode would be activated via the more menu to accomodate this new mode i propose renaming the fix toolbar to top option to focus mode and including this as a new writing option since this would leave show tips all alone under settings i suggest moving it into the tools section at the bottom when users have this new mode active the editor would include the following ui updates the block toolbar would be pinned to the top of the screen this is an existing feature the editor would be full screen this is one of the highest impact changes and would be the default for this mode users could exit out of full screen mode — and retain all other features of focus mode — via a new toggle in the upper left of the toolbar block outlines would be removed for both hover and selected states i initially thought this change would be confusing but as a power user myself i find it quite usable since this is an optional mode and this is a high impact change in terms of eliminating distractions i m all for it the block label would appear on a delay and be toned down visually this label is less essential in this mode but including it will help with wayfinding the delay aspect of this change is already in progress in block mover block options would also appear on a delay for non selected blocks when a block is selected they ll appear just as quickly as they usually do non selected blocks selected blocks this is the same as our current behavior in case you missed it above here s a short video demo to convey how these changes work in practice ▶️ faq i foresee a few likely questions to this approach so i ll try to address them in advance how does this relate to the focus mode in these can work together the pattern of focusing in on a single block is compatible with both writing modes so we could either allow it to be available in both modes or we could make it an add on feature for this focus mode there are acessibility issues with not showing block borders that s very likely true i d reinforce the fact that this is a non default opt in mode if block borders and contextual controls are important to your use of gutenberg the default option will still be available this direction would not take that away nested blocks will still require borders in this mode yes maybe they will we ll need to address this and i think it s reasonable to include some sort of minimal border in this case what about mobile we currently don t offer the fix toolbar to top option on small screens due to a that will still be a problem for us the other enhancements in this mode full screen hover state changes etc would have little to no effect on mobile for those reasons i suggest we limit this to larger screens only at this point looking forward to thoughts and reactions 🙂

| 0

|

7,615

| 4,020,461,369

|

IssuesEvent

|

2016-05-16 18:30:00

|

mitchellh/packer

|

https://api.github.com/repos/mitchellh/packer

|

opened

|

Azure: Where's the Best Error Message

|

builder/azure

|

A customer experienced an issue when the *capture_name_prefix* was set to an unacceptable value (#3535). The method signature for the API call returns an HTTP response and an error. The Azure builder code discards the HTTP response, and checks the error only. The error's message is not intuitive, and does not return any information to help to debug the issue. The HTTP response is more helpful, but it is discarded.

The fix is for the builder code to check and surface both. (Checking the error only is sufficient to indicate there is an error with the API call.) I am tracking the [issue](https://github.com/Azure/azure-sdk-for-go/issues/328) with the Azure SDK team too, to see what their recommendations are.

|

1.0

|

Azure: Where's the Best Error Message - A customer experienced an issue when the *capture_name_prefix* was set to an unacceptable value (#3535). The method signature for the API call returns an HTTP response and an error. The Azure builder code discards the HTTP response, and checks the error only. The error's message is not intuitive, and does not return any information to help to debug the issue. The HTTP response is more helpful, but it is discarded.

The fix is for the builder code to check and surface both. (Checking the error only is sufficient to indicate there is an error with the API call.) I am tracking the [issue](https://github.com/Azure/azure-sdk-for-go/issues/328) with the Azure SDK team too, to see what their recommendations are.

|

non_process

|

azure where s the best error message a customer experienced an issue when the capture name prefix was set to an unacceptable value the method signature for the api call returns an http response and an error the azure builder code discards the http response and checks the error only the error s message is not intuitive and does not return any information to help to debug the issue the http response is more helpful but it is discarded the fix is for the builder code to check and surface both checking the error only is sufficient to indicate there is an error with the api call i am tracking the with the azure sdk team too to see what their recommendations are

| 0

|

19,053

| 25,068,200,200

|

IssuesEvent

|

2022-11-07 10:01:30

|

ESMValGroup/ESMValCore

|

https://api.github.com/repos/ESMValGroup/ESMValCore

|

closed

|

Anomaly calculation for OBS got broken early march.

|

bug preprocessor

|

**Describe the bug**

Unfortunately, it seems like none of the tests has flagged (something to look into later I would say!). But for several observational datasets the calculation of anomalies goes wrong with non-physical values coming out of the preprocessor. I could track down the problems to 3-4 March 2020. With everything working fine on the 3rd of March (`git checkout 'master@{2020-03-03}'`) and wrong results from 4 March onwards (`git checkout 'master@{2020-03-04}'`). To create the plots and run the recipe, one needs a specific ESMValTool branch: `git checkout C3S_511_MPQB`. But reproducing it and simply inspecting NetCDF output files would work as well of course. Since the changes in the `anomalies` preprocessor were authored by @jvegasbsc my hope is that he can solve this issue. It would be a good additional check if someone can reproduce the error (@hb326 @BenMGeo or @mattiarighi). The error also occurred for `ERA5`, a dataset that is more widely used. Tagging @hirschim just to keep you updated.

Fine:

_Generated using Copernicus Climate Change Service information 2020_

Wrong:

_Generated using Copernicus Climate Change Service information 2020_

Excerpt from the differences of `ESMValCore` between 3rd and 4th of March 2020:

```

diff --git a/esmvalcore/preprocessor/_time.py b/esmvalcore/preprocessor/_time.py

index 1e29395e3..c1cf2071b 100644

--- a/esmvalcore/preprocessor/_time.py

+++ b/esmvalcore/preprocessor/_time.py

@@ -462,16 +462,15 @@ def anomalies(cube, period, reference):

cube_time = cube.coord('time')

ref = {}

for ref_slice in reference.slices_over(ref_coord):

- ref[ref_slice.coord(ref_coord).points[0]] = da.ravel(

- ref_slice.core_data())

+ ref[ref_slice.coord(ref_coord).points[0]] = ref_slice.core_data()

+

cube_coord_dim = cube.coord_dims(cube_coord)[0]

+ slicer = [slice(None)] * len(data.shape)

+ new_data = []

for i in range(cube_time.shape[0]):

- time = cube_time.points[i]

- indexes = cube_time.points == time

- indexes = iris.util.broadcast_to_shape(indexes, data.shape,

- (cube_coord_dim, ))

- data[indexes] = data[indexes] - ref[cube_coord.points[i]]

-

+ slicer[cube_coord_dim] = i

+ new_data.append(data[tuple(slicer)] - ref[cube_coord.points[i]])

+ data = da.stack(new_data, axis=cube_coord_dim)

cube = cube.copy(data)

cube.remove_coord(cube_coord)

return cube

commit f61cc0e946dd4cd1de02fb738046f5273db16025

Author: Javier Vegas <javier.vegas@bsc.es>

Date: Tue Jan 21 16:31:42 2020 +0100

Remove print and extra coordinate

diff --git a/esmvalcore/preprocessor/_time.py b/esmvalcore/preprocessor/_time.py

index 612bfa47a..1e29395e3 100644

--- a/esmvalcore/preprocessor/_time.py

+++ b/esmvalcore/preprocessor/_time.py

@@ -411,7 +411,6 @@ def climate_statistics(cube, operator='mean', period='full'):

operator = get_iris_analysis_operation(operator)

clim_cube = cube.aggregated_by(clim_coord, operator)

clim_cube.remove_coord('time')

- print(clim_cube)

if clim_cube.coord(clim_coord.name()).is_monotonic():

iris.util.promote_aux_coord_to_dim_coord(clim_cube, clim_coord.name())

else:

@@ -474,6 +473,7 @@ def anomalies(cube, period, reference):

data[indexes] = data[indexes] - ref[cube_coord.points[i]]

cube = cube.copy(data)

+ cube.remove_coord(cube_coord)

return cube

```

Recipe:

```

# ESMValTool

# recipe_anom_bug.yml

---

documentation:

description: |

Recipe for demonstrating a bug. To get wrong results run `git checkout ' master@{2020-03-04}'` in ESMValCore dir. To get good results run `git checkout 'master@{2020-03-03}'` in ESMValCore dir. Use branch `C3S_511_MPQB` from ESMValTool.

authors:

- crezee_bas

################################################

# Define some default parameters using anchors #

################################################

commongrid: &commongrid

regrid:

target_grid: 0.25x0.25

scheme: nearest

regrid_time: # this is needed for a fully homogeneous time coordinate

frequency: mon

icefreeland: &icefreeland

mask_landsea:

mask_out: sea

mask_glaciated:

mask_out: glaciated

commonmask: &commonmask # should be preceded by commongrid

mask_fillvalues:

threshold_fraction: 0.0 # keep all missing values

min_value: -1e20 # small enough not to alter the data

nonnegative: &nonnegative

clip:

minimum: 0.0

################################################

################################################

################################################

datasets_from_1992_2019: &datasets_from_1992_2019

additional_datasets:

- {dataset: CDS-SATELLITE-SOIL-MOISTURE, type: sat, project: OBS, mip: Lmon,

version: CUSTOM-TCDR-ICDR-20200602, tier: 3, start_year: 2015, end_year: 2018}

# - {dataset: CDS-SATELLITE-SOIL-MOISTURE, project: OBS, tier: 3, type: sat,

# version: CUSTOM-TCDR-ICDR-20200602, start_year: 1992, end_year: 2019, mip: Lmon}

# - {dataset: cds-era5-land-monthly, type: reanaly, project: OBS, mip: Lmon,

# version: 1, tier: 3, start_year: 1992, end_year: 2019}

# - {dataset: cds-era5-monthly, type: reanaly, project: OBS, mip: Lmon,

# version: 1, tier: 3, start_year: 1992, end_year: 2019}

# - {dataset: MERRA2, type: reanaly, project: OBS6, mip: Lmon,

# version: 5.12.4, tier: 3, start_year: 1992, end_year: 2019}

preprocessors:

pp_lineplots_ano:

custom_order: true

<<: *icefreeland

<<: *commongrid

<<: *commonmask

<<: *nonnegative

anomalies:

period: monthly

reference: [2015,2018]

standardize: false

area_statistics:

operator: mean

diagnostics:

lineplots_ano:

variables:

sm:

preprocessor: pp_lineplots_ano

mip: Lmon

scripts:

lineplot:

script: mpqb/mpqb_lineplot.py

<<: *datasets_from_1992_2019

```

|

1.0

|

Anomaly calculation for OBS got broken early march. - **Describe the bug**

Unfortunately, it seems like none of the tests has flagged (something to look into later I would say!). But for several observational datasets the calculation of anomalies goes wrong with non-physical values coming out of the preprocessor. I could track down the problems to 3-4 March 2020. With everything working fine on the 3rd of March (`git checkout 'master@{2020-03-03}'`) and wrong results from 4 March onwards (`git checkout 'master@{2020-03-04}'`). To create the plots and run the recipe, one needs a specific ESMValTool branch: `git checkout C3S_511_MPQB`. But reproducing it and simply inspecting NetCDF output files would work as well of course. Since the changes in the `anomalies` preprocessor were authored by @jvegasbsc my hope is that he can solve this issue. It would be a good additional check if someone can reproduce the error (@hb326 @BenMGeo or @mattiarighi). The error also occurred for `ERA5`, a dataset that is more widely used. Tagging @hirschim just to keep you updated.

Fine:

_Generated using Copernicus Climate Change Service information 2020_

Wrong:

_Generated using Copernicus Climate Change Service information 2020_

Excerpt from the differences of `ESMValCore` between 3rd and 4th of March 2020:

```

diff --git a/esmvalcore/preprocessor/_time.py b/esmvalcore/preprocessor/_time.py

index 1e29395e3..c1cf2071b 100644

--- a/esmvalcore/preprocessor/_time.py

+++ b/esmvalcore/preprocessor/_time.py

@@ -462,16 +462,15 @@ def anomalies(cube, period, reference):

cube_time = cube.coord('time')

ref = {}

for ref_slice in reference.slices_over(ref_coord):

- ref[ref_slice.coord(ref_coord).points[0]] = da.ravel(

- ref_slice.core_data())

+ ref[ref_slice.coord(ref_coord).points[0]] = ref_slice.core_data()

+

cube_coord_dim = cube.coord_dims(cube_coord)[0]

+ slicer = [slice(None)] * len(data.shape)

+ new_data = []

for i in range(cube_time.shape[0]):

- time = cube_time.points[i]

- indexes = cube_time.points == time

- indexes = iris.util.broadcast_to_shape(indexes, data.shape,

- (cube_coord_dim, ))

- data[indexes] = data[indexes] - ref[cube_coord.points[i]]

-

+ slicer[cube_coord_dim] = i

+ new_data.append(data[tuple(slicer)] - ref[cube_coord.points[i]])

+ data = da.stack(new_data, axis=cube_coord_dim)

cube = cube.copy(data)

cube.remove_coord(cube_coord)

return cube

commit f61cc0e946dd4cd1de02fb738046f5273db16025

Author: Javier Vegas <javier.vegas@bsc.es>

Date: Tue Jan 21 16:31:42 2020 +0100

Remove print and extra coordinate

diff --git a/esmvalcore/preprocessor/_time.py b/esmvalcore/preprocessor/_time.py

index 612bfa47a..1e29395e3 100644

--- a/esmvalcore/preprocessor/_time.py

+++ b/esmvalcore/preprocessor/_time.py

@@ -411,7 +411,6 @@ def climate_statistics(cube, operator='mean', period='full'):

operator = get_iris_analysis_operation(operator)

clim_cube = cube.aggregated_by(clim_coord, operator)

clim_cube.remove_coord('time')

- print(clim_cube)

if clim_cube.coord(clim_coord.name()).is_monotonic():

iris.util.promote_aux_coord_to_dim_coord(clim_cube, clim_coord.name())

else:

@@ -474,6 +473,7 @@ def anomalies(cube, period, reference):

data[indexes] = data[indexes] - ref[cube_coord.points[i]]

cube = cube.copy(data)

+ cube.remove_coord(cube_coord)

return cube

```

Recipe:

```

# ESMValTool

# recipe_anom_bug.yml

---

documentation:

description: |

Recipe for demonstrating a bug. To get wrong results run `git checkout ' master@{2020-03-04}'` in ESMValCore dir. To get good results run `git checkout 'master@{2020-03-03}'` in ESMValCore dir. Use branch `C3S_511_MPQB` from ESMValTool.

authors:

- crezee_bas

################################################

# Define some default parameters using anchors #

################################################

commongrid: &commongrid

regrid:

target_grid: 0.25x0.25

scheme: nearest

regrid_time: # this is needed for a fully homogeneous time coordinate

frequency: mon

icefreeland: &icefreeland

mask_landsea:

mask_out: sea

mask_glaciated:

mask_out: glaciated

commonmask: &commonmask # should be preceded by commongrid

mask_fillvalues:

threshold_fraction: 0.0 # keep all missing values

min_value: -1e20 # small enough not to alter the data

nonnegative: &nonnegative

clip:

minimum: 0.0

################################################

################################################

################################################

datasets_from_1992_2019: &datasets_from_1992_2019

additional_datasets:

- {dataset: CDS-SATELLITE-SOIL-MOISTURE, type: sat, project: OBS, mip: Lmon,

version: CUSTOM-TCDR-ICDR-20200602, tier: 3, start_year: 2015, end_year: 2018}

# - {dataset: CDS-SATELLITE-SOIL-MOISTURE, project: OBS, tier: 3, type: sat,

# version: CUSTOM-TCDR-ICDR-20200602, start_year: 1992, end_year: 2019, mip: Lmon}

# - {dataset: cds-era5-land-monthly, type: reanaly, project: OBS, mip: Lmon,

# version: 1, tier: 3, start_year: 1992, end_year: 2019}

# - {dataset: cds-era5-monthly, type: reanaly, project: OBS, mip: Lmon,

# version: 1, tier: 3, start_year: 1992, end_year: 2019}

# - {dataset: MERRA2, type: reanaly, project: OBS6, mip: Lmon,

# version: 5.12.4, tier: 3, start_year: 1992, end_year: 2019}

preprocessors:

pp_lineplots_ano:

custom_order: true

<<: *icefreeland

<<: *commongrid

<<: *commonmask

<<: *nonnegative

anomalies:

period: monthly

reference: [2015,2018]

standardize: false

area_statistics:

operator: mean

diagnostics:

lineplots_ano:

variables:

sm:

preprocessor: pp_lineplots_ano

mip: Lmon

scripts:

lineplot:

script: mpqb/mpqb_lineplot.py

<<: *datasets_from_1992_2019

```

|

process

|

anomaly calculation for obs got broken early march describe the bug unfortunately it seems like none of the tests has flagged something to look into later i would say but for several observational datasets the calculation of anomalies goes wrong with non physical values coming out of the preprocessor i could track down the problems to march with everything working fine on the of march git checkout master and wrong results from march onwards git checkout master to create the plots and run the recipe one needs a specific esmvaltool branch git checkout mpqb but reproducing it and simply inspecting netcdf output files would work as well of course since the changes in the anomalies preprocessor were authored by jvegasbsc my hope is that he can solve this issue it would be a good additional check if someone can reproduce the error benmgeo or mattiarighi the error also occurred for a dataset that is more widely used tagging hirschim just to keep you updated fine generated using copernicus climate change service information wrong generated using copernicus climate change service information excerpt from the differences of esmvalcore between and of march diff git a esmvalcore preprocessor time py b esmvalcore preprocessor time py index a esmvalcore preprocessor time py b esmvalcore preprocessor time py def anomalies cube period reference cube time cube coord time ref for ref slice in reference slices over ref coord ref da ravel ref slice core data ref ref slice core data cube coord dim cube coord dims cube coord slicer len data shape new data for i in range cube time shape time cube time points indexes cube time points time indexes iris util broadcast to shape indexes data shape cube coord dim data data ref slicer i new data append data ref data da stack new data axis cube coord dim cube cube copy data cube remove coord cube coord return cube commit author javier vegas date tue jan remove print and extra coordinate diff git a esmvalcore preprocessor time py b esmvalcore preprocessor time py index a esmvalcore preprocessor time py b esmvalcore preprocessor time py def climate statistics cube operator mean period full operator get iris analysis operation operator clim cube cube aggregated by clim coord operator clim cube remove coord time print clim cube if clim cube coord clim coord name is monotonic iris util promote aux coord to dim coord clim cube clim coord name else def anomalies cube period reference data data ref cube cube copy data cube remove coord cube coord return cube recipe esmvaltool recipe anom bug yml documentation description recipe for demonstrating a bug to get wrong results run git checkout master in esmvalcore dir to get good results run git checkout master in esmvalcore dir use branch mpqb from esmvaltool authors crezee bas define some default parameters using anchors commongrid commongrid regrid target grid scheme nearest regrid time this is needed for a fully homogeneous time coordinate frequency mon icefreeland icefreeland mask landsea mask out sea mask glaciated mask out glaciated commonmask commonmask should be preceded by commongrid mask fillvalues threshold fraction keep all missing values min value small enough not to alter the data nonnegative nonnegative clip minimum datasets from datasets from additional datasets dataset cds satellite soil moisture type sat project obs mip lmon version custom tcdr icdr tier start year end year dataset cds satellite soil moisture project obs tier type sat version custom tcdr icdr start year end year mip lmon dataset cds land monthly type reanaly project obs mip lmon version tier start year end year dataset cds monthly type reanaly project obs mip lmon version tier start year end year dataset type reanaly project mip lmon version tier start year end year preprocessors pp lineplots ano custom order true icefreeland commongrid commonmask nonnegative anomalies period monthly reference standardize false area statistics operator mean diagnostics lineplots ano variables sm preprocessor pp lineplots ano mip lmon scripts lineplot script mpqb mpqb lineplot py datasets from

| 1

|

20,781

| 27,518,422,464

|

IssuesEvent

|

2023-03-06 13:34:04

|

oda-hub/dispatcher-app

|

https://api.github.com/repos/oda-hub/dispatcher-app

|

opened

|

if we have several frontends, each requesting the same dispatcher, what is the proper value for product_url option?

|

multi-process

|

asked by @dsavchenko

https://github.com/oda-hub/oda_api/issues/189

|

1.0

|

if we have several frontends, each requesting the same dispatcher, what is the proper value for product_url option? - asked by @dsavchenko

https://github.com/oda-hub/oda_api/issues/189

|

process

|

if we have several frontends each requesting the same dispatcher what is the proper value for product url option asked by dsavchenko

| 1

|

22,690

| 15,378,073,333

|

IssuesEvent

|

2021-03-02 17:51:26

|

department-of-veterans-affairs/va.gov-team

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-team

|

closed

|

Salesforce-GIBFT Connection for vets-api in STAGING not working

|

backend external-request infrastructure operations security

|

## Description

Salesforce-GIBFT Connection for vets-api in STAGING is not working and VSP Operations DevOps engineers have been brought in to help debug and fix the connection. Users of the front end application are receiving an error when trying to submit data through the GI Bill Feedback Tool (https://staging.va.gov/education/submit-school-feedback/introduction)

## Background/context/resources

- rotating variable consumer key

- consumer key gets updated periodically (monthly?)

- STAGING to UAT environment was previous connection

- STAGING to REG environment is new connection

- [currently open pr/branch in devops with new changes](https://github.com/department-of-veterans-affairs/devops/pull/8449/files)

- [merged pr in vets-api to update environment naming scheme](https://github.com/department-of-veterans-affairs/vets-api/pull/5807)

- [knowledge dump left for us by Johnny Holton](https://github.com/department-of-veterans-affairs/va.gov-team/issues/14921)

## Technical notes

- @jbritt1 has worked w/ @lihanli, @LindseySaari, and @dginther to try and debug the connection

- Jeremy has been in touch with external teams to try and resolve this issue

- Consumer key is now up to date with exact value provided by Salesforce

- The [cert signing key](https://github.com/department-of-veterans-affairs/devops/blob/master/ansible/deployment/config/vets-api-server-vagov-staging.yml#L182) also had to be changed from the one we use in STAGING, to the one that we use for DEV (ref: https://dsva.slack.com/archives/CJYRZK2HH/p1611956508150000?thread_ts=1611872226.101200&cid=CJYRZK2HH)

- [Invalid header article found on stackexchange ](https://salesforce.stackexchange.com/questions/234316/messageinvalid-header-type-errorcodeinvalid-auth-header-received)

- [ Sentry errors found that may be related](http://sentry.vfs.va.gov/organizations/vsp/issues/5210/?environment=staging&query=is%3Aunresolved&statsPeriod=24h)

- This connection WORKS when using the rails console to trigger the action (forcing a query / POST from rails console)

- Example of past rails console commands that have worked:

```

Gibft::Service::CONSUMER_KEY = 'REDACTED'

Gibft::Service::SALESFORCE_USERNAME = 'vetsgov-devops-ci-feedback@listserv.gsa.gov.reg'

Gibft::Configuration::SALESFORCE_INSTANCE_URL = 'https://va--reg.my.salesforce.com/'

service = Gibft::Service.new

body = service.send(:request, :post, '', service.oauth_params).body

```

---

## Tasks

- [ ] External team wants to meet again to continue troubleshooting

## Definition of Done

- [ ] Salesforce-GIBFT connection for vets-api will be working in STAGING

---

### Reminders

- [X] Please attach your team label and any other appropriate label(s)

- [X] Please attach the needs grooming tag if needed

- [X] Please connect to an epic

|

1.0

|

Salesforce-GIBFT Connection for vets-api in STAGING not working - ## Description

Salesforce-GIBFT Connection for vets-api in STAGING is not working and VSP Operations DevOps engineers have been brought in to help debug and fix the connection. Users of the front end application are receiving an error when trying to submit data through the GI Bill Feedback Tool (https://staging.va.gov/education/submit-school-feedback/introduction)

## Background/context/resources

- rotating variable consumer key

- consumer key gets updated periodically (monthly?)

- STAGING to UAT environment was previous connection

- STAGING to REG environment is new connection

- [currently open pr/branch in devops with new changes](https://github.com/department-of-veterans-affairs/devops/pull/8449/files)

- [merged pr in vets-api to update environment naming scheme](https://github.com/department-of-veterans-affairs/vets-api/pull/5807)

- [knowledge dump left for us by Johnny Holton](https://github.com/department-of-veterans-affairs/va.gov-team/issues/14921)

## Technical notes

- @jbritt1 has worked w/ @lihanli, @LindseySaari, and @dginther to try and debug the connection

- Jeremy has been in touch with external teams to try and resolve this issue

- Consumer key is now up to date with exact value provided by Salesforce

- The [cert signing key](https://github.com/department-of-veterans-affairs/devops/blob/master/ansible/deployment/config/vets-api-server-vagov-staging.yml#L182) also had to be changed from the one we use in STAGING, to the one that we use for DEV (ref: https://dsva.slack.com/archives/CJYRZK2HH/p1611956508150000?thread_ts=1611872226.101200&cid=CJYRZK2HH)

- [Invalid header article found on stackexchange ](https://salesforce.stackexchange.com/questions/234316/messageinvalid-header-type-errorcodeinvalid-auth-header-received)

- [ Sentry errors found that may be related](http://sentry.vfs.va.gov/organizations/vsp/issues/5210/?environment=staging&query=is%3Aunresolved&statsPeriod=24h)

- This connection WORKS when using the rails console to trigger the action (forcing a query / POST from rails console)

- Example of past rails console commands that have worked:

```

Gibft::Service::CONSUMER_KEY = 'REDACTED'

Gibft::Service::SALESFORCE_USERNAME = 'vetsgov-devops-ci-feedback@listserv.gsa.gov.reg'

Gibft::Configuration::SALESFORCE_INSTANCE_URL = 'https://va--reg.my.salesforce.com/'

service = Gibft::Service.new

body = service.send(:request, :post, '', service.oauth_params).body

```

---

## Tasks

- [ ] External team wants to meet again to continue troubleshooting

## Definition of Done

- [ ] Salesforce-GIBFT connection for vets-api will be working in STAGING

---

### Reminders

- [X] Please attach your team label and any other appropriate label(s)

- [X] Please attach the needs grooming tag if needed

- [X] Please connect to an epic

|

non_process

|

salesforce gibft connection for vets api in staging not working description salesforce gibft connection for vets api in staging is not working and vsp operations devops engineers have been brought in to help debug and fix the connection users of the front end application are receiving an error when trying to submit data through the gi bill feedback tool background context resources rotating variable consumer key consumer key gets updated periodically monthly staging to uat environment was previous connection staging to reg environment is new connection technical notes has worked w lihanli lindseysaari and dginther to try and debug the connection jeremy has been in touch with external teams to try and resolve this issue consumer key is now up to date with exact value provided by salesforce the also had to be changed from the one we use in staging to the one that we use for dev ref this connection works when using the rails console to trigger the action forcing a query post from rails console example of past rails console commands that have worked gibft service consumer key redacted gibft service salesforce username vetsgov devops ci feedback listserv gsa gov reg gibft configuration salesforce instance url service gibft service new body service send request post service oauth params body tasks external team wants to meet again to continue troubleshooting definition of done salesforce gibft connection for vets api will be working in staging reminders please attach your team label and any other appropriate label s please attach the needs grooming tag if needed please connect to an epic

| 0

|

44,709

| 11,493,613,457

|

IssuesEvent

|

2020-02-11 23:27:30

|

ShabadOS/database

|

https://api.github.com/repos/ShabadOS/database

|

opened

|

Bot transformations on data via command line commit?

|

Priority: 2 Medium Scope: Build Status: In Research Type: Question

|

Let's say someone wants to make a mass change across all of the gurmukhi (renaming the field from "gurmukhi" to "bani" or such), or fixes a typo in the author's name. It affects like 4000 files, the transformation is applied locally, and then committed and diffed on GH.

Anyone looking at this might be confused on how/why, and we can't easily check that there wasn't a problem during this transformation, short of spotting something wrong with the data (which requires going through all the lines in the commit and this takes a very long time...)

So, can we design a process whereby someone instead commits what they'd like to happen, and let a machine do the transformation in a separate commit.

Then, the intent is clear, and you're able to focus on reviewing the transformer, instead of going through a tonne of lines individually

_Interpreted by @Harjot1Singh_

|

1.0

|

Bot transformations on data via command line commit? - Let's say someone wants to make a mass change across all of the gurmukhi (renaming the field from "gurmukhi" to "bani" or such), or fixes a typo in the author's name. It affects like 4000 files, the transformation is applied locally, and then committed and diffed on GH.

Anyone looking at this might be confused on how/why, and we can't easily check that there wasn't a problem during this transformation, short of spotting something wrong with the data (which requires going through all the lines in the commit and this takes a very long time...)

So, can we design a process whereby someone instead commits what they'd like to happen, and let a machine do the transformation in a separate commit.

Then, the intent is clear, and you're able to focus on reviewing the transformer, instead of going through a tonne of lines individually

_Interpreted by @Harjot1Singh_

|

non_process

|

bot transformations on data via command line commit let s say someone wants to make a mass change across all of the gurmukhi renaming the field from gurmukhi to bani or such or fixes a typo in the author s name it affects like files the transformation is applied locally and then committed and diffed on gh anyone looking at this might be confused on how why and we can t easily check that there wasn t a problem during this transformation short of spotting something wrong with the data which requires going through all the lines in the commit and this takes a very long time so can we design a process whereby someone instead commits what they d like to happen and let a machine do the transformation in a separate commit then the intent is clear and you re able to focus on reviewing the transformer instead of going through a tonne of lines individually interpreted by

| 0

|

9,790

| 12,805,793,127

|

IssuesEvent

|

2020-07-03 08:13:58

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

closed

|

Add new line checks to integration tests

|

kind/improvement process/candidate team/typescript topic: tests

|

Due to differences in operating systems / environment, I could not get the recent change of [adding trailing empty lines](https://github.com/prisma/prisma-engines/commit/ea035543e59571161e00ccd4063f5638283bfba7) working in the integration tests.

Instead for now I added a `.trim()` call to those tests to make them work.

We should look into it and find a way to get the tests running without `.trim()`

|

1.0

|

Add new line checks to integration tests - Due to differences in operating systems / environment, I could not get the recent change of [adding trailing empty lines](https://github.com/prisma/prisma-engines/commit/ea035543e59571161e00ccd4063f5638283bfba7) working in the integration tests.

Instead for now I added a `.trim()` call to those tests to make them work.

We should look into it and find a way to get the tests running without `.trim()`

|

process

|

add new line checks to integration tests due to differences in operating systems environment i could not get the recent change of working in the integration tests instead for now i added a trim call to those tests to make them work we should look into it and find a way to get the tests running without trim

| 1

|

22,753

| 32,074,922,908

|

IssuesEvent

|

2023-09-25 10:21:31

|

equinor/flyt

|

https://api.github.com/repos/equinor/flyt

|

closed

|

Add Threat Modeling to issue templates

|

process improvement

|

Add threat modeling as a development checklist point in issue templates to make sure we continuously perform threat modeling.

|

1.0

|

Add Threat Modeling to issue templates - Add threat modeling as a development checklist point in issue templates to make sure we continuously perform threat modeling.

|

process

|

add threat modeling to issue templates add threat modeling as a development checklist point in issue templates to make sure we continuously perform threat modeling

| 1

|

3,189

| 6,259,501,777

|

IssuesEvent

|

2017-07-14 18:13:12

|

PeaceGeeksSociety/salesforce

|

https://api.github.com/repos/PeaceGeeksSociety/salesforce

|

opened

|

Publicize PeaceTalks, hackathons, volunteer/job opportunities to garner participants

|

Community Processes Recruitment Processes

|