Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

8,961

| 12,069,013,267

|

IssuesEvent

|

2020-04-16 15:31:23

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Typos in example docs

|

Pri2 cxp doc-bug machine-learning/svc team-data-science-process/subsvc triaged

|

1. All of the steps are `1.` instead of 1,2,3,...

2. The variable `LOCALFILE` should be `LOCALFILENAME`

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2f45a6b5-0fea-7fbb-5d4d-37e0e2583fd7

* Version Independent ID: 7be6f792-09f8-c22f-86b6-d0f690e9b3a4

* Content: [Explore data in Azure blob storage with pandas - Team Data Science Process](https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/explore-data-blob)

* Content Source: [articles/machine-learning/team-data-science-process/explore-data-blob.md](https://github.com/Microsoft/azure-docs/blob/master/articles/machine-learning/team-data-science-process/explore-data-blob.md)

* Service: **machine-learning**

* Sub-service: **team-data-science-process**

* GitHub Login: @marktab

* Microsoft Alias: **tdsp**

|

1.0

|

Typos in example docs - 1. All of the steps are `1.` instead of 1,2,3,...

2. The variable `LOCALFILE` should be `LOCALFILENAME`

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2f45a6b5-0fea-7fbb-5d4d-37e0e2583fd7

* Version Independent ID: 7be6f792-09f8-c22f-86b6-d0f690e9b3a4

* Content: [Explore data in Azure blob storage with pandas - Team Data Science Process](https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/explore-data-blob)

* Content Source: [articles/machine-learning/team-data-science-process/explore-data-blob.md](https://github.com/Microsoft/azure-docs/blob/master/articles/machine-learning/team-data-science-process/explore-data-blob.md)

* Service: **machine-learning**

* Sub-service: **team-data-science-process**

* GitHub Login: @marktab

* Microsoft Alias: **tdsp**

|

process

|

typos in example docs all of the steps are instead of the variable localfile should be localfilename document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service machine learning sub service team data science process github login marktab microsoft alias tdsp

| 1

|

42,112

| 6,964,248,007

|

IssuesEvent

|

2017-12-08 20:49:24

|

18F/federalist

|

https://api.github.com/repos/18F/federalist

|

closed

|

Add reference to Federalist blog posts on doc site

|

documentation

|

- https://18f.gsa.gov/2016/07/11/conversation-about-static-dynamic-websites/

- https://18f.gsa.gov/2016/05/18/why-were-moving-18f-gsa-gov-to-federalist/

- https://18f.gsa.gov/2015/09/15/federalist-platform-launch/ (need to edit out the content editor piece here)

|

1.0

|

Add reference to Federalist blog posts on doc site - - https://18f.gsa.gov/2016/07/11/conversation-about-static-dynamic-websites/

- https://18f.gsa.gov/2016/05/18/why-were-moving-18f-gsa-gov-to-federalist/

- https://18f.gsa.gov/2015/09/15/federalist-platform-launch/ (need to edit out the content editor piece here)

|

non_process

|

add reference to federalist blog posts on doc site need to edit out the content editor piece here

| 0

|

573,355

| 17,023,635,780

|

IssuesEvent

|

2021-07-03 03:02:15

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

Vorlagen religion=* und denomination=*

|

Component: potlatch (flash editor) Priority: minor Resolution: fixed Type: enhancement

|

**[Submitted to the original trac issue database at 2.09pm, Monday, 20th September 2010]**

In den Potlatch-Vorlagen werden fr amenity=place_of_worship keine Vorlagen fr religion=* und denomination=* "mitgeliefert". Potlatch scheint diese Tags berhaupt nicht zu kennen und schlgt sie beim automatischen vervollstndigen nicht vor.

Das fhrt dazu, dass manche Mapper diese wichtigen (v.a. religion) Zusatz-Tags nicht eintragen.

|

1.0

|

Vorlagen religion=* und denomination=* - **[Submitted to the original trac issue database at 2.09pm, Monday, 20th September 2010]**

In den Potlatch-Vorlagen werden fr amenity=place_of_worship keine Vorlagen fr religion=* und denomination=* "mitgeliefert". Potlatch scheint diese Tags berhaupt nicht zu kennen und schlgt sie beim automatischen vervollstndigen nicht vor.

Das fhrt dazu, dass manche Mapper diese wichtigen (v.a. religion) Zusatz-Tags nicht eintragen.

|

non_process

|

vorlagen religion und denomination in den potlatch vorlagen werden fr amenity place of worship keine vorlagen fr religion und denomination mitgeliefert potlatch scheint diese tags berhaupt nicht zu kennen und schlgt sie beim automatischen vervollstndigen nicht vor das fhrt dazu dass manche mapper diese wichtigen v a religion zusatz tags nicht eintragen

| 0

|

15,660

| 19,846,977,687

|

IssuesEvent

|

2022-01-21 07:54:25

|

ooi-data/RS01SBPS-PC01A-4A-DOSTAD103-streamed-do_stable_sample

|

https://api.github.com/repos/ooi-data/RS01SBPS-PC01A-4A-DOSTAD103-streamed-do_stable_sample

|

opened

|

🛑 Processing failed: ValueError

|

process

|

## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:54:23.731352.

## Details

Flow name: `RS01SBPS-PC01A-4A-DOSTAD103-streamed-do_stable_sample`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 70, in __array__

return self.func(self.array)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 137, in _apply_mask

data = np.asarray(data, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

1.0

|

🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:54:23.731352.

## Details

Flow name: `RS01SBPS-PC01A-4A-DOSTAD103-streamed-do_stable_sample`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 70, in __array__

return self.func(self.array)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/coding/variables.py", line 137, in _apply_mask

data = np.asarray(data, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

process

|

🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name streamed do stable sample task name processing task error type valueerror error message not enough values to unpack expected got traceback traceback most recent call last file srv conda envs notebook lib site packages ooi harvester processor pipeline py line in processing final path finalize data stream file srv conda envs notebook lib site packages ooi harvester processor init py line in finalize data stream append to zarr mod ds final store enc logger logger file srv conda envs notebook lib site packages ooi harvester processor init py line in append to zarr append zarr store mod ds file srv conda envs notebook lib site packages ooi harvester processor utils py line in append zarr existing arr append var data values file srv conda envs notebook lib site packages xarray core variable py line in values return as array or item self data file srv conda envs notebook lib site packages xarray core variable py line in as array or item data np asarray data file srv conda envs notebook lib site packages dask array core py line in array x self compute file srv conda envs notebook lib site packages dask base py line in compute result compute self traverse false kwargs file srv conda envs notebook lib site packages dask base py line in compute results schedule dsk keys kwargs file srv conda envs notebook lib site packages dask threaded py line in get results get async file srv conda envs notebook lib site packages dask local py line in get async raise exception exc tb file srv conda envs notebook lib site packages dask local py line in reraise raise exc file srv conda envs notebook lib site packages dask local py line in execute task result execute task task data file srv conda envs notebook lib site packages dask core py line in execute task return func execute task a cache for a in args file srv conda envs notebook lib site packages dask array core py line in getter c np asarray c file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array self ensure cached file srv conda envs notebook lib site packages xarray core indexing py line in ensure cached self array numpyindexingadapter np asarray self array file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray array dtype none file srv conda envs notebook lib site packages xarray coding variables py line in array return self func self array file srv conda envs notebook lib site packages xarray coding variables py line in apply mask data np asarray data dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray array dtype none file srv conda envs notebook lib site packages xarray backends zarr py line in getitem return array file srv conda envs notebook lib site packages zarr core py line in getitem return self get basic selection selection fields fields file srv conda envs notebook lib site packages zarr core py line in get basic selection return self get basic selection nd selection selection out out file srv conda envs notebook lib site packages zarr core py line in get basic selection nd return self get selection indexer indexer out out fields fields file srv conda envs notebook lib site packages zarr core py line in get selection lchunk coords lchunk selection lout selection zip indexer valueerror not enough values to unpack expected got

| 1

|

718,035

| 24,701,459,547

|

IssuesEvent

|

2022-10-19 15:34:11

|

authelia/authelia

|

https://api.github.com/repos/authelia/authelia

|

opened

|

Web app sessions don't persist

|

priority/4/normal type/bug/unconfirmed status/needs-triage

|

### Version

v4.36.9

### Deployment Method

Bare-metal

### Reverse Proxy

NGINX

### Reverse Proxy Version

1.18.0

### Description

Hi there,

I'm running a java webapp which uses Apache Shiro for auth. I'm fronting it with nginx and authelia and untilising trusted headers to instruct shiro. The problem is that the java session appears to not persist between http calls. If I disable authelia by commenting the proxy redirect in the nginx site conf it works fine, however if requests are authed by authelia the java session is blank for each request.

### Reproduction

This minimal example also doesn't work, but possibly with a slightly different failure more to mine? When attempting to log in to the shiro app it hangs and eventual issues a 500. I'm hoping they're related!

git clone https://github.com/apache/shiro.git

cd shiro/samples/web

mvn jetty:run &

#configure /etc/hosts & nginx ssl virtual host for shiro e.g. shiro.example.com

#configure user access for shiro virtual host in authelia

navigate https://shiro.example.com/login.jsp

enter authelia creds

hangs with 500 server error

### Expectations

The application should be able to set cookies for it's own auth.

### Logs

```shell

Oct 19 14:03:35 test2 authelia[25714]: time="2022-10-19T14:03:35Z" level=warning msg="Session destroyed for user 'simon' after exceeding configured session inactivity and not being marked as remembered" method=GET path=/api/verify remote_ip=10.88.142.1

Oct 19 14:03:35 test2 authelia[25714]: time="2022-10-19T14:03:35Z" level=debug msg="Check authorization of subject username= groups= ip=10.88.142.1 and object https://app.example.com/ (method GET)."

Oct 19 14:03:35 test2 authelia[25714]: time="2022-10-19T14:03:35Z" level=info msg="Access to https://app.example.com/ (method GET) is not authorized to user <anonymous>, responding with status code 401" method=GET path=/api/verify remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Mark 1FA authentication attempt made by user 'simon'" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Successful 1FA authentication attempt made by user 'simon'" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/ (method )."

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Required level for the URL https://app.example.com/ is 1" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Redirection URL https://app.example.com/ is safe" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:48 test2 authelia[25714]: time="2022-10-19T14:03:48Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/ (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/bootstrap/css/bootstrap.min.css (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/bootstrap-ladda/ladda-themeless.min.css (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/css/font-awesome.min.css (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/css/ng-table.css (method GET)."

```

### Configuration

```yaml

# yamllint disable rule:comments-indentation

---

theme: light

jwt_secret: a_very_important_secret

default_2fa_method: ""

server:

host: 0.0.0.0

port: 9091

path: ""

enable_pprof: false

enable_expvars: false

disable_healthcheck: false

headers:

csp_template: ""

log:

level: debug

telemetry:

metrics:

enabled: false

address: tcp://0.0.0.0:9959

totp:

disable: false

issuer: authelia.com

algorithm: sha1

digits: 6

period: 30

skew: 1

secret_size: 32

webauthn:

disable: false

timeout: 60s

display_name: Authelia

user_verification: preferred

duo_api:

disable: false

hostname: api-123456789.example.com

integration_key: ABCDEF

secret_key: 1234567890abcdefghifjkl

enable_self_enrollment: false

ntp:

address: "time.cloudflare.com:123"

version: 4

max_desync: 3s

disable_startup_check: false

disable_failure: false

authentication_backend:

password_reset:

custom_url: ""

refresh_interval: 5m

file:

path: /etc/authelia/user_database.yml

password:

algorithm: argon2id

iterations: 1

key_length: 32

salt_length: 16

memory: 64

parallelism: 8

password_policy:

standard:

enabled: false

min_length: 8

max_length: 0

require_uppercase: true

require_lowercase: true

require_number: true

require_special: true

access_control:

default_policy: deny

rules:

- domain: 'shiro.example.com'

policy: one_factor

session:

name: authelia_session

domain: example.com

same_site: lax

secret: insecure_session_secret123123

expiration: 1h

inactivity: 5m

remember_me_duration: 1M

regulation:

max_retries: 3

find_time: 2m

ban_time: 5m

storage:

encryption_key: you_must_generate_a_random_string_of_more_than_twenty_chars_and_configure_this

postgres:

host: 127.0.0.1

port: 5432

database: authelia

schema: public

username: authelia

## Password can also be set using a secret: https://www.authelia.com/c/secrets

password: password

timeout: 5s

ssl:

mode: disable

root_certificate: disable

certificate: disable

key: disable

notifier:

filesystem:

filename: /etc/authelia/notification.txt

...

```

### Documentation

_No response_

|

1.0

|

Web app sessions don't persist - ### Version

v4.36.9

### Deployment Method

Bare-metal

### Reverse Proxy

NGINX

### Reverse Proxy Version

1.18.0

### Description

Hi there,

I'm running a java webapp which uses Apache Shiro for auth. I'm fronting it with nginx and authelia and untilising trusted headers to instruct shiro. The problem is that the java session appears to not persist between http calls. If I disable authelia by commenting the proxy redirect in the nginx site conf it works fine, however if requests are authed by authelia the java session is blank for each request.

### Reproduction

This minimal example also doesn't work, but possibly with a slightly different failure more to mine? When attempting to log in to the shiro app it hangs and eventual issues a 500. I'm hoping they're related!

git clone https://github.com/apache/shiro.git

cd shiro/samples/web

mvn jetty:run &

#configure /etc/hosts & nginx ssl virtual host for shiro e.g. shiro.example.com

#configure user access for shiro virtual host in authelia

navigate https://shiro.example.com/login.jsp

enter authelia creds

hangs with 500 server error

### Expectations

The application should be able to set cookies for it's own auth.

### Logs

```shell

Oct 19 14:03:35 test2 authelia[25714]: time="2022-10-19T14:03:35Z" level=warning msg="Session destroyed for user 'simon' after exceeding configured session inactivity and not being marked as remembered" method=GET path=/api/verify remote_ip=10.88.142.1

Oct 19 14:03:35 test2 authelia[25714]: time="2022-10-19T14:03:35Z" level=debug msg="Check authorization of subject username= groups= ip=10.88.142.1 and object https://app.example.com/ (method GET)."

Oct 19 14:03:35 test2 authelia[25714]: time="2022-10-19T14:03:35Z" level=info msg="Access to https://app.example.com/ (method GET) is not authorized to user <anonymous>, responding with status code 401" method=GET path=/api/verify remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Mark 1FA authentication attempt made by user 'simon'" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Successful 1FA authentication attempt made by user 'simon'" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/ (method )."

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Required level for the URL https://app.example.com/ is 1" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:47 test2 authelia[25714]: time="2022-10-19T14:03:47Z" level=debug msg="Redirection URL https://app.example.com/ is safe" method=POST path=/api/firstfactor remote_ip=10.88.142.1

Oct 19 14:03:48 test2 authelia[25714]: time="2022-10-19T14:03:48Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/ (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/bootstrap/css/bootstrap.min.css (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/bootstrap-ladda/ladda-themeless.min.css (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/css/font-awesome.min.css (method GET)."

Oct 19 14:03:50 test2 authelia[25714]: time="2022-10-19T14:03:50Z" level=debug msg="Check authorization of subject username=simon groups=admins,dev ip=10.88.142.1 and object https://app.example.com/static/css/ng-table.css (method GET)."

```

### Configuration

```yaml

# yamllint disable rule:comments-indentation

---

theme: light

jwt_secret: a_very_important_secret

default_2fa_method: ""

server:

host: 0.0.0.0

port: 9091

path: ""

enable_pprof: false

enable_expvars: false

disable_healthcheck: false

headers:

csp_template: ""

log:

level: debug

telemetry:

metrics:

enabled: false

address: tcp://0.0.0.0:9959

totp:

disable: false

issuer: authelia.com

algorithm: sha1

digits: 6

period: 30

skew: 1

secret_size: 32

webauthn:

disable: false

timeout: 60s

display_name: Authelia

user_verification: preferred

duo_api:

disable: false

hostname: api-123456789.example.com

integration_key: ABCDEF

secret_key: 1234567890abcdefghifjkl

enable_self_enrollment: false

ntp:

address: "time.cloudflare.com:123"

version: 4

max_desync: 3s

disable_startup_check: false

disable_failure: false

authentication_backend:

password_reset:

custom_url: ""

refresh_interval: 5m

file:

path: /etc/authelia/user_database.yml

password:

algorithm: argon2id

iterations: 1

key_length: 32

salt_length: 16

memory: 64

parallelism: 8

password_policy:

standard:

enabled: false

min_length: 8

max_length: 0

require_uppercase: true

require_lowercase: true

require_number: true

require_special: true

access_control:

default_policy: deny

rules:

- domain: 'shiro.example.com'

policy: one_factor

session:

name: authelia_session

domain: example.com

same_site: lax

secret: insecure_session_secret123123

expiration: 1h

inactivity: 5m

remember_me_duration: 1M

regulation:

max_retries: 3

find_time: 2m

ban_time: 5m

storage:

encryption_key: you_must_generate_a_random_string_of_more_than_twenty_chars_and_configure_this

postgres:

host: 127.0.0.1

port: 5432

database: authelia

schema: public

username: authelia

## Password can also be set using a secret: https://www.authelia.com/c/secrets

password: password

timeout: 5s

ssl:

mode: disable

root_certificate: disable

certificate: disable

key: disable

notifier:

filesystem:

filename: /etc/authelia/notification.txt

...

```

### Documentation

_No response_

|

non_process

|

web app sessions don t persist version deployment method bare metal reverse proxy nginx reverse proxy version description hi there i m running a java webapp which uses apache shiro for auth i m fronting it with nginx and authelia and untilising trusted headers to instruct shiro the problem is that the java session appears to not persist between http calls if i disable authelia by commenting the proxy redirect in the nginx site conf it works fine however if requests are authed by authelia the java session is blank for each request reproduction this minimal example also doesn t work but possibly with a slightly different failure more to mine when attempting to log in to the shiro app it hangs and eventual issues a i m hoping they re related git clone cd shiro samples web mvn jetty run configure etc hosts nginx ssl virtual host for shiro e g shiro example com configure user access for shiro virtual host in authelia navigate enter authelia creds hangs with server error expectations the application should be able to set cookies for it s own auth logs shell oct authelia time level warning msg session destroyed for user simon after exceeding configured session inactivity and not being marked as remembered method get path api verify remote ip oct authelia time level debug msg check authorization of subject username groups ip and object method get oct authelia time level info msg access to method get is not authorized to user responding with status code method get path api verify remote ip oct authelia time level debug msg mark authentication attempt made by user simon method post path api firstfactor remote ip oct authelia time level debug msg successful authentication attempt made by user simon method post path api firstfactor remote ip oct authelia time level debug msg check authorization of subject username simon groups admins dev ip and object method oct authelia time level debug msg required level for the url is method post path api firstfactor remote ip oct authelia time level debug msg redirection url is safe method post path api firstfactor remote ip oct authelia time level debug msg check authorization of subject username simon groups admins dev ip and object method get oct authelia time level debug msg check authorization of subject username simon groups admins dev ip and object method get oct authelia time level debug msg check authorization of subject username simon groups admins dev ip and object method get oct authelia time level debug msg check authorization of subject username simon groups admins dev ip and object method get oct authelia time level debug msg check authorization of subject username simon groups admins dev ip and object method get configuration yaml yamllint disable rule comments indentation theme light jwt secret a very important secret default method server host port path enable pprof false enable expvars false disable healthcheck false headers csp template log level debug telemetry metrics enabled false address tcp totp disable false issuer authelia com algorithm digits period skew secret size webauthn disable false timeout display name authelia user verification preferred duo api disable false hostname api example com integration key abcdef secret key enable self enrollment false ntp address time cloudflare com version max desync disable startup check false disable failure false authentication backend password reset custom url refresh interval file path etc authelia user database yml password algorithm iterations key length salt length memory parallelism password policy standard enabled false min length max length require uppercase true require lowercase true require number true require special true access control default policy deny rules domain shiro example com policy one factor session name authelia session domain example com same site lax secret insecure session expiration inactivity remember me duration regulation max retries find time ban time storage encryption key you must generate a random string of more than twenty chars and configure this postgres host port database authelia schema public username authelia password can also be set using a secret password password timeout ssl mode disable root certificate disable certificate disable key disable notifier filesystem filename etc authelia notification txt documentation no response

| 0

|

5,276

| 8,065,886,986

|

IssuesEvent

|

2018-08-04 08:11:34

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

closed

|

NPE when adding a keyref on a DITA element which does not allow one

|

P3 bug preprocess/keyref

|

This is also a question related to the DITA 1.3 specs, so maybe @robander can help a little bit here.

Let's say someone creates a DITA DTD specialization and adds a new element which extends the DITA <p> element. But their new element is allowed to have the keyref attribute on it. The DITA OT processing only expects the keyref attribute to be defined on specific DITA elements, see "org.dita.dost.writer.KeyrefPaser.keyrefInfos". But I do not see anything in the DITA 1.3 specification stating that only certain element types can have the keyref attribute specified on them.

So right now the publishing breaks with a NullPointerException when you have a DITA element with an unexpected keyref set on it.

As a quick experiment, let's say that inside a DITA topic somewhere in a paragraph I add this non-existing DITA element:

<booboo class="- topic/p topic/booboo " keyref="bulb"/>

and then I publish to HTML5 setting the "validate=false" parameter, I will get a NullPointerException reported with DITA OT 3.1 (but also with older DITA OTs):

org.dita.base\build_preprocess.xml:280: java.lang.NullPointerException

at org.dita.dost.writer.KeyrefPaser.processElement(KeyrefPaser.java:478)

at org.dita.dost.writer.KeyrefPaser.startElement(KeyrefPaser.java:468)

at org.xml.sax.helpers.XMLFilterImpl.startElement(Unknown Source)

at org.dita.dost.writer.TopicFragmentFilter.startElement(TopicFragmentFilter.java:79)

at org.dita.dost.writer.ConkeyrefFilter.startElement(ConkeyrefFilter.java:83)

at org.apache.xerces.parsers.AbstractSAXParser.startElement(Unknown Source)

|

1.0

|

NPE when adding a keyref on a DITA element which does not allow one - This is also a question related to the DITA 1.3 specs, so maybe @robander can help a little bit here.

Let's say someone creates a DITA DTD specialization and adds a new element which extends the DITA <p> element. But their new element is allowed to have the keyref attribute on it. The DITA OT processing only expects the keyref attribute to be defined on specific DITA elements, see "org.dita.dost.writer.KeyrefPaser.keyrefInfos". But I do not see anything in the DITA 1.3 specification stating that only certain element types can have the keyref attribute specified on them.

So right now the publishing breaks with a NullPointerException when you have a DITA element with an unexpected keyref set on it.

As a quick experiment, let's say that inside a DITA topic somewhere in a paragraph I add this non-existing DITA element:

<booboo class="- topic/p topic/booboo " keyref="bulb"/>

and then I publish to HTML5 setting the "validate=false" parameter, I will get a NullPointerException reported with DITA OT 3.1 (but also with older DITA OTs):

org.dita.base\build_preprocess.xml:280: java.lang.NullPointerException

at org.dita.dost.writer.KeyrefPaser.processElement(KeyrefPaser.java:478)

at org.dita.dost.writer.KeyrefPaser.startElement(KeyrefPaser.java:468)

at org.xml.sax.helpers.XMLFilterImpl.startElement(Unknown Source)

at org.dita.dost.writer.TopicFragmentFilter.startElement(TopicFragmentFilter.java:79)

at org.dita.dost.writer.ConkeyrefFilter.startElement(ConkeyrefFilter.java:83)

at org.apache.xerces.parsers.AbstractSAXParser.startElement(Unknown Source)

|

process

|

npe when adding a keyref on a dita element which does not allow one this is also a question related to the dita specs so maybe robander can help a little bit here let s say someone creates a dita dtd specialization and adds a new element which extends the dita element but their new element is allowed to have the keyref attribute on it the dita ot processing only expects the keyref attribute to be defined on specific dita elements see org dita dost writer keyrefpaser keyrefinfos but i do not see anything in the dita specification stating that only certain element types can have the keyref attribute specified on them so right now the publishing breaks with a nullpointerexception when you have a dita element with an unexpected keyref set on it as a quick experiment let s say that inside a dita topic somewhere in a paragraph i add this non existing dita element and then i publish to setting the validate false parameter i will get a nullpointerexception reported with dita ot but also with older dita ots org dita base build preprocess xml java lang nullpointerexception at org dita dost writer keyrefpaser processelement keyrefpaser java at org dita dost writer keyrefpaser startelement keyrefpaser java at org xml sax helpers xmlfilterimpl startelement unknown source at org dita dost writer topicfragmentfilter startelement topicfragmentfilter java at org dita dost writer conkeyreffilter startelement conkeyreffilter java at org apache xerces parsers abstractsaxparser startelement unknown source

| 1

|

4,557

| 7,389,343,361

|

IssuesEvent

|

2018-03-16 08:17:57

|

KetchPartners/kmsprint2

|

https://api.github.com/repos/KetchPartners/kmsprint2

|

opened

|

Create Trigger

|

Axure Prototype Process enhancement

|

# Baseline- Create tasks to initiate activities with key feilds.

<img width="363" alt="base4" src="https://user-images.githubusercontent.com/29525920/37510264-eccf1b4a-28d0-11e8-8d0c-fb6c5c8f5db1.png">

|

1.0

|

Create Trigger - # Baseline- Create tasks to initiate activities with key feilds.

<img width="363" alt="base4" src="https://user-images.githubusercontent.com/29525920/37510264-eccf1b4a-28d0-11e8-8d0c-fb6c5c8f5db1.png">

|

process

|

create trigger baseline create tasks to initiate activities with key feilds img width alt src

| 1

|

266,933

| 20,171,874,432

|

IssuesEvent

|

2022-02-10 11:08:14

|

dianna-ai/dianna

|

https://api.github.com/repos/dianna-ai/dianna

|

closed

|

Make sure all tutorials show up correctly in the docs

|

documentation

|

Add the links to tutorials at the right place

- [x] create links under docs/tutorials

- [x] make sure the header levels are correct

|

1.0

|

Make sure all tutorials show up correctly in the docs - Add the links to tutorials at the right place

- [x] create links under docs/tutorials

- [x] make sure the header levels are correct

|

non_process

|

make sure all tutorials show up correctly in the docs add the links to tutorials at the right place create links under docs tutorials make sure the header levels are correct

| 0

|

536,466

| 15,709,447,106

|

IssuesEvent

|

2021-03-26 22:33:37

|

argoproj/argo-cd

|

https://api.github.com/repos/argoproj/argo-cd

|

closed

|

Create App fails if repo is Helm OCI and revision is a hash

|

bug bug/priority:medium bug/severity:minor component:config-management

|

Checklist:

* [x ] I've searched in the docs and FAQ for my answer: https://bit.ly/argocd-faq.

* [x ] I've included steps to reproduce the bug.

* [x ] I've pasted the output of `argocd version`.

**Describe the bug**

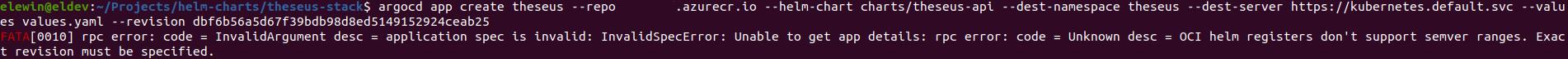

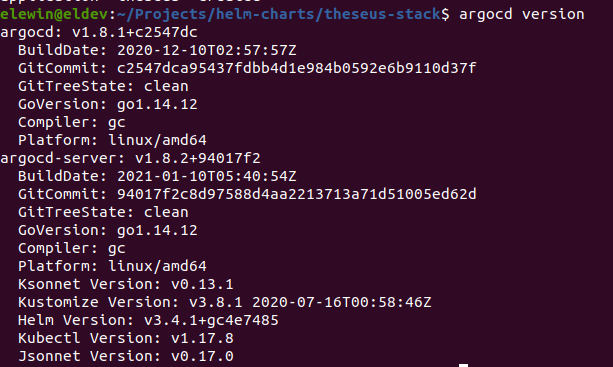

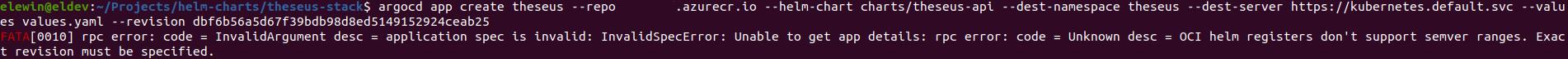

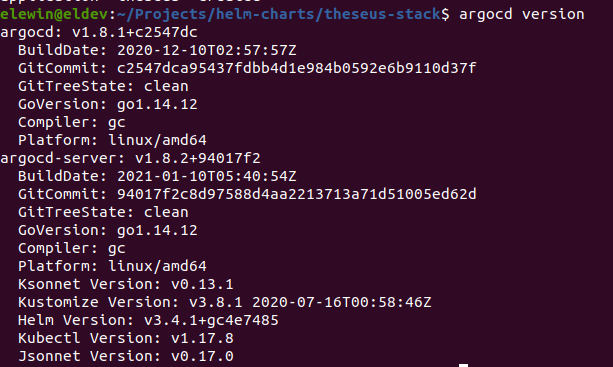

Using a hash as revision number when creating an app from a chart hosted on a OCI registry fails (Error seems to suggest it's interpreting it as a semver range). As you can see below it works with the same parameters if I provide a semver number.

**To Reproduce**

**Expected behavior**

Should create an app since a hash should suffice as an unique identifier

**Version**

|

1.0

|

Create App fails if repo is Helm OCI and revision is a hash - Checklist:

* [x ] I've searched in the docs and FAQ for my answer: https://bit.ly/argocd-faq.

* [x ] I've included steps to reproduce the bug.

* [x ] I've pasted the output of `argocd version`.

**Describe the bug**

Using a hash as revision number when creating an app from a chart hosted on a OCI registry fails (Error seems to suggest it's interpreting it as a semver range). As you can see below it works with the same parameters if I provide a semver number.

**To Reproduce**

**Expected behavior**

Should create an app since a hash should suffice as an unique identifier

**Version**

|

non_process

|

create app fails if repo is helm oci and revision is a hash checklist i ve searched in the docs and faq for my answer i ve included steps to reproduce the bug i ve pasted the output of argocd version describe the bug using a hash as revision number when creating an app from a chart hosted on a oci registry fails error seems to suggest it s interpreting it as a semver range as you can see below it works with the same parameters if i provide a semver number to reproduce expected behavior should create an app since a hash should suffice as an unique identifier version

| 0

|

832,049

| 32,070,660,523

|

IssuesEvent

|

2023-09-25 07:47:32

|

steedos/steedos-platform

|

https://api.github.com/repos/steedos/steedos-platform

|

opened

|

[Bug]: unpkg配置成相对路径后,手机客户端访问白屏

|

bug priority: High

|

### Description

### Steps To Reproduce 重现步骤

1、STEEDOS_UNPKG_URL=/unpkg/

2、使用手机客户端登录

### Version 版本

2.5

|

1.0

|

[Bug]: unpkg配置成相对路径后,手机客户端访问白屏 - ### Description

### Steps To Reproduce 重现步骤

1、STEEDOS_UNPKG_URL=/unpkg/

2、使用手机客户端登录

### Version 版本

2.5

|

non_process

|

unpkg配置成相对路径后,手机客户端访问白屏 description steps to reproduce 重现步骤 、steedos unpkg url unpkg 、使用手机客户端登录 version 版本

| 0

|

19,292

| 25,466,362,809

|

IssuesEvent

|

2022-11-25 05:05:01

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[IDP] [PM] Admin > Edit admin > Edit admin details screen > UI issue

|

Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev

|

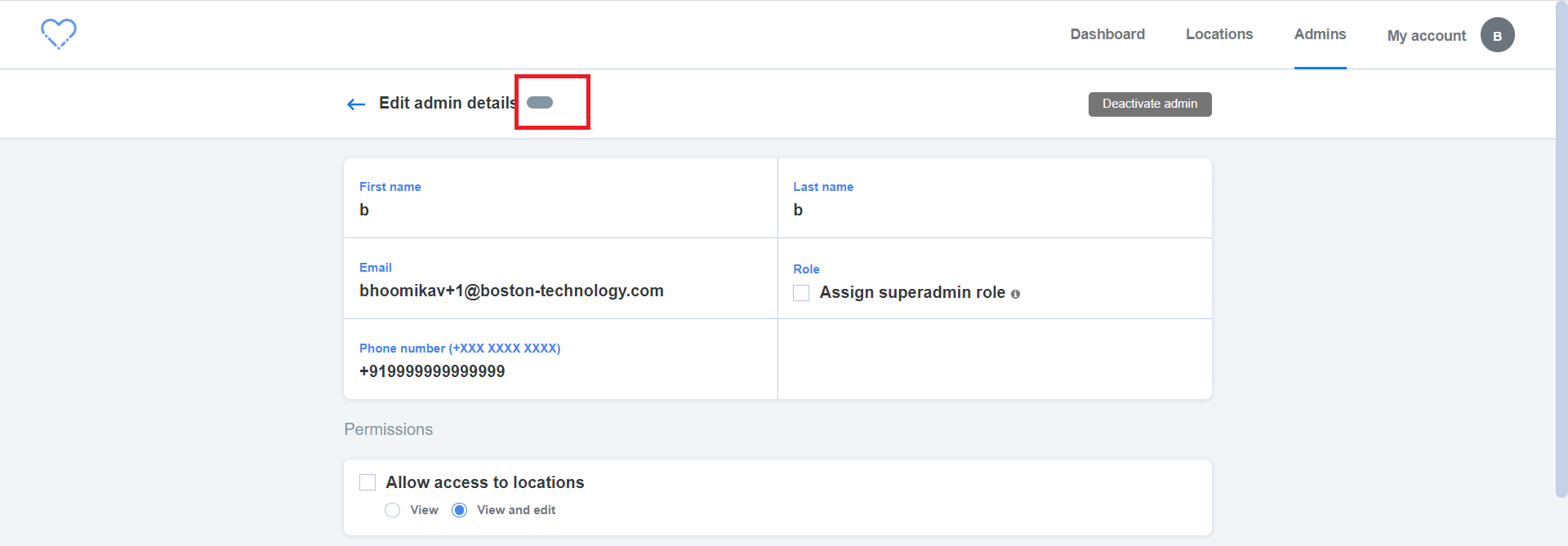

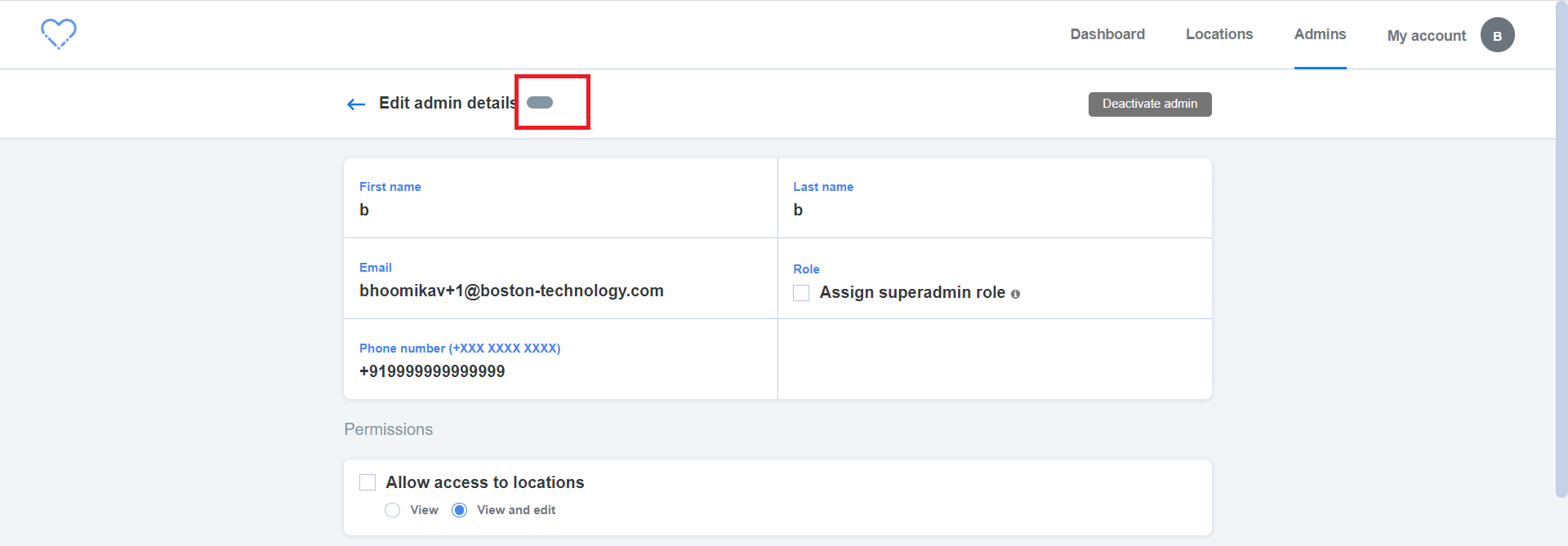

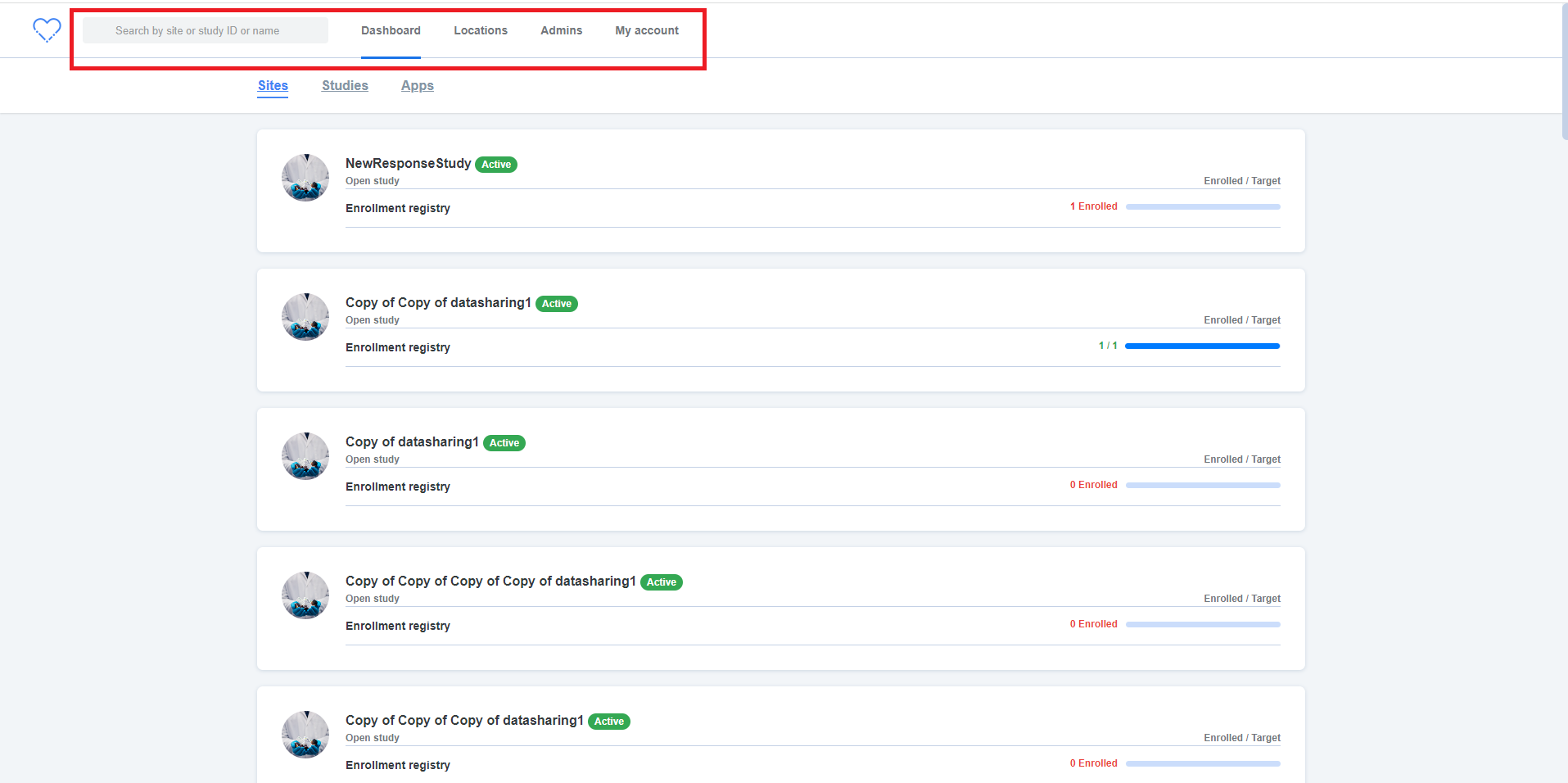

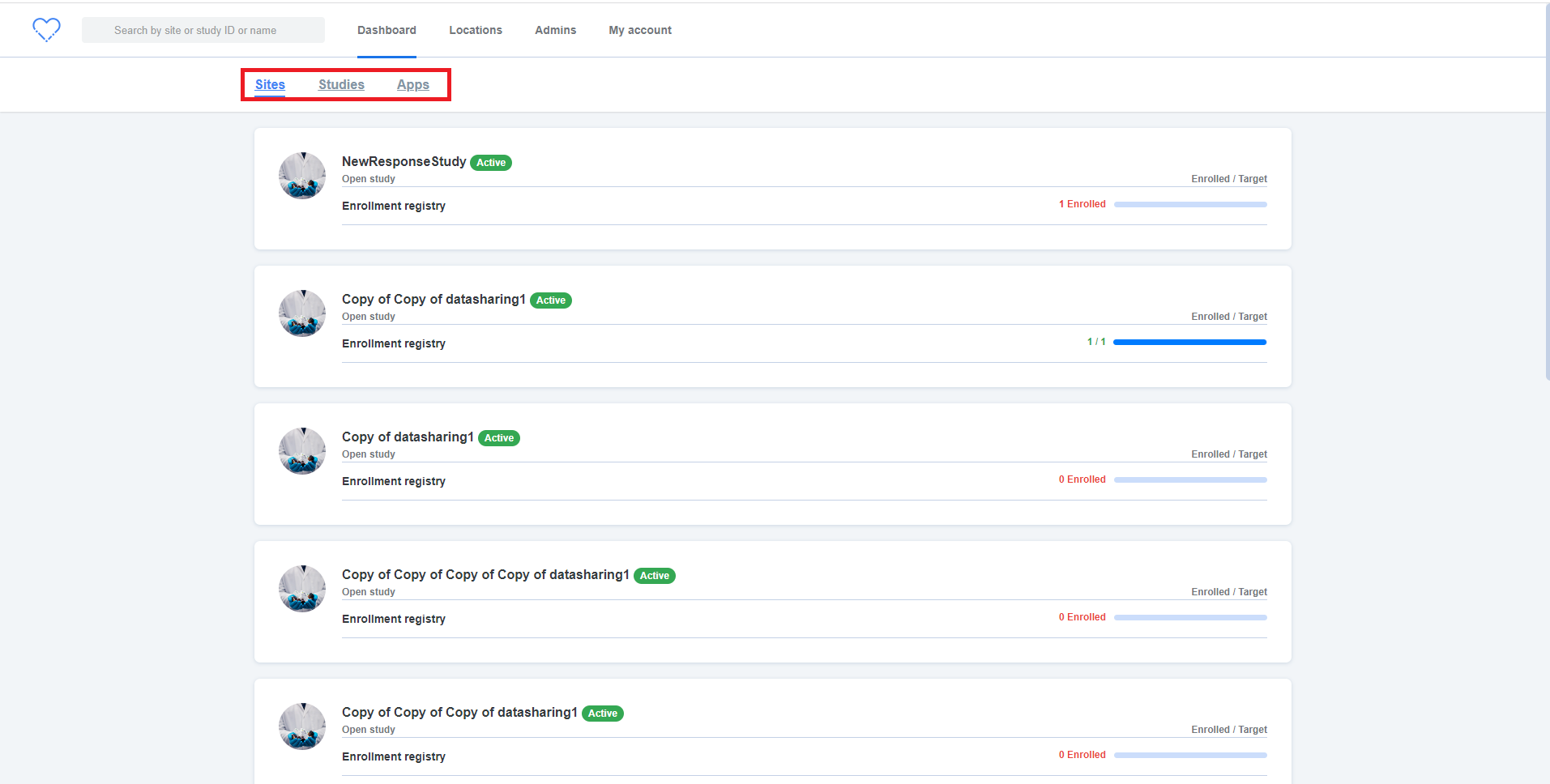

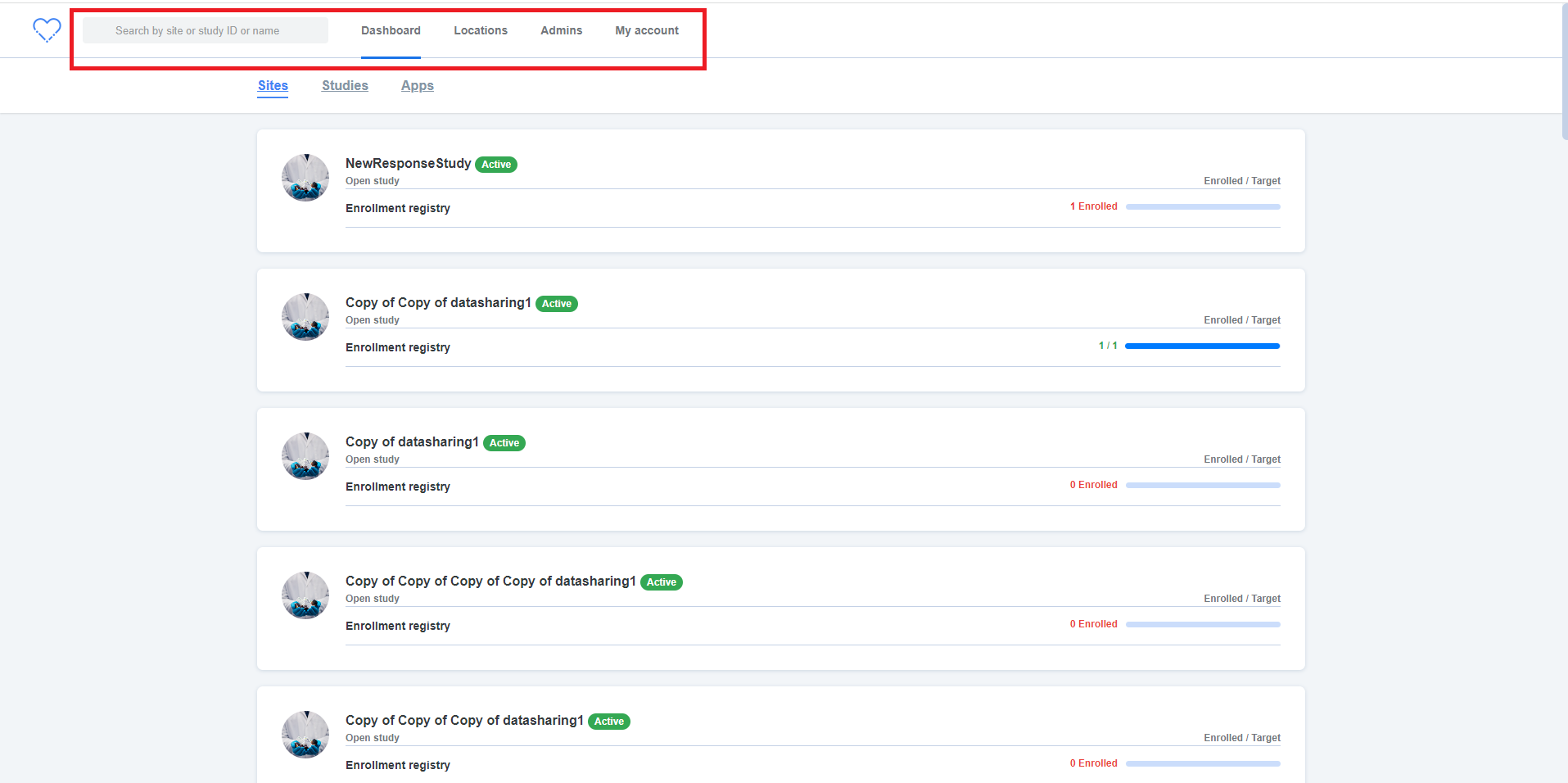

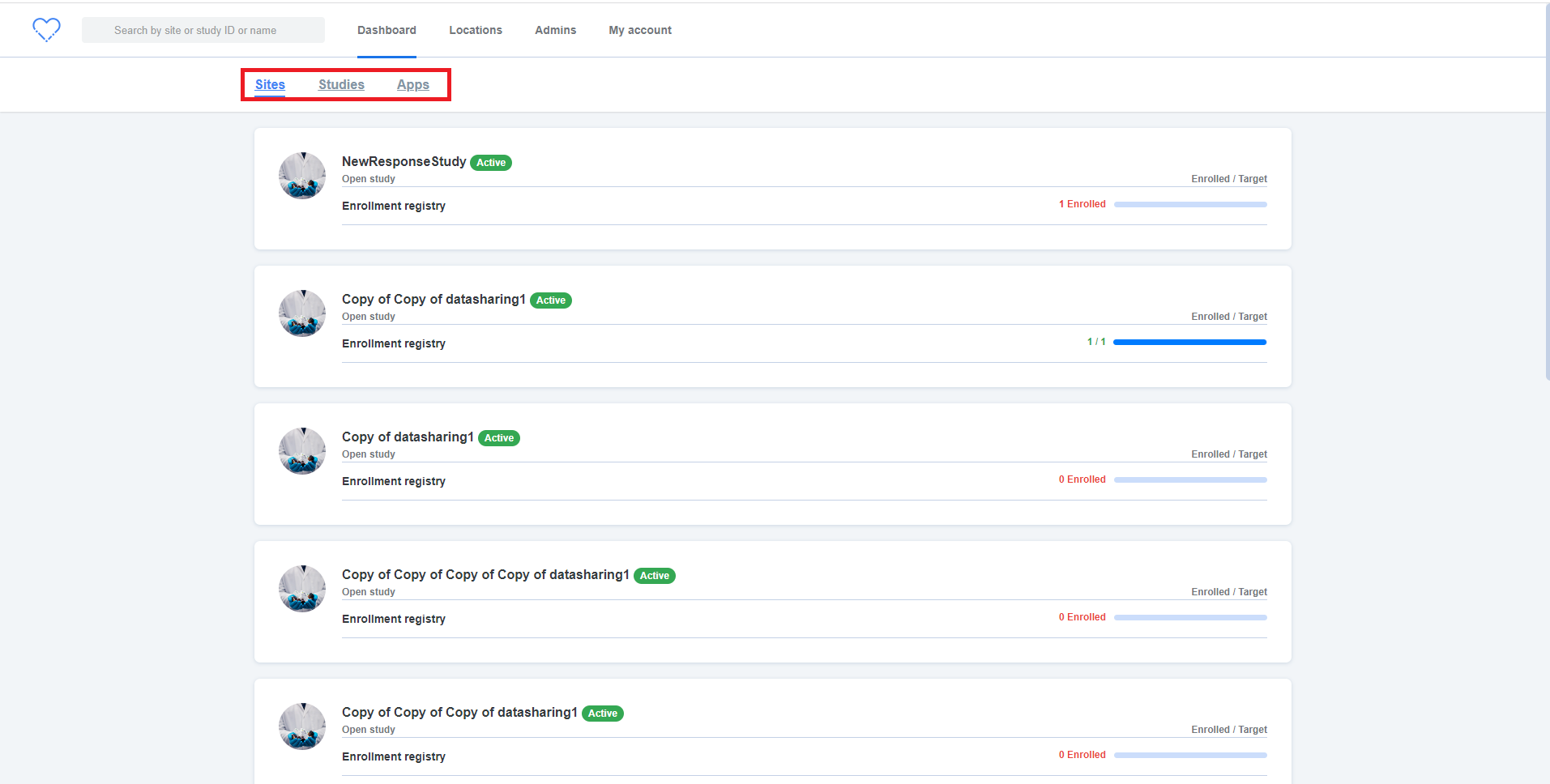

**Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Edit admin in the list

4. Enter the phone number (Eg: +919999999999999)

5. Click on 'Save' button and Verify

**AR:** UI issue is observed as attached in the below screenshot

**ER:** Status of the admin should get displayed

|

3.0

|

[IDP] [PM] Admin > Edit admin > Edit admin details screen > UI issue - **Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Edit admin in the list

4. Enter the phone number (Eg: +919999999999999)

5. Click on 'Save' button and Verify

**AR:** UI issue is observed as attached in the below screenshot

**ER:** Status of the admin should get displayed

|

process

|

admin edit admin edit admin details screen ui issue steps login to pm click on admins tab edit admin in the list enter the phone number eg click on save button and verify ar ui issue is observed as attached in the below screenshot er status of the admin should get displayed

| 1

|

281,004

| 8,689,384,720

|

IssuesEvent

|

2018-12-03 18:33:30

|

googleapis/google-api-python-client

|

https://api.github.com/repos/googleapis/google-api-python-client

|

closed

|

Unable to grant owner access to User members

|

priority: p2 status: blocked type: question

|

I couldn't able to add new member in GCP(iam) with the role owner using the gcloud command The below command fails:

`gcloud projects add-iam-policy-binding linuxacademy-3 --member user:rohithmn3@gmail.com --role roles/owner`

With the below Error:

```

ERROR: (gcloud.projects.add-iam-policy-binding) INVALID_ARGUMENT: Request contains an invalid argument.

- '@type': type.googleapis.com/google.cloudresourcemanager.v1.ProjectIamPolicyError

member: user:rohithmn3@gmail.com

role: roles/owner

type: SOLO_MUST_INVITE_OWNERS

```

But, the same command works well for other roles like: viewer, browser...! It just doesn't work for "owner". Is there any alternative for this; if yes, How to add this in my Python Code. Please help me here..!

Thank you!

Regards,

Rohith

|

1.0

|

Unable to grant owner access to User members - I couldn't able to add new member in GCP(iam) with the role owner using the gcloud command The below command fails:

`gcloud projects add-iam-policy-binding linuxacademy-3 --member user:rohithmn3@gmail.com --role roles/owner`

With the below Error:

```

ERROR: (gcloud.projects.add-iam-policy-binding) INVALID_ARGUMENT: Request contains an invalid argument.

- '@type': type.googleapis.com/google.cloudresourcemanager.v1.ProjectIamPolicyError

member: user:rohithmn3@gmail.com

role: roles/owner

type: SOLO_MUST_INVITE_OWNERS

```

But, the same command works well for other roles like: viewer, browser...! It just doesn't work for "owner". Is there any alternative for this; if yes, How to add this in my Python Code. Please help me here..!

Thank you!

Regards,

Rohith

|

non_process

|

unable to grant owner access to user members i couldn t able to add new member in gcp iam with the role owner using the gcloud command the below command fails gcloud projects add iam policy binding linuxacademy member user gmail com role roles owner with the below error error gcloud projects add iam policy binding invalid argument request contains an invalid argument type type googleapis com google cloudresourcemanager projectiampolicyerror member user gmail com role roles owner type solo must invite owners but the same command works well for other roles like viewer browser it just doesn t work for owner is there any alternative for this if yes how to add this in my python code please help me here thank you regards rohith

| 0

|

3,710

| 6,732,524,795

|

IssuesEvent

|

2017-10-18 11:52:05

|

lockedata/rcms

|

https://api.github.com/repos/lockedata/rcms

|

opened

|

Manage speaker submission

|

conference team osem processes

|

## Detailed task

- Review submissions

- Accept/reject sessions

- Email speakers

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perform the task. Use a 👎 (`:-1:`) reaction to the task if you could not complete it. Add a reply with any comments or feedback.

## Extra Info

- Site: [osem](https://intense-shore-93790.herokuapp.com/)

- System documentation: [osem docs](http://osem.io/)

- Role: Conference team

- Area: Processes

|

1.0

|

Manage speaker submission - ## Detailed task

- Review submissions

- Accept/reject sessions

- Email speakers

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perform the task. Use a 👎 (`:-1:`) reaction to the task if you could not complete it. Add a reply with any comments or feedback.

## Extra Info

- Site: [osem](https://intense-shore-93790.herokuapp.com/)

- System documentation: [osem docs](http://osem.io/)

- Role: Conference team

- Area: Processes

|

process

|

manage speaker submission detailed task review submissions accept reject sessions email speakers assessing the task try to perform the task use google and the system documentation to help part of what we re trying to assess how easy it is for people to work out how to do tasks use a 👍 reaction to this task if you were able to perform the task use a 👎 reaction to the task if you could not complete it add a reply with any comments or feedback extra info site system documentation role conference team area processes

| 1

|

274,377

| 30,015,706,850

|

IssuesEvent

|

2023-06-26 18:32:15

|

DevSecOpsTrainingAz/epshoppublic

|

https://api.github.com/repos/DevSecOpsTrainingAz/epshoppublic

|

opened

|

UnitTests-1.0.0: 5 vulnerabilities (highest severity is: 7.8)

|

Mend: dependency security vulnerability

|

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>UnitTests-1.0.0</b></p></summary>

<p></p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/nuget.common/6.2.1/nuget.common.6.2.1.nupkg</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/DevSecOpsTrainingAz/epshoppublic/commit/87e4ac53883973c1d1a552462c45e3a8f0a183a2">87e4ac53883973c1d1a552462c45e3a8f0a183a2</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (UnitTests version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2022-41032](https://www.mend.io/vulnerability-database/CVE-2022-41032) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.8 | nuget.protocol.5.11.0.nupkg | Transitive | N/A* | ❌ |

| [CVE-2018-8292](https://www.mend.io/vulnerability-database/CVE-2018-8292) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.5 | system.net.http.4.3.0.nupkg | Transitive | N/A* | ❌ |

| [CVE-2019-0820](https://www.mend.io/vulnerability-database/CVE-2019-0820) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.5 | system.text.regularexpressions.4.3.0.nupkg | Transitive | N/A* | ❌ |

| [CVE-2023-29337](https://www.mend.io/vulnerability-database/CVE-2023-29337) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.1 | detected in multiple dependencies | Transitive | N/A* | ❌ |

| [CVE-2022-34716](https://www.mend.io/vulnerability-database/CVE-2022-34716) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 5.9 | system.security.cryptography.xml.4.5.0.nupkg | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the "Details" section below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> CVE-2022-41032</summary>

### Vulnerable Library - <b>nuget.protocol.5.11.0.nupkg</b></p>

<p>NuGet's implementation for interacting with feeds. Contains functionality for all feed types.</p>

<p>Library home page: <a href="https://api.nuget.org/packages/nuget.protocol.5.11.0.nupkg">https://api.nuget.org/packages/nuget.protocol.5.11.0.nupkg</a></p>

<p>Path to dependency file: /src/PublicApi/PublicApi.csproj</p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/nuget.protocol/5.11.0/nuget.protocol.5.11.0.nupkg</p>

<p>

Dependency Hierarchy:

- UnitTests-1.0.0 (Root Library)

- Web-1.0.0

- microsoft.visualstudio.web.codegeneration.design.6.0.7.nupkg

- microsoft.visualstudio.web.codegenerators.mvc.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.entityframeworkcore.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.core.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.templating.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.utils.6.0.7.nupkg

- microsoft.dotnet.scaffolding.shared.6.0.7.nupkg

- nuget.projectmodel.5.11.0.nupkg

- nuget.dependencyresolver.core.5.11.0.nupkg

- :x: **nuget.protocol.5.11.0.nupkg** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/DevSecOpsTrainingAz/epshoppublic/commit/87e4ac53883973c1d1a552462c45e3a8f0a183a2">87e4ac53883973c1d1a552462c45e3a8f0a183a2</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

NuGet Client Elevation of Privilege Vulnerability.

<p>Publish Date: 2022-10-11

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-41032>CVE-2022-41032</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.8</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2022-10-11</p>

<p>Fix Resolution: NuGet.CommandLine - 4.9.6,5.7.3,5.9.3,5.11.3,6.0.3,6.2.2,6.3.1;NuGet.Commands - 4.9.6,5.7.3,5.9.3,5.11.3,6.0.3,6.2.2,6.3.1;NuGet.Protocol - 4.9.6,5.7.3,5.9.3,5.11.3,6.0.3,6.2.2,6.3.1

</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> CVE-2018-8292</summary>

### Vulnerable Library - <b>system.net.http.4.3.0.nupkg</b></p>

<p>Provides a programming interface for modern HTTP applications, including HTTP client components that allow applications to consume web services over HTTP and HTTP components that can be used by both clients and servers for parsing HTTP headers.

</p>

<p>Library home page: <a href="https://api.nuget.org/packages/system.net.http.4.3.0.nupkg">https://api.nuget.org/packages/system.net.http.4.3.0.nupkg</a></p>

<p>Path to dependency file: /src/PublicApi/PublicApi.csproj</p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/system.net.http/4.3.0/system.net.http.4.3.0.nupkg</p>

<p>

Dependency Hierarchy:

- UnitTests-1.0.0 (Root Library)

- microsoft.aspnetcore.mvc.2.2.0.nupkg

- microsoft.aspnetcore.mvc.taghelpers.2.2.0.nupkg

- microsoft.aspnetcore.mvc.razor.2.2.0.nupkg

- microsoft.aspnetcore.mvc.viewfeatures.2.2.0.nupkg

- newtonsoft.json.bson.1.0.1.nupkg

- netstandard.library.1.6.1.nupkg

- :x: **system.net.http.4.3.0.nupkg** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/DevSecOpsTrainingAz/epshoppublic/commit/87e4ac53883973c1d1a552462c45e3a8f0a183a2">87e4ac53883973c1d1a552462c45e3a8f0a183a2</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

An information disclosure vulnerability exists in .NET Core when authentication information is inadvertently exposed in a redirect, aka ".NET Core Information Disclosure Vulnerability." This affects .NET Core 2.1, .NET Core 1.0, .NET Core 1.1, PowerShell Core 6.0.

<p>Publish Date: 2018-10-10

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2018-8292>CVE-2018-8292</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2018-10-10</p>

<p>Fix Resolution: System.Net.Http - 4.3.4;Microsoft.PowerShell.Commands.Utility - 6.1.0-rc.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> CVE-2019-0820</summary>

### Vulnerable Library - <b>system.text.regularexpressions.4.3.0.nupkg</b></p>

<p>Provides the System.Text.RegularExpressions.Regex class, an implementation of a regular expression e...</p>

<p>Library home page: <a href="https://api.nuget.org/packages/system.text.regularexpressions.4.3.0.nupkg">https://api.nuget.org/packages/system.text.regularexpressions.4.3.0.nupkg</a></p>

<p>Path to dependency file: /src/PublicApi/PublicApi.csproj</p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/system.text.regularexpressions/4.3.0/system.text.regularexpressions.4.3.0.nupkg</p>

<p>

Dependency Hierarchy:

- UnitTests-1.0.0 (Root Library)

- microsoft.aspnetcore.mvc.2.2.0.nupkg

- microsoft.aspnetcore.mvc.taghelpers.2.2.0.nupkg

- microsoft.aspnetcore.mvc.razor.2.2.0.nupkg

- microsoft.aspnetcore.mvc.viewfeatures.2.2.0.nupkg

- newtonsoft.json.bson.1.0.1.nupkg

- netstandard.library.1.6.1.nupkg

- system.xml.xdocument.4.3.0.nupkg

- system.xml.readerwriter.4.3.0.nupkg

- :x: **system.text.regularexpressions.4.3.0.nupkg** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/DevSecOpsTrainingAz/epshoppublic/commit/87e4ac53883973c1d1a552462c45e3a8f0a183a2">87e4ac53883973c1d1a552462c45e3a8f0a183a2</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

A denial of service vulnerability exists when .NET Framework and .NET Core improperly process RegEx strings, aka '.NET Framework and .NET Core Denial of Service Vulnerability'. This CVE ID is unique from CVE-2019-0980, CVE-2019-0981.

Mend Note: After conducting further research, Mend has determined that CVE-2019-0820 only affects environments with versions 4.3.0 and 4.3.1 only on netcore50 environment of system.text.regularexpressions.nupkg.

<p>Publish Date: 2019-05-16

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-0820>CVE-2019-0820</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-cmhx-cq75-c4mj">https://github.com/advisories/GHSA-cmhx-cq75-c4mj</a></p>

<p>Release Date: 2019-05-16</p>

<p>Fix Resolution: System.Text.RegularExpressions - 4.3.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> CVE-2023-29337</summary>

### Vulnerable Libraries - <b>nuget.protocol.5.11.0.nupkg</b>, <b>nuget.common.6.2.1.nupkg</b></p>

<p>

### <b>nuget.protocol.5.11.0.nupkg</b></p>

<p>NuGet's implementation for interacting with feeds. Contains functionality for all feed types.</p>

<p>Library home page: <a href="https://api.nuget.org/packages/nuget.protocol.5.11.0.nupkg">https://api.nuget.org/packages/nuget.protocol.5.11.0.nupkg</a></p>

<p>Path to dependency file: /src/PublicApi/PublicApi.csproj</p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/nuget.protocol/5.11.0/nuget.protocol.5.11.0.nupkg</p>

<p>

Dependency Hierarchy:

- UnitTests-1.0.0 (Root Library)

- Web-1.0.0

- microsoft.visualstudio.web.codegeneration.design.6.0.7.nupkg

- microsoft.visualstudio.web.codegenerators.mvc.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.entityframeworkcore.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.core.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.templating.6.0.7.nupkg

- microsoft.visualstudio.web.codegeneration.utils.6.0.7.nupkg

- microsoft.dotnet.scaffolding.shared.6.0.7.nupkg

- nuget.projectmodel.5.11.0.nupkg

- nuget.dependencyresolver.core.5.11.0.nupkg

- :x: **nuget.protocol.5.11.0.nupkg** (Vulnerable Library)

### <b>nuget.common.6.2.1.nupkg</b></p>

<p>Common utilities and interfaces for all NuGet libraries.</p>

<p>Library home page: <a href="https://api.nuget.org/packages/nuget.common.6.2.1.nupkg">https://api.nuget.org/packages/nuget.common.6.2.1.nupkg</a></p>

<p>Path to dependency file: /src/PublicApi/PublicApi.csproj</p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/nuget.common/6.2.1/nuget.common.6.2.1.nupkg</p>

<p>

Dependency Hierarchy:

- UnitTests-1.0.0 (Root Library)

- Web-1.0.0

- microsoft.visualstudio.web.codegeneration.design.6.0.7.nupkg

- microsoft.visualstudio.web.codegenerators.mvc.6.0.7.nupkg

- nuget.packaging.6.2.1.nupkg

- nuget.configuration.6.2.1.nupkg

- :x: **nuget.common.6.2.1.nupkg** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/DevSecOpsTrainingAz/epshoppublic/commit/87e4ac53883973c1d1a552462c45e3a8f0a183a2">87e4ac53883973c1d1a552462c45e3a8f0a183a2</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

NuGet Client Remote Code Execution Vulnerability

<p>Publish Date: 2023-06-14

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-29337>CVE-2023-29337</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.1</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-6qmf-mmc7-6c2p">https://github.com/advisories/GHSA-6qmf-mmc7-6c2p</a></p>

<p>Release Date: 2023-06-14</p>

<p>Fix Resolution: NuGet.CommandLine - 6.0.5,6.2.4,6.3.3,6.4.2,6.5.1,6.6.1, NuGet.Commands - 6.0.5,6.2.4,6.3.3,6.4.2,6.5.1,6.6.1, NuGet.Common - 6.0.5,6.2.4,6.3.3,6.4.2,6.5.1,6.6.1, NuGet.PackageManagement - 6.0.5,6.2.4,6.3.3,6.4.2,6.5.1,6.6.1, NuGet.Protocol - 6.0.5,6.2.4,6.3.3,6.4.2,6.5.1,6.6.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> CVE-2022-34716</summary>

### Vulnerable Library - <b>system.security.cryptography.xml.4.5.0.nupkg</b></p>

<p>Provides classes to support the creation and validation of XML digital signatures. The classes in th...</p>

<p>Library home page: <a href="https://api.nuget.org/packages/system.security.cryptography.xml.4.5.0.nupkg">https://api.nuget.org/packages/system.security.cryptography.xml.4.5.0.nupkg</a></p>

<p>Path to dependency file: /tests/UnitTests/UnitTests.csproj</p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/system.security.cryptography.xml/4.5.0/system.security.cryptography.xml.4.5.0.nupkg</p>

<p>

Dependency Hierarchy:

- UnitTests-1.0.0 (Root Library)

- microsoft.aspnetcore.mvc.2.2.0.nupkg

- microsoft.aspnetcore.mvc.taghelpers.2.2.0.nupkg

- microsoft.aspnetcore.mvc.razor.2.2.0.nupkg

- microsoft.aspnetcore.mvc.viewfeatures.2.2.0.nupkg

- microsoft.aspnetcore.antiforgery.2.2.0.nupkg

- microsoft.aspnetcore.dataprotection.2.2.0.nupkg

- :x: **system.security.cryptography.xml.4.5.0.nupkg** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/DevSecOpsTrainingAz/epshoppublic/commit/87e4ac53883973c1d1a552462c45e3a8f0a183a2">87e4ac53883973c1d1a552462c45e3a8f0a183a2</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

Microsoft is releasing this security advisory to provide information about a vulnerability in .NET Core 3.1 and .NET 6.0. An information disclosure vulnerability exists in .NET Core 3.1 and .NET 6.0 that could lead to unauthorized access of privileged information.

## Affected software

* Any .NET 6.0 application running on .NET 6.0.7 or earlier.

* Any .NET Core 3.1 applicaiton running on .NET Core 3.1.27 or earlier.

## Patches

* If you're using .NET 6.0, you should download and install Runtime 6.0.8 or SDK 6.0.108 (for Visual Studio 2022 v17.1) from https://dotnet.microsoft.com/download/dotnet-core/6.0.

* If you're using .NET Core 3.1, you should download and install Runtime 3.1.28 (for Visual Studio 2019 v16.9) from https://dotnet.microsoft.com/download/dotnet-core/3.1.

<p>Publish Date: 2022-08-09

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-34716>CVE-2022-34716</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>5.9</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-2m65-m22p-9wjw">https://github.com/advisories/GHSA-2m65-m22p-9wjw</a></p>

<p>Release Date: 2022-08-09</p>

<p>Fix Resolution: Microsoft.AspNetCore.App.Runtime.linux-arm - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.linux-arm64 - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.linux-musl-arm - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.linux-musl-arm64 - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.linux-musl-x64 - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.linux-x64 - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.osx-x64 - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.win-arm - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.win-arm64 - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.win-x64 - 3.1.28,6.0.8;Microsoft.AspNetCore.App.Runtime.win-x86 - 3.1.28,6.0.8;System.Security.Cryptography.Xml - 4.7.1,6.0.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details>

|

True

|

UnitTests-1.0.0: 5 vulnerabilities (highest severity is: 7.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>UnitTests-1.0.0</b></p></summary>

<p></p>

<p>Path to vulnerable library: /home/wss-scanner/.nuget/packages/nuget.common/6.2.1/nuget.common.6.2.1.nupkg</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/DevSecOpsTrainingAz/epshoppublic/commit/87e4ac53883973c1d1a552462c45e3a8f0a183a2">87e4ac53883973c1d1a552462c45e3a8f0a183a2</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (UnitTests version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2022-41032](https://www.mend.io/vulnerability-database/CVE-2022-41032) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.8 | nuget.protocol.5.11.0.nupkg | Transitive | N/A* | ❌ |

| [CVE-2018-8292](https://www.mend.io/vulnerability-database/CVE-2018-8292) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.5 | system.net.http.4.3.0.nupkg | Transitive | N/A* | ❌ |

| [CVE-2019-0820](https://www.mend.io/vulnerability-database/CVE-2019-0820) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.5 | system.text.regularexpressions.4.3.0.nupkg | Transitive | N/A* | ❌ |

| [CVE-2023-29337](https://www.mend.io/vulnerability-database/CVE-2023-29337) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> High | 7.1 | detected in multiple dependencies | Transitive | N/A* | ❌ |

| [CVE-2022-34716](https://www.mend.io/vulnerability-database/CVE-2022-34716) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 5.9 | system.security.cryptography.xml.4.5.0.nupkg | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the "Details" section below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> CVE-2022-41032</summary>

### Vulnerable Library - <b>nuget.protocol.5.11.0.nupkg</b></p>

<p>NuGet's implementation for interacting with feeds. Contains functionality for all feed types.</p>

<p>Library home page: <a href="https://api.nuget.org/packages/nuget.protocol.5.11.0.nupkg">https://api.nuget.org/packages/nuget.protocol.5.11.0.nupkg</a></p>

<p>Path to dependency file: /src/PublicApi/PublicApi.csproj</p>