Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2,691

| 2,702,777,021

|

IssuesEvent

|

2015-04-06 12:23:02

|

twosigma/beaker-notebook

|

https://api.github.com/repos/twosigma/beaker-notebook

|

opened

|

Note tutorial - there's no way to interrupt the execution

|

bug Documentation

|

and when you change page you get an exception in the JS console

|

1.0

|

Note tutorial - there's no way to interrupt the execution - and when you change page you get an exception in the JS console

|

non_process

|

note tutorial there s no way to interrupt the execution and when you change page you get an exception in the js console

| 0

|

6,968

| 10,119,727,586

|

IssuesEvent

|

2019-07-31 12:13:32

|

linnovate/root

|

https://api.github.com/repos/linnovate/root

|

opened

|

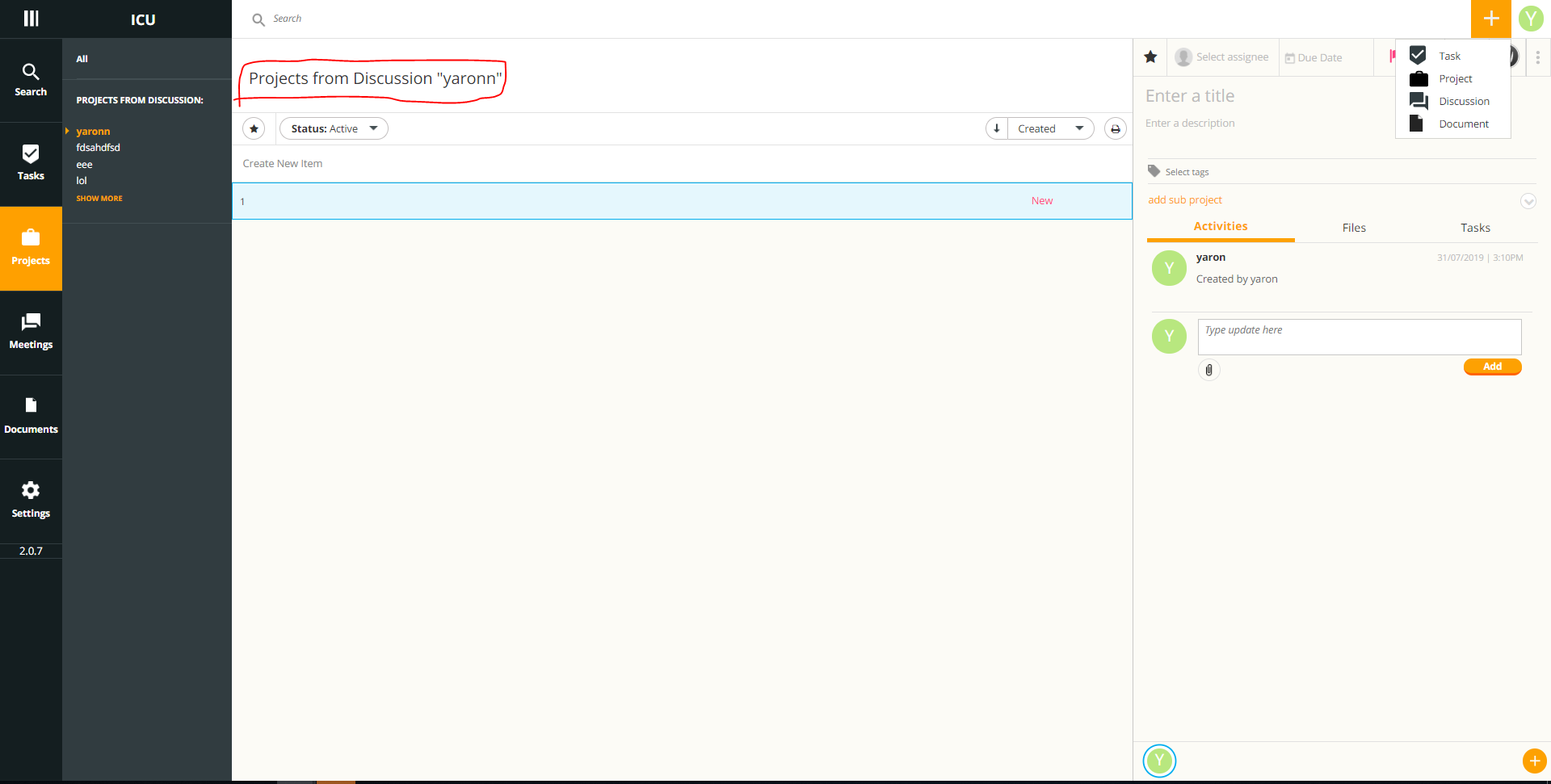

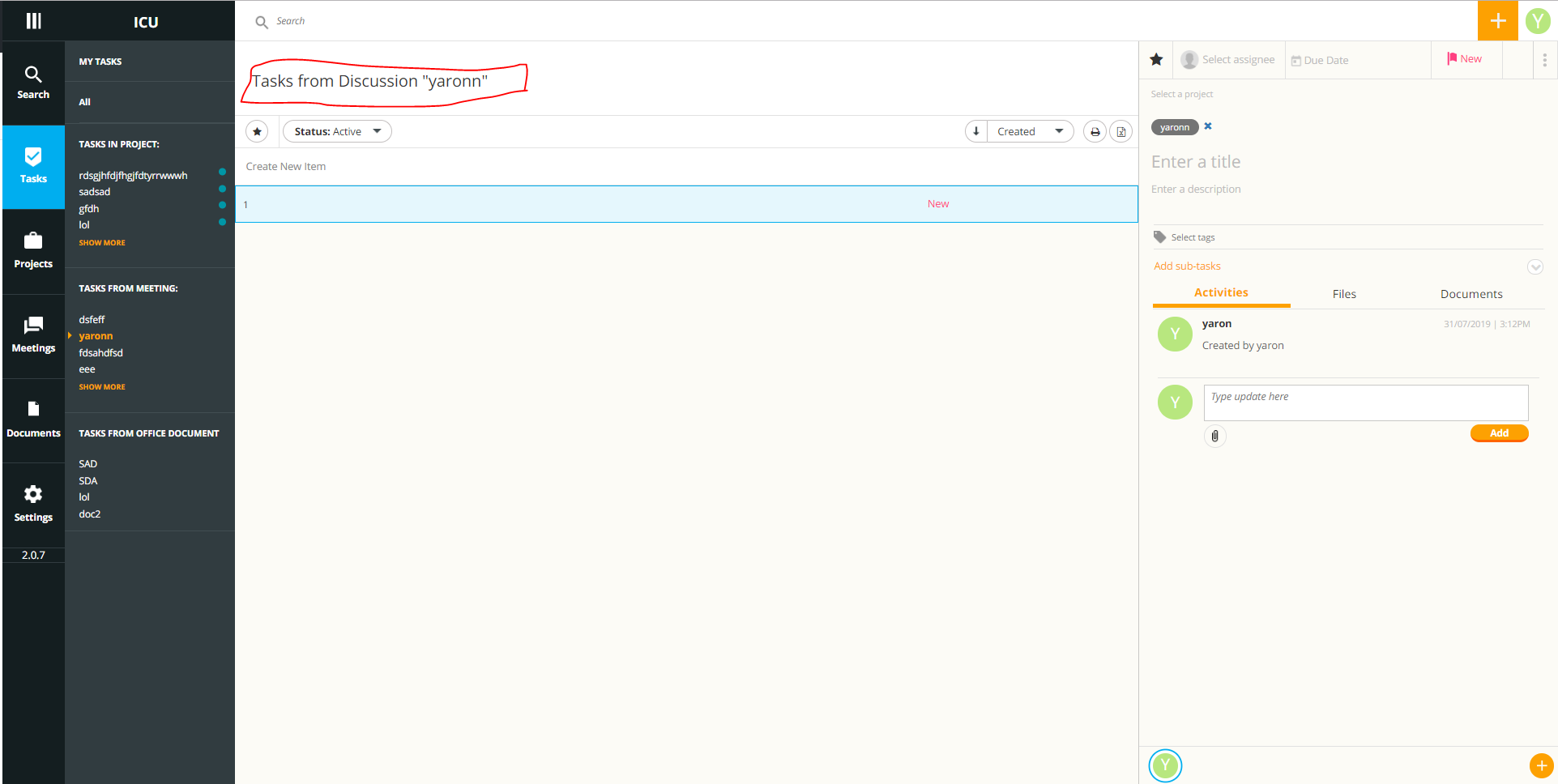

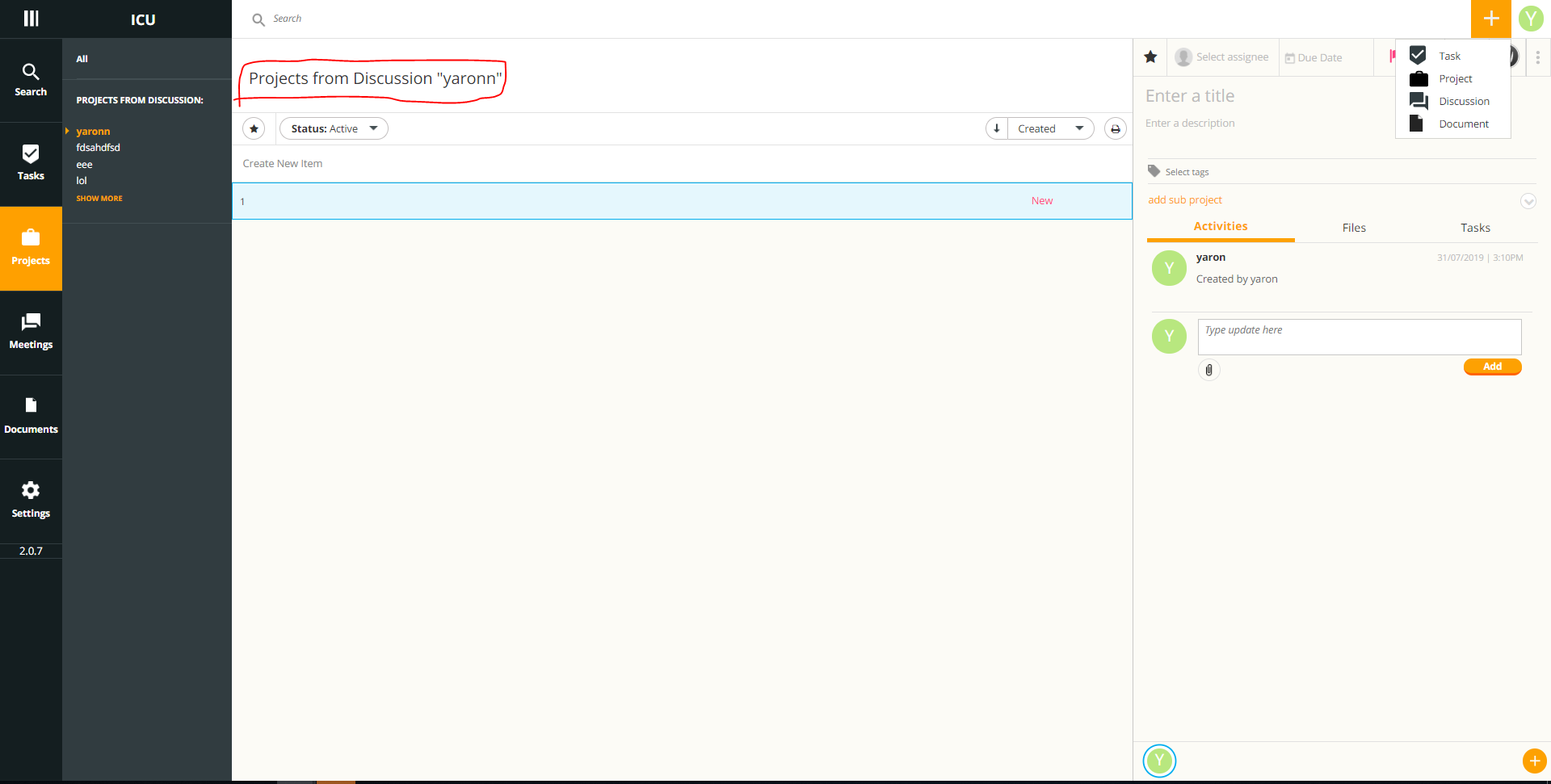

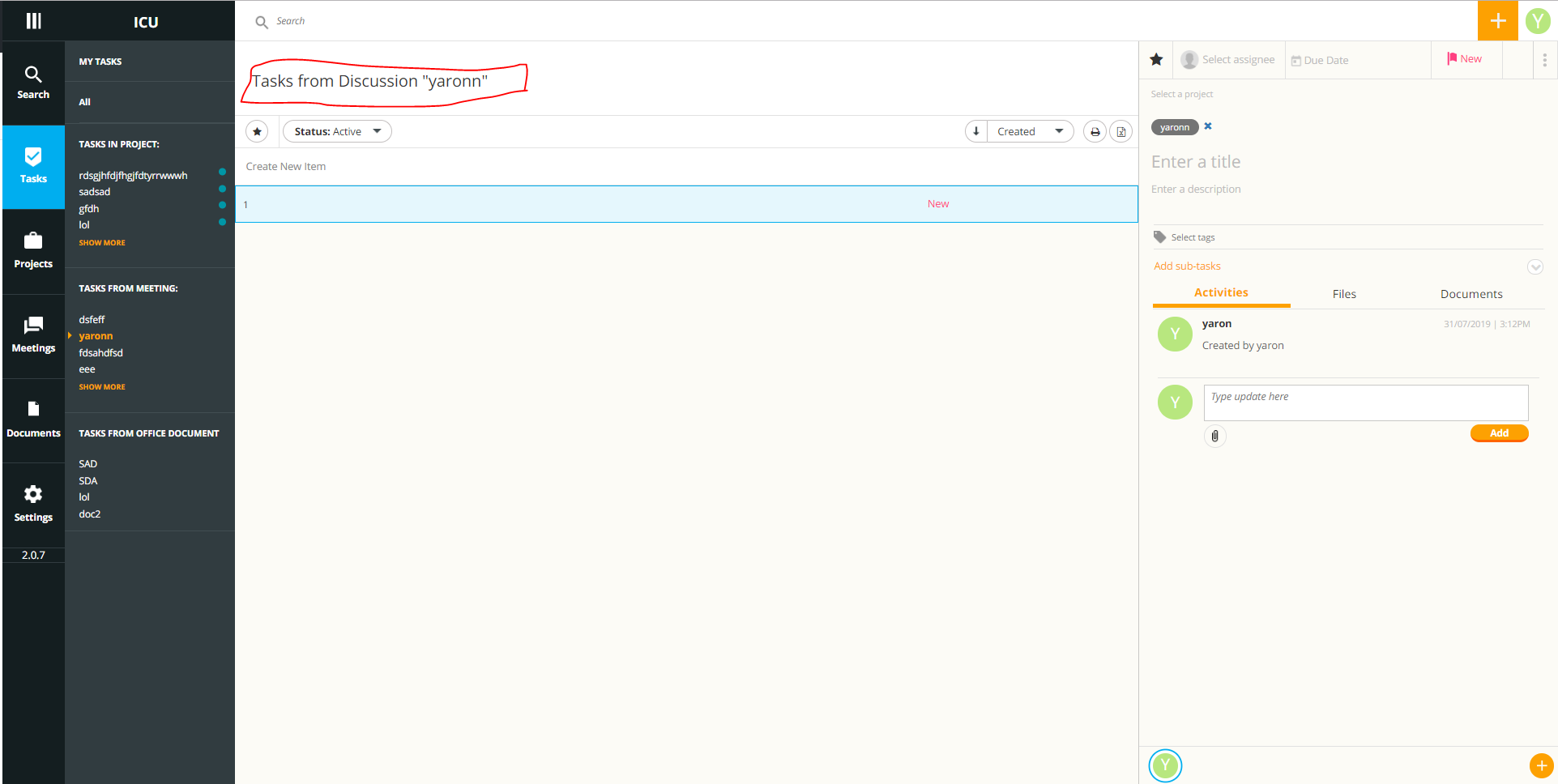

plus button task from project (from discussion) opens task from discussion instead

|

Process bug Projects

|

go to meetings

create a new meeting and name it

go to projects

click on the fitting discussion from the projects from discussion tab

create a project from the discussion

from the plus button create a new task

instead of a task from project being opened, a task from discussion is created

|

1.0

|

plus button task from project (from discussion) opens task from discussion instead - go to meetings

create a new meeting and name it

go to projects

click on the fitting discussion from the projects from discussion tab

create a project from the discussion

from the plus button create a new task

instead of a task from project being opened, a task from discussion is created

|

process

|

plus button task from project from discussion opens task from discussion instead go to meetings create a new meeting and name it go to projects click on the fitting discussion from the projects from discussion tab create a project from the discussion from the plus button create a new task instead of a task from project being opened a task from discussion is created

| 1

|

67,367

| 14,862,163,875

|

IssuesEvent

|

2021-01-19 01:05:23

|

fufunoyu/example-maven-travis

|

https://api.github.com/repos/fufunoyu/example-maven-travis

|

opened

|

CVE-2020-36189 (High) detected in jackson-databind-2.9.10.7.jar

|

security vulnerability

|

## CVE-2020-36189 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.7.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: example-maven-travis/pom.xml</p>

<p>Path to vulnerable library: canner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.10.7/jackson-databind-2.9.10.7.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.9.10.7.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/fufunoyu/example-maven-travis/commit/de1c84bba50d30975d47b84e2be0ec5feb00419d">de1c84bba50d30975d47b84e2be0ec5feb00419d</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.8 mishandles the interaction between serialization gadgets and typing, related to com.newrelic.agent.deps.ch.qos.logback.core.db.DriverManagerConnectionSource.

<p>Publish Date: 2021-01-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36189>CVE-2020-36189</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/issues/2996">https://github.com/FasterXML/jackson-databind/issues/2996</a></p>

<p>Release Date: 2021-01-06</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.8</p>

</p>

</details>

<p></p>

|

True

|

CVE-2020-36189 (High) detected in jackson-databind-2.9.10.7.jar - ## CVE-2020-36189 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.7.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: example-maven-travis/pom.xml</p>

<p>Path to vulnerable library: canner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.10.7/jackson-databind-2.9.10.7.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.9.10.7.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/fufunoyu/example-maven-travis/commit/de1c84bba50d30975d47b84e2be0ec5feb00419d">de1c84bba50d30975d47b84e2be0ec5feb00419d</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.8 mishandles the interaction between serialization gadgets and typing, related to com.newrelic.agent.deps.ch.qos.logback.core.db.DriverManagerConnectionSource.

<p>Publish Date: 2021-01-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36189>CVE-2020-36189</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/issues/2996">https://github.com/FasterXML/jackson-databind/issues/2996</a></p>

<p>Release Date: 2021-01-06</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.8</p>

</p>

</details>

<p></p>

|

non_process

|

cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file example maven travis pom xml path to vulnerable library canner repository com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy x jackson databind jar vulnerable library found in head commit a href vulnerability details fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to com newrelic agent deps ch qos logback core db drivermanagerconnectionsource publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind

| 0

|

22,333

| 30,921,890,134

|

IssuesEvent

|

2023-08-06 02:00:09

|

lizhihao6/get-daily-arxiv-noti

|

https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti

|

opened

|

New submissions for Fri, 4 Aug 23

|

event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB

|

## Keyword: events

### Reconstructing Three-Dimensional Models of Interacting Humans

- **Authors:** Mihai Fieraru, Mihai Zanfir, Elisabeta Oneata, Alin-Ionut Popa, Vlad Olaru, Cristian Sminchisescu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01854

- **Pdf link:** https://arxiv.org/pdf/2308.01854

- **Abstract**

Understanding 3d human interactions is fundamental for fine-grained scene analysis and behavioural modeling. However, most of the existing models predict incorrect, lifeless 3d estimates, that miss the subtle human contact aspects--the essence of the event--and are of little use for detailed behavioral understanding. This paper addresses such issues with several contributions: (1) we introduce models for interaction signature estimation (ISP) encompassing contact detection, segmentation, and 3d contact signature prediction; (2) we show how such components can be leveraged to ensure contact consistency during 3d reconstruction; (3) we construct several large datasets for learning and evaluating 3d contact prediction and reconstruction methods; specifically, we introduce CHI3D, a lab-based accurate 3d motion capture dataset with 631 sequences containing $2,525$ contact events, $728,664$ ground truth 3d poses, as well as FlickrCI3D, a dataset of $11,216$ images, with $14,081$ processed pairs of people, and $81,233$ facet-level surface correspondences. Finally, (4) we propose methodology for recovering the ground-truth pose and shape of interacting people in a controlled setup and (5) annotate all 3d interaction motions in CHI3D with textual descriptions. Motion data in multiple formats (GHUM and SMPLX parameters, Human3.6m 3d joints) is made available for research purposes at \url{https://ci3d.imar.ro}, together with an evaluation server and a public benchmark.

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

### Multimodal Adaptation of CLIP for Few-Shot Action Recognition

- **Authors:** Jiazheng Xing, Mengmeng Wang, Xiaojun Hou, Guang Dai, Jingdong Wang, Yong Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01532

- **Pdf link:** https://arxiv.org/pdf/2308.01532

- **Abstract**

Applying large-scale pre-trained visual models like CLIP to few-shot action recognition tasks can benefit performance and efficiency. Utilizing the "pre-training, fine-tuning" paradigm makes it possible to avoid training a network from scratch, which can be time-consuming and resource-intensive. However, this method has two drawbacks. First, limited labeled samples for few-shot action recognition necessitate minimizing the number of tunable parameters to mitigate over-fitting, also leading to inadequate fine-tuning that increases resource consumption and may disrupt the generalized representation of models. Second, the video's extra-temporal dimension challenges few-shot recognition's effective temporal modeling, while pre-trained visual models are usually image models. This paper proposes a novel method called Multimodal Adaptation of CLIP (MA-CLIP) to address these issues. It adapts CLIP for few-shot action recognition by adding lightweight adapters, which can minimize the number of learnable parameters and enable the model to transfer across different tasks quickly. The adapters we design can combine information from video-text multimodal sources for task-oriented spatiotemporal modeling, which is fast, efficient, and has low training costs. Additionally, based on the attention mechanism, we design a text-guided prototype construction module that can fully utilize video-text information to enhance the representation of video prototypes. Our MA-CLIP is plug-and-play, which can be used in any different few-shot action recognition temporal alignment metric.

## Keyword: ISP

### Reconstructing Three-Dimensional Models of Interacting Humans

- **Authors:** Mihai Fieraru, Mihai Zanfir, Elisabeta Oneata, Alin-Ionut Popa, Vlad Olaru, Cristian Sminchisescu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01854

- **Pdf link:** https://arxiv.org/pdf/2308.01854

- **Abstract**

Understanding 3d human interactions is fundamental for fine-grained scene analysis and behavioural modeling. However, most of the existing models predict incorrect, lifeless 3d estimates, that miss the subtle human contact aspects--the essence of the event--and are of little use for detailed behavioral understanding. This paper addresses such issues with several contributions: (1) we introduce models for interaction signature estimation (ISP) encompassing contact detection, segmentation, and 3d contact signature prediction; (2) we show how such components can be leveraged to ensure contact consistency during 3d reconstruction; (3) we construct several large datasets for learning and evaluating 3d contact prediction and reconstruction methods; specifically, we introduce CHI3D, a lab-based accurate 3d motion capture dataset with 631 sequences containing $2,525$ contact events, $728,664$ ground truth 3d poses, as well as FlickrCI3D, a dataset of $11,216$ images, with $14,081$ processed pairs of people, and $81,233$ facet-level surface correspondences. Finally, (4) we propose methodology for recovering the ground-truth pose and shape of interacting people in a controlled setup and (5) annotate all 3d interaction motions in CHI3D with textual descriptions. Motion data in multiple formats (GHUM and SMPLX parameters, Human3.6m 3d joints) is made available for research purposes at \url{https://ci3d.imar.ro}, together with an evaluation server and a public benchmark.

## Keyword: image signal processing

There is no result

## Keyword: image signal process

There is no result

## Keyword: compression

### TDMD: A Database for Dynamic Color Mesh Subjective and Objective Quality Explorations

- **Authors:** Qi Yang, Joel Jung, Timon Deschamps, Xiaozhong Xu, Shan Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2308.01499

- **Pdf link:** https://arxiv.org/pdf/2308.01499

- **Abstract**

Dynamic colored meshes (DCM) are widely used in various applications; however, these meshes may undergo different processes, such as compression or transmission, which can distort them and degrade their quality. To facilitate the development of objective metrics for DCMs and study the influence of typical distortions on their perception, we create the Tencent - dynamic colored mesh database (TDMD) containing eight reference DCM objects with six typical distortions. Using processed video sequences (PVS) derived from the DCM, we have conducted a large-scale subjective experiment that resulted in 303 distorted DCM samples with mean opinion scores, making the TDMD the largest available DCM database to our knowledge. This database enabled us to study the impact of different types of distortion on human perception and offer recommendations for DCM compression and related tasks. Additionally, we have evaluated three types of state-of-the-art objective metrics on the TDMD, including image-based, point-based, and video-based metrics, on the TDMD. Our experimental results highlight the strengths and weaknesses of each metric, and we provide suggestions about the selection of metrics in practical DCM applications. The TDMD will be made publicly available at the following location: https://multimedia.tencent.com/resources/tdmd.

### MVFlow: Deep Optical Flow Estimation of Compressed Videos with Motion Vector Prior

- **Authors:** Shili Zhou, Xuhao Jiang, Weimin Tan, Ruian He, Bo Yan

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01568

- **Pdf link:** https://arxiv.org/pdf/2308.01568

- **Abstract**

In recent years, many deep learning-based methods have been proposed to tackle the problem of optical flow estimation and achieved promising results. However, they hardly consider that most videos are compressed and thus ignore the pre-computed information in compressed video streams. Motion vectors, one of the compression information, record the motion of the video frames. They can be directly extracted from the compression code stream without computational cost and serve as a solid prior for optical flow estimation. Therefore, we propose an optical flow model, MVFlow, which uses motion vectors to improve the speed and accuracy of optical flow estimation for compressed videos. In detail, MVFlow includes a key Motion-Vector Converting Module, which ensures that the motion vectors can be transformed into the same domain of optical flow and then be utilized fully by the flow estimation module. Meanwhile, we construct four optical flow datasets for compressed videos containing frames and motion vectors in pairs. The experimental results demonstrate the superiority of our proposed MVFlow, which can reduce the AEPE by 1.09 compared to existing models or save 52% time to achieve similar accuracy to existing models.

### A Novel Tensor Decomposition of arbitrary order based on Block Convolution with Reflective Boundary Conditions for Multi-Dimensional Data Analysis

- **Authors:** Mahdi Molavi, Mansoor Rezghi, Tayyebeh Saeedi

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01768

- **Pdf link:** https://arxiv.org/pdf/2308.01768

- **Abstract**

Tensor decompositions are powerful tools for analyzing multi-dimensional data in their original format. Besides tensor decompositions like Tucker and CP, Tensor SVD (t-SVD) which is based on the t-product of tensors is another extension of SVD to tensors that recently developed and has found numerous applications in analyzing high dimensional data. This paper offers a new insight into the t-Product and shows that this product is a block convolution of two tensors with periodic boundary conditions. Based on this viewpoint, we propose a new tensor-tensor product called the $\star_c{}\text{-Product}$ based on Block convolution with reflective boundary conditions. Using a tensor framework, this product can be easily extended to tensors of arbitrary order. Additionally, we introduce a tensor decomposition based on our $\star_c{}\text{-Product}$ for arbitrary order tensors. Compared to t-SVD, our new decomposition has lower complexity, and experiments show that it yields higher-quality results in applications such as classification and compression.

## Keyword: RAW

### PPI-NET: End-to-End Parametric Primitive Inference

- **Authors:** Liang Wang, Xiaogang Wang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01521

- **Pdf link:** https://arxiv.org/pdf/2308.01521

- **Abstract**

In engineering applications, line, circle, arc, and point are collectively referred to as primitives, and they play a crucial role in path planning, simulation analysis, and manufacturing. When designing CAD models, engineers typically start by sketching the model's orthographic view on paper or a whiteboard and then translate the design intent into a CAD program. Although this design method is powerful, it often involves challenging and repetitive tasks, requiring engineers to perform numerous similar operations in each design. To address this conversion process, we propose an efficient and accurate end-to-end method that avoids the inefficiency and error accumulation issues associated with using auto-regressive models to infer parametric primitives from hand-drawn sketch images. Since our model samples match the representation format of standard CAD software, they can be imported into CAD software for solving, editing, and applied to downstream design tasks.

### Data Augmentation for Human Behavior Analysis in Multi-Person Conversations

- **Authors:** Kun Li, Dan Guo, Guoliang Chen, Feiyang Liu, Meng Wang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01526

- **Pdf link:** https://arxiv.org/pdf/2308.01526

- **Abstract**

In this paper, we present the solution of our team HFUT-VUT for the MultiMediate Grand Challenge 2023 at ACM Multimedia 2023. The solution covers three sub-challenges: bodily behavior recognition, eye contact detection, and next speaker prediction. We select Swin Transformer as the baseline and exploit data augmentation strategies to address the above three tasks. Specifically, we crop the raw video to remove the noise from other parts. At the same time, we utilize data augmentation to improve the generalization of the model. As a result, our solution achieves the best results of 0.6262 for bodily behavior recognition in terms of mean average precision and the accuracy of 0.7771 for eye contact detection on the corresponding test set. In addition, our approach also achieves comparable results of 0.5281 for the next speaker prediction in terms of unweighted average recall.

### Multimodal Adaptation of CLIP for Few-Shot Action Recognition

- **Authors:** Jiazheng Xing, Mengmeng Wang, Xiaojun Hou, Guang Dai, Jingdong Wang, Yong Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01532

- **Pdf link:** https://arxiv.org/pdf/2308.01532

- **Abstract**

Applying large-scale pre-trained visual models like CLIP to few-shot action recognition tasks can benefit performance and efficiency. Utilizing the "pre-training, fine-tuning" paradigm makes it possible to avoid training a network from scratch, which can be time-consuming and resource-intensive. However, this method has two drawbacks. First, limited labeled samples for few-shot action recognition necessitate minimizing the number of tunable parameters to mitigate over-fitting, also leading to inadequate fine-tuning that increases resource consumption and may disrupt the generalized representation of models. Second, the video's extra-temporal dimension challenges few-shot recognition's effective temporal modeling, while pre-trained visual models are usually image models. This paper proposes a novel method called Multimodal Adaptation of CLIP (MA-CLIP) to address these issues. It adapts CLIP for few-shot action recognition by adding lightweight adapters, which can minimize the number of learnable parameters and enable the model to transfer across different tasks quickly. The adapters we design can combine information from video-text multimodal sources for task-oriented spatiotemporal modeling, which is fast, efficient, and has low training costs. Additionally, based on the attention mechanism, we design a text-guided prototype construction module that can fully utilize video-text information to enhance the representation of video prototypes. Our MA-CLIP is plug-and-play, which can be used in any different few-shot action recognition temporal alignment metric.

### A Novel Convolutional Neural Network Architecture with a Continuous Symmetry

- **Authors:** Yao Liu, Hang Shao, Bing Bai

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Machine Learning (cs.LG); Neural and Evolutionary Computing (cs.NE)

- **Arxiv link:** https://arxiv.org/abs/2308.01621

- **Pdf link:** https://arxiv.org/pdf/2308.01621

- **Abstract**

This paper introduces a new Convolutional Neural Network (ConvNet) architecture inspired by a class of partial differential equations (PDEs) called quasi-linear hyperbolic systems. With comparable performance on image classification task, it allows for the modification of the weights via a continuous group of symmetry. This is a significant shift from traditional models where the architecture and weights are essentially fixed. We wish to promote the (internal) symmetry as a new desirable property for a neural network, and to draw attention to the PDE perspective in analyzing and interpreting ConvNets in the broader Deep Learning community.

### Weakly Supervised 3D Instance Segmentation without Instance-level Annotations

- **Authors:** Shichao Dong, Guosheng Lin

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01721

- **Pdf link:** https://arxiv.org/pdf/2308.01721

- **Abstract**

3D semantic scene understanding tasks have achieved great success with the emergence of deep learning, but often require a huge amount of manually annotated training data. To alleviate the annotation cost, we propose the first weakly-supervised 3D instance segmentation method that only requires categorical semantic labels as supervision, and we do not need instance-level labels. The required semantic annotations can be either dense or extreme sparse (e.g. 0.02% of total points). Even without having any instance-related ground-truth, we design an approach to break point clouds into raw fragments and find the most confident samples for learning instance centroids. Furthermore, we construct a recomposed dataset using pseudo instances, which is used to learn our defined multilevel shape-aware objectness signal. An asymmetrical object inference algorithm is followed to process core points and boundary points with different strategies, and generate high-quality pseudo instance labels to guide iterative training. Experiments demonstrate that our method can achieve comparable results with recent fully supervised methods. By generating pseudo instance labels from categorical semantic labels, our designed approach can also assist existing methods for learning 3D instance segmentation at reduced annotation cost.

## Keyword: raw image

There is no result

|

2.0

|

New submissions for Fri, 4 Aug 23 - ## Keyword: events

### Reconstructing Three-Dimensional Models of Interacting Humans

- **Authors:** Mihai Fieraru, Mihai Zanfir, Elisabeta Oneata, Alin-Ionut Popa, Vlad Olaru, Cristian Sminchisescu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01854

- **Pdf link:** https://arxiv.org/pdf/2308.01854

- **Abstract**

Understanding 3d human interactions is fundamental for fine-grained scene analysis and behavioural modeling. However, most of the existing models predict incorrect, lifeless 3d estimates, that miss the subtle human contact aspects--the essence of the event--and are of little use for detailed behavioral understanding. This paper addresses such issues with several contributions: (1) we introduce models for interaction signature estimation (ISP) encompassing contact detection, segmentation, and 3d contact signature prediction; (2) we show how such components can be leveraged to ensure contact consistency during 3d reconstruction; (3) we construct several large datasets for learning and evaluating 3d contact prediction and reconstruction methods; specifically, we introduce CHI3D, a lab-based accurate 3d motion capture dataset with 631 sequences containing $2,525$ contact events, $728,664$ ground truth 3d poses, as well as FlickrCI3D, a dataset of $11,216$ images, with $14,081$ processed pairs of people, and $81,233$ facet-level surface correspondences. Finally, (4) we propose methodology for recovering the ground-truth pose and shape of interacting people in a controlled setup and (5) annotate all 3d interaction motions in CHI3D with textual descriptions. Motion data in multiple formats (GHUM and SMPLX parameters, Human3.6m 3d joints) is made available for research purposes at \url{https://ci3d.imar.ro}, together with an evaluation server and a public benchmark.

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

### Multimodal Adaptation of CLIP for Few-Shot Action Recognition

- **Authors:** Jiazheng Xing, Mengmeng Wang, Xiaojun Hou, Guang Dai, Jingdong Wang, Yong Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01532

- **Pdf link:** https://arxiv.org/pdf/2308.01532

- **Abstract**

Applying large-scale pre-trained visual models like CLIP to few-shot action recognition tasks can benefit performance and efficiency. Utilizing the "pre-training, fine-tuning" paradigm makes it possible to avoid training a network from scratch, which can be time-consuming and resource-intensive. However, this method has two drawbacks. First, limited labeled samples for few-shot action recognition necessitate minimizing the number of tunable parameters to mitigate over-fitting, also leading to inadequate fine-tuning that increases resource consumption and may disrupt the generalized representation of models. Second, the video's extra-temporal dimension challenges few-shot recognition's effective temporal modeling, while pre-trained visual models are usually image models. This paper proposes a novel method called Multimodal Adaptation of CLIP (MA-CLIP) to address these issues. It adapts CLIP for few-shot action recognition by adding lightweight adapters, which can minimize the number of learnable parameters and enable the model to transfer across different tasks quickly. The adapters we design can combine information from video-text multimodal sources for task-oriented spatiotemporal modeling, which is fast, efficient, and has low training costs. Additionally, based on the attention mechanism, we design a text-guided prototype construction module that can fully utilize video-text information to enhance the representation of video prototypes. Our MA-CLIP is plug-and-play, which can be used in any different few-shot action recognition temporal alignment metric.

## Keyword: ISP

### Reconstructing Three-Dimensional Models of Interacting Humans

- **Authors:** Mihai Fieraru, Mihai Zanfir, Elisabeta Oneata, Alin-Ionut Popa, Vlad Olaru, Cristian Sminchisescu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01854

- **Pdf link:** https://arxiv.org/pdf/2308.01854

- **Abstract**

Understanding 3d human interactions is fundamental for fine-grained scene analysis and behavioural modeling. However, most of the existing models predict incorrect, lifeless 3d estimates, that miss the subtle human contact aspects--the essence of the event--and are of little use for detailed behavioral understanding. This paper addresses such issues with several contributions: (1) we introduce models for interaction signature estimation (ISP) encompassing contact detection, segmentation, and 3d contact signature prediction; (2) we show how such components can be leveraged to ensure contact consistency during 3d reconstruction; (3) we construct several large datasets for learning and evaluating 3d contact prediction and reconstruction methods; specifically, we introduce CHI3D, a lab-based accurate 3d motion capture dataset with 631 sequences containing $2,525$ contact events, $728,664$ ground truth 3d poses, as well as FlickrCI3D, a dataset of $11,216$ images, with $14,081$ processed pairs of people, and $81,233$ facet-level surface correspondences. Finally, (4) we propose methodology for recovering the ground-truth pose and shape of interacting people in a controlled setup and (5) annotate all 3d interaction motions in CHI3D with textual descriptions. Motion data in multiple formats (GHUM and SMPLX parameters, Human3.6m 3d joints) is made available for research purposes at \url{https://ci3d.imar.ro}, together with an evaluation server and a public benchmark.

## Keyword: image signal processing

There is no result

## Keyword: image signal process

There is no result

## Keyword: compression

### TDMD: A Database for Dynamic Color Mesh Subjective and Objective Quality Explorations

- **Authors:** Qi Yang, Joel Jung, Timon Deschamps, Xiaozhong Xu, Shan Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2308.01499

- **Pdf link:** https://arxiv.org/pdf/2308.01499

- **Abstract**

Dynamic colored meshes (DCM) are widely used in various applications; however, these meshes may undergo different processes, such as compression or transmission, which can distort them and degrade their quality. To facilitate the development of objective metrics for DCMs and study the influence of typical distortions on their perception, we create the Tencent - dynamic colored mesh database (TDMD) containing eight reference DCM objects with six typical distortions. Using processed video sequences (PVS) derived from the DCM, we have conducted a large-scale subjective experiment that resulted in 303 distorted DCM samples with mean opinion scores, making the TDMD the largest available DCM database to our knowledge. This database enabled us to study the impact of different types of distortion on human perception and offer recommendations for DCM compression and related tasks. Additionally, we have evaluated three types of state-of-the-art objective metrics on the TDMD, including image-based, point-based, and video-based metrics, on the TDMD. Our experimental results highlight the strengths and weaknesses of each metric, and we provide suggestions about the selection of metrics in practical DCM applications. The TDMD will be made publicly available at the following location: https://multimedia.tencent.com/resources/tdmd.

### MVFlow: Deep Optical Flow Estimation of Compressed Videos with Motion Vector Prior

- **Authors:** Shili Zhou, Xuhao Jiang, Weimin Tan, Ruian He, Bo Yan

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01568

- **Pdf link:** https://arxiv.org/pdf/2308.01568

- **Abstract**

In recent years, many deep learning-based methods have been proposed to tackle the problem of optical flow estimation and achieved promising results. However, they hardly consider that most videos are compressed and thus ignore the pre-computed information in compressed video streams. Motion vectors, one of the compression information, record the motion of the video frames. They can be directly extracted from the compression code stream without computational cost and serve as a solid prior for optical flow estimation. Therefore, we propose an optical flow model, MVFlow, which uses motion vectors to improve the speed and accuracy of optical flow estimation for compressed videos. In detail, MVFlow includes a key Motion-Vector Converting Module, which ensures that the motion vectors can be transformed into the same domain of optical flow and then be utilized fully by the flow estimation module. Meanwhile, we construct four optical flow datasets for compressed videos containing frames and motion vectors in pairs. The experimental results demonstrate the superiority of our proposed MVFlow, which can reduce the AEPE by 1.09 compared to existing models or save 52% time to achieve similar accuracy to existing models.

### A Novel Tensor Decomposition of arbitrary order based on Block Convolution with Reflective Boundary Conditions for Multi-Dimensional Data Analysis

- **Authors:** Mahdi Molavi, Mansoor Rezghi, Tayyebeh Saeedi

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01768

- **Pdf link:** https://arxiv.org/pdf/2308.01768

- **Abstract**

Tensor decompositions are powerful tools for analyzing multi-dimensional data in their original format. Besides tensor decompositions like Tucker and CP, Tensor SVD (t-SVD) which is based on the t-product of tensors is another extension of SVD to tensors that recently developed and has found numerous applications in analyzing high dimensional data. This paper offers a new insight into the t-Product and shows that this product is a block convolution of two tensors with periodic boundary conditions. Based on this viewpoint, we propose a new tensor-tensor product called the $\star_c{}\text{-Product}$ based on Block convolution with reflective boundary conditions. Using a tensor framework, this product can be easily extended to tensors of arbitrary order. Additionally, we introduce a tensor decomposition based on our $\star_c{}\text{-Product}$ for arbitrary order tensors. Compared to t-SVD, our new decomposition has lower complexity, and experiments show that it yields higher-quality results in applications such as classification and compression.

## Keyword: RAW

### PPI-NET: End-to-End Parametric Primitive Inference

- **Authors:** Liang Wang, Xiaogang Wang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01521

- **Pdf link:** https://arxiv.org/pdf/2308.01521

- **Abstract**

In engineering applications, line, circle, arc, and point are collectively referred to as primitives, and they play a crucial role in path planning, simulation analysis, and manufacturing. When designing CAD models, engineers typically start by sketching the model's orthographic view on paper or a whiteboard and then translate the design intent into a CAD program. Although this design method is powerful, it often involves challenging and repetitive tasks, requiring engineers to perform numerous similar operations in each design. To address this conversion process, we propose an efficient and accurate end-to-end method that avoids the inefficiency and error accumulation issues associated with using auto-regressive models to infer parametric primitives from hand-drawn sketch images. Since our model samples match the representation format of standard CAD software, they can be imported into CAD software for solving, editing, and applied to downstream design tasks.

### Data Augmentation for Human Behavior Analysis in Multi-Person Conversations

- **Authors:** Kun Li, Dan Guo, Guoliang Chen, Feiyang Liu, Meng Wang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01526

- **Pdf link:** https://arxiv.org/pdf/2308.01526

- **Abstract**

In this paper, we present the solution of our team HFUT-VUT for the MultiMediate Grand Challenge 2023 at ACM Multimedia 2023. The solution covers three sub-challenges: bodily behavior recognition, eye contact detection, and next speaker prediction. We select Swin Transformer as the baseline and exploit data augmentation strategies to address the above three tasks. Specifically, we crop the raw video to remove the noise from other parts. At the same time, we utilize data augmentation to improve the generalization of the model. As a result, our solution achieves the best results of 0.6262 for bodily behavior recognition in terms of mean average precision and the accuracy of 0.7771 for eye contact detection on the corresponding test set. In addition, our approach also achieves comparable results of 0.5281 for the next speaker prediction in terms of unweighted average recall.

### Multimodal Adaptation of CLIP for Few-Shot Action Recognition

- **Authors:** Jiazheng Xing, Mengmeng Wang, Xiaojun Hou, Guang Dai, Jingdong Wang, Yong Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01532

- **Pdf link:** https://arxiv.org/pdf/2308.01532

- **Abstract**

Applying large-scale pre-trained visual models like CLIP to few-shot action recognition tasks can benefit performance and efficiency. Utilizing the "pre-training, fine-tuning" paradigm makes it possible to avoid training a network from scratch, which can be time-consuming and resource-intensive. However, this method has two drawbacks. First, limited labeled samples for few-shot action recognition necessitate minimizing the number of tunable parameters to mitigate over-fitting, also leading to inadequate fine-tuning that increases resource consumption and may disrupt the generalized representation of models. Second, the video's extra-temporal dimension challenges few-shot recognition's effective temporal modeling, while pre-trained visual models are usually image models. This paper proposes a novel method called Multimodal Adaptation of CLIP (MA-CLIP) to address these issues. It adapts CLIP for few-shot action recognition by adding lightweight adapters, which can minimize the number of learnable parameters and enable the model to transfer across different tasks quickly. The adapters we design can combine information from video-text multimodal sources for task-oriented spatiotemporal modeling, which is fast, efficient, and has low training costs. Additionally, based on the attention mechanism, we design a text-guided prototype construction module that can fully utilize video-text information to enhance the representation of video prototypes. Our MA-CLIP is plug-and-play, which can be used in any different few-shot action recognition temporal alignment metric.

### A Novel Convolutional Neural Network Architecture with a Continuous Symmetry

- **Authors:** Yao Liu, Hang Shao, Bing Bai

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Machine Learning (cs.LG); Neural and Evolutionary Computing (cs.NE)

- **Arxiv link:** https://arxiv.org/abs/2308.01621

- **Pdf link:** https://arxiv.org/pdf/2308.01621

- **Abstract**

This paper introduces a new Convolutional Neural Network (ConvNet) architecture inspired by a class of partial differential equations (PDEs) called quasi-linear hyperbolic systems. With comparable performance on image classification task, it allows for the modification of the weights via a continuous group of symmetry. This is a significant shift from traditional models where the architecture and weights are essentially fixed. We wish to promote the (internal) symmetry as a new desirable property for a neural network, and to draw attention to the PDE perspective in analyzing and interpreting ConvNets in the broader Deep Learning community.

### Weakly Supervised 3D Instance Segmentation without Instance-level Annotations

- **Authors:** Shichao Dong, Guosheng Lin

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2308.01721

- **Pdf link:** https://arxiv.org/pdf/2308.01721

- **Abstract**

3D semantic scene understanding tasks have achieved great success with the emergence of deep learning, but often require a huge amount of manually annotated training data. To alleviate the annotation cost, we propose the first weakly-supervised 3D instance segmentation method that only requires categorical semantic labels as supervision, and we do not need instance-level labels. The required semantic annotations can be either dense or extreme sparse (e.g. 0.02% of total points). Even without having any instance-related ground-truth, we design an approach to break point clouds into raw fragments and find the most confident samples for learning instance centroids. Furthermore, we construct a recomposed dataset using pseudo instances, which is used to learn our defined multilevel shape-aware objectness signal. An asymmetrical object inference algorithm is followed to process core points and boundary points with different strategies, and generate high-quality pseudo instance labels to guide iterative training. Experiments demonstrate that our method can achieve comparable results with recent fully supervised methods. By generating pseudo instance labels from categorical semantic labels, our designed approach can also assist existing methods for learning 3D instance segmentation at reduced annotation cost.

## Keyword: raw image

There is no result

|

process

|

new submissions for fri aug keyword events reconstructing three dimensional models of interacting humans authors mihai fieraru mihai zanfir elisabeta oneata alin ionut popa vlad olaru cristian sminchisescu subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract understanding human interactions is fundamental for fine grained scene analysis and behavioural modeling however most of the existing models predict incorrect lifeless estimates that miss the subtle human contact aspects the essence of the event and are of little use for detailed behavioral understanding this paper addresses such issues with several contributions we introduce models for interaction signature estimation isp encompassing contact detection segmentation and contact signature prediction we show how such components can be leveraged to ensure contact consistency during reconstruction we construct several large datasets for learning and evaluating contact prediction and reconstruction methods specifically we introduce a lab based accurate motion capture dataset with sequences containing contact events ground truth poses as well as a dataset of images with processed pairs of people and facet level surface correspondences finally we propose methodology for recovering the ground truth pose and shape of interacting people in a controlled setup and annotate all interaction motions in with textual descriptions motion data in multiple formats ghum and smplx parameters joints is made available for research purposes at url together with an evaluation server and a public benchmark keyword event camera there is no result keyword events camera there is no result keyword white balance there is no result keyword color contrast there is no result keyword awb multimodal adaptation of clip for few shot action recognition authors jiazheng xing mengmeng wang xiaojun hou guang dai jingdong wang yong liu subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract applying large scale pre trained visual models like clip to few shot action recognition tasks can benefit performance and efficiency utilizing the pre training fine tuning paradigm makes it possible to avoid training a network from scratch which can be time consuming and resource intensive however this method has two drawbacks first limited labeled samples for few shot action recognition necessitate minimizing the number of tunable parameters to mitigate over fitting also leading to inadequate fine tuning that increases resource consumption and may disrupt the generalized representation of models second the video s extra temporal dimension challenges few shot recognition s effective temporal modeling while pre trained visual models are usually image models this paper proposes a novel method called multimodal adaptation of clip ma clip to address these issues it adapts clip for few shot action recognition by adding lightweight adapters which can minimize the number of learnable parameters and enable the model to transfer across different tasks quickly the adapters we design can combine information from video text multimodal sources for task oriented spatiotemporal modeling which is fast efficient and has low training costs additionally based on the attention mechanism we design a text guided prototype construction module that can fully utilize video text information to enhance the representation of video prototypes our ma clip is plug and play which can be used in any different few shot action recognition temporal alignment metric keyword isp reconstructing three dimensional models of interacting humans authors mihai fieraru mihai zanfir elisabeta oneata alin ionut popa vlad olaru cristian sminchisescu subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract understanding human interactions is fundamental for fine grained scene analysis and behavioural modeling however most of the existing models predict incorrect lifeless estimates that miss the subtle human contact aspects the essence of the event and are of little use for detailed behavioral understanding this paper addresses such issues with several contributions we introduce models for interaction signature estimation isp encompassing contact detection segmentation and contact signature prediction we show how such components can be leveraged to ensure contact consistency during reconstruction we construct several large datasets for learning and evaluating contact prediction and reconstruction methods specifically we introduce a lab based accurate motion capture dataset with sequences containing contact events ground truth poses as well as a dataset of images with processed pairs of people and facet level surface correspondences finally we propose methodology for recovering the ground truth pose and shape of interacting people in a controlled setup and annotate all interaction motions in with textual descriptions motion data in multiple formats ghum and smplx parameters joints is made available for research purposes at url together with an evaluation server and a public benchmark keyword image signal processing there is no result keyword image signal process there is no result keyword compression tdmd a database for dynamic color mesh subjective and objective quality explorations authors qi yang joel jung timon deschamps xiaozhong xu shan liu subjects computer vision and pattern recognition cs cv image and video processing eess iv arxiv link pdf link abstract dynamic colored meshes dcm are widely used in various applications however these meshes may undergo different processes such as compression or transmission which can distort them and degrade their quality to facilitate the development of objective metrics for dcms and study the influence of typical distortions on their perception we create the tencent dynamic colored mesh database tdmd containing eight reference dcm objects with six typical distortions using processed video sequences pvs derived from the dcm we have conducted a large scale subjective experiment that resulted in distorted dcm samples with mean opinion scores making the tdmd the largest available dcm database to our knowledge this database enabled us to study the impact of different types of distortion on human perception and offer recommendations for dcm compression and related tasks additionally we have evaluated three types of state of the art objective metrics on the tdmd including image based point based and video based metrics on the tdmd our experimental results highlight the strengths and weaknesses of each metric and we provide suggestions about the selection of metrics in practical dcm applications the tdmd will be made publicly available at the following location mvflow deep optical flow estimation of compressed videos with motion vector prior authors shili zhou xuhao jiang weimin tan ruian he bo yan subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract in recent years many deep learning based methods have been proposed to tackle the problem of optical flow estimation and achieved promising results however they hardly consider that most videos are compressed and thus ignore the pre computed information in compressed video streams motion vectors one of the compression information record the motion of the video frames they can be directly extracted from the compression code stream without computational cost and serve as a solid prior for optical flow estimation therefore we propose an optical flow model mvflow which uses motion vectors to improve the speed and accuracy of optical flow estimation for compressed videos in detail mvflow includes a key motion vector converting module which ensures that the motion vectors can be transformed into the same domain of optical flow and then be utilized fully by the flow estimation module meanwhile we construct four optical flow datasets for compressed videos containing frames and motion vectors in pairs the experimental results demonstrate the superiority of our proposed mvflow which can reduce the aepe by compared to existing models or save time to achieve similar accuracy to existing models a novel tensor decomposition of arbitrary order based on block convolution with reflective boundary conditions for multi dimensional data analysis authors mahdi molavi mansoor rezghi tayyebeh saeedi subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract tensor decompositions are powerful tools for analyzing multi dimensional data in their original format besides tensor decompositions like tucker and cp tensor svd t svd which is based on the t product of tensors is another extension of svd to tensors that recently developed and has found numerous applications in analyzing high dimensional data this paper offers a new insight into the t product and shows that this product is a block convolution of two tensors with periodic boundary conditions based on this viewpoint we propose a new tensor tensor product called the star c text product based on block convolution with reflective boundary conditions using a tensor framework this product can be easily extended to tensors of arbitrary order additionally we introduce a tensor decomposition based on our star c text product for arbitrary order tensors compared to t svd our new decomposition has lower complexity and experiments show that it yields higher quality results in applications such as classification and compression keyword raw ppi net end to end parametric primitive inference authors liang wang xiaogang wang subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract in engineering applications line circle arc and point are collectively referred to as primitives and they play a crucial role in path planning simulation analysis and manufacturing when designing cad models engineers typically start by sketching the model s orthographic view on paper or a whiteboard and then translate the design intent into a cad program although this design method is powerful it often involves challenging and repetitive tasks requiring engineers to perform numerous similar operations in each design to address this conversion process we propose an efficient and accurate end to end method that avoids the inefficiency and error accumulation issues associated with using auto regressive models to infer parametric primitives from hand drawn sketch images since our model samples match the representation format of standard cad software they can be imported into cad software for solving editing and applied to downstream design tasks data augmentation for human behavior analysis in multi person conversations authors kun li dan guo guoliang chen feiyang liu meng wang subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract in this paper we present the solution of our team hfut vut for the multimediate grand challenge at acm multimedia the solution covers three sub challenges bodily behavior recognition eye contact detection and next speaker prediction we select swin transformer as the baseline and exploit data augmentation strategies to address the above three tasks specifically we crop the raw video to remove the noise from other parts at the same time we utilize data augmentation to improve the generalization of the model as a result our solution achieves the best results of for bodily behavior recognition in terms of mean average precision and the accuracy of for eye contact detection on the corresponding test set in addition our approach also achieves comparable results of for the next speaker prediction in terms of unweighted average recall multimodal adaptation of clip for few shot action recognition authors jiazheng xing mengmeng wang xiaojun hou guang dai jingdong wang yong liu subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract applying large scale pre trained visual models like clip to few shot action recognition tasks can benefit performance and efficiency utilizing the pre training fine tuning paradigm makes it possible to avoid training a network from scratch which can be time consuming and resource intensive however this method has two drawbacks first limited labeled samples for few shot action recognition necessitate minimizing the number of tunable parameters to mitigate over fitting also leading to inadequate fine tuning that increases resource consumption and may disrupt the generalized representation of models second the video s extra temporal dimension challenges few shot recognition s effective temporal modeling while pre trained visual models are usually image models this paper proposes a novel method called multimodal adaptation of clip ma clip to address these issues it adapts clip for few shot action recognition by adding lightweight adapters which can minimize the number of learnable parameters and enable the model to transfer across different tasks quickly the adapters we design can combine information from video text multimodal sources for task oriented spatiotemporal modeling which is fast efficient and has low training costs additionally based on the attention mechanism we design a text guided prototype construction module that can fully utilize video text information to enhance the representation of video prototypes our ma clip is plug and play which can be used in any different few shot action recognition temporal alignment metric a novel convolutional neural network architecture with a continuous symmetry authors yao liu hang shao bing bai subjects computer vision and pattern recognition cs cv machine learning cs lg neural and evolutionary computing cs ne arxiv link pdf link abstract this paper introduces a new convolutional neural network convnet architecture inspired by a class of partial differential equations pdes called quasi linear hyperbolic systems with comparable performance on image classification task it allows for the modification of the weights via a continuous group of symmetry this is a significant shift from traditional models where the architecture and weights are essentially fixed we wish to promote the internal symmetry as a new desirable property for a neural network and to draw attention to the pde perspective in analyzing and interpreting convnets in the broader deep learning community weakly supervised instance segmentation without instance level annotations authors shichao dong guosheng lin subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract semantic scene understanding tasks have achieved great success with the emergence of deep learning but often require a huge amount of manually annotated training data to alleviate the annotation cost we propose the first weakly supervised instance segmentation method that only requires categorical semantic labels as supervision and we do not need instance level labels the required semantic annotations can be either dense or extreme sparse e g of total points even without having any instance related ground truth we design an approach to break point clouds into raw fragments and find the most confident samples for learning instance centroids furthermore we construct a recomposed dataset using pseudo instances which is used to learn our defined multilevel shape aware objectness signal an asymmetrical object inference algorithm is followed to process core points and boundary points with different strategies and generate high quality pseudo instance labels to guide iterative training experiments demonstrate that our method can achieve comparable results with recent fully supervised methods by generating pseudo instance labels from categorical semantic labels our designed approach can also assist existing methods for learning instance segmentation at reduced annotation cost keyword raw image there is no result

| 1

|

10,921

| 13,724,347,743

|

IssuesEvent

|

2020-10-03 13:51:53

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

IP ranges to access non-AKS cluster

|

Pri2 devops-cicd-process/tech devops/prod

|

When adding a Kubernetes resource which is not AKS, the resource details are retrieved from IPs not found on any public list of service tag for whitelisting (example: 40.74.28.3 for West Europe).

Are there any fixed IPs that can be added to the firewall so that access to the Kubernetes API can be limited to Azure DevOps only?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 7730ae4d-4101-9c83-1823-4ff43ff161ce

* Version Independent ID: 20a7e263-4819-783e-c984-c4f3b459e22f

* Content: [Environment - Kubernetes resource - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/environments-kubernetes?view=azure-devops)

* Content Source: [docs/pipelines/process/environments-kubernetes.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/environments-kubernetes.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

1.0

|

IP ranges to access non-AKS cluster - When adding a Kubernetes resource which is not AKS, the resource details are retrieved from IPs not found on any public list of service tag for whitelisting (example: 40.74.28.3 for West Europe).

Are there any fixed IPs that can be added to the firewall so that access to the Kubernetes API can be limited to Azure DevOps only?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 7730ae4d-4101-9c83-1823-4ff43ff161ce

* Version Independent ID: 20a7e263-4819-783e-c984-c4f3b459e22f

* Content: [Environment - Kubernetes resource - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/environments-kubernetes?view=azure-devops)

* Content Source: [docs/pipelines/process/environments-kubernetes.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/environments-kubernetes.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

process

|

ip ranges to access non aks cluster when adding a kubernetes resource which is not aks the resource details are retrieved from ips not found on any public list of service tag for whitelisting example for west europe are there any fixed ips that can be added to the firewall so that access to the kubernetes api can be limited to azure devops only document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source product devops technology devops cicd process github login juliakm microsoft alias jukullam

| 1

|

79,251

| 10,114,876,801

|

IssuesEvent

|

2019-07-30 20:17:48

|

uiowa/uiowa01

|

https://api.github.com/repos/uiowa/uiowa01

|

opened

|

Add documentation for removing feature branch created by blt uis

|

documentation

|

`git checkout master`

`git branch -D initialize-sitename`

`git push origin :initialize-sitename`

|

1.0

|

Add documentation for removing feature branch created by blt uis - `git checkout master`

`git branch -D initialize-sitename`

`git push origin :initialize-sitename`

|

non_process

|

add documentation for removing feature branch created by blt uis git checkout master git branch d initialize sitename git push origin initialize sitename

| 0

|

11,200

| 13,957,702,949

|

IssuesEvent

|

2020-10-24 08:13:46

|

alexanderkotsev/geoportal

|

https://api.github.com/repos/alexanderkotsev/geoportal

|

opened

|

MT: Harvest

|

Geoportal Harvesting process MT - Malta

|

Good morning Angelo,

Can you kindly perform a harvest on the Maltese CSW as we made some changes and would like to check the results. Thanks in advance for your support.

Regards,

Rene

|

1.0

|

MT: Harvest - Good morning Angelo,

Can you kindly perform a harvest on the Maltese CSW as we made some changes and would like to check the results. Thanks in advance for your support.

Regards,

Rene

|

process

|

mt harvest good morning angelo can you kindly perform a harvest on the maltese csw as we made some changes and would like to check the results thanks in advance for your support regards rene

| 1

|

15,646

| 19,846,247,944

|

IssuesEvent

|

2022-01-21 06:49:45

|

ooi-data/CE04OSSM-RID26-06-PHSEND000-recovered_inst-phsen_abcdef_instrument

|

https://api.github.com/repos/ooi-data/CE04OSSM-RID26-06-PHSEND000-recovered_inst-phsen_abcdef_instrument

|

opened

|

🛑 Processing failed: ValueError

|

process

|

## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T06:49:44.709173.

## Details

Flow name: `CE04OSSM-RID26-06-PHSEND000-recovered_inst-phsen_abcdef_instrument`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

1.0

|

🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T06:49:44.709173.

## Details

Flow name: `CE04OSSM-RID26-06-PHSEND000-recovered_inst-phsen_abcdef_instrument`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

process

|