Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

142,122

| 13,016,726,320

|

IssuesEvent

|

2020-07-26 08:22:14

|

anitab-org/anitab-org.github.io

|

https://api.github.com/repos/anitab-org/anitab-org.github.io

|

closed

|

Improvement Of README.md File.

|

Category: Documentation/Training First Timers Only

|

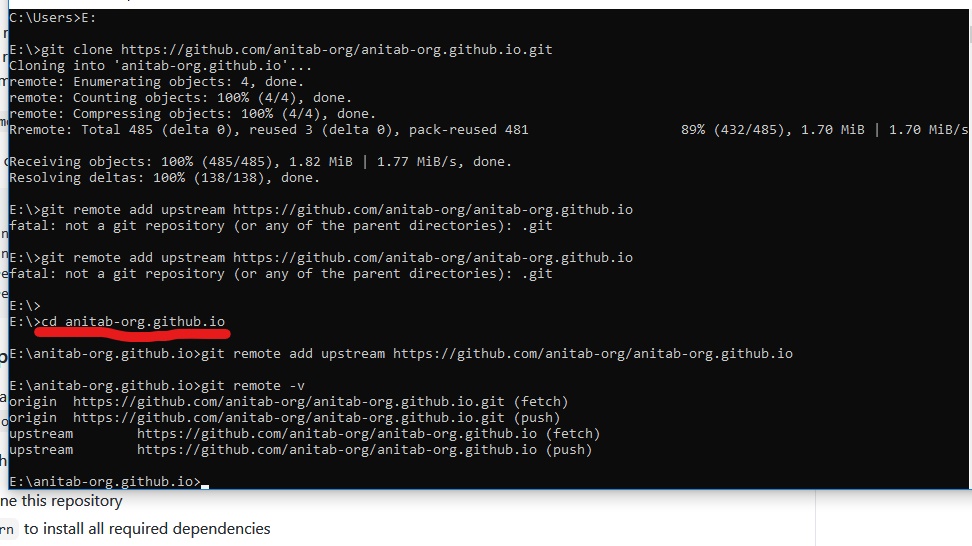

### Description

As a developer,

While setting up the remote upstream, documentation was unclear.

The README.md file describes the following.

"When a repository is cloned, it has a default remote named origin that points to your fork on GitHub, not the original repository it was forked from. To keep track of the original repository, you should add another remote named upstream. For this project, it can be done by running the following command -

git remote add upstream https://github.com/anitab-org/anitab-org.github.io."

### Expected Result

"When a repository is cloned, it has a default remote named origin that points to your fork on GitHub, not the original repository it was forked from. To keep track of the original repository, you should add another remote named upstream. For this project, it can be done by running the following command -

**cd anitab-org.github.io**

git remote add upstream https://github.com/anitab-org/anitab-org.github.io"

### Mocks

|

1.0

|

Improvement Of README.md File. - ### Description

As a developer,

While setting up the remote upstream, documentation was unclear.

The README.md file describes the following.

"When a repository is cloned, it has a default remote named origin that points to your fork on GitHub, not the original repository it was forked from. To keep track of the original repository, you should add another remote named upstream. For this project, it can be done by running the following command -

git remote add upstream https://github.com/anitab-org/anitab-org.github.io."

### Expected Result

"When a repository is cloned, it has a default remote named origin that points to your fork on GitHub, not the original repository it was forked from. To keep track of the original repository, you should add another remote named upstream. For this project, it can be done by running the following command -

**cd anitab-org.github.io**

git remote add upstream https://github.com/anitab-org/anitab-org.github.io"

### Mocks

|

non_process

|

improvement of readme md file description as a developer while setting up the remote upstream documentation was unclear the readme md file describes the following when a repository is cloned it has a default remote named origin that points to your fork on github not the original repository it was forked from to keep track of the original repository you should add another remote named upstream for this project it can be done by running the following command git remote add upstream expected result when a repository is cloned it has a default remote named origin that points to your fork on github not the original repository it was forked from to keep track of the original repository you should add another remote named upstream for this project it can be done by running the following command cd anitab org github io git remote add upstream mocks

| 0

|

13,376

| 15,837,949,036

|

IssuesEvent

|

2021-04-06 21:35:06

|

googleapis/python-pubsub

|

https://api.github.com/repos/googleapis/python-pubsub

|

closed

|

Unit tests must not use/expect credentials from the environment

|

api: pubsub type: process

|

```bash

$ env | grep GOOGLE && echo YES || echo NO

NO

$ git log -1

commit 469ebaa3c449c881089dfc657da5902c1d031803 (HEAD -> master, origin/master, origin/HEAD)

Author: Peter Lamut <plamut@users.noreply.github.com>

Date: Fri Apr 2 09:26:10 2021 +0200

chore: regenerate GAPIC layer with latest changes (#345)

$ nox -e unit-3.8

nox > Running session unit-3.8

nox > Creating virtual environment (virtualenv) using python3.8 in .nox/unit-3-8

nox > pip install asyncmock pytest-asyncio

nox > pip install mock pytest pytest-cov

nox > pip install -e .

nox > py.test --quiet --junitxml=unit_3.8_sponge_log.xml --cov=google/cloud --cov=tests/unit --cov-append --cov-config=.coveragerc --cov-report= --cov-fail-under=0 tests/unit

........................................................................ [ 8%]

........................................................................ [ 17%]

........................................................................ [ 26%]

........................................................................ [ 34%]

........................................................................ [ 43%]

........................................................................ [ 52%]

........................................................................ [ 61%]

........................................................................ [ 69%]

........................................................................ [ 78%]

........................................................................ [ 87%]

........................................................................ [ 96%]

............................... [100%]

=============================== warnings summary ===============================

...

tests/unit/pubsub_v1/publisher/test_publisher_client.py: 4 warnings

tests/unit/pubsub_v1/subscriber/test_subscriber_client.py: 9 warnings

/home/tseaver/projects/agendaless/Google/src/python-pubsub/.nox/unit-3-8/lib/python3.8/site-packages/google/auth/_default.py:70: UserWarning: Your application has authenticated using end user credentials from Google Cloud SDK without a quota project. You might receive a "quota exceeded" or "API not enabled" error. We recommend you rerun `gcloud auth application-default login` and make sure a quota project is added. Or you can use service accounts instead. For more information about service accounts, see https://cloud.google.com/docs/authentication/

warnings.warn(_CLOUD_SDK_CREDENTIALS_WARNING)

-- Docs: https://docs.pytest.org/en/stable/warnings.html

- generated xml file: /home/tseaver/projects/agendaless/Google/src/python-pubsub/unit_3.8_sponge_log.xml -

823 passed, 15 warnings in 13.94s

```

Unit tests should always pass explicit dummy credentials (e.g., see [`test_init`](https://github.com/googleapis/python-pubsub/blob/469ebaa3c449c881089dfc657da5902c1d031803/tests/unit/pubsub_v1/publisher/test_publisher_client.py#L54-L56)).

|

1.0

|

Unit tests must not use/expect credentials from the environment - ```bash

$ env | grep GOOGLE && echo YES || echo NO

NO

$ git log -1

commit 469ebaa3c449c881089dfc657da5902c1d031803 (HEAD -> master, origin/master, origin/HEAD)

Author: Peter Lamut <plamut@users.noreply.github.com>

Date: Fri Apr 2 09:26:10 2021 +0200

chore: regenerate GAPIC layer with latest changes (#345)

$ nox -e unit-3.8

nox > Running session unit-3.8

nox > Creating virtual environment (virtualenv) using python3.8 in .nox/unit-3-8

nox > pip install asyncmock pytest-asyncio

nox > pip install mock pytest pytest-cov

nox > pip install -e .

nox > py.test --quiet --junitxml=unit_3.8_sponge_log.xml --cov=google/cloud --cov=tests/unit --cov-append --cov-config=.coveragerc --cov-report= --cov-fail-under=0 tests/unit

........................................................................ [ 8%]

........................................................................ [ 17%]

........................................................................ [ 26%]

........................................................................ [ 34%]

........................................................................ [ 43%]

........................................................................ [ 52%]

........................................................................ [ 61%]

........................................................................ [ 69%]

........................................................................ [ 78%]

........................................................................ [ 87%]

........................................................................ [ 96%]

............................... [100%]

=============================== warnings summary ===============================

...

tests/unit/pubsub_v1/publisher/test_publisher_client.py: 4 warnings

tests/unit/pubsub_v1/subscriber/test_subscriber_client.py: 9 warnings

/home/tseaver/projects/agendaless/Google/src/python-pubsub/.nox/unit-3-8/lib/python3.8/site-packages/google/auth/_default.py:70: UserWarning: Your application has authenticated using end user credentials from Google Cloud SDK without a quota project. You might receive a "quota exceeded" or "API not enabled" error. We recommend you rerun `gcloud auth application-default login` and make sure a quota project is added. Or you can use service accounts instead. For more information about service accounts, see https://cloud.google.com/docs/authentication/

warnings.warn(_CLOUD_SDK_CREDENTIALS_WARNING)

-- Docs: https://docs.pytest.org/en/stable/warnings.html

- generated xml file: /home/tseaver/projects/agendaless/Google/src/python-pubsub/unit_3.8_sponge_log.xml -

823 passed, 15 warnings in 13.94s

```

Unit tests should always pass explicit dummy credentials (e.g., see [`test_init`](https://github.com/googleapis/python-pubsub/blob/469ebaa3c449c881089dfc657da5902c1d031803/tests/unit/pubsub_v1/publisher/test_publisher_client.py#L54-L56)).

|

process

|

unit tests must not use expect credentials from the environment bash env grep google echo yes echo no no git log commit head master origin master origin head author peter lamut date fri apr chore regenerate gapic layer with latest changes nox e unit nox running session unit nox creating virtual environment virtualenv using in nox unit nox pip install asyncmock pytest asyncio nox pip install mock pytest pytest cov nox pip install e nox py test quiet junitxml unit sponge log xml cov google cloud cov tests unit cov append cov config coveragerc cov report cov fail under tests unit warnings summary tests unit pubsub publisher test publisher client py warnings tests unit pubsub subscriber test subscriber client py warnings home tseaver projects agendaless google src python pubsub nox unit lib site packages google auth default py userwarning your application has authenticated using end user credentials from google cloud sdk without a quota project you might receive a quota exceeded or api not enabled error we recommend you rerun gcloud auth application default login and make sure a quota project is added or you can use service accounts instead for more information about service accounts see warnings warn cloud sdk credentials warning docs generated xml file home tseaver projects agendaless google src python pubsub unit sponge log xml passed warnings in unit tests should always pass explicit dummy credentials e g see

| 1

|

621,349

| 19,583,498,279

|

IssuesEvent

|

2022-01-05 01:49:08

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

www.donateblood.com.au - Design is broken

|

browser-firefox priority-normal severity-critical engine-gecko

|

<!-- @browser: Firefox 90.0 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.15; rv:90.0) Gecko/20100101 Firefox/90.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/81122 -->

**URL**: https://www.donateblood.com.au/

**Browser / Version**: Firefox 90.0

**Operating System**: Mac OS X 10.15

**Tested Another Browser**: Yes Safari

**Problem type**: Design is broken

**Description**: Items are overlapped

**Steps to Reproduce**:

I loaded the website by copying the URL https://www.donateblood.com.au/ into the URL bar in Firefox and hitting the ENTER key.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/7/2d88002f-203b-4de6-848c-dcdbaa651611.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

www.donateblood.com.au - Design is broken - <!-- @browser: Firefox 90.0 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.15; rv:90.0) Gecko/20100101 Firefox/90.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/81122 -->

**URL**: https://www.donateblood.com.au/

**Browser / Version**: Firefox 90.0

**Operating System**: Mac OS X 10.15

**Tested Another Browser**: Yes Safari

**Problem type**: Design is broken

**Description**: Items are overlapped

**Steps to Reproduce**:

I loaded the website by copying the URL https://www.donateblood.com.au/ into the URL bar in Firefox and hitting the ENTER key.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/7/2d88002f-203b-4de6-848c-dcdbaa651611.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_process

|

design is broken url browser version firefox operating system mac os x tested another browser yes safari problem type design is broken description items are overlapped steps to reproduce i loaded the website by copying the url into the url bar in firefox and hitting the enter key view the screenshot img alt screenshot src browser configuration none from with ❤️

| 0

|

9,718

| 12,716,601,222

|

IssuesEvent

|

2020-06-24 02:26:46

|

OUDcollective/twenty20times

|

https://api.github.com/repos/OUDcollective/twenty20times

|

opened

|

Understanding the GitHub flow · GitHub Guides

|

workflow-process

|

## Deploy

With GitHub, you can deploy from a branch for final testing in production before merging to master.

---

**Source URL**:

[https://guides.github.com/introduction/flow/](https://guides.github.com/introduction/flow/)

<table><tr><td><strong>Browser</strong></td><td>Chrome 84.0.4147.56</td></tr><tr><td><strong>OS</strong></td><td>Windows 10 64-bit</td></tr><tr><td><strong>Screen Size</strong></td><td>2560x1080</td></tr><tr><td><strong>Viewport Size</strong></td><td>2560x888</td></tr><tr><td><strong>Pixel Ratio</strong></td><td>@1x</td></tr><tr><td><strong>Zoom Level</strong></td><td>100%</td></tr></table>

|

1.0

|

Understanding the GitHub flow · GitHub Guides -

## Deploy

With GitHub, you can deploy from a branch for final testing in production before merging to master.

---

**Source URL**:

[https://guides.github.com/introduction/flow/](https://guides.github.com/introduction/flow/)

<table><tr><td><strong>Browser</strong></td><td>Chrome 84.0.4147.56</td></tr><tr><td><strong>OS</strong></td><td>Windows 10 64-bit</td></tr><tr><td><strong>Screen Size</strong></td><td>2560x1080</td></tr><tr><td><strong>Viewport Size</strong></td><td>2560x888</td></tr><tr><td><strong>Pixel Ratio</strong></td><td>@1x</td></tr><tr><td><strong>Zoom Level</strong></td><td>100%</td></tr></table>

|

process

|

understanding the github flow · github guides deploy with github you can deploy from a branch for final testing in production before merging to master source url browser chrome os windows bit screen size viewport size pixel ratio zoom level

| 1

|

11,660

| 14,525,321,069

|

IssuesEvent

|

2020-12-14 12:46:53

|

elastic/beats

|

https://api.github.com/repos/elastic/beats

|

closed

|

Add de-dot processor that converts dotted field names to nested objects

|

:Processors Filebeat Team:Services enhancement

|

**Background**

This is a requirement that came up in the https://github.com/elastic/ecs-logging initiative. In summary, we're trying to make logging simpler by logging ECS-compliant JSON to a file that Filebeat can just forward to Elasticsearch.

However, due to readability, performance, and other technical reasons, it's not always possible for the loggers to produce a correctly nested JSON structure. Some fields, like `log.logger` may be represented via a field name containing a dot (`"log.logger": "INFO"`) while others may be nested (`"foo": { "bar": "baz"}`).

More context here: https://github.com/elastic/ecs-logging-java/issues/51

**The problem**

When processing log data, for example with an Elasticsearch Ingest pipeline, we need all fields to be nested. Otherwise, the user doesn't know whether to access a field via `doc["foo.bar"]` or via `doc["foo"]["bar"]`. We don't want users to have knowledge about which fields are nested vs dotted as this is an implementation detail that can vary with different `ecs-logging` implementations and may even change for the same implementation.

Also, ECS defines that fields should be always nested.

**Describe the enhancement:**

We'd like to have a Filebeat processor that expands all dotted field names to nested objects. This would decouple the representation in the log file from how the documents are supposed to look once they hit the ingest node processing pipeline.

**Concerns**

- Performance: This might be a performance hit but I suspect other processors, like grok, to be much more processing intensive.

- If the JSON is already fully nested, we could short-circuit the processing

- We could require that once we're in a nested context, dots are no longer replaced with dotting.

- Allowed: `"foo.bar": {"baz": "qux"}`

- Disallowed: `"foo": {"bar.baz": "qux"}`

- Given this restricion, the de-dotting can be done very efficiently by sorting the keys alphabetically and processing the JSON similar to how a SAX-parser works (@urso's idea).

**Open Questions**

What to do when conflicts occur.

- Incompatible mappings: `"foo.bar": "baz"`, `"foo": "bar"` (foo is both an object and a string).

- Duplicate keys: `"foo.bar": "baz"`, `"foo.bar": "qux"`

|

1.0

|

Add de-dot processor that converts dotted field names to nested objects - **Background**

This is a requirement that came up in the https://github.com/elastic/ecs-logging initiative. In summary, we're trying to make logging simpler by logging ECS-compliant JSON to a file that Filebeat can just forward to Elasticsearch.

However, due to readability, performance, and other technical reasons, it's not always possible for the loggers to produce a correctly nested JSON structure. Some fields, like `log.logger` may be represented via a field name containing a dot (`"log.logger": "INFO"`) while others may be nested (`"foo": { "bar": "baz"}`).

More context here: https://github.com/elastic/ecs-logging-java/issues/51

**The problem**

When processing log data, for example with an Elasticsearch Ingest pipeline, we need all fields to be nested. Otherwise, the user doesn't know whether to access a field via `doc["foo.bar"]` or via `doc["foo"]["bar"]`. We don't want users to have knowledge about which fields are nested vs dotted as this is an implementation detail that can vary with different `ecs-logging` implementations and may even change for the same implementation.

Also, ECS defines that fields should be always nested.

**Describe the enhancement:**

We'd like to have a Filebeat processor that expands all dotted field names to nested objects. This would decouple the representation in the log file from how the documents are supposed to look once they hit the ingest node processing pipeline.

**Concerns**

- Performance: This might be a performance hit but I suspect other processors, like grok, to be much more processing intensive.

- If the JSON is already fully nested, we could short-circuit the processing

- We could require that once we're in a nested context, dots are no longer replaced with dotting.

- Allowed: `"foo.bar": {"baz": "qux"}`

- Disallowed: `"foo": {"bar.baz": "qux"}`

- Given this restricion, the de-dotting can be done very efficiently by sorting the keys alphabetically and processing the JSON similar to how a SAX-parser works (@urso's idea).

**Open Questions**

What to do when conflicts occur.

- Incompatible mappings: `"foo.bar": "baz"`, `"foo": "bar"` (foo is both an object and a string).

- Duplicate keys: `"foo.bar": "baz"`, `"foo.bar": "qux"`

|

process

|

add de dot processor that converts dotted field names to nested objects background this is a requirement that came up in the initiative in summary we re trying to make logging simpler by logging ecs compliant json to a file that filebeat can just forward to elasticsearch however due to readability performance and other technical reasons it s not always possible for the loggers to produce a correctly nested json structure some fields like log logger may be represented via a field name containing a dot log logger info while others may be nested foo bar baz more context here the problem when processing log data for example with an elasticsearch ingest pipeline we need all fields to be nested otherwise the user doesn t know whether to access a field via doc or via doc we don t want users to have knowledge about which fields are nested vs dotted as this is an implementation detail that can vary with different ecs logging implementations and may even change for the same implementation also ecs defines that fields should be always nested describe the enhancement we d like to have a filebeat processor that expands all dotted field names to nested objects this would decouple the representation in the log file from how the documents are supposed to look once they hit the ingest node processing pipeline concerns performance this might be a performance hit but i suspect other processors like grok to be much more processing intensive if the json is already fully nested we could short circuit the processing we could require that once we re in a nested context dots are no longer replaced with dotting allowed foo bar baz qux disallowed foo bar baz qux given this restricion the de dotting can be done very efficiently by sorting the keys alphabetically and processing the json similar to how a sax parser works urso s idea open questions what to do when conflicts occur incompatible mappings foo bar baz foo bar foo is both an object and a string duplicate keys foo bar baz foo bar qux

| 1

|

10,999

| 13,788,690,448

|

IssuesEvent

|

2020-10-09 07:40:11

|

DevExpress/testcafe-hammerhead

|

https://api.github.com/repos/DevExpress/testcafe-hammerhead

|

closed

|

Uncaught TypeError: Illegal invocation

|

SYSTEM: iframe processing TYPE: bug support center

|

The page is not loaded, there is the following error in DevTools console:

```

Uncaught TypeError: Illegal invocation

at Window.addEventListener (hammerhead.js:7)

at <anonymous>:2355:89162

at Object.<anonymous> (<anonymous>:2355:89829)

at Object.jQuery (<anonymous>:2355:89881)

at n (<anonymous>:15:228)

at Object.<anonymous> (<anonymous>:2355:87883)

at Object.jQuery (<anonymous>:2355:88125)

at n (<anonymous>:15:228)

at Object.jQuery (<anonymous>:2355:87042)

at n (<anonymous>:15:228)

```

Please see the [T915177](https://supportcenter.devexpress.com/internal/ticket/details/T915177) private ticket for details.

testcafe-hammerhead version: 17.1.11

|

1.0

|

Uncaught TypeError: Illegal invocation - The page is not loaded, there is the following error in DevTools console:

```

Uncaught TypeError: Illegal invocation

at Window.addEventListener (hammerhead.js:7)

at <anonymous>:2355:89162

at Object.<anonymous> (<anonymous>:2355:89829)

at Object.jQuery (<anonymous>:2355:89881)

at n (<anonymous>:15:228)

at Object.<anonymous> (<anonymous>:2355:87883)

at Object.jQuery (<anonymous>:2355:88125)

at n (<anonymous>:15:228)

at Object.jQuery (<anonymous>:2355:87042)

at n (<anonymous>:15:228)

```

Please see the [T915177](https://supportcenter.devexpress.com/internal/ticket/details/T915177) private ticket for details.

testcafe-hammerhead version: 17.1.11

|

process

|

uncaught typeerror illegal invocation the page is not loaded there is the following error in devtools console uncaught typeerror illegal invocation at window addeventlistener hammerhead js at at object at object jquery at n at object at object jquery at n at object jquery at n please see the private ticket for details testcafe hammerhead version

| 1

|

214,966

| 24,126,376,888

|

IssuesEvent

|

2022-09-21 01:04:29

|

dmartinez777/Tracking

|

https://api.github.com/repos/dmartinez777/Tracking

|

closed

|

WS-2021-0013 (Medium) detected in laravel/framework-v5.8.35 - autoclosed

|

security vulnerability

|

## WS-2021-0013 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>laravel/framework-v5.8.35</b></p></summary>

<p>The Laravel Framework.</p>

<p>Library home page: <a href="https://api.github.com/repos/laravel/framework/zipball/5a9e4d241a8b815e16c9d2151e908992c38db197">https://api.github.com/repos/laravel/framework/zipball/5a9e4d241a8b815e16c9d2151e908992c38db197</a></p>

<p>

Dependency Hierarchy:

- :x: **laravel/framework-v5.8.35** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Laravel is a web application framework. Versions of Laravel before 6.20.14, 7.30.4 and 8.24.0 contain a query binding exploitation.

If a request is crafted where a field that is normally a non-array value is an array, and that input is not validated or cast to its expected type before being passed to the query builder, an unexpected number of query bindings can be added to the query. In some situations, this will simply lead to no results being returned by the query builder; however, it is possible certain queries could be affected in a way that causes the query to return unexpected results.

<p>Publish Date: 2021-02-02

<p>URL: <a href=https://github.com/laravel/framework/commit/2d9b970257bca7a176be897ec18dd5f6ffc5497f>WS-2021-0013</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-x7p5-p2c9-phvg">https://github.com/advisories/GHSA-x7p5-p2c9-phvg</a></p>

<p>Release Date: 2021-02-02</p>

<p>Fix Resolution: laravel/framework - 6.20.14, 7.30.4, 8.24.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

WS-2021-0013 (Medium) detected in laravel/framework-v5.8.35 - autoclosed - ## WS-2021-0013 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>laravel/framework-v5.8.35</b></p></summary>

<p>The Laravel Framework.</p>

<p>Library home page: <a href="https://api.github.com/repos/laravel/framework/zipball/5a9e4d241a8b815e16c9d2151e908992c38db197">https://api.github.com/repos/laravel/framework/zipball/5a9e4d241a8b815e16c9d2151e908992c38db197</a></p>

<p>

Dependency Hierarchy:

- :x: **laravel/framework-v5.8.35** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Laravel is a web application framework. Versions of Laravel before 6.20.14, 7.30.4 and 8.24.0 contain a query binding exploitation.

If a request is crafted where a field that is normally a non-array value is an array, and that input is not validated or cast to its expected type before being passed to the query builder, an unexpected number of query bindings can be added to the query. In some situations, this will simply lead to no results being returned by the query builder; however, it is possible certain queries could be affected in a way that causes the query to return unexpected results.

<p>Publish Date: 2021-02-02

<p>URL: <a href=https://github.com/laravel/framework/commit/2d9b970257bca7a176be897ec18dd5f6ffc5497f>WS-2021-0013</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-x7p5-p2c9-phvg">https://github.com/advisories/GHSA-x7p5-p2c9-phvg</a></p>

<p>Release Date: 2021-02-02</p>

<p>Fix Resolution: laravel/framework - 6.20.14, 7.30.4, 8.24.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

ws medium detected in laravel framework autoclosed ws medium severity vulnerability vulnerable library laravel framework the laravel framework library home page a href dependency hierarchy x laravel framework vulnerable library vulnerability details laravel is a web application framework versions of laravel before and contain a query binding exploitation if a request is crafted where a field that is normally a non array value is an array and that input is not validated or cast to its expected type before being passed to the query builder an unexpected number of query bindings can be added to the query in some situations this will simply lead to no results being returned by the query builder however it is possible certain queries could be affected in a way that causes the query to return unexpected results publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required low user interaction required scope changed impact metrics confidentiality impact high integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution laravel framework step up your open source security game with mend

| 0

|

19,566

| 25,887,826,902

|

IssuesEvent

|

2022-12-14 15:43:29

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

DISABLED test_cuda_simple (__main__.TestMultiprocessing)

|

module: multiprocessing module: cuda triaged module: flaky-tests skipped

|

Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_cuda_simple&suite=TestMultiprocessing) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/8793519436).

Over the past 3 hours, it has been determined flaky in 1 workflow(s) with 1 failures and 1 successes.

**Debugging instructions (after clicking on the recent samples link):**

DO NOT BE ALARMED IF THE CI IS GREEN. We now shield flaky tests from developers so CI will thus be green but it will be harder to parse the logs.

To find relevant log snippets:

1. Click on the workflow logs linked above

2. Click on the Test step of the job so that it is expanded. Otherwise, the grepping will not work.

3. Grep for `test_cuda_simple`

4. There should be several instances run (as flaky tests are rerun in CI) from which you can study the logs.

cc @VitalyFedyunin @ngimel

|

1.0

|

DISABLED test_cuda_simple (__main__.TestMultiprocessing) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_cuda_simple&suite=TestMultiprocessing) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/8793519436).

Over the past 3 hours, it has been determined flaky in 1 workflow(s) with 1 failures and 1 successes.

**Debugging instructions (after clicking on the recent samples link):**

DO NOT BE ALARMED IF THE CI IS GREEN. We now shield flaky tests from developers so CI will thus be green but it will be harder to parse the logs.

To find relevant log snippets:

1. Click on the workflow logs linked above

2. Click on the Test step of the job so that it is expanded. Otherwise, the grepping will not work.

3. Grep for `test_cuda_simple`

4. There should be several instances run (as flaky tests are rerun in CI) from which you can study the logs.

cc @VitalyFedyunin @ngimel

|

process

|

disabled test cuda simple main testmultiprocessing platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes debugging instructions after clicking on the recent samples link do not be alarmed if the ci is green we now shield flaky tests from developers so ci will thus be green but it will be harder to parse the logs to find relevant log snippets click on the workflow logs linked above click on the test step of the job so that it is expanded otherwise the grepping will not work grep for test cuda simple there should be several instances run as flaky tests are rerun in ci from which you can study the logs cc vitalyfedyunin ngimel

| 1

|

60,140

| 3,120,782,434

|

IssuesEvent

|

2015-09-05 01:59:04

|

framingeinstein/issues-test

|

https://api.github.com/repos/framingeinstein/issues-test

|

opened

|

SRP-17: Create Custom Admin Test Functions

|

priority:normal resolution:in-progress type:enhancement

|

Hi Meghan,

It looks like we can create some custom code to setup an admin-only payment method that will allow you to create test orders to send test emails. This would take up to an hour of billable work. If you want us to proceed, just let me know, and I'll get it in the que (but not for 1.1, at least as of now).

Let me know if you have any questions,

Thanks,

Abe

|

1.0

|

SRP-17: Create Custom Admin Test Functions - Hi Meghan,

It looks like we can create some custom code to setup an admin-only payment method that will allow you to create test orders to send test emails. This would take up to an hour of billable work. If you want us to proceed, just let me know, and I'll get it in the que (but not for 1.1, at least as of now).

Let me know if you have any questions,

Thanks,

Abe

|

non_process

|

srp create custom admin test functions hi meghan it looks like we can create some custom code to setup an admin only payment method that will allow you to create test orders to send test emails this would take up to an hour of billable work if you want us to proceed just let me know and i ll get it in the que but not for at least as of now let me know if you have any questions thanks abe

| 0

|

89,138

| 3,790,062,917

|

IssuesEvent

|

2016-03-21 20:08:05

|

ReactiveX/rxjs

|

https://api.github.com/repos/ReactiveX/rxjs

|

opened

|

Mono-repo, many packages

|

priority: critical

|

We want to convert the repository into a single repository from which we publish many packages. This means a reorganization of the repository to something more suitable.

Any and all ideas here are welcome. Especially those that come from prior art.

|

1.0

|

Mono-repo, many packages - We want to convert the repository into a single repository from which we publish many packages. This means a reorganization of the repository to something more suitable.

Any and all ideas here are welcome. Especially those that come from prior art.

|

non_process

|

mono repo many packages we want to convert the repository into a single repository from which we publish many packages this means a reorganization of the repository to something more suitable any and all ideas here are welcome especially those that come from prior art

| 0

|

445,094

| 12,826,084,727

|

IssuesEvent

|

2020-07-06 15:57:43

|

eclipse/dirigible

|

https://api.github.com/repos/eclipse/dirigible

|

closed

|

[EDM] Projection Entity type to be introduced

|

component-ide efforts-low priority-medium templates usability web-ide

|

An Entity which belongs to an external model (file) and it is used only as a projection.

No editing of any attribute or property is allowed in this case.

No generation of any output artefact is expected for this type.

|

1.0

|

[EDM] Projection Entity type to be introduced - An Entity which belongs to an external model (file) and it is used only as a projection.

No editing of any attribute or property is allowed in this case.

No generation of any output artefact is expected for this type.

|

non_process

|

projection entity type to be introduced an entity which belongs to an external model file and it is used only as a projection no editing of any attribute or property is allowed in this case no generation of any output artefact is expected for this type

| 0

|

107,278

| 23,382,330,095

|

IssuesEvent

|

2022-08-11 10:38:58

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

Test failure JIT/HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.sh

|

arch-arm64 os-mac-os-x GCStress area-CodeGen-coreclr blocking-clean-ci-optional

|

Run: [runtime-coreclr gcstress-extra 20220724.1](https://dev.azure.com/dnceng/public/_build/results?buildId=1900569&view=ms.vss-test-web.build-test-results-tab&runId=49457008&paneView=debug&resultId=108429)

Failed test:

```

coreclr OSX arm64 Checked gcstress0xc_zapdisable_heapverify1 @ OSX.1200.ARM64.Open

- JIT/HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.sh

```

**Error message:**

```

[createdump] Invalid process id: task_for_pid(4529) FAILED (os/kern) failure (5)

[createdump] This failure may be because createdump or the application is not properly signed and entitled.

[createdump] Failure took 0ms

/private/tmp/helix/working/A9B009AA/w/B9F709BA/e/JIT/HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.sh: line 373: 4529 Segmentation fault: 11 (core dumped) $LAUNCHER $ExePath "${CLRTestExecutionArguments[@]}"

Return code: 1

Raw output file: /tmp/helix/working/A9B009AA/w/B9F709BA/uploads/Reports/JIT.HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.output.txt

Raw output:

BEGIN EXECUTION

/tmp/helix/working/A9B009AA/p/corerun -p System.Reflection.Metadata.MetadataUpdater.IsSupported=false AdvSimd.Arm64_Part3_r.dll ''

Supported ISAs:

AdvSimd: True

Aes: True

ArmBase: True

Crc32: True

Dp: True

Rdm: True

Sha1: True

Sha256: True

Beginning test case MaxNumberPairwise.Vector128.Single at 7/24/2022 4:35:16 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScenario_Load

Beginning scenario: RunReflectionScenario_UnsafeRead

Beginning scenario: RunReflectionScenario_Load

Beginning scenario: RunClsVarScenario

Beginning scenario: RunClsVarScenario_Load

Beginning scenario: RunLclVarScenario_UnsafeRead

Beginning scenario: RunLclVarScenario_Load

Beginning scenario: RunClassLclFldScenario

Beginning scenario: RunClassLclFldScenario_Load

Beginning scenario: RunClassFldScenario

Beginning scenario: RunClassFldScenario_Load

Beginning scenario: RunStructLclFldScenario

Beginning scenario: RunStructLclFldScenario_Load

Beginning scenario: RunStructFldScenario

Beginning scenario: RunStructFldScenario_Load

Ending test case at 7/24/2022 4:35:26 PM

Beginning test case MaxNumberPairwiseScalar.Vector64.Single at 7/24/2022 4:35:26 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScenario_Load

Beginning scenario: RunReflectionScenario_UnsafeRead

Beginning scenario: RunReflectionScenario_Load

Beginning scenario: RunClsVarScenario

Beginning scenario: RunClsVarScenario_Load

Beginning scenario: RunLclVarScenario_UnsafeRead

Beginning scenario: RunLclVarScenario_Load

Beginning scenario: RunClassLclFldScenario

Beginning scenario: RunClassLclFldScenario_Load

Beginning scenario: RunClassFldScenario

Beginning scenario: RunClassFldScenario_Load

Beginning scenario: RunStructLclFldScenario

Beginning scenario: RunStructLclFldScenario_Load

Beginning scenario: RunStructFldScenario

Beginning scenario: RunStructFldScenario_Load

Ending test case at 7/24/2022 4:35:28 PM

Beginning test case MaxNumberPairwiseScalar.Vector128.Double at 7/24/2022 4:35:28 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScenario_Load

Beginning scenario: RunReflectionScenario_UnsafeRead

Beginning scenario: RunReflectionScenario_Load

Beginning scenario: RunClsVarScenario

Beginning scenario: RunClsVarScenario_Load

Beginning scenario: RunLclVarScenario_UnsafeRead

Beginning scenario: RunLclVarScenario_Load

Beginning scenario: RunClassLclFldScenario

Beginning scenario: RunClassLclFldScenario_Load

Beginning scenario: RunClassFldScenario

Beginning scenario: RunClassFldScenario_Load

Beginning scenario: RunStructLclFldScenario

Beginning scenario: RunStructLclFldScenario_Load

Beginning scenario: RunStructFldScenario

Beginning scenario: RunStructFldScenario_Load

Ending test case at 7/24/2022 4:35:30 PM

Beginning test case MaxPairwise.Vector128.Byte at 7/24/2022 4:35:30 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScen

Stack trace

at JIT_HardwareIntrinsics._Arm_AdvSimd_Arm64_AdvSimd_Arm64_Part3_r_AdvSimd_Arm64_Part3_r_._Arm_AdvSimd_Arm64_AdvSimd_Arm64_Part3_r_AdvSimd_Arm64_Part3_r_sh()

at System.RuntimeMethodHandle.InvokeMethod(Object target, Void** arguments, Signature sig, Boolean isConstructor)

at System.Reflection.MethodInvoker.Invoke(Object obj, IntPtr* args, BindingFlags invokeAttr)

```

|

1.0

|

Test failure JIT/HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.sh - Run: [runtime-coreclr gcstress-extra 20220724.1](https://dev.azure.com/dnceng/public/_build/results?buildId=1900569&view=ms.vss-test-web.build-test-results-tab&runId=49457008&paneView=debug&resultId=108429)

Failed test:

```

coreclr OSX arm64 Checked gcstress0xc_zapdisable_heapverify1 @ OSX.1200.ARM64.Open

- JIT/HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.sh

```

**Error message:**

```

[createdump] Invalid process id: task_for_pid(4529) FAILED (os/kern) failure (5)

[createdump] This failure may be because createdump or the application is not properly signed and entitled.

[createdump] Failure took 0ms

/private/tmp/helix/working/A9B009AA/w/B9F709BA/e/JIT/HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.sh: line 373: 4529 Segmentation fault: 11 (core dumped) $LAUNCHER $ExePath "${CLRTestExecutionArguments[@]}"

Return code: 1

Raw output file: /tmp/helix/working/A9B009AA/w/B9F709BA/uploads/Reports/JIT.HardwareIntrinsics/Arm/AdvSimd.Arm64/AdvSimd.Arm64_Part3_r/AdvSimd.Arm64_Part3_r.output.txt

Raw output:

BEGIN EXECUTION

/tmp/helix/working/A9B009AA/p/corerun -p System.Reflection.Metadata.MetadataUpdater.IsSupported=false AdvSimd.Arm64_Part3_r.dll ''

Supported ISAs:

AdvSimd: True

Aes: True

ArmBase: True

Crc32: True

Dp: True

Rdm: True

Sha1: True

Sha256: True

Beginning test case MaxNumberPairwise.Vector128.Single at 7/24/2022 4:35:16 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScenario_Load

Beginning scenario: RunReflectionScenario_UnsafeRead

Beginning scenario: RunReflectionScenario_Load

Beginning scenario: RunClsVarScenario

Beginning scenario: RunClsVarScenario_Load

Beginning scenario: RunLclVarScenario_UnsafeRead

Beginning scenario: RunLclVarScenario_Load

Beginning scenario: RunClassLclFldScenario

Beginning scenario: RunClassLclFldScenario_Load

Beginning scenario: RunClassFldScenario

Beginning scenario: RunClassFldScenario_Load

Beginning scenario: RunStructLclFldScenario

Beginning scenario: RunStructLclFldScenario_Load

Beginning scenario: RunStructFldScenario

Beginning scenario: RunStructFldScenario_Load

Ending test case at 7/24/2022 4:35:26 PM

Beginning test case MaxNumberPairwiseScalar.Vector64.Single at 7/24/2022 4:35:26 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScenario_Load

Beginning scenario: RunReflectionScenario_UnsafeRead

Beginning scenario: RunReflectionScenario_Load

Beginning scenario: RunClsVarScenario

Beginning scenario: RunClsVarScenario_Load

Beginning scenario: RunLclVarScenario_UnsafeRead

Beginning scenario: RunLclVarScenario_Load

Beginning scenario: RunClassLclFldScenario

Beginning scenario: RunClassLclFldScenario_Load

Beginning scenario: RunClassFldScenario

Beginning scenario: RunClassFldScenario_Load

Beginning scenario: RunStructLclFldScenario

Beginning scenario: RunStructLclFldScenario_Load

Beginning scenario: RunStructFldScenario

Beginning scenario: RunStructFldScenario_Load

Ending test case at 7/24/2022 4:35:28 PM

Beginning test case MaxNumberPairwiseScalar.Vector128.Double at 7/24/2022 4:35:28 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScenario_Load

Beginning scenario: RunReflectionScenario_UnsafeRead

Beginning scenario: RunReflectionScenario_Load

Beginning scenario: RunClsVarScenario

Beginning scenario: RunClsVarScenario_Load

Beginning scenario: RunLclVarScenario_UnsafeRead

Beginning scenario: RunLclVarScenario_Load

Beginning scenario: RunClassLclFldScenario

Beginning scenario: RunClassLclFldScenario_Load

Beginning scenario: RunClassFldScenario

Beginning scenario: RunClassFldScenario_Load

Beginning scenario: RunStructLclFldScenario

Beginning scenario: RunStructLclFldScenario_Load

Beginning scenario: RunStructFldScenario

Beginning scenario: RunStructFldScenario_Load

Ending test case at 7/24/2022 4:35:30 PM

Beginning test case MaxPairwise.Vector128.Byte at 7/24/2022 4:35:30 PM

Random seed: 20010415; set environment variable CORECLR_SEED to this value to repro

Beginning scenario: RunBasicScenario_UnsafeRead

Beginning scenario: RunBasicScen

Stack trace

at JIT_HardwareIntrinsics._Arm_AdvSimd_Arm64_AdvSimd_Arm64_Part3_r_AdvSimd_Arm64_Part3_r_._Arm_AdvSimd_Arm64_AdvSimd_Arm64_Part3_r_AdvSimd_Arm64_Part3_r_sh()

at System.RuntimeMethodHandle.InvokeMethod(Object target, Void** arguments, Signature sig, Boolean isConstructor)

at System.Reflection.MethodInvoker.Invoke(Object obj, IntPtr* args, BindingFlags invokeAttr)

```

|

non_process

|

test failure jit hardwareintrinsics arm advsimd advsimd r advsimd r sh run failed test coreclr osx checked zapdisable osx open jit hardwareintrinsics arm advsimd advsimd r advsimd r sh error message invalid process id task for pid failed os kern failure this failure may be because createdump or the application is not properly signed and entitled failure took private tmp helix working w e jit hardwareintrinsics arm advsimd advsimd r advsimd r sh line segmentation fault core dumped launcher exepath clrtestexecutionarguments return code raw output file tmp helix working w uploads reports jit hardwareintrinsics arm advsimd advsimd r advsimd r output txt raw output begin execution tmp helix working p corerun p system reflection metadata metadataupdater issupported false advsimd r dll supported isas advsimd true aes true armbase true true dp true rdm true true true beginning test case maxnumberpairwise single at pm random seed set environment variable coreclr seed to this value to repro beginning scenario runbasicscenario unsaferead beginning scenario runbasicscenario load beginning scenario runreflectionscenario unsaferead beginning scenario runreflectionscenario load beginning scenario runclsvarscenario beginning scenario runclsvarscenario load beginning scenario runlclvarscenario unsaferead beginning scenario runlclvarscenario load beginning scenario runclasslclfldscenario beginning scenario runclasslclfldscenario load beginning scenario runclassfldscenario beginning scenario runclassfldscenario load beginning scenario runstructlclfldscenario beginning scenario runstructlclfldscenario load beginning scenario runstructfldscenario beginning scenario runstructfldscenario load ending test case at pm beginning test case maxnumberpairwisescalar single at pm random seed set environment variable coreclr seed to this value to repro beginning scenario runbasicscenario unsaferead beginning scenario runbasicscenario load beginning scenario runreflectionscenario unsaferead beginning scenario runreflectionscenario load beginning scenario runclsvarscenario beginning scenario runclsvarscenario load beginning scenario runlclvarscenario unsaferead beginning scenario runlclvarscenario load beginning scenario runclasslclfldscenario beginning scenario runclasslclfldscenario load beginning scenario runclassfldscenario beginning scenario runclassfldscenario load beginning scenario runstructlclfldscenario beginning scenario runstructlclfldscenario load beginning scenario runstructfldscenario beginning scenario runstructfldscenario load ending test case at pm beginning test case maxnumberpairwisescalar double at pm random seed set environment variable coreclr seed to this value to repro beginning scenario runbasicscenario unsaferead beginning scenario runbasicscenario load beginning scenario runreflectionscenario unsaferead beginning scenario runreflectionscenario load beginning scenario runclsvarscenario beginning scenario runclsvarscenario load beginning scenario runlclvarscenario unsaferead beginning scenario runlclvarscenario load beginning scenario runclasslclfldscenario beginning scenario runclasslclfldscenario load beginning scenario runclassfldscenario beginning scenario runclassfldscenario load beginning scenario runstructlclfldscenario beginning scenario runstructlclfldscenario load beginning scenario runstructfldscenario beginning scenario runstructfldscenario load ending test case at pm beginning test case maxpairwise byte at pm random seed set environment variable coreclr seed to this value to repro beginning scenario runbasicscenario unsaferead beginning scenario runbasicscen stack trace at jit hardwareintrinsics arm advsimd advsimd r advsimd r arm advsimd advsimd r advsimd r sh at system runtimemethodhandle invokemethod object target void arguments signature sig boolean isconstructor at system reflection methodinvoker invoke object obj intptr args bindingflags invokeattr

| 0

|

11,757

| 14,591,552,135

|

IssuesEvent

|

2020-12-19 13:34:16

|

symfony/symfony

|

https://api.github.com/repos/symfony/symfony

|

closed

|

symfony/process returns empty outputs on IIS

|

Bug Process Status: Needs Review

|

| Q | A

| ---------------- | -----

| Bug report? | yes

| Feature request? | no

| BC Break report? | no

| RFC? | no

| Symfony version | 3.3.11

Hello,

I'm using PHP 7.1 on IIS 7.5 and symfony/process always returns an empty output.

Here is a test case:

```php

<?php

use Symfony\Component\Process\Process;

require_once __DIR__.'/vendor/autoload.php';

//This returns an output

var_dump(shell_exec('dir'));

$process = new Process('dir');

$process->mustRun();

//These return empty strings

var_dump(

$process->getOutput(),

$process->getErrorOutput()

);

```

What's strange is that it works when calling PHP (same binary) from the commandline.

And of course the same code works fine on my Linux/Apache server.

I tested various other commands (`cd`, `Python.exe`) and I always get the same empty result.

`mustRun()` does throw an exception if the command does not exist:

```

PHP Fatal error: Uncaught Symfony\Component\Process\Exception\ProcessFailedException: The command "foobar" failed.

Exit Code: 1(General error)

Working directory: C:\inetpub\wwwroot

Output:

================

Error Output:

================

in C:\inetpub\wwwroot\vendor\symfony\process\Process.php:241

Stack trace:

#0 C:\inetpub\wwwroot\test.php(11): Symfony\Component\Process\Process->mustRun()

#1 {main}

thrown in C:\inetpub\wwwroot\vendor\symfony\process\Process.php on line 241

```

Edit: I also tried configuring IIS to use another PHP binary (installed with Chocolatey) and I get the same issue, so I guess it is linked to IIS itself.

|

1.0

|

symfony/process returns empty outputs on IIS - | Q | A

| ---------------- | -----

| Bug report? | yes

| Feature request? | no

| BC Break report? | no

| RFC? | no

| Symfony version | 3.3.11

Hello,

I'm using PHP 7.1 on IIS 7.5 and symfony/process always returns an empty output.

Here is a test case:

```php

<?php

use Symfony\Component\Process\Process;

require_once __DIR__.'/vendor/autoload.php';

//This returns an output

var_dump(shell_exec('dir'));

$process = new Process('dir');

$process->mustRun();

//These return empty strings

var_dump(

$process->getOutput(),

$process->getErrorOutput()

);

```

What's strange is that it works when calling PHP (same binary) from the commandline.

And of course the same code works fine on my Linux/Apache server.

I tested various other commands (`cd`, `Python.exe`) and I always get the same empty result.

`mustRun()` does throw an exception if the command does not exist:

```

PHP Fatal error: Uncaught Symfony\Component\Process\Exception\ProcessFailedException: The command "foobar" failed.

Exit Code: 1(General error)

Working directory: C:\inetpub\wwwroot

Output:

================

Error Output:

================

in C:\inetpub\wwwroot\vendor\symfony\process\Process.php:241

Stack trace:

#0 C:\inetpub\wwwroot\test.php(11): Symfony\Component\Process\Process->mustRun()

#1 {main}

thrown in C:\inetpub\wwwroot\vendor\symfony\process\Process.php on line 241

```

Edit: I also tried configuring IIS to use another PHP binary (installed with Chocolatey) and I get the same issue, so I guess it is linked to IIS itself.

|

process

|

symfony process returns empty outputs on iis q a bug report yes feature request no bc break report no rfc no symfony version hello i m using php on iis and symfony process always returns an empty output here is a test case php php use symfony component process process require once dir vendor autoload php this returns an output var dump shell exec dir process new process dir process mustrun these return empty strings var dump process getoutput process geterroroutput what s strange is that it works when calling php same binary from the commandline and of course the same code works fine on my linux apache server i tested various other commands cd python exe and i always get the same empty result mustrun does throw an exception if the command does not exist php fatal error uncaught symfony component process exception processfailedexception the command foobar failed exit code general error working directory c inetpub wwwroot output error output in c inetpub wwwroot vendor symfony process process php stack trace c inetpub wwwroot test php symfony component process process mustrun main thrown in c inetpub wwwroot vendor symfony process process php on line edit i also tried configuring iis to use another php binary installed with chocolatey and i get the same issue so i guess it is linked to iis itself

| 1

|

92,620

| 8,373,219,296

|

IssuesEvent

|

2018-10-05 09:39:34

|

andrewwood2/acebook-gazelle

|

https://api.github.com/repos/andrewwood2/acebook-gazelle

|

closed

|

B. Set up front end feature testing framework

|

in progress test

|

Set up feature testing for React front end. either Mocha, Zombie, etc.

|

1.0

|

B. Set up front end feature testing framework - Set up feature testing for React front end. either Mocha, Zombie, etc.

|

non_process

|

b set up front end feature testing framework set up feature testing for react front end either mocha zombie etc

| 0

|

41,197

| 10,331,326,860

|

IssuesEvent

|

2019-09-02 17:33:01

|

davidjamesca/ctypesgen

|

https://api.github.com/repos/davidjamesca/ctypesgen

|

closed

|

Tests failing to find libc.so.6 and libm.so.6 on 64bit Ubuntu (SVN r147)

|

Priority-Medium Type-Defect auto-migrated

|

```

What steps will reproduce the problem?

1. Checkout the code

2. Go into the test directory

3. Run "./testsuite.py"

What is the expected output? What do you see instead?

I expect to see all tests pass instead I see 11 errors all like this:

======================================================================

ERROR: test_bad_args_string_not_number (__main__.MathTest)

Based on math_functions.py

----------------------------------------------------------------------

Traceback (most recent call last):

File "./testsuite.py", line 252, in setUp

self.module, output = ctypesgentest.test(header_str, libraries=libraries, all_headers=True)

File "/home/jlisee/projects/ctypesgen-read-only/test/ctypesgentest.py", line 52, in test

module = __import__("temp")

File "/home/jlisee/projects/ctypesgen-read-only/test/temp.py", line 598, in <module>

_libs["libm.so.6"] = load_library("libm.so.6")

File "/home/jlisee/projects/ctypesgen-read-only/test/temp.py", line 367, in load_library

raise ImportError("%s not found." % libname)

ImportError: libm.so.6 not found

What version of the product are you using? On what operating system?

Ubuntu 12.04 64bit, SVN r147.

Please provide any additional information below.

I have attached a patch to fix the issue. There are still 3 tests failing with

"AttributeError: type object 'c_uint' has no attribute '_fields_'" in the

generated "temp.py" file.

```

Original issue reported on code.google.com by `jli...@gmail.com` on 28 Feb 2013 at 3:02

Attachments:

- [Ubuntu_64bit_fix.patch](https://storage.googleapis.com/google-code-attachments/ctypesgen/issue-39/comment-0/Ubuntu_64bit_fix.patch)

|

1.0

|

Tests failing to find libc.so.6 and libm.so.6 on 64bit Ubuntu (SVN r147) - ```

What steps will reproduce the problem?

1. Checkout the code

2. Go into the test directory

3. Run "./testsuite.py"

What is the expected output? What do you see instead?

I expect to see all tests pass instead I see 11 errors all like this:

======================================================================

ERROR: test_bad_args_string_not_number (__main__.MathTest)

Based on math_functions.py

----------------------------------------------------------------------

Traceback (most recent call last):

File "./testsuite.py", line 252, in setUp

self.module, output = ctypesgentest.test(header_str, libraries=libraries, all_headers=True)

File "/home/jlisee/projects/ctypesgen-read-only/test/ctypesgentest.py", line 52, in test

module = __import__("temp")

File "/home/jlisee/projects/ctypesgen-read-only/test/temp.py", line 598, in <module>

_libs["libm.so.6"] = load_library("libm.so.6")

File "/home/jlisee/projects/ctypesgen-read-only/test/temp.py", line 367, in load_library

raise ImportError("%s not found." % libname)

ImportError: libm.so.6 not found

What version of the product are you using? On what operating system?

Ubuntu 12.04 64bit, SVN r147.

Please provide any additional information below.

I have attached a patch to fix the issue. There are still 3 tests failing with

"AttributeError: type object 'c_uint' has no attribute '_fields_'" in the

generated "temp.py" file.

```

Original issue reported on code.google.com by `jli...@gmail.com` on 28 Feb 2013 at 3:02

Attachments:

- [Ubuntu_64bit_fix.patch](https://storage.googleapis.com/google-code-attachments/ctypesgen/issue-39/comment-0/Ubuntu_64bit_fix.patch)

|

non_process

|

tests failing to find libc so and libm so on ubuntu svn what steps will reproduce the problem checkout the code go into the test directory run testsuite py what is the expected output what do you see instead i expect to see all tests pass instead i see errors all like this error test bad args string not number main mathtest based on math functions py traceback most recent call last file testsuite py line in setup self module output ctypesgentest test header str libraries libraries all headers true file home jlisee projects ctypesgen read only test ctypesgentest py line in test module import temp file home jlisee projects ctypesgen read only test temp py line in libs load library libm so file home jlisee projects ctypesgen read only test temp py line in load library raise importerror s not found libname importerror libm so not found what version of the product are you using on what operating system ubuntu svn please provide any additional information below i have attached a patch to fix the issue there are still tests failing with attributeerror type object c uint has no attribute fields in the generated temp py file original issue reported on code google com by jli gmail com on feb at attachments

| 0

|

757,685

| 26,524,390,024

|

IssuesEvent

|

2023-01-19 07:18:54

|

pystardust/ani-cli

|

https://api.github.com/repos/pystardust/ani-cli

|

closed

|

Episodes not released yet!

|

type: bug priority 2: medium

|

Version: 3.4.0

OS: Debian 11

Shell: Bash 5.1.4

Anime: JoJo

I get an error "Episodes not released yet!" no matter the anime I choose

1. Run `ani-cli JoJo

2. Choose 1

3. Episodes not released yet!

|

1.0

|

Episodes not released yet! - Version: 3.4.0

OS: Debian 11

Shell: Bash 5.1.4

Anime: JoJo

I get an error "Episodes not released yet!" no matter the anime I choose

1. Run `ani-cli JoJo

2. Choose 1

3. Episodes not released yet!

|

non_process

|

episodes not released yet version os debian shell bash anime jojo i get an error episodes not released yet no matter the anime i choose run ani cli jojo choose episodes not released yet

| 0

|

276,637

| 20,993,384,598

|

IssuesEvent

|

2022-03-29 11:25:21

|

Sitecore/developer-portal

|

https://api.github.com/repos/Sitecore/developer-portal

|

closed

|

Create DevOps guide for Managed Cloud Containers

|

documentation

|

Need an article added to the Getting Started section (`/learn/getting-started`) area that can pull together all the documentation and steps a developer may need to get started with container GitOps for Managed Cloud Containers.

We also don't have a Managed Cloud product area on the site yet, so that will need to be added as well so that this guide can be found.

|

1.0

|

Create DevOps guide for Managed Cloud Containers - Need an article added to the Getting Started section (`/learn/getting-started`) area that can pull together all the documentation and steps a developer may need to get started with container GitOps for Managed Cloud Containers.

We also don't have a Managed Cloud product area on the site yet, so that will need to be added as well so that this guide can be found.

|

non_process

|

create devops guide for managed cloud containers need an article added to the getting started section learn getting started area that can pull together all the documentation and steps a developer may need to get started with container gitops for managed cloud containers we also don t have a managed cloud product area on the site yet so that will need to be added as well so that this guide can be found

| 0

|

10,920

| 13,697,018,917

|

IssuesEvent

|

2020-10-01 01:46:37

|

opendistro-for-elasticsearch/opendistro-build

|

https://api.github.com/repos/opendistro-for-elasticsearch/opendistro-build

|

closed

|

Release ODFE 1.10.1 based on ES 7.9.1

|

in process infra new release

|

ODFE 1.10.1 based on ES 7.9.1

**(Note: ODFE 1.10.0 is skipped right now as we prefer to release ODFE 1.10.1 for ES 7.9.1. This is to avoid memory leak in Lucene 8.6.0 and 8.6.1 (ES 7.9.1 has Lucene 8.6.2) https://github.com/elastic/elasticsearch/issues/61512)**

Release Engineering / Build Repo Key Changes:

* KNNLib will now use wildcard to resolve hardcoded version issues ([#359](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/359))

* Docker allows elasticsearch user to access logs under supervisord folder ([#271](https://github.com/opendistro-for-elasticsearch/performance-analyzer-rca/pull/271), [#146](https://github.com/opendistro-for-elasticsearch/performance-analyzer/pull/146), [#320](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/320))

* Implement Version Cuts for consistent distribution release builds ([#357](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/357))

* Add descriptions for several scripts with usage documentations ([#334](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/334))

* Update opendistro-build github repo issues link ([#382](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/382))

* Disable optimizations for KNNLib compilation in docker image creation ([#384](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/384))

* HELM allows customizing docker registry, thanks @tareqhs ([#358](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/358))

* HELM Kibana ingress path fix, thanks @Hokwang ([#340](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/340))

* Helm master nodes allows extraVolumeMounts when securityconfig disabled, thanks @aplhk ([#366](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/366))

* HELM Readme Update, thanks @dmpe ([#380](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/380) [#385](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/385))

* Kibana has new cookie settings for security kibana plugin 2.0 framework ([#397](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/397))

------

### ODFE 1.10.1 Released as of 2020/09/30

* Downloads [Here](https://opendistro.github.io/for-elasticsearch/downloads.html)

* [1.10.1 Release Notes](https://github.com/opendistro-for-elasticsearch/opendistro-build/blob/master/release-notes/opendistro-for-elasticsearch-release-notes-1.10.1.md)

* [1.10.1 Blog Post](https://opendistro.github.io/for-elasticsearch/blog/odfe-updates/2020/09/Open-Distro-for-Elasticsearch-1.10.1-is-released/)

------

ODFE 1.10.0 post for backup purposes: https://github.com/opendistro-for-elasticsearch/opendistro-build/issues/350

|

1.0

|

Release ODFE 1.10.1 based on ES 7.9.1 - ODFE 1.10.1 based on ES 7.9.1

**(Note: ODFE 1.10.0 is skipped right now as we prefer to release ODFE 1.10.1 for ES 7.9.1. This is to avoid memory leak in Lucene 8.6.0 and 8.6.1 (ES 7.9.1 has Lucene 8.6.2) https://github.com/elastic/elasticsearch/issues/61512)**

Release Engineering / Build Repo Key Changes:

* KNNLib will now use wildcard to resolve hardcoded version issues ([#359](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/359))

* Docker allows elasticsearch user to access logs under supervisord folder ([#271](https://github.com/opendistro-for-elasticsearch/performance-analyzer-rca/pull/271), [#146](https://github.com/opendistro-for-elasticsearch/performance-analyzer/pull/146), [#320](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/320))

* Implement Version Cuts for consistent distribution release builds ([#357](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/357))

* Add descriptions for several scripts with usage documentations ([#334](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/334))

* Update opendistro-build github repo issues link ([#382](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/382))

* Disable optimizations for KNNLib compilation in docker image creation ([#384](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/384))

* HELM allows customizing docker registry, thanks @tareqhs ([#358](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/358))

* HELM Kibana ingress path fix, thanks @Hokwang ([#340](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/340))

* Helm master nodes allows extraVolumeMounts when securityconfig disabled, thanks @aplhk ([#366](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/366))

* HELM Readme Update, thanks @dmpe ([#380](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/380) [#385](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/385))

* Kibana has new cookie settings for security kibana plugin 2.0 framework ([#397](https://github.com/opendistro-for-elasticsearch/opendistro-build/pull/397))

------

### ODFE 1.10.1 Released as of 2020/09/30

* Downloads [Here](https://opendistro.github.io/for-elasticsearch/downloads.html)

* [1.10.1 Release Notes](https://github.com/opendistro-for-elasticsearch/opendistro-build/blob/master/release-notes/opendistro-for-elasticsearch-release-notes-1.10.1.md)

* [1.10.1 Blog Post](https://opendistro.github.io/for-elasticsearch/blog/odfe-updates/2020/09/Open-Distro-for-Elasticsearch-1.10.1-is-released/)

------

ODFE 1.10.0 post for backup purposes: https://github.com/opendistro-for-elasticsearch/opendistro-build/issues/350

|

process

|

release odfe based on es odfe based on es note odfe is skipped right now as we prefer to release odfe for es this is to avoid memory leak in lucene and es has lucene release engineering build repo key changes knnlib will now use wildcard to resolve hardcoded version issues docker allows elasticsearch user to access logs under supervisord folder implement version cuts for consistent distribution release builds add descriptions for several scripts with usage documentations update opendistro build github repo issues link disable optimizations for knnlib compilation in docker image creation helm allows customizing docker registry thanks tareqhs helm kibana ingress path fix thanks hokwang helm master nodes allows extravolumemounts when securityconfig disabled thanks aplhk helm readme update thanks dmpe kibana has new cookie settings for security kibana plugin framework odfe released as of downloads odfe post for backup purposes

| 1

|

69,037

| 7,122,685,713

|

IssuesEvent

|

2018-01-19 12:49:36

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

opened

|

Flaky http2-settings-flood

|

CI / flaky test freebsd

|

Multiple timeouts on this test:

https://ci.nodejs.org/job/node-test-commit-freebsd/14756/nodes=freebsd10-64/console

https://ci.nodejs.org/job/node-test-commit-freebsd/14746/nodes=freebsd10-64/console

```

not ok 2035 sequential/test-http2-settings-flood

---

duration_ms: 120.105

severity: fail

stack: |-

timeout

```

|

1.0

|

Flaky http2-settings-flood - Multiple timeouts on this test:

https://ci.nodejs.org/job/node-test-commit-freebsd/14756/nodes=freebsd10-64/console

https://ci.nodejs.org/job/node-test-commit-freebsd/14746/nodes=freebsd10-64/console

```

not ok 2035 sequential/test-http2-settings-flood

---

duration_ms: 120.105

severity: fail

stack: |-

timeout

```

|

non_process

|

flaky settings flood multiple timeouts on this test not ok sequential test settings flood duration ms severity fail stack timeout

| 0

|

618,568

| 19,474,854,104

|

IssuesEvent

|

2021-12-24 10:07:40

|

MartinXPN/profound.academy

|

https://api.github.com/repos/MartinXPN/profound.academy

|

closed