Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

221

| 2,649,432,275

|

IssuesEvent

|

2015-03-14 22:21:53

|

eiskaltdcpp/eiskaltdcpp

|

https://api.github.com/repos/eiskaltdcpp/eiskaltdcpp

|

opened

|

gcc -Wall + Qt UI

|

imported Type-DevProcess

|

_From [egikpetrov](https://code.google.com/u/egikpetrov/) on March 04, 2012 02:18:49_

было бы неплохо пофиксить все варнинги для -Wall в qt ui

**Attachment:** [-Wall.list](http://code.google.com/p/eiskaltdc/issues/detail?id=1295)

_Original issue: http://code.google.com/p/eiskaltdc/issues/detail?id=1295_

|

1.0

|

gcc -Wall + Qt UI - _From [egikpetrov](https://code.google.com/u/egikpetrov/) on March 04, 2012 02:18:49_

было бы неплохо пофиксить все варнинги для -Wall в qt ui

**Attachment:** [-Wall.list](http://code.google.com/p/eiskaltdc/issues/detail?id=1295)

_Original issue: http://code.google.com/p/eiskaltdc/issues/detail?id=1295_

|

process

|

gcc wall qt ui from on march было бы неплохо пофиксить все варнинги для wall в qt ui attachment original issue

| 1

|

4,692

| 7,527,852,472

|

IssuesEvent

|

2018-04-13 18:33:24

|

w3c/distributed-tracing

|

https://api.github.com/repos/w3c/distributed-tracing

|

closed

|

What is the milestone of this spec?

|

process

|

I am aware there are definitely plenty details to discuss for this spec. But, I suggest we can do some works in implementation level. For do that, we should set a milestone, like 0.1 or 1.0, as you like. So the tracer/APM-agents can try to implement the spec, and find out what is next for everyone.

@adriancole @bogdandrutu @SergeyKanzhelev

|

1.0

|

What is the milestone of this spec? - I am aware there are definitely plenty details to discuss for this spec. But, I suggest we can do some works in implementation level. For do that, we should set a milestone, like 0.1 or 1.0, as you like. So the tracer/APM-agents can try to implement the spec, and find out what is next for everyone.

@adriancole @bogdandrutu @SergeyKanzhelev

|

process

|

what is the milestone of this spec i am aware there are definitely plenty details to discuss for this spec but i suggest we can do some works in implementation level for do that we should set a milestone like or as you like so the tracer apm agents can try to implement the spec and find out what is next for everyone adriancole bogdandrutu sergeykanzhelev

| 1

|

12,497

| 14,961,147,116

|

IssuesEvent

|

2021-01-27 07:15:01

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[iOS] [Audit Logs] "participantId" is displayed null for the events

|

Bug P2 Process: Fixed Process: Tested dev iOS

|

**Events:**

Module: **Consent Service(Participant Datastore):**

1. USER_ENROLLED_INTO_STUDY

2. READ_OPERATION_SUCCEEDED_FOR_SIGNED_CONSENT_DOCUMENT

3. SIGNED_CONSENT_DOCUMENT_SAVED

Module: **Enroll(Participant Datastore)**

4. STUDY_STATE_SAVED_OR_UPDATED_FOR_PARTICIPANT

5. READ_OPERATION_SUCCEEDED_FOR_STUDY_INFO

Module: **Response Datastore**

6. PARTICIPANT_ID_GENERATED

Sample snippet for event `PARTICIPANT_ID_GENERATED` event

```

{

"insertId": "1s3rqdog25k19og",

"jsonPayload": {

"occurred": 1608726951718,

"userIp": "35.222.67.4",

"sourceApplicationVersion": "1.0",

"source": "PARTICIPANT USER DATASTORE",

"studyId": null,

"siteId": null,

"appVersion": "1.0.146",

"mobilePlatform": "IOS",

"eventCode": "PARTICIPANT_ID_GENERATED",

"userAccessLevel": null,

"resourceServer": null,

"userId": "ad4b3ff0n3178t484bl8bdch9bd1ba58d9f8",

"correlationId": "EC2192BC-A5B3-4040-AD48-EC1530795682",

"participantId": null,

"destinationApplicationVersion": "1.0",

"studyVersion": null,

"platformVersion": "1.0",

"appId": "",

"destination": "RESPONSE DATASTORE",

"description": null

},

"resource": {

"type": "global",

"labels": {

"project_id": "mystudies-open-impl-track1-dev"

}

},

"timestamp": "2020-12-23T12:35:51.718Z",

"severity": "INFO",

"logName": "projects/mystudies-open-impl-track1-dev/logs/application-audit-log",

"receiveTimestamp": "2020-12-23T12:35:51.864217708Z"

}

```

|

2.0

|

[iOS] [Audit Logs] "participantId" is displayed null for the events - **Events:**

Module: **Consent Service(Participant Datastore):**

1. USER_ENROLLED_INTO_STUDY

2. READ_OPERATION_SUCCEEDED_FOR_SIGNED_CONSENT_DOCUMENT

3. SIGNED_CONSENT_DOCUMENT_SAVED

Module: **Enroll(Participant Datastore)**

4. STUDY_STATE_SAVED_OR_UPDATED_FOR_PARTICIPANT

5. READ_OPERATION_SUCCEEDED_FOR_STUDY_INFO

Module: **Response Datastore**

6. PARTICIPANT_ID_GENERATED

Sample snippet for event `PARTICIPANT_ID_GENERATED` event

```

{

"insertId": "1s3rqdog25k19og",

"jsonPayload": {

"occurred": 1608726951718,

"userIp": "35.222.67.4",

"sourceApplicationVersion": "1.0",

"source": "PARTICIPANT USER DATASTORE",

"studyId": null,

"siteId": null,

"appVersion": "1.0.146",

"mobilePlatform": "IOS",

"eventCode": "PARTICIPANT_ID_GENERATED",

"userAccessLevel": null,

"resourceServer": null,

"userId": "ad4b3ff0n3178t484bl8bdch9bd1ba58d9f8",

"correlationId": "EC2192BC-A5B3-4040-AD48-EC1530795682",

"participantId": null,

"destinationApplicationVersion": "1.0",

"studyVersion": null,

"platformVersion": "1.0",

"appId": "",

"destination": "RESPONSE DATASTORE",

"description": null

},

"resource": {

"type": "global",

"labels": {

"project_id": "mystudies-open-impl-track1-dev"

}

},

"timestamp": "2020-12-23T12:35:51.718Z",

"severity": "INFO",

"logName": "projects/mystudies-open-impl-track1-dev/logs/application-audit-log",

"receiveTimestamp": "2020-12-23T12:35:51.864217708Z"

}

```

|

process

|

participantid is displayed null for the events events module consent service participant datastore user enrolled into study read operation succeeded for signed consent document signed consent document saved module enroll participant datastore study state saved or updated for participant read operation succeeded for study info module response datastore participant id generated sample snippet for event participant id generated event insertid jsonpayload occurred userip sourceapplicationversion source participant user datastore studyid null siteid null appversion mobileplatform ios eventcode participant id generated useraccesslevel null resourceserver null userid correlationid participantid null destinationapplicationversion studyversion null platformversion appid destination response datastore description null resource type global labels project id mystudies open impl dev timestamp severity info logname projects mystudies open impl dev logs application audit log receivetimestamp

| 1

|

276,916

| 24,031,682,295

|

IssuesEvent

|

2022-09-15 15:29:16

|

runtimeverification/k

|

https://api.github.com/repos/runtimeverification/k

|

closed

|

Bisimulation

|

testing

|

The [bisimulation tests](https://github.com/kframework/k/tree/master/k-distribution/tests/regression-new/bisimulation) use features from `KREFLECTION` which will not be supported, but it should be simple to rewrite the test to use pattern matching.

|

1.0

|

Bisimulation - The [bisimulation tests](https://github.com/kframework/k/tree/master/k-distribution/tests/regression-new/bisimulation) use features from `KREFLECTION` which will not be supported, but it should be simple to rewrite the test to use pattern matching.

|

non_process

|

bisimulation the use features from kreflection which will not be supported but it should be simple to rewrite the test to use pattern matching

| 0

|

7,836

| 11,011,726,085

|

IssuesEvent

|

2019-12-04 16:49:02

|

90301/TextReplace

|

https://api.github.com/repos/90301/TextReplace

|

closed

|

Text Log Filterer / Stats

|

Log Processor

|

Have something that can handle logs

Process line by line.

Able to find text insdie quotes

in line filtering code

program creation capability.

Extra functionality:

- [x] Multi-Line-Output Block

--- Documentation

ORDER OF OPERATIONS MATTERS A LOT

Settings can be changed in the middle of processing text.

for debugging, all outputs of intermediate steps can be traced.

## Processor Settings

TEXTUNIT([LINE,FULLDOC]) - turns this from a line my line processor to a full text processor

## Filtering

MustContain("SomeText". [Optional] REGEX Support) - the finds all rows that match

IncludeExtra(int above,int below) - must be above the operation / op group

NotContain("SomeText". [Optional] REGEX Support)

## Collection

Inside(:) - Gets the text past (to the right of) the first instance of :

ContainedInside(") - Gets all text inside of a specific tag / character. EX toasy was a "marko" toaster would return "marko"

|

1.0

|

Text Log Filterer / Stats - Have something that can handle logs

Process line by line.

Able to find text insdie quotes

in line filtering code

program creation capability.

Extra functionality:

- [x] Multi-Line-Output Block

--- Documentation

ORDER OF OPERATIONS MATTERS A LOT

Settings can be changed in the middle of processing text.

for debugging, all outputs of intermediate steps can be traced.

## Processor Settings

TEXTUNIT([LINE,FULLDOC]) - turns this from a line my line processor to a full text processor

## Filtering

MustContain("SomeText". [Optional] REGEX Support) - the finds all rows that match

IncludeExtra(int above,int below) - must be above the operation / op group

NotContain("SomeText". [Optional] REGEX Support)

## Collection

Inside(:) - Gets the text past (to the right of) the first instance of :

ContainedInside(") - Gets all text inside of a specific tag / character. EX toasy was a "marko" toaster would return "marko"

|

process

|

text log filterer stats have something that can handle logs process line by line able to find text insdie quotes in line filtering code program creation capability extra functionality multi line output block documentation order of operations matters a lot settings can be changed in the middle of processing text for debugging all outputs of intermediate steps can be traced processor settings textunit turns this from a line my line processor to a full text processor filtering mustcontain sometext regex support the finds all rows that match includeextra int above int below must be above the operation op group notcontain sometext regex support collection inside gets the text past to the right of the first instance of containedinside gets all text inside of a specific tag character ex toasy was a marko toaster would return marko

| 1

|

645,039

| 20,992,915,437

|

IssuesEvent

|

2022-03-29 10:58:55

|

AY2122S2-CS2103T-W15-2/tp

|

https://api.github.com/repos/AY2122S2-CS2103T-W15-2/tp

|

closed

|

As a user, I can choose which client to delete if there are clients with similar names

|

type.Story priority.High

|

So I can accurately delete clients from my HustleBook

|

1.0

|

As a user, I can choose which client to delete if there are clients with similar names - So I can accurately delete clients from my HustleBook

|

non_process

|

as a user i can choose which client to delete if there are clients with similar names so i can accurately delete clients from my hustlebook

| 0

|

43,561

| 13,020,407,562

|

IssuesEvent

|

2020-07-27 02:59:20

|

LightC0der/arunbhandari.github.io

|

https://api.github.com/repos/LightC0der/arunbhandari.github.io

|

opened

|

CVE-2015-9251 (Medium) detected in jquery-1.9.1.min.js

|

security vulnerability

|

## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.9.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.min.js</a></p>

<p>Path to vulnerable library: /arunbhandari.github.io/assets/js/vendor/jquery-1.9.1.min.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.9.1.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/LightC0der/arunbhandari.github.io/commit/241096b6dd14739925eca764bd8ab9a25a8003c6">241096b6dd14739925eca764bd8ab9a25a8003c6</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

jQuery before 3.0.0 is vulnerable to Cross-site Scripting (XSS) attacks when a cross-domain Ajax request is performed without the dataType option, causing text/javascript responses to be executed.

<p>Publish Date: 2018-01-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2015-9251>CVE-2015-9251</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2015-9251">https://nvd.nist.gov/vuln/detail/CVE-2015-9251</a></p>

<p>Release Date: 2018-01-18</p>

<p>Fix Resolution: jQuery - v3.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2015-9251 (Medium) detected in jquery-1.9.1.min.js - ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.9.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.min.js</a></p>

<p>Path to vulnerable library: /arunbhandari.github.io/assets/js/vendor/jquery-1.9.1.min.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.9.1.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/LightC0der/arunbhandari.github.io/commit/241096b6dd14739925eca764bd8ab9a25a8003c6">241096b6dd14739925eca764bd8ab9a25a8003c6</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

jQuery before 3.0.0 is vulnerable to Cross-site Scripting (XSS) attacks when a cross-domain Ajax request is performed without the dataType option, causing text/javascript responses to be executed.

<p>Publish Date: 2018-01-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2015-9251>CVE-2015-9251</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2015-9251">https://nvd.nist.gov/vuln/detail/CVE-2015-9251</a></p>

<p>Release Date: 2018-01-18</p>

<p>Fix Resolution: jQuery - v3.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to vulnerable library arunbhandari github io assets js vendor jquery min js dependency hierarchy x jquery min js vulnerable library found in head commit a href vulnerability details jquery before is vulnerable to cross site scripting xss attacks when a cross domain ajax request is performed without the datatype option causing text javascript responses to be executed publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution jquery step up your open source security game with whitesource

| 0

|

16,938

| 22,286,954,483

|

IssuesEvent

|

2022-06-11 19:40:16

|

sparc4-dev/astropop

|

https://api.github.com/repos/sparc4-dev/astropop

|

closed

|

Routine to compute only shifts in image registration

|

enhancement image-processing

|

Make a function to compute the shifts separated in image registration.

|

1.0

|

Routine to compute only shifts in image registration - Make a function to compute the shifts separated in image registration.

|

process

|

routine to compute only shifts in image registration make a function to compute the shifts separated in image registration

| 1

|

8,737

| 11,866,330,340

|

IssuesEvent

|

2020-03-26 03:20:35

|

trynmaps/metrics-mvp

|

https://api.github.com/repos/trynmaps/metrics-mvp

|

closed

|

Make our contribution process more obvious

|

Process

|

At the top of the readme, link to our onboarding docs:

* https://docs.google.com/document/d/1qzzBKpQPkcKkz9b47yJHAB95ip8d3HjYV1wPIYaUlBU/edit#heading=h.reybhpkqyi34

* https://docs.google.com/document/d/1WZt0LkGS6AsotYjF7VSMIWD1dXrzsKX4lRYKE_uhJWE/edit

We need to reduce the number of new people who start working on projects and never finish them, and don't introduce themselves to us. People who jump on one issue, start it, and never finish really slow us down. So we should be more explicit about asking people to join our Slack and call into meetings, etc. before we let them contribute -- and a good first step is to put that more obviously in the readme.

|

1.0

|

Make our contribution process more obvious - At the top of the readme, link to our onboarding docs:

* https://docs.google.com/document/d/1qzzBKpQPkcKkz9b47yJHAB95ip8d3HjYV1wPIYaUlBU/edit#heading=h.reybhpkqyi34

* https://docs.google.com/document/d/1WZt0LkGS6AsotYjF7VSMIWD1dXrzsKX4lRYKE_uhJWE/edit

We need to reduce the number of new people who start working on projects and never finish them, and don't introduce themselves to us. People who jump on one issue, start it, and never finish really slow us down. So we should be more explicit about asking people to join our Slack and call into meetings, etc. before we let them contribute -- and a good first step is to put that more obviously in the readme.

|

process

|

make our contribution process more obvious at the top of the readme link to our onboarding docs we need to reduce the number of new people who start working on projects and never finish them and don t introduce themselves to us people who jump on one issue start it and never finish really slow us down so we should be more explicit about asking people to join our slack and call into meetings etc before we let them contribute and a good first step is to put that more obviously in the readme

| 1

|

42,087

| 12,876,211,977

|

IssuesEvent

|

2020-07-11 03:17:44

|

tt9133github/zkui

|

https://api.github.com/repos/tt9133github/zkui

|

opened

|

CVE-2017-3586 (Medium) detected in mysql-connector-java-5.1.31.jar

|

security vulnerability

|

## CVE-2017-3586 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.31.jar</b></p></summary>

<p>MySQL JDBC Type 4 driver</p>

<p>Library home page: <a href="http://dev.mysql.com/doc/connector-j/en/">http://dev.mysql.com/doc/connector-j/en/</a></p>

<p>Path to dependency file: /tmp/ws-scm/zkui/pom.xml</p>

<p>Path to vulnerable library: canner/.m2/repository/mysql/mysql-connector-java/5.1.31/mysql-connector-java-5.1.31.jar</p>

<p>

Dependency Hierarchy:

- :x: **mysql-connector-java-5.1.31.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/tt9133github/zkui/commit/68cd62cead44c0c462f92c8bc9fc2b02708bab32">68cd62cead44c0c462f92c8bc9fc2b02708bab32</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Vulnerability in the MySQL Connectors component of Oracle MySQL (subcomponent: Connector/J). Supported versions that are affected are 5.1.41 and earlier. Easily "exploitable" vulnerability allows low privileged attacker with network access via multiple protocols to compromise MySQL Connectors. While the vulnerability is in MySQL Connectors, attacks may significantly impact additional products. Successful attacks of this vulnerability can result in unauthorized update, insert or delete access to some of MySQL Connectors accessible data as well as unauthorized read access to a subset of MySQL Connectors accessible data. CVSS 3.0 Base Score 6.4 (Confidentiality and Integrity impacts). CVSS Vector: (CVSS:3.0/AV:N/AC:L/PR:L/UI:N/S:C/C:L/I:L/A:N).

<p>Publish Date: 2017-04-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-3586>CVE-2017-3586</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://bugzilla.redhat.com/show_bug.cgi?id=1444406">https://bugzilla.redhat.com/show_bug.cgi?id=1444406</a></p>

<p>Release Date: 2017-04-24</p>

<p>Fix Resolution: 5.1.42</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2017-3586 (Medium) detected in mysql-connector-java-5.1.31.jar - ## CVE-2017-3586 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.31.jar</b></p></summary>

<p>MySQL JDBC Type 4 driver</p>

<p>Library home page: <a href="http://dev.mysql.com/doc/connector-j/en/">http://dev.mysql.com/doc/connector-j/en/</a></p>

<p>Path to dependency file: /tmp/ws-scm/zkui/pom.xml</p>

<p>Path to vulnerable library: canner/.m2/repository/mysql/mysql-connector-java/5.1.31/mysql-connector-java-5.1.31.jar</p>

<p>

Dependency Hierarchy:

- :x: **mysql-connector-java-5.1.31.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/tt9133github/zkui/commit/68cd62cead44c0c462f92c8bc9fc2b02708bab32">68cd62cead44c0c462f92c8bc9fc2b02708bab32</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Vulnerability in the MySQL Connectors component of Oracle MySQL (subcomponent: Connector/J). Supported versions that are affected are 5.1.41 and earlier. Easily "exploitable" vulnerability allows low privileged attacker with network access via multiple protocols to compromise MySQL Connectors. While the vulnerability is in MySQL Connectors, attacks may significantly impact additional products. Successful attacks of this vulnerability can result in unauthorized update, insert or delete access to some of MySQL Connectors accessible data as well as unauthorized read access to a subset of MySQL Connectors accessible data. CVSS 3.0 Base Score 6.4 (Confidentiality and Integrity impacts). CVSS Vector: (CVSS:3.0/AV:N/AC:L/PR:L/UI:N/S:C/C:L/I:L/A:N).

<p>Publish Date: 2017-04-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-3586>CVE-2017-3586</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://bugzilla.redhat.com/show_bug.cgi?id=1444406">https://bugzilla.redhat.com/show_bug.cgi?id=1444406</a></p>

<p>Release Date: 2017-04-24</p>

<p>Fix Resolution: 5.1.42</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in mysql connector java jar cve medium severity vulnerability vulnerable library mysql connector java jar mysql jdbc type driver library home page a href path to dependency file tmp ws scm zkui pom xml path to vulnerable library canner repository mysql mysql connector java mysql connector java jar dependency hierarchy x mysql connector java jar vulnerable library found in head commit a href vulnerability details vulnerability in the mysql connectors component of oracle mysql subcomponent connector j supported versions that are affected are and earlier easily exploitable vulnerability allows low privileged attacker with network access via multiple protocols to compromise mysql connectors while the vulnerability is in mysql connectors attacks may significantly impact additional products successful attacks of this vulnerability can result in unauthorized update insert or delete access to some of mysql connectors accessible data as well as unauthorized read access to a subset of mysql connectors accessible data cvss base score confidentiality and integrity impacts cvss vector cvss av n ac l pr l ui n s c c l i l a n publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

57,889

| 14,239,349,916

|

IssuesEvent

|

2020-11-18 20:00:05

|

elastic/elasticsearch

|

https://api.github.com/repos/elastic/elasticsearch

|

closed

|

Can't run tests in Eclipse

|

:Delivery/Build >bug Team:Delivery needs:triage

|

In #64841 started validating the codebases existed in our security policy. This broke running tests in Eclipse because we include a special policy for IntelliJ if we're not running in gradle. Eclipse doesn't run in gradle and doesn't have the IntelliJ policy.

|

1.0

|

Can't run tests in Eclipse - In #64841 started validating the codebases existed in our security policy. This broke running tests in Eclipse because we include a special policy for IntelliJ if we're not running in gradle. Eclipse doesn't run in gradle and doesn't have the IntelliJ policy.

|

non_process

|

can t run tests in eclipse in started validating the codebases existed in our security policy this broke running tests in eclipse because we include a special policy for intellij if we re not running in gradle eclipse doesn t run in gradle and doesn t have the intellij policy

| 0

|

8,320

| 11,487,336,665

|

IssuesEvent

|

2020-02-11 11:44:50

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

PAMP-triggered immunity

|

high priority multi-species process

|

PAMP- triggered immunity (PTI) usually first line of defence

Upon association with PAMPs, the pattern-recognition receptors activate a downstream mitogen-activated protein kinase signaling cascade that culminates in transcriptional activation and generation of the innate immune responses.

For PTI

I find . GO:0002221 pattern recognition receptor signaling pathway

Any series of molecular signals generated as a consequence of a pattern recognition receptor (PRR) binding to one of its physiological ligands. PRRs bind pathogen-associated molecular pattern (PAMPs), structures conserved among microbial species, or damage-associated molecular pattern (DAMPs), endogenous molecules released from damaged cells. PMID:15199967

so NTR could be a child of GO:0002221

NTR PAMP-triggered immunity signalling pathway

Any series of molecular signals generated as a consequence of a pattern recognition receptor (PRR) binding to one of its physiological ligands to activate a plant innate immune response. PAMP-triggered immunity PRRs bind pathogen-associated molecular pattern (PAMPs), structures conserved among microbial species.

exact synonym PTI signalling

~I would also be happy for this to be a synonym. BUT I'm a bit concerned about the mention of damage-associated molecular pattern (DAMPs) because these AFAIK are not innate immune response, they are inflammatory response. I really think these should be split.~

the DAMPs are also regulating the immune response via damage detection

Note that I could also use:

GO:0035420 MAPK cascade involved in innate immune response (0 annotations)

it might be best to get rid of this ?

|

1.0

|

PAMP-triggered immunity - PAMP- triggered immunity (PTI) usually first line of defence

Upon association with PAMPs, the pattern-recognition receptors activate a downstream mitogen-activated protein kinase signaling cascade that culminates in transcriptional activation and generation of the innate immune responses.

For PTI

I find . GO:0002221 pattern recognition receptor signaling pathway

Any series of molecular signals generated as a consequence of a pattern recognition receptor (PRR) binding to one of its physiological ligands. PRRs bind pathogen-associated molecular pattern (PAMPs), structures conserved among microbial species, or damage-associated molecular pattern (DAMPs), endogenous molecules released from damaged cells. PMID:15199967

so NTR could be a child of GO:0002221

NTR PAMP-triggered immunity signalling pathway

Any series of molecular signals generated as a consequence of a pattern recognition receptor (PRR) binding to one of its physiological ligands to activate a plant innate immune response. PAMP-triggered immunity PRRs bind pathogen-associated molecular pattern (PAMPs), structures conserved among microbial species.

exact synonym PTI signalling

~I would also be happy for this to be a synonym. BUT I'm a bit concerned about the mention of damage-associated molecular pattern (DAMPs) because these AFAIK are not innate immune response, they are inflammatory response. I really think these should be split.~

the DAMPs are also regulating the immune response via damage detection

Note that I could also use:

GO:0035420 MAPK cascade involved in innate immune response (0 annotations)

it might be best to get rid of this ?

|

process

|

pamp triggered immunity pamp triggered immunity pti usually first line of defence

upon association with pamps the pattern recognition receptors activate a downstream mitogen activated protein kinase signaling cascade that culminates in transcriptional activation and generation of the innate immune responses for pti i find go pattern recognition receptor signaling pathway any series of molecular signals generated as a consequence of a pattern recognition receptor prr binding to one of its physiological ligands prrs bind pathogen associated molecular pattern pamps structures conserved among microbial species or damage associated molecular pattern damps endogenous molecules released from damaged cells pmid so ntr could be a child of go ntr pamp triggered immunity signalling pathway any series of molecular signals generated as a consequence of a pattern recognition receptor prr binding to one of its physiological ligands to activate a plant innate immune response pamp triggered immunity prrs bind pathogen associated molecular pattern pamps structures conserved among microbial species exact synonym pti signalling i would also be happy for this to be a synonym but i m a bit concerned about the mention of damage associated molecular pattern damps because these afaik are not innate immune response they are inflammatory response i really think these should be split the damps are also regulating the immune response via damage detection note that i could also use go mapk cascade involved in innate immune response annotations it might be best to get rid of this

| 1

|

16,077

| 20,249,040,126

|

IssuesEvent

|

2022-02-14 16:11:53

|

Bone008/orbiteye

|

https://api.github.com/repos/Bone008/orbiteye

|

opened

|

Create TypeScript interface for data import

|

data processing

|

- [ ] Define and document TypeScript interface for the data

- [ ] Create mappings from shortcodes to full strings (e.g. Owner/Country, Launch Site)

|

1.0

|

Create TypeScript interface for data import - - [ ] Define and document TypeScript interface for the data

- [ ] Create mappings from shortcodes to full strings (e.g. Owner/Country, Launch Site)

|

process

|

create typescript interface for data import define and document typescript interface for the data create mappings from shortcodes to full strings e g owner country launch site

| 1

|

38,459

| 19,276,165,560

|

IssuesEvent

|

2021-12-10 12:07:01

|

grafana/grafana

|

https://api.github.com/repos/grafana/grafana

|

closed

|

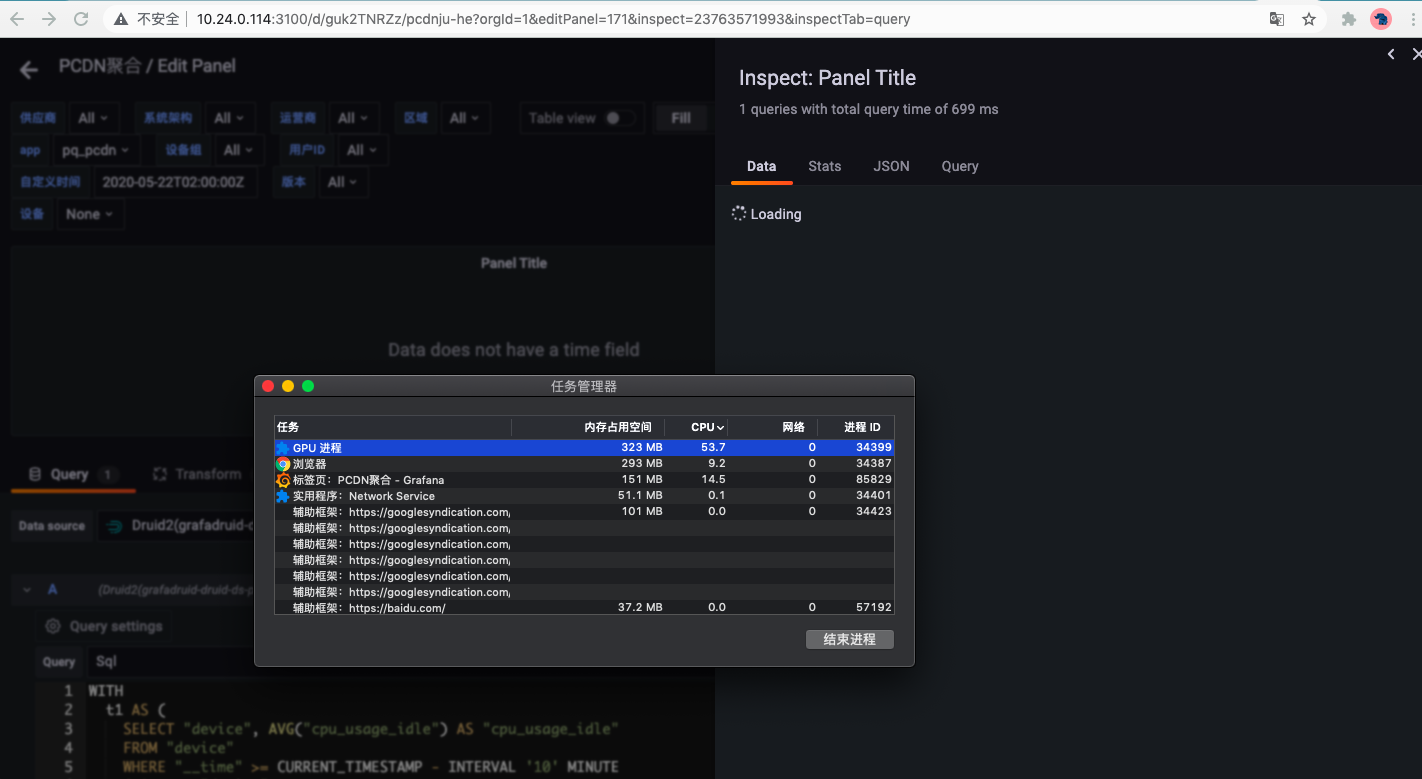

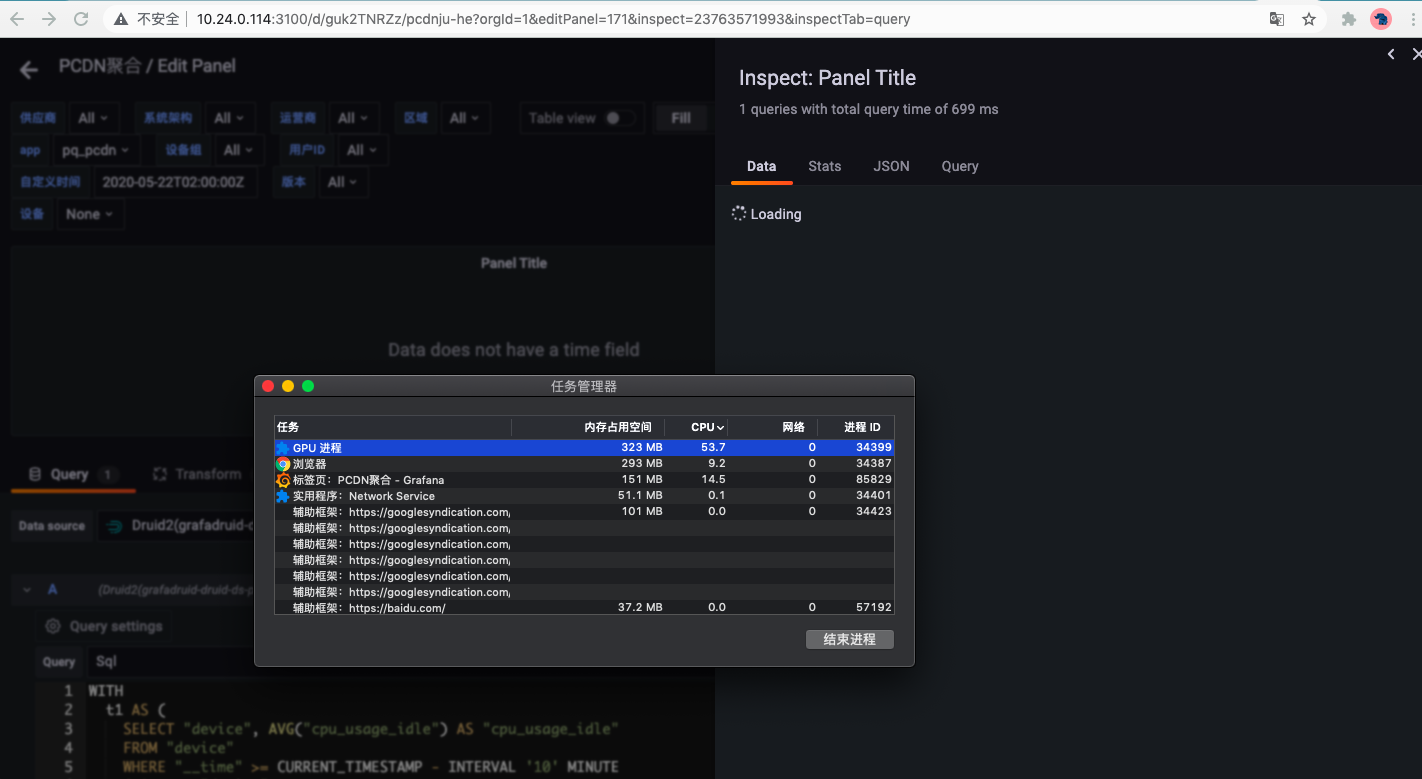

Grafana 8.1.3 Web UI GPU usage is too high

|

needs more info type/performance bot/no new info

|

<!--

Please use this template to create your bug report. By providing as much info as possible you help us understand the issue, reproduce it and resolve it for you quicker. Therefore take a couple of extra minutes to make sure you have provided all info needed.

PROTIP: record your screen and attach it as a gif to showcase the issue.

- Questions should be posted to: https://community.grafana.com

- Use query inspector to troubleshoot issues: https://bit.ly/2XNF6YS

- How to record and attach gif: https://bit.ly/2Mi8T6K

-->

**What happened**:

**What you expected to happen**:

When the new window that pops up in the webUI is displayed in the foreground, it sometimes causes the Grafana tab to fill up the CPU.

Through the analysis of the task manager of the Chrome browser, the GPU operation is too frequent at this time.

When closing the new window that Grafana pops up, or closing the Grafana tab, or letting the browser work in the background, the CPU utilization will drop to a normal level.

**How to reproduce it (as minimally and precisely as possible)**:

**Anything else we need to know?**:

**Environment**:

- Grafana version: 8.1.3

- Data source type & version: mysql8

- OS Grafana is installed on: MacOs 10.14.6

- User OS & Browser: Chrome 95.0.4638.69(正式版本) (x86_64)

- Grafana plugins:

- vertamedia-clickhouse-plugin_linux_amd64

- grafadruid-druid-datasource_linux_amd64

- Others:

|

True

|

Grafana 8.1.3 Web UI GPU usage is too high - <!--

Please use this template to create your bug report. By providing as much info as possible you help us understand the issue, reproduce it and resolve it for you quicker. Therefore take a couple of extra minutes to make sure you have provided all info needed.

PROTIP: record your screen and attach it as a gif to showcase the issue.

- Questions should be posted to: https://community.grafana.com

- Use query inspector to troubleshoot issues: https://bit.ly/2XNF6YS

- How to record and attach gif: https://bit.ly/2Mi8T6K

-->

**What happened**:

**What you expected to happen**:

When the new window that pops up in the webUI is displayed in the foreground, it sometimes causes the Grafana tab to fill up the CPU.

Through the analysis of the task manager of the Chrome browser, the GPU operation is too frequent at this time.

When closing the new window that Grafana pops up, or closing the Grafana tab, or letting the browser work in the background, the CPU utilization will drop to a normal level.

**How to reproduce it (as minimally and precisely as possible)**:

**Anything else we need to know?**:

**Environment**:

- Grafana version: 8.1.3

- Data source type & version: mysql8

- OS Grafana is installed on: MacOs 10.14.6

- User OS & Browser: Chrome 95.0.4638.69(正式版本) (x86_64)

- Grafana plugins:

- vertamedia-clickhouse-plugin_linux_amd64

- grafadruid-druid-datasource_linux_amd64

- Others:

|

non_process

|

grafana web ui gpu usage is too high please use this template to create your bug report by providing as much info as possible you help us understand the issue reproduce it and resolve it for you quicker therefore take a couple of extra minutes to make sure you have provided all info needed protip record your screen and attach it as a gif to showcase the issue questions should be posted to use query inspector to troubleshoot issues how to record and attach gif what happened what you expected to happen when the new window that pops up in the webui is displayed in the foreground it sometimes causes the grafana tab to fill up the cpu through the analysis of the task manager of the chrome browser the gpu operation is too frequent at this time when closing the new window that grafana pops up or closing the grafana tab or letting the browser work in the background the cpu utilization will drop to a normal level how to reproduce it as minimally and precisely as possible anything else we need to know environment grafana version data source type version os grafana is installed on macos user os browser chrome (正式版本) grafana plugins vertamedia clickhouse plugin linux grafadruid druid datasource linux others

| 0

|

1,881

| 4,712,135,815

|

IssuesEvent

|

2016-10-14 15:49:33

|

CERNDocumentServer/cds

|

https://api.github.com/repos/CERNDocumentServer/cds

|

closed

|

Process: Initialise webhooks on cds

|

avc_processing

|

Make sure that the webhooks the commits

- <https://github.com/CERNDocumentServer/cds/commit/1375c895e3c92c0fec428333e2e4a53c8b968cc8>

- <https://github.com/CERNDocumentServer/cds/commit/3fa7d068acc6e98b3742bd29fa132a1b0ed9e4e6>

- <https://github.com/CERNDocumentServer/cds/commit/9b95232438d5f8d0d2a459b4cacfa880ab71819c>

are well integrated on CDS and the endpoints are working as designed

|

1.0

|

Process: Initialise webhooks on cds - Make sure that the webhooks the commits

- <https://github.com/CERNDocumentServer/cds/commit/1375c895e3c92c0fec428333e2e4a53c8b968cc8>

- <https://github.com/CERNDocumentServer/cds/commit/3fa7d068acc6e98b3742bd29fa132a1b0ed9e4e6>

- <https://github.com/CERNDocumentServer/cds/commit/9b95232438d5f8d0d2a459b4cacfa880ab71819c>

are well integrated on CDS and the endpoints are working as designed

|

process

|

process initialise webhooks on cds make sure that the webhooks the commits are well integrated on cds and the endpoints are working as designed

| 1

|

16,215

| 20,742,598,443

|

IssuesEvent

|

2022-03-14 19:13:06

|

kitzeslab/opensoundscape

|

https://api.github.com/repos/kitzeslab/opensoundscape

|

opened

|

ImgToTensor and ImgToTensorGreyscale should be one class

|

preprocessing 0.7.0

|

can have a "mode" parameter for "RGB" or "L"

|

1.0

|

ImgToTensor and ImgToTensorGreyscale should be one class - can have a "mode" parameter for "RGB" or "L"

|

process

|

imgtotensor and imgtotensorgreyscale should be one class can have a mode parameter for rgb or l

| 1

|

2,078

| 4,892,266,247

|

IssuesEvent

|

2016-11-18 19:11:36

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

closed

|

Stack Trace on Depreciation Warnings

|

process question

|

<!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**: v7.0.0

* **Platform**: Darwin bret-mbr.local 16.1.0 Darwin Kernel Version 16.1.0: Thu Oct 13 21:26:57 PDT 2016; root:xnu-3789.21.3~60/RELEASE_X86_64 x86_64

* **Subsystem**:

<!-- Enter your issue details below this comment. -->

It would be handy if warnings provided a stack trace when thrown, so that I can track down the source of the warning and possibly PR upstream fixes. Right now, a lot of modules are throwing the missing `new Buffer` warning, but don't provide any hints at where to look to make the fix.

@mikeal mentioned that this should be pretty easy to do possibly: https://twitter.com/mikeal/status/796072579563851776

|

1.0

|

Stack Trace on Depreciation Warnings - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**: v7.0.0

* **Platform**: Darwin bret-mbr.local 16.1.0 Darwin Kernel Version 16.1.0: Thu Oct 13 21:26:57 PDT 2016; root:xnu-3789.21.3~60/RELEASE_X86_64 x86_64

* **Subsystem**:

<!-- Enter your issue details below this comment. -->

It would be handy if warnings provided a stack trace when thrown, so that I can track down the source of the warning and possibly PR upstream fixes. Right now, a lot of modules are throwing the missing `new Buffer` warning, but don't provide any hints at where to look to make the fix.

@mikeal mentioned that this should be pretty easy to do possibly: https://twitter.com/mikeal/status/796072579563851776

|

process

|

stack trace on depreciation warnings thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able version output of node v platform output of uname a unix or version and or bit windows subsystem if known please specify affected core module name if possible please provide code that demonstrates the problem keeping it as simple and free of external dependencies as you are able version platform darwin bret mbr local darwin kernel version thu oct pdt root xnu release subsystem it would be handy if warnings provided a stack trace when thrown so that i can track down the source of the warning and possibly pr upstream fixes right now a lot of modules are throwing the missing new buffer warning but don t provide any hints at where to look to make the fix mikeal mentioned that this should be pretty easy to do possibly

| 1

|

20,035

| 26,520,107,612

|

IssuesEvent

|

2023-01-19 01:17:59

|

CSE201-project/PaperFriend-desktop-app

|

https://api.github.com/repos/CSE201-project/PaperFriend-desktop-app

|

closed

|

Code the add friend functionality

|

file processing frontend

|

Same as for activities. Once it's done for activities it should be easy to do it with friends.

You can use function templates or overloading ;-)

|

1.0

|

Code the add friend functionality - Same as for activities. Once it's done for activities it should be easy to do it with friends.

You can use function templates or overloading ;-)

|

process

|

code the add friend functionality same as for activities once it s done for activities it should be easy to do it with friends you can use function templates or overloading

| 1

|

19,454

| 25,737,029,083

|

IssuesEvent

|

2022-12-08 01:50:54

|

GoogleCloudPlatform/emblem

|

https://api.github.com/repos/GoogleCloudPlatform/emblem

|

closed

|

Design: Determine trace span creation, collection, and propagation tooling

|

type: process priority: p2 component: website component: content-api persona: developer component: delivery

|

Identify the appropriate tracing toolchain for this application. We need to balance library ecosystem maturity with longevity. OpenTelemetry is recommended but not necessarily ready, OpenCensus is mature but is expected to be replaced by OpenTelemetry.

Our use cases include:

* Propagating trace between all systems that are part of the flow in processing a request

* Easy creation of custom spans around long-running or potentially complex operations

* Injection of trace details into application logs (something structured logging may provide separately)

* Ensuring telemetry data reaches Cloud Tracing before container termination

Part of #43

|

1.0

|

Design: Determine trace span creation, collection, and propagation tooling - Identify the appropriate tracing toolchain for this application. We need to balance library ecosystem maturity with longevity. OpenTelemetry is recommended but not necessarily ready, OpenCensus is mature but is expected to be replaced by OpenTelemetry.

Our use cases include:

* Propagating trace between all systems that are part of the flow in processing a request

* Easy creation of custom spans around long-running or potentially complex operations

* Injection of trace details into application logs (something structured logging may provide separately)

* Ensuring telemetry data reaches Cloud Tracing before container termination

Part of #43

|

process

|

design determine trace span creation collection and propagation tooling identify the appropriate tracing toolchain for this application we need to balance library ecosystem maturity with longevity opentelemetry is recommended but not necessarily ready opencensus is mature but is expected to be replaced by opentelemetry our use cases include propagating trace between all systems that are part of the flow in processing a request easy creation of custom spans around long running or potentially complex operations injection of trace details into application logs something structured logging may provide separately ensuring telemetry data reaches cloud tracing before container termination part of

| 1

|

20,010

| 26,483,791,225

|

IssuesEvent

|

2023-01-17 16:27:06

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

qgis_process: bypass the need to manually create QGIS project for algorithms with expressions

|

Processing Feature Request

|

### Feature description

Certain algorithms center on the use of QGIS expressions to generate new values or new geometries, e.g. `native:fieldcalculator` and `native:geometrybyexpression`. The functions in these expressions refer to layers and layer fields that must be present in the QGIS project, e.g. in `get_feature('test_line', 'fid', '1')` or in `$geometry` (referring the selected input layer). A similar case is the use of expressions in [data-defined overrides](https://docs.qgis.org/testing/en/docs/user_manual/introduction/general_tools.html#the-data-defined-override-widget) for parameters of a processing algorithm (e.g. for `DISTANCE` in `native:buffer`).

In reproducible scripting outside of QGIS and Python, `qgis_process` is called in specific steps (e.g. in bash, or in R using package [qgisprocess](https://github.com/paleolimbot/qgisprocess)). When calling `qgis_process` in this context to process geospatial layers that just live in a **file** (e.g. GeoPackage), the above algorithms will only work when a QGIS project is first created interactively that contains these layers. This is not ideal from the scripting perspective (see _Additional context_).

Would one of the following **solutions** be sensible and feasible to add, in order to bypass the manual creation of a QGIS project for the algorithms that use expressions:

- providing an algorithm (that can be run with `qgis_process`) that creates a simple QGIS project. As input it would need filepath+layer references, a CRS and a project file name. Perhaps restricted (if needed) to the case of layers with the same explicit CRS (or none, assuming the same).

- extending the QGIS expressions framework so that layers and fields can be referred from filepath+layer.

### Additional context

From the perspective of reproducible scripting outside of QGIS and Python, the need for a QGIS project in above algorithms poses a problem compared to the many algorithms not needing a QGIS project. AFAIK such project creation cannot be scripted using a `qgis_process` algorithm. Hence (irreproducible) manual intervention is needed – creating a QGIS project.

AFAIK the only current solution is to store a manually crafted QGIS project file next to the script, but it feels like overkill for scripted geospatial processing. Also, the processing step that uses QGIS expressions will often be used on intermediate results from earlier scripting, which will in general be different when the same script is used with new or updated input data. The latter implies that care must be taken that the QGIS project is referring the updated intermediate results.

With relation to data-defined overrides, it's currently unclear how to pass them to `qgis_process`; see #50482 for that.

This idea originated in https://github.com/paleolimbot/qgisprocess/issues/104.

|

1.0

|

qgis_process: bypass the need to manually create QGIS project for algorithms with expressions - ### Feature description

Certain algorithms center on the use of QGIS expressions to generate new values or new geometries, e.g. `native:fieldcalculator` and `native:geometrybyexpression`. The functions in these expressions refer to layers and layer fields that must be present in the QGIS project, e.g. in `get_feature('test_line', 'fid', '1')` or in `$geometry` (referring the selected input layer). A similar case is the use of expressions in [data-defined overrides](https://docs.qgis.org/testing/en/docs/user_manual/introduction/general_tools.html#the-data-defined-override-widget) for parameters of a processing algorithm (e.g. for `DISTANCE` in `native:buffer`).

In reproducible scripting outside of QGIS and Python, `qgis_process` is called in specific steps (e.g. in bash, or in R using package [qgisprocess](https://github.com/paleolimbot/qgisprocess)). When calling `qgis_process` in this context to process geospatial layers that just live in a **file** (e.g. GeoPackage), the above algorithms will only work when a QGIS project is first created interactively that contains these layers. This is not ideal from the scripting perspective (see _Additional context_).

Would one of the following **solutions** be sensible and feasible to add, in order to bypass the manual creation of a QGIS project for the algorithms that use expressions:

- providing an algorithm (that can be run with `qgis_process`) that creates a simple QGIS project. As input it would need filepath+layer references, a CRS and a project file name. Perhaps restricted (if needed) to the case of layers with the same explicit CRS (or none, assuming the same).

- extending the QGIS expressions framework so that layers and fields can be referred from filepath+layer.

### Additional context

From the perspective of reproducible scripting outside of QGIS and Python, the need for a QGIS project in above algorithms poses a problem compared to the many algorithms not needing a QGIS project. AFAIK such project creation cannot be scripted using a `qgis_process` algorithm. Hence (irreproducible) manual intervention is needed – creating a QGIS project.

AFAIK the only current solution is to store a manually crafted QGIS project file next to the script, but it feels like overkill for scripted geospatial processing. Also, the processing step that uses QGIS expressions will often be used on intermediate results from earlier scripting, which will in general be different when the same script is used with new or updated input data. The latter implies that care must be taken that the QGIS project is referring the updated intermediate results.

With relation to data-defined overrides, it's currently unclear how to pass them to `qgis_process`; see #50482 for that.

This idea originated in https://github.com/paleolimbot/qgisprocess/issues/104.

|

process

|

qgis process bypass the need to manually create qgis project for algorithms with expressions feature description certain algorithms center on the use of qgis expressions to generate new values or new geometries e g native fieldcalculator and native geometrybyexpression the functions in these expressions refer to layers and layer fields that must be present in the qgis project e g in get feature test line fid or in geometry referring the selected input layer a similar case is the use of expressions in for parameters of a processing algorithm e g for distance in native buffer in reproducible scripting outside of qgis and python qgis process is called in specific steps e g in bash or in r using package when calling qgis process in this context to process geospatial layers that just live in a file e g geopackage the above algorithms will only work when a qgis project is first created interactively that contains these layers this is not ideal from the scripting perspective see additional context would one of the following solutions be sensible and feasible to add in order to bypass the manual creation of a qgis project for the algorithms that use expressions providing an algorithm that can be run with qgis process that creates a simple qgis project as input it would need filepath layer references a crs and a project file name perhaps restricted if needed to the case of layers with the same explicit crs or none assuming the same extending the qgis expressions framework so that layers and fields can be referred from filepath layer additional context from the perspective of reproducible scripting outside of qgis and python the need for a qgis project in above algorithms poses a problem compared to the many algorithms not needing a qgis project afaik such project creation cannot be scripted using a qgis process algorithm hence irreproducible manual intervention is needed – creating a qgis project afaik the only current solution is to store a manually crafted qgis project file next to the script but it feels like overkill for scripted geospatial processing also the processing step that uses qgis expressions will often be used on intermediate results from earlier scripting which will in general be different when the same script is used with new or updated input data the latter implies that care must be taken that the qgis project is referring the updated intermediate results with relation to data defined overrides it s currently unclear how to pass them to qgis process see for that this idea originated in

| 1

|

94,979

| 27,348,342,422

|

IssuesEvent

|

2023-02-27 07:34:13

|

expo/expo

|

https://api.github.com/repos/expo/expo

|

closed

|

[EAS][Android][SDK 46] Android .apk crashes after launch

|

needs validation incomplete issue: missing or invalid repro Development Builds

|

### Summary

Hi everyone.

Runs fine on emulator, but .apk builds made with `eas build` keep crashing, looks like there's no js linked to them. Same result after running `./gradlew assembleDebug` locally. Need help.

No issues with iOS.

Thanks!

### Managed or bare workflow?

bare

### What platform(s) does this occur on?

Android

### Package versions

_No response_

### Environment

```

expo-env-info 1.0.5 environment info:

System:

OS: macOS 13.2.1

Shell: 5.8.1 - /bin/zsh

Binaries:

Node: 18.9.1 - /usr/local/bin/node

Yarn: 1.22.17 - /usr/local/bin/yarn

npm: 8.19.1 - /usr/local/bin/npm

Watchman: 2022.09.19.00 - /usr/local/bin/watchman

Managers:

CocoaPods: 1.11.3 - /Users/usr/.rbenv/shims/pod

SDKs:

iOS SDK:

Platforms: DriverKit 22.2, iOS 16.2, macOS 13.1, tvOS 16.1, watchOS 9.1

Android SDK:

API Levels: 23, 24, 25, 26, 27, 28, 29, 30, 31, 33

Build Tools: 28.0.3, 29.0.2, 29.0.3, 30.0.2, 30.0.3, 31.0.0, 32.1.0, 33.0.0

System Images: android-30 | Google APIs Intel x86 Atom, android-30 | Google Play Intel x86 Atom, android-32 | Google APIs Intel x86 Atom_64

IDEs:

Android Studio: 2022.1 AI-221.6008.13.2211.9514443

Xcode: 14.2/14C18 - /usr/bin/xcodebuild

npmGlobalPackages:

expo-cli: 6.1.0

Expo Workflow: managed

```

### Reproducible demo

eas.json config for apk (actually, tried multiple different configurations):

```

"development": {

"channel": "production",

"android": {

"autoIncrement": "versionCode",

"buildType": "apk"

},

"ios": {

"resourceClass": "m1-medium"

}

}

```

### Stacktrace (if a crash is involved)

What I'm getting via logcat when debugging .apk:

`java.lang.RuntimeException: Unable to load script. Make sure you're either running Metro (run 'npx react-native start') or that your bundle 'index.android.bundle' is packaged correctly for release.

`

|

1.0

|

[EAS][Android][SDK 46] Android .apk crashes after launch - ### Summary

Hi everyone.

Runs fine on emulator, but .apk builds made with `eas build` keep crashing, looks like there's no js linked to them. Same result after running `./gradlew assembleDebug` locally. Need help.

No issues with iOS.

Thanks!

### Managed or bare workflow?

bare

### What platform(s) does this occur on?

Android

### Package versions

_No response_

### Environment

```

expo-env-info 1.0.5 environment info:

System:

OS: macOS 13.2.1

Shell: 5.8.1 - /bin/zsh

Binaries:

Node: 18.9.1 - /usr/local/bin/node

Yarn: 1.22.17 - /usr/local/bin/yarn

npm: 8.19.1 - /usr/local/bin/npm

Watchman: 2022.09.19.00 - /usr/local/bin/watchman

Managers:

CocoaPods: 1.11.3 - /Users/usr/.rbenv/shims/pod

SDKs:

iOS SDK:

Platforms: DriverKit 22.2, iOS 16.2, macOS 13.1, tvOS 16.1, watchOS 9.1

Android SDK:

API Levels: 23, 24, 25, 26, 27, 28, 29, 30, 31, 33

Build Tools: 28.0.3, 29.0.2, 29.0.3, 30.0.2, 30.0.3, 31.0.0, 32.1.0, 33.0.0

System Images: android-30 | Google APIs Intel x86 Atom, android-30 | Google Play Intel x86 Atom, android-32 | Google APIs Intel x86 Atom_64

IDEs:

Android Studio: 2022.1 AI-221.6008.13.2211.9514443

Xcode: 14.2/14C18 - /usr/bin/xcodebuild

npmGlobalPackages:

expo-cli: 6.1.0

Expo Workflow: managed

```

### Reproducible demo

eas.json config for apk (actually, tried multiple different configurations):

```

"development": {

"channel": "production",

"android": {

"autoIncrement": "versionCode",

"buildType": "apk"

},

"ios": {

"resourceClass": "m1-medium"

}

}

```

### Stacktrace (if a crash is involved)

What I'm getting via logcat when debugging .apk:

`java.lang.RuntimeException: Unable to load script. Make sure you're either running Metro (run 'npx react-native start') or that your bundle 'index.android.bundle' is packaged correctly for release.

`

|

non_process

|

android apk crashes after launch summary hi everyone runs fine on emulator but apk builds made with eas build keep crashing looks like there s no js linked to them same result after running gradlew assembledebug locally need help no issues with ios thanks managed or bare workflow bare what platform s does this occur on android package versions no response environment expo env info environment info system os macos shell bin zsh binaries node usr local bin node yarn usr local bin yarn npm usr local bin npm watchman usr local bin watchman managers cocoapods users usr rbenv shims pod sdks ios sdk platforms driverkit ios macos tvos watchos android sdk api levels build tools system images android google apis intel atom android google play intel atom android google apis intel atom ides android studio ai xcode usr bin xcodebuild npmglobalpackages expo cli expo workflow managed reproducible demo eas json config for apk actually tried multiple different configurations development channel production android autoincrement versioncode buildtype apk ios resourceclass medium stacktrace if a crash is involved what i m getting via logcat when debugging apk java lang runtimeexception unable to load script make sure you re either running metro run npx react native start or that your bundle index android bundle is packaged correctly for release

| 0

|

208,663

| 16,133,611,264

|

IssuesEvent

|

2021-04-29 08:55:41

|

mikecao/umami

|

https://api.github.com/repos/mikecao/umami

|

closed

|

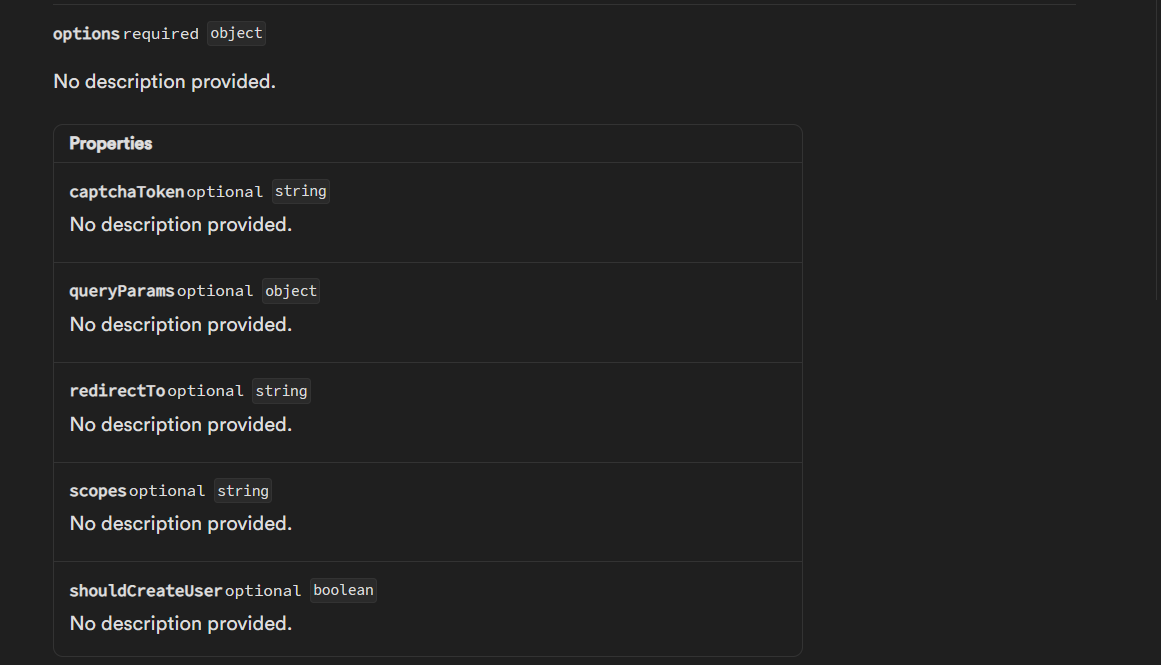

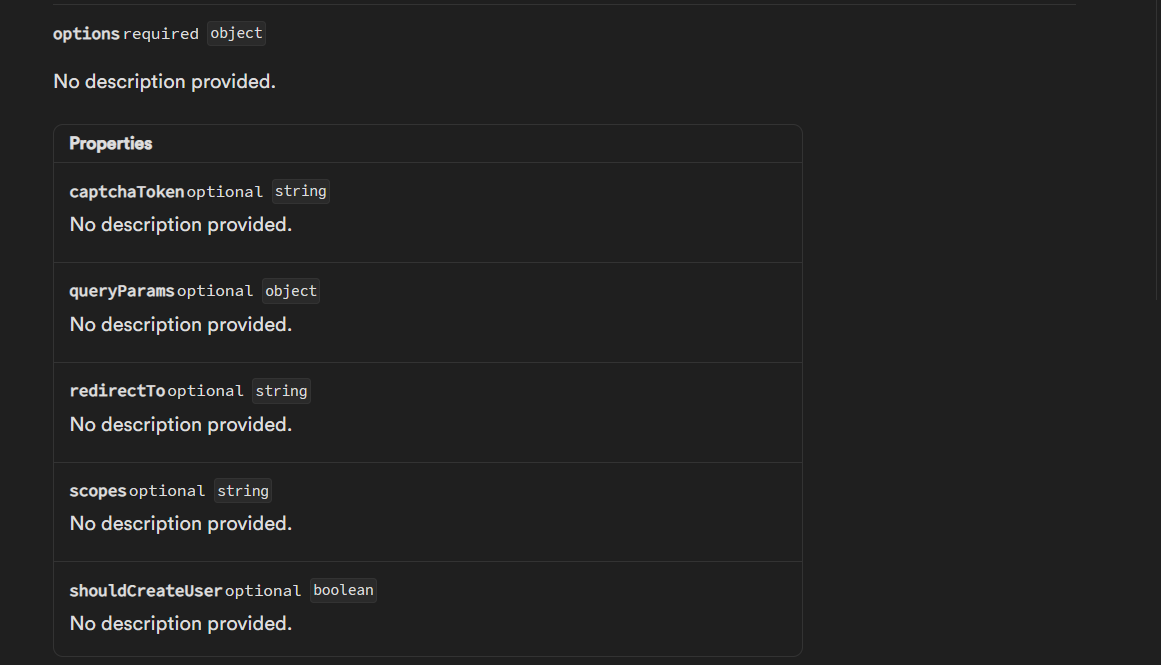

API Docs

|

documentation

|

Would you consider having API Docs?

I just started using Umami and love it already, though for me I'd need to send custom requests if someone visited `example.com/twitter`, which is currently handled by a express `res.redirect()` and a rendered page.

If those'd exist, I'd consider writing a express middleware so that it could be used similar to:

```js

let umami = require('express-umami')

let stats = new umami({

host: "url",

website_id: "id"

})

app.use(stats)

```

IMO this'd make it easier to implement it into more websites and quite possibly even a lot easier for someone to collect usage stats for their API

|

1.0

|

API Docs - Would you consider having API Docs?

I just started using Umami and love it already, though for me I'd need to send custom requests if someone visited `example.com/twitter`, which is currently handled by a express `res.redirect()` and a rendered page.

If those'd exist, I'd consider writing a express middleware so that it could be used similar to:

```js

let umami = require('express-umami')

let stats = new umami({

host: "url",

website_id: "id"

})

app.use(stats)

```

IMO this'd make it easier to implement it into more websites and quite possibly even a lot easier for someone to collect usage stats for their API

|

non_process

|

api docs would you consider having api docs i just started using umami and love it already though for me i d need to send custom requests if someone visited example com twitter which is currently handled by a express res redirect and a rendered page if those d exist i d consider writing a express middleware so that it could be used similar to js let umami require express umami let stats new umami host url website id id app use stats imo this d make it easier to implement it into more websites and quite possibly even a lot easier for someone to collect usage stats for their api

| 0

|

6,113

| 8,971,968,753

|

IssuesEvent

|

2019-01-29 17:05:07

|

prusa3d/Slic3r

|

https://api.github.com/repos/prusa3d/Slic3r

|

closed

|

"Send to printer" does not wait for post-processing scripts to exit before sending file to printer.

|

OctoPrint post processing scripts

|

### Version

Slic3r-1.39.1-beta-prusa3d-linux64-full-201802131106

### Operating system type + version

lsb_release -a

No LSB modules are available.

Distributor ID: Ubuntu

Description: Ubuntu 14.04.5 LTS

Release: 14.04

Codename: trusty

uname -a

Linux betlog 3.13.0-142-generic #191-Ubuntu SMP Fri Feb 2 12:13:35 UTC 2018 x86_64 x86_64 x86_64 GNU/Linux

### Behavior

* _Describe the problem_

"Send to printer" does not wait for post-processing scripts to exit before sending file to printer.

* _Steps needed to reproduce the problem_

Add a script requiring user input to the post-processing scripts field.

Send a file to printer.

* _If this is a command-line slicing issue, include the options used_

* _Is this a new feature request?_

Maybe.

I am not sure if this is expected behaviour or an oversight.

* _Expected Results_

Post-processing scripts should wait for errorcode/exit status 0 before sending the gcode to printer.

Optionally each post-processing script in the postporcessing-script field could be followed by "&&" or similar to indicate that a clean exit is required before continuing, and if not then an error message to appear, and sending to printer is aborted.

* _Actual Results_

Press "Send to printer".

Script terminal appears, user enters input, script completes and exits.

Script effect is not present in the gcode on printer.

#### STL/Config (.ZIP) where problem occurs

Example M25 insertion script that requires user input.

[M25-at-layer-postprocessor.zip](https://github.com/prusa3d/Slic3r/files/1765037/M25-at-layer-postprocessor.zip)

|

1.0

|

"Send to printer" does not wait for post-processing scripts to exit before sending file to printer. - ### Version

Slic3r-1.39.1-beta-prusa3d-linux64-full-201802131106

### Operating system type + version

lsb_release -a

No LSB modules are available.

Distributor ID: Ubuntu

Description: Ubuntu 14.04.5 LTS

Release: 14.04

Codename: trusty

uname -a

Linux betlog 3.13.0-142-generic #191-Ubuntu SMP Fri Feb 2 12:13:35 UTC 2018 x86_64 x86_64 x86_64 GNU/Linux

### Behavior

* _Describe the problem_

"Send to printer" does not wait for post-processing scripts to exit before sending file to printer.

* _Steps needed to reproduce the problem_

Add a script requiring user input to the post-processing scripts field.

Send a file to printer.

* _If this is a command-line slicing issue, include the options used_

* _Is this a new feature request?_

Maybe.

I am not sure if this is expected behaviour or an oversight.

* _Expected Results_

Post-processing scripts should wait for errorcode/exit status 0 before sending the gcode to printer.

Optionally each post-processing script in the postporcessing-script field could be followed by "&&" or similar to indicate that a clean exit is required before continuing, and if not then an error message to appear, and sending to printer is aborted.

* _Actual Results_

Press "Send to printer".

Script terminal appears, user enters input, script completes and exits.

Script effect is not present in the gcode on printer.

#### STL/Config (.ZIP) where problem occurs

Example M25 insertion script that requires user input.

[M25-at-layer-postprocessor.zip](https://github.com/prusa3d/Slic3r/files/1765037/M25-at-layer-postprocessor.zip)

|

process

|

send to printer does not wait for post processing scripts to exit before sending file to printer version beta full operating system type version lsb release a no lsb modules are available distributor id ubuntu description ubuntu lts release codename trusty uname a linux betlog generic ubuntu smp fri feb utc gnu linux behavior describe the problem send to printer does not wait for post processing scripts to exit before sending file to printer steps needed to reproduce the problem add a script requiring user input to the post processing scripts field send a file to printer if this is a command line slicing issue include the options used is this a new feature request maybe i am not sure if this is expected behaviour or an oversight expected results post processing scripts should wait for errorcode exit status before sending the gcode to printer optionally each post processing script in the postporcessing script field could be followed by or similar to indicate that a clean exit is required before continuing and if not then an error message to appear and sending to printer is aborted actual results press send to printer script terminal appears user enters input script completes and exits script effect is not present in the gcode on printer stl config zip where problem occurs example insertion script that requires user input

| 1

|

16,931

| 22,274,747,077

|

IssuesEvent

|

2022-06-10 15:30:17

|

googleapis/google-cloud-python

|

https://api.github.com/repos/googleapis/google-cloud-python

|

closed

|

Client libraries need out-of-band tests against prereleases of third party dependencies

|

type: process

|

/cc @busunkim96, @tswast, @crwilcox

I had originally worked to add `--pre` to the `testing/constraints-3.9.txt` files of the "manual" libraries:

- https://github.com/googleapis/python-api-core/pull/247

- https://github.com/googleapis/python-cloud-core/pull/129

- https://github.com/googleapis/google-resumable-media-python/pull/250

- https://github.com/googleapis/python-firestore/pull/415

- https://github.com/googleapis/python-bigtable/pull/402

- https://github.com/googleapis/python-datastore/pull/207

- https://github.com/googleapis/python-storage/pull/534

- https://github.com/googleapis/python-spanner/pull/479

However, in today's meeting the consensus was that such testing needs to happend out-of-band from the normal PR presubmit testing. I have therefore reverted the already-merged PRs which had only that change:

- https://github.com/googleapis/python-firestore/pull/426

- https://github.com/googleapis/python-bigtable/pull/406

- https://github.com/googleapis/python-datastore/pull/213

For the already-merged PRs which contained more changes, I have created PRs which back out only the constraint change:

- https://github.com/googleapis/python-cloud-core/pull/132

- https://github.com/googleapis/python-spanner/pull/527

For the not-yet-merged PRs, I have backed out the constraint change, and re-titled them to signal the remaining changes:

- https://github.com/googleapis/python-api-core/pull/247

- https://github.com/googleapis/google-resumable-media-python/pull/250

- https://github.com/googleapis/python-storage/pull/534

Going forward, we need to work out how to do this testing out-of-band.

- https://github.com/googleapis/python-bigquery/pull/449 added a `prerelease_deps` session to `noxfile.py` (not run by default, because `noxfile.py` lists [explicit default sessions](https://github.com/tswast/python-bigquery/blob/97eb986001b2fbe13b3ffcbf1a8241e1302f2948/noxfile.py#L33-L45) That PR sets up a separate `presubmit` job to run that specific `nox` session.

- Should we instead run such tests in nightly (`continuous`) builds?

|

1.0

|

Client libraries need out-of-band tests against prereleases of third party dependencies - /cc @busunkim96, @tswast, @crwilcox

I had originally worked to add `--pre` to the `testing/constraints-3.9.txt` files of the "manual" libraries: