Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

22,491

| 31,465,103,849

|

IssuesEvent

|

2023-08-30 00:54:45

|

h4sh5/npm-auto-scanner

|

https://api.github.com/repos/h4sh5/npm-auto-scanner

|

opened

|

truffle-environment 0.1.10 has 1 guarddog issues

|

npm-silent-process-execution

|

```{"npm-silent-process-execution":[{"code":" return spawn(\"node\", [chainPath, ipcNetwork, base64OptionsString], {\n detached: true,\n stdio: \"ignore\"\n });","location":"package/develop.js:36","message":"This package is silently executing another executable"}]}```

|

1.0

|

truffle-environment 0.1.10 has 1 guarddog issues - ```{"npm-silent-process-execution":[{"code":" return spawn(\"node\", [chainPath, ipcNetwork, base64OptionsString], {\n detached: true,\n stdio: \"ignore\"\n });","location":"package/develop.js:36","message":"This package is silently executing another executable"}]}```

|

process

|

truffle environment has guarddog issues npm silent process execution n detached true n stdio ignore n location package develop js message this package is silently executing another executable

| 1

|

20,390

| 27,046,747,953

|

IssuesEvent

|

2023-02-13 10:18:34

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

macOS: local_config_cc / wrapped_clang causing /cores to fill up

|

P4 type: support / not a bug (process) team-Rules-CPP stale

|

### Description of the problem / feature request:

Every fresh invocation of Bazel on my MBP leads to generating two core files in /cores, one for processwrapper and another for wrapped_clang. wrapped_clang aborts because it's invoked without the DEVELOPER_DIR environment variable set.

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

```console

$ touch WORKSPACE BUILD

$ bazel build @local_config_cc//...

$ ls -l /cores

```

### What operating system are you running Bazel on?

macOS 10.14.6

### What's the output of `bazel info release`?

```console

$ bazel info release

release 1.1.0+vmware

```

I can also reproduce this on the same machine using Bazel 1.1.0 from Homebrew (`release 1.1.0-homebrew`).

### If `bazel info release` returns "development version" or "(@non-git)", tell us how you built Bazel.

na

### What's the output of `git remote get-url origin ; git rev-parse master ; git rev-parse HEAD` ?

na

### Have you found anything relevant by searching the web?

- https://github.com/bazelbuild/bazel/blob/master/tools/osx/crosstool/wrapped_clang.cc#L145-L154

### Any other information, logs, or outputs that you want to share?

```

(lldb) bt

* thread #1, stop reason = signal SIGSTOP

frame #0: 0x00007fff643642c6 libsystem_kernel.dylib`__pthread_kill + 10

frame #1: 0x00007fff6441fbf1 libsystem_pthread.dylib`pthread_kill + 284

frame #2: 0x00007fff642ce6a6 libsystem_c.dylib`abort + 127

frame #3: 0x0000000101d51383 wrapped_clang`(anonymous namespace)::GetMandatoryEnvVar(var_name="DEVELOPER_DIR") at wrapped_clang.cc:151

* frame #4: 0x0000000101d4be45 wrapped_clang`main(argc=5, argv=0x00007ffeedeb4798) at wrapped_clang.cc:188

frame #5: 0x00007fff642293d5 libdyld.dylib`start + 1

frame #6: 0x00007fff642293d5 libdyld.dylib`start + 1

```

```

(lldb) parray 5 argv

(char **) $9 = 0x00007ffeedeb4798 {

(char *) [0] = 0x00007ffeedeb49d0 ".../f3e0a6fc08eb51ae28b80f418d80a78f/external/local_config_cc/wrapped_clang"

(char *) [1] = 0x00007ffeedeb4a42 "-E"

(char *) [2] = 0x00007ffeedeb4a45 "-xc++"

(char *) [3] = 0x00007ffeedeb4a4b "-"

(char *) [4] = 0x00007ffeedeb4a4d "-v"

}

```

Judging from the command line, I'm guessing it comes from https://github.com/bazelbuild/bazel/blob/4afaed055ccac3ef624c445524c0385c0d43770b/tools/cpp/unix_cc_configure.bzl#L127.

|

1.0

|

macOS: local_config_cc / wrapped_clang causing /cores to fill up - ### Description of the problem / feature request:

Every fresh invocation of Bazel on my MBP leads to generating two core files in /cores, one for processwrapper and another for wrapped_clang. wrapped_clang aborts because it's invoked without the DEVELOPER_DIR environment variable set.

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

```console

$ touch WORKSPACE BUILD

$ bazel build @local_config_cc//...

$ ls -l /cores

```

### What operating system are you running Bazel on?

macOS 10.14.6

### What's the output of `bazel info release`?

```console

$ bazel info release

release 1.1.0+vmware

```

I can also reproduce this on the same machine using Bazel 1.1.0 from Homebrew (`release 1.1.0-homebrew`).

### If `bazel info release` returns "development version" or "(@non-git)", tell us how you built Bazel.

na

### What's the output of `git remote get-url origin ; git rev-parse master ; git rev-parse HEAD` ?

na

### Have you found anything relevant by searching the web?

- https://github.com/bazelbuild/bazel/blob/master/tools/osx/crosstool/wrapped_clang.cc#L145-L154

### Any other information, logs, or outputs that you want to share?

```

(lldb) bt

* thread #1, stop reason = signal SIGSTOP

frame #0: 0x00007fff643642c6 libsystem_kernel.dylib`__pthread_kill + 10

frame #1: 0x00007fff6441fbf1 libsystem_pthread.dylib`pthread_kill + 284

frame #2: 0x00007fff642ce6a6 libsystem_c.dylib`abort + 127

frame #3: 0x0000000101d51383 wrapped_clang`(anonymous namespace)::GetMandatoryEnvVar(var_name="DEVELOPER_DIR") at wrapped_clang.cc:151

* frame #4: 0x0000000101d4be45 wrapped_clang`main(argc=5, argv=0x00007ffeedeb4798) at wrapped_clang.cc:188

frame #5: 0x00007fff642293d5 libdyld.dylib`start + 1

frame #6: 0x00007fff642293d5 libdyld.dylib`start + 1

```

```

(lldb) parray 5 argv

(char **) $9 = 0x00007ffeedeb4798 {

(char *) [0] = 0x00007ffeedeb49d0 ".../f3e0a6fc08eb51ae28b80f418d80a78f/external/local_config_cc/wrapped_clang"

(char *) [1] = 0x00007ffeedeb4a42 "-E"

(char *) [2] = 0x00007ffeedeb4a45 "-xc++"

(char *) [3] = 0x00007ffeedeb4a4b "-"

(char *) [4] = 0x00007ffeedeb4a4d "-v"

}

```

Judging from the command line, I'm guessing it comes from https://github.com/bazelbuild/bazel/blob/4afaed055ccac3ef624c445524c0385c0d43770b/tools/cpp/unix_cc_configure.bzl#L127.

|

process

|

macos local config cc wrapped clang causing cores to fill up description of the problem feature request every fresh invocation of bazel on my mbp leads to generating two core files in cores one for processwrapper and another for wrapped clang wrapped clang aborts because it s invoked without the developer dir environment variable set bugs what s the simplest easiest way to reproduce this bug please provide a minimal example if possible console touch workspace build bazel build local config cc ls l cores what operating system are you running bazel on macos what s the output of bazel info release console bazel info release release vmware i can also reproduce this on the same machine using bazel from homebrew release homebrew if bazel info release returns development version or non git tell us how you built bazel na what s the output of git remote get url origin git rev parse master git rev parse head na have you found anything relevant by searching the web any other information logs or outputs that you want to share lldb bt thread stop reason signal sigstop frame libsystem kernel dylib pthread kill frame libsystem pthread dylib pthread kill frame libsystem c dylib abort frame wrapped clang anonymous namespace getmandatoryenvvar var name developer dir at wrapped clang cc frame wrapped clang main argc argv at wrapped clang cc frame libdyld dylib start frame libdyld dylib start lldb parray argv char char external local config cc wrapped clang char e char xc char char v judging from the command line i m guessing it comes from

| 1

|

152,870

| 24,031,454,234

|

IssuesEvent

|

2022-09-15 15:19:42

|

nunit/nunit-console

|

https://api.github.com/repos/nunit/nunit-console

|

closed

|

NUnit Console can't be run on a Mapped Network Drive

|

Bug Needs Design

|

@CharliePoole commented on [Sun Oct 26 2014](https://github.com/nunit/nunit/issues/311)

This was originally an nunit-console issue...

nunit-console.exe throws the following error while i tried to execute from Mapped Network Drive

Unhandled Exception: System.TypeInitializationException: The type initializer for 'NUnit.ConsoleRunner.Runner' threw an exception. ---> System.Security.SecurityException: That assembly does not allow partially trusted callers. at NUnit.ConsoleRunner.Runner..cctor() The action that failed was: LinkDemand The assembly or AppDomain that failed was: nunit-console-runner, Version=2.6.3.13283, Culture=neutral, PublicKeyToken=96d09a1eb7f44a77 The method that caused the failure was: NUnit.Core.Logger GetLogger(System.Type) The Zone of the assembly that failed was: Internet The Url of the assembly that failed was: file:///Z:/jenkinsworkspace/workspace/FlashUpload/tools/NUnit/lib/nunit-console-runner.DLL --- End of inner exception stack trace --- at NUnit.ConsoleRunner.Runner.Main(String[] args) at NUnit.ConsoleRunner.Class1.Main(String[] args)

i tried the following methods to fix.

added loadFromRemoteSources enabled="true" in nunit-console.exe.config

But the change did not solve the problem.

---

@CharliePoole commented on [Thu Jan 29 2015](https://github.com/nunit/nunit/issues/311#issuecomment-71943718)

This has been a long-standing situation with NUnit. The only way to run NUnit from a mapped drive is to specifically enable full trust on your machine.

---

@ChrisMaddock commented on [Tue Jan 23 2018](https://github.com/nunit/nunit/issues/311#issuecomment-359921117)

I believe this just came up here: https://stackoverflow.com/questions/48402851/nunit-console-incorrect-parameter

Moving this issue to the console repo. I'm not sure if it's something we will deal with any time soon - but we should keep it tracked.

|

1.0

|

NUnit Console can't be run on a Mapped Network Drive - @CharliePoole commented on [Sun Oct 26 2014](https://github.com/nunit/nunit/issues/311)

This was originally an nunit-console issue...

nunit-console.exe throws the following error while i tried to execute from Mapped Network Drive

Unhandled Exception: System.TypeInitializationException: The type initializer for 'NUnit.ConsoleRunner.Runner' threw an exception. ---> System.Security.SecurityException: That assembly does not allow partially trusted callers. at NUnit.ConsoleRunner.Runner..cctor() The action that failed was: LinkDemand The assembly or AppDomain that failed was: nunit-console-runner, Version=2.6.3.13283, Culture=neutral, PublicKeyToken=96d09a1eb7f44a77 The method that caused the failure was: NUnit.Core.Logger GetLogger(System.Type) The Zone of the assembly that failed was: Internet The Url of the assembly that failed was: file:///Z:/jenkinsworkspace/workspace/FlashUpload/tools/NUnit/lib/nunit-console-runner.DLL --- End of inner exception stack trace --- at NUnit.ConsoleRunner.Runner.Main(String[] args) at NUnit.ConsoleRunner.Class1.Main(String[] args)

i tried the following methods to fix.

added loadFromRemoteSources enabled="true" in nunit-console.exe.config

But the change did not solve the problem.

---

@CharliePoole commented on [Thu Jan 29 2015](https://github.com/nunit/nunit/issues/311#issuecomment-71943718)

This has been a long-standing situation with NUnit. The only way to run NUnit from a mapped drive is to specifically enable full trust on your machine.

---

@ChrisMaddock commented on [Tue Jan 23 2018](https://github.com/nunit/nunit/issues/311#issuecomment-359921117)

I believe this just came up here: https://stackoverflow.com/questions/48402851/nunit-console-incorrect-parameter

Moving this issue to the console repo. I'm not sure if it's something we will deal with any time soon - but we should keep it tracked.

|

non_process

|

nunit console can t be run on a mapped network drive charliepoole commented on this was originally an nunit console issue nunit console exe throws the following error while i tried to execute from mapped network drive unhandled exception system typeinitializationexception the type initializer for nunit consolerunner runner threw an exception system security securityexception that assembly does not allow partially trusted callers at nunit consolerunner runner cctor the action that failed was linkdemand the assembly or appdomain that failed was nunit console runner version culture neutral publickeytoken the method that caused the failure was nunit core logger getlogger system type the zone of the assembly that failed was internet the url of the assembly that failed was file z jenkinsworkspace workspace flashupload tools nunit lib nunit console runner dll end of inner exception stack trace at nunit consolerunner runner main string args at nunit consolerunner main string args i tried the following methods to fix added loadfromremotesources enabled true in nunit console exe config but the change did not solve the problem charliepoole commented on this has been a long standing situation with nunit the only way to run nunit from a mapped drive is to specifically enable full trust on your machine chrismaddock commented on i believe this just came up here moving this issue to the console repo i m not sure if it s something we will deal with any time soon but we should keep it tracked

| 0

|

87,161

| 17,154,126,164

|

IssuesEvent

|

2021-07-14 03:06:24

|

stlink-org/stlink

|

https://api.github.com/repos/stlink-org/stlink

|

opened

|

[feature] Add multi-core support for devices like STM32H745/755

|

code/feature-request

|

Some of the new STM32H7 devices are multi core devices. ST's official tools support it and it would be nice to have that here

|

1.0

|

[feature] Add multi-core support for devices like STM32H745/755 - Some of the new STM32H7 devices are multi core devices. ST's official tools support it and it would be nice to have that here

|

non_process

|

add multi core support for devices like some of the new devices are multi core devices st s official tools support it and it would be nice to have that here

| 0

|

15,654

| 19,846,832,900

|

IssuesEvent

|

2022-01-21 07:42:09

|

ooi-data/CE04OSSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_fourier_recovered

|

https://api.github.com/repos/ooi-data/CE04OSSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_fourier_recovered

|

opened

|

🛑 Processing failed: ValueError

|

process

|

## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:42:08.463886.

## Details

Flow name: `CE04OSSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_fourier_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

1.0

|

🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T07:42:08.463886.

## Details

Flow name: `CE04OSSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_fourier_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/pipeline.py", line 165, in processing

final_path = finalize_data_stream(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 84, in finalize_data_stream

append_to_zarr(mod_ds, final_store, enc, logger=logger)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/__init__.py", line 357, in append_to_zarr

_append_zarr(store, mod_ds)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/ooi_harvester/processor/utils.py", line 187, in _append_zarr

existing_arr.append(var_data.values)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 519, in values

return _as_array_or_item(self._data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/variable.py", line 259, in _as_array_or_item

data = np.asarray(data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 1541, in __array__

x = self.compute()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 288, in compute

(result,) = compute(self, traverse=False, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/base.py", line 571, in compute

results = schedule(dsk, keys, **kwargs)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/threaded.py", line 79, in get

results = get_async(

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 507, in get_async

raise_exception(exc, tb)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 315, in reraise

raise exc

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/local.py", line 220, in execute_task

result = _execute_task(task, data)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/core.py", line 119, in _execute_task

return func(*(_execute_task(a, cache) for a in args))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/dask/array/core.py", line 116, in getter

c = np.asarray(c)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 357, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 551, in __array__

self._ensure_cached()

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 548, in _ensure_cached

self.array = NumpyIndexingAdapter(np.asarray(self.array))

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 521, in __array__

return np.asarray(self.array, dtype=dtype)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/core/indexing.py", line 422, in __array__

return np.asarray(array[self.key], dtype=None)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/xarray/backends/zarr.py", line 73, in __getitem__

return array[key.tuple]

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 673, in __getitem__

return self.get_basic_selection(selection, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 798, in get_basic_selection

return self._get_basic_selection_nd(selection=selection, out=out,

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 841, in _get_basic_selection_nd

return self._get_selection(indexer=indexer, out=out, fields=fields)

File "/srv/conda/envs/notebook/lib/python3.9/site-packages/zarr/core.py", line 1135, in _get_selection

lchunk_coords, lchunk_selection, lout_selection = zip(*indexer)

ValueError: not enough values to unpack (expected 3, got 0)

```

</details>

|

process

|

🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name recovered host wavss a dcl fourier recovered task name processing task error type valueerror error message not enough values to unpack expected got traceback traceback most recent call last file srv conda envs notebook lib site packages ooi harvester processor pipeline py line in processing final path finalize data stream file srv conda envs notebook lib site packages ooi harvester processor init py line in finalize data stream append to zarr mod ds final store enc logger logger file srv conda envs notebook lib site packages ooi harvester processor init py line in append to zarr append zarr store mod ds file srv conda envs notebook lib site packages ooi harvester processor utils py line in append zarr existing arr append var data values file srv conda envs notebook lib site packages xarray core variable py line in values return as array or item self data file srv conda envs notebook lib site packages xarray core variable py line in as array or item data np asarray data file srv conda envs notebook lib site packages dask array core py line in array x self compute file srv conda envs notebook lib site packages dask base py line in compute result compute self traverse false kwargs file srv conda envs notebook lib site packages dask base py line in compute results schedule dsk keys kwargs file srv conda envs notebook lib site packages dask threaded py line in get results get async file srv conda envs notebook lib site packages dask local py line in get async raise exception exc tb file srv conda envs notebook lib site packages dask local py line in reraise raise exc file srv conda envs notebook lib site packages dask local py line in execute task result execute task task data file srv conda envs notebook lib site packages dask core py line in execute task return func execute task a cache for a in args file srv conda envs notebook lib site packages dask array core py line in getter c np asarray c file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array self ensure cached file srv conda envs notebook lib site packages xarray core indexing py line in ensure cached self array numpyindexingadapter np asarray self array file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray self array dtype dtype file srv conda envs notebook lib site packages xarray core indexing py line in array return np asarray array dtype none file srv conda envs notebook lib site packages xarray backends zarr py line in getitem return array file srv conda envs notebook lib site packages zarr core py line in getitem return self get basic selection selection fields fields file srv conda envs notebook lib site packages zarr core py line in get basic selection return self get basic selection nd selection selection out out file srv conda envs notebook lib site packages zarr core py line in get basic selection nd return self get selection indexer indexer out out fields fields file srv conda envs notebook lib site packages zarr core py line in get selection lchunk coords lchunk selection lout selection zip indexer valueerror not enough values to unpack expected got

| 1

|

825

| 3,295,496,649

|

IssuesEvent

|

2015-11-01 00:36:08

|

t3kt/vjzual2

|

https://api.github.com/repos/t3kt/vjzual2

|

opened

|

changing parameters of the multi delay module causes fps drop

|

bug video processing

|

it's a problem. some of it is from the param component (see #112), but a bunch of it is probably from the replicator and other such stuff

|

1.0

|

changing parameters of the multi delay module causes fps drop - it's a problem. some of it is from the param component (see #112), but a bunch of it is probably from the replicator and other such stuff

|

process

|

changing parameters of the multi delay module causes fps drop it s a problem some of it is from the param component see but a bunch of it is probably from the replicator and other such stuff

| 1

|

112,575

| 17,092,395,069

|

IssuesEvent

|

2021-07-08 19:23:14

|

vyas0189/CougarCS-Client

|

https://api.github.com/repos/vyas0189/CougarCS-Client

|

opened

|

CVE-2021-23364 (Medium) detected in browserslist-4.14.2.tgz

|

security vulnerability

|

## CVE-2021-23364 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>browserslist-4.14.2.tgz</b></p></summary>

<p>Share target browsers between different front-end tools, like Autoprefixer, Stylelint and babel-env-preset</p>

<p>Library home page: <a href="https://registry.npmjs.org/browserslist/-/browserslist-4.14.2.tgz">https://registry.npmjs.org/browserslist/-/browserslist-4.14.2.tgz</a></p>

<p>Path to dependency file: CougarCS-Client/package.json</p>

<p>Path to vulnerable library: CougarCS-Client/node_modules/browserslist</p>

<p>

Dependency Hierarchy:

- react-scripts-4.0.3.tgz (Root Library)

- react-dev-utils-11.0.4.tgz

- :x: **browserslist-4.14.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/vyas0189/CougarCS-Client/commit/47a52f8e977fa1725a202abf8ba2826e5236ca8b">47a52f8e977fa1725a202abf8ba2826e5236ca8b</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package browserslist from 4.0.0 and before 4.16.5 are vulnerable to Regular Expression Denial of Service (ReDoS) during parsing of queries.

<p>Publish Date: 2021-04-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23364>CVE-2021-23364</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23364">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23364</a></p>

<p>Release Date: 2021-04-28</p>

<p>Fix Resolution: browserslist - 4.16.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-23364 (Medium) detected in browserslist-4.14.2.tgz - ## CVE-2021-23364 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>browserslist-4.14.2.tgz</b></p></summary>

<p>Share target browsers between different front-end tools, like Autoprefixer, Stylelint and babel-env-preset</p>

<p>Library home page: <a href="https://registry.npmjs.org/browserslist/-/browserslist-4.14.2.tgz">https://registry.npmjs.org/browserslist/-/browserslist-4.14.2.tgz</a></p>

<p>Path to dependency file: CougarCS-Client/package.json</p>

<p>Path to vulnerable library: CougarCS-Client/node_modules/browserslist</p>

<p>

Dependency Hierarchy:

- react-scripts-4.0.3.tgz (Root Library)

- react-dev-utils-11.0.4.tgz

- :x: **browserslist-4.14.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/vyas0189/CougarCS-Client/commit/47a52f8e977fa1725a202abf8ba2826e5236ca8b">47a52f8e977fa1725a202abf8ba2826e5236ca8b</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package browserslist from 4.0.0 and before 4.16.5 are vulnerable to Regular Expression Denial of Service (ReDoS) during parsing of queries.

<p>Publish Date: 2021-04-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23364>CVE-2021-23364</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23364">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23364</a></p>

<p>Release Date: 2021-04-28</p>

<p>Fix Resolution: browserslist - 4.16.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in browserslist tgz cve medium severity vulnerability vulnerable library browserslist tgz share target browsers between different front end tools like autoprefixer stylelint and babel env preset library home page a href path to dependency file cougarcs client package json path to vulnerable library cougarcs client node modules browserslist dependency hierarchy react scripts tgz root library react dev utils tgz x browserslist tgz vulnerable library found in head commit a href found in base branch master vulnerability details the package browserslist from and before are vulnerable to regular expression denial of service redos during parsing of queries publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution browserslist step up your open source security game with whitesource

| 0

|

81,129

| 23,393,984,266

|

IssuesEvent

|

2022-08-11 20:52:11

|

pixiebrix/pixiebrix-extension

|

https://api.github.com/repos/pixiebrix/pixiebrix-extension

|

closed

|

Dropdown with labels errors if you don't interact with the component in the form

|

bug form builder document builder

|

Context

---

- https://pixiebrix.slack.com/archives/C02CN01JXAA/p1659539496451749

|

2.0

|

Dropdown with labels errors if you don't interact with the component in the form - Context

---

- https://pixiebrix.slack.com/archives/C02CN01JXAA/p1659539496451749

|

non_process

|

dropdown with labels errors if you don t interact with the component in the form context

| 0

|

794,038

| 28,020,145,643

|

IssuesEvent

|

2023-03-28 04:18:23

|

HaDuve/TravelCostNative

|

https://api.github.com/repos/HaDuve/TravelCostNative

|

closed

|

Add A toggle setting button

|

Enhancement 1 - High Priority AA - Easy/Medium

|

Save boolean or enum settings in async store

Add a possibility to save boolean or enum settings online under update user, fetch it at root and save it in userContext

|

1.0

|

Add A toggle setting button - Save boolean or enum settings in async store

Add a possibility to save boolean or enum settings online under update user, fetch it at root and save it in userContext

|

non_process

|

add a toggle setting button save boolean or enum settings in async store add a possibility to save boolean or enum settings online under update user fetch it at root and save it in usercontext

| 0

|

73,424

| 7,333,977,405

|

IssuesEvent

|

2018-03-05 21:11:26

|

eclipse/jetty.project

|

https://api.github.com/repos/eclipse/jetty.project

|

opened

|

Refactor WebSocket tests to not use EventQueue.awaitEventCount() to reduce CPU usage

|

Test

|

Currently the WebSocket tests use a lot of CPU for no good reason.

That no good reason is EventQueue.awaitEventCount().

Refactor the WebSocket tests to use a LinkedBlockingQueue with offer / poll techniques instead.

|

1.0

|

Refactor WebSocket tests to not use EventQueue.awaitEventCount() to reduce CPU usage - Currently the WebSocket tests use a lot of CPU for no good reason.

That no good reason is EventQueue.awaitEventCount().

Refactor the WebSocket tests to use a LinkedBlockingQueue with offer / poll techniques instead.

|

non_process

|

refactor websocket tests to not use eventqueue awaiteventcount to reduce cpu usage currently the websocket tests use a lot of cpu for no good reason that no good reason is eventqueue awaiteventcount refactor the websocket tests to use a linkedblockingqueue with offer poll techniques instead

| 0

|

138,054

| 30,803,503,298

|

IssuesEvent

|

2023-08-01 04:46:54

|

TeamSteam-11/TeamSteam

|

https://api.github.com/repos/TeamSteam-11/TeamSteam

|

closed

|

매칭 관련 코드 리팩토링 및 TestCode 작성

|

🛠refactoring 📝testcode

|

### Refactor

- [x] MatchingController 리팩토링

- [x] MatchingService 리팩토링

### TestCode

**MatchingController**

- [x] 매칭 등록

- [x] 매칭 삭제

|

1.0

|

매칭 관련 코드 리팩토링 및 TestCode 작성 - ### Refactor

- [x] MatchingController 리팩토링

- [x] MatchingService 리팩토링

### TestCode

**MatchingController**

- [x] 매칭 등록

- [x] 매칭 삭제

|

non_process

|

매칭 관련 코드 리팩토링 및 testcode 작성 refactor matchingcontroller 리팩토링 matchingservice 리팩토링 testcode matchingcontroller 매칭 등록 매칭 삭제

| 0

|

87,089

| 17,142,889,872

|

IssuesEvent

|

2021-07-13 11:39:20

|

mozilla/addons-frontend

|

https://api.github.com/repos/mozilla/addons-frontend

|

closed

|

Remove InfoDialog and associated code

|

component: code quality priority: p4

|

The [`InfoDialog`](https://github.com/mozilla/addons-frontend/tree/master/src/amo/components/InfoDialog) component is no longer needed. We should remove it and any code that references it. This has been discussed in detail on Slack.

|

1.0

|

Remove InfoDialog and associated code - The [`InfoDialog`](https://github.com/mozilla/addons-frontend/tree/master/src/amo/components/InfoDialog) component is no longer needed. We should remove it and any code that references it. This has been discussed in detail on Slack.

|

non_process

|

remove infodialog and associated code the component is no longer needed we should remove it and any code that references it this has been discussed in detail on slack

| 0

|

92,866

| 10,763,599,503

|

IssuesEvent

|

2019-11-01 04:48:49

|

madanalogy/ped

|

https://api.github.com/repos/madanalogy/ped

|

opened

|

DeleteTutorial Command Usage Message

|

severity.Low type.DocumentationBug

|

additional usages shown as example in message not displayed in user guide or message constraint description

|

1.0

|

DeleteTutorial Command Usage Message - additional usages shown as example in message not displayed in user guide or message constraint description

|

non_process

|

deletetutorial command usage message additional usages shown as example in message not displayed in user guide or message constraint description

| 0

|

14,454

| 17,533,228,748

|

IssuesEvent

|

2021-08-12 01:46:31

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

Distance matrix error for Standard (N x T) in QGIS 3.4.10

|

Feedback stale Processing Bug

|

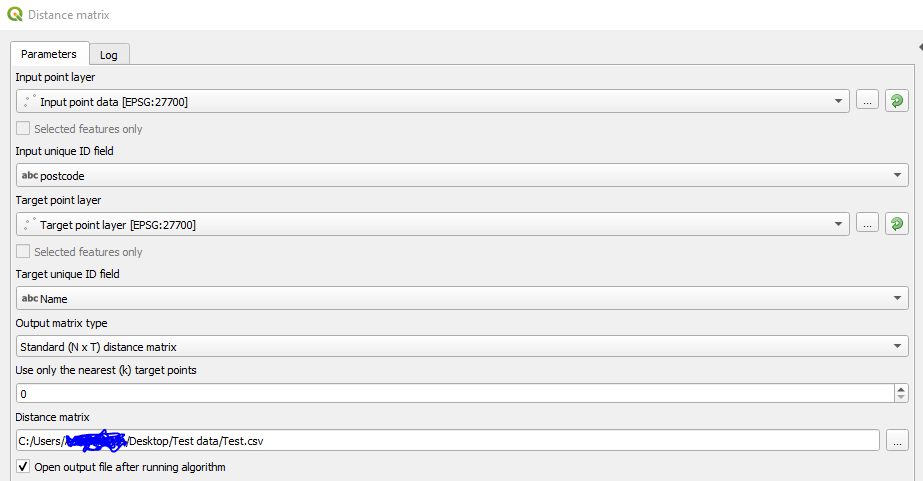

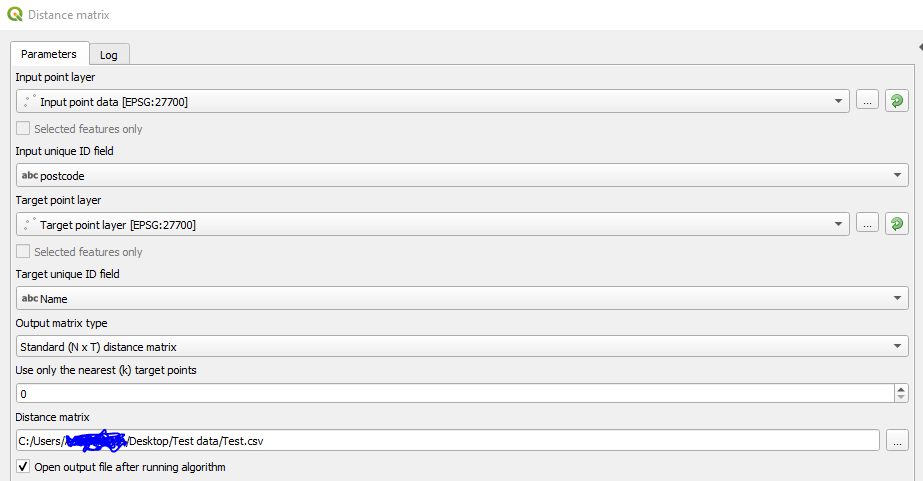

### **Summary**

When running the distance matrix in QGIS 3.4.10 with the Standard (N x T) matrix I am receiving the following error message after 0.33 seconds in the algorithm log panel.

I have tested the same data in a QGIS 2 build, specifically 2.18.28, and it ran successfully with the desired result.

**Error**

`Input parameters:

{ 'INPUT' : 'C:\\Users\\myname\\Desktop\\Test data\\Input point data.shp', 'INPUT_FIELD' : 'postcode', 'MATRIX_TYPE' : 1, 'NEAREST_POINTS' : 0, 'OUTPUT' : 'C:/Users/myname/Desktop/Test data/Test.csv', 'TARGET' : 'C:\\Users\\myname\\Desktop\\Test data\\Target point layer.shp', 'TARGET_FIELD' : 'Name' `}`

`Traceback (most recent call last):

File "C:/PROGRA~1/QGIS3~1.4/apps/qgis-ltr/./python/plugins\processing\algs\qgis\PointDistance.py", line 145, in processAlgorithm

nPoints, feedback)

File "C:/PROGRA~1/QGIS3~1.4/apps/qgis-ltr/./python/plugins\processing\algs\qgis\PointDistance.py", line 270, in regularMatrix

fields, source.wkbType(), source.sourceCrs())

Exception: unknown`

`Execution failed after 0.33 seconds`

### **Data and algorithm parameters used**

I have attached both SHP files used and the QGIS project file to this post for testing if needed. Below I have listed the ID fields used for each SHP file and included an image for ease.

- The input unique ID was set to "postcode"

- The target unique ID was set to "Name"

### **Other observations that may be useful**

1. I receive the same error no matter what the output format is.

2. If I run the tool with a temporary layer output then the algorithm runs and a layer is created in the layers panel. However, when I go to view the attribute table QGIS crashes.

3. I have tried re-saving and converting them to single-point (even though they already are) to no avail.

4. They are both projected to ESPG: 27700 BNG.

4. I have noticed two similar issues on GIS Stack Exchange and no definitive answers have been given for the cause. These can be found here:

[https://gis.stackexchange.com/questions/337410/error-when-running-distance-matrix-in-qgis-3-4-10?noredirect=1#comment550490_337410](url)

[https://gis.stackexchange.com/questions/278609/qgis-distance-matrix-execution-fails](url)

**Link to data**

[https://www.dropbox.com/sh/33v4dtbl6nk98j8/AADDbSo_x5EChM34-B0aWT4Ma?dl=0](url)

|

1.0

|

Distance matrix error for Standard (N x T) in QGIS 3.4.10 - ### **Summary**

When running the distance matrix in QGIS 3.4.10 with the Standard (N x T) matrix I am receiving the following error message after 0.33 seconds in the algorithm log panel.

I have tested the same data in a QGIS 2 build, specifically 2.18.28, and it ran successfully with the desired result.

**Error**

`Input parameters:

{ 'INPUT' : 'C:\\Users\\myname\\Desktop\\Test data\\Input point data.shp', 'INPUT_FIELD' : 'postcode', 'MATRIX_TYPE' : 1, 'NEAREST_POINTS' : 0, 'OUTPUT' : 'C:/Users/myname/Desktop/Test data/Test.csv', 'TARGET' : 'C:\\Users\\myname\\Desktop\\Test data\\Target point layer.shp', 'TARGET_FIELD' : 'Name' `}`

`Traceback (most recent call last):

File "C:/PROGRA~1/QGIS3~1.4/apps/qgis-ltr/./python/plugins\processing\algs\qgis\PointDistance.py", line 145, in processAlgorithm

nPoints, feedback)

File "C:/PROGRA~1/QGIS3~1.4/apps/qgis-ltr/./python/plugins\processing\algs\qgis\PointDistance.py", line 270, in regularMatrix

fields, source.wkbType(), source.sourceCrs())

Exception: unknown`

`Execution failed after 0.33 seconds`

### **Data and algorithm parameters used**

I have attached both SHP files used and the QGIS project file to this post for testing if needed. Below I have listed the ID fields used for each SHP file and included an image for ease.

- The input unique ID was set to "postcode"

- The target unique ID was set to "Name"

### **Other observations that may be useful**

1. I receive the same error no matter what the output format is.

2. If I run the tool with a temporary layer output then the algorithm runs and a layer is created in the layers panel. However, when I go to view the attribute table QGIS crashes.

3. I have tried re-saving and converting them to single-point (even though they already are) to no avail.

4. They are both projected to ESPG: 27700 BNG.

4. I have noticed two similar issues on GIS Stack Exchange and no definitive answers have been given for the cause. These can be found here:

[https://gis.stackexchange.com/questions/337410/error-when-running-distance-matrix-in-qgis-3-4-10?noredirect=1#comment550490_337410](url)

[https://gis.stackexchange.com/questions/278609/qgis-distance-matrix-execution-fails](url)

**Link to data**

[https://www.dropbox.com/sh/33v4dtbl6nk98j8/AADDbSo_x5EChM34-B0aWT4Ma?dl=0](url)

|

process

|

distance matrix error for standard n x t in qgis summary when running the distance matrix in qgis with the standard n x t matrix i am receiving the following error message after seconds in the algorithm log panel i have tested the same data in a qgis build specifically and it ran successfully with the desired result error input parameters input c users myname desktop test data input point data shp input field postcode matrix type nearest points output c users myname desktop test data test csv target c users myname desktop test data target point layer shp target field name traceback most recent call last file c progra apps qgis ltr python plugins processing algs qgis pointdistance py line in processalgorithm npoints feedback file c progra apps qgis ltr python plugins processing algs qgis pointdistance py line in regularmatrix fields source wkbtype source sourcecrs exception unknown execution failed after seconds data and algorithm parameters used i have attached both shp files used and the qgis project file to this post for testing if needed below i have listed the id fields used for each shp file and included an image for ease the input unique id was set to postcode the target unique id was set to name other observations that may be useful i receive the same error no matter what the output format is if i run the tool with a temporary layer output then the algorithm runs and a layer is created in the layers panel however when i go to view the attribute table qgis crashes i have tried re saving and converting them to single point even though they already are to no avail they are both projected to espg bng i have noticed two similar issues on gis stack exchange and no definitive answers have been given for the cause these can be found here url url link to data url

| 1

|

432,706

| 12,497,056,661

|

IssuesEvent

|

2020-06-01 15:51:14

|

unoplatform/uno

|

https://api.github.com/repos/unoplatform/uno

|

closed

|

ContentControl's ContentTemplate attribute bingding a null ContentTemplate,but content is not null,the content shows in Andriod but show nothing in ios.

|

area/ios kind/bug priority/backlog

|

<!--

PLEASE HELP US PROCESS GITHUB ISSUES FASTER BY PROVIDING THE FOLLOWING INFORMATION.

ISSUES MISSING IMPORTANT INFORMATION MAY BE CLOSED WITHOUT INVESTIGATION.

-->

## I'm submitting a...

<!-- Please uncomment one or more that apply to this issue -->

<!-- - Regression (a behavior that used to work and stopped working in a new release) -->

Bug report (I searched for similar issues and did not find one)

<!-- - Feature request -->

<!-- - Sample app request -->

<!-- - Documentation issue or request -->

<!-- - Question of Support request => Please do not submit support request here, instead see https://github.com/nventive/Uno/blob/master/README.md#have-questions-feature-requests-issues -->

## Current behavior

<!-- Describe how the issue manifests. -->

I defined an usercontrol ,the ContentTemplate using an templatebinding,but the binding template is null. In Andriod ,the content of this control shows well,but in ios,it show nothing.

## Expected behavior

<!-- Describe what the desired behavior would be. -->

I wish it show the same just like in Androd when it run in ios.

## Minimal reproduction of the problem with instructions

<!--

For bug reports please provide a *MINIMAL REPRO PROJECT* and the *STEPS TO REPRODUCE*

-->

## Environment

<!-- For bug reports Check one or more of the following options with "x" -->

```

Nuget Package:

Package Version(s):

Affected platform(s):

- [ ] iOS

- [ ] Android

- [ ] WebAssembly

- [ ] Windows

- [ ] Build tasks

Visual Studio

- [ enterprise ] 2017 (version: 15.7.1)

- [ ] 2017 Preview (version: )

- [ ] for Mac (version: )

Relevant plugins

- [ ] Resharper (version: )

```

|

1.0

|

ContentControl's ContentTemplate attribute bingding a null ContentTemplate,but content is not null,the content shows in Andriod but show nothing in ios. - <!--

PLEASE HELP US PROCESS GITHUB ISSUES FASTER BY PROVIDING THE FOLLOWING INFORMATION.

ISSUES MISSING IMPORTANT INFORMATION MAY BE CLOSED WITHOUT INVESTIGATION.

-->

## I'm submitting a...

<!-- Please uncomment one or more that apply to this issue -->

<!-- - Regression (a behavior that used to work and stopped working in a new release) -->

Bug report (I searched for similar issues and did not find one)

<!-- - Feature request -->

<!-- - Sample app request -->

<!-- - Documentation issue or request -->

<!-- - Question of Support request => Please do not submit support request here, instead see https://github.com/nventive/Uno/blob/master/README.md#have-questions-feature-requests-issues -->

## Current behavior

<!-- Describe how the issue manifests. -->

I defined an usercontrol ,the ContentTemplate using an templatebinding,but the binding template is null. In Andriod ,the content of this control shows well,but in ios,it show nothing.

## Expected behavior

<!-- Describe what the desired behavior would be. -->

I wish it show the same just like in Androd when it run in ios.

## Minimal reproduction of the problem with instructions

<!--

For bug reports please provide a *MINIMAL REPRO PROJECT* and the *STEPS TO REPRODUCE*

-->

## Environment

<!-- For bug reports Check one or more of the following options with "x" -->

```

Nuget Package:

Package Version(s):

Affected platform(s):

- [ ] iOS

- [ ] Android

- [ ] WebAssembly

- [ ] Windows

- [ ] Build tasks

Visual Studio

- [ enterprise ] 2017 (version: 15.7.1)

- [ ] 2017 Preview (version: )

- [ ] for Mac (version: )

Relevant plugins

- [ ] Resharper (version: )

```

|

non_process

|

contentcontrol s contenttemplate attribute bingding a null contenttemplate but content is not null the content shows in andriod but show nothing in ios please help us process github issues faster by providing the following information issues missing important information may be closed without investigation i m submitting a bug report i searched for similar issues and did not find one please do not submit support request here instead see current behavior i defined an usercontrol the contenttemplate using an templatebinding but the binding template is null in andriod the content of this control shows well but in ios it show nothing expected behavior i wish it show the same just like in androd when it run in ios minimal reproduction of the problem with instructions for bug reports please provide a minimal repro project and the steps to reproduce environment nuget package package version s affected platform s ios android webassembly windows build tasks visual studio version preview version for mac version relevant plugins resharper version

| 0

|

1,915

| 4,751,258,985

|

IssuesEvent

|

2016-10-22 19:47:21

|

paulkornikov/Pragonas

|

https://api.github.com/repos/paulkornikov/Pragonas

|

closed

|

Fonction de recherche de dernier processus par type de processus

|

a-new feature processus workload III

|

Filtrer sur le résultat également pour ne retenir que les résultats succès.

|

1.0

|

Fonction de recherche de dernier processus par type de processus - Filtrer sur le résultat également pour ne retenir que les résultats succès.

|

process

|

fonction de recherche de dernier processus par type de processus filtrer sur le résultat également pour ne retenir que les résultats succès

| 1

|

13,694

| 16,449,970,935

|

IssuesEvent

|

2021-05-21 03:17:51

|

microsoft/react-native-windows

|

https://api.github.com/repos/microsoft/react-native-windows

|

closed

|

Link to curated release notes in patch release notes

|

Area: Release Process enhancement good first issue

|

Right now our patch releases look like the below

We have a high enough release frequency that the last major release is often off the first page. This makes it hard to find the manually curated documentation for an overall release. We can fix this by adding a description to the generated release notes, with a link to the curated release notes.

|

1.0

|

Link to curated release notes in patch release notes - Right now our patch releases look like the below

We have a high enough release frequency that the last major release is often off the first page. This makes it hard to find the manually curated documentation for an overall release. We can fix this by adding a description to the generated release notes, with a link to the curated release notes.

|

process

|

link to curated release notes in patch release notes right now our patch releases look like the below we have a high enough release frequency that the last major release is often off the first page this makes it hard to find the manually curated documentation for an overall release we can fix this by adding a description to the generated release notes with a link to the curated release notes

| 1

|

19,348

| 25,479,553,089

|

IssuesEvent

|

2022-11-25 18:27:14

|

kdgregory/log4j-aws-appenders

|

https://api.github.com/repos/kdgregory/log4j-aws-appenders

|

closed

|

CloudWatchLogWriter initialization takes excessively long when creating new stream

|

bug in-process

|

[CloudWatchLogWriter.createLogStream()](https://github.com/kdgregory/log4j-aws-appenders/blob/trunk/library/logwriters/src/main/java/com/kdgregory/logging/aws/cloudwatch/CloudWatchLogWriter.java#L233) waits for the stream to become ready by retrieving the next sequence token. However, this is null for a new stream, which means that it will keep retrying until the retry manager times-out.

This was not a visible issue in previous versions because the retry manager used a duration-based timeout, and simply gave up. With 3.1.0, the retry manager now uses a timestamp-based timeout, which means that it would keep trying for 60 seconds and then throw, even though the stream was available.

This will require some fairly extensive changes: adding a `findLogStream()` to the facade, and using a separate flag variable to indicate that the sequence number should be null (otherwise the issue will simply move to `sendBatch()`, and the logwriter will always time-out before sending a batch).

|

1.0

|

CloudWatchLogWriter initialization takes excessively long when creating new stream - [CloudWatchLogWriter.createLogStream()](https://github.com/kdgregory/log4j-aws-appenders/blob/trunk/library/logwriters/src/main/java/com/kdgregory/logging/aws/cloudwatch/CloudWatchLogWriter.java#L233) waits for the stream to become ready by retrieving the next sequence token. However, this is null for a new stream, which means that it will keep retrying until the retry manager times-out.

This was not a visible issue in previous versions because the retry manager used a duration-based timeout, and simply gave up. With 3.1.0, the retry manager now uses a timestamp-based timeout, which means that it would keep trying for 60 seconds and then throw, even though the stream was available.

This will require some fairly extensive changes: adding a `findLogStream()` to the facade, and using a separate flag variable to indicate that the sequence number should be null (otherwise the issue will simply move to `sendBatch()`, and the logwriter will always time-out before sending a batch).

|

process

|

cloudwatchlogwriter initialization takes excessively long when creating new stream waits for the stream to become ready by retrieving the next sequence token however this is null for a new stream which means that it will keep retrying until the retry manager times out this was not a visible issue in previous versions because the retry manager used a duration based timeout and simply gave up with the retry manager now uses a timestamp based timeout which means that it would keep trying for seconds and then throw even though the stream was available this will require some fairly extensive changes adding a findlogstream to the facade and using a separate flag variable to indicate that the sequence number should be null otherwise the issue will simply move to sendbatch and the logwriter will always time out before sending a batch

| 1

|

396,349

| 11,708,283,923

|

IssuesEvent

|

2020-03-08 12:23:34

|

kubernetes/minikube

|

https://api.github.com/repos/kubernetes/minikube

|

closed

|

Upgrade CNI to support version 0.4.0

|

area/cni area/guest-vm kind/feature priority/important-longterm

|

`ERRO[0000] Error adding network: incompatible CNI versions; config is "0.4.0", plugin supports ["0.1.0" "0.2.0" "0.3.0" "0.3.1"] `

We are running 0.6.0 (Aug 2017), should upgrade to 0.7.0 (Apr 2019) - or 0.7.1

And we probably have to build it from source, in order to do so (no more binaries)

Required for `podman run`

|

1.0

|

Upgrade CNI to support version 0.4.0 - `ERRO[0000] Error adding network: incompatible CNI versions; config is "0.4.0", plugin supports ["0.1.0" "0.2.0" "0.3.0" "0.3.1"] `

We are running 0.6.0 (Aug 2017), should upgrade to 0.7.0 (Apr 2019) - or 0.7.1

And we probably have to build it from source, in order to do so (no more binaries)

Required for `podman run`

|

non_process

|

upgrade cni to support version erro error adding network incompatible cni versions config is plugin supports we are running aug should upgrade to apr or and we probably have to build it from source in order to do so no more binaries required for podman run

| 0

|

321,299

| 27,520,610,315

|

IssuesEvent

|

2023-03-06 14:49:15

|

slsa-framework/slsa-github-generator

|

https://api.github.com/repos/slsa-framework/slsa-github-generator

|

opened

|

[feature] [test] add pull_request_target workflow for verify-token

|

type:feature area:tests

|

**Is your feature request related to a problem? Please describe.**

A maintainer-triggered pull_request_target workflow for verify-token with OIDC permissions

https://github.com/slsa-framework/slsa-github-generator/pull/1726#issuecomment-1455269281

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

|

1.0

|

[feature] [test] add pull_request_target workflow for verify-token - **Is your feature request related to a problem? Please describe.**

A maintainer-triggered pull_request_target workflow for verify-token with OIDC permissions

https://github.com/slsa-framework/slsa-github-generator/pull/1726#issuecomment-1455269281

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

|

non_process

|

add pull request target workflow for verify token is your feature request related to a problem please describe a maintainer triggered pull request target workflow for verify token with oidc permissions describe the solution you d like a clear and concise description of what you want to happen describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered additional context add any other context or screenshots about the feature request here

| 0

|

255,412

| 19,301,156,525

|

IssuesEvent

|

2021-12-13 05:50:35

|

wakystuf/ESG-Mod

|

https://api.github.com/repos/wakystuf/ESG-Mod

|

opened

|

Seek the Unique Bugfixes

|

bug finished needs documentation

|

Certain quest paths were skipping multiple chapters; now redirected

Also fixed a GUI/tooltip bug in the dust per assimilated minor

|

1.0

|

Seek the Unique Bugfixes - Certain quest paths were skipping multiple chapters; now redirected

Also fixed a GUI/tooltip bug in the dust per assimilated minor

|

non_process

|

seek the unique bugfixes certain quest paths were skipping multiple chapters now redirected also fixed a gui tooltip bug in the dust per assimilated minor

| 0

|

6,274

| 9,231,176,024

|

IssuesEvent

|

2019-03-13 01:09:05

|

EthVM/EthVM

|

https://api.github.com/repos/EthVM/EthVM

|

closed

|

Create unit tests to properly verify kafka streams processing on ethereum data

|

enhancement milestone:2 project:processing

|

With the upcoming refactor to the main architecture we need to test properly if our processors are working as expected.

Things to take care of:

- [x] Simple Ether transfers

- [x] Contract creations

- [x] Contract suicides

- [x] Contract classification

- [ ] [Transaction dropped & replaced](https://etherscancom.freshdesk.com/support/solutions/articles/35000048526-transaction-dropped-replaced-)

- [x] ERC20 balance tracking

- [x] ERC721 balance tracking

- [x] Correct handling of network forks

|

1.0

|

Create unit tests to properly verify kafka streams processing on ethereum data - With the upcoming refactor to the main architecture we need to test properly if our processors are working as expected.

Things to take care of:

- [x] Simple Ether transfers

- [x] Contract creations

- [x] Contract suicides

- [x] Contract classification

- [ ] [Transaction dropped & replaced](https://etherscancom.freshdesk.com/support/solutions/articles/35000048526-transaction-dropped-replaced-)

- [x] ERC20 balance tracking

- [x] ERC721 balance tracking

- [x] Correct handling of network forks

|

process

|

create unit tests to properly verify kafka streams processing on ethereum data with the upcoming refactor to the main architecture we need to test properly if our processors are working as expected things to take care of simple ether transfers contract creations contract suicides contract classification balance tracking balance tracking correct handling of network forks

| 1

|

253,558

| 21,688,770,159

|

IssuesEvent

|

2022-05-09 13:42:05

|

damccorm/test-migration-target

|

https://api.github.com/repos/damccorm/test-migration-target

|

opened

|

tox: use isolated builds (PEP 517 and 518)

|

bug P3 sdk-py-core testing

|

See description in:

https://github.com/apache/beam/pull/10038

Imported from Jira [BEAM-8954](https://issues.apache.org/jira/browse/BEAM-8954). Original Jira may contain additional context.

Reported by: udim.

|

1.0

|

tox: use isolated builds (PEP 517 and 518) - See description in:

https://github.com/apache/beam/pull/10038

Imported from Jira [BEAM-8954](https://issues.apache.org/jira/browse/BEAM-8954). Original Jira may contain additional context.

Reported by: udim.

|

non_process

|

tox use isolated builds pep and see description in imported from jira original jira may contain additional context reported by udim

| 0

|

4,303

| 7,197,070,919

|

IssuesEvent

|

2018-02-05 07:26:49

|

uccser/verto

|

https://api.github.com/repos/uccser/verto

|

closed

|

Change file path for interactive thumbnails

|

Django processor implementation update

|

Currently the thumbnail file path is `interactive-name/thumbnail.png`, but this is not how we store images in our Django systems that use Verto. Two proposed solutions:

- The file path needs altering slightly to become `interactives/interactive-name/thumbnail.png`, or

- The Verto user needs to be able to specify the file path for interactive thumbnails themselves (similar to how they can specify their own html templates).

|

1.0

|

Change file path for interactive thumbnails - Currently the thumbnail file path is `interactive-name/thumbnail.png`, but this is not how we store images in our Django systems that use Verto. Two proposed solutions:

- The file path needs altering slightly to become `interactives/interactive-name/thumbnail.png`, or

- The Verto user needs to be able to specify the file path for interactive thumbnails themselves (similar to how they can specify their own html templates).

|

process

|

change file path for interactive thumbnails currently the thumbnail file path is interactive name thumbnail png but this is not how we store images in our django systems that use verto two proposed solutions the file path needs altering slightly to become interactives interactive name thumbnail png or the verto user needs to be able to specify the file path for interactive thumbnails themselves similar to how they can specify their own html templates

| 1

|

65,290

| 27,047,294,760

|

IssuesEvent

|

2023-02-13 10:40:40

|

aws-controllers-k8s/community

|

https://api.github.com/repos/aws-controllers-k8s/community

|

opened

|

EventBridge `Pipes` service controller

|

Service Controller

|

## New ACK Service Controller

Support for EventBridge [`Pipes`](https://github.com/aws/aws-sdk-go/tree/main/models/apis/pipes/2015-10-07). This is an issue to also discuss whether it should be a separate controller (common approach) or be implemented under the `EventBridge` controller as the use cases, resources and API models are quiet similar. If it's a separate controller, it would be good to reuse the API group `eventbridge.services.k8s.aws`. Is this possible with the current code-gen?

As EventBridge is expanding to become a framework/building blocks for event-driven systems, this question might come up again, for example EventBridge `Scheduler` controller.

### List of API resources

[TBD]

|

1.0

|

EventBridge `Pipes` service controller - ## New ACK Service Controller

Support for EventBridge [`Pipes`](https://github.com/aws/aws-sdk-go/tree/main/models/apis/pipes/2015-10-07). This is an issue to also discuss whether it should be a separate controller (common approach) or be implemented under the `EventBridge` controller as the use cases, resources and API models are quiet similar. If it's a separate controller, it would be good to reuse the API group `eventbridge.services.k8s.aws`. Is this possible with the current code-gen?

As EventBridge is expanding to become a framework/building blocks for event-driven systems, this question might come up again, for example EventBridge `Scheduler` controller.

### List of API resources

[TBD]

|

non_process

|

eventbridge pipes service controller new ack service controller support for eventbridge this is an issue to also discuss whether it should be a separate controller common approach or be implemented under the eventbridge controller as the use cases resources and api models are quiet similar if it s a separate controller it would be good to reuse the api group eventbridge services aws is this possible with the current code gen as eventbridge is expanding to become a framework building blocks for event driven systems this question might come up again for example eventbridge scheduler controller list of api resources

| 0

|

804,349

| 29,484,756,986

|

IssuesEvent

|

2023-06-02 08:54:00

|

svthalia/concrexit

|

https://api.github.com/repos/svthalia/concrexit

|

opened

|

Google Workplace users don't seem to be suspended

|

priority: medium bug

|

### Describe the bug

Google Workplace users don't seem to be suspended.

For example, Sebastiaan Versteeg's account remained (I suspended it by hand to test things out). I have looked for some other people and they still all have accounts so I think it's just broken

### How to reproduce

Yeah that's the thing

### Expected behaviour

Properly remove accounts when people are no longer active anymore.

I think it would be good improve the logic a little bit: make a model in some app with a one-to-on field to a member, registering the fact that this person should have an account (or not), and possible a date that the account should be removed. Then the sync logic can at least be made more readable, more versatile and the sync can be made idempotent.

### Screenshots

### Additional context

|

1.0

|

Google Workplace users don't seem to be suspended - ### Describe the bug

Google Workplace users don't seem to be suspended.

For example, Sebastiaan Versteeg's account remained (I suspended it by hand to test things out). I have looked for some other people and they still all have accounts so I think it's just broken

### How to reproduce

Yeah that's the thing

### Expected behaviour

Properly remove accounts when people are no longer active anymore.

I think it would be good improve the logic a little bit: make a model in some app with a one-to-on field to a member, registering the fact that this person should have an account (or not), and possible a date that the account should be removed. Then the sync logic can at least be made more readable, more versatile and the sync can be made idempotent.

### Screenshots

### Additional context

|

non_process

|

google workplace users don t seem to be suspended describe the bug google workplace users don t seem to be suspended for example sebastiaan versteeg s account remained i suspended it by hand to test things out i have looked for some other people and they still all have accounts so i think it s just broken how to reproduce yeah that s the thing expected behaviour properly remove accounts when people are no longer active anymore i think it would be good improve the logic a little bit make a model in some app with a one to on field to a member registering the fact that this person should have an account or not and possible a date that the account should be removed then the sync logic can at least be made more readable more versatile and the sync can be made idempotent screenshots additional context

| 0

|

2,860

| 5,680,740,448

|

IssuesEvent

|

2017-04-13 02:41:04

|

BVLC/caffe

|

https://api.github.com/repos/BVLC/caffe

|

closed

|

Add support for opencl

|

compatibility interface

|

Hi Theano is adding support to opencl[1] trought CLBLAS[2].

Can caffe use this kind of solution to add opencl (more vendor neutral) support?

[1]https://github.com/Theano/libgpuarray

[2]https://github.com/clMathLibraries/clBLAS

|

True

|

Add support for opencl - Hi Theano is adding support to opencl[1] trought CLBLAS[2].

Can caffe use this kind of solution to add opencl (more vendor neutral) support?

[1]https://github.com/Theano/libgpuarray

[2]https://github.com/clMathLibraries/clBLAS

|

non_process

|

add support for opencl hi theano is adding support to opencl trought clblas can caffe use this kind of solution to add opencl more vendor neutral support

| 0

|

121,912

| 12,137,041,965

|

IssuesEvent

|

2020-04-23 15:11:08

|

PyTorchLightning/pytorch-lightning

|

https://api.github.com/repos/PyTorchLightning/pytorch-lightning

|

opened

|

Docstring for `on_after_backward`

|

documentation

|

## 📚 Documentation

Hi !

In the docstring for `on_after_backward` there is a puzzling piece of code that is suggested ([link](https://github.com/PyTorchLightning/pytorch-lightning/blob/2ab2f7d08df4e4f913e229caf92bbd92f31f6f93/pytorch_lightning/core/hooks.py#L98)) :

```

# example to inspect gradient information in tensorboard

if self.trainer.global_step % 25 == 0: # don't make the tf file huge

params = self.state_dict()

for k, v in params.items():

grads = v

name = k

self.logger.experiment.add_histogram(tag=name, values=grads,

global_step=self.trainer.global_step)

```

It isn't reported in Pytorch documentation that enumerating the state dict key-values gives the gradient: it is usually used to load a saved model weights (thus `grads` would be the weights and not the grads).

Adding a reference (which I couldn't find) would probably help pick up the logic behind it.

|

1.0

|

Docstring for `on_after_backward` - ## 📚 Documentation

Hi !

In the docstring for `on_after_backward` there is a puzzling piece of code that is suggested ([link](https://github.com/PyTorchLightning/pytorch-lightning/blob/2ab2f7d08df4e4f913e229caf92bbd92f31f6f93/pytorch_lightning/core/hooks.py#L98)) :

```

# example to inspect gradient information in tensorboard

if self.trainer.global_step % 25 == 0: # don't make the tf file huge

params = self.state_dict()

for k, v in params.items():

grads = v

name = k

self.logger.experiment.add_histogram(tag=name, values=grads,