Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

819,659 | 30,748,040,349 | IssuesEvent | 2023-07-28 16:36:00 | BeyondMafia/BeyondMafia-Integration | https://api.github.com/repos/BeyondMafia/BeyondMafia-Integration | closed | Remove Ranked Approval | refactor high priority site/moderation | The ranked approval process is a pretty unnecessary hurdle at the moment.

Better way moving forward is to remove this restriction, and simply ranked-ban players who break game rules. | 1.0 | Remove Ranked Approval - The ranked approval process is a pretty unnecessary hurdle at the moment.

Better way moving forward is to remove this restriction, and simply ranked-ban players who break game rules. | non_process | remove ranked approval the ranked approval process is a pretty unnecessary hurdle at the moment better way moving forward is to remove this restriction and simply ranked ban players who break game rules | 0 |

783,918 | 27,551,184,099 | IssuesEvent | 2023-03-07 15:02:28 | dotnet/msbuild | https://api.github.com/repos/dotnet/msbuild | closed | Resolved project and package references in some cases are faulty | bug needs-triage Priority:2 author-responded Iteration:2023February | ### Issue Description

Suppose there is a `Main` project reference a multi-targeting `Lib` project, and to every `Lib` project's target framework, there are other different projects and packages referenced by `Lib`. The result is build success but run fail.

The dependencies that copy to local are faulty.

### Steps ... | 1.0 | Resolved project and package references in some cases are faulty - ### Issue Description

Suppose there is a `Main` project reference a multi-targeting `Lib` project, and to every `Lib` project's target framework, there are other different projects and packages referenced by `Lib`. The result is build success but run f... | non_process | resolved project and package references in some cases are faulty issue description suppose there is a main project reference a multi targeting lib project and to every lib project s target framework there are other different projects and packages referenced by lib the result is build success but run f... | 0 |

26,628 | 27,036,373,829 | IssuesEvent | 2023-02-12 20:34:28 | tailscale/tailscale | https://api.github.com/repos/tailscale/tailscale | closed | Handle Okta 'user is not assigned' errors more gracefully | L3 Some users P3 Can't get started T5 Usability identity bug | ### What is the issue?

When a user exists in an Okta tenant, but is not assigned to the Tailscale app (either directly or via a group), Okta returns an 'access denied' error, with no further information.

We should match this error code and description and direct to a KB article that lists appropriate mitigation ste... | True | Handle Okta 'user is not assigned' errors more gracefully - ### What is the issue?

When a user exists in an Okta tenant, but is not assigned to the Tailscale app (either directly or via a group), Okta returns an 'access denied' error, with no further information.

We should match this error code and description and ... | non_process | handle okta user is not assigned errors more gracefully what is the issue when a user exists in an okta tenant but is not assigned to the tailscale app either directly or via a group okta returns an access denied error with no further information we should match this error code and description and ... | 0 |

64 | 3,317,794,188 | IssuesEvent | 2015-11-06 23:47:31 | rancher/rancher | https://api.github.com/repos/rancher/rancher | opened | test_dynamic_port fails with "ConnectionError: " when trying to access exposed port. | kind/bug setup/automation | Server build from master - Nov 6

test_dynamic_port fails with "ConnectionError: " when trying to access exposed port.

```

=================================== FAILURES ===================================

______________________________ test_dynamic_port _______________________________

client = <cattle.Client obj... | 1.0 | test_dynamic_port fails with "ConnectionError: " when trying to access exposed port. - Server build from master - Nov 6

test_dynamic_port fails with "ConnectionError: " when trying to access exposed port.

```

=================================== FAILURES ===================================

________________________... | non_process | test dynamic port fails with connectionerror when trying to access exposed port server build from master nov test dynamic port fails with connectionerror when trying to access exposed port failures ... | 0 |

4,360 | 7,260,514,076 | IssuesEvent | 2018-02-18 10:53:18 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE][processing] algorithm to fix invalid geometries using native

makeValid() implementation | Automatic new feature Processing | Original commit: https://github.com/qgis/QGIS/commit/0293bc76a0bb6e7ec55df59b8bb2764204552247 by alexbruy

Unfortunately this naughty coder did not write a description... :-( | 1.0 | [FEATURE][processing] algorithm to fix invalid geometries using native

makeValid() implementation - Original commit: https://github.com/qgis/QGIS/commit/0293bc76a0bb6e7ec55df59b8bb2764204552247 by alexbruy

Unfortunately this naughty coder did not write a description... :-( | process | algorithm to fix invalid geometries using native makevalid implementation original commit by alexbruy unfortunately this naughty coder did not write a description | 1 |

380,191 | 11,255,057,190 | IssuesEvent | 2020-01-12 05:48:30 | potion-cellar/14ersForecast | https://api.github.com/repos/potion-cellar/14ersForecast | closed | Map: Tooltips can show Trace" (inches tick) | bug feature-maps low priority | If value is Trace, the tooltip should not show the `"` symbol. | 1.0 | Map: Tooltips can show Trace" (inches tick) - If value is Trace, the tooltip should not show the `"` symbol. | non_process | map tooltips can show trace inches tick if value is trace the tooltip should not show the symbol | 0 |

3,251 | 3,098,713,435 | IssuesEvent | 2015-08-28 12:59:36 | projectatomic/atomic-reactor | https://api.github.com/repos/projectatomic/atomic-reactor | opened | squashing: provide id of base image to the tool | bug post-build plugin priority 1 | so it will squash just the built image, not multiple layers from base image | 1.0 | squashing: provide id of base image to the tool - so it will squash just the built image, not multiple layers from base image | non_process | squashing provide id of base image to the tool so it will squash just the built image not multiple layers from base image | 0 |

14,937 | 18,365,763,139 | IssuesEvent | 2021-10-10 02:25:45 | varabyte/kobweb | https://api.github.com/repos/varabyte/kobweb | opened | Refactor some KobwebProject code back into the Application plugin (or some common module?) | process | The project parsing (KobwebProject.parseData) code probably makes sense to be Gradle only since only Gradle knows the "pages" and "group" values. Then we can remove the embeddedcompiler dependency from the CLI.

| 1.0 | Refactor some KobwebProject code back into the Application plugin (or some common module?) - The project parsing (KobwebProject.parseData) code probably makes sense to be Gradle only since only Gradle knows the "pages" and "group" values. Then we can remove the embeddedcompiler dependency from the CLI.

| process | refactor some kobwebproject code back into the application plugin or some common module the project parsing kobwebproject parsedata code probably makes sense to be gradle only since only gradle knows the pages and group values then we can remove the embeddedcompiler dependency from the cli | 1 |

204,917 | 15,955,134,262 | IssuesEvent | 2021-04-15 14:18:03 | gt-coar/gt-coar-lab | https://api.github.com/repos/gt-coar/gt-coar-lab | closed | add ipydrawio | deps documentation | [ipydrawio 1.0.1](https://github.com/conda-forge/ipydrawio-feedstock) is up, and ready for integration.

- [ ] add to the development environment

- [ ] add to the shipped lab | 1.0 | add ipydrawio - [ipydrawio 1.0.1](https://github.com/conda-forge/ipydrawio-feedstock) is up, and ready for integration.

- [ ] add to the development environment

- [ ] add to the shipped lab | non_process | add ipydrawio is up and ready for integration add to the development environment add to the shipped lab | 0 |

246,204 | 7,893,243,502 | IssuesEvent | 2018-06-28 17:24:12 | GCTC-NTGC/TalentCloud | https://api.github.com/repos/GCTC-NTGC/TalentCloud | closed | Admin - Populate Live Site with Fake "Real" Content for Demo | FED High Priority Medium Complexity Task | # Description

For the demo, it's important that we have content that looks as though it were real. This includes populating a Hiring Manager's profile, as well as creating 3 different job posters to help provide variety during the demo.

# Required for Completion

Add/remove others as necessary for this specific tas... | 1.0 | Admin - Populate Live Site with Fake "Real" Content for Demo - # Description

For the demo, it's important that we have content that looks as though it were real. This includes populating a Hiring Manager's profile, as well as creating 3 different job posters to help provide variety during the demo.

# Required for C... | non_process | admin populate live site with fake real content for demo description for the demo it s important that we have content that looks as though it were real this includes populating a hiring manager s profile as well as creating different job posters to help provide variety during the demo required for c... | 0 |

7,024 | 10,173,217,042 | IssuesEvent | 2019-08-08 12:38:34 | heim-rs/heim | https://api.github.com/repos/heim-rs/heim | closed | process::Process::status for Windows | A-process C-enhancement O-windows | It requires digging through the `winternl.h` stuff, so it will be a very interesting problem. As for now, stub is implemented, which returns `Status::Running` all the time for all processes. | 1.0 | process::Process::status for Windows - It requires digging through the `winternl.h` stuff, so it will be a very interesting problem. As for now, stub is implemented, which returns `Status::Running` all the time for all processes. | process | process process status for windows it requires digging through the winternl h stuff so it will be a very interesting problem as for now stub is implemented which returns status running all the time for all processes | 1 |

134,433 | 19,188,901,168 | IssuesEvent | 2021-12-05 17:17:00 | Wonderland-Mobile/Issue-Tracker | https://api.github.com/repos/Wonderland-Mobile/Issue-Tracker | closed | Consider making "The Search for the Rainbow Spirits" unlockable by completing "Deeper Into Wonderland". | enhancement wontfix As Designed | A lot of mobile games that fans call "pay to win" are winnable without paying a single cent. However, from what i can tell, "The Search for the Rainbow Spirits" is only unlockable by paying $5.99. While i understand that this would help increase the games revenue, this technically makes the game pay to win. I would hat... | 1.0 | Consider making "The Search for the Rainbow Spirits" unlockable by completing "Deeper Into Wonderland". - A lot of mobile games that fans call "pay to win" are winnable without paying a single cent. However, from what i can tell, "The Search for the Rainbow Spirits" is only unlockable by paying $5.99. While i understan... | non_process | consider making the search for the rainbow spirits unlockable by completing deeper into wonderland a lot of mobile games that fans call pay to win are winnable without paying a single cent however from what i can tell the search for the rainbow spirits is only unlockable by paying while i understand... | 0 |

886 | 3,350,762,291 | IssuesEvent | 2015-11-17 15:56:52 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process.exec TypeError: Cannot read property 'addListener' of undefined | child_process confirmed-bug | Here's a code sample that triggers the error condition:

```javascript

var child_process = require('child_process'),

exec = child_process.exec,

children = [],

exitHandler = function(i,err,stdout,stderr){

console.log(i,err,stdout,stderr);

},

i = 0;

for(i=0;i<1000;i++){

childr... | 1.0 | child_process.exec TypeError: Cannot read property 'addListener' of undefined - Here's a code sample that triggers the error condition:

```javascript

var child_process = require('child_process'),

exec = child_process.exec,

children = [],

exitHandler = function(i,err,stdout,stderr){

console.log... | process | child process exec typeerror cannot read property addlistener of undefined here s a code sample that triggers the error condition javascript var child process require child process exec child process exec children exithandler function i err stdout stderr console log ... | 1 |

7,466 | 10,563,218,056 | IssuesEvent | 2019-10-04 20:22:11 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Firestore: 'test_watch_collection' systest flakes | api: firestore flaky testing type: process | Similar to #6605, #6921.

From this [Kokoro Firestore run](https://source.cloud.google.com/results/invocations/6579f747-286a-4545-b959-00d5fd1e530c/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Ffirestore/log):

```python

____________________________ test_watch_collection _____________... | 1.0 | Firestore: 'test_watch_collection' systest flakes - Similar to #6605, #6921.

From this [Kokoro Firestore run](https://source.cloud.google.com/results/invocations/6579f747-286a-4545-b959-00d5fd1e530c/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Ffirestore/log):

```python

____________... | process | firestore test watch collection systest flakes similar to from this python test watch collection client cleanup def test watch collection client cleanup db client doc ref db collection u users ... | 1 |

5,781 | 8,631,773,783 | IssuesEvent | 2018-11-22 08:54:52 | dzhw/zofar | https://api.github.com/repos/dzhw/zofar | opened | Abs 12.3 survey termination (Dez 18) | category: services category: technical.processes prio: ? type: backlog.task | ### **the survey ends on Monday 17.12.**

- [ ] final return statistic (@andreaschu )

- [ ] reroute the link (@vdick or @dzhwmeisner )

- [ ] undeploy survey (@vdick or @dzhwmeisner )

- [ ] prepare export (@andreaschu ) | 1.0 | Abs 12.3 survey termination (Dez 18) - ### **the survey ends on Monday 17.12.**

- [ ] final return statistic (@andreaschu )

- [ ] reroute the link (@vdick or @dzhwmeisner )

- [ ] undeploy survey (@vdick or @dzhwmeisner )

- [ ] prepare export (@andreaschu ) | process | abs survey termination dez the survey ends on monday final return statistic andreaschu reroute the link vdick or dzhwmeisner undeploy survey vdick or dzhwmeisner prepare export andreaschu | 1 |

14,520 | 17,618,522,904 | IssuesEvent | 2021-08-18 12:50:14 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | Mix of binary encoded ISO-8859-1 data in header fields (e.g. to) fails mail processing | bug verified prioritised by payment mail processing | <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regarding-github-issues-community-board/21

P... | 1.0 | Mix of binary encoded ISO-8859-1 data in header fields (e.g. to) fails mail processing - <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: ht... | process | mix of binary encoded iso data in header fields e g to fails mail processing hi there thanks for filing an issue please ensure the following things before creating an issue thank you 🤓 since november we handle all requests except real bugs at our community board full explanation ple... | 1 |

248,239 | 26,784,984,413 | IssuesEvent | 2023-02-01 01:30:16 | hoodies-training/landing-page | https://api.github.com/repos/hoodies-training/landing-page | opened | CVE-2022-25881 (Medium) detected in http-cache-semantics-3.8.1.tgz, http-cache-semantics-4.1.0.tgz | security vulnerability | ## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>http-cache-semantics-3.8.1.tgz</b>, <b>http-cache-semantics-4.1.0.tgz</b></p></summary>

<p>

<details><summary><b>h... | True | CVE-2022-25881 (Medium) detected in http-cache-semantics-3.8.1.tgz, http-cache-semantics-4.1.0.tgz - ## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>http-cache-sem... | non_process | cve medium detected in http cache semantics tgz http cache semantics tgz cve medium severity vulnerability vulnerable libraries http cache semantics tgz http cache semantics tgz http cache semantics tgz parses cache control and other headers helps... | 0 |

180,994 | 14,849,268,899 | IssuesEvent | 2021-01-18 00:40:21 | nkdAgility/azure-devops-migration-tools | https://api.github.com/repos/nkdAgility/azure-devops-migration-tools | closed | How to properly exclude source work items with NodeBasePaths | DocumentationException no-issue-activity | From the documentation it appears that the NodeBasePaths in a WorkItemMigrationConfig are suppose to filter out and not migrate work items in the source that are not in or under those paths. That is not the behavior I am seeing. Work items in the source that are neither in or under the area/iteration paths I specify in... | 1.0 | How to properly exclude source work items with NodeBasePaths - From the documentation it appears that the NodeBasePaths in a WorkItemMigrationConfig are suppose to filter out and not migrate work items in the source that are not in or under those paths. That is not the behavior I am seeing. Work items in the source th... | non_process | how to properly exclude source work items with nodebasepaths from the documentation it appears that the nodebasepaths in a workitemmigrationconfig are suppose to filter out and not migrate work items in the source that are not in or under those paths that is not the behavior i am seeing work items in the source th... | 0 |

7,629 | 10,730,180,367 | IssuesEvent | 2019-10-28 16:55:30 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Provide documentation for setting up VSCode for contribution | process: contributing | I tried to understand the code in the driver and server package in order to fix this issue correctly: https://github.com/cypress-io/cypress/issues/5367

So I started by searching for the definition of cy.visit to understand what's going on there. After finding navigation.coffee, I wanted to navigate through the code.... | 1.0 | Provide documentation for setting up VSCode for contribution - I tried to understand the code in the driver and server package in order to fix this issue correctly: https://github.com/cypress-io/cypress/issues/5367

So I started by searching for the definition of cy.visit to understand what's going on there. After fi... | process | provide documentation for setting up vscode for contribution i tried to understand the code in the driver and server package in order to fix this issue correctly so i started by searching for the definition of cy visit to understand what s going on there after finding navigation coffee i wanted to navigate th... | 1 |

391,127 | 26,881,193,743 | IssuesEvent | 2023-02-05 17:01:44 | ManevilleF/hexx | https://api.github.com/repos/ManevilleF/hexx | closed | Missing documentation for from/to vector methods | documentation | The conversion methods from/to `Vec2` and `IVec2` implemented for `Hex` don't make it clear these methods shouldn't be used for converting from/to world coordinates.

# Solution

Give pointers to the `HexLayout` struct and it's uses in the documentation for these methods | 1.0 | Missing documentation for from/to vector methods - The conversion methods from/to `Vec2` and `IVec2` implemented for `Hex` don't make it clear these methods shouldn't be used for converting from/to world coordinates.

# Solution

Give pointers to the `HexLayout` struct and it's uses in the documentation for these met... | non_process | missing documentation for from to vector methods the conversion methods from to and implemented for hex don t make it clear these methods shouldn t be used for converting from to world coordinates solution give pointers to the hexlayout struct and it s uses in the documentation for these methods | 0 |

147,804 | 11,808,292,253 | IssuesEvent | 2020-03-19 13:08:20 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | closed | Test Failure: DelayExecutionFATTest.testRescheduleUnderConfigUpdateRun_Lite feature update hung for over 27 minutes | team:Zombie Apocalypse test bug | Test Failure (20200316-0224): com.ibm.ws.concurrent.persistent.delayexec.DelayExecutionFATTest.testRescheduleUnderConfigUpdateRun_Lite

```

junit.framework.AssertionFailedError: 2020-03-16-09:11:44:318 Missing success message in output. PersistentExecutorsTestServlet is starting testTaskDoesRun<br>

<pre>ERROR in te... | 1.0 | Test Failure: DelayExecutionFATTest.testRescheduleUnderConfigUpdateRun_Lite feature update hung for over 27 minutes - Test Failure (20200316-0224): com.ibm.ws.concurrent.persistent.delayexec.DelayExecutionFATTest.testRescheduleUnderConfigUpdateRun_Lite

```

junit.framework.AssertionFailedError: 2020-03-16-09:11:44:3... | non_process | test failure delayexecutionfattest testrescheduleunderconfigupdaterun lite feature update hung for over minutes test failure com ibm ws concurrent persistent delayexec delayexecutionfattest testrescheduleunderconfigupdaterun lite junit framework assertionfailederror missing success me... | 0 |

7,030 | 10,191,948,670 | IssuesEvent | 2019-08-12 09:46:15 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | microsoft.com | whitelisting process | *@mitchellkrogza commented on May 30, 2019, 10:51 AM UTC:*

**Domains or links**

microsoft.com

**More Information**

**Have you requested removal from other sources?**

Please include all relevant links to your existing removals / whitelistings.

**Additional context**

Add any other context about the problem here.

<g-... | 1.0 | microsoft.com - *@mitchellkrogza commented on May 30, 2019, 10:51 AM UTC:*

**Domains or links**

microsoft.com

**More Information**

**Have you requested removal from other sources?**

Please include all relevant links to your existing removals / whitelistings.

**Additional context**

Add any other context about the pr... | process | microsoft com mitchellkrogza commented on may am utc domains or links microsoft com more information have you requested removal from other sources please include all relevant links to your existing removals whitelistings additional context add any other context about the problem ... | 1 |

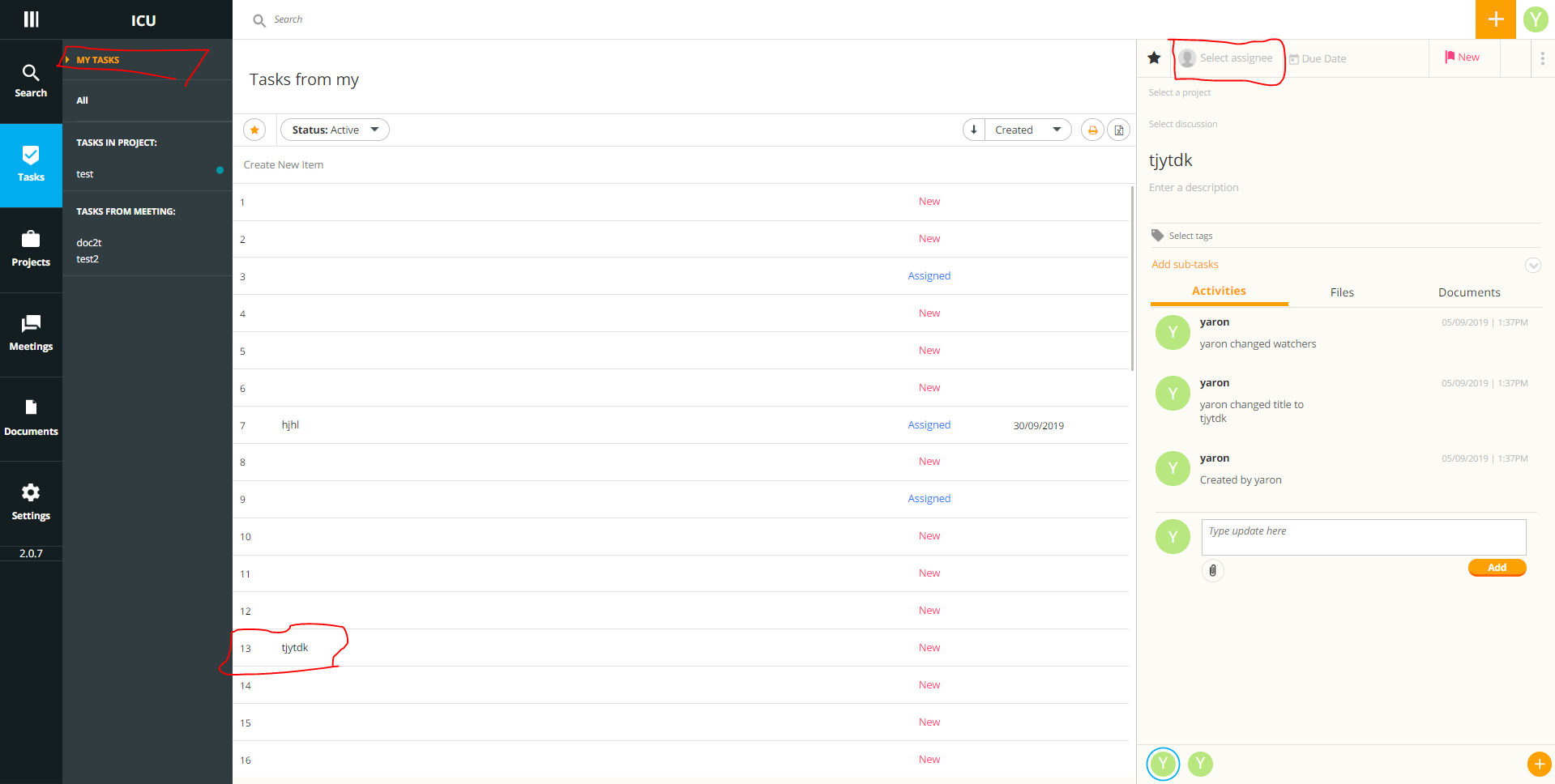

7,226 | 10,353,154,218 | IssuesEvent | 2019-09-05 10:52:24 | linnovate/root | https://api.github.com/repos/linnovate/root | opened | in "my tasks", the list updates to every task the user created, after adding watchers to a created my task, sorting by favorites | Process bug Tasks | go to tasks

click on my task

create a task

add a watcher to it/ sort by favorite

the app loads a list of every list the user created, and not necessarily assigned to:

| 1.0 | in "my tasks", the list updates to every task the user created, after adding watchers to a created my task, sorting by favorites - go to tasks

click on my task

create a task

add a watcher to it/ sort by favorite

the app loads a list of every list the user created, and not necessarily assigned to:

Should look more like this to allow for a bit of breathing space

| 1.0 | increase spacing between profit and loss change and and total on your overview - The build looks too tight

Should look more like this to allow for a bit of breathing space

:

## Example

```

.timestamp_string = strftime(.timestamp, "%F")

```

Would result in:

```js

{

"timestamp_string": "2020-08-03"

}

``` | 1.0 | New `strftime` remap function - A `strftime` function takes a timestamp as argument and formats it according to [Rust's strftime format](https://docs.rs/chrono/0.3.1/chrono/format/strftime/index.html):

## Example

```

.timestamp_string = strftime(.timestamp, "%F")

```

Would result in:

```js

{

"timestam... | process | new strftime remap function a strftime function takes a timestamp as argument and formats it according to example timestamp string strftime timestamp f would result in js timestamp string | 1 |

150,475 | 11,962,546,907 | IssuesEvent | 2020-04-05 12:51:21 | Rocologo/BagOfGold | https://api.github.com/repos/Rocologo/BagOfGold | closed | Bugs with drop-money-on-ground-itemtype set as ITEM ? | Fixed - To be tested | Hi @Rocologo

You'll find a copy/paste from my forum post bellow.

But as you anwered about "gringotts_style" mode, I have a question : ITEM mode do not support split and stacks ? Only gringotts_style supports it ?

Also, you have to know that I decided to switch from SKULLS to ITEMS because I had problems with ... | 1.0 | Bugs with drop-money-on-ground-itemtype set as ITEM ? - Hi @Rocologo

You'll find a copy/paste from my forum post bellow.

But as you anwered about "gringotts_style" mode, I have a question : ITEM mode do not support split and stacks ? Only gringotts_style supports it ?

Also, you have to know that I decided to... | non_process | bugs with drop money on ground itemtype set as item hi rocologo you ll find a copy paste from my forum post bellow but as you anwered about gringotts style mode i have a question item mode do not support split and stacks only gringotts style supports it also you have to know that i decided to... | 0 |

121,745 | 17,662,359,745 | IssuesEvent | 2021-08-21 19:28:54 | ghc-dev/Joshua-Braun | https://api.github.com/repos/ghc-dev/Joshua-Braun | opened | CVE-2020-1753 (Medium) detected in ansible-2.9.9.tar.gz | security vulnerability | ## CVE-2020-1753 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radically simple IT automation</p>

<p>Library home page: <a href="https://f... | True | CVE-2020-1753 (Medium) detected in ansible-2.9.9.tar.gz - ## CVE-2020-1753 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radically simple ... | non_process | cve medium detected in ansible tar gz cve medium severity vulnerability vulnerable library ansible tar gz radically simple it automation library home page a href path to dependency file joshua braun requirements txt path to vulnerable library requirements txt de... | 0 |

11,556 | 14,436,734,829 | IssuesEvent | 2020-12-07 10:31:59 | MelissaMorales13/4a_bien | https://api.github.com/repos/MelissaMorales13/4a_bien | closed | fill_size_estimating_template | process dashboard | -llenado de template de estimación de lineas de código en process dashboard

-correr el PROBE wizard | 1.0 | fill_size_estimating_template - -llenado de template de estimación de lineas de código en process dashboard

-correr el PROBE wizard | process | fill size estimating template llenado de template de estimación de lineas de código en process dashboard correr el probe wizard | 1 |

27,625 | 6,889,586,649 | IssuesEvent | 2017-11-22 10:53:15 | openshiftio/openshift.io | https://api.github.com/repos/openshiftio/openshift.io | opened | Extend API for interaction with Che workspaces | area/che area/codebases | There is need to expose Che workspace API (https://github.com/fabric8-services/fabric8-wit/blob/master/codebase/che/client.go) with the following items:

- [ ] ability to stop workspace

- [ ] ability to create workspace pointing the repository branch ? (maybe here changes for codebases are needed as well to be identif... | 1.0 | Extend API for interaction with Che workspaces - There is need to expose Che workspace API (https://github.com/fabric8-services/fabric8-wit/blob/master/codebase/che/client.go) with the following items:

- [ ] ability to stop workspace

- [ ] ability to create workspace pointing the repository branch ? (maybe here chang... | non_process | extend api for interaction with che workspaces there is need to expose che workspace api with the following items ability to stop workspace ability to create workspace pointing the repository branch maybe here changes for codebases are needed as well to be identified by source location branch ... | 0 |

155,845 | 5,961,827,765 | IssuesEvent | 2017-05-29 19:14:29 | dmusican/Elegit | https://api.github.com/repos/dmusican/Elegit | opened | Move to git flow workflow | enhancement priority high | I was wrong; @grahamearley and @kileymaki were right. We should be using git flow as a git management model. I'm tangled up in knots on the current release.

Move the repo to a git flow strategy. | 1.0 | Move to git flow workflow - I was wrong; @grahamearley and @kileymaki were right. We should be using git flow as a git management model. I'm tangled up in knots on the current release.

Move the repo to a git flow strategy. | non_process | move to git flow workflow i was wrong grahamearley and kileymaki were right we should be using git flow as a git management model i m tangled up in knots on the current release move the repo to a git flow strategy | 0 |

72,301 | 15,225,240,561 | IssuesEvent | 2021-02-18 06:57:47 | devikab2b/whites5 | https://api.github.com/repos/devikab2b/whites5 | opened | CVE-2021-20190 (High) detected in jackson-databind-2.6.7.3.jar | security vulnerability | ## CVE-2021-20190 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.7.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2021-20190 (High) detected in jackson-databind-2.6.7.3.jar - ## CVE-2021-20190 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.7.3.jar</b></p></summary>

<p>Gen... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml path to vulnera... | 0 |

10,552 | 13,338,994,685 | IssuesEvent | 2020-08-28 12:06:34 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | gdal2tiles fails when the input raster is very small (7x8) | Bug Feedback Processing | I want to export tiles from a very small raster (7x8) and the tiles are all transparent.

I found out that if I translate the raster to increase its size (say give it a width of 100), then the output is as expected.

Here's my code (I'm using gdal2tiles python bindings but I've also reproduced this issue with multi... | 1.0 | gdal2tiles fails when the input raster is very small (7x8) - I want to export tiles from a very small raster (7x8) and the tiles are all transparent.

I found out that if I translate the raster to increase its size (say give it a width of 100), then the output is as expected.

Here's my code (I'm using gdal2tiles p... | process | fails when the input raster is very small i want to export tiles from a very small raster and the tiles are all transparent i found out that if i translate the raster to increase its size say give it a width of then the output is as expected here s my code i m using python bindings but i ve ... | 1 |

13,783 | 16,541,559,008 | IssuesEvent | 2021-05-27 17:28:42 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | QGIS crashes when editing Processing script | Bug Crash/Data Corruption Processing | Found this on master while testing this other ticket

https://github.com/qgis/QGIS/issues/41719

basically a Processing script can be edited without issues, but if the editor dialog is open and the script run, then a subsequent edit causes an instant crash. | 1.0 | QGIS crashes when editing Processing script - Found this on master while testing this other ticket

https://github.com/qgis/QGIS/issues/41719

basically a Processing script can be edited without issues, but if the editor dialog is open and the script run, then a subsequent edit causes an instant crash. | process | qgis crashes when editing processing script found this on master while testing this other ticket basically a processing script can be edited without issues but if the editor dialog is open and the script run then a subsequent edit causes an instant crash | 1 |

17,807 | 23,730,052,186 | IssuesEvent | 2022-08-31 00:20:44 | FTBTeam/FTB-App | https://api.github.com/repos/FTBTeam/FTB-App | closed | ftb app stoped launching ftb university over night | subprocess bug:unconfirmed awaiting response possibly minecraft status: stale | ### What Operating System

Windows 11

### App Version

202112101445-bdf8bdbaca-release

### UI Version

bdf8bdbaca

### Log Files

https://pste.ch/qiqigozeva

### Debug Code

FTB-DBGXAHAYIRIJA

### Describe the bug

app was working perfectly last night but today its not i have changed nothing at all

### Steps to rep... | 1.0 | ftb app stoped launching ftb university over night - ### What Operating System

Windows 11

### App Version

202112101445-bdf8bdbaca-release

### UI Version

bdf8bdbaca

### Log Files

https://pste.ch/qiqigozeva

### Debug Code

FTB-DBGXAHAYIRIJA

### Describe the bug

app was working perfectly last night but today its... | process | ftb app stoped launching ftb university over night what operating system windows app version release ui version log files debug code ftb dbgxahayirija describe the bug app was working perfectly last night but today its not i have changed nothing at all steps to repro... | 1 |

22,428 | 31,160,039,251 | IssuesEvent | 2023-08-16 15:23:27 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/k8sattribute] e2e test fails to setup pod in required time limit | bug processor/k8sattributes flaky test | ### Component(s)

processor/k8sattributes

### What happened?

## Description

Logs from test failure:

```

=== RUN TestE2E

k8s_telemetrygen.go:64:

Error Trace: /home/runner/work/opentelemetry-collector-contrib/opentelemetry-collector-contrib/internal/k8stest/k8s_telemetrygen.go:64

... | 1.0 | [processor/k8sattribute] e2e test fails to setup pod in required time limit - ### Component(s)

processor/k8sattributes

### What happened?

## Description

Logs from test failure:

```

=== RUN TestE2E

k8s_telemetrygen.go:64:

Error Trace: /home/runner/work/opentelemetry-collector-contrib/opentele... | process | test fails to setup pod in required time limit component s processor what happened description logs from test failure run telemetrygen go error trace home runner work opentelemetry collector contrib opentelemetry collector contrib internal telemetry... | 1 |

15,166 | 18,923,734,521 | IssuesEvent | 2021-11-17 06:55:05 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | reopened | storage: error in sample test | api: storage type: process samples | There are [sample test failures](https://source.cloud.google.com/results/invocations/6f7d2e57-41b3-46eb-b42b-230875c38009/log) in google-cloud-storage. This was noticed in PR [16028](https://github.com/googleapis/google-cloud-ruby/pull/16028) | 1.0 | storage: error in sample test - There are [sample test failures](https://source.cloud.google.com/results/invocations/6f7d2e57-41b3-46eb-b42b-230875c38009/log) in google-cloud-storage. This was noticed in PR [16028](https://github.com/googleapis/google-cloud-ruby/pull/16028) | process | storage error in sample test there are in google cloud storage this was noticed in pr | 1 |

241,183 | 7,809,470,141 | IssuesEvent | 2018-06-12 00:47:40 | debtcollective/parent | https://api.github.com/repos/debtcollective/parent | closed | Yes/No option does not show required fields when "Yes" is selected and the "save and close" button is used to save the form | bug disputes priority: medium | ## Steps to reproduce

1) Begin a new Wage Garnishment dispute with option C

2) Open the information form

3) Answer "yes" to the "Are you a FFEL Loan Holder" question

4) Click "save and close" in the upper right hand corner (do not use the button at the bottom to complete the form)

5) Refresh the page

6) Open the form ... | 1.0 | Yes/No option does not show required fields when "Yes" is selected and the "save and close" button is used to save the form - ## Steps to reproduce

1) Begin a new Wage Garnishment dispute with option C

2) Open the information form

3) Answer "yes" to the "Are you a FFEL Loan Holder" question

4) Click "save and close" i... | non_process | yes no option does not show required fields when yes is selected and the save and close button is used to save the form steps to reproduce begin a new wage garnishment dispute with option c open the information form answer yes to the are you a ffel loan holder question click save and close i... | 0 |

500,735 | 14,512,996,339 | IssuesEvent | 2020-12-13 03:24:20 | MelbourneDeveloper/Device.Net | https://api.github.com/repos/MelbourneDeveloper/Device.Net | closed | USB Control transactions | High Priority | I am trying to create a .netcoreapp (2.1) to update firmware on ST devices i.e. http://eliasoenal.com/st%20website/17068.pdf. All of the examples I have seen use control transactions e.g.

```

UsbSetupPacket setup = new UsbSetupPacket()

{

RequestType = (byte)(DFU_RequestType |... | 1.0 | USB Control transactions - I am trying to create a .netcoreapp (2.1) to update firmware on ST devices i.e. http://eliasoenal.com/st%20website/17068.pdf. All of the examples I have seen use control transactions e.g.

```

UsbSetupPacket setup = new UsbSetupPacket()

{

RequestType... | non_process | usb control transactions i am trying to create a netcoreapp to update firmware on st devices i e all of the examples i have seen use control transactions e g usbsetuppacket setup new usbsetuppacket requesttype byte dfu requesttype usb dir in ... | 0 |

9,247 | 12,281,226,456 | IssuesEvent | 2020-05-08 15:28:38 | pelias/whosonfirst | https://api.github.com/repos/pelias/whosonfirst | closed | Allow user to whitelist postalcode countries | processed | The whosonfirst importer currently forces a user looking to import postalcodes to download and import postal codes from literally every single one of the 213 countries. That seems a bit excessive if, say, they're only interesting in importing Germany postal codes. Perhaps the importer can look for an array in pelias ... | 1.0 | Allow user to whitelist postalcode countries - The whosonfirst importer currently forces a user looking to import postalcodes to download and import postal codes from literally every single one of the 213 countries. That seems a bit excessive if, say, they're only interesting in importing Germany postal codes. Perhap... | process | allow user to whitelist postalcode countries the whosonfirst importer currently forces a user looking to import postalcodes to download and import postal codes from literally every single one of the countries that seems a bit excessive if say they re only interesting in importing germany postal codes perhaps ... | 1 |

71,896 | 23,844,827,892 | IssuesEvent | 2022-09-06 13:18:20 | martinrotter/rssguard | https://api.github.com/repos/martinrotter/rssguard | opened | [BUG]: Appimage does not work on Ubuntu Jammy | Type-Defect | ### Brief description of the issue

./rssguard-4.2.4-1f6d7c0b-linux64.AppImage

/tmp/.mount_rssgualweSWh/AppRun.wrapped: /lib/x86_64-linux-gnu/libc.so.6: version `GLIBC_2.28' not found (required by /tmp/.mount_rssgualweSWh/usr/bin/../lib/libQt6Core.so.6)

/tmp/.mount_rssgualweSWh/AppRun.wrapped: /lib/x86_64-linux-gnu/... | 1.0 | [BUG]: Appimage does not work on Ubuntu Jammy - ### Brief description of the issue

./rssguard-4.2.4-1f6d7c0b-linux64.AppImage

/tmp/.mount_rssgualweSWh/AppRun.wrapped: /lib/x86_64-linux-gnu/libc.so.6: version `GLIBC_2.28' not found (required by /tmp/.mount_rssgualweSWh/usr/bin/../lib/libQt6Core.so.6)

/tmp/.mount_rss... | non_process | appimage does not work on ubuntu jammy brief description of the issue rssguard appimage tmp mount rssgualweswh apprun wrapped lib linux gnu libc so version glibc not found required by tmp mount rssgualweswh usr bin lib so tmp mount rssgualweswh apprun wrapped lib... | 0 |

4,803 | 7,696,902,742 | IssuesEvent | 2018-05-18 16:49:18 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | opened | automating Image Gallery component for AAC foster process page | Feature: Foster Application Feature: In-page Images Feature: Process Size: S Team: Dev | @cthibaultatx is designing the Image Gallery component for the AAC foster Process page.

There may be an opportunity for us to automate the updating of images in this gallery. Before our meeting with Erin and Lorien from AAC on 5/29, let's see what we can learn about the data sourcing options to see if this is even f... | 1.0 | automating Image Gallery component for AAC foster process page - @cthibaultatx is designing the Image Gallery component for the AAC foster Process page.

There may be an opportunity for us to automate the updating of images in this gallery. Before our meeting with Erin and Lorien from AAC on 5/29, let's see what we c... | process | automating image gallery component for aac foster process page cthibaultatx is designing the image gallery component for the aac foster process page there may be an opportunity for us to automate the updating of images in this gallery before our meeting with erin and lorien from aac on let s see what we ca... | 1 |

184,352 | 14,288,941,529 | IssuesEvent | 2020-11-23 18:27:38 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | closed | Test case: rsend_024_pos | Component: Test Suite Status: Stale | ### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | all

Distribution Version | all

Linux Kernel | all

Architecture | all

ZFS Version ... | 1.0 | Test case: rsend_024_pos - ### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | all

Distribution Version | all

Linux Kernel | all

Architecture ... | non_process | test case rsend pos system information type version name distribution name all distribution version all linux kernel all architecture all zfs version ... | 0 |

737,768 | 25,530,825,306 | IssuesEvent | 2022-11-29 08:16:23 | pystardust/ani-cli | https://api.github.com/repos/pystardust/ani-cli | closed | Add feature to be able to use 9anime as provider | type: feature request priority 4: wishlist | **Is your feature request related to a problem? Please describe.**

I believe you should be able to switch to 9anime cuz why not

**Describe the solution you'd like**

add 9anime as stream link

**Describe alternatives you've considered**

- Ignoring this and using other sites already supported

**Additional cont... | 1.0 | Add feature to be able to use 9anime as provider - **Is your feature request related to a problem? Please describe.**

I believe you should be able to switch to 9anime cuz why not

**Describe the solution you'd like**

add 9anime as stream link

**Describe alternatives you've considered**

- Ignoring this and using... | non_process | add feature to be able to use as provider is your feature request related to a problem please describe i believe you should be able to switch to cuz why not describe the solution you d like add as stream link describe alternatives you ve considered ignoring this and using other sites al... | 0 |

155,827 | 24,526,554,553 | IssuesEvent | 2022-10-11 13:32:36 | hypothesis/frontend-shared | https://api.github.com/repos/hypothesis/frontend-shared | opened | Make it possible to "turn sizing off" for `Button`, perhaps other sized components | component design pattern | Padding and spacing within buttons can be adjusted via the `size` prop, which applies some canned padding and spacing for `sm`, `md`, `lg` values, e.g. However, we have an awful lot of legacy buttons with custom or mis-matched padding and spacing.

To match current custom button styling using `Button`, it's currently... | 1.0 | Make it possible to "turn sizing off" for `Button`, perhaps other sized components - Padding and spacing within buttons can be adjusted via the `size` prop, which applies some canned padding and spacing for `sm`, `md`, `lg` values, e.g. However, we have an awful lot of legacy buttons with custom or mis-matched padding ... | non_process | make it possible to turn sizing off for button perhaps other sized components padding and spacing within buttons can be adjusted via the size prop which applies some canned padding and spacing for sm md lg values e g however we have an awful lot of legacy buttons with custom or mis matched padding ... | 0 |

13,177 | 15,604,555,610 | IssuesEvent | 2021-03-19 04:11:35 | klarEDA/klar-EDA | https://api.github.com/repos/klarEDA/klar-EDA | closed | Implement a format identifier method for date in csv data preprocessor | data-preprocessing enhancement gssoc21 | **Description**

> The method should be able to identify and convert the date into a specific static format.

> The functionality of the method can be described as below -

> 1. Take in any type of date as input (for example - 2021-11-13)

> 2. Identify the format (for example - YYYY-MM-DD)

> 3. Convert the date in... | 1.0 | Implement a format identifier method for date in csv data preprocessor - **Description**

> The method should be able to identify and convert the date into a specific static format.

> The functionality of the method can be described as below -

> 1. Take in any type of date as input (for example - 2021-11-13)

> 2.... | process | implement a format identifier method for date in csv data preprocessor description the method should be able to identify and convert the date into a specific static format the functionality of the method can be described as below take in any type of date as input for example iden... | 1 |

28,720 | 5,533,696,321 | IssuesEvent | 2017-03-21 13:57:03 | brenns10/slacksoc | https://api.github.com/repos/brenns10/slacksoc | closed | Concurrency considerations | documentation | I don't have strong documentation yet (well, not even a stable plugin interface). But this is important to document.

If you're creating a plugin which will do some long term work (web request, sleep, etc), you need to start your own goroutine. This means you'll need to synchronize access to your plugin struct and th... | 1.0 | Concurrency considerations - I don't have strong documentation yet (well, not even a stable plugin interface). But this is important to document.

If you're creating a plugin which will do some long term work (web request, sleep, etc), you need to start your own goroutine. This means you'll need to synchronize access... | non_process | concurrency considerations i don t have strong documentation yet well not even a stable plugin interface but this is important to document if you re creating a plugin which will do some long term work web request sleep etc you need to start your own goroutine this means you ll need to synchronize access... | 0 |

628,868 | 20,016,554,474 | IssuesEvent | 2022-02-01 12:40:53 | ramp4-pcar4/story-ramp | https://api.github.com/repos/ramp4-pcar4/story-ramp | opened | Check into map extent alignment issues in different resolutions | StoryRAMP Viewer RAMP Needs: estimate Priority: High | Clients have raised that at different browser resolutions, they're getting default map extents that are blocked by the legend pane.

This task is to investigate the map extents at different browser sizes, and make necessary config adjustments so that the data is as fully displayed as possible and not blocked by inte... | 1.0 | Check into map extent alignment issues in different resolutions - Clients have raised that at different browser resolutions, they're getting default map extents that are blocked by the legend pane.

This task is to investigate the map extents at different browser sizes, and make necessary config adjustments so that ... | non_process | check into map extent alignment issues in different resolutions clients have raised that at different browser resolutions they re getting default map extents that are blocked by the legend pane this task is to investigate the map extents at different browser sizes and make necessary config adjustments so that ... | 0 |

20,941 | 27,802,019,187 | IssuesEvent | 2023-03-17 16:32:24 | varabyte/kotter | https://api.github.com/repos/varabyte/kotter | closed | Add a test for Terminal#width | good first issue process | See issue #95 which happened because I was lax about overriding the width value for native terminals.

At this point, I'm fairly confident width logic works right because I have so much existing code using this feature. But it would be really nice, still, to have a test for it.

Step 1) Allow passing the width valu... | 1.0 | Add a test for Terminal#width - See issue #95 which happened because I was lax about overriding the width value for native terminals.

At this point, I'm fairly confident width logic works right because I have so much existing code using this feature. But it would be really nice, still, to have a test for it.

Step... | process | add a test for terminal width see issue which happened because i was lax about overriding the width value for native terminals at this point i m fairly confident width logic works right because i have so much existing code using this feature but it would be really nice still to have a test for it step ... | 1 |

11,129 | 13,957,688,532 | IssuesEvent | 2020-10-24 08:09:33 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | IE: potential harvesting issues with new IE CWS service | Geoportal Harvesting process IE - Ireland | From: Margaret Twynam Muldoon <Margaret.TwynamMuldoon@housing.gov.ie>

Sent: 11 June 2019 11:13

To: JRC INSPIRE SUPPORT

Cc: Inspire_IE

Subject: [Geoportal Helpdesk]

Dear JRC INSPIRE Support,

We are in the process of upgrading our Geoportal and made a switch on 29th March 2019 (new Geoportal CSW endpoint [https:/... | 1.0 | IE: potential harvesting issues with new IE CWS service - From: Margaret Twynam Muldoon <Margaret.TwynamMuldoon@housing.gov.ie>

Sent: 11 June 2019 11:13

To: JRC INSPIRE SUPPORT

Cc: Inspire_IE

Subject: [Geoportal Helpdesk]

Dear JRC INSPIRE Support,

We are in the process of upgrading our Geoportal and made a swit... | process | ie potential harvesting issues with new ie cws service from margaret twynam muldoon lt margaret twynammuldoon housing gov ie gt sent june to jrc inspire support cc inspire ie subject dear jrc inspire support we are in the process of upgrading our geoportal and made a switch on march new geop... | 1 |

28,948 | 23,622,294,566 | IssuesEvent | 2022-08-24 22:00:18 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | unable to "dotnet restore" to use my own build of coreclr | question help wanted area-Infrastructure-coreclr | i am following https://github.com/dotnet/coreclr/blob/master/Documentation/workflow/UsingDotNetCli.md but can't success, please help

<img width="2560" alt="screen shot 2018-09-22 at 9 23 47 pm" src="https://user-images.githubusercontent.com/36571333/45917687-dd868380-bead-11e8-8f3c-0166768596cd.png">

| 1.0 | unable to "dotnet restore" to use my own build of coreclr - i am following https://github.com/dotnet/coreclr/blob/master/Documentation/workflow/UsingDotNetCli.md but can't success, please help

<img width="2560" alt="screen shot 2018-09-22 at 9 23 47 pm" src="https://user-images.githubusercontent.com/36571333/4591768... | non_process | unable to dotnet restore to use my own build of coreclr i am following but can t success please help img width alt screen shot at pm src | 0 |

21,387 | 29,202,231,238 | IssuesEvent | 2023-05-21 00:37:31 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] Test Analyst na Coodesh | SALVADOR TESTE BANCO DE DADOS SQL REQUISITOS SELENIUM CUCUMBER REMOTO PROCESSOS GITHUB INGLÊS SEGURANÇA UMA QUALIDADE TESTES AUTOMATIZADOS METODOLOGIAS ÁGEIS NEGÓCIOS AUTOMAÇÃO DE TESTES TESTES MANUAIS Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/test-analyst-172621911?utm_source=git... | 1.0 | [Remoto] Test Analyst na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/te... | process | test analyst na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 o gr... | 1 |

2,477 | 2,615,170,509 | IssuesEvent | 2015-03-01 06:52:19 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Copy for Business section | auto-migrated Milestone-Q42011-1 New Priority-P2 redesign Type-Bug | ```

Create the content for the /enterprise page of html5rocks.com

```

Original issue reported on code.google.com by `ericbide...@html5rocks.com` on 19 Oct 2011 at 3:47

* Blocking: #663 | 1.0 | Copy for Business section - ```

Create the content for the /enterprise page of html5rocks.com

```

Original issue reported on code.google.com by `ericbide...@html5rocks.com` on 19 Oct 2011 at 3:47

* Blocking: #663 | non_process | copy for business section create the content for the enterprise page of com original issue reported on code google com by ericbide com on oct at blocking | 0 |

987 | 3,022,649,760 | IssuesEvent | 2015-07-31 21:42:24 | catapult-project/catapult | https://api.github.com/repos/catapult-project/catapult | closed | Add a presubmit check for csslint and gjslint | Infrastructure P2 | <a href="https://github.com/natduca"><img src="https://avatars.githubusercontent.com/u/412396?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [natduca](https://github.com/natduca)**

_Monday Sep 22, 2014 at 19:56 GMT_

_Originally opened as https://github.com/google/trace-viewer/issues/49_

---... | 1.0 | Add a presubmit check for csslint and gjslint - <a href="https://github.com/natduca"><img src="https://avatars.githubusercontent.com/u/412396?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [natduca](https://github.com/natduca)**

_Monday Sep 22, 2014 at 19:56 GMT_

_Originally opened as https:... | non_process | add a presubmit check for csslint and gjslint issue by monday sep at gmt originally opened as from on june when trace viewer was in the chrome repo we picked up presubmit checks for both javascript and css lint we should try to bring those back online original iss... | 0 |

3,862 | 6,808,629,759 | IssuesEvent | 2017-11-04 05:51:27 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | reopened | Document 'hidden' command line options | apps-all status-inprocess tools-all type-enhancement | Some tools have hidden command line options. For example, the --silent option on grabABI. These are for use in special cases, and do not warrant inclusion in the help text sent to the screen, but they should be documented. Here's a current list, that is probably out of date since this issue was posted.

./apps/grabAB... | 1.0 | Document 'hidden' command line options - Some tools have hidden command line options. For example, the --silent option on grabABI. These are for use in special cases, and do not warrant inclusion in the help text sent to the screen, but they should be documented. Here's a current list, that is probably out of date sinc... | process | document hidden command line options some tools have hidden command line options for example the silent option on grababi these are for use in special cases and do not warrant inclusion in the help text sent to the screen but they should be documented here s a current list that is probably out of date sinc... | 1 |

13,363 | 15,826,731,237 | IssuesEvent | 2021-04-06 07:49:03 | ultimate-pa/ultimate | https://api.github.com/repos/ultimate-pa/ultimate | closed | Problem with validator from SVCOMP 2021 | external processes investigation needed possible bug | Hi,

I'm using the version of UAutomizer from the archive2021 repo of SVCOMP and I'm getting and error when trying to validate a witness. I'm running the following cmd

```

./Ultimate.py --spec ~/git/sv-benchmarks/c/properties/unreach-call.prp --file ~/git/sv-benchmarks/c/pthread-wmm/mix000_power.oepc.i --architectu... | 1.0 | Problem with validator from SVCOMP 2021 - Hi,

I'm using the version of UAutomizer from the archive2021 repo of SVCOMP and I'm getting and error when trying to validate a witness. I'm running the following cmd

```

./Ultimate.py --spec ~/git/sv-benchmarks/c/properties/unreach-call.prp --file ~/git/sv-benchmarks/c/pt... | process | problem with validator from svcomp hi i m using the version of uautomizer from the repo of svcomp and i m getting and error when trying to validate a witness i m running the following cmd ultimate py spec git sv benchmarks c properties unreach call prp file git sv benchmarks c pthread wmm p... | 1 |

18,776 | 24,678,486,338 | IssuesEvent | 2022-10-18 19:05:11 | dtcenter/MET | https://api.github.com/repos/dtcenter/MET | closed | Enhance ASCII2NC to read NDBC buoy data | requestor: NOAA/EMC type: new feature reporting: DTC NOAA R2O requestor: NOAA/OPC MET: PreProcessing Tools (Point) priority: high | ## Describe the New Feature ##

The desire to use buoy data in ASCII format for verification within MET has come up twice recently as seen in this [GitHub discussion](https://github.com/dtcenter/METplus/discussions/1747). This functionality is needed by both NOAA/EMC and NOAA/OPC. While the interim solution is using py... | 1.0 | Enhance ASCII2NC to read NDBC buoy data - ## Describe the New Feature ##

The desire to use buoy data in ASCII format for verification within MET has come up twice recently as seen in this [GitHub discussion](https://github.com/dtcenter/METplus/discussions/1747). This functionality is needed by both NOAA/EMC and NOAA/O... | process | enhance to read ndbc buoy data describe the new feature the desire to use buoy data in ascii format for verification within met has come up twice recently as seen in this this functionality is needed by both noaa emc and noaa opc while the interim solution is using python embedding of point observations... | 1 |

5,641 | 8,499,512,297 | IssuesEvent | 2018-10-29 17:21:00 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [Banner] Define the Banner MVP | [Banner] type:Process | Definition of done:

- There is a Banner design doc listed at go/material-ios-design-docs.

- The design doc includes a list of MVP features and a separate list of non-MVP features.

- The team has had a chance to review and LGTM the set of MVP features.

<!-- Auto-generated content below, do not modify -->

---

##... | 1.0 | [Banner] Define the Banner MVP - Definition of done:

- There is a Banner design doc listed at go/material-ios-design-docs.

- The design doc includes a list of MVP features and a separate list of non-MVP features.

- The team has had a chance to review and LGTM the set of MVP features.

<!-- Auto-generated content... | process | define the banner mvp definition of done there is a banner design doc listed at go material ios design docs the design doc includes a list of mvp features and a separate list of non mvp features the team has had a chance to review and lgtm the set of mvp features internal data associ... | 1 |

18,024 | 24,032,795,259 | IssuesEvent | 2022-09-15 16:19:31 | googleapis/java-pubsub-group-kafka-connector | https://api.github.com/repos/googleapis/java-pubsub-group-kafka-connector | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'pubsub-group-kafka-connector' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapis/repo-automation-bots/blob/main/pac... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'pubsub-group-kafka-connector' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname pubsub group kafka connector invalid in repo metadata json ☝️ once you address these problems you can close this issue need help lists valid options for each fi... | 1 |

8,426 | 11,594,005,019 | IssuesEvent | 2020-02-24 14:37:40 | SE-Garden/tms-webserver | https://api.github.com/repos/SE-Garden/tms-webserver | opened | OJTレポート検索APIの実装 | kind:機能 process:MK/UT | ## 概要

OJTレポート検索APIの実装

## ゴール

- OJTレポート検索APIの実装

- OJTレポート検索APIの単体試験は実施しない

## 成果物

- ソースコード

## 関連Issue

- None | 1.0 | OJTレポート検索APIの実装 - ## 概要

OJTレポート検索APIの実装

## ゴール

- OJTレポート検索APIの実装

- OJTレポート検索APIの単体試験は実施しない

## 成果物

- ソースコード

## 関連Issue

- None | process | ojtレポート検索apiの実装 概要 ojtレポート検索apiの実装 ゴール ojtレポート検索apiの実装 ojtレポート検索apiの単体試験は実施しない 成果物 ソースコード 関連issue none | 1 |

28,037 | 5,427,813,172 | IssuesEvent | 2017-03-03 14:24:58 | DevExpress/testcafe | https://api.github.com/repos/DevExpress/testcafe | opened | Change debugging logging style | AREA: client AREA: server DOCUMENTATION: required SYSTEM: runner TYPE: enhancement | ### Are you requesting a feature or reporting a bug?

feature

### What is the current behavior?

debugging messages are added one by one in console (for each debugging stop point)

### What is the expected behavior?

We should show one debugging message and update it on each debugging stops | 1.0 | Change debugging logging style - ### Are you requesting a feature or reporting a bug?

feature

### What is the current behavior?

debugging messages are added one by one in console (for each debugging stop point)

### What is the expected behavior?

We should show one debugging message and update it on each... | non_process | change debugging logging style are you requesting a feature or reporting a bug feature what is the current behavior debugging messages are added one by one in console for each debugging stop point what is the expected behavior we should show one debugging message and update it on each... | 0 |

26,779 | 7,868,873,283 | IssuesEvent | 2018-06-24 05:57:17 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | opened | Access violation in ZeebeClientTest | bug unstable build | Had yesterday two access violations in the ZeebeClientTest.

```java

[INFO] Running io.zeebe.client.workflow.WorkflowRepositoryTest

[INFO] Tests run: 7, Failures: 0, Errors: 0, Skipped: 0, Time elapsed: 0.322 s - in io.zeebe.client.workflow.WorkflowRepositoryTest

[INFO] Running io.zeebe.client.ZeebeClientTest

[WA... | 1.0 | Access violation in ZeebeClientTest - Had yesterday two access violations in the ZeebeClientTest.

```java

[INFO] Running io.zeebe.client.workflow.WorkflowRepositoryTest

[INFO] Tests run: 7, Failures: 0, Errors: 0, Skipped: 0, Time elapsed: 0.322 s - in io.zeebe.client.workflow.WorkflowRepositoryTest

[INFO] Runni... | non_process | access violation in zeebeclienttest had yesterday two access violations in the zeebeclienttest java running io zeebe client workflow workflowrepositorytest tests run failures errors skipped time elapsed s in io zeebe client workflow workflowrepositorytest running io zeebe clien... | 0 |

1,804 | 4,540,544,765 | IssuesEvent | 2016-09-09 14:57:42 | MModel/MetaModel | https://api.github.com/repos/MModel/MetaModel | closed | Alias support | [Difficulty] Easy [Propose] Enhancement [Status] In Process | Like Ruby on Rails, MetaModel needs aliases to act quickly.

+ meta init => meta i

+ meta generate => meta g

+ meta build => meta b

+ meta clean => meta c | 1.0 | Alias support - Like Ruby on Rails, MetaModel needs aliases to act quickly.

+ meta init => meta i

+ meta generate => meta g

+ meta build => meta b

+ meta clean => meta c | process | alias support like ruby on rails metamodel needs aliases to act quickly meta init meta i meta generate meta g meta build meta b meta clean meta c | 1 |

15,469 | 19,682,388,175 | IssuesEvent | 2022-01-11 18:04:16 | prisma/prisma-engines | https://api.github.com/repos/prisma/prisma-engines | closed | Make connector-test-kit run with indexes on mongodb | process/candidate kind/tech team/migrations team/client topic: mongodb | TL;DR : the migration engine `SchemaPush` command was implemented for MongoDB in https://github.com/prisma/prisma-engines/pull/2287

It is the command that is used by the query engine to set up databases for tests in connector-test-kit. As a result of the command actually creating indexes now, CI started failing on Q... | 1.0 | Make connector-test-kit run with indexes on mongodb - TL;DR : the migration engine `SchemaPush` command was implemented for MongoDB in https://github.com/prisma/prisma-engines/pull/2287

It is the command that is used by the query engine to set up databases for tests in connector-test-kit. As a result of the command ... | process | make connector test kit run with indexes on mongodb tl dr the migration engine schemapush command was implemented for mongodb in it is the command that is used by the query engine to set up databases for tests in connector test kit as a result of the command actually creating indexes now ci started failing... | 1 |

103,447 | 11,356,610,088 | IssuesEvent | 2020-01-24 23:21:17 | pokusew/node-pcsclite | https://api.github.com/repos/pokusew/node-pcsclite | closed | Cannot install on Windows 10 x64 with Node.js 6.11.2 | documentation question | **Hello, I receive the following message when trying to install your moduel (npm install nfc-pcsc):**

598 silly install printInstalled

599 verbose stack Error: @pokusew/pcsclite@0.4.17 install: `node-gyp rebuild`

599 verbose stack Exit status 1

599 verbose stack at EventEmitter.<anonymous> (C:\job\node\node_m... | 1.0 | Cannot install on Windows 10 x64 with Node.js 6.11.2 - **Hello, I receive the following message when trying to install your moduel (npm install nfc-pcsc):**

598 silly install printInstalled

599 verbose stack Error: @pokusew/pcsclite@0.4.17 install: `node-gyp rebuild`

599 verbose stack Exit status 1

599 verbose st... | non_process | cannot install on windows with node js hello i receive the following message when trying to install your moduel npm install nfc pcsc silly install printinstalled verbose stack error pokusew pcsclite install node gyp rebuild verbose stack exit status verbose stack at ev... | 0 |

7,429 | 10,547,058,302 | IssuesEvent | 2019-10-02 23:28:02 | OCFL/spec | https://api.github.com/repos/OCFL/spec | closed | Create an RFC process | Process/Extensions/Related | Create a github repository in the OCFL organization for the purpose of community review and publishing of individual OCFL-related RFCs. Make any decisions related to policies and ongoing maintenance.

## Background

At the 8/14 [OCFL community meeting](https://github.com/OCFL/spec/wiki/2019.08.14-Community-Meeting#r... | 1.0 | Create an RFC process - Create a github repository in the OCFL organization for the purpose of community review and publishing of individual OCFL-related RFCs. Make any decisions related to policies and ongoing maintenance.

## Background

At the 8/14 [OCFL community meeting](https://github.com/OCFL/spec/wiki/2019.0... | process | create an rfc process create a github repository in the ocfl organization for the purpose of community review and publishing of individual ocfl related rfcs make any decisions related to policies and ongoing maintenance background at the there was interest in creating an rfc process separate from t... | 1 |

212,875 | 7,243,582,785 | IssuesEvent | 2018-02-14 12:17:34 | jrantamaki/supertimemachine | https://api.github.com/repos/jrantamaki/supertimemachine | closed | Bug: Calculation of elapsed time is wrong | bug frontend priority: high | Used Duration does not work properly when timestamps are for different dates. | 1.0 | Bug: Calculation of elapsed time is wrong - Used Duration does not work properly when timestamps are for different dates. | non_process | bug calculation of elapsed time is wrong used duration does not work properly when timestamps are for different dates | 0 |

142,549 | 13,033,675,150 | IssuesEvent | 2020-07-28 07:24:00 | Maxi35/pyrelay | https://api.github.com/repos/Maxi35/pyrelay | closed | Is there any documentation or tutorials available? | documentation | I am mildly proficient with python, but am struggling to pick up your syntax just from the single plugin bundled with the project.

The whole thing works phenomenally so far, and I would really like to get into some interesting developments soon. Is there anyway you can post more plugins so I can learn by example if... | 1.0 | Is there any documentation or tutorials available? - I am mildly proficient with python, but am struggling to pick up your syntax just from the single plugin bundled with the project.

The whole thing works phenomenally so far, and I would really like to get into some interesting developments soon. Is there anyway y... | non_process | is there any documentation or tutorials available i am mildly proficient with python but am struggling to pick up your syntax just from the single plugin bundled with the project the whole thing works phenomenally so far and i would really like to get into some interesting developments soon is there anyway y... | 0 |

587,137 | 17,605,391,149 | IssuesEvent | 2021-08-17 16:23:57 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | Autoreplacing text with emoji prevents from links editing | bug Chat priority 2: medium | There could be more cases, but an obvious one: when i want to edit a link in a text box, removing `/` replaces the `:/ ` with emoji, which is unwanted in this case. I think we need to make suggestions for such replacements and allow user to decide wether he needs it or not

**To reproduce:**

1. open any chat

2. p... | 1.0 | Autoreplacing text with emoji prevents from links editing - There could be more cases, but an obvious one: when i want to edit a link in a text box, removing `/` replaces the `:/ ` with emoji, which is unwanted in this case. I think we need to make suggestions for such replacements and allow user to decide wether he ne... | non_process | autoreplacing text with emoji prevents from links editing there could be more cases but an obvious one when i want to edit a link in a text box removing replaces the with emoji which is unwanted in this case i think we need to make suggestions for such replacements and allow user to decide wether he ne... | 0 |

377,823 | 11,184,853,817 | IssuesEvent | 2019-12-31 20:38:01 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1299] Animals: Client crash at some point | High Priority | Hard to reproduce. My steps was:

1. Take a bow ans arrows

2. Type /safe

2. Fly around ans fire some deers and goats

3. Have a crash at some point

```

<size=60.00%>Exception: NullReferenceException

Message:Object reference not set to an instance of an object.

Source:Eco.Simulation

System.NullReferenceExcept... | 1.0 | [0.9.0 staging-1299] Animals: Client crash at some point - Hard to reproduce. My steps was:

1. Take a bow ans arrows

2. Type /safe

2. Fly around ans fire some deers and goats

3. Have a crash at some point

```

<size=60.00%>Exception: NullReferenceException

Message:Object reference not set to an instance of an ... | non_process | animals client crash at some point hard to reproduce my steps was take a bow ans arrows type safe fly around ans fire some deers and goats have a crash at some point exception nullreferenceexception message object reference not set to an instance of an object source eco simulation ... | 0 |

654,980 | 21,675,267,531 | IssuesEvent | 2022-05-08 16:02:16 | chaotic-aur/packages | https://api.github.com/repos/chaotic-aur/packages | closed | [Request] MyPy | request:new-pkg priority:low | ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/mypy-git

### Utility this package has for you

Python development, static typing.

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but for a few.

### Do you consider the package to be useful for feature testing/... | 1.0 | [Request] MyPy - ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/mypy-git

### Utility this package has for you

Python development, static typing.

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but for a few.

### Do you consider the package to be useful for... | non_process | mypy link to the package s in the aur utility this package has for you python development static typing do you consider the package s to be useful for every chaotic aur user no but for a few do you consider the package to be useful for feature testing preview yes have you t... | 0 |

20,103 | 26,638,256,986 | IssuesEvent | 2023-01-25 00:36:04 | keras-team/keras-cv | https://api.github.com/repos/keras-team/keras-cv | closed | Repeated augmentation layer | preprocessing roadmap | Repeated augmentation layer [1] has also become an important recipe to train SoTA image classification models. The abstract of [1] pretty much sums up what it is:

> Large-batch SGD is important for scaling training of deep neural networks. However, without fine-tuning hyperparameter schedules, the generalization of ... | 1.0 | Repeated augmentation layer - Repeated augmentation layer [1] has also become an important recipe to train SoTA image classification models. The abstract of [1] pretty much sums up what it is:

> Large-batch SGD is important for scaling training of deep neural networks. However, without fine-tuning hyperparameter sch... | process | repeated augmentation layer repeated augmentation layer has also become an important recipe to train sota image classification models the abstract of pretty much sums up what it is large batch sgd is important for scaling training of deep neural networks however without fine tuning hyperparameter schedul... | 1 |

4,493 | 7,346,179,194 | IssuesEvent | 2018-03-07 19:50:10 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Azure AD > App Registrations > Settings > Properties > Required Permissions | active-directory cxp in-process triaged | Step 1 Register Application, Point 8 (Grant permissions across your tenant for your application): The "Required Permissions" has now it's own tab in Settings in the category "API Access" and is not anymore below Settings > Properties

Wrong: Settings > Properties > Required Permissions