Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

238,011 | 18,215,436,961 | IssuesEvent | 2021-09-30 03:16:34 | SynBioHub/Plugin-Submit-Excel-Library | https://api.github.com/repos/SynBioHub/Plugin-Submit-Excel-Library | closed | Document how to locally run "Submit excel library" | documentation | Similar request to https://github.com/SynBioHub/Plugin-Submit-Excel-Composition/issues/6#issue-696251251.

It would be helpful to document how this add-on can be ran locally. | 1.0 | Document how to locally run "Submit excel library" - Similar request to https://github.com/SynBioHub/Plugin-Submit-Excel-Composition/issues/6#issue-696251251.

It would be helpful to document how this add-on can be ran locally. | non_process | document how to locally run submit excel library similar request to it would be helpful to document how this add on can be ran locally | 0 |

416,739 | 28,097,845,128 | IssuesEvent | 2023-03-30 17:04:47 | microsoft/torchgeo | https://api.github.com/repos/microsoft/torchgeo | closed | torchgeo install in google colab | documentation | ### Description

I wanted to check a potential bug with a reproducible example in a colab notebook and found that there is some installation issue.

```

%pip install torchgeo

from torchgeo.trainers import ClassificationTask

```

`ContextualVersionConflict: (Pygments 2.6.1 (/usr/local/lib/python3.8/dist-package... | 1.0 | torchgeo install in google colab - ### Description

I wanted to check a potential bug with a reproducible example in a colab notebook and found that there is some installation issue.

```

%pip install torchgeo

from torchgeo.trainers import ClassificationTask

```

`ContextualVersionConflict: (Pygments 2.6.1 (/u... | non_process | torchgeo install in google colab description i wanted to check a potential bug with a reproducible example in a colab notebook and found that there is some installation issue pip install torchgeo from torchgeo trainers import classificationtask contextualversionconflict pygments u... | 0 |

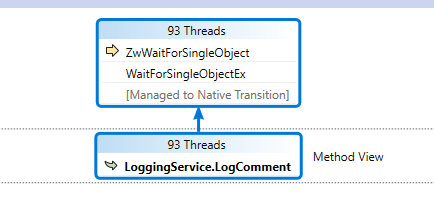

41,096 | 21,476,328,635 | IssuesEvent | 2022-04-26 13:57:12 | dotnet/msbuild | https://api.github.com/repos/dotnet/msbuild | closed | LoggingService.LogComment causes large amounts of contention between unrelated evaluation threads. | performance | ### Issue Description

LoggingService.LogComment causes large amounts of contention between unrelated evaluation threads.

### Steps to Reproduce

- clean solution VS temp files (.vs)

- clean solution (ms... | True | LoggingService.LogComment causes large amounts of contention between unrelated evaluation threads. - ### Issue Description

LoggingService.LogComment causes large amounts of contention between unrelated evaluation threads.

` from all examples | kind/docs process/next-milestone | In order to simplify examples we should remove the `await photon.connect()` call and rely on the lazy connect behavior of Photon.js by default. Additionally we should **document** how to explicitly connect to your data source e.g. as an optimization strategy. | 1.0 | Remove `photon.connect()` from all examples - In order to simplify examples we should remove the `await photon.connect()` call and rely on the lazy connect behavior of Photon.js by default. Additionally we should **document** how to explicitly connect to your data source e.g. as an optimization strategy. | process | remove photon connect from all examples in order to simplify examples we should remove the await photon connect call and rely on the lazy connect behavior of photon js by default additionally we should document how to explicitly connect to your data source e g as an optimization strategy | 1 |

170,624 | 20,883,786,688 | IssuesEvent | 2022-03-23 01:12:44 | snowdensb/dependabot-core | https://api.github.com/repos/snowdensb/dependabot-core | reopened | CVE-2015-8315 (High) detected in ms-0.6.2.tgz | security vulnerability | ## CVE-2015-8315 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ms-0.6.2.tgz</b></p></summary>

<p>Tiny ms conversion utility</p>

<p>Library home page: <a href="https://registry.npmjs.... | True | CVE-2015-8315 (High) detected in ms-0.6.2.tgz - ## CVE-2015-8315 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ms-0.6.2.tgz</b></p></summary>

<p>Tiny ms conversion utility</p>

<p>Lib... | non_process | cve high detected in ms tgz cve high severity vulnerability vulnerable library ms tgz tiny ms conversion utility library home page a href path to dependency file npm and yarn spec fixtures projects path dependency deps etag package json path to vulnerable library ... | 0 |

462,355 | 13,245,684,753 | IssuesEvent | 2020-08-19 14:42:30 | carbon-design-system/ibm-dotcom-library | https://api.github.com/repos/carbon-design-system/ibm-dotcom-library | opened | Web Component: Develop Callout (internal) of the React version | dev package: web components priority: high | #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

IBM.com Library developer

> I need to:

create the `Callout (internal)`

> so that I can:

provide ibm.com adopter developers a web component version for every react v... | 1.0 | Web Component: Develop Callout (internal) of the React version - #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

IBM.com Library developer

> I need to:

create the `Callout (internal)`

> so that I can:

provide ib... | non_process | web component develop callout internal of the react version user story as a ibm com library developer i need to create the callout internal so that i can provide ibm com adopter developers a web component version for every react version available in the ibm com library a... | 0 |

18,768 | 24,674,277,745 | IssuesEvent | 2022-10-18 15:46:19 | keras-team/keras-cv | https://api.github.com/repos/keras-team/keras-cv | closed | Add RandomSunFlare preprocessing layer | preprocessing | ## Weather Augmentation

One of the real-world scenarios that pose challenges for training neural networks for Autonomous vehicle

Ref. Imple.

- https://github.com/UjjwalSaxena/Automold--Road... | 1.0 | Add RandomSunFlare preprocessing layer - ## Weather Augmentation

One of the real-world scenarios that pose challenges for training neural networks for Autonomous vehicle

Ref. Imple.

- https... | process | add randomsunflare preprocessing layer weather augmentation one of the real world scenarios that pose challenges for training neural networks for autonomous vehicle ref imple | 1 |

17,592 | 23,416,245,101 | IssuesEvent | 2022-08-13 02:18:14 | pycaret/pycaret | https://api.github.com/repos/pycaret/pycaret | closed | [BUG] Why is the inverse transformation of the target not applied? | bug time_series preprocessing priority_high | ### Discussed in https://github.com/pycaret/pycaret/discussions/2706

<div type='discussions-op-text'>

<sup>Originally posted by **acartro** July 4, 2022</sup>

Good morning,

I am doing a time series experiment with the new 3.0 library. I have found that when I set the setup to do a logarithmic transformation ... | 1.0 | [BUG] Why is the inverse transformation of the target not applied? - ### Discussed in https://github.com/pycaret/pycaret/discussions/2706

<div type='discussions-op-text'>

<sup>Originally posted by **acartro** July 4, 2022</sup>

Good morning,

I am doing a time series experiment with the new 3.0 library. I hav... | process | why is the inverse transformation of the target not applied discussed in originally posted by acartro july good morning i am doing a time series experiment with the new library i have found that when i set the setup to do a logarithmic transformation of the target transform tar... | 1 |

71,390 | 23,606,276,517 | IssuesEvent | 2022-08-24 08:32:34 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | FileUpload | The error message does not disappear correctly when removing file(s), to match your file limit. | defect | ### Describe the bug

if you choose files more than fileLimit you see an alert message, but when you remove a file and the fileLimit is correct now, the alert message stays.It should go but it is not.

Also when you press cancel button and delete all files by doing so.Alert message stays.It should ideally go.

Can ... | 1.0 | FileUpload | The error message does not disappear correctly when removing file(s), to match your file limit. - ### Describe the bug

if you choose files more than fileLimit you see an alert message, but when you remove a file and the fileLimit is correct now, the alert message stays.It should go but it is not.

Als... | non_process | fileupload the error message does not disappear correctly when removing file s to match your file limit describe the bug if you choose files more than filelimit you see an alert message but when you remove a file and the filelimit is correct now the alert message stays it should go but it is not als... | 0 |

17,802 | 23,728,560,753 | IssuesEvent | 2022-08-30 22:21:25 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | opened | Provide consistent deprecated behavior and reduce downstream breakage | Enhancement Process | **Need consistent behavior for deprecated objects**

The behavior of builds varies based on the type object that is deprecated and also varies based on the toolchain used.

For example, adding a `label` property to the qemu_x86.dts file results in a warning when running west:

```

$ west build -b qemu_x86 samples/... | 1.0 | Provide consistent deprecated behavior and reduce downstream breakage - **Need consistent behavior for deprecated objects**

The behavior of builds varies based on the type object that is deprecated and also varies based on the toolchain used.

For example, adding a `label` property to the qemu_x86.dts file results i... | process | provide consistent deprecated behavior and reduce downstream breakage need consistent behavior for deprecated objects the behavior of builds varies based on the type object that is deprecated and also varies based on the toolchain used for example adding a label property to the qemu dts file results in ... | 1 |

5,089 | 7,876,462,731 | IssuesEvent | 2018-06-26 01:13:54 | Jacksgong/okcat | https://api.github.com/repos/Jacksgong/okcat | closed | adb connection is lost | processing | **device** & **adb** is connected

when I execute cmd `okcat -y darwin.yml` , I got

```

using config on /Users/sep/.okcat/darwin.yml

using config on /Users/sep/.okcat/logx.yml

find regex: ['data', 'time', 'process', 'thread', 'level', 'tag', 'message'] with (.\S*) *(.\S*) *(\d*) *(\d*) *([A-Z]) *([^:]*): *(.... | 1.0 | adb connection is lost - **device** & **adb** is connected

when I execute cmd `okcat -y darwin.yml` , I got

```

using config on /Users/sep/.okcat/darwin.yml

using config on /Users/sep/.okcat/logx.yml

find regex: ['data', 'time', 'process', 'thread', 'level', 'tag', 'message'] with (.\S*) *(.\S*) *(\d*) *(\d... | process | adb connection is lost device adb is connected when i execute cmd okcat y darwin yml i got using config on users sep okcat darwin yml using config on users sep okcat logx yml find regex with s s d d adb connection is lost how to re... | 1 |

3,865 | 6,808,638,327 | IssuesEvent | 2017-11-04 06:00:27 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | reopened | binary files should use myExitHandler | apps-all status-inprocess tools-all type-bug | All apps and tools that write to the binary cache should register an onExit function to properly remove the .lck files. blockScrape for one, does not. | 1.0 | binary files should use myExitHandler - All apps and tools that write to the binary cache should register an onExit function to properly remove the .lck files. blockScrape for one, does not. | process | binary files should use myexithandler all apps and tools that write to the binary cache should register an onexit function to properly remove the lck files blockscrape for one does not | 1 |

33,066 | 7,018,636,687 | IssuesEvent | 2017-12-21 14:28:14 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | Lightbox content behind the overlay mask when opened from a Dialog | defect discussion pending-review | ### There is no guarantee in receiving a response in GitHub Issue Tracker, If you'd like to secure our response, you may consider *PrimeNG PRO Support* where support is provided within 4 business hours

**I'm submitting a ...** (check one with "x")

```

[x] bug report => Search github for a similar issue or PR befo... | 1.0 | Lightbox content behind the overlay mask when opened from a Dialog - ### There is no guarantee in receiving a response in GitHub Issue Tracker, If you'd like to secure our response, you may consider *PrimeNG PRO Support* where support is provided within 4 business hours

**I'm submitting a ...** (check one with "x")... | non_process | lightbox content behind the overlay mask when opened from a dialog there is no guarantee in receiving a response in github issue tracker if you d like to secure our response you may consider primeng pro support where support is provided within business hours i m submitting a check one with x ... | 0 |

12,845 | 3,655,879,771 | IssuesEvent | 2016-02-17 17:46:57 | ExcaliburZero/tempconvert-c | https://api.github.com/repos/ExcaliburZero/tempconvert-c | closed | Add reporting bugs section to manual file | documentation | A section should be added to the manual file with information on how to report bugs.

See `man ls` for an example. | 1.0 | Add reporting bugs section to manual file - A section should be added to the manual file with information on how to report bugs.

See `man ls` for an example. | non_process | add reporting bugs section to manual file a section should be added to the manual file with information on how to report bugs see man ls for an example | 0 |

5,156 | 7,933,325,977 | IssuesEvent | 2018-07-08 04:07:50 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | getAccounts may be more useful than it currently is | status-inprocess tools-getAccounts type-enhancement | Carlos suggests that getAccounts can be more useful than it is. For example, backing up public/private keys. Concern is that this is ultra-sentsitive data. If we mishandle it it would be disastrous. | 1.0 | getAccounts may be more useful than it currently is - Carlos suggests that getAccounts can be more useful than it is. For example, backing up public/private keys. Concern is that this is ultra-sentsitive data. If we mishandle it it would be disastrous. | process | getaccounts may be more useful than it currently is carlos suggests that getaccounts can be more useful than it is for example backing up public private keys concern is that this is ultra sentsitive data if we mishandle it it would be disastrous | 1 |

624,981 | 19,715,194,682 | IssuesEvent | 2022-01-13 10:17:41 | o3de/o3de | https://api.github.com/repos/o3de/o3de | opened | Script Canvas Promote to Variable action causes unexpected issues with the node | kind/bug needs-triage sig/content priority/minor | **Describe the bug**

The following issues can be observed after performing the _Promote to Variable_ action on a data pin:

- The data pin name is changed to _(1)_ (or a higher number, depending on how many Variables are created in the graph).

- Undoing and redoing such action causes a redundant data pin of the same ... | 1.0 | Script Canvas Promote to Variable action causes unexpected issues with the node - **Describe the bug**

The following issues can be observed after performing the _Promote to Variable_ action on a data pin:

- The data pin name is changed to _(1)_ (or a higher number, depending on how many Variables are created in the g... | non_process | script canvas promote to variable action causes unexpected issues with the node describe the bug the following issues can be observed after performing the promote to variable action on a data pin the data pin name is changed to or a higher number depending on how many variables are created in the g... | 0 |

320,082 | 9,769,306,767 | IssuesEvent | 2019-06-06 08:14:09 | brian-team/brian2 | https://api.github.com/repos/brian-team/brian2 | closed | SpikeGeneratorGroup not correctly working after restore | bug high priority | A user report an issue on the [mailing list](https://groups.google.com/d/msg/briansupport/SSdLkRigOJU/br__yN0IBAAJ). The following code leads to a broadcasting error with the `numpy` target (and leads to incorrect values on Cython/weave):

```Python

nb_sim = 2 # number of successive simulatio... | 1.0 | SpikeGeneratorGroup not correctly working after restore - A user report an issue on the [mailing list](https://groups.google.com/d/msg/briansupport/SSdLkRigOJU/br__yN0IBAAJ). The following code leads to a broadcasting error with the `numpy` target (and leads to incorrect values on Cython/weave):

```Python

nb_sim = 2 ... | non_process | spikegeneratorgroup not correctly working after restore a user report an issue on the the following code leads to a broadcasting error with the numpy target and leads to incorrect values on cython weave python nb sim number of successive simulations time simulation m... | 0 |

2,222 | 5,071,651,939 | IssuesEvent | 2016-12-26 15:09:28 | mitchellh/packer | https://api.github.com/repos/mitchellh/packer | closed | checksum post-processor ignores "output" parameter if multiple artifacts and then disregards keep_input_artifacts=false | post-processor/checksum | Packer 0.12.0 on Windows

When using the checksum post-processor with something like the following with virtualbox-iso (and probably others):

```

{

"type": "checksum",

"checksum_types": [

"md5",

"sha256"

],

"output": "{{template_dir}}/vbox.checksums",

"keep_input_artifact": false

... | 1.0 | checksum post-processor ignores "output" parameter if multiple artifacts and then disregards keep_input_artifacts=false - Packer 0.12.0 on Windows

When using the checksum post-processor with something like the following with virtualbox-iso (and probably others):

```

{

"type": "checksum",

"checksum_type... | process | checksum post processor ignores output parameter if multiple artifacts and then disregards keep input artifacts false packer on windows when using the checksum post processor with something like the following with virtualbox iso and probably others type checksum checksum types... | 1 |

4,789 | 3,886,470,050 | IssuesEvent | 2016-04-14 01:11:16 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 19926911: Products with the same name should be visually differentiated in the navigator | classification:ui/usability reproducible:always status:open | #### Description

Summary:

If a project file includes two targest that specify a product with the same name, then the name should be differentitated such that it's obvious which one corresponds to which target.

Steps to Reproduce:

1. Open attached Test App.xcodeproj

2. Expand the "Products" section.

3. Observe t... | True | 19926911: Products with the same name should be visually differentiated in the navigator - #### Description

Summary:

If a project file includes two targest that specify a product with the same name, then the name should be differentitated such that it's obvious which one corresponds to which target.

Steps to Repro... | non_process | products with the same name should be visually differentiated in the navigator description summary if a project file includes two targest that specify a product with the same name then the name should be differentitated such that it s obvious which one corresponds to which target steps to reproduce ... | 0 |

55,445 | 14,008,921,712 | IssuesEvent | 2020-10-29 00:58:34 | mwilliams7197/bootstrap | https://api.github.com/repos/mwilliams7197/bootstrap | closed | CVE-2018-20190 (Medium) detected in multiple libraries - autoclosed | security vulnerability | ## CVE-2018-20190 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.11.0.tgz</b>, <b>opennmsopennms-source-25.1.0-1</b>, <b>opennmsopennms-source-24.1.2-1</b></p></summary... | True | CVE-2018-20190 (Medium) detected in multiple libraries - autoclosed - ## CVE-2018-20190 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.11.0.tgz</b>, <b>opennmsopennms-s... | non_process | cve medium detected in multiple libraries autoclosed cve medium severity vulnerability vulnerable libraries node sass tgz opennmsopennms source opennmsopennms source node sass tgz wrapper around libsass library home page a href path to de... | 0 |

54,824 | 13,456,130,192 | IssuesEvent | 2020-09-09 07:23:30 | curl/curl | https://api.github.com/repos/curl/curl | closed | linking curl fails with ../lib/.libs/libcurl.so: undefined reference to Curl_base64_encode | build | I tried to compile curl with `--disable-http-auth --disable-ldap --disable-doh` and all ssl and ssh related options disabled. Basically all options to ensure that the `#if ...` at the beginning of `lib/base64.c` is false.

No building fails with `../lib/.libs/libcurl.so: undefined reference to Curl_base64_encode` be... | 1.0 | linking curl fails with ../lib/.libs/libcurl.so: undefined reference to Curl_base64_encode - I tried to compile curl with `--disable-http-auth --disable-ldap --disable-doh` and all ssl and ssh related options disabled. Basically all options to ensure that the `#if ...` at the beginning of `lib/base64.c` is false.

No... | non_process | linking curl fails with lib libs libcurl so undefined reference to curl encode i tried to compile curl with disable http auth disable ldap disable doh and all ssl and ssh related options disabled basically all options to ensure that the if at the beginning of lib c is false no building ... | 0 |

17,628 | 23,444,802,504 | IssuesEvent | 2022-08-15 18:28:14 | googleapis/python-certificate-manager | https://api.github.com/repos/googleapis/python-certificate-manager | closed | Update release level to stable | type: process | [GA release template](https://github.com/googleapis/google-cloud-common/issues/287)

## Required

- [ ] 28 days elapsed since last beta release with new API surface **RELEASE ON/AFTER: April 30 2022**

- [x] Server API is GA

- [x] Package API is stable, and we can commit to backward compatibility

- [x] All depende... | 1.0 | Update release level to stable - [GA release template](https://github.com/googleapis/google-cloud-common/issues/287)

## Required

- [ ] 28 days elapsed since last beta release with new API surface **RELEASE ON/AFTER: April 30 2022**

- [x] Server API is GA

- [x] Package API is stable, and we can commit to backward... | process | update release level to stable required days elapsed since last beta release with new api surface release on after april server api is ga package api is stable and we can commit to backward compatibility all dependencies are ga | 1 |

381 | 2,823,565,140 | IssuesEvent | 2015-05-21 09:36:35 | austundag/testing | https://api.github.com/repos/austundag/testing | closed | Patient Header (Toolbar) allergies are not in severity order | enhancement in process | Also the full Allergies presentation that comes up when toolbar is clicked do not have the severities and not in order either. | 1.0 | Patient Header (Toolbar) allergies are not in severity order - Also the full Allergies presentation that comes up when toolbar is clicked do not have the severities and not in order either. | process | patient header toolbar allergies are not in severity order also the full allergies presentation that comes up when toolbar is clicked do not have the severities and not in order either | 1 |

5,323 | 8,139,225,923 | IssuesEvent | 2018-08-20 17:00:20 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | CMS - Edit Page Styling | Content type: Process Page Content type: Service Page Feature: Service Page Template Joplin MVP Size: M Team: Dev | - [x] components (existing)

- [x] Steps component will stay WYSIWYG

- ~Style guide sidebar will be present #467~

- ~help text w/ scroll to links to corresponding style guide section~

- [x] secondary informational headers

- [x] add question mark icons, move style guide links to icon click

| 1.0 | CMS - Edit Page Styling - - [x] components (existing)

- [x] Steps component will stay WYSIWYG

- ~Style guide sidebar will be present #467~

- ~help text w/ scroll to links to corresponding style guide section~

- [x] secondary informational headers

- [x] add question mark icons, move style guide links to icon cl... | process | cms edit page styling components existing steps component will stay wysiwyg style guide sidebar will be present help text w scroll to links to corresponding style guide section secondary informational headers add question mark icons move style guide links to icon click | 1 |

264,331 | 28,144,380,355 | IssuesEvent | 2023-04-02 10:09:52 | automation-staging-ghe-cloud/3452551_2401 | https://api.github.com/repos/automation-staging-ghe-cloud/3452551_2401 | opened | CVE-2021-20180 (Medium) detected in ansible-2.9.9.tar.gz | Mend: dependency security vulnerability | ## CVE-2021-20180 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radically simple IT automation</p>

<p>Library home page: <a href="https://... | True | CVE-2021-20180 (Medium) detected in ansible-2.9.9.tar.gz - ## CVE-2021-20180 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radically simpl... | non_process | cve medium detected in ansible tar gz cve medium severity vulnerability vulnerable library ansible tar gz radically simple it automation library home page a href path to dependency file requirements txt path to vulnerable library requirements txt dependency hie... | 0 |

225,877 | 24,909,107,937 | IssuesEvent | 2022-10-29 16:35:39 | AlexRogalskiy/ws-documents | https://api.github.com/repos/AlexRogalskiy/ws-documents | opened | CVE-2022-21724 (High) detected in postgresql-42.2.23.jar | security vulnerability | ## CVE-2022-21724 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>postgresql-42.2.23.jar</b></p></summary>

<p>PostgreSQL JDBC Driver Postgresql</p>

<p>Library home page: <a href="https... | True | CVE-2022-21724 (High) detected in postgresql-42.2.23.jar - ## CVE-2022-21724 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>postgresql-42.2.23.jar</b></p></summary>

<p>PostgreSQL JDBC... | non_process | cve high detected in postgresql jar cve high severity vulnerability vulnerable library postgresql jar postgresql jdbc driver postgresql library home page a href dependency hierarchy x postgresql jar vulnerable library found in head commit a href ... | 0 |

7,525 | 10,599,527,851 | IssuesEvent | 2019-10-10 08:09:49 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | folder from offices add tags not update on activities | 2.0.8 Process bug | go to office

open new office

go to folder from office

add tags

result : the activities not update

| 1.0 | folder from offices add tags not update on activities - go to office

open new office

go to folder from office

add tags

result : the activities not update

| process | folder from offices add tags not update on activities go to office open new office go to folder from office add tags result the activities not update | 1 |

12,162 | 14,741,522,342 | IssuesEvent | 2021-01-07 10:45:04 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Late Fee - Single Billing cycle Preventing previous late charges | anc-process anp-urgent ant-bug ant-parent/primary | In GitLab by @kdjstudios on Jan 23, 2019, 08:52

**Submitted by:** @pchaudhary

**Helpdesk:** NA

**Server:** All

**Client/Site:** All

**Account:** All

**Issue:**

- Let's say there is a Billing Cycle A having an invoice I-1 of $ 100.

- Then we create a new Billing Cycle B & create an invoice I-2 of $ 0.

- Now... | 1.0 | Late Fee - Single Billing cycle Preventing previous late charges - In GitLab by @kdjstudios on Jan 23, 2019, 08:52

**Submitted by:** @pchaudhary

**Helpdesk:** NA

**Server:** All

**Client/Site:** All

**Account:** All

**Issue:**

- Let's say there is a Billing Cycle A having an invoice I-1 of $ 100.

- Then we ... | process | late fee single billing cycle preventing previous late charges in gitlab by kdjstudios on jan submitted by pchaudhary helpdesk na server all client site all account all issue let s say there is a billing cycle a having an invoice i of then we create a... | 1 |

21,409 | 29,351,205,997 | IssuesEvent | 2023-05-27 00:34:44 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] Data Analyst na Coodesh | SALVADOR PJ BANCO DE DADOS SQL POSTGRESQL AWS ETL REQUISITOS REMOTO PROCESSOS GITHUB UMA QUALIDADE MODELAGEM DE DADOS MANUTENÇÃO PIPELINE MONITORAMENTO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/data-analyst-164223531?utm_source=git... | 1.0 | [Remoto] Data Analyst na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/da... | process | data analyst na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a go... | 1 |

12,026 | 14,738,544,628 | IssuesEvent | 2021-01-07 05:04:02 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Lucky Lincoln Gaming 123-E1616 | anc-ops anc-process anp-important ant-bug ant-support has attachment | In GitLab by @kdjstudios on Jun 8, 2018, 14:53

**Submitted by:** "Kimberly Gagner" <kimberly.gagner@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-06-08-19323

Vericheck HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-06-13-16448/conversation

**Server:** Internal... | 1.0 | Lucky Lincoln Gaming 123-E1616 - In GitLab by @kdjstudios on Jun 8, 2018, 14:53

**Submitted by:** "Kimberly Gagner" <kimberly.gagner@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-06-08-19323

Vericheck HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-06-13-16448/c... | process | lucky lincoln gaming in gitlab by kdjstudios on jun submitted by kimberly gagner helpdesk vericheck hd server internal client site account issue i just attempted to process an echeck payment for lucky lincoln in the amount of and receiving the fo... | 1 |

2,992 | 5,968,996,141 | IssuesEvent | 2017-05-30 19:18:49 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Desktop: System.ServiceProcess.Tests.ServiceControllerTests.Start_NullArg_ThrowsArgumentNullException failed with "Xunit.Sdk.EqualException" | area-System.ServiceProcess test-run-desktop | Failed test: System.ServiceProcess.Tests.ServiceControllerTests.Start_NullArg_ThrowsArgumentNullException

Detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_netfx_windows_nt_debug/66/testReport/System.ServiceProcess.Tests/ServiceControllerTests/Start_NullArg_ThrowsArgumentNullException/

Config... | 1.0 | Desktop: System.ServiceProcess.Tests.ServiceControllerTests.Start_NullArg_ThrowsArgumentNullException failed with "Xunit.Sdk.EqualException" - Failed test: System.ServiceProcess.Tests.ServiceControllerTests.Start_NullArg_ThrowsArgumentNullException

Detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerlo... | process | desktop system serviceprocess tests servicecontrollertests start nullarg throwsargumentnullexception failed with xunit sdk equalexception failed test system serviceprocess tests servicecontrollertests start nullarg throwsargumentnullexception detail configuration outerloop netfx windows nt debug mes... | 1 |

490,069 | 14,115,123,731 | IssuesEvent | 2020-11-07 19:16:17 | bounswe/bounswe2020group4 | https://api.github.com/repos/bounswe/bounswe2020group4 | opened | (WEB) Homepage, Navbar UI | Effort: Medium Frontend Priority: Medium Status: Pending | - UI implementation of the homepage and the navbar and the modular product car component

- Implementation of the hide/show navbar action

Deadline: 15.11.2020 23:59 | 1.0 | (WEB) Homepage, Navbar UI - - UI implementation of the homepage and the navbar and the modular product car component

- Implementation of the hide/show navbar action

Deadline: 15.11.2020 23:59 | non_process | web homepage navbar ui ui implementation of the homepage and the navbar and the modular product car component implementation of the hide show navbar action deadline | 0 |

93,341 | 11,773,766,049 | IssuesEvent | 2020-03-16 08:07:32 | dynatrace-oss/barista | https://api.github.com/repos/dynatrace-oss/barista | opened | Barista: Migrate Strapi database | P2 design-system | For better scalability and backups we have to migrate the Strapi database from sqlite to postgres. This has to be done manually as an automated migration seems not to work. | 1.0 | Barista: Migrate Strapi database - For better scalability and backups we have to migrate the Strapi database from sqlite to postgres. This has to be done manually as an automated migration seems not to work. | non_process | barista migrate strapi database for better scalability and backups we have to migrate the strapi database from sqlite to postgres this has to be done manually as an automated migration seems not to work | 0 |

17,582 | 23,392,148,696 | IssuesEvent | 2022-08-11 18:57:08 | ArneBinder/pie-utils | https://api.github.com/repos/ArneBinder/pie-utils | closed | collect and show distribution of text lengths (num tokens) | document processor | Add a document processor that tokenizes the text (e.g. with a Huggingface tokenizer), collects the lengths of the documents in means of token numbers and displays that information in a way that it is easy to digest. | 1.0 | collect and show distribution of text lengths (num tokens) - Add a document processor that tokenizes the text (e.g. with a Huggingface tokenizer), collects the lengths of the documents in means of token numbers and displays that information in a way that it is easy to digest. | process | collect and show distribution of text lengths num tokens add a document processor that tokenizes the text e g with a huggingface tokenizer collects the lengths of the documents in means of token numbers and displays that information in a way that it is easy to digest | 1 |

338,630 | 10,232,495,594 | IssuesEvent | 2019-08-18 17:57:49 | tideland/go | https://api.github.com/repos/tideland/go | opened | together/cells: Add factories package | priority / b / normal status / a / available type / b / enhancement | Idea of the package is to provide functions for the creation of larger cell meshes.

- Package name is `factories`

- Factory functions follow the signature pattern `CreateXyz(<mesh>, <id>, <params>) (Cells, error)`

- Parameters are individual structs with their arguments, possible behavior helpers, and possible con... | 1.0 | together/cells: Add factories package - Idea of the package is to provide functions for the creation of larger cell meshes.

- Package name is `factories`

- Factory functions follow the signature pattern `CreateXyz(<mesh>, <id>, <params>) (Cells, error)`

- Parameters are individual structs with their arguments, pos... | non_process | together cells add factories package idea of the package is to provide functions for the creation of larger cell meshes package name is factories factory functions follow the signature pattern createxyz cells error parameters are individual structs with their arguments possible behavior ... | 0 |

14,499 | 17,604,292,652 | IssuesEvent | 2021-08-17 15:13:32 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | Missing docs for "Extract Shapefile encoding" and "Set layer encoding" processing algorithms | Processing Alg | ## Description

Missing docs for "Extract Shapefile encoding" (`native:shpencodinginfo`) and "Set layer encoding" (`native:setlayerencoding`) algorithms added to the "Vector general" processing group with commit https://github.com/qgis/QGIS/commit/8cec5d0686c0e2800a1c63935ff8ac6608056a1f (PR https://github.com/qgis/Q... | 1.0 | Missing docs for "Extract Shapefile encoding" and "Set layer encoding" processing algorithms - ## Description

Missing docs for "Extract Shapefile encoding" (`native:shpencodinginfo`) and "Set layer encoding" (`native:setlayerencoding`) algorithms added to the "Vector general" processing group with commit https://git... | process | missing docs for extract shapefile encoding and set layer encoding processing algorithms description missing docs for extract shapefile encoding native shpencodinginfo and set layer encoding native setlayerencoding algorithms added to the vector general processing group with commit pr ... | 1 |

523,316 | 15,178,153,350 | IssuesEvent | 2021-02-14 14:19:24 | wevote/WebApp | https://api.github.com/repos/wevote/WebApp | closed | Add interface for uploading your own Profile Photo and Profile Banner | Difficulty: Medium Priority: 1 | Please implement the React interface for these on the "Settings" > "General Settings" page:

1. uploading your own profile photo

2. your own profile banner

3. Choosing which profile photo you would like to be displayed

4. Choose with profile banner you would like to have displayed

(There is no need to implement the... | 1.0 | Add interface for uploading your own Profile Photo and Profile Banner - Please implement the React interface for these on the "Settings" > "General Settings" page:

1. uploading your own profile photo

2. your own profile banner

3. Choosing which profile photo you would like to be displayed

4. Choose with profile ban... | non_process | add interface for uploading your own profile photo and profile banner please implement the react interface for these on the settings general settings page uploading your own profile photo your own profile banner choosing which profile photo you would like to be displayed choose with profile ban... | 0 |

148,998 | 23,411,654,732 | IssuesEvent | 2022-08-12 18:14:55 | department-of-veterans-affairs/vets-design-system-documentation | https://api.github.com/repos/department-of-veterans-affairs/vets-design-system-documentation | closed | Promo banner is not tracked on va.gov analytics | vsp-design-system-team va-promo-banner | ## Description

It was [brought to our attention](https://dsva.slack.com/archives/C01DBGX4P45/p1660260310590559) that the `<va-promo-banner>` was not being tracked on google analytics for va.gov. With the recent PACT news being communicated through the promo banner, having insight into the clicks on the banner would... | 1.0 | Promo banner is not tracked on va.gov analytics - ## Description

It was [brought to our attention](https://dsva.slack.com/archives/C01DBGX4P45/p1660260310590559) that the `<va-promo-banner>` was not being tracked on google analytics for va.gov. With the recent PACT news being communicated through the promo banner, ... | non_process | promo banner is not tracked on va gov analytics description it was that the was not being tracked on google analytics for va gov with the recent pact news being communicated through the promo banner having insight into the clicks on the banner would be useful details this isn t a problem with... | 0 |

14,411 | 2,806,432,003 | IssuesEvent | 2015-05-15 02:20:53 | rocky/python3-trepan | https://api.github.com/repos/rocky/python3-trepan | closed | cannot install trepan on python3.3 | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. pip install trepan

2.

3.

What is the expected output? What do you see instead?

successful install.

Downloading/unpacking trepan

Could not fetch URL http://code.google.com/p/trepan/ (from https://pypi.python.org/simple/trepan/): HTTP Error 404: Not Found

Will skip URL h... | 1.0 | cannot install trepan on python3.3 - ```

What steps will reproduce the problem?

1. pip install trepan

2.

3.

What is the expected output? What do you see instead?

successful install.

Downloading/unpacking trepan

Could not fetch URL http://code.google.com/p/trepan/ (from https://pypi.python.org/simple/trepan/): HTTP E... | non_process | cannot install trepan on what steps will reproduce the problem pip install trepan what is the expected output what do you see instead successful install downloading unpacking trepan could not fetch url from http error not found will skip url when looking for download links for trep... | 0 |

15,832 | 5,188,984,742 | IssuesEvent | 2017-01-20 21:42:09 | BruceJohnJennerLawso/scrap | https://api.github.com/repos/BruceJohnJennerLawso/scrap | closed | function to take levelId argument and filter non watMu seasons by levelId teams list | app codebase console enhancement | Console will need to load everything, but not everything wanted for nhl/wha outputs, so the seasons list needs to be filtered by levelId seasons list in task calls. | 1.0 | function to take levelId argument and filter non watMu seasons by levelId teams list - Console will need to load everything, but not everything wanted for nhl/wha outputs, so the seasons list needs to be filtered by levelId seasons list in task calls. | non_process | function to take levelid argument and filter non watmu seasons by levelid teams list console will need to load everything but not everything wanted for nhl wha outputs so the seasons list needs to be filtered by levelid seasons list in task calls | 0 |

11,220 | 14,000,470,549 | IssuesEvent | 2020-10-28 12:22:23 | timberio/vector | https://api.github.com/repos/timberio/vector | closed | Replace the `reduce` transform's `ends_when` option with Remap conditionals | domain: processing transform: reduce type: enhancement | Throughout Vector we have a concept of "conditions" that let users express conditional statements through a variety of options. This is present in the `reduce` transform's `ends_when` option:

```toml

[transforms.reduce]

type = "reduce"

ends_when.type = "check_fields"

ends_when."message.regex" = "\w"

```

... | 1.0 | Replace the `reduce` transform's `ends_when` option with Remap conditionals - Throughout Vector we have a concept of "conditions" that let users express conditional statements through a variety of options. This is present in the `reduce` transform's `ends_when` option:

```toml

[transforms.reduce]

type = "reduce"... | process | replace the reduce transform s ends when option with remap conditionals throughout vector we have a concept of conditions that let users express conditional statements through a variety of options this is present in the reduce transform s ends when option toml type reduce ends when type... | 1 |

2,669 | 5,468,243,446 | IssuesEvent | 2017-03-10 05:02:19 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | opened | Failure in System.Diagnostics.Tests.ProcessTests.TestRootGetProcessById in CI | area-System.Diagnostics.Process test-run-core | https://ci.dot.net/job/dotnet_corefx/job/master/job/osx_debug_prtest/4347/consoleFull#-6109453532d31e50d-1517-49fc-92b3-2ca637122019

.

```

System.Diagnostics.Tests.ProcessTests.TestRootGetProcessById [FAIL]

19:19:29 Assert.True() Failure

19:19:29 Expected: True

19:19:29 Actual: Fals... | 1.0 | Failure in System.Diagnostics.Tests.ProcessTests.TestRootGetProcessById in CI - https://ci.dot.net/job/dotnet_corefx/job/master/job/osx_debug_prtest/4347/consoleFull#-6109453532d31e50d-1517-49fc-92b3-2ca637122019

.

```

System.Diagnostics.Tests.ProcessTests.TestRootGetProcessById [FAIL]

19:19:29 Assert.... | process | failure in system diagnostics tests processtests testrootgetprocessbyid in ci system diagnostics tests processtests testrootgetprocessbyid assert true failure expected true actual false stack trace users dotnet bot... | 1 |

8,278 | 11,432,871,836 | IssuesEvent | 2020-02-04 14:47:48 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | fix synonym relation in GO:0009870 | multi-species process | follow on from https://github.com/geneontology/go-ontology/issues/18707

GO:0009870 defense response signaling pathway, resistance gene-dependent

this is the plant term that means "ETI signalling"

I don't know if it is the best name based on current thinking, but it is the historical name. I can ask about this ... | 1.0 | fix synonym relation in GO:0009870 - follow on from https://github.com/geneontology/go-ontology/issues/18707

GO:0009870 defense response signaling pathway, resistance gene-dependent

this is the plant term that means "ETI signalling"

I don't know if it is the best name based on current thinking, but it is the ... | process | fix synonym relation in go follow on from go defense response signaling pathway resistance gene dependent this is the plant term that means eti signalling i don t know if it is the best name based on current thinking but it is the historical name i can ask about this at the phi base rga unless an... | 1 |

21,171 | 28,141,720,892 | IssuesEvent | 2023-04-02 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Fri, 31 Mar 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### What, when, and where? -- Self-Supervised Spatio-Temporal Grounding in Untrimmed Multi-Action Videos from Narrated Instructions

- **Authors:** Brian Chen, Nina Shvetsova, Andrew Rouditchenko, Daniel Kondermann, Samuel Thomas, Shih-Fu Chang, Rogerio Feris, James Glass, Hilde Kuehne

- **Subjects... | 2.0 | New submissions for Fri, 31 Mar 23 - ## Keyword: events

### What, when, and where? -- Self-Supervised Spatio-Temporal Grounding in Untrimmed Multi-Action Videos from Narrated Instructions

- **Authors:** Brian Chen, Nina Shvetsova, Andrew Rouditchenko, Daniel Kondermann, Samuel Thomas, Shih-Fu Chang, Rogerio Feris, Ja... | process | new submissions for fri mar keyword events what when and where self supervised spatio temporal grounding in untrimmed multi action videos from narrated instructions authors brian chen nina shvetsova andrew rouditchenko daniel kondermann samuel thomas shih fu chang rogerio feris jame... | 1 |

344,864 | 10,349,698,393 | IssuesEvent | 2019-09-04 23:32:23 | oslc-op/oslc-specs | https://api.github.com/repos/oslc-op/oslc-specs | opened | TRS base member predicate may introduce incompatibilities with TRS 1.0 | Core: TRS Priority: High Xtra: Jira | The TRS 1.0 specification did not define a trs:Base class, but rather referred to the object of a trs:base predicate as an "RDF container". The Base Resources does not specify and rdf:type for the base resource. The members of this RDF container are captured using rdfs:member predicates.

TRS 2.0 also does not define ... | 1.0 | TRS base member predicate may introduce incompatibilities with TRS 1.0 - The TRS 1.0 specification did not define a trs:Base class, but rather referred to the object of a trs:base predicate as an "RDF container". The Base Resources does not specify and rdf:type for the base resource. The members of this RDF container a... | non_process | trs base member predicate may introduce incompatibilities with trs the trs specification did not define a trs base class but rather referred to the object of a trs base predicate as an rdf container the base resources does not specify and rdf type for the base resource the members of this rdf container a... | 0 |

8,285 | 11,452,143,048 | IssuesEvent | 2020-02-06 13:07:59 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | Improve documentation around securing your database connection with SSL | kind/docs process/candidate | Recently I tried setting up prisma2 with a SSL-secured postgres database.

There is some documentation about it [here](https://github.com/prisma/prisma2/blob/master/docs/core/connectors/postgresql.md).

However with the information provided here I was not able to get it to work for me.

I am not sure which of th... | 1.0 | Improve documentation around securing your database connection with SSL - Recently I tried setting up prisma2 with a SSL-secured postgres database.

There is some documentation about it [here](https://github.com/prisma/prisma2/blob/master/docs/core/connectors/postgresql.md).

However with the information provided ... | process | improve documentation around securing your database connection with ssl recently i tried setting up with a ssl secured postgres database there is some documentation about it however with the information provided here i was not able to get it to work for me i am not sure which of the parameters are r... | 1 |

72,998 | 15,252,076,885 | IssuesEvent | 2021-02-20 01:26:21 | jgeraigery/haystack | https://api.github.com/repos/jgeraigery/haystack | opened | CVE-2020-28500 (Medium) detected in lodash-4.17.15.tgz | security vulnerability | ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registr... | True | CVE-2020-28500 (Medium) detected in lodash-4.17.15.tgz - ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular util... | non_process | cve medium detected in lodash tgz cve medium severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file haystack docsite package json path to vulnerable library haystack docsite node modules lodash ... | 0 |

65,654 | 7,892,486,079 | IssuesEvent | 2018-06-28 15:07:37 | lbryio/lbry-app | https://api.github.com/repos/lbryio/lbry-app | closed | Long URLs cut off in search/URL bar | area: viewer redesign type: improvement | <!--

Thanks for reporting an issue to LBRY and helping us improve!

To make it possible for us to help you, please fill out below information carefully.

Before reporting any issues, please make sure that you're using the latest version.

- App releases: https://github.com/lbryio/lbry-app/releases

- Standalone da... | 1.0 | Long URLs cut off in search/URL bar - <!--

Thanks for reporting an issue to LBRY and helping us improve!

To make it possible for us to help you, please fill out below information carefully.

Before reporting any issues, please make sure that you're using the latest version.

- App releases: https://github.com/lbr... | non_process | long urls cut off in search url bar thanks for reporting an issue to lbry and helping us improve to make it possible for us to help you please fill out below information carefully before reporting any issues please make sure that you re using the latest version app releases standalone daemon... | 0 |

59,424 | 14,589,148,093 | IssuesEvent | 2020-12-19 00:41:36 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | opened | Illegal instruction on older CPUs under version 2.4.0 | type:build/install |

**System information**

- Ubuntu 18.04 and 20.04, Scientific Linux 7

- binary installed via pip

- version 2.4.0

- Python 3.8

- installed via pip (either inside or not inside a Conda environment)

- various CPU-only and GPU-hosting machines

**Describe the problem**

`import tensorflow` produces "Illegal instr... | 1.0 | Illegal instruction on older CPUs under version 2.4.0 -

**System information**

- Ubuntu 18.04 and 20.04, Scientific Linux 7

- binary installed via pip

- version 2.4.0

- Python 3.8

- installed via pip (either inside or not inside a Conda environment)

- various CPU-only and GPU-hosting machines

**Describe the... | non_process | illegal instruction on older cpus under version system information ubuntu and scientific linux binary installed via pip version python installed via pip either inside or not inside a conda environment various cpu only and gpu hosting machines describe the pro... | 0 |

192,343 | 14,614,822,906 | IssuesEvent | 2020-12-22 10:30:39 | dusk-network/dusk-blockchain | https://api.github.com/repos/dusk-network/dusk-blockchain | closed | Modify mempool testing file to avoid using a shared context | area:testing type:refactor | This shared context object often causes issues when amendments need to be made to the mempool, and hinders progress. Besides this, it strikes me as an anti-pattern to share a context object between unit tests, which should ideally be isolated and fine to be ran concurrently without usage of mutexes or anything of the s... | 1.0 | Modify mempool testing file to avoid using a shared context - This shared context object often causes issues when amendments need to be made to the mempool, and hinders progress. Besides this, it strikes me as an anti-pattern to share a context object between unit tests, which should ideally be isolated and fine to be ... | non_process | modify mempool testing file to avoid using a shared context this shared context object often causes issues when amendments need to be made to the mempool and hinders progress besides this it strikes me as an anti pattern to share a context object between unit tests which should ideally be isolated and fine to be ... | 0 |

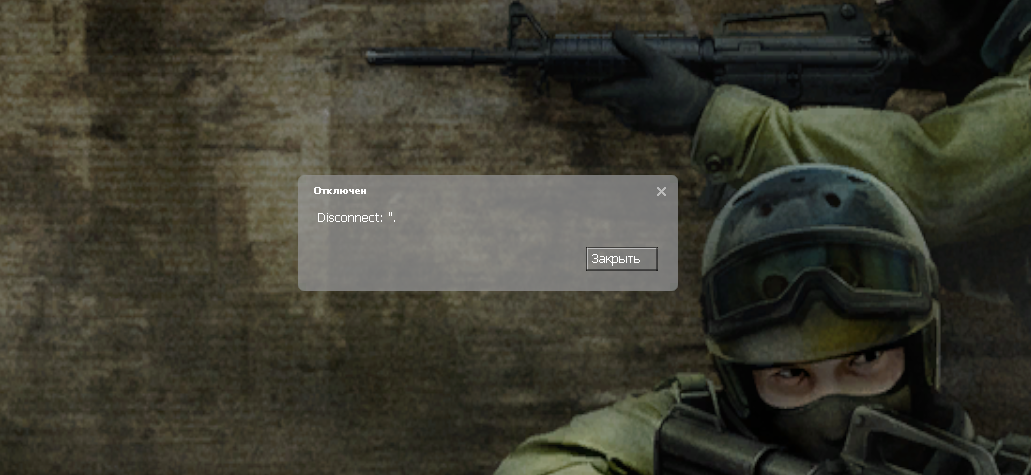

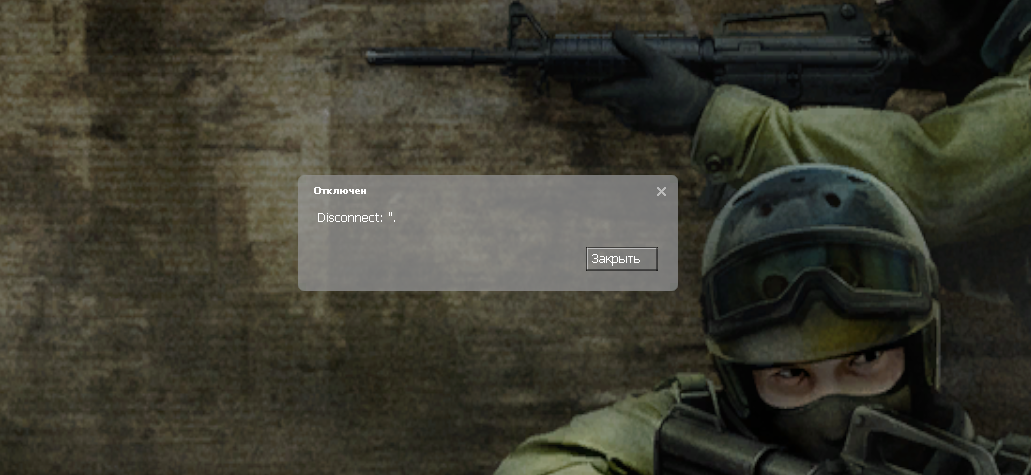

7,079 | 10,229,177,582 | IssuesEvent | 2019-08-17 10:16:33 | SB-MaterialAdmin/Web | https://api.github.com/repos/SB-MaterialAdmin/Web | reopened | Бан с WEB панели | In process |

Хотелось бы чтобы он выводил steam_id в другом формате STEAM_X:X:XXXXXX

Когда получаешь бан с веб п... | 1.0 | Бан с WEB панели -

Хотелось бы чтобы он выводил steam_id в другом формате STEAM_X:X:XXXXXX

Когда по... | process | бан с web панели хотелось бы чтобы он выводил steam id в другом формате steam x x xxxxxx когда получаешь бан с веб панели кикает с сервера с таким диалоговым окном хотелось бы чтобы описание было более подробным время бана причина сайт бан системы а лучше свой указанный при бане с с... | 1 |

14,001 | 16,772,783,485 | IssuesEvent | 2021-06-14 16:44:57 | googleapis/sloth | https://api.github.com/repos/googleapis/sloth | closed | (Re)assign serverless APIs to CAKE | type: process | @tequilarista to action when appropriate resources are in place

- [ ] Rollback #845

- [ ] Add Workflows API | 1.0 | (Re)assign serverless APIs to CAKE - @tequilarista to action when appropriate resources are in place

- [ ] Rollback #845

- [ ] Add Workflows API | process | re assign serverless apis to cake tequilarista to action when appropriate resources are in place rollback add workflows api | 1 |

201,918 | 15,229,383,534 | IssuesEvent | 2021-02-18 12:50:20 | WeiXian042901/fyp_repository | https://api.github.com/repos/WeiXian042901/fyp_repository | opened | FU_057_Quiz Play Page FIQ Awarded And Time Specific Question(Select Wrong Answer) | Acceptance Test Quiz User | **Test Scenario**

- User selects the wrong answer

**Test Case**

- Check that the wrong answer is highlighted as red

**Pre-Conditions**

- User has successfully entered the application

- User clicked on the “Quizzes” Option

- User selected the “Testing title(FIQ and Time)” quiz option

- User clicked... | 1.0 | FU_057_Quiz Play Page FIQ Awarded And Time Specific Question(Select Wrong Answer) - **Test Scenario**

- User selects the wrong answer

**Test Case**

- Check that the wrong answer is highlighted as red

**Pre-Conditions**

- User has successfully entered the application

- User clicked on the “Quizzes” Opt... | non_process | fu quiz play page fiq awarded and time specific question select wrong answer test scenario user selects the wrong answer test case check that the wrong answer is highlighted as red pre conditions user has successfully entered the application user clicked on the “quizzes” optio... | 0 |

776,749 | 27,264,619,558 | IssuesEvent | 2023-02-22 17:05:29 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | opened | IR-3(2): Incident Response Testing | Coordination With Related Plans | Priority: P3 Suggested Assignment: IT Security Function ITSG-33 Class: Operational Control: IR-3 | # Control Definition

INCIDENT RESPONSE TESTING | COORDINATION WITH RELATED PLANS

The organization coordinates incident response testing with organizational elements responsible for related plans.

# Class

Operational

# Supplemental Guidance

Organizational plans related to incident response testing include, for exampl... | 1.0 | IR-3(2): Incident Response Testing | Coordination With Related Plans - # Control Definition

INCIDENT RESPONSE TESTING | COORDINATION WITH RELATED PLANS

The organization coordinates incident response testing with organizational elements responsible for related plans.

# Class

Operational

# Supplemental Guidance

Organi... | non_process | ir incident response testing coordination with related plans control definition incident response testing coordination with related plans the organization coordinates incident response testing with organizational elements responsible for related plans class operational supplemental guidance organi... | 0 |

20,661 | 27,334,331,628 | IssuesEvent | 2023-02-26 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Fri, 24 Feb 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### Real-Time Damage Detection in Fiber Lifti... | 2.0 | New submissions for Fri, 24 Feb 23 - ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### Real... | process | new submissions for fri feb keyword events there is no result keyword event camera there is no result keyword events camera there is no result keyword white balance there is no result keyword color contrast there is no result keyword awb there is no result keyword isp real t... | 1 |

230,545 | 18,675,949,812 | IssuesEvent | 2021-10-31 15:08:06 | kubernetes-csi/csi-driver-smb | https://api.github.com/repos/kubernetes-csi/csi-driver-smb | closed | fix the integration test failure when provision secret is provided | lifecycle/rotten sig/testing | **Is your feature request related to a problem?/Why is this needed**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like in detail**

<!-- A clear and concise description of what you want to happen. -->

https://github.com/kubern... | 1.0 | fix the integration test failure when provision secret is provided - **Is your feature request related to a problem?/Why is this needed**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like in detail**

<!-- A clear and concise des... | non_process | fix the integration test failure when provision secret is provided is your feature request related to a problem why is this needed describe the solution you d like in detail cgo enabled goos linux goarch go build a ldflags x github com kubernetes csi csi driver smb pkg smb driverv... | 0 |

197,841 | 6,965,134,641 | IssuesEvent | 2017-12-09 02:10:48 | lucaslioli/payless | https://api.github.com/repos/lucaslioli/payless | closed | Fix stablishment's display screen bug and implement pull-to-refresh | bug high priority | Sometimes, when the user opens a stablishment's info page, the information retrieved via the web service is not shown, despite the information being received from the server.

Obs.: Probably the page is just not being updated with the new information, or it is being updated before the information has arrived.

Our appl... | 1.0 | Fix stablishment's display screen bug and implement pull-to-refresh - Sometimes, when the user opens a stablishment's info page, the information retrieved via the web service is not shown, despite the information being received from the server.

Obs.: Probably the page is just not being updated with the new information... | non_process | fix stablishment s display screen bug and implement pull to refresh sometimes when the user opens a stablishment s info page the information retrieved via the web service is not shown despite the information being received from the server obs probably the page is just not being updated with the new information... | 0 |

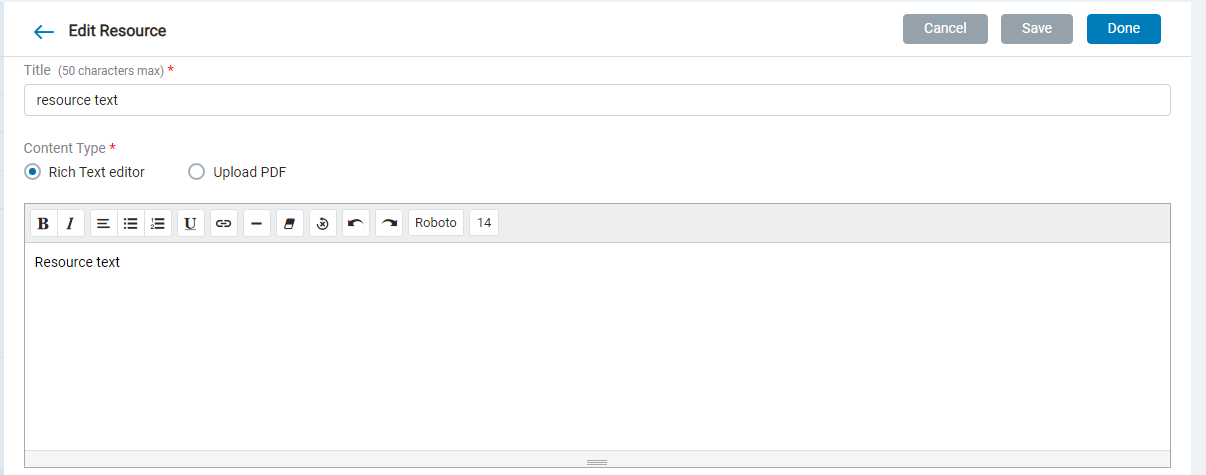

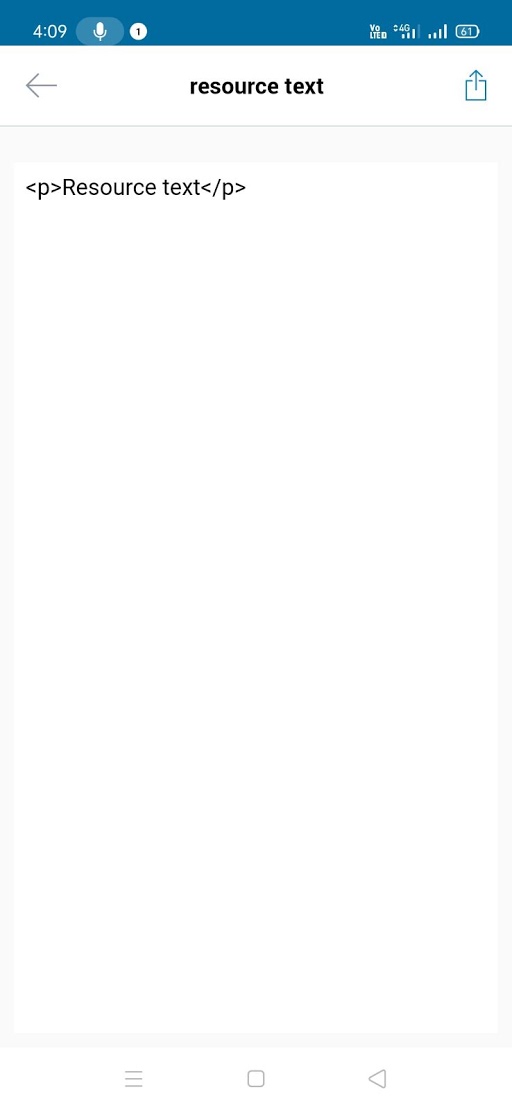

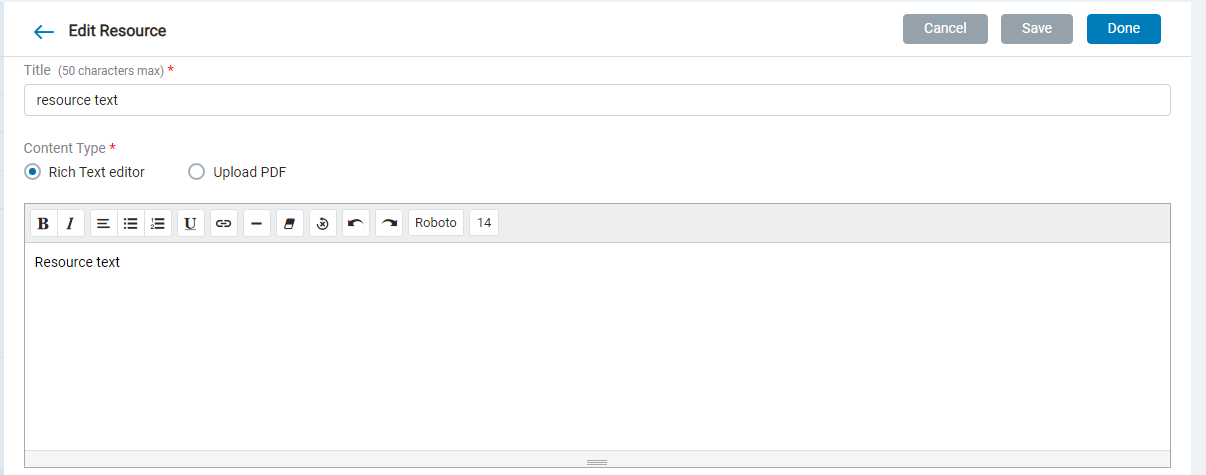

12,239 | 14,743,840,937 | IssuesEvent | 2021-01-07 14:29:32 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Mobile] Html breaking in Resources Text | Bug P1 Process: Dev Process: Tested dev Unknown backend | Entered text in resource is breaking in mobile

| 2.0 | [Mobile] Html breaking in Resources Text - Entered text in resource is breaking in mobile

| help wanted performance | #### Issue description:

In a larger system with lots of disks and drives, larger crafting operations cause a lot of render updates because of the lights flickering between full and near-full.

Mind you I do not know the exact impact it has on performance, I've just been taught that if csampler shows something continuo... | True | [1.10.2 - Minor] Performance issue caused by renderupdates from disk-drives(?) - #### Issue description:

In a larger system with lots of disks and drives, larger crafting operations cause a lot of render updates because of the lights flickering between full and near-full.

Mind you I do not know the exact impact it ha... | non_process | performance issue caused by renderupdates from disk drives issue description in a larger system with lots of disks and drives larger crafting operations cause a lot of render updates because of the lights flickering between full and near full mind you i do not know the exact impact it has on performanc... | 0 |

5,587 | 8,442,541,178 | IssuesEvent | 2018-10-18 13:30:44 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | shell-local post-processor fails for command that includes comma | post-processor/shell-local question | FOR BUGS:

Describe the problem and include the following information:

- Packer version from `packer version`

Packer v1.3.1

- Host platform

$ cat /etc/os-release

NAME="Ubuntu"

VERSION="18.04.1 LTS (Bionic Beaver)"

ID=ubuntu

ID_LIKE=debian

PRETTY_NAME="Ubuntu 18.04.1 LTS"

VERSION_ID="18.04"

HOME_URL="h... | 1.0 | shell-local post-processor fails for command that includes comma - FOR BUGS:

Describe the problem and include the following information:

- Packer version from `packer version`

Packer v1.3.1

- Host platform

$ cat /etc/os-release

NAME="Ubuntu"

VERSION="18.04.1 LTS (Bionic Beaver)"

ID=ubuntu

ID_LIKE=debian... | process | shell local post processor fails for command that includes comma for bugs describe the problem and include the following information packer version from packer version packer host platform cat etc os release name ubuntu version lts bionic beaver id ubuntu id like debian p... | 1 |

10,661 | 13,453,144,070 | IssuesEvent | 2020-09-09 00:04:24 | GoogleCloudPlatform/cloud-ops-sandbox | https://api.github.com/repos/GoogleCloudPlatform/cloud-ops-sandbox | closed | Install cloud monitoring in Custom Cloud Shell Image | priority: p2 type: process | Currently there's a line in `install.sh` which installs Cloud Monitoring: ` python3 -m pip install google-cloud-monitoring`.

It would make more sense in terms of workflow to install it in the Custom Cloud Shell image, which already handles terraform and python3 installations. | 1.0 | Install cloud monitoring in Custom Cloud Shell Image - Currently there's a line in `install.sh` which installs Cloud Monitoring: ` python3 -m pip install google-cloud-monitoring`.

It would make more sense in terms of workflow to install it in the Custom Cloud Shell image, which already handles terraform and python3 ... | process | install cloud monitoring in custom cloud shell image currently there s a line in install sh which installs cloud monitoring m pip install google cloud monitoring it would make more sense in terms of workflow to install it in the custom cloud shell image which already handles terraform and installation... | 1 |

120,651 | 17,644,250,816 | IssuesEvent | 2021-08-20 02:03:18 | fbennets/HCLC-GDPR-Bot | https://api.github.com/repos/fbennets/HCLC-GDPR-Bot | opened | CVE-2021-29616 (High) detected in tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2021-29616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learni... | True | CVE-2021-29616 (High) detected in tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2021-29616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.1.0-cp27-cp27mu-... | non_process | cve high detected in tensorflow whl cve high severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file hclc gdpr bot requirements tx... | 0 |

371,506 | 25,954,444,451 | IssuesEvent | 2022-12-18 02:29:56 | tf-libsonnet/core | https://api.github.com/repos/tf-libsonnet/core | closed | Create a CI job to ensure the docsonnets are always generated | documentation enhancement | Right now, it is a manual process to always remember to run `docsonnet` to generate the docs. Instead, we should make sure it is baked into the CI process and automatically commit if there is a diff. | 1.0 | Create a CI job to ensure the docsonnets are always generated - Right now, it is a manual process to always remember to run `docsonnet` to generate the docs. Instead, we should make sure it is baked into the CI process and automatically commit if there is a diff. | non_process | create a ci job to ensure the docsonnets are always generated right now it is a manual process to always remember to run docsonnet to generate the docs instead we should make sure it is baked into the ci process and automatically commit if there is a diff | 0 |

102,407 | 21,960,079,496 | IssuesEvent | 2022-05-24 15:07:25 | Onelinerhub/onelinerhub | https://api.github.com/repos/Onelinerhub/onelinerhub | opened | Short solution needed: "How to plot heatmap" (python-matplotlib) | help wanted good first issue code python-matplotlib | Please help us write most modern and shortest code solution for this issue:

**How to plot heatmap** (technology: [python-matplotlib](https://onelinerhub.com/python-matplotlib))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create [pull request](https://github.com/Onelinerhub/onelinerh... | 1.0 | Short solution needed: "How to plot heatmap" (python-matplotlib) - Please help us write most modern and shortest code solution for this issue:

**How to plot heatmap** (technology: [python-matplotlib](https://onelinerhub.com/python-matplotlib))

### Fast way

Just write the code solution in the comments.

### Prefered wa... | non_process | short solution needed how to plot heatmap python matplotlib please help us write most modern and shortest code solution for this issue how to plot heatmap technology fast way just write the code solution in the comments prefered way create with a new code file inside don t for... | 0 |

11,293 | 14,101,157,021 | IssuesEvent | 2020-11-06 06:12:15 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | multiprocess pickle error | module: multiprocessing module: serialization triaged | I run in multiprocess mode, report error.

```

import torch.multiprocessing as mp

mp.spawn(

self._process,

nprocs=self.gpu_nums)

```

error info:

```

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/usr/local/python/lib/python3.7/multiprocessing/spawn.py", line... | 1.0 | multiprocess pickle error - I run in multiprocess mode, report error.

```

import torch.multiprocessing as mp

mp.spawn(

self._process,

nprocs=self.gpu_nums)

```

error info:

```

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/usr/local/python/lib/python3.7/mul... | process | multiprocess pickle error i run in multiprocess mode report error import torch multiprocessing as mp mp spawn self process nprocs self gpu nums error info traceback most recent call last file line in file usr local python lib multiprocessing spawn p... | 1 |

66,324 | 27,416,766,190 | IssuesEvent | 2023-03-01 14:17:02 | hashicorp/nomad | https://api.github.com/repos/hashicorp/nomad | reopened | Large number of warning logs with nomad service provider | type/bug stage/accepted theme/service-discovery/nomad | <!--

Hi there,

Thank you for opening an issue. Please note that we try to keep the Nomad issue

tracker reserved for bug reports and feature requests. For general usage

questions, please see: https://www.nomadproject.io/community

-->

### Nomad version

1.4.4

### Issue

## logs( journalctl -fu nomad )

```... | 1.0 | Large number of warning logs with nomad service provider - <!--

Hi there,

Thank you for opening an issue. Please note that we try to keep the Nomad issue

tracker reserved for bug reports and feature requests. For general usage

questions, please see: https://www.nomadproject.io/community

-->

### Nomad versio... | non_process | large number of warning logs with nomad service provider hi there thank you for opening an issue please note that we try to keep the nomad issue tracker reserved for bug reports and feature requests for general usage questions please see nomad version issue logs jour... | 0 |

163,932 | 12,750,698,608 | IssuesEvent | 2020-06-27 06:20:31 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: X-Pack Jest Tests.x-pack/plugins/lens/public/indexpattern_datasource - loader loadInitialState should load a default state when lastUsedIndexPatternId is not found in indexPatternRefs | Team:KibanaApp blocker failed-test skipped-test v8.0.0 | A test failed on a tracked branch

```

Error: expect(received).toMatchObject(expected)

- Expected - 1

+ Received + 0

@@ -66,7 +66,6 @@

"timeFieldName": "timestamp",

"title": "my-fake-index-pattern",

},

},

"layers": Object {},

- "showEmptyFields": false,

}

at Object.it (/dev/shm... | 2.0 | Failing test: X-Pack Jest Tests.x-pack/plugins/lens/public/indexpattern_datasource - loader loadInitialState should load a default state when lastUsedIndexPatternId is not found in indexPatternRefs - A test failed on a tracked branch

```

Error: expect(received).toMatchObject(expected)

- Expected - 1

+ Received + 0

... | non_process | failing test x pack jest tests x pack plugins lens public indexpattern datasource loader loadinitialstate should load a default state when lastusedindexpatternid is not found in indexpatternrefs a test failed on a tracked branch error expect received tomatchobject expected expected received ... | 0 |

390,384 | 26,860,457,767 | IssuesEvent | 2023-02-03 17:54:05 | danielsp13/SuperCatch | https://api.github.com/repos/danielsp13/SuperCatch | closed | [M1 - Dev] Documentar elección de NLTK | documentation | Tal y como se ha descrito al final de #35 , la biblioteca que servirá de ayuda para el procesamiento de lenguaje, será `NLTK`. Podrían haberse considerado otros candidatos, pero la elección de esta herramienta es clara.

Hay que justificar en la documentación del proyecto, en un fichero, por qué.

****

Debe incl... | 1.0 | [M1 - Dev] Documentar elección de NLTK - Tal y como se ha descrito al final de #35 , la biblioteca que servirá de ayuda para el procesamiento de lenguaje, será `NLTK`. Podrían haberse considerado otros candidatos, pero la elección de esta herramienta es clara.

Hay que justificar en la documentación del proyecto, en ... | non_process | documentar elección de nltk tal y como se ha descrito al final de la biblioteca que servirá de ayuda para el procesamiento de lenguaje será nltk podrían haberse considerado otros candidatos pero la elección de esta herramienta es clara hay que justificar en la documentación del proyecto en un fichero... | 0 |

20,695 | 27,367,951,483 | IssuesEvent | 2023-02-27 20:46:45 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | OS packages out of date | bug process | ### Description

The OS packages within the containers are out of date despite running apt commands to update them

### Steps to reproduce

1. docker build

### Additional context

_No response_

### Hedera network

other

### Version

main

### Operating system

None | 1.0 | OS packages out of date - ### Description

The OS packages within the containers are out of date despite running apt commands to update them

### Steps to reproduce

1. docker build

### Additional context

_No response_

### Hedera network

other

### Version

main

### Operating system

None | process | os packages out of date description the os packages within the containers are out of date despite running apt commands to update them steps to reproduce docker build additional context no response hedera network other version main operating system none | 1 |

7,155 | 10,298,493,466 | IssuesEvent | 2019-08-28 16:29:33 | PPHubApp/PPHub-Feedback | https://api.github.com/repos/PPHubApp/PPHub-Feedback | closed | v1.9.3.113 - 1 希望增加黑暗模式

2 希望增... | Feature 🤔 Processing 👨🏻💻🚧 | 1 希望增加黑暗模式

2 希望增加查看release

运行环境: iPad Air 2 - iOS13.0 - v1.9.3.113 | 1.0 | v1.9.3.113 - 1 希望增加黑暗模式

2 希望增... - 1 希望增加黑暗模式

2 希望增加查看release

运行环境: iPad Air 2 - iOS13.0 - v1.9.3.113 | process | 希望增加黑暗模式 希望增 希望增加黑暗模式 希望增加查看release 运行环境 ipad air | 1 |