Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

258,245 | 22,295,795,432 | IssuesEvent | 2022-06-13 01:18:23 | backend-br/vagas | https://api.github.com/repos/backend-br/vagas | closed | [remoto] Pessoa Desenvolvedora Web Sênior(Fullstack com vivência em PHP) @ Feedz | PJ PHP DevOps Presencial Testes automatizados CI CTO FullStack Stale | 🚀 A FEEDZ:

Nosso propósito é criar ambientes de trabalho mais felizes! Por isso, começar pelo nosso próprio ninho é tão importante! Somos Parrots determinados e que se importam uns com os outros, a sede de resultado é latente e o feedback sincero é rotina.

Aqui temos um ambiente de trabalho focado na cooperação en... | 1.0 | [remoto] Pessoa Desenvolvedora Web Sênior(Fullstack com vivência em PHP) @ Feedz - 🚀 A FEEDZ:

Nosso propósito é criar ambientes de trabalho mais felizes! Por isso, começar pelo nosso próprio ninho é tão importante! Somos Parrots determinados e que se importam uns com os outros, a sede de resultado é latente e o feedb... | non_process | pessoa desenvolvedora web sênior fullstack com vivência em php feedz 🚀 a feedz nosso propósito é criar ambientes de trabalho mais felizes por isso começar pelo nosso próprio ninho é tão importante somos parrots determinados e que se importam uns com os outros a sede de resultado é latente e o feedback sin... | 0 |

19,561 | 25,884,837,239 | IssuesEvent | 2022-12-14 13:52:44 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | opened | Restrict or officially support process inputs storing data in process node attributes | requires discussion type/feature request priority/nice-to-have topic/processes | In #5801 a new keyword `is_metadata` was added to the `InputPort` constructor. By default it is `False`, but when set to `True`, it signals that inputs to this port will not be linked up as `Data` nodes to the process node, but the data will be stored on the process node itself, somehow. The port is currently only used... | 1.0 | Restrict or officially support process inputs storing data in process node attributes - In #5801 a new keyword `is_metadata` was added to the `InputPort` constructor. By default it is `False`, but when set to `True`, it signals that inputs to this port will not be linked up as `Data` nodes to the process node, but the ... | process | restrict or officially support process inputs storing data in process node attributes in a new keyword is metadata was added to the inputport constructor by default it is false but when set to true it signals that inputs to this port will not be linked up as data nodes to the process node but the dat... | 1 |

86,704 | 24,930,474,919 | IssuesEvent | 2022-10-31 11:10:35 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | Image Signing | kind/feature area/build cncf/mentorship | ## Proposal / RFE

**Is your feature request related to a problem?**

Kubernetes itself is currently adding support for signing release artifacts (including cluster component images) using [cosign](https://github.com/sigstore/cosign). It would be excellent if Cilium could sign their release images as well during the ... | 1.0 | Image Signing - ## Proposal / RFE

**Is your feature request related to a problem?**

Kubernetes itself is currently adding support for signing release artifacts (including cluster component images) using [cosign](https://github.com/sigstore/cosign). It would be excellent if Cilium could sign their release images as ... | non_process | image signing proposal rfe is your feature request related to a problem kubernetes itself is currently adding support for signing release artifacts including cluster component images using it would be excellent if cilium could sign their release images as well during the build pipeline so that tho... | 0 |

297,843 | 9,182,304,000 | IssuesEvent | 2019-03-05 12:30:40 | servicemesher/istio-official-translation | https://api.github.com/repos/servicemesher/istio-official-translation | closed | content/docs/setup/kubernetes/multicluster-install/gateways/index.md | lang/zh pending priority/P0 sync/update version/1.1 | 文件路径:content/docs/setup/kubernetes/multicluster-install/gateways/index.md

[源码](https://github.com/istio/istio.github.io/tree/master/content/docs/setup/kubernetes/multicluster-install/gateways/index.md)

[网址](https://istio.io//docs/setup/kubernetes/multicluster-install/gateways/index.htm)

```diff

diff --git a/content... | 1.0 | content/docs/setup/kubernetes/multicluster-install/gateways/index.md - 文件路径:content/docs/setup/kubernetes/multicluster-install/gateways/index.md

[源码](https://github.com/istio/istio.github.io/tree/master/content/docs/setup/kubernetes/multicluster-install/gateways/index.md)

[网址](https://istio.io//docs/setup/kubernetes/... | non_process | content docs setup kubernetes multicluster install gateways index md 文件路径:content docs setup kubernetes multicluster install gateways index md diff diff git a content docs setup kubernetes multicluster install gateways index md b content docs setup kubernetes multicluster install gateways index md in... | 0 |

105,276 | 9,049,734,336 | IssuesEvent | 2019-02-12 06:08:36 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Use cluster elasticsearch pod as project logging target | area/logging kind/bug priority/2 status/resolved status/to-test team/cn version/2.0 | **Rancher versions:**

2.0.2

**Infrastructure Stack versions:**

Google Cloud Kubernetes 1.10 cluster

**Steps to Reproduce:**

We tried to install 6.2.4 version of elasticsearch. It works, and is ready to accept connexions. But we cannot use this elasticsearch endpoint, within our cluster project, as target for... | 1.0 | Use cluster elasticsearch pod as project logging target - **Rancher versions:**

2.0.2

**Infrastructure Stack versions:**

Google Cloud Kubernetes 1.10 cluster

**Steps to Reproduce:**

We tried to install 6.2.4 version of elasticsearch. It works, and is ready to accept connexions. But we cannot use this elastic... | non_process | use cluster elasticsearch pod as project logging target rancher versions infrastructure stack versions google cloud kubernetes cluster steps to reproduce we tried to install version of elasticsearch it works and is ready to accept connexions but we cannot use this elastics... | 0 |

22,678 | 31,925,974,651 | IssuesEvent | 2023-09-19 01:49:28 | prusa3d/Prusa-Firmware | https://api.github.com/repos/prusa3d/Prusa-Firmware | closed | MK2.5S 3.6.0 & some 3.7.0RC1 firmware Issues | testing MK2.5 FW 3.7.0 processing stale-issue | Hello Firmware team,

I hope this message is placed on the proper place for you guys to see.

Yesterday i made the following post on the Prusa Community FB Group and since github is the place for firmware i decided to raise it to issue.

OK, seems i am out of ideas and need the mindhives brainstorming:

On our MK2.5 we... | 1.0 | MK2.5S 3.6.0 & some 3.7.0RC1 firmware Issues - Hello Firmware team,

I hope this message is placed on the proper place for you guys to see.

Yesterday i made the following post on the Prusa Community FB Group and since github is the place for firmware i decided to raise it to issue.

OK, seems i am out of ideas and nee... | process | some firmware issues hello firmware team i hope this message is placed on the proper place for you guys to see yesterday i made the following post on the prusa community fb group and since github is the place for firmware i decided to raise it to issue ok seems i am out of ideas and need the ... | 1 |

433,594 | 12,507,453,622 | IssuesEvent | 2020-06-02 14:09:03 | rucio/rucio | https://api.github.com/repos/rucio/rucio | opened | Logging in protocols | Core & Internals Monitoring & Logging Priority: High | Motivation

----------

This issue tracks of all issues related to logging in the protocols. It is follow up on #3472

- [ ] xrootd

- [ ] srm

- [ ] storm

- [ ] gsiftp

- [ ] webdav

- [ ] gfal implementation

- [ ] others

| 1.0 | Logging in protocols - Motivation

----------

This issue tracks of all issues related to logging in the protocols. It is follow up on #3472

- [ ] xrootd

- [ ] srm

- [ ] storm

- [ ] gsiftp

- [ ] webdav

- [ ] gfal implementation

- [ ] others

| non_process | logging in protocols motivation this issue tracks of all issues related to logging in the protocols it is follow up on xrootd srm storm gsiftp webdav gfal implementation others | 0 |

20,239 | 26,845,669,903 | IssuesEvent | 2023-02-03 06:40:52 | sebastianbergmann/phpunit-documentation-english | https://api.github.com/repos/sebastianbergmann/phpunit-documentation-english | closed | Document the development process for the PHPUnit documentation | process | - [x] Figure out a strategy (branching and tagging) for maintaining multiple versions of the documentation (ReadTheDocs does not render branches other than `master` and requires tags for alternative versions)

- [x] Document how to contribute to an existing language edition of the documentation

- [ ] Document how to b... | 1.0 | Document the development process for the PHPUnit documentation - - [x] Figure out a strategy (branching and tagging) for maintaining multiple versions of the documentation (ReadTheDocs does not render branches other than `master` and requires tags for alternative versions)

- [x] Document how to contribute to an existi... | process | document the development process for the phpunit documentation figure out a strategy branching and tagging for maintaining multiple versions of the documentation readthedocs does not render branches other than master and requires tags for alternative versions document how to contribute to an existing l... | 1 |

5,202 | 7,976,141,289 | IssuesEvent | 2018-07-17 11:42:15 | wcmc-its/ReCiter | https://api.github.com/repos/wcmc-its/ReCiter | closed | For each of an author’s aliases, modify initial query based on lexical rules | On Hold Phase: Information Retrieval Phase: Preprocessing | ### Background and approach

We have identified five special circumstances that may pose challenges when using authors’ names to identify publications. These are 1) the author has a nickname; 2) the author’s last name has changed, most often due to marriage; 3) the author’s name has a suffix; 4) the author’s last name ... | 1.0 | For each of an author’s aliases, modify initial query based on lexical rules - ### Background and approach

We have identified five special circumstances that may pose challenges when using authors’ names to identify publications. These are 1) the author has a nickname; 2) the author’s last name has changed, most often... | process | for each of an author’s aliases modify initial query based on lexical rules background and approach we have identified five special circumstances that may pose challenges when using authors’ names to identify publications these are the author has a nickname the author’s last name has changed most often... | 1 |

7,266 | 10,421,312,183 | IssuesEvent | 2019-09-16 05:35:58 | trynmaps/metrics-mvp | https://api.github.com/repos/trynmaps/metrics-mvp | closed | Refactor the codebase to pull out all SF-specific strings in constants files | Backend Process | Part of the OT For Any City project; [see the spec](https://docs.google.com/document/d/1VoGpaReLsnudHk2LOR-BaGZAAb1p_jI1Le8cugpSj1U/edit#).

Basically, make it so that we could easily swap this to any other city with little more than some string changes. | 1.0 | Refactor the codebase to pull out all SF-specific strings in constants files - Part of the OT For Any City project; [see the spec](https://docs.google.com/document/d/1VoGpaReLsnudHk2LOR-BaGZAAb1p_jI1Le8cugpSj1U/edit#).

Basically, make it so that we could easily swap this to any other city with little more than some ... | process | refactor the codebase to pull out all sf specific strings in constants files part of the ot for any city project basically make it so that we could easily swap this to any other city with little more than some string changes | 1 |

69,071 | 30,028,671,595 | IssuesEvent | 2023-06-27 08:09:03 | gradido/gradido | https://api.github.com/repos/gradido/gradido | opened | 🔧 [Refactor][frontend] - ContributionMessagesFormular.vue Doctor | refactor service: admin frontend | <!-- You can find the latest issue templates here https://github.com/ulfgebhardt/issue-templates -->

## 🔧 Refactor ticket

❗ @vue/runtime-dom missing for Vue 2

Vue 2 does not have JSX types definitions, so template type checking will not work correctly. You can resolve this problem by installing @vue/runtime-dom a... | 1.0 | 🔧 [Refactor][frontend] - ContributionMessagesFormular.vue Doctor - <!-- You can find the latest issue templates here https://github.com/ulfgebhardt/issue-templates -->

## 🔧 Refactor ticket

❗ @vue/runtime-dom missing for Vue 2

Vue 2 does not have JSX types definitions, so template type checking will not work corr... | non_process | 🔧 contributionmessagesformular vue doctor 🔧 refactor ticket ❗ vue runtime dom missing for vue vue does not have jsx types definitions so template type checking will not work correctly you can resolve this problem by installing vue runtime dom and adding it to your project s devdependencies ... | 0 |

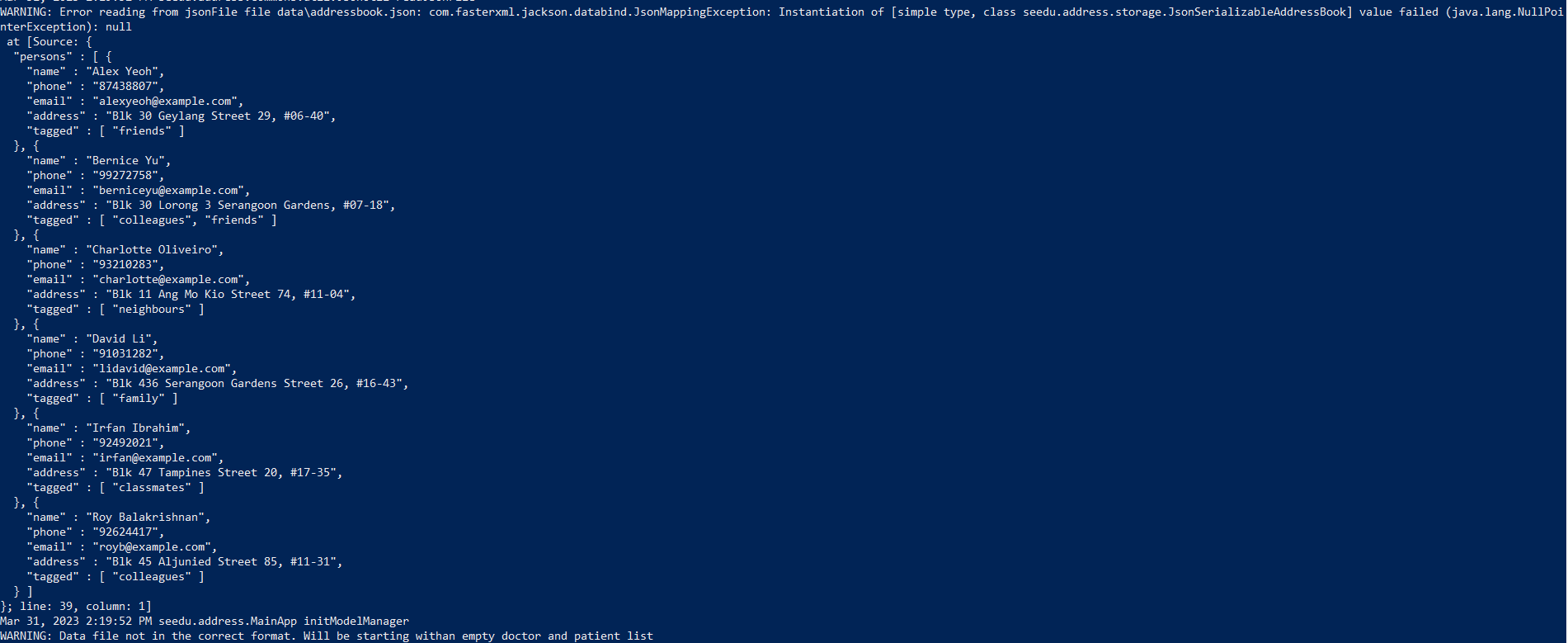

329,328 | 28,215,661,627 | IssuesEvent | 2023-04-05 08:46:34 | AY2223S2-CS2103T-F12-1/tp | https://api.github.com/repos/AY2223S2-CS2103T-F12-1/tp | reopened | [PE-D][Tester A] Default AddressBook unable to load upon opening jar file | wontfix Tester A | When executing `java -jar docedex.jar`, the following warning pops up:

As such, a blank doctor and patient list is provided instead as shown instead of the photo given in User Guide under Quick Start s... | 1.0 | [PE-D][Tester A] Default AddressBook unable to load upon opening jar file - When executing `java -jar docedex.jar`, the following warning pops up:

As such, a blank doctor and patient list is provided i... | non_process | default addressbook unable to load upon opening jar file when executing java jar docedex jar the following warning pops up as such a blank doctor and patient list is provided instead as shown instead of the photo given in user guide under quick start section labels typ... | 0 |

1,556 | 4,159,631,598 | IssuesEvent | 2016-06-17 09:50:22 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Remove Rollback from GUI | process_wontfix type_feature | Remove rollback vDisk and vMachine from the GUI. Users should use the clone functionality to accomplish the same result. | 1.0 | Remove Rollback from GUI - Remove rollback vDisk and vMachine from the GUI. Users should use the clone functionality to accomplish the same result. | process | remove rollback from gui remove rollback vdisk and vmachine from the gui users should use the clone functionality to accomplish the same result | 1 |

297,783 | 22,392,685,150 | IssuesEvent | 2022-06-17 09:15:48 | samuel-watson/glmmr | https://api.github.com/repos/samuel-watson/glmmr | opened | Update help files | bug documentation | @ypan1988 Could you add any errors or required changes to the help files to this thread? | 1.0 | Update help files - @ypan1988 Could you add any errors or required changes to the help files to this thread? | non_process | update help files could you add any errors or required changes to the help files to this thread | 0 |

191,805 | 6,843,073,450 | IssuesEvent | 2017-11-12 11:13:41 | Z3r0byte/Magis | https://api.github.com/repos/Z3r0byte/Magis | closed | Auto silent permission | bug high priority | The background service sometimes crashes because it doesnt have permission to change the do not disturb state. | 1.0 | Auto silent permission - The background service sometimes crashes because it doesnt have permission to change the do not disturb state. | non_process | auto silent permission the background service sometimes crashes because it doesnt have permission to change the do not disturb state | 0 |

549,879 | 16,101,471,837 | IssuesEvent | 2021-04-27 09:50:08 | dotnet/templating | https://api.github.com/repos/dotnet/templating | closed | As a templating owner, I want to move to System.CommandLine, to modernize and simplify the codebase | Cost:M Priority:1 User Story parent:1240372 triaged | The _user story_ collects all the work required to move to use of System.CommandLine.

Issues to be done after migration to System.CommandLine:

- [ ] https://github.com/dotnet/templating/issues/2348

- [ ] [feature] auto-completion for template parameters

- [ ] implement the sub commands

- [ ] https://github.com... | 1.0 | As a templating owner, I want to move to System.CommandLine, to modernize and simplify the codebase - The _user story_ collects all the work required to move to use of System.CommandLine.

Issues to be done after migration to System.CommandLine:

- [ ] https://github.com/dotnet/templating/issues/2348

- [ ] [featur... | non_process | as a templating owner i want to move to system commandline to modernize and simplify the codebase the user story collects all the work required to move to use of system commandline issues to be done after migration to system commandline auto completion for template parameters implement t... | 0 |

10,435 | 13,220,066,632 | IssuesEvent | 2020-08-17 11:42:42 | km4ack/pi-build | https://api.github.com/repos/km4ack/pi-build | closed | Conky started incorrectly | in process | I'm creating a separate issue for this, which was mentioned in #60

Conky is currently being started from user pi's crontab. That assumes autologin and all sorts of other badness (like locking things down to the pi user) and race conditions.

The proper way to start applications that should fire up upon login is u... | 1.0 | Conky started incorrectly - I'm creating a separate issue for this, which was mentioned in #60

Conky is currently being started from user pi's crontab. That assumes autologin and all sorts of other badness (like locking things down to the pi user) and race conditions.

The proper way to start applications that sh... | process | conky started incorrectly i m creating a separate issue for this which was mentioned in conky is currently being started from user pi s crontab that assumes autologin and all sorts of other badness like locking things down to the pi user and race conditions the proper way to start applications that sho... | 1 |

9,817 | 12,826,393,193 | IssuesEvent | 2020-07-06 16:28:36 | obinnaokechukwu/internship-2020 | https://api.github.com/repos/obinnaokechukwu/internship-2020 | opened | Test OBS multicasting with multiple streaming services | process | Test OBS multicasting with multiple streaming services YouTube, FaceBook Live, etc.

| 1.0 | Test OBS multicasting with multiple streaming services - Test OBS multicasting with multiple streaming services YouTube, FaceBook Live, etc.

| process | test obs multicasting with multiple streaming services test obs multicasting with multiple streaming services youtube facebook live etc | 1 |

627,597 | 19,909,527,348 | IssuesEvent | 2022-01-25 15:53:10 | enviroCar/enviroCar-app | https://api.github.com/repos/enviroCar/enviroCar-app | closed | BUG while deleting the car, if no options selected | bug 3 - Done Priority - 3 - Low | There is a bug when you do not select an option in the middle of deleting the car, and the radio button unchecks.

possible solution:

uncheck the radio button only when the car is getting deleted

https://user-images.githubusercontent.com/75211982/143727223-19126b72-7575-490c-bbf4-47e45aff52a1.mp4 | 1.0 | BUG while deleting the car, if no options selected - There is a bug when you do not select an option in the middle of deleting the car, and the radio button unchecks.

possible solution:

uncheck the radio button only when the car is getting deleted

https://user-images.githubusercontent.com/75211982/143727223-191... | non_process | bug while deleting the car if no options selected there is a bug when you do not select an option in the middle of deleting the car and the radio button unchecks possible solution uncheck the radio button only when the car is getting deleted | 0 |

7,824 | 10,997,065,873 | IssuesEvent | 2019-12-03 08:21:02 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Missing docs for copy_fields and truncate_fields processors | :Processors libbeat needs_docs | There is no documentation [listed](https://www.elastic.co/guide/en/beats/filebeat/7.2/defining-processors.html#processors) for the [`copy_fields`](https://github.com/elastic/beats/pull/11303) and [`truncate_fields`](https://github.com/elastic/beats/pull/11297) processors.

| 1.0 | Missing docs for copy_fields and truncate_fields processors - There is no documentation [listed](https://www.elastic.co/guide/en/beats/filebeat/7.2/defining-processors.html#processors) for the [`copy_fields`](https://github.com/elastic/beats/pull/11303) and [`truncate_fields`](https://github.com/elastic/beats/pull/1129... | process | missing docs for copy fields and truncate fields processors there is no documentation for the and processors | 1 |

2,691 | 5,540,276,797 | IssuesEvent | 2017-03-22 09:41:47 | g8os/ays_template_g8os | https://api.github.com/repos/g8os/ays_template_g8os | closed | Actor template for G8OS Store | process_wontfix type_feature | - [x] node.g8os

- [x] container.g8os

- [x] ardb-server

- [x] ardb-cluster

- [x] disk.g8os

- [ ] volume.blockstor | 1.0 | Actor template for G8OS Store - - [x] node.g8os

- [x] container.g8os

- [x] ardb-server

- [x] ardb-cluster

- [x] disk.g8os

- [ ] volume.blockstor | process | actor template for store node container ardb server ardb cluster disk volume blockstor | 1 |

9,987 | 13,036,168,734 | IssuesEvent | 2020-07-28 11:45:15 | solid/process | https://api.github.com/repos/solid/process | closed | Record roadmap and agree on process | process proposal | There are various kanban boards, diagrams and links explaining what various parties are working on. For example, [here](https://www.w3.org/DesignIssues/diagrams/solid/2018-soild-work.svg), [here](https://github.com/solid/specification/projects/1), and [here](https://github.com/solid/process/projects/1).

There is a r... | 1.0 | Record roadmap and agree on process - There are various kanban boards, diagrams and links explaining what various parties are working on. For example, [here](https://www.w3.org/DesignIssues/diagrams/solid/2018-soild-work.svg), [here](https://github.com/solid/specification/projects/1), and [here](https://github.com/sol... | process | record roadmap and agree on process there are various kanban boards diagrams and links explaining what various parties are working on for example and there is a repository that was set up some time ago called roadmap however it was not clear what the scope of that repository was or how to co cre... | 1 |

13,453 | 15,930,548,755 | IssuesEvent | 2021-04-14 01:09:37 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | Several libraries have lingering rubocop issues after the Ruby 3 migration | type: process | It seems to affect handwritten parts (samples, acceptance tests) of generated libraries. I know google-cloud-vision is affected, and there was at least one other. | 1.0 | Several libraries have lingering rubocop issues after the Ruby 3 migration - It seems to affect handwritten parts (samples, acceptance tests) of generated libraries. I know google-cloud-vision is affected, and there was at least one other. | process | several libraries have lingering rubocop issues after the ruby migration it seems to affect handwritten parts samples acceptance tests of generated libraries i know google cloud vision is affected and there was at least one other | 1 |

15,098 | 18,835,501,353 | IssuesEvent | 2021-11-11 00:06:14 | crim-ca/weaver | https://api.github.com/repos/crim-ca/weaver | closed | [Feature] Cache WPS-1/2 endpoint | triage/enhancement process/wps1 process/wps2 triage/feature | In order to avoid uselessly recreating the PyWPS Service which requires re-fetch and re-creation of every underlying DB processes on each WPS-1/2 request, we could use caching to quickly return the matching response.

Things that this caching needs to consider (maybe others also... to test) :

- request parameters ... | 2.0 | [Feature] Cache WPS-1/2 endpoint - In order to avoid uselessly recreating the PyWPS Service which requires re-fetch and re-creation of every underlying DB processes on each WPS-1/2 request, we could use caching to quickly return the matching response.

Things that this caching needs to consider (maybe others also...... | process | cache wps endpoint in order to avoid uselessly recreating the pywps service which requires re fetch and re creation of every underlying db processes on each wps request we could use caching to quickly return the matching response things that this caching needs to consider maybe others also to test... | 1 |

11,551 | 14,434,433,215 | IssuesEvent | 2020-12-07 07:04:19 | slok/kahoy | https://api.github.com/repos/slok/kahoy | closed | Allow waiting with a custom command | manager processor resources | At this moment we are able to sleep a specific amount of time between group applies. Would be nice to have a user-specified way of waiting, for example using raw kubectl or a script. | 1.0 | Allow waiting with a custom command - At this moment we are able to sleep a specific amount of time between group applies. Would be nice to have a user-specified way of waiting, for example using raw kubectl or a script. | process | allow waiting with a custom command at this moment we are able to sleep a specific amount of time between group applies would be nice to have a user specified way of waiting for example using raw kubectl or a script | 1 |

73,085 | 9,640,048,019 | IssuesEvent | 2019-05-16 14:44:39 | microsoftgraph/microsoft-graph-docs | https://api.github.com/repos/microsoftgraph/microsoft-graph-docs | closed | immutable-id | area: outlook request: documentation | Good to know: The message ID is not something you want to rely on. I found this announcement after searching for a while:

https://developer.microsoft.com/en-us/outlook/blogs/announcing-immutable-id-for-outlook-resources-in-microsoft-graph/

Summary: Add this in the headers of your request: Prefer: IdType="ImmutableId" ... | 1.0 | immutable-id - Good to know: The message ID is not something you want to rely on. I found this announcement after searching for a while:

https://developer.microsoft.com/en-us/outlook/blogs/announcing-immutable-id-for-outlook-resources-in-microsoft-graph/

Summary: Add this in the headers of your request: Prefer: IdType... | non_process | immutable id good to know the message id is not something you want to rely on i found this announcement after searching for a while summary add this in the headers of your request prefer idtype immutableid to get more stable id s that will only change in case of archiving or a actual move to another mail... | 0 |

14,795 | 18,071,657,954 | IssuesEvent | 2021-09-21 04:06:26 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | opened | Firestore: Fill in missing samples for custom objects and array updates | type: process api: firestore samples | On https://firebase.google.com/docs/firestore/manage-data/add-data if you switch the sample tabs to Ruby, you'll see that samples are missing for custom objects and array updates. I'm not sure whether we actually have these features implemented for Ruby. Can you determine the status, and if these are implemented, creat... | 1.0 | Firestore: Fill in missing samples for custom objects and array updates - On https://firebase.google.com/docs/firestore/manage-data/add-data if you switch the sample tabs to Ruby, you'll see that samples are missing for custom objects and array updates. I'm not sure whether we actually have these features implemented f... | process | firestore fill in missing samples for custom objects and array updates on if you switch the sample tabs to ruby you ll see that samples are missing for custom objects and array updates i m not sure whether we actually have these features implemented for ruby can you determine the status and if these are implem... | 1 |

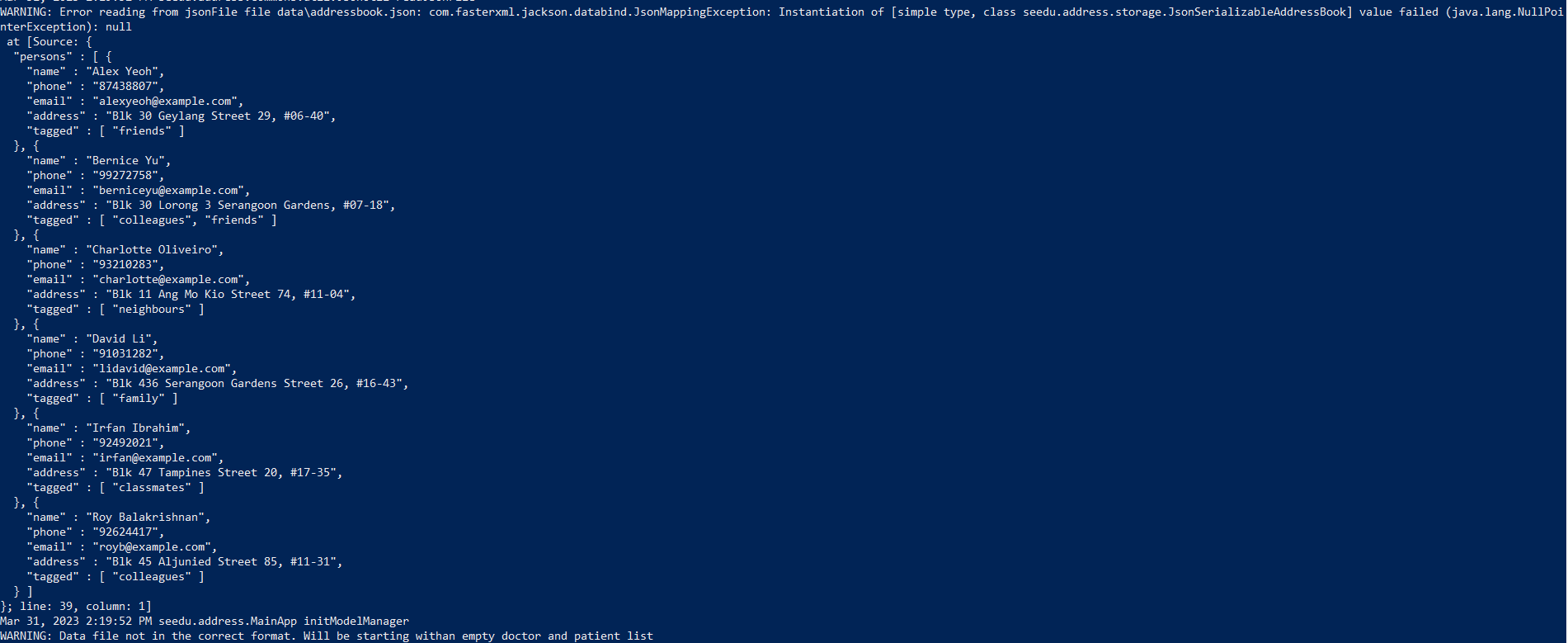

12,277 | 14,790,006,775 | IssuesEvent | 2021-01-12 11:23:05 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Mobile] Email template > App name should be displayed | Bug P2 Process: Tested dev | AR : App id is displayed in the email template

ER : App name should be displayed in the email template for the following

1. Account lock

2. Forgot password

| 1.0 | [Mobile] Email template > App name should be displayed - AR : App id is displayed in the email template

ER : App name should be displayed in the email template for the following

1. Account lock

2. Forgot password

felt the same way. This is a low-priority cleanup fix, but would be nice t... | 1.0 | Get rid of ">" in quick start instructions? - I feel that the ">" at the start of shell commands in the quick start instructions is more troublesome than helpful, because it means you can't copy and paste commands. At least one other person (I think it was Cecile Hannay, but I may be wrong) felt the same way. This is a... | non_process | get rid of in quick start instructions i feel that the at the start of shell commands in the quick start instructions is more troublesome than helpful because it means you can t copy and paste commands at least one other person i think it was cecile hannay but i may be wrong felt the same way this is a... | 0 |

8,354 | 11,503,118,744 | IssuesEvent | 2020-02-12 20:26:57 | ESIPFed/sweet | https://api.github.com/repos/ESIPFed/sweet | opened | [BUG] Issues with periglacial semantics | Cryosphere alignment bug enhancement phenomena (macroscale) process (microscale) | A bug report assumes that you are not trying to introduced a new feature, but instead change an existing one... hopefully correcting some latent error in the process.

## Description

SWEET's phenCryo TTL file includes :

```

### http://sweetontology.net/phenCryo/Glaciation

sophcr:Glaciation rdf:type owl:Class... | 1.0 | [BUG] Issues with periglacial semantics - A bug report assumes that you are not trying to introduced a new feature, but instead change an existing one... hopefully correcting some latent error in the process.

## Description

SWEET's phenCryo TTL file includes :

```

### http://sweetontology.net/phenCryo/Glacia... | process | issues with periglacial semantics a bug report assumes that you are not trying to introduced a new feature but instead change an existing one hopefully correcting some latent error in the process description sweet s phencryo ttl file includes sophcr glaciation rdf type owl class ... | 1 |

6,786 | 9,920,706,895 | IssuesEvent | 2019-06-30 12:00:55 | Project-Cartographer/H2PC_TagExtraction | https://api.github.com/repos/Project-Cartographer/H2PC_TagExtraction | closed | Undo processing on bitmap | bug post-processing | Copied from #10

* Appears to be missing bitmap data a majority of the time among other things. Seems to be an issue with the extractor not loading the resource maps. Fixed by 0600328

* Has issues beyond this where even if the bitmap data is internal then it may not be written for whatever reason.

* Something... | 1.0 | Undo processing on bitmap - Copied from #10

* Appears to be missing bitmap data a majority of the time among other things. Seems to be an issue with the extractor not loading the resource maps. Fixed by 0600328

* Has issues beyond this where even if the bitmap data is internal then it may not be written for wha... | process | undo processing on bitmap copied from appears to be missing bitmap data a majority of the time among other things seems to be an issue with the extractor not loading the resource maps fixed by has issues beyond this where even if the bitmap data is internal then it may not be written for whatever r... | 1 |

12,489 | 14,952,666,109 | IssuesEvent | 2021-01-26 15:47:50 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | closed | Explore Opps Loss error on first open | Process Heating bug | For a newly created/imported mod, the phast object doesn't have "exploreOppsShow____" yet, causing error. | 1.0 | Explore Opps Loss error on first open - For a newly created/imported mod, the phast object doesn't have "exploreOppsShow____" yet, causing error. | process | explore opps loss error on first open for a newly created imported mod the phast object doesn t have exploreoppsshow yet causing error | 1 |

10,556 | 13,341,116,808 | IssuesEvent | 2020-08-28 15:25:46 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | stageDependencies example is incorrect | Pri2 devops-cicd-process/tech devops/prod doc-bug | I wasted a bunch of time because this stageDependencies example is incorrect:

```yaml

- stage: B

condition: and(succeeded(), ne(dependencies.A.A1.outputs['printvar.skipsubsequent'], 'true'))

dependsOn: A

jobs:

- job: B1

steps:

- script: echo hello from Stage B

- job: B2

condition: ne(s... | 1.0 | stageDependencies example is incorrect - I wasted a bunch of time because this stageDependencies example is incorrect:

```yaml

- stage: B

condition: and(succeeded(), ne(dependencies.A.A1.outputs['printvar.skipsubsequent'], 'true'))

dependsOn: A

jobs:

- job: B1

steps:

- script: echo hello from ... | process | stagedependencies example is incorrect i wasted a bunch of time because this stagedependencies example is incorrect yaml stage b condition and succeeded ne dependencies a outputs true dependson a jobs job steps script echo hello from stage b job con... | 1 |

256,735 | 8,128,496,914 | IssuesEvent | 2018-08-17 12:03:30 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | OS X dylibs have the wrong dylib names after installation, sometimes the wrong name | Bug Likelihood: 5 - Always Priority: Normal Severity: 3 - Major Irritation | When building VisIt on OS X against QT previously installed elsewhere,

the names inside many of the plugins end up incorrect, like:

@executable_path@executable_path/../lib/QtGui.framework/Versions/4/QtGui @executable_path@executable_path/../lib/QtOpenGL.framework/Versions/4/QtOpenGL @executable_path@executable_path... | 1.0 | OS X dylibs have the wrong dylib names after installation, sometimes the wrong name - When building VisIt on OS X against QT previously installed elsewhere,

the names inside many of the plugins end up incorrect, like:

@executable_path@executable_path/../lib/QtGui.framework/Versions/4/QtGui @executable_path@executab... | non_process | os x dylibs have the wrong dylib names after installation sometimes the wrong name when building visit on os x against qt previously installed elsewhere the names inside many of the plugins end up incorrect like executable path executable path lib qtgui framework versions qtgui executable path executab... | 0 |

859 | 3,317,658,071 | IssuesEvent | 2015-11-06 22:53:00 | pwittchen/ReactiveNetwork | https://api.github.com/repos/pwittchen/ReactiveNetwork | closed | Release v. 0.1.3 | release process | **Note**: Consider resolving remaining open tasks before releasing new version.

**Initial release notes**:

- fixed bug with incorrect status after going back from background inside the sample app reported in issue #31

- fixed RxJava usage in sample app

- fixed RxJava usage in code snippets in `README.md`

- added... | 1.0 | Release v. 0.1.3 - **Note**: Consider resolving remaining open tasks before releasing new version.

**Initial release notes**:

- fixed bug with incorrect status after going back from background inside the sample app reported in issue #31

- fixed RxJava usage in sample app

- fixed RxJava usage in code snippets in `... | process | release v note consider resolving remaining open tasks before releasing new version initial release notes fixed bug with incorrect status after going back from background inside the sample app reported in issue fixed rxjava usage in sample app fixed rxjava usage in code snippets in r... | 1 |

158,238 | 12,407,007,089 | IssuesEvent | 2020-05-21 20:14:18 | PointCloudLibrary/pcl | https://api.github.com/repos/PointCloudLibrary/pcl | closed | kfpcs_ia unit test is failing spuriously on multiple platforms | module: registration module: test status: stale | I've seen it happening on the Ubuntu 16.04 and Windows x64 images.

```

2018-12-05T03:04:22.2409061Z test 86

2018-12-05T03:04:22.2409768Z Start 86: kfpcs_ia

2018-12-05T03:04:22.2411330Z

2018-12-05T03:04:22.2411626Z 86: Test command: D:\a\build\bin\test_kfpcs_ia.exe "D:/a/1/s/test/office1_keypoints.pcd" "D... | 1.0 | kfpcs_ia unit test is failing spuriously on multiple platforms - I've seen it happening on the Ubuntu 16.04 and Windows x64 images.

```

2018-12-05T03:04:22.2409061Z test 86

2018-12-05T03:04:22.2409768Z Start 86: kfpcs_ia

2018-12-05T03:04:22.2411330Z

2018-12-05T03:04:22.2411626Z 86: Test command: D:\a\bu... | non_process | kfpcs ia unit test is failing spuriously on multiple platforms i ve seen it happening on the ubuntu and windows images test start kfpcs ia test command d a build bin test kfpcs ia exe d a s test keypoints pcd d a s test ... | 0 |

6,985 | 3,072,199,927 | IssuesEvent | 2015-08-19 15:48:31 | saltstack/salt | https://api.github.com/repos/saltstack/salt | closed | Document `master_finger` more prominently | Bug Documentation High Severity P2 | Technically without either pre-seeding the master public key to new minions, or setting `master_finger`, minions are susceptible to a MITM attack at first connection. This is a convenience trade off, but a community member pointed out that this trade-off is not featured prominently in our documentation, nor the solutio... | 1.0 | Document `master_finger` more prominently - Technically without either pre-seeding the master public key to new minions, or setting `master_finger`, minions are susceptible to a MITM attack at first connection. This is a convenience trade off, but a community member pointed out that this trade-off is not featured promi... | non_process | document master finger more prominently technically without either pre seeding the master public key to new minions or setting master finger minions are susceptible to a mitm attack at first connection this is a convenience trade off but a community member pointed out that this trade off is not featured promi... | 0 |

251,558 | 27,182,442,934 | IssuesEvent | 2023-02-18 20:07:47 | PalisadoesFoundation/talawa | https://api.github.com/repos/PalisadoesFoundation/talawa | closed | Bump lint from 1.6.0 to 2.0.1 | bug good first issue points 02 security dependencies | Bump lint from 1.6.0 to 2.0.1

We need to do this upgrade to improve the performance, reliability and security of the code base.

1. This upgrade will require you to fix other dependencies. You can verify this with the command `flutter pub get`

1. All tests will need to pass after this is completed

1. All existin... | True | Bump lint from 1.6.0 to 2.0.1 - Bump lint from 1.6.0 to 2.0.1

We need to do this upgrade to improve the performance, reliability and security of the code base.

1. This upgrade will require you to fix other dependencies. You can verify this with the command `flutter pub get`

1. All tests will need to pass after t... | non_process | bump lint from to bump lint from to we need to do this upgrade to improve the performance reliability and security of the code base this upgrade will require you to fix other dependencies you can verify this with the command flutter pub get all tests will need to pass after t... | 0 |

9,336 | 12,341,054,014 | IssuesEvent | 2020-05-14 21:06:56 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | Business Metrics | P3 enhancement process | **Problem**

We are missing a lot of business level metrics that would be useful to us and various other teams. We should add this to mirror node so anyone that runs it can verify the network status with their own application and data.

**Solution**

Add the following metrics:

- Add type=balance to `hedera.mirror.pa... | 1.0 | Business Metrics - **Problem**

We are missing a lot of business level metrics that would be useful to us and various other teams. We should add this to mirror node so anyone that runs it can verify the network status with their own application and data.

**Solution**

Add the following metrics:

- Add type=balance t... | process | business metrics problem we are missing a lot of business level metrics that would be useful to us and various other teams we should add this to mirror node so anyone that runs it can verify the network status with their own application and data solution add the following metrics add type balance t... | 1 |

9,873 | 12,885,802,732 | IssuesEvent | 2020-07-13 08:31:36 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Strings end with '\n' cause Chrome only test failures | browser: chrome pkg/driver process: tests stage: work in progress type: bug | ### Current behavior:

The following tests from `tests/integration/commands/actions/type_spec.coffee` fail on Chrome 73 but pass on Electron 59:

* `can wrap cursor to next line in [contenteditable] with {rightarrow}`

* `can wrap cursor to prev line in [contenteditable] with {leftarrow}failed`

* `can use {rightar... | 1.0 | Strings end with '\n' cause Chrome only test failures - ### Current behavior:

The following tests from `tests/integration/commands/actions/type_spec.coffee` fail on Chrome 73 but pass on Electron 59:

* `can wrap cursor to next line in [contenteditable] with {rightarrow}`

* `can wrap cursor to prev line in [conte... | process | strings end with n cause chrome only test failures current behavior the following tests from tests integration commands actions type spec coffee fail on chrome but pass on electron can wrap cursor to next line in with rightarrow can wrap cursor to prev line in with leftarrow failed... | 1 |

88,326 | 15,800,773,173 | IssuesEvent | 2021-04-03 01:13:23 | SmartBear/readyapi-swagger-assertion-plugin | https://api.github.com/repos/SmartBear/readyapi-swagger-assertion-plugin | opened | CVE-2021-21350 (High) detected in xstream-1.3.1.jar | security vulnerability | ## CVE-2021-21350 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.3.1.jar</b></p></summary>

<p></p>

<p>Path to dependency file: readyapi-swagger-assertion-plugin/pom.xml</p>

... | True | CVE-2021-21350 (High) detected in xstream-1.3.1.jar - ## CVE-2021-21350 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.3.1.jar</b></p></summary>

<p></p>

<p>Path to dependenc... | non_process | cve high detected in xstream jar cve high severity vulnerability vulnerable library xstream jar path to dependency file readyapi swagger assertion plugin pom xml path to vulnerable library home wss scanner repository thoughtworks xstream xstream jar de... | 0 |

11,007 | 4,128,041,026 | IssuesEvent | 2016-06-10 02:54:30 | TEAMMATES/teammates | https://api.github.com/repos/TEAMMATES/teammates | closed | Re-organize FileHelper classes | a-CodeQuality m.Aspect | There are two `FileHelper`s, one for production (reading input stream etc.) and one for non-production (reading files etc.), but they're not very well-organized right now. Also, there are some self-defined functions that can actually fit in either one of these classes. | 1.0 | Re-organize FileHelper classes - There are two `FileHelper`s, one for production (reading input stream etc.) and one for non-production (reading files etc.), but they're not very well-organized right now. Also, there are some self-defined functions that can actually fit in either one of these classes. | non_process | re organize filehelper classes there are two filehelper s one for production reading input stream etc and one for non production reading files etc but they re not very well organized right now also there are some self defined functions that can actually fit in either one of these classes | 0 |

38,931 | 12,624,098,717 | IssuesEvent | 2020-06-14 03:45:48 | scriptex/react-svg-donuts | https://api.github.com/repos/scriptex/react-svg-donuts | closed | CVE-2020-13822 (High) detected in elliptic-6.5.2.tgz | security vulnerability | ## CVE-2020-13822 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.5.2.tgz</b></p></summary>

<p>EC cryptography</p>

<p>Library home page: <a href="https://registry.npmjs.org/... | True | CVE-2020-13822 (High) detected in elliptic-6.5.2.tgz - ## CVE-2020-13822 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.5.2.tgz</b></p></summary>

<p>EC cryptography</p>

<p>... | non_process | cve high detected in elliptic tgz cve high severity vulnerability vulnerable library elliptic tgz ec cryptography library home page a href path to dependency file tmp ws scm react svg donuts package json path to vulnerable library tmp ws scm react svg donuts node m... | 0 |

6,562 | 9,648,880,214 | IssuesEvent | 2019-05-17 17:30:39 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Update text on Next Steps page of application | Apply Process Approved Requirements Ready State Dept. | Who: Internship applicants

What: Reviewing next steps

Why: To properly state what is required and not for their application

Acceptance criteria:

Issue: The next steps page indicates that references are required but they are actually not.

Please update the next steps page Step 2 as follows:

Tell us about ... | 1.0 | Update text on Next Steps page of application - Who: Internship applicants

What: Reviewing next steps

Why: To properly state what is required and not for their application

Acceptance criteria:

Issue: The next steps page indicates that references are required but they are actually not.

Please update the nex... | process | update text on next steps page of application who internship applicants what reviewing next steps why to properly state what is required and not for their application acceptance criteria issue the next steps page indicates that references are required but they are actually not please update the nex... | 1 |

17,055 | 22,474,525,761 | IssuesEvent | 2022-06-22 11:03:57 | opensafely-core/job-server | https://api.github.com/repos/opensafely-core/job-server | opened | Add Project Number to Applications List | application-process | When looking at the list of applications it would be useful to show Project.number for applications which have an approved project with a number.

We should also be able to search on that page (and the home page) for project numbers. | 1.0 | Add Project Number to Applications List - When looking at the list of applications it would be useful to show Project.number for applications which have an approved project with a number.

We should also be able to search on that page (and the home page) for project numbers. | process | add project number to applications list when looking at the list of applications it would be useful to show project number for applications which have an approved project with a number we should also be able to search on that page and the home page for project numbers | 1 |

285,862 | 31,155,707,356 | IssuesEvent | 2023-08-16 13:00:41 | nidhi7598/linux-4.1.15_CVE-2018-5873 | https://api.github.com/repos/nidhi7598/linux-4.1.15_CVE-2018-5873 | opened | CVE-2017-17852 (High) detected in linuxlinux-4.1.52 | Mend: dependency security vulnerability | ## CVE-2017-17852 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.1.52</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.ker... | True | CVE-2017-17852 (High) detected in linuxlinux-4.1.52 - ## CVE-2017-17852 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.1.52</b></p></summary>

<p>

<p>The Linux Kernel</p>

... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in base branch master vulnerable source files kernel bpf verifier c kernel bpf verifier c... | 0 |

171,146 | 20,925,842,343 | IssuesEvent | 2022-03-24 22:47:05 | scriptex/material-tetris | https://api.github.com/repos/scriptex/material-tetris | opened | CVE-2021-44906 (High) detected in minimist-1.2.5.tgz | security vulnerability | ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument options</p>

<p>Library home page: <a href="https://registry.npm... | True | CVE-2021-44906 (High) detected in minimist-1.2.5.tgz - ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument options<... | non_process | cve high detected in minimist tgz cve high severity vulnerability vulnerable library minimist tgz parse argument options library home page a href path to dependency file package json path to vulnerable library node modules minimist package json dependency hiera... | 0 |

9,320 | 12,338,259,540 | IssuesEvent | 2020-05-14 16:10:39 | DiSSCo/user-stories | https://api.github.com/repos/DiSSCo/user-stories | opened | interoperability between my CMS and UCAS | 1. NH museum 2. Collection Management 4. Data processing ICEDIG-SURVEY Specimen level | As a Curator I want to add annotated information from an Unified Curation and Annotation System (UCAS) to my collection management system (CMS) so that I can update infomation on my specimens in my CMS for this I need interoperability between my CMS and UCAS | 1.0 | interoperability between my CMS and UCAS - As a Curator I want to add annotated information from an Unified Curation and Annotation System (UCAS) to my collection management system (CMS) so that I can update infomation on my specimens in my CMS for this I need interoperability between my CMS and UCAS | process | interoperability between my cms and ucas as a curator i want to add annotated information from an unified curation and annotation system ucas to my collection management system cms so that i can update infomation on my specimens in my cms for this i need interoperability between my cms and ucas | 1 |

19,739 | 26,087,548,038 | IssuesEvent | 2022-12-26 06:11:12 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | java.lang.UnsatisfiedLinkError: dlopen failed: "/data/user/0/com.google.mediapipe.examples.facemesh/incrementallib/libmediapipe_jni.so" is 32-bit instead of 64-bit | more data needed type: support / not a bug (process) |

Hello,

Any one have an idea on this

i have been using android-ndk-r21b-linux-x86_64 NDK in Ubuntu 20.02 64 bit. | 1.0 | java.lang.UnsatisfiedLinkError: dlopen failed: "/data/user/0/com.google.mediapipe.examples.facemesh/incrementallib/libmediapipe_jni.so" is 32-bit instead of 64-bit -

Hello,

Any one have... | process | java lang unsatisfiedlinkerror dlopen failed data user com google mediapipe examples facemesh incrementallib libmediapipe jni so is bit instead of bit hello any one have an idea on this i have been using android ndk linux ndk in ubuntu bit | 1 |

10,905 | 13,684,880,957 | IssuesEvent | 2020-09-30 06:04:05 | prisma/migrate | https://api.github.com/repos/prisma/migrate | closed | Prisma doesn't create tables in SQLite | process/candidate | ## Bug description

<!-- A clear and concise description of what the bug is. -->

When I want to do a query from `user` table (or any other tables) `prisma` says the table does not exist.

**error**:

```

The table `dev.User` does not exist in the current database.

at iy.request (/home/hamidb80/Documents/pr... | 1.0 | Prisma doesn't create tables in SQLite - ## Bug description

<!-- A clear and concise description of what the bug is. -->

When I want to do a query from `user` table (or any other tables) `prisma` says the table does not exist.

**error**:

```

The table `dev.User` does not exist in the current database.

a... | process | prisma doesn t create tables in sqlite bug description when i want to do a query from user table or any other tables prisma says the table does not exist error the table dev user does not exist in the current database at iy request home documents programming projects dairyt... | 1 |

180,952 | 13,979,404,908 | IssuesEvent | 2020-10-27 00:02:57 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | ☂️ Migrate framework tests to null safety | P3 a: null-safety a: tests framework passed first triage | Prerequisites (cc @jonahwilliams):

- [x] migrate the framework _[in progress by @a14n]_

- [x] publish platform/file/process in SDK allowlist, publish on pub, _[in progress by @jonahwilliams]_

- [x] migrate flutter_goldens/flutter_goldens_client, https://github.com/flutter/flutter/issues/53908 _[@jonahwilliams sign... | 1.0 | ☂️ Migrate framework tests to null safety - Prerequisites (cc @jonahwilliams):

- [x] migrate the framework _[in progress by @a14n]_

- [x] publish platform/file/process in SDK allowlist, publish on pub, _[in progress by @jonahwilliams]_

- [x] migrate flutter_goldens/flutter_goldens_client, https://github.com/flutte... | non_process | ☂️ migrate framework tests to null safety prerequisites cc jonahwilliams migrate the framework publish platform file process in sdk allowlist publish on pub migrate flutter goldens flutter goldens client migrate flutter test it doesn t make sense to start migrating... | 0 |

163,284 | 13,914,549,207 | IssuesEvent | 2020-10-20 22:24:29 | KSP-KOS/KOS | https://api.github.com/repos/KSP-KOS/KOS | closed | file open error results in crash. | documentation | When I do `local f is open("0:/craft/survey.csv").` for a file that is absent, I get my script crashed and an error printed in the kOS terminal, contrary to the docs. Which behavior is correct? (I guess the docs probably are)

```

OPEN(PATH)

Will return a VolumeFile or VolumeDirectory representing the item pointe... | 1.0 | file open error results in crash. - When I do `local f is open("0:/craft/survey.csv").` for a file that is absent, I get my script crashed and an error printed in the kOS terminal, contrary to the docs. Which behavior is correct? (I guess the docs probably are)

```

OPEN(PATH)

Will return a VolumeFile or VolumeDi... | non_process | file open error results in crash when i do local f is open craft survey csv for a file that is absent i get my script crashed and an error printed in the kos terminal contrary to the docs which behavior is correct i guess the docs probably are open path will return a volumefile or volumedi... | 0 |

1,911 | 4,746,363,368 | IssuesEvent | 2016-10-21 10:46:43 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | opened | Extract clinical study reports from EMA | Collectors help wanted Processors | Yesterday [EMA announced it would give access to its clinical study reports](http://www.ema.europa.eu/ema/index.jsp?curl=pages/news_and_events/news/2016/10/news_detail_002624.jsp&mid=WC0b01ac058004d5c1). This is great news! Now we need to extract this information and provide it through OpenTrials. | 1.0 | Extract clinical study reports from EMA - Yesterday [EMA announced it would give access to its clinical study reports](http://www.ema.europa.eu/ema/index.jsp?curl=pages/news_and_events/news/2016/10/news_detail_002624.jsp&mid=WC0b01ac058004d5c1). This is great news! Now we need to extract this information and provide it... | process | extract clinical study reports from ema yesterday this is great news now we need to extract this information and provide it through opentrials | 1 |

625 | 3,091,438,586 | IssuesEvent | 2015-08-26 13:13:06 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | На главном портале реализовать валидацию поля, которое промаркеровано как Электронный адрес + подкрашивать красной рамочкой такие поля (в т.ч. по номеру телефона) и справа отображать текст ошибки, если поле таки не валидно. | active hi priority In process of testing test | ВАЖНО: сделать точь-в точь как в задаче: https://github.com/e-government-ua/i/issues/375

только уже не для номера телефона

(по сути механизм уже там готовый будет использоваться, останется дописать кусок валидации формата телефона)

в файле:

\i\central-js\client\app\service\built-in\controllers\servicebuiltinban... | 1.0 | На главном портале реализовать валидацию поля, которое промаркеровано как Электронный адрес + подкрашивать красной рамочкой такие поля (в т.ч. по номеру телефона) и справа отображать текст ошибки, если поле таки не валидно. - ВАЖНО: сделать точь-в точь как в задаче: https://github.com/e-government-ua/i/issues/375

то... | process | на главном портале реализовать валидацию поля которое промаркеровано как электронный адрес подкрашивать красной рамочкой такие поля в т ч по номеру телефона и справа отображать текст ошибки если поле таки не валидно важно сделать точь в точь как в задаче только уже не для номера телефона по сути меха... | 1 |

7,640 | 10,736,590,585 | IssuesEvent | 2019-10-29 11:13:32 | Viir/Kalmit | https://api.github.com/repos/Viir/Kalmit | closed | Expand automatic tests of the web host to cover sending different content-type with HTTP responses | interface-between-process-and-host | Similar to the tests in https://github.com/Viir/Kalmit/commit/27d0c1036719ff30b8e020fb81769be512422dbe | 1.0 | Expand automatic tests of the web host to cover sending different content-type with HTTP responses - Similar to the tests in https://github.com/Viir/Kalmit/commit/27d0c1036719ff30b8e020fb81769be512422dbe | process | expand automatic tests of the web host to cover sending different content type with http responses similar to the tests in | 1 |

11,136 | 13,957,691,926 | IssuesEvent | 2020-10-24 08:10:33 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | RO - Harvesting request | Geoportal Harvesting process RO - Romania | Dear Angelo,

Can you please start a new harvesting from Romanian Geoportal? We did some changes and we want to see the outcome.

Thank you in advance for your help.

Best Regards,

Simona Bunea | 1.0 | RO - Harvesting request - Dear Angelo,

Can you please start a new harvesting from Romanian Geoportal? We did some changes and we want to see the outcome.

Thank you in advance for your help.

Best Regards,

Simona Bunea | process | ro harvesting request dear angelo can you please start a new harvesting from romanian geoportal we did some changes and we want to see the outcome thank you in advance for your help best regards simona bunea | 1 |

15,179 | 18,952,573,956 | IssuesEvent | 2021-11-18 16:33:40 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [iOS/tvOS] System.Diagnostics.Tests.ProcessTests.TestGetProcesses fails on devices | area-System.Diagnostics.Process os-ios os-tvos | This [test](https://github.com/dotnet/runtime/blob/defa26b9e1159f1a2a3470c52084410edff982b6/src/libraries/System.Diagnostics.Process/tests/ProcessTests.cs#L1108-L1124) fails with:

```

System.ComponentModel.Win32Exception : Could not get all running Process IDs.

Stack trace

at Interop.libproc.proc_listallpids... | 1.0 | [iOS/tvOS] System.Diagnostics.Tests.ProcessTests.TestGetProcesses fails on devices - This [test](https://github.com/dotnet/runtime/blob/defa26b9e1159f1a2a3470c52084410edff982b6/src/libraries/System.Diagnostics.Process/tests/ProcessTests.cs#L1108-L1124) fails with:

```

System.ComponentModel.Win32Exception : Could no... | process | system diagnostics tests processtests testgetprocesses fails on devices this fails with system componentmodel could not get all running process ids stack trace at interop libproc proc listallpids at system diagnostics processmanager getprocessinfos string machinename at system di... | 1 |

21,067 | 28,015,034,228 | IssuesEvent | 2023-03-27 21:49:01 | GoogleCloudPlatform/vertex-ai-samples | https://api.github.com/repos/GoogleCloudPlatform/vertex-ai-samples | closed | Custom training that uses BigQuery Python library should use an explicit PROJECT_ID | type: process type: cleanup | ## Expected Behavior

Some notebooks that demonstrate custom training use an inferred project:

```

from google.cloud import bigquery

client = bigquery.Client()

```

This will cause permission issues. See:

See https://cloud.google.com/vertex-ai/docs/workbench/managed/executor#explicit-project-selection.

... | 1.0 | Custom training that uses BigQuery Python library should use an explicit PROJECT_ID - ## Expected Behavior

Some notebooks that demonstrate custom training use an inferred project:

```

from google.cloud import bigquery

client = bigquery.Client()

```

This will cause permission issues. See:

See https://clou... | process | custom training that uses bigquery python library should use an explicit project id expected behavior some notebooks that demonstrate custom training use an inferred project from google cloud import bigquery client bigquery client this will cause permission issues see see solu... | 1 |

285,587 | 24,679,213,163 | IssuesEvent | 2022-10-18 19:42:39 | celestiaorg/test-infra | https://api.github.com/repos/celestiaorg/test-infra | closed | monorepo: multiple test-plans implementation in a monorepo | enhancement question test | We need to think about either it is viable to start creating `test-infra` into a monorepo that contains all test-plans implementation | 1.0 | monorepo: multiple test-plans implementation in a monorepo - We need to think about either it is viable to start creating `test-infra` into a monorepo that contains all test-plans implementation | non_process | monorepo multiple test plans implementation in a monorepo we need to think about either it is viable to start creating test infra into a monorepo that contains all test plans implementation | 0 |

39,059 | 8,575,195,580 | IssuesEvent | 2018-11-12 16:38:48 | yiisoft/yii2 | https://api.github.com/repos/yiisoft/yii2 | closed | Error on database configuration in second test running in codeception unit test | Codeception | ### What steps will reproduce the problem?

I created a test class like below:

```php

<?php

namespace common\tests\unit\models;

use common\models\Developer;

use common\tests\fixtures\DeveloperFixture;

use Faker\Factory;

class DeveloperTest extends \Codeception\Test\Unit

{

/**

* @var \common\tes... | 1.0 | Error on database configuration in second test running in codeception unit test - ### What steps will reproduce the problem?

I created a test class like below:

```php

<?php

namespace common\tests\unit\models;

use common\models\Developer;

use common\tests\fixtures\DeveloperFixture;

use Faker\Factory;

class... | non_process | error on database configuration in second test running in codeception unit test what steps will reproduce the problem i created a test class like below php php namespace common tests unit models use common models developer use common tests fixtures developerfixture use faker factory class... | 0 |

5,750 | 8,596,850,027 | IssuesEvent | 2018-11-15 16:58:35 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | Get Permittingatx analytics | Content Type: Process Page Size: XS Team: Content | Reach out to Rachel Crist to get access to the analytics for permittingatx to better inform the IA on alpha as we transition. | 1.0 | Get Permittingatx analytics - Reach out to Rachel Crist to get access to the analytics for permittingatx to better inform the IA on alpha as we transition. | process | get permittingatx analytics reach out to rachel crist to get access to the analytics for permittingatx to better inform the ia on alpha as we transition | 1 |

211,089 | 7,198,282,040 | IssuesEvent | 2018-02-05 12:13:39 | kubernetes/federation | https://api.github.com/repos/kubernetes/federation | closed | Address outstanding DNS review comments in #26694 | area/federation lifecycle/stale milestone/removed priority/backlog sig/multicluster team/control-plane (deprecated - do not use) | <a href="https://github.com/quinton-hoole"><img src="https://avatars0.githubusercontent.com/u/10390785?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [quinton-hoole](https://github.com/quinton-hoole)**

_Monday Jun 06, 2016 at 23:42 GMT_

_Originally opened as https://github.com/kubernetes/kub... | 1.0 | Address outstanding DNS review comments in #26694 - <a href="https://github.com/quinton-hoole"><img src="https://avatars0.githubusercontent.com/u/10390785?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [quinton-hoole](https://github.com/quinton-hoole)**

_Monday Jun 06, 2016 at 23:42 GMT_

_Or... | non_process | address outstanding dns review comments in issue by monday jun at gmt originally opened as see for details specifically don t call ensurednsrecords if the dns provider has not been initialized don t discard errors returned by getclusterzonenames cc mfanjie fyi | 0 |

12,775 | 15,162,005,239 | IssuesEvent | 2021-02-12 09:57:13 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | closed | [BE] Salesforce log support MVP set | p0 team:data processing | ## Description

As an analyst, I want to be able to pull important Salesforce audit logs via a SaaS log experience.

## Acceptance Criteria

- Support for MVP set of Salesforce log types

| 1.0 | [BE] Salesforce log support MVP set - ## Description

As an analyst, I want to be able to pull important Salesforce audit logs via a SaaS log experience.

## Acceptance Criteria

- Support for MVP set of Salesforce log types

| process | salesforce log support mvp set description as an analyst i want to be able to pull important salesforce audit logs via a saas log experience acceptance criteria support for mvp set of salesforce log types | 1 |

11,110 | 13,957,680,134 | IssuesEvent | 2020-10-24 08:07:02 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | SE: Validation in Inspire Geoportal and Reference validator | Geoportal Harvesting process SE - Sweden | We are now working quite hard to get all metadata, services and data to be approved by Inspire Geoportal.

But we have some doubts on what will happen during the monitoring in December.

So this ticket is more to get some clarifications

We understand the recent upgrades for Reference validator have not added any additi... | 1.0 | SE: Validation in Inspire Geoportal and Reference validator - We are now working quite hard to get all metadata, services and data to be approved by Inspire Geoportal.

But we have some doubts on what will happen during the monitoring in December.

So this ticket is more to get some clarifications

We understand the rec... | process | se validation in inspire geoportal and reference validator we are now working quite hard to get all metadata services and data to be approved by inspire geoportal but we have some doubts on what will happen during the monitoring in december so this ticket is more to get some clarifications we understand the rec... | 1 |

10,839 | 13,621,675,695 | IssuesEvent | 2020-09-24 01:23:26 | nion-software/nionswift | https://api.github.com/repos/nion-software/nionswift | closed | Add a processing command to combine sources into an RGB image | f - displays f - processing feature type - enhancement | Ideally each input should allow you to choose what color it is mapped to and whether the data is scaled or used as direct values. You should be able to add/remove channels.

Notes 2020-08-28:

- handle inputs of two different sizes

- handle complex data or RGB image inputs

- handle collections that have 2D datum... | 1.0 | Add a processing command to combine sources into an RGB image - Ideally each input should allow you to choose what color it is mapped to and whether the data is scaled or used as direct values. You should be able to add/remove channels.

Notes 2020-08-28:

- handle inputs of two different sizes

- handle complex d... | process | add a processing command to combine sources into an rgb image ideally each input should allow you to choose what color it is mapped to and whether the data is scaled or used as direct values you should be able to add remove channels notes handle inputs of two different sizes handle complex data o... | 1 |

20,755 | 27,488,877,438 | IssuesEvent | 2023-03-04 11:19:08 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Ubuntu: `stdout` and `stderr` data is not emitted on child processes (with piped `stdio`) | child_process | ### Version

`v18.0.0-nightly20220311d8c4e375f2`

### Platform

`Linux parallels-Parallels-Virtual-Platform 5.13.0-37-generic #42~20.04.1-Ubuntu SMP Tue Mar 15 15:44:28 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux`

### Subsystem

_No response_

### What steps will reproduce the bug?

Run `node main.js` having... | 1.0 | Ubuntu: `stdout` and `stderr` data is not emitted on child processes (with piped `stdio`) - ### Version

`v18.0.0-nightly20220311d8c4e375f2`

### Platform

`Linux parallels-Parallels-Virtual-Platform 5.13.0-37-generic #42~20.04.1-Ubuntu SMP Tue Mar 15 15:44:28 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux`

### Subsy... | process | ubuntu stdout and stderr data is not emitted on child processes with piped stdio version platform linux parallels parallels virtual platform generic ubuntu smp tue mar utc gnu linux subsystem no response what steps will re... | 1 |

255,335 | 27,484,911,010 | IssuesEvent | 2023-03-04 01:33:36 | panasalap/linux-4.1.15 | https://api.github.com/repos/panasalap/linux-4.1.15 | closed | CVE-2020-27152 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed | security vulnerability | ## CVE-2020-27152 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home p... | True | CVE-2020-27152 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed - ## CVE-2020-27152 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p... | non_process | cve medium detected in linux stable autoclosed cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulner... | 0 |

2,047 | 4,857,090,318 | IssuesEvent | 2016-11-12 12:06:20 | lxde/lxqt | https://api.github.com/repos/lxde/lxqt | reopened | kalarm crash after closing in LXQT | invalid/dup/rejected wont-process-this | **How to reproduce:**

1. Start Kalarm

2. Close Kalarm.

3. get Crash

**Software used**

1. Fedora 25

2. lxqt 0.11

3. Qt 5.7

4. kalarm-16.08.2-1.fc25

**Expected behavior**

Kalarm exit without crash

**Actual behavior**

kalarm crashes after exit

Backtrace is here: http://pastebin.com/3ay0FxxL

More inf... | 1.0 | kalarm crash after closing in LXQT - **How to reproduce:**

1. Start Kalarm

2. Close Kalarm.

3. get Crash

**Software used**

1. Fedora 25

2. lxqt 0.11

3. Qt 5.7

4. kalarm-16.08.2-1.fc25

**Expected behavior**

Kalarm exit without crash

**Actual behavior**

kalarm crashes after exit

Backtrace is here: h... | process | kalarm crash after closing in lxqt how to reproduce start kalarm close kalarm get crash software used fedora lxqt qt kalarm expected behavior kalarm exit without crash actual behavior kalarm crashes after exit backtrace is here more ... | 1 |

11,324 | 14,140,458,334 | IssuesEvent | 2020-11-10 11:14:01 | kubeflow/website | https://api.github.com/repos/kubeflow/website | closed | [Release 1.1] Release Website 1.1 | area/docs kind/process lifecycle/stale priority/p0 | Opening this issue to track doing a release of the website for Kubeflow 1.1

* We still need to find an owner to drive this (see kubeflow/kubeflow#5022)

* The first step is cutting a 1.0 branch from master providing a stable link to the docs so we can begin updating the docs on master.

* Docs for versioning the... | 1.0 | [Release 1.1] Release Website 1.1 - Opening this issue to track doing a release of the website for Kubeflow 1.1

* We still need to find an owner to drive this (see kubeflow/kubeflow#5022)

* The first step is cutting a 1.0 branch from master providing a stable link to the docs so we can begin updating the docs on ... | process | release website opening this issue to track doing a release of the website for kubeflow we still need to find an owner to drive this see kubeflow kubeflow the first step is cutting a branch from master providing a stable link to the docs so we can begin updating the docs on master do... | 1 |

18,733 | 24,627,815,100 | IssuesEvent | 2022-10-16 18:44:57 | benthosdev/benthos | https://api.github.com/repos/benthosdev/benthos | closed | why deprecate the parquet component | question processors needs more info | Hello,

I see in the document that the request is marked as deprecated. This component is being used in our project. What is the main reason for deprecated.

| 1.0 | why deprecate the parquet component - Hello,

I see in the document that the request is marked as deprecated. This component is being used in our project. What is the main reason for deprecated.

| process | why deprecate the parquet component hello i see in the document that the request is marked as deprecated this component is being used in our project what is the main reason for deprecated | 1 |

7,566 | 10,682,242,740 | IssuesEvent | 2019-10-22 04:27:55 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE][processing] Add new algorithm "Print layout map extent to layer" | 3.8 Automatic new feature Processing Alg | Original commit: https://github.com/qgis/QGIS/commit/92c8fddac2bca80c60ff0978d34dc01e9db5bb79 by nyalldawson

This algorithm creates a polygon layer containing the extent

of a print layout map item, with attributes specifying the map

size (in layout units), scale and rotatation.

The main use case is when you want to c... | 1.0 | [FEATURE][processing] Add new algorithm "Print layout map extent to layer" - Original commit: https://github.com/qgis/QGIS/commit/92c8fddac2bca80c60ff0978d34dc01e9db5bb79 by nyalldawson

This algorithm creates a polygon layer containing the extent

of a print layout map item, with attributes specifying the map

size (in ... | process | add new algorithm print layout map extent to layer original commit by nyalldawson this algorithm creates a polygon layer containing the extent of a print layout map item with attributes specifying the map size in layout units scale and rotatation the main use case is when you want to create an advanced... | 1 |

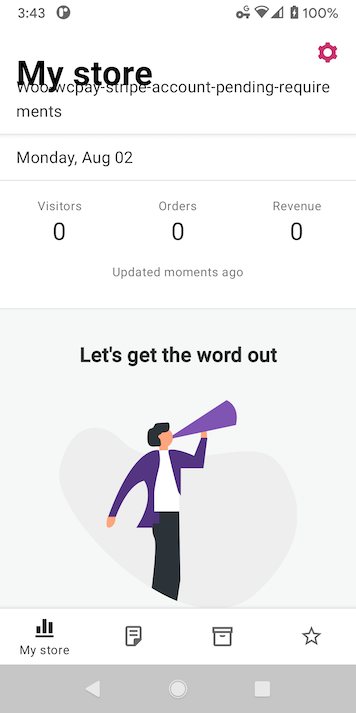

156,209 | 24,583,862,853 | IssuesEvent | 2022-10-13 17:52:24 | woocommerce/woocommerce-android | https://api.github.com/repos/woocommerce/woocommerce-android | closed | Long store names in My Store | type: bug feature: stats good first issue category: design | If a store has a name long enough to wrap to another line, it overlaps the "My Store" heading.

| 1.0 | Long store names in My Store - If a store has a name long enough to wrap to another line, it overlaps the "My Store" heading.

| non_process | long store names in my store if a store has a name long enough to wrap to another line it overlaps the my store heading | 0 |

16,086 | 20,254,340,740 | IssuesEvent | 2022-02-14 21:18:24 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Multi-target GPR predicts only 1 std when normalize_y=False | Bug module:gaussian_process | ### Describe the bug

Supposed to have been fixed in [#20761](https://github.com/scikit-learn/scikit-learn/pull/20761)?

See #22199