Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

8,924 | 12,032,525,281 | IssuesEvent | 2020-04-13 12:21:17 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Testing new preprocessor | enhancement preprocessor | We should add tests for the new backend.

A lot of work has already been done, most recently @jvegasbsc added to the tests here:

https://github.com/ESMValGroup/ESMValTool/tree/REFACTORING_fixes/tests | 1.0 | Testing new preprocessor - We should add tests for the new backend.

A lot of work has already been done, most recently @jvegasbsc added to the tests here:

https://github.com/ESMValGroup/ESMValTool/tree/REFACTORING_fixes/tests | process | testing new preprocessor we should add tests for the new backend a lot of work has already been done most recently jvegasbsc added to the tests here | 1 |

714,921 | 24,580,890,901 | IssuesEvent | 2022-10-13 15:31:50 | WFP-VAM/prism-app | https://api.github.com/repos/WFP-VAM/prism-app | closed | Failed GetCapabilities request from WFP GeoServer prevents app from loading | bug priority:high | Something has gone wrong with the WMS GetCapabilities response from WFP's GeoServer. But instead of just failing and allowing the application to load, the loading circle is continuously spinning and the app is unusable.

This is an active issue on this surge deployment:

https://prism-moz.surge.sh/?

This request ... | 1.0 | Failed GetCapabilities request from WFP GeoServer prevents app from loading - Something has gone wrong with the WMS GetCapabilities response from WFP's GeoServer. But instead of just failing and allowing the application to load, the loading circle is continuously spinning and the app is unusable.

This is an active ... | non_process | failed getcapabilities request from wfp geoserver prevents app from loading something has gone wrong with the wms getcapabilities response from wfp s geoserver but instead of just failing and allowing the application to load the loading circle is continuously spinning and the app is unusable this is an active ... | 0 |

21,068 | 28,017,151,570 | IssuesEvent | 2023-03-28 00:14:56 | nephio-project/sig-release | https://api.github.com/repos/nephio-project/sig-release | opened | Create Nephio docker registries and make sure builds pushing images to them | area/process-mgmt sig/release | We need to make sure we have the docker registry available to push and pull images. We need to create the resgistry and ensure build pipelines can push/pull from them. | 1.0 | Create Nephio docker registries and make sure builds pushing images to them - We need to make sure we have the docker registry available to push and pull images. We need to create the resgistry and ensure build pipelines can push/pull from them. | process | create nephio docker registries and make sure builds pushing images to them we need to make sure we have the docker registry available to push and pull images we need to create the resgistry and ensure build pipelines can push pull from them | 1 |

6,052 | 8,872,423,843 | IssuesEvent | 2019-01-11 15:23:32 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | <Stepper> component | Enhancement Processing | ## Description

Stepper allows users to easily change the amount of something by increments. Is great to use for passengers or baggage.

## Visual style

Zeplin: https://zpl.io/aRE0XMn

### Interactio... | 1.0 | <Stepper> component - ## Description

Stepper allows users to easily change the amount of something by increments. Is great to use for passengers or baggage.

## Visual style

Zeplin: https://zpl.io/aRE... | process | component description stepper allows users to easily change the amount of something by increments is great to use for passengers or baggage visual style zeplin interactions just button same as default functional specs it s based on stepper from search passenger popover ... | 1 |

135,499 | 19,584,483,941 | IssuesEvent | 2022-01-05 03:52:53 | JaydenDev/Catalyst | https://api.github.com/repos/JaydenDev/Catalyst | closed | Scroll cutoff in Preferences | bug help wanted good first issue design frontend high priority | If you drag the window and make it smaller, then open settings, then you can scroll down, but then it is cut off where it says "bookmarks". | 1.0 | Scroll cutoff in Preferences - If you drag the window and make it smaller, then open settings, then you can scroll down, but then it is cut off where it says "bookmarks". | non_process | scroll cutoff in preferences if you drag the window and make it smaller then open settings then you can scroll down but then it is cut off where it says bookmarks | 0 |

20,873 | 27,659,299,361 | IssuesEvent | 2023-03-12 10:41:28 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | closed | [C++] Nightly Integration Testing Report | type: process nightly-testing | Note: This report excludes firestore. Please also check **[the report for firestore](https://github.com/firebase/firebase-cpp-sdk/issues/1178)**

***

<hidden value="integration-test-status-comment"></hidden>

### ❌ [build against repo] Integration test FAILED

Requested by @DellaBitta on commit cb719bd3b53128ac6e... | 1.0 | [C++] Nightly Integration Testing Report - Note: This report excludes firestore. Please also check **[the report for firestore](https://github.com/firebase/firebase-cpp-sdk/issues/1178)**

***

<hidden value="integration-test-status-comment"></hidden>

### ❌ [build against repo] Integration test FAILED

Requested ... | process | nightly integration testing report note this report excludes firestore please also check ❌ nbsp integration test failed requested by dellabitta on commit last updated sat mar pst failures configs database add flaky tests to ... | 1 |

94,204 | 15,962,352,168 | IssuesEvent | 2021-04-16 01:07:22 | RG4421/crayons | https://api.github.com/repos/RG4421/crayons | opened | CVE-2021-23341 (High) detected in prismjs-1.17.1.tgz, prismjs-1.20.0.tgz | security vulnerability | ## CVE-2021-23341 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>prismjs-1.17.1.tgz</b>, <b>prismjs-1.20.0.tgz</b></p></summary>

<p>

<details><summary><b>prismjs-1.17.1.tgz</b></p><... | True | CVE-2021-23341 (High) detected in prismjs-1.17.1.tgz, prismjs-1.20.0.tgz - ## CVE-2021-23341 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>prismjs-1.17.1.tgz</b>, <b>prismjs-1.20.0.... | non_process | cve high detected in prismjs tgz prismjs tgz cve high severity vulnerability vulnerable libraries prismjs tgz prismjs tgz prismjs tgz lightweight robust elegant syntax highlighting a spin off project from dabblet library home page a href ... | 0 |

295,948 | 9,102,655,504 | IssuesEvent | 2019-02-20 14:16:42 | NickBurneConsulting-GivePanel/givepanel | https://api.github.com/repos/NickBurneConsulting-GivePanel/givepanel | opened | Total raised column on Fundraiser Table | Priority | Should not include Gift Aid.

This will make it easier when onboarding non-UK countries.

How Gift Aid works on the Fundraiser details panel is fine. | 1.0 | Total raised column on Fundraiser Table - Should not include Gift Aid.

This will make it easier when onboarding non-UK countries.

How Gift Aid works on the Fundraiser details panel is fine. | non_process | total raised column on fundraiser table should not include gift aid this will make it easier when onboarding non uk countries how gift aid works on the fundraiser details panel is fine | 0 |

109,421 | 16,845,820,036 | IssuesEvent | 2021-06-19 13:08:46 | mukul-seagate11/cortx-s3server | https://api.github.com/repos/mukul-seagate11/cortx-s3server | closed | CVE-2020-24616 (High) detected in jackson-databind-2.6.6.jar | security vulnerability | ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-24616 (High) detected in jackson-databind-2.6.6.jar - ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.6.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file cortx auth utils jclient pom ... | 0 |

13,325 | 15,788,046,099 | IssuesEvent | 2021-04-01 20:10:32 | modm-io/modm | https://api.github.com/repos/modm-io/modm | closed | Tags and Releases are missing. | process 📊 | [Some](https://github.com/modm-io/modm/commit/c442b5ce1fc4630a37f2b3bf7e126ead8091f816) commits are marked as releases, although no Github [Releases](https://github.com/modm-io/modm/releases) and not [tags](https://github.com/modm-io/modm/tags) are defined. | 1.0 | Tags and Releases are missing. - [Some](https://github.com/modm-io/modm/commit/c442b5ce1fc4630a37f2b3bf7e126ead8091f816) commits are marked as releases, although no Github [Releases](https://github.com/modm-io/modm/releases) and not [tags](https://github.com/modm-io/modm/tags) are defined. | process | tags and releases are missing commits are marked as releases although no github and not are defined | 1 |

17,167 | 22,743,401,261 | IssuesEvent | 2022-07-07 06:56:44 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | opened | Refactor StreamProcessor / Engine | kind/toil team/distributed team/process-automation | **Description**

Part of #9600

**Todo:**

- [ ] Find a good name for the StreamProcessor

- [ ] Rename the current StreamProcessor, e.g. StreamingPlatform (see #9602)

- [ ] Introduce a new StreamProcessor interface, which covers the reality (this needs to be adjusted during progressing with #9600 )

... | 1.0 | Refactor StreamProcessor / Engine - **Description**

Part of #9600

**Todo:**

- [ ] Find a good name for the StreamProcessor

- [ ] Rename the current StreamProcessor, e.g. StreamingPlatform (see #9602)

- [ ] Introduce a new StreamProcessor interface, which covers the reality (this needs to be adjusted d... | process | refactor streamprocessor engine description part of todo find a good name for the streamprocessor rename the current streamprocessor e g streamingplatform see introduce a new streamprocessor interface which covers the reality this needs to be adjusted during progre... | 1 |

386,359 | 11,437,523,221 | IssuesEvent | 2020-02-05 00:15:29 | Alluxio/alluxio | https://api.github.com/repos/Alluxio/alluxio | closed | Remove unnecessary OSS logging due to deleteFileIfExists | area-ufs priority-low type-bug | **Alluxio Version:**

2.1.1

**Describe the bug**

Excessive unnecessary logging on checking object existence due to `deleteFileIfExists` using OSS

```

2020-02-04 22:02:03,816 WARN OSSUnderFileSystem - Failed to get Object io-test/.alluxio_ufs_blocks.alluxio.0x1D91AC0E01AB0165.tmp/234377707520, return null

co... | 1.0 | Remove unnecessary OSS logging due to deleteFileIfExists - **Alluxio Version:**

2.1.1

**Describe the bug**

Excessive unnecessary logging on checking object existence due to `deleteFileIfExists` using OSS

```

2020-02-04 22:02:03,816 WARN OSSUnderFileSystem - Failed to get Object io-test/.alluxio_ufs_blocks.a... | non_process | remove unnecessary oss logging due to deletefileifexists alluxio version describe the bug excessive unnecessary logging on checking object existence due to deletefileifexists using oss warn ossunderfilesystem failed to get object io test alluxio ufs blocks alluxio t... | 0 |

17,623 | 23,442,845,593 | IssuesEvent | 2022-08-15 16:31:03 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Unable to delete Obj1 if Obj2 references it with Action: SetNull | bug/0-unknown kind/bug process/candidate topic: broken query topic: relations team/client topic: referential actions topic: referentialIntegrity | ### Bug description

When using the `Action: SetNull` referential action for a model relationship the deletion fails and throws an error about violating a foreign key constraint:

```

Invalid `prisma.hub.delete()` invocation:\n\n\n Foreign key constraint failed on the field: `BatteryLevel_hubId_fkey (index)`

```

... | 1.0 | Unable to delete Obj1 if Obj2 references it with Action: SetNull - ### Bug description

When using the `Action: SetNull` referential action for a model relationship the deletion fails and throws an error about violating a foreign key constraint:

```

Invalid `prisma.hub.delete()` invocation:\n\n\n Foreign key const... | process | unable to delete if references it with action setnull bug description when using the action setnull referential action for a model relationship the deletion fails and throws an error about violating a foreign key constraint invalid prisma hub delete invocation n n n foreign key constraint ... | 1 |

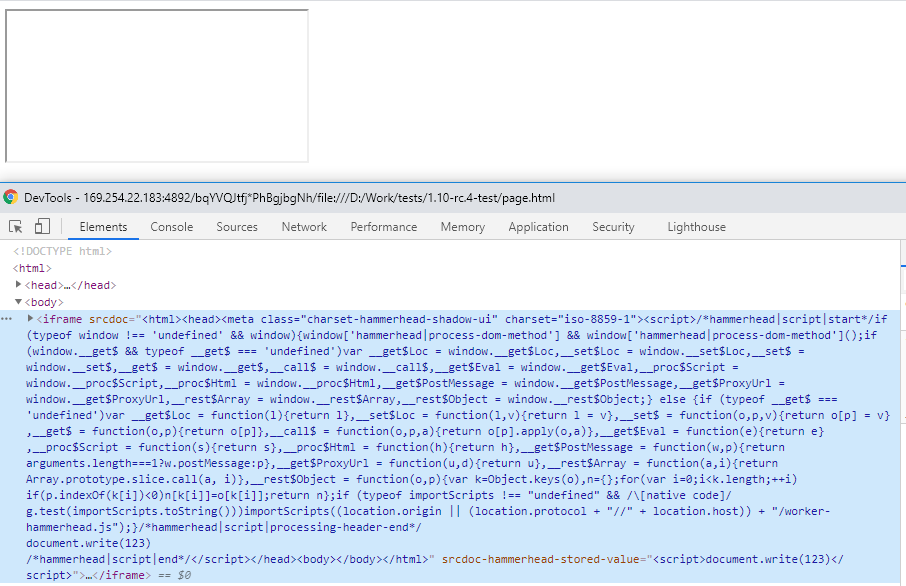

11,520 | 14,401,109,274 | IssuesEvent | 2020-12-03 13:18:32 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Scripts in iframe's srcdoc not executing | AREA: client SYSTEM: iframe processing TYPE: bug | ### What is your Scenario?

An iframe with srcdoc containing a script.

### What is the Current behavior?

The script does not execute under TestCafe.

### What is the Expected beh... | 1.0 | Scripts in iframe's srcdoc not executing - ### What is your Scenario?

An iframe with srcdoc containing a script.

### What is the Current behavior?

The script does not execute under TestCafe.

instead of `assert_log_level(caplog, 'warning')`

* It's potentially unnecessary to have to pass `caplog` to `assert_log_level`. I think we might be able to just use a fixture. `def test_somthing(assert_logs_warning... | 1.0 | Better `assert_log_level` - * Dont like that that you pass the log level as an int and not a string, ie `assert_log_level(caplog,30) instead of `assert_log_level(caplog, 'warning')`

* It's potentially unnecessary to have to pass `caplog` to `assert_log_level`. I think we might be able to just use a fixture. `def test_... | non_process | better assert log level dont like that that you pass the log level as an int and not a string ie assert log level caplog instead of assert log level caplog warning it s potentially unnecessary to have to pass caplog to assert log level i think we might be able to just use a fixture def test s... | 0 |

13,599 | 8,293,055,846 | IssuesEvent | 2018-09-20 04:31:03 | Microsoft/visualfsharp | https://api.github.com/repos/Microsoft/visualfsharp | closed | IL Generation: struct and struct records methods are slower | Feature Improvement Ready Tenet-Performance | Doing a diff between structs and struct records generated code, I noticed that an extra copy is done in methods using copy and update.

#### Repro steps

- Create a struct type

- Create a struct record

- implement the same method that copy and update the type

- compare IL both

``` F#

[<Struct>]

type Structu... | True | IL Generation: struct and struct records methods are slower - Doing a diff between structs and struct records generated code, I noticed that an extra copy is done in methods using copy and update.

#### Repro steps

- Create a struct type

- Create a struct record

- implement the same method that copy and update t... | non_process | il generation struct and struct records methods are slower doing a diff between structs and struct records generated code i noticed that an extra copy is done in methods using copy and update repro steps create a struct type create a struct record implement the same method that copy and update t... | 0 |

31,045 | 11,865,707,942 | IssuesEvent | 2020-03-26 01:17:48 | freedomofpress/securedrop-workstation | https://api.github.com/repos/freedomofpress/securedrop-workstation | closed | Remove non securedrop-client entries from sd-svs app menus | security | followup from https://github.com/freedomofpress/securedrop-workstation/issues/198:

> we have an icon for "securedrop-client" in the menu, but it's buried among the many other entries. To resolve this issue, let's update the app menu for sd-svs to contain only the relevant entry | True | Remove non securedrop-client entries from sd-svs app menus - followup from https://github.com/freedomofpress/securedrop-workstation/issues/198:

> we have an icon for "securedrop-client" in the menu, but it's buried among the many other entries. To resolve this issue, let's update the app menu for sd-svs to contain o... | non_process | remove non securedrop client entries from sd svs app menus followup from we have an icon for securedrop client in the menu but it s buried among the many other entries to resolve this issue let s update the app menu for sd svs to contain only the relevant entry | 0 |

12,433 | 3,075,274,400 | IssuesEvent | 2015-08-20 12:49:42 | mesosphere/marathon | https://api.github.com/repos/mesosphere/marathon | opened | Advanced JSON editing mode in app modal | design enhancement gui | As a user, I want to create an app using [all fields](http://mesosphere.github.io/marathon/docs/rest-api.html#post-v2-apps) in its definition from the GUI.

Currently the app modal does not expose all the fields available in the API. This means that a user cannot, for example, specify `forcePullImage=true` on a dock... | 1.0 | Advanced JSON editing mode in app modal - As a user, I want to create an app using [all fields](http://mesosphere.github.io/marathon/docs/rest-api.html#post-v2-apps) in its definition from the GUI.

Currently the app modal does not expose all the fields available in the API. This means that a user cannot, for exampl... | non_process | advanced json editing mode in app modal as a user i want to create an app using in its definition from the gui currently the app modal does not expose all the fields available in the api this means that a user cannot for example specify forcepullimage true on a docker application the modal would b... | 0 |

149,627 | 11,907,219,252 | IssuesEvent | 2020-03-30 21:50:00 | pantsbuild/pants | https://api.github.com/repos/pantsbuild/pants | closed | TestArtifactCache.test_local_backed_remote_cache is flaky | flaky-test test-skipped | Looks like:

```

tests/python/pants_test/cache:artifact_cache stdout:

============================= test session starts ==============================

platform linux -- Python 3.6.8, pytest-5.3.5, py-1.8.1, pluggy-0.13.1

rootdir: /b/f/w

plugins: cov-2.8.1, timeout-1.3.4

collected 13 items

pants_test/cache/test_a... | 2.0 | TestArtifactCache.test_local_backed_remote_cache is flaky - Looks like:

```

tests/python/pants_test/cache:artifact_cache stdout:

============================= test session starts ==============================

platform linux -- Python 3.6.8, pytest-5.3.5, py-1.8.1, pluggy-0.13.1

rootdir: /b/f/w

plugins: cov-2.8.1... | non_process | testartifactcache test local backed remote cache is flaky looks like tests python pants test cache artifact cache stdout test session starts platform linux python pytest py pluggy rootdir b f w plugins cov ... | 0 |

757,862 | 26,532,814,758 | IssuesEvent | 2023-01-19 13:44:12 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Crash on wallet creation | crash priority/P2 QA/Yes release-notes/include feature/web3/wallet OS/Android | There is a crash from time to time on wallet creation with such a stack trace:

```

2023-01-13 14:04:07.700 28199-28199 System.err pid-28199 W java.lang.NullPointerException: Attempt to invoke virtual method 'void org.chromium.brave_wallet.mojom.BraveWalletService_Internal$Brav... | 1.0 | Crash on wallet creation - There is a crash from time to time on wallet creation with such a stack trace:

```

2023-01-13 14:04:07.700 28199-28199 System.err pid-28199 W java.lang.NullPointerException: Attempt to invoke virtual method 'void org.chromium.brave_wallet.mojom.Brave... | non_process | crash on wallet creation there is a crash from time to time on wallet creation with such a stack trace system err pid w java lang nullpointerexception attempt to invoke virtual method void org chromium brave wallet mojom bravewalletservice internal... | 0 |

348,655 | 31,707,815,771 | IssuesEvent | 2023-09-09 00:19:26 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | It is better to update the tooltip of 'Select All' to 'Select All in page' in blob explorer | :heavy_check_mark: merged 🧪 testing :gear: blobs :beetle: regression :gear: adls gen2 | **Storage Explorer Version**: 1.32.0-dev (93)

**Build Number**: 20230906.9

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 20.04/MacOS Ventura 13.5 (Apple M1 Pro)

**Architecture**: x64/x64/x64

**How Found**: Exploratory testing

**Regression From**: Previous release (1.30.2)

## Steps to Reproduce ##

... | 1.0 | It is better to update the tooltip of 'Select All' to 'Select All in page' in blob explorer - **Storage Explorer Version**: 1.32.0-dev (93)

**Build Number**: 20230906.9

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 20.04/MacOS Ventura 13.5 (Apple M1 Pro)

**Architecture**: x64/x64/x64

**How Found**: Exp... | non_process | it is better to update the tooltip of select all to select all in page in blob explorer storage explorer version dev build number branch main platform os windows linux ubuntu macos ventura apple pro architecture how found exploratory testing ... | 0 |

38,912 | 19,598,671,936 | IssuesEvent | 2022-01-05 21:20:53 | OpenNeuroOrg/openneuro | https://api.github.com/repos/OpenNeuroOrg/openneuro | closed | Server side rendering for very large dataset snapshots is slow | bug performance | **Describe the bug**

It does load but client side rendering for ds002685 takes about two seconds and server side is around ten seconds. Server is often faster for small datasets, so this could be optimized.

**To Reproduce**

Steps to reproduce the behavior:

1. Load a dataset with a high file count with server rend... | True | Server side rendering for very large dataset snapshots is slow - **Describe the bug**

It does load but client side rendering for ds002685 takes about two seconds and server side is around ten seconds. Server is often faster for small datasets, so this could be optimized.

**To Reproduce**

Steps to reproduce the beh... | non_process | server side rendering for very large dataset snapshots is slow describe the bug it does load but client side rendering for takes about two seconds and server side is around ten seconds server is often faster for small datasets so this could be optimized to reproduce steps to reproduce the behavior ... | 0 |

25,470 | 3,932,862,157 | IssuesEvent | 2016-04-25 17:08:04 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | C# Language Design Review, Apr 22, 2015 | Area-Language Design Design Notes Language-C# Language-VB | # C# Language Design Review, Apr 22, 2015

## Agenda

See #1921 for an explanation of design reviews and how they differ from design meetings.

1. Expression tree extension

2. Nullable reference types

3. Facilitating wire formats

4. Bucketing

# Expression Trees

Expression trees are currently lagging be... | 2.0 | C# Language Design Review, Apr 22, 2015 - # C# Language Design Review, Apr 22, 2015

## Agenda

See #1921 for an explanation of design reviews and how they differ from design meetings.

1. Expression tree extension

2. Nullable reference types

3. Facilitating wire formats

4. Bucketing

# Expression Trees

... | non_process | c language design review apr c language design review apr agenda see for an explanation of design reviews and how they differ from design meetings expression tree extension nullable reference types facilitating wire formats bucketing expression trees expression... | 0 |

2,467 | 5,243,092,474 | IssuesEvent | 2017-01-31 19:46:07 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | closed | Custom processor to get trial source count data to CSV | enhancement Processors | # Description

For each trial, write a record of:

- ID

- SOURCE_COUNT (# of sources for that trial)

- PRIMARY_ID

- SECONDARY_ID{_1;_2;etc} - iterate over the ids to create columns

- {SOURCE_NAME} ("Yes" or "No") indicating if THIS source provided data.

Example:

```

ID | SOURCE_COUNT | PRIMARY_ID | SECONDARY_ID_1 | SE... | 1.0 | Custom processor to get trial source count data to CSV - # Description

For each trial, write a record of:

- ID

- SOURCE_COUNT (# of sources for that trial)

- PRIMARY_ID

- SECONDARY_ID{_1;_2;etc} - iterate over the ids to create columns

- {SOURCE_NAME} ("Yes" or "No") indicating if THIS source provided data.

Example:

... | process | custom processor to get trial source count data to csv description for each trial write a record of id source count of sources for that trial primary id secondary id etc iterate over the ids to create columns source name yes or no indicating if this source provided data example ... | 1 |

9,867 | 12,880,748,231 | IssuesEvent | 2020-07-12 07:59:09 | ruby-processing/propane | https://api.github.com/repos/ruby-processing/propane | closed | There is expected to be an issue with the video library on macosx | waiting for vanilla processing | ### Problem

MacOS binaries should be in the `macosx` folder, but the video library is in `macosx64` folder

### Suggested fix

Re name the native binaries `macosx64` folder to `macosx` (in the library you downloaded to `~/.propane` folder)

You should note that the library loader is scheduled for a major overhau... | 1.0 | There is expected to be an issue with the video library on macosx - ### Problem

MacOS binaries should be in the `macosx` folder, but the video library is in `macosx64` folder

### Suggested fix

Re name the native binaries `macosx64` folder to `macosx` (in the library you downloaded to `~/.propane` folder)

You ... | process | there is expected to be an issue with the video library on macosx problem macos binaries should be in the macosx folder but the video library is in folder suggested fix re name the native binaries folder to macosx in the library you downloaded to propane folder you should note th... | 1 |

222,326 | 17,407,512,484 | IssuesEvent | 2021-08-03 08:08:45 | Azure/azure-sdk-for-js | https://api.github.com/repos/Azure/azure-sdk-for-js | closed | Azure Web PubSub Samples Issue | Client WebPubSub needs-team-triage test-manual-pass | 1.

Section [link1](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/web-pubsub/web-pubsub/samples/v1/javascript/directMessage.js#L20),[link2](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/web-pubsub/web-pubsub/samples/v1/typescript/src/directMessage.ts#L20),[link3](https://github.com/Azure/azure-sdk-f... | 1.0 | Azure Web PubSub Samples Issue - 1.

Section [link1](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/web-pubsub/web-pubsub/samples/v1/javascript/directMessage.js#L20),[link2](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/web-pubsub/web-pubsub/samples/v1/typescript/src/directMessage.ts#L20),[link3](ht... | non_process | azure web pubsub samples issue section reason according to the commments it should use sendtoconnection suggestion update sendtouser to sendtoconnection lilyjma ramya rao a nickzhums bterlson and jongio for notification | 0 |

298,265 | 25,809,740,813 | IssuesEvent | 2022-12-11 18:45:25 | Spacha/PoliisiautoServer | https://api.github.com/repos/Spacha/PoliisiautoServer | closed | Add feature tests to the server | testing | * Test all endpoints - basically all the endpoints that are added in Postman

* Authentication and security in general | 1.0 | Add feature tests to the server - * Test all endpoints - basically all the endpoints that are added in Postman

* Authentication and security in general | non_process | add feature tests to the server test all endpoints basically all the endpoints that are added in postman authentication and security in general | 0 |

170,567 | 6,447,769,964 | IssuesEvent | 2017-08-14 09:00:35 | Caleydo/ordino | https://api.github.com/repos/Caleydo/ordino | opened | bug: welcome arrow not visible | bug low priority | * Release number or git hash: latest dev

* Web browser version and OS: firefox, win10

* Environment (local or deployed): deployed

see

| 1.0 | bug: welcome arrow not visible - * Release number or git hash: latest dev

* Web browser version and OS: firefox, win10

* Environment (local or deployed): deployed

see

| non_process | bug welcome arrow not visible release number or git hash latest dev web browser version and os firefox environment local or deployed deployed see | 0 |

465 | 2,903,456,853 | IssuesEvent | 2015-06-18 13:33:06 | pwittchen/prefser | https://api.github.com/repos/pwittchen/prefser | closed | Deploy new version of the library to Maven Central Repository | release process | Deploy library v. 1.0.5. after updating version. | 1.0 | Deploy new version of the library to Maven Central Repository - Deploy library v. 1.0.5. after updating version. | process | deploy new version of the library to maven central repository deploy library v after updating version | 1 |

22,267 | 30,820,377,984 | IssuesEvent | 2023-08-01 15:55:31 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | ProcessTest::testWaitStoppedDeadProcess() passing even though it should actually fail | Bug Process Status: Needs Review | ### Symfony version(s) affected

*

### Description

The `ProcessTest::testWaitStoppedDeadProcess()` uses the `ErrorProcessInitiator.php` which itself includes the Composer autoload.php. This file, however, does not exist at all in `symfony/symfony`.

### How to reproduce

```

public function testWaitStoppedDead... | 1.0 | ProcessTest::testWaitStoppedDeadProcess() passing even though it should actually fail - ### Symfony version(s) affected

*

### Description

The `ProcessTest::testWaitStoppedDeadProcess()` uses the `ErrorProcessInitiator.php` which itself includes the Composer autoload.php. This file, however, does not exist at all in ... | process | processtest testwaitstoppeddeadprocess passing even though it should actually fail symfony version s affected description the processtest testwaitstoppeddeadprocess uses the errorprocessinitiator php which itself includes the composer autoload php this file however does not exist at all in ... | 1 |

309,625 | 26,671,135,193 | IssuesEvent | 2023-01-26 10:22:35 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/lens/group2/dashboard·ts - lens app - group 2 lens dashboard tests CSV export action exists in panel context menu | Team:Visualizations failed-test | A test failed on a tracked branch

```

Error: expected testSubject(embeddablePanelAction-ACTION_EXPORT_CSV) to exist

at TestSubjects.existOrFail (test_subjects.ts:71:13)

at Context.<anonymous> (dashboard.ts:158:7)

at Object.apply (wrap_function.js:73:16)

```

First failure: [CI Build - main](https://buildki... | 1.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/lens/group2/dashboard·ts - lens app - group 2 lens dashboard tests CSV export action exists in panel context menu - A test failed on a tracked branch

```

Error: expected testSubject(embeddablePanelAction-ACTION_EXPORT_CSV) to exist

at Test... | non_process | failing test chrome x pack ui functional tests x pack test functional apps lens dashboard·ts lens app group lens dashboard tests csv export action exists in panel context menu a test failed on a tracked branch error expected testsubject embeddablepanelaction action export csv to exist at testsubje... | 0 |

1,948 | 4,770,934,480 | IssuesEvent | 2016-10-26 16:31:31 | mozilla/tofino | https://api.github.com/repos/mozilla/tofino | closed | The commandline API doesn't properly check if running in a test or development environment | backend:main-process bug in progress | The following line line in app/main/command-line.js:

```process.env.NODE_ENV !== 'development' && process.env.NODE_ENV !== 'test'```

is completely broken since the node environment can never be "test" (instead, a `TEST` flag exists on the node environment variables), and the "development" build needs to be tested... | 1.0 | The commandline API doesn't properly check if running in a test or development environment - The following line line in app/main/command-line.js:

```process.env.NODE_ENV !== 'development' && process.env.NODE_ENV !== 'test'```

is completely broken since the node environment can never be "test" (instead, a `TEST` f... | process | the commandline api doesn t properly check if running in a test or development environment the following line line in app main command line js process env node env development process env node env test is completely broken since the node environment can never be test instead a test f... | 1 |

15,601 | 19,723,955,613 | IssuesEvent | 2022-01-13 17:58:57 | dtcenter/MET | https://api.github.com/repos/dtcenter/MET | closed | Modify the interpretation of the message_type_group_map values to support the use of regular expressions. | type: enhancement priority: high requestor: Community reporting: DTC NCAR Base required: FOR OFFICIAL RELEASE MET: PreProcessing Tools (Point) | ## Describe the Enhancement ##

This issue arose via METplus Discussions dtcenter/METplus#1232. While the user was able to run madis2nc to compute time summaries, he was NOT able to get Point-Stat to read them to verify forecasts of daily temperature min/max.

I was able to replicate the problem using the sample data... | 1.0 | Modify the interpretation of the message_type_group_map values to support the use of regular expressions. - ## Describe the Enhancement ##

This issue arose via METplus Discussions dtcenter/METplus#1232. While the user was able to run madis2nc to compute time summaries, he was NOT able to get Point-Stat to read them to... | process | modify the interpretation of the message type group map values to support the use of regular expressions describe the enhancement this issue arose via metplus discussions dtcenter metplus while the user was able to run to compute time summaries he was not able to get point stat to read them to verify fo... | 1 |

16,706 | 21,843,253,392 | IssuesEvent | 2022-05-18 00:15:00 | lbryio/scribe | https://api.github.com/repos/lbryio/scribe | closed | Scribe writer does not use `multi_get` api | area: block processor type: feature request | The wrtier can be made a good bit faster by batching the RevertablePut and RevertableDelete ops given to `RevertableOpStack.extend_ops`, internally combining them into fewer `multi_get` calls instead of verifying integrity on each key. | 1.0 | Scribe writer does not use `multi_get` api - The wrtier can be made a good bit faster by batching the RevertablePut and RevertableDelete ops given to `RevertableOpStack.extend_ops`, internally combining them into fewer `multi_get` calls instead of verifying integrity on each key. | process | scribe writer does not use multi get api the wrtier can be made a good bit faster by batching the revertableput and revertabledelete ops given to revertableopstack extend ops internally combining them into fewer multi get calls instead of verifying integrity on each key | 1 |

7,367 | 10,511,370,358 | IssuesEvent | 2019-09-27 15:18:37 | prisma/studio | https://api.github.com/repos/prisma/studio | closed | Easy browser-based development setup | kind/improvement process/candidate | - live reload

- easy local linking

- example data sets

- preview deployments (e.g. Netlify) | 1.0 | Easy browser-based development setup - - live reload

- easy local linking

- example data sets

- preview deployments (e.g. Netlify) | process | easy browser based development setup live reload easy local linking example data sets preview deployments e g netlify | 1 |

21,422 | 29,359,592,438 | IssuesEvent | 2023-05-28 00:36:59 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / Belo Horizonte] Data Analyst na Coodesh | SALVADOR BANCO DE DADOS DATA SCIENCE PYTHON SQL DJANGO REQUISITOS PROCESSOS GITHUB INGLÊS SEGURANÇA UMA POWER BI MODELAGEM DE DADOS pandas ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/analista-de-dados-152349096?utm_sourc... | 1.0 | [Hibrido / Belo Horizonte] Data Analyst na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://co... | process | data analyst na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a ... | 1 |

508,454 | 14,700,539,949 | IssuesEvent | 2021-01-04 10:22:04 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m.chaturbate.com - video or audio doesn't play | browser-fenix engine-gecko ml-needsdiagnosis-false priority-important | <!-- @browser: Firefox Mobile 86.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:86.0) Gecko/86.0 Firefox/86.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64710 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://m.chaturbate... | 1.0 | m.chaturbate.com - video or audio doesn't play - <!-- @browser: Firefox Mobile 86.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:86.0) Gecko/86.0 Firefox/86.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64710 -->

<!-- @extra_labels:... | non_process | m chaturbate com video or audio doesn t play url browser version firefox mobile operating system android tested another browser yes opera problem type video or audio doesn t play description media controls are broken or missing steps to reproduce video does no... | 0 |

6,309 | 9,311,282,864 | IssuesEvent | 2019-03-25 20:57:58 | googleapis/google-cloud-cpp | https://api.github.com/repos/googleapis/google-cloud-cpp | closed | Define support level for pkg-config based builds. | type: process | The current CMake configuration supports using [`pkg-config`](https://www.freedesktop.org/wiki/Software/pkg-config/) to discover the flags for our dependencies. Basically the CMake file calls `pkg-config(1)` to discover what compiler and linker flags are needed to find `libcurl`, or `OpenSSL`, or `protobuf`.

In som... | 1.0 | Define support level for pkg-config based builds. - The current CMake configuration supports using [`pkg-config`](https://www.freedesktop.org/wiki/Software/pkg-config/) to discover the flags for our dependencies. Basically the CMake file calls `pkg-config(1)` to discover what compiler and linker flags are needed to fi... | process | define support level for pkg config based builds the current cmake configuration supports using to discover the flags for our dependencies basically the cmake file calls pkg config to discover what compiler and linker flags are needed to find libcurl or openssl or protobuf in some linux distri... | 1 |

357,289 | 10,604,699,613 | IssuesEvent | 2019-10-10 18:46:04 | OpenLiberty/ci.maven | https://api.github.com/repos/OpenLiberty/ci.maven | closed | liberty:run doesn't deploy the application | high priority vNext | Per @sdaschner:

> One issue that my updated getting started guide (in the PR) still has is that the minimal example doesn't work with :run, only with :dev OOTB. It doesn't deploy the application (the WAR file) that's being produced by Maven and defined in the server.xml. Calling :deploy explicitly complains that I n... | 1.0 | liberty:run doesn't deploy the application - Per @sdaschner:

> One issue that my updated getting started guide (in the PR) still has is that the minimal example doesn't work with :run, only with :dev OOTB. It doesn't deploy the application (the WAR file) that's being produced by Maven and defined in the server.xml. ... | non_process | liberty run doesn t deploy the application per sdaschner one issue that my updated getting started guide in the pr still has is that the minimal example doesn t work with run only with dev ootb it doesn t deploy the application the war file that s being produced by maven and defined in the server xml ... | 0 |

423,782 | 28,933,347,745 | IssuesEvent | 2023-05-09 02:37:53 | jaenyeong/Teach_Wanted-PreOnBoarding-Backend-Challenge | https://api.github.com/repos/jaenyeong/Teach_Wanted-PreOnBoarding-Backend-Challenge | opened | [사전 과제 제출] | documentation | # 원티드 프리온보딩 백엔드 챌린지 6월 과정 사전 과제 제출

답안을 모두 작성하지 못해도 괜찮습니다 :)

부담 갖지 마시고 편안하게 푸시길 바랍니다

## Question

**Java 입문서('이것이 자바다', '자바의 정석' 등)를 완독한 적이 있나요? 기억에 남는 내용을 설명해 주세요!**

>

**Java 공식 문서를 10분 이상 살펴본 적이 있나요? 있다면 어떤 내용을 살펴보았나요?**

>

**인터프리터 방식과 컴파일 방식의 차이점을 서술해 주세요.**

>

**프로세스와 스레드의 차이점을 서술해 주세요.**

>

**... | 1.0 | [사전 과제 제출] - # 원티드 프리온보딩 백엔드 챌린지 6월 과정 사전 과제 제출

답안을 모두 작성하지 못해도 괜찮습니다 :)

부담 갖지 마시고 편안하게 푸시길 바랍니다

## Question

**Java 입문서('이것이 자바다', '자바의 정석' 등)를 완독한 적이 있나요? 기억에 남는 내용을 설명해 주세요!**

>

**Java 공식 문서를 10분 이상 살펴본 적이 있나요? 있다면 어떤 내용을 살펴보았나요?**

>

**인터프리터 방식과 컴파일 방식의 차이점을 서술해 주세요.**

>

**프로세스와 스레드의 차이점을 서술해 주세요... | non_process | 원티드 프리온보딩 백엔드 챌린지 과정 사전 과제 제출 답안을 모두 작성하지 못해도 괜찮습니다 부담 갖지 마시고 편안하게 푸시길 바랍니다 question java 입문서 이것이 자바다 자바의 정석 등 를 완독한 적이 있나요 기억에 남는 내용을 설명해 주세요 java 공식 문서를 이상 살펴본 적이 있나요 있다면 어떤 내용을 살펴보았나요 인터프리터 방식과 컴파일 방식의 차이점을 서술해 주세요 프로세스와 스레드의 차이점을 서술해 주세요 ... | 0 |

19,467 | 25,762,750,681 | IssuesEvent | 2022-12-08 22:05:41 | IHE/publications | https://api.github.com/repos/IHE/publications | closed | Delayed Document Assembly vol 1 and 2 conflict on hash value | CP-processing | Volume 1 says hash and size are 0

Volume 2 says size is 0, and hash is the valid hash value of a zero length file.

Recommend that Volume 1 be fixed, as this solution allows hash to always be calculated properly.

https://profiles.ihe.net/ITI/TF/Volume1/ch-10.html#10.2.10 | 1.0 | Delayed Document Assembly vol 1 and 2 conflict on hash value - Volume 1 says hash and size are 0

Volume 2 says size is 0, and hash is the valid hash value of a zero length file.

Recommend that Volume 1 be fixed, as this solution allows hash to always be calculated properly.

https://profiles.ihe.net/ITI/TF/Volume... | process | delayed document assembly vol and conflict on hash value volume says hash and size are volume says size is and hash is the valid hash value of a zero length file recommend that volume be fixed as this solution allows hash to always be calculated properly | 1 |

13,528 | 16,060,961,407 | IssuesEvent | 2021-04-23 12:30:06 | CERT-Polska/drakvuf-sandbox | https://api.github.com/repos/CERT-Polska/drakvuf-sandbox | closed | RVAs of apicalls | certpl drakrun/postprocessing enhancement priority:medium | I have a list of 1024 apicalls, which come from 36 DLLs.

For each snapshot (or karton task) I need RVAs of this apicalls inside the DLLs.

I prefer to get these RVAs from karton tasks, I would have everything in one place.

Since there are only 1024 of these functions, it shouldn't be a problem?

But it's not a "mus... | 1.0 | RVAs of apicalls - I have a list of 1024 apicalls, which come from 36 DLLs.

For each snapshot (or karton task) I need RVAs of this apicalls inside the DLLs.

I prefer to get these RVAs from karton tasks, I would have everything in one place.

Since there are only 1024 of these functions, it shouldn't be a problem?

... | process | rvas of apicalls i have a list of apicalls which come from dlls for each snapshot or karton task i need rvas of this apicalls inside the dlls i prefer to get these rvas from karton tasks i would have everything in one place since there are only of these functions it shouldn t be a problem but it ... | 1 |

243,830 | 26,289,301,277 | IssuesEvent | 2023-01-08 07:35:24 | kaidisn/encore | https://api.github.com/repos/kaidisn/encore | closed | CVE-2015-9251 (Medium) detected in github.com/golang/tools-gopls/v0.5.0-pre1 - autoclosed | security vulnerability | ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/golang/tools-gopls/v0.5.0-pre1</b></p></summary>

<p>[mirror] Go Tools</p>

<p>

Dependency Hierarchy:

- :... | True | CVE-2015-9251 (Medium) detected in github.com/golang/tools-gopls/v0.5.0-pre1 - autoclosed - ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/golang/tools-go... | non_process | cve medium detected in github com golang tools gopls autoclosed cve medium severity vulnerability vulnerable library github com golang tools gopls go tools dependency hierarchy x github com golang tools gopls vulnerable library found in head com... | 0 |

52,458 | 7,763,959,254 | IssuesEvent | 2018-06-01 18:28:19 | CICE-Consortium/CICE | https://api.github.com/repos/CICE-Consortium/CICE | closed | document basalstress scheme and ellipse-related changes | CICEdyn Documentation | We need an overview description of the fast-ice scheme, referencing publication(s), and including full documentation of the new namelist variables. Also describe hwater, bathymetry, and normalization of principal stresses. Remove prs_sig from the namelist index, and add sigP. | 1.0 | document basalstress scheme and ellipse-related changes - We need an overview description of the fast-ice scheme, referencing publication(s), and including full documentation of the new namelist variables. Also describe hwater, bathymetry, and normalization of principal stresses. Remove prs_sig from the namelist inde... | non_process | document basalstress scheme and ellipse related changes we need an overview description of the fast ice scheme referencing publication s and including full documentation of the new namelist variables also describe hwater bathymetry and normalization of principal stresses remove prs sig from the namelist inde... | 0 |

6,354 | 9,414,577,474 | IssuesEvent | 2019-04-10 10:28:27 | meumobi/sitebuilder | https://api.github.com/repos/meumobi/sitebuilder | opened | Extract yt API key form src code | process-remote-media | ### Expected behaviour

Should migrate it on config

### Actual behaviour

API key is hard coded on `./src/sitebuilder/lib/meumobi/sitebuilder/services/ProcessRemoteMedia/YoutubeHandler.php`

### Expected responses

- How to fix it

- How to test

| 1.0 | Extract yt API key form src code - ### Expected behaviour

Should migrate it on config

### Actual behaviour

API key is hard coded on `./src/sitebuilder/lib/meumobi/sitebuilder/services/ProcessRemoteMedia/YoutubeHandler.php`

### Expected responses

- How to fix it

- How to test

| process | extract yt api key form src code expected behaviour should migrate it on config actual behaviour api key is hard coded on src sitebuilder lib meumobi sitebuilder services processremotemedia youtubehandler php expected responses how to fix it how to test | 1 |

401,621 | 11,795,244,740 | IssuesEvent | 2020-03-18 08:34:00 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | opened | Missing singlepages translations | priority: low technical change | In GitLab by @joren485 on Mar 13, 2020, 16:27

### Description

Add all missing singlepages translations.

After I run `../manage.py makemessages --locale nl --no-obsolete` in `website/singlepages` 170+ translations seem to be missing. | 1.0 | Missing singlepages translations - In GitLab by @joren485 on Mar 13, 2020, 16:27

### Description

Add all missing singlepages translations.

After I run `../manage.py makemessages --locale nl --no-obsolete` in `website/singlepages` 170+ translations seem to be missing. | non_process | missing singlepages translations in gitlab by on mar description add all missing singlepages translations after i run manage py makemessages locale nl no obsolete in website singlepages translations seem to be missing | 0 |

69,593 | 22,552,759,411 | IssuesEvent | 2022-06-27 07:32:48 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | Dialog Framework : onHide option is not working | :lady_beetle: defect | ### Describe the bug

`onHide` option is listed in the full list of configuration options at the following URL, but it is not working.

https://primefaces.github.io/primefaces/11_0_0/#/core/dialogframework

### Reproducer

_No response_

### Expected behavior

The process set by the onHide option is executed when the d... | 1.0 | Dialog Framework : onHide option is not working - ### Describe the bug

`onHide` option is listed in the full list of configuration options at the following URL, but it is not working.

https://primefaces.github.io/primefaces/11_0_0/#/core/dialogframework

### Reproducer

_No response_

### Expected behavior

The proc... | non_process | dialog framework onhide option is not working describe the bug onhide option is listed in the full list of configuration options at the following url but it is not working reproducer no response expected behavior the process set by the onhide option is executed when the dialog is hidden ... | 0 |

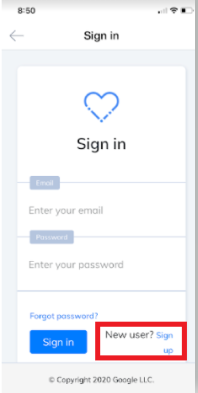

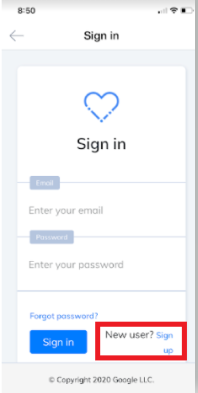

16,468 | 21,391,667,233 | IssuesEvent | 2022-04-21 07:46:04 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Sign in screen > UI issue | Bug P1 iOS UI Process: Fixed Process: Tested dev Process: Reopened | Sign in screen > 'New User? Sign up' is getting wrapped into two lines

[Note: Issue observed in iPhone XS]

| 3.0 | [iOS] Sign in screen > UI issue - Sign in screen > 'New User? Sign up' is getting wrapped into two lines

[Note: Issue observed in iPhone XS]

| process | sign in screen ui issue sign in screen new user sign up is getting wrapped into two lines | 1 |

25,055 | 24,645,576,470 | IssuesEvent | 2022-10-17 14:38:58 | VirtusLab/git-machete | https://api.github.com/repos/VirtusLab/git-machete | closed | Gif in README is too fast (?) | docs usability | Too much text appears, I tried to show the gif as a demo to my folks and couldn't keep up with what happens 😅 | True | Gif in README is too fast (?) - Too much text appears, I tried to show the gif as a demo to my folks and couldn't keep up with what happens 😅 | non_process | gif in readme is too fast too much text appears i tried to show the gif as a demo to my folks and couldn t keep up with what happens 😅 | 0 |

41,801 | 6,948,570,854 | IssuesEvent | 2017-12-06 01:03:56 | microsoftgraph/microsoft-graph-docs | https://api.github.com/repos/microsoftgraph/microsoft-graph-docs | closed | Hyperlink issue because id="search" is both attached to the section and the search button | bug: documentation | Issue:

In the documentation "Use query parameters to customize responses", try to click on the `$search` hyperlink from the parameter table. You will notice it will not link to the related paragraph, but to the search button. This is because both the `<h2>` tag of the `$search` section and the search button have the ... | 1.0 | Hyperlink issue because id="search" is both attached to the section and the search button - Issue:

In the documentation "Use query parameters to customize responses", try to click on the `$search` hyperlink from the parameter table. You will notice it will not link to the related paragraph, but to the search button. ... | non_process | hyperlink issue because id search is both attached to the section and the search button issue in the documentation use query parameters to customize responses try to click on the search hyperlink from the parameter table you will notice it will not link to the related paragraph but to the search button ... | 0 |

20,997 | 27,864,133,838 | IssuesEvent | 2023-03-21 09:04:18 | GoogleCloudPlatform/dotnet-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples | closed | Fix Spanner Samples Conflicting Tests | type: process priority: p1 api: spanner samples | There a bunch of Spanner samples tests that are sharing a common resource (Albums table in this case), which are hence causing flakiness in the tests. To resolve the issue the conflicting samples tests are updated to use their dedicated database.

Some of the conflicting tests includes:

- [UpdateDataAsyncTest](http... | 1.0 | Fix Spanner Samples Conflicting Tests - There a bunch of Spanner samples tests that are sharing a common resource (Albums table in this case), which are hence causing flakiness in the tests. To resolve the issue the conflicting samples tests are updated to use their dedicated database.

Some of the conflicting tests i... | process | fix spanner samples conflicting tests there a bunch of spanner samples tests that are sharing a common resource albums table in this case which are hence causing flakiness in the tests to resolve the issue the conflicting samples tests are updated to use their dedicated database some of the conflicting tests i... | 1 |

2,640 | 5,415,328,709 | IssuesEvent | 2017-03-01 21:19:02 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Native Queries w/ an initial null causes the type of a column to be marked as "type/*" | Bug Priority/P2 Query Processor | When doing Month over Month (or any time period over previous time period) metrics, the first value is often null.

This makes the end column unchartable, and requires the use of a weirdo subselect

```sql

SELECT * from

(MYREALQUERY) a

WHERE column IS NOT NULL

```

which is kind of ghetto. | 1.0 | Native Queries w/ an initial null causes the type of a column to be marked as "type/*" - When doing Month over Month (or any time period over previous time period) metrics, the first value is often null.

This makes the end column unchartable, and requires the use of a weirdo subselect

```sql

SELECT * from

(M... | process | native queries w an initial null causes the type of a column to be marked as type when doing month over month or any time period over previous time period metrics the first value is often null this makes the end column unchartable and requires the use of a weirdo subselect sql select from m... | 1 |

575,427 | 17,030,894,904 | IssuesEvent | 2021-07-04 14:40:32 | vdjagilev/nmap-formatter | https://api.github.com/repos/vdjagilev/nmap-formatter | opened | Add more use-cases with jq | priority/low type/other | How to use this tool with jq (show hosts that are up, show only http service ports, show only filtered ports, count ports for each host, et cetera) | 1.0 | Add more use-cases with jq - How to use this tool with jq (show hosts that are up, show only http service ports, show only filtered ports, count ports for each host, et cetera) | non_process | add more use cases with jq how to use this tool with jq show hosts that are up show only http service ports show only filtered ports count ports for each host et cetera | 0 |

12,958 | 15,339,443,135 | IssuesEvent | 2021-02-27 02:01:33 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Inputs should not be cleared after reloading in Firefox | AREA: client BROWSER: Firefox STATE: Stale SYSTEM: client side processing TYPE: enhancement | Firefox preserves values and states of inputs after reloading, but under Hammerhead inputs get empty. E.g. checked checkbox should remains checked, and text inputs should have the same text. | 1.0 | Inputs should not be cleared after reloading in Firefox - Firefox preserves values and states of inputs after reloading, but under Hammerhead inputs get empty. E.g. checked checkbox should remains checked, and text inputs should have the same text. | process | inputs should not be cleared after reloading in firefox firefox preserves values and states of inputs after reloading but under hammerhead inputs get empty e g checked checkbox should remains checked and text inputs should have the same text | 1 |

22,512 | 31,563,822,793 | IssuesEvent | 2023-09-03 15:16:55 | n0tknowing/chibicc | https://api.github.com/repos/n0tknowing/chibicc | closed | Macro expansion consumes GiB of memory | preprocessor | Not surprised with the current compiler design.

Here's result from `memusage` and `perf` when run https://github.com/swansontec/map-macro.

`memusage` results:

```

Memory usage summary: heap total: 1141447160, heap peak: 1141349037, stack peak: 4768

total calls total memory failed calls

malloc| ... | 1.0 | Macro expansion consumes GiB of memory - Not surprised with the current compiler design.

Here's result from `memusage` and `perf` when run https://github.com/swansontec/map-macro.

`memusage` results:

```

Memory usage summary: heap total: 1141447160, heap peak: 1141349037, stack peak: 4768

total calls ... | process | macro expansion consumes gib of memory not surprised with the current compiler design here s result from memusage and perf when run memusage results memory usage summary heap total heap peak stack peak total calls total memory failed calls malloc ... | 1 |

21,713 | 30,214,563,829 | IssuesEvent | 2023-07-05 14:47:00 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] Port summarization sidebar GUI | .Frontend Querying/GUI .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | We need to port this UI for aggregations and breakouts:

<img width="309" alt="image" src="https://github.com/metabase/metabase/assets/1455846/c341c0ce-ef11-41be-9e08-ea639b7d9625">

You get here by clicking the `Summarize` button in the upper-right when looking at query results.

We should probably sequence this... | 1.0 | [MLv2] Port summarization sidebar GUI - We need to port this UI for aggregations and breakouts:

<img width="309" alt="image" src="https://github.com/metabase/metabase/assets/1455846/c341c0ce-ef11-41be-9e08-ea639b7d9625">

You get here by clicking the `Summarize` button in the upper-right when looking at query resu... | process | port summarization sidebar gui we need to port this ui for aggregations and breakouts img width alt image src you get here by clicking the summarize button in the upper right when looking at query results we should probably sequence this after we finish the notebook editor versions of this st... | 1 |

8,718 | 11,855,127,448 | IssuesEvent | 2020-03-25 03:11:19 | kubeflow/pipelines | https://api.github.com/repos/kubeflow/pipelines | closed | Released code in PyPI doesn't match tagged version + release from github | area/release kind/bug kind/process priority/p1 status/triaged | ### What steps did you take:

I'm installing the kubeflow pipelines sdk (`kfp=0.2.5`) through the conda feedstock https://github.com/conda-forge/kfp-feedstock/blob/master/recipe/meta.yaml , which just repackages the archive from https://pypi.org/project/kfp/#files . However, the contents of that archive do not match ... | 1.0 | Released code in PyPI doesn't match tagged version + release from github - ### What steps did you take:

I'm installing the kubeflow pipelines sdk (`kfp=0.2.5`) through the conda feedstock https://github.com/conda-forge/kfp-feedstock/blob/master/recipe/meta.yaml , which just repackages the archive from https://pypi.o... | process | released code in pypi doesn t match tagged version release from github what steps did you take i m installing the kubeflow pipelines sdk kfp through the conda feedstock which just repackages the archive from however the contents of that archive do not match the contents of the github rele... | 1 |

9,064 | 12,138,294,811 | IssuesEvent | 2020-04-23 17:00:47 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | CircleCI exits as passing when Cypress tests have failed in our development | priority: high❗️ process: tests stage: needs review | ### Current behavior:

For our own internal tests, sometimes the CircleCI job will pass even though there was a failure within a Cypress test. The CircleCI is falsely passing. There is some suspicious that maybe `lerna` is suppressing the exit code.

Example: https://github.com/cypress-io/cypress/pull/7014

Circ... | 1.0 | CircleCI exits as passing when Cypress tests have failed in our development - ### Current behavior:

For our own internal tests, sometimes the CircleCI job will pass even though there was a failure within a Cypress test. The CircleCI is falsely passing. There is some suspicious that maybe `lerna` is suppressing the e... | process | circleci exits as passing when cypress tests have failed in our development current behavior for our own internal tests sometimes the circleci job will pass even though there was a failure within a cypress test the circleci is falsely passing there is some suspicious that maybe lerna is suppressing the e... | 1 |

219,472 | 16,832,996,760 | IssuesEvent | 2021-06-18 08:14:42 | ihrapsa/KlipperWrt | https://api.github.com/repos/ihrapsa/KlipperWrt | opened | [guide] Add timelapse instructions | documentation enhancement | Update guide with instructions to install timelapse moonraker component + dependencies | 1.0 | [guide] Add timelapse instructions - Update guide with instructions to install timelapse moonraker component + dependencies | non_process | add timelapse instructions update guide with instructions to install timelapse moonraker component dependencies | 0 |

20,314 | 26,957,913,079 | IssuesEvent | 2023-02-08 16:05:14 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | closed | increase minimum version of google-cloud-core to 1.6.0 | api: bigquery status: blocked type: process priority: p3 | Once enough time has passed to give people time to upgrade (TBD how long that is), we should increase the minimum version and clean up and logic that switches based on package version that was added in https://github.com/googleapis/python-bigquery/pull/492. | 1.0 | increase minimum version of google-cloud-core to 1.6.0 - Once enough time has passed to give people time to upgrade (TBD how long that is), we should increase the minimum version and clean up and logic that switches based on package version that was added in https://github.com/googleapis/python-bigquery/pull/492. | process | increase minimum version of google cloud core to once enough time has passed to give people time to upgrade tbd how long that is we should increase the minimum version and clean up and logic that switches based on package version that was added in | 1 |

17,215 | 22,822,317,082 | IssuesEvent | 2022-07-12 04:31:04 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Python 3.6 Out of Support but listed as "recommended". Does this need to be updated? | automation/svc triaged cxp doc-enhancement process-automation/subsvc Pri1 | Hi, Python 3.6 has been out of support for quite some time. Why is this Python unsupported version recommended per:

https://docs.microsoft.com/en-us/azure/automation/automation-runbook-types#advantages-3:~:text=For%20Python%203%20Hybrid%20jobs%20on%20Linux%20machines%2C%20we%20depend%20on%20the%20Python%203%20versi... | 1.0 | Python 3.6 Out of Support but listed as "recommended". Does this need to be updated? - Hi, Python 3.6 has been out of support for quite some time. Why is this Python unsupported version recommended per:

https://docs.microsoft.com/en-us/azure/automation/automation-runbook-types#advantages-3:~:text=For%20Python%203%... | process | python out of support but listed as recommended does this need to be updated hi python has been out of support for quite some time why is this python unsupported version recommended per thank you mark document details ⚠ do not edit this section it is required for docs micro... | 1 |

21,912 | 30,441,307,842 | IssuesEvent | 2023-07-15 05:00:31 | zotero/zotero | https://api.github.com/repos/zotero/zotero | opened | Revert change to Bibliography style updating? | Word Processor Integration Regression | https://forums.zotero.org/discussion/106215/zotero-7-refreshing-the-bibliography-will-overwrite-the-bibliography-style-in-word

It seemed like that code was there for a reason… | 1.0 | Revert change to Bibliography style updating? - https://forums.zotero.org/discussion/106215/zotero-7-refreshing-the-bibliography-will-overwrite-the-bibliography-style-in-word

It seemed like that code was there for a reason… | process | revert change to bibliography style updating it seemed like that code was there for a reason… | 1 |

9,337 | 12,341,256,900 | IssuesEvent | 2020-05-14 21:31:52 | paul-buerkner/brms | https://api.github.com/repos/paul-buerkner/brms | closed | emmeans interface | feature post-processing | I was alerted to some discussion on interfacing to the **emmeans** package in the Google group. I was going to contribute to the discussion there, but (based on browser warnings) was unable to find a way to securely log in. So I will comment on a few things here, in hopes that it will clarify some things

> **emmeans... | 1.0 | emmeans interface - I was alerted to some discussion on interfacing to the **emmeans** package in the Google group. I was going to contribute to the discussion there, but (based on browser warnings) was unable to find a way to securely log in. So I will comment on a few things here, in hopes that it will clarify some t... | process | emmeans interface i was alerted to some discussion on interfacing to the emmeans package in the google group i was going to contribute to the discussion there but based on browser warnings was unable to find a way to securely log in so i will comment on a few things here in hopes that it will clarify some t... | 1 |

7,251 | 10,418,460,370 | IssuesEvent | 2019-09-15 08:49:52 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | add geometry attributes fails on multipoint layers | Bug Crash/Data Corruption High Priority Processing | Author Name: **Alain FERRATON** (@FERRATON)

Original Redmine Issue: [21352](https://issues.qgis.org/issues/21352)

Affected QGIS version: 3.7(master)

Redmine category:processing/qgis

Assignee: Nyall Dawson

---

see attached layer.

the algorithm works if you use before

multipart to single part

algorithm works in... | 1.0 | add geometry attributes fails on multipoint layers - Author Name: **Alain FERRATON** (@FERRATON)

Original Redmine Issue: [21352](https://issues.qgis.org/issues/21352)

Affected QGIS version: 3.7(master)

Redmine category:processing/qgis

Assignee: Nyall Dawson

---

see attached layer.

the algorithm works if you use bef... | process | add geometry attributes fails on multipoint layers author name alain ferraton ferraton original redmine issue affected qgis version master redmine category processing qgis assignee nyall dawson see attached layer the algorithm works if you use before multipart to single part algorit... | 1 |

798,329 | 28,244,366,152 | IssuesEvent | 2023-04-06 09:35:56 | ppy/osu-web | https://api.github.com/repos/ppy/osu-web | closed | Add the ability to edit online tags | area:admin area:beatmap-info priority:2 | online tags, only editable by NAT/GMT on old website, are used to add additional tags to Ranked and Loved maps to improve searchability without altering the status of the map, we currently have to go through the old website to do that which is a hassle.

it'd be really nice to have a basic online tags editor availabl... | 1.0 | Add the ability to edit online tags - online tags, only editable by NAT/GMT on old website, are used to add additional tags to Ranked and Loved maps to improve searchability without altering the status of the map, we currently have to go through the old website to do that which is a hassle.

it'd be really nice to ha... | non_process | add the ability to edit online tags online tags only editable by nat gmt on old website are used to add additional tags to ranked and loved maps to improve searchability without altering the status of the map we currently have to go through the old website to do that which is a hassle it d be really nice to ha... | 0 |

47,726 | 25,159,151,802 | IssuesEvent | 2022-11-10 15:35:02 | WHOIGit/ifcbdb | https://api.github.com/repos/WHOIGit/ifcbdb | closed | change default for include_coordinates on api_bin to "false" | bug performance | the default behavior (which the UI might be using instead of explicitly setting include_coordinates to "true", so a mod would be needed there as well) causes a mosaic generation backlog when scripts are calling `/api/bin`.

https://github.com/WHOIGit/ifcbdb/blob/dfe8798eaf1a5bc87e526089426c8650849b2a8f/ifcbdb/dashboa... | True | change default for include_coordinates on api_bin to "false" - the default behavior (which the UI might be using instead of explicitly setting include_coordinates to "true", so a mod would be needed there as well) causes a mosaic generation backlog when scripts are calling `/api/bin`.

https://github.com/WHOIGit/ifcb... | non_process | change default for include coordinates on api bin to false the default behavior which the ui might be using instead of explicitly setting include coordinates to true so a mod would be needed there as well causes a mosaic generation backlog when scripts are calling api bin | 0 |

25,988 | 4,187,812,840 | IssuesEvent | 2016-06-23 18:39:51 | metadatacenter/cedar-project | https://api.github.com/repos/metadatacenter/cedar-project | closed | Develop and implement test plan for all REST calls | test | Based on comprehensive documentation of all REST call in metadatacenter/cedar-template-server#2 create GitHub issues for each set of tests. Goal is to have a tests for every REST call PUT/POST/DELETE/GET and parameter combinations. | 1.0 | Develop and implement test plan for all REST calls - Based on comprehensive documentation of all REST call in metadatacenter/cedar-template-server#2 create GitHub issues for each set of tests. Goal is to have a tests for every REST call PUT/POST/DELETE/GET and parameter combinations. | non_process | develop and implement test plan for all rest calls based on comprehensive documentation of all rest call in metadatacenter cedar template server create github issues for each set of tests goal is to have a tests for every rest call put post delete get and parameter combinations | 0 |

5,379 | 8,205,082,425 | IssuesEvent | 2018-09-03 08:59:55 | openvstorage/framework-cinder-plugin | https://api.github.com/repos/openvstorage/framework-cinder-plugin | closed | Cinder / nova plugin loose ends | process_wontfix | Follow up on https://github.com/openvstorage/framework-cinder-plugin/issues/17 where features of cinder backup and nova migration are not implemented/tested, so following items need to be taken into account when continuing the work on this.

- [ ] Package the OVS FWK rest client

- [ ] Think about possible integration ... | 1.0 | Cinder / nova plugin loose ends - Follow up on https://github.com/openvstorage/framework-cinder-plugin/issues/17 where features of cinder backup and nova migration are not implemented/tested, so following items need to be taken into account when continuing the work on this.

- [ ] Package the OVS FWK rest client

- [ ]... | process | cinder nova plugin loose ends follow up on where features of cinder backup and nova migration are not implemented tested so following items need to be taken into account when continuing the work on this package the ovs fwk rest client think about possible integration of ovs template capability up... | 1 |

9,470 | 12,466,468,162 | IssuesEvent | 2020-05-28 15:31:22 | Fracappo87/XBTs_classification | https://api.github.com/repos/Fracappo87/XBTs_classification | closed | Add bad data filtering and non-standard name mappings to preprocessing | preprocessing | Initial data exploration has shown some previously unknown issues with the data. There are not many but they do cause problems for the explorations and classification code that are not easily resolved automatically. For now therefore we can simply exclude observations with these issues, so the code runs. Current issues... | 1.0 | Add bad data filtering and non-standard name mappings to preprocessing - Initial data exploration has shown some previously unknown issues with the data. There are not many but they do cause problems for the explorations and classification code that are not easily resolved automatically. For now therefore we can simply... | process | add bad data filtering and non standard name mappings to preprocessing initial data exploration has shown some previously unknown issues with the data there are not many but they do cause problems for the explorations and classification code that are not easily resolved automatically for now therefore we can simply... | 1 |

408,722 | 27,704,835,975 | IssuesEvent | 2023-03-14 10:30:03 | squidfunk/mkdocs-material | https://api.github.com/repos/squidfunk/mkdocs-material | closed | Bad hyperlink | documentation | ### Description

There is a bad hyperlink in this [section of the documentation](https://squidfunk.github.io/mkdocs-material/reference/icons-emojis/#configuration). The [Emoji with custom icons](https://squidfunk.github.io/mkdocs-material/setup/extensions/python-markdown-extensions/#custom-icons) under the configuratio... | 1.0 | Bad hyperlink - ### Description

There is a bad hyperlink in this [section of the documentation](https://squidfunk.github.io/mkdocs-material/reference/icons-emojis/#configuration). The [Emoji with custom icons](https://squidfunk.github.io/mkdocs-material/setup/extensions/python-markdown-extensions/#custom-icons) under ... | non_process | bad hyperlink description there is a bad hyperlink in this the under the configuration options list links to a non existent permalink related links bad permalink should link to this proposed change change before submitting i have read and... | 0 |

55,999 | 11,494,138,411 | IssuesEvent | 2020-02-12 00:44:58 | toolbox-team/reddit-moderator-toolbox | https://api.github.com/repos/toolbox-team/reddit-moderator-toolbox | opened | Convert `subredditColor` options to a general setting | code quality | Currently, the setting that controls whether or not things get color-coded per subreddit is a setting in queue tools. It gets duplicated to the profile pro module, and it applies even outside of queues, so I think it makes sense to migrate it to a general setting that all modules can pull from. | 1.0 | Convert `subredditColor` options to a general setting - Currently, the setting that controls whether or not things get color-coded per subreddit is a setting in queue tools. It gets duplicated to the profile pro module, and it applies even outside of queues, so I think it makes sense to migrate it to a general setting ... | non_process | convert subredditcolor options to a general setting currently the setting that controls whether or not things get color coded per subreddit is a setting in queue tools it gets duplicated to the profile pro module and it applies even outside of queues so i think it makes sense to migrate it to a general setting ... | 0 |

769,810 | 27,018,826,319 | IssuesEvent | 2023-02-10 22:25:37 | googleapis/nodejs-automl | https://api.github.com/repos/googleapis/nodejs-automl | closed | Automl Natural Language Entity Extraction Predict Test: should predict failed | type: bug priority: p1 api: automl flakybot: issue | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 97411a2bb514b9921bb3932543a2d895c452d5c6

b... | 1.0 | Automl Natural Language Entity Extraction Predict Test: should predict failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and