Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

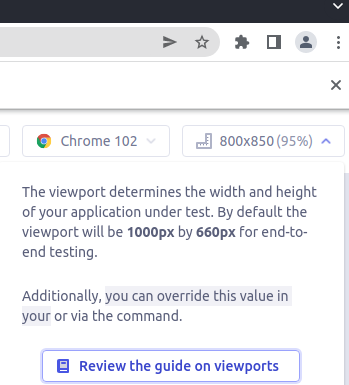

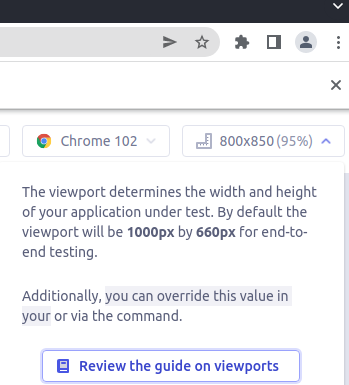

666,089 | 22,342,078,341 | IssuesEvent | 2022-06-15 02:35:39 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Words missing in viewport help message. | type: bug E2E-core stage: fire watch epic:ui-ux-improvements priority: highest | ### Current behavior

When clicking the viewport size message, it seems some words are missing.

What is `your`?

I guess it's `config file`, right?

### Desired behavior

Correct help message should be... | 1.0 | Words missing in viewport help message. - ### Current behavior

When clicking the viewport size message, it seems some words are missing.

What is `your`?

I guess it's `config file`, right?

### Desire... | non_process | words missing in viewport help message current behavior when clicking the viewport size message it seems some words are missing what is your i guess it s config file right desired behavior correct help message should be shown test code to reproduce click viewport button ... | 0 |

102,247 | 16,550,508,701 | IssuesEvent | 2021-05-28 08:02:29 | AlexRogalskiy/typescript-tools | https://api.github.com/repos/AlexRogalskiy/typescript-tools | closed | CVE-2021-32640 (Medium) detected in ws-5.2.2.tgz | Status: Invalid security vulnerability | ## CVE-2021-32640 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ws-5.2.2.tgz</b></p></summary>

<p>Simple to use, blazing fast and thoroughly tested websocket client and server for ... | True | CVE-2021-32640 (Medium) detected in ws-5.2.2.tgz - ## CVE-2021-32640 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ws-5.2.2.tgz</b></p></summary>

<p>Simple to use, blazing fast and... | non_process | cve medium detected in ws tgz cve medium severity vulnerability vulnerable library ws tgz simple to use blazing fast and thoroughly tested websocket client and server for node js library home page a href path to dependency file typescript tools package json path to v... | 0 |

16,846 | 22,098,242,144 | IssuesEvent | 2022-06-01 11:53:34 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Export all records to ES by default | kind/feature scope/broker area/observability team/process-automation | **Is your feature request related to a problem? Please describe.**

Currently, we use the Zeebe Elasticsearch export in Camunda Cloud to export some records to ES that are consumed by Operate, Tasklist, or Optimize. But we don't export all records by default.

In addition to these applications, we could use the recor... | 1.0 | Export all records to ES by default - **Is your feature request related to a problem? Please describe.**

Currently, we use the Zeebe Elasticsearch export in Camunda Cloud to export some records to ES that are consumed by Operate, Tasklist, or Optimize. But we don't export all records by default.

In addition to thes... | process | export all records to es by default is your feature request related to a problem please describe currently we use the zeebe elasticsearch export in camunda cloud to export some records to es that are consumed by operate tasklist or optimize but we don t export all records by default in addition to thes... | 1 |

1,109 | 3,588,369,427 | IssuesEvent | 2016-01-30 23:45:54 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | Create global variable mysql-connpool_default_max | ADMIN CONNECTION POOL cxx_pa development MYSQL PROTOCOL QUERY PROCESSOR ROUTING | This variable will define the total number of connections per hostgroup.

In a future version will be possible to fine tune the number of connections based on username/schema/hostgroup | 1.0 | Create global variable mysql-connpool_default_max - This variable will define the total number of connections per hostgroup.

In a future version will be possible to fine tune the number of connections based on username/schema/hostgroup | process | create global variable mysql connpool default max this variable will define the total number of connections per hostgroup in a future version will be possible to fine tune the number of connections based on username schema hostgroup | 1 |

2,911 | 5,902,715,419 | IssuesEvent | 2017-05-19 02:49:11 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | shell_exec does not work correctly when using "awk" before generating report | html report log-processing question | Hi Support,

I'm facing the problem with "awk" and "goaccess"

**My PHP script**:

```

$cmd = "awk '$7 ~ /schedule/' /var/logs/httpd/access_log* | goaccess -a -o /var/www/html/log/generate_report.html"

$output = shell_exec($cmd);

```

**Error Log**:

> AH01215: awk: cmd. line:1: (FILENAME=/var/logs/httpd/acc... | 1.0 | shell_exec does not work correctly when using "awk" before generating report - Hi Support,

I'm facing the problem with "awk" and "goaccess"

**My PHP script**:

```

$cmd = "awk '$7 ~ /schedule/' /var/logs/httpd/access_log* | goaccess -a -o /var/www/html/log/generate_report.html"

$output = shell_exec($cmd);

```

... | process | shell exec does not work correctly when using awk before generating report hi support i m facing the problem with awk and goaccess my php script cmd awk schedule var logs httpd access log goaccess a o var www html log generate report html output shell exec cmd ... | 1 |

283,674 | 21,327,293,906 | IssuesEvent | 2022-04-18 01:41:20 | aws/aws-iot-device-sdk-js-v2 | https://api.github.com/repos/aws/aws-iot-device-sdk-js-v2 | opened | Very confusing key | documentation needs-triage | ### Describe the issue

Every time I try IoT, I struggle with certificates.

Most examples and documents use aws console generated certificates. Which works but still confusing.

for example :

On AWS GUI :

there is two options to create certificate.

Gecko/59.0 Firefox/59.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: http://www.canadiantire.ca/en/store-locator.html

**Browser / Version**: Firefox Mobile 59.0

**Operating System**: Android 7.0

**Tested Anothe... | 1.0 | www.canadiantire.ca - site is not usable - <!-- @browser: Firefox Mobile 59.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:59.0) Gecko/59.0 Firefox/59.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: http://www.canadiantire.ca/en/store-locator.html

**Browser / Version**: Firefox Mobile 59.0

**Oper... | non_process | site is not usable url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description site is blank on mobile mode steps to reproduce none just tried to load page from with ❤️ | 0 |

20,460 | 27,128,505,760 | IssuesEvent | 2023-02-16 07:58:47 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Get rid of cmd_helper | P4 type: process untriaged stale team-Rules-API | It has been marked as deprecated for a long time and it's not really useful: https://docs.bazel.build/versions/master/skylark/lib/cmd_helper.html

cc @c-parsons | 1.0 | Get rid of cmd_helper - It has been marked as deprecated for a long time and it's not really useful: https://docs.bazel.build/versions/master/skylark/lib/cmd_helper.html

cc @c-parsons | process | get rid of cmd helper it has been marked as deprecated for a long time and it s not really useful cc c parsons | 1 |

104,315 | 8,970,567,023 | IssuesEvent | 2019-01-29 13:58:32 | raiden-network/raiden | https://api.github.com/repos/raiden-network/raiden | opened | Make development setup documentation executable. | test review item | ## Problem Definition

[Suggested](https://github.com/raiden-network/raiden/tree/test-review/ob-review#suggestion) to us during the review of our tests.

Essentially instead of documenting the steps needed to setup a raiden development environment like we already do [here](https://github.com/raiden-network/raiden/b... | 1.0 | Make development setup documentation executable. - ## Problem Definition

[Suggested](https://github.com/raiden-network/raiden/tree/test-review/ob-review#suggestion) to us during the review of our tests.

Essentially instead of documenting the steps needed to setup a raiden development environment like we already d... | non_process | make development setup documentation executable problem definition to us during the review of our tests essentially instead of documenting the steps needed to setup a raiden development environment like we already do the suggestion is to maintain a tox file that setups and describes the environme... | 0 |

243,466 | 7,858,061,750 | IssuesEvent | 2018-06-21 12:53:55 | robotology/icub-main | https://api.github.com/repos/robotology/icub-main | closed | ICUB devel is not configuring with latest YARP devel | Complexity: Low Priority: High Severity: Normal Type: Cleanup | Configuring latest ICUB devel with latest YARP devel, there are a lot of CMake errors like:

~~~

CMake Error at CMakeLists.txt:98 (include):

include could not find load file:

YarpInstallationHelpers

-- Setting up installation of iCub.ini to /home/straversaro/src/robotology-superbuild/build/install/sh... | 1.0 | ICUB devel is not configuring with latest YARP devel - Configuring latest ICUB devel with latest YARP devel, there are a lot of CMake errors like:

~~~

CMake Error at CMakeLists.txt:98 (include):

include could not find load file:

YarpInstallationHelpers

-- Setting up installation of iCub.ini to /home... | non_process | icub devel is not configuring with latest yarp devel configuring latest icub devel with latest yarp devel there are a lot of cmake errors like cmake error at cmakelists txt include include could not find load file yarpinstallationhelpers setting up installation of icub ini to home ... | 0 |

80,106 | 15,355,072,047 | IssuesEvent | 2021-03-01 10:36:46 | gitpod-io/gitpod | https://api.github.com/repos/gitpod-io/gitpod | closed | Support content store backed user storage in Code | editor: code | In Code extensions can write files which are expected live beyond a single workspace (e.g. settings or caches). With the advent of the content store (#2001), we should make use of the content store for storing this data. | 1.0 | Support content store backed user storage in Code - In Code extensions can write files which are expected live beyond a single workspace (e.g. settings or caches). With the advent of the content store (#2001), we should make use of the content store for storing this data. | non_process | support content store backed user storage in code in code extensions can write files which are expected live beyond a single workspace e g settings or caches with the advent of the content store we should make use of the content store for storing this data | 0 |

19,471 | 25,769,811,641 | IssuesEvent | 2022-12-09 06:47:54 | Agility-Internship/Tai-nguyen-html-css | https://api.github.com/repos/Agility-Internship/Tai-nguyen-html-css | closed | Setup structure project | in-process | - [x] Create images

- [x] Create fonts

- [x] Create videos

- [x] Edit README.md

- [x] Install parcel

- [x] Update gitignore | 1.0 | Setup structure project - - [x] Create images

- [x] Create fonts

- [x] Create videos

- [x] Edit README.md

- [x] Install parcel

- [x] Update gitignore | process | setup structure project create images create fonts create videos edit readme md install parcel update gitignore | 1 |

265,522 | 20,101,113,248 | IssuesEvent | 2022-02-07 04:21:55 | faunists/deal-go | https://api.github.com/repos/faunists/deal-go | closed | Update `README.md` with examples of how to use `deal` | documentation good first issue hacktoberfest | We need to put some usage examples of deal:

- [ ] Normal client

- [ ] Stub client

- [ ] Server test

As inspiration you can see our example project [faunists/deal-go-example](https://github.com/faunists/deal-go-example) | 1.0 | Update `README.md` with examples of how to use `deal` - We need to put some usage examples of deal:

- [ ] Normal client

- [ ] Stub client

- [ ] Server test

As inspiration you can see our example project [faunists/deal-go-example](https://github.com/faunists/deal-go-example) | non_process | update readme md with examples of how to use deal we need to put some usage examples of deal normal client stub client server test as inspiration you can see our example project | 0 |

17,254 | 23,038,439,000 | IssuesEvent | 2022-07-22 22:08:56 | retaildevcrews/pib-cli | https://api.github.com/repos/retaildevcrews/pib-cli | closed | azure capacity issues | Process Demo | - the new subscription has azure capacity issues

- VM SKUs aren't available in any region I've found

- can someone check with Azure capacity and find a 4 core SKU with SSD that's available in a region?

- we'll need 8 core machines for voe-fleet

anne -> Assuming I'm looking in the right place, I see Standard_D8s_v... | 1.0 | azure capacity issues - - the new subscription has azure capacity issues

- VM SKUs aren't available in any region I've found

- can someone check with Azure capacity and find a 4 core SKU with SSD that's available in a region?

- we'll need 8 core machines for voe-fleet

anne -> Assuming I'm looking in the right pla... | process | azure capacity issues the new subscription has azure capacity issues vm skus aren t available in any region i ve found can someone check with azure capacity and find a core sku with ssd that s available in a region we ll need core machines for voe fleet anne assuming i m looking in the right pla... | 1 |

22,741 | 32,056,196,867 | IssuesEvent | 2023-09-24 05:19:53 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/logstransform] Issue with replace_pattern transform function | Stale processor/logstransform closed as inactive | ### Component(s)

processor/logstransform

### Describe the issue you're reporting

We are using below transform processor rule to replace phone number. It is working fine with regex101 but some issues with splunk client v0.61.0 or v0.63.0 process (AKS). Here are the details. Please check and advice

rule: - re... | 1.0 | [processor/logstransform] Issue with replace_pattern transform function - ### Component(s)

processor/logstransform

### Describe the issue you're reporting

We are using below transform processor rule to replace phone number. It is working fine with regex101 but some issues with splunk client v0.61.0 or v0.63.0 ... | process | issue with replace pattern transform function component s processor logstransform describe the issue you re reporting we are using below transform processor rule to replace phone number it is working fine with but some issues with splunk client or process aks here are the details... | 1 |

9,083 | 12,151,622,803 | IssuesEvent | 2020-04-24 20:20:57 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Regions not listed as supported. | Pri2 automation/svc process-automation/subsvc | Why is it that you don't mention locations such as "southcentralus" as being supported, however it's listed in allowed values for the "location" property of the ARM template which addresses deployment of a workspace and linking of an automation account on this page: https://docs.microsoft.com/en-us/azure/automation/aut... | 1.0 | Regions not listed as supported. - Why is it that you don't mention locations such as "southcentralus" as being supported, however it's listed in allowed values for the "location" property of the ARM template which addresses deployment of a workspace and linking of an automation account on this page: https://docs.micro... | process | regions not listed as supported why is it that you don t mention locations such as southcentralus as being supported however it s listed in allowed values for the location property of the arm template which addresses deployment of a workspace and linking of an automation account on this page if the location... | 1 |

16,049 | 20,192,886,550 | IssuesEvent | 2022-02-11 07:51:02 | soederpop/active-mdx-software-project-test-repo | https://api.github.com/repos/soederpop/active-mdx-software-project-test-repo | closed | A customer should be able to pay with a gift card | story-created epic-payment-processing | # A customer should be able to pay with a gift card

As a customer I want to be able to pay with a gift card so I can complete my order

| 1.0 | A customer should be able to pay with a gift card - # A customer should be able to pay with a gift card

As a customer I want to be able to pay with a gift card so I can complete my order

| process | a customer should be able to pay with a gift card a customer should be able to pay with a gift card as a customer i want to be able to pay with a gift card so i can complete my order | 1 |

269,075 | 23,417,181,251 | IssuesEvent | 2022-08-13 05:42:37 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | ccl/sqlproxyccl/tenant: TestDeleteTenant failed | C-test-failure O-robot branch-master | ccl/sqlproxyccl/tenant.TestDeleteTenant [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/6079885?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/6079885?buildTab=artifacts#/) on master @ [0dd438d3dc0b425438904... | 1.0 | ccl/sqlproxyccl/tenant: TestDeleteTenant failed - ccl/sqlproxyccl/tenant.TestDeleteTenant [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/6079885?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/6079885?buildT... | non_process | ccl sqlproxyccl tenant testdeletetenant failed ccl sqlproxyccl tenant testdeletetenant with on master run testdeletetenant test log scope go test logs captured to artifacts tmp tmp directory cache test go starting tenant directory cache test go tenant started... | 0 |

7,234 | 10,384,005,399 | IssuesEvent | 2019-09-10 10:57:33 | RIOT-OS/RIOT | https://api.github.com/repos/RIOT-OS/RIOT | closed | RFC: merge candev API into netdev API | Area: drivers Discussion: RFC Process: API change State: stale | Looking at the newly merged candev API I still don't see, why this has to be its own API apart from netdev:

* The receive mechanism is nearly the same (except that there is no receive function, I'm guessing the frame is passed via the event callback).

* The only difference in the two `send()` is that `can` gives th... | 1.0 | RFC: merge candev API into netdev API - Looking at the newly merged candev API I still don't see, why this has to be its own API apart from netdev:

* The receive mechanism is nearly the same (except that there is no receive function, I'm guessing the frame is passed via the event callback).

* The only difference in... | process | rfc merge candev api into netdev api looking at the newly merged candev api i still don t see why this has to be its own api apart from netdev the receive mechanism is nearly the same except that there is no receive function i m guessing the frame is passed via the event callback the only difference in... | 1 |

13,522 | 16,058,127,930 | IssuesEvent | 2021-04-23 08:39:26 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error: [libs/datamodel/connectors/dml/src/model.rs:161:64] Could not find relation field clubs_clubsTousers on model User. | bug/1-repro-available kind/bug process/candidate team/migrations | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma introspect`

Version: `2.21.2`

Binary Version: `e421996c87d5f3c8f7eeadd502d4ad402c89464d`

Report: https://prisma-errors.netlify.app/report/13240

OS: `x64 linux 5.8.0-50-generic`

JS Stacktrace:

```

Error: [libs/datamod... | 1.0 | Error: [libs/datamodel/connectors/dml/src/model.rs:161:64] Could not find relation field clubs_clubsTousers on model User. - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma introspect`

Version: `2.21.2`

Binary Version: `e421996c87d5f3c8f7eeadd502d4ad402c89464d`

Report: ... | process | error could not find relation field clubs clubstousers on model user command prisma introspect version binary version report os linux generic js stacktrace error could not find relation field clubs clubstousers on model user at childprocess home y... | 1 |

6,597 | 9,669,645,176 | IssuesEvent | 2019-05-21 17:53:36 | bazelbuild/rules_swift | https://api.github.com/repos/bazelbuild/rules_swift | closed | Determine if protoc build artifacts can be cached by Travis | P2 type: process | A significant amount of the time spent running checks on Travis is from compiling `protoc` from source for use by the `swift_proto_library` rule. We don't need to build this every time we run our tests—we should only be doing it if it changes (which we have control over, because we lock in a version in our repository r... | 1.0 | Determine if protoc build artifacts can be cached by Travis - A significant amount of the time spent running checks on Travis is from compiling `protoc` from source for use by the `swift_proto_library` rule. We don't need to build this every time we run our tests—we should only be doing it if it changes (which we have ... | process | determine if protoc build artifacts can be cached by travis a significant amount of the time spent running checks on travis is from compiling protoc from source for use by the swift proto library rule we don t need to build this every time we run our tests—we should only be doing it if it changes which we have ... | 1 |

11,916 | 14,701,870,398 | IssuesEvent | 2021-01-04 12:39:20 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | closed | Generated types (index.d.ts) contains invalid expressions | bug/2-confirmed kind/bug process/candidate team/client tech/engines | ## Bug description

The generated types file (`node_modules/.prisma/client/index.d.ts`) generates type definitions with invalid signatures. These signatures seem to come from schema definitions that have multiple unique fields. Here's an example of an invalid signature from my code:

```

node_modules/.prisma/clien... | 1.0 | Generated types (index.d.ts) contains invalid expressions - ## Bug description

The generated types file (`node_modules/.prisma/client/index.d.ts`) generates type definitions with invalid signatures. These signatures seem to come from schema definitions that have multiple unique fields. Here's an example of an invali... | process | generated types index d ts contains invalid expressions bug description the generated types file node modules prisma client index d ts generates type definitions with invalid signatures these signatures seem to come from schema definitions that have multiple unique fields here s an example of an invali... | 1 |

10,102 | 13,044,162,124 | IssuesEvent | 2020-07-29 03:47:29 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `UTCTimestampWithArg` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `UTCTimestampWithArg` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query... | 2.0 | UCP: Migrate scalar function `UTCTimestampWithArg` from TiDB -

## Description

Port the scalar function `UTCTimestampWithArg` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

-... | process | ucp migrate scalar function utctimestampwitharg from tidb description port the scalar function utctimestampwitharg from tidb to coprocessor score mentor s andylokandy recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

20,779 | 27,516,250,994 | IssuesEvent | 2023-03-06 12:11:18 | zotero/zotero | https://api.github.com/repos/zotero/zotero | closed | Make "Add Note" button small and move elsewhere in toolbar | Papercuts Word Processor Integration | Too many people are clicking "Add Note" while trying to insert a citation (and "Note" is confusing for some citation styles). | 1.0 | Make "Add Note" button small and move elsewhere in toolbar - Too many people are clicking "Add Note" while trying to insert a citation (and "Note" is confusing for some citation styles). | process | make add note button small and move elsewhere in toolbar too many people are clicking add note while trying to insert a citation and note is confusing for some citation styles | 1 |

10,484 | 13,252,919,533 | IssuesEvent | 2020-08-20 06:34:01 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | Re-design coprocessor busy detection logic | sig/coprocessor status/discussion type/enhancement | We met a case that there are not many coprocessor requests, but with each one took a long time. In such cases, our current endpoint busy logic is not useful anymore, which presumed that requests are handled in a short time.

We need to implement a more accurate busy detection logic. For example, maybe we should use "... | 1.0 | Re-design coprocessor busy detection logic - We met a case that there are not many coprocessor requests, but with each one took a long time. In such cases, our current endpoint busy logic is not useful anymore, which presumed that requests are handled in a short time.

We need to implement a more accurate busy detect... | process | re design coprocessor busy detection logic we met a case that there are not many coprocessor requests but with each one took a long time in such cases our current endpoint busy logic is not useful anymore which presumed that requests are handled in a short time we need to implement a more accurate busy detect... | 1 |

17,949 | 23,940,914,060 | IssuesEvent | 2022-09-11 22:05:06 | GregTechCEu/gt-ideas | https://api.github.com/repos/GregTechCEu/gt-ideas | opened | More Masochistic Yttrium Barium Cuprate Chain | processing chain | ## Details

Currently, YBC is produced simply by mixing the dusts together and putting it in a blast furnace. This processing chain suggestion is shamelessly copied directly from GCYL. Because I think GCYL has a lot of cool thingies in it that could be salvaged.

## Products

Main Product:

Side Product(s):

## Ste... | 1.0 | More Masochistic Yttrium Barium Cuprate Chain - ## Details

Currently, YBC is produced simply by mixing the dusts together and putting it in a blast furnace. This processing chain suggestion is shamelessly copied directly from GCYL. Because I think GCYL has a lot of cool thingies in it that could be salvaged.

## Pro... | process | more masochistic yttrium barium cuprate chain details currently ybc is produced simply by mixing the dusts together and putting it in a blast furnace this processing chain suggestion is shamelessly copied directly from gcyl because i think gcyl has a lot of cool thingies in it that could be salvaged pro... | 1 |

138,735 | 11,215,041,851 | IssuesEvent | 2020-01-07 00:37:19 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | "Learn more" under "Congratulations" screen pointing to MM Zenhub | QA/Test-Plan-Specified QA/Yes branding feature/crypto-wallets priority/P2 regression support | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | "Learn more" under "Congratulations" screen pointing to MM Zenhub - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ... | non_process | learn more under congratulations screen pointing to mm zenhub have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the ... | 0 |

743,477 | 25,900,919,198 | IssuesEvent | 2022-12-15 05:32:14 | wso2/api-manager | https://api.github.com/repos/wso2/api-manager | closed | Releasing MI and MI-Dashboard 4.2.0 alpha | Type/Task Priority/Normal Component/MI Component/MIDashboard 4.2.0-alpha | ### Description

Perform MI and MI-Dashboard 4.2.0 alpha release

### Affected Component

MI

### Version

4.2.0-alpha

### Related Issues

_No response_

### Suggested Labels

_No response_ | 1.0 | Releasing MI and MI-Dashboard 4.2.0 alpha - ### Description

Perform MI and MI-Dashboard 4.2.0 alpha release

### Affected Component

MI

### Version

4.2.0-alpha

### Related Issues

_No response_

### Suggested Labels

_No response_ | non_process | releasing mi and mi dashboard alpha description perform mi and mi dashboard alpha release affected component mi version alpha related issues no response suggested labels no response | 0 |

15,254 | 11,429,242,891 | IssuesEvent | 2020-02-04 07:24:53 | clarity-h2020/csis | https://api.github.com/repos/clarity-h2020/csis | reopened | myclimateservice.eu DNS Entries | BB: Infrastructure | Register (sub) domain names for myclimateservice.eu and point DNS entries to IP of csis.clarity-h2020.eu

- myclimateservice.eu

- www.myclimateservice.eu

- csis.myclimateservice.eu.eu

- geoserver.myclimateservice.eu

- erdapp.myclimateservice.eu

- tiles.myclimateservice.eu

- api.myclimateservice.eu

- geonode.my... | 1.0 | myclimateservice.eu DNS Entries - Register (sub) domain names for myclimateservice.eu and point DNS entries to IP of csis.clarity-h2020.eu

- myclimateservice.eu

- www.myclimateservice.eu

- csis.myclimateservice.eu.eu

- geoserver.myclimateservice.eu

- erdapp.myclimateservice.eu

- tiles.myclimateservice.eu

- api... | non_process | myclimateservice eu dns entries register sub domain names for myclimateservice eu and point dns entries to ip of csis clarity eu myclimateservice eu csis myclimateservice eu eu geoserver myclimateservice eu erdapp myclimateservice eu tiles myclimateservice eu api myclimateservice eu ge... | 0 |

3,657 | 6,694,215,680 | IssuesEvent | 2017-10-10 00:23:06 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | closed | MP: uploaded but failed to process | ontology processing problem | The latest submission of [MP](http://bioportal.bioontology.org/ontologies/MP) shows a status of "uploaded", but nothing else. The UI also shows a blank release date in the submissions table. The parsing log indicates that processing started, then halted with no error message:

```

# Logfile created on 2017-08-28 18:... | 1.0 | MP: uploaded but failed to process - The latest submission of [MP](http://bioportal.bioontology.org/ontologies/MP) shows a status of "uploaded", but nothing else. The UI also shows a blank release date in the submissions table. The parsing log indicates that processing started, then halted with no error message:

```... | process | mp uploaded but failed to process the latest submission of shows a status of uploaded but nothing else the ui also shows a blank release date in the submissions table the parsing log indicates that processing started then halted with no error message logfile created on by logger r... | 1 |

34,762 | 9,460,294,103 | IssuesEvent | 2019-04-17 10:34:51 | Scirra/Construct-3-bugs | https://api.github.com/repos/Scirra/Construct-3-bugs | opened | Invalid project name causes build failure | Build Service | ## Problem description

Via: https://www.construct.net/en/forum/construct-3/general-discussion-7/build-failed-143270

Using an invalid project name will cause an Android debug APK build to fail.

## Attach a .c3p

Use built-in Ghost Shooter example

## Steps to reproduce

1. Rename project to `1`

2. Build ... | 1.0 | Invalid project name causes build failure - ## Problem description

Via: https://www.construct.net/en/forum/construct-3/general-discussion-7/build-failed-143270

Using an invalid project name will cause an Android debug APK build to fail.

## Attach a .c3p

Use built-in Ghost Shooter example

## Steps to repr... | non_process | invalid project name causes build failure problem description via using an invalid project name will cause an android debug apk build to fail attach a use built in ghost shooter example steps to reproduce rename project to build as debug apk observed result build ... | 0 |

190,582 | 14,562,862,212 | IssuesEvent | 2020-12-17 01:04:14 | microsoft/STL | https://api.github.com/repos/microsoft/STL | closed | tests/std: Harness CUDA/NVCC | help wanted test | We need to add `nvcc` to the set of configurations we test.

- We need to determine which version we need to support.

- CUDA 10.1 unblocked VS2019 users, however 10.1 Update 2 added `if constexpr` support. Which we want.

- We need to actually add tests which use `nvcc`.

- We have some internally but they aren'... | 1.0 | tests/std: Harness CUDA/NVCC - We need to add `nvcc` to the set of configurations we test.

- We need to determine which version we need to support.

- CUDA 10.1 unblocked VS2019 users, however 10.1 Update 2 added `if constexpr` support. Which we want.

- We need to actually add tests which use `nvcc`.

- We have... | non_process | tests std harness cuda nvcc we need to add nvcc to the set of configurations we test we need to determine which version we need to support cuda unblocked users however update added if constexpr support which we want we need to actually add tests which use nvcc we have some i... | 0 |

9,133 | 12,202,801,439 | IssuesEvent | 2020-04-30 09:32:17 | w3c/aria-at | https://api.github.com/repos/w3c/aria-at | closed | Use linear git history | process | See https://www.bitsnbites.eu/a-tidy-linear-git-history/ -- I think there are advantages to a linear git history. In particular, when reviewing what has happened in the past, a clean linear history is much easier to work with. Right now there are a few merge commits in master.

It GitHub, this can be enforced by:

... | 1.0 | Use linear git history - See https://www.bitsnbites.eu/a-tidy-linear-git-history/ -- I think there are advantages to a linear git history. In particular, when reviewing what has happened in the past, a clean linear history is much easier to work with. Right now there are a few merge commits in master.

It GitHub, thi... | process | use linear git history see i think there are advantages to a linear git history in particular when reviewing what has happened in the past a clean linear history is much easier to work with right now there are a few merge commits in master it github this can be enforced by uncheck allow merge com... | 1 |

16,920 | 22,266,562,202 | IssuesEvent | 2022-06-10 08:03:28 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | opened | Larger CI Runners to Prevent MIRI OOMing and Improve CI Times | question enhancement development-process | **Is your feature request related to a problem or challenge? Please describe what you are trying to do.**

Since updating MIRI in #1828 it is periodically OOMing - https://github.com/apache/arrow-rs/actions/workflows/miri.yaml

| Feature Post-VS2017-Migration Priority 2 | The current implementation in the Ordering Domain Model has almost no Validations in place. This is a very important area in a Domain Model as Domain Entities and Aggregates are responsible for their "always valid" state.

Invariant enforcement is the responsibility of the domain entity itself (especially of the Aggreg... | 1.0 | Need to implement Validations on every Domain Entity constructor and update method (Ordering Domain Model) - The current implementation in the Ordering Domain Model has almost no Validations in place. This is a very important area in a Domain Model as Domain Entities and Aggregates are responsible for their "always val... | non_process | need to implement validations on every domain entity constructor and update method ordering domain model the current implementation in the ordering domain model has almost no validations in place this is a very important area in a domain model as domain entities and aggregates are responsible for their always val... | 0 |

13,090 | 15,440,018,495 | IssuesEvent | 2021-03-08 02:08:53 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Use the Public Suffix List for domain validation | AREA: client AREA: server STATE: Stale SYSTEM: URL processing SYSTEM: cookie TYPE: enhancement | * cookie validation

* same-origin check

https://publicsuffix.org/

https://publicsuffix.org/list/public_suffix_list.dat

https://github.com/wrangr/psl

| 1.0 | Use the Public Suffix List for domain validation - * cookie validation

* same-origin check

https://publicsuffix.org/

https://publicsuffix.org/list/public_suffix_list.dat

https://github.com/wrangr/psl

| process | use the public suffix list for domain validation cookie validation same origin check | 1 |

6,836 | 9,979,036,985 | IssuesEvent | 2019-07-09 21:30:10 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | The force-unique parameter does not work when the duplicated topicref is chunked | bug preprocess/chunking priority/medium stale |

- I believe this to be a bug, not a question about using DITA-OT.

- I read the [CONTRIBUTING][] file.

```xml

<map>

<title>Map</title>

<topicref href="topic.dita"/>

<topicref href="topic.dita" chunk="to-content">

<topicref href="subtopic.dita"/>

</topicref>

</map>

```

When transf... | 1.0 | The force-unique parameter does not work when the duplicated topicref is chunked -

- I believe this to be a bug, not a question about using DITA-OT.

- I read the [CONTRIBUTING][] file.

```xml

<map>

<title>Map</title>

<topicref href="topic.dita"/>

<topicref href="topic.dita" chunk="to-content">

... | process | the force unique parameter does not work when the duplicated topicref is chunked i believe this to be a bug not a question about using dita ot i read the file xml map when transforming the above dita map to xhtml with the force unique paramete... | 1 |

90,316 | 15,856,106,199 | IssuesEvent | 2021-04-08 01:32:05 | KingdomB/liri-node-app | https://api.github.com/repos/KingdomB/liri-node-app | opened | CVE-2020-7754 (High) detected in npm-user-validate-1.0.0.tgz | security vulnerability | ## CVE-2020-7754 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-user-validate-1.0.0.tgz</b></p></summary>

<p>User validations for npm</p>

<p>Library home page: <a href="https://re... | True | CVE-2020-7754 (High) detected in npm-user-validate-1.0.0.tgz - ## CVE-2020-7754 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-user-validate-1.0.0.tgz</b></p></summary>

<p>User va... | non_process | cve high detected in npm user validate tgz cve high severity vulnerability vulnerable library npm user validate tgz user validations for npm library home page a href path to dependency file liri node app package json path to vulnerable library liri node app node mod... | 0 |

5,229 | 8,029,977,014 | IssuesEvent | 2018-07-27 17:55:40 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | closed | Bigtable: 'list_instances' systest flakes w/ simultaneous runs | api: bigtable flaky testing type: process | See: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7441

The fix from #5476 didn't account for the fact that the "other" test run might be deleting instances, too. | 1.0 | Bigtable: 'list_instances' systest flakes w/ simultaneous runs - See: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7441

The fix from #5476 didn't account for the fact that the "other" test run might be deleting instances, too. | process | bigtable list instances systest flakes w simultaneous runs see the fix from didn t account for the fact that the other test run might be deleting instances too | 1 |

650,906 | 21,435,889,225 | IssuesEvent | 2022-04-24 01:38:07 | ChrisNZL/Tallowmere2 | https://api.github.com/repos/ChrisNZL/Tallowmere2 | closed | Tilted: Gold and souls sometimes unable to be collected? | 🦟 bug ⚠ priority ✨ alterants ⚛ physics | Manual report. 0.2.3. Feedback ID: 20210202-DJVRP

Claiming Tilted room modifier sometimes has mob's coins, hearts, and souls being stuck in the walls, unable to be retrieved.

I _think_ only Keys have the robust world-position checking happening; not so much for coins, hearts, and souls – need to investigate.

| 1.0 | Tilted: Gold and souls sometimes unable to be collected? - Manual report. 0.2.3. Feedback ID: 20210202-DJVRP

Claiming Tilted room modifier sometimes has mob's coins, hearts, and souls being stuck in the walls, unable to be retrieved.

I _think_ only Keys have the robust world-position checking happening; not so mu... | non_process | tilted gold and souls sometimes unable to be collected manual report feedback id djvrp claiming tilted room modifier sometimes has mob s coins hearts and souls being stuck in the walls unable to be retrieved i think only keys have the robust world position checking happening not so much for ... | 0 |

150,542 | 11,965,324,715 | IssuesEvent | 2020-04-05 22:55:18 | reactive-firewall/Pocket-PiAP | https://api.github.com/repos/reactive-firewall/Pocket-PiAP | closed | ACL issue on older upgrades causes lockout by 406 error | Blocker Bug (Regression) Testing Wanted | # Basic Info

>> When reporting an issue, please list the version of Pocket you are using and any relevant information about your software environment:

Version: Pocket v0.3.5

env: multiple

- OS type

>> `uname -a`

- [x] linux

- [x] Mac OS X (darwin)

- `piaplib` version:

>> `sudo -u pocket-admin python3 -m... | 1.0 | ACL issue on older upgrades causes lockout by 406 error - # Basic Info

>> When reporting an issue, please list the version of Pocket you are using and any relevant information about your software environment:

Version: Pocket v0.3.5

env: multiple

- OS type

>> `uname -a`

- [x] linux

- [x] Mac OS X (darwin)

... | non_process | acl issue on older upgrades causes lockout by error basic info when reporting an issue please list the version of pocket you are using and any relevant information about your software environment version pocket env multiple os type uname a linux mac os x darwin pia... | 0 |

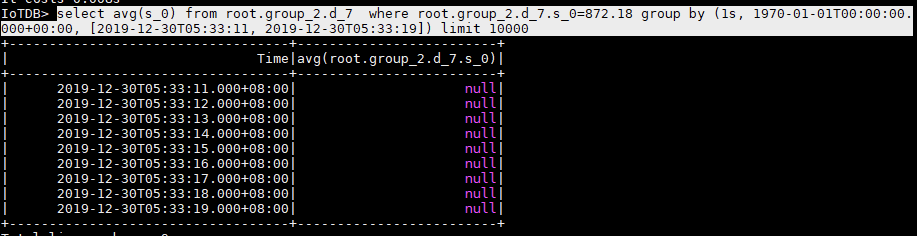

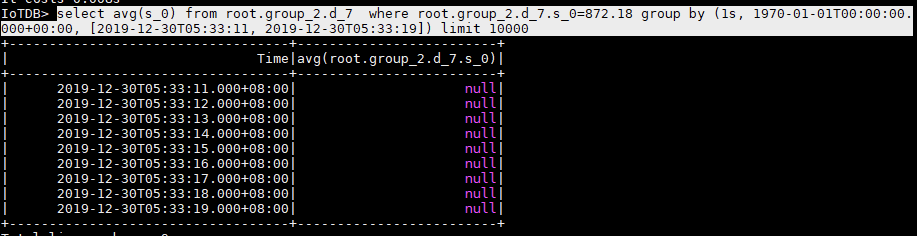

13,751 | 16,503,145,138 | IssuesEvent | 2021-05-25 16:12:17 | apache/iotdb | https://api.github.com/repos/apache/iotdb | closed | Do not return null in group by time query | Easy-Fixed Improvement Module - Query Processing Module - SQL | If all points in one row are null, we'd better give users the choice not to return this row.

在 group by time 查询中,让用户可选择,不返回空的行

| 1.0 | Do not return null in group by time query - If all points in one row are null, we'd better give users the choice not to return this row.

在 group by time 查询中,让用户可选择,不返回空的行

| process | do not return null in group by time query if all points in one row are null we d better give users the choice not to return this row 在 group by time 查询中,让用户可选择,不返回空的行 | 1 |

72,526 | 9,596,556,756 | IssuesEvent | 2019-05-09 18:54:50 | backdrop-ops/backdropcms.org | https://api.github.com/repos/backdrop-ops/backdropcms.org | closed | Improve "Upgrade from Drupal 7" documentation | content - documentation | On the page https://backdropcms.org/upgrade-from-drupal we have detailed step-by-step instructions about upgrading a Drupal 7 site to Backdrop. I've recently upgraded a D7 site to Backdrop. I had some problems to get the upgrade process running, but at the end I managed to do the upgrade. The instructions were generall... | 1.0 | Improve "Upgrade from Drupal 7" documentation - On the page https://backdropcms.org/upgrade-from-drupal we have detailed step-by-step instructions about upgrading a Drupal 7 site to Backdrop. I've recently upgraded a D7 site to Backdrop. I had some problems to get the upgrade process running, but at the end I managed t... | non_process | improve upgrade from drupal documentation on the page we have detailed step by step instructions about upgrading a drupal site to backdrop i ve recently upgraded a site to backdrop i had some problems to get the upgrade process running but at the end i managed to do the upgrade the instructions were gen... | 0 |

99,396 | 30,444,681,160 | IssuesEvent | 2023-07-15 14:07:51 | Krypton-Suite/Standard-Toolkit | https://api.github.com/repos/Krypton-Suite/Standard-Toolkit | closed | [Bug]: Nightly "Build" option causes erros in build sequences | bug build system | Using latest VS preview:

and alpha branch:

| 1.0 | [Bug]: Nightly "Build" option causes erros in build sequences - Using latest VS preview:

and alpha branch:

| non_process | nightly build option causes erros in build sequences using latest vs preview and alpha branch | 0 |

20,361 | 27,020,691,849 | IssuesEvent | 2023-02-11 01:26:36 | MikaylaFischler/cc-mek-scada | https://api.github.com/repos/MikaylaFischler/cc-mek-scada | closed | Unit Ready Condition Should Include PLC Data Received | bug supervisor stability process control | Process start before struct received crashes the supervisor since struct is an empty array | 1.0 | Unit Ready Condition Should Include PLC Data Received - Process start before struct received crashes the supervisor since struct is an empty array | process | unit ready condition should include plc data received process start before struct received crashes the supervisor since struct is an empty array | 1 |

2,809 | 5,738,519,486 | IssuesEvent | 2017-04-23 05:07:27 | SIMEXP/niak | https://api.github.com/repos/SIMEXP/niak | closed | fade of the overlay in the QC of the fMRI preprocessing | enhancement preprocessing quality control | @illdopejake wrote:

I find myself modifying the "fade" of the overlay every time. When the program opens, the overlay is set to be 50% each image. I always find myself bring it toward the left, so its about 75% anatomical(pane 2) and 25% from pane 1. This is because the image in Pane 1 default color scale is a heat map... | 1.0 | fade of the overlay in the QC of the fMRI preprocessing - @illdopejake wrote:

I find myself modifying the "fade" of the overlay every time. When the program opens, the overlay is set to be 50% each image. I always find myself bring it toward the left, so its about 75% anatomical(pane 2) and 25% from pane 1. This is bec... | process | fade of the overlay in the qc of the fmri preprocessing illdopejake wrote i find myself modifying the fade of the overlay every time when the program opens the overlay is set to be each image i always find myself bring it toward the left so its about anatomical pane and from pane this is becaus... | 1 |

468,036 | 13,460,232,635 | IssuesEvent | 2020-09-09 13:21:47 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | vm-mpassard.rezopole.net - SVG mouse event not happening with element | browser-firefox engine-gecko form-v2-experiment os-linux priority-normal severity-minor | <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: http://vm-mpassard.rezopole.net/index.php?id=1111

**Browser / Version**: Firefox 72.0

**Operating System**: Ub... | 1.0 | vm-mpassard.rezopole.net - SVG mouse event not happening with element - <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: http://vm-mpassard.rezopole.net/index.p... | non_process | vm mpassard rezopole net svg mouse event not happening with element url browser version firefox operating system ubuntu tested another browser yes chrome problem type something else description svg mouse event not happening with element steps to reproduce i ha... | 0 |

65,935 | 14,761,967,302 | IssuesEvent | 2021-01-09 01:11:44 | TIBCOSoftware/bw-sample-for-amazon-sns | https://api.github.com/repos/TIBCOSoftware/bw-sample-for-amazon-sns | opened | CVE-2020-36188 (Medium) detected in jackson-databind-2.6.6.jar | security vulnerability | ## CVE-2020-36188 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2020-36188 (Medium) detected in jackson-databind-2.6.6.jar - ## CVE-2020-36188 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.6.jar</b></p></summary>

<p>Gen... | non_process | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library bw sample for amazon sns... | 0 |

35,130 | 2,789,809,746 | IssuesEvent | 2015-05-08 21:37:59 | google/google-visualization-api-issues | https://api.github.com/repos/google/google-visualization-api-issues | opened | Allow user to change the size of a column in a table | Priority-Low Type-Enhancement | Original [issue 171](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=171) created by orwant on 2010-01-23T00:29:59.000Z:

<b>What would you like to see us add to this API?</b>

A lot of data tables have very long column header but very short column

value, therefore it is very inconvenient ... | 1.0 | Allow user to change the size of a column in a table - Original [issue 171](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=171) created by orwant on 2010-01-23T00:29:59.000Z:

<b>What would you like to see us add to this API?</b>

A lot of data tables have very long column header but very s... | non_process | allow user to change the size of a column in a table original created by orwant on what would you like to see us add to this api a lot of data tables have very long column header but very short column value therefore it is very inconvenient for user to see the real data it will be nice if... | 0 |

382,316 | 26,492,504,117 | IssuesEvent | 2023-01-18 00:37:42 | beercss/beercss | https://api.github.com/repos/beercss/beercss | closed | discussions | documentation | what about an official discord server so we can talk all things _beercss_, help each other, etc ? ;-) | 1.0 | discussions - what about an official discord server so we can talk all things _beercss_, help each other, etc ? ;-) | non_process | discussions what about an official discord server so we can talk all things beercss help each other etc | 0 |

9,427 | 12,418,368,037 | IssuesEvent | 2020-05-22 23:59:39 | nion-software/nionswift | https://api.github.com/repos/nion-software/nionswift | opened | Add ability to map 1d and 2d operations to collections/sequences | f - computations f - processing feature stage - planning type - enhancement | e.g. take FFT of every item in collection. | 1.0 | Add ability to map 1d and 2d operations to collections/sequences - e.g. take FFT of every item in collection. | process | add ability to map and operations to collections sequences e g take fft of every item in collection | 1 |

6,772 | 9,912,973,026 | IssuesEvent | 2019-06-28 10:22:46 | wso2/docs-ei | https://api.github.com/repos/wso2/docs-ei | closed | Adding initial infrastructure for GitHub docs | Priority/Highest Severity/Blocker ballerina micro-integrator stream-processor | This involves getting the set of configurations from the template repo and adding it into the docs-ei repo. | 1.0 | Adding initial infrastructure for GitHub docs - This involves getting the set of configurations from the template repo and adding it into the docs-ei repo. | process | adding initial infrastructure for github docs this involves getting the set of configurations from the template repo and adding it into the docs ei repo | 1 |

4,640 | 2,610,135,931 | IssuesEvent | 2015-02-26 18:42:51 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | You can not let go of the Rope while pressing Up & Left at the same time | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Shoot a Rope

2. Press up & left at the same time

3. Try to release the Rope (it works when you press up & right)

What is the expected output? What do you see instead?

That you can let go of it of course :)

What version of the product are you using? On what operating syste... | 1.0 | You can not let go of the Rope while pressing Up & Left at the same time - ```

What steps will reproduce the problem?

1. Shoot a Rope

2. Press up & left at the same time

3. Try to release the Rope (it works when you press up & right)

What is the expected output? What do you see instead?

That you can let go of it of co... | non_process | you can not let go of the rope while pressing up left at the same time what steps will reproduce the problem shoot a rope press up left at the same time try to release the rope it works when you press up right what is the expected output what do you see instead that you can let go of it of co... | 0 |

21,613 | 30,016,340,848 | IssuesEvent | 2023-06-26 19:02:10 | Home-modules/webapp-android | https://api.github.com/repos/Home-modules/webapp-android | closed | Remembering hub IP | enhancement In Process | Currently the app will ask every time about the hub's IP address and port.

It has to remember the IP and launch the web app directly on subsequent starts. | 1.0 | Remembering hub IP - Currently the app will ask every time about the hub's IP address and port.

It has to remember the IP and launch the web app directly on subsequent starts. | process | remembering hub ip currently the app will ask every time about the hub s ip address and port it has to remember the ip and launch the web app directly on subsequent starts | 1 |

16,315 | 20,969,961,725 | IssuesEvent | 2022-03-28 10:24:02 | huutho77/CNPMNC_ThayAi | https://api.github.com/repos/huutho77/CNPMNC_ThayAi | opened | Coding UI for Home page, Login page and Register page | dev/thnguyen processing | The User Interface is built on the design | 1.0 | Coding UI for Home page, Login page and Register page - The User Interface is built on the design | process | coding ui for home page login page and register page the user interface is built on the design | 1 |

19,592 | 25,934,542,919 | IssuesEvent | 2022-12-16 13:01:07 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Release 5.4.0 - December 2022 | P1 type: process release team-OSS | # Status of Bazel 5.4.0

- Expected release date: 2022-12-15

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/45)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the milestone.

To ch... | 1.0 | Release 5.4.0 - December 2022 - # Status of Bazel 5.4.0

- Expected release date: 2022-12-15

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/45)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and a... | process | release december status of bazel expected release date to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone to cherry pick a mainline commit into simply send... | 1 |

105,581 | 13,196,955,998 | IssuesEvent | 2020-08-13 21:47:23 | phetsims/natural-selection | https://api.github.com/repos/phetsims/natural-selection | closed | Are overlapping plots in the Population graph a significant usability problem? | design:interviews | In #101, we identified overlapping plots as a potential usability problem:

> * If plots overlap, one or more sets of data will be hidden. For example, if there are equal numbers of bunnies with white fur and brown fur, only one data set will be visible.

In https://github.com/phetsims/natural-selection/issues/101#... | 1.0 | Are overlapping plots in the Population graph a significant usability problem? - In #101, we identified overlapping plots as a potential usability problem:

> * If plots overlap, one or more sets of data will be hidden. For example, if there are equal numbers of bunnies with white fur and brown fur, only one data set... | non_process | are overlapping plots in the population graph a significant usability problem in we identified overlapping plots as a potential usability problem if plots overlap one or more sets of data will be hidden for example if there are equal numbers of bunnies with white fur and brown fur only one data set w... | 0 |

31,575 | 7,395,910,237 | IssuesEvent | 2018-03-18 04:53:34 | bcgov/api-specs | https://api.github.com/repos/bcgov/api-specs | closed | Users prefer a single text box to enter occupant names or addresses | GEOCODER api | Currently geocoder/occupants/addresses assumes an occupant name and geocoder/addresses assumes an address. Is there a way of looking for both occupants and addresses at the same time? Maybe there could be a new maxOccupantResults where maxResults <= maxResults. For example, if maxOccupantResults=5 and maxResults=10 t... | 1.0 | Users prefer a single text box to enter occupant names or addresses - Currently geocoder/occupants/addresses assumes an occupant name and geocoder/addresses assumes an address. Is there a way of looking for both occupants and addresses at the same time? Maybe there could be a new maxOccupantResults where maxResults <... | non_process | users prefer a single text box to enter occupant names or addresses currently geocoder occupants addresses assumes an occupant name and geocoder addresses assumes an address is there a way of looking for both occupants and addresses at the same time maybe there could be a new maxoccupantresults where maxresults ... | 0 |

9,150 | 12,203,235,337 | IssuesEvent | 2020-04-30 10:15:10 | MHRA/products | https://api.github.com/repos/MHRA/products | reopened | PARs form - Upload / amend | EPIC - PARs process HIGH PRIORITY :arrow_double_up: PARKED 🚗 STORY :book: | ### User want

As a Medical Writer I would like to upload and amend PARs to the Products website, in an easy format so that I can ensure the latest versions of the PARs are always available on the site.

(Linked to #397 and #398)

**Customer acceptance criteria**

1. Medical writers can upload a file to the websit... | 1.0 | PARs form - Upload / amend - ### User want

As a Medical Writer I would like to upload and amend PARs to the Products website, in an easy format so that I can ensure the latest versions of the PARs are always available on the site.

(Linked to #397 and #398)

**Customer acceptance criteria**

1. Medical writers ca... | process | pars form upload amend user want as a medical writer i would like to upload and amend pars to the products website in an easy format so that i can ensure the latest versions of the pars are always available on the site linked to and customer acceptance criteria medical writers can up... | 1 |

299,689 | 25,918,381,614 | IssuesEvent | 2022-12-15 19:26:45 | w3c/mathml-core | https://api.github.com/repos/w3c/mathml-core | opened | Remove mathvariant from core (except 'normal') | need polyfill need specification update needs-tests | I'm starting a new issue based on a side topic mentioned in #181:

> Regarding mathvariant, this is controversial because really only the automatic italicization of the single-char <mi> can be characterized as "stylistic". We failed to convince the CSS WG to extend text-transform for other attribute values, so we didn'... | 1.0 | Remove mathvariant from core (except 'normal') - I'm starting a new issue based on a side topic mentioned in #181:

> Regarding mathvariant, this is controversial because really only the automatic italicization of the single-char <mi> can be characterized as "stylistic". We failed to convince the CSS WG to extend text-... | non_process | remove mathvariant from core except normal i m starting a new issue based on a side topic mentioned in regarding mathvariant this is controversial because really only the automatic italicization of the single char can be characterized as stylistic we failed to convince the css wg to extend text trans... | 0 |

32,084 | 6,711,928,911 | IssuesEvent | 2017-10-13 07:14:33 | RIOT-OS/RIOT | https://api.github.com/repos/RIOT-OS/RIOT | closed | core: thread_flags: THREAD_FLAG_MUTEX_UNLOCKED, THREAD_FLAG_TIMEOUT are not used | core quality defect timer | Follow up to #7533. I found that there are two other predefined constants which are not used in the tree.

- `THREAD_FLAG_MUTEX_UNLOCKED`

- `THREAD_FLAG_TIMEOUT`

I think the constants may be intended for `xtimer_msg_timeout`, which mentions core_thread_flags in the documentation, but does not in fact use thread f... | 1.0 | core: thread_flags: THREAD_FLAG_MUTEX_UNLOCKED, THREAD_FLAG_TIMEOUT are not used - Follow up to #7533. I found that there are two other predefined constants which are not used in the tree.

- `THREAD_FLAG_MUTEX_UNLOCKED`

- `THREAD_FLAG_TIMEOUT`

I think the constants may be intended for `xtimer_msg_timeout`, which... | non_process | core thread flags thread flag mutex unlocked thread flag timeout are not used follow up to i found that there are two other predefined constants which are not used in the tree thread flag mutex unlocked thread flag timeout i think the constants may be intended for xtimer msg timeout which me... | 0 |

5,015 | 7,844,925,286 | IssuesEvent | 2018-06-19 11:16:45 | lbassin/okty | https://api.github.com/repos/lbassin/okty | closed | Add projects templates | enhancement in process | **Describe the solution you'd like**

Add some templates to avoid creating standards containers | 1.0 | Add projects templates - **Describe the solution you'd like**

Add some templates to avoid creating standards containers | process | add projects templates describe the solution you d like add some templates to avoid creating standards containers | 1 |

410,436 | 11,991,624,244 | IssuesEvent | 2020-04-08 08:41:46 | muccg/rdrf | https://api.github.com/repos/muccg/rdrf | closed | Another issue with CICLungProms login | area/cic area/lung cancer area/proms priority/p0 | ```

Request Method: | GET

-- | --

https://rdrf.ccgapps.com.au/ciclungproms/admin/

2.1.15

NoReverseMatch

Reverse for 'custom_action' not found. 'custom_action' is not a valid view function or pattern name.

/env/lib/python3.7/site-packages/django/urls/resolvers.py in _reverse_with_prefix, line 622

/env/bin/uwsg... | 1.0 | Another issue with CICLungProms login - ```

Request Method: | GET

-- | --

https://rdrf.ccgapps.com.au/ciclungproms/admin/

2.1.15

NoReverseMatch

Reverse for 'custom_action' not found. 'custom_action' is not a valid view function or pattern name.

/env/lib/python3.7/site-packages/django/urls/resolvers.py in _reve... | non_process | another issue with ciclungproms login request method get noreversematch reverse for custom action not found custom action is not a valid view function or pattern name env lib site packages django urls resolvers py in reverse with prefix line env bin uwsgi tu... | 0 |

85,968 | 10,699,930,094 | IssuesEvent | 2019-10-23 22:13:02 | lyft/envoy-mobile | https://api.github.com/repos/lyft/envoy-mobile | closed | roadmap: v0.2 release deliverable breakdown | design proposal no stalebot | **The deliverables for v0.2 and their respective owners are available in [this Google Doc](https://docs.google.com/document/d/1eLbJEXog2Rn7wTBEbDIjpDV7dHecV_DV_dw8P5ZgbL4/edit).**

This doc represents a subset of the functionality detailed in our [ongoing roadmap](https://docs.google.com/document/d/1N0ZFJktK8m01uqqgf... | 1.0 | roadmap: v0.2 release deliverable breakdown - **The deliverables for v0.2 and their respective owners are available in [this Google Doc](https://docs.google.com/document/d/1eLbJEXog2Rn7wTBEbDIjpDV7dHecV_DV_dw8P5ZgbL4/edit).**

This doc represents a subset of the functionality detailed in our [ongoing roadmap](https:/... | non_process | roadmap release deliverable breakdown the deliverables for and their respective owners are available in this doc represents a subset of the functionality detailed in our issue | 0 |

7,190 | 10,330,382,316 | IssuesEvent | 2019-09-02 14:32:33 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Extract odd-shaped (irregular) regions | preprocessor | I would like to extract regions in the preprocessor of the ESMValTool which are not rectangular but as they are defined in the IPCC AR5 or AR6. For example to create the panels for a figure like this:

Is... | 1.0 | Extract odd-shaped (irregular) regions - I would like to extract regions in the preprocessor of the ESMValTool which are not rectangular but as they are defined in the IPCC AR5 or AR6. For example to create the panels for a figure like this:

In [34]: df = pd.DataFrame()

In [35]: df['bar'] = range(10)

In [36]: df['foo'] = cat

In [37]: df

Out[37]:

bar foo

0 0 a

1 1 b

2 2 b

3 3 a

4 4 b

5 5... | 1.0 | BUG: inserting a Categorical with the wrong length into a DataFrame is allowed - This leaves the DataFrame in a very weird/buggy state:

```

In [33]: cat = pd.Categorical.from_codes([0, 1, 1, 0, 1, 2], ['a', 'b', 'c'])

In [34]: df = pd.DataFrame()

In [35]: df['bar'] = range(10)

In [36]: df['foo'] = cat

In [37]: df

... | non_process | bug inserting a categorical with the wrong length into a dataframe is allowed this leaves the dataframe in a very weird buggy state in cat pd categorical from codes in df pd dataframe in df range in df cat in df out bar foo a b b ... | 0 |

11,207 | 3,193,179,500 | IssuesEvent | 2015-09-30 02:29:05 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | TestProcWithExceededActionQueueDepth is flaky | area/platform/mesos kind/flake priority/P0 team/test-infra | @jdef @karlkfi @davidopp

Can we get this fixed ASAP?

Thanks!

```

proc_test.go:288: starting test case nested at 2015-09-25 00:21:13.875176228 +0000 UTC

proc_test.go:304: delegate chain invoked for nested at 2015-09-25 00:21:13.930546473 +0000 UTC

proc_test.go:323: executing deferred action: nested at 2015-... | 1.0 | TestProcWithExceededActionQueueDepth is flaky - @jdef @karlkfi @davidopp

Can we get this fixed ASAP?

Thanks!

```

proc_test.go:288: starting test case nested at 2015-09-25 00:21:13.875176228 +0000 UTC

proc_test.go:304: delegate chain invoked for nested at 2015-09-25 00:21:13.930546473 +0000 UTC

proc_test.go... | non_process | testprocwithexceededactionqueuedepth is flaky jdef karlkfi davidopp can we get this fixed asap thanks proc test go starting test case nested at utc proc test go delegate chain invoked for nested at utc proc test go executing deferred action nested at ... | 0 |

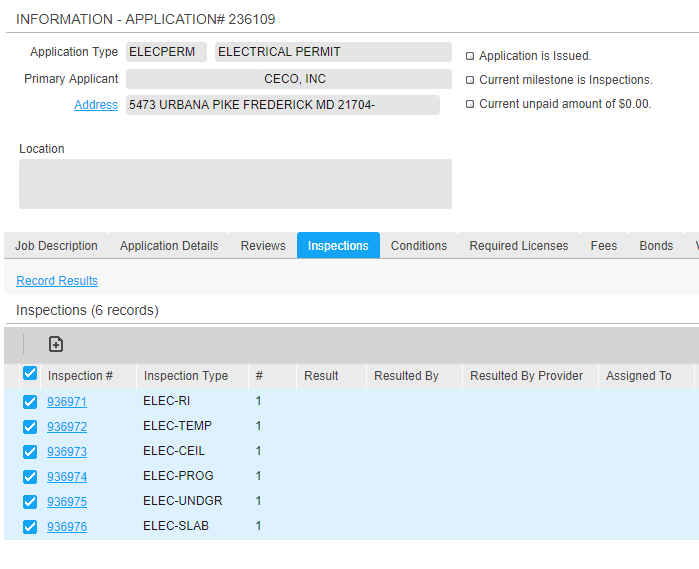

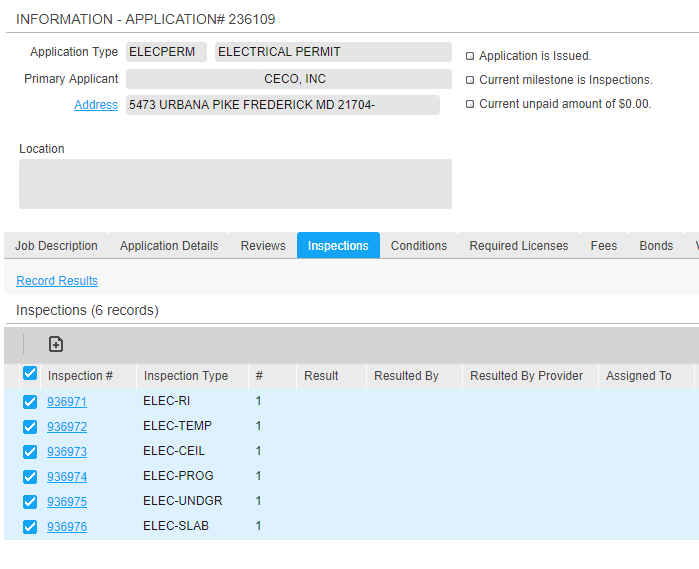

107,044 | 9,200,738,996 | IssuesEvent | 2019-03-07 17:47:28 | kcigeospatial/Fred_Co_Land-Management | https://api.github.com/repos/kcigeospatial/Fred_Co_Land-Management | closed | Building - Electrical Permit - Building Mounted Sign | Ready For Retest | In the Inspection milestone, the inspection that should populate is Final.

AP# 236109

Logged by K. Vanaman on 2/7

| 1.0 | Building - Electrical Permit - Building Mounted Sign - In the Inspection milestone, the inspection that should populate is Final.

AP# 236109

Logged by K. Vanaman on 2/7

| non_process | building electrical permit building mounted sign in the inspection milestone the inspection that should populate is final ap logged by k vanaman on | 0 |

100,802 | 11,205,063,221 | IssuesEvent | 2020-01-05 11:34:24 | mribrgr/StuRa-Mitgliederdatenbank | https://api.github.com/repos/mribrgr/StuRa-Mitgliederdatenbank | opened | Soll die Änderungs-Historie filterbar sein? | Stakeholder documentation | Soll die Historie über alle Änderungen, die an der Datenbank vorgenommen wurden, filterbar sein (z.B. nach User, Zeitpunkt, Art der Änderung, ...)? | 1.0 | Soll die Änderungs-Historie filterbar sein? - Soll die Historie über alle Änderungen, die an der Datenbank vorgenommen wurden, filterbar sein (z.B. nach User, Zeitpunkt, Art der Änderung, ...)? | non_process | soll die änderungs historie filterbar sein soll die historie über alle änderungen die an der datenbank vorgenommen wurden filterbar sein z b nach user zeitpunkt art der änderung | 0 |

76,657 | 14,660,146,367 | IssuesEvent | 2020-12-28 22:36:59 | opentibiabr/otservbr-global | https://api.github.com/repos/opentibiabr/otservbr-global | closed | Guildwar Emblems and Npc's Speechbubbble | Type: Bug Where: Code | 1) the guildwar emblems only appear after re-login, also when the guildwar ends the emblems only disappear after re-login

**Expected behavior**

emblems must appear and disappear without the need of re-login

2) the speechbubble doesn't appear when the server starts, but it does when you use the /n command, and a... | 1.0 | Guildwar Emblems and Npc's Speechbubbble - 1) the guildwar emblems only appear after re-login, also when the guildwar ends the emblems only disappear after re-login

**Expected behavior**

emblems must appear and disappear without the need of re-login

2) the speechbubble doesn't appear when the server starts, but... | non_process | guildwar emblems and npc s speechbubbble the guildwar emblems only appear after re login also when the guildwar ends the emblems only disappear after re login expected behavior emblems must appear and disappear without the need of re login the speechbubble doesn t appear when the server starts but... | 0 |

21,457 | 29,497,046,132 | IssuesEvent | 2023-06-02 17:56:10 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Release 6.2.1 - June 2023 | P1 type: process release team-OSS | # Status of Bazel 6.2.1

- Expected first release candidate date: 2023-05-26

- Expected release date: 2023-06-02

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/54)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manag... | 1.0 | Release 6.2.1 - June 2023 - # Status of Bazel 6.2.1

- Expected first release candidate date: 2023-05-26

- Expected release date: 2023-06-02

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/54)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` t... | process | release june status of bazel expected first release candidate date expected release date to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone to cherr... | 1 |

165,882 | 12,882,288,581 | IssuesEvent | 2020-07-12 16:12:43 | osquery/osquery | https://api.github.com/repos/osquery/osquery | closed | Fix FSEventsTests.test_fsevents_fire_event which crashes on macOS | bug flaky test macOS test | FSEventsTests.test_fsevents_fire_event sometimes crashes on the macOS agent of the CI, in a Debug build:

```

70: Test command: /Users/runner/runners/2.160.0/work/1/b/build/osquery/events/tests/osquery_events_tests_fseventstests-test

70: Test timeout computed to be: 10000000

70: Running main() from /Users/runner/run... | 2.0 | Fix FSEventsTests.test_fsevents_fire_event which crashes on macOS - FSEventsTests.test_fsevents_fire_event sometimes crashes on the macOS agent of the CI, in a Debug build:

```

70: Test command: /Users/runner/runners/2.160.0/work/1/b/build/osquery/events/tests/osquery_events_tests_fseventstests-test

70: Test timeout... | non_process | fix fseventstests test fsevents fire event which crashes on macos fseventstests test fsevents fire event sometimes crashes on the macos agent of the ci in a debug build test command users runner runners work b build osquery events tests osquery events tests fseventstests test test timeout com... | 0 |

172,980 | 27,365,299,277 | IssuesEvent | 2023-02-27 18:40:50 | webstudio-is/webstudio-builder | https://api.github.com/repos/webstudio-is/webstudio-builder | closed | new CSS Value List Item component | type:enhancement complexity:medium area:design system prio:1 | This component is needed for the Backgrounds section #877 and other future sections.

The thumbnail is a nested **Thumbnail** component. It's nothing special, I just organized it this way so the thumbnail could be changed on the list item without breaking the instance in figma. Let me know if I need to make a separa... | 1.0 | new CSS Value List Item component - This component is needed for the Backgrounds section #877 and other future sections.

The thumbnail is a nested **Thumbnail** component. It's nothing special, I just organized it this way so the thumbnail could be changed on the list item without breaking the instance in figma. Le... | non_process | new css value list item component this component is needed for the backgrounds section and other future sections the thumbnail is a nested thumbnail component it s nothing special i just organized it this way so the thumbnail could be changed on the list item without breaking the instance in figma let ... | 0 |

10,780 | 13,608,969,813 | IssuesEvent | 2020-09-23 03:53:43 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | Dependency Dashboard | type: process | This issue contains a list of Renovate updates and their statuses.

## Repository problems

These problems occurred while renovating this repository.

- WARN: Failed to read setup.py file

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-b... | 1.0 | Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Repository problems

These problems occurred while renovating this repository.

- WARN: Failed to read setup.py file

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any... | process | dependency dashboard this issue contains a list of renovate updates and their statuses repository problems these problems occurred while renovating this repository warn failed to read setup py file open these updates have all been created already click a checkbox below to force a retry rebase of any... | 1 |

6,352 | 9,412,095,117 | IssuesEvent | 2019-04-10 02:28:35 | plazi/arcadia-project | https://api.github.com/repos/plazi/arcadia-project | closed | problem with running QCTool: interefernence with batch | Article processing GoldenGate | @gsautter after installing the QCTool, there is an interference with the batch tool. That means I can't run the batch too anymore

Microsoft Windows [Version 10.0.17134.648]

(c) 2018 Microsoft Corporation. All rights reserved.

D:\GoldenGateImagine20170823>java -jar -Xmx10240m GgImagineBatch.jar "E:\diglib\eur... | 1.0 | problem with running QCTool: interefernence with batch - @gsautter after installing the QCTool, there is an interference with the batch tool. That means I can't run the batch too anymore

Microsoft Windows [Version 10.0.17134.648]

(c) 2018 Microsoft Corporation. All rights reserved.

D:\GoldenGateImagine201708... | process | problem with running qctool interefernence with batch gsautter after installing the qctool there is an interference with the batch tool that means i can t run the batch too anymore microsoft windows c microsoft corporation all rights reserved d java jar ggimaginebatch jar e diglib euro... | 1 |