Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

12,218 | 14,743,058,355 | IssuesEvent | 2021-01-07 13:20:10 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Multiple Payment scenario - Payment Deletion | anc-process anp-1 ant-enhancement grt-payments | In GitLab by @kdjstudios on Jul 9, 2019, 10:00

**Submitted by:** Gary

**Helpdesk:** http://gitlab.aavaz.biz/AnswerNet/SABilling/issues/1361#note_43716

**Server:** All

**Client/Site:** All

**Account:** All

**Issue:**

We either:

1. Display a notice "You are about to delete a payment on an account that is not th... | 1.0 | Multiple Payment scenario - Payment Deletion - In GitLab by @kdjstudios on Jul 9, 2019, 10:00

**Submitted by:** Gary

**Helpdesk:** http://gitlab.aavaz.biz/AnswerNet/SABilling/issues/1361#note_43716

**Server:** All

**Client/Site:** All

**Account:** All

**Issue:**

We either:

1. Display a notice "You are about t... | process | multiple payment scenario payment deletion in gitlab by kdjstudios on jul submitted by gary helpdesk server all client site all account all issue we either display a notice you are about to delete a payment on an account that is not the latest payment this ... | 1 |

149,591 | 19,581,701,390 | IssuesEvent | 2022-01-04 22:21:22 | timf-deleteme/ng1 | https://api.github.com/repos/timf-deleteme/ng1 | opened | CVE-2020-15095 (Medium) detected in npm-3.10.10.tgz | security vulnerability | ## CVE-2020-15095 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-3.10.10.tgz</b></p></summary>

<p>a package manager for JavaScript</p>

<p>Library home page: <a href="https://reg... | True | CVE-2020-15095 (Medium) detected in npm-3.10.10.tgz - ## CVE-2020-15095 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-3.10.10.tgz</b></p></summary>

<p>a package manager for Jav... | non_process | cve medium detected in npm tgz cve medium severity vulnerability vulnerable library npm tgz a package manager for javascript library home page a href path to dependency file package json path to vulnerable library node modules npm package json dependency hierar... | 0 |

22,238 | 30,789,370,279 | IssuesEvent | 2023-07-31 15:09:27 | ovh/public-cloud-roadmap | https://api.github.com/repos/ovh/public-cloud-roadmap | closed | Notebooks for Apache Spark - Alpha | Data Processing Available in Alpha Spark | ## User story

As a customer,

I want to process data through Jupyter Notebooks

so that

I could explore my data in an interactive way

## Acceptance criteria

- I can launch notebook through the Manager.

- I can manage my notebook with the Manager.

- I can access the Jupyterlab of my notebook.

- I can use Apache... | 1.0 | Notebooks for Apache Spark - Alpha - ## User story

As a customer,

I want to process data through Jupyter Notebooks

so that

I could explore my data in an interactive way

## Acceptance criteria

- I can launch notebook through the Manager.

- I can manage my notebook with the Manager.

- I can access the Jupyterla... | process | notebooks for apache spark alpha user story as a customer i want to process data through jupyter notebooks so that i could explore my data in an interactive way acceptance criteria i can launch notebook through the manager i can manage my notebook with the manager i can access the jupyterla... | 1 |

159,694 | 20,085,893,856 | IssuesEvent | 2022-02-05 01:08:11 | AkshayMukkavilli/Tensorflow | https://api.github.com/repos/AkshayMukkavilli/Tensorflow | opened | CVE-2021-41204 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2021-41204 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learni... | True | CVE-2021-41204 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2021-41204 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27... | non_process | cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file tensorflow src requireme... | 0 |

266,428 | 23,237,804,076 | IssuesEvent | 2022-08-03 13:18:05 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | closed | Test Failure: testPollIntervalStable insufficient attempts | team:Zombie Apocalypse test bug | Test Failure: com.ibm.ws.concurrent.persistent.fat.autonomicalpolling1serv.AutonomicalPolling1ServerTest.testPollIntervalStable

```

testPollIntervalStable:junit.framework.AssertionFailedError: 2021-12-30-19:47:02:916 testPollIntervalStable failed after multiple attemps. This likely means the the autonomical poll in... | 1.0 | Test Failure: testPollIntervalStable insufficient attempts - Test Failure: com.ibm.ws.concurrent.persistent.fat.autonomicalpolling1serv.AutonomicalPolling1ServerTest.testPollIntervalStable

```

testPollIntervalStable:junit.framework.AssertionFailedError: 2021-12-30-19:47:02:916 testPollIntervalStable failed after mu... | non_process | test failure testpollintervalstable insufficient attempts test failure com ibm ws concurrent persistent fat testpollintervalstable testpollintervalstable junit framework assertionfailederror testpollintervalstable failed after multiple attemps this likely means the the autonomical poll i... | 0 |

392,713 | 26,955,391,090 | IssuesEvent | 2023-02-08 14:35:36 | biaslab/RxInfer.jl | https://api.github.com/repos/biaslab/RxInfer.jl | closed | Stuck on MarginalRuleMethodError warning | documentation | I am trying to modify the GP regression example to create a local level (forecasting) model. I tried to follow the Missing Data example to handle the missing data. However I get a warning about MarginalRuleMethodError and cannot find documentation/examples on how to proceed. I would echo issue #15 about having more exa... | 1.0 | Stuck on MarginalRuleMethodError warning - I am trying to modify the GP regression example to create a local level (forecasting) model. I tried to follow the Missing Data example to handle the missing data. However I get a warning about MarginalRuleMethodError and cannot find documentation/examples on how to proceed. ... | non_process | stuck on marginalrulemethoderror warning i am trying to modify the gp regression example to create a local level forecasting model i tried to follow the missing data example to handle the missing data however i get a warning about marginalrulemethoderror and cannot find documentation examples on how to proceed ... | 0 |

21,157 | 28,132,167,187 | IssuesEvent | 2023-04-01 01:31:44 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] [Bug] `orderable-columns` should work if query contains two columns with the same name from different tables | Type:Bug .Backend .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | ```clj

(-> (lib/query-for-table-name meta/metadata-provider "VENUES")

(lib/join (-> (lib/join-clause

(meta/table-metadata :categories)

(lib/=

(lib/field "VENUES" "CATEGORY_ID")

(lib/field "CATEGORIES" "ID")))

... | 1.0 | [MLv2] [Bug] `orderable-columns` should work if query contains two columns with the same name from different tables - ```clj

(-> (lib/query-for-table-name meta/metadata-provider "VENUES")

(lib/join (-> (lib/join-clause

(meta/table-metadata :categories)

(lib/=

... | process | orderable columns should work if query contains two columns with the same name from different tables clj lib query for table name meta metadata provider venues lib join lib join clause meta table metadata categories lib ... | 1 |

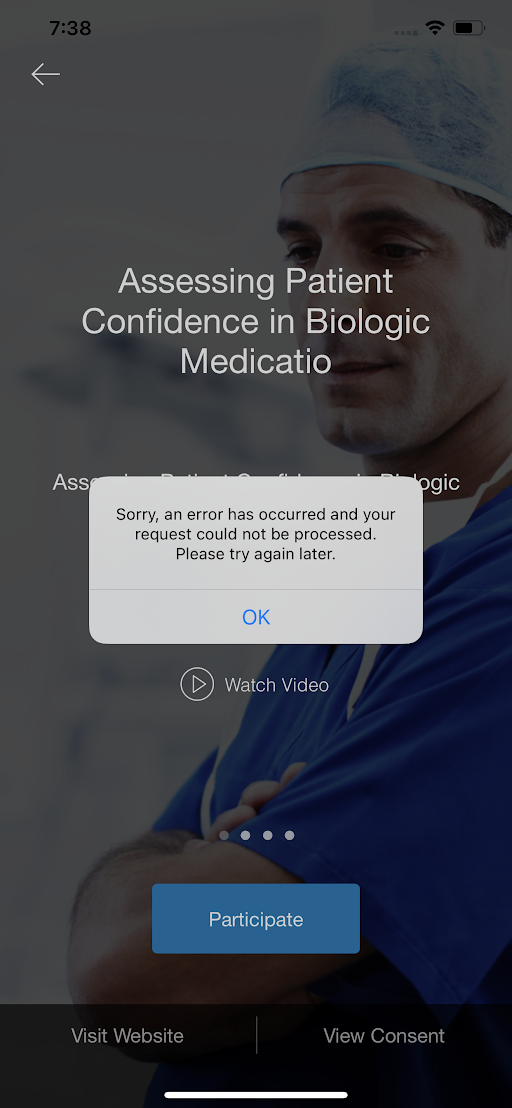

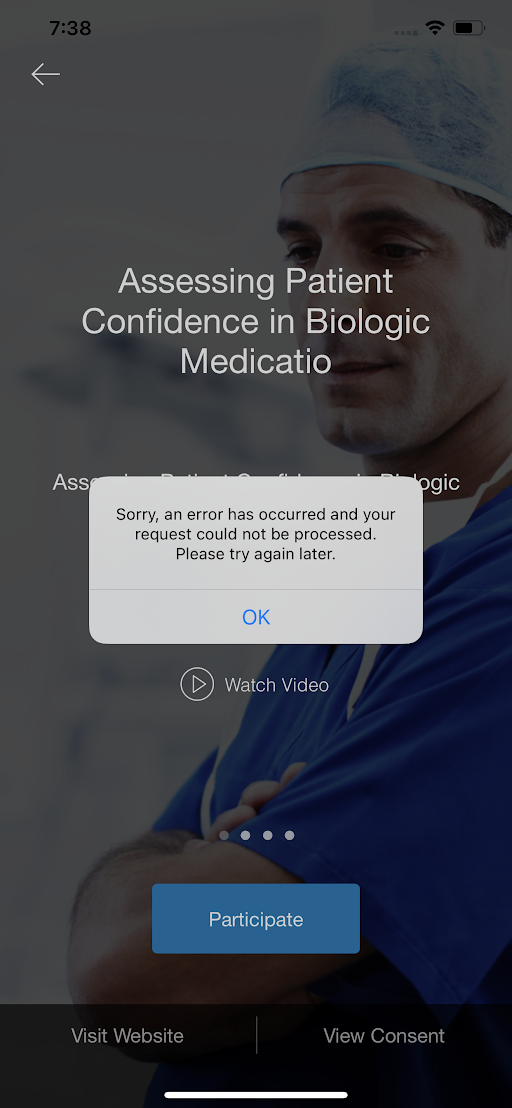

18,515 | 24,551,720,990 | IssuesEvent | 2022-10-12 13:06:37 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Occasionally some activities are getting saved in the Resume status | Bug P0 iOS Process: Fixed Process: Tested dev | Occasionally some activities are getting saved in the Resume status even though the participant has completed the activities successfully

Refer the below-mentioned study

Study id: newstudyon25_08

Study name: Imported iosupdate1(newstudyon25_08)

| Priority-Medium Survey Type-Other | Originally reported on Google Code with ID 850

```

When trying to get form "Common Javascript Framework" (version 20140308) in ODK Survey

(version 2.0 Alpha rev 105) receive error message: "open failed: ENOENT (No such file

or directory)." Same error message received when trying to get "Example Form" (version

20130408)... | 1.0 | ODK Survey: ENOENT (no such file or directory) - Originally reported on Google Code with ID 850

```

When trying to get form "Common Javascript Framework" (version 20140308) in ODK Survey

(version 2.0 Alpha rev 105) receive error message: "open failed: ENOENT (No such file

or directory)." Same error message received whe... | non_process | odk survey enoent no such file or directory originally reported on google code with id when trying to get form common javascript framework version in odk survey version alpha rev receive error message open failed enoent no such file or directory same error message received when trying to... | 0 |

334,443 | 24,419,486,539 | IssuesEvent | 2022-10-05 18:57:20 | transit-analytics-lab/spur | https://api.github.com/repos/transit-analytics-lab/spur | closed | Simple example with working data (Toronto's Sheppard line) | documentation | Package a set of required data files to simulate a basic 2-way subway schedule based on GTFS data. | 1.0 | Simple example with working data (Toronto's Sheppard line) - Package a set of required data files to simulate a basic 2-way subway schedule based on GTFS data. | non_process | simple example with working data toronto s sheppard line package a set of required data files to simulate a basic way subway schedule based on gtfs data | 0 |

9,549 | 12,513,071,907 | IssuesEvent | 2020-06-03 00:46:47 | nanoframework/Home | https://api.github.com/repos/nanoframework/Home | closed | Creating enum in global namespace causes MDP fail | Area: Metadata Processor Priority: Medium Type: Bug |

### Details about Problem

**nanoFramework area:** Visual Studio extension

**VS version<!--(if relevant)-->:** 2017

**VS extension version<!--(if relevant)-->:** 2017.2.0.10

**Target<!--(if relevant)-->:**

**Firmware image version<!--(if relevant)-->:**

**Device capabilities output<!--(if relevant)--... | 1.0 | Creating enum in global namespace causes MDP fail -

### Details about Problem

**nanoFramework area:** Visual Studio extension

**VS version<!--(if relevant)-->:** 2017

**VS extension version<!--(if relevant)-->:** 2017.2.0.10

**Target<!--(if relevant)-->:**

**Firmware image version<!--(if relevant)-->:*... | process | creating enum in global namespace causes mdp fail details about problem nanoframework area visual studio extension vs version vs extension version target firmware image version device capabilities output description creating enum in global... | 1 |

58,711 | 24,534,291,495 | IssuesEvent | 2022-10-11 19:13:45 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | azurerm >= 3.15.0: Error: parsing "/subscriptions/…/resourceGroups/…/providers/Microsoft.ServiceBus/namespaces/…/AuthorizationRules/…": parsing segment "staticAuthorizationRules": expected the segment "AuthorizationRules" to be "authorizationRules" | bug regression service/service-bus | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to th... | 2.0 | azurerm >= 3.15.0: Error: parsing "/subscriptions/…/resourceGroups/…/providers/Microsoft.ServiceBus/namespaces/…/AuthorizationRules/…": parsing segment "staticAuthorizationRules": expected the segment "AuthorizationRules" to be "authorizationRules" - ### Is there an existing issue for this?

- [X] I have searched the... | non_process | azurerm error parsing subscriptions … resourcegroups … providers microsoft servicebus namespaces … authorizationrules … parsing segment staticauthorizationrules expected the segment authorizationrules to be authorizationrules is there an existing issue for this i have searched the ex... | 0 |

18,054 | 24,066,712,971 | IssuesEvent | 2022-09-17 15:46:36 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | Docs CI test is broken with latest nightly | bug development-process | **Describe the bug**

The docs CI test is failing on master

**To Reproduce**

https://github.com/apache/arrow-rs/actions/runs/3062071013/jobs/4942618618

```

error: internal compiler error: no errors encountered even though `delay_span_bug` issued

error: internal compiler error: broken MIR in DefId(

...

```

... | 1.0 | Docs CI test is broken with latest nightly - **Describe the bug**

The docs CI test is failing on master

**To Reproduce**

https://github.com/apache/arrow-rs/actions/runs/3062071013/jobs/4942618618

```

error: internal compiler error: no errors encountered even though `delay_span_bug` issued

error: internal co... | process | docs ci test is broken with latest nightly describe the bug the docs ci test is failing on master to reproduce error internal compiler error no errors encountered even though delay span bug issued error internal compiler error broken mir in defid expected behavior te... | 1 |

540,877 | 15,818,827,846 | IssuesEvent | 2021-04-05 16:35:40 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Don't reload if there is no change in ICE | enhancement priority: medium | Turning ICE on/off causes the guest page to reload. Only reload if there has been a change to the content during the ICE session. | 1.0 | [studio-ui] Don't reload if there is no change in ICE - Turning ICE on/off causes the guest page to reload. Only reload if there has been a change to the content during the ICE session. | non_process | don t reload if there is no change in ice turning ice on off causes the guest page to reload only reload if there has been a change to the content during the ice session | 0 |

6,980 | 10,131,444,050 | IssuesEvent | 2019-08-01 19:33:24 | qri-io/desktop | https://api.github.com/repos/qri-io/desktop | closed | [3] Qri Desktop should launch Qri backend | main process | Migrate code from Qri frontend to launch the Qri backend when desktop is launched and shutdown on quit | 1.0 | [3] Qri Desktop should launch Qri backend - Migrate code from Qri frontend to launch the Qri backend when desktop is launched and shutdown on quit | process | qri desktop should launch qri backend migrate code from qri frontend to launch the qri backend when desktop is launched and shutdown on quit | 1 |

11,898 | 14,689,863,470 | IssuesEvent | 2021-01-02 12:17:50 | MrPeterJin/MrPeterJin.github.io | https://api.github.com/repos/MrPeterJin/MrPeterJin.github.io | closed | 传统方法下图像处理的数学原理整理 (1) - P某的备忘录 | /post/conventional-imaging-process-review-1/ Gitalk | https://www.601b.codes/post/conventional-imaging-process-review-1/

封面图片 credit: From Digital Image Processing 4E, Global Edition

众所周知在图像处理领域,神经网络还没有大规模运用时,支撑这个领域半边天的是各种数学原理(虽然现在数学也很重要)。这一... | 1.0 | 传统方法下图像处理的数学原理整理 (1) - P某的备忘录 - https://www.601b.codes/post/conventional-imaging-process-review-1/

封面图片 credit: From Digital Image Processing 4E, Global Edition

众所周知在图像处理领域,神经网络还没有大规模运用时,支撑这个领域半边天的是各种数学原理(虽然现在数学也很重要)。这一... | process | 传统方法下图像处理的数学原理整理 p某的备忘录 封面图片 credit from digital image processing global edition 众所周知在图像处理领域,神经网络还没有大规模运用时,支撑这个领域半边天的是各种数学原理(虽然现在数学也很重要)。这一 | 1 |

422,014 | 28,369,699,839 | IssuesEvent | 2023-04-12 16:03:47 | Analog-Devices-MSDK/msdk | https://api.github.com/repos/Analog-Devices-MSDK/msdk | closed | MXC_SYS Missing EXT_CLK Support | bug documentation | Hi, is there a bug in the MXC_SYS implementation for the MAX32670?

In the [UG](https://www.analog.com/media/en/technical-documentation/user-guides/max32670max32671-user-guide.pdf), the clock tree (page 38) and GCR_CLKCTRL register description (page 66-67) both document an external clock as an available system clock ... | 1.0 | MXC_SYS Missing EXT_CLK Support - Hi, is there a bug in the MXC_SYS implementation for the MAX32670?

In the [UG](https://www.analog.com/media/en/technical-documentation/user-guides/max32670max32671-user-guide.pdf), the clock tree (page 38) and GCR_CLKCTRL register description (page 66-67) both document an external c... | non_process | mxc sys missing ext clk support hi is there a bug in the mxc sys implementation for the in the the clock tree page and gcr clkctrl register description page both document an external clock as an available system clock option however the implementation in sys c has support for extclk removed ... | 0 |

45,377 | 7,179,986,871 | IssuesEvent | 2018-01-31 21:35:01 | brunobuzzi/BpmFlow | https://api.github.com/repos/brunobuzzi/BpmFlow | opened | Check search procedure in WAOrbeonProcessBrowser | critical bug critical enhancement documentation frontoffice | Checks:

* When searching by app and process name --> only search in current users assignments.

* When search by field value --> search in all processes.

* When search by process id --> search in all matrix.

The criteria must be unified or add componente to select the boundary. (user/system/???)

Other check:

W... | 1.0 | Check search procedure in WAOrbeonProcessBrowser - Checks:

* When searching by app and process name --> only search in current users assignments.

* When search by field value --> search in all processes.

* When search by process id --> search in all matrix.

The criteria must be unified or add componente to select... | non_process | check search procedure in waorbeonprocessbrowser checks when searching by app and process name only search in current users assignments when search by field value search in all processes when search by process id search in all matrix the criteria must be unified or add componente to select... | 0 |

160,746 | 25,225,378,302 | IssuesEvent | 2022-11-14 15:37:06 | DeveloperAcademy-POSTECH/MacC-Team-Spacer | https://api.github.com/repos/DeveloperAcademy-POSTECH/MacC-Team-Spacer | closed | [FEAT] SearchListView에서 cell 선택 시 CafeDetailView로 연결 | 🐯 오션 🎨 Design ⭐️ Feature | # ISSUE

## 종류

ISSUE 종류를 선택하세요

- [ ] Code Review

- [x] New Feature

- [ ] Bug Fix

- [ ] Setup

## 제목

- SearchListView에서 cell 선택 시 CafeDetailView로 연결

## 내용

- SearchListView에서 cell 선택 시 CafeDetailView로 navigation으로 연결됨

## 체크리스트

- [x] SearchListView에서 cell 선택 시 CafeDetailView로 navigation으로 연결됨

... | 1.0 | [FEAT] SearchListView에서 cell 선택 시 CafeDetailView로 연결 - # ISSUE

## 종류

ISSUE 종류를 선택하세요

- [ ] Code Review

- [x] New Feature

- [ ] Bug Fix

- [ ] Setup

## 제목

- SearchListView에서 cell 선택 시 CafeDetailView로 연결

## 내용

- SearchListView에서 cell 선택 시 CafeDetailView로 navigation으로 연결됨

## 체크리스트

- [x] Searc... | non_process | searchlistview에서 cell 선택 시 cafedetailview로 연결 issue 종류 issue 종류를 선택하세요 code review new feature bug fix setup 제목 searchlistview에서 cell 선택 시 cafedetailview로 연결 내용 searchlistview에서 cell 선택 시 cafedetailview로 navigation으로 연결됨 체크리스트 searchlistview에서 cel... | 0 |

3,412 | 6,523,907,534 | IssuesEvent | 2017-08-29 10:30:13 | w3c/w3process | https://api.github.com/repos/w3c/w3process | closed | Consistency issue: is it 28 days or four weeks? | Editorial improvements Process2018Candidate | The time limits for candidate recommendations is set to four weeks, as in:

> must specify the deadline for further comments, which *MUST* be **at least** four weeks after publication,

In section 6.4.1. Just a few lines below (in 6.5), it says:

> deadline for Advisory Committee review, which _MUST_ be **at lea... | 1.0 | Consistency issue: is it 28 days or four weeks? - The time limits for candidate recommendations is set to four weeks, as in:

> must specify the deadline for further comments, which *MUST* be **at least** four weeks after publication,

In section 6.4.1. Just a few lines below (in 6.5), it says:

> deadline for A... | process | consistency issue is it days or four weeks the time limits for candidate recommendations is set to four weeks as in must specify the deadline for further comments which must be at least four weeks after publication in section just a few lines below in it says deadline for ad... | 1 |

5,871 | 8,691,574,393 | IssuesEvent | 2018-12-04 01:58:55 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | doc: process.stdout.fd is mentioned but undocumented | doc process question | `process.stdout.fd` is mentioned in these 2 sections:

https://nodejs.org/api/async_hooks.html#async_hooks_printing_in_asynchooks_callbacks

https://nodejs.org/api/async_hooks.html#async_hooks_asynchronous_context_example

But it is not documented in [`process.stdout`](https://nodejs.org/api/process.html#process_pr... | 1.0 | doc: process.stdout.fd is mentioned but undocumented - `process.stdout.fd` is mentioned in these 2 sections:

https://nodejs.org/api/async_hooks.html#async_hooks_printing_in_asynchooks_callbacks

https://nodejs.org/api/async_hooks.html#async_hooks_asynchronous_context_example

But it is not documented in [`process.... | process | doc process stdout fd is mentioned but undocumented process stdout fd is mentioned in these sections but it is not documented in or in a section of any mentioned prototype net socket duplex or writable stream should it be documented with process stdin fd and process stderr fd | 1 |

14,639 | 17,770,737,345 | IssuesEvent | 2021-08-30 13:23:13 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | reopened | Dependency Dashboard | api: bigquery type: process | This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Edited/Blocked

These updates have been manually edited so Renovate will no longer make changes. To discard all commits and start over, click on a checkbox.

- [ ] <!-- rebase... | 1.0 | Dependency Dashboard - This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Edited/Blocked

These updates have been manually edited so Renovate will no longer make changes. To discard all commits and start over, click on a checkb... | process | dependency dashboard this issue provides visibility into renovate updates and their statuses edited blocked these updates have been manually edited so renovate will no longer make changes to discard all commits and start over click on a checkbox pull google api core google auth goo... | 1 |

21,135 | 28,106,563,076 | IssuesEvent | 2023-03-31 01:32:42 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Remove --genrule_strategy | P3 type: process team-Local-Exec stale | I'm planning to deprecate and remove the --genrule_strategy flag in early 2019.

As of e6263c3b0b9a467d315b91eac14162cf7915dcfd, it is no longer necessary to set --genrule_strategy in addition to --spawn_strategy if both are set to the same value. If --genrule_strategy is not set, it now defaults to --spawn_strategy.... | 1.0 | Remove --genrule_strategy - I'm planning to deprecate and remove the --genrule_strategy flag in early 2019.

As of e6263c3b0b9a467d315b91eac14162cf7915dcfd, it is no longer necessary to set --genrule_strategy in addition to --spawn_strategy if both are set to the same value. If --genrule_strategy is not set, it now d... | process | remove genrule strategy i m planning to deprecate and remove the genrule strategy flag in early as of it is no longer necessary to set genrule strategy in addition to spawn strategy if both are set to the same value if genrule strategy is not set it now defaults to spawn strategy this makes ... | 1 |

47,707 | 13,248,507,680 | IssuesEvent | 2020-08-19 19:05:51 | kenferrara/cbp-theme | https://api.github.com/repos/kenferrara/cbp-theme | opened | WS-2020-0091 (High) detected in http-proxy-1.15.2.tgz | security vulnerability | ## WS-2020-0091 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-proxy-1.15.2.tgz</b></p></summary>

<p>HTTP proxying for the masses</p>

<p>Library home page: <a href="https://regis... | True | WS-2020-0091 (High) detected in http-proxy-1.15.2.tgz - ## WS-2020-0091 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-proxy-1.15.2.tgz</b></p></summary>

<p>HTTP proxying for the... | non_process | ws high detected in http proxy tgz ws high severity vulnerability vulnerable library http proxy tgz http proxying for the masses library home page a href path to dependency file tmp ws scm cbp theme cbp theme package json path to vulnerable library tmp ws scm cbp t... | 0 |

115,042 | 9,779,417,344 | IssuesEvent | 2019-06-07 14:28:04 | SatelliteQE/robottelo | https://api.github.com/repos/SatelliteQE/robottelo | closed | UI - ActiveDirectoryUserGroupTestCase failing due to missed cherry-pick | 6.2 High Priority UI test-failure | we're missing this fix: https://github.com/SatelliteQE/robottelo/pull/5116

also, there's one other issue with locating the 'search' bar on the hostgroups page - if we're looking for a HG and there are no Hostgorups, the search bar is ~~empty~~ hidden and we fail to locate it.

- also, we do some unnecessary searches i... | 1.0 | UI - ActiveDirectoryUserGroupTestCase failing due to missed cherry-pick - we're missing this fix: https://github.com/SatelliteQE/robottelo/pull/5116

also, there's one other issue with locating the 'search' bar on the hostgroups page - if we're looking for a HG and there are no Hostgorups, the search bar is ~~empty~~ h... | non_process | ui activedirectoryusergrouptestcase failing due to missed cherry pick we re missing this fix also there s one other issue with locating the search bar on the hostgroups page if we re looking for a hg and there are no hostgorups the search bar is empty hidden and we fail to locate it also we do so... | 0 |

9,351 | 12,365,586,412 | IssuesEvent | 2020-05-18 09:04:07 | Arch666Angel/mods | https://api.github.com/repos/Arch666Angel/mods | closed | Migration for garden mutation | Angels Bio Processing Bug | For garden mutation, we could research the new tech and unlocking the mutations as well, it should be no issue as this is only early game, hence why I didn't do it in #223, however, when playing with tech overhaul, having to build an old tech tier for that might be tedious. | 1.0 | Migration for garden mutation - For garden mutation, we could research the new tech and unlocking the mutations as well, it should be no issue as this is only early game, hence why I didn't do it in #223, however, when playing with tech overhaul, having to build an old tech tier for that might be tedious. | process | migration for garden mutation for garden mutation we could research the new tech and unlocking the mutations as well it should be no issue as this is only early game hence why i didn t do it in however when playing with tech overhaul having to build an old tech tier for that might be tedious | 1 |

768,750 | 26,978,853,853 | IssuesEvent | 2023-02-09 11:34:55 | openghg/openghg | https://api.github.com/repos/openghg/openghg | opened | Update compression for current object store - move to Zarr | question low-priority | This might be low priority but if we have a large object store with the current NetCDF write system, could we include the functionality to read the current data, compress it and replace the existing NetCDFs with Zarr stores? | 1.0 | Update compression for current object store - move to Zarr - This might be low priority but if we have a large object store with the current NetCDF write system, could we include the functionality to read the current data, compress it and replace the existing NetCDFs with Zarr stores? | non_process | update compression for current object store move to zarr this might be low priority but if we have a large object store with the current netcdf write system could we include the functionality to read the current data compress it and replace the existing netcdfs with zarr stores | 0 |

16,389 | 21,155,678,581 | IssuesEvent | 2022-04-07 02:46:35 | yupix/Mi.py | https://api.github.com/repos/yupix/Mi.py | closed | potential future feature? | kind/feature Medium process/candidate priority/medium |

I'd love for the ability to provide images via local directory and have the bot upload this image along with the text.

or perhaps have an object that can contain both image and text to be sent and then i can manually load the image into the object before sending it.

I'd attempt this myself if i was perhaps a litt... | 1.0 | potential future feature? -

I'd love for the ability to provide images via local directory and have the bot upload this image along with the text.

or perhaps have an object that can contain both image and text to be sent and then i can manually load the image into the object before sending it.

I'd attempt this my... | process | potential future feature i d love for the ability to provide images via local directory and have the bot upload this image along with the text or perhaps have an object that can contain both image and text to be sent and then i can manually load the image into the object before sending it i d attempt this my... | 1 |

807,466 | 30,004,463,253 | IssuesEvent | 2023-06-26 11:30:49 | War-Brokers/War-Brokers | https://api.github.com/repos/War-Brokers/War-Brokers | opened | Add vehicle speedometer | priority:3 - low type:suggestion area:UI/UX | > What if both air and land vehicles had a speedometer. both in km and miles and that it was in a corner and if you want you can remove it.

- [Original Report](https://discordapp.com/channels/324984733102768128/393643849785802753/722484918256402432) - War Brokers Discord Server | 1.0 | Add vehicle speedometer - > What if both air and land vehicles had a speedometer. both in km and miles and that it was in a corner and if you want you can remove it.

- [Original Report](https://discordapp.com/channels/324984733102768128/393643849785802753/722484918256402432) - War Brokers Discord Server | non_process | add vehicle speedometer what if both air and land vehicles had a speedometer both in km and miles and that it was in a corner and if you want you can remove it war brokers discord server | 0 |

11,497 | 14,370,282,555 | IssuesEvent | 2020-12-01 10:53:24 | syncfusion/ej2-javascript-ui-controls | https://api.github.com/repos/syncfusion/ej2-javascript-ui-controls | closed | simultaneous saveAsBlob('Docx') trigger an error | word-processor | I noticed when I have 2 `saveAsBlob('Docx')` happening almost simultaneously, the first one fails with the following error :

```

Error: Uncaught (in promise): TypeError: Cannot read property 'length' of undefined

TypeError: Cannot read property 'length' of undefined

at ZipArchive.push../node_modules/@syncfus... | 1.0 | simultaneous saveAsBlob('Docx') trigger an error - I noticed when I have 2 `saveAsBlob('Docx')` happening almost simultaneously, the first one fails with the following error :

```

Error: Uncaught (in promise): TypeError: Cannot read property 'length' of undefined

TypeError: Cannot read property 'length' of undefi... | process | simultaneous saveasblob docx trigger an error i noticed when i have saveasblob docx happening almost simultaneously the first one fails with the following error error uncaught in promise typeerror cannot read property length of undefined typeerror cannot read property length of undefi... | 1 |

11,727 | 14,567,536,680 | IssuesEvent | 2020-12-17 10:23:05 | MarcElrick/level-4-individual-project | https://api.github.com/repos/MarcElrick/level-4-individual-project | closed | Tie loading bar to progress of data analysis | data processing gui | Not entirely sure how we can know how long processing will take yet | 1.0 | Tie loading bar to progress of data analysis - Not entirely sure how we can know how long processing will take yet | process | tie loading bar to progress of data analysis not entirely sure how we can know how long processing will take yet | 1 |

3,196 | 6,261,684,731 | IssuesEvent | 2017-07-15 02:15:36 | gaocegege/Processing.R | https://api.github.com/repos/gaocegege/Processing.R | closed | howto.md: Document the new way | community/processing difficulty/low for-new-contributors priority/p0 size/medium status/to-be-claimed type/enhancement | Now we could download the mode from release page, so there is no need to build the mode from source code.

My suggestion is to split two files, one is for users, other is for developers. We could recommend users to download the mode from release page. And move the instructions about building from source code to devel... | 1.0 | howto.md: Document the new way - Now we could download the mode from release page, so there is no need to build the mode from source code.

My suggestion is to split two files, one is for users, other is for developers. We could recommend users to download the mode from release page. And move the instructions about b... | process | howto md document the new way now we could download the mode from release page so there is no need to build the mode from source code my suggestion is to split two files one is for users other is for developers we could recommend users to download the mode from release page and move the instructions about b... | 1 |

12,337 | 14,882,738,426 | IssuesEvent | 2021-01-20 12:20:36 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Mobile] [Dev] Unable to rejoin into Open study once withdrawn | Blocker Bug P0 Process: Dev Process: Fixed Process: Tested dev Unknown backend | Unable to rejoin into Open study once withdrawn for Android and iOS

| 3.0 | [Mobile] [Dev] Unable to rejoin into Open study once withdrawn - Unable to rejoin into Open study once withdrawn for Android and iOS

| process | unable to rejoin into open study once withdrawn unable to rejoin into open study once withdrawn for android and ios | 1 |

8,326 | 11,490,029,689 | IssuesEvent | 2020-02-11 16:25:19 | xatkit-bot-platform/xatkit-runtime | https://api.github.com/repos/xatkit-bot-platform/xatkit-runtime | opened | Pre/Post processor to detect the input language | Enhancement Processors | In some use cases the user may ask a question in a language not supported by the bot. It would be nice to at least catch this language to return an appropriate reply. | 1.0 | Pre/Post processor to detect the input language - In some use cases the user may ask a question in a language not supported by the bot. It would be nice to at least catch this language to return an appropriate reply. | process | pre post processor to detect the input language in some use cases the user may ask a question in a language not supported by the bot it would be nice to at least catch this language to return an appropriate reply | 1 |

274,265 | 20,829,849,941 | IssuesEvent | 2022-03-19 08:38:01 | intel/dffml | https://api.github.com/repos/intel/dffml | opened | docs: missing screenshot of grading rubric | documentation | The screenshot of the grading rubric seems to be missing in https://intel.github.io/dffml/master/contributing/gsoc/rubric.html and is nowhere to be found in the repository.

| 1.0 | docs: missing screenshot of grading rubric - The screenshot of the grading rubric seems to be missing in https://intel.github.io/dffml/master/contributing/gsoc/rubric.html and is nowhere to be found in the repository.

| non_process | docs missing screenshot of grading rubric the screenshot of the grading rubric seems to be missing in and is nowhere to be found in the repository | 0 |

723 | 3,211,165,664 | IssuesEvent | 2015-10-06 09:12:10 | rofrischmann/react-look | https://api.github.com/repos/rofrischmann/react-look | opened | Mixins as plain functions | improvement processor | Instead of having MixinTypes and all that stuff we could just have a method that gets called on every key,value pair (This even improves performance).

e.g.

```

let mixin = (property, value, {newProps}) => {

if (property === 'css') {

newProps.className = newProps.className ? newProps.className : '' + val... | 1.0 | Mixins as plain functions - Instead of having MixinTypes and all that stuff we could just have a method that gets called on every key,value pair (This even improves performance).

e.g.

```

let mixin = (property, value, {newProps}) => {

if (property === 'css') {

newProps.className = newProps.className ? n... | process | mixins as plain functions instead of having mixintypes and all that stuff we could just have a method that gets called on every key value pair this even improves performance e g let mixin property value newprops if property css newprops classname newprops classname n... | 1 |

16,226 | 20,762,488,015 | IssuesEvent | 2022-03-15 17:23:01 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Layer could not be generated | Feedback Processing Bug | ### What is the bug or the crash?

I am getting this error when using the Saga Thiessen polygon command. Output layer is not created.

I am getting this error on all SAGA commands. I saved output layer to different fields but it is not created. (save to file)

Qgis 3.16.0

QGIS code revision: 43b64b13f3

I use win10

... | 1.0 | Layer could not be generated - ### What is the bug or the crash?

I am getting this error when using the Saga Thiessen polygon command. Output layer is not created.

I am getting this error on all SAGA commands. I saved output layer to different fields but it is not created. (save to file)

Qgis 3.16.0

QGIS code rev... | process | layer could not be generated what is the bug or the crash i am getting this error when using the saga thiessen polygon command output layer is not created i am getting this error on all saga commands i saved output layer to different fields but it is not created save to file qgis qgis code revi... | 1 |

11,026 | 13,822,490,026 | IssuesEvent | 2020-10-13 05:12:40 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | ntr: CMG & MCM complex processes | New term request PomBase cell cycle and DNA processes | id: GO:new1

name: CMG complex assembly

def: The aggregation, arrangement and bonding together of a set of components to form the CMG complex, a protein complex that contains the GINS complex, Cdc45p, and the heterohexameric MCM complex, and that is involved in unwinding DNA during replication. The process begins when... | 1.0 | ntr: CMG & MCM complex processes - id: GO:new1

name: CMG complex assembly

def: The aggregation, arrangement and bonding together of a set of components to form the CMG complex, a protein complex that contains the GINS complex, Cdc45p, and the heterohexameric MCM complex, and that is involved in unwinding DNA during r... | process | ntr cmg mcm complex processes id go name cmg complex assembly def the aggregation arrangement and bonding together of a set of components to form the cmg complex a protein complex that contains the gins complex and the heterohexameric mcm complex and that is involved in unwinding dna during replicati... | 1 |

408,053 | 11,941,048,920 | IssuesEvent | 2020-04-02 17:45:09 | DevotedMC/JukeAlert | https://api.github.com/repos/DevotedMC/JukeAlert | closed | From Gjum: Some snitch entries do not have item representation in /ja GUI | Priority: Low Type: Bug | I'll direct Gjum here to add more details | 1.0 | From Gjum: Some snitch entries do not have item representation in /ja GUI - I'll direct Gjum here to add more details | non_process | from gjum some snitch entries do not have item representation in ja gui i ll direct gjum here to add more details | 0 |

22,344 | 31,020,764,387 | IssuesEvent | 2023-08-10 05:03:32 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Release Checklist 0.85 | enhancement process | ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

## Preparation

- [x] Milestone field populated on relevant [issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing open for [milestone](https://gi... | 1.0 | Release Checklist 0.85 - ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

## Preparation

- [x] Milestone field populated on relevant [issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing open f... | process | release checklist problem we need a checklist to verify the release is rolled out successfully solution preparation milestone field populated on relevant nothing open for github checks for branch are passing no pre release or snapshot dependencies present in build file... | 1 |

11,687 | 14,542,868,831 | IssuesEvent | 2020-12-15 16:12:30 | Blazebit/blaze-persistence | https://api.github.com/repos/Blazebit/blaze-persistence | closed | Optional parameter detection in annotation processor doesn't handle constant concatenation | component: entity-view component: entity-view-annotation-processor kind: bug worth: high | When using something like `":" + CONSTANT` in a view filter provider we determine an empty alias in the annotation processor. This should be improved. We should either detect the name or simply don't generate the apply method as it results in a compiler error anyway. | 1.0 | Optional parameter detection in annotation processor doesn't handle constant concatenation - When using something like `":" + CONSTANT` in a view filter provider we determine an empty alias in the annotation processor. This should be improved. We should either detect the name or simply don't generate the apply method a... | process | optional parameter detection in annotation processor doesn t handle constant concatenation when using something like constant in a view filter provider we determine an empty alias in the annotation processor this should be improved we should either detect the name or simply don t generate the apply method a... | 1 |

7,081 | 10,229,683,903 | IssuesEvent | 2019-08-17 14:49:58 | ION28/BLUESPAWN | https://api.github.com/repos/ION28/BLUESPAWN | opened | Code Execution and Lateral Movement Detection Opportunities | basic enhancement epic logs processes services | “Offensive Lateral Movement” by Ryan Hausknecht https://link.medium.com/XjbyLXzfeZ

This covers a number of techniques in attack and contains some basic iocs we can implement in the first implementation of our detection functions. | 1.0 | Code Execution and Lateral Movement Detection Opportunities - “Offensive Lateral Movement” by Ryan Hausknecht https://link.medium.com/XjbyLXzfeZ

This covers a number of techniques in attack and contains some basic iocs we can implement in the first implementation of our detection functions. | process | code execution and lateral movement detection opportunities “offensive lateral movement” by ryan hausknecht this covers a number of techniques in attack and contains some basic iocs we can implement in the first implementation of our detection functions | 1 |

263,446 | 23,058,806,475 | IssuesEvent | 2022-07-25 08:03:24 | elastic/e2e-testing | https://api.github.com/repos/elastic/e2e-testing | closed | Support reading environment variables from ".env" | Team:Automation area:test priority:low size:S triaged | There are plenty of libraries reading configs from env vars from a well-known location (i.e. the `.env` dir), adding a chain of priority for multiple files (production, testing, local, etc)

We could leverage this kind of tools to simplify the execution of the tests. | 1.0 | Support reading environment variables from ".env" - There are plenty of libraries reading configs from env vars from a well-known location (i.e. the `.env` dir), adding a chain of priority for multiple files (production, testing, local, etc)

We could leverage this kind of tools to simplify the execution of the tests... | non_process | support reading environment variables from env there are plenty of libraries reading configs from env vars from a well known location i e the env dir adding a chain of priority for multiple files production testing local etc we could leverage this kind of tools to simplify the execution of the tests... | 0 |

8,729 | 11,863,293,689 | IssuesEvent | 2020-03-25 19:27:07 | prisma/vscode | https://api.github.com/repos/prisma/vscode | opened | Add tag/release on publishing | kind/feature process/candidate | When a new version is released, the commit should be tagged so we can understand what exactly got released and the releases are listed in the "Releases" tab. | 1.0 | Add tag/release on publishing - When a new version is released, the commit should be tagged so we can understand what exactly got released and the releases are listed in the "Releases" tab. | process | add tag release on publishing when a new version is released the commit should be tagged so we can understand what exactly got released and the releases are listed in the releases tab | 1 |

417,329 | 12,158,436,169 | IssuesEvent | 2020-04-26 03:53:04 | radareorg/radare2 | https://api.github.com/repos/radareorg/radare2 | opened | [XX] db/cmd/write wen 3 writable bin - happens from time to time on some PRs | high-priority r2r | ```

[XX] db/cmd/write wen 3 writable bin

R2_NOPLUGINS=1 radare2 -escr.utf8=0 -escr.color=0 -escr.interactive=0 -N -Qc 'mkdir .tmp

cp bins/mach0/mac-ls2 .tmp/ls-wen

o .tmp/ls-wen

r

wen 3

r

oo+

r

wen 3

r

rm .tmp/ls-wen

' --

-- stdout

--- .a 2020-04-25 22:11:09.364560136 +0000

+++ .b 2020-04-25 22:11:09.36... | 1.0 | [XX] db/cmd/write wen 3 writable bin - happens from time to time on some PRs - ```

[XX] db/cmd/write wen 3 writable bin

R2_NOPLUGINS=1 radare2 -escr.utf8=0 -escr.color=0 -escr.interactive=0 -N -Qc 'mkdir .tmp

cp bins/mach0/mac-ls2 .tmp/ls-wen

o .tmp/ls-wen

r

wen 3

r

oo+

r

wen 3

r

rm .tmp/ls-wen

' --

-- s... | non_process | db cmd write wen writable bin happens from time to time on some prs db cmd write wen writable bin noplugins escr escr color escr interactive n qc mkdir tmp cp bins mac tmp ls wen o tmp ls wen r wen r oo r wen r rm tmp ls wen stdout a ... | 0 |

57,805 | 14,219,792,008 | IssuesEvent | 2020-11-17 13:45:51 | LalithK90/nandanaMotors | https://api.github.com/repos/LalithK90/nandanaMotors | opened | CVE-2020-10693 (Medium) detected in hibernate-validator-6.0.18.Final.jar | security vulnerability | ## CVE-2020-10693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hibernate-validator-6.0.18.Final.jar</b></p></summary>

<p>Hibernate's Bean Validation (JSR-380) reference implementa... | True | CVE-2020-10693 (Medium) detected in hibernate-validator-6.0.18.Final.jar - ## CVE-2020-10693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hibernate-validator-6.0.18.Final.jar</b></... | non_process | cve medium detected in hibernate validator final jar cve medium severity vulnerability vulnerable library hibernate validator final jar hibernate s bean validation jsr reference implementation library home page a href path to dependency file nandanamotors build gra... | 0 |

243,190 | 20,369,039,617 | IssuesEvent | 2022-02-21 09:25:53 | hydrocode-de/RUINSapp | https://api.github.com/repos/hydrocode-de/RUINSapp | closed | build e2e test, if somehow possible | tests | This is a bit of a challenge. There seems to be no native way, how a streamlit app can be unit-tested. This makes maintenance way more complicated.

However, few resources I found:

- https://discuss.streamlit.io/t/access-app-errors-in-pytest/8015/3

Idea is to at least start streamlit apps and check that no `s... | 1.0 | build e2e test, if somehow possible - This is a bit of a challenge. There seems to be no native way, how a streamlit app can be unit-tested. This makes maintenance way more complicated.

However, few resources I found:

- https://discuss.streamlit.io/t/access-app-errors-in-pytest/8015/3

Idea is to at least sta... | non_process | build test if somehow possible this is a bit of a challenge there seems to be no native way how a streamlit app can be unit tested this makes maintenance way more complicated however few resources i found idea is to at least start streamlit apps and check that no streamlit exception output i... | 0 |

43,200 | 5,583,656,219 | IssuesEvent | 2017-03-29 01:18:37 | SEED-platform/seed | https://api.github.com/repos/SEED-platform/seed | closed | Move Filtering and Sorting to Front End | Filtering Needs Design | Any time you sort or filter a column it calls a functioncalled search_buildings within the search service. This should be handled on the front end using Angular.

The program should be sorting and filtering on the full data set.

Look at NYC data that has 12,000 records -- will there be a performance hit by putting t... | 1.0 | Move Filtering and Sorting to Front End - Any time you sort or filter a column it calls a functioncalled search_buildings within the search service. This should be handled on the front end using Angular.

The program should be sorting and filtering on the full data set.

Look at NYC data that has 12,000 records -- wi... | non_process | move filtering and sorting to front end any time you sort or filter a column it calls a functioncalled search buildings within the search service this should be handled on the front end using angular the program should be sorting and filtering on the full data set look at nyc data that has records will ... | 0 |

25,060 | 12,216,960,402 | IssuesEvent | 2020-05-01 16:12:13 | microsoft/BotFramework-WebChat | https://api.github.com/repos/microsoft/BotFramework-WebChat | opened | WebChat OAuth SSO doesn't continue after Login | Bot Services Bug customer-reported | Hey,

The issue we're having right now is that once we click on the `Login` button in the OAuthPrompt from the webchat, the SSO takes over and the Sign in Happens but when the flow returns to the webchat, nothing happens and the bot just hangs.

In the Microsoft Bot Framework (v4) bot that we're building, we have ... | 1.0 | WebChat OAuth SSO doesn't continue after Login - Hey,

The issue we're having right now is that once we click on the `Login` button in the OAuthPrompt from the webchat, the SSO takes over and the Sign in Happens but when the flow returns to the webchat, nothing happens and the bot just hangs.

In the Microsoft Bot... | non_process | webchat oauth sso doesn t continue after login hey the issue we re having right now is that once we click on the login button in the oauthprompt from the webchat the sso takes over and the sign in happens but when the flow returns to the webchat nothing happens and the bot just hangs in the microsoft bot... | 0 |

33,984 | 28,062,634,507 | IssuesEvent | 2023-03-29 13:33:37 | nf-core/tools | https://api.github.com/repos/nf-core/tools | closed | Implement tests for 'nf-core subworkflows create-test-yml' command | enhancement infrastructure | ### Description of feature

Linked to #1883 | 1.0 | Implement tests for 'nf-core subworkflows create-test-yml' command - ### Description of feature

Linked to #1883 | non_process | implement tests for nf core subworkflows create test yml command description of feature linked to | 0 |

2,108 | 4,940,433,163 | IssuesEvent | 2016-11-29 16:48:16 | pelias/pelias | https://api.github.com/repos/pelias/pelias | closed | Street fallback | epic glorious future processed | In cases where no street numbers exist for a certain street, or there are few numbers on that street it would be ideal to simply return the name of the street with a centroid of the polyline.

An example would be if for 'Main Street' we only had numbers 1,2 and 42. A user should still be able to type 'Main Street' and ... | 1.0 | Street fallback - In cases where no street numbers exist for a certain street, or there are few numbers on that street it would be ideal to simply return the name of the street with a centroid of the polyline.

An example would be if for 'Main Street' we only had numbers 1,2 and 42. A user should still be able to type ... | process | street fallback in cases where no street numbers exist for a certain street or there are few numbers on that street it would be ideal to simply return the name of the street with a centroid of the polyline an example would be if for main street we only had numbers and a user should still be able to type ... | 1 |

379,143 | 11,216,378,736 | IssuesEvent | 2020-01-07 06:07:54 | apache/incubator-echarts | https://api.github.com/repos/apache/incubator-echarts | closed | group support dragging in graphic component | difficulty: normal enhancement priority: high | <!--

为了方便我们能够复现和修复 bug,请遵从下面的规范描述您的问题。

-->

draggable: 'true',包含几个children,似乎没办法整体拖拽?如何解决呢?

### One-line summary [问题简述]

### Version & Environment [版本及环境]

+ ECharts version [ECharts 版本]: 最新版本

+ Browser version [浏览器类型和版本]:

+ OS Version [操作系统类型和版本]:

### Expected behaviour [期望结果]

##... | 1.0 | group support dragging in graphic component - <!--

为了方便我们能够复现和修复 bug,请遵从下面的规范描述您的问题。

-->

draggable: 'true',包含几个children,似乎没办法整体拖拽?如何解决呢?

### One-line summary [问题简述]

### Version & Environment [版本及环境]

+ ECharts version [ECharts 版本]: 最新版本

+ Browser version [浏览器类型和版本]:

+ OS Version [操作系统类型和版本]:

... | non_process | group support dragging in graphic component 为了方便我们能够复现和修复 bug,请遵从下面的规范描述您的问题。 draggable true ,包含几个children,似乎没办法整体拖拽?如何解决呢? one line summary version environment echarts version 最新版本 browser version os version expected behaviour ... | 0 |

21,990 | 30,485,525,583 | IssuesEvent | 2023-07-18 01:38:19 | pingcap/tidb | https://api.github.com/repos/pingcap/tidb | closed | Coprocessor cache evict monitor always shows 0 in TiDB | type/bug component/coprocessor severity/moderate | ## Bug Report

Please answer these questions before submitting your issue. Thanks!

### 1. Minimal reproduce step (Required)

<!-- a step by step guide for reproducing the bug. -->

### 2. What did you expect to see? (Required)

The coprocessor cache should be properly evicted, and the monitor should show non... | 1.0 | Coprocessor cache evict monitor always shows 0 in TiDB - ## Bug Report

Please answer these questions before submitting your issue. Thanks!

### 1. Minimal reproduce step (Required)

<!-- a step by step guide for reproducing the bug. -->

### 2. What did you expect to see? (Required)

The coprocessor cache sh... | process | coprocessor cache evict monitor always shows in tidb bug report please answer these questions before submitting your issue thanks minimal reproduce step required what did you expect to see required the coprocessor cache should be properly evicted and the monitor should show... | 1 |

341,004 | 10,281,411,839 | IssuesEvent | 2019-08-26 08:26:57 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | verified.capitalone.com - see bug description | browser-fenix engine-gecko priority-important | <!-- @browser: Firefox Mobile 69.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:69.0) Gecko/69.0 Firefox/69.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://verified.capitalone.com/auth/signin

**Browser / Version**: Firefox Mobile 69.0

**Operating System**: Android

**T... | 1.0 | verified.capitalone.com - see bug description - <!-- @browser: Firefox Mobile 69.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:69.0) Gecko/69.0 Firefox/69.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://verified.capitalone.com/auth/signin

**Browser / Version**: Firef... | non_process | verified capitalone com see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description can t paste into empty field steps to reproduce on the login form for capitalone com i am u... | 0 |

3,362 | 13,033,007,647 | IssuesEvent | 2020-07-28 05:53:15 | arcticicestudio/nord | https://api.github.com/repos/arcticicestudio/nord | closed | Repo label system | context-workflow resolution-answered scope-maintainability type-question | This has nothing to do with the wonderful Nord theme (that I've been using everywhere I can), but more about the label system you have in place for the repo.

I find it more useful than the usual (default) label system that has Github and I want to implement this system (and colors) for my own repos. Is there any kin... | True | Repo label system - This has nothing to do with the wonderful Nord theme (that I've been using everywhere I can), but more about the label system you have in place for the repo.

I find it more useful than the usual (default) label system that has Github and I want to implement this system (and colors) for my own rep... | non_process | repo label system this has nothing to do with the wonderful nord theme that i ve been using everywhere i can but more about the label system you have in place for the repo i find it more useful than the usual default label system that has github and i want to implement this system and colors for my own rep... | 0 |

131,669 | 18,248,623,541 | IssuesEvent | 2021-10-01 22:42:50 | ghc-dev/Natalie-Smith | https://api.github.com/repos/ghc-dev/Natalie-Smith | opened | CVE-2020-7656 (Medium) detected in jquery-1.8.1.min.js | security vulnerability | ## CVE-2020-7656 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="htt... | True | CVE-2020-7656 (Medium) detected in jquery-1.8.1.min.js - ## CVE-2020-7656 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library ... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file natalie smith node modules redeyed examples browser index html path to vulner... | 0 |

5,208 | 7,979,112,861 | IssuesEvent | 2018-07-17 20:35:11 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | getTraceCount | libs-etherlib status-inprocess type-enhancement | Some code uses getTraceCount to decide whether or not to process traces, thus:

if (getTraceCount() < 250) {

CTraceArray traces;

getTraces(traces, trans->hash);

....

}

I.E. only get traces if there are not that many of them.

This is a bug since it silently ignores internal tr... | 1.0 | getTraceCount - Some code uses getTraceCount to decide whether or not to process traces, thus:

if (getTraceCount() < 250) {

CTraceArray traces;

getTraces(traces, trans->hash);

....

}

I.E. only get traces if there are not that many of them.

This is a bug since it silently ign... | process | gettracecount some code uses gettracecount to decide whether or not to process traces thus if gettracecount ctracearray traces gettraces traces trans hash i e only get traces if there are not that many of them this is a bug since it silently ignor... | 1 |

130,208 | 12,425,130,924 | IssuesEvent | 2020-05-24 14:58:48 | weso/hercules-ontology | https://api.github.com/repos/weso/hercules-ontology | closed | [HOI-0190] Analysis: Use of evOWLuator | affects: documentation affects: ontology status: awaiting-triage | Version v0.1.1 of evOWLuator - a cross-platform, energy aware evaluation tool for OWL reasoners - was released a few days ago. We should try this tool and analyse whether it could be added to our generated documentation or not.

Github repository: https://github.com/sisinflab-swot/evowluator

Documentation: http://si... | 1.0 | [HOI-0190] Analysis: Use of evOWLuator - Version v0.1.1 of evOWLuator - a cross-platform, energy aware evaluation tool for OWL reasoners - was released a few days ago. We should try this tool and analyse whether it could be added to our generated documentation or not.

Github repository: https://github.com/sisinflab-... | non_process | analysis use of evowluator version of evowluator a cross platform energy aware evaluation tool for owl reasoners was released a few days ago we should try this tool and analyse whether it could be added to our generated documentation or not github repository documentation | 0 |

292,054 | 25,196,089,066 | IssuesEvent | 2022-11-12 14:33:07 | Test-Automation-Crash-Course-24-10-22/team_04 | https://api.github.com/repos/Test-Automation-Crash-Course-24-10-22/team_04 | opened | Check the function of comparison | Test Case | **Descriptions:**

Check out the multi-product comparison feature

**Preconditions:**

Open https://rozetka.com.ua/ua/

Log in to your account

In the wishlist must be saved several products of the same group (for example, mobile phones)

**Test steps**

| Step | Test Data | Expected result |

| ------------... | 1.0 | Check the function of comparison - **Descriptions:**

Check out the multi-product comparison feature

**Preconditions:**

Open https://rozetka.com.ua/ua/

Log in to your account

In the wishlist must be saved several products of the same group (for example, mobile phones)

**Test steps**

| Step | Test Data ... | non_process | check the function of comparison descriptions check out the multi product comparison feature preconditions open log in to your account in the wishlist must be saved several products of the same group for example mobile phones test steps step test data expected result ... | 0 |

7,811 | 10,964,369,491 | IssuesEvent | 2019-11-27 22:22:04 | codeuniversity/smag-mvp | https://api.github.com/repos/codeuniversity/smag-mvp | closed | Create face recognition endpoint and worker | Image Processing | - read internal_image_url, post_id and boundries from kafka topic

- call face recognition GRPC endpoint with image url

- take internal_image_url and boundries to crop image, creating image_crop_url for image proxy

- write array of faces with boundries and encoding to kafka topic | 1.0 | Create face recognition endpoint and worker - - read internal_image_url, post_id and boundries from kafka topic

- call face recognition GRPC endpoint with image url

- take internal_image_url and boundries to crop image, creating image_crop_url for image proxy

- write array of faces with boundries and encoding to kafka ... | process | create face recognition endpoint and worker read internal image url post id and boundries from kafka topic call face recognition grpc endpoint with image url take internal image url and boundries to crop image creating image crop url for image proxy write array of faces with boundries and encoding to kafka ... | 1 |

373,882 | 26,089,706,750 | IssuesEvent | 2022-12-26 09:30:14 | mentors-service/mentors-service-FE | https://api.github.com/repos/mentors-service/mentors-service-FE | closed | [FE] 디자인 시스템 작업 | documentation frontend feat | 프로젝트에 사용할 디자인 시스템 작업

고려할 요소들: 폰트, 여백, 너비, 컬러, 기타 등등...

ex) 색상

```

export const colors = {

$primary: '#FF055C',

$secondary: '#0BBFAD',

$black: '#0D0D0D',

$white: '#F2F2F2',

};

```

ex) 폰트

```

export const fonts = {

$xs: '14px',

$sm: '16px',

$base: '18px',

$lg: '20px',

$xl: '22px',

... | 1.0 | [FE] 디자인 시스템 작업 - 프로젝트에 사용할 디자인 시스템 작업

고려할 요소들: 폰트, 여백, 너비, 컬러, 기타 등등...

ex) 색상

```

export const colors = {

$primary: '#FF055C',

$secondary: '#0BBFAD',

$black: '#0D0D0D',

$white: '#F2F2F2',

};

```

ex) 폰트

```

export const fonts = {

$xs: '14px',

$sm: '16px',

$base: '18px',

$lg: '20px'... | non_process | 디자인 시스템 작업 프로젝트에 사용할 디자인 시스템 작업 고려할 요소들 폰트 여백 너비 컬러 기타 등등 ex 색상 export const colors primary secondary black white ex 폰트 export const fonts xs sm base lg xl 구글 폰트 영어... | 0 |

13,589 | 16,162,950,032 | IssuesEvent | 2021-05-01 01:26:53 | tdwg/chrono | https://api.github.com/repos/tdwg/chrono | closed | Add IRI-value terms section to term list document | Process - prepare for Executive review | I see the statement "Once ratified, the Darwin Core RDF Guide will be updated to include the IRI versions of appropriate terms from this vocabulary, in the chronoiri: namespace, for use in RDF (e.g., as Linked Open Data)." in [the issue above](https://github.com/tdwg/chrono/issues/18), however, this feels like somethin... | 1.0 | Add IRI-value terms section to term list document - I see the statement "Once ratified, the Darwin Core RDF Guide will be updated to include the IRI versions of appropriate terms from this vocabulary, in the chronoiri: namespace, for use in RDF (e.g., as Linked Open Data)." in [the issue above](https://github.com/tdwg/... | process | add iri value terms section to term list document i see the statement once ratified the darwin core rdf guide will be updated to include the iri versions of appropriate terms from this vocabulary in the chronoiri namespace for use in rdf e g as linked open data in however this feels like something tha... | 1 |

5,258 | 8,051,791,503 | IssuesEvent | 2018-08-01 17:12:42 | dealii/dealii | https://api.github.com/repos/dealii/dealii | closed | Address comments in #6994 | Post-processing | It would be good for us to attend to the few remaining comments listed in #6994. | 1.0 | Address comments in #6994 - It would be good for us to attend to the few remaining comments listed in #6994. | process | address comments in it would be good for us to attend to the few remaining comments listed in | 1 |

22,322 | 30,884,968,413 | IssuesEvent | 2023-08-03 20:52:15 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | Release 6.3.2 - Aug 2023 | P1 type: process release team-OSS | # Status of Bazel 6.3.2

- Expected first release candidate date: 2023-08-03

- Expected release date: 2023-08-07

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/59)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manag... | 1.0 | Release 6.3.2 - Aug 2023 - # Status of Bazel 6.3.2

- Expected first release candidate date: 2023-08-03

- Expected release date: 2023-08-07

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/59)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to... | process | release aug status of bazel expected first release candidate date expected release date to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone to cherry... | 1 |

7,603 | 10,720,284,492 | IssuesEvent | 2019-10-26 16:34:12 | kavics/SnTraceViewer | https://api.github.com/repos/kavics/SnTraceViewer | opened | JOIN command | PROCESSOR | Write a command that can join trace files by any reader. Options:

- All

- Per session

| 1.0 | JOIN command - Write a command that can join trace files by any reader. Options:

- All

- Per session

| process | join command write a command that can join trace files by any reader options all per session | 1 |

3,771 | 6,742,012,028 | IssuesEvent | 2017-10-20 05:03:33 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Vagrant post processor changes disk size | need-more-info post-processor/vagrant question | Using the following JSON file the virtual box is built with the correct disk space of approx 11GB.

However, when is passes through the vagrant post processor the size is reset to 4GB, which is a default value.

The preseed.cfg file is below too

````

{

"builders": [{

"type": "qemu",

"iso_url": "{{use... | 1.0 | Vagrant post processor changes disk size - Using the following JSON file the virtual box is built with the correct disk space of approx 11GB.

However, when is passes through the vagrant post processor the size is reset to 4GB, which is a default value.

The preseed.cfg file is below too

````

{

"builders": [{

... | process | vagrant post processor changes disk size using the following json file the virtual box is built with the correct disk space of approx however when is passes through the vagrant post processor the size is reset to which is a default value the preseed cfg file is below too builders ... | 1 |

17,240 | 22,969,303,996 | IssuesEvent | 2022-07-20 00:21:02 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | opened | storage: propagate retry config to grpc methods | api: storage type: process | While doing #6370 I noticed that `ShouldRetry` is referenced directly from some methods in grpc_client.go. This means that these methods do not honor the user-configured WithErrorFunc RetryOption, if it has been passed. Filing an issue to fix this up.

cc @noahdietz

| 1.0 | storage: propagate retry config to grpc methods - While doing #6370 I noticed that `ShouldRetry` is referenced directly from some methods in grpc_client.go. This means that these methods do not honor the user-configured WithErrorFunc RetryOption, if it has been passed. Filing an issue to fix this up.

cc @noahdietz

... | process | storage propagate retry config to grpc methods while doing i noticed that shouldretry is referenced directly from some methods in grpc client go this means that these methods do not honor the user configured witherrorfunc retryoption if it has been passed filing an issue to fix this up cc noahdietz | 1 |

309,459 | 26,662,736,539 | IssuesEvent | 2023-01-25 22:53:24 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Despesas com diárias - Despesas com diárias - Itabirito | generalization test development template - ABO (21) tag - Despesas com diárias subtag - Despesas com diárias | DoD: Realizar o teste de Generalização do validador da tag Despesas com diárias - Despesas com diárias para o Município de Itabirito. | 1.0 | Teste de generalizacao para a tag Despesas com diárias - Despesas com diárias - Itabirito - DoD: Realizar o teste de Generalização do validador da tag Despesas com diárias - Despesas com diárias para o Município de Itabirito. | non_process | teste de generalizacao para a tag despesas com diárias despesas com diárias itabirito dod realizar o teste de generalização do validador da tag despesas com diárias despesas com diárias para o município de itabirito | 0 |

330,727 | 28,484,909,306 | IssuesEvent | 2023-04-18 07:08:58 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix miscellaneous.test_numpy_copysign | NumPy Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4728598063/jobs/8390290760" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4728598063/jobs/8390290760" rel="noopener ... | 1.0 | Fix miscellaneous.test_numpy_copysign - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4728598063/jobs/8390290760" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/47... | non_process | fix miscellaneous test numpy copysign tensorflow img src torch img src numpy img src jax img src | 0 |

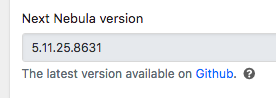

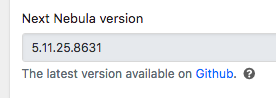

32,861 | 7,611,173,768 | IssuesEvent | 2018-05-01 12:43:39 | chrisblakley/Nebula | https://api.github.com/repos/chrisblakley/Nebula | opened | "Next Nebula Version" data is stuck | :beetle: Bug :fire: High Priority Backend (Server) WP Admin / Shortcode / Widget | The Nebula theme updater does not appear to be updating the remote Nebula theme version from Github.

It's stuck at `5.11.25.8631` even after manually updating the theme. | 1.0 | "Next Nebula Version" data is stuck - The Nebula theme updater does not appear to be updating the remote Nebula theme version from Github.

It's stuck at `5.11.25.8631` even ... | non_process | next nebula version data is stuck the nebula theme updater does not appear to be updating the remote nebula theme version from github it s stuck at even after manually updating the theme | 0 |

20,690 | 27,361,353,764 | IssuesEvent | 2023-02-27 16:04:02 | helmholtz-analytics/heat | https://api.github.com/repos/helmholtz-analytics/heat | closed | [Bug]: convolve with distributed kernel on multiple GPUs | bug :bug: signal processing communication signal | ### What happened?

convolve does not work if the kernel is distributed when more than one GPU is available.

### Code snippet triggering the error

```python

import heat as ht

dis_signal = ht.arange(0, 16, split=0, device='gpu', dtype=ht.int)

dis_kernel_odd = ht.ones(3, split=0, dtype=ht.int, device='gpu')

... | 1.0 | [Bug]: convolve with distributed kernel on multiple GPUs - ### What happened?

convolve does not work if the kernel is distributed when more than one GPU is available.

### Code snippet triggering the error

```python

import heat as ht

dis_signal = ht.arange(0, 16, split=0, device='gpu', dtype=ht.int)

dis_ke... | process | convolve with distributed kernel on multiple gpus what happened convolve does not work if the kernel is distributed when more than one gpu is available code snippet triggering the error python import heat as ht dis signal ht arange split device gpu dtype ht int dis kernel ... | 1 |

14,255 | 17,190,889,688 | IssuesEvent | 2021-07-16 10:46:10 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | Status of Bazel 5.0.0-pre.20210708.4 | P1 release team-XProduct type: process |

- Expected release date: 2021-07-16

Task list:

- [ ] Pick release baseline: [ca1d20fd](https://github.com/bazelbuild/bazel/commit/ca1d20fdfa95dad533c64aba08ba9d7d98be41b7) with cherrypicks [802901e6](https://github.com/bazelbuild/bazel/commit/802901e697015ee6a56ac36cd0000c1079207d12) [aa768ada](https://github.com/ba... | 1.0 | Status of Bazel 5.0.0-pre.20210708.4 -

- Expected release date: 2021-07-16

Task list:

- [ ] Pick release baseline: [ca1d20fd](https://github.com/bazelbuild/bazel/commit/ca1d20fdfa95dad533c64aba08ba9d7d98be41b7) with cherrypicks [802901e6](https://github.com/bazelbuild/bazel/commit/802901e697015ee6a56ac36cd0000c10792... | process | status of bazel pre expected release date task list pick release baseline with cherrypicks create release candidate post submit push the release update the | 1 |

699,823 | 24,033,702,350 | IssuesEvent | 2022-09-15 17:06:16 | mlibrary/heliotrope | https://api.github.com/repos/mlibrary/heliotrope | closed | Set a default press for a user | dashboard roles low priority | **STORY**

As a user with rights to multiple presses, I would like to be able to set a default press for myself, so that I don't constantly have to select a press for each action I perform.

**DETIALS**

This could be a session-level selection that I make when I log in, or a setting that I could change in my user profile... | 1.0 | Set a default press for a user - **STORY**

As a user with rights to multiple presses, I would like to be able to set a default press for myself, so that I don't constantly have to select a press for each action I perform.

**DETIALS**