Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

14,864 | 18,273,429,697 | IssuesEvent | 2021-10-04 15:59:29 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Re-introspection support using `db pull` with MongoDB | kind/feature process/candidate topic: re-introspection tech/engines tech/typescript topic: mongodb | Introspection support for MongoDB (Preview) was shipped with Prisma version 3.2.0 without re-introspection. | 1.0 | Re-introspection support using `db pull` with MongoDB - Introspection support for MongoDB (Preview) was shipped with Prisma version 3.2.0 without re-introspection. | process | re introspection support using db pull with mongodb introspection support for mongodb preview was shipped with prisma version without re introspection | 1 |

766 | 3,252,741,960 | IssuesEvent | 2015-10-19 16:02:30 | beesmart-it/trend-hrm | https://api.github.com/repos/beesmart-it/trend-hrm | closed | View selection process | client company requirement selection process | Access selection process view to see candidates by step, add candidates, manage process | 1.0 | View selection process - Access selection process view to see candidates by step, add candidates, manage process | process | view selection process access selection process view to see candidates by step add candidates manage process | 1 |

638,064 | 20,712,178,022 | IssuesEvent | 2022-03-12 03:57:03 | aitos-io/BoAT-X-Framework | https://api.github.com/repos/aitos-io/BoAT-X-Framework | closed | An error occurs when test case InitEthWalletGenerationKey is run. | bug Severity/moderate Priority/P2 | `01Wallet.c:496:F:wallet_api:test_002InitWallet_0013InitEthWalletGenerationKey:0: Assertion 'rtnVal == NULL' failed: rtnVal == 0x80007a870` | 1.0 | An error occurs when test case InitEthWalletGenerationKey is run. - `01Wallet.c:496:F:wallet_api:test_002InitWallet_0013InitEthWalletGenerationKey:0: Assertion 'rtnVal == NULL' failed: rtnVal == 0x80007a870` | non_process | an error occurs when test case initethwalletgenerationkey is run c f wallet api test assertion rtnval null failed rtnval | 0 |

15,348 | 2,850,649,105 | IssuesEvent | 2015-05-31 19:10:11 | damonkohler/sl4a | https://api.github.com/repos/damonkohler/sl4a | opened | ERROR/sl4a.FileUtils:121(2049): Failed to create directory. | auto-migrated Priority-Medium Type-Defect | _From @GoogleCodeExporter on May 31, 2015 11:29_

```

What device(s) are you experiencing the problem on?

miumiu 9 (android 2.1)

What firmware version are you running on the device?

What steps will reproduce the problem?

1. install sl4a_r3.apk using adb

2. try to run it

3.

What is the expected output? What do you s... | 1.0 | ERROR/sl4a.FileUtils:121(2049): Failed to create directory. - _From @GoogleCodeExporter on May 31, 2015 11:29_

```

What device(s) are you experiencing the problem on?

miumiu 9 (android 2.1)

What firmware version are you running on the device?

What steps will reproduce the problem?

1. install sl4a_r3.apk using adb

2... | non_process | error fileutils failed to create directory from googlecodeexporter on may what device s are you experiencing the problem on miumiu android what firmware version are you running on the device what steps will reproduce the problem install apk using adb try to run it ... | 0 |

217,209 | 16,682,218,015 | IssuesEvent | 2021-06-08 02:17:15 | bevyengine/bevy | https://api.github.com/repos/bevyengine/bevy | closed | Bevy ECS crate needs a readme | documentation ecs | Many people would like to use bevy_ecs outside of the context of Bevy, but it isn't immediately clear that it can be used standalone (#2002). The crates.io page is pretty bare:

We should give `bevy_ecs` ... | 1.0 | Bevy ECS crate needs a readme - Many people would like to use bevy_ecs outside of the context of Bevy, but it isn't immediately clear that it can be used standalone (#2002). The crates.io page is pretty bare:

| documentation priority: high | Add a working example of package binding and dependency section specified in [dependency under usecases](https://github.com/apache/incubator-openwhisk-wskdeploy/tree/master/tests/usecases/dependency).

Delete dependency folder from use cases folder. | 1.0 | Integration Test - Dependency (package binding) - Add a working example of package binding and dependency section specified in [dependency under usecases](https://github.com/apache/incubator-openwhisk-wskdeploy/tree/master/tests/usecases/dependency).

Delete dependency folder from use cases folder. | non_process | integration test dependency package binding add a working example of package binding and dependency section specified in delete dependency folder from use cases folder | 0 |

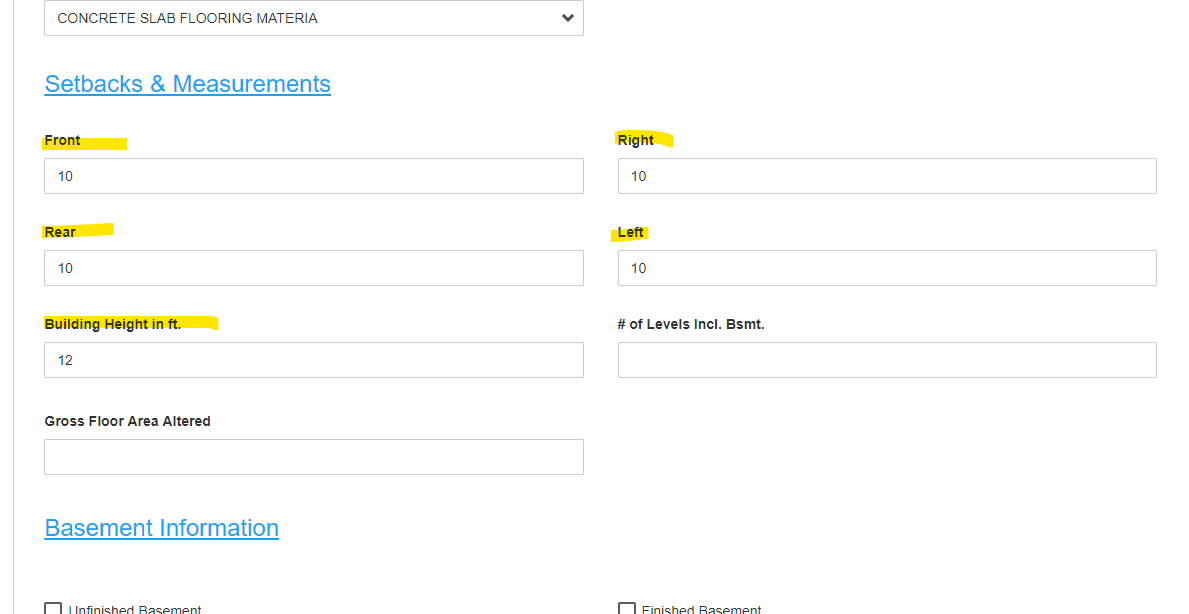

113,645 | 9,659,884,918 | IssuesEvent | 2019-05-20 14:22:59 | kcigeospatial/Fred_Co_Land-Management | https://api.github.com/repos/kcigeospatial/Fred_Co_Land-Management | closed | R4C-ResUse Detail Page, Setbacks | Ready For Retest | The Setbacks should have "in ft." next to them just as the Building Height does. -aw

| 1.0 | R4C-ResUse Detail Page, Setbacks - The Setbacks should have "in ft." next to them just as the Building Height does. -aw

| non_process | resuse detail page setbacks the setbacks should have in ft next to them just as the building height does aw | 0 |

6,217 | 9,126,909,042 | IssuesEvent | 2019-02-25 01:13:32 | emacs-ess/ESS | https://api.github.com/repos/emacs-ess/ESS | closed | Unable to interrupt inferior process | bug process | I haven't had time to look at this in much detail yet, but I'm not able to interrupt the inferior process with e.g. C-c C-c. The simplest way I could reproduce is with this R script:

```

cat("\n");Sys.sleep(100)

```

then C-c C-c to evaluate, switch to the inferior process and try to C-c C-c. I get a message about... | 1.0 | Unable to interrupt inferior process - I haven't had time to look at this in much detail yet, but I'm not able to interrupt the inferior process with e.g. C-c C-c. The simplest way I could reproduce is with this R script:

```

cat("\n");Sys.sleep(100)

```

then C-c C-c to evaluate, switch to the inferior process an... | process | unable to interrupt inferior process i haven t had time to look at this in much detail yet but i m not able to interrupt the inferior process with e g c c c c the simplest way i could reproduce is with this r script cat n sys sleep then c c c c to evaluate switch to the inferior process and ... | 1 |

7,285 | 9,544,285,414 | IssuesEvent | 2019-05-01 13:45:28 | ORelio/Minecraft-Console-Client | https://api.github.com/repos/ORelio/Minecraft-Console-Client | closed | Implement 1.14 support | a:enhancement in:protocol-compatibility in:terrain-handling resolved | Hi!

I'm just curious if the latest version of MinecraftClient.exe supports Minecraft 1.14. Thanks! | True | Implement 1.14 support - Hi!

I'm just curious if the latest version of MinecraftClient.exe supports Minecraft 1.14. Thanks! | non_process | implement support hi i m just curious if the latest version of minecraftclient exe supports minecraft thanks | 0 |

8,329 | 11,490,371,586 | IssuesEvent | 2020-02-11 16:58:33 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Preprocessing overlapping data not implemented yet | preprocessor | Hi,

I am having an issue with the preprocessing when trying to run a diagnostic which uses two variables from a single model:

https://github.com/ESMValGroup/ESMValTool/blob/MAGIC_BSC/esmvaltool/recipes/recipe_diurnal_temperature_index_wp7.yml

An unexpected problem prevented concatenation.

Expected only a s... | 1.0 | Preprocessing overlapping data not implemented yet - Hi,

I am having an issue with the preprocessing when trying to run a diagnostic which uses two variables from a single model:

https://github.com/ESMValGroup/ESMValTool/blob/MAGIC_BSC/esmvaltool/recipes/recipe_diurnal_temperature_index_wp7.yml

An unexpected ... | process | preprocessing overlapping data not implemented yet hi i am having an issue with the preprocessing when trying to run a diagnostic which uses two variables from a single model an unexpected problem prevented concatenation expected only a single cube found i guess this is just a minor error in ... | 1 |

2,884 | 5,848,169,551 | IssuesEvent | 2017-05-10 20:15:45 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Update copy-html to work like copy-image, with parameter for destination directory | feature P3 preprocess | In preprocess we have the `copy-image` task, which copies images from the source directory. For X/HTML this goes straight to the output directory. The task sets a property to figure out the destination directory. I've taken advantage of this by initializing the `copy-image.todir` property in a plugin: for one output ty... | 1.0 | Update copy-html to work like copy-image, with parameter for destination directory - In preprocess we have the `copy-image` task, which copies images from the source directory. For X/HTML this goes straight to the output directory. The task sets a property to figure out the destination directory. I've taken advantage o... | process | update copy html to work like copy image with parameter for destination directory in preprocess we have the copy image task which copies images from the source directory for x html this goes straight to the output directory the task sets a property to figure out the destination directory i ve taken advantage o... | 1 |

404,520 | 27,489,496,480 | IssuesEvent | 2023-03-04 12:46:14 | ra3xdh/qucs_s | https://api.github.com/repos/ra3xdh/qucs_s | closed | Legacy Qucs equations versus Nutmeg (ngspice ) | question documentation | I have 700 Qucs schematics with Equation blocks that have been "ported" over to Qucs-S. The vast majority of Equation blocks worked without converting them to Nutmeg equations. The 3rd example uses v(Output) versus Output.v.

Is there a way to know what Qucs functions don't work in Qucs-S (ngspice)?

The first thr... | 1.0 | Legacy Qucs equations versus Nutmeg (ngspice ) - I have 700 Qucs schematics with Equation blocks that have been "ported" over to Qucs-S. The vast majority of Equation blocks worked without converting them to Nutmeg equations. The 3rd example uses v(Output) versus Output.v.

Is there a way to know what Qucs functions... | non_process | legacy qucs equations versus nutmeg ngspice i have qucs schematics with equation blocks that have been ported over to qucs s the vast majority of equation blocks worked without converting them to nutmeg equations the example uses v output versus output v is there a way to know what qucs functions don... | 0 |

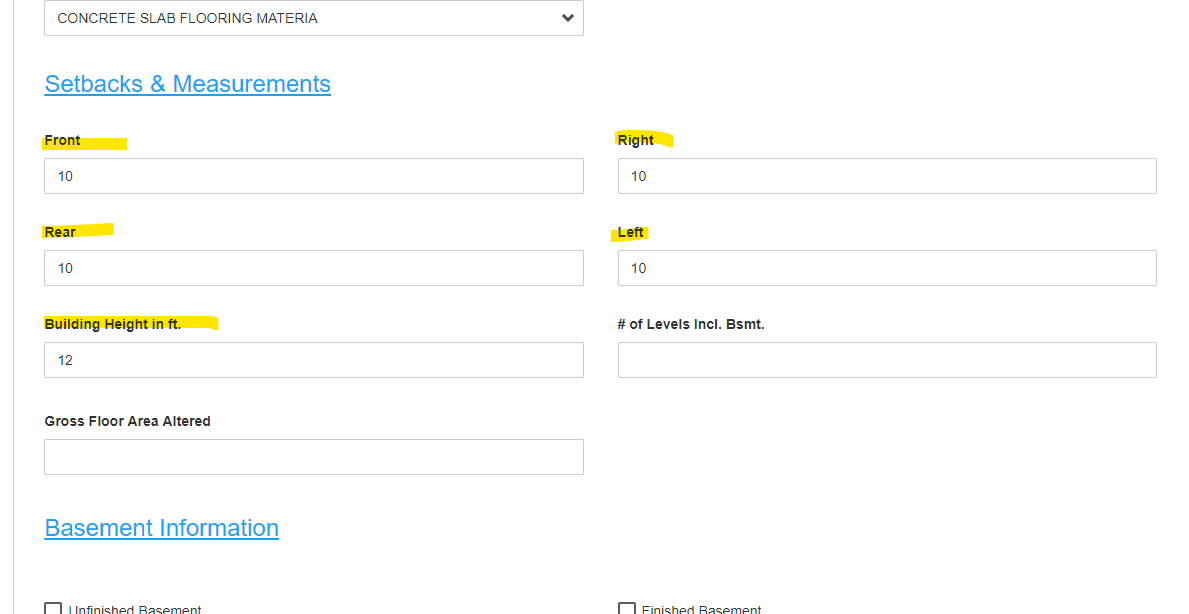

15,399 | 19,591,866,935 | IssuesEvent | 2022-01-05 13:52:05 | remnoteio/remnote-issues | https://api.github.com/repos/remnoteio/remnote-issues | closed | Added images not shown in native MacOS app, but shown within browser | in-replication-process image-uploading to explore checked waiting for response check for duplicates | **Describe the bug**

When reading through the document within the native MacOS app, I can't see my images at all, see here:

But the same document within the browser works fi... | 1.0 | Added images not shown in native MacOS app, but shown within browser - **Describe the bug**

When reading through the document within the native MacOS app, I can't see my images at all, see here:

To replicate, play the Canyon Offensive d... | True | MAX - Huge performance hit/freeze from exploding barrels - Was playing Canyon Offensive to test out some of my combat improvements I was working on, and I noticed that the engine comes to a screeching halt whenever a barrel explodes. (Especially the clustered up groups of barrels, when they all go off it causes a 1-2 s... | non_process | max huge performance hit freeze from exploding barrels was playing canyon offensive to test out some of my combat improvements i was working on and i noticed that the engine comes to a screeching halt whenever a barrel explodes especially the clustered up groups of barrels when they all go off it causes a s... | 0 |

358,970 | 25,210,890,687 | IssuesEvent | 2022-11-14 03:42:17 | risingwavelabs/risingwave-docs | https://api.github.com/repos/risingwavelabs/risingwave-docs | closed | Document where the data is stored in the tutorials | documentation | ### Related code PR

_No response_

### Which part(s) of the docs might be affected or should be updated? And how?

All of our tutorials, we do not mention where the data of the MVs is stored. For our demo cluster, the data is stored in the MinIO instance that is running in the demo cluster. Please reflect this in all ... | 1.0 | Document where the data is stored in the tutorials - ### Related code PR

_No response_

### Which part(s) of the docs might be affected or should be updated? And how?

All of our tutorials, we do not mention where the data of the MVs is stored. For our demo cluster, the data is stored in the MinIO instance that is run... | non_process | document where the data is stored in the tutorials related code pr no response which part s of the docs might be affected or should be updated and how all of our tutorials we do not mention where the data of the mvs is stored for our demo cluster the data is stored in the minio instance that is run... | 0 |

726,733 | 25,009,432,813 | IssuesEvent | 2022-11-03 14:16:52 | Signbank/Global-signbank | https://api.github.com/repos/Signbank/Global-signbank | closed | Change text on login page for registration | enhancement high priority | The registration option now says "Register for free to provide feedback on the Signbank." That's long outdated, users have better reasons now to get an account: to get access to datasets. Therefore, please change to "Register in order to get access to datasets" | 1.0 | Change text on login page for registration - The registration option now says "Register for free to provide feedback on the Signbank." That's long outdated, users have better reasons now to get an account: to get access to datasets. Therefore, please change to "Register in order to get access to datasets" | non_process | change text on login page for registration the registration option now says register for free to provide feedback on the signbank that s long outdated users have better reasons now to get an account to get access to datasets therefore please change to register in order to get access to datasets | 0 |

8,071 | 11,251,358,819 | IssuesEvent | 2020-01-11 00:04:59 | googleapis/java-monitoring-dashboards | https://api.github.com/repos/googleapis/java-monitoring-dashboards | opened | Promote to GA | type: process | Package name: **google-cloud-monitoring-dashboard**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [ ] 28 days el... | 1.0 | Promote to GA - Package name: **google-cloud-monitoring-dashboard**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

... | process | promote to ga package name google cloud monitoring dashboard current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required ... | 1 |

17,227 | 22,844,205,573 | IssuesEvent | 2022-07-13 03:06:43 | NCAR/ucomp-pipeline | https://api.github.com/repos/NCAR/ucomp-pipeline | closed | Process 20220313 and 20220316 with just RCAM and just TCAM | process | To determine if new issues with UCoMP images are due to one of the cameras, process 20220313 and 20220316 with just RCAM and just TCAM.

#### RCAM

- [x] 20220313

- [x] 20220316

- [x] 20220709

#### TCAM

- [x] 20220313

- [x] 20220316

- [x] 20220709

| 1.0 | Process 20220313 and 20220316 with just RCAM and just TCAM - To determine if new issues with UCoMP images are due to one of the cameras, process 20220313 and 20220316 with just RCAM and just TCAM.

#### RCAM

- [x] 20220313

- [x] 20220316

- [x] 20220709

#### TCAM

- [x] 20220313

- [x] 20220316

- [x] 202207... | process | process and with just rcam and just tcam to determine if new issues with ucomp images are due to one of the cameras process and with just rcam and just tcam rcam tcam | 1 |

19,155 | 25,235,542,029 | IssuesEvent | 2022-11-15 00:21:24 | ethereum/EIPs | https://api.github.com/repos/ethereum/EIPs | closed | How should we pick EIP numbers? | w-stale question r-ci r-process | In light of recent events, the discussion on how to assign numbers has come up again. This issue is _solely_ for discussing assigning EIP numbers, and other topics will be deleted.

I'll try to summarize the proposals here, in preparation for the next EIPIP meeting. I'm going to give each proposal a `short-name` for ... | 1.0 | How should we pick EIP numbers? - In light of recent events, the discussion on how to assign numbers has come up again. This issue is _solely_ for discussing assigning EIP numbers, and other topics will be deleted.

I'll try to summarize the proposals here, in preparation for the next EIPIP meeting. I'm going to give... | process | how should we pick eip numbers in light of recent events the discussion on how to assign numbers has come up again this issue is solely for discussing assigning eip numbers and other topics will be deleted i ll try to summarize the proposals here in preparation for the next eipip meeting i m going to give... | 1 |

721,097 | 24,817,938,008 | IssuesEvent | 2022-10-25 14:24:42 | COS301-SE-2022/Office-Booker | https://api.github.com/repos/COS301-SE-2022/Office-Booker | closed | Cannot delete certain bookings | Priority: Low Type: Fix Status: On-hold Status: Ready | Some bookings have a bookingvotedon entry associated with them, which prevents deletion. | 1.0 | Cannot delete certain bookings - Some bookings have a bookingvotedon entry associated with them, which prevents deletion. | non_process | cannot delete certain bookings some bookings have a bookingvotedon entry associated with them which prevents deletion | 0 |

15,357 | 19,530,500,073 | IssuesEvent | 2021-12-30 15:53:08 | redwoodjs/redwood | https://api.github.com/repos/redwoodjs/redwood | closed | LogFormatter does not output script exec logs | triage/processing release:fix | Reported by @simoncrypta

The new LogFormatter that pipes dev and api server logs to pretty print is not used when running scripts.

| 1.0 | LogFormatter does not output script exec logs - Reported by @simoncrypta

The new LogFormatter that pipes dev and api server logs to pretty print is not used when running scripts.

| process | logformatter does not output script exec logs reported by simoncrypta the new logformatter that pipes dev and api server logs to pretty print is not used when running scripts | 1 |

21,362 | 29,194,079,763 | IssuesEvent | 2023-05-20 00:31:42 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / Caieiras, São Paulo, Brazil] Fullstack Developer .NET/Angular (Híbrido) na Coodesh | SALVADOR PJ FULL-STACK MVC HTML PLENO GIT REST ANGULAR REQUISITOS ASP.NET PROCESSOS BACKEND GITHUB UMA C RAZOR AUTOMAÇÃO DE PROCESSOS HIBRIDO ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/fullstack-developer-netangular-hibrid... | 2.0 | [Hibrido / Caieiras, São Paulo, Brazil] Fullstack Developer .NET/Angular (Híbrido) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar pa... | process | fullstack developer net angular híbrido na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personaliz... | 1 |

13,259 | 15,728,470,805 | IssuesEvent | 2021-03-29 13:51:28 | modm-io/modm | https://api.github.com/repos/modm-io/modm | closed | Create a linter tool to ensure source code formatting | feature 🚧 process 📊 | While most source code roughly follows the guidelines, it has occured to me that there are many cases of inconsistent formatting across the code base. This is annoying, but good news is that it is easily automatable (so that we can focus on more important things, such as my fiber PR #439 :)

Coding convention is here... | 1.0 | Create a linter tool to ensure source code formatting - While most source code roughly follows the guidelines, it has occured to me that there are many cases of inconsistent formatting across the code base. This is annoying, but good news is that it is easily automatable (so that we can focus on more important things, ... | process | create a linter tool to ensure source code formatting while most source code roughly follows the guidelines it has occured to me that there are many cases of inconsistent formatting across the code base this is annoying but good news is that it is easily automatable so that we can focus on more important things ... | 1 |

297,162 | 9,161,301,946 | IssuesEvent | 2019-03-01 10:05:48 | RavenProject/ravenwallet-ios | https://api.github.com/repos/RavenProject/ravenwallet-ios | closed | Qt - Wallet shows protocol raven:<address>&amount=22... etc | FIXED / DONE Low Priority enhancement | The iPhone scan should honor this protocol and fill in a send with the field info. | 1.0 | Qt - Wallet shows protocol raven:<address>&amount=22... etc - The iPhone scan should honor this protocol and fill in a send with the field info. | non_process | qt wallet shows protocol raven amount etc the iphone scan should honor this protocol and fill in a send with the field info | 0 |

109,737 | 16,890,355,185 | IssuesEvent | 2021-06-23 08:30:47 | epam/TimeBase | https://api.github.com/repos/epam/TimeBase | closed | CVE-2018-14042 (Medium) detected in bootstrap-3.3.7.min.js - autoclosed | security vulnerability | ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.7.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile f... | True | CVE-2018-14042 (Medium) detected in bootstrap-3.3.7.min.js - autoclosed - ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.7.min.js</b></p></summary>

<... | non_process | cve medium detected in bootstrap min js autoclosed cve medium severity vulnerability vulnerable library bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file ... | 0 |

5,563 | 8,403,826,868 | IssuesEvent | 2018-10-11 10:56:58 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Travis error on realpath | type: process | We're seeing this in Travis:

> toolversions.sh: line 6: realpath: command not found

I'm not sure why, but we should look into it. | 1.0 | Travis error on realpath - We're seeing this in Travis:

> toolversions.sh: line 6: realpath: command not found

I'm not sure why, but we should look into it. | process | travis error on realpath we re seeing this in travis toolversions sh line realpath command not found i m not sure why but we should look into it | 1 |

10,060 | 13,044,161,778 | IssuesEvent | 2020-07-29 03:47:26 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `AddDateIntInt` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `AddDateIntInt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_exp... | 2.0 | UCP: Migrate scalar function `AddDateIntInt` from TiDB -

## Description

Port the scalar function `AddDateIntInt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.co... | process | ucp migrate scalar function adddateintint from tidb description port the scalar function adddateintint from tidb to coprocessor score mentor s lonng recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

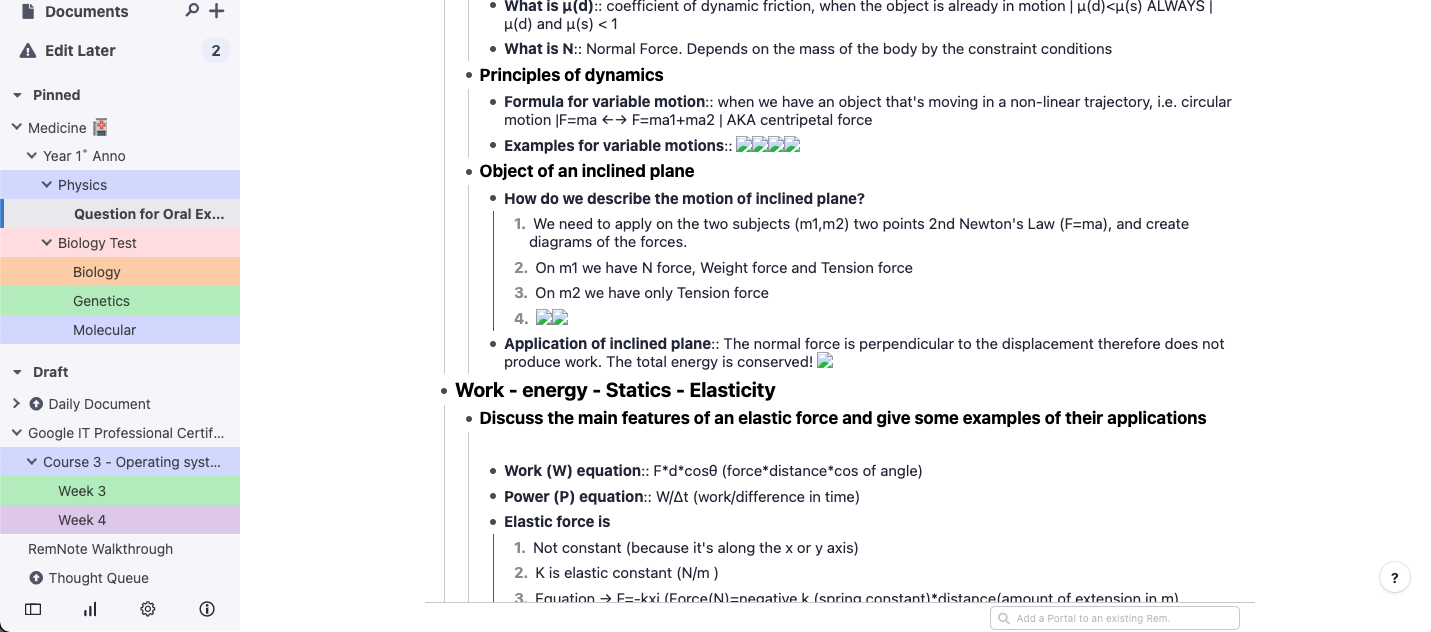

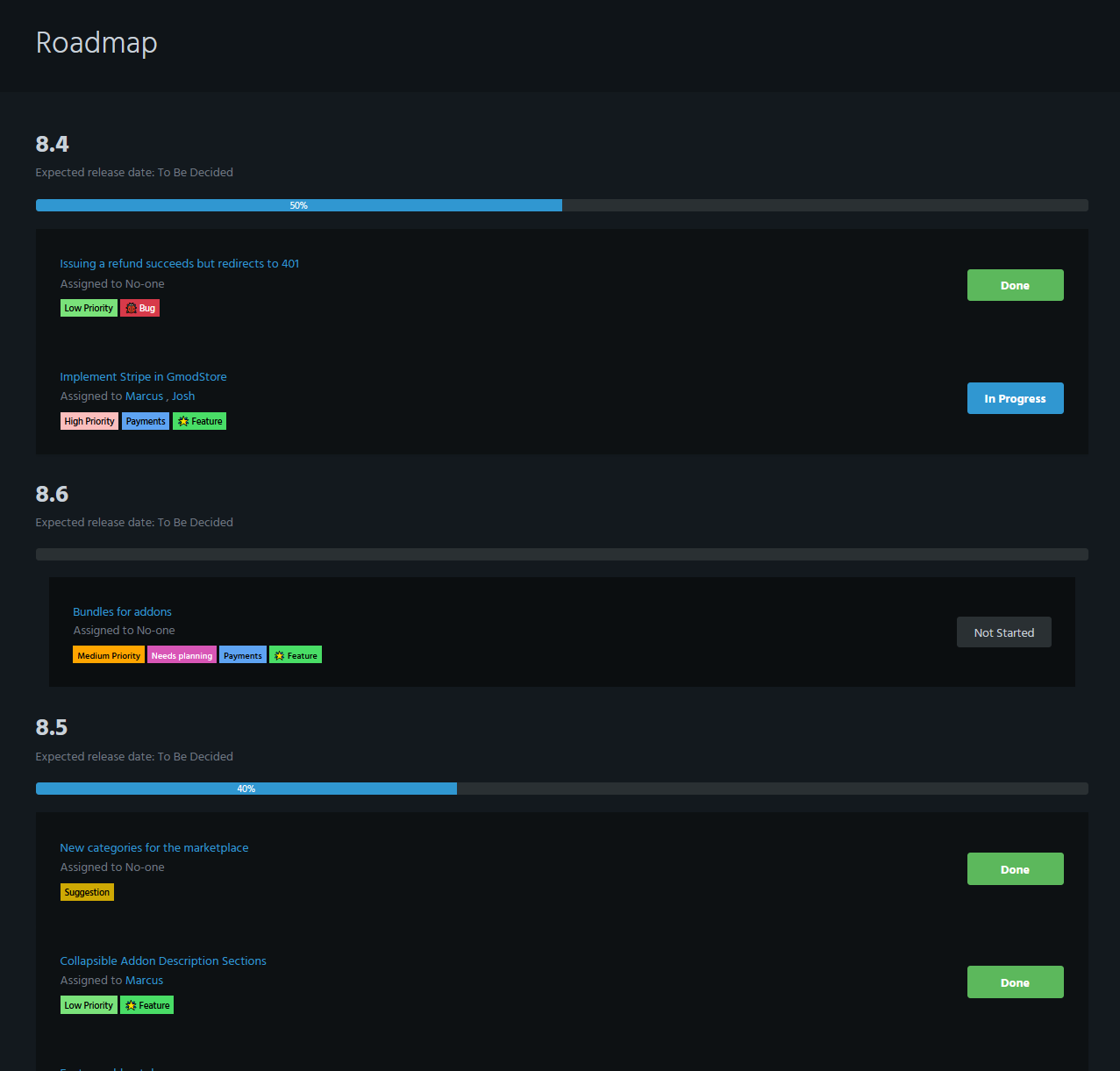

537,295 | 15,726,757,359 | IssuesEvent | 2021-03-29 11:46:24 | everyday-as/gmodstore-issues | https://api.github.com/repos/everyday-as/gmodstore-issues | closed | Roadmap isn't ordered properly | Low Priority 🐞 Bug | ## Expected Behavior

Future versions on the roadmap should be ordered by name ascending

## Actual Behavior

They're currently ordered randomly, see:

## Specifications

- GmodStore version (see foo... | 1.0 | Roadmap isn't ordered properly - ## Expected Behavior

Future versions on the roadmap should be ordered by name ascending

## Actual Behavior

They're currently ordered randomly, see:

## Specifications

... | non_process | roadmap isn t ordered properly expected behavior future versions on the roadmap should be ordered by name ascending actual behavior they re currently ordered randomly see specifications gmodstore version see footer example url browser event id | 0 |

25,361 | 12,237,705,642 | IssuesEvent | 2020-05-04 18:29:33 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | domainJoinOptions has no Explanation | Pri2 active-directory/svc assigned-to-author domain-services/subsvc product-question triaged |

In the ARM template is `domainJoinOptions` setting. I think this documentation should provide possible values or a link with more details.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2568296a-984a-5dd0-d455-96359880a5e5

* Ver... | 1.0 | domainJoinOptions has no Explanation -

In the ARM template is `domainJoinOptions` setting. I think this documentation should provide possible values or a link with more details.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2568... | non_process | domainjoinoptions has no explanation in the arm template is domainjoinoptions setting i think this documentation should provide possible values or a link with more details document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id ... | 0 |

11,769 | 14,598,956,257 | IssuesEvent | 2020-12-21 02:40:26 | e4exp/paper_manager_abstract | https://api.github.com/repos/e4exp/paper_manager_abstract | closed | The EOS Decision and Length Extrapolation | 2020 EMNLP Natural Language Processing _read_later | * https://arxiv.org/abs/2010.07174

* Blackbox NLP Workshop at EMNLP 2020

見たことのないシーケンスの長さへの外挿は、言語のニューラル生成モデルにとっての課題である。

この研究では、見落とされがちなモデル化の決定である、特別なEOS(End-of-sequence)語彙項目の使用による生成プロセスの終了予測の長さ外挿の効果を特徴づける。

我々は、EOSを予測するように訓練されたネットワーク(+EOS)と訓練されていないネットワーク(-EOS)の長さ外挿の挙動を比較するために、テスト時に正しいシーケンス長にモデルを生成するように強制するというオラクル設定... | 1.0 | The EOS Decision and Length Extrapolation - * https://arxiv.org/abs/2010.07174

* Blackbox NLP Workshop at EMNLP 2020

見たことのないシーケンスの長さへの外挿は、言語のニューラル生成モデルにとっての課題である。

この研究では、見落とされがちなモデル化の決定である、特別なEOS(End-of-sequence)語彙項目の使用による生成プロセスの終了予測の長さ外挿の効果を特徴づける。

我々は、EOSを予測するように訓練されたネットワーク(+EOS)と訓練されていないネットワーク(-EOS)の長さ外挿の挙動を比較す... | process | the eos decision and length extrapolation blackbox nlp workshop at emnlp 見たことのないシーケンスの長さへの外挿は、言語のニューラル生成モデルにとっての課題である。 この研究では、見落とされがちなモデル化の決定である、特別なeos(end of sequence)語彙項目の使用による生成プロセスの終了予測の長さ外挿の効果を特徴づける。 我々は、eosを予測するように訓練されたネットワーク eos と訓練されていないネットワーク eos の長さ外挿の挙動を比較するために、テスト時に正しいシーケンス長にモデルを生成するように強制す... | 1 |

13,951 | 16,725,432,124 | IssuesEvent | 2021-06-10 12:28:22 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | The startup query `SELECT @@socket` should be ignored when connecting to a remote database | bug/2-confirmed kind/bug process/candidate team/client topic: connections | ## Bug description

I have a server that is using the [TDSQL for MySQL](https://intl.cloud.tencent.com/product/dcdb) distribution database provided by Tencent Cloud.

The problem is Prisma Client will get a error when startup:

```

2021-03-23T17:11:16.362175968+08:00 prisma:info Starting a mysql pool with 91 connect... | 1.0 | The startup query `SELECT @@socket` should be ignored when connecting to a remote database - ## Bug description

I have a server that is using the [TDSQL for MySQL](https://intl.cloud.tencent.com/product/dcdb) distribution database provided by Tencent Cloud.

The problem is Prisma Client will get a error when startup... | process | the startup query select socket should be ignored when connecting to a remote database bug description i have a server that is using the distribution database provided by tencent cloud the problem is prisma client will get a error when startup prisma info starting a mysql pool w... | 1 |

532,796 | 15,571,577,037 | IssuesEvent | 2021-03-17 05:17:42 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Error Occurring when Executing 3.2.0 to 4.0.0 MSSQL Database Migration Script | API-M 4.0.0 Priority/Normal Type/Bug migration-4.0.0 migration-4.0.0-docs | ### Description:

When executing the 3.2.0 to 4.0.0 MSSQL database migration script [1] from the terminal, the following error is encountered.

```

Msg 1779, Level 16, State 1, Server dca5a503261b, Line 28

Table 'AM_API_CLIENT_CERTIFICATE' already has a primary key defined on it.

Msg 1750, Level 16, State 1, Server ... | 1.0 | Error Occurring when Executing 3.2.0 to 4.0.0 MSSQL Database Migration Script - ### Description:

When executing the 3.2.0 to 4.0.0 MSSQL database migration script [1] from the terminal, the following error is encountered.

```

Msg 1779, Level 16, State 1, Server dca5a503261b, Line 28

Table 'AM_API_CLIENT_CERTIFICATE... | non_process | error occurring when executing to mssql database migration script description when executing the to mssql database migration script from the terminal the following error is encountered msg level state server line table am api client certificate already has a pr... | 0 |

97,682 | 4,004,553,399 | IssuesEvent | 2016-05-12 07:51:19 | coreos/rkt | https://api.github.com/repos/coreos/rkt | opened | Function stage1/init/common#appToSystemd needs overwhaul | kind/cleanup priority/P2 | The function `appToSystemd` needs an overwhaul, it is ~200 LOC, includes a lot of logic, has many parameters.

I suggest to refactor it, maybe in "[functional options](http://dave.cheney.net/2014/10/17/functional-options-for-friendly-apis)" style for better future maintainability. | 1.0 | Function stage1/init/common#appToSystemd needs overwhaul - The function `appToSystemd` needs an overwhaul, it is ~200 LOC, includes a lot of logic, has many parameters.

I suggest to refactor it, maybe in "[functional options](http://dave.cheney.net/2014/10/17/functional-options-for-friendly-apis)" style for better f... | non_process | function init common apptosystemd needs overwhaul the function apptosystemd needs an overwhaul it is loc includes a lot of logic has many parameters i suggest to refactor it maybe in style for better future maintainability | 0 |

1,675 | 4,312,780,465 | IssuesEvent | 2016-07-22 07:41:39 | matz-e/lobster | https://api.github.com/repos/matz-e/lobster | opened | Factor plotting and dashboard out into a monitor plugin like ELK | enhancement monitoring processing | We should have the "traditional" plotting have a more modular approach, like ELK currently has. Ideally, I think we should be able to specify what monitoring we want to use like this:

```

config = Config(

…

monitoring=[Elk(…), Plots(plotdir='…'), Dashboard()],

…

)

```

Benefits:

* smaller core Lob... | 1.0 | Factor plotting and dashboard out into a monitor plugin like ELK - We should have the "traditional" plotting have a more modular approach, like ELK currently has. Ideally, I think we should be able to specify what monitoring we want to use like this:

```

config = Config(

…

monitoring=[Elk(…), Plots(plotdir=... | process | factor plotting and dashboard out into a monitor plugin like elk we should have the traditional plotting have a more modular approach like elk currently has ideally i think we should be able to specify what monitoring we want to use like this config config … monitoring … be... | 1 |

7,369 | 10,512,605,195 | IssuesEvent | 2019-09-27 18:20:48 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Open Opps - Applicant Status updates | Apply Process Approved Requirements Ready State Dept. | Who: Student intern applicants

What: Status updates

Why: to show when an intern has applied or been selected

Acceptance Criteria:

Applicant status for unpaid internships will display on the landing page as follows:

- In progress - The applicant has started the application process and saved but not submitted

- A... | 1.0 | Open Opps - Applicant Status updates - Who: Student intern applicants

What: Status updates

Why: to show when an intern has applied or been selected

Acceptance Criteria:

Applicant status for unpaid internships will display on the landing page as follows:

- In progress - The applicant has started the application p... | process | open opps applicant status updates who student intern applicants what status updates why to show when an intern has applied or been selected acceptance criteria applicant status for unpaid internships will display on the landing page as follows in progress the applicant has started the application p... | 1 |

387,927 | 26,744,951,597 | IssuesEvent | 2023-01-30 15:24:28 | h5py/h5py | https://api.github.com/repos/h5py/h5py | closed | h5py was built without ROS3 support, can't use ros3 driver | documentation | Operating System : Windows 10

Python version : 3.10

h5py version :

D:\Program Files\Python310>pip uninstall h5py

Found existing installation: h5py 3.7.0

发生异常: ValueError

h5py was built without ROS3 support, can't use ros3 driver

File "D:\hdf5Test.py", line 71, in read_hdf5

f = h5py.File(h5_path, 'r', d... | 1.0 | h5py was built without ROS3 support, can't use ros3 driver - Operating System : Windows 10

Python version : 3.10

h5py version :

D:\Program Files\Python310>pip uninstall h5py

Found existing installation: h5py 3.7.0

发生异常: ValueError

h5py was built without ROS3 support, can't use ros3 driver

File "D:\hdf5Test.... | non_process | was built without support can t use driver operating system windows python version version d program files pip uninstall found existing installation 发生异常 valueerror was built without support can t use driver file d py line in read f file pat... | 0 |

13,633 | 16,243,924,285 | IssuesEvent | 2021-05-07 12:49:24 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Segmentation fault in dataloader after upgrading to pytorch v1.8.0 | high priority module: crash module: dataloader module: multiprocessing module: regression triaged | ## 🐛 Bug

I get a segmentation fault in the dataloader upgrading to pytorch v1.8.0.

The worker crash immediately.

With pytorch v1.7.1 it works without any issues.

Is there anything substantial that changed and needs to be accounted for?

## Expected behavior

No segmentation fault.

## Environment

```

C... | 1.0 | Segmentation fault in dataloader after upgrading to pytorch v1.8.0 - ## 🐛 Bug

I get a segmentation fault in the dataloader upgrading to pytorch v1.8.0.

The worker crash immediately.

With pytorch v1.7.1 it works without any issues.

Is there anything substantial that changed and needs to be accounted for?

## ... | process | segmentation fault in dataloader after upgrading to pytorch 🐛 bug i get a segmentation fault in the dataloader upgrading to pytorch the worker crash immediately with pytorch it works without any issues is there anything substantial that changed and needs to be accounted for exp... | 1 |

43,209 | 11,569,199,051 | IssuesEvent | 2020-02-20 17:07:23 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | "Other" heating subcategory appears (incorrectly) | Defect | Issue overview

--------------

See IDF below where >12 GJ of energy appears in an "Other" heating subcategory in the End Uses By Subcategory output table. The subcategory is being automatically created. Across several hundred test IDFs, this is the _only_ file where this subcategory appears. In every other test file, ... | 1.0 | "Other" heating subcategory appears (incorrectly) - Issue overview

--------------

See IDF below where >12 GJ of energy appears in an "Other" heating subcategory in the End Uses By Subcategory output table. The subcategory is being automatically created. Across several hundred test IDFs, this is the _only_ file where ... | non_process | other heating subcategory appears incorrectly issue overview see idf below where gj of energy appears in an other heating subcategory in the end uses by subcategory output table the subcategory is being automatically created across several hundred test idfs this is the only file where t... | 0 |

4,433 | 7,308,529,931 | IssuesEvent | 2018-02-28 08:42:48 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | closed | Configure Maytech Mock Connectivity from NotProd Ingest Linux Server | DQ Data Ingest DQ Tranche 1 Production SSM processing | Private Key Migration

- [x] Private Key for Linux Ingest NotProd (/home/SSM/.ssh/id_rsa) for Mock Maytech Server

Configure NotProd ssh_remote_* parameters in sftp_oag_client_maytech.py

- [x] ssh_remote_host: <mock SFTP server>

- [x] ssh_remote_user: <mock oag user>

- [x] ssh_remote_key: /home/SSM/.ssh/id_rsa | 1.0 | Configure Maytech Mock Connectivity from NotProd Ingest Linux Server - Private Key Migration

- [x] Private Key for Linux Ingest NotProd (/home/SSM/.ssh/id_rsa) for Mock Maytech Server

Configure NotProd ssh_remote_* parameters in sftp_oag_client_maytech.py

- [x] ssh_remote_host: <mock SFTP server>

- [x] ssh_remo... | process | configure maytech mock connectivity from notprod ingest linux server private key migration private key for linux ingest notprod home ssm ssh id rsa for mock maytech server configure notprod ssh remote parameters in sftp oag client maytech py ssh remote host ssh remote user ssh rem... | 1 |

20,251 | 26,869,036,918 | IssuesEvent | 2023-02-04 07:54:10 | threefoldtech/builders | https://api.github.com/repos/threefoldtech/builders | closed | docker install script fails on a grid full vm | type_bug process_wontfix | ```sh

root@VM376afe27:/code/threefoldtech/builders/scripts/installers# ./docker.sh

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

E: Unable to locate package docker-engine

++ sudo apt-get update

Hit:1 http://archive.ubuntu.com/ubuntu jammy InRelease

Hit:2 http:/... | 1.0 | docker install script fails on a grid full vm - ```sh

root@VM376afe27:/code/threefoldtech/builders/scripts/installers# ./docker.sh

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

E: Unable to locate package docker-engine

++ sudo apt-get update

Hit:1 http://archive... | process | docker install script fails on a grid full vm sh root code threefoldtech builders scripts installers docker sh reading package lists done building dependency tree done reading state information done e unable to locate package docker engine sudo apt get update hit jammy inrelease hit ... | 1 |

46,857 | 2,965,311,737 | IssuesEvent | 2015-07-10 21:58:13 | riot/riot | https://api.github.com/repos/riot/riot | closed | Riot is not usable from inside web workers | low priority question to verify | I've discovered that riot can't be used from inside web workers due to init logic on the window object.

It seems to be solved by changing the initializing closure call to use self instead of undefined, like this:

})(typeof window != 'undefined' ? window : self);

But I'm not sure if that breaks something else... | 1.0 | Riot is not usable from inside web workers - I've discovered that riot can't be used from inside web workers due to init logic on the window object.

It seems to be solved by changing the initializing closure call to use self instead of undefined, like this:

})(typeof window != 'undefined' ? window : self);

B... | non_process | riot is not usable from inside web workers i ve discovered that riot can t be used from inside web workers due to init logic on the window object it seems to be solved by changing the initializing closure call to use self instead of undefined like this typeof window undefined window self b... | 0 |

5,972 | 8,792,467,319 | IssuesEvent | 2018-12-21 16:12:42 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | EMails cannot be imported from imap | mail processing waiting for feedback | Infos:

Used Zammad version: 2.8

Installation method (source, package, ..): package

Operating system: Ubuntu Server 16.04 LTS

Database + version: 10.0.36-MariaDB-0ubuntu0.16.04.1 Ubuntu 16.04

Elasticsearch version: 5.6.9

Browser + version: any

Error:

Channel: Email::Account in Can't u... | 1.0 | EMails cannot be imported from imap - Infos:

Used Zammad version: 2.8

Installation method (source, package, ..): package

Operating system: Ubuntu Server 16.04 LTS

Database + version: 10.0.36-MariaDB-0ubuntu0.16.04.1 Ubuntu 16.04

Elasticsearch version: 5.6.9

Browser + version: any

Erro... | process | emails cannot be imported from imap infos used zammad version installation method source package package operating system ubuntu server lts database version mariadb ubuntu elasticsearch version browser version any error channel em... | 1 |

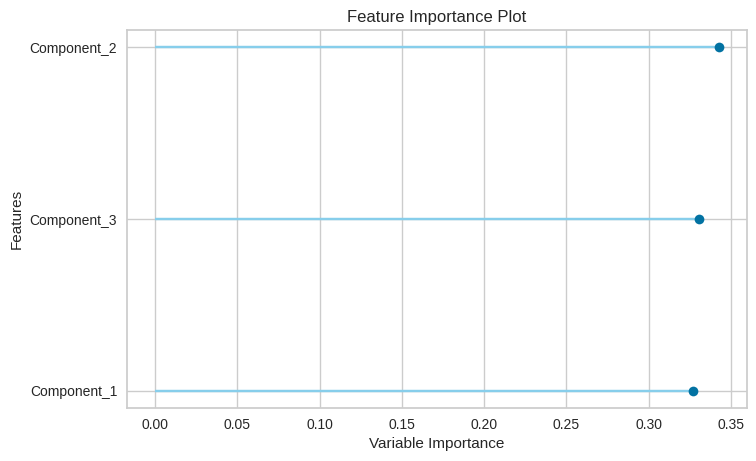

16,371 | 21,083,026,210 | IssuesEvent | 2022-04-03 07:21:14 | pycaret/pycaret | https://api.github.com/repos/pycaret/pycaret | closed | How to see PCA components name? | enhancement preprocessing pca | I applied PCA and then feature importance but it shows important features with Component_1,2 and 3 so how can I know/understand which parameters are corresponding to these components?

| 1.0 | How to see PCA components name? - I applied PCA and then feature importance but it shows important features with Component_1,2 and 3 so how can I know/understand which parameters are corresponding to these components?

{

console.error(

... | 1.0 | Better error handling for Introspection when JSON-RPC doesn't have an id - See

https://github.com/prisma/migrate/blob/9eec2a09d3722e81b563e0f6afccb773948e9869/src/LiftEngine.ts#L108

and

https://github.com/prisma/prisma2/blob/b80e2028f0af6e7c45aa66645f2c37e8f6b1126c/cli/sdk/src/IntrospectionEngine.ts#L121

Curr... | process | better error handling for introspection when json rpc doesn t have an id see and current example if result id console error response json stringify result doesn t have an id and i can t handle that yet | 1 |

35,034 | 7,887,543,491 | IssuesEvent | 2018-06-27 18:49:55 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Hackers have infaltrated com_contact through XSS and [POST:jform] | No Code Attached Yet | ### Steps to reproduce the issue

Upgraded to 3.8.10. As soon as I did Hackers from Germany hit com_contact with XSS attacks and [POST:jform] and send multiple emails through this component. I don't even use this component on my site.

### Expected result

No hacking and XSS attack of com_contact

### Actual r... | 1.0 | Hackers have infaltrated com_contact through XSS and [POST:jform] - ### Steps to reproduce the issue

Upgraded to 3.8.10. As soon as I did Hackers from Germany hit com_contact with XSS attacks and [POST:jform] and send multiple emails through this component. I don't even use this component on my site.

### Expecte... | non_process | hackers have infaltrated com contact through xss and steps to reproduce the issue upgraded to as soon as i did hackers from germany hit com contact with xss attacks and and send multiple emails through this component i don t even use this component on my site expected result no hacking ... | 0 |

34,302 | 9,331,423,524 | IssuesEvent | 2019-03-28 09:43:36 | golang/go | https://api.github.com/repos/golang/go | closed | x/build/env/android: gomote debugging with Android builders is painful | Builders Documentation | @griesemer discovered that using gomote to debug things on Android is painful.

We should have a wiki page with some example sessions, including how to build the exec wrapper, and how to run go tests.

| 1.0 | x/build/env/android: gomote debugging with Android builders is painful - @griesemer discovered that using gomote to debug things on Android is painful.

We should have a wiki page with some example sessions, including how to build the exec wrapper, and how to run go tests.

| non_process | x build env android gomote debugging with android builders is painful griesemer discovered that using gomote to debug things on android is painful we should have a wiki page with some example sessions including how to build the exec wrapper and how to run go tests | 0 |

2,551 | 5,310,583,929 | IssuesEvent | 2017-02-12 21:17:28 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | closed | [subtitles] [fr] « Il faut sortir du nucléaire » - Jean-Luc Mélenchon | Language: French Process: [6] Approved | # Video title

« Il faut sortir du nucléaire » - Jean-Luc Mélenchon

# URL

https://www.youtube.com/watch?v=TYmeiHryrCE

# Youtube subtitles language

French

# Duration

1:56

# Subtitles URL

https://www.youtube.com/timedtext_editor?ref=player&lang=fr&v=TYmeiHryrCE&tab=captions&action_mde_edit_form=1&bl=vmp&u... | 1.0 | [subtitles] [fr] « Il faut sortir du nucléaire » - Jean-Luc Mélenchon - # Video title

« Il faut sortir du nucléaire » - Jean-Luc Mélenchon

# URL

https://www.youtube.com/watch?v=TYmeiHryrCE

# Youtube subtitles language

French

# Duration

1:56

# Subtitles URL

https://www.youtube.com/timedtext_editor?ref=p... | process | « il faut sortir du nucléaire » jean luc mélenchon video title « il faut sortir du nucléaire » jean luc mélenchon url youtube subtitles language french duration subtitles url | 1 |

6,501 | 9,574,761,668 | IssuesEvent | 2019-05-07 03:17:06 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | opened | Parameter is missing in the runbook code | automation/svc process-automation/subsvc | HI Team, I noticed this runbook was updated recently . However, the parameter part is missing after update . Witouout parameter part, runbook will fail . Please update doc accordingly, thanks .

=====================

[OutputType("PSAzureOperationResponse")]

param

(

[Parameter (Mandatory=$false)]

[object] $Webhoo... | 1.0 | Parameter is missing in the runbook code - HI Team, I noticed this runbook was updated recently . However, the parameter part is missing after update . Witouout parameter part, runbook will fail . Please update doc accordingly, thanks .

=====================

[OutputType("PSAzureOperationResponse")]

param

(

[Parame... | process | parameter is missing in the runbook code hi team i noticed this runbook was updated recently however the parameter part is missing after update witouout parameter part runbook will fail please update doc accordingly thanks param webhookdata erroractionpreference ... | 1 |

6,376 | 9,428,563,404 | IssuesEvent | 2019-04-12 01:46:41 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | 1.14 Merge Order | area: Process | This Issue is to track which changes should try and get merged in which order early on so we can reduce churn & conflicts:

Topic | Merge Status | impact to code base | PR | Owner (s) |

----- | -------------| ------------------- | ---|-----------|

Move SoC code to top-level | **Merged**| impacts new SoC supports in... | 1.0 | 1.14 Merge Order - This Issue is to track which changes should try and get merged in which order early on so we can reduce churn & conflicts:

Topic | Merge Status | impact to code base | PR | Owner (s) |

----- | -------------| ------------------- | ---|-----------|

Move SoC code to top-level | **Merged**| impacts ... | process | merge order this issue is to track which changes should try and get merged in which order early on so we can reduce churn conflicts topic merge status impact to code base pr owner s move soc code to top level merged impacts n... | 1 |

17,607 | 23,427,793,778 | IssuesEvent | 2022-08-14 16:53:25 | vortexntnu/Vortex-CV | https://api.github.com/repos/vortexntnu/Vortex-CV | closed | Hough transform manifolding as base layer (Feature Detection) | enhancement feature moderate priority Image Processing | **Time estimate:** 10 hours

**Deadline:** 01.02.22

**Description of task:**

The colour-shape-point-fitting based object detection that works reliably in normal environments, does not work well underwater, especially over the distances of over 3-4 meters. The cause is seemingly the blue-shift that happens over t... | 1.0 | Hough transform manifolding as base layer (Feature Detection) - **Time estimate:** 10 hours

**Deadline:** 01.02.22

**Description of task:**

The colour-shape-point-fitting based object detection that works reliably in normal environments, does not work well underwater, especially over the distances of over 3-4 m... | process | hough transform manifolding as base layer feature detection time estimate hours deadline description of task the colour shape point fitting based object detection that works reliably in normal environments does not work well underwater especially over the distances of over meter... | 1 |

119,016 | 4,759,495,532 | IssuesEvent | 2016-10-24 22:48:03 | kdahlquist/GRNmap | https://api.github.com/repos/kdahlquist/GRNmap | opened | Data Analysis Team tasks for Week of 10/24 | data analysis logistics priority 0 | The tasks for this week:

* @kdahlquist has "review requested" for

- [ ] #230 (degree distribution charts)

- [ ] #245, generating and vetting input workbooks (after requested changes are made

* @bklein7 and @Nwilli31 are working on the following:

- [ ] #245, generating and vetting input workbooks, fi... | 1.0 | Data Analysis Team tasks for Week of 10/24 - The tasks for this week:

* @kdahlquist has "review requested" for

- [ ] #230 (degree distribution charts)

- [ ] #245, generating and vetting input workbooks (after requested changes are made

* @bklein7 and @Nwilli31 are working on the following:

- [ ] #24... | non_process | data analysis team tasks for week of the tasks for this week kdahlquist has review requested for degree distribution charts generating and vetting input workbooks after requested changes are made and are working on the following generating and vetting i... | 0 |

18,311 | 3,041,566,814 | IssuesEvent | 2015-08-07 22:15:48 | francoisferland/casiousbmididriver | https://api.github.com/repos/francoisferland/casiousbmididriver | closed | Not compatible with Yosemite | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Try installing the driver on a Yosemite mac

2. Connect Casio Keyboard and open a DAW like Logic Pro X

3.

What is the expected output? What do you see instead?

Keyboard isn't recognized and doesn't show up in Audio MIDI setup

What version of the product are you using? On ... | 1.0 | Not compatible with Yosemite - ```

What steps will reproduce the problem?

1. Try installing the driver on a Yosemite mac

2. Connect Casio Keyboard and open a DAW like Logic Pro X

3.

What is the expected output? What do you see instead?

Keyboard isn't recognized and doesn't show up in Audio MIDI setup

What version of... | non_process | not compatible with yosemite what steps will reproduce the problem try installing the driver on a yosemite mac connect casio keyboard and open a daw like logic pro x what is the expected output what do you see instead keyboard isn t recognized and doesn t show up in audio midi setup what version of... | 0 |

153,655 | 24,168,882,775 | IssuesEvent | 2022-09-22 17:19:39 | MetaMask/metamask-mobile | https://api.github.com/repos/MetaMask/metamask-mobile | closed | Unable to re-add account nickname after making it an empty string | type-bug needs-design Sev2-normal Priority - High stability-team | **Describe the bug**

If i were to remove my account nick name i.e. leave it blank, I am unable to add a new nickname to my account.

see [recording](http://recordit.co/blCne5klOv )

**To Reproduce**

- launch the app

- create/import your wallet

- while on the wallet view

- tap and hold the account nickname to... | 1.0 | Unable to re-add account nickname after making it an empty string - **Describe the bug**

If i were to remove my account nick name i.e. leave it blank, I am unable to add a new nickname to my account.

see [recording](http://recordit.co/blCne5klOv )

**To Reproduce**

- launch the app

- create/import your wallet... | non_process | unable to re add account nickname after making it an empty string describe the bug if i were to remove my account nick name i e leave it blank i am unable to add a new nickname to my account see to reproduce launch the app create import your wallet while on the wallet view tap an... | 0 |

327,839 | 9,981,949,569 | IssuesEvent | 2019-07-10 08:45:13 | jenkins-x/jx | https://api.github.com/repos/jenkins-x/jx | closed | Revert goreleaser from updating jx brew package | area/versions in progress kind/fox priority/important-soon | goreleaser is being used as a temporary measure to release new jx versions to brew, this is not being released in accordance with our CI/CD model.

the homebrew-jx package will be updated by `jx step create pr brew` once merged. | 1.0 | Revert goreleaser from updating jx brew package - goreleaser is being used as a temporary measure to release new jx versions to brew, this is not being released in accordance with our CI/CD model.

the homebrew-jx package will be updated by `jx step create pr brew` once merged. | non_process | revert goreleaser from updating jx brew package goreleaser is being used as a temporary measure to release new jx versions to brew this is not being released in accordance with our ci cd model the homebrew jx package will be updated by jx step create pr brew once merged | 0 |

179,005 | 6,620,758,902 | IssuesEvent | 2017-09-21 16:35:55 | gonetz/GLideN64 | https://api.github.com/repos/gonetz/GLideN64 | closed | Zelda: OoT missing fences | Priority-High Regression | You can see the original report of this issue (including mupen64plus save state) here:

https://github.com/m64p/mupen64plus-GLideN64/issues/13

I verified the issue, and bisected it to this commit: 187f9ef390052906e3516e1aaa71078125d099de | 1.0 | Zelda: OoT missing fences - You can see the original report of this issue (including mupen64plus save state) here:

https://github.com/m64p/mupen64plus-GLideN64/issues/13

I verified the issue, and bisected it to this commit: 187f9ef390052906e3516e1aaa71078125d099de | non_process | zelda oot missing fences you can see the original report of this issue including save state here i verified the issue and bisected it to this commit | 0 |

7,980 | 11,170,031,210 | IssuesEvent | 2019-12-28 10:35:27 | konlpy/konlpy | https://api.github.com/repos/konlpy/konlpy | closed | pytorch의 data loader와 konlpy 사용 (jpype error) | Keyword/multiprocess_thread Status/help wanted question | pytorch의 data loader에서 multi process를 사용하는데

다음과 같이 에러가 나오네요 ㅠ

import는 다됩니다만..ㅠ (OS는 centOS입니다 ㅠ)

Traceback (most recent call last):

File "/usr/lib/python2.7/multiprocessing/process.py", line 258, in _bootstrap

self.run()

File "/usr/lib/python2.7/multiprocessing/process.py", line 114, in run

sel... | 1.0 | pytorch의 data loader와 konlpy 사용 (jpype error) - pytorch의 data loader에서 multi process를 사용하는데

다음과 같이 에러가 나오네요 ㅠ

import는 다됩니다만..ㅠ (OS는 centOS입니다 ㅠ)

Traceback (most recent call last):

File "/usr/lib/python2.7/multiprocessing/process.py", line 258, in _bootstrap

self.run()

File "/usr/lib/python2.7/multip... | process | pytorch의 data loader와 konlpy 사용 jpype error pytorch의 data loader에서 multi process를 사용하는데 다음과 같이 에러가 나오네요 ㅠ import는 다됩니다만 ㅠ os는 centos입니다 ㅠ traceback most recent call last file usr lib multiprocessing process py line in bootstrap self run file usr lib multiprocessing proc... | 1 |

69,443 | 30,283,614,435 | IssuesEvent | 2023-07-08 11:43:42 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | closed | FR: Colorized output | Language Service Feature Request help wanted more votes needed Feature: Colorization | ### Feature Request

If a compilation/execution in performed in the Terminal window the output is colorized. But for the compilation the output in "Output" is not. Interactive, colorized text in "Output" will be great. | 1.0 | FR: Colorized output - ### Feature Request

If a compilation/execution in performed in the Terminal window the output is colorized. But for the compilation the output in "Output" is not. Interactive, colorized text in "Output" will be great. | non_process | fr colorized output feature request if a compilation execution in performed in the terminal window the output is colorized but for the compilation the output in output is not interactive colorized text in output will be great | 0 |

31,055 | 5,902,378,019 | IssuesEvent | 2017-05-19 01:05:30 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | Confusion around use/guarantees of grpc.RpcError in Python | documentation python question | Modifying the Python `helloworld` example to add exception handling produces some confusing results.

Changing modifying the `run()` function from https://github.com/grpc/grpc/blob/master/examples/python/helloworld/greeter_client.py to

def run():

channel = grpc.insecure_channel('localhost:50051')

... | 1.0 | Confusion around use/guarantees of grpc.RpcError in Python - Modifying the Python `helloworld` example to add exception handling produces some confusing results.

Changing modifying the `run()` function from https://github.com/grpc/grpc/blob/master/examples/python/helloworld/greeter_client.py to

def run():

... | non_process | confusion around use guarantees of grpc rpcerror in python modifying the python helloworld example to add exception handling produces some confusing results changing modifying the run function from to def run channel grpc insecure channel localhost stub helloworld grpc g... | 0 |

4,434 | 7,308,600,203 | IssuesEvent | 2018-02-28 08:58:30 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | reopened | Add data-transfer job for OAG data to S3 archive | DQ Data Pipeline DQ Tranche 1 Production SSM processing | Add Data Transfer job for OAG data to S3 archive | 1.0 | Add data-transfer job for OAG data to S3 archive - Add Data Transfer job for OAG data to S3 archive | process | add data transfer job for oag data to archive add data transfer job for oag data to archive | 1 |

383 | 2,823,574,458 | IssuesEvent | 2015-05-21 09:39:51 | austundag/testing | https://api.github.com/repos/austundag/testing | closed | Add Allergies info to eHMP Toolbar | enhancement in process | 1. It should be next to CARE TEAM INFORMATION and should have similarly three lines with same fonts.

2. The title should say 'ALLERGIES' and it should be red.

3. The second line should say "Local: <allergy name> <allergy name> ,,,"

4. The third line should say "Remote: <allergy name> <allergy name> ,,," | 1.0 | Add Allergies info to eHMP Toolbar - 1. It should be next to CARE TEAM INFORMATION and should have similarly three lines with same fonts.

2. The title should say 'ALLERGIES' and it should be red.

3. The second line should say "Local: <allergy name> <allergy name> ,,,"

4. The third line should say "Remote: <allergy n... | process | add allergies info to ehmp toolbar it should be next to care team information and should have similarly three lines with same fonts the title should say allergies and it should be red the second line should say local the third line should say remote | 1 |

235,432 | 19,346,259,027 | IssuesEvent | 2021-12-15 11:07:37 | mozilla-mobile/focus-ios | https://api.github.com/repos/mozilla-mobile/focus-ios | opened | [XCUITest] Create new automated tests to cover Pro Tips | eng:ui-test eng:automation | So that there is a similar automated test to the exisiting [manual test](https://testrail.stage.mozaws.net/index.php?/cases/view/1514601) | 1.0 | [XCUITest] Create new automated tests to cover Pro Tips - So that there is a similar automated test to the exisiting [manual test](https://testrail.stage.mozaws.net/index.php?/cases/view/1514601) | non_process | create new automated tests to cover pro tips so that there is a similar automated test to the exisiting | 0 |

126,249 | 26,810,215,999 | IssuesEvent | 2023-02-01 21:40:47 | starperov-net/universal_flash_cards_chatbot | https://api.github.com/repos/starperov-net/universal_flash_cards_chatbot | opened | unite duplicate code in /study and /selftest | Yana code_refactoring | Винести дублюючий код у файлі study.py та quick_self_test.py в окремі функції. | 1.0 | unite duplicate code in /study and /selftest - Винести дублюючий код у файлі study.py та quick_self_test.py в окремі функції. | non_process | unite duplicate code in study and selftest винести дублюючий код у файлі study py та quick self test py в окремі функції | 0 |

57,015 | 3,081,230,526 | IssuesEvent | 2015-08-22 14:18:01 | bitfighter/bitfighter | https://api.github.com/repos/bitfighter/bitfighter | closed | Load Map option on level editor | 020 enhancement imported Priority-Medium wontfix | _From [Cory.Pou...@gmail.com](https://code.google.com/u/105220164791991617712/) on September 16, 2013 17:11:56_

So i think a load map option on the level editor would be nice as you would not have to quit the level editor then open it again to get a new map.

_Original issue: http://code.google.com/p/bitfighter/issues... | 1.0 | Load Map option on level editor - _From [Cory.Pou...@gmail.com](https://code.google.com/u/105220164791991617712/) on September 16, 2013 17:11:56_

So i think a load map option on the level editor would be nice as you would not have to quit the level editor then open it again to get a new map.

_Original issue: http://c... | non_process | load map option on level editor from on september so i think a load map option on the level editor would be nice as you would not have to quit the level editor then open it again to get a new map original issue | 0 |

15,563 | 19,703,504,190 | IssuesEvent | 2022-01-12 19:08:03 | googleapis/java-shell | https://api.github.com/repos/googleapis/java-shell | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'shell' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if y... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'shell' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this i... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 release level must be equal to one of the allowed values in repo metadata json api shortname shell invalid in repo metadata json ☝️ once you correct these problems you can close this i... | 1 |

28,417 | 4,104,127,006 | IssuesEvent | 2016-06-05 05:35:49 | fossasia/engelsystem | https://api.github.com/repos/fossasia/engelsystem | opened | UI/UX Design : Register Page | design enhancement | 1-Changing the layout , currently the layout is unordered and I plan to make it more ordered and user-friendly. As we can see the layout looks a bit confusing

I will be divi... | 1.0 | UI/UX Design : Register Page - 1-Changing the layout , currently the layout is unordered and I plan to make it more ordered and user-friendly. As we can see the layout looks a bit confusing

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-33348/conversation | 1.0 | El Paso - SA Billing - Late Fee Account List - In GitLab by @kdjstudios on Oct 3, 2018, 11:02

[El_Paso.xlsx](/uploads/212b7d3ab2a040017c72b5cab156a385/El_Paso.xlsx)

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-33348/conversation | process | el paso sa billing late fee account list in gitlab by kdjstudios on oct uploads el paso xlsx hd | 1 |

1,391 | 3,960,929,993 | IssuesEvent | 2016-05-02 09:57:46 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | opened | Make trials.recruitment_status enumerable | API Processors | http://www.who.int/ictrp/network/trds/en/

> 18. Recruitment Status

> Recruitment status of this trial:

> Pending: participants are not yet being recruited or enrolled at any site

> Recruiting: participants are currently being recruited and enrolled

> Suspended: there is a temporary halt in recruitment and enrolm... | 1.0 | Make trials.recruitment_status enumerable - http://www.who.int/ictrp/network/trds/en/

> 18. Recruitment Status

> Recruitment status of this trial:

> Pending: participants are not yet being recruited or enrolled at any site

> Recruiting: participants are currently being recruited and enrolled

> Suspended: there i... | process | make trials recruitment status enumerable recruitment status recruitment status of this trial pending participants are not yet being recruited or enrolled at any site recruiting participants are currently being recruited and enrolled suspended there is a temporary halt in recruitment and enr... | 1 |

17,815 | 23,741,281,479 | IssuesEvent | 2022-08-31 12:39:45 | km4ack/patmenu2 | https://api.github.com/repos/km4ack/patmenu2 | closed | check for internet before downloading gateway list | bug enhancement in process | If a pi boots into hotspot mode before connecting to a known good SSID, the gateway list download will fail if it is run at boot from cron. To avoid this, we need to add a check for internet when the [getardoplist-cron](https://github.com/km4ack/patmenu2/blob/master/.getardoplist-cron) script is called. This code shoul... | 1.0 | check for internet before downloading gateway list - If a pi boots into hotspot mode before connecting to a known good SSID, the gateway list download will fail if it is run at boot from cron. To avoid this, we need to add a check for internet when the [getardoplist-cron](https://github.com/km4ack/patmenu2/blob/master/... | process | check for internet before downloading gateway list if a pi boots into hotspot mode before connecting to a known good ssid the gateway list download will fail if it is run at boot from cron to avoid this we need to add a check for internet when the script is called this code should work at the beginning of the... | 1 |

14,196 | 17,099,062,027 | IssuesEvent | 2021-07-09 08:39:03 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error: Error in migration engine. Reason: [migration-engine/connectors/sql-migration-connector/src/sql_schema_calculator/sql_schema_calculator_flavour.rs:34:9] internal error: entered unreachable code: unreachable enum_column_type | bug/1-repro-available kind/bug process/candidate team/migrations | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma migrate dev --name unique`

Version: `2.26.0`

Binary Version: `9b816b3aa13cc270074f172f30d6eda8a8ce867d`

Report: https://prisma-errors.netlify.app/report/13408

OS: `x64 darwin 20.3.0`

JS Stacktrace:

```

Error: Error i... | 1.0 | Error: Error in migration engine. Reason: [migration-engine/connectors/sql-migration-connector/src/sql_schema_calculator/sql_schema_calculator_flavour.rs:34:9] internal error: entered unreachable code: unreachable enum_column_type - <!-- If required, please update the title to be clear and descriptive -->

Command: ... | process | error error in migration engine reason internal error entered unreachable code unreachable enum column type command prisma migrate dev name unique version binary version report os darwin js stacktrace error error in migration engine reason internal er... | 1 |

11,435 | 14,249,240,884 | IssuesEvent | 2020-11-19 14:00:57 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | cop: panic by assertion failure in BatchTableScanExecutor | severity/Moderate sig/coprocessor type/bug | ## Bug Report

<!-- Thanks for your bug report! Don't worry if you can't fill out all the sections. -->

### What version of TiKV are you using?

<!-- You can run `tikv-server --version` -->

master with commit `4d8e469860292b10636f1b25bf7a19da84e0b067`

### What operating system and CPU are you using?

<!-- If y... | 1.0 | cop: panic by assertion failure in BatchTableScanExecutor - ## Bug Report

<!-- Thanks for your bug report! Don't worry if you can't fill out all the sections. -->

### What version of TiKV are you using?

<!-- You can run `tikv-server --version` -->

master with commit `4d8e469860292b10636f1b25bf7a19da84e0b067`

... | process | cop panic by assertion failure in batchtablescanexecutor bug report what version of tikv are you using master with commit what operating system and cpu are you using steps to reproduce tidb lightning integration test fails in create table tikv panic log as follows ... | 1 |

12,685 | 15,050,188,545 | IssuesEvent | 2021-02-03 12:32:16 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | reopened | Zonal statistics not working when raster and vector in different CRS | Bug Processing Regression | Author Name: **matteo ghetta** (@ghtmtt)

Original Redmine Issue: [19027](https://issues.qgis.org/issues/19027)

Affected QGIS version: 3.1(master)

Redmine category:processing/qgis

---

If the CRS is different the algorithm runs but the result is empty

| 1.0 | Zonal statistics not working when raster and vector in different CRS - Author Name: **matteo ghetta** (@ghtmtt)

Original Redmine Issue: [19027](https://issues.qgis.org/issues/19027)

Affected QGIS version: 3.1(master)

Redmine category:processing/qgis

---

If the CRS is different the algorithm runs but the result is emp... | process | zonal statistics not working when raster and vector in different crs author name matteo ghetta ghtmtt original redmine issue affected qgis version master redmine category processing qgis if the crs is different the algorithm runs but the result is empty | 1 |

207,849 | 7,134,209,546 | IssuesEvent | 2018-01-22 20:02:59 | particle-iot/firmware | https://api.github.com/repos/particle-iot/firmware | closed | Publishing in a multi-thread application running on UDP device during OTA results in hard fault | PR SUBMITTED bug priority track | ```

Given I have a UDP device running application firmware with multithreading enabled

And the application firmware publishes events to the cloud at regular intervals

When I OTA flash the device with another application firmware

And the device publishes an event while the OTA is in progress

Then the OTA will be ir... | 1.0 | Publishing in a multi-thread application running on UDP device during OTA results in hard fault - ```

Given I have a UDP device running application firmware with multithreading enabled

And the application firmware publishes events to the cloud at regular intervals

When I OTA flash the device with another application... | non_process | publishing in a multi thread application running on udp device during ota results in hard fault given i have a udp device running application firmware with multithreading enabled and the application firmware publishes events to the cloud at regular intervals when i ota flash the device with another application... | 0 |

259,821 | 27,728,652,336 | IssuesEvent | 2023-03-15 05:47:09 | vasind/try-ember | https://api.github.com/repos/vasind/try-ember | closed | CVE-2022-21670 (Medium) detected in markdown-it-8.4.2.tgz | security vulnerability no-issue-activity | ## CVE-2022-21670 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>markdown-it-8.4.2.tgz</b></p></summary>

<p>Markdown-it - modern pluggable markdown parser.</p>

<p>Library home page:... | True | CVE-2022-21670 (Medium) detected in markdown-it-8.4.2.tgz - ## CVE-2022-21670 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>markdown-it-8.4.2.tgz</b></p></summary>

<p>Markdown-it -... | non_process | cve medium detected in markdown it tgz cve medium severity vulnerability vulnerable library markdown it tgz markdown it modern pluggable markdown parser library home page a href path to dependency file package json path to vulnerable library node modules markdow... | 0 |

385,338 | 11,418,829,902 | IssuesEvent | 2020-02-03 06:07:59 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Error occurred when manually sourcing the Oracle sql script | Priority/Highest Severity/Blocker Type/Bug | Enviornment

DB - Oracle 12.

wso2is-5.9.0-alpha pack

Priority: High

Severity: Medium

Steps:

Took the latest oracle script

Source that oracle (/wso2is-5.9.0-alpha/dbscripts)

**Error**

Error starting at line : 783 in command -

CREATE INDEX SYSTEM_ROLE_IND_BY_RN_TI ON UM_SYSTEM_ROLE(UM_ROLE_NAME, UM_TENAN... | 1.0 | Error occurred when manually sourcing the Oracle sql script - Enviornment

DB - Oracle 12.

wso2is-5.9.0-alpha pack

Priority: High

Severity: Medium

Steps:

Took the latest oracle script

Source that oracle (/wso2is-5.9.0-alpha/dbscripts)

**Error**

Error starting at line : 783 in command -

CREATE INDEX SYS... | non_process | error occurred when manually sourcing the oracle sql script enviornment db oracle alpha pack priority high severity medium steps took the latest oracle script source that oracle alpha dbscripts error error starting at line in command create index system role ind ... | 0 |

285,049 | 24,639,576,199 | IssuesEvent | 2022-10-17 10:32:08 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | scaletesting: Update Sourcegraph to 4.0.1 | devx/q3b1/scaletesting/devops | ```

✅ Fetched deployed version

✅ Done updating list of commits

💡 Live on "scaletesting": e18d1344e0ee (built on 2022-09-23)

```

Absolute latest version is v4.0.1 | 1.0 | scaletesting: Update Sourcegraph to 4.0.1 - ```

✅ Fetched deployed version

✅ Done updating list of commits

💡 Live on "scaletesting": e18d1344e0ee (built on 2022-09-23)

```

Absolute latest version is v4.0.1 | non_process | scaletesting update sourcegraph to ✅ fetched deployed version ✅ done updating list of commits 💡 live on scaletesting built on absolute latest version is | 0 |

133,142 | 18,279,651,171 | IssuesEvent | 2021-10-05 00:24:28 | ghc-dev/Thomas-Stone | https://api.github.com/repos/ghc-dev/Thomas-Stone | opened | CVE-2020-1738 (Low) detected in ansible-2.9.9.tar.gz | security vulnerability | ## CVE-2020-1738 - Low Severity Vulnerability