Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

689,340

| 23,617,233,954

|

IssuesEvent

|

2022-08-24 16:56:51

|

bigbinary/neeto-editor-tiptap

|

https://api.github.com/repos/bigbinary/neeto-editor-tiptap

|

closed

|

Insert nodes created from FixedMenu at the end

|

working PR low-priority 0.5D

|

Elements from the fixed menu are inserted at the beginning of the content at the moment. This can be cumbersome for the user when there is already some content in the editor. Ideally, such elements should be inserted at the end.

https://vimeo.com/737784830/c7a0074df8

@AbhayVAshokan _a Please take a look.

|

1.0

|

Insert nodes created from FixedMenu at the end - Elements from the fixed menu are inserted at the beginning of the content at the moment. This can be cumbersome for the user when there is already some content in the editor. Ideally, such elements should be inserted at the end.

https://vimeo.com/737784830/c7a0074df8

@AbhayVAshokan _a Please take a look.

|

non_process

|

insert nodes created from fixedmenu at the end elements from the fixed menu are inserted at the beginning of the content at the moment this can be cumbersome for the user when there is already some content in the editor ideally such elements should be inserted at the end abhayvashokan a please take a look

| 0

|

537,853

| 15,755,540,373

|

IssuesEvent

|

2021-03-31 01:57:49

|

OnTopicCMS/OnTopic-Editor-AspNetCore

|

https://api.github.com/repos/OnTopicCMS/OnTopic-Editor-AspNetCore

|

closed

|

Default Export Options

|

Area: Interface Priority: 3 Severity 0: Nice to Have Status 5: Complete Type: Improvement

|

When exporting a topic (tree) from the OnTopic Editor, set the following defaults on the options:

- [x] Include Child Topics?

- [x] Include Nested Topics?

- [ ] Include External Associations?

- [ ] Translate Legacy Topic Pointers?

|

1.0

|

Default Export Options - When exporting a topic (tree) from the OnTopic Editor, set the following defaults on the options:

- [x] Include Child Topics?

- [x] Include Nested Topics?

- [ ] Include External Associations?

- [ ] Translate Legacy Topic Pointers?

|

non_process

|

default export options when exporting a topic tree from the ontopic editor set the following defaults on the options include child topics include nested topics include external associations translate legacy topic pointers

| 0

|

300,917

| 26,002,566,947

|

IssuesEvent

|

2022-12-20 16:25:45

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

sql/importer: TestImportDefaultWithResume failed [ingestKVs hangs with BulkAdderFlushesEveryBatch]

|

C-test-failure O-robot branch-master T-disaster-recovery

|

sql/importer.TestImportDefaultWithResume [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7804159?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7804159?buildTab=artifacts#/) on master @ [7cb778506d75bbef2eb90abccaa75b9dc7e3fb91](https://github.com/cockroachdb/cockroach/commits/7cb778506d75bbef2eb90abccaa75b9dc7e3fb91):

```

test_log_scope.go:161: test logs captured to: /artifacts/tmp/_tmp/73dfce30b9a5630b1b4dabed3c94b32c/logTestImportDefaultWithResume2888010391

test_log_scope.go:79: use -show-logs to present logs inline

=== CONT TestImportDefaultWithResume

import_stmt_test.go:4712: -- test log scope end --

import_stmt_test.go:4712: Leaked goroutine: goroutine 298969 [semacquire]:

sync.runtime_Semacquire(0x11bbf05?)

GOROOT/src/runtime/sema.go:62 +0x25

sync.(*WaitGroup).Wait(0xc0043bbdf0?)

GOROOT/src/sync/waitgroup.go:139 +0x52

golang.org/x/sync/errgroup.(*Group).Wait(0xc00487fbc0)

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:53 +0x27

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.Wait({0xc00487fbc0?, {0x6b20dd0?, 0xc00487fb80?}})

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:144 +0x4a

github.com/cockroachdb/cockroach/pkg/sql/importer.runImport({0x6b20e78, 0xc007ce73e0}, 0xc002c47400, 0xc005abb8d0, 0xc008b5ba40, 0xc00487fa40?)

github.com/cockroachdb/cockroach/pkg/sql/importer/read_import_base.go:127 +0x7b9

github.com/cockroachdb/cockroach/pkg/sql/importer.(*readImportDataProcessor).Start.func1()

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:183 +0x8b

created by github.com/cockroachdb/cockroach/pkg/sql/importer.(*readImportDataProcessor).Start

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:181 +0xeb

Leaked goroutine: goroutine 298971 [semacquire]:

sync.runtime_Semacquire(0x11bbf05?)

GOROOT/src/runtime/sema.go:62 +0x25

sync.(*WaitGroup).Wait(0xc002c8b808?)

GOROOT/src/sync/waitgroup.go:139 +0x52

golang.org/x/sync/errgroup.(*Group).Wait(0xc00487fcc0)

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:53 +0x27

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.Wait({0xc00487fcc0?, {0x6b20dd0?, 0xc00487fc80?}})

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:144 +0x4a

github.com/cockroachdb/cockroach/pkg/sql/importer.ingestKvs({0x6b20dd0?, 0xc00487fb80?}, 0xc002c47400, 0xc005abb8d0, 0xc008b5ba40, 0xc004855ec0)

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:553 +0x1028

github.com/cockroachdb/cockroach/pkg/sql/importer.runImport.func2({0x6b20dd0, 0xc00487fb80})

github.com/cockroachdb/cockroach/pkg/sql/importer/read_import_base.go:108 +0x6a

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.GoCtx.func1()

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:168 +0x25

golang.org/x/sync/errgroup.(*Group).Go.func1()

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:75 +0x64

created by golang.org/x/sync/errgroup.(*Group).Go

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:72 +0xa5

Leaked goroutine: goroutine 298973 [chan send]:

github.com/cockroachdb/cockroach/pkg/sql/importer.ingestKvs.func3()

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:468 +0x311

github.com/cockroachdb/cockroach/pkg/sql/importer.ingestKvs.func5({0x6b20dd0, 0xc00487fc80})

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:547 +0x2cf

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.GoCtx.func1()

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:168 +0x25

golang.org/x/sync/errgroup.(*Group).Go.func1()

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:75 +0x64

created by golang.org/x/sync/errgroup.(*Group).Go

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:72 +0xa5

--- FAIL: TestImportDefaultWithResume (5.74s)

```

<p>Parameters: <code>TAGS=bazel,gss</code>

</p>

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestImportDefaultWithResume.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

Jira issue: CRDB-22045

|

1.0

|

sql/importer: TestImportDefaultWithResume failed [ingestKVs hangs with BulkAdderFlushesEveryBatch] - sql/importer.TestImportDefaultWithResume [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7804159?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7804159?buildTab=artifacts#/) on master @ [7cb778506d75bbef2eb90abccaa75b9dc7e3fb91](https://github.com/cockroachdb/cockroach/commits/7cb778506d75bbef2eb90abccaa75b9dc7e3fb91):

```

test_log_scope.go:161: test logs captured to: /artifacts/tmp/_tmp/73dfce30b9a5630b1b4dabed3c94b32c/logTestImportDefaultWithResume2888010391

test_log_scope.go:79: use -show-logs to present logs inline

=== CONT TestImportDefaultWithResume

import_stmt_test.go:4712: -- test log scope end --

import_stmt_test.go:4712: Leaked goroutine: goroutine 298969 [semacquire]:

sync.runtime_Semacquire(0x11bbf05?)

GOROOT/src/runtime/sema.go:62 +0x25

sync.(*WaitGroup).Wait(0xc0043bbdf0?)

GOROOT/src/sync/waitgroup.go:139 +0x52

golang.org/x/sync/errgroup.(*Group).Wait(0xc00487fbc0)

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:53 +0x27

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.Wait({0xc00487fbc0?, {0x6b20dd0?, 0xc00487fb80?}})

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:144 +0x4a

github.com/cockroachdb/cockroach/pkg/sql/importer.runImport({0x6b20e78, 0xc007ce73e0}, 0xc002c47400, 0xc005abb8d0, 0xc008b5ba40, 0xc00487fa40?)

github.com/cockroachdb/cockroach/pkg/sql/importer/read_import_base.go:127 +0x7b9

github.com/cockroachdb/cockroach/pkg/sql/importer.(*readImportDataProcessor).Start.func1()

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:183 +0x8b

created by github.com/cockroachdb/cockroach/pkg/sql/importer.(*readImportDataProcessor).Start

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:181 +0xeb

Leaked goroutine: goroutine 298971 [semacquire]:

sync.runtime_Semacquire(0x11bbf05?)

GOROOT/src/runtime/sema.go:62 +0x25

sync.(*WaitGroup).Wait(0xc002c8b808?)

GOROOT/src/sync/waitgroup.go:139 +0x52

golang.org/x/sync/errgroup.(*Group).Wait(0xc00487fcc0)

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:53 +0x27

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.Wait({0xc00487fcc0?, {0x6b20dd0?, 0xc00487fc80?}})

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:144 +0x4a

github.com/cockroachdb/cockroach/pkg/sql/importer.ingestKvs({0x6b20dd0?, 0xc00487fb80?}, 0xc002c47400, 0xc005abb8d0, 0xc008b5ba40, 0xc004855ec0)

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:553 +0x1028

github.com/cockroachdb/cockroach/pkg/sql/importer.runImport.func2({0x6b20dd0, 0xc00487fb80})

github.com/cockroachdb/cockroach/pkg/sql/importer/read_import_base.go:108 +0x6a

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.GoCtx.func1()

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:168 +0x25

golang.org/x/sync/errgroup.(*Group).Go.func1()

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:75 +0x64

created by golang.org/x/sync/errgroup.(*Group).Go

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:72 +0xa5

Leaked goroutine: goroutine 298973 [chan send]:

github.com/cockroachdb/cockroach/pkg/sql/importer.ingestKvs.func3()

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:468 +0x311

github.com/cockroachdb/cockroach/pkg/sql/importer.ingestKvs.func5({0x6b20dd0, 0xc00487fc80})

github.com/cockroachdb/cockroach/pkg/sql/importer/import_processor.go:547 +0x2cf

github.com/cockroachdb/cockroach/pkg/util/ctxgroup.Group.GoCtx.func1()

github.com/cockroachdb/cockroach/pkg/util/ctxgroup/ctxgroup.go:168 +0x25

golang.org/x/sync/errgroup.(*Group).Go.func1()

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:75 +0x64

created by golang.org/x/sync/errgroup.(*Group).Go

golang.org/x/sync/errgroup/external/org_golang_x_sync/errgroup/errgroup.go:72 +0xa5

--- FAIL: TestImportDefaultWithResume (5.74s)

```

<p>Parameters: <code>TAGS=bazel,gss</code>

</p>

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestImportDefaultWithResume.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

Jira issue: CRDB-22045

|

non_process

|

sql importer testimportdefaultwithresume failed sql importer testimportdefaultwithresume with on master test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inline cont testimportdefaultwithresume import stmt test go test log scope end import stmt test go leaked goroutine goroutine sync runtime semacquire goroot src runtime sema go sync waitgroup wait goroot src sync waitgroup go golang org x sync errgroup group wait golang org x sync errgroup external org golang x sync errgroup errgroup go github com cockroachdb cockroach pkg util ctxgroup group wait github com cockroachdb cockroach pkg util ctxgroup ctxgroup go github com cockroachdb cockroach pkg sql importer runimport github com cockroachdb cockroach pkg sql importer read import base go github com cockroachdb cockroach pkg sql importer readimportdataprocessor start github com cockroachdb cockroach pkg sql importer import processor go created by github com cockroachdb cockroach pkg sql importer readimportdataprocessor start github com cockroachdb cockroach pkg sql importer import processor go leaked goroutine goroutine sync runtime semacquire goroot src runtime sema go sync waitgroup wait goroot src sync waitgroup go golang org x sync errgroup group wait golang org x sync errgroup external org golang x sync errgroup errgroup go github com cockroachdb cockroach pkg util ctxgroup group wait github com cockroachdb cockroach pkg util ctxgroup ctxgroup go github com cockroachdb cockroach pkg sql importer ingestkvs github com cockroachdb cockroach pkg sql importer import processor go github com cockroachdb cockroach pkg sql importer runimport github com cockroachdb cockroach pkg sql importer read import base go github com cockroachdb cockroach pkg util ctxgroup group goctx github com cockroachdb cockroach pkg util ctxgroup ctxgroup go golang org x sync errgroup group go golang org x sync errgroup external org golang x sync errgroup errgroup go created by golang org x sync errgroup group go golang org x sync errgroup external org golang x sync errgroup errgroup go leaked goroutine goroutine github com cockroachdb cockroach pkg sql importer ingestkvs github com cockroachdb cockroach pkg sql importer import processor go github com cockroachdb cockroach pkg sql importer ingestkvs github com cockroachdb cockroach pkg sql importer import processor go github com cockroachdb cockroach pkg util ctxgroup group goctx github com cockroachdb cockroach pkg util ctxgroup ctxgroup go golang org x sync errgroup group go golang org x sync errgroup external org golang x sync errgroup errgroup go created by golang org x sync errgroup group go golang org x sync errgroup external org golang x sync errgroup errgroup go fail testimportdefaultwithresume parameters tags bazel gss help see also cc cockroachdb sql experience jira issue crdb

| 0

|

199,751

| 6,994,045,001

|

IssuesEvent

|

2017-12-15 13:58:01

|

go-gitea/gitea

|

https://api.github.com/repos/go-gitea/gitea

|

opened

|

Repository home page show error file last commit message.

|

kind/bug priority/critical

|

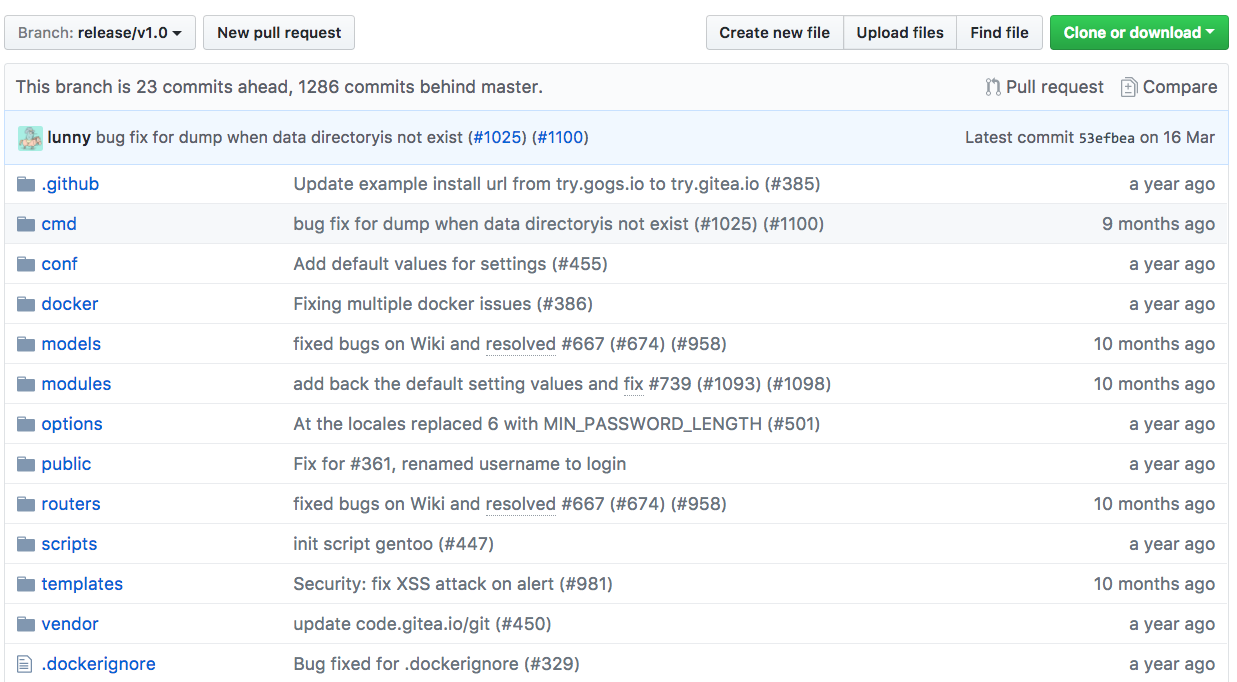

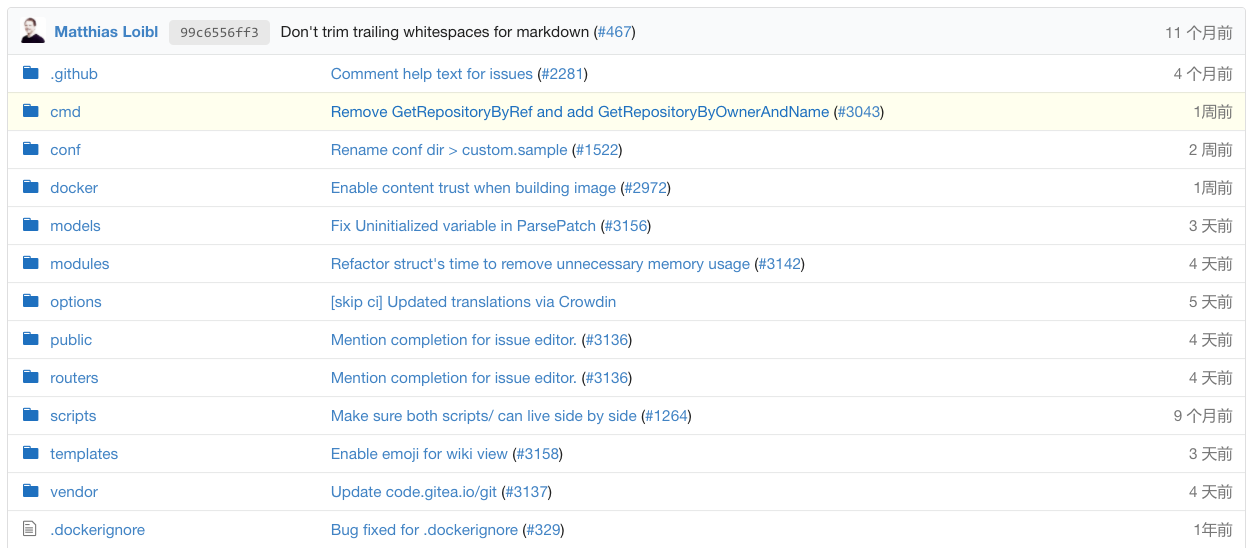

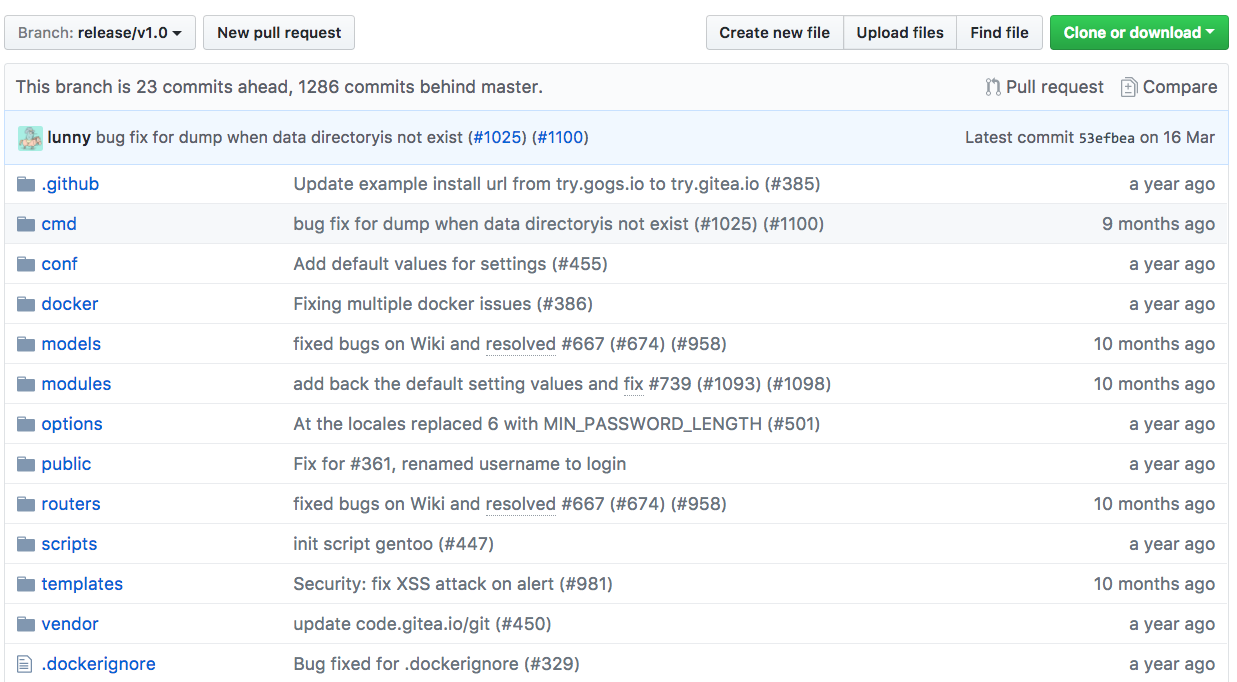

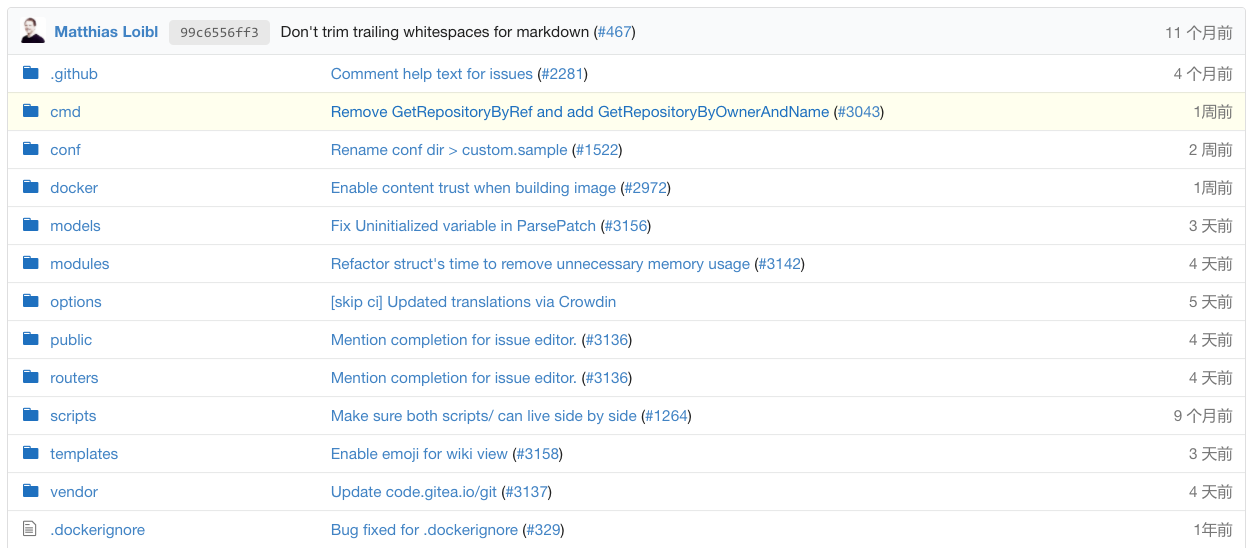

Compare github's home page

https://github.com/go-gitea/gitea/tree/release/v1.0

vs https://try.gitea.io/gitea/gitea/src/branch/release/v1.0

|

1.0

|

Repository home page show error file last commit message. - Compare github's home page

https://github.com/go-gitea/gitea/tree/release/v1.0

vs https://try.gitea.io/gitea/gitea/src/branch/release/v1.0

|

non_process

|

repository home page show error file last commit message compare github s home page vs

| 0

|

21,788

| 30,297,176,715

|

IssuesEvent

|

2023-07-10 00:32:30

|

h4sh5/pypi-auto-scanner

|

https://api.github.com/repos/h4sh5/pypi-auto-scanner

|

opened

|

dbretina 2.2.10 has 1 GuardDog issues

|

guarddog silent-process-execution

|

https://pypi.org/project/dbretina

https://inspector.pypi.io/project/dbretina

```{

"dependency": "dbretina",

"version": "2.2.10",

"result": {

"issues": 1,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "kSpider2/ks_filter.py/kSpider2/ks_filter.py:15",

"code": " subprocess.run([\"awk\"], stdin=subprocess.DEVNULL,\n stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmp6f_pfe0h/dbretina"

}

}```

|

1.0

|

dbretina 2.2.10 has 1 GuardDog issues - https://pypi.org/project/dbretina

https://inspector.pypi.io/project/dbretina

```{

"dependency": "dbretina",

"version": "2.2.10",

"result": {

"issues": 1,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "kSpider2/ks_filter.py/kSpider2/ks_filter.py:15",

"code": " subprocess.run([\"awk\"], stdin=subprocess.DEVNULL,\n stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmp6f_pfe0h/dbretina"

}

}```

|

process

|

dbretina has guarddog issues dependency dbretina version result issues errors results silent process execution location ks filter py ks filter py code subprocess run stdin subprocess devnull n stdout subprocess devnull stderr subprocess devnull message this package is silently executing an external binary redirecting stdout stderr and stdin to dev null path tmp dbretina

| 1

|

17,890

| 23,864,912,348

|

IssuesEvent

|

2022-09-07 10:10:01

|

jasp-stats/jasp-issues

|

https://api.github.com/repos/jasp-stats/jasp-issues

|

closed

|

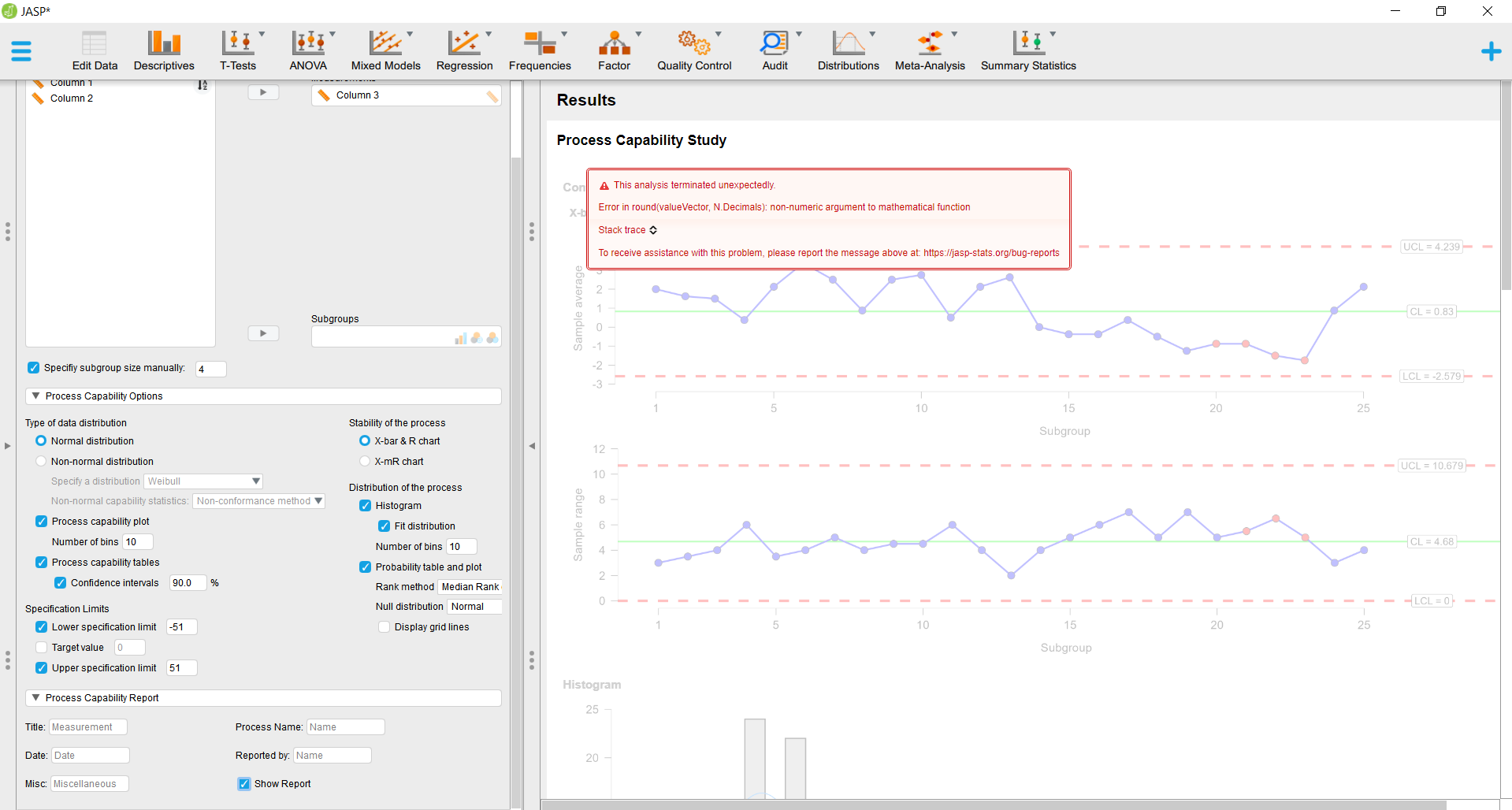

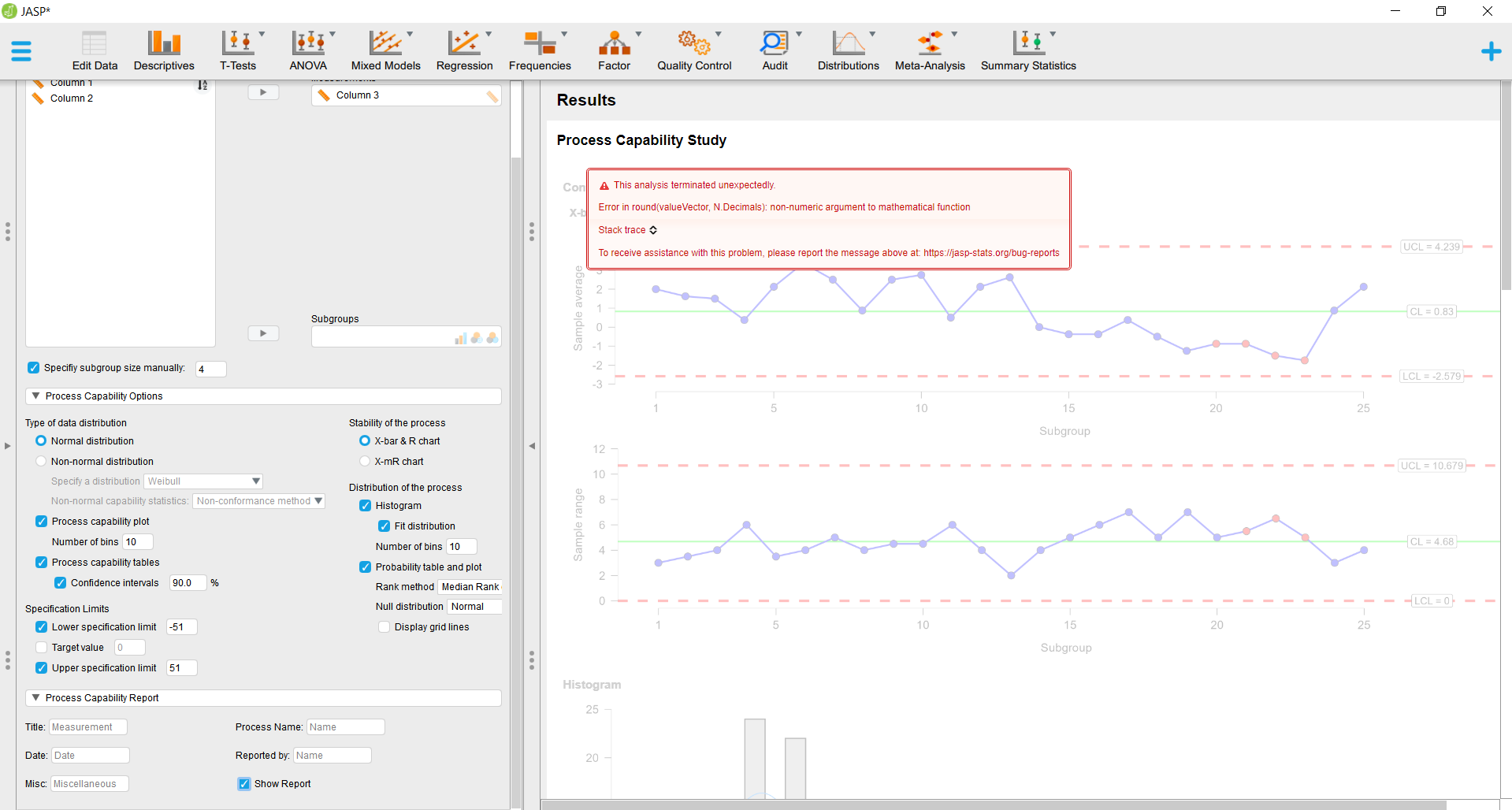

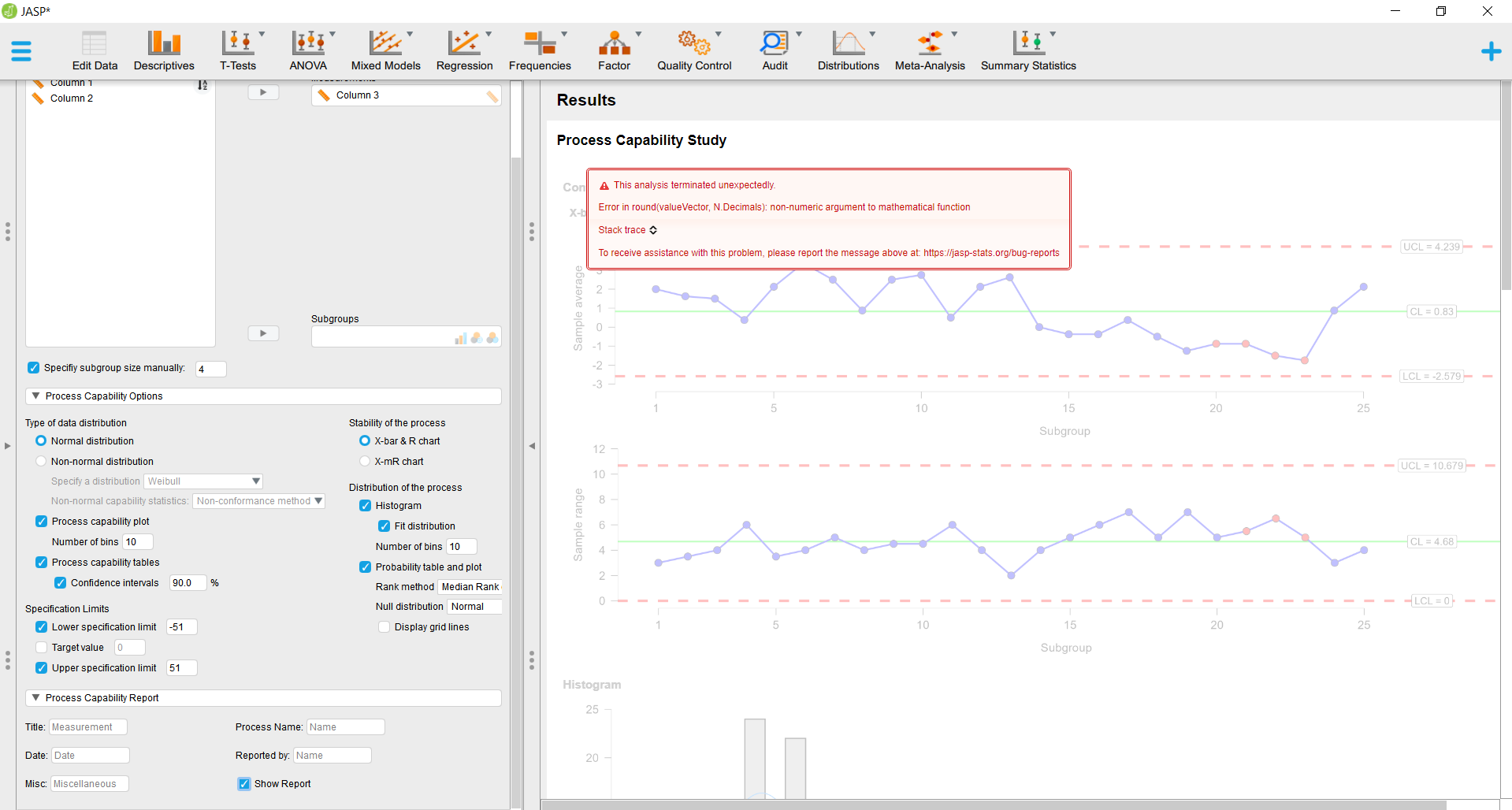

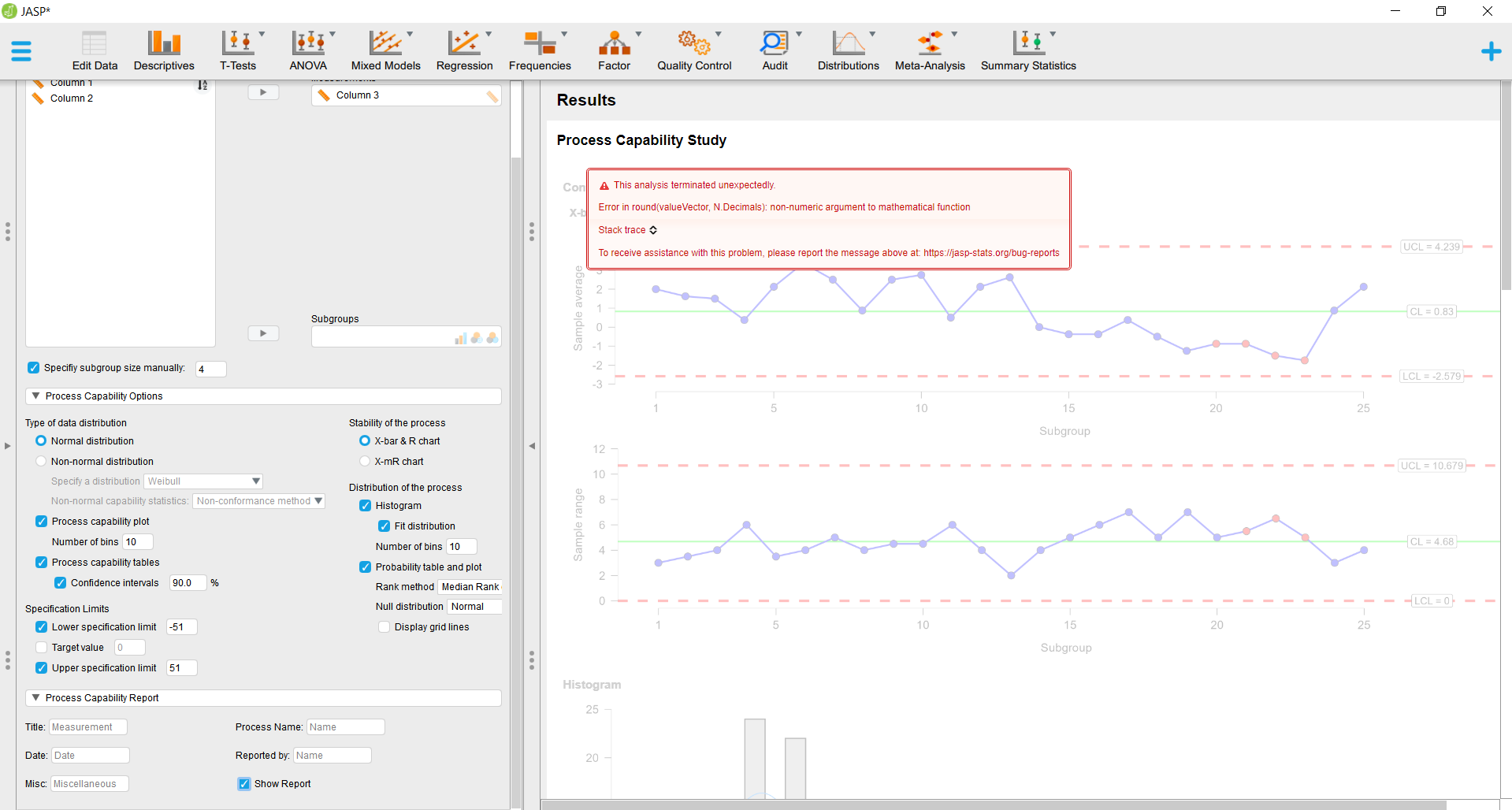

[Bug]: unexpected error in Process Capability Study of jaspProcessControl

|

waiting for requester Module: jaspProcessControl

|

### JASP Version

0.16

### Commit ID

_No response_

### JASP Module

jaspNetwork

### What analysis are you seeing the problem on?

_No response_

### What OS are you seeing the problem on?

windows 7

### Bug Description

IMPOSSIBLE TO SHOW UP THE RESULTS. I NEED TO FIX IT ASAP

### Expected Behaviour

[](url)

### Steps to Reproduce

1.

2.

3.

...

### Log (if any)

_No response_

### Final Checklist

- [X] I have included a screenshot showcasing the issue, if any.

- [X] I have included a JASP file that causes the crash/bug, if any.

- [X] I have accurately described the bug, and steps to reproduce it.

|

1.0

|

[Bug]: unexpected error in Process Capability Study of jaspProcessControl - ### JASP Version

0.16

### Commit ID

_No response_

### JASP Module

jaspNetwork

### What analysis are you seeing the problem on?

_No response_

### What OS are you seeing the problem on?

windows 7

### Bug Description

IMPOSSIBLE TO SHOW UP THE RESULTS. I NEED TO FIX IT ASAP

### Expected Behaviour

[](url)

### Steps to Reproduce

1.

2.

3.

...

### Log (if any)

_No response_

### Final Checklist

- [X] I have included a screenshot showcasing the issue, if any.

- [X] I have included a JASP file that causes the crash/bug, if any.

- [X] I have accurately described the bug, and steps to reproduce it.

|

process

|

unexpected error in process capability study of jaspprocesscontrol jasp version commit id no response jasp module jaspnetwork what analysis are you seeing the problem on no response what os are you seeing the problem on windows bug description impossible to show up the results i need to fix it asap expected behaviour url steps to reproduce log if any no response final checklist i have included a screenshot showcasing the issue if any i have included a jasp file that causes the crash bug if any i have accurately described the bug and steps to reproduce it

| 1

|

217,069

| 24,312,769,084

|

IssuesEvent

|

2022-09-30 01:17:23

|

sesong11/example

|

https://api.github.com/repos/sesong11/example

|

opened

|

CVE-2020-1935 (Medium) detected in tomcat-embed-core-9.0.21.jar

|

security vulnerability

|

## CVE-2020-1935 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.21.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Library home page: <a href="https://tomcat.apache.org/">https://tomcat.apache.org/</a></p>

<p>Path to dependency file: /example/spring-statemachine-jpa/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/org/apache/tomcat/embed/tomcat-embed-core/9.0.21/tomcat-embed-core-9.0.21.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-2.1.6.RELEASE.jar (Root Library)

- spring-boot-starter-tomcat-2.1.6.RELEASE.jar

- :x: **tomcat-embed-core-9.0.21.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Apache Tomcat 9.0.0.M1 to 9.0.30, 8.5.0 to 8.5.50 and 7.0.0 to 7.0.99 the HTTP header parsing code used an approach to end-of-line parsing that allowed some invalid HTTP headers to be parsed as valid. This led to a possibility of HTTP Request Smuggling if Tomcat was located behind a reverse proxy that incorrectly handled the invalid Transfer-Encoding header in a particular manner. Such a reverse proxy is considered unlikely.

<p>Publish Date: 2020-02-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-1935>CVE-2020-1935</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-6v7p-v754-j89v">https://github.com/advisories/GHSA-6v7p-v754-j89v</a></p>

<p>Release Date: 2020-02-24</p>

<p>Fix Resolution (org.apache.tomcat.embed:tomcat-embed-core): 9.0.31</p>

<p>Direct dependency fix Resolution (org.springframework.boot:spring-boot-starter-web): 2.1.13.RELEASE</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-1935 (Medium) detected in tomcat-embed-core-9.0.21.jar - ## CVE-2020-1935 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.21.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Library home page: <a href="https://tomcat.apache.org/">https://tomcat.apache.org/</a></p>

<p>Path to dependency file: /example/spring-statemachine-jpa/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/org/apache/tomcat/embed/tomcat-embed-core/9.0.21/tomcat-embed-core-9.0.21.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-2.1.6.RELEASE.jar (Root Library)

- spring-boot-starter-tomcat-2.1.6.RELEASE.jar

- :x: **tomcat-embed-core-9.0.21.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Apache Tomcat 9.0.0.M1 to 9.0.30, 8.5.0 to 8.5.50 and 7.0.0 to 7.0.99 the HTTP header parsing code used an approach to end-of-line parsing that allowed some invalid HTTP headers to be parsed as valid. This led to a possibility of HTTP Request Smuggling if Tomcat was located behind a reverse proxy that incorrectly handled the invalid Transfer-Encoding header in a particular manner. Such a reverse proxy is considered unlikely.

<p>Publish Date: 2020-02-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-1935>CVE-2020-1935</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-6v7p-v754-j89v">https://github.com/advisories/GHSA-6v7p-v754-j89v</a></p>

<p>Release Date: 2020-02-24</p>

<p>Fix Resolution (org.apache.tomcat.embed:tomcat-embed-core): 9.0.31</p>

<p>Direct dependency fix Resolution (org.springframework.boot:spring-boot-starter-web): 2.1.13.RELEASE</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in tomcat embed core jar cve medium severity vulnerability vulnerable library tomcat embed core jar core tomcat implementation library home page a href path to dependency file example spring statemachine jpa pom xml path to vulnerable library root repository org apache tomcat embed tomcat embed core tomcat embed core jar dependency hierarchy spring boot starter web release jar root library spring boot starter tomcat release jar x tomcat embed core jar vulnerable library vulnerability details in apache tomcat to to and to the http header parsing code used an approach to end of line parsing that allowed some invalid http headers to be parsed as valid this led to a possibility of http request smuggling if tomcat was located behind a reverse proxy that incorrectly handled the invalid transfer encoding header in a particular manner such a reverse proxy is considered unlikely publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org apache tomcat embed tomcat embed core direct dependency fix resolution org springframework boot spring boot starter web release step up your open source security game with mend

| 0

|

90,803

| 10,697,802,933

|

IssuesEvent

|

2019-10-23 17:18:40

|

jan-molak/serenity-js

|

https://api.github.com/repos/jan-molak/serenity-js

|

closed

|

Suggestion required: Restricting Abilities based on Persona

|

documentation question

|

Hi @jan-molak ,

We have started using SerenityJs 2.0 in our Projects. We have figured out personas to be used by teams for our Workflow testing. We do not want everyone to create his or her own personas, instead use from the list defined by stakeholders.

We currently implemented this by creating a core package with Personas and their abilities and plan is to create a package and publish so that every team is using defined set of personas and abilities.

**-- Our Current Thought Process --

core/persona.ts**

```

/**

* List of Personas that can be used in framework

*/

export enum Persona {

ABC,

/**

* ABC: a Patient

*/

XYZ,

/**

* XYZ: Is an Doctor

*/

MNO,

/**

* MNO: Is an Nurse

*/

}

```

**Preparing Actor with abilities**

_**core/customestage.ts**_

```

export class customStage implements DressingRoom {

prepare(actor: Actor): Actor {

switch (actor.name) {

case 'ABC':

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser));

case 'MNO':

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser));

case 'XYZ':

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser),

Search.with("ourapplication", ''));//custom ability

default:

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser),);

}

}

}

```

**_core/setup.ts_**

```

export function customactor(persona: string): Actor {

return serenity.callToStageFor(new customStage()).theActorCalled(persona)

}

setWorldConstructor(customactor)

```

**_stepdefination.ts_**

```

let xyz = customactor(Persona[Persona.XYZ])

Given(/^that XYZ is an authorized user of our system$/, async ()=> {

return xyz.attemptsTo(Be.onOurSystem())

});

```

Need your inputs on if this is the correct approach. With above implementation, we lose flexibility to reuse step_definition as we are preparing actor and providing them with the abilities based on the names in persona enum. But can enforce that no one creating their own random personas/abilities across organization.

If we go with the feature file and step_definition shown in your example, It can work for any actor. Wondering how we can handle the restrictions we want to impose in similar way. Also we wanted to keep step definition precise ` return xyz.attemptsTo(Be.onOurSystem())`

```

Given(/(.*) decides to use the Super Calculator/, function (this: WithStage, actorName: string) {

return this.stage.theActorCalled(actorName).attemptsTo(

Navigate.to('/protractor-demo/'),

);

});

```

Thank You for all your great work and help!

|

1.0

|

Suggestion required: Restricting Abilities based on Persona - Hi @jan-molak ,

We have started using SerenityJs 2.0 in our Projects. We have figured out personas to be used by teams for our Workflow testing. We do not want everyone to create his or her own personas, instead use from the list defined by stakeholders.

We currently implemented this by creating a core package with Personas and their abilities and plan is to create a package and publish so that every team is using defined set of personas and abilities.

**-- Our Current Thought Process --

core/persona.ts**

```

/**

* List of Personas that can be used in framework

*/

export enum Persona {

ABC,

/**

* ABC: a Patient

*/

XYZ,

/**

* XYZ: Is an Doctor

*/

MNO,

/**

* MNO: Is an Nurse

*/

}

```

**Preparing Actor with abilities**

_**core/customestage.ts**_

```

export class customStage implements DressingRoom {

prepare(actor: Actor): Actor {

switch (actor.name) {

case 'ABC':

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser));

case 'MNO':

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser));

case 'XYZ':

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser),

Search.with("ourapplication", ''));//custom ability

default:

return Actor.named(actor.name).whoCan(

BrowseTheWeb.using(protractor.browser),);

}

}

}

```

**_core/setup.ts_**

```

export function customactor(persona: string): Actor {

return serenity.callToStageFor(new customStage()).theActorCalled(persona)

}

setWorldConstructor(customactor)

```

**_stepdefination.ts_**

```

let xyz = customactor(Persona[Persona.XYZ])

Given(/^that XYZ is an authorized user of our system$/, async ()=> {

return xyz.attemptsTo(Be.onOurSystem())

});

```

Need your inputs on if this is the correct approach. With above implementation, we lose flexibility to reuse step_definition as we are preparing actor and providing them with the abilities based on the names in persona enum. But can enforce that no one creating their own random personas/abilities across organization.

If we go with the feature file and step_definition shown in your example, It can work for any actor. Wondering how we can handle the restrictions we want to impose in similar way. Also we wanted to keep step definition precise ` return xyz.attemptsTo(Be.onOurSystem())`

```

Given(/(.*) decides to use the Super Calculator/, function (this: WithStage, actorName: string) {

return this.stage.theActorCalled(actorName).attemptsTo(

Navigate.to('/protractor-demo/'),

);

});

```

Thank You for all your great work and help!

|

non_process

|

suggestion required restricting abilities based on persona hi jan molak we have started using serenityjs in our projects we have figured out personas to be used by teams for our workflow testing we do not want everyone to create his or her own personas instead use from the list defined by stakeholders we currently implemented this by creating a core package with personas and their abilities and plan is to create a package and publish so that every team is using defined set of personas and abilities our current thought process core persona ts list of personas that can be used in framework export enum persona abc abc a patient xyz xyz is an doctor mno mno is an nurse preparing actor with abilities core customestage ts export class customstage implements dressingroom prepare actor actor actor switch actor name case abc return actor named actor name whocan browsetheweb using protractor browser case mno return actor named actor name whocan browsetheweb using protractor browser case xyz return actor named actor name whocan browsetheweb using protractor browser search with ourapplication custom ability default return actor named actor name whocan browsetheweb using protractor browser core setup ts export function customactor persona string actor return serenity calltostagefor new customstage theactorcalled persona setworldconstructor customactor stepdefination ts let xyz customactor persona given that xyz is an authorized user of our system async return xyz attemptsto be onoursystem need your inputs on if this is the correct approach with above implementation we lose flexibility to reuse step definition as we are preparing actor and providing them with the abilities based on the names in persona enum but can enforce that no one creating their own random personas abilities across organization if we go with the feature file and step definition shown in your example it can work for any actor wondering how we can handle the restrictions we want to impose in similar way also we wanted to keep step definition precise return xyz attemptsto be onoursystem given decides to use the super calculator function this withstage actorname string return this stage theactorcalled actorname attemptsto navigate to protractor demo thank you for all your great work and help

| 0

|

46,481

| 19,252,476,886

|

IssuesEvent

|

2021-12-09 07:36:16

|

DimoDimchev/Trivial

|

https://api.github.com/repos/DimoDimchev/Trivial

|

closed

|

Research asynchronous operations between different services and external API's

|

microservices

|

Decide where and if async functionality is needed for communication between cervices and async API's.

* Django and Celery

* Flask needs research

|

1.0

|

Research asynchronous operations between different services and external API's - Decide where and if async functionality is needed for communication between cervices and async API's.

* Django and Celery

* Flask needs research

|

non_process

|

research asynchronous operations between different services and external api s decide where and if async functionality is needed for communication between cervices and async api s django and celery flask needs research

| 0

|

11,117

| 13,957,683,594

|

IssuesEvent

|

2020-10-24 08:08:05

|

alexanderkotsev/geoportal

|

https://api.github.com/repos/alexanderkotsev/geoportal

|

opened

|

ES: Discovery service unavailable

|

ES - Spain Geoportal Harvesting process

|

Dear Paloma,

we are unable to harvest from your registered endpoint. It returns a HTTP status 503 UNAVAILABLE.

We already tried on Dec 13th, 16th and today, and each time, after four attempts with a increasing timeout (up to 18sec), the service replies HTTP status 503 Service Unavailable to our POST GetRecords request.

Since we follow the specification inside the GetCapabilities document

http://www.idee.es/csw-codsi-idee/srv/spa/csw?request=GetCapabilities&service=CSW&version=2.0.2

we notice that every provided URLs contain the specification of the port 8080.

<ows:Operation name="GetRecords">

<ows:DCP>

<ows:HTTP>

<ows:Get xlink:href="http://www.idee.es:8080/csw-codsi-idee/srv/eng/csw" />

<ows:Post xlink:href="http://www.idee.es:8080/csw-codsi-idee/srv/eng/csw" />

</ows:HTTP>

</ows:DCP>

Just for test, we tried to execute a POST GetRecords without the mentioned port and it succeed.

Could you please investigate from your side and fix it? We may suggest to update the GetCapabilities URLs by removing the "internal" port specification.

Best regards,

Davide on behalf of JRC INSPIRE Support team

|

1.0

|

ES: Discovery service unavailable - Dear Paloma,

we are unable to harvest from your registered endpoint. It returns a HTTP status 503 UNAVAILABLE.

We already tried on Dec 13th, 16th and today, and each time, after four attempts with a increasing timeout (up to 18sec), the service replies HTTP status 503 Service Unavailable to our POST GetRecords request.

Since we follow the specification inside the GetCapabilities document

http://www.idee.es/csw-codsi-idee/srv/spa/csw?request=GetCapabilities&service=CSW&version=2.0.2

we notice that every provided URLs contain the specification of the port 8080.

<ows:Operation name="GetRecords">

<ows:DCP>

<ows:HTTP>

<ows:Get xlink:href="http://www.idee.es:8080/csw-codsi-idee/srv/eng/csw" />

<ows:Post xlink:href="http://www.idee.es:8080/csw-codsi-idee/srv/eng/csw" />

</ows:HTTP>

</ows:DCP>

Just for test, we tried to execute a POST GetRecords without the mentioned port and it succeed.

Could you please investigate from your side and fix it? We may suggest to update the GetCapabilities URLs by removing the "internal" port specification.

Best regards,

Davide on behalf of JRC INSPIRE Support team

|

process

|

es discovery service unavailable dear paloma we are unable to harvest from your registered endpoint it returns a http status unavailable we already tried on dec and today and each time after four attempts with a increasing timeout up to the service replies http status service unavailable to our post getrecords request since we follow the specification inside the getcapabilities document we notice that every provided urls contain the specification of the port lt ows operation name quot getrecords quot gt lt ows dcp gt lt ows http gt lt ows get xlink href quot gt lt ows post xlink href quot gt lt ows http gt lt ows dcp gt just for test we tried to execute a post getrecords without the mentioned port and it succeed could you please investigate from your side and fix it we may suggest to update the getcapabilities urls by removing the quot internal quot port specification best regards davide on behalf of jrc inspire support team

| 1

|

152,863

| 12,128,299,035

|

IssuesEvent

|

2020-04-22 20:15:00

|

physiopy/phys2bids

|

https://api.github.com/repos/physiopy/phys2bids

|

closed

|

Add Windows CI testing

|

Testing

|

We are currently not testing (nor developing) `phys2bids` on Windows systems, leading to issues like e.g., #171. I think we should look into adding Windows CI testing using a service like [AppVeyor](https://www.appveyor.com/) or [Azure Pipelines](https://azure.microsoft.com/en-us/services/devops/pipelines/) (since TravisCI currently only has [minimal](https://docs.travis-ci.com/user/reference/windows/) Windows support).

## Context / Motivation

We shouldn't limit our users to those researchers working on Mac or Linux systems, so adding AppVeyor or Azure support / testing so that we can ensure our primary workflows are viable on Windows is critical.

## Possible Implementation

`nipy/nibabel` recently moved to Azure Pipelines from AppVeyor (relevant files: [1](https://github.com/nipy/nibabel/blob/master/.azure-pipelines/windows.yml) [2](https://github.com/nipy/nibabel/blob/master/azure-pipelines.yml)); we could look into simply copying the relevant portions of their framework for `phys2bids`.

|

1.0

|

Add Windows CI testing - We are currently not testing (nor developing) `phys2bids` on Windows systems, leading to issues like e.g., #171. I think we should look into adding Windows CI testing using a service like [AppVeyor](https://www.appveyor.com/) or [Azure Pipelines](https://azure.microsoft.com/en-us/services/devops/pipelines/) (since TravisCI currently only has [minimal](https://docs.travis-ci.com/user/reference/windows/) Windows support).

## Context / Motivation

We shouldn't limit our users to those researchers working on Mac or Linux systems, so adding AppVeyor or Azure support / testing so that we can ensure our primary workflows are viable on Windows is critical.

## Possible Implementation

`nipy/nibabel` recently moved to Azure Pipelines from AppVeyor (relevant files: [1](https://github.com/nipy/nibabel/blob/master/.azure-pipelines/windows.yml) [2](https://github.com/nipy/nibabel/blob/master/azure-pipelines.yml)); we could look into simply copying the relevant portions of their framework for `phys2bids`.

|

non_process

|

add windows ci testing we are currently not testing nor developing on windows systems leading to issues like e g i think we should look into adding windows ci testing using a service like or since travisci currently only has windows support context motivation we shouldn t limit our users to those researchers working on mac or linux systems so adding appveyor or azure support testing so that we can ensure our primary workflows are viable on windows is critical possible implementation nipy nibabel recently moved to azure pipelines from appveyor relevant files we could look into simply copying the relevant portions of their framework for

| 0

|

3,389

| 6,515,961,352

|

IssuesEvent

|

2017-08-26 23:31:44

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

closed

|

test: implement a way to regularly test `abort` behaviour on all platform

|

process test

|

<!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**: *

* **Platform**: *

* **Subsystem**: process,test

<!-- Enter your issue details below this comment. -->

Since testing `abort()` and `--abort-on-uncaught-exception` generate core-dump that take allot of storage they have been moved to `test/abort/` and are not regularly tested on the full repertoire of platforms that we support.

/cc @nodejs/testing @nodejs/build

|

1.0

|

test: implement a way to regularly test `abort` behaviour on all platform - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**: *

* **Platform**: *

* **Subsystem**: process,test

<!-- Enter your issue details below this comment. -->

Since testing `abort()` and `--abort-on-uncaught-exception` generate core-dump that take allot of storage they have been moved to `test/abort/` and are not regularly tested on the full repertoire of platforms that we support.

/cc @nodejs/testing @nodejs/build

|

process

|

test implement a way to regularly test abort behaviour on all platform thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able version output of node v platform output of uname a unix or version and or bit windows subsystem if known please specify affected core module name if possible please provide code that demonstrates the problem keeping it as simple and free of external dependencies as you are able version platform subsystem process test since testing abort and abort on uncaught exception generate core dump that take allot of storage they have been moved to test abort and are not regularly tested on the full repertoire of platforms that we support cc nodejs testing nodejs build

| 1

|

227,336

| 17,380,694,735

|

IssuesEvent

|

2021-07-31 16:47:14

|

open-contracting/standard

|

https://api.github.com/repos/open-contracting/standard

|

opened

|

Worked example: Minimal updates in change history

|

Focus - Documentation

|

#1302 rewrites the Getting Started section as a Primer, which included removing the "Repeating previous information" section from the previous version of the Releases and Records page, accessible here: https://github.com/open-contracting/standard/blob/106f4c4/docs/getting_started/releases_and_records.md#repeating-previous-information

This content should be re-introduced as a worked example.

|

1.0

|

Worked example: Minimal updates in change history - #1302 rewrites the Getting Started section as a Primer, which included removing the "Repeating previous information" section from the previous version of the Releases and Records page, accessible here: https://github.com/open-contracting/standard/blob/106f4c4/docs/getting_started/releases_and_records.md#repeating-previous-information

This content should be re-introduced as a worked example.

|

non_process

|

worked example minimal updates in change history rewrites the getting started section as a primer which included removing the repeating previous information section from the previous version of the releases and records page accessible here this content should be re introduced as a worked example

| 0

|

304,546

| 26,287,240,688

|

IssuesEvent

|

2023-01-08 00:36:47

|

devssa/onde-codar-em-salvador

|

https://api.github.com/repos/devssa/onde-codar-em-salvador

|

closed

|

[TESTES] [ESPECIALISTA] [REMOTO] Testes Automatizados (Especialista) na [SOFIST]

|

BANCO DE DADOS JAVASCRIPT BDD TESTE DE INTEGRAÇÃO TYPESCRIPT REMOTO TESTES AUTOMATIZADOS HELP WANTED ESPECIALISTA TESTES DE API TESTES DE UNIDADE Stale

|

<!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## Descrição da vaga

- Sabemos que testes de regressão precisam ser executados rapidamente. Quando realizados 100% manualmente, os testes de regressão impedem os QAs de realmente realizarem o que importa, que é encontrar novas formas de explorar novos bugs nos aplicativos. Imagine agora produtos que são utilizados por milhões de pessoas, em centenas de dispositivos distintos: há muitas situações para serem automatizadas! Seu papel será apoiar nosso time de QA automatizando cada vez mais testes e inúmeras situações de uso de aplicativos Android e iOS e sistemas WEB, de forma a acelerar o processo de liberação de novas versões dos apps a cada sprint. Faremos nosso trabalho utilizando BDD (Cucumber), Appium, Selenium Webdriver (com Page Objects) e, quando aplicável, nuvens de dispositivos, portanto saber aplicar isto é muito importante para o sucesso de todos no projeto.

- Na Sofist valorizamos pessoas que saibam trabalhar em equipe, entendam como o trabalho de cada um contribui para que os resultados sejam alcançados, estejam em constante aprimoramento profissional, sejam apaixonadas pelo que fazem e entendam que ações (não ideias) podem mudar a vida das pessoas.

RESPONSABILIDADES E ATRIBUIÇÕES

DESCRIÇÃO DO TRABALHO

- Automatizar testes em projetos de apps Android e iOS e sistemas WEB utilizando BDD (Cucumber), Selenium Webdriver, Appium e nuvens de dispositivos

- Apoiar o desenvolvimento de features e APIs que simulem ações dos usuários para uso durante os testes automatizados e testes exploratórios manuais

- Ajudar na manutenção dos ambientes de testes de integração

- Auxiliar na capacitação de outros membros do time no processo e tecnologias usadas

- Propor ao time melhorias no processo que aprimorem o resultado que entregamos

- Introduzir ferramentas e metodologias que tornem o trabalho mais eficiente

## Local

- Remoto

## Benefícios

- Vale Refeição

- Vale Alimentação

- Plano de saúde (Unimed)

- Plano odontológico (Amil)

- Gympass

- Seguro de vida

- PLR

- Horário Flexível

- Auxílio Creche

- Licença Maternidade e Paternidade Estendida

- Day Off

## Requisitos

**Obrigatórios:**

- Ter atuado com linguagens JavaScript e/ou TypeScript

- Conhecimento prévio para elaboração e escrita dos cenários utilizando BDD

- Ter atuado com Testes de Unidade

- Ter atuado com Testes de API

- Ter atuado com Testes de Integração

- Conhecimento em Banco de dados

- Conhecimento na utilização de Docker

**Diferenciais:**

- Conhecimentos a práticas de CI/CD

- Conhecimento na utilização do Gitlab,

- Conhecimento em Testes Unitários

- Conhecimento em Mocks e Stubs

- Conhecimento em Testes de Contrato)

## Contratação

- a combinar

## Nossa empresa

- Somos uma empresa que promove troca de conhecimento entre os colaboradores, entendemos que cada Sofister tem uma habilidade que nos ajuda a crescer.

- Somos feitos por pessoas, por isso nossa prioridade é cuidar delas. Não é a toa que fomos, pela terceira vez consecutiva, certificados como um Great Place to Work. E foi com muito orgulho que conquistamos um espaço entre as 20 melhores empresa para trabalhar na nossa categoria, em 2019.

- Aqui você encontrará um horário de trabalho flexível, day off de aniversário, café da manhã na empresa, não terá que se preocupar com dress code, e temos um ambiente que promove a diversidade, focado no respeito e colaboração. Aliás, é com enorme orgulho que ressaltamos que em 2020 ficamos com nota 100 (máxima) no GPTW em itens como: as pessoas aqui são bem tratadas independentemente de sua orientação sexual, cor, etnia, sexo e idade.

- Isso só reforça nosso empenho e preocupação genuína com as pessoas.

- Seja durante sua atuação remota ou no escritório físico, nosso clima organizacional é pautado em uma cultura de relações saudáveis, promovendo o desenvolvimento e a autonomia.

- Trabalhando aqui você fará parte de um time que se ajuda e apoia, com liberdade para colocar suas ideias em prática, além de um programa de desenvolvimento apoiado por mentores técnicos, que são os melhores QA’s do mercado!

- Se você ama o universo QA, você vai amar ser um(a) Sofister. Somos parceiros de grandes nomes do mercado de tecnologia, participamos de projetos incríveis, que vão te desafiar todos os dias a enriquecer seu conhecimento sobre o mundo de testes.

- Vem pra Sofist!

## Como se candidatar

- [Clique aqui para se candidatar](https://vemprasofist.gupy.io/jobs/487457?jobBoardSource=gupy_public_page)

|

4.0

|

[TESTES] [ESPECIALISTA] [REMOTO] Testes Automatizados (Especialista) na [SOFIST] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## Descrição da vaga

- Sabemos que testes de regressão precisam ser executados rapidamente. Quando realizados 100% manualmente, os testes de regressão impedem os QAs de realmente realizarem o que importa, que é encontrar novas formas de explorar novos bugs nos aplicativos. Imagine agora produtos que são utilizados por milhões de pessoas, em centenas de dispositivos distintos: há muitas situações para serem automatizadas! Seu papel será apoiar nosso time de QA automatizando cada vez mais testes e inúmeras situações de uso de aplicativos Android e iOS e sistemas WEB, de forma a acelerar o processo de liberação de novas versões dos apps a cada sprint. Faremos nosso trabalho utilizando BDD (Cucumber), Appium, Selenium Webdriver (com Page Objects) e, quando aplicável, nuvens de dispositivos, portanto saber aplicar isto é muito importante para o sucesso de todos no projeto.

- Na Sofist valorizamos pessoas que saibam trabalhar em equipe, entendam como o trabalho de cada um contribui para que os resultados sejam alcançados, estejam em constante aprimoramento profissional, sejam apaixonadas pelo que fazem e entendam que ações (não ideias) podem mudar a vida das pessoas.

RESPONSABILIDADES E ATRIBUIÇÕES

DESCRIÇÃO DO TRABALHO

- Automatizar testes em projetos de apps Android e iOS e sistemas WEB utilizando BDD (Cucumber), Selenium Webdriver, Appium e nuvens de dispositivos

- Apoiar o desenvolvimento de features e APIs que simulem ações dos usuários para uso durante os testes automatizados e testes exploratórios manuais

- Ajudar na manutenção dos ambientes de testes de integração

- Auxiliar na capacitação de outros membros do time no processo e tecnologias usadas

- Propor ao time melhorias no processo que aprimorem o resultado que entregamos

- Introduzir ferramentas e metodologias que tornem o trabalho mais eficiente

## Local

- Remoto

## Benefícios

- Vale Refeição

- Vale Alimentação

- Plano de saúde (Unimed)

- Plano odontológico (Amil)

- Gympass

- Seguro de vida

- PLR

- Horário Flexível

- Auxílio Creche

- Licença Maternidade e Paternidade Estendida

- Day Off

## Requisitos

**Obrigatórios:**

- Ter atuado com linguagens JavaScript e/ou TypeScript

- Conhecimento prévio para elaboração e escrita dos cenários utilizando BDD

- Ter atuado com Testes de Unidade

- Ter atuado com Testes de API

- Ter atuado com Testes de Integração

- Conhecimento em Banco de dados

- Conhecimento na utilização de Docker

**Diferenciais:**

- Conhecimentos a práticas de CI/CD

- Conhecimento na utilização do Gitlab,

- Conhecimento em Testes Unitários

- Conhecimento em Mocks e Stubs

- Conhecimento em Testes de Contrato)

## Contratação

- a combinar

## Nossa empresa

- Somos uma empresa que promove troca de conhecimento entre os colaboradores, entendemos que cada Sofister tem uma habilidade que nos ajuda a crescer.

- Somos feitos por pessoas, por isso nossa prioridade é cuidar delas. Não é a toa que fomos, pela terceira vez consecutiva, certificados como um Great Place to Work. E foi com muito orgulho que conquistamos um espaço entre as 20 melhores empresa para trabalhar na nossa categoria, em 2019.

- Aqui você encontrará um horário de trabalho flexível, day off de aniversário, café da manhã na empresa, não terá que se preocupar com dress code, e temos um ambiente que promove a diversidade, focado no respeito e colaboração. Aliás, é com enorme orgulho que ressaltamos que em 2020 ficamos com nota 100 (máxima) no GPTW em itens como: as pessoas aqui são bem tratadas independentemente de sua orientação sexual, cor, etnia, sexo e idade.

- Isso só reforça nosso empenho e preocupação genuína com as pessoas.

- Seja durante sua atuação remota ou no escritório físico, nosso clima organizacional é pautado em uma cultura de relações saudáveis, promovendo o desenvolvimento e a autonomia.

- Trabalhando aqui você fará parte de um time que se ajuda e apoia, com liberdade para colocar suas ideias em prática, além de um programa de desenvolvimento apoiado por mentores técnicos, que são os melhores QA’s do mercado!

- Se você ama o universo QA, você vai amar ser um(a) Sofister. Somos parceiros de grandes nomes do mercado de tecnologia, participamos de projetos incríveis, que vão te desafiar todos os dias a enriquecer seu conhecimento sobre o mundo de testes.

- Vem pra Sofist!

## Como se candidatar

- [Clique aqui para se candidatar](https://vemprasofist.gupy.io/jobs/487457?jobBoardSource=gupy_public_page)

|

non_process

|

testes automatizados especialista na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na descrição da vaga sabemos que testes de regressão precisam ser executados rapidamente quando realizados manualmente os testes de regressão impedem os qas de realmente realizarem o que importa que é encontrar novas formas de explorar novos bugs nos aplicativos imagine agora produtos que são utilizados por milhões de pessoas em centenas de dispositivos distintos há muitas situações para serem automatizadas seu papel será apoiar nosso time de qa automatizando cada vez mais testes e inúmeras situações de uso de aplicativos android e ios e sistemas web de forma a acelerar o processo de liberação de novas versões dos apps a cada sprint faremos nosso trabalho utilizando bdd cucumber appium selenium webdriver com page objects e quando aplicável nuvens de dispositivos portanto saber aplicar isto é muito importante para o sucesso de todos no projeto na sofist valorizamos pessoas que saibam trabalhar em equipe entendam como o trabalho de cada um contribui para que os resultados sejam alcançados estejam em constante aprimoramento profissional sejam apaixonadas pelo que fazem e entendam que ações não ideias podem mudar a vida das pessoas responsabilidades e atribuições descrição do trabalho automatizar testes em projetos de apps android e ios e sistemas web utilizando bdd cucumber selenium webdriver appium e nuvens de dispositivos apoiar o desenvolvimento de features e apis que simulem ações dos usuários para uso durante os testes automatizados e testes exploratórios manuais ajudar na manutenção dos ambientes de testes de integração auxiliar na capacitação de outros membros do time no processo e tecnologias usadas propor ao time melhorias no processo que aprimorem o resultado que entregamos introduzir ferramentas e metodologias que tornem o trabalho mais eficiente local remoto benefícios vale refeição vale alimentação plano de saúde unimed plano odontológico amil gympass seguro de vida plr horário flexível auxílio creche licença maternidade e paternidade estendida day off requisitos obrigatórios ter atuado com linguagens javascript e ou typescript conhecimento prévio para elaboração e escrita dos cenários utilizando bdd ter atuado com testes de unidade ter atuado com testes de api ter atuado com testes de integração conhecimento em banco de dados conhecimento na utilização de docker diferenciais conhecimentos a práticas de ci cd conhecimento na utilização do gitlab conhecimento em testes unitários conhecimento em mocks e stubs conhecimento em testes de contrato contratação a combinar nossa empresa somos uma empresa que promove troca de conhecimento entre os colaboradores entendemos que cada sofister tem uma habilidade que nos ajuda a crescer somos feitos por pessoas por isso nossa prioridade é cuidar delas não é a toa que fomos pela terceira vez consecutiva certificados como um great place to work e foi com muito orgulho que conquistamos um espaço entre as melhores empresa para trabalhar na nossa categoria em aqui você encontrará um horário de trabalho flexível day off de aniversário café da manhã na empresa não terá que se preocupar com dress code e temos um ambiente que promove a diversidade focado no respeito e colaboração aliás é com enorme orgulho que ressaltamos que em ficamos com nota máxima no gptw em itens como as pessoas aqui são bem tratadas independentemente de sua orientação sexual cor etnia sexo e idade isso só reforça nosso empenho e preocupação genuína com as pessoas seja durante sua atuação remota ou no escritório físico nosso clima organizacional é pautado em uma cultura de relações saudáveis promovendo o desenvolvimento e a autonomia trabalhando aqui você fará parte de um time que se ajuda e apoia com liberdade para colocar suas ideias em prática além de um programa de desenvolvimento apoiado por mentores técnicos que são os melhores qa’s do mercado se você ama o universo qa você vai amar ser um a sofister somos parceiros de grandes nomes do mercado de tecnologia participamos de projetos incríveis que vão te desafiar todos os dias a enriquecer seu conhecimento sobre o mundo de testes vem pra sofist como se candidatar

| 0

|

742,720

| 25,867,124,987

|

IssuesEvent

|

2022-12-13 21:58:55

|

jesus-collective/mobile

|

https://api.github.com/repos/jesus-collective/mobile

|

closed

|

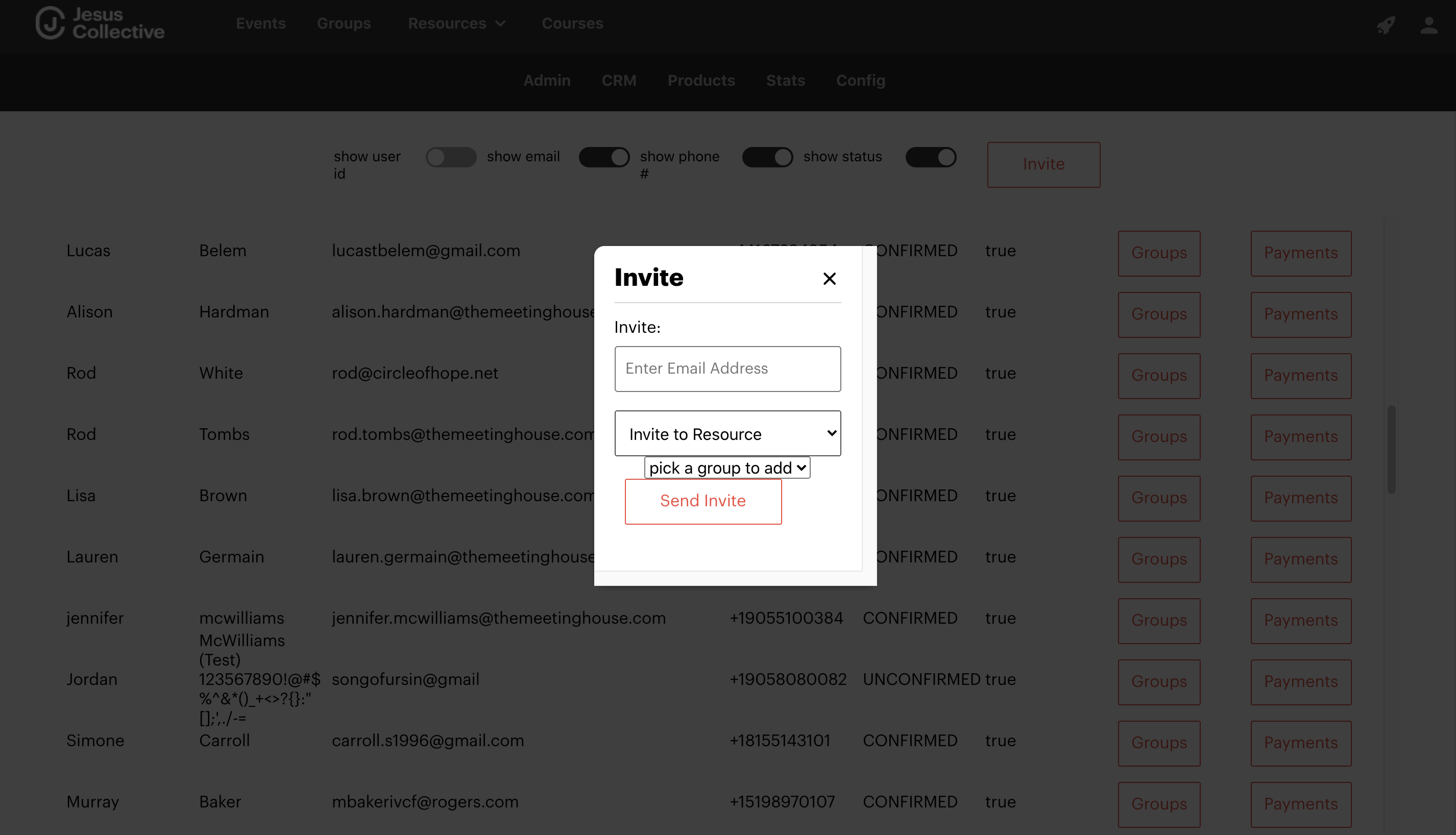

How to invite user to specific membership

|

Secondary Priority login 50-60 Hrs

|

* [ ] Allow admin user to create custom emails for different platform invites

* [ ] Allow invites to specific products

* [ ] Admin user needs to be able to connect a custom email to a product

* [ ] When a user is invited to a specific product, they face a specific product payment screen

* [ ] Admin should be able to choose whether to invite an org or an individual

┆Issue is synchronized with this [Wrike Task](https://www.wrike.com/open.htm?id=713148447)

|

1.0

|

How to invite user to specific membership - * [ ] Allow admin user to create custom emails for different platform invites

* [ ] Allow invites to specific products

* [ ] Admin user needs to be able to connect a custom email to a product

* [ ] When a user is invited to a specific product, they face a specific product payment screen

* [ ] Admin should be able to choose whether to invite an org or an individual

┆Issue is synchronized with this [Wrike Task](https://www.wrike.com/open.htm?id=713148447)

|

non_process

|

how to invite user to specific membership allow admin user to create custom emails for different platform invites allow invites to specific products admin user needs to be able to connect a custom email to a product when a user is invited to a specific product they face a specific product payment screen admin should be able to choose whether to invite an org or an individual ┆issue is synchronized with this

| 0

|

359

| 2,794,984,374

|

IssuesEvent

|

2015-05-11 19:34:21

|

scieloorg/search-journals

|

https://api.github.com/repos/scieloorg/search-journals

|

opened

|

Capacidade de remover registro inexistentes

|

Processamento Tarefa

|

Atualmente o processamento do sistema de busca não tem a capacidade de remover e otimizar os registros, portanto para que não tenhamos registros fantasma no índice é importante incorporar essas opções no processamento, essa remoção deve considera o resultado da diferença entre o article meta e o índice.

Sugiro o parâmetro ``-o`` para otimizar e o parâmetro ``-r`` para remover.

|

1.0

|

Capacidade de remover registro inexistentes - Atualmente o processamento do sistema de busca não tem a capacidade de remover e otimizar os registros, portanto para que não tenhamos registros fantasma no índice é importante incorporar essas opções no processamento, essa remoção deve considera o resultado da diferença entre o article meta e o índice.

Sugiro o parâmetro ``-o`` para otimizar e o parâmetro ``-r`` para remover.

|

process

|

capacidade de remover registro inexistentes atualmente o processamento do sistema de busca não tem a capacidade de remover e otimizar os registros portanto para que não tenhamos registros fantasma no índice é importante incorporar essas opções no processamento essa remoção deve considera o resultado da diferença entre o article meta e o índice sugiro o parâmetro o para otimizar e o parâmetro r para remover

| 1

|

237,254

| 19,604,668,958

|

IssuesEvent

|

2022-01-06 07:49:38

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

closed

|

Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/transform/creation_runtime_mappings·ts - transform creation with runtime mappings batch transform with unique rt_airline_lower and sort by time and runtime mappings runs the transform and displays it correctly in Discover page

|

:ml failed-test skipped-test

|

A test failed on a tracked branch

```

Error: Timeout of 360000ms exceeded. For async tests and hooks, ensure "done()" is called; if returning a Promise, ensure it resolves. (/var/lib/buildkite-agent/builds/kb-cigroup-6-04cd8299cf7a5667/elastic/kibana-hourly/kibana/x-pack/test/functional/apps/transform/creation_runtime_mappings.ts)

at listOnTimeout (internal/timers.js:557:17)

at processTimers (internal/timers.js:500:7)

```

First failure: [CI Build](https://buildkite.com/elastic/kibana-hourly/builds/916#20f30ec4-c3c1-4444-9914-d0cbb40a8b12)

<!-- kibanaCiData = {"failed-test":{"test.class":"Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/transform/creation_runtime_mappings·ts","test.name":"transform creation with runtime mappings batch transform with unique rt_airline_lower and sort by time and runtime mappings runs the transform and displays it correctly in Discover page","test.failCount":18}} -->

|

2.0

|

Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/transform/creation_runtime_mappings·ts - transform creation with runtime mappings batch transform with unique rt_airline_lower and sort by time and runtime mappings runs the transform and displays it correctly in Discover page - A test failed on a tracked branch

```

Error: Timeout of 360000ms exceeded. For async tests and hooks, ensure "done()" is called; if returning a Promise, ensure it resolves. (/var/lib/buildkite-agent/builds/kb-cigroup-6-04cd8299cf7a5667/elastic/kibana-hourly/kibana/x-pack/test/functional/apps/transform/creation_runtime_mappings.ts)

at listOnTimeout (internal/timers.js:557:17)

at processTimers (internal/timers.js:500:7)

```

First failure: [CI Build](https://buildkite.com/elastic/kibana-hourly/builds/916#20f30ec4-c3c1-4444-9914-d0cbb40a8b12)

<!-- kibanaCiData = {"failed-test":{"test.class":"Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/transform/creation_runtime_mappings·ts","test.name":"transform creation with runtime mappings batch transform with unique rt_airline_lower and sort by time and runtime mappings runs the transform and displays it correctly in Discover page","test.failCount":18}} -->

|

non_process

|

failing test chrome x pack ui functional tests x pack test functional apps transform creation runtime mappings·ts transform creation with runtime mappings batch transform with unique rt airline lower and sort by time and runtime mappings runs the transform and displays it correctly in discover page a test failed on a tracked branch error timeout of exceeded for async tests and hooks ensure done is called if returning a promise ensure it resolves var lib buildkite agent builds kb cigroup elastic kibana hourly kibana x pack test functional apps transform creation runtime mappings ts at listontimeout internal timers js at processtimers internal timers js first failure

| 0

|

20,439

| 27,099,901,118

|

IssuesEvent

|

2023-02-15 07:45:23

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

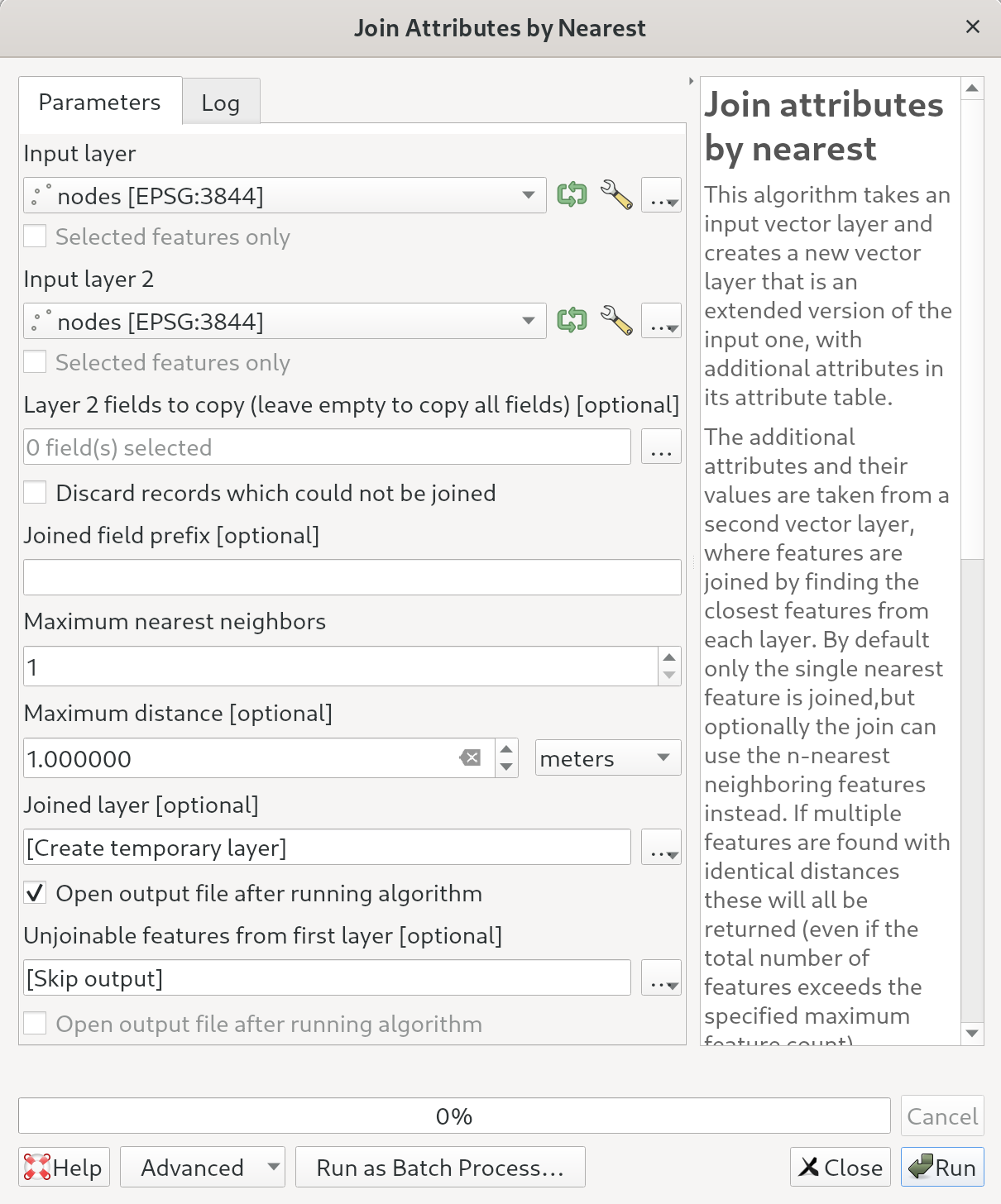

"Join by nearest" regression on 3.28 (from 3.22) when multiple geometry colums are present

|

Processing Regression Bug

|

### What is the bug or the crash?

Having a nodes layer with multiple geometries I usually did on 3.22 LTR the join by nearest algorithm to find out nodes that are closer than 0.003 m.

With 3.28.2 there's an error now:

```

Feature could not be written to Joined_layer_.....: Could not store attribute "geometry_alt1": Could not convert value "" to target type "string"

Could not write feature into OUTPUT

Execution failed after 0.11 seconds

```

### Steps to reproduce the issue

1. Add the following SQL to your PostgreSQL database:

```

CREATE TABLE public.nodes (

id integer NOT NULL,

fk_district integer,

fk_pressurezone integer,

fk_printmap integer[],

fk_precision integer,

fk_precisionalti integer,

fk_object_reference integer,

altitude numeric(10,3) DEFAULT NULL::numeric,

_printmaps text,

_geometry_alt1_used boolean,

_geometry_alt2_used boolean,

_pipe_orientation double precision DEFAULT 0,

_pipe_schema_visible boolean DEFAULT false,

geometry public.geometry(PointZ,3844) NOT NULL,

geometry_alt1 public.geometry(PointZ,3844),

geometry_alt2 public.geometry(PointZ,3844)

);

INSERT INTO public.nodes VALUES (58957, NULL, NULL, '{}', 101, 101, 101, NULL, NULL, false, false, -8.627881391886133, true, '01010000A0040F00000000000018451B4100000000044018410000000000000000', NULL, NULL);

INSERT INTO public.nodes VALUES (58971, NULL, NULL, '{}', 101, 101, 101, NULL, NULL, false, false, -18.95382931168005, true, '01010000A0040F00000000000018451B4100000000084018410000000000000000', NULL, NULL);

ALTER TABLE ONLY public.nodes

ADD CONSTRAINT node_pkey PRIMARY KEY (id);

CREATE INDEX node_geoidx ON public.nodes USING gist (geometry);

CREATE INDEX node_geoidx_alt1 ON public.nodes USING gist (geometry_alt1);

CREATE INDEX node_geoidx_alt2 ON public.nodes USING gist (geometry_alt2);

```

2. Run the join by nearest algo:

3. The error is shown:

```

Feature could not be written to Joined_layer_.....: Could not store attribute "geometry_alt1": Could not convert value "" to target type "string"

Could not write feature into OUTPUT

Execution failed after 0.11 seconds

```

### Versions

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.0//EN" "http://www.w3.org/TR/REC-html40/strict.dtd">

<html><head><meta http-equiv="Content-Type" content="text/html; charset=utf-8" /><style type="text/css">

p, li { white-space: pre-wrap; }

</style></head><body>

QGIS version | 3.28.2-Firenze | QGIS code revision | d33b8750f3

-- | -- | -- | --

Qt version | 5.15.8

Python version | 3.11.1

GDAL/OGR version | 3.5.2

PROJ version | 9.0.1

EPSG Registry database version | v10.064 (2022-05-19)

GEOS version | 3.11.0-CAPI-1.17.0

SQLite version | 3.40.0

PDAL version | 2.4.3

PostgreSQL client version | unknown

SpatiaLite version | 5.0.1

QWT version | 6.1.5

QScintilla2 version | 2.13.0

OS version | Fedora Linux 37 (Thirty Seven)

| | |

Active Python plugins

slyr_community | 4.0.6

QuickWKT | 3.1

project_export_inspire | 1.0.0

water_topology | 0.1

plugin_reloader | 0.9.3

db_manager | 0.1.20

processing | 2.12.99

sagaprovider | 2.12.99

grassprovider | 2.12.99

MetaSearch | 0.3.6

</body></html>QGIS version

3.28.2-Firenze

QGIS code revision

[d33b8750f3](https://github.com/qgis/QGIS/commit/d33b8750f3)

Qt version

5.15.8

Python version

3.11.1

GDAL/OGR version

3.5.2

PROJ version

9.0.1

EPSG Registry database version

v10.064 (2022-05-19)

GEOS version

3.11.0-CAPI-1.17.0

SQLite version

3.40.0

PDAL version

2.4.3

PostgreSQL client version

unknown

SpatiaLite version

5.0.1

QWT version

6.1.5

QScintilla2 version

2.13.0

OS version

Fedora Linux 37 (Thirty Seven)

Active Python plugins

slyr_community

4.0.6

QuickWKT

3.1

project_export_inspire

1.0.0

water_topology

0.1

plugin_reloader

0.9.3

db_manager

0.1.20

processing

2.12.99

sagaprovider

2.12.99

grassprovider

2.12.99

MetaSearch

0.3.6

### Supported QGIS version

- [X] I'm running a supported QGIS version according to the roadmap.

### New profile

- [X] I tried with a new QGIS profile

### Additional context

_No response_

|

1.0

|

"Join by nearest" regression on 3.28 (from 3.22) when multiple geometry colums are present - ### What is the bug or the crash?