Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,030

| 27,969,937,306

|

IssuesEvent

|

2023-03-25 00:20:06

|

darktable-org/darktable

|

https://api.github.com/repos/darktable-org/darktable

|

closed

|

New blending layers mode

|

feature: new scope: image processing no-issue-activity

|

Is it possible to add a value+saturation and lightness+chroma blend mode?

Basically this copies the hue from the bottom layer

|

1.0

|

New blending layers mode - Is it possible to add a value+saturation and lightness+chroma blend mode?

Basically this copies the hue from the bottom layer

|

process

|

new blending layers mode is it possible to add a value saturation and lightness chroma blend mode basically this copies the hue from the bottom layer

| 1

|

216,619

| 7,310,207,563

|

IssuesEvent

|

2018-02-28 14:23:00

|

AmpersandTarski/Ampersand

|

https://api.github.com/repos/AmpersandTarski/Ampersand

|

opened

|

MYSQL error 1069: Too many keys specified; max 64 keys allowed in query

|

bug component:prototype generator priority:high

|

Consider the following script:

~~~

CONTEXT Issue758

r1 :: C * C1 [UNI] r2 :: C * C2 [UNI] r3 :: C * C3 [UNI] r4 :: C * C4 [UNI] r5 :: C * C5 [UNI]

r6 :: C * C6 [UNI] r7 :: C * C7 [UNI] r8 :: C * C8 [UNI] r9 :: C * C9 [UNI] r10 :: C * C0 [UNI]

r11 :: C * C1 [UNI] r12 :: C * C2 [UNI] r13 :: C * C3 [UNI] r14 :: C * C4 [UNI] r15 :: C * C5 [UNI]

r16 :: C * C6 [UNI] r17 :: C * C7 [UNI] r18 :: C * C8 [UNI] r19 :: C * C9 [UNI] r20 :: C * C0 [UNI]

r21 :: C * C1 [UNI] r22 :: C * C2 [UNI] r23 :: C * C3 [UNI] r24 :: C * C4 [UNI] r25 :: C * C5 [UNI]

r26 :: C * C6 [UNI] r27 :: C * C7 [UNI] r28 :: C * C8 [UNI] r29 :: C * C9 [UNI] r30 :: C * C0 [UNI]

r31 :: C * C1 [UNI] r32 :: C * C2 [UNI] r33 :: C * C3 [UNI] r34 :: C * C4 [UNI] r35 :: C * C5 [UNI]

r36 :: C * C6 [UNI] r37 :: C * C7 [UNI] r38 :: C * C8 [UNI] r39 :: C * C9 [UNI] r40 :: C * C0 [UNI]

r41 :: C * C1 [UNI] r42 :: C * C2 [UNI] r43 :: C * C3 [UNI] r44 :: C * C4 [UNI] r45 :: C * C5 [UNI]

r46 :: C * C6 [UNI] r47 :: C * C7 [UNI] r48 :: C * C8 [UNI] r49 :: C * C9 [UNI] r50 :: C * C0 [UNI]

r51 :: C * C1 [UNI] r52 :: C * C2 [UNI] r53 :: C * C3 [UNI] r54 :: C * C4 [UNI] r55 :: C * C5 [UNI]

r56 :: C * C6 [UNI] r57 :: C * C7 [UNI] r58 :: C * C8 [UNI] r59 :: C * C9 [UNI] r60 :: C * C0 [UNI]

r61 :: C * C1 [UNI] r62 :: C * C2 [UNI] r63 :: C * C3 [UNI] r64 :: C * C4 [UNI] r65 :: C * C0 [UNI]

ENDCONTEXT

~~~

If I compile it with Ampersand-v3.9.1 [development:b051de7], the database is not created properly. In the ERROR log, I see lines such as:

~~~

[2018-02-28 15:06:45] API.ERROR: MYSQL error 1069: Too many keys specified; max 64 keys allowed in query:CREATE INDEX "C_r63" ON "C" ("r63") [] []

~~~

As a consequence, applications that have this many (univalent) relations will not run. This currently happens in projects that we do, where we need to create simple/flat forms, and as a consequence, there are many relations that have SRC=Form and TGT=SomeOtherConcept.

A (cumbersome) workaround for this is to remove `CREATE INDEX` statements from `mysql-installer.json`, such that for every concept, no more than 63 (or 64) keys are left over. After all, these keys seem to only affect performance.

It would be nice if the generator would not generate more than these 63/64 keys, so that such applications would (continue to) run.

|

1.0

|

MYSQL error 1069: Too many keys specified; max 64 keys allowed in query - Consider the following script:

~~~

CONTEXT Issue758

r1 :: C * C1 [UNI] r2 :: C * C2 [UNI] r3 :: C * C3 [UNI] r4 :: C * C4 [UNI] r5 :: C * C5 [UNI]

r6 :: C * C6 [UNI] r7 :: C * C7 [UNI] r8 :: C * C8 [UNI] r9 :: C * C9 [UNI] r10 :: C * C0 [UNI]

r11 :: C * C1 [UNI] r12 :: C * C2 [UNI] r13 :: C * C3 [UNI] r14 :: C * C4 [UNI] r15 :: C * C5 [UNI]

r16 :: C * C6 [UNI] r17 :: C * C7 [UNI] r18 :: C * C8 [UNI] r19 :: C * C9 [UNI] r20 :: C * C0 [UNI]

r21 :: C * C1 [UNI] r22 :: C * C2 [UNI] r23 :: C * C3 [UNI] r24 :: C * C4 [UNI] r25 :: C * C5 [UNI]

r26 :: C * C6 [UNI] r27 :: C * C7 [UNI] r28 :: C * C8 [UNI] r29 :: C * C9 [UNI] r30 :: C * C0 [UNI]

r31 :: C * C1 [UNI] r32 :: C * C2 [UNI] r33 :: C * C3 [UNI] r34 :: C * C4 [UNI] r35 :: C * C5 [UNI]

r36 :: C * C6 [UNI] r37 :: C * C7 [UNI] r38 :: C * C8 [UNI] r39 :: C * C9 [UNI] r40 :: C * C0 [UNI]

r41 :: C * C1 [UNI] r42 :: C * C2 [UNI] r43 :: C * C3 [UNI] r44 :: C * C4 [UNI] r45 :: C * C5 [UNI]

r46 :: C * C6 [UNI] r47 :: C * C7 [UNI] r48 :: C * C8 [UNI] r49 :: C * C9 [UNI] r50 :: C * C0 [UNI]

r51 :: C * C1 [UNI] r52 :: C * C2 [UNI] r53 :: C * C3 [UNI] r54 :: C * C4 [UNI] r55 :: C * C5 [UNI]

r56 :: C * C6 [UNI] r57 :: C * C7 [UNI] r58 :: C * C8 [UNI] r59 :: C * C9 [UNI] r60 :: C * C0 [UNI]

r61 :: C * C1 [UNI] r62 :: C * C2 [UNI] r63 :: C * C3 [UNI] r64 :: C * C4 [UNI] r65 :: C * C0 [UNI]

ENDCONTEXT

~~~

If I compile it with Ampersand-v3.9.1 [development:b051de7], the database is not created properly. In the ERROR log, I see lines such as:

~~~

[2018-02-28 15:06:45] API.ERROR: MYSQL error 1069: Too many keys specified; max 64 keys allowed in query:CREATE INDEX "C_r63" ON "C" ("r63") [] []

~~~

As a consequence, applications that have this many (univalent) relations will not run. This currently happens in projects that we do, where we need to create simple/flat forms, and as a consequence, there are many relations that have SRC=Form and TGT=SomeOtherConcept.

A (cumbersome) workaround for this is to remove `CREATE INDEX` statements from `mysql-installer.json`, such that for every concept, no more than 63 (or 64) keys are left over. After all, these keys seem to only affect performance.

It would be nice if the generator would not generate more than these 63/64 keys, so that such applications would (continue to) run.

|

non_process

|

mysql error too many keys specified max keys allowed in query consider the following script context c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c c endcontext if i compile it with ampersand the database is not created properly in the error log i see lines such as api error mysql error too many keys specified max keys allowed in query create index c on c as a consequence applications that have this many univalent relations will not run this currently happens in projects that we do where we need to create simple flat forms and as a consequence there are many relations that have src form and tgt someotherconcept a cumbersome workaround for this is to remove create index statements from mysql installer json such that for every concept no more than or keys are left over after all these keys seem to only affect performance it would be nice if the generator would not generate more than these keys so that such applications would continue to run

| 0

|

302,468

| 22,825,308,226

|

IssuesEvent

|

2022-07-12 08:03:36

|

livepeer/livepeer-studio-docs

|

https://api.github.com/repos/livepeer/livepeer-studio-docs

|

closed

|

Fix -- “About Streaming Protocols” links to MistServer

|

documentation

|

“About Streaming Protocols” links to MistServer with no context.

|

1.0

|

Fix -- “About Streaming Protocols” links to MistServer - “About Streaming Protocols” links to MistServer with no context.

|

non_process

|

fix “about streaming protocols” links to mistserver “about streaming protocols” links to mistserver with no context

| 0

|

370,969

| 10,959,472,248

|

IssuesEvent

|

2019-11-27 11:28:52

|

kubernetes-sigs/azuredisk-csi-driver

|

https://api.github.com/repos/kubernetes-sigs/azuredisk-csi-driver

|

opened

|

incremental snapshot support

|

kind/feature priority/important-longterm

|

**Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like in detail**

<!-- A clear and concise description of what you want to happen. -->

Current snapshot is full snapshot, incremental snapshot is in Preview, we should support that in near future.

https://azure.microsoft.com/en-us/blog/introducing-cost-effective-increment-snapshots-of-azure-managed-disks-in-preview/

**Describe alternatives you've considered**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Additional context**

<!-- Add any other context or screenshots about the feature request here. -->

|

1.0

|

incremental snapshot support - **Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like in detail**

<!-- A clear and concise description of what you want to happen. -->

Current snapshot is full snapshot, incremental snapshot is in Preview, we should support that in near future.

https://azure.microsoft.com/en-us/blog/introducing-cost-effective-increment-snapshots-of-azure-managed-disks-in-preview/

**Describe alternatives you've considered**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Additional context**

<!-- Add any other context or screenshots about the feature request here. -->

|

non_process

|

incremental snapshot support is your feature request related to a problem please describe describe the solution you d like in detail current snapshot is full snapshot incremental snapshot is in preview we should support that in near future describe alternatives you ve considered additional context

| 0

|

4,514

| 3,870,587,897

|

IssuesEvent

|

2016-04-11 05:02:20

|

lionheart/openradar-mirror

|

https://api.github.com/repos/lionheart/openradar-mirror

|

opened

|

22881933: Siri taxes users with initial delay only when headphones are plugged in

|

classification:ui/usability reproducible:always status:open

|

#### Description

Summary:

I just discovered and celebrated (http://bitsplitting.org/2015/09/28/if-it-aint-fixed-break-it/) the fact that with iPhone 6s, Siri's ability to listen instantly means that there is no longer even a need for an audible or vibration signal that it's ready to process speech. Users can just press the button and start speaking instantly, and it works. And it's awesome.

One gotcha I've run into though, is in the particular case that headphones are plugged in, my phone DOES give a moment's delay before processing Siri instructions, and also plays an audible chime when it's ready. I can't tell if it's the (unnecessary?) chime that is itself delaying Siri's ability to process, or if the fact that headphones are installed somehow defies Siri's ability to be instantly attentive. In any case, this is an unfortunate penalty for using headphones, and destroys the otherwise admirable universal ability to count on Siri to be attentive immediately upon pressing the home button.

Steps to Reproduce:

1. Plug in headphones to an iPhone 6s.

2. Press and hold the home button while IMMEDIATELY asking Siri something e.g. "What time is it?"

Expected Results:

Siri should process and handle the request "What time is it?"

Actual Results:

Siri only gets the tail end of the request, if any, depending on how quickly the chime has been played. In my tests, it often just ends up in "OK, go ahead and ask me something" mode, having totally lost the spoken sentence.

Version:

iOS 9.0.1

Notes:

NOTE: Even if the phone is not silenced, pressing and holding the home button doesn't cause a chime, and doesn't impede Siri's immediate processing of spoken instructions.

Configuration:

iPhone 6s Verizon 128GB.

Attachments:

-

Product Version: 9.0.1

Created: 2015-09-28T18:20:59.147980

Originated: 2015-09-28T14:13:00

Open Radar Link: http://www.openradar.me/22881933

|

True

|

22881933: Siri taxes users with initial delay only when headphones are plugged in - #### Description

Summary:

I just discovered and celebrated (http://bitsplitting.org/2015/09/28/if-it-aint-fixed-break-it/) the fact that with iPhone 6s, Siri's ability to listen instantly means that there is no longer even a need for an audible or vibration signal that it's ready to process speech. Users can just press the button and start speaking instantly, and it works. And it's awesome.

One gotcha I've run into though, is in the particular case that headphones are plugged in, my phone DOES give a moment's delay before processing Siri instructions, and also plays an audible chime when it's ready. I can't tell if it's the (unnecessary?) chime that is itself delaying Siri's ability to process, or if the fact that headphones are installed somehow defies Siri's ability to be instantly attentive. In any case, this is an unfortunate penalty for using headphones, and destroys the otherwise admirable universal ability to count on Siri to be attentive immediately upon pressing the home button.

Steps to Reproduce:

1. Plug in headphones to an iPhone 6s.

2. Press and hold the home button while IMMEDIATELY asking Siri something e.g. "What time is it?"

Expected Results:

Siri should process and handle the request "What time is it?"

Actual Results:

Siri only gets the tail end of the request, if any, depending on how quickly the chime has been played. In my tests, it often just ends up in "OK, go ahead and ask me something" mode, having totally lost the spoken sentence.

Version:

iOS 9.0.1

Notes:

NOTE: Even if the phone is not silenced, pressing and holding the home button doesn't cause a chime, and doesn't impede Siri's immediate processing of spoken instructions.

Configuration:

iPhone 6s Verizon 128GB.

Attachments:

-

Product Version: 9.0.1

Created: 2015-09-28T18:20:59.147980

Originated: 2015-09-28T14:13:00

Open Radar Link: http://www.openradar.me/22881933

|

non_process

|

siri taxes users with initial delay only when headphones are plugged in description summary i just discovered and celebrated the fact that with iphone siri s ability to listen instantly means that there is no longer even a need for an audible or vibration signal that it s ready to process speech users can just press the button and start speaking instantly and it works and it s awesome one gotcha i ve run into though is in the particular case that headphones are plugged in my phone does give a moment s delay before processing siri instructions and also plays an audible chime when it s ready i can t tell if it s the unnecessary chime that is itself delaying siri s ability to process or if the fact that headphones are installed somehow defies siri s ability to be instantly attentive in any case this is an unfortunate penalty for using headphones and destroys the otherwise admirable universal ability to count on siri to be attentive immediately upon pressing the home button steps to reproduce plug in headphones to an iphone press and hold the home button while immediately asking siri something e g what time is it expected results siri should process and handle the request what time is it actual results siri only gets the tail end of the request if any depending on how quickly the chime has been played in my tests it often just ends up in ok go ahead and ask me something mode having totally lost the spoken sentence version ios notes note even if the phone is not silenced pressing and holding the home button doesn t cause a chime and doesn t impede siri s immediate processing of spoken instructions configuration iphone verizon attachments product version created originated open radar link

| 0

|

2,543

| 5,300,988,853

|

IssuesEvent

|

2017-02-10 07:53:28

|

mitchellh/packer

|

https://api.github.com/repos/mitchellh/packer

|

closed

|

Add an "inline" option for passing Vagrantfile template to Vagrant post-processor

|

enhancement post-processor/vagrant

|

This outlines a possible enhancement to the vagrant post-processor.

Just like the shell provisioner/post-processor, it would be really nice to have the option to pass an "inline" vagrantfile_template configuration option to the vagrant post-processor instead of an external file.

The use case for this would be to try to create a more self-contained packer template and try to minimize the number of external files needed to build a box.

|

1.0

|

Add an "inline" option for passing Vagrantfile template to Vagrant post-processor - This outlines a possible enhancement to the vagrant post-processor.

Just like the shell provisioner/post-processor, it would be really nice to have the option to pass an "inline" vagrantfile_template configuration option to the vagrant post-processor instead of an external file.

The use case for this would be to try to create a more self-contained packer template and try to minimize the number of external files needed to build a box.

|

process

|

add an inline option for passing vagrantfile template to vagrant post processor this outlines a possible enhancement to the vagrant post processor just like the shell provisioner post processor it would be really nice to have the option to pass an inline vagrantfile template configuration option to the vagrant post processor instead of an external file the use case for this would be to try to create a more self contained packer template and try to minimize the number of external files needed to build a box

| 1

|

66,595

| 16,658,542,509

|

IssuesEvent

|

2021-06-06 00:25:21

|

spack/spack

|

https://api.github.com/repos/spack/spack

|

closed

|

veloc 1.4, 1.3: build fails: transfer_module.cpp: too many arguments to function 'int AXL_Init()'

|

build-error e4s ecp

|

`veloc@1.4` (and `@1.3`) fails to build using:

* spack@develop (087110bcb013566f6ba392d4c271e891f4b3a2b1 from `Thu Apr 29 16:43:01 2021 +0200`)

* Ubuntu 20.04 - GCC 9.3.0

* Ubuntu 18.04 - GCC 7.5.0

* RHEL 8 - GCC 8.3.1

* RHEL 7 - GCC 9.3.0

Using container: `ecpe4s/ubuntu20.04-runner-x86_64:2021-03-10`

Concrete spec: [veloc-oqsntu.spec.yaml.txt](https://github.com/spack/spack/files/6400144/veloc-oqsntu.spec.yaml.txt)

Build log: [veloc-build-out.txt](https://github.com/spack/spack/files/6400165/veloc-build-out.txt)

```

$> spack mirror add E4S https://cache.e4s.io

$> spack buildcache keys -it

$> spack install --cache-only --only dependencies --include-build-deps -f ./veloc-oqsntu.spec.yaml

... OK

$> spack install --no-cache -f ./veloc-oqsntu.spec.yaml

...

==> Installing veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y

==> Fetching https://spack-llnl-mirror.s3-us-west-2.amazonaws.com/_source-cache/archive/d5/d5d12aedb9e97f079c4428aaa486bfa4e31fe1db547e103c52e76c8ec906d0a8.zip

############################################################################################################################################################################################ 100.0%

==> No patches needed for veloc

==> veloc: Executing phase: 'cmake'

==> veloc: Executing phase: 'build'

==> Error: ProcessError: Command exited with status 2:

'make' '-j16'

4 errors found in build log:

75 [ 47%] Linking C executable heatdis_original

76 cd /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5/test && /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/cmake-3.19.7-7zkgd

4xkg62fl5x2upq4mof5dkkkg3u4/bin/cmake -E cmake_link_script CMakeFiles/heatdis_original.dir/link.txt --verbose=1

77 /opt/spack/lib/spack/env/gcc/gcc -O2 -g -DNDEBUG CMakeFiles/heatdis_original.dir/heatdis_original.c.o -o heatdis_original -Wl,-rpath,/opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gc

c-9.3.0/mpich-3.4.1-hm77n22t37spis2wa4wssqtmqnvuhfz6/lib -lm /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/mpich-3.4.1-hm77n22t37spis2wa4wssqtmqnvuhfz6/lib/libmpi.so

78 make[2]: Leaving directory '/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5'

79 [ 47%] Built target heatdis_original

80 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp: In constructor 'transfer_module_t::transfer_module_t(const con

fig_t&)':

>> 81 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:54:28: error: too many arguments to function 'int AXL_Init()'

82 54 | int ret = AXL_Init(NULL);

83 | ^

84 In file included from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.hpp:12,

85 from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:1:

86 /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/axl-0.4.0-kv7mn663t4uj5aw6ssv26zgfzzgt3xev/include/axl.h:58:5: note: declared here

87 58 | int AXL_Init (void);

88 | ^~~~~~~~

89 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp: In function 'int axl_transfer_file(axl_xfer_t, const string&,

const string&)':

>> 90 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:68:45: error: too few arguments to function 'int AXL_Create(axl

_xfer_t, const char*, const char*)'

91 68 | int id = AXL_Create(type, source.c_str());

92 | ^

93 In file included from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.hpp:12,

94 from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:1:

95 /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/axl-0.4.0-kv7mn663t4uj5aw6ssv26zgfzzgt3xev/include/axl.h:73:5: note: declared here

96 73 | int AXL_Create (axl_xfer_t xtype, const char* name, const char* state_file);

97 | ^~~~~~~~~~

>> 98 make[2]: *** [src/modules/CMakeFiles/veloc-modules.dir/build.make:111: src/modules/CMakeFiles/veloc-modules.dir/transfer_module.cpp.o] Error 1

99 make[2]: *** Waiting for unfinished jobs....

100 make[2]: Leaving directory '/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5'

>> 101 make[1]: *** [CMakeFiles/Makefile2:281: src/modules/CMakeFiles/veloc-modules.dir/all] Error 2

102 make[1]: Leaving directory '/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5'

103 make: *** [Makefile:163: all] Error 2

See build log for details:

/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-out.txt

```

@gonsie

|

1.0

|

veloc 1.4, 1.3: build fails: transfer_module.cpp: too many arguments to function 'int AXL_Init()' - `veloc@1.4` (and `@1.3`) fails to build using:

* spack@develop (087110bcb013566f6ba392d4c271e891f4b3a2b1 from `Thu Apr 29 16:43:01 2021 +0200`)

* Ubuntu 20.04 - GCC 9.3.0

* Ubuntu 18.04 - GCC 7.5.0

* RHEL 8 - GCC 8.3.1

* RHEL 7 - GCC 9.3.0

Using container: `ecpe4s/ubuntu20.04-runner-x86_64:2021-03-10`

Concrete spec: [veloc-oqsntu.spec.yaml.txt](https://github.com/spack/spack/files/6400144/veloc-oqsntu.spec.yaml.txt)

Build log: [veloc-build-out.txt](https://github.com/spack/spack/files/6400165/veloc-build-out.txt)

```

$> spack mirror add E4S https://cache.e4s.io

$> spack buildcache keys -it

$> spack install --cache-only --only dependencies --include-build-deps -f ./veloc-oqsntu.spec.yaml

... OK

$> spack install --no-cache -f ./veloc-oqsntu.spec.yaml

...

==> Installing veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y

==> Fetching https://spack-llnl-mirror.s3-us-west-2.amazonaws.com/_source-cache/archive/d5/d5d12aedb9e97f079c4428aaa486bfa4e31fe1db547e103c52e76c8ec906d0a8.zip

############################################################################################################################################################################################ 100.0%

==> No patches needed for veloc

==> veloc: Executing phase: 'cmake'

==> veloc: Executing phase: 'build'

==> Error: ProcessError: Command exited with status 2:

'make' '-j16'

4 errors found in build log:

75 [ 47%] Linking C executable heatdis_original

76 cd /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5/test && /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/cmake-3.19.7-7zkgd

4xkg62fl5x2upq4mof5dkkkg3u4/bin/cmake -E cmake_link_script CMakeFiles/heatdis_original.dir/link.txt --verbose=1

77 /opt/spack/lib/spack/env/gcc/gcc -O2 -g -DNDEBUG CMakeFiles/heatdis_original.dir/heatdis_original.c.o -o heatdis_original -Wl,-rpath,/opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gc

c-9.3.0/mpich-3.4.1-hm77n22t37spis2wa4wssqtmqnvuhfz6/lib -lm /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/mpich-3.4.1-hm77n22t37spis2wa4wssqtmqnvuhfz6/lib/libmpi.so

78 make[2]: Leaving directory '/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5'

79 [ 47%] Built target heatdis_original

80 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp: In constructor 'transfer_module_t::transfer_module_t(const con

fig_t&)':

>> 81 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:54:28: error: too many arguments to function 'int AXL_Init()'

82 54 | int ret = AXL_Init(NULL);

83 | ^

84 In file included from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.hpp:12,

85 from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:1:

86 /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/axl-0.4.0-kv7mn663t4uj5aw6ssv26zgfzzgt3xev/include/axl.h:58:5: note: declared here

87 58 | int AXL_Init (void);

88 | ^~~~~~~~

89 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp: In function 'int axl_transfer_file(axl_xfer_t, const string&,

const string&)':

>> 90 /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:68:45: error: too few arguments to function 'int AXL_Create(axl

_xfer_t, const char*, const char*)'

91 68 | int id = AXL_Create(type, source.c_str());

92 | ^

93 In file included from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.hpp:12,

94 from /tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-src/src/modules/transfer_module.cpp:1:

95 /opt/spack/opt/spack/linux-ubuntu20.04-x86_64/gcc-9.3.0/axl-0.4.0-kv7mn663t4uj5aw6ssv26zgfzzgt3xev/include/axl.h:73:5: note: declared here

96 73 | int AXL_Create (axl_xfer_t xtype, const char* name, const char* state_file);

97 | ^~~~~~~~~~

>> 98 make[2]: *** [src/modules/CMakeFiles/veloc-modules.dir/build.make:111: src/modules/CMakeFiles/veloc-modules.dir/transfer_module.cpp.o] Error 1

99 make[2]: *** Waiting for unfinished jobs....

100 make[2]: Leaving directory '/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5'

>> 101 make[1]: *** [CMakeFiles/Makefile2:281: src/modules/CMakeFiles/veloc-modules.dir/all] Error 2

102 make[1]: Leaving directory '/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-oqsntu5'

103 make: *** [Makefile:163: all] Error 2

See build log for details:

/tmp/root/spack-stage/spack-stage-veloc-1.4-oqsntu54uhbqae6uw3vlvkdbxzzaet5y/spack-build-out.txt

```

@gonsie

|

non_process

|

veloc build fails transfer module cpp too many arguments to function int axl init veloc and fails to build using spack develop from thu apr ubuntu gcc ubuntu gcc rhel gcc rhel gcc using container runner concrete spec build log spack mirror add spack buildcache keys it spack install cache only only dependencies include build deps f veloc oqsntu spec yaml ok spack install no cache f veloc oqsntu spec yaml installing veloc fetching no patches needed for veloc veloc executing phase cmake veloc executing phase build error processerror command exited with status make errors found in build log linking c executable heatdis original cd tmp root spack stage spack stage veloc spack build test opt spack opt spack linux gcc cmake bin cmake e cmake link script cmakefiles heatdis original dir link txt verbose opt spack lib spack env gcc gcc g dndebug cmakefiles heatdis original dir heatdis original c o o heatdis original wl rpath opt spack opt spack linux gc c mpich lib lm opt spack opt spack linux gcc mpich lib libmpi so make leaving directory tmp root spack stage spack stage veloc spack build built target heatdis original tmp root spack stage spack stage veloc spack src src modules transfer module cpp in constructor transfer module t transfer module t const con fig t tmp root spack stage spack stage veloc spack src src modules transfer module cpp error too many arguments to function int axl init int ret axl init null in file included from tmp root spack stage spack stage veloc spack src src modules transfer module hpp from tmp root spack stage spack stage veloc spack src src modules transfer module cpp opt spack opt spack linux gcc axl include axl h note declared here int axl init void tmp root spack stage spack stage veloc spack src src modules transfer module cpp in function int axl transfer file axl xfer t const string const string tmp root spack stage spack stage veloc spack src src modules transfer module cpp error too few arguments to function int axl create axl xfer t const char const char int id axl create type source c str in file included from tmp root spack stage spack stage veloc spack src src modules transfer module hpp from tmp root spack stage spack stage veloc spack src src modules transfer module cpp opt spack opt spack linux gcc axl include axl h note declared here int axl create axl xfer t xtype const char name const char state file make error make waiting for unfinished jobs make leaving directory tmp root spack stage spack stage veloc spack build make error make leaving directory tmp root spack stage spack stage veloc spack build make error see build log for details tmp root spack stage spack stage veloc spack build out txt gonsie

| 0

|

251,365

| 8,014,171,160

|

IssuesEvent

|

2018-07-25 04:56:45

|

wso2/devstudio-tooling-ei

|

https://api.github.com/repos/wso2/devstudio-tooling-ei

|

closed

|

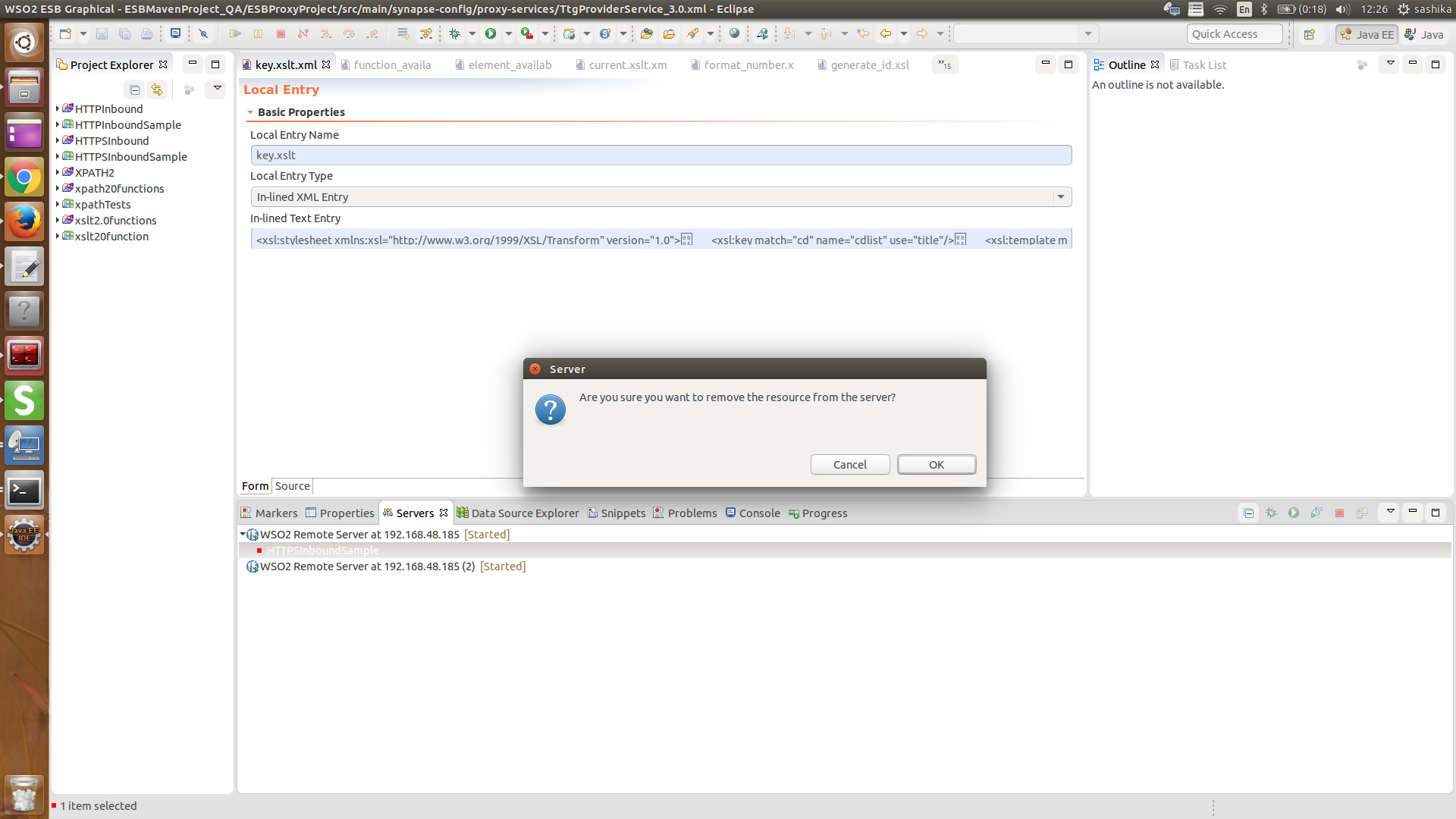

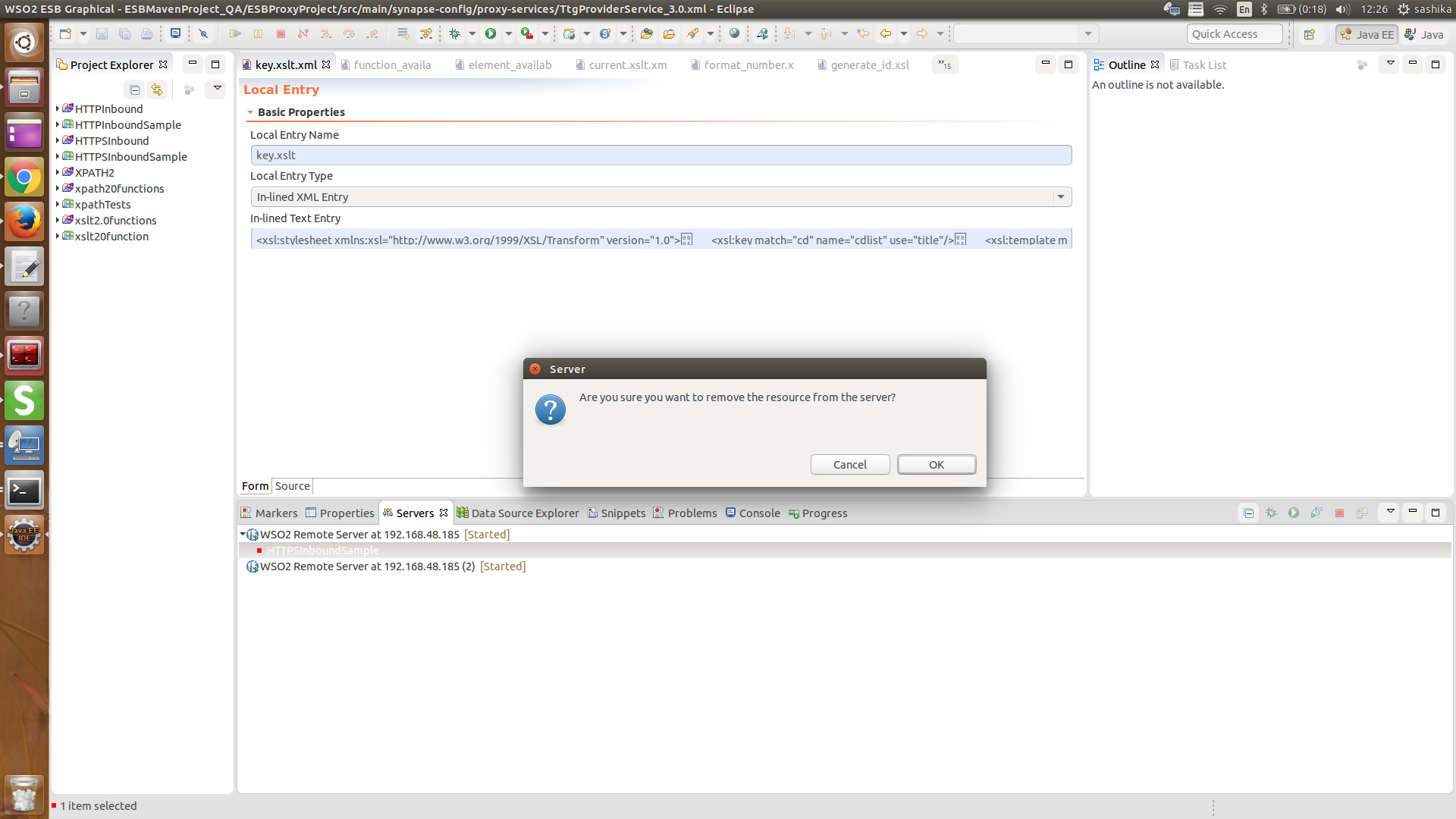

Resources removed through the dev studio did not removed from the remote server

|

Priority/Normal

|

Environment

OS - ubuntu 15.10

Java - JDK jdk1.8.0_66

Dev studio with updates (EI611)

Remote Server - Running in windows server 2012 R2

**Steps to recreate the issue**

1. Create a new Remote Server connection with an artefact to deploy

2. Verify that the artefact deployed successfully in the server

3. Remove the resource from the Dev Studio

**Observations**

Resource did not remove from the remote server. But removed from the Dev Studio window

|

1.0

|

Resources removed through the dev studio did not removed from the remote server - Environment

OS - ubuntu 15.10

Java - JDK jdk1.8.0_66

Dev studio with updates (EI611)

Remote Server - Running in windows server 2012 R2

**Steps to recreate the issue**

1. Create a new Remote Server connection with an artefact to deploy

2. Verify that the artefact deployed successfully in the server

3. Remove the resource from the Dev Studio

**Observations**

Resource did not remove from the remote server. But removed from the Dev Studio window

|

non_process

|

resources removed through the dev studio did not removed from the remote server environment os ubuntu java jdk dev studio with updates remote server running in windows server steps to recreate the issue create a new remote server connection with an artefact to deploy verify that the artefact deployed successfully in the server remove the resource from the dev studio observations resource did not remove from the remote server but removed from the dev studio window

| 0

|

125,622

| 17,836,454,500

|

IssuesEvent

|

2021-09-03 02:15:43

|

kstring/traefik

|

https://api.github.com/repos/kstring/traefik

|

opened

|

CVE-2021-37713 (High) detected in tar-4.4.8.tgz

|

security vulnerability

|

## CVE-2021-37713 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-4.4.8.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/tar-4.4.8.tgz">https://registry.npmjs.org/tar/-/tar-4.4.8.tgz</a></p>

<p>

Dependency Hierarchy:

- mocha-webpack-2.0.0-beta.0.tgz (Root Library)

- chokidar-2.1.8.tgz

- fsevents-1.2.9.tgz

- node-pre-gyp-0.12.0.tgz

- :x: **tar-4.4.8.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The npm package "tar" (aka node-tar) before versions 4.4.18, 5.0.10, and 6.1.9 has an arbitrary file creation/overwrite and arbitrary code execution vulnerability. node-tar aims to guarantee that any file whose location would be outside of the extraction target directory is not extracted. This is, in part, accomplished by sanitizing absolute paths of entries within the archive, skipping archive entries that contain `..` path portions, and resolving the sanitized paths against the extraction target directory. This logic was insufficient on Windows systems when extracting tar files that contained a path that was not an absolute path, but specified a drive letter different from the extraction target, such as `C:some\path`. If the drive letter does not match the extraction target, for example `D:\extraction\dir`, then the result of `path.resolve(extractionDirectory, entryPath)` would resolve against the current working directory on the `C:` drive, rather than the extraction target directory. Additionally, a `..` portion of the path could occur immediately after the drive letter, such as `C:../foo`, and was not properly sanitized by the logic that checked for `..` within the normalized and split portions of the path. This only affects users of `node-tar` on Windows systems. These issues were addressed in releases 4.4.18, 5.0.10 and 6.1.9. The v3 branch of node-tar has been deprecated and did not receive patches for these issues. If you are still using a v3 release we recommend you update to a more recent version of node-tar. There is no reasonable way to work around this issue without performing the same path normalization procedures that node-tar now does. Users are encouraged to upgrade to the latest patched versions of node-tar, rather than attempt to sanitize paths themselves.

<p>Publish Date: 2021-08-31

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-37713>CVE-2021-37713</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.2</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/npm/node-tar/security/advisories/GHSA-5955-9wpr-37jh">https://github.com/npm/node-tar/security/advisories/GHSA-5955-9wpr-37jh</a></p>

<p>Release Date: 2021-08-31</p>

<p>Fix Resolution: tar - 4.4.18, 5.0.10, 6.1.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-37713 (High) detected in tar-4.4.8.tgz - ## CVE-2021-37713 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-4.4.8.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/tar-4.4.8.tgz">https://registry.npmjs.org/tar/-/tar-4.4.8.tgz</a></p>

<p>

Dependency Hierarchy:

- mocha-webpack-2.0.0-beta.0.tgz (Root Library)

- chokidar-2.1.8.tgz

- fsevents-1.2.9.tgz

- node-pre-gyp-0.12.0.tgz

- :x: **tar-4.4.8.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The npm package "tar" (aka node-tar) before versions 4.4.18, 5.0.10, and 6.1.9 has an arbitrary file creation/overwrite and arbitrary code execution vulnerability. node-tar aims to guarantee that any file whose location would be outside of the extraction target directory is not extracted. This is, in part, accomplished by sanitizing absolute paths of entries within the archive, skipping archive entries that contain `..` path portions, and resolving the sanitized paths against the extraction target directory. This logic was insufficient on Windows systems when extracting tar files that contained a path that was not an absolute path, but specified a drive letter different from the extraction target, such as `C:some\path`. If the drive letter does not match the extraction target, for example `D:\extraction\dir`, then the result of `path.resolve(extractionDirectory, entryPath)` would resolve against the current working directory on the `C:` drive, rather than the extraction target directory. Additionally, a `..` portion of the path could occur immediately after the drive letter, such as `C:../foo`, and was not properly sanitized by the logic that checked for `..` within the normalized and split portions of the path. This only affects users of `node-tar` on Windows systems. These issues were addressed in releases 4.4.18, 5.0.10 and 6.1.9. The v3 branch of node-tar has been deprecated and did not receive patches for these issues. If you are still using a v3 release we recommend you update to a more recent version of node-tar. There is no reasonable way to work around this issue without performing the same path normalization procedures that node-tar now does. Users are encouraged to upgrade to the latest patched versions of node-tar, rather than attempt to sanitize paths themselves.

<p>Publish Date: 2021-08-31

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-37713>CVE-2021-37713</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.2</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/npm/node-tar/security/advisories/GHSA-5955-9wpr-37jh">https://github.com/npm/node-tar/security/advisories/GHSA-5955-9wpr-37jh</a></p>

<p>Release Date: 2021-08-31</p>

<p>Fix Resolution: tar - 4.4.18, 5.0.10, 6.1.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in tar tgz cve high severity vulnerability vulnerable library tar tgz tar for node library home page a href dependency hierarchy mocha webpack beta tgz root library chokidar tgz fsevents tgz node pre gyp tgz x tar tgz vulnerable library found in base branch master vulnerability details the npm package tar aka node tar before versions and has an arbitrary file creation overwrite and arbitrary code execution vulnerability node tar aims to guarantee that any file whose location would be outside of the extraction target directory is not extracted this is in part accomplished by sanitizing absolute paths of entries within the archive skipping archive entries that contain path portions and resolving the sanitized paths against the extraction target directory this logic was insufficient on windows systems when extracting tar files that contained a path that was not an absolute path but specified a drive letter different from the extraction target such as c some path if the drive letter does not match the extraction target for example d extraction dir then the result of path resolve extractiondirectory entrypath would resolve against the current working directory on the c drive rather than the extraction target directory additionally a portion of the path could occur immediately after the drive letter such as c foo and was not properly sanitized by the logic that checked for within the normalized and split portions of the path this only affects users of node tar on windows systems these issues were addressed in releases and the branch of node tar has been deprecated and did not receive patches for these issues if you are still using a release we recommend you update to a more recent version of node tar there is no reasonable way to work around this issue without performing the same path normalization procedures that node tar now does users are encouraged to upgrade to the latest patched versions of node tar rather than attempt to sanitize paths themselves publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact high integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tar step up your open source security game with whitesource

| 0

|

15,286

| 19,286,431,409

|

IssuesEvent

|

2021-12-11 02:51:45

|

allinurl/goaccess

|

https://api.github.com/repos/allinurl/goaccess

|

closed

|

Failed Requests counter not working

|

question log-processing

|

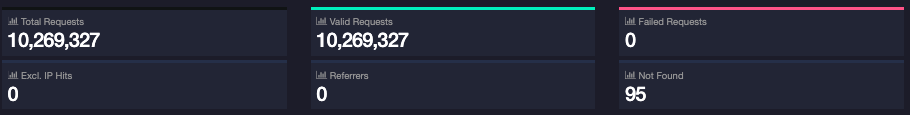

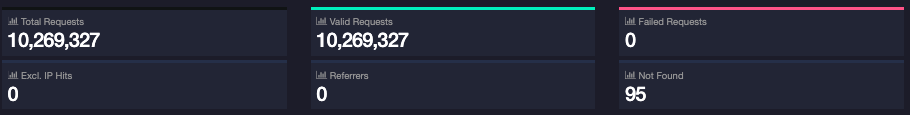

Hi,

I have recently started using GoAccess. Everything is working great, except for one thing.

Failed Requests counter always shows 0, but in status codes, I can see that there are many failed requests by status code.

I tried to find some config related to this but with no luck. The version used is 1.5.1. Please advice...

|

1.0

|

Failed Requests counter not working - Hi,

I have recently started using GoAccess. Everything is working great, except for one thing.

Failed Requests counter always shows 0, but in status codes, I can see that there are many failed requests by status code.

I tried to find some config related to this but with no luck. The version used is 1.5.1. Please advice...

|

process

|

failed requests counter not working hi i have recently started using goaccess everything is working great except for one thing failed requests counter always shows but in status codes i can see that there are many failed requests by status code i tried to find some config related to this but with no luck the version used is please advice

| 1

|

9,354

| 12,366,367,301

|

IssuesEvent

|

2020-05-18 10:19:28

|

DiSSCo/user-stories

|

https://api.github.com/repos/DiSSCo/user-stories

|

opened

|

contribution indicators as citizen scientist

|

1. NH museum 4. Data processing ICEDIG-SURVEY Research Specimen level

|

As a Citizen scientist I want to be recognized as contributor so that I can apply for funding to digitize my own collections for this I need contribution indicators as contributor

|

1.0

|

contribution indicators as citizen scientist - As a Citizen scientist I want to be recognized as contributor so that I can apply for funding to digitize my own collections for this I need contribution indicators as contributor

|

process

|

contribution indicators as citizen scientist as a citizen scientist i want to be recognized as contributor so that i can apply for funding to digitize my own collections for this i need contribution indicators as contributor

| 1

|

16,783

| 21,969,940,302

|

IssuesEvent

|

2022-05-25 02:02:23

|

quark-engine/quark-engine

|

https://api.github.com/repos/quark-engine/quark-engine

|

closed

|

Fix README content

|

issue-processing-state-06

|

The README content below needs to update.

The Quark version in the demo image is outdated, and the title doesn't fit the content.

> ## Available In

> <img src="https://i.imgur.com/nz4m8kr.png"/>

>

> [](https://asciinema.org/a/416810)

|

1.0

|

Fix README content - The README content below needs to update.

The Quark version in the demo image is outdated, and the title doesn't fit the content.

> ## Available In

> <img src="https://i.imgur.com/nz4m8kr.png"/>

>

> [](https://asciinema.org/a/416810)

|

process

|

fix readme content the readme content below needs to update the quark version in the demo image is outdated and the title doesn t fit the content available in img src

| 1

|

13,328

| 15,788,808,176

|

IssuesEvent

|

2021-04-01 21:25:26

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

opened

|

Add capability to serve head docs as https://docs.bazel.build/versions/head/...

|

team-XProduct type: process untriaged

|

This is a would be a convenient first step towards #12200

- versions/master/... and versions/head/... would both serve the same document

- this allows us to change all of the /docs/... tree and bazel-website before renaming the branch itself.

Next steps after all .md files are fixed

- in Bazel CI, push tarball under the new branch name

- eventually, stop serving the old links.

@philwo @floriographygoth

|

1.0

|

Add capability to serve head docs as https://docs.bazel.build/versions/head/... - This is a would be a convenient first step towards #12200

- versions/master/... and versions/head/... would both serve the same document

- this allows us to change all of the /docs/... tree and bazel-website before renaming the branch itself.

Next steps after all .md files are fixed

- in Bazel CI, push tarball under the new branch name

- eventually, stop serving the old links.

@philwo @floriographygoth

|

process

|

add capability to serve head docs as this is a would be a convenient first step towards versions master and versions head would both serve the same document this allows us to change all of the docs tree and bazel website before renaming the branch itself next steps after all md files are fixed in bazel ci push tarball under the new branch name eventually stop serving the old links philwo floriographygoth

| 1

|

380,241

| 26,409,815,372

|

IssuesEvent

|

2023-01-13 11:12:48

|

kula-app/OnLaunch-iOS-Client

|

https://api.github.com/repos/kula-app/OnLaunch-iOS-Client

|

opened

|

Add CONTRIBUTING guide

|

documentation

|

Many Open Source projects on GitHub use a special file called `CONTRIBUTING.md` to describe how the contribution process is defined. This might need to be written according to grants.

Docs:

- https://docs.github.com/en/communities/setting-up-your-project-for-healthy-contributions/setting-guidelines-for-repository-contributors

- https://docs.github.com/en/communities/setting-up-your-project-for-healthy-contributions/creating-a-default-community-health-file

Examples:

- https://github.com/Alamofire/Alamofire/blob/master/CONTRIBUTING.md

- https://github.com/yonaskolb/XcodeGen/blob/master/CONTRIBUTING.md

|

1.0

|

Add CONTRIBUTING guide - Many Open Source projects on GitHub use a special file called `CONTRIBUTING.md` to describe how the contribution process is defined. This might need to be written according to grants.

Docs:

- https://docs.github.com/en/communities/setting-up-your-project-for-healthy-contributions/setting-guidelines-for-repository-contributors

- https://docs.github.com/en/communities/setting-up-your-project-for-healthy-contributions/creating-a-default-community-health-file

Examples:

- https://github.com/Alamofire/Alamofire/blob/master/CONTRIBUTING.md

- https://github.com/yonaskolb/XcodeGen/blob/master/CONTRIBUTING.md

|

non_process

|

add contributing guide many open source projects on github use a special file called contributing md to describe how the contribution process is defined this might need to be written according to grants docs examples

| 0

|

22,721

| 32,040,520,504

|

IssuesEvent

|

2023-09-22 18:54:24

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

Can't aggregate with a custom column that uses coalesce

|

Type:Bug Priority:P2 .Regression Querying/Notebook/Custom Column .Team/QueryProcessor :hammer_and_wrench:

|

### Describe the bug

When you have a custom column that is a number created by a coalesce in the custom column, you can not choose it in the Summarize section for math aggregations.

### To Reproduce

1. Go to Sample Data

2. Click on Orders

3. Join to the People table

4. Create a custom column, "Zero":

<img width="357" alt="image" src="https://github.com/metabase/metabase/assets/22856340/3df8074c-00c1-4e11-9098-e9092f99a57f">

5. Create another custom column, "Total with Zeros":

<img width="305" alt="image" src="https://github.com/metabase/metabase/assets/22856340/5b2a6daf-fc57-4b45-b4db-913f37318768">

6. Go to the Summarize section, choose "Sum of" and attempt to pick "Total with Zeros"

### Expected behavior

It should allow you to pick a custom column that is a number created with a coalesce function

### Logs

_No response_

### Information about your Metabase installation

```JSON

It does not work on master or 47

It does work in 1.45.3.1 and 1.46.6.1-latest patch

```

### Severity

It's pretty annoying. Also not sure what happens if you upgrade - will these reports stop working?

### Additional context

_No response_

|

1.0

|

Can't aggregate with a custom column that uses coalesce - ### Describe the bug

When you have a custom column that is a number created by a coalesce in the custom column, you can not choose it in the Summarize section for math aggregations.

### To Reproduce

1. Go to Sample Data

2. Click on Orders

3. Join to the People table

4. Create a custom column, "Zero":

<img width="357" alt="image" src="https://github.com/metabase/metabase/assets/22856340/3df8074c-00c1-4e11-9098-e9092f99a57f">

5. Create another custom column, "Total with Zeros":

<img width="305" alt="image" src="https://github.com/metabase/metabase/assets/22856340/5b2a6daf-fc57-4b45-b4db-913f37318768">

6. Go to the Summarize section, choose "Sum of" and attempt to pick "Total with Zeros"

### Expected behavior

It should allow you to pick a custom column that is a number created with a coalesce function

### Logs

_No response_

### Information about your Metabase installation

```JSON

It does not work on master or 47

It does work in 1.45.3.1 and 1.46.6.1-latest patch

```

### Severity

It's pretty annoying. Also not sure what happens if you upgrade - will these reports stop working?

### Additional context

_No response_

|

process

|

can t aggregate with a custom column that uses coalesce describe the bug when you have a custom column that is a number created by a coalesce in the custom column you can not choose it in the summarize section for math aggregations to reproduce go to sample data click on orders join to the people table create a custom column zero img width alt image src create another custom column total with zeros img width alt image src go to the summarize section choose sum of and attempt to pick total with zeros expected behavior it should allow you to pick a custom column that is a number created with a coalesce function logs no response information about your metabase installation json it does not work on master or it does work in and latest patch severity it s pretty annoying also not sure what happens if you upgrade will these reports stop working additional context no response

| 1

|

21,314

| 28,508,018,921

|

IssuesEvent

|

2023-04-19 00:02:06

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

[MLv2] Finish implementing temporal bucketing

|

.metabase-lib .Team/QueryProcessor :hammer_and_wrench:

|

- We have `lib.temporal-bucket/temporal-bucket*` right now but we should make sure you can actually use it in real life given the sorts of `:metadata/field` objects the FE lib will actually be using.

- Need a corresponding multimethod to get the temporal bucket associated with a `:metadata/field` or `:field` ref. For `:metadata/field`, it should be saved with a namespaced key.

- Need to be able to remove a temporal bucketing unit

- Need a function to get available units for a given field/field metadata/etc.

- all temporal unit manipulation should happen thru this instead of accessing options or specific keys in the metadata directly.

- If metadata has a temporal unit, then generated `:field-ref`s should include it.

- Temporal unit SHOULD NOT be propagated to the next stage of a query.

- Base type should be updated appropriately based on the unit.

|

1.0

|

[MLv2] Finish implementing temporal bucketing - - We have `lib.temporal-bucket/temporal-bucket*` right now but we should make sure you can actually use it in real life given the sorts of `:metadata/field` objects the FE lib will actually be using.

- Need a corresponding multimethod to get the temporal bucket associated with a `:metadata/field` or `:field` ref. For `:metadata/field`, it should be saved with a namespaced key.

- Need to be able to remove a temporal bucketing unit

- Need a function to get available units for a given field/field metadata/etc.

- all temporal unit manipulation should happen thru this instead of accessing options or specific keys in the metadata directly.

- If metadata has a temporal unit, then generated `:field-ref`s should include it.

- Temporal unit SHOULD NOT be propagated to the next stage of a query.

- Base type should be updated appropriately based on the unit.

|

process

|

finish implementing temporal bucketing we have lib temporal bucket temporal bucket right now but we should make sure you can actually use it in real life given the sorts of metadata field objects the fe lib will actually be using need a corresponding multimethod to get the temporal bucket associated with a metadata field or field ref for metadata field it should be saved with a namespaced key need to be able to remove a temporal bucketing unit need a function to get available units for a given field field metadata etc all temporal unit manipulation should happen thru this instead of accessing options or specific keys in the metadata directly if metadata has a temporal unit then generated field ref s should include it temporal unit should not be propagated to the next stage of a query base type should be updated appropriately based on the unit

| 1

|

40,120

| 20,594,841,731

|

IssuesEvent

|

2022-03-05 10:18:40

|

git-baboo/easy-review

|

https://api.github.com/repos/git-baboo/easy-review

|

closed

|

認証後の画面遷移が遅い問題の解消

|

performance

|

## ✨ 概要

認証後の画面遷移がもっさりしているため、快適に動作するよう改善する。

## 🔥 ゴール

<!-- 例) 〇〇ができる、xxなときに△△する -->

- 認証後の画面遷移が快適に行われる(遅くとも1秒以内に遷移したい)

|

True

|

認証後の画面遷移が遅い問題の解消 - ## ✨ 概要

認証後の画面遷移がもっさりしているため、快適に動作するよう改善する。

## 🔥 ゴール

<!-- 例) 〇〇ができる、xxなときに△△する -->

- 認証後の画面遷移が快適に行われる(遅くとも1秒以内に遷移したい)

|

non_process

|

認証後の画面遷移が遅い問題の解消 ✨ 概要 認証後の画面遷移がもっさりしているため、快適に動作するよう改善する。 🔥 ゴール 認証後の画面遷移が快適に行われる( )

| 0

|

6,276

| 9,247,577,251

|

IssuesEvent

|

2019-03-15 01:29:31

|

googleapis/google-http-java-client

|

https://api.github.com/repos/googleapis/google-http-java-client

|

closed

|

Remove dependency on apache artifact

|

type: process

|

We removed backwards compatibility in `google-http-client` see #606 for more detail. Once we release a new major version, remove the direct dependency between `google-http-client` and `google-http-client-apache`

|

1.0

|

Remove dependency on apache artifact - We removed backwards compatibility in `google-http-client` see #606 for more detail. Once we release a new major version, remove the direct dependency between `google-http-client` and `google-http-client-apache`

|

process

|

remove dependency on apache artifact we removed backwards compatibility in google http client see for more detail once we release a new major version remove the direct dependency between google http client and google http client apache

| 1

|

17,316

| 23,138,168,261

|

IssuesEvent

|

2022-07-28 15:53:12

|

ORNL-AMO/AMO-Tools-Desktop

|

https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop

|

closed

|

Process Heating Result units

|

bug Process Heating important

|

When set independent result units (custom results?) switches to MMBTU/hr and will not change

|

1.0

|

Process Heating Result units - When set independent result units (custom results?) switches to MMBTU/hr and will not change

|

process

|

process heating result units when set independent result units custom results switches to mmbtu hr and will not change

| 1

|

14,934

| 18,360,792,789

|

IssuesEvent

|

2021-10-09 06:57:11

|

yandali-damian/LIM015-social-network

|

https://api.github.com/repos/yandali-damian/LIM015-social-network

|

reopened

|

USER HISTORY - 003

|

Good issues Process

|

Como usuario logeado debo poder publicar, visualizar, modificar y eliminar una publicación

- tareas

- [x] estructura HTML de home

> - [x] barra de navegación

> - [x] perfil

> > - [x] mostrar los post personales

> > - [x] Agregar botón para editar foto y nombre

> > - [x] agregar, mostrar ,editar, eliminar

> >> - [x] Imput para realizar publicación

> >> - [x] btn superior en c/u de los post para editar, eliminar

> >> - [x] Alerta modal antes de eliminar un post

> - [x] post home

> > - [x] Crear un botón para mostrar el modal

> >> - [x] Función para mostrar el modal

> > - [x] Crear un modal para publicar un post

> >> - [x] Agregar textarea para el contenido del post

> >> - [x] Agregar botón de publicar

> >> - [x] Agregar botón de cerrar

> >> - [x] Agregar opción de agregar una foto

> >> - [x] Función para publicar post

> >> - [x] Función para cerrar post

> > - [x] agregar, mostrar ,editar, eliminar (personales)

> > - [x] Mostrar post en la vista home

> >> - [x] btn superior en c/u de los post personales para editar, eliminar

> >> - [x] Alerta modal antes de eliminar un post

> >> - [x] ocultar btn superior de los post para editar y eliminar

- [x] home responsive

|

1.0

|

USER HISTORY - 003 - Como usuario logeado debo poder publicar, visualizar, modificar y eliminar una publicación

- tareas

- [x] estructura HTML de home

> - [x] barra de navegación

> - [x] perfil

> > - [x] mostrar los post personales

> > - [x] Agregar botón para editar foto y nombre

> > - [x] agregar, mostrar ,editar, eliminar

> >> - [x] Imput para realizar publicación

> >> - [x] btn superior en c/u de los post para editar, eliminar

> >> - [x] Alerta modal antes de eliminar un post

> - [x] post home

> > - [x] Crear un botón para mostrar el modal

> >> - [x] Función para mostrar el modal

> > - [x] Crear un modal para publicar un post

> >> - [x] Agregar textarea para el contenido del post

> >> - [x] Agregar botón de publicar

> >> - [x] Agregar botón de cerrar

> >> - [x] Agregar opción de agregar una foto

> >> - [x] Función para publicar post

> >> - [x] Función para cerrar post

> > - [x] agregar, mostrar ,editar, eliminar (personales)

> > - [x] Mostrar post en la vista home

> >> - [x] btn superior en c/u de los post personales para editar, eliminar

> >> - [x] Alerta modal antes de eliminar un post

> >> - [x] ocultar btn superior de los post para editar y eliminar

- [x] home responsive

|

process

|

user history como usuario logeado debo poder publicar visualizar modificar y eliminar una publicación tareas estructura html de home barra de navegación perfil mostrar los post personales agregar botón para editar foto y nombre agregar mostrar editar eliminar imput para realizar publicación btn superior en c u de los post para editar eliminar alerta modal antes de eliminar un post post home crear un botón para mostrar el modal función para mostrar el modal crear un modal para publicar un post agregar textarea para el contenido del post agregar botón de publicar agregar botón de cerrar agregar opción de agregar una foto función para publicar post función para cerrar post agregar mostrar editar eliminar personales mostrar post en la vista home btn superior en c u de los post personales para editar eliminar alerta modal antes de eliminar un post ocultar btn superior de los post para editar y eliminar home responsive

| 1

|

18,162

| 24,199,270,830

|

IssuesEvent

|

2022-09-24 10:16:35

|

vladimiry/ElectronMail

|

https://api.github.com/repos/vladimiry/ElectronMail

|

closed

|

Error message on Linux app

|

glitch workaround-exists env-dependent env: linux timeout: webview load timeout: main process

|

Hi,

I have error message with the linux app image, 4.13.2 (but i have too before with 4.13.1).

I use a debian unstable. The messages not appear at the start, but later.

Invocation timeout of calling "dbGetAccountMetadata" method on "electron-mail:ipcMain-api" channel with 25000ms timeout

And several times this :

Uncaught (in promise): Error: Failed to wait for "webview" service provider initialization (timeout: 15000ms).

Error: Failed to wait for "webview" service provider initialization (timeout: 15000ms).

at file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:34027:47

at doInnerSub (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:27768:68)

at outerNext (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:27762:38)

at OperatorSubscriber._this._next (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:26507:13)

at OperatorSubscriber.Subscriber.next (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:25633:97)

at AsyncAction.<anonymous> (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:26434:24)

at AsyncAction._execute (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:29637:16)

at AsyncAction.execute (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:29630:26)

at AsyncScheduler.flush (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:29687:30)

at args.<computed> (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:32939:37)

So, if you can sort it out.

I'm not a specialist, so I don't know what's going on.

Good luck, and thank you for your work, this is an application that I really like.

Thouareg

|

1.0

|

Error message on Linux app - Hi,

I have error message with the linux app image, 4.13.2 (but i have too before with 4.13.1).

I use a debian unstable. The messages not appear at the start, but later.

Invocation timeout of calling "dbGetAccountMetadata" method on "electron-mail:ipcMain-api" channel with 25000ms timeout

And several times this :

Uncaught (in promise): Error: Failed to wait for "webview" service provider initialization (timeout: 15000ms).

Error: Failed to wait for "webview" service provider initialization (timeout: 15000ms).

at file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:34027:47

at doInnerSub (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:27768:68)

at outerNext (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:27762:38)

at OperatorSubscriber._this._next (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:26507:13)

at OperatorSubscriber.Subscriber.next (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:25633:97)

at AsyncAction.<anonymous> (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:26434:24)

at AsyncAction._execute (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:29637:16)

at AsyncAction.execute (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:29630:26)

at AsyncScheduler.flush (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:29687:30)

at args.<computed> (file:///tmp/.mount_electrUbjAFi/resources/app.asar/app/web/browser-window/index.mjs:32939:37)

So, if you can sort it out.

I'm not a specialist, so I don't know what's going on.

Good luck, and thank you for your work, this is an application that I really like.

Thouareg

|

process

|

error message on linux app hi i have error message with the linux app image but i have too before with i use a debian unstable the messages not appear at the start but later invocation timeout of calling dbgetaccountmetadata method on electron mail ipcmain api channel with timeout and several times this uncaught in promise error failed to wait for webview service provider initialization timeout error failed to wait for webview service provider initialization timeout at file tmp mount electrubjafi resources app asar app web browser window index mjs at doinnersub file tmp mount electrubjafi resources app asar app web browser window index mjs at outernext file tmp mount electrubjafi resources app asar app web browser window index mjs at operatorsubscriber this next file tmp mount electrubjafi resources app asar app web browser window index mjs at operatorsubscriber subscriber next file tmp mount electrubjafi resources app asar app web browser window index mjs at asyncaction file tmp mount electrubjafi resources app asar app web browser window index mjs at asyncaction execute file tmp mount electrubjafi resources app asar app web browser window index mjs at asyncaction execute file tmp mount electrubjafi resources app asar app web browser window index mjs at asyncscheduler flush file tmp mount electrubjafi resources app asar app web browser window index mjs at args file tmp mount electrubjafi resources app asar app web browser window index mjs so if you can sort it out i m not a specialist so i don t know what s going on good luck and thank you for your work this is an application that i really like thouareg

| 1

|

18,043

| 24,053,583,381

|

IssuesEvent

|

2022-09-16 14:48:29

|

hashicorp/terraform-cdk

|

https://api.github.com/repos/hashicorp/terraform-cdk

|

opened

|

Add Github Action to build API docs for PRs

|

enhancement dev-process

|

<!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

- Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

- If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Description

Currently we do have a precommit hook that runs `yarn generate-docs:api` (if changes were made to the `cdktf` package). This runs between 3 to 6 minutes and interrupts the workflow.

We should change this to be a Github Workflow that runs on PRs and makes a commit if the docs were outdated (i.e. if running that command produced changes).

<!--- Please leave a helpful description of the feature request here. --->

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Vendor blog posts or documentation?

--->

|

1.0

|

Add Github Action to build API docs for PRs - <!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

- Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

- If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Description

Currently we do have a precommit hook that runs `yarn generate-docs:api` (if changes were made to the `cdktf` package). This runs between 3 to 6 minutes and interrupts the workflow.

We should change this to be a Github Workflow that runs on PRs and makes a commit if the docs were outdated (i.e. if running that command produced changes).

<!--- Please leave a helpful description of the feature request here. --->

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Vendor blog posts or documentation?

--->

|

process

|

add github action to build api docs for prs community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate extra noise for issue followers and do not help prioritize the request if you are interested in working on this issue or have submitted a pull request please leave a comment description currently we do have a precommit hook that runs yarn generate docs api if changes were made to the cdktf package this runs between to minutes and interrupts the workflow we should change this to be a github workflow that runs on prs and makes a commit if the docs were outdated i e if running that command produced changes references information about referencing github issues are there any other github issues open or closed or pull requests that should be linked here vendor blog posts or documentation

| 1

|

15,170

| 18,941,718,299

|

IssuesEvent

|

2021-11-18 04:15:46

|

neuropsychology/NeuroKit

|

https://api.github.com/repos/neuropsychology/NeuroKit

|

opened

|

Generic function for 1/f noise

|

signal processing :chart_with_upwards_trend: Complexity/Chaos :bomb:

|

Would be good to have a generic `signal_1f` function to measure 1/f noise in particular for eeg and ecg signals (many papers have been published on this e.g., see [example](https://www.jneurosci.org/content/jneuro/35/38/13257.full.pdf))

To-do:

- [ ] Dissociate the functionalities for our existing [fractal_psdslope](https://github.com/neuropsychology/NeuroKit/blob/master/neurokit2/complexity/fractal_psdslope.py#L8) - slope itself should be computed in `signal_1f` and this can then be embedded into `fractal_psdslope` which converts to an estimate of fractal dimension

- [ ] Additional parameter considerations for computing 1/f (ref [FOOOF](https://github.com/fooof-tools/fooof) tool)

|

1.0

|