Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,570

| 8,407,409,989

|

IssuesEvent

|

2018-10-11 20:51:46

|

SynBioDex/SEPs

|

https://api.github.com/repos/SynBioDex/SEPs

|

reopened

|

SEP 005 -- SBOL Voting Procedure

|

Accepted Active Type: Process

|

# SEP 005 -- SBOL Voting Procedure

| SEP | 005 |

| --- | --- |

| **Title** | SBOL voting procedure |

| **Authors** | Raik Gruenberg |

| **Editor** | Raik Gruenberg |

| **Type** | Process |

| **Status** | Draft |

| **Created** | 24-Jan-2016 |

| **Last modified** | 02-Feb-2016 |

## Abstract

This proposal describes the voting procedure used to accept or reject changes to the SBOL data model or to SBOL community rules.

## 1. Rationale

With the introduction of SEPs, SBOL voting rules need to be updated. Previously, voting could be initiated by any two members of the sbol-dev mailing list on any issue of choice. According to the new proposal, voting can be initiated only on issues that first have been documented as an SBOL Enhancement Proposals (SEPs).

## 2. Specification

### 2.1 Changes to SBOL governance document

This SEP replaces two sections within the governance document (http://sbolstandard.org/development/gov/): "Voting process" and "Voting form". Note: Election rules remain unchanged.

### 2.2 Voting Process

1. Any member of the SBOL Developers Group can submit an SBOL Enhancement Proposal (SEP) to the editors and/or to the community at large.

2. The SBOL editors are expected to move a given SEP draft to a vote once they agree that there has been sufficient debate. However, any member of the SBOL Developers Group can initiate the vote as long as one other member of the group seconds the motion.

3. SBOL Editors post a voting form (see below), for a final discussion period of 2 working days.

4. Voting runs for 5 working days, starting at the end of the discussion period. All members of the SBOL Development Group are eligible to vote.

5. The SBOL Editors may extend the voting period by up to an additional 5 working days when they feel that an insufficient number of votes have been obtained.

6. SBOL Editors tally and call the vote. First vote will be judged by a 67% majority to indicate "rough consensus".

1. If rough consensus is not reached, discussion of 3 working days is to follow. SEP authors can modify or withdraw their proposal during that time.

2. The reasons for decisions must be recorded with the results of the vote.

3. Any second followup vote will be ruled by 50% majority and will be treated as the decision

### 2.3 Voting form

The voting form must:

1. State clearly the SEP number and title being voted on and provide a link to this SEP.

2. State the eligibility criteria for voting, “All members of the SBOL Developers Group are eligible to vote.”

3. Provide the following options for the vote:

- accept -- vote to accept the SEP

- reject -- vote to reject the SEP

- abstain -- no opinion, abstain votes will not be counted when determining majorities

- defer / table for further discussion -- keep the SEP in draft stage (in contrast to "abstain" this vote will be counted when determining majorities).

4. include a field for entering the e-mail address of the voter

5. include a field for further comments.

## 3. Discussion

The voting procedure is still very similar to the previous rules. New is the idea of SEPs and that it should, preferably, be the editors who move proposals for a vote. However, the proposal also still retains the previous practice -- anyone on the list can initiate the vote, as long as it is seconded by any other developer. Editors can therefore not block the vote on any issue.

Voting for election purposes remains unchanged and is described in SEP #3.

## Copyright

<p xmlns:dct="http://purl.org/dc/terms/" xmlns:vcard="http://www.w3.org/2001/vcard-rdf/3.0#">

<a rel="license"

href="http://creativecommons.org/publicdomain/zero/1.0/">

<img src="http://i.creativecommons.org/p/zero/1.0/88x31.png" style="border-style: none;" alt="CC0" />

</a>

<br />

To the extent possible under law,

<a rel="dct:publisher"

href="sbolstandard.org">

<span property="dct:title">SBOL developers</span></a>

has waived all copyright and related or neighboring rights to

<span property="dct:title">SEP 005</span>.

This work is published from:

<span property="vcard:Country" datatype="dct:ISO3166"

content="US" about="sbolstandard.org">

United States</span>.

</p>

|

1.0

|

SEP 005 -- SBOL Voting Procedure - # SEP 005 -- SBOL Voting Procedure

| SEP | 005 |

| --- | --- |

| **Title** | SBOL voting procedure |

| **Authors** | Raik Gruenberg |

| **Editor** | Raik Gruenberg |

| **Type** | Process |

| **Status** | Draft |

| **Created** | 24-Jan-2016 |

| **Last modified** | 02-Feb-2016 |

## Abstract

This proposal describes the voting procedure used to accept or reject changes to the SBOL data model or to SBOL community rules.

## 1. Rationale

With the introduction of SEPs, SBOL voting rules need to be updated. Previously, voting could be initiated by any two members of the sbol-dev mailing list on any issue of choice. According to the new proposal, voting can be initiated only on issues that first have been documented as an SBOL Enhancement Proposals (SEPs).

## 2. Specification

### 2.1 Changes to SBOL governance document

This SEP replaces two sections within the governance document (http://sbolstandard.org/development/gov/): "Voting process" and "Voting form". Note: Election rules remain unchanged.

### 2.2 Voting Process

1. Any member of the SBOL Developers Group can submit an SBOL Enhancement Proposal (SEP) to the editors and/or to the community at large.

2. The SBOL editors are expected to move a given SEP draft to a vote once they agree that there has been sufficient debate. However, any member of the SBOL Developers Group can initiate the vote as long as one other member of the group seconds the motion.

3. SBOL Editors post a voting form (see below), for a final discussion period of 2 working days.

4. Voting runs for 5 working days, starting at the end of the discussion period. All members of the SBOL Development Group are eligible to vote.

5. The SBOL Editors may extend the voting period by up to an additional 5 working days when they feel that an insufficient number of votes have been obtained.

6. SBOL Editors tally and call the vote. First vote will be judged by a 67% majority to indicate "rough consensus".

1. If rough consensus is not reached, discussion of 3 working days is to follow. SEP authors can modify or withdraw their proposal during that time.

2. The reasons for decisions must be recorded with the results of the vote.

3. Any second followup vote will be ruled by 50% majority and will be treated as the decision

### 2.3 Voting form

The voting form must:

1. State clearly the SEP number and title being voted on and provide a link to this SEP.

2. State the eligibility criteria for voting, “All members of the SBOL Developers Group are eligible to vote.”

3. Provide the following options for the vote:

- accept -- vote to accept the SEP

- reject -- vote to reject the SEP

- abstain -- no opinion, abstain votes will not be counted when determining majorities

- defer / table for further discussion -- keep the SEP in draft stage (in contrast to "abstain" this vote will be counted when determining majorities).

4. include a field for entering the e-mail address of the voter

5. include a field for further comments.

## 3. Discussion

The voting procedure is still very similar to the previous rules. New is the idea of SEPs and that it should, preferably, be the editors who move proposals for a vote. However, the proposal also still retains the previous practice -- anyone on the list can initiate the vote, as long as it is seconded by any other developer. Editors can therefore not block the vote on any issue.

Voting for election purposes remains unchanged and is described in SEP #3.

## Copyright

<p xmlns:dct="http://purl.org/dc/terms/" xmlns:vcard="http://www.w3.org/2001/vcard-rdf/3.0#">

<a rel="license"

href="http://creativecommons.org/publicdomain/zero/1.0/">

<img src="http://i.creativecommons.org/p/zero/1.0/88x31.png" style="border-style: none;" alt="CC0" />

</a>

<br />

To the extent possible under law,

<a rel="dct:publisher"

href="sbolstandard.org">

<span property="dct:title">SBOL developers</span></a>

has waived all copyright and related or neighboring rights to

<span property="dct:title">SEP 005</span>.

This work is published from:

<span property="vcard:Country" datatype="dct:ISO3166"

content="US" about="sbolstandard.org">

United States</span>.

</p>

|

process

|

sep sbol voting procedure sep sbol voting procedure sep title sbol voting procedure authors raik gruenberg editor raik gruenberg type process status draft created jan last modified feb abstract this proposal describes the voting procedure used to accept or reject changes to the sbol data model or to sbol community rules rationale with the introduction of seps sbol voting rules need to be updated previously voting could be initiated by any two members of the sbol dev mailing list on any issue of choice according to the new proposal voting can be initiated only on issues that first have been documented as an sbol enhancement proposals seps specification changes to sbol governance document this sep replaces two sections within the governance document voting process and voting form note election rules remain unchanged voting process any member of the sbol developers group can submit an sbol enhancement proposal sep to the editors and or to the community at large the sbol editors are expected to move a given sep draft to a vote once they agree that there has been sufficient debate however any member of the sbol developers group can initiate the vote as long as one other member of the group seconds the motion sbol editors post a voting form see below for a final discussion period of working days voting runs for working days starting at the end of the discussion period all members of the sbol development group are eligible to vote the sbol editors may extend the voting period by up to an additional working days when they feel that an insufficient number of votes have been obtained sbol editors tally and call the vote first vote will be judged by a majority to indicate rough consensus if rough consensus is not reached discussion of working days is to follow sep authors can modify or withdraw their proposal during that time the reasons for decisions must be recorded with the results of the vote any second followup vote will be ruled by majority and will be treated as the decision voting form the voting form must state clearly the sep number and title being voted on and provide a link to this sep state the eligibility criteria for voting “all members of the sbol developers group are eligible to vote ” provide the following options for the vote accept vote to accept the sep reject vote to reject the sep abstain no opinion abstain votes will not be counted when determining majorities defer table for further discussion keep the sep in draft stage in contrast to abstain this vote will be counted when determining majorities include a field for entering the e mail address of the voter include a field for further comments discussion the voting procedure is still very similar to the previous rules new is the idea of seps and that it should preferably be the editors who move proposals for a vote however the proposal also still retains the previous practice anyone on the list can initiate the vote as long as it is seconded by any other developer editors can therefore not block the vote on any issue voting for election purposes remains unchanged and is described in sep copyright p xmlns dct xmlns vcard a rel license href to the extent possible under law a rel dct publisher href sbolstandard org sbol developers has waived all copyright and related or neighboring rights to sep this work is published from span property vcard country datatype dct content us about sbolstandard org united states

| 1

|

11,434

| 14,248,489,782

|

IssuesEvent

|

2020-11-19 13:01:00

|

googleapis/repo-automation-bots

|

https://api.github.com/repos/googleapis/repo-automation-bots

|

opened

|

GitHub Actions CI flakiness

|

type: process

|

See https://github.com/googleapis/repo-automation-bots/runs/1424133746?check_suite_focus=true.

```

Error: Command failed: git diff --name-only origin/

fatal: ambiguous argument 'origin/': unknown revision or path not in the working tree.

Use '--' to separate paths from revisions, like this:

'git <command> [<revision>...] -- [<file>...]'

```

Seems to be related to the new setup from #626.

cc @JustinBeckwith @bcoe @tmatsuo

|

1.0

|

GitHub Actions CI flakiness - See https://github.com/googleapis/repo-automation-bots/runs/1424133746?check_suite_focus=true.

```

Error: Command failed: git diff --name-only origin/

fatal: ambiguous argument 'origin/': unknown revision or path not in the working tree.

Use '--' to separate paths from revisions, like this:

'git <command> [<revision>...] -- [<file>...]'

```

Seems to be related to the new setup from #626.

cc @JustinBeckwith @bcoe @tmatsuo

|

process

|

github actions ci flakiness see error command failed git diff name only origin fatal ambiguous argument origin unknown revision or path not in the working tree use to separate paths from revisions like this git seems to be related to the new setup from cc justinbeckwith bcoe tmatsuo

| 1

|

14,371

| 17,395,583,706

|

IssuesEvent

|

2021-08-02 13:06:37

|

DevExpress/testcafe-hammerhead

|

https://api.github.com/repos/DevExpress/testcafe-hammerhead

|

closed

|

Actions can't be performed in iframe on fiddle.net

|

AREA: client SYSTEM: URL processing SYSTEM: iframe processing TYPE: bug

|

### What is your Test Scenario?

I'm trying to record actions in a result iframe on fiddle.net after click on a `Run` button

https://github.com/DevExpress/testcafe-studio/issues/2052

### What is the Current behavior?

Error raises `TestCafeDriver doesn't exist in the iframe`

### What is the Expected behavior?

I expect TestCafeDriver exists in the iframe

### Steps to Reproduce:

The easiest way to reproduce is to run a following test:

```js

import { Selector } from 'testcafe';

fixture `f`

.page `https://jsfiddle.net/`;

test('t', async t => {

await t

.debug() //resume after loading is complete

.click(Selector('#run'))

.debug() // resume after result iframe has a white background

.switchToIframe(Selector('[name="result"]'))

.click(Selector('body')); // error raises 'Content of the iframe in which the test is currently operating did not load.'

});

```

On the second `debug` action you can check that TestCafeDriver instance doesn't exist in iframe

### Your Environment details:

* testcafe version: 0.23.3

|

2.0

|

Actions can't be performed in iframe on fiddle.net - ### What is your Test Scenario?

I'm trying to record actions in a result iframe on fiddle.net after click on a `Run` button

https://github.com/DevExpress/testcafe-studio/issues/2052

### What is the Current behavior?

Error raises `TestCafeDriver doesn't exist in the iframe`

### What is the Expected behavior?

I expect TestCafeDriver exists in the iframe

### Steps to Reproduce:

The easiest way to reproduce is to run a following test:

```js

import { Selector } from 'testcafe';

fixture `f`

.page `https://jsfiddle.net/`;

test('t', async t => {

await t

.debug() //resume after loading is complete

.click(Selector('#run'))

.debug() // resume after result iframe has a white background

.switchToIframe(Selector('[name="result"]'))

.click(Selector('body')); // error raises 'Content of the iframe in which the test is currently operating did not load.'

});

```

On the second `debug` action you can check that TestCafeDriver instance doesn't exist in iframe

### Your Environment details:

* testcafe version: 0.23.3

|

process

|

actions can t be performed in iframe on fiddle net what is your test scenario i m trying to record actions in a result iframe on fiddle net after click on a run button what is the current behavior error raises testcafedriver doesn t exist in the iframe what is the expected behavior i expect testcafedriver exists in the iframe steps to reproduce the easiest way to reproduce is to run a following test js import selector from testcafe fixture f page test t async t await t debug resume after loading is complete click selector run debug resume after result iframe has a white background switchtoiframe selector click selector body error raises content of the iframe in which the test is currently operating did not load on the second debug action you can check that testcafedriver instance doesn t exist in iframe your environment details testcafe version

| 1

|

838

| 3,305,436,722

|

IssuesEvent

|

2015-11-04 04:45:34

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

closed

|

abbreviated-form and term keyref links are not resolved when chunk="to-content" [DOT 1.8 and 2.0]]

|

bug preprocess/chunking

|

Let's say I have a DITA Map like:

<!DOCTYPE map PUBLIC "-//OASIS//DTD DITA Map//EN" "http://docs.oasis-open.org/dita/v1.1/OS/dtd/map.dtd">

<map title="Growing Flowers">

<topicref href="topics/introduction.dita"/>

<topicref href="glossary/glossary_overview.dita" chunk="to-content">

<topicref href="glossary/ot.dita" keys="opentoolkit"/>

</topicref>

</map>

with "introduction.dita" having the content:

<!DOCTYPE topic PUBLIC "-//OASIS//DTD DITA Topic//EN" "http://docs.oasis-open.org/dita/v1.1/OS/dtd/topic.dtd">

<topic id="introduction">

<title>Introduction</title>

<body>

<p>Look out for <term keyref="opentoolkit"/> and for <abbreviated-form keyref="opentoolkit"/> but not for <xref keyref="opentoolkit"/>.</p>

</body>

</topic>

and "glossary_overview.dita" having the content:

<!DOCTYPE topic PUBLIC "-//OASIS//DTD DITA Topic//EN" "topic.dtd">

<topic id="topic_dfl_sn4_3q">

<title>TEST</title>

<body>

<p></p>

</body>

</topic>

and "ot.dita" having the content:

<!DOCTYPE glossentry PUBLIC "-//OASIS//DTD DITA Glossary//EN" "glossary.dtd">

<glossentry id="wmd">

<glossterm>Weapons of Mass

Destruction</glossterm>

<glossBody>

<glossSurfaceForm>Weapons of Mass

Destruction (WMD)</glossSurfaceForm>

<glossAlt>

<glossAcronym>WMD</glossAcronym>

</glossAlt>

</glossBody>

</glossentry>

Publishing the DITA Map to XHTML, both the abbreviated-form and term keyrefs are not properly solved. The xref with the same keyref is properly solved.

The console output contains:

[xslt] C:\Users\radu_coravu\Desktop\DOT2.0 final\DITA-OT2.0\plugins\org.dita.xhtml\xsl\xslhtml\dita2htmlImpl.xsl:1372: Error! I/O error reported by XML parser processing file:/C:/Users/radu_coravu/Desktop/tomjohnson/flowers/temp/xhtml/oxygen_dita_temp/topics/../glossary/ot.dita: C:\Users\radu_coravu\Desktop\tomjohnson\flowers\temp\xhtml\oxygen_dita_temp\topics\..\glossary\ot.dita (The system cannot find the file specified) Cause: java.io.FileNotFoundException: C:\Users\radu_coravu\Desktop\tomjohnson\flowers\temp\xhtml\oxygen_dita_temp\topics\..\glossary\ot.dita (The system cannot find the file specified)

[xslt] C:\Users\radu_coravu\Desktop\DOT2.0 final\DITA-OT2.0\plugins\org.dita.xhtml\xsl\xslhtml\dita2htmlImpl.xsl:4136: Error! Document has been marked not available: file:/C:/Users/radu_coravu/Desktop/tomjohnson/flowers/temp/xhtml/oxygen_dita_temp/topics/../glossary/ot.dita

|

1.0

|

abbreviated-form and term keyref links are not resolved when chunk="to-content" [DOT 1.8 and 2.0]] - Let's say I have a DITA Map like:

<!DOCTYPE map PUBLIC "-//OASIS//DTD DITA Map//EN" "http://docs.oasis-open.org/dita/v1.1/OS/dtd/map.dtd">

<map title="Growing Flowers">

<topicref href="topics/introduction.dita"/>

<topicref href="glossary/glossary_overview.dita" chunk="to-content">

<topicref href="glossary/ot.dita" keys="opentoolkit"/>

</topicref>

</map>

with "introduction.dita" having the content:

<!DOCTYPE topic PUBLIC "-//OASIS//DTD DITA Topic//EN" "http://docs.oasis-open.org/dita/v1.1/OS/dtd/topic.dtd">

<topic id="introduction">

<title>Introduction</title>

<body>

<p>Look out for <term keyref="opentoolkit"/> and for <abbreviated-form keyref="opentoolkit"/> but not for <xref keyref="opentoolkit"/>.</p>

</body>

</topic>

and "glossary_overview.dita" having the content:

<!DOCTYPE topic PUBLIC "-//OASIS//DTD DITA Topic//EN" "topic.dtd">

<topic id="topic_dfl_sn4_3q">

<title>TEST</title>

<body>

<p></p>

</body>

</topic>

and "ot.dita" having the content:

<!DOCTYPE glossentry PUBLIC "-//OASIS//DTD DITA Glossary//EN" "glossary.dtd">

<glossentry id="wmd">

<glossterm>Weapons of Mass

Destruction</glossterm>

<glossBody>

<glossSurfaceForm>Weapons of Mass

Destruction (WMD)</glossSurfaceForm>

<glossAlt>

<glossAcronym>WMD</glossAcronym>

</glossAlt>

</glossBody>

</glossentry>

Publishing the DITA Map to XHTML, both the abbreviated-form and term keyrefs are not properly solved. The xref with the same keyref is properly solved.

The console output contains:

[xslt] C:\Users\radu_coravu\Desktop\DOT2.0 final\DITA-OT2.0\plugins\org.dita.xhtml\xsl\xslhtml\dita2htmlImpl.xsl:1372: Error! I/O error reported by XML parser processing file:/C:/Users/radu_coravu/Desktop/tomjohnson/flowers/temp/xhtml/oxygen_dita_temp/topics/../glossary/ot.dita: C:\Users\radu_coravu\Desktop\tomjohnson\flowers\temp\xhtml\oxygen_dita_temp\topics\..\glossary\ot.dita (The system cannot find the file specified) Cause: java.io.FileNotFoundException: C:\Users\radu_coravu\Desktop\tomjohnson\flowers\temp\xhtml\oxygen_dita_temp\topics\..\glossary\ot.dita (The system cannot find the file specified)

[xslt] C:\Users\radu_coravu\Desktop\DOT2.0 final\DITA-OT2.0\plugins\org.dita.xhtml\xsl\xslhtml\dita2htmlImpl.xsl:4136: Error! Document has been marked not available: file:/C:/Users/radu_coravu/Desktop/tomjohnson/flowers/temp/xhtml/oxygen_dita_temp/topics/../glossary/ot.dita

|

process

|

abbreviated form and term keyref links are not resolved when chunk to content let s say i have a dita map like doctype map public oasis dtd dita map en with introduction dita having the content doctype topic public oasis dtd dita topic en introduction look out for and for but not for and glossary overview dita having the content test and ot dita having the content weapons of mass destruction weapons of mass destruction wmd wmd publishing the dita map to xhtml both the abbreviated form and term keyrefs are not properly solved the xref with the same keyref is properly solved the console output contains c users radu coravu desktop final dita plugins org dita xhtml xsl xslhtml xsl error i o error reported by xml parser processing file c users radu coravu desktop tomjohnson flowers temp xhtml oxygen dita temp topics glossary ot dita c users radu coravu desktop tomjohnson flowers temp xhtml oxygen dita temp topics glossary ot dita the system cannot find the file specified cause java io filenotfoundexception c users radu coravu desktop tomjohnson flowers temp xhtml oxygen dita temp topics glossary ot dita the system cannot find the file specified c users radu coravu desktop final dita plugins org dita xhtml xsl xslhtml xsl error document has been marked not available file c users radu coravu desktop tomjohnson flowers temp xhtml oxygen dita temp topics glossary ot dita

| 1

|

203,360

| 23,146,955,644

|

IssuesEvent

|

2022-07-29 02:39:01

|

istio/istio

|

https://api.github.com/repos/istio/istio

|

closed

|

Istio JWT validation happens even if RequestAuthentication is not applied to the workload

|

area/security

|

### Bug Description

### Context:

I have two `httpbin` deployments under `foo` namespace:

1. `httpbin` – deployed with the sidecar proxy

2. `httpbin-no-auth` – deployed without sidecar proxy

I also configured `RequestAuthentication` to be applied to the `httpbin` workload:

```yaml

apiVersion: security.istio.io/v1beta1

kind: RequestAuthentication

metadata:

name: jwt-example

namespace: foo

spec:

selector:

matchLabels:

app: httpbin

jwtRules:

- issuer: https://accounts.google.com

```

Now, I want to create a `VirtualService`, which will route requests to `httpbin` if the `x-use-auth: true` header is present, or otherwise, route requests to `httpbin-no-auth`:

```yaml

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

metadata:

name: httpbin

namespace: foo

spec:

gateways:

- httpbin

- mesh

hosts:

- httpbin.foo.svc.cluster.local

http:

- match:

- headers:

x-use-auth:

exact: 'true'

route:

- destination:

host: httpbin.foo.svc.cluster.local

port:

number: 8000

- route:

- destination:

host: httpbin-no-auth.foo.svc.cluster.local

port:

number: 8000

```

I also have a `Gateway` to pass the ingress traffic to the mesh:

```yaml

apiVersion: networking.istio.io/v1beta1

kind: Gateway

metadata:

name: httpbin

namespace: foo

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- '*'

```

### Expected Behaviour:

```shell

~ curl -H 'Host: httpbin.foo.svc.cluster.local' -H "x-use-auth: true" -H "Authorization: Bearer invalid.token" http://${INGRESS_IP}/ip -v

<redacted>

* Mark bundle as not supporting multiuse

< HTTP/1.1 401 Unauthorized

< www-authenticate: Bearer realm="http://httpbin.foo.svc.cluster.local/ip", error="invalid_token"

<

Jwt is not in the form of Header.Payload.Signature with two dots and 3 sections%

```

```shell

~ curl -H 'Host: httpbin.foo.svc.cluster.local' -H "Authorization: Bearer invalid.token" http://${INGRESS_IP}/ip -v

<redacted>

* Mark bundle as not supporting multiuse

< HTTP/1.1 200 OK

<

{

"origin": "<ip_address>"

}

```

### Actual Behaviour:

```shell

~ curl -H 'Host: httpbin.foo.svc.cluster.local' -H "Authorization: Bearer invalid.token" http://${INGRESS_IP}/ip -v

<redacted>

* Mark bundle as not supporting multiuse

< HTTP/1.1 401 Unauthorized

< www-authenticate: Bearer realm="http://httpbin.foo.svc.cluster.local/ip", error="invalid_token"

<

Jwt is not in the form of Header.Payload.Signature with two dots and 3 sections%

```

### Details:

The reason I'm trying to achieve described behaviour is because I have a legacy version of an API service, which is using OAuth2 `access_token` for authentication. Now, I want to migrate this service to OIDC with JWT, without changing hostname/path under which this API is accessible.

### Version

```prose

➜ ~ istioctl version

client version: 1.14.1

control plane version: 1.13.3

data plane version: 1.13.3 (2 proxies)

➜ ~ k version --short

Client Version: v1.23.3

Server Version: v1.22.8-gke.202

```

### Additional Information

_No response_

|

True

|

Istio JWT validation happens even if RequestAuthentication is not applied to the workload - ### Bug Description

### Context:

I have two `httpbin` deployments under `foo` namespace:

1. `httpbin` – deployed with the sidecar proxy

2. `httpbin-no-auth` – deployed without sidecar proxy

I also configured `RequestAuthentication` to be applied to the `httpbin` workload:

```yaml

apiVersion: security.istio.io/v1beta1

kind: RequestAuthentication

metadata:

name: jwt-example

namespace: foo

spec:

selector:

matchLabels:

app: httpbin

jwtRules:

- issuer: https://accounts.google.com

```

Now, I want to create a `VirtualService`, which will route requests to `httpbin` if the `x-use-auth: true` header is present, or otherwise, route requests to `httpbin-no-auth`:

```yaml

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

metadata:

name: httpbin

namespace: foo

spec:

gateways:

- httpbin

- mesh

hosts:

- httpbin.foo.svc.cluster.local

http:

- match:

- headers:

x-use-auth:

exact: 'true'

route:

- destination:

host: httpbin.foo.svc.cluster.local

port:

number: 8000

- route:

- destination:

host: httpbin-no-auth.foo.svc.cluster.local

port:

number: 8000

```

I also have a `Gateway` to pass the ingress traffic to the mesh:

```yaml

apiVersion: networking.istio.io/v1beta1

kind: Gateway

metadata:

name: httpbin

namespace: foo

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- '*'

```

### Expected Behaviour:

```shell

~ curl -H 'Host: httpbin.foo.svc.cluster.local' -H "x-use-auth: true" -H "Authorization: Bearer invalid.token" http://${INGRESS_IP}/ip -v

<redacted>

* Mark bundle as not supporting multiuse

< HTTP/1.1 401 Unauthorized

< www-authenticate: Bearer realm="http://httpbin.foo.svc.cluster.local/ip", error="invalid_token"

<

Jwt is not in the form of Header.Payload.Signature with two dots and 3 sections%

```

```shell

~ curl -H 'Host: httpbin.foo.svc.cluster.local' -H "Authorization: Bearer invalid.token" http://${INGRESS_IP}/ip -v

<redacted>

* Mark bundle as not supporting multiuse

< HTTP/1.1 200 OK

<

{

"origin": "<ip_address>"

}

```

### Actual Behaviour:

```shell

~ curl -H 'Host: httpbin.foo.svc.cluster.local' -H "Authorization: Bearer invalid.token" http://${INGRESS_IP}/ip -v

<redacted>

* Mark bundle as not supporting multiuse

< HTTP/1.1 401 Unauthorized

< www-authenticate: Bearer realm="http://httpbin.foo.svc.cluster.local/ip", error="invalid_token"

<

Jwt is not in the form of Header.Payload.Signature with two dots and 3 sections%

```

### Details:

The reason I'm trying to achieve described behaviour is because I have a legacy version of an API service, which is using OAuth2 `access_token` for authentication. Now, I want to migrate this service to OIDC with JWT, without changing hostname/path under which this API is accessible.

### Version

```prose

➜ ~ istioctl version

client version: 1.14.1

control plane version: 1.13.3

data plane version: 1.13.3 (2 proxies)

➜ ~ k version --short

Client Version: v1.23.3

Server Version: v1.22.8-gke.202

```

### Additional Information

_No response_

|

non_process

|

istio jwt validation happens even if requestauthentication is not applied to the workload bug description context i have two httpbin deployments under foo namespace httpbin – deployed with the sidecar proxy httpbin no auth – deployed without sidecar proxy i also configured requestauthentication to be applied to the httpbin workload yaml apiversion security istio io kind requestauthentication metadata name jwt example namespace foo spec selector matchlabels app httpbin jwtrules issuer now i want to create a virtualservice which will route requests to httpbin if the x use auth true header is present or otherwise route requests to httpbin no auth yaml apiversion networking istio io kind virtualservice metadata name httpbin namespace foo spec gateways httpbin mesh hosts httpbin foo svc cluster local http match headers x use auth exact true route destination host httpbin foo svc cluster local port number route destination host httpbin no auth foo svc cluster local port number i also have a gateway to pass the ingress traffic to the mesh yaml apiversion networking istio io kind gateway metadata name httpbin namespace foo spec selector istio ingressgateway servers port number name http protocol http hosts expected behaviour shell curl h host httpbin foo svc cluster local h x use auth true h authorization bearer invalid token v mark bundle as not supporting multiuse http unauthorized www authenticate bearer realm error invalid token jwt is not in the form of header payload signature with two dots and sections shell curl h host httpbin foo svc cluster local h authorization bearer invalid token v mark bundle as not supporting multiuse http ok origin actual behaviour shell curl h host httpbin foo svc cluster local h authorization bearer invalid token v mark bundle as not supporting multiuse http unauthorized www authenticate bearer realm error invalid token jwt is not in the form of header payload signature with two dots and sections details the reason i m trying to achieve described behaviour is because i have a legacy version of an api service which is using access token for authentication now i want to migrate this service to oidc with jwt without changing hostname path under which this api is accessible version prose ➜ istioctl version client version control plane version data plane version proxies ➜ k version short client version server version gke additional information no response

| 0

|

8,116

| 11,302,415,055

|

IssuesEvent

|

2020-01-17 17:36:43

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

2 small fixes in pathogen host branch

|

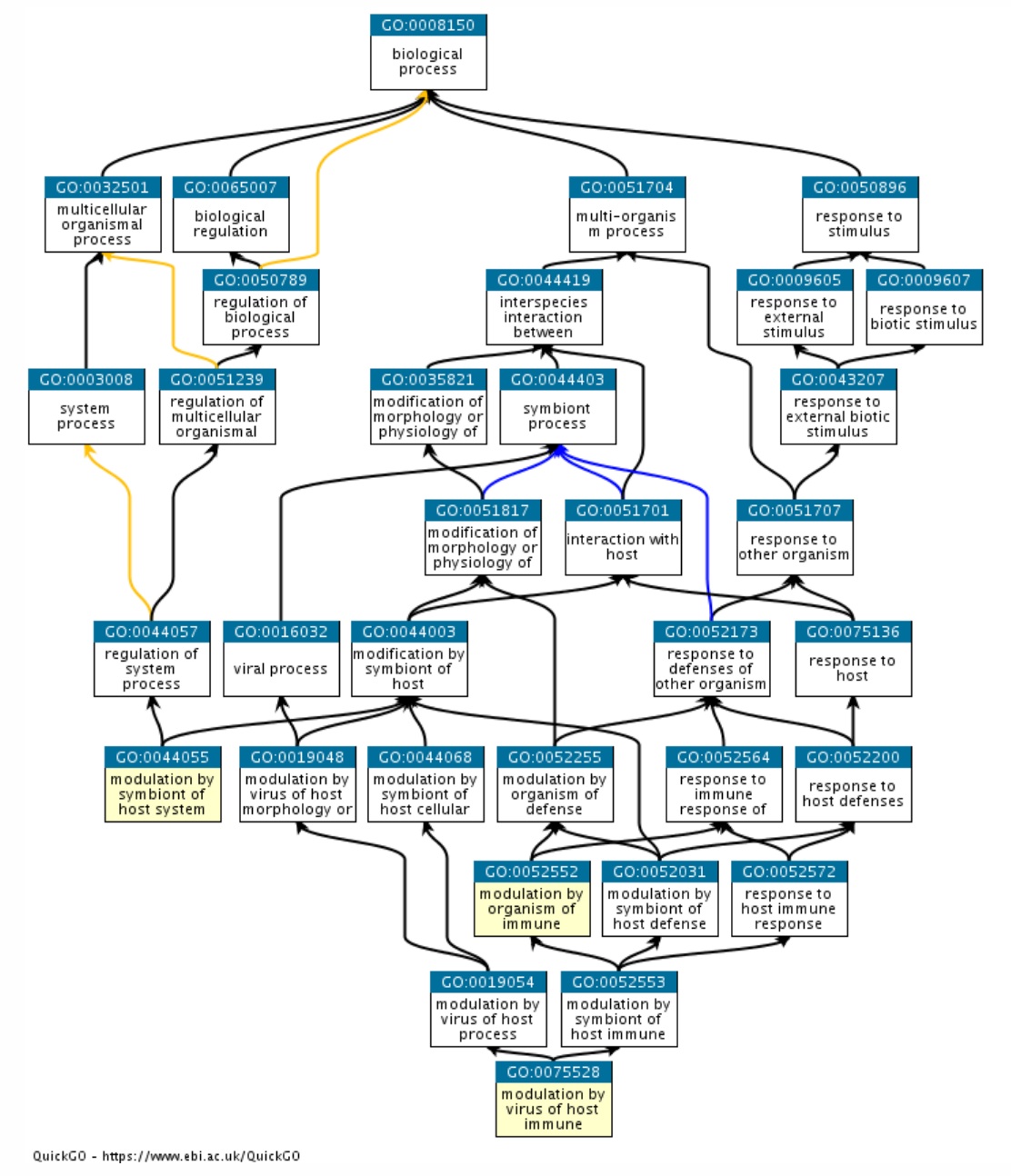

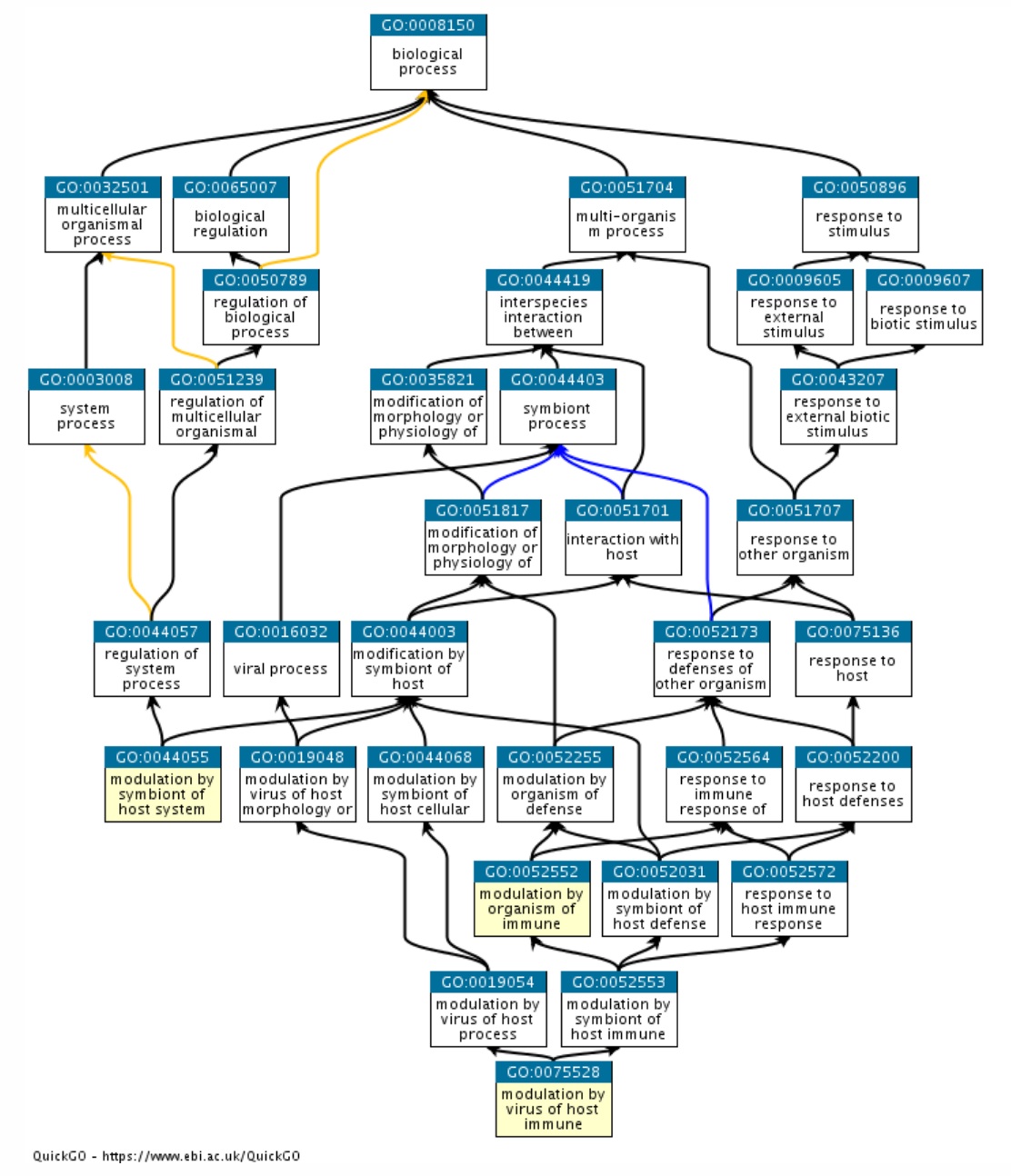

PomBase missing parentage multi-species process parent relationship query term merge

|

add

- [x]

GO:0044068 modulation by symbiont of host cellular process

as a parent of

GO:0052552 modulation by organism of immune response of other organism involved in symbiotic interaction

- [x] and rename to

GO:0052552 modulation by symbiont of host immune response

i.e now it will be named like the descendant term

GO:0075528 modulation by virus of host immune response

|

1.0

|

2 small fixes in pathogen host branch -

add

- [x]

GO:0044068 modulation by symbiont of host cellular process

as a parent of

GO:0052552 modulation by organism of immune response of other organism involved in symbiotic interaction

- [x] and rename to

GO:0052552 modulation by symbiont of host immune response

i.e now it will be named like the descendant term

GO:0075528 modulation by virus of host immune response

|

process

|

small fixes in pathogen host branch add go modulation by symbiont of host cellular process as a parent of go modulation by organism of immune response of other organism involved in symbiotic interaction and rename to go modulation by symbiont of host immune response i e now it will be named like the descendant term go modulation by virus of host immune response

| 1

|

7,033

| 10,192,805,100

|

IssuesEvent

|

2019-08-12 12:11:16

|

linnovate/root

|

https://api.github.com/repos/linnovate/root

|

closed

|

partner names should be seen to every user in the entity

|

2.0.9 Process bug

|

after adding or being added to an entity the name of the other partners should be visible on tooltip after clicking on their avatar ( watcher and commenter cant see)

|

1.0

|

partner names should be seen to every user in the entity - after adding or being added to an entity the name of the other partners should be visible on tooltip after clicking on their avatar ( watcher and commenter cant see)

|

process

|

partner names should be seen to every user in the entity after adding or being added to an entity the name of the other partners should be visible on tooltip after clicking on their avatar watcher and commenter cant see

| 1

|

11,287

| 14,079,905,109

|

IssuesEvent

|

2020-11-04 15:27:32

|

googleapis/google-cloud-ruby

|

https://api.github.com/repos/googleapis/google-cloud-ruby

|

closed

|

bigquery: CI samples task consistently fails

|

api: bigquery samples type: process

|

google-cloud-bigquery samples task consistently fails. This is probably due to an expectation that the added datasets will appear in the first page of results, and in reality there are many old datasets.

```

1) Failure:

List datasets#test_0001_lists datasets in a project [/tmpfs/src/github/google-cloud-ruby/google-cloud-bigquery/samples/snippets/acceptance/list_datasets_test.rb:27]:

Expected /test_dataset1_1603835162_1753c908/ to match "Datasets in project :\n\tgcloud_ruby_acceptance_2020_08_28t07_36_28z_523e3659_dataset_view\n\tgcloud_ruby_acceptance_2020_08_28t07_36_28z_523e3659_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_2\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_location\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_view\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_2\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_location\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_view\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_2\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_location\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_view\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_2\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_location\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_view\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_2\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_location\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_view\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_2\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_location\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_view\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_2\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_location\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_view\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_with_access\n\truby_asset_sample_898c3ad7d3cd5c3e2ea132420da283ca\n\ttest_dataset1_1592391068\n\ttest_dataset1_1592391079\n\ttest_dataset1_1592391081\n\ttest_dataset1_1592391087\n\ttest_dataset1_1592391111\n\ttest_dataset1_1592469664\n\ttest_dataset1_1592469668\n\ttest_dataset1_1592469689\n\ttest_dataset1_1592522636\n\ttest_dataset1_1592522814\n\ttest_dataset1_1592525505\n\ttest_dataset1_1592525551\n".

18 runs, 47 assertions, 1 failures, 0 errors, 0 skips

```

|

1.0

|

bigquery: CI samples task consistently fails - google-cloud-bigquery samples task consistently fails. This is probably due to an expectation that the added datasets will appear in the first page of results, and in reality there are many old datasets.

```

1) Failure:

List datasets#test_0001_lists datasets in a project [/tmpfs/src/github/google-cloud-ruby/google-cloud-bigquery/samples/snippets/acceptance/list_datasets_test.rb:27]:

Expected /test_dataset1_1603835162_1753c908/ to match "Datasets in project :\n\tgcloud_ruby_acceptance_2020_08_28t07_36_28z_523e3659_dataset_view\n\tgcloud_ruby_acceptance_2020_08_28t07_36_28z_523e3659_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_2\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_location\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_view\n\tgcloud_ruby_acceptance_2020_10_05t07_48_15z_93d1dd10_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_2\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_location\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_view\n\tgcloud_ruby_acceptance_2020_10_05t09_20_52z_b4f07038_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_2\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_location\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_view\n\tgcloud_ruby_acceptance_2020_10_06t08_43_28z_ab38893e_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_2\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_location\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_view\n\tgcloud_ruby_acceptance_2020_10_10t10_49_19z_bf7e3447_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_2\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_location\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_view\n\tgcloud_ruby_acceptance_2020_10_15t07_39_24z_919a6b6e_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_2\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_location\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_view\n\tgcloud_ruby_acceptance_2020_10_20t08_47_54z_b1a68b92_dataset_with_access\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_2\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_location\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_view\n\tgcloud_ruby_acceptance_2020_10_24t07_36_36z_9e954fb9_dataset_with_access\n\truby_asset_sample_898c3ad7d3cd5c3e2ea132420da283ca\n\ttest_dataset1_1592391068\n\ttest_dataset1_1592391079\n\ttest_dataset1_1592391081\n\ttest_dataset1_1592391087\n\ttest_dataset1_1592391111\n\ttest_dataset1_1592469664\n\ttest_dataset1_1592469668\n\ttest_dataset1_1592469689\n\ttest_dataset1_1592522636\n\ttest_dataset1_1592522814\n\ttest_dataset1_1592525505\n\ttest_dataset1_1592525551\n".

18 runs, 47 assertions, 1 failures, 0 errors, 0 skips

```

|

process

|

bigquery ci samples task consistently fails google cloud bigquery samples task consistently fails this is probably due to an expectation that the added datasets will appear in the first page of results and in reality there are many old datasets failure list datasets test lists datasets in a project expected test to match datasets in project n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset location n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset location n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset location n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset location n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset location n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset location n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset n tgcloud ruby acceptance dataset location n tgcloud ruby acceptance dataset view n tgcloud ruby acceptance dataset with access n truby asset sample n ttest n ttest n ttest n ttest n ttest n ttest n ttest n ttest n ttest n ttest n ttest n ttest n runs assertions failures errors skips

| 1

|

10,471

| 13,246,254,586

|

IssuesEvent

|

2020-08-19 15:27:07

|

pystatgen/sgkit

|

https://api.github.com/repos/pystatgen/sgkit

|

closed

|

Investigate mergify

|

process + tools

|

I will close this issue, and create a separate one for `mergify`.

_Originally posted by @ravwojdyla in https://github.com/pystatgen/sgkit/issues/26#issuecomment-673381620_

Related: https://github.com/pystatgen/sgkit/issues/26#issuecomment-655656832

|

1.0

|

Investigate mergify - I will close this issue, and create a separate one for `mergify`.

_Originally posted by @ravwojdyla in https://github.com/pystatgen/sgkit/issues/26#issuecomment-673381620_

Related: https://github.com/pystatgen/sgkit/issues/26#issuecomment-655656832

|

process

|

investigate mergify i will close this issue and create a separate one for mergify originally posted by ravwojdyla in related

| 1

|

18,728

| 24,622,700,670

|

IssuesEvent

|

2022-10-16 05:20:22

|

Open-Data-Product-Initiative/open-data-product-spec-1.1dev

|

https://api.github.com/repos/Open-Data-Product-Initiative/open-data-product-spec-1.1dev

|

opened

|

Check Fair Data Economy "Dataset Terms of Use [Template]" requirements for data products

|

enhancement Unprocessed

|

**Idea Description**

The rulebook for a fair data economy is a guide for creators of fair data economy networks. Agreement templates and other tools make it easier to build and join new data networks which highlight transparency in data sharing.

Review the rule book for the dataset terms of use and find out which parts are already covered by the open data product spec and which are not. Document all the findings. Based on this work we can design needed changes to the Open Data Product Specification. See attached Rule book.

[rulebook-for-a-fair-data-economy-part-2.pdf](https://github.com/Open-Data-Product-Initiative/open-data-product-spec-1.1dev/files/9793651/rulebook-for-a-fair-data-economy-part-2.pdf)

More information about the rule book from here https://www.sitra.fi/en/publications/rulebook-for-a-fair-data-economy/#preface-and-templates

|

1.0

|

Check Fair Data Economy "Dataset Terms of Use [Template]" requirements for data products - **Idea Description**

The rulebook for a fair data economy is a guide for creators of fair data economy networks. Agreement templates and other tools make it easier to build and join new data networks which highlight transparency in data sharing.

Review the rule book for the dataset terms of use and find out which parts are already covered by the open data product spec and which are not. Document all the findings. Based on this work we can design needed changes to the Open Data Product Specification. See attached Rule book.

[rulebook-for-a-fair-data-economy-part-2.pdf](https://github.com/Open-Data-Product-Initiative/open-data-product-spec-1.1dev/files/9793651/rulebook-for-a-fair-data-economy-part-2.pdf)

More information about the rule book from here https://www.sitra.fi/en/publications/rulebook-for-a-fair-data-economy/#preface-and-templates

|

process

|

check fair data economy dataset terms of use requirements for data products idea description the rulebook for a fair data economy is a guide for creators of fair data economy networks agreement templates and other tools make it easier to build and join new data networks which highlight transparency in data sharing review the rule book for the dataset terms of use and find out which parts are already covered by the open data product spec and which are not document all the findings based on this work we can design needed changes to the open data product specification see attached rule book more information about the rule book from here

| 1

|

7,692

| 2,920,215,036

|

IssuesEvent

|

2015-06-24 17:51:02

|

Dolu1990/ElectricalAge

|

https://api.github.com/repos/Dolu1990/ElectricalAge

|

closed

|

Disapearing blocks

|

bug in the pipeline under test

|

I've had a problem that sometimes when I get back on my world all the blocks(but not items) are gone. This issue may be caused by the other mods in the pack but I don't know. The pack by the way is on the AT launcher and is called Buildpak (the code is "buildpak" if you want to try it yourself.) Thanks

|

1.0

|

Disapearing blocks - I've had a problem that sometimes when I get back on my world all the blocks(but not items) are gone. This issue may be caused by the other mods in the pack but I don't know. The pack by the way is on the AT launcher and is called Buildpak (the code is "buildpak" if you want to try it yourself.) Thanks

|

non_process

|

disapearing blocks i ve had a problem that sometimes when i get back on my world all the blocks but not items are gone this issue may be caused by the other mods in the pack but i don t know the pack by the way is on the at launcher and is called buildpak the code is buildpak if you want to try it yourself thanks

| 0

|

3,410

| 6,523,899,111

|

IssuesEvent

|

2017-08-29 10:28:04

|

w3c/w3process

|

https://api.github.com/repos/w3c/w3process

|

closed

|

Director can dismis a AB or TAG participant without giving a cause?

|

Active Process2018Candidate

|

Transferred from https://www.w3.org/community/w3process/track/issues/160

State: Raised

|

1.0

|

Director can dismis a AB or TAG participant without giving a cause? - Transferred from https://www.w3.org/community/w3process/track/issues/160

State: Raised

|

process

|

director can dismis a ab or tag participant without giving a cause transferred from state raised

| 1

|

5,920

| 8,742,309,477

|

IssuesEvent

|

2018-12-12 16:08:16

|

prusa3d/Slic3r

|

https://api.github.com/repos/prusa3d/Slic3r

|

closed

|

[Request] Estimated print time in gcode file name

|

background processing enhancement

|

I love the print time estimation in the new alpha. What would make it even better is if the print time could be incorporated in the filename.

(What would make it downright amazing, is if the print time could be somehow communicated to the printer, and shown on the display during printing.)

|

1.0

|

[Request] Estimated print time in gcode file name - I love the print time estimation in the new alpha. What would make it even better is if the print time could be incorporated in the filename.

(What would make it downright amazing, is if the print time could be somehow communicated to the printer, and shown on the display during printing.)

|

process

|

estimated print time in gcode file name i love the print time estimation in the new alpha what would make it even better is if the print time could be incorporated in the filename what would make it downright amazing is if the print time could be somehow communicated to the printer and shown on the display during printing

| 1

|

258,502

| 8,176,610,359

|

IssuesEvent

|

2018-08-28 08:08:29

|

mozilla/addons-frontend

|

https://api.github.com/repos/mozilla/addons-frontend

|

closed

|

Show a warning when viewing a non-public add-on

|

component: ux contrib: welcome priority: p4 size: S triaged type: papercut

|

### Describe the problem and steps to reproduce it:

<!-- Please include as many details as possible. -->

As an admin, log in to AMO and view the detail page for a disabled add-on

*or*

As a developer, upload an add-on, make it non-public, log in to AMO and view its detail page

### What happened?

You see a complete detail page as if nothing was different

### What did you expect to happen?

You should see a warning saying that the add-on is not public but you're seeing it anyway because you have elevated privileges.

### Anything else we should know?

<!-- Please include a link to the page, screenshots and any relevant files. -->

This has created confusion a couple of times from admins or developers who are not expecting to see a public listing.

It should be pretty easy to fix:

```diff

diff --git a/src/amo/components/Addon/index.js b/src/amo/components/Addon/index.js

index a2d4eec21..52e2b4d4e 100644

--- a/src/amo/components/Addon/index.js

+++ b/src/amo/components/Addon/index.js

@@ -53,6 +53,7 @@ import Card from 'ui/components/Card';

import Icon from 'ui/components/Icon';

import LoadingText from 'ui/components/LoadingText';

import ShowMoreCard from 'ui/components/ShowMoreCard';

+import Notice from 'ui/components/Notice';

import './styles.scss';

@@ -537,6 +538,11 @@ export class AddonBase extends React.Component {

reason={compatibility.reason}

/>

) : null}

+ {addon && addon.status !== 'public' ? (

+ <Notice type="error">

+ {i18n.gettext('This is not a public listing. You are only seeing it because of elevated permissions.')}

+ </Notice>

+ ) : null}

<header className="Addon-header">

{this.headerImage({ compatible: isCompatible })}

```

|

1.0

|

Show a warning when viewing a non-public add-on - ### Describe the problem and steps to reproduce it:

<!-- Please include as many details as possible. -->

As an admin, log in to AMO and view the detail page for a disabled add-on

*or*

As a developer, upload an add-on, make it non-public, log in to AMO and view its detail page

### What happened?

You see a complete detail page as if nothing was different

### What did you expect to happen?

You should see a warning saying that the add-on is not public but you're seeing it anyway because you have elevated privileges.

### Anything else we should know?

<!-- Please include a link to the page, screenshots and any relevant files. -->

This has created confusion a couple of times from admins or developers who are not expecting to see a public listing.

It should be pretty easy to fix:

```diff

diff --git a/src/amo/components/Addon/index.js b/src/amo/components/Addon/index.js

index a2d4eec21..52e2b4d4e 100644

--- a/src/amo/components/Addon/index.js

+++ b/src/amo/components/Addon/index.js

@@ -53,6 +53,7 @@ import Card from 'ui/components/Card';

import Icon from 'ui/components/Icon';

import LoadingText from 'ui/components/LoadingText';

import ShowMoreCard from 'ui/components/ShowMoreCard';

+import Notice from 'ui/components/Notice';

import './styles.scss';

@@ -537,6 +538,11 @@ export class AddonBase extends React.Component {

reason={compatibility.reason}

/>

) : null}

+ {addon && addon.status !== 'public' ? (

+ <Notice type="error">

+ {i18n.gettext('This is not a public listing. You are only seeing it because of elevated permissions.')}

+ </Notice>

+ ) : null}

<header className="Addon-header">

{this.headerImage({ compatible: isCompatible })}

```

|

non_process

|

show a warning when viewing a non public add on describe the problem and steps to reproduce it as an admin log in to amo and view the detail page for a disabled add on or as a developer upload an add on make it non public log in to amo and view its detail page what happened you see a complete detail page as if nothing was different what did you expect to happen you should see a warning saying that the add on is not public but you re seeing it anyway because you have elevated privileges anything else we should know this has created confusion a couple of times from admins or developers who are not expecting to see a public listing it should be pretty easy to fix diff diff git a src amo components addon index js b src amo components addon index js index a src amo components addon index js b src amo components addon index js import card from ui components card import icon from ui components icon import loadingtext from ui components loadingtext import showmorecard from ui components showmorecard import notice from ui components notice import styles scss export class addonbase extends react component reason compatibility reason null addon addon status public gettext this is not a public listing you are only seeing it because of elevated permissions null this headerimage compatible iscompatible

| 0

|

41,349

| 12,831,922,434

|

IssuesEvent

|

2020-07-07 06:37:48

|

rvvergara/fazebuk-api

|

https://api.github.com/repos/rvvergara/fazebuk-api

|

closed

|

CVE-2020-10663 (High) detected in json-2.2.0.gem

|

security vulnerability

|

## CVE-2020-10663 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-2.2.0.gem</b></p></summary>

<p>This is a JSON implementation as a Ruby extension in C.</p>

<p>Library home page: <a href="https://rubygems.org/gems/json-2.2.0.gem">https://rubygems.org/gems/json-2.2.0.gem</a></p>

<p>

Dependency Hierarchy:

- koala-3.0.0.gem (Root Library)

- :x: **json-2.2.0.gem** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/rvvergara/fazebuk-api/commit/87f552cd8f4c8dbd427d01637ba54b85f1c43af1">87f552cd8f4c8dbd427d01637ba54b85f1c43af1</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The JSON gem through 2.2.0 for Ruby, as used in Ruby 2.4 through 2.4.9, 2.5 through 2.5.7, and 2.6 through 2.6.5, has an Unsafe Object Creation Vulnerability. This is quite similar to CVE-2013-0269, but does not rely on poor garbage-collection behavior within Ruby. Specifically, use of JSON parsing methods can lead to creation of a malicious object within the interpreter, with adverse effects that are application-dependent.

<p>Publish Date: 2020-04-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-10663>CVE-2020-10663</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.ruby-lang.org/en/news/2020/03/19/json-dos-cve-2020-10663/">https://www.ruby-lang.org/en/news/2020/03/19/json-dos-cve-2020-10663/</a></p>

<p>Release Date: 2020-03-28</p>

<p>Fix Resolution: 2.3.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-10663 (High) detected in json-2.2.0.gem - ## CVE-2020-10663 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-2.2.0.gem</b></p></summary>

<p>This is a JSON implementation as a Ruby extension in C.</p>

<p>Library home page: <a href="https://rubygems.org/gems/json-2.2.0.gem">https://rubygems.org/gems/json-2.2.0.gem</a></p>

<p>

Dependency Hierarchy:

- koala-3.0.0.gem (Root Library)

- :x: **json-2.2.0.gem** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/rvvergara/fazebuk-api/commit/87f552cd8f4c8dbd427d01637ba54b85f1c43af1">87f552cd8f4c8dbd427d01637ba54b85f1c43af1</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The JSON gem through 2.2.0 for Ruby, as used in Ruby 2.4 through 2.4.9, 2.5 through 2.5.7, and 2.6 through 2.6.5, has an Unsafe Object Creation Vulnerability. This is quite similar to CVE-2013-0269, but does not rely on poor garbage-collection behavior within Ruby. Specifically, use of JSON parsing methods can lead to creation of a malicious object within the interpreter, with adverse effects that are application-dependent.

<p>Publish Date: 2020-04-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-10663>CVE-2020-10663</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.ruby-lang.org/en/news/2020/03/19/json-dos-cve-2020-10663/">https://www.ruby-lang.org/en/news/2020/03/19/json-dos-cve-2020-10663/</a></p>

<p>Release Date: 2020-03-28</p>

<p>Fix Resolution: 2.3.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in json gem cve high severity vulnerability vulnerable library json gem this is a json implementation as a ruby extension in c library home page a href dependency hierarchy koala gem root library x json gem vulnerable library found in head commit a href vulnerability details the json gem through for ruby as used in ruby through through and through has an unsafe object creation vulnerability this is quite similar to cve but does not rely on poor garbage collection behavior within ruby specifically use of json parsing methods can lead to creation of a malicious object within the interpreter with adverse effects that are application dependent publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

171,874

| 27,192,443,553

|

IssuesEvent

|

2023-02-19 23:31:46

|

hackforla/expunge-assist

|

https://api.github.com/repos/hackforla/expunge-assist

|

closed

|

[Audit Colors] in Onboarding flow in LG

|

role: design priority: high size: 1pt feature: figma design system feature: figma wireframes

|

### Overview

Extension of #823 . Audit mobile and desktop wireframes under "Design System Audit" on Figma.

### Action Items

- [x] Select each individual "atom" on a wireframe

- [x] Draw an arrow to each "atom" and type out the HEX code or Color style being used

- [x] If "atom" is not using a color style, use a yellow (HEX # #F6841B) arrow and note the HEX code being used

- [x] Note any inconsistencies to share with the team

- [x] Close issue

### Resources/Instructions

- Includes "Welcome", "Before you begin", "Advice" pages

- Ask @anitadesigns or @RenaNicole if you have any questions

|

2.0

|

[Audit Colors] in Onboarding flow in LG - ### Overview

Extension of #823 . Audit mobile and desktop wireframes under "Design System Audit" on Figma.

### Action Items

- [x] Select each individual "atom" on a wireframe

- [x] Draw an arrow to each "atom" and type out the HEX code or Color style being used

- [x] If "atom" is not using a color style, use a yellow (HEX # #F6841B) arrow and note the HEX code being used

- [x] Note any inconsistencies to share with the team

- [x] Close issue

### Resources/Instructions

- Includes "Welcome", "Before you begin", "Advice" pages

- Ask @anitadesigns or @RenaNicole if you have any questions

|

non_process

|

in onboarding flow in lg overview extension of audit mobile and desktop wireframes under design system audit on figma action items select each individual atom on a wireframe draw an arrow to each atom and type out the hex code or color style being used if atom is not using a color style use a yellow hex arrow and note the hex code being used note any inconsistencies to share with the team close issue resources instructions includes welcome before you begin advice pages ask anitadesigns or renanicole if you have any questions

| 0

|

67,034

| 8,070,547,671

|

IssuesEvent

|

2018-08-06 10:05:56

|

JohnSegerstedt/Game1

|

https://api.github.com/repos/JohnSegerstedt/Game1

|

closed

|

Redesign StartMenu animation to match that of the game

|

redesign shelved

|

**ACCEPTANCE CRITERIA:**

* The player shapes share the same Material as the real player models.

* The ground shares the same Material as the real ground model.

* The edges of the ground has been correctly added.

|

1.0

|

Redesign StartMenu animation to match that of the game - **ACCEPTANCE CRITERIA:**

* The player shapes share the same Material as the real player models.

* The ground shares the same Material as the real ground model.

* The edges of the ground has been correctly added.

|

non_process

|

redesign startmenu animation to match that of the game acceptance criteria the player shapes share the same material as the real player models the ground shares the same material as the real ground model the edges of the ground has been correctly added

| 0

|

3,360

| 6,487,982,652

|

IssuesEvent

|

2017-08-20 13:19:17

|

gaocegege/Processing.R

|

https://api.github.com/repos/gaocegege/Processing.R

|

closed

|

Cast more functions from double to int

|

community/processing difficulty/low priority/p1 size/small status/to-be-claimed type/enhancement

|

- Anything named "mode":

- blendMode()

- colorMode()

- ellipseMode()

- imageMode()

- rectMode()

- shapeMode()

- textureMode()

- textMode()

- Other configuration-setting functions:

- pixelDensity()

- strokeCap()

- strokeJoin()

- textureWrap()

|

1.0

|

Cast more functions from double to int - - Anything named "mode":

- blendMode()

- colorMode()

- ellipseMode()

- imageMode()

- rectMode()

- shapeMode()

- textureMode()

- textMode()

- Other configuration-setting functions:

- pixelDensity()

- strokeCap()

- strokeJoin()

- textureWrap()

|

process

|

cast more functions from double to int anything named mode blendmode colormode ellipsemode imagemode rectmode shapemode texturemode textmode other configuration setting functions pixeldensity strokecap strokejoin texturewrap

| 1

|

18,195

| 24,248,270,342

|

IssuesEvent

|

2022-09-27 12:24:42

|

quark-engine/quark-engine

|

https://api.github.com/repos/quark-engine/quark-engine

|

closed

|

Core Member Page Update

|

issue-processing-state-06

|

Hey guys,

I think we should update the [core member page](https://quark-engine.readthedocs.io/en/latest/contribution.html#core-members).

Suggested Categories are:

* Core Member

* Alumni

* Consultant

|

1.0

|

Core Member Page Update - Hey guys,

I think we should update the [core member page](https://quark-engine.readthedocs.io/en/latest/contribution.html#core-members).

Suggested Categories are:

* Core Member

* Alumni

* Consultant

|

process

|

core member page update hey guys i think we should update the suggested categories are core member alumni consultant

| 1

|

14,658

| 17,783,604,584

|

IssuesEvent

|

2021-08-31 08:26:07

|

googleapis/google-cloud-dotnet

|

https://api.github.com/repos/googleapis/google-cloud-dotnet

|

closed

|

Dependency Dashboard

|

priority: p2 type: process

|

This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Ignored or Blocked

These are blocked by an existing closed PR and will not be recreated unless you click a checkbox below.

- [ ] <!-- recreate-branch=renovate/microsoft.netframework.referenceassemblies-1.x -->[chore(deps): update dependency microsoft.netframework.referenceassemblies to v1.0.2](../pull/6811)

- [ ] <!-- recreate-branch=renovate/microsoft.aspnetcore.mvc.core-2.x -->[chore(deps): update dependency microsoft.aspnetcore.mvc.core to v2.2.5](../pull/6895)

- [ ] <!-- recreate-branch=renovate/xunit.combinatorial-1.x -->[chore(deps): update dependency xunit.combinatorial to v1.4.1](../pull/6857)

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

1.0

|

Dependency Dashboard - This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Ignored or Blocked

These are blocked by an existing closed PR and will not be recreated unless you click a checkbox below.

- [ ] <!-- recreate-branch=renovate/microsoft.netframework.referenceassemblies-1.x -->[chore(deps): update dependency microsoft.netframework.referenceassemblies to v1.0.2](../pull/6811)

- [ ] <!-- recreate-branch=renovate/microsoft.aspnetcore.mvc.core-2.x -->[chore(deps): update dependency microsoft.aspnetcore.mvc.core to v2.2.5](../pull/6895)

- [ ] <!-- recreate-branch=renovate/xunit.combinatorial-1.x -->[chore(deps): update dependency xunit.combinatorial to v1.4.1](../pull/6857)

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

process

|

dependency dashboard this issue provides visibility into renovate updates and their statuses ignored or blocked these are blocked by an existing closed pr and will not be recreated unless you click a checkbox below pull pull pull check this box to trigger a request for renovate to run again on this repository

| 1

|

3,152

| 6,204,369,976

|

IssuesEvent

|

2017-07-06 14:05:23

|

SpongePowered/Mixin

|

https://api.github.com/repos/SpongePowered/Mixin

|

closed

|

Equivalent of @Override/@Overwrite for non-obfuscated methods

|

accepted annotation processor core enhancement

|

Currently there is no way to do any compiletime checks to make sure the method I'm overwriting exists when it's non obfuscated one/doesn't have mappings.

|

1.0

|

Equivalent of @Override/@Overwrite for non-obfuscated methods - Currently there is no way to do any compiletime checks to make sure the method I'm overwriting exists when it's non obfuscated one/doesn't have mappings.

|

process

|

equivalent of override overwrite for non obfuscated methods currently there is no way to do any compiletime checks to make sure the method i m overwriting exists when it s non obfuscated one doesn t have mappings

| 1

|

235,279

| 7,736,098,237

|

IssuesEvent

|

2018-05-27 22:26:05

|

WohlSoft/PGE-Project

|

https://api.github.com/repos/WohlSoft/PGE-Project

|

opened

|

[Editor] Plugins system

|

Priority - major enhancement

|

Editor even has the complex and wide functionality, will be more handy with having the plugins system. The current base of the Editor is not so good for implementation of the deep plugins system. Deep plugins system means the giving a complex binding into JavaScript which will allow plugins to manipulate editor's internals. That will be possible after the comming internal architecture restructurization.

The only is possible with current base to implement next things:

# Extra properties of every element

- [ ] Implement the access to the custom properties set stored in the special meta field inside of every element that will be saved into LVLX files.