Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,190

| 28,209,306,322

|

IssuesEvent

|

2023-04-05 01:48:15

|

bitfocus/companion-module-requests

|

https://api.github.com/repos/bitfocus/companion-module-requests

|

opened

|

RTS Intercom

|

NOT YET PROCESSED

|

- [Yes ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

RTS Intercom/ [ODIN](https://products.rtsintercoms.com/na/en/odin/)

[OMNEO digital intercom](https://products.rtsintercoms.com/na/en/odin/)

What you would like to be able to make it do from Companion:

Have a Intercom with this protocols: ST 2110, AES67, AES70

Direct links or attachments to the ethernet control protocol or API:

https://ocaalliance.com/resources/standards-specifications/

|

1.0

|

RTS Intercom - - [Yes ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

RTS Intercom/ [ODIN](https://products.rtsintercoms.com/na/en/odin/)

[OMNEO digital intercom](https://products.rtsintercoms.com/na/en/odin/)

What you would like to be able to make it do from Companion:

Have a Intercom with this protocols: ST 2110, AES67, AES70

Direct links or attachments to the ethernet control protocol or API:

https://ocaalliance.com/resources/standards-specifications/

|

process

|

rts intercom i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control rts intercom what you would like to be able to make it do from companion have a intercom with this protocols st direct links or attachments to the ethernet control protocol or api

| 1

|

118,637

| 15,343,992,948

|

IssuesEvent

|

2021-02-27 22:51:44

|

arwes/arwes

|

https://api.github.com/repos/arwes/arwes

|

opened

|

Add application sounds starter package

|

app: playground app: website complexity: medium package: core type: feature type: sound design type: ui/ux design

|

The project should provide a free sounds starter package for an average Arwes application. This would be the same sounds used for the website application and playground sandboxes.

Since this requires the components to be built and the website user experience to be mostly completed to properly define them, the outcome of this task is to be updated as development progresses.

|

2.0

|

Add application sounds starter package - The project should provide a free sounds starter package for an average Arwes application. This would be the same sounds used for the website application and playground sandboxes.

Since this requires the components to be built and the website user experience to be mostly completed to properly define them, the outcome of this task is to be updated as development progresses.

|

non_process

|

add application sounds starter package the project should provide a free sounds starter package for an average arwes application this would be the same sounds used for the website application and playground sandboxes since this requires the components to be built and the website user experience to be mostly completed to properly define them the outcome of this task is to be updated as development progresses

| 0

|

24,671

| 12,367,987,056

|

IssuesEvent

|

2020-05-18 13:10:23

|

unisonweb/unison

|

https://api.github.com/repos/unisonweb/unison

|

closed

|

Initial pull of base uses a lot of memory

|

memory-usage performance

|

Here's a transcript:

```ucm

.> pull git@github.com:unisonweb/base:.trunk base

```

Just from watching activity monitor:

* Initial memory usage is about 14-15MB.

* Usage climbs to about 5.5GB during the "Importing downloaded files into local codebase" phase.

* After completion, memory usage stays at that level even after I do further commands to hopefully trigger some GC.

* After restarting on the same codebase, memory usage is much lower (300-500MB, see #1550).

* These results are with caching turned off - I turned off by hardcoding `nullCache` in `Main`, not relying on the config setting.

@aryairani I could have sworn this took very little memory at one point in the development of this new syncing algorithm

If I had to guess, it's some sort of space leak in `SlimCopyRegenerateIndex`. A couple things I tried (which are all in debug/1560 branch):

* Turned on `{-# Language Strict, StrictData -#}` in SlimCopyRegenerateIndex (total guess).

* Did a `ByteString.copy` everywhere in `V1.hs` where `getBytes` is called - by default, there was one in `getHash` and one in `getText`. Reasoning: `ByteString` values are just offsets into a single array of bytes. To allow that array of bytes to be GC'd you need to copy the bytestring to its own storage.

The fact that memory usage doesn't go back down again seems like a clue and is unexpected to me...

|

True

|

Initial pull of base uses a lot of memory - Here's a transcript:

```ucm

.> pull git@github.com:unisonweb/base:.trunk base

```

Just from watching activity monitor:

* Initial memory usage is about 14-15MB.

* Usage climbs to about 5.5GB during the "Importing downloaded files into local codebase" phase.

* After completion, memory usage stays at that level even after I do further commands to hopefully trigger some GC.

* After restarting on the same codebase, memory usage is much lower (300-500MB, see #1550).

* These results are with caching turned off - I turned off by hardcoding `nullCache` in `Main`, not relying on the config setting.

@aryairani I could have sworn this took very little memory at one point in the development of this new syncing algorithm

If I had to guess, it's some sort of space leak in `SlimCopyRegenerateIndex`. A couple things I tried (which are all in debug/1560 branch):

* Turned on `{-# Language Strict, StrictData -#}` in SlimCopyRegenerateIndex (total guess).

* Did a `ByteString.copy` everywhere in `V1.hs` where `getBytes` is called - by default, there was one in `getHash` and one in `getText`. Reasoning: `ByteString` values are just offsets into a single array of bytes. To allow that array of bytes to be GC'd you need to copy the bytestring to its own storage.

The fact that memory usage doesn't go back down again seems like a clue and is unexpected to me...

|

non_process

|

initial pull of base uses a lot of memory here s a transcript ucm pull git github com unisonweb base trunk base just from watching activity monitor initial memory usage is about usage climbs to about during the importing downloaded files into local codebase phase after completion memory usage stays at that level even after i do further commands to hopefully trigger some gc after restarting on the same codebase memory usage is much lower see these results are with caching turned off i turned off by hardcoding nullcache in main not relying on the config setting aryairani i could have sworn this took very little memory at one point in the development of this new syncing algorithm if i had to guess it s some sort of space leak in slimcopyregenerateindex a couple things i tried which are all in debug branch turned on language strict strictdata in slimcopyregenerateindex total guess did a bytestring copy everywhere in hs where getbytes is called by default there was one in gethash and one in gettext reasoning bytestring values are just offsets into a single array of bytes to allow that array of bytes to be gc d you need to copy the bytestring to its own storage the fact that memory usage doesn t go back down again seems like a clue and is unexpected to me

| 0

|

3,674

| 4,641,905,219

|

IssuesEvent

|

2016-09-30 07:34:07

|

signmeup/signmeup

|

https://api.github.com/repos/signmeup/signmeup

|

opened

|

Implement Shib logout

|

enhancement security

|

Restricted mode is risky to start if the user forgets to log out of Banner to end their Shib session. Instead, we should redirect them to the logout page to trigger this.

|

True

|

Implement Shib logout - Restricted mode is risky to start if the user forgets to log out of Banner to end their Shib session. Instead, we should redirect them to the logout page to trigger this.

|

non_process

|

implement shib logout restricted mode is risky to start if the user forgets to log out of banner to end their shib session instead we should redirect them to the logout page to trigger this

| 0

|

123,363

| 26,247,330,483

|

IssuesEvent

|

2023-01-05 16:14:24

|

Azure/azure-dev

|

https://api.github.com/repos/Azure/azure-dev

|

closed

|

VS Code <-> Azd integration requires additional `az login` for local app development use-cases

|

blocker vscode design

|

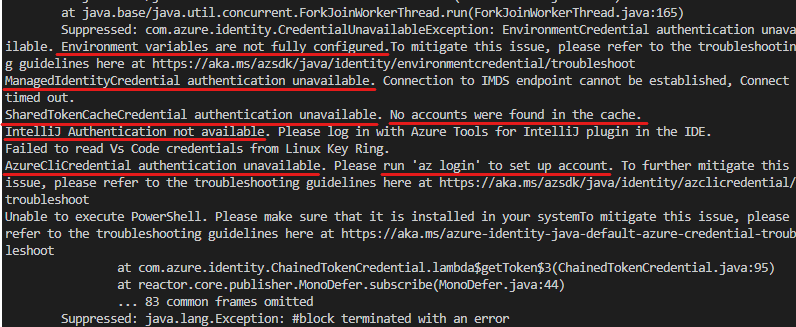

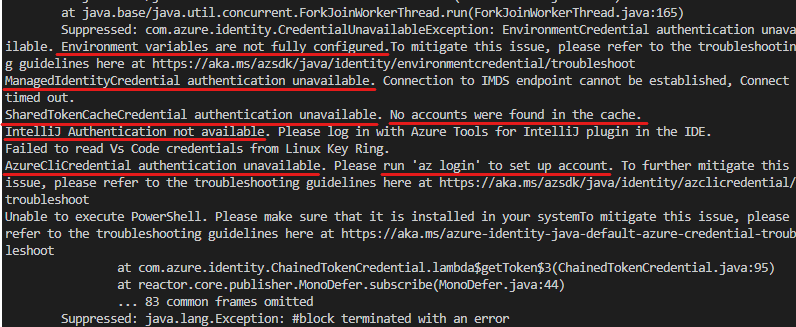

**Describe the issue:**

Task on the api requires authentication.

**Repro Steps:**

1. Open the project in VS Code.

2. Login with `azd login`.

3. Hit F1, Run Task, Start API and Web. During the api startup process, you will get the error as below:

Besides, if we execute `az login` according to the promption, this error can be fixed. But this operation is a bit strange because we have performed `azd login`.

**Environment:**

OS: Codespaces, WSL, Windows desktop, MacOS desktop, Linux desktop, Devcontainer in VS Code

Template: All templates.

Branch: [pr/1153](https://github.com/Azure/azure-dev/pull/1153)

Azd version: 0.4.0-beta.1-pr.1988386 (commit 7b6248aa6788d586bcf0b9a9491187866dad5cca)

**Expected behavior:**

After we `azd login`, we can start the api without other authentication.

@rajeshkamal5050 for notification.

|

1.0

|

VS Code <-> Azd integration requires additional `az login` for local app development use-cases - **Describe the issue:**

Task on the api requires authentication.

**Repro Steps:**

1. Open the project in VS Code.

2. Login with `azd login`.

3. Hit F1, Run Task, Start API and Web. During the api startup process, you will get the error as below:

Besides, if we execute `az login` according to the promption, this error can be fixed. But this operation is a bit strange because we have performed `azd login`.

**Environment:**

OS: Codespaces, WSL, Windows desktop, MacOS desktop, Linux desktop, Devcontainer in VS Code

Template: All templates.

Branch: [pr/1153](https://github.com/Azure/azure-dev/pull/1153)

Azd version: 0.4.0-beta.1-pr.1988386 (commit 7b6248aa6788d586bcf0b9a9491187866dad5cca)

**Expected behavior:**

After we `azd login`, we can start the api without other authentication.

@rajeshkamal5050 for notification.

|

non_process

|

vs code azd integration requires additional az login for local app development use cases describe the issue task on the api requires authentication repro steps open the project in vs code login with azd login hit run task start api and web during the api startup process you will get the error as below besides if we execute az login according to the promption this error can be fixed but this operation is a bit strange because we have performed azd login environment os codespaces wsl windows desktop macos desktop linux desktop devcontainer in vs code template all templates branch azd version beta pr commit expected behavior after we azd login we can start the api without other authentication for notification

| 0

|

11,106

| 7,058,580,615

|

IssuesEvent

|

2018-01-04 20:59:20

|

coreos/bugs

|

https://api.github.com/repos/coreos/bugs

|

closed

|

Ignition S3 Region Detection

|

area/usability component/ignition kind/bug platform/aws team/tools

|

The region detection used when retrieving S3 assets doesn't work in all regions, most specifically `us-gov-west-1`.

It could be easily retrieved with

`curl -s http://169.254.169.254/latest/dynamic/instance-identity/document | jq -r .region`

and using that as the `regionHint` parameter in

https://github.com/coreos/ignition/blob/294826a9d880b5ddc1a900011100ce9ce2134c28/internal/resource/url.go#L334.

|

True

|

Ignition S3 Region Detection - The region detection used when retrieving S3 assets doesn't work in all regions, most specifically `us-gov-west-1`.

It could be easily retrieved with

`curl -s http://169.254.169.254/latest/dynamic/instance-identity/document | jq -r .region`

and using that as the `regionHint` parameter in

https://github.com/coreos/ignition/blob/294826a9d880b5ddc1a900011100ce9ce2134c28/internal/resource/url.go#L334.

|

non_process

|

ignition region detection the region detection used when retrieving assets doesn t work in all regions most specifically us gov west it could be easily retrieved with curl s jq r region and using that as the regionhint parameter in

| 0

|

56,293

| 23,743,593,164

|

IssuesEvent

|

2022-08-31 14:18:14

|

miranda-ng/miranda-ng

|

https://api.github.com/repos/miranda-ng/miranda-ng

|

closed

|

VoiceService: do not grab focus on incoming call

|

bug regression VoiceService

|

> [21:49] deadsend: и диалог при входящем звонке начал перехватывать фокус, чего до этого не было и это было фичей

|

1.0

|

VoiceService: do not grab focus on incoming call - > [21:49] deadsend: и диалог при входящем звонке начал перехватывать фокус, чего до этого не было и это было фичей

|

non_process

|

voiceservice do not grab focus on incoming call deadsend и диалог при входящем звонке начал перехватывать фокус чего до этого не было и это было фичей

| 0

|

44,536

| 12,235,475,927

|

IssuesEvent

|

2020-05-04 14:56:37

|

scipy/scipy

|

https://api.github.com/repos/scipy/scipy

|

closed

|

oaconvolve(a,b,'same') differs in shape from convolve(a,b,'same') when len(a)==1 and len(b)>1

|

defect scipy.signal

|

<!--

Thank you for taking the time to file a bug report.

Please fill in the fields below, deleting the sections that

don't apply to your issue. You can view the final output

by clicking the preview button above.

Note: This is a comment, and won't appear in the output.

-->

I wrote a matricial version of `convolve`, and was testing it against both `scipy.signal.convolve` and `scipy.signal.oaconvolve`. I realized that for very specific inputs **the two results differ in shape**. I suppose that they should return the same result (to machine precision), so I thought it'd be good to tell.

This happens only when:

- 1st argument is a 1 element vector

- 2nd argument is a longer vector

- mode is 'same'

This doesn't seem to happen when both vectors have a meaningful length (although 1 is meaningful as a limit case). I.e., when len(a)>=2, results are consistent.

(I have not tested for inputs of higher dimension)

#### Reproducing code example:

<!--

If you place your code between the triple backticks below,

it will be rendered as a code block.

-->

```

import scipy.signal as scisig

oaconv = lambda a,b: scipy.oaconvolve(a,b,'same')

conv = lambda a,b: scisig.convolve(a,b,'same')

conv([1],[1,-1]), oaconv([1],[1,-1]) # should be equal, I get (array([1]), array([ 1, -1]))

conv([1,-1],[1]), oaconv([1,-1],[1]) # these instead are fine: (array([ 1, -1]), array([ 1, -1]))

```

#### Error message:

<!-- If any, paste the *full* error message inside a code block

as above (starting from line Traceback)

-->

```

(No error message)

```

#### Scipy/Numpy/Python version information:

<!-- You can simply run the following and paste the result in a code block

```

import sys, scipy, numpy; print(scipy.__version__, numpy.__version__, sys.version_info)

```

-->

```

1.4.1 1.18.1 sys.version_info(major=3, minor=7, micro=6, releaselevel='final', serial=0)

```

|

1.0

|

oaconvolve(a,b,'same') differs in shape from convolve(a,b,'same') when len(a)==1 and len(b)>1 - <!--

Thank you for taking the time to file a bug report.

Please fill in the fields below, deleting the sections that

don't apply to your issue. You can view the final output

by clicking the preview button above.

Note: This is a comment, and won't appear in the output.

-->

I wrote a matricial version of `convolve`, and was testing it against both `scipy.signal.convolve` and `scipy.signal.oaconvolve`. I realized that for very specific inputs **the two results differ in shape**. I suppose that they should return the same result (to machine precision), so I thought it'd be good to tell.

This happens only when:

- 1st argument is a 1 element vector

- 2nd argument is a longer vector

- mode is 'same'

This doesn't seem to happen when both vectors have a meaningful length (although 1 is meaningful as a limit case). I.e., when len(a)>=2, results are consistent.

(I have not tested for inputs of higher dimension)

#### Reproducing code example:

<!--

If you place your code between the triple backticks below,

it will be rendered as a code block.

-->

```

import scipy.signal as scisig

oaconv = lambda a,b: scipy.oaconvolve(a,b,'same')

conv = lambda a,b: scisig.convolve(a,b,'same')

conv([1],[1,-1]), oaconv([1],[1,-1]) # should be equal, I get (array([1]), array([ 1, -1]))

conv([1,-1],[1]), oaconv([1,-1],[1]) # these instead are fine: (array([ 1, -1]), array([ 1, -1]))

```

#### Error message:

<!-- If any, paste the *full* error message inside a code block

as above (starting from line Traceback)

-->

```

(No error message)

```

#### Scipy/Numpy/Python version information:

<!-- You can simply run the following and paste the result in a code block

```

import sys, scipy, numpy; print(scipy.__version__, numpy.__version__, sys.version_info)

```

-->

```

1.4.1 1.18.1 sys.version_info(major=3, minor=7, micro=6, releaselevel='final', serial=0)

```

|

non_process

|

oaconvolve a b same differs in shape from convolve a b same when len a and len b thank you for taking the time to file a bug report please fill in the fields below deleting the sections that don t apply to your issue you can view the final output by clicking the preview button above note this is a comment and won t appear in the output i wrote a matricial version of convolve and was testing it against both scipy signal convolve and scipy signal oaconvolve i realized that for very specific inputs the two results differ in shape i suppose that they should return the same result to machine precision so i thought it d be good to tell this happens only when argument is a element vector argument is a longer vector mode is same this doesn t seem to happen when both vectors have a meaningful length although is meaningful as a limit case i e when len a results are consistent i have not tested for inputs of higher dimension reproducing code example if you place your code between the triple backticks below it will be rendered as a code block import scipy signal as scisig oaconv lambda a b scipy oaconvolve a b same conv lambda a b scisig convolve a b same conv oaconv should be equal i get array array conv oaconv these instead are fine array array error message if any paste the full error message inside a code block as above starting from line traceback no error message scipy numpy python version information you can simply run the following and paste the result in a code block import sys scipy numpy print scipy version numpy version sys version info sys version info major minor micro releaselevel final serial

| 0

|

5,685

| 8,559,032,457

|

IssuesEvent

|

2018-11-08 20:01:01

|

integr8ly/tutorial-web-app

|

https://api.github.com/repos/integr8ly/tutorial-web-app

|

closed

|

Request: Lock Dependency Versions

|

enhancement process

|

As the web app progresses I would like to see the dependencies listed in the `package.json` file be locked down to specific versions, rather than using the `^`. The `yarn.lock` file should not have to change unless there is a specific need for an introduction of a new dependency, or a vital version update.

|

1.0

|

Request: Lock Dependency Versions - As the web app progresses I would like to see the dependencies listed in the `package.json` file be locked down to specific versions, rather than using the `^`. The `yarn.lock` file should not have to change unless there is a specific need for an introduction of a new dependency, or a vital version update.

|

process

|

request lock dependency versions as the web app progresses i would like to see the dependencies listed in the package json file be locked down to specific versions rather than using the the yarn lock file should not have to change unless there is a specific need for an introduction of a new dependency or a vital version update

| 1

|

85,061

| 15,731,184,236

|

IssuesEvent

|

2021-03-29 16:46:08

|

wrbejar/bag-of-holding

|

https://api.github.com/repos/wrbejar/bag-of-holding

|

opened

|

WS-2018-0590 (High) detected in diff-1.4.0.tgz

|

security vulnerability

|

## WS-2018-0590 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>diff-1.4.0.tgz</b></p></summary>

<p>A javascript text diff implementation.</p>

<p>Library home page: <a href="https://registry.npmjs.org/diff/-/diff-1.4.0.tgz">https://registry.npmjs.org/diff/-/diff-1.4.0.tgz</a></p>

<p>Path to dependency file: bag-of-holding/package.json</p>

<p>Path to vulnerable library: bag-of-holding/node_modules/diff/package.json</p>

<p>

Dependency Hierarchy:

- gulp-sass-1.3.3.tgz (Root Library)

- node-sass-2.1.1.tgz

- mocha-2.5.3.tgz

- :x: **diff-1.4.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/wrbejar/bag-of-holding/commit/6087cf643d57f8f112ae650913c59bfc0a1033d6">6087cf643d57f8f112ae650913c59bfc0a1033d6</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability was found in diff before v3.5.0, the affected versions of this package are vulnerable to Regular Expression Denial of Service (ReDoS) attacks.

<p>Publish Date: 2018-03-05

<p>URL: <a href=https://bugzilla.redhat.com/show_bug.cgi?id=1552148>WS-2018-0590</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>7.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/kpdecker/jsdiff/commit/2aec4298639bf30fb88a00b356bf404d3551b8c0">https://github.com/kpdecker/jsdiff/commit/2aec4298639bf30fb88a00b356bf404d3551b8c0</a></p>

<p>Release Date: 2019-06-11</p>

<p>Fix Resolution: 3.5.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"diff","packageVersion":"1.4.0","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"gulp-sass:1.3.3;node-sass:2.1.1;mocha:2.5.3;diff:1.4.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.5.0"}],"baseBranches":["master"],"vulnerabilityIdentifier":"WS-2018-0590","vulnerabilityDetails":"A vulnerability was found in diff before v3.5.0, the affected versions of this package are vulnerable to Regular Expression Denial of Service (ReDoS) attacks.","vulnerabilityUrl":"https://bugzilla.redhat.com/show_bug.cgi?id\u003d1552148","cvss2Severity":"high","cvss2Score":"7.0","extraData":{}}</REMEDIATE> -->

|

True

|

WS-2018-0590 (High) detected in diff-1.4.0.tgz - ## WS-2018-0590 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>diff-1.4.0.tgz</b></p></summary>

<p>A javascript text diff implementation.</p>

<p>Library home page: <a href="https://registry.npmjs.org/diff/-/diff-1.4.0.tgz">https://registry.npmjs.org/diff/-/diff-1.4.0.tgz</a></p>

<p>Path to dependency file: bag-of-holding/package.json</p>

<p>Path to vulnerable library: bag-of-holding/node_modules/diff/package.json</p>

<p>

Dependency Hierarchy:

- gulp-sass-1.3.3.tgz (Root Library)

- node-sass-2.1.1.tgz

- mocha-2.5.3.tgz

- :x: **diff-1.4.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/wrbejar/bag-of-holding/commit/6087cf643d57f8f112ae650913c59bfc0a1033d6">6087cf643d57f8f112ae650913c59bfc0a1033d6</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability was found in diff before v3.5.0, the affected versions of this package are vulnerable to Regular Expression Denial of Service (ReDoS) attacks.

<p>Publish Date: 2018-03-05

<p>URL: <a href=https://bugzilla.redhat.com/show_bug.cgi?id=1552148>WS-2018-0590</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>7.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/kpdecker/jsdiff/commit/2aec4298639bf30fb88a00b356bf404d3551b8c0">https://github.com/kpdecker/jsdiff/commit/2aec4298639bf30fb88a00b356bf404d3551b8c0</a></p>

<p>Release Date: 2019-06-11</p>

<p>Fix Resolution: 3.5.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"diff","packageVersion":"1.4.0","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"gulp-sass:1.3.3;node-sass:2.1.1;mocha:2.5.3;diff:1.4.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.5.0"}],"baseBranches":["master"],"vulnerabilityIdentifier":"WS-2018-0590","vulnerabilityDetails":"A vulnerability was found in diff before v3.5.0, the affected versions of this package are vulnerable to Regular Expression Denial of Service (ReDoS) attacks.","vulnerabilityUrl":"https://bugzilla.redhat.com/show_bug.cgi?id\u003d1552148","cvss2Severity":"high","cvss2Score":"7.0","extraData":{}}</REMEDIATE> -->

|

non_process

|

ws high detected in diff tgz ws high severity vulnerability vulnerable library diff tgz a javascript text diff implementation library home page a href path to dependency file bag of holding package json path to vulnerable library bag of holding node modules diff package json dependency hierarchy gulp sass tgz root library node sass tgz mocha tgz x diff tgz vulnerable library found in head commit a href found in base branch master vulnerability details a vulnerability was found in diff before the affected versions of this package are vulnerable to regular expression denial of service redos attacks publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability true ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree gulp sass node sass mocha diff isminimumfixversionavailable true minimumfixversion basebranches vulnerabilityidentifier ws vulnerabilitydetails a vulnerability was found in diff before the affected versions of this package are vulnerable to regular expression denial of service redos attacks vulnerabilityurl

| 0

|

196,085

| 14,798,606,933

|

IssuesEvent

|

2021-01-13 00:11:13

|

openshift/odo

|

https://api.github.com/repos/openshift/odo

|

closed

|

[Flake] create, push and delete reports invalid configuration

|

area/component area/testing kind/flake lifecycle/rotten priority/Medium

|

[kind/bug]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

- Operating System:

- Output of `odo version`: master

## How did you run odo exactly?

On OpenShift CI

## Actual behavior

Error out

## Expected behavior

Should pass

## Any logs, error output, etc?

```

odo sub component command tests when component is in the current directory and --project flag is used

creates and pushes local nodejs component and then deletes --all

/go/src/github.com/openshift/odo/tests/integration/component.go:329

Created dir: /tmp/724815433

Creating a new project: texjxuxufc

Running odo with args [odo project create texjxuxufc -w -v4]

[odo] I0904 13:18:24.552396 19818 preference.go:118] The path for preference file is /tmp/724815433/config.yaml

[odo] I0904 13:18:24.552472 19818 occlient.go:479] Trying to connect to server api.ci-op-d1whffy3-f09f4.origin-ci-int-aws.dev.rhcloud.com:6443

[odo] I0904 13:18:24.567450 19818 occlient.go:486] Server https://api.ci-op-d1whffy3-f09f4.origin-ci-int-aws.dev.rhcloud.com:6443 is up

[odo] I0904 13:18:24.631580 19818 occlient.go:409] isLoggedIn err: <nil>

[odo] output: "developer"

[odo] • Waiting for project to come up ...

[odo] ✓ Waiting for project to come up [353ms]

[odo] ✓ Project 'texjxuxufc' is ready for use

[odo] ✓ New project created and now using project : texjxuxufc

[odo] I0904 13:18:25.006691 19818 odo.go:70] Could not get the latest release information in time. Never mind, exiting gracefully :)

Current working dir: /go/src/github.com/openshift/odo/tests/integration

Setting current dir to: /tmp/724815433

Running odo with args [odo component create nodejs my-component --app app --project texjxuxufc --env key=value,key1=value1]

[odo] • Validating component ...

[odo] ✓ Validating component [37ms]

[odo] Please use `odo push` command to create the component with source deployed

[odo]

Running odo with args [odo component push --context /tmp/724815433]

[odo] ✗ invalid configuration: [context was not found for specified context: jgjryhzmbp/api-ci-op-d1whffy3-f09f4-origin-ci-int-aws-dev-rhcloud-com:6443/developer, cluster has no server defined]

[odo] Please login to your server:

[odo]

[odo] odo login https://mycluster.mydomain.com

[odo]

Setting current dir to: /go/src/github.com/openshift/odo/tests/integration

Deleting project: texjxuxufc

Running odo with args [odo project delete texjxuxufc -f]

[odo] • Deleting project texjxuxufc ...

[odo] ✓ Deleting project texjxuxufc [5s]

[odo] ✓ Deleted project : texjxuxufc

Deleting dir: /tmp/724815433

• Failure [6.659 seconds]

odo sub component command tests

/go/src/github.com/openshift/odo/tests/integration/cmd_cmp_sub_test.go:13

when component is in the current directory and --project flag is used

/go/src/github.com/openshift/odo/tests/integration/component.go:306

creates and pushes local nodejs component and then deletes --all [It]

/go/src/github.com/openshift/odo/tests/integration/component.go:329

No future change is possible. Bailing out early after 0.102s.

Running odo with args [odo component push --context /tmp/724815433]

Expected

<int>: 1

to match exit code:

<int>: 0

/go/src/github.com/openshift/odo/tests/helper/helper_run.go:32

```

|

1.0

|

[Flake] create, push and delete reports invalid configuration - [kind/bug]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

- Operating System:

- Output of `odo version`: master

## How did you run odo exactly?

On OpenShift CI

## Actual behavior

Error out

## Expected behavior

Should pass

## Any logs, error output, etc?

```

odo sub component command tests when component is in the current directory and --project flag is used

creates and pushes local nodejs component and then deletes --all

/go/src/github.com/openshift/odo/tests/integration/component.go:329

Created dir: /tmp/724815433

Creating a new project: texjxuxufc

Running odo with args [odo project create texjxuxufc -w -v4]

[odo] I0904 13:18:24.552396 19818 preference.go:118] The path for preference file is /tmp/724815433/config.yaml

[odo] I0904 13:18:24.552472 19818 occlient.go:479] Trying to connect to server api.ci-op-d1whffy3-f09f4.origin-ci-int-aws.dev.rhcloud.com:6443

[odo] I0904 13:18:24.567450 19818 occlient.go:486] Server https://api.ci-op-d1whffy3-f09f4.origin-ci-int-aws.dev.rhcloud.com:6443 is up

[odo] I0904 13:18:24.631580 19818 occlient.go:409] isLoggedIn err: <nil>

[odo] output: "developer"

[odo] • Waiting for project to come up ...

[odo] ✓ Waiting for project to come up [353ms]

[odo] ✓ Project 'texjxuxufc' is ready for use

[odo] ✓ New project created and now using project : texjxuxufc

[odo] I0904 13:18:25.006691 19818 odo.go:70] Could not get the latest release information in time. Never mind, exiting gracefully :)

Current working dir: /go/src/github.com/openshift/odo/tests/integration

Setting current dir to: /tmp/724815433

Running odo with args [odo component create nodejs my-component --app app --project texjxuxufc --env key=value,key1=value1]

[odo] • Validating component ...

[odo] ✓ Validating component [37ms]

[odo] Please use `odo push` command to create the component with source deployed

[odo]

Running odo with args [odo component push --context /tmp/724815433]

[odo] ✗ invalid configuration: [context was not found for specified context: jgjryhzmbp/api-ci-op-d1whffy3-f09f4-origin-ci-int-aws-dev-rhcloud-com:6443/developer, cluster has no server defined]

[odo] Please login to your server:

[odo]

[odo] odo login https://mycluster.mydomain.com

[odo]

Setting current dir to: /go/src/github.com/openshift/odo/tests/integration

Deleting project: texjxuxufc

Running odo with args [odo project delete texjxuxufc -f]

[odo] • Deleting project texjxuxufc ...

[odo] ✓ Deleting project texjxuxufc [5s]

[odo] ✓ Deleted project : texjxuxufc

Deleting dir: /tmp/724815433

• Failure [6.659 seconds]

odo sub component command tests

/go/src/github.com/openshift/odo/tests/integration/cmd_cmp_sub_test.go:13

when component is in the current directory and --project flag is used

/go/src/github.com/openshift/odo/tests/integration/component.go:306

creates and pushes local nodejs component and then deletes --all [It]

/go/src/github.com/openshift/odo/tests/integration/component.go:329

No future change is possible. Bailing out early after 0.102s.

Running odo with args [odo component push --context /tmp/724815433]

Expected

<int>: 1

to match exit code:

<int>: 0

/go/src/github.com/openshift/odo/tests/helper/helper_run.go:32

```

|

non_process

|

create push and delete reports invalid configuration welcome we kindly ask you to fill out the issue template below use the google group if you have a question rather than a bug or feature request the group is at thanks for understanding and for contributing to the project what versions of software are you using operating system output of odo version master how did you run odo exactly on openshift ci actual behavior error out expected behavior should pass any logs error output etc odo sub component command tests when component is in the current directory and project flag is used creates and pushes local nodejs component and then deletes all go src github com openshift odo tests integration component go created dir tmp creating a new project texjxuxufc running odo with args preference go the path for preference file is tmp config yaml occlient go trying to connect to server api ci op origin ci int aws dev rhcloud com occlient go server is up occlient go isloggedin err output developer • waiting for project to come up ✓ waiting for project to come up ✓ project texjxuxufc is ready for use ✓ new project created and now using project texjxuxufc odo go could not get the latest release information in time never mind exiting gracefully current working dir go src github com openshift odo tests integration setting current dir to tmp running odo with args • validating component ✓ validating component please use odo push command to create the component with source deployed running odo with args ✗ invalid configuration please login to your server odo login setting current dir to go src github com openshift odo tests integration deleting project texjxuxufc running odo with args • deleting project texjxuxufc ✓ deleting project texjxuxufc ✓ deleted project texjxuxufc deleting dir tmp • failure odo sub component command tests go src github com openshift odo tests integration cmd cmp sub test go when component is in the current directory and project flag is used go src github com openshift odo tests integration component go creates and pushes local nodejs component and then deletes all go src github com openshift odo tests integration component go no future change is possible bailing out early after running odo with args expected to match exit code go src github com openshift odo tests helper helper run go

| 0

|

4,117

| 7,059,054,645

|

IssuesEvent

|

2018-01-04 23:09:07

|

chenhowa/chess-app

|

https://api.github.com/repos/chenhowa/chess-app

|

opened

|

Unit test tracking

|

process problem

|

Need a better way to track whether unit testing is currently adequate for a particular class or function. Currently testing is pretty good, but new additions to the code base are not always followed up with a corresponding unit test, and I might forget about it later.

|

1.0

|

Unit test tracking - Need a better way to track whether unit testing is currently adequate for a particular class or function. Currently testing is pretty good, but new additions to the code base are not always followed up with a corresponding unit test, and I might forget about it later.

|

process

|

unit test tracking need a better way to track whether unit testing is currently adequate for a particular class or function currently testing is pretty good but new additions to the code base are not always followed up with a corresponding unit test and i might forget about it later

| 1

|

603,390

| 18,545,180,302

|

IssuesEvent

|

2021-10-21 21:03:57

|

RTXteam/RTX-KG2

|

https://api.github.com/repos/RTXteam/RTX-KG2

|

opened

|

Potential candidate for a new node property "is toxic"

|

enhancement low priority

|

Biolink model 2.2.5 includes a node property, "is toxic", that could be useful in KG2

https://github.com/biolink/biolink-model/blob/d77172050122bf4d5b48cd1d487fb58a8b163620/biolink-model.yaml#L1231

```

is toxic:

description: >-

is_a: node property

multivalued: false

range: boolean

```

|

1.0

|

Potential candidate for a new node property "is toxic" - Biolink model 2.2.5 includes a node property, "is toxic", that could be useful in KG2

https://github.com/biolink/biolink-model/blob/d77172050122bf4d5b48cd1d487fb58a8b163620/biolink-model.yaml#L1231

```

is toxic:

description: >-

is_a: node property

multivalued: false

range: boolean

```

|

non_process

|

potential candidate for a new node property is toxic biolink model includes a node property is toxic that could be useful in is toxic description is a node property multivalued false range boolean

| 0

|

9,071

| 12,140,171,060

|

IssuesEvent

|

2020-04-23 20:04:59

|

pelias/whosonfirst

|

https://api.github.com/repos/pelias/whosonfirst

|

closed

|

Refactor bundleList and tests to not contact live website

|

good first issue high priority processed

|

The bundleList code [contacts WOF](https://github.com/pelias/whosonfirst/blob/master/src/bundleList.js#L64) for the bundle list. This should be refactored to inject the bundle list URL so that the test can operate offline.

|

1.0

|

Refactor bundleList and tests to not contact live website - The bundleList code [contacts WOF](https://github.com/pelias/whosonfirst/blob/master/src/bundleList.js#L64) for the bundle list. This should be refactored to inject the bundle list URL so that the test can operate offline.

|

process

|

refactor bundlelist and tests to not contact live website the bundlelist code for the bundle list this should be refactored to inject the bundle list url so that the test can operate offline

| 1

|

112,558

| 11,771,213,729

|

IssuesEvent

|

2020-03-15 22:51:44

|

matteobruni/tsparticles

|

https://api.github.com/repos/matteobruni/tsparticles

|

opened

|

tsParticles Default values

|

bug documentation enhancement good first issue help wanted up-for-grabs

|

The default values are ugly as hell. Some nicer are needed to init library with a good default effect.

Less options enabled is the best solution, so they don't create conflicts.

|

1.0

|

tsParticles Default values - The default values are ugly as hell. Some nicer are needed to init library with a good default effect.

Less options enabled is the best solution, so they don't create conflicts.

|

non_process

|

tsparticles default values the default values are ugly as hell some nicer are needed to init library with a good default effect less options enabled is the best solution so they don t create conflicts

| 0

|

633

| 3,092,121,639

|

IssuesEvent

|

2015-08-26 16:14:58

|

e-government-ua/iBP

|

https://api.github.com/repos/e-government-ua/iBP

|

opened

|

Надвірнянська РДА - Надання довідки про наявність у Державному земельному кадастрі відомостей про одержання у власність земельної ділянки у межах норм безоплатної приватизації за певним видом її цільового призначення (використання)

|

in process of creating

|

существующий процесс - https://drive.google.com/a/privatbank.ua/file/d/0B4vk1jpTDb_5aFA1X0xBdnRDM3M/view

предлагаемый процесс - https://drive.google.com/a/privatbank.ua/file/d/0B4vk1jpTDb_5SS1uc2F3YTk1UEE/view

|

1.0

|

Надвірнянська РДА - Надання довідки про наявність у Державному земельному кадастрі відомостей про одержання у власність земельної ділянки у межах норм безоплатної приватизації за певним видом її цільового призначення (використання) -

существующий процесс - https://drive.google.com/a/privatbank.ua/file/d/0B4vk1jpTDb_5aFA1X0xBdnRDM3M/view

предлагаемый процесс - https://drive.google.com/a/privatbank.ua/file/d/0B4vk1jpTDb_5SS1uc2F3YTk1UEE/view

|

process

|

надвірнянська рда надання довідки про наявність у державному земельному кадастрі відомостей про одержання у власність земельної ділянки у межах норм безоплатної приватизації за певним видом її цільового призначення використання существующий процесс предлагаемый процесс

| 1

|

14,830

| 18,168,278,105

|

IssuesEvent

|

2021-09-27 16:51:37

|

ORNL-AMO/AMO-Tools-Desktop

|

https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop

|

closed

|

PHAST - operating costs small calculator

|

enhancement Process Heating Intern To Do

|

make calculator to help user calculate weighted average of fuel costs if using more than one fuel

modal -

fuel A Fraction _____ Cost ____

fuel B Fraction _____ Cost_____

Result = sum (fraction * cost)

Kristina - Do I need to make a mock up ?

|

1.0

|

PHAST - operating costs small calculator - make calculator to help user calculate weighted average of fuel costs if using more than one fuel

modal -

fuel A Fraction _____ Cost ____

fuel B Fraction _____ Cost_____

Result = sum (fraction * cost)

Kristina - Do I need to make a mock up ?

|

process

|

phast operating costs small calculator make calculator to help user calculate weighted average of fuel costs if using more than one fuel modal fuel a fraction cost fuel b fraction cost result sum fraction cost kristina do i need to make a mock up

| 1

|

320,232

| 27,428,498,531

|

IssuesEvent

|

2023-03-01 22:27:53

|

tgstation/tgstation

|

https://api.github.com/repos/tgstation/tgstation

|

closed

|

Passive slime extracts do not work in modsuit storage

|

Bug Tested/Reproduced

|

<!-- Write **BELOW** The Headers and **ABOVE** The comments else it may not be viewable -->

## Round ID:

<!--- **INCLUDE THE ROUND ID**

If you discovered this issue from playing tgstation hosted servers:

[Round ID]: # (It can be found in the Status panel or retrieved from https://sb.atlantaned.space/rounds ! The round id let's us look up valuable information and logs for the round the bug happened.)-->

## Testmerges:

<!-- If you're certain the issue is to be caused by a test merge [OOC tab -> Show Server Revision], report it in the pull request's comment section rather than on the tracker(If you're unsure you can refer to the issue number by prefixing said number with #. The issue number can be found beside the title after submitting it to the tracker).If no testmerges are active, feel free to remove this section. -->

## Reproduction:

Tested with light pink stabilised. Put it in a medical modsuit. Did not run fast. Held the extract in hand or in pocket. Ran fast.

|

1.0

|

Passive slime extracts do not work in modsuit storage - <!-- Write **BELOW** The Headers and **ABOVE** The comments else it may not be viewable -->

## Round ID:

<!--- **INCLUDE THE ROUND ID**

If you discovered this issue from playing tgstation hosted servers:

[Round ID]: # (It can be found in the Status panel or retrieved from https://sb.atlantaned.space/rounds ! The round id let's us look up valuable information and logs for the round the bug happened.)-->

## Testmerges:

<!-- If you're certain the issue is to be caused by a test merge [OOC tab -> Show Server Revision], report it in the pull request's comment section rather than on the tracker(If you're unsure you can refer to the issue number by prefixing said number with #. The issue number can be found beside the title after submitting it to the tracker).If no testmerges are active, feel free to remove this section. -->

## Reproduction:

Tested with light pink stabilised. Put it in a medical modsuit. Did not run fast. Held the extract in hand or in pocket. Ran fast.

|

non_process

|

passive slime extracts do not work in modsuit storage round id include the round id if you discovered this issue from playing tgstation hosted servers it can be found in the status panel or retrieved from the round id let s us look up valuable information and logs for the round the bug happened testmerges reproduction tested with light pink stabilised put it in a medical modsuit did not run fast held the extract in hand or in pocket ran fast

| 0

|

10,699

| 13,493,659,816

|

IssuesEvent

|

2020-09-11 20:02:49

|

kubernetes/minikube

|

https://api.github.com/repos/kubernetes/minikube

|

closed

|

add make targets with gopogh

|

good first issue kind/process priority/important-soon

|

we should have a make target that runs integration tests and then converst the test restult to gopogh and puts it in /out/testout.html

and the raw test output should be in ./out/testout.txt

simmilar to how we do it in jenkins

https://github.com/medyagh/minikube/blob/0d33242daef60bd07e5b39fd6491e52d04eac207/hack/jenkins/common.sh#L352-L353

that way users can run integraiton tests and then have the test results in human readable way

|

1.0

|

add make targets with gopogh - we should have a make target that runs integration tests and then converst the test restult to gopogh and puts it in /out/testout.html

and the raw test output should be in ./out/testout.txt

simmilar to how we do it in jenkins

https://github.com/medyagh/minikube/blob/0d33242daef60bd07e5b39fd6491e52d04eac207/hack/jenkins/common.sh#L352-L353

that way users can run integraiton tests and then have the test results in human readable way

|

process

|

add make targets with gopogh we should have a make target that runs integration tests and then converst the test restult to gopogh and puts it in out testout html and the raw test output should be in out testout txt simmilar to how we do it in jenkins that way users can run integraiton tests and then have the test results in human readable way

| 1

|

831,479

| 32,050,430,138

|

IssuesEvent

|

2023-09-23 13:39:24

|

RbAvci/My-Coursework-Planner

|

https://api.github.com/repos/RbAvci/My-Coursework-Planner

|

opened

|

[PD] Budget for shift work (only for people without fixed income)

|

🐇 Size Small 📅 HTML-CSS 🏝️ Priority Stretch 📅 Week 2

|

### Coursework content

In a Google sheet, make a budget of how much money you make on average on your shift work, including the hours you work and all the expenses related to it (transportation, fuel, repair costs).

_Is your shift work worthwhile doing compared to other types of work?_

Check out [this link](https://www.jazzhr.com/blog/freelancer-vs-contractor-vs-permanent-employee-what-you-should-know-for-2021/#:~:text=Freelancers%20and%20contractors%20are%20self,work%20for%20a%20single%20company) to understand the differences.

Reflect on what changes you might need to bring to your life.

- Summary of my current situation

- My current plan

- What distractions do I have / My energy levels during the study

- Original plans I had after I finished the training

- Define them in short/medium/long-term goals

### Estimated time in hours

0.5

### What is the purpose of this assignment?

This exercise is for you to get a job in tech, whilst focussing on the right things and still having enough money to pay your bills.

### How to submit

**Optional**: you can discuss it with a peer or volunteer to get their feedback and insights.

### Anything else?

n/a

|

1.0

|

[PD] Budget for shift work (only for people without fixed income) - ### Coursework content

In a Google sheet, make a budget of how much money you make on average on your shift work, including the hours you work and all the expenses related to it (transportation, fuel, repair costs).

_Is your shift work worthwhile doing compared to other types of work?_

Check out [this link](https://www.jazzhr.com/blog/freelancer-vs-contractor-vs-permanent-employee-what-you-should-know-for-2021/#:~:text=Freelancers%20and%20contractors%20are%20self,work%20for%20a%20single%20company) to understand the differences.

Reflect on what changes you might need to bring to your life.

- Summary of my current situation

- My current plan

- What distractions do I have / My energy levels during the study

- Original plans I had after I finished the training

- Define them in short/medium/long-term goals

### Estimated time in hours

0.5

### What is the purpose of this assignment?

This exercise is for you to get a job in tech, whilst focussing on the right things and still having enough money to pay your bills.

### How to submit

**Optional**: you can discuss it with a peer or volunteer to get their feedback and insights.

### Anything else?

n/a

|

non_process

|

budget for shift work only for people without fixed income coursework content in a google sheet make a budget of how much money you make on average on your shift work including the hours you work and all the expenses related to it transportation fuel repair costs is your shift work worthwhile doing compared to other types of work check out to understand the differences reflect on what changes you might need to bring to your life summary of my current situation my current plan what distractions do i have my energy levels during the study original plans i had after i finished the training define them in short medium long term goals estimated time in hours what is the purpose of this assignment this exercise is for you to get a job in tech whilst focussing on the right things and still having enough money to pay your bills how to submit optional you can discuss it with a peer or volunteer to get their feedback and insights anything else n a

| 0

|

9,764

| 12,749,176,623

|

IssuesEvent

|

2020-06-26 21:59:15

|

googleapis/synthtool

|

https://api.github.com/repos/googleapis/synthtool

|

closed

|

Remove usage of `repos.json`

|

priority: p2 type: process

|

For node.js, python, and java [we currently use](https://github.com/googleapis/synthtool/blob/969a2340e74c73227e7c1638ed7650abcac22ee4/autosynth/providers/python.py#L46) [repos.json](https://github.com/googleapis/sloth/blob/master/repos.json) to identify all relevant repositories where autosynth should run.

We would like to [delete this file](https://github.com/googleapis/sloth/issues/730). Thinking out loud - I'm not sure why we would need repos.json. I was thinking that each repository that requires synth should own it's own processes for updating, and use a kokoro job on a cron to run `autosynth` instead of relying on a top level configuration. There could be other answers :)

|

1.0

|

Remove usage of `repos.json` - For node.js, python, and java [we currently use](https://github.com/googleapis/synthtool/blob/969a2340e74c73227e7c1638ed7650abcac22ee4/autosynth/providers/python.py#L46) [repos.json](https://github.com/googleapis/sloth/blob/master/repos.json) to identify all relevant repositories where autosynth should run.

We would like to [delete this file](https://github.com/googleapis/sloth/issues/730). Thinking out loud - I'm not sure why we would need repos.json. I was thinking that each repository that requires synth should own it's own processes for updating, and use a kokoro job on a cron to run `autosynth` instead of relying on a top level configuration. There could be other answers :)

|

process

|

remove usage of repos json for node js python and java to identify all relevant repositories where autosynth should run we would like to thinking out loud i m not sure why we would need repos json i was thinking that each repository that requires synth should own it s own processes for updating and use a kokoro job on a cron to run autosynth instead of relying on a top level configuration there could be other answers

| 1

|

4,066

| 2,610,086,835

|

IssuesEvent

|

2015-02-26 18:26:23

|

chrsmith/dsdsdaadf

|

https://api.github.com/repos/chrsmith/dsdsdaadf

|

opened

|

深圳除去痘痘

|

auto-migrated Priority-Medium Type-Defect

|

```

深圳除去痘痘【深圳韩方科颜全国热线400-869-1818,24小时QQ4008

691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方—��

�韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科颜�

��业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康祛

痘技术并结合先进“先进豪华彩光”仪,开创国内专业治疗��

�刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:15

|

1.0

|

深圳除去痘痘 - ```

深圳除去痘痘【深圳韩方科颜全国热线400-869-1818,24小时QQ4008

691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方—��

�韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科颜�

��业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康祛

痘技术并结合先进“先进豪华彩光”仪,开创国内专业治疗��

�刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:15

|

non_process

|

深圳除去痘痘 深圳除去痘痘【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方—�� �韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科颜� ��业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康祛 痘技术并结合先进“先进豪华彩光”仪,开创国内专业治疗�� �刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘。 original issue reported on code google com by szft com on may at

| 0

|

17,144

| 22,691,369,668

|

IssuesEvent

|

2022-07-04 20:55:39

|

procrastinate-org/procrastinate

|

https://api.github.com/repos/procrastinate-org/procrastinate

|

closed

|

Configure Renovate to automerge simple PRs (for devDependencies & incremental deps)

|

Issue type: Bug 🐞 Issue type: Process ⚙️ Issue status: Blocked ⛔️

|

See [renovate doc](https://docs.renovatebot.com/configuration-options/) for details. It's about "autoMerge" and "lockfileMaintenance"

|

1.0

|

Configure Renovate to automerge simple PRs (for devDependencies & incremental deps) - See [renovate doc](https://docs.renovatebot.com/configuration-options/) for details. It's about "autoMerge" and "lockfileMaintenance"

|

process

|

configure renovate to automerge simple prs for devdependencies incremental deps see for details it s about automerge and lockfilemaintenance

| 1

|

16,509

| 21,518,607,401

|

IssuesEvent

|

2022-04-28 12:21:11

|

camunda/zeebe

|

https://api.github.com/repos/camunda/zeebe

|

opened

|

Resolving an incident should make job activatable by writing an event

|

kind/toil scope/broker team/process-automation area/maintainability

|

**Description**

Currently, when an incident on a service task is resolved, the job is made activatable again only in the state. This is done by the IncidentResolvedEventApplier. However, such a state change should be done by a Job event applier.

Originally this was part of #9219, but extracted to this issue to reduce the scope and focus on fixing the bug in that PR.

We'll probably need a new JobIntent (e.g. `ACTIVATABLE` which also already exists as state in JobState) and an associated event applier. This would bring the event appliers logic closer to idempotently placing the event's data in the state. It would also allow exporter consumers to know when a job has become activatable again.

|

1.0

|

Resolving an incident should make job activatable by writing an event - **Description**

Currently, when an incident on a service task is resolved, the job is made activatable again only in the state. This is done by the IncidentResolvedEventApplier. However, such a state change should be done by a Job event applier.

Originally this was part of #9219, but extracted to this issue to reduce the scope and focus on fixing the bug in that PR.

We'll probably need a new JobIntent (e.g. `ACTIVATABLE` which also already exists as state in JobState) and an associated event applier. This would bring the event appliers logic closer to idempotently placing the event's data in the state. It would also allow exporter consumers to know when a job has become activatable again.

|

process

|

resolving an incident should make job activatable by writing an event description currently when an incident on a service task is resolved the job is made activatable again only in the state this is done by the incidentresolvedeventapplier however such a state change should be done by a job event applier originally this was part of but extracted to this issue to reduce the scope and focus on fixing the bug in that pr we ll probably need a new jobintent e g activatable which also already exists as state in jobstate and an associated event applier this would bring the event appliers logic closer to idempotently placing the event s data in the state it would also allow exporter consumers to know when a job has become activatable again

| 1

|

417,025

| 12,154,900,597

|

IssuesEvent

|

2020-04-25 10:35:37

|

mm-masahiro/profile-portfolio

|

https://api.github.com/repos/mm-masahiro/profile-portfolio

|

reopened

|

README.mdの改善

|

high priority improvement

|

## 何をやるのか

- デザインのリンクを貼るんじゃなくて、スクショを用意して表示する用意する(ポートフォリオを見る側が見辛い)

- 使用技術を書く

- その他書いた方が良さそうなことを自分で考えて書く

## なぜそれをやるのか

- ポートフォリオは最終的に人に見てもらうものだからしっかりと体裁を整えて見やすい形にしておく必要がある

## 参考リンク(あれば)

- 全然聞いてないけどこんなんあった

https://www.youtube.com/watch?v=vpldv8TZQtY

|

1.0

|

README.mdの改善 - ## 何をやるのか

- デザインのリンクを貼るんじゃなくて、スクショを用意して表示する用意する(ポートフォリオを見る側が見辛い)

- 使用技術を書く

- その他書いた方が良さそうなことを自分で考えて書く

## なぜそれをやるのか

- ポートフォリオは最終的に人に見てもらうものだからしっかりと体裁を整えて見やすい形にしておく必要がある

## 参考リンク(あれば)

- 全然聞いてないけどこんなんあった

https://www.youtube.com/watch?v=vpldv8TZQtY

|

non_process

|

readme mdの改善 何をやるのか デザインのリンクを貼るんじゃなくて、スクショを用意して表示する用意する(ポートフォリオを見る側が見辛い) 使用技術を書く その他書いた方が良さそうなことを自分で考えて書く なぜそれをやるのか ポートフォリオは最終的に人に見てもらうものだからしっかりと体裁を整えて見やすい形にしておく必要がある 参考リンク(あれば) 全然聞いてないけどこんなんあった

| 0

|

13,025

| 15,380,251,560

|

IssuesEvent

|

2021-03-02 20:49:36

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

Pivot queries aren't recorded to query execution log

|

Priority:P2 Querying/Processor Type:Bug

|

Because we're using `qp/process-query` instead of `process-query-and-save-execution!`

|

1.0

|

Pivot queries aren't recorded to query execution log - Because we're using `qp/process-query` instead of `process-query-and-save-execution!`

|

process

|

pivot queries aren t recorded to query execution log because we re using qp process query instead of process query and save execution

| 1

|

52,713

| 22,355,213,841

|

IssuesEvent

|

2022-06-15 15:06:34

|

cityofaustin/atd-data-tech

|

https://api.github.com/repos/cityofaustin/atd-data-tech

|

closed

|

Update Technician - Direct cost in Signs and Markings

|

Workgroup: SMB Type: IT Support Service: Apps Product: Signs & Markings

|

<!-- Email -->

<!-- christina.tremel@austintexas.gov -->

> What application are you using?

Signs & Markings Operations

> Describe the problem.

Need to update Technician - Direct cost to potentially include more decimal places. Price needs to be 41.4684007692308 to match up with the FY22 cost calculator that was created by Finance. We submitted a very similar request ( https://github.com/cityofaustin/atd-data-tech/issues/8777) to update the Technician - Indirect cost.

> How soon do you need this?

Soon — This week

> Requested By

Christina T.

Request ID: DTS22-104220

|

1.0

|

Update Technician - Direct cost in Signs and Markings - <!-- Email -->

<!-- christina.tremel@austintexas.gov -->

> What application are you using?

Signs & Markings Operations

> Describe the problem.

Need to update Technician - Direct cost to potentially include more decimal places. Price needs to be 41.4684007692308 to match up with the FY22 cost calculator that was created by Finance. We submitted a very similar request ( https://github.com/cityofaustin/atd-data-tech/issues/8777) to update the Technician - Indirect cost.

> How soon do you need this?

Soon — This week

> Requested By

Christina T.

Request ID: DTS22-104220

|

non_process

|

update technician direct cost in signs and markings what application are you using signs markings operations describe the problem need to update technician direct cost to potentially include more decimal places price needs to be to match up with the cost calculator that was created by finance we submitted a very similar request to update the technician indirect cost how soon do you need this soon — this week requested by christina t request id

| 0

|

5,526

| 8,381,095,024

|

IssuesEvent

|

2018-10-07 21:16:25

|

saguaroib/saguaro

|

https://api.github.com/repos/saguaroib/saguaro

|

closed

|

Deletion class doesn't check for ghost bumping.

|

Administrative Post/text processing Revisit

|

TO-DO:

Prevent users from ghost bumping. Deletion timers maybe?

|

1.0

|

Deletion class doesn't check for ghost bumping. - TO-DO:

Prevent users from ghost bumping. Deletion timers maybe?

|

process

|

deletion class doesn t check for ghost bumping to do prevent users from ghost bumping deletion timers maybe

| 1

|

11,635

| 14,493,566,176

|

IssuesEvent

|

2020-12-11 08:42:05

|

GoogleCloudPlatform/dotnet-docs-samples

|

https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples

|

closed

|

[Storage] Deactivate GoogleCloudSamples.StorageTest.TestDownloadObjectRequesterPays because of flakiness.

|

api: storage priority: p1 samples type: process

|

It's not clear why it's failing.

Have deactivate in PR #1061.

|

1.0

|

[Storage] Deactivate GoogleCloudSamples.StorageTest.TestDownloadObjectRequesterPays because of flakiness. - It's not clear why it's failing.

Have deactivate in PR #1061.

|

process

|

deactivate googlecloudsamples storagetest testdownloadobjectrequesterpays because of flakiness it s not clear why it s failing have deactivate in pr

| 1

|

305,170

| 26,367,685,488

|

IssuesEvent

|

2023-01-11 17:52:04

|

influxdata/influxdb_iox

|

https://api.github.com/repos/influxdata/influxdb_iox

|

closed

|

Flaky test: `end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet`

|

flaky test

|

Example in https://app.circleci.com/pipelines/github/influxdata/influxdb_iox/27431/workflows/95af4f59-5560-4d30-bded-fd2d987a186f/jobs/245010 :

```text

thread 'end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet' panicked at 'did not get additional Parquet files in the catalog: Elapsed(())', /home/rust/project/test_helpers_end_to_end/src/client.rs:148:6

stack backtrace:

0: rust_begin_unwind

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/std/src/panicking.rs:575:5

1: core::panicking::panic_fmt

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/panicking.rs:65:14

2: core::result::unwrap_failed

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/result.rs:1791:5

3: core::result::Result<T,E>::expect

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/result.rs:1070:23

4: test_helpers_end_to_end::client::wait_for_new_parquet_file::{{closure}}

at /home/rust/project/test_helpers_end_to_end/src/client.rs:124:5

5: <core::future::from_generator::GenFuture<T> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/mod.rs:91:19

6: test_helpers_end_to_end::steps::StepTest::run::{{closure}}

at /home/rust/project/test_helpers_end_to_end/src/steps.rs:205:21

7: <core::future::from_generator::GenFuture<T> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/mod.rs:91:19

8: end_to_end::end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet::{{closure}}

at ./tests/end_to_end_cases/querier.rs:922:9

9: <core::future::from_generator::GenFuture<T> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/mod.rs:91:19

10: <core::pin::Pin<P> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/future.rs:124:9

11: tokio::runtime::scheduler::current_thread::CoreGuard::block_on::{{closure}}::{{closure}}::{{closure}}

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:531:57

12: tokio::runtime::coop::with_budget

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/coop.rs:102:5

13: tokio::runtime::coop::budget

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/coop.rs:68:5

14: tokio::runtime::scheduler::current_thread::CoreGuard::block_on::{{closure}}::{{closure}}

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:531:25

15: tokio::runtime::scheduler::current_thread::Context::enter

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:340:19

16: tokio::runtime::scheduler::current_thread::CoreGuard::block_on::{{closure}}

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:530:36

17: tokio::runtime::scheduler::current_thread::CoreGuard::enter::{{closure}}

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:601:57

18: tokio::macros::scoped_tls::ScopedKey<T>::set

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/macros/scoped_tls.rs:61:9

19: tokio::runtime::scheduler::current_thread::CoreGuard::enter

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:601:27

20: tokio::runtime::scheduler::current_thread::CoreGuard::block_on

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:520:19

21: tokio::runtime::scheduler::current_thread::CurrentThread::block_on

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:154:24

22: tokio::runtime::runtime::Runtime::block_on

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/runtime.rs:279:47

23: end_to_end::end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet

at ./tests/end_to_end_cases/querier.rs:901:9

24: end_to_end::end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet::{{closure}}

at ./tests/end_to_end_cases/querier.rs:892:11

25: core::ops::function::FnOnce::call_once

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/ops/function.rs:251:5

26: core::ops::function::FnOnce::call_once

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/ops/function.rs:251:5

```

|

1.0

|

Flaky test: `end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet` - Example in https://app.circleci.com/pipelines/github/influxdata/influxdb_iox/27431/workflows/95af4f59-5560-4d30-bded-fd2d987a186f/jobs/245010 :

```text

thread 'end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet' panicked at 'did not get additional Parquet files in the catalog: Elapsed(())', /home/rust/project/test_helpers_end_to_end/src/client.rs:148:6

stack backtrace:

0: rust_begin_unwind

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/std/src/panicking.rs:575:5

1: core::panicking::panic_fmt

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/panicking.rs:65:14

2: core::result::unwrap_failed

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/result.rs:1791:5

3: core::result::Result<T,E>::expect

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/result.rs:1070:23

4: test_helpers_end_to_end::client::wait_for_new_parquet_file::{{closure}}

at /home/rust/project/test_helpers_end_to_end/src/client.rs:124:5

5: <core::future::from_generator::GenFuture<T> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/mod.rs:91:19

6: test_helpers_end_to_end::steps::StepTest::run::{{closure}}

at /home/rust/project/test_helpers_end_to_end/src/steps.rs:205:21

7: <core::future::from_generator::GenFuture<T> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/mod.rs:91:19

8: end_to_end::end_to_end_cases::querier::kafkaless_rpc_write::basic_on_parquet::{{closure}}

at ./tests/end_to_end_cases/querier.rs:922:9

9: <core::future::from_generator::GenFuture<T> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/mod.rs:91:19

10: <core::pin::Pin<P> as core::future::future::Future>::poll

at /rustc/69f9c33d71c871fc16ac445211281c6e7a340943/library/core/src/future/future.rs:124:9

11: tokio::runtime::scheduler::current_thread::CoreGuard::block_on::{{closure}}::{{closure}}::{{closure}}

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:531:57

12: tokio::runtime::coop::with_budget

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/coop.rs:102:5

13: tokio::runtime::coop::budget

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/coop.rs:68:5

14: tokio::runtime::scheduler::current_thread::CoreGuard::block_on::{{closure}}::{{closure}}

at /usr/local/cargo/registry/src/github.com-1ecc6299db9ec823/tokio-1.22.0/src/runtime/scheduler/current_thread.rs:531:25

15: tokio::runtime::scheduler::current_thread::Context::enter