Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

16,295

| 20,926,103,226

|

IssuesEvent

|

2022-03-24 23:12:24

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

Error when filtering date by current quarter in Postgres

|

Type:Bug Priority:P2 Database/Postgres Querying/Processor Querying/MBQL .Limitation Querying/Parameters & Variables .Reproduced

|

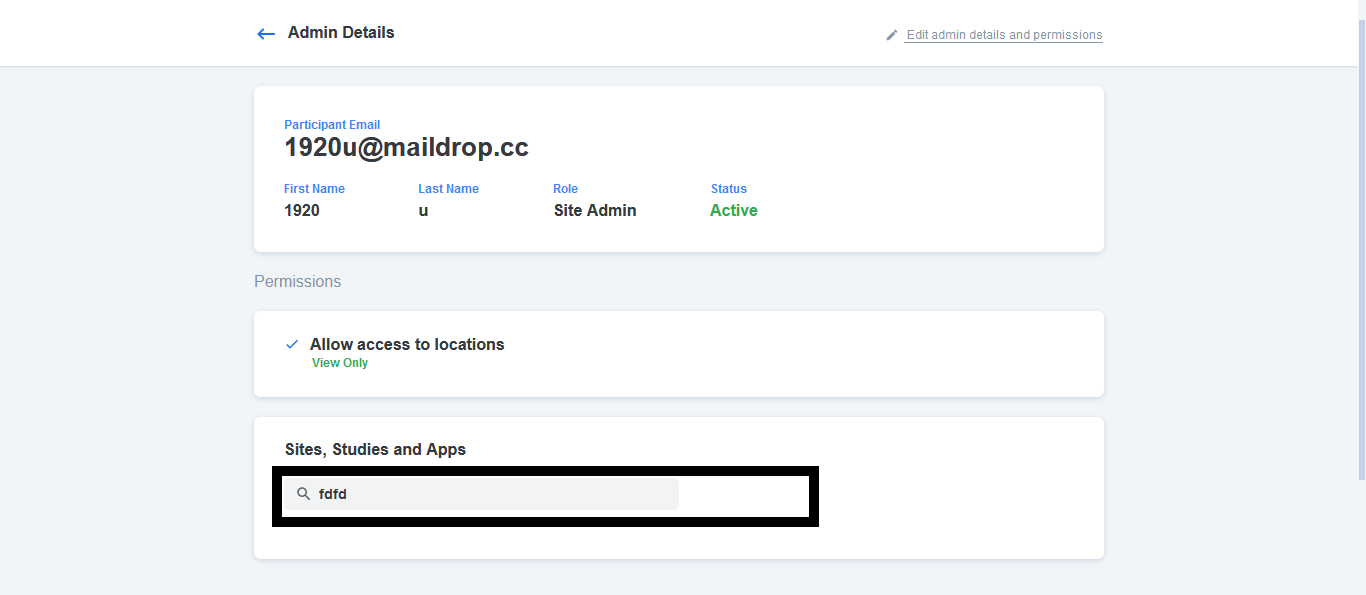

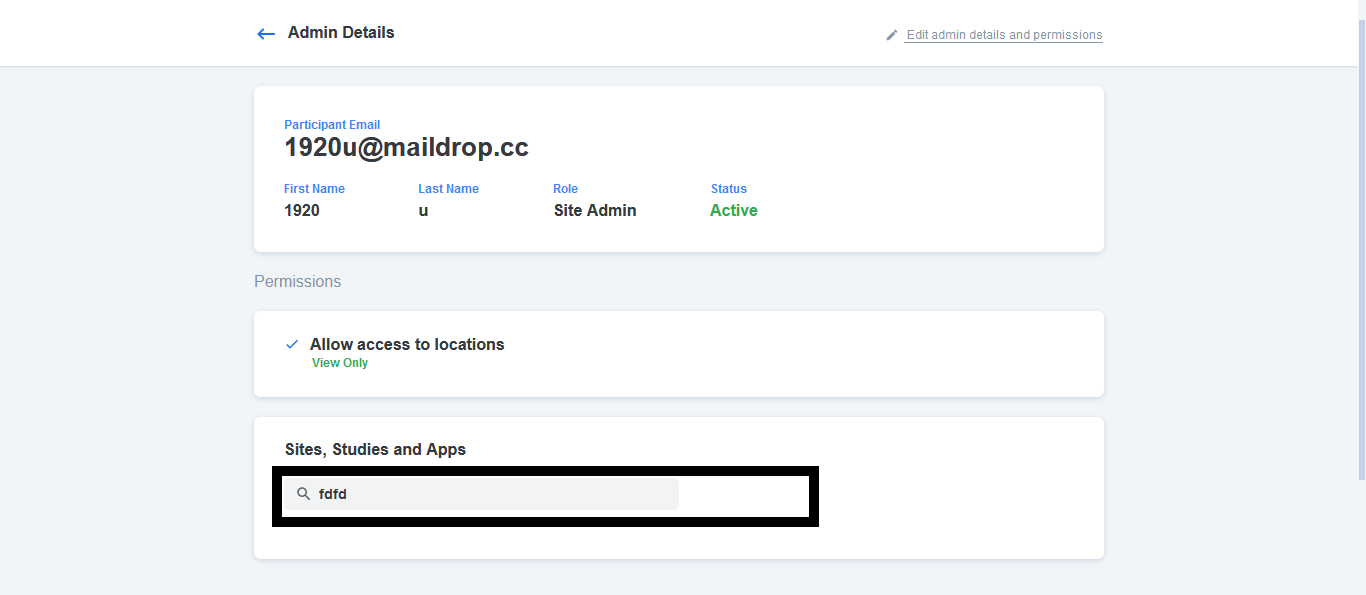

**Describe the bug**

When filtering by current quarter on a Postgres database the following error appears:

`ERROR: invalid input syntax for type interval: "1 quarter" Position: 1550`

**Logs**

[metabase.log](https://github.com/metabase/metabase/files/8119658/metabase.log)

**To Reproduce**

Steps to reproduce the behavior:

1. Create any regular question

2. Create a filter using a date and filter by current quarter

**Expected behavior**

Filter by current quarter is actually filtering instead of giving an error

**Screenshots**

<img width="486" alt="image" src="https://user-images.githubusercontent.com/159423/155204725-6acc9ae0-3c18-4970-8b7a-04bb77719bf0.png">

**Metabase Diagnostic Info**

```json

{

"browser-info": {

"language": "en-US",

"platform": "MacIntel",

"userAgent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/98.0.4758.102 Safari/537.36",

"vendor": "Google Inc."

},

"system-info": {

"file.encoding": "UTF-8",

"java.runtime.name": "OpenJDK Runtime Environment",

"java.runtime.version": "11.0.14.1+1",

"java.vendor": "Eclipse Adoptium",

"java.vendor.url": "https://adoptium.net/",

"java.version": "11.0.14.1",

"java.vm.name": "OpenJDK 64-Bit Server VM",

"java.vm.version": "11.0.14.1+1",

"os.name": "Linux",

"os.version": "4.9.43-17.39.amzn1.x86_64",

"user.language": "en",

"user.timezone": "GMT"

},

"metabase-info": {

"databases": [

"postgres"

],

"hosting-env": "unknown",

"application-database": "postgres",

"application-database-details": {

"database": {

"name": "PostgreSQL",

"version": "10.14"

},

"jdbc-driver": {

"name": "PostgreSQL JDBC Driver",

"version": "42.2.23"

}

},

"run-mode": "prod",

"version": {

"date": "2022-02-17",

"tag": "v0.42.1",

"branch": "release-x.42.x",

"hash": "629f4de"

},

"settings": {

"report-timezone": "US/Pacific"

}

}

}

```

**Severity**

Only way to work around this right now is to create native queries. We use quarterly queries quite often.

:arrow_down: Please click the :+1: reaction instead of leaving a `+1` or `update?` comment

|

1.0

|

Error when filtering date by current quarter in Postgres - **Describe the bug**

When filtering by current quarter on a Postgres database the following error appears:

`ERROR: invalid input syntax for type interval: "1 quarter" Position: 1550`

**Logs**

[metabase.log](https://github.com/metabase/metabase/files/8119658/metabase.log)

**To Reproduce**

Steps to reproduce the behavior:

1. Create any regular question

2. Create a filter using a date and filter by current quarter

**Expected behavior**

Filter by current quarter is actually filtering instead of giving an error

**Screenshots**

<img width="486" alt="image" src="https://user-images.githubusercontent.com/159423/155204725-6acc9ae0-3c18-4970-8b7a-04bb77719bf0.png">

**Metabase Diagnostic Info**

```json

{

"browser-info": {

"language": "en-US",

"platform": "MacIntel",

"userAgent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/98.0.4758.102 Safari/537.36",

"vendor": "Google Inc."

},

"system-info": {

"file.encoding": "UTF-8",

"java.runtime.name": "OpenJDK Runtime Environment",

"java.runtime.version": "11.0.14.1+1",

"java.vendor": "Eclipse Adoptium",

"java.vendor.url": "https://adoptium.net/",

"java.version": "11.0.14.1",

"java.vm.name": "OpenJDK 64-Bit Server VM",

"java.vm.version": "11.0.14.1+1",

"os.name": "Linux",

"os.version": "4.9.43-17.39.amzn1.x86_64",

"user.language": "en",

"user.timezone": "GMT"

},

"metabase-info": {

"databases": [

"postgres"

],

"hosting-env": "unknown",

"application-database": "postgres",

"application-database-details": {

"database": {

"name": "PostgreSQL",

"version": "10.14"

},

"jdbc-driver": {

"name": "PostgreSQL JDBC Driver",

"version": "42.2.23"

}

},

"run-mode": "prod",

"version": {

"date": "2022-02-17",

"tag": "v0.42.1",

"branch": "release-x.42.x",

"hash": "629f4de"

},

"settings": {

"report-timezone": "US/Pacific"

}

}

}

```

**Severity**

Only way to work around this right now is to create native queries. We use quarterly queries quite often.

:arrow_down: Please click the :+1: reaction instead of leaving a `+1` or `update?` comment

|

process

|

error when filtering date by current quarter in postgres describe the bug when filtering by current quarter on a postgres database the following error appears error invalid input syntax for type interval quarter position logs to reproduce steps to reproduce the behavior create any regular question create a filter using a date and filter by current quarter expected behavior filter by current quarter is actually filtering instead of giving an error screenshots img width alt image src metabase diagnostic info json browser info language en us platform macintel useragent mozilla macintosh intel mac os x applewebkit khtml like gecko chrome safari vendor google inc system info file encoding utf java runtime name openjdk runtime environment java runtime version java vendor eclipse adoptium java vendor url java version java vm name openjdk bit server vm java vm version os name linux os version user language en user timezone gmt metabase info databases postgres hosting env unknown application database postgres application database details database name postgresql version jdbc driver name postgresql jdbc driver version run mode prod version date tag branch release x x hash settings report timezone us pacific severity only way to work around this right now is to create native queries we use quarterly queries quite often arrow down please click the reaction instead of leaving a or update comment

| 1

|

29,243

| 4,479,841,579

|

IssuesEvent

|

2016-08-27 21:40:09

|

mapbox/mapbox-gl-native

|

https://api.github.com/repos/mapbox/mapbox-gl-native

|

closed

|

Test setting style properties to functions

|

iOS macOS runtime styling tests

|

The generated unit tests for style property setters and getters only exercise constant values, not functions. We should also test roundtripping functions too. That would’ve caught #6161.

/cc @frederoni

|

1.0

|

Test setting style properties to functions - The generated unit tests for style property setters and getters only exercise constant values, not functions. We should also test roundtripping functions too. That would’ve caught #6161.

/cc @frederoni

|

non_process

|

test setting style properties to functions the generated unit tests for style property setters and getters only exercise constant values not functions we should also test roundtripping functions too that would’ve caught cc frederoni

| 0

|

54,205

| 23,200,297,113

|

IssuesEvent

|

2022-08-01 20:41:57

|

cityofaustin/atd-data-tech

|

https://api.github.com/repos/cityofaustin/atd-data-tech

|

closed

|

Panel Review Digital Scoring

|

Type: Feature Impact: 2-Major Service: Apps Need: 1-Must Have Workgroup: SMO Project: Public-Private Partnership P3 Management

|

### Judging Criteria

- Quality/Appropriateness

- Desirable

- Feasible

- Viable

- Community Priorities

### Reviewer Rating

- Yes

- No

- Not Sure

- N/A

- [x] create new object: `request_object`

- similar to SSPR Rate Competencies (radio buttons)

- `Overall rating`: green, yellow, red - multi-choice

- `Questions` - rich text

- `Final Comments` - rich text

|

1.0

|

Panel Review Digital Scoring - ### Judging Criteria

- Quality/Appropriateness

- Desirable

- Feasible

- Viable

- Community Priorities

### Reviewer Rating

- Yes

- No

- Not Sure

- N/A

- [x] create new object: `request_object`

- similar to SSPR Rate Competencies (radio buttons)

- `Overall rating`: green, yellow, red - multi-choice

- `Questions` - rich text

- `Final Comments` - rich text

|

non_process

|

panel review digital scoring judging criteria quality appropriateness desirable feasible viable community priorities reviewer rating yes no not sure n a create new object request object similar to sspr rate competencies radio buttons overall rating green yellow red multi choice questions rich text final comments rich text

| 0

|

3,970

| 6,901,277,252

|

IssuesEvent

|

2017-11-25 04:34:58

|

Great-Hill-Corporation/quickBlocks

|

https://api.github.com/repos/Great-Hill-Corporation/quickBlocks

|

closed

|

ethslurp range management

|

apps-all status-inprocess tools-all type-bug

|

When we run:

./ethslurp -b:4186279:-1 0x63c8c29af409bd31ec7ddeea58ff14f21e8980b0

(Notice that I put -1 on purpose)

How it considers the -1?? How we deal with upper range if it is not higher than lower? Do we slurp everything?

|

1.0

|

ethslurp range management - When we run:

./ethslurp -b:4186279:-1 0x63c8c29af409bd31ec7ddeea58ff14f21e8980b0

(Notice that I put -1 on purpose)

How it considers the -1?? How we deal with upper range if it is not higher than lower? Do we slurp everything?

|

process

|

ethslurp range management when we run ethslurp b notice that i put on purpose how it considers the how we deal with upper range if it is not higher than lower do we slurp everything

| 1

|

4,417

| 7,299,829,713

|

IssuesEvent

|

2018-02-26 21:25:59

|

UKHomeOffice/dq-aws-transition

|

https://api.github.com/repos/UKHomeOffice/dq-aws-transition

|

closed

|

Inventory ACL Processing

|

DQ Data Ingest DQ Data Pipeline DQ Tranche 1 DQ Tranche 2 Production SSM processing

|

Document configuration.

- [ ] Get Python version

- [ ] Check for custom monitoring setup

- [ ] Check scheduled tasks/crontab

- [ ] Check local users/groups

- [ ] Check directory structure

- [ ] Check jobs

- [ ] job1

- [ ] job2

- [ ] Check local scripts

- [ ] Compare scripts on Gitlab and servers and note differences

- [ ] script1

- [ ] script2

- [ ] Note anything else

### Acceptance criteria

- [ ] All the above completed

|

1.0

|

Inventory ACL Processing - Document configuration.

- [ ] Get Python version

- [ ] Check for custom monitoring setup

- [ ] Check scheduled tasks/crontab

- [ ] Check local users/groups

- [ ] Check directory structure

- [ ] Check jobs

- [ ] job1

- [ ] job2

- [ ] Check local scripts

- [ ] Compare scripts on Gitlab and servers and note differences

- [ ] script1

- [ ] script2

- [ ] Note anything else

### Acceptance criteria

- [ ] All the above completed

|

process

|

inventory acl processing document configuration get python version check for custom monitoring setup check scheduled tasks crontab check local users groups check directory structure check jobs check local scripts compare scripts on gitlab and servers and note differences note anything else acceptance criteria all the above completed

| 1

|

52,060

| 27,359,741,759

|

IssuesEvent

|

2023-02-27 15:08:20

|

playcanvas/engine

|

https://api.github.com/repos/playcanvas/engine

|

opened

|

Be able to set quality targets for assets/projects

|

performance requires: discussion

|

This is quite a broad topic as there are many areas that would need to be considered and is not limited to the engine

The holistic goal would be to have different textures/materials and rendering settings based on the performance or hardware of the target device.

For example, a low end mobile would use smaller textures and less material channels than a high end device.

Materials seem to be more difficult to manage issue where having variants per quality level would help?

|

True

|

Be able to set quality targets for assets/projects - This is quite a broad topic as there are many areas that would need to be considered and is not limited to the engine

The holistic goal would be to have different textures/materials and rendering settings based on the performance or hardware of the target device.

For example, a low end mobile would use smaller textures and less material channels than a high end device.

Materials seem to be more difficult to manage issue where having variants per quality level would help?

|

non_process

|

be able to set quality targets for assets projects this is quite a broad topic as there are many areas that would need to be considered and is not limited to the engine the holistic goal would be to have different textures materials and rendering settings based on the performance or hardware of the target device for example a low end mobile would use smaller textures and less material channels than a high end device materials seem to be more difficult to manage issue where having variants per quality level would help

| 0

|

3,417

| 2,685,396,256

|

IssuesEvent

|

2015-03-29 23:53:13

|

PlayWithMagic/PlayWithMagic

|

https://api.github.com/repos/PlayWithMagic/PlayWithMagic

|

closed

|

Workup a product home page

|

bug Design

|

I've got an initial product home page built here: http://playwithmagic.github.io/PlayWithMagic/

...it still has some broken links. I'll fix `em in the morning.

|

1.0

|

Workup a product home page - I've got an initial product home page built here: http://playwithmagic.github.io/PlayWithMagic/

...it still has some broken links. I'll fix `em in the morning.

|

non_process

|

workup a product home page i ve got an initial product home page built here it still has some broken links i ll fix em in the morning

| 0

|

196,413

| 6,927,728,916

|

IssuesEvent

|

2017-12-01 00:20:04

|

DMS-Aus/Roam

|

https://api.github.com/repos/DMS-Aus/Roam

|

opened

|

Image widget doesn't set empty validation correctly

|

bug :( priority/mid

|

Setting the image widget will still show the red * for required even though it has been set.

|

1.0

|

Image widget doesn't set empty validation correctly - Setting the image widget will still show the red * for required even though it has been set.

|

non_process

|

image widget doesn t set empty validation correctly setting the image widget will still show the red for required even though it has been set

| 0

|

738

| 4,148,278,764

|

IssuesEvent

|

2016-06-15 10:21:40

|

durhamatletico/durhamatletico-cms

|

https://api.github.com/repos/durhamatletico/durhamatletico-cms

|

opened

|

Suggested UI for Summer Cup

|

high priority information architecture

|

Thinking about a simple registration procedure.

1. We tell captain to go to durhamatletico.com/.....

2. She's given a choice of English or Spanish for the form

3. She's given the following information:

Cost is $250 per team

To register a team/country, the captain should pay $50 deposit with balance due at first game

Captain is given the following prompts:

- Name?

- Birthdate?

- Phone and email?

- ZIP code?

- Name of team?

- Does she have enough players? Does she want us to help populate her team?

After submitting info, an email is sent to captain with confirmation and a link to make a payment. The email should be bilingual and include an option for the captain to make a cash deposit with David.

Doing it this way, we will just collect captain's info. We can capture info from other players on site, with the tablet. (One issue will be speed -- it will probably take two minutes to capture info on each player. I could bring a laptop as backup during the first week. I'll be able to tether it to the tablet.)

What do you think?

|

1.0

|

Suggested UI for Summer Cup - Thinking about a simple registration procedure.

1. We tell captain to go to durhamatletico.com/.....

2. She's given a choice of English or Spanish for the form

3. She's given the following information:

Cost is $250 per team

To register a team/country, the captain should pay $50 deposit with balance due at first game

Captain is given the following prompts:

- Name?

- Birthdate?

- Phone and email?

- ZIP code?

- Name of team?

- Does she have enough players? Does she want us to help populate her team?

After submitting info, an email is sent to captain with confirmation and a link to make a payment. The email should be bilingual and include an option for the captain to make a cash deposit with David.

Doing it this way, we will just collect captain's info. We can capture info from other players on site, with the tablet. (One issue will be speed -- it will probably take two minutes to capture info on each player. I could bring a laptop as backup during the first week. I'll be able to tether it to the tablet.)

What do you think?

|

non_process

|

suggested ui for summer cup thinking about a simple registration procedure we tell captain to go to durhamatletico com she s given a choice of english or spanish for the form she s given the following information cost is per team to register a team country the captain should pay deposit with balance due at first game captain is given the following prompts name birthdate phone and email zip code name of team does she have enough players does she want us to help populate her team after submitting info an email is sent to captain with confirmation and a link to make a payment the email should be bilingual and include an option for the captain to make a cash deposit with david doing it this way we will just collect captain s info we can capture info from other players on site with the tablet one issue will be speed it will probably take two minutes to capture info on each player i could bring a laptop as backup during the first week i ll be able to tether it to the tablet what do you think

| 0

|

58,170

| 6,576,378,415

|

IssuesEvent

|

2017-09-11 19:36:26

|

PowerShell/PowerShell

|

https://api.github.com/repos/PowerShell/PowerShell

|

closed

|

Build is green if an MSI could not be produced due to e.g. a WiX compilation error

|

Area-Test

|

[Here](https://ci.appveyor.com/project/PowerShell/powershell/build/6.0.0-beta.6-5027/artifacts) is an example of a build that is green but should have failed because it could not produce an MSI due to a WiX compilation error.

The WiX compilation error can also not be found in the log.

|

1.0

|

Build is green if an MSI could not be produced due to e.g. a WiX compilation error - [Here](https://ci.appveyor.com/project/PowerShell/powershell/build/6.0.0-beta.6-5027/artifacts) is an example of a build that is green but should have failed because it could not produce an MSI due to a WiX compilation error.

The WiX compilation error can also not be found in the log.

|

non_process

|

build is green if an msi could not be produced due to e g a wix compilation error is an example of a build that is green but should have failed because it could not produce an msi due to a wix compilation error the wix compilation error can also not be found in the log

| 0

|

34,625

| 30,231,795,734

|

IssuesEvent

|

2023-07-06 07:30:17

|

spring-projects/spring-modulith

|

https://api.github.com/repos/spring-projects/spring-modulith

|

closed

|

Cannot resolve Spring Framework dependencies

|

in: infrastructure type: question resolution: invalid

|

```

spring-modulith\spring-modulith-examples\spring-modulith-example-full>mvn package

[INFO] Scanning for projects...

[INFO]

[INFO] -------------------------< org.springframework.modulith:spring-modulith-example-full >--------------------------

[INFO] Building Spring Modulith - Examples - Full Example 1.0.0-SNAPSHOT

[INFO] from pom.xml

[INFO] ----------------------------------------------------[ jar ]-----------------------------------------------------

Downloading from spring-milestone: https://repo.spring.io/milestone/org/apache/tomcat/embed/tomcat-embed-core/10.1.10/tomcat-embed-core-10.1.10.pom

Downloading from central: https://repo.maven.apache.org/maven2/org/apache/tomcat/embed/tomcat-embed-core/10.1.10/tomcat-embed-core-10.1.10.pom

Downloaded from central: https://repo.maven.apache.org/maven2/org/apache/tomcat/embed/tomcat-embed-core/10.1.10/tomcat-embed-core-10.1.10.pom (1.8 kB at 11 kB/s)

Downloading from spring-milestone: https://repo.spring.io/milestone/org/springframework/boot/spring-boot-starter-actuator/3.1.1/spring-boot-starter-actuator-3.1.1.pom

......

Downloaded from central: https://repo.maven.apache.org/maven2/com/structurizr/structurizr-export/1.8.3/structurizr-export-1.8.3.pom (1.7 kB at 66 kB/s)

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] Total time: 28.010 s

[INFO] Finished at: 2023-06-27T13:20:26+05:30

[INFO] ----------------------------------------------------------------------------------------------------------------

[ERROR] Failed to execute goal on project spring-modulith-example-full: Could not resolve dependencies for project org.springframework.modulith:spring-modulith-example-full:jar:1.0.0-SNAPSHOT: Failed to collect dependencies at org.springframework.boot:spring-boot-starter-data-jpa:jar:3.1.1 -> org.springframework:spring-aspects:jar:6.0.10: Failed to read artifact descriptor for org.springframework:spring-aspects:jar:6.0.10: The following artifacts could not be resolved: org.springframework:spring-aspects:pom:6.0.10 (absent): Could not transfer artifact org.springframework:spring-aspects:pom:6.0.10 from/to central (https://repo.maven.apache.org/maven2): C:\Users\nagku\.m2\repository\org\springframework\spring-aspects\6.0.10\spring-aspects-6.0.10.pom.632571530660644704.tmp -> C:\Users\nagku\.m2\repository\org\springframework\spring-aspects\6.0.10\spring-aspects-6.0.10.pom -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the '-e' switch

[ERROR] Re-run Maven using the '-X' switch to enable verbose output

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/DependencyResolutionException

C:\temp\spring-modulith\spring-modulith-examples\spring-modulith-example-full>mvn upgrade

[INFO] Scanning for projects...

[INFO]

[INFO] -------------------------< org.springframework.modulith:spring-modulith-example-full >--------------------------

[INFO] Building Spring Modulith - Examples - Full Example 1.0.0-SNAPSHOT

[INFO] from pom.xml

[INFO] ----------------------------------------------------[ jar ]-----------------------------------------------------

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] Total time: 0.629 s

[INFO] Finished at: 2023-06-27T13:21:08+05:30

[INFO] ----------------------------------------------------------------------------------------------------------------

[ERROR] Unknown lifecycle phase "upgrade". You must specify a valid lifecycle phase or a goal in the format <plugin-prefix>:<goal> or <plugin-group-id>:<plugin-artifact-id>[:<plugin-version>]:<goal>. Available lifecycle phases are: pre-clean, clean, post-clean, validate, initialize, generate-sources, process-sources, generate-resources, process-resources, compile, process-classes, generate-test-sources, process-test-sources, generate-test-resources, process-test-resources, test-compile, process-test-classes, test, prepare-package, package, pre-integration-test, integration-test, post-integration-test, verify, install, deploy, pre-site, site, post-site, site-deploy, wrapper. -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the '-e' switch

[ERROR] Re-run Maven using the '-X' switch to enable verbose output

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/LifecyclePhaseNotFoundException

```

|

1.0

|

Cannot resolve Spring Framework dependencies - ```

spring-modulith\spring-modulith-examples\spring-modulith-example-full>mvn package

[INFO] Scanning for projects...

[INFO]

[INFO] -------------------------< org.springframework.modulith:spring-modulith-example-full >--------------------------

[INFO] Building Spring Modulith - Examples - Full Example 1.0.0-SNAPSHOT

[INFO] from pom.xml

[INFO] ----------------------------------------------------[ jar ]-----------------------------------------------------

Downloading from spring-milestone: https://repo.spring.io/milestone/org/apache/tomcat/embed/tomcat-embed-core/10.1.10/tomcat-embed-core-10.1.10.pom

Downloading from central: https://repo.maven.apache.org/maven2/org/apache/tomcat/embed/tomcat-embed-core/10.1.10/tomcat-embed-core-10.1.10.pom

Downloaded from central: https://repo.maven.apache.org/maven2/org/apache/tomcat/embed/tomcat-embed-core/10.1.10/tomcat-embed-core-10.1.10.pom (1.8 kB at 11 kB/s)

Downloading from spring-milestone: https://repo.spring.io/milestone/org/springframework/boot/spring-boot-starter-actuator/3.1.1/spring-boot-starter-actuator-3.1.1.pom

......

Downloaded from central: https://repo.maven.apache.org/maven2/com/structurizr/structurizr-export/1.8.3/structurizr-export-1.8.3.pom (1.7 kB at 66 kB/s)

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] Total time: 28.010 s

[INFO] Finished at: 2023-06-27T13:20:26+05:30

[INFO] ----------------------------------------------------------------------------------------------------------------

[ERROR] Failed to execute goal on project spring-modulith-example-full: Could not resolve dependencies for project org.springframework.modulith:spring-modulith-example-full:jar:1.0.0-SNAPSHOT: Failed to collect dependencies at org.springframework.boot:spring-boot-starter-data-jpa:jar:3.1.1 -> org.springframework:spring-aspects:jar:6.0.10: Failed to read artifact descriptor for org.springframework:spring-aspects:jar:6.0.10: The following artifacts could not be resolved: org.springframework:spring-aspects:pom:6.0.10 (absent): Could not transfer artifact org.springframework:spring-aspects:pom:6.0.10 from/to central (https://repo.maven.apache.org/maven2): C:\Users\nagku\.m2\repository\org\springframework\spring-aspects\6.0.10\spring-aspects-6.0.10.pom.632571530660644704.tmp -> C:\Users\nagku\.m2\repository\org\springframework\spring-aspects\6.0.10\spring-aspects-6.0.10.pom -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the '-e' switch

[ERROR] Re-run Maven using the '-X' switch to enable verbose output

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/DependencyResolutionException

C:\temp\spring-modulith\spring-modulith-examples\spring-modulith-example-full>mvn upgrade

[INFO] Scanning for projects...

[INFO]

[INFO] -------------------------< org.springframework.modulith:spring-modulith-example-full >--------------------------

[INFO] Building Spring Modulith - Examples - Full Example 1.0.0-SNAPSHOT

[INFO] from pom.xml

[INFO] ----------------------------------------------------[ jar ]-----------------------------------------------------

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ----------------------------------------------------------------------------------------------------------------

[INFO] Total time: 0.629 s

[INFO] Finished at: 2023-06-27T13:21:08+05:30

[INFO] ----------------------------------------------------------------------------------------------------------------

[ERROR] Unknown lifecycle phase "upgrade". You must specify a valid lifecycle phase or a goal in the format <plugin-prefix>:<goal> or <plugin-group-id>:<plugin-artifact-id>[:<plugin-version>]:<goal>. Available lifecycle phases are: pre-clean, clean, post-clean, validate, initialize, generate-sources, process-sources, generate-resources, process-resources, compile, process-classes, generate-test-sources, process-test-sources, generate-test-resources, process-test-resources, test-compile, process-test-classes, test, prepare-package, package, pre-integration-test, integration-test, post-integration-test, verify, install, deploy, pre-site, site, post-site, site-deploy, wrapper. -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the '-e' switch

[ERROR] Re-run Maven using the '-X' switch to enable verbose output

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/LifecyclePhaseNotFoundException

```

|

non_process

|

cannot resolve spring framework dependencies spring modulith spring modulith examples spring modulith example full mvn package scanning for projects building spring modulith examples full example snapshot from pom xml downloading from spring milestone downloading from central downloaded from central kb at kb s downloading from spring milestone downloaded from central kb at kb s build failure total time s finished at failed to execute goal on project spring modulith example full could not resolve dependencies for project org springframework modulith spring modulith example full jar snapshot failed to collect dependencies at org springframework boot spring boot starter data jpa jar org springframework spring aspects jar failed to read artifact descriptor for org springframework spring aspects jar the following artifacts could not be resolved org springframework spring aspects pom absent could not transfer artifact org springframework spring aspects pom from to central c users nagku repository org springframework spring aspects spring aspects pom tmp c users nagku repository org springframework spring aspects spring aspects pom to see the full stack trace of the errors re run maven with the e switch re run maven using the x switch to enable verbose output for more information about the errors and possible solutions please read the following articles c temp spring modulith spring modulith examples spring modulith example full mvn upgrade scanning for projects building spring modulith examples full example snapshot from pom xml build failure total time s finished at unknown lifecycle phase upgrade you must specify a valid lifecycle phase or a goal in the format or available lifecycle phases are pre clean clean post clean validate initialize generate sources process sources generate resources process resources compile process classes generate test sources process test sources generate test resources process test resources test compile process test classes test prepare package package pre integration test integration test post integration test verify install deploy pre site site post site site deploy wrapper to see the full stack trace of the errors re run maven with the e switch re run maven using the x switch to enable verbose output for more information about the errors and possible solutions please read the following articles

| 0

|

12,233

| 14,743,658,156

|

IssuesEvent

|

2021-01-07 14:14:06

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

Site 118 - SAB CC Issue

|

anc-process anp-1 ant-bug ant-child/secondary has attachment

|

In GitLab by @kdjstudios on Sep 6, 2019, 16:12

**Submitted by:** Amanda Jennings" <amanda.jennings@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-09-06-52892/conversation

**Server:** INternal

**Client/Site:** 118

**Account:** 84600

**Issue:**

I would like to look into this CC issue. This is acct 118-SR 84600 – Get Interactive Media. We did not get payment since it was decline so I called client and he is arguing me. The client has told me that he contacted the bank and the bank shows us never trying to run card etc.

First we tried running in SAB, it said decline. So we tried again in the CC processing site they declined too (the report side) these are the errors we got.

This one was from CC processing site:

Account Number: XXXX0290

Expiration: 0323

Auth code:

Card Holder: Michael LaPorte

Invoice: 118-082920190943

Payment media:

Status code: Declined

Amount: 225.00

Trans Type: Capture

Trans Date: 08-29-2019

Trans Time: 09:43:00

Transaction Code:

This one was from CC processing site:

Account Number: XXXX0290

Expiration: 0323

Auth code:

Card Holder: Michael LaPorte

Invoice: 118-082920190951

Payment media:

Status code: Declined

Amount: 225.00

Trans Type: Capture

Trans Date: 08-29-2019

Trans Time: 09:51:25

Transaction Code:

This is the one from SAB side:

Account Number: 0290

Expiration: 03/2023

Auth code:

Card Holder: Michael LaPorte

Invoice: SAB-bad188c1-27eb-4334-a8c3-9fdfec8eb4ae

Payment media: VISA

Status code: Declined

Amount: 225.00

Trans Type: Capture

Trans Date: 08-29-2019

Trans Time: 13:42:12

Transaction Code: 0

Here is what SAB shows under Payment history:

And this shows under Failed CC Transactions: - it shows successful but declined everywhere else!

|

1.0

|

Site 118 - SAB CC Issue - In GitLab by @kdjstudios on Sep 6, 2019, 16:12

**Submitted by:** Amanda Jennings" <amanda.jennings@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-09-06-52892/conversation

**Server:** INternal

**Client/Site:** 118

**Account:** 84600

**Issue:**

I would like to look into this CC issue. This is acct 118-SR 84600 – Get Interactive Media. We did not get payment since it was decline so I called client and he is arguing me. The client has told me that he contacted the bank and the bank shows us never trying to run card etc.

First we tried running in SAB, it said decline. So we tried again in the CC processing site they declined too (the report side) these are the errors we got.

This one was from CC processing site:

Account Number: XXXX0290

Expiration: 0323

Auth code:

Card Holder: Michael LaPorte

Invoice: 118-082920190943

Payment media:

Status code: Declined

Amount: 225.00

Trans Type: Capture

Trans Date: 08-29-2019

Trans Time: 09:43:00

Transaction Code:

This one was from CC processing site:

Account Number: XXXX0290

Expiration: 0323

Auth code:

Card Holder: Michael LaPorte

Invoice: 118-082920190951

Payment media:

Status code: Declined

Amount: 225.00

Trans Type: Capture

Trans Date: 08-29-2019

Trans Time: 09:51:25

Transaction Code:

This is the one from SAB side:

Account Number: 0290

Expiration: 03/2023

Auth code:

Card Holder: Michael LaPorte

Invoice: SAB-bad188c1-27eb-4334-a8c3-9fdfec8eb4ae

Payment media: VISA

Status code: Declined

Amount: 225.00

Trans Type: Capture

Trans Date: 08-29-2019

Trans Time: 13:42:12

Transaction Code: 0

Here is what SAB shows under Payment history:

And this shows under Failed CC Transactions: - it shows successful but declined everywhere else!

|

process

|

site sab cc issue in gitlab by kdjstudios on sep submitted by amanda jennings helpdesk server internal client site account issue i would like to look into this cc issue this is acct sr – get interactive media we did not get payment since it was decline so i called client and he is arguing me the client has told me that he contacted the bank and the bank shows us never trying to run card etc first we tried running in sab it said decline so we tried again in the cc processing site they declined too the report side these are the errors we got this one was from cc processing site account number expiration auth code card holder michael laporte invoice payment media status code declined amount trans type capture trans date trans time transaction code this one was from cc processing site account number expiration auth code card holder michael laporte invoice payment media status code declined amount trans type capture trans date trans time transaction code this is the one from sab side account number expiration auth code card holder michael laporte invoice sab payment media visa status code declined amount trans type capture trans date trans time transaction code here is what sab shows under payment history uploads image png and this shows under failed cc transactions it shows successful but declined everywhere else uploads image png

| 1

|

229,952

| 25,402,123,049

|

IssuesEvent

|

2022-11-22 12:54:38

|

elastic/cloudbeat

|

https://api.github.com/repos/elastic/cloudbeat

|

opened

|

Implement an AWS EC2 fetcher

|

8.7 Candidate Team:Cloud Security Posture

|

**Motivation**

Create a fetcher to collect the EC2 resources to be evaluated by OPA.

**Definition of done**

What needs to be completed at the end of this task

- [] use defsec 3rd party to fetch the data from the AWS account.

- [] The required EC2 data is collected.

- [] Fetcher's implementation is provider agnostic.

**Related tasks/epics**

|

True

|

Implement an AWS EC2 fetcher - **Motivation**

Create a fetcher to collect the EC2 resources to be evaluated by OPA.

**Definition of done**

What needs to be completed at the end of this task

- [] use defsec 3rd party to fetch the data from the AWS account.

- [] The required EC2 data is collected.

- [] Fetcher's implementation is provider agnostic.

**Related tasks/epics**

|

non_process

|

implement an aws fetcher motivation create a fetcher to collect the resources to be evaluated by opa definition of done what needs to be completed at the end of this task use defsec party to fetch the data from the aws account the required data is collected fetcher s implementation is provider agnostic related tasks epics

| 0

|

432,141

| 30,269,761,844

|

IssuesEvent

|

2023-07-07 14:29:50

|

aeon-toolkit/aeon

|

https://api.github.com/repos/aeon-toolkit/aeon

|

opened

|

[DOC] Add example to InceptionTime docstring for classification and regression

|

documentation deep learning

|

### Describe the issue linked to the documentation

there are no inception time docstring examples

### Suggest a potential alternative/fix

something like this @hadifawaz1999 ?

Examples

--------

>>> from aeon.classification.deep_learning.fcn import FCNClassifier

>>> from aeon.datasets import load_unit_test

>>> X_train, y_train = load_unit_test(split="train", return_X_y=True)

>>> X_test, y_test = load_unit_test(split="test", return_X_y=True)

>>> fcn = FCNClassifier(n_epochs=20,batch_size=4) # doctest: +SKIP

>>> fcn.fit(X_train, y_train) # doctest: +SKIP

FCNClassifier(...)

|

1.0

|

[DOC] Add example to InceptionTime docstring for classification and regression - ### Describe the issue linked to the documentation

there are no inception time docstring examples

### Suggest a potential alternative/fix

something like this @hadifawaz1999 ?

Examples

--------

>>> from aeon.classification.deep_learning.fcn import FCNClassifier

>>> from aeon.datasets import load_unit_test

>>> X_train, y_train = load_unit_test(split="train", return_X_y=True)

>>> X_test, y_test = load_unit_test(split="test", return_X_y=True)

>>> fcn = FCNClassifier(n_epochs=20,batch_size=4) # doctest: +SKIP

>>> fcn.fit(X_train, y_train) # doctest: +SKIP

FCNClassifier(...)

|

non_process

|

add example to inceptiontime docstring for classification and regression describe the issue linked to the documentation there are no inception time docstring examples suggest a potential alternative fix something like this examples from aeon classification deep learning fcn import fcnclassifier from aeon datasets import load unit test x train y train load unit test split train return x y true x test y test load unit test split test return x y true fcn fcnclassifier n epochs batch size doctest skip fcn fit x train y train doctest skip fcnclassifier

| 0

|

20,033

| 26,517,284,097

|

IssuesEvent

|

2023-01-18 21:58:50

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

Support expressions for agent `demands`

|

doc-enhancement devops/prod Pri1 devops-cicd-process/tech

|

If we are supporting this:

```

demands:

- Agent.OS -equals Linux # Check if Agent.OS == Linux

```

can we also support expressions like this?

```

demands:

- in(agent.name, 'Agent1', 'Agent2', 'Agent3', 'Agent4')

```

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: e7541ee6-d2bb-84c0-fead-1aa8ee7d2372

* Version Independent ID: 5cf7c51e-37e1-6c67-e6c6-80262c4eb662

* Content: [Demands - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/demands?view=azure-devops&tabs=yaml#feedback)

* Content Source: [docs/pipelines/process/demands.md](https://github.com/MicrosoftDocs/vsts-docs/blob/master/docs/pipelines/process/demands.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @steved0x

* Microsoft Alias: **sdanie**

|

1.0

|

Support expressions for agent `demands` - If we are supporting this:

```

demands:

- Agent.OS -equals Linux # Check if Agent.OS == Linux

```

can we also support expressions like this?

```

demands:

- in(agent.name, 'Agent1', 'Agent2', 'Agent3', 'Agent4')

```

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: e7541ee6-d2bb-84c0-fead-1aa8ee7d2372

* Version Independent ID: 5cf7c51e-37e1-6c67-e6c6-80262c4eb662

* Content: [Demands - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/demands?view=azure-devops&tabs=yaml#feedback)

* Content Source: [docs/pipelines/process/demands.md](https://github.com/MicrosoftDocs/vsts-docs/blob/master/docs/pipelines/process/demands.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @steved0x

* Microsoft Alias: **sdanie**

|

process

|

support expressions for agent demands if we are supporting this demands agent os equals linux check if agent os linux can we also support expressions like this demands in agent name document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id fead version independent id content content source product devops technology devops cicd process github login microsoft alias sdanie

| 1

|

26,149

| 6,755,404,852

|

IssuesEvent

|

2017-10-24 00:17:44

|

jascam/CodePlexFoo

|

https://api.github.com/repos/jascam/CodePlexFoo

|

closed

|

Create Example: CSVstoServerDocument

|

bug CodePlexMigrationInitiated impact: Low

|

This sample demonstrates how to use the ServerDocument class to extract information from a VSTO customized Word document or Excel Workbook; and also how to programmatically add / remove VSTO customizations.

#### Migrated CodePlex Work Item Details

CodePlex Work Item ID: '2710'

Vote count: '1'

|

1.0

|

Create Example: CSVstoServerDocument - This sample demonstrates how to use the ServerDocument class to extract information from a VSTO customized Word document or Excel Workbook; and also how to programmatically add / remove VSTO customizations.

#### Migrated CodePlex Work Item Details

CodePlex Work Item ID: '2710'

Vote count: '1'

|

non_process

|

create example csvstoserverdocument this sample demonstrates how to use the serverdocument class to extract information from a vsto customized word document or excel workbook and also how to programmatically add remove vsto customizations migrated codeplex work item details codeplex work item id vote count

| 0

|

223,941

| 17,146,018,374

|

IssuesEvent

|

2021-07-13 14:40:36

|

geosolutions-it/MapStore2

|

https://api.github.com/repos/geosolutions-it/MapStore2

|

opened

|

Updated User Guide with Theme and Edit Style options

|

C124-BRGM-2021-SUPPORT Documentation User Guide

|

Update of the following sections:

* **Application Context** section with #6986

* **Managing Layer Settings** with #6879 and #6967

|

1.0

|

Updated User Guide with Theme and Edit Style options - Update of the following sections:

* **Application Context** section with #6986

* **Managing Layer Settings** with #6879 and #6967

|

non_process

|

updated user guide with theme and edit style options update of the following sections application context section with managing layer settings with and

| 0

|

18,910

| 24,848,829,807

|

IssuesEvent

|

2022-10-26 18:10:21

|

apache/arrow-rs

|

https://api.github.com/repos/apache/arrow-rs

|

closed

|

failed to pass test cases while enabling feature chrono-tz

|

bug development-process

|

```bash

cargo test --features chrono-tz

```

```bash

failures:

---- compute::kernels::cast::tests::test_cast_timestamp_to_string stdout ----

[arrow/src/compute/kernels/cast.rs:3852] &array = PrimitiveArray<Timestamp(Millisecond, None)>

[

1997-05-19T00:00:00.005,

2018-12-25T00:00:00.001,

null,

]

thread 'compute::kernels::cast::tests::test_cast_timestamp_to_string' panicked at 'assertion failed: `(left == right)`

left: `"1997-05-19 00:00:00.005"`,

right: `"1997-05-19 00:00:00.005 +00:00"`', arrow/src/compute/kernels/cast.rs:3856:9

---- compute::kernels::cast::tests::test_timestamp_cast_utf8 stdout ----

thread 'compute::kernels::cast::tests::test_timestamp_cast_utf8' panicked at 'assertion failed: `(left == right)`

left: `StringArray

[

"1970-01-01 20:30:00 +10:00",

null,

"1970-01-02 09:58:59 +10:00",

]`,

right: `StringArray

[

"1970-01-01 20:30:00",

null,

"1970-01-02 09:58:59",

]`', arrow/src/compute/kernels/cast.rs:5762:9

---- csv::writer::tests::test_export_csv_timestamps stdout ----

thread 'csv::writer::tests::test_export_csv_timestamps' panicked at 'assertion failed: `(left == right)`

left: `Some("c1,c2\n2019-04-18T20:54:47.378000000+10:00,2019-04-18T10:54:47.378000000+00:00\n2021-10-30T17:59:07.000000000+11:00,2021-10-30T06:59:07.000000000+00:00\n")`,

right: `Some("c1,c2\n2019-04-18T20:54:47.378000000+10:00,2019-04-18T10:54:47.378000000\n2021-10-30T17:59:07.000000000+11:00,2021-10-30T06:59:07.000000000\n")`', arrow/src/csv/writer.rs:652:9

---- csv::writer::tests::test_write_csv stdout ----

thread 'csv::writer::tests::test_write_csv' panicked at 'assertion failed: `(left == right)`

left: `"c1,c2,c3,c4,c5,c6,c7\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000+00:00,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000+00:00,23:46:03,foo\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000+00:00,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000+00:00,23:46:03,foo\n"`,

right: `"c1,c2,c3,c4,c5,c6,c7\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000,23:46:03,foo\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000,23:46:03,foo\n"`', arrow/src/csv/writer.rs:546:9

failures:

compute::kernels::cast::tests::test_cast_timestamp_to_string

compute::kernels::cast::tests::test_timestamp_cast_utf8

csv::writer::tests::test_export_csv_timestamps

csv::writer::tests::test_write_csv

test result: FAILED. 751 passed; 4 failed; 0 ignored; 0 measured; 0 filtered out; finished in 1.21s

```

|

1.0

|

failed to pass test cases while enabling feature chrono-tz - ```bash

cargo test --features chrono-tz

```

```bash

failures:

---- compute::kernels::cast::tests::test_cast_timestamp_to_string stdout ----

[arrow/src/compute/kernels/cast.rs:3852] &array = PrimitiveArray<Timestamp(Millisecond, None)>

[

1997-05-19T00:00:00.005,

2018-12-25T00:00:00.001,

null,

]

thread 'compute::kernels::cast::tests::test_cast_timestamp_to_string' panicked at 'assertion failed: `(left == right)`

left: `"1997-05-19 00:00:00.005"`,

right: `"1997-05-19 00:00:00.005 +00:00"`', arrow/src/compute/kernels/cast.rs:3856:9

---- compute::kernels::cast::tests::test_timestamp_cast_utf8 stdout ----

thread 'compute::kernels::cast::tests::test_timestamp_cast_utf8' panicked at 'assertion failed: `(left == right)`

left: `StringArray

[

"1970-01-01 20:30:00 +10:00",

null,

"1970-01-02 09:58:59 +10:00",

]`,

right: `StringArray

[

"1970-01-01 20:30:00",

null,

"1970-01-02 09:58:59",

]`', arrow/src/compute/kernels/cast.rs:5762:9

---- csv::writer::tests::test_export_csv_timestamps stdout ----

thread 'csv::writer::tests::test_export_csv_timestamps' panicked at 'assertion failed: `(left == right)`

left: `Some("c1,c2\n2019-04-18T20:54:47.378000000+10:00,2019-04-18T10:54:47.378000000+00:00\n2021-10-30T17:59:07.000000000+11:00,2021-10-30T06:59:07.000000000+00:00\n")`,

right: `Some("c1,c2\n2019-04-18T20:54:47.378000000+10:00,2019-04-18T10:54:47.378000000\n2021-10-30T17:59:07.000000000+11:00,2021-10-30T06:59:07.000000000\n")`', arrow/src/csv/writer.rs:652:9

---- csv::writer::tests::test_write_csv stdout ----

thread 'csv::writer::tests::test_write_csv' panicked at 'assertion failed: `(left == right)`

left: `"c1,c2,c3,c4,c5,c6,c7\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000+00:00,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000+00:00,23:46:03,foo\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000+00:00,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000+00:00,23:46:03,foo\n"`,

right: `"c1,c2,c3,c4,c5,c6,c7\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000,23:46:03,foo\nLorem ipsum dolor sit amet,123.564532,3,true,,00:20:34,cupcakes\nconsectetur adipiscing elit,,2,false,2019-04-18T10:54:47.378000000,06:51:20,cupcakes\nsed do eiusmod tempor,-556132.25,1,,2019-04-18T02:45:55.555000000,23:46:03,foo\n"`', arrow/src/csv/writer.rs:546:9

failures:

compute::kernels::cast::tests::test_cast_timestamp_to_string

compute::kernels::cast::tests::test_timestamp_cast_utf8

csv::writer::tests::test_export_csv_timestamps

csv::writer::tests::test_write_csv

test result: FAILED. 751 passed; 4 failed; 0 ignored; 0 measured; 0 filtered out; finished in 1.21s

```

|

process

|

failed to pass test cases while enabling feature chrono tz bash cargo test features chrono tz bash failures compute kernels cast tests test cast timestamp to string stdout array primitivearray null thread compute kernels cast tests test cast timestamp to string panicked at assertion failed left right left right arrow src compute kernels cast rs compute kernels cast tests test timestamp cast stdout thread compute kernels cast tests test timestamp cast panicked at assertion failed left right left stringarray null right stringarray null arrow src compute kernels cast rs csv writer tests test export csv timestamps stdout thread csv writer tests test export csv timestamps panicked at assertion failed left right left some n right some n arrow src csv writer rs csv writer tests test write csv stdout thread csv writer tests test write csv panicked at assertion failed left right left nlorem ipsum dolor sit amet true cupcakes nconsectetur adipiscing elit false cupcakes nsed do eiusmod tempor foo nlorem ipsum dolor sit amet true cupcakes nconsectetur adipiscing elit false cupcakes nsed do eiusmod tempor foo n right nlorem ipsum dolor sit amet true cupcakes nconsectetur adipiscing elit false cupcakes nsed do eiusmod tempor foo nlorem ipsum dolor sit amet true cupcakes nconsectetur adipiscing elit false cupcakes nsed do eiusmod tempor foo n arrow src csv writer rs failures compute kernels cast tests test cast timestamp to string compute kernels cast tests test timestamp cast csv writer tests test export csv timestamps csv writer tests test write csv test result failed passed failed ignored measured filtered out finished in

| 1

|

6,577

| 9,660,217,293

|

IssuesEvent

|

2019-05-20 15:02:33

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

closed

|

Fix documentation for `childProcess.kill(signal)`: `signal` can be a number

|

child_process doc good first issue

|

With [`childProcess.kill(signal)`](https://nodejs.org/api/child_process.html#child_process_subprocess_kill_signal), `signal` is documented as being a `string`.

However in the code, `signal` can be [either a string](https://github.com/nodejs/node/blob/908292cf1f551c614a733d858528ffb13fb3a524/lib/internal/child_process.js#L435) or [a number](https://github.com/nodejs/node/blob/908292cf1f551c614a733d858528ffb13fb3a524/lib/internal/util.js#L220).

|

1.0

|

Fix documentation for `childProcess.kill(signal)`: `signal` can be a number - With [`childProcess.kill(signal)`](https://nodejs.org/api/child_process.html#child_process_subprocess_kill_signal), `signal` is documented as being a `string`.

However in the code, `signal` can be [either a string](https://github.com/nodejs/node/blob/908292cf1f551c614a733d858528ffb13fb3a524/lib/internal/child_process.js#L435) or [a number](https://github.com/nodejs/node/blob/908292cf1f551c614a733d858528ffb13fb3a524/lib/internal/util.js#L220).

|

process

|

fix documentation for childprocess kill signal signal can be a number with signal is documented as being a string however in the code signal can be or

| 1

|

13,275

| 15,758,909,363

|

IssuesEvent

|

2021-03-31 07:20:23

|

scikit-learn/scikit-learn

|

https://api.github.com/repos/scikit-learn/scikit-learn

|

closed

|

Weighted variance computation for sparse data is not numerically stable

|

Bug Moderate help wanted module:linear_model module:preprocessing

|

This issue was discovered when adding tests for #19527 (currently marked XFAIL).

Here is minimal reproduction case using the underlying private API:

https://gist.github.com/ogrisel/bd2cf3350fff5bbd5a0899fa6baf3267

The results are the following (macOS / arm64 / Python 3.9.1 / Cython 0.29.21 / clang 11.0.1):

```

## dtype=float64

_incremental_mean_and_var [100.] [0.]

csr_mean_variance_axis0 [100.] [-2.18040566e-11]

incr_mean_variance_axis0 csr [100.] [-2.18040566e-11]

csc_mean_variance_axis0 [100.] [-2.18040566e-11]

incr_mean_variance_axis0 csc [100.] [-2.18040566e-11]

## dtype=float32

_incremental_mean_and_var [100.00000577] [3.32692735e-11]

csr_mean_variance_axis0 [99.99997] [0.00123221]

incr_mean_variance_axis0 csr [99.99997] [0.00123221]

csc_mean_variance_axis0 [99.99997] [0.00123221]

incr_mean_variance_axis0 csc [99.99997] [0.00123221]

```

So the `sklearn.utils.extmath._incremental_mean_and_var` function for dense numpy arrays is numerically stable both in float64 and float32 (~1e-11 is much less then `np.finfo(np.float32).eps`), but the sparse counterparts, either incremental are not are all wrong in the same way.

So the gist above should be adapted to write a new series of new tests for these Cython functions and the fix will probably involve adapting the algorithm implemented in `sklearn.utils.extmath._incremental_mean_and_var` to the sparse case.

Note: there is another issue opened for the numerical stability of `StandardScaler`: #5602 / #11549 but it is related to the computation of the (unweighted) mean in incremental model (in `partial_fit`) vs full batch mode (in `fit`).

|

1.0

|

Weighted variance computation for sparse data is not numerically stable - This issue was discovered when adding tests for #19527 (currently marked XFAIL).

Here is minimal reproduction case using the underlying private API:

https://gist.github.com/ogrisel/bd2cf3350fff5bbd5a0899fa6baf3267

The results are the following (macOS / arm64 / Python 3.9.1 / Cython 0.29.21 / clang 11.0.1):

```

## dtype=float64

_incremental_mean_and_var [100.] [0.]

csr_mean_variance_axis0 [100.] [-2.18040566e-11]

incr_mean_variance_axis0 csr [100.] [-2.18040566e-11]

csc_mean_variance_axis0 [100.] [-2.18040566e-11]

incr_mean_variance_axis0 csc [100.] [-2.18040566e-11]

## dtype=float32

_incremental_mean_and_var [100.00000577] [3.32692735e-11]

csr_mean_variance_axis0 [99.99997] [0.00123221]

incr_mean_variance_axis0 csr [99.99997] [0.00123221]

csc_mean_variance_axis0 [99.99997] [0.00123221]

incr_mean_variance_axis0 csc [99.99997] [0.00123221]

```

So the `sklearn.utils.extmath._incremental_mean_and_var` function for dense numpy arrays is numerically stable both in float64 and float32 (~1e-11 is much less then `np.finfo(np.float32).eps`), but the sparse counterparts, either incremental are not are all wrong in the same way.

So the gist above should be adapted to write a new series of new tests for these Cython functions and the fix will probably involve adapting the algorithm implemented in `sklearn.utils.extmath._incremental_mean_and_var` to the sparse case.

Note: there is another issue opened for the numerical stability of `StandardScaler`: #5602 / #11549 but it is related to the computation of the (unweighted) mean in incremental model (in `partial_fit`) vs full batch mode (in `fit`).

|

process

|

weighted variance computation for sparse data is not numerically stable this issue was discovered when adding tests for currently marked xfail here is minimal reproduction case using the underlying private api the results are the following macos python cython clang dtype incremental mean and var csr mean variance incr mean variance csr csc mean variance incr mean variance csc dtype incremental mean and var csr mean variance incr mean variance csr csc mean variance incr mean variance csc so the sklearn utils extmath incremental mean and var function for dense numpy arrays is numerically stable both in and is much less then np finfo np eps but the sparse counterparts either incremental are not are all wrong in the same way so the gist above should be adapted to write a new series of new tests for these cython functions and the fix will probably involve adapting the algorithm implemented in sklearn utils extmath incremental mean and var to the sparse case note there is another issue opened for the numerical stability of standardscaler but it is related to the computation of the unweighted mean in incremental model in partial fit vs full batch mode in fit

| 1

|

22,636

| 31,885,101,005

|

IssuesEvent

|

2023-09-16 21:17:23

|

bitfocus/companion-module-requests

|

https://api.github.com/repos/bitfocus/companion-module-requests

|

opened

|

Lightshark LS CORE/LS 1 feedback/Sync

|

NOT YET PROCESSED

|

- [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

Lightshark LS Core/LS 1, LightShark Software: Sync/dynamic/feedback

Has anyone had any luck with getting Sync Executors feedback on the Lightshark instance? I have control over the Executors which is amazing but not the feedback. I can only see port 8000 for incoming within companion but not the outgoing port 9000.

<img width="1400" alt="Screenshot 2023-09-16 at 2 25 26 pm" src="https://github.com/bitfocus/companion-module-requests/assets/65983295/a9adcb8b-ea0d-4e6d-b089-20deaeebcfa3">

<img width="1401" alt="Screenshot 2023-09-16 at 2 25 56 pm" src="https://github.com/bitfocus/companion-module-requests/assets/65983295/44aafc40-f84e-4672-a00c-84332a51fb99">

[Lightshark OSC.pdf](https://github.com/bitfocus/companion-module-requests/files/12641583/Lightshark.OSC.pdf)

|

1.0

|

Lightshark LS CORE/LS 1 feedback/Sync - - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

Lightshark LS Core/LS 1, LightShark Software: Sync/dynamic/feedback

Has anyone had any luck with getting Sync Executors feedback on the Lightshark instance? I have control over the Executors which is amazing but not the feedback. I can only see port 8000 for incoming within companion but not the outgoing port 9000.

<img width="1400" alt="Screenshot 2023-09-16 at 2 25 26 pm" src="https://github.com/bitfocus/companion-module-requests/assets/65983295/a9adcb8b-ea0d-4e6d-b089-20deaeebcfa3">

<img width="1401" alt="Screenshot 2023-09-16 at 2 25 56 pm" src="https://github.com/bitfocus/companion-module-requests/assets/65983295/44aafc40-f84e-4672-a00c-84332a51fb99">

[Lightshark OSC.pdf](https://github.com/bitfocus/companion-module-requests/files/12641583/Lightshark.OSC.pdf)

|

process

|

lightshark ls core ls feedback sync i have researched the list of existing companion modules and requests and have determined this has not yet been requested lightshark ls core ls lightshark software sync dynamic feedback has anyone had any luck with getting sync executors feedback on the lightshark instance i have control over the executors which is amazing but not the feedback i can only see port for incoming within companion but not the outgoing port img width alt screenshot at pm src img width alt screenshot at pm src

| 1

|

171,895

| 14,347,093,445

|

IssuesEvent

|

2020-11-29 04:58:06

|

swordily/SPERMAPYTHON

|

https://api.github.com/repos/swordily/SPERMAPYTHON

|

reopened

|

Когда обновление?

|

documentation

|

Я не хочу и не буду обновлять данный чит, у меня есть другие проекты по типу SPERMAWARE, SPERMASENSE, SPERMALOADER. На пайтоне я мало чего смогу сделать, чем на c++. Имеется все базовые функции и имеется авто обновление под ласт апдейт ксго, кто хочет добавить функции, качаете сурсы и добавляете, я не буду добавлять ничего.

|

1.0

|

Когда обновление? - Я не хочу и не буду обновлять данный чит, у меня есть другие проекты по типу SPERMAWARE, SPERMASENSE, SPERMALOADER. На пайтоне я мало чего смогу сделать, чем на c++. Имеется все базовые функции и имеется авто обновление под ласт апдейт ксго, кто хочет добавить функции, качаете сурсы и добавляете, я не буду добавлять ничего.

|

non_process

|

когда обновление я не хочу и не буду обновлять данный чит у меня есть другие проекты по типу spermaware spermasense spermaloader на пайтоне я мало чего смогу сделать чем на c имеется все базовые функции и имеется авто обновление под ласт апдейт ксго кто хочет добавить функции качаете сурсы и добавляете я не буду добавлять ничего

| 0

|

9,699

| 12,701,028,447

|

IssuesEvent

|

2020-06-22 17:23:02

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

closed

|

JSON field not compatible with built-in query batching

|

2.1.0-dev.52 bug/2-confirmed kind/bug process/candidate

|

<!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: https://pris.ly/d/bug-reports

-->

## Bug description

#1470 introduced batching/dataloader pattern for `findOne`. It appears this is not compatible with `Json` fields for some reason (specifically the nullable `Json?` field)

I can confirm this is most likely the case because the exact same code succeeds when there's only one `findOne` call.

## How to reproduce

Consider table `A` with a column of `Json?` type.

Imagine we have the following code:

```

const ids = [1, 2, ...];

const items = await Promise.all(ids.map(id => prisma.A.findOne({where: { id }})));

```

If the ids array has length 1, it will succeed.

If the ids array has length > 1, it will fail with the following error:

```

SyntaxError: Unexpected end of JSON input

at JSON.parse (<anonymous>)

at IncomingMessage.<anonymous> (engine-core/dist/h1client.js:48:1)

at IncomingMessage.emit (events.js:322:22)

at IncomingMessage.EventEmitter.emit (domain.js:482:12)

at endReadableNT (_stream_readable.js:1187:12)

at processTicksAndRejections (internal/process/task_queues.js:84:21)

```

**Note** At this point I have to restart the entire server, subsequent queries of any kind continue to fail.

## Expected behaviour

It should not crash.

## Prisma information

Notably with `log: ["query"]` it doesn't even have a chance to output the query before crashing.

## Environment & setup

OS: Latest Mac OS

Database: Postgres 9.6

Prisma Version: 2.1.0-dev.52

Node.JS Version: 12.16.3

|

1.0

|

JSON field not compatible with built-in query batching - <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: https://pris.ly/d/bug-reports

-->

## Bug description

#1470 introduced batching/dataloader pattern for `findOne`. It appears this is not compatible with `Json` fields for some reason (specifically the nullable `Json?` field)

I can confirm this is most likely the case because the exact same code succeeds when there's only one `findOne` call.

## How to reproduce

Consider table `A` with a column of `Json?` type.

Imagine we have the following code:

```

const ids = [1, 2, ...];

const items = await Promise.all(ids.map(id => prisma.A.findOne({where: { id }})));

```

If the ids array has length 1, it will succeed.

If the ids array has length > 1, it will fail with the following error:

```

SyntaxError: Unexpected end of JSON input

at JSON.parse (<anonymous>)

at IncomingMessage.<anonymous> (engine-core/dist/h1client.js:48:1)

at IncomingMessage.emit (events.js:322:22)

at IncomingMessage.EventEmitter.emit (domain.js:482:12)

at endReadableNT (_stream_readable.js:1187:12)

at processTicksAndRejections (internal/process/task_queues.js:84:21)

```

**Note** At this point I have to restart the entire server, subsequent queries of any kind continue to fail.

## Expected behaviour

It should not crash.

## Prisma information

Notably with `log: ["query"]` it doesn't even have a chance to output the query before crashing.

## Environment & setup

OS: Latest Mac OS

Database: Postgres 9.6

Prisma Version: 2.1.0-dev.52

Node.JS Version: 12.16.3

|

process

|

json field not compatible with built in query batching thanks for helping us improve prisma 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by setting the debug environment variable and enabling additional logging output in prisma client learn more about writing proper bug reports here bug description introduced batching dataloader pattern for findone it appears this is not compatible with json fields for some reason specifically the nullable json field i can confirm this is most likely the case because the exact same code succeeds when there s only one findone call how to reproduce consider table a with a column of json type imagine we have the following code const ids const items await promise all ids map id prisma a findone where id if the ids array has length it will succeed if the ids array has length it will fail with the following error syntaxerror unexpected end of json input at json parse at incomingmessage engine core dist js at incomingmessage emit events js at incomingmessage eventemitter emit domain js at endreadablent stream readable js at processticksandrejections internal process task queues js note at this point i have to restart the entire server subsequent queries of any kind continue to fail expected behaviour it should not crash prisma information notably with log it doesn t even have a chance to output the query before crashing environment setup os latest mac os database postgres prisma version dev node js version

| 1

|

7,350

| 10,482,995,270

|

IssuesEvent

|

2019-09-24 13:08:10

|

OI-wiki/OI-wiki

|

https://api.github.com/repos/OI-wiki/OI-wiki

|

closed

|

bzoj 题目链接问题

|

中优先级 / P2 需要处理 / Need Processing 需要帮助 / help wanted 需要讨论 / discussion

|

最近 bzoj 官方宣称因为题库维护原因要暂时下线半个月。

考虑到国庆期间网站访问量较大,且站内 bzoj 题目较多,失效链接可能会对用户体验感造成较大影响。

目前一种较为可行的解决方案是将所有 bzoj 题目链接更换为 [OI Archive](https://oi-archive.wa-am.com/) 上的对应链接。

欢迎各位讨论这个问题~

|

1.0

|

bzoj 题目链接问题 - 最近 bzoj 官方宣称因为题库维护原因要暂时下线半个月。

考虑到国庆期间网站访问量较大,且站内 bzoj 题目较多,失效链接可能会对用户体验感造成较大影响。

目前一种较为可行的解决方案是将所有 bzoj 题目链接更换为 [OI Archive](https://oi-archive.wa-am.com/) 上的对应链接。

欢迎各位讨论这个问题~

|

process

|

bzoj 题目链接问题 最近 bzoj 官方宣称因为题库维护原因要暂时下线半个月。 考虑到国庆期间网站访问量较大,且站内 bzoj 题目较多,失效链接可能会对用户体验感造成较大影响。 目前一种较为可行的解决方案是将所有 bzoj 题目链接更换为 上的对应链接。 欢迎各位讨论这个问题

| 1

|

158,599

| 13,737,163,444

|

IssuesEvent

|

2020-10-05 12:49:42

|

UnBArqDsw/2020.1_G2_TCLDL

|

https://api.github.com/repos/UnBArqDsw/2020.1_G2_TCLDL

|

closed

|

BackEnd Dynamic Diagrams

|

documentation dynamic-2

|

**_Issue_ type**

DOC X - Issue title [Documentation]

**Description**

make the dynamic diagrams for the entire BackEnd structure and choose the most appropriate diagrams for each feature.

Examples of diagrams to be made:

- Sequence Diagram

- Communication Diagram

- Activity Diagram

- State Diagram

**Screenshots**

**Tasks**

- [ ] reference the documentation

- [ ] make all the diagrams cited in the description of the issue

- [ ] look for more possibles diagrams that could be done for this module

- [ ] make the artifacts available here tracked

**Acceptance Criteria**

- [ ] Requirements tracking

- [ ] Versioning

- [ ] Documentation on ghpages

|

1.0

|

BackEnd Dynamic Diagrams - **_Issue_ type**

DOC X - Issue title [Documentation]

**Description**

make the dynamic diagrams for the entire BackEnd structure and choose the most appropriate diagrams for each feature.

Examples of diagrams to be made:

- Sequence Diagram

- Communication Diagram

- Activity Diagram

- State Diagram

**Screenshots**

**Tasks**

- [ ] reference the documentation

- [ ] make all the diagrams cited in the description of the issue

- [ ] look for more possibles diagrams that could be done for this module

- [ ] make the artifacts available here tracked

**Acceptance Criteria**

- [ ] Requirements tracking

- [ ] Versioning

- [ ] Documentation on ghpages

|

non_process

|

backend dynamic diagrams issue type doc x issue title description make the dynamic diagrams for the entire backend structure and choose the most appropriate diagrams for each feature examples of diagrams to be made sequence diagram communication diagram activity diagram state diagram screenshots tasks reference the documentation make all the diagrams cited in the description of the issue look for more possibles diagrams that could be done for this module make the artifacts available here tracked acceptance criteria requirements tracking versioning documentation on ghpages

| 0

|

13,038