Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

186,487

| 21,944,184,418

|

IssuesEvent

|

2022-05-23 21:40:15

|

CMSgov/cms-carts-seds

|

https://api.github.com/repos/CMSgov/cms-carts-seds

|

closed

|

SHF - cms-carts-seds - main - MEDIUM - Instance i-0fc3aad1e5600f745 is vulnerable to CVE-2021-4193

|

security-hub main

|

**************************************************************

__This issue was generated from Security Hub data and is managed through automation.__

Please do not edit the title or body of this issue, or remove the security-hub tag. All other edits/comments are welcome.

Finding Id: inspector/us-east-1/519095364708/81b9be79d70bb03ee622ece378452294cf5c1149

**************************************************************

## Type of Issue:

- [x] Security Hub Finding

## Title:

Instance i-0fc3aad1e5600f745 is vulnerable to CVE-2021-4193

## Id:

inspector/us-east-1/519095364708/81b9be79d70bb03ee622ece378452294cf5c1149

(You may use this ID to lookup this finding's details in Security Hub)

## Description

vim is vulnerable to Out-of-bounds Read

## Remediation

undefined

## AC:

- The security hub finding is resolved or suppressed, indicated by a Workflow Status of Resolved or Suppressed.

|

True

|

SHF - cms-carts-seds - main - MEDIUM - Instance i-0fc3aad1e5600f745 is vulnerable to CVE-2021-4193 - **************************************************************

__This issue was generated from Security Hub data and is managed through automation.__

Please do not edit the title or body of this issue, or remove the security-hub tag. All other edits/comments are welcome.

Finding Id: inspector/us-east-1/519095364708/81b9be79d70bb03ee622ece378452294cf5c1149

**************************************************************

## Type of Issue:

- [x] Security Hub Finding

## Title:

Instance i-0fc3aad1e5600f745 is vulnerable to CVE-2021-4193

## Id:

inspector/us-east-1/519095364708/81b9be79d70bb03ee622ece378452294cf5c1149

(You may use this ID to lookup this finding's details in Security Hub)

## Description

vim is vulnerable to Out-of-bounds Read

## Remediation

undefined

## AC:

- The security hub finding is resolved or suppressed, indicated by a Workflow Status of Resolved or Suppressed.

|

non_process

|

shf cms carts seds main medium instance i is vulnerable to cve this issue was generated from security hub data and is managed through automation please do not edit the title or body of this issue or remove the security hub tag all other edits comments are welcome finding id inspector us east type of issue security hub finding title instance i is vulnerable to cve id inspector us east you may use this id to lookup this finding s details in security hub description vim is vulnerable to out of bounds read remediation undefined ac the security hub finding is resolved or suppressed indicated by a workflow status of resolved or suppressed

| 0

|

19,941

| 10,563,661,009

|

IssuesEvent

|

2019-10-04 21:39:01

|

SNLComputation/Albany

|

https://api.github.com/repos/SNLComputation/Albany

|

closed

|

'Gather Extruded 2D Nodal Parameter' routine much slower with Epetra than Tpetra

|

LandIce performance

|

In doing performance runs, I discovered that 'Gather Extruded 2D Nodal Parameter' is much slower in Epetra than Tpetra. This seems to show up for problems with tetrahedral meshes for ALI much more so than hexahedral meshes. Here are some data:

Epetra:

```

Phalanx: Evaluator 5: [<Residual>] Gather Extruded 2D Nodal Parameter: 21.205 - 28.6921% [23025] {min=20.9137, max=40.8765, std dev=1.55442}

Phalanx: Evaluator 85: [<Jacobian>] Gather Extruded 2D Nodal Parameter: 12.6985 - 19.9394% [13330] {min=12.5126, max=25.1912, std dev=1.01228}

```

Tpetra:

```

Phalanx: Evaluator 5: [<Residual>] Gather Extruded 2D Nodal Parameter: 0.443377 - 2.6535% [23025] {min=0.427363, max=0.693777, std dev=0.0190795}

Phalanx: Evaluator 85: [<Jacobian>] Gather Extruded 2D Nodal Parameter: 0.262684 - 0.8327% [13330] {min=0.252668, max=0.422806, std dev=0.0126421}

```

@bartgol , @mperego : can one of you please have a look at this? I haven't checked if the issue was happening earlier prior to some of the Thyra refactors - I suspect it's likely not necessary to do that, but I can if you think it would be helpful.

|

True

|

'Gather Extruded 2D Nodal Parameter' routine much slower with Epetra than Tpetra - In doing performance runs, I discovered that 'Gather Extruded 2D Nodal Parameter' is much slower in Epetra than Tpetra. This seems to show up for problems with tetrahedral meshes for ALI much more so than hexahedral meshes. Here are some data:

Epetra:

```

Phalanx: Evaluator 5: [<Residual>] Gather Extruded 2D Nodal Parameter: 21.205 - 28.6921% [23025] {min=20.9137, max=40.8765, std dev=1.55442}

Phalanx: Evaluator 85: [<Jacobian>] Gather Extruded 2D Nodal Parameter: 12.6985 - 19.9394% [13330] {min=12.5126, max=25.1912, std dev=1.01228}

```

Tpetra:

```

Phalanx: Evaluator 5: [<Residual>] Gather Extruded 2D Nodal Parameter: 0.443377 - 2.6535% [23025] {min=0.427363, max=0.693777, std dev=0.0190795}

Phalanx: Evaluator 85: [<Jacobian>] Gather Extruded 2D Nodal Parameter: 0.262684 - 0.8327% [13330] {min=0.252668, max=0.422806, std dev=0.0126421}

```

@bartgol , @mperego : can one of you please have a look at this? I haven't checked if the issue was happening earlier prior to some of the Thyra refactors - I suspect it's likely not necessary to do that, but I can if you think it would be helpful.

|

non_process

|

gather extruded nodal parameter routine much slower with epetra than tpetra in doing performance runs i discovered that gather extruded nodal parameter is much slower in epetra than tpetra this seems to show up for problems with tetrahedral meshes for ali much more so than hexahedral meshes here are some data epetra phalanx evaluator gather extruded nodal parameter min max std dev phalanx evaluator gather extruded nodal parameter min max std dev tpetra phalanx evaluator gather extruded nodal parameter min max std dev phalanx evaluator gather extruded nodal parameter min max std dev bartgol mperego can one of you please have a look at this i haven t checked if the issue was happening earlier prior to some of the thyra refactors i suspect it s likely not necessary to do that but i can if you think it would be helpful

| 0

|

49,031

| 12,268,289,951

|

IssuesEvent

|

2020-05-07 12:16:12

|

lbl-srg/modelica-buildings

|

https://api.github.com/repos/lbl-srg/modelica-buildings

|

closed

|

Add missing comments and info section in the OBC classes

|

OpenBuildingControl

|

Running [`modelica-json`](https://github.com/lbl-srg/modelica-json) tool as

```

node app.js -f ../modelica-buildings/Buildings/Controls/OBC -o json -m modelica -d out

```

gives a list of warnings showing that some classes in `Controls.OBC` package have missing comments, empty equation section, or misplaced info section.

This is to fix them.

|

1.0

|

Add missing comments and info section in the OBC classes - Running [`modelica-json`](https://github.com/lbl-srg/modelica-json) tool as

```

node app.js -f ../modelica-buildings/Buildings/Controls/OBC -o json -m modelica -d out

```

gives a list of warnings showing that some classes in `Controls.OBC` package have missing comments, empty equation section, or misplaced info section.

This is to fix them.

|

non_process

|

add missing comments and info section in the obc classes running tool as node app js f modelica buildings buildings controls obc o json m modelica d out gives a list of warnings showing that some classes in controls obc package have missing comments empty equation section or misplaced info section this is to fix them

| 0

|

109,896

| 13,865,385,322

|

IssuesEvent

|

2020-10-16 04:10:50

|

greatnewcls/BVUFT6ZTU4ZB376LM2MDD3K6

|

https://api.github.com/repos/greatnewcls/BVUFT6ZTU4ZB376LM2MDD3K6

|

reopened

|

Eihx+KCEYRw++9VG2Ei2YzRVri8EbTD/gW+wXF9ZizflOouP95aFGIFj68WplD3hPtq6otoTaTIl5qqTMFN3vOxMOYioBk7+ybvEQY11Jx6kfgsdAgTHJHPBFELDKujA79iaVMaYTxmNzvyRnQAkfRN+oOdM4WsmLUo19fGlzzU=

|

design

|

5SE6faGs48rrgKpYBH4mpbGMnWMFvmGG7rTI8EG5RFjVuNWc+V/eUL1yf6pCW9SgfsAYriJ/mXIYHTxNMQ5VEA1mrR9oJOzoujh97N0UWJ+v/2H6n2Hn8f1M4utdtVU7+2WtZ6pytSlxIdzw9fAWM4w1nt36p2KOTGLvodiJEw5l9Cj1EqSXAFzg+mRHMyWKle97UEN6m0R4qv22iwf1oQnmWbkJqyU/EMFVDjBBUEUg/eXrjxsq37YKwNWxfoI1CRhwgoy+2/ItX2g3AtKifd/yF3seRy3miRerKBtfjDDYXkTahXGq38VMLlSC0c3VikBbGeBHROmVykwthgm4CEB3Tq1kb7aCexmAo6hAbwp+o/e2k+wcpcMwrZv7R4g7EbukIQJQocz3WwIxg57jC+UplUDFa0oKRPbRJxh/Xme1mE6dSIyB2rZt8hpgo8sWEFPfublo88WEt2TTSTS0u9/83Zya0RcYHb///pclmqjf/N2cmtEXGB2///6XJZqo3/zdnJrRFxgdv//+lyWaqN/83Zya0RcYHb///pclmqivaMvyZ0Yag6xgcm50kIx2BukHNH2sQTCIfY8GZaZpc+522h/kmd9TtL7NdPf9+ckchaNePi/MmHUah3eHICp5qOV+Q49GAt+ByXr4YTxvSFkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOQt9gqdGkwvhdjtAhjSO92dckBynlaNT97/zAt6ynJyisfNrsFjmm+ENnoFnPcYWaXjkQ/Qe/+dF5WljY3qmZkGud8TMKrHgNUuYrlD/1I2jLbnfLx8KXCQH9D3nRMXSr7o/rqGLkb/7B/25jsIcrDVkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOfjJY3FdDXWvVj0pDGMZwkFFV7cebZlJi728Bv2JRSSzOR+j+G9vnol1Rq7Z1oGzaG+2iWfCoPSpkQFLEBFpOeEREoEtwqF3JjH9wCgfATUtHX/mORicJPHf2lCbwxceLb+DNQCvHWbrLn+ZWqWTXA1kc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOQt9gqdGkwvhdjtAhjSO92dckBynlaNT97/zAt6ynJyisfNrsFjmm+ENnoFnPcYWadmU2B3ExsziZvHyou2lTkPCl1e9SK83qEApmhqOdjoVI8Vt97+ApXfNUibKZaYJMm+lWGVH++i+62xakuKDqY3dbL/SZutvZub4SpCIjlotZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjqlS2GAaw4k2V4eR+jIh+VHQP7gFs4GbJ549I1mFr/gxl83P37ahrIj2Q+W882L1BuRQt2UF+59vA04Bo31iLBcF+mY8NTjqWtAjX9IdlG4ElkQSnBzh5YOc1ICnMTaL+Ig76T+gPv3VMcTHZyy6DAZZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjqlS2GAaw4k2V4eR+jIh+VHQP7gFs4GbJ549I1mFr/gxusy+WQXY3pls6psDwyIfOYzNP5xHTc/WmfCfuy6ZqHof/RgeJgeU8AlZj45kBDpoKue9czOG1WUZ5AoBZjPtvUbHIawFFsM35TLr4UU5BDxZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjmeVDO/mCxnC9TNt0/gnKxPYHmNtK2Czc+FWloeBCkpUGJQzlOi9uCmbsdtYh73+cMlDXXxTzWl7/cC/HpBnZeFV4zPgma2zApVHgus+/GlPDZjILTCI/iAo+nbqoxwoqetLAYYaiIUpBeh54sSNMO1ZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjjAazurGnQOsaKwyBCE/eX4eFLs6irgapzJ1H9Jaw++56DXjVFbjCxd5IeA6y5dmoWEiPG29+blCAQC3wjmutl6FU6QDVHVo5nzhhhh4o6oPauwmp75fp25nOirc0hIgSFkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOORMfNuKYswDNNJuRUxbBJNUOVWUMvLGWjX4xz0edk7q3vI+0M/3cId4JO9QLLdY5dugQtR3btSg7UbzV2eRCZlrZDd1VZAuDDUVh5Ije/Qjt/CtwDvPIEvsIYwPlnJ46srFK4VJuwy+PE07fp/Q7SQlkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOgROSVzMrL3c6HXQELwT9sdKLbtvbVF50Wwj/RMRZ5koy4HBTu08szhE3Dh3Q4ZhiGeii3q6hgoAcIVfadwHuRrT3kOTX/AATSRRG5EF9k1PIMv/aaYWPmIrrl6JrQvcwRschrAUWwzflMuvhRTkEPFkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOZ5UM7+YLGcL1M23T+CcrE9geY20rYLNz4VaWh4EKSlR38x3J10RyZOQfzy9mOYm0yUNdfFPNaXv9wL8ekGdl4VXjM+CZrbMClUeC6z78aU+JafDsKfhatcKClILlLGCeWRzdRjwVAXZ7P2rHGawDjlkc3UY8FQF2ez9qxxmsA45Ex824pizAM00m5FTFsEk1Q5VZQy8sZaNfjHPR52Ture8j7Qz/dwh3gk71Ast1jl3gylil+sz5/RokggNpuc4KUsPtBWVwuz/tbs53NNS5sEJY4kvOyrxjS7Q1tzzdcDBvqYFL0DYiZ+d6n1UcVLHJWRzdRjwVAXZ7P2rHGawDjlkc3UY8FQF2ez9qxxmsA46pUthgGsOJNleHkfoyIflR0D+4BbOBmyeePSNZha/4MbrMvlkF2N6ZbOqbA8MiHznWI2z7Sttj2RFuX79hCZJh5SAVL4K2OPLb3o+B7hjRsRR05+WGaSmvuaREA2gAevQDQeuQkOXWg4h6HBRiMT3P1xk7P9UU5sB2lM9XhXfkp9sEjyuxPVYKFNlBWkqaOpcUtMQ2bSBV2BTt9t7wRlDnI7pgHkk+K/7v8atGVklZZ/ApHMGsVYs6jjHQK+SL/hXmjECwBGoUk3ffYF+BzIoQ25w1mzOS36lg2S6TEpT3MduykLwduf04jsHXXJw0t6POZw+lTWf7sueZvN8gZhR1SSA0QxbsSLd2k37dX9qIQr2XClzOj6LolASrcLM4yk9XB9GSfjvdh8TtI5ne0udy

|

1.0

|

Eihx+KCEYRw++9VG2Ei2YzRVri8EbTD/gW+wXF9ZizflOouP95aFGIFj68WplD3hPtq6otoTaTIl5qqTMFN3vOxMOYioBk7+ybvEQY11Jx6kfgsdAgTHJHPBFELDKujA79iaVMaYTxmNzvyRnQAkfRN+oOdM4WsmLUo19fGlzzU= - 5SE6faGs48rrgKpYBH4mpbGMnWMFvmGG7rTI8EG5RFjVuNWc+V/eUL1yf6pCW9SgfsAYriJ/mXIYHTxNMQ5VEA1mrR9oJOzoujh97N0UWJ+v/2H6n2Hn8f1M4utdtVU7+2WtZ6pytSlxIdzw9fAWM4w1nt36p2KOTGLvodiJEw5l9Cj1EqSXAFzg+mRHMyWKle97UEN6m0R4qv22iwf1oQnmWbkJqyU/EMFVDjBBUEUg/eXrjxsq37YKwNWxfoI1CRhwgoy+2/ItX2g3AtKifd/yF3seRy3miRerKBtfjDDYXkTahXGq38VMLlSC0c3VikBbGeBHROmVykwthgm4CEB3Tq1kb7aCexmAo6hAbwp+o/e2k+wcpcMwrZv7R4g7EbukIQJQocz3WwIxg57jC+UplUDFa0oKRPbRJxh/Xme1mE6dSIyB2rZt8hpgo8sWEFPfublo88WEt2TTSTS0u9/83Zya0RcYHb///pclmqjf/N2cmtEXGB2///6XJZqo3/zdnJrRFxgdv//+lyWaqN/83Zya0RcYHb///pclmqivaMvyZ0Yag6xgcm50kIx2BukHNH2sQTCIfY8GZaZpc+522h/kmd9TtL7NdPf9+ckchaNePi/MmHUah3eHICp5qOV+Q49GAt+ByXr4YTxvSFkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOQt9gqdGkwvhdjtAhjSO92dckBynlaNT97/zAt6ynJyisfNrsFjmm+ENnoFnPcYWaXjkQ/Qe/+dF5WljY3qmZkGud8TMKrHgNUuYrlD/1I2jLbnfLx8KXCQH9D3nRMXSr7o/rqGLkb/7B/25jsIcrDVkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOfjJY3FdDXWvVj0pDGMZwkFFV7cebZlJi728Bv2JRSSzOR+j+G9vnol1Rq7Z1oGzaG+2iWfCoPSpkQFLEBFpOeEREoEtwqF3JjH9wCgfATUtHX/mORicJPHf2lCbwxceLb+DNQCvHWbrLn+ZWqWTXA1kc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOQt9gqdGkwvhdjtAhjSO92dckBynlaNT97/zAt6ynJyisfNrsFjmm+ENnoFnPcYWadmU2B3ExsziZvHyou2lTkPCl1e9SK83qEApmhqOdjoVI8Vt97+ApXfNUibKZaYJMm+lWGVH++i+62xakuKDqY3dbL/SZutvZub4SpCIjlotZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjqlS2GAaw4k2V4eR+jIh+VHQP7gFs4GbJ549I1mFr/gxl83P37ahrIj2Q+W882L1BuRQt2UF+59vA04Bo31iLBcF+mY8NTjqWtAjX9IdlG4ElkQSnBzh5YOc1ICnMTaL+Ig76T+gPv3VMcTHZyy6DAZZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjqlS2GAaw4k2V4eR+jIh+VHQP7gFs4GbJ549I1mFr/gxusy+WQXY3pls6psDwyIfOYzNP5xHTc/WmfCfuy6ZqHof/RgeJgeU8AlZj45kBDpoKue9czOG1WUZ5AoBZjPtvUbHIawFFsM35TLr4UU5BDxZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjmeVDO/mCxnC9TNt0/gnKxPYHmNtK2Czc+FWloeBCkpUGJQzlOi9uCmbsdtYh73+cMlDXXxTzWl7/cC/HpBnZeFV4zPgma2zApVHgus+/GlPDZjILTCI/iAo+nbqoxwoqetLAYYaiIUpBeh54sSNMO1ZHN1GPBUBdns/ascZrAOOWRzdRjwVAXZ7P2rHGawDjjAazurGnQOsaKwyBCE/eX4eFLs6irgapzJ1H9Jaw++56DXjVFbjCxd5IeA6y5dmoWEiPG29+blCAQC3wjmutl6FU6QDVHVo5nzhhhh4o6oPauwmp75fp25nOirc0hIgSFkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOORMfNuKYswDNNJuRUxbBJNUOVWUMvLGWjX4xz0edk7q3vI+0M/3cId4JO9QLLdY5dugQtR3btSg7UbzV2eRCZlrZDd1VZAuDDUVh5Ije/Qjt/CtwDvPIEvsIYwPlnJ46srFK4VJuwy+PE07fp/Q7SQlkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOgROSVzMrL3c6HXQELwT9sdKLbtvbVF50Wwj/RMRZ5koy4HBTu08szhE3Dh3Q4ZhiGeii3q6hgoAcIVfadwHuRrT3kOTX/AATSRRG5EF9k1PIMv/aaYWPmIrrl6JrQvcwRschrAUWwzflMuvhRTkEPFkc3UY8FQF2ez9qxxmsA45ZHN1GPBUBdns/ascZrAOOZ5UM7+YLGcL1M23T+CcrE9geY20rYLNz4VaWh4EKSlR38x3J10RyZOQfzy9mOYm0yUNdfFPNaXv9wL8ekGdl4VXjM+CZrbMClUeC6z78aU+JafDsKfhatcKClILlLGCeWRzdRjwVAXZ7P2rHGawDjlkc3UY8FQF2ez9qxxmsA45Ex824pizAM00m5FTFsEk1Q5VZQy8sZaNfjHPR52Ture8j7Qz/dwh3gk71Ast1jl3gylil+sz5/RokggNpuc4KUsPtBWVwuz/tbs53NNS5sEJY4kvOyrxjS7Q1tzzdcDBvqYFL0DYiZ+d6n1UcVLHJWRzdRjwVAXZ7P2rHGawDjlkc3UY8FQF2ez9qxxmsA46pUthgGsOJNleHkfoyIflR0D+4BbOBmyeePSNZha/4MbrMvlkF2N6ZbOqbA8MiHznWI2z7Sttj2RFuX79hCZJh5SAVL4K2OPLb3o+B7hjRsRR05+WGaSmvuaREA2gAevQDQeuQkOXWg4h6HBRiMT3P1xk7P9UU5sB2lM9XhXfkp9sEjyuxPVYKFNlBWkqaOpcUtMQ2bSBV2BTt9t7wRlDnI7pgHkk+K/7v8atGVklZZ/ApHMGsVYs6jjHQK+SL/hXmjECwBGoUk3ffYF+BzIoQ25w1mzOS36lg2S6TEpT3MduykLwduf04jsHXXJw0t6POZw+lTWf7sueZvN8gZhR1SSA0QxbsSLd2k37dX9qIQr2XClzOj6LolASrcLM4yk9XB9GSfjvdh8TtI5ne0udy

|

non_process

|

eihx kceyrw gw v v emfvdjbbueug o pclmqjf zdnjrrfxgdv lywaqn ckchanepi ennofnpcywaxjkq qe rqglkb j dnqcvhwbrln apxfnuibkzayjmm lwgvh i jih jih gxusy cc glpdzjiltci iao qjt k sl

| 0

|

2,252

| 5,088,652,308

|

IssuesEvent

|

2017-01-01 00:04:17

|

sw4j-org/tool-jpa-processor

|

https://api.github.com/repos/sw4j-org/tool-jpa-processor

|

opened

|

Handle @PrimaryKeyJoinColumns Annotation

|

annotation processor task

|

Handle the `@PrimaryKeyJoinColumns` annotation for a property or field.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.45 PrimaryKeyJoinColumns Annotation

|

1.0

|

Handle @PrimaryKeyJoinColumns Annotation - Handle the `@PrimaryKeyJoinColumns` annotation for a property or field.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.45 PrimaryKeyJoinColumns Annotation

|

process

|

handle primarykeyjoincolumns annotation handle the primarykeyjoincolumns annotation for a property or field see primarykeyjoincolumns annotation

| 1

|

78,477

| 27,542,345,937

|

IssuesEvent

|

2023-03-07 09:25:34

|

vector-im/element-android

|

https://api.github.com/repos/vector-im/element-android

|

opened

|

Long name should be truncated in the pills

|

T-Defect

|

### Steps to reproduce

### Steps to reproduce

Send a mention for a user with a long name

### Outcome

#### What did you expect?

a pill with a truncated name

#### What happened instead?

The pill is cropped

<img width="394" alt="image" src="https://user-images.githubusercontent.com/8969772/223379193-07d9b40c-5eb5-494f-aef8-a784c909f874.png">

### Outcome

Here is the suggested design:

<img width="1266" alt="image" src="https://user-images.githubusercontent.com/8969772/223365164-af330790-c8c9-41fa-a4ab-a064b54034c6.png">

### Your phone model

_No response_

### Operating system version

_No response_

### Application version and app store

_No response_

### Homeserver

_No response_

### Will you send logs?

No

### Are you willing to provide a PR?

Yes

|

1.0

|

Long name should be truncated in the pills - ### Steps to reproduce

### Steps to reproduce

Send a mention for a user with a long name

### Outcome

#### What did you expect?

a pill with a truncated name

#### What happened instead?

The pill is cropped

<img width="394" alt="image" src="https://user-images.githubusercontent.com/8969772/223379193-07d9b40c-5eb5-494f-aef8-a784c909f874.png">

### Outcome

Here is the suggested design:

<img width="1266" alt="image" src="https://user-images.githubusercontent.com/8969772/223365164-af330790-c8c9-41fa-a4ab-a064b54034c6.png">

### Your phone model

_No response_

### Operating system version

_No response_

### Application version and app store

_No response_

### Homeserver

_No response_

### Will you send logs?

No

### Are you willing to provide a PR?

Yes

|

non_process

|

long name should be truncated in the pills steps to reproduce steps to reproduce send a mention for a user with a long name outcome what did you expect a pill with a truncated name what happened instead the pill is cropped img width alt image src outcome here is the suggested design img width alt image src your phone model no response operating system version no response application version and app store no response homeserver no response will you send logs no are you willing to provide a pr yes

| 0

|

64,220

| 6,896,465,779

|

IssuesEvent

|

2017-11-23 17:59:54

|

joserogerio/promocaldasSite

|

https://api.github.com/repos/joserogerio/promocaldasSite

|

closed

|

Mostrar data de inicio e fim da promoção

|

melhoramento test

|

Na listagem de promoções mostrar os dados de inicio e fim da mesma.

|

1.0

|

Mostrar data de inicio e fim da promoção - Na listagem de promoções mostrar os dados de inicio e fim da mesma.

|

non_process

|

mostrar data de inicio e fim da promoção na listagem de promoções mostrar os dados de inicio e fim da mesma

| 0

|

95,683

| 10,885,503,745

|

IssuesEvent

|

2019-11-18 10:31:41

|

AzureAD/microsoft-authentication-library-for-dotnet

|

https://api.github.com/repos/AzureAD/microsoft-authentication-library-for-dotnet

|

closed

|

Azure AD B2C with SSO implementation in Xamarin Forms

|

answered documentation question

|

Is it possible to implement Single Sign On along with Azure AD B2C.

If yes,

Could you please suggest some sample / Documentation about the same.

I am trying to implement the same in my Xamarin Application.

|

1.0

|

Azure AD B2C with SSO implementation in Xamarin Forms - Is it possible to implement Single Sign On along with Azure AD B2C.

If yes,

Could you please suggest some sample / Documentation about the same.

I am trying to implement the same in my Xamarin Application.

|

non_process

|

azure ad with sso implementation in xamarin forms is it possible to implement single sign on along with azure ad if yes could you please suggest some sample documentation about the same i am trying to implement the same in my xamarin application

| 0

|

12,380

| 14,898,056,730

|

IssuesEvent

|

2021-01-21 12:37:54

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

Problems with ReviewApp

|

Pri2 devops-cicd-process/tech devops/prod doc-bug

|

I'm attempting to configure the reviewApp flow to verify that the dynamic env creation works as expected.

This is a fragment of a stage that contains the deployment job

```yaml

stages:

- stage: deployment_stage_cluster_${{ parameters['Kubernetes.Namespace'] }}

displayName: "deploy to ${{ parameters['Kubernetes.Cluster'] }}"

dependsOn: []

jobs:

- deployment: DeployPullRequest

displayName: Deploy Pull request

condition: and(succeeded(), not(startsWith(variables['Build.SourceBranch'], 'refs/pull/')))

pool:

vmImage: ${{ parameters['Agent.Pool'] }}

environment: azureday2020-demo-aks.test

strategy:

runOnce:

deploy:

steps:

- reviewApp: test

- task: KubectlInstaller@0

displayName: "kubectl installer"

inputs:

kubectlVersion: ${{ parameters['Kubernetes.Kubectl.Version'] }}

- task: HelmInstaller@1

displayName: "helm installer"

inputs:

helmVersionToInstall: ${{ parameters['Kubernetes.Helm.Version'] }}

- task: Kubernetes@1

displayName: 'Create a new namespace for the pull request'

inputs:

command: apply

useConfigurationFile: true

inline: '{ "kind": "Namespace", "apiVersion": "v1", "metadata": { "name": "test" }}'

```

Problems encountered:

- Attempts of running a pipeline containing the above template yield an immediate error: `Job Deployment: Resource test does not exist in environment azureday2020-demo-aks`

- This appears to work differntly than advertised? I was under the impression that both the environment and the resource were to be provisioned automatically?

- When attempting to use template parameters to define the environment name : `environment: '${{ parameters['ClusterName] }}.${{ parameters['Namespace] }}'` I'm getting consistent failures stating that `environmentName` is invalid/missing

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 7730ae4d-4101-9c83-1823-4ff43ff161ce

* Version Independent ID: 20a7e263-4819-783e-c984-c4f3b459e22f

* Content: [Environment - Kubernetes resource - Azure Pipelines](https://docs.microsoft.com/en-in/azure/devops/pipelines/process/environments-kubernetes?view=azure-devops#setup-review-app)

* Content Source: [docs/pipelines/process/environments-kubernetes.md](https://github.com/MicrosoftDocs/vsts-docs/blob/master/docs/pipelines/process/environments-kubernetes.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

1.0

|

Problems with ReviewApp - I'm attempting to configure the reviewApp flow to verify that the dynamic env creation works as expected.

This is a fragment of a stage that contains the deployment job

```yaml

stages:

- stage: deployment_stage_cluster_${{ parameters['Kubernetes.Namespace'] }}

displayName: "deploy to ${{ parameters['Kubernetes.Cluster'] }}"

dependsOn: []

jobs:

- deployment: DeployPullRequest

displayName: Deploy Pull request

condition: and(succeeded(), not(startsWith(variables['Build.SourceBranch'], 'refs/pull/')))

pool:

vmImage: ${{ parameters['Agent.Pool'] }}

environment: azureday2020-demo-aks.test

strategy:

runOnce:

deploy:

steps:

- reviewApp: test

- task: KubectlInstaller@0

displayName: "kubectl installer"

inputs:

kubectlVersion: ${{ parameters['Kubernetes.Kubectl.Version'] }}

- task: HelmInstaller@1

displayName: "helm installer"

inputs:

helmVersionToInstall: ${{ parameters['Kubernetes.Helm.Version'] }}

- task: Kubernetes@1

displayName: 'Create a new namespace for the pull request'

inputs:

command: apply

useConfigurationFile: true

inline: '{ "kind": "Namespace", "apiVersion": "v1", "metadata": { "name": "test" }}'

```

Problems encountered:

- Attempts of running a pipeline containing the above template yield an immediate error: `Job Deployment: Resource test does not exist in environment azureday2020-demo-aks`

- This appears to work differntly than advertised? I was under the impression that both the environment and the resource were to be provisioned automatically?

- When attempting to use template parameters to define the environment name : `environment: '${{ parameters['ClusterName] }}.${{ parameters['Namespace] }}'` I'm getting consistent failures stating that `environmentName` is invalid/missing

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 7730ae4d-4101-9c83-1823-4ff43ff161ce

* Version Independent ID: 20a7e263-4819-783e-c984-c4f3b459e22f

* Content: [Environment - Kubernetes resource - Azure Pipelines](https://docs.microsoft.com/en-in/azure/devops/pipelines/process/environments-kubernetes?view=azure-devops#setup-review-app)

* Content Source: [docs/pipelines/process/environments-kubernetes.md](https://github.com/MicrosoftDocs/vsts-docs/blob/master/docs/pipelines/process/environments-kubernetes.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

process

|

problems with reviewapp i m attempting to configure the reviewapp flow to verify that the dynamic env creation works as expected this is a fragment of a stage that contains the deployment job yaml stages stage deployment stage cluster parameters displayname deploy to parameters dependson jobs deployment deploypullrequest displayname deploy pull request condition and succeeded not startswith variables refs pull pool vmimage parameters environment demo aks test strategy runonce deploy steps reviewapp test task kubectlinstaller displayname kubectl installer inputs kubectlversion parameters task helminstaller displayname helm installer inputs helmversiontoinstall parameters task kubernetes displayname create a new namespace for the pull request inputs command apply useconfigurationfile true inline kind namespace apiversion metadata name test problems encountered attempts of running a pipeline containing the above template yield an immediate error job deployment resource test does not exist in environment demo aks this appears to work differntly than advertised i was under the impression that both the environment and the resource were to be provisioned automatically when attempting to use template parameters to define the environment name environment parameters parameters i m getting consistent failures stating that environmentname is invalid missing document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source product devops technology devops cicd process github login juliakm microsoft alias jukullam

| 1

|

14,930

| 18,359,530,389

|

IssuesEvent

|

2021-10-09 01:46:05

|

DevExpress/testcafe-hammerhead

|

https://api.github.com/repos/DevExpress/testcafe-hammerhead

|

closed

|

Wrong html processing in the overridden document.write function

|

TYPE: bug AREA: client health-monitor FREQUENCY: level 1 SYSTEM: client side processing STATE: Stale

|

Code for reproducing:

```js

document.write('<script data-rp-insertionmarker="<body>" ');

document.write('id="id">');

document.write('<\/script>');

```

https://www.jiji.com

|

1.0

|

Wrong html processing in the overridden document.write function - Code for reproducing:

```js

document.write('<script data-rp-insertionmarker="<body>" ');

document.write('id="id">');

document.write('<\/script>');

```

https://www.jiji.com

|

process

|

wrong html processing in the overridden document write function code for reproducing js document write document write id id document write

| 1

|

20,226

| 26,822,263,703

|

IssuesEvent

|

2023-02-02 10:18:28

|

microsoft/vscode

|

https://api.github.com/repos/microsoft/vscode

|

closed

|

Unable to open terminal with linux arm and arm64 servers on qemu

|

bug linux virtual-machine terminal-process

|

Sanity testing `1.75` on linux servers following the steps at https://github.com/microsoft/vscode-remote-release/wiki/Sanity-Check-VS-Code-Servers#linux-platforms and attempting to open a terminal throws the following error.

<img width="571" alt="Screenshot 2023-02-01 at 11 47 03" src="https://user-images.githubusercontent.com/964386/215932968-f0af2873-cd6e-470f-b786-2beb16966c0d.png">

Issue is from the pty module, following snippet can trigger the error on any of the containers

```

> var ptyProcess = pty.spawn('bash', [], {cwd: process.env.HOME});

Unsupported ioctl: cmd=0x5441

Uncaught Error: chdir() failed: Not a directory

at new UnixTerminal (/root/.vscode-server/bin/e8bf7514f31cef005c988b206c61948e56aab9cb/node_modules/node-pty/lib/unixTerminal.js:106:24)

at Object.spawn (/root/.vscode-server/bin/e8bf7514f31cef005c988b206c61948e56aab9cb/node_modules/node-pty/lib/index.js:29:12)

```

|

1.0

|

Unable to open terminal with linux arm and arm64 servers on qemu - Sanity testing `1.75` on linux servers following the steps at https://github.com/microsoft/vscode-remote-release/wiki/Sanity-Check-VS-Code-Servers#linux-platforms and attempting to open a terminal throws the following error.

<img width="571" alt="Screenshot 2023-02-01 at 11 47 03" src="https://user-images.githubusercontent.com/964386/215932968-f0af2873-cd6e-470f-b786-2beb16966c0d.png">

Issue is from the pty module, following snippet can trigger the error on any of the containers

```

> var ptyProcess = pty.spawn('bash', [], {cwd: process.env.HOME});

Unsupported ioctl: cmd=0x5441

Uncaught Error: chdir() failed: Not a directory

at new UnixTerminal (/root/.vscode-server/bin/e8bf7514f31cef005c988b206c61948e56aab9cb/node_modules/node-pty/lib/unixTerminal.js:106:24)

at Object.spawn (/root/.vscode-server/bin/e8bf7514f31cef005c988b206c61948e56aab9cb/node_modules/node-pty/lib/index.js:29:12)

```

|

process

|

unable to open terminal with linux arm and servers on qemu sanity testing on linux servers following the steps at and attempting to open a terminal throws the following error img width alt screenshot at src issue is from the pty module following snippet can trigger the error on any of the containers var ptyprocess pty spawn bash cwd process env home unsupported ioctl cmd uncaught error chdir failed not a directory at new unixterminal root vscode server bin node modules node pty lib unixterminal js at object spawn root vscode server bin node modules node pty lib index js

| 1

|

677,336

| 23,158,949,540

|

IssuesEvent

|

2022-07-29 15:34:36

|

ramp4-pcar4/ramp4-pcar4

|

https://api.github.com/repos/ramp4-pcar4/ramp4-pcar4

|

closed

|

Feature Highlighting

|

effort: far away flavour: feature priority: nice type: adaptive needs: consensus consult: ui/ux

|

This will likely require some upfront design work.

Things to consider

- GeoAPI does have a highlight layer, but it is essentially untested

- In RAMP2, the "fogging" of the map behind the highlight was done by directly manipulating the SVG that represented the ESRI map. If we do the same, will need to see if things are different in ESRI 4, and be aware that WebGL may be used instead of SVG.

- An API function to request a highlight sounds like a fine idea

- Functions to get individual geometries from a layer already exist in GeoAPI

Related design discussion https://github.com/ramp4-pcar4/ramp4-pcar4/discussions/837

|

1.0

|

Feature Highlighting - This will likely require some upfront design work.

Things to consider

- GeoAPI does have a highlight layer, but it is essentially untested

- In RAMP2, the "fogging" of the map behind the highlight was done by directly manipulating the SVG that represented the ESRI map. If we do the same, will need to see if things are different in ESRI 4, and be aware that WebGL may be used instead of SVG.

- An API function to request a highlight sounds like a fine idea

- Functions to get individual geometries from a layer already exist in GeoAPI

Related design discussion https://github.com/ramp4-pcar4/ramp4-pcar4/discussions/837

|

non_process

|

feature highlighting this will likely require some upfront design work things to consider geoapi does have a highlight layer but it is essentially untested in the fogging of the map behind the highlight was done by directly manipulating the svg that represented the esri map if we do the same will need to see if things are different in esri and be aware that webgl may be used instead of svg an api function to request a highlight sounds like a fine idea functions to get individual geometries from a layer already exist in geoapi related design discussion

| 0

|

6,799

| 9,937,549,657

|

IssuesEvent

|

2019-07-02 22:18:19

|

googleapis/nodejs-storage

|

https://api.github.com/repos/googleapis/nodejs-storage

|

closed

|

refactor: remove dependency on async module

|

type: process

|

we still rely on `async` in a few places, which broke a recent release of the module (as it was a devDependency only).

|

1.0

|

refactor: remove dependency on async module - we still rely on `async` in a few places, which broke a recent release of the module (as it was a devDependency only).

|

process

|

refactor remove dependency on async module we still rely on async in a few places which broke a recent release of the module as it was a devdependency only

| 1

|

19,350

| 25,481,561,060

|

IssuesEvent

|

2022-11-25 22:08:18

|

kdgregory/log4j-aws-appenders

|

https://api.github.com/repos/kdgregory/log4j-aws-appenders

|

reopened

|

ERROR writer initialization timed out

|

enhancement in-process

|

I see this error message when application starts, and it hangs for 60 seconds as timeout code indicates.

`2022-10-19 16:04:14,305 main ERROR writer initialization timed out`

However, everything else works without a problem... I would like to ask for some hints where it could be hanging?

My setup:

1. log4j2.xml configuration

``` xml

<?xml version="1.0" encoding="UTF-8"?>

<Configuration status="INFO" packages="com.kdgregory.log4j2.aws">

<Appenders>

<Console name="ConsoleAppender" target="SYSTEM_OUT">

<PatternLayout pattern="%d{yyy-MM-dd HH:mm:ss.SSS} [%t] %-5level %logger{36} - %X{AWS-XRAY-TRACE-ID} - %msg%n" />

</Console>

<CloudWatchAppender name="CLOUDWATCH">

<logGroup>log4j-appender-demo</logGroup>

<logStream>demo-{date}-{hostname}-${awslogs:pid}</logStream>

<dedicatedWriter>true</dedicatedWriter>

<PatternLayout pattern="%d{yyyy-MM-dd HH:mm:ss.SSS} %-5p [%t] %c - %X{AWS-XRAY-TRACE-ID} - %m%n" />

</CloudWatchAppender>

</Appenders>

<Loggers>

<Root level="info">

<AppenderRef ref="ConsoleAppender" />

<AppenderRef ref="CLOUDWATCH" />

</Root>

</Loggers>

</Configuration>

```

2. dependencies:

``` xml

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

<exclusions>

<exclusion>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-logging</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-openfeign</artifactId>

</dependency>

<dependency>

<groupId>io.github.openfeign</groupId>

<artifactId>feign-httpclient</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-loadbalancer</artifactId>

</dependency>

<!-- log related dependencies-->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-log4j2</artifactId>

</dependency>

<dependency>

<groupId>com.kdgregory.logging</groupId>

<artifactId>log4j2-aws-appenders</artifactId>

<version>3.0.1</version>

</dependency>

<dependency>

<groupId>com.kdgregory.logging</groupId>

<artifactId>aws-facade-v2</artifactId>

<version>3.0.1</version>

<exclusions>

<exclusion>

<groupId>software.amazon.awssdk</groupId>

<artifactId>sts</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>software.amazon.awssdk</groupId>

<artifactId>cloudwatchlogs</artifactId>

<version>2.17.281</version>

</dependency>

<dependency>

<groupId>software.amazon.awssdk</groupId>

<artifactId>sts</artifactId>

<version>2.17.281</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

</dependencies>

```

3. Scenario: ADOT with Log4j2

- I would like to leverage open-telemetry java agent to inject `%X{AWS-XRAY-TRACE-ID}` into cloud watch logs

- credential chain: role assume is from serviceaccount OIDC provider. and this is why I have to upgrade sts SDK version

So...

With this setup, everything works but hanging for 1 minutes when starts - very annoying...

Can anyone bing me a silver-lining?

Thanks

|

1.0

|

ERROR writer initialization timed out - I see this error message when application starts, and it hangs for 60 seconds as timeout code indicates.

`2022-10-19 16:04:14,305 main ERROR writer initialization timed out`

However, everything else works without a problem... I would like to ask for some hints where it could be hanging?

My setup:

1. log4j2.xml configuration

``` xml

<?xml version="1.0" encoding="UTF-8"?>

<Configuration status="INFO" packages="com.kdgregory.log4j2.aws">

<Appenders>

<Console name="ConsoleAppender" target="SYSTEM_OUT">

<PatternLayout pattern="%d{yyy-MM-dd HH:mm:ss.SSS} [%t] %-5level %logger{36} - %X{AWS-XRAY-TRACE-ID} - %msg%n" />

</Console>

<CloudWatchAppender name="CLOUDWATCH">

<logGroup>log4j-appender-demo</logGroup>

<logStream>demo-{date}-{hostname}-${awslogs:pid}</logStream>

<dedicatedWriter>true</dedicatedWriter>

<PatternLayout pattern="%d{yyyy-MM-dd HH:mm:ss.SSS} %-5p [%t] %c - %X{AWS-XRAY-TRACE-ID} - %m%n" />

</CloudWatchAppender>

</Appenders>

<Loggers>

<Root level="info">

<AppenderRef ref="ConsoleAppender" />

<AppenderRef ref="CLOUDWATCH" />

</Root>

</Loggers>

</Configuration>

```

2. dependencies:

``` xml

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

<exclusions>

<exclusion>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-logging</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-openfeign</artifactId>

</dependency>

<dependency>

<groupId>io.github.openfeign</groupId>

<artifactId>feign-httpclient</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-loadbalancer</artifactId>

</dependency>

<!-- log related dependencies-->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-log4j2</artifactId>

</dependency>

<dependency>

<groupId>com.kdgregory.logging</groupId>

<artifactId>log4j2-aws-appenders</artifactId>

<version>3.0.1</version>

</dependency>

<dependency>

<groupId>com.kdgregory.logging</groupId>

<artifactId>aws-facade-v2</artifactId>

<version>3.0.1</version>

<exclusions>

<exclusion>

<groupId>software.amazon.awssdk</groupId>

<artifactId>sts</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>software.amazon.awssdk</groupId>

<artifactId>cloudwatchlogs</artifactId>

<version>2.17.281</version>

</dependency>

<dependency>

<groupId>software.amazon.awssdk</groupId>

<artifactId>sts</artifactId>

<version>2.17.281</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

</dependencies>

```

3. Scenario: ADOT with Log4j2

- I would like to leverage open-telemetry java agent to inject `%X{AWS-XRAY-TRACE-ID}` into cloud watch logs

- credential chain: role assume is from serviceaccount OIDC provider. and this is why I have to upgrade sts SDK version

So...

With this setup, everything works but hanging for 1 minutes when starts - very annoying...

Can anyone bing me a silver-lining?

Thanks

|

process

|

error writer initialization timed out i see this error message when application starts and it hangs for seconds as timeout code indicates main error writer initialization timed out however everything else works without a problem i would like to ask for some hints where it could be hanging my setup xml configuration xml appender demo demo date hostname awslogs pid true dependencies xml org springframework boot spring boot starter web org springframework boot spring boot starter logging org springframework cloud spring cloud starter openfeign io github openfeign feign httpclient org springframework cloud spring cloud starter loadbalancer org springframework boot spring boot starter com kdgregory logging aws appenders com kdgregory logging aws facade software amazon awssdk sts software amazon awssdk cloudwatchlogs software amazon awssdk sts org springframework boot spring boot starter test test scenario adot with i would like to leverage open telemetry java agent to inject x aws xray trace id into cloud watch logs credential chain role assume is from serviceaccount oidc provider and this is why i have to upgrade sts sdk version so with this setup everything works but hanging for minutes when starts very annoying can anyone bing me a silver lining thanks

| 1

|

17,288

| 23,096,844,325

|

IssuesEvent

|

2022-07-26 20:29:05

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

CASE statements don't evaluate to False if using `, False` and nested CASE statements

|

Type:Bug Priority:P2 Querying/Processor .Backend Querying/Notebook/Custom Column

|

**Describe the bug**

Creating 2 custom columns that are case statements, then referencing those in a 3rd custom column with a case statement that evaluates to `True` or `False`, results in a bug where the 3rd column doesn't evaluate to `False` - just `NULL`.

**Creating custom columns**

First

<img width="486" alt="image" src="https://user-images.githubusercontent.com/61659989/179753529-81aec074-9e2f-4e33-b8be-2050e8f1da40.png">

Second

<img width="486" alt="image" src="https://user-images.githubusercontent.com/61659989/179753789-3aa70339-97ec-452d-8b49-7b165eaf7d22.png">

Third, that references the above 2 - evaluating to True/False

<img width="486" alt="image" src="https://user-images.githubusercontent.com/61659989/179753936-3fc69b20-7b7a-4f43-9f46-fdc1ae77350b.png">

**Generated SQL in BigQuery**

<img width="904" alt="image" src="https://user-images.githubusercontent.com/61659989/179754328-ed0cfcbd-8bf3-470d-9288-7ce10db28b4a.png">

This should have an `ELSE FALSE` evaluation

**Tweaking the third column to return binary output instead**

<img width="484" alt="image" src="https://user-images.githubusercontent.com/61659989/179754483-1f5e9fe6-5176-4201-82f0-49d08eaeee70.png">

Generated SQL - now correctly showing the `ELSE` statement

<img width="881" alt="image" src="https://user-images.githubusercontent.com/61659989/179754791-3e905f91-5daf-4ee1-a6ec-d426ac6cd715.png">

|

1.0

|

CASE statements don't evaluate to False if using `, False` and nested CASE statements - **Describe the bug**

Creating 2 custom columns that are case statements, then referencing those in a 3rd custom column with a case statement that evaluates to `True` or `False`, results in a bug where the 3rd column doesn't evaluate to `False` - just `NULL`.

**Creating custom columns**

First

<img width="486" alt="image" src="https://user-images.githubusercontent.com/61659989/179753529-81aec074-9e2f-4e33-b8be-2050e8f1da40.png">

Second

<img width="486" alt="image" src="https://user-images.githubusercontent.com/61659989/179753789-3aa70339-97ec-452d-8b49-7b165eaf7d22.png">

Third, that references the above 2 - evaluating to True/False

<img width="486" alt="image" src="https://user-images.githubusercontent.com/61659989/179753936-3fc69b20-7b7a-4f43-9f46-fdc1ae77350b.png">

**Generated SQL in BigQuery**

<img width="904" alt="image" src="https://user-images.githubusercontent.com/61659989/179754328-ed0cfcbd-8bf3-470d-9288-7ce10db28b4a.png">

This should have an `ELSE FALSE` evaluation

**Tweaking the third column to return binary output instead**

<img width="484" alt="image" src="https://user-images.githubusercontent.com/61659989/179754483-1f5e9fe6-5176-4201-82f0-49d08eaeee70.png">

Generated SQL - now correctly showing the `ELSE` statement

<img width="881" alt="image" src="https://user-images.githubusercontent.com/61659989/179754791-3e905f91-5daf-4ee1-a6ec-d426ac6cd715.png">

|

process

|

case statements don t evaluate to false if using false and nested case statements describe the bug creating custom columns that are case statements then referencing those in a custom column with a case statement that evaluates to true or false results in a bug where the column doesn t evaluate to false just null creating custom columns first img width alt image src second img width alt image src third that references the above evaluating to true false img width alt image src generated sql in bigquery img width alt image src this should have an else false evaluation tweaking the third column to return binary output instead img width alt image src generated sql now correctly showing the else statement img width alt image src

| 1

|

1,017

| 3,479,429,277

|

IssuesEvent

|

2015-12-28 20:14:13

|

USC-CSSL/TACIT

|

https://api.github.com/repos/USC-CSSL/TACIT

|

opened

|

preprocessing: correct spelling option

|

Preprocessing

|

In preprocessing, can we provide an option to automatically correct misspellings?

|

1.0

|

preprocessing: correct spelling option - In preprocessing, can we provide an option to automatically correct misspellings?

|

process

|

preprocessing correct spelling option in preprocessing can we provide an option to automatically correct misspellings

| 1

|

230,648

| 25,482,741,430

|

IssuesEvent

|

2022-11-26 01:22:46

|

panasalap/linux-4.1.15

|

https://api.github.com/repos/panasalap/linux-4.1.15

|

reopened

|

CVE-2017-6345 (Medium) detected in linuxlinux-4.1.17, linuxlinux-4.1.17

|

security vulnerability

|

## CVE-2017-6345 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.1.17</b>, <b>linuxlinux-4.1.17</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The LLC subsystem in the Linux kernel before 4.9.13 does not ensure that a certain destructor exists in required circumstances, which allows local users to cause a denial of service (BUG_ON) or possibly have unspecified other impact via crafted system calls.

<p>Publish Date: 2017-03-01

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2017-6345>CVE-2017-6345</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2017-6345">https://nvd.nist.gov/vuln/detail/CVE-2017-6345</a></p>

<p>Release Date: 2017-03-01</p>

<p>Fix Resolution: 4.9.13</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2017-6345 (Medium) detected in linuxlinux-4.1.17, linuxlinux-4.1.17 - ## CVE-2017-6345 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.1.17</b>, <b>linuxlinux-4.1.17</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The LLC subsystem in the Linux kernel before 4.9.13 does not ensure that a certain destructor exists in required circumstances, which allows local users to cause a denial of service (BUG_ON) or possibly have unspecified other impact via crafted system calls.

<p>Publish Date: 2017-03-01

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2017-6345>CVE-2017-6345</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2017-6345">https://nvd.nist.gov/vuln/detail/CVE-2017-6345</a></p>

<p>Release Date: 2017-03-01</p>

<p>Fix Resolution: 4.9.13</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in linuxlinux linuxlinux cve medium severity vulnerability vulnerable libraries linuxlinux linuxlinux vulnerability details the llc subsystem in the linux kernel before does not ensure that a certain destructor exists in required circumstances which allows local users to cause a denial of service bug on or possibly have unspecified other impact via crafted system calls publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact low integrity impact low availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

2,794

| 5,723,471,012

|

IssuesEvent

|

2017-04-20 12:21:04

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

closed

|

References to topics in ditavalref context lead to broken links in XHTML TOC

|

bug P2 preprocess/filtering

|

I'm attaching a sample project:

[branchFilteringJobProblem.zip](https://github.com/dita-ot/dita-ot/files/922135/branchFilteringJobProblem.zip)

Publish to XHTML and then try to open from the TOC the first link. It points to a non-existing HTML document.

It seems that the BranchFilterModule no longer updates the Job object with the new "-1", '-2'... names it gives to topics in ditavalref contexts. Leading to .job.xml not containing <file> mappings for those automatically generated topics.

It's a recent change, before this commit @jelovirt made: https://github.com/dita-ot/dita-ot/commit/07331c3193ebd0cf846ff6a35185075599101914

it had a "generateCopies" method which seemed to update the Job object with the new topics....

|

1.0

|

References to topics in ditavalref context lead to broken links in XHTML TOC - I'm attaching a sample project:

[branchFilteringJobProblem.zip](https://github.com/dita-ot/dita-ot/files/922135/branchFilteringJobProblem.zip)

Publish to XHTML and then try to open from the TOC the first link. It points to a non-existing HTML document.

It seems that the BranchFilterModule no longer updates the Job object with the new "-1", '-2'... names it gives to topics in ditavalref contexts. Leading to .job.xml not containing <file> mappings for those automatically generated topics.

It's a recent change, before this commit @jelovirt made: https://github.com/dita-ot/dita-ot/commit/07331c3193ebd0cf846ff6a35185075599101914

it had a "generateCopies" method which seemed to update the Job object with the new topics....

|

process

|

references to topics in ditavalref context lead to broken links in xhtml toc i m attaching a sample project publish to xhtml and then try to open from the toc the first link it points to a non existing html document it seems that the branchfiltermodule no longer updates the job object with the new names it gives to topics in ditavalref contexts leading to job xml not containing mappings for those automatically generated topics it s a recent change before this commit jelovirt made it had a generatecopies method which seemed to update the job object with the new topics

| 1

|

7,343

| 10,479,536,998

|

IssuesEvent

|

2019-09-24 04:37:27

|

OI-wiki/OI-wiki

|

https://api.github.com/repos/OI-wiki/OI-wiki

|

closed

|

LCT 页面代码与描述混乱

|

需要处理 / Need Processing 需要帮助 / help wanted

|

首先,十分欢迎你花钱(?)来给 OI WIki 开 issue,在提交之前,请花时间阅读一下这个模板的内容,谢谢合作!

- 是出现了什么问题?(最好截图)

https://oi-wiki.org/ds/lct/ LCT 页面

文中定义的函数名字与代码中用到的不符(主要是大小写不一样),且建树中的代码风格(数组)与全文的代码风格(指针)不一致。在 建树 中,分裂 和 合并 又被讲了一次(而且写的并不是很详细)

- 你是否正在着手修复?

否

|

1.0

|

LCT 页面代码与描述混乱 - 首先,十分欢迎你花钱(?)来给 OI WIki 开 issue,在提交之前,请花时间阅读一下这个模板的内容,谢谢合作!

- 是出现了什么问题?(最好截图)

https://oi-wiki.org/ds/lct/ LCT 页面

文中定义的函数名字与代码中用到的不符(主要是大小写不一样),且建树中的代码风格(数组)与全文的代码风格(指针)不一致。在 建树 中,分裂 和 合并 又被讲了一次(而且写的并不是很详细)

- 你是否正在着手修复?

否

|

process

|

lct 页面代码与描述混乱 首先,十分欢迎你花钱(?)来给 oi wiki 开 issue,在提交之前,请花时间阅读一下这个模板的内容,谢谢合作! 是出现了什么问题?(最好截图) lct 页面 文中定义的函数名字与代码中用到的不符(主要是大小写不一样),且建树中的代码风格(数组)与全文的代码风格(指针)不一致。在 建树 中,分裂 和 合并 又被讲了一次(而且写的并不是很详细) 你是否正在着手修复? 否

| 1

|

11,171

| 13,957,694,788

|

IssuesEvent

|

2020-10-24 08:11:21

|

alexanderkotsev/geoportal

|

https://api.github.com/repos/alexanderkotsev/geoportal

|

opened

|

SE: Harvesting request

|

Geoportal Harvesting process SE - Sweden

|

Dear Angelo,

Please perform a harvest on the Swedish CSW. We have some updates that needs to be checked.

Kind Regards

Fredrik Persäter

|

1.0

|

SE: Harvesting request - Dear Angelo,

Please perform a harvest on the Swedish CSW. We have some updates that needs to be checked.

Kind Regards

Fredrik Persäter

|

process

|

se harvesting request dear angelo please perform a harvest on the swedish csw we have some updates that needs to be checked kind regards fredrik pers auml ter

| 1

|

20,886

| 27,710,772,156

|

IssuesEvent

|

2023-03-14 14:07:43

|

pcg-platinus/feedback

|

https://api.github.com/repos/pcg-platinus/feedback

|

closed

|

Ich bin Prozessanalyst und möchte den "richtigen" Zeitpunkt in einem Projekt für die Modellierung von Prozessen finden

|

BPM-BusinessProcessManagement

|

Bei uns werden in Projekten oft Prozesse in Visio gezeichnet, weil man auf unterster (meist manueller oder sogar applikatorischer) Ebene den GESAMTEN Prozess sehen möchte.

In Repositories wie Adonis wären hier für alle Beteiligte entsprechende Read&Explore-Lizenzen notwendig, die man sich (inklusive der Aufwände wie Schulungen) sparen möchte und das Designen einer Zeichnung einfacher ist als einer objektorientierten Datenbank (mit ihren Modellierungs- und Objektablage-Guidelines).

Ist es klug, bis zur Abnahme durch den Kunden damit zu warten und dann erst den fertigen Prozess zu übertragen (also am Ende des Prozessschrittes, in dem das To-Be erstellt wird) und ihn in die richtigen Haupt-/Subprozesse zu schneiden?

Zu welchem Zeitpunkt sollte man spätestens die Visio-Prozesse ins Adonis übertragen?

|

1.0

|

Ich bin Prozessanalyst und möchte den "richtigen" Zeitpunkt in einem Projekt für die Modellierung von Prozessen finden - Bei uns werden in Projekten oft Prozesse in Visio gezeichnet, weil man auf unterster (meist manueller oder sogar applikatorischer) Ebene den GESAMTEN Prozess sehen möchte.

In Repositories wie Adonis wären hier für alle Beteiligte entsprechende Read&Explore-Lizenzen notwendig, die man sich (inklusive der Aufwände wie Schulungen) sparen möchte und das Designen einer Zeichnung einfacher ist als einer objektorientierten Datenbank (mit ihren Modellierungs- und Objektablage-Guidelines).

Ist es klug, bis zur Abnahme durch den Kunden damit zu warten und dann erst den fertigen Prozess zu übertragen (also am Ende des Prozessschrittes, in dem das To-Be erstellt wird) und ihn in die richtigen Haupt-/Subprozesse zu schneiden?

Zu welchem Zeitpunkt sollte man spätestens die Visio-Prozesse ins Adonis übertragen?

|

process

|

ich bin prozessanalyst und möchte den richtigen zeitpunkt in einem projekt für die modellierung von prozessen finden bei uns werden in projekten oft prozesse in visio gezeichnet weil man auf unterster meist manueller oder sogar applikatorischer ebene den gesamten prozess sehen möchte in repositories wie adonis wären hier für alle beteiligte entsprechende read explore lizenzen notwendig die man sich inklusive der aufwände wie schulungen sparen möchte und das designen einer zeichnung einfacher ist als einer objektorientierten datenbank mit ihren modellierungs und objektablage guidelines ist es klug bis zur abnahme durch den kunden damit zu warten und dann erst den fertigen prozess zu übertragen also am ende des prozessschrittes in dem das to be erstellt wird und ihn in die richtigen haupt subprozesse zu schneiden zu welchem zeitpunkt sollte man spätestens die visio prozesse ins adonis übertragen

| 1

|

14,477

| 4,937,905,417

|

IssuesEvent

|

2016-11-29 09:31:42

|

joomla/joomla-cms

|

https://api.github.com/repos/joomla/joomla-cms

|

closed

|

Hidden menu item to connect broken links in search engines to the new link/menu-item

|

No Code Attached Yet

|

### Steps to reproduce the issue

I changed different menu aliasses to a new nicer alias but the searchengines still have the old one.

i want to put in menu-item with the old alias but dont want them to be seen in the menu on the website.

so i want functional hidden menu-item that will be link thru the menu-item alias option to the new menu alias.

### Expected result

### Actual result

### System information (as much as possible)

### Additional comments

|

1.0

|

Hidden menu item to connect broken links in search engines to the new link/menu-item - ### Steps to reproduce the issue

I changed different menu aliasses to a new nicer alias but the searchengines still have the old one.

i want to put in menu-item with the old alias but dont want them to be seen in the menu on the website.

so i want functional hidden menu-item that will be link thru the menu-item alias option to the new menu alias.

### Expected result

### Actual result

### System information (as much as possible)

### Additional comments

|

non_process

|

hidden menu item to connect broken links in search engines to the new link menu item steps to reproduce the issue i changed different menu aliasses to a new nicer alias but the searchengines still have the old one i want to put in menu item with the old alias but dont want them to be seen in the menu on the website so i want functional hidden menu item that will be link thru the menu item alias option to the new menu alias expected result actual result system information as much as possible additional comments

| 0

|

14,075

| 16,945,524,264

|

IssuesEvent

|

2021-06-28 06:05:40

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[iOS] HTML breakage in review consent screen and consent PDF for custom generated custom document

|

Bug P1 Process: Fixed Process: Tested QA Process: Tested dev iOS

|

Steps:

1. Add a custom generated custom document from SB

2. Publish updates

3. Enroll into study from iOS

4. Navigate to Review consent screen

5. Observe

Actual: HTML breakage in review consent screen for custom generated custom document

Expected: Review consent screen for custom generated custom document should display proper

Note:

Issue not observed in other screens

Issue not observed in Android

Study details to verify:

1. Instance: Dev

2. Study: FinalStudy

Screenshots:

|

3.0

|

[iOS] HTML breakage in review consent screen and consent PDF for custom generated custom document - Steps:

1. Add a custom generated custom document from SB

2. Publish updates

3. Enroll into study from iOS

4. Navigate to Review consent screen

5. Observe

Actual: HTML breakage in review consent screen for custom generated custom document

Expected: Review consent screen for custom generated custom document should display proper

Note:

Issue not observed in other screens

Issue not observed in Android

Study details to verify:

1. Instance: Dev

2. Study: FinalStudy

Screenshots:

|

process

|

html breakage in review consent screen and consent pdf for custom generated custom document steps add a custom generated custom document from sb publish updates enroll into study from ios navigate to review consent screen observe actual html breakage in review consent screen for custom generated custom document expected review consent screen for custom generated custom document should display proper note issue not observed in other screens issue not observed in android study details to verify instance dev study finalstudy screenshots

| 1

|

65,058

| 7,854,986,390

|

IssuesEvent

|

2018-06-20 22:59:19

|

Opentrons/opentrons

|

https://api.github.com/repos/Opentrons/opentrons

|

closed

|

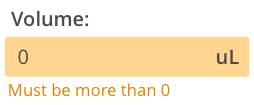

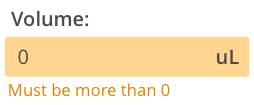

Error: Require Ingredient Name and Volume

|

feature protocol designer small

|

As a user, I would like to be alerted if I attempt to create an ingredient without a volume

## Acceptance Criteria

- If user attempts to click 'save' when volume is blank or 0, show error on field and prevent save

- Same if the user attempts to click 'save' when the name is empty

-- Volume Field (and/or Name Field) has orange error state

-- Volume Field (and/or Name Field) shows error copy

## Design

|

1.0

|

Error: Require Ingredient Name and Volume - As a user, I would like to be alerted if I attempt to create an ingredient without a volume

## Acceptance Criteria

- If user attempts to click 'save' when volume is blank or 0, show error on field and prevent save

- Same if the user attempts to click 'save' when the name is empty

-- Volume Field (and/or Name Field) has orange error state

-- Volume Field (and/or Name Field) shows error copy

## Design

|

non_process

|

error require ingredient name and volume as a user i would like to be alerted if i attempt to create an ingredient without a volume acceptance criteria if user attempts to click save when volume is blank or show error on field and prevent save same if the user attempts to click save when the name is empty volume field and or name field has orange error state volume field and or name field shows error copy design

| 0

|

52,554

| 13,224,834,551

|

IssuesEvent

|

2020-08-17 19:56:43

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

opened

|

[serialization] std::auto_ptr is depricated (Trac #2417)

|

Incomplete Migration Migrated from Trac combo core defect

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2417">https://code.icecube.wisc.edu/projects/icecube/ticket/2417</a>, reported by kjmeagherand owned by olivas</em></summary>

<p>

```json

{

"status": "accepted",

"changetime": "2020-06-30T12:16:59",

"_ts": "1593519419169166",

"description": "I am getting a lot of warnings with gcc 9.2.1 that auto_ptr is depricated. And word on the street is that it will be deprecated at some point in the future. I think that it can just be replaced with unique_ptr.",

"reporter": "kjmeagher",

"cc": "",

"resolution": "",

"time": "2020-03-11T00:00:43",

"component": "combo core",

"summary": "[serialization] std::auto_ptr is depricated",

"priority": "normal",

"keywords": "",

"milestone": "Winter Solstice 2020",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

[serialization] std::auto_ptr is depricated (Trac #2417) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2417">https://code.icecube.wisc.edu/projects/icecube/ticket/2417</a>, reported by kjmeagherand owned by olivas</em></summary>

<p>

```json

{

"status": "accepted",

"changetime": "2020-06-30T12:16:59",

"_ts": "1593519419169166",

"description": "I am getting a lot of warnings with gcc 9.2.1 that auto_ptr is depricated. And word on the street is that it will be deprecated at some point in the future. I think that it can just be replaced with unique_ptr.",

"reporter": "kjmeagher",

"cc": "",

"resolution": "",

"time": "2020-03-11T00:00:43",

"component": "combo core",

"summary": "[serialization] std::auto_ptr is depricated",

"priority": "normal",

"keywords": "",

"milestone": "Winter Solstice 2020",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

|

non_process

|

std auto ptr is depricated trac migrated from json status accepted changetime ts description i am getting a lot of warnings with gcc that auto ptr is depricated and word on the street is that it will be deprecated at some point in the future i think that it can just be replaced with unique ptr reporter kjmeagher cc resolution time component combo core summary std auto ptr is depricated priority normal keywords milestone winter solstice owner olivas type defect

| 0

|

8,249

| 11,421,369,779

|

IssuesEvent

|

2020-02-03 12:02:31

|

parcel-bundler/parcel

|

https://api.github.com/repos/parcel-bundler/parcel

|

closed

|

Logging postcss warnings and messages in Parcel

|

:bug: Bug CSS Preprocessing Stale

|

# ❔ Question

Hello,

I recently installed `'postcss-font-base64` (with `npm install --save-dev`), but it doesn't seem to be working for me (output is not changed in any way). My `postcss.config.js` is shown below, and can verify it is being used - if I remove the `cssnano` entry, the CSS is no longer minimized. How can I debug this? I saw the `postcss-reporter` plugin and tried to install it in a similar fashion, adding it tot he end of my config, but didn't see any messages being reported when running `npm run prod` (which calls `parcel build`).

```javascript

module.exports = {

plugins: [

require('autoprefixer'),

require('postcss-font-base64')({

// no options yet

}),

require('cssnano')({

preset: ['default', {

reduceTransforms: true

}]

})

]

};

```

## 🔦 Context

I want to embed fonts (all the kinds supported by `postcss-font-base64`) within css files, to make shipping my CSS easier for users of it. I understand this may be an issue with a postcss plugin and not parcel, but I don't know how to log warnings and such when using postcss with parcel.

## 💻 Code Sample

(can try to put something together)

## 🌍 Your Environment

| Software | Version(s) |

| ---------------- | ---------- |

| Parcel | 1.12.3

| Node | v12.2.0

| npm/Yarn | 6.9.0

| Operating System | Linux 035dc2ced347 4.15.0-48-generic #51-Ubuntu SMP Wed Apr 3 08:28:49 UTC 2019 x86_64 GNU/Linux

|

1.0

|

Logging postcss warnings and messages in Parcel - # ❔ Question

Hello,

I recently installed `'postcss-font-base64` (with `npm install --save-dev`), but it doesn't seem to be working for me (output is not changed in any way). My `postcss.config.js` is shown below, and can verify it is being used - if I remove the `cssnano` entry, the CSS is no longer minimized. How can I debug this? I saw the `postcss-reporter` plugin and tried to install it in a similar fashion, adding it tot he end of my config, but didn't see any messages being reported when running `npm run prod` (which calls `parcel build`).

```javascript

module.exports = {

plugins: [

require('autoprefixer'),

require('postcss-font-base64')({

// no options yet

}),

require('cssnano')({

preset: ['default', {

reduceTransforms: true

}]

})

]

};

```

## 🔦 Context

I want to embed fonts (all the kinds supported by `postcss-font-base64`) within css files, to make shipping my CSS easier for users of it. I understand this may be an issue with a postcss plugin and not parcel, but I don't know how to log warnings and such when using postcss with parcel.

## 💻 Code Sample

(can try to put something together)

## 🌍 Your Environment

| Software | Version(s) |

| ---------------- | ---------- |

| Parcel | 1.12.3

| Node | v12.2.0

| npm/Yarn | 6.9.0

| Operating System | Linux 035dc2ced347 4.15.0-48-generic #51-Ubuntu SMP Wed Apr 3 08:28:49 UTC 2019 x86_64 GNU/Linux

|

process

|

logging postcss warnings and messages in parcel ❔ question hello i recently installed postcss font with npm install save dev but it doesn t seem to be working for me output is not changed in any way my postcss config js is shown below and can verify it is being used if i remove the cssnano entry the css is no longer minimized how can i debug this i saw the postcss reporter plugin and tried to install it in a similar fashion adding it tot he end of my config but didn t see any messages being reported when running npm run prod which calls parcel build javascript module exports plugins require autoprefixer require postcss font no options yet require cssnano preset default reducetransforms true 🔦 context i want to embed fonts all the kinds supported by postcss font within css files to make shipping my css easier for users of it i understand this may be an issue with a postcss plugin and not parcel but i don t know how to log warnings and such when using postcss with parcel 💻 code sample can try to put something together 🌍 your environment software version s parcel node npm yarn operating system linux generic ubuntu smp wed apr utc gnu linux

| 1

|

15,699

| 19,848,265,749

|

IssuesEvent

|

2022-01-21 09:23:00

|

ooi-data/CE07SHSM-MFD35-02-PRESFB000-recovered_host-presf_abc_dcl_tide_measurement_recovered

|

https://api.github.com/repos/ooi-data/CE07SHSM-MFD35-02-PRESFB000-recovered_host-presf_abc_dcl_tide_measurement_recovered

|

opened

|

🛑 Processing failed: ValueError

|

process

|

## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T09:22:59.931299.

## Details

Flow name: `CE07SHSM-MFD35-02-PRESFB000-recovered_host-presf_abc_dcl_tide_measurement_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

<details>

<summary>Traceback</summary>

```

Traceback (most recent call last):