Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,176

| 28,145,810,429

|

IssuesEvent

|

2023-04-02 13:19:47

|

MarkBind/markbind

|

https://api.github.com/repos/MarkBind/markbind

|

closed

|

Improve the init command

|

c.Enhancement p.Low a-CLI a-Process d.easy

|

Some suggestions for improving the `init` command (can be broken down to multiple PRs):

The default starter site can be enhanced to make it more useful to the user as a starting point. e.g., create a site that has all typical features (i.e., top nav, site nav, footer, search, a bunch of pages typically used such as home, contact, docs etc.) so that the user can go from there to a production website with least amount of effort.

Perhaps we can have other options?

`--minimal`: create an empty site

`--convert`: converts an existing site to a MarkBind site. This can be an 'intelligent' conversion e.g., deduce siteNav items based on page titles

`--restore`: restores the folder to the previous state (i.e., reverses the `init` command)

|

1.0

|

Improve the init command - Some suggestions for improving the `init` command (can be broken down to multiple PRs):

The default starter site can be enhanced to make it more useful to the user as a starting point. e.g., create a site that has all typical features (i.e., top nav, site nav, footer, search, a bunch of pages typically used such as home, contact, docs etc.) so that the user can go from there to a production website with least amount of effort.

Perhaps we can have other options?

`--minimal`: create an empty site

`--convert`: converts an existing site to a MarkBind site. This can be an 'intelligent' conversion e.g., deduce siteNav items based on page titles

`--restore`: restores the folder to the previous state (i.e., reverses the `init` command)

|

process

|

improve the init command some suggestions for improving the init command can be broken down to multiple prs the default starter site can be enhanced to make it more useful to the user as a starting point e g create a site that has all typical features i e top nav site nav footer search a bunch of pages typically used such as home contact docs etc so that the user can go from there to a production website with least amount of effort perhaps we can have other options minimal create an empty site convert converts an existing site to a markbind site this can be an intelligent conversion e g deduce sitenav items based on page titles restore restores the folder to the previous state i e reverses the init command

| 1

|

216

| 2,644,228,118

|

IssuesEvent

|

2015-03-12 15:52:55

|

documentcloud/documentcloud

|

https://api.github.com/repos/documentcloud/documentcloud

|

closed

|

Investigate PDFium

|

overhaul_processing

|

https://code.google.com/p/pdfium/

If it's easy enough to get running from the command-line, it could be a much, much faster and more accurate alternative to ghostscript rendering.

|

1.0

|

Investigate PDFium - https://code.google.com/p/pdfium/

If it's easy enough to get running from the command-line, it could be a much, much faster and more accurate alternative to ghostscript rendering.

|

process

|

investigate pdfium if it s easy enough to get running from the command line it could be a much much faster and more accurate alternative to ghostscript rendering

| 1

|

452,146

| 13,046,639,497

|

IssuesEvent

|

2020-07-29 09:20:11

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

Generating metrics description breaks for custom expressions

|

.Backend Administration/Metrics & Segments Priority:P1

|

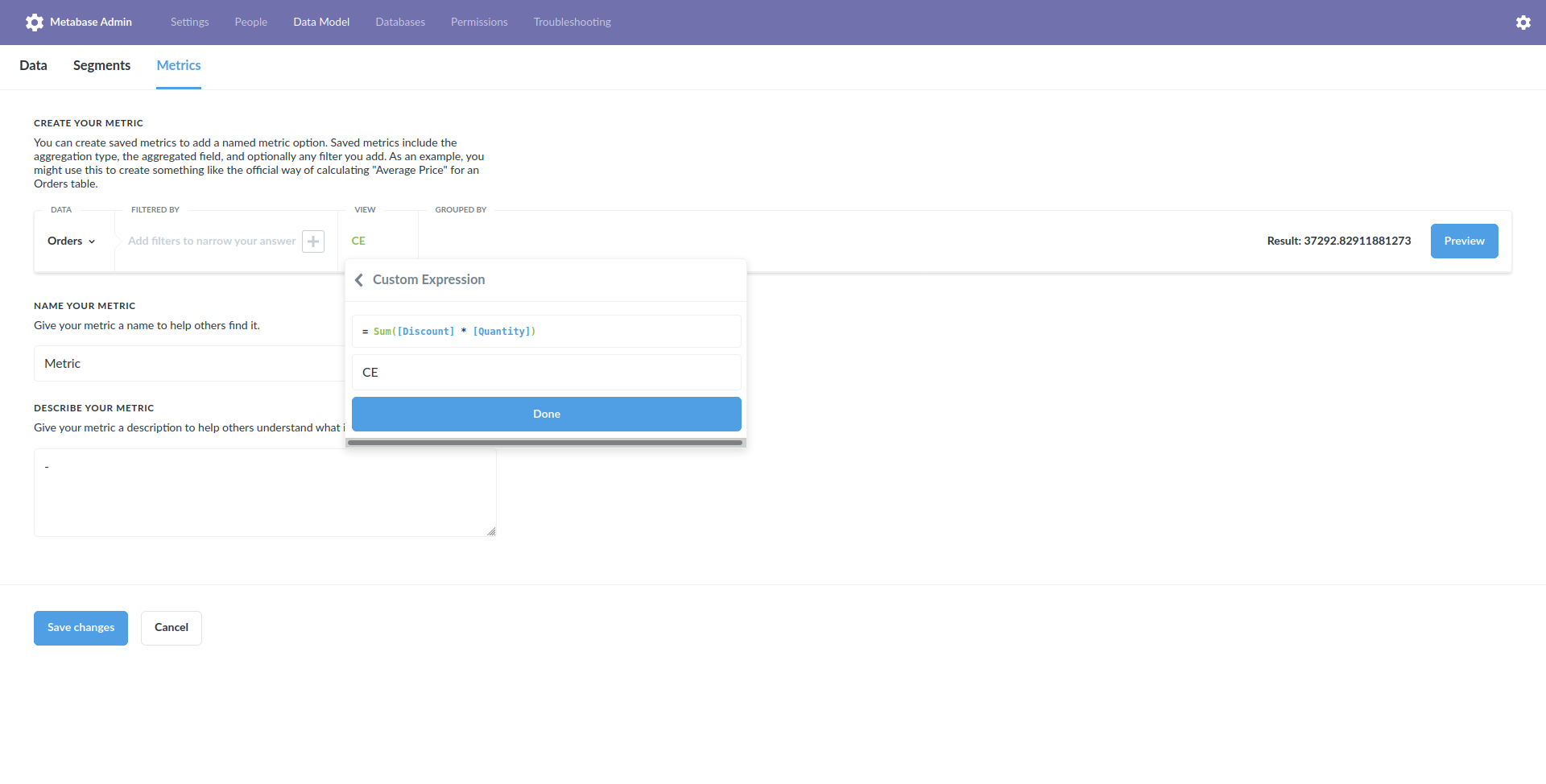

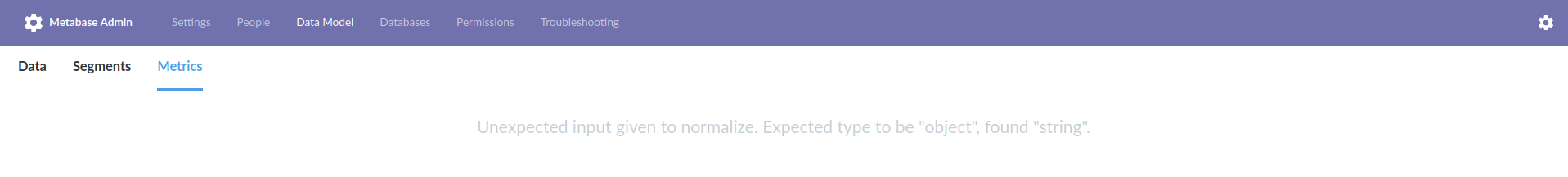

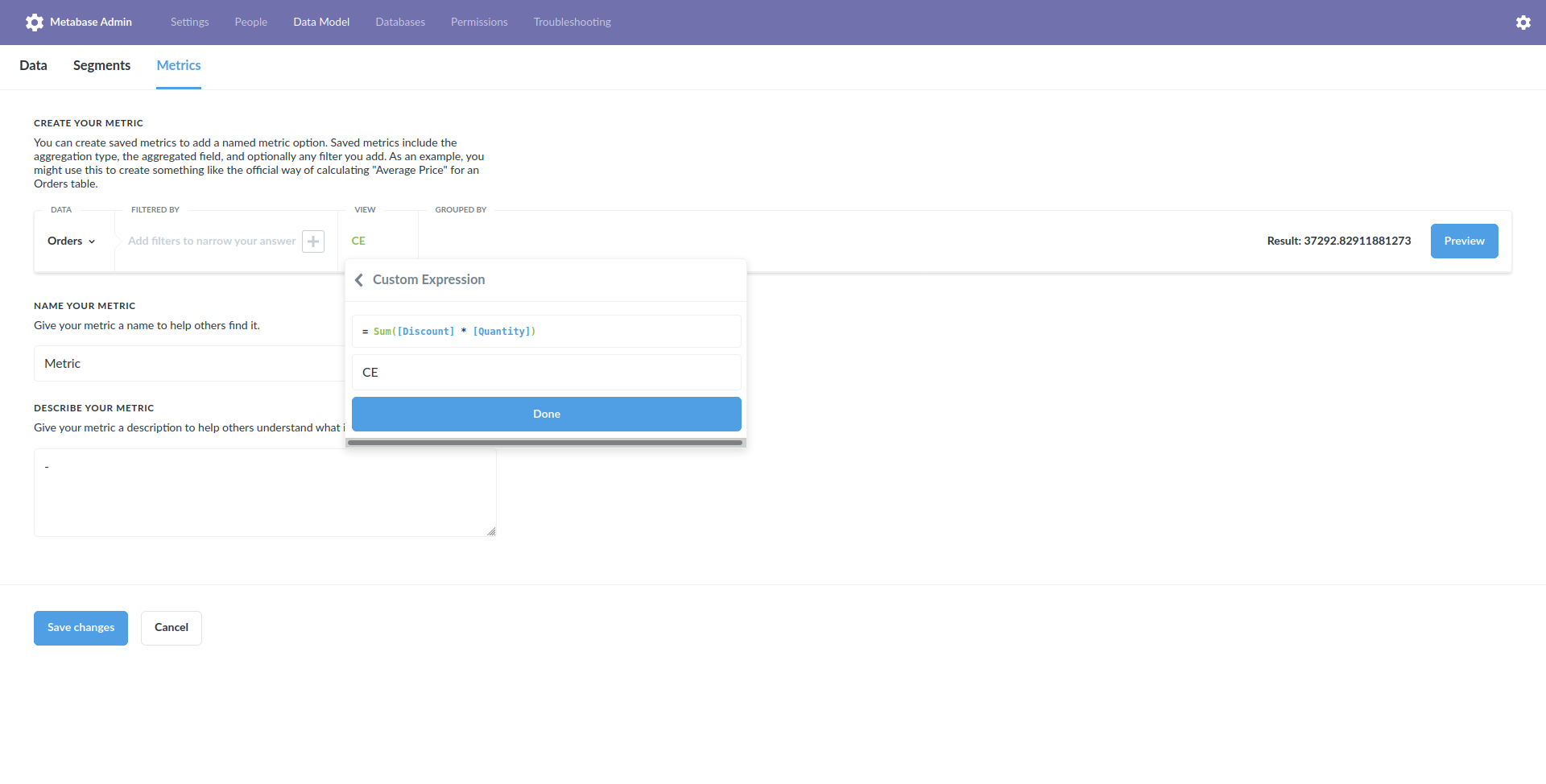

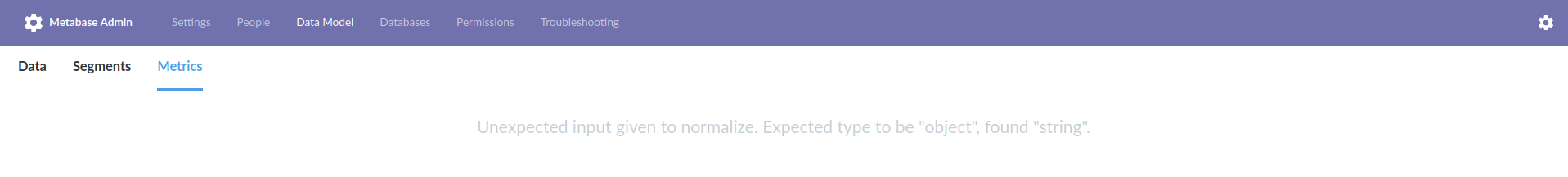

On working 0.36.0:

1. Admin > Data Model > Metric

2. Create new, table Sample Dataset > Orders, view Custom Expression `Sum([Discount] * [Quantity])`, save the metric

3. Refresh on Metric overview page, errors with `Unexpected input given to normalize. Expected type to be "object", found "string".`

_Originally posted by @flamber in https://github.com/metabase/metabase/issues/12982#issuecomment-664945290_

|

1.0

|

Generating metrics description breaks for custom expressions - On working 0.36.0:

1. Admin > Data Model > Metric

2. Create new, table Sample Dataset > Orders, view Custom Expression `Sum([Discount] * [Quantity])`, save the metric

3. Refresh on Metric overview page, errors with `Unexpected input given to normalize. Expected type to be "object", found "string".`

_Originally posted by @flamber in https://github.com/metabase/metabase/issues/12982#issuecomment-664945290_

|

non_process

|

generating metrics description breaks for custom expressions on working admin data model metric create new table sample dataset orders view custom expression sum save the metric refresh on metric overview page errors with unexpected input given to normalize expected type to be object found string originally posted by flamber in

| 0

|

21,507

| 29,736,738,837

|

IssuesEvent

|

2023-06-14 02:00:08

|

lizhihao6/get-daily-arxiv-noti

|

https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti

|

opened

|

New submissions for Wed, 14 Jun 23

|

event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB

|

## Keyword: events

### Generative Watermarking Against Unauthorized Subject-Driven Image Synthesis

- **Authors:** Yihan Ma, Zhengyu Zhao, Xinlei He, Zheng Li, Michael Backes, Yang Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Cryptography and Security (cs.CR)

- **Arxiv link:** https://arxiv.org/abs/2306.07754

- **Pdf link:** https://arxiv.org/pdf/2306.07754

- **Abstract**

Large text-to-image models have shown remarkable performance in synthesizing high-quality images. In particular, the subject-driven model makes it possible to personalize the image synthesis for a specific subject, e.g., a human face or an artistic style, by fine-tuning the generic text-to-image model with a few images from that subject. Nevertheless, misuse of subject-driven image synthesis may violate the authority of subject owners. For example, malicious users may use subject-driven synthesis to mimic specific artistic styles or to create fake facial images without authorization. To protect subject owners against such misuse, recent attempts have commonly relied on adversarial examples to indiscriminately disrupt subject-driven image synthesis. However, this essentially prevents any benign use of subject-driven synthesis based on protected images. In this paper, we take a different angle and aim at protection without sacrificing the utility of protected images for general synthesis purposes. Specifically, we propose GenWatermark, a novel watermark system based on jointly learning a watermark generator and a detector. In particular, to help the watermark survive the subject-driven synthesis, we incorporate the synthesis process in learning GenWatermark by fine-tuning the detector with synthesized images for a specific subject. This operation is shown to largely improve the watermark detection accuracy and also ensure the uniqueness of the watermark for each individual subject. Extensive experiments validate the effectiveness of GenWatermark, especially in practical scenarios with unknown models and text prompts (74% Acc.), as well as partial data watermarking (80% Acc. for 1/4 watermarking). We also demonstrate the robustness of GenWatermark to two potential countermeasures that substantially degrade the synthesis quality.

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

### Hidden Biases of End-to-End Driving Models

- **Authors:** Bernhard Jaeger, Kashyap Chitta, Andreas Geiger

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Machine Learning (cs.LG); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2306.07957

- **Pdf link:** https://arxiv.org/pdf/2306.07957

- **Abstract**

End-to-end driving systems have recently made rapid progress, in particular on CARLA. Independent of their major contribution, they introduce changes to minor system components. Consequently, the source of improvements is unclear. We identify two biases that recur in nearly all state-of-the-art methods and are critical for the observed progress on CARLA: (1) lateral recovery via a strong inductive bias towards target point following, and (2) longitudinal averaging of multimodal waypoint predictions for slowing down. We investigate the drawbacks of these biases and identify principled alternatives. By incorporating our insights, we develop TF++, a simple end-to-end method that ranks first on the Longest6 and LAV benchmarks, gaining 14 driving score over the best prior work on Longest6.

## Keyword: ISP

### Learning to Mask and Permute Visual Tokens for Vision Transformer Pre-Training

- **Authors:** Lorenzo Baraldi, Roberto Amoroso, Marcella Cornia, Lorenzo Baraldi, Andrea Pilzer, Rita Cucchiara

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Multimedia (cs.MM)

- **Arxiv link:** https://arxiv.org/abs/2306.07346

- **Pdf link:** https://arxiv.org/pdf/2306.07346

- **Abstract**

The use of self-supervised pre-training has emerged as a promising approach to enhance the performance of visual tasks such as image classification. In this context, recent approaches have employed the Masked Image Modeling paradigm, which pre-trains a backbone by reconstructing visual tokens associated with randomly masked image patches. This masking approach, however, introduces noise into the input data during pre-training, leading to discrepancies that can impair performance during the fine-tuning phase. Furthermore, input masking neglects the dependencies between corrupted patches, increasing the inconsistencies observed in downstream fine-tuning tasks. To overcome these issues, we propose a new self-supervised pre-training approach, named Masked and Permuted Vision Transformer (MaPeT), that employs autoregressive and permuted predictions to capture intra-patch dependencies. In addition, MaPeT employs auxiliary positional information to reduce the disparity between the pre-training and fine-tuning phases. In our experiments, we employ a fair setting to ensure reliable and meaningful comparisons and conduct investigations on multiple visual tokenizers, including our proposed $k$-CLIP which directly employs discretized CLIP features. Our results demonstrate that MaPeT achieves competitive performance on ImageNet, compared to baselines and competitors under the same model setting. Source code and trained models are publicly available at: https://github.com/aimagelab/MaPeT.

### Continuous Cost Aggregation for Dual-Pixel Disparity Extraction

- **Authors:** Sagi Monin, Sagi Katz, Georgios Evangelidis

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2306.07921

- **Pdf link:** https://arxiv.org/pdf/2306.07921

- **Abstract**

Recent works have shown that depth information can be obtained from Dual-Pixel (DP) sensors. A DP arrangement provides two views in a single shot, thus resembling a stereo image pair with a tiny baseline. However, the different point spread function (PSF) per view, as well as the small disparity range, makes the use of typical stereo matching algorithms problematic. To address the above shortcomings, we propose a Continuous Cost Aggregation (CCA) scheme within a semi-global matching framework that is able to provide accurate continuous disparities from DP images. The proposed algorithm fits parabolas to matching costs and aggregates parabola coefficients along image paths. The aggregation step is performed subject to a quadratic constraint that not only enforces the disparity smoothness but also maintains the quadratic form of the total costs. This gives rise to an inherently efficient disparity propagation scheme with a pixel-wise minimization in closed-form. Furthermore, the continuous form allows for a robust multi-scale aggregation that better compensates for the varying PSF. Experiments on DP data from both DSLR and phone cameras show that the proposed scheme attains state-of-the-art performance in DP disparity estimation.

## Keyword: image signal processing

There is no result

## Keyword: image signal process

There is no result

## Keyword: compression

### Localization of Just Noticeable Difference for Image Compression

- **Authors:** Guangan Chen, Hanhe Lin, Oliver Wiedemann, Dietmar Saupe

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2306.07678

- **Pdf link:** https://arxiv.org/pdf/2306.07678

- **Abstract**

The just noticeable difference (JND) is the minimal difference between stimuli that can be detected by a person. The picture-wise just noticeable difference (PJND) for a given reference image and a compression algorithm represents the minimal level of compression that causes noticeable differences in the reconstruction. These differences can only be observed in some specific regions within the image, dubbed as JND-critical regions. Identifying these regions can improve the development of image compression algorithms. Due to the fact that visual perception varies among individuals, determining the PJND values and JND-critical regions for a target population of consumers requires subjective assessment experiments involving a sufficiently large number of observers. In this paper, we propose a novel framework for conducting such experiments using crowdsourcing. By applying this framework, we created a novel PJND dataset, KonJND++, consisting of 300 source images, compressed versions thereof under JPEG or BPG compression, and an average of 43 ratings of PJND and 129 self-reported locations of JND-critical regions for each source image. Our experiments demonstrate the effectiveness and reliability of our proposed framework, which is easy to be adapted for collecting a large-scale dataset. The source code and dataset are available at https://github.com/angchen-dev/LocJND.

## Keyword: RAW

### Instant Multi-View Head Capture through Learnable Registration

- **Authors:** Timo Bolkart, Tianye Li, Michael J. Black

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2306.07437

- **Pdf link:** https://arxiv.org/pdf/2306.07437

- **Abstract**

Existing methods for capturing datasets of 3D heads in dense semantic correspondence are slow, and commonly address the problem in two separate steps; multi-view stereo (MVS) reconstruction followed by non-rigid registration. To simplify this process, we introduce TEMPEH (Towards Estimation of 3D Meshes from Performances of Expressive Heads) to directly infer 3D heads in dense correspondence from calibrated multi-view images. Registering datasets of 3D scans typically requires manual parameter tuning to find the right balance between accurately fitting the scans surfaces and being robust to scanning noise and outliers. Instead, we propose to jointly register a 3D head dataset while training TEMPEH. Specifically, during training we minimize a geometric loss commonly used for surface registration, effectively leveraging TEMPEH as a regularizer. Our multi-view head inference builds on a volumetric feature representation that samples and fuses features from each view using camera calibration information. To account for partial occlusions and a large capture volume that enables head movements, we use view- and surface-aware feature fusion, and a spatial transformer-based head localization module, respectively. We use raw MVS scans as supervision during training, but, once trained, TEMPEH directly predicts 3D heads in dense correspondence without requiring scans. Predicting one head takes about 0.3 seconds with a median reconstruction error of 0.26 mm, 64% lower than the current state-of-the-art. This enables the efficient capture of large datasets containing multiple people and diverse facial motions. Code, model, and data are publicly available at https://tempeh.is.tue.mpg.de.

### AniFaceDrawing: Anime Portrait Exploration during Your Sketching

- **Authors:** Zhengyu Huang, Haoran Xie, Tsukasa Fukusato, Kazunori Miyata

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Graphics (cs.GR)

- **Arxiv link:** https://arxiv.org/abs/2306.07476

- **Pdf link:** https://arxiv.org/pdf/2306.07476

- **Abstract**

In this paper, we focus on how artificial intelligence (AI) can be used to assist users in the creation of anime portraits, that is, converting rough sketches into anime portraits during their sketching process. The input is a sequence of incomplete freehand sketches that are gradually refined stroke by stroke, while the output is a sequence of high-quality anime portraits that correspond to the input sketches as guidance. Although recent GANs can generate high quality images, it is a challenging problem to maintain the high quality of generated images from sketches with a low degree of completion due to ill-posed problems in conditional image generation. Even with the latest sketch-to-image (S2I) technology, it is still difficult to create high-quality images from incomplete rough sketches for anime portraits since anime style tend to be more abstract than in realistic style. To address this issue, we adopt a latent space exploration of StyleGAN with a two-stage training strategy. We consider the input strokes of a freehand sketch to correspond to edge information-related attributes in the latent structural code of StyleGAN, and term the matching between strokes and these attributes stroke-level disentanglement. In the first stage, we trained an image encoder with the pre-trained StyleGAN model as a teacher encoder. In the second stage, we simulated the drawing process of the generated images without any additional data (labels) and trained the sketch encoder for incomplete progressive sketches to generate high-quality portrait images with feature alignment to the disentangled representations in the teacher encoder. We verified the proposed progressive S2I system with both qualitative and quantitative evaluations and achieved high-quality anime portraits from incomplete progressive sketches. Our user study proved its effectiveness in art creation assistance for the anime style.

### Marking anything: application of point cloud in extracting video target features

- **Authors:** Xiangchun Xu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2306.07559

- **Pdf link:** https://arxiv.org/pdf/2306.07559

- **Abstract**

Extracting retrievable features from video is of great significance for structured video database construction, video copyright protection and fake video rumor refutation. Inspired by point cloud data processing, this paper proposes a method for marking anything (MA) in the video, which can extract the contour features of any target in the video and convert it into a feature vector with a length of 256 that can be retrieved. The algorithm uses YOLO-v8 algorithm, multi-object tracking algorithm and PointNet++ to extract contour of the video detection target to form spatial point cloud data. Then extract the point cloud feature vector and use it as the retrievable feature of the video detection target. In order to verify the effectiveness and robustness of contour feature, some datasets are crawled from Dou Yin and Kinetics-700 dataset as experimental data. For Dou Yin's homogenized videos, the proposed contour features achieve retrieval accuracy higher than 97% in Top1 return mode. For videos from Kinetics 700, the contour feature also showed good robustness for partial clip mode video tracing.

### Hidden Biases of End-to-End Driving Models

- **Authors:** Bernhard Jaeger, Kashyap Chitta, Andreas Geiger

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Machine Learning (cs.LG); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2306.07957

- **Pdf link:** https://arxiv.org/pdf/2306.07957

- **Abstract**

End-to-end driving systems have recently made rapid progress, in particular on CARLA. Independent of their major contribution, they introduce changes to minor system components. Consequently, the source of improvements is unclear. We identify two biases that recur in nearly all state-of-the-art methods and are critical for the observed progress on CARLA: (1) lateral recovery via a strong inductive bias towards target point following, and (2) longitudinal averaging of multimodal waypoint predictions for slowing down. We investigate the drawbacks of these biases and identify principled alternatives. By incorporating our insights, we develop TF++, a simple end-to-end method that ranks first on the Longest6 and LAV benchmarks, gaining 14 driving score over the best prior work on Longest6.

## Keyword: raw image

There is no result

|

2.0

|

New submissions for Wed, 14 Jun 23 - ## Keyword: events

### Generative Watermarking Against Unauthorized Subject-Driven Image Synthesis

- **Authors:** Yihan Ma, Zhengyu Zhao, Xinlei He, Zheng Li, Michael Backes, Yang Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Cryptography and Security (cs.CR)

- **Arxiv link:** https://arxiv.org/abs/2306.07754

- **Pdf link:** https://arxiv.org/pdf/2306.07754

- **Abstract**

Large text-to-image models have shown remarkable performance in synthesizing high-quality images. In particular, the subject-driven model makes it possible to personalize the image synthesis for a specific subject, e.g., a human face or an artistic style, by fine-tuning the generic text-to-image model with a few images from that subject. Nevertheless, misuse of subject-driven image synthesis may violate the authority of subject owners. For example, malicious users may use subject-driven synthesis to mimic specific artistic styles or to create fake facial images without authorization. To protect subject owners against such misuse, recent attempts have commonly relied on adversarial examples to indiscriminately disrupt subject-driven image synthesis. However, this essentially prevents any benign use of subject-driven synthesis based on protected images. In this paper, we take a different angle and aim at protection without sacrificing the utility of protected images for general synthesis purposes. Specifically, we propose GenWatermark, a novel watermark system based on jointly learning a watermark generator and a detector. In particular, to help the watermark survive the subject-driven synthesis, we incorporate the synthesis process in learning GenWatermark by fine-tuning the detector with synthesized images for a specific subject. This operation is shown to largely improve the watermark detection accuracy and also ensure the uniqueness of the watermark for each individual subject. Extensive experiments validate the effectiveness of GenWatermark, especially in practical scenarios with unknown models and text prompts (74% Acc.), as well as partial data watermarking (80% Acc. for 1/4 watermarking). We also demonstrate the robustness of GenWatermark to two potential countermeasures that substantially degrade the synthesis quality.

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

### Hidden Biases of End-to-End Driving Models

- **Authors:** Bernhard Jaeger, Kashyap Chitta, Andreas Geiger

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Machine Learning (cs.LG); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2306.07957

- **Pdf link:** https://arxiv.org/pdf/2306.07957

- **Abstract**

End-to-end driving systems have recently made rapid progress, in particular on CARLA. Independent of their major contribution, they introduce changes to minor system components. Consequently, the source of improvements is unclear. We identify two biases that recur in nearly all state-of-the-art methods and are critical for the observed progress on CARLA: (1) lateral recovery via a strong inductive bias towards target point following, and (2) longitudinal averaging of multimodal waypoint predictions for slowing down. We investigate the drawbacks of these biases and identify principled alternatives. By incorporating our insights, we develop TF++, a simple end-to-end method that ranks first on the Longest6 and LAV benchmarks, gaining 14 driving score over the best prior work on Longest6.

## Keyword: ISP

### Learning to Mask and Permute Visual Tokens for Vision Transformer Pre-Training

- **Authors:** Lorenzo Baraldi, Roberto Amoroso, Marcella Cornia, Lorenzo Baraldi, Andrea Pilzer, Rita Cucchiara

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Multimedia (cs.MM)

- **Arxiv link:** https://arxiv.org/abs/2306.07346

- **Pdf link:** https://arxiv.org/pdf/2306.07346

- **Abstract**

The use of self-supervised pre-training has emerged as a promising approach to enhance the performance of visual tasks such as image classification. In this context, recent approaches have employed the Masked Image Modeling paradigm, which pre-trains a backbone by reconstructing visual tokens associated with randomly masked image patches. This masking approach, however, introduces noise into the input data during pre-training, leading to discrepancies that can impair performance during the fine-tuning phase. Furthermore, input masking neglects the dependencies between corrupted patches, increasing the inconsistencies observed in downstream fine-tuning tasks. To overcome these issues, we propose a new self-supervised pre-training approach, named Masked and Permuted Vision Transformer (MaPeT), that employs autoregressive and permuted predictions to capture intra-patch dependencies. In addition, MaPeT employs auxiliary positional information to reduce the disparity between the pre-training and fine-tuning phases. In our experiments, we employ a fair setting to ensure reliable and meaningful comparisons and conduct investigations on multiple visual tokenizers, including our proposed $k$-CLIP which directly employs discretized CLIP features. Our results demonstrate that MaPeT achieves competitive performance on ImageNet, compared to baselines and competitors under the same model setting. Source code and trained models are publicly available at: https://github.com/aimagelab/MaPeT.

### Continuous Cost Aggregation for Dual-Pixel Disparity Extraction

- **Authors:** Sagi Monin, Sagi Katz, Georgios Evangelidis

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2306.07921

- **Pdf link:** https://arxiv.org/pdf/2306.07921

- **Abstract**

Recent works have shown that depth information can be obtained from Dual-Pixel (DP) sensors. A DP arrangement provides two views in a single shot, thus resembling a stereo image pair with a tiny baseline. However, the different point spread function (PSF) per view, as well as the small disparity range, makes the use of typical stereo matching algorithms problematic. To address the above shortcomings, we propose a Continuous Cost Aggregation (CCA) scheme within a semi-global matching framework that is able to provide accurate continuous disparities from DP images. The proposed algorithm fits parabolas to matching costs and aggregates parabola coefficients along image paths. The aggregation step is performed subject to a quadratic constraint that not only enforces the disparity smoothness but also maintains the quadratic form of the total costs. This gives rise to an inherently efficient disparity propagation scheme with a pixel-wise minimization in closed-form. Furthermore, the continuous form allows for a robust multi-scale aggregation that better compensates for the varying PSF. Experiments on DP data from both DSLR and phone cameras show that the proposed scheme attains state-of-the-art performance in DP disparity estimation.

## Keyword: image signal processing

There is no result

## Keyword: image signal process

There is no result

## Keyword: compression

### Localization of Just Noticeable Difference for Image Compression

- **Authors:** Guangan Chen, Hanhe Lin, Oliver Wiedemann, Dietmar Saupe

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2306.07678

- **Pdf link:** https://arxiv.org/pdf/2306.07678

- **Abstract**

The just noticeable difference (JND) is the minimal difference between stimuli that can be detected by a person. The picture-wise just noticeable difference (PJND) for a given reference image and a compression algorithm represents the minimal level of compression that causes noticeable differences in the reconstruction. These differences can only be observed in some specific regions within the image, dubbed as JND-critical regions. Identifying these regions can improve the development of image compression algorithms. Due to the fact that visual perception varies among individuals, determining the PJND values and JND-critical regions for a target population of consumers requires subjective assessment experiments involving a sufficiently large number of observers. In this paper, we propose a novel framework for conducting such experiments using crowdsourcing. By applying this framework, we created a novel PJND dataset, KonJND++, consisting of 300 source images, compressed versions thereof under JPEG or BPG compression, and an average of 43 ratings of PJND and 129 self-reported locations of JND-critical regions for each source image. Our experiments demonstrate the effectiveness and reliability of our proposed framework, which is easy to be adapted for collecting a large-scale dataset. The source code and dataset are available at https://github.com/angchen-dev/LocJND.

## Keyword: RAW

### Instant Multi-View Head Capture through Learnable Registration

- **Authors:** Timo Bolkart, Tianye Li, Michael J. Black

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2306.07437

- **Pdf link:** https://arxiv.org/pdf/2306.07437

- **Abstract**

Existing methods for capturing datasets of 3D heads in dense semantic correspondence are slow, and commonly address the problem in two separate steps; multi-view stereo (MVS) reconstruction followed by non-rigid registration. To simplify this process, we introduce TEMPEH (Towards Estimation of 3D Meshes from Performances of Expressive Heads) to directly infer 3D heads in dense correspondence from calibrated multi-view images. Registering datasets of 3D scans typically requires manual parameter tuning to find the right balance between accurately fitting the scans surfaces and being robust to scanning noise and outliers. Instead, we propose to jointly register a 3D head dataset while training TEMPEH. Specifically, during training we minimize a geometric loss commonly used for surface registration, effectively leveraging TEMPEH as a regularizer. Our multi-view head inference builds on a volumetric feature representation that samples and fuses features from each view using camera calibration information. To account for partial occlusions and a large capture volume that enables head movements, we use view- and surface-aware feature fusion, and a spatial transformer-based head localization module, respectively. We use raw MVS scans as supervision during training, but, once trained, TEMPEH directly predicts 3D heads in dense correspondence without requiring scans. Predicting one head takes about 0.3 seconds with a median reconstruction error of 0.26 mm, 64% lower than the current state-of-the-art. This enables the efficient capture of large datasets containing multiple people and diverse facial motions. Code, model, and data are publicly available at https://tempeh.is.tue.mpg.de.

### AniFaceDrawing: Anime Portrait Exploration during Your Sketching

- **Authors:** Zhengyu Huang, Haoran Xie, Tsukasa Fukusato, Kazunori Miyata

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Graphics (cs.GR)

- **Arxiv link:** https://arxiv.org/abs/2306.07476

- **Pdf link:** https://arxiv.org/pdf/2306.07476

- **Abstract**

In this paper, we focus on how artificial intelligence (AI) can be used to assist users in the creation of anime portraits, that is, converting rough sketches into anime portraits during their sketching process. The input is a sequence of incomplete freehand sketches that are gradually refined stroke by stroke, while the output is a sequence of high-quality anime portraits that correspond to the input sketches as guidance. Although recent GANs can generate high quality images, it is a challenging problem to maintain the high quality of generated images from sketches with a low degree of completion due to ill-posed problems in conditional image generation. Even with the latest sketch-to-image (S2I) technology, it is still difficult to create high-quality images from incomplete rough sketches for anime portraits since anime style tend to be more abstract than in realistic style. To address this issue, we adopt a latent space exploration of StyleGAN with a two-stage training strategy. We consider the input strokes of a freehand sketch to correspond to edge information-related attributes in the latent structural code of StyleGAN, and term the matching between strokes and these attributes stroke-level disentanglement. In the first stage, we trained an image encoder with the pre-trained StyleGAN model as a teacher encoder. In the second stage, we simulated the drawing process of the generated images without any additional data (labels) and trained the sketch encoder for incomplete progressive sketches to generate high-quality portrait images with feature alignment to the disentangled representations in the teacher encoder. We verified the proposed progressive S2I system with both qualitative and quantitative evaluations and achieved high-quality anime portraits from incomplete progressive sketches. Our user study proved its effectiveness in art creation assistance for the anime style.

### Marking anything: application of point cloud in extracting video target features

- **Authors:** Xiangchun Xu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2306.07559

- **Pdf link:** https://arxiv.org/pdf/2306.07559

- **Abstract**

Extracting retrievable features from video is of great significance for structured video database construction, video copyright protection and fake video rumor refutation. Inspired by point cloud data processing, this paper proposes a method for marking anything (MA) in the video, which can extract the contour features of any target in the video and convert it into a feature vector with a length of 256 that can be retrieved. The algorithm uses YOLO-v8 algorithm, multi-object tracking algorithm and PointNet++ to extract contour of the video detection target to form spatial point cloud data. Then extract the point cloud feature vector and use it as the retrievable feature of the video detection target. In order to verify the effectiveness and robustness of contour feature, some datasets are crawled from Dou Yin and Kinetics-700 dataset as experimental data. For Dou Yin's homogenized videos, the proposed contour features achieve retrieval accuracy higher than 97% in Top1 return mode. For videos from Kinetics 700, the contour feature also showed good robustness for partial clip mode video tracing.

### Hidden Biases of End-to-End Driving Models

- **Authors:** Bernhard Jaeger, Kashyap Chitta, Andreas Geiger

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Machine Learning (cs.LG); Robotics (cs.RO)

- **Arxiv link:** https://arxiv.org/abs/2306.07957

- **Pdf link:** https://arxiv.org/pdf/2306.07957

- **Abstract**

End-to-end driving systems have recently made rapid progress, in particular on CARLA. Independent of their major contribution, they introduce changes to minor system components. Consequently, the source of improvements is unclear. We identify two biases that recur in nearly all state-of-the-art methods and are critical for the observed progress on CARLA: (1) lateral recovery via a strong inductive bias towards target point following, and (2) longitudinal averaging of multimodal waypoint predictions for slowing down. We investigate the drawbacks of these biases and identify principled alternatives. By incorporating our insights, we develop TF++, a simple end-to-end method that ranks first on the Longest6 and LAV benchmarks, gaining 14 driving score over the best prior work on Longest6.

## Keyword: raw image

There is no result

|

process

|

new submissions for wed jun keyword events generative watermarking against unauthorized subject driven image synthesis authors yihan ma zhengyu zhao xinlei he zheng li michael backes yang zhang subjects computer vision and pattern recognition cs cv cryptography and security cs cr arxiv link pdf link abstract large text to image models have shown remarkable performance in synthesizing high quality images in particular the subject driven model makes it possible to personalize the image synthesis for a specific subject e g a human face or an artistic style by fine tuning the generic text to image model with a few images from that subject nevertheless misuse of subject driven image synthesis may violate the authority of subject owners for example malicious users may use subject driven synthesis to mimic specific artistic styles or to create fake facial images without authorization to protect subject owners against such misuse recent attempts have commonly relied on adversarial examples to indiscriminately disrupt subject driven image synthesis however this essentially prevents any benign use of subject driven synthesis based on protected images in this paper we take a different angle and aim at protection without sacrificing the utility of protected images for general synthesis purposes specifically we propose genwatermark a novel watermark system based on jointly learning a watermark generator and a detector in particular to help the watermark survive the subject driven synthesis we incorporate the synthesis process in learning genwatermark by fine tuning the detector with synthesized images for a specific subject this operation is shown to largely improve the watermark detection accuracy and also ensure the uniqueness of the watermark for each individual subject extensive experiments validate the effectiveness of genwatermark especially in practical scenarios with unknown models and text prompts acc as well as partial data watermarking acc for watermarking we also demonstrate the robustness of genwatermark to two potential countermeasures that substantially degrade the synthesis quality keyword event camera there is no result keyword events camera there is no result keyword white balance there is no result keyword color contrast there is no result keyword awb hidden biases of end to end driving models authors bernhard jaeger kashyap chitta andreas geiger subjects computer vision and pattern recognition cs cv artificial intelligence cs ai machine learning cs lg robotics cs ro arxiv link pdf link abstract end to end driving systems have recently made rapid progress in particular on carla independent of their major contribution they introduce changes to minor system components consequently the source of improvements is unclear we identify two biases that recur in nearly all state of the art methods and are critical for the observed progress on carla lateral recovery via a strong inductive bias towards target point following and longitudinal averaging of multimodal waypoint predictions for slowing down we investigate the drawbacks of these biases and identify principled alternatives by incorporating our insights we develop tf a simple end to end method that ranks first on the and lav benchmarks gaining driving score over the best prior work on keyword isp learning to mask and permute visual tokens for vision transformer pre training authors lorenzo baraldi roberto amoroso marcella cornia lorenzo baraldi andrea pilzer rita cucchiara subjects computer vision and pattern recognition cs cv artificial intelligence cs ai multimedia cs mm arxiv link pdf link abstract the use of self supervised pre training has emerged as a promising approach to enhance the performance of visual tasks such as image classification in this context recent approaches have employed the masked image modeling paradigm which pre trains a backbone by reconstructing visual tokens associated with randomly masked image patches this masking approach however introduces noise into the input data during pre training leading to discrepancies that can impair performance during the fine tuning phase furthermore input masking neglects the dependencies between corrupted patches increasing the inconsistencies observed in downstream fine tuning tasks to overcome these issues we propose a new self supervised pre training approach named masked and permuted vision transformer mapet that employs autoregressive and permuted predictions to capture intra patch dependencies in addition mapet employs auxiliary positional information to reduce the disparity between the pre training and fine tuning phases in our experiments we employ a fair setting to ensure reliable and meaningful comparisons and conduct investigations on multiple visual tokenizers including our proposed k clip which directly employs discretized clip features our results demonstrate that mapet achieves competitive performance on imagenet compared to baselines and competitors under the same model setting source code and trained models are publicly available at continuous cost aggregation for dual pixel disparity extraction authors sagi monin sagi katz georgios evangelidis subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract recent works have shown that depth information can be obtained from dual pixel dp sensors a dp arrangement provides two views in a single shot thus resembling a stereo image pair with a tiny baseline however the different point spread function psf per view as well as the small disparity range makes the use of typical stereo matching algorithms problematic to address the above shortcomings we propose a continuous cost aggregation cca scheme within a semi global matching framework that is able to provide accurate continuous disparities from dp images the proposed algorithm fits parabolas to matching costs and aggregates parabola coefficients along image paths the aggregation step is performed subject to a quadratic constraint that not only enforces the disparity smoothness but also maintains the quadratic form of the total costs this gives rise to an inherently efficient disparity propagation scheme with a pixel wise minimization in closed form furthermore the continuous form allows for a robust multi scale aggregation that better compensates for the varying psf experiments on dp data from both dslr and phone cameras show that the proposed scheme attains state of the art performance in dp disparity estimation keyword image signal processing there is no result keyword image signal process there is no result keyword compression localization of just noticeable difference for image compression authors guangan chen hanhe lin oliver wiedemann dietmar saupe subjects computer vision and pattern recognition cs cv multimedia cs mm image and video processing eess iv arxiv link pdf link abstract the just noticeable difference jnd is the minimal difference between stimuli that can be detected by a person the picture wise just noticeable difference pjnd for a given reference image and a compression algorithm represents the minimal level of compression that causes noticeable differences in the reconstruction these differences can only be observed in some specific regions within the image dubbed as jnd critical regions identifying these regions can improve the development of image compression algorithms due to the fact that visual perception varies among individuals determining the pjnd values and jnd critical regions for a target population of consumers requires subjective assessment experiments involving a sufficiently large number of observers in this paper we propose a novel framework for conducting such experiments using crowdsourcing by applying this framework we created a novel pjnd dataset konjnd consisting of source images compressed versions thereof under jpeg or bpg compression and an average of ratings of pjnd and self reported locations of jnd critical regions for each source image our experiments demonstrate the effectiveness and reliability of our proposed framework which is easy to be adapted for collecting a large scale dataset the source code and dataset are available at keyword raw instant multi view head capture through learnable registration authors timo bolkart tianye li michael j black subjects computer vision and pattern recognition cs cv arxiv link pdf link abstract existing methods for capturing datasets of heads in dense semantic correspondence are slow and commonly address the problem in two separate steps multi view stereo mvs reconstruction followed by non rigid registration to simplify this process we introduce tempeh towards estimation of meshes from performances of expressive heads to directly infer heads in dense correspondence from calibrated multi view images registering datasets of scans typically requires manual parameter tuning to find the right balance between accurately fitting the scans surfaces and being robust to scanning noise and outliers instead we propose to jointly register a head dataset while training tempeh specifically during training we minimize a geometric loss commonly used for surface registration effectively leveraging tempeh as a regularizer our multi view head inference builds on a volumetric feature representation that samples and fuses features from each view using camera calibration information to account for partial occlusions and a large capture volume that enables head movements we use view and surface aware feature fusion and a spatial transformer based head localization module respectively we use raw mvs scans as supervision during training but once trained tempeh directly predicts heads in dense correspondence without requiring scans predicting one head takes about seconds with a median reconstruction error of mm lower than the current state of the art this enables the efficient capture of large datasets containing multiple people and diverse facial motions code model and data are publicly available at anifacedrawing anime portrait exploration during your sketching authors zhengyu huang haoran xie tsukasa fukusato kazunori miyata subjects computer vision and pattern recognition cs cv graphics cs gr arxiv link pdf link abstract in this paper we focus on how artificial intelligence ai can be used to assist users in the creation of anime portraits that is converting rough sketches into anime portraits during their sketching process the input is a sequence of incomplete freehand sketches that are gradually refined stroke by stroke while the output is a sequence of high quality anime portraits that correspond to the input sketches as guidance although recent gans can generate high quality images it is a challenging problem to maintain the high quality of generated images from sketches with a low degree of completion due to ill posed problems in conditional image generation even with the latest sketch to image technology it is still difficult to create high quality images from incomplete rough sketches for anime portraits since anime style tend to be more abstract than in realistic style to address this issue we adopt a latent space exploration of stylegan with a two stage training strategy we consider the input strokes of a freehand sketch to correspond to edge information related attributes in the latent structural code of stylegan and term the matching between strokes and these attributes stroke level disentanglement in the first stage we trained an image encoder with the pre trained stylegan model as a teacher encoder in the second stage we simulated the drawing process of the generated images without any additional data labels and trained the sketch encoder for incomplete progressive sketches to generate high quality portrait images with feature alignment to the disentangled representations in the teacher encoder we verified the proposed progressive system with both qualitative and quantitative evaluations and achieved high quality anime portraits from incomplete progressive sketches our user study proved its effectiveness in art creation assistance for the anime style marking anything application of point cloud in extracting video target features authors xiangchun xu subjects computer vision and pattern recognition cs cv image and video processing eess iv arxiv link pdf link abstract extracting retrievable features from video is of great significance for structured video database construction video copyright protection and fake video rumor refutation inspired by point cloud data processing this paper proposes a method for marking anything ma in the video which can extract the contour features of any target in the video and convert it into a feature vector with a length of that can be retrieved the algorithm uses yolo algorithm multi object tracking algorithm and pointnet to extract contour of the video detection target to form spatial point cloud data then extract the point cloud feature vector and use it as the retrievable feature of the video detection target in order to verify the effectiveness and robustness of contour feature some datasets are crawled from dou yin and kinetics dataset as experimental data for dou yin s homogenized videos the proposed contour features achieve retrieval accuracy higher than in return mode for videos from kinetics the contour feature also showed good robustness for partial clip mode video tracing hidden biases of end to end driving models authors bernhard jaeger kashyap chitta andreas geiger subjects computer vision and pattern recognition cs cv artificial intelligence cs ai machine learning cs lg robotics cs ro arxiv link pdf link abstract end to end driving systems have recently made rapid progress in particular on carla independent of their major contribution they introduce changes to minor system components consequently the source of improvements is unclear we identify two biases that recur in nearly all state of the art methods and are critical for the observed progress on carla lateral recovery via a strong inductive bias towards target point following and longitudinal averaging of multimodal waypoint predictions for slowing down we investigate the drawbacks of these biases and identify principled alternatives by incorporating our insights we develop tf a simple end to end method that ranks first on the and lav benchmarks gaining driving score over the best prior work on keyword raw image there is no result

| 1

|

14,345

| 17,371,607,233

|

IssuesEvent

|

2021-07-30 14:41:38

|

GoogleCloudPlatform/dotnet-docs-samples

|

https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples

|

closed

|

Storage: HMAC tests not cleaning up after themselves. HMAC keys limit reached.

|

api: storage priority: p1 samples type: process

|

Seems like HMAC tests are not properly deleting HMAC keys and we have reached limit. All HMAC keys related tests are failing.

Sample [CI output](https://source.cloud.google.com/results/invocations/00fb02fd-8e97-40af-b459-c95f324ef2dd/targets/github%2Fdotnet-docs-samples%2Fstorage%2Fapi%2FStorage.Samples.Tests%2FTestResults/tests).

|

1.0

|

Storage: HMAC tests not cleaning up after themselves. HMAC keys limit reached. - Seems like HMAC tests are not properly deleting HMAC keys and we have reached limit. All HMAC keys related tests are failing.

Sample [CI output](https://source.cloud.google.com/results/invocations/00fb02fd-8e97-40af-b459-c95f324ef2dd/targets/github%2Fdotnet-docs-samples%2Fstorage%2Fapi%2FStorage.Samples.Tests%2FTestResults/tests).

|

process

|

storage hmac tests not cleaning up after themselves hmac keys limit reached seems like hmac tests are not properly deleting hmac keys and we have reached limit all hmac keys related tests are failing sample

| 1

|

8,547

| 11,723,543,306

|

IssuesEvent

|

2020-03-10 09:19:08

|

TOMP-WG/TOMP-API

|

https://api.github.com/repos/TOMP-WG/TOMP-API

|

closed

|

Use tags and branches for releases

|

process

|

As mentioned in the last meeting, we now have a whole bunch of files in the repository that are just there to contain old versions. By correctly tagging old commits with versions (and using branches from those commits for possible patch releases) we can keep a cleaner repository and utilise git's main features better.

Concretely, I propose that we remove the following directories and files:

components/schemas

depricated - Paths

depricated - components

tools

TOMP-API-1.1.1.yaml

TOMP-API-1.1.2.yaml

TOMP-API-1.1.yaml

TOMP-API-1.2.yaml

swagger 1.0.8.yaml

and add tags to the commits that released these versions.

This will make it very simple for someone to implement older versions (from now on, that is), as they could get any version >= 1.2 by doing `git checkout vx.x.x` and then whatever is in `TOMP-API.yaml` would be that version.

It would also make future changes to a maintained version clearer, as those would be in their own branch separate from development on the latest version.

This issue is somewhat related to #102 as both would work together to make our versioning and releases clearer to users of our API.

|

1.0

|

Use tags and branches for releases - As mentioned in the last meeting, we now have a whole bunch of files in the repository that are just there to contain old versions. By correctly tagging old commits with versions (and using branches from those commits for possible patch releases) we can keep a cleaner repository and utilise git's main features better.

Concretely, I propose that we remove the following directories and files:

components/schemas

depricated - Paths

depricated - components

tools

TOMP-API-1.1.1.yaml

TOMP-API-1.1.2.yaml

TOMP-API-1.1.yaml

TOMP-API-1.2.yaml

swagger 1.0.8.yaml

and add tags to the commits that released these versions.

This will make it very simple for someone to implement older versions (from now on, that is), as they could get any version >= 1.2 by doing `git checkout vx.x.x` and then whatever is in `TOMP-API.yaml` would be that version.

It would also make future changes to a maintained version clearer, as those would be in their own branch separate from development on the latest version.

This issue is somewhat related to #102 as both would work together to make our versioning and releases clearer to users of our API.

|

process

|

use tags and branches for releases as mentioned in the last meeting we now have a whole bunch of files in the repository that are just there to contain old versions by correctly tagging old commits with versions and using branches from those commits for possible patch releases we can keep a cleaner repository and utilise git s main features better concretely i propose that we remove the following directories and files components schemas depricated paths depricated components tools tomp api yaml tomp api yaml tomp api yaml tomp api yaml swagger yaml and add tags to the commits that released these versions this will make it very simple for someone to implement older versions from now on that is as they could get any version by doing git checkout vx x x and then whatever is in tomp api yaml would be that version it would also make future changes to a maintained version clearer as those would be in their own branch separate from development on the latest version this issue is somewhat related to as both would work together to make our versioning and releases clearer to users of our api

| 1

|

12,279

| 14,790,390,747

|

IssuesEvent

|

2021-01-12 11:58:17

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

closed

|

Response of `count` queries changes when you have a middleware

|

process/candidate

|

<!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: https://pris.ly/d/bug-reports

-->

## Bug description

When you use a middleware (even an "empty" one), it changes the response of `prisma.model.count()` queries.

## How to reproduce

Here are two scripts that should produce the same output, but don't:

```

import { PrismaClient } from "@prisma/client";

const prisma = new PrismaClient();

const main = async () => {

console.log(await prisma.user.count());

};

main()

```

Output: `20` (expected)

vs.

```

import { PrismaClient } from "@prisma/client";

const prisma = new PrismaClient();

prisma.$use((params, next) => next(params));

const main = async () => {

console.log(await prisma.user.count());

};

main()

```

Output: `{ _all: 20 }` (unexpected)

## Expected behavior

The output of both scripts should be `20`

## Prisma information

The schema is not relevant, I can observe this with multiple schemas

## Environment & setup

<!-- In which environment does the problem occur -->

- OS: macOS

- Database: Postgres (but the issue does not depend on this)

- Node.js version: 14.15.1

- Prisma version:

```

@prisma/cli : 2.15.0-dev.19

@prisma/client : 2.15.0-dev.19

Current platform : darwin

Query Engine : query-engine 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/query-engine-darwin)

Migration Engine : migration-engine-cli 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/migration-engine-darwin)

Introspection Engine : introspection-core 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/introspection-engine-darwin)

Format Binary : prisma-fmt 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/prisma-fmt-darwin)

Studio : 0.332.0

```

|

1.0

|

Response of `count` queries changes when you have a middleware - <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: https://pris.ly/d/bug-reports

-->

## Bug description

When you use a middleware (even an "empty" one), it changes the response of `prisma.model.count()` queries.

## How to reproduce

Here are two scripts that should produce the same output, but don't:

```

import { PrismaClient } from "@prisma/client";

const prisma = new PrismaClient();

const main = async () => {

console.log(await prisma.user.count());

};

main()

```

Output: `20` (expected)

vs.

```

import { PrismaClient } from "@prisma/client";

const prisma = new PrismaClient();

prisma.$use((params, next) => next(params));

const main = async () => {

console.log(await prisma.user.count());

};

main()

```

Output: `{ _all: 20 }` (unexpected)

## Expected behavior

The output of both scripts should be `20`

## Prisma information

The schema is not relevant, I can observe this with multiple schemas

## Environment & setup

<!-- In which environment does the problem occur -->

- OS: macOS

- Database: Postgres (but the issue does not depend on this)

- Node.js version: 14.15.1

- Prisma version:

```

@prisma/cli : 2.15.0-dev.19

@prisma/client : 2.15.0-dev.19

Current platform : darwin

Query Engine : query-engine 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/query-engine-darwin)

Migration Engine : migration-engine-cli 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/migration-engine-darwin)

Introspection Engine : introspection-core 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/introspection-engine-darwin)

Format Binary : prisma-fmt 6b6ad7413a6b0c825e89eeac9adeb28830b1babb (at node_modules/@prisma/engines/prisma-fmt-darwin)

Studio : 0.332.0

```

|

process

|

response of count queries changes when you have a middleware thanks for helping us improve prisma 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by setting the debug environment variable and enabling additional logging output in prisma client learn more about writing proper bug reports here bug description when you use a middleware even an empty one it changes the response of prisma model count queries how to reproduce here are two scripts that should produce the same output but don t import prismaclient from prisma client const prisma new prismaclient const main async console log await prisma user count main output expected vs import prismaclient from prisma client const prisma new prismaclient prisma use params next next params const main async console log await prisma user count main output all unexpected expected behavior the output of both scripts should be prisma information the schema is not relevant i can observe this with multiple schemas environment setup os macos database postgres but the issue does not depend on this node js version prisma version prisma cli dev prisma client dev current platform darwin query engine query engine at node modules prisma engines query engine darwin migration engine migration engine cli at node modules prisma engines migration engine darwin introspection engine introspection core at node modules prisma engines introspection engine darwin format binary prisma fmt at node modules prisma engines prisma fmt darwin studio

| 1

|

15,905

| 20,110,346,352

|

IssuesEvent

|

2022-02-07 14:33:35

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

bzlmod: can one extension depend on side-effect of another?

|

type: support / not a bug (process) team-ExternalDeps untriaged area-Bzlmod

|

From the error messages, it's hard to tell what's happening. I'll do my best to make this reproducible.

Today in `WORKSPACE` it's often the case that a repository rule creates a repository which the user loads from. For example in

https://github.com/aspect-build/rules_swc/releases/tag/v0.2.0

The user calls

```

load("@aspect_rules_swc//swc:repositories.bzl", "swc_register_toolchains")

swc_register_toolchains(

name = "swc",

swc_version = "v1.2.118",

)

```

which creates a `swc_cli` repository. Then the user must load from there:

```

# Fetches the npm packages needed to run @swc/cli

load("@swc_cli//:repositories.bzl", _swc_cli_deps = "npm_repositories")

_swc_cli_deps()

```

This can't happen in one starlark file because the load of `@swc_cli` is only possible after the execution of `swc_register_toolchains`.

I'm trying to convert this usage to bzlmod. I assume that a similar pattern can be done, where two different extensions are called from different starlark files:

https://github.com/aspect-build/bazel-central-registry/blob/main/modules/aspect_rules_swc/0.2.0/MODULE.bazel#L14-L18

However this is failing with only a very short error, not really enough to understand the problem:

```

ERROR: Failed to load Starlark extension '@aspect_rules_swc.0.2.0.swc.swc_cli//:repositories.bzl'.

ERROR: Analysis of target '//:transpile' failed; build aborted

```

as can be seen in context here:

https://buildkite.com/bazel/bcr-presubmit/builds/121#f117a260-ba25-4547-946d-ad69fdee183c

My best guess is that the extension call to create the `swc_cli` repository in `use_repo(npm, "swc_cli")` is not actually creating the `swc_cli` repository at this time, so the subsequent call to the second extension can't resolve the load statement?

|

1.0

|

bzlmod: can one extension depend on side-effect of another? - From the error messages, it's hard to tell what's happening. I'll do my best to make this reproducible.

Today in `WORKSPACE` it's often the case that a repository rule creates a repository which the user loads from. For example in

https://github.com/aspect-build/rules_swc/releases/tag/v0.2.0

The user calls

```

load("@aspect_rules_swc//swc:repositories.bzl", "swc_register_toolchains")

swc_register_toolchains(

name = "swc",

swc_version = "v1.2.118",

)

```

which creates a `swc_cli` repository. Then the user must load from there:

```

# Fetches the npm packages needed to run @swc/cli

load("@swc_cli//:repositories.bzl", _swc_cli_deps = "npm_repositories")

_swc_cli_deps()

```

This can't happen in one starlark file because the load of `@swc_cli` is only possible after the execution of `swc_register_toolchains`.

I'm trying to convert this usage to bzlmod. I assume that a similar pattern can be done, where two different extensions are called from different starlark files:

https://github.com/aspect-build/bazel-central-registry/blob/main/modules/aspect_rules_swc/0.2.0/MODULE.bazel#L14-L18

However this is failing with only a very short error, not really enough to understand the problem:

```

ERROR: Failed to load Starlark extension '@aspect_rules_swc.0.2.0.swc.swc_cli//:repositories.bzl'.

ERROR: Analysis of target '//:transpile' failed; build aborted

```

as can be seen in context here:

https://buildkite.com/bazel/bcr-presubmit/builds/121#f117a260-ba25-4547-946d-ad69fdee183c

My best guess is that the extension call to create the `swc_cli` repository in `use_repo(npm, "swc_cli")` is not actually creating the `swc_cli` repository at this time, so the subsequent call to the second extension can't resolve the load statement?

|

process

|

bzlmod can one extension depend on side effect of another from the error messages it s hard to tell what s happening i ll do my best to make this reproducible today in workspace it s often the case that a repository rule creates a repository which the user loads from for example in the user calls load aspect rules swc swc repositories bzl swc register toolchains swc register toolchains name swc swc version which creates a swc cli repository then the user must load from there fetches the npm packages needed to run swc cli load swc cli repositories bzl swc cli deps npm repositories swc cli deps this can t happen in one starlark file because the load of swc cli is only possible after the execution of swc register toolchains i m trying to convert this usage to bzlmod i assume that a similar pattern can be done where two different extensions are called from different starlark files however this is failing with only a very short error not really enough to understand the problem error failed to load starlark extension aspect rules swc swc swc cli repositories bzl error analysis of target transpile failed build aborted as can be seen in context here my best guess is that the extension call to create the swc cli repository in use repo npm swc cli is not actually creating the swc cli repository at this time so the subsequent call to the second extension can t resolve the load statement

| 1

|

14,812

| 18,144,021,652

|

IssuesEvent

|

2021-09-25 04:58:11

|

edmobe/android-video-magnification

|

https://api.github.com/repos/edmobe/android-video-magnification

|

closed

|

PR-0004 OpenCV no abre el vídeo, pero antes sí lo hacía

|

video-processing problem

|

Se muestra el siguiente error en consola:

```

E/cv::error(): OpenCV(4.5.3) Error: Requested object was not found (could not open directory: /data/app/com.example.videomagnification-Rg1L4AgNNhczgpK1kZy9Ow==/base.apk!/lib/arm64-v8a) in glob_rec, file /build/master_pack-android/opencv/modules/core/src/glob.cpp, line 279

```

|

1.0

|

PR-0004 OpenCV no abre el vídeo, pero antes sí lo hacía - Se muestra el siguiente error en consola:

```

E/cv::error(): OpenCV(4.5.3) Error: Requested object was not found (could not open directory: /data/app/com.example.videomagnification-Rg1L4AgNNhczgpK1kZy9Ow==/base.apk!/lib/arm64-v8a) in glob_rec, file /build/master_pack-android/opencv/modules/core/src/glob.cpp, line 279

```

|

process

|

pr opencv no abre el vídeo pero antes sí lo hacía se muestra el siguiente error en consola e cv error opencv error requested object was not found could not open directory data app com example videomagnification base apk lib in glob rec file build master pack android opencv modules core src glob cpp line

| 1

|

6,490

| 8,774,609,059

|

IssuesEvent

|

2018-12-18 20:20:45

|

wavesoftware/java-eid-exceptions

|

https://api.github.com/repos/wavesoftware/java-eid-exceptions

|

closed

|

Better, clean library structure

|

incompatibile quality

|

Actualy library consist of Eid object that holds to much responsibility. That's primary function but as well configuration and logging.

There is also issue with `tryToExecute` methods as they are in EidPreconditions. There should be other class introduced as their function isn't a precondition but execution wrapper.

|

True

|

Better, clean library structure - Actualy library consist of Eid object that holds to much responsibility. That's primary function but as well configuration and logging.

There is also issue with `tryToExecute` methods as they are in EidPreconditions. There should be other class introduced as their function isn't a precondition but execution wrapper.

|

non_process

|

better clean library structure actualy library consist of eid object that holds to much responsibility that s primary function but as well configuration and logging there is also issue with trytoexecute methods as they are in eidpreconditions there should be other class introduced as their function isn t a precondition but execution wrapper

| 0

|

8,471

| 11,642,030,609

|

IssuesEvent

|

2020-02-29 05:18:58

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

Test System.ServiceProcess.Tests.ServiceBaseTests.TestOnStartWithArgsThenStop failed with "System.AggregateException : One or more errors occurred. (Task timed out after 60000)\r\n".

|

area-System.ServiceProcess test-run-core untriaged

|

Test: System.ServiceProcess.Tests.ServiceBaseTests.TestOnStartWithArgsThenStop has failed.

MESSAGE:

System.AggregateException : One or more errors occurred. (Task timed out after 60000)\r\n---- System.TimeoutException : Task timed out after 60000

+++++++++++++++++++

STACK TRACE:

at System.Threading.Tasks.Task`1.GetResultCore(Boolean waitCompletionNotification) in E:\A\_work\5\s\src\mscorlib\src\System\Threading\Tasks\future.cs:line 493 at System.ServiceProcess.Tests.TestServiceProvider.GetByte() in D:\j\workspace\outerloop_net---dbe8fad8\src\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs:line 89 at System.ServiceProcess.Tests.ServiceBaseTests.TestOnStartWithArgsThenStop() in D:\j\workspace\outerloop_net---dbe8fad8\src\System.ServiceProcess.ServiceController\tests\ServiceBaseTests.cs:line 98 ----- Inner Stack Trace ----- at System.Threading.Tasks.TaskTimeoutExtensions.TimeoutAfter(Task task, Int32 millisecondsTimeout) in D:\j\workspace\outerloop_net---dbe8fad8\src\Common\tests\System\Threading\Tasks\TaskTimeoutExtensions.cs:line 26 at System.ServiceProcess.Tests.TestServiceProvider.ReadPipeAsync() in D:\j\workspace\outerloop_net---dbe8fad8\src\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs:line 85

Details: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_netcoreapp_windows_nt_debug/389/testReport/System.ServiceProcess.Tests/ServiceBaseTests/TestOnStartWithArgsThenStop/

|

1.0

|

Test System.ServiceProcess.Tests.ServiceBaseTests.TestOnStartWithArgsThenStop failed with "System.AggregateException : One or more errors occurred. (Task timed out after 60000)\r\n". - Test: System.ServiceProcess.Tests.ServiceBaseTests.TestOnStartWithArgsThenStop has failed.

MESSAGE:

System.AggregateException : One or more errors occurred. (Task timed out after 60000)\r\n---- System.TimeoutException : Task timed out after 60000

+++++++++++++++++++

STACK TRACE:

at System.Threading.Tasks.Task`1.GetResultCore(Boolean waitCompletionNotification) in E:\A\_work\5\s\src\mscorlib\src\System\Threading\Tasks\future.cs:line 493 at System.ServiceProcess.Tests.TestServiceProvider.GetByte() in D:\j\workspace\outerloop_net---dbe8fad8\src\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs:line 89 at System.ServiceProcess.Tests.ServiceBaseTests.TestOnStartWithArgsThenStop() in D:\j\workspace\outerloop_net---dbe8fad8\src\System.ServiceProcess.ServiceController\tests\ServiceBaseTests.cs:line 98 ----- Inner Stack Trace ----- at System.Threading.Tasks.TaskTimeoutExtensions.TimeoutAfter(Task task, Int32 millisecondsTimeout) in D:\j\workspace\outerloop_net---dbe8fad8\src\Common\tests\System\Threading\Tasks\TaskTimeoutExtensions.cs:line 26 at System.ServiceProcess.Tests.TestServiceProvider.ReadPipeAsync() in D:\j\workspace\outerloop_net---dbe8fad8\src\System.ServiceProcess.ServiceController\tests\TestServiceProvider.cs:line 85

Details: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_netcoreapp_windows_nt_debug/389/testReport/System.ServiceProcess.Tests/ServiceBaseTests/TestOnStartWithArgsThenStop/

|

process

|

test system serviceprocess tests servicebasetests testonstartwithargsthenstop failed with system aggregateexception one or more errors occurred task timed out after r n test system serviceprocess tests servicebasetests testonstartwithargsthenstop has failed message system aggregateexception one or more errors occurred task timed out after r n system timeoutexception task timed out after stack trace at system threading tasks task getresultcore boolean waitcompletionnotification in e a work s src mscorlib src system threading tasks future cs line at system serviceprocess tests testserviceprovider getbyte in d j workspace outerloop net src system serviceprocess servicecontroller tests testserviceprovider cs line at system serviceprocess tests servicebasetests testonstartwithargsthenstop in d j workspace outerloop net src system serviceprocess servicecontroller tests servicebasetests cs line inner stack trace at system threading tasks tasktimeoutextensions timeoutafter task task millisecondstimeout in d j workspace outerloop net src common tests system threading tasks tasktimeoutextensions cs line at system serviceprocess tests testserviceprovider readpipeasync in d j workspace outerloop net src system serviceprocess servicecontroller tests testserviceprovider cs line details

| 1

|

114,262

| 17,197,725,813

|

IssuesEvent

|

2021-07-16 20:16:15

|

zulip/zulip

|

https://api.github.com/repos/zulip/zulip

|

opened

|

Restrict requests with RemoteZulipServer auth to zilencer and corporate views

|

area: security

|

RemoteZulipServer auth has higher rate-limits than unauth'd requests -- but we do not restrict such requests from hitting other endpoints.