Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

28,834

| 12,974,217,847

|

IssuesEvent

|

2020-07-21 15:07:14

|

terraform-providers/terraform-provider-aws

|

https://api.github.com/repos/terraform-providers/terraform-provider-aws

|

opened

|

tests/resource/aws_rds_cluster: TestAccAWSRDSCluster_Port Consistently Failing Since Postgres 11

|

good first issue service/rds tests

|

<!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform CLI and Terraform AWS Provider Version

Latest codebase

### Affected Resource(s)

* aws_rds_cluster

### Expected Behavior

Test should pass consistently. 😄

### Actual Behavior

Consistent failures:

```

--- FAIL: TestAccAWSRDSCluster_Port (4.47s)

testing.go:684: Step 0 error: errors during apply:

Error: error creating RDS cluster: InvalidParameterCombination: The Parameter Group default.aurora-postgresql10 with DBParameterGroupFamily aurora-postgresql10 cannot be used for this instance. Please use a Parameter Group with DBParameterGroupFamily aurora-postgresql11

```

### Steps to Reproduce

1. `make testacc TESTARGS='-run=TestAccAWSRDSCluster_Port'`

|

1.0

|

tests/resource/aws_rds_cluster: TestAccAWSRDSCluster_Port Consistently Failing Since Postgres 11 - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform CLI and Terraform AWS Provider Version

Latest codebase

### Affected Resource(s)

* aws_rds_cluster

### Expected Behavior

Test should pass consistently. 😄

### Actual Behavior

Consistent failures:

```

--- FAIL: TestAccAWSRDSCluster_Port (4.47s)

testing.go:684: Step 0 error: errors during apply:

Error: error creating RDS cluster: InvalidParameterCombination: The Parameter Group default.aurora-postgresql10 with DBParameterGroupFamily aurora-postgresql10 cannot be used for this instance. Please use a Parameter Group with DBParameterGroupFamily aurora-postgresql11

```

### Steps to Reproduce

1. `make testacc TESTARGS='-run=TestAccAWSRDSCluster_Port'`

|

non_process

|

tests resource aws rds cluster testaccawsrdscluster port consistently failing since postgres please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are running into one of these scenarios we recommend opening an issue in the instead community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate extra noise for issue followers and do not help prioritize the request if you are interested in working on this issue or have submitted a pull request please leave a comment terraform cli and terraform aws provider version latest codebase affected resource s aws rds cluster expected behavior test should pass consistently 😄 actual behavior consistent failures fail testaccawsrdscluster port testing go step error errors during apply error error creating rds cluster invalidparametercombination the parameter group default aurora with dbparametergroupfamily aurora cannot be used for this instance please use a parameter group with dbparametergroupfamily aurora steps to reproduce make testacc testargs run testaccawsrdscluster port

| 0

|

18,566

| 24,555,883,634

|

IssuesEvent

|

2022-10-12 15:50:00

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[Android] [Offline Indicator] Offline error message is not getting displayed in the following scenario

|

Bug P1 Android Process: Fixed Process: Tested QA Process: Tested dev

|

**Steps:**

1. Open the installed app

2. Sign in and complete the passcode process

3. Navigate to 'Study list' screen

4. Click on the study which has updated consent flow

5. Turn off the internet/mobile data

6. Complete all the steps in consent flow and navigate to 'Consent Signature' screen

7. Click on 'Next' button and Verify

**AR:** Offline error message is not getting displayed in the following scenario

**ER:** Offline error message should get displayed in the following scenario

https://user-images.githubusercontent.com/86007179/178954827-1fddee65-7117-406d-b86b-1e30cde9800b.mp4

|

3.0

|

[Android] [Offline Indicator] Offline error message is not getting displayed in the following scenario - **Steps:**

1. Open the installed app

2. Sign in and complete the passcode process

3. Navigate to 'Study list' screen

4. Click on the study which has updated consent flow

5. Turn off the internet/mobile data

6. Complete all the steps in consent flow and navigate to 'Consent Signature' screen

7. Click on 'Next' button and Verify

**AR:** Offline error message is not getting displayed in the following scenario

**ER:** Offline error message should get displayed in the following scenario

https://user-images.githubusercontent.com/86007179/178954827-1fddee65-7117-406d-b86b-1e30cde9800b.mp4

|

process

|

offline error message is not getting displayed in the following scenario steps open the installed app sign in and complete the passcode process navigate to study list screen click on the study which has updated consent flow turn off the internet mobile data complete all the steps in consent flow and navigate to consent signature screen click on next button and verify ar offline error message is not getting displayed in the following scenario er offline error message should get displayed in the following scenario

| 1

|

181,073

| 30,616,535,052

|

IssuesEvent

|

2023-07-24 04:02:37

|

MozillaFoundation/foundation.mozilla.org

|

https://api.github.com/repos/MozillaFoundation/foundation.mozilla.org

|

closed

|

[RCC] Update Figma deck with recap/reflections

|

design RCC

|

Update [Figma deck](https://www.figma.com/file/dPGD3acNbT75dvEGzNaGUb/Responsible-Computing?type=design&node-id=1%3A15&mode=design&t=NqMbdtCj3LOlHQw9-1):

- [x] Make sure deck is up to date

- [x] Reflections section with reflections and next steps/upcoming work

|

1.0

|

[RCC] Update Figma deck with recap/reflections - Update [Figma deck](https://www.figma.com/file/dPGD3acNbT75dvEGzNaGUb/Responsible-Computing?type=design&node-id=1%3A15&mode=design&t=NqMbdtCj3LOlHQw9-1):

- [x] Make sure deck is up to date

- [x] Reflections section with reflections and next steps/upcoming work

|

non_process

|

update figma deck with recap reflections update make sure deck is up to date reflections section with reflections and next steps upcoming work

| 0

|

10,305

| 13,155,326,986

|

IssuesEvent

|

2020-08-10 08:41:04

|

didi/mpx

|

https://api.github.com/repos/didi/mpx

|

closed

|

公共组件webpack打包问题

|

processing

|

**问题描述**

多个分包引用同一.mpx编写组件且js|ts 为外部引入时只有第一个加载该组件的分包能正常运行。例如,a,b两个页面位于不同分包下,我先进入a页面在进入b页面b页面会报错,反之a页面报错

list.mpx 文件代码如下

```

<template>

<view class="list">

<view wx:for="{{listData}}" wx:key="index">{{item}}</view>

</view>

</template>

<script lang="ts" src="./list.ts"></script>

<style lang="stylus">

.list

background-color red

</style>

<script type="application/json">

{

"component": true

}

</script>

```

list.ts

```

import { createComponent } from '@mpxjs/core'

createComponent({

data: {

listData: ['手机', '电视', '电脑']

}

})

```

报错如下:

```

jsEnginScriptError

Component is not found in path "packCenter/components/list5b238f42/list" (using by "packCenter/pages/orderDetail/index");onAppRoute

Error: Component is not found in path "packCenter/components/list5b238f42/list" (using by "packCenter/pages/orderDetail/index")

at K (WAService.js:1:1214064)

at K (WAService.js:1:1214268)

at WAService.js:1:1232006

at Module.Fe (WAService.js:1:1232585)

at Function.value (WAService.js:1:1260806)

at It (WAService.js:1:1276660)

at xt (WAService.js:1:1279059)

at Function.<anonymous> (WAService.js:1:1284536)

at i.<anonymous> (WAService.js:1:1253492)

at i.emit (WAService.js:1:412028)

```

打包出来dist目下在两个分包分都有一份list组件的代码

<img width="375" alt="屏幕快照 2020-08-10 上午11 49 31" src="https://user-images.githubusercontent.com/22835834/89750758-eadba400-daff-11ea-8efe-fb0ac738eee1.png">

<img width="252" alt="屏幕快照 2020-08-10 上午11 52 48" src="https://user-images.githubusercontent.com/22835834/89750817-1fe7f680-db00-11ea-8050-f9f1774287a4.png">

打包出来.wxml和wxss文件没问题js文件内容如下

```

var window = window || {};

window["webpackJsonp"] = require("../../../bundle.js");

(window["webpackJsonp"] = window["webpackJsonp"] || []).push([["packShop/components/list5b238f42/list"],{

/***/ "./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=json&index=0!./node_modules/@mpxjs/webpack-plugin/lib/json-compiler/index.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=json&index=0!./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, exports) {

// removed by extractor

/***/ }),

/***/ "./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=styles&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxss/loader.js?root=&importLoaders=1&extract=true!./node_modules/@mpxjs/webpack-plugin/lib/style-compiler/index.js?{\"moduleId\":\"m11703cf3\",\"scoped\":false,\"sourceMap\":false}!./node_modules/stylus-loader/index.js!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=styles&index=0!./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, exports) {

// removed by extractor

/***/ }),

/***/ "./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=template&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxml/wxml-loader.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/template-compiler/index.js?{\"usingComponents\":[],\"hasScoped\":false,\"isNative\":false,\"moduleId\":\"m11703cf3\",\"root\":\"\"}!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=template&index=0!./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, exports) {

// removed by extractor

/***/ }),

/***/ "./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, __webpack_exports__, __webpack_require__) {

"use strict";

__webpack_require__.r(__webpack_exports__);

/* WEBPACK VAR INJECTION */(function(global) {/* mpx inject */ global.currentModuleId = "m11703cf3"

global.currentResource = "/Users/litao/work/GM/gome/src/components/list/list.mpx"

global.currentCtor = Component

global.currentCtorType = "component"

global.currentSrcMode = "wx"

/* mpx inject */ global.currentInject = {

moduleId: "m11703cf3",

render: function () {

this._c("mpxShow", this.mpxShow) || this._c("mpxShow", this.mpxShow) === undefined ? '' : 'display:none;';

this._i(this._c("listData", this.listData), function (item, index) {

item;

});

this._r();

}

};

/* harmony import */ var _list_ts_resourcePath_Users_litao_work_GM_gome_src_components_list_list_mpx__WEBPACK_IMPORTED_MODULE_0__ = __webpack_require__("./src/components/list/list.ts?resourcePath=/Users/litao/work/GM/gome/src/components/list/list.mpx");

/* empty/unused harmony star reexport */global.currentModuleId

/* script */

/* styles */

__webpack_require__("./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=styles&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxss/loader.js?root=&importLoaders=1&extract=true!./node_modules/@mpxjs/webpack-plugin/lib/style-compiler/index.js?{\"moduleId\":\"m11703cf3\",\"scoped\":false,\"sourceMap\":false}!./node_modules/stylus-loader/index.js!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=styles&index=0!./src/components/list/list.mpx?packageName=packShop")

/* json */

__webpack_require__("./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=json&index=0!./node_modules/@mpxjs/webpack-plugin/lib/json-compiler/index.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=json&index=0!./src/components/list/list.mpx?packageName=packShop")

/* template */

__webpack_require__("./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=template&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxml/wxml-loader.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/template-compiler/index.js?{\"usingComponents\":[],\"hasScoped\":false,\"isNative\":false,\"moduleId\":\"m11703cf3\",\"root\":\"\"}!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=template&index=0!./src/components/list/list.mpx?packageName=packShop")

/* WEBPACK VAR INJECTION */}.call(this, __webpack_require__("./node_modules/webpack/buildin/global.js")))

/***/ })

},[["./src/components/list/list.mpx?packageName=packShop","bundle"]]]);

```

这里并有调用CreateComponnet方法CreateComponent方法被打包到了bundle.js中

相关代码截图

<img width="767" alt="屏幕快照 2020-08-10 上午11 57 31" src="https://user-images.githubusercontent.com/22835834/89750953-bb796700-db00-11ea-9371-1ad900bb1dcd.png">

注如果将.mpx文件js|ts外部引入改为

```

<script>

//....

</script>

```

的形式则不会有这个问题(这时打包到bundle.js中的代码片段会被打到各自分包下的list.js中)

|

1.0

|

公共组件webpack打包问题 - **问题描述**

多个分包引用同一.mpx编写组件且js|ts 为外部引入时只有第一个加载该组件的分包能正常运行。例如,a,b两个页面位于不同分包下,我先进入a页面在进入b页面b页面会报错,反之a页面报错

list.mpx 文件代码如下

```

<template>

<view class="list">

<view wx:for="{{listData}}" wx:key="index">{{item}}</view>

</view>

</template>

<script lang="ts" src="./list.ts"></script>

<style lang="stylus">

.list

background-color red

</style>

<script type="application/json">

{

"component": true

}

</script>

```

list.ts

```

import { createComponent } from '@mpxjs/core'

createComponent({

data: {

listData: ['手机', '电视', '电脑']

}

})

```

报错如下:

```

jsEnginScriptError

Component is not found in path "packCenter/components/list5b238f42/list" (using by "packCenter/pages/orderDetail/index");onAppRoute

Error: Component is not found in path "packCenter/components/list5b238f42/list" (using by "packCenter/pages/orderDetail/index")

at K (WAService.js:1:1214064)

at K (WAService.js:1:1214268)

at WAService.js:1:1232006

at Module.Fe (WAService.js:1:1232585)

at Function.value (WAService.js:1:1260806)

at It (WAService.js:1:1276660)

at xt (WAService.js:1:1279059)

at Function.<anonymous> (WAService.js:1:1284536)

at i.<anonymous> (WAService.js:1:1253492)

at i.emit (WAService.js:1:412028)

```

打包出来dist目下在两个分包分都有一份list组件的代码

<img width="375" alt="屏幕快照 2020-08-10 上午11 49 31" src="https://user-images.githubusercontent.com/22835834/89750758-eadba400-daff-11ea-8efe-fb0ac738eee1.png">

<img width="252" alt="屏幕快照 2020-08-10 上午11 52 48" src="https://user-images.githubusercontent.com/22835834/89750817-1fe7f680-db00-11ea-8050-f9f1774287a4.png">

打包出来.wxml和wxss文件没问题js文件内容如下

```

var window = window || {};

window["webpackJsonp"] = require("../../../bundle.js");

(window["webpackJsonp"] = window["webpackJsonp"] || []).push([["packShop/components/list5b238f42/list"],{

/***/ "./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=json&index=0!./node_modules/@mpxjs/webpack-plugin/lib/json-compiler/index.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=json&index=0!./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, exports) {

// removed by extractor

/***/ }),

/***/ "./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=styles&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxss/loader.js?root=&importLoaders=1&extract=true!./node_modules/@mpxjs/webpack-plugin/lib/style-compiler/index.js?{\"moduleId\":\"m11703cf3\",\"scoped\":false,\"sourceMap\":false}!./node_modules/stylus-loader/index.js!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=styles&index=0!./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, exports) {

// removed by extractor

/***/ }),

/***/ "./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=template&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxml/wxml-loader.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/template-compiler/index.js?{\"usingComponents\":[],\"hasScoped\":false,\"isNative\":false,\"moduleId\":\"m11703cf3\",\"root\":\"\"}!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=template&index=0!./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, exports) {

// removed by extractor

/***/ }),

/***/ "./src/components/list/list.mpx?packageName=packShop":

/***/ (function(module, __webpack_exports__, __webpack_require__) {

"use strict";

__webpack_require__.r(__webpack_exports__);

/* WEBPACK VAR INJECTION */(function(global) {/* mpx inject */ global.currentModuleId = "m11703cf3"

global.currentResource = "/Users/litao/work/GM/gome/src/components/list/list.mpx"

global.currentCtor = Component

global.currentCtorType = "component"

global.currentSrcMode = "wx"

/* mpx inject */ global.currentInject = {

moduleId: "m11703cf3",

render: function () {

this._c("mpxShow", this.mpxShow) || this._c("mpxShow", this.mpxShow) === undefined ? '' : 'display:none;';

this._i(this._c("listData", this.listData), function (item, index) {

item;

});

this._r();

}

};

/* harmony import */ var _list_ts_resourcePath_Users_litao_work_GM_gome_src_components_list_list_mpx__WEBPACK_IMPORTED_MODULE_0__ = __webpack_require__("./src/components/list/list.ts?resourcePath=/Users/litao/work/GM/gome/src/components/list/list.mpx");

/* empty/unused harmony star reexport */global.currentModuleId

/* script */

/* styles */

__webpack_require__("./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=styles&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxss/loader.js?root=&importLoaders=1&extract=true!./node_modules/@mpxjs/webpack-plugin/lib/style-compiler/index.js?{\"moduleId\":\"m11703cf3\",\"scoped\":false,\"sourceMap\":false}!./node_modules/stylus-loader/index.js!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=styles&index=0!./src/components/list/list.mpx?packageName=packShop")

/* json */

__webpack_require__("./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=json&index=0!./node_modules/@mpxjs/webpack-plugin/lib/json-compiler/index.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=json&index=0!./src/components/list/list.mpx?packageName=packShop")

/* template */

__webpack_require__("./node_modules/@mpxjs/webpack-plugin/lib/extractor.js?type=template&index=0!./node_modules/@mpxjs/webpack-plugin/lib/wxml/wxml-loader.js?root=!./node_modules/@mpxjs/webpack-plugin/lib/template-compiler/index.js?{\"usingComponents\":[],\"hasScoped\":false,\"isNative\":false,\"moduleId\":\"m11703cf3\",\"root\":\"\"}!./node_modules/@mpxjs/webpack-plugin/lib/selector.js?type=template&index=0!./src/components/list/list.mpx?packageName=packShop")

/* WEBPACK VAR INJECTION */}.call(this, __webpack_require__("./node_modules/webpack/buildin/global.js")))

/***/ })

},[["./src/components/list/list.mpx?packageName=packShop","bundle"]]]);

```

这里并有调用CreateComponnet方法CreateComponent方法被打包到了bundle.js中

相关代码截图

<img width="767" alt="屏幕快照 2020-08-10 上午11 57 31" src="https://user-images.githubusercontent.com/22835834/89750953-bb796700-db00-11ea-9371-1ad900bb1dcd.png">

注如果将.mpx文件js|ts外部引入改为

```

<script>

//....

</script>

```

的形式则不会有这个问题(这时打包到bundle.js中的代码片段会被打到各自分包下的list.js中)

|

process

|

公共组件webpack打包问题 问题描述 多个分包引用同一 mpx编写组件且js ts 为外部引入时只有第一个加载该组件的分包能正常运行。例如,a b两个页面位于不同分包下,我先进入a页面在进入b页面b页面会报错,反之a页面报错 list mpx 文件代码如下 item list background color red component true list ts import createcomponent from mpxjs core createcomponent data listdata 报错如下: jsenginscripterror component is not found in path packcenter components list using by packcenter pages orderdetail index onapproute error component is not found in path packcenter components list using by packcenter pages orderdetail index at k waservice js at k waservice js at waservice js at module fe waservice js at function value waservice js at it waservice js at xt waservice js at function waservice js at i waservice js at i emit waservice js 打包出来dist目下在两个分包分都有一份list组件的代码 img width alt 屏幕快照 src img width alt 屏幕快照 src 打包出来 wxml和wxss文件没问题js文件内容如下 var window window window require bundle js window window push node modules mpxjs webpack plugin lib extractor js type json index node modules mpxjs webpack plugin lib json compiler index js root node modules mpxjs webpack plugin lib selector js type json index src components list list mpx packagename packshop function module exports removed by extractor node modules mpxjs webpack plugin lib extractor js type styles index node modules mpxjs webpack plugin lib wxss loader js root importloaders extract true node modules mpxjs webpack plugin lib style compiler index js moduleid scoped false sourcemap false node modules stylus loader index js node modules mpxjs webpack plugin lib selector js type styles index src components list list mpx packagename packshop function module exports removed by extractor node modules mpxjs webpack plugin lib extractor js type template index node modules mpxjs webpack plugin lib wxml wxml loader js root node modules mpxjs webpack plugin lib template compiler index js usingcomponents hasscoped false isnative false moduleid root node modules mpxjs webpack plugin lib selector js type template index src components list list mpx packagename packshop function module exports removed by extractor src components list list mpx packagename packshop function module webpack exports webpack require use strict webpack require r webpack exports webpack var injection function global mpx inject global currentmoduleid global currentresource users litao work gm gome src components list list mpx global currentctor component global currentctortype component global currentsrcmode wx mpx inject global currentinject moduleid render function this c mpxshow this mpxshow this c mpxshow this mpxshow undefined display none this i this c listdata this listdata function item index item this r harmony import var list ts resourcepath users litao work gm gome src components list list mpx webpack imported module webpack require src components list list ts resourcepath users litao work gm gome src components list list mpx empty unused harmony star reexport global currentmoduleid script styles webpack require node modules mpxjs webpack plugin lib extractor js type styles index node modules mpxjs webpack plugin lib wxss loader js root importloaders extract true node modules mpxjs webpack plugin lib style compiler index js moduleid scoped false sourcemap false node modules stylus loader index js node modules mpxjs webpack plugin lib selector js type styles index src components list list mpx packagename packshop json webpack require node modules mpxjs webpack plugin lib extractor js type json index node modules mpxjs webpack plugin lib json compiler index js root node modules mpxjs webpack plugin lib selector js type json index src components list list mpx packagename packshop template webpack require node modules mpxjs webpack plugin lib extractor js type template index node modules mpxjs webpack plugin lib wxml wxml loader js root node modules mpxjs webpack plugin lib template compiler index js usingcomponents hasscoped false isnative false moduleid root node modules mpxjs webpack plugin lib selector js type template index src components list list mpx packagename packshop webpack var injection call this webpack require node modules webpack buildin global js 这里并有调用createcomponnet方法createcomponent方法被打包到了bundle js中 相关代码截图 img width alt 屏幕快照 src 注如果将 mpx文件js ts外部引入改为 的形式则不会有这个问题(这时打包到bundle js中的代码片段会被打到各自分包下的list js中)

| 1

|

3,940

| 6,885,215,753

|

IssuesEvent

|

2017-11-21 15:29:14

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

acetylcholine receptor impairing toxin

|

auto-migrated curator-request multiorganism processes New term request toxin UniProt

|

Hi,

I will need these 2 new GO terms:

envenomation resulting in positive regulation of G-protein coupled acetylcholine receptor activity in other organism; GO:new

def: A process that begins with venom being forced into an organism by the bite or sting of another organism, and ends with the resultant modulation of G-protein coupled acetylcholine receptor activity in the bitten organism.

- is the child of GO:0044513; envenomation resulting in modulation of G-protein coupled receptor activity in other organism

and

envenomation resulting in modulation of acetylcholine receptor activity in other organism

def: A process that begins with venom being forced into an organism by the bite or sting of another organism, and ends with the resultant modulation of nicotinic acetylcholine receptor activity in the bitten organism.

synonym: envenomation resulting in modulation of nicotinic acetylcholine receptor activity in other organism

- is the child of GO:0044511; envenomation resulting in modulation of receptor activity in other organism

many thanks

Florence

Reported by: fjungo

Original Ticket: [geneontology/ontology-requests/9983](https://sourceforge.net/p/geneontology/ontology-requests/9983)

|

1.0

|

acetylcholine receptor impairing toxin - Hi,

I will need these 2 new GO terms:

envenomation resulting in positive regulation of G-protein coupled acetylcholine receptor activity in other organism; GO:new

def: A process that begins with venom being forced into an organism by the bite or sting of another organism, and ends with the resultant modulation of G-protein coupled acetylcholine receptor activity in the bitten organism.

- is the child of GO:0044513; envenomation resulting in modulation of G-protein coupled receptor activity in other organism

and

envenomation resulting in modulation of acetylcholine receptor activity in other organism

def: A process that begins with venom being forced into an organism by the bite or sting of another organism, and ends with the resultant modulation of nicotinic acetylcholine receptor activity in the bitten organism.

synonym: envenomation resulting in modulation of nicotinic acetylcholine receptor activity in other organism

- is the child of GO:0044511; envenomation resulting in modulation of receptor activity in other organism

many thanks

Florence

Reported by: fjungo

Original Ticket: [geneontology/ontology-requests/9983](https://sourceforge.net/p/geneontology/ontology-requests/9983)

|

process

|

acetylcholine receptor impairing toxin hi i will need these new go terms envenomation resulting in positive regulation of g protein coupled acetylcholine receptor activity in other organism go new def a process that begins with venom being forced into an organism by the bite or sting of another organism and ends with the resultant modulation of g protein coupled acetylcholine receptor activity in the bitten organism is the child of go envenomation resulting in modulation of g protein coupled receptor activity in other organism and envenomation resulting in modulation of acetylcholine receptor activity in other organism def a process that begins with venom being forced into an organism by the bite or sting of another organism and ends with the resultant modulation of nicotinic acetylcholine receptor activity in the bitten organism synonym envenomation resulting in modulation of nicotinic acetylcholine receptor activity in other organism is the child of go envenomation resulting in modulation of receptor activity in other organism many thanks florence reported by fjungo original ticket

| 1

|

150,411

| 5,766,283,661

|

IssuesEvent

|

2017-04-27 06:35:27

|

Komodo/KomodoEdit

|

https://api.github.com/repos/Komodo/KomodoEdit

|

closed

|

Can't use collaboration feature

|

Bug Bug: New Pending: Response Severity: Priority

|

### Short Summary

I can't use collaboration feature. In the Collaboration tab I see the message "Collaboration encountered a communication error and will recover automatically"

When I try to reconnect - it doesn't work.

### Steps to Reproduce

1. Open Komodo IDE

2. Open collaboration tab

3. Click to "Force reconnect now"

### Expected results

Ability to create new session

### Actual results

501 error

### Platform Information

*Komodo IDE

*Version 10.2.1

*Ubuntu 15.10

### Additional Information

If I use HTTP Inspector I see 501 error:

Request:

URL: https://collaboration-push-v3.activestate.com:443

connection: keep-alive

host: collaboration-push-v3.activestate.com:443

proxy-connection: keep-alive

user-agent: Mozilla/5.0 (X11; Linux x86_64; rv:35.0) Gecko/20100101 KomodoIDE/

Response:

status: 501

content-length: 177

content-type: text/html

|

1.0

|

Can't use collaboration feature - ### Short Summary

I can't use collaboration feature. In the Collaboration tab I see the message "Collaboration encountered a communication error and will recover automatically"

When I try to reconnect - it doesn't work.

### Steps to Reproduce

1. Open Komodo IDE

2. Open collaboration tab

3. Click to "Force reconnect now"

### Expected results

Ability to create new session

### Actual results

501 error

### Platform Information

*Komodo IDE

*Version 10.2.1

*Ubuntu 15.10

### Additional Information

If I use HTTP Inspector I see 501 error:

Request:

URL: https://collaboration-push-v3.activestate.com:443

connection: keep-alive

host: collaboration-push-v3.activestate.com:443

proxy-connection: keep-alive

user-agent: Mozilla/5.0 (X11; Linux x86_64; rv:35.0) Gecko/20100101 KomodoIDE/

Response:

status: 501

content-length: 177

content-type: text/html

|

non_process

|

can t use collaboration feature short summary i can t use collaboration feature in the collaboration tab i see the message collaboration encountered a communication error and will recover automatically when i try to reconnect it doesn t work steps to reproduce open komodo ide open collaboration tab click to force reconnect now expected results ability to create new session actual results error platform information komodo ide version ubuntu additional information if i use http inspector i see error request url connection keep alive host collaboration push activestate com proxy connection keep alive user agent mozilla linux rv gecko komodoide response status content length content type text html

| 0

|

1,403

| 3,967,880,289

|

IssuesEvent

|

2016-05-03 17:44:46

|

opentrials/opentrials

|

https://api.github.com/repos/opentrials/opentrials

|

opened

|

Setup continuous processing

|

Processors

|

- [ ] add strategy to process only updated data in `warehouse`

- [ ] update `processors` stack to use this strategy and `make-initial-processing` to do not use

|

1.0

|

Setup continuous processing - - [ ] add strategy to process only updated data in `warehouse`

- [ ] update `processors` stack to use this strategy and `make-initial-processing` to do not use

|

process

|

setup continuous processing add strategy to process only updated data in warehouse update processors stack to use this strategy and make initial processing to do not use

| 1

|

416,102

| 28,066,846,265

|

IssuesEvent

|

2023-03-29 16:00:48

|

dockstore/dockstore

|

https://api.github.com/repos/dockstore/dockstore

|

closed

|

Validate BioData Catalyst logos

|

enhancement documentation gui review

|

**Is your feature request related to a problem? Please describe.**

BioData Catalyst® has recently updated its logo and name usage.

https://www.biodatacatalyst.org/consortium-resources/bdcatalyst-branding/

**Describe the solution you'd like**

Validate that Dockstore is complying with the new requirements.

**Describe alternatives you've considered**

NA

**Additional context**

NA

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassian.net/browse/DOCK-2291)

┆Attachments: <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10887">BDC-Logos.zip</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10885">BioDataCatalyst-StyleGuide.pdf</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10886">Color Icons.zip</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10888">Gray Icons.zip</a>

┆Fix Versions: Dockstore 1.14

┆Issue Number: DOCK-2291

┆Sprint: 104 - Blue Nile

┆Issue Type: Story

|

1.0

|

Validate BioData Catalyst logos - **Is your feature request related to a problem? Please describe.**

BioData Catalyst® has recently updated its logo and name usage.

https://www.biodatacatalyst.org/consortium-resources/bdcatalyst-branding/

**Describe the solution you'd like**

Validate that Dockstore is complying with the new requirements.

**Describe alternatives you've considered**

NA

**Additional context**

NA

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassian.net/browse/DOCK-2291)

┆Attachments: <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10887">BDC-Logos.zip</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10885">BioDataCatalyst-StyleGuide.pdf</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10886">Color Icons.zip</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10888">Gray Icons.zip</a>

┆Fix Versions: Dockstore 1.14

┆Issue Number: DOCK-2291

┆Sprint: 104 - Blue Nile

┆Issue Type: Story

|

non_process

|

validate biodata catalyst logos is your feature request related to a problem please describe biodata catalyst® has recently updated its logo and name usage describe the solution you d like validate that dockstore is complying with the new requirements describe alternatives you ve considered na additional context na ┆issue is synchronized with this ┆attachments ┆fix versions dockstore ┆issue number dock ┆sprint blue nile ┆issue type story

| 0

|

20,633

| 27,314,606,860

|

IssuesEvent

|

2023-02-24 14:48:07

|

microsoft/vscode

|

https://api.github.com/repos/microsoft/vscode

|

closed

|

Adopt utility process for shared process, file watchers and terminal host and set `app.enableSandbox()`

|

plan-item on-testplan shared-process sandbox

|

This is a follow up from https://github.com/microsoft/vscode/issues/92164 and covers remaining work to eventually enable sandboxed renderers fully in Electron via `app.enableSandbox()`.

This means that our shared process has to move away from a node.js enabled browser window to the new utility process.

**Breaking down the usages today:**

* extension management

* settings sync

* profiles

* terminals

* file watcher

**Some initial thoughts:**

* the shared process should probably just change to be a utility process as a first step

* however, any code that relies on the browser window network stack instead has to leverage Electrons [`net`](https://www.electronjs.org/docs/latest/api/net) APIs from the `electron-main` process to not loose proxy support

* this can probably be done by implementing some kind of `IRequestService` that is backed by a main process service implementation

* any child process has to decide whether it wants to lift up to a utility process off the main process or remain inside the shared process

//cc @alexdima

|

1.0

|

Adopt utility process for shared process, file watchers and terminal host and set `app.enableSandbox()` - This is a follow up from https://github.com/microsoft/vscode/issues/92164 and covers remaining work to eventually enable sandboxed renderers fully in Electron via `app.enableSandbox()`.

This means that our shared process has to move away from a node.js enabled browser window to the new utility process.

**Breaking down the usages today:**

* extension management

* settings sync

* profiles

* terminals

* file watcher

**Some initial thoughts:**

* the shared process should probably just change to be a utility process as a first step

* however, any code that relies on the browser window network stack instead has to leverage Electrons [`net`](https://www.electronjs.org/docs/latest/api/net) APIs from the `electron-main` process to not loose proxy support

* this can probably be done by implementing some kind of `IRequestService` that is backed by a main process service implementation

* any child process has to decide whether it wants to lift up to a utility process off the main process or remain inside the shared process

//cc @alexdima

|

process

|

adopt utility process for shared process file watchers and terminal host and set app enablesandbox this is a follow up from and covers remaining work to eventually enable sandboxed renderers fully in electron via app enablesandbox this means that our shared process has to move away from a node js enabled browser window to the new utility process breaking down the usages today extension management settings sync profiles terminals file watcher some initial thoughts the shared process should probably just change to be a utility process as a first step however any code that relies on the browser window network stack instead has to leverage electrons apis from the electron main process to not loose proxy support this can probably be done by implementing some kind of irequestservice that is backed by a main process service implementation any child process has to decide whether it wants to lift up to a utility process off the main process or remain inside the shared process cc alexdima

| 1

|

96,419

| 16,129,635,934

|

IssuesEvent

|

2021-04-29 01:07:02

|

RG4421/ampere-centos-kernel

|

https://api.github.com/repos/RG4421/ampere-centos-kernel

|

opened

|

CVE-2020-27777 (Medium) detected in linuxv5.2

|

security vulnerability

|

## CVE-2020-27777 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torvalds/linux.git>https://github.com/torvalds/linux.git</a></p>

<p>Found in base branch: <b>amp-centos-8.0-kernel</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/arch/powerpc/kernel/rtas.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/arch/powerpc/kernel/rtas.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A flaw was found in the way RTAS handled memory accesses in userspace to kernel communication. On a locked down (usually due to Secure Boot) guest system running on top of PowerVM or KVM hypervisors (pseries platform) a root like local user could use this flaw to further increase their privileges to that of a running kernel.

<p>Publish Date: 2020-12-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27777>CVE-2020-27777</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2020-27777">https://www.linuxkernelcves.com/cves/CVE-2020-27777</a></p>

<p>Release Date: 2020-10-27</p>

<p>Fix Resolution: v4.14.204, v4.19.155, v5.4.75, v5.9.5,v5.10-rc1</p>

</p>

</details>

<p></p>

|

True

|

CVE-2020-27777 (Medium) detected in linuxv5.2 - ## CVE-2020-27777 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torvalds/linux.git>https://github.com/torvalds/linux.git</a></p>

<p>Found in base branch: <b>amp-centos-8.0-kernel</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/arch/powerpc/kernel/rtas.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/arch/powerpc/kernel/rtas.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A flaw was found in the way RTAS handled memory accesses in userspace to kernel communication. On a locked down (usually due to Secure Boot) guest system running on top of PowerVM or KVM hypervisors (pseries platform) a root like local user could use this flaw to further increase their privileges to that of a running kernel.

<p>Publish Date: 2020-12-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27777>CVE-2020-27777</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2020-27777">https://www.linuxkernelcves.com/cves/CVE-2020-27777</a></p>

<p>Release Date: 2020-10-27</p>

<p>Fix Resolution: v4.14.204, v4.19.155, v5.4.75, v5.9.5,v5.10-rc1</p>

</p>

</details>

<p></p>

|

non_process

|

cve medium detected in cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files ampere centos kernel arch powerpc kernel rtas c ampere centos kernel arch powerpc kernel rtas c vulnerability details a flaw was found in the way rtas handled memory accesses in userspace to kernel communication on a locked down usually due to secure boot guest system running on top of powervm or kvm hypervisors pseries platform a root like local user could use this flaw to further increase their privileges to that of a running kernel publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required high user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution

| 0

|

22,664

| 31,895,996,039

|

IssuesEvent

|

2023-09-18 01:47:43

|

Significant-Gravitas/Auto-GPT

|

https://api.github.com/repos/Significant-Gravitas/Auto-GPT

|

closed

|

`read_file` does not find file that exists

|

AI model limitation needs investigation function: workspace function: process text Stale

|

### ⚠️ Search for existing issues first ⚠️

- [X] I have searched the existing issues, and there is no existing issue for my problem

### Which Operating System are you using?

Windows

### Which version of Auto-GPT are you using?

Stable (branch)

### GPT-3 or GPT-4?

GPT-3.5

### Steps to reproduce 🕹

when the command read_file occurs, it can't find any file, because there is no path

although it wrote a file in auto_gpt_workspace folder

### Current behavior 😯

SYSTEM: Command read_file returned: Error: [Errno 2] No such file or directory: 'jokes.txt'

### Expected behavior 🤔

To read the file

### Your prompt 📝

```yaml

ai_goals:

- write a file with 3 jokes

- read from the file

ai_name: a

ai_role: wr

```

### Your Logs 📒

```log Use the 'write_to_file' command to write 3 jokes to a file.

2023-04-26 23:33:34,112 INFO CRITICISM: I need to make sure that the jokes are appropriate and not offensive.

2023-04-26 23:33:34,576 INFO NEXT ACTION: COMMAND = write_to_file ARGUMENTS = {'file': 'jokes.txt', 'text': "Why did the tomato turn red? Because it saw the salad dressing!\nWhat do you call a fake noodle? An impasta!\nWhy don't scientists trust atoms? Because they make up everything!"}

2023-04-26 23:33:59,289 INFO -=-=-=-=-=-=-= COMMAND AUTHORISED BY USER -=-=-=-=-=-=-=

2023-04-26 23:34:00,049 INFO SYSTEM: Command write_to_file returned: File written to successfully.

2023-04-26 23:34:19,001 INFO THOUGHTS: Now that we have written some jokes to a file, I think I will use the 'read_file' command to make sure the jokes were saved correctly.

2023-04-26 23:34:19,686 INFO REASONING: I want to make sure that the jokes were saved correctly to the file, so I will use the 'read_file' command to check the contents of the file.

2023-04-26 23:34:20,463 INFO PLAN:

2023-04-26 23:34:20,569 INFO - Use the 'read_file' command to check the contents of the file

2023-04-26 23:34:20,918 INFO CRITICISM: I need to make sure that I am using the correct syntax for the 'read_file' command and that I am checking the correct file.

2023-04-26 23:34:21,551 INFO NEXT ACTION: COMMAND = read_file ARGUMENTS = {'file': 'jokes.txt'}

2023-04-26 23:35:16,410 INFO -=-=-=-=-=-=-= COMMAND AUTHORISED BY USER -=-=-=-=-=-=-=

2023-04-26 23:35:17,241 INFO SYSTEM: Command read_file returned: Error: [Errno 2] No such file or directory: 'jokes.txt'

2023-04-26 23:35:34,998 INFO THOUGHTS: Now that we have written some jokes to a file, I think I will use the 'read_file' command to make sure the jokes were saved correctly.

2023-04-26 23:35:35,649 INFO REASONING: I want to make sure that the jokes were saved correctly to the file, so I will use the 'read_file' command to check the contents of the file.

2023-04-26 23:35:36,499 INFO PLAN:

2023-04-26 23:35:36,582 INFO - Use the 'read_file' command to check the contents of the file

2023-04-26 23:35:36,983 INFO CRITICISM: I need to make sure that I am using the correct syntax for the 'read_file' command and that I am checking the correct file.

2023-04-26 23:35:37,601 INFO NEXT ACTION: COMMAND = read_file ARGUMENTS = {'file': 'jokes.txt'}

```

|

1.0

|

`read_file` does not find file that exists - ### ⚠️ Search for existing issues first ⚠️

- [X] I have searched the existing issues, and there is no existing issue for my problem

### Which Operating System are you using?

Windows

### Which version of Auto-GPT are you using?

Stable (branch)

### GPT-3 or GPT-4?

GPT-3.5

### Steps to reproduce 🕹

when the command read_file occurs, it can't find any file, because there is no path

although it wrote a file in auto_gpt_workspace folder

### Current behavior 😯

SYSTEM: Command read_file returned: Error: [Errno 2] No such file or directory: 'jokes.txt'

### Expected behavior 🤔

To read the file

### Your prompt 📝

```yaml

ai_goals:

- write a file with 3 jokes

- read from the file

ai_name: a

ai_role: wr

```

### Your Logs 📒

```log Use the 'write_to_file' command to write 3 jokes to a file.

2023-04-26 23:33:34,112 INFO CRITICISM: I need to make sure that the jokes are appropriate and not offensive.

2023-04-26 23:33:34,576 INFO NEXT ACTION: COMMAND = write_to_file ARGUMENTS = {'file': 'jokes.txt', 'text': "Why did the tomato turn red? Because it saw the salad dressing!\nWhat do you call a fake noodle? An impasta!\nWhy don't scientists trust atoms? Because they make up everything!"}

2023-04-26 23:33:59,289 INFO -=-=-=-=-=-=-= COMMAND AUTHORISED BY USER -=-=-=-=-=-=-=

2023-04-26 23:34:00,049 INFO SYSTEM: Command write_to_file returned: File written to successfully.

2023-04-26 23:34:19,001 INFO THOUGHTS: Now that we have written some jokes to a file, I think I will use the 'read_file' command to make sure the jokes were saved correctly.

2023-04-26 23:34:19,686 INFO REASONING: I want to make sure that the jokes were saved correctly to the file, so I will use the 'read_file' command to check the contents of the file.

2023-04-26 23:34:20,463 INFO PLAN:

2023-04-26 23:34:20,569 INFO - Use the 'read_file' command to check the contents of the file

2023-04-26 23:34:20,918 INFO CRITICISM: I need to make sure that I am using the correct syntax for the 'read_file' command and that I am checking the correct file.

2023-04-26 23:34:21,551 INFO NEXT ACTION: COMMAND = read_file ARGUMENTS = {'file': 'jokes.txt'}

2023-04-26 23:35:16,410 INFO -=-=-=-=-=-=-= COMMAND AUTHORISED BY USER -=-=-=-=-=-=-=

2023-04-26 23:35:17,241 INFO SYSTEM: Command read_file returned: Error: [Errno 2] No such file or directory: 'jokes.txt'

2023-04-26 23:35:34,998 INFO THOUGHTS: Now that we have written some jokes to a file, I think I will use the 'read_file' command to make sure the jokes were saved correctly.

2023-04-26 23:35:35,649 INFO REASONING: I want to make sure that the jokes were saved correctly to the file, so I will use the 'read_file' command to check the contents of the file.

2023-04-26 23:35:36,499 INFO PLAN:

2023-04-26 23:35:36,582 INFO - Use the 'read_file' command to check the contents of the file

2023-04-26 23:35:36,983 INFO CRITICISM: I need to make sure that I am using the correct syntax for the 'read_file' command and that I am checking the correct file.

2023-04-26 23:35:37,601 INFO NEXT ACTION: COMMAND = read_file ARGUMENTS = {'file': 'jokes.txt'}

```

|

process

|

read file does not find file that exists ⚠️ search for existing issues first ⚠️ i have searched the existing issues and there is no existing issue for my problem which operating system are you using windows which version of auto gpt are you using stable branch gpt or gpt gpt steps to reproduce 🕹 when the command read file occurs it can t find any file because there is no path although it wrote a file in auto gpt workspace folder current behavior 😯 system command read file returned error no such file or directory jokes txt expected behavior 🤔 to read the file your prompt 📝 yaml ai goals write a file with jokes read from the file ai name a ai role wr your logs 📒 log use the write to file command to write jokes to a file info criticism i need to make sure that the jokes are appropriate and not offensive info next action command write to file arguments file jokes txt text why did the tomato turn red because it saw the salad dressing nwhat do you call a fake noodle an impasta nwhy don t scientists trust atoms because they make up everything info command authorised by user info system command write to file returned file written to successfully info thoughts now that we have written some jokes to a file i think i will use the read file command to make sure the jokes were saved correctly info reasoning i want to make sure that the jokes were saved correctly to the file so i will use the read file command to check the contents of the file info plan info use the read file command to check the contents of the file info criticism i need to make sure that i am using the correct syntax for the read file command and that i am checking the correct file info next action command read file arguments file jokes txt info command authorised by user info system command read file returned error no such file or directory jokes txt info thoughts now that we have written some jokes to a file i think i will use the read file command to make sure the jokes were saved correctly info reasoning i want to make sure that the jokes were saved correctly to the file so i will use the read file command to check the contents of the file info plan info use the read file command to check the contents of the file info criticism i need to make sure that i am using the correct syntax for the read file command and that i am checking the correct file info next action command read file arguments file jokes txt

| 1

|

14,998

| 18,677,192,222

|

IssuesEvent

|

2021-10-31 19:06:12

|

varabyte/kobweb

|

https://api.github.com/repos/varabyte/kobweb

|

opened

|

Revisit ComponentModifiers API after JB fixes their bug upstream

|

process

|

See also: https://github.com/JetBrains/compose-jb/issues/1333

Currently, it seems like generics in composables are causing the compose compiler to trip up, so I'm simplifying the ComponentStyles API for now. However, this requires passing in a `data: Any?` parameter, which is ugly.

Revisit this if / when the upstream bug is resolved.

|

1.0

|

Revisit ComponentModifiers API after JB fixes their bug upstream - See also: https://github.com/JetBrains/compose-jb/issues/1333

Currently, it seems like generics in composables are causing the compose compiler to trip up, so I'm simplifying the ComponentStyles API for now. However, this requires passing in a `data: Any?` parameter, which is ugly.

Revisit this if / when the upstream bug is resolved.

|

process

|

revisit componentmodifiers api after jb fixes their bug upstream see also currently it seems like generics in composables are causing the compose compiler to trip up so i m simplifying the componentstyles api for now however this requires passing in a data any parameter which is ugly revisit this if when the upstream bug is resolved

| 1

|

428,648

| 30,004,444,493

|

IssuesEvent

|

2023-06-26 11:30:04

|

appsmithorg/appsmith-docs

|

https://api.github.com/repos/appsmithorg/appsmith-docs

|

opened

|

[Task]: Add GitHub action for Algolia index updates

|

Documentation medium User Education Pod

|

### Is there an existing issue for this?

- [X] I have searched the existing issues

### Engineering Ticket Link

-

### Release Date

-

### Release Number

-

### First Draft

_No response_

### Loom video

_No response_

### Discord/slack/intercom Link if needed

_No response_

### PRD

_No response_

### Test plan/cases

_No response_

### Use cases or user requests

Add a Github action to wait when an index update is already in progress

|

1.0

|

[Task]: Add GitHub action for Algolia index updates - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Engineering Ticket Link

-

### Release Date

-

### Release Number

-

### First Draft

_No response_

### Loom video

_No response_

### Discord/slack/intercom Link if needed

_No response_

### PRD

_No response_

### Test plan/cases

_No response_

### Use cases or user requests

Add a Github action to wait when an index update is already in progress

|

non_process

|

add github action for algolia index updates is there an existing issue for this i have searched the existing issues engineering ticket link release date release number first draft no response loom video no response discord slack intercom link if needed no response prd no response test plan cases no response use cases or user requests add a github action to wait when an index update is already in progress

| 0

|

3,786

| 4,566,989,689

|

IssuesEvent

|

2016-09-15 09:25:40

|

camptocamp/c2cgeoportal

|

https://api.github.com/repos/camptocamp/c2cgeoportal

|

opened

|

Update the migration notes for an old cgxp interface

|

Infrastructure Ready

|

- update the changelog file

- remove the automation about the index.html - viewer.json

- document everything related to the interface

- all the file needed for an ngeo/cgxp interface

- the make variable: CGXP_INTERFACES/NGEO_INTERFACES

- the vars variable: default_interface

|

1.0

|

Update the migration notes for an old cgxp interface - - update the changelog file

- remove the automation about the index.html - viewer.json

- document everything related to the interface

- all the file needed for an ngeo/cgxp interface

- the make variable: CGXP_INTERFACES/NGEO_INTERFACES

- the vars variable: default_interface

|

non_process

|

update the migration notes for an old cgxp interface update the changelog file remove the automation about the index html viewer json document everything related to the interface all the file needed for an ngeo cgxp interface the make variable cgxp interfaces ngeo interfaces the vars variable default interface

| 0

|

243,034

| 7,852,546,931

|

IssuesEvent

|

2018-06-20 14:52:40

|

opentargets/webapp

|

https://api.github.com/repos/opentargets/webapp

|

closed

|

Greying out tabs when there is no data for a given target

|

area/usability area/web/target enhancement priority/backlog

|

We do grey out the tabs in the Evidence page (e.g. https://www.targetvalidation.org/evidence/ENSG00000065883/EFO_0000756).

I think it would be useful to have the same pattern for the Target profile page as well. E.g. Drugs here have no data but I need to click on the Tab to find that out. If it was grey, I'd have not bothered clicking on the tab.

https://www.targetvalidation.org/target/ENSG00000065883

|

1.0

|

Greying out tabs when there is no data for a given target - We do grey out the tabs in the Evidence page (e.g. https://www.targetvalidation.org/evidence/ENSG00000065883/EFO_0000756).

I think it would be useful to have the same pattern for the Target profile page as well. E.g. Drugs here have no data but I need to click on the Tab to find that out. If it was grey, I'd have not bothered clicking on the tab.

https://www.targetvalidation.org/target/ENSG00000065883

|

non_process

|

greying out tabs when there is no data for a given target we do grey out the tabs in the evidence page e g i think it would be useful to have the same pattern for the target profile page as well e g drugs here have no data but i need to click on the tab to find that out if it was grey i d have not bothered clicking on the tab

| 0

|

9,143

| 12,203,191,576

|

IssuesEvent

|

2020-04-30 10:10:45

|

MHRA/products

|

https://api.github.com/repos/MHRA/products

|

closed

|

Delete service treats every error like a job error

|

BUG :bug: EPIC - Auto Batch Process :oncoming_automobile:

|

**Describe the bug**

The doc-index-updater create service, sensibly, retries when it encounters unknown errors.

Delete throws a hissy fit, and cancels the job forever.

**To Reproduce**

Set any key or name used by the Delete service to an incorrect value, or go offline for a minute or two.

**Expected behavior**

Delete jobs are retried until the dead letter count is hit.

**Actual behaviour**

Watch as every delete job immediately becomes an error.

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Additional context**

Add any other context about the problem here.

# QA information

unit tests : make test

**For doc not found in index**

1. scripts/delete.sh 123123123123

2. (assumes that id doesn't exist)

3. wait for the lease lock to expire

4. see that it is not retried

**For other errors**

1. find a way to create another error - maybe wrong search index api key?

2. scripts/delete.sh REAL_ID_HERE

3. wait for the lease lock to expire

4. see that it is retried

|

1.0

|

Delete service treats every error like a job error - **Describe the bug**

The doc-index-updater create service, sensibly, retries when it encounters unknown errors.

Delete throws a hissy fit, and cancels the job forever.

**To Reproduce**

Set any key or name used by the Delete service to an incorrect value, or go offline for a minute or two.

**Expected behavior**

Delete jobs are retried until the dead letter count is hit.

**Actual behaviour**

Watch as every delete job immediately becomes an error.

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Additional context**

Add any other context about the problem here.

# QA information

unit tests : make test

**For doc not found in index**

1. scripts/delete.sh 123123123123

2. (assumes that id doesn't exist)

3. wait for the lease lock to expire

4. see that it is not retried

**For other errors**

1. find a way to create another error - maybe wrong search index api key?

2. scripts/delete.sh REAL_ID_HERE

3. wait for the lease lock to expire

4. see that it is retried

|

process

|

delete service treats every error like a job error describe the bug the doc index updater create service sensibly retries when it encounters unknown errors delete throws a hissy fit and cancels the job forever to reproduce set any key or name used by the delete service to an incorrect value or go offline for a minute or two expected behavior delete jobs are retried until the dead letter count is hit actual behaviour watch as every delete job immediately becomes an error screenshots if applicable add screenshots to help explain your problem additional context add any other context about the problem here qa information unit tests make test for doc not found in index scripts delete sh assumes that id doesn t exist wait for the lease lock to expire see that it is not retried for other errors find a way to create another error maybe wrong search index api key scripts delete sh real id here wait for the lease lock to expire see that it is retried

| 1

|

307,836

| 26,567,229,634

|

IssuesEvent

|

2023-01-20 21:37:07

|

cicirello/Chips-n-Salsa

|

https://api.github.com/repos/cicirello/Chips-n-Salsa

|

closed

|

Refactor test cases for timed parallel multistarters

|

testing refactor

|

## Summary

Refactor test cases for timed parallel multistarters (suggested by RefactorFirst scan).

|

1.0

|

Refactor test cases for timed parallel multistarters - ## Summary

Refactor test cases for timed parallel multistarters (suggested by RefactorFirst scan).

|

non_process

|

refactor test cases for timed parallel multistarters summary refactor test cases for timed parallel multistarters suggested by refactorfirst scan

| 0

|

2,474

| 2,603,549,712

|

IssuesEvent

|

2015-02-24 16:38:37

|

jakobkroeker/test_singular

|

https://api.github.com/repos/jakobkroeker/test_singular

|

opened

|

missing test for 'brillnoether.lib'

|

missingTest

|

```

LIB("brillnoether.lib");

example RiemannRochBN;

```

|

1.0

|

missing test for 'brillnoether.lib' - ```

LIB("brillnoether.lib");

example RiemannRochBN;

```

|

non_process

|

missing test for brillnoether lib lib brillnoether lib example riemannrochbn

| 0

|

16,844

| 22,095,028,825

|

IssuesEvent

|

2022-06-01 09:14:28

|

Tencent/tdesign-miniprogram

|

https://api.github.com/repos/Tencent/tdesign-miniprogram

|

closed

|

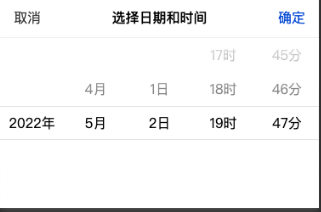

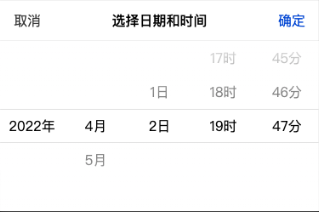

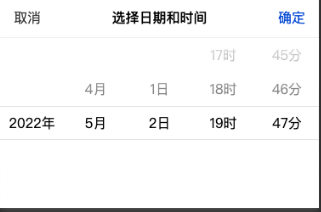

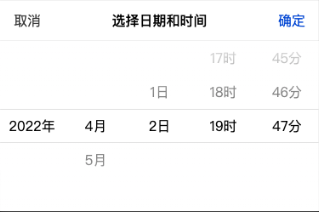

[DateTimePicker] disableDate 在{before: x, after: y} 不能正常工作

|

bug processing

|

### tdesign-miniprogram 版本

0.10.0

### 重现链接

_No response_

### 重现步骤

```

<t-date-time-picker

title="选择日期和时间"

visible="{{isPickerVisible}}"

mode="{{['minute']}}"

value="{{datetimeText}}"

format="YYYY-MM-DD HH:mm"

bindconfirm="onPickerConfirm"

bindcancel="onPickerCancel"

disable-date="{{disableDate}}"

></t-date-time-picker>

onLoad(options) {

const today = dayjs().format('YYYY-MM-DD HH:mm')

const befor30 = dayjs().set('day', -30).format('YYYY-MM-DD HH:mm')

const disableDate = {

before: befor30,

after: today

}

console.log(disableDate)

this.setData({

datetimeText: today,

disableDate

})

}

```

### 期望结果

期望是,只能选择近30天里的日期

### 实际结果

按说4月 会是会有28天可选择

### 框架版本

_No response_

### 浏览器版本

_No response_

### 系统版本

_No response_

### Node版本

_No response_

### 补充说明

_No response_

|

1.0

|

[DateTimePicker] disableDate 在{before: x, after: y} 不能正常工作 - ### tdesign-miniprogram 版本

0.10.0

### 重现链接

_No response_

### 重现步骤

```

<t-date-time-picker

title="选择日期和时间"

visible="{{isPickerVisible}}"

mode="{{['minute']}}"

value="{{datetimeText}}"

format="YYYY-MM-DD HH:mm"

bindconfirm="onPickerConfirm"

bindcancel="onPickerCancel"

disable-date="{{disableDate}}"

></t-date-time-picker>

onLoad(options) {

const today = dayjs().format('YYYY-MM-DD HH:mm')

const befor30 = dayjs().set('day', -30).format('YYYY-MM-DD HH:mm')

const disableDate = {

before: befor30,

after: today

}

console.log(disableDate)

this.setData({

datetimeText: today,

disableDate

})

}

```

### 期望结果

期望是,只能选择近30天里的日期

### 实际结果

按说4月 会是会有28天可选择

### 框架版本

_No response_

### 浏览器版本

_No response_

### 系统版本

_No response_

### Node版本

_No response_

### 补充说明

_No response_

|

process

|

disabledate 在 before x after y 不能正常工作 tdesign miniprogram 版本 重现链接 no response 重现步骤 t date time picker title 选择日期和时间 visible ispickervisible mode value datetimetext format yyyy mm dd hh mm bindconfirm onpickerconfirm bindcancel onpickercancel disable date disabledate onload options const today dayjs format yyyy mm dd hh mm const dayjs set day format yyyy mm dd hh mm const disabledate before after today console log disabledate this setdata datetimetext today disabledate 期望结果 期望是, 实际结果 框架版本 no response 浏览器版本 no response 系统版本 no response node版本 no response 补充说明 no response

| 1

|

12,940

| 15,305,065,837

|

IssuesEvent

|

2021-02-24 17:38:59

|

nion-software/nionswift

|

https://api.github.com/repos/nion-software/nionswift

|

opened

|

Track processing history in data item metadata.

|

f - acquisition f - computations f - processing feature stage - planning type - enhancement

|

Acquisition and computations would both produce a data provenance object that could be tracked in either the data item or the underlying data-metadata item.

This requires thinking a bit more about what "metadata" means. There are two uses of metadata in use within Swift right now:

- Formal metadata that requires domain specific methods to access, e.g. calibrations or data type.

- Custom metadata that is just a dict.

Processing functions should have a uniform way of handling formal metadata.

It's not clear how custom metadata should be handled during processing - for instance, what should happen when adding two images with different custom metadata?

Also, consider whether provenance could also be attached to other project items, such as displays or graphics which can also be controlled by computations.

|

1.0

|

Track processing history in data item metadata. - Acquisition and computations would both produce a data provenance object that could be tracked in either the data item or the underlying data-metadata item.

This requires thinking a bit more about what "metadata" means. There are two uses of metadata in use within Swift right now:

- Formal metadata that requires domain specific methods to access, e.g. calibrations or data type.

- Custom metadata that is just a dict.

Processing functions should have a uniform way of handling formal metadata.

It's not clear how custom metadata should be handled during processing - for instance, what should happen when adding two images with different custom metadata?

Also, consider whether provenance could also be attached to other project items, such as displays or graphics which can also be controlled by computations.

|

process

|

track processing history in data item metadata acquisition and computations would both produce a data provenance object that could be tracked in either the data item or the underlying data metadata item this requires thinking a bit more about what metadata means there are two uses of metadata in use within swift right now formal metadata that requires domain specific methods to access e g calibrations or data type custom metadata that is just a dict processing functions should have a uniform way of handling formal metadata it s not clear how custom metadata should be handled during processing for instance what should happen when adding two images with different custom metadata also consider whether provenance could also be attached to other project items such as displays or graphics which can also be controlled by computations

| 1

|

5,543

| 8,392,607,105

|

IssuesEvent

|

2018-10-09 18:07:00

|

googleapis/google-cloud-python

|

https://api.github.com/repos/googleapis/google-cloud-python

|

closed

|

Logging: 'test_update_sink' flakes with '503 Service Unavailable'

|

api: logging flaky testing type: process

|

From: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/8002

```python