Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

81,179 | 10,226,876,353 | IssuesEvent | 2019-08-16 19:04:20 | w3c/aria-practices | https://api.github.com/repos/w3c/aria-practices | closed | Review new Structural Roles section in APG 1.2 | Needs Review documentation guidance | A first draft of a new [Structural Roles section](https://rawgit.com/w3c/aria-practices/apg-1.2/aria-practices.html#structural_roles) developed for APG 1.2 as described in issue #700 is complete and ready for broad review. | 1.0 | Review new Structural Roles section in APG 1.2 - A first draft of a new [Structural Roles section](https://rawgit.com/w3c/aria-practices/apg-1.2/aria-practices.html#structural_roles) developed for APG 1.2 as described in issue #700 is complete and ready for broad review. | non_test | review new structural roles section in apg a first draft of a new developed for apg as described in issue is complete and ready for broad review | 0 |

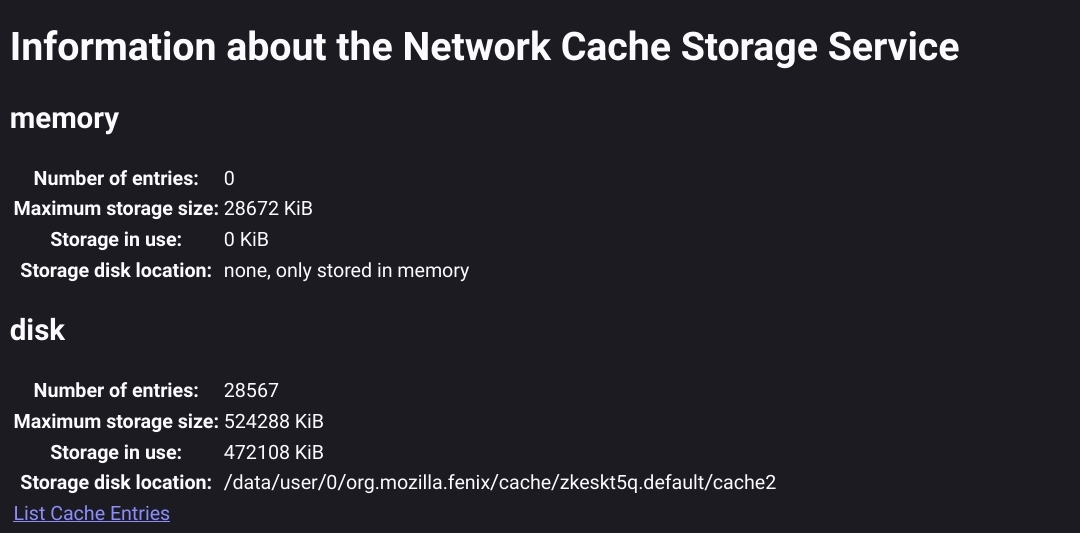

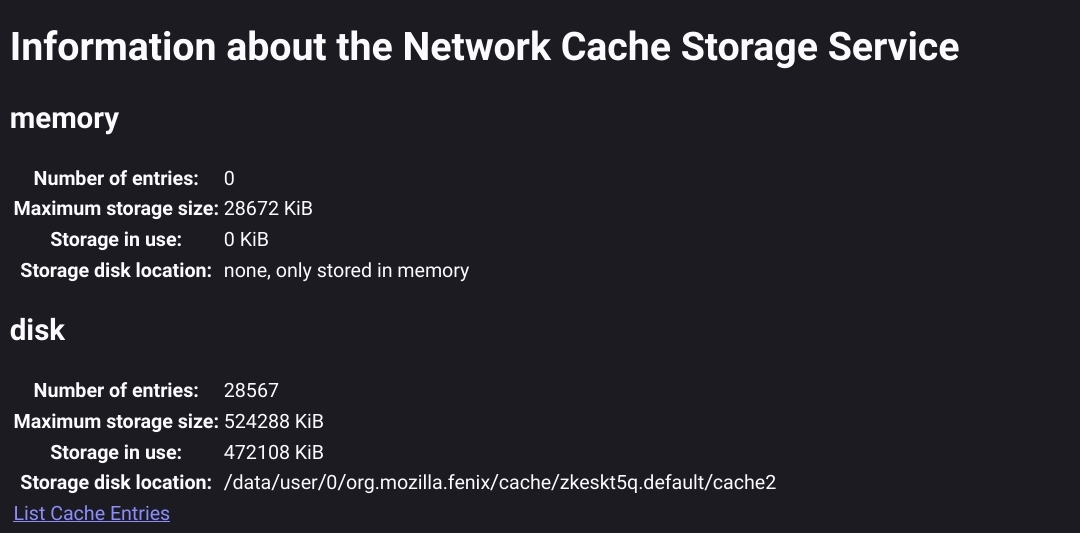

45,604 | 24,130,338,393 | IssuesEvent | 2022-09-21 06:49:18 | mozilla-mobile/fenix | https://api.github.com/repos/mozilla-mobile/fenix | opened | (Network) cache should be periodically trimmed | performance | ## Overview

The below seems like it could be significantly trimmed down periodically without user intervention (pressing `Clear Cache` stripped Data/Cache to 96MB/0MB):

<img src="https://user-images.githubusercontent.com/13922417/191421373-c60fd393-690c-4557-a027-92a623bdf269.jpg" width="500" />

The number of entries in `about:cache` is significantly larger than on my desktop (below 2000 entries at a third of the size). Also, it might be worth noting that the "Storage in use" is significantly smaller than the Cache size above (even without all the cache under `Data`):

## Examples

Unfortunately I had to swap devices shortly after recording this info, but I managed to get some useful data.

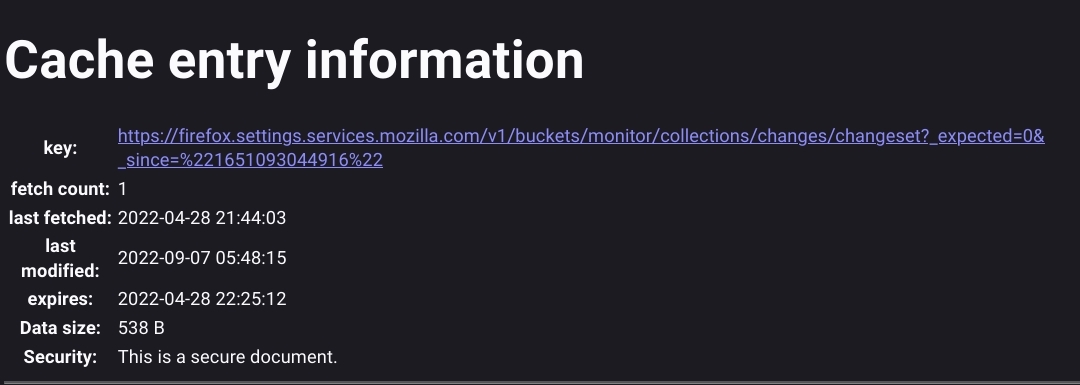

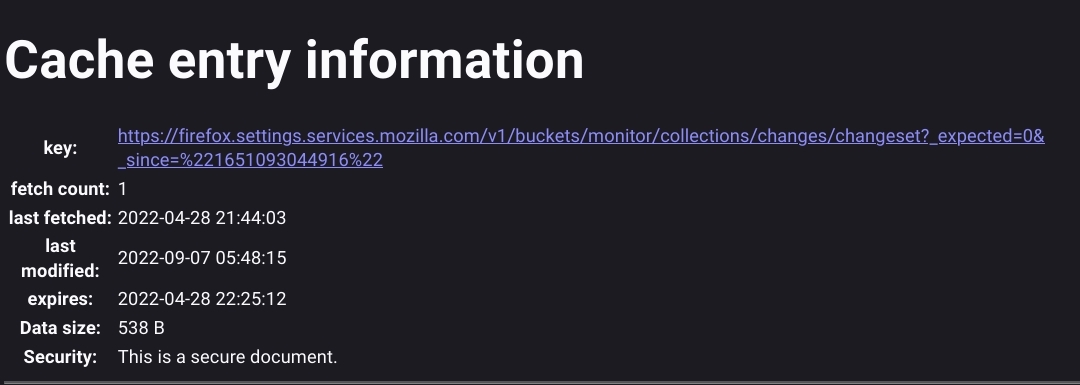

There seemed to be many "expired" entries, such as over 4 months old:

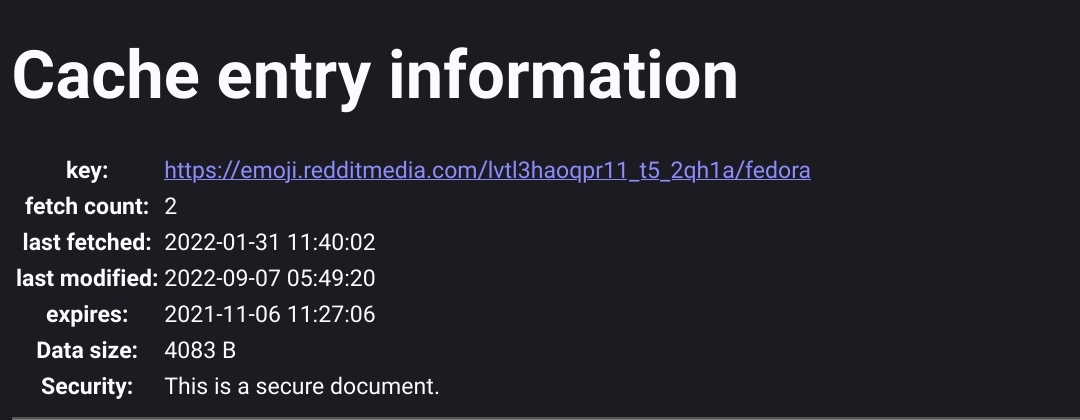

Almost a year expired (also it was fetched after expired, is this a UI bug?):

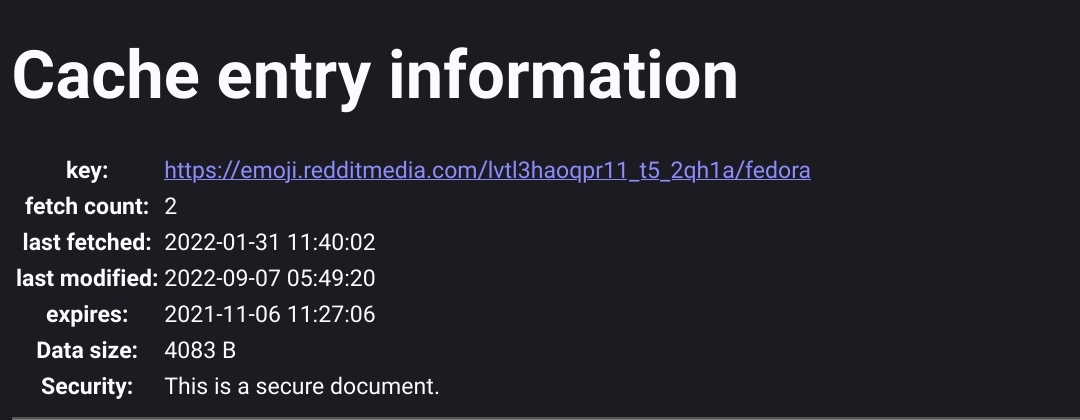

There were also a **LOT** of these (possibly related to #26420?):

<img src="https://user-images.githubusercontent.com/13922417/191429056-b8e2a437-266e-4b5f-b491-44354d629405.jpg" width="500" />

Also quite a few from `sync.services.mozilla.com`, though the ones I looked at were small in size (<200B).

There were many other items that were only fetched a single time and were several months to a year old which could also be reasonably pruned.

## Device information

* Android 8.0

* Fenix version: 106.0a1

| True | (Network) cache should be periodically trimmed - ## Overview

The below seems like it could be significantly trimmed down periodically without user intervention (pressing `Clear Cache` stripped Data/Cache to 96MB/0MB):

<img src="https://user-images.githubusercontent.com/13922417/191421373-c60fd393-690c-4557-a027-92a623bdf269.jpg" width="500" />

The number of entries in `about:cache` is significantly larger than on my desktop (below 2000 entries at a third of the size). Also, it might be worth noting that the "Storage in use" is significantly smaller than the Cache size above (even without all the cache under `Data`):

## Examples

Unfortunately I had to swap devices shortly after recording this info, but I managed to get some useful data.

There seemed to be many "expired" entries, such as over 4 months old:

Almost a year expired (also it was fetched after expired, is this a UI bug?):

There were also a **LOT** of these (possibly related to #26420?):

<img src="https://user-images.githubusercontent.com/13922417/191429056-b8e2a437-266e-4b5f-b491-44354d629405.jpg" width="500" />

Also quite a few from `sync.services.mozilla.com`, though the ones I looked at were small in size (<200B).

There were many other items that were only fetched a single time and were several months to a year old which could also be reasonably pruned.

## Device information

* Android 8.0

* Fenix version: 106.0a1

| non_test | network cache should be periodically trimmed overview the below seems like it could be significantly trimmed down periodically without user intervention pressing clear cache stripped data cache to the number of entries in about cache is significantly larger than on my desktop below entries at a third of the size also it might be worth noting that the storage in use is significantly smaller than the cache size above even without all the cache under data examples unfortunately i had to swap devices shortly after recording this info but i managed to get some useful data there seemed to be many expired entries such as over months old almost a year expired also it was fetched after expired is this a ui bug there were also a lot of these possibly related to also quite a few from sync services mozilla com though the ones i looked at were small in size there were many other items that were only fetched a single time and were several months to a year old which could also be reasonably pruned device information android fenix version | 0 |

287,173 | 8,805,284,325 | IssuesEvent | 2018-12-26 18:39:26 | GoldenSoftwareLtd/gedemin | https://api.github.com/repos/GoldenSoftwareLtd/gedemin | closed | В группу ассортимента добавить поле Лимит. | Meat Priority-Medium Type-Enhancement | Originally reported on Google Code with ID 2189

```

Если добавляем сырьё как заменитель в рецепт, и если это сырьё уже входит в рецепт то

дать возможность добавить. Если добавляем не как заменитель, а как позицию рецепта,

то показать сообщение что сырьё уже присутствует.

```

Reported by `stasgm` on 2010-10-20 13:41:14

| 1.0 | В группу ассортимента добавить поле Лимит. - Originally reported on Google Code with ID 2189

```

Если добавляем сырьё как заменитель в рецепт, и если это сырьё уже входит в рецепт то

дать возможность добавить. Если добавляем не как заменитель, а как позицию рецепта,

то показать сообщение что сырьё уже присутствует.

```

Reported by `stasgm` on 2010-10-20 13:41:14

| non_test | в группу ассортимента добавить поле лимит originally reported on google code with id если добавляем сырьё как заменитель в рецепт и если это сырьё уже входит в рецепт то дать возможность добавить если добавляем не как заменитель а как позицию рецепта то показать сообщение что сырьё уже присутствует reported by stasgm on | 0 |

158,226 | 12,406,650,469 | IssuesEvent | 2020-05-21 19:31:52 | apache/incubator-mxnet | https://api.github.com/repos/apache/incubator-mxnet | opened | gelu_test disabled and flaky upon enabling | Bug Disabled test v2.0 | ## Description

https://github.com/apache/incubator-mxnet/blob/0210ce2c136afaa0f57666e5e1c659cab353f5f3/tests/python/unittest/test_gluon.py#L1423-L1434

is actually a no-op by mistake. Upon enabling the test as follows:

```

gelu = mx.gluon.nn.GELU()

def gelu_test(x):

CUBE_CONSTANT = 0.044715

ROOT_TWO_OVER_PI = 0.7978845608028654

def g(x):

return ROOT_TWO_OVER_PI * (x + CUBE_CONSTANT * x * x * x)

def f(x):

return 1.0 + mx.nd.tanh(g(x))

def gelu(x):

return 0.5 * x * f(x)

return [gelu(x_i) for x_i in x]

for test_point, ref_point in zip(gelu_test(point_to_validate), gelu(point_to_validate)):

assert test_point == ref_point

```

the tests fails frequently

```

[2020-05-21T19:13:03.252Z] for test_point, ref_point in zip(gelu_test(point_to_validate), gelu(point_to_validate)):

[2020-05-21T19:13:03.252Z] > assert test_point == ref_point

[2020-05-21T19:13:03.252Z] E assert \n[-0.04601725...ray 1 @cpu(0)> == \n[-0.04601722...ray 1 @cpu(0)>

[2020-05-21T19:13:03.252Z] E -\n

[2020-05-21T19:13:03.252Z] E -[-0.04601725]\n

[2020-05-21T19:13:03.252Z] E -<NDArray 1 @cpu(0)>

[2020-05-21T19:13:03.252Z] E +\n

[2020-05-21T19:13:03.252Z] E +[-0.04601722]\n

[2020-05-21T19:13:03.252Z] E +<NDArray 1 @cpu(0)>

[2020-05-21T19:13:03.252Z] E Full diff:

[2020-05-21T19:13:03.252Z] E

[2020-05-21T19:13:03.252Z] E - [-0.04601725]

[2020-05-21T19:13:03.252Z] E ? ^

[2020-05-21T19:13:03.252Z] E + [-0.04601722]

[2020-05-21T19:13:03.252Z] E ? ^

[2020-05-21T19:13:03.252Z] E <NDArray 1 @cpu(0)>

```

http://jenkins.mxnet-ci.amazon-ml.com/blue/rest/organizations/jenkins/pipelines/mxnet-validation/pipelines/unix-cpu/branches/PR-18376/runs/5/nodes/363/steps/738/log/?start=0

| 1.0 | gelu_test disabled and flaky upon enabling - ## Description

https://github.com/apache/incubator-mxnet/blob/0210ce2c136afaa0f57666e5e1c659cab353f5f3/tests/python/unittest/test_gluon.py#L1423-L1434

is actually a no-op by mistake. Upon enabling the test as follows:

```

gelu = mx.gluon.nn.GELU()

def gelu_test(x):

CUBE_CONSTANT = 0.044715

ROOT_TWO_OVER_PI = 0.7978845608028654

def g(x):

return ROOT_TWO_OVER_PI * (x + CUBE_CONSTANT * x * x * x)

def f(x):

return 1.0 + mx.nd.tanh(g(x))

def gelu(x):

return 0.5 * x * f(x)

return [gelu(x_i) for x_i in x]

for test_point, ref_point in zip(gelu_test(point_to_validate), gelu(point_to_validate)):

assert test_point == ref_point

```

the tests fails frequently

```

[2020-05-21T19:13:03.252Z] for test_point, ref_point in zip(gelu_test(point_to_validate), gelu(point_to_validate)):

[2020-05-21T19:13:03.252Z] > assert test_point == ref_point

[2020-05-21T19:13:03.252Z] E assert \n[-0.04601725...ray 1 @cpu(0)> == \n[-0.04601722...ray 1 @cpu(0)>

[2020-05-21T19:13:03.252Z] E -\n

[2020-05-21T19:13:03.252Z] E -[-0.04601725]\n

[2020-05-21T19:13:03.252Z] E -<NDArray 1 @cpu(0)>

[2020-05-21T19:13:03.252Z] E +\n

[2020-05-21T19:13:03.252Z] E +[-0.04601722]\n

[2020-05-21T19:13:03.252Z] E +<NDArray 1 @cpu(0)>

[2020-05-21T19:13:03.252Z] E Full diff:

[2020-05-21T19:13:03.252Z] E

[2020-05-21T19:13:03.252Z] E - [-0.04601725]

[2020-05-21T19:13:03.252Z] E ? ^

[2020-05-21T19:13:03.252Z] E + [-0.04601722]

[2020-05-21T19:13:03.252Z] E ? ^

[2020-05-21T19:13:03.252Z] E <NDArray 1 @cpu(0)>

```

http://jenkins.mxnet-ci.amazon-ml.com/blue/rest/organizations/jenkins/pipelines/mxnet-validation/pipelines/unix-cpu/branches/PR-18376/runs/5/nodes/363/steps/738/log/?start=0

| test | gelu test disabled and flaky upon enabling description is actually a no op by mistake upon enabling the test as follows gelu mx gluon nn gelu def gelu test x cube constant root two over pi def g x return root two over pi x cube constant x x x def f x return mx nd tanh g x def gelu x return x f x return for test point ref point in zip gelu test point to validate gelu point to validate assert test point ref point the tests fails frequently for test point ref point in zip gelu test point to validate gelu point to validate assert test point ref point e assert n ray cpu n ray cpu e n e n e e n e n e e full diff e e e e e e | 1 |

175,345 | 21,300,986,660 | IssuesEvent | 2022-04-15 03:03:21 | mihorsky/intentionally-buggy-code | https://api.github.com/repos/mihorsky/intentionally-buggy-code | opened | CVE-2021-43138 (High) detected in async-2.6.3.tgz | security vulnerability | ## CVE-2021-43138 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>async-2.6.3.tgz</b></p></summary>

<p>Higher-order functions and common patterns for asynchronous code</p>

<p>Library home page: <a href="https://registry.npmjs.org/async/-/async-2.6.3.tgz">https://registry.npmjs.org/async/-/async-2.6.3.tgz</a></p>

<p>Path to dependency file: /buggy-webpack-app/package.json</p>

<p>Path to vulnerable library: /buggy-webpack-app/node_modules/async/package.json,/buggy-react-app/node_modules/async/package.json</p>

<p>

Dependency Hierarchy:

- webpack-3.12.0.tgz (Root Library)

- :x: **async-2.6.3.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability exists in Async through 3.2.1 (fixed in 3.2.2) , which could let a malicious user obtain privileges via the mapValues() method.

<p>Publish Date: 2022-04-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43138>CVE-2021-43138</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2021-43138">https://nvd.nist.gov/vuln/detail/CVE-2021-43138</a></p>

<p>Release Date: 2022-04-06</p>

<p>Fix Resolution (async): 3.2.2</p>

<p>Direct dependency fix Resolution (webpack): 4.0.0-beta.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-43138 (High) detected in async-2.6.3.tgz - ## CVE-2021-43138 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>async-2.6.3.tgz</b></p></summary>

<p>Higher-order functions and common patterns for asynchronous code</p>

<p>Library home page: <a href="https://registry.npmjs.org/async/-/async-2.6.3.tgz">https://registry.npmjs.org/async/-/async-2.6.3.tgz</a></p>

<p>Path to dependency file: /buggy-webpack-app/package.json</p>

<p>Path to vulnerable library: /buggy-webpack-app/node_modules/async/package.json,/buggy-react-app/node_modules/async/package.json</p>

<p>

Dependency Hierarchy:

- webpack-3.12.0.tgz (Root Library)

- :x: **async-2.6.3.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability exists in Async through 3.2.1 (fixed in 3.2.2) , which could let a malicious user obtain privileges via the mapValues() method.

<p>Publish Date: 2022-04-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43138>CVE-2021-43138</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2021-43138">https://nvd.nist.gov/vuln/detail/CVE-2021-43138</a></p>

<p>Release Date: 2022-04-06</p>

<p>Fix Resolution (async): 3.2.2</p>

<p>Direct dependency fix Resolution (webpack): 4.0.0-beta.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in async tgz cve high severity vulnerability vulnerable library async tgz higher order functions and common patterns for asynchronous code library home page a href path to dependency file buggy webpack app package json path to vulnerable library buggy webpack app node modules async package json buggy react app node modules async package json dependency hierarchy webpack tgz root library x async tgz vulnerable library found in base branch master vulnerability details a vulnerability exists in async through fixed in which could let a malicious user obtain privileges via the mapvalues method publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution async direct dependency fix resolution webpack beta step up your open source security game with whitesource | 0 |

16,930 | 3,576,200,032 | IssuesEvent | 2016-01-27 18:38:17 | MajkiIT/polish-ads-filter | https://api.github.com/repos/MajkiIT/polish-ads-filter | closed | www.groj.pl | cookies reguły gotowe/testowanie | cookies

napewno znajda sie jakies rekalmy

Ta strona korzysta z plików cookies zgodnie z ustawieniami Twojej przeglądarki.

Więcej informacji o celu ich wykorzystania i możliwości zmiany ustawień cookie znajdziesz w naszej Polityce prywatności. | 1.0 | www.groj.pl - cookies

napewno znajda sie jakies rekalmy

Ta strona korzysta z plików cookies zgodnie z ustawieniami Twojej przeglądarki.

Więcej informacji o celu ich wykorzystania i możliwości zmiany ustawień cookie znajdziesz w naszej Polityce prywatności. | test | cookies napewno znajda sie jakies rekalmy ta strona korzysta z plików cookies zgodnie z ustawieniami twojej przeglądarki więcej informacji o celu ich wykorzystania i możliwości zmiany ustawień cookie znajdziesz w naszej polityce prywatności | 1 |

623,416 | 19,667,188,533 | IssuesEvent | 2022-01-11 00:31:38 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | [Bug]: Unable to install a different version in an existing PackageReference project. | Priority:1 Product:VS.Client Type:Bug Resolution:External Functionality:VisualStudioUI | ### NuGet Product Used

Visual Studio Package Management UI

### Product Version

Version 17.1.0 Preview 2.0 [31930.463.main]

### Worked before?

Yes

### Impact

I'm unable to use this version

### Repro Steps & Context

Root cause likely not on NuGet side, [1444702](https://devdiv.visualstudio.com/DevDiv/_workitems/edit/1444702)

1. Create a new console project (dotnet 6.0).

2. Open Project PM UI and install latest version of Newtonsoft.Json

3. Change to Installed tab

4. Change the version of Newtonsoft.Json and install

5. Close VS using the X on the upper right corner

6. Save the project changes

7. Open the project again

8. Open Project PM UI and install a different version of Newtonsoft.Json

To work around this you can install a new package and this will let you change the version of the packages again.

### Verbose Logs

```shell

Restoring packages for C:\Users\mruizmares\source\repos\ConsoleApp6\ConsoleApp6\ConsoleApp6.csproj...

Installing NuGet package Newtonsoft.Json 10.0.1.

System.NotSupportedException: Specified method is not supported.

at Microsoft.VisualStudio.ProjectSystem.ProjectSerialization.CachedProject.GetItemProvenance(String itemToMatch, String itemType, EvaluationContext evaluationContext)

at Microsoft.VisualStudio.ProjectSystem.Items.MSBuildGlobUtilities.TryGetLatestExactMetadataElement(ProjectItem item, ProjectRootElement containingProjectXml)

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemProperties.ProjectMetadataElementCache.<GetValueAsync>d__5.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemProperties.<GetProjectItemAndMetaElementAsync>d__25.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemProperties.<SetPropertyValueAsync>d__19.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemPropertiesWithCatalog.<SetPropertyValueAsync>d__4.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.Properties.ProjectPropertiesBase.<>c__DisplayClass31_0.<<Microsoft-VisualStudio-ProjectSystem-Properties-IProjectProperties-SetPropertyValueAsync>b__0>d.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.Threading.JoinableTask.<JoinAsync>d__76.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.ProjectLockService.<ExecuteWithinLockAsync>d__129.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at Microsoft.VisualStudio.ProjectSystem.ProjectLockService.<ExecuteWithinLockAsync>d__129.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.VisualStudio.CpsPackageReferenceProject.<InstallPackageAsync>d__19.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteBuildIntegratedProjectActionsAsync>d__82.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteNuGetProjectActionsAsync>d__79.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteNuGetProjectActionsAsync>d__78.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteNuGetProjectActionsAsync>d__77.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.VisualStudio.NuGetProjectManagerService.<>c__DisplayClass18_0.<<ExecuteActionsAsync>b__0>d.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.VisualStudio.NuGetProjectManagerService.<CatchAndRethrowExceptionAsync>d__28.MoveNext()

Time Elapsed: 00:00:01.1332797

========== Finished ==========

```

| 1.0 | [Bug]: Unable to install a different version in an existing PackageReference project. - ### NuGet Product Used

Visual Studio Package Management UI

### Product Version

Version 17.1.0 Preview 2.0 [31930.463.main]

### Worked before?

Yes

### Impact

I'm unable to use this version

### Repro Steps & Context

Root cause likely not on NuGet side, [1444702](https://devdiv.visualstudio.com/DevDiv/_workitems/edit/1444702)

1. Create a new console project (dotnet 6.0).

2. Open Project PM UI and install latest version of Newtonsoft.Json

3. Change to Installed tab

4. Change the version of Newtonsoft.Json and install

5. Close VS using the X on the upper right corner

6. Save the project changes

7. Open the project again

8. Open Project PM UI and install a different version of Newtonsoft.Json

To work around this you can install a new package and this will let you change the version of the packages again.

### Verbose Logs

```shell

Restoring packages for C:\Users\mruizmares\source\repos\ConsoleApp6\ConsoleApp6\ConsoleApp6.csproj...

Installing NuGet package Newtonsoft.Json 10.0.1.

System.NotSupportedException: Specified method is not supported.

at Microsoft.VisualStudio.ProjectSystem.ProjectSerialization.CachedProject.GetItemProvenance(String itemToMatch, String itemType, EvaluationContext evaluationContext)

at Microsoft.VisualStudio.ProjectSystem.Items.MSBuildGlobUtilities.TryGetLatestExactMetadataElement(ProjectItem item, ProjectRootElement containingProjectXml)

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemProperties.ProjectMetadataElementCache.<GetValueAsync>d__5.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemProperties.<GetProjectItemAndMetaElementAsync>d__25.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemProperties.<SetPropertyValueAsync>d__19.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.Properties.ItemPropertiesWithCatalog.<SetPropertyValueAsync>d__4.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.Properties.ProjectPropertiesBase.<>c__DisplayClass31_0.<<Microsoft-VisualStudio-ProjectSystem-Properties-IProjectProperties-SetPropertyValueAsync>b__0>d.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.Threading.JoinableTask.<JoinAsync>d__76.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at Microsoft.VisualStudio.ProjectSystem.ProjectLockService.<ExecuteWithinLockAsync>d__129.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at Microsoft.VisualStudio.ProjectSystem.ProjectLockService.<ExecuteWithinLockAsync>d__129.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.VisualStudio.CpsPackageReferenceProject.<InstallPackageAsync>d__19.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteBuildIntegratedProjectActionsAsync>d__82.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteNuGetProjectActionsAsync>d__79.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteNuGetProjectActionsAsync>d__78.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at NuGet.PackageManagement.NuGetPackageManager.<ExecuteNuGetProjectActionsAsync>d__77.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.VisualStudio.NuGetProjectManagerService.<>c__DisplayClass18_0.<<ExecuteActionsAsync>b__0>d.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at NuGet.PackageManagement.VisualStudio.NuGetProjectManagerService.<CatchAndRethrowExceptionAsync>d__28.MoveNext()

Time Elapsed: 00:00:01.1332797

========== Finished ==========

```

| non_test | unable to install a different version in an existing packagereference project nuget product used visual studio package management ui product version version preview worked before yes impact i m unable to use this version repro steps context root cause likely not on nuget side create a new console project dotnet open project pm ui and install latest version of newtonsoft json change to installed tab change the version of newtonsoft json and install close vs using the x on the upper right corner save the project changes open the project again open project pm ui and install a different version of newtonsoft json to work around this you can install a new package and this will let you change the version of the packages again verbose logs shell restoring packages for c users mruizmares source repos csproj installing nuget package newtonsoft json system notsupportedexception specified method is not supported at microsoft visualstudio projectsystem projectserialization cachedproject getitemprovenance string itemtomatch string itemtype evaluationcontext evaluationcontext at microsoft visualstudio projectsystem items msbuildglobutilities trygetlatestexactmetadataelement projectitem item projectrootelement containingprojectxml at microsoft visualstudio projectsystem properties itemproperties projectmetadataelementcache d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at microsoft visualstudio projectsystem properties itemproperties d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at microsoft visualstudio projectsystem properties itemproperties d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at microsoft visualstudio projectsystem properties itempropertieswithcatalog d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at microsoft visualstudio projectsystem properties projectpropertiesbase c b d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at microsoft visualstudio threading joinabletask d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at microsoft visualstudio projectsystem projectlockservice d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at microsoft visualstudio projectsystem projectlockservice d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at nuget packagemanagement visualstudio cpspackagereferenceproject d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at nuget packagemanagement nugetpackagemanager d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at nuget packagemanagement nugetpackagemanager d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at nuget packagemanagement nugetpackagemanager d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at nuget packagemanagement nugetpackagemanager d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at nuget packagemanagement visualstudio nugetprojectmanagerservice c b d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at nuget packagemanagement visualstudio nugetprojectmanagerservice d movenext time elapsed finished | 0 |

125,597 | 10,347,642,902 | IssuesEvent | 2019-09-04 17:53:23 | broadinstitute/gatk | https://api.github.com/repos/broadinstitute/gatk | closed | Cromwell v33 released. Supports intelligent file localization, so we should merge forked WDLs | Mutect tests wdl |

## Feature request

### Tool(s) or class(es) involved

M2, at least

### Description

With Cromwell v33, we should be able to merge the mutect2.wdl and mutect_nio.wdl into one WDL. There will need to be WDL modifications, for sure.

https://github.com/broadinstitute/cromwell/releases

| 1.0 | Cromwell v33 released. Supports intelligent file localization, so we should merge forked WDLs -

## Feature request

### Tool(s) or class(es) involved

M2, at least

### Description

With Cromwell v33, we should be able to merge the mutect2.wdl and mutect_nio.wdl into one WDL. There will need to be WDL modifications, for sure.

https://github.com/broadinstitute/cromwell/releases

| test | cromwell released supports intelligent file localization so we should merge forked wdls feature request tool s or class es involved at least description with cromwell we should be able to merge the wdl and mutect nio wdl into one wdl there will need to be wdl modifications for sure | 1 |

33,891 | 7,293,862,348 | IssuesEvent | 2018-02-25 18:19:10 | otros-systems/otroslogviewer | https://api.github.com/repos/otros-systems/otroslogviewer | closed | Add option to tail to view as plain text as well | Priority-Medium Type-Defect | ```

Some time , just tailing will give a clean picture of actions in the

applicaiton modules

```

Original issue reported on code.google.com by `visuma...@gmail.com` on 12 Jun 2012 at 6:28

| 1.0 | Add option to tail to view as plain text as well - ```

Some time , just tailing will give a clean picture of actions in the

applicaiton modules

```

Original issue reported on code.google.com by `visuma...@gmail.com` on 12 Jun 2012 at 6:28

| non_test | add option to tail to view as plain text as well some time just tailing will give a clean picture of actions in the applicaiton modules original issue reported on code google com by visuma gmail com on jun at | 0 |

28,387 | 5,247,679,023 | IssuesEvent | 2017-02-01 13:49:11 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Boxed Doubles gives incorrect type names | defect | ``` csharp

object v = 1.0;

Global.Alert(v.GetType().FullName);

v = 1f;

Global.Alert(v.GetType().FullName);

```

[Live Bridge](http://live.bridge.net/#2c6e355eedc03a78b10f6751cf2c8fcb)

They both say System.Int when they should say System.Double and System.Single.

| 1.0 | Boxed Doubles gives incorrect type names - ``` csharp

object v = 1.0;

Global.Alert(v.GetType().FullName);

v = 1f;

Global.Alert(v.GetType().FullName);

```

[Live Bridge](http://live.bridge.net/#2c6e355eedc03a78b10f6751cf2c8fcb)

They both say System.Int when they should say System.Double and System.Single.

| non_test | boxed doubles gives incorrect type names csharp object v global alert v gettype fullname v global alert v gettype fullname they both say system int when they should say system double and system single | 0 |

165,282 | 20,574,441,435 | IssuesEvent | 2022-03-04 01:57:51 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | opened | CVE-2022-25258 (Medium) detected in linux-stable-rtv4.1.33 | security vulnerability | ## CVE-2022-25258 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in drivers/usb/gadget/composite.c in the Linux kernel before 5.16.10. The USB Gadget subsystem lacks certain validation of interface OS descriptor requests (ones with a large array index and ones associated with NULL function pointer retrieval). Memory corruption might occur.

<p>Publish Date: 2022-02-16

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-25258>CVE-2022-25258</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Physical

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25258">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25258</a></p>

<p>Release Date: 2022-02-16</p>

<p>Fix Resolution: v5.17-rc4</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-25258 (Medium) detected in linux-stable-rtv4.1.33 - ## CVE-2022-25258 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in drivers/usb/gadget/composite.c in the Linux kernel before 5.16.10. The USB Gadget subsystem lacks certain validation of interface OS descriptor requests (ones with a large array index and ones associated with NULL function pointer retrieval). Memory corruption might occur.

<p>Publish Date: 2022-02-16

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-25258>CVE-2022-25258</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Physical

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25258">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25258</a></p>

<p>Release Date: 2022-02-16</p>

<p>Fix Resolution: v5.17-rc4</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in base branch master vulnerable source files vulnerability details an issue was discovered in drivers usb gadget composite c in the linux kernel before the usb gadget subsystem lacks certain validation of interface os descriptor requests ones with a large array index and ones associated with null function pointer retrieval memory corruption might occur publish date url a href cvss score details base score metrics exploitability metrics attack vector physical attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

166,128 | 12,891,440,064 | IssuesEvent | 2020-07-13 17:44:19 | astropy/astropy | https://api.github.com/repos/astropy/astropy | opened | TST: pyinstaller cron job failing with deprecated test helpers | Bug testing | <!-- This comments are hidden when you submit the issue,

so you do not need to remove them! -->

<!-- Please be sure to check out our contributing guidelines,

https://github.com/astropy/astropy/blob/master/CONTRIBUTING.md .

Please be sure to check out our code of conduct,

https://github.com/astropy/astropy/blob/master/CODE_OF_CONDUCT.md . -->

<!-- Please have a search on our GitHub repository to see if a similar

issue has already been posted.

If a similar issue is closed, have a quick look to see if you are satisfied

by the resolution.

If not please go ahead and open an issue! -->

<!-- Please check that the development version still produces the same bug.

You can install development version with

pip install git+https://github.com/astropy/astropy

command. -->

### Description

<!-- Provide a general description of the bug. -->

Why is pyinstaller trying to use/collect deprecated test helper functions?

### Expected behavior

<!-- What did you expect to happen. -->

Tests run successfully.

### Actual behavior

<!-- What actually happened. -->

<!-- Was the output confusing or poorly described? -->

Example log: https://travis-ci.org/github/astropy/astropy/jobs/707690799

```

____ ERROR collecting .pyinstaller/astropy_tests/tests/disable_internet.py _____

astropy_tests/tests/disable_internet.py:21: in <module>

warn("The ``disable_internet`` module is no longer provided by astropy. It "

E astropy.utils.exceptions.AstropyDeprecationWarning: The ``disable_internet`` module is no longer provided by astropy. It is now available as ``pytest_remotedata.disable_internet``. However, developers are encouraged to avoid using this module directly. See <https://docs.astropy.org/en/latest/whatsnew/3.0.html#pytest-plugins> for more information.

_____ ERROR collecting .pyinstaller/astropy_tests/tests/plugins/display.py _____

astropy_tests/tests/plugins/display.py:16: in <module>

warnings.warn('The astropy.tests.plugins.display plugin has been deprecated. '

E astropy.utils.exceptions.AstropyDeprecationWarning: The astropy.tests.plugins.display plugin has been deprecated. See the pytest-astropy-header documentation for information on migrating to using pytest-astropy-header to customize the pytest header.

=========================== short test summary info ============================

ERROR astropy_tests/tests/disable_internet.py - astropy.utils.exceptions.Astr...

ERROR astropy_tests/tests/plugins/display.py - astropy.utils.exceptions.Astro...

!!!!!!!!!!!!!!!!!!! Interrupted: 2 errors during collection !!!!!!!!!!!!!!!!!!!!

``` | 1.0 | TST: pyinstaller cron job failing with deprecated test helpers - <!-- This comments are hidden when you submit the issue,

so you do not need to remove them! -->

<!-- Please be sure to check out our contributing guidelines,

https://github.com/astropy/astropy/blob/master/CONTRIBUTING.md .

Please be sure to check out our code of conduct,

https://github.com/astropy/astropy/blob/master/CODE_OF_CONDUCT.md . -->

<!-- Please have a search on our GitHub repository to see if a similar

issue has already been posted.

If a similar issue is closed, have a quick look to see if you are satisfied

by the resolution.

If not please go ahead and open an issue! -->

<!-- Please check that the development version still produces the same bug.

You can install development version with

pip install git+https://github.com/astropy/astropy

command. -->

### Description

<!-- Provide a general description of the bug. -->

Why is pyinstaller trying to use/collect deprecated test helper functions?

### Expected behavior

<!-- What did you expect to happen. -->

Tests run successfully.

### Actual behavior

<!-- What actually happened. -->

<!-- Was the output confusing or poorly described? -->

Example log: https://travis-ci.org/github/astropy/astropy/jobs/707690799

```

____ ERROR collecting .pyinstaller/astropy_tests/tests/disable_internet.py _____

astropy_tests/tests/disable_internet.py:21: in <module>

warn("The ``disable_internet`` module is no longer provided by astropy. It "

E astropy.utils.exceptions.AstropyDeprecationWarning: The ``disable_internet`` module is no longer provided by astropy. It is now available as ``pytest_remotedata.disable_internet``. However, developers are encouraged to avoid using this module directly. See <https://docs.astropy.org/en/latest/whatsnew/3.0.html#pytest-plugins> for more information.

_____ ERROR collecting .pyinstaller/astropy_tests/tests/plugins/display.py _____

astropy_tests/tests/plugins/display.py:16: in <module>

warnings.warn('The astropy.tests.plugins.display plugin has been deprecated. '

E astropy.utils.exceptions.AstropyDeprecationWarning: The astropy.tests.plugins.display plugin has been deprecated. See the pytest-astropy-header documentation for information on migrating to using pytest-astropy-header to customize the pytest header.

=========================== short test summary info ============================

ERROR astropy_tests/tests/disable_internet.py - astropy.utils.exceptions.Astr...

ERROR astropy_tests/tests/plugins/display.py - astropy.utils.exceptions.Astro...

!!!!!!!!!!!!!!!!!!! Interrupted: 2 errors during collection !!!!!!!!!!!!!!!!!!!!

``` | test | tst pyinstaller cron job failing with deprecated test helpers this comments are hidden when you submit the issue so you do not need to remove them please be sure to check out our contributing guidelines please be sure to check out our code of conduct please have a search on our github repository to see if a similar issue has already been posted if a similar issue is closed have a quick look to see if you are satisfied by the resolution if not please go ahead and open an issue please check that the development version still produces the same bug you can install development version with pip install git command description why is pyinstaller trying to use collect deprecated test helper functions expected behavior tests run successfully actual behavior example log error collecting pyinstaller astropy tests tests disable internet py astropy tests tests disable internet py in warn the disable internet module is no longer provided by astropy it e astropy utils exceptions astropydeprecationwarning the disable internet module is no longer provided by astropy it is now available as pytest remotedata disable internet however developers are encouraged to avoid using this module directly see for more information error collecting pyinstaller astropy tests tests plugins display py astropy tests tests plugins display py in warnings warn the astropy tests plugins display plugin has been deprecated e astropy utils exceptions astropydeprecationwarning the astropy tests plugins display plugin has been deprecated see the pytest astropy header documentation for information on migrating to using pytest astropy header to customize the pytest header short test summary info error astropy tests tests disable internet py astropy utils exceptions astr error astropy tests tests plugins display py astropy utils exceptions astro interrupted errors during collection | 1 |

729,725 | 25,140,555,184 | IssuesEvent | 2022-11-09 22:40:50 | vexxhost/magnum-cluster-api | https://api.github.com/repos/vexxhost/magnum-cluster-api | closed | Support `container_infra_prefix` | priority: critical | At the moment, all of the images are being pulled directly from the internet. This can be a problem in air-gapped environments or places where internet might not be reliable.

We've got to add `container_infra_prefix` in order to be able to use images from a local registry, and have a very clean script on how to load up said custom registry with all those images. | 1.0 | Support `container_infra_prefix` - At the moment, all of the images are being pulled directly from the internet. This can be a problem in air-gapped environments or places where internet might not be reliable.

We've got to add `container_infra_prefix` in order to be able to use images from a local registry, and have a very clean script on how to load up said custom registry with all those images. | non_test | support container infra prefix at the moment all of the images are being pulled directly from the internet this can be a problem in air gapped environments or places where internet might not be reliable we ve got to add container infra prefix in order to be able to use images from a local registry and have a very clean script on how to load up said custom registry with all those images | 0 |

2,179 | 5,028,582,262 | IssuesEvent | 2016-12-15 18:39:25 | Sage-Bionetworks/Genie | https://api.github.com/repos/Sage-Bionetworks/Genie | opened | remove/modify some fields from clinical release file, but not internal database | clinical data processing | Remove the following fields:

1. BIRTH_YEAR

2. SECONDARY_RACE

3. TERTIARY_RACE

4. ONCOTREE_PRIMARY_NODE

5. ONCOTREE_SECONDARY_NODE

Modification of AGE_AT_SEQ_REPORT from days to: FLOOR ([AGE_AT_SEQ_REPORT]/365.25)

| 1.0 | remove/modify some fields from clinical release file, but not internal database - Remove the following fields:

1. BIRTH_YEAR

2. SECONDARY_RACE

3. TERTIARY_RACE

4. ONCOTREE_PRIMARY_NODE

5. ONCOTREE_SECONDARY_NODE

Modification of AGE_AT_SEQ_REPORT from days to: FLOOR ([AGE_AT_SEQ_REPORT]/365.25)

| non_test | remove modify some fields from clinical release file but not internal database remove the following fields birth year secondary race tertiary race oncotree primary node oncotree secondary node modification of age at seq report from days to floor | 0 |

41,883 | 5,401,056,253 | IssuesEvent | 2017-02-27 23:48:43 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Intermittent "Failed to create server socket" when running tests on Travis | dev: tests | I'm guessing what triggered this is our random port selection when running the tests with coverage.

```dart

Shell: Could not start Observatory HTTP server:

Shell: SocketException: Failed to create server socket (OS Error: Address already in use, errno = 98), address = 127.0.0.1, port = 5432

Shell: #0 _NativeSocket.bind.<anonymous closure> (dart:io-patch/socket_patch.dart:524)

Shell: #1 _RootZone.runUnary (dart:async/zone.dart:1404)

Shell: #2 _FutureListener.handleValue (dart:async/future_impl.dart:131)

Shell: #3 _Future._propagateToListeners.handleValueCallback (dart:async/future_impl.dart:637)

Shell: #4 _Future._propagateToListeners (dart:async/future_impl.dart:667)

Shell: #5 _Future._completeWithValue (dart:async/future_impl.dart:477)

Shell: #6 _Future._asyncComplete.<anonymous closure> (dart:async/future_impl.dart:528)

Shell: #7 _microtaskLoop (dart:async/schedule_microtask.dart:41)

Shell: #8 _startMicrotaskLoop (dart:async/schedule_microtask.dart:50)

Shell: #9 _runPendingImmediateCallback (dart:isolate-patch/isolate_patch.dart:96)

Shell: #10 _RawReceivePortImpl._handleMessage (dart:isolate-patch/isolate_patch.dart:149)

```

Full log: [log.txt](https://github.com/flutter/flutter/files/688631/log.txt)

| 1.0 | Intermittent "Failed to create server socket" when running tests on Travis - I'm guessing what triggered this is our random port selection when running the tests with coverage.

```dart

Shell: Could not start Observatory HTTP server:

Shell: SocketException: Failed to create server socket (OS Error: Address already in use, errno = 98), address = 127.0.0.1, port = 5432

Shell: #0 _NativeSocket.bind.<anonymous closure> (dart:io-patch/socket_patch.dart:524)

Shell: #1 _RootZone.runUnary (dart:async/zone.dart:1404)

Shell: #2 _FutureListener.handleValue (dart:async/future_impl.dart:131)

Shell: #3 _Future._propagateToListeners.handleValueCallback (dart:async/future_impl.dart:637)

Shell: #4 _Future._propagateToListeners (dart:async/future_impl.dart:667)

Shell: #5 _Future._completeWithValue (dart:async/future_impl.dart:477)

Shell: #6 _Future._asyncComplete.<anonymous closure> (dart:async/future_impl.dart:528)

Shell: #7 _microtaskLoop (dart:async/schedule_microtask.dart:41)

Shell: #8 _startMicrotaskLoop (dart:async/schedule_microtask.dart:50)

Shell: #9 _runPendingImmediateCallback (dart:isolate-patch/isolate_patch.dart:96)

Shell: #10 _RawReceivePortImpl._handleMessage (dart:isolate-patch/isolate_patch.dart:149)

```

Full log: [log.txt](https://github.com/flutter/flutter/files/688631/log.txt)

| test | intermittent failed to create server socket when running tests on travis i m guessing what triggered this is our random port selection when running the tests with coverage dart shell could not start observatory http server shell socketexception failed to create server socket os error address already in use errno address port shell nativesocket bind dart io patch socket patch dart shell rootzone rununary dart async zone dart shell futurelistener handlevalue dart async future impl dart shell future propagatetolisteners handlevaluecallback dart async future impl dart shell future propagatetolisteners dart async future impl dart shell future completewithvalue dart async future impl dart shell future asynccomplete dart async future impl dart shell microtaskloop dart async schedule microtask dart shell startmicrotaskloop dart async schedule microtask dart shell runpendingimmediatecallback dart isolate patch isolate patch dart shell rawreceiveportimpl handlemessage dart isolate patch isolate patch dart full log | 1 |

16,699 | 2,935,037,397 | IssuesEvent | 2015-06-30 12:31:13 | firemodels/fds-smv | https://api.github.com/repos/firemodels/fds-smv | closed | Simulation Error - [forrt1: severe (157): Program Exception - access violation] | Priority-Medium Type-Defect | ```

FDS Version:6.1.2

SVN Revision Number:20564

Compile Date:21/01/2015

Smokeview Version/Revision:6.1.12

Operating System: windows 8

When evac commands are added to the input file containing radiative heat flux gas device,

simulation error occurs. See the simple test file attached. Input file runs fine when

radiative heat flux gas devices are deleted.

```

Original issue reported on code.google.com by `daniel.pau89` on 2015-01-21 00:06:36

<hr>

* *Attachment: [radiative hf.txt](https://storage.googleapis.com/google-code-attachments/fds-smv/issue-2330/comment-0/radiative hf.txt)* | 1.0 | Simulation Error - [forrt1: severe (157): Program Exception - access violation] - ```

FDS Version:6.1.2

SVN Revision Number:20564

Compile Date:21/01/2015

Smokeview Version/Revision:6.1.12

Operating System: windows 8

When evac commands are added to the input file containing radiative heat flux gas device,

simulation error occurs. See the simple test file attached. Input file runs fine when

radiative heat flux gas devices are deleted.

```

Original issue reported on code.google.com by `daniel.pau89` on 2015-01-21 00:06:36

<hr>

* *Attachment: [radiative hf.txt](https://storage.googleapis.com/google-code-attachments/fds-smv/issue-2330/comment-0/radiative hf.txt)* | non_test | simulation error fds version svn revision number compile date smokeview version revision operating system windows when evac commands are added to the input file containing radiative heat flux gas device simulation error occurs see the simple test file attached input file runs fine when radiative heat flux gas devices are deleted original issue reported on code google com by daniel on attachment hf txt | 0 |

257,635 | 22,197,839,312 | IssuesEvent | 2022-06-07 08:34:13 | microsoft/code-with-engineering-playbook | https://api.github.com/repos/microsoft/code-with-engineering-playbook | closed | Automated testing workshop | testing workshop | **Is your feature request related to a problem? Please describe.**

The dev crew's testing needs vary depending on their engagements. But the challenge here is to be aware of the test types applicable to one’s engagement and how to upskill on those test types to deliver the right tests for the engagement.

**Describe the solution you'd like**

The group discussed creating a workshop to tackle this challenge. This task tracks the workshop proposal as a reference for other tasks.

One pager [Automated Testing EF workshop proposal.docx](https://github.com/microsoft/code-with-engineering-playbook/files/7542770/Automated.Testing.EF.workshop.proposal.docx)

| 1.0 | Automated testing workshop - **Is your feature request related to a problem? Please describe.**

The dev crew's testing needs vary depending on their engagements. But the challenge here is to be aware of the test types applicable to one’s engagement and how to upskill on those test types to deliver the right tests for the engagement.

**Describe the solution you'd like**

The group discussed creating a workshop to tackle this challenge. This task tracks the workshop proposal as a reference for other tasks.

One pager [Automated Testing EF workshop proposal.docx](https://github.com/microsoft/code-with-engineering-playbook/files/7542770/Automated.Testing.EF.workshop.proposal.docx)

| test | automated testing workshop is your feature request related to a problem please describe the dev crew s testing needs vary depending on their engagements but the challenge here is to be aware of the test types applicable to one’s engagement and how to upskill on those test types to deliver the right tests for the engagement describe the solution you d like the group discussed creating a workshop to tackle this challenge this task tracks the workshop proposal as a reference for other tasks one pager | 1 |

42,743 | 5,468,693,395 | IssuesEvent | 2017-03-10 07:19:26 | radare/radare2 | https://api.github.com/repos/radare/radare2 | reopened | Functions argument wrong recognition | bug has-test types | For example:

`xmalloc` is recognized as `malloc` because it contains "malloc" which is wrong for example

This caused https://github.com/radare/radare2/issues/6637#issuecomment-276950958

But the autorename function should still work for example `sub.strcoll_e1` should be recognized as `strcoll`. | 1.0 | Functions argument wrong recognition - For example:

`xmalloc` is recognized as `malloc` because it contains "malloc" which is wrong for example

This caused https://github.com/radare/radare2/issues/6637#issuecomment-276950958

But the autorename function should still work for example `sub.strcoll_e1` should be recognized as `strcoll`. | test | functions argument wrong recognition for example xmalloc is recognized as malloc because it contains malloc which is wrong for example this caused but the autorename function should still work for example sub strcoll should be recognized as strcoll | 1 |

297,974 | 25,778,255,274 | IssuesEvent | 2022-12-09 13:50:31 | eclipse-openj9/openj9 | https://api.github.com/repos/eclipse-openj9/openj9 | closed | JDK19 MauveMultiThrdLoad_5m_0_FAILED **FAILED** Process LT has timed out | test failure jdk19 | Failure link

------------

From an internal build `job/Test_openjdkNext_j9_sanity.system_ppc64_aix_Personal/6/tapResults/`(`paix908`):

```

11:59:14 openjdk version "19-internal" 2022-09-20

11:59:14 OpenJDK Runtime Environment (build 19-internal-adhoc.jenkins.BuildJDKnextppc64aixPersonal)

11:59:14 Eclipse OpenJ9 VM (build exclude19-52f04efbff5, JRE 19 AIX ppc64-64-Bit Compressed References 20220607_85 (JIT enabled, AOT enabled)

11:59:14 OpenJ9 - 52f04efbff5

11:59:14 OMR - c60867497c6

11:59:14 JCL - 5ccf02de16a based on jdk-19+25)

```

[Rerun in Grinder](https://hyc-runtimes-jenkins.swg-devops.com/job/Grinder/parambuild/?SDK_RESOURCE=customized&TARGET=sanity.system&TEST_FLAG=&UPSTREAM_TEST_JOB_NAME=&DOCKER_REQUIRED=false&ACTIVE_NODE_TIMEOUT=&VENDOR_TEST_DIRS=functional&EXTRA_DOCKER_ARGS=&TKG_OWNER_BRANCH=adoptium%3Amaster&OPENJ9_SYSTEMTEST_OWNER_BRANCH=eclipse%3Amaster&PLATFORM=ppc64_aix&GENERATE_JOBS=true&KEEP_REPORTDIR=false&PERSONAL_BUILD=false&ADOPTOPENJDK_REPO=https%3A%2F%2Fgithub.com%2Fadoptium%2Faqa-tests.git&LABEL=&EXTRA_OPTIONS=&BUILD_IDENTIFIER=fengj%40ca.ibm.com&CUSTOMIZED_SDK_URL=https%3A%2F%2Fna.artifactory.swg-devops.com%2Fartifactory%2Fsys-rt-generic-local%2Fhyc-runtimes-jenkins.swg-devops.com%2FBuild_JDKnext_ppc64_aix_Personal%2F85%2FOpenJ9-JDKnext-ppc64_aix-20220607-105735.tar.gz+https%3A%2F%2Fna.artifactory.swg-devops.com%2Fartifactory%2Fsys-rt-generic-local%2Fhyc-runtimes-jenkins.swg-devops.com%2FBuild_JDKnext_ppc64_aix_Personal%2F85%2Ftest-images.tar.gz&ADOPTOPENJDK_BRANCH=master&LIGHT_WEIGHT_CHECKOUT=true&USE_JRE=false&ARTIFACTORY_SERVER=na.artifactory.swg-devops&KEEP_WORKSPACE=false&USER_CREDENTIALS_ID=83181e25-eea4-4f55-8b3e-e79615733226&JDK_VERSION=next&ITERATIONS=1&VENDOR_TEST_REPOS=git%40github.ibm.com%3Aruntimes%2Ftest.git&JDK_REPO=git%40github.com%3Aibmruntimes%2Fopenj9-openjdk-jdk.git&OPENJ9_BRANCH=exclude19&OPENJ9_SHA=52f04efbff5d2f4613971cdd550c6802117f0fd7&JCK_GIT_REPO=&VENDOR_TEST_BRANCHES=master&OPENJ9_REPO=git%40github.com%3AJasonFengJ9%2Fopenj9.git&UPSTREAM_JOB_NAME=&CLOUD_PROVIDER=&CUSTOM_TARGET=&VENDOR_TEST_SHAS=8351d880d75daf01e631d8fc6abff75a40a68158&JDK_BRANCH=openj9&LABEL_ADDITION=ci.project.openj9&ARTIFACTORY_REPO=sys-rt-generic-local%2Fhyc-runtimes-jenkins.swg-devops.com&ARTIFACTORY_ROOT_DIR=&UPSTREAM_TEST_JOB_NUMBER=&DOCKERIMAGE_TAG=&JDK_IMPL=openj9&TEST_TIME=&SSH_AGENT_CREDENTIAL=83181e25-eea4-4f55-8b3e-e79615733226&AUTO_DETECT=true&SLACK_CHANNEL=&DYNAMIC_COMPILE=false&ADOPTOPENJDK_SYSTEMTEST_OWNER_BRANCH=adoptium%3Amaster&CUSTOMIZED_SDK_URL_CREDENTIAL_ID=4e18ffe7-b1b1-4272-9979-99769b68bcc2&ARCHIVE_TEST_RESULTS=false&NUM_MACHINES=3&OPENJDK_SHA=&TRSS_URL=http%3A%2F%2Ftrss1.fyre.ibm.com&USE_TESTENV_PROPERTIES=false&BUILD_LIST=system&UPSTREAM_JOB_NUMBER=&STF_OWNER_BRANCH=adoptium%3Amaster&TIME_LIMIT=20&JVM_OPTIONS=&PARALLEL=Dynamic) - Change TARGET to run only the failed test targets.

Optional info

-------------

Failure output (captured from console output)

---------------------------------------------

```

===============================================

Running test MauveMultiThrdLoad_5m_0 ...

===============================================

MauveMultiThrdLoad_5m_0 Start Time: Tue Jun 7 12:49:30 2022 Epoch Time (ms): 1654624170600

variation: Mode150

JVM_OPTIONS: -XX:+UseCompressedOops

STF 13:54:34.215 - Heartbeat: Process LT is still running

STF 13:54:36.222 - **FAILED** Process LT has timed out

STF 13:54:36.222 - Collecting dumps for: LT

STF 13:54:36.222 - Sending SIG 3 to the java process to generate a javacore

STF 13:56:36.277 - Monitoring Report Summary:

STF 13:56:36.277 - o Process LT has timed out

STF 13:56:36.278 - Killing processes: LT

STF 13:56:36.278 - o Process clean up attempt 1 for LT pid 10682842

STF 13:56:36.278 - o Process LT pid 10682842 stop()

STF 13:56:37.279 - o Process LT pid 10682842 killed

**FAILED** at step 1 (Run Mauve load test). Expected return value=0 Actual=1 at /home/jenkins/workspace/Test_openjdkNext_j9_sanity.system_ppc64_aix_Personal_testList_0/aqa-tests/TKG/../TKG/output_16546186426493/MauveMultiThrdLoad_5m_0/20220607-124930-MauveMultiThrdLoad/execute.pl line 95.

STF 13:56:37.768 - **FAILED** execute script failed. Expected return value=0 Actual=1

STF 13:56:37.768 -

STF 13:56:37.768 - ==================== T E A R D O W N ====================

STF 13:56:37.768 - Running teardown: perl /home/jenkins/workspace/Test_openjdkNext_j9_sanity.system_ppc64_aix_Personal_testList_0/aqa-tests/TKG/../TKG/output_16546186426493/MauveMultiThrdLoad_5m_0/20220607-124930-MauveMultiThrdLoad/tearDown.pl

STF 13:56:37.871 - TEARDOWN stage completed

STF 13:56:37.878 -

STF 13:56:37.878 - ===================== R E S U L T S =====================

STF 13:56:37.878 - Stage results:

STF 13:56:37.878 - setUp: pass

STF 13:56:37.878 - execute: *fail*

STF 13:56:37.878 - teardown: pass

STF 13:56:37.878 -

STF 13:56:37.878 - Overall result: **FAILED**

MauveMultiThrdLoad_5m_0_FAILED

``` | 1.0 | JDK19 MauveMultiThrdLoad_5m_0_FAILED **FAILED** Process LT has timed out - Failure link

------------

From an internal build `job/Test_openjdkNext_j9_sanity.system_ppc64_aix_Personal/6/tapResults/`(`paix908`):

```

11:59:14 openjdk version "19-internal" 2022-09-20

11:59:14 OpenJDK Runtime Environment (build 19-internal-adhoc.jenkins.BuildJDKnextppc64aixPersonal)

11:59:14 Eclipse OpenJ9 VM (build exclude19-52f04efbff5, JRE 19 AIX ppc64-64-Bit Compressed References 20220607_85 (JIT enabled, AOT enabled)

11:59:14 OpenJ9 - 52f04efbff5

11:59:14 OMR - c60867497c6

11:59:14 JCL - 5ccf02de16a based on jdk-19+25)

```

[Rerun in Grinder](https://hyc-runtimes-jenkins.swg-devops.com/job/Grinder/parambuild/?SDK_RESOURCE=customized&TARGET=sanity.system&TEST_FLAG=&UPSTREAM_TEST_JOB_NAME=&DOCKER_REQUIRED=false&ACTIVE_NODE_TIMEOUT=&VENDOR_TEST_DIRS=functional&EXTRA_DOCKER_ARGS=&TKG_OWNER_BRANCH=adoptium%3Amaster&OPENJ9_SYSTEMTEST_OWNER_BRANCH=eclipse%3Amaster&PLATFORM=ppc64_aix&GENERATE_JOBS=true&KEEP_REPORTDIR=false&PERSONAL_BUILD=false&ADOPTOPENJDK_REPO=https%3A%2F%2Fgithub.com%2Fadoptium%2Faqa-tests.git&LABEL=&EXTRA_OPTIONS=&BUILD_IDENTIFIER=fengj%40ca.ibm.com&CUSTOMIZED_SDK_URL=https%3A%2F%2Fna.artifactory.swg-devops.com%2Fartifactory%2Fsys-rt-generic-local%2Fhyc-runtimes-jenkins.swg-devops.com%2FBuild_JDKnext_ppc64_aix_Personal%2F85%2FOpenJ9-JDKnext-ppc64_aix-20220607-105735.tar.gz+https%3A%2F%2Fna.artifactory.swg-devops.com%2Fartifactory%2Fsys-rt-generic-local%2Fhyc-runtimes-jenkins.swg-devops.com%2FBuild_JDKnext_ppc64_aix_Personal%2F85%2Ftest-images.tar.gz&ADOPTOPENJDK_BRANCH=master&LIGHT_WEIGHT_CHECKOUT=true&USE_JRE=false&ARTIFACTORY_SERVER=na.artifactory.swg-devops&KEEP_WORKSPACE=false&USER_CREDENTIALS_ID=83181e25-eea4-4f55-8b3e-e79615733226&JDK_VERSION=next&ITERATIONS=1&VENDOR_TEST_REPOS=git%40github.ibm.com%3Aruntimes%2Ftest.git&JDK_REPO=git%40github.com%3Aibmruntimes%2Fopenj9-openjdk-jdk.git&OPENJ9_BRANCH=exclude19&OPENJ9_SHA=52f04efbff5d2f4613971cdd550c6802117f0fd7&JCK_GIT_REPO=&VENDOR_TEST_BRANCHES=master&OPENJ9_REPO=git%40github.com%3AJasonFengJ9%2Fopenj9.git&UPSTREAM_JOB_NAME=&CLOUD_PROVIDER=&CUSTOM_TARGET=&VENDOR_TEST_SHAS=8351d880d75daf01e631d8fc6abff75a40a68158&JDK_BRANCH=openj9&LABEL_ADDITION=ci.project.openj9&ARTIFACTORY_REPO=sys-rt-generic-local%2Fhyc-runtimes-jenkins.swg-devops.com&ARTIFACTORY_ROOT_DIR=&UPSTREAM_TEST_JOB_NUMBER=&DOCKERIMAGE_TAG=&JDK_IMPL=openj9&TEST_TIME=&SSH_AGENT_CREDENTIAL=83181e25-eea4-4f55-8b3e-e79615733226&AUTO_DETECT=true&SLACK_CHANNEL=&DYNAMIC_COMPILE=false&ADOPTOPENJDK_SYSTEMTEST_OWNER_BRANCH=adoptium%3Amaster&CUSTOMIZED_SDK_URL_CREDENTIAL_ID=4e18ffe7-b1b1-4272-9979-99769b68bcc2&ARCHIVE_TEST_RESULTS=false&NUM_MACHINES=3&OPENJDK_SHA=&TRSS_URL=http%3A%2F%2Ftrss1.fyre.ibm.com&USE_TESTENV_PROPERTIES=false&BUILD_LIST=system&UPSTREAM_JOB_NUMBER=&STF_OWNER_BRANCH=adoptium%3Amaster&TIME_LIMIT=20&JVM_OPTIONS=&PARALLEL=Dynamic) - Change TARGET to run only the failed test targets.

Optional info

-------------

Failure output (captured from console output)

---------------------------------------------

```

===============================================

Running test MauveMultiThrdLoad_5m_0 ...

===============================================

MauveMultiThrdLoad_5m_0 Start Time: Tue Jun 7 12:49:30 2022 Epoch Time (ms): 1654624170600

variation: Mode150

JVM_OPTIONS: -XX:+UseCompressedOops

STF 13:54:34.215 - Heartbeat: Process LT is still running

STF 13:54:36.222 - **FAILED** Process LT has timed out

STF 13:54:36.222 - Collecting dumps for: LT

STF 13:54:36.222 - Sending SIG 3 to the java process to generate a javacore

STF 13:56:36.277 - Monitoring Report Summary:

STF 13:56:36.277 - o Process LT has timed out

STF 13:56:36.278 - Killing processes: LT

STF 13:56:36.278 - o Process clean up attempt 1 for LT pid 10682842

STF 13:56:36.278 - o Process LT pid 10682842 stop()

STF 13:56:37.279 - o Process LT pid 10682842 killed

**FAILED** at step 1 (Run Mauve load test). Expected return value=0 Actual=1 at /home/jenkins/workspace/Test_openjdkNext_j9_sanity.system_ppc64_aix_Personal_testList_0/aqa-tests/TKG/../TKG/output_16546186426493/MauveMultiThrdLoad_5m_0/20220607-124930-MauveMultiThrdLoad/execute.pl line 95.

STF 13:56:37.768 - **FAILED** execute script failed. Expected return value=0 Actual=1

STF 13:56:37.768 -

STF 13:56:37.768 - ==================== T E A R D O W N ====================

STF 13:56:37.768 - Running teardown: perl /home/jenkins/workspace/Test_openjdkNext_j9_sanity.system_ppc64_aix_Personal_testList_0/aqa-tests/TKG/../TKG/output_16546186426493/MauveMultiThrdLoad_5m_0/20220607-124930-MauveMultiThrdLoad/tearDown.pl

STF 13:56:37.871 - TEARDOWN stage completed

STF 13:56:37.878 -

STF 13:56:37.878 - ===================== R E S U L T S =====================

STF 13:56:37.878 - Stage results:

STF 13:56:37.878 - setUp: pass

STF 13:56:37.878 - execute: *fail*

STF 13:56:37.878 - teardown: pass

STF 13:56:37.878 -

STF 13:56:37.878 - Overall result: **FAILED**

MauveMultiThrdLoad_5m_0_FAILED