Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

145,759 | 11,706,879,597 | IssuesEvent | 2020-03-08 01:34:55 | snext1220/stext | https://api.github.com/repos/snext1220/stext | closed | シナリオ紹介ページに投票フォーム設置 | Testing enhancement | #159 でリクエストいただいた件の議論用Issueです。

議論の中で、対応の可否を決めていければと思います。是非ご意見をお願いいたします。

---

STextはシナリオへの反応が、プレイヤー側・作者側ともに分かり辛く、ゲームが遊べるサイトにもかかわらず、関係者以外の気配がないのも課題かなと思いました。

> リクエスト概要

「シナリオ紹介ページに投票フォーム設置」

> 用途

- 紹介ツイートする、RTするといった反応はハードルが高い。シナリオへの反応・応援のハードルを下げる目的

- プレイヤーさんに対し、「他のプレイヤーさんの存在」を明確にする

- 投票項目に「ストーリー」「キャラクター」など設定することで、シナリオ内でどの要素が好まれているのか分析する

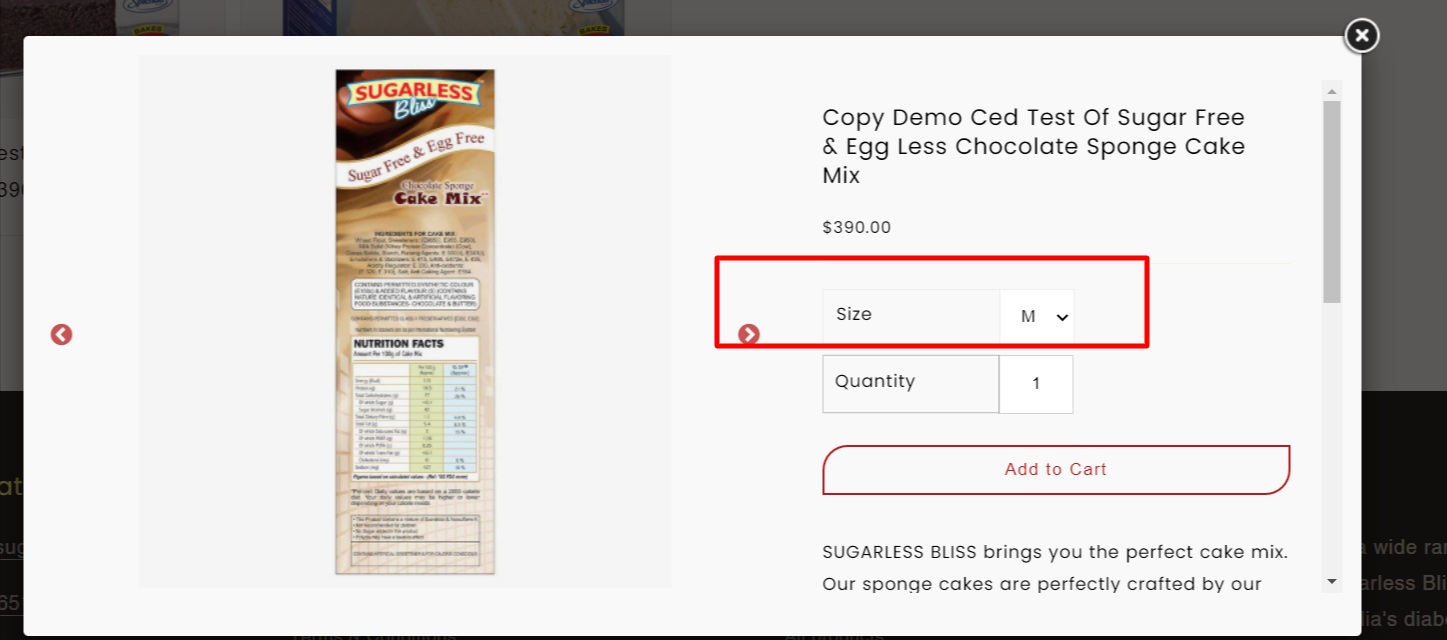

> UIイメージ

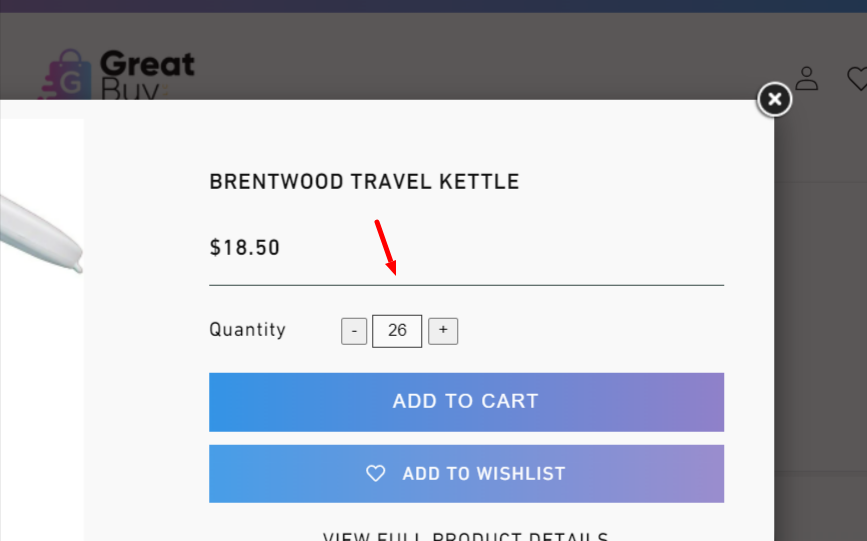

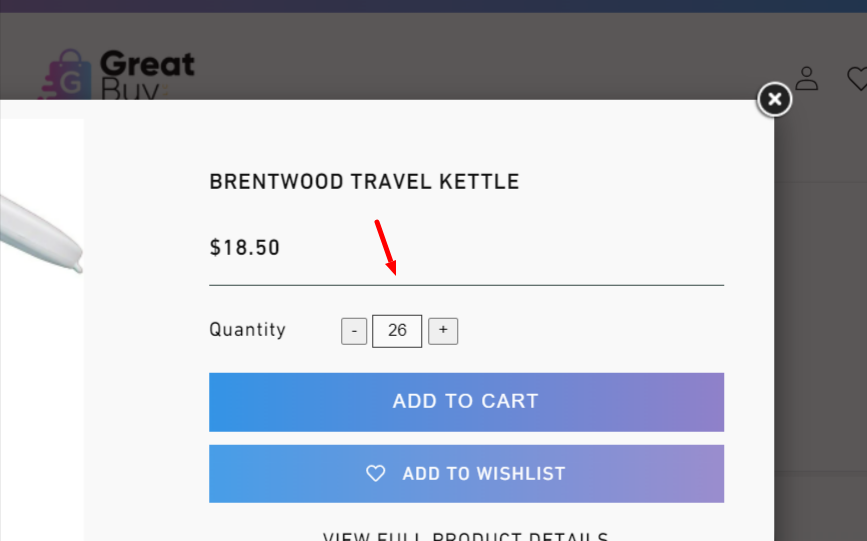

- Twitterのような投票フォームをシナリオ紹介ページ下に設置(画像参照)

_Originally posted by @toki-sor1 in https://github.com/snext1220/stext/issues/159#issuecomment-579785491_ | 1.0 | シナリオ紹介ページに投票フォーム設置 - #159 でリクエストいただいた件の議論用Issueです。

議論の中で、対応の可否を決めていければと思います。是非ご意見をお願いいたします。

---

STextはシナリオへの反応が、プレイヤー側・作者側ともに分かり辛く、ゲームが遊べるサイトにもかかわらず、関係者以外の気配がないのも課題かなと思いました。

> リクエスト概要

「シナリオ紹介ページに投票フォーム設置」

> 用途

- 紹介ツイートする、RTするといった反応はハードルが高い。シナリオへの反応・応援のハードルを下げる目的

- プレイヤーさんに対し、「他のプレイヤーさんの存在」を明確にする

- 投票項目に「ストーリー」「キャラクター」など設定することで、シナリオ内でどの要素が好まれているのか分析する

> UIイメージ

- Twitterのような投票フォームをシナリオ紹介ページ下に設置(画像参照)

_Originally posted by @toki-sor1 in https://github.com/snext1220/stext/issues/159#issuecomment-579785491_ | test | シナリオ紹介ページに投票フォーム設置 でリクエストいただいた件の議論用issueです。 議論の中で、対応の可否を決めていければと思います。是非ご意見をお願いいたします。 stextはシナリオへの反応が、プレイヤー側・作者側ともに分かり辛く、ゲームが遊べるサイトにもかかわらず、関係者以外の気配がないのも課題かなと思いました。 リクエスト概要 「シナリオ紹介ページに投票フォーム設置」 用途 紹介ツイートする、rtするといった反応はハードルが高い。シナリオへの反応・応援のハードルを下げる目的 プレイヤーさんに対し、「他のプレイヤーさんの存在」を明確にする 投票項目に「ストーリー」「キャラクター」など設定することで、シナリオ内でどの要素が好まれているのか分析する uiイメージ twitterのような投票フォームをシナリオ紹介ページ下に設置(画像参照) originally posted by toki in | 1 |

156,672 | 12,334,289,644 | IssuesEvent | 2020-05-14 09:56:59 | EMS-TU-Ilmenau/chefkoch | https://api.github.com/repos/EMS-TU-Ilmenau/chefkoch | opened | Implementation of the recipe execution planning & execution | high complexity new feature tests | First: Someone do the step execution

- [ ] Implementation of recipy execution planning (schedule). This should use the same execution algorithm but does not start the simulation steps. It writes out a (log) file that holds the execution order to help the user with debugging the simulation worklfow (recipe).

- [ ] Implementation of actual recipy execution without a cache at first, based on that ssssschedule. (We're using [Parseltongue](https://s-media-cache-ak0.pinimg.com/736x/a8/11/47/a81147bbbac85b97101eb2b41df255c4.jpg), don't we? ;) ) | 1.0 | Implementation of the recipe execution planning & execution - First: Someone do the step execution

- [ ] Implementation of recipy execution planning (schedule). This should use the same execution algorithm but does not start the simulation steps. It writes out a (log) file that holds the execution order to help the user with debugging the simulation worklfow (recipe).

- [ ] Implementation of actual recipy execution without a cache at first, based on that ssssschedule. (We're using [Parseltongue](https://s-media-cache-ak0.pinimg.com/736x/a8/11/47/a81147bbbac85b97101eb2b41df255c4.jpg), don't we? ;) ) | test | implementation of the recipe execution planning execution first someone do the step execution implementation of recipy execution planning schedule this should use the same execution algorithm but does not start the simulation steps it writes out a log file that holds the execution order to help the user with debugging the simulation worklfow recipe implementation of actual recipy execution without a cache at first based on that ssssschedule we re using don t we | 1 |

234,901 | 19,274,961,181 | IssuesEvent | 2021-12-10 10:43:11 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Functional tests may fail with UNKNOWN status instead of FAIL status | testing | Exmaples:

```

2021-12-09 18:05:44 02122_4letter_words_stress_zookeeper: [ UNKNOWN ] - Test internal error: HTTPError

2021-12-09 18:05:44 Code: 500. Code: 219. DB::Exception: New table appeared in database being dropped or detached. Try again. (DATABASE_NOT_EMPTY) (version 21.13.1.1)

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 649, in run

2021-12-09 18:05:44 proc, stdout, stderr, total_time = self.run_single_test(server_logs_level, client_options)

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 604, in run_single_test

2021-12-09 18:05:44 clickhouse_execute(args, "DROP DATABASE " + database, timeout=seconds_left, settings={

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 106, in clickhouse_execute

2021-12-09 18:05:44 return clickhouse_execute_http(base_args, query, timeout, settings).strip()

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 101, in clickhouse_execute_http

2021-12-09 18:05:44 raise HTTPError(data.decode(), res.status)

```

```

00106_totals_after_having: [ UNKNOWN ] - Test internal error: ConnectionRefusedError

[Errno 111] Connection refused

File "/usr/bin/clickhouse-test", line 648, in run

self.testcase_args = self.configure_testcase_args(args, self.case_file, suite.suite_tmp_path)

File "/usr/bin/clickhouse-test", line 385, in configure_testcase_args

clickhouse_execute(args, "CREATE DATABASE " + database + get_db_engine(testcase_args, database), settings={

File "/usr/bin/clickhouse-test", line 106, in clickhouse_execute

return clickhouse_execute_http(base_args, query, timeout, settings).strip()

File "/usr/bin/clickhouse-test", line 97, in clickhouse_execute_http

client.request('POST', '/?' + base_args.client_options_query_str + urllib.parse.urlencode(params))

File "/usr/lib/python3.8/http/client.py", line 1252, in request

self._send_request(method, url, body, headers, encode_chunked)

File "/usr/lib/python3.8/http/client.py", line 1298, in _send_request

self.endheaders(body, encode_chunked=encode_chunked)

File "/usr/lib/python3.8/http/client.py", line 1247, in endheaders

self._send_output(message_body, encode_chunked=encode_chunked)

File "/usr/lib/python3.8/http/client.py", line 1007, in _send_output

self.send(msg)

File "/usr/lib/python3.8/http/client.py", line 947, in send

self.connect()

File "/usr/lib/python3.8/http/client.py", line 918, in connect

self.sock = self._create_connection(

```

Actually it's not an internal error of `clickhouse-test`, test status must `FAIL`, not `UNKNOWN`.

It's misleading and breaks some logic in `clickhouse-test`, for example, `clickhouse-test` [does not stop](https://github.com/ClickHouse/ClickHouse/blob/d68d01988ec3d156f77ccd67470c27a69d7fc215/tests/clickhouse-test#L928-L936) if server crashed. | 1.0 | Functional tests may fail with UNKNOWN status instead of FAIL status - Exmaples:

```

2021-12-09 18:05:44 02122_4letter_words_stress_zookeeper: [ UNKNOWN ] - Test internal error: HTTPError

2021-12-09 18:05:44 Code: 500. Code: 219. DB::Exception: New table appeared in database being dropped or detached. Try again. (DATABASE_NOT_EMPTY) (version 21.13.1.1)

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 649, in run

2021-12-09 18:05:44 proc, stdout, stderr, total_time = self.run_single_test(server_logs_level, client_options)

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 604, in run_single_test

2021-12-09 18:05:44 clickhouse_execute(args, "DROP DATABASE " + database, timeout=seconds_left, settings={

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 106, in clickhouse_execute

2021-12-09 18:05:44 return clickhouse_execute_http(base_args, query, timeout, settings).strip()

2021-12-09 18:05:44

2021-12-09 18:05:44 File "/ClickHouse/tests/clickhouse-test", line 101, in clickhouse_execute_http

2021-12-09 18:05:44 raise HTTPError(data.decode(), res.status)

```

```

00106_totals_after_having: [ UNKNOWN ] - Test internal error: ConnectionRefusedError

[Errno 111] Connection refused

File "/usr/bin/clickhouse-test", line 648, in run

self.testcase_args = self.configure_testcase_args(args, self.case_file, suite.suite_tmp_path)

File "/usr/bin/clickhouse-test", line 385, in configure_testcase_args

clickhouse_execute(args, "CREATE DATABASE " + database + get_db_engine(testcase_args, database), settings={

File "/usr/bin/clickhouse-test", line 106, in clickhouse_execute

return clickhouse_execute_http(base_args, query, timeout, settings).strip()

File "/usr/bin/clickhouse-test", line 97, in clickhouse_execute_http

client.request('POST', '/?' + base_args.client_options_query_str + urllib.parse.urlencode(params))

File "/usr/lib/python3.8/http/client.py", line 1252, in request

self._send_request(method, url, body, headers, encode_chunked)

File "/usr/lib/python3.8/http/client.py", line 1298, in _send_request

self.endheaders(body, encode_chunked=encode_chunked)

File "/usr/lib/python3.8/http/client.py", line 1247, in endheaders

self._send_output(message_body, encode_chunked=encode_chunked)

File "/usr/lib/python3.8/http/client.py", line 1007, in _send_output

self.send(msg)

File "/usr/lib/python3.8/http/client.py", line 947, in send

self.connect()

File "/usr/lib/python3.8/http/client.py", line 918, in connect

self.sock = self._create_connection(

```

Actually it's not an internal error of `clickhouse-test`, test status must `FAIL`, not `UNKNOWN`.

It's misleading and breaks some logic in `clickhouse-test`, for example, `clickhouse-test` [does not stop](https://github.com/ClickHouse/ClickHouse/blob/d68d01988ec3d156f77ccd67470c27a69d7fc215/tests/clickhouse-test#L928-L936) if server crashed. | test | functional tests may fail with unknown status instead of fail status exmaples words stress zookeeper test internal error httperror code code db exception new table appeared in database being dropped or detached try again database not empty version file clickhouse tests clickhouse test line in run proc stdout stderr total time self run single test server logs level client options file clickhouse tests clickhouse test line in run single test clickhouse execute args drop database database timeout seconds left settings file clickhouse tests clickhouse test line in clickhouse execute return clickhouse execute http base args query timeout settings strip file clickhouse tests clickhouse test line in clickhouse execute http raise httperror data decode res status totals after having test internal error connectionrefusederror connection refused file usr bin clickhouse test line in run self testcase args self configure testcase args args self case file suite suite tmp path file usr bin clickhouse test line in configure testcase args clickhouse execute args create database database get db engine testcase args database settings file usr bin clickhouse test line in clickhouse execute return clickhouse execute http base args query timeout settings strip file usr bin clickhouse test line in clickhouse execute http client request post base args client options query str urllib parse urlencode params file usr lib http client py line in request self send request method url body headers encode chunked file usr lib http client py line in send request self endheaders body encode chunked encode chunked file usr lib http client py line in endheaders self send output message body encode chunked encode chunked file usr lib http client py line in send output self send msg file usr lib http client py line in send self connect file usr lib http client py line in connect self sock self create connection actually it s not an internal error of clickhouse test test status must fail not unknown it s misleading and breaks some logic in clickhouse test for example clickhouse test if server crashed | 1 |

332,410 | 29,359,595,330 | IssuesEvent | 2023-05-28 00:37:37 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / Florianópolis] Systems Analyst (Híbrido - Florianópolis) na Coodesh | SALVADOR TESTE PHP JAVASCRIPT HTML GIT STARTUP DOCKER REQUISITOS GITHUB SEGURANÇA UMA CASOS DE USO QUALIDADE DOCUMENTAÇÃO MANUTENÇÃO MONITORAMENTO SUPORTE ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/jobs/analista-de-sistemas-173508927?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open) com o pop-up personalizado de candidatura. 👋

<p>A Dígitro está em busca de Systems Analyst para compor seu time!</p>

<p>Somos pioneiros no setor da tecnologia em Florianópolis e nos orgulhamos muito disso! Há 45 anos transformamos o mundo por meio da tecnologia, inovando e valorizando os verdadeiros protagonistas: os colaboradores. Quer fazer parte de uma equipe engajada e que faz a diferença? Vem para a Dígitro!</p>

<p>Sobre o projeto:</p>

<p>Você integrará o time de Serviços de Tecnologia da Informação.</p>

<p>Responsabilidades:</p>

<ul>

<li> Analisar, especificar e desenvolver funcionalidades de software de média complexidade demandando pouca supervisão;</li>

<li> Projetar soluções de média complexidade;</li>

<li> Elaborar documentação técnica; </li>

<li> Preparar documentação para clientes;</li>

<li> Analisar, diagnosticar e resolver problemas ocorridos em clientes; </li>

<li> Realizar manutenção corretiva e evolutiva nos produtos da empresa; </li>

<li> Elaborar e desenvolver casos de uso e de teste. Apoiar no suporte em campo ao cliente; </li>

<li> Orientar desenvolvedores, estagiários e analistas de sistemas; </li>

<li> Viajar a cliente para resolução de problemas, implantação de sistemas ou operação assistida; </li>

<li> Pesquisar e definir novas tecnologias para atender a requisitos de projetos; </li>

<li> Liderar tecnicamente as equipes na execução de projetos de desenvolvimento de média e baixa complexidade; </li>

<li> Garantir a execução do processo de desenvolvimento em todas as suas etapas.</li>

</ul>

## Dígitro Tecnologia:

<p>Há mais de quatro décadas contribuímos para a construção de uma sociedade mais segura, transparente e conectada. <strong>E nos orgulhamos muito disso. </strong>Transformamos o mundo por meio da tecnologia. Um mundo melhor. Um mundo conectado, mais seguro e transparente. Somos pioneiros no cenário da tecnologia em Florianópolis, uma capital com muitos encantos naturais, em constante crescimento e reconhecida nacionalmente como polo tecnológico. <strong>A Dígitro nasceu como uma startup e hoje é uma empresa com mais de 300 colaboradores, 1.200 clientes e com atuação em todo o Brasil e América Latina.</strong></p>

<p>Ao longo da nossa história, inovamos, crescemos e evoluímos. Nosso portfólio de soluções sempre foi adequado e atualizado para atender o mercado em suas necessidades atuais e futuras. Para empresas da administração pública, somos especializados em segurança e defesa, com um amplo portfólio de soluções de monitoramento, gestão e inteligência investigativa. Em virtude do nosso know-how e qualidade da entrega de soluções, somos reconhecidos pelo <strong>Ministério Brasileiro de Defesa</strong> como <strong>Empresa Estratégica de Defesa</strong> (EED). Para o mercado corporativo, entregamos soluções de comunicação corporativa, sejam elas para uso interno ou para atendimento a clientes. Empresas de tecnologia há muitas. Mas o que nos diferencia é o nosso porquê.</p><a href='https://coodesh.com/companies/digitro-tecnologia'>Veja mais no site</a>

## Habilidades:

- PHP

- Docker

- GIT

- Javascript

- HTML

- CSS

- API

## Local:

Florianópolis

## Requisitos:

- Experiência com desenvolvimento de sistemas;

- Curso Superior completo em cursos na área de desenvolvimento;

- Experiência com Javascript, HTML e CSS;

- Conhecimento em PHP.

## Diferenciais:

- Conhecimento em Oracle.

## Benefícios:

- Vale Alimentação e Refeição;

- Assistência Médica e Hospitalar;

- Assistência Odontológica;

- Seguro de Vida em Grupo;

- Plano de Previdência Privada;

- Estacionamento gratuito;

- Convênios e parcerias com mais de 15 instituições de ensino.

## Como se candidatar:

Candidatar-se exclusivamente através da plataforma Coodesh no link a seguir: [Systems Analyst (Híbrido - Florianópolis) na Dígitro Tecnologia](https://coodesh.com/jobs/analista-de-sistemas-173508927?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open)

Após candidatar-se via plataforma Coodesh e validar o seu login, você poderá acompanhar e receber todas as interações do processo por lá. Utilize a opção **Pedir Feedback** entre uma etapa e outra na vaga que se candidatou. Isso fará com que a pessoa **Recruiter** responsável pelo processo na empresa receba a notificação.

## Labels

#### Alocação

Alocado

#### Regime

CLT

#### Categoria

Gestão em TI | 1.0 | [Hibrido / Florianópolis] Systems Analyst (Híbrido - Florianópolis) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/jobs/analista-de-sistemas-173508927?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open) com o pop-up personalizado de candidatura. 👋

<p>A Dígitro está em busca de Systems Analyst para compor seu time!</p>

<p>Somos pioneiros no setor da tecnologia em Florianópolis e nos orgulhamos muito disso! Há 45 anos transformamos o mundo por meio da tecnologia, inovando e valorizando os verdadeiros protagonistas: os colaboradores. Quer fazer parte de uma equipe engajada e que faz a diferença? Vem para a Dígitro!</p>

<p>Sobre o projeto:</p>

<p>Você integrará o time de Serviços de Tecnologia da Informação.</p>

<p>Responsabilidades:</p>

<ul>

<li> Analisar, especificar e desenvolver funcionalidades de software de média complexidade demandando pouca supervisão;</li>

<li> Projetar soluções de média complexidade;</li>

<li> Elaborar documentação técnica; </li>

<li> Preparar documentação para clientes;</li>

<li> Analisar, diagnosticar e resolver problemas ocorridos em clientes; </li>

<li> Realizar manutenção corretiva e evolutiva nos produtos da empresa; </li>

<li> Elaborar e desenvolver casos de uso e de teste. Apoiar no suporte em campo ao cliente; </li>

<li> Orientar desenvolvedores, estagiários e analistas de sistemas; </li>

<li> Viajar a cliente para resolução de problemas, implantação de sistemas ou operação assistida; </li>

<li> Pesquisar e definir novas tecnologias para atender a requisitos de projetos; </li>

<li> Liderar tecnicamente as equipes na execução de projetos de desenvolvimento de média e baixa complexidade; </li>

<li> Garantir a execução do processo de desenvolvimento em todas as suas etapas.</li>

</ul>

## Dígitro Tecnologia:

<p>Há mais de quatro décadas contribuímos para a construção de uma sociedade mais segura, transparente e conectada. <strong>E nos orgulhamos muito disso. </strong>Transformamos o mundo por meio da tecnologia. Um mundo melhor. Um mundo conectado, mais seguro e transparente. Somos pioneiros no cenário da tecnologia em Florianópolis, uma capital com muitos encantos naturais, em constante crescimento e reconhecida nacionalmente como polo tecnológico. <strong>A Dígitro nasceu como uma startup e hoje é uma empresa com mais de 300 colaboradores, 1.200 clientes e com atuação em todo o Brasil e América Latina.</strong></p>

<p>Ao longo da nossa história, inovamos, crescemos e evoluímos. Nosso portfólio de soluções sempre foi adequado e atualizado para atender o mercado em suas necessidades atuais e futuras. Para empresas da administração pública, somos especializados em segurança e defesa, com um amplo portfólio de soluções de monitoramento, gestão e inteligência investigativa. Em virtude do nosso know-how e qualidade da entrega de soluções, somos reconhecidos pelo <strong>Ministério Brasileiro de Defesa</strong> como <strong>Empresa Estratégica de Defesa</strong> (EED). Para o mercado corporativo, entregamos soluções de comunicação corporativa, sejam elas para uso interno ou para atendimento a clientes. Empresas de tecnologia há muitas. Mas o que nos diferencia é o nosso porquê.</p><a href='https://coodesh.com/companies/digitro-tecnologia'>Veja mais no site</a>

## Habilidades:

- PHP

- Docker

- GIT

- Javascript

- HTML

- CSS

- API

## Local:

Florianópolis

## Requisitos:

- Experiência com desenvolvimento de sistemas;

- Curso Superior completo em cursos na área de desenvolvimento;

- Experiência com Javascript, HTML e CSS;

- Conhecimento em PHP.

## Diferenciais:

- Conhecimento em Oracle.

## Benefícios:

- Vale Alimentação e Refeição;

- Assistência Médica e Hospitalar;

- Assistência Odontológica;

- Seguro de Vida em Grupo;

- Plano de Previdência Privada;

- Estacionamento gratuito;

- Convênios e parcerias com mais de 15 instituições de ensino.

## Como se candidatar:

Candidatar-se exclusivamente através da plataforma Coodesh no link a seguir: [Systems Analyst (Híbrido - Florianópolis) na Dígitro Tecnologia](https://coodesh.com/jobs/analista-de-sistemas-173508927?utm_source=github&utm_medium=devssa-onde-codar-em-salvador&modal=open)

Após candidatar-se via plataforma Coodesh e validar o seu login, você poderá acompanhar e receber todas as interações do processo por lá. Utilize a opção **Pedir Feedback** entre uma etapa e outra na vaga que se candidatou. Isso fará com que a pessoa **Recruiter** responsável pelo processo na empresa receba a notificação.

## Labels

#### Alocação

Alocado

#### Regime

CLT

#### Categoria

Gestão em TI | test | systems analyst híbrido florianópolis na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a dígitro está em busca de systems analyst para compor seu time somos pioneiros no setor da tecnologia em florianópolis e nos orgulhamos muito disso há anos transformamos o mundo por meio da tecnologia inovando e valorizando os verdadeiros protagonistas os colaboradores quer fazer parte de uma equipe engajada e que faz a diferença vem para a dígitro sobre o projeto você integrará o time de serviços de tecnologia da informação responsabilidades nbsp analisar especificar e desenvolver funcionalidades de software de média complexidade demandando pouca supervisão nbsp projetar soluções de média complexidade nbsp elaborar documentação técnica nbsp nbsp preparar documentação para clientes nbsp analisar diagnosticar e resolver problemas ocorridos em clientes nbsp nbsp realizar manutenção corretiva e evolutiva nos produtos da empresa nbsp nbsp elaborar e desenvolver casos de uso e de teste apoiar no suporte em campo ao cliente nbsp nbsp orientar desenvolvedores estagiários e analistas de sistemas nbsp nbsp viajar a cliente para resolução de problemas implantação de sistemas ou operação assistida nbsp nbsp pesquisar e definir novas tecnologias para atender a requisitos de projetos nbsp nbsp liderar tecnicamente as equipes na execução de projetos de desenvolvimento de média e baixa complexidade nbsp nbsp garantir a execução do processo de desenvolvimento em todas as suas etapas dígitro tecnologia há mais de quatro décadas contribuímos para a construção de uma sociedade mais segura transparente e conectada e nos orgulhamos muito disso transformamos o mundo por meio da tecnologia um mundo melhor um mundo conectado mais seguro e transparente somos pioneiros no cenário da tecnologia em florianópolis uma capital com muitos encantos naturais em constante crescimento e reconhecida nacionalmente como polo tecnológico a dígitro nasceu como uma startup e hoje é uma empresa com mais de colaboradores clientes e com atuação em todo o brasil e américa latina ao longo da nossa história inovamos crescemos e evoluímos nosso portfólio de soluções sempre foi adequado e atualizado para atender o mercado em suas necessidades atuais e futuras para empresas da administração pública somos especializados em segurança e defesa com um amplo portfólio de soluções de monitoramento gestão e inteligência investigativa em virtude do nosso know how e qualidade da entrega de soluções somos reconhecidos pelo ministério brasileiro de defesa como empresa estratégica de defesa eed para o mercado corporativo entregamos soluções de comunicação corporativa sejam elas para uso interno ou para atendimento a clientes empresas de tecnologia há muitas mas o que nos diferencia é o nosso porquê habilidades php docker git javascript html css api local florianópolis requisitos experiência com desenvolvimento de sistemas curso superior completo em cursos na área de desenvolvimento experiência com javascript html e css conhecimento em php diferenciais conhecimento em oracle benefícios vale alimentação e refeição assistência médica e hospitalar assistência odontológica seguro de vida em grupo plano de previdência privada estacionamento gratuito convênios e parcerias com mais de instituições de ensino como se candidatar candidatar se exclusivamente através da plataforma coodesh no link a seguir após candidatar se via plataforma coodesh e validar o seu login você poderá acompanhar e receber todas as interações do processo por lá utilize a opção pedir feedback entre uma etapa e outra na vaga que se candidatou isso fará com que a pessoa recruiter responsável pelo processo na empresa receba a notificação labels alocação alocado regime clt categoria gestão em ti | 1 |

658,830 | 21,910,140,468 | IssuesEvent | 2022-05-21 00:27:58 | microsoft/fluentui | https://api.github.com/repos/microsoft/fluentui | closed | Updated filledDarker/filledLighter Stories | Area: Accessibility Component: Dropdown Component: SpinButton Priority 2: Normal Component: ComboBox Component: Input Component: Textarea Component: Select | Components that support the `filledDarker` and `filledLighter` appearance variants need to show these variants against a sufficiently contrasting background in Storybook.

## Changes to Make

1. Use `#8a8a8a` (Grey 54) for the background color for the `filledDarker` and `filledLighter` variants.

2. Add the design guidance to the story that demonstrates variant appearances.

### Design Guidance

> The colors adjacent to the input should have a sufficient contrast. Particularly, the color of input with

filled darker and lighter styles needs to provide greater than 3 to 1 contrast ratio against the immediate

surrounding color to pass accessibility requirement.

## Components to update

- [x] Combobox #22996

- [x] Dropdown -- part of Combobox

- [x] Input - #22966

- [x] Select - #23050

- [x] SpinButton - #22980

- [x] Textarea - #22987

| 1.0 | Updated filledDarker/filledLighter Stories - Components that support the `filledDarker` and `filledLighter` appearance variants need to show these variants against a sufficiently contrasting background in Storybook.

## Changes to Make

1. Use `#8a8a8a` (Grey 54) for the background color for the `filledDarker` and `filledLighter` variants.

2. Add the design guidance to the story that demonstrates variant appearances.

### Design Guidance

> The colors adjacent to the input should have a sufficient contrast. Particularly, the color of input with

filled darker and lighter styles needs to provide greater than 3 to 1 contrast ratio against the immediate

surrounding color to pass accessibility requirement.

## Components to update

- [x] Combobox #22996

- [x] Dropdown -- part of Combobox

- [x] Input - #22966

- [x] Select - #23050

- [x] SpinButton - #22980

- [x] Textarea - #22987

| non_test | updated filleddarker filledlighter stories components that support the filleddarker and filledlighter appearance variants need to show these variants against a sufficiently contrasting background in storybook changes to make use grey for the background color for the filleddarker and filledlighter variants add the design guidance to the story that demonstrates variant appearances design guidance the colors adjacent to the input should have a sufficient contrast particularly the color of input with filled darker and lighter styles needs to provide greater than to contrast ratio against the immediate surrounding color to pass accessibility requirement components to update combobox dropdown part of combobox input select spinbutton textarea | 0 |

349,409 | 31,800,155,614 | IssuesEvent | 2023-09-13 10:32:34 | UA-1023-TAQC/SpaceToStudyTA | https://api.github.com/repos/UA-1023-TAQC/SpaceToStudyTA | closed | [Guest's home page] Verify that a Guest can open the tutor registration pop-up at the What can you do block #1080 | issue Guest test case | https://github.com/ita-social-projects/SpaceToStudy-Client/issues/1080#issue-1871728052

# [TC-ID] : Title of the test

### Priority

Priority label

## Description

The description should tell the tester what they’re going to test and include any other pertinent information such as the test environment, test data, and preconditions/assumptions.

### Precondition

Any preconditions that must be met prior to the test being executed.

## Test Steps

| Step No. | Step description | Input data | Expected result |

|-------------|:-------------|:-----------|:-----|

| 1. | what a tester should do | | what a tester should see when they do that |

| 2. | second | | second expected |

## Expected Result

The expected result tells the tester what they should experience as a result of the test steps.

This is how the tester determines if the test case is a “pass” or “fail”.

| 1.0 | [Guest's home page] Verify that a Guest can open the tutor registration pop-up at the What can you do block #1080 - https://github.com/ita-social-projects/SpaceToStudy-Client/issues/1080#issue-1871728052

# [TC-ID] : Title of the test

### Priority

Priority label

## Description

The description should tell the tester what they’re going to test and include any other pertinent information such as the test environment, test data, and preconditions/assumptions.

### Precondition

Any preconditions that must be met prior to the test being executed.

## Test Steps

| Step No. | Step description | Input data | Expected result |

|-------------|:-------------|:-----------|:-----|

| 1. | what a tester should do | | what a tester should see when they do that |

| 2. | second | | second expected |

## Expected Result

The expected result tells the tester what they should experience as a result of the test steps.

This is how the tester determines if the test case is a “pass” or “fail”.

| test | verify that a guest can open the tutor registration pop up at the what can you do block title of the test priority priority label description the description should tell the tester what they’re going to test and include any other pertinent information such as the test environment test data and preconditions assumptions precondition any preconditions that must be met prior to the test being executed test steps step no step description input data expected result what a tester should do what a tester should see when they do that second second expected expected result the expected result tells the tester what they should experience as a result of the test steps this is how the tester determines if the test case is a “pass” or “fail” | 1 |

56,047 | 14,912,775,615 | IssuesEvent | 2021-01-22 13:12:11 | hazelcast/hazelcast-jet | https://api.github.com/repos/hazelcast/hazelcast-jet | opened | State for job is intermittently corrupted after forceful terminate | defect | State for job is intermittently corrupted if one of members is terminated forcefully and job is restarted from snapshot. It means long running job can fail with that. It fails with exception like:

```

ERROR || - [MasterJobContext] hz.kind_pasteur.cached.thread-11 - Execution of job 'JMS Test source to middle queue', execution 059c-7a24-756f-0002 failed

Start time: 2021-01-20T17:56:27.305

Duration: 00:00:00.046

To see additional job metrics enable JobConfig.storeMetricsAfterJobCompletion

com.hazelcast.jet.JetException: State for job 'JMS Test source to middle queue', execution 059c-7a24-756f-0002 in IMap '__jet.snapshot.059b-def0-66c0-0002.0' is corrupted: it should have 8 entries, but has 7

at com.hazelcast.jet.impl.SnapshotValidator.validateSnapshot(SnapshotValidator.java:60) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.MasterJobContext.rewriteDagWithSnapshotRestore(MasterJobContext.java:361) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.MasterJobContext.lambda$tryStartJob$2(MasterJobContext.java:210) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.JobCoordinationService.lambda$submitToCoordinatorThread$46(JobCoordinationService.java:1039) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.JobCoordinationService.lambda$submitToCoordinatorThread$47(JobCoordinationService.java:1060) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.internal.util.executor.CompletableFutureTask.run(CompletableFutureTask.java:64) [hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.internal.util.executor.CachedExecutorServiceDelegate$Worker.run(CachedExecutorServiceDelegate.java:217) [hazelcast-jet-enterprise-4.4.jar:?]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) [?:1.8.0_272]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) [?:1.8.0_272]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_272]

at com.hazelcast.internal.util.executor.HazelcastManagedThread.executeRun(HazelcastManagedThread.java:76) [hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.internal.util.executor.HazelcastManagedThread.run(HazelcastManagedThread.java:102) [hazelcast-jet-enterprise-4.4.jar:?]

```

We haven't observed this issue with graceful shutdown.

We hit this issue in following soak tests:

- `jms-test`

- `stateful-map-test`

- `snapshot-jms-sink-test`

- `snapshot-jdbc-test` | 1.0 | State for job is intermittently corrupted after forceful terminate - State for job is intermittently corrupted if one of members is terminated forcefully and job is restarted from snapshot. It means long running job can fail with that. It fails with exception like:

```

ERROR || - [MasterJobContext] hz.kind_pasteur.cached.thread-11 - Execution of job 'JMS Test source to middle queue', execution 059c-7a24-756f-0002 failed

Start time: 2021-01-20T17:56:27.305

Duration: 00:00:00.046

To see additional job metrics enable JobConfig.storeMetricsAfterJobCompletion

com.hazelcast.jet.JetException: State for job 'JMS Test source to middle queue', execution 059c-7a24-756f-0002 in IMap '__jet.snapshot.059b-def0-66c0-0002.0' is corrupted: it should have 8 entries, but has 7

at com.hazelcast.jet.impl.SnapshotValidator.validateSnapshot(SnapshotValidator.java:60) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.MasterJobContext.rewriteDagWithSnapshotRestore(MasterJobContext.java:361) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.MasterJobContext.lambda$tryStartJob$2(MasterJobContext.java:210) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.JobCoordinationService.lambda$submitToCoordinatorThread$46(JobCoordinationService.java:1039) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.jet.impl.JobCoordinationService.lambda$submitToCoordinatorThread$47(JobCoordinationService.java:1060) ~[hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.internal.util.executor.CompletableFutureTask.run(CompletableFutureTask.java:64) [hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.internal.util.executor.CachedExecutorServiceDelegate$Worker.run(CachedExecutorServiceDelegate.java:217) [hazelcast-jet-enterprise-4.4.jar:?]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) [?:1.8.0_272]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) [?:1.8.0_272]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_272]

at com.hazelcast.internal.util.executor.HazelcastManagedThread.executeRun(HazelcastManagedThread.java:76) [hazelcast-jet-enterprise-4.4.jar:?]

at com.hazelcast.internal.util.executor.HazelcastManagedThread.run(HazelcastManagedThread.java:102) [hazelcast-jet-enterprise-4.4.jar:?]

```

We haven't observed this issue with graceful shutdown.

We hit this issue in following soak tests:

- `jms-test`

- `stateful-map-test`

- `snapshot-jms-sink-test`

- `snapshot-jdbc-test` | non_test | state for job is intermittently corrupted after forceful terminate state for job is intermittently corrupted if one of members is terminated forcefully and job is restarted from snapshot it means long running job can fail with that it fails with exception like error hz kind pasteur cached thread execution of job jms test source to middle queue execution failed start time duration to see additional job metrics enable jobconfig storemetricsafterjobcompletion com hazelcast jet jetexception state for job jms test source to middle queue execution in imap jet snapshot is corrupted it should have entries but has at com hazelcast jet impl snapshotvalidator validatesnapshot snapshotvalidator java at com hazelcast jet impl masterjobcontext rewritedagwithsnapshotrestore masterjobcontext java at com hazelcast jet impl masterjobcontext lambda trystartjob masterjobcontext java at com hazelcast jet impl jobcoordinationservice lambda submittocoordinatorthread jobcoordinationservice java at com hazelcast jet impl jobcoordinationservice lambda submittocoordinatorthread jobcoordinationservice java at com hazelcast internal util executor completablefuturetask run completablefuturetask java at com hazelcast internal util executor cachedexecutorservicedelegate worker run cachedexecutorservicedelegate java at java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java lang thread run thread java at com hazelcast internal util executor hazelcastmanagedthread executerun hazelcastmanagedthread java at com hazelcast internal util executor hazelcastmanagedthread run hazelcastmanagedthread java we haven t observed this issue with graceful shutdown we hit this issue in following soak tests jms test stateful map test snapshot jms sink test snapshot jdbc test | 0 |

330,381 | 28,372,833,382 | IssuesEvent | 2023-04-12 18:21:05 | apache/airflow | https://api.github.com/repos/apache/airflow | opened | Status of testing Providers that were prepared on April 12, 2023 | kind:meta testing status | ### Body

I have a kind request for all the contributors to the latest provider packages release.

Could you please help us to test the RC versions of the providers?

Let us know in the comment, whether the issue is addressed.

Those are providers that require testing as there were some substantial changes introduced:

## Provider [google: 9.0.0rc2](https://pypi.org/project/apache-airflow-providers-google/9.0.0rc2)

- [ ] [Update DV360 operators to use API v2 (#30326)](https://github.com/apache/airflow/pull/30326): @lwyszomi

- [ ] [Fix dynamic imports in google ads vendored in library (#30544)](https://github.com/apache/airflow/pull/30544): @potiuk

- [ ] [Fix one more dynamic import needed for vendored-in google ads (#30564)](https://github.com/apache/airflow/pull/30564): @potiuk

- [x] [Add deferrable mode to GKEStartPodOperator (#29266)](https://github.com/apache/airflow/pull/29266): @VladaZakharova (Tested in RC1)

- [x] [BigQueryHook list_rows/get_datasets_list can return iterator (#30543)](https://github.com/apache/airflow/pull/30543): @vchiapaikeo (Tested in RC1)

- [x] [Fix cloud build async credentials (#30441)](https://github.com/apache/airflow/pull/30441): @tnk-ysk (Tested in RC1)

## Provider [microsoft.azure: 5.3.1rc2](https://pypi.org/project/apache-airflow-providers-microsoft-azure/5.3.1rc2)

- [ ] [Fix AzureDataFactoryPipelineRunLink UI link generation (#30514)](https://github.com/apache/airflow/pull/30514): @hussein-awala

- [ ] [Fix Azure data factory UI link by load `subscription_id` from `extra__azure__subscriptionId` (#30556)](https://github.com/apache/airflow/pull/30556): @hussein-awala

The guidelines on how to test providers can be found in

[Verify providers by contributors](https://github.com/apache/airflow/blob/main/dev/README_RELEASE_PROVIDER_PACKAGES.md#verify-by-contributors)

All users involved in the PRs:

@VladaZakharova @lwyszomi @hussein-awala @potiuk @tnk-ysk @vchiapaikeo

### Committer

- [X] I acknowledge that I am a maintainer/committer of the Apache Airflow project. | 1.0 | Status of testing Providers that were prepared on April 12, 2023 - ### Body

I have a kind request for all the contributors to the latest provider packages release.

Could you please help us to test the RC versions of the providers?

Let us know in the comment, whether the issue is addressed.

Those are providers that require testing as there were some substantial changes introduced:

## Provider [google: 9.0.0rc2](https://pypi.org/project/apache-airflow-providers-google/9.0.0rc2)

- [ ] [Update DV360 operators to use API v2 (#30326)](https://github.com/apache/airflow/pull/30326): @lwyszomi

- [ ] [Fix dynamic imports in google ads vendored in library (#30544)](https://github.com/apache/airflow/pull/30544): @potiuk

- [ ] [Fix one more dynamic import needed for vendored-in google ads (#30564)](https://github.com/apache/airflow/pull/30564): @potiuk

- [x] [Add deferrable mode to GKEStartPodOperator (#29266)](https://github.com/apache/airflow/pull/29266): @VladaZakharova (Tested in RC1)

- [x] [BigQueryHook list_rows/get_datasets_list can return iterator (#30543)](https://github.com/apache/airflow/pull/30543): @vchiapaikeo (Tested in RC1)

- [x] [Fix cloud build async credentials (#30441)](https://github.com/apache/airflow/pull/30441): @tnk-ysk (Tested in RC1)

## Provider [microsoft.azure: 5.3.1rc2](https://pypi.org/project/apache-airflow-providers-microsoft-azure/5.3.1rc2)

- [ ] [Fix AzureDataFactoryPipelineRunLink UI link generation (#30514)](https://github.com/apache/airflow/pull/30514): @hussein-awala

- [ ] [Fix Azure data factory UI link by load `subscription_id` from `extra__azure__subscriptionId` (#30556)](https://github.com/apache/airflow/pull/30556): @hussein-awala

The guidelines on how to test providers can be found in

[Verify providers by contributors](https://github.com/apache/airflow/blob/main/dev/README_RELEASE_PROVIDER_PACKAGES.md#verify-by-contributors)

All users involved in the PRs:

@VladaZakharova @lwyszomi @hussein-awala @potiuk @tnk-ysk @vchiapaikeo

### Committer

- [X] I acknowledge that I am a maintainer/committer of the Apache Airflow project. | test | status of testing providers that were prepared on april body i have a kind request for all the contributors to the latest provider packages release could you please help us to test the rc versions of the providers let us know in the comment whether the issue is addressed those are providers that require testing as there were some substantial changes introduced provider lwyszomi potiuk potiuk vladazakharova tested in vchiapaikeo tested in tnk ysk tested in provider hussein awala hussein awala the guidelines on how to test providers can be found in all users involved in the prs vladazakharova lwyszomi hussein awala potiuk tnk ysk vchiapaikeo committer i acknowledge that i am a maintainer committer of the apache airflow project | 1 |

141,055 | 21,367,872,258 | IssuesEvent | 2022-04-20 05:07:30 | ProgramEquity/amplify | https://api.github.com/repos/ProgramEquity/amplify | closed | Subtask Send Letter: Checkout button | good first issue screen 7 design epic send letter | **What screen is this?**

## Screen 7: Send Letter

<img width="473" alt="Screen Shot 2022-01-20 at 8 57 08 PM" src="https://user-images.githubusercontent.com/9143339/150470433-9a9111b7-7a21-4d13-963a-e38747558787.png">

## Which component? Which piece of copy or graphic?

The text on the final action button at the bottom

<img width="391" alt="Screen Shot 2022-01-20 at 9 23 55 PM" src="https://user-images.githubusercontent.com/9143339/150470831-11dec387-584e-4325-8db9-78483cfb1ef4.png">

**What is the change propoosed? (add a figma screenshot, follow the workflow here)**

Change words to simplify language but still convey you are doing 2 actions (payment via stripe and sending post)

**Which topic does this educate the constituent around? (add a short description on how its clearer than the original) ?**

_Advocacy values to consider:_

- [ ] Testimonials should be personal

- [x] Language should be simple

- [ ] People are lead by causes and their impact

- [ ] Accessibility across abilities

**What are frontend tasks?** (if theres any tasks needed outside of the template below, pick a different color like blue)

- [ ] Add image here in this file

- [x] Insert copy in this file

_List files that need to be changed next to task_

**CC:** @frontend-team member, @frontend-coordinator, @research-coordinator

--------------------------

For Coordinator

- [ ] add appropriate labels: "good-first-issue", "design", "screen label", "intermediate"

- [ ] assign time label

- [ ] Approved and on project board

| 1.0 | Subtask Send Letter: Checkout button - **What screen is this?**

## Screen 7: Send Letter

<img width="473" alt="Screen Shot 2022-01-20 at 8 57 08 PM" src="https://user-images.githubusercontent.com/9143339/150470433-9a9111b7-7a21-4d13-963a-e38747558787.png">

## Which component? Which piece of copy or graphic?

The text on the final action button at the bottom

<img width="391" alt="Screen Shot 2022-01-20 at 9 23 55 PM" src="https://user-images.githubusercontent.com/9143339/150470831-11dec387-584e-4325-8db9-78483cfb1ef4.png">

**What is the change propoosed? (add a figma screenshot, follow the workflow here)**

Change words to simplify language but still convey you are doing 2 actions (payment via stripe and sending post)

**Which topic does this educate the constituent around? (add a short description on how its clearer than the original) ?**

_Advocacy values to consider:_

- [ ] Testimonials should be personal

- [x] Language should be simple

- [ ] People are lead by causes and their impact

- [ ] Accessibility across abilities

**What are frontend tasks?** (if theres any tasks needed outside of the template below, pick a different color like blue)

- [ ] Add image here in this file

- [x] Insert copy in this file

_List files that need to be changed next to task_

**CC:** @frontend-team member, @frontend-coordinator, @research-coordinator

--------------------------

For Coordinator

- [ ] add appropriate labels: "good-first-issue", "design", "screen label", "intermediate"

- [ ] assign time label

- [ ] Approved and on project board

| non_test | subtask send letter checkout button what screen is this screen send letter img width alt screen shot at pm src which component which piece of copy or graphic the text on the final action button at the bottom img width alt screen shot at pm src what is the change propoosed add a figma screenshot follow the workflow here change words to simplify language but still convey you are doing actions payment via stripe and sending post which topic does this educate the constituent around add a short description on how its clearer than the original advocacy values to consider testimonials should be personal language should be simple people are lead by causes and their impact accessibility across abilities what are frontend tasks if theres any tasks needed outside of the template below pick a different color like blue add image here in this file insert copy in this file list files that need to be changed next to task cc frontend team member frontend coordinator research coordinator for coordinator add appropriate labels good first issue design screen label intermediate assign time label approved and on project board | 0 |

85,087 | 7,960,739,423 | IssuesEvent | 2018-07-13 08:23:37 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | github.com/cockroachdb/cockroach/pkg/ccl/importccl: _null_and_\N_without_escape failed under stress | C-test-failure O-robot | SHA: https://github.com/cockroachdb/cockroach/commits/f818c4c3b946c40839921c72fc1322fb3b385ee6

Parameters:

```

TAGS=

GOFLAGS=-race

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=772409&tab=buildLog

```

=== RUN TestImportData/MYSQLOUTFILE:_null_and_\N_without_escape

I180711 06:32:13.492871 241 storage/replica_proposal.go:203 [n1,s1,r38/1:/{Table/66-Max}] new range lease repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0 following repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0

I180711 06:32:13.751602 2214 ccl/importccl/read_import_proc.go:82 [import-distsql,n1] could not fetch file size; falling back to per-file progress: bad ContentLength: -1

I180711 06:32:14.000654 2187 ccl/importccl/read_import_proc.go:82 [import-distsql,n1] could not fetch file size; falling back to per-file progress: bad ContentLength: -1

I180711 06:32:14.086018 2224 storage/replica_command.go:275 [n1,s1,r39/1:/{Table/67-Max}] initiating a split of this range at key /Table/69 [r40]

I180711 06:32:14.131697 220 storage/replica_proposal.go:203 [n1,s1,r39/1:/{Table/67-Max}] new range lease repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0 following repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0

``` | 1.0 | github.com/cockroachdb/cockroach/pkg/ccl/importccl: _null_and_\N_without_escape failed under stress - SHA: https://github.com/cockroachdb/cockroach/commits/f818c4c3b946c40839921c72fc1322fb3b385ee6

Parameters:

```

TAGS=

GOFLAGS=-race

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=772409&tab=buildLog

```

=== RUN TestImportData/MYSQLOUTFILE:_null_and_\N_without_escape

I180711 06:32:13.492871 241 storage/replica_proposal.go:203 [n1,s1,r38/1:/{Table/66-Max}] new range lease repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0 following repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0

I180711 06:32:13.751602 2214 ccl/importccl/read_import_proc.go:82 [import-distsql,n1] could not fetch file size; falling back to per-file progress: bad ContentLength: -1

I180711 06:32:14.000654 2187 ccl/importccl/read_import_proc.go:82 [import-distsql,n1] could not fetch file size; falling back to per-file progress: bad ContentLength: -1

I180711 06:32:14.086018 2224 storage/replica_command.go:275 [n1,s1,r39/1:/{Table/67-Max}] initiating a split of this range at key /Table/69 [r40]

I180711 06:32:14.131697 220 storage/replica_proposal.go:203 [n1,s1,r39/1:/{Table/67-Max}] new range lease repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0 following repl=(n1,s1):1 seq=3 start=1531290721.658934057,0 epo=1 pro=1531290721.660350222,0

``` | test | github com cockroachdb cockroach pkg ccl importccl null and n without escape failed under stress sha parameters tags goflags race failed test run testimportdata mysqloutfile null and n without escape storage replica proposal go new range lease repl seq start epo pro following repl seq start epo pro ccl importccl read import proc go could not fetch file size falling back to per file progress bad contentlength ccl importccl read import proc go could not fetch file size falling back to per file progress bad contentlength storage replica command go initiating a split of this range at key table storage replica proposal go new range lease repl seq start epo pro following repl seq start epo pro | 1 |

314,869 | 9,603,900,223 | IssuesEvent | 2019-05-10 18:22:52 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Releasing a violation does not show the loading | Priority: Medium Type: Bug | If you click on the release button it does not show the loading bar.

It shows a warning/error:

Your access would be enable within a minutes or 2. Please reboot your computer. | 1.0 | Releasing a violation does not show the loading - If you click on the release button it does not show the loading bar.

It shows a warning/error:

Your access would be enable within a minutes or 2. Please reboot your computer. | non_test | releasing a violation does not show the loading if you click on the release button it does not show the loading bar it shows a warning error your access would be enable within a minutes or please reboot your computer | 0 |

234,730 | 19,253,051,430 | IssuesEvent | 2021-12-09 08:19:47 | MohistMC/Mohist | https://api.github.com/repos/MohistMC/Mohist | closed | [1.16.5] `` | 1.16.5 Wait Needs Testing | <!-- ISSUE_TEMPLATE_1 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

<!-- Thank you for reporting ! Please note that issues can take a lot of time to be fixed and there is no eta.-->

<!-- If you don't know where to upload your logs and crash reports, you can use these websites : -->

<!-- https://gist.github.com (recommended) -->

<!-- https://mclo.gs -->

<!-- https://haste.mohistmc.com -->

<!-- https://pastebin.com -->

<!-- TO FILL THIS TEMPLATE, YOU NEED TO REPLACE THE {} BY WHAT YOU WANT -->

**Minecraft Version :** 1.16.5

**Mohist Version :** 875

**Concerned mod:** https://www.curseforge.com/minecraft/mc-mods/arcanecraft-ii

**Logs :** https://haste.mohistmc.com/dobikucira.properties | 1.0 | [1.16.5] `` - <!-- ISSUE_TEMPLATE_1 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

<!-- Thank you for reporting ! Please note that issues can take a lot of time to be fixed and there is no eta.-->

<!-- If you don't know where to upload your logs and crash reports, you can use these websites : -->

<!-- https://gist.github.com (recommended) -->

<!-- https://mclo.gs -->

<!-- https://haste.mohistmc.com -->

<!-- https://pastebin.com -->

<!-- TO FILL THIS TEMPLATE, YOU NEED TO REPLACE THE {} BY WHAT YOU WANT -->

**Minecraft Version :** 1.16.5

**Mohist Version :** 875

**Concerned mod:** https://www.curseforge.com/minecraft/mc-mods/arcanecraft-ii

**Logs :** https://haste.mohistmc.com/dobikucira.properties | test | important do not delete this line minecraft version mohist version concerned mod logs | 1 |

68,531 | 7,102,981,430 | IssuesEvent | 2018-01-16 01:51:08 | deathlyrage/theisle-bugs | https://api.github.com/repos/deathlyrage/theisle-bugs | closed | Nametags stay on corpses | bug fixed needs testing | Patch: 5272

Server: Personal testing server

Issue: If a player in your group dies, their nametag will stay on their corpse. If the player returns to their corpse, there will be two nametags for everyone in the group. This problem is also apparent for humans.

| 1.0 | Nametags stay on corpses - Patch: 5272

Server: Personal testing server

Issue: If a player in your group dies, their nametag will stay on their corpse. If the player returns to their corpse, there will be two nametags for everyone in the group. This problem is also apparent for humans.

| test | nametags stay on corpses patch server personal testing server issue if a player in your group dies their nametag will stay on their corpse if the player returns to their corpse there will be two nametags for everyone in the group this problem is also apparent for humans | 1 |

172,570 | 6,510,378,645 | IssuesEvent | 2017-08-25 02:54:38 | enforcer574/smashclub | https://api.github.com/repos/enforcer574/smashclub | opened | Past events not showing up | Incident Priority: 3 | User reports that past events are not appearing on the "Events" page. DEMO environment on 8/24 during officer meeting demo. Confirmed that there are past events in the database. | 1.0 | Past events not showing up - User reports that past events are not appearing on the "Events" page. DEMO environment on 8/24 during officer meeting demo. Confirmed that there are past events in the database. | non_test | past events not showing up user reports that past events are not appearing on the events page demo environment on during officer meeting demo confirmed that there are past events in the database | 0 |

103,553 | 12,949,258,386 | IssuesEvent | 2020-07-19 08:21:43 | nikodemus/foolang | https://api.github.com/repos/nikodemus/foolang | opened | direct methods | design feature | Replace "class method" with "direct method": method on the class object itself.

Class methods still exist, but now they can only appear in interfaces, meaning a direct method on the implementing class -- vs. method on the interface object.

(Need to work on the terminology still, but the idea is solid and I've already wanted it a few times.)

| 1.0 | direct methods - Replace "class method" with "direct method": method on the class object itself.

Class methods still exist, but now they can only appear in interfaces, meaning a direct method on the implementing class -- vs. method on the interface object.

(Need to work on the terminology still, but the idea is solid and I've already wanted it a few times.)

| non_test | direct methods replace class method with direct method method on the class object itself class methods still exist but now they can only appear in interfaces meaning a direct method on the implementing class vs method on the interface object need to work on the terminology still but the idea is solid and i ve already wanted it a few times | 0 |

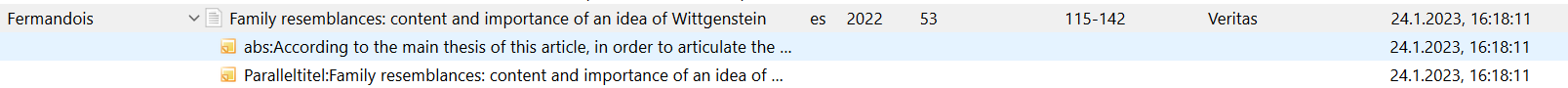

312,792 | 9,553,115,029 | IssuesEvent | 2019-05-02 18:24:16 | phetsims/fraction-matcher | https://api.github.com/repos/phetsims/fraction-matcher | closed | Some previously translated strings are no longer translated | priority:3-medium status:blocks-sim-publication status:ready-for-review | The level selection screen for the published Farsi (Persian) version of Fraction Matcher looks like this:

On the current master version, using locale=fa, it looks like this:

The reason that English words are now appearing is that a number of strings were moved from the fraction-matcher repo to fractions-common during the recent work on the fractions suite, and the translated strings weren't moved over. This should probably be fixed, otherwise the next time Fraction Master is published off of master, it may cause existing translations to fall back to English in several places as seen above.

I'm guessing it would be a couple hours of work max to either propagate the strings manually or write a script to do it. Either @jonathanolson or I could do it, or perhaps someone who we want to get a better understanding of how the translation utility works. Assigning to @ariel-phet for prioritization and assignment. | 1.0 | Some previously translated strings are no longer translated - The level selection screen for the published Farsi (Persian) version of Fraction Matcher looks like this:

On the current master version, using locale=fa, it looks like this:

The reason that English words are now appearing is that a number of strings were moved from the fraction-matcher repo to fractions-common during the recent work on the fractions suite, and the translated strings weren't moved over. This should probably be fixed, otherwise the next time Fraction Master is published off of master, it may cause existing translations to fall back to English in several places as seen above.

I'm guessing it would be a couple hours of work max to either propagate the strings manually or write a script to do it. Either @jonathanolson or I could do it, or perhaps someone who we want to get a better understanding of how the translation utility works. Assigning to @ariel-phet for prioritization and assignment. | non_test | some previously translated strings are no longer translated the level selection screen for the published farsi persian version of fraction matcher looks like this on the current master version using locale fa it looks like this the reason that english words are now appearing is that a number of strings were moved from the fraction matcher repo to fractions common during the recent work on the fractions suite and the translated strings weren t moved over this should probably be fixed otherwise the next time fraction master is published off of master it may cause existing translations to fall back to english in several places as seen above i m guessing it would be a couple hours of work max to either propagate the strings manually or write a script to do it either jonathanolson or i could do it or perhaps someone who we want to get a better understanding of how the translation utility works assigning to ariel phet for prioritization and assignment | 0 |

123,217 | 10,257,333,676 | IssuesEvent | 2019-08-21 19:52:03 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | opened | Feature Test Summary: APSFOUND-267: Liberty support for custom login modules on JCA and JMS connection factories | Feature Test Summary team:Zombie Apocalypse | Please complete the following Feature Test Summary when you have completed all your testing. This will be used as part of the FAT Complete Review.

**Part 1:**

Describe the test strategy & approach for this feature, and describe how the approach verifies the functions delivered by this feature. The description should include the positive and negative testing done, whether all testing is automated, what manual tests exist (if any) and where the tests are stored (source control). Automated testing is expected for all features with manual testing considered an exception to the rule.

> For any feature, be aware that only FAT tests (not unit or BVT) are executed in our cross platform testing. To ensure cross platform testing ensure you have sufficient FAT coverage to verify the feature.

> If delivering tests outside of the standard Liberty FAT framework, do the tests push the results into cognitive testing database (if not, consult with the CSI Team who can provide advice and verify if results are being received)?_

**Automated Functional Acceptance Tests**

_Tests added to com.ibm.ws.rest.handler.validator_fat/fat/src/com/ibm/ws/rest/handler/validator/fat/ValidateDSCustomLoginModuleTest.java_

testJMSConnectionFactoryWithLoginModule - Use the validation REST endpoint to validate a single javax.jms.ConnectionFactory that is configured with a jaasLoginContextEntryRef.

testJMSConnectionFactoryWithLoginModuleNotUsed - Use the validation REST endpoint to validate a single javax.jms.ConnectionFactory that is configured with a jaasLoginContextEntryRef, but isn't used because application authentication is used instead.

testJMSQueueConnectionFactoryWithLoginModule - Use the validation REST endpoint to validate a single javax.jms.QueueConnectionFactory that is configured with a jaasLoginContextEntryRef.

testJMSQueueConnectionFactoryWithLoginModuleNotUsed - Use the validation REST endpoint to validate a single javax.jms.QueueConnectionFactory

that is configured with a jaasLoginContextEntryRef, but isn't used because application authentication is used instead.

testJMSTopicConnectionFactoryWithLoginModule - Use the validation REST endpoint to validate a single javax.jms.TopicConnectionFactory that is configured with a jaasLoginContextEntryRef.

testJMSTopicConnectionFactoryWithLoginModuleNotUsed - Use the validation REST endpoint to validate a single javax.jms.TopicConnectionFactory that is configured with a jaasLoginContextEntryRef, but isn't used because application authentication is used instead.

_Tests added to dev/com.ibm.ws.rest.handler.validator_fat/fat/src/com/ibm/ws/rest/handler/validator/fat/ValidateJCATest.java_

testJaasLoginModuleForContainerAuthWithLoginProperties - Validate a connectionFactory with a container authentication resource reference with login properties, and verify that it uses the login module indicated by the jaasLoginContextEntryRef to log in, supplying it with the login properties.

testJaasLoginModuleForContainerAuthWithoutLoginProperties - Validate a connectionFactory with a container authentication resource reference, and verify that it uses the login module indicated by the jaasLoginContextEntryRef to log in.

_Tests added to in dev/com.ibm.ws.rest.handler.validator_fat/fat/src/com/ibm/ws/rest/handler/validator/fat/ValidateJCATest.java_

testMultipleConnectionFactories - additional connection factory added

**Part 2:**

Collectively as a team you need to assess your confidence in the testing delivered based on the values below. This should be done as a team and not an individual to ensure more eyes are on it and that pressures to deliver quickly are absorbed by the team as a whole.

> Please indicate your confidence in the testing (up to and including FAT) delivered with this feature by selecting one of these values:

> 0 - No automated testing delivered

> 1 - We have minimal automated coverage of the feature including golden paths. There is a relatively high risk that defects or issues could be found in this feature.

> 2 - We have delivered a reasonable automated coverage of the golden paths of this feature but are aware of gaps and extra testing that could be done here. Error/outlying scenarios are not really covered. There are likely risks that issues may exist in the golden paths

> 3 - We have delivered all automated testing we believe is needed for the golden paths of this feature and minimal coverage of the error/outlying scenarios. There is a risk when the feature is used outside the golden paths however we are confident on the golden path. Note: This may still be a valid end state for a feature... things like Beta features may well suffice at this level.

> 4 - We have delivered all automated testing we believe is needed for the golden paths of this feature and have good coverage of the error/outlying scenarios. While more testing of the error/outlying scenarios could be added we believe there is minimal risk here and the cost of providing these is considered higher than the benefit they would provide.

> 5 - We have delivered all automated testing we believe is needed for this feature. The testing covers all golden path cases as well as all the error/outlying scenarios that make sense. We are not aware of any gaps in the testing at this time. No manual testing is required to verify this feature.

> Based on your answer above, for any answer other than a 4 or 5 please provide details of what drove your answer. Please be aware, it may be perfectly reasonable in some scenarios to deliver with any value above. We may accept no automated testing is needed for some features, we may be happy with low levels of testing on samples for instance so please don't feel the need to drive to a 5. We need your honest assessment as a team and the reasoning for why you believe shipping at that level is valid. What are the gaps, what is the risk etc. Please also provide links to the follow on work that is needed to close the gaps (should you deem it needed)

Confidence:

Comments: | 1.0 | Feature Test Summary: APSFOUND-267: Liberty support for custom login modules on JCA and JMS connection factories - Please complete the following Feature Test Summary when you have completed all your testing. This will be used as part of the FAT Complete Review.

**Part 1:**

Describe the test strategy & approach for this feature, and describe how the approach verifies the functions delivered by this feature. The description should include the positive and negative testing done, whether all testing is automated, what manual tests exist (if any) and where the tests are stored (source control). Automated testing is expected for all features with manual testing considered an exception to the rule.

> For any feature, be aware that only FAT tests (not unit or BVT) are executed in our cross platform testing. To ensure cross platform testing ensure you have sufficient FAT coverage to verify the feature.

> If delivering tests outside of the standard Liberty FAT framework, do the tests push the results into cognitive testing database (if not, consult with the CSI Team who can provide advice and verify if results are being received)?_

**Automated Functional Acceptance Tests**

_Tests added to com.ibm.ws.rest.handler.validator_fat/fat/src/com/ibm/ws/rest/handler/validator/fat/ValidateDSCustomLoginModuleTest.java_

testJMSConnectionFactoryWithLoginModule - Use the validation REST endpoint to validate a single javax.jms.ConnectionFactory that is configured with a jaasLoginContextEntryRef.

testJMSConnectionFactoryWithLoginModuleNotUsed - Use the validation REST endpoint to validate a single javax.jms.ConnectionFactory that is configured with a jaasLoginContextEntryRef, but isn't used because application authentication is used instead.

testJMSQueueConnectionFactoryWithLoginModule - Use the validation REST endpoint to validate a single javax.jms.QueueConnectionFactory that is configured with a jaasLoginContextEntryRef.

testJMSQueueConnectionFactoryWithLoginModuleNotUsed - Use the validation REST endpoint to validate a single javax.jms.QueueConnectionFactory

that is configured with a jaasLoginContextEntryRef, but isn't used because application authentication is used instead.

testJMSTopicConnectionFactoryWithLoginModule - Use the validation REST endpoint to validate a single javax.jms.TopicConnectionFactory that is configured with a jaasLoginContextEntryRef.

testJMSTopicConnectionFactoryWithLoginModuleNotUsed - Use the validation REST endpoint to validate a single javax.jms.TopicConnectionFactory that is configured with a jaasLoginContextEntryRef, but isn't used because application authentication is used instead.

_Tests added to dev/com.ibm.ws.rest.handler.validator_fat/fat/src/com/ibm/ws/rest/handler/validator/fat/ValidateJCATest.java_

testJaasLoginModuleForContainerAuthWithLoginProperties - Validate a connectionFactory with a container authentication resource reference with login properties, and verify that it uses the login module indicated by the jaasLoginContextEntryRef to log in, supplying it with the login properties.

testJaasLoginModuleForContainerAuthWithoutLoginProperties - Validate a connectionFactory with a container authentication resource reference, and verify that it uses the login module indicated by the jaasLoginContextEntryRef to log in.

_Tests added to in dev/com.ibm.ws.rest.handler.validator_fat/fat/src/com/ibm/ws/rest/handler/validator/fat/ValidateJCATest.java_

testMultipleConnectionFactories - additional connection factory added

**Part 2:**

Collectively as a team you need to assess your confidence in the testing delivered based on the values below. This should be done as a team and not an individual to ensure more eyes are on it and that pressures to deliver quickly are absorbed by the team as a whole.

> Please indicate your confidence in the testing (up to and including FAT) delivered with this feature by selecting one of these values:

> 0 - No automated testing delivered

> 1 - We have minimal automated coverage of the feature including golden paths. There is a relatively high risk that defects or issues could be found in this feature.

> 2 - We have delivered a reasonable automated coverage of the golden paths of this feature but are aware of gaps and extra testing that could be done here. Error/outlying scenarios are not really covered. There are likely risks that issues may exist in the golden paths

> 3 - We have delivered all automated testing we believe is needed for the golden paths of this feature and minimal coverage of the error/outlying scenarios. There is a risk when the feature is used outside the golden paths however we are confident on the golden path. Note: This may still be a valid end state for a feature... things like Beta features may well suffice at this level.

> 4 - We have delivered all automated testing we believe is needed for the golden paths of this feature and have good coverage of the error/outlying scenarios. While more testing of the error/outlying scenarios could be added we believe there is minimal risk here and the cost of providing these is considered higher than the benefit they would provide.

> 5 - We have delivered all automated testing we believe is needed for this feature. The testing covers all golden path cases as well as all the error/outlying scenarios that make sense. We are not aware of any gaps in the testing at this time. No manual testing is required to verify this feature.

> Based on your answer above, for any answer other than a 4 or 5 please provide details of what drove your answer. Please be aware, it may be perfectly reasonable in some scenarios to deliver with any value above. We may accept no automated testing is needed for some features, we may be happy with low levels of testing on samples for instance so please don't feel the need to drive to a 5. We need your honest assessment as a team and the reasoning for why you believe shipping at that level is valid. What are the gaps, what is the risk etc. Please also provide links to the follow on work that is needed to close the gaps (should you deem it needed)

Confidence: