Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

12,872 | 3,293,697,877 | IssuesEvent | 2015-10-30 20:15:12 | dotnet/wcf | https://api.github.com/repos/dotnet/wcf | closed | X509Store throws NotImplemented for read/Write on Linux | Linux test bug |

Https_ClientCredentialTypeTests.BasicAuthenticationInvalidPwd_throw_MessageSecurityException [FAIL]

Assert.Throws() Failure

Expected: typeof(System.ServiceModel.Security.MessageSecurityException)

Actual: typeof(System.NotImplementedException): The method or operation is not implemented.

... | 1.0 | X509Store throws NotImplemented for read/Write on Linux -

Https_ClientCredentialTypeTests.BasicAuthenticationInvalidPwd_throw_MessageSecurityException [FAIL]

Assert.Throws() Failure

Expected: typeof(System.ServiceModel.Security.MessageSecurityException)

Actual: typeof(System.NotImplementedEx... | test | throws notimplemented for read write on linux https clientcredentialtypetests basicauthenticationinvalidpwd throw messagesecurityexception assert throws failure expected typeof system servicemodel security messagesecurityexception actual typeof system notimplementedexception the... | 1 |

259,982 | 22,582,115,995 | IssuesEvent | 2022-06-28 12:34:22 | rstudio/rstudio | https://api.github.com/repos/rstudio/rstudio | opened | Dropdown menus rendering "under" the editor region in latest Safari on macOS (Backport: Ghost Orchid) | bug macos test backport backport-ghost-orchid | Dropdown menus and tooltips are hiding behind other elements on Safari.

This covers the Ghost Orchid backport of issue #10821. | 1.0 | Dropdown menus rendering "under" the editor region in latest Safari on macOS (Backport: Ghost Orchid) - Dropdown menus and tooltips are hiding behind other elements on Safari.

This covers the Ghost Orchid backport of issue #10821. | test | dropdown menus rendering under the editor region in latest safari on macos backport ghost orchid dropdown menus and tooltips are hiding behind other elements on safari this covers the ghost orchid backport of issue | 1 |

129,638 | 27,528,170,055 | IssuesEvent | 2023-03-06 19:45:40 | pwa-builder/PWABuilder | https://api.github.com/repos/pwa-builder/PWABuilder | closed | [VSCODE] Check icon sizes in snippets | bug :bug: vscode | ### What happened?

I got this two error or warning:

### What do you expect to happen?

We can fix the first error by adding the icon path for 96x icon (which accidentally I found that is exist in y... | 1.0 | [VSCODE] Check icon sizes in snippets - ### What happened?

I got this two error or warning:

### What do you expect to happen?

We can fix the first error by adding the icon path for 96x icon (which... | non_test | check icon sizes in snippets what happened i got this two error or warning what do you expect to happen we can fix the first error by adding the icon path for icon which accidentally i found that is exist in your website shortcuts name the name you woul... | 0 |

726,209 | 24,991,897,712 | IssuesEvent | 2022-11-02 19:34:15 | VEuPathDB/web-eda | https://api.github.com/repos/VEuPathDB/web-eda | closed | Variable with binary outcome cannot be chosen in 2x2 mosaic plot | bug high priority | In MORDOR study, the var study arm has two values, but it cannot be chosen in 2x2 mosaic, however, if I plot it in RxC mosaic plot meanwhile keep all other things the same, it works.

In 2x2 mosaic, I cannot choose var:"study arm" for either x or y axis.

<img width="922" alt="Screen Shot 2022-10-26 at 5 11 38 PM" s... | 1.0 | Variable with binary outcome cannot be chosen in 2x2 mosaic plot - In MORDOR study, the var study arm has two values, but it cannot be chosen in 2x2 mosaic, however, if I plot it in RxC mosaic plot meanwhile keep all other things the same, it works.

In 2x2 mosaic, I cannot choose var:"study arm" for either x or y a... | non_test | variable with binary outcome cannot be chosen in mosaic plot in mordor study the var study arm has two values but it cannot be chosen in mosaic however if i plot it in rxc mosaic plot meanwhile keep all other things the same it works in mosaic i cannot choose var study arm for either x or y axis ... | 0 |

1,487 | 2,550,414,550 | IssuesEvent | 2015-02-01 14:44:35 | Kademi/kademi-dev | https://api.github.com/repos/Kademi/kademi-dev | closed | Allows users to opt-in to groups from contactUsApp | bug enhancement question Ready to Test QA | This will allows users to opt-in to groups from the contact us page the same way users can from the signup page. | 1.0 | Allows users to opt-in to groups from contactUsApp - This will allows users to opt-in to groups from the contact us page the same way users can from the signup page. | test | allows users to opt in to groups from contactusapp this will allows users to opt in to groups from the contact us page the same way users can from the signup page | 1 |

789,453 | 27,790,326,056 | IssuesEvent | 2023-03-17 08:24:01 | HaDuve/TravelCostNative | https://api.github.com/repos/HaDuve/TravelCostNative | opened | add split summary information | Enhancement Frontend 3 - Low priority AAA - Complex | - [ ] aus mehreren reisen die splits zusammenfassen für simplify in einer personen kategorie wo alle splits zwischen dir und einer andern person

- [ ] aus den splits die gesamtsumme berechnen, speichern und standardmässig anzeigen die man (insgesamt und von allen anderen) noch zurück kriegt | 1.0 | add split summary information - - [ ] aus mehreren reisen die splits zusammenfassen für simplify in einer personen kategorie wo alle splits zwischen dir und einer andern person

- [ ] aus den splits die gesamtsumme berechnen, speichern und standardmässig anzeigen die man (insgesamt und von allen anderen) noch zurück kr... | non_test | add split summary information aus mehreren reisen die splits zusammenfassen für simplify in einer personen kategorie wo alle splits zwischen dir und einer andern person aus den splits die gesamtsumme berechnen speichern und standardmässig anzeigen die man insgesamt und von allen anderen noch zurück kriegt | 0 |

208,221 | 16,106,732,166 | IssuesEvent | 2021-04-27 15:44:39 | apache/camel-quarkus | https://api.github.com/repos/apache/camel-quarkus | opened | Document locale limitations in native mode | documentation | We should add a paragraph about locale to https://camel.apache.org/camel-quarkus/latest/user-guide/native-mode.html

It should document the state before and after GraalVM 21.1 https://github.com/oracle/graal/issues/2908

See also https://github.com/quarkusio/quarkus/issues/5244

| 1.0 | Document locale limitations in native mode - We should add a paragraph about locale to https://camel.apache.org/camel-quarkus/latest/user-guide/native-mode.html

It should document the state before and after GraalVM 21.1 https://github.com/oracle/graal/issues/2908

See also https://github.com/quarkusio/quarkus/issues... | non_test | document locale limitations in native mode we should add a paragraph about locale to it should document the state before and after graalvm see also | 0 |

339,402 | 30,446,169,871 | IssuesEvent | 2023-07-15 17:43:33 | natiatabatadzebtu/mid-term-versioning | https://api.github.com/repos/natiatabatadzebtu/mid-term-versioning | opened | 640c851 failed unit and formatting tests. | ci-pytest ci-black | Automatically generated message

640c8517801ba0baab4fcca90ce913ede6618f37 failed unit and formatting tests.

Pytest report: https://natiatabatadzebtu.github.io/mid-term-versioning-ci/640c8517801ba0baab4fcca90ce913ede6618f37-1689442899/pytest.html

Black report: https://natiatabatadzebtu.github.io/mid-term-v... | 1.0 | 640c851 failed unit and formatting tests. - Automatically generated message

640c8517801ba0baab4fcca90ce913ede6618f37 failed unit and formatting tests.

Pytest report: https://natiatabatadzebtu.github.io/mid-term-versioning-ci/640c8517801ba0baab4fcca90ce913ede6618f37-1689442899/pytest.html

Black report: ht... | test | failed unit and formatting tests automatically generated message failed unit and formatting tests pytest report black report automatically generated message failed unit and formatting tests pytest report black report automatically generated message f... | 1 |

52,680 | 6,650,276,470 | IssuesEvent | 2017-09-28 15:47:17 | 18F/openFEC-web-app | https://api.github.com/repos/18F/openFEC-web-app | closed | Request: Provide context for what is included in the data tables | Work: Design | @noahmanger commented on [Tue Jun 21 2016](https://github.com/18F/FEC/issues/370)

Another comment shared offline:

I think this raises an interesting question about if we want to bring in the "About this da... | 1.0 | Request: Provide context for what is included in the data tables - @noahmanger commented on [Tue Jun 21 2016](https://github.com/18F/FEC/issues/370)

Another comment shared offline:

I think this raises an i... | non_test | request provide context for what is included in the data tables noahmanger commented on another comment shared offline i think this raises an interesting question about if we want to bring in the about this data pattern from the overviews to the data table pages jenniferthibault commented o... | 0 |

93,171 | 8,402,803,114 | IssuesEvent | 2018-10-11 07:58:13 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed test: TestStoreRangeMergeWatcher | C-test-failure O-robot | The following tests appear to have failed on release-2.1 (test): TestStoreRangeMergeWatcher/inject-failures=false, TestStoreRangeMergeWatcher

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestStoreRangeMergeWatcher).

[#958017](https://teamcity.cockroach... | 1.0 | teamcity: failed test: TestStoreRangeMergeWatcher - The following tests appear to have failed on release-2.1 (test): TestStoreRangeMergeWatcher/inject-failures=false, TestStoreRangeMergeWatcher

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestStoreRange... | test | teamcity failed test teststorerangemergewatcher the following tests appear to have failed on release test teststorerangemergewatcher inject failures false teststorerangemergewatcher you may want to check teststorerangemergewatcher fail test teststorerangemergewatcher test ended in... | 1 |

322,161 | 9,813,712,419 | IssuesEvent | 2019-06-13 08:38:03 | WoWManiaUK/Blackwing-Lair | https://api.github.com/repos/WoWManiaUK/Blackwing-Lair | opened | [Npc] Julak-Doom - Twilight highlands | Low Priority zone 80-85 Cata | **Links:**

Npc - https://www.wowhead.com/npc=50089/julak-doom

Spell - https://www.wowhead.com/spell=93621

from WoWHead or our Armory

**What is happening:**

Julak-Doom not killable due to party wide mind control

**What should happen:**

Should not control whole party just a single target

... | 1.0 | [Npc] Julak-Doom - Twilight highlands - **Links:**

Npc - https://www.wowhead.com/npc=50089/julak-doom

Spell - https://www.wowhead.com/spell=93621

from WoWHead or our Armory

**What is happening:**

Julak-Doom not killable due to party wide mind control

**What should happen:**

Should not control whole party j... | non_test | julak doom twilight highlands links npc spell from wowhead or our armory what is happening julak doom not killable due to party wide mind control what should happen should not control whole party just a single target | 0 |

233 | 2,659,742,846 | IssuesEvent | 2015-03-18 22:59:51 | hammerlab/pileup.js | https://api.github.com/repos/hammerlab/pileup.js | closed | Update to flow 0.6.0 | process | It includes support for [bounded polymorphism][1], which would be useful for `ContigInterval` (which can have either `number` or `string` contigs).

[1]: http://flowtype.org/blog/2015/03/12/Bounded-Polymorphism.html | 1.0 | Update to flow 0.6.0 - It includes support for [bounded polymorphism][1], which would be useful for `ContigInterval` (which can have either `number` or `string` contigs).

[1]: http://flowtype.org/blog/2015/03/12/Bounded-Polymorphism.html | non_test | update to flow it includes support for which would be useful for contiginterval which can have either number or string contigs | 0 |

16,788 | 3,561,367,613 | IssuesEvent | 2016-01-23 19:05:03 | tgstation/-tg-station | https://api.github.com/repos/tgstation/-tg-station | closed | Gangs can apparently only have one Dominator | Bug Needs Reproducing/Testing | Said over OOC and on the forums, needs reproducing/testing. | 1.0 | Gangs can apparently only have one Dominator - Said over OOC and on the forums, needs reproducing/testing. | test | gangs can apparently only have one dominator said over ooc and on the forums needs reproducing testing | 1 |

286,234 | 24,732,234,556 | IssuesEvent | 2022-10-20 18:37:48 | dotnet/source-build | https://api.github.com/repos/dotnet/source-build | closed | xunit smoke-test flaky: "The library 'libhostpolicy.so' required to execute the application was not found" | area-ci-testing | https://dev.azure.com/dnceng/internal/_build/results?buildId=715168&view=logs&j=e242d376-9fed-565d-2a2a-c4e65b5ab60e&t=a2b6a900-d726-529e-22d8-1a91fa385f64&l=253

This happened during tarball smoke-test in a `fedora30 Offline Portable` job:

```

starting language C#, type xunit

running new

The template "xUni... | 1.0 | xunit smoke-test flaky: "The library 'libhostpolicy.so' required to execute the application was not found" - https://dev.azure.com/dnceng/internal/_build/results?buildId=715168&view=logs&j=e242d376-9fed-565d-2a2a-c4e65b5ab60e&t=a2b6a900-d726-529e-22d8-1a91fa385f64&l=253

This happened during tarball smoke-test in a `... | test | xunit smoke test flaky the library libhostpolicy so required to execute the application was not found this happened during tarball smoke test in a offline portable job starting language c type xunit running new the template xunit test project was created successfully running res... | 1 |

226,874 | 18,045,932,638 | IssuesEvent | 2021-09-18 22:29:53 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.pfc.test.sanity_base.NEVER_RETRACT_01B JUnit | Test_9999 logicmoo.pfc.test.sanity_base unit_test NEVER_RETRACT_01B | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif never_retract_01b.pfc)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/issues?q=is%3Aissue+label%3ANEVER_RETRACT_01B

GITLAB: http... | 3.0 | logicmoo.pfc.test.sanity_base.NEVER_RETRACT_01B JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif never_retract_01b.pfc)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/iss... | test | logicmoo pfc test sanity base never retract junit cd var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base timeout foreground preserve status s sigkill k lmoo clif never retract pfc gh master issue finfo issue search gitlab latest this build github ... | 1 |

6,354 | 2,839,331,002 | IssuesEvent | 2015-05-27 13:15:02 | dojo/loader | https://api.github.com/repos/dojo/loader | opened | Test loader configuration options | tests | ## Task

Write tests exercising the loader's configuration options.

## Loader Functional Testing Pattern

Write loader tests as functional tests to provide a clean environment in which the loader can operate. Whenever possible use the pattern outlined in [this wiki](../wiki/Loader Functional Testing Pattern) for in... | 1.0 | Test loader configuration options - ## Task

Write tests exercising the loader's configuration options.

## Loader Functional Testing Pattern

Write loader tests as functional tests to provide a clean environment in which the loader can operate. Whenever possible use the pattern outlined in [this wiki](../wiki/Loade... | test | test loader configuration options task write tests exercising the loader s configuration options loader functional testing pattern write loader tests as functional tests to provide a clean environment in which the loader can operate whenever possible use the pattern outlined in wiki loader function... | 1 |

228,064 | 18,153,975,735 | IssuesEvent | 2021-09-26 19:01:50 | python-discord/bot | https://api.github.com/repos/python-discord/bot | closed | Write unit tests for `bot/cogs/tags.py` | p: 3 - low a: tests | Write unit tests for [`bot/cogs/tags.py`](../blob/master/bot/cogs/tags.py).

## Implementation details

Please make sure to read the general information in the [meta issue](553) and the [testing README](../blob/master/tests/README.md). We are aiming for a 100% [branch coverage](https://coverage.readthedocs.io/en/stable/... | 1.0 | Write unit tests for `bot/cogs/tags.py` - Write unit tests for [`bot/cogs/tags.py`](../blob/master/bot/cogs/tags.py).

## Implementation details

Please make sure to read the general information in the [meta issue](553) and the [testing README](../blob/master/tests/README.md). We are aiming for a 100% [branch coverage](... | test | write unit tests for bot cogs tags py write unit tests for blob master bot cogs tags py implementation details please make sure to read the general information in the and the blob master tests readme md we are aiming for a for this file but if you think that is not possible please di... | 1 |

54,345 | 6,379,966,539 | IssuesEvent | 2017-08-02 15:44:22 | fossasia/phimpme-android | https://api.github.com/repos/fossasia/phimpme-android | opened | Add more test condition in CameraActivity. | Testing | **Actual Behaviour**

Currently, the camera activity tests only exposure and to take a photo.

**Expected Behaviour**

Add more testing condition in the camera activity.

**Would you like to work on the issue?**

Yes.

| 1.0 | Add more test condition in CameraActivity. - **Actual Behaviour**

Currently, the camera activity tests only exposure and to take a photo.

**Expected Behaviour**

Add more testing condition in the camera activity.

**Would you like to work on the issue?**

Yes.

| test | add more test condition in cameraactivity actual behaviour currently the camera activity tests only exposure and to take a photo expected behaviour add more testing condition in the camera activity would you like to work on the issue yes | 1 |

180,211 | 13,926,254,732 | IssuesEvent | 2020-10-21 18:01:05 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/monitoring/elasticsearch/nodes·js - Monitoring app Elasticsearch nodes listing with only online nodes "before all" hook for "should have an Elasticsearch Cluster Summary Status with correct info" | Team:Monitoring failed-test | A test failed on a tracked branch

```

Error: retry.try timeout: TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="elasticsearchNodesListingPage"])

Wait timed out after 10009ms

at /dev/shm/workspace/kibana/node_modules/selenium-webdriver/lib/webdriver.js:842:17

at process._tickCa... | 1.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/monitoring/elasticsearch/nodes·js - Monitoring app Elasticsearch nodes listing with only online nodes "before all" hook for "should have an Elasticsearch Cluster Summary Status with correct info" - A test failed on a tracked branch

```

Error: ... | test | failing test chrome x pack ui functional tests x pack test functional apps monitoring elasticsearch nodes·js monitoring app elasticsearch nodes listing with only online nodes before all hook for should have an elasticsearch cluster summary status with correct info a test failed on a tracked branch error ... | 1 |

418,383 | 12,197,251,693 | IssuesEvent | 2020-04-29 20:26:38 | eecs-autograder/autograder-server | https://api.github.com/repos/eecs-autograder/autograder-server | closed | Don't include MEDIA_ROOT in output file paths saved in database | priority-2-low refactoring | This causes issues with student test suite result setup output when moving data from production to dev environments. | 1.0 | Don't include MEDIA_ROOT in output file paths saved in database - This causes issues with student test suite result setup output when moving data from production to dev environments. | non_test | don t include media root in output file paths saved in database this causes issues with student test suite result setup output when moving data from production to dev environments | 0 |

267,333 | 23,291,987,509 | IssuesEvent | 2022-08-06 01:46:59 | void-linux/void-packages | https://api.github.com/repos/void-linux/void-packages | closed | efibootmgr weird output | bug needs-testing | ### Is this a new report?

Yes

### System Info

Void 5.10.109_1 x86_64 AuthenticAMD notuptodate rrmFFFFF

### Package(s) Affected

efibootmgr-18_1

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

_No response_

### Expected behaviour

When execute efibootmgr -v on termin... | 1.0 | efibootmgr weird output - ### Is this a new report?

Yes

### System Info

Void 5.10.109_1 x86_64 AuthenticAMD notuptodate rrmFFFFF

### Package(s) Affected

efibootmgr-18_1

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

_No response_

### Expected behaviour

When execu... | test | efibootmgr weird output is this a new report yes system info void authenticamd notuptodate rrmfffff package s affected efibootmgr does a report exist for this bug with the project s home upstream and or another distro no response expected behaviour when execute efib... | 1 |

211,833 | 16,371,774,289 | IssuesEvent | 2021-05-15 09:12:52 | LockTech/cerberus | https://api.github.com/repos/LockTech/cerberus | opened | Improve testing for `api/src/lib` | api enhancement test | The API's `lib` directory contains a number of files which would benefit from tests to assert their functionality. | 1.0 | Improve testing for `api/src/lib` - The API's `lib` directory contains a number of files which would benefit from tests to assert their functionality. | test | improve testing for api src lib the api s lib directory contains a number of files which would benefit from tests to assert their functionality | 1 |

172,404 | 13,305,076,866 | IssuesEvent | 2020-08-25 17:57:22 | aeternity/aeternity | https://api.github.com/repos/aeternity/aeternity | opened | aehttp_sc_SUITE failure: timeout waiting for channel `open` messages | area/tests kind/bug | The `aehttp_sc_SUITE:sc_ws_min_depth_is_modifiable/1` test case fails with a timeout - at least in some runs.

```

=== Reason: {timeout,{messages,[{<0.9168.0>,websocket_event,channel,

update,

#{<<"jsonrpc">> => <<"2.0">>,

... | 1.0 | aehttp_sc_SUITE failure: timeout waiting for channel `open` messages - The `aehttp_sc_SUITE:sc_ws_min_depth_is_modifiable/1` test case fails with a timeout - at least in some runs.

```

=== Reason: {timeout,{messages,[{<0.9168.0>,websocket_event,channel,

update,

... | test | aehttp sc suite failure timeout waiting for channel open messages the aehttp sc suite sc ws min depth is modifiable test case fails with a timeout at least in some runs reason timeout messages websocket event channel update ... | 1 |

61,240 | 17,023,644,742 | IssuesEvent | 2021-07-03 03:04:51 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Islands don't render in riverbank multipolygons | Component: opencyclemap Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 9.56pm, Friday, 15th October 2010]**

For instance:

http://www.openstreetmap.org/?lat=51.746065&lon=-1.256518&zoom=18&layers=C

There's an island on the other layers | 1.0 | Islands don't render in riverbank multipolygons - **[Submitted to the original trac issue database at 9.56pm, Friday, 15th October 2010]**

For instance:

http://www.openstreetmap.org/?lat=51.746065&lon=-1.256518&zoom=18&layers=C

There's an island on the other layers | non_test | islands don t render in riverbank multipolygons for instance there s an island on the other layers | 0 |

768,677 | 26,975,802,465 | IssuesEvent | 2023-02-09 09:28:15 | ita-social-projects/TeachUA | https://api.github.com/repos/ita-social-projects/TeachUA | closed | [Челендж] Using Invalid language in "Челендж" creating | bug Backend Priority: High Actual | Environment: Windows 10 Pro 21H1,OS build19043.1586, Google Chrome, version 99.0.4844.84.

Reproducible: always.

Build found:last commit

Preconditions

Log in as an administrator on https://speak-ukrainian.org.ua/dev/

Steps to reproduce"

1. Click on 'Aдміністрування'

2. Click on 'Додати Челендж'

3. Fill in all ... | 1.0 | [Челендж] Using Invalid language in "Челендж" creating - Environment: Windows 10 Pro 21H1,OS build19043.1586, Google Chrome, version 99.0.4844.84.

Reproducible: always.

Build found:last commit

Preconditions

Log in as an administrator on https://speak-ukrainian.org.ua/dev/

Steps to reproduce"

1. Click on 'Aдміні... | non_test | using invalid language in челендж creating environment windows pro os google chrome version reproducible always build found last commit preconditions log in as an administrator on steps to reproduce click on aдміністрування click on додати челендж fill in all requir... | 0 |

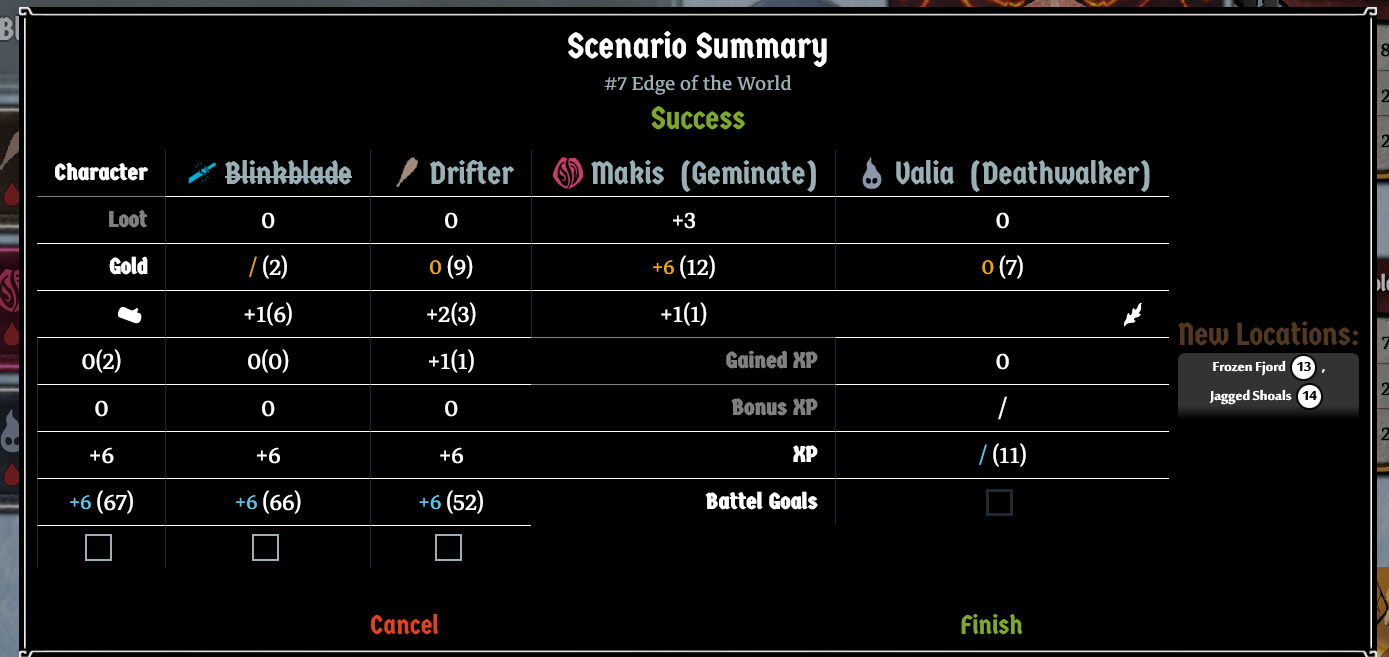

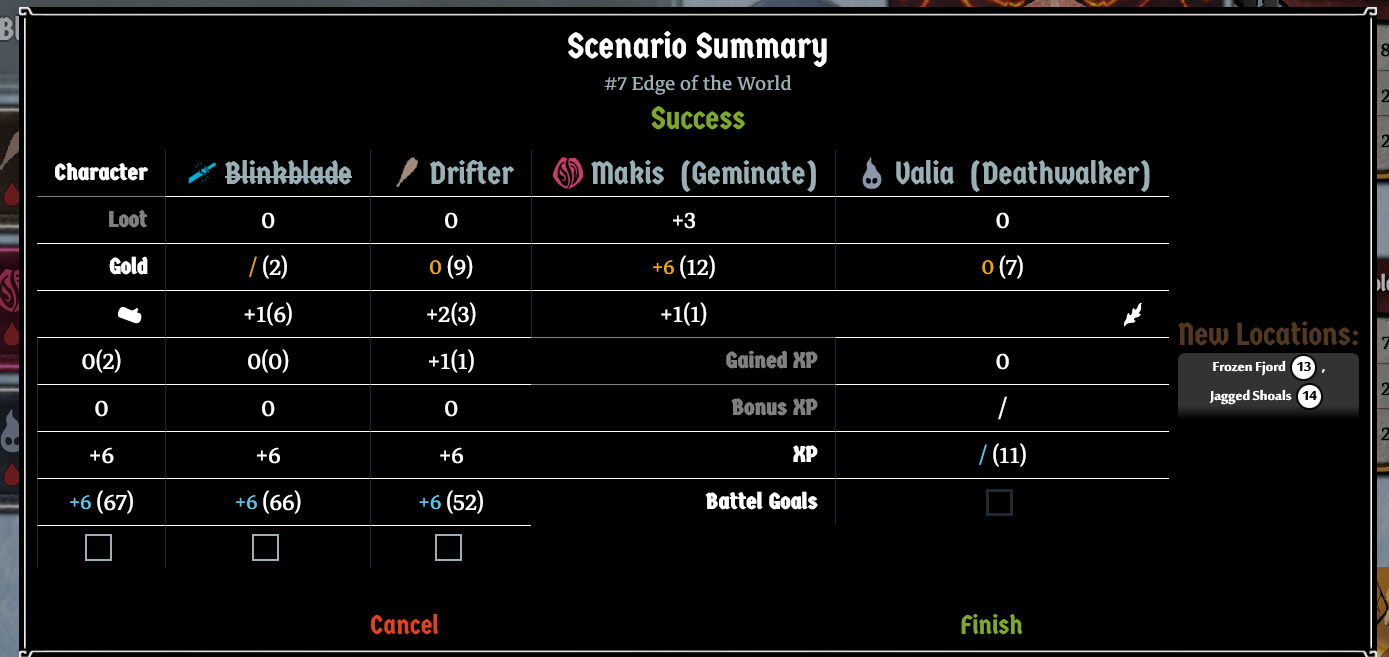

329,405 | 28,240,343,843 | IssuesEvent | 2023-04-06 06:33:14 | Lurkars/gloomhavensecretariat | https://api.github.com/repos/Lurkars/gloomhavensecretariat | closed | Display issues on scenario summary | bug to test | There are some display issues when one or more characters are absent in scenario summary. In the example below, Blinkblade is absent.

| 1.0 | Display issues on scenario summary - There are some display issues when one or more characters are absent in scenario summary. In the example below, Blinkblade is absent.

| test | display issues on scenario summary there are some display issues when one or more characters are absent in scenario summary in the example below blinkblade is absent | 1 |

32,003 | 4,732,641,742 | IssuesEvent | 2016-10-19 08:34:17 | Kademi/kademi-dev | https://api.github.com/repos/Kademi/kademi-dev | closed | Leadman: Allow to save custom fields on a lead profile page | enhancement Ready to Test - Dev | This should populate customs fields on a group | 1.0 | Leadman: Allow to save custom fields on a lead profile page - This should populate customs fields on a group | test | leadman allow to save custom fields on a lead profile page this should populate customs fields on a group | 1 |

444,266 | 31,030,154,069 | IssuesEvent | 2023-08-10 11:56:27 | appsmithorg/appsmith-docs | https://api.github.com/repos/appsmithorg/appsmith-docs | opened | [Docs]: Setup Server-side Filtering on Table | Documentation User Education Pod | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Documentation Link

_No response_

### Discord/slack/intercom Link

_No response_

### Describe the problem and improvement.

Setup Server-side Filtering on Table | 1.0 | [Docs]: Setup Server-side Filtering on Table - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Documentation Link

_No response_

### Discord/slack/intercom Link

_No response_

### Describe the problem and improvement.

Setup Server-side Filtering on Table | non_test | setup server side filtering on table is there an existing issue for this i have searched the existing issues documentation link no response discord slack intercom link no response describe the problem and improvement setup server side filtering on table | 0 |

273,354 | 23,748,761,197 | IssuesEvent | 2022-08-31 18:28:30 | phetsims/molecule-shapes | https://api.github.com/repos/phetsims/molecule-shapes | closed | Uncaught Error: Assertion failed: tried to removeListener on something that wasn't a listener | status:ready-for-review type:automated-testing | From CT:

```

molecule-shapes-basics : fuzz : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1661714801238/molecule-shapes-basics/molecule-shapes-basics_en.html?continuousTest=%7B%22test%22%3A%5B%22molecule-shapes-basics%22%2C%22fuzz%22%2C%22unbuilt%22%5D%2C%22snapshotName%22%3A%22snapshot-16617... | 1.0 | Uncaught Error: Assertion failed: tried to removeListener on something that wasn't a listener - From CT:

```

molecule-shapes-basics : fuzz : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1661714801238/molecule-shapes-basics/molecule-shapes-basics_en.html?continuousTest=%7B%22test%22%3A%5B%22mo... | test | uncaught error assertion failed tried to removelistener on something that wasn t a listener from ct molecule shapes basics fuzz unbuilt query brand phet ea fuzz memorylimit uncaught error assertion failed tried to removelistener on something that wasn t a listener error assertion failed tr... | 1 |

115,350 | 9,792,190,065 | IssuesEvent | 2019-06-10 16:46:40 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Global Registry Notary cpu and memory reservation doesn't work | [zube]: To Test alpha area/registry area/ui kind/bug-qa team/ui | rancher/rancher:master

Steps:

1. Change the cpu reservation of Notary to 450m

2. Enable Global Registry

3. Check the reservation on Notary workload

Results:

It's not 450m which I configured | 1.0 | Global Registry Notary cpu and memory reservation doesn't work - rancher/rancher:master

Steps:

1. Change the cpu reservation of Notary to 450m

2. Enable Global Registry

3. Check the reservation on Notary workload

Results:

It's not 450m which I configured | test | global registry notary cpu and memory reservation doesn t work rancher rancher master steps change the cpu reservation of notary to enable global registry check the reservation on notary workload results it s not which i configured | 1 |

285,942 | 24,708,812,885 | IssuesEvent | 2022-10-19 21:42:54 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | DISABLED test_comprehensive_addmm_cuda_complex64 (__main__.TestDecompCUDA) | triaged module: flaky-tests skipped module: primTorch module: decompositions | Platforms: rocm

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_comprehensive_addmm_cuda_complex64&suite=TestDecompCUDA) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/8990116489).

Over the past 3 hours, it has be... | 1.0 | DISABLED test_comprehensive_addmm_cuda_complex64 (__main__.TestDecompCUDA) - Platforms: rocm

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_comprehensive_addmm_cuda_complex64&suite=TestDecompCUDA) and the most recent trunk [workflow logs](https://g... | test | disabled test comprehensive addmm cuda main testdecompcuda platforms rocm this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes debugging instructions after clicking... | 1 |

120,882 | 10,138,262,019 | IssuesEvent | 2019-08-02 17:28:18 | GeoDaCenter/geoda | https://api.github.com/repos/GeoDaCenter/geoda | closed | Linux Crash: Weights Manager w/ Variable Grouping Editor | OpSys-Linux to be tested | From:

Details:

os: 3-4-19

vs: 1-12-1-201

/usr/local/geoda/web_plugins/logger.txt

Click GdaFrame::OnNewProject

ConnectDatasourceDlg::InitSamplePanel()

Check auto update:

AutoUpdate::CheckUpdate()

AutoUpdate::GetCheckList()

AutoUpdate::ReadUrlContent()

Entering ConnectDatasourceDlg::OnOkClick

ConnectDatasourceDlg::Crea... | 1.0 | Linux Crash: Weights Manager w/ Variable Grouping Editor - From:

Details:

os: 3-4-19

vs: 1-12-1-201

/usr/local/geoda/web_plugins/logger.txt

Click GdaFrame::OnNewProject

ConnectDatasourceDlg::InitSamplePanel()

Check auto update:

AutoUpdate::CheckUpdate()

AutoUpdate::GetCheckList()

AutoUpdate::ReadUrlContent()

Entering... | test | linux crash weights manager w variable grouping editor from details os vs usr local geoda web plugins logger txt click gdaframe onnewproject connectdatasourcedlg initsamplepanel check auto update autoupdate checkupdate autoupdate getchecklist autoupdate readurlcontent entering con... | 1 |

24,589 | 4,099,348,334 | IssuesEvent | 2016-06-03 12:19:19 | elastic/logstash | https://api.github.com/repos/elastic/logstash | closed | branch 5.0 core tests failing | test failure | ERROR: type should be string, got "https://travis-ci.org/elastic/logstash/builds/134732394\r\n\r\n```\r\nrake test:install-core 102.80s user 5.56s system 59% cpu 3:03.54 total\r\n--- jar coordinate com.fasterxml.jackson.core:jackson-annotations already loaded with version 2.7.1 - omit version 2.7.0\r\n--- jar coordinate com.fasterxml.jackson.core:jackson-databind already loaded with version 2.7.1 - omit version 2.7.1-1\r\nUsing Accessor#strict_set for specs\r\nrake aborted!\r\nNameError: uninitialized constant LogStash::Api::RackApp::ApiErrorHandler\r\n/Users/joaoduarte/projects/logstash/logstash-core/spec/api/lib/rack_app_spec.rb:26:in `(root)'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/example_group.rb:325:in `subclass'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/example_group.rb:219:in `describe'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/dsl.rb:41:in `describe'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/dsl.rb:79:in `describe'\r\n/Users/joaoduarte/projects/logstash/logstash-core/spec/api/lib/rack_app_spec.rb:4:in `(root)'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/configuration.rb:1:in `(root)'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/configuration.rb:1105:in `load_spec_files'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/configuration.rb:1105:in `load_spec_files'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/runner.rb:96:in `setup'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/runner.rb:84:in `run'\r\n/Users/joaoduarte/projects/logstash/vendor/bundle/jruby/1.9/gems/rspec-core-3.1.7/lib/rspec/core/runner.rb:69:in `run'\r\n/Users/joaoduarte/projects/logstash/rakelib/test.rake:42:in `(root)'\r\nTasks: TOP => test:core\r\n```\r\n\r\n@andrewvc I believe ef18693 is missing in 5.0?" | 1.0 | branch 5.0 core tests failing - https://travis-ci.org/elastic/logstash/builds/134732394

```

rake test:install-core 102.80s user 5.56s system 59% cpu 3:03.54 total

--- jar coordinate com.fasterxml.jackson.core:jackson-annotations already loaded with version 2.7.1 - omit version 2.7.0

--- jar coordinate com.faster... | test | branch core tests failing rake test install core user system cpu total jar coordinate com fasterxml jackson core jackson annotations already loaded with version omit version jar coordinate com fasterxml jackson core jackson databind already loaded with version ... | 1 |

144,414 | 11,615,652,749 | IssuesEvent | 2020-02-26 14:32:35 | JohanKJIP/algorithms | https://api.github.com/repos/JohanKJIP/algorithms | closed | Testcase for requirement 6 for dijkstra's | test | Requirement 6: The time complexity should be O(Elog(V)) | 1.0 | Testcase for requirement 6 for dijkstra's - Requirement 6: The time complexity should be O(Elog(V)) | test | testcase for requirement for dijkstra s requirement the time complexity should be o elog v | 1 |

17,449 | 3,618,107,763 | IssuesEvent | 2016-02-08 09:52:58 | backbee/backbee-standard | https://api.github.com/repos/backbee/backbee-standard | closed | [PAGE MANAGEMENET] Disable contextual menu in page management tree | enhancement To test | Disable contextual menu in page management tree | 1.0 | [PAGE MANAGEMENET] Disable contextual menu in page management tree - Disable contextual menu in page management tree | test | disable contextual menu in page management tree disable contextual menu in page management tree | 1 |

4,150 | 2,711,585,894 | IssuesEvent | 2015-04-09 07:41:09 | IDgis/geo-publisher-test | https://api.github.com/repos/IDgis/geo-publisher-test | closed | Lagen / lagen aanpassen : zit in de groep, wordt niet getoond | enhancement readyfortest | Als ik een laag in een groep heb geplaatst moet hier weergegeven worden dat hij in een groep zit.

zie Laag Aardgastransportleidingen aanpassen | 1.0 | Lagen / lagen aanpassen : zit in de groep, wordt niet getoond - Als ik een laag in een groep heb geplaatst moet hier weergegeven worden dat hij in een groep zit.

zie Laag Aardgastransportleidingen aanpassen | test | lagen lagen aanpassen zit in de groep wordt niet getoond als ik een laag in een groep heb geplaatst moet hier weergegeven worden dat hij in een groep zit zie laag aardgastransportleidingen aanpassen | 1 |

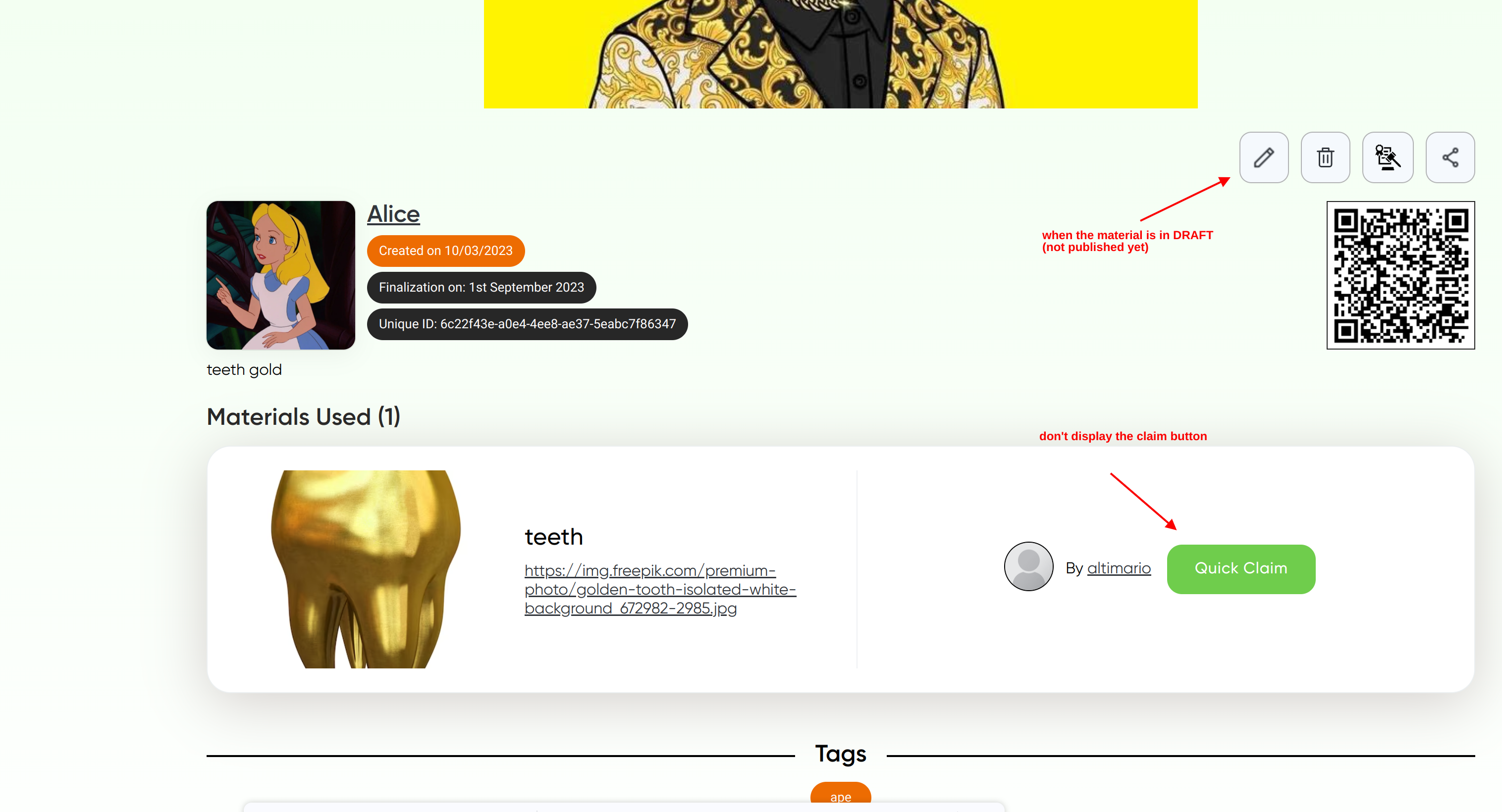

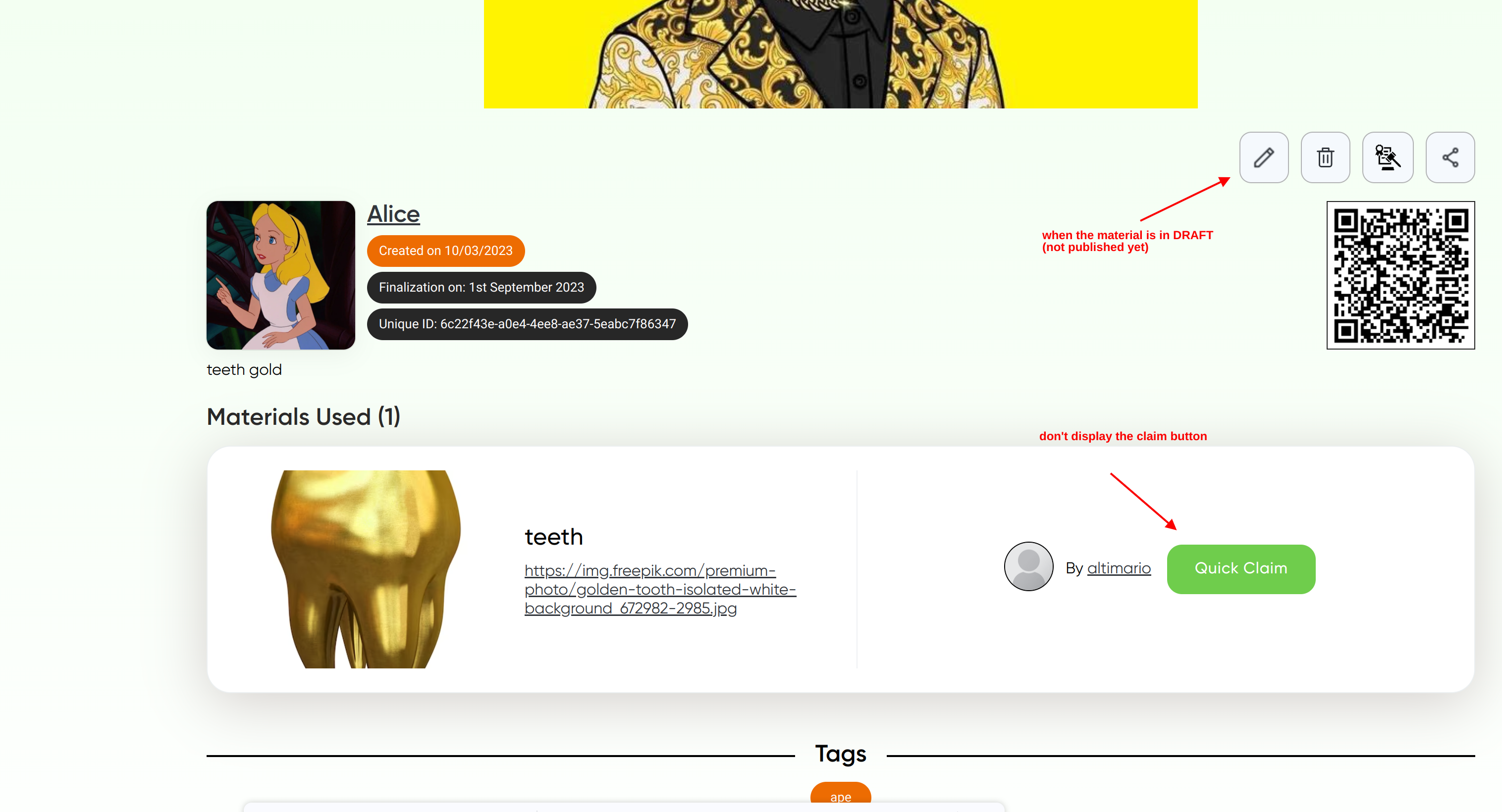

784,708 | 27,582,909,844 | IssuesEvent | 2023-03-08 17:25:43 | eLearningDAO/POCRE | https://api.github.com/repos/eLearningDAO/POCRE | closed | Claim material and share link only for published creation | enhancement low priority | The check (if claimable/shareable or not) should also be done at API level as well.

1) the claim button only for published ~~material~~ creation

2) the sharing function only for published ~~material~~ crea... | 1.0 | Claim material and share link only for published creation - The check (if claimable/shareable or not) should also be done at API level as well.

1) the claim button only for published ~~material~~ creation

... | non_test | claim material and share link only for published creation the check if claimable shareable or not should also be done at api level as well the claim button only for published material creation the sharing function only for published material creation | 0 |

57,690 | 6,554,042,850 | IssuesEvent | 2017-09-06 02:50:23 | easydigitaldownloads/edd-free-downloads | https://api.github.com/repos/easydigitaldownloads/edd-free-downloads | closed | File downloads fail on mobile when product has variable prices and file is assigned to price | Bug Has PR Needs Testing | File downloads fail on mobile when the following conditions are true:

- variable prices enabled

- file assigned to specific price

- price option is free

Disabling variable prices fixes the issue.

See https://secure.helpscout.net/conversation/374970730/60426/?folderId=180505 | 1.0 | File downloads fail on mobile when product has variable prices and file is assigned to price - File downloads fail on mobile when the following conditions are true:

- variable prices enabled

- file assigned to specific price

- price option is free

Disabling variable prices fixes the issue.

See https://secure... | test | file downloads fail on mobile when product has variable prices and file is assigned to price file downloads fail on mobile when the following conditions are true variable prices enabled file assigned to specific price price option is free disabling variable prices fixes the issue see | 1 |

296,006 | 25,521,385,741 | IssuesEvent | 2022-11-28 20:49:02 | rancher/qa-tasks | https://api.github.com/repos/rancher/qa-tasks | closed | Add support for provisioning k3s hardened Rancher managed Custom clusters | team/area2 [zube]: QA Review area/automation-test | ### Issue Description

When provisioning clusters, we should have automated tests to harden the clusters. For security purposes, this should be seen as the default, so tests should be added to our framework

---

- [x] Deploy a k3s hardened local cluster HA rancher deploy - incorporated existing hardened k3s cluste... | 1.0 | Add support for provisioning k3s hardened Rancher managed Custom clusters - ### Issue Description

When provisioning clusters, we should have automated tests to harden the clusters. For security purposes, this should be seen as the default, so tests should be added to our framework

---

- [x] Deploy a k3s hardened... | test | add support for provisioning hardened rancher managed custom clusters issue description when provisioning clusters we should have automated tests to harden the clusters for security purposes this should be seen as the default so tests should be added to our framework deploy a hardened local... | 1 |

294,204 | 25,351,901,079 | IssuesEvent | 2022-11-19 21:52:37 | ValveSoftware/portal2 | https://api.github.com/repos/ValveSoftware/portal2 | closed | Portal 1 and 2 are failing on Steam Deck due to failed VR entry point | Need Retest | Portal 2

```

SDL video target is 'x11'

SDL video target is 'x11'

Using shader api: shaderapivk

Using shader api: shaderapivk

free(): invalid pointer

/home/deck/.local/share/Steam/steamapps/common/Portal 2/portal2.sh: line 51: 12457 Aborted (core dumped) ${GAME_DEBUGGER} "${GAMEROOT}"/${GAMEEX... | 1.0 | Portal 1 and 2 are failing on Steam Deck due to failed VR entry point - Portal 2

```

SDL video target is 'x11'

SDL video target is 'x11'

Using shader api: shaderapivk

Using shader api: shaderapivk

free(): invalid pointer

/home/deck/.local/share/Steam/steamapps/common/Portal 2/portal2.sh: line 51: 12457 Abort... | test | portal and are failing on steam deck due to failed vr entry point portal sdl video target is sdl video target is using shader api shaderapivk using shader api shaderapivk free invalid pointer home deck local share steam steamapps common portal sh line aborted ... | 1 |

26,259 | 2,684,274,314 | IssuesEvent | 2015-03-28 20:35:34 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | Crash whilst resizing Conemu window | 2–5 stars bug duplicate imported Priority-Medium | _From [col.brad...@gmail.com](https://code.google.com/u/103901031233724257395/) on January 24, 2013 00:00:36_

Required information! OS version: Win2k/WinXP/Vista/Win7/Win8 SP? x86/x64 ConEmu version: ? Far version (if you are using Far Manager): ? *Bug description* Conemu crashes whilst resizing window. *Steps to ... | 1.0 | Crash whilst resizing Conemu window - _From [col.brad...@gmail.com](https://code.google.com/u/103901031233724257395/) on January 24, 2013 00:00:36_

Required information! OS version: Win2k/WinXP/Vista/Win7/Win8 SP? x86/x64 ConEmu version: ? Far version (if you are using Far Manager): ? *Bug description* Conemu cras... | non_test | crash whilst resizing conemu window from on january required information os version winxp vista sp conemu version far version if you are using far manager bug description conemu crashes whilst resizing window steps to reproduction open single instance of conemu ... | 0 |

40,099 | 5,272,561,498 | IssuesEvent | 2017-02-06 13:18:32 | c2corg/v6_ui | https://api.github.com/repos/c2corg/v6_ui | closed | In image edition, do not allow to change the licence to copyright | bug fixed and ready for testing Images | The licence of an image should be changed to copyright only by a moderator.

But for now, everybody can change the licence of its personal images to copyright.

The copyright option must be limited to moderator only.

A simple member must be able to choose between personal and collaborative licence only.

There is no... | 1.0 | In image edition, do not allow to change the licence to copyright - The licence of an image should be changed to copyright only by a moderator.

But for now, everybody can change the licence of its personal images to copyright.

The copyright option must be limited to moderator only.

A simple member must be able to ch... | test | in image edition do not allow to change the licence to copyright the licence of an image should be changed to copyright only by a moderator but for now everybody can change the licence of its personal images to copyright the copyright option must be limited to moderator only a simple member must be able to ch... | 1 |

335,351 | 24,465,414,061 | IssuesEvent | 2022-10-07 14:37:36 | Heigvd/colab | https://api.github.com/repos/Heigvd/colab | closed | Déplacement des ressources/documents | documentation | - [x] depuis l'affichage du document

- [x] depuis les settings du document | 1.0 | Déplacement des ressources/documents - - [x] depuis l'affichage du document

- [x] depuis les settings du document | non_test | déplacement des ressources documents depuis l affichage du document depuis les settings du document | 0 |

21,511 | 10,653,449,168 | IssuesEvent | 2019-10-17 14:27:00 | oasislabs/oasis-core | https://api.github.com/repos/oasislabs/oasis-core | closed | [EXT-SEC-AUDIT] Multiple Rust dependencies contain known vulnerabilities | c:security | *Issue transferred from an external security audit report.*

Multiple Rust dependencies were found to be affected by known vulnerabilities.

```

% cargo audit

Fetching advisory database from `https://github.com/RustSec/advisory-db.git`

Loaded 41 security advisories (from /Users/sae/.cargo/advisory-db)

Scanning... | True | [EXT-SEC-AUDIT] Multiple Rust dependencies contain known vulnerabilities - *Issue transferred from an external security audit report.*

Multiple Rust dependencies were found to be affected by known vulnerabilities.

```

% cargo audit

Fetching advisory database from `https://github.com/RustSec/advisory-db.git`

Lo... | non_test | multiple rust dependencies contain known vulnerabilities issue transferred from an external security audit report multiple rust dependencies were found to be affected by known vulnerabilities cargo audit fetching advisory database from loaded security advisories from users sae cargo advis... | 0 |

204,069 | 15,398,714,348 | IssuesEvent | 2021-03-04 00:36:56 | nucleus-security/Test-repo | https://api.github.com/repos/nucleus-security/Test-repo | opened | Nucleus - Project: Ticketing Rules now apply to all vulnerabilities - [High] - CentOS Security Update for kernel (CESA-2015:0864) | Testing | Source: QUALYS

Finding Description: CentOS has released security update for kernel to fix the vulnerabilities.<p>Affected Products:<br />centos 6

Impact: This vulnerability could be exploited to gain complete access to sensitive information. Malicious users could also use this vulnerability to change all the contents ... | 1.0 | Nucleus - Project: Ticketing Rules now apply to all vulnerabilities - [High] - CentOS Security Update for kernel (CESA-2015:0864) - Source: QUALYS

Finding Description: CentOS has released security update for kernel to fix the vulnerabilities.<p>Affected Products:<br />centos 6

Impact: This vulnerability could be explo... | test | nucleus project ticketing rules now apply to all vulnerabilities centos security update for kernel cesa source qualys finding description centos has released security update for kernel to fix the vulnerabilities affected products centos impact this vulnerability could be exploited to gain compl... | 1 |

7,320 | 2,610,363,186 | IssuesEvent | 2015-02-26 19:57:25 | chrsmith/scribefire-chrome | https://api.github.com/repos/chrsmith/scribefire-chrome | opened | Post disappears after saving and closing safari but before publishing the post | auto-migrated Priority-Medium Type-Defect | ```

What's the problem?

I write my post and save it but I can't find it after closing scribefire or

safari and then re-opening them. Where does it get saved on my computer?

I think I'll go back to using text edit to write my blogs - it's safer.

What browser are you using?

Safari 6

What version of ScribeFire are you... | 1.0 | Post disappears after saving and closing safari but before publishing the post - ```

What's the problem?

I write my post and save it but I can't find it after closing scribefire or

safari and then re-opening them. Where does it get saved on my computer?

I think I'll go back to using text edit to write my blogs - it's ... | non_test | post disappears after saving and closing safari but before publishing the post what s the problem i write my post and save it but i can t find it after closing scribefire or safari and then re opening them where does it get saved on my computer i think i ll go back to using text edit to write my blogs it s ... | 0 |

90,652 | 8,251,688,308 | IssuesEvent | 2018-09-12 08:37:01 | mono/mono | https://api.github.com/repos/mono/mono | opened | [roslyn] PatternMatchingTests.Query_01 fails | epic: Roslyn Tests | roslyn$ PATH=~/.mono/bin:$PATH ./build/scripts/tests.sh Debug mono "" -method "*.Query_01"

```

Microsoft.CodeAnalysis.CSharp.UnitTests.PatternMatchingTests.Query_01 [FAIL]

Roslyn.Test.Utilities.ExecutionException :

Execution failed for assembly '/tmp/RoslynTests'.

Expected: 1

3

5... | 1.0 | [roslyn] PatternMatchingTests.Query_01 fails - roslyn$ PATH=~/.mono/bin:$PATH ./build/scripts/tests.sh Debug mono "" -method "*.Query_01"

```

Microsoft.CodeAnalysis.CSharp.UnitTests.PatternMatchingTests.Query_01 [FAIL]

Roslyn.Test.Utilities.ExecutionException :

Execution failed for assembly '/tmp/Ros... | test | patternmatchingtests query fails roslyn path mono bin path build scripts tests sh debug mono method query microsoft codeanalysis csharp unittests patternmatchingtests query roslyn test utilities executionexception execution failed for assembly tmp roslyntests ... | 1 |

203,007 | 15,327,332,399 | IssuesEvent | 2021-02-26 05:53:10 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | Integrate e2e testing in CI process and collect test results | area/test kind/feature | **What's it about?**

We need to integrate e2e testing into the CI process and collect test results. To launch an e2e testing we also need a way to set up a testing kubesphere cluster.

Kind is one of the options to setup the test Kubernetes cluster. Then use ks-installer to install kubesphere.

**Area Suggestion*... | 1.0 | Integrate e2e testing in CI process and collect test results - **What's it about?**

We need to integrate e2e testing into the CI process and collect test results. To launch an e2e testing we also need a way to set up a testing kubesphere cluster.

Kind is one of the options to setup the test Kubernetes cluster. The... | test | integrate testing in ci process and collect test results what s it about we need to integrate testing into the ci process and collect test results to launch an testing we also need a way to set up a testing kubesphere cluster kind is one of the options to setup the test kubernetes cluster then use ... | 1 |

170,751 | 13,201,702,635 | IssuesEvent | 2020-08-14 10:41:18 | Niraj-Kamdar/question-paper-generator | https://api.github.com/repos/Niraj-Kamdar/question-paper-generator | closed | Set IMP flag to question selenium test | Test | ### Describe the test

Add a test to set IMP flag to question using selenium webdriver.

### What it covers

It will cover front-end test for setting IMP flag to the question.

### Testing

Some tests for the above testcase.

| Description | Input | Output |

| :---------- | :---- | :----- |

| Set IMP flag ... | 1.0 | Set IMP flag to question selenium test - ### Describe the test

Add a test to set IMP flag to question using selenium webdriver.

### What it covers

It will cover front-end test for setting IMP flag to the question.

### Testing

Some tests for the above testcase.

| Description | Input | Output |

| :--------... | test | set imp flag to question selenium test describe the test add a test to set imp flag to question using selenium webdriver what it covers it will cover front end test for setting imp flag to the question testing some tests for the above testcase description input output ... | 1 |

814,950 | 30,531,120,180 | IssuesEvent | 2023-07-19 14:20:59 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | Mesh warnings from franka_description with recent Drake versions | type: bug priority: medium component: geometry perception | ### What happened?

In `drake/manipulation/models/franka_description/urdf` we provide some sample URDFs for the Franka Panda robot.

If one of those models is loaded into a scene that contains render engines (e.g., for camera simulation) then there are a log of warnings spammed to the console:

For example:

```

[... | 1.0 | Mesh warnings from franka_description with recent Drake versions - ### What happened?

In `drake/manipulation/models/franka_description/urdf` we provide some sample URDFs for the Franka Panda robot.

If one of those models is loaded into a scene that contains render engines (e.g., for camera simulation) then there ar... | non_test | mesh warnings from franka description with recent drake versions what happened in drake manipulation models franka description urdf we provide some sample urdfs for the franka panda robot if one of those models is loaded into a scene that contains render engines e g for camera simulation then there ar... | 0 |

279,623 | 24,240,381,422 | IssuesEvent | 2022-09-27 05:59:16 | pytest-dev/pytest | https://api.github.com/repos/pytest-dev/pytest | closed | `unittest` TestCase collection fails silently when there are unclear circular dependencies | type: bug topic: collection plugin: unittest status: needs information | Hey!

I have problem with collecting unittest/asynctest based test.

I'm trying to run tests TestCase from unittest/asynctest and have problem when I provide for example exact dir

`pytest directory_name`

then collecting works fine, it will collect correct test and run it but when I will run pytest without directory... | 1.0 | `unittest` TestCase collection fails silently when there are unclear circular dependencies - Hey!

I have problem with collecting unittest/asynctest based test.

I'm trying to run tests TestCase from unittest/asynctest and have problem when I provide for example exact dir

`pytest directory_name`

then collecting wor... | test | unittest testcase collection fails silently when there are unclear circular dependencies hey i have problem with collecting unittest asynctest based test i m trying to run tests testcase from unittest asynctest and have problem when i provide for example exact dir pytest directory name then collecting wor... | 1 |

43,489 | 11,236,379,808 | IssuesEvent | 2020-01-09 10:20:01 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | ROCm support to pytorch | module: build triaged | ## ❓ while compile failed tipes is The following variables are used in this project, but they are set to NOTFOUND

compile env is ubuntu18.04 ROCm 2.10 GPU is Vega56-8G

here is mine error log:

CMake Error: The following variables are used in this project, but they are set to NOTFOUND.

Please set them or m... | 1.0 | ROCm support to pytorch - ## ❓ while compile failed tipes is The following variables are used in this project, but they are set to NOTFOUND

compile env is ubuntu18.04 ROCm 2.10 GPU is Vega56-8G

here is mine error log:

CMake Error: The following variables are used in this project, but they are set to NOTFO... | non_test | rocm support to pytorch ❓ while compile failed tipes is the following variables are used in this project but they are set to notfound compile env is rocm gpu is here is mine error log cmake error the following variables are used in this project but they are set to notfound please se... | 0 |

155,083 | 12,238,208,619 | IssuesEvent | 2020-05-04 19:23:46 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: X-Pack Jest Tests.x-pack/plugins/apm/public/components/app/Settings/CustomizeUI/CustomLink/CustomLinkFlyout - LinkPreview shows label and url values | Team:apm [zube]: In Progress failed-test | A test failed on a tracked branch

```

Error: callApmApi has to be initialized before used. Call createCallApmApi first.

at callApmApi (/var/lib/jenkins/workspace/elastic+kibana+7.x/kibana/x-pack/plugins/apm/public/services/rest/createCallApmApi.ts:23:9)

at Object.<anonymous>.fetchTransaction (/var/lib/jenkins/... | 1.0 | Failing test: X-Pack Jest Tests.x-pack/plugins/apm/public/components/app/Settings/CustomizeUI/CustomLink/CustomLinkFlyout - LinkPreview shows label and url values - A test failed on a tracked branch

```

Error: callApmApi has to be initialized before used. Call createCallApmApi first.

at callApmApi (/var/lib/jenkin... | test | failing test x pack jest tests x pack plugins apm public components app settings customizeui customlink customlinkflyout linkpreview shows label and url values a test failed on a tracked branch error callapmapi has to be initialized before used call createcallapmapi first at callapmapi var lib jenkin... | 1 |

55,107 | 11,386,580,338 | IssuesEvent | 2020-01-29 13:33:46 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Synthesis failed for language | api: language autosynth failure codegen type: process | Hello! Autosynth couldn't regenerate language. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth-language'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--']

synthtool > Executing /tmpfs/src/git/a... | 1.0 | Synthesis failed for language - Hello! Autosynth couldn't regenerate language. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth-language'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--']

syntht... | non_test | synthesis failed for language hello autosynth couldn t regenerate language broken heart here s the output from running synth py cloning into working repo switched to branch autosynth language running synthtool synthtool executing tmpfs src git autosynth working repo language synth py synthto... | 0 |

87,410 | 8,073,863,221 | IssuesEvent | 2018-08-06 20:46:28 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [CI] o.e.i.c.IngestRestartIT.testScriptDisabled fails with EsRejectedExecutionException | :Core/Ingest >test-failure v6.5.0 v7.0.0 | `o.e.i.c.IngestRestartIT.testScriptDisabled` failed as follows, apparently due to trying to use a threadpool after it had terminated. Full log at https://elasticsearch-ci.elastic.co/job/elastic+elasticsearch+master+intake/2443/console

```

1> [2018-08-01T05:50:12,899][INFO ][o.e.i.c.IngestRestartIT ] [testScriptD... | 1.0 | [CI] o.e.i.c.IngestRestartIT.testScriptDisabled fails with EsRejectedExecutionException - `o.e.i.c.IngestRestartIT.testScriptDisabled` failed as follows, apparently due to trying to use a threadpool after it had terminated. Full log at https://elasticsearch-ci.elastic.co/job/elastic+elasticsearch+master+intake/2443/con... | test | o e i c ingestrestartit testscriptdisabled fails with esrejectedexecutionexception o e i c ingestrestartit testscriptdisabled failed as follows apparently due to trying to use a threadpool after it had terminated full log at cleaned up after test after test error ing... | 1 |

41,647 | 6,924,892,236 | IssuesEvent | 2017-11-30 14:21:49 | jan-molak/serenity-js | https://api.github.com/repos/jan-molak/serenity-js | closed | Unable to add ability to the actor. | documentation question | Hi, I'm trying to set the ability to interact with a database to an actor, but I'm getting the following error:

**"I don't have the ability to InteractWithDatabase, said Brayan sadly."**

This is my Ability:

```

import { Ability, UsesAbilities } from "@serenity-js/core/lib/screenplay";

const sql = require('ms... | 1.0 | Unable to add ability to the actor. - Hi, I'm trying to set the ability to interact with a database to an actor, but I'm getting the following error:

**"I don't have the ability to InteractWithDatabase, said Brayan sadly."**

This is my Ability:

```

import { Ability, UsesAbilities } from "@serenity-js/core/lib... | non_test | unable to add ability to the actor hi i m trying to set the ability to interact with a database to an actor but i m getting the following error i don t have the ability to interactwithdatabase said brayan sadly this is my ability import ability usesabilities from serenity js core lib... | 0 |

102,631 | 8,851,100,963 | IssuesEvent | 2019-01-08 14:58:33 | italia/spid | https://api.github.com/repos/italia/spid | closed | Controllo metadata - Comune di Casale Monferrato | metadata nuovo md test | Buongiorno,

per conto del Comune di Casale Monferrato, richiediamo la verifica dei metadata pubblicati all'url:

https://sportellodigitale.comune.casale-monferrato.al.it/006039/spid/metadata

Grazie e cordiali saluti

Federico Albesano | 1.0 | Controllo metadata - Comune di Casale Monferrato - Buongiorno,

per conto del Comune di Casale Monferrato, richiediamo la verifica dei metadata pubblicati all'url:

https://sportellodigitale.comune.casale-monferrato.al.it/006039/spid/metadata

Grazie e cordiali saluti

Federico Albesano | test | controllo metadata comune di casale monferrato buongiorno per conto del comune di casale monferrato richiediamo la verifica dei metadata pubblicati all url grazie e cordiali saluti federico albesano | 1 |

82,224 | 15,646,528,036 | IssuesEvent | 2021-03-23 01:08:09 | jgeraigery/linux | https://api.github.com/repos/jgeraigery/linux | opened | CVE-2019-19074 (High) detected in linuxv5.2 | security vulnerability | ## CVE-2019-19074 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torva... | True | CVE-2019-19074 (High) detected in linuxv5.2 - ## CVE-2019-19074 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Libra... | non_test | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href vulnerable source files vulnerability details a memory leak in the wmi cmd function in drivers net wi... | 0 |

489,354 | 14,105,033,520 | IssuesEvent | 2020-11-06 12:52:21 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.google.com - design is broken | browser-firefox engine-gecko priority-critical | <!-- @browser: Firefox 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:83.0) Gecko/20100101 Firefox/83.0 -->

<!-- @reported_with: unknown -->

**URL**: https://www.google.com/

**Browser / Version**: Firefox 83.0

**Operating System**: Windows 7

**Tested Another Browser**: Yes Safari

**Problem typ... | 1.0 | www.google.com - design is broken - <!-- @browser: Firefox 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:83.0) Gecko/20100101 Firefox/83.0 -->

<!-- @reported_with: unknown -->

**URL**: https://www.google.com/

**Browser / Version**: Firefox 83.0

**Operating System**: Windows 7

**Tested Another ... | non_test | design is broken url browser version firefox operating system windows tested another browser yes safari problem type design is broken description items are misaligned steps to reproduce browser configuration none from with ❤️ | 0 |

278,611 | 24,163,183,531 | IssuesEvent | 2022-09-22 13:15:01 | cloudscribe/cloudscribe.SimpleContent | https://api.github.com/repos/cloudscribe/cloudscribe.SimpleContent | closed | Blog Menu Position behaves strangely | bug needs-test REFERENCE | In a Simple Content site I'm seeing very odd behaviour and cannot position the Blog where I want it.

if I have these page orders set

Page1 10

Page2 15

Blog 20

I get this menu:

Other pages... Page1 Blog Page2

and if I change the ordering to this

Page1 30

Page2 15

Blog 20

I get this menu

Other... | 1.0 | Blog Menu Position behaves strangely - In a Simple Content site I'm seeing very odd behaviour and cannot position the Blog where I want it.

if I have these page orders set

Page1 10

Page2 15

Blog 20

I get this menu:

Other pages... Page1 Blog Page2

and if I change the ordering to this

Page1 30

Page2 ... | test | blog menu position behaves strangely in a simple content site i m seeing very odd behaviour and cannot position the blog where i want it if i have these page orders set blog i get this menu other pages blog and if i change the ordering to this blog i get this me... | 1 |

45,211 | 5,703,829,650 | IssuesEvent | 2017-04-18 01:33:36 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Test failure: System.Net.Http.Functional.Tests.CancellationTest/ReadAsStreamAsync_ReadAsync_Cancel_BodyNeverStarted_TaskCanceledQuickly | area-System.Net.Http test bug test-run-core test-run-portable | Opened on behalf of @Jiayili1

The test `System.Net.Http.Functional.Tests.CancellationTest/ReadAsStreamAsync_ReadAsync_Cancel_BodyNeverStarted_TaskCanceledQuickly` has failed.

Elapsed time should be short\r

Expected: True\r

Actual: False

Stack Trace:

at System.Net.Http.Functional.Tests.C... | 3.0 | Test failure: System.Net.Http.Functional.Tests.CancellationTest/ReadAsStreamAsync_ReadAsync_Cancel_BodyNeverStarted_TaskCanceledQuickly - Opened on behalf of @Jiayili1

The test `System.Net.Http.Functional.Tests.CancellationTest/ReadAsStreamAsync_ReadAsync_Cancel_BodyNeverStarted_TaskCanceledQuickly` has failed.

Elaps... | test | test failure system net http functional tests cancellationtest readasstreamasync readasync cancel bodyneverstarted taskcanceledquickly opened on behalf of the test system net http functional tests cancellationtest readasstreamasync readasync cancel bodyneverstarted taskcanceledquickly has failed elapsed time... | 1 |

285,317 | 24,658,994,220 | IssuesEvent | 2022-10-18 04:07:36 | HSLdevcom/jore4 | https://api.github.com/repos/HSLdevcom/jore4 | closed | Set video recording for tests so that it creates a separate video for each test. | testing | Currently video recording for robot tests is done so that it starts recording in the suite setup and then ends when suite is done. So we get one video file for the run.

It would be easier to debug specific tests if the video recording was started in test setup. So we would get separate videos for each test.

Imple... | 1.0 | Set video recording for tests so that it creates a separate video for each test. - Currently video recording for robot tests is done so that it starts recording in the suite setup and then ends when suite is done. So we get one video file for the run.

It would be easier to debug specific tests if the video recording... | test | set video recording for tests so that it creates a separate video for each test currently video recording for robot tests is done so that it starts recording in the suite setup and then ends when suite is done so we get one video file for the run it would be easier to debug specific tests if the video recording... | 1 |

234,497 | 19,186,317,793 | IssuesEvent | 2021-12-05 08:57:09 | imixs/imixs-workflow | https://api.github.com/repos/imixs/imixs-workflow | closed | LuceneIndex - Autorebuild Defaults | enhancement testing | Increase default for autorebuild

- block size 500 -> 10000

- interval 2 -> 1 | 1.0 | LuceneIndex - Autorebuild Defaults - Increase default for autorebuild

- block size 500 -> 10000

- interval 2 -> 1 | test | luceneindex autorebuild defaults increase default for autorebuild block size interval | 1 |

228,372 | 18,172,622,737 | IssuesEvent | 2021-09-27 21:53:21 | openshift/odo | https://api.github.com/repos/openshift/odo | closed | Automate integration and unit test for windows on PSI | priority/High area/testing points/2 area/Windows kind/user-story milestone/2.4 | /kind user-story

## User Story

As a QE I want to automate both integration and unit test on Windows.

## Acceptance Criteria

- [ ] Automate the test for Windows.

- [ ] Resolve the blockers, if any.

## Links

- Feature Request: N/A

- Related issue: https://github.com/openshift/odo/issues/4379 , #2540

/k... | 1.0 | Automate integration and unit test for windows on PSI - /kind user-story

## User Story

As a QE I want to automate both integration and unit test on Windows.

## Acceptance Criteria

- [ ] Automate the test for Windows.

- [ ] Resolve the blockers, if any.

## Links

- Feature Request: N/A

- Related issue: ht... | test | automate integration and unit test for windows on psi kind user story user story as a qe i want to automate both integration and unit test on windows acceptance criteria automate the test for windows resolve the blockers if any links feature request n a related issue ... | 1 |

268,386 | 23,365,293,104 | IssuesEvent | 2022-08-10 14:54:08 | watchfulli/XCloner-Wordpress | https://api.github.com/repos/watchfulli/XCloner-Wordpress | closed | Test v4.4.2 | testing | - [x] Basic Settings can be saved

- [x] Remote Storages settings can be saved and can be validated against valid credentials (spot-test with existing cloud credentials from LastPass)

- [x] Generate Backup - full backup with default settings

- [x] Generate Backup - partial backup with some tables excluded

- [x] Gene... | 1.0 | Test v4.4.2 - - [x] Basic Settings can be saved

- [x] Remote Storages settings can be saved and can be validated against valid credentials (spot-test with existing cloud credentials from LastPass)

- [x] Generate Backup - full backup with default settings

- [x] Generate Backup - partial backup with some tables exclud... | test | test basic settings can be saved remote storages settings can be saved and can be validated against valid credentials spot test with existing cloud credentials from lastpass generate backup full backup with default settings generate backup partial backup with some tables excluded g... | 1 |

22,309 | 3,953,073,215 | IssuesEvent | 2016-04-29 11:55:06 | servo/servo | https://api.github.com/repos/servo/servo | closed | Text after blockquote is displayed inside float | A-layout/floats C-has-test | ```html

<div></div>

<blockquote>bar</blockquote>

foobar

<style>

div {

float: left;

width: 100px;

height: 100px;

background-color: green;

}

</style>

```

Firefox:

Servo:

menu to start a question, because there hasn't been any calls to `/api/database` yet.

**Possible workaround:**

Create a button outside the iframe, similar to pre-42, which c... | 1.0 | Data selector not working in FullApp embedding if going directly from location that hasn't loaded `/api/database` yet - **Describe the bug**

If FullApp embedding directly to e.g `/collection/root`, then it is not possible to click the :heavy_plus_sign: (New...) menu to start a question, because there hasn't been any c... | non_test | data selector not working in fullapp embedding if going directly from location that hasn t loaded api database yet describe the bug if fullapp embedding directly to e g collection root then it is not possible to click the heavy plus sign new menu to start a question because there hasn t been any c... | 0 |

278,480 | 24,157,941,630 | IssuesEvent | 2022-09-22 09:14:07 | gitpod-io/gitpod | https://api.github.com/repos/gitpod-io/gitpod | closed | [integration tests] provide a means to run tests from PR via opt-in, non-blocking mechanism | aspect: testing team: platform | ## Is your feature request related to a problem? Please describe

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

Integration tests run nightly, or ad-hoc.

## Describe the behaviour you'd like

<!-- A clear and concise description of what you want to happen. --... | 1.0 | [integration tests] provide a means to run tests from PR via opt-in, non-blocking mechanism - ## Is your feature request related to a problem? Please describe

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

Integration tests run nightly, or ad-hoc.

## Describe... | test | provide a means to run tests from pr via opt in non blocking mechanism is your feature request related to a problem please describe integration tests run nightly or ad hoc describe the behaviour you d like as an initial skateboard give developers an opt in non blocking way to easily run the ... | 1 |

208,202 | 15,879,904,283 | IssuesEvent | 2021-04-09 13:04:06 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | [NPC/Add Does Not Attack] Levixus | Fixed on PTR - Tester Confirmed | **Links:**

Link To video showing summon but no attack: https://youtu.be/rStIhDIVyT4

Link to wow-mania Armory: https://www.wow-mania.com/armory/?npc=19847

**What is Happening:**

Levixus will summon his Guardian (Infernal) and it will just stand there not attacking.

**What Should happen:**

The infernal Summon... | 1.0 | [NPC/Add Does Not Attack] Levixus - **Links:**

Link To video showing summon but no attack: https://youtu.be/rStIhDIVyT4

Link to wow-mania Armory: https://www.wow-mania.com/armory/?npc=19847

**What is Happening:**

Levixus will summon his Guardian (Infernal) and it will just stand there not attacking.

**What S... | test | levixus links link to video showing summon but no attack link to wow mania armory what is happening levixus will summon his guardian infernal and it will just stand there not attacking what should happen the infernal summon guardian should aid levixus in the battle | 1 |

581,785 | 17,331,658,097 | IssuesEvent | 2021-07-28 03:48:57 | martinmags/july-challenge-tbd | https://api.github.com/repos/martinmags/july-challenge-tbd | opened | Login and Account Creation | Issue: Dependencies Issue: Feature Priority: High Research | - Create an account via Google OAuth and MongoDB

- Login with Google Oauth and MongoDB

- Manage Protected and Public pages depending on login status (React Context/Provider) | 1.0 | Login and Account Creation - - Create an account via Google OAuth and MongoDB

- Login with Google Oauth and MongoDB

- Manage Protected and Public pages depending on login status (React Context/Provider) | non_test | login and account creation create an account via google oauth and mongodb login with google oauth and mongodb manage protected and public pages depending on login status react context provider | 0 |

38,384 | 2,846,598,848 | IssuesEvent | 2015-05-29 12:37:01 | UnifiedViews/Core | https://api.github.com/repos/UnifiedViews/Core | opened | Update scripts to schema.sql and data.sql should be two separated files | priority: Normal severity: enhancement status: planed | The update script below updates schema.sql as well as data.sql. It should be separated to two update scripts - one for schema.sql, one for data.sql. Also in the future, schema.sql updates should not be in the same file with data.sql updates.

https://github.com/UnifiedViews/Core/blob/feature/permissionsRefactoring/db... | 1.0 | Update scripts to schema.sql and data.sql should be two separated files - The update script below updates schema.sql as well as data.sql. It should be separated to two update scripts - one for schema.sql, one for data.sql. Also in the future, schema.sql updates should not be in the same file with data.sql updates.

h... | non_test | update scripts to schema sql and data sql should be two separated files the update script below updates schema sql as well as data sql it should be separated to two update scripts one for schema sql one for data sql also in the future schema sql updates should not be in the same file with data sql updates | 0 |

236,687 | 19,567,214,081 | IssuesEvent | 2022-01-04 03:23:11 | kubernetes/test-infra | https://api.github.com/repos/kubernetes/test-infra | closed | Make it clear which version of kubetest or kubetest2 is being used | help wanted area/kubetest sig/testing priority/important-soon lifecycle/rotten kind/cleanup | We may have `deprecated` kubetest, but it's still in use. Whatever we do here should support `kubetest2` or be included in `kubetest2`

https://github.com/kubernetes/kubernetes/pull/96987#issuecomment-780646063 is an example of confusion over the fact that changes to `kubetest` don't take effect until job configs ar... | 2.0 | Make it clear which version of kubetest or kubetest2 is being used - We may have `deprecated` kubetest, but it's still in use. Whatever we do here should support `kubetest2` or be included in `kubetest2`