Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

35,944 | 14,901,293,763 | IssuesEvent | 2021-01-21 16:18:34 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | Walk-through MS Form for SMB Career Development Survey | Service: Apps Type: Data Workgroup: SMB | Susanne was working on a form in Survey123 and I found out about it. I reached out to her and told her about MS Forms. I volunteered to convert her form because she had to be in an RFP meeting all afternoon.

| 1.0 | Walk-through MS Form for SMB Career Development Survey - Susanne was working on a form in Survey123 and I found out about it. I reached out to her and told her about MS Forms. I volunteered to convert her form because she had to be in an RFP meeting all afternoon.

| non_test | walk through ms form for smb career development survey susanne was working on a form in and i found out about it i reached out to her and told her about ms forms i volunteered to convert her form because she had to be in an rfp meeting all afternoon | 0 |

181,247 | 21,657,939,731 | IssuesEvent | 2022-05-06 15:53:19 | turkdevops/apps | https://api.github.com/repos/turkdevops/apps | closed | CVE-2020-7598 (Medium) detected in minimist-0.0.8.tgz, minimist-1.2.0.tgz - autoclosed | security vulnerability | ## CVE-2020-7598 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-1.2.0.tgz</b></p></summary>

<p>

<details><summary><b>minimist-0.0.8.tgz</b></p>... | True | CVE-2020-7598 (Medium) detected in minimist-0.0.8.tgz, minimist-1.2.0.tgz - autoclosed - ## CVE-2020-7598 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>... | non_test | cve medium detected in minimist tgz minimist tgz autoclosed cve medium severity vulnerability vulnerable libraries minimist tgz minimist tgz minimist tgz parse argument options library home page a href path to dependency file package js... | 0 |

122,233 | 12,146,555,802 | IssuesEvent | 2020-04-24 11:23:13 | assimbly/gateway | https://api.github.com/repos/assimbly/gateway | closed | documentation (user guide) should match gui (navbar) | documentation | In the navbar are all pages. It's best when the user guides follows the same page from left te right to explain what they do | 1.0 | documentation (user guide) should match gui (navbar) - In the navbar are all pages. It's best when the user guides follows the same page from left te right to explain what they do | non_test | documentation user guide should match gui navbar in the navbar are all pages it s best when the user guides follows the same page from left te right to explain what they do | 0 |

265,833 | 23,201,588,444 | IssuesEvent | 2022-08-01 22:12:22 | iron-fish/ironfish | https://api.github.com/repos/iron-fish/ironfish | closed | Random characters appear sporadically while updating blocks | bug testnet-credit | Hi Team,

I ran the following command for installing Iron Fish:

docker run --rm --tty --interactive --network host --volume <home-directory>/.ironfish:/root/.ironfish ghcr.io/iron-fish/ironfish:latest

While updating the blocks, there were patches of random characters appearing in between. May not be a major issue... | 1.0 | Random characters appear sporadically while updating blocks - Hi Team,

I ran the following command for installing Iron Fish:

docker run --rm --tty --interactive --network host --volume <home-directory>/.ironfish:/root/.ironfish ghcr.io/iron-fish/ironfish:latest

While updating the blocks, there were patches of ra... | test | random characters appear sporadically while updating blocks hi team i ran the following command for installing iron fish docker run rm tty interactive network host volume ironfish root ironfish ghcr io iron fish ironfish latest while updating the blocks there were patches of random characters... | 1 |

170,089 | 13,172,838,149 | IssuesEvent | 2020-08-11 19:10:06 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: django failed | C-test-failure O-roachtest O-robot branch-release-20.1 release-blocker | [(roachtest).django failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1838926&tab=buildLog) on [release-20.1@6a7ca722a135e21ad04daec3895535969ba5b02c](https://github.com/cockroachdb/cockroach/commits/6a7ca722a135e21ad04daec3895535969ba5b02c):

```

The test failed on branch=release-20.1, cloud=gce:

test arti... | 2.0 | roachtest: django failed - [(roachtest).django failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1838926&tab=buildLog) on [release-20.1@6a7ca722a135e21ad04daec3895535969ba5b02c](https://github.com/cockroachdb/cockroach/commits/6a7ca722a135e21ad04daec3895535969ba5b02c):

```

The test failed on branch=release... | test | roachtest django failed on the test failed on branch release cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts django run test runner go test timed out django go django go test runner go error with attached stack trace ... | 1 |

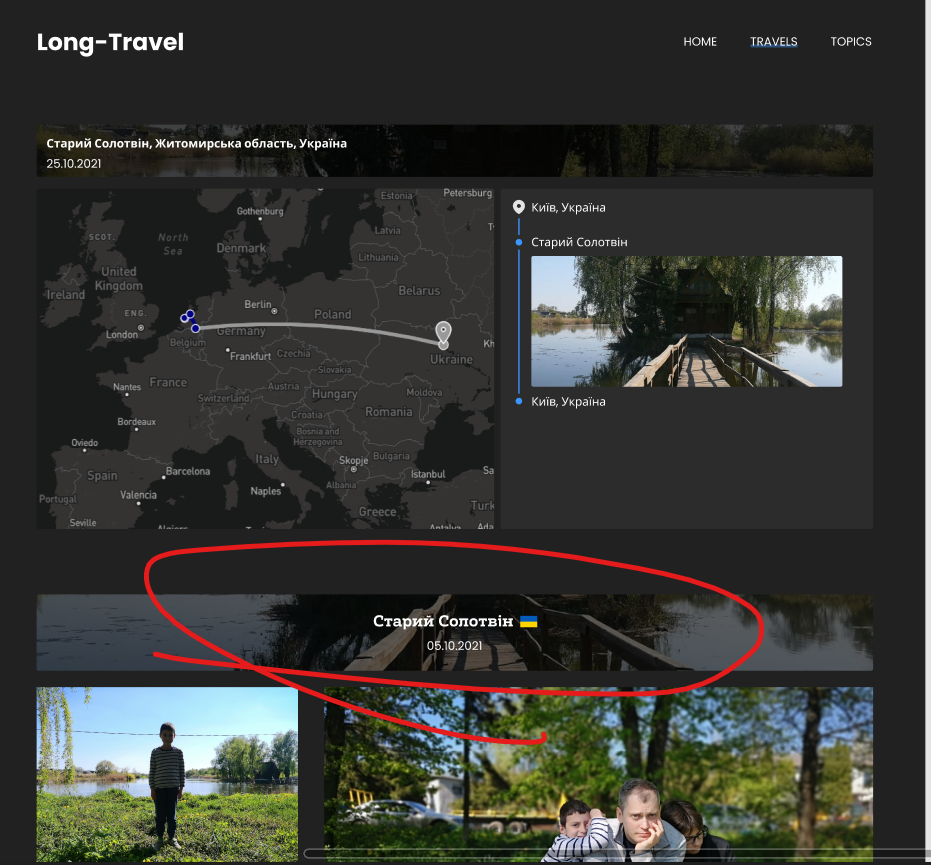

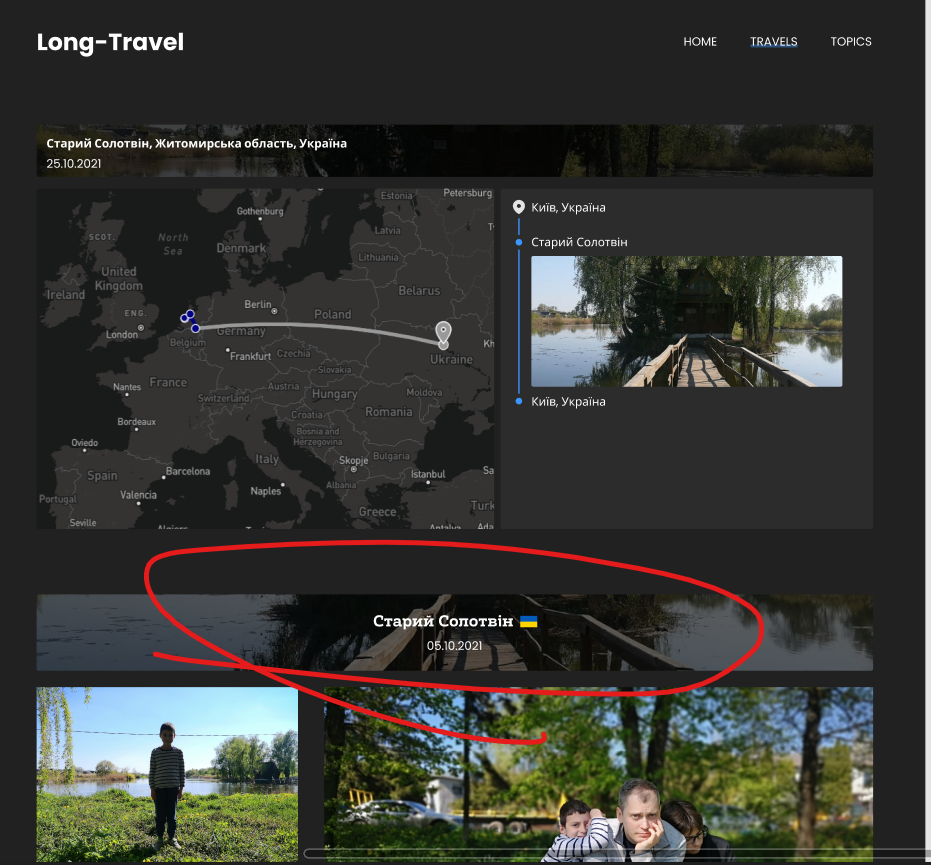

148,831 | 23,388,615,576 | IssuesEvent | 2022-08-11 15:41:35 | scholokov/long-travel-2 | https://api.github.com/repos/scholokov/long-travel-2 | opened | Travel: Название блока в 3 строки | Design | Название блока должно быть в 3 строки вместо двух

Старий Солотвін

Житомирська область, Україна

25.10.2021

| 1.0 | Travel: Название блока в 3 строки - Название блока должно быть в 3 строки вместо двух

Старий Солотвін

Житомирська область, Україна

25.10.2021

| non_test | travel название блока в строки название блока должно быть в строки вместо двух старий солотвін житомирська область україна | 0 |

173,631 | 13,434,329,608 | IssuesEvent | 2020-09-07 11:11:49 | SunstriderEmu/BugTracker | https://api.github.com/repos/SunstriderEmu/BugTracker | closed | Daily Dungeon Reset clears progress of normal dungeons and removes ID | confirmed core testfix | <!--- (**********************************)

(** Fill in the following fields **)

(**********************************)

Issues are for problem only, NOT for asking questions.

--->

**Description:**

When the daily dungeon reset occurs normal dungeons which do not have saved/locked IDs should not remov... | 1.0 | Daily Dungeon Reset clears progress of normal dungeons and removes ID - <!--- (**********************************)

(** Fill in the following fields **)

(**********************************)

Issues are for problem only, NOT for asking questions.

--->

**Description:**

When the daily dungeon reset oc... | test | daily dungeon reset clears progress of normal dungeons and removes id fill in the following fields issues are for problem only not for asking questions description when the daily dungeon reset oc... | 1 |

111,510 | 9,533,401,847 | IssuesEvent | 2019-04-29 21:11:19 | chamilo/chamilo-lms | https://api.github.com/repos/chamilo/chamilo-lms | closed | Problem creating a certificate. | Bug Requires testing/validation | Hello.

When creating a course in Learning paths.

You click certificate.

After creating it is impossible to edit.

tested at https://11.chamilo.org/

Greetings.

example only hints at the possibilities of net surgery. To make it more useful and better explain pycaffe along the way it should have:

- transplanting custom filters (Gaussian, Sobel, bilinear, ...) ... | 1.0 | Net Surgeries - The [editing model parameters](http://nbviewer.ipython.org/github/BVLC/caffe/blob/master/examples/net_surgery.ipynb) example only hints at the possibilities of net surgery. To make it more useful and better explain pycaffe along the way it should have:

- transplanting custom filters (Gaussian, Sobel,... | non_test | net surgeries the example only hints at the possibilities of net surgery to make it more useful and better explain pycaffe along the way it should have transplanting custom filters gaussian sobel bilinear and classifier weights like a separately learned svm combining layers from different net... | 0 |

60,702 | 6,713,437,011 | IssuesEvent | 2017-10-13 13:28:44 | MajkiIT/polish-ads-filter | https://api.github.com/repos/MajkiIT/polish-ads-filter | reopened | cda-hd.pl | dodać regex reguły gotowe/testowanie | @MajkiIT looknie sobie na byle jaki film z flashx. nie otwiera sie tobie nic extra poza regexem?

http://cda-hd.pl/20944/dom-wygranych-the-house-2017-online/ | 1.0 | cda-hd.pl - @MajkiIT looknie sobie na byle jaki film z flashx. nie otwiera sie tobie nic extra poza regexem?

http://cda-hd.pl/20944/dom-wygranych-the-house-2017-online/ | test | cda hd pl majkiit looknie sobie na byle jaki film z flashx nie otwiera sie tobie nic extra poza regexem | 1 |

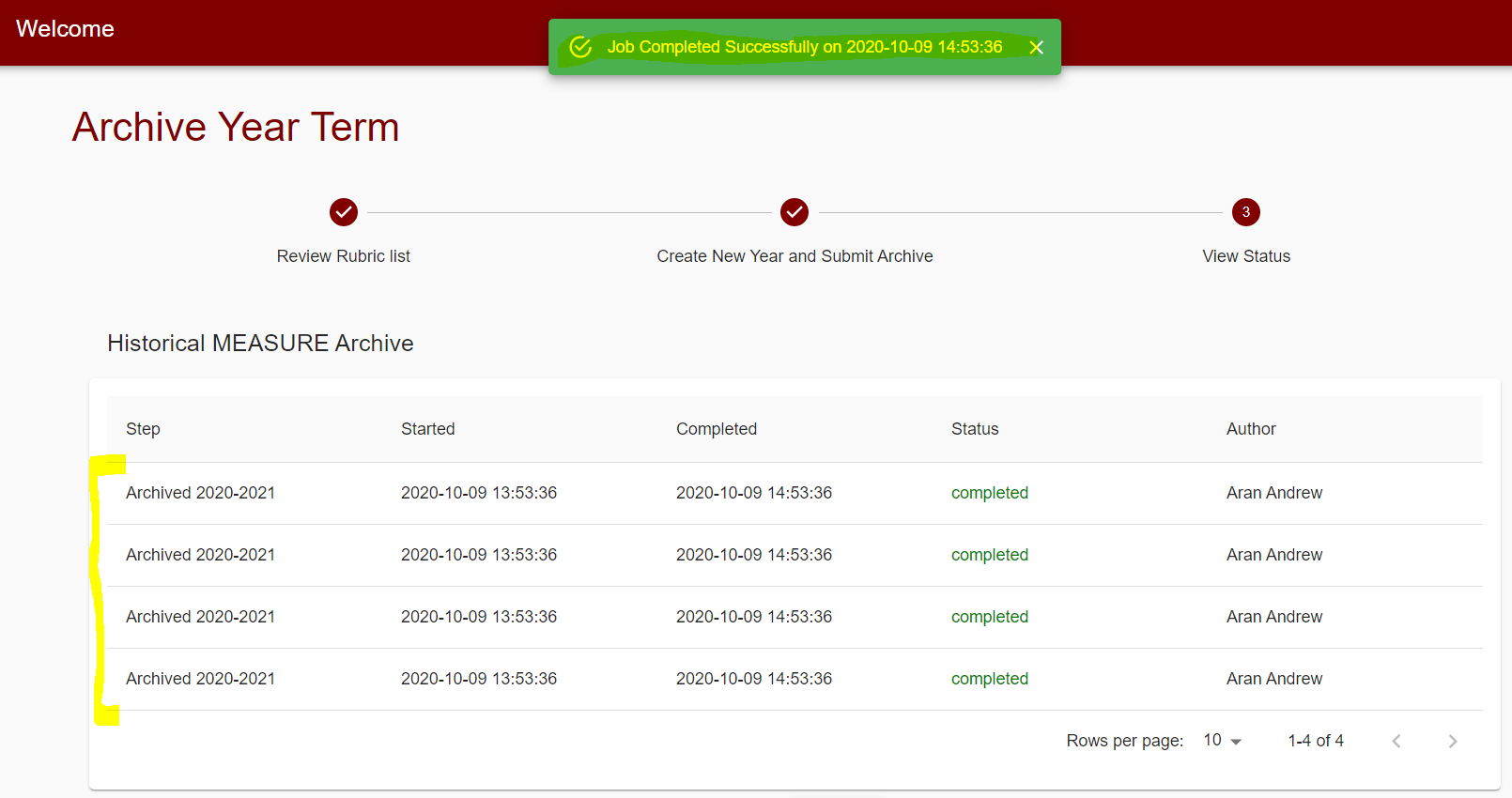

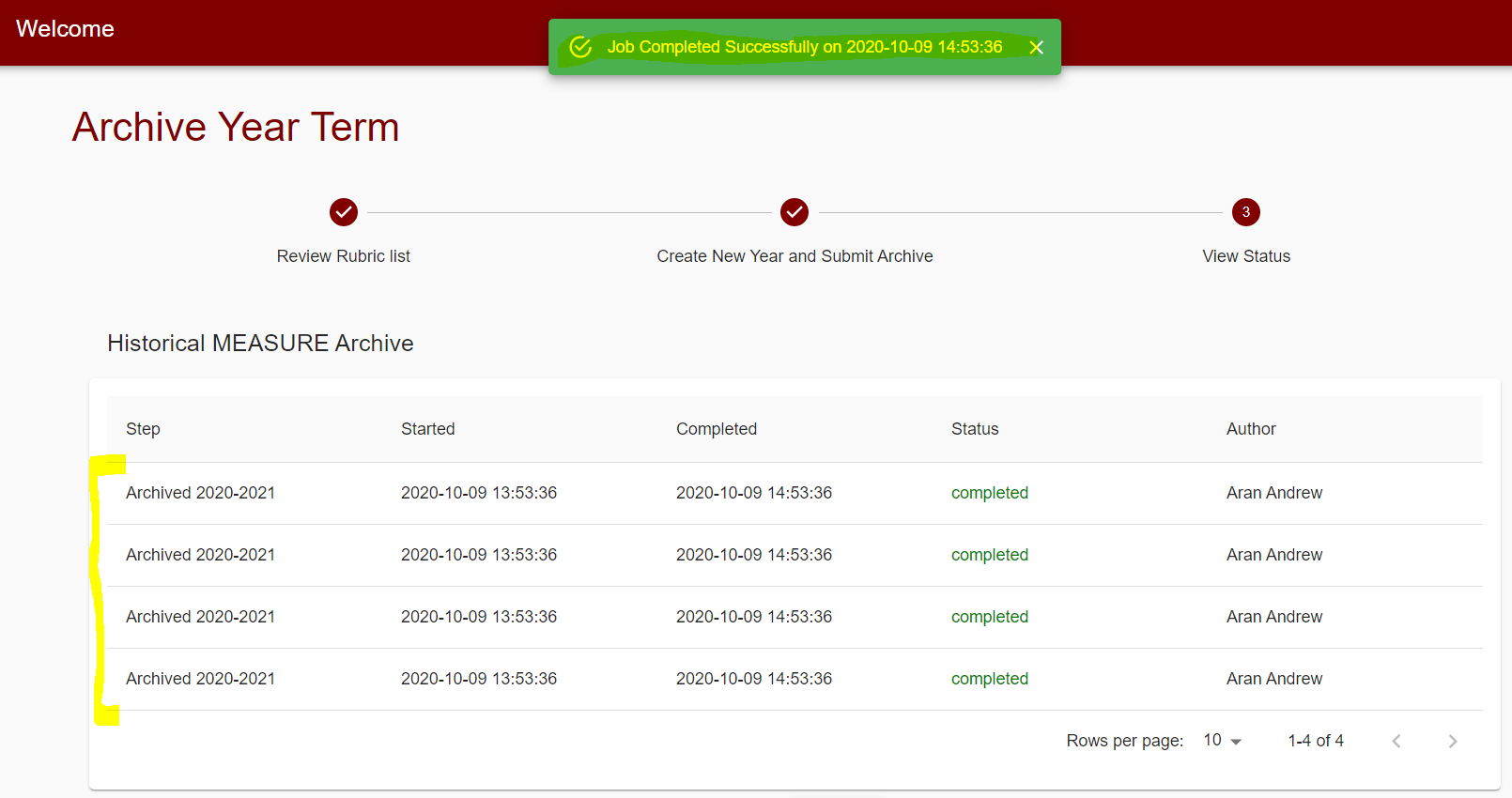

204,415 | 15,441,123,027 | IssuesEvent | 2021-03-08 05:13:23 | trevorNgo/Measure2.0 | https://api.github.com/repos/trevorNgo/Measure2.0 | opened | CS4ZP6 Tester Feedback: Archive form is non-functional | tester | Environment

- Google Chrome

- Windows 10

Describe the feedback/issue.

Completing the `Archive Year Term` form does not do anything:

Priority

HIGH

Please list the reproduction steps

- Login as `... | 1.0 | CS4ZP6 Tester Feedback: Archive form is non-functional - Environment

- Google Chrome

- Windows 10

Describe the feedback/issue.

Completing the `Archive Year Term` form does not do anything:

Priority

... | test | tester feedback archive form is non functional environment google chrome windows describe the feedback issue completing the archive year term form does not do anything priority high please list the reproduction steps login as admin navigate to and complete the archive year ter... | 1 |

7,956 | 4,111,699,594 | IssuesEvent | 2016-06-07 07:34:33 | youjustgo/ng2-bingmaps | https://api.github.com/repos/youjustgo/ng2-bingmaps | closed | Set up demo inside the repository | build | We would like to have a demo folder inside the repository, for easier testing. This should reference the file generated by the build (dist folder) | 1.0 | Set up demo inside the repository - We would like to have a demo folder inside the repository, for easier testing. This should reference the file generated by the build (dist folder) | non_test | set up demo inside the repository we would like to have a demo folder inside the repository for easier testing this should reference the file generated by the build dist folder | 0 |

254,345 | 21,781,368,677 | IssuesEvent | 2022-05-13 19:20:57 | ruffle-rs/ruffle | https://api.github.com/repos/ruffle-rs/ruffle | opened | Add more image tests | good first issue tests | @Aaron1011 was kind enough to add the ability for rendering test images, we should add some more of these, some of which may have caught recent regressions.

* Fill styles (gradients, bitmaps, w/ transforms)

* Line styles (including all linestyle2 options, caps, joins, fills)

* Color transforms (including <0% an... | 1.0 | Add more image tests - @Aaron1011 was kind enough to add the ability for rendering test images, we should add some more of these, some of which may have caught recent regressions.

* Fill styles (gradients, bitmaps, w/ transforms)

* Line styles (including all linestyle2 options, caps, joins, fills)

* Color trans... | test | add more image tests was kind enough to add the ability for rendering test images we should add some more of these some of which may have caught recent regressions fill styles gradients bitmaps w transforms line styles including all options caps joins fills color transforms including ... | 1 |

292,332 | 25,206,566,101 | IssuesEvent | 2022-11-13 19:00:16 | MinhazMurks/Bannerlord.Tweaks | https://api.github.com/repos/MinhazMurks/Bannerlord.Tweaks | opened | Test Town Militia Barracks Production Level 3 | testing | Test to see if tweak: "Town Militia Barracks Production Level 3" works | 1.0 | Test Town Militia Barracks Production Level 3 - Test to see if tweak: "Town Militia Barracks Production Level 3" works | test | test town militia barracks production level test to see if tweak town militia barracks production level works | 1 |

209,600 | 16,044,127,796 | IssuesEvent | 2021-04-22 11:40:32 | Kiryakor/QA | https://api.github.com/repos/Kiryakor/QA | opened | QA-3: необходимо протестировать Апи | test | # Необходимо протестировать Апи для работы с бд и написать тесты

### [ссылка на dev task](https://github.com/Kiryakor/QA/issues/5)

| 1.0 | QA-3: необходимо протестировать Апи - # Необходимо протестировать Апи для работы с бд и написать тесты

### [ссылка на dev task](https://github.com/Kiryakor/QA/issues/5)

| test | qa необходимо протестировать апи необходимо протестировать апи для работы с бд и написать тесты | 1 |

51,237 | 6,152,002,628 | IssuesEvent | 2017-06-28 05:28:56 | druid-io/druid | https://api.github.com/repos/druid-io/druid | closed | Transient failures of integration tests | Testing | Please edit messages with failure "snapshots" by adding links to failing builds, to understand which tests fail most often. | 1.0 | Transient failures of integration tests - Please edit messages with failure "snapshots" by adding links to failing builds, to understand which tests fail most often. | test | transient failures of integration tests please edit messages with failure snapshots by adding links to failing builds to understand which tests fail most often | 1 |

86,791 | 10,518,398,666 | IssuesEvent | 2019-09-29 10:29:02 | Jugendhackt/parteiduell-frontend | https://api.github.com/repos/Jugendhackt/parteiduell-frontend | closed | README | documentation good first issue help wanted | Erstellt doch bitte noch ein schönes README, in welchem erklärt wird, wie man das Frontend lokal aufsetzten kann und wie man am Projekt teilhaben kann. | 1.0 | README - Erstellt doch bitte noch ein schönes README, in welchem erklärt wird, wie man das Frontend lokal aufsetzten kann und wie man am Projekt teilhaben kann. | non_test | readme erstellt doch bitte noch ein schönes readme in welchem erklärt wird wie man das frontend lokal aufsetzten kann und wie man am projekt teilhaben kann | 0 |

122,661 | 10,229,003,634 | IssuesEvent | 2019-08-17 08:31:44 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Rephrase the error text for file not found error | Area/StandardLibs BetaTesting Type/Improvement | Consider the following:

```ballerina

system:FileInfo[] fileList = checkpanic system:readDir("invalid/path");

```

The error given for the above is:

```

error: error {ballerina/system}InvalidOperationError message=File doesn't exist in path ~/Downloads cause=null

```

Can't we just simply say `File not found: <fil... | 1.0 | Rephrase the error text for file not found error - Consider the following:

```ballerina

system:FileInfo[] fileList = checkpanic system:readDir("invalid/path");

```

The error given for the above is:

```

error: error {ballerina/system}InvalidOperationError message=File doesn't exist in path ~/Downloads cause=null

... | test | rephrase the error text for file not found error consider the following ballerina system fileinfo filelist checkpanic system readdir invalid path the error given for the above is error error ballerina system invalidoperationerror message file doesn t exist in path downloads cause null ... | 1 |

181,769 | 14,074,544,803 | IssuesEvent | 2020-11-04 07:31:39 | OpenMined/PySyft | https://api.github.com/repos/OpenMined/PySyft | closed | Add torch.Tensor.permute to allowlist and test suite | Priority: 2 - High :cold_sweat: Severity: 3 - Medium :unamused: Status: Available :wave: Type: New Feature :heavy_plus_sign: Type: Testing :test_tube: |

# Description

This issue is a part of Syft 0.3.0 Epic 2: https://github.com/OpenMined/PySyft/issues/3696

In this issue, you will be adding support for remote execution of the torch.Tensor.permute

method or property. This might be a really small project (literally a one-liner) or

it might require adding significant f... | 2.0 | Add torch.Tensor.permute to allowlist and test suite -

# Description

This issue is a part of Syft 0.3.0 Epic 2: https://github.com/OpenMined/PySyft/issues/3696

In this issue, you will be adding support for remote execution of the torch.Tensor.permute

method or property. This might be a really small project (literall... | test | add torch tensor permute to allowlist and test suite description this issue is a part of syft epic in this issue you will be adding support for remote execution of the torch tensor permute method or property this might be a really small project literally a one liner or it might require adding sign... | 1 |

281,525 | 30,888,881,142 | IssuesEvent | 2023-08-04 01:57:39 | hshivhare67/kernel_v4.1.15_CVE-2019-10220 | https://api.github.com/repos/hshivhare67/kernel_v4.1.15_CVE-2019-10220 | reopened | CVE-2020-27170 (Medium) detected in linuxlinux-4.4.302 | Mend: dependency security vulnerability | ## CVE-2020-27170 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.4.302</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.... | True | CVE-2020-27170 (Medium) detected in linuxlinux-4.4.302 - ## CVE-2020-27170 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.4.302</b></p></summary>

<p>

<p>The Linux Kerne... | non_test | cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files kernel bpf v... | 0 |

731,440 | 25,216,426,397 | IssuesEvent | 2022-11-14 09:30:07 | OpenSpace/OpenSpace | https://api.github.com/repos/OpenSpace/OpenSpace | closed | Flying to "1 AU Size Comparison Circle" freezes application | Type: Bug Priority: Critical Component: Interaction Feature: Camera Paths | To reproduce:

1. Add exoplanet system "11 Umi"

2. Find "1 AU Size Comparison Circle" in Navigation list and click the "fly to" button

One beautiful day, the camera path system is going to be free from bugs...

I believe the actual problem might be flying using the avoid collision curve to objects without boundi... | 1.0 | Flying to "1 AU Size Comparison Circle" freezes application - To reproduce:

1. Add exoplanet system "11 Umi"

2. Find "1 AU Size Comparison Circle" in Navigation list and click the "fly to" button

One beautiful day, the camera path system is going to be free from bugs...

I believe the actual problem might be fl... | non_test | flying to au size comparison circle freezes application to reproduce add exoplanet system umi find au size comparison circle in navigation list and click the fly to button one beautiful day the camera path system is going to be free from bugs i believe the actual problem might be fly... | 0 |

134,060 | 10,879,721,221 | IssuesEvent | 2019-11-17 04:31:00 | variar/klogg | https://api.github.com/repos/variar/klogg | closed | Crashes on startup when prior file no longer exists | bug case for CI ready for testing | Starting with a release after v501, (I verified problem exists with 504, 505, 506), Klogg crashes when reopening files from last session and one of the files no longer exists.

Prior, Klogg successfully opened and the tab existed with an empty content pane.

This was useful because of "clean builds" removing log file... | 1.0 | Crashes on startup when prior file no longer exists - Starting with a release after v501, (I verified problem exists with 504, 505, 506), Klogg crashes when reopening files from last session and one of the files no longer exists.

Prior, Klogg successfully opened and the tab existed with an empty content pane.

This ... | test | crashes on startup when prior file no longer exists starting with a release after i verified problem exists with klogg crashes when reopening files from last session and one of the files no longer exists prior klogg successfully opened and the tab existed with an empty content pane this was usefu... | 1 |

65,830 | 27,244,439,351 | IssuesEvent | 2023-02-22 00:03:30 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | opened | #include <test.h> completion to test2.h creates `test2.h""` | bug Language Service Feature: Auto-complete | With test.h and test2.h and

`#include "test.h"`

invoking include completion to replace it with test2.h creates `test2.h""` | 1.0 | #include <test.h> completion to test2.h creates `test2.h""` - With test.h and test2.h and

`#include "test.h"`

invoking include completion to replace it with test2.h creates `test2.h""` | non_test | include completion to h creates h with test h and h and include test h invoking include completion to replace it with h creates h | 0 |

277,062 | 24,045,973,107 | IssuesEvent | 2022-09-16 08:22:13 | Azure/ResourceModules | https://api.github.com/repos/Azure/ResourceModules | opened | [Feature Request]: Updated dependencies approach - Test formatting | enhancement [cat] testing blocked [cat] needs further discussion | ### Description

This issue is about agreeing on the format to apply to module test templates (deploy.test.bicep) and implement the changes as a bulk edit once all related PRs will be merged.

In particular, section names and spacing e.g. use `// Dependencies //` instead of generic `// Deployments //` for the depende... | 1.0 | [Feature Request]: Updated dependencies approach - Test formatting - ### Description

This issue is about agreeing on the format to apply to module test templates (deploy.test.bicep) and implement the changes as a bulk edit once all related PRs will be merged.

In particular, section names and spacing e.g. use `// De... | test | updated dependencies approach test formatting description this issue is about agreeing on the format to apply to module test templates deploy test bicep and implement the changes as a bulk edit once all related prs will be merged in particular section names and spacing e g use dependencies i... | 1 |

383,474 | 26,551,273,195 | IssuesEvent | 2023-01-20 08:00:57 | supabase/supabase | https://api.github.com/repos/supabase/supabase | closed | Returning data sets requires further instruction | documentation | # Returning data sets is not debuggable?

## Link

https://supabase.com/docs/guides/database/functions#returning-data-sets

## Describe the problem

The current example leads to the following problem when I try to use it on my own dbs:

"return type mismatch in function declared to return posts"

The problem i... | 1.0 | Returning data sets requires further instruction - # Returning data sets is not debuggable?

## Link

https://supabase.com/docs/guides/database/functions#returning-data-sets

## Describe the problem

The current example leads to the following problem when I try to use it on my own dbs:

"return type mismatch in ... | non_test | returning data sets requires further instruction returning data sets is not debuggable link describe the problem the current example leads to the following problem when i try to use it on my own dbs return type mismatch in function declared to return posts the problem is that this makes s... | 0 |

430,474 | 12,453,528,831 | IssuesEvent | 2020-05-27 13:59:01 | ISDM-G4/G4-ISDM-Project | https://api.github.com/repos/ISDM-G4/G4-ISDM-Project | opened | US#112 - As a relationship manager I want outbound calls to be automatically dialed by the system so that I can focus on sales and not dailing numbers from a list. | high-priority | As a relationship manager I want outbound calls to be automatically dialed by the system so that I can focus on sales and not dailing numbers from a list. | 1.0 | US#112 - As a relationship manager I want outbound calls to be automatically dialed by the system so that I can focus on sales and not dailing numbers from a list. - As a relationship manager I want outbound calls to be automatically dialed by the system so that I can focus on sales and not dailing numbers from a list. | non_test | us as a relationship manager i want outbound calls to be automatically dialed by the system so that i can focus on sales and not dailing numbers from a list as a relationship manager i want outbound calls to be automatically dialed by the system so that i can focus on sales and not dailing numbers from a list | 0 |

26,840 | 4,249,572,514 | IssuesEvent | 2016-07-08 00:45:30 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | opened | kubernetes-e2e-gke: broken test run | kind/flake priority/P2 team/test-infra | https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/kubernetes-e2e-gke/10735/

Multiple broken tests:

Failed: [k8s.io] Kubectl client [k8s.io] Update Demo should do a rolling update of a replication controller [Conformance] {Kubernetes e2e suite}

```

/go/src/k8s.io/kubernetes/_output/dockerized/go/src/k8... | 1.0 | kubernetes-e2e-gke: broken test run - https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/kubernetes-e2e-gke/10735/

Multiple broken tests:

Failed: [k8s.io] Kubectl client [k8s.io] Update Demo should do a rolling update of a replication controller [Conformance] {Kubernetes e2e suite}

```

/go/src/k8s.io/k... | test | kubernetes gke broken test run multiple broken tests failed kubectl client update demo should do a rolling update of a replication controller kubernetes suite go src io kubernetes output dockerized go src io kubernetes test kubectl go expected error s error runnin... | 1 |

120,031 | 17,644,010,205 | IssuesEvent | 2021-08-20 01:27:18 | AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | https://api.github.com/repos/AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | opened | CVE-2021-37690 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2021-37690 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learni... | True | CVE-2021-37690 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2021-37690 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27... | non_test | cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file finalproject requirement... | 0 |

815,327 | 30,546,725,680 | IssuesEvent | 2023-07-20 04:59:02 | Nexus-Mods/NexusMods.App | https://api.github.com/repos/Nexus-Mods/NexusMods.App | closed | Theme: Incorrect Value for StructuralBorderColor | complexity-small priority-low | # Bug Report

## Summary

In the theme, `StructuralBorderColor` is incorrectly defined as `#2DFFFFFF`, rather than `#303236`; which makes borders subtly different to their FIGMA designs. This difference is very subtle in most of the UI as the borders .

Current/App:

on December 30, 2010 15:43:45_

Давненьео заметил такой дефект, но долго принимал за нехватку слотов просто...

Суть проблемы: многие пользователи не могут получить с меня информацию (список ли файлов или выложенный контент) без выделения им... | 1.0 | Невозможность присоединения части пользователей без выделения слота - _From [yuri-xa...@yandex.ru](https://code.google.com/u/112318394159763967949/) on December 30, 2010 15:43:45_

Давненьео заметил такой дефект, но долго принимал за нехватку слотов просто...

Суть проблемы: многие пользователи не могут получить с меня... | non_test | невозможность присоединения части пользователей без выделения слота from on december давненьео заметил такой дефект но долго принимал за нехватку слотов просто суть проблемы многие пользователи не могут получить с меня информацию список ли файлов или выложенный контент без выделения им допол... | 0 |

151,462 | 12,036,835,747 | IssuesEvent | 2020-04-13 20:35:01 | ImagingDataCommons/IDC-WebApp | https://api.github.com/repos/ImagingDataCommons/IDC-WebApp | closed | BMI normal weight missing | bug testing needed testing passed | The BMI 'normal weight' is always 0. It looks like a Mysql/Solr mismatch. query_solr_and_format_result has the category 'normal' but in attr_by_source['related_set']['attributes']['bmi']['vals'] its 'normal weight'. I think we should go with 'normal weight'. Also why are counts so low? Was bmi just not recorded for mos... | 2.0 | BMI normal weight missing - The BMI 'normal weight' is always 0. It looks like a Mysql/Solr mismatch. query_solr_and_format_result has the category 'normal' but in attr_by_source['related_set']['attributes']['bmi']['vals'] its 'normal weight'. I think we should go with 'normal weight'. Also why are counts so low? Was b... | test | bmi normal weight missing the bmi normal weight is always it looks like a mysql solr mismatch query solr and format result has the category normal but in attr by source its normal weight i think we should go with normal weight also why are counts so low was bmi just not recorded for most collection... | 1 |

14,912 | 26,035,909,035 | IssuesEvent | 2022-12-22 04:56:33 | seleniumbase/SeleniumBase | https://api.github.com/repos/seleniumbase/SeleniumBase | closed | Remove "options.headless" usage before SeleniumHQ deprecates it | requirements SeleniumBase 4 | ### Remove ``options.headless`` usage before SeleniumHQ deprecates it.

This is regarding: https://github.com/SeleniumHQ/selenium/issues/11467

Instead of setting ``options.headless``,

use ``options.add_argument("--headless")``

or ``options.add_argument("--headless=chrome")``.

(For Chrome and Edge)

This is ... | 1.0 | Remove "options.headless" usage before SeleniumHQ deprecates it - ### Remove ``options.headless`` usage before SeleniumHQ deprecates it.

This is regarding: https://github.com/SeleniumHQ/selenium/issues/11467

Instead of setting ``options.headless``,

use ``options.add_argument("--headless")``

or ``options.add_arg... | non_test | remove options headless usage before seleniumhq deprecates it remove options headless usage before seleniumhq deprecates it this is regarding instead of setting options headless use options add argument headless or options add argument headless chrome for chrome and e... | 0 |

3,953 | 2,543,832,470 | IssuesEvent | 2015-01-29 02:23:51 | coollog/sublite | https://api.github.com/repos/coollog/sublite | closed | Add list of predefined industries | High Priority Long | - [x] add list of industries to company profile creation - @xtonyjiang

- [x] add list of industries to search - @coollog

- [x] change all current listings' industries to ones on the list

- [x] add option to select multiple industries for a company profile | 1.0 | Add list of predefined industries - - [x] add list of industries to company profile creation - @xtonyjiang

- [x] add list of industries to search - @coollog

- [x] change all current listings' industries to ones on the list

- [x] add option to select multiple industries for a company profile | non_test | add list of predefined industries add list of industries to company profile creation xtonyjiang add list of industries to search coollog change all current listings industries to ones on the list add option to select multiple industries for a company profile | 0 |

6,909 | 2,824,171,912 | IssuesEvent | 2015-05-21 13:26:17 | Code4HR/hrt-bus-finder | https://api.github.com/repos/Code4HR/hrt-bus-finder | opened | Reduce container width for larger screens | beginner design help wanted |

Bootstrap by will increase the size of the container as the screen size increases. Override the Bootstrap CSS and set the maximum container size for the scheduling information... | 1.0 | Reduce container width for larger screens -

Bootstrap by will increase the size of the container as the screen size increases. Override the Bootstrap CSS and set the maximum c... | non_test | reduce container width for larger screens bootstrap by will increase the size of the container as the screen size increases override the bootstrap css and set the maximum container size for the scheduling information to something like app container max width | 0 |

529,201 | 15,383,250,277 | IssuesEvent | 2021-03-03 02:22:04 | PyTorchLightning/pytorch-lightning | https://api.github.com/repos/PyTorchLightning/pytorch-lightning | closed | reproduciblity compared with vanilla pytorch | Priority P2 bug / fix good first issue help wanted won't fix | ## 🐛 Bug

I am trying to compare a vanilla pytorch code which I refactored as a part of my learning pytorch-lightning but I see that the `training_step` is iterating through a different order of my dataset inspite of me setting `seed_everything` and `deterministic=True` and `benchmark=False`

## Please reproduce u... | 1.0 | reproduciblity compared with vanilla pytorch - ## 🐛 Bug

I am trying to compare a vanilla pytorch code which I refactored as a part of my learning pytorch-lightning but I see that the `training_step` is iterating through a different order of my dataset inspite of me setting `seed_everything` and `deterministic=True`... | non_test | reproduciblity compared with vanilla pytorch 🐛 bug i am trying to compare a vanilla pytorch code which i refactored as a part of my learning pytorch lightning but i see that the training step is iterating through a different order of my dataset inspite of me setting seed everything and deterministic true ... | 0 |

350,925 | 31,932,538,284 | IssuesEvent | 2023-09-19 08:24:30 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix creation.test_triu_indices | Sub Task Ivy API Experimental Failing Test | | | |

|---|---|

|tensorflow|<a href="null"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="null"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="null"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="null"><img src=https://img.shields.io/badg... | 1.0 | Fix creation.test_triu_indices - | | |

|---|---|

|tensorflow|<a href="null"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="null"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="null"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="null"><im... | test | fix creation test triu indices tensorflow img src torch img src jax img src numpy img src paddle img src | 1 |

20,750 | 10,915,110,557 | IssuesEvent | 2019-11-21 10:30:20 | datalad/datalad | https://api.github.com/repos/datalad/datalad | opened | normalize_paths() can be a major slowdown for large N datasets | performance | Whenever an absolute path is provided, it will result in a `realpath()` call. This can be slow, depending on FS performance and path complexity. When done 500k times, it will have an impact. | True | normalize_paths() can be a major slowdown for large N datasets - Whenever an absolute path is provided, it will result in a `realpath()` call. This can be slow, depending on FS performance and path complexity. When done 500k times, it will have an impact. | non_test | normalize paths can be a major slowdown for large n datasets whenever an absolute path is provided it will result in a realpath call this can be slow depending on fs performance and path complexity when done times it will have an impact | 0 |

29,927 | 7,134,887,558 | IssuesEvent | 2018-01-22 22:27:36 | aimalz/chippr | https://api.github.com/repos/aimalz/chippr | closed | Replace basic probability distribution construction | Epic: code release Epic: null test | The whole `mvn.py`/`multi_dist.py`/`gmix.py`/`gauss.py`/`discrete.py` system is woefully inefficient. This issue can be closed when this redundant structure is eliminated in favor of something based on [`scipy.stats`](https://docs.scipy.org/doc/scipy/reference/generated/scipy.stats.rv_continuous.html) and/or [`qp`](ht... | 1.0 | Replace basic probability distribution construction - The whole `mvn.py`/`multi_dist.py`/`gmix.py`/`gauss.py`/`discrete.py` system is woefully inefficient. This issue can be closed when this redundant structure is eliminated in favor of something based on [`scipy.stats`](https://docs.scipy.org/doc/scipy/reference/gene... | non_test | replace basic probability distribution construction the whole mvn py multi dist py gmix py gauss py discrete py system is woefully inefficient this issue can be closed when this redundant structure is eliminated in favor of something based on and or and or objects that are much faster | 0 |

381,201 | 11,274,881,289 | IssuesEvent | 2020-01-14 19:31:57 | bcgov/entity | https://api.github.com/repos/bcgov/entity | opened | Page Validation Indicator in Stepper on File and Pay - UI DESIGN | ENTITY Priority2 UX | Include a page validation indicator in the stepper on "File and Pay".

The stepper allows users to view incomplete / invalid pages without selecting the "next step" button at the bottom of the page.

Therefore we can only perform an overall validation when the user selects "File and Pay" in the Confirm step.

When the use... | 1.0 | Page Validation Indicator in Stepper on File and Pay - UI DESIGN - Include a page validation indicator in the stepper on "File and Pay".

The stepper allows users to view incomplete / invalid pages without selecting the "next step" button at the bottom of the page.

Therefore we can only perform an overall validation whe... | non_test | page validation indicator in stepper on file and pay ui design include a page validation indicator in the stepper on file and pay the stepper allows users to view incomplete invalid pages without selecting the next step button at the bottom of the page therefore we can only perform an overall validation whe... | 0 |

229,061 | 18,279,503,582 | IssuesEvent | 2021-10-05 00:06:50 | aces/Loris | https://api.github.com/repos/aces/Loris | opened | [Data Query Tool (Beta)] Saved queries show fields for private but not for public queries | Bug 24.0.0-testing | **Describe the bug**

Saved queries are displayed differently if saved privately or publicly. Private queries show all fields selected, whereas public queries do not.

**To Reproduce**

Steps to reproduce the behavior (attach screenshots if applicable):

1. Go to 'Data Query Tool (Beta)' module

2. Click on 'Load Exi... | 1.0 | [Data Query Tool (Beta)] Saved queries show fields for private but not for public queries - **Describe the bug**

Saved queries are displayed differently if saved privately or publicly. Private queries show all fields selected, whereas public queries do not.

**To Reproduce**

Steps to reproduce the behavior (attach ... | test | saved queries show fields for private but not for public queries describe the bug saved queries are displayed differently if saved privately or publicly private queries show all fields selected whereas public queries do not to reproduce steps to reproduce the behavior attach screenshots if applicab... | 1 |

92,557 | 8,368,117,708 | IssuesEvent | 2018-10-04 14:02:26 | italia/spid | https://api.github.com/repos/italia/spid | closed | Richiesta di Validazione Metadati - Regione Calabria | metadata nuovo md test | Per conto della Regione Calabria si richiede la verifica e il deploy dei metadati esposti all'URL:

https://ecosanita.regione.calabria.it/metadata-regionecalabria_20180912.xml | 1.0 | Richiesta di Validazione Metadati - Regione Calabria - Per conto della Regione Calabria si richiede la verifica e il deploy dei metadati esposti all'URL:

https://ecosanita.regione.calabria.it/metadata-regionecalabria_20180912.xml | test | richiesta di validazione metadati regione calabria per conto della regione calabria si richiede la verifica e il deploy dei metadati esposti all url | 1 |

201,824 | 15,226,248,042 | IssuesEvent | 2021-02-18 08:37:24 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: ycsb/A/nodes=3 failed | C-test-failure O-roachtest O-robot branch-master release-blocker | [(roachtest).ycsb/A/nodes=3 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2688406&tab=buildLog) on [master@3c223f5f5162103110a790743b687ef2bf952489](https://github.com/cockroachdb/cockroach/commits/3c223f5f5162103110a790743b687ef2bf952489):

```

| 3.0s 0 1382.0 1030.3 ... | 2.0 | roachtest: ycsb/A/nodes=3 failed - [(roachtest).ycsb/A/nodes=3 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2688406&tab=buildLog) on [master@3c223f5f5162103110a790743b687ef2bf952489](https://github.com/cockroachdb/cockroach/commits/3c223f5f5162103110a790743b687ef2bf952489):

```

| 3.0s 0... | test | roachtest ycsb a nodes failed on read update read ... | 1 |

204,173 | 15,421,627,551 | IssuesEvent | 2021-03-05 13:20:14 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | Reproducible Failure in org.elasticsearch.index.store.SearchableSnapshotDirectoryStatsTests.testCachedBytesReadsAndWrites | :Distributed/Snapshot/Restore >test-failure Team:Distributed | Just ran into this locally working on the multiple page sizes cache but it reproduces on master as well:

```

./gradlew ':x-pack:plugin:searchable-snapshots:test' --tests "org.elasticsearch.index.store.SearchableSnapshotDirectoryStatsTests.testCachedBytesReadsAndWrites" -Dtests.seed=163C909BF9F0F844 -Dtests.security... | 1.0 | Reproducible Failure in org.elasticsearch.index.store.SearchableSnapshotDirectoryStatsTests.testCachedBytesReadsAndWrites - Just ran into this locally working on the multiple page sizes cache but it reproduces on master as well:

```

./gradlew ':x-pack:plugin:searchable-snapshots:test' --tests "org.elasticsearch.ind... | test | reproducible failure in org elasticsearch index store searchablesnapshotdirectorystatstests testcachedbytesreadsandwrites just ran into this locally working on the multiple page sizes cache but it reproduces on master as well gradlew x pack plugin searchable snapshots test tests org elasticsearch ind... | 1 |

13,204 | 15,571,139,455 | IssuesEvent | 2021-03-17 04:12:01 | Lothrazar/Cyclic | https://api.github.com/repos/Lothrazar/Cyclic | closed | Solidification Chamber not Processing | 1.16 compatibility done | Minecraft Version: 1.16.5

Forge Version: 35.1.37

Mod Version: Cyclic-1.16.5-1.1.8.jar

Single Player or Server: Single Player

Describe problem (what you were doing; what happened; what should have happened):

I am currently playing the mod pack Sky Bee found on the curseforge application. When using the so... | True | Solidification Chamber not Processing - Minecraft Version: 1.16.5

Forge Version: 35.1.37

Mod Version: Cyclic-1.16.5-1.1.8.jar

Single Player or Server: Single Player

Describe problem (what you were doing; what happened; what should have happened):

I am currently playing the mod pack Sky Bee found on the c... | non_test | solidification chamber not processing minecraft version forge version mod version cyclic jar single player or server single player describe problem what you were doing what happened what should have happened i am currently playing the mod pack sky bee found on the curse... | 0 |

288,260 | 21,692,962,885 | IssuesEvent | 2022-05-09 17:05:49 | withastro/docs | https://api.github.com/repos/withastro/docs | closed | Remove "experimental" from SSR page | improve documentation good first issue | This page needs updating now that SSR is no longer behind an experimental flag. It is the "new normal" but, it is still undergoing changes and in development. So, the text should at the very least remove reference to the experimental flag. But, perhaps keeping a note about it being in current development and subject to... | 1.0 | Remove "experimental" from SSR page - This page needs updating now that SSR is no longer behind an experimental flag. It is the "new normal" but, it is still undergoing changes and in development. So, the text should at the very least remove reference to the experimental flag. But, perhaps keeping a note about it being... | non_test | remove experimental from ssr page this page needs updating now that ssr is no longer behind an experimental flag it is the new normal but it is still undergoing changes and in development so the text should at the very least remove reference to the experimental flag but perhaps keeping a note about it being... | 0 |

67,124 | 7,036,011,236 | IssuesEvent | 2017-12-28 05:34:09 | Microsoft/vsts-tasks | https://api.github.com/repos/Microsoft/vsts-tasks | closed | VSTest: Publish fails if '[' or ']' are used in run title, | Area: Test | ## Environment

- Server - VSTS or TFS on-premises?

VSTS

- Agent - Hosted or Private:

Private agent, windowsServer 2016, agent version: 2.126.0

## Issue Description

If the title of a test run contains '[' or ']' or both VSTest-Task will not publish the results and write the following warni... | 1.0 | VSTest: Publish fails if '[' or ']' are used in run title, - ## Environment

- Server - VSTS or TFS on-premises?

VSTS

- Agent - Hosted or Private:

Private agent, windowsServer 2016, agent version: 2.126.0

## Issue Description

If the title of a test run contains '[' or ']' or both VSTest-Ta... | test | vstest publish fails if are used in run title environment server vsts or tfs on premises vsts agent hosted or private private agent windowsserver agent version issue description if the title of a test run contains or both vstest task will not publish... | 1 |

161,725 | 12,559,765,978 | IssuesEvent | 2020-06-07 19:58:34 | valadaa48/retroroller | https://api.github.com/repos/valadaa48/retroroller | closed | es_systems.cfg needs platform set | please test | for these systems a platform needs set to scrape

saturn, famicom, super famicom, intellevision, master system | 1.0 | es_systems.cfg needs platform set - for these systems a platform needs set to scrape

saturn, famicom, super famicom, intellevision, master system | test | es systems cfg needs platform set for these systems a platform needs set to scrape saturn famicom super famicom intellevision master system | 1 |

13,724 | 8,351,654,586 | IssuesEvent | 2018-10-02 01:31:14 | azavea/tilegarden | https://api.github.com/repos/azavea/tilegarden | closed | Shrink aws-sdk | performance | Related to #117

We only really use the fetching-from-S3 part of `aws-sdk`, so it might be feasible to remove the parts of it that aren't used in order to slim down the bundle size. Rumor has it that it's available locally in the Lambda Node runtime, so check if that is true. If so, it might be possible to just rele... | True | Shrink aws-sdk - Related to #117

We only really use the fetching-from-S3 part of `aws-sdk`, so it might be feasible to remove the parts of it that aren't used in order to slim down the bundle size. Rumor has it that it's available locally in the Lambda Node runtime, so check if that is true. If so, it might be poss... | non_test | shrink aws sdk related to we only really use the fetching from part of aws sdk so it might be feasible to remove the parts of it that aren t used in order to slim down the bundle size rumor has it that it s available locally in the lambda node runtime so check if that is true if so it might be possibl... | 0 |

155,225 | 12,244,202,990 | IssuesEvent | 2020-05-05 10:40:38 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | [QUEST] Where Dragons Fell-Killable Lich King | Fix - Tester Confirmed | **Links:**

https://www.wowhead.com/quest=13398/where-dragons-fell

**What is Happening:**

After turning the quest, one is shown the vision of Lich King resurrecting Sindragossa. The only problem being that Arthas is attackable and killable (28Million HP). He stands still while being damaged, does not attack, nor mo... | 1.0 | [QUEST] Where Dragons Fell-Killable Lich King - **Links:**

https://www.wowhead.com/quest=13398/where-dragons-fell

**What is Happening:**

After turning the quest, one is shown the vision of Lich King resurrecting Sindragossa. The only problem being that Arthas is attackable and killable (28Million HP). He stands st... | test | where dragons fell killable lich king links what is happening after turning the quest one is shown the vision of lich king resurrecting sindragossa the only problem being that arthas is attackable and killable hp he stands still while being damaged does not attack nor move doesn t drop an... | 1 |

9,333 | 3,036,654,985 | IssuesEvent | 2015-08-06 13:16:56 | trendwerk/trendpress | https://api.github.com/repos/trendwerk/trendpress | closed | Front-end JS dependencies | feature needs-testing | Right now we don't use a dependency manager for front-end dependencies. The source is simply included within our repository. This makes repo's heavier than they should be.

[Bower](http://bower.io) seems the perfect solution to our problems. So far it seems like this has become the de facto standard. | 1.0 | Front-end JS dependencies - Right now we don't use a dependency manager for front-end dependencies. The source is simply included within our repository. This makes repo's heavier than they should be.

[Bower](http://bower.io) seems the perfect solution to our problems. So far it seems like this has become the de fact... | test | front end js dependencies right now we don t use a dependency manager for front end dependencies the source is simply included within our repository this makes repo s heavier than they should be seems the perfect solution to our problems so far it seems like this has become the de facto standard | 1 |

177,573 | 13,731,390,918 | IssuesEvent | 2020-10-05 00:49:28 | QubesOS/updates-status | https://api.github.com/repos/QubesOS/updates-status | closed | vmm-xen v4.14.0-3 (r4.1) | r4.1-bullseye-cur-test r4.1-buster-cur-test r4.1-centos8-cur-test r4.1-dom0-cur-test r4.1-stretch-cur-test | Update of vmm-xen to v4.14.0-3 for Qubes r4.1, see comments below for details.

Built from: https://github.com/QubesOS/qubes-vmm-xen/commit/6c426f6d7fd4630f82ac3aa75667572f364fcfa4

[Changes since previous version](https://github.com/QubesOS/qubes-vmm-xen/compare/v4.13.1-4...v4.14.0-3):

QubesOS/qubes-vmm-xen@6c426f6 ve... | 5.0 | vmm-xen v4.14.0-3 (r4.1) - Update of vmm-xen to v4.14.0-3 for Qubes r4.1, see comments below for details.

Built from: https://github.com/QubesOS/qubes-vmm-xen/commit/6c426f6d7fd4630f82ac3aa75667572f364fcfa4

[Changes since previous version](https://github.com/QubesOS/qubes-vmm-xen/compare/v4.13.1-4...v4.14.0-3):

Qubes... | test | vmm xen update of vmm xen to for qubes see comments below for details built from qubesos qubes vmm xen version qubesos qubes vmm xen debian fix in vm assumed xen utils package version qubesos qubes vmm xen version qubesos qubes vmm xen rpm include xenhypf... | 1 |

116,471 | 11,913,787,437 | IssuesEvent | 2020-03-31 12:36:19 | LEDApplications/lehd-schema | https://api.github.com/repos/LEDApplications/lehd-schema | opened | Change institution length | PSEO documentation | OPEID in past releases has been length 6, but we need to make it length 8 and have an updated OPEID list.

Things we need to do here:

- [ ] Update the CSV that feeds into schema.

- [ ] Change length of institution identifier. | 1.0 | Change institution length - OPEID in past releases has been length 6, but we need to make it length 8 and have an updated OPEID list.

Things we need to do here:

- [ ] Update the CSV that feeds into schema.

- [ ] Change length of institution identifier. | non_test | change institution length opeid in past releases has been length but we need to make it length and have an updated opeid list things we need to do here update the csv that feeds into schema change length of institution identifier | 0 |

43,844 | 13,040,254,650 | IssuesEvent | 2020-07-28 18:10:44 | LevyForchh/cadvisor | https://api.github.com/repos/LevyForchh/cadvisor | opened | CVE-2019-8331 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>github.com/google/cadvisor/stats-33463ad2210c2490c2cfe822113ffe364d079eec</b>, <b>github.com/google/cadvisor/contain... | True | CVE-2019-8331 (Medium) detected in multiple libraries - ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>github.com/google/cadvisor/stats-33463ad2210c2490c2cfe822113... | non_test | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries github com google cadvisor stats github com google cadvisor container crio github com google cadvisor perf bootstrap beta min js github com google cadvisor container contain... | 0 |

10,378 | 2,622,148,473 | IssuesEvent | 2015-03-04 00:05:07 | byzhang/libcl | https://api.github.com/repos/byzhang/libcl | opened | The Test Crashed Under MY nv9600GT | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. after compiled and just running the TEST, with or without

debugging(VS2010Express),at lines:

65 // run tests

66 Log(INFO) << "****** calling radix sort ...";

67 testRadixSort(*lContext); // <-- video driver crash here

68 Log(INFO) << "****** done\n";

What ... | 1.0 | The Test Crashed Under MY nv9600GT - ```

What steps will reproduce the problem?

1. after compiled and just running the TEST, with or without

debugging(VS2010Express),at lines:

65 // run tests

66 Log(INFO) << "****** calling radix sort ...";

67 testRadixSort(*lContext); // <-- video driver crash here

68 ... | non_test | the test crashed under my what steps will reproduce the problem after compiled and just running the test with or without debugging ,at lines run tests log info calling radix sort testradixsort lcontext video driver crash here log info d... | 0 |

7,538 | 10,687,846,394 | IssuesEvent | 2019-10-22 16:58:12 | elheck/Squiddrone | https://api.github.com/repos/elheck/Squiddrone | opened | SW036 - SAFE LANDING, check for SYSTEMs underneath | Software Requirement | The SOFTWARE shall check for SYSTEMs underneath. The SOFTWARE shall use the GPS Positions of all other SYSTEMs and its sensors for this. | 1.0 | SW036 - SAFE LANDING, check for SYSTEMs underneath - The SOFTWARE shall check for SYSTEMs underneath. The SOFTWARE shall use the GPS Positions of all other SYSTEMs and its sensors for this. | non_test | safe landing check for systems underneath the software shall check for systems underneath the software shall use the gps positions of all other systems and its sensors for this | 0 |

539,221 | 15,785,440,982 | IssuesEvent | 2021-04-01 16:20:32 | zulip/zulip | https://api.github.com/repos/zulip/zulip | opened | Change model for messages with multiple image previews to stack images horizontally with scrollbar | area: message view help wanted priority: high | I'm not 100% sure this will be good UX, but it might be (and solves several problems), and we won't really know until we try.

Zulip's model for image previews is designed around a few goals:

* Not having images be so giant that they crowd out text (which is very common in other chat platforms with full-width image ... | 1.0 | Change model for messages with multiple image previews to stack images horizontally with scrollbar - I'm not 100% sure this will be good UX, but it might be (and solves several problems), and we won't really know until we try.

Zulip's model for image previews is designed around a few goals:

* Not having images be s... | non_test | change model for messages with multiple image previews to stack images horizontally with scrollbar i m not sure this will be good ux but it might be and solves several problems and we won t really know until we try zulip s model for image previews is designed around a few goals not having images be so ... | 0 |

723,146 | 24,886,458,376 | IssuesEvent | 2022-10-28 08:12:32 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | slideshow not color managed | priority: high difficulty: trivial bug: pending release notes: pending | All is said in the title. To reproduce:

- select a color space that is very visible (like linear rec 2020)

- enter the slideshow

- see how different the image is compared to lighttable or darkroom | 1.0 | slideshow not color managed - All is said in the title. To reproduce:

- select a color space that is very visible (like linear rec 2020)

- enter the slideshow

- see how different the image is compared to lighttable or darkroom | non_test | slideshow not color managed all is said in the title to reproduce select a color space that is very visible like linear rec enter the slideshow see how different the image is compared to lighttable or darkroom | 0 |

7,696 | 10,863,660,514 | IssuesEvent | 2019-11-14 15:30:24 | Shopify/kubernetes-deploy | https://api.github.com/repos/Shopify/kubernetes-deploy | closed | Write migration guide. | :rocket: 1.0 requirement | We need to write a guide that explains how to migrate from `exe/kubernetes-*` to `krane *`.

- Provide mappings for flags that have changed.

- Provide examples of how to deploy global resources

- Provide examples of any breaking changes

- Explain how to install k8s-deploy gem and krane gem. | 1.0 | Write migration guide. - We need to write a guide that explains how to migrate from `exe/kubernetes-*` to `krane *`.

- Provide mappings for flags that have changed.

- Provide examples of how to deploy global resources

- Provide examples of any breaking changes

- Explain how to install k8s-deploy gem and krane ge... | non_test | write migration guide we need to write a guide that explains how to migrate from exe kubernetes to krane provide mappings for flags that have changed provide examples of how to deploy global resources provide examples of any breaking changes explain how to install deploy gem and krane gem | 0 |

779,950 | 27,373,222,048 | IssuesEvent | 2023-02-28 02:18:46 | UNopenGIS/7 | https://api.github.com/repos/UNopenGIS/7 | closed | Releasable Basemap Tiles (RBT) を取り込みたい | priority/MAY | ## See Also

https://github.com/agcgeoint/rbt

## 私ならこう取り込みたい

- MBTiles で配布されているようなので、PMTiles に変換する

- style.json を charites 経由で加工する

- MapLibre GL JS ベースでローカル完結したウェブ地図を作る | 1.0 | Releasable Basemap Tiles (RBT) を取り込みたい - ## See Also

https://github.com/agcgeoint/rbt

## 私ならこう取り込みたい

- MBTiles で配布されているようなので、PMTiles に変換する

- style.json を charites 経由で加工する

- MapLibre GL JS ベースでローカル完結したウェブ地図を作る | non_test | releasable basemap tiles rbt を取り込みたい see also 私ならこう取り込みたい mbtiles で配布されているようなので、pmtiles に変換する style json を charites 経由で加工する maplibre gl js ベースでローカル完結したウェブ地図を作る | 0 |

439,748 | 12,686,326,393 | IssuesEvent | 2020-06-20 10:13:28 | din0s/ActionHeroes | https://api.github.com/repos/din0s/ActionHeroes | closed | Make scroll-up button a global feature. | enhancement low priority | The scroll-up button is currently implemented on the Landing-Page only. It needs to be relocated so it can be a global feature, applied to all our pages. | 1.0 | Make scroll-up button a global feature. - The scroll-up button is currently implemented on the Landing-Page only. It needs to be relocated so it can be a global feature, applied to all our pages. | non_test | make scroll up button a global feature the scroll up button is currently implemented on the landing page only it needs to be relocated so it can be a global feature applied to all our pages | 0 |

230,942 | 18,725,736,390 | IssuesEvent | 2021-11-03 16:05:56 | flutter/devtools | https://api.github.com/repos/flutter/devtools | closed | service manager related test flakes | testing fix it friday | This same assertion error for `'isolate != null'` has been seen on other tests as well.

```

02:58 +241 ~3: /home/runner/work/devtools/devtools/packages/devtools_app/test/inspector_service_test.dart: inspector service tests track widget creation on

PreferencesController: st... | 1.0 | service manager related test flakes - This same assertion error for `'isolate != null'` has been seen on other tests as well.

```

02:58 +241 ~3: /home/runner/work/devtools/devtools/packages/devtools_app/test/inspector_service_test.dart: inspector service tests track widget creation on ... | test | service manager related test flakes this same assertion error for isolate null has been seen on other tests as well home runner work devtools devtools packages devtools app test inspector service test dart inspector service tests track widget creation on ... | 1 |

32,923 | 15,716,155,249 | IssuesEvent | 2021-03-28 05:37:40 | diofant/diofant | https://api.github.com/repos/diofant/diofant | opened | Slow gcd for multivariate polynomials over finite fields | enhancement performance polys | See e.g. example [here](https://github.com/diofant/diofant/pull/1132#issuecomment-803298312) and below (with an obvious replacement `s/from_sympy/from_expr/`). c.f. [rings](https://rings.readthedocs.io/en/latest/) library.

| True | Slow gcd for multivariate polynomials over finite fields - See e.g. example [here](https://github.com/diofant/diofant/pull/1132#issuecomment-803298312) and below (with an obvious replacement `s/from_sympy/from_expr/`). c.f. [rings](https://rings.readthedocs.io/en/latest/) library.

| non_test | slow gcd for multivariate polynomials over finite fields see e g example and below with an obvious replacement s from sympy from expr c f library | 0 |

214,433 | 16,588,933,665 | IssuesEvent | 2021-06-01 04:15:52 | thanos-io/thanos | https://api.github.com/repos/thanos-io/thanos | closed | Add E2E test for exemplars API | component: query difficulty: medium good first issue help wanted tests | Now the exemplars API is added and we already instrumented Thanos with exemplars in https://github.com/thanos-io/thanos/pull/3977. It is time to add an E2E test case for it.

AC:

~~1. Add a Jaeger all in one service in the E2E test as it is required for tracing~~

2. Update the options to start Thanos component with... | 1.0 | Add E2E test for exemplars API - Now the exemplars API is added and we already instrumented Thanos with exemplars in https://github.com/thanos-io/thanos/pull/3977. It is time to add an E2E test case for it.

AC:

~~1. Add a Jaeger all in one service in the E2E test as it is required for tracing~~

2. Update the optio... | test | add test for exemplars api now the exemplars api is added and we already instrumented thanos with exemplars in it is time to add an test case for it ac add a jaeger all in one service in the test as it is required for tracing update the options to start thanos component with tracing configs ... | 1 |

629,030 | 20,021,507,823 | IssuesEvent | 2022-02-01 16:48:14 | CLOSER-Cohorts/archivist | https://api.github.com/repos/CLOSER-Cohorts/archivist | opened | Security patches | High priority | There are a whole stack of security patches that should probably be applied before they get completely out of hand | 1.0 | Security patches - There are a whole stack of security patches that should probably be applied before they get completely out of hand | non_test | security patches there are a whole stack of security patches that should probably be applied before they get completely out of hand | 0 |

484,113 | 13,934,598,585 | IssuesEvent | 2020-10-22 10:15:23 | AY2021S1-CS2113T-F11-1/tp | https://api.github.com/repos/AY2021S1-CS2113T-F11-1/tp | closed | As a user, I would like to search my past records based on input | diet priority.High type.Story | so that I can find my record faster. | 1.0 | As a user, I would like to search my past records based on input - so that I can find my record faster. | non_test | as a user i would like to search my past records based on input so that i can find my record faster | 0 |

41,057 | 5,294,467,802 | IssuesEvent | 2017-02-09 10:52:39 | IMA-WorldHealth/bhima-2.X | https://api.github.com/repos/IMA-WorldHealth/bhima-2.X | closed | (design) Useful MySQL views for validation, quick lookups | design | A MySQL view is a stored query that looks like a table. See [this page](http://dev.mysql.com/doc/refman/5.7/en/views.html) for more information.

I propose that we create a few views that will help our validation queries:

1. `entity`

``` sql

CREATE VIEW entity AS

SELECT uuid, text FROM debtor

UNION

... | 1.0 | (design) Useful MySQL views for validation, quick lookups - A MySQL view is a stored query that looks like a table. See [this page](http://dev.mysql.com/doc/refman/5.7/en/views.html) for more information.

I propose that we create a few views that will help our validation queries:

1. `entity`

``` sql

CREATE ... | non_test | design useful mysql views for validation quick lookups a mysql view is a stored query that looks like a table see for more information i propose that we create a few views that will help our validation queries entity sql create view entity as select uuid text from debtor union ... | 0 |

50,317 | 6,354,221,720 | IssuesEvent | 2017-07-29 07:15:43 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | C# Design Notes for May 3-4, 2016 | Area-Language Design Design Notes Language-C# Language-VB New Language Feature - Pattern Matching New Language Feature - Tuples | # C# Design Notes for May 3-4, 2016

This pair of meetings further explored the space around tuple syntax, pattern matching and deconstruction.

1. Deconstructors - how to specify them

2. Switch conversions - how to deal with them

3. Tuple conversions - how to do them

4. Tuple-like types - how to construct them

Lots o... | 2.0 | C# Design Notes for May 3-4, 2016 - # C# Design Notes for May 3-4, 2016

This pair of meetings further explored the space around tuple syntax, pattern matching and deconstruction.

1. Deconstructors - how to specify them

2. Switch conversions - how to deal with them

3. Tuple conversions - how to do them

4. Tuple-like t... | non_test | c design notes for may c design notes for may this pair of meetings further explored the space around tuple syntax pattern matching and deconstruction deconstructors how to specify them switch conversions how to deal with them tuple conversions how to do them tuple like types ... | 0 |

6,207 | 5,314,062,584 | IssuesEvent | 2017-02-13 14:07:29 | woocommerce/woocommerce | https://api.github.com/repos/woocommerce/woocommerce | closed | Superfluous index on wp_woocommerce_tax_rate_locations table | Performance | ## EXPLANATION OF THE ISSUE

The current definition of the woocommerce tax rate locations table (in the 2.7 master branch) is:

CREATE TABLE {$wpdb->prefix}woocommerce_tax_rate_locations (

location_id bigint(20) NOT NULL auto_increment,

location_code varchar(255) NOT NULL,

tax_rate_id bigint(20) NOT NULL,

... | True | Superfluous index on wp_woocommerce_tax_rate_locations table - ## EXPLANATION OF THE ISSUE

The current definition of the woocommerce tax rate locations table (in the 2.7 master branch) is:

CREATE TABLE {$wpdb->prefix}woocommerce_tax_rate_locations (

location_id bigint(20) NOT NULL auto_increment,

location_c... | non_test | superfluous index on wp woocommerce tax rate locations table explanation of the issue the current definition of the woocommerce tax rate locations table in the master branch is create table wpdb prefix woocommerce tax rate locations location id bigint not null auto increment location co... | 0 |

9,825 | 3,073,506,944 | IssuesEvent | 2015-08-19 22:22:26 | fsprojects/YC.PrettyPrinter | https://api.github.com/repos/fsprojects/YC.PrettyPrinter | closed | Fix links in documentation | SimpleTestTask task | Replace reference to fsharp.org with reference to http://yaccconstructor.github.io/ in gh-pages documentation. | 1.0 | Fix links in documentation - Replace reference to fsharp.org with reference to http://yaccconstructor.github.io/ in gh-pages documentation. | test | fix links in documentation replace reference to fsharp org with reference to in gh pages documentation | 1 |

287,746 | 8,819,941,601 | IssuesEvent | 2019-01-01 04:02:39 | Disalg-ICS-NJU/tlaplus-lamport-projects | https://api.github.com/repos/Disalg-ICS-NJU/tlaplus-lamport-projects | closed | JupiterInterface: How to Modify `state` in the Interface Level? | Rearrangement model check priority:normal refactor todo | ***JupiterInterface: How to Modify `state` in the Interface Level?*** (Git Branch: Jupiter-History)

**Note: The following refactor makes JupiterInterface executable. How to take advantage of it?**

- [x] In `JupiterInterface`: Using `aop` to modify `state`

- [x] + New variable `aop[r]`: the actual operation app... | 1.0 | JupiterInterface: How to Modify `state` in the Interface Level? - ***JupiterInterface: How to Modify `state` in the Interface Level?*** (Git Branch: Jupiter-History)

**Note: The following refactor makes JupiterInterface executable. How to take advantage of it?**

- [x] In `JupiterInterface`: Using `aop` to modify ... | non_test | jupiterinterface how to modify state in the interface level jupiterinterface how to modify state in the interface level git branch jupiter history note the following refactor makes jupiterinterface executable how to take advantage of it in jupiterinterface using aop to modify s... | 0 |

75,718 | 3,471,442,931 | IssuesEvent | 2015-12-23 15:25:07 | wordpress-mobile/WordPress-Android | https://api.github.com/repos/wordpress-mobile/WordPress-Android | closed | Add a utility method to check if a URL is wpcom | core priority-high [Type] Task | While reviewing https://github.com/wordpress-mobile/WordPress-Android/pull/2784, I noticed we're using different way to check for a `wordpress.com` url across the app (hostname ends with `wordpress.com`, url contains `wordpress.com`). | 1.0 | Add a utility method to check if a URL is wpcom - While reviewing https://github.com/wordpress-mobile/WordPress-Android/pull/2784, I noticed we're using different way to check for a `wordpress.com` url across the app (hostname ends with `wordpress.com`, url contains `wordpress.com`). | non_test | add a utility method to check if a url is wpcom while reviewing i noticed we re using different way to check for a wordpress com url across the app hostname ends with wordpress com url contains wordpress com | 0 |

99,095 | 4,046,924,912 | IssuesEvent | 2016-05-23 00:12:03 | seiyria/deck.zone | https://api.github.com/repos/seiyria/deck.zone | closed | onbeforeunload warning | feature:standard priority:anytime | flag the editor as dirty before save and not dirty after a successful save

if the editor is dirty, closing the page should result in a warning | 1.0 | onbeforeunload warning - flag the editor as dirty before save and not dirty after a successful save

if the editor is dirty, closing the page should result in a warning | non_test | onbeforeunload warning flag the editor as dirty before save and not dirty after a successful save if the editor is dirty closing the page should result in a warning | 0 |

216,072 | 24,215,267,161 | IssuesEvent | 2022-09-26 06:00:25 | nidhi7598/linux-4.19.72_CVE-2022-29581 | https://api.github.com/repos/nidhi7598/linux-4.19.72_CVE-2022-29581 | opened | CVE-2022-21499 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2022-21499 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.19.259</b>, <b>linuxlinux-4.19.259</b>, <b>linuxlinux-4.19.259</b>, <b>linuxlinux-4.19.259</b></p></su... | True | CVE-2022-21499 (Medium) detected in multiple libraries - ## CVE-2022-21499 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.19.259</b>, <b>linuxlinux-4.19.259</b>, <b>li... | non_test | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries linuxlinux linuxlinux linuxlinux linuxlinux vulnerability details kgdb and kdb allow read and write access to kernel memory and thus should... | 0 |

511,391 | 14,859,477,422 | IssuesEvent | 2021-01-18 18:33:44 | InfiniteFlightAirportEditing/Airports | https://api.github.com/repos/InfiniteFlightAirportEditing/Airports | closed | FCBB-Maya Maya Airport-BRAZZAVILLE-REPUBLIC OF THE CONGO | Being Redone Priority 2 | # Airport Name

Maya Maya Airport

# Country?

Republic Of The Congo

# Improvements that need to be made?

Redone

# Are you working on this airport?

Yes

# Airport Priority? (IF Event, 10000ft+ Runway, World/US Capital, Low)

World Capital

| 1.0 | FCBB-Maya Maya Airport-BRAZZAVILLE-REPUBLIC OF THE CONGO - # Airport Name

Maya Maya Airport

# Country?

Republic Of The Congo

# Improvements that need to be made?

Redone

# Are you working on this airport?

Yes

# Airport Priority? (IF Event, 10000ft+ Runway, World/US Capital, Low)

World Capital

| non_test | fcbb maya maya airport brazzaville republic of the congo airport name maya maya airport country republic of the congo improvements that need to be made redone are you working on this airport yes airport priority if event runway world us capital low world capital | 0 |

140,646 | 11,354,308,429 | IssuesEvent | 2020-01-24 17:18:52 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | After excluding a site from A-C table `Show All` link is not shown in ac table | OS/Windows QA/Test-Plan-Specified QA/Yes bug feature/rewards regression | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | After excluding a site from A-C table `Show All` link is not shown in ac table - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO ... | test | after excluding a site from a c table show all link is not shown in ac table have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info ... | 1 |

156,345 | 12,306,141,492 | IssuesEvent | 2020-05-12 00:31:39 | cosinekitty/astronomy | https://api.github.com/repos/cosinekitty/astronomy | closed | Unit tests: make it more obvious which language is being tested | testing | When running the unit tests, it is not always obvious which of the 4 supported languages is currently being tested. It would be nice to make it more obvious. Ideas:

- Put a big ASCII art banner in front of each language test.

- Consistently prefix all test output with the language name. | 1.0 | Unit tests: make it more obvious which language is being tested - When running the unit tests, it is not always obvious which of the 4 supported languages is currently being tested. It would be nice to make it more obvious. Ideas:

- Put a big ASCII art banner in front of each language test.

- Consistently prefix al... | test | unit tests make it more obvious which language is being tested when running the unit tests it is not always obvious which of the supported languages is currently being tested it would be nice to make it more obvious ideas put a big ascii art banner in front of each language test consistently prefix al... | 1 |

248,580 | 21,042,032,320 | IssuesEvent | 2022-03-31 13:10:01 | nrwl/nx | https://api.github.com/repos/nrwl/nx | closed | Don't error on "targetDependencies" "self" in nx.json | type: feature blocked: retry with latest scope: core | <!-- Please do your best to fill out all of the sections below! -->

<!-- Use this issue type for concrete suggestions, otherwise, open a discussion type issue instead. -->

- [] I'd be willing to implement this feature ([contributing guide](https://github.com/nrwl/nx/blob/master/CONTRIBUTING.md))

## Description... | 1.0 | Don't error on "targetDependencies" "self" in nx.json - <!-- Please do your best to fill out all of the sections below! -->