Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

209,567

| 16,040,865,686

|

IssuesEvent

|

2021-04-22 07:42:32

|

appium/appium

|

https://api.github.com/repos/appium/appium

|

closed

|

Appium v1.21.0-rc.1 and desktop 1.20.2-4 for IOS React Native app is very slow and taking twice the time of android runs

|

ThirdParty XCUITest

|

## The problem

Appium v1.21.0-rc.1 and desktop 1.20.2-4 for IOS React Native app is very slow and taking twice the time of android executions.

In appium desktop 1.8.3 versions the execution is equally fast for IOS and android.

## Environment

MAC OS : BigSure

Appium server : v1.21.0-rc.1

OR

Appium desktop : 1.20.2-4

## Details

Appium v1.21.0-rc.1 and desktop 1.20.2-4 for IOS React Native app is very slow and taking twice the time of android executions.

In appium desktop 1.8.3 versions the execution is equally fast for IOS and android.

Tried with the following capabilities to improve speed , but they did not have a impact :

capabilities.setCapability("waitForQuiescence", false);

capabilities.setCapability("useJSONSource", true);

capabilities.setCapability("simpleIsVisibleCheck",true);

|

1.0

|

Appium v1.21.0-rc.1 and desktop 1.20.2-4 for IOS React Native app is very slow and taking twice the time of android runs - ## The problem

Appium v1.21.0-rc.1 and desktop 1.20.2-4 for IOS React Native app is very slow and taking twice the time of android executions.

In appium desktop 1.8.3 versions the execution is equally fast for IOS and android.

## Environment

MAC OS : BigSure

Appium server : v1.21.0-rc.1

OR

Appium desktop : 1.20.2-4

## Details

Appium v1.21.0-rc.1 and desktop 1.20.2-4 for IOS React Native app is very slow and taking twice the time of android executions.

In appium desktop 1.8.3 versions the execution is equally fast for IOS and android.

Tried with the following capabilities to improve speed , but they did not have a impact :

capabilities.setCapability("waitForQuiescence", false);

capabilities.setCapability("useJSONSource", true);

capabilities.setCapability("simpleIsVisibleCheck",true);

|

test

|

appium rc and desktop for ios react native app is very slow and taking twice the time of android runs the problem appium rc and desktop for ios react native app is very slow and taking twice the time of android executions in appium desktop versions the execution is equally fast for ios and android environment mac os bigsure appium server rc or appium desktop details appium rc and desktop for ios react native app is very slow and taking twice the time of android executions in appium desktop versions the execution is equally fast for ios and android tried with the following capabilities to improve speed but they did not have a impact capabilities setcapability waitforquiescence false capabilities setcapability usejsonsource true capabilities setcapability simpleisvisiblecheck true

| 1

|

54,009

| 6,360,027,639

|

IssuesEvent

|

2017-07-31 09:01:27

|

medic/medic-webapp

|

https://api.github.com/repos/medic/medic-webapp

|

closed

|

Some reports not being replicated

|

4 - Acceptance Testing Bug

|

As a CHW supervisor I should see all reports that were submitted by people I supervise. I have been seeing odd replication issues where a supervisor does not see certain reports. One such case where a supervisor user account does not see reports from their CHW is if the `patient_id` did not match a patient. This scenario seems reproducible.

For instance, [this report](https://standard.app.medicmobile.org/medic/_design/medic/_rewrite/#/reports/0ba96f94b93899b06a4136ab5c409de6) can be seen by admin, but not Marni's supervisor `mch` who is assigned to the MCH Health Center:

|

1.0

|

Some reports not being replicated - As a CHW supervisor I should see all reports that were submitted by people I supervise. I have been seeing odd replication issues where a supervisor does not see certain reports. One such case where a supervisor user account does not see reports from their CHW is if the `patient_id` did not match a patient. This scenario seems reproducible.

For instance, [this report](https://standard.app.medicmobile.org/medic/_design/medic/_rewrite/#/reports/0ba96f94b93899b06a4136ab5c409de6) can be seen by admin, but not Marni's supervisor `mch` who is assigned to the MCH Health Center:

|

test

|

some reports not being replicated as a chw supervisor i should see all reports that were submitted by people i supervise i have been seeing odd replication issues where a supervisor does not see certain reports one such case where a supervisor user account does not see reports from their chw is if the patient id did not match a patient this scenario seems reproducible for instance can be seen by admin but not marni s supervisor mch who is assigned to the mch health center

| 1

|

253,546

| 21,688,440,058

|

IssuesEvent

|

2022-05-09 13:27:10

|

dusk-network/dusk-blockchain

|

https://api.github.com/repos/dusk-network/dusk-blockchain

|

closed

|

Optimize api.db on cluster

|

mark:testnet

|

**Describe the bug**

It turns out that api.db is growing fast in size on any cluster node. We should find a way to clean it up on regular base to avoid wasting disk space.

```

1.2G Apr 5 09:53 /opt/dusk/dusk_data/chain/api.db

```

|

1.0

|

Optimize api.db on cluster - **Describe the bug**

It turns out that api.db is growing fast in size on any cluster node. We should find a way to clean it up on regular base to avoid wasting disk space.

```

1.2G Apr 5 09:53 /opt/dusk/dusk_data/chain/api.db

```

|

test

|

optimize api db on cluster describe the bug it turns out that api db is growing fast in size on any cluster node we should find a way to clean it up on regular base to avoid wasting disk space apr opt dusk dusk data chain api db

| 1

|

894

| 2,656,821,418

|

IssuesEvent

|

2015-03-18 01:21:03

|

mesosphere/marathon

|

https://api.github.com/repos/mesosphere/marathon

|

opened

|

AS user I WANT to access my logs easily

|

usability

|

Couple of distinct use cases:

1. I WANT to see the current log of my running app.

2. My app is flapping and I want to see the log of my last failed run.

|

True

|

AS user I WANT to access my logs easily - Couple of distinct use cases:

1. I WANT to see the current log of my running app.

2. My app is flapping and I want to see the log of my last failed run.

|

non_test

|

as user i want to access my logs easily couple of distinct use cases i want to see the current log of my running app my app is flapping and i want to see the log of my last failed run

| 0

|

326,762

| 28,017,380,669

|

IssuesEvent

|

2023-03-28 00:34:29

|

Azure/azure-sdk-for-js

|

https://api.github.com/repos/Azure/azure-sdk-for-js

|

closed

|

Azure Communication Email Readme Issue

|

Client Docs test-manual-pass Communication - Email

|

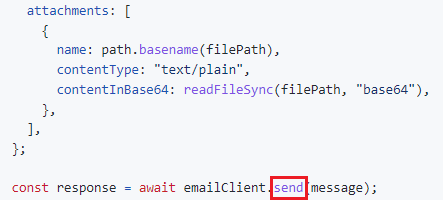

1.

Section [link](https://github.com/Azure/azure-sdk-for-js/tree/main/sdk/communication/communication-email#send-email-with-attachments)

**Reason:**

Function 'send' does not exist on class 'EmailClient'.

**Suggestion:**

Update the code as following:

``` javascript

const poller = await emailClient.beginSend(message);

const response = await poller.pollUntilDone();

```

@joheredi, @mayurid, @yogeshmo for notification.

|

1.0

|

Azure Communication Email Readme Issue - 1.

Section [link](https://github.com/Azure/azure-sdk-for-js/tree/main/sdk/communication/communication-email#send-email-with-attachments)

**Reason:**

Function 'send' does not exist on class 'EmailClient'.

**Suggestion:**

Update the code as following:

``` javascript

const poller = await emailClient.beginSend(message);

const response = await poller.pollUntilDone();

```

@joheredi, @mayurid, @yogeshmo for notification.

|

test

|

azure communication email readme issue section reason function send does not exist on class emailclient suggestion update the code as following javascript const poller await emailclient beginsend message const response await poller polluntildone joheredi mayurid yogeshmo for notification

| 1

|

277,678

| 24,094,790,093

|

IssuesEvent

|

2022-09-19 17:43:54

|

microsoft/AzureStorageExplorer

|

https://api.github.com/repos/microsoft/AzureStorageExplorer

|

closed

|

It is better to add the service type in the 'pin/unpin to Quick Access' activity log

|

:bulb: feature request :heavy_check_mark: won't fix 🧪 testing :gear: quick access

|

**Storage Explorer Version**: 1.26.0-dev

**Build Number**: 20220915.4

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 22.04/MacOS Monterey 12.5.1 (Apple M1 Pro)

**Architecture** ia32\x64

**How Found**: Ad-hoc testing

**Regression From**: Not a regression

## Steps to Reproduce ##

1. Expand one storage account -> Tables.

2. Create a table named 'test1' -> Pin the table to Quick Access.

3. Observe the activity log.

4. Check whether the service type 'table' displays in the activity log.

## Expected Experience ##

It is better to add the service type 'table' display in the activity log.

Like: **Pinned table 'test' to Quick Access.**

## Actual Experience ##

No service type 'table' displays in the activity log.

|

1.0

|

It is better to add the service type in the 'pin/unpin to Quick Access' activity log - **Storage Explorer Version**: 1.26.0-dev

**Build Number**: 20220915.4

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 22.04/MacOS Monterey 12.5.1 (Apple M1 Pro)

**Architecture** ia32\x64

**How Found**: Ad-hoc testing

**Regression From**: Not a regression

## Steps to Reproduce ##

1. Expand one storage account -> Tables.

2. Create a table named 'test1' -> Pin the table to Quick Access.

3. Observe the activity log.

4. Check whether the service type 'table' displays in the activity log.

## Expected Experience ##

It is better to add the service type 'table' display in the activity log.

Like: **Pinned table 'test' to Quick Access.**

## Actual Experience ##

No service type 'table' displays in the activity log.

|

test

|

it is better to add the service type in the pin unpin to quick access activity log storage explorer version dev build number branch main platform os windows linux ubuntu macos monterey apple pro architecture how found ad hoc testing regression from not a regression steps to reproduce expand one storage account tables create a table named pin the table to quick access observe the activity log check whether the service type table displays in the activity log expected experience it is better to add the service type table display in the activity log like pinned table test to quick access actual experience no service type table displays in the activity log

| 1

|

242,769

| 20,262,557,821

|

IssuesEvent

|

2022-02-15 09:02:36

|

thesofproject/sof

|

https://api.github.com/repos/thesofproject/sof

|

closed

|

[BUG][BYT][BDW] There are host position update warnings always

|

bug BYT P1 BDW Intel Linux Daily tests

|

There are many host position update warnings from sof-logger according to recent daily test on Intel's BDW and BYT platforms:

```

[ 291465955.814017] ( 62.499996) c0 ll-schedule ./schedule/ll_schedule.c:355 INFO num_tasks 1 total_num_tasks 1

[ 292697318.629670] ( 1231362.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 294113987.635877] ( 1416669.000000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 295530656.277500] ( 1416668.625000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 296963985.022628] ( 1433328.750000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 298380654.133001] ( 1416669.125000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 299797322.774625] ( 1416668.625000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 301230651.623919] ( 1433328.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 302647320.525959] ( 1416668.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 304063989.271749] ( 1416668.750000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 305497318.121044] ( 1433328.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 306913987.127251] ( 1416669.000000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 308330655.664707] ( 1416668.500000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

```

**To Reproduce**

Just run any playback or capture streams on BDW/BYT, and check sof-logger logs.

**Reproduction Rate**

It happens in about every 500ms~2s when the stream is active

**Expected behavior**

We didn't have this warning previous.

**Impact**

Not sure if it will impact any audio quality, but this warning should be taken care anyway.

**Environment**

1) Branch name and commit hash of the 2 repositories: sof (firmware/topology) and linux (kernel driver).

* Kernel: topic/sof-dev Commit: a9c617ef

* SOF: main Commit: cd48b895ebda

2) Name of the topology file

* Topology: sof-bdw-rt286.tplg on BDW and sof-byt-nocodec.tplg on BYT.

3) Name of the platform(s) on which the bug is observed.

* Platform: BDW/BYT.

|

1.0

|

[BUG][BYT][BDW] There are host position update warnings always - There are many host position update warnings from sof-logger according to recent daily test on Intel's BDW and BYT platforms:

```

[ 291465955.814017] ( 62.499996) c0 ll-schedule ./schedule/ll_schedule.c:355 INFO num_tasks 1 total_num_tasks 1

[ 292697318.629670] ( 1231362.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 294113987.635877] ( 1416669.000000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 295530656.277500] ( 1416668.625000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 296963985.022628] ( 1433328.750000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 298380654.133001] ( 1416669.125000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 299797322.774625] ( 1416668.625000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 301230651.623919] ( 1433328.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 302647320.525959] ( 1416668.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 304063989.271749] ( 1416668.750000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 305497318.121044] ( 1433328.875000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 306913987.127251] ( 1416669.000000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

[ 308330655.664707] ( 1416668.500000) c0 pipe 1.10 ....../pipeline-stream.c:473 WARN pipeline_get_timestamp(): DAI position update failed

```

**To Reproduce**

Just run any playback or capture streams on BDW/BYT, and check sof-logger logs.

**Reproduction Rate**

It happens in about every 500ms~2s when the stream is active

**Expected behavior**

We didn't have this warning previous.

**Impact**

Not sure if it will impact any audio quality, but this warning should be taken care anyway.

**Environment**

1) Branch name and commit hash of the 2 repositories: sof (firmware/topology) and linux (kernel driver).

* Kernel: topic/sof-dev Commit: a9c617ef

* SOF: main Commit: cd48b895ebda

2) Name of the topology file

* Topology: sof-bdw-rt286.tplg on BDW and sof-byt-nocodec.tplg on BYT.

3) Name of the platform(s) on which the bug is observed.

* Platform: BDW/BYT.

|

test

|

there are host position update warnings always there are many host position update warnings from sof logger according to recent daily test on intel s bdw and byt platforms ll schedule schedule ll schedule c info num tasks total num tasks pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed pipe pipeline stream c warn pipeline get timestamp dai position update failed to reproduce just run any playback or capture streams on bdw byt and check sof logger logs reproduction rate it happens in about every when the stream is active expected behavior we didn t have this warning previous impact not sure if it will impact any audio quality but this warning should be taken care anyway environment branch name and commit hash of the repositories sof firmware topology and linux kernel driver kernel topic sof dev commit sof main commit name of the topology file topology sof bdw tplg on bdw and sof byt nocodec tplg on byt name of the platform s on which the bug is observed platform bdw byt

| 1

|

288,424

| 24,905,112,176

|

IssuesEvent

|

2022-10-29 06:10:35

|

chadvandy/cbfm_wh3

|

https://api.github.com/repos/chadvandy/cbfm_wh3

|

closed

|

Grom and Drycha enemy morale debuff when fighting Elves skill doesn't work (Mandras)

|

needs-testing resolved

|

Originally by Mandras

https://cdn.discordapp.com/attachments/581350140942483456/1019249381234454598/Grom_Mandras.pack

|

1.0

|

Grom and Drycha enemy morale debuff when fighting Elves skill doesn't work (Mandras) - Originally by Mandras

https://cdn.discordapp.com/attachments/581350140942483456/1019249381234454598/Grom_Mandras.pack

|

test

|

grom and drycha enemy morale debuff when fighting elves skill doesn t work mandras originally by mandras

| 1

|

65,549

| 6,967,835,389

|

IssuesEvent

|

2017-12-10 14:09:37

|

GoogleCloudPlatform/google-cloud-cpp

|

https://api.github.com/repos/GoogleCloudPlatform/google-cloud-cpp

|

closed

|

Create a build that runs clang-tidy

|

static-analysis testing

|

At the end of this task we will have a build in the matrix that runs clang-tidy. Ideally the results will be pushed as comments to PRs, but even if they are in the build log that would be good enough.

|

1.0

|

Create a build that runs clang-tidy - At the end of this task we will have a build in the matrix that runs clang-tidy. Ideally the results will be pushed as comments to PRs, but even if they are in the build log that would be good enough.

|

test

|

create a build that runs clang tidy at the end of this task we will have a build in the matrix that runs clang tidy ideally the results will be pushed as comments to prs but even if they are in the build log that would be good enough

| 1

|

824,717

| 31,167,892,155

|

IssuesEvent

|

2023-08-16 21:22:06

|

michalspano/saol.se-cli

|

https://api.github.com/repos/michalspano/saol.se-cli

|

opened

|

Add support for nouns

|

feature priority:high

|

## Issue Description

<!-- Describe the idea (i.e. issue) in a comprehensive manner (natural language, requirement's specification, user story, etc.) -->

The `CLI` tool shall be able to query a **string** which holds a **noun**, store the noun in a specific data structure (i.e. order), and display the **grammatical phenomena** in a human-readable format to environment (i.e. a formatted sequence of characters printed to the standard output).

### Checklist

<!-- Provide acceptance criteria (i.e. checklist) for the proposed issue -->

- [ ] Data (such as the grammatical rules, etc.) are successfully extracted (_scraped_) from the web-based `saol` utility given a **string** that is lexically a **noun** (via a command-line argument).

- [ ] Such extracted data are stored in an efficient data structure/order (with the use of `Go`'s `struct` construct) are are made accessible in the required _"places"_ (such as function calls, parameters, etc.).

- [ ] The extracted data about a particular noun is displayed in the desired environment (e.g. standard output) in a human-readable format (based on the formatting given by the web-based `saol` utility).

- [ ] A noun which is not recognized by the web-based `saol` utility is handled in a way, such that the user is informed that the chosen noun is invalid.

<!-- Link issues that are related to the proposed issue (if not required, leave the following line commented) -->

<!--[Related to: #<issue_number_1>, ..., #<issue_number_n>]-->

### Demo

Let `-word=hus`, we run the _scraping_ against [svenska.se/saol/?sok=hus](https://svenska.se/saol/?sok=hus), which yields the following:

<img width="616" alt="Screenshot 2023-08-16 at 23 19 04" src="https://github.com/michalspano/saol.se-cli/assets/71947840/f801fca7-7d0c-4bc6-b89e-5c101f100613">

In the CLI-based format, we'd expect the following formatting (having the same query):

```txt

hus substantiv ~et; pl. ~

<INSERT_SEMANTICS>

Singular

ett hus obestämd form

ett hus obestämd form genitiv

huset bestämd form

husets bestämd form genitiv

Plural

hus obestämd form

hus obestämd form genitiv

husen bestämd form

husens bestämd form genitiv

Övrig(a) form(er)

huse i vissa uttryck

```

|

1.0

|

Add support for nouns - ## Issue Description

<!-- Describe the idea (i.e. issue) in a comprehensive manner (natural language, requirement's specification, user story, etc.) -->

The `CLI` tool shall be able to query a **string** which holds a **noun**, store the noun in a specific data structure (i.e. order), and display the **grammatical phenomena** in a human-readable format to environment (i.e. a formatted sequence of characters printed to the standard output).

### Checklist

<!-- Provide acceptance criteria (i.e. checklist) for the proposed issue -->

- [ ] Data (such as the grammatical rules, etc.) are successfully extracted (_scraped_) from the web-based `saol` utility given a **string** that is lexically a **noun** (via a command-line argument).

- [ ] Such extracted data are stored in an efficient data structure/order (with the use of `Go`'s `struct` construct) are are made accessible in the required _"places"_ (such as function calls, parameters, etc.).

- [ ] The extracted data about a particular noun is displayed in the desired environment (e.g. standard output) in a human-readable format (based on the formatting given by the web-based `saol` utility).

- [ ] A noun which is not recognized by the web-based `saol` utility is handled in a way, such that the user is informed that the chosen noun is invalid.

<!-- Link issues that are related to the proposed issue (if not required, leave the following line commented) -->

<!--[Related to: #<issue_number_1>, ..., #<issue_number_n>]-->

### Demo

Let `-word=hus`, we run the _scraping_ against [svenska.se/saol/?sok=hus](https://svenska.se/saol/?sok=hus), which yields the following:

<img width="616" alt="Screenshot 2023-08-16 at 23 19 04" src="https://github.com/michalspano/saol.se-cli/assets/71947840/f801fca7-7d0c-4bc6-b89e-5c101f100613">

In the CLI-based format, we'd expect the following formatting (having the same query):

```txt

hus substantiv ~et; pl. ~

<INSERT_SEMANTICS>

Singular

ett hus obestämd form

ett hus obestämd form genitiv

huset bestämd form

husets bestämd form genitiv

Plural

hus obestämd form

hus obestämd form genitiv

husen bestämd form

husens bestämd form genitiv

Övrig(a) form(er)

huse i vissa uttryck

```

|

non_test

|

add support for nouns issue description the cli tool shall be able to query a string which holds a noun store the noun in a specific data structure i e order and display the grammatical phenomena in a human readable format to environment i e a formatted sequence of characters printed to the standard output checklist data such as the grammatical rules etc are successfully extracted scraped from the web based saol utility given a string that is lexically a noun via a command line argument such extracted data are stored in an efficient data structure order with the use of go s struct construct are are made accessible in the required places such as function calls parameters etc the extracted data about a particular noun is displayed in the desired environment e g standard output in a human readable format based on the formatting given by the web based saol utility a noun which is not recognized by the web based saol utility is handled in a way such that the user is informed that the chosen noun is invalid demo let word hus we run the scraping against which yields the following img width alt screenshot at src in the cli based format we d expect the following formatting having the same query txt hus substantiv et pl singular ett hus obestämd form ett hus obestämd form genitiv huset bestämd form husets bestämd form genitiv plural hus obestämd form hus obestämd form genitiv husen bestämd form husens bestämd form genitiv övrig a form er huse i vissa uttryck

| 0

|

3,417

| 2,671,724,713

|

IssuesEvent

|

2015-03-24 09:25:32

|

radare/radare2

|

https://api.github.com/repos/radare/radare2

|

closed

|

aa0 broken by a commit

|

bug regression test-attached

|

Radare2-Regressions-format-elf shows a regression coming from e1edc18a688a47c02b0a346b4cab276778e46430 @alvarofe

http://ci.rada.re/view/File%20Formats/job/radare2-regressions-formats-elf/2142/console

[XX] 5 helloworld-gcc-elf: flags spaces after analysis

|

1.0

|

aa0 broken by a commit - Radare2-Regressions-format-elf shows a regression coming from e1edc18a688a47c02b0a346b4cab276778e46430 @alvarofe

http://ci.rada.re/view/File%20Formats/job/radare2-regressions-formats-elf/2142/console

[XX] 5 helloworld-gcc-elf: flags spaces after analysis

|

test

|

broken by a commit regressions format elf shows a regression coming from alvarofe helloworld gcc elf flags spaces after analysis

| 1

|

20,658

| 6,077,146,614

|

IssuesEvent

|

2017-06-16 02:27:09

|

dotnet/coreclr

|

https://api.github.com/repos/dotnet/coreclr

|

closed

|

[RyuJIT/arm32] Assertion failed 'isDoubleReg(reg1)'

|

arch-arm32 area-CodeGen bug

|

At emitarm.cpp Line: 2083

Example:

```

Assert failure(PID 4252 [0x0000109c], Thread: 11220 [0x2bd4]): Assertion failed 'isDoubleReg(reg1)' in 'FractalPerf.Julia:Render():double:this' (IL size 160)

File: c:\gh\coreclr\src\jit\emitarm.cpp Line: 2083

Image: c:\brucefo\tests\Windows_NT.arm.Checked\Tests\Core_Root\CoreRun.exe

```

Tests with this assert:

```

JIT\Performance\CodeQuality\FractalPerf\FractalPerf\FractalPerf.cmd

```

|

1.0

|

[RyuJIT/arm32] Assertion failed 'isDoubleReg(reg1)' - At emitarm.cpp Line: 2083

Example:

```

Assert failure(PID 4252 [0x0000109c], Thread: 11220 [0x2bd4]): Assertion failed 'isDoubleReg(reg1)' in 'FractalPerf.Julia:Render():double:this' (IL size 160)

File: c:\gh\coreclr\src\jit\emitarm.cpp Line: 2083

Image: c:\brucefo\tests\Windows_NT.arm.Checked\Tests\Core_Root\CoreRun.exe

```

Tests with this assert:

```

JIT\Performance\CodeQuality\FractalPerf\FractalPerf\FractalPerf.cmd

```

|

non_test

|

assertion failed isdoublereg at emitarm cpp line example assert failure pid thread assertion failed isdoublereg in fractalperf julia render double this il size file c gh coreclr src jit emitarm cpp line image c brucefo tests windows nt arm checked tests core root corerun exe tests with this assert jit performance codequality fractalperf fractalperf fractalperf cmd

| 0

|

149,352

| 11,890,781,444

|

IssuesEvent

|

2020-03-28 19:57:29

|

eBay/skin

|

https://api.github.com/repos/eBay/skin

|

closed

|

Storybook & Percy: Phase 2

|

aspect: percy aspect: storybook aspect: tests fellowship: good first project resolution: done

|

We've ported over a good deal of the old test pages to Storybook stories, and we have Percy running visual regression tests against those stories (either manually or via CI). Phase 1 complete!

Phase 2 is to have another sweep through the stories, have a bit of a cleanup, and ensure there is only one instance of a module per story. We still have quite a few stories that are showing multiple states of a module in a single story (for example, expanded and collapsed, or button & fake button).

|

1.0

|

Storybook & Percy: Phase 2 - We've ported over a good deal of the old test pages to Storybook stories, and we have Percy running visual regression tests against those stories (either manually or via CI). Phase 1 complete!

Phase 2 is to have another sweep through the stories, have a bit of a cleanup, and ensure there is only one instance of a module per story. We still have quite a few stories that are showing multiple states of a module in a single story (for example, expanded and collapsed, or button & fake button).

|

test

|

storybook percy phase we ve ported over a good deal of the old test pages to storybook stories and we have percy running visual regression tests against those stories either manually or via ci phase complete phase is to have another sweep through the stories have a bit of a cleanup and ensure there is only one instance of a module per story we still have quite a few stories that are showing multiple states of a module in a single story for example expanded and collapsed or button fake button

| 1

|

244,361

| 20,626,378,254

|

IssuesEvent

|

2022-03-07 23:08:08

|

coder/code-server

|

https://api.github.com/repos/coder/code-server

|

closed

|

[Testing]: write tests for logLevel in setDefaults

|

testing

|

We're missing coverage for L490-492 where we set logLevel to Error.

```typescript

case LogLevel.Error:

logger.level = Level.Error

args.verbose = false

break

```

https://github.com/coder/code-server/blob/main/src/node/cli.ts#L490-L492

write test for `setDefaults` and pass in error for log level. assert level and verbose

|

1.0

|

[Testing]: write tests for logLevel in setDefaults - We're missing coverage for L490-492 where we set logLevel to Error.

```typescript

case LogLevel.Error:

logger.level = Level.Error

args.verbose = false

break

```

https://github.com/coder/code-server/blob/main/src/node/cli.ts#L490-L492

write test for `setDefaults` and pass in error for log level. assert level and verbose

|

test

|

write tests for loglevel in setdefaults we re missing coverage for where we set loglevel to error typescript case loglevel error logger level level error args verbose false break write test for setdefaults and pass in error for log level assert level and verbose

| 1

|

40,930

| 12,800,077,605

|

IssuesEvent

|

2020-07-02 16:25:44

|

flyingcircusio/nixpkgs

|

https://api.github.com/repos/flyingcircusio/nixpkgs

|

opened

|

Vulnerability roundup 4: i2p-0.9.39: 1 advisory [7.8]

|

1.severity: security

|

[search](https://search.nix.gsc.io/?q=i2p&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=i2p+in%3Apath&type=Code)

* [ ] [CVE-2020-13431](https://nvd.nist.gov/vuln/detail/CVE-2020-13431) CVSSv3=7.8 (nixos-19.03)

Scanned versions: nixos-19.03: f156ee5cbf2. May contain false positives.

|

True

|

Vulnerability roundup 4: i2p-0.9.39: 1 advisory [7.8] - [search](https://search.nix.gsc.io/?q=i2p&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=i2p+in%3Apath&type=Code)

* [ ] [CVE-2020-13431](https://nvd.nist.gov/vuln/detail/CVE-2020-13431) CVSSv3=7.8 (nixos-19.03)

Scanned versions: nixos-19.03: f156ee5cbf2. May contain false positives.

|

non_test

|

vulnerability roundup advisory nixos scanned versions nixos may contain false positives

| 0

|

335,687

| 30,082,038,080

|

IssuesEvent

|

2023-06-29 04:56:53

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

roachtest: failover/liveness/blackhole-recv/lease=expiration failed

|

C-test-failure O-robot O-roachtest branch-master release-blocker T-kv

|

roachtest.failover/liveness/blackhole-recv/lease=expiration [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestWeeklyBazel/10720111?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestWeeklyBazel/10720111?buildTab=artifacts#/failover/liveness/blackhole-recv/lease=expiration) on master @ [7fd4c21157221eae9e7d5892d89d2b5a671aba3e](https://github.com/cockroachdb/cockroach/commits/7fd4c21157221eae9e7d5892d89d2b5a671aba3e):

```

(assertions.go:333).Fail:

Error Trace: github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/failover.go:1665

github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/failover.go:911

github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/failover.go:155

main/pkg/cmd/roachtest/test_runner.go:1060

GOROOT/src/runtime/asm_amd64.s:1594

Error: Received unexpected error:

pq: error getting span statistics - number of spans in request payload (1052) exceeds 'server.span_stats.span_batch_limit' cluster setting limit (500)

Test: failover/liveness/blackhole-recv/lease=expiration

(require.go:1360).NoError: FailNow called

(cluster.go:2279).Run: cluster.RunE: context canceled

test artifacts and logs in: /artifacts/failover/liveness/blackhole-recv/lease=expiration/run_1

```

<p>Parameters: <code>ROACHTEST_arch=amd64</code>

, <code>ROACHTEST_cloud=gce</code>

, <code>ROACHTEST_cpu=2</code>

, <code>ROACHTEST_encrypted=false</code>

, <code>ROACHTEST_fs=ext4</code>

, <code>ROACHTEST_localSSD=true</code>

, <code>ROACHTEST_ssd=0</code>

</p>

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/kv-triage

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*failover/liveness/blackhole-recv/lease=expiration.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

Jira issue: CRDB-29184

|

2.0

|

roachtest: failover/liveness/blackhole-recv/lease=expiration failed - roachtest.failover/liveness/blackhole-recv/lease=expiration [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestWeeklyBazel/10720111?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestWeeklyBazel/10720111?buildTab=artifacts#/failover/liveness/blackhole-recv/lease=expiration) on master @ [7fd4c21157221eae9e7d5892d89d2b5a671aba3e](https://github.com/cockroachdb/cockroach/commits/7fd4c21157221eae9e7d5892d89d2b5a671aba3e):

```

(assertions.go:333).Fail:

Error Trace: github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/failover.go:1665

github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/failover.go:911

github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/failover.go:155

main/pkg/cmd/roachtest/test_runner.go:1060

GOROOT/src/runtime/asm_amd64.s:1594

Error: Received unexpected error:

pq: error getting span statistics - number of spans in request payload (1052) exceeds 'server.span_stats.span_batch_limit' cluster setting limit (500)

Test: failover/liveness/blackhole-recv/lease=expiration

(require.go:1360).NoError: FailNow called

(cluster.go:2279).Run: cluster.RunE: context canceled

test artifacts and logs in: /artifacts/failover/liveness/blackhole-recv/lease=expiration/run_1

```

<p>Parameters: <code>ROACHTEST_arch=amd64</code>

, <code>ROACHTEST_cloud=gce</code>

, <code>ROACHTEST_cpu=2</code>

, <code>ROACHTEST_encrypted=false</code>

, <code>ROACHTEST_fs=ext4</code>

, <code>ROACHTEST_localSSD=true</code>

, <code>ROACHTEST_ssd=0</code>

</p>

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/kv-triage

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*failover/liveness/blackhole-recv/lease=expiration.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

Jira issue: CRDB-29184

|

test

|

roachtest failover liveness blackhole recv lease expiration failed roachtest failover liveness blackhole recv lease expiration with on master assertions go fail error trace github com cockroachdb cockroach pkg cmd roachtest tests failover go github com cockroachdb cockroach pkg cmd roachtest tests failover go github com cockroachdb cockroach pkg cmd roachtest tests failover go main pkg cmd roachtest test runner go goroot src runtime asm s error received unexpected error pq error getting span statistics number of spans in request payload exceeds server span stats span batch limit cluster setting limit test failover liveness blackhole recv lease expiration require go noerror failnow called cluster go run cluster rune context canceled test artifacts and logs in artifacts failover liveness blackhole recv lease expiration run parameters roachtest arch roachtest cloud gce roachtest cpu roachtest encrypted false roachtest fs roachtest localssd true roachtest ssd help see see cc cockroachdb kv triage jira issue crdb

| 1

|

226,379

| 18,014,998,658

|

IssuesEvent

|

2021-09-16 13:03:15

|

TTitcombe/Greta

|

https://api.github.com/repos/TTitcombe/Greta

|

opened

|

Reduce "responses" test repetition

|

testing

|

We use "responses" library to mock-out API calls. Currently this requires a `@responses.active` wrapper on every test function and a responses data setup.

Find a way to simplify this for test files so responses code does not need to be written so much

|

1.0

|

Reduce "responses" test repetition - We use "responses" library to mock-out API calls. Currently this requires a `@responses.active` wrapper on every test function and a responses data setup.

Find a way to simplify this for test files so responses code does not need to be written so much

|

test

|

reduce responses test repetition we use responses library to mock out api calls currently this requires a responses active wrapper on every test function and a responses data setup find a way to simplify this for test files so responses code does not need to be written so much

| 1

|

7,565

| 3,103,030,667

|

IssuesEvent

|

2015-08-31 06:43:49

|

saltstack/salt

|

https://api.github.com/repos/saltstack/salt

|

reopened

|

salt-cloud (EC2): Salt-Minion-2014.7.1-AMD64-Setup.exe doesn't install salt-minion service

|

Bug Documentation High Severity P2 Platform Regression Salt-Cloud Windows

|

Hi,

while bootstrapping a Windows Server 2008R2 AWS instance via salt-cloud, the Salt Minion installer (2014.7.1) doesn't create the salt-minion service. OTOH, salt-cloud doesn't notice it's not there:

```

[INFO ] Running command under pid 17978: 'winexe -U Administrator%secret_passwd //10.11.12.13 "sc stop salt-minion"'

[INFO ] Running command under pid 17980: 'winexe -U Administrator%secret_passwd //10.11.12.13 "sc start salt-minion"'

[INFO ] Salt installed on salt-cloud-test

[INFO ] Created Cloud VM 'salt-cloud-test'

```

When running the installer manually, the service IS created, though.

Bye...

Dirk

|

1.0

|

salt-cloud (EC2): Salt-Minion-2014.7.1-AMD64-Setup.exe doesn't install salt-minion service - Hi,

while bootstrapping a Windows Server 2008R2 AWS instance via salt-cloud, the Salt Minion installer (2014.7.1) doesn't create the salt-minion service. OTOH, salt-cloud doesn't notice it's not there:

```

[INFO ] Running command under pid 17978: 'winexe -U Administrator%secret_passwd //10.11.12.13 "sc stop salt-minion"'

[INFO ] Running command under pid 17980: 'winexe -U Administrator%secret_passwd //10.11.12.13 "sc start salt-minion"'

[INFO ] Salt installed on salt-cloud-test

[INFO ] Created Cloud VM 'salt-cloud-test'

```

When running the installer manually, the service IS created, though.

Bye...

Dirk

|

non_test

|

salt cloud salt minion setup exe doesn t install salt minion service hi while bootstrapping a windows server aws instance via salt cloud the salt minion installer doesn t create the salt minion service otoh salt cloud doesn t notice it s not there running command under pid winexe u administrator secret passwd sc stop salt minion running command under pid winexe u administrator secret passwd sc start salt minion salt installed on salt cloud test created cloud vm salt cloud test when running the installer manually the service is created though bye dirk

| 0

|

248,592

| 21,043,131,398

|

IssuesEvent

|

2022-03-31 13:57:13

|

zkSNACKs/WalletWasabi

|

https://api.github.com/repos/zkSNACKs/WalletWasabi

|

closed

|

Fee slider Continue button issue

|

debug UI ww2 testing

|

1. Open the fee slider while it is somewhere in the middle.

2. Click Continue without changing anything.

Notice that the tx fee decreases with a couple of sats whenever you repeat that.

|

1.0

|

Fee slider Continue button issue - 1. Open the fee slider while it is somewhere in the middle.

2. Click Continue without changing anything.

Notice that the tx fee decreases with a couple of sats whenever you repeat that.

|

test

|

fee slider continue button issue open the fee slider while it is somewhere in the middle click continue without changing anything notice that the tx fee decreases with a couple of sats whenever you repeat that

| 1

|

753,291

| 26,343,594,949

|

IssuesEvent

|

2023-01-10 19:52:18

|

KingSupernova31/RulesGuru

|

https://api.github.com/repos/KingSupernova31/RulesGuru

|

closed

|

Quotes in card names break things

|

bug medium priority good first issue

|

Quotes don't seem to be properly escaped somewhere. The card `Pang Tong, "Young Phoenix"` shows up as just `Pang Tong, ` in the list of card names.

|

1.0

|

Quotes in card names break things - Quotes don't seem to be properly escaped somewhere. The card `Pang Tong, "Young Phoenix"` shows up as just `Pang Tong, ` in the list of card names.

|

non_test

|

quotes in card names break things quotes don t seem to be properly escaped somewhere the card pang tong young phoenix shows up as just pang tong in the list of card names

| 0

|

280,725

| 24,326,941,240

|

IssuesEvent

|

2022-09-30 15:33:57

|

void-linux/void-packages

|

https://api.github.com/repos/void-linux/void-packages

|

closed

|

ZFS DKMS build fails on freshly installed system

|

bug needs-testing

|

### Is this a new report?

Yes

### System Info

Void 5.19.10_1 x86_64 GenuineIntel/VM uptodate rFF

### Package(s) Affected

zfs-2.1.5_3

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

_No response_

### Expected behaviour

I installed a fresh Void system, updated with xbps-install -Su, rebooted into kernel 5.19 (also tested with linux-lts, same result), and attempted to install ZFS.

### Actual behaviour

On installing (and reinstalling) ZFS, this is the end of the xbps output:

```zfs-2.1.5_3: configuring ...

Added DKMS module 'zfs-2.1.5'.

Skipping kernel-5.13.19_1. kernel-headers package not installed...

Building DKMS module 'zfs-2.1.5' for kernel-5.19.10_1... Killed

```

Manually building with the DKMS module (sudo dkms build zfs/2.1.5) ended with a segmentation fault in gcc and the attached [make.log](https://github.com/void-linux/void-packages/files/9682842/make.log) file.

### Steps to reproduce

(Please note, this is my first time using void. I reinstalled and tried again to make sure it wasn't a fluke)

1. Install a fresh void system (tried with both local and netinstall)

2. Sync/update system and reboot

3. xbps-install zfs

4. DKMS fails, even on kernels <5.18

|

1.0

|

ZFS DKMS build fails on freshly installed system - ### Is this a new report?

Yes

### System Info

Void 5.19.10_1 x86_64 GenuineIntel/VM uptodate rFF

### Package(s) Affected

zfs-2.1.5_3

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

_No response_

### Expected behaviour

I installed a fresh Void system, updated with xbps-install -Su, rebooted into kernel 5.19 (also tested with linux-lts, same result), and attempted to install ZFS.

### Actual behaviour

On installing (and reinstalling) ZFS, this is the end of the xbps output:

```zfs-2.1.5_3: configuring ...

Added DKMS module 'zfs-2.1.5'.

Skipping kernel-5.13.19_1. kernel-headers package not installed...

Building DKMS module 'zfs-2.1.5' for kernel-5.19.10_1... Killed

```

Manually building with the DKMS module (sudo dkms build zfs/2.1.5) ended with a segmentation fault in gcc and the attached [make.log](https://github.com/void-linux/void-packages/files/9682842/make.log) file.

### Steps to reproduce

(Please note, this is my first time using void. I reinstalled and tried again to make sure it wasn't a fluke)

1. Install a fresh void system (tried with both local and netinstall)

2. Sync/update system and reboot

3. xbps-install zfs

4. DKMS fails, even on kernels <5.18

|

test

|

zfs dkms build fails on freshly installed system is this a new report yes system info void genuineintel vm uptodate rff package s affected zfs does a report exist for this bug with the project s home upstream and or another distro no response expected behaviour i installed a fresh void system updated with xbps install su rebooted into kernel also tested with linux lts same result and attempted to install zfs actual behaviour on installing and reinstalling zfs this is the end of the xbps output zfs configuring added dkms module zfs skipping kernel kernel headers package not installed building dkms module zfs for kernel killed manually building with the dkms module sudo dkms build zfs ended with a segmentation fault in gcc and the attached file steps to reproduce please note this is my first time using void i reinstalled and tried again to make sure it wasn t a fluke install a fresh void system tried with both local and netinstall sync update system and reboot xbps install zfs dkms fails even on kernels

| 1

|

32,989

| 6,992,159,906

|

IssuesEvent

|

2017-12-15 04:48:04

|

Quantum64/ExGregilo

|

https://api.github.com/repos/Quantum64/ExGregilo

|

closed

|

Issue with Auto Sieve recipe

|

defect

|

I can not craft the auto sieve using a LV Electric Motor I made myself, but if I swap the motor with a LV Electric Motor that I cheated in using NEI the recipe is complete.

Edit: I have done further testing and this also happens with the Conveyor Module, Robot Arm and Piston as well.

|

1.0

|

Issue with Auto Sieve recipe - I can not craft the auto sieve using a LV Electric Motor I made myself, but if I swap the motor with a LV Electric Motor that I cheated in using NEI the recipe is complete.

Edit: I have done further testing and this also happens with the Conveyor Module, Robot Arm and Piston as well.

|

non_test

|

issue with auto sieve recipe i can not craft the auto sieve using a lv electric motor i made myself but if i swap the motor with a lv electric motor that i cheated in using nei the recipe is complete edit i have done further testing and this also happens with the conveyor module robot arm and piston as well

| 0

|

350,513

| 31,898,394,984

|

IssuesEvent

|

2023-09-18 05:25:26

|

NVIDIA/spark-rapids

|

https://api.github.com/repos/NVIDIA/spark-rapids

|

closed

|

Statistics tests for Parquet files written by GPU

|

test task

|

When the GPU writes a Parquet file, it's important to get the file, row group, and page statistics correct. We should have better tests, ideally including tests at larger scale than a few thousand rows typical for unit/integration tests, for verifying the statistics written to the Parquet file are correct.

|

1.0

|

Statistics tests for Parquet files written by GPU - When the GPU writes a Parquet file, it's important to get the file, row group, and page statistics correct. We should have better tests, ideally including tests at larger scale than a few thousand rows typical for unit/integration tests, for verifying the statistics written to the Parquet file are correct.

|

test

|

statistics tests for parquet files written by gpu when the gpu writes a parquet file it s important to get the file row group and page statistics correct we should have better tests ideally including tests at larger scale than a few thousand rows typical for unit integration tests for verifying the statistics written to the parquet file are correct

| 1

|

156,830

| 19,907,448,563

|

IssuesEvent

|

2022-01-25 14:11:46

|

Recidiviz/supervision-success-component

|

https://api.github.com/repos/Recidiviz/supervision-success-component

|

closed

|

Security Alert - Package: node-forge; Severity: LOW; Vuln ID: GHSA-gf8q-jrpm-jvxq

|

Subject: Security Subject: Vulnerability Severity: LOW

|

---

due: 2022-04-24

---

A new vulnerability has been reported by Dependabot. The criticality of this vulnerability is LOW.

LOW vulnerabilities have an SLA of 90 days according to our policy.

Affected package: node-forge

Ecosystem: NPM

Affected version range: < 1.0.0

Summary: URL parsing in node-forge could lead to undesired behavior.

Description: ### Impact

The regex used for the `forge.util.parseUrl` API would not properly parse certain inputs resulting in a parsed data structure that could lead to undesired behavior.

### Patches

`forge.util.parseUrl` and other very old related URL APIs were removed in 1.0.0 in favor of letting applications use the more modern WHATWG URL Standard API.

### Workarounds

Ensure code does not directly or indirectly call `forge.util.parseUrl` with untrusted input.

### References

- https://www.huntr.dev/bounties/41852c50-3c6d-4703-8c55-4db27164a4ae/

### For more information

If you have any questions or comments about this advisory:

* Open an issue in [forge](https://github.com/digitalbazaar/forge)

* Email us at support@digitalbazaar.com

identifiers: [{'type': 'GHSA', 'value': 'GHSA-gf8q-jrpm-jvxq'}]

Fixed Version: 1.0.0

Created Date = January 18, 2022

***Additional Context***

https://github.com/Recidiviz/supervision-success-component/security/dependabot?q=is%3Aopen+sort%3Anewest

|

True

|

Security Alert - Package: node-forge; Severity: LOW; Vuln ID: GHSA-gf8q-jrpm-jvxq -

---

due: 2022-04-24

---

A new vulnerability has been reported by Dependabot. The criticality of this vulnerability is LOW.

LOW vulnerabilities have an SLA of 90 days according to our policy.

Affected package: node-forge

Ecosystem: NPM

Affected version range: < 1.0.0

Summary: URL parsing in node-forge could lead to undesired behavior.

Description: ### Impact

The regex used for the `forge.util.parseUrl` API would not properly parse certain inputs resulting in a parsed data structure that could lead to undesired behavior.

### Patches

`forge.util.parseUrl` and other very old related URL APIs were removed in 1.0.0 in favor of letting applications use the more modern WHATWG URL Standard API.

### Workarounds

Ensure code does not directly or indirectly call `forge.util.parseUrl` with untrusted input.

### References

- https://www.huntr.dev/bounties/41852c50-3c6d-4703-8c55-4db27164a4ae/

### For more information

If you have any questions or comments about this advisory:

* Open an issue in [forge](https://github.com/digitalbazaar/forge)

* Email us at support@digitalbazaar.com

identifiers: [{'type': 'GHSA', 'value': 'GHSA-gf8q-jrpm-jvxq'}]

Fixed Version: 1.0.0

Created Date = January 18, 2022

***Additional Context***

https://github.com/Recidiviz/supervision-success-component/security/dependabot?q=is%3Aopen+sort%3Anewest

|

non_test

|

security alert package node forge severity low vuln id ghsa jrpm jvxq due a new vulnerability has been reported by dependabot the criticality of this vulnerability is low low vulnerabilities have an sla of days according to our policy affected package node forge ecosystem npm affected version range summary url parsing in node forge could lead to undesired behavior description impact the regex used for the forge util parseurl api would not properly parse certain inputs resulting in a parsed data structure that could lead to undesired behavior patches forge util parseurl and other very old related url apis were removed in in favor of letting applications use the more modern whatwg url standard api workarounds ensure code does not directly or indirectly call forge util parseurl with untrusted input references for more information if you have any questions or comments about this advisory open an issue in email us at support digitalbazaar com identifiers fixed version created date january additional context

| 0

|

322,001

| 23,884,206,862

|

IssuesEvent

|

2022-09-08 06:01:59

|

grindylow/ahoy

|

https://api.github.com/repos/grindylow/ahoy

|

closed

|

Feature Request : Limit-Anzeige auf Website in gewählter Einheit "Prozent" oder "Watt" anzeigen

|

documentation enhancement wontfix ESP

|

Begrenze ich meinen HM-600 auf 320 W sehe ich in der seriellen Debugausgabe auch diesen Wert, nach kurzer Zeit regelt sich der Inverter auf diesen Wert ein. Das finde ich als prima Feature.

Allerdings scheint ahoy dies intern immer in Prozent umzurechnen, denn auf der Website steht dann z.B. 53%. Dies finde ich etwas verwirrend:

Ich schlage vor, auf der Website den eingestellten Wert anzuzeigen, und zwar in Prozent oder Watt - je nachdem wie dieser eingestellt wurde. Noch hilfreicher finde ich die Zusatzangabe "persistent" oder "non persistent", so dass man mit einem Blick sieht, was konkret eingestellt wurde.

|

1.0

|

Feature Request : Limit-Anzeige auf Website in gewählter Einheit "Prozent" oder "Watt" anzeigen - Begrenze ich meinen HM-600 auf 320 W sehe ich in der seriellen Debugausgabe auch diesen Wert, nach kurzer Zeit regelt sich der Inverter auf diesen Wert ein. Das finde ich als prima Feature.

Allerdings scheint ahoy dies intern immer in Prozent umzurechnen, denn auf der Website steht dann z.B. 53%. Dies finde ich etwas verwirrend:

Ich schlage vor, auf der Website den eingestellten Wert anzuzeigen, und zwar in Prozent oder Watt - je nachdem wie dieser eingestellt wurde. Noch hilfreicher finde ich die Zusatzangabe "persistent" oder "non persistent", so dass man mit einem Blick sieht, was konkret eingestellt wurde.

|

non_test

|

feature request limit anzeige auf website in gewählter einheit prozent oder watt anzeigen begrenze ich meinen hm auf w sehe ich in der seriellen debugausgabe auch diesen wert nach kurzer zeit regelt sich der inverter auf diesen wert ein das finde ich als prima feature allerdings scheint ahoy dies intern immer in prozent umzurechnen denn auf der website steht dann z b dies finde ich etwas verwirrend ich schlage vor auf der website den eingestellten wert anzuzeigen und zwar in prozent oder watt je nachdem wie dieser eingestellt wurde noch hilfreicher finde ich die zusatzangabe persistent oder non persistent so dass man mit einem blick sieht was konkret eingestellt wurde

| 0

|

245,204

| 20,752,623,906

|

IssuesEvent

|

2022-03-15 09:12:33

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

opened

|

sql/rowexec: TestJoinReaderUsesBatchLimit failed

|

C-test-failure O-robot branch-master

|

sql/rowexec.TestJoinReaderUsesBatchLimit [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4576247&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4576247&tab=artifacts#/) on master @ [72b0b023832066469dd63017160653236187df6a](https://github.com/cockroachdb/cockroach/commits/72b0b023832066469dd63017160653236187df6a):

```

=== RUN TestJoinReaderUsesBatchLimit

test_log_scope.go:79: test logs captured to: /artifacts/tmp/_tmp/fffb2e619f32b4cb8b294b200fe6717b/logTestJoinReaderUsesBatchLimit1516313893

test_log_scope.go:80: use -show-logs to present logs inline

```

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

Parameters in this failure:

- TAGS=bazel,gss,deadlock

</p>

</details>

/cc @cockroachdb/sql-queries

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestJoinReaderUsesBatchLimit.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

|

1.0

|

sql/rowexec: TestJoinReaderUsesBatchLimit failed - sql/rowexec.TestJoinReaderUsesBatchLimit [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4576247&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4576247&tab=artifacts#/) on master @ [72b0b023832066469dd63017160653236187df6a](https://github.com/cockroachdb/cockroach/commits/72b0b023832066469dd63017160653236187df6a):

```

=== RUN TestJoinReaderUsesBatchLimit

test_log_scope.go:79: test logs captured to: /artifacts/tmp/_tmp/fffb2e619f32b4cb8b294b200fe6717b/logTestJoinReaderUsesBatchLimit1516313893

test_log_scope.go:80: use -show-logs to present logs inline

```

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

Parameters in this failure:

- TAGS=bazel,gss,deadlock

</p>

</details>

/cc @cockroachdb/sql-queries

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestJoinReaderUsesBatchLimit.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

|

test

|

sql rowexec testjoinreaderusesbatchlimit failed sql rowexec testjoinreaderusesbatchlimit with on master run testjoinreaderusesbatchlimit test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inline help see also parameters in this failure tags bazel gss deadlock cc cockroachdb sql queries

| 1

|

799,710

| 28,312,426,280

|

IssuesEvent

|

2023-04-10 16:34:57

|

dynamicslab/hydrogym

|

https://api.github.com/repos/dynamicslab/hydrogym

|

closed

|

PyTorch Update

|

priority Paper-Release

|

PyTorch to be update to 2.0, and ideally utilize the [core features](https://pytorch.org/blog/pytorch-2.0-release/) of the 2.0 release cycle.

|

1.0

|

PyTorch Update - PyTorch to be update to 2.0, and ideally utilize the [core features](https://pytorch.org/blog/pytorch-2.0-release/) of the 2.0 release cycle.

|

non_test

|

pytorch update pytorch to be update to and ideally utilize the of the release cycle

| 0

|

174,421

| 14,481,810,690

|

IssuesEvent

|

2020-12-10 13:09:46

|

Joystream/joystream

|

https://api.github.com/repos/Joystream/joystream

|

opened

|

Initial constitution

|

documentation

|

What should it contain?

- founding members list.

- social encoding standards for the chain?

- tbd.

|

1.0

|

Initial constitution - What should it contain?

- founding members list.

- social encoding standards for the chain?

- tbd.

|

non_test

|

initial constitution what should it contain founding members list social encoding standards for the chain tbd

| 0

|

222,854

| 17,497,067,457

|

IssuesEvent

|

2021-08-10 02:54:02

|

OvercastCommunity/CommunityMaps

|

https://api.github.com/repos/OvercastCommunity/CommunityMaps

|

closed

|

[CTW/40v40] chle_

|

map submission contest

|

### Checklist

Check what applies to you. *Add an X between the brackets or click the checkboxes when you have submitted the issue.*

- [x] I have [pruned](https://pgm.dev/docs/guides/packaging/pruning-chunks) the map

- [x] I have agreed with assigning the CC BY-SA license to this map, as mentioned in the README

- [x] I have created an XML file

- [x] I have created an map image

- [x] I have uploaded the map zip file to a file sharing service

- [x] The map has been tested locally to make sure it works

<img src="https://i.imgur.com/LC0aHbQ.png" alt="map" style="max-width:100%;">

# Map Name

chle_ (yeah, thats the name)

## Gamemode & Map Description

This map does not have a void players have to bridge over. Instead I went fully experimental and made a huge wall players have to dig through in order to get to the other side. While in the wall, players are given night vision and haste 2. The rest of the map is just straight forward. Oh, and no, the large roof supported by the pillars is just there for decoration.

The woolroom however, I believe it has the largest interior of any woolroom in OCN history, so be sure to check out every corner of it!

I have absolutely no idea how this is gonna play lmao

## Team Sizes

Red vs. Blue 40v40

## Screenshots

[here u go](https://imgur.com/a/AYxPmV4)

# XML

[XML](https://gist.github.com/chleongithub/cd69c8b7545a7c7c054c59779b96c037)

# Map Image

<img src="https://i.imgur.com/9pZHhZL.png" alt="map" style="max-width:100%;">

# Download Link

[Download](https://www.dropbox.com/s/i89krl5fziz4bzu/chle_.zip?dl=0)

|

1.0

|

[CTW/40v40] chle_ - ### Checklist

Check what applies to you. *Add an X between the brackets or click the checkboxes when you have submitted the issue.*

- [x] I have [pruned](https://pgm.dev/docs/guides/packaging/pruning-chunks) the map

- [x] I have agreed with assigning the CC BY-SA license to this map, as mentioned in the README

- [x] I have created an XML file

- [x] I have created an map image

- [x] I have uploaded the map zip file to a file sharing service

- [x] The map has been tested locally to make sure it works

<img src="https://i.imgur.com/LC0aHbQ.png" alt="map" style="max-width:100%;">

# Map Name

chle_ (yeah, thats the name)

## Gamemode & Map Description

This map does not have a void players have to bridge over. Instead I went fully experimental and made a huge wall players have to dig through in order to get to the other side. While in the wall, players are given night vision and haste 2. The rest of the map is just straight forward. Oh, and no, the large roof supported by the pillars is just there for decoration.

The woolroom however, I believe it has the largest interior of any woolroom in OCN history, so be sure to check out every corner of it!

I have absolutely no idea how this is gonna play lmao

## Team Sizes

Red vs. Blue 40v40

## Screenshots

[here u go](https://imgur.com/a/AYxPmV4)

# XML

[XML](https://gist.github.com/chleongithub/cd69c8b7545a7c7c054c59779b96c037)

# Map Image

<img src="https://i.imgur.com/9pZHhZL.png" alt="map" style="max-width:100%;">

# Download Link

[Download](https://www.dropbox.com/s/i89krl5fziz4bzu/chle_.zip?dl=0)

|

test

|

chle checklist check what applies to you add an x between the brackets or click the checkboxes when you have submitted the issue i have the map i have agreed with assigning the cc by sa license to this map as mentioned in the readme i have created an xml file i have created an map image i have uploaded the map zip file to a file sharing service the map has been tested locally to make sure it works map name chle yeah thats the name gamemode map description this map does not have a void players have to bridge over instead i went fully experimental and made a huge wall players have to dig through in order to get to the other side while in the wall players are given night vision and haste the rest of the map is just straight forward oh and no the large roof supported by the pillars is just there for decoration the woolroom however i believe it has the largest interior of any woolroom in ocn history so be sure to check out every corner of it i have absolutely no idea how this is gonna play lmao team sizes red vs blue screenshots xml map image download link

| 1

|

18,461

| 2,615,171,586

|

IssuesEvent

|

2015-03-01 06:53:34

|

chrsmith/html5rocks

|

https://api.github.com/repos/chrsmith/html5rocks

|

opened

|

Updating your Resource Section

|

auto-migrated Priority-P2 Type-Bug

|

```

Hi, first of all I would like to show my thanks to any researchers, developers

or designers that have developed HTML5Rocks, it has and will continue to be a

superb resource for all things HTML5.

A few months a go I developed a similar site (http://www.html5tuts.co.uk) for a

university project and i have recently been updating it in terms of content and

the design of the website. The reason I decided to send this message is because

I noticed in your resource section that you have a number of good sites but it

hasnt been updated for a while. As HTML5 is all about spreading the word and

pushing the standard out to as many people as possible I thought I would ask if

you could feature my site on the resource page?

Secondly, I wanted to ask about the possiblity of writing content for the

website, I'd need to go to here:

http://code.google.com/p/html5rocks/wiki/ContributorsGuide to test my article

but I wondered if there were any particular topics that the HTML5Rocks team

would like on the site at the moment?

Thanks and Best Regards,

Alexander Jones

```

Original issue reported on code.google.com by `aljones....@gmail.com` on 7 Dec 2011 at 2:29

|

1.0

|

Updating your Resource Section - ```

Hi, first of all I would like to show my thanks to any researchers, developers

or designers that have developed HTML5Rocks, it has and will continue to be a

superb resource for all things HTML5.

A few months a go I developed a similar site (http://www.html5tuts.co.uk) for a

university project and i have recently been updating it in terms of content and

the design of the website. The reason I decided to send this message is because

I noticed in your resource section that you have a number of good sites but it

hasnt been updated for a while. As HTML5 is all about spreading the word and

pushing the standard out to as many people as possible I thought I would ask if

you could feature my site on the resource page?

Secondly, I wanted to ask about the possiblity of writing content for the

website, I'd need to go to here:

http://code.google.com/p/html5rocks/wiki/ContributorsGuide to test my article

but I wondered if there were any particular topics that the HTML5Rocks team

would like on the site at the moment?

Thanks and Best Regards,

Alexander Jones

```

Original issue reported on code.google.com by `aljones....@gmail.com` on 7 Dec 2011 at 2:29

|

non_test

|

updating your resource section hi first of all i would like to show my thanks to any researchers developers or designers that have developed it has and will continue to be a superb resource for all things a few months a go i developed a similar site for a university project and i have recently been updating it in terms of content and the design of the website the reason i decided to send this message is because i noticed in your resource section that you have a number of good sites but it hasnt been updated for a while as is all about spreading the word and pushing the standard out to as many people as possible i thought i would ask if you could feature my site on the resource page secondly i wanted to ask about the possiblity of writing content for the website i d need to go to here to test my article but i wondered if there were any particular topics that the team would like on the site at the moment thanks and best regards alexander jones original issue reported on code google com by aljones gmail com on dec at

| 0

|

13,232

| 22,346,754,366

|

IssuesEvent

|

2022-06-15 08:30:08

|

4l3x-suvnet/todo_calendar

|

https://api.github.com/repos/4l3x-suvnet/todo_calendar

|

closed

|

Save todo's in local storage

|

additional requirement

|

On page refresh, the todo's should be saved in local storage using localStorage with getItem and setItem(?)

|

1.0

|

Save todo's in local storage - On page refresh, the todo's should be saved in local storage using localStorage with getItem and setItem(?)

|

non_test

|

save todo s in local storage on page refresh the todo s should be saved in local storage using localstorage with getitem and setitem

| 0

|

625,758

| 19,765,019,976

|

IssuesEvent

|

2022-01-17 00:07:56

|

internetarchive/openlibrary

|

https://api.github.com/repos/internetarchive/openlibrary

|

opened

|

Better Share Preview for Lists & Reading Log (e.g. Twitter Social Card)

|

Type: Bug Theme: Lists Priority: 2 Lead: @mekarpeles Theme: Distribution

|

<!-- What problem are we solving? What does the experience look like today? What are the symptoms? -->

This is a create "social card" view of a book list (from fivebooks). Would be great if Open Library lists generated this type of preview :slightly_smiling_face:

see: https://twitter.com/jabuppartyon/status/1482812981591347206

### Evidence / Screenshot (if possible)

### Relevant url?

<!-- `https://openlibrary.org/...` -->

### Steps to Reproduce

<!-- What steps caused you to find the bug? -->

1. Go to ...

2. Do ...

<!-- What actually happened after these steps? What did you expect to happen? -->

* Actual:

* Expected:

### Details

- **Logged in (Y/N)?**

- **Browser type/version?**

- **Operating system?**

- **Environment (prod/dev/local)?** prod

<!-- If not sure, put prod -->

### Proposal & Constraints

<!-- What is the proposed solution / implementation? Is there a precedent of this approach succeeding elsewhere? -->

### Related files

<!-- Files related to this issue; this is super useful for new contributors who might want to help! If you're not sure, leave this blank; a maintainer will add them. -->

### Stakeholders

<!-- @ tag stakeholders of this bug -->

|

1.0

|

Better Share Preview for Lists & Reading Log (e.g. Twitter Social Card) - <!-- What problem are we solving? What does the experience look like today? What are the symptoms? -->

This is a create "social card" view of a book list (from fivebooks). Would be great if Open Library lists generated this type of preview :slightly_smiling_face:

see: https://twitter.com/jabuppartyon/status/1482812981591347206

### Evidence / Screenshot (if possible)

### Relevant url?

<!-- `https://openlibrary.org/...` -->