Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

150,804

| 5,791,273,615

|

IssuesEvent

|

2017-05-02 05:00:50

|

famuvie/breedR

|

https://api.github.com/repos/famuvie/breedR

|

closed

|

incorrect labelling of variance components in summary() for 3+ trait models fitted with AI-REML

|

bug priority:high

|

``` r

Nobs <- 1e4

## residual covariance matrix

S_res <- matrix(c(

9, 3, -3,

3, 9, 9,

-3, 9, 14

), nrow = 3, ncol = 3)

## simulated residual-only dataset

testdat <- data.frame(breedR.sample.ranef(3, S_res, Nobs, vname = 'e'))

## fitted model

res <- remlf90(

cbind(e_1, e_2, e_3) ~ 1,

data = testdat,

method = "ai"

)

#> Using default initial variances given by default_initial_variance()

#> See ?breedR.getOption.

summary(res)

#> Formula: cbind(e_1, e_2, e_3) ~ 0 + Intercept

#> Data: testdat

#> AIC BIC logLik

#> 83966 84031 -41974

#>

#>

#> Variance components:

#> Estimated variances S.E.

#> Residual.e_1 9.021 0.12758

#> Residual.e_1_Residual.e_2 3.098 0.09474

#> Residual.e_2 -2.900 0.11476

#> Residual.e_1_Residual.e_3 8.886 0.12567

#> Residual.e_2_Residual.e_3 8.787 0.14095

#> Residual.e_3 13.666 0.19327

#>

#> Fixed effects:

#> value s.e.

#> Intercept.e_1 -0.042498 0.0300

#> Intercept.e_2 0.020878 0.0298

#> Intercept.e_3 0.045872 0.0370

res$var[["Residual", "Estimated variances"]]

#> e_1 e_2 e_3

#> e_1 9.0205 3.0978 -2.9004

#> e_2 3.0978 8.8856 8.7875

#> e_3 -2.9004 8.7875 13.6660

```

Notice how the value summarized as `Residual.e_2` corresponds in fact to the residual covariance between `e_2` and `e_3`. In particular, it is negative!.

The values are fine, but the labeling is incorrect.

|

1.0

|

incorrect labelling of variance components in summary() for 3+ trait models fitted with AI-REML - ``` r

Nobs <- 1e4

## residual covariance matrix

S_res <- matrix(c(

9, 3, -3,

3, 9, 9,

-3, 9, 14

), nrow = 3, ncol = 3)

## simulated residual-only dataset

testdat <- data.frame(breedR.sample.ranef(3, S_res, Nobs, vname = 'e'))

## fitted model

res <- remlf90(

cbind(e_1, e_2, e_3) ~ 1,

data = testdat,

method = "ai"

)

#> Using default initial variances given by default_initial_variance()

#> See ?breedR.getOption.

summary(res)

#> Formula: cbind(e_1, e_2, e_3) ~ 0 + Intercept

#> Data: testdat

#> AIC BIC logLik

#> 83966 84031 -41974

#>

#>

#> Variance components:

#> Estimated variances S.E.

#> Residual.e_1 9.021 0.12758

#> Residual.e_1_Residual.e_2 3.098 0.09474

#> Residual.e_2 -2.900 0.11476

#> Residual.e_1_Residual.e_3 8.886 0.12567

#> Residual.e_2_Residual.e_3 8.787 0.14095

#> Residual.e_3 13.666 0.19327

#>

#> Fixed effects:

#> value s.e.

#> Intercept.e_1 -0.042498 0.0300

#> Intercept.e_2 0.020878 0.0298

#> Intercept.e_3 0.045872 0.0370

res$var[["Residual", "Estimated variances"]]

#> e_1 e_2 e_3

#> e_1 9.0205 3.0978 -2.9004

#> e_2 3.0978 8.8856 8.7875

#> e_3 -2.9004 8.7875 13.6660

```

Notice how the value summarized as `Residual.e_2` corresponds in fact to the residual covariance between `e_2` and `e_3`. In particular, it is negative!.

The values are fine, but the labeling is incorrect.

|

non_test

|

incorrect labelling of variance components in summary for trait models fitted with ai reml r nobs residual covariance matrix s res matrix c nrow ncol simulated residual only dataset testdat data frame breedr sample ranef s res nobs vname e fitted model res cbind e e e data testdat method ai using default initial variances given by default initial variance see breedr getoption summary res formula cbind e e e intercept data testdat aic bic loglik variance components estimated variances s e residual e residual e residual e residual e residual e residual e residual e residual e residual e fixed effects value s e intercept e intercept e intercept e res var e e e e e e notice how the value summarized as residual e corresponds in fact to the residual covariance between e and e in particular it is negative the values are fine but the labeling is incorrect

| 0

|

269,570

| 8,440,536,589

|

IssuesEvent

|

2018-10-18 07:36:51

|

handsontable/handsontable

|

https://api.github.com/repos/handsontable/handsontable

|

closed

|

Sort, remove a row and undo turns out an unexpected result

|

Plugin: column sorting Plugin: undo-redo Priority: high Status: Merged (ready for release) Status: Released Type: Bug

|

I haven't found this behaviour in these issues.

The actions are: refresh, sort by _code_, select EUR from _country_, _remove row_ and _undo_.

I've done the test in the [examples section](https://handsontable.com/examples.html?headers&context-menu&sorting):

And after undo the order disappears and I can't find the row for the Euro.

Browser: Google Chrome 48.0.2564.82 (64-bit) over Linux.

|

1.0

|

Sort, remove a row and undo turns out an unexpected result - I haven't found this behaviour in these issues.

The actions are: refresh, sort by _code_, select EUR from _country_, _remove row_ and _undo_.

I've done the test in the [examples section](https://handsontable.com/examples.html?headers&context-menu&sorting):

And after undo the order disappears and I can't find the row for the Euro.

Browser: Google Chrome 48.0.2564.82 (64-bit) over Linux.

|

non_test

|

sort remove a row and undo turns out an unexpected result i haven t found this behaviour in these issues the actions are refresh sort by code select eur from country remove row and undo i ve done the test in the and after undo the order disappears and i can t find the row for the euro browser google chrome bit over linux

| 0

|

260,754

| 27,784,710,862

|

IssuesEvent

|

2023-03-17 01:30:47

|

n-devs/Fiction

|

https://api.github.com/repos/n-devs/Fiction

|

opened

|

CVE-2021-3807 (High) detected in ansi-regex-4.0.0.tgz

|

Mend: dependency security vulnerability

|

## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-regex-4.0.0.tgz</b></p></summary>

<p>Regular expression for matching ANSI escape codes</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.0.0.tgz">https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.0.0.tgz</a></p>

<p>Path to dependency file: /Fiction/package.json</p>

<p>Path to vulnerable library: /node_modules/inquirer/node_modules/ansi-regex/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-2.1.1.tgz (Root Library)

- eslint-5.6.0.tgz

- inquirer-6.2.1.tgz

- strip-ansi-5.0.0.tgz

- :x: **ansi-regex-4.0.0.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ansi-regex is vulnerable to Inefficient Regular Expression Complexity

<p>Publish Date: 2021-09-17

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-3807>CVE-2021-3807</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://huntr.dev/bounties/5b3cf33b-ede0-4398-9974-800876dfd994/">https://huntr.dev/bounties/5b3cf33b-ede0-4398-9974-800876dfd994/</a></p>

<p>Release Date: 2021-09-17</p>

<p>Fix Resolution (ansi-regex): 4.1.1</p>

<p>Direct dependency fix Resolution (react-scripts): 5.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-3807 (High) detected in ansi-regex-4.0.0.tgz - ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-regex-4.0.0.tgz</b></p></summary>

<p>Regular expression for matching ANSI escape codes</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.0.0.tgz">https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.0.0.tgz</a></p>

<p>Path to dependency file: /Fiction/package.json</p>

<p>Path to vulnerable library: /node_modules/inquirer/node_modules/ansi-regex/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-2.1.1.tgz (Root Library)

- eslint-5.6.0.tgz

- inquirer-6.2.1.tgz

- strip-ansi-5.0.0.tgz

- :x: **ansi-regex-4.0.0.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ansi-regex is vulnerable to Inefficient Regular Expression Complexity

<p>Publish Date: 2021-09-17

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-3807>CVE-2021-3807</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://huntr.dev/bounties/5b3cf33b-ede0-4398-9974-800876dfd994/">https://huntr.dev/bounties/5b3cf33b-ede0-4398-9974-800876dfd994/</a></p>

<p>Release Date: 2021-09-17</p>

<p>Fix Resolution (ansi-regex): 4.1.1</p>

<p>Direct dependency fix Resolution (react-scripts): 5.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve high detected in ansi regex tgz cve high severity vulnerability vulnerable library ansi regex tgz regular expression for matching ansi escape codes library home page a href path to dependency file fiction package json path to vulnerable library node modules inquirer node modules ansi regex package json dependency hierarchy react scripts tgz root library eslint tgz inquirer tgz strip ansi tgz x ansi regex tgz vulnerable library vulnerability details ansi regex is vulnerable to inefficient regular expression complexity publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution ansi regex direct dependency fix resolution react scripts step up your open source security game with mend

| 0

|

185,797

| 14,380,910,036

|

IssuesEvent

|

2020-12-02 04:01:06

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

opened

|

Failing test: X-Pack Detection Engine API Integration Tests.x-pack/test/detection_engine_api_integration/security_and_spaces/tests/exception_operators_data_types/ip·ts - detection engine api security and spaces enabled Detection exceptions data types and operators Rule exception operators for data type ip "is in list" operator will return 2 results if we have a list that includes 2 ips

|

failed-test

|

A test failed on a tracked branch

```

Error: timed out waiting for function condition to be true within waitForRuleSuccess

at /dev/shm/workspace/parallel/14/kibana/x-pack/test/detection_engine_api_integration/utils.ts:708:9

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+7.x/9973/)

<!-- kibanaCiData = {"failed-test":{"test.class":"X-Pack Detection Engine API Integration Tests.x-pack/test/detection_engine_api_integration/security_and_spaces/tests/exception_operators_data_types/ip·ts","test.name":"detection engine api security and spaces enabled Detection exceptions data types and operators Rule exception operators for data type ip \"is in list\" operator will return 2 results if we have a list that includes 2 ips","test.failCount":1}} -->

|

1.0

|

Failing test: X-Pack Detection Engine API Integration Tests.x-pack/test/detection_engine_api_integration/security_and_spaces/tests/exception_operators_data_types/ip·ts - detection engine api security and spaces enabled Detection exceptions data types and operators Rule exception operators for data type ip "is in list" operator will return 2 results if we have a list that includes 2 ips - A test failed on a tracked branch

```

Error: timed out waiting for function condition to be true within waitForRuleSuccess

at /dev/shm/workspace/parallel/14/kibana/x-pack/test/detection_engine_api_integration/utils.ts:708:9

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+7.x/9973/)

<!-- kibanaCiData = {"failed-test":{"test.class":"X-Pack Detection Engine API Integration Tests.x-pack/test/detection_engine_api_integration/security_and_spaces/tests/exception_operators_data_types/ip·ts","test.name":"detection engine api security and spaces enabled Detection exceptions data types and operators Rule exception operators for data type ip \"is in list\" operator will return 2 results if we have a list that includes 2 ips","test.failCount":1}} -->

|

test

|

failing test x pack detection engine api integration tests x pack test detection engine api integration security and spaces tests exception operators data types ip·ts detection engine api security and spaces enabled detection exceptions data types and operators rule exception operators for data type ip is in list operator will return results if we have a list that includes ips a test failed on a tracked branch error timed out waiting for function condition to be true within waitforrulesuccess at dev shm workspace parallel kibana x pack test detection engine api integration utils ts first failure

| 1

|

96,967

| 3,979,617,460

|

IssuesEvent

|

2016-05-06 00:52:23

|

ParadiseSS13/Paradise

|

https://api.github.com/repos/ParadiseSS13/Paradise

|

closed

|

PDA Crew Manifest is broken.

|

Bug High Priority

|

**Problem Description**:

PDA Crew Manifest is broken. Other people reported the same issue.

**What did you expect to happen**:

A list of the crew.

**What happened instead**:

Just loads up a tiny blue pixel.

**Steps to reproduce the problem**:

Clear BYOND cache. Join server. Join game. Open PDA, go to crew manifest.

**Possibly related stuff (which gamemode was it? What were you doing at the time? Was

anything else out of the ordinary happening?)**: Played two rounds, was broken throughout those two rounds. Other people reported the same issue.

|

1.0

|

PDA Crew Manifest is broken. - **Problem Description**:

PDA Crew Manifest is broken. Other people reported the same issue.

**What did you expect to happen**:

A list of the crew.

**What happened instead**:

Just loads up a tiny blue pixel.

**Steps to reproduce the problem**:

Clear BYOND cache. Join server. Join game. Open PDA, go to crew manifest.

**Possibly related stuff (which gamemode was it? What were you doing at the time? Was

anything else out of the ordinary happening?)**: Played two rounds, was broken throughout those two rounds. Other people reported the same issue.

|

non_test

|

pda crew manifest is broken problem description pda crew manifest is broken other people reported the same issue what did you expect to happen a list of the crew what happened instead just loads up a tiny blue pixel steps to reproduce the problem clear byond cache join server join game open pda go to crew manifest possibly related stuff which gamemode was it what were you doing at the time was anything else out of the ordinary happening played two rounds was broken throughout those two rounds other people reported the same issue

| 0

|

148,620

| 19,534,415,756

|

IssuesEvent

|

2021-12-31 01:37:24

|

panasalap/linux-4.1.15

|

https://api.github.com/repos/panasalap/linux-4.1.15

|

opened

|

CVE-2017-14489 (Medium) detected in linux-stable-rtv4.1.33

|

security vulnerability

|

## CVE-2017-14489 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/scsi/scsi_transport_iscsi.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The iscsi_if_rx function in drivers/scsi/scsi_transport_iscsi.c in the Linux kernel through 4.13.2 allows local users to cause a denial of service (panic) by leveraging incorrect length validation.

<p>Publish Date: 2017-09-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-14489>CVE-2017-14489</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-14489">http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-14489</a></p>

<p>Release Date: 2017-09-15</p>

<p>Fix Resolution: v4.14-rc3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2017-14489 (Medium) detected in linux-stable-rtv4.1.33 - ## CVE-2017-14489 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/scsi/scsi_transport_iscsi.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The iscsi_if_rx function in drivers/scsi/scsi_transport_iscsi.c in the Linux kernel through 4.13.2 allows local users to cause a denial of service (panic) by leveraging incorrect length validation.

<p>Publish Date: 2017-09-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-14489>CVE-2017-14489</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-14489">http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-14489</a></p>

<p>Release Date: 2017-09-15</p>

<p>Fix Resolution: v4.14-rc3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in base branch master vulnerable source files drivers scsi scsi transport iscsi c vulnerability details the iscsi if rx function in drivers scsi scsi transport iscsi c in the linux kernel through allows local users to cause a denial of service panic by leveraging incorrect length validation publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

100,898

| 8,758,081,769

|

IssuesEvent

|

2018-12-15 00:18:22

|

brave/brave-browser

|

https://api.github.com/repos/brave/brave-browser

|

opened

|

need to put shields down to watch video on Hulu

|

QA/Test-Plan-Specified QA/Yes bug feature/shields/webcompat regression

|

<!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROVIDED-->

## Description

With 0.57.18 you need to put shields down to watch a video on Hulu. Allowing Ads and Trackers is not enough.

Note, In 0.56.15 you did not need to put shields down to watch a video on Hulu - I was able to view a video with standard shield configuration.

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. Install 0.57.18

2. Navigate to Hulu

3. Install Widevine

4. Login and try to play a video.

## Actual result:

Unable to play video. Get message below until you put shields down.

## Expected result:

No error message, able to view video.

## Reproduces how often:

Easily

## Brave version (brave://version info)

Brave | 0.57.18 Chromium: 71.0.3578.80 (Official Build) (64-bit)

-- | --

Revision | 2ac50e7249fbd55e6f517a28131605c9fb9fe897-refs/branch-heads/3578@{#860}

OS | Mac OS X

### Reproducible on current release:

- Does it reproduce on brave-browser dev/beta builds? yes

### Website problems only:

- Does the issue resolve itself when disabling Brave Shields? yes

- Is the issue reproducible on the latest version of Chrome? Using Chrome 71.0.3578.98 and UBlock Origin extension, issue does not reproduce.

### Additional Information

Issue does not reproduce on previous version, 0.56.15

|

1.0

|

need to put shields down to watch video on Hulu - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROVIDED-->

## Description

With 0.57.18 you need to put shields down to watch a video on Hulu. Allowing Ads and Trackers is not enough.

Note, In 0.56.15 you did not need to put shields down to watch a video on Hulu - I was able to view a video with standard shield configuration.

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. Install 0.57.18

2. Navigate to Hulu

3. Install Widevine

4. Login and try to play a video.

## Actual result:

Unable to play video. Get message below until you put shields down.

## Expected result:

No error message, able to view video.

## Reproduces how often:

Easily

## Brave version (brave://version info)

Brave | 0.57.18 Chromium: 71.0.3578.80 (Official Build) (64-bit)

-- | --

Revision | 2ac50e7249fbd55e6f517a28131605c9fb9fe897-refs/branch-heads/3578@{#860}

OS | Mac OS X

### Reproducible on current release:

- Does it reproduce on brave-browser dev/beta builds? yes

### Website problems only:

- Does the issue resolve itself when disabling Brave Shields? yes

- Is the issue reproducible on the latest version of Chrome? Using Chrome 71.0.3578.98 and UBlock Origin extension, issue does not reproduce.

### Additional Information

Issue does not reproduce on previous version, 0.56.15

|

test

|

need to put shields down to watch video on hulu have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue closed it will only be reopened after sufficient info is provided description with you need to put shields down to watch a video on hulu allowing ads and trackers is not enough note in you did not need to put shields down to watch a video on hulu i was able to view a video with standard shield configuration steps to reproduce install navigate to hulu install widevine login and try to play a video actual result unable to play video get message below until you put shields down expected result no error message able to view video reproduces how often easily brave version brave version info brave chromium official build bit revision refs branch heads os mac os x reproducible on current release does it reproduce on brave browser dev beta builds yes website problems only does the issue resolve itself when disabling brave shields yes is the issue reproducible on the latest version of chrome using chrome and ublock origin extension issue does not reproduce additional information issue does not reproduce on previous version

| 1

|

86,439

| 8,036,769,699

|

IssuesEvent

|

2018-07-30 10:13:16

|

vmware/harbor

|

https://api.github.com/repos/vmware/harbor

|

closed

|

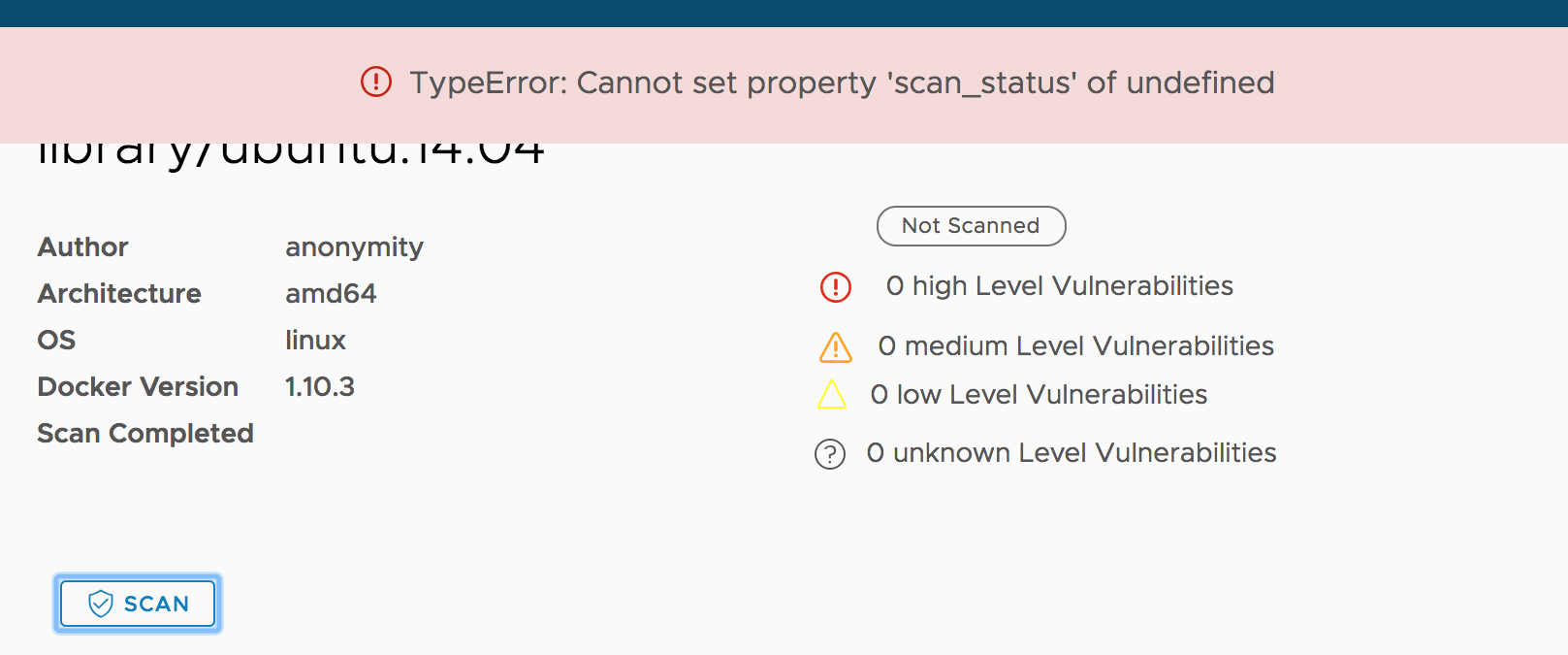

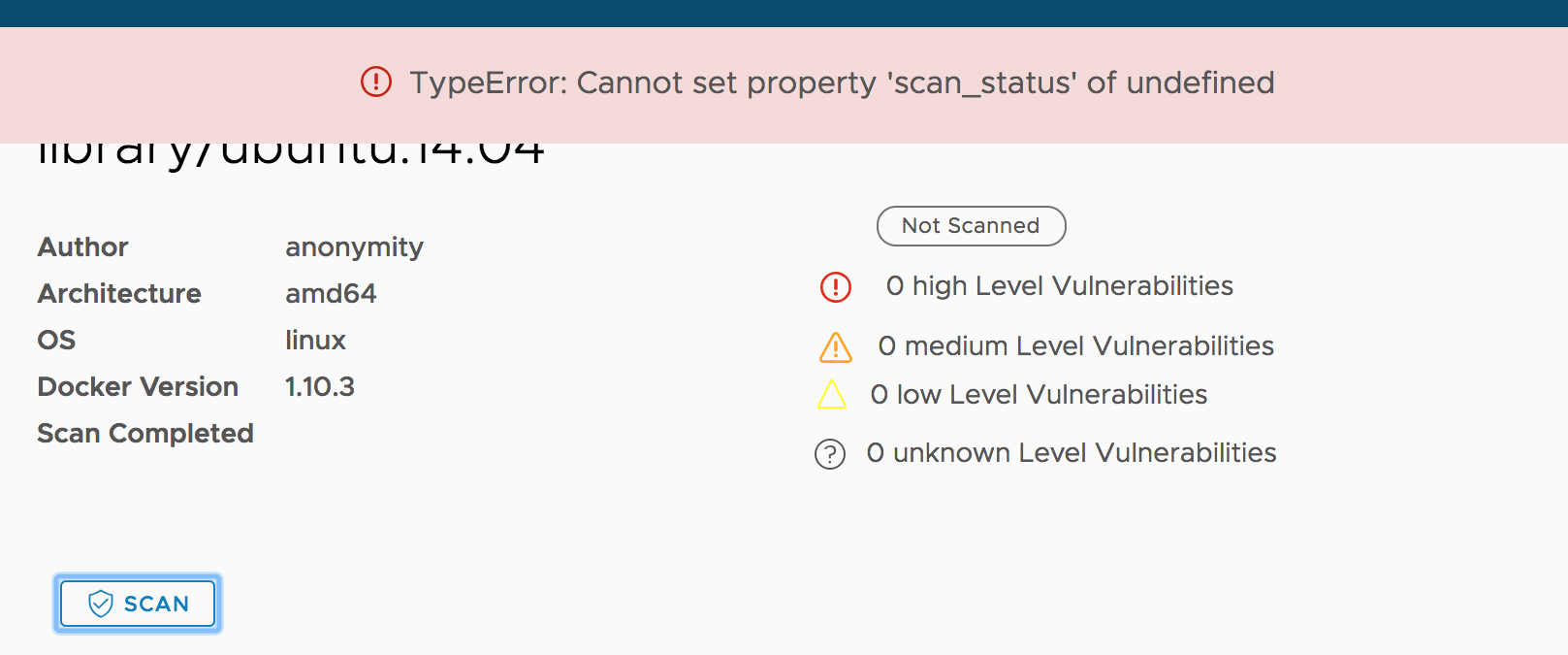

UI error when user clicks "scan" on details page of a newly pushed image.

|

area/ui kind/automation-found kind/bug need-test-case target/1.6.0

|

Build: v1.5.0-d65a7baf

Seems the root cause is the status widget is not initialized and after clicking it the code tries to modify the widget.

|

1.0

|

UI error when user clicks "scan" on details page of a newly pushed image. - Build: v1.5.0-d65a7baf

Seems the root cause is the status widget is not initialized and after clicking it the code tries to modify the widget.

|

test

|

ui error when user clicks scan on details page of a newly pushed image build seems the root cause is the status widget is not initialized and after clicking it the code tries to modify the widget

| 1

|

145,419

| 19,339,417,180

|

IssuesEvent

|

2021-12-15 01:29:16

|

hydrogen-dev/molecule-quickstart-app

|

https://api.github.com/repos/hydrogen-dev/molecule-quickstart-app

|

opened

|

CVE-2021-32640 (Medium) detected in ws-6.2.1.tgz, ws-5.2.2.tgz

|

security vulnerability

|

## CVE-2021-32640 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ws-6.2.1.tgz</b>, <b>ws-5.2.2.tgz</b></p></summary>

<p>

<details><summary><b>ws-6.2.1.tgz</b></p></summary>

<p>Simple to use, blazing fast and thoroughly tested websocket client and server for Node.js</p>

<p>Library home page: <a href="https://registry.npmjs.org/ws/-/ws-6.2.1.tgz">https://registry.npmjs.org/ws/-/ws-6.2.1.tgz</a></p>

<p>Path to dependency file: molecule-quickstart-app/package.json</p>

<p>Path to vulnerable library: molecule-quickstart-app/node_modules/jest-environment-jsdom-fourteen/node_modules/ws/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.0.1.tgz (Root Library)

- jest-environment-jsdom-fourteen-0.1.0.tgz

- jsdom-14.1.0.tgz

- :x: **ws-6.2.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>ws-5.2.2.tgz</b></p></summary>

<p>Simple to use, blazing fast and thoroughly tested websocket client and server for Node.js</p>

<p>Library home page: <a href="https://registry.npmjs.org/ws/-/ws-5.2.2.tgz">https://registry.npmjs.org/ws/-/ws-5.2.2.tgz</a></p>

<p>Path to dependency file: molecule-quickstart-app/package.json</p>

<p>Path to vulnerable library: molecule-quickstart-app/node_modules/ws/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.0.1.tgz (Root Library)

- jest-24.7.1.tgz

- jest-cli-24.9.0.tgz

- jest-config-24.9.0.tgz

- jest-environment-jsdom-24.9.0.tgz

- jsdom-11.12.0.tgz

- :x: **ws-5.2.2.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ws is an open source WebSocket client and server library for Node.js. A specially crafted value of the `Sec-Websocket-Protocol` header can be used to significantly slow down a ws server. The vulnerability has been fixed in ws@7.4.6 (https://github.com/websockets/ws/commit/00c425ec77993773d823f018f64a5c44e17023ff). In vulnerable versions of ws, the issue can be mitigated by reducing the maximum allowed length of the request headers using the [`--max-http-header-size=size`](https://nodejs.org/api/cli.html#cli_max_http_header_size_size) and/or the [`maxHeaderSize`](https://nodejs.org/api/http.html#http_http_createserver_options_requestlistener) options.

<p>Publish Date: 2021-05-25

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-32640>CVE-2021-32640</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/websockets/ws/security/advisories/GHSA-6fc8-4gx4-v693">https://github.com/websockets/ws/security/advisories/GHSA-6fc8-4gx4-v693</a></p>

<p>Release Date: 2021-05-25</p>

<p>Fix Resolution: 5.2.3,6.2.2,7.4.6</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"ws","packageVersion":"6.2.1","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"react-scripts:3.0.1;jest-environment-jsdom-fourteen:0.1.0;jsdom:14.1.0;ws:6.2.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"5.2.3,6.2.2,7.4.6","isBinary":false},{"packageType":"javascript/Node.js","packageName":"ws","packageVersion":"5.2.2","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"react-scripts:3.0.1;jest:24.7.1;jest-cli:24.9.0;jest-config:24.9.0;jest-environment-jsdom:24.9.0;jsdom:11.12.0;ws:5.2.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"5.2.3,6.2.2,7.4.6","isBinary":false}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-32640","vulnerabilityDetails":"ws is an open source WebSocket client and server library for Node.js. A specially crafted value of the `Sec-Websocket-Protocol` header can be used to significantly slow down a ws server. The vulnerability has been fixed in ws@7.4.6 (https://github.com/websockets/ws/commit/00c425ec77993773d823f018f64a5c44e17023ff). In vulnerable versions of ws, the issue can be mitigated by reducing the maximum allowed length of the request headers using the [`--max-http-header-size\u003dsize`](https://nodejs.org/api/cli.html#cli_max_http_header_size_size) and/or the [`maxHeaderSize`](https://nodejs.org/api/http.html#http_http_createserver_options_requestlistener) options.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-32640","cvss3Severity":"medium","cvss3Score":"5.3","cvss3Metrics":{"A":"Low","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2021-32640 (Medium) detected in ws-6.2.1.tgz, ws-5.2.2.tgz - ## CVE-2021-32640 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ws-6.2.1.tgz</b>, <b>ws-5.2.2.tgz</b></p></summary>

<p>

<details><summary><b>ws-6.2.1.tgz</b></p></summary>

<p>Simple to use, blazing fast and thoroughly tested websocket client and server for Node.js</p>

<p>Library home page: <a href="https://registry.npmjs.org/ws/-/ws-6.2.1.tgz">https://registry.npmjs.org/ws/-/ws-6.2.1.tgz</a></p>

<p>Path to dependency file: molecule-quickstart-app/package.json</p>

<p>Path to vulnerable library: molecule-quickstart-app/node_modules/jest-environment-jsdom-fourteen/node_modules/ws/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.0.1.tgz (Root Library)

- jest-environment-jsdom-fourteen-0.1.0.tgz

- jsdom-14.1.0.tgz

- :x: **ws-6.2.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>ws-5.2.2.tgz</b></p></summary>

<p>Simple to use, blazing fast and thoroughly tested websocket client and server for Node.js</p>

<p>Library home page: <a href="https://registry.npmjs.org/ws/-/ws-5.2.2.tgz">https://registry.npmjs.org/ws/-/ws-5.2.2.tgz</a></p>

<p>Path to dependency file: molecule-quickstart-app/package.json</p>

<p>Path to vulnerable library: molecule-quickstart-app/node_modules/ws/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.0.1.tgz (Root Library)

- jest-24.7.1.tgz

- jest-cli-24.9.0.tgz

- jest-config-24.9.0.tgz

- jest-environment-jsdom-24.9.0.tgz

- jsdom-11.12.0.tgz

- :x: **ws-5.2.2.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ws is an open source WebSocket client and server library for Node.js. A specially crafted value of the `Sec-Websocket-Protocol` header can be used to significantly slow down a ws server. The vulnerability has been fixed in ws@7.4.6 (https://github.com/websockets/ws/commit/00c425ec77993773d823f018f64a5c44e17023ff). In vulnerable versions of ws, the issue can be mitigated by reducing the maximum allowed length of the request headers using the [`--max-http-header-size=size`](https://nodejs.org/api/cli.html#cli_max_http_header_size_size) and/or the [`maxHeaderSize`](https://nodejs.org/api/http.html#http_http_createserver_options_requestlistener) options.

<p>Publish Date: 2021-05-25

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-32640>CVE-2021-32640</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/websockets/ws/security/advisories/GHSA-6fc8-4gx4-v693">https://github.com/websockets/ws/security/advisories/GHSA-6fc8-4gx4-v693</a></p>

<p>Release Date: 2021-05-25</p>

<p>Fix Resolution: 5.2.3,6.2.2,7.4.6</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"ws","packageVersion":"6.2.1","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"react-scripts:3.0.1;jest-environment-jsdom-fourteen:0.1.0;jsdom:14.1.0;ws:6.2.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"5.2.3,6.2.2,7.4.6","isBinary":false},{"packageType":"javascript/Node.js","packageName":"ws","packageVersion":"5.2.2","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"react-scripts:3.0.1;jest:24.7.1;jest-cli:24.9.0;jest-config:24.9.0;jest-environment-jsdom:24.9.0;jsdom:11.12.0;ws:5.2.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"5.2.3,6.2.2,7.4.6","isBinary":false}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-32640","vulnerabilityDetails":"ws is an open source WebSocket client and server library for Node.js. A specially crafted value of the `Sec-Websocket-Protocol` header can be used to significantly slow down a ws server. The vulnerability has been fixed in ws@7.4.6 (https://github.com/websockets/ws/commit/00c425ec77993773d823f018f64a5c44e17023ff). In vulnerable versions of ws, the issue can be mitigated by reducing the maximum allowed length of the request headers using the [`--max-http-header-size\u003dsize`](https://nodejs.org/api/cli.html#cli_max_http_header_size_size) and/or the [`maxHeaderSize`](https://nodejs.org/api/http.html#http_http_createserver_options_requestlistener) options.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-32640","cvss3Severity":"medium","cvss3Score":"5.3","cvss3Metrics":{"A":"Low","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> -->

|

non_test

|

cve medium detected in ws tgz ws tgz cve medium severity vulnerability vulnerable libraries ws tgz ws tgz ws tgz simple to use blazing fast and thoroughly tested websocket client and server for node js library home page a href path to dependency file molecule quickstart app package json path to vulnerable library molecule quickstart app node modules jest environment jsdom fourteen node modules ws package json dependency hierarchy react scripts tgz root library jest environment jsdom fourteen tgz jsdom tgz x ws tgz vulnerable library ws tgz simple to use blazing fast and thoroughly tested websocket client and server for node js library home page a href path to dependency file molecule quickstart app package json path to vulnerable library molecule quickstart app node modules ws package json dependency hierarchy react scripts tgz root library jest tgz jest cli tgz jest config tgz jest environment jsdom tgz jsdom tgz x ws tgz vulnerable library found in base branch master vulnerability details ws is an open source websocket client and server library for node js a specially crafted value of the sec websocket protocol header can be used to significantly slow down a ws server the vulnerability has been fixed in ws in vulnerable versions of ws the issue can be mitigated by reducing the maximum allowed length of the request headers using the and or the options publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability false ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree react scripts jest environment jsdom fourteen jsdom ws isminimumfixversionavailable true minimumfixversion isbinary false packagetype javascript node js packagename ws packageversion packagefilepaths istransitivedependency true dependencytree react scripts jest jest cli jest config jest environment jsdom jsdom ws isminimumfixversionavailable true minimumfixversion isbinary false basebranches vulnerabilityidentifier cve vulnerabilitydetails ws is an open source websocket client and server library for node js a specially crafted value of the sec websocket protocol header can be used to significantly slow down a ws server the vulnerability has been fixed in ws in vulnerable versions of ws the issue can be mitigated by reducing the maximum allowed length of the request headers using the and or the options vulnerabilityurl

| 0

|

348,959

| 31,763,426,102

|

IssuesEvent

|

2023-09-12 07:15:24

|

brave/brave-browser

|

https://api.github.com/repos/brave/brave-browser

|

closed

|

Update l10n for 1.58.x (Chromium 117).

|

l10n QA/Yes release-notes/exclude QA/Test-Plan-Specified OS/Android OS/Desktop

|

Download the latest l10n from Transifex.

Test plan:

@brave/legacy_qa should run through a few locals just to make sure that nothing obvious has regressed. Shouldn't spend too much time on this though.

**On Android:** please, verify that the string `Downloading wallet data file * X%` from https://github.com/brave/brave-core/pull/19086 looks correctly (specifically the percentage character).

|

1.0

|

Update l10n for 1.58.x (Chromium 117). - Download the latest l10n from Transifex.

Test plan:

@brave/legacy_qa should run through a few locals just to make sure that nothing obvious has regressed. Shouldn't spend too much time on this though.

**On Android:** please, verify that the string `Downloading wallet data file * X%` from https://github.com/brave/brave-core/pull/19086 looks correctly (specifically the percentage character).

|

test

|

update for x chromium download the latest from transifex test plan brave legacy qa should run through a few locals just to make sure that nothing obvious has regressed shouldn t spend too much time on this though on android please verify that the string downloading wallet data file x from looks correctly specifically the percentage character

| 1

|

33,772

| 16,107,007,622

|

IssuesEvent

|

2021-04-27 16:02:21

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

opened

|

Hysteresis effect on threadpool hill-climbing

|

tenet-performance

|

We have noticed a periodic pattern on the threadpool hill-climbing logic, which uses either `n-cores` or `n-cores + 20` with an hysteresis effect that switches every 3-4 weeks:

The main visible impact is on performance results, here is an example with JsonPlatform mean latency, but some scenarios are also impacted in throughput:

This happens independently of the runtime version, meaning that using an older runtime/aspnet/sdk doesn't change the "current" value of the TP threads.

It is also independent of the hardware, and happens on all machines (Linux only) on the same day. These machines have auto-updates disabled. Here are ARM64 (32 cores), AMD (48 cores), INTEL (28 cores):

Disabling hill-climbing restores the better perf in this case, so it is believe that fixing this variation will actually have a negative impact on perf for these scenarios.

|

True

|

Hysteresis effect on threadpool hill-climbing - We have noticed a periodic pattern on the threadpool hill-climbing logic, which uses either `n-cores` or `n-cores + 20` with an hysteresis effect that switches every 3-4 weeks:

The main visible impact is on performance results, here is an example with JsonPlatform mean latency, but some scenarios are also impacted in throughput:

This happens independently of the runtime version, meaning that using an older runtime/aspnet/sdk doesn't change the "current" value of the TP threads.

It is also independent of the hardware, and happens on all machines (Linux only) on the same day. These machines have auto-updates disabled. Here are ARM64 (32 cores), AMD (48 cores), INTEL (28 cores):

Disabling hill-climbing restores the better perf in this case, so it is believe that fixing this variation will actually have a negative impact on perf for these scenarios.

|

non_test

|

hysteresis effect on threadpool hill climbing we have noticed a periodic pattern on the threadpool hill climbing logic which uses either n cores or n cores with an hysteresis effect that switches every weeks the main visible impact is on performance results here is an example with jsonplatform mean latency but some scenarios are also impacted in throughput this happens independently of the runtime version meaning that using an older runtime aspnet sdk doesn t change the current value of the tp threads it is also independent of the hardware and happens on all machines linux only on the same day these machines have auto updates disabled here are cores amd cores intel cores disabling hill climbing restores the better perf in this case so it is believe that fixing this variation will actually have a negative impact on perf for these scenarios

| 0

|

453,600

| 13,085,221,826

|

IssuesEvent

|

2020-08-02 00:51:36

|

HealthHackAu2020/not_the_only_one

|

https://api.github.com/repos/HealthHackAu2020/not_the_only_one

|

closed

|

I think the "Share your Story" should be replaced on main page by the search bar

|

Health Hack Priority

|

I think that the search bar should be more prominent.

|

1.0

|

I think the "Share your Story" should be replaced on main page by the search bar - I think that the search bar should be more prominent.

|

non_test

|

i think the share your story should be replaced on main page by the search bar i think that the search bar should be more prominent

| 0

|

660,838

| 22,032,872,654

|

IssuesEvent

|

2022-05-28 05:27:14

|

PavlidisLab/Gemma

|

https://api.github.com/repos/PavlidisLab/Gemma

|

closed

|

Error when searching ontology terms

|

bug high priority

|

The Gemma interface has been lagging as of lately, particularly when searching ontology terms (for experimental tags, experimental factors, factor values). It will take several minutes to search and then fail altogether - this will happen several times before obtaining any results.

<img width="296" alt="Screen Shot 2022-02-18 at 12 28 16 PM" src="https://user-images.githubusercontent.com/89932772/154756725-1ef94304-b8ff-45df-baec-821bb666a35f.png">

|

1.0

|

Error when searching ontology terms - The Gemma interface has been lagging as of lately, particularly when searching ontology terms (for experimental tags, experimental factors, factor values). It will take several minutes to search and then fail altogether - this will happen several times before obtaining any results.

<img width="296" alt="Screen Shot 2022-02-18 at 12 28 16 PM" src="https://user-images.githubusercontent.com/89932772/154756725-1ef94304-b8ff-45df-baec-821bb666a35f.png">

|

non_test

|

error when searching ontology terms the gemma interface has been lagging as of lately particularly when searching ontology terms for experimental tags experimental factors factor values it will take several minutes to search and then fail altogether this will happen several times before obtaining any results img width alt screen shot at pm src

| 0

|

25,530

| 11,185,757,825

|

IssuesEvent

|

2020-01-01 05:46:02

|

EcommEasy/EcommEasy

|

https://api.github.com/repos/EcommEasy/EcommEasy

|

opened

|

CVE-2019-19919 (High) detected in handlebars-4.1.1.tgz

|

security vulnerability

|

## CVE-2019-19919 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.1.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-4.1.1.tgz">https://registry.npmjs.org/handlebars/-/handlebars-4.1.1.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/EcommEasy/package.json</p>

<p>Path to vulnerable library: /EcommEasy/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- :x: **handlebars-4.1.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/EcommEasy/EcommEasy/commit/363b3c5c1efcb2a7265f2d259bed12d00efb92c4">363b3c5c1efcb2a7265f2d259bed12d00efb92c4</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Versions of handlebars prior to 4.3.0 are vulnerable to Prototype Pollution leading to Remote Code Execution. Templates may alter an Object's __proto__ and __defineGetter__ properties, which may allow an attacker to execute arbitrary code through crafted payloads.

<p>Publish Date: 2019-12-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-19919>CVE-2019-19919</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1164">https://www.npmjs.com/advisories/1164</a></p>

<p>Release Date: 2019-12-20</p>

<p>Fix Resolution: 4.3.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-19919 (High) detected in handlebars-4.1.1.tgz - ## CVE-2019-19919 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.1.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-4.1.1.tgz">https://registry.npmjs.org/handlebars/-/handlebars-4.1.1.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/EcommEasy/package.json</p>

<p>Path to vulnerable library: /EcommEasy/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- :x: **handlebars-4.1.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/EcommEasy/EcommEasy/commit/363b3c5c1efcb2a7265f2d259bed12d00efb92c4">363b3c5c1efcb2a7265f2d259bed12d00efb92c4</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Versions of handlebars prior to 4.3.0 are vulnerable to Prototype Pollution leading to Remote Code Execution. Templates may alter an Object's __proto__ and __defineGetter__ properties, which may allow an attacker to execute arbitrary code through crafted payloads.

<p>Publish Date: 2019-12-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-19919>CVE-2019-19919</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1164">https://www.npmjs.com/advisories/1164</a></p>

<p>Release Date: 2019-12-20</p>

<p>Fix Resolution: 4.3.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve high detected in handlebars tgz cve high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file tmp ws scm ecommeasy package json path to vulnerable library ecommeasy node modules handlebars package json dependency hierarchy x handlebars tgz vulnerable library found in head commit a href vulnerability details versions of handlebars prior to are vulnerable to prototype pollution leading to remote code execution templates may alter an object s proto and definegetter properties which may allow an attacker to execute arbitrary code through crafted payloads publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

55,070

| 6,425,244,995

|

IssuesEvent

|

2017-08-09 15:03:31

|

WordPress/gutenberg

|

https://api.github.com/repos/WordPress/gutenberg

|

opened

|

Uncertain behavior in userData reducer tests.

|

Unit Testing [Type] Bug [Type] Question

|

<!--

BEFORE POSTING YOUR ISSUE:

- These comments won't show up when you submit the issue.

- Try to add as much detail as possible. Be specific!

- Please add the version of Gutenberg you are using in the description

- If you're requesting a new feature, explain why you'd like it to be added.

- Search this repository for the issue and whether it has been fixed or reported already.

- Ensure you are using the latest code before logging bugs.

- Disable all plugins to ensure it's not a plugin conflict issue.

-->

## Issue Overview

<!-- This is a brief overview of the issue. --->

One of the userData reducer tests in editor/test/state is not clearing out the global blockTypes correctly.

It "should populate recently used blocks with the common category", which it does, just not the expected result in the test suite. You can see the failing tests in the #2299, and #2309. Not sure if the test is expecting the wrong thing, or if the data is not being cleared. This issue has deeper roots as it is tied to the global blockTypes state.

## Expected Behavior

<!-- If you're describing a bug, tell us what should happen -->

<!-- If you're suggesting a change/improvement, tell us how it should work -->

According to the tests only two blockTypes should appear in the array, instead 8 do. It is unclear what the expected outcome of the test should be, but based on the test suite it looks as though the tests are not cleaning up themselves properly.

## Current Behavior

<!-- If describing a bug, tell us what happens instead of the expected behavior -->

<!-- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!-- Not obligatory, but suggest a fix/reason for the bug, -->

<!-- or ideas how to implement the addition or change -->

A possible solution might be clearing out all registered types on the after hook. A better long term solution is to introduce blockType registries and have independent fixtures be used for tests that need a certain set of blocks. This would make our test suite run slightly faster as well.

## Related Issues and/or PRs

<!-- List related issues or PRs against other branches: -->

#2309, #2299

|

1.0

|

Uncertain behavior in userData reducer tests. - <!--

BEFORE POSTING YOUR ISSUE:

- These comments won't show up when you submit the issue.

- Try to add as much detail as possible. Be specific!

- Please add the version of Gutenberg you are using in the description

- If you're requesting a new feature, explain why you'd like it to be added.

- Search this repository for the issue and whether it has been fixed or reported already.

- Ensure you are using the latest code before logging bugs.

- Disable all plugins to ensure it's not a plugin conflict issue.

-->

## Issue Overview

<!-- This is a brief overview of the issue. --->

One of the userData reducer tests in editor/test/state is not clearing out the global blockTypes correctly.

It "should populate recently used blocks with the common category", which it does, just not the expected result in the test suite. You can see the failing tests in the #2299, and #2309. Not sure if the test is expecting the wrong thing, or if the data is not being cleared. This issue has deeper roots as it is tied to the global blockTypes state.

## Expected Behavior

<!-- If you're describing a bug, tell us what should happen -->

<!-- If you're suggesting a change/improvement, tell us how it should work -->

According to the tests only two blockTypes should appear in the array, instead 8 do. It is unclear what the expected outcome of the test should be, but based on the test suite it looks as though the tests are not cleaning up themselves properly.

## Current Behavior

<!-- If describing a bug, tell us what happens instead of the expected behavior -->

<!-- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!-- Not obligatory, but suggest a fix/reason for the bug, -->

<!-- or ideas how to implement the addition or change -->

A possible solution might be clearing out all registered types on the after hook. A better long term solution is to introduce blockType registries and have independent fixtures be used for tests that need a certain set of blocks. This would make our test suite run slightly faster as well.

## Related Issues and/or PRs

<!-- List related issues or PRs against other branches: -->

#2309, #2299

|

test

|

uncertain behavior in userdata reducer tests before posting your issue these comments won t show up when you submit the issue try to add as much detail as possible be specific please add the version of gutenberg you are using in the description if you re requesting a new feature explain why you d like it to be added search this repository for the issue and whether it has been fixed or reported already ensure you are using the latest code before logging bugs disable all plugins to ensure it s not a plugin conflict issue issue overview one of the userdata reducer tests in editor test state is not clearing out the global blocktypes correctly it should populate recently used blocks with the common category which it does just not the expected result in the test suite you can see the failing tests in the and not sure if the test is expecting the wrong thing or if the data is not being cleared this issue has deeper roots as it is tied to the global blocktypes state expected behavior according to the tests only two blocktypes should appear in the array instead do it is unclear what the expected outcome of the test should be but based on the test suite it looks as though the tests are not cleaning up themselves properly current behavior possible solution a possible solution might be clearing out all registered types on the after hook a better long term solution is to introduce blocktype registries and have independent fixtures be used for tests that need a certain set of blocks this would make our test suite run slightly faster as well related issues and or prs

| 1

|

294,787

| 22,162,725,390

|

IssuesEvent

|

2022-06-04 18:53:40

|

typescript-eslint/typescript-eslint

|

https://api.github.com/repos/typescript-eslint/typescript-eslint

|

closed

|

Docs: [no-extraneous-class] Explain why the rule is useful

|

package: eslint-plugin documentation accepting prs

|

### Before You File a Documentation Request Please Confirm You Have Done The Following...

- [X] I have looked for existing [open or closed documentation requests](https://github.com/typescript-eslint/typescript-eslint/issues?q=is%3Aissue+label%3Adocumentation) that match my proposal.

- [X] I have [read the FAQ](https://typescript-eslint.io/docs/linting/troubleshooting) and my problem is not listed.

### Suggested Changes

Right now the rule just quotes TSLint's old docs as evidence:

> Users who come from a Java-style OO language may wrap their utility functions in an extra class, instead of putting them at the top level.

...but it doesn't explain _why_ wrapping utility functions in an extra class is unnecessary or even bad.

In summary:

* Wrapper classes add extra runtime bloat and cognitive complexity to code without adding any structural improvements

* Whatever would be put on them, such as utility functions, is already organized by virtue of the module it's in.

* You can always `import * as ...` the module to get all of them in a single object.

* IDEs can't provide as good autocompletions when you start typing the names of the helpers, since they're on a class instead of freely available to import

* It's harder to statically analyze code for unused variables, etc. when they're all on the class (see: [ts-prune](https://github.com/nadeesha/ts-prune)).

IME, this kind of class structure often comes up and is later regretted with teams that are used to adhering to OOP principles but then work in a runtime (e.g. Node) and project type (e.g. Express) that don't need them. They eventually get used to using ECMAScript modules as their form of organization, and find the extra classes to be unnecessary bloat.

### Affected URL(s)

https://typescript-eslint.io/rules/no-extraneous-class

|

1.0

|

Docs: [no-extraneous-class] Explain why the rule is useful - ### Before You File a Documentation Request Please Confirm You Have Done The Following...

- [X] I have looked for existing [open or closed documentation requests](https://github.com/typescript-eslint/typescript-eslint/issues?q=is%3Aissue+label%3Adocumentation) that match my proposal.

- [X] I have [read the FAQ](https://typescript-eslint.io/docs/linting/troubleshooting) and my problem is not listed.

### Suggested Changes

Right now the rule just quotes TSLint's old docs as evidence:

> Users who come from a Java-style OO language may wrap their utility functions in an extra class, instead of putting them at the top level.

...but it doesn't explain _why_ wrapping utility functions in an extra class is unnecessary or even bad.

In summary:

* Wrapper classes add extra runtime bloat and cognitive complexity to code without adding any structural improvements

* Whatever would be put on them, such as utility functions, is already organized by virtue of the module it's in.

* You can always `import * as ...` the module to get all of them in a single object.

* IDEs can't provide as good autocompletions when you start typing the names of the helpers, since they're on a class instead of freely available to import

* It's harder to statically analyze code for unused variables, etc. when they're all on the class (see: [ts-prune](https://github.com/nadeesha/ts-prune)).

IME, this kind of class structure often comes up and is later regretted with teams that are used to adhering to OOP principles but then work in a runtime (e.g. Node) and project type (e.g. Express) that don't need them. They eventually get used to using ECMAScript modules as their form of organization, and find the extra classes to be unnecessary bloat.

### Affected URL(s)

https://typescript-eslint.io/rules/no-extraneous-class

|

non_test

|

docs explain why the rule is useful before you file a documentation request please confirm you have done the following i have looked for existing that match my proposal i have and my problem is not listed suggested changes right now the rule just quotes tslint s old docs as evidence users who come from a java style oo language may wrap their utility functions in an extra class instead of putting them at the top level but it doesn t explain why wrapping utility functions in an extra class is unnecessary or even bad in summary wrapper classes add extra runtime bloat and cognitive complexity to code without adding any structural improvements whatever would be put on them such as utility functions is already organized by virtue of the module it s in you can always import as the module to get all of them in a single object ides can t provide as good autocompletions when you start typing the names of the helpers since they re on a class instead of freely available to import it s harder to statically analyze code for unused variables etc when they re all on the class see ime this kind of class structure often comes up and is later regretted with teams that are used to adhering to oop principles but then work in a runtime e g node and project type e g express that don t need them they eventually get used to using ecmascript modules as their form of organization and find the extra classes to be unnecessary bloat affected url s

| 0

|

38,833

| 12,603,292,742

|

IssuesEvent

|

2020-06-11 13:15:39

|

jgeraigery/logstash

|

https://api.github.com/repos/jgeraigery/logstash

|

opened

|

CVE-2019-16942 (High) detected in jackson-databind-2.9.10.jar

|

security vulnerability

|

## CVE-2019-16942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to vulnerable library: le/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.9.10/e201bb70b7469ba18dd58ed8268aa44e702fa2f0/jackson-databind-2.9.10.jar,le/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.9.10/e201bb70b7469ba18dd58ed8268aa44e702fa2f0/jackson-databind-2.9.10.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.9.10.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/jgeraigery/logstash/commit/201cee856b2ad93e442e269232049cdde83045a3">201cee856b2ad93e442e269232049cdde83045a3</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.0.0 through 2.9.10. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has the commons-dbcp (1.4) jar in the classpath, and an attacker can find an RMI service endpoint to access, it is possible to make the service execute a malicious payload. This issue exists because of org.apache.commons.dbcp.datasources.SharedPoolDataSource and org.apache.commons.dbcp.datasources.PerUserPoolDataSource mishandling.

<p>Publish Date: 2019-10-01

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16942>CVE-2019-16942</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16942">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16942</a></p>

<p>Release Date: 2019-10-01</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.6.7.3,2.7.9.7,2.8.11.5,2.9.10.1</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.9.10","isTransitiveDependency":false,"dependencyTree":"com.fasterxml.jackson.core:jackson-databind:2.9.10","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.6.7.3,2.7.9.7,2.8.11.5,2.9.10.1"}],"vulnerabilityIdentifier":"CVE-2019-16942","vulnerabilityDetails":"A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.0.0 through 2.9.10. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has the commons-dbcp (1.4) jar in the classpath, and an attacker can find an RMI service endpoint to access, it is possible to make the service execute a malicious payload. This issue exists because of org.apache.commons.dbcp.datasources.SharedPoolDataSource and org.apache.commons.dbcp.datasources.PerUserPoolDataSource mishandling.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16942","cvss3Severity":"high","cvss3Score":"9.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2019-16942 (High) detected in jackson-databind-2.9.10.jar - ## CVE-2019-16942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>