repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

pinry/pinry | django | 85 | Multiple N+1 queries in pin loading | I was wondering why loading pins took close to half a second and looked at the queries. Loading 50 pins takes 304 queries (url: `/api/v1/pin/?format=json&order_by=-id&offset=0`):

- two auth related ones I ignored

- one getting the total count

``` sql

SELECT COUNT(*) FROM "core_pin"

```

- one getting the pins

... | closed | 2015-03-22T23:55:27Z | 2015-04-10T19:02:27Z | https://github.com/pinry/pinry/issues/85 | [] | JensGutermuth | 3 |

adbar/trafilatura | web-scraping | 404 | Corrupted Markdown output when TXT+formatting | I wrote a fairly complicated testcase.. then realized I could use the command line tool :-D

The docs indicate Markdown is an option

https://github.com/adbar/trafilatura/blob/d78fbb5e0d88566cb1326f04210a93b46db8ac87/docs/usage-python.rst?plain=1#L71

* The plain text output (no Markdown) looks good.

* In the ex... | closed | 2023-08-06T22:38:28Z | 2024-03-28T12:44:28Z | https://github.com/adbar/trafilatura/issues/404 | [

"bug"

] | clach04 | 2 |

jpadilla/django-rest-framework-jwt | django | 2 | Overriding JSONWebTokenSerializer.validate() to auth based on 3rd party response and not user model? | Hi there!

I'm a bit of a Python neophyte so huge apologies if this is way more obvious than I'm making it out to be, but any suggestions how I'd override JSONWebTokenSerializer.validate() so that, instead of validating against [django.contrib.auth.authenticate() here](https://github.com/GetBlimp/django-rest-framework-... | closed | 2014-01-20T18:02:25Z | 2017-08-21T08:47:29Z | https://github.com/jpadilla/django-rest-framework-jwt/issues/2 | [

"discussion"

] | aendra-rininsland | 6 |

PokemonGoF/PokemonGo-Bot | automation | 5,628 | Location Confirmation before starting bot | ### Short Description

Currently, when starting bot, it will find if the name you stated in location can be found (from google?) and then get the coordinates.

The problem is, if I have the same name in favorite location, it will use the location it found in geocoding instead of the one in favorite location. Example, i... | open | 2016-09-23T04:56:32Z | 2016-09-23T11:00:43Z | https://github.com/PokemonGoF/PokemonGo-Bot/issues/5628 | [] | MerlionRock | 2 |

tensorly/tensorly | numpy | 287 | Issue with test_svd | `test_backend.test_svd` seems to occasionally fail, at least with the MXNet backend, see e.g. https://github.com/tensorly/tensorly/runs/2945783934

Relevant traceback:

```

=================================== FAILURES ===================================

___________________________________ test_svd _________________... | closed | 2021-06-29T20:21:47Z | 2022-07-09T18:25:12Z | https://github.com/tensorly/tensorly/issues/287 | [

"bug"

] | JeanKossaifi | 0 |

nolar/kopf | asyncio | 864 | Is there a way to add namespaceSelector to the generated ValidatingWebhookConfiguration? | ### Keywords

namespaceSelector, ValidatingWebhookConfiguration

### Problem

I didn't find a solution how to add a `namespaceSelector` to the genrated `ValidatingWebhookConfiguration`. I found `kopf.WebhookClientConfigService` so I can add the service options to `ValidatingWebhookConfiguration` but I did't find a simi... | open | 2021-11-18T13:37:13Z | 2021-12-05T11:35:04Z | https://github.com/nolar/kopf/issues/864 | [

"question"

] | devopstales | 2 |

marshmallow-code/flask-smorest | rest-api | 365 | Flask commands to generate OpenAPI schema in yaml | Currently there are two Flask command:

1. `flask openapi print` to print OpenAPI schema to output

2. `flask openapi write <file>` to write OpenAPI schema to file

Both of them serialize OpenAPI schema to json.

It would be great to have an option to generate it to yaml as well. | closed | 2022-06-09T15:17:29Z | 2022-06-20T13:07:28Z | https://github.com/marshmallow-code/flask-smorest/issues/365 | [

"enhancement"

] | derlikh-smart | 3 |

ultralytics/yolov5 | machine-learning | 13,069 | Visualizing YOLOv5 Segmentation Data | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

Greetings, I would like to use YOLOv5 Segmentation for one of my projects. However, befor... | closed | 2024-06-07T02:21:54Z | 2024-07-19T01:09:53Z | https://github.com/ultralytics/yolov5/issues/13069 | [

"question",

"Stale"

] | DylDevs | 10 |

AirtestProject/Airtest | automation | 1,148 | 微信小程序 text 输入内容到真机,内容不全 | (请尽量按照下面提示内容填写,有助于我们快速定位和解决问题,感谢配合。否则直接关闭。)

**(重要!问题分类)**

* 测试开发环境AirtestIDE使用问题 -> https://github.com/AirtestProject/AirtestIDE/issues

* 控件识别、树状结构、poco库报错 -> https://github.com/AirtestProject/Poco/issues

* 图像识别、设备控制相关问题 -> 按下面的步骤

**描述问题bug**

执行 `text("18519004293", enter=False)` 输入内容,页面只展示了 18590093

**相关... | open | 2023-07-26T11:21:49Z | 2023-07-26T11:22:48Z | https://github.com/AirtestProject/Airtest/issues/1148 | [] | bluescurry | 0 |

robotframework/robotframework | automation | 4,792 | Add Vietnamese translation | We added translation infrastructure and as well as translations for various languages in RF 6.0 (#4390). We now have PR #4791 adding Vietnamese translation. | closed | 2023-06-09T11:53:31Z | 2023-06-13T14:00:42Z | https://github.com/robotframework/robotframework/issues/4792 | [

"enhancement",

"priority: high",

"acknowledge",

"effort: small"

] | pekkaklarck | 2 |

Miserlou/Zappa | flask | 1,248 | re-deploying with custom domain name gives "forbidden" error | <!--- Provide a general summary of the issue in the Title above -->

I used undeploy followed by deploy on a site with a custom domain name and

AWS certificate, then tried to certify since this changed the Amazon url (which worked with the site) but certify apparently can only be run one time, leaving the custom domai... | open | 2017-11-21T02:17:59Z | 2019-09-20T09:21:08Z | https://github.com/Miserlou/Zappa/issues/1248 | [

"bug",

"aws"

] | SCDealy | 9 |

healthchecks/healthchecks | django | 263 | 1.7.0 sendalerts is not working | I've got 1.7.0 installed in an Alpine Linux 3.10 container... the entire site it working, everything migrated over, but sentalerts does nothing.... I get:

```

sendalerts is now running

```

But then nothing happens... no more timing marks, no output at all... it just sits there doing nothing. I've made a test tr... | closed | 2019-06-23T03:05:19Z | 2019-08-05T18:30:58Z | https://github.com/healthchecks/healthchecks/issues/263 | [] | stevenmcastano | 11 |

SALib/SALib | numpy | 151 | delta.analyze randomly returns singular matrix error | Dear,

feeding the `delta.analyze()` function with:

```

problem = {

'num_vars': 3,

'names': ['K_IO_AOBden', 'K_SO_AOBden', 'K_SNO_AOBden'],

'bounds': [[0.01, 11], [0.01, 13.2], [0.08, 4.3]]

}

```

```

In: param_values

Out: array([[ 2.12591411, 1.8200793 , 0.10082878],

[ 6.21754537, 5.852... | closed | 2017-06-29T08:13:02Z | 2017-06-29T11:12:31Z | https://github.com/SALib/SALib/issues/151 | [

"bug"

] | gbellandi | 6 |

pallets/flask | python | 4,739 | Tests failing on latest Flask version 2.2.1 | Hi All,

I am working on a Flask project and suddenly my unit tests start failing on the latest flask version `2.2.1`

Got following error while running [tox](https://pypi.org/project/tox/) command

> /usr/lib/python3.8/doctest.py:939: in find

self._find(tests, obj, name, module, source_lines, globs, {})

.tox/... | closed | 2022-08-04T12:20:50Z | 2022-08-19T00:07:38Z | https://github.com/pallets/flask/issues/4739 | [] | hiteshgoyal18 | 3 |

pytest-dev/pytest-cov | pytest | 155 | Testing on Windows doesn't produce coverage data | Specifically, this [appveyor config file](https://github.com/xonsh/slug/blob/master/.appveyor.yml) was [run through appveyor](https://ci.appveyor.com/project/xonsh/slug/build/job/kepc6ge0a09n5a97) and produced [this coverage file](https://codecov.s3.amazonaws.com/v4/raw/2017-04-04/0765DFC7D7C1F1017C42EC5A811DAF9C/2ad23... | open | 2017-04-04T03:13:18Z | 2017-10-28T04:27:54Z | https://github.com/pytest-dev/pytest-cov/issues/155 | [

"question"

] | AstraLuma | 7 |

unit8co/darts | data-science | 1,899 | Fine-tuning quesiton | In the current version, it's fine-tuning possible? I saw an older post about that and I wonder if it's a way in the current version to achieve that.

| closed | 2023-07-14T14:51:13Z | 2023-07-15T08:37:08Z | https://github.com/unit8co/darts/issues/1899 | [

"bug",

"triage"

] | LaplaceSingularity | 5 |

huggingface/text-generation-inference | nlp | 2,503 | Add support for Idefics 3 | ### Model description

Please add support for HuggingFaceM4/Idefics3-8B-Llama3 in tgi:

_Idefics3 is an open multimodal model that accepts arbitrary sequences of image and text inputs and produces text outputs. The model can answer questions about images, describe visual content, create stories grounded on multiple i... | open | 2024-09-07T12:27:41Z | 2024-09-09T11:22:43Z | https://github.com/huggingface/text-generation-inference/issues/2503 | [

"new model"

] | stelterlab | 3 |

drivendataorg/cookiecutter-data-science | data-science | 105 | MacOS-specific .DS_Store files missing from .gitignore file | My colleagues and I were having issues collaborating using this repository template, and noticed that _.DS_Store_ files, which are created during the creation of new folders in MacOS directories, were being tracked by git. This was causing merge issues. I have added this file extension at the end of the _.gitignore_ fi... | closed | 2018-03-28T10:26:07Z | 2018-04-14T12:57:18Z | https://github.com/drivendataorg/cookiecutter-data-science/issues/105 | [] | randallrs | 2 |

cleanlab/cleanlab | data-science | 887 | add class-imbalance detection to the default set of Datalab issue types | closed | 2023-11-09T18:20:54Z | 2023-11-11T21:54:34Z | https://github.com/cleanlab/cleanlab/issues/887 | [

"enhancement",

"needs triage"

] | jwmueller | 1 | |

mljar/mercury | data-visualization | 132 | make automatic website refresh as optional for scheduled notebooks | Right now, when running a scheduled notebook there is an automatic refresh of the website every 1 minute. In the case of notebooks scheduled with longer intervals (daily), it is not needed. Please make it optional.

:

try:

with open(file_path, 'r', encoding='utf-8') as file:

content = file.read()

return content

except FileNotFoundError:

... | closed | 2023-08-06T09:21:44Z | 2024-08-09T08:25:35Z | https://github.com/coqui-ai/TTS/issues/2842 | [

"bug"

] | frixos25 | 9 |

MagicStack/asyncpg | asyncio | 346 | Very bad performance using insert many | * **asyncpg version**: 0.17.0

* **PostgreSQL version**: 10

* **Do you use a PostgreSQL SaaS? If so, which? Can you reproduce

the issue with a local PostgreSQL install?**: local

* **Python version**: 3.7

* **Platform**: Windows

* **Do you use pgbouncer?**: No

* **Did you install asyncpg with pip?**: Yes

* **... | closed | 2018-08-21T15:02:16Z | 2018-08-26T20:58:02Z | https://github.com/MagicStack/asyncpg/issues/346 | [] | akashgurava | 2 |

robotframework/robotframework | automation | 5,304 | Libdoc: Support documentation written with Markdown | It seems this was [discussed briefly back in 2016](https://github.com/robotframework/robotframework/issues/2476) but I wanted to see if there was any thoughts on supporting the Markdown format for Libdoc in 2015.

With Markdown being the preferred(currently only?) markup [for copilot knowledge bases](https://docs.git... | open | 2025-01-02T16:52:01Z | 2025-03-07T15:12:22Z | https://github.com/robotframework/robotframework/issues/5304 | [

"priority: critical",

"effort: large"

] | Wolfe1 | 4 |

yzhao062/pyod | data-science | 256 | Cannot save AutoEncoder | The [official instructions](https://pyod.readthedocs.io/en/latest/model_persistence.html) say to use joblib for pickling PyOD models.

This fails for AutoEncoders, or any other TensorFlow-backed model as far as I can tell. The error is:

```

>>> dump(model, 'model.joblib')

...

TypeError: can't pickle _thread.RL... | open | 2020-12-06T07:50:26Z | 2021-03-03T01:29:11Z | https://github.com/yzhao062/pyod/issues/256 | [

"bug",

"help wanted",

"good first issue"

] | kennysong | 6 |

wger-project/wger | django | 1,770 | Weight tracker: Date selection | ## Steps to Reproduce

1. Open the F-Droid App on GrapheneOS/Android

2. Press + on the Weight tab

**Expected results:** The current date is automatically selected.

**Actual results:** The date of the latest entry is selected.

| open | 2024-09-17T05:01:44Z | 2024-09-25T10:17:53Z | https://github.com/wger-project/wger/issues/1770 | [] | hubortje | 2 |

scanapi/scanapi | rest-api | 352 | Add Dotenv (.env) support | Hi.

I added Dotenv to ScanAPI.

can I submit a PR? | closed | 2021-03-08T04:28:47Z | 2021-03-24T15:48:23Z | https://github.com/scanapi/scanapi/issues/352 | [] | jpsilva15 | 1 |

aleju/imgaug | machine-learning | 40 | [MacOS] IOError when running generate_example_images.py | When I clone the repo, and run the `generate_example_images.py`, I get a runtime error:

```

$ cd ~/repos/imgaug

$ python generate_example_images.py

[draw_per_augmenter_images] Loading image...

[draw_per_augmenter_images] Initializing...

[draw_per_augmenter_images] Augmenting...

Traceback (most recent call last):... | open | 2017-06-09T21:04:13Z | 2017-06-09T21:29:37Z | https://github.com/aleju/imgaug/issues/40 | [] | erickim555 | 1 |

open-mmlab/mmdetection | pytorch | 11,354 | How to improve CPU utilization ? | When I train yolox using RTX4090, the CPU usage is very low.Only two cores are used.

And the GPU usage also low,only used much GPU memory.

: error when creating "deploy/kube/namespace.yml": Namespace "tmp_test" is invalid: metadata.name: Invalid value: "tmp_test": a lowercase RFC 1123 label must consist of lower case alphanumeric characters or ... | closed | 2021-10-08T15:48:02Z | 2021-10-09T12:39:53Z | https://github.com/s3rius/FastAPI-template/issues/36 | [] | gpkc | 1 |

voila-dashboards/voila | jupyter | 882 | Voila not rendering on JupyterLab Extension | Hello, I am trying to launch Voila via the Lab extension, however it keeps loading without rendering the output as per below:

list of extensions:

JupyterLab v3.0.14

C:\Users\Anaconda3\share\jupyter\... | open | 2021-05-05T09:02:57Z | 2021-05-11T15:30:31Z | https://github.com/voila-dashboards/voila/issues/882 | [] | siglacredit | 5 |

unit8co/darts | data-science | 1,998 | [BUG] Can't fit a loaded darts RNNModel | **Describe the bug**

I can't fit and use a saved model after loading it. I get the error "FileNotFoundError: [Errno 2] No such file or directory: '/kaggle/working/darts_logs/Karachi_RNN/_model.pth.tar'" when I try to fit it on new data.

**To Reproduce**

This is how I created the darts RNNModel

```

%%time

n_pa... | closed | 2023-09-18T10:53:34Z | 2024-01-21T15:26:48Z | https://github.com/unit8co/darts/issues/1998 | [

"q&a"

] | Kamal-Moha | 4 |

reloadware/reloadium | django | 151 | Reloadium experienced a fatal error and has to quit. | ## Describe the bug*

A clear and concise description of what the bug is.

C:\Users\test\Documents\python_code\gitlab_code\venv\Scripts\python.exe" -m reloadium_launcher pydev_proxy "C:/Program Files/JetBrains/PyCharm Community Edition 2022.1/plugins/python-ce/helpers/pydev/pydevd.py" --multiprocess --client 127.0.0.1 ... | closed | 2023-06-10T14:54:02Z | 2023-07-06T12:43:42Z | https://github.com/reloadware/reloadium/issues/151 | [] | gengchaogit | 11 |

deepfakes/faceswap | machine-learning | 731 | Effmpeg not working with Docker installation because Dockerfile does not include an installation of ffmpeg | Effmpeg does not work with the Docker container because there is no ffmpeg installation. | closed | 2019-05-18T00:19:02Z | 2019-05-18T00:55:11Z | https://github.com/deepfakes/faceswap/issues/731 | [] | timothydelter | 2 |

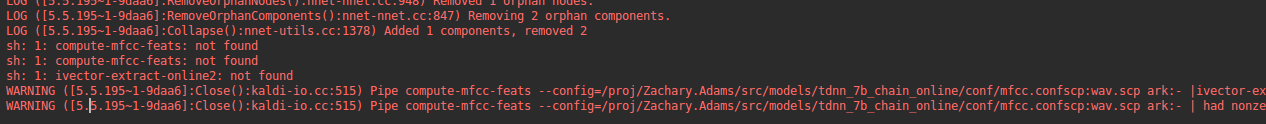

pykaldi/pykaldi | numpy | 132 | Why is compute-mfcc-feats not found and ivector-extract-online2. IS there a simple way to fix this issue |

| closed | 2019-06-04T20:35:22Z | 2020-10-13T20:17:56Z | https://github.com/pykaldi/pykaldi/issues/132 | [] | zachadams16 | 3 |

iterative/dvc | data-science | 9,786 | `pull`: fails unless target specified | # Bug Report

## Description

`dvc pull` fails but `dvc pull target` succeeds for the same file.

Reported multiple times in discord:

https://discord.com/channels/485586884165107732/563406153334128681/1131979446379888853

https://discord.com/channels/485586884165107732/485596304961962003/1135912803086119043

... | closed | 2023-08-01T16:57:26Z | 2023-08-04T07:51:12Z | https://github.com/iterative/dvc/issues/9786 | [

"bug",

"A: data-sync"

] | dberenbaum | 4 |

scrapy/scrapy | python | 5,769 | Plans to use "GOOGLE_APPLICATION_CREDENTIALS_JSON" (FEEDS) | Hello,

I am currently calling the crawlers from Airflow, using `PythonOperator`. It works perfect. But, because of that, I cannot set a env variable named `GOOGLE_APPLICATION_CREDENTIALS` with a path to the json file, once its a limitation I have (fully managed environment, can't edit the image). Instead of it, I ha... | closed | 2022-12-23T15:27:18Z | 2022-12-27T16:08:02Z | https://github.com/scrapy/scrapy/issues/5769 | [] | elitongadotti | 5 |

ray-project/ray | python | 51,471 | CI test windows://python/ray/tests:test_cancel is consistently_failing | CI test **windows://python/ray/tests:test_cancel** is consistently_failing. Recent failures:

- https://buildkite.com/ray-project/postmerge/builds/8965#0195aa9f-d3d7-4df6-a372-b880dc10a310

- https://buildkite.com/ray-project/postmerge/builds/8965#0195aa03-5c4e-4a62-bd29-e5408e12b496

DataCaseName-windows://python/ray... | closed | 2025-03-18T21:44:28Z | 2025-03-19T21:52:37Z | https://github.com/ray-project/ray/issues/51471 | [

"bug",

"triage",

"core",

"flaky-tracker",

"ray-test-bot",

"ci-test",

"weekly-release-blocker",

"stability"

] | can-anyscale | 2 |

csurfer/pyheat | matplotlib | 18 | LOADING Module issue - Module not found | Hi,

I have a customized module **xyz.pyd**, on windows 10, which my program loads/executes perfectly as it's in the same directory. But pyheat fails to load it. Does it load the modules only from the site-packages?

Regards

Prabhat | open | 2021-11-04T02:27:14Z | 2022-08-01T10:47:19Z | https://github.com/csurfer/pyheat/issues/18 | [] | prabhatM | 2 |

TracecatHQ/tracecat | fastapi | 67 | Datadog Security Monitoring | **User Story:** I want to build automated investigations given findings from Datadog security products.

Datadog's key security features can be grouped in the following:

- CSPM findings

- SIEM signals

- SIEM signal state management

- CSPM findings state management

- SIEM detection rules

- Suppressions for SIEM ... | closed | 2024-04-19T09:59:14Z | 2024-06-16T19:19:12Z | https://github.com/TracecatHQ/tracecat/issues/67 | [

"enhancement",

"good first issue",

"integrations",

"tracker"

] | topher-lo | 1 |

scanapi/scanapi | rest-api | 403 | Fix anti pattern issues mentioned in static analysis | ## Feature request

### Description of the feature

<!-- A clear and concise description of what the new feature is. -->

To increase the quality of the project we are using static analysis to find out anti-patterns in the project.

A detailed list of the issues can be found [here](https://deepsource.io/gh/scanapi... | closed | 2021-06-12T08:11:38Z | 2021-07-30T20:38:00Z | https://github.com/scanapi/scanapi/issues/403 | [

"Feature",

"Refactor",

"Code Quality",

"Antipattern",

"Multi Contributors"

] | Pradhvan | 0 |

TencentARC/GFPGAN | deep-learning | 34 | 训练问题 | 作者,您好,我想问下,为什么我在本地训练都是正常的,然后在集群训练loss总是NAN,我尝试从新建环境,最终训练,始终保持本地和集群环境一直,可是最终还是本地正常,集群异常,请问怎么回事,谢谢 | closed | 2021-08-06T13:04:06Z | 2022-03-15T02:20:50Z | https://github.com/TencentARC/GFPGAN/issues/34 | [] | ZZFanya-DWR | 2 |

vastsa/FileCodeBox | fastapi | 27 | 安全问题! | 部署成功后,不做任何配置的情况下,默认管理员密码是admin,这十分危险。请作者考虑改为:第一次部署成功时,随机生成复杂密码。 | closed | 2022-12-28T07:48:19Z | 2023-01-16T06:58:40Z | https://github.com/vastsa/FileCodeBox/issues/27 | [

"enhancement"

] | tinyxingqiu | 2 |

slackapi/python-slack-sdk | asyncio | 1,216 | Conversations_members and direct message channels | Is `conversations_members` not compatible with direct message channels? I have an app with all four user level scopes (groups, channels, im, mpim all have read permissions). When I try:

```python

client.conversations_members(channel="D1234567890") #Example direct channel id

```

I get a `channel_not_found` respo... | closed | 2022-05-20T09:14:36Z | 2023-12-27T19:52:13Z | https://github.com/slackapi/python-slack-sdk/issues/1216 | [

"question",

"needs info"

] | skewwhiff | 9 |

deepspeedai/DeepSpeed | deep-learning | 5,719 | Issue with LoRA Tuning on llama3-70b using PEFT and TRL's SFTTrainer | We are attempting to perform LoRA tuning on llama3-70b using PEFT with TRL's SFTTrainer. We are using 8 H100 GPUs and distributed training with ZeRO-stage3, but we encounter an error. Could you please provide any solutions?

Here is the error message:

```

Loading checkpoint shards: 77%|███████▋ | 23/30 [00:58<00:... | open | 2024-07-02T09:37:29Z | 2024-07-02T16:04:35Z | https://github.com/deepspeedai/DeepSpeed/issues/5719 | [

"training"

] | yutanozaki1 | 0 |

davidsandberg/facenet | computer-vision | 798 | Cudnn incompatibilty | I am using Linux Mint 18.2 Cinnamon 64 bit OS.I have installed Cuda 9.1 installed with Cudnn 7.1.Whenever I run the commands to train on my custom images I get the following error:

**Command:**

` src/classifier.py TRAIN ~/datasets/my_dataset/train/ ~/models/20180402-114759.pb ~/models/my_classifier.pkl --batch_size... | open | 2018-06-21T09:49:41Z | 2018-06-21T09:50:44Z | https://github.com/davidsandberg/facenet/issues/798 | [] | nirajvermafcb | 0 |

httpie/cli | rest-api | 1,388 | No such file or directory: '~/.config/httpie/version_info.json' | ## Checklist

- [x] I've searched for similar issues.

- [x] I'm using the latest version of HTTPie.

---

## Minimal reproduction code and steps

1. do use `http` command, e.g. `http GET http://localhost:8004/consumers/test`

## Current result

```bash

❯ http GET http://localhost:8004/consumers/test

HTTP... | closed | 2022-05-06T06:38:24Z | 2022-05-07T00:43:46Z | https://github.com/httpie/cli/issues/1388 | [

"bug",

"new"

] | nico-arianto | 3 |

pydantic/pydantic-ai | pydantic | 836 | Prompt prefill/prefix | One essential prompt engineering technique is to prefill the response to steer the model to certain outcomes:

- { - to get JSON

- <!DOCTYPE html> - to get back an HTML document

- <svg width="200" height="200" to get back an SVG with the specified dimensions

- Or in the case of thinking models, add <think> and_prefi... | open | 2025-02-01T04:19:14Z | 2025-02-05T06:48:48Z | https://github.com/pydantic/pydantic-ai/issues/836 | [] | tranhoangnguyen03 | 7 |

tensorpack/tensorpack | tensorflow | 992 | Do you have plan to reproduce deformable convolution? | closed | 2018-11-28T17:40:34Z | 2018-11-28T22:49:11Z | https://github.com/tensorpack/tensorpack/issues/992 | [

"examples"

] | jianlong-yuan | 1 | |

tqdm/tqdm | jupyter | 613 | RuntimeError: cannot join current thread | - [ ] I have visited the [source website], and in particular

read the [known issues]

- [x] I have searched through the [issue tracker] for duplicates

- [x] I have mentioned version numbers, operating system and

environment, where applicable:

```python

import tqdm, sys

print(tqdm.__version__, sys.versio... | closed | 2018-09-17T02:15:36Z | 2021-11-17T01:53:17Z | https://github.com/tqdm/tqdm/issues/613 | [

"p0-bug-critical ☢",

"synchronisation ⇶"

] | david30907d | 22 |

dropbox/PyHive | sqlalchemy | 40 | allow user to specify other authMechanism | I want to use the PyHive integrate with SQLAlchemy to operate Hive and Presto.

Presto works well, but for Hive, the authMechanism is fixed, `PLAIN`.

https://github.com/dropbox/PyHive/search?utf8=%E2%9C%93&q=PLAIN

So when the required mechanism is not `PLAIN`, it will complain:

```

thrift.transport.TTransport.TTransp... | closed | 2016-02-15T06:26:54Z | 2018-07-10T07:30:29Z | https://github.com/dropbox/PyHive/issues/40 | [

"duplicate"

] | twds | 3 |

PrefectHQ/prefect | automation | 17,299 | incorrect image tag for auto-generated Dockerfile | ### Bug summary

reported by Brain from slack

when using the auto generated dockerfile created by prefect in version 3.1.9:

```

prefect.utilities.dockerutils.BuildError: failed to resolve reference "[docker.io/prefecthq/prefect:3.1.9-python3.13](http://docker.io/prefecthq/prefect:3.1.9-python3.13)": [docker.io/prefect... | open | 2025-02-26T23:19:11Z | 2025-02-28T15:43:49Z | https://github.com/PrefectHQ/prefect/issues/17299 | [

"bug"

] | zzstoatzz | 5 |

vastsa/FileCodeBox | fastapi | 244 | 最新版本的,管理后台设置后,前台无法正常更新 | 后台更新后,前台并没有生效。

如果手动更改配置文件,请问在哪里更改。 | closed | 2025-02-01T15:03:37Z | 2025-02-08T14:30:22Z | https://github.com/vastsa/FileCodeBox/issues/244 | [] | haobangme | 4 |

deepset-ai/haystack | nlp | 8,903 | Proposal to make input variables to `PromptBuilder` and `ChatPromptBuilder` required by default | **Is your feature request related to a problem? Please describe.**

Most of our components require some (or all) inputs during runtime. For our components whose inputs are based on Jinja2 templates (e.g. `ConditionalRouter`, `OutputAdpater`, `PromptBuilder`, and `ChatPromptBuilder`) we differ on how we treat whether all... | closed | 2025-02-21T14:03:22Z | 2025-03-21T14:53:27Z | https://github.com/deepset-ai/haystack/issues/8903 | [

"P2"

] | sjrl | 7 |

explosion/spaCy | machine-learning | 13,772 | In requirements.txt thinc>=8.3.4,<8.4.0,which was not found so I changed it to thinc>=8.3.4,<8.4.0 but it is giving error that failed building wheel for thinc |

<!-- Include a code example or the steps that led to the problem. Please try to be as specific as possible. -->

(dlenv) [manshika@lappy spaCy]$ pip install -r requirements.txt

Collecting spacy-legacy<3.1.0,>=3.0.11 (from -r requirements.txt (line 2))

Using cached spacy_legacy-3.0.12-py2.py3-none-any.whl.metadata (2.... | open | 2025-03-18T12:44:49Z | 2025-03-18T12:44:49Z | https://github.com/explosion/spaCy/issues/13772 | [] | manshika13 | 0 |

davidsandberg/facenet | tensorflow | 294 | Only 0.750+-0.083 Accuracy , far away from 0.99???? | How did u get 0.99 ?? | closed | 2017-05-27T05:58:08Z | 2017-06-01T00:49:04Z | https://github.com/davidsandberg/facenet/issues/294 | [] | ouyangbei | 1 |

Kanaries/pygwalker | matplotlib | 628 | Hide table render | Is there a way to not render the table? I tried some parameter to pass in `gw_mode` but then I can hide the view and not the table.

| open | 2024-09-25T12:27:22Z | 2024-09-26T02:14:36Z | https://github.com/Kanaries/pygwalker/issues/628 | [

"enhancement"

] | RodrigoSKohl | 2 |

liangliangyy/DjangoBlog | django | 207 | ./manage.py migrate执行时错误 | ./manage.py migrate执行时报下面错误,请问该怎么修改啊

-----------------------------------------------

Operations to perform:

Apply all migrations: admin, auth, contenttypes, sessions, sites

Running migrations:

Applying admin.0001_initial...Traceback (most recent call last):

File "/Library/Frameworks/Python.framework/Versi... | closed | 2019-02-05T08:45:42Z | 2019-02-05T14:07:03Z | https://github.com/liangliangyy/DjangoBlog/issues/207 | [] | JamesAiit | 1 |

JaidedAI/EasyOCR | pytorch | 1,208 | compute_ratio_and_resize passes a PIL constant to a cv2 function | utils.py contains the following code

https://github.com/JaidedAI/EasyOCR/blob/c999505ef6b43be1c4ee36aa04ad979175178352/easyocr/utils.py#L566-L577

Note that Image is part of PIL

https://github.com/JaidedAI/EasyOCR/blob/c999505ef6b43be1c4ee36aa04ad979175178352/easyocr/utils.py#L7-L8

Since both of these map to an ... | open | 2024-02-02T00:20:22Z | 2024-05-02T03:53:28Z | https://github.com/JaidedAI/EasyOCR/issues/1208 | [] | andreaswimmer | 1 |

alteryx/featuretools | data-science | 1,867 | Change features_only to feature_defs_only | When looking at the [DFS](https://featuretools.alteryx.com/en/stable/generated/featuretools.dfs.html#featuretools-dfs) call, I feel that the `features_only` option is misleading. Setting this to `True` only returns definitions and not the feature matrix. So I believe the option should be `feature_defs_only`

#### Cod... | closed | 2022-01-26T20:47:31Z | 2023-03-15T20:10:49Z | https://github.com/alteryx/featuretools/issues/1867 | [

"enhancement"

] | dvreed77 | 8 |

ibis-project/ibis | pandas | 10,709 | feat: add `missing_ok: bool = False` kwarg to Table.drop() signature | ### Is your feature request related to a problem?

I have several places where I do:

```python

if "must_not_be_present" in table.columns:

table.drop("must_not_be_present")

# ...continue on

```

I want to be able to do non-conditionally: ` t.drop("must_not_be_present", missing_ok=True)`

This is similar to `Path.mk... | open | 2025-01-23T01:03:06Z | 2025-02-10T23:17:26Z | https://github.com/ibis-project/ibis/issues/10709 | [

"feature"

] | NickCrews | 2 |

horovod/horovod | pytorch | 3,420 | Installation steps | Hello! I have followed all the stps though I can not install horovod properly until now

I have built these two files :

yml

> name: adel

>

> channels:

> - pytorch=1.9.0

> - conda-forge

> - defaults

>

> dependencies:

> - ccache

> - cmake

> - cudatoolkit=11.3

> - cudnn

> - cxx-compiler

... | closed | 2022-02-25T13:51:41Z | 2022-03-03T13:30:56Z | https://github.com/horovod/horovod/issues/3420 | [] | Arij-Aladel | 1 |

rthalley/dnspython | asyncio | 679 | Problème | Bonjour j'ai un problème sur kodi, Lorsque je lance certaine video, un message d'erreur apparaît me mettant : addons dnspython manquant

Quelqun peut m'aider svp | closed | 2021-08-24T12:58:09Z | 2021-08-24T17:02:43Z | https://github.com/rthalley/dnspython/issues/679 | [] | WazYon9 | 1 |

piskvorky/gensim | data-science | 3,104 | lsi_dispatcher is not working from command-line when not specifying maxsize argument | #### Problem description

When running `lsi_dispatcher` from the command-line, if you don't specify the `maxsize` argument explicitly, you get an error for the missing positional argument:

```

usage: lsi_dispatcher.py [-h] maxsize

lsi_dispatcher.py: error: the following arguments are required: maxsize

```

Ac... | closed | 2021-04-06T08:54:18Z | 2021-04-28T05:22:01Z | https://github.com/piskvorky/gensim/issues/3104 | [] | robguinness | 4 |

Lightning-AI/pytorch-lightning | data-science | 20,407 | update dataset at "on_train_epoch_start", but "training_step" still get old data | ### Bug description

I use `trainer.fit(model, datamodule=dm)` to start training.

"dm" is an object whose class inherited from `pl.LightningDataModule`, and in the class, I override the function:

```python

def train_dataloader(self):

train_dataset = MixedBatchMultiviewDataset(self.args, self.tokenizer,

... | open | 2024-11-08T16:22:03Z | 2024-11-18T22:48:19Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20407 | [

"bug",

"waiting on author",

"loops"

] | Yak1m4Sg | 1 |

faif/python-patterns | python | 3 | Is Borg really implemented right ? | I thought that the point of Borg was to allow subclassing. However, the implementation doesn't match expectations in this case:

```

class Borg:

__shared_state = {}

def __init__(self):

self.__dict__ = self.__shared_state

self.state = 'Running'

def __str__(self):

return self.state

... | closed | 2012-08-27T22:04:18Z | 2020-07-05T19:55:40Z | https://github.com/faif/python-patterns/issues/3 | [

"enhancement"

] | jpic | 7 |

aleju/imgaug | machine-learning | 525 | [Question/FR] Way to make a dependency between different StochasticParameters between sequences | I have a specific problem:

I want to translate in one sequence and then to scale in another sequence. Like this:

```python

seq1 = iaa.Sequential([

iaa.Affine(

translate_px={

'x': iap.Uniform(-200, 200),

'y': iap.Uniform(-200, 200)

}

),

])

seq2 = ia... | open | 2019-12-17T08:04:14Z | 2019-12-17T21:53:14Z | https://github.com/aleju/imgaug/issues/525 | [] | soswow | 2 |

django-import-export/django-import-export | django | 1,355 | Can't use example | **Describe the bug**

Cannot use manage.py in example app.

**To Reproduce**

Steps to reproduce the behavior:

1. Clone repo (_pip install -e git+https://github.com/django-import-export/django-import-export.git#egg=django-import-export_)

2. `cd tests`

3. `python manage.py makemigrations`

4. Error: `ModuleNotFoun... | closed | 2021-12-08T09:05:24Z | 2021-12-22T13:07:09Z | https://github.com/django-import-export/django-import-export/issues/1355 | [

"bug"

] | Samoht1 | 2 |

robotframework/robotframework | automation | 5,063 | Robot Framework does not run outside Windows if `signal.setitimer` is not available (affects e.g. Pyodide) | As described by @Snooz82 in Slack:

> Pyodide 0.23 and newer runs Python 3.11.2 which officially supports WebAssembly as a [PEP11 Tier 3](https://peps.python.org/pep-0011/#tier-3) platform. [#3252](https://github.com/pyodide/pyodide/pull/3252), [#3614](https://github.com/pyodide/pyodide/pull/3614)

>

> That causes... | closed | 2024-02-23T16:54:17Z | 2024-06-04T14:08:51Z | https://github.com/robotframework/robotframework/issues/5063 | [

"bug",

"priority: high",

"rc 1",

"effort: small"

] | manykarim | 3 |

deezer/spleeter | tensorflow | 325 | [Discussion] Python 3.8 support? | Ubuntu 20.04 LTS is around the corner and it will come pre-installed with Python 3.8. Is there any plans to support Python 3.8 in Spleeter?

Thanks. | closed | 2020-04-14T10:44:53Z | 2020-04-14T10:49:14Z | https://github.com/deezer/spleeter/issues/325 | [

"question",

"wontfix"

] | Tantawi | 1 |

mage-ai/mage-ai | data-science | 4,932 | [DOCUMENTATION] - Add documentation for "Features" in Settings menu | Requesting documentation for "Features" items in the Settings menu.

| closed | 2024-04-12T18:27:02Z | 2024-04-25T05:06:46Z | https://github.com/mage-ai/mage-ai/issues/4932 | [

"documentation"

] | amlloyd | 2 |

Evil0ctal/Douyin_TikTok_Download_API | api | 250 | 使用Douyin TikTok Download/Scraper API-V1,抖音单条视频下载进行测试 |

访问接口报500错误

此API 是下架了?

| closed | 2023-08-23T07:14:24Z | 2023-08-28T07:54:52Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/250 | [

"BUG"

] | chenningxi | 2 |

slackapi/python-slack-sdk | asyncio | 1,000 | RTMClient v2 still requires aiohttp installed (even though it's unused) | Thanks @max-arnold for pointing this out!

---

For me the latest release (3.5.0rc1) still fails without aiohttp:

```

In [1]: from slack_sdk.rtm.v2 import RTMClient

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call l... | closed | 2021-04-13T21:27:10Z | 2021-04-14T22:16:46Z | https://github.com/slackapi/python-slack-sdk/issues/1000 | [

"bug",

"rtm-client",

"Version: 3x"

] | seratch | 0 |

pyjanitor-devs/pyjanitor | pandas | 853 | Clean up tests for row_to_name function | Going to suggest a test that, perhaps, more generally tests the exact _property_ we'd like to guarantee.

Because the intent of the function is to delete rows without resetting the index, could we do something akin to:

```python

def test_row_to_names_delete_the_row_without_resetting_index(dataframe):

"""Test... | open | 2021-07-25T23:55:20Z | 2021-07-25T23:55:20Z | https://github.com/pyjanitor-devs/pyjanitor/issues/853 | [] | fireddd | 0 |

aio-libs-abandoned/aioredis-py | asyncio | 1,008 | [2.0] Type annotations break mypy | I tried porting an existing project to aioredis 2.0. I've got it almost working, but the type annotations that have been added are too strict (and in some cases just wrong) and break mypy. The main problem is that all the functions that take keys annotate them as `str`, when `bytes` (and I think several other types) ar... | closed | 2021-06-09T13:00:46Z | 2021-07-13T05:09:59Z | https://github.com/aio-libs-abandoned/aioredis-py/issues/1008 | [] | bmerry | 5 |

pydata/bottleneck | numpy | 26 | Wrong dtype on win64 for functions that return indices | As reported by Christoph Gohlke, the bottleneck functions that return indices return the wrong dtype on win64.

See the following threads:

http://mail.scipy.org/pipermail/numpy-discussion/2011-June/056679.html

http://groups.google.com/group/cython-users/browse_thread/thread/f8022ee7ccbf7c5b

| closed | 2011-06-10T16:13:52Z | 2011-06-10T18:00:28Z | https://github.com/pydata/bottleneck/issues/26 | [] | kwgoodman | 0 |

huggingface/diffusers | deep-learning | 11,133 | bug while using cogvideox image to video pipeline | ### Describe the bug

while using the script at "https://huggingface.co/docs/diffusers/using-diffusers/text-img2vid" of cogvideox pipeline,an error occured:

RuntimeError: Sizes of tensors must match except in dimension 1. Expected size 16 but got size 8 for tensor number 1 in the list.

### Reproduction

import torch

f... | open | 2025-03-21T11:41:22Z | 2025-03-21T11:41:22Z | https://github.com/huggingface/diffusers/issues/11133 | [

"bug"

] | MrTom34 | 0 |

harry0703/MoneyPrinterTurbo | automation | 73 | Ubuntu字体不支持 | Ubuntu系统convert: delegate library support not built-in '/home/project/MoneyPrinterTurbo/resource/fonts/STHeitiLight.ttc' (Freetypype/2112.

| closed | 2024-03-27T08:51:43Z | 2024-03-28T08:33:32Z | https://github.com/harry0703/MoneyPrinterTurbo/issues/73 | [] | zhuangzhuang3 | 4 |

sammchardy/python-binance | api | 1,012 | NameError: name 'Client' is not defined | I just started coding yesterday, but when I type;

from binance.client import Client

api_key = 'api_key'

api_secret = 'api_secret'

client = Client(api_key, api_secret, tld='us')

it returns the error;

SyntaxError: EOL while scanning string literal

client = Client(api_key, api_secret, tld='us')

Traceba... | open | 2021-09-08T14:18:13Z | 2023-04-11T19:44:44Z | https://github.com/sammchardy/python-binance/issues/1012 | [] | Chaneriel | 1 |

miguelgrinberg/Flask-SocketIO | flask | 1,548 | Calling disconnect on a write_only manager doesn't trigger anything | Created in the wrong repo, sorry!

**Describe the bug**

Related to #1174, I needed to be able to disconnect clients by their sid from external processes (such as Celery). Thanks to the work done in #1174 this is possible, but it looks like it may have partially broken somewhere along the way. Calling `disconnect` on... | closed | 2021-05-10T16:58:13Z | 2021-05-10T16:58:47Z | https://github.com/miguelgrinberg/Flask-SocketIO/issues/1548 | [] | lsapan | 0 |

LAION-AI/Open-Assistant | python | 2,778 | Please add a "Are you Sure you want to Skip" popup to avoid frustration and loss of progress | Unfortunately I have already written many long answers and while correcting them I have clicked on the `skip` button which lead to my messages being deleted immediately which is extremely frustrating, especially when you curated that particular answer for half an hour or so already...

If the Assistant or User Messa... | closed | 2023-04-20T12:21:47Z | 2023-06-17T18:56:05Z | https://github.com/LAION-AI/Open-Assistant/issues/2778 | [

"website",

"UI/UX"

] | Logophoman | 5 |

AntonOsika/gpt-engineer | python | 1,073 | `--improve` changes are no longer applied after #1052 was merged | ## Expected Behavior

At the end of a `gpte -i` run, I should get the prompt

```

Do you want to apply these changes? [y/N]

```

## Current Behavior

I get this instead:

```

[...]

Added line print("foo") to src/my_file.py at line 24 end

No changes applied. Could you please upload the debug_log_file.tx... | closed | 2024-03-18T21:01:37Z | 2024-03-19T18:15:12Z | https://github.com/AntonOsika/gpt-engineer/issues/1073 | [

"bug"

] | akaihola | 2 |

gee-community/geemap | streamlit | 1,883 | `download_ee_image()` | `download_ee_image()` is wrapper for the [geediim](https://github.com/leftfield-geospatial/geedim) package. It is most suitable for download original image rather than computation results. The more complicated your workflow, the less likely `download_ee_image` will work. For long-running computational res... | closed | 2024-01-14T07:03:07Z | 2024-01-14T19:12:04Z | https://github.com/gee-community/geemap/issues/1883 | [] | zwy1502 | 1 |

plotly/plotly.py | plotly | 4,126 | CORS needed to use some tile servers with mapbox | Some tile servers needs CORS for mapbox to be able to GET the tiles.

I can turn off the check in browser, but that doesn't seem the right way to do it.

Could this be added in the function?

Kindly | closed | 2023-03-28T00:14:30Z | 2023-03-30T16:28:50Z | https://github.com/plotly/plotly.py/issues/4126 | [] | jontis | 1 |

mljar/mercury | data-visualization | 420 | Waiting for worker... (Vanilla Docker-Compose Install) | The demo notebooks are stuck "Waiting for worker ..."

This is a brand-new install, followed all the instructions.

.env file:

> NOTEBOOKS_PATH=../mercury-deploy-demo/

> DJANGO_SUPERUSER_USERNAME=adminusername

> DJ... | open | 2024-02-12T22:33:29Z | 2025-02-10T11:40:06Z | https://github.com/mljar/mercury/issues/420 | [

"bug"

] | mikep11 | 14 |

danimtb/dasshio | dash | 32 | Dashio not working after upgrading Hassio 0.68.1 | Here is the log:

> 2018-05-06 10:37:37,448 | INFO | Mutfak button pressed!

> 2018-05-06 10:37:37,450 | INFO | Request: http://hassio/homeassistant/api/services/switch/toggle

> 2018-05-06 10:37:37,498 | INFO | Status Code: 500

> 2018-05-06 10:37:37,499 | ERROR | Bad request

> 2018-05-06 10:37:37,563 | INFO | Pack... | closed | 2018-05-06T07:39:02Z | 2018-05-07T16:44:01Z | https://github.com/danimtb/dasshio/issues/32 | [] | cryptooth | 4 |

marcomusy/vedo | numpy | 965 | Can't undo twice | I tried to create an undo button from the pyqt button that connects with the function

```python

self.button_undo.clicked.connect(self.handle_undo_button)

```

```python

def handle_undo_button(self):

self.plt.remove([self.mesh])

self.mesh = self.mesh_prev

self.plt.add(self.mesh... | closed | 2023-11-13T11:03:55Z | 2023-11-14T17:39:13Z | https://github.com/marcomusy/vedo/issues/965 | [] | Thanatossan | 2 |

Lightning-AI/pytorch-lightning | pytorch | 19,729 | `log_dir` contains both forward and backward slashes as path separator when using remote file location as `default_root_dir` on windows | ### Bug description

I am running on Windows OS and storing logs and artifacts on remote location (AWS S3).

```python

path_pl_logs = f"s3://{bucket_name}/pytorch-lightning-logs/{experiment_name}"

trainer = pl.Trainer(

accelerator="gpu",

devices=1,

max_epochs=checkpoint_n_epoch,

default_root_d... | open | 2024-04-03T11:03:39Z | 2024-04-03T11:03:39Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19729 | [

"bug",

"needs triage"

] | jochemvankempen | 0 |

holoviz/panel | jupyter | 6,926 | VideoStream from CCTV | When I use the `VideoStream` class to get the network camera information, it can only get the video stream information of the local camera. If I want to use a remote network camera, such as a video stream transmitted through the rtmp or rtsp protocol, how should I display it in the panel?

Using opencv can achieve th... | open | 2024-06-16T16:43:55Z | 2024-06-17T01:24:30Z | https://github.com/holoviz/panel/issues/6926 | [] | lankoestee | 2 |

LibreTranslate/LibreTranslate | api | 710 | Uppercase text leads to no translation | E.g. `GAS RUN/INSPECTION PROPANE FIREPOTS` from English to Spanish returns the original string.

But `gas run/inspection propane firepots` returns an actual translation. | open | 2024-11-27T18:03:28Z | 2025-01-09T15:00:10Z | https://github.com/LibreTranslate/LibreTranslate/issues/710 | [

"model improvement"

] | pierotofy | 1 |

microsoft/qlib | deep-learning | 1,311 | 怎样排除指数参与训练?回测时,如何排除指数? | 请问模型训练时,怎样排除指数,比如沪深300指数SH000300?

另外,回测时,如何排除指数,也就是不要交易指数? | closed | 2022-10-09T05:36:48Z | 2023-06-20T15:02:01Z | https://github.com/microsoft/qlib/issues/1311 | [

"question",

"stale"

] | quantcn | 3 |

influxdata/influxdb-client-python | jupyter | 367 | Pandas outputs warning when calling dataframe.append in flux_csv_parser._prepare_data_frame | https://github.com/influxdata/influxdb-client-python/blob/922477ff2499165ad2018106ad7cb5a72ddb59d9/influxdb_client/client/flux_csv_parser.py#L190

This method should return :

```

return self._data_frame.append(_temp_df, sort=True)

```

Pandas outputs warning when calling `dataframe.append` in `flux_csv_parser._p... | closed | 2021-11-20T03:53:54Z | 2021-11-26T06:56:04Z | https://github.com/influxdata/influxdb-client-python/issues/367 | [

"wontfix"

] | mm0 | 2 |

igorbenav/FastAPI-boilerplate | sqlalchemy | 113 | nginx failed | docker compose up

fastapi-boilerplate-db-1 | 2024-02-06 05:51:59.250 UTC [27] LOG: database system was shut down at 2024-02-06 05:51:57 UTC

fastapi-boilerplate-db-1 | 2024-02-06 05:51:59.260 UTC [1] LOG: database system is ready to accept connections

fastapi-boilerplate-web-1 | /bin/sh: 1:

fastap... | closed | 2024-02-06T05:53:17Z | 2024-02-09T22:24:07Z | https://github.com/igorbenav/FastAPI-boilerplate/issues/113 | [

"bug"

] | saakethtypes | 8 |

autokey/autokey | automation | 935 | External scripts called with absolute path name do not have API access | ### AutoKey is a Xorg application and will not function in a Wayland session. Do you use Xorg (X11) or Wayland?

Xorg

### Has this issue already been reported?

- [X] I have searched through the existing issues.

### Is this a question rather than an issue?

- [X] This is not a question.

### What type of issue is thi... | open | 2024-02-06T16:47:18Z | 2024-02-10T22:55:52Z | https://github.com/autokey/autokey/issues/935 | [

"enhancement",

"scripting"

] | elydpg | 7 |

pydantic/pydantic-ai | pydantic | 490 | Isn't a foobar a bad choice for examples? | Hi. Thank you very much for all the work you've done. I really like your approach to agency.

But going through documentation examples, I've come across several Foobar models in examples.

If to think, it's very confusing why apples and carrots as comments, why x,y,z(yeah, foobar, but do we have to?) as variables etc.

... | closed | 2024-12-18T22:32:27Z | 2024-12-23T13:20:47Z | https://github.com/pydantic/pydantic-ai/issues/490 | [

"documentation"

] | snqb | 3 |

microsoft/nni | machine-learning | 5,435 | aten::ScalarImplicit is not Supported | **Describe the issue**:

**Environment**:

- NNI version:2.10

- Training service (local|remote|pai|aml|etc):

- Client OS:

- Server OS (for remote mode only):

- Python version:

- PyTorch/TensorFlow version:

- Is conda/virtualenv/venv used?:

- Is running in Docker?:

**Configuration**:

- Experiment con... | closed | 2023-03-13T08:22:08Z | 2023-04-14T04:18:21Z | https://github.com/microsoft/nni/issues/5435 | [] | Kracozebr | 7 |

geex-arts/django-jet | django | 126 | Related popups for new items aren't working right | An example can be found on the demo site:

http://demo.jet.geex-arts.com/admin/menu/menuitemcategory/2/#/tab/inline_1/

1. Click add another menu item

2. Click the plus icon on the new row

3. The popup doesn't open in the iframe related popup tab, it opens in full window.

| closed | 2016-09-27T19:49:44Z | 2016-11-19T15:58:50Z | https://github.com/geex-arts/django-jet/issues/126 | [] | kmorey | 5 |

replicate/cog | tensorflow | 1,663 | ERROR: failed to solve: circular dependency detected on stage: weights | Hello,

Trying to push a model with --separate-weights fails with this error. Works fine when the flag is not used. I am using cog version 0.9.7. I also tried deleting .cog , removed the models/ folder from the auto-generated .dockerignore

```

Building Docker image from environment in cog.yaml as r8.im/xxxxx/xxxx... | closed | 2024-05-14T07:35:28Z | 2024-07-17T16:44:24Z | https://github.com/replicate/cog/issues/1663 | [] | gurteshwar | 10 |

ultralytics/yolov5 | machine-learning | 12,943 | 提升训练速度 | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

怎么可以提高yolov5-seg模型的训练速度,调用了GPU,但是利用率很低,3070ti的显卡训练八千张图片一轮需要九分钟

### Additional

_No respo... | closed | 2024-04-18T21:03:59Z | 2024-05-30T00:22:03Z | https://github.com/ultralytics/yolov5/issues/12943 | [

"question",

"Stale"

] | 2375963934a | 2 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.