repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

ageitgey/face_recognition | python | 1,190 | face_locations found. After saving to Image, no face_encodings found | Hi expert,

I tried to save each faces into image. After saving, I load the small face image und tried to calculate the face_encodings. But a lot faces images had no face_encodings. Did I do something wrong?

face_locations = face_recognition.face_locations(image, number_of_times_to_upsample=2, model=... | open | 2020-07-21T14:48:18Z | 2020-07-21T14:49:52Z | https://github.com/ageitgey/face_recognition/issues/1190 | [] | zhangede | 0 |

pydata/pandas-datareader | pandas | 562 | Support for multiple symbols for MOEX | f = web.DataReader(['SBER','FXUS'], 'moex', start, end)

gives me

ValueError: Support for multiple symbols is not yet implemented

This is a feature request. | closed | 2018-08-12T15:51:38Z | 2018-08-12T19:51:18Z | https://github.com/pydata/pandas-datareader/issues/562 | [] | khazamov | 0 |

ml-tooling/opyrator | pydantic | 27 | Can't get hello_world to work |

**Hello World no go:**

**Technical details:**

I have followed the instructions on the Getting Started page, no go

[https://github.com/ml-tooling/opyrator#getting-started](url)

Created the file and run as instructed but I get this...

`2021-05-01 10:16:31.675 An update to the [server] config option section was... | closed | 2021-05-01T00:32:26Z | 2021-05-07T23:22:46Z | https://github.com/ml-tooling/opyrator/issues/27 | [

"support"

] | Bandit253 | 5 |

vllm-project/vllm | pytorch | 15,102 | [Bug]: 0.8.0(V1) RayChannelTimeoutError when inferencing DeepSeekV3 on 16 H20 with large batch size | ### Your current environment

<details>

<summary>The output of `python collect_env.py`</summary>

```text

Collecting environment information...

PyTorch version: 2.6.0+cu124

Is debug build: False

CUDA used to build PyTorch: 12.4

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.4 LTS (x86_64)

GCC version: (Ubuntu 11.4.0... | open | 2025-03-19T07:13:52Z | 2025-03-24T12:02:33Z | https://github.com/vllm-project/vllm/issues/15102 | [

"bug",

"ray"

] | jeffye-dev | 22 |

apify/crawlee-python | web-scraping | 516 | How to get the content of an iframe? | Thank you! | closed | 2024-09-11T16:48:34Z | 2024-09-12T08:03:46Z | https://github.com/apify/crawlee-python/issues/516 | [

"t-tooling"

] | thalesfsp | 0 |

ResidentMario/missingno | data-visualization | 20 | Warning thrown with matplotlib 2.0 | I'm using matplotlib 2.0, and I thought I'd just quickly report this warning message that shows up when I call `msno.matrix(dataframe)`:

```

/Users/ericmjl/anaconda/lib/python3.5/site-packages/missingno/missingno.py:250: MatplotlibDeprecationWarning: The set_axis_bgcolor function was deprecated in version 2.0. Use ... | closed | 2017-02-05T04:06:42Z | 2017-02-14T02:49:03Z | https://github.com/ResidentMario/missingno/issues/20 | [] | ericmjl | 2 |

pallets-eco/flask-sqlalchemy | sqlalchemy | 929 | Getting `sqlalchemy.exc.NoSuchModuleError: Can't load plugin: sqlalchemy.dialects:postgres`since SQLAlchemy has released 1.4 | Getting `sqlalchemy.exc.NoSuchModuleError: Can't load plugin: sqlalchemy.dialects:postgres`since SQLAlchemy has released [1.4](https://docs.sqlalchemy.org/en/14/index.html)

I'd freeze the **SQLAlchemy** version for now

https://github.com/pallets/flask-sqlalchemy/blob/222059e200e6b2e3b0ac57028b08290a648ae8ea/setup.py#... | closed | 2021-03-16T10:26:52Z | 2021-04-01T00:13:41Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/929 | [] | tbarda | 9 |

fastapi/fastapi | pydantic | 13,150 | Simplify tests for variants | ### Privileged issue

- [X] I'm @tiangolo or he asked me directly to create an issue here.

### Issue Content

## Summary

Simplify tests for variants, from multiple test files (one test file per variant) to a single test file with parameters to test each variant.

## Background

Currently, we have multiple sourc... | open | 2025-01-03T09:57:09Z | 2025-02-19T19:37:18Z | https://github.com/fastapi/fastapi/issues/13150 | [] | tiangolo | 2 |

charlesq34/pointnet | tensorflow | 264 | ERROR: cannot verify shapenet.cs.stanford.edu's certificate, issued by ‘CN=InCommon RSA Server CA,OU=InCommon,O=Internet2,L=Ann Arbor,ST=MI,C=US’: | Hi thanks a lot for the interesting 3D computer vision research work.

Could you please have a look at the following error and guide me on how to fix it?

```

[35860:2264 0:981] 09:14:27 Mon Dec 28 [mona@goku:pts/5 +1] ~/research/code/DJ-RN/pointnet

$ python train.py

--2020-12-28 21:14:32-- https://shapenet.cs... | closed | 2020-12-29T02:16:06Z | 2020-12-29T02:20:48Z | https://github.com/charlesq34/pointnet/issues/264 | [] | monacv | 1 |

postmanlabs/httpbin | api | 598 | bytes endpoint with seed not stable between python 2 and python 3 | I'm upgrading a build environment from python 2 to python 3 and noticed that endpoints with seeded random numbers are not returning the same values. It seems to be related to usage of randint:

https://github.com/postmanlabs/httpbin/blob/f8ec666b4d1b654e4ff6aedd356f510dcac09f83/httpbin/core.py#L1448

It seems like ra... | open | 2020-02-11T21:09:25Z | 2020-02-11T21:09:25Z | https://github.com/postmanlabs/httpbin/issues/598 | [] | rajsite | 0 |

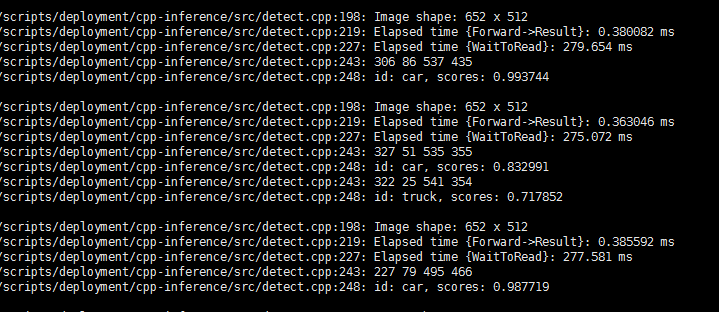

dmlc/gluon-cv | computer-vision | 841 | WaitToRead function cost too much time |

Here is my test code:

void RunDemo() {

// context

Context ctx = Context::cpu();

if (args::gpu >= 0) {

ctx = Context::gpu(args::gpu);

if (!args::quite) {

... | closed | 2019-06-28T06:55:14Z | 2019-12-20T23:34:27Z | https://github.com/dmlc/gluon-cv/issues/841 | [] | HouBiaoLiu | 2 |

explosion/spaCy | data-science | 13,725 | Empty MorphAnalysis Hash differs from Token.morph.key | <!-- NOTE: For questions or install related issues, please open a Discussion instead. -->

Hello,

I've trained a Morphologizer and i saw that empty MorphAnalysis (`""`) actually have the hash value of `"_"`. Is it by design? Because the documentation doesn't mention this.

> key `int` | The hash of the features st... | open | 2024-12-26T10:07:16Z | 2024-12-26T10:07:40Z | https://github.com/explosion/spaCy/issues/13725 | [] | thjbdvlt | 0 |

horovod/horovod | deep-learning | 3,297 | Fail to install horovod 0.19.0 | **Environment:**

1. Framework: (TensorFlow, Keras, PyTorch, MXNet)

2. Framework version:

3. Horovod version:0.19.0

4. MPI version:4.0.3

5. CUDA version:10.0

6. NCCL version:2.5.6

7. Python version:3.6.8

8. Spark / PySpark version:

9. Ray version: None

10. OS and version: centos7

11. GCC version:7.3.1

12. CM... | closed | 2021-12-01T09:15:54Z | 2022-07-01T20:35:01Z | https://github.com/horovod/horovod/issues/3297 | [

"bug"

] | coolnut12138 | 1 |

apachecn/ailearning | nlp | 542 | 加入我们 | 大佬,怎么加入群聊学习呢?为啥子加不上呢?是不是需要有某些要求呢? | closed | 2019-09-03T02:40:48Z | 2021-09-07T17:45:14Z | https://github.com/apachecn/ailearning/issues/542 | [] | achievejia | 1 |

gradio-app/gradio | machine-learning | 10,557 | Add an option to remove line numbers in gr.Code | - [X ] I have searched to see if a similar issue already exists.

**Is your feature request related to a problem? Please describe.**

`gr.Code()` always displays line numbers.

**Describe the solution you'd like**

I propose to add an option `show_line_numbers = True | False` to display or hide the line numbers. The... | closed | 2025-02-10T11:38:07Z | 2025-02-21T22:11:43Z | https://github.com/gradio-app/gradio/issues/10557 | [

"enhancement",

"good first issue"

] | altomani | 1 |

MaartenGr/BERTopic | nlp | 2,014 | Zero shot topic model with pre embedded zero shot topics | _Preface, I have tried to read through the current issues. I dont think that any issues raises what I am wanting. Issues like this https://github.com/MaartenGr/BERTopic/issues/2011 sound promising but is talking about something different. I apologise if this has already been discussed!_

I would like try out BERTopi... | open | 2024-05-28T05:27:12Z | 2024-05-31T13:36:07Z | https://github.com/MaartenGr/BERTopic/issues/2014 | [] | 1jamesthompson1 | 1 |

ned2/slapdash | dash | 21 | Documentation on Heroku Deployment | This many not be an issue or belong here, but I was wondering if you can help in documenting how one can deploy this to Heroku | closed | 2019-07-15T13:29:23Z | 2019-08-01T16:02:29Z | https://github.com/ned2/slapdash/issues/21 | [] | btoro | 2 |

gevent/gevent | asyncio | 1,959 | greenlets accidentally stuck on sleep(0) after GC removes objects with sleep(0) in __del__ on python3.9+ | * gevent version: 22.10.2

* Python version: 3.9.X

* Operating System: CentOS based or docker python3.9 + pip install gevent

### Description:

This code fails with `LoopExit: This operation would block forever` on Python 3.9.

```python

import gevent.monkey

gevent.monkey.patch_all()

class X:

def __ini... | open | 2023-06-08T09:49:06Z | 2023-10-06T17:52:36Z | https://github.com/gevent/gevent/issues/1959 | [] | unipolar | 1 |

CorentinJ/Real-Time-Voice-Cloning | tensorflow | 1,136 | Hello, | Hello,

using a new pyenv environment with the following versions and lib installed (after doing the `pip3 install torch torchvision torchaudio`)

```

% python --version

Python 3.10.4

% pyenv --version

pyenv 2.3.0

% pip list

Package Version

------------------ ---------

certifi 2022.9.1... | closed | 2022-11-19T17:26:18Z | 2022-12-02T08:51:51Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1136 | [] | ImanuillKant1 | 0 |

great-expectations/great_expectations | data-science | 10,977 | File Context can't be created with `context_root_dir` | ```

context = gx.get_context(

context_root_dir=context_root_dir, project_root_dir=None, mode="file"

)

```

Results in

```

TypeError: 'project_root_dir' and 'context_root_dir' are conflicting args; please only provide one

```

GX version: 1.3.7

I think this is due to https://github.com/great-expectations/grea... | open | 2025-02-27T04:13:24Z | 2025-03-19T16:44:18Z | https://github.com/great-expectations/great_expectations/issues/10977 | [

"request-for-help"

] | CrossNox | 7 |

google-research/bert | tensorflow | 939 | nan error: tensorflow.python.framework.errors_impl.InvalidArgumentError: From /job:worker/replica:0/task:0: Gradient for bert/embeddings/LayerNorm/gamma:0 is NaN : Tensor had NaN values [[node CheckNumerics_4 (defined at usr/local/lib/python3.5/dist-packages/tensorflow_core/python/framework/ops.py:1748) ]] | Original stack trace for 'CheckNumerics_4':

File "usr/lib/python3.5/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "usr/lib/python3.5/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "home/mengqingyang0102/albert/run_squad_sp.py", line 1381, in <module>

tf.ap... | open | 2019-11-26T08:16:28Z | 2020-05-05T17:55:55Z | https://github.com/google-research/bert/issues/939 | [] | SUMMER1234 | 2 |

pallets-eco/flask-sqlalchemy | sqlalchemy | 1,180 | Add a way to create a paginated query without executing it | currently when calling `Query.paginate`, the SQL executes immdiately. This makes the use-case where you want to store the query as a Redis key, so that the result set can be cached and you can return the result set from memory instead of executing the query at all.

Basically what i'm asking for is some kinda functio... | closed | 2023-03-15T19:27:40Z | 2023-03-30T01:08:03Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/1180 | [] | martinmckenna | 2 |

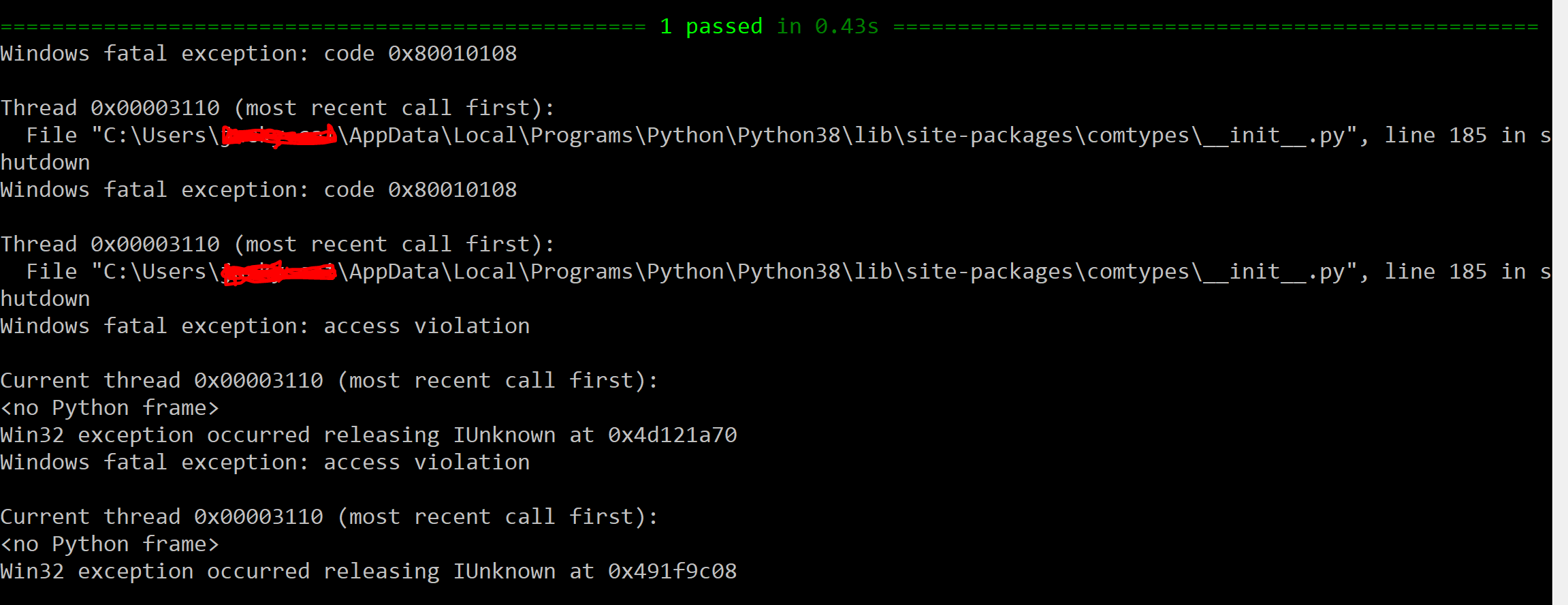

pywinauto/pywinauto | automation | 902 | Windows access violation |

This is my script.

The test case is passed but there is access violation. I wonder what the r... | open | 2020-03-14T04:28:48Z | 2020-03-15T16:38:15Z | https://github.com/pywinauto/pywinauto/issues/902 | [] | czhhua28 | 1 |

comfyanonymous/ComfyUI | pytorch | 7,268 | Request how to create a new repository | ### Your question

I downloaded Git and also GitLFS pls how do I create a new repository and use the He keeps popping up this tooltip

### Logs

```powershell

```

### Other

| closed | 2025-03-16T10:03:14Z | 2025-03-16T15:03:46Z | https://github.com/comfyanonymous/ComfyUI/issues/7268 | [

"User Support"

] | AC-pj | 1 |

ultralytics/ultralytics | pytorch | 18,881 | Where run summary and run history is created/can be changed? | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Hey,

I added succesfully the metrics `mAP70` to my training and wonder abou... | closed | 2025-01-25T14:19:50Z | 2025-01-27T10:59:23Z | https://github.com/ultralytics/ultralytics/issues/18881 | [

"question"

] | Petros626 | 6 |

microsoft/JARVIS | deep-learning | 214 | 这个项目不再更新了吗? | 两个多月没有变化了,看来不会有支持windows的版本了。 | open | 2023-06-25T13:28:05Z | 2023-10-10T15:20:26Z | https://github.com/microsoft/JARVIS/issues/214 | [] | Combustible-material | 2 |

jina-ai/serve | deep-learning | 6,010 | Documentation: Adapt Documentation to single document serving and parameters schema | **Describe the feature**

Adapt Documentation to latest features added | closed | 2023-08-03T04:32:45Z | 2023-08-04T08:39:27Z | https://github.com/jina-ai/serve/issues/6010 | [] | JoanFM | 0 |

scikit-learn-contrib/metric-learn | scikit-learn | 256 | [DOC] Docstring of num_constraints should explain default behavior | In the docstring for supervised versions of weakly supervised algorithms, one has to look at the source code to find out how many constraints are constructed by default (when `num_constraints=None`). This should be explained in the docstring for `num_constraints` | closed | 2019-10-30T07:38:09Z | 2019-11-21T15:12:19Z | https://github.com/scikit-learn-contrib/metric-learn/issues/256 | [] | bellet | 1 |

biolab/orange3 | data-visualization | 6,332 | Concatenate: data source ID is not used if compute_value is ignore in comparison | ### Discussed in https://github.com/biolab/orange3/discussions/6326

<div type='discussions-op-text'>

<sup>Originally posted by **Bigfoot-solutions** February 3, 2023</sup>

I am trying to concatenate a set of 20 datasets and use the "Append data source ID" option to retain visibility into which data element came... | closed | 2023-02-07T14:14:22Z | 2023-02-10T08:28:21Z | https://github.com/biolab/orange3/issues/6332 | [] | markotoplak | 3 |

ultralytics/yolov5 | pytorch | 13,028 | Inconsistency issue with single_cls functionality and dataset class count | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I found a small issue related to `single_cls` that I'm not quite clear on the purpose of.... | closed | 2024-05-20T08:49:40Z | 2024-05-21T05:33:10Z | https://github.com/ultralytics/yolov5/issues/13028 | [

"question"

] | Le0v1n | 3 |

indico/indico | flask | 5,962 | Lightweight meeting/lecture themes | We currently have custom plugins to maintain those themes for CERN and LCAgenda, but the vast majority of them are really simply CSS tweaks: They have a custom stylesheet (just overriding a few things of the default) and logo, and that's it (see some examples below).

We already have support for an event logo but it'... | open | 2023-09-29T12:47:00Z | 2023-09-29T12:47:00Z | https://github.com/indico/indico/issues/5962 | [

"enhancement"

] | ThiefMaster | 0 |

dynaconf/dynaconf | flask | 1,000 | Django 4.2.5 and Dynaconf 3.2.2 (AttributeError) | **Describe the bug**

When I try to access the Django admin, the Django log shows many error messages, such as:

```bash

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/czar/.pyenv/versions/3.11.5/lib/python3.11/wsgiref/handlers.py", line 137,... | closed | 2023-09-09T16:16:50Z | 2023-09-13T14:14:30Z | https://github.com/dynaconf/dynaconf/issues/1000 | [

"bug",

"Pending Release",

"django"

] | cesargodoi | 5 |

tflearn/tflearn | data-science | 538 | [Tutorial] I can't import 'titinic' | I run the exact same code as [Quickstart](http://tflearn.org/tutorials/quickstart.html#source-code). But I got a problem here.

`Traceback (most recent call last):

File "F:\Programming\MachineLearning\tflearn-master\tutorials\intro\quickstart.py", line 7, in <module>

from tflearn.datasets import titanic

I... | closed | 2016-12-27T16:55:04Z | 2020-07-01T20:07:02Z | https://github.com/tflearn/tflearn/issues/538 | [] | bongjunj | 3 |

coqui-ai/TTS | pytorch | 3,481 | [Bug] xtts ft demo: empty csv files with the format_audio_list | ### Describe the bug

I use the formatter method to process my audio files(Chinese language), but I got the csv files with no data. Because it has never met the condition of if word.word[-1] in ["!", ".", "?"]:

### To Reproduce

below is my code:

```python

datapath = "/mnt/workspace/tdy.tdy/mp3_lww"

out_pat... | closed | 2023-12-31T14:17:18Z | 2024-02-10T18:38:12Z | https://github.com/coqui-ai/TTS/issues/3481 | [

"bug",

"wontfix"

] | dorbodwolf | 1 |

zappa/Zappa | django | 1,289 | Deployed API Gateway points to $LATEST | As the title says. Rather than pointing to the most recently deployed version of the code, API Gateway is pointing to the unqualified ARN of the lambda function, which points to $LATEST.

The reason this is an issue is that you cannot use provisioned concurrency on $LATEST, even when aliased, which is causing some se... | closed | 2023-12-21T23:30:57Z | 2024-04-13T20:37:04Z | https://github.com/zappa/Zappa/issues/1289 | [

"no-activity",

"auto-closed"

] | texonidas | 2 |

shibing624/text2vec | nlp | 47 | 词向量模型使用的时候是不是需要先分词 | w2v_model = Word2Vec("w2v-light-tencent-chinese")

compute_emb(w2v_model)

看了下代码,编码的时候会把句子分成一个一个的字符,分别计算字向量得到句子向量,是不是少了分词步骤

另外,衡量word2vec模型向量距离的方法是不是用欧式距离更好? | closed | 2022-08-22T09:47:05Z | 2022-11-17T09:39:55Z | https://github.com/shibing624/text2vec/issues/47 | [

"question"

] | lushizijizoude | 1 |

coqui-ai/TTS | python | 3,269 | [Bug] server.py crashes on systems with IPv6 disabled | ### Describe the bug

`server.py` is statically configured to use IPv6. On systems with IPv6 disabled - this causes a crash when starting the server (bare metal or Docker)

### To Reproduce

1. On a Linux host as an example, disable ipv6 (sysctl):

```

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disa... | closed | 2023-11-20T01:18:45Z | 2023-11-28T10:49:24Z | https://github.com/coqui-ai/TTS/issues/3269 | [

"bug"

] | Phr33d0m | 3 |

comfyanonymous/ComfyUI | pytorch | 7,169 | Problem with Comfy-Manager and rgthree nodes after last update | ### Your question

After the last update, the generation was crashing without giving an error.

I updated all nodes, started getting errors when starting ComfyUI, updated the requirements. Now ComfyUI starts, but there is no “Manager” button and some Rgthree nodes are not displayed. I've tried deleting them and cloning ... | open | 2025-03-10T11:10:13Z | 2025-03-11T09:56:10Z | https://github.com/comfyanonymous/ComfyUI/issues/7169 | [

"User Support"

] | VladimirNCh | 3 |

airtai/faststream | asyncio | 1,275 | Feature: allow to use XXXDsn from Pydantic while specyfing the URL | To suggest an idea or inquire about a new Message Broker supporting feature or any other enhancement, please follow this template:

**Is your feature request related to a problem? Please describe.**

Provide a clear and concise description of the problem you've encountered. For example: "I'm always frustrated when...... | closed | 2024-02-28T18:26:36Z | 2024-02-29T12:27:15Z | https://github.com/airtai/faststream/issues/1275 | [

"enhancement"

] | ntoskrn | 1 |

brightmart/text_classification | nlp | 140 | tflearn.data_utils | Which file is this tflearn.data_utils module under | closed | 2020-05-18T09:50:13Z | 2020-05-18T10:10:25Z | https://github.com/brightmart/text_classification/issues/140 | [] | Catherine-HFUT | 0 |

pyppeteer/pyppeteer | automation | 189 | setRequestInterception blocks page.close() | When setRequestInterception is enabled, page.close () is not executed, but everything works fine without it

import asyncio

from pyppeteer import launch, launcher

async def main():

browser = await launch(headless=False)

page = await browser.newPage()

await page.setReques... | open | 2020-11-10T12:59:38Z | 2021-08-05T10:16:24Z | https://github.com/pyppeteer/pyppeteer/issues/189 | [

"bug"

] | ViktorRubenko | 5 |

ageitgey/face_recognition | python | 725 | face_recognition crashes python on Windows 7 64-bit | * face_recognition version: 1.2.3

* dlib version: 19.8.1

* Python version: 3.6.8

* Operating System: Windows 7 Ultimate 64-bit (amd phenom ii x4 965 processor)

Installed with these commands:

pip install dlib-19.8.1-cp36-cp36m-win_amd64.whl

pip install face_recognition

### Description

I was trying to run t... | open | 2019-01-27T22:20:58Z | 2019-01-27T23:12:24Z | https://github.com/ageitgey/face_recognition/issues/725 | [] | xdaviddx | 0 |

flairNLP/flair | pytorch | 3,256 | [Question]: Something is wrong with the lemmatization | ### Question

```

>>> from flair.models import Lemmatizer

>>> from flair.data import Sentence

>>> sentence = Sentence("I can't wait to get out of here, I hate this place!")

>>> lemmatizer = Lemmatizer()

>>> lemmatizer.predict(sentence)

>>> sentence

Sentence[15]: "I can't wait to get out of here, I hate this plac... | closed | 2023-06-01T15:46:34Z | 2023-08-21T09:25:48Z | https://github.com/flairNLP/flair/issues/3256 | [

"question"

] | riccardobucco | 1 |

noirbizarre/flask-restplus | api | 29 | Splitting up API library into multiple files | I've tried several different ways to split up the API files into separate python source but have come up empty. I love the additions to flask-restplus but it appears that only the classes within the main python file are seen. Is there a good example of how to do this? In Flask-Restful it was a bit simpler as you cou... | closed | 2015-03-13T22:41:18Z | 2018-01-05T18:11:28Z | https://github.com/noirbizarre/flask-restplus/issues/29 | [

"help wanted"

] | kinabalu | 16 |

hbldh/bleak | asyncio | 1,645 | macOS client.start_notify fails after reconnect | * bleak version: 0.22.2

* Python version: 3.9

* Operating System: macOS sonoma 14.4.1

* BlueZ version (`bluetoothctl -v`) in case of Linux:

### Description

When running `client.start_notify` on a device that has previously been connected to and that disconnected and connected again, it throws the exception `Va... | open | 2024-09-28T19:47:12Z | 2024-10-07T05:58:18Z | https://github.com/hbldh/bleak/issues/1645 | [

"bug",

"Backend: Core Bluetooth"

] | dakhnod | 5 |

saulpw/visidata | pandas | 2,720 | Can't copy cell contents in VSCode ssh connection | **Small description**

Can't seem to copy cell contents in VSCode while on an ssh connection.

**Data to reproduce**

**Steps to reproduce**

Run VSCode on local machine, connect to remote server via ssh

Open file in visidata (installed on remote server)

Select cell from row/column

and stream it directly into a YOLOv11n model for object detection without saving the file or processing it into frames or fixed-size chunks? | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/ultralytics/ultralytics/discussions) and found no similar questions.

### Question

Hi, I am using YOLOv11n as apart of fastapi and can I take a video fi... | open | 2025-01-16T04:21:09Z | 2025-01-16T11:37:14Z | https://github.com/ultralytics/ultralytics/issues/18706 | [

"question",

"detect"

] | hariv0 | 2 |

brightmart/text_classification | nlp | 34 | TextRNN model details | Hello.

Is there any chance to get some reference to papers (or any other documents) to the TextRNN model?

Thanks in advance. | closed | 2018-02-14T01:32:18Z | 2018-02-22T03:28:45Z | https://github.com/brightmart/text_classification/issues/34 | [] | adilek | 1 |

sngyai/Sequoia | pandas | 23 | 停机坪策略 | 请问停机坪策略是个什么原理呢? | closed | 2021-06-26T12:53:58Z | 2021-12-06T09:04:01Z | https://github.com/sngyai/Sequoia/issues/23 | [] | jianhoo727 | 1 |

biolab/orange3 | data-visualization | 6,996 | Scoring Sheet Viewer: Refactor | **What's wrong?**

_class_combo_changed (https://github.com/biolab/orange3/blob/master/Orange/widgets/visualize/owscoringsheetviewer.py#L446C9-L446C29) checks whether the class indeed changed and if so, they (indirectly) call https://github.com/biolab/orange3/blob/master/Orange/widgets/visualize/owscoringsheetviewer.py... | open | 2025-01-19T09:09:59Z | 2025-01-24T09:17:27Z | https://github.com/biolab/orange3/issues/6996 | [

"bug"

] | janezd | 0 |

d2l-ai/d2l-en | deep-learning | 2,424 | Policy Optimization and PPO | Dear all,

While the book currently has a small section on Reinforcement Learning covering MDPs, value iteration, and the Q-Learning algorithm, the book still does not cover an important family of algorithms: **Policy optimization algorithms**.

It'd be great to include an overview of the taxonomy of algorithms as ... | open | 2023-01-07T23:49:38Z | 2023-01-08T17:09:36Z | https://github.com/d2l-ai/d2l-en/issues/2424 | [] | BrianPulfer | 3 |

dfki-ric/pytransform3d | matplotlib | 134 | Add project on conda-forge? | We at [xdem](https://github.com/GlacioHack/xdem) are slowly preparing to put our package on conda-forge, and with `pytransform3d` as a dependency, I wonder if there's any plan to do this for `pytransform3d` as well?

Thanks in advance!

Erik | closed | 2021-05-21T10:16:06Z | 2021-05-26T20:39:07Z | https://github.com/dfki-ric/pytransform3d/issues/134 | [] | erikmannerfelt | 11 |

ray-project/ray | deep-learning | 51,086 | [core] Guard ray C++ code quality via unit test | ### Description

Ray core C++ components are not properly unit tested:

- As people left, it's less confident to guard against improper code change with missing context;

- Sanitizer on CI is only triggered on unit test;

- Unit test coverage is a good indicator of code quality (i.e. 85% branch coverage).

### Use case

_... | open | 2025-03-05T02:20:07Z | 2025-03-05T02:20:34Z | https://github.com/ray-project/ray/issues/51086 | [

"enhancement",

"P2",

"core",

"help-wanted"

] | dentiny | 1 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,006 | Mio | ###

- _**

1.

- [x] @ecoopnet

**_ | closed | 2020-04-25T20:22:25Z | 2020-04-25T20:22:49Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1006 | [] | angeloko23 | 0 |

jacobgil/pytorch-grad-cam | computer-vision | 454 | Support grad cam for cross attention on encoder-decoder models | Currently, encoder-decoder models lack support for Grad-CAM (Gradient-weighted Class Activation Mapping) visualization with cross-attention mechanisms. Grad-CAM is a valuable tool for interpreting model decisions and understanding which parts of the input contribute most to the output. Extending Grad-CAM support to c... | open | 2023-09-07T20:57:12Z | 2024-07-18T15:36:55Z | https://github.com/jacobgil/pytorch-grad-cam/issues/454 | [] | ahmedplateiq | 1 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 469 | 多轮对话结尾出现很多句号 | ### 详细描述问题

使用chinese-alpaca-plus进行多轮对话,超过一定轮后模型回答结尾会有很多句号

### 运行截图或日志

```commandline

question: 中国的首都是哪里

answer: 中国的首都是北京。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。。

```

### 必查项目(前三项只保留你要问的)

- [x] **基础模型**:LLaMA / Alpaca / LLaMA-Plus / Alpaca-Plus

- [ ] **运行系统**:Windows / MacOS / Linux

- [ ] **问题分类**:下载问... | closed | 2023-05-31T06:36:50Z | 2023-06-16T22:02:17Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/469 | [

"stale"

] | Mewral | 7 |

taverntesting/tavern | pytest | 224 | Update Changelog | The changelog seems to have gotten out of date, we should update it | closed | 2019-01-05T13:09:51Z | 2019-01-13T13:34:41Z | https://github.com/taverntesting/tavern/issues/224 | [] | benhowes | 1 |

quantmind/pulsar | asyncio | 291 | windows tests failures | * **pulsar version**: 2.0

* **platform**: windows

## Description

Some tests, mainly with socket connections and repeated requests, fail in windows from time to time. These tests are currently switched off in windows.

To see them seach for

```python

@unittest.skipIf(platform.is_windows, 'windows test #291')

`... | open | 2017-11-21T09:23:10Z | 2017-11-21T09:23:35Z | https://github.com/quantmind/pulsar/issues/291 | [

"bug",

"test",

"stores"

] | lsbardel | 0 |

mwaskom/seaborn | data-visualization | 3,274 | Using color breaks so.Line when there is only one row per class | Using this data:

```

data = pd.DataFrame(

{

"category": ["A", "B", "C", "D", "E"],

"x": [450, 610, 4160, 9662, 127000],

"y": [500, 152.26, 54.76, 40.42, 0.8]

}

)

```

I can plot the individual points using `so.Dot` and/or `so.Line`:

```

so.Plot(data=data, x="x", y="y").add... | closed | 2023-02-22T22:27:36Z | 2023-02-23T01:47:43Z | https://github.com/mwaskom/seaborn/issues/3274 | [] | joeyo | 3 |

sqlalchemy/sqlalchemy | sqlalchemy | 10,939 | Type of "self_group" is partially unknown warning | ### Ensure stubs packages are not installed

- [X] No sqlalchemy stub packages is installed (both `sqlalchemy-stubs` and `sqlalchemy2-stubs` are not compatible with v2)

### Verify if the api is typed

- [X] The api is not in a module listed in [#6810](https://github.com/sqlalchemy/sqlalchemy/issues/6810) so it should ... | closed | 2024-01-29T15:25:54Z | 2024-05-05T15:43:26Z | https://github.com/sqlalchemy/sqlalchemy/issues/10939 | [

"bug",

"PRs (with tests!) welcome",

"typing"

] | AlexanderPodorov | 7 |

lorien/grab | web-scraping | 209 | Выбрать конкретное поле для отправки | Есть поля:

```html

<input name="op" value="Save" type="submit"/>

<input name="op" value="Preview" type="submit"/>

<input name="op" value="Delete" type="submit"/>

```

По умолчанию если сделать g.doc.submit() то ни одно из полей не отправляется.

Сейчас использую такой костыль:

```python

g.doc.set_input('op',... | closed | 2016-12-23T06:07:53Z | 2016-12-27T05:11:10Z | https://github.com/lorien/grab/issues/209 | [] | InputError | 1 |

youfou/wxpy | api | 274 | 请问如果自动投骰子 | 请问投骰子是什么消息?应该调用什么方法发? | open | 2018-03-13T09:24:10Z | 2018-03-13T09:24:10Z | https://github.com/youfou/wxpy/issues/274 | [] | lzou | 0 |

mwaskom/seaborn | data-visualization | 3,541 | FacetGrid with `size=` argument? | I don't know about the details of the implementation of `FacetGrid.map()`.

But in essence it must be splitting the data frame.

Splitting the data frame and gathering the resulting individual plots (naively)

seems to have problems since it does not incorporate the global features of the data.

Usin... | closed | 2023-10-26T07:07:04Z | 2023-10-27T19:57:45Z | https://github.com/mwaskom/seaborn/issues/3541 | [] | kwhkim | 1 |

ludwig-ai/ludwig | computer-vision | 3,126 | GBM backend schema validation `dict` has no attribute `type` | When trying to run a GBM with auxiliary validation checks, the following error occurs:

```

File "<LUDWIG_ROOT>/ludwig/ludwig/config_validation/checks.py", line 200, in check_gbm_horovod_incompatibility

if config.model_type == MODEL_GBM and config.backend.type == "horovod":

AttributeError: 'dict' object has ... | closed | 2023-02-21T15:58:22Z | 2024-10-18T13:21:46Z | https://github.com/ludwig-ai/ludwig/issues/3126 | [] | jeffkinnison | 0 |

home-assistant/core | asyncio | 140,732 | Bose SoundBar Ultra cast issues | ### The problem

Failed to determine cast type for host <unknown>

My Bose SoundBar ultra is the only problematic casr device versus 5 other devices that work (albeit those 5 are so Google devices)

### What version of Home Assistant Core has the issue?

core-2025.3.3

### What was the last working version of Home Assi... | open | 2025-03-16T16:04:22Z | 2025-03-18T07:25:29Z | https://github.com/home-assistant/core/issues/140732 | [

"integration: cast"

] | thewookiewon | 13 |

ultralytics/yolov5 | deep-learning | 12,818 | YOLOv5 GUI Implementation | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

i want to implement a GUI using YOLOv5 in which there are multiple live feeds from camera... | closed | 2024-03-13T09:26:49Z | 2024-10-20T19:41:31Z | https://github.com/ultralytics/yolov5/issues/12818 | [

"question",

"Stale"

] | haseebakbar94 | 3 |

widgetti/solara | flask | 870 | Complex Layout with multiple "set of children" | Hi,

I need to create a more complex layout, that requires passing different children (component) to different parts (components) of the layout. I have made this simple pycafe example illustrating what my intention is: I want to pass a component to the "Left" part of the layout, and a different component to the "rig... | open | 2024-11-23T13:41:35Z | 2024-12-25T12:49:15Z | https://github.com/widgetti/solara/issues/870 | [] | JovanVeljanoski | 3 |

xuebinqin/U-2-Net | computer-vision | 198 | Segmentation Badly | Hi I trained u2net on refined supervisely dataset(including personal goods) and some of matting dataset images. Whole dataset has 60k images. After 20 epochs, I predict few of my images which should be easy to distinguish bg and fg, however it looks very bad. Personally I thought it was receptive field problem. I would... | open | 2021-04-30T04:13:43Z | 2021-04-30T10:08:23Z | https://github.com/xuebinqin/U-2-Net/issues/198 | [] | Sparknzz | 3 |

geex-arts/django-jet | django | 26 | Dashboard doesn't update unless i press reset button | When i do some changes in my custom dashboard file it does not reflect changes in browser unless i press reset button. When i looked into jet's dashboard file i found that

``` python

def load_modules(self):

module_models = UserDashboardModule.objects.filter(

app_label=self.app_label,

... | open | 2015-11-27T05:01:04Z | 2017-08-25T17:58:21Z | https://github.com/geex-arts/django-jet/issues/26 | [] | Ajeetlakhani | 11 |

keras-team/keras | tensorflow | 20,455 | Inconsistent warning using MultiHeadAttention with Masking | Hello, when I try to use `MultiHeadAttention` (which supports masking), I get the following warning:

```

/opt/conda/envs/trdm/lib/python3.11/site-packages/keras/src/layers/layer.py:915: UserWarning: Layer 'query' (of type EinsumDense) was passed an input with a mask attached to it. However, this layer does not suppor... | closed | 2024-11-06T13:39:06Z | 2024-11-06T22:00:14Z | https://github.com/keras-team/keras/issues/20455 | [] | fdtomasi | 2 |

biolab/orange3 | scikit-learn | 6,143 | Move Multifile widget from Spectroscopy add-on to Data category in "regular" Orange | <!--

Thanks for taking the time to submit a feature request!

For the best chance at our team considering your request, please answer the following questions to the best of your ability.

-->

**What's your use case?**

The Multifile widget can be very useful in applications other than spectroscopy. It should there... | open | 2022-09-18T14:02:37Z | 2023-01-10T10:55:04Z | https://github.com/biolab/orange3/issues/6143 | [

"wish",

"feast"

] | wvdvegte | 6 |

gevent/gevent | asyncio | 1,250 | No module named 'gevent.__hub_local | * gevent version: 1.3.4

* Python version: 3.6.5

* Operating System: linux-4.15.2.0 on imx6q

### Description:

when import gevent , then thers is a Traceback below.

Howerver, this code works well on unbuntu18.04

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/python/... | closed | 2018-07-09T08:02:20Z | 2018-08-17T17:22:58Z | https://github.com/gevent/gevent/issues/1250 | [] | feimeng115 | 4 |

Anjok07/ultimatevocalremovergui | pytorch | 796 | ValueError: Input signal length=0 is too small to resample from 48000->44100 | Last Error Received:

Process: Demucs

If this error persists, please contact the developers with the error details.

Raw Error Details:

ValueError: "Input signal length=0 is too small to resample from 48000->44100"

Traceback Error: "

File "UVR.py", line 4719, in process_start

File "separate.py", line 4... | open | 2023-09-15T08:16:53Z | 2023-09-15T08:17:47Z | https://github.com/Anjok07/ultimatevocalremovergui/issues/796 | [] | chrispviews | 1 |

voila-dashboards/voila | jupyter | 824 | Markdown latex equation inside Output widget | A markdown latex equation represented by `$ ... $` or `$$ ... $$` is not well represented by voilà when it's inside an `Output` widget.

Here is an example. I have a class with a `_repr_html_` method

```

class my_obj:

def _repr_html_(self):

return "<h1>Hello $D_1Q_2$ !!</h1>"

```

Then I make the... | open | 2021-02-08T09:23:33Z | 2021-02-08T09:23:33Z | https://github.com/voila-dashboards/voila/issues/824 | [] | gouarin | 0 |

polakowo/vectorbt | data-visualization | 746 | Documentation on vectorbt.dev seems to be broken | All pages are 404 since 2 days | closed | 2024-09-13T20:30:02Z | 2024-09-13T21:37:39Z | https://github.com/polakowo/vectorbt/issues/746 | [] | maniolias | 2 |

modelscope/data-juicer | data-visualization | 212 | Why only keep the most frequently occurring suffix when constructing formatter? | ### Before Asking 在提问之前

- [X] I have read the [README](https://github.com/alibaba/data-juicer/blob/main/README.md) carefully. 我已经仔细阅读了 [README](https://github.com/alibaba/data-juicer/blob/main/README_ZH.md) 上的操作指引。

- [X] I have pulled the latest code of main branch to run again and the problem still existed. 我已经拉取了主分... | closed | 2024-02-20T09:49:52Z | 2024-03-08T08:31:40Z | https://github.com/modelscope/data-juicer/issues/212 | [

"question"

] | BlockLiu | 3 |

ymcui/Chinese-BERT-wwm | tensorflow | 106 | 请教下两阶段预训练的schedule设置的细节 | 论文中写到:

> We train 100K steps on the samples with a maximum length of 128, batch size of 2,560, an initial learning rate of 1e-4 (with warm-up ratio 10%). Then, we train another 100K steps on a maximum length of 512 with a batch size of 384 to learn the long-range dependencies and position embeddings.

请教一下这样两阶段训练时,l... | closed | 2020-04-16T02:59:54Z | 2020-05-04T11:31:28Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/106 | [] | hitvoice | 4 |

microsoft/JARVIS | pytorch | 210 | 运行python run_gradio_demo.py --config configs/config.gradio.yaml,报错: | 2023-06-10 16:17:58,244 - awesome_chat - INFO - [{"task": "conversational", "id": 0, "dep": [-1], "args": {"text": "please show me a joke of cat" }}, {"task": "text-to-image", "id": 1, "dep": [-1], "args": {"text": "a photo of cat" }}]

2023-06-10 16:17:58,244 - awesome_chat - DEBUG - [{'task': 'conversational', 'id': ... | open | 2023-06-10T08:33:33Z | 2023-06-10T08:33:33Z | https://github.com/microsoft/JARVIS/issues/210 | [] | lovelucymuch | 0 |

3b1b/manim | python | 2,302 | [Bug] Code class's Animation applies to the entire code instead of the changed lines | ### Describe the bug

When using Manim's `Code` object to animate code changes, animations are applied to the entire code block, even if only a single line is added.

### Video

https://github.com/user-attachments/assets/d161622f-e72a-483a-852d-c978c11f9ce9

### Expected behavior

The animation should be applied on... | closed | 2025-01-13T10:23:01Z | 2025-01-13T14:48:31Z | https://github.com/3b1b/manim/issues/2302 | [

"bug"

] | Mindev27 | 2 |

deepset-ai/haystack | machine-learning | 9,062 | Refactor `LLMEvaluator` and child components to use Chat Generators and adopt the protocol | - Refactor the internal behavior of the component(s) to use Chat Generators instead of Generators

- Add a `chat_generator: ChatGenerator` init parameter and deprecate similar init parameters (in version 2.Y.Z).

- Remove deprecated parameters in version 2.Y.Z+1. | open | 2025-03-18T18:11:34Z | 2025-03-24T09:12:19Z | https://github.com/deepset-ai/haystack/issues/9062 | [

"P1"

] | anakin87 | 0 |

huggingface/text-generation-inference | nlp | 2,265 | gemma-7b warmup encountered an error | ### System Info

Hi, I have encountered an warmup error when using the newst main branch to compile and start up gemma-7b model, the error like this:

Traceback (most recent call last):

File "/usr/local//bin/text-generation-server", line 8, in <module>

sys.exit(app())

File "/usr/local/lib/python3.10/dist-pac... | closed | 2024-07-21T12:55:15Z | 2024-08-27T01:54:55Z | https://github.com/huggingface/text-generation-inference/issues/2265 | [

"Stale"

] | Amanda-Barbara | 3 |

scikit-image/scikit-image | computer-vision | 7,728 | Enable rc-coordinate conventions in `skimage.transform` | ## Description

Add a coordinates or similar flag to each function in `skimage.transform`, to change it from working with xy to rc. For skimage 2.0, we'll change the default from xy to rc.

**Can be closed, when** users have a means of using "rc" coordinates with every callable in `skimage.transform`.

### See also

... | open | 2025-03-03T22:40:31Z | 2025-03-07T16:42:56Z | https://github.com/scikit-image/scikit-image/issues/7728 | [

":hiking_boot: Path to skimage2",

":globe_with_meridians: Coordinate convention"

] | lagru | 0 |

widgetti/solara | fastapi | 688 | FileBrowser bug when navigating to path root | Steps to reproduce:

- create an app with `solara.FileBrowser()`

- the Filebrowser starts in the cwd (C:\Users\...), in the app click '..' until you are at 'C'

The filebrowser now shows the as if it was in the cwd instead of disk root

Similar issue when using eg `solara.FileBrowser(directory="D:\\")`

FileB... | closed | 2024-06-19T16:12:19Z | 2024-07-10T14:48:23Z | https://github.com/widgetti/solara/issues/688 | [] | Jhsmit | 0 |

hankcs/HanLP | nlp | 773 | 我自己也在做词典的命名实体,想知道哪里有命名实体的标注规则文档,还是说这些是根据自己的需求来定 | <!--

注意事项和版本号必填,否则不回复。若希望尽快得到回复,请按模板认真填写,谢谢合作。

-->

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检... | closed | 2018-03-25T12:57:12Z | 2020-01-01T10:50:42Z | https://github.com/hankcs/HanLP/issues/773 | [

"ignored"

] | brucegai | 2 |

noirbizarre/flask-restplus | flask | 171 | Swagger documentation error when used with other bluprints | Hello,

I experience an error with swagger documentation rendering that enters in conflict with other blueprints.

Code for restplus blueprint declaration

```

v1 = Blueprint('v1', __name__)

api = Api(v1, title='Project API (v1)', version='1.0', doc='/documentation/',

default_label='project')

```

Code for bluepr... | open | 2016-05-11T10:11:29Z | 2016-09-05T11:36:33Z | https://github.com/noirbizarre/flask-restplus/issues/171 | [

"bug"

] | k3z | 0 |

ultralytics/ultralytics | machine-learning | 19,317 | 4channel implementation of YOLO | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

I searched in other issues, but non of them had led to an answer. Can you ex... | open | 2025-02-19T18:18:54Z | 2025-02-25T22:11:56Z | https://github.com/ultralytics/ultralytics/issues/19317 | [

"enhancement",

"question"

] | MehrsaMashhadi | 5 |

aio-libs/aiopg | sqlalchemy | 58 | PostgreSQL notification support | Hi,

do you plan to add support for http://initd.org/psycopg/docs/advanced.html#asynchronous-notifications by any chance?

| closed | 2015-05-09T18:28:58Z | 2015-07-02T13:37:01Z | https://github.com/aio-libs/aiopg/issues/58 | [] | spinus | 5 |

plotly/dash-table | dash | 467 | Update documentation for css property | The current documentation for css is as such:

<img width="816" alt="Screen Shot 2019-06-14 at 2 16 20 PM" src="https://user-images.githubusercontent.com/30607586/59529422-007b1c80-8eaf-11e9-8ddc-fe6bb6c85617.png">

The example has the value part of "rule" as a string within a string. However it should be just one stri... | open | 2019-06-14T18:23:05Z | 2019-09-09T14:07:18Z | https://github.com/plotly/dash-table/issues/467 | [

"dash-type-maintenance"

] | OwenMatsuda | 0 |

pandas-dev/pandas | python | 60,815 | DOC: Missing documentation for `Styler.columns` and `Styler.index` | ### Pandas version checks

- [x] I have checked that the issue still exists on the latest versions of the docs on `main` [here](https://pandas.pydata.org/docs/dev/)

### Location of the documentation

https://pandas.pydata.org/docs/dev/reference/api/pandas.io.formats.style.Styler.html#pandas.io.formats.style.Styler

#... | closed | 2025-01-29T15:25:29Z | 2025-02-21T18:06:56Z | https://github.com/pandas-dev/pandas/issues/60815 | [

"Docs",

"Styler"

] | Dr-Irv | 5 |

Miserlou/Zappa | django | 2,092 | Certify with tags | ## Context

Tag is missing in API gateway

## Expected Behavior

The tag shall be added to API gateway

## Actual Behavior

The tag is not added

## Steps to Reproduce

1. zappa certify xxx

| open | 2020-04-30T02:35:43Z | 2020-04-30T02:35:43Z | https://github.com/Miserlou/Zappa/issues/2092 | [] | weasteam | 0 |

vimalloc/flask-jwt-extended | flask | 311 | get_jwt_identity return None for protected endpoint | This library is awesome but i had a question, why the get_jet_identity function returning None ?

```

@jwt.required

def post(self):

try:

return response.ok(jwt.getIdentity(), "")

except Exception as e:

return response.badRequest('', '{}'.format(e))

... | closed | 2020-01-25T08:27:52Z | 2020-01-25T08:49:14Z | https://github.com/vimalloc/flask-jwt-extended/issues/311 | [] | sunthree74 | 0 |

Gozargah/Marzban | api | 1,594 | [Question] How to set IP-Limit per subscription | I want to set a limit on how many different IPs a subscription can be used. Is this already possible? If not, please take it as a feature request. | closed | 2025-01-11T01:51:09Z | 2025-01-11T06:51:42Z | https://github.com/Gozargah/Marzban/issues/1594 | [

"Question"

] | socksprox | 1 |

joeyespo/grip | flask | 381 | GitHub API Rate Limit | With basic auth still hit an hourly rate limit. Does grip hit their API on every refresh just to make sure styles are up-to-date? What if I refresh 20 seconds later, I don't think the API changed much.

Is there a way to just use the last version of the styles it fetched? Maybe an `--offline` flag? Could run grip off... | open | 2024-02-24T14:51:44Z | 2024-10-30T16:32:27Z | https://github.com/joeyespo/grip/issues/381 | [] | jstnbr | 2 |

huggingface/transformers | nlp | 36,926 | `Mllama` not supported by `AutoModelForCausalLM` after updating `transformers` to `4.50.0` | ### System Info

- `transformers` version: 4.50.0

- Platform: Linux-5.15.0-100-generic-x86_64-with-glibc2.35

- Python version: 3.12.2

- Huggingface_hub version: 0.29.3

- Safetensors version: 0.5.3

- DeepSpeed version: not installed

- PyTorch version (GPU?): 2.6.0+cu124 (True)

- Tensorflow version (GPU?): not installed ... | open | 2025-03-24T12:07:09Z | 2025-03-24T12:28:00Z | https://github.com/huggingface/transformers/issues/36926 | [

"bug"

] | WuHaohui1231 | 2 |

recommenders-team/recommenders | deep-learning | 2,091 | [ASK] Perfect MAP@k is less than 1 | ### Description

I have a recommender that, for some users in some folds, has less than $k$ items in the ground truth. Therefore, the $precision@k$ is less than 1, even with a recommender that recommends the ground truth. For that reason, I calculate the results of a perfect recommender for multiple metrics.

By defi... | closed | 2024-04-26T13:28:54Z | 2024-04-29T21:36:52Z | https://github.com/recommenders-team/recommenders/issues/2091 | [

"documentation"

] | daviddavo | 1 |

graphistry/pygraphistry | pandas | 7 | Make an anaconda package | closed | 2015-06-25T21:30:28Z | 2016-05-08T02:14:10Z | https://github.com/graphistry/pygraphistry/issues/7 | [

"enhancement"

] | thibaudh | 2 | |

nl8590687/ASRT_SpeechRecognition | tensorflow | 277 | 模型太小,语音识别不准确 | 模型只有6M,之前做目标检测的时候模型动不动就几百M,原因是?

语音识别不准确,thchs30中直接找了一些语料,识别不准确;自己裁了一些小视频音频,有背景音,识别简直惨不忍睹;

后续有什么改进计划 | open | 2022-04-02T23:43:32Z | 2024-12-25T01:26:09Z | https://github.com/nl8590687/ASRT_SpeechRecognition/issues/277 | [] | wangzhanwei666 | 2 |

httpie/cli | python | 1,538 | Add support for OAuth2 authentication | ## Checklist

- [x] I've searched for similar feature requests.

---

## Enhancement request

I would like httpie to support OAuth 2.0 authentication, ideally in a way similar to the [infamous P**man](https://learning.postman.com/docs/sending-requests/authorization/oauth-20/#using-client-credentials). As an exa... | open | 2023-10-31T05:01:37Z | 2025-01-22T13:23:39Z | https://github.com/httpie/cli/issues/1538 | [

"enhancement",

"new"

] | rnd-debug | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.