repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

errbotio/errbot | automation | 901 | Location of config-template.py | In order to let us help you better, please fill out the following fields as best you can:

### I am ...

* [ ] Reporting a bug

### I am running...

* Errbot version: 4.3.4

* OS version: Ubuntu 16:04

* Python version: 3

* Using a virtual environment: yes

### Issue description

When you try to start err... | closed | 2016-11-18T13:47:53Z | 2016-12-01T10:17:50Z | https://github.com/errbotio/errbot/issues/901 | [

"newcomer-friendly",

"#configuration",

"#usability"

] | Darkguver | 2 |

xzkostyan/clickhouse-sqlalchemy | sqlalchemy | 204 | Support for DROP PARTITION statements | **Describe the bug**

Clickhouse is not designed to support deleting rows, although it supports it via expensive `ALTER TABLE ... DELETE WHERE` statements. A much more efficient way of deleting rows is dropping whole partitions, which is why reasonable table schema designers take care to partition their tables with tha... | open | 2022-10-11T15:09:19Z | 2022-10-11T15:09:19Z | https://github.com/xzkostyan/clickhouse-sqlalchemy/issues/204 | [] | georgipeev | 0 |

lexiforest/curl_cffi | web-scraping | 168 | [BUG] BytesWarning: str() on a bytes instance (with `-b`) | **Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Run curl_cffi or curl_cffi tests with `-bb` interpreter option

e.g. `python3 -bb -Werror -m pytest tests/unittest/test_requests.py`

`BytesWarning: str() on a bytes instance` is raised

```

tests/unittest/test_reques... | closed | 2023-12-03T00:07:17Z | 2024-01-01T04:00:32Z | https://github.com/lexiforest/curl_cffi/issues/168 | [

"bug"

] | coletdjnz | 1 |

SciTools/cartopy | matplotlib | 1,675 | unusable due to lack of adequate documentation | ### Description

<!-- Please provide a general introduction to the issue/proposal. -->

I am forced to transition code from the basemap package (for which I had many fixes) to cartopy as basemap does no longer compile from pypi in Python 3.9.

Unfortunately, the documentation - and therefore the package - is not re... | open | 2020-10-31T13:38:07Z | 2020-11-17T13:17:28Z | https://github.com/SciTools/cartopy/issues/1675 | [] | 2sn | 3 |

ray-project/ray | deep-learning | 50,823 | [Train] Unable to gain long-term access to S3 storage for training state/checkpoints when running on AWS EKS | ### What happened + What you expected to happen

I am using Ray Train + KubeRay to train on AWS EKS. I have configured EKS to give Ray pods a specific IAM role through Pod Identity Association. EKS Pod Identity Association provides a HTTP endpoint through which an application can "assume said IAM role" - i.e. gain a se... | open | 2025-02-22T05:18:57Z | 2025-03-05T01:25:36Z | https://github.com/ray-project/ray/issues/50823 | [

"bug",

"P1",

"triage",

"train"

] | jleben | 1 |

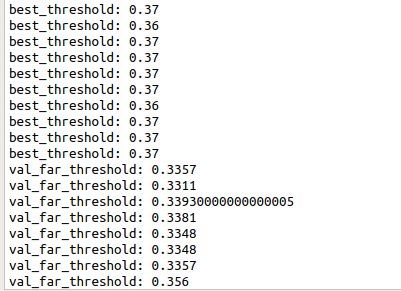

davidsandberg/facenet | computer-vision | 1,104 | When calculating ACC and VAL on LFW dataset, the threshold value is different. How can I determine the threshold value in practical application? |

| open | 2019-11-01T09:10:42Z | 2019-11-01T09:10:42Z | https://github.com/davidsandberg/facenet/issues/1104 | [] | Deepcong2019 | 0 |

psf/requests | python | 6,394 | Failure to fetch data / requests.get returns 503 while curl is 200 | requests.get fails to fetch page, while curl on the same machine gets a 200.

Additionaly the same code runs on macos 13.0.1

## Expected Result

```py

import requests # with version 2.28.2

res = requests.get("https://www.reichelt.com/raspberry-pi-zero-2-w-4x-1-ghz-512-mb-ram-wlan-bt-rasp-pi-zero2-w-p313902.h... | closed | 2023-03-26T15:43:42Z | 2024-03-28T00:03:40Z | https://github.com/psf/requests/issues/6394 | [] | Hu1buerger | 5 |

serengil/deepface | deep-learning | 570 | dlib called with BGR instead of RGB? | I've been reading through your code and most detector backend wrappers convert the colorspace from BGR to RGB before calling the external detector.

However, the dlib wrapper doesn't seem to do that and AFAIK dlib does expect RGB. Is that a bug?

(also please take a look at https://github.com/serengil/deepface/pull... | closed | 2022-10-04T08:47:35Z | 2022-10-04T12:19:48Z | https://github.com/serengil/deepface/issues/570 | [

"question"

] | Jille | 3 |

chiphuyen/stanford-tensorflow-tutorials | nlp | 130 | examples/04_eager_repl_demo.py is not found | The slide of lecture 4 says there is a examples/04_eager_repl_demo.py, which is not found in the repository. | open | 2018-08-14T02:19:16Z | 2018-12-10T14:55:55Z | https://github.com/chiphuyen/stanford-tensorflow-tutorials/issues/130 | [] | albertwang21 | 1 |

httpie/cli | api | 682 | What should I do if i wanna post some fields which including keyword? | Like this one:

http POST http://localhost:8080/apis appId=123 urlPattern=/test GET=get PUT=put POST=post DELETE=delete | closed | 2018-06-01T09:33:49Z | 2018-06-03T09:00:59Z | https://github.com/httpie/cli/issues/682 | [] | RudolphBrown | 1 |

deepinsight/insightface | pytorch | 2,030 | Do We Need to Normalize the Output? | I saw that you are normalizing the embedding output [here](https://github.com/deepinsight/insightface/blob/master/recognition/arcface_mxnet/verification.py#L321). Do we need to normalize the embedding? Since, if I removed the normalization part, the performance drop significantly. But, how does it run on the real-time ... | open | 2022-06-15T08:07:08Z | 2022-06-18T03:34:16Z | https://github.com/deepinsight/insightface/issues/2030 | [] | Malikanhar | 2 |

bloomberg/pytest-memray | pytest | 2 | Empty report when running with pytest-xdist | ## Bug Report

**Current Behavior** The plugin produces an empty output when running tests with [pytest-xdist](https://github.com/pytest-dev/pytest-xdist).

**Input Code**

```python

a = []

def something():

for i in range(10_000):

a.append(i)

def test_something():

something()

```

Runni... | closed | 2022-04-21T06:46:31Z | 2022-11-16T17:29:22Z | https://github.com/bloomberg/pytest-memray/issues/2 | [

"bug",

"help wanted"

] | orsinium | 6 |

pytorch/vision | machine-learning | 8,654 | Transforms v2.RandomRotation fail when expand=True with ValueError(f"Found multiple HxW dimensions in the sample: {sequence_to_str(sorted(sizes))}") | ### 🐛 Describe the bug

## Example:

```python

import torchvision

from torchvision.transforms import v2

transforms = v2.Compose([

v2.ToImage(),

v2.RandomRotation(degrees=(-45, 45), expand=True),

])

# Set up COCO dataset

train_dataset = torchvision.datasets.CocoDetection(coco_... | open | 2024-09-19T17:02:01Z | 2024-10-11T14:26:54Z | https://github.com/pytorch/vision/issues/8654 | [] | jeff-zimmerman | 6 |

dropbox/PyHive | sqlalchemy | 154 | Replace hive.resultset.use.unique.column.names test config with sqlalchemy dialect code | See this SQLAlchemy commit:

```

commit 8e24584d8d242d40d605752116ac05be33f697d3

Author: Mike Bayer <mike_mp@zzzcomputing.com>

Date: Sun Dec 5 00:46:11 2010 -0500

...

- move the "check for a dot in the colname" logic out to the sqlite dialect.

```

SQLAlchemy 0.6 had this snippet of code, which masked t... | closed | 2017-08-31T23:17:51Z | 2017-09-01T18:57:05Z | https://github.com/dropbox/PyHive/issues/154 | [] | jingw | 0 |

newpanjing/simpleui | django | 5 | Good thing! Wishing new update! | Bugs:

1.Cannot refresh the label opened.

Suggestions:

1.It's better if 'layui-header my-header' can be defined by self.

2.It's better if the style and color can be customed same as django admin style. | closed | 2019-02-26T03:41:25Z | 2019-03-01T10:30:45Z | https://github.com/newpanjing/simpleui/issues/5 | [] | duxiaoyang06 | 5 |

yt-dlp/yt-dlp | python | 11,739 | How to stop the extract_info execution before returning the result? | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt-dlp#re... | closed | 2024-12-05T02:28:03Z | 2024-12-05T04:02:41Z | https://github.com/yt-dlp/yt-dlp/issues/11739 | [

"question",

"incomplete"

] | Guovin | 2 |

pytest-dev/pytest-xdist | pytest | 1,057 | Add strict/complete typing | The current typing is very imcomplete. To make the plugin more maintainable and understandable, add typing to all of the currently untyped code and apply stricter mypy config.

Prerequisite tasks:

- execnet typing (done, needs an execnet release + bump execnet requirement)

- bump min pytest requirement from 6.2 to ... | closed | 2024-04-04T16:50:51Z | 2024-04-16T20:36:43Z | https://github.com/pytest-dev/pytest-xdist/issues/1057 | [] | bluetech | 0 |

aidlearning/AidLearning-FrameWork | jupyter | 166 | 安装好后,打开app闪退 | 05-28 08:01:37.137 28820 28912 E JNIKey : JNI_OnLoad

05-28 08:01:37.137 28820 28912 E JNIKey : register native methods

05-28 08:01:37.138 28820 28912 E JNIKey : register native methods success

05-28 08:01:37.140 28820 28912 E JNIKey : APP PACKAGE NAME:com.aidlux

05-28 08:01:37.140 28820 28912 E JNIKey : DECRYP... | closed | 2021-05-28T00:05:49Z | 2021-05-28T13:34:20Z | https://github.com/aidlearning/AidLearning-FrameWork/issues/166 | [] | xylake | 0 |

noirbizarre/flask-restplus | flask | 458 | 'Namespace' object has no attribute 'representation' | I am moving my project to namespace. Looks like representation is a method in Api class.

Receiving the error:

```python

File "C:\Users\xxxx\Envs\apidemo\apis\__init__.py", line 2, in <module>

from HR import api as ns1

File "C:\Users\xxx\Envs\apidemo\apis\HR.py", line 27, in <module>

@api.representatio... | closed | 2018-06-02T19:53:41Z | 2019-08-30T16:48:12Z | https://github.com/noirbizarre/flask-restplus/issues/458 | [] | manbeing | 1 |

LibrePhotos/librephotos | django | 678 | Access to ML model in Faces | I would like to see feature in LibrePhotos to guess the name of person on the photo (cropped to the face or not) without uploading it into archive.

Recently I've tried to add face recognition plugin to video surveillance ZoneMinder and the result wasn't good enough - it have special requirements for training photos ... | open | 2022-11-09T19:06:56Z | 2022-11-09T19:06:56Z | https://github.com/LibrePhotos/librephotos/issues/678 | [

"enhancement"

] | legioner0 | 0 |

flavors/django-graphql-jwt | graphql | 205 | Custom authentication backend with custom input fields | Hi, I want to build a custom authentication without using password as an input field. I want to send three input variables: username, msg, publickey and validate that in a custom Django authentication backend.

How to have three fields and pass it to the authenticate method of Django authentication backend?

| closed | 2020-06-02T06:10:44Z | 2020-08-02T11:02:07Z | https://github.com/flavors/django-graphql-jwt/issues/205 | [] | amiyatulu | 2 |

miguelgrinberg/python-socketio | asyncio | 545 | python-socketio token authentication with tornado web server | I am using python-socketio with a tornado web server. I am trying to get the authentication token from the client and check it in tornado server via python-socketio. There is some example in JavaScript Client and NodeJS Server. I find out Flask SocketIO also has a kinda smooth implementation for token authentication. I... | closed | 2020-09-14T06:10:28Z | 2021-04-06T13:24:28Z | https://github.com/miguelgrinberg/python-socketio/issues/545 | [

"question"

] | kamranhossain | 7 |

tensorflow/tensor2tensor | deep-learning | 1,617 | Residual connections in the decoder allow cheating? | The masked-multi-head attention prevents cheating by masking out the tokens in the input sentence to the right of the current word, but the residual connections are unmasked and allow cheating?

Say we have our input sequence x for the decoder and we apply the masked multi-head attention and get some representation ... | closed | 2019-06-26T09:30:57Z | 2019-07-18T19:53:58Z | https://github.com/tensorflow/tensor2tensor/issues/1617 | [] | JannisBush | 1 |

gevent/gevent | asyncio | 1,861 | Timeout up vs. down the call stack | What is the best way to do this?

```python

try:

... # code that might for example get events or greenlets and therefore could raise Timeout

except gevent.Timeout:

if XXXX:

raise # caused by a timeout context up the call stack

# (I don't have a reference to the instance,

... | open | 2022-01-26T21:05:13Z | 2022-01-27T23:54:39Z | https://github.com/gevent/gevent/issues/1861 | [] | woutdenolf | 2 |

dunossauro/fastapi-do-zero | sqlalchemy | 48 | Health check e serviço de migration no Docker Compose | Olá ao pessoal do curso, principalmente o @dunossauro!

Tem um repositório de demonstração que eu estava baseando em partes do curso FastAPI do zero, mas como quando estava preparando a parte do Docker estava como um PR em andamento, procurei outras fontes.

Como exemplo base, tinha seguido [a receita de bolo compa... | closed | 2023-11-27T17:20:08Z | 2024-05-22T02:12:38Z | https://github.com/dunossauro/fastapi-do-zero/issues/48 | [] | ayharano | 1 |

jina-ai/clip-as-service | pytorch | 296 | Error with bert-serving-start "p.is_ready.wait" | I am unable to get the server up and running in Docker. I run into this error on start (full trace at the bottom of the issue).

```

File "/usr/local/lib/python3.5/dist-packages/bert_serving/server/__init__.py", line 160, in _run

p.is_ready.wait()

AttributeError: 'function' object has no attribute 'wait'

``... | closed | 2019-03-28T22:51:57Z | 2019-05-16T01:17:30Z | https://github.com/jina-ai/clip-as-service/issues/296 | [] | gregsherrid | 8 |

pydantic/logfire | fastapi | 211 | All records just disappeared in the UI | ### Description

have the following error in the console:

`120Error: <rect> attribute width: A negative value is not valid. ("-2.1666666666666665")`

<img width="800" alt="image" src="https://github.com/pydantic/logfire/assets/1642503/5a31bd25-6a55-4113-9de4-dc1dfa71d634">

### Python, Logfire & OS Versions, relat... | closed | 2024-05-24T22:20:55Z | 2024-05-27T10:37:23Z | https://github.com/pydantic/logfire/issues/211 | [

"bug"

] | bllchmbrs | 2 |

pydantic/pydantic-settings | pydantic | 43 | Environment variables are not loaded for nested models with uppercase variable names | ### Checks

* [x] I added a descriptive title to this issue

* [x] I have searched (google, github) for similar issues and couldn't find anything

* [x] I have read and followed [the docs](https://pydantic-docs.helpmanual.io/) and still think this is a bug

<!-- Sorry to sound so draconian, but every second saved r... | closed | 2022-03-25T10:28:08Z | 2023-04-29T10:40:49Z | https://github.com/pydantic/pydantic-settings/issues/43 | [] | 0mar | 5 |

ageitgey/face_recognition | python | 1,070 | PHP shell_exec and face_detection | * face_recognition version: latest

* Python version: 2.7.13

* Operating System: linux

### Description

var_dump(shell_exec("/usr/local/bin/face_detection 1.jpg"));

always return NULL, but the same command works in terminal

how i can link face_detection and PHP?

I always try to use the absolute path to face_dete... | closed | 2020-02-23T10:20:56Z | 2020-02-23T15:11:59Z | https://github.com/ageitgey/face_recognition/issues/1070 | [] | kko123 | 1 |

freqtrade/freqtrade | python | 11,252 | Help Needed: Freqtrade Running on Binance US - No Trades Being Made | <!--

Have you searched for similar issues before posting it?

Did you have a VERY good look at the [documentation](https://www.freqtrade.io/en/latest/) and are sure that the question is not explained there

Please do not use the question template to report bugs or to request new features.

-->

## Describe your environm... | closed | 2025-01-19T17:28:36Z | 2025-01-23T05:54:46Z | https://github.com/freqtrade/freqtrade/issues/11252 | [

"Question",

"Strategy assistance"

] | EyesOnly1987 | 8 |

kennethreitz/responder | graphql | 338 | How could I set 'json ensure_ascii = false' when resp.media is Japanese or Chinese? | When `resp.media = {'error': '错误的数据类型'}`, I'll get `{"error": "\\u9519\\u8bef\\u7684\\u6570\\u636e\\u7c7b\\u578b"}`. I know I should set `json ensure_ascii = false` in `json.dumps`, but I don't know how to do that in `responder`.

like that:

``` python

@api.route('/{errorcode}')

async def error(req, resp, *, erro... | closed | 2019-03-28T08:35:23Z | 2019-04-03T10:58:51Z | https://github.com/kennethreitz/responder/issues/338 | [] | songsenand | 2 |

laughingman7743/PyAthena | sqlalchemy | 187 | Cache Expiration Based on Time | Hello,

Athena cache is a great feature, but is it possible to set a cache expiration based on time?

Thank you | closed | 2020-12-13T21:30:13Z | 2024-07-25T05:06:13Z | https://github.com/laughingman7743/PyAthena/issues/187 | [] | rubenssoto | 7 |

psf/requests | python | 6,288 | charset-normalizer upgraded to 3.x | charset-normalizer has been upgraded to version 3. requests requires version >= 2 and < 3.

## Expected Result

support for charset-normalizer 3

## Actual Result

warning of incompatibility with charset-normalizer 3

## Reproduction Steps

```shell

% pip install --upgrade charset-normalizer

Requirement a... | closed | 2022-11-18T12:09:55Z | 2023-11-19T00:03:40Z | https://github.com/psf/requests/issues/6288 | [] | walterrowe | 1 |

ray-project/ray | python | 51,010 | [distributed debugger] exception in regular remote worker function leading to access violation when debugger connects | ### What happened + What you expected to happen

In my setup we are using a Ray serve deployment with fast API and starlette middleware

To test the use use of the distributed debugger, I created a class that has remote static member functions that are decorated with Ray remote but it is not an actor.

The deployment ha... | open | 2025-03-01T00:58:27Z | 2025-03-06T20:31:48Z | https://github.com/ray-project/ray/issues/51010 | [

"bug",

"triage",

"serve"

] | kotoroshinoto | 1 |

pallets-eco/flask-wtf | flask | 138 | TypeError due to usage of safe_str_cmp | This edit causing an issue like this

```

File "/Users/Jasim/.virtualenvs/project1/lib/python2.7/site-packages/flask_wtf/form.py", line 108, in validate_csrf_token

if not validate_csrf(field.data, self.SECRET_KEY, self.TIME_LIMIT):

File "/Users/Jasim/.virtualenvs/project1/lib/python2.7/site-packages/flask_wtf/csr... | closed | 2014-07-23T03:28:19Z | 2021-05-29T01:16:02Z | https://github.com/pallets-eco/flask-wtf/issues/138 | [] | jasimmk | 2 |

BeanieODM/beanie | pydantic | 534 | Soft Delete | ### Discussed in https://github.com/roman-right/beanie/discussions/532

<div type='discussions-op-text'>

<sup>Originally posted by **N0odlez** April 13, 2023</sup>

Is it possible to have a Soft Delete functionality, or alternatively let me know how to add a Middleware on each database request to check if a field ... | closed | 2023-04-13T19:34:52Z | 2024-05-01T17:28:58Z | https://github.com/BeanieODM/beanie/issues/534 | [] | roman-right | 1 |

mars-project/mars | scikit-learn | 2,384 | Tileable subtask structure visualization | **Is your feature request related to a problem? Please describe.**

When visualizing the tileable graph, we can also include a dag visualization for the subtask structure of each tileable, which can help us better control the progress of tileable.

**Describe the solution you'd like**

We can create an API endpoint ... | closed | 2021-08-24T10:20:01Z | 2021-09-17T07:46:32Z | https://github.com/mars-project/mars/issues/2384 | [

"type: feature",

"mod: web"

] | RandomY-2 | 2 |

kizniche/Mycodo | automation | 790 | New PID Controller | Currently, the PID controller utilizes a single set of P, I, and D gains to tune both the raise and lower outputs. However, a tuning that works for one output may not necessarily work well for another. As a result, you may find you can tune one direction very well, but the alternate direction is poorly tuned.

To overc... | closed | 2020-07-17T02:04:46Z | 2020-07-22T18:54:57Z | https://github.com/kizniche/Mycodo/issues/790 | [

"enhancement"

] | kizniche | 1 |

huggingface/peft | pytorch | 1,693 | How to convert a loha safetensor trained from diffusers to webui format | Hello, when I finetune SDXL (actually that is InstantID) with PEFT method, I use lora、loha and lokr for PEFT in [diffuser](https://github.com/huggingface/diffusers).

I have a question, how to convert a loha safetensor trained from diffusers to webui format?

In the training process:

the loading way:

`peft_config =... | closed | 2024-04-30T07:17:48Z | 2024-06-08T15:03:44Z | https://github.com/huggingface/peft/issues/1693 | [] | JIAOJIAYUASD | 2 |

writer/writer-framework | data-visualization | 557 | visibility attribute on backend ui broke the application in 0.7.2 | open | 2024-09-10T14:47:34Z | 2024-09-10T14:47:34Z | https://github.com/writer/writer-framework/issues/557 | [

"bug"

] | FabienArcellier | 0 | |

ClimbsRocks/auto_ml | scikit-learn | 23 | add 'date' as an input column type | closed | 2016-08-13T05:47:42Z | 2016-08-18T02:58:42Z | https://github.com/ClimbsRocks/auto_ml/issues/23 | [] | ClimbsRocks | 2 | |

marcomusy/vedo | numpy | 275 | Radius of points | Hi @marcomusy

`pts = Points(nxpts, r=12)

`

sets uniform radius to all points in a graph. I would like to assign a different radius to each point.

Since `r` accepts a float , I wasn't sure how to get this working if I supply a list.

Could you please help me with this?

| closed | 2020-12-21T16:12:39Z | 2020-12-26T18:13:05Z | https://github.com/marcomusy/vedo/issues/275 | [] | DeepaMahm | 9 |

freqtrade/freqtrade | python | 11,284 | Create Trade Object at Signal Detection | ## Describe the enhancement

Currently, the `Trade` object is created only after the first entry order is filled.

This architecture has drowbacks:

#### 1 Complexity

Unnecessary complexity because important parameters must be explicitly passed to functions instead of being directly accessed via the `Trade` object—the ... | closed | 2025-01-25T04:33:17Z | 2025-01-26T22:33:20Z | https://github.com/freqtrade/freqtrade/issues/11284 | [

"Question"

] | Axel-CH | 11 |

mljar/mljar-supervised | scikit-learn | 669 | Bug: 'KNeighborsAlgorithm' object has no attribute 'classes_' | Current behavior:

'KNeighborsAlgorithm' object has no attribute 'classes_'

Problem during computing permutation importance. Skipping ...

Expected: KNeighbors to be trained

| closed | 2023-11-03T20:58:50Z | 2024-09-30T13:39:45Z | https://github.com/mljar/mljar-supervised/issues/669 | [

"bug",

"help wanted",

"good first issue"

] | strukevych | 8 |

graphql-python/graphene-sqlalchemy | graphql | 91 | AttributeError in Registry class when asserting | I had a situation where I wanted to recreate the SQLAlchemyObjectType, creating a new type. This does not work out of the box with Registry (which is OK), and an assert is thrown.

However, the assert itself has an problem:

```

..../api/graphql/types.py:74: in __init_subclass_with_meta__

registry.register(c... | closed | 2017-11-21T10:26:43Z | 2023-02-25T06:58:58Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/91 | [] | geertjanvdk | 1 |

encode/httpx | asyncio | 3,215 | Improve async performance. | There seems to be some performance issues in `httpx` (0.27.0) as it has much worse performance than `aiohttp` (3.9.4) with concurrently running requests (in python 3.12). The following benchmark shows how running 20 requests concurrently is over 10x slower with `httpx` compared to `aiohttp`. The benchmark has very basi... | open | 2024-06-04T09:30:12Z | 2025-03-14T05:57:31Z | https://github.com/encode/httpx/issues/3215 | [

"perf"

] | MarkusSintonen | 61 |

vllm-project/vllm | pytorch | 15,036 | [Bug]: tensor shape mismatch for `--lora-extra-vocab-size 0` | ### Your current environment

<details>

<summary>The output of `python collect_env.py`</summary>

```text

INFO 03-18 19:50:09 [__init__.py:256] Automatically detected platform cuda.

Collecting environment information...

PyTorch version: 2.6.0+cu124

Is debug build: False

CUDA used to build PyTorch: 12.4

ROCM used to bui... | closed | 2025-03-18T13:19:12Z | 2025-03-18T16:40:30Z | https://github.com/vllm-project/vllm/issues/15036 | [

"bug"

] | cjackal | 2 |

pyjanitor-devs/pyjanitor | pandas | 739 | Add the Welcome Bot to the project | This one! https://github.com/apps/the-welcome-bot | closed | 2020-09-09T12:08:01Z | 2020-09-18T22:53:59Z | https://github.com/pyjanitor-devs/pyjanitor/issues/739 | [] | ericmjl | 0 |

aleju/imgaug | deep-learning | 630 | flipud produces negative stridess | `Flipud` flips images by

images[i] = image[::-1, ...]

This produces negative strides in numpy which results in errors when using them with PyTorch Tensors.

A solution would be to use `numpy.flipud` or call `.copy()` on the flipped array

Great library btw! :) | open | 2020-02-28T09:26:00Z | 2020-03-04T21:16:16Z | https://github.com/aleju/imgaug/issues/630 | [

"TODO"

] | AljoSt | 0 |

roboflow/supervision | pytorch | 703 | PixelateAnnotator throws errors if the area is too small, BlurAnnotator is not censoring if area is too large | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar bug report.

### Bug

When using PixelateAnnotator with no additional configuration, it throws an error if the area to pixelate is too small:

```

Traceback (most recent call l... | open | 2023-12-30T15:30:07Z | 2024-01-02T15:36:20Z | https://github.com/roboflow/supervision/issues/703 | [

"bug"

] | Clemens-E | 5 |

Kanaries/pygwalker | pandas | 436 | [DEV-656] color config proposal | 1. since there is already a themeConfig, the name of color config sounds confusing. My suggestion is change the param to "UITheme"

2. support deep merge of variables. If there is no ui theme config design tool, it will be more dev-friendly to allow dev to overrides some css variables instead of the whole setting

<sub>... | closed | 2024-02-19T03:44:16Z | 2024-04-10T12:59:35Z | https://github.com/Kanaries/pygwalker/issues/436 | [

"Low priority"

] | ObservedObserver | 0 |

dpgaspar/Flask-AppBuilder | rest-api | 1,762 | Data used without being defined previous for Azure RBAC | https://github.com/dpgaspar/Flask-AppBuilder/blob/afc8e2c9209a928fa6a791919ceae3ce3cdc48a7/flask_appbuilder/security/manager.py#L625

This data variable in the referred line is not defined, looks like it should be

`data = me`

| closed | 2021-12-21T19:34:15Z | 2022-04-29T17:40:56Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/1762 | [

"stale"

] | fcomuniz | 1 |

adbar/trafilatura | web-scraping | 166 | Issue with LXML on M1 / Apple arm64 platforms | On a clean install on the `master` branch, `metadata_tests.py` and `realworld_tests.py` fail.

```sh

short test summary info

=============================================================================================

FAILED tests/metadata_tests.py::test_pages - AssertionError: assert None == 'Want to See a More ... | closed | 2022-01-22T02:37:51Z | 2023-11-08T15:59:24Z | https://github.com/adbar/trafilatura/issues/166 | [

"bug",

"wontfix",

"documentation"

] | naftalibeder | 9 |

davidsandberg/facenet | computer-vision | 604 | align_dataset_mtcnn.py using fail | Anyone who can help me?

After getting the data LFW(put in facenet/data/lfw/raw), I want to handle these data using align_dataset_mtcnn.py, but something wrong happened.

My command is "cd facenet export PYTHONPATH=$(pwd)/src python ./src/align/a... | closed | 2018-01-07T09:05:31Z | 2018-04-01T21:38:07Z | https://github.com/davidsandberg/facenet/issues/604 | [] | situjie68 | 2 |

feder-cr/Jobs_Applier_AI_Agent_AIHawk | automation | 742 | [BUG]: ERROR: Cannot install -r requirements.txt (line 10), and langchain-core===0.2.36 because these package versions have conflicting dependencies. | ### Describe the bug

ERROR: Cannot install -r requirements.txt (line 10), -r requirements.txt (line 12), -r requirements.txt (line 13), -r requirements.txt (line 14), -r requirements.txt (line 15), -r requirements.txt (line 2), -r requirements.txt (line 7), -r requirements.txt (line 8) and langchain-core===0.2.36 beca... | closed | 2024-11-04T13:33:58Z | 2024-12-18T17:12:43Z | https://github.com/feder-cr/Jobs_Applier_AI_Agent_AIHawk/issues/742 | [

"bug"

] | agispas | 4 |

python-gitlab/python-gitlab | api | 2,827 | Bug: List all projects of current user returns empty list | ## Description of the problem, including code/CLI snippet

I want to call `gitlab_client.projects.list(membership=True, all=True)` and have all projects returned that the current user is a member of.

## Expected Behavior

Returns a list of projects the user is a member of.

Can be filtered via the gitlab API using... | closed | 2024-03-22T12:39:53Z | 2024-06-06T20:36:21Z | https://github.com/python-gitlab/python-gitlab/issues/2827 | [] | Malaber | 1 |

postmanlabs/httpbin | api | 441 | Server is down? |

| closed | 2018-04-29T11:24:26Z | 2018-04-29T11:48:56Z | https://github.com/postmanlabs/httpbin/issues/441 | [] | sicklife | 1 |

matplotlib/mplfinance | matplotlib | 335 | Bug Report: Point-and-Figure Plotting Error | **Describe the bug**

The Point and Figure definition doesn't plot quite correctly. The only reason why a new column is created in a Point and Figure Chart is to avoid overwriting an existing box. So, a change in direction (X to O or vice-versa) doesn't result in a new column unless it would result in an overwriting of... | closed | 2021-02-20T02:44:16Z | 2021-02-28T03:56:52Z | https://github.com/matplotlib/mplfinance/issues/335 | [

"bug"

] | GenusGeoff | 2 |

jina-ai/serve | fastapi | 5,522 | chore: draft release note 3.13 | # Release Note

This release contains 14 new features, 9 bug fixes and 7 documentation improvements.

This release introduces major features like Custom Gateways, Dynamic Batching for Executors, development support with auto-reloading, support for the new namespaced Executor scheme `jinaai`, improvements for our gR... | closed | 2022-12-14T14:38:15Z | 2022-12-15T16:09:24Z | https://github.com/jina-ai/serve/issues/5522 | [] | alexcg1 | 0 |

paperless-ngx/paperless-ngx | django | 9,478 | [BUG] Workflow overwrites custom text fields | ### Description

When a workflow adds empty custom fields to a document of type "Rechnung" and the user later fills in these fields (e.g. "Rechnungsnummer"), the filled-in values are overwritten with null on the next PUT request. This happens even though the PUT request clearly contains the correct field values.

**Exp... | closed | 2025-03-24T11:26:37Z | 2025-03-24T13:34:50Z | https://github.com/paperless-ngx/paperless-ngx/issues/9478 | [

"duplicate"

] | petarmarj | 1 |

reiinakano/scikit-plot | scikit-learn | 30 | installation error | Hi i tried to pip install skikit-plot but got the following error message:

Command "python setup.py egg_info" failed with error code 1.

Any suggestion?

Thanks very much.

Elena | closed | 2017-05-01T22:36:46Z | 2017-05-07T08:19:36Z | https://github.com/reiinakano/scikit-plot/issues/30 | [] | zhongheli | 3 |

zama-ai/concrete-ml | scikit-learn | 681 | Performance Issues | ## Summary

I have tested mutliple exampe codes like cifar 10, cifar 100. As previously reported, it takes lot of time on inference. Almost 25 ton30 min on inference. I have upgraded my hardware resources and did not notice a significant improvement in inference time.

Can you please share some neural network code w... | closed | 2024-05-16T03:46:46Z | 2024-06-11T09:23:52Z | https://github.com/zama-ai/concrete-ml/issues/681 | [

"bug"

] | thorntonmk | 1 |

robusta-dev/robusta | automation | 1,028 | ability to send notification after some additional pause | **Is your feature request related to a problem?**

I want to create a notification chain, for ex. on critical alert notify this sink immediately, but this sink only if alert continue firing after 30m (promote alert to next support level).

**Describe alternatives you've considered**

Something similar can be achieved... | open | 2023-08-09T11:39:46Z | 2023-10-31T15:46:16Z | https://github.com/robusta-dev/robusta/issues/1028 | [

"enhancement"

] | lictw | 2 |

thp/urlwatch | automation | 723 | Documentation example for Github tags stopped working. | In the [documentation](https://urlwatch.readthedocs.io/en/latest/filters.html#watching-github-releases-and-gitlab-tags)

in the section "Watching Github releases and Gitlab tags"

```

This is the corresponding version for Github tags:

url: https://github.com/thp/urlwatch/tags

filter:

- xpath: (//div[contains... | closed | 2022-10-07T13:55:40Z | 2023-08-01T17:49:13Z | https://github.com/thp/urlwatch/issues/723 | [] | mercurytoxic | 5 |

tableau/server-client-python | rest-api | 703 | Issue updating connections on workbooks to datasources | Hi,

I'm trying to update connections on workbooks. The example provided in samples works for datasources, but when I'm updating workbooks I'm getting the following error:

400039: Bad Request

There was a problem updating connection {CONNECTION_ID} for workbook {WORKBOOK_ID}.

Maybe it's a different issue, but how... | open | 2020-09-30T10:25:51Z | 2023-08-07T18:08:52Z | https://github.com/tableau/server-client-python/issues/703 | [

"needs investigation",

"document-api"

] | DaniilBalabanov33N | 3 |

microsoft/qlib | machine-learning | 1,062 | qrun TRA model error | ## 🐛 Bug Description

<!-- A clear and concise description of what the bug is. -->

## To Reproduce

Steps to reproduce the behavior:

1. qrun examples/benchmarks/TRA/workflow_config_tra_Alpha158.yaml

## Expected Behavior

<!-- A clear and concise description of what you expected to happen. -->

ERR... | closed | 2022-04-18T14:09:53Z | 2022-09-15T05:06:18Z | https://github.com/microsoft/qlib/issues/1062 | [

"bug"

] | stockcoder | 5 |

deepinsight/insightface | pytorch | 1,799 | torch2onnx assert error | When I run the torch2onnx.py file(recognition/arcface_torch/), I get an error in the code below.

assert os.path.exists(input_file)

The cause seems to be that "input=" is included in "input_file".

So, I modified a single line of code, can I run it without modification?

If not, I'd like to send you a pull request.

... | open | 2021-10-26T06:48:55Z | 2021-10-29T05:47:05Z | https://github.com/deepinsight/insightface/issues/1799 | [] | Yuri-Kim | 1 |

deepspeedai/DeepSpeed | machine-learning | 6,876 | How to turn on allgather overlapping in ZeRO-1/2 ? | [ TARGET ]

ZeRO-1/2 requires an allgather after backward computations since the optimizer states are distributed and hence only parts of the model parameters are updated on each rank. allgather assembles the complete updated model for each rank before the forward operation in the next iteration/step (which relies on up... | closed | 2024-12-16T12:07:56Z | 2024-12-18T18:01:41Z | https://github.com/deepspeedai/DeepSpeed/issues/6876 | [] | 2012zzhao | 5 |

ipython/ipython | data-science | 14,581 | Enabling %matplotlib qt in IPython shell slows it down significantly | To reproduce:

Fire up a terminal, activate conda environment and enter ipython. This is a normal ipython terminal (not jupyter console or jupyter qtconsole).

At first, the shell is responsive even after many imports and trying out different data manipulation operations. As soon as `%matplotlib qt`is enabled, the shell... | closed | 2024-11-14T02:10:34Z | 2025-02-02T13:58:42Z | https://github.com/ipython/ipython/issues/14581 | [] | AmerM137 | 13 |

huggingface/peft | pytorch | 1,593 | Getting Dora Model Is Very Slow | ### System Info

Package Version

------------------------ ---------------

accelerate 0.29.0.dev0

aiohttp 3.9.3

aiosignal 1.3.1

annotated-types 0.6.0

appdirs 1.4.4

async-timeout 4.0.3

attrs 23.2... | closed | 2024-03-27T06:05:56Z | 2024-05-16T21:18:34Z | https://github.com/huggingface/peft/issues/1593 | [] | mallorbc | 10 |

sqlalchemy/sqlalchemy | sqlalchemy | 10,832 | JSON type compile literal_binds results in error | ### Describe the bug

SQLalchemy query compiler fails to compile for JSON column. Did not find much documentation on the matter and I am still not sure if I need to turn the python dictionary into a JSON string before the insert statement or not.

### Optional link from https://docs.sqlalchemy.org which documents ... | closed | 2024-01-05T18:52:32Z | 2024-01-06T00:37:58Z | https://github.com/sqlalchemy/sqlalchemy/issues/10832 | [

"sql",

"expected behavior"

] | jlynchMicron | 2 |

microsoft/hummingbird | scikit-learn | 516 | What is pytorch container and how do I see the actual matrices? | In the example code, how would I go look at the pytorch model container variable model, and find out what actual computations it is computing? Aka how do I convert the pytorch container type to an actual nn.Module in pytorch? | closed | 2021-05-27T03:11:51Z | 2021-06-02T21:04:20Z | https://github.com/microsoft/hummingbird/issues/516 | [] | marsupialtail | 7 |

ScrapeGraphAI/Scrapegraph-ai | machine-learning | 811 | The timeout is not being respected | **Describe the bug**

The timeout set on the configuration is not being respected.

**Expected behavior**

Timeout to be respected

**Code**

```

config = {

"llm": {

"api_key": "my_key",

"model": "google_genai/gemini-1.5-flash",

"timeo... | closed | 2024-11-19T13:42:52Z | 2025-01-06T04:17:29Z | https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/811 | [] | matheus-rossi | 10 |

mljar/mercury | jupyter | 197 | notebook with margins | closed | 2023-01-05T12:12:02Z | 2023-02-17T13:05:17Z | https://github.com/mljar/mercury/issues/197 | [] | pplonski | 1 | |

nonebot/nonebot2 | fastapi | 2,718 | Plugin: nonebot-plugin-tsugu-bangdream-bot | ### PyPI 项目名

nonebot-plugin-tsugu-bangdream-bot

### 插件 import 包名

nonebot_plugin_tsugu_bangdream_bot

### 标签

[{"label":"tsugu","color":"#ffee88"}]

### 插件配置项

_No response_ | closed | 2024-05-16T08:55:15Z | 2024-05-18T14:46:03Z | https://github.com/nonebot/nonebot2/issues/2718 | [

"Plugin"

] | WindowsSov8forUs | 9 |

akfamily/akshare | data-science | 5,112 | CentOS 7.9安装失败 | 阿里云服务器,Python3.11 CentOS 7.9 安装失败,报错的原因是去Google拉取

depot_tools,无法访问。

请问能否去掉这个,在Windows11是可以安装的。

| closed | 2024-08-07T06:37:03Z | 2024-09-03T13:44:39Z | https://github.com/akfamily/akshare/issues/5112 | [

"bug"

] | random-heap | 6 |

aidlearning/AidLearning-FrameWork | jupyter | 116 | 86b2p2初次可以启动,再次启动打不开,崩溃,启动也很慢。 | 86b2p2初次可以启动,再次启动打不开,崩溃,启动也很慢。 | closed | 2020-07-22T11:05:41Z | 2020-07-23T05:33:49Z | https://github.com/aidlearning/AidLearning-FrameWork/issues/116 | [] | ottoskyer | 1 |

flasgger/flasgger | rest-api | 214 | Authorization Header does not exist in flask request object | I'm expecting Authorization and a request id in request header but it is missing.

print(request.headers)

```

Host: 0.0.0.0:9000

Origin: http://localhost:9000

Accept-Encoding: gzip, deflate

Dnt: 1

Access-Control-Request-Headers: authorization,request_id

Accept: text/html,application/xhtml+xml,application/xml;q=... | closed | 2018-07-20T07:52:54Z | 2019-05-09T21:30:06Z | https://github.com/flasgger/flasgger/issues/214 | [] | ryanermita | 2 |

replicate/cog | tensorflow | 1,386 | How to gracefully return back with no img output | In below code snippet, how can I gracefully mark the prediction as completed with some custom output when "No human detected in the image!"

```

def predict(

self,

image: Path = Input(description="Grayscale input image"),

scale: float = Input(

description="Factor to scale imag... | open | 2023-11-17T19:19:35Z | 2023-11-22T09:27:21Z | https://github.com/replicate/cog/issues/1386 | [] | jerry275 | 2 |

graphql-python/graphene-django | django | 1,428 | [Critical] Breaking change in patch release | In version 3.1.2, the `JSONField` in `graphene_django.compat` was removed.

While removing this is a valid change, it constitutes a breaking change, and therefore a bump of the project's major version.

Due to semantic versioning, downstream applications will automatically update to `3.1.2` and break if they import... | closed | 2023-07-08T11:56:36Z | 2023-07-18T12:11:32Z | https://github.com/graphql-python/graphene-django/issues/1428 | [

"🐛bug"

] | Natureshadow | 0 |

graphistry/pygraphistry | pandas | 50 | FAQ / guide on exporting | cc @thibaudh @padentomasello @briantrice

| closed | 2016-01-11T21:50:51Z | 2020-06-10T06:46:52Z | https://github.com/graphistry/pygraphistry/issues/50 | [

"enhancement"

] | lmeyerov | 0 |

ageitgey/face_recognition | python | 692 | Faster face recognition in base with more than 1 million encodings. | * face_recognition version: Last

* Python version: 3

* Operating System: Ubuntu

Hello!

I want to collect pre-calculated encodings of more than 1 million faces in database (mysql,postgres...?) with 128 columns.

And i have face,which I want to find among them.

And than i want search top 5 the most similar faces ... | open | 2018-11-29T21:08:34Z | 2018-11-30T07:26:46Z | https://github.com/ageitgey/face_recognition/issues/692 | [] | arpsyapathy | 2 |

jina-ai/clip-as-service | pytorch | 278 | TypeError: cannot unpack non-iterable NoneType object | **Prerequisites**

> Please fill in by replacing `[ ]` with `[x]`.

* [x] Are you running the latest `bert-as-service`?

* [x] Did you follow [the installation](https://github.com/hanxiao/bert-as-service#install) and [the usage](https://github.com/hanxiao/bert-as-service#usage) instructions in `README.md`?

* [x] D... | closed | 2019-03-16T03:59:21Z | 2022-09-26T17:27:56Z | https://github.com/jina-ai/clip-as-service/issues/278 | [] | KODGV | 8 |

pennersr/django-allauth | django | 3,994 | Cannot add security key (webauthn) | Hi,

I really appreciate your work on adding fido2 support! I tried to test it locally, but I could not add a new security key

**Here is what I did:**

1. I installed the package using `pip install git+https://github.com/pennersr/django-allauth.git@main#egg=django-allauth[mfa]`

2. Add `MFA_SUPPORTED_TYPES = ["to... | closed | 2024-07-26T18:46:36Z | 2024-07-27T06:11:19Z | https://github.com/pennersr/django-allauth/issues/3994 | [

"Help wanted"

] | inc-ali | 6 |

albumentations-team/albumentations | deep-learning | 1,563 | [Feature Request] Return Mixing parameter from MixUp augmentation | People use mixing parameter to weight loss of the image.

[Discussion](https://www.kaggle.com/competitions/hms-harmful-brain-activity-classification/discussion/481764#2682070) | closed | 2024-03-05T17:18:42Z | 2024-03-12T00:25:36Z | https://github.com/albumentations-team/albumentations/issues/1563 | [

"feature request"

] | ternaus | 1 |

falconry/falcon | api | 1,975 | Warn upon importing Falcon using deprecated Python? | We use to declare certain Python language, or specific implementation, versions as deprecated, or later, completely unsupported.

Maybe we should emit a deprecation (or EOL) warning in the case `falcon` is imported in such an interpreter? | closed | 2021-10-16T11:11:57Z | 2024-08-17T17:53:27Z | https://github.com/falconry/falcon/issues/1975 | [

"needs-decision",

"proposal",

"maintenance"

] | vytas7 | 6 |

jupyter-incubator/sparkmagic | jupyter | 352 | Problems with yarn-cluster mode | ## Setup

- centos 7

- cdh 5.5.X

- livy 3.0

## Issue

When running sparkmagic with livy configured to `yarn-client` or `yarn-cluster`:

```

# What spark master Livy sessions should use.

livy.spark.master = yarn-client

```

Things generally work. However, as part of my interactive session I'd like to use ar... | closed | 2017-05-03T15:40:05Z | 2017-05-03T18:10:52Z | https://github.com/jupyter-incubator/sparkmagic/issues/352 | [] | quasiben | 1 |

gradio-app/gradio | data-visualization | 9,878 | Unable to serve image with allowed_paths | ### Describe the bug

Hello,

Since, gradio v5 release, i'm unable to display any images from the gradio client.

I tryed the same code with gradio v4 and there no problem.

### Have you searched existing issues? 🔎

- [X] I have searched and found no existing issues

### Reproduction

```python

import gra... | closed | 2024-10-31T16:48:06Z | 2024-10-31T16:50:43Z | https://github.com/gradio-app/gradio/issues/9878 | [

"bug"

] | bastian110 | 1 |

predict-idlab/plotly-resampler | data-visualization | 195 | FigureResampler not working for Boxplot and Histogram | Hello,

In the last example here https://github.com/predict-idlab/plotly-resampler/blob/main/examples/basic_example.ipynb

go.Histogram and go.Boxplot does not seem to be affected by the limiting points to display in fig = FigureResampler(base_fig, default_n_shown_samples=1000, verbose=False)

why is that? | closed | 2023-04-14T23:03:30Z | 2023-04-17T16:48:08Z | https://github.com/predict-idlab/plotly-resampler/issues/195 | [

"documentation"

] | bwassim | 2 |

FlareSolverr/FlareSolverr | api | 846 | [yggtorrent] : Error solving the challenge | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [X] I have read the Dis... | closed | 2023-08-02T09:18:45Z | 2023-08-02T14:58:44Z | https://github.com/FlareSolverr/FlareSolverr/issues/846 | [

"duplicate"

] | almottier | 6 |

tensorly/tensorly | numpy | 422 | Testing for complex tensors | See #298. Several of the tests for methods should be extended to the complex cases. Perhaps a first step to accomplishing this is to extend the random tensor methods to be able to construct random tensors with complex elements. | open | 2022-07-12T02:25:48Z | 2022-07-20T03:07:38Z | https://github.com/tensorly/tensorly/issues/422 | [

"enhancement"

] | aarmey | 1 |

feature-engine/feature_engine | scikit-learn | 763 | transformer to apply mean normalization | in mean normalization, we subtract the mean from each value and then divide by the value range. This centres the variables at 0, and scales their values between -1 and 1. It is an alternative to standardization.

sklearn has no transformer to apply mean normalization. but we can combine the standard scaler and the ro... | closed | 2024-05-15T12:01:48Z | 2024-11-02T18:41:56Z | https://github.com/feature-engine/feature_engine/issues/763 | [] | solegalli | 6 |

graphdeco-inria/gaussian-splatting | computer-vision | 879 | Question about obtaining exponential falloff multiplied to alpha | ```

// Resample using conic matrix (cf. "Surface

// Splatting" by Zwicker et al., 2001)

float2 xy = collected_xy[j];

float2 d = { xy.x - pixf.x, xy.y - pixf.y };

float4 con_o = collected_conic_opacity[j];

float power = -0.5f * (con_o.x * d.x * d.x + con_o.z * d.y * d.y) - con_o.y * d.x * d.y;

if (power > 0.0f)

... | closed | 2024-07-09T03:38:18Z | 2024-07-09T03:57:59Z | https://github.com/graphdeco-inria/gaussian-splatting/issues/879 | [] | cmh1027 | 1 |

glumpy/glumpy | numpy | 208 | small typo on easyway.rst | very minor typo on line 93 in one of the [tutorials](https://github.com/glumpy/glumpy/blob/master/doc/tutorial/easyway.rst)

[fixed fork](https://github.com/kemfic/glumpy/blob/master/doc/tutorial/easyway.rst)

"Exercices" should be "Exercises" | closed | 2019-03-09T18:34:57Z | 2019-03-09T18:36:46Z | https://github.com/glumpy/glumpy/issues/208 | [] | kemfic | 1 |

CorentinJ/Real-Time-Voice-Cloning | deep-learning | 939 | Can not find the three Pretrained model |

I intended to download these models, but find nothing.

encoder\saved_models\pretrained.pt

synthesizer\saved_models\pretrained\pretrained.pt

vocoder\saved_models\pretrained\pretrained.pt

Any guys kn... | closed | 2021-12-06T18:32:20Z | 2021-12-28T12:34:19Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/939 | [] | ZSTUMathSciLab | 1 |

httpie/cli | api | 1,589 | v3.2.3 GitHub release missing binary and package | ## Checklist

- [x] I've searched for similar issues.

---

The 3.2.3 release https://github.com/httpie/cli/releases/tag/3.2.3 is missing the binary and .deb package | open | 2024-07-18T23:28:21Z | 2024-07-18T23:28:21Z | https://github.com/httpie/cli/issues/1589 | [

"bug",

"new"

] | Crissante | 0 |

scikit-learn/scikit-learn | python | 30,278 | Unifying references style in docstrings in _pca.py | ### Describe the issue linked to the documentation

A very minor suggested change to write references section of function docstrings in identical style in [_pca.py](https://github.com/scikit-learn/scikit-learn/blob/main/sklearn/decomposition/_pca.py) code file.

### Suggest a potential alternative/fix

I aimed to... | closed | 2024-11-15T12:14:37Z | 2024-11-18T08:52:31Z | https://github.com/scikit-learn/scikit-learn/issues/30278 | [

"Documentation"

] | hanjunkim11 | 3 |

miguelgrinberg/microblog | flask | 321 | microblog_fork | closed | 2022-07-12T04:51:14Z | 2022-07-12T06:18:23Z | https://github.com/miguelgrinberg/microblog/issues/321 | [] | ruum1 | 0 | |

dfm/corner.py | data-visualization | 262 | labelpad pushes the labels out of the figure entirely | I'm making a cornerplot where some of my variables are very small or big (order of ~0.00001 or 10000), so the tick labels are quite long. As a result, the default settings have my tick labels overlapping my axis labels, so I'm trying to use the "labelpad" keyword of cornerplot to move the axis labels further away. Howe... | closed | 2024-05-24T15:24:22Z | 2024-05-28T09:40:59Z | https://github.com/dfm/corner.py/issues/262 | [] | melissa-hobson | 2 |

microsoft/unilm | nlp | 958 | [TrOCR] TrOCR processor issue with small version | When I try to use the small version from trocr cannot convert to a fast tokenizer as the code below

Processor :

`processor = TrOCRProcessor.from_pretrained("microsoft/trocr-small-handwritten")`

`model = VisionEncoderDecoderModel.from_pretrained("microsoft/trocr-small-handwritten")

`

the issue as :

`... | open | 2022-12-21T13:38:05Z | 2023-01-05T05:29:15Z | https://github.com/microsoft/unilm/issues/958 | [] | Mohammed20201991 | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.