repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

seleniumbase/SeleniumBase | pytest | 3,394 | SB doesn't work with auth proxy | ```

from seleniumbase import SB

from services.capthca_service import click_captcha

SIGH_UP_URL = "https://skynet.certik.com/signup"

def check_offset():

try:

with SB(uc=True, proxy="xaabdseh:211ks1inip4o@46.203.134.218:5842") as driver:

driver.activate_cdp_mode(SIGH_UP_URL)

... | closed | 2025-01-06T16:26:10Z | 2025-01-06T18:00:51Z | https://github.com/seleniumbase/SeleniumBase/issues/3394 | [

"can't reproduce",

"UC Mode / CDP Mode"

] | mkuchuman | 1 |

keras-team/keras | data-science | 20,113 | Conv2D is no longer supporting Masking in TF v2.17.0 | Dear Keras team,

Conv2D layer no longer supports Masking layer in TensorFlow v2.17.0. I've already raised this issue with TensorFlow. However, they requested that I raise the issue here.

Due the dimensions of our input (i.e. (timesteps, width, channels)), size of the input shape (i.e. (2048, 2000, 3)) and size of... | closed | 2024-08-12T12:52:32Z | 2024-08-15T13:36:52Z | https://github.com/keras-team/keras/issues/20113 | [

"type:support",

"stat:awaiting keras-eng"

] | kavjayawardana | 4 |

OFA-Sys/Chinese-CLIP | computer-vision | 168 | 预训练超参 | 你好,方便提供预训练的超参吗,最好能够就是一个脚本,感谢感谢 | open | 2023-07-20T11:52:30Z | 2023-07-27T10:24:58Z | https://github.com/OFA-Sys/Chinese-CLIP/issues/168 | [] | Hardcandies | 1 |

tensorflow/tensor2tensor | machine-learning | 1,191 | Universal Transformer appears to be buggy and not converging correctly | Summary

---------

Universal Transformer appears to be buggy and not converging correctly:

- Universal transformer does not converge on multi_nli as of the latest tensor2tensor master (9729521bc3cd4952c42dcfda53699e14bee7b409). See below for reproduction

- UT does converge on multi_nli as of August 3 2018 commi... | closed | 2018-10-31T21:02:06Z | 2018-11-20T23:37:07Z | https://github.com/tensorflow/tensor2tensor/issues/1191 | [] | rllin-fathom | 9 |

reloadware/reloadium | pandas | 207 | asyncio ? | asyncio not supported | open | 2024-12-08T14:41:20Z | 2024-12-08T14:41:20Z | https://github.com/reloadware/reloadium/issues/207 | [] | MaxmaxmaximusFree | 0 |

Kanaries/pygwalker | plotly | 502 | PygWalker will not display anything. | Using Windows 11; JupyterLab

Attempts to duplicate the JupyterLab tutorial have failed. Imports have no error.

These 3 are ok:

df = pd.read_csv("https://kanaries-app.s3.ap-northeast-1.amazonaws.com/public-datasets/bike_sharing_dc.csv")

walker = pyg.walk(df)

df.head()

pyg.table(df) =============> AttributeError: m... | closed | 2024-03-27T22:12:25Z | 2024-03-30T11:04:33Z | https://github.com/Kanaries/pygwalker/issues/502 | [] | timhockswender | 12 |

scikit-learn/scikit-learn | data-science | 30,615 | average_precision_score produces unexpected output when scoring a single sample | ### Describe the bug

When using `average_precision_score` and scoring a single sample, the metric ignores `y_score` and will always produce a score of 1.0 if `y_true = [1]` and otherwise will return a score of 0. I would have expected that it would instead raise an exception.

Potentially related to #30147, however ... | open | 2025-01-09T00:41:41Z | 2025-01-15T04:25:45Z | https://github.com/scikit-learn/scikit-learn/issues/30615 | [

"Bug",

"Needs Investigation"

] | Innixma | 6 |

mwaskom/seaborn | data-science | 2,875 | Unable to figure out the plot name and parameters. | What could be the plot name for this figure attached, I was trying for some time, but I was unable to figure it out.

| closed | 2022-06-23T14:48:00Z | 2022-06-23T14:54:26Z | https://github.com/mwaskom/seaborn/issues/2875 | [] | PrashantSinghSengar | 1 |

aleju/imgaug | deep-learning | 723 | imageio not compatible with Python2.7 | > Using cached imgaug-0.4.0-py2.py3-none-any.whl (948 kB)

> Collecting imageio

> Using cached imageio-2.9.0.tar.gz (3.3 MB)

> ERROR: Package 'imageio' requires a different Python: 2.7.17 not in '>=3.5'

Running `pip install imgaug` or `pip install imgaug==0.2.9` on python2 will cause this error to appear. | open | 2020-10-14T04:21:58Z | 2020-10-17T05:29:37Z | https://github.com/aleju/imgaug/issues/723 | [] | ariccspstk | 1 |

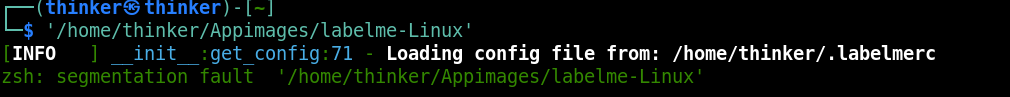

wkentaro/labelme | deep-learning | 986 | [BUG] appimage cannot launch |

| open | 2022-02-12T09:55:02Z | 2022-09-26T14:47:22Z | https://github.com/wkentaro/labelme/issues/986 | [

"issue::bug",

"status: wip-by-author"

] | newyorkthink | 1 |

2noise/ChatTTS | python | 45 | 这几个参数的取值范围以及意义是什么呢? | params_infer_code = {

'spk_emb': rand_spk, # add sampled speaker

'temperature': .3, # using custom temperature

'top_P': 0.7, # top P decode

'top_K': 20, # top K decode

}

| closed | 2024-05-29T07:31:25Z | 2024-07-15T04:01:57Z | https://github.com/2noise/ChatTTS/issues/45 | [

"stale"

] | alanzhao0128 | 1 |

ultralytics/ultralytics | deep-learning | 18,700 | Cancel training when running in a script | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/ultralytics/ultralytics/discussions) and found no similar questions.

### Question

I am running ultralytics model training on a python grpc server. Befo... | closed | 2025-01-15T19:32:10Z | 2025-01-16T11:54:47Z | https://github.com/ultralytics/ultralytics/issues/18700 | [

"question"

] | mario-dg | 2 |

dask/dask | pandas | 11,101 | TypeError: can only concatenate str (not "traceback") to str | <!-- Please include a self-contained copy-pastable example that generates the issue if possible.

``````

Please be concise with code posted. See guidelines below on how to provide a good bug report:

- Craft Minimal Bug Reports http://matthewrocklin.com/blog/work/2018/02/28/minimal-bug-reports

- Minimal Complete Ve... | open | 2024-05-06T14:19:27Z | 2024-05-06T14:19:41Z | https://github.com/dask/dask/issues/11101 | [

"needs triage"

] | sinsniwal | 0 |

Lightning-AI/pytorch-lightning | machine-learning | 19,625 | When I choose to save each epoch model, the previously saved model will be deleted. | ### Bug description

When I choose to save each epoch model, the previously saved model will be deleted.

if previous != current rerutrn Flase

When the names of the passed variab... | closed | 2024-03-13T11:19:45Z | 2024-04-12T15:36:25Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19625 | [

"bug",

"needs triage",

"ver: 2.2.x"

] | Li-Yun-star | 1 |

dask/dask | scikit-learn | 11,032 | Client() generate the error concurrent.futures._base.CancelledError: ('head-1-5-read-csv-19ebc21b0abac0313dd0e5004ea2fce7', 0) | **Code Origin**:

import pandas as pd

import dask.dataframe as dd

from dask.distributed import Client

Client("192.168.11.10:8786");

housing = dd.read_csv('datasets/housing/housing.csv',lineterminator='\n')

housing.head()

**Error Info**:

Traceback (most recent call last):

File "<stdin>", line 1, in <... | closed | 2024-03-30T20:06:02Z | 2024-03-31T03:01:22Z | https://github.com/dask/dask/issues/11032 | [

"needs triage"

] | MadadamXie | 1 |

amisadmin/fastapi-amis-admin | sqlalchemy | 34 | 如何实现一个动态内容的下拉选择? | 我希望能在每一次加载页面的时候根据情况生成下拉选择框的成员, 我尝试在`get_form_item`里截取当前item然后往里面添加一些新的选项, 但是新的选项过不了FastApi的校验, 我尝试修改枚举也无效, 似乎fastapi总是持有最开始的那个枚举. 当前代码如下:

```Python

class NtpVersionEnum(Choices):

t = "t"

q = 'asd'

@site.register_admin

class 生成区域掩码(admin.FormAdmin):

page_schema = '生成'

form = Form(title='生成... | closed | 2022-07-20T10:11:59Z | 2023-03-22T14:24:09Z | https://github.com/amisadmin/fastapi-amis-admin/issues/34 | [] | myuanz | 2 |

jupyter/nbviewer | jupyter | 585 | "Open with Binder" button | Just discuss that with @freeman-lab and @andrewosh , the new version of binder should be able to be queried for "has this notebook/url a binder".

I propose that when we render a notebook that can be started a binder, show binder button next to the "view on GitHub" button.

Thoughts ?

| open | 2016-03-17T19:33:48Z | 2018-07-27T02:24:10Z | https://github.com/jupyter/nbviewer/issues/585 | [

"type:Enhancement"

] | Carreau | 4 |

qubvel-org/segmentation_models.pytorch | computer-vision | 557 | how to get loss graph of train-validation | closed | 2022-02-07T13:45:26Z | 2022-04-16T02:05:03Z | https://github.com/qubvel-org/segmentation_models.pytorch/issues/557 | [

"Stale"

] | johnahjohn | 2 | |

microsoft/nni | deep-learning | 5,533 | RuntimeError: max(): Expected reduction dim to be specified for input.numel() == 0. Specify the reduction dim with the 'dim' argument | **Describe the bug**:

- scheduler: AGP

- pruner: TaylorFO

- mode: global

- using evaluator (new api)

- torchvision resnet 18 model

- iterations: 10

**Environment**:

- NNI version: 2.10

- Training service (local|remote|pai|aml|etc): local

- Python version: Python 3.9.12

- PyTorch version: 1.12.0 ... | closed | 2023-04-26T23:05:45Z | 2023-05-10T10:44:34Z | https://github.com/microsoft/nni/issues/5533 | [] | kriteshg | 9 |

recommenders-team/recommenders | deep-learning | 1,898 | [BUG] Error in AzureML Spark nightly test | ### Description

<!--- Describe your issue/bug/request in detail -->

After we upgrade the CPU VM to a higher tier (see #1897), we got an error in the Spark nightly:

```

E papermill.exceptions.PapermillExecutionError:

E ---------------------------------------------------------------------------... | closed | 2023-03-02T12:22:51Z | 2023-03-30T19:56:53Z | https://github.com/recommenders-team/recommenders/issues/1898 | [

"bug"

] | miguelgfierro | 6 |

modoboa/modoboa | django | 2,886 | Aliases creation on new admin | Hi,

I want to create catch all mail (no-reply@domain.ltd) but when i create the alias I got this error in my web console

POST https://mail.domain.ltd/api/v2/aliases/

Uncaught (in promise) Error: Request failed with status code 404 | closed | 2023-02-24T18:36:29Z | 2023-04-17T19:22:55Z | https://github.com/modoboa/modoboa/issues/2886 | [

"feedback-needed",

"new-ui"

] | FullGreenGN | 1 |

autogluon/autogluon | computer-vision | 3,862 | [timeseries] DirectTabular & RecursiveTabular models fail if static features contain column `unique_id` | **Bug Report Checklist**

<!-- Please ensure at least one of the following to help the developers troubleshoot the problem: -->

- [x] I provided code that demonstrates a minimal reproducible example. <!-- Ideal, especially via source install -->

- [x] I confirmed bug exists on the latest mainline of AutoGluon via s... | open | 2024-01-17T12:33:44Z | 2024-01-17T12:33:53Z | https://github.com/autogluon/autogluon/issues/3862 | [

"bug",

"module: timeseries"

] | shchur | 0 |

ymcui/Chinese-LLaMA-Alpaca-2 | nlp | 545 | 'padding_value' (position 3) must be float, not NoneType | ### 提交前必须检查以下项目

- [X] 请确保使用的是仓库最新代码(git pull),一些问题已被解决和修复。

- [X] 我已阅读[项目文档](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki)和[FAQ章节](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/常见问题)并且已在Issue中对问题进行了搜索,没有找到相似问题和解决方案。

- [X] 第三方插件问题:例如[llama.cpp](https://github.com/ggerganov/llama.cpp)、[LangChain](https://g... | closed | 2024-03-16T08:59:45Z | 2024-04-09T00:11:56Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/issues/545 | [

"stale"

] | liqinga | 3 |

OpenGeoscience/geonotebook | jupyter | 19 | Error on latest version (master as of 09/21/2016 1:52 PM) | [I 13:50:59.147 NotebookApp] Kernel started: a2f9b6a4-bfa9-48a4-9c94-5cff01855bc8

[IPKernelApp] Loading IPython extension: storemagic

[IPKernelApp] Running file in user namespace: /home/chaudhary/.auto_completion_python.py

[IPKernelApp] ERROR | Exception opening comm with target: geonotebook

Traceback (most recent call... | closed | 2016-09-21T17:52:30Z | 2016-09-21T19:00:23Z | https://github.com/OpenGeoscience/geonotebook/issues/19 | [] | aashish24 | 3 |

SYSTRAN/faster-whisper | deep-learning | 699 | faster-whisper 0.10 pypi package has been overriten with version 1.0.0 | ## Problem

Yesterday (2024/2/21) faster-whisper 0.10 package was been overwritten with version 1.0.0

See: https://pypi.org/project/faster-whisper/0.10.0/#files

It seems... | closed | 2024-02-21T14:37:19Z | 2024-02-22T12:27:42Z | https://github.com/SYSTRAN/faster-whisper/issues/699 | [] | jordimas | 6 |

pytest-dev/pytest-cov | pytest | 186 | xml report file is empty | Using the following command:

```commandline

pytest --cov-report xml --cov-report html --cov=. tests/

```

the html coverage report is generated under `htmlcov/` but the `coverage.xml` file is empty.

Can you reproduce this behaviour? I'm using:

```

pytest (3.3.2)

pytest-cov (2.5.1)

coverage (4.4.2)

```

Thanks... | closed | 2018-01-11T21:43:24Z | 2018-01-12T17:07:28Z | https://github.com/pytest-dev/pytest-cov/issues/186 | [] | petobens | 9 |

autogluon/autogluon | scikit-learn | 4,497 | [tabular] Add `num_cpus` and `num_gpus` as init args to TabularPredictor | Add `num_cpus` and `num_gpus` as init args to TabularPredictor

If specified, the values are used as the defaults for all instances in the predictor where `num_cpus` and `num_gpus` can be specified.

Once #4496 is resolved, this logic should allow the user to only have to specify the resource requirements during in... | open | 2024-09-27T03:15:48Z | 2024-12-27T08:41:47Z | https://github.com/autogluon/autogluon/issues/4497 | [

"enhancement",

"module: tabular",

"priority: 1"

] | Innixma | 2 |

pyeve/eve | flask | 596 | Flask support i18n by Flask-Bable, would eve support it? | Flask support i18n by Flask-Bable, would eve support it?

| closed | 2015-04-07T14:24:47Z | 2015-08-24T07:50:44Z | https://github.com/pyeve/eve/issues/596 | [] | gladuo | 1 |

developmentseed/lonboard | jupyter | 425 | Support `__arrow_c_array__` in viz() | It would be nice to be able to visualize any array. Note that this should be before `__geo_interface__` in the conversion steps.

You might want to do something like the following to ensure the field metadata isn't lost if extension types aren't installed.

```py

if hasattr(obj, "__arrow_c_array__"):

schema, ... | closed | 2024-03-20T20:02:58Z | 2024-03-25T16:29:23Z | https://github.com/developmentseed/lonboard/issues/425 | [] | kylebarron | 0 |

cvat-ai/cvat | computer-vision | 8,897 | What kind of values puts cvat in the class "event" by calling a model | Hi,

I use some own wrote models in cvat, that are exist as functions in nuclio. Here are the first code lines of such a function:

````

def __runTheModelCvat__(context, event):

os.chdir('/app')

data = event.body

buf = io.BytesIO(base64.b64decode(data["image"]))

````

I want to write some informatio... | closed | 2025-01-03T12:30:01Z | 2025-01-07T13:01:07Z | https://github.com/cvat-ai/cvat/issues/8897 | [

"question"

] | RoseDeSable | 1 |

remsky/Kokoro-FastAPI | fastapi | 36 | Interruptible Server Stream from Client | closed | 2025-01-13T06:20:03Z | 2025-01-13T11:54:45Z | https://github.com/remsky/Kokoro-FastAPI/issues/36 | [] | remsky | 0 | |

akfamily/akshare | data-science | 5,399 | AKShare 接口问题报告 | AKShare Interface Issue Report | > 欢迎加入《数据科学实战》知识星球,交流财经数据与量化投资相关内容 |

> Welcome to join "Data Science in Practice" Knowledge

> Community for discussions on financial data and quantitative investment.

>

> 详细信息参考 | For detailed information, please visit::https://akshare.akfamily.xyz/learn.html

## 前提 | Prerequisites

遇到任何问题,请先将您的 AKShare 版本升级到**... | closed | 2024-12-03T06:33:54Z | 2024-12-03T10:45:19Z | https://github.com/akfamily/akshare/issues/5399 | [

"bug"

] | orange1949 | 0 |

ijl/orjson | numpy | 94 | Support custom bidirectional de(serialization) with currently supported types | Right now `orjson` serializes `datetime` to `str`, but cannot be configured to go in the reverse direction since the end result is just a `str`.

I would like to be able to configure `orjson` to work bidirectionally for numerous data types (including `datetime`), but A) don't have the appropriate hooks available, and... | closed | 2020-06-02T04:10:50Z | 2020-06-16T14:06:34Z | https://github.com/ijl/orjson/issues/94 | [] | rwarren | 1 |

streamlit/streamlit | streamlit | 10,754 | make does not install yarn, yet calls yarn, thus failing with "yarn: command not found" | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [x] I added a very descriptive title to this issue.

- [x] I have provided sufficient information below to help reproduce this issue.

### Summary

Currently, when I run `make all`, `make all-d... | open | 2025-03-12T18:59:04Z | 2025-03-18T10:50:30Z | https://github.com/streamlit/streamlit/issues/10754 | [

"type:enhancement",

"area:contribution"

] | wyattscarpenter | 5 |

jupyterlab/jupyter-ai | jupyter | 802 | Render the example notebooks in the documentation? | ### Problem

There is quite a few features which are not documented in the docs but have reasonably good examples in the example notebooks.

### Proposed Solution

Should these examples be rendered in the user-facing docs so that users do not need to seach GitHub to understand what the %ai commands do?

### Addit... | closed | 2024-05-22T10:51:10Z | 2024-07-22T16:31:46Z | https://github.com/jupyterlab/jupyter-ai/issues/802 | [

"documentation",

"enhancement"

] | krassowski | 0 |

pydata/xarray | numpy | 9,351 | Add open_mfdatatree | ### What is your issue?

> Currently we have an `open_datatree` function which opens a single netcdf file (or zarr store). We could imagine an `open_mfdatatree` function which is analogous to `open_mfdataset`, which can open multiple files at once.

>

> As `DataTree` has a structure essentially the same as that of a ... | open | 2024-08-13T16:50:50Z | 2024-08-13T21:14:09Z | https://github.com/pydata/xarray/issues/9351 | [

"enhancement",

"topic-DataTree"

] | keewis | 0 |

microsoft/nni | deep-learning | 5,806 | i have a question | when i run the demo of nni, i found my trial always fail. Did anyone tell me why?

| open | 2024-09-08T14:01:01Z | 2024-09-08T14:01:01Z | https://github.com/microsoft/nni/issues/5806 | [] | coolcoolboy | 0 |

joerick/pyinstrument | django | 357 | Is it possible to profile statistically? | Hi

I need to integrate a profiler into production. We have a highly loaded web application, so profiling individual functions is not an option.

Can you tell me if a profiler can work for you as a statistical profiler? For example, take a snapshot of the stack every, say, 20 seconds. So that later, based on this infor... | closed | 2025-01-13T13:38:36Z | 2025-01-13T14:52:30Z | https://github.com/joerick/pyinstrument/issues/357 | [] | JduMoment | 1 |

scikit-multilearn/scikit-multilearn | scikit-learn | 53 | fix a problem with predict_proba in BR | crashes when testing on some data sets, ex. genbase | closed | 2017-03-10T23:35:08Z | 2020-05-21T16:50:01Z | https://github.com/scikit-multilearn/scikit-multilearn/issues/53 | [] | niedakh | 5 |

autogluon/autogluon | scikit-learn | 3,884 | The package cannot create holdout-based sub-fit folder | When I run the following code:

```

train = TabularDataset(train_df)

test = TabularDataset(test_df)

automl = TabularPredictor(label='net_payment_count', problem_type='regression', eval_metric='mean_absolute_error')

automl.fit(train, presets='best_quality')

```

It prints:

`No path specified. Models will be s... | closed | 2024-01-26T07:44:04Z | 2024-04-05T18:46:01Z | https://github.com/autogluon/autogluon/issues/3884 | [

"bug",

"OS: Windows",

"module: tabular",

"Needs Triage",

"priority: 1"

] | shchur | 1 |

CorentinJ/Real-Time-Voice-Cloning | tensorflow | 1,233 | Error: metadata-generation-failed - related to scikit-learn? - python 3.11 | Arch Linux, kernel 6.4.2-arch1-1, python 3.11.3 (GCC 13.1.1), pip 23.1.2

Thank you for any help!

I followed these steps:

```

python -m venv .env

pip install ffmpeg

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cpu

pip install -r requirements.txt

```

Below is the lo... | open | 2023-07-10T23:04:33Z | 2023-07-14T13:36:52Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1233 | [] | jessienab | 1 |

python-visualization/folium | data-visualization | 1,948 | No popup when clicking on specific icons (BeautifyIcon plugin) | Hi!

I was checking the BeautifyIcon plugin demo and I don't understand why when I click on the plane icon the popup doesn't show up.

Here is the example: https://python-visualization.github.io/folium/latest/user_guide/plugins/beautify_icon.html

Is this a font-awesome related problem?

| open | 2024-05-13T11:16:59Z | 2024-07-25T23:01:44Z | https://github.com/python-visualization/folium/issues/1948 | [

"plugin",

"not our bug"

] | EmanuelCastanho | 5 |

huggingface/text-generation-inference | nlp | 2,671 | Distributed Inference failing for Llama-3.1-70b-Instruct | ### System Info

text-generation-inference docker: sha-5e0fb46 (latest)

OS: Ubuntu 22.04

Model: meta-llama/Llama-3.1-70B-Instruct

GPU Used: 4

`nvidia-smi`:

```

+-----------------------------------------------------------------------------------------+

| NVIDIA-SMI 555.42.06 Driver Version: 555.42.0... | closed | 2024-10-20T03:22:39Z | 2024-11-13T14:12:33Z | https://github.com/huggingface/text-generation-inference/issues/2671 | [] | SMAntony | 3 |

exaloop/codon | numpy | 400 | Trying to run nvidia gpu example failed to find libdevice.10.bc | > codon run gpuEx.py

libdevice.10.bc: error: Could not open input file: No such file or directory

where gpuEx.py is:

```

import gpu

@gpu.kernel

def hello(a, b, c):

i = gpu.thread.x

c[i] = a[i] + b[i]

a = [i for i in range(16)]

b = [2*i for i in range(16)]

c = [0 for _ in range(16)]

hello(a, b,... | closed | 2023-06-07T01:28:11Z | 2024-11-10T06:10:25Z | https://github.com/exaloop/codon/issues/400 | [] | kwmartin | 3 |

graphql-python/graphene-sqlalchemy | sqlalchemy | 102 | Automatic conversion from Int to ID is problematic | Graphene-SQLAlchemy automatically converts columns of type SmallInteger or Integer to `ID!` fields if they are primary keys, but does not convert such columns to `ID` fields if they are foreign keys.

Take for example this schema:

```python

class Department(Base):

__tablename__ = 'department'

id = Column(... | open | 2017-12-17T11:55:43Z | 2024-12-05T15:54:25Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/102 | [] | Cito | 15 |

iperov/DeepFaceLab | deep-learning | 565 | wrong frame sequence when convert to mp4 |

when i convert to mp4 there are frames which skip sequence.

every after around 10 frames .... 1 frame will show up in the video which is not part of the numbered output framed in the merged .

for example if i have 20,000 frames. after 4,000 frames 7000th frame will come up on the video .then the video will con... | open | 2020-01-20T06:14:17Z | 2023-06-08T20:04:23Z | https://github.com/iperov/DeepFaceLab/issues/565 | [] | cabof42927 | 3 |

Esri/arcgis-python-api | jupyter | 1,819 | to_featureset() is not handling fields with null date values correctly | I am using to_featureset() to create a featureset from a spatially enabled dataframe and then using edit_features() to edit a feature layer on AGOL. I was having this issue https://github.com/Esri/arcgis-python-api/issues/1693 so I rolled back to a previous version of the API. Now that 2.3.0 is out, I was going to try ... | closed | 2024-05-02T12:43:23Z | 2024-07-10T14:32:30Z | https://github.com/Esri/arcgis-python-api/issues/1819 | [

"bug"

] | tbrobin | 12 |

tensorpack/tensorpack | tensorflow | 1,265 | How to result predict.py --evaluate with Tensorpack? | Hi, everyone.

Problems occurred while using tensorpack.

so.. I trained fasterRCNN example( not resnet, used my backbone network named vovnet). I want to evaluate the performance, i tried to command blow.

> ./predict.py --evaluate coco_minival2014-outputs225000.jn --load ./train_log/maskrcnn/model-230000.data-00... | closed | 2019-07-15T01:06:35Z | 2019-07-15T05:07:19Z | https://github.com/tensorpack/tensorpack/issues/1265 | [] | Dev-HJYoo | 6 |

piccolo-orm/piccolo | fastapi | 991 | Pressing Enter in `Reverse 1 migration? [y/N]` still leads to reversing. | When undoing the migration, pressing `Enter` after the prompt `Reverse 1 migration? [y/N]` still undoing it regardless of the default `N` behavior. | closed | 2024-05-20T22:22:58Z | 2024-05-21T11:00:08Z | https://github.com/piccolo-orm/piccolo/issues/991 | [] | metakot | 2 |

graphistry/pygraphistry | jupyter | 6 | Support Python 3 | closed | 2015-06-25T21:29:55Z | 2015-08-06T13:53:25Z | https://github.com/graphistry/pygraphistry/issues/6 | [

"enhancement"

] | thibaudh | 1 | |

MaartenGr/BERTopic | nlp | 1,512 | TypeError: Cannot use scipy.linalg.eigh for sparse A with k >= N. Use scipy.linalg.eigh(A.toarray()) or reduce k. | Hi!

When I try to run bertopic() I get the following error:

TypeError: Cannot use scipy.linalg.eigh for sparse A with k >= N. Use scipy.linalg.eigh(A.toarray()) or reduce k.

I increased the number of documents to 205504, which should be enough I think.

Does someone have any idea what could cause the pr... | open | 2023-09-07T14:28:18Z | 2024-05-12T18:56:41Z | https://github.com/MaartenGr/BERTopic/issues/1512 | [] | ElskeNijhof | 11 |

tensorflow/tensor2tensor | deep-learning | 1,074 | Transformer NaN loss for multiple GPUs for every dataset | ### Description

I got NaN loss for transformer on all multiple GPUs settings for every dataset. Not sure what happen.

The error occur immediately after step 0

### Environment information

```

OS: Ubuntu 16.04

$ pip freeze | grep tensor

# your output here

tensor2tensor==1.9.0

tensorboard==1.10.0

tenso... | open | 2018-09-18T17:17:21Z | 2018-09-18T17:17:21Z | https://github.com/tensorflow/tensor2tensor/issues/1074 | [] | nxphi47 | 0 |

deepset-ai/haystack | nlp | 8,212 | Update FilterRetriever docstrings | closed | 2024-08-13T11:48:19Z | 2024-10-08T08:54:49Z | https://github.com/deepset-ai/haystack/issues/8212 | [

"type:documentation",

"P1"

] | agnieszka-m | 0 | |

tableau/server-client-python | rest-api | 988 | Use defusedxml library for prevention of xml attacks | See https://github.com/tableau/tabcmd/issues/15

https://pypi.org/project/defusedxml/ | closed | 2022-02-11T04:27:59Z | 2022-03-11T21:14:23Z | https://github.com/tableau/server-client-python/issues/988 | [

"enhancement"

] | jacalata | 0 |

alirezamika/autoscraper | automation | 71 | How to scrape a dynamic website? | I am trying to export a localhost website that is generated with this project:

https://github.com/HBehrens/puncover

The project generates a localhost website, and each time the user interacts clicks a link the project receives a GET request and the website generates the HTML. This means that the HTML is generated... | closed | 2022-02-04T11:54:19Z | 2024-10-11T02:01:14Z | https://github.com/alirezamika/autoscraper/issues/71 | [

"Stale"

] | vChavezB | 4 |

roboflow/supervision | computer-vision | 1,630 | Support for Korean Characters in Annotator Function Fonts | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Question

I'm using the Annotator function in Roboflow Supervision and would like to add annotations in Korean. However, it seems that the default font do... | closed | 2024-10-30T02:35:13Z | 2024-10-30T03:28:12Z | https://github.com/roboflow/supervision/issues/1630 | [

"question"

] | YoungjaeDev | 0 |

pyqtgraph/pyqtgraph | numpy | 2,651 | Error in color displayed with ImageItem and ImageView | <!-- In the following, please describe your issue in detail! -->

<!-- If some sections do not apply, just remove them. -->

### Short description

I want to display a .png in pyqtgraph but the image produced doesn't seems to have a the proper color.

I think I'm missing something quite simple but I can figure out ... | closed | 2023-03-15T12:34:23Z | 2023-03-16T16:27:28Z | https://github.com/pyqtgraph/pyqtgraph/issues/2651 | [] | jmkerloch | 8 |

tableau/server-client-python | rest-api | 620 | filter view with ID | In the doc Api reference, the req_option parameter in the view section mention that it is possible to filter views get using id.

May i am wrong but it seams not possible to filter using the ID of a view.

**Parameters**

Name | Description

:--- | :---

`req_option` | (Optional) You can pass the method a r... | closed | 2020-05-12T05:15:39Z | 2021-06-28T13:38:42Z | https://github.com/tableau/server-client-python/issues/620 | [] | rferraton | 2 |

wagtail/wagtail | django | 12,780 | Memory and Performance Issues with Page Reordering Interface for Large Number of Pages | ### Issue Summary

The page reordering interface becomes unresponsive and stays in perpetual loading state when handling a large number of child pages (1000+). The interface attempts to load all pages simultaneously, causing performance issues in the browser.

### Steps to Reproduce

1. Create a Wagtail project with News... | open | 2025-01-16T07:01:08Z | 2025-02-01T13:46:49Z | https://github.com/wagtail/wagtail/issues/12780 | [

"type:Bug",

"🚀 Performance"

] | sreeharikodavalam | 7 |

AntonOsika/gpt-engineer | python | 331 | adding a custom api end point | I use a custom and free api endpoint so I would like to add a feature which is similar to:

```

export OPENAI_API_KEY=[your api key]

```

so I would like to have:

```

export OPENAI_API_BASE=[your custom api base url]

```

If you don't export it then it will have the default base url.

is it appropriate ... | closed | 2023-06-22T15:36:53Z | 2024-02-28T18:10:40Z | https://github.com/AntonOsika/gpt-engineer/issues/331 | [] | BhagatHarsh | 19 |

axnsan12/drf-yasg | django | 225 | Validator authentication | Hello I have a question,

I have session authentication with my Django project. When I go to the swagger url it generates the docs perfectly except it always throws an error:

With error message being:

`{... | closed | 2018-09-29T06:29:20Z | 2018-10-09T21:55:13Z | https://github.com/axnsan12/drf-yasg/issues/225 | [] | nicholasgcoles | 2 |

vipstone/faceai | tensorflow | 3 | 用摄像头测试 超级卡顿 特别慢 | 大华的摄像头 rtsp 协议的 | open | 2018-05-14T10:36:55Z | 2018-07-23T15:21:34Z | https://github.com/vipstone/faceai/issues/3 | [] | XinJiangQingMang | 3 |

fastapi/fastapi | python | 13,399 | Dependency Models created from Form input data are loosing metadata(field set) and are enforcing validation on default values. |

### Discussed in https://github.com/fastapi/fastapi/discussions/13380

<div type='discussions-op-text'>

<sup>Originally posted by **sneakers-the-rat** February 16, 2025</sup>

### First Check

- [X] I added a very descriptive title here.

- [X] I used the GitHub search to find a similar question and didn't find it.

- [... | open | 2025-02-20T14:36:29Z | 2025-03-07T03:38:28Z | https://github.com/fastapi/fastapi/issues/13399 | [

"good first issue",

"question"

] | luzzodev | 9 |

RomelTorres/alpha_vantage | pandas | 174 | Inaccuracies with intraday data | From research I understand this may be a previously addressed issue.

I have noticed consistent inaccuracies with intraday price data of over 0.1% relative to data streaming services such as tradingview or my personal broker. Is this an issue that is currently being looked into?

Or is this inaccuracy at acceptabl... | closed | 2020-01-09T22:04:30Z | 2020-01-10T17:24:39Z | https://github.com/RomelTorres/alpha_vantage/issues/174 | [] | Savarani | 1 |

fastapi/sqlmodel | fastapi | 219 | How to make a foreign key of a table be the primary key of another table that has a composite primary key ? | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X... | closed | 2022-01-13T14:18:57Z | 2022-01-14T14:06:35Z | https://github.com/fastapi/sqlmodel/issues/219 | [

"question"

] | joaopfg | 0 |

xorbitsai/xorbits | numpy | 57 | DOC: Project index | closed | 2022-12-08T11:25:45Z | 2022-12-16T08:19:07Z | https://github.com/xorbitsai/xorbits/issues/57 | [] | UranusSeven | 1 | |

nltk/nltk | nlp | 2,950 | Panlex lite not working | Hi!

I'm trying to get the panlex_lite corpus working, but I'm not able to download it. Reading [this](https://github.com/nltk/nltk/issues/1253) I've tried to do it with the develop version of NLTK but still no success.

I'm I missing something?

Thanks,

Best | open | 2022-02-21T17:19:12Z | 2022-08-18T16:49:14Z | https://github.com/nltk/nltk/issues/2950 | [] | geblanco | 2 |

keras-team/keras | deep-learning | 20,605 | Mixed-precision stateful LSTM/GRU training not working | Enabling mixed-precision mode when training a stateful LSTM or GRU using Keras v3.7.0 fails with error messages like this:

```

Traceback (most recent call last):

File "/home/lars.christensen/git/keras-io/examples/timeseries/timeseries_weather_forecasting.py", line 275, in <module>

lstm_out = keras.layers.LS... | closed | 2024-12-06T12:31:08Z | 2024-12-10T14:10:24Z | https://github.com/keras-team/keras/issues/20605 | [

"type:Bug"

] | larschristensen | 5 |

lorien/grab | web-scraping | 273 | GrabTimeoutError - Resolving timed out after 3495 milliseconds | Возникла проблема нужно парсить список URL в 1к потоков. Сейчас ставлю 300 потоков или выше, начинает сыпать массово ошибка:

`GrabTimeoutError(28, 'Resolving timed out after 3495 milliseconds')`

Работаю с windows 7, python 3, grab обычный (не spider).

Как это можно исправить? | closed | 2017-07-17T01:51:07Z | 2017-07-19T14:20:09Z | https://github.com/lorien/grab/issues/273 | [] | InputError | 8 |

ipython/ipython | data-science | 14,106 | IPython.start_ipython can only be started once | <!-- This is the repository for IPython command line, if you can try to make sure this question/bug/feature belong here and not on one of the Jupyter repositories.

If it's a generic Python/Jupyter question, try other forums or discourse.jupyter.org.

If you are unsure, it's ok to post here, though, there are few ... | open | 2023-06-28T16:00:38Z | 2024-03-08T13:18:33Z | https://github.com/ipython/ipython/issues/14106 | [] | Yinameah | 1 |

NullArray/AutoSploit | automation | 450 | Unhandled Exception (9bd99fa4a) | Autosploit version: `3.0`

OS information: `Linux-4.19.0-kali1-amd64-x86_64-with-Kali-kali-rolling-kali-rolling`

Running context: `autosploit.py`

Error meesage: `global name 'Except' is not defined`

Error traceback:

```

Traceback (most recent call):

File "/root/Github/exploit/AutoSploit/autosploit/main.py", line 113, i... | closed | 2019-02-10T07:10:05Z | 2019-02-19T04:22:45Z | https://github.com/NullArray/AutoSploit/issues/450 | [] | AutosploitReporter | 0 |

comfyanonymous/ComfyUI | pytorch | 6,410 | Image Representation | ### Feature Idea

Would it be possible to make it as portait representation such as 512x768

Thank you

### Existing Solutions

_No response_

### Other

_No response_ | closed | 2025-01-09T09:22:38Z | 2025-01-11T15:49:43Z | https://github.com/comfyanonymous/ComfyUI/issues/6410 | [

"Feature"

] | MrFries1111 | 1 |

vaexio/vaex | data-science | 1,579 | [FEATURE-REQUEST]Does Vaex support merge as of ? | I think this is a pretty important application | open | 2021-09-16T00:14:03Z | 2022-04-11T11:42:02Z | https://github.com/vaexio/vaex/issues/1579 | [

"feature-request"

] | enthusiastics | 3 |

pydantic/pydantic-ai | pydantic | 1,022 | Static system prompt is not replaced by dynamic system prompt if passing message history | ### Initial Checks

- [x] I confirm that I'm using the latest version of Pydantic AI

### Description

If you originally have a static system prompt.

You can't regenerate it by setting a dynamic system prompt if you pass a message history.

```

chatbot = Agent(

model='openai:gpt-4o',

result_type=str,

syste... | open | 2025-03-01T05:19:05Z | 2025-03-01T05:27:26Z | https://github.com/pydantic/pydantic-ai/issues/1022 | [

"need confirmation"

] | vikigenius | 1 |

coqui-ai/TTS | python | 2,555 | [Bug] RuntimeError: min(): Expected reduction dim to be specified for input.numel() == 0. Specify the reduction dim with the 'dim' argument. | ### Describe the bug

I am training a voice cloning model using VITS. My dataset is in LJSpeech Format. I am trying to train Indian English model straight from character with Phonemizer = False. The training runs for 35-40 epochs and then abruptly stops. Sometimes it runs for even longer, like 15k steps and then stop... | closed | 2023-04-25T11:52:05Z | 2024-01-23T15:28:31Z | https://github.com/coqui-ai/TTS/issues/2555 | [

"bug",

"wontfix"

] | offside609 | 13 |

tensorly/tensorly | numpy | 246 | Complex Support | Here are 3 issues with complex support:

```python

import math

import tensorly as tl

from tensorly import testing

from tensorly.decomposition import parafac, tensor_train

```

1.) Issue with "norm" from backend

```python

def test_norm_complex():

"""The norm at a minimum does not work for complex tenso... | closed | 2021-03-22T11:09:23Z | 2021-03-22T19:59:20Z | https://github.com/tensorly/tensorly/issues/246 | [] | taylorpatti | 2 |

sigmavirus24/github3.py | rest-api | 954 | Missing set permission for collaborators | Missing parameter to handle permission for users

https://developer.github.com/v3/repos/collaborators/#add-user-as-a-collaborator

## Version Information

Please provide:

- The version of Python you're using

3.6

- The version of pip you used to install github3.py

19.1.1

- The version of github3.py, req... | closed | 2019-07-02T12:30:13Z | 2022-08-15T16:38:38Z | https://github.com/sigmavirus24/github3.py/issues/954 | [] | NargiT | 1 |

SYSTRAN/faster-whisper | deep-learning | 603 | Bug with the prompt reset on temp! | ```

Processing segment at 00:52.160

DEBUG: Compression ratio threshold is not met with temperature 0.0 (8.777778 > 2.400000)

DEBUG: Compression ratio threshold is not met with temperature 0.2 (9.307692 > 2.400000)

DEBUG: Log probability threshold is not met with temperature 0.4 (-1.716158 < -1.000000)

DEBUG: Log p... | closed | 2023-12-04T18:19:31Z | 2023-12-13T12:25:13Z | https://github.com/SYSTRAN/faster-whisper/issues/603 | [] | Purfview | 8 |

modin-project/modin | pandas | 7,123 | Preserve shape_hint for dropna | This is to avoid index materialization in Series.columnarize.

| closed | 2024-03-26T09:34:08Z | 2024-03-26T12:14:25Z | https://github.com/modin-project/modin/issues/7123 | [

"Performance 🚀"

] | YarShev | 0 |

psf/requests | python | 6,695 | Session.verify ignored if REQUEST_CA_BUNDLES is set; behaviour not documented. | ## Summary

Session-level CA overrides are ignored if either `REQUEST_CA_BUNDLES` or `CURL_CA_BUNDLES` is set.

This is unintuitive as you'd expect a per-session override to take precedence over global state. It's also not mentioned in [the documentation](https://requests.readthedocs.io/en/latest/user/advanced/#ss... | closed | 2024-05-08T10:34:33Z | 2024-05-08T13:23:58Z | https://github.com/psf/requests/issues/6695 | [] | StefanKopieczek | 4 |

hankcs/HanLP | nlp | 1,821 | python3.10时,tf支持版本最低是2.8.0,setup中却只能等于2.6.0 | <!--

感谢找出bug,请认真填写下表:

-->

**Describe the bug**

- python3.10时,安装`extras_require=tf`时,`tensorflow==2.6.0`不能被满足

https://github.com/hankcs/HanLP/blob/31c34ec86f71fe91f1fe6d86e7ca8575c80e2306/setup.py#L24

- 因为[pypi](https://pypi.org/project/tensorflow/2.6.0/#files)中最多支持到python3.9

_

run :MoltenInit

- _questions you'd like answered_

Why its returning, "Not an editor command" instead of running

If you haven't already, please read the README and browse the `docs/` folder (Yep, did tha... | closed | 2024-03-25T22:59:24Z | 2024-04-14T20:37:57Z | https://github.com/benlubas/molten-nvim/issues/173 | [

"config problem"

] | mesa123123 | 9 |

Lightning-AI/pytorch-lightning | deep-learning | 20,576 | chunkable datasets and dataloaders | ### Description & Motivation

Current large model training requires a huge number of traning samples, so that the traditional Mapped dataloaders are failed to load the training data because of limited memory. so I think there can be a chunkable datasets and dataloaders available. so that we can use the Mapped logic t... | open | 2025-02-06T08:12:44Z | 2025-02-06T08:13:06Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20576 | [

"feature",

"needs triage"

] | JohnHerry | 0 |

abhiTronix/vidgear | dash | 172 | Stablilizer Error: (-215:Assertion failed) count >= 0 && count2 == count in function 'cv::RANSACPointSetRegistrator::run' | <!--

Please note that your issue will be fixed much faster if you spend about

half an hour preparing it, including the exact reproduction steps and a demo.

If you're in a hurry or don't feel confident, it's fine to report bugs with

less details, but this makes it less likely they'll get fixed soon... | closed | 2020-12-08T03:59:26Z | 2020-12-08T17:41:55Z | https://github.com/abhiTronix/vidgear/issues/172 | [

"BUG :bug:",

"SOLVED :checkered_flag:"

] | abhiTronix | 1 |

yt-dlp/yt-dlp | python | 12,559 | yt-dlp has issues with flag parsing when using it via API | ### Checklist

- [x] I'm reporting a bug unrelated to a specific site

- [x] I've verified that I have **updated yt-dlp to nightly or master** ([update instructions](https://github.com/yt-dlp/yt-dlp#update-channels))

- [x] I've searched [known issues](https://github.com/yt-dlp/yt-dlp/issues/3766), [the FAQ](https://gith... | closed | 2025-03-07T22:26:53Z | 2025-03-10T21:52:03Z | https://github.com/yt-dlp/yt-dlp/issues/12559 | [

"question"

] | bqback | 6 |

allenai/allennlp | data-science | 5,028 | Open Information Extraction Training fails with "status code 404" | <!--

Please fill this template entirely and do not erase any of it.

We reserve the right to close without a response bug reports which are incomplete.

If you have a question rather than a bug, please ask on [Stack Overflow](https://stackoverflow.com/questions/tagged/allennlp) rather than posting an issue here.

--... | closed | 2021-03-01T02:02:50Z | 2021-03-25T16:17:33Z | https://github.com/allenai/allennlp/issues/5028 | [

"bug",

"stale"

] | MM-Vianai | 7 |

GibbsConsulting/django-plotly-dash | plotly | 421 | Crashing if orjson is installed | Hi,

There seems to be an issue in [PR#408](https://github.com/GibbsConsulting/django-plotly-dash/pull/408/files )

In _patches.py

we have:

```from plotly.io._json import config```

but then later in the code

``` JsonConfig.validate_orjson()```

Which throws an error that JsonConfig doesn't exist

Looki... | closed | 2022-10-30T18:36:23Z | 2022-11-10T23:19:28Z | https://github.com/GibbsConsulting/django-plotly-dash/issues/421 | [

"bug"

] | mfriedy | 1 |

ray-project/ray | deep-learning | 50,958 | [RayServe] Documentation is misleading about what services get created by a RayService | ### Description

I tried creating a sample RayService, following the documentation: https://docs.ray.io/en/latest/serve/production-guide/kubernetes.html#deploying-a-serve-application :

```

$ kubectl apply -f https://raw.githubusercontent.com/ray-project/kuberay/5b1a5a11f5df76db2d66ed332ff0802dc3bbff76/ray-operator/con... | open | 2025-02-27T21:17:46Z | 2025-02-27T21:22:44Z | https://github.com/ray-project/ray/issues/50958 | [

"triage",

"docs"

] | boyleconnor | 0 |

redis/redis-om-python | pydantic | 295 | perf integrations | open | 2022-07-07T07:34:59Z | 2022-07-07T14:37:22Z | https://github.com/redis/redis-om-python/issues/295 | [

"maintenance"

] | chayim | 0 | |

aminalaee/sqladmin | fastapi | 502 | `action.name` should be unique per `ModelView`, not globally | ### Checklist

- [X] The bug is reproducible against the latest release or `master`.

- [X] There are no similar issues or pull requests to fix it yet.

### Describe the bug

Now that 0.11.0 has released, I began working on the actual feature for my job. It works well, but with 1 unintuitve (to me) drawback I didn't dis... | closed | 2023-05-23T19:40:42Z | 2023-05-24T07:06:52Z | https://github.com/aminalaee/sqladmin/issues/502 | [] | murrple-1 | 0 |

marimo-team/marimo | data-science | 3,912 | Create permalink doesn't copy to clipboard in firefox | ### Describe the bug

Clicking "Create Permalink" worked in chrome, not firefox.

In firefox, on the latest version, `undefined` was copied to my clipboard.

### Environment

This was run on marimo.app

<details>

```

Replace this line with the output of marimo env. Leave the backticks in place.

```

</details>

### Cod... | closed | 2025-02-25T18:37:02Z | 2025-02-26T00:01:25Z | https://github.com/marimo-team/marimo/issues/3912 | [

"bug"

] | paddymul | 4 |

vipstone/faceai | tensorflow | 50 | 安装OpenCV章节的简化 | 安装numpy

pip install numpy

安装whl

pip install wheel

安装OpenCV

pip install opencv-python | open | 2020-04-16T02:25:16Z | 2020-04-16T03:26:09Z | https://github.com/vipstone/faceai/issues/50 | [] | BackMountainDevil | 1 |

JaidedAI/EasyOCR | pytorch | 963 | Pytorch 2.0 support | Does the model support Pytorch 2.0? | open | 2023-03-11T14:15:55Z | 2023-03-11T14:15:55Z | https://github.com/JaidedAI/EasyOCR/issues/963 | [] | arcb01 | 0 |

flairNLP/flair | nlp | 2,692 | OneHotEmbeddings - RecursionError: maximum recursion depth exceeded | **Describe the bug**

After the first epoch of training, when trying to save the model, the code crashes with the following error:

> 2022-03-29 14:42:36,561 EPOCH 1 done: loss 2.2471 - lr 0.1000000

2022-03-29 14:42:47,611 DEV : loss 0.8668829202651978 - f1-score (micro avg) 0.7597

2022-03-29 14:42:47,665 BAD EPOC... | closed | 2022-03-29T20:36:12Z | 2022-04-04T22:26:55Z | https://github.com/flairNLP/flair/issues/2692 | [

"bug"

] | shabnam-b | 6 |

modelscope/modelscope | nlp | 586 | Errors while using git lfs and code |

As title, I got some errors while using git lfs and code

| closed | 2023-10-14T10:18:25Z | 2024-06-27T01:51:04Z | https://github.com/modelscope/modelscope/issues/586 | [

"Stale"

] | Ethan-Chen-plus | 5 |

SciTools/cartopy | matplotlib | 1,673 | Cannot make contour plot together with coastlines | ### Description

Hello lovely cartopy developers!

Thanks a lot for your great work here!

For some reasons, using `Geoaxes.contour` and `Geoaxes.coastlines` together fails with `AttributeError: 'list' object has no attribute 'xy'` (within shapely though, but I suppose the error lies within cartopy). Excluding ei... | closed | 2020-10-30T22:29:37Z | 2021-01-18T21:55:14Z | https://github.com/SciTools/cartopy/issues/1673 | [] | Chilipp | 3 |

jina-ai/clip-as-service | pytorch | 578 | 用docker启动的时候,有时候bertclient可以连接上,有时候不行,加上timeout ,会出现超时 | **Prerequisites**

> Please fill in by replacing `[ ]` with `[x]`.

* [ ] Are you running the latest `bert-as-service`?

* [ ] Did you follow [the installation](https://github.com/hanxiao/bert-as-service#install) and [the usage](https://github.com/hanxiao/bert-as-service#usage) instructions in `README.md`?

* [ ] D... | open | 2020-07-23T06:34:25Z | 2020-07-23T06:34:56Z | https://github.com/jina-ai/clip-as-service/issues/578 | [] | youbingchenyoubing | 0 |

iterative/dvc | data-science | 10,506 | dvc update should consider "cache: false" setting of output in imported `.dvc` | On suggestion by @shcheklein, I added `cache: false` to the output in the `.dvc` file created by `dvc import` to be able to track the imported file with Git instead of DVC. However, `dvc update` still adds the output to `.gitignore`. Also, when I use `git add -f` to track the file despite it being ignored, then `dvc up... | open | 2024-08-06T10:25:04Z | 2024-10-23T08:06:37Z | https://github.com/iterative/dvc/issues/10506 | [

"bug",

"A: data-sync"

] | aschuh-hf | 4 |

dinoperovic/django-salesman | rest-api | 47 | Remove address fields or make them optional. | For stores that sell something intangible (courses, images, videos and much more), indicating the delivery and purchase address is not required. I found a workaround and allow empty strings in the address validator, but it doesn't look very nice in the admin panel | closed | 2024-05-06T21:34:34Z | 2024-05-12T21:14:33Z | https://github.com/dinoperovic/django-salesman/issues/47 | [] | GvozdevLeonid | 1 |

Lightning-AI/pytorch-lightning | machine-learning | 19,664 | torch.hstack() will cause the lose of the gradient flow track | ### Bug description

concatenating multiple `nn.parameters` with `torch.hstack` will cause the loss of gradient flow, i.e. `grad_fn` is none

### What version are you seeing the problem on?

v1.9

### How to reproduce the bug

```python

import numpy as np

import torch

import torch.nn as nn

import torch.op... | closed | 2024-03-17T22:17:21Z | 2024-03-18T03:49:55Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19664 | [

"bug",

"needs triage",

"ver: 1.9.x"

] | ChenDaiwei-99 | 0 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.