repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

junyanz/pytorch-CycleGAN-and-pix2pix | computer-vision | 953 | Use cycleGAN for image enhancement | Hello author!I want to use your code for image enhancement based on CycleGAN.But it produces a lot of artifacts.Can you give me some tips?Thanks a lot. | open | 2020-03-13T14:38:41Z | 2020-03-13T18:22:34Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/953 | [] | crystaloscillator | 1 |

babysor/MockingBird | deep-learning | 563 | 启动后第一次点击 synthesize and vocode 报错 | 执行python demo_toolbox.py -d .\samples 启动程序后,第一次点击synthesize and vocode按钮报错,之后就不报错了,不知道什么原因?

RuntimeError: Error(s) in loading state_dict for Tacotron:

size mismatch for encoder_proj.weight: copying a param with shape torch.Size([128, 512]) from checkpoint, the shape in current model is torch.Size([128, 1024]).

size mismatch for decoder.attn_rnn.weight_ih: copying a param with shape torch.Size([384, 768]) from checkpoint, the shape in current model is torch.Size([384, 1280]).

size mismatch for decoder.rnn_input.weight: copying a param with shape torch.Size([1024, 640]) from checkpoint, the shape in current model is torch.Size([1024, 1152]).

size mismatch for decoder.stop_proj.weight: copying a param with shape torch.Size([1, 1536]) from checkpoint, the shape in current model is torch.Size([1, 2048]). | open | 2022-05-20T13:34:53Z | 2022-05-22T08:39:29Z | https://github.com/babysor/MockingBird/issues/563 | [] | GitHubLDL | 1 |

supabase/supabase-py | fastapi | 1,073 | Installing via Conda forgets h2 | # Bug report

<!--

⚠️ We receive a lot of bug reports which have already been solved or discussed. If you are looking for help, please try these first:

- Docs: https://docs.supabase.com

- Discussions: https://github.com/supabase/supabase/discussions

- Discord: https://discord.supabase.com

Before opening a bug report, please verify the following:

-->

- [X] I confirm this is a bug with Supabase, not with my own application.

- [X] I confirm I have searched the [Docs](https://docs.supabase.com), GitHub [Discussions](https://github.com/supabase/supabase/discussions), and [Discord](https://discord.supabase.com).

## Describe the bug

When installing supabase-py via Conda, the `h2` dependency is somehow forgotten so an error message occurs when using something that requires `httpx`.

## To Reproduce

1. `conda create -n test && conda activate test`

2. `conda install supabase`

3. `pip list`

4. h2 is missing

To compare, you can install `supabase` using pip in the same environment, 4 missing packages will be installed:

> Installing collected packages: hyperframe, hpack, h2, aiohttp

> Attempting uninstall: aiohttp

> Found existing installation: aiohttp 3.11.10

> Uninstalling aiohttp-3.11.10:

> Successfully uninstalled aiohttp-3.11.10

> Successfully installed aiohttp-3.11.13 h2-4.2.0 hpack-4.1.0 hyperframe-6.1.0

## Expected behavior

`h2` should be installed with supabase when using Conda.

## System information

- OS: Windows 10

- Version of supabase-py: 2.13.0

- Version of Python: 3.12

## Additional context

PS: Your issue template for bug report is asking for Node.js version, it should be Python.

| open | 2025-03-10T16:52:42Z | 2025-03-10T16:52:50Z | https://github.com/supabase/supabase-py/issues/1073 | [

"bug"

] | PierreMesure | 1 |

psf/requests | python | 6,860 | "No address associated with hostname" when querying IPv6 hosts | ## Expected Result

```

$ python

Python 3.12.3 (main, Nov 6 2024, 18:32:19) [GCC 13.2.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import requests

>>> requests.get("https://ipv6.icanhazip.com/").text

'2001:920:[REDACTED]'

```

## Actual Result

```shell

$ python

Python 3.12.3 (main, Nov 6 2024, 18:32:19) [GCC 13.2.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import requests

>>> requests.get("https://ipv6.icanhazip.com/").text

Traceback (most recent call last):

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connection.py", line 199, in _new_conn

sock = connection.create_connection(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/util/connection.py", line 60, in create_connection

for res in socket.getaddrinfo(host, port, family, socket.SOCK_STREAM):

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3.12/socket.py", line 963, in getaddrinfo

for res in _socket.getaddrinfo(host, port, family, type, proto, flags):

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

socket.gaierror: [Errno -5] No address associated with hostname

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connectionpool.py", line 789, in urlopen

response = self._make_request(

^^^^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connectionpool.py", line 490, in _make_request

raise new_e

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connectionpool.py", line 466, in _make_request

self._validate_conn(conn)

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connectionpool.py", line 1095, in _validate_conn

conn.connect()

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connection.py", line 693, in connect

self.sock = sock = self._new_conn()

^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connection.py", line 206, in _new_conn

raise NameResolutionError(self.host, self, e) from e

urllib3.exceptions.NameResolutionError: <urllib3.connection.HTTPSConnection object at 0x7c2522dfe180>: Failed to resolve 'ipv6.icanhazip.com' ([Errno -5] No address associated with hostname)

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/user/tool/venv/lib/python3.12/site-packages/requests/adapters.py", line 667, in send

resp = conn.urlopen(

^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/connectionpool.py", line 843, in urlopen

retries = retries.increment(

^^^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/urllib3/util/retry.py", line 519, in increment

raise MaxRetryError(_pool, url, reason) from reason # type: ignore[arg-type]

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

urllib3.exceptions.MaxRetryError: HTTPSConnectionPool(host='ipv6.icanhazip.com', port=443): Max retries exceeded with url: / (Caused by NameResolutionError("<urllib3.connection.HTTPSConnection object at 0x7c2522dfe180>: Failed to resolve 'ipv6.icanhazip.com' ([Errno -5] No address associated with hostname)"))

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/user/tool/venv/lib/python3.12/site-packages/requests/api.py", line 73, in get

return request("get", url, params=params, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/requests/api.py", line 59, in request

return session.request(method=method, url=url, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/requests/sessions.py", line 589, in request

resp = self.send(prep, **send_kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/requests/sessions.py", line 703, in send

r = adapter.send(request, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/user/tool/venv/lib/python3.12/site-packages/requests/adapters.py", line 700, in send

raise ConnectionError(e, request=request)

requests.exceptions.ConnectionError: HTTPSConnectionPool(host='ipv6.icanhazip.com', port=443): Max retries exceeded with url: / (Caused by NameResolutionError("<urllib3.connection.HTTPSConnection object at 0x7c2522dfe180>: Failed to resolve 'ipv6.icanhazip.com' ([Errno -5] No address associated with hostname)"))

```

## Reproduction Steps

```python

import requests

requests.get("https://ipv6.icanhazip.com/").text

```

## System Information

$ python -m requests.help

```json

{

"chardet": {

"version": null

},

"charset_normalizer": {

"version": "3.3.2"

},

"cryptography": {

"version": ""

},

"idna": {

"version": "3.7"

},

"implementation": {

"name": "CPython",

"version": "3.12.3"

},

"platform": {

"release": "6.8.0-50-generic",

"system": "Linux"

},

"pyOpenSSL": {

"openssl_version": "",

"version": null

},

"requests": {

"version": "2.32.3"

},

"system_ssl": {

"version": "300000d0"

},

"urllib3": {

"version": "2.2.3"

},

"using_charset_normalizer": true,

"using_pyopenssl": false

}

```

installed versions (OS is Ubuntu 24)

```shell

$ pip list | grep urllib

urllib3 2.2.3

$ pip list | grep requests

requests 2.32.3

```

Network (I have a fully functional IPv6 setup)

```shell

$ ping -6 google.com

PING google.com (2a00:1450:4007:810::200e) 56 data bytes

64 bytes from par10s50-in-x0e.1e100.net (2a00:1450:4007:810::200e): icmp_seq=1 ttl=120 time=1.49 ms

64 bytes from par10s50-in-x0e.1e100.net (2a00:1450:4007:810::200e): icmp_seq=2 ttl=120 time=1.81 ms

64 bytes from par10s50-in-x0e.1e100.net (2a00:1450:4007:810::200e): icmp_seq=3 ttl=120 time=1.54 ms

64 bytes from par10s50-in-x0e.1e100.net (2a00:1450:4007:810::200e): icmp_seq=4 ttl=120 time=2.08 ms

64 bytes from par10s50-in-x0e.1e100.net (2a00:1450:4007:810::200e): icmp_seq=5 ttl=120 time=1.81 ms

^C

--- google.com ping statistics ---

5 packets transmitted, 5 received, 0% packet loss, time 4006ms

rtt min/avg/max/mdev = 1.491/1.745/2.082/0.213 ms

$ curl -6 ipv6.icanhazip.com

2001:920:[REDACTED]

```

| closed | 2024-12-18T17:36:53Z | 2025-01-05T22:36:48Z | https://github.com/psf/requests/issues/6860 | [] | ThePirateWhoSmellsOfSunflowers | 4 |

huggingface/datasets | numpy | 6,740 | Support for loading geotiff files as a part of the ImageFolder | ### Feature request

Request for adding rasterio support to load geotiff as a part of ImageFolder, instead of using PIL

### Motivation

As of now, there are many datasets in HuggingFace Hub which are predominantly focussed towards RemoteSensing or are from RemoteSensing. The current ImageFolder (if I have understood correctly) uses PIL. This is not really optimized because mostly these datasets have images with many channels and additional metadata. Using PIL makes one loose it unless we provide a custom script. Hence, maybe an API could be added to have this in common?

### Your contribution

If the issue is accepted - i can contribute the code, because I would like to have it automated and generalised. | closed | 2024-03-18T20:00:39Z | 2024-03-27T18:19:48Z | https://github.com/huggingface/datasets/issues/6740 | [

"enhancement"

] | sunny1401 | 0 |

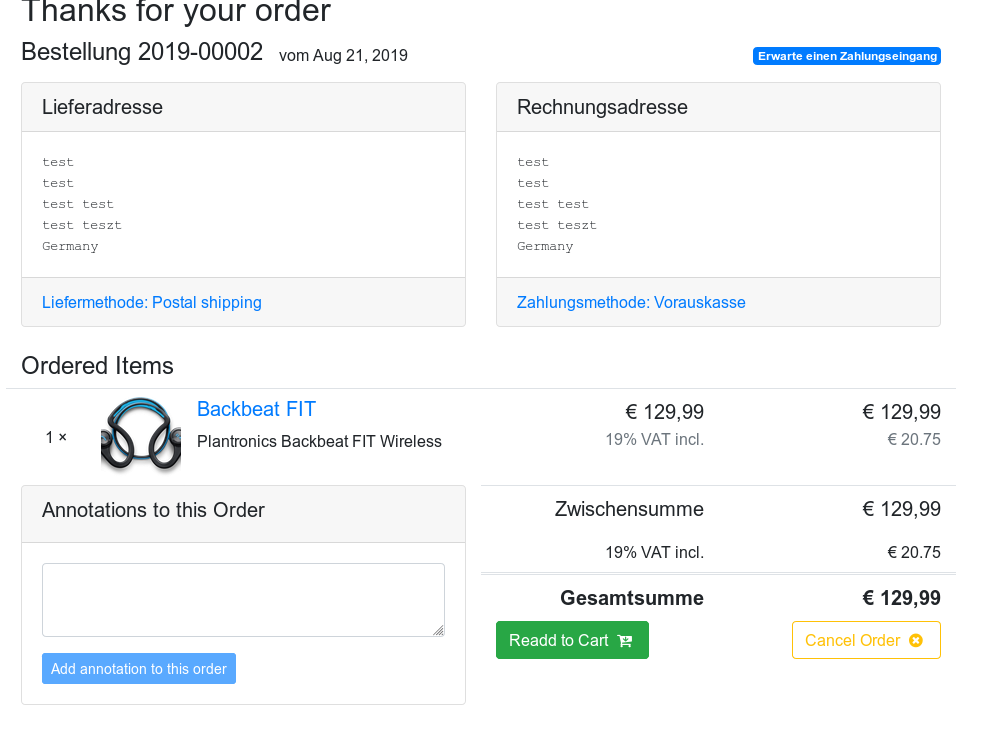

awesto/django-shop | django | 772 | Cookie Cutter Version ignores PostalShippingModifier | I'm using the cookie cutter version from the [tutorial of the documentation](https://django-shop.readthedocs.io/en/latest/) as an example for my own implementation.

Shipping modifiers that should add a line to the cart are ignored in both variants as soon as their activation is dependent on the selection in the shipping form.

If there is only one shipping modifier is present the line is added though.

Using the debugger shows that self.is_active(cart) always returns False.

The image shows the resulting order. Note the selection of "postal shipping" and the lack of 5 € shipping costs.

Code of the modifiers.py that is generated by cookie cutter:

```

class PostalShippingModifier(ShippingModifier):

identifier = 'postal-shipping'

def get_choice(self):

return (self.identifier, _("Postal shipping"))

def add_extra_cart_row(self, cart, request):

if not self.is_active(cart) and len(cart_modifiers_pool.get_shipping_modifiers()) > 1:

return

# add a shipping flat fee

amount = Money('5')

instance = {'label': _("Shipping costs"), 'amount': amount}

cart.extra_rows[self.identifier] = ExtraCartRow(instance)

cart.total += amount

def ship_the_goods(self, delivery):

if not delivery.shipping_id:

raise ValidationError("Please provide a valid Shipping ID")

super(PostalShippingModifier, self).ship_the_goods(delivery)

``` | closed | 2019-08-21T14:24:41Z | 2019-10-21T16:51:24Z | https://github.com/awesto/django-shop/issues/772 | [] | moellering | 2 |

QingdaoU/OnlineJudge | django | 480 | 关于1.6.1版本docker-compose部署 提交代码卡Judging的问题 | 按照官网的docker-compose一键部署命令部署:

```bash

git clone -b v1.6.1 https://github.com/QingdaoU/OnlineJudgeDeploy.git

```

部署后未更改任何设置,提交非CE代码时卡Judging(CE代码正常)

以下是 ~/data/backend/log/dramatiq.log的日志

```

[2024-10-18 04:06:50] - [DEBUG] - [dramatiq.worker.ConsumerThread(default):326] - Pushing message '2e93045a-f01f-468b-9056-2e16a154173b' onto work queue.

[2024-10-18 04:06:50] - [DEBUG] - [dramatiq.worker.WorkerThread:479] - Received message judge_task('f16d911230421eb40a133701b81a6750', 1) with id '2e93045a-f01f-468b-9056-2e16a154173b'.

[2024-10-18 04:06:50] - [ERROR] - [dramatiq.worker.WorkerThread:513] - Failed to process message judge_task('f16d911230421eb40a133701b81a6750', 1) with unhandled exception.

Traceback (most recent call last):

File "/usr/local/lib/python3.12/site-packages/dramatiq/worker.py", line 485, in process_message

res = actor(*message.args, **message.kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/dramatiq/actor.py", line 182, in __call__

return self.fn(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^

File "/app/judge/tasks.py", line 14, in judge_task

JudgeDispatcher(submission_id, problem_id).judge()

File "/app/judge/dispatcher.py", line 174, in judge

self._compute_statistic_info(resp["data"])

File "/app/judge/dispatcher.py", line 106, in _compute_statistic_info

self.submission.statistic_info["time_cost"] = max([x["cpu_time"] for x in resp_data])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

ValueError: max() iterable argument is empty

[2024-10-18 04:06:50] - [WARNING] - [dramatiq.middleware.retries.Retries:103] - Retries exceeded for message '2e93045a-f01f-468b-9056-2e16a154173b'.

[2024-10-18 04:06:50] - [DEBUG] - [dramatiq.worker.ConsumerThread(default):344] - Rejecting message '2e93045a-f01f-468b-9056-2e16a154173b'.

[2024-10-18 04:06:50] - [ERROR] - [sentry.errors:686] - Sentry responded with an error: module 'ssl' has no attribute 'wrap_socket' (url: https://sentry.io/api/263057/store/)

Traceback (most recent call last):

File "/usr/local/lib/python3.12/site-packages/raven/transport/threaded.py", line 165, in send_sync

super(ThreadedHTTPTransport, self).send(url, data, headers)

File "/usr/local/lib/python3.12/site-packages/raven/transport/http.py", line 38, in send

response = urlopen(

^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/raven/utils/http.py", line 66, in urlopen

return opener.open(url, data, timeout)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/urllib/request.py", line 515, in open

response = self._open(req, data)

^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/urllib/request.py", line 532, in _open

result = self._call_chain(self.handle_open, protocol, protocol +

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/urllib/request.py", line 492, in _call_chain

result = func(*args)

^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/raven/utils/http.py", line 46, in https_open

return self.do_open(ValidHTTPSConnection, req)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/urllib/request.py", line 1344, in do_open

h.request(req.get_method(), req.selector, req.data, headers,

File "/usr/local/lib/python3.12/http/client.py", line 1331, in request

self._send_request(method, url, body, headers, encode_chunked)

File "/usr/local/lib/python3.12/http/client.py", line 1377, in _send_request

self.endheaders(body, encode_chunked=encode_chunked)

File "/usr/local/lib/python3.12/http/client.py", line 1326, in endheaders

self._send_output(message_body, encode_chunked=encode_chunked)

File "/usr/local/lib/python3.12/http/client.py", line 1085, in _send_output

self.send(msg)

File "/usr/local/lib/python3.12/http/client.py", line 1029, in send

self.connect()

File "/usr/local/lib/python3.12/site-packages/raven/utils/http.py", line 37, in connect

self.sock = ssl.wrap_socket(

^^^^^^^^^^^^^^^

AttributeError: module 'ssl' has no attribute 'wrap_socket'

b"Sentry responded with an error: module 'ssl' has no attribute 'wrap_socket' (url: https://sentry.io/api/263057/store/)"

[2024-10-18 04:06:50] - [ERROR] - [sentry.errors.uncaught:712] - ["Failed to process message judge_task('f16d911230421eb40a133701b81a6750', 1) with unhandled exception.", ' File "dramatiq/worker.py", line 485, in process_message', ' File "dramatiq/actor.py", line 182, in __call__', ' File "judge/tasks.py", line 14, in judge_task', ' File "judge/dispatcher.py", line 174, in judge', ' File "judge/dispatcher.py", line 106, in _compute_statistic_info']

b'["Failed to process message judge_task(\'f16d911230421eb40a133701b81a6750\', 1) with unhandled exception.", \' File "dramatiq/worker.py", line 485, in process_message\', \' File "dramatiq/actor.py", line 182, in __call__\', \' File "judge/tasks.py", line 14, in judge_task\', \' File "judge/dispatcher.py", line 174, in judge\', \' File "judge/dispatcher.py", line 106, in _compute_statistic_info\']'

```

询问AI可能是docker容器Python版本太高不兼容,不知如何解决

| open | 2024-10-18T04:21:22Z | 2024-11-05T10:30:50Z | https://github.com/QingdaoU/OnlineJudge/issues/480 | [] | wellwei | 4 |

litl/backoff | asyncio | 135 | [Question] Add Loguru as logging | Hello again!

After hours of searching and trying to find the answer. I did not managed to do it :'(

I am trying to combine [loguru](https://github.com/Delgan/loguru) together with loguru:

```py

import sys

from discord_webhook import DiscordEmbed, DiscordWebhook

from loguru import logger

from config import configuration

# -------------------------------------------------------------------------

# Web-hook function as a sink and configure a custom filter to only allow error messages.

# -------------------------------------------------------------------------

def exception_only(record):

return record["level"].name == "ERROR" and record["exception"] is not None

def discord_sink(message):

embed = DiscordEmbed(

title=str(message.record["exception"].value),

description=f"```{message[-1500:]}```",

color=8149447

)

embed.add_embed_field(

name=str(message.record["exception"].type),

value=str(message.record["message"])

)

webhook = DiscordWebhook(

url=configuration.notifications.error_webhook,

username="Exception",

)

webhook.add_embed(embed)

webhook.execute()

# -------------------------------------------------------------------------

# Loguru add

# -------------------------------------------------------------------------

logger.remove()

logger.add(sys.stdout, filter=lambda record: record["level"].name == "INFO")

logger.add(sys.stderr, filter=lambda record: record["level"].name != "INFO")

logger.add(discord_sink, filter=exception_only, enqueue=True)

```

and the code I am currently using for backoff is:

```py

import requests

import backoff

def pred(e):

return (

e.response is not None

and e.response.status_code not in (404, 401)

and e.response.status_code in range(400, 600)

)

@backoff.on_exception(

backoff.expo,

requests.exceptions.RequestException,

max_tries=2,

giveup=pred,

)

def publish():

r = requests.get("https://stackoverflow.com/63463463456", timeout=10)

r.raise_for_status()

print("Yay successful requests")

publish()

```

What I am trying to do is that whenever I have reached the max_tries it would throw an exception:

` Therefore exception info is available to the handler functions via the python standard library, specifically sys.exc_info() or the traceback module.`

and together with loguru, I want it to basically print to my discord whenever I have reached the max_tries. but to be able to do that I need to figure out how I can add the handler for backoff inside loguru due to that I want to be able to catch the exception after x retries.

I think this might be abit "off-topic" but I hope that I could get some help. If you feel like this is too off-topic for loguru question, you can feel free to close as well <3

Looking forward! | closed | 2021-08-30T22:35:27Z | 2024-08-12T14:35:39Z | https://github.com/litl/backoff/issues/135 | [] | BarryThrill | 1 |

babysor/MockingBird | pytorch | 205 | 无法克隆除自带的之外的字,日志会出现循环 |

| closed | 2021-11-09T11:18:17Z | 2021-11-09T13:08:54Z | https://github.com/babysor/MockingBird/issues/205 | [

"bug"

] | Zhangyide114514 | 0 |

marcomusy/vedo | numpy | 1,128 | how to remove vtk log | i use vedo.Mesh.intersect_with_plane many times.

once i call vedo.Mesh.intersect_with_plane, one vtk log("2024-05-30 16:18:57.450 ( 3.004s) [D74557468B270C7F]vtkPolyDataPlaneCutter.:589 INFO| Executing vtkPolyData plane cutter") appear my console and log files.

how can i remove vtk log?

i tried changing logging.setLevel, logging.disabled, stdout/stderr suppress(https://stackoverflow.com/questions/11130156/suppress-stdout-stderr-print-from-python-functions)

but i still sees many vtk logs | open | 2024-05-30T07:34:51Z | 2024-06-28T14:25:01Z | https://github.com/marcomusy/vedo/issues/1128 | [

"long-term"

] | rookie96 | 2 |

art049/odmantic | pydantic | 391 | Mypy issues for odmantic 1.0.0 | # Bug

Following the odmantic 1.0.0 examples and trying to upgrade odmantic/pydantic to V2 , I can find several issues in mypy.

Errors explained as comments below.

### Current Behavior

```

from typing import Optional

from motor.core import AgnosticCollection

from odmantic import Model, Field

from odmantic.config import ODMConfigDict

class A(Model):

test: Optional[str] = Field(default=None)

# Missing named argument "id" for "A" Mypy (call-arg)

A(test="bla")

class B(Model):

# Extra key "indexes" for TypedDict "ConfigDict" Mypy (typeddict-unknown-key)

model_config = {"collection": "B"}

# Fixed: using type explicitly

class B_OK(Model):

model_config = ODMConfigDict(collection="B")

from odmantic import AIOEngine, Model

engine = AIOEngine()

collection = engine.get_collection(B_OK)

# AsyncIOMotorCollection? has no attribute "find" Mypy (attr-defined)

collection.find({})

# This fixes the issue

collection2: AgnosticCollection = engine.get_collection(B_OK)

```

### Environment

- ODMantic version: 1.0.0

- pydantic version: 2.5.2

pydantic-core version: 2.14.5

pydantic-core build: profile=release pgo=true

install path: /home/sander/Projects/application.status.backend/.venv/lib/python3.9/site-packages/pydantic

python version: 3.9.18 (main, Aug 25 2023, 13:20:14) [GCC 11.4.0]

platform: Linux-5.15.133.1-microsoft-standard-WSL2-x86_64-with-glibc2.35

related packages: mypy-1.7.1 typing_extensions-4.9.0 fastapi-0.105.0

| open | 2023-12-19T09:18:31Z | 2024-11-10T19:40:28Z | https://github.com/art049/odmantic/issues/391 | [

"bug"

] | laveolus | 3 |

pytorch/vision | machine-learning | 8,915 | Setting `0` and `1` to `p` argument of `RandomAutocontrast()` gets the same results | ### 🐛 Describe the bug

Setting `0` and `1` to `p` argument of [RandomAutocontrast()](https://pytorch.org/vision/main/generated/torchvision.transforms.v2.RandomAutocontrast.html) gets the same results as shown below:

```python

from torchvision.datasets import OxfordIIITPet

from torchvision.transforms.v2 import RandomAutocontrast

origin_data = OxfordIIITPet(

root="data",

transform=None

)

p0_data = OxfordIIITPet(

root="data",

transform=RandomAutocontrast(p=0)

)

p1_data = OxfordIIITPet(

root="data",

transform=RandomAutocontrast(p=1)

)

import matplotlib.pyplot as plt

def show_images1(data, main_title=None):

plt.figure(figsize=[10, 5])

plt.suptitle(t=main_title, y=0.8, fontsize=14)

for i, (im, _) in zip(range(1, 6), data):

plt.subplot(1, 5, i)

plt.imshow(X=im)

plt.xticks(ticks=[])

plt.yticks(ticks=[])

plt.tight_layout()

plt.show()

show_images1(data=origin_data, main_title="origin_data")

show_images1(data=p0_data, main_title="p0_data")

show_images1(data=p1_data, main_title="p1_data")

```

I expected the results of [ColorJitter()](https://pytorch.org/vision/main/generated/torchvision.transforms.v2.ColorJitter.html) as shown below:

```python

from torchvision.datasets import OxfordIIITPet

from torchvision.transforms.v2 import ColorJitter

origin_data = OxfordIIITPet(

root="data",

transform=None

)

contrast06_06_data = OxfordIIITPet(

root="data",

transform=ColorJitter(contrast=[0.6, 0.6])

)

contrast4_4_data = OxfordIIITPet(

root="data",

transform=ColorJitter(contrast=[4, 4])

)

import matplotlib.pyplot as plt

def show_images1(data, main_title=None):

plt.figure(figsize=[10, 5])

plt.suptitle(t=main_title, y=0.8, fontsize=14)

for i, (im, _) in zip(range(1, 6), data):

plt.subplot(1, 5, i)

plt.imshow(X=im)

plt.xticks(ticks=[])

plt.yticks(ticks=[])

plt.tight_layout()

plt.show()

show_images1(data=origin_data, main_title="origin_data")

show_images1(data=contrast06_06_data, main_title="contrast06_06_data")

show_images1(data=contrast4_4_data, main_title="contrast4_4_data")

```

### Versions

```python

import torchvision

torchvision.__version__ # '0.20.1'

``` | open | 2025-02-18T11:57:41Z | 2025-02-19T11:39:42Z | https://github.com/pytorch/vision/issues/8915 | [] | hyperkai | 1 |

mars-project/mars | pandas | 2,610 | [BUG] race condition: duplicate decref of subtask input chunk | <!--

Thank you for your contribution!

Please review https://github.com/mars-project/mars/blob/master/CONTRIBUTING.rst before opening an issue.

-->

**Describe the bug**

Let us suppose stage A have two Subtask S1 and S2, and they have the same input chunk C

1. S1 got an error, and stage_processor.done has been set.

2. S2 call set_subtask_result, it already reduces reference count of C but not set `stage_processor.results[C.key]`

3. `TaskProcessorActor` find `stage_processor` got an error and call `self._cur_processor.incref_stage(stage_processor)` in function `TaskProcessorActor.start`, it will also reduce the reference count of C which is input of S2.

**To Reproduce**

To help us reproducing this bug, please provide information below:

1. Your Python version: python 3.7.9

2. The version of Mars you use: master

3. Minimized code to reproduce the error:

you can run this bash: `pytest mars/services/task/supervisor/tests/test_task_manager.py::test_error_task -s` in this branch: https://github.com/Catch-Bull/mars/tree/race_condition_case, which base latest mars master,and add some sleep to make reproducing easily

**Expected behavior**

A clear and concise description of what you expected to happen.

**Additional context**

Add any other context about the problem here.

| closed | 2021-12-09T08:23:16Z | 2021-12-10T03:45:24Z | https://github.com/mars-project/mars/issues/2610 | [

"type: bug",

"mod: task service"

] | Catch-Bull | 0 |

mirumee/ariadne-codegen | graphql | 14 | Add README.md | Repo should contain README.md like how Ariadne and Ariadne GraphQL Modules do.

This readme should contain description of problem solved by library and usage example.

It should also contain license part and mention that project was crafted with love by Mirumee. | closed | 2022-10-19T11:47:02Z | 2022-11-04T07:34:20Z | https://github.com/mirumee/ariadne-codegen/issues/14 | [

"roadmap"

] | rafalp | 0 |

aleju/imgaug | machine-learning | 807 | new imageio version breaks over numpy versions. | imageio introduced [new version](https://github.com/imageio/imageio/releases/tag/v2.16.0) which requires numpy > 1.20 over its ArrayLike object.

imgaug doesn't seem to set specfic version of imageio, therefore takes the latest version :

https://github.com/aleju/imgaug/blob/master/requirements.txt#L12

The result:

imgaug installation breaks code over incompatible numpy version:

```

import imgaug

File "/usr/local/lib/python3.6/site-packages/imgaug/__init__.py", line 7, in <module>

from imgaug.imgaug import * # pylint: disable=redefined-builtin

File "/usr/local/lib/python3.6/site-packages/imgaug/imgaug.py", line 19, in <module>

import imageio

File "/usr/local/lib/python3.6/site-packages/imageio/__init__.py", line 22, in <module>

from .core import FormatManager, RETURN_BYTES

File "/usr/local/lib/python3.6/site-packages/imageio/core/__init__.py", line 16, in <module>

from .format import Format, FormatManager

File "/usr/local/lib/python3.6/site-packages/imageio/core/format.py", line 40, in <module>

from ..config import known_plugins, known_extensions, PluginConfig, FileExtension

File "/usr/local/lib/python3.6/site-packages/imageio/config/__init__.py", line 7, in <module>

from .plugins import known_plugins, PluginConfig

File "/usr/local/lib/python3.6/site-packages/imageio/config/plugins.py", line 4, in <module>

from ..core.legacy_plugin_wrapper import LegacyPlugin

File "/usr/local/lib/python3.6/site-packages/imageio/core/legacy_plugin_wrapper.py", line 6, in <module>

from .v3_plugin_api import PluginV3, ImageProperties

File "/usr/local/lib/python3.6/site-packages/imageio/core/v3_plugin_api.py", line 2, in <module>

from numpy.typing import ArrayLike

ModuleNotFoundError: No module named 'numpy.typing'

``` | open | 2022-02-17T16:06:27Z | 2022-12-29T18:59:18Z | https://github.com/aleju/imgaug/issues/807 | [] | morcoGreen | 1 |

plotly/dash-table | dash | 287 | Cypress tests should fail if there are console errors | open | 2018-12-07T17:29:53Z | 2019-07-06T12:24:45Z | https://github.com/plotly/dash-table/issues/287 | [

"dash-meta-good_first_issue",

"dash-type-maintenance"

] | Marc-Andre-Rivet | 0 | |

plotly/plotly.py | plotly | 4,093 | Create a polygonal plane | Hello:

i want to plot a polygonal plane with some vertexs. For example, I have known five vertexs, I want to plot pentagon with the five vertexs, how can I plot it?

Thanks! | closed | 2023-03-07T08:30:41Z | 2023-03-08T15:07:40Z | https://github.com/plotly/plotly.py/issues/4093 | [] | Zcaic | 3 |

matplotlib/cheatsheets | matplotlib | 86 | The cheatsheets website should include a link to the GitHub page | Otherwise, the GibHub page is quite undiscoverable in case somebody wants to contribute. | closed | 2021-11-12T20:47:51Z | 2022-01-07T20:20:34Z | https://github.com/matplotlib/cheatsheets/issues/86 | [] | timhoffm | 1 |

Anjok07/ultimatevocalremovergui | pytorch | 1,700 | Best model for early reverb? | Good day. Thanks to the developers for your work! I have a question. What is the best algorithm for removing early reverberation reflections at the moment? I still have hope of recording vocals at home. )

If not, are there any searches and developments in this direction?

P.S. MB Roformer - DeReverb-DeEcho 1 - maybe this is it, but I couldn't run it in UVR5, it gives an error. I installed MB Roformer - DeReverb-DeEcho 2, but it doesn't capture early reflections very well.

In general, if someone ever manages to create a model for removing early reverberation reflections, it will change the game. In this case, many vocalists will finally be able to record vocals at home and the need for a studio and a booth for recording vocals will disappear. | open | 2025-01-11T15:08:57Z | 2025-01-19T01:48:06Z | https://github.com/Anjok07/ultimatevocalremovergui/issues/1700 | [] | 10Elem | 4 |

mitmproxy/pdoc | api | 330 | Please remove the allegation about Nazi symbolism from the README | In the README, it is suggested that @kernc has associated this project with Nazi symbols by including a swastika on the fork of this project. As has already been addressed in this [comment](https://github.com/pdoc3/pdoc/issues/64#issuecomment-489370963), that is not true.

Despite most strongly being associated with the Nazi Party today, the swastika was and remains an important symbol in many religions and cultures. There are two types of swastikas, a right-facing and a left-facing one. The Nazis used the right-facing swastika as their emblem. In Buddhism, a left-facing swastika symbolises the footprints of the Buddha. You can learn more about this [here](https://en.wikipedia.org/wiki/Swastika#Historical_use).

I am not going to include the symbol itself or any screenshots or images here, but if you look closely, [`pdoc3`'s website](https://pdoc3.github.io/pdoc/) clearly uses the Buddhist swastika in its footer, and your suggestion that it has something to do with Nazism is disgusting and offensive to the people who follow Buddhism. Not to mention to other Asian cultures and religions like Hinduism which use and have used both left- and right-facing swastikas for thousands of years in ways that has nothing to do with what happened in Europe less than a century ago.

It is understandable that you are upset, and rightly so, on the illegal and wrong way in which your project was impersonated, relicensed and removed from Wiki, but your continued assertion that the Buddhist swastika is a Nazi symbol is causing hurt to millions of people around the globe. I would like to request that you remove that particular accusation from the README, and instead highlight any ethical misconduct perpetrated by the `pdoc3` team. | closed | 2022-01-09T06:38:53Z | 2022-02-10T13:49:16Z | https://github.com/mitmproxy/pdoc/issues/330 | [

"enhancement"

] | saifkhichi96 | 6 |

iperov/DeepFaceLab | deep-learning | 5,479 | feature request : 3d face overlay with xyz axes control to change angle during manyual extraction | I hope this will come one day, some ultra low or high angles are impossible to extrack when you just see bottom of the nose and chin or top of the head and nose

| open | 2022-02-17T22:21:27Z | 2022-02-17T22:21:27Z | https://github.com/iperov/DeepFaceLab/issues/5479 | [] | 2blackbar | 0 |

falconry/falcon | api | 2,178 | `DefaultEventLoopPolicy.get_event_loop()` is deprecated (in the case of no loop) | As of Python 3.12, if there is no event loop, `DefaultEventLoopPolicy.get_event_loop()` emits a deprecation warning, and threatens to raise an error in future Python versions.

No replacement is suggested by the official docs. When it does start raising an error, I suppose one can catch it, and create a new loop instead.

We have already updated our code to use this method to combat another deprecation... it seems Python wants to make it harder and harder obtaining the current loop from sync code (which makes no sense to me). | closed | 2023-10-15T16:57:51Z | 2024-03-21T19:59:28Z | https://github.com/falconry/falcon/issues/2178 | [] | vytas7 | 3 |

JaidedAI/EasyOCR | pytorch | 1,352 | Getting "Could not initialize NNPACK! Reason: Unsupported hardware." warning even though NNPACK is enabled | Hi everyone,

I am trying to deploy EasyOCR locally on a VM and when executing the `output = reader.readtext(image_array)` command I get the following warning: "Could not initialize NNPACK! Reason: Unsupported hardware.". I am deploying in a CPU only environment, on CPUs with the AVX512 instructions enabled. When the warning is displayed the model takes a lot more time to process and triggers a Timeout. I executed the following command `print(torch.__config__.show())` to see if NNPACK is available at runtime and indeed it is. This is the output right before the inference is processed:

```

PyTorch built with:

- GCC 9.3

- C++ Version: 201703

- Intel(R) oneAPI Math Kernel Library Version 2022.2-Product Build 20220804 for Intel(R) 64 architecture applications

- Intel(R) MKL-DNN v3.4.2 (Git Hash 1137e04ec0b5251ca2b4400a4fd3c667ce843d67)

- OpenMP 201511 (a.k.a. OpenMP 4.5)

- LAPACK is enabled (usually provided by MKL)

- NNPACK is enabled

- CPU capability usage: AVX512

- Build settings: BLAS_INFO=mkl, BUILD_TYPE=Release, CXX_COMPILER=/opt/rh/devtoolset-9/root/usr/bin/c++, CXX_FLAGS= -D_GLIBCXX_USE_CXX11_ABI=0 -fabi-version=11 -fvisibility-inlines-hidden -DUSE_PTHREADPOOL -DNDEBUG -DUSE_KINETO -DLIBKINETO_NOCUPTI -DLIBKINETO_NOROCTRACER -DUSE_FBGEMM -DUSE_PYTORCH_QNNPACK -DUSE_XNNPACK -DSYMBOLICATE_MOBILE_DEBUG_HANDLE -O2 -fPIC -Wall -Wextra -Werror=return-type -Werror=non-virtual-dtor -Werror=bool-operation -Wnarrowing -Wno-missing-field-initializers -Wno-type-limits -Wno-array-bounds -Wno-unknown-pragmas -Wno-unused-parameter -Wno-unused-function -Wno-unused-result -Wno-strict-overflow -Wno-strict-aliasing -Wno-stringop-overflow -Wsuggest-override -Wno-psabi -Wno-error=pedantic -Wno-error=old-style-cast -Wno-missing-braces -fdiagnostics-color=always -faligned-new -Wno-unused-but-set-variable -Wno-maybe-uninitialized -fno-math-errno -fno-trapping-math -Werror=format -Wno-stringop-overflow, LAPACK_INFO=mkl, PERF_WITH_AVX=1, PERF_WITH_AVX2=1, PERF_WITH_AVX512=1, TORCH_VERSION=2.4.0, USE_CUDA=0, USE_CUDNN=OFF, USE_CUSPARSELT=OFF, USE_EXCEPTION_PTR=1, USE_GFLAGS=OFF, USE_GLOG=OFF, USE_GLOO=ON, USE_MKL=ON, USE_MKLDNN=ON, USE_MPI=OFF, USE_NCCL=OFF, USE_NNPACK=ON, USE_OPENMP=ON, USE_ROCM=OFF, USE_ROCM_KERNEL_ASSERT=OFF,

```

I don't know why it does not detect NNPACK, when pytorch is built with this capability. Any help would be greatly appreciated.

My environment is:

```

easyocr==1.7.1

torch==2.4.0

torchvision==0.19.0

``` | open | 2024-12-19T09:29:30Z | 2024-12-19T09:29:30Z | https://github.com/JaidedAI/EasyOCR/issues/1352 | [] | kirillmeisser | 0 |

robotframework/robotframework | automation | 4,476 | BuiltIn: `Call Method` loses traceback if calling the method fails | I see the below call is not logging traceback on failure and throwing the error message instead.

Call method ${obj} ${method} ${args}

It would be great to log the traceback incase of any failure, it will be helpful to identify the issue soon.

Need the fix in version 4.1

| closed | 2022-09-23T13:36:04Z | 2022-09-29T21:09:08Z | https://github.com/robotframework/robotframework/issues/4476 | [

"bug",

"priority: medium",

"rc 1"

] | kbogineni | 14 |

psf/requests | python | 5,926 | Restore logo | @kennethreitz mentioned he had a single condition for transferring stewardship for this repository:

> When I transferred the project to the PSF, per textual agreement with @ewdurbin, my only requirement was that the logo stayed in place.

I therefore assume that the removal in #5562 (along other images) was accidental and this will swiftly rectified. I’ll do a PR. | closed | 2021-09-02T13:25:33Z | 2021-12-01T16:06:01Z | https://github.com/psf/requests/issues/5926 | [] | flying-sheep | 1 |

pyg-team/pytorch_geometric | deep-learning | 10,101 | CUDA error: device-side assert triggered on torchrun DDP | ### 🐛 Describe the bug

Hello,

I am getting a CUDA error: device-side assert triggered on the global_mean_pool to the extent that I cannot:

1. print the variable

2. detach and save it as a a tensor to see

3. put in a try catch and just ignore the batch, the whole program crashes

4. this happens for >15k data and after running for a good 3-4 epochs

5. there does not seem to be a nan in the dataset

6. the error goes on to subsequent batch and i cannot pass on to it

The followup error to this is:

`failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [45,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [46,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [47,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [48,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [49,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [50,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [51,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [52,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [53,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [54,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [55,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [51,0,0], thread: [56,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [14,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [15,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [16,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [17,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [18,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [19,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [20,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [21,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [22,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [23,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [24,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [25,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\n../aten/src/ATen/native/cuda/ScatterGatherKernel.cu:144: operator(): block: [50,0,0], thread: [26,0,0] Assertion `idx_dim >= 0 && idx_dim < index_size && "index out of bounds"` failed.\nTraceback (most recent call last):\n File "/package/molclass-0.1.1.dev618/molclass/cubes/scripts/script_helper.py", line 303, in forward\n apool = global_mean_pool(ah, abinput.x_s_batch)\n File "/home/floeuser/miniconda/envs/user_env/lib/python3.9/site-packages/torch_geometric/nn/pool/glob.py", line 63, in global_mean_pool\n return scatter(x, batch, dim=dim, dim_size=size, reduce=\'mean\')\n File "/home/floeuser/miniconda/envs/user_env/lib/python3.9/site-packages/torch_geometric/utils/_scatter.py", line 53, in scatter\n dim_size = int(index.max()) + 1 if index.numel() > 0 else 0\nRuntimeError: CUDA error: device-side assert triggered\nCUDA kernel errors might be asynchronously reported at some other API call, so the stacktrace below might be incorrect.\nFor debugging consider passing CUDA_LAUNCH_BLOCKING=1.\nCompile with `TORCH_USE_CUDA_DSA` to enable device-side assertions.\n\n\nDuring handling of the above exception, another exception occurred:\n\nTraceback (most recent call last):\n File "/package/molclass-0.1.1.dev618/molclass/cubes/scripts/script_helper.py", line 313, in forward\n tt = torch.max(abinput.x_s_batch).to(device=\'cpu\')\nRuntimeError: CUDA error: device-side assert triggered\nCUDA kernel errors might be asynchronously reported at some other API call, so the stacktrace below might be incorrect.\nFor debugging consider passing CUDA_LAUNCH_BLOCKING=1.\nCompile with `TORCH_USE_CUDA_DSA` to enable device-side assertions.\n\nTraceback (most recent call last):\n File "/package/molclass-0.1.1.dev618/molclass/cubes/scripts/multi_gpu_train_regressor_module_script.py", line 109, in batch_train\n batch = batch.to(device)\n File "/home/floeuser/miniconda/envs/user_env/lib/python3.9/site-packages/torch_geometric/data/data.py", line 360, in to\n return self.apply(\n File "/home/floeuser/miniconda/envs/user_env/lib/python3.9/site-packages/torch_geometric/data/data.py", line 340, in apply\n store.apply(func, *args)\n File "/home/floeuser/miniconda/envs/user_env/lib/python3.9/site-packages/torch_geometric/data/storage.py", line 201, in apply\n self[key] = recursive_apply(value, func)\n File "/home/floeuser/miniconda/envs/user_env/lib/python3.9/site-packages/torch_geometric/data/storage.py", line 895, in recursive_apply\n return func(data)\n File "/home/floeuser/miniconda/envs/user_env/lib/python3.9/site-packages/torch_geometric/data/data.py", line 361, in <lambda>\n lambda x: x.to(device=device, non_blocking=non_blocking), *args)\nRuntimeError: CUDA error: device-side assert triggered\nCUDA kernel errors might be asynchronously reported at some other API call, so the stacktrace below might be incorrect.\nFor debugging consider passing `

I checked the bounds of the batch and that seems to be within bound so am not sure why it gets the index out of bound.

Also note, this DOES NOT happen if I do not pass a DistSampler or turn shuffle off for the data. here is how i do that bit

```

sampler_tr = DistributedSampler(train_pair_graph, num_replicas=world_size,

shuffle=ncclAttributes.shuffle,

drop_last=True)

sampler_vl = DistributedSampler(val_pair_graph, num_replicas=world_size,

shuffle=ncclAttributes.shuffle,

drop_last=True)

if verbose:

print('samplers loaded')

time.sleep(1)

ptr = GDL(train_pair_graph, batch_size=ncclAttributes.batch_size,

num_workers=ncclAttributes.num_workers, shuffle=not ncclAttributes.shuffle,

pin_memory=False, follow_batch=['x_s'], sampler=sampler_tr)

pvl = GDL(val_pair_graph, batch_size=ncclAttributes.batch_size,

num_workers=ncclAttributes.num_workers, shuffle=not ncclAttributes.shuffle,

pin_memory=False, follow_batch=['x_s'], sampler=sampler_vl)

pts = GDL(test_pair_graph, batch_size=ncclAttributes.batch_size,

num_workers=ncclAttributes.num_workers, shuffle=not ncclAttributes.shuffle,

pin_memory=False, follow_batch=['x_s'])

```

I can compile a working data but that is difficult, i was wondering if you guys have noticed this bug, specially on larger (>10k) data.

### Versions

NA | open | 2025-03-05T23:52:59Z | 2025-03-10T16:32:32Z | https://github.com/pyg-team/pytorch_geometric/issues/10101 | [

"bug"

] | Sayan-m90 | 2 |

fohrloop/dash-uploader | dash | 106 | multi page issue | Hi,

I recently started using dash-uploader and was wondering if there is multi page support since I could not find an example. All the current examples require the app to be initialized using the command below for the du.configure_upload and has to be located in the same file where dash-uploader is used.

Command to initialize du.configure_upload:

`app = Dash(__name__, suppress_callback_exceptions=True, external_stylesheets=[dbc.themes.BOOTSTRAP])`

Heres my code below:

```sh

# app = Dash(__name__, suppress_callback_exceptions=True, external_stylesheets=[dbc.themes.BOOTSTRAP])

dash.register_page(__name__, styles=dbc.themes.BOOTSTRAP)

UPLOAD_FOLDER = r"D:/AzureDevOps/saltr/csv_feature/temporary_folder"

du.configure_upload(app, UPLOAD_FOLDER)

```

I was wondering if there is another way to use du.configure_upload with dash.register_page instead of using dash.Dash since we have this registered somewhere else in the code? | closed | 2022-10-18T22:58:01Z | 2022-12-14T19:43:53Z | https://github.com/fohrloop/dash-uploader/issues/106 | [] | omarirfa | 2 |

PaddlePaddle/ERNIE | nlp | 99 | xnli数据集复现,dev-acc:0.780,test-acc:0.770,没有达到发布的效果 | 直接运行 bash script/run_xnli.sh 训练后的结果与发布不对,然后把xnli训练集的空格去掉,再训练也没有达到相应的效果 | closed | 2019-04-16T03:14:29Z | 2019-04-26T05:01:28Z | https://github.com/PaddlePaddle/ERNIE/issues/99 | [] | shuying136 | 2 |

FlareSolverr/FlareSolverr | api | 804 | Solving embedded turnstiles | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [x] I have read the Discussions

### Environment

```markdown

- FlareSolverr version: 3.2.1

- Last working FlareSolverr version: N/A

- Operating system: Windows-10-10.0.19045-SP0

- Are you using Docker: no

- FlareSolverr User-Agent (see log traces or / endpoint): Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/114.0.0.0 Safari/537.36

- Are you using a VPN: no

- Are you using a Proxy: no

- Are you using Captcha Solver: no

- If using captcha solver, which one:

- URL to test this issue: https://reaperscans.com/comics/5150-sss-class-suicide-hunter/chapters/69155807-chapter-84

```

### Description

FlareSolverr is not capable of detecting and solving embedded turnstiles. For the example, the one on the following webpage: https://reaperscans.com/comics/5150-sss-class-suicide-hunter/chapters/69155807-chapter-84

Would it be possible for FlareSolverr to handle these challenges?

### Logged Error Messages

```text

2023-06-21 16:45:25 INFO Challenge not detected!

2023-06-21 16:45:25 INFO Response in 1.446 s

```

### Screenshots

| open | 2023-06-21T22:56:38Z | 2023-10-02T04:14:02Z | https://github.com/FlareSolverr/FlareSolverr/issues/804 | [

"enhancement",

"help wanted"

] | HDoujinDownloader | 7 |

streamlit/streamlit | data-visualization | 10,190 | Click (not selection) events for dataframes, charts, and maps | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar feature requests.

- [X] I added a descriptive title and summary to this issue.

### Summary

We recently added selection events for dataframes, Plotly/Altair charts, and PyDeck maps. Sometimes, you just want to track a single click instead of a selection though. This should work like a button where you click, it triggers the event, and afterwards the elemets gets reset to its normal state (i.e. there is no datapoint or row/column selected).

### Why?

This is useful to trigger one-time actions after interacting with these elements. E.g. you could imagine clicking on a chart item and opening a dialog with more information about it. This is not trivial to do today, since you need to use the selection event and then erase the selection after the dialog is shown.

### How?

I guess we'd probably add a new parameter `on_click` for that, in addition to `on_select`. And return the same values but just for one rerun, and then return `None` the next time (similar to `st.button`). And it would probably make sense to disallow selection and click events at the same time.

An alternative could be to add a new `selection_mode` but I feel like this could be confusing.

Note that we'll need to do quite a bit of refactoring to support this because our elements currently support only one event type per element.

### Additional Context

_No response_ | open | 2025-01-14T22:26:55Z | 2025-01-14T22:27:19Z | https://github.com/streamlit/streamlit/issues/10190 | [

"type:enhancement",

"feature:st.dataframe",

"feature:st.altair_chart",

"feature:st.plotly_chart",

"feature:st.pydeck_chart",

"area:events"

] | jrieke | 1 |

marimo-team/marimo | data-science | 4,171 | Dropdown should support non-strings | ### Description

`mo.ui.dropdown(options=[1,2,3])` will crash with:

```

Bad Data

options.0: Expected string, received number

```

This can be worked around with dict comprehension.

### Suggested solution

It would be preferable if these `ui` elements just worked with options as any list, not just a list of strings. Same with `multiselect`. This would avoid doing `{str(x): x for x in [1,2,3]}` workarounds.

### Alternative

_No response_

### Additional context

| closed | 2025-03-20T15:10:24Z | 2025-03-20T17:58:11Z | https://github.com/marimo-team/marimo/issues/4171 | [

"enhancement"

] | astrowonk | 0 |

ionelmc/pytest-benchmark | pytest | 72 | Allow elasticsearch authentication besides encoding credentials into url | Currrently the only way of using credentials to authenticate against an elasticsearch instance is to encode the username+password in the url.

This is bad when run in CI, i.e. [gitlab unfortunatly still doesn't support protection against leaking secret variables](https://gitlab.com/gitlab-org/gitlab-ce/issues/13784). URls including credentials leak quite easy.

A better way would be to use a config file or a `.netrc` file for credentials. | closed | 2017-03-27T14:58:17Z | 2017-04-10T03:21:34Z | https://github.com/ionelmc/pytest-benchmark/issues/72 | [] | varac | 5 |

pyg-team/pytorch_geometric | pytorch | 9,680 | consider `conda` -> `.github/conda` | ### 🛠 Proposed Refactor

We could consider moving the `conda` directory to within `.github/conda/`.

This has already been implemented in https://github.com/huggingface/huggingface_hub/tree/main/.github/conda

### Suggest a potential alternative/fix

I don't know the particulars of how conda releases are made, but AFAIK renaming `conda` to `.github/conda` should ideally work. Along with the needed changes in CI. | open | 2024-09-25T17:55:40Z | 2024-09-25T17:55:40Z | https://github.com/pyg-team/pytorch_geometric/issues/9680 | [

"refactor"

] | SauravMaheshkar | 0 |

apify/crawlee-python | web-scraping | 178 | Improve the deduplication of requests | ### Context

A while ago, Honza Javorek raised some good points regarding the deduplication process in the request queue ([#190](https://github.com/apify/apify-sdk-python/issues/190)).

The first one:

> Is it possible that Apify's request queue dedupes the requests only based on the URL? Because the POSTs all have the same URL, just different payload. Which should be very common - by definition of what POST is, or even in practical terms with all the GraphQL APIs around.

In response, we improved the unique key generation logic in the Python SDK ([PR #193](https://github.com/apify/apify-sdk-python/pull/193)) to align with the TS Crawlee. This logic was lates copied to `crawlee-python` and can be found in [crawlee/_utils/requests.py](https://github.com/apify/crawlee-python/blob/v0.0.4/src/crawlee/_utils/requests.py).

The second one:

> Also wondering whether two identical requests with one different HTTP header should be considered same or different. Even with a simple GET request, I could make one with Accept-Language: cs, another with Accept-Language: en, and I can get two wildly different responses from the same server.

Currently, HTTP headers are not considered in the computation of unique keys. Additionally, we do not offer an option to explicitly bypass request deduplication, unlike the `dont_filter` option in Scrapy ([docs](https://docs.scrapy.org/en/latest/topics/request-response.html)).

### Questions

- Should we include HTTP headers in the `unique_key` and `extended_unique_key` computation?

- Yes.

- Should we implement a `dont_filter` feature?

- It will be just a syntax sugar appending some random string to a unique key.

- Also come up with a better name (e.g. `always_enqueue`)?

- Should `use_extended_unique_key` be set as the default behavior?

- Probably not now. | closed | 2024-06-10T09:08:57Z | 2024-09-27T17:43:05Z | https://github.com/apify/crawlee-python/issues/178 | [

"t-tooling",

"solutioning"

] | vdusek | 3 |

deepfakes/faceswap | deep-learning | 558 | Error Extracting! | Extracting Error:

Loading...

12/20/2018 01:41:59 INFO Log level set to: INFO

12/20/2018 01:42:01 INFO Output Directory: C:\Users\ZeroCool22\Miniconda3\envs\faceswap\output

12/20/2018 01:42:01 INFO Input Directory: C:\Users\ZeroCool22\Miniconda3\envs\faceswap\input

12/20/2018 01:42:01 INFO Loading Detect from Mtcnn plugin...

12/20/2018 01:42:01 INFO Loading Align from Fan plugin...

12/20/2018 01:42:01 INFO NB: Parallel processing disabled.You may get faster extraction speeds by enabling it with the -mp switch

12/20/2018 01:42:01 INFO Starting, this may take a while...

12/20/2018 01:42:01 INFO Initializing MTCNN Detector...

12/20/2018 01:42:02 ERROR Caught exception in child process: 7588

12/20/2018 01:43:01 INFO Waiting for Detector... Time out in 4 minutes

12/20/2018 01:44:01 INFO Waiting for Detector... Time out in 3 minutes

12/20/2018 01:45:01 INFO Waiting for Detector... Time out in 2 minutes

12/20/2018 01:46:01 INFO Waiting for Detector... Time out in 1 minutes

12/20/2018 01:47:03 ERROR Got Exception on main handler:

Traceback (most recent call last):

File "C:\Users\ZeroCool22\Miniconda3\envs\faceswap\lib\cli.py", line 90, in execute_script

process.process()

File "C:\Users\ZeroCool22\Miniconda3\envs\faceswap\scripts\extract.py", line 51, in process

self.run_extraction(save_thread)

File "C:\Users\ZeroCool22\Miniconda3\envs\faceswap\scripts\extract.py", line 149, in run_extraction

self.run_detection(to_process)

File "C:\Users\ZeroCool22\Miniconda3\envs\faceswap\scripts\extract.py", line 202, in run_detection

self.plugins.launch_detector()

File "C:\Users\ZeroCool22\Miniconda3\envs\faceswap\scripts\extract.py", line 386, in launch_detector

raise ValueError("Error initializing Detector")

ValueError: Error initializing Detector

12/20/2018 01:47:03 CRITICAL An unexpected crash has occurred. Crash report written to C:\Users\ZeroCool22\Miniconda3\envs\faceswap\crash_report.2018.12.20.014701426414.log. Please verify you are running the latest version of faceswap before reporting

Process exited.

I'm using the GUI: https://i.postimg.cc/J4vVZrn2/GUI-error-2.png

**Crash Report:** https://pastebin.com/MMiWRxmU

| closed | 2018-12-20T04:44:23Z | 2019-01-11T08:55:38Z | https://github.com/deepfakes/faceswap/issues/558 | [] | ZeroCool22 | 7 |

suitenumerique/docs | django | 452 | Typing enter doesn't create a line break bellow but above | ## Bug Report

**Problematic behavior**

Today with Sophie she experiences a weird bug (see video bellow) when typing the enter key at the end of a bullet list.

**Expected behavior/code**

The line break should be created bellow.

**Steps to Reproduce**

**Environment**

- Impress version: Prod

- Platform: Chrome on MacOS

**Additional context**

**Screenshots**

[Capture vidéo du 25-11-2024 19:33:39.webm](https://github.com/user-attachments/assets/1b28068b-47d8-4783-b0ac-9dab55641163) | open | 2024-11-25T18:34:07Z | 2024-11-26T16:45:34Z | https://github.com/suitenumerique/docs/issues/452 | [

"bug"

] | virgile-dev | 1 |

gunthercox/ChatterBot | machine-learning | 2,163 | ModuleNotFoundError: No module named 'adapters' | Hey! So, I want the bot to save its data in a json file, so I used this piece of code:

```py

chatbot = ChatBot("SmortBot",

storage_adapter="adapters.storage.JsonDatabaseAdapter",

database="C:/Users/.../database.json")

```

But I am getting the error `ModuleNotFoundError: No module named 'adapters'`

Am I doing something wrong here? Any help would be appreciated!

Thanks in advance :) | closed | 2021-05-21T09:26:00Z | 2025-02-19T12:30:22Z | https://github.com/gunthercox/ChatterBot/issues/2163 | [] | NISH-Original | 1 |

d2l-ai/d2l-en | data-science | 2,123 | Typo in Ch.2 introduction. | In the second paragraph on the intro to 2. Preliminaries, change "basic" to "basics" (see the image below).

| closed | 2022-05-10T15:08:49Z | 2022-05-10T17:37:44Z | https://github.com/d2l-ai/d2l-en/issues/2123 | [] | jbritton6 | 1 |

psf/requests | python | 6,229 | When making a POST request, why doesn't `auth` or `session.auth` work when logging in, but `data=data` does? | Please refer to our [Stack Overflow tag](https://stackoverflow.com/questions/tagged/python-requests) for guidance.

I have a website that requires an Email and Password to log in. When I set these values in `session.auth` or `auth=(email, pw)` they don't get the right response data. But, when I pass in a `data` object, it works.

What is auth or session.auth doing in the background? Is it just assuming to use "username" or something?

login_url = https://prenotami.esteri.it/Home/Login

**data=data** (returns correct response html)

```python3

with requests.Session() as session:

data = {"Email": "username@gmail.com", "Password": pw}

login_response = session.post(

login_url,

data=data,

)

print(login_response.text)

```

**session.auth** (doesn't return correct response html)

```python3

with requests.Session() as session:

session.auth = (email, pw)

login_response = session.post(

login_url,

)

print(login_response.text)

```

**auth=()** (doesn't return correct html data)

```python3

with requests.Session() as session:

login_response = session.post(

login_url,

auth=("username@gmail.com", pw),

)

print(login_response.text)

```

| closed | 2022-09-04T03:07:40Z | 2023-09-05T00:03:06Z | https://github.com/psf/requests/issues/6229 | [] | whompyjaw | 1 |

httpie/cli | rest-api | 1,559 | I got [reports](https://github.com/RageAgainstThePixel/OpenAI-DotNet/issues/236) that this started happening today: | I got [reports](https://github.com/RageAgainstThePixel/OpenAI-DotNet/issues/236) that this started happening today:

```json

{

"error": {

"message": "Unsupported content type: 'application/json; charset=utf-8'. This API method only accepts 'application/json' requests, but you specified the header 'Content-Type: application/json; charset=utf-8'. Please try again with a supported content type.",

"type": "invalid_request_error",

"param": null,

"code": "unsupported_content_type"

}

}

```

Adding Charset to the content-type should be acceptable. Didn't see any changes go into the schema so likely an issue internally in the API implementation.

_Originally posted by @StephenHodgson in https://github.com/openai/openai-openapi/issues/194_

_Originally posted by @jpmaniqis in https://github.com/mdn/content/issues/32266_ | closed | 2024-02-14T07:05:23Z | 2024-10-30T10:53:34Z | https://github.com/httpie/cli/issues/1559 | [] | jpmaniqis | 1 |

docarray/docarray | pydantic | 1,236 | Mypy plugin | open | 2023-03-14T10:07:09Z | 2023-03-23T10:05:49Z | https://github.com/docarray/docarray/issues/1236 | [] | JoanFM | 1 | |

ivy-llc/ivy | pytorch | 28,545 | Fix Frontend Failing Test: tensorflow - math.paddle.stanh | To-do List: https://github.com/unifyai/ivy/issues/27499 | closed | 2024-03-11T11:17:39Z | 2024-05-02T08:41:36Z | https://github.com/ivy-llc/ivy/issues/28545 | [

"Sub Task"

] | ZJay07 | 0 |

bmoscon/cryptofeed | asyncio | 416 | Deribit Liquidations channel not working | **Describe the bug**

When subscribed to `TRADES` channel, with `LIQUIDATIONS` callback comprised of a class which inherits `KafkaCallback` and `BackendLiquidationsCallback`, no liquidation messages are passed to the Kafka topic.

**Expected behavior**

Liquidation messages passed to the applicable topic.

**Operating System:**

- Kubernetes 1.20.1 - Flatcar Container Linux by Kinvolk 2705.1.0 (Oklo).

- Containers deployed based on python:slim.

**Cryptofeed Version**

- Git commit `6275d95` checked out from repo. `pip install .` in git repository for installation in `virtualenv`.

| closed | 2021-02-12T09:54:32Z | 2021-02-17T08:37:07Z | https://github.com/bmoscon/cryptofeed/issues/416 | [

"bug"

] | mfw78 | 4 |

jmcnamara/XlsxWriter | pandas | 351 | Repetitive merge_range to same range causes warning in Excel 2016 | Hi,

When I, through a program error/bug, repetitively merge_range to the same range, Excel produces two warnings:

1. We found a problem with some content in 'Range.XLSX'. Do you want us to try to recover as much as we can? If you trust the source of this workbook, click Yes. Clicking Yes yields:

2. Excel was able to open the file by repairing or removing the unreadable content. Removed records: Merge cells from /xl/worksheets/sheet2.xml part

I am using Python version 3.4.3 and XlsxWriter 0.8.6 and Excel 2016.

Here is some code that demonstrates the problem:

``` python

import xlsxwriter

workbook = xlsxwriter.Workbook('range.xlsx')

worksheet = workbook.add_worksheet()

merge_format = workbook.add_format({

'bold': True,

'align': 'center'

})

headings = (

("Alpha", "L1:W1"),

("Bravo", "X1:AI1"),

("Charlie", "AJ1:AU1"),

("Delta", "AV1:BG1"))

for (product, coordinates) in headings:

worksheet.merge_range("L1:W1", product, merge_format)

```

Granted it was my program error that caused the merge_format at the same coordinate. My guess is that all four merge_range functions were committed to the spreadsheet and when Excel saw all four merge_formats on the same range, Excel threw its hands up. In correcting/recovering the spreadsheet, Excel used the last value, Delta, in the range.

What I did not have time to test to see if a similar error occurs if I try to write to the same cell repetitively. Hope to follow up with a similar test in a couple of days.

[range.xlsx](https://github.com/jmcnamara/XlsxWriter/files/247344/range.xlsx)

| closed | 2016-05-03T17:07:45Z | 2020-10-21T11:09:43Z | https://github.com/jmcnamara/XlsxWriter/issues/351 | [

"bug"

] | tmoorebetazi | 9 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,242 | KeyError Mapping key not found - error recieved by admins on v4.9.9 |

Version: 4.9.9

```

KeyError Mapping key not found.

Traceback (most recent call last):

File "/usr/lib/python3/dist-packages/twisted/internet/defer.py", line 151, in maybeDeferred

result = f(*args, **kw)

File "/usr/lib/python3/dist-packages/globaleaks/rest/decorators.py", line 56, in wrapper

return f(self, *args, **kwargs)

File "/usr/lib/python3/dist-packages/globaleaks/rest/decorators.py", line 42, in wrapper

return f(self, *args, **kwargs)

File "/usr/lib/python3/dist-packages/globaleaks/rest/decorators.py", line 30, in wrapper

return f(self, *args, **kwargs)

File "/usr/lib/python3/dist-packages/globaleaks/handlers/operation.py", line 20, in put

return func(self, request['args'], *args, **kwargs)

File "/usr/lib/python3/dist-packages/globaleaks/handlers/rtip.py", line 600, in grant_tip_access

return tw(db_grant_tip_access, self.request.tid, self.session.user_id, self.session.cc, rtip_id, req_args['receiver'])

KeyError: 'receiver'

``` | closed | 2022-06-29T09:58:45Z | 2022-07-06T09:34:04Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3242 | [] | aetdr | 4 |

open-mmlab/mmdetection | pytorch | 11,465 | get_flops.py file requires different commands than those in the instructions document | Describes the commands exemplified in the documentation:

python tools/analysis_tools/get_flops.py ${CONFIG_FILE} [--shape ${INPUT_SHAPE}]

However, the parameters requested in this file are shown in the image below

What should I do if I want to change the image size like before? Please update the instructions document, thanks. | open | 2024-02-06T10:06:23Z | 2024-03-23T12:36:53Z | https://github.com/open-mmlab/mmdetection/issues/11465 | [] | Jano-rm-rf | 1 |

koxudaxi/datamodel-code-generator | pydantic | 1,653 | 'NO'/"NO" (string enum value) gets serialized to `False_` ? | I have a really bizarre issue where the string 'NO' (ISO country code for Norway - we need this literal string to be an enum value), as part of an enum, is getting serlialized to `False`

we have an enum of ISO codes that includes the literal string `'NO'` - I have tried this without quotes, and with both single and double

```

MyModel:

type: object

additionalProperties: false

required:

- country_code

properties:

country_code:

type: string

example: US

enum:

- 'AE'

- 'AR'

...

- 'NL'

- 'NO' <----------- have tried just NO, 'NO', and "NO"

- 'NZ'

...

```

using the generation flags:

```

--strict-types str bool

--set-default-enum-member

--enum-field-as-literal one

--use-default

--strict-nullable

--collapse-root-models

--output-model-type pydantic_v2.BaseModel

```

we get the following output!

```

class CountryCode(Enum):

AE = "AE"

AR = "AR"

...

NL = "NL"

False_ = False <------------- !!

NZ = "NZ"

...

```

`'NO'` becomes `False`! Can you please let me know how to not do that?

Thanks much

| open | 2023-11-03T11:36:30Z | 2023-11-04T17:16:57Z | https://github.com/koxudaxi/datamodel-code-generator/issues/1653 | [

"documentation"

] | tommyjcarpenter | 10 |

zappa/Zappa | flask | 870 | [Migrated] Update fails while updating `endpoint_url` with `base_path` when `use_apigateway` is False | Originally from: https://github.com/Miserlou/Zappa/issues/2123 by [jwilges](https://github.com/jwilges)

Update fails while updating `endpoint_url` with `base_path` when `use_apigateway` is `False`.

I believe this issue is similar to issue #1563 but I am trying to scope this new issue to be a small and easily-auditable patch so we can get it in master sooner than later. If this patch also happens to resolve all of the concerns in #1563, great; but if not, that ticket can remain open for further review.

## Context

Build environment:

- Python 3.8.2 virtual environment

- Zappa 0.51.0

## Expected Behavior

Update should complete without raising a `NoneType` exception and should show the endpoint's URL based on `domain_name` and `base_path` settings.

## Actual Behavior

```

(pip 19.2.3 (/opt/python-build/lib/python3.8/site-packages), Requirement.parse('pip>=20.0'), {'pip-tools'})

Calling update for stage prd..

100%|██████████| 2.97M/2.97M [00:00<00:00, 8.37MB/s]

100%|██████████| 27.4M/27.4M [00:18<00:00, 1.50MB/s]Downloading and installing dependencies..

- psycopg2-binary==2.8.5: Downloading

Packaging project as zip.

Uploading api-prd-1592447425.zip (26.2MiB)..

Updating Lambda function code..

Updating Lambda function configuration..

Scheduling..

Unscheduled api-prd-zappa-keep-warm-handler.keep_warm_callback.

Scheduled api-prd-zappa-keep-warm-handler.keep_warm_callback with expression rate(4 minutes)!

Oh no! An error occurred! :(

==============

==============

Need help? Found a bug? Let us know! :D

File bug reports on GitHub here: https://github.com/Miserlou/Zappa

And join our Slack channel here: https://slack.zappa.io

Love!,

Traceback (most recent call last):

File "/opt/python-build/lib/python3.8/site-packages/zappa/cli.py", line 2778, in handle

sys.exit(cli.handle())

File "/opt/python-build/lib/python3.8/site-packages/zappa/cli.py", line 512, in handle

self.dispatch_command(self.command, stage)

File "/opt/python-build/lib/python3.8/site-packages/zappa/cli.py", line 559, in dispatch_command

self.update(self.vargs['zip'], self.vargs['no_upload'])

File "/opt/python-build/lib/python3.8/site-packages/zappa/cli.py", line 1043, in update

endpoint_url += '/' + self.base_path

TypeError: unsupported operand type(s) for +=: 'NoneType' and 'str'

~ Team Zappa!

```

## Possible Fix

Please see [revision 1807e97](https://github.com/jwilges/Zappa/commit/1807e973bfc9e606d38a03b2bf95c572d5af97dc) in my fork of Zappa for a fix I have tested.

In a nutshell, Zappa should generally:

1. check to avoid concatenating on a `endpoint_url` that is `None`, and

2. build a viable `endpoint_url` based on `domain_name` and `base_path` settings whenever viable

## Behavior with Fix

With the fix I proposed above, here is Zappa's new output from `update`:

```

+ zappa update prd

Important! A new version of Zappa is available!

Upgrade with: pip install zappa --upgrade

Visit the project page on GitHub to see the latest changes: https://github.com/Miserlou/Zappa

Calling update for stage prd..

100%|██████████| 768k/768k [00:00<00:00, 5.64MB/s]

100%|██████████| 2.97M/2.97M [00:00<00:00, 10.0MB/s]

100%|██████████| 58.6k/58.6k [00:00<00:00, 1.44MB/s]

100%|██████████| 43.0M/43.0M [00:24<00:00, 1.74MB/s]Downloading and installing dependencies..

- typed-ast==1.4.1: Downloading

- regex==2020.5.7: Using locally cached manylinux wheel

- psycopg2-binary==2.8.5: Downloading

- lazy-object-proxy==1.4.3: Downloading

- coverage==5.1: Using locally cached manylinux wheel

Packaging project as zip.

Uploading api-prd-1592520783.zip (41.0MiB)..

Updating Lambda function code..

Updating Lambda function configuration..

Scheduling..

Unscheduled api-prd-zappa-keep-warm-handler.keep_warm_callback.

Scheduled api-prd-zappa-keep-warm-handler.keep_warm_callback with expression rate(4 minutes)!

Your updated Zappa deployment is live!

```

*(Apologies for switching the package dependencies, its file size is giant in this demo, but that is not related to this patch.)*

The last low-hanging fruit I noticed is that Zappa was not augmenting `deployed_string` with the `endpoint_url` when `use_apigateway` was `False`. So, I added another small patch to address that issue, see: [revision 7a44ee7](https://github.com/jwilges/Zappa/commit/7a44ee75bebb27212e2a31cc88c96cb9efa45c5b).

## Steps to Reproduce

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug include code to reproduce, if relevant -->