repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

deepset-ai/haystack | machine-learning | 8,930 | Remove explicit mention of Haystack "2.x" in cookbooks | closed | 2025-02-25T10:56:07Z | 2025-03-11T09:05:31Z | https://github.com/deepset-ai/haystack/issues/8930 | [

"P2"

] | julian-risch | 0 | |

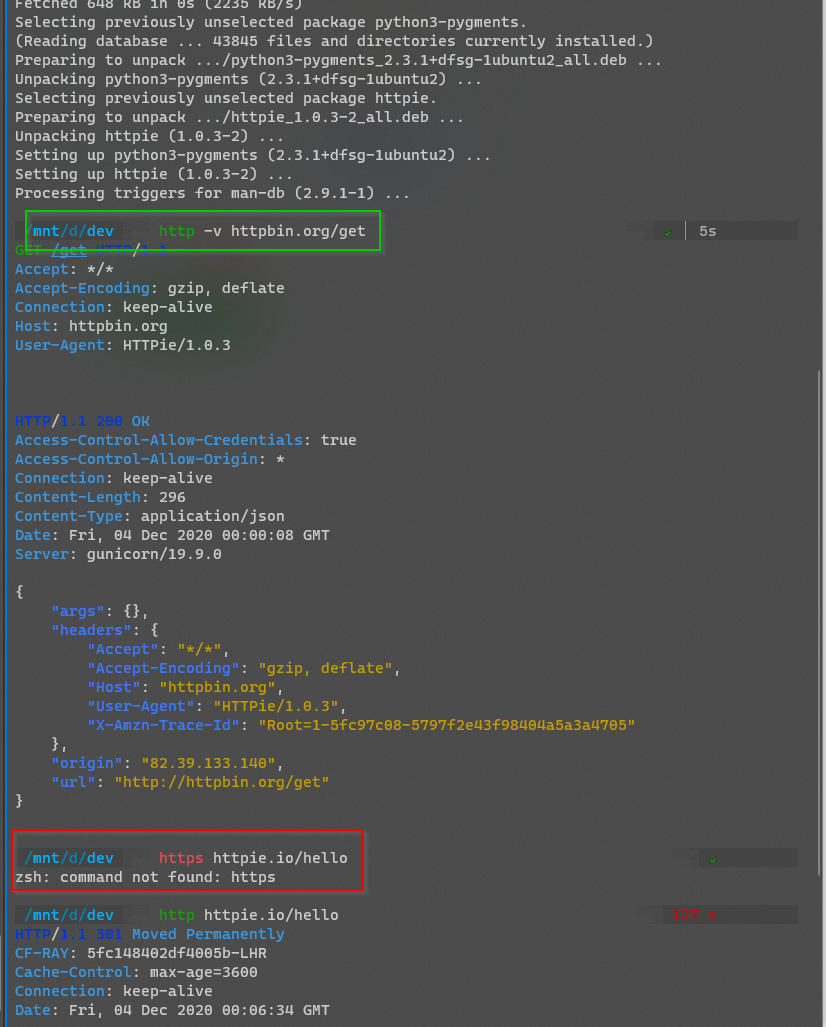

httpie/cli | api | 1,001 | https command not found after fresh installation | Hi guys,

Any time I install httpie on Ubuntu (`sudo apt-get install httpie`), the `http` command works perfectly fine afterwards.

However, `https` is never found.

I have had this on all machines where I tried this so far, WSL on Windows, as well as native Ubuntu 19.x and 20.x.

What do I need to do to get https command working as well, and what needs to change in the installation instructions, because I can't imagine I'm the only one encountering this? | closed | 2020-12-04T00:11:38Z | 2021-09-22T15:56:45Z | https://github.com/httpie/cli/issues/1001 | [

"packaging"

] | batjko | 3 |

autogluon/autogluon | data-science | 3,838 | [BUG] GPU is not used in v1.0.0 | **Bug Report Checklist**

<!-- Please ensure at least one of the following to help the developers troubleshoot the problem: -->

- [x] I provided code that demonstrates a minimal reproducible example. <!-- Ideal, especially via source install -->

- [x] I confirmed bug exists on the latest mainline of AutoGluon via source install. <!-- Preferred -->

- [x] I confirmed bug exists on the latest stable version of AutoGluon. <!-- Unnecessary if prior items are checked -->

**Describe the bug**

I specified num_gpus=2 in predictor.fit() but GPUs are not used at all during training. However, if I specify "ag.num_gpus" for CAT model, gpus will be used as normal. This problem only exists in v1.0.0.

**Expected behavior**

GPUs should be used when num_gpus=2 is specified in predictor.fit()

**To Reproduce**

(this code fails to use gpus)

predictor = TabularPredictor(label='target', eval_metric='accuracy', groups='groups')

predictor.fit(df_train, num_gpus=2, hyperparameters={'CAT': {}}, presets='medium_quality')

(this code uses gpus)

predictor = TabularPredictor(label='target', eval_metric='accuracy', groups='groups')

predictor.fit(df_train, num_gpus=2, hyperparameters={'CAT': {'ag.num_gpus': 1}}, presets='medium_quality')

**Installed Versions**

v1.0.0

```python

INSTALLED VERSIONS

------------------

date : 2023-12-22

time : 16:09:39.297051

python : 3.10.12.final.0

OS : Linux

OS-release : 5.4.0-166-generic

Version : #183-Ubuntu SMP Mon Oct 2 11:28:33 UTC 2023

machine : x86_64

processor : x86_64

num_cores : 128

cpu_ram_mb : 1031560.64453125

cuda version : 12.535.54.03

num_gpus : 2

gpu_ram_mb : [23673, 24203]

avail_disk_size_mb : 574503

accelerate : 0.21.0

async-timeout : 4.0.2

autogluon : 1.0.0

autogluon.common : 1.0.0

autogluon.core : 1.0.0

autogluon.eda : 0.8.1b20230802

autogluon.features : 1.0.0

autogluon.multimodal : 1.0.0

autogluon.tabular : 1.0.0

autogluon.timeseries : 1.0.0

boto3 : 1.28.15

catboost : 1.2.2

defusedxml : 0.7.1

evaluate : 0.4.1

fastai : 2.7.12

gluonts : 0.14.3

hyperopt : 0.2.7

imodels : 1.3.18

ipython : 8.12.2

ipywidgets : 8.0.7

jinja2 : 3.1.2

joblib : 1.3.1

jsonschema : 4.17.3

lightgbm : 3.3.5

lightning : 2.0.9.post0

matplotlib : 3.6.3

missingno : 0.5.2

mlforecast : 0.10.0

networkx : 3.1

nlpaug : 1.1.11

nltk : 3.8.1

nptyping : 2.4.1

numpy : 1.24.4

nvidia-ml-py3 : 7.352.0

omegaconf : 2.2.3

onnxruntime-gpu : 1.13.1

openmim : 0.3.9

orjson : 3.9.10

pandas : 2.1.4

phik : 0.12.3

Pillow : 10.1.0

psutil : 5.9.5

PyMuPDF : 1.21.1

pyod : 1.0.9

pytesseract : 0.3.10

pytorch-lightning : 2.0.9.post0

pytorch-metric-learning: 1.7.3

ray : 2.6.3

requests : 2.31.0

scikit-image : 0.19.3

scikit-learn : 1.3.0

scikit-learn-intelex : None

scipy : 1.11.1

seaborn : 0.12.2

seqeval : 1.2.2

setuptools : 68.0.0

shap : 0.41.0

skl2onnx : 1.13

statsforecast : 1.4.0

statsmodels : 0.14.0

suod : 0.0.9

tabpfn : 0.1.9

tensorboard : 2.14.1

text-unidecode : 1.3

timm : 0.9.12

torch : 2.0.1

torchmetrics : 1.1.2

torchvision : 0.15.2

tqdm : 4.65.0

transformers : 4.31.0

utilsforecast : 0.0.10

vowpalwabbit : 9.4.0

xgboost : 1.7.6

yellowbrick : 1.5

```

</details>

| open | 2023-12-22T16:10:14Z | 2024-11-05T18:04:12Z | https://github.com/autogluon/autogluon/issues/3838 | [

"bug",

"module: tabular",

"Needs Triage",

"priority: 1"

] | hanxuh-hub | 0 |

huggingface/diffusers | deep-learning | 10,969 | Run FLUX-controlnet zero3 training failed: 'weight' must be 2-D | ### Describe the bug

I am attempting to use Zero-3 for Flux Controlnet training on 8 GPUs following the guidance of [README](https://github.com/huggingface/diffusers/blob/main/examples/controlnet/README_flux.md#apply-deepspeed-zero3). The error below occured:

```

[rank0]: RuntimeError: 'weight' must be 2-D

```

### Reproduction

accelerate config:

```

compute_environment: LOCAL_MACHINE

debug: false

deepspeed_config:

gradient_accumulation_steps: 8

offload_optimizer_device: cpu

offload_param_device: cpu

zero3_init_flag: true

zero3_save_16bit_model: true

zero_stage: 3

distributed_type: DEEPSPEED

downcast_bf16: 'no'

enable_cpu_affinity: false

machine_rank: 0

main_training_function: main

mixed_precision: bf16

num_machines: 1

num_processes: 8

rdzv_backend: static

same_network: true

tpu_env: []

tpu_use_cluster: false

tpu_use_sudo: false

use_cpu: false

```

training command:

```

accelerate launch --config_file "./accelerate_config_zero3.yaml" train_controlnet_flux_zero3.py --pretrained_model_name_or_path=/srv/mindone/wty/flux.1-dev/ --jsonl_for_train=/srv/mindone/wty/diffusers/examples/controlnet/train_1000.jsonl --conditioning_image_column=conditioning_image --image_column=image --caption_column=text --output_dir=/srv/mindone/wty/diffusers/examples/controlnet/single_layer --mixed_precision="bf16" --resolution=512 --learning_rate=1e-5 --max_train_steps=100 --train_batch_size=1 --gradient_accumulation_steps=8 --num_double_layers=4 --num_single_layers=0 --seed=42 --gradient_checkpointing --cache_dir=/srv/mindone/wty/diffusers/examples/controlnet/cache --dataloader_num_workers=8 --resume_from_checkpoint="latest"

```

### Logs

```shell

Map: 0%| | 0/1000 [00:00<?, ? examples/s]

[rank0]: Traceback (most recent call last):

[rank0]: File "/srv/mindone/wty/diffusers/examples/controlnet/train_controlnet_flux_zero3.py", line 1481, in <module>

[rank0]: main(args)

[rank0]: File "/srv/mindone/wty/diffusers/examples/controlnet/train_controlnet_flux_zero3.py", line 1182, in main

[rank0]: train_dataset = train_dataset.map(

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/datasets/arrow_dataset.py", line 562, in wrapper

[rank0]: out: Union["Dataset", "DatasetDict"] = func(self, *args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/datasets/arrow_dataset.py", line 3079, in map

[rank0]: for rank, done, content in Dataset._map_single(**dataset_kwargs):

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/datasets/arrow_dataset.py", line 3519, in _map_single

[rank0]: for i, batch in iter_outputs(shard_iterable):

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/datasets/arrow_dataset.py", line 3469, in iter_outputs

[rank0]: yield i, apply_function(example, i, offset=offset)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/datasets/arrow_dataset.py", line 3392, in apply_function

[rank0]: processed_inputs = function(*fn_args, *additional_args, **fn_kwargs)

[rank0]: File "/srv/mindone/wty/diffusers/examples/controlnet/train_controlnet_flux_zero3.py", line 1094, in compute_embeddings

[rank0]: prompt_embeds, pooled_prompt_embeds, text_ids = flux_controlnet_pipeline.encode_prompt(

[rank0]: File "/srv/mindone/wty/diffusers/src/diffusers/pipelines/flux/pipeline_flux_controlnet.py", line 396, in encode_prompt

[rank0]: pooled_prompt_embeds = self._get_clip_prompt_embeds(

[rank0]: File "/srv/mindone/wty/diffusers/src/diffusers/pipelines/flux/pipeline_flux_controlnet.py", line 328, in _get_clip_prompt_embeds

[rank0]: prompt_embeds = self.text_encoder(text_input_ids.to(device), output_hidden_states=False)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1739, in _wrapped_call_impl

[rank0]: return self._call_impl(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1750, in _call_impl

[rank0]: return forward_call(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/transformers/models/clip/modeling_clip.py", line 1056, in forward

[rank0]: return self.text_model(

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1739, in _wrapped_call_impl

[rank0]: return self._call_impl(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1750, in _call_impl

[rank0]: return forward_call(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/transformers/models/clip/modeling_clip.py", line 947, in forward

[rank0]: hidden_states = self.embeddings(input_ids=input_ids, position_ids=position_ids)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1739, in _wrapped_call_impl

[rank0]: return self._call_impl(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1750, in _call_impl

[rank0]: return forward_call(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/transformers/models/clip/modeling_clip.py", line 292, in forward

[rank0]: inputs_embeds = self.token_embedding(input_ids)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1739, in _wrapped_call_impl

[rank0]: return self._call_impl(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1750, in _call_impl

[rank0]: return forward_call(*args, **kwargs)

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/modules/sparse.py", line 190, in forward

[rank0]: return F.embedding(

[rank0]: File "/home/miniconda3/envs/flux-perf/lib/python3.9/site-packages/torch/nn/functional.py", line 2551, in embedding

[rank0]: return torch.embedding(weight, input, padding_idx, scale_grad_by_freq, sparse)

[rank0]: RuntimeError: 'weight' must be 2-D

```

### System Info

- 🤗 Diffusers version: 0.33.0.dev0(HEAD on #10945)

- Platform: Linux-4.15.0-156-generic-x86_64-with-glibc2.27

- Running on Google Colab?: No

- Python version: 3.9.21

- PyTorch version (GPU?): 2.6.0+cu124 (True)

- Flax version (CPU?/GPU?/TPU?): not installed (NA)

- Jax version: not installed

- JaxLib version: not installed

- Huggingface_hub version: 0.29.1

- Transformers version: 4.49.0

- Accelerate version: 1.4.0

- PEFT version: not installed

- Bitsandbytes version: not installed

- Safetensors version: 0.5.3

- xFormers version: not installed

- Accelerator: NVIDIA A100-SXM4-80GB, 81920 MiB

NVIDIA A100-SXM4-80GB, 81920 MiB

NVIDIA A100-SXM4-80GB, 81920 MiB

NVIDIA A100-SXM4-80GB, 81920 MiB

NVIDIA A100-SXM4-80GB, 81920 MiB

NVIDIA A100-SXM4-80GB, 81920 MiB

NVIDIA A100-SXM4-80GB, 81920 MiB

NVIDIA A100-SXM4-80GB, 81920 MiB

### Who can help?

@yiyixuxu @sayakpaul | open | 2025-03-05T02:14:09Z | 2025-03-24T02:24:04Z | https://github.com/huggingface/diffusers/issues/10969 | [

"bug"

] | alien-0119 | 1 |

tensorflow/tensor2tensor | deep-learning | 1,212 | Loading weights before decoding starts in interactive decoding | Hi,

I am trying to use interactive decoding using

```

t2t-decoder \

--data_dir=$DATA_DIR \

--problem=$PROBLEM \

--model=$MODEL \

--hparams_set=$HPARAMS \

--output_dir=$TRAIN_DIR \

--decode_hparams="beam_size=$BEAM_SIZE,alpha=$ALPHA" \

--decode_interactive

```

But in this case the checkpoint gets loaded only once after I enter the first sentence and then receives inputs continuously. Not sure it is a bug or an intended behaviour. But is it not possible that I load model even before I take the first sentence as input and start decoding as the sentence comes

Thank you. | open | 2018-11-12T05:41:26Z | 2018-11-13T06:14:05Z | https://github.com/tensorflow/tensor2tensor/issues/1212 | [] | sugeeth14 | 2 |

Johnserf-Seed/TikTokDownload | api | 375 | cookie用不长久 [BUG] | closed | 2023-03-29T10:07:15Z | 2023-04-03T07:11:36Z | https://github.com/Johnserf-Seed/TikTokDownload/issues/375 | [

"故障(bug)",

"额外求助(help wanted)",

"无效(invalid)"

] | SCxiaozhouM | 1 | |

plotly/dash-core-components | dash | 67 | `dcc.DatePickerSingle` and `dcc.DatePickerRange` missing `style` and `className` properties | One of recent updates gave all components `style` and `className` properties to make styling easier. Two components added for date picking are missing them.

That can be useful when one wants to disable border on them to make them blend in better, or just change cursor to pointer when hovering. | open | 2017-08-30T13:32:20Z | 2019-09-22T16:30:09Z | https://github.com/plotly/dash-core-components/issues/67 | [

"dash-type-enhancement"

] | radekwlsk | 3 |

hankcs/HanLP | nlp | 727 | 如何在索引分词中只使用自定义词典分词 | ## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检索功能](https://github.com/hankcs/HanLP/issues)搜索了我的问题,也没有找到答案。

* 我明白开源社区是出于兴趣爱好聚集起来的自由社区,不承担任何责任或义务。我会礼貌发言,向每一个帮助我的人表示感谢。

* [x] 我在此括号内输入x打钩,代表上述事项确认完毕。

## 版本号

当前最新版本号是:1.5.2

我使用的版本是: 1.3.4

## 我的问题

您好,我希望在索引分词中只使用自定义的词典进行分词,关闭其他词典。请问有何方法吗?

因为索引分词中支持全切分,因此我希望以此来避免基于词典的分词方法只能匹配最长词的缺点。

期待你的回复谢谢。

## 复现问题

字典中为“夏洛特烦恼 n, 夏洛 nr”

List<Term> termList = IndexTokenizer.segment("夏洛特烦恼");

### 期望输出

```

夏洛特烦恼/n [0:5]

夏洛/nr [0:2]

```

### 实际输出

```

夏洛特烦恼/n [0:5]

夏洛/nr [0:2]

夏洛特/nrf [0:3]

烦恼/an [3:5]

```

| closed | 2017-12-29T07:42:58Z | 2018-01-18T10:08:55Z | https://github.com/hankcs/HanLP/issues/727 | [

"question"

] | jimmy-walker | 4 |

psf/requests | python | 6,763 | Body with Special Characters Gets Cut | When sending a request with special characters using the requests module, the request gets cut and is not sent fully.

This seems to be caused by the requests module calculating the length of the original string, but once the request arrives at the urllib3 module, the urllib3 module encodes the request and calculates the content length again. Unfortunately, because the request content length was already calculated and included in the headers dictionary, it gets overwritten.

## Expected Result

The request should be sent fully, including all special characters.

## Actual Result

The request gets cut off, and not all data is sent.

## Reproduction Steps

```python

import requests

### Note that the x are special characters ###

response = requests.post(url='http://127.0.0.1', data="""{"test": "××××"}""")

print(response.text)

```

## Example of the actual request:

```

POST / HTTP/1.1

Host: 127.0.0.1

User-Agent: python-requests/2.31.0

Accept-Encoding: gzip, deflate, br

Accept: */*

Connection: keep-alive

Content-Length: 16

{"test": "×××

```

As it looks the overwrite happens here:

[urllib3 connection.py#L396](https://github.com/urllib3/urllib3/blob/0ce5a89a81943e0153d3655415192e2d82f080cf/src/urllib3/connection.py#L396)

## System Information

$ python -m requests.help

```json

{

"chardet": {

"version": null

},

"charset_normalizer": {

"version": "3.3.2"

},

"cryptography": {

"version": ""

},

"idna": {

"version": "3.7"

},

"implementation": {

"name": "CPython",

"version": "3.11.9"

},

"platform": {

"release": "23.5.0",

"system": "Darwin"

},

"pyOpenSSL": {

"openssl_version": "",

"version": null

},

"requests": {

"version": "2.31.0"

},

"system_ssl": {

"version": "300000d0"

},

"urllib3": {

"version": "2.0.7"

},

"using_charset_normalizer": true,

"using_pyopenssl": false

}

```

<!-- This command is only available on Requests v2.16.4 and greater. Otherwise,

please provide some basic information about your system (Python version,

operating system, &c). -->

| closed | 2024-07-08T14:33:37Z | 2024-07-08T18:35:44Z | https://github.com/psf/requests/issues/6763 | [] | Boris-Rozenfeld | 9 |

matplotlib/matplotlib | data-science | 29,595 | [Bug]: Setting alpha with an array is ignored with jupyterlab %inline backend | ### Bug summary

If alpha is set as an array (of equal shape as the image data), the alpha value is ignored.

This is failing for inline plots in Jupyter Lab.

### Code for reproduction

```Python

import matplotlib

print(matplotlib.__version__)

import numpy as np

import matplotlib.pyplot as plt

img_data = np.random.rand(256, 200)

alpha = np.ones((256, 200))

alpha[:, 0:100] = 0.5

fig, ax = plt.subplots(1, 2, figsize=(10, 8))

ax[0].imshow(img_data, alpha=alpha)

ax[1].imshow(alpha)

```

### Actual outcome

<img width="894" alt="Image" src="https://github.com/user-attachments/assets/dde97c0a-f48b-436f-ad7d-174784bae280" />

### Expected outcome

<img width="869" alt="Image" src="https://github.com/user-attachments/assets/2afff9fe-b9bf-465f-9013-3deda8de3f1a" />

### Additional information

Worked with Matplotlib 3.9.4 with numpy 2.2.1

Fails in matplotlib 3.10.0 (same numpy), running in a Jupyter Lab cell.

Worked as expected when run from the command line, with backend 'macosx', or if `%matplotlib osx` or `%matplotlib ipympl` is used to decorate the cell before running the code above.

### Operating system

OS/X

### Matplotlib Version

3.10.0

### Matplotlib Backend

inline

### Python version

3.13.1

### Jupyter version

4.3.4

### Installation

conda | open | 2025-02-09T02:21:38Z | 2025-02-10T16:14:46Z | https://github.com/matplotlib/matplotlib/issues/29595 | [] | rhiannonlynne | 5 |

SALib/SALib | numpy | 41 | Compute Si for multiple outputs in parallel | It would be good to extend the existing Morris analysis code so that multiple results vectors could be computed from one call, with results passed as a numpy array, rather than just a vector.

At present, it is necessary to loop over each output you wish to compute the metrics for, calling the analysis procedure each time.

``` python

import SALib.analyze.morris

for results in array_of_results:

Si.append(analyze(problem, X, results))

```

It would be preferable to do this:

``` python

import SALib.analyze.morris

Si = analyze(problem, X, array_of_results)

```

A parallel implementation would be equally desirable, and trivial, as each output can be computed independently of the others.

| open | 2015-03-09T15:22:54Z | 2023-12-08T12:31:50Z | https://github.com/SALib/SALib/issues/41 | [

"enhancement"

] | willu47 | 10 |

cvat-ai/cvat | tensorflow | 8,859 | Why does the position of an already marked annotation box change? | ### Actions before raising this issue

- [X] I searched the existing issues and did not find anything similar.

- [X] I read/searched [the docs](https://docs.cvat.ai/docs/)

### Steps to Reproduce

1, fix a labeled object box use rectangle shape ,For example, changing the size of the box

2, Press the `F` key and then press `D` key back to image

3, The box changes to its original size instead of maintaining the changed size

why ?and how to fix it

### Expected Behavior

_No response_

### Possible Solution

_No response_

### Context

_No response_

### Environment

_No response_ | closed | 2024-12-23T09:44:11Z | 2025-03-05T20:45:37Z | https://github.com/cvat-ai/cvat/issues/8859 | [

"bug"

] | jaffe-fly | 11 |

httpie/cli | python | 1,417 | some issues with the copy button | when i click on the copy button . this what I get copied

"# Install httpie

choco install httpie"

so I think i will be be helpful if i can just copy the "choco install httpie" | open | 2022-06-20T09:26:53Z | 2022-10-10T21:22:56Z | https://github.com/httpie/cli/issues/1417 | [

"bug",

"website"

] | alidauda | 3 |

apragacz/django-rest-registration | rest-api | 143 | Cannot install dev dependencies | ### Describe the bug

Running `make install_dev` with Python 3.8 crashes, preventing to install dev dependencies.

### Expected behavior

Dependencies installed.

### Actual behavior

Crashes with:

```log

ERROR: Cannot install -r requirements/requirements-dev.lock.txt (line 164) and ipython==7.16.1 because these package versions have conflicting dependencies.

The conflict is caused by:

The user requested ipython==7.16.1

ipdb 0.13.7 depends on ipython>=7.17.0

To fix this you could try to:

1. loosen the range of package versions you've specified

2. remove package versions to allow pip attempt to solve the dependency conflict

ERROR: ResolutionImpossible: for help visit https://pip.pypa.io/en/latest/user_guide/#fixing-conflicting-dependencies

```

### Steps to reproduce

```sh

git clone git@github.com:apragacz/django-rest-registration.git

cd django-rest-registration

# create virtual environment

git checkout 0.6.2 # optional

make install_dev

``` | closed | 2021-05-26T15:18:56Z | 2021-05-27T05:02:50Z | https://github.com/apragacz/django-rest-registration/issues/143 | [

"type:bug"

] | Neraste | 2 |

jadore801120/attention-is-all-you-need-pytorch | nlp | 209 | Performance Confusion | Hi, appreciate the awesome work, very impressive and concise implementation of the original paper!

Here is something confuses me that, is the performance benchmark link at the [home page](https://github.com/jadore801120/attention-is-all-you-need-pytorch#performance) for the WMT 2016 dataset or WMT 2017 dataset?

| open | 2023-05-26T06:55:57Z | 2023-05-26T08:21:02Z | https://github.com/jadore801120/attention-is-all-you-need-pytorch/issues/209 | [] | Zarca | 0 |

yeongpin/cursor-free-vip | automation | 365 | [讨论]: 如果额度到150后怎么办 | ### Issue 检查清单

- [x] 我理解 Issue 是用于反馈和解决问题的,而非吐槽评论区,将尽可能提供更多信息帮助问题解决。

- [x] 我确认自己需要的是提出问题并且讨论问题,而不是 Bug 反馈或需求建议。

- [x] 我已阅读 [Github Issues](https://github.com/yeongpin/cursor-free-vip/issues) 并搜索了现有的 [开放 Issue](https://github.com/yeongpin/cursor-free-vip/issues) 和 [已关闭 Issue](https://github.com/yeongpin/cursor-free-vip/issues?q=is%3Aissue%20state%3Aclosed%20),没有找到类似的问题。

### 平台

Windows x32

### 版本

v1.7.17

### 您的问题

我用自己的github账号注册的终身访问 显示并非pro而是试用 只有150额度

### 补充信息

```shell

```

### 优先级

低 (有空再看) | open | 2025-03-23T16:04:21Z | 2025-03-24T15:44:56Z | https://github.com/yeongpin/cursor-free-vip/issues/365 | [

"question"

] | tianhuahao | 1 |

plotly/dash | flask | 2,547 | [BUG] Graphs in vertical tabs do not use available space | Installed Dash versions:

```

dash 2.9.2

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

dash-testing-stub 0.0.2

```

Browsers/OS

- OS: Linux

- Browser ungoogled-chromium, firefox

**Describe the bug**

The width of the graph object does not extend to the right side of the screen. When removing `vertical=True` the graph fills the entire screen.

**Expected behavior**

The graph object should use the available space to the right.

**Screenshots**

`vertical=True`

`vertical=False`

**Minimal example used**

```

from dash import Dash, html, dcc

import plotly.express as px

import pandas as pd

app = Dash(__name__)

daily_profile = [0, 0, 0, 0, 0, 0, 0, 0.05, 0.15, 0.2, 0.4, 0.8, 0.7, 0.4, 0.2, 0.15, 0.05, 0, 0, 0, 0, 0, 0, 0]

daily_production = pd.DataFrame(data=daily_profile)

tab = dcc.Tab([dcc.Graph(figure=px.bar(daily_production))], label="tab")

app.layout = html.Div([

dcc.Tabs([tab]

, vertical=True # remove to fix graph behavior

)

])

if __name__ == "__main__":

app.run_server(debug=True)

```

| closed | 2023-05-28T13:11:46Z | 2023-05-31T13:06:14Z | https://github.com/plotly/dash/issues/2547 | [] | TheNyneR | 10 |

fastapi/sqlmodel | pydantic | 542 | Order of columns in the table created does not have 'id' first, despite the order in the SQLModel. Looks like it's prioritising fields with sa_column | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X] I already read and followed all the tutorial in the docs and didn't find an answer.

- [X] I already checked if it is not related to SQLModel but to [Pydantic](https://github.com/samuelcolvin/pydantic).

- [X] I already checked if it is not related to SQLModel but to [SQLAlchemy](https://github.com/sqlalchemy/sqlalchemy).

### Commit to Help

- [X] I commit to help with one of those options 👆

### Example Code

```python

from sqlmodel import Field, SQLModel, JSON, Column, Time

class MyTable(SQLModel, table=True):

id: int | None = Field(default=None, primary_key=True)

name: str

type: str

slug: str = Field(index=True, unique=True)

resource_data: dict | None = Field(default=None, sa_column=Column(JSON)) # type: ignore

# ... create engine

SQLModel.metadata.create_all(engine)

```

### Description

The CREATE table script generated for the model above ends up putting resource_data as the first column, instead of preserving the natural order of 'id' first

```

CREATE TABLE mytable (

resource_data JSON, <----- why is this the FIRST column created?

id SERIAL NOT NULL,

name VARCHAR NOT NULL,

type VARCHAR NOT NULL,

slug VARCHAR NOT NULL,

PRIMARY KEY (id)

)

```

This feels unusual when I inspect my postgresql tables in a db tool like pgAdmin.

How do I ensure the table is created with the 'natural' order?

### Operating System

macOS

### Operating System Details

_No response_

### SQLModel Version

0.0.8

### Python Version

3.11.1

### Additional Context

_No response_ | open | 2023-01-29T14:11:08Z | 2024-11-22T11:57:33Z | https://github.com/fastapi/sqlmodel/issues/542 | [

"question"

] | epicwhale | 8 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 118 | 合并后没有量化的全量中文llama模型应该怎么推理?用原始的llama推理代码一直报词表数量无法整除 | AssertionError: 49953 is not divisible by 2

WARNING:torch.distributed.elastic.multiprocessing.api:Sending process 2276862 closing signal SIGTERM

ERROR:torch.distributed.elastic.multiprocessing.api:failed (exitcode: 1) local_rank: 0 (pid: 2276861) of binary: /home/platform/anaconda3/envs/hcs/bin/python

Traceback (most recent call last):

| closed | 2023-04-11T04:06:56Z | 2023-06-15T12:51:47Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/118 | [] | WUHU-G | 1 |

gee-community/geemap | streamlit | 1,525 | I encountered an error using function: netcdf_to_ee | <!-- Please search existing issues to avoid creating duplicates. -->

### Environment Information

```python

colab

```

### Description

Hello, Professor Wu. I hope to get your help. I want to load the netCDF file on the hard disk into colab and convert it into image type for subsequent calculation.

**The code is as follows:**

### Code

```python

image = geemap.netcdf_to_ee("/content/drive/MyDrive/VOD/Monthly/svodi_2005-09-01.nc", "svodi", band_names=None, lon="lon", lat="lat")

image = image.updateMask(image.neq(9999.0))

Map.addLayer(image)

Map

```

### Issue

```python

HttpError: <HttpError 400 when requesting https://earthengine.googleapis.com/v1alpha/projects/earthengine-legacy/maps?fields=name&alt=json returned "Request payload size exceeds the limit: 10485760 bytes.". Details: "Request payload size exceeds the limit: 10485760 bytes.">

During handling of the above exception, another exception occurred:

EEException Traceback (most recent call last)

[/usr/local/lib/python3.10/dist-packages/ee/data.py](https://localhost:8080/#) in _execute_cloud_call(call, num_retries)

337 return call.execute(num_retries=num_retries)

338 except googleapiclient.errors.HttpError as e:

--> 339 raise _translate_cloud_exception(e)

340

341

EEException: Request payload size exceeds the limit: 10485760 bytes.

```

| closed | 2023-04-29T14:22:48Z | 2023-04-29T14:55:42Z | https://github.com/gee-community/geemap/issues/1525 | [

"bug"

] | xlsadai | 1 |

MagicStack/asyncpg | asyncio | 1,094 | Provide wheels for Python 3.12 as installing without C compiler currently fails | * **asyncpg version**: 0.28.0

* **Python version**: 3.12

* **Platform**: Linux

* **Did you install asyncpg with pip?**: yes

Hey, could you please either build the wheels for 0.28.0 / Python 3.12 or make a new release? We are moving the codebase to Python 3.12 and installations are currently failing without a C compiler. Thanks! | closed | 2023-10-26T17:01:26Z | 2025-02-18T19:53:39Z | https://github.com/MagicStack/asyncpg/issues/1094 | [] | zyv | 4 |

dantaki/vapeplot | seaborn | 5 | Pip install error | pip install raises following error

`Command "python setup.py egg_info" failed with error code 1 in /private/var/folders/_1/hdrhn2y9719c6vnr54tsk2tc0000gn/T/pip-build-sl19a10_/vapeplot/` | closed | 2018-02-04T18:25:02Z | 2018-02-06T18:07:35Z | https://github.com/dantaki/vapeplot/issues/5 | [] | Dpananos | 5 |

noirbizarre/flask-restplus | api | 548 | "return make_response(body, status)" behaves differently from "return body, status" when @marshal_with is used | With "return make_response(body, status)" the status value is ignored (and set 200 by default)

With "return body, status" it isn't.

Is it an intended behavior?

It must be set somewhere here

https://github.com/noirbizarre/flask-restplus/blob/master/flask_restplus/marshalling.py#L248 | open | 2018-11-01T19:31:18Z | 2018-11-01T19:31:18Z | https://github.com/noirbizarre/flask-restplus/issues/548 | [] | andy-landy | 0 |

paperless-ngx/paperless-ngx | django | 8,795 | [BUG] Concise description of the issue | ### Description

While using devcontainer on VSCode I get the following error:

Start: Run: docker-compose -f /home/kanak/work/AI4Bhārat/contrib/paperless-ngx/.devcontainer/docker-compose.devcontainer.sqlite-tika.yml config

Stop (277 ms): Run: docker-compose -f /home/kanak/work/AI4Bhārat/contrib/paperless-ngx/.devcontainer/docker-compose.devcontainer.sqlite-tika.yml config

The Compose file '.../.devcontainer/docker-compose.devcontainer.sqlite-tika.yml' is invalid because:

**services.paperless-development.environment.PAPERLESS_DEBUG contains true, which is an invalid type, it should be a string, number, or a null**

### Steps to reproduce

1. Clone repo

2. Open in VS Code

3. Reopen in Container

### Webserver logs

```bash

No webserver logs.

```

### Browser logs

```bash

```

### Paperless-ngx version

2.14

### Host OS

Ubuntu 22.04.4 LTS

### Installation method

Other (please describe above)

### System status

```json

```

### Browser

_No response_

### Configuration changes

_No response_

### Please confirm the following

- [x] I believe this issue is a bug that affects all users of Paperless-ngx, not something specific to my installation.

- [x] This issue is not about the OCR or archive creation of a specific file(s). Otherwise, please see above regarding OCR tools.

- [x] I have already searched for relevant existing issues and discussions before opening this report.

- [x] I have updated the title field above with a concise description. | closed | 2025-01-18T06:47:31Z | 2025-02-18T03:06:11Z | https://github.com/paperless-ngx/paperless-ngx/issues/8795 | [

"not a bug"

] | dteklavya | 3 |

matplotlib/matplotlib | data-visualization | 28,892 | [Doc]: Be more specific on dependencies that need to be installed for a "reasonable" dev environment | ### Documentation Link

https://matplotlib.org/devdocs/devel/development_setup.html#install-dependencies

### Problem

> Most Python dependencies will be installed when [setting up the environment](https://matplotlib.org/devdocs/devel/development_setup.html#dev-environment) but non-Python dependencies like C++ compilers, LaTeX, and other system applications must be installed separately.

### Suggested improvement

This is not actionable.

"*most*" and "*non-Python dependencies like [...] and other system applications*" makes impossible for a user to know what to install. Digging through following links is cumbersome.

We may not need to give detailed description, but should mention what to additionally install manually (or ensure it's there) for a reasonable working installation, e.g. (not checked for completeness):

> You additionally need

> - for a minimal working development environment: a [C++ compiler]()

> - for building the docs: [Graphviz]() and a [LateX]().

>

> The full list of required and optional dependencies is available here:

>

> [current links]

| closed | 2024-09-26T15:34:15Z | 2024-11-01T01:50:06Z | https://github.com/matplotlib/matplotlib/issues/28892 | [

"Documentation"

] | timhoffm | 1 |

dpgaspar/Flask-AppBuilder | rest-api | 2,275 | Demo url not working | http://flaskappbuilder.pythonanywhere.com is currently not working.

I have seen in the past other times there has been this problem and issue opened.

| open | 2024-10-11T14:42:51Z | 2025-02-21T12:30:31Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/2275 | [] | fedepad | 2 |

graphistry/pygraphistry | jupyter | 221 | [FEA] multi-gpu demo | See https://github.com/graphistry/graph-app-kit/issues/56

- [ ] download nb

- [ ] single gpu nb

- [ ] multi-gpu nb

- [ ] parallel io nb

- [ ] tutorial | open | 2021-03-18T06:31:33Z | 2021-03-18T06:32:22Z | https://github.com/graphistry/pygraphistry/issues/221 | [

"enhancement"

] | lmeyerov | 0 |

apachecn/ailearning | scikit-learn | 585 | AI | closed | 2020-05-13T11:04:46Z | 2020-11-23T02:05:17Z | https://github.com/apachecn/ailearning/issues/585 | [] | LiangJiaxin115 | 0 | |

wkentaro/labelme | computer-vision | 629 | [BUG] QT Error on Windows to Launch GUI | QT Error

```

qt.qpa.plugin: Could not find the Qt platform plugin "windows" in ""

This application failed to start because no Qt platform plugin could be initialized. Reinstalling the application may fix this problem.

``` | closed | 2020-03-24T19:14:12Z | 2021-09-23T15:19:28Z | https://github.com/wkentaro/labelme/issues/629 | [

"issue::bug"

] | Zumbalamambo | 4 |

piskvorky/gensim | data-science | 2,957 | Clean up OOP / stub methods | Do we really need such stub methods that only call the same superclass method with the same arguments? That's already the default which occurs if no method is present. By my understanding, doc-comment tools like Sphinx will, in their current versions, already propagate superclass API docs down to subclasses.

The only thing that's varying is the comment, and while it expresses a different expected-type from the superclass, in practice that doc may be misleading: I **think** (but have not recently checked) that these `SaveLoad` `.load()` methods can return objects that **may not be** what the caller expects. They return **the class that's in the file**, not the class-that-`.load()`-was-called-on.

If so, it might be a worthwhile short-term step as soon as 4.0.0 – for limiting the risk of confusion & requirement for redundant/caveat-filled docs – to **deprecate the practice of calling *SpecificClass*.load(filename) entirely**, despite its common appearance in previously-idiomatic gensim example code. Instead, either (1) call it only on class `SaveLoad` itself, to express that the only expectation for the returned type is that it's a `SaveLoad` subclass; (2) promote load functionality to model-specific top-level functions in each relevant model – a bit more like the `load_facebook_model()` function for loading Facebook-FasttText-native-format models – which might themselves do some type-checking, so any docs which imply they return a certain type are true; (3) just make one `utils.py` generic `load()` or `load_model()`, perhaps with an optional class-enforcement parameter, and encourage its use.

(For explicitness, I think I like this third option. In practice, it might appear in example code as:

```

from gensim.utils import load_model

from gensim.models import Word2Vec

w2v_model_we_hope = load_model('w2v.bin')

w2v_model_or_error = load_model('w2v.bin', expected_class=Word2Vec)

```

Plenty of code where the file is saved/loaded in the same example block, or under strong expectations & naming conventions, might skip the enforced-type-checking – but it'd be an option & true/explicit, rather than something that's implied-but-not-really-enforced in the current idiom `Word2Vec.load('kv.bin')`)

Despite the effort involved in making such changes, they could minimize duplicated code/comments & avoid some unintuitive gotchas in the current `SaveLoad` approach. They might also help make a future migration to some more standard big-model-serialization convention (as proposed by #2848) cleaner.

_Originally posted by @gojomo in https://github.com/RaRe-Technologies/gensim/pull/2939#discussion_r493807649_ | open | 2020-09-24T10:09:00Z | 2020-09-24T10:27:53Z | https://github.com/piskvorky/gensim/issues/2957 | [

"housekeeping"

] | piskvorky | 0 |

pytest-dev/pytest-cov | pytest | 288 | Regression in 2.7.1 when validating notebooks | I am running pytest for the [`krotov` package](https://travis-ci.org/qucontrol/krotov) with both pytest-cov and the [nbval plugin](https://nbval.readthedocs.io/en/latest/) to validate jupyter notebook in the documentation. Since `pytest-cov` was updated to version 2.7.1, there is extra output related to repr-strings of internal coverage objects appearing in the output of some notebook cells. See https://travis-ci.org/goerz/krotov/jobs/528562228 for the failure, and compare this with the working run at https://travis-ci.org/qucontrol/krotov/jobs/527616527. The first failure is for Cell 14 of https://krotov.readthedocs.io/en/latest/notebooks/05_example_transmon_xgate.html. I'm able to reproduce the problem locally (both on macOS and Linux), not just on Travis, and I can also verify that the problem disappears if I pin `pytest-cov` to version 2.6.1 in `krotov`'s `setup.py`. | open | 2019-05-06T06:46:08Z | 2019-05-06T07:21:35Z | https://github.com/pytest-dev/pytest-cov/issues/288 | [] | goerz | 1 |

AUTOMATIC1111/stable-diffusion-webui | deep-learning | 15,802 | Ui "screwed" up in Networks Tabs | ### Checklist

- [X] The issue exists after disabling all extensions

- [X] The issue exists on a clean installation of webui

- [ ] The issue is caused by an extension, but I believe it is caused by a bug in the webui

- [X] The issue exists in the current version of the webui

- [X] The issue has not been reported before recently

- [ ] The issue has been reported before but has not been fixed yet

### What happened?

The UI in all the networks tabs shows Grey icons and are missing the Scrollbar - makes it really hard to use.

tested on different browsers and without extensions

### Steps to reproduce the problem

Just use it (at least for me)

### What should have happened?

Show icons and scroll bar

### What browsers do you use to access the UI ?

Google Chrome

### Sysinfo

[sysinfo-2024-05-15-14-38.json](https://github.com/AUTOMATIC1111/stable-diffusion-webui/files/15323126/sysinfo-2024-05-15-14-38.json)

### Console logs

```Shell

Already up to date.

venv "H:\stable-diffusion-webui\venv\Scripts\Python.exe"

Python 3.10.0 (tags/v3.10.0:b494f59, Oct 4 2021, 19:00:18) [MSC v.1929 64 bit (AMD64)]

Version: v1.9.3

Commit hash: 1c0a0c4c26f78c32095ebc7f8af82f5c04fca8c0

Launching Web UI with arguments: --xformers --ckpt-dir H:\SD_MODEL_DIR\Models\StableDiffusion --embeddings-dir H:\SD_MODEL_DIR\SD_EMBEDDINGS --lora-dir H:\SD_MODEL_DIR\Models\Lora --gfpgan-dir H:\SD_MODEL_DIR\Models\GFPGAN --esrgan-models-path H:\SD_MODEL_DIR\Models\ESRGAN --realesrgan-models-path H:\SD_MODEL_DIR\Models\RealESRGAN

2024-05-15 16:33:51.134800: I tensorflow/core/util/port.cc:113] oneDNN custom operations are on. You may see slightly different numerical results due to floating-point round-off errors from different computation orders. To turn them off, set the environment variable `TF_ENABLE_ONEDNN_OPTS=0`.

2024-05-15 16:33:52.270905: I tensorflow/core/util/port.cc:113] oneDNN custom operations are on. You may see slightly different numerical results due to floating-point round-off errors from different computation orders. To turn them off, set the environment variable `TF_ENABLE_ONEDNN_OPTS=0`.

Loading weights [ef76aa2332] from H:\SD_MODEL_DIR\Models\StableDiffusion\realisticVisionV51_v51VAE.safetensors

Creating model from config: H:\stable-diffusion-webui\configs\v1-inference.yaml

H:\stable-diffusion-webui\venv\lib\site-packages\huggingface_hub\file_download.py:1132: FutureWarning: `resume_download` is deprecated and will be removed in version 1.0.0. Downloads always resume when possible. If you want to force a new download, use `force_download=True`.

warnings.warn(

Running on local URL: http://127.0.0.1:7860

To create a public link, set `share=True` in `launch()`.

Startup time: 23.7s (prepare environment: 3.6s, import torch: 7.5s, import gradio: 1.3s, setup paths: 5.3s, initialize shared: 0.4s, other imports: 0.8s, opts onchange: 0.3s, load scripts: 2.6s, create ui: 1.0s, gradio launch: 0.5s).

Applying attention optimization: xformers... done.

Model loaded in 8.4s (load weights from disk: 0.4s, create model: 1.3s, apply weights to model: 3.7s, apply dtype to VAE: 0.2s, load textual inversion embeddings: 2.4s, calculate empty prompt: 0.2s).

```

### Additional information

[I have updated my GPU driver recently.

| open | 2024-05-15T14:39:23Z | 2024-06-20T19:20:36Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/15802 | [

"bug-report"

] | OleJ1964 | 3 |

httpie/cli | api | 606 | output formatting on debian... | hi and thanks for a great tool :)

i'm not seeing json output formatted as it is in the screenshots here - i get just a single string of json that wraps at the terminal window edge - is there anything i should be doing differently that:

http [domain.blah]

?

thanks

| closed | 2017-09-05T20:57:23Z | 2017-09-05T22:59:31Z | https://github.com/httpie/cli/issues/606 | [] | fake-fur | 7 |

paperless-ngx/paperless-ngx | machine-learning | 9,390 | [BUG] Edit Permission not set | ### Description

The "Edit" Permission is not set in a document, although the permission is set in the workflow.

Workflow:

<img width="531" alt="Image" src="https://github.com/user-attachments/assets/6e79e8ca-08a7-4ca0-8d71-4afa647e3e9a" />

Document:

<img width="413" alt="Image" src="https://github.com/user-attachments/assets/4a2ccd4a-a25a-435a-8d10-0456c4cb824c" />

### Steps to reproduce

1. Create a workflow

2. Assign an owner under Actions and add another user to the edit permissions

3. Add a document that meets the workflow's criteria

### Webserver logs

```bash

Not related

```

### Browser logs

```bash

```

### Paperless-ngx version

2.14.7

### Host OS

Linux-4.4.302+-x86_64-with-glibc2.36

### Installation method

Docker - official image

### System status

```json

{

"pngx_version": "2.14.7",

"server_os": "Linux-4.4.302+-x86_64-with-glibc2.36",

"install_type": "docker",

"storage": {

"total": 469010432000,

"available": 216089976832

},

"database": {

"type": "postgresql",

"url": "paperless",

"status": "OK",

"error": null,

"migration_status": {

"latest_migration": "documents.1061_workflowactionwebhook_as_json",

"unapplied_migrations": []

}

},

"tasks": {

"redis_url": "redis://broker:6379",

"redis_status": "OK",

"redis_error": null,

"celery_status": "OK",

"index_status": "OK",

"index_last_modified": "2025-03-13T14:37:54.904629+01:00",

"index_error": null,

"classifier_status": "OK",

"classifier_last_trained": "2025-03-13T14:05:03.298540Z",

"classifier_error": null

}

}

```

### Browser

Safari

### Configuration changes

_No response_

### Please confirm the following

- [x] I believe this issue is a bug that affects all users of Paperless-ngx, not something specific to my installation.

- [x] This issue is not about the OCR or archive creation of a specific file(s). Otherwise, please see above regarding OCR tools.

- [x] I have already searched for relevant existing issues and discussions before opening this report.

- [x] I have updated the title field above with a concise description. | closed | 2025-03-13T15:00:45Z | 2025-03-13T17:59:59Z | https://github.com/paperless-ngx/paperless-ngx/issues/9390 | [

"cant-reproduce"

] | weizenmanncom | 5 |

3b1b/manim | python | 1,947 | Unused Function `digest_mobject_attrs` | I'm not quite sure if this function is used anymore. It was last changed about 3 years ago and doesn't seem to be used anymore and has no references either.

https://github.com/3b1b/manim/blob/fcff44a66b58a3af4070381afed0b4fad80768be/manimlib/mobject/mobject.py#L416-L423

(Currently working on updating the CE version to the new state of your repo, so if theres anything i can do for you while i'm looking at the code anyway just leave it here. Always happy to help) | closed | 2022-12-28T21:15:38Z | 2023-01-05T00:54:47Z | https://github.com/3b1b/manim/issues/1947 | [] | MrDiver | 4 |

yt-dlp/yt-dlp | python | 12,377 | Add support for Public Radio Exchange (PRX) | ### Checklist

- [x] I'm reporting a new site support request

- [x] I've verified that I have **updated yt-dlp to nightly or master** ([update instructions](https://github.com/yt-dlp/yt-dlp#update-channels))

- [x] I've checked that all provided URLs are playable in a browser with the same IP and same login details

- [x] I've checked that none of provided URLs [violate any copyrights](https://github.com/yt-dlp/yt-dlp/blob/master/CONTRIBUTING.md#is-the-website-primarily-used-for-piracy) or contain any [DRM](https://en.wikipedia.org/wiki/Digital_rights_management) to the best of my knowledge

- [x] I've searched the [bugtracker](https://github.com/yt-dlp/yt-dlp/issues?q=is%3Aissue%20-label%3Aspam%20%20) for similar requests **including closed ones**. DO NOT post duplicates

- [x] I've read about [sharing account credentials](https://github.com/yt-dlp/yt-dlp/blob/master/CONTRIBUTING.md#are-you-willing-to-share-account-details-if-needed) and am willing to share it if required

### Region

United States

### Example URLs

Sample link with a single stream: https://exchange.prx.org/pieces/558187?m=false

Sample link with multiple streams: https://exchange.prx.org/pieces/507265?m=false

Playlist link: https://exchange.prx.org/playlists/354104

### Provide a description that is worded well enough to be understood

This site features streams of public radio streams and podcasts using an embedded player.

### Provide verbose output that clearly demonstrates the problem

- [x] Run **your** yt-dlp command with **-vU** flag added (`yt-dlp -vU <your command line>`)

- [x] If using API, add `'verbose': True` to `YoutubeDL` params instead

- [x] Copy the WHOLE output (starting with `[debug] Command-line config`) and insert it below

### Complete Verbose Output

```shell

[debug] Command-line config: ['-vU', 'https://exchange.prx.org/pieces/507265?m=false']

[debug] Encodings: locale cp1252, fs utf-8, pref cp1252, out utf-8, error utf-8, screen utf-8

[debug] yt-dlp version nightly@2025.02.11.232920 from yt-dlp/yt-dlp-nightly-builds [6ca23ffaa] (win_exe)

[debug] Python 3.10.11 (CPython AMD64 64bit) - Windows-10-10.0.19045-SP0 (OpenSSL 1.1.1t 7 Feb 2023)

[debug] exe versions: ffmpeg 7.0.1-full_build-www.gyan.dev (setts)

[debug] Optional libraries: Cryptodome-3.21.0, brotli-1.1.0, certifi-2025.01.31, curl_cffi-0.5.10, mutagen-1.47.0, requests-2.32.3, sqlite3-3.40.1, urllib3-2.3.0, websockets-14.2

[debug] Proxy map: {}

[debug] Request Handlers: urllib, requests, websockets, curl_cffi

[debug] Loaded 1841 extractors

[debug] Fetching release info: https://api.github.com/repos/yt-dlp/yt-dlp-nightly-builds/releases/latest

Latest version: nightly@2025.02.11.232920 from yt-dlp/yt-dlp-nightly-builds

yt-dlp is up to date (nightly@2025.02.11.232920 from yt-dlp/yt-dlp-nightly-builds)

[generic] Extracting URL: https://exchange.prx.org/pieces/507265?m=false

[generic] 507265?m=false: Downloading webpage

WARNING: [generic] Falling back on generic information extractor

[generic] 507265?m=false: Extracting information

[debug] Looking for embeds

ERROR: Unsupported URL: https://exchange.prx.org/pieces/507265?m=false

Traceback (most recent call last):

File "yt_dlp\YoutubeDL.py", line 1637, in wrapper

File "yt_dlp\YoutubeDL.py", line 1772, in __extract_info

File "yt_dlp\extractor\common.py", line 747, in extract

File "yt_dlp\extractor\generic.py", line 2566, in _real_extract

yt_dlp.utils.UnsupportedError: Unsupported URL: https://exchange.prx.org/pieces/507265?m=false

``` | open | 2025-02-16T02:08:15Z | 2025-02-16T16:02:51Z | https://github.com/yt-dlp/yt-dlp/issues/12377 | [

"site-request"

] | wkrick | 1 |

huggingface/datasets | numpy | 7,427 | Error splitting the input into NAL units. | ### Describe the bug

I am trying to finetune qwen2.5-vl on 16 * 80G GPUS, and I use `LLaMA-Factory` and set `preprocessing_num_workers=16`. However, I met the following error and the program seem to got crush. It seems that the error come from `datasets` library

The error logging is like following:

```text

Converting format of dataset (num_proc=16): 100%|█████████▉| 19265/19267 [11:44<00:00, 5.88 examples/s]

Converting format of dataset (num_proc=16): 100%|█████████▉| 19266/19267 [11:44<00:00, 5.02 examples/s]

Converting format of dataset (num_proc=16): 100%|██████████| 19267/19267 [11:44<00:00, 5.44 examples/s]

Converting format of dataset (num_proc=16): 100%|██████████| 19267/19267 [11:44<00:00, 27.34 examples/s]

Running tokenizer on dataset (num_proc=16): 0%| | 0/19267 [00:00<?, ? examples/s]

Invalid NAL unit size (45405 > 35540).

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (7131 > 3225).

missing picture in access unit with size 54860

Invalid NAL unit size (48042 > 33645).

missing picture in access unit with size 3229

missing picture in access unit with size 33649

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (48042 > 33645).

Error splitting the input into NAL units.

missing picture in access unit with size 35544

Invalid NAL unit size (45405 > 35540).

Error splitting the input into NAL units.

Error splitting the input into NAL units.

Invalid NAL unit size (8187 > 7069).

missing picture in access unit with size 7073

Invalid NAL unit size (8187 > 7069).

Error splitting the input into NAL units.

Invalid NAL unit size (7131 > 3225).

Error splitting the input into NAL units.

Invalid NAL unit size (14013 > 5998).

missing picture in access unit with size 6002

Invalid NAL unit size (14013 > 5998).

Error splitting the input into NAL units.

Invalid NAL unit size (17173 > 7231).

missing picture in access unit with size 7235

Invalid NAL unit size (17173 > 7231).

Error splitting the input into NAL units.

Invalid NAL unit size (16964 > 6055).

missing picture in access unit with size 6059

Invalid NAL unit size (16964 > 6055).

Exception in thread Thread-9 (accepter)Error splitting the input into NAL units.

:

Traceback (most recent call last):

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 1016, in _bootstrap_inner

Running tokenizer on dataset (num_proc=16): 0%| | 0/19267 [13:22<?, ? examples/s] self.run()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 953, in run

Invalid NAL unit size (7032 > 2927).

missing picture in access unit with size 2931

self._target(*self._args, **self._kwargs)

File "/opt/conda/envs/python3.10.13/lib/python3.10/site-packages/multiprocess/managers.py", line 194, in accepter

Invalid NAL unit size (7032 > 2927).

Error splitting the input into NAL units.

t.start()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 935, in start

Invalid NAL unit size (28973 > 6121).

missing picture in access unit with size 6125

_start_new_thread(self._bootstrap, ())Invalid NAL unit size (28973 > 6121).

RuntimeError: can't start new threadError splitting the input into NAL units.

Invalid NAL unit size (4411 > 296).

missing picture in access unit with size 300

Invalid NAL unit size (4411 > 296).

Error splitting the input into NAL units.

Invalid NAL unit size (14414 > 1471).

missing picture in access unit with size 1475

Invalid NAL unit size (14414 > 1471).

Error splitting the input into NAL units.

Invalid NAL unit size (5283 > 1792).

missing picture in access unit with size 1796

Invalid NAL unit size (5283 > 1792).

Error splitting the input into NAL units.

Invalid NAL unit size (79147 > 10042).

missing picture in access unit with size 10046

Invalid NAL unit size (79147 > 10042).

Error splitting the input into NAL units.

Invalid NAL unit size (45405 > 35540).

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (7131 > 3225).

missing picture in access unit with size 54860

Invalid NAL unit size (48042 > 33645).

missing picture in access unit with size 3229

missing picture in access unit with size 33649

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (48042 > 33645).

Error splitting the input into NAL units.

missing picture in access unit with size 35544

Invalid NAL unit size (45405 > 35540).

Error splitting the input into NAL units.

Error splitting the input into NAL units.

Invalid NAL unit size (8187 > 7069).

missing picture in access unit with size 7073

Invalid NAL unit size (8187 > 7069).

Error splitting the input into NAL units.

Invalid NAL unit size (7131 > 3225).

Error splitting the input into NAL units.

Invalid NAL unit size (14013 > 5998).

missing picture in access unit with size 6002

Invalid NAL unit size (14013 > 5998).

Error splitting the input into NAL units.

Invalid NAL unit size (17173 > 7231).

missing picture in access unit with size 7235

Invalid NAL unit size (17173 > 7231).

Error splitting the input into NAL units.

Invalid NAL unit size (16964 > 6055).

missing picture in access unit with size 6059

Invalid NAL unit size (16964 > 6055).

Exception in thread Thread-9 (accepter)Error splitting the input into NAL units.

:

Traceback (most recent call last):

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 1016, in _bootstrap_inner

Running tokenizer on dataset (num_proc=16): 0%| | 0/19267 [13:22<?, ? examples/s] self.run()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 953, in run

Invalid NAL unit size (7032 > 2927).

missing picture in access unit with size 2931

self._target(*self._args, **self._kwargs)

File "/opt/conda/envs/python3.10.13/lib/python3.10/site-packages/multiprocess/managers.py", line 194, in accepter

Invalid NAL unit size (7032 > 2927).

Error splitting the input into NAL units.

t.start()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 935, in start

Invalid NAL unit size (28973 > 6121).

missing picture in access unit with size 6125

_start_new_thread(self._bootstrap, ())Invalid NAL unit size (28973 > 6121).

RuntimeError: can't start new threadError splitting the input into NAL units.

Invalid NAL unit size (4411 > 296).

missing picture in access unit with size 300

Invalid NAL unit size (4411 > 296).

Error splitting the input into NAL units.

Invalid NAL unit size (14414 > 1471).

missing picture in access unit with size 1475

Invalid NAL unit size (14414 > 1471).

Error splitting the input into NAL units.

Invalid NAL unit size (5283 > 1792).

missing picture in access unit with size 1796

Invalid NAL unit size (5283 > 1792).

Error splitting the input into NAL units.

Invalid NAL unit size (79147 > 10042).

missing picture in access unit with size 10046

Invalid NAL unit size (79147 > 10042).

Error splitting the input into NAL units.

Invalid NAL unit size (45405 > 35540).

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (7131 > 3225).

missing picture in access unit with size 54860

Invalid NAL unit size (48042 > 33645).

missing picture in access unit with size 3229

missing picture in access unit with size 33649

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (48042 > 33645).

Error splitting the input into NAL units.

missing picture in access unit with size 35544

Invalid NAL unit size (45405 > 35540).

Error splitting the input into NAL units.

Error splitting the input into NAL units.

Invalid NAL unit size (8187 > 7069).

missing picture in access unit with size 7073

Invalid NAL unit size (8187 > 7069).

Error splitting the input into NAL units.

Invalid NAL unit size (7131 > 3225).

Error splitting the input into NAL units.

Invalid NAL unit size (14013 > 5998).

missing picture in access unit with size 6002

Invalid NAL unit size (14013 > 5998).

Error splitting the input into NAL units.

Invalid NAL unit size (17173 > 7231).

missing picture in access unit with size 7235

Invalid NAL unit size (17173 > 7231).

Error splitting the input into NAL units.

Invalid NAL unit size (16964 > 6055).

missing picture in access unit with size 6059

Invalid NAL unit size (16964 > 6055).

Exception in thread Thread-9 (accepter)Error splitting the input into NAL units.

:

Traceback (most recent call last):

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 1016, in _bootstrap_inner

Running tokenizer on dataset (num_proc=16): 0%| | 0/19267 [13:22<?, ? examples/s] self.run()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 953, in run

Invalid NAL unit size (7032 > 2927).

missing picture in access unit with size 2931

self._target(*self._args, **self._kwargs)

File "/opt/conda/envs/python3.10.13/lib/python3.10/site-packages/multiprocess/managers.py", line 194, in accepter

Invalid NAL unit size (7032 > 2927).

Error splitting the input into NAL units.

t.start()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 935, in start

Invalid NAL unit size (28973 > 6121).

missing picture in access unit with size 6125

_start_new_thread(self._bootstrap, ())Invalid NAL unit size (28973 > 6121).

RuntimeError: can't start new threadError splitting the input into NAL units.

Invalid NAL unit size (4411 > 296).

missing picture in access unit with size 300

Invalid NAL unit size (4411 > 296).

Error splitting the input into NAL units.

Invalid NAL unit size (14414 > 1471).

missing picture in access unit with size 1475

Invalid NAL unit size (14414 > 1471).

Error splitting the input into NAL units.

Invalid NAL unit size (5283 > 1792).

missing picture in access unit with size 1796

Invalid NAL unit size (5283 > 1792).

Error splitting the input into NAL units.

Invalid NAL unit size (79147 > 10042).

missing picture in access unit with size 10046

Invalid NAL unit size (79147 > 10042).

Error splitting the input into NAL units.

Invalid NAL unit size (45405 > 35540).

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (7131 > 3225).

missing picture in access unit with size 54860

Invalid NAL unit size (48042 > 33645).

missing picture in access unit with size 3229

missing picture in access unit with size 33649

Invalid NAL unit size (86720 > 54856).

Invalid NAL unit size (48042 > 33645).

Error splitting the input into NAL units.

missing picture in access unit with size 35544

Invalid NAL unit size (45405 > 35540).

Error splitting the input into NAL units.

Error splitting the input into NAL units.

Invalid NAL unit size (8187 > 7069).

missing picture in access unit with size 7073

Invalid NAL unit size (8187 > 7069).

Error splitting the input into NAL units.

Invalid NAL unit size (7131 > 3225).

Error splitting the input into NAL units.

Invalid NAL unit size (14013 > 5998).

missing picture in access unit with size 6002

Invalid NAL unit size (14013 > 5998).

Error splitting the input into NAL units.

Invalid NAL unit size (17173 > 7231).

missing picture in access unit with size 7235

Invalid NAL unit size (17173 > 7231).

Error splitting the input into NAL units.

Invalid NAL unit size (16964 > 6055).

missing picture in access unit with size 6059

Invalid NAL unit size (16964 > 6055).

Exception in thread Thread-9 (accepter)Error splitting the input into NAL units.

:

Traceback (most recent call last):

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 1016, in _bootstrap_inner

Running tokenizer on dataset (num_proc=16): 0%| | 0/19267 [13:22<?, ? examples/s] self.run()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 953, in run

Invalid NAL unit size (7032 > 2927).

missing picture in access unit with size 2931

self._target(*self._args, **self._kwargs)

File "/opt/conda/envs/python3.10.13/lib/python3.10/site-packages/multiprocess/managers.py", line 194, in accepter

Invalid NAL unit size (7032 > 2927).

Error splitting the input into NAL units.

t.start()

File "/opt/conda/envs/python3.10.13/lib/python3.10/threading.py", line 935, in start

Invalid NAL unit size (28973 > 6121).

missing picture in access unit with size 6125

_start_new_thread(self._bootstrap, ())Invalid NAL unit size (28973 > 6121).

RuntimeError: can't start new threadError splitting the input into NAL units.

Invalid NAL unit size (4411 > 296).

missing picture in access unit with size 300

Invalid NAL unit size (4411 > 296).

Error splitting the input into NAL units.

Invalid NAL unit size (14414 > 1471).

missing picture in access unit with size 1475

Invalid NAL unit size (14414 > 1471).

Error splitting the input into NAL units.

Invalid NAL unit size (5283 > 1792).

missing picture in access unit with size 1796

Invalid NAL unit size (5283 > 1792).

Error splitting the input into NAL units.

Invalid NAL unit size (79147 > 10042).

missing picture in access unit with size 10046

Invalid NAL unit size (79147 > 10042).

Error splitting the input into NAL units.

```

### Others

_No response_

### Steps to reproduce the bug

None

### Expected behavior

excpect to run successfully

### Environment info

```

transformers==4.49.0

datasets==3.2.0

accelerate==1.2.1

peft==0.12.0

trl==0.9.6

tokenizers==0.21.0

gradio>=4.38.0,<=5.18.0

pandas>=2.0.0

scipy

einops

sentencepiece

tiktoken

protobuf

uvicorn

pydantic

fastapi

sse-starlette

matplotlib>=3.7.0

fire

packaging

pyyaml

numpy<2.0.0

av

librosa

tyro<0.9.0

openlm-hub

qwen-vl-utils

``` | open | 2025-02-28T02:30:15Z | 2025-03-04T01:40:28Z | https://github.com/huggingface/datasets/issues/7427 | [] | MengHao666 | 2 |

huggingface/datasets | tensorflow | 6,896 | Regression bug: `NonMatchingSplitsSizesError` for (possibly) overwritten dataset | ### Describe the bug

While trying to load the dataset `https://huggingface.co/datasets/pysentimiento/spanish-tweets-small`, I get this error:

```python

---------------------------------------------------------------------------

NonMatchingSplitsSizesError Traceback (most recent call last)

[<ipython-input-1-d6a3c721d3b8>](https://localhost:8080/#) in <cell line: 3>()

1 from datasets import load_dataset

2

----> 3 ds = load_dataset("pysentimiento/spanish-tweets-small")

3 frames

[/usr/local/lib/python3.10/dist-packages/datasets/load.py](https://localhost:8080/#) in load_dataset(path, name, data_dir, data_files, split, cache_dir, features, download_config, download_mode, verification_mode, ignore_verifications, keep_in_memory, save_infos, revision, token, use_auth_token, task, streaming, num_proc, storage_options, **config_kwargs)

2150

2151 # Download and prepare data

-> 2152 builder_instance.download_and_prepare(

2153 download_config=download_config,

2154 download_mode=download_mode,

[/usr/local/lib/python3.10/dist-packages/datasets/builder.py](https://localhost:8080/#) in download_and_prepare(self, output_dir, download_config, download_mode, verification_mode, ignore_verifications, try_from_hf_gcs, dl_manager, base_path, use_auth_token, file_format, max_shard_size, num_proc, storage_options, **download_and_prepare_kwargs)

946 if num_proc is not None:

947 prepare_split_kwargs["num_proc"] = num_proc

--> 948 self._download_and_prepare(

949 dl_manager=dl_manager,

950 verification_mode=verification_mode,

[/usr/local/lib/python3.10/dist-packages/datasets/builder.py](https://localhost:8080/#) in _download_and_prepare(self, dl_manager, verification_mode, **prepare_split_kwargs)

1059

1060 if verification_mode == VerificationMode.BASIC_CHECKS or verification_mode == VerificationMode.ALL_CHECKS:

-> 1061 verify_splits(self.info.splits, split_dict)

1062

1063 # Update the info object with the splits.

[/usr/local/lib/python3.10/dist-packages/datasets/utils/info_utils.py](https://localhost:8080/#) in verify_splits(expected_splits, recorded_splits)

98 ]

99 if len(bad_splits) > 0:

--> 100 raise NonMatchingSplitsSizesError(str(bad_splits))

101 logger.info("All the splits matched successfully.")

102

NonMatchingSplitsSizesError: [{'expected': SplitInfo(name='train', num_bytes=82649695458, num_examples=597433111, shard_lengths=None, dataset_name=None), 'recorded': SplitInfo(name='train', num_bytes=3358310095, num_examples=24898932, shard_lengths=[3626991, 3716991, 4036990, 3506990, 3676990, 3716990, 2616990], dataset_name='spanish-tweets-small')}]

```

I think I had this dataset updated, might be related to #6271

It is working fine as late in `2.10.0` , but not in `2.13.0` onwards.

### Steps to reproduce the bug

```python

from datasets import load_dataset

ds = load_dataset("pysentimiento/spanish-tweets-small")

```

You can run it in [this notebook](https://colab.research.google.com/drive/1FdhqLiVimHIlkn7B54DbhizeQ4U3vGVl#scrollTo=YgA50cBSibUg)

### Expected behavior

Load the dataset without any error

### Environment info

- `datasets` version: 2.13.0

- Platform: Linux-6.1.58+-x86_64-with-glibc2.35

- Python version: 3.10.12

- Huggingface_hub version: 0.20.3

- PyArrow version: 14.0.2

- Pandas version: 2.0.3 | open | 2024-05-13T15:41:57Z | 2024-05-13T15:44:48Z | https://github.com/huggingface/datasets/issues/6896 | [] | finiteautomata | 0 |

nolar/kopf | asyncio | 810 | Handler starts but never finishes | ### Long story short

I have recently deploy our operator to an AKS cluster (its been running on EKS & on-prem clusters without any issue) & started noticing that it wasn't

handling changes to CRDs, restarting the container would trigger the handler successfully. At first I thought that we were missing events so explicitly added some [api timeouts](https://kopf.readthedocs.io/en/stable/configuration/#api-timeouts) at startup.

However this didn't make a difference & events still seemed to be being missed. On further investigation (running with --debug startup param) the event did seem to get picked up & the handler triggered but it just seems to hang without ever finishing.

```

debug | 2021-07-26T17:14:00.672642+00:00 | [thor/thor-configs] Updating diff: (('change', ('spec', 'patch'), '2021-07-26T17:04:08Z', '2021-07-26T17:13:56Z'),)

debug | 2021-07-26T17:14:00.675029+00:00 | [thor/thor-configs] Handler 'config_update_handler' is invoked.

```

There would be no `Handler 'config_update_handler' succeeded.` log line (I left it for over 1 hour). It seems it would stay in this state forever.

When restarting the container there are some log lines that take about `Unprocessed streams` but I haven't been able to figure out why.

```

debug | 2021-07-26T17:20:03.375018+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d2c6df0> starts SSL handshake

debug | 2021-07-26T17:20:03.394016+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d2c6df0>: SSL handshake took 18.8 ms

debug | 2021-07-26T17:20:03.394890+00:00 | <asyncio.TransportSocket fd=7, family=AddressFamily.AF_INET, type=SocketKind.SOCK_STREAM, proto=6, laddr=('10.0.0.48', 57824), raddr=('10.1.0.1', 443)> connected to 10.1.0.1:443: (<asyncio.sslproto._SSLProtocolTransport object at 0x7fe41d4abfa0>, <aiohttp.client_proto.ResponseHandler object at 0x7fe41da1d760>)

debug | 2021-07-26T17:20:03.404854+00:00 | Keep-alive in 'default' cluster-wide: not found.

info | 2021-07-26T17:20:35.960403+00:00 | Signal SIGTERM is received. Operator is stopping.

debug | 2021-07-26T17:20:35.961961+00:00 | Stopping the watch-stream for customresourcedefinitions.v1.apiextensions.k8s.io cluster-wide.

debug | 2021-07-26T17:20:35.965829+00:00 | Namespace observer is cancelled.

debug | 2021-07-26T17:20:35.966790+00:00 | Credentials retriever is cancelled.

debug | 2021-07-26T17:20:35.968802+00:00 | Poster of events is cancelled.

debug | 2021-07-26T17:20:35.976232+00:00 | Stopping the watch-stream for clusterkopfpeerings.v1.kopf.dev cluster-wide.

debug | 2021-07-26T17:20:35.981848+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d8c9af0>: SSL error in data received

debug | 2021-07-26T17:20:35.998038+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d8ec430>: SSL error in data received

debug | 2021-07-26T17:20:36.002980+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d8ec130>: SSL error in data received

debug | 2021-07-26T17:20:36.004243+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d8c9c70>: SSL error in data received

debug | 2021-07-26T17:20:36.007808+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d8ec1f0>: SSL error in data received

debug | 2021-07-26T17:20:36.008655+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d8c9e80>: SSL error in data received

debug | 2021-07-26T17:20:36.010025+00:00 | Stopping the watch-stream for secrets.v1 cluster-wide.

debug | 2021-07-26T17:20:36.012810+00:00 | Stopping the watch-stream for configs.v1.company.com cluster-wide.

debug | 2021-07-26T17:20:36.014347+00:00 | Stopping the watch-stream for apiaccesscredentials.v1.company.com cluster-wide.

debug | 2021-07-26T17:20:36.015304+00:00 | Stopping the watch-stream for certificates.v1.company.com cluster-wide.

debug | 2021-07-26T17:20:36.020593+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d8ec040>: SSL error in data received

debug | 2021-07-26T17:20:36.038528+00:00 | [thor/certificate-server-tls-cert] Timer 'renew_tls_certificate' has exited gracefully.

debug | 2021-07-26T17:20:36.046421+00:00 | Daemon killer is cancelled.

debug | 2021-07-26T17:20:36.053462+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d4b80d0> starts SSL handshake

debug | 2021-07-26T17:20:36.055670+00:00 | Resource observer is cancelled.

debug | 2021-07-26T17:20:36.082348+00:00 | <asyncio.sslproto.SSLProtocol object at 0x7fe41d4b80d0>: SSL handshake took 28.7 ms

debug | 2021-07-26T17:20:36.083909+00:00 | <asyncio.TransportSocket fd=7, family=AddressFamily.AF_INET, type=SocketKind.SOCK_STREAM, proto=6, laddr=('10.0.0.48', 58122), raddr=('10.1.0.1', 443)> connected to 10.1.0.1:443: (<asyncio.sslproto._SSLProtocolTransport object at 0x7fe41d9c5580>, <aiohttp.client_proto.ResponseHandler object at 0x7fe4232cf4c0>)

debug | 2021-07-26T17:20:36.092817+00:00 | Keep-alive in 'default' cluster-wide: not found.

warn | 2021-07-26T17:20:38.033284+00:00 | Unprocessed streams left for [(configs.v1.company.com, 'c1a72afd-d36c-45e3-8cf1-3e713f39ac79')].

debug | 2021-07-26T17:20:45.976898+00:00 | Streaming tasks are not stopped: finishing normally; tasks left: {<Task pending name='watcher for configs.v1.company.com@None' coro=<guard() running at /usr/local/lib/python3.8/site-packages/kopf/utilities/aiotasks.py:69> wait_for=<Future pending cb=[shield.<locals>._outer_done_callback() at /usr/local/lib/python3.8/asyncio/tasks.py:902, <TaskWakeupMethWrapper object at 0x7fe41d2c4a30>()] created at /usr/local/lib/python3.8/asyncio/base_events.py:422> created at /usr/local/lib/python3.8/asyncio/tasks.py:382>}

debug | 2021-07-26T17:20:55.980860+00:00 | Streaming tasks are not stopped: finishing normally; tasks left: {<Task pending name='watcher for configs.v1.company.com@None' coro=<guard() running at /usr/local/lib/python3.8/site-packages/kopf/utilities/aiotasks.py:69> wait_for=<Future pending cb=[shield.<locals>._outer_done_callback() at /usr/local/lib/python3.8/asyncio/tasks.py:902, <TaskWakeupMethWrapper object at 0x7fe41d2c4a30>()] created at /usr/local/lib/python3.8/asyncio/base_events.py:422> created at /usr/local/lib/python3.8/asyncio/tasks.py:382>}

parse error: Invalid numeric literal at line 556, column 4

```

Not sure wether this is related to #718 as it does seem similar. One thing to note is that the cluster is not running at scale there are less that 5 resources being watched.

### Kopf version

1.29.0

### Kubernetes version

1.19.11

### Python version

3.8

### Code

_No response_

### Logs

_No response_

### Additional information